Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

447,290 | 12,887,574,345 | IssuesEvent | 2020-07-13 11:29:40 | crestic-urca/remotelabz | https://api.github.com/repos/crestic-urca/remotelabz | closed | Start VM marche pas sur machine arrêté | normal priority | In GitLab by @fnolot on Feb 19, 2011, 19:00

Si un utilisateur arrête une VM par un halt ou un arrête sous Windows,

le clic droit sur Start ne fait rien. Par contre le Reboot relance bien

la VM.

Il faudra que le start fasse de même.

*(from redmine: issue id 13, created on 2011-02-19)* | 1.0 | Start VM marche pas sur machine arrêté - In GitLab by @fnolot on Feb 19, 2011, 19:00

Si un utilisateur arrête une VM par un halt ou un arrête sous Windows,

le clic droit sur Start ne fait rien. Par contre le Reboot relance bien

la VM.

Il faudra que le start fasse de même.

*(from redmine: issue id 13, created on 2011-02-19)* | priority | start vm marche pas sur machine arrêté in gitlab by fnolot on feb si un utilisateur arrête une vm par un halt ou un arrête sous windows le clic droit sur start ne fait rien par contre le reboot relance bien la vm il faudra que le start fasse de même from redmine issue id created on | 1 |

89,894 | 25,920,670,477 | IssuesEvent | 2022-12-15 21:37:05 | brtnfld/cgnsjira | https://api.github.com/repos/brtnfld/cgnsjira | opened | [CGNS-254] CGNS has several problems building and running on Mac M1. Bugs identified in this report. | bug To Do Build Critical |

> This issue has been migrated from the Forge. Read the [original ticket here](https://cgnsorg.atlassian.net/browse/CGNS-254).

- _**Reporter:**_ None

- _**Created at:**_ Sun, 27 Jun 2021 08:42:08 -0500

<p>I installed `cgns` on a Mac M1 Mini using `homebrew`. The library had errors, including a difficult to understand error in the subroutine `cg_conn_write_f`, and a ''missing'' subroutine in the cgns library (`cgns_f`). I installed `cgns`<br/>

from the github repository and made the library on my mac and had similar issues. Additionally, whene I built `cgns` and tested it using `make test`, some of the tests failed. I found the following errors in the distribution:</p>

<p>1. In the header file `fortran_macros.h`, `STR_PLEN` needs to be redefined for the Mac M1 Mini. Fortran appends to subroutine passed arguments hidden default integer giveing the length of character arguments. In the subroutine `cg_conn_write_f`, there are two character strings. On the Mac Mini, these hidden integer variables are `long`, not `int`. It appears from some debug statements in `cg_conn_write_f` that this is a problem that has been previously worked on but not solved.</p>

<p>2. The subroutine `cg_conn_write_f` was ''missing'' from the cgns library. This subroutine is a c subroutine, not a fortran subroutine. But the interface in `cgns_f.F90` had the `BIND` statement commented out. I uncommented and this fixed the problem. </p>

<p>3. Not all the `utilities` built correctly. I added `binaryio.o`, which is needed by `p3dfout.o`, to `utilities/Makefile.unix`, which corrected the problem. All `make test` passed.</p>

<p>Environment variables<br/>

=====================</p>

<p>This section shows environment variables used to build `cgns` on Mac M1 Mini.</p>

<p>~~~<br/>

FC=gfortran<br/>

FCFLAGS=-I/opt/homebrew/opt/libx11/include -fallow-argument-mismatch -std=gnu<br/>

LIBS=-L/opt/homebrew/Cellar/tcl-tk/8.6.11_1/lib/ -L/opt/homebrew/opt/libx11/lib<br/>

CPPFLAGS=-I/opt/homebrew/include/<br/>

CFLAGS=-I/opt/homebrew/include/<br/>

~~~</p>

<p>Config flags<br/>

============</p>

<p>This section shows config flags used to build `cgns` on Mac M1 Mini.</p>

<p>~~~<br/>

./configure --enable-gcc --with-fortran=LOWERCASE_ --enable-cgnstools --enable-64bit<br/>

~~~</p>

<p>Edits to code<br/>

=============</p>

<p>This section shows edits used to fix build.</p>

<p>src/cgnstools/utilities/Makefile.unix<br/>

---------------------------------</p>

<p>~~~<br/>

src % diff cgnstools/utilities/Makefile.unix ../../CGNS-master/src/cgnstools/utilities/Makefile.unix <br/>

82c82<br/>

< p3dfout.$(O) binaryio.$(O)<br/>

—<br/>

> p3dfout.$(O)<br/>

84c84<br/>

< getargs.$(O) p3dfout.$(O) binaryio.$(O) $(LDLIST)<br/>

—<br/>

> getargs.$(O) p3dfout.$(O) $(LDLIST)<br/>

~~~</p>

<p>src/fortran_macros.h<br/>

--------------------</p>

<p>~~~<br/>

src % diff fortran_macros.h ../../CGNS-master/src/fortran_macros.h <br/>

141c141<br/>

< # define STR_PLEN(str) , long CONCATENATE(Len,str)<br/>

—<br/>

> # define STR_PLEN(str) , int CONCATENATE(Len,str)<br/>

~~~</p>

<p>src/cgns_f.F90<br/>

-------------</p>

<p>~~~<br/>

src % diff cgns_f.F90 ../../CGNS-master/src/cgns_f.F90<br/>

3021c3021<br/>

< SUBROUTINE cg_state_size_f(size, ier) BIND(C, NAME="cg_state_size_f")<br/>

—<br/>

> SUBROUTINE cg_state_size_f(size, ier) !BIND(C, NAME="cg_state_size_f")<br/>

~~~</p>

| 1.0 | [CGNS-254] CGNS has several problems building and running on Mac M1. Bugs identified in this report. -

> This issue has been migrated from the Forge. Read the [original ticket here](https://cgnsorg.atlassian.net/browse/CGNS-254).

- _**Reporter:**_ None

- _**Created at:**_ Sun, 27 Jun 2021 08:42:08 -0500

<p>I installed `cgns` on a Mac M1 Mini using `homebrew`. The library had errors, including a difficult to understand error in the subroutine `cg_conn_write_f`, and a ''missing'' subroutine in the cgns library (`cgns_f`). I installed `cgns`<br/>

from the github repository and made the library on my mac and had similar issues. Additionally, whene I built `cgns` and tested it using `make test`, some of the tests failed. I found the following errors in the distribution:</p>

<p>1. In the header file `fortran_macros.h`, `STR_PLEN` needs to be redefined for the Mac M1 Mini. Fortran appends to subroutine passed arguments hidden default integer giveing the length of character arguments. In the subroutine `cg_conn_write_f`, there are two character strings. On the Mac Mini, these hidden integer variables are `long`, not `int`. It appears from some debug statements in `cg_conn_write_f` that this is a problem that has been previously worked on but not solved.</p>

<p>2. The subroutine `cg_conn_write_f` was ''missing'' from the cgns library. This subroutine is a c subroutine, not a fortran subroutine. But the interface in `cgns_f.F90` had the `BIND` statement commented out. I uncommented and this fixed the problem. </p>

<p>3. Not all the `utilities` built correctly. I added `binaryio.o`, which is needed by `p3dfout.o`, to `utilities/Makefile.unix`, which corrected the problem. All `make test` passed.</p>

<p>Environment variables<br/>

=====================</p>

<p>This section shows environment variables used to build `cgns` on Mac M1 Mini.</p>

<p>~~~<br/>

FC=gfortran<br/>

FCFLAGS=-I/opt/homebrew/opt/libx11/include -fallow-argument-mismatch -std=gnu<br/>

LIBS=-L/opt/homebrew/Cellar/tcl-tk/8.6.11_1/lib/ -L/opt/homebrew/opt/libx11/lib<br/>

CPPFLAGS=-I/opt/homebrew/include/<br/>

CFLAGS=-I/opt/homebrew/include/<br/>

~~~</p>

<p>Config flags<br/>

============</p>

<p>This section shows config flags used to build `cgns` on Mac M1 Mini.</p>

<p>~~~<br/>

./configure --enable-gcc --with-fortran=LOWERCASE_ --enable-cgnstools --enable-64bit<br/>

~~~</p>

<p>Edits to code<br/>

=============</p>

<p>This section shows edits used to fix build.</p>

<p>src/cgnstools/utilities/Makefile.unix<br/>

---------------------------------</p>

<p>~~~<br/>

src % diff cgnstools/utilities/Makefile.unix ../../CGNS-master/src/cgnstools/utilities/Makefile.unix <br/>

82c82<br/>

< p3dfout.$(O) binaryio.$(O)<br/>

—<br/>

> p3dfout.$(O)<br/>

84c84<br/>

< getargs.$(O) p3dfout.$(O) binaryio.$(O) $(LDLIST)<br/>

—<br/>

> getargs.$(O) p3dfout.$(O) $(LDLIST)<br/>

~~~</p>

<p>src/fortran_macros.h<br/>

--------------------</p>

<p>~~~<br/>

src % diff fortran_macros.h ../../CGNS-master/src/fortran_macros.h <br/>

141c141<br/>

< # define STR_PLEN(str) , long CONCATENATE(Len,str)<br/>

—<br/>

> # define STR_PLEN(str) , int CONCATENATE(Len,str)<br/>

~~~</p>

<p>src/cgns_f.F90<br/>

-------------</p>

<p>~~~<br/>

src % diff cgns_f.F90 ../../CGNS-master/src/cgns_f.F90<br/>

3021c3021<br/>

< SUBROUTINE cg_state_size_f(size, ier) BIND(C, NAME="cg_state_size_f")<br/>

—<br/>

> SUBROUTINE cg_state_size_f(size, ier) !BIND(C, NAME="cg_state_size_f")<br/>

~~~</p>

| non_priority | cgns has several problems building and running on mac bugs identified in this report this issue has been migrated from the forge read the reporter none created at sun jun i installed cgns on a mac mini using homebrew the library had errors including a difficult to understand error in the subroutine cg conn write f and a missing subroutine in the cgns library cgns f i installed cgns from the github repository and made the library on my mac and had similar issues additionally whene i built cgns and tested it using make test some of the tests failed i found the following errors in the distribution in the header file fortran macros h str plen needs to be redefined for the mac mini fortran appends to subroutine passed arguments hidden default integer giveing the length of character arguments in the subroutine cg conn write f there are two character strings on the mac mini these hidden integer variables are long not int it appears from some debug statements in cg conn write f that this is a problem that has been previously worked on but not solved the subroutine cg conn write f was missing from the cgns library this subroutine is a c subroutine not a fortran subroutine but the interface in cgns f had the bind statement commented out i uncommented and this fixed the problem not all the utilities built correctly i added binaryio o which is needed by o to utilities makefile unix which corrected the problem all make test passed environment variables this section shows environment variables used to build cgns on mac mini fc gfortran fcflags i opt homebrew opt include fallow argument mismatch std gnu libs l opt homebrew cellar tcl tk lib l opt homebrew opt lib cppflags i opt homebrew include cflags i opt homebrew include config flags this section shows config flags used to build cgns on mac mini configure enable gcc with fortran lowercase enable cgnstools enable edits to code this section shows edits used to fix build src cgnstools utilities makefile unix src diff cgnstools utilities makefile unix cgns master src cgnstools utilities makefile unix o getargs o o ldlist src fortran macros h src diff fortran macros h cgns master src fortran macros h define str plen str int concatenate len str src cgns f src diff cgns f cgns master src cgns f subroutine cg state size f size ier bind c name cg state size f | 0 |

118,167 | 25,265,390,485 | IssuesEvent | 2022-11-16 03:54:57 | Azure/autorest.python | https://api.github.com/repos/Azure/autorest.python | closed | LRO basics from CADL | DPG DPG/RLC v2.0b2 Epic: Parity with DPG 1.0 WS: Code Generation | First level of support for LRO in CADL, is to consider the presence of `@Azure.Core.pollingOperation` as a boolean switch to generate LRO code, like `x-ms-long-running-operation: true` was doing in Swagger:

```

@Azure.Core.pollingOperation(Authoring.createProjectStatusMonitor)

createProject is Azure.Core.LongRunningResourceCreateWithServiceProvidedName<Project>;

```

([full example](https://cadlplayground.z22.web.core.windows.net/cadl-azure/?c=aW1wb3J0ICJAY2FkbC1sYW5nL3ZlcnNpb25pbmciOwrJIGF6dXJlLXRvb2xzL8UsxhFjb3JlIjsKCkBzZXJ2aWNlVGl0bGUoIlRleHQgYXV0aG9yxEgpCi8vQFbJWC7HY2VkKFPGN8cccykKbmFtZXNwYWNlIMREQchDOwoKZW51bSDPMCB7CiAgdjE6ICIyMDIxLTDEAyIsxBQyxhQyLTA5LTEyIiwKfQoKQENhZGwuUmVzdC5yZXNvdXJjZSgicHJvamVjdHMiKQptb2RlbCBQxhHFW0BrZXnEB3Zpc2liaWxpdHkoInJlYWQiKQogIGlkOiBzdOYAniAgZGVzY3JpcHRpb24%2FyhgKICDkANLLEn0KCmludGVyZuQA5OkA4MVuY3JlYXRl5wCAU3RhdHVzTW9uaXRvciBpcyBDdXN0b21Db3JlLlBvbGxpbmdPcGVyYcRxPMc0PsVxQEHkAZsuxSpwzyooyXIu2m7kAN3NHsR%2Fy1VMb25nUnVu5AHLUucBSEPFL1dpdGjnAaJQcm92aWRlZE5hbWXuAJ9kZWxl2FrIT0TFJMs4ICBsaXN0xxBz1zZMaXN0zTRkZXBsb3nea0Fj5QFCCiAgIOgB%2B%2BQCQSDlAZLEAXNsb3TkAMbsAe0gIH3GJccyCiAgxXFnZegApNduUmVhZOsAo30K6wLx6gHhxXzrAq5wYXJlbnTIRChU5AGh9QLNb%2BgB6OQCzyAg5gLR6QH95gHlyEc8VD7vAKVGb3VuZMY%2FLs815wEO5wMQICDJaUntAwV95QKA5QCaSHR0cC5yb3V08gCXb3Ag8QLCVOYA%2FvAAk%2B0BOPkAycc85QFR)) | 1.0 | LRO basics from CADL - First level of support for LRO in CADL, is to consider the presence of `@Azure.Core.pollingOperation` as a boolean switch to generate LRO code, like `x-ms-long-running-operation: true` was doing in Swagger:

```

@Azure.Core.pollingOperation(Authoring.createProjectStatusMonitor)

createProject is Azure.Core.LongRunningResourceCreateWithServiceProvidedName<Project>;

```

([full example](https://cadlplayground.z22.web.core.windows.net/cadl-azure/?c=aW1wb3J0ICJAY2FkbC1sYW5nL3ZlcnNpb25pbmciOwrJIGF6dXJlLXRvb2xzL8UsxhFjb3JlIjsKCkBzZXJ2aWNlVGl0bGUoIlRleHQgYXV0aG9yxEgpCi8vQFbJWC7HY2VkKFPGN8cccykKbmFtZXNwYWNlIMREQchDOwoKZW51bSDPMCB7CiAgdjE6ICIyMDIxLTDEAyIsxBQyxhQyLTA5LTEyIiwKfQoKQENhZGwuUmVzdC5yZXNvdXJjZSgicHJvamVjdHMiKQptb2RlbCBQxhHFW0BrZXnEB3Zpc2liaWxpdHkoInJlYWQiKQogIGlkOiBzdOYAniAgZGVzY3JpcHRpb24%2FyhgKICDkANLLEn0KCmludGVyZuQA5OkA4MVuY3JlYXRl5wCAU3RhdHVzTW9uaXRvciBpcyBDdXN0b21Db3JlLlBvbGxpbmdPcGVyYcRxPMc0PsVxQEHkAZsuxSpwzyooyXIu2m7kAN3NHsR%2Fy1VMb25nUnVu5AHLUucBSEPFL1dpdGjnAaJQcm92aWRlZE5hbWXuAJ9kZWxl2FrIT0TFJMs4ICBsaXN0xxBz1zZMaXN0zTRkZXBsb3nea0Fj5QFCCiAgIOgB%2B%2BQCQSDlAZLEAXNsb3TkAMbsAe0gIH3GJccyCiAgxXFnZegApNduUmVhZOsAo30K6wLx6gHhxXzrAq5wYXJlbnTIRChU5AGh9QLNb%2BgB6OQCzyAg5gLR6QH95gHlyEc8VD7vAKVGb3VuZMY%2FLs815wEO5wMQICDJaUntAwV95QKA5QCaSHR0cC5yb3V08gCXb3Ag8QLCVOYA%2FvAAk%2B0BOPkAycc85QFR)) | non_priority | lro basics from cadl first level of support for lro in cadl is to consider the presence of azure core pollingoperation as a boolean switch to generate lro code like x ms long running operation true was doing in swagger azure core pollingoperation authoring createprojectstatusmonitor createproject is azure core longrunningresourcecreatewithserviceprovidedname | 0 |

100,618 | 11,200,485,496 | IssuesEvent | 2020-01-03 21:56:31 | tomapper/tomapper | https://api.github.com/repos/tomapper/tomapper | opened | Come up with a library concept | documentation help wanted | ### Languages

- Java 1.7+

### Description

The library makes it easy to convert one class to another using annotations or stream APIs.

### TODO

- Create an algorithm of work

- Create prototype | 1.0 | Come up with a library concept - ### Languages

- Java 1.7+

### Description

The library makes it easy to convert one class to another using annotations or stream APIs.

### TODO

- Create an algorithm of work

- Create prototype | non_priority | come up with a library concept languages java description the library makes it easy to convert one class to another using annotations or stream apis todo create an algorithm of work create prototype | 0 |

253,550 | 8,057,567,141 | IssuesEvent | 2018-08-02 15:43:56 | SUSE/DeepSea | https://api.github.com/repos/SUSE/DeepSea | closed | Exercise stage.5 ( removal ) in functional/system tests | QA enhancement priority | ### Description of Issue/Question

After hitting a bug in stage.5 it's time to create a test to exercise the code in stage.5

Potential issues:

- Teuthology only deploys _one_ node which can't be removed without bringing down the cluster.

| 1.0 | Exercise stage.5 ( removal ) in functional/system tests - ### Description of Issue/Question

After hitting a bug in stage.5 it's time to create a test to exercise the code in stage.5

Potential issues:

- Teuthology only deploys _one_ node which can't be removed without bringing down the cluster.

| priority | exercise stage removal in functional system tests description of issue question after hitting a bug in stage it s time to create a test to exercise the code in stage potential issues teuthology only deploys one node which can t be removed without bringing down the cluster | 1 |

202,703 | 7,051,503,881 | IssuesEvent | 2018-01-03 12:05:45 | chingu-voyage3/bears-31-api | https://api.github.com/repos/chingu-voyage3/bears-31-api | closed | Write login endpoint logic | api feature high priority | Implement the functionality to find a user with the username passed in the request body and comparing the hash of the password with the one stored.

If the credentials are correct, generate a new JWT token with a payload containing *only* the username, then return the token.

If the credentials were incorrect, return an error message specifying it. | 1.0 | Write login endpoint logic - Implement the functionality to find a user with the username passed in the request body and comparing the hash of the password with the one stored.

If the credentials are correct, generate a new JWT token with a payload containing *only* the username, then return the token.

If the credentials were incorrect, return an error message specifying it. | priority | write login endpoint logic implement the functionality to find a user with the username passed in the request body and comparing the hash of the password with the one stored if the credentials are correct generate a new jwt token with a payload containing only the username then return the token if the credentials were incorrect return an error message specifying it | 1 |

813,892 | 30,478,284,729 | IssuesEvent | 2023-07-17 18:13:14 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | opened | [DDL][Post-Upgarde] Not found: Unable to find schema name for YSQL table system_postgres.sequences_data due to error | kind/bug area/ysql priority/low status/awaiting-triage jira-originated | Jira Link: [DB-7269](https://yugabyte.atlassian.net/browse/DB-7269)

| 1.0 | [DDL][Post-Upgarde] Not found: Unable to find schema name for YSQL table system_postgres.sequences_data due to error - Jira Link: [DB-7269](https://yugabyte.atlassian.net/browse/DB-7269)

| priority | not found unable to find schema name for ysql table system postgres sequences data due to error jira link | 1 |

722,914 | 24,878,368,325 | IssuesEvent | 2022-10-27 21:23:58 | WowRarity/Rarity | https://api.github.com/repos/WowRarity/Rarity | closed | Holiday reminders aren't displayed after logging in, until the main window is opened (?) | Priority: Low Status: Duplicate Type: Bug Complexity: TBD Category: GUI | Source: [Discord](https://discord.com/channels/788119147740790854/788119314909626388/1034115793572614154)

> Mine holiday reminder only shows me when i mouse over the mini rarity icon, then the text shows up in the middle of the screen, it dosent show when i log in on new character only when i mouse over it. How do i fix it?

| 1.0 | Holiday reminders aren't displayed after logging in, until the main window is opened (?) - Source: [Discord](https://discord.com/channels/788119147740790854/788119314909626388/1034115793572614154)

> Mine holiday reminder only shows me when i mouse over the mini rarity icon, then the text shows up in the middle of the screen, it dosent show when i log in on new character only when i mouse over it. How do i fix it?

| priority | holiday reminders aren t displayed after logging in until the main window is opened source mine holiday reminder only shows me when i mouse over the mini rarity icon then the text shows up in the middle of the screen it dosent show when i log in on new character only when i mouse over it how do i fix it | 1 |

275,277 | 8,575,543,323 | IssuesEvent | 2018-11-12 17:34:11 | aowen87/TicketTester | https://api.github.com/repos/aowen87/TicketTester | closed | Curve2D reader cannot read double-precision values | Bug Likelihood: 3 - Occasional Priority: Normal Severity: 2 - Minor Irritation | I created an ultra file with values > FLT_MAX.

The curve reader could not read the file.

I've attached the file.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket number: 1819

Status: Resolved

Project: VisIt

Tracker: Bug

Priority: Normal

Subject: Curve2D reader cannot read double-precision values

Assigned to: Kathleen Biagas

Category:

Target version: 2.8

Author: Kathleen Biagas

Start: 04/22/2014

Due date:

% Done: 0

Estimated time:

Created: 04/22/2014 01:14 pm

Updated: 07/16/2014 02:34 pm

Likelihood: 3 - Occasional

Severity: 2 - Minor Irritation

Found in version: 2.7.1

Impact:

Expected Use:

OS: All

Support Group: Any

Description:

I created an ultra file with values > FLT_MAX.

The curve reader could not read the file.

I've attached the file.

Comments:

Made an attempt to fix the reader, and it can be made to use doubles and pass them along.However, the curve will not be rendered. I double-checked avtOpenGLCurveRenderer, and the values being passed to glVertex3dv are correct.Perhaps we should scale the values down before rendering?

Modified the reader to server up double. Very large values or very small values won't be rendered correctly without scaling. I added data and a test demonstrating this.Svn revision 23772-4.

| 1.0 | Curve2D reader cannot read double-precision values - I created an ultra file with values > FLT_MAX.

The curve reader could not read the file.

I've attached the file.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket number: 1819

Status: Resolved

Project: VisIt

Tracker: Bug

Priority: Normal

Subject: Curve2D reader cannot read double-precision values

Assigned to: Kathleen Biagas

Category:

Target version: 2.8

Author: Kathleen Biagas

Start: 04/22/2014

Due date:

% Done: 0

Estimated time:

Created: 04/22/2014 01:14 pm

Updated: 07/16/2014 02:34 pm

Likelihood: 3 - Occasional

Severity: 2 - Minor Irritation

Found in version: 2.7.1

Impact:

Expected Use:

OS: All

Support Group: Any

Description:

I created an ultra file with values > FLT_MAX.

The curve reader could not read the file.

I've attached the file.

Comments:

Made an attempt to fix the reader, and it can be made to use doubles and pass them along.However, the curve will not be rendered. I double-checked avtOpenGLCurveRenderer, and the values being passed to glVertex3dv are correct.Perhaps we should scale the values down before rendering?

Modified the reader to server up double. Very large values or very small values won't be rendered correctly without scaling. I added data and a test demonstrating this.Svn revision 23772-4.

| priority | reader cannot read double precision values i created an ultra file with values flt max the curve reader could not read the file i ve attached the file redmine migration this ticket was migrated from redmine as such not all information was able to be captured in the transition below is a complete record of the original redmine ticket ticket number status resolved project visit tracker bug priority normal subject reader cannot read double precision values assigned to kathleen biagas category target version author kathleen biagas start due date done estimated time created pm updated pm likelihood occasional severity minor irritation found in version impact expected use os all support group any description i created an ultra file with values flt max the curve reader could not read the file i ve attached the file comments made an attempt to fix the reader and it can be made to use doubles and pass them along however the curve will not be rendered i double checked avtopenglcurverenderer and the values being passed to are correct perhaps we should scale the values down before rendering modified the reader to server up double very large values or very small values won t be rendered correctly without scaling i added data and a test demonstrating this svn revision | 1 |

263,874 | 8,302,828,444 | IssuesEvent | 2018-09-21 15:35:35 | Vhoyon/Discord-Bot | https://api.github.com/repos/Vhoyon/Discord-Bot | opened | Host a permanent version of our bot to have an online alias of our work | !Priority: Medium | Here are our options (more may be edited in) :

- [Heroku](https://www.heroku.com) - _possibility of using a [GitHub Student Pack account](https://education.github.com/pack) to get access to 2 years worth of non-sleeping dynos ([Hobby Dyno](https://devcenter.heroku.com/articles/dyno-types))_;

- Self-Hosted on a Raspberry-Pi;

- Buying some third-party server to run it so we don't have to deal with setting up the Raspberry-Pi with ssh and outside secure access (SSH, static IP address, etc). | 1.0 | Host a permanent version of our bot to have an online alias of our work - Here are our options (more may be edited in) :

- [Heroku](https://www.heroku.com) - _possibility of using a [GitHub Student Pack account](https://education.github.com/pack) to get access to 2 years worth of non-sleeping dynos ([Hobby Dyno](https://devcenter.heroku.com/articles/dyno-types))_;

- Self-Hosted on a Raspberry-Pi;

- Buying some third-party server to run it so we don't have to deal with setting up the Raspberry-Pi with ssh and outside secure access (SSH, static IP address, etc). | priority | host a permanent version of our bot to have an online alias of our work here are our options more may be edited in possibility of using a to get access to years worth of non sleeping dynos self hosted on a raspberry pi buying some third party server to run it so we don t have to deal with setting up the raspberry pi with ssh and outside secure access ssh static ip address etc | 1 |

103,859 | 22,490,830,917 | IssuesEvent | 2022-06-23 01:21:25 | gitpod-io/gitpod | https://api.github.com/repos/gitpod-io/gitpod | closed | [insiders] emit console.log on workspace boot if person is using insiders | meta: stale editor: code (browser) team: IDE aspect: error-handling | ## Is your feature request related to a problem? Please describe

If a person is using insiders emit a 🚨🚨🚨🚨🚨🚨🚨🚨🚨🚨🚨 informing them that they are running insiders:

- if someone is looking at console log it is likely because Gitpod isn't working for them

- if someone is using insiders then the console.log should nudge people to switch to stable

- inform person if problem persists please raise a support case

Related to https://github.com/gitpod-io/gitpod/pull/6221

## Additional context

https://www.gitpodstatus.com/incidents/7b5z20kf0c3j | 1.0 | [insiders] emit console.log on workspace boot if person is using insiders - ## Is your feature request related to a problem? Please describe

If a person is using insiders emit a 🚨🚨🚨🚨🚨🚨🚨🚨🚨🚨🚨 informing them that they are running insiders:

- if someone is looking at console log it is likely because Gitpod isn't working for them

- if someone is using insiders then the console.log should nudge people to switch to stable

- inform person if problem persists please raise a support case

Related to https://github.com/gitpod-io/gitpod/pull/6221

## Additional context

https://www.gitpodstatus.com/incidents/7b5z20kf0c3j | non_priority | emit console log on workspace boot if person is using insiders is your feature request related to a problem please describe if a person is using insiders emit a 🚨🚨🚨🚨🚨🚨🚨🚨🚨🚨🚨 informing them that they are running insiders if someone is looking at console log it is likely because gitpod isn t working for them if someone is using insiders then the console log should nudge people to switch to stable inform person if problem persists please raise a support case related to additional context | 0 |

83,847 | 3,643,871,346 | IssuesEvent | 2016-02-15 06:13:11 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | In-container client config doesn't work for many common use cases because no namespace available | area/usability priority/P1 team/control-plane team/CSI | This is kind of last minute, but I'd like to consider this for 1.2 because we've had a recent rash of users who want to script this and are effectively blocked.

Today, by default service accounts inject one of their secrets into `/var/run/secrets/kubernetes.io/default`. That secret enables API access. We have support in the client config to read that value and provide a default client connection using that service account, which means `kubectl` inside of the container can effectively perform operations as the service account and is easily scriptable. The one gap today is that `kubectl` does not have access to a default namespace. Since the point of this injection is to enable easy use of the API by a pod, and most of our APIs that a pod would mutate are namespace scoped, and the service account is scoped to a namespace (although it may have other privileges), the fact that the secret comes with no identifying information as to the namespace means that in order to do "easy" integration with the API, the caller also has to inject the pod namespace via the downward API.

I would like to add a `namespace` key to each service account token secret, and change in-cluster-config to check for that file and use its value as a default namespace for kubectl and other kubectl like tools. The secret is a "default injected convention" and the convention is meant to enable easy API integration. Auto-injecting a downward API volume / env is more complex.

This enables scenarios like:

* Create a pod that has the command `/bin/sh -c "kubectl rolling-upgrade $SOURCE $DESTINATION && curl -X POST https://myserver/deployment/successful`

* Fetch endpoints for the current service from any pod that has kubectl `kubectl get endpoints db -o jsonpath={.endpoints.subset.addresses}`

| 1.0 | In-container client config doesn't work for many common use cases because no namespace available - This is kind of last minute, but I'd like to consider this for 1.2 because we've had a recent rash of users who want to script this and are effectively blocked.

Today, by default service accounts inject one of their secrets into `/var/run/secrets/kubernetes.io/default`. That secret enables API access. We have support in the client config to read that value and provide a default client connection using that service account, which means `kubectl` inside of the container can effectively perform operations as the service account and is easily scriptable. The one gap today is that `kubectl` does not have access to a default namespace. Since the point of this injection is to enable easy use of the API by a pod, and most of our APIs that a pod would mutate are namespace scoped, and the service account is scoped to a namespace (although it may have other privileges), the fact that the secret comes with no identifying information as to the namespace means that in order to do "easy" integration with the API, the caller also has to inject the pod namespace via the downward API.

I would like to add a `namespace` key to each service account token secret, and change in-cluster-config to check for that file and use its value as a default namespace for kubectl and other kubectl like tools. The secret is a "default injected convention" and the convention is meant to enable easy API integration. Auto-injecting a downward API volume / env is more complex.

This enables scenarios like:

* Create a pod that has the command `/bin/sh -c "kubectl rolling-upgrade $SOURCE $DESTINATION && curl -X POST https://myserver/deployment/successful`

* Fetch endpoints for the current service from any pod that has kubectl `kubectl get endpoints db -o jsonpath={.endpoints.subset.addresses}`

| priority | in container client config doesn t work for many common use cases because no namespace available this is kind of last minute but i d like to consider this for because we ve had a recent rash of users who want to script this and are effectively blocked today by default service accounts inject one of their secrets into var run secrets kubernetes io default that secret enables api access we have support in the client config to read that value and provide a default client connection using that service account which means kubectl inside of the container can effectively perform operations as the service account and is easily scriptable the one gap today is that kubectl does not have access to a default namespace since the point of this injection is to enable easy use of the api by a pod and most of our apis that a pod would mutate are namespace scoped and the service account is scoped to a namespace although it may have other privileges the fact that the secret comes with no identifying information as to the namespace means that in order to do easy integration with the api the caller also has to inject the pod namespace via the downward api i would like to add a namespace key to each service account token secret and change in cluster config to check for that file and use its value as a default namespace for kubectl and other kubectl like tools the secret is a default injected convention and the convention is meant to enable easy api integration auto injecting a downward api volume env is more complex this enables scenarios like create a pod that has the command bin sh c kubectl rolling upgrade source destination curl x post fetch endpoints for the current service from any pod that has kubectl kubectl get endpoints db o jsonpath endpoints subset addresses | 1 |

356,723 | 10,596,827,170 | IssuesEvent | 2019-10-09 22:19:14 | InfiniteFlightAirportEditing/Airports | https://api.github.com/repos/InfiniteFlightAirportEditing/Airports | closed | VAID-Devi Ahilya Bai Holkar International/Indore Airport-MADHYA PRADESH-INDIA | Being Redone Low Priority | # Airport Name

Devi Ahilya Bai Holkar International Airport

# Country?

India

# Improvements that need to be made?

from scratch

# Are you working on this airport?

yes

# Airport Priority? (IF Event, 10000ft+ Runway, World/US Capital, Low)

Low priority

| 1.0 | VAID-Devi Ahilya Bai Holkar International/Indore Airport-MADHYA PRADESH-INDIA - # Airport Name

Devi Ahilya Bai Holkar International Airport

# Country?

India

# Improvements that need to be made?

from scratch

# Are you working on this airport?

yes

# Airport Priority? (IF Event, 10000ft+ Runway, World/US Capital, Low)

Low priority

| priority | vaid devi ahilya bai holkar international indore airport madhya pradesh india airport name devi ahilya bai holkar international airport country india improvements that need to be made from scratch are you working on this airport yes airport priority if event runway world us capital low low priority | 1 |

462,902 | 13,256,006,041 | IssuesEvent | 2020-08-20 11:58:20 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.transfermarkt.de - The country flag overlaps the site's logo | browser-fenix engine-gecko priority-normal severity-minor type-css-moz-document | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.transfermarkt.de/

**Browser / Version**: Firefox Mobile 68.0

**Operating System**: Android

**Tested Another Browser**: Yes

**Problem type**: Design is broken

**Description**: language selection at the top is broken (logos interfering with each other)

**Steps to Reproduce**:

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.transfermarkt.de - The country flag overlaps the site's logo - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.transfermarkt.de/

**Browser / Version**: Firefox Mobile 68.0

**Operating System**: Android

**Tested Another Browser**: Yes

**Problem type**: Design is broken

**Description**: language selection at the top is broken (logos interfering with each other)

**Steps to Reproduce**:

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | the country flag overlaps the site s logo url browser version firefox mobile operating system android tested another browser yes problem type design is broken description language selection at the top is broken logos interfering with each other steps to reproduce browser configuration none from with ❤️ | 1 |

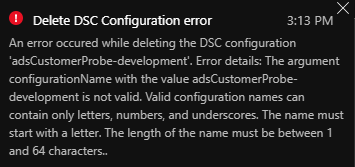

10,800 | 9,104,562,527 | IssuesEvent | 2019-02-20 18:30:11 | Azure/azure-powershell | https://api.github.com/repos/Azure/azure-powershell | closed | Azure Automation cmdlets allows illegal characters (hypens) in DSC configuration name. GUI prevents deletion of resources with hypens in name. | Automation Service Attention automation-dsc | ### Description

<!-- Please provide a description of the issue you are facing -->

### Script/Steps for Reproduction

<!-- Please provide the necessary script(s) that reproduce the issue -->

```powershell

Import-AzAutomationDscConfiguration @AAParams -sourcepath configuration-with-hypens.ps1

```

Then try to delete it from the GUI.

### Module Version

0.4.0

### Workaround

Use underscores which are a supported character

### Recommended Fix

Add the input validation to the Azure Automation API backend, don't rely on clients to do the input validation (GUI does it, Az cmdlets don't)

| 1.0 | Azure Automation cmdlets allows illegal characters (hypens) in DSC configuration name. GUI prevents deletion of resources with hypens in name. - ### Description

<!-- Please provide a description of the issue you are facing -->

### Script/Steps for Reproduction

<!-- Please provide the necessary script(s) that reproduce the issue -->

```powershell

Import-AzAutomationDscConfiguration @AAParams -sourcepath configuration-with-hypens.ps1

```

Then try to delete it from the GUI.

### Module Version

0.4.0

### Workaround

Use underscores which are a supported character

### Recommended Fix

Add the input validation to the Azure Automation API backend, don't rely on clients to do the input validation (GUI does it, Az cmdlets don't)

| non_priority | azure automation cmdlets allows illegal characters hypens in dsc configuration name gui prevents deletion of resources with hypens in name description script steps for reproduction powershell import azautomationdscconfiguration aaparams sourcepath configuration with hypens then try to delete it from the gui module version workaround use underscores which are a supported character recommended fix add the input validation to the azure automation api backend don t rely on clients to do the input validation gui does it az cmdlets don t | 0 |

284,473 | 21,427,654,866 | IssuesEvent | 2022-04-23 00:01:48 | BCDevOps/developer-experience | https://api.github.com/repos/BCDevOps/developer-experience | opened | Update CrunchyDB example to fit in the default namespace quota | documentation team/DXC | **Describe the issue**

The default namespace has a very small quota and the defaults resources don't really fit in there. Add to the example resource configs for all the containers so that it fits better in an HA config.

**Additional context**

https://github.com/bcgov/how-to-workshops/blob/master/crunchydb/high-availablility/PostgresCluster.yaml

```

oc explain postgrescluster.spec.monitoring.pgmonitor.exporter.resources

oc explain postgrescluster.spec.proxy.pgBouncer.resources

oc explain postgrescluster.spec.backups.pgbackrest.repoHost.resources

oc explain postgrescluster.spec.instances.resources

```

**Definition of done**

Example HA PostgresCluster can be run in the default quota namespace

| 1.0 | Update CrunchyDB example to fit in the default namespace quota - **Describe the issue**

The default namespace has a very small quota and the defaults resources don't really fit in there. Add to the example resource configs for all the containers so that it fits better in an HA config.

**Additional context**

https://github.com/bcgov/how-to-workshops/blob/master/crunchydb/high-availablility/PostgresCluster.yaml

```

oc explain postgrescluster.spec.monitoring.pgmonitor.exporter.resources

oc explain postgrescluster.spec.proxy.pgBouncer.resources

oc explain postgrescluster.spec.backups.pgbackrest.repoHost.resources

oc explain postgrescluster.spec.instances.resources

```

**Definition of done**

Example HA PostgresCluster can be run in the default quota namespace

| non_priority | update crunchydb example to fit in the default namespace quota describe the issue the default namespace has a very small quota and the defaults resources don t really fit in there add to the example resource configs for all the containers so that it fits better in an ha config additional context oc explain postgrescluster spec monitoring pgmonitor exporter resources oc explain postgrescluster spec proxy pgbouncer resources oc explain postgrescluster spec backups pgbackrest repohost resources oc explain postgrescluster spec instances resources definition of done example ha postgrescluster can be run in the default quota namespace | 0 |

374,249 | 26,108,282,205 | IssuesEvent | 2022-12-27 15:57:55 | TrixiEther/SmileBot | https://api.github.com/repos/TrixiEther/SmileBot | closed | Command processing mechanism | documentation enhancement | **Develop a bot management mechanism on the server**

Comand template:

![bot control word] [main commands] [flags]

Implement(for now):

1. Bot initialization on the server

2. Removing server-related information

3. Bot reinitialization on the server

4. Emoji usage statistics(type, used in message, used as reaction, summary)

| 1.0 | Command processing mechanism - **Develop a bot management mechanism on the server**

Comand template:

![bot control word] [main commands] [flags]

Implement(for now):

1. Bot initialization on the server

2. Removing server-related information

3. Bot reinitialization on the server

4. Emoji usage statistics(type, used in message, used as reaction, summary)

| non_priority | command processing mechanism develop a bot management mechanism on the server comand template implement for now bot initialization on the server removing server related information bot reinitialization on the server emoji usage statistics type used in message used as reaction summary | 0 |

118,457 | 9,991,276,978 | IssuesEvent | 2019-07-11 10:42:25 | mozilla-mobile/firefox-ios | https://api.github.com/repos/mozilla-mobile/firefox-ios | opened | [Sync Integration] Tear down method is failing when removing the fxaccount | Test Automation :robot: | The tests are passing but the final status reported is red due to this FxA [issue](https://github.com/mozilla/fxa/issues/1740)

If it is not solved soon, we will disable that last part so that the status after tests is correct. | 1.0 | [Sync Integration] Tear down method is failing when removing the fxaccount - The tests are passing but the final status reported is red due to this FxA [issue](https://github.com/mozilla/fxa/issues/1740)

If it is not solved soon, we will disable that last part so that the status after tests is correct. | non_priority | tear down method is failing when removing the fxaccount the tests are passing but the final status reported is red due to this fxa if it is not solved soon we will disable that last part so that the status after tests is correct | 0 |

208,084 | 7,135,642,845 | IssuesEvent | 2018-01-23 02:11:53 | cssconf/2018.cssconf.eu | https://api.github.com/repos/cssconf/2018.cssconf.eu | closed | Frontpage: Add "Arena, Eichenstraße 4" | priority: high | Below the city, this should have and extra line with the venue, as people keep asking about it.

The line should also be a link to a marker on googlemaps.

I suggest to use the same font-size and green color.

| 1.0 | Frontpage: Add "Arena, Eichenstraße 4" - Below the city, this should have and extra line with the venue, as people keep asking about it.

The line should also be a link to a marker on googlemaps.

I suggest to use the same font-size and green color.

| priority | frontpage add arena eichenstraße below the city this should have and extra line with the venue as people keep asking about it the line should also be a link to a marker on googlemaps i suggest to use the same font size and green color | 1 |

649,963 | 21,330,775,027 | IssuesEvent | 2022-04-18 08:03:30 | ZapSquared/Quaver | https://api.github.com/repos/ZapSquared/Quaver | closed | Everytime Quaver gets disconnected or gets an error from Lavalink, treats it as a crash. | type:bug released on @next affects:functionality priority:p0 | **Describe the bug**

What isn't working as intended, and what does it affect?

https://github.com/ZapSquared/Quaver/blob/21b864d268e172f02bf7bdd4bb9522ced5807e7d/events/music/error.js#L1-L11

Lavalink disconnection and error listeners and how it shuts down.

Affects: User experience, functionality

**Affected versions**

What versions are affected by this bug? (e.g. >=3.0.1, 2.5.1-2.6.3, >=1.2.0)

3.4.0-next.10 and so on.

**Steps to reproduce**

Steps to reproduce the behavior. (e.g. click on a button, enter a value, etc. and see error)

1. Run Quaver

2. Run Lavalink

3. Play a track on Quaver on stage or voice channel

4. While a track is running, stop your Lavalink instance.

**Expected behavior**

What is expected to happen?

Not making Quaver treat Lavalink disconnection or errors as crashes since it's outside Quaver.

**Actual behavior**

What actually happens? Attach or add errors or screenshots here as well.

As Lavalink encounters an error, Quaver has a listener `bot.music.on('error, err`

It will shut down the process with an eventType of nothing.

shuttingDown does not filter that, so treats it as a crash.

https://github.com/ZapSquared/Quaver/blob/21b864d268e172f02bf7bdd4bb9522ced5807e7d/main.js#L120 | 1.0 | Everytime Quaver gets disconnected or gets an error from Lavalink, treats it as a crash. - **Describe the bug**

What isn't working as intended, and what does it affect?

https://github.com/ZapSquared/Quaver/blob/21b864d268e172f02bf7bdd4bb9522ced5807e7d/events/music/error.js#L1-L11

Lavalink disconnection and error listeners and how it shuts down.

Affects: User experience, functionality

**Affected versions**

What versions are affected by this bug? (e.g. >=3.0.1, 2.5.1-2.6.3, >=1.2.0)

3.4.0-next.10 and so on.

**Steps to reproduce**

Steps to reproduce the behavior. (e.g. click on a button, enter a value, etc. and see error)

1. Run Quaver

2. Run Lavalink

3. Play a track on Quaver on stage or voice channel

4. While a track is running, stop your Lavalink instance.

**Expected behavior**

What is expected to happen?

Not making Quaver treat Lavalink disconnection or errors as crashes since it's outside Quaver.

**Actual behavior**

What actually happens? Attach or add errors or screenshots here as well.

As Lavalink encounters an error, Quaver has a listener `bot.music.on('error, err`

It will shut down the process with an eventType of nothing.

shuttingDown does not filter that, so treats it as a crash.

https://github.com/ZapSquared/Quaver/blob/21b864d268e172f02bf7bdd4bb9522ced5807e7d/main.js#L120 | priority | everytime quaver gets disconnected or gets an error from lavalink treats it as a crash describe the bug what isn t working as intended and what does it affect lavalink disconnection and error listeners and how it shuts down affects user experience functionality affected versions what versions are affected by this bug e g next and so on steps to reproduce steps to reproduce the behavior e g click on a button enter a value etc and see error run quaver run lavalink play a track on quaver on stage or voice channel while a track is running stop your lavalink instance expected behavior what is expected to happen not making quaver treat lavalink disconnection or errors as crashes since it s outside quaver actual behavior what actually happens attach or add errors or screenshots here as well as lavalink encounters an error quaver has a listener bot music on error err it will shut down the process with an eventtype of nothing shuttingdown does not filter that so treats it as a crash | 1 |

18,072 | 10,879,151,278 | IssuesEvent | 2019-11-16 22:58:50 | Azure/azure-sdk-for-net | https://api.github.com/repos/Azure/azure-sdk-for-net | closed | Create Options type to hold showStats and modelVersion | Client Cognitive Services TextAnalytics | Instead of taking both of these as parameters to the "kitchen sink" methods, we should have an options type. | 1.0 | Create Options type to hold showStats and modelVersion - Instead of taking both of these as parameters to the "kitchen sink" methods, we should have an options type. | non_priority | create options type to hold showstats and modelversion instead of taking both of these as parameters to the kitchen sink methods we should have an options type | 0 |

396,235 | 27,108,398,399 | IssuesEvent | 2023-02-15 13:46:28 | cilium/cilium | https://api.github.com/repos/cilium/cilium | closed | Document that `install-egress-gateway-routes` is specific to EKS | area/documentation good-first-issue feature/egress-gateway | The `install-egress-gateway-routes` flag, aka the `egressGateway.installRoutes` Helm value, is needed on EKS's ENI mode to steer traffic through the proper interfaces.

It is [documented in the egress gateway guide](https://docs.cilium.io/en/stable/gettingstarted/egress-gateway/#compatibility-with-cloud-environments), but we never mention that it's only meant for ENI mode. We should fix that as it can break things on other setups (e.g., known to cause issues on GKE). | 1.0 | Document that `install-egress-gateway-routes` is specific to EKS - The `install-egress-gateway-routes` flag, aka the `egressGateway.installRoutes` Helm value, is needed on EKS's ENI mode to steer traffic through the proper interfaces.

It is [documented in the egress gateway guide](https://docs.cilium.io/en/stable/gettingstarted/egress-gateway/#compatibility-with-cloud-environments), but we never mention that it's only meant for ENI mode. We should fix that as it can break things on other setups (e.g., known to cause issues on GKE). | non_priority | document that install egress gateway routes is specific to eks the install egress gateway routes flag aka the egressgateway installroutes helm value is needed on eks s eni mode to steer traffic through the proper interfaces it is but we never mention that it s only meant for eni mode we should fix that as it can break things on other setups e g known to cause issues on gke | 0 |

162,075 | 6,147,325,582 | IssuesEvent | 2017-06-27 15:30:32 | angular/angular-cli | https://api.github.com/repos/angular/angular-cli | closed | ERROR in Maximum call stack size exceeded : Feature module circular dependency | freq2: medium priority: 2 (required) type: bug | <!--

IF YOU DON'T FILL OUT THE FOLLOWING INFORMATION YOUR ISSUE MIGHT BE CLOSED WITHOUT INVESTIGATING

-->

### Bug Report or Feature Request (mark with an `x`)

```

- [X] bug report -> please search issues before submitting

- [ ] feature request

```

### Versions.

<!--

Output from: `ng --version`.

If nothing, output from: `node --version` and `npm --version`.

Windows (7/8/10). Linux (incl. distribution). macOS (El Capitan? Sierra?)

-->

> @angular/cli: 1.0.0-rc.2

node: 6.10.0

os: linux x64

@angular/common: 2.4.10

@angular/compiler: 2.4.10

@angular/core: 2.4.10

@angular/forms: 2.4.10

@angular/http: 2.4.10

@angular/platform-browser: 2.4.10

@angular/platform-browser-dynamic: 2.4.10

@angular/router: 3.4.10

@angular/cli: 1.0.0-rc.2

@angular/compiler-cli: 2.4.10

### Repro steps.

<!--

Simple steps to reproduce this bug.

Please include: commands run, packages added, related code changes.

A link to a sample repo would help too.

-->

**STEPS TO REPRODUCE**

See git repo

https://github.com/vaibhavbparikh/circular-routes

### The log given by the failure.

<!-- Normally this include a stack trace and some more information. -->

> ** NG Live Development Server is running on http://localhost:4200 **

Hash: f669143e24ec08ff9586

Time: 23223ms

chunk {0} polyfills.bundle.js, polyfills.bundle.js.map (polyfills) 232 kB {4} [initial] [rendered]

chunk {1} main.bundle.js, main.bundle.js.map (main) 332 kB {3} [initial] [rendered]

chunk {2} styles.bundle.js, styles.bundle.js.map (styles) 304 kB {4} [initial] [rendered]

chunk {3} vendor.bundle.js, vendor.bundle.js.map (vendor) 5.64 MB [initial] [rendered]

chunk {4} inline.bundle.js, inline.bundle.js.map (inline) 0 bytes [entry] [rendered]

ERROR in Maximum call stack size exceeded

webpack: Failed to compile.

However when I do any changes in file and save it again, angular-cli compiles it again and it runs fine then.

### Desired functionality.

<!--

What would like to see implemented?

What is the usecase?

-->

This behaviour shall not create cyclic dependency, there should be mechanism to detect this and break.

Either it shall not compile at all or it shall not throw error at first compile.

** USE CASE **

> I have 3 Feature modules in the application. Each Feature modules contains 3 or more sub feature modules with their own components and specially **routes** with router module.

> Now consider below scenario.

> I have modules as below.

>

> - Feature 1

> - Feature1Sub Feature 1

> - Feature1Sub Feature 2

> - Feature 2

> - Feature2Sub Feature 1

> - Feature2Sub Feature 2

> - Feature 3

> - Feature3Sub Feature 1

> - Feature3Sub Feature 2

>

> Now Consider below flow.

> I am loading Feature 1 -> Feature1Sub Feature 1. I want to load Feature2Sub Feature 1 directly from Feature1Sub Feature 1. Without loading whole Feature 2 module. Also, I need to maintain navigation flow with back button.

> So I will need to write routes and load that module with loadchildren feature in module of Feature1Sub Feature 1.

> Same way I want to load Feature1Sub Feature 1 directly from Feature2SubFeature 1 without loading whole Feature 2 module. So again I will need to write routes in Feature1Sub Feature 1 module.

>

As a complex and large application, there might be need to create flow where two or more modules needs each other with routing.

### Mention any other details that might be useful.

<!-- Please include a link to the repo if this is related to an OSS project. -->

| 1.0 | ERROR in Maximum call stack size exceeded : Feature module circular dependency - <!--

IF YOU DON'T FILL OUT THE FOLLOWING INFORMATION YOUR ISSUE MIGHT BE CLOSED WITHOUT INVESTIGATING

-->

### Bug Report or Feature Request (mark with an `x`)

```

- [X] bug report -> please search issues before submitting

- [ ] feature request

```

### Versions.

<!--

Output from: `ng --version`.

If nothing, output from: `node --version` and `npm --version`.

Windows (7/8/10). Linux (incl. distribution). macOS (El Capitan? Sierra?)

-->

> @angular/cli: 1.0.0-rc.2

node: 6.10.0

os: linux x64

@angular/common: 2.4.10

@angular/compiler: 2.4.10

@angular/core: 2.4.10

@angular/forms: 2.4.10

@angular/http: 2.4.10

@angular/platform-browser: 2.4.10

@angular/platform-browser-dynamic: 2.4.10

@angular/router: 3.4.10

@angular/cli: 1.0.0-rc.2

@angular/compiler-cli: 2.4.10

### Repro steps.

<!--

Simple steps to reproduce this bug.

Please include: commands run, packages added, related code changes.

A link to a sample repo would help too.

-->

**STEPS TO REPRODUCE**

See git repo

https://github.com/vaibhavbparikh/circular-routes

### The log given by the failure.

<!-- Normally this include a stack trace and some more information. -->

> ** NG Live Development Server is running on http://localhost:4200 **

Hash: f669143e24ec08ff9586

Time: 23223ms

chunk {0} polyfills.bundle.js, polyfills.bundle.js.map (polyfills) 232 kB {4} [initial] [rendered]

chunk {1} main.bundle.js, main.bundle.js.map (main) 332 kB {3} [initial] [rendered]

chunk {2} styles.bundle.js, styles.bundle.js.map (styles) 304 kB {4} [initial] [rendered]

chunk {3} vendor.bundle.js, vendor.bundle.js.map (vendor) 5.64 MB [initial] [rendered]

chunk {4} inline.bundle.js, inline.bundle.js.map (inline) 0 bytes [entry] [rendered]

ERROR in Maximum call stack size exceeded

webpack: Failed to compile.

However when I do any changes in file and save it again, angular-cli compiles it again and it runs fine then.

### Desired functionality.

<!--

What would like to see implemented?

What is the usecase?

-->

This behaviour shall not create cyclic dependency, there should be mechanism to detect this and break.

Either it shall not compile at all or it shall not throw error at first compile.

** USE CASE **

> I have 3 Feature modules in the application. Each Feature modules contains 3 or more sub feature modules with their own components and specially **routes** with router module.

> Now consider below scenario.

> I have modules as below.

>

> - Feature 1

> - Feature1Sub Feature 1

> - Feature1Sub Feature 2

> - Feature 2

> - Feature2Sub Feature 1

> - Feature2Sub Feature 2

> - Feature 3

> - Feature3Sub Feature 1

> - Feature3Sub Feature 2

>

> Now Consider below flow.

> I am loading Feature 1 -> Feature1Sub Feature 1. I want to load Feature2Sub Feature 1 directly from Feature1Sub Feature 1. Without loading whole Feature 2 module. Also, I need to maintain navigation flow with back button.

> So I will need to write routes and load that module with loadchildren feature in module of Feature1Sub Feature 1.

> Same way I want to load Feature1Sub Feature 1 directly from Feature2SubFeature 1 without loading whole Feature 2 module. So again I will need to write routes in Feature1Sub Feature 1 module.

>

As a complex and large application, there might be need to create flow where two or more modules needs each other with routing.

### Mention any other details that might be useful.

<!-- Please include a link to the repo if this is related to an OSS project. -->

| priority | error in maximum call stack size exceeded feature module circular dependency if you don t fill out the following information your issue might be closed without investigating bug report or feature request mark with an x bug report please search issues before submitting feature request versions output from ng version if nothing output from node version and npm version windows linux incl distribution macos el capitan sierra angular cli rc node os linux angular common angular compiler angular core angular forms angular http angular platform browser angular platform browser dynamic angular router angular cli rc angular compiler cli repro steps simple steps to reproduce this bug please include commands run packages added related code changes a link to a sample repo would help too steps to reproduce see git repo the log given by the failure ng live development server is running on hash time chunk polyfills bundle js polyfills bundle js map polyfills kb chunk main bundle js main bundle js map main kb chunk styles bundle js styles bundle js map styles kb chunk vendor bundle js vendor bundle js map vendor mb chunk inline bundle js inline bundle js map inline bytes error in maximum call stack size exceeded webpack failed to compile however when i do any changes in file and save it again angular cli compiles it again and it runs fine then desired functionality what would like to see implemented what is the usecase this behaviour shall not create cyclic dependency there should be mechanism to detect this and break either it shall not compile at all or it shall not throw error at first compile use case i have feature modules in the application each feature modules contains or more sub feature modules with their own components and specially routes with router module now consider below scenario i have modules as below feature feature feature feature feature feature feature feature feature now consider below flow i am loading feature feature i want to load feature directly from feature without loading whole feature module also i need to maintain navigation flow with back button so i will need to write routes and load that module with loadchildren feature in module of feature same way i want to load feature directly from without loading whole feature module so again i will need to write routes in feature module as a complex and large application there might be need to create flow where two or more modules needs each other with routing mention any other details that might be useful | 1 |

8,944 | 4,364,784,070 | IssuesEvent | 2016-08-03 08:23:11 | CartoDB/cartodb | https://api.github.com/repos/CartoDB/cartodb | closed | Implement endpoint to store the builder onboarding seen status | backend Builder | For the new builder onboarding (see https://github.com/CartoDB/cartodb/issues/8887) we need a new endpoint to store the seen status of the screen. This way, if the user checks the `Never show me this message` option we'll set certain flag to true in the user model, and we won't disturb he or her again. | 1.0 | Implement endpoint to store the builder onboarding seen status - For the new builder onboarding (see https://github.com/CartoDB/cartodb/issues/8887) we need a new endpoint to store the seen status of the screen. This way, if the user checks the `Never show me this message` option we'll set certain flag to true in the user model, and we won't disturb he or her again. | non_priority | implement endpoint to store the builder onboarding seen status for the new builder onboarding see we need a new endpoint to store the seen status of the screen this way if the user checks the never show me this message option we ll set certain flag to true in the user model and we won t disturb he or her again | 0 |

15,600 | 3,476,591,721 | IssuesEvent | 2015-12-27 02:56:38 | PolarisSS13/Polaris | https://api.github.com/repos/PolarisSS13/Polaris | closed | Z8 Carbine Grenade Launcher | BS12 Bug Needs Retesting | Doesn't work, the grenade option won't shoot the grenade, and acts as the semi-automatic option. | 1.0 | Z8 Carbine Grenade Launcher - Doesn't work, the grenade option won't shoot the grenade, and acts as the semi-automatic option. | non_priority | carbine grenade launcher doesn t work the grenade option won t shoot the grenade and acts as the semi automatic option | 0 |

5,897 | 2,580,630,259 | IssuesEvent | 2015-02-13 18:57:51 | GoogleCloudPlatform/kubernetes | https://api.github.com/repos/GoogleCloudPlatform/kubernetes | closed | Return 201 when objects are created | api/cluster area/usability priority/P2 status/help-wanted team/UX | Currently we return 200 when a new object is created, spec:

> If a resource has been created on the origin server, the response SHOULD be 201 (Created) and contain an entity which describes the status of the request and refers to the new resource

Extracted from #1782 | 1.0 | Return 201 when objects are created - Currently we return 200 when a new object is created, spec:

> If a resource has been created on the origin server, the response SHOULD be 201 (Created) and contain an entity which describes the status of the request and refers to the new resource

Extracted from #1782 | priority | return when objects are created currently we return when a new object is created spec if a resource has been created on the origin server the response should be created and contain an entity which describes the status of the request and refers to the new resource extracted from | 1 |

257,241 | 27,561,820,778 | IssuesEvent | 2023-03-07 22:48:27 | samqws-marketing/coursera_naptime | https://api.github.com/repos/samqws-marketing/coursera_naptime | closed | CVE-2016-10540 (High) detected in npm-2.11.2.jar - autoclosed | Mend: dependency security vulnerability | ## CVE-2016-10540 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>npm-2.11.2.jar</b></p></summary>

<p>WebJar for npm</p>

<p>Library home page: <a href="http://webjars.org">http://webjars.org</a></p>

<p>Path to vulnerable library: /home/wss-scanner/.ivy2/cache/org.webjars/npm/jars/npm-2.11.2.jar</p>

<p>

Dependency Hierarchy:

- sbt-plugin-2.4.4.jar (Root Library)

- sbt-js-engine-1.1.3.jar

- npm_2.10-1.1.1.jar

- :x: **npm-2.11.2.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/samqws-marketing/coursera_naptime/commit/95750513b615ecf0ea9b7e14fb5f71e577d01a1f">95750513b615ecf0ea9b7e14fb5f71e577d01a1f</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Minimatch is a minimal matching utility that works by converting glob expressions into JavaScript `RegExp` objects. The primary function, `minimatch(path, pattern)` in Minimatch 3.0.1 and earlier is vulnerable to ReDoS in the `pattern` parameter.

<p>Publish Date: 2018-05-31

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2016-10540>CVE-2016-10540</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2016-10540">https://nvd.nist.gov/vuln/detail/CVE-2016-10540</a></p>

<p>Release Date: 2018-05-31</p>

<p>Fix Resolution: Pvc.Browserify - 0.0.1.1;JetBrains.Rider.Frontend4 - 203.0.20201014.202610-eap04;JetBrains.Rider.Frontend5 - 203.0.20201006.200056-eap03,213.0.20211008.154703-eap03;Bridge.AWS - 0.3.30.36;tslint - 3.11.0;MIDIator.WebClient - 1.0.105;BumperLane.Public.Service.Contracts - 0.23.35.214-prerelease;ng-grid - 2.0.4;minimatch - 3.0.2;Virteom.Tenant.Mobile.Bluetooth - 0.21.29.159-prerelease;ShowingVault.DotNet.Sdk - 0.13.41.190-prerelease;Triarc.Web.Build - 1.3.0;Virteom.Tenant.Mobile.Framework.UWP - 0.20.41.103-prerelease;Virteom.Tenant.Mobile.Framework.iOS - 0.20.41.103-prerelease;BumperLane.Public.Api.V2.ClientModule - 0.23.35.214-prerelease;Virteom.Tenant.Mobile.Bluetooth.iOS - 0.20.41.103-prerelease;Virteom.Public.Utilities - 0.23.37.212-prerelease;Mustache.Reports.Data - 1.2.2;Virteom.Tenant.Mobile.Framework - 0.21.29.159-prerelease;Virteom.Tenant.Mobile.Bluetooth.Android - 0.20.41.103-prerelease;z4a-dotnet-scaffold - 1.0.0.2;Raml.Parser - 2.0.0,1.0.2;AntData.ORM - 1.2.9;ApiExplorer.HelpPage - 1.0.0-alpha3;SitecoreMaster.TrueDynamicPlaceholders - 1.0.3;Virteom.Tenant.Mobile.Framework.Android - 0.20.41.103-prerelease;BumperLane.Public.Api.Client - 0.23.35.214-prerelease</p>

</p>

</details>

<p></p>

| True | CVE-2016-10540 (High) detected in npm-2.11.2.jar - autoclosed - ## CVE-2016-10540 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>npm-2.11.2.jar</b></p></summary>

<p>WebJar for npm</p>

<p>Library home page: <a href="http://webjars.org">http://webjars.org</a></p>

<p>Path to vulnerable library: /home/wss-scanner/.ivy2/cache/org.webjars/npm/jars/npm-2.11.2.jar</p>

<p>

Dependency Hierarchy:

- sbt-plugin-2.4.4.jar (Root Library)

- sbt-js-engine-1.1.3.jar

- npm_2.10-1.1.1.jar

- :x: **npm-2.11.2.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/samqws-marketing/coursera_naptime/commit/95750513b615ecf0ea9b7e14fb5f71e577d01a1f">95750513b615ecf0ea9b7e14fb5f71e577d01a1f</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Minimatch is a minimal matching utility that works by converting glob expressions into JavaScript `RegExp` objects. The primary function, `minimatch(path, pattern)` in Minimatch 3.0.1 and earlier is vulnerable to ReDoS in the `pattern` parameter.

<p>Publish Date: 2018-05-31

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2016-10540>CVE-2016-10540</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2016-10540">https://nvd.nist.gov/vuln/detail/CVE-2016-10540</a></p>

<p>Release Date: 2018-05-31</p>

<p>Fix Resolution: Pvc.Browserify - 0.0.1.1;JetBrains.Rider.Frontend4 - 203.0.20201014.202610-eap04;JetBrains.Rider.Frontend5 - 203.0.20201006.200056-eap03,213.0.20211008.154703-eap03;Bridge.AWS - 0.3.30.36;tslint - 3.11.0;MIDIator.WebClient - 1.0.105;BumperLane.Public.Service.Contracts - 0.23.35.214-prerelease;ng-grid - 2.0.4;minimatch - 3.0.2;Virteom.Tenant.Mobile.Bluetooth - 0.21.29.159-prerelease;ShowingVault.DotNet.Sdk - 0.13.41.190-prerelease;Triarc.Web.Build - 1.3.0;Virteom.Tenant.Mobile.Framework.UWP - 0.20.41.103-prerelease;Virteom.Tenant.Mobile.Framework.iOS - 0.20.41.103-prerelease;BumperLane.Public.Api.V2.ClientModule - 0.23.35.214-prerelease;Virteom.Tenant.Mobile.Bluetooth.iOS - 0.20.41.103-prerelease;Virteom.Public.Utilities - 0.23.37.212-prerelease;Mustache.Reports.Data - 1.2.2;Virteom.Tenant.Mobile.Framework - 0.21.29.159-prerelease;Virteom.Tenant.Mobile.Bluetooth.Android - 0.20.41.103-prerelease;z4a-dotnet-scaffold - 1.0.0.2;Raml.Parser - 2.0.0,1.0.2;AntData.ORM - 1.2.9;ApiExplorer.HelpPage - 1.0.0-alpha3;SitecoreMaster.TrueDynamicPlaceholders - 1.0.3;Virteom.Tenant.Mobile.Framework.Android - 0.20.41.103-prerelease;BumperLane.Public.Api.Client - 0.23.35.214-prerelease</p>

</p>

</details>

<p></p>

| non_priority | cve high detected in npm jar autoclosed cve high severity vulnerability vulnerable library npm jar webjar for npm library home page a href path to vulnerable library home wss scanner cache org webjars npm jars npm jar dependency hierarchy sbt plugin jar root library sbt js engine jar npm jar x npm jar vulnerable library found in head commit a href found in base branch master vulnerability details minimatch is a minimal matching utility that works by converting glob expressions into javascript regexp objects the primary function minimatch path pattern in minimatch and earlier is vulnerable to redos in the pattern parameter publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution pvc browserify jetbrains rider jetbrains rider bridge aws tslint midiator webclient bumperlane public service contracts prerelease ng grid minimatch virteom tenant mobile bluetooth prerelease showingvault dotnet sdk prerelease triarc web build virteom tenant mobile framework uwp prerelease virteom tenant mobile framework ios prerelease bumperlane public api clientmodule prerelease virteom tenant mobile bluetooth ios prerelease virteom public utilities prerelease mustache reports data virteom tenant mobile framework prerelease virteom tenant mobile bluetooth android prerelease dotnet scaffold raml parser antdata orm apiexplorer helppage sitecoremaster truedynamicplaceholders virteom tenant mobile framework android prerelease bumperlane public api client prerelease | 0 |

109,943 | 23,846,434,993 | IssuesEvent | 2022-09-06 14:21:22 | arturo-lang/arturo | https://api.github.com/repos/arturo-lang/arturo | closed | [VM/bytecode] replace zippy with already existing functions? | enhancement vm todo performance bytecode | [VM/bytecode] replace zippy with already existing functions?

we could somehow use some of the existing miniz functions, to avoid the extra dependency

https://github.com/arturo-lang/arturo/blob/f194d4353079ce45693f443e9c9ec43f62c2fc74/src/vm/bytecode.nim#L16

```text

import streams

# TODO(VM/bytecode) replace zippy with already existing functions?

# we could somehow use some of the existing miniz functions, to avoid the extra dependency

# labels: vm, bytecode, enhancement, performance

import extras/zippy

import os

# when not defined(NOUNZIP):

# import extras/miniz

import helpers/bytes as bytesHelper

```

165f61c38ddee10401677fe8ce026b9c7c93c435 | 1.0 | [VM/bytecode] replace zippy with already existing functions? - [VM/bytecode] replace zippy with already existing functions?

we could somehow use some of the existing miniz functions, to avoid the extra dependency

https://github.com/arturo-lang/arturo/blob/f194d4353079ce45693f443e9c9ec43f62c2fc74/src/vm/bytecode.nim#L16

```text

import streams

# TODO(VM/bytecode) replace zippy with already existing functions?

# we could somehow use some of the existing miniz functions, to avoid the extra dependency

# labels: vm, bytecode, enhancement, performance

import extras/zippy

import os

# when not defined(NOUNZIP):

# import extras/miniz

import helpers/bytes as bytesHelper

```

165f61c38ddee10401677fe8ce026b9c7c93c435 | non_priority | replace zippy with already existing functions replace zippy with already existing functions we could somehow use some of the existing miniz functions to avoid the extra dependency text import streams todo vm bytecode replace zippy with already existing functions we could somehow use some of the existing miniz functions to avoid the extra dependency labels vm bytecode enhancement performance import extras zippy import os when not defined nounzip import extras miniz import helpers bytes as byteshelper | 0 |

688,897 | 23,599,309,968 | IssuesEvent | 2022-08-23 23:06:07 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | opened | Create CalcSpatialInertiaAboutPoint() to mimic related functions in multibody_plant.h and multibody_tree.h. | type: feature request priority: medium component: multibody plant type: usability release notes: feature | The implementation of a helper function ComputeCompositeInertia() arose during work in an Anzu [PR](#https://reviewable.shared-services.aws.tri.global/reviews/robotics/anzu/9323#-).

The documentation and signature in that Anzu PR is:

/// Returns `M_CFo_F` spatial inertia of `C` (composition of `bodies`)