Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

520,233 | 15,082,093,977 | IssuesEvent | 2021-02-05 14:07:39 | OpenSRP/opensrp-client-reveal | https://api.github.com/repos/OpenSRP/opensrp-client-reveal | closed | Mark residential structures as ineligible | Android Client Pilot Critical Priority: High enhancement | The Thailand team would like the ability to mark an unenumerated residential structure as ineligible so that they can state the location was visited and is not eligible for the intervention. An ineligible structure is a valid structure that is ineligible to participate in an intervention for a valid reason (e.g., a res... | 1.0 | Mark residential structures as ineligible - The Thailand team would like the ability to mark an unenumerated residential structure as ineligible so that they can state the location was visited and is not eligible for the intervention. An ineligible structure is a valid structure that is ineligible to participate in an ... | priority | mark residential structures as ineligible the thailand team would like the ability to mark an unenumerated residential structure as ineligible so that they can state the location was visited and is not eligible for the intervention an ineligible structure is a valid structure that is ineligible to participate in an ... | 1 |

300,499 | 9,211,291,451 | IssuesEvent | 2019-03-09 14:08:34 | qgisissuebot/QGIS | https://api.github.com/repos/qgisissuebot/QGIS | closed | 'processing' extension cannot be loaded. | Bug Priority: normal Regression | ---

Author Name: **Robin Schwemmle** (Robin Schwemmle)

Original Redmine Issue: 21411, https://issues.qgis.org/issues/21411

Original Assignee: Denis Rouzaud

---

The processing toolbox is not available. I followed the instructions in the README and added the path of the GDAL framework, but this doesn't help. It seem... | 1.0 | 'processing' extension cannot be loaded. - ---

Author Name: **Robin Schwemmle** (Robin Schwemmle)

Original Redmine Issue: 21411, https://issues.qgis.org/issues/21411

Original Assignee: Denis Rouzaud

---

The processing toolbox is not available. I followed the instructions in the README and added the path of the GDA... | priority | processing extension cannot be loaded author name robin schwemmle robin schwemmle original redmine issue original assignee denis rouzaud the processing toolbox is not available i followed the instructions in the readme and added the path of the gdal framework but this doesn t help it ... | 1 |

26,464 | 2,684,555,930 | IssuesEvent | 2015-03-29 03:30:59 | gtcasl/gpuocelot | https://api.github.com/repos/gtcasl/gpuocelot | closed | ret instruction for global entry kernel not supported in llvm bacend (cuda 4.1) | bug imported Priority-High | _From [wangjin....@gmail.com](https://code.google.com/u/115604721152422776435/) on January 21, 2012 19:42:26_

What steps will reproduce the problem? 1.Use cuda toolkit 4.1

2.Compile helloWorld.cu attached with nvcc, then link against libocelot

3.Set llvm as backend in configure.ocelot

4.Run helloWolrd What is the e... | 1.0 | ret instruction for global entry kernel not supported in llvm bacend (cuda 4.1) - _From [wangjin....@gmail.com](https://code.google.com/u/115604721152422776435/) on January 21, 2012 19:42:26_

What steps will reproduce the problem? 1.Use cuda toolkit 4.1

2.Compile helloWorld.cu attached with nvcc, then link against li... | priority | ret instruction for global entry kernel not supported in llvm bacend cuda from on january what steps will reproduce the problem use cuda toolkit compile helloworld cu attached with nvcc then link against libocelot set llvm as backend in configure ocelot run hellowolrd what is ... | 1 |

425,608 | 12,342,984,881 | IssuesEvent | 2020-05-15 02:32:33 | Matteas-Eden/roll-for-reaction | https://api.github.com/repos/Matteas-Eden/roll-for-reaction | closed | Add more spells to the spellbook | Medium Priority enhancement | **User Story**

As a game designer, I'd like to offer spellcasting players a wide variety of spells and provide an element of progression with those spells.

**Acceptance Criteria**

- More spells are added to the spellbook

- Spells are unlocked based on level

**Notes**

---

**Why is this feature needed? P... | 1.0 | Add more spells to the spellbook - **User Story**

As a game designer, I'd like to offer spellcasting players a wide variety of spells and provide an element of progression with those spells.

**Acceptance Criteria**

- More spells are added to the spellbook

- Spells are unlocked based on level

**Notes**

---... | priority | add more spells to the spellbook user story as a game designer i d like to offer spellcasting players a wide variety of spells and provide an element of progression with those spells acceptance criteria more spells are added to the spellbook spells are unlocked based on level notes ... | 1 |

465,182 | 13,358,227,770 | IssuesEvent | 2020-08-31 11:18:19 | webpack-contrib/file-loader | https://api.github.com/repos/webpack-contrib/file-loader | closed | Pass additional assetInfo object when calling emitFile | flag: Community help wanted priority: 5 (nice to have) semver: Minor type: Feature | <!--

Issues are so 🔥

If you remove or skip this template, you'll make the 🐼 sad and the mighty god

of Github will appear and pile-drive the close button from a great height

while making animal noises.

👉🏽 Need support, advice, or help? Don't open an issue!

Head to StackOverflow or https://gitte... | 1.0 | Pass additional assetInfo object when calling emitFile - <!--

Issues are so 🔥

If you remove or skip this template, you'll make the 🐼 sad and the mighty god

of Github will appear and pile-drive the close button from a great height

while making animal noises.

👉🏽 Need support, advice, or help? Don't... | priority | pass additional assetinfo object when calling emitfile issues are so 🔥 if you remove or skip this template you ll make the 🐼 sad and the mighty god of github will appear and pile drive the close button from a great height while making animal noises 👉🏽 need support advice or help don t... | 1 |

92,995 | 11,730,391,184 | IssuesEvent | 2020-03-10 21:16:39 | ibm-openbmc/dev | https://api.github.com/repos/ibm-openbmc/dev | closed | GUI : Design : Revisit Header | GUI UI Design | ## Stakeholders

**SME**: n/a

**Design Researcher**: @nicoleconser

**UX Designer**: TBD

**FED**: TBD

## Use Case

Through talking with end users, several questions involving the header have surfaced, including:

• **Firmware Level** — "It's very helpful that the ASMI interface displays the firmware level o... | 1.0 | GUI : Design : Revisit Header - ## Stakeholders

**SME**: n/a

**Design Researcher**: @nicoleconser

**UX Designer**: TBD

**FED**: TBD

## Use Case

Through talking with end users, several questions involving the header have surfaced, including:

• **Firmware Level** — "It's very helpful that the ASMI interfa... | non_priority | gui design revisit header stakeholders sme n a design researcher nicoleconser ux designer tbd fed tbd use case through talking with end users several questions involving the header have surfaced including • firmware level — it s very helpful that the asmi interfa... | 0 |

420,838 | 12,244,511,322 | IssuesEvent | 2020-05-05 11:16:44 | minio/mc | https://api.github.com/repos/minio/mc | closed | [Proposal] Support STS based access to MinIO | priority: medium stale | To access MinIO via the STS API, we can add a command to mc such as:

`mc sts ENDPOINT <sts-type> <sts-specific-args>`

to print out access key, secret key and session token.

For example for LDAP, this could be:

`mc sts ENDPOINT ldap ldapuser=myusername ldappasswd=yyy`

In addition to this, we can add a mod... | 1.0 | [Proposal] Support STS based access to MinIO - To access MinIO via the STS API, we can add a command to mc such as:

`mc sts ENDPOINT <sts-type> <sts-specific-args>`

to print out access key, secret key and session token.

For example for LDAP, this could be:

`mc sts ENDPOINT ldap ldapuser=myusername ldappassw... | priority | support sts based access to minio to access minio via the sts api we can add a command to mc such as mc sts endpoint to print out access key secret key and session token for example for ldap this could be mc sts endpoint ldap ldapuser myusername ldappasswd yyy in addition to this we ca... | 1 |

28,514 | 23,307,862,799 | IssuesEvent | 2022-08-08 04:25:55 | APSIMInitiative/ApsimX | https://api.github.com/repos/APSIMInitiative/ApsimX | closed | Create a system for deriving a pmf model from a generic base model. | newfeature interface/infrastructure | There are a lot of plant models in APSIM that are (or should be) very similar to other models. e.g. barley and oats should be similar to wheat. At the moment we don't know what differences there are between wheat/barley/oats or between the different legume models. Some consistency is needed.

We would like to create ... | 1.0 | Create a system for deriving a pmf model from a generic base model. - There are a lot of plant models in APSIM that are (or should be) very similar to other models. e.g. barley and oats should be similar to wheat. At the moment we don't know what differences there are between wheat/barley/oats or between the different ... | non_priority | create a system for deriving a pmf model from a generic base model there are a lot of plant models in apsim that are or should be very similar to other models e g barley and oats should be similar to wheat at the moment we don t know what differences there are between wheat barley oats or between the different ... | 0 |

246,298 | 7,894,388,593 | IssuesEvent | 2018-06-28 21:18:22 | minetest/minetest | https://api.github.com/repos/minetest/minetest | closed | Android 0.4.17.1: Google Play Store build crash while loading singleplayer world | Android Bug High priority | ##### Issue type

<!-- Pick one below and delete others -->

- Bug report

##### Minetest version

<!--

Paste Minetest version between quotes below

If you are on a devel version, please add git commit hash

You can use `minetest --version` to find it.

-->

Google Play Store store build of `0.4.17.1` (4a48cd57, A... | 1.0 | Android 0.4.17.1: Google Play Store build crash while loading singleplayer world - ##### Issue type

<!-- Pick one below and delete others -->

- Bug report

##### Minetest version

<!--

Paste Minetest version between quotes below

If you are on a devel version, please add git commit hash

You can use `minetest --... | priority | android google play store build crash while loading singleplayer world issue type bug report minetest version paste minetest version between quotes below if you are on a devel version please add git commit hash you can use minetest version to find it google play st... | 1 |

14,022 | 5,536,632,959 | IssuesEvent | 2017-03-21 20:07:38 | SFTtech/openage | https://api.github.com/repos/SFTtech/openage | closed | Normalize install prefixes in cmake | buildsystem easy improvement just do it | Please normalize the paths that are shown after the buildsystem configuration step:

observed:

```

install prefix | /usr/local/../asdf/../test

py install prefix | /usr/lib/python-exec/python3.5/../../../lib64/python3.5/site-packages

```

expected:

```

install prefix | /usr/test

py install prefix | /usr... | 1.0 | Normalize install prefixes in cmake - Please normalize the paths that are shown after the buildsystem configuration step:

observed:

```

install prefix | /usr/local/../asdf/../test

py install prefix | /usr/lib/python-exec/python3.5/../../../lib64/python3.5/site-packages

```

expected:

```

install prefix... | non_priority | normalize install prefixes in cmake please normalize the paths that are shown after the buildsystem configuration step observed install prefix usr local asdf test py install prefix usr lib python exec site packages expected install prefix usr test py... | 0 |

609,532 | 18,876,064,299 | IssuesEvent | 2021-11-14 02:16:26 | massenergize/api | https://api.github.com/repos/massenergize/api | closed | Sandbox for all communities page | bug priority 1 | Make ?sandbox=true show non-published communities on all communities page | 1.0 | Sandbox for all communities page - Make ?sandbox=true show non-published communities on all communities page | priority | sandbox for all communities page make sandbox true show non published communities on all communities page | 1 |

72,438 | 9,592,942,391 | IssuesEvent | 2019-05-09 10:09:38 | gama-platform/gama | https://api.github.com/repos/gama-platform/gama | opened | introduce ?help in interactive console to bring help into console | > Enhancement Affects Usability Concerns Documentation Concerns GAML Concerns Interface Concerns Modeling | **Is your request related to a problem? Please describe.**

Currently, the search box in GAMA can be used to lookup for operators and hovering over the results would show @doc text in a small purple window. This window can be missed by the user because it does not appear close to the search result and neither does it s... | 1.0 | introduce ?help in interactive console to bring help into console - **Is your request related to a problem? Please describe.**

Currently, the search box in GAMA can be used to lookup for operators and hovering over the results would show @doc text in a small purple window. This window can be missed by the user because... | non_priority | introduce help in interactive console to bring help into console is your request related to a problem please describe currently the search box in gama can be used to lookup for operators and hovering over the results would show doc text in a small purple window this window can be missed by the user because... | 0 |

238,914 | 7,784,454,938 | IssuesEvent | 2018-06-06 13:20:24 | mozilla/addons-server | https://api.github.com/repos/mozilla/addons-server | closed | The notifications API endpoint returns the notification named "announcements" twice | component: api priority: p2 | ### Describe the problem and steps to reproduce it:

From: https://github.com/mozilla/addons-server/issues/8286#issuecomment-392537562

Retrieve the user notifications for any user.

### What happened?

The notifications API endpoint returns the notification named "announcements" twice.

### What did you expe... | 1.0 | The notifications API endpoint returns the notification named "announcements" twice - ### Describe the problem and steps to reproduce it:

From: https://github.com/mozilla/addons-server/issues/8286#issuecomment-392537562

Retrieve the user notifications for any user.

### What happened?

The notifications API e... | priority | the notifications api endpoint returns the notification named announcements twice describe the problem and steps to reproduce it from retrieve the user notifications for any user what happened the notifications api endpoint returns the notification named announcements twice what ... | 1 |

440,052 | 30,727,279,021 | IssuesEvent | 2023-07-27 20:49:11 | Nalini1998/Database | https://api.github.com/repos/Nalini1998/Database | closed | What is a NULL value? | documentation enhancement help wanted good first issue question | ** A. A value that represents missing or unknown data.

B. A known value.

C. An outdated value.

D. A numerical value. | 1.0 | What is a NULL value? - ** A. A value that represents missing or unknown data.

B. A known value.

C. An outdated value.

D. A numerical value. | non_priority | what is a null value a a value that represents missing or unknown data b a known value c an outdated value d a numerical value | 0 |

16,168 | 20,605,072,275 | IssuesEvent | 2022-03-06 21:13:27 | hoprnet/hoprnet | https://api.github.com/repos/hoprnet/hoprnet | opened | Update development processes | new issue processes | <!--- Please DO NOT remove the automatically added 'new issue' label -->

<!--- Provide a general summary of the issue in the Title above -->

After our call on 07/03/22 the new development processes were announced, update the development processes.

Updates: TBA | 1.0 | Update development processes - <!--- Please DO NOT remove the automatically added 'new issue' label -->

<!--- Provide a general summary of the issue in the Title above -->

After our call on 07/03/22 the new development processes were announced, update the development processes.

Updates: TBA | non_priority | update development processes after our call on the new development processes were announced update the development processes updates tba | 0 |

573,805 | 17,023,711,206 | IssuesEvent | 2021-07-03 03:26:04 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | [roads] Render passage ways | Component: mapnik Priority: major Resolution: fixed Type: enhancement | **[Submitted to the original trac issue database at 7.57am, Wednesday, 11th May 2011]**

passage ways are ways that go through buildings on the same level as the ground and are therefore not tunnels. example: http://wiki.openstreetmap.org/wiki/File:Durchgang.jpg

according to the (german) wiki page for "how to map". ... | 1.0 | [roads] Render passage ways - **[Submitted to the original trac issue database at 7.57am, Wednesday, 11th May 2011]**

passage ways are ways that go through buildings on the same level as the ground and are therefore not tunnels. example: http://wiki.openstreetmap.org/wiki/File:Durchgang.jpg

according to the (german... | priority | render passage ways passage ways are ways that go through buildings on the same level as the ground and are therefore not tunnels example according to the german wiki page for how to map those passages hausdurchfahrten should be tagged with covered yes for the part that goes through the build... | 1 |

653,611 | 21,608,508,394 | IssuesEvent | 2022-05-04 07:35:21 | bounswe/bounswe2022group2 | https://api.github.com/repos/bounswe/bounswe2022group2 | opened | Practice App: Set up the base structure of the back-end | priority-medium status-new practice-app practice-app:back-end | ### Issue Description

I will set up the base structure of the back-end of the practice app project. We decided to use Node.js and Express.js in our back-end. So, I will integrate the required packages and set up the base project. Afterward, we will develop the endpoints as the whole team one by one.

### Step Details

... | 1.0 | Practice App: Set up the base structure of the back-end - ### Issue Description

I will set up the base structure of the back-end of the practice app project. We decided to use Node.js and Express.js in our back-end. So, I will integrate the required packages and set up the base project. Afterward, we will develop the ... | priority | practice app set up the base structure of the back end issue description i will set up the base structure of the back end of the practice app project we decided to use node js and express js in our back end so i will integrate the required packages and set up the base project afterward we will develop the ... | 1 |

9,853 | 3,973,458,515 | IssuesEvent | 2016-05-04 18:39:51 | teotidev/guide | https://api.github.com/repos/teotidev/guide | closed | MetaData - use sub scroll pane and do not scroll the path label | code work | - make the label one size larger | 1.0 | MetaData - use sub scroll pane and do not scroll the path label - - make the label one size larger | non_priority | metadata use sub scroll pane and do not scroll the path label make the label one size larger | 0 |

238,739 | 7,782,751,724 | IssuesEvent | 2018-06-06 07:44:42 | javaee/servlet-spec | https://api.github.com/repos/javaee/servlet-spec | closed | Async Request parameters | Component: I/O Priority: Major Type: Improvement | Section 9.7.2 describes a set of request attributes that contain the path values of the original request, so that they may be accessed by a servlet called as a result of a AsyncContext.dispatch(...)

However, this implies that these attributes are only set after a AsyncContext.dispatch(...), which means that they are n... | 1.0 | Async Request parameters - Section 9.7.2 describes a set of request attributes that contain the path values of the original request, so that they may be accessed by a servlet called as a result of a AsyncContext.dispatch(...)

However, this implies that these attributes are only set after a AsyncContext.dispatch(...), ... | priority | async request parameters section describes a set of request attributes that contain the path values of the original request so that they may be accessed by a servlet called as a result of a asynccontext dispatch however this implies that these attributes are only set after a asynccontext dispatch ... | 1 |

641,712 | 20,832,780,130 | IssuesEvent | 2022-03-19 18:18:48 | Frontesque/VueTube | https://api.github.com/repos/Frontesque/VueTube | closed | Keyboard Enter to search not working | bug Priority: Low | **Describe the bug**

The enter key on the keyboard should allow for the same function as the search button

**To Reproduce**

1. Go to search

2. Type in search

3. Press enter and nothing will happen

**Expected behavior**

1. Go to search

2. Type in search

3. Press enter and search result should be retuned

... | 1.0 | Keyboard Enter to search not working - **Describe the bug**

The enter key on the keyboard should allow for the same function as the search button

**To Reproduce**

1. Go to search

2. Type in search

3. Press enter and nothing will happen

**Expected behavior**

1. Go to search

2. Type in search

3. Press ente... | priority | keyboard enter to search not working describe the bug the enter key on the keyboard should allow for the same function as the search button to reproduce go to search type in search press enter and nothing will happen expected behavior go to search type in search press ente... | 1 |

121,576 | 25,994,735,429 | IssuesEvent | 2022-12-20 10:36:08 | Open-Telecoms-Data/open-fibre-data-standard | https://api.github.com/repos/Open-Telecoms-Data/open-fibre-data-standard | closed | Multiple network providers per span and node | Schema Codelist | Currently, `Span.networkProvider` and `Node.networkProvider` are objects, i.e. there is a 1:1 relationship between `Span`/`Node` and `networkProvider`.

Therefore, to represent a span with many network providers (a common situation), implementers would need to publish multiple spans - one for each network provider.

... | 1.0 | Multiple network providers per span and node - Currently, `Span.networkProvider` and `Node.networkProvider` are objects, i.e. there is a 1:1 relationship between `Span`/`Node` and `networkProvider`.

Therefore, to represent a span with many network providers (a common situation), implementers would need to publish mu... | non_priority | multiple network providers per span and node currently span networkprovider and node networkprovider are objects i e there is a relationship between span node and networkprovider therefore to represent a span with many network providers a common situation implementers would need to publish mu... | 0 |

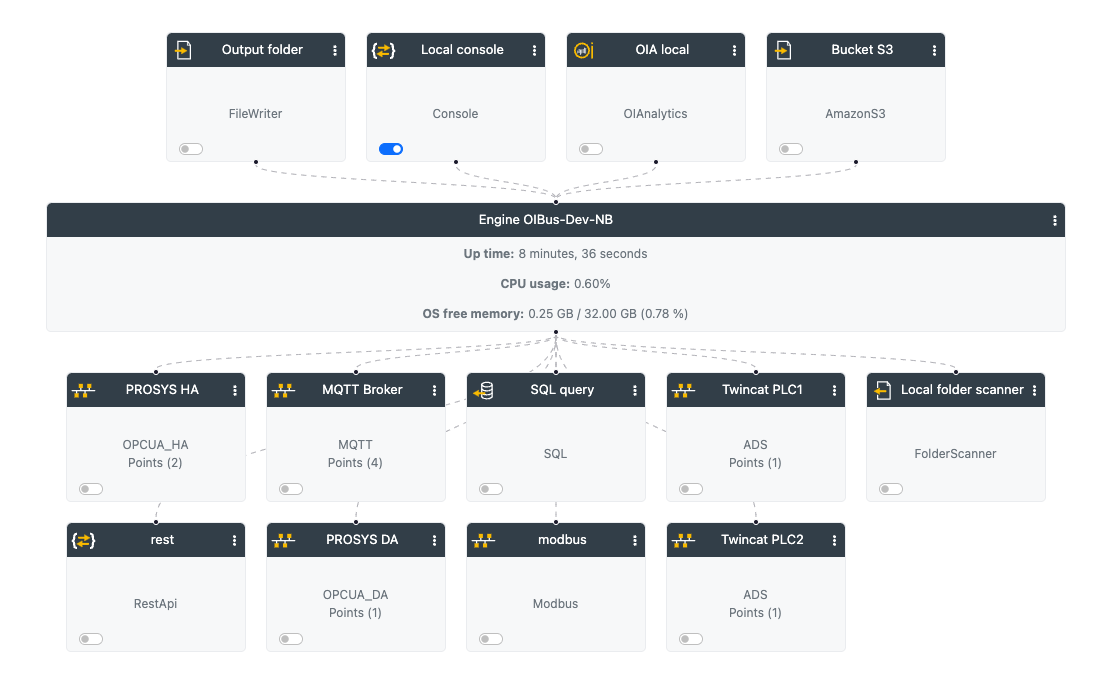

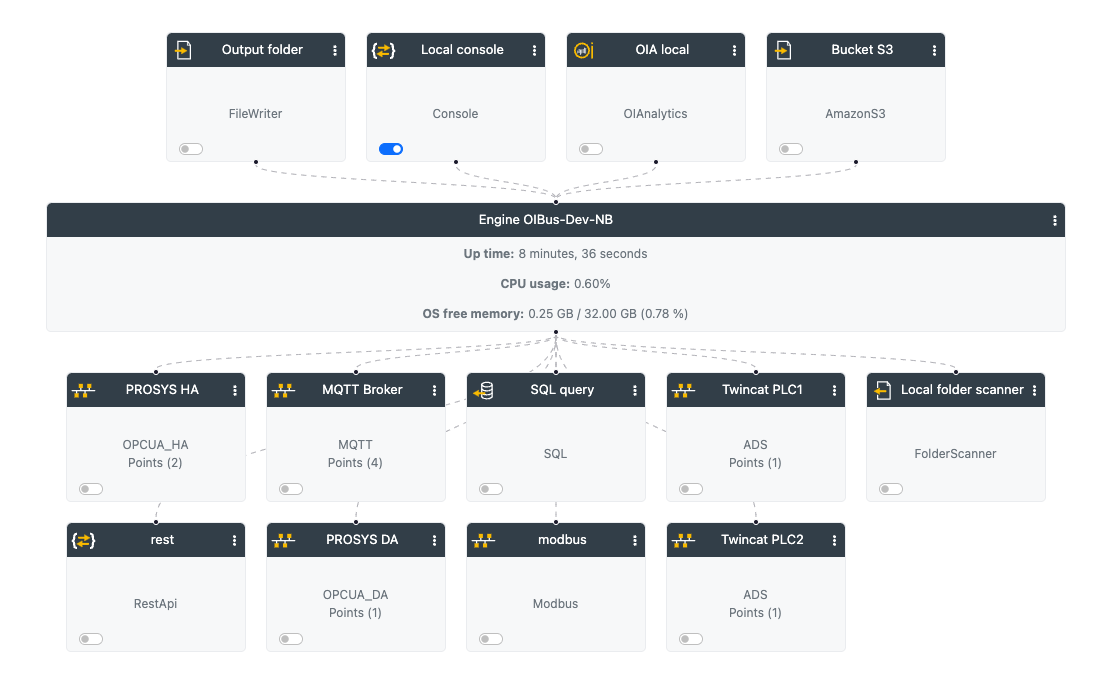

710,237 | 24,411,738,197 | IssuesEvent | 2022-10-05 12:57:20 | OptimistikSAS/OIBus | https://api.github.com/repos/OptimistikSAS/OIBus | closed | [UI - Home page] - Remove connection to engine when a connector is disabled | enhancement good first issue priority:high | When a connector is disabled, remove its connection to the engine

| 1.0 | [UI - Home page] - Remove connection to engine when a connector is disabled - When a connector is disabled, remove its connection to the engine

| priority | remove connection to engine when a connector is disabled when a connector is disabled remove its connection to the engine | 1 |

77,090 | 21,665,229,133 | IssuesEvent | 2022-05-07 04:07:14 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | Document packaging for Windows builds | tool platform-windows a: desktop documentation a: build P4 | See https://github.com/google/flutter-desktop-embedding/issues/587 for earlier discussion.

We should very likely either:

- switch the templates for apps and plugins to use the static runtime, or

- update the app bundling script to copy in the runtime libraries into the output directory for ease of packaging.

Fr... | 1.0 | Document packaging for Windows builds - See https://github.com/google/flutter-desktop-embedding/issues/587 for earlier discussion.

We should very likely either:

- switch the templates for apps and plugins to use the static runtime, or

- update the app bundling script to copy in the runtime libraries into the outpu... | non_priority | document packaging for windows builds see for earlier discussion we should very likely either switch the templates for apps and plugins to use the static runtime or update the app bundling script to copy in the runtime libraries into the output directory for ease of packaging from my initial investi... | 0 |

151,033 | 19,648,308,952 | IssuesEvent | 2022-01-10 01:24:28 | ThomasRuemmler/openspaceplanner | https://api.github.com/repos/ThomasRuemmler/openspaceplanner | closed | WS-2022-0007 (Medium) detected in node-forge-0.9.0.tgz - autoclosed | security vulnerability | ## WS-2022-0007 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-forge-0.9.0.tgz</b></p></summary>

<p>JavaScript implementations of network transports, cryptography, ciphers, PKI... | True | WS-2022-0007 (Medium) detected in node-forge-0.9.0.tgz - autoclosed - ## WS-2022-0007 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-forge-0.9.0.tgz</b></p></summary>

<p>JavaSc... | non_priority | ws medium detected in node forge tgz autoclosed ws medium severity vulnerability vulnerable library node forge tgz javascript implementations of network transports cryptography ciphers pki message digests and various utilities library home page a href path to dep... | 0 |

777,914 | 27,297,519,485 | IssuesEvent | 2023-02-23 21:47:06 | bcgov/entity | https://api.github.com/repos/bcgov/entity | closed | NameX - Restoration/Reinstatement NRs should be 1 year 56 days for Societies | bug Priority2 ENTITY SRE | We probably missed this last time when we fixed the expiry dates for other entity types. | 1.0 | NameX - Restoration/Reinstatement NRs should be 1 year 56 days for Societies - We probably missed this last time when we fixed the expiry dates for other entity types. | priority | namex restoration reinstatement nrs should be year days for societies we probably missed this last time when we fixed the expiry dates for other entity types | 1 |

74,906 | 3,452,191,069 | IssuesEvent | 2015-12-17 02:03:37 | YetiForceCompany/YetiForceCRM | https://api.github.com/repos/YetiForceCompany/YetiForceCRM | closed | [PL][BUG] Kalendarz - wyświetlanie zakończonych elementów jako zaplanowane | Label::Logic Priority::#2 Normal Type::Bug | Przy dodawaniu rekordu do kalendarza pojawiają się nam zadania dodane wcześniej, które są zaplanowane na ten sam dzień, ale wyświetlają się tam również el. wykonane.

Np:

| 1.0 | [PL][BUG] Kalendarz - wyświetlanie zakończonych elementów jako zaplanowane - Przy dodawaniu rekordu do kalendarza pojawiają się nam zadania dodane wcześniej, które są zaplanowane na ten sam dzień, ale wyświetlają się tam również el. wykonane.

Np:

* BLT version: 8.7.0

For reference:

- [Using Ubuntu Bash in Windows Creators' Update with Vagrant](https://www.jeffgeerling.com/blog/2017/using-ubuntu-bash-windows-creators-update-vagrant)... | 1.0 | Update WSL/Ubuntu Bash-based documentation to account for Creators Update - My system information:

* Operating system type: Windows 10

* Operating system version: Creators Update (April 11, 2017)

* BLT version: 8.7.0

For reference:

- [Using Ubuntu Bash in Windows Creators' Update with Vagrant](https://www.j... | non_priority | update wsl ubuntu bash based documentation to account for creators update my system information operating system type windows operating system version creators update april blt version for reference we may be able to tighten up our docs for windows users a bit ... | 0 |

620,339 | 19,559,559,865 | IssuesEvent | 2022-01-03 14:28:56 | bounswe/2021SpringGroup12 | https://api.github.com/repos/bounswe/2021SpringGroup12 | opened | Implementation of privacy policy and terms and conditions | priority: high frontend | **Description**

- Add privacy policy and terms and conditions forms to the register page

**Deadline**

- 04.01.2022

| 1.0 | Implementation of privacy policy and terms and conditions - **Description**

- Add privacy policy and terms and conditions forms to the register page

**Deadline**

- 04.01.2022

| priority | implementation of privacy policy and terms and conditions description add privacy policy and terms and conditions forms to the register page deadline | 1 |

303,615 | 26,218,325,637 | IssuesEvent | 2023-01-04 12:55:18 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: follower-reads/mixed-version/single-region failed | C-test-failure O-robot O-roachtest branch-master release-blocker T-kv | roachtest.follower-reads/mixed-version/single-region [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/8153407?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/8153407?buildTab=artifact... | 2.0 | roachtest: follower-reads/mixed-version/single-region failed - roachtest.follower-reads/mixed-version/single-region [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/8153407?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroa... | non_priority | roachtest follower reads mixed version single region failed roachtest follower reads mixed version single region with on master test artifacts and logs in artifacts follower reads mixed version single region run follower reads go verifyhighfollowerreadratios too many intervals with more ... | 0 |

105,119 | 16,624,126,435 | IssuesEvent | 2021-06-03 07:25:48 | Thanraj/OpenSSL_1.0.1b | https://api.github.com/repos/Thanraj/OpenSSL_1.0.1b | opened | CVE-2014-3566 (Low) detected in multiple libraries | security vulnerability | ## CVE-2014-3566 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>opensslOpenSSL_1_0_1b</b>, <b>opensslOpenSSL_1_0_1b</b>, <b>opensslOpenSSL_1_0_1b</b>, <b>opensslOpenSSL_1_0_1b</b>, <b... | True | CVE-2014-3566 (Low) detected in multiple libraries - ## CVE-2014-3566 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>opensslOpenSSL_1_0_1b</b>, <b>opensslOpenSSL_1_0_1b</b>, <b>openss... | non_priority | cve low detected in multiple libraries cve low severity vulnerability vulnerable libraries opensslopenssl opensslopenssl opensslopenssl opensslopenssl opensslopenssl opensslopenssl opensslopenssl opensslopenssl opensslopenss... | 0 |

367,368 | 25,734,822,690 | IssuesEvent | 2022-12-07 23:34:32 | hashicorp/terraform | https://api.github.com/repos/hashicorp/terraform | closed | Mistake/ Manage Resources in Terraform State Doc | documentation new | ### Terraform Version

```shell

terraform -v

Terraform v1.3.6

on linux_amd64

```

### Affected Pages

https://developer.hashicorp.com/terraform/tutorials/certification-associate-tutorials-003/state-cli

### What is the docs issue?

was released a few days ago but still doesn't appear on cdnjs. Is this pending review or is there some other reason it doesn't appear on the site?

/cc @jlukic | 1.0 | Add latest semantic-ui v2.4.0 - [Semantic UI v2.4.0](https://github.com/Semantic-Org/Semantic-UI/releases/tag/2.4.0) was released a few days ago but still doesn't appear on cdnjs. Is this pending review or is there some other reason it doesn't appear on the site?

/cc @jlukic | non_priority | add latest semantic ui was released a few days ago but still doesn t appear on cdnjs is this pending review or is there some other reason it doesn t appear on the site cc jlukic | 0 |

16,648 | 9,479,363,683 | IssuesEvent | 2019-04-20 07:41:47 | apache/incubator-mxnet | https://api.github.com/repos/apache/incubator-mxnet | closed | `mx.gluon.data.DataLoader` is slow when the size of batch is large | Gluon Performance | Hi, there.

I found `mx.gluon.data.DataLoader` is slow when the size of batch is large, since it calls `pickle.load` in `__next__` method of `_MultiWorkerIter` class. [[Code]](https://github.com/apache/incubator-mxnet/blob/master/python/mxnet/gluon/data/dataloader.py#L450)

I will upload the re-procedure code. | True | `mx.gluon.data.DataLoader` is slow when the size of batch is large - Hi, there.

I found `mx.gluon.data.DataLoader` is slow when the size of batch is large, since it calls `pickle.load` in `__next__` method of `_MultiWorkerIter` class. [[Code]](https://github.com/apache/incubator-mxnet/blob/master/python/mxnet/gluon/... | non_priority | mx gluon data dataloader is slow when the size of batch is large hi there i found mx gluon data dataloader is slow when the size of batch is large since it calls pickle load in next method of multiworkeriter class i will upload the re procedure code | 0 |

532,615 | 15,560,086,220 | IssuesEvent | 2021-03-16 12:19:56 | AY2021S2-CS2103-T14-3/tp | https://api.github.com/repos/AY2021S2-CS2103-T14-3/tp | closed | Update User Guide | priority.Medium | Update user guide to change all appointment-related command.

1. remove `to/TIME_TO`

2. make `l/LOCATION compulsory` | 1.0 | Update User Guide - Update user guide to change all appointment-related command.

1. remove `to/TIME_TO`

2. make `l/LOCATION compulsory` | priority | update user guide update user guide to change all appointment related command remove to time to make l location compulsory | 1 |

326,042 | 9,942,063,804 | IssuesEvent | 2019-07-03 13:07:17 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Remove snap CI provider, add GoCD provider | difficulty: 1️⃣ first-timers-only pkg/server priority: low 🎗 stage: work in progress type: bug | Snap CI is no longer available: https://snap-ci.com/

They recommend looking at GoCD: https://www.gocd.org

Should be updated in our CI Provider file here: https://github.com/cypress-io/cypress/blob/develop/packages/server/lib/util/ci_provider.coffee#L31

Standard GoCD environment variables listed here: https://d... | 1.0 | Remove snap CI provider, add GoCD provider - Snap CI is no longer available: https://snap-ci.com/

They recommend looking at GoCD: https://www.gocd.org

Should be updated in our CI Provider file here: https://github.com/cypress-io/cypress/blob/develop/packages/server/lib/util/ci_provider.coffee#L31

Standard GoCD... | priority | remove snap ci provider add gocd provider snap ci is no longer available they recommend looking at gocd should be updated in our ci provider file here standard gocd environment variables listed here | 1 |

302,157 | 22,792,060,866 | IssuesEvent | 2022-07-10 06:25:37 | ohmtech-rdi/eurorack-blocks | https://api.github.com/repos/ohmtech-rdi/eurorack-blocks | closed | Make an overview video and "Getting Started" guide | documentation | The current state of the project doesn't include a call-to-action that would lead to a successful feeling in the 5-minutes and first-hour of evaluation milestones.

- Direct the user to first watch an overview video and then read the "Getting Started" guide,

- Add a less than 5 minutes overview video to show the end... | 1.0 | Make an overview video and "Getting Started" guide - The current state of the project doesn't include a call-to-action that would lead to a successful feeling in the 5-minutes and first-hour of evaluation milestones.

- Direct the user to first watch an overview video and then read the "Getting Started" guide,

- Add... | non_priority | make an overview video and getting started guide the current state of the project doesn t include a call to action that would lead to a successful feeling in the minutes and first hour of evaluation milestones direct the user to first watch an overview video and then read the getting started guide add... | 0 |

766,771 | 26,898,084,224 | IssuesEvent | 2023-02-06 13:53:39 | anoma/juvix | https://api.github.com/repos/anoma/juvix | closed | Special syntax for `case` | enhancement syntax priority:medium | As we discussed, we want to add the syntax:

```haskell

case v of

| p1 := b1

| p2 := b2

```

or

```haskell

case v of {

p1 := b1;

p2 := b2;

}

```

where one doesn't need parentheses around complex patterns on the left-hand side.

- The first version works better with #1639, I think.

| 1.0 | Special syntax for `case` - As we discussed, we want to add the syntax:

```haskell

case v of

| p1 := b1

| p2 := b2

```

or

```haskell

case v of {

p1 := b1;

p2 := b2;

}

```

where one doesn't need parentheses around complex patterns on the left-hand side.

- The first version works better with #1639, I ... | priority | special syntax for case as we discussed we want to add the syntax haskell case v of or haskell case v of where one doesn t need parentheses around complex patterns on the left hand side the first version works better with i think | 1 |

68,444 | 21,664,559,307 | IssuesEvent | 2022-05-07 01:46:00 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Impossible to backfill over federation on joining a room on a new server | T-Defect P1 S-Major A-Timeline A-Federation | Repro steps (I hope):

* A room exists like #seaglass:matrix.org

* A user joins it from a virginal HS (e.g. `@neil:sandbox.modular.im`)

* They do not yet backpaginate any history for that room over federation for whatever reason

* Another user joins the room from the same HS (e.g. `@matthew:sandbox.modular.im`... | 1.0 | Impossible to backfill over federation on joining a room on a new server - Repro steps (I hope):

* A room exists like #seaglass:matrix.org

* A user joins it from a virginal HS (e.g. `@neil:sandbox.modular.im`)

* They do not yet backpaginate any history for that room over federation for whatever reason

* Anoth... | non_priority | impossible to backfill over federation on joining a room on a new server repro steps i hope a room exists like seaglass matrix org a user joins it from a virginal hs e g neil sandbox modular im they do not yet backpaginate any history for that room over federation for whatever reason anoth... | 0 |

68,211 | 3,284,911,315 | IssuesEvent | 2015-10-28 18:20:36 | cs2103aug2015-t10-3j/main | https://api.github.com/repos/cs2103aug2015-t10-3j/main | closed | A user can view the history of a past task (completed or not) | priority.low type.story | ...so that the user can look at it for auditing and tracking purposes.

| 1.0 | A user can view the history of a past task (completed or not) - ...so that the user can look at it for auditing and tracking purposes.

| priority | a user can view the history of a past task completed or not so that the user can look at it for auditing and tracking purposes | 1 |

170,722 | 14,268,494,082 | IssuesEvent | 2020-11-20 22:35:21 | Titane73/it115-a5-group2-stackproject | https://api.github.com/repos/Titane73/it115-a5-group2-stackproject | opened | Install NPM | NPM documentation | Lets install NPM which will help us install the rest

https://linuxconfig.org/install-the-mean-stack-on-ubuntu-18-04-bionic-beaver-linux

if it works lets document it!

Thanks | 1.0 | Install NPM - Lets install NPM which will help us install the rest

https://linuxconfig.org/install-the-mean-stack-on-ubuntu-18-04-bionic-beaver-linux

if it works lets document it!

Thanks | non_priority | install npm lets install npm which will help us install the rest if it works lets document it thanks | 0 |

605,121 | 18,725,213,584 | IssuesEvent | 2021-11-03 15:38:57 | kubernetes/release | https://api.github.com/repos/kubernetes/release | closed | Collaborative Release Notes review | kind/feature sig/release lifecycle/rotten area/release-eng needs-priority | <!-- Please only use this template for submitting feature requests -->

### Intro

[Initial design document](https://docs.google.com/document/d/13SCA5HfjdJy5ZiEMPfhhZ2w25sqMS_QOcsxrJEwSLn4/edit?usp=sharing) was shared to `#release-notes`.

#### What would you like to be added:

Ability for release notes review and ... | 1.0 | Collaborative Release Notes review - <!-- Please only use this template for submitting feature requests -->

### Intro

[Initial design document](https://docs.google.com/document/d/13SCA5HfjdJy5ZiEMPfhhZ2w25sqMS_QOcsxrJEwSLn4/edit?usp=sharing) was shared to `#release-notes`.

#### What would you like to be added:

... | priority | collaborative release notes review intro was shared to release notes what would you like to be added ability for release notes review and workflow to be more collaborative instead of individuals running the tool and reviewing by themselves why is this needed increasing collab... | 1 |

245,770 | 7,890,693,403 | IssuesEvent | 2018-06-28 09:35:28 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | opened | viewer process still running after exit | Bug Likelihood: 3 - Occasional OS: All Priority: High Severity: 5 - Very Serious Support Group: Any version: 2.8.0 | I notice this most when using the cli. I've seen it on trunk and 2.8RC. Still working on a reliable reproducer and will post a script as soon as I can.

In the meantime, developers may want to check for runaway processes when finished with a VisIt session.

-----------------------REDMINE MIGRATION------------------... | 1.0 | viewer process still running after exit - I notice this most when using the cli. I've seen it on trunk and 2.8RC. Still working on a reliable reproducer and will post a script as soon as I can.

In the meantime, developers may want to check for runaway processes when finished with a VisIt session.

----------------... | priority | viewer process still running after exit i notice this most when using the cli i ve seen it on trunk and still working on a reliable reproducer and will post a script as soon as i can in the meantime developers may want to check for runaway processes when finished with a visit session ... | 1 |

778,446 | 27,316,866,506 | IssuesEvent | 2023-02-24 16:21:42 | grpc/grpc | https://api.github.com/repos/grpc/grpc | closed | in c++ async stream mode, can write and read run simultaneously | kind/question priority/P3 untriaged | PLEASE DO NOT POST A QUESTION HERE.

This form is for bug reports and feature requests ONLY!

For general questions and troubleshooting, please ask/look for answers at StackOverflow, with "grpc" tag: https://stackoverflow.com/questions/tagged/grpc

For questions that specifically need to be answered by gRPC team me... | 1.0 | in c++ async stream mode, can write and read run simultaneously - PLEASE DO NOT POST A QUESTION HERE.

This form is for bug reports and feature requests ONLY!

For general questions and troubleshooting, please ask/look for answers at StackOverflow, with "grpc" tag: https://stackoverflow.com/questions/tagged/grpc

F... | priority | in c async stream mode can write and read run simultaneously please do not post a question here this form is for bug reports and feature requests only for general questions and troubleshooting please ask look for answers at stackoverflow with grpc tag for questions that specifically need to be answ... | 1 |

329,080 | 10,012,181,912 | IssuesEvent | 2019-07-15 12:37:12 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.reddit.com - video or audio doesn't play | browser-fenix engine-gecko priority-critical | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.1.0; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.reddit.com/r/interestingasfuck/comments/cd70m2/red_bull_just_made_the_fastest_pit_stop_in_f1/

**Browser ... | 1.0 | www.reddit.com - video or audio doesn't play - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.1.0; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.reddit.com/r/interestingasfuck/comments/cd70m2/red_bull_ju... | priority | video or audio doesn t play url browser version firefox mobile operating system android tested another browser yes problem type video or audio doesn t play description no video with supported format and mime type found steps to reproduce the video wil... | 1 |

342,208 | 10,313,377,077 | IssuesEvent | 2019-08-29 22:34:23 | metabase/metabase | https://api.github.com/repos/metabase/metabase | opened | X-axis on saved charts is obscured by the footer until it's resized | Priority:P2 Type:Bug Visualization/ | Whenever I open a saved question on `release-0.33.x` if it's an x/y chart of any kind, the x-axis labels are covered up by the footer UI elements until I either resize the browser window or cause the chart to rerender by opening up a sidebar.

detected in node-sass-4.14.1.tgz | security vulnerability | ## CVE-2018-19827 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-sass-4.14.1.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.n... | True | CVE-2018-19827 (High) detected in node-sass-4.14.1.tgz - ## CVE-2018-19827 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-sass-4.14.1.tgz</b></p></summary>

<p>Wrapper around libs... | non_priority | cve high detected in node sass tgz cve high severity vulnerability vulnerable library node sass tgz wrapper around libsass library home page a href dependency hierarchy gulp sass tgz root library x node sass tgz vulnerable library foun... | 0 |

221,490 | 7,389,146,901 | IssuesEvent | 2018-03-16 07:19:36 | llmhyy/microbat | https://api.github.com/repos/llmhyy/microbat | opened | [Instrumentation] StackOverflowError | high priority | hi @lylytran

Please kindly check the following command line, I generate a lot of include classes, it wind up with an exception as `Exception: java.lang.StackOverflowError thrown from the UncaughtExceptionHandler in thread "Thread-0"`. The bug happens for Closure-14. Would you please kindly have a check? Thanks!

... | 1.0 | [Instrumentation] StackOverflowError - hi @lylytran

Please kindly check the following command line, I generate a lot of include classes, it wind up with an exception as `Exception: java.lang.StackOverflowError thrown from the UncaughtExceptionHandler in thread "Thread-0"`. The bug happens for Closure-14. Would you... | priority | stackoverflowerror hi lylytran please kindly check the following command line i generate a lot of include classes it wind up with an exception as exception java lang stackoverflowerror thrown from the uncaughtexceptionhandler in thread thread the bug happens for closure would you please kindly ha... | 1 |

325,653 | 9,934,165,028 | IssuesEvent | 2019-07-02 13:55:53 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | auth.geeksforgeeks.org - site is not usable | browser-fenix engine-gecko priority-important | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.1.2; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://auth.geeksforgeeks.org/fb-login.php?to=https://auth.geeksforgeeks.org/?to=https://practice.geeksforgeeks.org/... | 1.0 | auth.geeksforgeeks.org - site is not usable - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.1.2; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://auth.geeksforgeeks.org/fb-login.php?to=https://auth.geeksforgee... | priority | auth geeksforgeeks org site is not usable url browser version firefox mobile operating system android tested another browser yes problem type site is not usable description i cannot sign up steps to reproduce browser configuration none ... | 1 |

43,927 | 5,577,655,990 | IssuesEvent | 2017-03-28 10:13:01 | khartec/waltz | https://api.github.com/repos/khartec/waltz | closed | Change initiative id selector factory should support identity selector | fixed (test & close) | To go from change initiative to change initiative

Useful for creating survey runs for a single change initiative | 1.0 | Change initiative id selector factory should support identity selector - To go from change initiative to change initiative

Useful for creating survey runs for a single change initiative | non_priority | change initiative id selector factory should support identity selector to go from change initiative to change initiative useful for creating survey runs for a single change initiative | 0 |

398,863 | 27,215,444,463 | IssuesEvent | 2023-02-20 21:09:49 | mawada-sweis/Clustering-Analysis | https://api.github.com/repos/mawada-sweis/Clustering-Analysis | closed | Readme file | documentation enhancement | # The Citywide Mobility Survey (CMS)

The Citywide Mobility Survey (CMS) is a survey conducted by the New York City Department of Transportation (DOT) to gather information about the travel behavior, preferences, and attitudes of New York City residents. The survey is conducted periodically, and the data collected is u... | 1.0 | Readme file - # The Citywide Mobility Survey (CMS)

The Citywide Mobility Survey (CMS) is a survey conducted by the New York City Department of Transportation (DOT) to gather information about the travel behavior, preferences, and attitudes of New York City residents. The survey is conducted periodically, and the data ... | non_priority | readme file the citywide mobility survey cms the citywide mobility survey cms is a survey conducted by the new york city department of transportation dot to gather information about the travel behavior preferences and attitudes of new york city residents the survey is conducted periodically and the data ... | 0 |

281,762 | 24,416,981,670 | IssuesEvent | 2022-10-05 16:45:19 | dotnet/source-build | https://api.github.com/repos/dotnet/source-build | closed | Test template smoke test failures in CI | area-ci-testing untriaged | All test template smoke-test projects are failing in CI (https://dev.azure.com/dnceng/internal/_build/results?buildId=2008513&view=results). This is likely related to https://github.com/dotnet/installer/pull/14634.

```

System.InvalidOperationException : Failed to execute /tarball/test/Microsoft.DotNet.SourceBuild.... | 1.0 | Test template smoke test failures in CI - All test template smoke-test projects are failing in CI (https://dev.azure.com/dnceng/internal/_build/results?buildId=2008513&view=results). This is likely related to https://github.com/dotnet/installer/pull/14634.

```

System.InvalidOperationException : Failed to execute ... | non_priority | test template smoke test failures in ci all test template smoke test projects are failing in ci this is likely related to system invalidoperationexception failed to execute tarball test microsoft dotnet sourcebuild smoketests bin release dotnet dotnet test bl tarball test microsoft dotnet sou... | 0 |

167,458 | 13,026,028,405 | IssuesEvent | 2020-07-27 14:24:51 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | Update rendering/localized_fonts golden images | a: internationalization a: tests a: text input framework | When #17700 is fixed, these golden files will need to be updated:

bin/cache/pkg/goldens/packages/flutter/test/rendering/localized_fonts.rich_text.styled_text_span.png

bin/cache/pkg/goldens/packages/flutter/test/rendering/localized_fonts.text_ambient_locale.chars.png

bin/cache/pkg/goldens/packages/flutter/test/rend... | 1.0 | Update rendering/localized_fonts golden images - When #17700 is fixed, these golden files will need to be updated:

bin/cache/pkg/goldens/packages/flutter/test/rendering/localized_fonts.rich_text.styled_text_span.png

bin/cache/pkg/goldens/packages/flutter/test/rendering/localized_fonts.text_ambient_locale.chars.png

... | non_priority | update rendering localized fonts golden images when is fixed these golden files will need to be updated bin cache pkg goldens packages flutter test rendering localized fonts rich text styled text span png bin cache pkg goldens packages flutter test rendering localized fonts text ambient locale chars png bin... | 0 |

217,806 | 24,351,601,595 | IssuesEvent | 2022-10-03 01:00:39 | billmcchesney1/hadoop | https://api.github.com/repos/billmcchesney1/hadoop | opened | CVE-2022-42004 (Medium) detected in jackson-databind-2.9.10.1.jar | security vulnerability | ## CVE-2022-42004 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.10.1.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core str... | True | CVE-2022-42004 (Medium) detected in jackson-databind-2.9.10.1.jar - ## CVE-2022-42004 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.10.1.jar</b></p></summary>

... | non_priority | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to vulnerable library hadoop yarn project... | 0 |

123,831 | 4,876,737,864 | IssuesEvent | 2016-11-16 13:49:10 | odalic/odalic-ui | https://api.github.com/repos/odalic/odalic-ui | opened | No processing results display for the first result paged until i hit Next and then Previous to get back | priority: High | This needs to be fixed. | 1.0 | No processing results display for the first result paged until i hit Next and then Previous to get back - This needs to be fixed. | priority | no processing results display for the first result paged until i hit next and then previous to get back this needs to be fixed | 1 |

28,607 | 2,708,056,175 | IssuesEvent | 2015-04-08 05:26:51 | leo-project/leofs | https://api.github.com/repos/leo-project/leofs | closed | leo_gateway can't stop (kill -0) properly on OSX. | Priority-LOW _leo_gateway | leo_gateway can't stop properly on OSX.

This is used ``kill -0`` command, but it does not work on OSX.

leo_gateway/bin/leo_gateway line 157.

```bash

134 stop)

135 # Wait for the node to completely stop...

136 case `uname -s` in

137 Linux|Darwin|FreeBSD|Drag... | 1.0 | leo_gateway can't stop (kill -0) properly on OSX. - leo_gateway can't stop properly on OSX.

This is used ``kill -0`` command, but it does not work on OSX.

leo_gateway/bin/leo_gateway line 157.

```bash

134 stop)

135 # Wait for the node to completely stop...

136 case `uname -... | priority | leo gateway can t stop kill properly on osx leo gateway can t stop properly on osx this is used kill command but it does not work on osx leo gateway bin leo gateway line bash stop wait for the node to completely stop case uname s in ... | 1 |

186,267 | 21,920,324,108 | IssuesEvent | 2022-05-22 13:21:54 | rengert/bolzplatzarena.blog | https://api.github.com/repos/rengert/bolzplatzarena.blog | reopened | CVE-2018-8292 (High) detected in system.net.http.4.3.0.nupkg | security vulnerability | ## CVE-2018-8292 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>system.net.http.4.3.0.nupkg</b></p></summary>

<p>Provides a programming interface for modern HTTP applications, includi... | True | CVE-2018-8292 (High) detected in system.net.http.4.3.0.nupkg - ## CVE-2018-8292 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>system.net.http.4.3.0.nupkg</b></p></summary>

<p>Provide... | non_priority | cve high detected in system net http nupkg cve high severity vulnerability vulnerable library system net http nupkg provides a programming interface for modern http applications including http client components that library home page a href path to dependency file ... | 0 |

8,150 | 2,869,058,542 | IssuesEvent | 2015-06-05 22:59:55 | dart-lang/mock | https://api.github.com/repos/dart-lang/mock | closed | Mocks Package is not friendly to use | AsDesigned bug | <a href="https://github.com/TedSander"><img src="https://avatars.githubusercontent.com/u/5643740?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [TedSander](https://github.com/TedSander)**

_Originally opened as dart-lang/sdk#16147_

----

The mocks package is not user friendly. It can't be use... | 1.0 | Mocks Package is not friendly to use - <a href="https://github.com/TedSander"><img src="https://avatars.githubusercontent.com/u/5643740?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [TedSander](https://github.com/TedSander)**

_Originally opened as dart-lang/sdk#16147_

----

The mocks packag... | non_priority | mocks package is not friendly to use issue by originally opened as dart lang sdk the mocks package is not user friendly it can t be used with code completion and refactoring is difficult with it an interface much like mockito would be ideal as it stands i tend to avoid mocking in dart becaus... | 0 |

105,129 | 22,916,206,009 | IssuesEvent | 2022-07-17 01:56:52 | Arcanorum/rogueworld | https://api.github.com/repos/Arcanorum/rogueworld | closed | Damage particles triggered when a creature sprite goes off screen | bug good first issue code | When a creature goes outside of the player view bounds, it is destroyed, which is also causing the death effect of spraying a bunch of damage particles from the point the entity was destroyed.

Need some check in the Character destroy event for if the entity was actually killed or not.

Hint:

https://github.com/Ar... | 1.0 | Damage particles triggered when a creature sprite goes off screen - When a creature goes outside of the player view bounds, it is destroyed, which is also causing the death effect of spraying a bunch of damage particles from the point the entity was destroyed.

Need some check in the Character destroy event for if th... | non_priority | damage particles triggered when a creature sprite goes off screen when a creature goes outside of the player view bounds it is destroyed which is also causing the death effect of spraying a bunch of damage particles from the point the entity was destroyed need some check in the character destroy event for if th... | 0 |

489,109 | 14,101,302,735 | IssuesEvent | 2020-11-06 06:32:24 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | open.spotify.com - design is broken | browser-fixme ml-needsdiagnosis-false ml-probability-high priority-critical | <!-- @browser: Dragon 65.0.2 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:65.0) Gecko/20100101 Firefox/65.0 IceDragon/65.0.2 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/61193 -->

**URL**: https://open.spotify.com/

**Browser / Versio... | 1.0 | open.spotify.com - design is broken - <!-- @browser: Dragon 65.0.2 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:65.0) Gecko/20100101 Firefox/65.0 IceDragon/65.0.2 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/61193 -->

**URL**: https:/... | priority | open spotify com design is broken url browser version dragon operating system windows tested another browser yes edge problem type design is broken description images not loaded steps to reproduce view the screenshot img alt screenshot ... | 1 |

372,192 | 11,010,776,542 | IssuesEvent | 2019-12-04 15:10:14 | geosolutions-it/geotools | https://api.github.com/repos/geosolutions-it/geotools | closed | Parse user provided mapping and store it in an appropriate structure | C009-2016-MONGODB Priority: Medium enhancement in progress | Using the user provided schema and provided mapping we should store that information in a way that can be easealy used to build a complex feature type, app-schema already existing support for this should be used (including their defined DSL).

| 1.0 | Parse user provided mapping and store it in an appropriate structure - Using the user provided schema and provided mapping we should store that information in a way that can be easealy used to build a complex feature type, app-schema already existing support for this should be used (including their defined DSL).

| priority | parse user provided mapping and store it in an appropriate structure using the user provided schema and provided mapping we should store that information in a way that can be easealy used to build a complex feature type app schema already existing support for this should be used including their defined dsl | 1 |

620,532 | 19,564,321,929 | IssuesEvent | 2022-01-03 21:06:42 | stake-house/eth-wizard | https://api.github.com/repos/stake-house/eth-wizard | closed | Uncaught exception with Teku on Windows | bug priority - high Windows 10 | Handle this better with eth-wizard 0.7.3.

```

2022-01-03 13:18:13,924 - ethwizard.platforms.windows10 - CRITICAL - Uncaught exception

Traceback (most recent call last):

File "C:\Users\Test\AppData\Local\Temp\7zS805612E6\httpx\_transports\default.py", line 62, in map_httpcore_exceptions

yield

File "C:\Us... | 1.0 | Uncaught exception with Teku on Windows - Handle this better with eth-wizard 0.7.3.

```

2022-01-03 13:18:13,924 - ethwizard.platforms.windows10 - CRITICAL - Uncaught exception

Traceback (most recent call last):

File "C:\Users\Test\AppData\Local\Temp\7zS805612E6\httpx\_transports\default.py", line 62, in map_htt... | priority | uncaught exception with teku on windows handle this better with eth wizard ethwizard platforms critical uncaught exception traceback most recent call last file c users test appdata local temp httpx transports default py line in map httpcore exceptions yield ... | 1 |

188,703 | 15,168,151,276 | IssuesEvent | 2021-02-12 18:56:06 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | opened | [Github] Request for changes to /products/identity-personalization/direct-deposit folder | direct deposit documentation-support vsa-authenticated-exp | ## Background

We need some restructuring in the `/products/identity-personalization/direct-deposit` folder in order to better account for different versions of direct deposit and to improve for overall organization.

## Tasks

Please help us with the following:

- [ ] Under `/products/identity-personalization/... | 1.0 | [Github] Request for changes to /products/identity-personalization/direct-deposit folder - ## Background

We need some restructuring in the `/products/identity-personalization/direct-deposit` folder in order to better account for different versions of direct deposit and to improve for overall organization.

## Task... | non_priority | request for changes to products identity personalization direct deposit folder background we need some restructuring in the products identity personalization direct deposit folder in order to better account for different versions of direct deposit and to improve for overall organization tasks pl... | 0 |

297,771 | 9,181,501,010 | IssuesEvent | 2019-03-05 10:22:41 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | CONFIG_BT_HCI_TX_STACK_SIZE is too small | area: Bluetooth bug priority: low | **Describe the bug**

I have pca10059 configured as central (running ncs nrf_desktop). The device crashes during connection attempt with a peripheral.

`CONFIG_BT_HCI_TX_STACK_SIZE` is set to 640B in Zephyr. If extend it to 1024B program works (I have not tried lower values yet).

We have not seen it in the past. The... | 1.0 | CONFIG_BT_HCI_TX_STACK_SIZE is too small - **Describe the bug**

I have pca10059 configured as central (running ncs nrf_desktop). The device crashes during connection attempt with a peripheral.

`CONFIG_BT_HCI_TX_STACK_SIZE` is set to 640B in Zephyr. If extend it to 1024B program works (I have not tried lower values ye... | priority | config bt hci tx stack size is too small describe the bug i have configured as central running ncs nrf desktop the device crashes during connection attempt with a peripheral config bt hci tx stack size is set to in zephyr if extend it to program works i have not tried lower values yet we have... | 1 |

392,274 | 11,589,334,328 | IssuesEvent | 2020-02-24 01:38:44 | omou-org/mainframe | https://api.github.com/repos/omou-org/mainframe | closed | POST Instructor Availability Not Working | 3 hours bug priority | When we post an instructor availability, the response we get is "null" for all the times

<img width="941" alt="Screen Shot 2020-01-16 at 10 55 39 PM" src="https://user-images.githubusercontent.com/12959959/72590756-5daad680-38b3-11ea-8fc7-cf960847d88e.png">

| 1.0 | POST Instructor Availability Not Working - When we post an instructor availability, the response we get is "null" for all the times

<img width="941" alt="Screen Shot 2020-01-16 at 10 55 39 PM" src="https://user-images.githubusercontent.com/12959959/72590756-5daad680-38b3-11ea-8fc7-cf960847d88e.png">

| priority | post instructor availability not working when we post an instructor availability the response we get is null for all the times img width alt screen shot at pm src | 1 |

500,667 | 14,503,816,856 | IssuesEvent | 2020-12-11 23:30:04 | googleapis/nodejs-recommender | https://api.github.com/repos/googleapis/nodejs-recommender | closed | Synthesis failed for nodejs-recommender | api: recommender autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate nodejs-recommender. :broken_heart:

Here's the output from running `synth.py`:

```

e7e855aed5a29e6e83c39beba2a

2020-12-11 21:37:59,042 autosynth [DEBUG] > Running: git log -1 --pretty=%at c2de32114ec484aa708d32012d1fa8d75232daf5

2020-12-11 21:37:59,045 autosynth [DEBUG] > Running: ... | 1.0 | Synthesis failed for nodejs-recommender - Hello! Autosynth couldn't regenerate nodejs-recommender. :broken_heart:

Here's the output from running `synth.py`:

```

e7e855aed5a29e6e83c39beba2a

2020-12-11 21:37:59,042 autosynth [DEBUG] > Running: git log -1 --pretty=%at c2de32114ec484aa708d32012d1fa8d75232daf5

2020-12-11 ... | priority | synthesis failed for nodejs recommender hello autosynth couldn t regenerate nodejs recommender broken heart here s the output from running synth py autosynth running git log pretty at autosynth running git log pretty at autosynth r... | 1 |

312,122 | 9,543,589,544 | IssuesEvent | 2019-05-01 10:49:10 | aau-giraf/weekplanner | https://api.github.com/repos/aau-giraf/weekplanner | closed | Change buttons on ConfirmDialog | priority: high type: refactor | The buttons on GirafConfirmDialog should be a button with gradient colors.

Button can be refactored after issue #85 has been merged.

| 1.0 | Change buttons on ConfirmDialog - The buttons on GirafConfirmDialog should be a button with gradient colors.

Button can be refactored after issue #85 has been merged.

| priority | change buttons on confirmdialog the buttons on girafconfirmdialog should be a button with gradient colors button can be refactored after issue has been merged | 1 |

66,821 | 16,725,814,689 | IssuesEvent | 2021-06-10 12:53:28 | opengeospatial/ogcapi-features | https://api.github.com/repos/opengeospatial/ogcapi-features | closed | CQL: requirement /req/filter/filter-crs-param: HTTP status code | API building blocks Part 3: CQL | Requirement /req/filter/filter-crs-param, subrequirement C:

> The server SHALL return an error, if it does not support the CRS identified in `filter-crs` for the resource.

Should that be the following in order to be consistent with /req/crs/fc-bbox-crs-valid-value and /req/crs/fc-crs-valid-value?

The server S... | 1.0 | CQL: requirement /req/filter/filter-crs-param: HTTP status code - Requirement /req/filter/filter-crs-param, subrequirement C:

> The server SHALL return an error, if it does not support the CRS identified in `filter-crs` for the resource.

Should that be the following in order to be consistent with /req/crs/fc-bbo... | non_priority | cql requirement req filter filter crs param http status code requirement req filter filter crs param subrequirement c the server shall return an error if it does not support the crs identified in filter crs for the resource should that be the following in order to be consistent with req crs fc bbo... | 0 |

379,980 | 11,252,614,164 | IssuesEvent | 2020-01-11 10:04:34 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.facebook.com - video or audio doesn't play | browser-firefox engine-gecko form-v2-experiment priority-critical | <!-- @browser: Firefox 72.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:72.0) Gecko/20100101 Firefox/72.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: form-v2-experiment -->

**URL**: http://www.facebook.com

**Browser / Version**: Firefox 72.0

**Operating System**: Windows 10

**Tested Anothe... | 1.0 | www.facebook.com - video or audio doesn't play - <!-- @browser: Firefox 72.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:72.0) Gecko/20100101 Firefox/72.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: form-v2-experiment -->

**URL**: http://www.facebook.com

**Browser / Version**: Firefox 72.0... | priority | video or audio doesn t play url browser version firefox operating system windows tested another browser yes other problem type video or audio doesn t play description the video or audio does not play steps to reproduce video not playing properly at any cost ... | 1 |

52,865 | 3,030,585,955 | IssuesEvent | 2015-08-04 18:13:19 | Financial-Times/next-front-page | https://api.github.com/repos/Financial-Times/next-front-page | closed | Mobile News/fastFT toggle styling wrong | bug HIGH PRIORITY | - wrong font family

- missing the initial status underline | 1.0 | Mobile News/fastFT toggle styling wrong - - wrong font family

- missing the initial status underline | priority | mobile news fastft toggle styling wrong wrong font family missing the initial status underline | 1 |

805,762 | 29,667,387,041 | IssuesEvent | 2023-06-11 00:53:08 | davidfstr/Crystal-Web-Archiver | https://api.github.com/repos/davidfstr/Crystal-Web-Archiver | opened | Allow treating URLs found inside <script> tags with an image file extension as NON-embedded | priority-low type-feature topic-sitespecific | URLs found inside `<script>` tags with an image file extension like .jpg or .png are assumed Crystal to be **embedded** by default, meaning that they will be automatically downloaded as an embedded-subresource when its referencing HTML resource is downloaded.

Sometimes when downloading a particular Resource Group wi... | 1.0 | Allow treating URLs found inside <script> tags with an image file extension as NON-embedded - URLs found inside `<script>` tags with an image file extension like .jpg or .png are assumed Crystal to be **embedded** by default, meaning that they will be automatically downloaded as an embedded-subresource when its referen... | priority | allow treating urls found inside tags with an image file extension as non embedded urls found inside tags with an image file extension like jpg or png are assumed crystal to be embedded by default meaning that they will be automatically downloaded as an embedded subresource when its referencing html reso... | 1 |

276,665 | 24,009,581,629 | IssuesEvent | 2022-09-14 17:32:55 | onc-healthit/onc-certification-g10-test-kit | https://api.github.com/repos/onc-healthit/onc-certification-g10-test-kit | closed | Add practitioner-scoped EHR Launch tests | g10-test-kit add constraint v3.0.0 | The SMART App Launch 2.0.0 IG [contains the following language](http://hl7.org/fhir/smart-app-launch/STU2/scopes-and-launch-context.html#apps-that-launch-from-the-ehr) in relation to both EHR and standalone launches:

> If an application requests a clinical scope which is restricted to a single patient (e.g., patient... | 1.0 | Add practitioner-scoped EHR Launch tests - The SMART App Launch 2.0.0 IG [contains the following language](http://hl7.org/fhir/smart-app-launch/STU2/scopes-and-launch-context.html#apps-that-launch-from-the-ehr) in relation to both EHR and standalone launches:

> If an application requests a clinical scope which is re... | non_priority | add practitioner scoped ehr launch tests the smart app launch ig in relation to both ehr and standalone launches if an application requests a clinical scope which is restricted to a single patient e g patient rs and the authorization results in the ehr is granting that scope the ehr shall esta... | 0 |

469,810 | 13,526,390,103 | IssuesEvent | 2020-09-15 14:11:39 | mozilla/addons-server | https://api.github.com/repos/mozilla/addons-server | closed | Optimize the storage in mlbf directory | priority: p4 | ### Describe the problem and steps to reproduce it:

Currently dumped blocked/notblocked json files are saved in `<storage_root>/mlbf` directory. In chatting with @eviljeff [[1]](https://mozilla.slack.com/archives/C4MTGNZ7S/p1589560549015900), we think that there are two possible issues with the current design.

Firs... | 1.0 | Optimize the storage in mlbf directory - ### Describe the problem and steps to reproduce it:

Currently dumped blocked/notblocked json files are saved in `<storage_root>/mlbf` directory. In chatting with @eviljeff [[1]](https://mozilla.slack.com/archives/C4MTGNZ7S/p1589560549015900), we think that there are two possibl... | priority | optimize the storage in mlbf directory describe the problem and steps to reproduce it currently dumped blocked notblocked json files are saved in mlbf directory in chatting with eviljeff we think that there are two possible issues with the current design first is that there is no hierarchy under ... | 1 |

112,307 | 9,560,075,560 | IssuesEvent | 2019-05-03 18:31:47 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Monitoring - can't completely clean up after disabling cluster monitoring | area/monitoring kind/bug status/ready-for-review status/resolved status/to-test status/triaged team/cn | **What kind of request is this (question/bug/enhancement/feature request):**

Bug

**Steps to reproduce (least amount of steps as possible):**

1. Enable Monitoring with large size requirement CPU & RAM

1. Disable Monitoring without enabling Monitoring completely

**Result:**

Can't clean up the `cluster-monitoring` App o... | 1.0 | Monitoring - can't completely clean up after disabling cluster monitoring - **What kind of request is this (question/bug/enhancement/feature request):**

Bug

**Steps to reproduce (least amount of steps as possible):**

1. Enable Monitoring with large size requirement CPU & RAM

1. Disable Monitoring without enabling Moni... | non_priority | monitoring can t completely clean up after disabling cluster monitoring what kind of request is this question bug enhancement feature request bug steps to reproduce least amount of steps as possible enable monitoring with large size requirement cpu ram disable monitoring without enabling moni... | 0 |

113,070 | 11,786,994,136 | IssuesEvent | 2020-03-17 13:18:59 | scholaempirica/reschola | https://api.github.com/repos/scholaempirica/reschola | closed | Update install instructions for drat repo | bug documentation | install.packages("reschola", repos = "https://scholaempirica.github.io/drat/", dependencies = T)

install.packages treats dependencies differently if repos is set | 1.0 | Update install instructions for drat repo - install.packages("reschola", repos = "https://scholaempirica.github.io/drat/", dependencies = T)