Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

299,570 | 9,205,621,911 | IssuesEvent | 2019-03-08 11:07:15 | qissue-bot/QGIS | https://api.github.com/repos/qissue-bot/QGIS | closed | Grayscale Band Min Max Values Not Saved | Category: Rasters Component: Affected QGIS version Component: Crashes QGIS or corrupts data Component: Easy fix? Component: Operating System Component: Pull Request or Patch supplied Component: Regression? Component: Resolution Priority: Low Project: QGIS Application Status: Closed Tracker: Bug report | ---

Author Name: **David A- Riggs -** (David A- Riggs -)

Original Redmine Issue: 945, https://issues.qgis.org/issues/945

Original Assignee: ersts -

---

1. Add a new raster layer, grayscale .tiff (DEM from ftp://ftp.wvgis.wvu.edu/pub/Clearinghouse/DEM/3m/Tiff/ )

2. Edit Properties: Select Grayscale Band Scaling "C... | 1.0 | Grayscale Band Min Max Values Not Saved - ---

Author Name: **David A- Riggs -** (David A- Riggs -)

Original Redmine Issue: 945, https://issues.qgis.org/issues/945

Original Assignee: ersts -

---

1. Add a new raster layer, grayscale .tiff (DEM from ftp://ftp.wvgis.wvu.edu/pub/Clearinghouse/DEM/3m/Tiff/ )

2. Edit Pr... | priority | grayscale band min max values not saved author name david a riggs david a riggs original redmine issue original assignee ersts add a new raster layer grayscale tiff dem from ftp ftp wvgis wvu edu pub clearinghouse dem tiff edit properties select grayscale band scal... | 1 |

281,985 | 30,889,065,524 | IssuesEvent | 2023-08-04 02:11:23 | panasalap/linux-4.1.15 | https://api.github.com/repos/panasalap/linux-4.1.15 | opened | CVE-2018-5873 (High) detected in linux-yocto-devv4.2.8 | Mend: dependency security vulnerability | ## CVE-2018-5873 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yocto-devv4.2.8</b></p></summary>

<p>

<p>Linux Embedded Kernel - tracks the next mainline release</p>

<p>Library ... | True | CVE-2018-5873 (High) detected in linux-yocto-devv4.2.8 - ## CVE-2018-5873 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yocto-devv4.2.8</b></p></summary>

<p>

<p>Linux Embedded ... | non_priority | cve high detected in linux yocto cve high severity vulnerability vulnerable library linux yocto linux embedded kernel tracks the next mainline release library home page a href found in base branch master vulnerable source files fs nsfs ... | 0 |

399,950 | 27,263,071,008 | IssuesEvent | 2023-02-22 16:06:51 | nest-desktop/nest-desktop | https://api.github.com/repos/nest-desktop/nest-desktop | opened | Docs: Incorrect rendering in the Snap installation guide | bug documentation | **Describe the bug**

The setup for Snap (.../user/setup/index.html#snap-linux) in the docs is not rendered correctly.

**To Reproduce**

Steps to reproduce the behavior:

1. Visit the Snap page

2. See error

**Expected behavior**

The rendering should be correct.

**Screenshots**

in the docs is not rendered correctly.

**To Reproduce**

Steps to reproduce the behavior:

1. Visit the Snap page

2. See error

**Expected behavior**

The rendering should ... | non_priority | docs incorrect rendering in the snap installation guide describe the bug the setup for snap user setup index html snap linux in the docs is not rendered correctly to reproduce steps to reproduce the behavior visit the snap page see error expected behavior the rendering should ... | 0 |

580,617 | 17,262,450,003 | IssuesEvent | 2021-07-22 09:30:01 | skni-kod/TournamentAppFrontend | https://api.github.com/repos/skni-kod/TournamentAppFrontend | closed | Tournament Info Map Bug | High priority | Naprawić wypisanie błędów w konsoli podczas przechodzenia na Tournament Info związanych z mapą - ewentualnie sprawdzić, czy błąd się nie pojawia, jeśli dane do mapy byłyby wczytywane dynamicznie z bazy (sam link).

Błąd na żółtym tle nie zawsze się pojawia - sprawdzić dlaczego.

.

Błąd na żółtym tle nie zawsze się pojawia - sprawdzić dlaczego.

docs. | 1.0 | [Docs][API] Add CREATE TABLESPACE to YSQL API docs - ### Description

Add CREATE TABLESPACES to [YSQL API](https://docs.yugabyte.com/latest/api/ysql/the-sql-language/statements/) docs. | non_priority | add create tablespace to ysql api docs description add create tablespaces to docs | 0 |

557,142 | 16,502,015,240 | IssuesEvent | 2021-05-25 15:20:35 | NeuraLegion/nexploit-cli | https://api.github.com/repos/NeuraLegion/nexploit-cli | closed | Ignore remote scripts if local scripts are used | Priority: high Type: enhancement | Allow configure from a cloud of script settings, but if a local script is selected they should be ignored once the repeater is connected

| 1.0 | Ignore remote scripts if local scripts are used - Allow configure from a cloud of script settings, but if a local script is selected they should be ignored once the repeater is connected

| priority | ignore remote scripts if local scripts are used allow configure from a cloud of script settings but if a local script is selected they should be ignored once the repeater is connected | 1 |

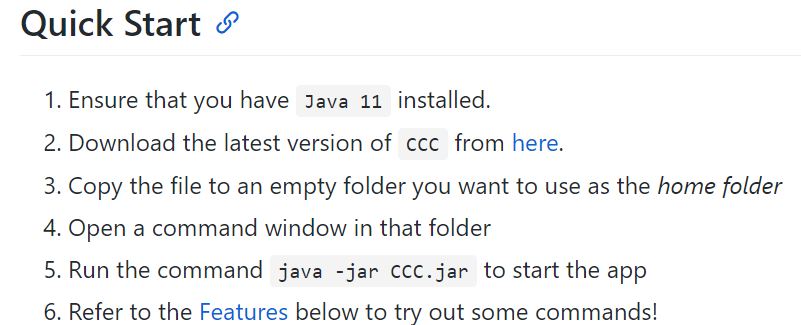

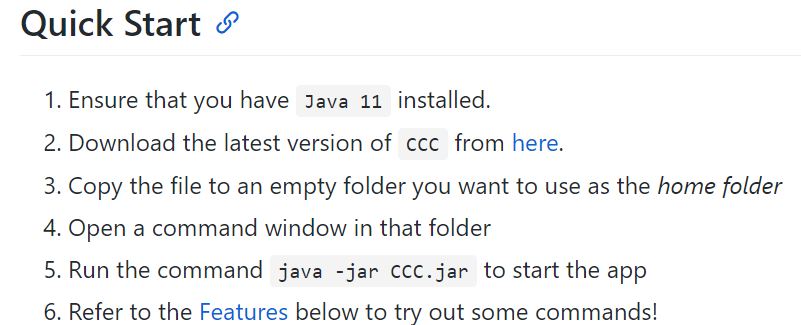

346,826 | 24,887,271,592 | IssuesEvent | 2022-10-28 08:51:55 | brian-vb/ped | https://api.github.com/repos/brian-vb/ped | opened | Incorrect Instruction for Step 5 of UG (Quick Start) | severity.VeryLow type.DocumentationBug |

The downloaded jar file is tp.jar

<!--session: 1666946577070-1af66f5c-5dec-424b-aed2-3d4e50698581-->

<!--Version: Web v3.4.4--> | 1.0 | Incorrect Instruction for Step 5 of UG (Quick Start) -

The downloaded jar file is tp.jar

<!--session: 1666946577070-1af66f5c-5dec-424b-aed2-3d4e50698581-->

<!--Version: Web v3.4.4--> | non_priority | incorrect instruction for step of ug quick start the downloaded jar file is tp jar | 0 |

125,326 | 4,955,587,017 | IssuesEvent | 2016-12-01 20:51:27 | caitlynmayers/dukes | https://api.github.com/repos/caitlynmayers/dukes | opened | Shopping Cart: Create Account Alignment | Low Priority Style | Upon checking out, "create an account" is on a separate line than the check box

<img width="189" alt="screen shot 2016-12-01 at 3 47 42 pm" src="https://cloud.githubusercontent.com/assets/24302252/20812010/fac027ea-b7dd-11e6-9e92-bb4a97466f82.png">

| 1.0 | Shopping Cart: Create Account Alignment - Upon checking out, "create an account" is on a separate line than the check box

<img width="189" alt="screen shot 2016-12-01 at 3 47 42 pm" src="https://cloud.githubusercontent.com/assets/24302252/20812010/fac027ea-b7dd-11e6-9e92-bb4a97466f82.png">

| priority | shopping cart create account alignment upon checking out create an account is on a separate line than the check box img width alt screen shot at pm src | 1 |

17,492 | 2,615,145,540 | IssuesEvent | 2015-03-01 06:21:26 | chrsmith/html5rocks | https://api.github.com/repos/chrsmith/html5rocks | closed | Need hook script for site update | auto-migrated Maintenance Milestone-2 Priority-Low Type-Enhancement | ```

- one for updating/building the slides cache manifest

- one for .zip'ing the studio samples

First is done, but should be put into a .sh script on in place of running

appcfg.py update.

```

Original issue reported on code.google.com by `ericbide...@html5rocks.com` on 2 Aug 2010 at 4:52 | 1.0 | Need hook script for site update - ```

- one for updating/building the slides cache manifest

- one for .zip'ing the studio samples

First is done, but should be put into a .sh script on in place of running

appcfg.py update.

```

Original issue reported on code.google.com by `ericbide...@html5rocks.com` on 2 Aug 2010 a... | priority | need hook script for site update one for updating building the slides cache manifest one for zip ing the studio samples first is done but should be put into a sh script on in place of running appcfg py update original issue reported on code google com by ericbide com on aug at | 1 |

39,392 | 10,335,278,628 | IssuesEvent | 2019-09-03 10:11:53 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | Protocol "https" not supported or disabled in libcurl | stat:awaiting response subtype: ubuntu/linux type:build/install | Hi Guys,

can anyone help me with the following issue

**System information**

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04):

- TensorFlow installed from source.

- TensorFlow version: r1.11

- Python version: 2.7.12

- GCC/Compiler version (if compiling from source): GNU 5.4.0

**Describe the problem... | 1.0 | Protocol "https" not supported or disabled in libcurl - Hi Guys,

can anyone help me with the following issue

**System information**

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04):

- TensorFlow installed from source.

- TensorFlow version: r1.11

- Python version: 2.7.12

- GCC/Compiler version (if c... | non_priority | protocol https not supported or disabled in libcurl hi guys can anyone help me with the following issue system information os platform and distribution e g linux ubuntu tensorflow installed from source tensorflow version python version gcc compiler version if compil... | 0 |

408,669 | 11,950,644,763 | IssuesEvent | 2020-04-03 15:32:04 | cloudfoundry-incubator/kubecf | https://api.github.com/repos/cloudfoundry-incubator/kubecf | closed | Disable SITS and rotate tests in the KubeCF pipeline | Priority: Critical Status: Accepted Type: CI | **Is your feature request related to a problem? Please describe.**

SITS and rotation tests are often failing, and they are gating tests that are passing

**Describe the solution you'd like**

Disable SITS and rotation tests until they prove to be reliable.

**Describe alternatives you've considered**

N/A

**Add... | 1.0 | Disable SITS and rotate tests in the KubeCF pipeline - **Is your feature request related to a problem? Please describe.**

SITS and rotation tests are often failing, and they are gating tests that are passing

**Describe the solution you'd like**

Disable SITS and rotation tests until they prove to be reliable.

**... | priority | disable sits and rotate tests in the kubecf pipeline is your feature request related to a problem please describe sits and rotation tests are often failing and they are gating tests that are passing describe the solution you d like disable sits and rotation tests until they prove to be reliable ... | 1 |

56,624 | 15,226,712,518 | IssuesEvent | 2021-02-18 09:15:33 | galasa-dev/projectmanagement | https://api.github.com/repos/galasa-dev/projectmanagement | closed | Galasa 3270 locking up during local runs | Manager: zOS 3270 defect | It appears that galasa seems to be locking up during local runs | 1.0 | Galasa 3270 locking up during local runs - It appears that galasa seems to be locking up during local runs | non_priority | galasa locking up during local runs it appears that galasa seems to be locking up during local runs | 0 |

26,511 | 4,733,369,539 | IssuesEvent | 2016-10-19 10:56:30 | sfepy/sfepy | https://api.github.com/repos/sfepy/sfepy | closed | "Latest snapshot" broken link | defect easy-to-fix | In the [website](http://sfepy.org/doc-devel/downloads.html) appears the following link

http://github.com/sfepy/sfepy/archives/master

But it leads to nowhere.

| 1.0 | "Latest snapshot" broken link - In the [website](http://sfepy.org/doc-devel/downloads.html) appears the following link

http://github.com/sfepy/sfepy/archives/master

But it leads to nowhere.

| non_priority | latest snapshot broken link in the appears the following link but it leads to nowhere | 0 |

322,847 | 9,829,323,639 | IssuesEvent | 2019-06-15 19:40:11 | marklogic/marklogic-data-hub | https://api.github.com/repos/marklogic/marklogic-data-hub | closed | Duplicate indices being created by QuickStart in entity-config/databases/final-database.json and staging-database.json | 2.0 3.0 4.0 BackLog better-errors bug priority:medium | [final-database.json.txt](https://github.com/marklogic-community/marklogic-data-hub/files/1471693/final-database.json.txt)

[staging-database.json.txt](https://github.com/marklogic-community/marklogic-data-hub/files/1471694/staging-database.json.txt)

#### The issue

Short description of the problem:

Duplicate indices b... | 1.0 | Duplicate indices being created by QuickStart in entity-config/databases/final-database.json and staging-database.json - [final-database.json.txt](https://github.com/marklogic-community/marklogic-data-hub/files/1471693/final-database.json.txt)

[staging-database.json.txt](https://github.com/marklogic-community/marklogic... | priority | duplicate indices being created by quickstart in entity config databases final database json and staging database json the issue short description of the problem duplicate indices being created by quickstart in entity config databases final database json and staging database json what behavior are... | 1 |

649,907 | 21,329,970,371 | IssuesEvent | 2022-04-18 06:55:57 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | az1.qualtrics.com - design is broken | priority-important browser-fenix engine-gecko | <!-- @browser: Firefox Mobile 101.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:101.0) Gecko/101.0 Firefox/101.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/102794 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://az1.qua... | 1.0 | az1.qualtrics.com - design is broken - <!-- @browser: Firefox Mobile 101.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:101.0) Gecko/101.0 Firefox/101.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/102794 -->

<!-- @extra_labels: brow... | priority | qualtrics com design is broken url browser version firefox mobile operating system android tested another browser yes internet explorer problem type design is broken description items are overlapped steps to reproduce my kids are missing they tampered with... | 1 |

206,098 | 7,108,570,063 | IssuesEvent | 2018-01-17 00:48:27 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [craftercms] The gradlew test stops to display the log messages send by test cases | bug priority: high | ### Expected behavior

The gradlew should not stops to displays log messages until the end of the execution.

### Actual behavior

After a time (6mins aprox) the gradlew stops to display the log messages send by each test cases and verbose of testNG. However, the automation continues executing in background.

And for... | 1.0 | [craftercms] The gradlew test stops to display the log messages send by test cases - ### Expected behavior

The gradlew should not stops to displays log messages until the end of the execution.

### Actual behavior

After a time (6mins aprox) the gradlew stops to display the log messages send by each test cases and ... | priority | the gradlew test stops to display the log messages send by test cases expected behavior the gradlew should not stops to displays log messages until the end of the execution actual behavior after a time aprox the gradlew stops to display the log messages send by each test cases and verbose of test... | 1 |

38,441 | 10,196,547,258 | IssuesEvent | 2019-08-12 21:02:43 | NuGet/Home | https://api.github.com/repos/NuGet/Home | closed | Lock file missing entries when RuntimeIdentifier is specified for netcoreapp 3.0 projects | Area:RepeatableBuild Area:Restore ClosedAs:Duplicate Style:PackageReference Type:Bug | ## Details about Problem

NuGet product used: dotnet.exe

dotnet.exe --version: 3.0.100-preview7-012821

Worked before? If so, with which NuGet version: yes. Seems to work even with current sdk if targeting netcoreapp2.0

## Detailed repro steps so we can see the same problem

### Automated repro

Download [r... | 1.0 | Lock file missing entries when RuntimeIdentifier is specified for netcoreapp 3.0 projects - ## Details about Problem

NuGet product used: dotnet.exe

dotnet.exe --version: 3.0.100-preview7-012821

Worked before? If so, with which NuGet version: yes. Seems to work even with current sdk if targeting netcoreapp2.0

... | non_priority | lock file missing entries when runtimeidentifier is specified for netcoreapp projects details about problem nuget product used dotnet exe dotnet exe version worked before if so with which nuget version yes seems to work even with current sdk if targeting detailed repro step... | 0 |

156,532 | 24,624,567,437 | IssuesEvent | 2022-10-16 10:52:22 | dotnet/efcore | https://api.github.com/repos/dotnet/efcore | closed | Dictinct not working for custom select | closed-by-design | Dictinct not add in SQL

For query:

```

var queryable = this.dbSet.Where(

x =>

x.Translation.StartsWith(model.Word) &&

(x.Localization == model.From || x.Localization == model.Dest)).Distinct()

.Select(x => new Dict... | 1.0 | Dictinct not working for custom select - Dictinct not add in SQL

For query:

```

var queryable = this.dbSet.Where(

x =>

x.Translation.StartsWith(model.Word) &&

(x.Localization == model.From || x.Localization == model.Dest)).Distinct... | non_priority | dictinct not working for custom select dictinct not add in sql for query var queryable this dbset where x x translation startswith model word x localization model from x localization model dest distinct... | 0 |

202,781 | 7,054,973,431 | IssuesEvent | 2018-01-04 04:59:10 | Marri/glowfic | https://api.github.com/repos/Marri/glowfic | opened | Move from gmail address to glowfic.com addresses for email | 3. medium priority 8. medium type: enhancement v. planned | We have now verified glowfic.com with Amazon SES, and should send mail from our domain instead of the Gmail account. | 1.0 | Move from gmail address to glowfic.com addresses for email - We have now verified glowfic.com with Amazon SES, and should send mail from our domain instead of the Gmail account. | priority | move from gmail address to glowfic com addresses for email we have now verified glowfic com with amazon ses and should send mail from our domain instead of the gmail account | 1 |

706,316 | 24,264,732,117 | IssuesEvent | 2022-09-28 04:34:55 | hackforla/expunge-assist | https://api.github.com/repos/hackforla/expunge-assist | opened | Sweeping layout change desktop top content padding | role: development priority: high | During the dev + design meeting when dev displayed the large distance between the progress bar and the start of the content on the final page of the letter generator, the design team agreed there was too much white space and the distance should be shortened to 56px. For consistency, we would like all pages on the deskt... | 1.0 | Sweeping layout change desktop top content padding - During the dev + design meeting when dev displayed the large distance between the progress bar and the start of the content on the final page of the letter generator, the design team agreed there was too much white space and the distance should be shortened to 56px.... | priority | sweeping layout change desktop top content padding during the dev design meeting when dev displayed the large distance between the progress bar and the start of the content on the final page of the letter generator the design team agreed there was too much white space and the distance should be shortened to fo... | 1 |

558,677 | 16,540,347,719 | IssuesEvent | 2021-05-27 16:02:27 | CredentialEngine/CredentialRegistry | https://api.github.com/repos/CredentialEngine/CredentialRegistry | closed | Getting a count of total results in SPARQL is extremely slow | Blocker High Priority | What we're seeing currently:

- Queries like "all of the credentials with a given SOC code" take about 45 seconds

- I took the query apart into its two pieces, "all credentials" and "things with a given SOC code", and:

- "all credentials" takes about a full minute(!) to execute

- "things with a given SOC code"... | 1.0 | Getting a count of total results in SPARQL is extremely slow - What we're seeing currently:

- Queries like "all of the credentials with a given SOC code" take about 45 seconds

- I took the query apart into its two pieces, "all credentials" and "things with a given SOC code", and:

- "all credentials" takes about ... | priority | getting a count of total results in sparql is extremely slow what we re seeing currently queries like all of the credentials with a given soc code take about seconds i took the query apart into its two pieces all credentials and things with a given soc code and all credentials takes about a... | 1 |

168,267 | 14,144,494,291 | IssuesEvent | 2020-11-10 16:30:55 | dimosp/CineFriends | https://api.github.com/repos/dimosp/CineFriends | opened | Add the new post API endpoint functionality to the Documentation | documentation | Update Swagger and POSTMAN collection.

We have to include:

// photo

router.get('/posts/photo/:postId', photo);

// post routes

router.put('/posts/:postId', requireSignin, isPoster, updatePost);

router.delete('/posts/:postId', requireSignin, isPoster, deletePost); | 1.0 | Add the new post API endpoint functionality to the Documentation - Update Swagger and POSTMAN collection.

We have to include:

// photo

router.get('/posts/photo/:postId', photo);

// post routes

router.put('/posts/:postId', requireSignin, isPoster, updatePost);

router.delete('/posts/:postId', requireSignin, isP... | non_priority | add the new post api endpoint functionality to the documentation update swagger and postman collection we have to include photo router get posts photo postid photo post routes router put posts postid requiresignin isposter updatepost router delete posts postid requiresignin isp... | 0 |

47,987 | 13,300,530,352 | IssuesEvent | 2020-08-25 11:31:05 | rammatzkvosky/biojava | https://api.github.com/repos/rammatzkvosky/biojava | opened | CVE-2019-20330 (High) detected in jackson-databind-2.9.9.3.jar | security vulnerability | ## CVE-2019-20330 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.9.3.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core stream... | True | CVE-2019-20330 (High) detected in jackson-databind-2.9.9.3.jar - ## CVE-2019-20330 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.9.3.jar</b></p></summary>

<p>Gen... | non_priority | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file tmp ws scm biojava biojava... | 0 |

75,669 | 7,480,280,267 | IssuesEvent | 2018-04-04 16:53:52 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Deletion of GKE cluster results in repeated errors logs in server relating to cluster not found. | kind/bug status/resolved status/to-test version/2.0 | Rancher versions: Build from master

Steps to reproduce the problem:

Created a GKE cluster.

Added 1 workload.

Deleted this cluster.

This results in repeated errors logs in server relating to cluster not found.

```

E0404 02:04:48.149453 1 reflector.go:205] github.com/rancher/rancher/vendor/github.com/ra... | 1.0 | Deletion of GKE cluster results in repeated errors logs in server relating to cluster not found. - Rancher versions: Build from master

Steps to reproduce the problem:

Created a GKE cluster.

Added 1 workload.

Deleted this cluster.

This results in repeated errors logs in server relating to cluster not found.

`... | non_priority | deletion of gke cluster results in repeated errors logs in server relating to cluster not found rancher versions build from master steps to reproduce the problem created a gke cluster added workload deleted this cluster this results in repeated errors logs in server relating to cluster not found ... | 0 |

509,143 | 14,713,799,564 | IssuesEvent | 2021-01-05 10:55:37 | wso2/devstudio-tooling-ei | https://api.github.com/repos/wso2/devstudio-tooling-ei | closed | Validations are not working when creating a new API with a swagger definition | Priority/High Severity/Major Swagger Type/Bug | **Description:**

In the Integration Studio, we can create an API with a swagger definition. However, it throws NPE if the swagger definition is not in the correct format. We need to handle this tooling itself. Also, in **Create new API** wizard we can create an API without pointing a swagger definition file. It always... | 1.0 | Validations are not working when creating a new API with a swagger definition - **Description:**

In the Integration Studio, we can create an API with a swagger definition. However, it throws NPE if the swagger definition is not in the correct format. We need to handle this tooling itself. Also, in **Create new API** w... | priority | validations are not working when creating a new api with a swagger definition description in the integration studio we can create an api with a swagger definition however it throws npe if the swagger definition is not in the correct format we need to handle this tooling itself also in create new api w... | 1 |

58,588 | 24,495,964,021 | IssuesEvent | 2022-10-10 08:42:12 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | [Unified search]- line details expand query editor and SQL reference missing dark theme | bug Team:AppServicesSv impact:low Feature:Unified search | **Kibana version:** 8.5.0 BC2

**Elasticsearch version:** 8.5.0 BC2

**Server OS version:** darwin_x86_64

**Browser version:** chrome latest

**Browser OS version:** OS X

**Original install method (e.g. download page, yum, from source, etc.):** from staging

**Describe the bug:** When user switches to S... | 1.0 | [Unified search]- line details expand query editor and SQL reference missing dark theme - **Kibana version:** 8.5.0 BC2

**Elasticsearch version:** 8.5.0 BC2

**Server OS version:** darwin_x86_64

**Browser version:** chrome latest

**Browser OS version:** OS X

**Original install method (e.g. download page,... | non_priority | line details expand query editor and sql reference missing dark theme kibana version elasticsearch version server os version darwin browser version chrome latest browser os version os x original install method e g download page yum from source etc... | 0 |

52,839 | 27,800,942,100 | IssuesEvent | 2023-03-17 15:47:38 | modin-project/modin | https://api.github.com/repos/modin-project/modin | opened | Evaluate the pros and cons of lazy functions submission (via `partition.add_to_apply_calls`) | Performance 🚀 | > Do we want to make this lazy? Since `split_row_partitions` is in effect the properly partitioned dataframe, we can transform to col partitions, and then `add_to_apply_calls` the sort instead, and defer metadata materialization till it's needed?

_Originally posted by @RehanSD in https://github.com/modin-project/mod... | True | Evaluate the pros and cons of lazy functions submission (via `partition.add_to_apply_calls`) - > Do we want to make this lazy? Since `split_row_partitions` is in effect the properly partitioned dataframe, we can transform to col partitions, and then `add_to_apply_calls` the sort instead, and defer metadata materializat... | non_priority | evaluate the pros and cons of lazy functions submission via partition add to apply calls do we want to make this lazy since split row partitions is in effect the properly partitioned dataframe we can transform to col partitions and then add to apply calls the sort instead and defer metadata materializat... | 0 |

298,594 | 25,837,939,961 | IssuesEvent | 2022-12-12 21:17:14 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | DISABLED test_fn_grad_linalg_lu_factor_cuda_complex128 (__main__.TestBwdGradientsCUDA) | module: autograd triaged module: flaky-tests module: linear algebra skipped module: unknown | Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_fn_grad_linalg_lu_factor_cuda_complex128&suite=TestBwdGradientsCUDA) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/9289278980).

Over the past 3 hou... | 1.0 | DISABLED test_fn_grad_linalg_lu_factor_cuda_complex128 (__main__.TestBwdGradientsCUDA) - Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_fn_grad_linalg_lu_factor_cuda_complex128&suite=TestBwdGradientsCUDA) and the most recent trunk ... | non_priority | disabled test fn grad linalg lu factor cuda main testbwdgradientscuda platforms linux this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has been determined flaky in workflow s with failures and successes debugging instructions af... | 0 |

101,181 | 4,108,738,053 | IssuesEvent | 2016-06-06 17:06:32 | WordPress/meta-environment | https://api.github.com/repos/WordPress/meta-environment | opened | WordCamp.dev: Make sites HTTP-only | priority: medium type: enhancement | Having them use SSL just adds confusion for people, people they run into the certificate errors. There may be some code that expects HTTPS, but we can probably work around that. | 1.0 | WordCamp.dev: Make sites HTTP-only - Having them use SSL just adds confusion for people, people they run into the certificate errors. There may be some code that expects HTTPS, but we can probably work around that. | priority | wordcamp dev make sites http only having them use ssl just adds confusion for people people they run into the certificate errors there may be some code that expects https but we can probably work around that | 1 |

79,500 | 3,535,965,498 | IssuesEvent | 2016-01-16 22:37:52 | proveit-js/proveit | https://api.github.com/repos/proveit-js/proveit | closed | Support AFC psuedo-namespace and Draft namespace | enhancement imported Priority-Medium | _From [matthew.flaschen@gatech.edu](https://code.google.com/u/108647890027017428365/) on January 04, 2014 16:10:36_

Show ProveIt in the AFC psuedo-namespace (Wikipedia talk:Articles for creation) and the Draft namespace.

_Original issue: http://code.google.com/p/proveit-js/issues/detail?id=179_ | 1.0 | Support AFC psuedo-namespace and Draft namespace - _From [matthew.flaschen@gatech.edu](https://code.google.com/u/108647890027017428365/) on January 04, 2014 16:10:36_

Show ProveIt in the AFC psuedo-namespace (Wikipedia talk:Articles for creation) and the Draft namespace.

_Original issue: http://code.google.com/p/prov... | priority | support afc psuedo namespace and draft namespace from on january show proveit in the afc psuedo namespace wikipedia talk articles for creation and the draft namespace original issue | 1 |

534,992 | 15,679,991,392 | IssuesEvent | 2021-03-25 01:49:51 | Landry333/Big-Owl | https://api.github.com/repos/Landry333/Big-Owl | closed | US-52: As a supervised user, I would like to respond to an attendance supervision request | medium priority user story | Details:

- The request can be either rejected or accepted

- This is the point when the user is added to the list of users that will be supervised by the user monitoring them | 1.0 | US-52: As a supervised user, I would like to respond to an attendance supervision request - Details:

- The request can be either rejected or accepted

- This is the point when the user is added to the list of users that will be supervised by the user monitoring them | priority | us as a supervised user i would like to respond to an attendance supervision request details the request can be either rejected or accepted this is the point when the user is added to the list of users that will be supervised by the user monitoring them | 1 |

403,596 | 27,425,121,603 | IssuesEvent | 2023-03-01 19:40:05 | nasa-jpl/stellar | https://api.github.com/repos/nasa-jpl/stellar | closed | Make the distinction between stellar and react-stellar clear | documentation | Make sure we properly document that Stellar is just CSS + icons and that React-Stellar builds on top of Stellar and provides general interactive components for React. Each repository should have a list of the components from Figma Stellar and indicate which components are supported by the repo. | 1.0 | Make the distinction between stellar and react-stellar clear - Make sure we properly document that Stellar is just CSS + icons and that React-Stellar builds on top of Stellar and provides general interactive components for React. Each repository should have a list of the components from Figma Stellar and indicate which... | non_priority | make the distinction between stellar and react stellar clear make sure we properly document that stellar is just css icons and that react stellar builds on top of stellar and provides general interactive components for react each repository should have a list of the components from figma stellar and indicate which... | 0 |

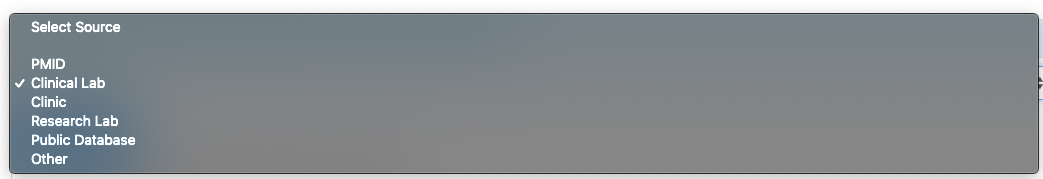

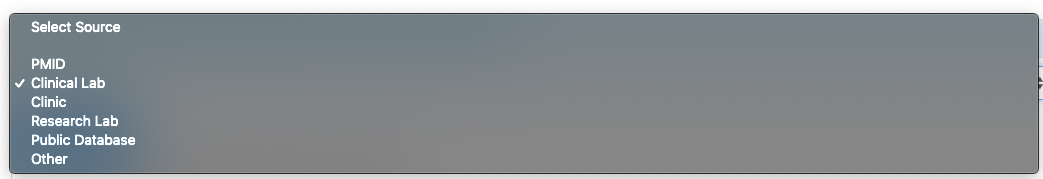

589,848 | 17,761,839,143 | IssuesEvent | 2021-08-29 21:00:25 | ClinGen/clincoded | https://api.github.com/repos/ClinGen/clincoded | closed | Case/Seg - guidance text for selecting evidence source type | priority: high VCI colleague request requires specification dependency | Add in guidance text for selecting evidence source type in Case/Seg tab.

| 1.0 | Case/Seg - guidance text for selecting evidence source type - Add in guidance text for selecting evidence source type in Case/Seg tab.

| priority | case seg guidance text for selecting evidence source type add in guidance text for selecting evidence source type in case seg tab | 1 |

34,265 | 14,351,492,937 | IssuesEvent | 2020-11-30 01:25:00 | Azure/azure-rest-api-specs | https://api.github.com/repos/Azure/azure-rest-api-specs | closed | SecurityInsights: multiple usages of same-named discriminator | SecurityInsights Service Attention | The discriminator `Scheduled` is both used in [`ScheduledAlertRule`](https://github.com/Azure/azure-rest-api-specs/blob/d50ea7233b80f662cb9e19883fb0083e653339ca/specification/securityinsights/resource-manager/Microsoft.SecurityInsights/preview/2019-01-01-preview/SecurityInsights.json#L8705) and [`ScheduledAlertRuleTemp... | 1.0 | SecurityInsights: multiple usages of same-named discriminator - The discriminator `Scheduled` is both used in [`ScheduledAlertRule`](https://github.com/Azure/azure-rest-api-specs/blob/d50ea7233b80f662cb9e19883fb0083e653339ca/specification/securityinsights/resource-manager/Microsoft.SecurityInsights/preview/2019-01-01-p... | non_priority | securityinsights multiple usages of same named discriminator the discriminator scheduled is both used in and the same problem exists for microsoftsecurityincidentcreation and fusion the issue exists in both and | 0 |

121,868 | 10,196,645,977 | IssuesEvent | 2019-08-12 21:19:32 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: splits/largerange/size=10GiB,nodes=3 failed | C-test-failure O-roachtest O-robot | SHA: https://github.com/cockroachdb/cockroach/commits/a850466dfc4f8eed9e7f758a61f60b120798410f

Parameters:

To repro, try:

```

# Don't forget to check out a clean suitable branch and experiment with the

# stress invocation until the desired results present themselves. For example,

# using stress instead of stressrace... | 2.0 | roachtest: splits/largerange/size=10GiB,nodes=3 failed - SHA: https://github.com/cockroachdb/cockroach/commits/a850466dfc4f8eed9e7f758a61f60b120798410f

Parameters:

To repro, try:

```

# Don't forget to check out a clean suitable branch and experiment with the

# stress invocation until the desired results present them... | non_priority | roachtest splits largerange size nodes failed sha parameters to repro try don t forget to check out a clean suitable branch and experiment with the stress invocation until the desired results present themselves for example using stress instead of stressrace and passing the p stressflag wh... | 0 |

429,681 | 30,085,235,840 | IssuesEvent | 2023-06-29 08:08:43 | bokeh/dataviz-fundamentals | https://api.github.com/repos/bokeh/dataviz-fundamentals | closed | Introduction files | tag: documentation tag: notebook | The `Introduction.ipynb` and `Introduction.html` files till contain the old file paths. I have to modify them to refelct the new file paths and also reflect the style guide for subsequent posts. | 1.0 | Introduction files - The `Introduction.ipynb` and `Introduction.html` files till contain the old file paths. I have to modify them to refelct the new file paths and also reflect the style guide for subsequent posts. | non_priority | introduction files the introduction ipynb and introduction html files till contain the old file paths i have to modify them to refelct the new file paths and also reflect the style guide for subsequent posts | 0 |

53,943 | 23,115,105,131 | IssuesEvent | 2022-07-27 15:58:23 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | closed | Revert automated Data Tracker Fulcrum updates | Workgroup: AMD Service: Apps Need: 2-Should Have | <!-- Email -->

<!-- billy.bolander@austintexas.gov -->

> What application are you using?

Other / Not Sure

> Describe the problem.

At this time Fulcrum is updating Tracker when a PM has been completed. This was by my request in the past. Due to a change in our internal procedure I request this to be reversed. Please... | 1.0 | Revert automated Data Tracker Fulcrum updates - <!-- Email -->

<!-- billy.bolander@austintexas.gov -->

> What application are you using?

Other / Not Sure

> Describe the problem.

At this time Fulcrum is updating Tracker when a PM has been completed. This was by my request in the past. Due to a change in our internal... | non_priority | revert automated data tracker fulcrum updates what application are you using other not sure describe the problem at this time fulcrum is updating tracker when a pm has been completed this was by my request in the past due to a change in our internal procedure i request this to be reversed please di... | 0 |

408,003 | 11,940,532,178 | IssuesEvent | 2020-04-02 16:52:21 | eclipse-ee4j/jaxb-api | https://api.github.com/repos/eclipse-ee4j/jaxb-api | closed | XMLGregorianCalendar needs a getXmlSchemaType() method | Component: datatypes Priority: Major Type: Improvement | Submitted by David Bau, entered initially by Joe Fialli

There should be a method to say "which one of the XML Schema Date

types does this correspond to"? based on the set fields, e.g., have an

enumeration for gYear, gMonth, gMonthYear, as well as no-type, and then have

a getXmlSchemaType() method. What's the use case?... | 1.0 | XMLGregorianCalendar needs a getXmlSchemaType() method - Submitted by David Bau, entered initially by Joe Fialli

There should be a method to say "which one of the XML Schema Date

types does this correspond to"? based on the set fields, e.g., have an

enumeration for gYear, gMonth, gMonthYear, as well as no-type, and th... | priority | xmlgregoriancalendar needs a getxmlschematype method submitted by david bau entered initially by joe fialli there should be a method to say which one of the xml schema date types does this correspond to based on the set fields e g have an enumeration for gyear gmonth gmonthyear as well as no type and th... | 1 |

60,698 | 8,454,311,895 | IssuesEvent | 2018-10-21 01:17:28 | Naoghuman/app-notification | https://api.github.com/repos/Naoghuman/app-notification | closed | [doc] Create a basic layout concept for the application gui. | documentation | [doc] Create a basic layout concept for the application gui.

* The main gui is primaly a TabPane where the user can configure the single notification topics. | 1.0 | [doc] Create a basic layout concept for the application gui. - [doc] Create a basic layout concept for the application gui.

* The main gui is primaly a TabPane where the user can configure the single notification topics. | non_priority | create a basic layout concept for the application gui create a basic layout concept for the application gui the main gui is primaly a tabpane where the user can configure the single notification topics | 0 |

80,193 | 23,138,188,430 | IssuesEvent | 2022-07-28 15:54:11 | Kotlin/multik | https://api.github.com/repos/Kotlin/multik | opened | _concat_fortran_string | build native | deal with the dependence to calculate eigenvalues and vectors. get rid of `quadmath` dependency and build for all platforms. | 1.0 | _concat_fortran_string - deal with the dependence to calculate eigenvalues and vectors. get rid of `quadmath` dependency and build for all platforms. | non_priority | concat fortran string deal with the dependence to calculate eigenvalues and vectors get rid of quadmath dependency and build for all platforms | 0 |

300,841 | 22,699,540,417 | IssuesEvent | 2022-07-05 09:24:17 | AlbertoCuadra/combustion_toolbox | https://api.github.com/repos/AlbertoCuadra/combustion_toolbox | closed | Validation: include validations in the docs - part I | documentation validation | - [x] Test TP: Equilibrium composition at defined T and p

- [x] Test HP: Adiabatic T and composition at constant p

- [x] Test SHOCK_R: Planar reflected shock wave

- [x] Test DET: Chapman-Jouget Detonation (CJ upper state)

- [x] Test IONIZATION

- [x] Test SHOCK_POLAR | 1.0 | Validation: include validations in the docs - part I - - [x] Test TP: Equilibrium composition at defined T and p

- [x] Test HP: Adiabatic T and composition at constant p

- [x] Test SHOCK_R: Planar reflected shock wave

- [x] Test DET: Chapman-Jouget Detonation (CJ upper state)

- [x] Test IONIZATION

- [x] Test SHOCK... | non_priority | validation include validations in the docs part i test tp equilibrium composition at defined t and p test hp adiabatic t and composition at constant p test shock r planar reflected shock wave test det chapman jouget detonation cj upper state test ionization test shock polar | 0 |

595,698 | 18,073,301,882 | IssuesEvent | 2021-09-21 06:55:11 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.beatport.com - site is not usable | os-ios browser-firefox-ios priority-normal device-tablet | <!-- @browser: Firefox -->

<!-- @ua_header: Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_4) AppleWebKit/605.1.15 (KHTML, like Gecko) Version/13.1 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/87255 -->

<!-- @extra_labels: browser-firefox-ios,... | 1.0 | www.beatport.com - site is not usable - <!-- @browser: Firefox -->

<!-- @ua_header: Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_4) AppleWebKit/605.1.15 (KHTML, like Gecko) Version/13.1 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/87255 -->

... | priority | site is not usable url browser version firefox operating system i pad tested another browser yes chrome problem type site is not usable description buttons or links not working steps to reproduce der play butten funktioniert nicht view the screens... | 1 |

15,483 | 19,693,135,301 | IssuesEvent | 2022-01-12 09:23:03 | bisq-network/bisq | https://api.github.com/repos/bisq-network/bisq | closed | Improve user experience once mediation has been accepted by both parties | in:gui a:feature in:trade-process | <!--

SUPPORT REQUESTS: This is for reporting bugs in the Bisq app.

If you have a support request, please join #support on Bisq's

Keybase team over at https://keybase.io/team/Bisq

-->

### Description

When mediation for a given trade is accepted by both parties the trade moves from 'open trades' to 'hi... | 1.0 | Improve user experience once mediation has been accepted by both parties - <!--

SUPPORT REQUESTS: This is for reporting bugs in the Bisq app.

If you have a support request, please join #support on Bisq's

Keybase team over at https://keybase.io/team/Bisq

-->

### Description

When mediation for a given ... | non_priority | improve user experience once mediation has been accepted by both parties support requests this is for reporting bugs in the bisq app if you have a support request please join support on bisq s keybase team over at description when mediation for a given trade is accepted by both p... | 0 |

683,499 | 23,384,600,029 | IssuesEvent | 2022-08-11 12:45:19 | TheYellowArchitect/doubledamnation | https://api.github.com/repos/TheYellowArchitect/doubledamnation | opened | Remake Enemy Behaviour | enemy ai low priority | It is no secret that the code for the enemies is **not** well-designed. After all, it was made back in 2018, I didn't even know a design pattern back then.

I didn't read on AI, it was quite literally handmade without any guidance, I still remember the "breakthroughs" and how making the AI felt like exploring, happy ti... | 1.0 | Remake Enemy Behaviour - It is no secret that the code for the enemies is **not** well-designed. After all, it was made back in 2018, I didn't even know a design pattern back then.

I didn't read on AI, it was quite literally handmade without any guidance, I still remember the "breakthroughs" and how making the AI felt... | priority | remake enemy behaviour it is no secret that the code for the enemies is not well designed after all it was made back in i didn t even know a design pattern back then i didn t read on ai it was quite literally handmade without any guidance i still remember the breakthroughs and how making the ai felt li... | 1 |

327,130 | 24,119,379,419 | IssuesEvent | 2022-09-20 17:16:28 | The-Mycelium-Network/bookclub | https://api.github.com/repos/The-Mycelium-Network/bookclub | opened | chore: add some notes for chapter 1 of JavaScript the definitive guide | documentation chore | I have some notes I want to add for the first chapter of JavaScript, the definitive guide | 1.0 | chore: add some notes for chapter 1 of JavaScript the definitive guide - I have some notes I want to add for the first chapter of JavaScript, the definitive guide | non_priority | chore add some notes for chapter of javascript the definitive guide i have some notes i want to add for the first chapter of javascript the definitive guide | 0 |

153,817 | 5,903,993,436 | IssuesEvent | 2017-05-19 08:36:15 | metasfresh/metasfresh | https://api.github.com/repos/metasfresh/metasfresh | closed | HU Transform - split out some TUs from LU does not work correctly with custom LUs | priority:high release:candidate status:integrated type:bug | ### Is this a bug or feature request?

Bug

### What is the current behavior?

#### Which are the steps to reproduce?

Consider following setup and test case (you can have it with different numbers):

* have a product which has the CU-TU capacity: 7 Kg per TU

* receive an LU with 10 TUs each of the TU shall have... | 1.0 | HU Transform - split out some TUs from LU does not work correctly with custom LUs - ### Is this a bug or feature request?

Bug

### What is the current behavior?

#### Which are the steps to reproduce?

Consider following setup and test case (you can have it with different numbers):

* have a product which has th... | priority | hu transform split out some tus from lu does not work correctly with custom lus is this a bug or feature request bug what is the current behavior which are the steps to reproduce consider following setup and test case you can have it with different numbers have a product which has th... | 1 |

329,120 | 24,209,219,680 | IssuesEvent | 2022-09-25 17:05:19 | pha4ge/hAMRonization | https://api.github.com/repos/pha4ge/hAMRonization | closed | Update README | documentation enhancement p3 | - [ ] Improve installation instructions

- [ ] Update parsers included

- [ ] Add wiki with small tutorial | 1.0 | Update README - - [ ] Improve installation instructions

- [ ] Update parsers included

- [ ] Add wiki with small tutorial | non_priority | update readme improve installation instructions update parsers included add wiki with small tutorial | 0 |

487,892 | 14,061,025,708 | IssuesEvent | 2020-11-03 07:20:35 | bryntum/support | https://api.github.com/repos/bryntum/support | closed | Export to MSP - Add support for version MSP 2019 | bug high-priority resolved | Reference: https://github.com/bryntum/support/issues/1250

- [x] Make work for version MSP 2019

- [x] Export Assignments with percent work complete

- [x] Export CalendarUID for resources

Version 2013:

detected in kafka-clients-3.0.1.jar - autoclosed | security vulnerability | ## CVE-2022-34917 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>kafka-clients-3.0.1.jar</b></p></summary>

<p></p>

<p>Library home page: <a href="https://kafka.apache.org">https://kaf... | True | CVE-2022-34917 (High) detected in kafka-clients-3.0.1.jar - autoclosed - ## CVE-2022-34917 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>kafka-clients-3.0.1.jar</b></p></summary>

<p>... | non_priority | cve high detected in kafka clients jar autoclosed cve high severity vulnerability vulnerable library kafka clients jar library home page a href path to dependency file build gradle path to vulnerable library home wss scanner gradle caches modules files or... | 0 |

189,943 | 22,047,158,449 | IssuesEvent | 2022-05-30 04:01:00 | madhans23/linux-4.1.15 | https://api.github.com/repos/madhans23/linux-4.1.15 | closed | CVE-2017-12153 (Medium) detected in linux-stable-rtv4.1.33 - autoclosed | security vulnerability | ## CVE-2017-12153 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home p... | True | CVE-2017-12153 (Medium) detected in linux-stable-rtv4.1.33 - autoclosed - ## CVE-2017-12153 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p... | non_priority | cve medium detected in linux stable autoclosed cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulner... | 0 |

20,886 | 10,556,689,872 | IssuesEvent | 2019-10-04 03:01:36 | istio/istio | https://api.github.com/repos/istio/istio | closed | Secure coding & testing practices that affect all new work -code-mauve style | area/security kind/need more info | (NOTE: This is used to report product bugs:

To report a security vulnerability, please visit <https://istio.io/about/security-vulnerabilities/>

To ask questions about how to use Istio, please visit <https://discuss.istio.io>

)

**Bug description**

This task is a spin off of #13618 , the goal here might be to ... | True | Secure coding & testing practices that affect all new work -code-mauve style - (NOTE: This is used to report product bugs:

To report a security vulnerability, please visit <https://istio.io/about/security-vulnerabilities/>

To ask questions about how to use Istio, please visit <https://discuss.istio.io>

)

**Bu... | non_priority | secure coding testing practices that affect all new work code mauve style note this is used to report product bugs to report a security vulnerability please visit to ask questions about how to use istio please visit bug description this task is a spin off of the goal here might be ... | 0 |

72,130 | 8,705,492,248 | IssuesEvent | 2018-12-05 22:36:08 | Opentrons/opentrons | https://api.github.com/repos/Opentrons/opentrons | closed | Blow out destination options default, source well, and dest well | feature medium protocol designer | Related to issue #1676

As a user I'd like better control over where blowout occurs. Currently my options are source plate A1, destination, trash.

As a user I'd like a surfaced default selection, so I can't select blow out and then nothing in the destination dropdown

## Acceptance Criteria

- [ ] Blow out dest... | 1.0 | Blow out destination options default, source well, and dest well - Related to issue #1676

As a user I'd like better control over where blowout occurs. Currently my options are source plate A1, destination, trash.

As a user I'd like a surfaced default selection, so I can't select blow out and then nothing in the ... | non_priority | blow out destination options default source well and dest well related to issue as a user i d like better control over where blowout occurs currently my options are source plate destination trash as a user i d like a surfaced default selection so i can t select blow out and then nothing in the dest... | 0 |

93,019 | 26,837,688,595 | IssuesEvent | 2023-02-02 20:59:21 | uselagoon/lagoon | https://api.github.com/repos/uselagoon/lagoon | closed | Invalid YAML in pvc annotations | bug 2-build-deploy | # Describe the bug

The YAML generated by Helm is invalid for `pvc` in the `nginx-php-persistent` template

```yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nginx-php

labels:

helm.sh/chart: nginx-php-persistent-0.1.0

app.kubernetes.io/name: nginx-php-persistent

app.kubernet... | 1.0 | Invalid YAML in pvc annotations - # Describe the bug

The YAML generated by Helm is invalid for `pvc` in the `nginx-php-persistent` template

```yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nginx-php

labels:

helm.sh/chart: nginx-php-persistent-0.1.0

app.kubernetes.io/name: ngin... | non_priority | invalid yaml in pvc annotations describe the bug the yaml generated by helm is invalid for pvc in the nginx php persistent template yaml apiversion kind persistentvolumeclaim metadata name nginx php labels helm sh chart nginx php persistent app kubernetes io name nginx... | 0 |

197,066 | 6,952,042,980 | IssuesEvent | 2017-12-06 16:14:56 | vmware/vic-product | https://api.github.com/repos/vmware/vic-product | opened | Clarify NFS docs | component/vic-engine kind/user-doc priority/high pub/vsphere | Per Slack chat with @zjs, @jooskim, @matthewavery:

- The API is just a passthrough to the library, but the UI seems to have a dropdown for selecting a datastore (which is a good thing), but no way to turn that into a free-form text box to supply an NFS server. I think it's an easy thing to add post-1.3

- nfs volume... | 1.0 | Clarify NFS docs - Per Slack chat with @zjs, @jooskim, @matthewavery:

- The API is just a passthrough to the library, but the UI seems to have a dropdown for selecting a datastore (which is a good thing), but no way to turn that into a free-form text box to supply an NFS server. I think it's an easy thing to add pos... | priority | clarify nfs docs per slack chat with zjs jooskim matthewavery the api is just a passthrough to the library but the ui seems to have a dropdown for selecting a datastore which is a good thing but no way to turn that into a free form text box to supply an nfs server i think it s an easy thing to add pos... | 1 |

274,051 | 23,806,529,684 | IssuesEvent | 2022-09-04 05:37:25 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: schemachange/mixed-versions failed | C-test-failure O-robot O-roachtest branch-master release-blocker | roachtest.schemachange/mixed-versions [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6341855?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6341855?buildTab=artifacts#/schemachange... | 2.0 | roachtest: schemachange/mixed-versions failed - roachtest.schemachange/mixed-versions [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6341855?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyG... | non_priority | roachtest schemachange mixed versions failed roachtest schemachange mixed versions with on master txnrbk opok ... | 0 |

321,404 | 23,853,006,552 | IssuesEvent | 2022-09-06 19:52:09 | Requisitos-de-Software/2022.1-TikTok | https://api.github.com/repos/Requisitos-de-Software/2022.1-TikTok | closed | Atualizar versionamento no documento de rich picture | documentation | ## Descrição

Adicionar datas no versionamento do documento de rich picture

## Tarefas

- [x] Adicionar datas

## Critérios de aceitação

- [x] Documento atualizado no repositório | 1.0 | Atualizar versionamento no documento de rich picture - ## Descrição

Adicionar datas no versionamento do documento de rich picture

## Tarefas

- [x] Adicionar datas

## Critérios de aceitação

- [x] Documento atualizado no repositório | non_priority | atualizar versionamento no documento de rich picture descrição adicionar datas no versionamento do documento de rich picture tarefas adicionar datas critérios de aceitação documento atualizado no repositório | 0 |

23,907 | 16,680,735,138 | IssuesEvent | 2021-06-07 23:11:37 | votingworks/vxsuite | https://api.github.com/repos/votingworks/vxsuite | closed | Share `jest.config.js` settings | [zube]: In Progress infrastructure | This can likely be accomplished with a `jest.shared.config.js` at the root that the others just `require` and override as needed. | 1.0 | Share `jest.config.js` settings - This can likely be accomplished with a `jest.shared.config.js` at the root that the others just `require` and override as needed. | non_priority | share jest config js settings this can likely be accomplished with a jest shared config js at the root that the others just require and override as needed | 0 |

92,006 | 8,336,264,068 | IssuesEvent | 2018-09-28 07:08:38 | owncloud/core | https://api.github.com/repos/owncloud/core | closed | Add automated phpcs checks in drone for acceptance test code | QA-team dev:acceptance-tests | The acceptance test code has been conforming to a proposed "standard" that was implemented some time ago but not formally enforced. It requires a bunch of PHP doc block standards, for example. See ``phpcs.xml`` in the root folder of the core repo.

The check is done with:

```

phpcs --standard=phpcs.xml tests/accept... | 1.0 | Add automated phpcs checks in drone for acceptance test code - The acceptance test code has been conforming to a proposed "standard" that was implemented some time ago but not formally enforced. It requires a bunch of PHP doc block standards, for example. See ``phpcs.xml`` in the root folder of the core repo.

The ch... | non_priority | add automated phpcs checks in drone for acceptance test code the acceptance test code has been conforming to a proposed standard that was implemented some time ago but not formally enforced it requires a bunch of php doc block standards for example see phpcs xml in the root folder of the core repo the ch... | 0 |

251,222 | 8,001,531,157 | IssuesEvent | 2018-07-23 03:41:56 | alipay/sofa-mosn | https://api.github.com/repos/alipay/sofa-mosn | closed | route wildcard domain match rule: longest wildcard suffix match | area/router kind/bug priority/P1 status/done | two questions:

1.

golang map's iteration order is not specified and is not guaranteed to be the same from one iteration to the next.

to longest wildcard suffix match, we need to sort key in wildcardVirtualHostSuffixes.

2.

Only unique values for domains are permitted, even if it is wildcard domain.

| 1.0 | route wildcard domain match rule: longest wildcard suffix match - two questions:

1.

golang map's iteration order is not specified and is not guaranteed to be the same from one iteration to the next.

to longest wildcard suffix match, we need to sort key in wildcardVirtualHostSuffixes.

2.

Only unique values for ... | priority | route wildcard domain match rule longest wildcard suffix match two questions golang map s iteration order is not specified and is not guaranteed to be the same from one iteration to the next to longest wildcard suffix match we need to sort key in wildcardvirtualhostsuffixes only unique values for ... | 1 |

190,698 | 6,821,649,267 | IssuesEvent | 2017-11-07 17:23:12 | vmware/vic | https://api.github.com/repos/vmware/vic | closed | Implement IP lookup for VCH create endpoint | area/ui priority/high team/lifecycle | **Details:**

We need to implement the findings from this issue https://github.com/vmware/vic/issues/6551 regarding the H5C VCH wizard being able find the correct IP of vic-machine-service.

**Acceptance Criteria:**

Demonstrate ability to deploy OVA with vic-machine-service and have the H5C vch wizard be able to fin... | 1.0 | Implement IP lookup for VCH create endpoint - **Details:**

We need to implement the findings from this issue https://github.com/vmware/vic/issues/6551 regarding the H5C VCH wizard being able find the correct IP of vic-machine-service.

**Acceptance Criteria:**

Demonstrate ability to deploy OVA with vic-machine-serv... | priority | implement ip lookup for vch create endpoint details we need to implement the findings from this issue regarding the vch wizard being able find the correct ip of vic machine service acceptance criteria demonstrate ability to deploy ova with vic machine service and have the vch wizard be able to f... | 1 |

35,950 | 9,691,079,929 | IssuesEvent | 2019-05-24 10:12:00 | Lundalogik/lip | https://api.github.com/repos/Lundalogik/lip | opened | Format lip.json so that it looks properly formatted | enhancement package builder | Today, everything ends up in one loooong line.

Write to several lines and indent it properly. | 1.0 | Format lip.json so that it looks properly formatted - Today, everything ends up in one loooong line.

Write to several lines and indent it properly. | non_priority | format lip json so that it looks properly formatted today everything ends up in one loooong line write to several lines and indent it properly | 0 |

91,284 | 3,851,440,429 | IssuesEvent | 2016-04-06 02:02:45 | cs2103jan2016-w10-3j/main | https://api.github.com/repos/cs2103jan2016-w10-3j/main | closed | A user can be alerted when events are close to expiration | priority.medium type.story | So the user wouldn't forget to do them. | 1.0 | A user can be alerted when events are close to expiration - So the user wouldn't forget to do them. | priority | a user can be alerted when events are close to expiration so the user wouldn t forget to do them | 1 |

100,798 | 30,777,243,885 | IssuesEvent | 2023-07-31 07:31:11 | SigNoz/signoz | https://api.github.com/repos/SigNoz/signoz | closed | FE(Dashboard): Migrate current dashboard api into v3 query range | enhancement frontend query-builder | ## Is your feature request related to a problem?

*Please describe.*

Right now in the dashboard we are using v2 we need to migrate it to v3 | 1.0 | FE(Dashboard): Migrate current dashboard api into v3 query range - ## Is your feature request related to a problem?

*Please describe.*

Right now in the dashboard we are using v2 we need to migrate it to v3 | non_priority | fe dashboard migrate current dashboard api into query range is your feature request related to a problem please describe right now in the dashboard we are using we need to migrate it to | 0 |

776,595 | 27,264,466,529 | IssuesEvent | 2023-02-22 16:59:41 | ascheid/itsg33-pbmm-issue-gen | https://api.github.com/repos/ascheid/itsg33-pbmm-issue-gen | opened | AC-20: Use Of External Information Systems | Priority: P2 Class: Technical Suggested Assignment: IT Security Function ITSG-33 Control: AC-20 | # Control Definition

(A) The organization establishes terms and conditions, consistent with any trust relationships established with other organizations owning, operating, and/or maintaining external information systems, allowing authorized individuals to access the information system from external information systems.... | 1.0 | AC-20: Use Of External Information Systems - # Control Definition

(A) The organization establishes terms and conditions, consistent with any trust relationships established with other organizations owning, operating, and/or maintaining external information systems, allowing authorized individuals to access the informat... | priority | ac use of external information systems control definition a the organization establishes terms and conditions consistent with any trust relationships established with other organizations owning operating and or maintaining external information systems allowing authorized individuals to access the informati... | 1 |

241,404 | 20,120,647,589 | IssuesEvent | 2022-02-08 01:40:57 | free-tomorrow/free-tomorrow | https://api.github.com/repos/free-tomorrow/free-tomorrow | closed | FE: Cypress Iteration 1: | feature FE testing | Write tests to confirm that:

- [ ] The header elements display correctly, and any links redirect to the correct Routes

- [ ] The homepage elements display correctly and any buttons redirect to the correct Routes | 1.0 | FE: Cypress Iteration 1: - Write tests to confirm that:

- [ ] The header elements display correctly, and any links redirect to the correct Routes

- [ ] The homepage elements display correctly and any buttons redirect to the correct Routes | non_priority | fe cypress iteration write tests to confirm that the header elements display correctly and any links redirect to the correct routes the homepage elements display correctly and any buttons redirect to the correct routes | 0 |

780,854 | 27,411,010,206 | IssuesEvent | 2023-03-01 10:31:25 | horizon-efrei/HorizonBot | https://api.github.com/repos/horizon-efrei/HorizonBot | closed | Feature pour délégués: TODO-list de devoirs | type: feature difficulty: complex status: awaiting approval scope: class groups priority: lowest | Ensemble de commandes pour gérer de façon semi-automatique une liste de devoirs à faire pour les délégués dans les classes

Commandes à prévoir: !todo create <liste des matières>, !todo add <matière> <intitulé> <date due>, !todo remove <matière/intitulé>, !todo edit <intitulé>, !todo archive

Exemple de layout (de... | 1.0 | Feature pour délégués: TODO-list de devoirs - Ensemble de commandes pour gérer de façon semi-automatique une liste de devoirs à faire pour les délégués dans les classes

Commandes à prévoir: !todo create <liste des matières>, !todo add <matière> <intitulé> <date due>, !todo remove <matière/intitulé>, !todo edit <inti... | priority | feature pour délégués todo list de devoirs ensemble de commandes pour gérer de façon semi automatique une liste de devoirs à faire pour les délégués dans les classes commandes à prévoir todo create todo add todo remove todo edit todo archive exemple de layout devoirs à faire de... | 1 |

249,654 | 26,968,376,070 | IssuesEvent | 2023-02-09 01:14:25 | turkdevops/icu | https://api.github.com/repos/turkdevops/icu | opened | cryptography-3.4.7-cp36-abi3-manylinux2014_x86_64.whl: 1 vulnerabilities (highest severity is: 4.8) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>cryptography-3.4.7-cp36-abi3-manylinux2014_x86_64.whl</b></p></summary>

<p>cryptography is a package which provides cryptographic recipes and primitives to Python dev... | True | cryptography-3.4.7-cp36-abi3-manylinux2014_x86_64.whl: 1 vulnerabilities (highest severity is: 4.8) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>cryptography-3.4.7-cp36-abi3-manylinux2014_x86_64.whl</b></p></su... | non_priority | cryptography whl vulnerabilities highest severity is vulnerable library cryptography whl cryptography is a package which provides cryptographic recipes and primitives to python developers library home page a href path to dependency file tools commit che... | 0 |

44,324 | 5,796,349,355 | IssuesEvent | 2017-05-02 19:14:35 | scrisenbery/dotfiles | https://api.github.com/repos/scrisenbery/dotfiles | closed | Brew directory configuration | area/apps area/install branch/master issue/new OS/mac type/bug type/design type/question | From #1

gmac has brew all done in $HOME. The gmac in particular needs this but it probably doesn't make sense on the general scale to use that config for other computers. Will open new issue for gmac-specific branch as well.

| 1.0 | Brew directory configuration - From #1

gmac has brew all done in $HOME. The gmac in particular needs this but it probably doesn't make sense on the general scale to use that config for other computers. Will open new issue for gmac-specific branch as well.

| non_priority | brew directory configuration from gmac has brew all done in home the gmac in particular needs this but it probably doesn t make sense on the general scale to use that config for other computers will open new issue for gmac specific branch as well | 0 |

250,614 | 7,979,146,271 | IssuesEvent | 2018-07-17 20:41:58 | conveyal/analysis-ui | https://api.github.com/repos/conveyal/analysis-ui | closed | Support email should not be hard-coded | low priority small task | We may want to specify the support email in the same place we specify API keys, instead of https://github.com/conveyal/analysis-ui/blob/c9a53e9f74386de40450d5d9e4d3b0f852b14b0a/lib/components/application.js#L245

This would make sure that our support email address only shows up for our supported deployments. | 1.0 | Support email should not be hard-coded - We may want to specify the support email in the same place we specify API keys, instead of https://github.com/conveyal/analysis-ui/blob/c9a53e9f74386de40450d5d9e4d3b0f852b14b0a/lib/components/application.js#L245

This would make sure that our support email address only shows u... | priority | support email should not be hard coded we may want to specify the support email in the same place we specify api keys instead of this would make sure that our support email address only shows up for our supported deployments | 1 |

101,705 | 4,128,779,295 | IssuesEvent | 2016-06-10 08:16:04 | pixelhumain/communecter | https://api.github.com/repos/pixelhumain/communecter | closed | Remove resized usage => prefere profilThumbImageUrl on every object | enhancement priority 2 | @oceatoon @Kgneo @clement59

The use of the resizer is deprecated.

We can now use the profilThumbImageUrl property on every object.

This property is set on new profil image or with new objects.

With old objects, the thumb is not generated yet. Change the image to have it.

Keep it in mind when you see a resizer c... | 1.0 | Remove resized usage => prefere profilThumbImageUrl on every object - @oceatoon @Kgneo @clement59

The use of the resizer is deprecated.

We can now use the profilThumbImageUrl property on every object.

This property is set on new profil image or with new objects.

With old objects, the thumb is not generated yet. ... | priority | remove resized usage prefere profilthumbimageurl on every object oceatoon kgneo the use of the resizer is deprecated we can now use the profilthumbimageurl property on every object this property is set on new profil image or with new objects with old objects the thumb is not generated yet change t... | 1 |

470,732 | 13,543,433,083 | IssuesEvent | 2020-09-16 18:57:16 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | [Coverity CID :212426] Unrecoverable parse warning in drivers/wifi/eswifi/eswifi_socket_offload.c | Coverity bug priority: low |

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/66bd06a7d1f9e4682faafbc551046af695fa1060/drivers/wifi/eswifi/eswifi_socket_offload.c#L502

Category: Parse warnings

Function: ``

Component: Drivers

CID: [212426](https://scan9.coverity.com/reports.htm#v29726/p12996/mergedDefectId... | 1.0 | [Coverity CID :212426] Unrecoverable parse warning in drivers/wifi/eswifi/eswifi_socket_offload.c -

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/66bd06a7d1f9e4682faafbc551046af695fa1060/drivers/wifi/eswifi/eswifi_socket_offload.c#L502

Category: Parse warnings

Function: ``

... | priority | unrecoverable parse warning in drivers wifi eswifi eswifi socket offload c static code scan issues found in file category parse warnings function component drivers cid details const struct fd op vtable eswifi socket fd op vtable ... | 1 |

67,190 | 12,886,307,491 | IssuesEvent | 2020-07-13 09:17:00 | khochaynhalam-dev/khochaynhalam-dev.github.io | https://api.github.com/repos/khochaynhalam-dev/khochaynhalam-dev.github.io | closed | Build - [khochaynhalam] - Build Pages | code enhancement | Dear @khochaynhalam-dev/khochaynhalam-team

Task Build Pages contains the following subtasks:

- [ ] #2

- [ ] #3

- [ ] #4

- [ ] #5

- [ ] #7

Please help me do it.

Thanks,

TrungNhan

| 1.0 | Build - [khochaynhalam] - Build Pages - Dear @khochaynhalam-dev/khochaynhalam-team

Task Build Pages contains the following subtasks:

- [ ] #2

- [ ] #3

- [ ] #4

- [ ] #5

- [ ] #7

Please help me do it.

Thanks,

TrungNhan

| non_priority | build build pages dear khochaynhalam dev khochaynhalam team task build pages contains the following subtasks please help me do it thanks trungnhan | 0 |

218,262 | 7,330,874,043 | IssuesEvent | 2018-03-05 11:27:04 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | Exclude httpclient_4.3.1.wso2v2.jar from the product and add the updated version : httpclient_4.3.6.wso2v1.jar | 2.2.0 Priority/Highest Resolution/Fixed Type/Task | **Description:**

Release an **openid4java** orbit bundle by fixing the import version range and exclude httpclient_4.3.1.wso2v2.jar from the product.

And then remove the exclusion of httpclient_4.3.6.wso2v1.jar to make it available in the product to make available the latest fixes to the httpclient.

**Suggested L... | 1.0 | Exclude httpclient_4.3.1.wso2v2.jar from the product and add the updated version : httpclient_4.3.6.wso2v1.jar - **Description:**

Release an **openid4java** orbit bundle by fixing the import version range and exclude httpclient_4.3.1.wso2v2.jar from the product.

And then remove the exclusion of httpclient_4.3.6.wso2... | priority | exclude httpclient jar from the product and add the updated version httpclient jar description release an orbit bundle by fixing the import version range and exclude httpclient jar from the product and then remove the exclusion of httpclient jar to make it available in... | 1 |

806,219 | 29,806,536,787 | IssuesEvent | 2023-06-16 12:08:16 | penrose/penrose | https://api.github.com/repos/penrose/penrose | opened | feat: Make it possible to "bake" diagrams | kind:enhancement system:optimization system:style kind:usability priority:feature request layout priority:low | ### Issue

Penrose uses random sampling to initialize variables (e.g., those marked `?` in a Style program, or shape properties that are not explicitly specified). If a user is happy with a particular instance of a diagram, they would like to be able to reproduce this diagram later down the road. For this reason, w... | 2.0 | feat: Make it possible to "bake" diagrams - ### Issue

Penrose uses random sampling to initialize variables (e.g., those marked `?` in a Style program, or shape properties that are not explicitly specified). If a user is happy with a particular instance of a diagram, they would like to be able to reproduce this diag... | priority | feat make it possible to bake diagrams issue penrose uses random sampling to initialize variables e g those marked in a style program or shape properties that are not explicitly specified if a user is happy with a particular instance of a diagram they would like to be able to reproduce this diag... | 1 |

25,553 | 2,683,840,932 | IssuesEvent | 2015-03-28 11:22:51 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | ConEmu 2009.10.1 - Не работает символ "c" | 2–5 stars bug imported Priority-Medium | _From [skonys...@gmail.com](https://code.google.com/u/113491849526375923289/) on October 13, 2009 10:18:24_

Версия ОС: WinXP Professional SP3

Не работает символ "c" в запущеном cmd.exe. При этом в фаре работает.