Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

388,944 | 26,789,794,461 | IssuesEvent | 2023-02-01 07:28:36 | mozilla-it/ctms-api | https://api.github.com/repos/mozilla-it/ctms-api | closed | CTMS code comparison over Time-Ranges: | documentation | ----

Compare main to...

- 1 day ago: https://github.com/mozilla-it/ctms-api/compare/main%40{1.day.ago}...main

- 2 weeks ago: https://github.com/mozilla-it/ctms-api/compare/main%40%7B2.weeks.ago%7D...main

- 1 month ago: https://github.com/mozilla-it/ctms-api/compare/main%40%7B1.month.ago%7D...main

- 6 months ago: h... | 1.0 | CTMS code comparison over Time-Ranges: - ----

Compare main to...

- 1 day ago: https://github.com/mozilla-it/ctms-api/compare/main%40{1.day.ago}...main

- 2 weeks ago: https://github.com/mozilla-it/ctms-api/compare/main%40%7B2.weeks.ago%7D...main

- 1 month ago: https://github.com/mozilla-it/ctms-api/compare/main%40%7... | non_priority | ctms code comparison over time ranges compare main to day ago weeks ago month ago months ago year ago provided for reference of progress work code over time | 0 |

221,282 | 24,608,423,745 | IssuesEvent | 2022-10-14 18:37:34 | adoptium/ci-jenkins-pipelines | https://api.github.com/repos/adoptium/ci-jenkins-pipelines | closed | Request to make Jenkins job permissions configurable | enhancement security | https://github.com/adoptium/ci-jenkins-pipelines/pull/154

https://github.com/adoptium/ci-jenkins-pipelines/pull/155

These 2 PRs introduced changes specific to Adopt that do not apply to other consumers of the Jenkins pipelines. Can these be reworked to be configurable via the defaults.json file? Minimum would be a ... | True | Request to make Jenkins job permissions configurable - https://github.com/adoptium/ci-jenkins-pipelines/pull/154

https://github.com/adoptium/ci-jenkins-pipelines/pull/155

These 2 PRs introduced changes specific to Adopt that do not apply to other consumers of the Jenkins pipelines. Can these be reworked to be confi... | non_priority | request to make jenkins job permissions configurable these prs introduced changes specific to adopt that do not apply to other consumers of the jenkins pipelines can these be reworked to be configurable via the defaults json file minimum would be a boolean but ideally also moving the hardcoded team name s... | 0 |

23,890 | 11,983,204,926 | IssuesEvent | 2020-04-07 14:06:58 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | [APM] Service map refresh failing to remove absent services | Feature:Service Maps [zube]: In Progress apm-test-plan-7.7.0 bug v7.7.0 | In the service map, when applying short time filters (e.g. `now-1s`), services are only added, making the map larger with each refresh, when it should omit those services which are not returned in the API results applying the short range.

This is due to the fact that services are rendered in an additive way, when it... | 1.0 | [APM] Service map refresh failing to remove absent services - In the service map, when applying short time filters (e.g. `now-1s`), services are only added, making the map larger with each refresh, when it should omit those services which are not returned in the API results applying the short range.

This is due to ... | non_priority | service map refresh failing to remove absent services in the service map when applying short time filters e g now services are only added making the map larger with each refresh when it should omit those services which are not returned in the api results applying the short range this is due to the f... | 0 |

15,027 | 18,740,545,908 | IssuesEvent | 2021-11-04 13:08:51 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | reopened | Computed Variables for Algorithm Outputs are NULL in Graphical Modeler | Processing Bug Modeller | ### What is the bug or the crash?

I have tried to use the computed variables for an algorithm output (e.g. `@Reproject_layer_OUTPUT_minx `) in a `Pre-calculated value` field in a subsequent algorithm. All but those coming from the input layer are always NULL.

### Steps to reproduce the issue

1. Start Graphical... | 1.0 | Computed Variables for Algorithm Outputs are NULL in Graphical Modeler - ### What is the bug or the crash?

I have tried to use the computed variables for an algorithm output (e.g. `@Reproject_layer_OUTPUT_minx `) in a `Pre-calculated value` field in a subsequent algorithm. All but those coming from the input layer a... | non_priority | computed variables for algorithm outputs are null in graphical modeler what is the bug or the crash i have tried to use the computed variables for an algorithm output e g reproject layer output minx in a pre calculated value field in a subsequent algorithm all but those coming from the input layer a... | 0 |

199,091 | 15,733,470,931 | IssuesEvent | 2021-03-29 19:36:25 | albin-johansson/centurion | https://api.github.com/repos/albin-johansson/centurion | closed | Overhaul documentation. | documentation | - [X] Overhaul Doxygen documentation.

- [ ] Create RtD documentation.

- [X] `area`

- [X] `base_path`

- [ ] `basic_joystick`

- [x] `basic_renderer`

- [x] `basic_window`

- [x] `battery`

- [x] `blend_mode`

- [x] `button_state`

- [x] `library`, `config`

- [x] Clipboard

- [x] `color`

- [... | 1.0 | Overhaul documentation. - - [X] Overhaul Doxygen documentation.

- [ ] Create RtD documentation.

- [X] `area`

- [X] `base_path`

- [ ] `basic_joystick`

- [x] `basic_renderer`

- [x] `basic_window`

- [x] `battery`

- [x] `blend_mode`

- [x] `button_state`

- [x] `library`, `config`

- [x] Clipboa... | non_priority | overhaul documentation overhaul doxygen documentation create rtd documentation area base path basic joystick basic renderer basic window battery blend mode button state library config clipboard color ... | 0 |

391,345 | 11,572,220,994 | IssuesEvent | 2020-02-20 23:25:13 | googleapis/python-texttospeech | https://api.github.com/repos/googleapis/python-texttospeech | closed | Synthesis failed for python-texttospeech | api: texttospeech autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate python-texttospeech. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to branch 'autosynth'

Running synthtool

['/tmpfs/src/git/autosynth/env/bin/python3', '-m', 'synthtool', 'synth.py', '--']

synthtool > Executing /tmpfs/src/git... | 1.0 | Synthesis failed for python-texttospeech - Hello! Autosynth couldn't regenerate python-texttospeech. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to branch 'autosynth'

Running synthtool

['/tmpfs/src/git/autosynth/env/bin/python3', '-m', 'synthtool', 'synth.py',... | priority | synthesis failed for python texttospeech hello autosynth couldn t regenerate python texttospeech broken heart here s the output from running synth py cloning into working repo switched to branch autosynth running synthtool synthtool executing tmpfs src git autosynth working repo synth py on ... | 1 |

238,000 | 18,215,194,034 | IssuesEvent | 2021-09-30 02:46:38 | portainer/portainer | https://api.github.com/repos/portainer/portainer | closed | Is the user's password supposed to be returned via Portainer API? | kind/enhancement area/documentation internal/jira | Hi guys,

Actually, my question is practically reflected in a $subject

Recently I tried to retrieve the password of a certain user via API (using GET request to the ``/users`` or ``/users/{id}`` API points) for further use in my code.

But with no luck. I just got a response:

```

'response' => {

... | 1.0 | Is the user's password supposed to be returned via Portainer API? - Hi guys,

Actually, my question is practically reflected in a $subject

Recently I tried to retrieve the password of a certain user via API (using GET request to the ``/users`` or ``/users/{id}`` API points) for further use in my code.

But with no ... | non_priority | is the user s password supposed to be returned via portainer api hi guys actually my question is practically reflected in a subject recently i tried to retrieve the password of a certain user via api using get request to the users or users id api points for further use in my code but with no ... | 0 |

426,566 | 12,374,199,793 | IssuesEvent | 2020-05-19 00:49:37 | kubeflow/pipelines | https://api.github.com/repos/kubeflow/pipelines | closed | mlpipeline-metrics not found | area/backend kind/bug priority/p0 status/triaged | ### What steps did you take:

I just install v1.0 and kick off Exit-Handler pipeline.

### What happened:

Checking pipeline logs, I get

```

I0224 21:55:00.245907 1 interceptor.go:29] /api.RunService/ReadArtifact handler starting

I0224 21:55:00.248584 1 error.go:218] ResourceNotFoundError: artifact ... | 1.0 | mlpipeline-metrics not found - ### What steps did you take:

I just install v1.0 and kick off Exit-Handler pipeline.

### What happened:

Checking pipeline logs, I get

```

I0224 21:55:00.245907 1 interceptor.go:29] /api.RunService/ReadArtifact handler starting

I0224 21:55:00.248584 1 error.go:218] R... | priority | mlpipeline metrics not found what steps did you take i just install and kick off exit handler pipeline what happened checking pipeline logs i get interceptor go api runservice readartifact handler starting error go resourcenotfounderror artifa... | 1 |

87,054 | 25,018,905,776 | IssuesEvent | 2022-11-03 21:38:18 | aws-amplify/amplify-hosting | https://api.github.com/repos/aws-amplify/amplify-hosting | closed | Different build command for different branch | question frontend-builds response-requested | **Please describe which feature you have a question about?**

Configuring Build Setting

**Provide additional details**

I have 1 app with two different environments and domains. each environment has different domain. for example:

- env `blue` will run in `app.blue` domain (for dev)

- env `red` will rub in `app.red... | 1.0 | Different build command for different branch - **Please describe which feature you have a question about?**

Configuring Build Setting

**Provide additional details**

I have 1 app with two different environments and domains. each environment has different domain. for example:

- env `blue` will run in `app.blue` dom... | non_priority | different build command for different branch please describe which feature you have a question about configuring build setting provide additional details i have app with two different environments and domains each environment has different domain for example env blue will run in app blue dom... | 0 |

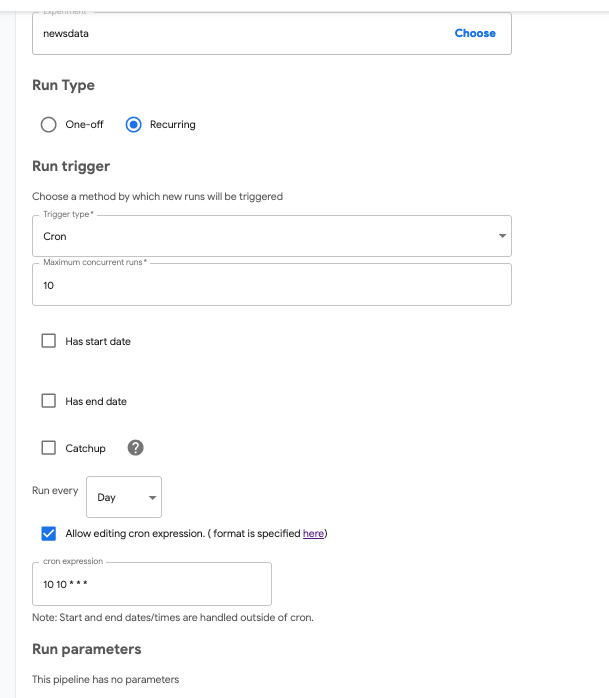

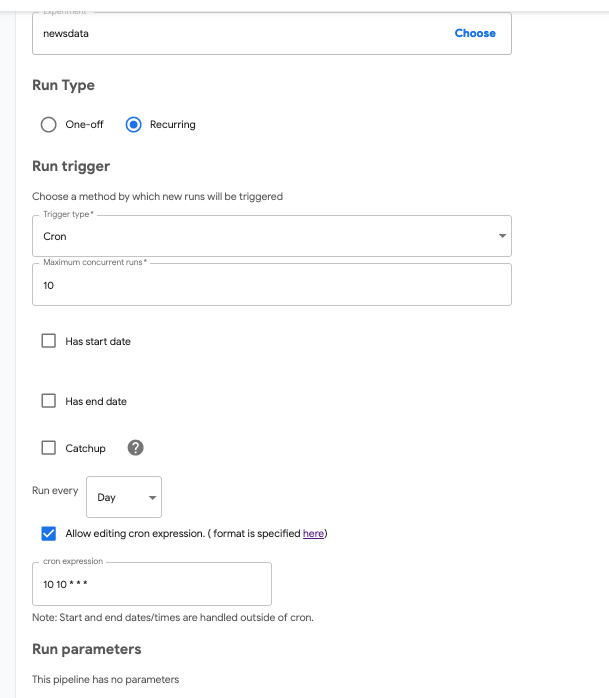

514,007 | 14,931,396,151 | IssuesEvent | 2021-01-25 05:41:19 | kubeflow/kubeflow | https://api.github.com/repos/kubeflow/kubeflow | closed | Improve the messaging around Scheduled Workflow Cron schedule | area/front-end area/pipelines kind/feature lifecycle/stale priority/p2 | /kind feature

**Why you need this feature:**

We were having a problem where we couldn't immediately figure out why the Cron wasn't working as we expected when giving it the following syntax.

```

10 10 ... | 1.0 | Improve the messaging around Scheduled Workflow Cron schedule - /kind feature

**Why you need this feature:**

We were having a problem where we couldn't immediately figure out why the Cron wasn't working as... | priority | improve the messaging around scheduled workflow cron schedule kind feature why you need this feature we were having a problem where we couldn t immediately figure out why the cron wasn t working as we expected when giving it the following syntax we expected it to run at ... | 1 |

26,328 | 12,965,372,157 | IssuesEvent | 2020-07-20 22:13:09 | JuliaLang/julia | https://api.github.com/repos/JuliaLang/julia | closed | Feature request: Multithreaded sparse matrix vector product | linear algebra performance sparse | I have a large sparse matrix which I need to multiply with dense vectors. The types of the elements in the vectors are UInt256 and UInt8 in the matrix. Currently each product takes about 45 seconds using SparseArrays.

It would be great if this could be sped up using multithreading. As far as I can see no such l... | True | Feature request: Multithreaded sparse matrix vector product - I have a large sparse matrix which I need to multiply with dense vectors. The types of the elements in the vectors are UInt256 and UInt8 in the matrix. Currently each product takes about 45 seconds using SparseArrays.

It would be great if this could b... | non_priority | feature request multithreaded sparse matrix vector product i have a large sparse matrix which i need to multiply with dense vectors the types of the elements in the vectors are and in the matrix currently each product takes about seconds using sparsearrays it would be great if this could be sped up u... | 0 |

544,578 | 15,894,731,289 | IssuesEvent | 2021-04-11 11:21:47 | marcusolsson/grafana-hourly-heatmap-panel | https://api.github.com/repos/marcusolsson/grafana-hourly-heatmap-panel | closed | Color option for null values | priority/low type/enhancement | If there is a null value in the data, the heatmap prints black color. It would be nice to have a color palette to select a color for null values. | 1.0 | Color option for null values - If there is a null value in the data, the heatmap prints black color. It would be nice to have a color palette to select a color for null values. | priority | color option for null values if there is a null value in the data the heatmap prints black color it would be nice to have a color palette to select a color for null values | 1 |

212,357 | 7,236,179,340 | IssuesEvent | 2018-02-13 05:23:42 | yanis333/SOEN341_Website | https://api.github.com/repos/yanis333/SOEN341_Website | closed | Create Jquery function to validate textboxes (if empty or not). | High value Priority3 Risk2 feature sprint 2 | [sp] = 1

This is a feature for issue #59 | 1.0 | Create Jquery function to validate textboxes (if empty or not). - [sp] = 1

This is a feature for issue #59 | priority | create jquery function to validate textboxes if empty or not this is a feature for issue | 1 |

45,582 | 18,762,337,112 | IssuesEvent | 2021-11-05 18:01:47 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | [Actions] Implement generic support for OAuth JWT authentication flow for the alerting connectors. | enhancement Team:Alerting Services Feature:Alerting/RuleActions estimate:medium | Inspired by the research issue https://github.com/elastic/kibana/issues/79372 results where we did a couple of POCs for the comparison of the different OAuth flows which is supported by the existing connectors integrations and the most urgent OAuth implementation requirements (for ServiceNow).

Current solution is to a... | 1.0 | [Actions] Implement generic support for OAuth JWT authentication flow for the alerting connectors. - Inspired by the research issue https://github.com/elastic/kibana/issues/79372 results where we did a couple of POCs for the comparison of the different OAuth flows which is supported by the existing connectors integrati... | non_priority | implement generic support for oauth jwt authentication flow for the alerting connectors inspired by the research issue results where we did a couple of pocs for the comparison of the different oauth flows which is supported by the existing connectors integrations and the most urgent oauth implementation requirem... | 0 |

690,061 | 23,644,547,129 | IssuesEvent | 2022-08-25 20:32:43 | WarwickAI/wai-platform-v2 | https://api.github.com/repos/WarwickAI/wai-platform-v2 | closed | Make user role an array instead of one value | enhancement med-priority | For example, if a user is an `exec` they should also have the `member` role.

Will allow greater security, for example with the merch page, to only allow certain people to create and edit merch items. | 1.0 | Make user role an array instead of one value - For example, if a user is an `exec` they should also have the `member` role.

Will allow greater security, for example with the merch page, to only allow certain people to create and edit merch items. | priority | make user role an array instead of one value for example if a user is an exec they should also have the member role will allow greater security for example with the merch page to only allow certain people to create and edit merch items | 1 |

267,402 | 28,509,009,892 | IssuesEvent | 2023-04-19 01:27:34 | dpteam/RK3188_TABLET | https://api.github.com/repos/dpteam/RK3188_TABLET | closed | CVE-2019-15219 (Medium) detected in linuxv3.0 - autoclosed | Mend: dependency security vulnerability | ## CVE-2019-15219 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/ver... | True | CVE-2019-15219 (Medium) detected in linuxv3.0 - autoclosed - ## CVE-2019-15219 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>Linux kernel source ... | non_priority | cve medium detected in autoclosed cve medium severity vulnerability vulnerable library linux kernel source tree library home page a href found in head commit a href found in base branch master vulnerable source files drivers usb misc ... | 0 |

427,640 | 12,397,936,784 | IssuesEvent | 2020-05-21 00:13:28 | eclipse-ee4j/glassfish | https://api.github.com/repos/eclipse-ee4j/glassfish | closed | Consider better use of start levels in conjuction with autostart dir | Component: OSGi ERR: Assignee Priority: Major Stale Type: Improvement | We hardcode a number of module names in osgi.properties file as core.bundles. We should consider having different startlevels in modules/autostart and moving those bundles to that dir.

#### Affected Versions

[4.0] | 1.0 | Consider better use of start levels in conjuction with autostart dir - We hardcode a number of module names in osgi.properties file as core.bundles. We should consider having different startlevels in modules/autostart and moving those bundles to that dir.

#### Affected Versions

[4.0] | priority | consider better use of start levels in conjuction with autostart dir we hardcode a number of module names in osgi properties file as core bundles we should consider having different startlevels in modules autostart and moving those bundles to that dir affected versions | 1 |

19,384 | 13,940,317,003 | IssuesEvent | 2020-10-22 17:43:49 | ONRR/nrrd | https://api.github.com/repos/ONRR/nrrd | opened | Clarify the difference between compare and nationwide sections | Explore Data P3: Medium usability | Four participants weren't clear that there was a difference between the nationwide and compare sections on Explore Data. Maybe put a US map on the headers for the nationwide sections. | True | Clarify the difference between compare and nationwide sections - Four participants weren't clear that there was a difference between the nationwide and compare sections on Explore Data. Maybe put a US map on the headers for the nationwide sections. | non_priority | clarify the difference between compare and nationwide sections four participants weren t clear that there was a difference between the nationwide and compare sections on explore data maybe put a us map on the headers for the nationwide sections | 0 |

104,282 | 22,621,474,808 | IssuesEvent | 2022-06-30 06:50:49 | FerretDB/FerretDB | https://api.github.com/repos/FerretDB/FerretDB | closed | Investigate fuzzing failure | code/bug not ripe | https://github.com/FerretDB/FerretDB/runs/4726383278

```

fuzz: elapsed: 15s, execs: 12535 (1444/sec), new interesting: 3 (total: 3)

fuzz: elapsed: 16s, execs: 12535 (0/sec), new interesting: 3 (total: 3)

--- FAIL: FuzzArray (16.40s)

fuzzing process hung or terminated unexpectedly while minimizing: EOF

F... | 1.0 | Investigate fuzzing failure - https://github.com/FerretDB/FerretDB/runs/4726383278

```

fuzz: elapsed: 15s, execs: 12535 (1444/sec), new interesting: 3 (total: 3)

fuzz: elapsed: 16s, execs: 12535 (0/sec), new interesting: 3 (total: 3)

--- FAIL: FuzzArray (16.40s)

fuzzing process hung or terminated unexpectedl... | non_priority | investigate fuzzing failure fuzz elapsed execs sec new interesting total fuzz elapsed execs sec new interesting total fail fuzzarray fuzzing process hung or terminated unexpectedly while minimizing eof failing input written to testdata fuzz f... | 0 |

19,735 | 5,923,729,695 | IssuesEvent | 2017-05-23 08:42:46 | akvo/akvo-flow-mobile | https://api.github.com/repos/akvo/akvo-flow-mobile | closed | Refactor dependencies management | Code Refactoring | # Overview

Although this is not a priority right now, we should consider refactoring this component at some point, aiming at having a simpler, more maintainable codebase.

| 1.0 | Refactor dependencies management - # Overview

Although this is not a priority right now, we should consider refactoring this component at some point, aiming at having a simpler, more maintainable codebase.

| non_priority | refactor dependencies management overview although this is not a priority right now we should consider refactoring this component at some point aiming at having a simpler more maintainable codebase | 0 |

255,785 | 27,504,281,018 | IssuesEvent | 2023-03-06 01:03:45 | snykiotcubedev/reactos-0.4.13-release | https://api.github.com/repos/snykiotcubedev/reactos-0.4.13-release | opened | CVE-2022-4645 (Medium) detected in reactosReactOS-0.4.13-release-28-g5724391-src | security vulnerability | ## CVE-2022-4645 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>reactosReactOS-0.4.13-release-28-g5724391-src</b></p></summary>

<p>

<p>An operating system based on the best Windows ... | True | CVE-2022-4645 (Medium) detected in reactosReactOS-0.4.13-release-28-g5724391-src - ## CVE-2022-4645 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>reactosReactOS-0.4.13-release-28-g5... | non_priority | cve medium detected in reactosreactos release src cve medium severity vulnerability vulnerable library reactosreactos release src an operating system based on the best windows nt design principles library home page a href found in base branch main ... | 0 |

274,649 | 20,862,380,549 | IssuesEvent | 2022-03-22 01:01:58 | benstream/CS372 | https://api.github.com/repos/benstream/CS372 | closed | Week 3 Iteration Plan (March 14th – 18th, 2022) | documentation | - [x] #12

- [x] #15

- [x] #19

- [x] #16

- [x] #14: high to low priority completion (viewer's perspective)

- [x] #20 | 1.0 | Week 3 Iteration Plan (March 14th – 18th, 2022) - - [x] #12

- [x] #15

- [x] #19

- [x] #16

- [x] #14: high to low priority completion (viewer's perspective)

- [x] #20 | non_priority | week iteration plan march – high to low priority completion viewer s perspective | 0 |

29,091 | 4,160,167,388 | IssuesEvent | 2016-06-17 12:13:01 | GSE-Project/SS2016-group1 | https://api.github.com/repos/GSE-Project/SS2016-group1 | closed | Request custom stop - Change Component Diagram | -> June 20 designIF | depends on #60

- [x] Change diagram

- [x] add traceability information | 1.0 | Request custom stop - Change Component Diagram - depends on #60

- [x] Change diagram

- [x] add traceability information | non_priority | request custom stop change component diagram depends on change diagram add traceability information | 0 |

20,725 | 11,495,998,002 | IssuesEvent | 2020-02-12 06:45:25 | Azure/azure-cli | https://api.github.com/repos/Azure/azure-cli | closed | Azure ARC Extension - No module named google.auth | AKS Service Attention |

### **This is autogenerated. Please review and update as needed.**

## Describe the bug

**Command Name**

`az connectedk8s connect`

**Errors:**

```

No module named google.auth

Traceback (most recent call last):

python2.7/site-packages/knack/cli.py, ln 206, in invoke

cmd_result = self.invocation.execu... | 1.0 | Azure ARC Extension - No module named google.auth -

### **This is autogenerated. Please review and update as needed.**

## Describe the bug

**Command Name**

`az connectedk8s connect`

**Errors:**

```

No module named google.auth

Traceback (most recent call last):

python2.7/site-packages/knack/cli.py, ln 20... | non_priority | azure arc extension no module named google auth this is autogenerated please review and update as needed describe the bug command name az connect errors no module named google auth traceback most recent call last site packages knack cli py ln in invoke c... | 0 |

252,829 | 8,042,486,294 | IssuesEvent | 2018-07-31 08:19:05 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.google.com - site is not usable | browser-firefox-mobile priority-critical | <!-- @browser: Firefox Mobile 63.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.1.0; Mobile; rv:63.0) Gecko/63.0 Firefox/63.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://www.google.com/search?q=galileo+telephone+portable

**Browser / Version**: Firefox Mobile 63.0

**Operating System**: Android 8.1.0

*... | 1.0 | www.google.com - site is not usable - <!-- @browser: Firefox Mobile 63.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.1.0; Mobile; rv:63.0) Gecko/63.0 Firefox/63.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://www.google.com/search?q=galileo+telephone+portable

**Browser / Version**: Firefox Mobile 63.0... | priority | site is not usable url browser version firefox mobile operating system android tested another browser yes problem type site is not usable description it says no internet connection while it works with focus steps to reproduce from with ❤️ | 1 |

168,122 | 20,742,230,691 | IssuesEvent | 2022-03-14 18:51:41 | snowflakedb/snowflake-jdbc | https://api.github.com/repos/snowflakedb/snowflake-jdbc | opened | CVE-2020-36518 (High) detected in jackson-databind-2.9.8.jar, jackson-databind-2.12.1.jar | security vulnerability | ## CVE-2020-36518 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.9.8.jar</b>, <b>jackson-databind-2.12.1.jar</b></p></summary>

<p>

<details><summary><b>jackson-da... | True | CVE-2020-36518 (High) detected in jackson-databind-2.9.8.jar, jackson-databind-2.12.1.jar - ## CVE-2020-36518 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.9.8.ja... | non_priority | cve high detected in jackson databind jar jackson databind jar cve high severity vulnerability vulnerable libraries jackson databind jar jackson databind jar jackson databind jar general data binding functionality for jackson works on core streami... | 0 |

92,501 | 3,871,643,981 | IssuesEvent | 2016-04-11 10:40:52 | osm2vectortiles/osm2vectortiles | https://api.github.com/repos/osm2vectortiles/osm2vectortiles | opened | Implement network and shield field | low-priority | - [ ] Implement field shield of layer road_label

- [ ] Implement field network of layer rail_station_label

- The OSM key [network](http://wiki.openstreetmap.org/wiki/Key:network) can be used to determine in which country/region (network) the road or railway is. | 1.0 | Implement network and shield field - - [ ] Implement field shield of layer road_label

- [ ] Implement field network of layer rail_station_label

- The OSM key [network](http://wiki.openstreetmap.org/wiki/Key:network) can be used to determine in which country/region (network) the road or railway is. | priority | implement network and shield field implement field shield of layer road label implement field network of layer rail station label the osm key can be used to determine in which country region network the road or railway is | 1 |

712,053 | 24,483,321,679 | IssuesEvent | 2022-10-09 05:07:18 | hengband/hengband | https://api.github.com/repos/hengband/hengband | closed | object-kind.cpp/h をobject/ からsystem/ へ移し、更に改名する | refactor Priority:MIDDLE | #2628 の関連チケット

リファクタリング当時はごちゃごちゃで気付かなかったが、k\_info.txt の中身 (とミュータブルな諸々)を示すファイルである

ならば掲題のフォルダがふさわしい

名前もitem-template-type-definition.cpp/h くらいが良いか

備忘録:quark インデックスがstd::string に変わっているのでコメントも差し替える | 1.0 | object-kind.cpp/h をobject/ からsystem/ へ移し、更に改名する - #2628 の関連チケット

リファクタリング当時はごちゃごちゃで気付かなかったが、k\_info.txt の中身 (とミュータブルな諸々)を示すファイルである

ならば掲題のフォルダがふさわしい

名前もitem-template-type-definition.cpp/h くらいが良いか

備忘録:quark インデックスがstd::string に変わっているのでコメントも差し替える | priority | object kind cpp h をobject からsystem へ移し、更に改名する の関連チケット リファクタリング当時はごちゃごちゃで気付かなかったが、k info txt の中身 とミュータブルな諸々 を示すファイルである ならば掲題のフォルダがふさわしい 名前もitem template type definition cpp h くらいが良いか 備忘録:quark インデックスがstd string に変わっているのでコメントも差し替える | 1 |

156,396 | 12,309,069,281 | IssuesEvent | 2020-05-12 08:21:24 | dso-toolkit/dso-toolkit | https://api.github.com/repos/dso-toolkit/dso-toolkit | closed | Line-height headings werken alleen binnen class="dso-rich-content" | status:testable type:bug | Met de toolkit upgrade naar 10.3.0 had ik een taak aangemaakt om de line-height van de headings te controleren. Nu blijkt dat deze enkel binnen de class="dso-rich-content" worden toegepast. De bedoeling was volgens mij om dit overal toe te passen.

In de gebruikerstoepassingen (van team PR12) wordt nergens class="dso... | 1.0 | Line-height headings werken alleen binnen class="dso-rich-content" - Met de toolkit upgrade naar 10.3.0 had ik een taak aangemaakt om de line-height van de headings te controleren. Nu blijkt dat deze enkel binnen de class="dso-rich-content" worden toegepast. De bedoeling was volgens mij om dit overal toe te passen.

... | non_priority | line height headings werken alleen binnen class dso rich content met de toolkit upgrade naar had ik een taak aangemaakt om de line height van de headings te controleren nu blijkt dat deze enkel binnen de class dso rich content worden toegepast de bedoeling was volgens mij om dit overal toe te passen i... | 0 |

289,098 | 8,854,938,884 | IssuesEvent | 2019-01-09 03:45:51 | visit-dav/issues-test | https://api.github.com/repos/visit-dav/issues-test | closed | viewercore introduces _ser library dependencies into simV2runtime_par. | bug likelihood medium priority reviewed severity medium | The viewercore library contains the core parts of the viewer and it is used to add viewer functionality to libsim. Since viewercore relies on some AVT stuff and was destined for the viewer, it ends up linking with some _ser avt libraries. When viewercore is linked into the parallel simV2runtime_par library, it brings a... | 1.0 | viewercore introduces _ser library dependencies into simV2runtime_par. - The viewercore library contains the core parts of the viewer and it is used to add viewer functionality to libsim. Since viewercore relies on some AVT stuff and was destined for the viewer, it ends up linking with some _ser avt libraries. When vie... | priority | viewercore introduces ser library dependencies into par the viewercore library contains the core parts of the viewer and it is used to add viewer functionality to libsim since viewercore relies on some avt stuff and was destined for the viewer it ends up linking with some ser avt libraries when viewercore is ... | 1 |

358,422 | 25,191,246,299 | IssuesEvent | 2022-11-12 01:30:45 | MitchTurner/naumachia | https://api.github.com/repos/MitchTurner/naumachia | closed | Architectural diagram of Naumachia | documentation | Let's help people understand the structure and why things work the way they do. It might still change a lot, but at least we can document those changes and stuff if we have a baseline. | 1.0 | Architectural diagram of Naumachia - Let's help people understand the structure and why things work the way they do. It might still change a lot, but at least we can document those changes and stuff if we have a baseline. | non_priority | architectural diagram of naumachia let s help people understand the structure and why things work the way they do it might still change a lot but at least we can document those changes and stuff if we have a baseline | 0 |

265,395 | 8,353,777,639 | IssuesEvent | 2018-10-02 11:13:26 | handsontable/handsontable-pro | https://api.github.com/repos/handsontable/handsontable-pro | closed | [Multi-column sorting] Click on the top-left overlay will throw an exception | Priority: high Regression Status: Released Type: Bug | ### Description

As the title says, when the `multiColumnSorting` plugin is enabled.

### Your environment

* Handsontable version: 6.0.0

| 1.0 | [Multi-column sorting] Click on the top-left overlay will throw an exception - ### Description

As the title says, when the `multiColumnSorting` plugin is enabled.

### Your environment

* Handsontable ver... | priority | click on the top left overlay will throw an exception description as the title says when the multicolumnsorting plugin is enabled your environment handsontable version | 1 |

52,674 | 3,026,141,409 | IssuesEvent | 2015-08-03 13:33:34 | twosigma/beaker-notebook | https://api.github.com/repos/twosigma/beaker-notebook | closed | Add automated memory tests | enhancement Priority 1 | ##### Two types of interactions to test at first:

* Adding and removing plots

* Adding and removing graphs | 1.0 | Add automated memory tests - ##### Two types of interactions to test at first:

* Adding and removing plots

* Adding and removing graphs | priority | add automated memory tests two types of interactions to test at first adding and removing plots adding and removing graphs | 1 |

362,417 | 25,374,377,936 | IssuesEvent | 2022-11-21 13:03:23 | rabbitmq/khepri | https://api.github.com/repos/rabbitmq/khepri | closed | Make it easy to find the import/export guide in the documentation | documentation | Currently there is no obvious link in the main documentation menu and no paragraph in the Overview page. Therefore it is difficult to know that everything is explained in the `khepri_import_export` module.

This was [reported by *Ipil* on the Erlang forums](https://erlangforums.com/t/khepri-a-tree-like-replicated-on-... | 1.0 | Make it easy to find the import/export guide in the documentation - Currently there is no obvious link in the main documentation menu and no paragraph in the Overview page. Therefore it is difficult to know that everything is explained in the `khepri_import_export` module.

This was [reported by *Ipil* on the Erlang ... | non_priority | make it easy to find the import export guide in the documentation currently there is no obvious link in the main documentation menu and no paragraph in the overview page therefore it is difficult to know that everything is explained in the khepri import export module this was | 0 |

783,171 | 27,521,009,707 | IssuesEvent | 2023-03-06 15:02:37 | fractal-analytics-platform/fractal-server | https://api.github.com/repos/fractal-analytics-platform/fractal-server | closed | Update common after new validators | High Priority | See https://github.com/fractal-analytics-platform/fractal-common/issues/18 (and partly #525).

The idea here is that fractal-common should be the only source for the Pydantic schemas that we use to encode endpoint requests and responses. Note that using Pydantic validators (instead of more advanced typing, e.g. using... | 1.0 | Update common after new validators - See https://github.com/fractal-analytics-platform/fractal-common/issues/18 (and partly #525).

The idea here is that fractal-common should be the only source for the Pydantic schemas that we use to encode endpoint requests and responses. Note that using Pydantic validators (instea... | priority | update common after new validators see and partly the idea here is that fractal common should be the only source for the pydantic schemas that we use to encode endpoint requests and responses note that using pydantic validators instead of more advanced typing e g using min length for strings or ... | 1 |

22,300 | 30,854,055,368 | IssuesEvent | 2023-08-02 19:03:30 | cohenlabUNC/clpipe | https://api.github.com/repos/cohenlabUNC/clpipe | closed | Add 3dTProject Implementation as Alternate Filtering Step | postprocess2 1.8.1 Req large | For now we don't have a scrub file available, so you'll need to alter the filtering workflow to be capable of running without a scrub file.

After this is done and 3dTproject is registered as an available implementation of filtering, we'll need a test to make sure that the filtering works without scrubbing.

Once s... | 1.0 | Add 3dTProject Implementation as Alternate Filtering Step - For now we don't have a scrub file available, so you'll need to alter the filtering workflow to be capable of running without a scrub file.

After this is done and 3dTproject is registered as an available implementation of filtering, we'll need a test to mak... | non_priority | add implementation as alternate filtering step for now we don t have a scrub file available so you ll need to alter the filtering workflow to be capable of running without a scrub file after this is done and is registered as an available implementation of filtering we ll need a test to make sure that the fi... | 0 |

171,810 | 20,998,532,108 | IssuesEvent | 2022-03-29 15:20:38 | gdcorp-action-public-forks/maven-settings-xml-action | https://api.github.com/repos/gdcorp-action-public-forks/maven-settings-xml-action | closed | CVE-2021-3807 (High) detected in ansi-regex-5.0.0.tgz, ansi-regex-4.1.0.tgz - autoclosed | security vulnerability | ## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ansi-regex-5.0.0.tgz</b>, <b>ansi-regex-4.1.0.tgz</b></p></summary>

<p>

<details><summary><b>ansi-regex-5.0.0.tgz</b>... | True | CVE-2021-3807 (High) detected in ansi-regex-5.0.0.tgz, ansi-regex-4.1.0.tgz - autoclosed - ## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ansi-regex-5.0.0.tgz</b>, <... | non_priority | cve high detected in ansi regex tgz ansi regex tgz autoclosed cve high severity vulnerability vulnerable libraries ansi regex tgz ansi regex tgz ansi regex tgz regular expression for matching ansi escape codes library home page a href pat... | 0 |

70,843 | 8,583,890,906 | IssuesEvent | 2018-11-13 21:04:46 | kids-first/kf-ui-release-coordinator | https://api.github.com/repos/kids-first/kf-ui-release-coordinator | closed | Planner Page | Design | page layout shouldn't be wrapped in a card

- move the `this is a major release` to the top as that's an important item that needs to be made more prevelant, could be a toggle

Studies section: study id tags could reflect the status of that study

Services to be run for this release could follow the same recomm... | 1.0 | Planner Page - page layout shouldn't be wrapped in a card

- move the `this is a major release` to the top as that's an important item that needs to be made more prevelant, could be a toggle

Studies section: study id tags could reflect the status of that study

Services to be run for this release could follow ... | non_priority | planner page page layout shouldn t be wrapped in a card move the this is a major release to the top as that s an important item that needs to be made more prevelant could be a toggle studies section study id tags could reflect the status of that study services to be run for this release could follow ... | 0 |

684,837 | 23,434,786,871 | IssuesEvent | 2022-08-15 08:35:28 | mayuNishikawa/CALL_ME | https://api.github.com/repos/mayuNishikawa/CALL_ME | closed | Step3 チームを作る機能 | enhancement higi priority | - [x] teamsテーブル、assignsテーブルを作成

- [x] teamモデルにvalidation, アソシエーション、メソッドを追加。

- [x] assignsモデルにアソシエーション追加

- [x] team新規作成、詳細、編集画面を作成

- [x] team削除機能を作成 | 1.0 | Step3 チームを作る機能 - - [x] teamsテーブル、assignsテーブルを作成

- [x] teamモデルにvalidation, アソシエーション、メソッドを追加。

- [x] assignsモデルにアソシエーション追加

- [x] team新規作成、詳細、編集画面を作成

- [x] team削除機能を作成 | priority | チームを作る機能 teamsテーブル、assignsテーブルを作成 teamモデルにvalidation アソシエーション、メソッドを追加。 assignsモデルにアソシエーション追加 team新規作成、詳細、編集画面を作成 team削除機能を作成 | 1 |

757,133 | 26,497,698,750 | IssuesEvent | 2023-01-18 07:42:51 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | closed | Using Eigen::Array<Polynomial, Dynamic, Dynamic> for PolynomialMatrix in PiecewisePolynomial | priority: backlog component: mathematical program | Currently we use `Eigen::Matrix<Polynomial, Eigen::Dynamic, Eigen::Dynamic>` as the type of `PolynomialMatrix` inside `PiecewisePolynomial` class. But this can cause confusion since we are doing element-wise product, when multiplying two piecewise polynomials, as we have seen in #4854

I think the solution is to use... | 1.0 | Using Eigen::Array<Polynomial, Dynamic, Dynamic> for PolynomialMatrix in PiecewisePolynomial - Currently we use `Eigen::Matrix<Polynomial, Eigen::Dynamic, Eigen::Dynamic>` as the type of `PolynomialMatrix` inside `PiecewisePolynomial` class. But this can cause confusion since we are doing element-wise product, when mul... | priority | using eigen array for polynomialmatrix in piecewisepolynomial currently we use eigen matrix as the type of polynomialmatrix inside piecewisepolynomial class but this can cause confusion since we are doing element wise product when multiplying two piecewise polynomials as we have seen in i think th... | 1 |

438,923 | 12,663,474,754 | IssuesEvent | 2020-06-18 01:29:11 | vmware/clarity | https://api.github.com/repos/vmware/clarity | closed | Datagrid memory leak and DOM elements leak. | component: datagrid flag: has workaround priority: 1 low status: needs investigation type: bug | ```

[x] bug

[ ] feature request

[ ] enhancement

```

### Expected behavior

Datagrid not to leak memory and DOM elements.

### Actual behavior

After constantly updating the table using a socket, memory leaks and element leaks are created.

### Reproduction of behavior

- https://stackblitz.com/edit/angular-4... | 1.0 | Datagrid memory leak and DOM elements leak. - ```

[x] bug

[ ] feature request

[ ] enhancement

```

### Expected behavior

Datagrid not to leak memory and DOM elements.

### Actual behavior

After constantly updating the table using a socket, memory leaks and element leaks are created.

### Reproduction of beh... | priority | datagrid memory leak and dom elements leak bug feature request enhancement expected behavior datagrid not to leak memory and dom elements actual behavior after constantly updating the table using a socket memory leaks and element leaks are created reproduction of behavior ... | 1 |

461,312 | 13,228,302,908 | IssuesEvent | 2020-08-18 05:50:43 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | SQL math expressions | Estimation: S Internal breaking change Module: SQL Priority: High Source: Internal Team: Core Type: Enhancement | We need to implement base math expressions:

1. `COS`/`SIN`/`TAN`/`COT`/`ACOS`/`ASIN`/`ATAN`

1. `EXP`/`LN`/`LOG10`

1. `RAND`

1. `ABS`

1. `SIGN`

1. `DEGREES`/`RADIANS`

1. `ROUND`/`TRUNCATE`

1. `CEIL`/`FLOOR` | 1.0 | SQL math expressions - We need to implement base math expressions:

1. `COS`/`SIN`/`TAN`/`COT`/`ACOS`/`ASIN`/`ATAN`

1. `EXP`/`LN`/`LOG10`

1. `RAND`

1. `ABS`

1. `SIGN`

1. `DEGREES`/`RADIANS`

1. `ROUND`/`TRUNCATE`

1. `CEIL`/`FLOOR` | priority | sql math expressions we need to implement base math expressions cos sin tan cot acos asin atan exp ln rand abs sign degrees radians round truncate ceil floor | 1 |

370,447 | 10,932,421,002 | IssuesEvent | 2019-11-23 17:35:56 | collinbarrett/FilterLists | https://api.github.com/repos/collinbarrett/FilterLists | closed | The changes from #1124 don't seem to have gone live yet | bug high priority ops | This is most visible in how the ABP versions of *1hosts* aren't shown on the landing page, neither are any of the other new lists.

Is there any particular reason for it? | 1.0 | The changes from #1124 don't seem to have gone live yet - This is most visible in how the ABP versions of *1hosts* aren't shown on the landing page, neither are any of the other new lists.

Is there any particular reason for it? | priority | the changes from don t seem to have gone live yet this is most visible in how the abp versions of aren t shown on the landing page neither are any of the other new lists is there any particular reason for it | 1 |

476,734 | 13,749,245,407 | IssuesEvent | 2020-10-06 10:11:57 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Missing translations for Fingerprint settings | OS/Android QA/Yes l10n priority/P1 release-notes/exclude release/blocking | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | Missing translations for Fingerprint settings - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WI... | priority | missing translations for fingerprint settings have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get the issue closed it wi... | 1 |

266,383 | 8,366,801,073 | IssuesEvent | 2018-10-04 10:11:26 | architecture-building-systems/CityEnergyAnalyst | https://api.github.com/repos/architecture-building-systems/CityEnergyAnalyst | closed | pyliburo is replaced by py4design, thus the installation of the environment needs to take this change into account | Priority 1 bug | pyliburo is renamed as py4design, so the change needs to be made. Also, the folder structure in py4design is different than what it used to be for pyliburo. This impacts the radiation scripts in CEA.

The problem is faced when we are creating the CEA environment afresh | 1.0 | pyliburo is replaced by py4design, thus the installation of the environment needs to take this change into account - pyliburo is renamed as py4design, so the change needs to be made. Also, the folder structure in py4design is different than what it used to be for pyliburo. This impacts the radiation scripts in CEA.

... | priority | pyliburo is replaced by thus the installation of the environment needs to take this change into account pyliburo is renamed as so the change needs to be made also the folder structure in is different than what it used to be for pyliburo this impacts the radiation scripts in cea the problem is faced wh... | 1 |

48,901 | 13,425,014,074 | IssuesEvent | 2020-09-06 08:19:56 | searchboy-sudo/headless-wp-nuxt | https://api.github.com/repos/searchboy-sudo/headless-wp-nuxt | opened | CVE-2019-6286 (Medium) detected in node-sass-v4.13.1, node-sass-4.13.1.tgz | security vulnerability | ## CVE-2019-6286 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sass-4.13.1.tgz</b></p></summary>

<p>

<details><summary><b>node-sass-4.13.1.tgz</b></p></summary>

<p>Wrapper ... | True | CVE-2019-6286 (Medium) detected in node-sass-v4.13.1, node-sass-4.13.1.tgz - ## CVE-2019-6286 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sass-4.13.1.tgz</b></p></summary>

... | non_priority | cve medium detected in node sass node sass tgz cve medium severity vulnerability vulnerable libraries node sass tgz node sass tgz wrapper around libsass library home page a href path to dependency file tmp ws scm headless wp nuxt package json pa... | 0 |

51,679 | 10,710,978,758 | IssuesEvent | 2019-10-25 04:31:05 | eclipse/vorto | https://api.github.com/repos/eclipse/vorto | closed | Generation of models slow due to deep structured mapping resolving | Code Generators Mappings | This problem occurs, that generator invocation is extremely slow for bigger models. The mappings for the generator are always resolved unnecessarily upon every invocation which impacts performance. | 1.0 | Generation of models slow due to deep structured mapping resolving - This problem occurs, that generator invocation is extremely slow for bigger models. The mappings for the generator are always resolved unnecessarily upon every invocation which impacts performance. | non_priority | generation of models slow due to deep structured mapping resolving this problem occurs that generator invocation is extremely slow for bigger models the mappings for the generator are always resolved unnecessarily upon every invocation which impacts performance | 0 |

15,531 | 10,317,092,124 | IssuesEvent | 2019-08-30 11:47:56 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Ruby error | Pri2 cognitive-services/svc cxp face-api/subsvc product-question triaged | I create a new file, name it faceDetection.rb and paste the above code. Then I changed the key and the west central server to West US and I keep getting a Failed to open TCP connection error. Anyone else is having troubles?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com... | 1.0 | Ruby error - I create a new file, name it faceDetection.rb and paste the above code. Then I changed the key and the west central server to West US and I keep getting a Failed to open TCP connection error. Anyone else is having troubles?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.... | non_priority | ruby error i create a new file name it facedetection rb and paste the above code then i changed the key and the west central server to west us and i keep getting a failed to open tcp connection error anyone else is having troubles document details ⚠ do not edit this section it is required for docs ... | 0 |

137,494 | 11,139,015,683 | IssuesEvent | 2019-12-21 01:14:37 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Forward port: Permissions are not updated in cluster when a cluster role is modified | [zube]: To Test team/ca | Forward port of: https://github.com/rancher/rancher/issues/23292

Addressed by https://github.com/rancher/rancher/pull/24661/commits/6dee0d8cae16a5020f752e7de5526614e520850c | 1.0 | Forward port: Permissions are not updated in cluster when a cluster role is modified - Forward port of: https://github.com/rancher/rancher/issues/23292

Addressed by https://github.com/rancher/rancher/pull/24661/commits/6dee0d8cae16a5020f752e7de5526614e520850c | non_priority | forward port permissions are not updated in cluster when a cluster role is modified forward port of addressed by | 0 |

438,120 | 12,619,567,073 | IssuesEvent | 2020-06-13 01:16:00 | skylight-hq/skylight.digital | https://api.github.com/repos/skylight-hq/skylight.digital | closed | Auto generate pages for blog post authors, blog post tags, and project team members | priority:low | Right now each page has to be set up manually. | 1.0 | Auto generate pages for blog post authors, blog post tags, and project team members - Right now each page has to be set up manually. | priority | auto generate pages for blog post authors blog post tags and project team members right now each page has to be set up manually | 1 |

5,861 | 4,047,281,829 | IssuesEvent | 2016-05-23 03:57:52 | PowerShell/platyPS | https://api.github.com/repos/PowerShell/platyPS | opened | Cmdlet Design Review notes | roadmap usability | ### Notes from cmdlet design review

We did a review with @JamesWTruher @BrucePay @jongeller @joeyaiello @SteveL-MSFT

These items was not addressed in v0.4.0 release.

I keeping them in a separate issue for future releases

* [ ] Metadadta hashtable should validate types [string] of Key-Values

* [ ] OnlineV... | True | Cmdlet Design Review notes - ### Notes from cmdlet design review

We did a review with @JamesWTruher @BrucePay @jongeller @joeyaiello @SteveL-MSFT

These items was not addressed in v0.4.0 release.

I keeping them in a separate issue for future releases

* [ ] Metadadta hashtable should validate types [string] o... | non_priority | cmdlet design review notes notes from cmdlet design review we did a review with jameswtruher brucepay jongeller joeyaiello stevel msft these items was not addressed in release i keeping them in a separate issue for future releases metadadta hashtable should validate types of key valu... | 0 |

88,873 | 25,524,160,668 | IssuesEvent | 2022-11-28 23:47:42 | liu-benny/web-services-project | https://api.github.com/repos/liu-benny/web-services-project | closed | Implement GET method for /clinics/{clinic_id}/patients/{patient_id}/appointments | Build #2 | Add in AppointmentModel for the GEt method in appointments_routes | 1.0 | Implement GET method for /clinics/{clinic_id}/patients/{patient_id}/appointments - Add in AppointmentModel for the GEt method in appointments_routes | non_priority | implement get method for clinics clinic id patients patient id appointments add in appointmentmodel for the get method in appointments routes | 0 |

41,953 | 10,833,777,216 | IssuesEvent | 2019-11-11 13:41:49 | trellis-ldp/trellis | https://api.github.com/repos/trellis-ldp/trellis | closed | Update relevant javax.* dependencies to jakarta.* | area/api build | Ideally all of the javax.* dependencies will start using jakarta.*, but the priority should be for `inject` and `cdi-api` | 1.0 | Update relevant javax.* dependencies to jakarta.* - Ideally all of the javax.* dependencies will start using jakarta.*, but the priority should be for `inject` and `cdi-api` | non_priority | update relevant javax dependencies to jakarta ideally all of the javax dependencies will start using jakarta but the priority should be for inject and cdi api | 0 |

26,657 | 13,087,115,434 | IssuesEvent | 2020-08-02 10:22:06 | scylladb/scylla | https://api.github.com/repos/scylladb/scylla | closed | Scylla node performance degrades over time with a constant workload | User Request performance | This is Scylla's bug tracker, to be used for reporting bugs only.

If you have a question about Scylla, and not a bug, please ask it in

our mailing-list at scylladb-dev@googlegroups.com or in our slack channel.

- [X] I have read the disclaimer above, and I am reporting a suspected malfunction in Scylla.

*Install... | True | Scylla node performance degrades over time with a constant workload - This is Scylla's bug tracker, to be used for reporting bugs only.

If you have a question about Scylla, and not a bug, please ask it in

our mailing-list at scylladb-dev@googlegroups.com or in our slack channel.

- [X] I have read the disclaimer ab... | non_priority | scylla node performance degrades over time with a constant workload this is scylla s bug tracker to be used for reporting bugs only if you have a question about scylla and not a bug please ask it in our mailing list at scylladb dev googlegroups com or in our slack channel i have read the disclaimer abov... | 0 |

129,392 | 10,573,223,526 | IssuesEvent | 2019-10-07 11:27:32 | olehan/kek | https://api.github.com/repos/olehan/kek | closed | Create a proper benchmark tests | test | Not really sure when I can do it, but I plan to rent a dedicated server and create some gud benchmarks, so you could see the clear performance of the lib. | 1.0 | Create a proper benchmark tests - Not really sure when I can do it, but I plan to rent a dedicated server and create some gud benchmarks, so you could see the clear performance of the lib. | non_priority | create a proper benchmark tests not really sure when i can do it but i plan to rent a dedicated server and create some gud benchmarks so you could see the clear performance of the lib | 0 |

256,216 | 8,127,038,622 | IssuesEvent | 2018-08-17 06:17:52 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | Append version number onto build_visit and visit-install that we put on the Web. | Expected Use: 3 - Occasional Feature Impact: 2 - Low Priority: Normal Support Group: DOE/ASC | cq-id: VisIt00008818

cq-submitter: Brad Whitlock

cq-submit-date: 12/01/08

A couple of external users have become confused with build_visit and visit-install and have ended up using them with wrong versions of the binary distributions and source code. The users both suggested that we append the version number to th... | 1.0 | Append version number onto build_visit and visit-install that we put on the Web. - cq-id: VisIt00008818

cq-submitter: Brad Whitlock

cq-submit-date: 12/01/08

A couple of external users have become confused with build_visit and visit-install and have ended up using them with wrong versions of the binary distribution... | priority | append version number onto build visit and visit install that we put on the web cq id cq submitter brad whitlock cq submit date a couple of external users have become confused with build visit and visit install and have ended up using them with wrong versions of the binary distributions and source co... | 1 |

696,611 | 23,907,166,861 | IssuesEvent | 2022-09-09 02:55:08 | philhawksworth/the-united-effort-orginization | https://api.github.com/repos/philhawksworth/the-united-effort-orginization | closed | Pull data from both housing and units Airtables | internal cleanup Priority 3 | We currently pull all data from the `Units` table in Airtable. There also exists a `Housing Database` table that has property-level data such as address or contact info. The two tables are linked, which makes data entry much easier. However, we do not get data directly from the `Housing Database` table and instead r... | 1.0 | Pull data from both housing and units Airtables - We currently pull all data from the `Units` table in Airtable. There also exists a `Housing Database` table that has property-level data such as address or contact info. The two tables are linked, which makes data entry much easier. However, we do not get data direct... | priority | pull data from both housing and units airtables we currently pull all data from the units table in airtable there also exists a housing database table that has property level data such as address or contact info the two tables are linked which makes data entry much easier however we do not get data direct... | 1 |

167,792 | 26,552,718,277 | IssuesEvent | 2023-01-20 09:20:45 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Add a shortcut for template browsing | Needs Design Feedback [Feature] Full Site Editing [Feature] Site Editor | The template list screen we've been working on for https://github.com/WordPress/gutenberg/issues/36597 will serve well as a centralised management screen with searching, bulk actions, and other such features in the future.

It serves less effectively as a navigational tool. Moving from one template to another is quit... | 1.0 | Add a shortcut for template browsing - The template list screen we've been working on for https://github.com/WordPress/gutenberg/issues/36597 will serve well as a centralised management screen with searching, bulk actions, and other such features in the future.

It serves less effectively as a navigational tool. Movi... | non_priority | add a shortcut for template browsing the template list screen we ve been working on for will serve well as a centralised management screen with searching bulk actions and other such features in the future it serves less effectively as a navigational tool moving from one template to another is quite a tedious... | 0 |

99,302 | 4,052,307,141 | IssuesEvent | 2016-05-24 01:39:35 | nvs/gem | https://api.github.com/repos/nvs/gem | opened | A health bar remains for an invisible unit | Priority: Later Status: Not Started Type: Bug | Happens fairly regularly, and just annoys the hell out of me. | 1.0 | A health bar remains for an invisible unit - Happens fairly regularly, and just annoys the hell out of me. | priority | a health bar remains for an invisible unit happens fairly regularly and just annoys the hell out of me | 1 |

625,536 | 19,751,917,507 | IssuesEvent | 2022-01-15 06:12:13 | DISSINET/InkVisitor | https://api.github.com/repos/DISSINET/InkVisitor | opened | Finalize discussion on Statement fragments | data model priority | - Statement-type composite Concepts such as "priests are greedy": should they be C decomposed just linearly into components "priests", "is", and "greedy", or should they be S entities?

- If S, it would be some special S' not sitting under any T and not attached to R.

- If S, TBD whether it should not be the same thin... | 1.0 | Finalize discussion on Statement fragments - - Statement-type composite Concepts such as "priests are greedy": should they be C decomposed just linearly into components "priests", "is", and "greedy", or should they be S entities?

- If S, it would be some special S' not sitting under any T and not attached to R.

- If ... | priority | finalize discussion on statement fragments statement type composite concepts such as priests are greedy should they be c decomposed just linearly into components priests is and greedy or should they be s entities if s it would be some special s not sitting under any t and not attached to r if ... | 1 |

36,632 | 2,806,369,063 | IssuesEvent | 2015-05-15 01:37:28 | duckduckgo/zeroclickinfo-spice | https://api.github.com/repos/duckduckgo/zeroclickinfo-spice | closed | Transit Switzerland: API not always providing platform, leads to bad result | Bug Priority: High | https://duckduckgo.com/?q=next+train+to+geneva+from+paris&ia=swisstrains

The footer in the IA shows "Platform " with no number because the API response doesn't contain one. We should check to make sure we have a platform, or show that one has not been assigned yet (I'm assuming that's the case?)

https://duckduckg... | 1.0 | Transit Switzerland: API not always providing platform, leads to bad result - https://duckduckgo.com/?q=next+train+to+geneva+from+paris&ia=swisstrains

The footer in the IA shows "Platform " with no number because the API response doesn't contain one. We should check to make sure we have a platform, or show that one ... | priority | transit switzerland api not always providing platform leads to bad result the footer in the ia shows platform with no number because the api response doesn t contain one we should check to make sure we have a platform or show that one has not been assigned yet i m assuming that s the case cc ... | 1 |

94,587 | 27,238,584,179 | IssuesEvent | 2023-02-21 18:16:40 | hyperledger/caliper | https://api.github.com/repos/hyperledger/caliper | closed | remove node as the chaincode language for fabric integration testing | enhancement build | Couple of reasons for this

1. Fabric doesn't pin exact versions of it's dependencies which means that changes to the dependency tree outside of our control (in fact it's outside of fabric's control because they don't pin dependencies) can break the code. This has happened today with grpc-js 1.8.1->1.8.2 breaking all n... | 1.0 | remove node as the chaincode language for fabric integration testing - Couple of reasons for this

1. Fabric doesn't pin exact versions of it's dependencies which means that changes to the dependency tree outside of our control (in fact it's outside of fabric's control because they don't pin dependencies) can break the... | non_priority | remove node as the chaincode language for fabric integration testing couple of reasons for this fabric doesn t pin exact versions of it s dependencies which means that changes to the dependency tree outside of our control in fact it s outside of fabric s control because they don t pin dependencies can break the... | 0 |

247,932 | 26,761,986,255 | IssuesEvent | 2023-01-31 07:48:21 | billmcchesney1/Hangar | https://api.github.com/repos/billmcchesney1/Hangar | closed | CVE-2022-23529 (High) detected in jsonwebtoken-8.5.1.tgz - autoclosed | security vulnerability | ## CVE-2022-23529 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jsonwebtoken-8.5.1.tgz</b></p></summary>

<p>JSON Web Token implementation (symmetric and asymmetric)</p>

<p>Library ho... | True | CVE-2022-23529 (High) detected in jsonwebtoken-8.5.1.tgz - autoclosed - ## CVE-2022-23529 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jsonwebtoken-8.5.1.tgz</b></p></summary>

<p>JS... | non_priority | cve high detected in jsonwebtoken tgz autoclosed cve high severity vulnerability vulnerable library jsonwebtoken tgz json web token implementation symmetric and asymmetric library home page a href path to dependency file package json path to vulnerable library ... | 0 |

88,439 | 15,800,924,637 | IssuesEvent | 2021-04-03 02:00:04 | AlexRogalskiy/github-action-tag-replacer | https://api.github.com/repos/AlexRogalskiy/github-action-tag-replacer | opened | CVE-2020-11022 (Medium) detected in jquery-1.8.1.min.js | security vulnerability | ## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.8.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="ht... | True | CVE-2020-11022 (Medium) detected in jquery-1.8.1.min.js - ## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.8.1.min.js</b></p></summary>

<p>JavaScript librar... | non_priority | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file github action tag replacer node modules redeyed examples browser index html p... | 0 |

77,680 | 15,569,825,243 | IssuesEvent | 2021-03-17 01:04:45 | veshitala/flask-blogger | https://api.github.com/repos/veshitala/flask-blogger | opened | CVE-2020-10177 (Medium) detected in Pillow-5.4.1-cp27-cp27mu-manylinux1_x86_64.whl | security vulnerability | ## CVE-2020-10177 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Pillow-5.4.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>Python Imaging Library (Fork)</p>

<p>Library hom... | True | CVE-2020-10177 (Medium) detected in Pillow-5.4.1-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2020-10177 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Pillow-5.4.1-cp27-cp27mu-manylin... | non_priority | cve medium detected in pillow whl cve medium severity vulnerability vulnerable library pillow whl python imaging library fork library home page a href path to vulnerable library flask blogger requirements txt dependency hierarchy x pil... | 0 |

231,500 | 17,691,570,247 | IssuesEvent | 2021-08-24 10:35:52 | SimoneGasperini/cms-cmSim | https://api.github.com/repos/SimoneGasperini/cms-cmSim | closed | Start documenting the code | documentation | I suggest to start working on code documentation.

I'd begin with simple docstrings. Personally I prefer Google style docstrings as described [here](https://google.github.io/styleguide/pyguide.html#38-comments-and-docstrings). | 1.0 | Start documenting the code - I suggest to start working on code documentation.

I'd begin with simple docstrings. Personally I prefer Google style docstrings as described [here](https://google.github.io/styleguide/pyguide.html#38-comments-and-docstrings). | non_priority | start documenting the code i suggest to start working on code documentation i d begin with simple docstrings personally i prefer google style docstrings as described | 0 |

80,715 | 15,557,509,526 | IssuesEvent | 2021-03-16 09:13:50 | Regalis11/Barotrauma | https://api.github.com/repos/Regalis11/Barotrauma | closed | Signal Check components can't send spaces | Bug Code | - [x] I have searched the issue tracker to check if the issue has already been reported.

**Description**

a value of " " (one or more space) doesn't get sent in signal check outputs

**Steps To Reproduce**

put space (one or more) as signal check's outputs (false/true)

**Version**

0.12

**Additional informat... | 1.0 | Signal Check components can't send spaces - - [x] I have searched the issue tracker to check if the issue has already been reported.

**Description**

a value of " " (one or more space) doesn't get sent in signal check outputs

**Steps To Reproduce**

put space (one or more) as signal check's outputs (false/true)

... | non_priority | signal check components can t send spaces i have searched the issue tracker to check if the issue has already been reported description a value of one or more space doesn t get sent in signal check outputs steps to reproduce put space one or more as signal check s outputs false true ... | 0 |

671,447 | 22,761,544,955 | IssuesEvent | 2022-07-07 21:47:20 | markmac99/UKmon-shared | https://api.github.com/repos/markmac99/UKmon-shared | closed | Add container support for cartopy | enhancement Low priority | This would allow OS maps to be used as the backdrop for the ground-track maps.

However cartopy is poorly supported and has to be built from source, along with GEOS, PROJ4 and SQLITE3, which makes it quite a task. | 1.0 | Add container support for cartopy - This would allow OS maps to be used as the backdrop for the ground-track maps.

However cartopy is poorly supported and has to be built from source, along with GEOS, PROJ4 and SQLITE3, which makes it quite a task. | priority | add container support for cartopy this would allow os maps to be used as the backdrop for the ground track maps however cartopy is poorly supported and has to be built from source along with geos and which makes it quite a task | 1 |

74,419 | 3,439,718,874 | IssuesEvent | 2015-12-14 11:00:32 | ceylon/ceylon-ide-eclipse | https://api.github.com/repos/ceylon/ceylon-ide-eclipse | closed | Eclipse unexpected behavior | bug on last release high priority | hi, I get an exception "`The ExpressionVisitor caused an exception visiting a InvocationExpression node: "com.redhat.ceylon.model.loader.ModelResolutionException: JDT reference binding without a JDT IType element : com.google.common.cache.Cache" at com.redhat.ceylon.eclipse.core.model.JDTModelLoader.toType(JDTModelLoad... | 1.0 | Eclipse unexpected behavior - hi, I get an exception "`The ExpressionVisitor caused an exception visiting a InvocationExpression node: "com.redhat.ceylon.model.loader.ModelResolutionException: JDT reference binding without a JDT IType element : com.google.common.cache.Cache" at com.redhat.ceylon.eclipse.core.model.JDTM... | priority | eclipse unexpected behavior hi i get an exception the expressionvisitor caused an exception visiting a invocationexpression node com redhat ceylon model loader modelresolutionexception jdt reference binding without a jdt itype element com google common cache cache at com redhat ceylon eclipse core model jdtm... | 1 |

600,061 | 18,288,623,083 | IssuesEvent | 2021-10-05 13:06:41 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Pending tips for Gemini creators not sent when switching from Uphold to Gemini | feature/rewards priority/P3 QA/Yes release-notes/exclude OS/Desktop feature/gemini-wallet feature/Uphold-wallet | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | Pending tips for Gemini creators not sent when switching from Uphold to Gemini - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO ... | priority | pending tips for gemini creators not sent when switching from uphold to gemini have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info ... | 1 |

29,254 | 2,714,206,549 | IssuesEvent | 2015-04-10 00:43:08 | hamiltont/clasp | https://api.github.com/repos/hamiltont/clasp | opened | Option for custom Android system, boot, and kernel images. | Low priority | _From @bamos on September 24, 2014 15:33_

Specifically to run nbd -- http://bamos.github.io/2014/09/08/nbd-android/

_Copied from original issue: hamiltont/attack#53_ | 1.0 | Option for custom Android system, boot, and kernel images. - _From @bamos on September 24, 2014 15:33_

Specifically to run nbd -- http://bamos.github.io/2014/09/08/nbd-android/

_Copied from original issue: hamiltont/attack#53_ | priority | option for custom android system boot and kernel images from bamos on september specifically to run nbd copied from original issue hamiltont attack | 1 |

275,555 | 8,576,942,762 | IssuesEvent | 2018-11-12 22:02:15 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | store.hp.com - site is not usable | browser-firefox-mobile priority-important | <!-- @browser: Firefox Mobile 64.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:64.0) Gecko/64.0 Firefox/64.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://store.hp.com/us/en/pdp/hp-pavilion-laptop-15z-touch-optional-3je92av-1?source=aw

**Browser / Version**: Firefox Mobile 64.0

**Operating... | 1.0 | store.hp.com - site is not usable - <!-- @browser: Firefox Mobile 64.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:64.0) Gecko/64.0 Firefox/64.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://store.hp.com/us/en/pdp/hp-pavilion-laptop-15z-touch-optional-3je92av-1?source=aw

**Browser / Versio... | priority | store hp com site is not usable url browser version firefox mobile operating system android tested another browser no problem type site is not usable description unable to use drop down menu for specifications features etc steps to reproduce browser confi... | 1 |

228,531 | 7,552,579,968 | IssuesEvent | 2018-04-19 01:09:32 | OperationCode/operationcode_backend | https://api.github.com/repos/OperationCode/operationcode_backend | closed | Rake task to tag community leaders | Priority: Low Status: In Progress Type: Feature | <!-- Please fill out one of the sections below based on the type of issue you're creating -->

# Feature

## Why is this feature being added?

<!-- What problem is it solving? What value does it add? -->

Now that https://github.com/OperationCode/operationcode_backend/pull/292 is merged, we need a rake task to initiall... | 1.0 | Rake task to tag community leaders - <!-- Please fill out one of the sections below based on the type of issue you're creating -->

# Feature

## Why is this feature being added?

<!-- What problem is it solving? What value does it add? -->

Now that https://github.com/OperationCode/operationcode_backend/pull/292 is me... | priority | rake task to tag community leaders feature why is this feature being added now that is merged we need a rake task to initially tag the appropriate users in prod with the community leader tag what should your feature do collaborate with hollomancer or whomever he deems appropriate to... | 1 |