Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

754,683 | 26,398,359,989 | IssuesEvent | 2023-01-12 21:46:49 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] "ERROR: Invalid argument: Invalid column number 2" after adding a new column | kind/bug area/ysql priority/medium status/awaiting-triage | Jira Link: [DB-3756](https://yugabyte.atlassian.net/browse/DB-3756)

### Description

Steps to reproduce:

1) Connect to one host, say 127.0.0.1:

```

yugabyte=# CREATE TABLE t (id int);

yugabyte=# INSERT INTO t VALUES (1), (2);

```

This populates the table caches for table "t" in TServer 1

End this connection... | 1.0 | [YSQL] "ERROR: Invalid argument: Invalid column number 2" after adding a new column - Jira Link: [DB-3756](https://yugabyte.atlassian.net/browse/DB-3756)

### Description

Steps to reproduce:

1) Connect to one host, say 127.0.0.1:

```

yugabyte=# CREATE TABLE t (id int);

yugabyte=# INSERT INTO t VALUES (1), (2)... | priority | error invalid argument invalid column number after adding a new column jira link description steps to reproduce connect to one host say yugabyte create table t id int yugabyte insert into t values this populates the table caches for table t in tse... | 1 |

567,249 | 16,851,639,274 | IssuesEvent | 2021-06-20 16:25:21 | GastonGit/Hot-Twitch-Clips | https://api.github.com/repos/GastonGit/Hot-Twitch-Clips | closed | TMI.JS :: DISCONNECT :: | bug high priority | Problem(https://github.com/GastonGit/Hot-Twitch-Clips/issues/126) still exists after fix with disconnects happening with no reason attached to disconnect. | 1.0 | TMI.JS :: DISCONNECT :: - Problem(https://github.com/GastonGit/Hot-Twitch-Clips/issues/126) still exists after fix with disconnects happening with no reason attached to disconnect. | priority | tmi js disconnect problem still exists after fix with disconnects happening with no reason attached to disconnect | 1 |

114,533 | 9,741,910,592 | IssuesEvent | 2019-06-02 12:59:31 | aiidateam/aiida_core | https://api.github.com/repos/aiidateam/aiida_core | closed | Random failing tests `verdi quicksetup` on SqlAlchemy python 3 | aiida-core 1.x priority/quality-of-life topic/testing topic/verdi type/bug | I have noticed this test failing multiple times the recent days, always on python 3 and SqlAlchemy. Not sure where it is coming from:

```

======================================================================

FAIL: test_quicksetup_from_config_file (aiida.backends.tests.cmdline.commands.test_setup.TestVerdiSetup)

--... | 1.0 | Random failing tests `verdi quicksetup` on SqlAlchemy python 3 - I have noticed this test failing multiple times the recent days, always on python 3 and SqlAlchemy. Not sure where it is coming from:

```

======================================================================

FAIL: test_quicksetup_from_config_file (aii... | non_priority | random failing tests verdi quicksetup on sqlalchemy python i have noticed this test failing multiple times the recent days always on python and sqlalchemy not sure where it is coming from fail test quicksetup from config file aii... | 0 |

76,619 | 15,496,152,308 | IssuesEvent | 2021-03-11 02:09:21 | jinuem/React-Type-Script-Starter | https://api.github.com/repos/jinuem/React-Type-Script-Starter | opened | CVE-2020-7608 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2020-7608 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>yargs-parser-2.4.1.tgz</b>, <b>yargs-parser-4.2.1.tgz</b>, <b>yargs-parser-5.0.0.tgz</b>, <b>yargs-parser-7.0.0.tgz<... | True | CVE-2020-7608 (Medium) detected in multiple libraries - ## CVE-2020-7608 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>yargs-parser-2.4.1.tgz</b>, <b>yargs-parser-4.2.1.tgz</b>, <... | non_priority | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries yargs parser tgz yargs parser tgz yargs parser tgz yargs parser tgz yargs parser tgz the mighty option parser used by yargs library home page ... | 0 |

249,702 | 7,964,659,940 | IssuesEvent | 2018-07-13 22:38:25 | huridocs/uwazi | https://api.github.com/repos/huridocs/uwazi | closed | Display a tool-tip asking user to change the default admin password | Priority: Low | Users aren't changing the default password for admin. We could add a tool-tip for instances with default password so they are inclined to do so. | 1.0 | Display a tool-tip asking user to change the default admin password - Users aren't changing the default password for admin. We could add a tool-tip for instances with default password so they are inclined to do so. | priority | display a tool tip asking user to change the default admin password users aren t changing the default password for admin we could add a tool tip for instances with default password so they are inclined to do so | 1 |

171,398 | 27,112,795,828 | IssuesEvent | 2023-02-15 16:24:18 | hackforla/expunge-assist | https://api.github.com/repos/hackforla/expunge-assist | closed | [Re-organize Figma: Archive] Archive FAQ page | role: design priority: medium role: UX content writing size: 1pt | ### Overview

Extension of #807

### Action Items

- [x] Read through comments and determine if they can be marked as "resolved"

- [x] For comments that cannot be marked as resolved, add a box on the side of the wireframe where the comment is as and copy and paste the comment/thread of comments to the side.

- [x... | 1.0 | [Re-organize Figma: Archive] Archive FAQ page - ### Overview

Extension of #807

### Action Items

- [x] Read through comments and determine if they can be marked as "resolved"

- [x] For comments that cannot be marked as resolved, add a box on the side of the wireframe where the comment is as and copy and paste th... | non_priority | archive faq page overview extension of action items read through comments and determine if they can be marked as resolved for comments that cannot be marked as resolved add a box on the side of the wireframe where the comment is as and copy and paste the comment thread of comments to t... | 0 |

72,050 | 3,371,408,300 | IssuesEvent | 2015-11-23 19:00:13 | aaroneiche/do-want | https://api.github.com/repos/aaroneiche/do-want | closed | Implement OAuth | enhancement imported Priority-Low | _Original author: aaron.ei...@gmail.com (September 06, 2012 16:41:34)_

Do-Want should support OAuth authentication through popular services like google, facebook, and twitter.

_Original issue: http://code.google.com/p/do-want/issues/detail?id=16_ | 1.0 | Implement OAuth - _Original author: aaron.ei...@gmail.com (September 06, 2012 16:41:34)_

Do-Want should support OAuth authentication through popular services like google, facebook, and twitter.

_Original issue: http://code.google.com/p/do-want/issues/detail?id=16_ | priority | implement oauth original author aaron ei gmail com september do want should support oauth authentication through popular services like google facebook and twitter original issue | 1 |

35,801 | 2,793,080,605 | IssuesEvent | 2015-05-11 08:31:47 | handsontable/handsontable | https://api.github.com/repos/handsontable/handsontable | closed | Creating ContextMenu items on demand pr cell | Feature Guess: a day or more Priority: normal | Is there any way to dynamically set the items of a context menu based on the cell that is right clicked? I need to show a different set of context menu items for each column, and ideally for each cell.

In my case, I need access to the underlying data of the current row and the column of the clicked cell to determin... | 1.0 | Creating ContextMenu items on demand pr cell - Is there any way to dynamically set the items of a context menu based on the cell that is right clicked? I need to show a different set of context menu items for each column, and ideally for each cell.

In my case, I need access to the underlying data of the current row... | priority | creating contextmenu items on demand pr cell is there any way to dynamically set the items of a context menu based on the cell that is right clicked i need to show a different set of context menu items for each column and ideally for each cell in my case i need access to the underlying data of the current row... | 1 |

202,608 | 23,077,569,693 | IssuesEvent | 2022-07-26 02:12:45 | YJSoft/macos-plist | https://api.github.com/repos/YJSoft/macos-plist | opened | CVE-2021-35065 (High) detected in glob-parent-5.1.2.tgz | security vulnerability | ## CVE-2021-35065 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>glob-parent-5.1.2.tgz</b></p></summary>

<p>Extract the non-magic parent path from a glob string.</p>

<p>Library home p... | True | CVE-2021-35065 (High) detected in glob-parent-5.1.2.tgz - ## CVE-2021-35065 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>glob-parent-5.1.2.tgz</b></p></summary>

<p>Extract the non-m... | non_priority | cve high detected in glob parent tgz cve high severity vulnerability vulnerable library glob parent tgz extract the non magic parent path from a glob string library home page a href path to dependency file package json path to vulnerable library node modules glob ... | 0 |

239,396 | 19,862,317,367 | IssuesEvent | 2022-01-22 02:39:03 | Exiled-Team/EXILED | https://api.github.com/repos/Exiled-Team/EXILED | closed | [BUG] Scp-049 can revive SCP (like SCP-049-2) | requires-testing | **Describe the bug**

A clear and concise description of what the bug is.

**To Reproduce**

Steps to reproduce the behavior:

1. Have an Dead SCP

2. Respawn it

3. And you have the problem

**Expected behavior**

SCP-049 can revive SCP

**Server logs**

Please include a pastebin of your localadmin log file (or bo... | 1.0 | [BUG] Scp-049 can revive SCP (like SCP-049-2) - **Describe the bug**

A clear and concise description of what the bug is.

**To Reproduce**

Steps to reproduce the behavior:

1. Have an Dead SCP

2. Respawn it

3. And you have the problem

**Expected behavior**

SCP-049 can revive SCP

**Server logs**

Please inclu... | non_priority | scp can revive scp like scp describe the bug a clear and concise description of what the bug is to reproduce steps to reproduce the behavior have an dead scp respawn it and you have the problem expected behavior scp can revive scp server logs please include a paste... | 0 |

252,198 | 27,233,114,173 | IssuesEvent | 2023-02-21 14:34:57 | billmcchesney1/linkerd2 | https://api.github.com/repos/billmcchesney1/linkerd2 | opened | CVE-2021-23440 (High) detected in set-value-2.0.1.tgz | security vulnerability | ## CVE-2021-23440 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>set-value-2.0.1.tgz</b></p></summary>

<p>Create nested values and any intermediaries using dot notation (`'a.b.c'`) pa... | True | CVE-2021-23440 (High) detected in set-value-2.0.1.tgz - ## CVE-2021-23440 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>set-value-2.0.1.tgz</b></p></summary>

<p>Create nested values ... | non_priority | cve high detected in set value tgz cve high severity vulnerability vulnerable library set value tgz create nested values and any intermediaries using dot notation a b c paths library home page a href path to dependency file web app package json path to vulnera... | 0 |

124,354 | 4,912,468,036 | IssuesEvent | 2016-11-23 09:11:41 | bespokeinteractive/mchapp | https://api.github.com/repos/bespokeinteractive/mchapp | opened | ANC clinic: There's an error when trying to update tetanus vaccine given to an ANC patient | bug High Priority | ANC clinic: There's an error when trying to update tetanus vaccine given to an ANC patient

Task

-----------

-Ensure user is able to update Tetanus administered to a patient

line of code.

The ClientMessageReader is basically copying data from one buffer to another buffer.

The source buffer (src in the code) is bytes received direc... | 1.0 | ClientMessage frame read for a message may be broken in case of large messages - Please see [this](https://github.com/hazelcast/hazelcast/blob/v5.1.1/hazelcast/src/main/java/com/hazelcast/client/impl/protocol/ClientMessageReader.java#L68) line of code.

The ClientMessageReader is basically copying data from one buffe... | non_priority | clientmessage frame read for a message may be broken in case of large messages please see line of code the clientmessagereader is basically copying data from one buffer to another buffer the source buffer src in the code is bytes received directly from the client socket connection the destination buffer... | 0 |

628,466 | 19,986,647,596 | IssuesEvent | 2022-01-30 19:11:46 | CodersCamp2021-HK/CodersCamp2021.Project.React | https://api.github.com/repos/CodersCamp2021-HK/CodersCamp2021.Project.React | opened | Poprawić routing | priority: high 🟠 type: ♻️ refactor | Teraz jak gh otwieramy to jest, że strona nie istnieje, trzeba dodać prefix do każdego routingu | 1.0 | Poprawić routing - Teraz jak gh otwieramy to jest, że strona nie istnieje, trzeba dodać prefix do każdego routingu | priority | poprawić routing teraz jak gh otwieramy to jest że strona nie istnieje trzeba dodać prefix do każdego routingu | 1 |

94,339 | 11,863,873,051 | IssuesEvent | 2020-03-25 20:33:04 | RoboJackets/igvc-software | https://api.github.com/repos/RoboJackets/igvc-software | opened | Joystick changes | level ➤ medium type ➤ design document type ➤ new feature type ➤ refactor | Our current joystick node's control style, ie. tank drive, is hard to drive around with. It could do some upgrading and improvements.

AC: Better joystick node. | 1.0 | Joystick changes - Our current joystick node's control style, ie. tank drive, is hard to drive around with. It could do some upgrading and improvements.

AC: Better joystick node. | non_priority | joystick changes our current joystick node s control style ie tank drive is hard to drive around with it could do some upgrading and improvements ac better joystick node | 0 |

137,782 | 18,764,965,415 | IssuesEvent | 2021-11-05 21:53:45 | zbmowrey/zbmowrey-com | https://api.github.com/repos/zbmowrey/zbmowrey-com | closed | CVE-2018-20676 (Medium) detected in bootstrap-3.3.1.min.js - autoclosed | security vulnerability | ## CVE-2018-20676 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.1.min.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile f... | True | CVE-2018-20676 (Medium) detected in bootstrap-3.3.1.min.js - autoclosed - ## CVE-2018-20676 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.1.min.js</b></p></summary>

<... | non_priority | cve medium detected in bootstrap min js autoclosed cve medium severity vulnerability vulnerable library bootstrap min js the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to dependency file ... | 0 |

44,513 | 13,057,546,121 | IssuesEvent | 2020-07-30 07:32:29 | stefanfreitag/anti-spaghetti-workshop | https://api.github.com/repos/stefanfreitag/anti-spaghetti-workshop | opened | CVE-2018-19839 (Medium) detected in node-sass-4.14.1.tgz, CSS::Sass-v3.4.11 | security vulnerability | ## CVE-2018-19839 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sass-4.14.1.tgz</b></p></summary>

<p>

<details><summary><b>node-sass-4.14.1.tgz</b></p></summary>

<p>Wrapper... | True | CVE-2018-19839 (Medium) detected in node-sass-4.14.1.tgz, CSS::Sass-v3.4.11 - ## CVE-2018-19839 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sass-4.14.1.tgz</b></p></summary... | non_priority | cve medium detected in node sass tgz css sass cve medium severity vulnerability vulnerable libraries node sass tgz node sass tgz wrapper around libsass library home page a href path to dependency file tmp ws scm anti spaghetti workshop package js... | 0 |

176,463 | 6,559,882,231 | IssuesEvent | 2017-09-07 07:00:17 | gdgphilippines/devfest | https://api.github.com/repos/gdgphilippines/devfest | opened | Change loading of content for each page | enhancement Priority Medium ready | In relation to gdgphilippines/devfestphfiles-2017

Need to change the parsing of files | 1.0 | Change loading of content for each page - In relation to gdgphilippines/devfestphfiles-2017

Need to change the parsing of files | priority | change loading of content for each page in relation to gdgphilippines devfestphfiles need to change the parsing of files | 1 |

708,255 | 24,335,462,602 | IssuesEvent | 2022-10-01 02:46:44 | AY2223S1-CS2103T-W12-3/tp | https://api.github.com/repos/AY2223S1-CS2103T-W12-3/tp | opened | Refactoring packages | priority.High type.Chore | Rename the packages listed below

- [ ] Seedu.Address -> RC4HDB

- [ ] Parser class -> Parser class + CommandParser class | 1.0 | Refactoring packages - Rename the packages listed below

- [ ] Seedu.Address -> RC4HDB

- [ ] Parser class -> Parser class + CommandParser class | priority | refactoring packages rename the packages listed below seedu address parser class parser class commandparser class | 1 |

20,953 | 2,632,574,930 | IssuesEvent | 2015-03-08 08:31:46 | prestaconcept/open-source-management | https://api.github.com/repos/prestaconcept/open-source-management | closed | [Deployment] Handle migration on install | priority-minor | When you have to install an application with migration on a new server, migration will fail cause shema is already uptodate.

Use the command to fill migration table | 1.0 | [Deployment] Handle migration on install - When you have to install an application with migration on a new server, migration will fail cause shema is already uptodate.

Use the command to fill migration table | priority | handle migration on install when you have to install an application with migration on a new server migration will fail cause shema is already uptodate use the command to fill migration table | 1 |

377,115 | 11,163,994,480 | IssuesEvent | 2019-12-27 02:20:13 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Unable to add "Missing" resources from a table when unowned | Medium Priority | Found an issue where I could not add the missing resources to any table after the job has started working if I am not the owner of it. In this case, I attempted to make a mint using an Unowned Anvil. I had the bricks and lumber handy, but because I couldn't hold both at once I had to start with one. Afterwards, however... | 1.0 | Unable to add "Missing" resources from a table when unowned - Found an issue where I could not add the missing resources to any table after the job has started working if I am not the owner of it. In this case, I attempted to make a mint using an Unowned Anvil. I had the bricks and lumber handy, but because I couldn't ... | priority | unable to add missing resources from a table when unowned found an issue where i could not add the missing resources to any table after the job has started working if i am not the owner of it in this case i attempted to make a mint using an unowned anvil i had the bricks and lumber handy but because i couldn t ... | 1 |

401,555 | 11,795,117,290 | IssuesEvent | 2020-03-18 08:17:40 | thaliawww/concrexit | https://api.github.com/repos/thaliawww/concrexit | closed | Sync mailinglists using Google Admin SDK | feature priority: medium | In GitLab by @se-bastiaan on Oct 7, 2019, 13:02

### One-sentence description

Sync mailinglists using Google Admin SDK

### Motivation

Because we switch to GSuite

### Desired functionality

Sync the mailinglists to

### Suggested implementation

We currently have a syncmailinglists scripts in the serverconfig repo ... | 1.0 | Sync mailinglists using Google Admin SDK - In GitLab by @se-bastiaan on Oct 7, 2019, 13:02

### One-sentence description

Sync mailinglists using Google Admin SDK

### Motivation

Because we switch to GSuite

### Desired functionality

Sync the mailinglists to

### Suggested implementation

We currently have a syncmai... | priority | sync mailinglists using google admin sdk in gitlab by se bastiaan on oct one sentence description sync mailinglists using google admin sdk motivation because we switch to gsuite desired functionality sync the mailinglists to suggested implementation we currently have a syncmailingl... | 1 |

778,842 | 27,331,370,835 | IssuesEvent | 2023-02-25 17:19:26 | EnsembleUI/ensemble | https://api.github.com/repos/EnsembleUI/ensemble | closed | Markdown links color to match primary color | enhancement HIGHEST Priority | Currently links in a markdown widget are blue. They should match the theme's primary color.

```yaml

View:

Column:

children:

- Markdown:

text: Here is an [inline links](https://ensembleui.com)

``` | 1.0 | Markdown links color to match primary color - Currently links in a markdown widget are blue. They should match the theme's primary color.

```yaml

View:

Column:

children:

- Markdown:

text: Here is an [inline links](https://ensembleui.com)

``` | priority | markdown links color to match primary color currently links in a markdown widget are blue they should match the theme s primary color yaml view column children markdown text here is an | 1 |

48,277 | 7,398,674,675 | IssuesEvent | 2018-03-19 07:34:05 | PaddlePaddle/Paddle | https://api.github.com/repos/PaddlePaddle/Paddle | opened | API doc problems of reshape | PythonAPI documentation | #### 针对以下问题修改时请协调组织其他内容语言

#### 单词拼写、遗漏和语法等问题未列出,修改时请注意

*请参考[API注释标准](https://github.com/PaddlePaddle/Paddle/blob/develop/doc/fluid/dev/api_doc_std_cn.md)!*

------

源文件:https://github.com/PaddlePaddle/Paddle/blob/develop/python/paddle/fluid/layers/ops.py

- reshape

http://www.paddlepaddle.org/docs/develop/api/en/f... | 1.0 | API doc problems of reshape - #### 针对以下问题修改时请协调组织其他内容语言

#### 单词拼写、遗漏和语法等问题未列出,修改时请注意

*请参考[API注释标准](https://github.com/PaddlePaddle/Paddle/blob/develop/doc/fluid/dev/api_doc_std_cn.md)!*

------

源文件:https://github.com/PaddlePaddle/Paddle/blob/develop/python/paddle/fluid/layers/ops.py

- reshape

http://www.paddlepa... | non_priority | api doc problems of reshape 针对以下问题修改时请协调组织其他内容语言 单词拼写、遗漏和语法等问题未列出,修改时请注意 请参考 源文件: reshape 缺 examples 表达须完善 格式问题(数据类型介绍等) | 0 |

822,123 | 30,854,183,933 | IssuesEvent | 2023-08-02 19:09:46 | calcom/cal.com | https://api.github.com/repos/calcom/cal.com | closed | [CAL-2120] Org owner/admin can't see the teams created by other owner/admin | 🐛 bug linear High priority organizations | He should be able to see all teams so that he can add himself to any team he wants.

He shouldn't be able to see a team's event-types unless he has added him to the team.

<sub>From [SyncLinear.com](https://synclinear.com) | [CAL-2120](https://linear.app/calcom/issue/CAL-2120/org-owneradmin-cant-see-the-teams-created-by... | 1.0 | [CAL-2120] Org owner/admin can't see the teams created by other owner/admin - He should be able to see all teams so that he can add himself to any team he wants.

He shouldn't be able to see a team's event-types unless he has added him to the team.

<sub>From [SyncLinear.com](https://synclinear.com) | [CAL-2120](https:/... | priority | org owner admin can t see the teams created by other owner admin he should be able to see all teams so that he can add himself to any team he wants he shouldn t be able to see a team s event types unless he has added him to the team from | 1 |

350,781 | 10,508,486,341 | IssuesEvent | 2019-09-27 08:46:08 | woocommerce/woocommerce-gateway-paypal-express-checkout | https://api.github.com/repos/woocommerce/woocommerce-gateway-paypal-express-checkout | closed | Synchronise Renewals Not Supported ATM | Priority: Low [Type] Enhancement | Hello,

I am delighted to see that PayPal Subscriptions for download/virtual products are now supported. However, I noticed that apparently Synchronise renewals are not a thing with PayPal? Is this something that can be added?

I tried this with the same product and switching the setting to sync to 1st of the month... | 1.0 | Synchronise Renewals Not Supported ATM - Hello,

I am delighted to see that PayPal Subscriptions for download/virtual products are now supported. However, I noticed that apparently Synchronise renewals are not a thing with PayPal? Is this something that can be added?

I tried this with the same product and switchin... | priority | synchronise renewals not supported atm hello i am delighted to see that paypal subscriptions for download virtual products are now supported however i noticed that apparently synchronise renewals are not a thing with paypal is this something that can be added i tried this with the same product and switchin... | 1 |

71,773 | 18,872,083,421 | IssuesEvent | 2021-11-13 11:08:42 | test-st-petersburg/DocTemplates | https://api.github.com/repos/test-st-petersburg/DocTemplates | opened | Невозможно установить требуемые nuget модули | bug build dependencies | ## Требуемое поведение

`Invoke-PSDepend` должен выполняться без ошибок.

## Текущее поведение

При установке на "голую" машину (на которой не был сконфигурирован nuget) не один из модулей nuget не удаётся установить.

## Возможное решение

- [ ] необходимо добавить репозиторий в конфигурацию nuget либо явно ... | 1.0 | Невозможно установить требуемые nuget модули - ## Требуемое поведение

`Invoke-PSDepend` должен выполняться без ошибок.

## Текущее поведение

При установке на "голую" машину (на которой не был сконфигурирован nuget) не один из модулей nuget не удаётся установить.

## Возможное решение

- [ ] необходимо добав... | non_priority | невозможно установить требуемые nuget модули требуемое поведение invoke psdepend должен выполняться без ошибок текущее поведение при установке на голую машину на которой не был сконфигурирован nuget не один из модулей nuget не удаётся установить возможное решение необходимо добавит... | 0 |

68,670 | 7,107,108,862 | IssuesEvent | 2018-01-16 18:48:52 | webcompat/webcompat.com | https://api.github.com/repos/webcompat/webcompat.com | closed | Add optional "browsers" argument for functional tests | in progress type: testing | I had already done that while trying to get tests to run in Safari, but didn't merge it in since those tests failed. I think this is helpful, specially when working with the tests, troubleshooting, etc.

My idea is to add the `browsers` argument and if the user doesn't specify anything, just run all the available one... | 1.0 | Add optional "browsers" argument for functional tests - I had already done that while trying to get tests to run in Safari, but didn't merge it in since those tests failed. I think this is helpful, specially when working with the tests, troubleshooting, etc.

My idea is to add the `browsers` argument and if the user ... | non_priority | add optional browsers argument for functional tests i had already done that while trying to get tests to run in safari but didn t merge it in since those tests failed i think this is helpful specially when working with the tests troubleshooting etc my idea is to add the browsers argument and if the user ... | 0 |

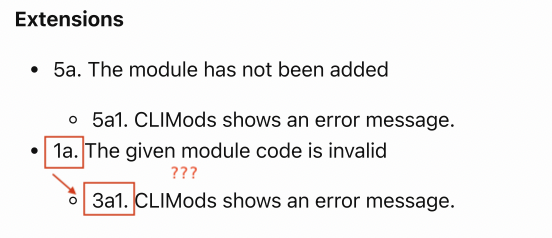

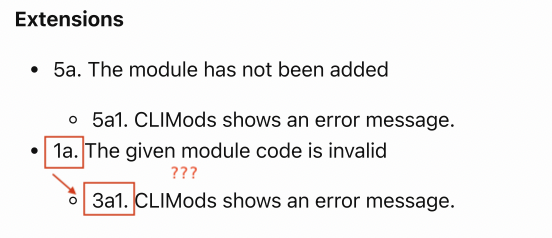

356,722 | 25,176,249,748 | IssuesEvent | 2022-11-11 09:31:07 | seelengxd/pe | https://api.github.com/repos/seelengxd/pe | opened | Use cases: wrong extension numbering? | severity.VeryLow type.DocumentationBug | Selecting lesson slot extension (DG - Use Cases)

<!--session: 1668153014144-6643e22d-f7c4-4d37-ba3e-b9b7512b6d35-->

<!--Version: Web v3.4.4--> | 1.0 | Use cases: wrong extension numbering? - Selecting lesson slot extension (DG - Use Cases)

<!--session: 1668153014144-6643e22d-f7c4-4d37-ba3e-b9b7512b6d35-->

<!--Version: Web v3.4.4--> | non_priority | use cases wrong extension numbering selecting lesson slot extension dg use cases | 0 |

524,212 | 15,208,989,908 | IssuesEvent | 2021-02-17 04:08:41 | brave/qa-resources | https://api.github.com/repos/brave/qa-resources | closed | iOS 1.23 release | iOS 12 iOS 13 iOS 14 priority/P1 | ### <img src="https://www.rebron.org/wordpress/wp-content/uploads/2019/06/ios-1.png">`iOS 1.23 Release`

#### Release Date/Target:

* Release Date: **`February 12, 2021`**

#### Summary:

Includes the following features/fixes:

* Security fixes

* Shields fixes

* Brave-Today Enhancements

* UI/UX Enhancement... | 1.0 | iOS 1.23 release - ### <img src="https://www.rebron.org/wordpress/wp-content/uploads/2019/06/ios-1.png">`iOS 1.23 Release`

#### Release Date/Target:

* Release Date: **`February 12, 2021`**

#### Summary:

Includes the following features/fixes:

* Security fixes

* Shields fixes

* Brave-Today Enhancements

... | priority | ios release img src release release date target release date february summary includes the following features fixes security fixes shields fixes brave today enhancements ui ux enhancements milestone current progress ipho... | 1 |

238,454 | 19,721,397,130 | IssuesEvent | 2022-01-13 15:41:43 | 389ds/389-ds-base | https://api.github.com/repos/389ds/389-ds-base | closed | ns-slapd crash in CI test plugins test_dna_interval_with_different_values | closed: duplicate CI test needs triage priority_high | **Issue Description**

ns-slapd crash in CI test plugins test_dna_interval_with_different_values

because the operation modifiers is corrupted when trying to log the operation

According to the operation we are handling modify operation on uid=interval3,ou=People,dc=example,dc=com replace uidNumber: -1

Crash is syste... | 1.0 | ns-slapd crash in CI test plugins test_dna_interval_with_different_values - **Issue Description**

ns-slapd crash in CI test plugins test_dna_interval_with_different_values

because the operation modifiers is corrupted when trying to log the operation

According to the operation we are handling modify operation on uid... | non_priority | ns slapd crash in ci test plugins test dna interval with different values issue description ns slapd crash in ci test plugins test dna interval with different values because the operation modifiers is corrupted when trying to log the operation according to the operation we are handling modify operation on uid... | 0 |

680,944 | 23,291,273,102 | IssuesEvent | 2022-08-05 23:34:12 | GoogleContainerTools/skaffold | https://api.github.com/repos/GoogleContainerTools/skaffold | closed | [v2][verify] skaffold verify docker.io images that have a "/" in them - ex: "alpine/curl:latest" | kind/bug priority/p1 2.0.0 area/verify | Currently `skaffold verify` is not able to properly parse skaffold docker.io images (with the repo ommitted) that have a "/" in them - ex: "alpine/curl:latest". For example:

working `verify` stanza

```

- name: alpine-wget

container:

name: alpine-wget

image: alpine:3.15.4

command: ["/bin/sh"]

... | 1.0 | [v2][verify] skaffold verify docker.io images that have a "/" in them - ex: "alpine/curl:latest" - Currently `skaffold verify` is not able to properly parse skaffold docker.io images (with the repo ommitted) that have a "/" in them - ex: "alpine/curl:latest". For example:

working `verify` stanza

```

- name: alpin... | priority | skaffold verify docker io images that have a in them ex alpine curl latest currently skaffold verify is not able to properly parse skaffold docker io images with the repo ommitted that have a in them ex alpine curl latest for example working verify stanza name alpine wget ... | 1 |

48,828 | 12,247,171,740 | IssuesEvent | 2020-05-05 15:29:57 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Hitting restore timeouts with NuGet | area-Infrastructure blocking-clean-ci blocking-official-build untriaged | Hitting timeout issues with nuget.org.

```

Restore completed in 5.75 sec for /Users/runner/runners/2.166.2/work/1/s/src/VS.Web.CG.Msbuild/VS.Web.CG.Msbuild.csproj.

Retrying 'FindPackagesByIdAsync' for source 'https://api.nuget.org/v3-flatcontainer/microsoft.codeanalysis.common/index.json'.

The HTTP request... | 1.0 | Hitting restore timeouts with NuGet - Hitting timeout issues with nuget.org.

```

Restore completed in 5.75 sec for /Users/runner/runners/2.166.2/work/1/s/src/VS.Web.CG.Msbuild/VS.Web.CG.Msbuild.csproj.

Retrying 'FindPackagesByIdAsync' for source 'https://api.nuget.org/v3-flatcontainer/microsoft.codeanalysis.c... | non_priority | hitting restore timeouts with nuget hitting timeout issues with nuget org restore completed in sec for users runner runners work s src vs web cg msbuild vs web cg msbuild csproj retrying findpackagesbyidasync for source the http request to get has timed out after retrying... | 0 |

224,551 | 24,781,646,492 | IssuesEvent | 2022-10-24 05:56:34 | sast-automation-dev/openmrs-core-43 | https://api.github.com/repos/sast-automation-dev/openmrs-core-43 | opened | standard-1.1.2.jar: 1 vulnerabilities (highest severity is: 7.3) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>standard-1.1.2.jar</b></p></summary>

<p></p>

<p>Path to dependency file: /webapp/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/taglibs/s... | True | standard-1.1.2.jar: 1 vulnerabilities (highest severity is: 7.3) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>standard-1.1.2.jar</b></p></summary>

<p></p>

<p>Path to dependency file: /webapp/pom.xml</p>

<p>Pat... | non_priority | standard jar vulnerabilities highest severity is vulnerable library standard jar path to dependency file webapp pom xml path to vulnerable library home wss scanner repository taglibs standard standard jar itory taglibs standard standard jar ... | 0 |

822,212 | 30,858,147,197 | IssuesEvent | 2023-08-02 22:53:11 | Memmy-App/memmy | https://api.github.com/repos/Memmy-App/memmy | closed | [Bug]: comment jump button will always jump to first comment on first press, even after scrolling past it | bug high priority in progress | ### Check for open issues

- [X] I have verified that another issue for this is not open, or it has been closed and has not been fixed.

### Minimal reproducible example

Scroll past top comment by any length, press comment jump button

### Expected Behavior

Jump to the next comment down

### Version

0.6.0 (1)

### A... | 1.0 | [Bug]: comment jump button will always jump to first comment on first press, even after scrolling past it - ### Check for open issues

- [X] I have verified that another issue for this is not open, or it has been closed and has not been fixed.

### Minimal reproducible example

Scroll past top comment by any length, pr... | priority | comment jump button will always jump to first comment on first press even after scrolling past it check for open issues i have verified that another issue for this is not open or it has been closed and has not been fixed minimal reproducible example scroll past top comment by any length press co... | 1 |

226,669 | 17,358,651,095 | IssuesEvent | 2021-07-29 17:21:21 | rokmoln/support-firecloud | https://api.github.com/repos/rokmoln/support-firecloud | opened | bats FTW | documentation enhancement | some shell scripts could really use some testing, and BATS is the tool for the job

* https://bats-core.readthedocs.io/en/latest/index.html

* https://opensource.com/article/19/2/testing-bash-bats

* https://medium.com/@pimterry/testing-your-shell-scripts-with-bats-abfca9bdc5b9

* https://github.com/dmlond/how_to_bat... | 1.0 | bats FTW - some shell scripts could really use some testing, and BATS is the tool for the job

* https://bats-core.readthedocs.io/en/latest/index.html

* https://opensource.com/article/19/2/testing-bash-bats

* https://medium.com/@pimterry/testing-your-shell-scripts-with-bats-abfca9bdc5b9

* https://github.com/dmlond... | non_priority | bats ftw some shell scripts could really use some testing and bats is the tool for the job | 0 |

58,102 | 24,330,395,640 | IssuesEvent | 2022-09-30 18:49:40 | hashicorp/terraform-provider-aws | https://api.github.com/repos/hashicorp/terraform-provider-aws | closed | AWS VPC Traffic Mirroring - enable GWLB as a valid traffic mirror target | enhancement good first issue service/vpc | <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not l... | 1.0 | AWS VPC Traffic Mirroring - enable GWLB as a valid traffic mirror target - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to he... | non_priority | aws vpc traffic mirroring enable gwlb as a valid traffic mirror target community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or other comments that do not add relevant new information ... | 0 |

245,633 | 26,549,314,034 | IssuesEvent | 2023-01-20 05:31:06 | nidhi7598/linux-3.0.35_CVE-2022-45934 | https://api.github.com/repos/nidhi7598/linux-3.0.35_CVE-2022-45934 | opened | CVE-2019-15922 (Medium) detected in linuxlinux-3.0.49 | security vulnerability | ## CVE-2019-15922 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-3.0.49</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.k... | True | CVE-2019-15922 (Medium) detected in linuxlinux-3.0.49 - ## CVE-2019-15922 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-3.0.49</b></p></summary>

<p>

<p>The Linux Kernel<... | non_priority | cve medium detected in linuxlinux cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files drivers bloc... | 0 |

808,519 | 30,085,857,026 | IssuesEvent | 2023-06-29 08:35:09 | red-hat-storage/ocs-ci | https://api.github.com/repos/red-hat-storage/ocs-ci | opened | Undo the early return in `conftest.py::nb_ensure_endpoint_count` once underlying issue is resolved | High Priority workaround MCG Squad/Red | Due to https://url.corp.redhat.com/2eba588, we had to give up on ensuring the minimum of two noobaa-endpoint pods in MCG tests via https://github.com/red-hat-storage/ocs-ci/pull/7922.

While this allows the acceptance tests to pass, we can expect this to fail more demanding tests in the CI, so we should undo the comp... | 1.0 | Undo the early return in `conftest.py::nb_ensure_endpoint_count` once underlying issue is resolved - Due to https://url.corp.redhat.com/2eba588, we had to give up on ensuring the minimum of two noobaa-endpoint pods in MCG tests via https://github.com/red-hat-storage/ocs-ci/pull/7922.

While this allows the acceptance... | priority | undo the early return in conftest py nb ensure endpoint count once underlying issue is resolved due to we had to give up on ensuring the minimum of two noobaa endpoint pods in mcg tests via while this allows the acceptance tests to pass we can expect this to fail more demanding tests in the ci so we shoul... | 1 |

196,357 | 15,591,230,802 | IssuesEvent | 2021-03-18 10:12:53 | VoidStoneDev/GradleMinecraftPluginHelper | https://api.github.com/repos/VoidStoneDev/GradleMinecraftPluginHelper | closed | Changing Brand Name To GradleMinecraftPluginHelper | documentation | Changing Brand Name To 'GradleMinecraftPluginHelper' Because Of Support For Non-Bukkit API Plugins | 1.0 | Changing Brand Name To GradleMinecraftPluginHelper - Changing Brand Name To 'GradleMinecraftPluginHelper' Because Of Support For Non-Bukkit API Plugins | non_priority | changing brand name to gradleminecraftpluginhelper changing brand name to gradleminecraftpluginhelper because of support for non bukkit api plugins | 0 |

236,117 | 19,514,507,037 | IssuesEvent | 2021-12-29 07:48:17 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | opened | Fail to add an entity that contains a boolean/int32 property in one emulator table | 🧪 testing :gear: tables :beetle: regression | **Storage Explorer Version:** 1.23.0-dev

**Build Number:** 20211220.7

**Branch:** main

**Platform/OS:** Windows 10

**Architecture:** ia32

**How Found:** AD hoc testing

**Regression From:** Previous release (1.21.3)

## Steps to Reproduce ##

1. Install and start Azurite 3.14.0.

2. Launch Storage Explorer -> ... | 1.0 | Fail to add an entity that contains a boolean/int32 property in one emulator table - **Storage Explorer Version:** 1.23.0-dev

**Build Number:** 20211220.7

**Branch:** main

**Platform/OS:** Windows 10

**Architecture:** ia32

**How Found:** AD hoc testing

**Regression From:** Previous release (1.21.3)

## Steps to... | non_priority | fail to add an entity that contains a boolean property in one emulator table storage explorer version dev build number branch main platform os windows architecture how found ad hoc testing regression from previous release steps to reproduce ... | 0 |

428,157 | 12,403,911,563 | IssuesEvent | 2020-05-21 14:41:30 | OpenNebula/one | https://api.github.com/repos/OpenNebula/one | closed | Put NSX info into NSX tab | Category: Sunstone Priority: Normal Status: Accepted Type: Feature | **Description**

Sunstone should show NSX info into NSX tab

**Use case**

To view more easily that information

**Interface Changes**

Sunstone

**Additional Context**

Add any other context or screenshots about the feature request here. Or any other alternative you have considered to addressed this new feature... | 1.0 | Put NSX info into NSX tab - **Description**

Sunstone should show NSX info into NSX tab

**Use case**

To view more easily that information

**Interface Changes**

Sunstone

**Additional Context**

Add any other context or screenshots about the feature request here. Or any other alternative you have considered t... | priority | put nsx info into nsx tab description sunstone should show nsx info into nsx tab use case to view more easily that information interface changes sunstone additional context add any other context or screenshots about the feature request here or any other alternative you have considered t... | 1 |

106,450 | 4,272,690,282 | IssuesEvent | 2016-07-13 15:11:17 | fgpv-vpgf/rcs | https://api.github.com/repos/fgpv-vpgf/rcs | opened | Permit recursive flag on Esri Map Services | improvements: enhancement needs: estimate priority: high | #45 was targeting only Group Layers, modify RCS to also handle Map Service listings of layers. This will involve some complexity as separate calls will be needed to pull out the nested layers beneath Group Layers.

Sample registration:

```

{

"service_url": "http://geoappext.nrcan.gc.ca/arcgis/rest/services/Nor... | 1.0 | Permit recursive flag on Esri Map Services - #45 was targeting only Group Layers, modify RCS to also handle Map Service listings of layers. This will involve some complexity as separate calls will be needed to pull out the nested layers beneath Group Layers.

Sample registration:

```

{

"service_url": "http://g... | priority | permit recursive flag on esri map services was targeting only group layers modify rcs to also handle map service listings of layers this will involve some complexity as separate calls will be needed to pull out the nested layers beneath group layers sample registration service url recu... | 1 |

32,349 | 7,529,463,182 | IssuesEvent | 2018-04-14 05:11:02 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | Request for standard form field type for all media in library | J3 Issue No Code Attached Yet | Please could we have a Standard Form Field Type which provides modal access to all the files in the media library.

This would be like the current "media" form field type, but wouldn't be restricted to just images.

The new field type should allow filtering, so that you could specify the types of files (eg audio / ... | 1.0 | Request for standard form field type for all media in library - Please could we have a Standard Form Field Type which provides modal access to all the files in the media library.

This would be like the current "media" form field type, but wouldn't be restricted to just images.

The new field type should allow filt... | non_priority | request for standard form field type for all media in library please could we have a standard form field type which provides modal access to all the files in the media library this would be like the current media form field type but wouldn t be restricted to just images the new field type should allow filt... | 0 |

302,340 | 26,139,861,719 | IssuesEvent | 2022-12-29 16:49:52 | apache/beam | https://api.github.com/repos/apache/beam | closed | Deploy PerfKit Explorer for Beam | tests P3 bug |

Imported from Jira [BEAM-1596](https://issues.apache.org/jira/browse/BEAM-1596). Original Jira may contain additional context.

Reported by: jaku. | 1.0 | Deploy PerfKit Explorer for Beam -

Imported from Jira [BEAM-1596](https://issues.apache.org/jira/browse/BEAM-1596). Original Jira may contain additional context.

Reported by: jaku. | non_priority | deploy perfkit explorer for beam imported from jira original jira may contain additional context reported by jaku | 0 |

328,605 | 28,127,430,457 | IssuesEvent | 2023-03-31 19:03:23 | flutter/flutter | https://api.github.com/repos/flutter/flutter | reopened | integration_test doesn't measure CPU, memory, or GPU utilization | integration_test P4 | This makes the numbers fairly limited compared to what driver can do. | 1.0 | integration_test doesn't measure CPU, memory, or GPU utilization - This makes the numbers fairly limited compared to what driver can do. | non_priority | integration test doesn t measure cpu memory or gpu utilization this makes the numbers fairly limited compared to what driver can do | 0 |

53,580 | 13,850,391,324 | IssuesEvent | 2020-10-15 01:05:16 | kenferrara/highcharts | https://api.github.com/repos/kenferrara/highcharts | opened | CVE-2020-11979 (High) detected in ant-1.8.2.jar | security vulnerability | ## CVE-2020-11979 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ant-1.8.2.jar</b></p></summary>

<p>master POM</p>

<p>Path to vulnerable library: highcharts/tools/apache-ant/lib/ant.j... | True | CVE-2020-11979 (High) detected in ant-1.8.2.jar - ## CVE-2020-11979 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ant-1.8.2.jar</b></p></summary>

<p>master POM</p>

<p>Path to vulnera... | non_priority | cve high detected in ant jar cve high severity vulnerability vulnerable library ant jar master pom path to vulnerable library highcharts tools apache ant lib ant jar dependency hierarchy x ant jar vulnerable library vulnerability details ... | 0 |

431,685 | 12,484,735,596 | IssuesEvent | 2020-05-30 16:11:11 | spectrochempy/test_issues_migration_from_redmine | https://api.github.com/repos/spectrochempy/test_issues_migration_from_redmine | closed | Check for new version at the program start up | Category: Deployment Priority: Minor Project: SpectroChemPy Status: Closed Tracker: Feature | Author: Christian Fernandez (Christian Fernandez )

Redmine Issue: 29, https://redmine.spectrochempy.fr/issues/29

---

Make a function call when the library is loaded to indicate whether or not a new version is present o PyPi and Conda.

| 1.0 | Check for new version at the program start up - Author: Christian Fernandez (Christian Fernandez )

Redmine Issue: 29, https://redmine.spectrochempy.fr/issues/29

---

Make a function call when the library is loaded to indicate whether or not a new version is present o PyPi and Conda.

| priority | check for new version at the program start up author christian fernandez christian fernandez redmine issue make a function call when the library is loaded to indicate whether or not a new version is present o pypi and conda | 1 |

742,340 | 25,849,995,158 | IssuesEvent | 2022-12-13 09:44:08 | l7mp/stunner | https://api.github.com/repos/l7mp/stunner | closed | stunnerd stops responding to filesystem events | priority: low status: cannot reproduce type: bug | The `stunnerd` pod seems to hang, with the following logs:

```

14:09:00.748041 main.go:148: stunnerd WARNING: unhnadled notify op on config file "/etc/stunnerd/stunnerd.conf" (ignoring): CHMOD

14:09:00.748077 main.go:133: stunnerd WARNING: config file deleted "REMOVE", disabling watcher

14:09:00.748090 main.go:138... | 1.0 | stunnerd stops responding to filesystem events - The `stunnerd` pod seems to hang, with the following logs:

```

14:09:00.748041 main.go:148: stunnerd WARNING: unhnadled notify op on config file "/etc/stunnerd/stunnerd.conf" (ignoring): CHMOD

14:09:00.748077 main.go:133: stunnerd WARNING: config file deleted "REMOVE... | priority | stunnerd stops responding to filesystem events the stunnerd pod seems to hang with the following logs main go stunnerd warning unhnadled notify op on config file etc stunnerd stunnerd conf ignoring chmod main go stunnerd warning config file deleted remove disabling watcher... | 1 |

337,905 | 30,271,068,967 | IssuesEvent | 2023-07-07 15:23:08 | BoBAdministration/QA-Bug-Reports | https://api.github.com/repos/BoBAdministration/QA-Bug-Reports | closed | World border kill zone jutting out into area within the border | Fixed-PendingTesting | **Describe the Bug**

In some spots, the kill zone that is supposed to be outside the world border is inside the world border, making it kill players who arent doing anything wrong. this seems to exclusively be around corners that jut into the playable area

**To Reproduce**

1. log into any titania server

2. telepo... | 1.0 | World border kill zone jutting out into area within the border - **Describe the Bug**

In some spots, the kill zone that is supposed to be outside the world border is inside the world border, making it kill players who arent doing anything wrong. this seems to exclusively be around corners that jut into the playable ar... | non_priority | world border kill zone jutting out into area within the border describe the bug in some spots the kill zone that is supposed to be outside the world border is inside the world border making it kill players who arent doing anything wrong this seems to exclusively be around corners that jut into the playable ar... | 0 |

2,142 | 4,272,560,830 | IssuesEvent | 2016-07-13 14:50:46 | IBM-Bluemix/logistics-wizard | https://api.github.com/repos/IBM-Bluemix/logistics-wizard | opened | Use transaction in ERP simulator to avoid corruption of data in concurrent API calls | erp-service story | Aim of this story is to review the ERP simulator and use Loopback transactions whenever needed.

Typically in demo.js, shipment.js, some remote methods alter multiple tables and objects at the same time. These methods should be protected against concurrent API calls that could potentially corrupt the data - as exampl... | 1.0 | Use transaction in ERP simulator to avoid corruption of data in concurrent API calls - Aim of this story is to review the ERP simulator and use Loopback transactions whenever needed.

Typically in demo.js, shipment.js, some remote methods alter multiple tables and objects at the same time. These methods should be pro... | non_priority | use transaction in erp simulator to avoid corruption of data in concurrent api calls aim of this story is to review the erp simulator and use loopback transactions whenever needed typically in demo js shipment js some remote methods alter multiple tables and objects at the same time these methods should be pro... | 0 |

43,735 | 2,891,935,225 | IssuesEvent | 2015-06-15 09:37:44 | geometalab/osmaxx | https://api.github.com/repos/geometalab/osmaxx | opened | Production and development suitable docker-compose.yml | priority:high | As a developer, I want to deploy the docker containers as simple or simpler than on the local machine.

Write a simple docker-compose file, that can be adapted/copied if used in production or in development, while still having both environments stick very close to each other. | 1.0 | Production and development suitable docker-compose.yml - As a developer, I want to deploy the docker containers as simple or simpler than on the local machine.

Write a simple docker-compose file, that can be adapted/copied if used in production or in development, while still having both environments stick very close... | priority | production and development suitable docker compose yml as a developer i want to deploy the docker containers as simple or simpler than on the local machine write a simple docker compose file that can be adapted copied if used in production or in development while still having both environments stick very close... | 1 |

61,972 | 25,813,186,113 | IssuesEvent | 2022-12-12 01:28:59 | fga-eps-mds/2022-2-MeasureSoftGram-Doc | https://api.github.com/repos/fga-eps-mds/2022-2-MeasureSoftGram-Doc | opened | US10: Visualizar `heatmap` de correlação entre as releases | Frontend Service US | ## Descrição da Issue

<!-- Descreva de forma sucinta a issue e caso necessite, as informações adicionais necessárias para sua realização. -->

Eu como **Pesquisador de Software/QA/Tech lead** gostaria de observar um **heatmap** com a correlação entre as releases.

## Tarefas:

<!-- - [ ] Tarefa 1

- [ ] Tarefa 2

- ... | 1.0 | US10: Visualizar `heatmap` de correlação entre as releases - ## Descrição da Issue

<!-- Descreva de forma sucinta a issue e caso necessite, as informações adicionais necessárias para sua realização. -->

Eu como **Pesquisador de Software/QA/Tech lead** gostaria de observar um **heatmap** com a correlação entre as rele... | non_priority | visualizar heatmap de correlação entre as releases descrição da issue eu como pesquisador de software qa tech lead gostaria de observar um heatmap com a correlação entre as releases tarefas tarefa tarefa tarefa critérios de aceitação critério ... | 0 |

80,118 | 3,550,920,478 | IssuesEvent | 2016-01-21 00:11:20 | washingtontrails/vms | https://api.github.com/repos/washingtontrails/vms | closed | Request higher Salesforce API call quota | High Priority Salesforce VMS BUDGET | @WaBirder57, we had discussed requesting a temporary increase in Salesforce's limit on API calls for the first few weeks that the live copy of VMS is running, until we have a better idea of what typical usage looks like and can figure out if we need to pay for more calls permanently or optimize the system.

Can you m... | 1.0 | Request higher Salesforce API call quota - @WaBirder57, we had discussed requesting a temporary increase in Salesforce's limit on API calls for the first few weeks that the live copy of VMS is running, until we have a better idea of what typical usage looks like and can figure out if we need to pay for more calls perma... | priority | request higher salesforce api call quota we had discussed requesting a temporary increase in salesforce s limit on api calls for the first few weeks that the live copy of vms is running until we have a better idea of what typical usage looks like and can figure out if we need to pay for more calls permanently or... | 1 |

589,511 | 17,703,531,333 | IssuesEvent | 2021-08-25 03:13:19 | woowa-techcamp-2021/store-6 | https://api.github.com/repos/woowa-techcamp-2021/store-6 | closed | [FE] useDebounce 개선 | refactor high priority | ## :hammer: 기능 설명

### useDebounce 개선

## 📑 완료 조건

- [x] 인자에 값이 아닌 콜백을 받도록 수정한다.

## :thought_balloon: 관련 Backlog

> [대분류] - [중분류] - [Backlog 이름]

리팩토링 - useDebounce | 1.0 | [FE] useDebounce 개선 - ## :hammer: 기능 설명

### useDebounce 개선

## 📑 완료 조건

- [x] 인자에 값이 아닌 콜백을 받도록 수정한다.

## :thought_balloon: 관련 Backlog

> [대분류] - [중분류] - [Backlog 이름]

리팩토링 - useDebounce | priority | usedebounce 개선 hammer 기능 설명 usedebounce 개선 📑 완료 조건 인자에 값이 아닌 콜백을 받도록 수정한다 thought balloon 관련 backlog 리팩토링 usedebounce | 1 |

44,003 | 9,530,755,758 | IssuesEvent | 2019-04-29 14:33:26 | jwilm/alacritty | https://api.github.com/repos/jwilm/alacritty | closed | Chinese character selection width | S - selection S - unicode help wanted | A single Chinese character requires two default widths to be fully selected. Otherwise only half can be selected. Just like this:

Running in Archlinux, Xorg environment. Alacritty version is `0.2.9`

| 1.0 | Chinese character selection width - A single Chinese character requires two default widths to be fully selected. Otherwise only half can be selected. Just like this:

Running in Archlinux, Xorg environmen... | non_priority | chinese character selection width a single chinese character requires two default widths to be fully selected otherwise only half can be selected just like this running in archlinux xorg environment alacritty version is | 0 |

55,407 | 14,008,903,983 | IssuesEvent | 2020-10-29 00:55:36 | mwilliams7197/ksa | https://api.github.com/repos/mwilliams7197/ksa | closed | WS-2018-0021 (Medium) detected in bootstrap-2.1.0.js - autoclosed | security vulnerability | ## WS-2018-0021 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-2.1.0.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first p... | True | WS-2018-0021 (Medium) detected in bootstrap-2.1.0.js - autoclosed - ## WS-2018-0021 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-2.1.0.js</b></p></summary>

<p>The most p... | non_priority | ws medium detected in bootstrap js autoclosed ws medium severity vulnerability vulnerable library bootstrap js the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to vulnerable library ksa ks... | 0 |

55,406 | 14,008,903,714 | IssuesEvent | 2020-10-29 00:55:33 | mwilliams7197/ksa | https://api.github.com/repos/mwilliams7197/ksa | closed | WS-2017-0330 (Medium) detected in mime-1.2.4.tgz - autoclosed | security vulnerability | ## WS-2017-0330 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mime-1.2.4.tgz</b></p></summary>

<p>A comprehensive library for mime-type mapping</p>

<p>Library home page: <a href="h... | True | WS-2017-0330 (Medium) detected in mime-1.2.4.tgz - autoclosed - ## WS-2017-0330 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mime-1.2.4.tgz</b></p></summary>

<p>A comprehensive li... | non_priority | ws medium detected in mime tgz autoclosed ws medium severity vulnerability vulnerable library mime tgz a comprehensive library for mime type mapping library home page a href path to dependency file ksa ksa web root ksa web src main webapp rs bootstrap package json ... | 0 |

520,722 | 15,091,782,005 | IssuesEvent | 2021-02-06 16:53:42 | ridhambhat/SlateVim | https://api.github.com/repos/ridhambhat/SlateVim | opened | Add React Router routing for group editing | priority.Medium type.Enhancement | Use `React Router` and have a route to something like `groups/:id` to allow for different editing "rooms" | 1.0 | Add React Router routing for group editing - Use `React Router` and have a route to something like `groups/:id` to allow for different editing "rooms" | priority | add react router routing for group editing use react router and have a route to something like groups id to allow for different editing rooms | 1 |

162,317 | 6,150,799,408 | IssuesEvent | 2017-06-27 23:50:02 | chaos/pdsh | https://api.github.com/repos/chaos/pdsh | closed | Support user defined variables in pdsh | auto-migrated Priority-Low Type-Enhancement | ```

Hi!

It would be nice having user defined variables per host in pdsh.

This could be the first "execute different command by host (I see a wishlist

bug here talking about)" implementation:

Like %h is hostname.

We could define:

$ cat ~/.pdsh/vars/host01:

ports="8080 8081"

$ cat ~/.pdsh/vars/host02:

ports="8080"

... | 1.0 | Support user defined variables in pdsh - ```

Hi!

It would be nice having user defined variables per host in pdsh.

This could be the first "execute different command by host (I see a wishlist

bug here talking about)" implementation:

Like %h is hostname.

We could define:

$ cat ~/.pdsh/vars/host01:

ports="8080 8081"... | priority | support user defined variables in pdsh hi it would be nice having user defined variables per host in pdsh this could be the first execute different command by host i see a wishlist bug here talking about implementation like h is hostname we could define cat pdsh vars ports cat p... | 1 |

186,843 | 6,743,025,513 | IssuesEvent | 2017-10-20 10:11:20 | CS2103AUG2017-W09-B3/main | https://api.github.com/repos/CS2103AUG2017-W09-B3/main | closed | As a forgetful user, I want to find specific contacts by attributes other than name | priority.high status.completed type.enhancement | ...so that I can find out the contact’s name and other details.

- Command class implementation

- Update Parser

- JUnit test | 1.0 | As a forgetful user, I want to find specific contacts by attributes other than name - ...so that I can find out the contact’s name and other details.

- Command class implementation

- Update Parser

- JUnit test | priority | as a forgetful user i want to find specific contacts by attributes other than name so that i can find out the contact’s name and other details command class implementation update parser junit test | 1 |

15,539 | 8,954,696,862 | IssuesEvent | 2019-01-26 00:07:42 | vim/vim | https://api.github.com/repos/vim/vim | closed | `rop=type:directx` in combination with `encoding=utf-8` slows down global command | patch performance platform-windows | Os: Windows 10 x64 (v1709)

Vim version:

```

VIM - Vi IMproved 8.0 (2016 Sep 12, compiled Mar 11 2018 23:08:09)

MS-Windows 32-bit GUI version with OLE support

Included patches: 1-1599

Compiled by appveyor@APPVYR-WIN

```

Steps to reproduce:

`gvim --clean +"set rop=type:directx encoding=utf-8" +"norm Itest" +... | True | `rop=type:directx` in combination with `encoding=utf-8` slows down global command - Os: Windows 10 x64 (v1709)

Vim version:

```

VIM - Vi IMproved 8.0 (2016 Sep 12, compiled Mar 11 2018 23:08:09)

MS-Windows 32-bit GUI version with OLE support

Included patches: 1-1599

Compiled by appveyor@APPVYR-WIN

```

Steps t... | non_priority | rop type directx in combination with encoding utf slows down global command os windows vim version vim vi improved sep compiled mar ms windows bit gui version with ole support included patches compiled by appveyor appvyr win steps to reproduce gvim ... | 0 |

207,603 | 7,131,595,064 | IssuesEvent | 2018-01-22 11:35:44 | GreenDelta/Sophena | https://api.github.com/repos/GreenDelta/Sophena | opened | Einführung einer Brennstoff-Gruppe und Zuordnung der Wärmeerzeuger dazu | enhancement medium priority | Bestehende Wärmeerzeuger können erfasste Brennstoffe nicht verwenden. | 1.0 | Einführung einer Brennstoff-Gruppe und Zuordnung der Wärmeerzeuger dazu - Bestehende Wärmeerzeuger können erfasste Brennstoffe nicht verwenden. | priority | einführung einer brennstoff gruppe und zuordnung der wärmeerzeuger dazu bestehende wärmeerzeuger können erfasste brennstoffe nicht verwenden | 1 |

173,272 | 6,522,819,979 | IssuesEvent | 2017-08-29 05:28:19 | Templarian/MaterialDesign | https://api.github.com/repos/Templarian/MaterialDesign | closed | Floorplan / blueprint icon | Contribution High Priority Home Assistant Icon Request | As a home assistant home automation user I found the need for a floorplan icon, but there is nothing suitable in MDI.

Here's one I rolled. It would be nice if it can be added to MDI.

[floorplan.zip](https://github.com/Templarian/MaterialDesign/files/1213052/floorplan.zip)

| 1.0 | Floorplan / blueprint icon - As a home assistant home automation user I found the need for a floorplan icon, but there is nothing suitable in MDI.

Here's one I rolled. It would be nice if it can be added to MDI.

[floorplan.zip](https://github.com/Templarian/MaterialDesign/files/1213052/floorplan.zip)

| priority | floorplan blueprint icon as a home assistant home automation user i found the need for a floorplan icon but there is nothing suitable in mdi here s one i rolled it would be nice if it can be added to mdi | 1 |

161,536 | 25,358,047,356 | IssuesEvent | 2022-11-20 15:21:10 | dotnet/fsharp | https://api.github.com/repos/dotnet/fsharp | closed | FSharpChecker.CompileToDynamicAssembly | Resolution-By Design Needs-Triage | i used this function to make in-memory dynamic assembiles from fsx scripts in 41.0.7

Does this function just no longer exist?

Is there a replacement?

I cant find a single thing about it in the notes | 1.0 | FSharpChecker.CompileToDynamicAssembly - i used this function to make in-memory dynamic assembiles from fsx scripts in 41.0.7

Does this function just no longer exist?

Is there a replacement?

I cant find a single thing about it in the notes | non_priority | fsharpchecker compiletodynamicassembly i used this function to make in memory dynamic assembiles from fsx scripts in does this function just no longer exist is there a replacement i cant find a single thing about it in the notes | 0 |

375,553 | 26,167,915,526 | IssuesEvent | 2023-01-01 14:28:06 | appium/appium | https://api.github.com/repos/appium/appium | closed | 'css selector' is not supported for this session | Documentation Help Needed | I am following the code from http://appium.io/docs/en/commands/element/actions/click/

`$('#SomeId').click();`

"webdriverio": "^5.18.5"

| 1.0 | 'css selector' is not supported for this session - I am following the code from http://appium.io/docs/en/commands/element/actions/click/

`$('#SomeId').click();`

"webdriverio": "^5.18.5"

| non_priority | css selector is not supported for this session i am following the code from someid click webdriverio | 0 |

224,622 | 17,762,237,766 | IssuesEvent | 2021-08-29 22:43:35 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | test/test_op_aliases.py generates illegal test method names | module: tests triaged | Note the double period:

```

TestOpNormalizationCPU.test_jit_op_alias_normalization_linalg.det_cpu

```

cc @mruberry @VitalyFedyunin @walterddr

| 1.0 | test/test_op_aliases.py generates illegal test method names - Note the double period:

```

TestOpNormalizationCPU.test_jit_op_alias_normalization_linalg.det_cpu

```

cc @mruberry @VitalyFedyunin @walterddr

| non_priority | test test op aliases py generates illegal test method names note the double period testopnormalizationcpu test jit op alias normalization linalg det cpu cc mruberry vitalyfedyunin walterddr | 0 |

115 | 3,298,962,295 | IssuesEvent | 2015-11-02 16:38:24 | magnumripper/JohnTheRipper | https://api.github.com/repos/magnumripper/JohnTheRipper | closed | clang build for Raspberry Pi 2 fails | portability | A regular autoconf build works, with and without openmp. But using clang instead of gcc doesn't.

```

$ clang --version

Raspbian clang version 3.5.0-10+rpi1 (tags/RELEASE_350/final) (based on LLVM 3.5.0)

Target: arm-unknown-linux-gnueabihf

Thread model: posix

$ clang -dumpversion

4.2.1

$ uname -a

Linux rasp... | True | clang build for Raspberry Pi 2 fails - A regular autoconf build works, with and without openmp. But using clang instead of gcc doesn't.

```

$ clang --version

Raspbian clang version 3.5.0-10+rpi1 (tags/RELEASE_350/final) (based on LLVM 3.5.0)

Target: arm-unknown-linux-gnueabihf

Thread model: posix

$ clang -dum... | non_priority | clang build for raspberry pi fails a regular autoconf build works with and without openmp but using clang instead of gcc doesn t clang version raspbian clang version tags release final based on llvm target arm unknown linux gnueabihf thread model posix clang dumpversi... | 0 |

35,761 | 14,876,816,111 | IssuesEvent | 2021-01-20 01:42:47 | Azure/azure-sdk-for-net | https://api.github.com/repos/Azure/azure-sdk-for-net | closed | [FEATURE REQ] Way to log the client information for messages being read | Client Service Attention Service Bus customer-reported feature-request needs-team-attention | **Library or service name.**

Microsoft.Azure.ServiceBus

**Is your feature request related to a problem? Please describe.**

We are using the Azure service bus for message processing. We host one or more App Service instances(subscribers) that would be connected to the same Topic and subscription.

After a message... | 2.0 | [FEATURE REQ] Way to log the client information for messages being read - **Library or service name.**

Microsoft.Azure.ServiceBus

**Is your feature request related to a problem? Please describe.**

We are using the Azure service bus for message processing. We host one or more App Service instances(subscribers) tha... | non_priority | way to log the client information for messages being read library or service name microsoft azure servicebus is your feature request related to a problem please describe we are using the azure service bus for message processing we host one or more app service instances subscribers that would be c... | 0 |

66,137 | 12,728,322,340 | IssuesEvent | 2020-06-25 02:12:01 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | [4.0] Filter options in private messaging layout | No Code Attached Yet | ### Steps to reproduce the issue

Click filter options in private messaging in admin

### Actual result

Note the uber wide dropdown for a tiny field content

<img width="1650" alt="Screenshot 2020-06-09 at 21 44 37" src="https://user-images.githubusercontent.com/400092/84197856-87e77580-aa9a-11ea-8634-a... | 1.0 | [4.0] Filter options in private messaging layout - ### Steps to reproduce the issue

Click filter options in private messaging in admin

### Actual result

Note the uber wide dropdown for a tiny field content

<img width="1650" alt="Screenshot 2020-06-09 at 21 44 37" src="https://user-images.githubuserc... | non_priority | filter options in private messaging layout steps to reproduce the issue click filter options in private messaging in admin actual result note the uber wide dropdown for a tiny field content img width alt screenshot at src | 0 |

99,724 | 8,709,493,936 | IssuesEvent | 2018-12-06 14:07:42 | edenlabllc/ehealth.api | https://api.github.com/repos/edenlabllc/ehealth.api | closed | Validate managing_organization==token.client_id on Update episode | [zube]: In Test kind/task priority/high project/medical_events status/test | On *Update Episode* validate ME.patient.episode.managing_organization==client_id

throw error *403* "User is not allowed to perform actions with an episode that belongs to another legal entity"

edenlabllc/ehealth.api#3241

| 2.0 | Validate managing_organization==token.client_id on Update episode - On *Update Episode* validate ME.patient.episode.managing_organization==client_id

throw error *403* "User is not allowed to perform actions with an episode that belongs to another legal entity"

edenlabllc/ehealth.api#3241

| non_priority | validate managing organization token client id on update episode on update episode validate me patient episode managing organization client id throw error user is not allowed to perform actions with an episode that belongs to another legal entity edenlabllc ehealth api | 0 |

6,019 | 8,678,681,370 | IssuesEvent | 2018-11-30 20:46:06 | isawnyu/isaw.web | https://api.github.com/repos/isawnyu/isaw.web | closed | make "filter results" functionality on search accessible: 3 | deploy requirement | > The “filter the results” option should not automatically update the results on the selection of the

checkbox to exclude the item type. This option should require a submit option or should have

the same submit button as the search text field and be placed visually before the search submit.

![screen shot 2018-06-0... | 1.0 | make "filter results" functionality on search accessible: 3 - > The “filter the results” option should not automatically update the results on the selection of the

checkbox to exclude the item type. This option should require a submit option or should have

the same submit button as the search text field and be placed... | non_priority | make filter results functionality on search accessible the “filter the results” option should not automatically update the results on the selection of the checkbox to exclude the item type this option should require a submit option or should have the same submit button as the search text field and be placed... | 0 |

198,947 | 22,674,199,161 | IssuesEvent | 2022-07-04 01:26:52 | Techini/WebGoat | https://api.github.com/repos/Techini/WebGoat | closed | CVE-2021-24122 (Medium) detected in tomcat-embed-core-9.0.27.jar - autoclosed | security vulnerability | ## CVE-2021-24122 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-9.0.27.jar</b></p></summary>

<p>Core Tomcat implementation</p>

<p>Library home page: <a href="http... | True | CVE-2021-24122 (Medium) detected in tomcat-embed-core-9.0.27.jar - autoclosed - ## CVE-2021-24122 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-9.0.27.jar</b></p><... | non_priority | cve medium detected in tomcat embed core jar autoclosed cve medium severity vulnerability vulnerable library tomcat embed core jar core tomcat implementation library home page a href path to dependency file webgoat integration tests pom xml path to vulnerable libra... | 0 |

718,547 | 24,722,100,130 | IssuesEvent | 2022-10-20 11:31:00 | googleapis/release-please | https://api.github.com/repos/googleapis/release-please | opened | Support interactive editing PR | type: feature request priority: p3 | **Is your feature request related to a problem? Please describe.**

Listen for PR events to react to any changes.

**Describe the solution you'd like**

1. React when the version in the PR title is edited, which means that the version to be released will change.

2. React when the PR body is edited, which means th... | 1.0 | Support interactive editing PR - **Is your feature request related to a problem? Please describe.**

Listen for PR events to react to any changes.

**Describe the solution you'd like**

1. React when the version in the PR title is edited, which means that the version to be released will change.