Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

168,314 | 14,145,530,523 | IssuesEvent | 2020-11-10 17:52:22 | noah-francis/SpaceNet_Software | https://api.github.com/repos/noah-francis/SpaceNet_Software | closed | Restart Versions? | documentation question | like:

*_V_3_1.mlapp --> *_V_1_1.mlapp

and just start from there again? | 1.0 | Restart Versions? - like:

*_V_3_1.mlapp --> *_V_1_1.mlapp

and just start from there again? | non_priority | restart versions like v mlapp v mlapp and just start from there again | 0 |

214,528 | 7,274,151,125 | IssuesEvent | 2018-02-21 08:59:00 | STEP-tw/battleship-phoenix | https://api.github.com/repos/STEP-tw/battleship-phoenix | closed | View settings | Low Priority small | As a _player_

I want to _have settings_

So that I may personalize language and settings_

**Additional Details**

User has landed on homepage.

Settings should be below about game option

**Acceptance Criteria**

- [x] Criteria 1

- Given _settings option_

- When _I click on settings_

- ... | 1.0 | View settings - As a _player_

I want to _have settings_

So that I may personalize language and settings_

**Additional Details**

User has landed on homepage.

Settings should be below about game option

**Acceptance Criteria**

- [x] Criteria 1

- Given _settings option_

- When _I click on... | priority | view settings as a player i want to have settings so that i may personalize language and settings additional details user has landed on homepage settings should be below about game option acceptance criteria criteria given settings option when i click on s... | 1 |

201,690 | 15,218,190,837 | IssuesEvent | 2021-02-17 17:30:29 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | QueueSplitBrainProtectionReadTest element_splitBrainProtection[splitBrainProtectionType:READ] | Source: Internal Team: Core Type: Test-Failure | - Fails on `Hazelcast-4.master-sonar`

- Fails on [Build #698 (Nov 24, 2020 9:17:00 PM)](http://jenkins.hazelcast.com/view/Official%20Builds/job/Hazelcast-4.master-sonar/698/testReport/junit/com.hazelcast.splitbrainprotection.queue/QueueSplitBrainProtectionReadTest/element_splitBrainProtection_splitBrainProtectionType_... | 1.0 | QueueSplitBrainProtectionReadTest element_splitBrainProtection[splitBrainProtectionType:READ] - - Fails on `Hazelcast-4.master-sonar`

- Fails on [Build #698 (Nov 24, 2020 9:17:00 PM)](http://jenkins.hazelcast.com/view/Official%20Builds/job/Hazelcast-4.master-sonar/698/testReport/junit/com.hazelcast.splitbrainprotectio... | non_priority | queuesplitbrainprotectionreadtest element splitbrainprotection fails on hazelcast master sonar fails on error queue is empty stacktrace java util nosuchelementexception queue is empty at com hazelcast collection impl queue queueproxyimpl element queueproxyimpl java at co... | 0 |

522,211 | 15,158,179,786 | IssuesEvent | 2021-02-12 00:31:08 | NOAA-GSL/MATS | https://api.github.com/repos/NOAA-GSL/MATS | closed | Adjust appearance of curve show/hide buttons | Priority: Medium Project: MATS Status: Closed Type: Feature | ---

Author Name: **molly.b.smith** (@mollybsmith-noaa)

Original Redmine Issue: 63802, https://vlab.ncep.noaa.gov/redmine/issues/63802

Original Date: 2019-05-10

Original Assignee: molly.b.smith

---

From the user feedback session:

*****Hide/show buttons: invert colors, background white, text color of curve. Add ... | 1.0 | Adjust appearance of curve show/hide buttons - ---

Author Name: **molly.b.smith** (@mollybsmith-noaa)

Original Redmine Issue: 63802, https://vlab.ncep.noaa.gov/redmine/issues/63802

Original Date: 2019-05-10

Original Assignee: molly.b.smith

---

From the user feedback session:

*****Hide/show buttons: invert color... | priority | adjust appearance of curve show hide buttons author name molly b smith mollybsmith noaa original redmine issue original date original assignee molly b smith from the user feedback session hide show buttons invert colors background white text color of curve add space be... | 1 |

9,509 | 29,145,126,091 | IssuesEvent | 2023-05-18 01:43:09 | MicaelJarniac/cookiecutter-python-project | https://api.github.com/repos/MicaelJarniac/cookiecutter-python-project | opened | Separate type-checking deps for Nox session | enhancement automation | Installing all dev deps takes too long for the type-checking session. | 1.0 | Separate type-checking deps for Nox session - Installing all dev deps takes too long for the type-checking session. | non_priority | separate type checking deps for nox session installing all dev deps takes too long for the type checking session | 0 |

219,135 | 16,819,213,279 | IssuesEvent | 2021-06-17 11:04:15 | yewstack/yew | https://api.github.com/repos/yewstack/yew | closed | Document `VRef` usage | documentation | We have an example of `VRef` usage here: https://github.com/yewstack/yew/blob/v0.12.0/examples/inner_html/src/lib.rs#L36 but we don't document this pattern anywhere. It's a common question, for example: https://gitter.im/yewframework/Lobby?at=5e5b905b8e04426dd016384a | 1.0 | Document `VRef` usage - We have an example of `VRef` usage here: https://github.com/yewstack/yew/blob/v0.12.0/examples/inner_html/src/lib.rs#L36 but we don't document this pattern anywhere. It's a common question, for example: https://gitter.im/yewframework/Lobby?at=5e5b905b8e04426dd016384a | non_priority | document vref usage we have an example of vref usage here but we don t document this pattern anywhere it s a common question for example | 0 |

586,138 | 17,570,600,695 | IssuesEvent | 2021-08-14 16:01:31 | tahmid02016/tahmid02016.github.io | https://api.github.com/repos/tahmid02016/tahmid02016.github.io | closed | Y অক্ষ বরাবর header-এর মার্জিন বৃদ্ধি করতে হবে। | enhancement priority:medium | কম্পিউটার স্ক্রিনে শীর্ষ অংশ আরও বড় দেখানোর জন্য Y অক্ষ বরাবর মার্জিন আরও বৃদ্ধি করতে হবে। | 1.0 | Y অক্ষ বরাবর header-এর মার্জিন বৃদ্ধি করতে হবে। - কম্পিউটার স্ক্রিনে শীর্ষ অংশ আরও বড় দেখানোর জন্য Y অক্ষ বরাবর মার্জিন আরও বৃদ্ধি করতে হবে। | priority | y অক্ষ বরাবর header এর মার্জিন বৃদ্ধি করতে হবে। কম্পিউটার স্ক্রিনে শীর্ষ অংশ আরও বড় দেখানোর জন্য y অক্ষ বরাবর মার্জিন আরও বৃদ্ধি করতে হবে। | 1 |

15,087 | 2,611,066,796 | IssuesEvent | 2015-02-27 00:31:13 | alistairreilly/andors-trail | https://api.github.com/repos/alistairreilly/andors-trail | closed | Ask to overwrite the savegame if the name differs. | auto-migrated Milestone-0.6.10 Priority-Medium Type-Enhancement | ```

qasur stated here:

http://andors.techby2guys.com/viewtopic.php?f=4&t=844

Can we get an "Are you sure?" button when you're about to overwrite an existing

saved file?

It should ask you if the character name being saved differs from the saved game

you're about to erase.

```

Original issue reported on code.google.... | 1.0 | Ask to overwrite the savegame if the name differs. - ```

qasur stated here:

http://andors.techby2guys.com/viewtopic.php?f=4&t=844

Can we get an "Are you sure?" button when you're about to overwrite an existing

saved file?

It should ask you if the character name being saved differs from the saved game

you're about t... | priority | ask to overwrite the savegame if the name differs qasur stated here can we get an are you sure button when you re about to overwrite an existing saved file it should ask you if the character name being saved differs from the saved game you re about to erase original issue reported on code google... | 1 |

55,784 | 14,681,837,777 | IssuesEvent | 2020-12-31 14:30:44 | TykTechnologies/tyk-operator | https://api.github.com/repos/TykTechnologies/tyk-operator | closed | creating 2 ingress resources results in an api definition being deleted | defect ingress | Template

```

apiVersion: tyk.tyk.io/v1alpha1

kind: ApiDefinition

metadata:

name: myapideftemplate

labels:

template: "true"

spec:

name: foo

protocol: http

use_keyless: true

use_standard_auth: false

active: true

proxy:

target_url: http://example.com

strip_listen_path: true

... | 1.0 | creating 2 ingress resources results in an api definition being deleted - Template

```

apiVersion: tyk.tyk.io/v1alpha1

kind: ApiDefinition

metadata:

name: myapideftemplate

labels:

template: "true"

spec:

name: foo

protocol: http

use_keyless: true

use_standard_auth: false

active: true

... | non_priority | creating ingress resources results in an api definition being deleted template apiversion tyk tyk io kind apidefinition metadata name myapideftemplate labels template true spec name foo protocol http use keyless true use standard auth false active true proxy ... | 0 |

660,007 | 21,948,305,781 | IssuesEvent | 2022-05-24 04:44:35 | DeFiCh/wallet | https://api.github.com/repos/DeFiCh/wallet | closed | Price Rates - order of token display | triage/accepted kind/feature area/ui-ux priority/low | <!-- Please only use this template for submitting enhancement/feature requests -->

#### What would you like to be added:

The order of the token displayed in price rates should be aligned with https://defiscan.live/

Follow the order of the pairing. If it's dBTC-DFI, then dBTC should be displayed first.

Align with ... | 1.0 | Price Rates - order of token display - <!-- Please only use this template for submitting enhancement/feature requests -->

#### What would you like to be added:

The order of the token displayed in price rates should be aligned with https://defiscan.live/

Follow the order of the pairing. If it's dBTC-DFI, then dBTC ... | priority | price rates order of token display what would you like to be added the order of the token displayed in price rates should be aligned with follow the order of the pairing if it s dbtc dfi then dbtc should be displayed first align with scan and go with the non dusd dfi token as the “primary” token ... | 1 |

277,031 | 24,042,810,408 | IssuesEvent | 2022-09-16 04:43:47 | Tencent/bk-ci | https://api.github.com/repos/Tencent/bk-ci | closed | bug:插件大版本中最新的版本下架的情况会导致agent下载到该已下架的版本 | kind/bug for gray for test done area/ci/backend tested | **问题:** 插件大版本中最新的版本下架的情况会导致agent下载到该已下架的版本

**解决方案**: 应该让agent下载到最新已发布的版本 | 2.0 | bug:插件大版本中最新的版本下架的情况会导致agent下载到该已下架的版本 - **问题:** 插件大版本中最新的版本下架的情况会导致agent下载到该已下架的版本

**解决方案**: 应该让agent下载到最新已发布的版本 | non_priority | bug 插件大版本中最新的版本下架的情况会导致agent下载到该已下架的版本 问题: 插件大版本中最新的版本下架的情况会导致agent下载到该已下架的版本 解决方案 : 应该让agent下载到最新已发布的版本 | 0 |

464,713 | 13,338,552,876 | IssuesEvent | 2020-08-28 11:15:36 | RxJellyBot/Jelly-Bot | https://api.github.com/repos/RxJellyBot/Jelly-Bot | closed | Message Stats - Top Active Member Message Count | Priority: 7 Type: Task | List the daily message count of the below members in the corresponding section.

- Top 3

- Top 10

- Top 20

These tops could have different member for each days.

For example:

- 1st day Top 3: A B C

- 2nd day Top 3: A B D | 1.0 | Message Stats - Top Active Member Message Count - List the daily message count of the below members in the corresponding section.

- Top 3

- Top 10

- Top 20

These tops could have different member for each days.

For example:

- 1st day Top 3: A B C

- 2nd day Top 3: A B D | priority | message stats top active member message count list the daily message count of the below members in the corresponding section top top top these tops could have different member for each days for example day top a b c day top a b d | 1 |

65,490 | 7,882,454,734 | IssuesEvent | 2018-06-26 22:49:02 | quicwg/base-drafts | https://api.github.com/repos/quicwg/base-drafts | closed | CFIN only recoverable with timeout | -tls -transport design stream0 | If we're sending CFIN and 1-RTT data in the same flight, and only the CFIN is lost, the client can only use the handshake timeout to recover the FIN and not any threshold based recovery.

We don't allow the server to use the 1-RTT keys until it receives the CFIN. This makes it so that even if the server has received ... | 1.0 | CFIN only recoverable with timeout - If we're sending CFIN and 1-RTT data in the same flight, and only the CFIN is lost, the client can only use the handshake timeout to recover the FIN and not any threshold based recovery.

We don't allow the server to use the 1-RTT keys until it receives the CFIN. This makes it so ... | non_priority | cfin only recoverable with timeout if we re sending cfin and rtt data in the same flight and only the cfin is lost the client can only use the handshake timeout to recover the fin and not any threshold based recovery we don t allow the server to use the rtt keys until it receives the cfin this makes it so ... | 0 |

264,157 | 19,989,485,517 | IssuesEvent | 2022-01-31 03:24:18 | eslutz/Space-Adventure-Text-Game | https://api.github.com/repos/eslutz/Space-Adventure-Text-Game | reopened | Update/Add Documentation | documentation | Create or update the following files:

- [ ] README

- [ ] CHANGELOG

- [ ] SECURITY

- [ ] CODEOWNER

- [x] Issue Templates | 1.0 | Update/Add Documentation - Create or update the following files:

- [ ] README

- [ ] CHANGELOG

- [ ] SECURITY

- [ ] CODEOWNER

- [x] Issue Templates | non_priority | update add documentation create or update the following files readme changelog security codeowner issue templates | 0 |

559,003 | 16,547,269,920 | IssuesEvent | 2021-05-28 02:36:47 | swlegion/tts | https://api.github.com/repos/swlegion/tts | closed | Issues to fix for re-landing Spawnv2 | priority 1: high 🐛 bug | Make sure to test with this [`cis-spam.json`](https://gist.githubusercontent.com/matanlurey/8fcf4fc55250cc1b68eabdb093a0517c/raw/fe6d79f78dd469cafd8420f8fad32618bbfaeb6b/cis-spam.json).

- Add collider data for all of the assetbundle-based minis

- Larger units (>5 minis?) spawn models stacked

- Units not spawning a... | 1.0 | Issues to fix for re-landing Spawnv2 - Make sure to test with this [`cis-spam.json`](https://gist.githubusercontent.com/matanlurey/8fcf4fc55250cc1b68eabdb093a0517c/raw/fe6d79f78dd469cafd8420f8fad32618bbfaeb6b/cis-spam.json).

- Add collider data for all of the assetbundle-based minis

- Larger units (>5 minis?) spawn... | priority | issues to fix for re landing make sure to test with this add collider data for all of the assetbundle based minis larger units minis spawn models stacked units not spawning at all in the back row when they do spawn models are being scattered across the x axis | 1 |

224,646 | 17,767,048,199 | IssuesEvent | 2021-08-30 08:53:28 | ckeditor/ckeditor4 | https://api.github.com/repos/ckeditor/ckeditor4 | closed | Link and image are not displayed properly by print plugin | type:bug status:confirmed type:failingtest status:blocked | ## Type of report

Bug

## Provide detailed reproduction steps (if any)

1. Go to `/tests/plugins/print/manual/print`

2. Click `Print` button

3. Check out preview

### Expected result

Preview content matches editor content exactly.

### Actual result

9/10 tries image is missing and link is displayed a... | 1.0 | Link and image are not displayed properly by print plugin - ## Type of report

Bug

## Provide detailed reproduction steps (if any)

1. Go to `/tests/plugins/print/manual/print`

2. Click `Print` button

3. Check out preview

### Expected result

Preview content matches editor content exactly.

### Actual r... | non_priority | link and image are not displayed properly by print plugin type of report bug provide detailed reproduction steps if any go to tests plugins print manual print click print button check out preview expected result preview content matches editor content exactly actual r... | 0 |

269,531 | 23,447,627,793 | IssuesEvent | 2022-08-15 21:26:07 | common-fate/granted-approvals | https://api.github.com/repos/common-fate/granted-approvals | opened | Add tests for snowflake | tests | Currently snowflake is implemented and functional, however we do not have adequate tests in place.

https://github.com/common-fate/granted-approvals/commit/a2c38f95e9ca835f5be342de3b50141124962191 | 1.0 | Add tests for snowflake - Currently snowflake is implemented and functional, however we do not have adequate tests in place.

https://github.com/common-fate/granted-approvals/commit/a2c38f95e9ca835f5be342de3b50141124962191 | non_priority | add tests for snowflake currently snowflake is implemented and functional however we do not have adequate tests in place | 0 |

118,679 | 17,598,790,955 | IssuesEvent | 2021-08-17 09:11:30 | ghc-dev/Gary-Parks | https://api.github.com/repos/ghc-dev/Gary-Parks | opened | CVE-2021-21290 (Medium) detected in netty-handler-4.1.39.Final.jar, netty-codec-http-4.1.39.Final.jar | security vulnerability | ## CVE-2021-21290 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>netty-handler-4.1.39.Final.jar</b>, <b>netty-codec-http-4.1.39.Final.jar</b></p></summary>

<p>

<details><summary><... | True | CVE-2021-21290 (Medium) detected in netty-handler-4.1.39.Final.jar, netty-codec-http-4.1.39.Final.jar - ## CVE-2021-21290 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>netty-handl... | non_priority | cve medium detected in netty handler final jar netty codec http final jar cve medium severity vulnerability vulnerable libraries netty handler final jar netty codec http final jar netty handler final jar netty is an asynchronous event driven networ... | 0 |

300,790 | 9,212,466,921 | IssuesEvent | 2019-03-10 00:58:14 | gravityview/GravityView | https://api.github.com/repos/gravityview/GravityView | closed | Editing an entry strips the labels from product calculation fields, nulls totals | Bug Core: Edit Entry Core: Fields Difficulty: Medium Priority: High | The labels get stripped from the receipt table after editing in Edit Entry; probably due to bad serialization?

Also look into whether it's the deleting of the entry meta on `GravityView_Field_Product::clear_product_info_cache()` method

See [HS#10931](https://secure.h... | 1.0 | Editing an entry strips the labels from product calculation fields, nulls totals - The labels get stripped from the receipt table after editing in Edit Entry; probably due to bad serialization?

Also look into whether it's the deleting of the entry meta on `GravityView_Field_Product::clear_product_info_cache()` method

... | priority | editing an entry strips the labels from product calculation fields nulls totals the labels get stripped from the receipt table after editing in edit entry probably due to bad serialization also look into whether it s the deleting of the entry meta on gravityview field product clear product info cache method ... | 1 |

84,710 | 24,393,514,444 | IssuesEvent | 2022-10-04 17:07:22 | expo/expo | https://api.github.com/repos/expo/expo | closed | Create development build using EAS with Notifee - Could not determine the dependencies of task ':app:compileDebugJavaWithJavac'. | needs review Development Builds | ### Summary

Create development build using EAS.

I've followed the [ Notifee Expo support workflow ](https://notifee.app/react-native/docs/installation#expo-support) running

```

expo prebuild

eas build --profile development --platform android

```

with the latest the `expo-dev-client` package, `expo-cli`, `eas... | 1.0 | Create development build using EAS with Notifee - Could not determine the dependencies of task ':app:compileDebugJavaWithJavac'. - ### Summary

Create development build using EAS.

I've followed the [ Notifee Expo support workflow ](https://notifee.app/react-native/docs/installation#expo-support) running

```

expo p... | non_priority | create development build using eas with notifee could not determine the dependencies of task app compiledebugjavawithjavac summary create development build using eas i ve followed the running expo prebuild eas build profile development platform android with the latest the expo d... | 0 |

610,722 | 18,922,574,887 | IssuesEvent | 2021-11-17 04:47:30 | CMPUT301F21T20/HabitTracker | https://api.github.com/repos/CMPUT301F21T20/HabitTracker | closed | 6.1 Habit Event Location | priority: medium Habit Events Geolocation and Maps complexity: high | User Story: As a doer, I want a habit event to have an optional location to record where it happened.

When a user creates a habit event we need to give them the option to save their location. This requires asking for location permission and saving the location along with the other habit event info in Firestore.

T... | 1.0 | 6.1 Habit Event Location - User Story: As a doer, I want a habit event to have an optional location to record where it happened.

When a user creates a habit event we need to give them the option to save their location. This requires asking for location permission and saving the location along with the other habit ev... | priority | habit event location user story as a doer i want a habit event to have an optional location to record where it happened when a user creates a habit event we need to give them the option to save their location this requires asking for location permission and saving the location along with the other habit ev... | 1 |

9,235 | 27,776,174,150 | IssuesEvent | 2023-03-16 17:22:24 | gchq/gaffer-docker | https://api.github.com/repos/gchq/gaffer-docker | opened | Update image tagging to work with new versioning | Docker automation | The changes in #275 add the Accumulo version to the version tag for certain images. The GitHub Actions workflow requires updating to work correctly with this new tag format.

This is also a good opportunity to upgrade the workflows to make greater use of Actions instead of scripts and to also push images to GHCR. | 1.0 | Update image tagging to work with new versioning - The changes in #275 add the Accumulo version to the version tag for certain images. The GitHub Actions workflow requires updating to work correctly with this new tag format.

This is also a good opportunity to upgrade the workflows to make greater use of Actions inst... | non_priority | update image tagging to work with new versioning the changes in add the accumulo version to the version tag for certain images the github actions workflow requires updating to work correctly with this new tag format this is also a good opportunity to upgrade the workflows to make greater use of actions instea... | 0 |

257,355 | 8,136,301,283 | IssuesEvent | 2018-08-20 07:56:31 | ow2-proactive/scheduling-portal | https://api.github.com/repos/ow2-proactive/scheduling-portal | opened | When a user doesn't have the right to execute scripts from the Resource Manager portal, a Http 500 error is reported | priority:low severity:minor type:bug | When a user doesn't have the right to execute scripts from the RM Portal, the portal reports a Http 500 error. This error message is confusing. Better replace it by a message like "You are not authorised to execute scripts on this node, please contact the node's administrator." | 1.0 | When a user doesn't have the right to execute scripts from the Resource Manager portal, a Http 500 error is reported - When a user doesn't have the right to execute scripts from the RM Portal, the portal reports a Http 500 error. This error message is confusing. Better replace it by a message like "You are not authoris... | priority | when a user doesn t have the right to execute scripts from the resource manager portal a http error is reported when a user doesn t have the right to execute scripts from the rm portal the portal reports a http error this error message is confusing better replace it by a message like you are not authorised t... | 1 |

553,064 | 16,342,922,199 | IssuesEvent | 2021-05-13 01:27:40 | knative/eventing | https://api.github.com/repos/knative/eventing | closed | Make dynamically created resources configurable | kind/feature-request lifecycle/stale priority/important-soon | **Problem**

Most of the resources created dynamically are not configurable. For instance see how the [PingSource receive adapter](https://github.com/knative/eventing/blob/master/pkg/reconciler/pingsource/resources/mt_receive_adapter.go#L49) is created.

**[Persona:](https://github.com/knative/eventing/blob/master/do... | 1.0 | Make dynamically created resources configurable - **Problem**

Most of the resources created dynamically are not configurable. For instance see how the [PingSource receive adapter](https://github.com/knative/eventing/blob/master/pkg/reconciler/pingsource/resources/mt_receive_adapter.go#L49) is created.

**[Persona:](... | priority | make dynamically created resources configurable problem most of the resources created dynamically are not configurable for instance see how the is created which persona is this feature for operator exit criteria a measurable binary test that would indicate that the problem has been r... | 1 |

46,026 | 13,055,840,532 | IssuesEvent | 2020-07-30 02:53:37 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | const-correctness in icetray (Trac #459) | Incomplete Migration Migrated from Trac combo reconstruction defect | Migrated from https://code.icecube.wisc.edu/ticket/459

```json

{

"status": "closed",

"changetime": "2013-03-20T21:11:36",

"description": "I found small bugs related to const methods. These are trivially corrected by adding a 'const' keyword.\n\nin icetray/private/icetray/PythonModule.h:\n\n\n{{{\n- const... | 1.0 | const-correctness in icetray (Trac #459) - Migrated from https://code.icecube.wisc.edu/ticket/459

```json

{

"status": "closed",

"changetime": "2013-03-20T21:11:36",

"description": "I found small bugs related to const methods. These are trivially corrected by adding a 'const' keyword.\n\nin icetray/private... | non_priority | const correctness in icetray trac migrated from json status closed changetime description i found small bugs related to const methods these are trivially corrected by adding a const keyword n nin icetray private icetray pythonmodule h n n n n const getc... | 0 |

585,703 | 17,515,238,334 | IssuesEvent | 2021-08-11 05:31:50 | GIST-Petition-Site-Project/GIST-petition-web | https://api.github.com/repos/GIST-Petition-Site-Project/GIST-petition-web | opened | 회원가입 페이지 코드 리팩토링 및 실사용자를 위한 UI 개선 | Type: Feature/UI Type: Feature/Function Status: To Do Priority: Medium | ## Feature description

- state를 개별적으로 선언하지 않고 객체로 만들어 선언

- 현재 테스트 사용자를 위한 UI로 구성되어 있지만 후에 이메일 인증 API 구현 시 실사용자를 대상으로 UI 개선 요망

### Use cases

## Benefits

For whom and why.

## Requirements

- pages/SignUp.js

## Links / references

| 1.0 | 회원가입 페이지 코드 리팩토링 및 실사용자를 위한 UI 개선 - ## Feature description

- state를 개별적으로 선언하지 않고 객체로 만들어 선언

- 현재 테스트 사용자를 위한 UI로 구성되어 있지만 후에 이메일 인증 API 구현 시 실사용자를 대상으로 UI 개선 요망

### Use cases

## Benefits

For whom and why.

## Requirements

- pages/SignUp.js

## Links / references

| priority | 회원가입 페이지 코드 리팩토링 및 실사용자를 위한 ui 개선 feature description state를 개별적으로 선언하지 않고 객체로 만들어 선언 현재 테스트 사용자를 위한 ui로 구성되어 있지만 후에 이메일 인증 api 구현 시 실사용자를 대상으로 ui 개선 요망 use cases benefits for whom and why requirements pages signup js links references | 1 |

54,332 | 3,066,352,687 | IssuesEvent | 2015-08-18 00:46:55 | theminted/lesswrong-migrated | https://api.github.com/repos/theminted/lesswrong-migrated | opened | WebApp Error: <type 'exceptions.AttributeError'>: _id not found | bug imported Priority-Medium | _From [wjmo...@gmail.com](https://code.google.com/u/117567618910921056910/) on October 22, 2013 09:17:03_

Error occurred accessing http://lesswrong.com/comments/ See attached exception email.

**Attachment:** [_id not found.pdf](http://code.google.com/p/lesswrong/issues/detail?id=408)

_Original issue: http://code.goo... | 1.0 | WebApp Error: <type 'exceptions.AttributeError'>: _id not found - _From [wjmo...@gmail.com](https://code.google.com/u/117567618910921056910/) on October 22, 2013 09:17:03_

Error occurred accessing http://lesswrong.com/comments/ See attached exception email.

**Attachment:** [_id not found.pdf](http://code.google.com/p... | priority | webapp error id not found from on october error occurred accessing see attached exception email attachment original issue | 1 |

542,779 | 15,866,617,088 | IssuesEvent | 2021-04-08 15:56:33 | ESCOMP/CTSM | https://api.github.com/repos/ESCOMP/CTSM | closed | external munging with cdeps and fox | priority: low tag: next type: -external type: enhancement | ### Brief summary of bug

The following generates an unclean state in components/cdeps that I'm not quite sure how to clean out:

```

git clone git@github.com:ESCOMP/CTSM.git ctsm-test-cdeps

cd ctsm-test-cdeps/

git remote add ckoven_repo git@github.com:ckoven/CTSM.git

git fetch ckoven_repo

./manage_externals/c... | 1.0 | external munging with cdeps and fox - ### Brief summary of bug

The following generates an unclean state in components/cdeps that I'm not quite sure how to clean out:

```

git clone git@github.com:ESCOMP/CTSM.git ctsm-test-cdeps

cd ctsm-test-cdeps/

git remote add ckoven_repo git@github.com:ckoven/CTSM.git

git f... | priority | external munging with cdeps and fox brief summary of bug the following generates an unclean state in components cdeps that i m not quite sure how to clean out git clone git github com escomp ctsm git ctsm test cdeps cd ctsm test cdeps git remote add ckoven repo git github com ckoven ctsm git git f... | 1 |

175,335 | 6,549,787,806 | IssuesEvent | 2017-09-05 08:29:32 | rogerthat-platform/rogerthat-backend | https://api.github.com/repos/rogerthat-platform/rogerthat-backend | opened | Sending message to deactivated user caused Exception instead of CanOnlySendToFriendsException | priority_minor type_bug | ```

Unknown exception occurred: error id bc0bc0ac-f532-4144-8011-d9a3e873fc6d (/base/data/home/apps/e~rogerthat-server/36.403870270423677127/add_1_monkey_patches.py:111)

Traceback (most recent call last):

File "/base/data/home/apps/e~rogerthat-server/36.403870270423677127/rogerthat/rpc/service.py", line 570, in _e... | 1.0 | Sending message to deactivated user caused Exception instead of CanOnlySendToFriendsException - ```

Unknown exception occurred: error id bc0bc0ac-f532-4144-8011-d9a3e873fc6d (/base/data/home/apps/e~rogerthat-server/36.403870270423677127/add_1_monkey_patches.py:111)

Traceback (most recent call last):

File "/base/da... | priority | sending message to deactivated user caused exception instead of canonlysendtofriendsexception unknown exception occurred error id base data home apps e rogerthat server add monkey patches py traceback most recent call last file base data home apps e rogerthat server rogerthat r... | 1 |

44,216 | 2,900,282,469 | IssuesEvent | 2015-06-17 15:41:30 | rasmi/civic-graph | https://api.github.com/repos/rasmi/civic-graph | closed | Only show three items by default in overflowing lists. | enhancement priority-medium | See `$scope.itemsShownDefault` in `app/static/js/app.js` to set defaults. | 1.0 | Only show three items by default in overflowing lists. - See `$scope.itemsShownDefault` in `app/static/js/app.js` to set defaults. | priority | only show three items by default in overflowing lists see scope itemsshowndefault in app static js app js to set defaults | 1 |

133,090 | 5,196,815,538 | IssuesEvent | 2017-01-23 14:02:10 | fgpv-vpgf/fgpv-vpgf | https://api.github.com/repos/fgpv-vpgf/fgpv-vpgf | closed | "Help" non responsive in Firefox | browser: Foxes bug-type: broken use case priority: high problem: bug v1.5.0 | Tested URL :http://fgpv.cloudapp.net/demo/v1.5.0-1/prod/samples/index-fgp-en.html?keys=JOSM,EcoAction,CESI_Other,NPRI_CO,Railways,mb_colour,Airports,Barley

"Help" option from the left hand side panel or from the right side panel do not work. Help window does not load in both cases. Below is the error I see in the co... | 1.0 | "Help" non responsive in Firefox - Tested URL :http://fgpv.cloudapp.net/demo/v1.5.0-1/prod/samples/index-fgp-en.html?keys=JOSM,EcoAction,CESI_Other,NPRI_CO,Railways,mb_colour,Airports,Barley

"Help" option from the left hand side panel or from the right side panel do not work. Help window does not load in both cases.... | priority | help non responsive in firefox tested url help option from the left hand side panel or from the right side panel do not work help window does not load in both cases below is the error i see in the console please investigate | 1 |

827,175 | 31,758,274,094 | IssuesEvent | 2023-09-12 01:38:00 | googleapis/python-dns | https://api.github.com/repos/googleapis/python-dns | opened | tests.unit.test__http.TestConnection: test_build_api_url_w_extra_query_params failed | type: bug priority: p1 flakybot: issue | Note: #85 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 7bf0386cffba77c0a0b14865d28944f476e9c5e2

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/00af0d43-6328-4db5-8d99-3d1dbed23cb2), [Sponge](http://sponge2/00af0d43-6328-4db5-8d... | 1.0 | tests.unit.test__http.TestConnection: test_build_api_url_w_extra_query_params failed - Note: #85 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 7bf0386cffba77c0a0b14865d28944f476e9c5e2

buildURL: [Build Status](https://source.cloud.google.com/results/invocatio... | priority | tests unit test http testconnection test build api url w extra query params failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed test output self def test build api url w extra query params self ... | 1 |

52,285 | 10,817,491,366 | IssuesEvent | 2019-11-08 09:51:42 | MoonchildProductions/UXP | https://api.github.com/repos/MoonchildProductions/UXP | closed | Remove DiskSpaceWatcher | App: Toolkit Code Cleanup Fixed Low Risk | This component was only ever relevant for space-restricted devices (i.e. GONK/Firefox OS) and has never been enabled on any other target. This is unmaintained code with an unknown working state.

It lives in `/toolkit/components/diskspacewatcher` and has some call sites from DOM (for local storage restrictions if the... | 1.0 | Remove DiskSpaceWatcher - This component was only ever relevant for space-restricted devices (i.e. GONK/Firefox OS) and has never been enabled on any other target. This is unmaintained code with an unknown working state.

It lives in `/toolkit/components/diskspacewatcher` and has some call sites from DOM (for local s... | non_priority | remove diskspacewatcher this component was only ever relevant for space restricted devices i e gonk firefox os and has never been enabled on any other target this is unmaintained code with an unknown working state it lives in toolkit components diskspacewatcher and has some call sites from dom for local s... | 0 |

62,780 | 12,240,706,310 | IssuesEvent | 2020-05-05 01:16:49 | microsoft/AdaptiveCards | https://api.github.com/repos/microsoft/AdaptiveCards | closed | [UWP][Accessibility] Focusable sibling elements must not have same and localized control type. | Bug Status-In Code Review Triage-Approved for Fix | # Platform

* UWP

# Version of SDK

master

# Details

Repro Steps:

1. Launch the application.

2. New screen starts appearing. Navigate to Left pane JSON files.

3. Now select "ExpenseReport.JSON" button. It will update the Middle and Right Pane.

4. Navigate to amount section like (Air Travel Expense $300,... | 1.0 | [UWP][Accessibility] Focusable sibling elements must not have same and localized control type. - # Platform

* UWP

# Version of SDK

master

# Details

Repro Steps:

1. Launch the application.

2. New screen starts appearing. Navigate to Left pane JSON files.

3. Now select "ExpenseReport.JSON" button. It will... | non_priority | focusable sibling elements must not have same and localized control type platform uwp version of sdk master details repro steps launch the application new screen starts appearing navigate to left pane json files now select expensereport json button it will update the middle... | 0 |

207,178 | 7,125,398,021 | IssuesEvent | 2018-01-19 22:50:56 | sul-dlss/preservation_catalog | https://api.github.com/repos/sul-dlss/preservation_catalog | closed | (C2M) SQL query for looping through catalog entries | high priority in progress | (See PR #459 if it hasn't been merged yet)

We will be selecting PreservedCopy objects by storage_dir / endpoint and by last_version_audit date. We want a SQL query to get us the correct PreservedCopy objects, but it must be done in a scalable way (i.e. chunked).

@jmartin-sul says: "re: the C2M query, i think you co... | 1.0 | (C2M) SQL query for looping through catalog entries - (See PR #459 if it hasn't been merged yet)

We will be selecting PreservedCopy objects by storage_dir / endpoint and by last_version_audit date. We want a SQL query to get us the correct PreservedCopy objects, but it must be done in a scalable way (i.e. chunked).

... | priority | sql query for looping through catalog entries see pr if it hasn t been merged yet we will be selecting preservedcopy objects by storage dir endpoint and by last version audit date we want a sql query to get us the correct preservedcopy objects but it must be done in a scalable way i e chunked jm... | 1 |

70,717 | 13,527,485,773 | IssuesEvent | 2020-09-15 15:28:28 | Perl/perl5 | https://api.github.com/repos/Perl/perl5 | closed | study_chunk recursion | Needs Triage meta-regexp-code | This is a placeholder ticket for consideration of a theoretically possible bug.

In #16947 we found that study_chunk reinvokes itself in two ways - by simple recursion, and by enframing. In some cases that involves restudying regexp ops multiple times, whereas in other cases the reinvocation is the only time the rele... | 1.0 | study_chunk recursion - This is a placeholder ticket for consideration of a theoretically possible bug.

In #16947 we found that study_chunk reinvokes itself in two ways - by simple recursion, and by enframing. In some cases that involves restudying regexp ops multiple times, whereas in other cases the reinvocation i... | non_priority | study chunk recursion this is a placeholder ticket for consideration of a theoretically possible bug in we found that study chunk reinvokes itself in two ways by simple recursion and by enframing in some cases that involves restudying regexp ops multiple times whereas in other cases the reinvocation is th... | 0 |

375,669 | 11,115,104,711 | IssuesEvent | 2019-12-18 10:03:47 | ooni/probe-engine | https://api.github.com/repos/ooni/probe-engine | closed | QA for Telegram in Go | cycle backlog effort/M priority/high technical task | This issue is about doing a final round of QA for Telegram in Go and replace the C++ implementation in MK with the implementation in Go, if we're satisfied. | 1.0 | QA for Telegram in Go - This issue is about doing a final round of QA for Telegram in Go and replace the C++ implementation in MK with the implementation in Go, if we're satisfied. | priority | qa for telegram in go this issue is about doing a final round of qa for telegram in go and replace the c implementation in mk with the implementation in go if we re satisfied | 1 |

659,251 | 21,920,721,036 | IssuesEvent | 2022-05-22 14:27:53 | stax76/mpv.net | https://api.github.com/repos/stax76/mpv.net | closed | The autoload function is not working normally | feature request priority medium | **Describe the bug**

When files are recovered not through the menu but through other scripts, other files in the same directory cannot be loaded automatically. For example, this restriction will be triggered when using the script function of [SmartHistory.lua](https://github.com/Eisa01/mpv-scripts#smarthistory-script)... | 1.0 | The autoload function is not working normally - **Describe the bug**

When files are recovered not through the menu but through other scripts, other files in the same directory cannot be loaded automatically. For example, this restriction will be triggered when using the script function of [SmartHistory.lua](https://gi... | priority | the autoload function is not working normally describe the bug when files are recovered not through the menu but through other scripts other files in the same directory cannot be loaded automatically for example this restriction will be triggered when using the script function of or but the works... | 1 |

139,434 | 12,856,996,219 | IssuesEvent | 2020-07-09 08:35:19 | xarial/docify | https://api.github.com/repos/xarial/docify | opened | Add merge option to code snippet plugin | documentation library | This option should merge the snippets created as the result of excluding regions or multiple regions into a single snippet without jagged lines | 1.0 | Add merge option to code snippet plugin - This option should merge the snippets created as the result of excluding regions or multiple regions into a single snippet without jagged lines | non_priority | add merge option to code snippet plugin this option should merge the snippets created as the result of excluding regions or multiple regions into a single snippet without jagged lines | 0 |

20,296 | 26,933,368,383 | IssuesEvent | 2023-02-07 18:30:50 | scverse/anndata | https://api.github.com/repos/scverse/anndata | opened | Remove workaround from test_concat_size_0_dim once upstream bug fixed | upstream topic: combining Bug 🐛 dev process | * https://github.com/scikit-hep/awkward/issues/2209

Remove marked workaround from `test_concat_size_0_dim` once the upstream bug from awkward is fixed, and a release made. | 1.0 | Remove workaround from test_concat_size_0_dim once upstream bug fixed - * https://github.com/scikit-hep/awkward/issues/2209

Remove marked workaround from `test_concat_size_0_dim` once the upstream bug from awkward is fixed, and a release made. | non_priority | remove workaround from test concat size dim once upstream bug fixed remove marked workaround from test concat size dim once the upstream bug from awkward is fixed and a release made | 0 |

275,204 | 20,912,436,470 | IssuesEvent | 2022-03-24 10:31:39 | JoGorska/bonsai-shop | https://api.github.com/repos/JoGorska/bonsai-shop | opened | DOCS: html validation | documentation | - validate all pages

- download prof of validation

- update readme with validation result

| 1.0 | DOCS: html validation - - validate all pages

- download prof of validation

- update readme with validation result

| non_priority | docs html validation validate all pages download prof of validation update readme with validation result | 0 |

376,635 | 11,149,594,234 | IssuesEvent | 2019-12-23 19:16:32 | texas-justice-initiative/website-nextjs | https://api.github.com/repos/texas-justice-initiative/website-nextjs | opened | make change to Eva Ruth's bio | high priority | Please make the following change to Eva Ruth's bio on "About Us" (no longer writing the book, thankfully!!)

> Executive Director and co-founder Eva Ruth Moravec is a 2018 John Jay/Harry Frank Guggenheim Criminal Justice Reporting fellow, **and a** freelance reporter `<del>`and the author of a forthcoming book that ... | 1.0 | make change to Eva Ruth's bio - Please make the following change to Eva Ruth's bio on "About Us" (no longer writing the book, thankfully!!)

> Executive Director and co-founder Eva Ruth Moravec is a 2018 John Jay/Harry Frank Guggenheim Criminal Justice Reporting fellow, **and a** freelance reporter `<del>`and the au... | priority | make change to eva ruth s bio please make the following change to eva ruth s bio on about us no longer writing the book thankfully executive director and co founder eva ruth moravec is a john jay harry frank guggenheim criminal justice reporting fellow and a freelance reporter and the author of... | 1 |

832,392 | 32,078,554,066 | IssuesEvent | 2023-09-25 12:37:33 | AY2324S1-CS2103T-T10-2/tp | https://api.github.com/repos/AY2324S1-CS2103T-T10-2/tp | opened | As an Event Planner, I can search for my contacts | type.Story priority.High | ... so that I can quickly locate the details of contact I want.

Tasks:

- [ ] Implement searching of contacts `findPerson` @jiakai-17

- [ ] Add `findPerson` to User Guide @jiakai-17 | 1.0 | As an Event Planner, I can search for my contacts - ... so that I can quickly locate the details of contact I want.

Tasks:

- [ ] Implement searching of contacts `findPerson` @jiakai-17

- [ ] Add `findPerson` to User Guide @jiakai-17 | priority | as an event planner i can search for my contacts so that i can quickly locate the details of contact i want tasks implement searching of contacts findperson jiakai add findperson to user guide jiakai | 1 |

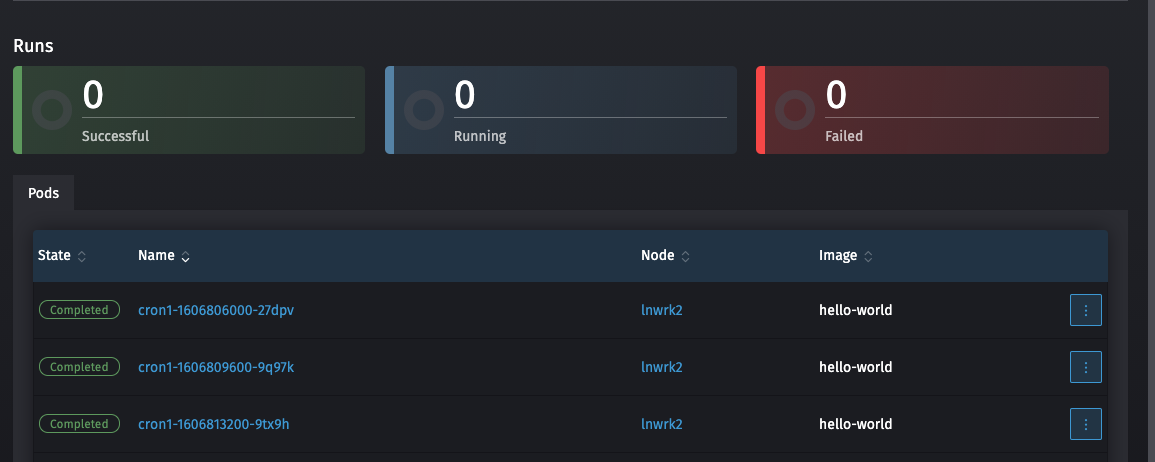

203,018 | 15,339,770,912 | IssuesEvent | 2021-02-27 03:29:30 | rancher/dashboard | https://api.github.com/repos/rancher/dashboard | closed | Cronjob is not counting successful amount in details | [zube]: To Test area/workloads kind/bug | Steps:

1. Create a cronjob that will fire every few minutes.

2. After about 10 minutes go to the details page

Results: The job fires successfully, but the count still says 0

| 1.0 | Cronjob is not counting successful amount in details - Steps:

1. Create a cronjob that will fire every few minutes.

2. After about 10 minutes go to the details page

Results: The job fires successfully, but the count still says 0

`

- [x] `h_labels()`

- [x] `fit_mmrm()`

- [x] `g_mmrm_diagnostic()1

- [x] document

- [x] Add tests | 1.0 | Add `weights` option to `fit_mmrm` etc. - Idea: Expose the `weights` option from `mmrm`.

To do:

- [x] `h_assert_data()`

- [x] `h_labels()`

- [x] `fit_mmrm()`

- [x] `g_mmrm_diagnostic()1

- [x] document

- [x] Add tests | priority | add weights option to fit mmrm etc idea expose the weights option from mmrm to do h assert data h labels fit mmrm g mmrm diagnostic document add tests | 1 |

568,323 | 16,964,751,154 | IssuesEvent | 2021-06-29 09:30:52 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | mail.yahoo.com - design is broken | browser-firefox engine-gecko os-mac priority-critical | <!-- @browser: Firefox 90.0 -->

<!-- @ua_header: Mozilla/5.0 (Macintosh; Intel Mac OS X 10.15; rv:90.0) Gecko/20100101 Firefox/90.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/78239 -->

**URL**: https://mail.yahoo.com/d/folders/1/messages/38474

**Browse... | 1.0 | mail.yahoo.com - design is broken - <!-- @browser: Firefox 90.0 -->

<!-- @ua_header: Mozilla/5.0 (Macintosh; Intel Mac OS X 10.15; rv:90.0) Gecko/20100101 Firefox/90.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/78239 -->

**URL**: https://mail.yahoo.com/... | priority | mail yahoo com design is broken url browser version firefox operating system mac os x tested another browser yes safari problem type design is broken description items not fully visible steps to reproduce yahoo mail preview screen will not load the entire do... | 1 |

143,051 | 19,142,713,398 | IssuesEvent | 2021-12-02 01:56:51 | azmathasan92/concourse-ci-cd | https://api.github.com/repos/azmathasan92/concourse-ci-cd | opened | CVE-2021-22096 (Medium) detected in spring-webflux-5.0.8.RELEASE.jar, spring-web-5.0.8.RELEASE.jar | security vulnerability | ## CVE-2021-22096 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>spring-webflux-5.0.8.RELEASE.jar</b>, <b>spring-web-5.0.8.RELEASE.jar</b></p></summary>

<p>

<details><summary><b>s... | True | CVE-2021-22096 (Medium) detected in spring-webflux-5.0.8.RELEASE.jar, spring-web-5.0.8.RELEASE.jar - ## CVE-2021-22096 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>spring-webflux... | non_priority | cve medium detected in spring webflux release jar spring web release jar cve medium severity vulnerability vulnerable libraries spring webflux release jar spring web release jar spring webflux release jar spring webflux library home page a href... | 0 |

72,239 | 8,712,605,268 | IssuesEvent | 2018-12-06 22:48:46 | kowhai-2018/Final-Project | https://api.github.com/repos/kowhai-2018/Final-Project | closed | Design a prototype/mockup of app using design guides | client design documentation | Have a wiki page dedicated to the design specs including all the different types of design guides, colours etc from #2 .

Relates to #2 | 1.0 | Design a prototype/mockup of app using design guides - Have a wiki page dedicated to the design specs including all the different types of design guides, colours etc from #2 .

Relates to #2 | non_priority | design a prototype mockup of app using design guides have a wiki page dedicated to the design specs including all the different types of design guides colours etc from relates to | 0 |

120,595 | 10,129,799,477 | IssuesEvent | 2019-08-01 15:30:20 | w3c/webrtc-pc | https://api.github.com/repos/w3c/webrtc-pc | closed | callback-based https://w3c.github.io/webrtc-pc/#method-extensions are not covered by WPT tests | Needs Test question | Looking at WPT webrtc tests, I do not find any testing for callback-based createOffer and addIceCandidate.

Are there already tests covering these somewhere?

It would be nice to add such WPT tests if not already covered. | 1.0 | callback-based https://w3c.github.io/webrtc-pc/#method-extensions are not covered by WPT tests - Looking at WPT webrtc tests, I do not find any testing for callback-based createOffer and addIceCandidate.

Are there already tests covering these somewhere?

It would be nice to add such WPT tests if not already covered. | non_priority | callback based are not covered by wpt tests looking at wpt webrtc tests i do not find any testing for callback based createoffer and addicecandidate are there already tests covering these somewhere it would be nice to add such wpt tests if not already covered | 0 |

469,289 | 13,505,002,390 | IssuesEvent | 2020-09-13 20:40:00 | apexcharts/apexcharts.js | https://api.github.com/repos/apexcharts/apexcharts.js | reopened | Chart height on mobile chrome/brave not correct (depending on number of lines in legend) | bug high-priority legend mobile | ## Codepen

https://codepen.io/guybrush-the-bold/full/LoXdgN

## Explanation

- What is the behavior you expect?

The chart should look the same on all devices

- What is happening instead?

On chrome (and brave) the height of the chart-plot is much less than on other devices - depending on the amount of line... | 1.0 | Chart height on mobile chrome/brave not correct (depending on number of lines in legend) - ## Codepen

https://codepen.io/guybrush-the-bold/full/LoXdgN

## Explanation

- What is the behavior you expect?

The chart should look the same on all devices

- What is happening instead?

On chrome (and brave) the he... | priority | chart height on mobile chrome brave not correct depending on number of lines in legend codepen explanation what is the behavior you expect the chart should look the same on all devices what is happening instead on chrome and brave the height of the chart plot is much less than on oth... | 1 |

633,803 | 20,266,206,910 | IssuesEvent | 2022-02-15 12:19:20 | tendermint/starport | https://api.github.com/repos/tendermint/starport | opened | network: stabilize HTTP tunneling | priority/high network | The feature first implemented by https://github.com/tendermint/starport/pull/2055.

There is a connectivity problem with more than two nodes when Gitpod and VM nodes used together.

@ivanovpetr also reported that:

> And I also found out that gitpod node id changes every time when it stopped

Let's ensure that

-... | 1.0 | network: stabilize HTTP tunneling - The feature first implemented by https://github.com/tendermint/starport/pull/2055.

There is a connectivity problem with more than two nodes when Gitpod and VM nodes used together.

@ivanovpetr also reported that:

> And I also found out that gitpod node id changes every time whe... | priority | network stabilize http tunneling the feature first implemented by there is a connectivity problem with more than two nodes when gitpod and vm nodes used together ivanovpetr also reported that and i also found out that gitpod node id changes every time when it stopped let s ensure that we can ru... | 1 |

177,781 | 21,509,183,168 | IssuesEvent | 2022-04-28 01:13:31 | rgordon95/advanced-react-demo | https://api.github.com/repos/rgordon95/advanced-react-demo | opened | WS-2021-0153 (High) detected in ejs-2.5.6.tgz | security vulnerability | ## WS-2021-0153 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ejs-2.5.6.tgz</b></p></summary>

<p>Embedded JavaScript templates</p>

<p>Library home page: <a href="https://registry.npm... | True | WS-2021-0153 (High) detected in ejs-2.5.6.tgz - ## WS-2021-0153 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ejs-2.5.6.tgz</b></p></summary>

<p>Embedded JavaScript templates</p>

<p>... | non_priority | ws high detected in ejs tgz ws high severity vulnerability vulnerable library ejs tgz embedded javascript templates library home page a href path to dependency file advanced react demo package json path to vulnerable library node modules ejs package json depend... | 0 |

37,765 | 18,764,850,412 | IssuesEvent | 2021-11-05 21:40:21 | Azure/azure-functions-host | https://api.github.com/repos/Azure/azure-functions-host | closed | Dynamic Concurrency Support | performance | ## Overview

This epic item tracks the work across the various repos for enabling **Dynamic Concurrency** (DC). The idea for DC is to define a collaborative model between the host and extensions to allow extensions to support a dynamic concurrency mode. In this mode, rather than the user manually configuring host lev... | True | Dynamic Concurrency Support - ## Overview

This epic item tracks the work across the various repos for enabling **Dynamic Concurrency** (DC). The idea for DC is to define a collaborative model between the host and extensions to allow extensions to support a dynamic concurrency mode. In this mode, rather than the user... | non_priority | dynamic concurrency support overview this epic item tracks the work across the various repos for enabling dynamic concurrency dc the idea for dc is to define a collaborative model between the host and extensions to allow extensions to support a dynamic concurrency mode in this mode rather than the user... | 0 |

39,833 | 9,670,953,544 | IssuesEvent | 2019-05-21 21:14:51 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | await on an already completed Task can abort async method | area-task defect | When using await on a Task object that is already completed, the javascript code nevertheless calls the function "continueWith" on the Task. This function then in turn immediately calls back to execute the continuation. This causes an ever-deeper nesting of function calls until some overflow happens and the execution t... | 1.0 | await on an already completed Task can abort async method - When using await on a Task object that is already completed, the javascript code nevertheless calls the function "continueWith" on the Task. This function then in turn immediately calls back to execute the continuation. This causes an ever-deeper nesting of fu... | non_priority | await on an already completed task can abort async method when using await on a task object that is already completed the javascript code nevertheless calls the function continuewith on the task this function then in turn immediately calls back to execute the continuation this causes an ever deeper nesting of fu... | 0 |

470,656 | 13,542,353,983 | IssuesEvent | 2020-09-16 17:12:35 | googleapis/python-storage | https://api.github.com/repos/googleapis/python-storage | closed | Hashes are no longer returned for partial downloads | api: storage needs more info priority: p2 | #204 Changed behavior for hashes for partial downloads.

When a partial request is made, we do not return the 'X-Goog-Hash' headers. As a result,

[blob.py:812](https://github.com/googleapis/python-storage/pull/204/files#diff-5d3277a5f0f072a447a1eb89f9fa1ae0R812) overwrites the `blob.crc32c` value with its default, `N... | 1.0 | Hashes are no longer returned for partial downloads - #204 Changed behavior for hashes for partial downloads.

When a partial request is made, we do not return the 'X-Goog-Hash' headers. As a result,

[blob.py:812](https://github.com/googleapis/python-storage/pull/204/files#diff-5d3277a5f0f072a447a1eb89f9fa1ae0R812) o... | priority | hashes are no longer returned for partial downloads changed behavior for hashes for partial downloads when a partial request is made we do not return the x goog hash headers as a result overwrites the blob value with its default none overwrites the blob hash value with its default n... | 1 |

93,303 | 3,898,884,202 | IssuesEvent | 2016-04-17 11:39:25 | CoderDojo/community-platform | https://api.github.com/repos/CoderDojo/community-platform | closed | Links to Charter & privacy statement broken when logged in | bug high priority | Charter link in nav and privacy statement link in footer seems to break when a user is logged in but works fine when no one is logged in.

1. Log in your account in zen

2. Click privacy statement - will give 404

3. Click charter - will go to https://zen.coderdojo.com/undefined

if you skip step 2, charter works... | 1.0 | Links to Charter & privacy statement broken when logged in - Charter link in nav and privacy statement link in footer seems to break when a user is logged in but works fine when no one is logged in.

1. Log in your account in zen

2. Click privacy statement - will give 404

3. Click charter - will go to https://zen... | priority | links to charter privacy statement broken when logged in charter link in nav and privacy statement link in footer seems to break when a user is logged in but works fine when no one is logged in log in your account in zen click privacy statement will give click charter will go to if you s... | 1 |

297,833 | 25,766,690,557 | IssuesEvent | 2022-12-09 02:49:57 | PalisadoesFoundation/talawa-api | https://api.github.com/repos/PalisadoesFoundation/talawa-api | closed | Resolvers: Create Tests for getDonationByOrgId | good first issue unapproved parent points 02 test | The Talawa-API code base needs to be 100% reliable. This means we need to have 100% test code coverage.

Tests need to be written for file `lib/resolvers/donation_query/getDonationByOrgId.js`

- We will need the API to be refactored for all methods, classes and/or functions found in this file for testing to be corr... | 1.0 | Resolvers: Create Tests for getDonationByOrgId - The Talawa-API code base needs to be 100% reliable. This means we need to have 100% test code coverage.

Tests need to be written for file `lib/resolvers/donation_query/getDonationByOrgId.js`

- We will need the API to be refactored for all methods, classes and/or fu... | non_priority | resolvers create tests for getdonationbyorgid the talawa api code base needs to be reliable this means we need to have test code coverage tests need to be written for file lib resolvers donation query getdonationbyorgid js we will need the api to be refactored for all methods classes and or functi... | 0 |

47,207 | 19,573,728,087 | IssuesEvent | 2022-01-04 13:09:51 | hashicorp/terraform-provider-aws | https://api.github.com/repos/hashicorp/terraform-provider-aws | closed | resource/aws_vpc_ipam_organization_admin_account | enhancement new-resource service/ec2 | <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not l... | 1.0 | resource/aws_vpc_ipam_organization_admin_account - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and ma... | non_priority | resource aws vpc ipam organization admin account community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or other comments that do not add relevant new information or questions they gene... | 0 |

7,800 | 19,409,914,676 | IssuesEvent | 2021-12-20 08:23:21 | TerriaJS/terriajs | https://api.github.com/repos/TerriaJS/terriajs | closed | MobX - Inconsistent Feature Highlighting | T-Architecture/refactor P-DroughtMap Version 8 | Not yet ported to MobX (TBC):

In some of the features when you select the layer the selected polygon is not being highlighted e.g., if you select Murray Darling Region in the Australian Drainage Division Layer then you can see the feature information pop-ups but the boundary is not been selected; https://uat.drought.g... | 1.0 | MobX - Inconsistent Feature Highlighting - Not yet ported to MobX (TBC):

In some of the features when you select the layer the selected polygon is not being highlighted e.g., if you select Murray Darling Region in the Australian Drainage Division Layer then you can see the feature information pop-ups but the boundary ... | non_priority | mobx inconsistent feature highlighting not yet ported to mobx tbc in some of the features when you select the layer the selected polygon is not being highlighted e g if you select murray darling region in the australian drainage division layer then you can see the feature information pop ups but the boundary ... | 0 |

119,610 | 25,546,149,006 | IssuesEvent | 2022-11-29 18:59:07 | dotnet/interactive | https://api.github.com/repos/dotnet/interactive | closed | [EXTERNAL] Python kernel with C# cell doesn't execute with C# kernel | bug Area-VS Code Extension waiting-on-feedback External Area-VS Code Jupyter Extension Interop | ### Describe the bug

Can't execute C# code into ipynb file in vscode stable. But with jupyter-lab I can:

### Please complete the following:

**Which version of .NET Interactive are you using?** There are... | 2.0 | [EXTERNAL] Python kernel with C# cell doesn't execute with C# kernel - ### Describe the bug

Can't execute C# code into ipynb file in vscode stable. But with jupyter-lab I can:

### Please complete the follo... | non_priority | python kernel with c cell doesn t execute with c kernel describe the bug can t execute c code into ipynb file in vscode stable but with jupyter lab i can please complete the following which version of net interactive are you using there are a few ways to find this out i... | 0 |

18,793 | 6,643,132,545 | IssuesEvent | 2017-09-27 10:04:48 | magicDGS/ReadTools | https://api.github.com/repos/magicDGS/ReadTools | closed | Pre-Sorted parts handling with DistmapPartDownloader | build/developer enhancement error prone GATK | Because the current implementation of `ReadsDataSource` from GATK assumes that the merged header is always `coordinate` sorted, it does not allow to check for already sorted batches.

If they support to have the merged header with the real sort order from all the files included, it will be nice to speed-up things in ... | 1.0 | Pre-Sorted parts handling with DistmapPartDownloader - Because the current implementation of `ReadsDataSource` from GATK assumes that the merged header is always `coordinate` sorted, it does not allow to check for already sorted batches.

If they support to have the merged header with the real sort order from all the... | non_priority | pre sorted parts handling with distmappartdownloader because the current implementation of readsdatasource from gatk assumes that the merged header is always coordinate sorted it does not allow to check for already sorted batches if they support to have the merged header with the real sort order from all the... | 0 |

333,417 | 29,582,992,332 | IssuesEvent | 2023-06-07 07:26:13 | nodejs/jenkins-alerts | https://api.github.com/repos/nodejs/jenkins-alerts | closed | test-rackspace-win2012r2_vs2019-x64-2 has low disk space | potential-incident test-ci | :warning: The machine `test-rackspace-win2012r2_vs2019-x64-2` has low space in Disk (used 91%).

Please refer to the [Jenkins Dashboard](https://ci.nodejs.org/manage/computer/test-rackspace-win2012r2_vs2019-x64-2) to check its status.

_This issue has been auto-generated by [UlisesGascon/jenkins-status-alerts-and-repo... | 1.0 | test-rackspace-win2012r2_vs2019-x64-2 has low disk space - :warning: The machine `test-rackspace-win2012r2_vs2019-x64-2` has low space in Disk (used 91%).

Please refer to the [Jenkins Dashboard](https://ci.nodejs.org/manage/computer/test-rackspace-win2012r2_vs2019-x64-2) to check its status.

_This issue has been aut... | non_priority | test rackspace has low disk space warning the machine test rackspace has low space in disk used please refer to the to check its status this issue has been auto generated by | 0 |

130,266 | 5,113,277,670 | IssuesEvent | 2017-01-06 14:49:53 | duhow/ProfesorOak | https://api.github.com/repos/duhow/ProfesorOak | closed | [IDEA] Módulo Git | enhancement priority-normal requiere otra | Pues al final me lo pensaré para no tener movidas por aquí...

Hacer plugin que haga git pull en el server cuando haga cambios, de esta forma se puede mantener el código actualizado en ambos lados. Igualar cambios necesarios. | 1.0 | [IDEA] Módulo Git - Pues al final me lo pensaré para no tener movidas por aquí...

Hacer plugin que haga git pull en el server cuando haga cambios, de esta forma se puede mantener el código actualizado en ambos lados. Igualar cambios necesarios. | priority | módulo git pues al final me lo pensaré para no tener movidas por aquí hacer plugin que haga git pull en el server cuando haga cambios de esta forma se puede mantener el código actualizado en ambos lados igualar cambios necesarios | 1 |

50,773 | 10,554,639,350 | IssuesEvent | 2019-10-03 19:56:31 | MicrosoftDocs/visualstudio-docs | https://api.github.com/repos/MicrosoftDocs/visualstudio-docs | closed | Visual Studio 2017 v15.9 will generate C26455 instead of C26439 for default constructors | Pri2 area - C++ area - code analysis doc-bug visual-studio-dev15/prod vs-ide-code-analysis/tech | The behavior in Visual Studio 2017 v15.9 has been changed:

https://developercommunity.visualstudio.com/content/problem/236762/c-code-analysis-warning-c26439-this-kind-of-functi.html

C26455 is now generated for default constructors not marked noexcept, which may be suppressed by adding noexcept or an explicit noexcept(... | 2.0 | Visual Studio 2017 v15.9 will generate C26455 instead of C26439 for default constructors - The behavior in Visual Studio 2017 v15.9 has been changed:

https://developercommunity.visualstudio.com/content/problem/236762/c-code-analysis-warning-c26439-this-kind-of-functi.html

C26455 is now generated for default constructo... | non_priority | visual studio will generate instead of for default constructors the behavior in visual studio has been changed is now generated for default constructors not marked noexcept which may be suppressed by adding noexcept or an explicit noexcept false noexcept false default is now also sup... | 0 |

83,707 | 24,125,725,894 | IssuesEvent | 2022-09-21 00:02:28 | xamarin/xamarin-android | https://api.github.com/repos/xamarin/xamarin-android | closed | The "GenerateResourceDesigner" task failed unexpectedly with NullReferenceException | Area: App+Library Build possibly-stale | ### Steps to Reproduce

1. Create a Xamarin.Forms project that references a custom .NETStandard library and an SDK extras project

2. Try to build the Xamarin.Forms Android platform project

3. Android build fails with the above error message.

<!--

If you have a repro project, you may drag & drop the .zip/etc. on... | 1.0 | The "GenerateResourceDesigner" task failed unexpectedly with NullReferenceException - ### Steps to Reproduce

1. Create a Xamarin.Forms project that references a custom .NETStandard library and an SDK extras project

2. Try to build the Xamarin.Forms Android platform project

3. Android build fails with the above err... | non_priority | the generateresourcedesigner task failed unexpectedly with nullreferenceexception steps to reproduce create a xamarin forms project that references a custom netstandard library and an sdk extras project try to build the xamarin forms android platform project android build fails with the above err... | 0 |

613,913 | 19,101,534,315 | IssuesEvent | 2021-11-29 23:21:34 | CMPUT301F21T21/detes | https://api.github.com/repos/CMPUT301F21T21/detes | closed | US 06.01.02 Geolocation and Maps | Final checkpoint High Risk Medium Priority New | As a doer, I want the location for a habit event to be specified using a map within the app, with the current phone position as the default location.

**Clarification:** The user would like the ability to specify a location of habit by using the app once the habit has been completed. This will all be accomplished in ... | 1.0 | US 06.01.02 Geolocation and Maps - As a doer, I want the location for a habit event to be specified using a map within the app, with the current phone position as the default location.

**Clarification:** The user would like the ability to specify a location of habit by using the app once the habit has been completed... | priority | us geolocation and maps as a doer i want the location for a habit event to be specified using a map within the app with the current phone position as the default location clarification the user would like the ability to specify a location of habit by using the app once the habit has been completed t... | 1 |

135,879 | 5,266,289,552 | IssuesEvent | 2017-02-04 10:46:23 | japanesemediamanager/ShokoServer | https://api.github.com/repos/japanesemediamanager/ShokoServer | closed | multiple db entries linking to same file | Bug - Low Priority Most Likely Fixed - Need Confirmation | I have file renaming, but it's failing sometimes.

1.Is there a way to avoid creating multiple database entries which link to same file? Check before overwriting a file?

2.Where does CRC come from? Anidb? Can it be calculated locally when missing?

JMM Desktop and Server 3.6.1.0

detected in jszip-2.4.0.tgz | security vulnerability | ## CVE-2021-23413 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jszip-2.4.0.tgz</b></p></summary>

<p>Create, read and edit .zip files with Javascript http://stuartk.com/jszip</p>

<p>... | True | CVE-2021-23413 (High) detected in jszip-2.4.0.tgz - ## CVE-2021-23413 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jszip-2.4.0.tgz</b></p></summary>

<p>Create, read and edit .zip fi... | non_priority | cve high detected in jszip tgz cve high severity vulnerability vulnerable library jszip tgz create read and edit zip files with javascript library home page a href path to dependency file zotero docker client zotero build xpi package json path to vulnerable library... | 0 |

186,948 | 14,426,868,438 | IssuesEvent | 2020-12-06 00:28:37 | kalexmills/github-vet-tests-dec2020 | https://api.github.com/repos/kalexmills/github-vet-tests-dec2020 | closed | zhengqiangzheng/vscode_code: go/pkg/mod/golang.org/x/tools@v0.0.0-20181130195746-895048a75ecf/go/internal/gccgoimporter/gccgoinstallation_test.go; 10 LoC | fresh test tiny |

Found a possible issue in [zhengqiangzheng/vscode_code](https://www.github.com/zhengqiangzheng/vscode_code) at [go/pkg/mod/golang.org/x/tools@v0.0.0-20181130195746-895048a75ecf/go/internal/gccgoimporter/gccgoinstallation_test.go](https://github.com/zhengqiangzheng/vscode_code/blob/d60b6126f7e6112ee54647b3aee81690b0c0f... | 1.0 | zhengqiangzheng/vscode_code: go/pkg/mod/golang.org/x/tools@v0.0.0-20181130195746-895048a75ecf/go/internal/gccgoimporter/gccgoinstallation_test.go; 10 LoC -

Found a possible issue in [zhengqiangzheng/vscode_code](https://www.github.com/zhengqiangzheng/vscode_code) at [go/pkg/mod/golang.org/x/tools@v0.0.0-20181130195746... | non_priority | zhengqiangzheng vscode code go pkg mod golang org x tools go internal gccgoimporter gccgoinstallation test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do no... | 0 |

357,294 | 25,176,361,479 | IssuesEvent | 2022-11-11 09:36:49 | LokQiJun/pe | https://api.github.com/repos/LokQiJun/pe | opened | Wrong formatting of variables on page 13 of DG | type.DocumentationBug severity.VeryLow | Format should be variable_name:variable_type

<!--session: 1668153579868-cea4df5c-4c6d-4f1d-ae65-e376ecbc9cc3-->

<!--Version: Web v3.4.4--> | 1.0 | Wrong formatting of variables on page 13 of DG - Format should be variable_name:variable_type

<!--session: 1668153579868-cea4df5c-4c6d-4f1d-ae65-e376ecbc9cc3-->

<!--Version: Web v3.4.4--> | non_priority | wrong formatting of variables on page of dg format should be variable name variable type | 0 |

157,013 | 19,911,268,247 | IssuesEvent | 2022-01-25 17:23:36 | ghc-dev/Michael-Jones | https://api.github.com/repos/ghc-dev/Michael-Jones | closed | CVE-2017-16138 (High) detected in mime-1.3.4.tgz - autoclosed | security vulnerability | ## CVE-2017-16138 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mime-1.3.4.tgz</b></p></summary>

<p>A comprehensive library for mime-type mapping</p>

<p>Library home page: <a href="h... | True | CVE-2017-16138 (High) detected in mime-1.3.4.tgz - autoclosed - ## CVE-2017-16138 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mime-1.3.4.tgz</b></p></summary>

<p>A comprehensive li... | non_priority | cve high detected in mime tgz autoclosed cve high severity vulnerability vulnerable library mime tgz a comprehensive library for mime type mapping library home page a href path to dependency file package json path to vulnerable library node modules mime package j... | 0 |

608,708 | 18,846,601,704 | IssuesEvent | 2021-11-11 15:35:40 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Dashboard filters overflow the screen making it not possible to use them on IE11 | Type:Bug .Won't Fix Priority:P2 Reporting/Dashboards Browser:IE Querying/Parameters & Variables | **Describe the bug**

Dashboard filters overflow the screen making it not possible to use them on IE11 - there's no horizontal scrollbar available.

Temporary workaround: Use the page zoom to zoom out (`CTRL`+`-`), set the filters and then reset the zoom level (`CTRL`+`0`)

Recommendation: Use the new Edge browser,... | 1.0 | Dashboard filters overflow the screen making it not possible to use them on IE11 - **Describe the bug**

Dashboard filters overflow the screen making it not possible to use them on IE11 - there's no horizontal scrollbar available.

Temporary workaround: Use the page zoom to zoom out (`CTRL`+`-`), set the filters and ... | priority | dashboard filters overflow the screen making it not possible to use them on describe the bug dashboard filters overflow the screen making it not possible to use them on there s no horizontal scrollbar available temporary workaround use the page zoom to zoom out ctrl set the filters and then r... | 1 |

11,244 | 9,291,965,633 | IssuesEvent | 2019-03-22 00:46:49 | Azure/azure-sdk-for-js | https://api.github.com/repos/Azure/azure-sdk-for-js | opened | [Service Bus] detached() on clients should not be a public method | Client Service Bus | _From API review notes in https://github.com/Azure/azure-sdk-for-js/issues/1481_

The `detached()` method on the QueueClient/TopicClient/SubscriptionClient is currently a public method.

This function is internally used when the library tries to recover from network issues.

All it does is call `detached()` on each... | 1.0 | [Service Bus] detached() on clients should not be a public method - _From API review notes in https://github.com/Azure/azure-sdk-for-js/issues/1481_

The `detached()` method on the QueueClient/TopicClient/SubscriptionClient is currently a public method.