Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

19,459

| 5,887,867,273

|

IssuesEvent

|

2017-05-17 08:41:48

|

Jakobtottrup/OptekSemester2

|

https://api.github.com/repos/Jakobtottrup/OptekSemester2

|

closed

|

Påkrævede felter skal have en rød *

|

Codework

|

Det er blevet fjernet for noget tid siden, men har været implementeret tidligere.

|

1.0

|

Påkrævede felter skal have en rød * - Det er blevet fjernet for noget tid siden, men har været implementeret tidligere.

|

non_process

|

påkrævede felter skal have en rød det er blevet fjernet for noget tid siden men har været implementeret tidligere

| 0

|

12,389

| 14,908,707,486

|

IssuesEvent

|

2021-01-22 06:31:02

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[PM] Site participant registry > Invited tab > if user disables the participants then user is navigating to 'New' tab

|

Bug P1 Participant manager Process: Fixed Process: Tested QA Process: Tested dev

|

Scenario 1: New to Disable

Steps

1. Closed study > Site participant registry > go to the invited tab

2. Select the participants email in New tab

3. Click on the disable invitation button

4. Observe the navigation of the user

AR : User is navigated to 'New' tab

ER : User should be navigated to Disable tab

Scenario 2: Invited to Disable

1. Closed study > Site participant registry > go to the invited tab

2. Select the participants email in the invited tab

3. Click on the disable invitation button

4. Observe the navigation of the user

AR : User is navigated to 'New' tab

ER : User should be navigated to Disable tab

Scenario 3: Disable to enable invitation

1. Closed study > Site participant registry > go to the invited tab

2. Select the participants email in the disable tab

3. Click on the enable invitation button

4. Observe the navigation of the user

ER : User should be navigated to New tab

|

3.0

|

[PM] Site participant registry > Invited tab > if user disables the participants then user is navigating to 'New' tab - Scenario 1: New to Disable

Steps

1. Closed study > Site participant registry > go to the invited tab

2. Select the participants email in New tab

3. Click on the disable invitation button

4. Observe the navigation of the user

AR : User is navigated to 'New' tab

ER : User should be navigated to Disable tab

Scenario 2: Invited to Disable

1. Closed study > Site participant registry > go to the invited tab

2. Select the participants email in the invited tab

3. Click on the disable invitation button

4. Observe the navigation of the user

AR : User is navigated to 'New' tab

ER : User should be navigated to Disable tab

Scenario 3: Disable to enable invitation

1. Closed study > Site participant registry > go to the invited tab

2. Select the participants email in the disable tab

3. Click on the enable invitation button

4. Observe the navigation of the user

ER : User should be navigated to New tab

|

process

|

site participant registry invited tab if user disables the participants then user is navigating to new tab scenario new to disable steps closed study site participant registry go to the invited tab select the participants email in new tab click on the disable invitation button observe the navigation of the user ar user is navigated to new tab er user should be navigated to disable tab scenario invited to disable closed study site participant registry go to the invited tab select the participants email in the invited tab click on the disable invitation button observe the navigation of the user ar user is navigated to new tab er user should be navigated to disable tab scenario disable to enable invitation closed study site participant registry go to the invited tab select the participants email in the disable tab click on the enable invitation button observe the navigation of the user er user should be navigated to new tab

| 1

|

13,842

| 16,602,738,757

|

IssuesEvent

|

2021-06-01 22:00:36

|

ivanbukhtiyarov/elevators

|

https://api.github.com/repos/ivanbukhtiyarov/elevators

|

closed

|

RI-04-01: Валидация команды

|

analysis enhancement in process

|

Реализовать методы is_source_valid, is_action_valid и is_value_valid в app.py в соответствии с RI-04-01. Для source и action проверять входимость значения в список возможных значений. Value зависит от action, поэтому нужно учесть допустимые значения value для разных action. Возможно, придётся уточнить требования.

|

1.0

|

RI-04-01: Валидация команды - Реализовать методы is_source_valid, is_action_valid и is_value_valid в app.py в соответствии с RI-04-01. Для source и action проверять входимость значения в список возможных значений. Value зависит от action, поэтому нужно учесть допустимые значения value для разных action. Возможно, придётся уточнить требования.

|

process

|

ri валидация команды реализовать методы is source valid is action valid и is value valid в app py в соответствии с ri для source и action проверять входимость значения в список возможных значений value зависит от action поэтому нужно учесть допустимые значения value для разных action возможно придётся уточнить требования

| 1

|

267,999

| 8,401,611,450

|

IssuesEvent

|

2018-10-11 02:01:41

|

CS2103-AY1819S1-F11-4/main

|

https://api.github.com/repos/CS2103-AY1819S1-F11-4/main

|

closed

|

timetable Ui

|

feature.Timetable priority.High severity.Low status.Ongoing

|

-need test for browser panel

-~~waiting on information on whether is doing ui with java graded.~~

|

1.0

|

timetable Ui - -need test for browser panel

-~~waiting on information on whether is doing ui with java graded.~~

|

non_process

|

timetable ui need test for browser panel waiting on information on whether is doing ui with java graded

| 0

|

12,229

| 14,743,572,355

|

IssuesEvent

|

2021-01-07 14:06:37

|

kdjstudios/SABillingGitlab

|

https://api.github.com/repos/kdjstudios/SABillingGitlab

|

closed

|

Dyad - Swift MD - failed payments (but actually processed?)

|

anc-process anp-0.5 ant-bug ant-parent/primary has attachment

|

In GitLab by @kdjstudios on Aug 28, 2019, 08:49

**Submitted by:** "Shonda Medwatz" <shonda.smith@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/9164557

**Server:** Internal

**Client/Site:** Dyad

**Account:** Swift MD

**Issue:**

The Dyad has their biggest client Swift MD who tried making a payment several times on the portal on Saturday 8/23/19 and the transactions showed as failed.

We received word today that they payment actually did go through, please see screenshot below. However, it did not apply in SAB. Can someone please take a look at this for me asap as the client wants this resolved asap.

|

1.0

|

Dyad - Swift MD - failed payments (but actually processed?) - In GitLab by @kdjstudios on Aug 28, 2019, 08:49

**Submitted by:** "Shonda Medwatz" <shonda.smith@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/9164557

**Server:** Internal

**Client/Site:** Dyad

**Account:** Swift MD

**Issue:**

The Dyad has their biggest client Swift MD who tried making a payment several times on the portal on Saturday 8/23/19 and the transactions showed as failed.

We received word today that they payment actually did go through, please see screenshot below. However, it did not apply in SAB. Can someone please take a look at this for me asap as the client wants this resolved asap.

|

process

|

dyad swift md failed payments but actually processed in gitlab by kdjstudios on aug submitted by shonda medwatz helpdesk server internal client site dyad account swift md issue the dyad has their biggest client swift md who tried making a payment several times on the portal on saturday and the transactions showed as failed we received word today that they payment actually did go through please see screenshot below however it did not apply in sab can someone please take a look at this for me asap as the client wants this resolved asap uploads image png

| 1

|

3,480

| 5,916,681,408

|

IssuesEvent

|

2017-05-22 11:12:01

|

support-project/knowledge

|

https://api.github.com/repos/support-project/knowledge

|

closed

|

tag Link encode

|

[Status] 3.merged [Type] 1.requirement

|

投稿一覧や投稿詳細を見た際のタグをクリックすると、encodeされないLinkになっているのをEncodeにしてもらえませんか?

キーワードで検索でタグ検索するとEncodeされているのでちょっと違和感が。

|

1.0

|

tag Link encode - 投稿一覧や投稿詳細を見た際のタグをクリックすると、encodeされないLinkになっているのをEncodeにしてもらえませんか?

キーワードで検索でタグ検索するとEncodeされているのでちょっと違和感が。

|

non_process

|

tag link encode 投稿一覧や投稿詳細を見た際のタグをクリックすると、encodeされないlinkになっているのをencodeにしてもらえませんか? キーワードで検索でタグ検索するとencodeされているのでちょっと違和感が。

| 0

|

121,780

| 4,821,245,957

|

IssuesEvent

|

2016-11-05 07:25:13

|

artfcl-intlgnce/NarcoWars

|

https://api.github.com/repos/artfcl-intlgnce/NarcoWars

|

closed

|

Suggested Enhancement: Add mob health bar

|

Engineering Team enhancement Priority 3

|

Mob health bar/health number. e.g. 7.4/10 or (////// )

|

1.0

|

Suggested Enhancement: Add mob health bar - Mob health bar/health number. e.g. 7.4/10 or (////// )

|

non_process

|

suggested enhancement add mob health bar mob health bar health number e g or

| 0

|

15,860

| 20,035,607,586

|

IssuesEvent

|

2022-02-02 11:32:19

|

plazi/community

|

https://api.github.com/repos/plazi/community

|

opened

|

African Invertebrates: Jason Londt project

|

Zenodo process request

|

Via Torsten Dikow

I have set-up a Dropbox folder with 28 publications by Jason Londt in African Invertebrates. Two of these are faunistic studies so might be of less taxonomic importance but I thought I include them.

I can provide bibliographic data as well. The earliest of these publications don’t have a DOI (or at least didn’t get one assigned initially) but were incorproared/accessible from the Sabinet African Journal Archive. I can obtain the unique URLs (I think they use the handle system now) for all of these earlier publications if that would help.

The folder can be accessed here: https://www.dropbox.com/sh/xmzrruu00xn5cd8/AACj3w9fSCMD8jSz_4QObeXVa?dl=0 .

How can I help in marking these articles up?

Thanks for all of your help, Torsten

|

1.0

|

African Invertebrates: Jason Londt project - Via Torsten Dikow

I have set-up a Dropbox folder with 28 publications by Jason Londt in African Invertebrates. Two of these are faunistic studies so might be of less taxonomic importance but I thought I include them.

I can provide bibliographic data as well. The earliest of these publications don’t have a DOI (or at least didn’t get one assigned initially) but were incorproared/accessible from the Sabinet African Journal Archive. I can obtain the unique URLs (I think they use the handle system now) for all of these earlier publications if that would help.

The folder can be accessed here: https://www.dropbox.com/sh/xmzrruu00xn5cd8/AACj3w9fSCMD8jSz_4QObeXVa?dl=0 .

How can I help in marking these articles up?

Thanks for all of your help, Torsten

|

process

|

african invertebrates jason londt project via torsten dikow i have set up a dropbox folder with publications by jason londt in african invertebrates two of these are faunistic studies so might be of less taxonomic importance but i thought i include them i can provide bibliographic data as well the earliest of these publications don’t have a doi or at least didn’t get one assigned initially but were incorproared accessible from the sabinet african journal archive i can obtain the unique urls i think they use the handle system now for all of these earlier publications if that would help the folder can be accessed here how can i help in marking these articles up thanks for all of your help torsten

| 1

|

5,991

| 8,805,374,822

|

IssuesEvent

|

2018-12-26 19:14:04

|

dita-ot/dita-ot

|

https://api.github.com/repos/dita-ot/dita-ot

|

closed

|

Keyref breaks with peer topic, relative path, topic in sub-directory

|

bug preprocess/keyref stale

|

I've got a key definition with `@scope="peer"`, linking to a topic with a relative path. I've also got a topic in a sub-directory.

When the key is referenced in a `<reltable>` and pushes a link into the subdirectory, the path is adjusted properly, link resolves. When the key is referenced directly from that topic, the link is not adjusted, and is broken.

To reproduce, add this to the end of `hierarchy.ditamap`:

```

<keydef keys="peerhtml" href="../peerdir/file.html" navtitle="peer html file in peerdir" scope="peer" format="html"/>

<keydef keys="peerdita" href="../peerdir/file.dita" navtitle="peer DITA file in peerdir" scope="peer" format="dita"/>

<reltable>

<relrow>

<relcell>

<topicref href="tasks/garagetaskoverview.xml" type="concept"/>

</relcell>

<relcell>

<topicref keyref="peerhtml"/>

<topicref keyref="peerdita"/>

</relcell>

</relrow>

</reltable>

```

Then add this reference into `tasks\garagetaskoverview.xml`:

```

<related-links>

<linklist>

<title>links in the topic (broken paths)</title>

<link keyref="peerdita"/>

<link keyref="peerhtml"/>

</linklist>

</related-links>

```

|

1.0

|

Keyref breaks with peer topic, relative path, topic in sub-directory - I've got a key definition with `@scope="peer"`, linking to a topic with a relative path. I've also got a topic in a sub-directory.

When the key is referenced in a `<reltable>` and pushes a link into the subdirectory, the path is adjusted properly, link resolves. When the key is referenced directly from that topic, the link is not adjusted, and is broken.

To reproduce, add this to the end of `hierarchy.ditamap`:

```

<keydef keys="peerhtml" href="../peerdir/file.html" navtitle="peer html file in peerdir" scope="peer" format="html"/>

<keydef keys="peerdita" href="../peerdir/file.dita" navtitle="peer DITA file in peerdir" scope="peer" format="dita"/>

<reltable>

<relrow>

<relcell>

<topicref href="tasks/garagetaskoverview.xml" type="concept"/>

</relcell>

<relcell>

<topicref keyref="peerhtml"/>

<topicref keyref="peerdita"/>

</relcell>

</relrow>

</reltable>

```

Then add this reference into `tasks\garagetaskoverview.xml`:

```

<related-links>

<linklist>

<title>links in the topic (broken paths)</title>

<link keyref="peerdita"/>

<link keyref="peerhtml"/>

</linklist>

</related-links>

```

|

process

|

keyref breaks with peer topic relative path topic in sub directory i ve got a key definition with scope peer linking to a topic with a relative path i ve also got a topic in a sub directory when the key is referenced in a and pushes a link into the subdirectory the path is adjusted properly link resolves when the key is referenced directly from that topic the link is not adjusted and is broken to reproduce add this to the end of hierarchy ditamap then add this reference into tasks garagetaskoverview xml links in the topic broken paths

| 1

|

602,395

| 18,468,266,436

|

IssuesEvent

|

2021-10-17 09:22:24

|

ohtuprojekti-Kierratysavustin/Kierratysavustin

|

https://api.github.com/repos/ohtuprojekti-Kierratysavustin/Kierratysavustin

|

opened

|

Kierrätyskisa

|

enhancement Size 9999 Priority Low

|

# Käyttäjätarina

**Käyttäjänä**

Haluan **luoda tai osallistua tai kutsua ihmisiä leikkimieliseen kierrätyskisaan**

Jotta **saamme kierrätysastetta nostettua**

## Hyväksymiskriteerit

### Toiminnalliset

- [ ] Jos *[ Käyttäjäskenaario ]*

Kun *[ Ympäristö ]*

Niin *[ Seuraukset ]*

### Ei-toiminnalliset

- [ ] [ *Miten* ]

- [ ] [ *Suorituskyky* ]

- [ ] [ *Tietoturva* ]

- [ ] [ *Datan käyttö* ]

- [ ] [ *Dokumentaation päivittäminen* ]

## Riippuvuudet

**Toteutettavissa jälkeen:** [ *Issue* ]

**Toteutettava ennen:** [ *Issue* ]

**Liittyy:** [ *Issue* ]

**Vaihtoehtoinen ratkaisu:** [ *Issue* ]

## Valmis kun:

- [ ] Ominaisuus on toteutettuna hyväksymiskriteerien mukaisesti ja toimii

- [ ] Ominaisuus on testattu käyttäjätarinan hyväksymiskriteerien mukaisesti ja testit menevät läpi

- [ ] Kaikki ominaisuuteen liittyvä dokumentaatio on kirjoitettu

- [ ] Koodi noudattaa määriteltyä koodaustyyliä

- [ ] Koodi on katselmoitua: katselmoinnin on tehnyt vähintään 2 muuta henkilöä, kuin ominaisuuden koodannut henkilö

- [ ] Ominaisuus mergetty mainiin ja toiminnassa staging-ympäristössä

- [ ] Ominaisuus on toiminnassa tuotanto-ympäristössä

|

1.0

|

Kierrätyskisa - # Käyttäjätarina

**Käyttäjänä**

Haluan **luoda tai osallistua tai kutsua ihmisiä leikkimieliseen kierrätyskisaan**

Jotta **saamme kierrätysastetta nostettua**

## Hyväksymiskriteerit

### Toiminnalliset

- [ ] Jos *[ Käyttäjäskenaario ]*

Kun *[ Ympäristö ]*

Niin *[ Seuraukset ]*

### Ei-toiminnalliset

- [ ] [ *Miten* ]

- [ ] [ *Suorituskyky* ]

- [ ] [ *Tietoturva* ]

- [ ] [ *Datan käyttö* ]

- [ ] [ *Dokumentaation päivittäminen* ]

## Riippuvuudet

**Toteutettavissa jälkeen:** [ *Issue* ]

**Toteutettava ennen:** [ *Issue* ]

**Liittyy:** [ *Issue* ]

**Vaihtoehtoinen ratkaisu:** [ *Issue* ]

## Valmis kun:

- [ ] Ominaisuus on toteutettuna hyväksymiskriteerien mukaisesti ja toimii

- [ ] Ominaisuus on testattu käyttäjätarinan hyväksymiskriteerien mukaisesti ja testit menevät läpi

- [ ] Kaikki ominaisuuteen liittyvä dokumentaatio on kirjoitettu

- [ ] Koodi noudattaa määriteltyä koodaustyyliä

- [ ] Koodi on katselmoitua: katselmoinnin on tehnyt vähintään 2 muuta henkilöä, kuin ominaisuuden koodannut henkilö

- [ ] Ominaisuus mergetty mainiin ja toiminnassa staging-ympäristössä

- [ ] Ominaisuus on toiminnassa tuotanto-ympäristössä

|

non_process

|

kierrätyskisa käyttäjätarina käyttäjänä haluan luoda tai osallistua tai kutsua ihmisiä leikkimieliseen kierrätyskisaan jotta saamme kierrätysastetta nostettua hyväksymiskriteerit toiminnalliset jos kun niin ei toiminnalliset riippuvuudet toteutettavissa jälkeen toteutettava ennen liittyy vaihtoehtoinen ratkaisu valmis kun ominaisuus on toteutettuna hyväksymiskriteerien mukaisesti ja toimii ominaisuus on testattu käyttäjätarinan hyväksymiskriteerien mukaisesti ja testit menevät läpi kaikki ominaisuuteen liittyvä dokumentaatio on kirjoitettu koodi noudattaa määriteltyä koodaustyyliä koodi on katselmoitua katselmoinnin on tehnyt vähintään muuta henkilöä kuin ominaisuuden koodannut henkilö ominaisuus mergetty mainiin ja toiminnassa staging ympäristössä ominaisuus on toiminnassa tuotanto ympäristössä

| 0

|

11,277

| 14,077,948,820

|

IssuesEvent

|

2020-11-04 12:51:04

|

MEDEAEditions/DEPCHA

|

https://api.github.com/repos/MEDEAEditions/DEPCHA

|

opened

|

TORDF

|

preprocessing

|

All task, bugs and to-do relating to the **TORDF.xsl** (Ingest XML/TEI into GAMS)

- [ ] `<bk:unit>LITERAL</bk:unit>` to `<bk:unit rdf:resource="https://gams.uni-graz.at/o:depcha.wheaton.1#unit.1">` if `<om:Unit> `exists; otherwise `<bk:unit>LITERAL</bk:unit>`

- [ ] remove `@rdf:seeAlso` in bk:EconomicGood; references to other LOD is in `<skos:Concept>`

|

1.0

|

TORDF - All task, bugs and to-do relating to the **TORDF.xsl** (Ingest XML/TEI into GAMS)

- [ ] `<bk:unit>LITERAL</bk:unit>` to `<bk:unit rdf:resource="https://gams.uni-graz.at/o:depcha.wheaton.1#unit.1">` if `<om:Unit> `exists; otherwise `<bk:unit>LITERAL</bk:unit>`

- [ ] remove `@rdf:seeAlso` in bk:EconomicGood; references to other LOD is in `<skos:Concept>`

|

process

|

tordf all task bugs and to do relating to the tordf xsl ingest xml tei into gams literal to exists otherwise literal remove rdf seealso in bk economicgood references to other lod is in

| 1

|

382,409

| 11,305,826,770

|

IssuesEvent

|

2020-01-18 09:11:08

|

kubernetes/minikube

|

https://api.github.com/repos/kubernetes/minikube

|

opened

|

Investigate compression methods on the minikube.iso

|

area/guest-vm area/performance kind/feature priority/important-longterm

|

The compression method to use is a trade-off between size and speed...

We could investigate changing to a slightly bigger ISO, that boots faster.

|

1.0

|

Investigate compression methods on the minikube.iso - The compression method to use is a trade-off between size and speed...

We could investigate changing to a slightly bigger ISO, that boots faster.

|

non_process

|

investigate compression methods on the minikube iso the compression method to use is a trade off between size and speed we could investigate changing to a slightly bigger iso that boots faster

| 0

|

768,779

| 26,979,861,203

|

IssuesEvent

|

2023-02-09 12:19:20

|

aquasecurity/trivy

|

https://api.github.com/repos/aquasecurity/trivy

|

closed

|

Unable to open JAR files

|

kind/bug priority/important-soon

|

## Description

Java scanning may lead to the following error.

```

failed to analyze file: failed to analyze usr/lib/jvm/java-1.8-openjdk/lib/tools.jar: unable to open usr/lib/jvm/java-1.8-openjdk/lib/tools.jar: failed to open: unable to read the file: stream error: stream ID 9; PROTOCOL_ERROR; received from peer

```

Currently, we're investigating this issue. As a temporary mitigation, you may be able to avoid this issue by downloading the Java DB in advance. `--download-java-db-only` is available in v0.37.0+.

```

$ trivy image --download-java-db-only

2023-02-01T16:57:04.322+0900 INFO Downloading the Java DB...

$ trivy image [YOUR_JAVA_IMAGE]

```

|

1.0

|

Unable to open JAR files - ## Description

Java scanning may lead to the following error.

```

failed to analyze file: failed to analyze usr/lib/jvm/java-1.8-openjdk/lib/tools.jar: unable to open usr/lib/jvm/java-1.8-openjdk/lib/tools.jar: failed to open: unable to read the file: stream error: stream ID 9; PROTOCOL_ERROR; received from peer

```

Currently, we're investigating this issue. As a temporary mitigation, you may be able to avoid this issue by downloading the Java DB in advance. `--download-java-db-only` is available in v0.37.0+.

```

$ trivy image --download-java-db-only

2023-02-01T16:57:04.322+0900 INFO Downloading the Java DB...

$ trivy image [YOUR_JAVA_IMAGE]

```

|

non_process

|

unable to open jar files description java scanning may lead to the following error failed to analyze file failed to analyze usr lib jvm java openjdk lib tools jar unable to open usr lib jvm java openjdk lib tools jar failed to open unable to read the file stream error stream id protocol error received from peer currently we re investigating this issue as a temporary mitigation you may be able to avoid this issue by downloading the java db in advance download java db only is available in trivy image download java db only info downloading the java db trivy image

| 0

|

75,404

| 7,470,857,601

|

IssuesEvent

|

2018-04-03 07:14:18

|

rancher/rancher

|

https://api.github.com/repos/rancher/rancher

|

closed

|

Support yaml file for pipeline

|

area/pipeline kind/enhancement status/resolved status/to-test team/cn version/2.0

|

1. support export a yaml file from existing pipeline

2. support create a pipeline by importing a yaml file.

3. support create a pipeline by specifiy a repos that contains the yaml file.

|

1.0

|

Support yaml file for pipeline - 1. support export a yaml file from existing pipeline

2. support create a pipeline by importing a yaml file.

3. support create a pipeline by specifiy a repos that contains the yaml file.

|

non_process

|

support yaml file for pipeline support export a yaml file from existing pipeline support create a pipeline by importing a yaml file support create a pipeline by specifiy a repos that contains the yaml file

| 0

|

570,270

| 17,023,076,848

|

IssuesEvent

|

2021-07-03 00:16:53

|

tomhughes/trac-tickets

|

https://api.github.com/repos/tomhughes/trac-tickets

|

closed

|

[PATCH] slippy maps page should use static viewer for non-javascript types

|

Component: website Priority: trivial Resolution: fixed Type: enhancement

|

**[Submitted to the original trac issue database at 12.06pm, Friday, 11th November 2005]**

The old static viewer should be in the page when it loads. If the user

has javascript and a compatible browser the tiles code should replace

the viewer with tiles and hide the zoom/pan links.

|

1.0

|

[PATCH] slippy maps page should use static viewer for non-javascript types - **[Submitted to the original trac issue database at 12.06pm, Friday, 11th November 2005]**

The old static viewer should be in the page when it loads. If the user

has javascript and a compatible browser the tiles code should replace

the viewer with tiles and hide the zoom/pan links.

|

non_process

|

slippy maps page should use static viewer for non javascript types the old static viewer should be in the page when it loads if the user has javascript and a compatible browser the tiles code should replace the viewer with tiles and hide the zoom pan links

| 0

|

14,634

| 17,768,239,356

|

IssuesEvent

|

2021-08-30 10:18:36

|

pystatgen/sgkit

|

https://api.github.com/repos/pystatgen/sgkit

|

opened

|

Support macOS arm64 processors

|

process + tools

|

I have a new Mac Mini with an Apple M1 chip. It would be good to be able to run (and develop) sgkit on this architecture.

|

1.0

|

Support macOS arm64 processors - I have a new Mac Mini with an Apple M1 chip. It would be good to be able to run (and develop) sgkit on this architecture.

|

process

|

support macos processors i have a new mac mini with an apple chip it would be good to be able to run and develop sgkit on this architecture

| 1

|

17,557

| 6,474,590,788

|

IssuesEvent

|

2017-08-17 18:26:45

|

habitat-sh/habitat

|

https://api.github.com/repos/habitat-sh/habitat

|

closed

|

Configurable publish phases in `builder.toml`

|

A-builder C-feature

|

## User Story

As a user of builder's builds, I need a way to declare configurable publish phases which will be performed by a builder-worker after the initial build phase, so I can consume my built artifact in various post-processed formats such as a Docker container, an AMI, etc

## Requirements

The `builder.toml` which resides next to a `plan.sh`/`plan.ps1` is the configuration that a builder-worker reads to understand additional steps. A refactored version of this may look something like this (with thanks to @tduffield)

```toml

[[publish]]

type = "docker-registry"

registry = "index.docker.io" # default

image_name = "{{pkg_origin}}/{{pkg_name}}" # default

tags = [ "{{pkg_version}}-{{pkg_release}}" ] # default

secret_key = "ENCRYPTED_VALUE" # placeholder for encrypted creds (if needed)

[[publish]]

type = "s3"

secret_key = "ENCRYPTED_VALUE"

[[publish]]

type = "artifactory"

secret_key = "ENCRYPTED_VALUE"

```

Some things to note:

* The `type` key/value represents the exporter/publisher to use for the phase

* We support an array of tables so multiple exporters of a single type can be used

* We have dropped the current configuration for publishing to the depot itself. This will become a requirement of the system

* Mustache can be used for string substitution for variables generated by the build phase such as pkg name, version, origin, and release

|

1.0

|

Configurable publish phases in `builder.toml` - ## User Story

As a user of builder's builds, I need a way to declare configurable publish phases which will be performed by a builder-worker after the initial build phase, so I can consume my built artifact in various post-processed formats such as a Docker container, an AMI, etc

## Requirements

The `builder.toml` which resides next to a `plan.sh`/`plan.ps1` is the configuration that a builder-worker reads to understand additional steps. A refactored version of this may look something like this (with thanks to @tduffield)

```toml

[[publish]]

type = "docker-registry"

registry = "index.docker.io" # default

image_name = "{{pkg_origin}}/{{pkg_name}}" # default

tags = [ "{{pkg_version}}-{{pkg_release}}" ] # default

secret_key = "ENCRYPTED_VALUE" # placeholder for encrypted creds (if needed)

[[publish]]

type = "s3"

secret_key = "ENCRYPTED_VALUE"

[[publish]]

type = "artifactory"

secret_key = "ENCRYPTED_VALUE"

```

Some things to note:

* The `type` key/value represents the exporter/publisher to use for the phase

* We support an array of tables so multiple exporters of a single type can be used

* We have dropped the current configuration for publishing to the depot itself. This will become a requirement of the system

* Mustache can be used for string substitution for variables generated by the build phase such as pkg name, version, origin, and release

|

non_process

|

configurable publish phases in builder toml user story as a user of builder s builds i need a way to declare configurable publish phases which will be performed by a builder worker after the initial build phase so i can consume my built artifact in various post processed formats such as a docker container an ami etc requirements the builder toml which resides next to a plan sh plan is the configuration that a builder worker reads to understand additional steps a refactored version of this may look something like this with thanks to tduffield toml type docker registry registry index docker io default image name pkg origin pkg name default tags default secret key encrypted value placeholder for encrypted creds if needed type secret key encrypted value type artifactory secret key encrypted value some things to note the type key value represents the exporter publisher to use for the phase we support an array of tables so multiple exporters of a single type can be used we have dropped the current configuration for publishing to the depot itself this will become a requirement of the system mustache can be used for string substitution for variables generated by the build phase such as pkg name version origin and release

| 0

|

74,949

| 20,515,138,925

|

IssuesEvent

|

2022-03-01 10:56:16

|

tensorflow/tensorflow

|

https://api.github.com/repos/tensorflow/tensorflow

|

opened

|

AttributeError: module 'tensorflow' has no attribute 'reduce_sum'

|

type:build/install

|

Hi while running

python -c "import tensorflow as tf;print(tf.reduce_sum(tf.random.normal([1000, 1000])))"

To verify my Tensorflow installation I get AttributeError;

AttributeError: module 'tensorflow' has no attribute 'reduce_sum'

tensorflow version: Version: 2.5.0

|

1.0

|

AttributeError: module 'tensorflow' has no attribute 'reduce_sum' - Hi while running

python -c "import tensorflow as tf;print(tf.reduce_sum(tf.random.normal([1000, 1000])))"

To verify my Tensorflow installation I get AttributeError;

AttributeError: module 'tensorflow' has no attribute 'reduce_sum'

tensorflow version: Version: 2.5.0

|

non_process

|

attributeerror module tensorflow has no attribute reduce sum hi while running python c import tensorflow as tf print tf reduce sum tf random normal to verify my tensorflow installation i get attributeerror attributeerror module tensorflow has no attribute reduce sum tensorflow version version

| 0

|

13,078

| 15,420,036,992

|

IssuesEvent

|

2021-03-05 10:59:04

|

geneontology/go-ontology

|

https://api.github.com/repos/geneontology/go-ontology

|

closed

|

'multi-species biofilm formation' has double inheritance

|

multi-species process

|

This class currently has ‘symbiotic process’ and ‘biofilm formation’ as parent classes. I would recommend removing the **subClassOf 'symbiotic process'** axiom since this process does not directly involve the interaction between the host and the symbiont. You can always add an axiom that asserts that this is part of some ‘symbiotic process’ if you want to relate it back to that class.

|

1.0

|

'multi-species biofilm formation' has double inheritance - This class currently has ‘symbiotic process’ and ‘biofilm formation’ as parent classes. I would recommend removing the **subClassOf 'symbiotic process'** axiom since this process does not directly involve the interaction between the host and the symbiont. You can always add an axiom that asserts that this is part of some ‘symbiotic process’ if you want to relate it back to that class.

|

process

|

multi species biofilm formation has double inheritance this class currently has ‘symbiotic process’ and ‘biofilm formation’ as parent classes i would recommend removing the subclassof symbiotic process axiom since this process does not directly involve the interaction between the host and the symbiont you can always add an axiom that asserts that this is part of some ‘symbiotic process’ if you want to relate it back to that class

| 1

|

11,389

| 14,224,466,938

|

IssuesEvent

|

2020-11-17 19:43:03

|

kubernetes/minikube

|

https://api.github.com/repos/kubernetes/minikube

|

closed

|

update kubernets version script does not generate unit tests

|

kind/process priority/important-longterm

|

in this PR https://github.com/kubernetes/minikube/pull/9693

I used the script

```

cd hack/update/kubernetes_version

go run update_kubernetes_version.go

```

it changed the constants.go and the docs but it did not change these files and didn't add a folder of unit test data

```

pkg/minikube/bootstrapper/bsutil/kubeadm_test.go

pkg/minikube/bootstrapper/bsutil/testdata/v1.20.0-beta.1

```

|

1.0

|

update kubernets version script does not generate unit tests - in this PR https://github.com/kubernetes/minikube/pull/9693

I used the script

```

cd hack/update/kubernetes_version

go run update_kubernetes_version.go

```

it changed the constants.go and the docs but it did not change these files and didn't add a folder of unit test data

```

pkg/minikube/bootstrapper/bsutil/kubeadm_test.go

pkg/minikube/bootstrapper/bsutil/testdata/v1.20.0-beta.1

```

|

process

|

update kubernets version script does not generate unit tests in this pr i used the script cd hack update kubernetes version go run update kubernetes version go it changed the constants go and the docs but it did not change these files and didn t add a folder of unit test data pkg minikube bootstrapper bsutil kubeadm test go pkg minikube bootstrapper bsutil testdata beta

| 1

|

479,234

| 13,793,254,062

|

IssuesEvent

|

2020-10-09 14:42:18

|

rokwire/safer-illinois-app

|

https://api.github.com/repos/rokwire/safer-illinois-app

|

closed

|

[BUG] The 2 different guidelines is displayed for the same county "Champaign Illinois"

|

Priority: High Type: Bug

|

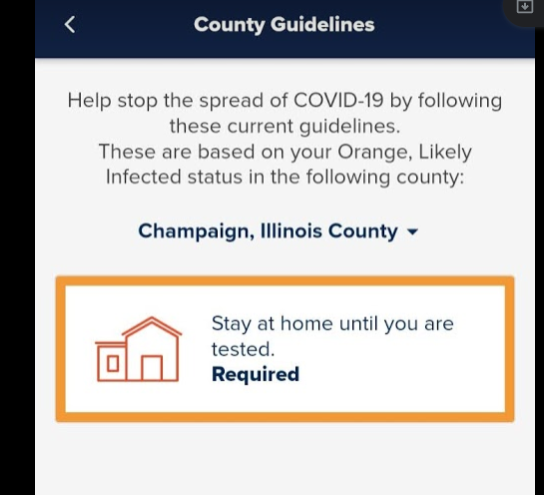

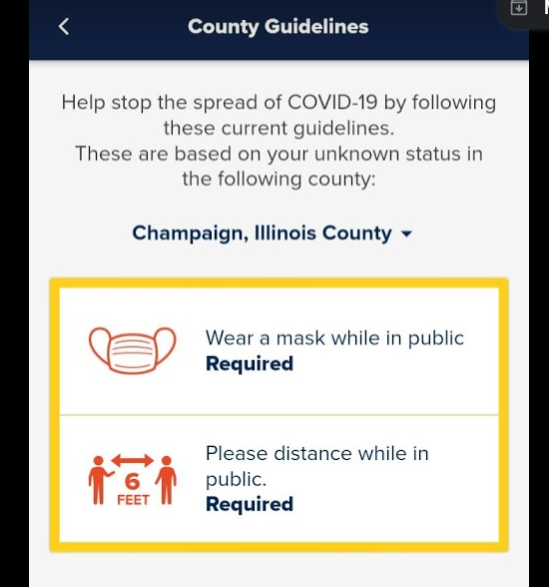

**Describe the bug**

Two different guidelines are displayed same county "Champaign, Illinois"

**To Reproduce**

Steps to reproduce the behavior:

1. Install Safer Illinois app

2. Complete the COVID onboarding process

3. User logged in as a University Student on Who are you screen and verified his identity

4. Complete the COVID onboarding steps with Exposure notification and test result Consent to YES

5. COVID set up is done. COVID - 19 screen is displayed

6. Users current status is "Orange, Likely infected"

7. Tap on County Guidelines. County Guidelines - "Champaign, Illinois county" is displayed for status "Orange".

8. Tap on the drop-down arrow displayed next to "Champaign, Illinois county" and select "Champaign, Illinois"

**Actual Result**

Now the county Guidelines is displayed for the status Yellow color

**Expected behavior**

The 2 different guidelines should not display for the same county

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Smartphone (please complete the following information):**

Device: [e.g. Android]

- Version [e.g. 2.6.13]

**Additional context**

Add any other context about the problem here.

|

1.0

|

[BUG] The 2 different guidelines is displayed for the same county "Champaign Illinois" - **Describe the bug**

Two different guidelines are displayed same county "Champaign, Illinois"

**To Reproduce**

Steps to reproduce the behavior:

1. Install Safer Illinois app

2. Complete the COVID onboarding process

3. User logged in as a University Student on Who are you screen and verified his identity

4. Complete the COVID onboarding steps with Exposure notification and test result Consent to YES

5. COVID set up is done. COVID - 19 screen is displayed

6. Users current status is "Orange, Likely infected"

7. Tap on County Guidelines. County Guidelines - "Champaign, Illinois county" is displayed for status "Orange".

8. Tap on the drop-down arrow displayed next to "Champaign, Illinois county" and select "Champaign, Illinois"

**Actual Result**

Now the county Guidelines is displayed for the status Yellow color

**Expected behavior**

The 2 different guidelines should not display for the same county

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Smartphone (please complete the following information):**

Device: [e.g. Android]

- Version [e.g. 2.6.13]

**Additional context**

Add any other context about the problem here.

|

non_process

|

the different guidelines is displayed for the same county champaign illinois describe the bug two different guidelines are displayed same county champaign illinois to reproduce steps to reproduce the behavior install safer illinois app complete the covid onboarding process user logged in as a university student on who are you screen and verified his identity complete the covid onboarding steps with exposure notification and test result consent to yes covid set up is done covid screen is displayed users current status is orange likely infected tap on county guidelines county guidelines champaign illinois county is displayed for status orange tap on the drop down arrow displayed next to champaign illinois county and select champaign illinois actual result now the county guidelines is displayed for the status yellow color expected behavior the different guidelines should not display for the same county screenshots if applicable add screenshots to help explain your problem smartphone please complete the following information device version additional context add any other context about the problem here

| 0

|

164,031

| 12,754,374,919

|

IssuesEvent

|

2020-06-28 05:00:21

|

microsoft/azure-tools-for-java

|

https://api.github.com/repos/microsoft/azure-tools-for-java

|

closed

|

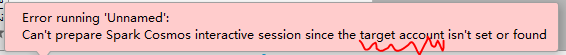

[intelliJ][Spark on Cosmos]Warning use account when config with cosmos configurartion.

|

HDInsight IntelliJ Internal Test fixed

|

Build:

azure-toolkit-for-intellij-2018.3.develop.1036.03-20-2019

Repro Steps:

1. Create a **Apache Spark on Cosmos configuration** **without Spark Clusters** and save it.

2. Right click LogQuery and run Spark livy Interactive Session Console(Scala).

3. Click Continue anyway.

Result:

Warning message use "account" rather than "cluster".

|

1.0

|

[intelliJ][Spark on Cosmos]Warning use account when config with cosmos configurartion. - Build:

azure-toolkit-for-intellij-2018.3.develop.1036.03-20-2019

Repro Steps:

1. Create a **Apache Spark on Cosmos configuration** **without Spark Clusters** and save it.

2. Right click LogQuery and run Spark livy Interactive Session Console(Scala).

3. Click Continue anyway.

Result:

Warning message use "account" rather than "cluster".

|

non_process

|

warning use account when config with cosmos configurartion build azure toolkit for intellij develop repro steps create a apache spark on cosmos configuration without spark clusters and save it right click logquery and run spark livy interactive session console scala click continue anyway result warning message use account rather than cluster

| 0

|

131,887

| 12,493,235,050

|

IssuesEvent

|

2020-06-01 08:52:44

|

kubernetes-sigs/cluster-api

|

https://api.github.com/repos/kubernetes-sigs/cluster-api

|

closed

|

Document that dollar mark needs to be escaped for variable expansion in clusterctl

|

help wanted kind/documentation kind/feature lifecycle/stale

|

Just a small todo / note to self.

When using env vars, for example a password, if it contains a dollar symbol, in addition to being single quoted, it needs to be doubled, .e.g.:

The password foo$bar must be represented as:

PASSWORD='foo$$bar'

Looks like some variable expansion happening in viper, but can get hit.

/kind feature

/area documentation

|

1.0

|

Document that dollar mark needs to be escaped for variable expansion in clusterctl - Just a small todo / note to self.

When using env vars, for example a password, if it contains a dollar symbol, in addition to being single quoted, it needs to be doubled, .e.g.:

The password foo$bar must be represented as:

PASSWORD='foo$$bar'

Looks like some variable expansion happening in viper, but can get hit.

/kind feature

/area documentation

|

non_process

|

document that dollar mark needs to be escaped for variable expansion in clusterctl just a small todo note to self when using env vars for example a password if it contains a dollar symbol in addition to being single quoted it needs to be doubled e g the password foo bar must be represented as password foo bar looks like some variable expansion happening in viper but can get hit kind feature area documentation

| 0

|

11,070

| 13,906,034,008

|

IssuesEvent

|

2020-10-20 10:41:24

|

pystatgen/sgkit

|

https://api.github.com/repos/pystatgen/sgkit

|

closed

|

Simulate genotypes for unit tests

|

core operations process + tools

|

I'm trying to simulate some genotype calls for unit tests using a simple model from `msprime`. At @jeromekelleher's suggestion, I tried this:

```python

# Using 1.x code, not yet released

!pip install git+https://github.com/tskit-dev/msprime.git#egg=msprime

import msprime

model = msprime.Demography.island_model(2, migration_rate=1e-3, Ne=10**4)

samples = model.sample(10, 10) # 10 haploids from each pop

ts = msprime.simulate(

samples=samples,

demography=model, # what should this be?

mutation_rate=1e-8,

recombination_rate=1e-8

)

list(ts.variants())

[]

```

What is `demography` supposed to be? I saw a "FIXME" for documenting it in `simulate` but I wasn't sure what to set it to if not the `Demography` instance that comes back from `island_model`.

Also, is this why I'm not getting any variants back out?

I am ultimately trying to simulate hardy weinberg equilibrium. I'd like to have some fraction of the variants be in or near perfect equilibrium and the remainder way out of it. If you get a chance, could I get an assist @jeromekelleher?

|

1.0

|

Simulate genotypes for unit tests - I'm trying to simulate some genotype calls for unit tests using a simple model from `msprime`. At @jeromekelleher's suggestion, I tried this:

```python

# Using 1.x code, not yet released

!pip install git+https://github.com/tskit-dev/msprime.git#egg=msprime

import msprime

model = msprime.Demography.island_model(2, migration_rate=1e-3, Ne=10**4)

samples = model.sample(10, 10) # 10 haploids from each pop

ts = msprime.simulate(

samples=samples,

demography=model, # what should this be?

mutation_rate=1e-8,

recombination_rate=1e-8

)

list(ts.variants())

[]

```

What is `demography` supposed to be? I saw a "FIXME" for documenting it in `simulate` but I wasn't sure what to set it to if not the `Demography` instance that comes back from `island_model`.

Also, is this why I'm not getting any variants back out?

I am ultimately trying to simulate hardy weinberg equilibrium. I'd like to have some fraction of the variants be in or near perfect equilibrium and the remainder way out of it. If you get a chance, could I get an assist @jeromekelleher?

|

process

|

simulate genotypes for unit tests i m trying to simulate some genotype calls for unit tests using a simple model from msprime at jeromekelleher s suggestion i tried this python using x code not yet released pip install git import msprime model msprime demography island model migration rate ne samples model sample haploids from each pop ts msprime simulate samples samples demography model what should this be mutation rate recombination rate list ts variants what is demography supposed to be i saw a fixme for documenting it in simulate but i wasn t sure what to set it to if not the demography instance that comes back from island model also is this why i m not getting any variants back out i am ultimately trying to simulate hardy weinberg equilibrium i d like to have some fraction of the variants be in or near perfect equilibrium and the remainder way out of it if you get a chance could i get an assist jeromekelleher

| 1

|

12,416

| 14,921,184,108

|

IssuesEvent

|

2021-01-23 08:52:08

|

threefoldfoundation/tft-stellar

|

https://api.github.com/repos/threefoldfoundation/tft-stellar

|

closed

|

Possibility for the unlock service to use an external storage like etcd or mongo

|

process_wontfix type_feature

|

Currently, if the node running the unlock service fails or the image is updated, the data inside the image is also lost. In order to achieve ha, rolling upgrades and node failure must be tolerated.

|

1.0

|

Possibility for the unlock service to use an external storage like etcd or mongo - Currently, if the node running the unlock service fails or the image is updated, the data inside the image is also lost. In order to achieve ha, rolling upgrades and node failure must be tolerated.

|

process

|

possibility for the unlock service to use an external storage like etcd or mongo currently if the node running the unlock service fails or the image is updated the data inside the image is also lost in order to achieve ha rolling upgrades and node failure must be tolerated

| 1

|

73,995

| 24,897,916,559

|

IssuesEvent

|

2022-10-28 17:38:41

|

matrix-org/synapse

|

https://api.github.com/repos/matrix-org/synapse

|

closed

|

Mautrix Bridges are not bridging messages since upgrading to version 1.70.0

|

A-Application-Service S-Major T-Defect X-Regression O-Occasional

|

### Description

Since upgrading to version 1.70.0, mautrix-instagram, mautrix-discord, mautrix-facebook and mautrix-telegram are not bridging mesaging from Matrix to the requested service. Any communication with the bot is also impossible (`help`, `ping`, etc). Maubot is unaffected.

### Steps to reproduce

- install and configure a mautrix-based bridge (instagram, facebook, discord or telegram) on 1.69

- upgrade to 1.70

- send a message from matrix in a bridged channel

### Homeserver

Privately hosted homeserver

### Synapse Version

1.70.0 (Python 3.9.2)

### Installation Method

Synapse is installed with `pip install --upgrade matrix-synapse[all]`, which compiles the project from sources.

### Platform

Bridges are manually installed from their git repo.

Host server is a Raspberry Pi 3 running Debian 11.5.

### Relevant log output

```shell

No logs seems to point to an error.

```

### Anything else that would be useful to know?

Reverting to synapse 1.69 fixes the issue.

|

1.0

|

Mautrix Bridges are not bridging messages since upgrading to version 1.70.0 - ### Description

Since upgrading to version 1.70.0, mautrix-instagram, mautrix-discord, mautrix-facebook and mautrix-telegram are not bridging mesaging from Matrix to the requested service. Any communication with the bot is also impossible (`help`, `ping`, etc). Maubot is unaffected.

### Steps to reproduce

- install and configure a mautrix-based bridge (instagram, facebook, discord or telegram) on 1.69

- upgrade to 1.70

- send a message from matrix in a bridged channel

### Homeserver

Privately hosted homeserver

### Synapse Version

1.70.0 (Python 3.9.2)

### Installation Method

Synapse is installed with `pip install --upgrade matrix-synapse[all]`, which compiles the project from sources.

### Platform

Bridges are manually installed from their git repo.

Host server is a Raspberry Pi 3 running Debian 11.5.

### Relevant log output

```shell

No logs seems to point to an error.

```

### Anything else that would be useful to know?

Reverting to synapse 1.69 fixes the issue.

|

non_process

|

mautrix bridges are not bridging messages since upgrading to version description since upgrading to version mautrix instagram mautrix discord mautrix facebook and mautrix telegram are not bridging mesaging from matrix to the requested service any communication with the bot is also impossible help ping etc maubot is unaffected steps to reproduce install and configure a mautrix based bridge instagram facebook discord or telegram on upgrade to send a message from matrix in a bridged channel homeserver privately hosted homeserver synapse version python installation method synapse is installed with pip install upgrade matrix synapse which compiles the project from sources platform bridges are manually installed from their git repo host server is a raspberry pi running debian relevant log output shell no logs seems to point to an error anything else that would be useful to know reverting to synapse fixes the issue

| 0

|

20,442

| 27,100,573,846

|

IssuesEvent

|

2023-02-15 08:19:03

|

billingran/Newsletter

|

https://api.github.com/repos/billingran/Newsletter

|

closed

|

Éviter d'enregistrer plusieurs fois le même utilisateur

|

processing... Brief 2

|

- [ ] Éviter d'enregistrer plusieurs fois le même utilisateur lorsqu'on rafraichit la page après avoir validé le formulaire avec succès.

|

1.0

|

Éviter d'enregistrer plusieurs fois le même utilisateur - - [ ] Éviter d'enregistrer plusieurs fois le même utilisateur lorsqu'on rafraichit la page après avoir validé le formulaire avec succès.

|

process

|

éviter d enregistrer plusieurs fois le même utilisateur éviter d enregistrer plusieurs fois le même utilisateur lorsqu on rafraichit la page après avoir validé le formulaire avec succès

| 1

|

17,813

| 23,741,281,329

|

IssuesEvent

|

2022-08-31 12:39:45

|

km4ack/patmenu2

|

https://api.github.com/repos/km4ack/patmenu2

|

closed

|

Add WL2K_MOBILES

|

enhancement in process

|

add WL2K_MOBILES to the position report section of Pat Menu. Send the request to INQUIRY with a subject of REQUEST. This will request a list of 100 position reports instead of the 30 delivered when WL2K_USERS is requested.

|

1.0

|

Add WL2K_MOBILES - add WL2K_MOBILES to the position report section of Pat Menu. Send the request to INQUIRY with a subject of REQUEST. This will request a list of 100 position reports instead of the 30 delivered when WL2K_USERS is requested.

|

process

|

add mobiles add mobiles to the position report section of pat menu send the request to inquiry with a subject of request this will request a list of position reports instead of the delivered when users is requested

| 1

|

17,850

| 23,795,761,483

|

IssuesEvent

|

2022-09-02 19:27:43

|

ncbo/bioportal-project

|

https://api.github.com/repos/ncbo/bioportal-project

|

closed

|

New submission creation for AIO fails with duplicate resource ID error

|

ontology processing problem

|

End user reported on the support list that they were unable to create a new submission for the [AIO ontology](https://bioportal.bioontology.org/ontologies/AIO).

Steps to reproduce:

* Navigate to the AIO [summary page](https://bioportal.bioontology.org/ontologies/AIO).

* Click the Add submission button

* On the [resulting form](https://bioportal.bioontology.org/ontologies/AIO/submissions/new), click the Add submission button

Form fails to submit with the following error displayed:

Plain text error:

```

[:error, #<OpenStruct links=nil, context=nil, proc_naming=#<OpenStruct links=nil, context=nil, duplicate="There is already a persistent resource with id `http://data.bioontology.org/ontologies/AIO/submissions/2`">>]

```

|

1.0

|

New submission creation for AIO fails with duplicate resource ID error - End user reported on the support list that they were unable to create a new submission for the [AIO ontology](https://bioportal.bioontology.org/ontologies/AIO).

Steps to reproduce:

* Navigate to the AIO [summary page](https://bioportal.bioontology.org/ontologies/AIO).

* Click the Add submission button

* On the [resulting form](https://bioportal.bioontology.org/ontologies/AIO/submissions/new), click the Add submission button

Form fails to submit with the following error displayed:

Plain text error:

```

[:error, #<OpenStruct links=nil, context=nil, proc_naming=#<OpenStruct links=nil, context=nil, duplicate="There is already a persistent resource with id `http://data.bioontology.org/ontologies/AIO/submissions/2`">>]

```

|

process

|

new submission creation for aio fails with duplicate resource id error end user reported on the support list that they were unable to create a new submission for the steps to reproduce navigate to the aio click the add submission button on the click the add submission button form fails to submit with the following error displayed plain text error

| 1

|

409,539

| 27,742,443,708

|

IssuesEvent

|

2023-03-15 15:04:46

|

scylladb/scylla-operator

|

https://api.github.com/repos/scylladb/scylla-operator

|

closed

|

Update docs version

|

kind/documentation priority/important-longterm

|

We should bump our sphinx theme https://sphinx-theme.scylladb.com/stable/upgrade/1-2-to-1-3.html and also bump pytest to at least 7.2.0 when at it. Also note that some of the upgrade instruction don't apply to our repo or need to be modified based on your judgment to maintain CI invariants.

this should help you bootstrap

```

podman run -it --rm -v="$( pwd ):/go/$( go list -m )" --workdir="/go/$( go list -m )/docs" -p 5500:5500 ubuntu:20.04 bash -c 'apt-get update && apt-get install -y curl python3 python3-distutils make git && ./hack/install-poetry.sh && $HOME/.poetry/bin/poetry update && make multiversion && make -C docs multiversionpreview'

```

|

1.0

|

Update docs version - We should bump our sphinx theme https://sphinx-theme.scylladb.com/stable/upgrade/1-2-to-1-3.html and also bump pytest to at least 7.2.0 when at it. Also note that some of the upgrade instruction don't apply to our repo or need to be modified based on your judgment to maintain CI invariants.

this should help you bootstrap

```

podman run -it --rm -v="$( pwd ):/go/$( go list -m )" --workdir="/go/$( go list -m )/docs" -p 5500:5500 ubuntu:20.04 bash -c 'apt-get update && apt-get install -y curl python3 python3-distutils make git && ./hack/install-poetry.sh && $HOME/.poetry/bin/poetry update && make multiversion && make -C docs multiversionpreview'

```

|

non_process

|

update docs version we should bump our sphinx theme and also bump pytest to at least when at it also note that some of the upgrade instruction don t apply to our repo or need to be modified based on your judgment to maintain ci invariants this should help you bootstrap podman run it rm v pwd go go list m workdir go go list m docs p ubuntu bash c apt get update apt get install y curl distutils make git hack install poetry sh home poetry bin poetry update make multiversion make c docs multiversionpreview

| 0

|

18,404

| 24,543,476,302

|

IssuesEvent

|

2022-10-12 06:51:42

|

home-climate-control/dz

|

https://api.github.com/repos/home-climate-control/dz

|

closed

|

Feature #2: replace bang-bang control mechanism with PI controller

|

enhancement usability fault tolerance process control reactive-only

|

### Expected Behavior

Control process emits signal producing reasonable HVAC unit uptimes and downtimes.

### Actual Behavior

Jitter is unbound. This will damage older or cheaper HVAC units that don't have internal protection from control short cycling.

### Mitigation

Replace bang-bang with PI controller (PID is not necessary).

|

1.0

|

Feature #2: replace bang-bang control mechanism with PI controller - ### Expected Behavior

Control process emits signal producing reasonable HVAC unit uptimes and downtimes.

### Actual Behavior

Jitter is unbound. This will damage older or cheaper HVAC units that don't have internal protection from control short cycling.

### Mitigation

Replace bang-bang with PI controller (PID is not necessary).

|

process

|

feature replace bang bang control mechanism with pi controller expected behavior control process emits signal producing reasonable hvac unit uptimes and downtimes actual behavior jitter is unbound this will damage older or cheaper hvac units that don t have internal protection from control short cycling mitigation replace bang bang with pi controller pid is not necessary

| 1

|

759,878

| 26,616,019,026

|

IssuesEvent

|

2023-01-24 07:10:11

|

robolaunch/central-orchestrator

|

https://api.github.com/repos/robolaunch/central-orchestrator

|

opened

|

user operations refactor

|

refactor medium priority

|

### What would you like to be added?

Refactor the all user, organization, and team operations.

### Why is this needed?

Ensurance

|

1.0

|

user operations refactor - ### What would you like to be added?

Refactor the all user, organization, and team operations.

### Why is this needed?

Ensurance

|

non_process

|

user operations refactor what would you like to be added refactor the all user organization and team operations why is this needed ensurance

| 0

|

7,453

| 10,560,782,972

|

IssuesEvent

|

2019-10-04 14:34:23

|

johang88/triton

|

https://api.github.com/repos/johang88/triton

|

opened

|

Add content type to content meta data

|

content processor

|

This will allow collision meshes to be created without hacks.

|

1.0

|

Add content type to content meta data - This will allow collision meshes to be created without hacks.

|

process

|

add content type to content meta data this will allow collision meshes to be created without hacks

| 1

|

20,759

| 27,492,774,604

|

IssuesEvent

|

2023-03-04 20:38:42

|

Azure/azure-sdk-tools

|

https://api.github.com/repos/Azure/azure-sdk-tools

|

closed

|

Define role, responsibilities, and more for Service buddy

|

Engagement Experience WS: Process Tools & Automation

|

The purpose of this Epic is to focus on defining the roles and responsibilities, tools for people to do their job, and mechanisms to build in accountability for the service buddy for Cadl

|

1.0

|

Define role, responsibilities, and more for Service buddy - The purpose of this Epic is to focus on defining the roles and responsibilities, tools for people to do their job, and mechanisms to build in accountability for the service buddy for Cadl

|

process

|

define role responsibilities and more for service buddy the purpose of this epic is to focus on defining the roles and responsibilities tools for people to do their job and mechanisms to build in accountability for the service buddy for cadl

| 1

|

35,955

| 2,793,980,912

|

IssuesEvent

|

2015-05-11 14:26:26

|

mozilla/marketplace-tests

|

https://api.github.com/repos/mozilla/marketplace-tests

|

closed

|

Add a setup.cfg file to configure the behaviour of flake8

|

Community difficulty beginner priority medium

|

This is a simple task:

1. Add a new file to the repo called `setup.cfg`. The file should look just like the file found at https://github.com/mozilla/mcom-tests/blob/master/setup.cfg

2. Update the `.travis.yml` file so the `script:` line reads:

```yml

script: "flake8 ."

```

|

1.0

|

Add a setup.cfg file to configure the behaviour of flake8 - This is a simple task:

1. Add a new file to the repo called `setup.cfg`. The file should look just like the file found at https://github.com/mozilla/mcom-tests/blob/master/setup.cfg

2. Update the `.travis.yml` file so the `script:` line reads:

```yml

script: "flake8 ."

```

|

non_process

|

add a setup cfg file to configure the behaviour of this is a simple task add a new file to the repo called setup cfg the file should look just like the file found at update the travis yml file so the script line reads yml script

| 0

|

15,768

| 19,913,877,896

|

IssuesEvent

|

2022-01-25 20:09:55

|

input-output-hk/high-assurance-legacy

|

https://api.github.com/repos/input-output-hk/high-assurance-legacy

|

closed

|

Make all small types possible as data types

|

type: enhancement reason: wontfix language: isabelle topic: process calculus

|

At its core, our process calculus implementation is untyped in the sense that there is a single type for channels, `chan`, and a single type for values, `val`. However, we have a typed layer, which allows us to use typed channels (using the type constructor `channel`) as well as data of various types.

Typed channels and other data are encoded as untyped channels and values. As a result, the sizes of the types we’re using are restricted by the sizes of `chan` and `val`. Currently, we force types `'a channel` to be countable and require other data types to be countable as well. This has the annoying consequence that we can treat neither real numbers nor functions on data as data, meaning we cannot communicate them over channels.

With the new AFP entry [`ZFC_in_HOL`][zfc-in-hol], we have a very good tool for relaxing this restriction. We plan to change the requirement of data types being countable to the requirement of them being _small_ in the sense of `ZFC_in_HOL`. The type of real numbers is certainly small and, more importantly, small types are closed under function space construction; so this change would solve the above described problem. It would not allow us to treat the type `V` of Zermelo–Fraenkel sets as a data type though, but then again our point is to support rich typing, while ZFC is untyped.

[zfc-in-hol]:

https://www.isa-afp.org/entries/ZFC_in_HOL.html

"Zermelo Fraenkel Set Theory in Higher-Order Logic"

|

1.0

|

Make all small types possible as data types - At its core, our process calculus implementation is untyped in the sense that there is a single type for channels, `chan`, and a single type for values, `val`. However, we have a typed layer, which allows us to use typed channels (using the type constructor `channel`) as well as data of various types.

Typed channels and other data are encoded as untyped channels and values. As a result, the sizes of the types we’re using are restricted by the sizes of `chan` and `val`. Currently, we force types `'a channel` to be countable and require other data types to be countable as well. This has the annoying consequence that we can treat neither real numbers nor functions on data as data, meaning we cannot communicate them over channels.

With the new AFP entry [`ZFC_in_HOL`][zfc-in-hol], we have a very good tool for relaxing this restriction. We plan to change the requirement of data types being countable to the requirement of them being _small_ in the sense of `ZFC_in_HOL`. The type of real numbers is certainly small and, more importantly, small types are closed under function space construction; so this change would solve the above described problem. It would not allow us to treat the type `V` of Zermelo–Fraenkel sets as a data type though, but then again our point is to support rich typing, while ZFC is untyped.

[zfc-in-hol]:

https://www.isa-afp.org/entries/ZFC_in_HOL.html

"Zermelo Fraenkel Set Theory in Higher-Order Logic"

|

process

|

make all small types possible as data types at its core our process calculus implementation is untyped in the sense that there is a single type for channels chan and a single type for values val however we have a typed layer which allows us to use typed channels using the type constructor channel as well as data of various types typed channels and other data are encoded as untyped channels and values as a result the sizes of the types we’re using are restricted by the sizes of chan and val currently we force types a channel to be countable and require other data types to be countable as well this has the annoying consequence that we can treat neither real numbers nor functions on data as data meaning we cannot communicate them over channels with the new afp entry we have a very good tool for relaxing this restriction we plan to change the requirement of data types being countable to the requirement of them being small in the sense of zfc in hol the type of real numbers is certainly small and more importantly small types are closed under function space construction so this change would solve the above described problem it would not allow us to treat the type v of zermelo–fraenkel sets as a data type though but then again our point is to support rich typing while zfc is untyped zermelo fraenkel set theory in higher order logic

| 1

|

30,416

| 11,824,944,561

|

IssuesEvent

|

2020-03-21 09:53:40

|

mchrapek/studia-projekt-zespolowy-backend

|

https://api.github.com/repos/mchrapek/studia-projekt-zespolowy-backend

|

closed

|

Możliwość zablokowania użytkownika przez administratora

|

core function security

|

[Kryteria akceptacji]

Jako administrator mogę zablokować użytkownika, co powoduje blokadę logowania użytkownika.

|

True

|

Możliwość zablokowania użytkownika przez administratora - [Kryteria akceptacji]

Jako administrator mogę zablokować użytkownika, co powoduje blokadę logowania użytkownika.

|

non_process

|

możliwość zablokowania użytkownika przez administratora jako administrator mogę zablokować użytkownika co powoduje blokadę logowania użytkownika

| 0

|

17,761

| 23,690,934,550

|

IssuesEvent

|

2022-08-29 10:43:17

|

apache/arrow-rs

|

https://api.github.com/repos/apache/arrow-rs

|

closed

|

Release Arrow `21.0.0` (next release after `20.0.0`)

|

development-process

|

Follow on from https://github.com/apache/arrow-rs/issues/2172

* Planned Release Candidate: ~2022-08-19~ 2022-08-18

* Planned Release and Publish to crates.io: ~2022-08-22~ 2022-08-22

Items:

- [x] https://github.com/apache/arrow-rs/issues/2338

- [x] https://github.com/apache/arrow-rs/pull/2339

- [x] https://github.com/apache/arrow-rs/pull/2483

- [x] https://github.com/apache/arrow-rs/pull/2506

- [x] https://lists.apache.org/thread/g4nzgd57w6rbt7o4tkqpbdmrqg8tqrxz

- [x] Release candidate approved

- [x] Release to crates.io

- [ ] Draft update to DataFusion:

See full list here:

https://github.com/apache/arrow-rs/compare/20.0.0...master

|

1.0

|

Release Arrow `21.0.0` (next release after `20.0.0`) - Follow on from https://github.com/apache/arrow-rs/issues/2172

* Planned Release Candidate: ~2022-08-19~ 2022-08-18

* Planned Release and Publish to crates.io: ~2022-08-22~ 2022-08-22

Items:

- [x] https://github.com/apache/arrow-rs/issues/2338

- [x] https://github.com/apache/arrow-rs/pull/2339

- [x] https://github.com/apache/arrow-rs/pull/2483

- [x] https://github.com/apache/arrow-rs/pull/2506

- [x] https://lists.apache.org/thread/g4nzgd57w6rbt7o4tkqpbdmrqg8tqrxz

- [x] Release candidate approved

- [x] Release to crates.io

- [ ] Draft update to DataFusion:

See full list here:

https://github.com/apache/arrow-rs/compare/20.0.0...master

|

process

|

release arrow next release after follow on from planned release candidate planned release and publish to crates io items release candidate approved release to crates io draft update to datafusion see full list here

| 1

|

269,131

| 8,432,286,133

|

IssuesEvent

|

2018-10-17 01:10:46

|

alan345/nacho

|

https://api.github.com/repos/alan345/nacho

|

closed

|

Minimize API documentation

|

High priority

|

Remove everything except signupDate and trialEndDate from POST.

Remove all other Optional fields from definitions in introduction.

|

1.0

|

Minimize API documentation - Remove everything except signupDate and trialEndDate from POST.

Remove all other Optional fields from definitions in introduction.

|

non_process

|

minimize api documentation remove everything except signupdate and trialenddate from post remove all other optional fields from definitions in introduction

| 0

|

10,252

| 13,105,618,185

|

IssuesEvent

|

2020-08-04 12:31:02

|

Explore-AI/test-repo

|

https://api.github.com/repos/Explore-AI/test-repo

|

opened

|

JHC-TAG-TEST-PP

|

bug content-type:pre-processing student-submitted unread

|

Content Type: Pre-Processing

Content Name: JHC-TAG-TEST-PP

Problem: The title is weird

Additional Details:

I think this is a piece of test content that we shouldn't be able to see

Supporting Files:

Reported by: jacob@explore-ai.net

|

1.0

|

JHC-TAG-TEST-PP - Content Type: Pre-Processing

Content Name: JHC-TAG-TEST-PP

Problem: The title is weird

Additional Details:

I think this is a piece of test content that we shouldn't be able to see

Supporting Files:

Reported by: jacob@explore-ai.net

|

process

|

jhc tag test pp content type pre processing content name jhc tag test pp problem the title is weird additional details i think this is a piece of test content that we shouldn t be able to see supporting files reported by jacob explore ai net

| 1

|

4,197

| 7,156,581,005

|

IssuesEvent

|

2018-01-26 16:45:08

|

HDLOfficial/Dark-Cave

|

https://api.github.com/repos/HDLOfficial/Dark-Cave

|

closed

|

Spelling Error in file README.md

|

Processing Issue

|

last line first word says "Pyscic" should say Psychic

(sorry to be nit picky)

By the way, I am interested in making a dialogue storyboard

|

1.0

|

Spelling Error in file README.md - last line first word says "Pyscic" should say Psychic

(sorry to be nit picky)

By the way, I am interested in making a dialogue storyboard

|

process

|

spelling error in file readme md last line first word says pyscic should say psychic sorry to be nit picky by the way i am interested in making a dialogue storyboard

| 1

|

49,620

| 20,824,857,111

|

IssuesEvent

|

2022-03-18 19:27:52

|

mitin20/bb-upptime

|

https://api.github.com/repos/mitin20/bb-upptime

|

closed

|

🛑 Promerica-renditionservice/qa is down

|

status promerica-renditionservice-qa

|

In [`d14bf76`](https://github.com/mitin20/bb-upptime/commit/d14bf760937660e259da40b8a648e68682f14a9a

), Promerica-renditionservice/qa (https://edbsqa.pfcti.com/api/portal/actuator/health) was **down**:

- HTTP code: 500

- Response time: 16 ms

|

1.0

|

🛑 Promerica-renditionservice/qa is down - In [`d14bf76`](https://github.com/mitin20/bb-upptime/commit/d14bf760937660e259da40b8a648e68682f14a9a

), Promerica-renditionservice/qa (https://edbsqa.pfcti.com/api/portal/actuator/health) was **down**:

- HTTP code: 500