Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

15,185

| 18,955,159,595

|

IssuesEvent

|

2021-11-18 19:20:28

|

googleapis/repo-automation-bots

|

https://api.github.com/repos/googleapis/repo-automation-bots

|

opened

|

unpin probot for owl-bot

|

type: process bot: owl-bot

|

#2912 added a pin on probot dependency to `12.1.0` because `12.1.1` gives me a compilation error.

|

1.0

|

unpin probot for owl-bot - #2912 added a pin on probot dependency to `12.1.0` because `12.1.1` gives me a compilation error.

|

process

|

unpin probot for owl bot added a pin on probot dependency to because gives me a compilation error

| 1

|

4,532

| 2,559,371,772

|

IssuesEvent

|

2015-02-05 00:11:17

|

tvkanters/Dopestreamer

|

https://api.github.com/repos/tvkanters/Dopestreamer

|

opened

|

Add viewer count

|

enhancement low-priority

|

There should be an viewer count in the main stream which sums the Hitbox, Twitch, Vacker live and Vacker restream viewcounts.

|

1.0

|

Add viewer count - There should be an viewer count in the main stream which sums the Hitbox, Twitch, Vacker live and Vacker restream viewcounts.

|

non_process

|

add viewer count there should be an viewer count in the main stream which sums the hitbox twitch vacker live and vacker restream viewcounts

| 0

|

6,765

| 9,888,280,189

|

IssuesEvent

|

2019-06-25 11:09:59

|

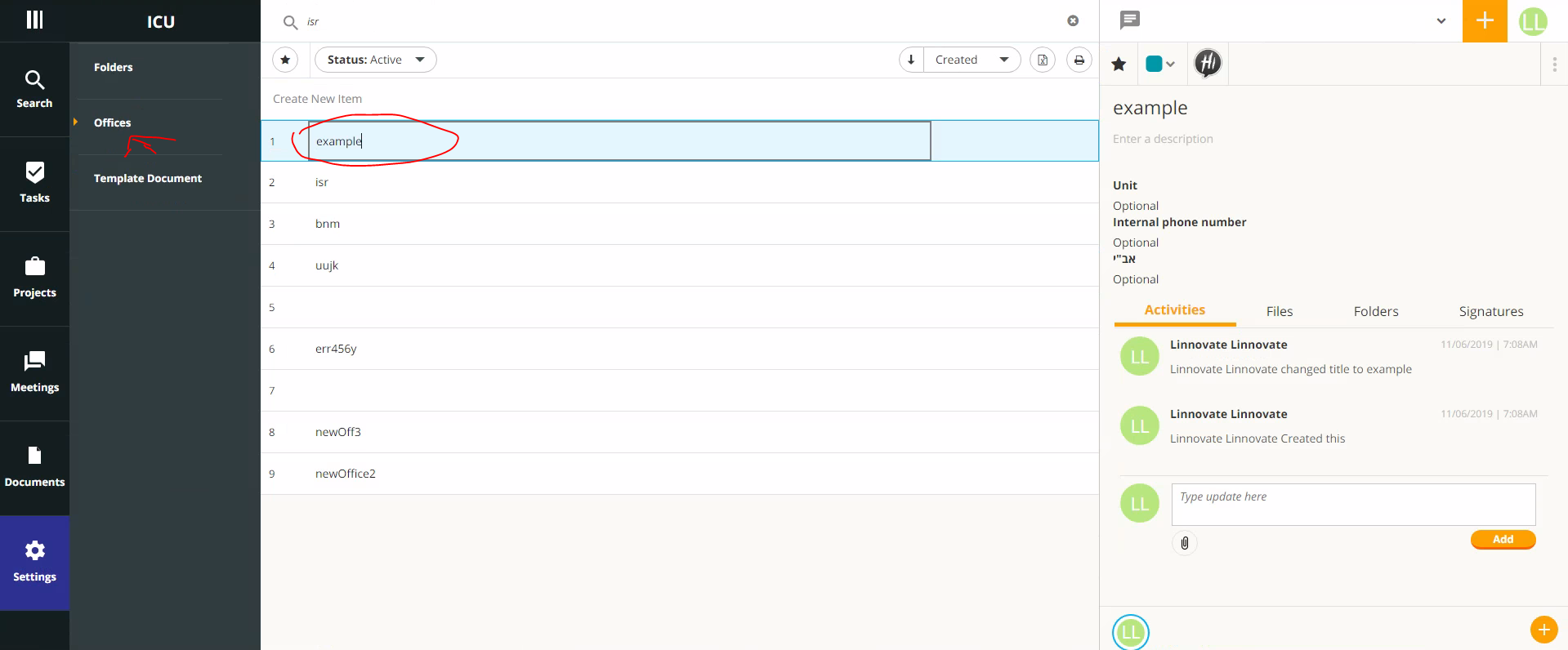

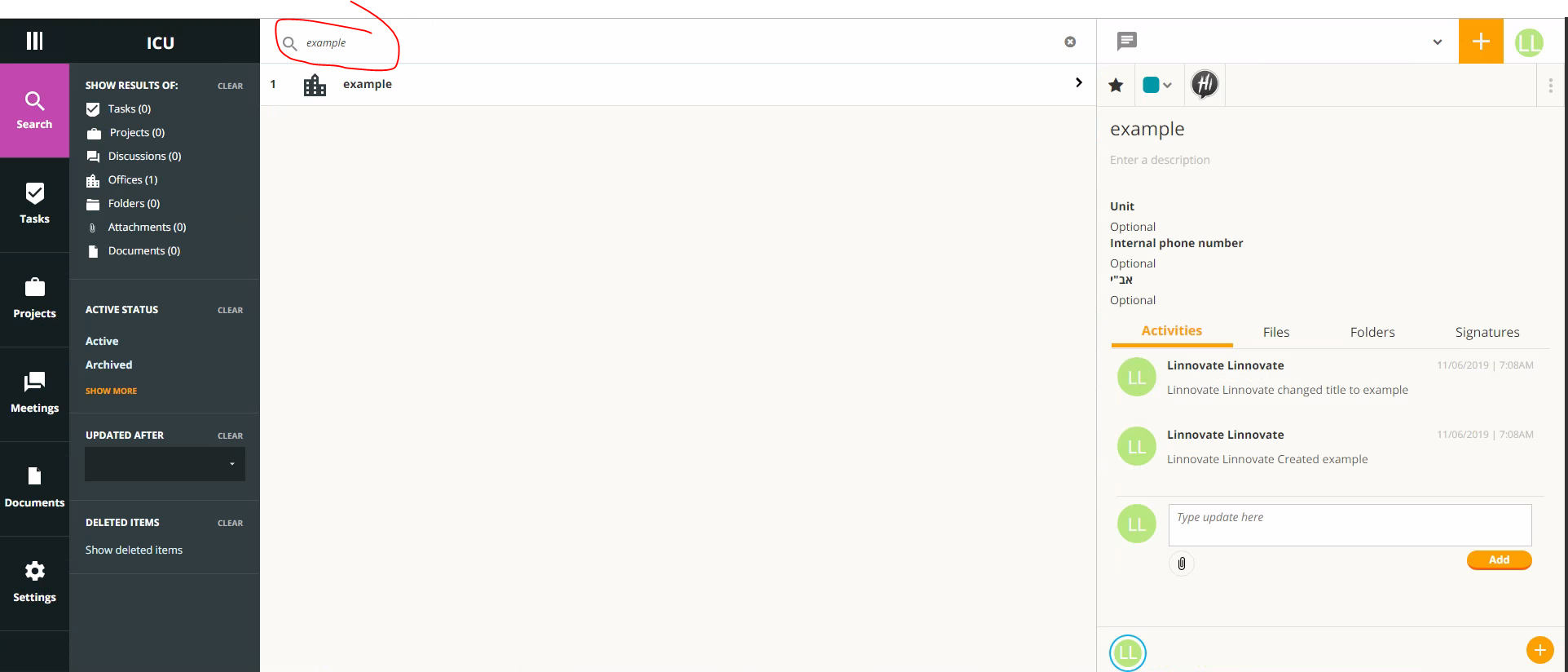

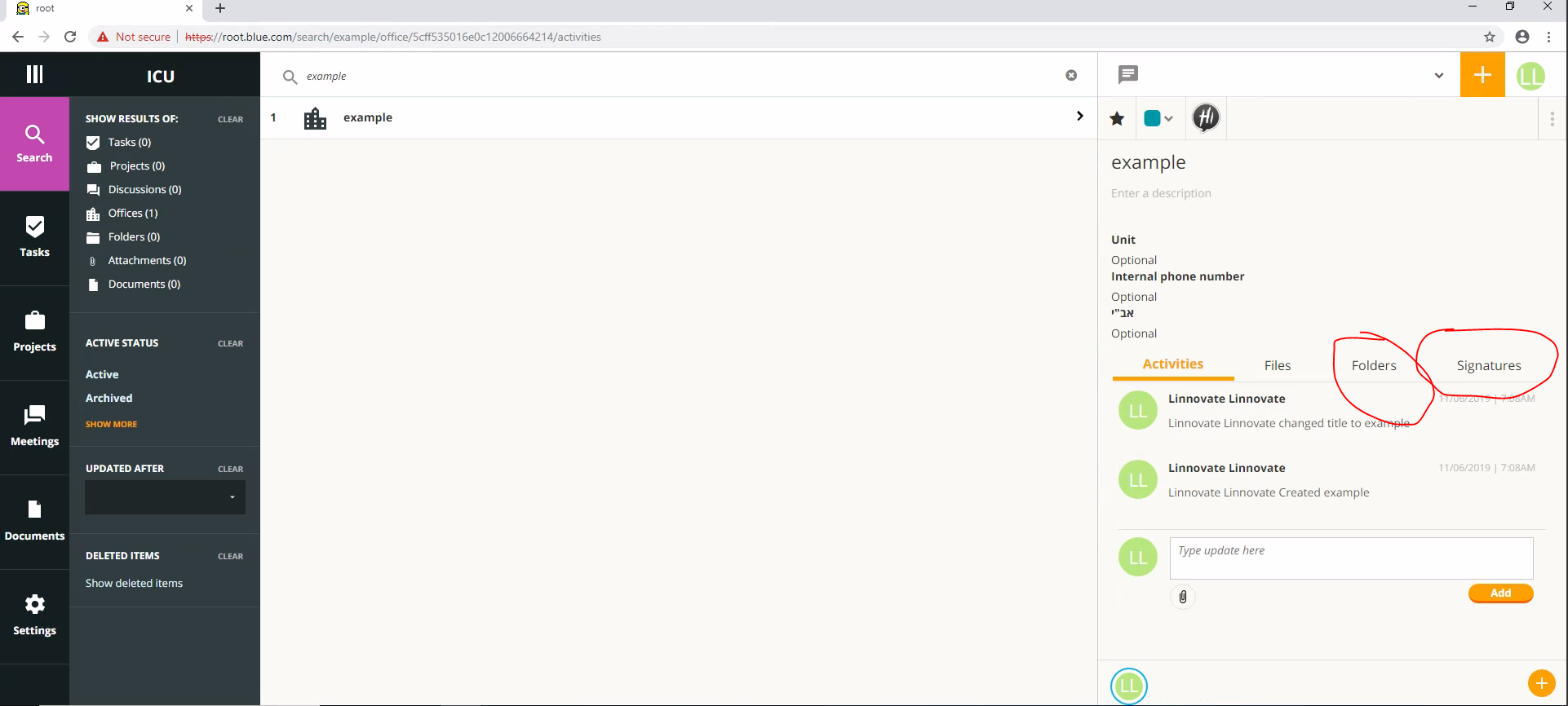

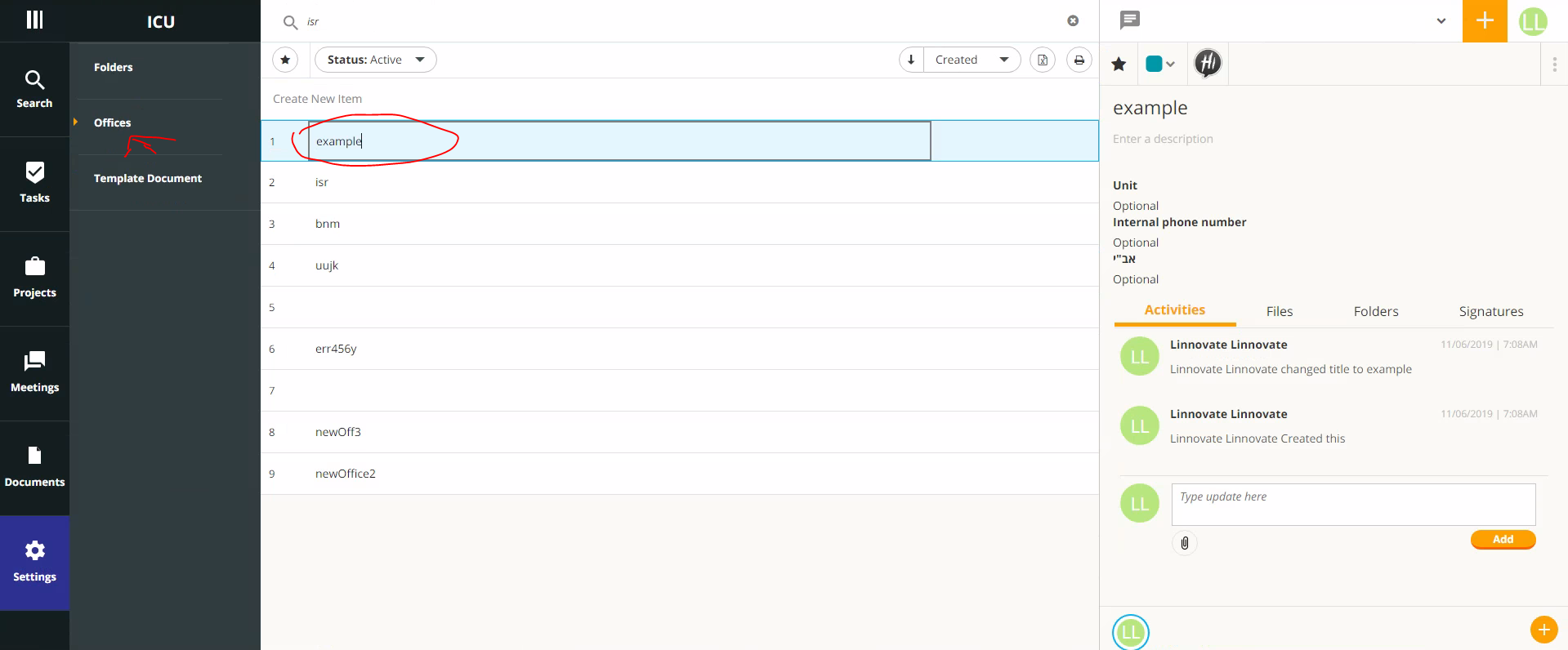

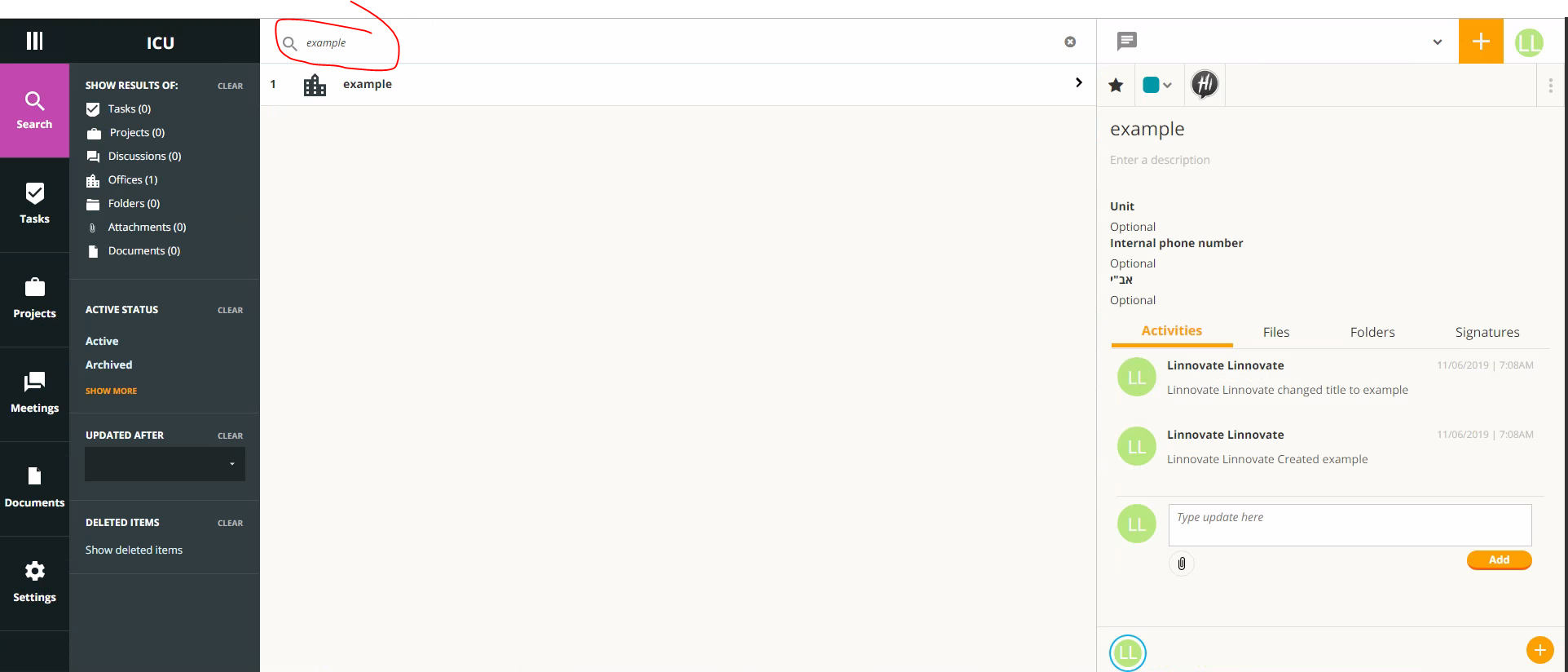

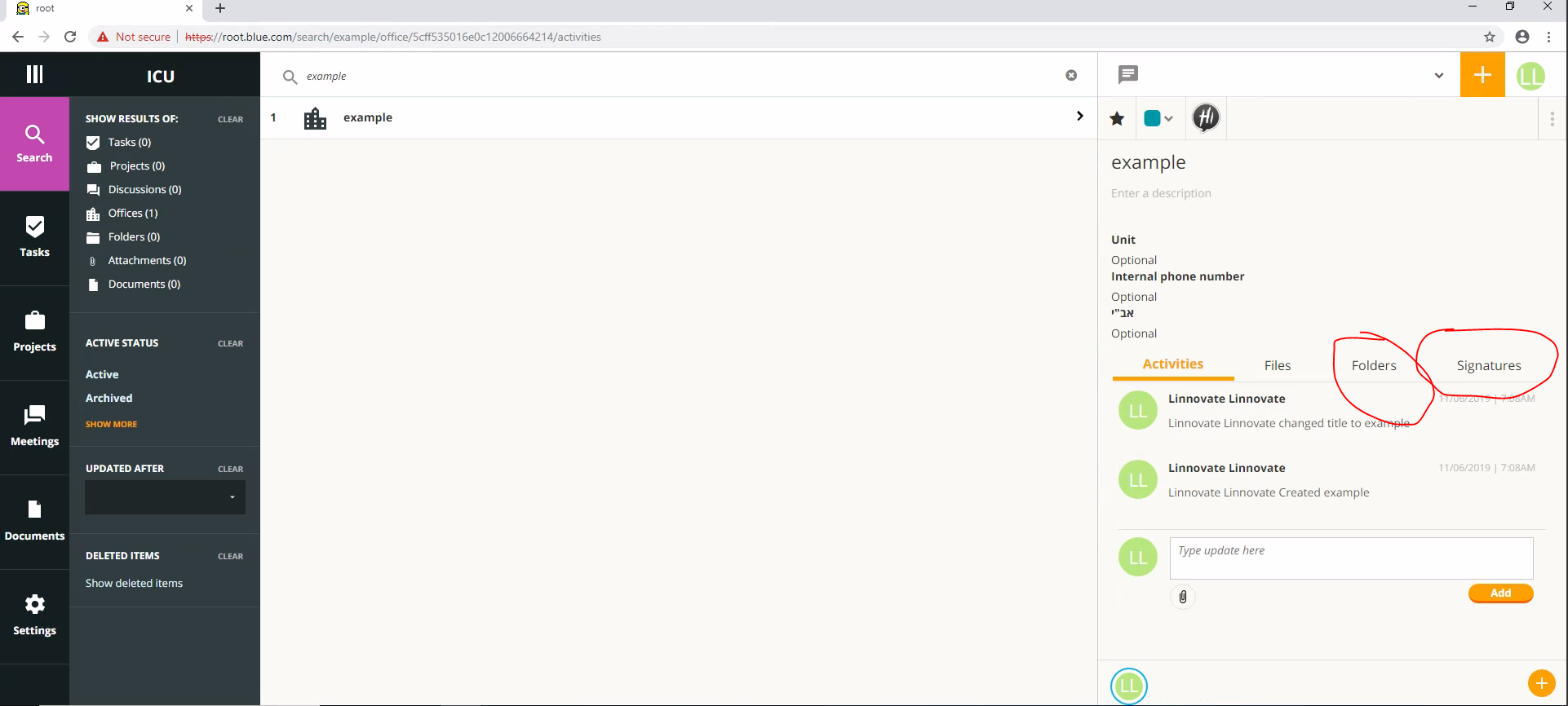

linnovate/root

|

https://api.github.com/repos/linnovate/root

|

closed

|

search office :can not click folder and signature

|

2.0.7 Fixed Process bug Search

|

go to offices

open new office

go to search and search for this office

Click on the office, and then click Folder or Signature

result : clicking on a signature or folder does not work

go to offices

open new office:

go to search and search for this office

Click on the office, and then click Folder or Signature

|

1.0

|

search office :can not click folder and signature - go to offices

open new office

go to search and search for this office

Click on the office, and then click Folder or Signature

result : clicking on a signature or folder does not work

go to offices

open new office:

go to search and search for this office

Click on the office, and then click Folder or Signature

|

process

|

search office can not click folder and signature go to offices open new office go to search and search for this office click on the office and then click folder or signature result clicking on a signature or folder does not work go to offices open new office go to search and search for this office click on the office and then click folder or signature

| 1

|

81,509

| 23,479,804,250

|

IssuesEvent

|

2022-08-17 09:33:20

|

godotengine/godot-proposals

|

https://api.github.com/repos/godotengine/godot-proposals

|

reopened

|

Tree-shaking compiler to reduce size and load time of exported games

|

archived topic:buildsystem

|

### Describe the project you are working on

Many small HTML5 and iOS/Android games, where it's crucial to have fast download and load times.

### Describe the problem or limitation you are having in your project

Godot has an ever-growing bundle of amazing functionality, which is hugely helpful for making games. However, most of this functionality is not used, leading to bloated game bundles that take longer for the player to download. For instance, even the simplest HTML5 games made with Godot need 15 MB for the engine, whereas the game logic and assets might only be 2-3 MB.

These simple games can also take 10+ seconds to load on old devices because the device has to load a lot of unneeded functionality into memory. This will likely become an increasingly important issue as more functionality is added to Godot.

### Describe the feature / enhancement and how it helps to overcome the problem or limitation

Add [tree-shaking](https://en.wikipedia.org/wiki/Tree_shaking) functionality to the compiler so only the part of the Godot engine that are actually used by the game are included. This would dramatically reduce size and load times.

### Describe how your proposal will work, with code, pseudo-code, mock-ups, and/or diagrams

This would work similar to [webpack's tree-shaking](https://webpack.js.org/guides/tree-shaking/) in that Godot would identify which functions are being used and remove the rest from the exported bundle.

This is similar to [disabling 3D mentioned in the docs](https://docs.godotengine.org/en/stable/development/compiling/optimizing_for_size.html#disabling-3d), but more refined and with much bigger benefit because it would disable lots of other unused functionality besides 3D. That being said, it might be cool to just do a simple initial version that just automatically detects if 3D is used and disables it otherwise.

Implementing tree-shaking would likely take quite a bit of effort, but would improve the bundle size and load time of every single game exported with Godot (there’s no game that uses all the functionality).

### If this enhancement will not be used often, can it be worked around with a few lines of script?

No.

### Is there a reason why this should be core and not an add-on in the asset library?

AFAIK this would only be possible to do in core.

|

1.0

|

Tree-shaking compiler to reduce size and load time of exported games - ### Describe the project you are working on

Many small HTML5 and iOS/Android games, where it's crucial to have fast download and load times.

### Describe the problem or limitation you are having in your project

Godot has an ever-growing bundle of amazing functionality, which is hugely helpful for making games. However, most of this functionality is not used, leading to bloated game bundles that take longer for the player to download. For instance, even the simplest HTML5 games made with Godot need 15 MB for the engine, whereas the game logic and assets might only be 2-3 MB.

These simple games can also take 10+ seconds to load on old devices because the device has to load a lot of unneeded functionality into memory. This will likely become an increasingly important issue as more functionality is added to Godot.

### Describe the feature / enhancement and how it helps to overcome the problem or limitation

Add [tree-shaking](https://en.wikipedia.org/wiki/Tree_shaking) functionality to the compiler so only the part of the Godot engine that are actually used by the game are included. This would dramatically reduce size and load times.

### Describe how your proposal will work, with code, pseudo-code, mock-ups, and/or diagrams

This would work similar to [webpack's tree-shaking](https://webpack.js.org/guides/tree-shaking/) in that Godot would identify which functions are being used and remove the rest from the exported bundle.

This is similar to [disabling 3D mentioned in the docs](https://docs.godotengine.org/en/stable/development/compiling/optimizing_for_size.html#disabling-3d), but more refined and with much bigger benefit because it would disable lots of other unused functionality besides 3D. That being said, it might be cool to just do a simple initial version that just automatically detects if 3D is used and disables it otherwise.

Implementing tree-shaking would likely take quite a bit of effort, but would improve the bundle size and load time of every single game exported with Godot (there’s no game that uses all the functionality).

### If this enhancement will not be used often, can it be worked around with a few lines of script?

No.

### Is there a reason why this should be core and not an add-on in the asset library?

AFAIK this would only be possible to do in core.

|

non_process

|

tree shaking compiler to reduce size and load time of exported games describe the project you are working on many small and ios android games where it s crucial to have fast download and load times describe the problem or limitation you are having in your project godot has an ever growing bundle of amazing functionality which is hugely helpful for making games however most of this functionality is not used leading to bloated game bundles that take longer for the player to download for instance even the simplest games made with godot need mb for the engine whereas the game logic and assets might only be mb these simple games can also take seconds to load on old devices because the device has to load a lot of unneeded functionality into memory this will likely become an increasingly important issue as more functionality is added to godot describe the feature enhancement and how it helps to overcome the problem or limitation add functionality to the compiler so only the part of the godot engine that are actually used by the game are included this would dramatically reduce size and load times describe how your proposal will work with code pseudo code mock ups and or diagrams this would work similar to in that godot would identify which functions are being used and remove the rest from the exported bundle this is similar to but more refined and with much bigger benefit because it would disable lots of other unused functionality besides that being said it might be cool to just do a simple initial version that just automatically detects if is used and disables it otherwise implementing tree shaking would likely take quite a bit of effort but would improve the bundle size and load time of every single game exported with godot there’s no game that uses all the functionality if this enhancement will not be used often can it be worked around with a few lines of script no is there a reason why this should be core and not an add on in the asset library afaik this would only be possible to do in core

| 0

|

649,766

| 21,320,384,885

|

IssuesEvent

|

2022-04-17 01:25:57

|

WeaponMechanics/MechanicsMain

|

https://api.github.com/repos/WeaponMechanics/MechanicsMain

|

closed

|

Add Vanilla Command Arguments/Validation

|

priority: low will add working on it

|

### Link to code

https://github.com/WeaponMechanics/MechanicsMain/tree/master/MechanicsCore/src/main/java/me/deecaad/core/commands

### Related Issues

_No response_

### Improvements

Lets say you are making a CSGO or Valorant or similar server. You need to give weapons to each member of a team. You cannot do this using `/wm give` since it doesn't accept command arguments like `@a[type=PLAYER,team=blue]`. However, in vanilla MC, commands handle this automatically.

MC also shows validation through colors, making command usage more responsive on the fly. To handle this,

you need an NMS based command api.

Existing:

* https://github.com/JorelAli/CommandAPI

* https://github.com/lucko/commodore

|

1.0

|

Add Vanilla Command Arguments/Validation - ### Link to code

https://github.com/WeaponMechanics/MechanicsMain/tree/master/MechanicsCore/src/main/java/me/deecaad/core/commands

### Related Issues

_No response_

### Improvements

Lets say you are making a CSGO or Valorant or similar server. You need to give weapons to each member of a team. You cannot do this using `/wm give` since it doesn't accept command arguments like `@a[type=PLAYER,team=blue]`. However, in vanilla MC, commands handle this automatically.

MC also shows validation through colors, making command usage more responsive on the fly. To handle this,

you need an NMS based command api.

Existing:

* https://github.com/JorelAli/CommandAPI

* https://github.com/lucko/commodore

|

non_process

|

add vanilla command arguments validation link to code related issues no response improvements lets say you are making a csgo or valorant or similar server you need to give weapons to each member of a team you cannot do this using wm give since it doesn t accept command arguments like a however in vanilla mc commands handle this automatically mc also shows validation through colors making command usage more responsive on the fly to handle this you need an nms based command api existing

| 0

|

296,191

| 25,535,616,349

|

IssuesEvent

|

2022-11-29 11:44:54

|

ToolJet/ToolJet

|

https://api.github.com/repos/ToolJet/ToolJet

|

closed

|

Add data-cy for table column edit options.

|

test cypress

|

### Specify the kind of test

<!--

Provide the kind of test

-->

Cypress E2E

### Describe the test

<!--

Provide a clear description of the test

-->

Add data -cy to help test cases for column edit options.

|

1.0

|

Add data-cy for table column edit options. - ### Specify the kind of test

<!--

Provide the kind of test

-->

Cypress E2E

### Describe the test

<!--

Provide a clear description of the test

-->

Add data -cy to help test cases for column edit options.

|

non_process

|

add data cy for table column edit options specify the kind of test provide the kind of test cypress describe the test provide a clear description of the test add data cy to help test cases for column edit options

| 0

|

7,139

| 10,281,285,005

|

IssuesEvent

|

2019-08-26 08:07:07

|

qgis/QGIS

|

https://api.github.com/repos/qgis/QGIS

|

closed

|

Add the "-overwrite" flag to the "clip raster by mask" GDAL tool

|

Feature Request Processing

|

<!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

- [ ] Search through existing issue reports and gis.stackexchange.com to check whether the issue already exists

- [ ] Test with a [clean new user profile](https://docs.qgis.org/testing/en/docs/user_manual/introduction/qgis_configuration.html?highlight=profile#working-with-user-profiles).

- [ ] Create a light and self-contained sample dataset and project file which demonstrates the issue

If the issue concerns a **third party plugin**, then it **cannot** be fixed by the QGIS team. Please raise your issue in the dedicated bug tracker for that specific plugin (as listed in the plugin's description). -->

**Describe the bug**

<!-- A clear and concise description of what the bug is. -->

When atempting to overwrite a previous raster (in this case) using clip raster by mask, it is not over written even when QGIS prompts you to overwrite.

**How to Reproduce**

Raster > Extraction > Clip Raster by Mask layer

Save the file to a directory

Go through the same process, save as same file name, and agree to overwrite the file.

Run clipping process again.

See error message below.

<!-- Steps, sample datasets and qgis project file to reproduce the behavior. Screencasts or screenshots welcome

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error -->

**QGIS and OS versions**

QGIS: 3.4.10-Madeira

OS: Ubuntu Xenial

GDAL/OGR: 2.2.2

<!-- In the QGIS menu help/about, click in the dialog, Ctrl+A and then Ctrl+C. Finally paste here -->

**Additional context**

<!-- Add any other context about the problem here. -->

Error Message:

```

Clip Raster by Mask Layer

ERROR 1: Output dataset /filename_clipped.tif exists,

but some command line options were provided indicating a new dataset

should be created. Please delete existing dataset and run again.

```

I think here when running the tool the ```-overwrite``` needs to be specified?

|

1.0

|

Add the "-overwrite" flag to the "clip raster by mask" GDAL tool - <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

- [ ] Search through existing issue reports and gis.stackexchange.com to check whether the issue already exists

- [ ] Test with a [clean new user profile](https://docs.qgis.org/testing/en/docs/user_manual/introduction/qgis_configuration.html?highlight=profile#working-with-user-profiles).

- [ ] Create a light and self-contained sample dataset and project file which demonstrates the issue

If the issue concerns a **third party plugin**, then it **cannot** be fixed by the QGIS team. Please raise your issue in the dedicated bug tracker for that specific plugin (as listed in the plugin's description). -->

**Describe the bug**

<!-- A clear and concise description of what the bug is. -->

When atempting to overwrite a previous raster (in this case) using clip raster by mask, it is not over written even when QGIS prompts you to overwrite.

**How to Reproduce**

Raster > Extraction > Clip Raster by Mask layer

Save the file to a directory

Go through the same process, save as same file name, and agree to overwrite the file.

Run clipping process again.

See error message below.

<!-- Steps, sample datasets and qgis project file to reproduce the behavior. Screencasts or screenshots welcome

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error -->

**QGIS and OS versions**

QGIS: 3.4.10-Madeira

OS: Ubuntu Xenial

GDAL/OGR: 2.2.2

<!-- In the QGIS menu help/about, click in the dialog, Ctrl+A and then Ctrl+C. Finally paste here -->

**Additional context**

<!-- Add any other context about the problem here. -->

Error Message:

```

Clip Raster by Mask Layer

ERROR 1: Output dataset /filename_clipped.tif exists,

but some command line options were provided indicating a new dataset

should be created. Please delete existing dataset and run again.

```

I think here when running the tool the ```-overwrite``` needs to be specified?

|

process

|

add the overwrite flag to the clip raster by mask gdal tool bug fixing and feature development is a community responsibility and not the responsibility of the qgis project alone if this bug report or feature request is high priority for you we suggest engaging a qgis developer or support organisation and financially sponsoring a fix checklist before submitting search through existing issue reports and gis stackexchange com to check whether the issue already exists test with a create a light and self contained sample dataset and project file which demonstrates the issue if the issue concerns a third party plugin then it cannot be fixed by the qgis team please raise your issue in the dedicated bug tracker for that specific plugin as listed in the plugin s description describe the bug when atempting to overwrite a previous raster in this case using clip raster by mask it is not over written even when qgis prompts you to overwrite how to reproduce raster extraction clip raster by mask layer save the file to a directory go through the same process save as same file name and agree to overwrite the file run clipping process again see error message below steps sample datasets and qgis project file to reproduce the behavior screencasts or screenshots welcome go to click on scroll down to see error qgis and os versions qgis madeira os ubuntu xenial gdal ogr additional context error message clip raster by mask layer error output dataset filename clipped tif exists but some command line options were provided indicating a new dataset should be created please delete existing dataset and run again i think here when running the tool the overwrite needs to be specified

| 1

|

10,810

| 13,609,288,898

|

IssuesEvent

|

2020-09-23 04:50:19

|

googleapis/java-accessapproval

|

https://api.github.com/repos/googleapis/java-accessapproval

|

closed

|

Dependency Dashboard

|

api: accessapproval type: process

|

This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/com.google.cloud-google-cloud-accessapproval-1.x -->chore(deps): update dependency com.google.cloud:google-cloud-accessapproval to v1

- [ ] <!-- rebase-branch=renovate/com.google.cloud-libraries-bom-10.x -->chore(deps): update dependency com.google.cloud:libraries-bom to v10

---

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

|

1.0

|

Dependency Dashboard - This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/com.google.cloud-google-cloud-accessapproval-1.x -->chore(deps): update dependency com.google.cloud:google-cloud-accessapproval to v1

- [ ] <!-- rebase-branch=renovate/com.google.cloud-libraries-bom-10.x -->chore(deps): update dependency com.google.cloud:libraries-bom to v10

---

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

|

process

|

dependency dashboard this issue contains a list of renovate updates and their statuses open these updates have all been created already click a checkbox below to force a retry rebase of any chore deps update dependency com google cloud google cloud accessapproval to chore deps update dependency com google cloud libraries bom to check this box to trigger a request for renovate to run again on this repository

| 1

|

5,870

| 8,691,508,758

|

IssuesEvent

|

2018-12-04 01:40:39

|

knative/serving

|

https://api.github.com/repos/knative/serving

|

closed

|

We should only use knative-releases for public released artifacts

|

area/test-and-release kind/feature kind/process

|

<!--

Pro-tip: You can leave this block commented, and it still works!

Select the appropriate areas for your issue:

/area test-and-release

Classify what kind of issue this is:

/kind feature

/kind process

/assign @adrcunha

-->

## Expected Behavior

We should knative-releases bucket exclusively for public releases (vX.Y.Z releases) to avoid accident cleanups of nightly and other ephemeral releases.

Nightly should be published to knative-nightly bucket.

## Actual Behavior

Nightly is currently published to knative-releases GCS/GCR buckets.

|

1.0

|

We should only use knative-releases for public released artifacts - <!--

Pro-tip: You can leave this block commented, and it still works!

Select the appropriate areas for your issue:

/area test-and-release

Classify what kind of issue this is:

/kind feature

/kind process

/assign @adrcunha

-->

## Expected Behavior

We should knative-releases bucket exclusively for public releases (vX.Y.Z releases) to avoid accident cleanups of nightly and other ephemeral releases.

Nightly should be published to knative-nightly bucket.

## Actual Behavior

Nightly is currently published to knative-releases GCS/GCR buckets.

|

process

|

we should only use knative releases for public released artifacts pro tip you can leave this block commented and it still works select the appropriate areas for your issue area test and release classify what kind of issue this is kind feature kind process assign adrcunha expected behavior we should knative releases bucket exclusively for public releases vx y z releases to avoid accident cleanups of nightly and other ephemeral releases nightly should be published to knative nightly bucket actual behavior nightly is currently published to knative releases gcs gcr buckets

| 1

|

432,333

| 30,278,937,578

|

IssuesEvent

|

2023-07-07 23:12:07

|

houghj16/ShareX

|

https://api.github.com/repos/houghj16/ShareX

|

opened

|

Update the routing for the OneDrive

|

documentation

|

#3

#6

@houghj16

Description is very important

```[tasklist]

### Tasks

- [ ] #7

```

|

1.0

|

Update the routing for the OneDrive - #3

#6

@houghj16

Description is very important

```[tasklist]

### Tasks

- [ ] #7

```

|

non_process

|

update the routing for the onedrive description is very important tasks

| 0

|

173,030

| 14,399,550,475

|

IssuesEvent

|

2020-12-03 11:05:01

|

kubernetes-sigs/external-dns

|

https://api.github.com/repos/kubernetes-sigs/external-dns

|

closed

|

documentation for fast dns changed

|

kind/bug kind/documentation

|

<!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!

-->

**What happened**:

Documentation for dast dns changes as it become lagacy so old links in https://github.com/kubernetes-sigs/external-dns/blob/master/docs/tutorials/akamai-fastdns.md broken

**What you expected to happen**:

Fastdns documentation shoud redirect to correct documentation page

|

1.0

|

documentation for fast dns changed - <!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!

-->

**What happened**:

Documentation for dast dns changes as it become lagacy so old links in https://github.com/kubernetes-sigs/external-dns/blob/master/docs/tutorials/akamai-fastdns.md broken

**What you expected to happen**:

Fastdns documentation shoud redirect to correct documentation page

|

non_process

|

documentation for fast dns changed please use this template while reporting a bug and provide as much info as possible not doing so may result in your bug not being addressed in a timely manner thanks what happened documentation for dast dns changes as it become lagacy so old links in broken what you expected to happen fastdns documentation shoud redirect to correct documentation page

| 0

|

302,930

| 26,174,182,533

|

IssuesEvent

|

2023-01-02 07:15:55

|

BoBAdministration/QA-Bug-Reports

|

https://api.github.com/repos/BoBAdministration/QA-Bug-Reports

|

closed

|

Tapping E makes you auto drink

|

Fixed-PendingTesting

|

**Describe the Bug**

When you tap E (or use, whatever keybind you have) at a water source you can infinitely drink.

**To Reproduce**

1. Logged onto a test server

2. Go on any creture

3. Go to any water source

4. Tap the button to drink, not hold

**Expected behavior**

Tapping the use button while trying to drink should make it that you only drink for a second

**Actual behavior**

Tapping the use button at water makes you drink forever

**Screenshots & Video**

Showed Pred on stream

**Branch Version**

Tester and Live

**Additional Information**

I first thought you could drain ponds with the bug but turns out it only takes water until you're full water/sat but you stay in the drinking animation, so not as huge of an issue in that case.

|

1.0

|

Tapping E makes you auto drink - **Describe the Bug**

When you tap E (or use, whatever keybind you have) at a water source you can infinitely drink.

**To Reproduce**

1. Logged onto a test server

2. Go on any creture

3. Go to any water source

4. Tap the button to drink, not hold

**Expected behavior**

Tapping the use button while trying to drink should make it that you only drink for a second

**Actual behavior**

Tapping the use button at water makes you drink forever

**Screenshots & Video**

Showed Pred on stream

**Branch Version**

Tester and Live

**Additional Information**

I first thought you could drain ponds with the bug but turns out it only takes water until you're full water/sat but you stay in the drinking animation, so not as huge of an issue in that case.

|

non_process

|

tapping e makes you auto drink describe the bug when you tap e or use whatever keybind you have at a water source you can infinitely drink to reproduce logged onto a test server go on any creture go to any water source tap the button to drink not hold expected behavior tapping the use button while trying to drink should make it that you only drink for a second actual behavior tapping the use button at water makes you drink forever screenshots video showed pred on stream branch version tester and live additional information i first thought you could drain ponds with the bug but turns out it only takes water until you re full water sat but you stay in the drinking animation so not as huge of an issue in that case

| 0

|

8,378

| 11,525,777,586

|

IssuesEvent

|

2020-02-15 10:55:57

|

dotnet/runtime

|

https://api.github.com/repos/dotnet/runtime

|

closed

|

Make it possible to start a process suspened, and later resume it.

|

api-suggestion area-System.Diagnostics.Process

|

This would make it a lot easier to (for example) attach it to a job.

|

1.0

|

Make it possible to start a process suspened, and later resume it. - This would make it a lot easier to (for example) attach it to a job.

|

process

|

make it possible to start a process suspened and later resume it this would make it a lot easier to for example attach it to a job

| 1

|

80,989

| 15,613,862,545

|

IssuesEvent

|

2021-03-19 17:00:02

|

CliMA/RRTMGP.jl

|

https://api.github.com/repos/CliMA/RRTMGP.jl

|

closed

|

Add code coverage back in

|

code quality

|

It looks like code-coverage is no longer being reported, and we should add this back in.

|

1.0

|

Add code coverage back in - It looks like code-coverage is no longer being reported, and we should add this back in.

|

non_process

|

add code coverage back in it looks like code coverage is no longer being reported and we should add this back in

| 0

|

172,153

| 13,263,670,777

|

IssuesEvent

|

2020-08-21 01:16:58

|

omegaup/omegaup

|

https://api.github.com/repos/omegaup/omegaup

|

closed

|

[FEATURE] Hacer obligatorio el campo de lenguaje cuando creas un concurso

|

UX Task feature-request omegaUp for Contests

|

En https://omegaup.com/contest/new/:

* Por default todos los lenguajes deben de estar seleccionados.

* Si el usuario desmarca todos los lenguajes se le muestra un error.

|

1.0

|

[FEATURE] Hacer obligatorio el campo de lenguaje cuando creas un concurso - En https://omegaup.com/contest/new/:

* Por default todos los lenguajes deben de estar seleccionados.

* Si el usuario desmarca todos los lenguajes se le muestra un error.

|

non_process

|

hacer obligatorio el campo de lenguaje cuando creas un concurso en por default todos los lenguajes deben de estar seleccionados si el usuario desmarca todos los lenguajes se le muestra un error

| 0

|

506,413

| 14,664,483,700

|

IssuesEvent

|

2020-12-29 12:06:56

|

eXpandFramework/eXpand

|

https://api.github.com/repos/eXpandFramework/eXpand

|

closed

|

How can I debug this?

|

Priority Question ❤ Backer

|

From time to time, I found this exception in production environment (web app), any help to debug it?

System.ArgumentException: Nombre de tipo duplicado en un ensamblado.

en System.Reflection.Emit.ModuleBuilder.CheckTypeNameConflict(String strTypeName, Type enclosingType)

en System.Reflection.Emit.AssemblyBuilderData.CheckTypeNameConflict(String strTypeName, TypeBuilder enclosingType)

en System.Reflection.Emit.TypeBuilder.Init(String fullname, TypeAttributes attr, Type parent, Type[] interfaces, ModuleBuilder module, PackingSize iPackingSize, Int32 iTypeSize, TypeBuilder enclosingType)

en System.Reflection.Emit.ModuleBuilder.DefineType(String name, TypeAttributes attr, Type parent)

en Xpand.XAF.Modules.Reactive.Services.Actions.ActionsService.NewControllerType[T](String id) en D:\a\1\s\src\Modules\Reactive\Services\Actions\RegisterAction.cs:línea 170

en Xpand.XAF.Modules.Reactive.Services.Actions.ActionsService.RegisterAction[TController,TAction](ApplicationModulesManager applicationModulesManager, String id, Func`2 actionBase) en D:\a\1\s\src\Modules\Reactive\Services\Actions\RegisterAction.cs:línea 147

en Xpand.XAF.Modules.PositionInListview.SwapPositionInListViewService.<>c__DisplayClass4_0.b__0(String actionnId) en D:\a\1\s\src\Modules\PositionInListview\SwapPositionInListViewService.cs:línea 33

en System.Reactive.Linq.ObservableImpl.SelectMany`2.ObservableSelector._.OnNext(TSource value)

WEB

Void CheckTypeNameConflict(System.String, System.Type)

en System.Reflection.Emit.ModuleBuilder.CheckTypeNameConflict(String strTypeName, Type enclosingType)

en System.Reflection.Emit.AssemblyBuilderData.CheckTypeNameConflict(String strTypeName, TypeBuilder enclosingType)

en System.Reflection.Emit.TypeBuilder.Init(String fullname, TypeAttributes attr, Type parent, Type[] interfaces, ModuleBuilder module, PackingSize iPackingSize, Int32 iTypeSize, TypeBuilder enclosingType)

en System.Reflection.Emit.ModuleBuilder.DefineType(String name, TypeAttributes attr, Type parent)

en Xpand.XAF.Modules.Reactive.Services.Actions.ActionsService.NewControllerType[T](String id) en D:\a\1\s\src\Modules\Reactive\Services\Actions\RegisterAction.cs:línea 170

en Xpand.XAF.Modules.Reactive.Services.Actions.ActionsService.RegisterAction[TController,TAction](ApplicationModulesManager applicationModulesManager, String id, Func`2 actionBase) en D:\a\1\s\src\Modules\Reactive\Services\Actions\RegisterAction.cs:línea 147

en Xpand.XAF.Modules.PositionInListview.SwapPositionInListViewService.<>c__DisplayClass4_0.b__0(String actionnId) en D:\a\1\s\src\Modules\PositionInListview\SwapPositionInListViewService.cs:línea 33

en System.Reactive.Linq.ObservableImpl.SelectMany`2.ObservableSelector._.OnNext(TSource value)

|

1.0

|

How can I debug this? - From time to time, I found this exception in production environment (web app), any help to debug it?

System.ArgumentException: Nombre de tipo duplicado en un ensamblado.

en System.Reflection.Emit.ModuleBuilder.CheckTypeNameConflict(String strTypeName, Type enclosingType)

en System.Reflection.Emit.AssemblyBuilderData.CheckTypeNameConflict(String strTypeName, TypeBuilder enclosingType)

en System.Reflection.Emit.TypeBuilder.Init(String fullname, TypeAttributes attr, Type parent, Type[] interfaces, ModuleBuilder module, PackingSize iPackingSize, Int32 iTypeSize, TypeBuilder enclosingType)

en System.Reflection.Emit.ModuleBuilder.DefineType(String name, TypeAttributes attr, Type parent)

en Xpand.XAF.Modules.Reactive.Services.Actions.ActionsService.NewControllerType[T](String id) en D:\a\1\s\src\Modules\Reactive\Services\Actions\RegisterAction.cs:línea 170

en Xpand.XAF.Modules.Reactive.Services.Actions.ActionsService.RegisterAction[TController,TAction](ApplicationModulesManager applicationModulesManager, String id, Func`2 actionBase) en D:\a\1\s\src\Modules\Reactive\Services\Actions\RegisterAction.cs:línea 147

en Xpand.XAF.Modules.PositionInListview.SwapPositionInListViewService.<>c__DisplayClass4_0.b__0(String actionnId) en D:\a\1\s\src\Modules\PositionInListview\SwapPositionInListViewService.cs:línea 33

en System.Reactive.Linq.ObservableImpl.SelectMany`2.ObservableSelector._.OnNext(TSource value)

WEB

Void CheckTypeNameConflict(System.String, System.Type)

en System.Reflection.Emit.ModuleBuilder.CheckTypeNameConflict(String strTypeName, Type enclosingType)

en System.Reflection.Emit.AssemblyBuilderData.CheckTypeNameConflict(String strTypeName, TypeBuilder enclosingType)

en System.Reflection.Emit.TypeBuilder.Init(String fullname, TypeAttributes attr, Type parent, Type[] interfaces, ModuleBuilder module, PackingSize iPackingSize, Int32 iTypeSize, TypeBuilder enclosingType)

en System.Reflection.Emit.ModuleBuilder.DefineType(String name, TypeAttributes attr, Type parent)

en Xpand.XAF.Modules.Reactive.Services.Actions.ActionsService.NewControllerType[T](String id) en D:\a\1\s\src\Modules\Reactive\Services\Actions\RegisterAction.cs:línea 170

en Xpand.XAF.Modules.Reactive.Services.Actions.ActionsService.RegisterAction[TController,TAction](ApplicationModulesManager applicationModulesManager, String id, Func`2 actionBase) en D:\a\1\s\src\Modules\Reactive\Services\Actions\RegisterAction.cs:línea 147

en Xpand.XAF.Modules.PositionInListview.SwapPositionInListViewService.<>c__DisplayClass4_0.b__0(String actionnId) en D:\a\1\s\src\Modules\PositionInListview\SwapPositionInListViewService.cs:línea 33

en System.Reactive.Linq.ObservableImpl.SelectMany`2.ObservableSelector._.OnNext(TSource value)

|

non_process

|

how can i debug this from time to time i found this exception in production environment web app any help to debug it system argumentexception nombre de tipo duplicado en un ensamblado en system reflection emit modulebuilder checktypenameconflict string strtypename type enclosingtype en system reflection emit assemblybuilderdata checktypenameconflict string strtypename typebuilder enclosingtype en system reflection emit typebuilder init string fullname typeattributes attr type parent type interfaces modulebuilder module packingsize ipackingsize itypesize typebuilder enclosingtype en system reflection emit modulebuilder definetype string name typeattributes attr type parent en xpand xaf modules reactive services actions actionsservice newcontrollertype string id en d a s src modules reactive services actions registeraction cs línea en xpand xaf modules reactive services actions actionsservice registeraction applicationmodulesmanager applicationmodulesmanager string id func actionbase en d a s src modules reactive services actions registeraction cs línea en xpand xaf modules positioninlistview swappositioninlistviewservice c b string actionnid en d a s src modules positioninlistview swappositioninlistviewservice cs línea en system reactive linq observableimpl selectmany observableselector onnext tsource value web void checktypenameconflict system string system type en system reflection emit modulebuilder checktypenameconflict string strtypename type enclosingtype en system reflection emit assemblybuilderdata checktypenameconflict string strtypename typebuilder enclosingtype en system reflection emit typebuilder init string fullname typeattributes attr type parent type interfaces modulebuilder module packingsize ipackingsize itypesize typebuilder enclosingtype en system reflection emit modulebuilder definetype string name typeattributes attr type parent en xpand xaf modules reactive services actions actionsservice newcontrollertype string id en d a s src modules reactive services actions registeraction cs línea en xpand xaf modules reactive services actions actionsservice registeraction applicationmodulesmanager applicationmodulesmanager string id func actionbase en d a s src modules reactive services actions registeraction cs línea en xpand xaf modules positioninlistview swappositioninlistviewservice c b string actionnid en d a s src modules positioninlistview swappositioninlistviewservice cs línea en system reactive linq observableimpl selectmany observableselector onnext tsource value

| 0

|

12,550

| 14,976,333,471

|

IssuesEvent

|

2021-01-28 07:53:00

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

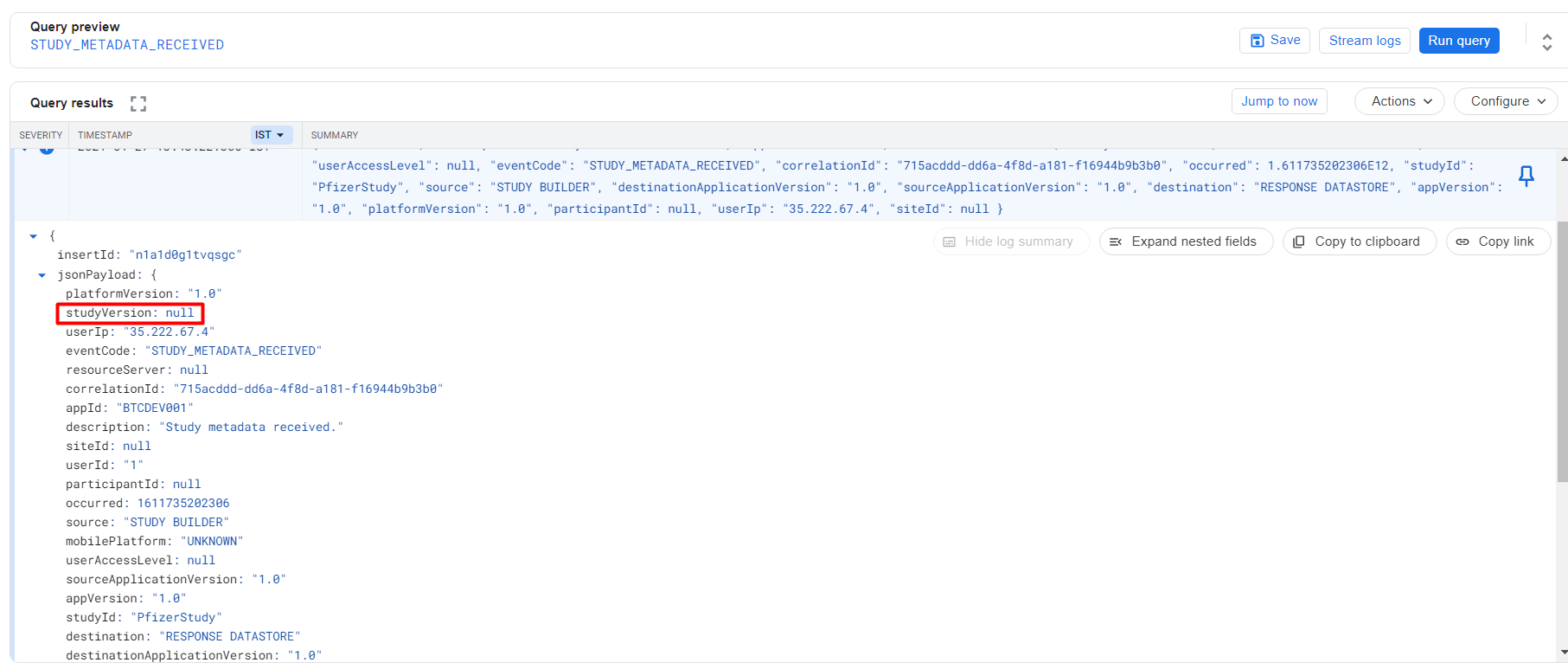

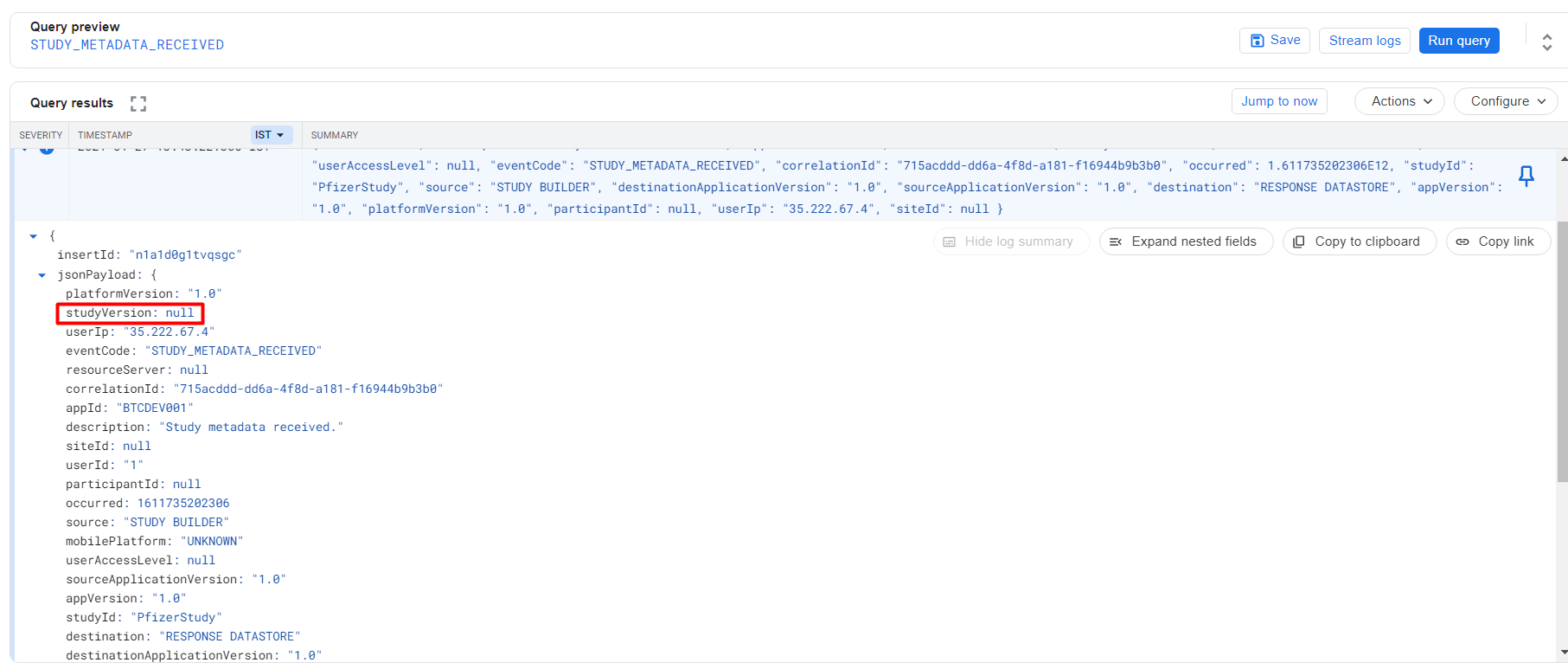

[Audit Logs] "studyVersion" is displayed null for the event in Response module

|

Bug P2 Process: Fixed Response datastore

|

Event:

STUDY_METADATA_RECEIVED

|

1.0

|

[Audit Logs] "studyVersion" is displayed null for the event in Response module - Event:

STUDY_METADATA_RECEIVED

|

process

|

studyversion is displayed null for the event in response module event study metadata received

| 1

|

11,640

| 14,496,618,192

|

IssuesEvent

|

2020-12-11 13:04:05

|

didi/mpx

|

https://api.github.com/repos/didi/mpx

|

closed

|

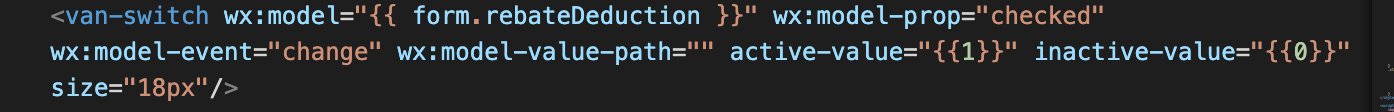

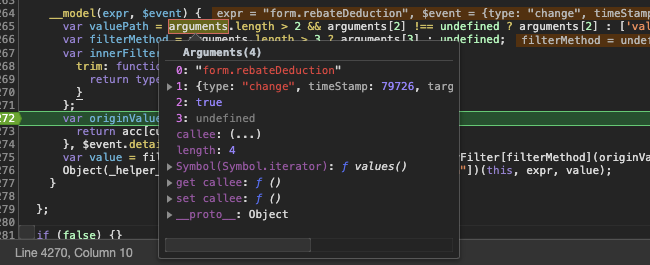

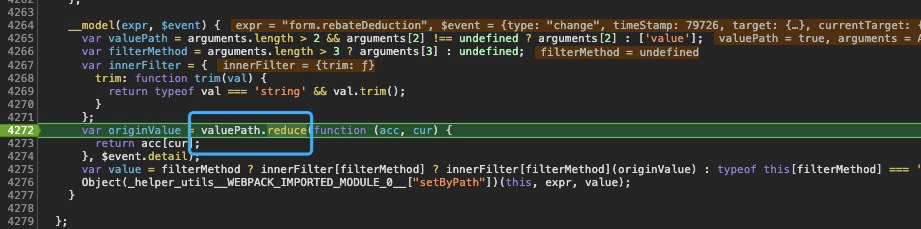

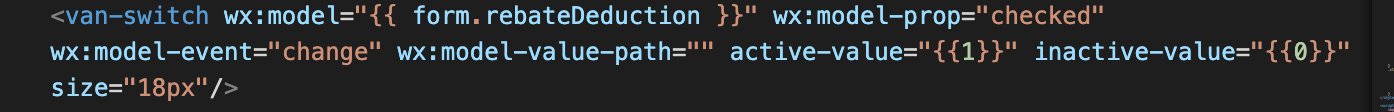

[Bug report]wx:model-value-path=""报错

|

processing

|

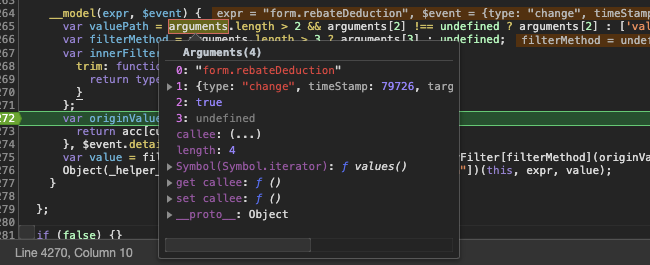

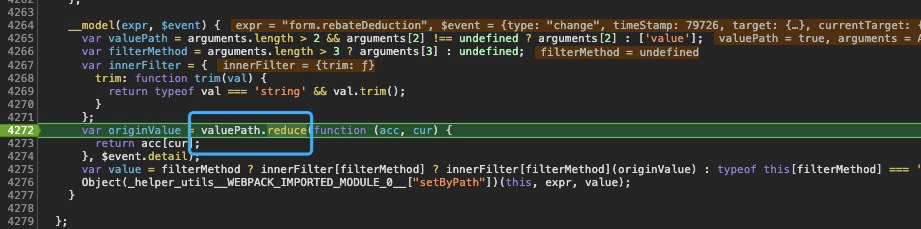

可以发现valuepath 这里为true 调用reduce就报错了,wx:model-value-path="[]" 没问题的

另外建议文档-api里 wx:model-value-path的链一下对应指南里的部分

|

1.0

|

[Bug report]wx:model-value-path=""报错 -

可以发现valuepath 这里为true 调用reduce就报错了,wx:model-value-path="[]" 没问题的

另外建议文档-api里 wx:model-value-path的链一下对应指南里的部分

|

process

|

wx model value path 报错 可以发现valuepath 这里为true 调用reduce就报错了 wx model value path 没问题的 另外建议文档 api里 wx model value path的链一下对应指南里的部分

| 1

|

287,675

| 8,818,181,568

|

IssuesEvent

|

2018-12-31 09:36:41

|

Veil-Project/veil

|

https://api.github.com/repos/Veil-Project/veil

|

opened

|

Daemon Crashes and corrupts wallet while mining.

|

core high priority wallet

|

ok you can get the debug log here https://veil.suprnova.cc/debug.log.2

and the wallet here https://veil.suprnova.cc/wallet.dat.2

just try to start the daemon with this wallet and it won't work

it will go to 100% cpu usage and simply do nothing

the only obvious errors i see in the logs are

2018-12-31T07:18:12Z ERROR: FindTx: Deserialize or I/O error - ReadCompactSize(): size too large: iostream error

2018-12-31T07:18:12Z ERROR: FindTx: Deserialize or I/O error - ReadCompactSize(): size too large: iostream error

2018-12-31T07:18:12Z ERROR: FindTx: Deserialize or I/O error - ReadCompactSize(): size too large: iostream error

2018-12-31T07:18:12Z UpdateTip: new best=f74862d1fbbd29087c606b7d7ea0333045d4015b52ab30cc2c7999c1f3a597cd height=9702 version=0x20000000 log2_work=60.34344 tx=16463 date='2018-12-31T06:34:48Z' progress=0.999463 cache=0.4MiB(2315txo)

2018-12-31T07:18:12Z ERROR: FindTx: txid mismatch

2018-12-31T07:18:12Z ERROR: FindTx: txid mismatch

|

1.0

|

Daemon Crashes and corrupts wallet while mining. - ok you can get the debug log here https://veil.suprnova.cc/debug.log.2

and the wallet here https://veil.suprnova.cc/wallet.dat.2

just try to start the daemon with this wallet and it won't work

it will go to 100% cpu usage and simply do nothing

the only obvious errors i see in the logs are

2018-12-31T07:18:12Z ERROR: FindTx: Deserialize or I/O error - ReadCompactSize(): size too large: iostream error

2018-12-31T07:18:12Z ERROR: FindTx: Deserialize or I/O error - ReadCompactSize(): size too large: iostream error

2018-12-31T07:18:12Z ERROR: FindTx: Deserialize or I/O error - ReadCompactSize(): size too large: iostream error

2018-12-31T07:18:12Z UpdateTip: new best=f74862d1fbbd29087c606b7d7ea0333045d4015b52ab30cc2c7999c1f3a597cd height=9702 version=0x20000000 log2_work=60.34344 tx=16463 date='2018-12-31T06:34:48Z' progress=0.999463 cache=0.4MiB(2315txo)

2018-12-31T07:18:12Z ERROR: FindTx: txid mismatch

2018-12-31T07:18:12Z ERROR: FindTx: txid mismatch

|

non_process

|

daemon crashes and corrupts wallet while mining ok you can get the debug log here and the wallet here just try to start the daemon with this wallet and it won t work it will go to cpu usage and simply do nothing the only obvious errors i see in the logs are error findtx deserialize or i o error readcompactsize size too large iostream error error findtx deserialize or i o error readcompactsize size too large iostream error error findtx deserialize or i o error readcompactsize size too large iostream error updatetip new best height version work tx date progress cache error findtx txid mismatch error findtx txid mismatch

| 0

|

12,273

| 3,061,890,203

|

IssuesEvent

|

2015-08-16 01:12:01

|

oppia/oppia

|

https://api.github.com/repos/oppia/oppia

|

closed

|

Bring the "featured exploration" flow within the site

|

feature: important ref: frontend/editor TODO: design doc

|

```

What steps will reproduce the problem?

1. Create a new exploration and publish it.

2. Click on "Nominate for featured status".

What is the expected output? What do you see instead?

A modal pops up and says "please write to this forum". This seems like too much

hassle and is a bit of a weird flow.

Instead it would be nicer for the nomination to be recorded in the moderator

queue, and an email to be automatically sent to moderators, when someone clicks

the button. There should also be a mechanism for the person who clicked the

button to get a reply from the moderator.

```

Original issue reported on code.google.com by `s...@seanlip.org` on 1 Dec 2014 at 5:02

|

1.0

|

Bring the "featured exploration" flow within the site - ```

What steps will reproduce the problem?

1. Create a new exploration and publish it.

2. Click on "Nominate for featured status".

What is the expected output? What do you see instead?

A modal pops up and says "please write to this forum". This seems like too much

hassle and is a bit of a weird flow.

Instead it would be nicer for the nomination to be recorded in the moderator

queue, and an email to be automatically sent to moderators, when someone clicks

the button. There should also be a mechanism for the person who clicked the

button to get a reply from the moderator.

```

Original issue reported on code.google.com by `s...@seanlip.org` on 1 Dec 2014 at 5:02

|

non_process

|

bring the featured exploration flow within the site what steps will reproduce the problem create a new exploration and publish it click on nominate for featured status what is the expected output what do you see instead a modal pops up and says please write to this forum this seems like too much hassle and is a bit of a weird flow instead it would be nicer for the nomination to be recorded in the moderator queue and an email to be automatically sent to moderators when someone clicks the button there should also be a mechanism for the person who clicked the button to get a reply from the moderator original issue reported on code google com by s seanlip org on dec at

| 0

|

7,370

| 10,512,610,703

|

IssuesEvent

|

2019-09-27 18:21:40

|

openopps/openopps-platform

|

https://api.github.com/repos/openopps/openopps-platform

|

closed

|

Bug: Experience dates display incorrectly on application review page

|

Apply Process Bug State Dept.

|

Environment: Production

Issue: Work experience start and end dates are displaying incorrectly on the application review page

Steps to reproduce:

Jot down work experience start and end dates in USAJOBS

Apply for an internship in Open Opps

Go to the review page of your application and the start and end dates display one month off

Related ticket: 3947

|

1.0

|

Bug: Experience dates display incorrectly on application review page - Environment: Production

Issue: Work experience start and end dates are displaying incorrectly on the application review page

Steps to reproduce:

Jot down work experience start and end dates in USAJOBS

Apply for an internship in Open Opps

Go to the review page of your application and the start and end dates display one month off

Related ticket: 3947

|

process

|

bug experience dates display incorrectly on application review page environment production issue work experience start and end dates are displaying incorrectly on the application review page steps to reproduce jot down work experience start and end dates in usajobs apply for an internship in open opps go to the review page of your application and the start and end dates display one month off related ticket

| 1

|

5,341

| 8,167,601,147

|

IssuesEvent

|

2018-08-26 01:10:17

|

MobileOrg/mobileorg

|

https://api.github.com/repos/MobileOrg/mobileorg

|

opened

|

Fastlane tools

|

development process

|

Use fastlane.tools for build/release to automate more. Releasing to testflight should be fully automated.

* https://fastlane.tools/

|

1.0

|

Fastlane tools - Use fastlane.tools for build/release to automate more. Releasing to testflight should be fully automated.

* https://fastlane.tools/

|

process

|

fastlane tools use fastlane tools for build release to automate more releasing to testflight should be fully automated

| 1

|

5,444

| 8,306,330,490

|

IssuesEvent

|

2018-09-22 17:33:11

|

emacs-ess/ESS

|

https://api.github.com/repos/emacs-ess/ESS

|

closed

|

turn off undo for process buffer

|

process

|

I always get a warning when running loops that print something:

`Warning (undo): Buffer `*julia*' undo info was 12560552 bytes long.

The undo info was discarded because it exceeded `undo-outer-limit'.`

I cannot think of a case where undoing something in the process buffer would be useful. So why not disable undo there completely? This also speeds things up if printing a lot into the REPL.

This can easily be done by `(add-hook 'inferior-ess-mode-hook 'buffer-disable-undo)` in the `.emacs`, but I think, there should be an option/documentation in ESS to do this automatically for process buffers.

|

1.0

|

turn off undo for process buffer - I always get a warning when running loops that print something:

`Warning (undo): Buffer `*julia*' undo info was 12560552 bytes long.

The undo info was discarded because it exceeded `undo-outer-limit'.`

I cannot think of a case where undoing something in the process buffer would be useful. So why not disable undo there completely? This also speeds things up if printing a lot into the REPL.

This can easily be done by `(add-hook 'inferior-ess-mode-hook 'buffer-disable-undo)` in the `.emacs`, but I think, there should be an option/documentation in ESS to do this automatically for process buffers.

|

process

|

turn off undo for process buffer i always get a warning when running loops that print something warning undo buffer julia undo info was bytes long the undo info was discarded because it exceeded undo outer limit i cannot think of a case where undoing something in the process buffer would be useful so why not disable undo there completely this also speeds things up if printing a lot into the repl this can easily be done by add hook inferior ess mode hook buffer disable undo in the emacs but i think there should be an option documentation in ess to do this automatically for process buffers

| 1

|

1,184

| 3,687,011,074

|

IssuesEvent

|

2016-02-25 05:22:41

|

dita-ot/dita-ot

|

https://api.github.com/repos/dita-ot/dita-ot

|

closed

|

Keyscope + branch filtering potential bug

|

bug needs-reproduction P2 preprocess/filtering

|

Related to this discussion I had no the DITA specs list:

https://lists.oasis-open.org/archives/dita-comment/201602/msg00001.html

So in one place of the DITA Map I have:

<topichead navtitle="Test" collection-type="choice">

<ditavalref href="topics/getting_started/install_windows.ditaval">

<ditavalmeta>

<dvrResourcePrefix>windows-</dvrResourcePrefix>

<dvrKeyscopePrefix>windows_scope-</dvrKeyscopePrefix>

</ditavalmeta>

</ditavalref>

<topicref href="topics/install.dita"

keys="topicref_install"

keyscope="scope_install"/>

</topichead>

and in some other place I have a keyref like:

<topicref

keyref="windows_scope-scope_install.topicref_install"/>

From what I understand the keyref is invalid because the "windows_scope-" prefix must only be applied on the @keyscope attribute specified on the topichead in which the ditavalref is placed.

But the DITA OT 2.x processes the keyref as valid and finds the target topic.

As the @keyscope is missing on the topichead, according to the specs the @keyscope on the topichead will become "windows-" so the proper way to have the keyref would be:

<topicref

keyref="windows_scope-.scope_install_select_parallel.topicref_install_select_parallel"/>

which currently does not work using DITA OT 2.x.

What's your opinion on this?

|

1.0

|

Keyscope + branch filtering potential bug - Related to this discussion I had no the DITA specs list:

https://lists.oasis-open.org/archives/dita-comment/201602/msg00001.html

So in one place of the DITA Map I have:

<topichead navtitle="Test" collection-type="choice">

<ditavalref href="topics/getting_started/install_windows.ditaval">

<ditavalmeta>

<dvrResourcePrefix>windows-</dvrResourcePrefix>

<dvrKeyscopePrefix>windows_scope-</dvrKeyscopePrefix>

</ditavalmeta>

</ditavalref>

<topicref href="topics/install.dita"

keys="topicref_install"

keyscope="scope_install"/>

</topichead>

and in some other place I have a keyref like:

<topicref

keyref="windows_scope-scope_install.topicref_install"/>

From what I understand the keyref is invalid because the "windows_scope-" prefix must only be applied on the @keyscope attribute specified on the topichead in which the ditavalref is placed.

But the DITA OT 2.x processes the keyref as valid and finds the target topic.

As the @keyscope is missing on the topichead, according to the specs the @keyscope on the topichead will become "windows-" so the proper way to have the keyref would be:

<topicref

keyref="windows_scope-.scope_install_select_parallel.topicref_install_select_parallel"/>

which currently does not work using DITA OT 2.x.

What's your opinion on this?

|

process

|

keyscope branch filtering potential bug related to this discussion i had no the dita specs list so in one place of the dita map i have windows windows scope topicref href topics install dita keys topicref install keyscope scope install and in some other place i have a keyref like topicref keyref windows scope scope install topicref install from what i understand the keyref is invalid because the windows scope prefix must only be applied on the keyscope attribute specified on the topichead in which the ditavalref is placed but the dita ot x processes the keyref as valid and finds the target topic as the keyscope is missing on the topichead according to the specs the keyscope on the topichead will become windows so the proper way to have the keyref would be topicref keyref windows scope scope install select parallel topicref install select parallel which currently does not work using dita ot x what s your opinion on this

| 1

|

180,557

| 13,937,210,133

|

IssuesEvent

|

2020-10-22 13:53:32

|

root-project/root

|

https://api.github.com/repos/root-project/root

|

closed

|

Unable to install pytest on MacOS with python2

|

bug in:Testing

|

We removed the pytest shipped with roottest because the source code was from 2014 and incompatible with py3.9 (see #6597). However, this poses now the issue on MacOs with python2 that we have to install pytest. Without a virtual environment, MacOS does not allow to pip packages. Since roottest fails on configuration level without pytest, roottest is currently broken in this configuration.

@axel @oshadura What should we do? Our CI always runs roottest against python3, so we currently don't see the issue in our infrastructure. I see three options:

1. Ditch testing of python2 on MacOS and rely on the test coverage of other platforms (python2 is anyway dead)

2. Use a venv overlay in Jenkins for the MacOS nodes (haven't tested but it should work and is binary compatible with the system python)

3. We change the hard failure of roottest to a soft failure.

|

1.0

|

Unable to install pytest on MacOS with python2 - We removed the pytest shipped with roottest because the source code was from 2014 and incompatible with py3.9 (see #6597). However, this poses now the issue on MacOs with python2 that we have to install pytest. Without a virtual environment, MacOS does not allow to pip packages. Since roottest fails on configuration level without pytest, roottest is currently broken in this configuration.

@axel @oshadura What should we do? Our CI always runs roottest against python3, so we currently don't see the issue in our infrastructure. I see three options:

1. Ditch testing of python2 on MacOS and rely on the test coverage of other platforms (python2 is anyway dead)

2. Use a venv overlay in Jenkins for the MacOS nodes (haven't tested but it should work and is binary compatible with the system python)

3. We change the hard failure of roottest to a soft failure.

|

non_process

|

unable to install pytest on macos with we removed the pytest shipped with roottest because the source code was from and incompatible with see however this poses now the issue on macos with that we have to install pytest without a virtual environment macos does not allow to pip packages since roottest fails on configuration level without pytest roottest is currently broken in this configuration axel oshadura what should we do our ci always runs roottest against so we currently don t see the issue in our infrastructure i see three options ditch testing of on macos and rely on the test coverage of other platforms is anyway dead use a venv overlay in jenkins for the macos nodes haven t tested but it should work and is binary compatible with the system python we change the hard failure of roottest to a soft failure

| 0

|

537,762

| 15,736,848,622

|

IssuesEvent

|

2021-03-30 01:34:35

|

musescore/MuseScore

|

https://api.github.com/repos/musescore/MuseScore

|

opened

|

[MU4 Issue] Scores should open on first page even if previous score was closed on a different page

|

Low Priority

|

**Describe the bug**

If user closes score on the second or upper page and then open another score, it will be opened from those page which was opened on a previous closed score

**To Reproduce**

Steps to reproduce the behavior:

1. Create a score with at least 2 pages

2. Close the score on the 2-nd page or higher

3. Open new score with at least 2 pages > score will be opened on the 2-nd page

**Expected behavior**

Scores should open on first page even if previous score was closed on a different page

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Desktop (please complete the following information):**

MacOS

**Additional context**

Add any other context about the problem here.

|

1.0

|

[MU4 Issue] Scores should open on first page even if previous score was closed on a different page - **Describe the bug**

If user closes score on the second or upper page and then open another score, it will be opened from those page which was opened on a previous closed score

**To Reproduce**

Steps to reproduce the behavior:

1. Create a score with at least 2 pages

2. Close the score on the 2-nd page or higher

3. Open new score with at least 2 pages > score will be opened on the 2-nd page

**Expected behavior**

Scores should open on first page even if previous score was closed on a different page

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Desktop (please complete the following information):**

MacOS

**Additional context**

Add any other context about the problem here.

|

non_process

|

scores should open on first page even if previous score was closed on a different page describe the bug if user closes score on the second or upper page and then open another score it will be opened from those page which was opened on a previous closed score to reproduce steps to reproduce the behavior create a score with at least pages close the score on the nd page or higher open new score with at least pages score will be opened on the nd page expected behavior scores should open on first page even if previous score was closed on a different page screenshots if applicable add screenshots to help explain your problem desktop please complete the following information macos additional context add any other context about the problem here

| 0

|

14,681

| 17,797,906,223

|

IssuesEvent

|

2021-09-01 02:01:23

|

Leviatan-Analytics/LA-data-processing

|

https://api.github.com/repos/Leviatan-Analytics/LA-data-processing

|

closed

|

Improve ward detection model [5]

|

Data Processing Week 4 Sprint 3

|

Re label images and find ways to improve ward detection model metrics:

- Processing time

- Model accuracy

|

1.0

|

Improve ward detection model [5] - Re label images and find ways to improve ward detection model metrics:

- Processing time

- Model accuracy

|

process

|

improve ward detection model re label images and find ways to improve ward detection model metrics processing time model accuracy

| 1

|

69,026

| 7,122,171,911

|

IssuesEvent

|

2018-01-19 10:50:38

|

rancher/rancher

|

https://api.github.com/repos/rancher/rancher

|

closed

|

rancher-cli install creates empty stack without services

|

area/cli status/resolved status/to-test

|

I've a local rancher installation. I've created an evironment and a API Key for it. I also have a custom catalog. If I now try to create a new stack using the following command line:

``

rancher catalog install customcat/testitem:1.0 --name test

``

the stack is successfully created, but does not contain any services. If I do the same using the GUI I get the expected services in my stack. Using --debug doesn't give any useful additional informations and the logs are empty either.

Am I missing something?

Rancher-CLI Version: 0.6.2 (current version offered as download)

---

| Useful | Info |

| :-- | :-- |

|Versions|Rancher `v1.6.0` Cattle: `v0.179.7` UI: `v1.6.1` Rancher-CLI: `v0.6.2`|

|Access|`ldap` `admin`|

|Orchestration|`Cattle`|

|Route|`stacks.index`|

|

1.0

|

rancher-cli install creates empty stack without services - I've a local rancher installation. I've created an evironment and a API Key for it. I also have a custom catalog. If I now try to create a new stack using the following command line:

``

rancher catalog install customcat/testitem:1.0 --name test

``

the stack is successfully created, but does not contain any services. If I do the same using the GUI I get the expected services in my stack. Using --debug doesn't give any useful additional informations and the logs are empty either.

Am I missing something?

Rancher-CLI Version: 0.6.2 (current version offered as download)

---

| Useful | Info |

| :-- | :-- |

|Versions|Rancher `v1.6.0` Cattle: `v0.179.7` UI: `v1.6.1` Rancher-CLI: `v0.6.2`|

|Access|`ldap` `admin`|

|Orchestration|`Cattle`|

|Route|`stacks.index`|

|

non_process

|

rancher cli install creates empty stack without services i ve a local rancher installation i ve created an evironment and a api key for it i also have a custom catalog if i now try to create a new stack using the following command line rancher catalog install customcat testitem name test the stack is successfully created but does not contain any services if i do the same using the gui i get the expected services in my stack using debug doesn t give any useful additional informations and the logs are empty either am i missing something rancher cli version current version offered as download useful info versions rancher cattle ui rancher cli access ldap admin orchestration cattle route stacks index

| 0

|

9,594

| 12,543,045,137

|

IssuesEvent

|

2020-06-05 14:56:21

|

prisma/prisma

|

https://api.github.com/repos/prisma/prisma

|

opened

|

Improve validation message for @@unique

|

kind/improvement process/candidate topic: errors

|

```prisma

// Specify a multi-field unique attribute that includes a relation field

model Post {

id Int @default(autoincrement())

author User @relation(fields: [authorId], references: [id])

authorId Int

title String

published Boolean @default(false)

@@unique([author, title])

}

model User {

id Int @id @default(autoincrement())

email String @unique

posts Post[]

}

```

Current message for this schema

`Error validating model "Post": The unique index definition refers to the relation fields author. Index definitions must reference only scalar fields.`

Here the fix is to replace `@@unique([author, title])` by `@@unique([authorId, title])` so the message could mention it maybe?

Discussion with @do4gr https://prisma-company.slack.com/archives/C5Z9TH6N9/p1591348814019400

|

1.0

|

Improve validation message for @@unique - ```prisma

// Specify a multi-field unique attribute that includes a relation field

model Post {

id Int @default(autoincrement())

author User @relation(fields: [authorId], references: [id])

authorId Int

title String

published Boolean @default(false)

@@unique([author, title])

}

model User {

id Int @id @default(autoincrement())

email String @unique

posts Post[]

}

```

Current message for this schema

`Error validating model "Post": The unique index definition refers to the relation fields author. Index definitions must reference only scalar fields.`

Here the fix is to replace `@@unique([author, title])` by `@@unique([authorId, title])` so the message could mention it maybe?

Discussion with @do4gr https://prisma-company.slack.com/archives/C5Z9TH6N9/p1591348814019400

|

process

|

improve validation message for unique prisma specify a multi field unique attribute that includes a relation field model post id int default autoincrement author user relation fields references authorid int title string published boolean default false unique model user id int id default autoincrement email string unique posts post current message for this schema error validating model post the unique index definition refers to the relation fields author index definitions must reference only scalar fields here the fix is to replace unique by unique so the message could mention it maybe discussion with

| 1

|

89,670

| 18,019,568,097

|

IssuesEvent

|

2021-09-16 17:36:22

|

WordPress/openverse-frontend

|

https://api.github.com/repos/WordPress/openverse-frontend

|

closed

|

[Bug] Managing playback of multiple media files

|

🟧 priority: high 🛠 goal: fix 💻 aspect: code

|

## Description

<!-- Concisely describe the bug. -->

The current setup allows for multiple audio files to be played concurrently, which is a bad user experience.

## Reproduction

<!-- Provide detailed steps to reproduce the bug. -->

1. View any page with multiple audio players

2. Press play on multiple audio players

3. Listen to the resulting 'chaos orchestra'

## Expectation

<!-- Concisely describe what you expected to happen. -->

When pressing 'play' on an audio file, if there is _already_ an active audio file it should be paused.

## Screenshots

<!-- Add screenshots to show the problem; or delete the section entirely. -->

## Resolution

<!-- Replace the [ ] with [x] to check the box. -->

I have proposed a solution in #183.

- [ ] 🙋 I would be interested in resolving this bug.

|

1.0

|

[Bug] Managing playback of multiple media files - ## Description

<!-- Concisely describe the bug. -->

The current setup allows for multiple audio files to be played concurrently, which is a bad user experience.

## Reproduction

<!-- Provide detailed steps to reproduce the bug. -->

1. View any page with multiple audio players

2. Press play on multiple audio players

3. Listen to the resulting 'chaos orchestra'

## Expectation

<!-- Concisely describe what you expected to happen. -->

When pressing 'play' on an audio file, if there is _already_ an active audio file it should be paused.

## Screenshots

<!-- Add screenshots to show the problem; or delete the section entirely. -->

## Resolution

<!-- Replace the [ ] with [x] to check the box. -->

I have proposed a solution in #183.

- [ ] 🙋 I would be interested in resolving this bug.

|

non_process

|

managing playback of multiple media files description the current setup allows for multiple audio files to be played concurrently which is a bad user experience reproduction view any page with multiple audio players press play on multiple audio players listen to the resulting chaos orchestra expectation when pressing play on an audio file if there is already an active audio file it should be paused screenshots resolution i have proposed a solution in 🙋 i would be interested in resolving this bug

| 0

|

7,806

| 10,960,891,835

|

IssuesEvent

|

2019-11-27 14:25:50

|

codeuniversity/smag-mvp

|

https://api.github.com/repos/codeuniversity/smag-mvp

|

opened

|

Figure out favorite bands of users and find associated photos

|

Image Processing

|

As part of the interests, it would be nice to find musicians the user is interested and get representative pictures of them.

|

1.0

|

Figure out favorite bands of users and find associated photos - As part of the interests, it would be nice to find musicians the user is interested and get representative pictures of them.

|

process

|

figure out favorite bands of users and find associated photos as part of the interests it would be nice to find musicians the user is interested and get representative pictures of them

| 1

|

57,596

| 14,163,858,424

|

IssuesEvent

|

2020-11-12 03:26:55

|

woocommerce/woocommerce-admin

|

https://api.github.com/repos/woocommerce/woocommerce-admin

|

closed

|

e2e Testing: Set up Puppeteer master issue

|

Build [Type] Task [estimate] 13

|

End to end (e2e) testing automates user flows of navigating apps by simulating clicks and selections.

Add Puppeteer infrastructure and tests so that we can check that reports, pages, and filtering are functioning as they should.

Why Puppeteer? Core will eventually migrate in that direction (p7bje6-1ne-p2).

### Tasks

- [x] Implement initial Puppeteer architecture #4343

- [x] Write a simple test to test Puppeteer config #4343

- [x] Integrate [Gutenberg’s WP util functions package](https://github.com/WordPress/gutenberg/tree/master/packages/e2e-test-utils) #4343

- [x] Write documentation on e2e test suite config #4343

- [ ] Create baseline tests for each report and page. Ensure elements are loading correctly.

- [ ] Set up Testing in different browsers

- [x] Integrate with Travis CI #4343

- [ ] Integrate with Slack/email/(something else?) to deliver a notice with screenshot of failed test

- [ ] Identify and create tests for complex flows, ie filtering or settings manipulation.

|

1.0

|

e2e Testing: Set up Puppeteer master issue - End to end (e2e) testing automates user flows of navigating apps by simulating clicks and selections.

Add Puppeteer infrastructure and tests so that we can check that reports, pages, and filtering are functioning as they should.

Why Puppeteer? Core will eventually migrate in that direction (p7bje6-1ne-p2).

### Tasks

- [x] Implement initial Puppeteer architecture #4343

- [x] Write a simple test to test Puppeteer config #4343

- [x] Integrate [Gutenberg’s WP util functions package](https://github.com/WordPress/gutenberg/tree/master/packages/e2e-test-utils) #4343

- [x] Write documentation on e2e test suite config #4343

- [ ] Create baseline tests for each report and page. Ensure elements are loading correctly.

- [ ] Set up Testing in different browsers

- [x] Integrate with Travis CI #4343

- [ ] Integrate with Slack/email/(something else?) to deliver a notice with screenshot of failed test

- [ ] Identify and create tests for complex flows, ie filtering or settings manipulation.

|

non_process

|

testing set up puppeteer master issue end to end testing automates user flows of navigating apps by simulating clicks and selections add puppeteer infrastructure and tests so that we can check that reports pages and filtering are functioning as they should why puppeteer core will eventually migrate in that direction tasks implement initial puppeteer architecture write a simple test to test puppeteer config integrate write documentation on test suite config create baseline tests for each report and page ensure elements are loading correctly set up testing in different browsers integrate with travis ci integrate with slack email something else to deliver a notice with screenshot of failed test identify and create tests for complex flows ie filtering or settings manipulation

| 0

|

182,691

| 21,673,922,027

|

IssuesEvent

|

2022-05-08 12:05:29

|

turkdevops/vscode

|

https://api.github.com/repos/turkdevops/vscode

|

closed

|

WS-2018-0069 (High) detected in is-my-json-valid-2.16.1.tgz - autoclosed

|

security vulnerability

|

## WS-2018-0069 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>is-my-json-valid-2.16.1.tgz</b></p></summary>

<p>A JSONSchema validator that uses code generation to be extremely fast</p>

<p>Library home page: <a href="https://registry.npmjs.org/is-my-json-valid/-/is-my-json-valid-2.16.1.tgz">https://registry.npmjs.org/is-my-json-valid/-/is-my-json-valid-2.16.1.tgz</a></p>

<p>Path to dependency file: /extensions/emmet/package.json</p>