Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

501,294

| 14,525,182,313

|

IssuesEvent

|

2020-12-14 12:34:15

|

StrangeLoopGames/EcoIssues

|

https://api.github.com/repos/StrangeLoopGames/EcoIssues

|

opened

|

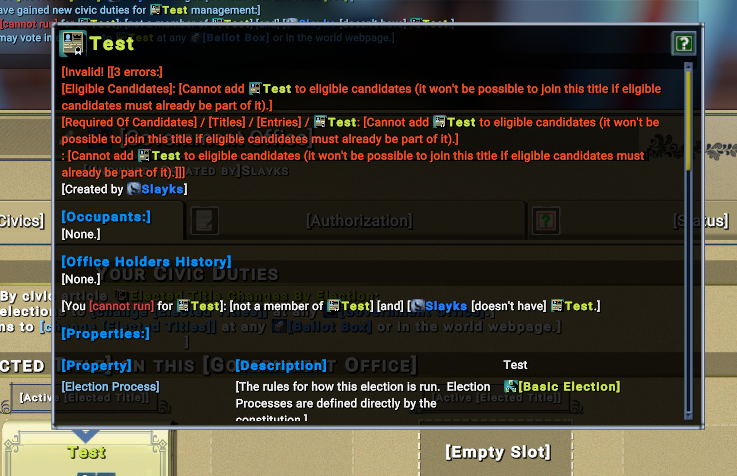

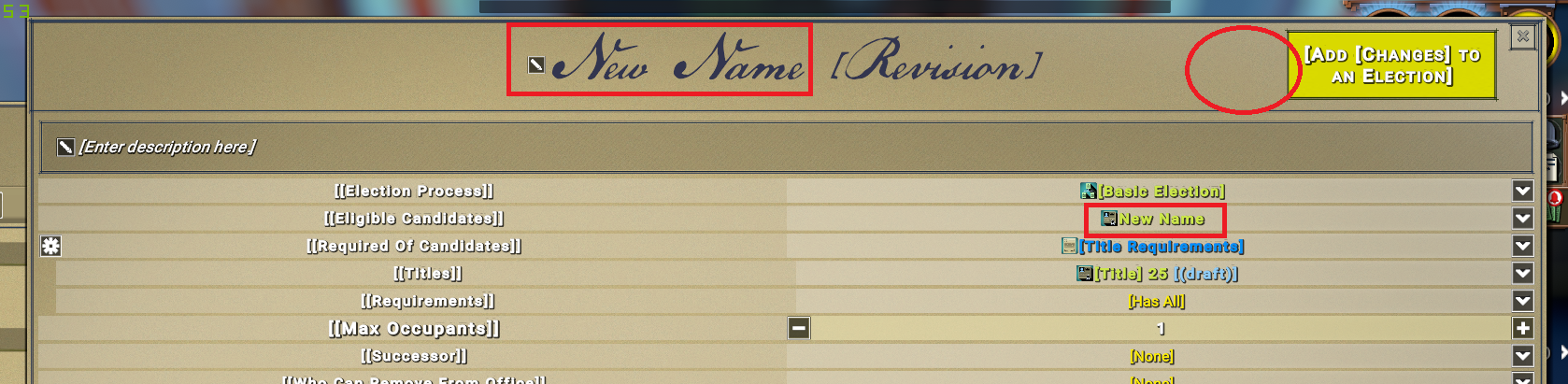

[0.9.2 staging-1872] Can make title with errors.

|

Category: Laws Priority: High

|

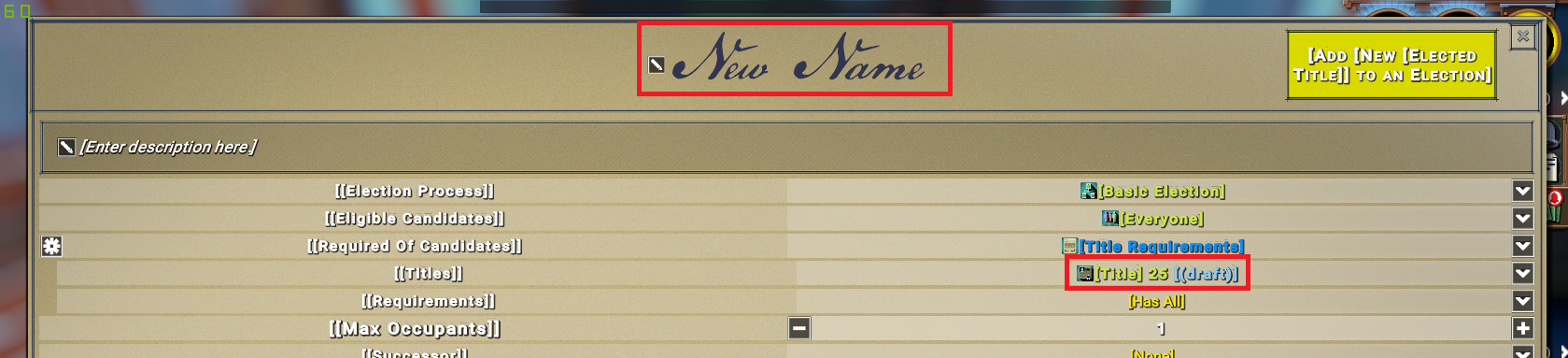

Step to reproduce:

- [ ] 1. Error with title requirement:

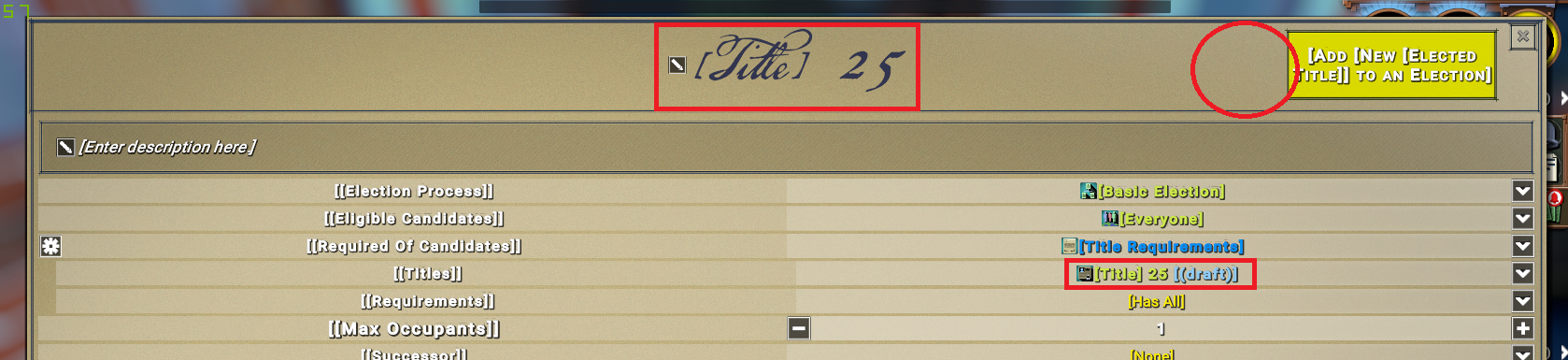

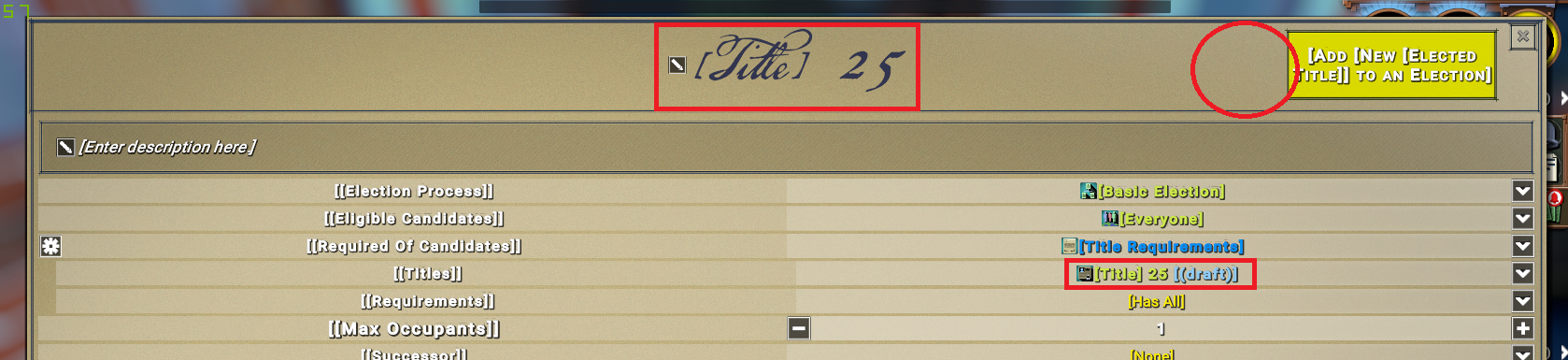

- Start to create a new title. Don't change name:

- add this title 25(draft) to title requirement, I should have exclamation mark, but I don't have:

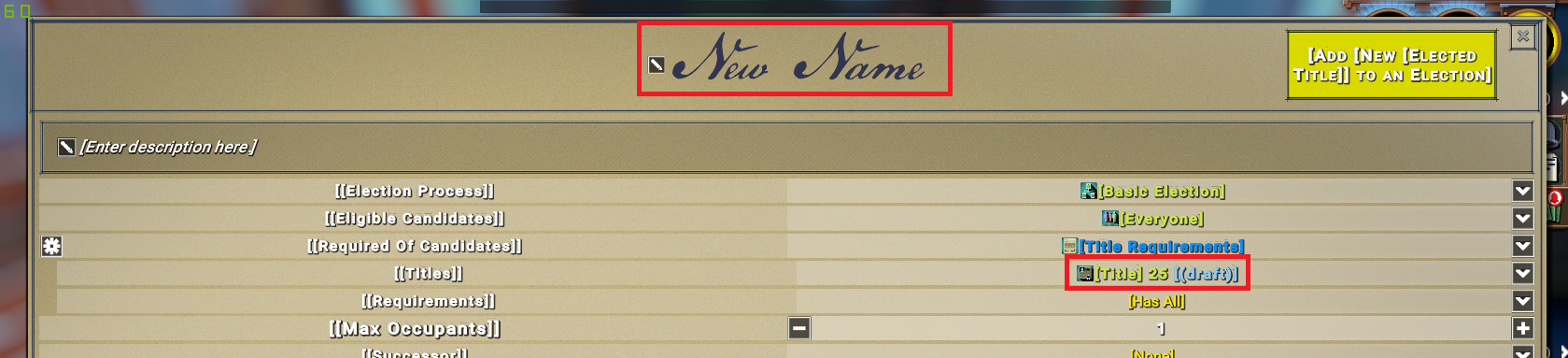

- A slightly different problem, but I think it's related - Rename this title, The title will change but will not change in the Title requirements:

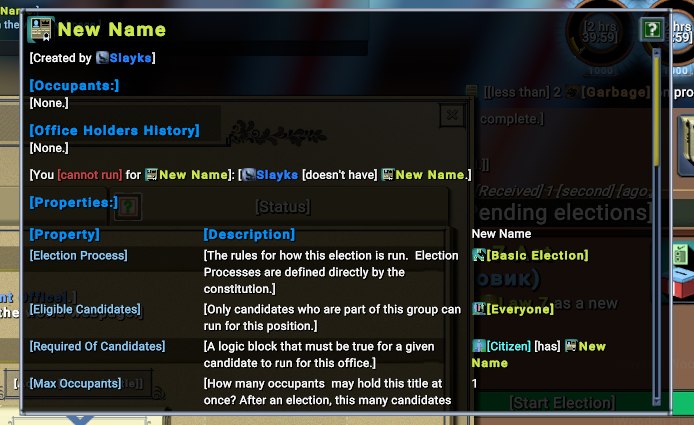

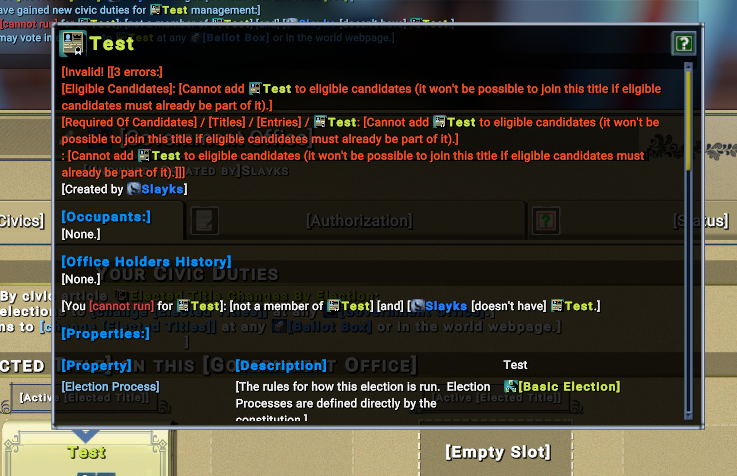

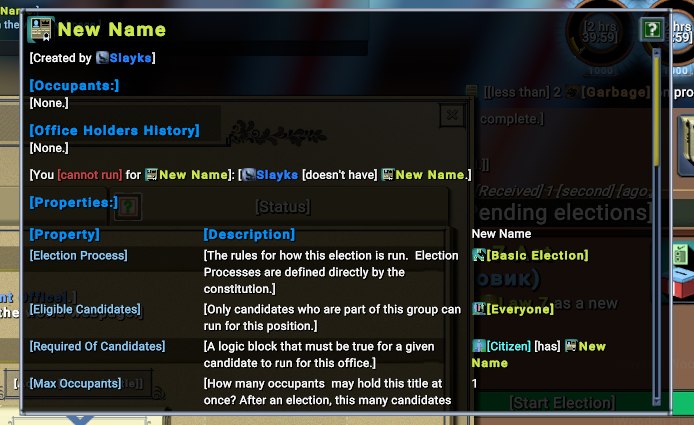

- start election and win, we have this title:

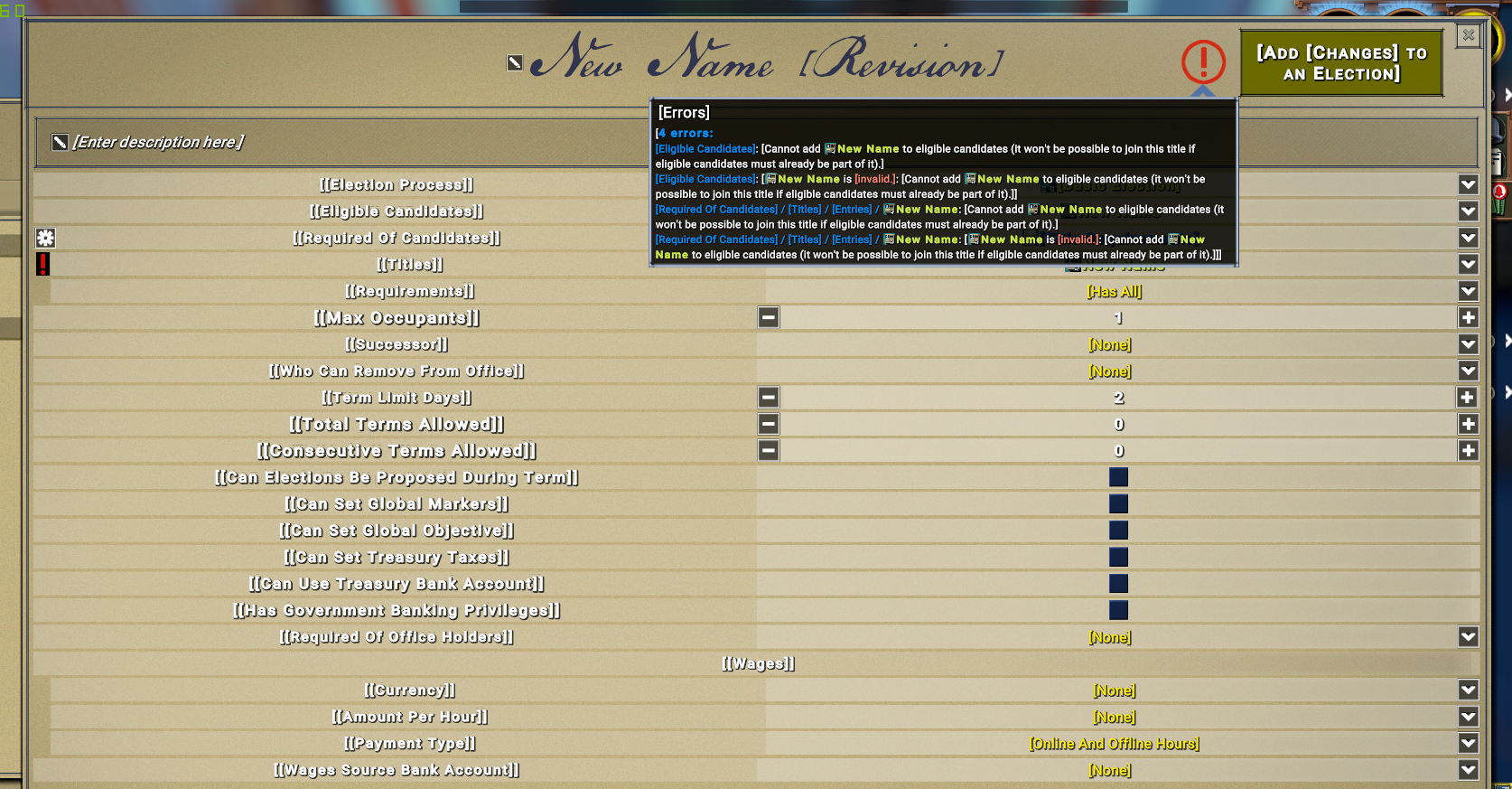

- [ ] 2. Error with revision in Eligable Candidates:

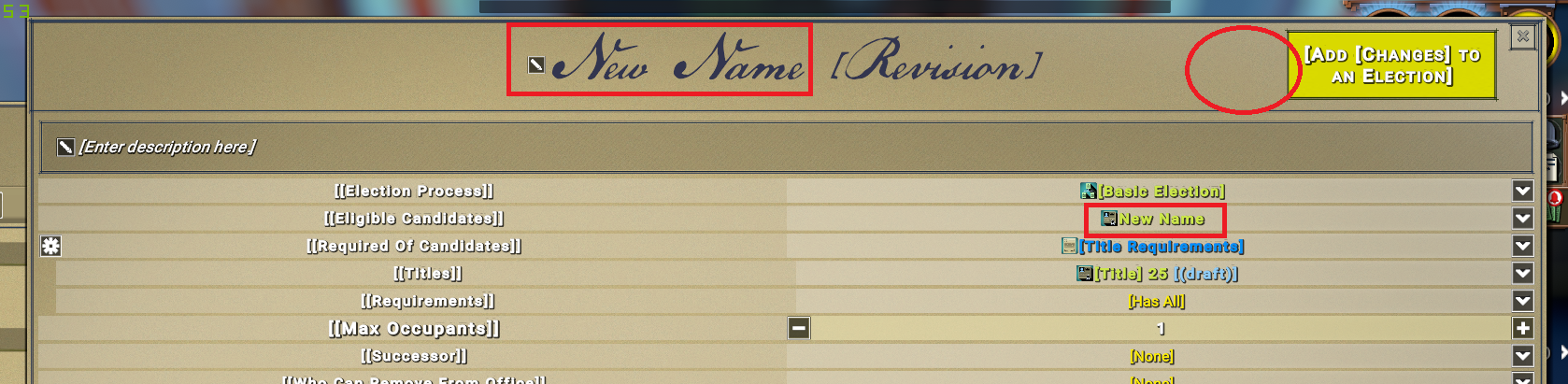

- Revise New title, still have problem with title 25 (draft):

- change eligible candidates to this title (not draft):

I should have exclamation mark again, but I don't have:

- start election and win it.

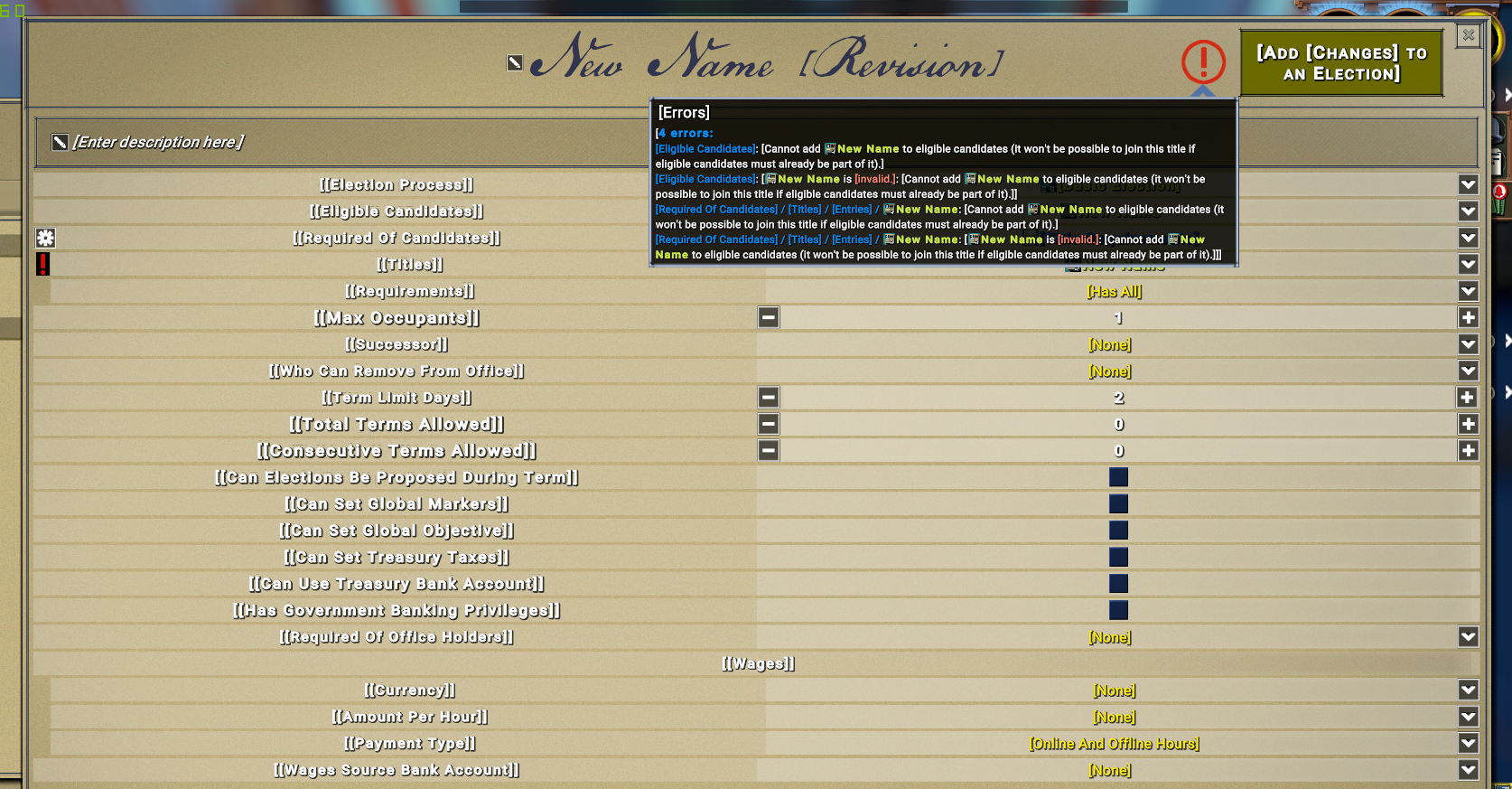

- start to revise again. Now I have 4 errors:

|

1.0

|

[0.9.2 staging-1872] Can make title with errors. -

Step to reproduce:

- [ ] 1. Error with title requirement:

- Start to create a new title. Don't change name:

- add this title 25(draft) to title requirement, I should have exclamation mark, but I don't have:

- A slightly different problem, but I think it's related - Rename this title, The title will change but will not change in the Title requirements:

- start election and win, we have this title:

- [ ] 2. Error with revision in Eligable Candidates:

- Revise New title, still have problem with title 25 (draft):

- change eligible candidates to this title (not draft):

I should have exclamation mark again, but I don't have:

- start election and win it.

- start to revise again. Now I have 4 errors:

|

non_process

|

can make title with errors step to reproduce error with title requirement start to create a new title don t change name add this title draft to title requirement i should have exclamation mark but i don t have a slightly different problem but i think it s related rename this title the title will change but will not change in the title requirements start election and win we have this title error with revision in eligable candidates revise new title still have problem with title draft change eligible candidates to this title not draft i should have exclamation mark again but i don t have start election and win it start to revise again now i have errors

| 0

|

18,648

| 24,581,012,053

|

IssuesEvent

|

2022-10-13 15:36:41

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

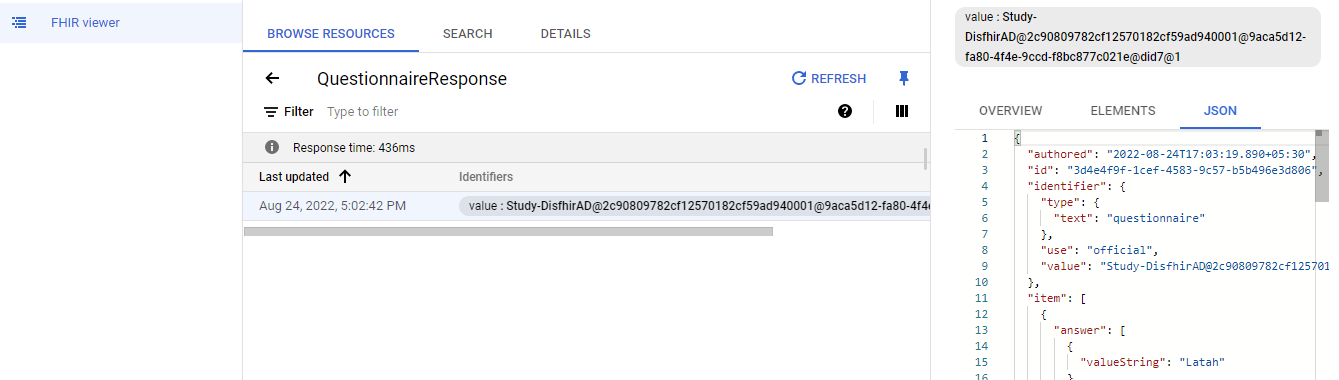

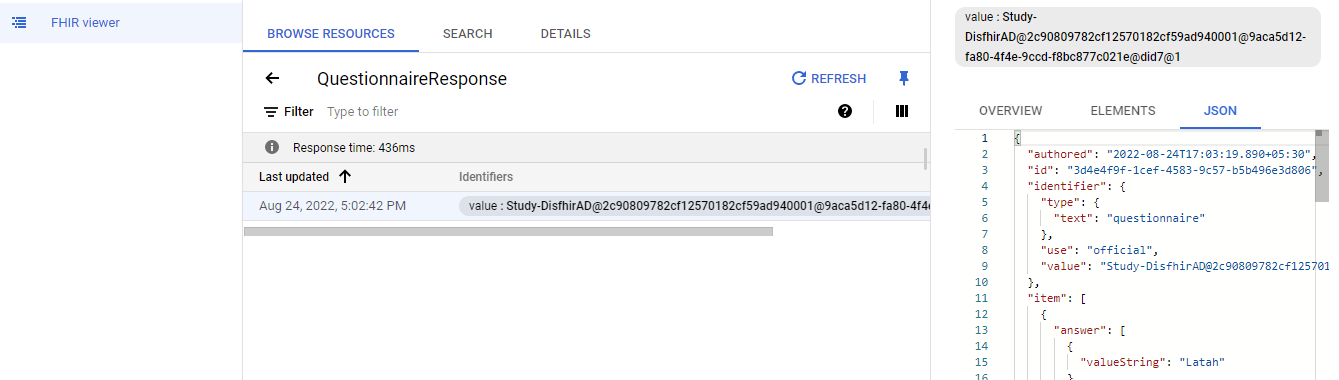

[FHIR] All the created 'Questionnaires' in the study builder are not mapping into the FHIR datastore

|

Bug Blocker P0 Process: Fixed Process: Tested dev

|

**Steps:**

1. Create multiple questionnaires in SB and launch the study [Created around 15 to 20 questionnaires with all response types in SB]

2. Go to the google cloud console

3. Search for FHIR viewer

4. Click on the particular dataset and click on the FHIR datastore

5. Search for the Questionnaire and click on it

6. Observe

**AR:** All the created 'Questionnaires' in the study builder are not mapping into the FHIR datastore

**ER:** All the created 'Questionnaires' in the study builder should be mapped into the FHIR datastore

|

2.0

|

[FHIR] All the created 'Questionnaires' in the study builder are not mapping into the FHIR datastore - **Steps:**

1. Create multiple questionnaires in SB and launch the study [Created around 15 to 20 questionnaires with all response types in SB]

2. Go to the google cloud console

3. Search for FHIR viewer

4. Click on the particular dataset and click on the FHIR datastore

5. Search for the Questionnaire and click on it

6. Observe

**AR:** All the created 'Questionnaires' in the study builder are not mapping into the FHIR datastore

**ER:** All the created 'Questionnaires' in the study builder should be mapped into the FHIR datastore

|

process

|

all the created questionnaires in the study builder are not mapping into the fhir datastore steps create multiple questionnaires in sb and launch the study go to the google cloud console search for fhir viewer click on the particular dataset and click on the fhir datastore search for the questionnaire and click on it observe ar all the created questionnaires in the study builder are not mapping into the fhir datastore er all the created questionnaires in the study builder should be mapped into the fhir datastore

| 1

|

5,185

| 7,965,383,863

|

IssuesEvent

|

2018-07-14 07:30:55

|

vtloc/grokking-links

|

https://api.github.com/repos/vtloc/grokking-links

|

opened

|

Building Pinterest’s A/B testing platform

|

Company-Pinterest Software Process Assessment and Improvement Software Testing

|

A/B testing là một kỹ thuật không mới. Khi áp dụng A/B testing thì chúng ta thường tạo ra 2 (hoặc nhiều hơn) phiên bản giao diện khác nhau và triển khai cho 2 (hoặc hơn) tập người dùng khác nhau, sau đó thu thập dữ liệu để đánh giá xem giao diện nào đáp ứng được các tiêu chí đề ra tốt hơn.

Tuy nhiên, nếu trong website của bạn có đến 1000 chỗ bạn muốn thử nghiệm A/B testing thì phải làm sao cho hiệu quả, phải quản lý dữ liệu thu thập được và config như thế nào? Đó là điều team Pinterest chia sẻ trong bài viết này.

https://medium.com/@Pinterest_Engineering/building-pinterests-a-b-testing-platform-ab4934ace9f4

|

1.0

|

Building Pinterest’s A/B testing platform - A/B testing là một kỹ thuật không mới. Khi áp dụng A/B testing thì chúng ta thường tạo ra 2 (hoặc nhiều hơn) phiên bản giao diện khác nhau và triển khai cho 2 (hoặc hơn) tập người dùng khác nhau, sau đó thu thập dữ liệu để đánh giá xem giao diện nào đáp ứng được các tiêu chí đề ra tốt hơn.

Tuy nhiên, nếu trong website của bạn có đến 1000 chỗ bạn muốn thử nghiệm A/B testing thì phải làm sao cho hiệu quả, phải quản lý dữ liệu thu thập được và config như thế nào? Đó là điều team Pinterest chia sẻ trong bài viết này.

https://medium.com/@Pinterest_Engineering/building-pinterests-a-b-testing-platform-ab4934ace9f4

|

process

|

building pinterest’s a b testing platform a b testing là một kỹ thuật không mới khi áp dụng a b testing thì chúng ta thường tạo ra hoặc nhiều hơn phiên bản giao diện khác nhau và triển khai cho hoặc hơn tập người dùng khác nhau sau đó thu thập dữ liệu để đánh giá xem giao diện nào đáp ứng được các tiêu chí đề ra tốt hơn tuy nhiên nếu trong website của bạn có đến chỗ bạn muốn thử nghiệm a b testing thì phải làm sao cho hiệu quả phải quản lý dữ liệu thu thập được và config như thế nào đó là điều team pinterest chia sẻ trong bài viết này

| 1

|

9,809

| 12,822,900,131

|

IssuesEvent

|

2020-07-06 10:39:14

|

prisma/prisma

|

https://api.github.com/repos/prisma/prisma

|

opened

|

Lifecycle of denylist

|

kind/improvement process/candidate

|

As of now, during `prisma generate`, the Prisma Client generator checks in its denylist, which model names are disallowed and fails if not possible. This is a good safe guard already, but can break the flow, when coming from introspection:

1. Introspect, get `schema.prisma`

2. Run `prisma generate` - get error that model name is on denylist.

Instead, `introspect` could already rename models which are on the denylist.

|

1.0

|

Lifecycle of denylist - As of now, during `prisma generate`, the Prisma Client generator checks in its denylist, which model names are disallowed and fails if not possible. This is a good safe guard already, but can break the flow, when coming from introspection:

1. Introspect, get `schema.prisma`

2. Run `prisma generate` - get error that model name is on denylist.

Instead, `introspect` could already rename models which are on the denylist.

|

process

|

lifecycle of denylist as of now during prisma generate the prisma client generator checks in its denylist which model names are disallowed and fails if not possible this is a good safe guard already but can break the flow when coming from introspection introspect get schema prisma run prisma generate get error that model name is on denylist instead introspect could already rename models which are on the denylist

| 1

|

472

| 2,909,953,318

|

IssuesEvent

|

2015-06-21 08:24:36

|

sebastianbergmann/phpunit

|

https://api.github.com/repos/sebastianbergmann/phpunit

|

closed

|

Merging Pull Requests

|

process

|

Recently came across http://blog.spreedly.com/2014/06/24/merge-pull-request-considered-harmful/ but still need to think about it. What do you think on the topic, @whatthejeff?

|

1.0

|

Merging Pull Requests - Recently came across http://blog.spreedly.com/2014/06/24/merge-pull-request-considered-harmful/ but still need to think about it. What do you think on the topic, @whatthejeff?

|

process

|

merging pull requests recently came across but still need to think about it what do you think on the topic whatthejeff

| 1

|

4,190

| 7,136,358,338

|

IssuesEvent

|

2018-01-23 06:40:10

|

symfony/symfony

|

https://api.github.com/repos/symfony/symfony

|

closed

|

Add a ProcessSignaledException

|

Feature Process

|

| Q | A

| ---------------- | -----

| Bug report? | no

| Feature request? | yes

| BC Break report? | no

| RFC? | no

| Symfony version | v3.4.3

As for `ProcessTimedOutException`, it would be great to have an exception when a signal has been sent to the sub-process.

Basically, on this line: https://github.com/symfony/symfony/blob/1df45e43563a37633b50d4a36478090361a0b9de/src/Symfony/Component/Process/Process.php#L389-L391

This would allow to catch signaled sub-process on a higher code level and retrieve the concerned process when running many, thanks to the process property of the exception.

|

1.0

|

Add a ProcessSignaledException - | Q | A

| ---------------- | -----

| Bug report? | no

| Feature request? | yes

| BC Break report? | no

| RFC? | no

| Symfony version | v3.4.3

As for `ProcessTimedOutException`, it would be great to have an exception when a signal has been sent to the sub-process.

Basically, on this line: https://github.com/symfony/symfony/blob/1df45e43563a37633b50d4a36478090361a0b9de/src/Symfony/Component/Process/Process.php#L389-L391

This would allow to catch signaled sub-process on a higher code level and retrieve the concerned process when running many, thanks to the process property of the exception.

|

process

|

add a processsignaledexception q a bug report no feature request yes bc break report no rfc no symfony version as for processtimedoutexception it would be great to have an exception when a signal has been sent to the sub process basically on this line this would allow to catch signaled sub process on a higher code level and retrieve the concerned process when running many thanks to the process property of the exception

| 1

|

11,529

| 3,493,846,562

|

IssuesEvent

|

2016-01-05 06:15:49

|

swisnl/jQuery-contextMenu

|

https://api.github.com/repos/swisnl/jQuery-contextMenu

|

closed

|

Custom icons

|

Documentation

|

How do I use a custom icon in 2.0?

There are no complete examples, no documentation. And I can't figure it out from the source code.

I'm so frustrated already, it's like it's not even possible anymore.

I had a custom icon before but who knows what version I was using. It used to be as simple as adding a single class to my css file pointing to an icon file, done. Now wtf.

I only upgraded because $('el').contextMenu() was not working in the version I was using but I need that now. If that was added before 2.0 can you tell me what version has that and not the new icon system and where can I get it?

|

1.0

|

Custom icons - How do I use a custom icon in 2.0?

There are no complete examples, no documentation. And I can't figure it out from the source code.

I'm so frustrated already, it's like it's not even possible anymore.

I had a custom icon before but who knows what version I was using. It used to be as simple as adding a single class to my css file pointing to an icon file, done. Now wtf.

I only upgraded because $('el').contextMenu() was not working in the version I was using but I need that now. If that was added before 2.0 can you tell me what version has that and not the new icon system and where can I get it?

|

non_process

|

custom icons how do i use a custom icon in there are no complete examples no documentation and i can t figure it out from the source code i m so frustrated already it s like it s not even possible anymore i had a custom icon before but who knows what version i was using it used to be as simple as adding a single class to my css file pointing to an icon file done now wtf i only upgraded because el contextmenu was not working in the version i was using but i need that now if that was added before can you tell me what version has that and not the new icon system and where can i get it

| 0

|

15,766

| 19,913,049,475

|

IssuesEvent

|

2022-01-25 19:14:46

|

input-output-hk/high-assurance-legacy

|

https://api.github.com/repos/input-output-hk/high-assurance-legacy

|

closed

|

Call facts that make an equivalence a congruence “compatibility laws”

|

language: isabelle topic: process calculus type: improvement

|

Currently, we call facts that make an equivalence a congruence “preservation laws”. For example, there is the fact named `basic_parallel_preservation`. This is confusing, as we also call homomorphisms “preservation laws”, which can be seen in the existence of fact names like `lift_composition_preservation`. Our goal is to use the term “compatibility laws” for laws of the former kind.

|

1.0

|

Call facts that make an equivalence a congruence “compatibility laws” - Currently, we call facts that make an equivalence a congruence “preservation laws”. For example, there is the fact named `basic_parallel_preservation`. This is confusing, as we also call homomorphisms “preservation laws”, which can be seen in the existence of fact names like `lift_composition_preservation`. Our goal is to use the term “compatibility laws” for laws of the former kind.

|

process

|

call facts that make an equivalence a congruence “compatibility laws” currently we call facts that make an equivalence a congruence “preservation laws” for example there is the fact named basic parallel preservation this is confusing as we also call homomorphisms “preservation laws” which can be seen in the existence of fact names like lift composition preservation our goal is to use the term “compatibility laws” for laws of the former kind

| 1

|

188,014

| 14,436,306,905

|

IssuesEvent

|

2020-12-07 09:57:47

|

kalexmills/github-vet-tests-dec2020

|

https://api.github.com/repos/kalexmills/github-vet-tests-dec2020

|

closed

|

allchain/eth: swarm/pss/forwarding_test.go; 3 LoC

|

fresh test tiny

|

Found a possible issue in [allchain/eth](https://www.github.com/allchain/eth) at [swarm/pss/forwarding_test.go](https://github.com/allchain/eth/blob/9d627ab0d5d40aa5829f455e98ee686f52b66d76/swarm/pss/forwarding_test.go#L234-L236)

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first

issue it finds, so please do not limit your consideration to the contents of the below message.

> function call which takes a reference to c at line 235 may start a goroutine

[Click here to see the code in its original context.](https://github.com/allchain/eth/blob/9d627ab0d5d40aa5829f455e98ee686f52b66d76/swarm/pss/forwarding_test.go#L234-L236)

<details>

<summary>Click here to show the 3 line(s) of Go which triggered the analyzer.</summary>

```go

for _, c := range testCases {

testForwardMsg(t, ps, &c)

}

```

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: 9d627ab0d5d40aa5829f455e98ee686f52b66d76

|

1.0

|

allchain/eth: swarm/pss/forwarding_test.go; 3 LoC -

Found a possible issue in [allchain/eth](https://www.github.com/allchain/eth) at [swarm/pss/forwarding_test.go](https://github.com/allchain/eth/blob/9d627ab0d5d40aa5829f455e98ee686f52b66d76/swarm/pss/forwarding_test.go#L234-L236)

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first

issue it finds, so please do not limit your consideration to the contents of the below message.

> function call which takes a reference to c at line 235 may start a goroutine

[Click here to see the code in its original context.](https://github.com/allchain/eth/blob/9d627ab0d5d40aa5829f455e98ee686f52b66d76/swarm/pss/forwarding_test.go#L234-L236)

<details>

<summary>Click here to show the 3 line(s) of Go which triggered the analyzer.</summary>

```go

for _, c := range testCases {

testForwardMsg(t, ps, &c)

}

```

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: 9d627ab0d5d40aa5829f455e98ee686f52b66d76

|

non_process

|

allchain eth swarm pss forwarding test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below message function call which takes a reference to c at line may start a goroutine click here to show the line s of go which triggered the analyzer go for c range testcases testforwardmsg t ps c leave a reaction on this issue to contribute to the project by classifying this instance as a bug mitigated or desirable behavior rocket see the descriptions of the classifications for more information commit id

| 0

|

373,921

| 26,091,839,205

|

IssuesEvent

|

2022-12-26 12:42:57

|

ajwalkiewicz/cochar

|

https://api.github.com/repos/ajwalkiewicz/cochar

|

closed

|

Update contribution page

|

documentation

|

1. In one part we still refer to MIT License instead of GPL

2. Add info about using black formatter

|

1.0

|

Update contribution page - 1. In one part we still refer to MIT License instead of GPL

2. Add info about using black formatter

|

non_process

|

update contribution page in one part we still refer to mit license instead of gpl add info about using black formatter

| 0

|

18,712

| 24,603,797,003

|

IssuesEvent

|

2022-10-14 14:32:54

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[FHIR] [Discard FHIR after DID enabled] The response records are shown in the FHIR datastore

|

Bug P1 Response datastore Process: Fixed Process: Tested dev

|

AR: Some response records are shown in the FHIR datastore even though 'Discard FHIR after DID' is enabled

ER: Records should not be shown in the FHIR datastore if discard FHIR after DID flag is enabled

Note: Issue observed only for the below study id

Study id: Study-DisfhirAD

|

2.0

|

[FHIR] [Discard FHIR after DID enabled] The response records are shown in the FHIR datastore - AR: Some response records are shown in the FHIR datastore even though 'Discard FHIR after DID' is enabled

ER: Records should not be shown in the FHIR datastore if discard FHIR after DID flag is enabled

Note: Issue observed only for the below study id

Study id: Study-DisfhirAD

|

process

|

the response records are shown in the fhir datastore ar some response records are shown in the fhir datastore even though discard fhir after did is enabled er records should not be shown in the fhir datastore if discard fhir after did flag is enabled note issue observed only for the below study id study id study disfhirad

| 1

|

28,182

| 11,598,212,840

|

IssuesEvent

|

2020-02-24 22:33:11

|

gate5/test2

|

https://api.github.com/repos/gate5/test2

|

opened

|

CVE-2019-20330 (High) detected in jackson-databind-2.0.4.jar

|

security vulnerability

|

## CVE-2019-20330 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.0.4.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Path to dependency file: /tmp/ws-scm/test2/pom.xml</p>

<p>Path to vulnerable library: downloadResource_89ee8ce3-8ef6-4d02-ab97-eac4907f0dea/20200224223234/jackson-databind-2.0.4.jar</p>

<p>

Dependency Hierarchy:

- :x: **jackson-databind-2.0.4.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/gate5/test2/commit/e86f5967b2903a7cc16251883e91ff56ccdcadc5">e86f5967b2903a7cc16251883e91ff56ccdcadc5</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.2 lacks certain net.sf.ehcache blocking.

<p>Publish Date: 2020-01-05

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-20330>CVE-2019-20330</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/FasterXML/jackson-databind/tree/jackson-databind-2.9.10.2">https://github.com/FasterXML/jackson-databind/tree/jackson-databind-2.9.10.2</a></p>

<p>Release Date: 2020-01-03</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.9.10.2</p>

</p>

</details>

<p></p>

|

True

|

CVE-2019-20330 (High) detected in jackson-databind-2.0.4.jar - ## CVE-2019-20330 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.0.4.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Path to dependency file: /tmp/ws-scm/test2/pom.xml</p>

<p>Path to vulnerable library: downloadResource_89ee8ce3-8ef6-4d02-ab97-eac4907f0dea/20200224223234/jackson-databind-2.0.4.jar</p>

<p>

Dependency Hierarchy:

- :x: **jackson-databind-2.0.4.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/gate5/test2/commit/e86f5967b2903a7cc16251883e91ff56ccdcadc5">e86f5967b2903a7cc16251883e91ff56ccdcadc5</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.2 lacks certain net.sf.ehcache blocking.

<p>Publish Date: 2020-01-05

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-20330>CVE-2019-20330</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/FasterXML/jackson-databind/tree/jackson-databind-2.9.10.2">https://github.com/FasterXML/jackson-databind/tree/jackson-databind-2.9.10.2</a></p>

<p>Release Date: 2020-01-03</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.9.10.2</p>

</p>

</details>

<p></p>

|

non_process

|

cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api path to dependency file tmp ws scm pom xml path to vulnerable library downloadresource jackson databind jar dependency hierarchy x jackson databind jar vulnerable library found in head commit a href vulnerability details fasterxml jackson databind x before lacks certain net sf ehcache blocking publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution com fasterxml jackson core jackson databind

| 0

|

9,761

| 12,743,413,195

|

IssuesEvent

|

2020-06-26 10:21:21

|

SQFvm/vm

|

https://api.github.com/repos/SQFvm/vm

|

opened

|

Warn on unused PreProcessor arg

|

PreProcessor enhancement

|

1.

// Should create a warning because `unused` is not being used in the macro but present in the define

#define foo(unused) bar

foo(something)

2.

// Should not create a warning as define contents are empty

#define foo(unused)

foo(something)

|

1.0

|

Warn on unused PreProcessor arg - 1.

// Should create a warning because `unused` is not being used in the macro but present in the define

#define foo(unused) bar

foo(something)

2.

// Should not create a warning as define contents are empty

#define foo(unused)

foo(something)

|

process

|

warn on unused preprocessor arg should create a warning because unused is not being used in the macro but present in the define define foo unused bar foo something should not create a warning as define contents are empty define foo unused foo something

| 1

|

16,498

| 21,480,391,895

|

IssuesEvent

|

2022-04-26 17:08:53

|

metabase/metabase

|

https://api.github.com/repos/metabase/metabase

|

closed

|

Ability to access fields in JSON Dicts in Postgres driver

|

Priority:P1 Database/Postgres Querying/Processor Administration/Metadata & Sync Type:New Feature .Completeness

|

⬇️ **Please click the 👍 reaction instead of leaving a `+1` or 👍 comment**

|

1.0

|

Ability to access fields in JSON Dicts in Postgres driver - ⬇️ **Please click the 👍 reaction instead of leaving a `+1` or 👍 comment**

|

process

|

ability to access fields in json dicts in postgres driver ⬇️ please click the 👍 reaction instead of leaving a or 👍 comment

| 1

|

9,377

| 12,374,420,179

|

IssuesEvent

|

2020-05-19 01:31:35

|

GoogleCloudPlatform/python-docs-samples

|

https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples

|

closed

|

testing: silence pytest

|

testing type: process

|

Locally I started to see the following warning:

```

.nox/py-3-7/lib/python3.7/site-packages/_pytest/junitxml.py:436

/usr/local/google/home/tmatsuo/work/python-docs-samples/run/markdown-preview/.nox/py-3-7/lib/python3.7/site-packages/_pytest/junitxml.py:436: PytestDeprecationWarning: The 'junit_family' default value will change to 'xunit2' in pytest 6.0.

Add 'junit_family=legacy' to your pytest.ini file to silence this warning and make your suite compatible.

_issue_warning_captured(deprecated.JUNIT_XML_DEFAULT_FAMILY, config.hook, 2)

```

I think we can just add a command line option to pytest.

|

1.0

|

testing: silence pytest - Locally I started to see the following warning:

```

.nox/py-3-7/lib/python3.7/site-packages/_pytest/junitxml.py:436

/usr/local/google/home/tmatsuo/work/python-docs-samples/run/markdown-preview/.nox/py-3-7/lib/python3.7/site-packages/_pytest/junitxml.py:436: PytestDeprecationWarning: The 'junit_family' default value will change to 'xunit2' in pytest 6.0.

Add 'junit_family=legacy' to your pytest.ini file to silence this warning and make your suite compatible.

_issue_warning_captured(deprecated.JUNIT_XML_DEFAULT_FAMILY, config.hook, 2)

```

I think we can just add a command line option to pytest.

|

process

|

testing silence pytest locally i started to see the following warning nox py lib site packages pytest junitxml py usr local google home tmatsuo work python docs samples run markdown preview nox py lib site packages pytest junitxml py pytestdeprecationwarning the junit family default value will change to in pytest add junit family legacy to your pytest ini file to silence this warning and make your suite compatible issue warning captured deprecated junit xml default family config hook i think we can just add a command line option to pytest

| 1

|

376,807

| 26,218,202,104

|

IssuesEvent

|

2023-01-04 12:49:30

|

littlewhitecloud/CustomTkinterTitlebar

|

https://api.github.com/repos/littlewhitecloud/CustomTkinterTitlebar

|

closed

|

Window move too laggy

|

🐞 bug 📖 documentation ✨ enhancement 📗 help wanted ❌ invalid 💬 question ⚙need more test

|

It improved thickframe with window and make window resizable

But it is too laggy.

Like this:

https://user-images.githubusercontent.com/71159641/209633321-23340f83-01db-4af9-8bdb-c5aacecdff46.mp4

I don't know does it only happened on my computer?

|

1.0

|

Window move too laggy - It improved thickframe with window and make window resizable

But it is too laggy.

Like this:

https://user-images.githubusercontent.com/71159641/209633321-23340f83-01db-4af9-8bdb-c5aacecdff46.mp4

I don't know does it only happened on my computer?

|

non_process

|

window move too laggy it improved thickframe with window and make window resizable but it is too laggy like this i don t know does it only happened on my computer

| 0

|

176,451

| 6,559,680,648

|

IssuesEvent

|

2017-09-07 05:48:10

|

architecture-building-systems/CityEnergyAnalyst

|

https://api.github.com/repos/architecture-building-systems/CityEnergyAnalyst

|

opened

|

NN4GA

|

Priority 2

|

a surrogate model is intended for the multi-objective optimization algorithm.

The goal is to lay the calculation burden entirely on the estimator so that rapid generations for the optimization algorithm is facilitated.

|

1.0

|

NN4GA - a surrogate model is intended for the multi-objective optimization algorithm.

The goal is to lay the calculation burden entirely on the estimator so that rapid generations for the optimization algorithm is facilitated.

|

non_process

|

a surrogate model is intended for the multi objective optimization algorithm the goal is to lay the calculation burden entirely on the estimator so that rapid generations for the optimization algorithm is facilitated

| 0

|

16,495

| 21,471,281,202

|

IssuesEvent

|

2022-04-26 09:43:04

|

prisma/prisma

|

https://api.github.com/repos/prisma/prisma

|

opened

|

Native types of relations `fields` and `references` are not validated

|

bug/1-unconfirmed kind/bug process/candidate topic: validation team/schema

|

https://github.com/prisma/prisma/blob/99b3e2ca2be862ccb7a232a34b617155c6a03e40/packages/client/src/__tests__/integration/happy/exhaustive-schema-mongo/schema.prisma

```prisma

model Post {

id String @id @default(auto()) @map("_id") @db.ObjectId

createdAt DateTime @default(now())

title String

content String?

published Boolean @default(false)

author User @relation(fields: [authorId], references: [id])

authorId String

}

model User {

id String @id @default(auto()) @map("_id") @db.ObjectId

email String @unique

int Int

optionalInt Int?

[...]

}

```

Note how `Post.authorId` and `User.id` have a different native type, but still validate.

(Might also apply to other connectors, not sure - just noticed for this test.)

|

1.0

|

Native types of relations `fields` and `references` are not validated - https://github.com/prisma/prisma/blob/99b3e2ca2be862ccb7a232a34b617155c6a03e40/packages/client/src/__tests__/integration/happy/exhaustive-schema-mongo/schema.prisma

```prisma

model Post {

id String @id @default(auto()) @map("_id") @db.ObjectId

createdAt DateTime @default(now())

title String

content String?

published Boolean @default(false)

author User @relation(fields: [authorId], references: [id])

authorId String

}

model User {

id String @id @default(auto()) @map("_id") @db.ObjectId

email String @unique

int Int

optionalInt Int?

[...]

}

```

Note how `Post.authorId` and `User.id` have a different native type, but still validate.

(Might also apply to other connectors, not sure - just noticed for this test.)

|

process

|

native types of relations fields and references are not validated prisma model post id string id default auto map id db objectid createdat datetime default now title string content string published boolean default false author user relation fields references authorid string model user id string id default auto map id db objectid email string unique int int optionalint int note how post authorid and user id have a different native type but still validate might also apply to other connectors not sure just noticed for this test

| 1

|

7,519

| 10,596,311,587

|

IssuesEvent

|

2019-10-09 20:57:10

|

geneontology/go-ontology

|

https://api.github.com/repos/geneontology/go-ontology

|

closed

|

def of 'symbiotic process' GO:0044403

|

multi-species process

|

Per our discussion at GOC meeting - broaden definition of 'symbiotic process' GO:0044403

so that it includes both gene products from symbiont and host. Current def specifies symbiont.

Here is a suggestion - Change first sentence

FROM old sentence:

"A process carried out by symbiont gene products that enables the interaction between two organisms living together in more or less intimate association."

TO new sentence:

"A process carried out by gene products in an organism that enable the organism to engage in a symbiotic relationship, a more or less intimate association, with another organism."

@ValWood @pgaudet @nsuvarnaiari @pmasson55 What do you think?

|

1.0

|

def of 'symbiotic process' GO:0044403 - Per our discussion at GOC meeting - broaden definition of 'symbiotic process' GO:0044403

so that it includes both gene products from symbiont and host. Current def specifies symbiont.

Here is a suggestion - Change first sentence

FROM old sentence:

"A process carried out by symbiont gene products that enables the interaction between two organisms living together in more or less intimate association."

TO new sentence:

"A process carried out by gene products in an organism that enable the organism to engage in a symbiotic relationship, a more or less intimate association, with another organism."

@ValWood @pgaudet @nsuvarnaiari @pmasson55 What do you think?

|

process

|

def of symbiotic process go per our discussion at goc meeting broaden definition of symbiotic process go so that it includes both gene products from symbiont and host current def specifies symbiont here is a suggestion change first sentence from old sentence a process carried out by symbiont gene products that enables the interaction between two organisms living together in more or less intimate association to new sentence a process carried out by gene products in an organism that enable the organism to engage in a symbiotic relationship a more or less intimate association with another organism valwood pgaudet nsuvarnaiari what do you think

| 1

|

102,646

| 8,851,450,381

|

IssuesEvent

|

2019-01-08 15:47:31

|

hzi-braunschweig/SORMAS-Project

|

https://api.github.com/repos/hzi-braunschweig/SORMAS-Project

|

opened

|

Add user role "External Lab User"

|

Sample Lab Testing api-change sormas-api sormas-ui

|

Should only be allowed to see samples assigned to the user's lab. No cases or any other data.

Needed for Dakar lab - see #878

|

1.0

|

Add user role "External Lab User" - Should only be allowed to see samples assigned to the user's lab. No cases or any other data.

Needed for Dakar lab - see #878

|

non_process

|

add user role external lab user should only be allowed to see samples assigned to the user s lab no cases or any other data needed for dakar lab see

| 0

|

11,080

| 13,921,156,652

|

IssuesEvent

|

2020-10-21 11:31:09

|

DO-CV/sara

|

https://api.github.com/repos/DO-CV/sara

|

closed

|

BUG: Fix scale normalization in DoH pyramid and Harris pyramid.

|

Image Processing

|

cf. Tony Lindeberg's paper for details:

http://www.diva-portal.org/smash/get/diva2:453064/FULLTEXT01.pdf

|

1.0

|

BUG: Fix scale normalization in DoH pyramid and Harris pyramid. - cf. Tony Lindeberg's paper for details:

http://www.diva-portal.org/smash/get/diva2:453064/FULLTEXT01.pdf

|

process

|

bug fix scale normalization in doh pyramid and harris pyramid cf tony lindeberg s paper for details

| 1

|

9,623

| 12,560,779,244

|

IssuesEvent

|

2020-06-07 23:20:21

|

qgis/QGIS

|

https://api.github.com/repos/qgis/QGIS

|

closed

|

Performance issue and large file size when creating vector tiles

|

Bug Processing Vector tiles

|

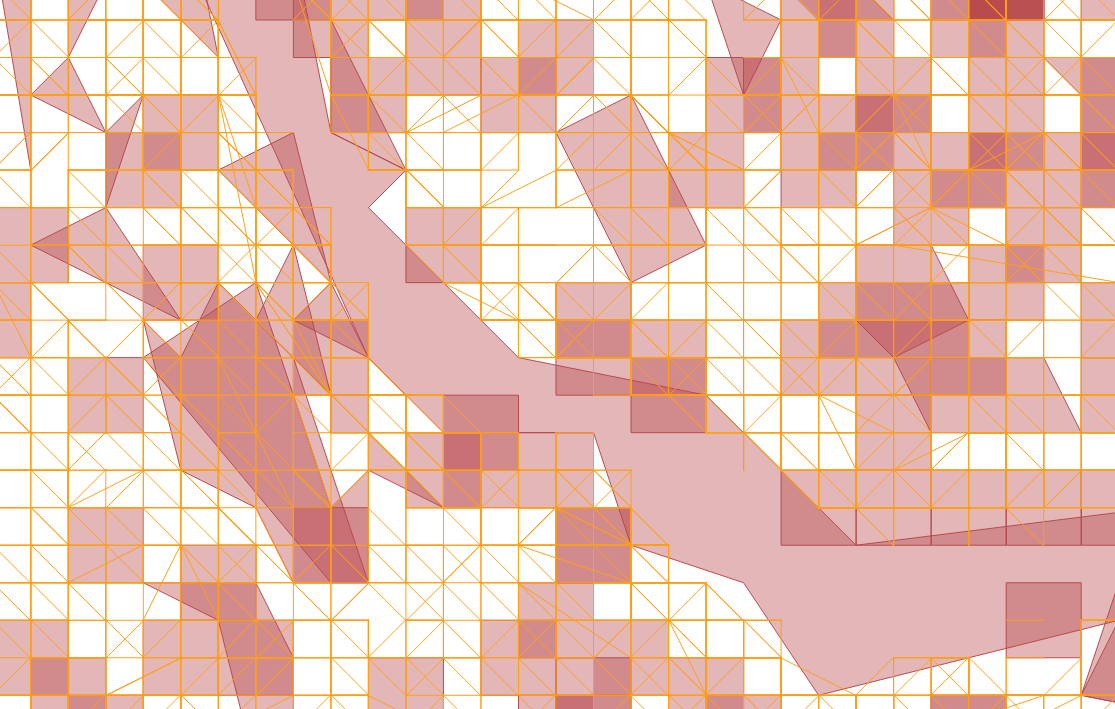

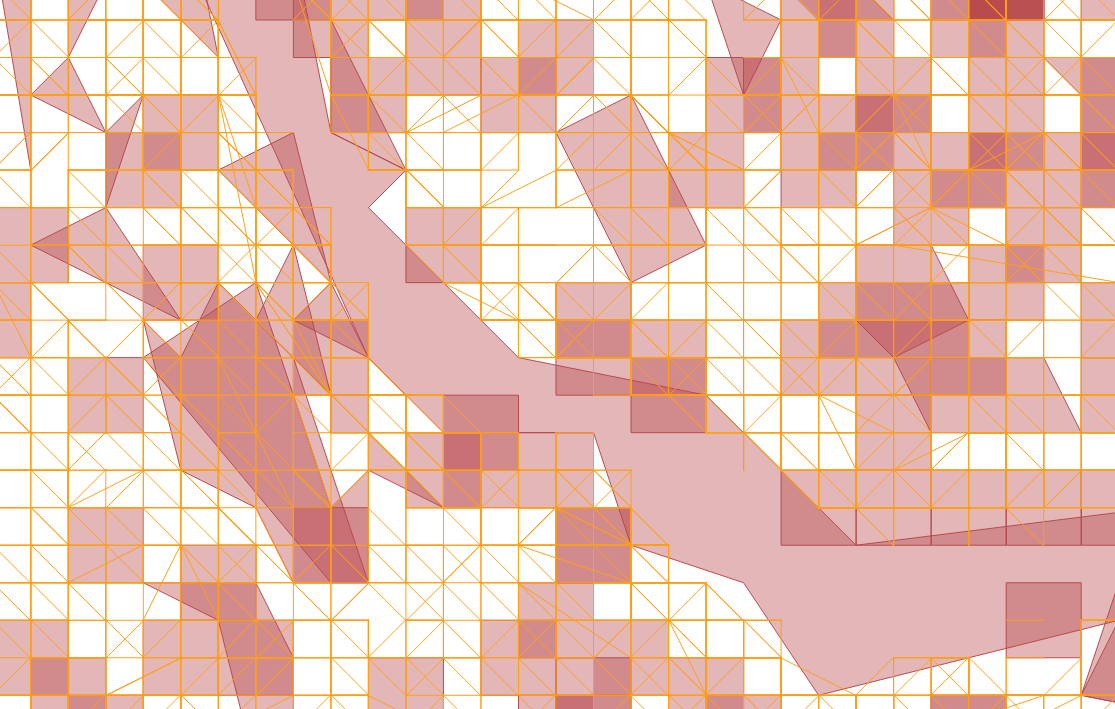

I have run some benchmark comparing the new QGIS native tool to generate vector tile with other 3rd party tools. I picked [tippecanoe from Mapbox](https://github.com/mapbox/tippecanoe) to run some benchmarks.

Here are the result:

| | QGIS | tippecanoe |

| ------------- | ------------- | ------------- |

| Time | 105.34 s | 10.16 s |

| File size | 11.4 MB | 908.0 KB |

Additional info:

min zoom level=0

max zoom level=3

Input layers: water, landuse and roads (see attached)

In addition to the performance and file size, I have noticed that Tippecanoe preserves the geometries better than QGIS.

Output from QGIS:

Output from Tippecanoe:

[input_data.zip](https://github.com/qgis/QGIS/files/4725350/input_data.zip)

[output_data.zip](https://github.com/qgis/QGIS/files/4725372/output_data.zip)

|

1.0

|

Performance issue and large file size when creating vector tiles - I have run some benchmark comparing the new QGIS native tool to generate vector tile with other 3rd party tools. I picked [tippecanoe from Mapbox](https://github.com/mapbox/tippecanoe) to run some benchmarks.

Here are the result:

| | QGIS | tippecanoe |

| ------------- | ------------- | ------------- |

| Time | 105.34 s | 10.16 s |

| File size | 11.4 MB | 908.0 KB |

Additional info:

min zoom level=0

max zoom level=3

Input layers: water, landuse and roads (see attached)

In addition to the performance and file size, I have noticed that Tippecanoe preserves the geometries better than QGIS.

Output from QGIS:

Output from Tippecanoe:

[input_data.zip](https://github.com/qgis/QGIS/files/4725350/input_data.zip)

[output_data.zip](https://github.com/qgis/QGIS/files/4725372/output_data.zip)

|

process

|

performance issue and large file size when creating vector tiles i have run some benchmark comparing the new qgis native tool to generate vector tile with other party tools i picked to run some benchmarks here are the result qgis tippecanoe time s s file size mb kb additional info min zoom level max zoom level input layers water landuse and roads see attached in addition to the performance and file size i have noticed that tippecanoe preserves the geometries better than qgis output from qgis output from tippecanoe

| 1

|

1,025

| 3,485,766,457

|

IssuesEvent

|

2015-12-31 11:12:38

|

e-government-ua/iBP

|

https://api.github.com/repos/e-government-ua/iBP

|

closed

|

Тернопільська обл. - Надання одноразової матеріальної допомоги

|

In process of testing in work

|

Расширение старого процесса по ОДА с добавлением районов и внесением последних разработок + изменение названия.

https://docs.google.com/spreadsheets/d/13MpThVlD-h4WO9cT1M9BSTTR-P2fR4HknGmxc2Rs1Kc/edit?ts=564dc8c3#gid=0

|

1.0

|

Тернопільська обл. - Надання одноразової матеріальної допомоги - Расширение старого процесса по ОДА с добавлением районов и внесением последних разработок + изменение названия.

https://docs.google.com/spreadsheets/d/13MpThVlD-h4WO9cT1M9BSTTR-P2fR4HknGmxc2Rs1Kc/edit?ts=564dc8c3#gid=0

|

process

|

тернопільська обл надання одноразової матеріальної допомоги расширение старого процесса по ода с добавлением районов и внесением последних разработок изменение названия

| 1

|

114,123

| 17,189,280,006

|

IssuesEvent

|

2021-07-16 08:37:17

|

elastic/kibana

|

https://api.github.com/repos/elastic/kibana

|

closed

|

[Security Solution]Success message on the toaster is getting cropped on attaching an alert with a New Case.

|

QA:Validated Team: SecuritySolution Team:Threat Hunting bug fixed impact:low v7.14.0

|

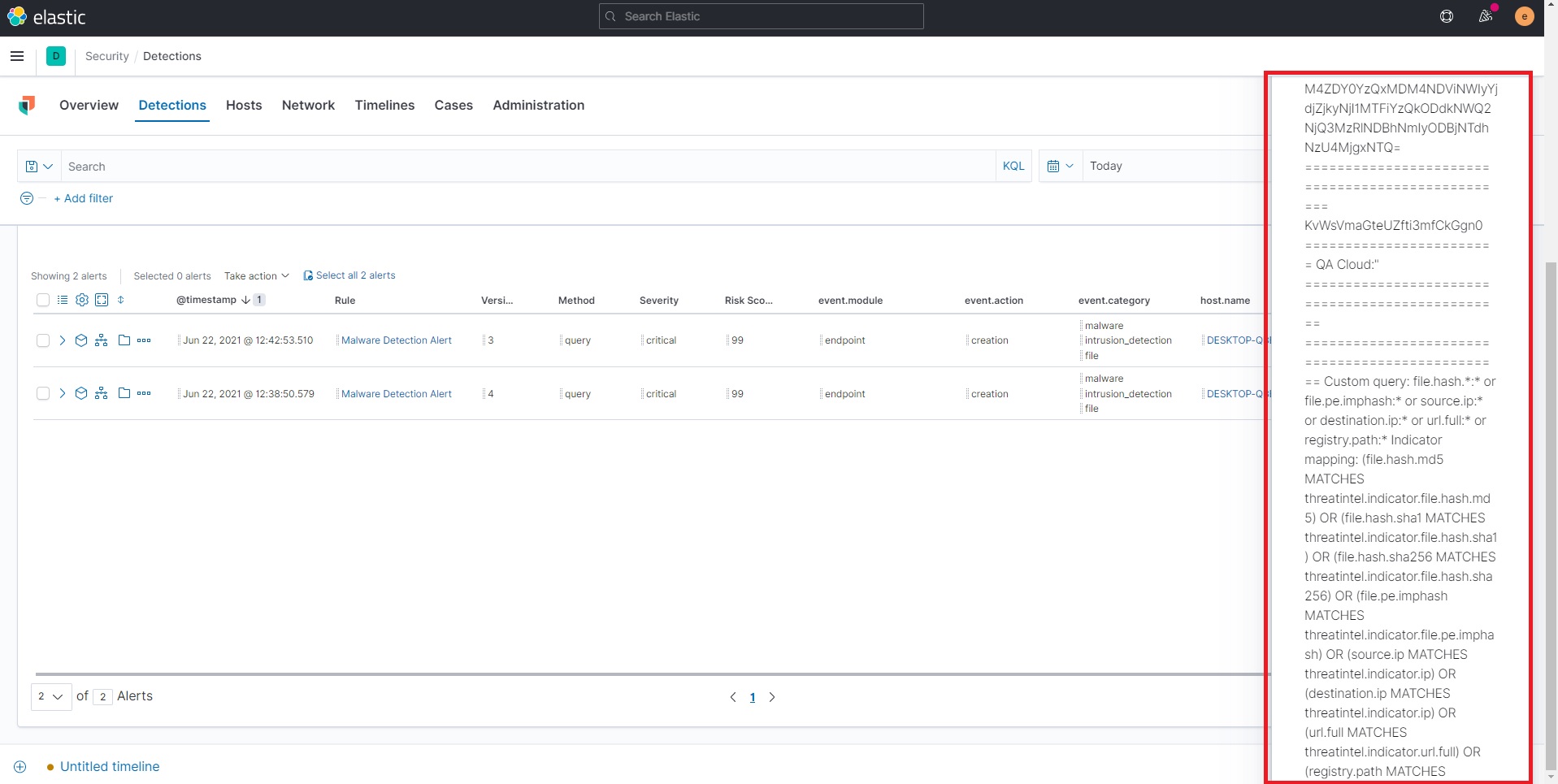

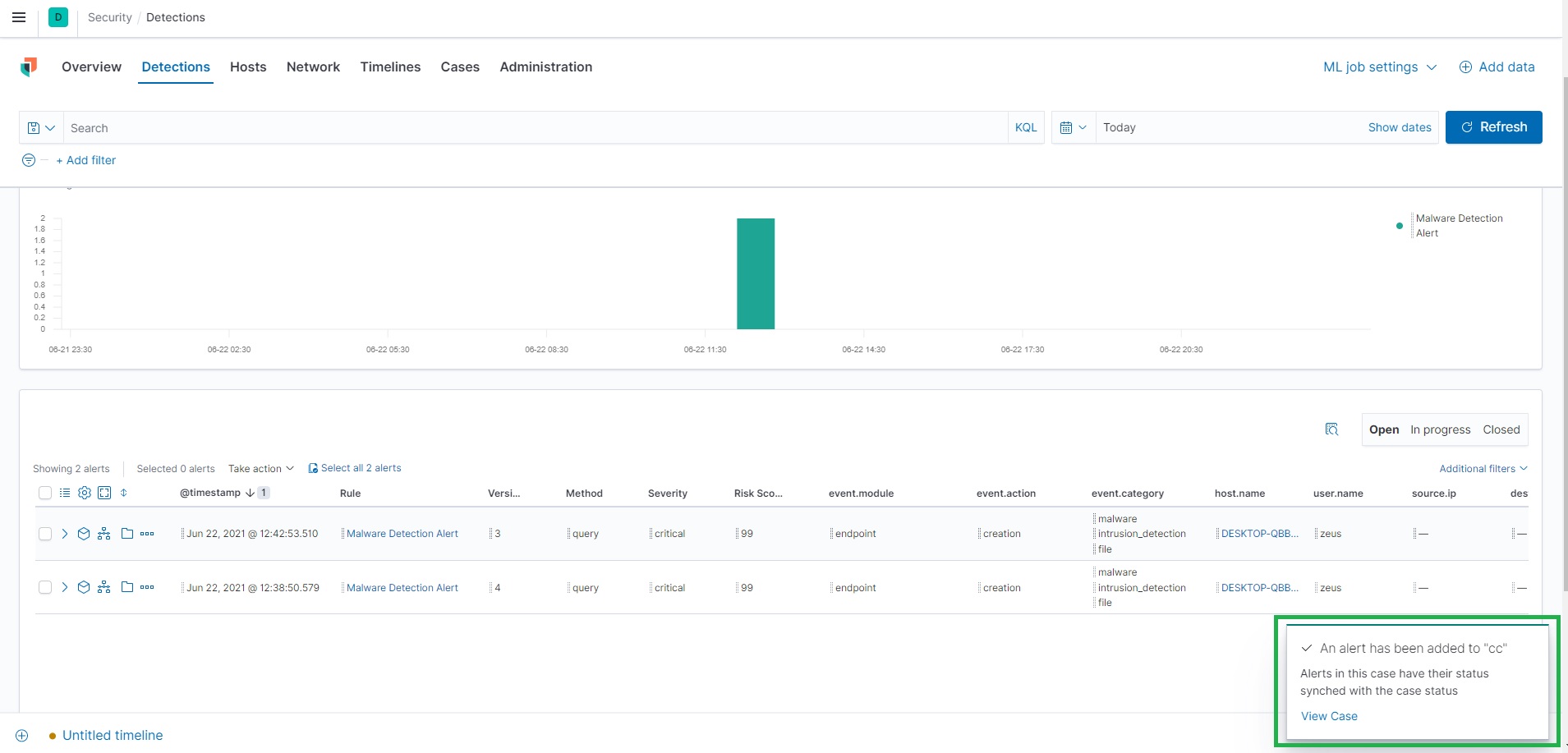

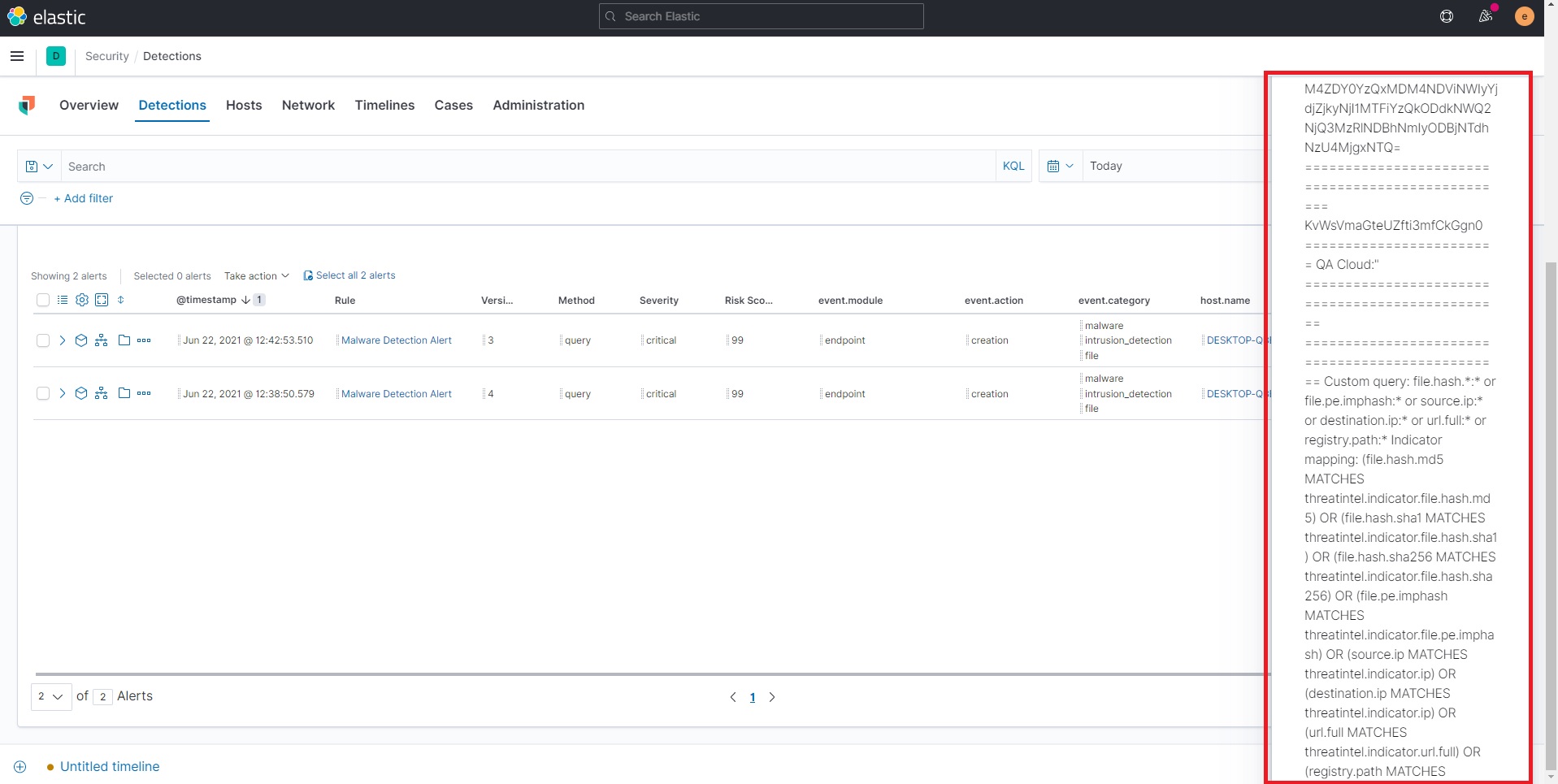

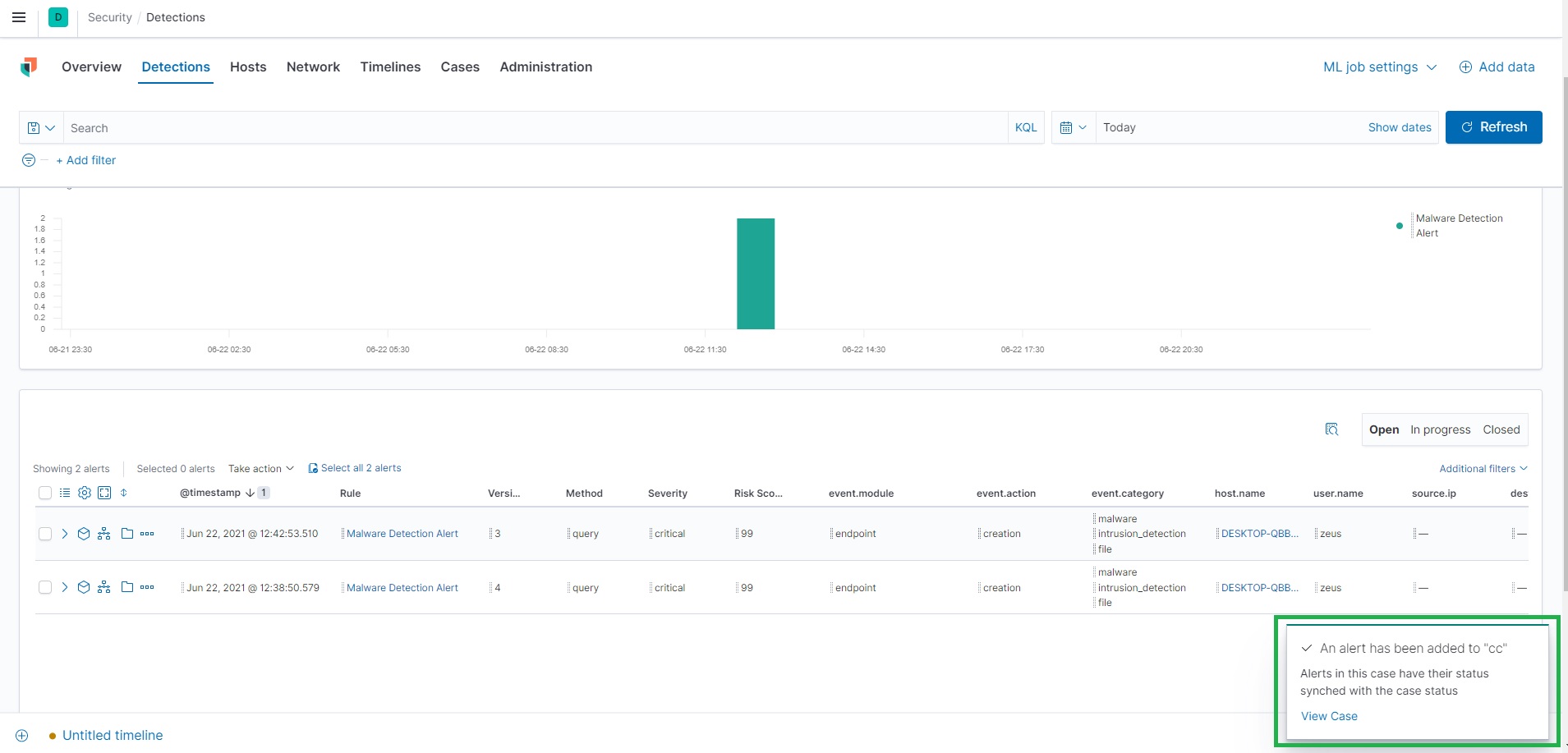

**Description:**

Success message on the toaster is getting cropped on attaching an alert with a New Case.

**Build Details:**

Version: 7.14.0 snapshot

Build: 41498

Commit: 5cab87e2069dd31848490f83964291fc802d6889

Artifact link: https://artifacts-api.elastic.co/v1/search/7.14.0-SNAPSHOT

**Browser Details:**

All

**Preconditions:**

- Kibana Environment should exist.

- Endpoint security and Elastic Agent should be installed

- Detection alerts should be generated

**Steps to Reproduce:**

1. Navigate to 'Detections' tab under Security App.

2. Click on 'Add to New Case' button.

3. Provide long content in 'Case Name' field and fill out all the mandatory fields.

4. Now, click on 'Create Case' button and Observe that Success message is getting cropped and showing incomplete message on Success toaster.

**Note: Please find the text for long name below:**

What is Global Warming? A term that we commonly encounter today is global warming. Our acquaintance with the term is limited to our textbooks and its negative consequences that we read about. But what global warming really is so much more than a theoretical concept. Global Warming refers to the phenomenon of the gradual heating of the earth because of the trapping of heat primarily due to human activities. A major consequence of global warming is that it will increase the Earth’s temperature which will have severe negative effects like melting of polar ice caps, extreme climates and thereby disruption of normal functioning. Its perils are not restricted to only some aspects but are all-encompassing and can put the existence of life on earth in danger. Although there are multiple causes of global warming, some major causes contribute more than others. These factors accelerate its rate: Excessive burning of fossil fuels to meet energy.

**Impacted Test case:**

N/A

**Actual Result:**

Success message is getting cropped on attaching an alert with New Case.

**Screen-Shot:**

**Expected Result:**

- Complete Success message should be displayed on attaching an alert with New Case.

- Correct message should be displayed :

**An alert has been added to "Case Name"

Alerts in this case have their status synched with the case status**

**What's not working:**

- This issue is not occurring if case name is of shorter length

**Screen-Shot:**

**What's working:**

- This issue is also occurring if user attach an alert to already existing case having a long Name.

|

True

|

[Security Solution]Success message on the toaster is getting cropped on attaching an alert with a New Case. - **Description:**

Success message on the toaster is getting cropped on attaching an alert with a New Case.

**Build Details:**

Version: 7.14.0 snapshot

Build: 41498

Commit: 5cab87e2069dd31848490f83964291fc802d6889

Artifact link: https://artifacts-api.elastic.co/v1/search/7.14.0-SNAPSHOT

**Browser Details:**

All

**Preconditions:**

- Kibana Environment should exist.

- Endpoint security and Elastic Agent should be installed

- Detection alerts should be generated

**Steps to Reproduce:**

1. Navigate to 'Detections' tab under Security App.

2. Click on 'Add to New Case' button.

3. Provide long content in 'Case Name' field and fill out all the mandatory fields.

4. Now, click on 'Create Case' button and Observe that Success message is getting cropped and showing incomplete message on Success toaster.

**Note: Please find the text for long name below:**

What is Global Warming? A term that we commonly encounter today is global warming. Our acquaintance with the term is limited to our textbooks and its negative consequences that we read about. But what global warming really is so much more than a theoretical concept. Global Warming refers to the phenomenon of the gradual heating of the earth because of the trapping of heat primarily due to human activities. A major consequence of global warming is that it will increase the Earth’s temperature which will have severe negative effects like melting of polar ice caps, extreme climates and thereby disruption of normal functioning. Its perils are not restricted to only some aspects but are all-encompassing and can put the existence of life on earth in danger. Although there are multiple causes of global warming, some major causes contribute more than others. These factors accelerate its rate: Excessive burning of fossil fuels to meet energy.

**Impacted Test case:**

N/A

**Actual Result:**

Success message is getting cropped on attaching an alert with New Case.

**Screen-Shot:**

**Expected Result:**

- Complete Success message should be displayed on attaching an alert with New Case.

- Correct message should be displayed :

**An alert has been added to "Case Name"

Alerts in this case have their status synched with the case status**

**What's not working:**

- This issue is not occurring if case name is of shorter length

**Screen-Shot:**

**What's working:**

- This issue is also occurring if user attach an alert to already existing case having a long Name.

|

non_process

|

success message on the toaster is getting cropped on attaching an alert with a new case description success message on the toaster is getting cropped on attaching an alert with a new case build details version snapshot build commit artifact link browser details all preconditions kibana environment should exist endpoint security and elastic agent should be installed detection alerts should be generated steps to reproduce navigate to detections tab under security app click on add to new case button provide long content in case name field and fill out all the mandatory fields now click on create case button and observe that success message is getting cropped and showing incomplete message on success toaster note please find the text for long name below what is global warming a term that we commonly encounter today is global warming our acquaintance with the term is limited to our textbooks and its negative consequences that we read about but what global warming really is so much more than a theoretical concept global warming refers to the phenomenon of the gradual heating of the earth because of the trapping of heat primarily due to human activities a major consequence of global warming is that it will increase the earth’s temperature which will have severe negative effects like melting of polar ice caps extreme climates and thereby disruption of normal functioning its perils are not restricted to only some aspects but are all encompassing and can put the existence of life on earth in danger although there are multiple causes of global warming some major causes contribute more than others these factors accelerate its rate excessive burning of fossil fuels to meet energy impacted test case n a actual result success message is getting cropped on attaching an alert with new case screen shot expected result complete success message should be displayed on attaching an alert with new case correct message should be displayed an alert has been added to case name alerts in this case have their status synched with the case status what s not working this issue is not occurring if case name is of shorter length screen shot what s working this issue is also occurring if user attach an alert to already existing case having a long name

| 0

|

8,557

| 11,731,053,414

|

IssuesEvent

|

2020-03-10 22:55:07

|

gearboxworks/gearbox

|

https://api.github.com/repos/gearboxworks/gearbox

|

closed

|

Setup Docker auto-builds.

|

discuss process-docker process-workflow

|

All GitHub docker repos should trigger a DockerHub build on release.

|

2.0

|

Setup Docker auto-builds. - All GitHub docker repos should trigger a DockerHub build on release.

|

process

|

setup docker auto builds all github docker repos should trigger a dockerhub build on release

| 1

|

16,819

| 22,060,937,047

|

IssuesEvent

|

2022-05-30 17:43:32

|

bitPogo/kmock

|

https://api.github.com/repos/bitPogo/kmock

|

closed

|

Support Receivers

|

bug enhancement kmock-processor

|

## Description

<!--- Provide a detailed introduction to the issue itself, and why you consider it to be a bug -->

Currently KMock generates Members for receivers normal members.

Acceptance Criteria:

* Receiver member proxies are accessable like normal proxies

* receiver members are generated as receivers not as normal members

|

1.0

|

Support Receivers - ## Description

<!--- Provide a detailed introduction to the issue itself, and why you consider it to be a bug -->

Currently KMock generates Members for receivers normal members.

Acceptance Criteria:

* Receiver member proxies are accessable like normal proxies

* receiver members are generated as receivers not as normal members

|

process

|

support receivers description currently kmock generates members for receivers normal members acceptance criteria receiver member proxies are accessable like normal proxies receiver members are generated as receivers not as normal members

| 1

|

11,825

| 14,652,931,928

|

IssuesEvent

|

2020-12-28 03:56:46

|

initialshl/history-tree

|

https://api.github.com/repos/initialshl/history-tree

|

reopened

|

Organize URLs relationships using TransitionTypes

|

process

|

Blocked by #10

Seems like a difficult problem

|

1.0

|

Organize URLs relationships using TransitionTypes - Blocked by #10

Seems like a difficult problem

|

process

|

organize urls relationships using transitiontypes blocked by seems like a difficult problem

| 1

|

15,045

| 18,762,488,465

|

IssuesEvent

|

2021-11-05 18:13:37

|

GoogleCloudPlatform/cloudml-samples

|

https://api.github.com/repos/GoogleCloudPlatform/cloudml-samples

|

closed

|

Python 3.5 build failing

|

type: process

|

All Python 3.5 builds are failing with this error:

`FileNotFoundError: [Errno 2] No such file or directory: '/tmpfs/src/envs/python3.5/venv'`

[Example failed PR](https://github.com/GoogleCloudPlatform/cloudml-samples/pull/501)

|

1.0

|

Python 3.5 build failing - All Python 3.5 builds are failing with this error:

`FileNotFoundError: [Errno 2] No such file or directory: '/tmpfs/src/envs/python3.5/venv'`

[Example failed PR](https://github.com/GoogleCloudPlatform/cloudml-samples/pull/501)

|

process

|

python build failing all python builds are failing with this error filenotfounderror no such file or directory tmpfs src envs venv

| 1

|

286,307

| 24,740,693,311

|

IssuesEvent

|

2022-10-21 04:40:49

|

wpfoodmanager/wp-food-manager

|

https://api.github.com/repos/wpfoodmanager/wp-food-manager

|

closed

|

Food Types can able to set icon same like menu icon

|

In Testing

|

Food Types can able to set icon same like menu icon.

<img width="1728" alt="Screenshot 2022-10-14 at 00 00 04" src="https://user-images.githubusercontent.com/15089059/195718875-ae075941-085f-4a23-95d1-7f86a7f34ece.png">

|

1.0

|

Food Types can able to set icon same like menu icon - Food Types can able to set icon same like menu icon.

<img width="1728" alt="Screenshot 2022-10-14 at 00 00 04" src="https://user-images.githubusercontent.com/15089059/195718875-ae075941-085f-4a23-95d1-7f86a7f34ece.png">

|

non_process

|

food types can able to set icon same like menu icon food types can able to set icon same like menu icon img width alt screenshot at src

| 0

|

374,758

| 11,095,088,179

|

IssuesEvent

|

2019-12-16 08:16:17

|

RaenonX/Jelly-Bot

|

https://api.github.com/repos/RaenonX/Jelly-Bot

|

opened

|

User activity report page

|

priority-5 type-task

|

Have a page (not yet determined) to display a user's:

- Message activity across the channels

- Bot feature usage activity across the channels

- The user can determine if they want these to be public

|

1.0

|

User activity report page - Have a page (not yet determined) to display a user's:

- Message activity across the channels

- Bot feature usage activity across the channels

- The user can determine if they want these to be public

|

non_process

|

user activity report page have a page not yet determined to display a user s message activity across the channels bot feature usage activity across the channels the user can determine if they want these to be public

| 0

|

256,238

| 27,556,743,597

|

IssuesEvent

|

2023-03-07 18:32:02

|

lotus-web3/client-contract

|

https://api.github.com/repos/lotus-web3/client-contract

|

closed

|

Hardening AuthenticateMessage

|

security

|

Validate the below fields

- [ ] verified deal

- [ ] storage price

- [ ] client collateral

|

True

|

Hardening AuthenticateMessage - Validate the below fields

- [ ] verified deal

- [ ] storage price

- [ ] client collateral

|

non_process

|

hardening authenticatemessage validate the below fields verified deal storage price client collateral

| 0

|

714,375

| 24,559,614,393

|

IssuesEvent

|

2022-10-12 19:00:02

|

virtualcell/vcell

|

https://api.github.com/repos/virtualcell/vcell

|

closed

|

Reserved parameters get duplicated during repeated round trips.

|

bug High Priority VCell-7.5.0

|

During the first round trip, we export reserved symbols and when we import the sbml parameters we add the param_ prefix to create the vcell ewserved param name.

During the second round, pe export the param_xxx as globals and again we export the reserved symbols. During import, we import the param_xxx as they are and also add the param_ to the _T_, _F_ aso. Hence, we get duplicated param_xxx

|

1.0

|

Reserved parameters get duplicated during repeated round trips. - During the first round trip, we export reserved symbols and when we import the sbml parameters we add the param_ prefix to create the vcell ewserved param name.

During the second round, pe export the param_xxx as globals and again we export the reserved symbols. During import, we import the param_xxx as they are and also add the param_ to the _T_, _F_ aso. Hence, we get duplicated param_xxx

|

non_process

|

reserved parameters get duplicated during repeated round trips during the first round trip we export reserved symbols and when we import the sbml parameters we add the param prefix to create the vcell ewserved param name during the second round pe export the param xxx as globals and again we export the reserved symbols during import we import the param xxx as they are and also add the param to the t f aso hence we get duplicated param xxx

| 0

|

307,482

| 9,417,751,485

|

IssuesEvent

|

2019-04-10 17:30:23

|

mflores31/TestZen

|

https://api.github.com/repos/mflores31/TestZen

|

opened

|

HU2-Registro de Pensamientos

|

priority: high type: HU

|

Yo como usuario, quiero poder escribir mis pensamientos para que queden guardados

|

1.0

|

HU2-Registro de Pensamientos - Yo como usuario, quiero poder escribir mis pensamientos para que queden guardados

|

non_process

|

registro de pensamientos yo como usuario quiero poder escribir mis pensamientos para que queden guardados

| 0

|

19,576

| 25,897,053,258

|

IssuesEvent

|

2022-12-14 23:52:00

|

biocodellc/localcontexts_db

|

https://api.github.com/repos/biocodellc/localcontexts_db

|

closed

|

Registration: set up validation to make sure email can only be used once per user

|

registration process

|

To prevent user profile duplication, set up checks at registration to make sure email is not already being used.

|

1.0

|

Registration: set up validation to make sure email can only be used once per user - To prevent user profile duplication, set up checks at registration to make sure email is not already being used.

|

process

|

registration set up validation to make sure email can only be used once per user to prevent user profile duplication set up checks at registration to make sure email is not already being used

| 1

|

7,271

| 24,552,966,394

|

IssuesEvent

|

2022-10-12 13:55:04

|

Accenture/sfmc-devtools

|

https://api.github.com/repos/Accenture/sfmc-devtools

|

closed

|

[BUG] automation docs broken when file trigger is used

|

bug c/automation NEW

|

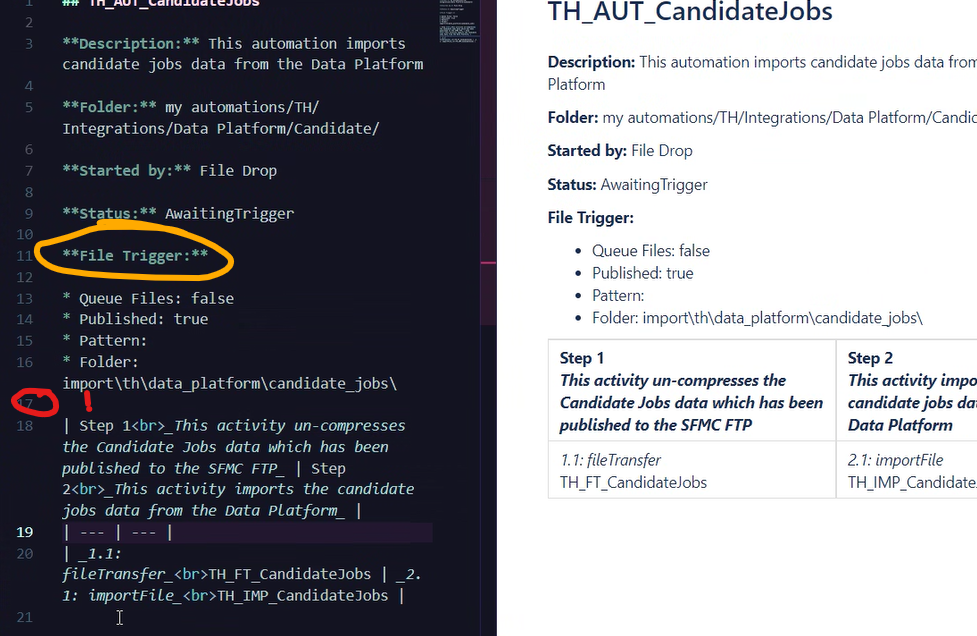

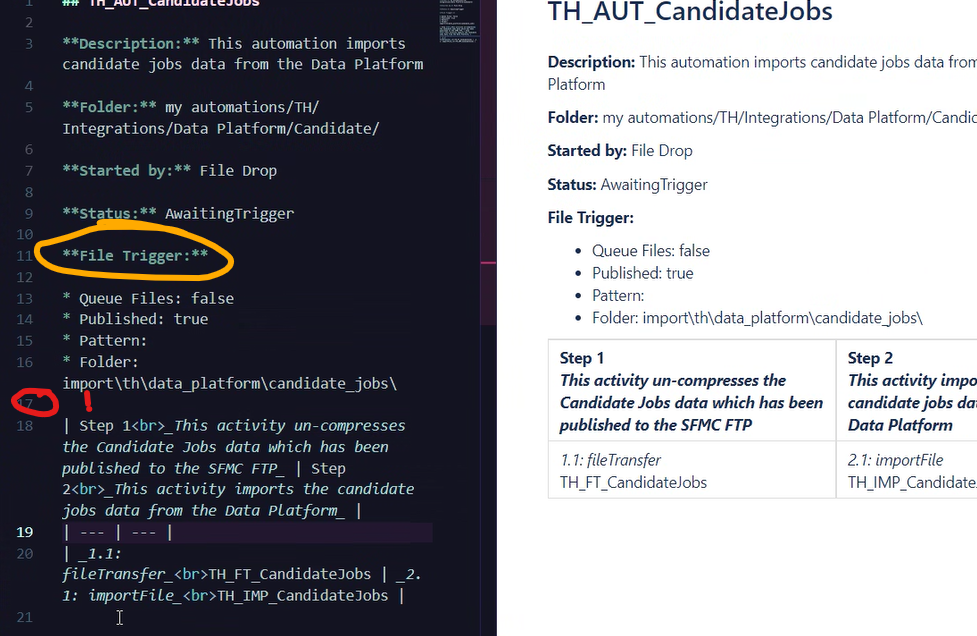

### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

table for steps look fine when no trigger or schedule is used

but when file trigger is specified it does not have the line break shown here on line 17:

### Expected Behavior

add another line break after file trigger section

### Steps To Reproduce

1. Go to '...'

2. Click on '....'

3. Run '...'

4. See error...

### Version

4.0.0

### Environment

- OS:

- Node:

- npm:

### Participation

- [X] I am willing to submit a pull request for this issue.

### Additional comments

_No response_

|

1.0

|

[BUG] automation docs broken when file trigger is used - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

table for steps look fine when no trigger or schedule is used

but when file trigger is specified it does not have the line break shown here on line 17:

### Expected Behavior

add another line break after file trigger section

### Steps To Reproduce

1. Go to '...'

2. Click on '....'

3. Run '...'

4. See error...

### Version

4.0.0

### Environment

- OS:

- Node:

- npm:

### Participation

- [X] I am willing to submit a pull request for this issue.

### Additional comments

_No response_

|

non_process

|

automation docs broken when file trigger is used is there an existing issue for this i have searched the existing issues current behavior table for steps look fine when no trigger or schedule is used but when file trigger is specified it does not have the line break shown here on line expected behavior add another line break after file trigger section steps to reproduce go to click on run see error version environment os node npm participation i am willing to submit a pull request for this issue additional comments no response

| 0

|

5,830

| 7,346,549,693

|

IssuesEvent

|

2018-03-07 21:06:41

|

Microsoft/vscode-cpptools

|

https://api.github.com/repos/Microsoft/vscode-cpptools

|

closed

|

VSCode C/C++ Extension(ms-vscode.cpptools) can be cached?

|

Language Service bug

|

As my usage, I usually open 2 or more Linux Kernel Source projects in vscode, it's very huge.

When I open vscode, ms-vscode.cpptools will auto start preparation of C/C++ IntelliSense, such as symbol search ing, goto defination and so on.

But as I say, the project of kernel source code is very huge and ms-vscode.cpptools will take a lot of time to finish. Every time I open vscode, this preparation job will run again and take a lot of time.

I'm thinking that ms-vscode.cpptools should add cache support for a project.

|

1.0

|

VSCode C/C++ Extension(ms-vscode.cpptools) can be cached? - As my usage, I usually open 2 or more Linux Kernel Source projects in vscode, it's very huge.

When I open vscode, ms-vscode.cpptools will auto start preparation of C/C++ IntelliSense, such as symbol search ing, goto defination and so on.

But as I say, the project of kernel source code is very huge and ms-vscode.cpptools will take a lot of time to finish. Every time I open vscode, this preparation job will run again and take a lot of time.

I'm thinking that ms-vscode.cpptools should add cache support for a project.

|

non_process

|

vscode c c extension ms vscode cpptools can be cached as my usage i usually open or more linux kernel source projects in vscode it s very huge when i open vscode ms vscode cpptools will auto start preparation of c c intellisense such as symbol search ing goto defination and so on but as i say the project of kernel source code is very huge and ms vscode cpptools will take a lot of time to finish every time i open vscode this preparation job will run again and take a lot of time i m thinking that ms vscode cpptools should add cache support for a project

| 0

|

19,659

| 26,020,093,785

|

IssuesEvent

|

2022-12-21 11:52:38

|

0xPolygonMiden/miden-vm

|

https://api.github.com/repos/0xPolygonMiden/miden-vm

|

closed

|

Memoization of hash execution traces

|

enhancement processor v0.3

|

Currently, [Hasher](https://github.com/maticnetwork/miden/blob/next/processor/src/hasher/mod.rs) component of the processor always builds traces for hash computations from scratch. This happens even in cases when computing hashes of the same values more than once.

We can improve on this by keeping track of the hashes which have already been computed, and just copying the sections of the trace with minimal modifications. Specifically, the only thing that needs to be updated when computing hash for previously hashed values is the row address column of the trace - everything else would remain the same.

This can also be done at a higher level. For example, we could keep track of sections of a trace used for Merkle path verification and then, if the same Merkle path was verified more than once, we can just copy the relevant sections of the trace (again, with minimal modifications).

|

1.0

|

Memoization of hash execution traces - Currently, [Hasher](https://github.com/maticnetwork/miden/blob/next/processor/src/hasher/mod.rs) component of the processor always builds traces for hash computations from scratch. This happens even in cases when computing hashes of the same values more than once.

We can improve on this by keeping track of the hashes which have already been computed, and just copying the sections of the trace with minimal modifications. Specifically, the only thing that needs to be updated when computing hash for previously hashed values is the row address column of the trace - everything else would remain the same.

This can also be done at a higher level. For example, we could keep track of sections of a trace used for Merkle path verification and then, if the same Merkle path was verified more than once, we can just copy the relevant sections of the trace (again, with minimal modifications).

|

process

|

memoization of hash execution traces currently component of the processor always builds traces for hash computations from scratch this happens even in cases when computing hashes of the same values more than once we can improve on this by keeping track of the hashes which have already been computed and just copying the sections of the trace with minimal modifications specifically the only thing that needs to be updated when computing hash for previously hashed values is the row address column of the trace everything else would remain the same this can also be done at a higher level for example we could keep track of sections of a trace used for merkle path verification and then if the same merkle path was verified more than once we can just copy the relevant sections of the trace again with minimal modifications

| 1

|

11,020

| 13,806,577,438

|

IssuesEvent

|

2020-10-11 18:16:29

|

Mikts/Infobserve

|

https://api.github.com/repos/Mikts/Infobserve

|

closed

|

`_get_file_sources` also returns directories

|

bug component/processing priority/medium

|

## Description

When I specify something like `"yara_rules/*` it yields directories also which breaks the runtime.

I think it should recurse into everything under the path given and return os files only and *only* if the file is not a valid rule, in which case, it is proper to break the runtime and let the yara lib handle this.

|

1.0

|

`_get_file_sources` also returns directories - ## Description

When I specify something like `"yara_rules/*` it yields directories also which breaks the runtime.

I think it should recurse into everything under the path given and return os files only and *only* if the file is not a valid rule, in which case, it is proper to break the runtime and let the yara lib handle this.

|

process

|

get file sources also returns directories description when i specify something like yara rules it yields directories also which breaks the runtime i think it should recurse into everything under the path given and return os files only and only if the file is not a valid rule in which case it is proper to break the runtime and let the yara lib handle this

| 1

|

7,989

| 11,184,599,186

|

IssuesEvent

|

2019-12-31 19:05:13

|

openopps/openopps-platform

|

https://api.github.com/repos/openopps/openopps-platform

|

closed

|

Update Process: navigation circles should be all green and allow you to click around

|

Apply Process State Dept.

|

Who: Interns updating their applications

What: ability to skip to the last step of the apply process

Why: in order to confirm they submit

Acceptance Criteria:

- Currently, if an intern updates their application they are required to click through to the end to submit.

- If a job is updated in USAJOBS, the app guide opens with all of the steps filled in green and allows you to click right to the last step.

- Update Open Opportunities to have the same functionality on an update to allow the intern to skip to the end,

Screen shot from USAJOBS:

|

1.0

|

Update Process: navigation circles should be all green and allow you to click around - Who: Interns updating their applications

What: ability to skip to the last step of the apply process

Why: in order to confirm they submit

Acceptance Criteria:

- Currently, if an intern updates their application they are required to click through to the end to submit.

- If a job is updated in USAJOBS, the app guide opens with all of the steps filled in green and allows you to click right to the last step.

- Update Open Opportunities to have the same functionality on an update to allow the intern to skip to the end,

Screen shot from USAJOBS:

|

process

|

update process navigation circles should be all green and allow you to click around who interns updating their applications what ability to skip to the last step of the apply process why in order to confirm they submit acceptance criteria currently if an intern updates their application they are required to click through to the end to submit if a job is updated in usajobs the app guide opens with all of the steps filled in green and allows you to click right to the last step update open opportunities to have the same functionality on an update to allow the intern to skip to the end screen shot from usajobs

| 1

|

196,509

| 14,876,491,331

|

IssuesEvent

|

2021-01-20 00:59:30

|

markddrake/YADAMU---Yet-Another-DAta-Migration-Utility

|

https://api.github.com/repos/markddrake/YADAMU---Yet-Another-DAta-Migration-Utility

|

closed

|

MsSQL Error 6104 when attempting to Kill Connection

|

Disconnect Testing MsSQLDBI YadamuQA bug

|

"Msg 6104, Level 16, State 1, Line 1 Cannot use KILL to kill your own process" is intermittently reported when a pooled connection is used to issue KILL request

|

1.0

|

MsSQL Error 6104 when attempting to Kill Connection - "Msg 6104, Level 16, State 1, Line 1 Cannot use KILL to kill your own process" is intermittently reported when a pooled connection is used to issue KILL request

|

non_process

|

mssql error when attempting to kill connection msg level state line cannot use kill to kill your own process is intermittently reported when a pooled connection is used to issue kill request

| 0

|

12,752

| 15,109,753,765

|

IssuesEvent

|

2021-02-08 18:16:17

|

MicrosoftDocs/azure-devops-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-devops-docs

|

closed

|

Note on specifying demands for manually queued builds

|

Pri3 devops-cicd-process/tech devops/prod doc-enhancement help wanted ready-to-doc

|

There's a tip regarding specifying demands at queue time:

> When you manually queue a build you can change the demands for that run.

However, it seems that this is only true for classic non-YAML build definitions – could be worth pointing out. It confused me a few minutes until I figured out the cause.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: e7541ee6-d2bb-84c0-fead-1aa8ee7d2372

* Version Independent ID: 5cf7c51e-37e1-6c67-e6c6-80262c4eb662

* Content: [Demands - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/demands?view=azure-devops&tabs=yaml)

* Content Source: [docs/pipelines/process/demands.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/master/docs/pipelines/process/demands.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @steved0x

* Microsoft Alias: **sdanie**

|

1.0

|

Note on specifying demands for manually queued builds - There's a tip regarding specifying demands at queue time:

> When you manually queue a build you can change the demands for that run.