Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

39,075

| 5,037,388,063

|

IssuesEvent

|

2016-12-17 16:50:13

|

eloipuertas/ES2016F

|

https://api.github.com/repos/eloipuertas/ES2016F

|

closed

|

[A] Animation model saruman

|

Animations Design Team-A

|

3.21 As a graphic designer, I want a set of animations for Saruman, to give movement to the character. [Low priority]

|

1.0

|

[A] Animation model saruman - 3.21 As a graphic designer, I want a set of animations for Saruman, to give movement to the character. [Low priority]

|

non_process

|

animation model saruman as a graphic designer i want a set of animations for saruman to give movement to the character

| 0

|

4,320

| 7,214,021,250

|

IssuesEvent

|

2018-02-08 00:00:22

|

lvergergsk/BibGallery-FrontEnd

|

https://api.github.com/repos/lvergergsk/BibGallery-FrontEnd

|

closed

|

Using SQL to check free space for a user

|

Data Processing

|

## Oracle

* Possible way 1

```

SELECT

ts.tablespace_name,

TO_CHAR(SUM(NVL(fs.bytes,0))/1024/1024, '99,999,990.99') AS MB_FREE

FROM

user_free_space fs,

user_tablespaces ts,

user_users us

WHERE

fs.tablespace_name(+) = ts.tablespace_name

AND ts.tablespace_name(+) = us.default_tablespace

GROUP BY

ts.tablespace_name;

```

* Possible way 2

```

SELECT table_name as Table_Name, row_cnt as Row_Count, SUM(mb) as Size_MB

FROM

(SELECT in_tbl.table_name, to_number(extractvalue(xmltype(dbms_xmlgen.getxml('select count(*) c from ' ||ut.table_name)),'/ROWSET/ROW/C')) AS row_cnt , mb

FROM

(SELECT CASE WHEN lob_tables IS NULL THEN table_name WHEN lob_tables IS NOT NULL THEN lob_tables END AS table_name , mb

FROM (SELECT ul.table_name AS lob_tables, us.segment_name AS table_name , us.bytes/1024/1024 MB FROM user_segments us

LEFT JOIN user_lobs ul ON us.segment_name = ul.segment_name ) ) in_tbl INNER JOIN user_tables ut ON in_tbl.table_name = ut.table_name ) GROUP BY table_name, row_cnt ORDER BY 3 DESC;

```

* Possible way 3

```

SELECT SUM(bytes)

FROM user_segments

```

## MySQL

```

select table_schema, sum((data_length+index_length)/1024/1024) AS MB from information_schema.tables group by 1;

```

|

1.0

|

Using SQL to check free space for a user - ## Oracle

* Possible way 1

```

SELECT

ts.tablespace_name,

TO_CHAR(SUM(NVL(fs.bytes,0))/1024/1024, '99,999,990.99') AS MB_FREE

FROM

user_free_space fs,

user_tablespaces ts,

user_users us

WHERE

fs.tablespace_name(+) = ts.tablespace_name

AND ts.tablespace_name(+) = us.default_tablespace

GROUP BY

ts.tablespace_name;

```

* Possible way 2

```

SELECT table_name as Table_Name, row_cnt as Row_Count, SUM(mb) as Size_MB

FROM

(SELECT in_tbl.table_name, to_number(extractvalue(xmltype(dbms_xmlgen.getxml('select count(*) c from ' ||ut.table_name)),'/ROWSET/ROW/C')) AS row_cnt , mb

FROM

(SELECT CASE WHEN lob_tables IS NULL THEN table_name WHEN lob_tables IS NOT NULL THEN lob_tables END AS table_name , mb

FROM (SELECT ul.table_name AS lob_tables, us.segment_name AS table_name , us.bytes/1024/1024 MB FROM user_segments us

LEFT JOIN user_lobs ul ON us.segment_name = ul.segment_name ) ) in_tbl INNER JOIN user_tables ut ON in_tbl.table_name = ut.table_name ) GROUP BY table_name, row_cnt ORDER BY 3 DESC;

```

* Possible way 3

```

SELECT SUM(bytes)

FROM user_segments

```

## MySQL

```

select table_schema, sum((data_length+index_length)/1024/1024) AS MB from information_schema.tables group by 1;

```

|

process

|

using sql to check free space for a user oracle possible way select ts tablespace name to char sum nvl fs bytes as mb free from user free space fs user tablespaces ts user users us where fs tablespace name ts tablespace name and ts tablespace name us default tablespace group by ts tablespace name possible way select table name as table name row cnt as row count sum mb as size mb from select in tbl table name to number extractvalue xmltype dbms xmlgen getxml select count c from ut table name rowset row c as row cnt mb from select case when lob tables is null then table name when lob tables is not null then lob tables end as table name mb from select ul table name as lob tables us segment name as table name us bytes mb from user segments us left join user lobs ul on us segment name ul segment name in tbl inner join user tables ut on in tbl table name ut table name group by table name row cnt order by desc possible way select sum bytes from user segments mysql select table schema sum data length index length as mb from information schema tables group by

| 1

|

6,905

| 10,056,518,350

|

IssuesEvent

|

2019-07-22 09:20:31

|

CymChad/BaseRecyclerViewAdapterHelper

|

https://api.github.com/repos/CymChad/BaseRecyclerViewAdapterHelper

|

closed

|

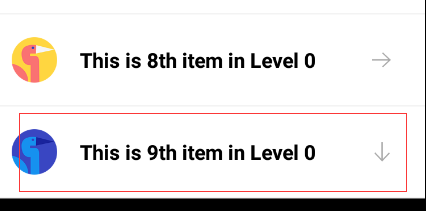

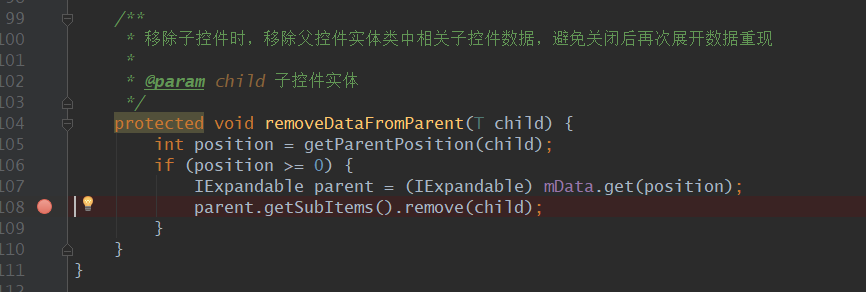

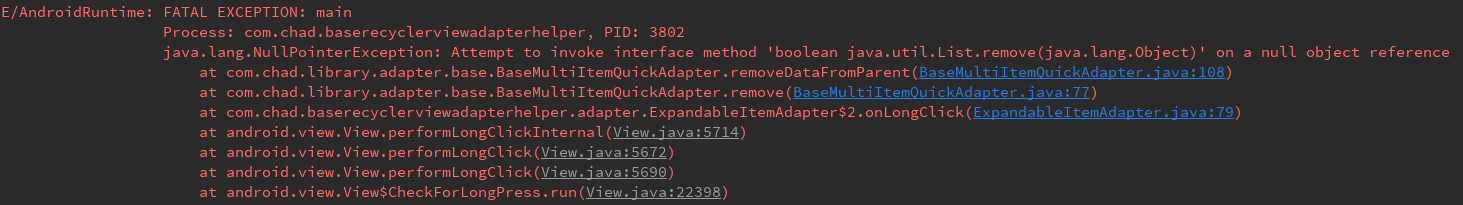

树形列表删除bug

|

processing

|

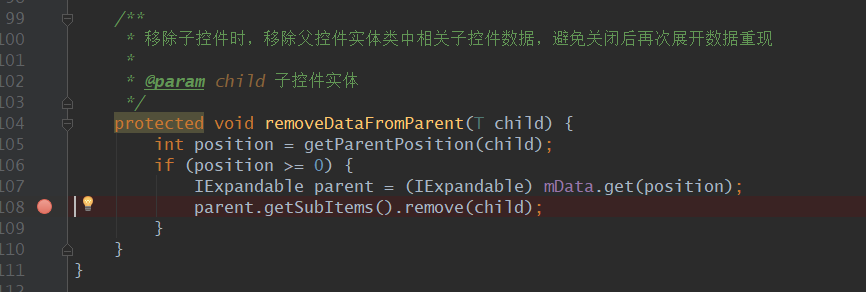

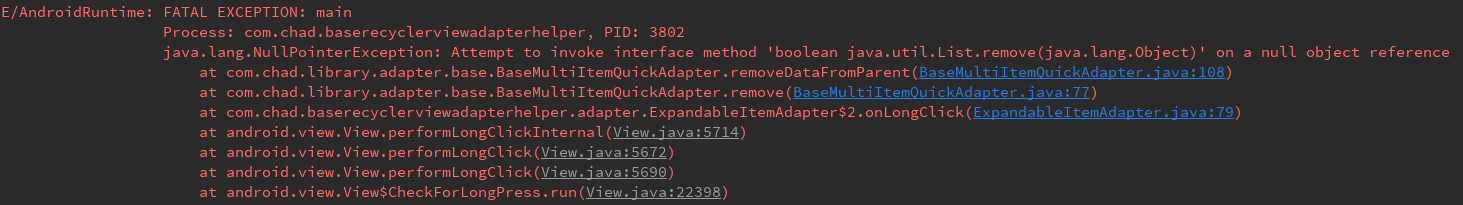

在树形列表中,如果一级目录下没有子目录时,在调用BaseMultiItemQuickAdapter中remove(int position),删除,会导致程序崩溃。你使用你写的列子也是这样。

|

1.0

|

树形列表删除bug -

在树形列表中,如果一级目录下没有子目录时,在调用BaseMultiItemQuickAdapter中remove(int position),删除,会导致程序崩溃。你使用你写的列子也是这样。

|

process

|

树形列表删除bug 在树形列表中,如果一级目录下没有子目录时,在调用basemultiitemquickadapter中remove int position 删除,会导致程序崩溃。你使用你写的列子也是这样。

| 1

|

262,022

| 8,249,166,046

|

IssuesEvent

|

2018-09-11 20:42:16

|

craftercms/craftercms

|

https://api.github.com/repos/craftercms/craftercms

|

opened

|

[studio] Non-site members have access to site and full permissions

|

bug priority: high

|

### Expected behavior

Non-site members shouldn't have access to sites

### Actual behavior

Everyone has full access to everything (seems that all groups are being returned for a user).

### Steps to reproduce the problem

* Create a user and a site, but don't assign the user to any groups

* Login as that user

* See the site

### Log/stack trace (use https://gist.github.com)

### Specs

#### Version

```

Studio Version Number: 3.1.0-SNAPSHOT-0a0946

Build Number: 0a0946d689773516fda8075b4058b38e67f8920c

Build Date/Time: 09-11-2018 13:57:45 -0400

```

#### OS

Any

#### Browser

Any

|

1.0

|

[studio] Non-site members have access to site and full permissions - ### Expected behavior

Non-site members shouldn't have access to sites

### Actual behavior

Everyone has full access to everything (seems that all groups are being returned for a user).

### Steps to reproduce the problem

* Create a user and a site, but don't assign the user to any groups

* Login as that user

* See the site

### Log/stack trace (use https://gist.github.com)

### Specs

#### Version

```

Studio Version Number: 3.1.0-SNAPSHOT-0a0946

Build Number: 0a0946d689773516fda8075b4058b38e67f8920c

Build Date/Time: 09-11-2018 13:57:45 -0400

```

#### OS

Any

#### Browser

Any

|

non_process

|

non site members have access to site and full permissions expected behavior non site members shouldn t have access to sites actual behavior everyone has full access to everything seems that all groups are being returned for a user steps to reproduce the problem create a user and a site but don t assign the user to any groups login as that user see the site log stack trace use specs version studio version number snapshot build number build date time os any browser any

| 0

|

44,091

| 13,048,237,902

|

IssuesEvent

|

2020-07-29 12:11:54

|

jgeraigery/imhotep

|

https://api.github.com/repos/jgeraigery/imhotep

|

opened

|

WS-2018-0125 (Medium) detected in jackson-core-2.2.3.jar, jackson-core-2.6.7.jar

|

security vulnerability

|

## WS-2018-0125 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-core-2.2.3.jar</b>, <b>jackson-core-2.6.7.jar</b></p></summary>

<p>

<details><summary><b>jackson-core-2.2.3.jar</b></p></summary>

<p>Core Jackson abstractions, basic JSON streaming API implementation</p>

<p>Path to dependency file: /tmp/ws-scm/imhotep/imhotep-archive/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-core/2.2.3/jackson-core-2.2.3.jar</p>

<p>

Dependency Hierarchy:

- hadoop-client-2.6.0-cdh5.4.11.jar (Root Library)

- hadoop-aws-2.6.0-cdh5.4.11.jar

- jackson-databind-2.2.3.jar

- :x: **jackson-core-2.2.3.jar** (Vulnerable Library)

</details>

<details><summary><b>jackson-core-2.6.7.jar</b></p></summary>

<p>Core Jackson abstractions, basic JSON streaming API implementation</p>

<p>Library home page: <a href="https://github.com/FasterXML/jackson-core">https://github.com/FasterXML/jackson-core</a></p>

<p>Path to dependency file: /tmp/ws-scm/imhotep/imhotep-server/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-core/2.6.7/jackson-core-2.6.7.jar</p>

<p>

Dependency Hierarchy:

- jackson-databind-2.6.7.1.jar (Root Library)

- :x: **jackson-core-2.6.7.jar** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/jgeraigery/imhotep/commit/4432df39a5fc652b4512ad35a6db8f1a3776b771">4432df39a5fc652b4512ad35a6db8f1a3776b771</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

OutOfMemoryError when writing BigDecimal In Jackson Core before version 2.7.7.

When enabled the WRITE_BIGDECIMAL_AS_PLAIN setting, Jackson will attempt to write out the whole number, no matter how large the exponent.

<p>Publish Date: 2016-08-25

<p>URL: <a href=https://github.com/FasterXML/jackson-core/issues/315>WS-2018-0125</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/FasterXML/jackson-core/releases/tag/jackson-core-2.7.7">https://github.com/FasterXML/jackson-core/releases/tag/jackson-core-2.7.7</a></p>

<p>Release Date: 2016-08-25</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-core:2.7.7</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-core","packageVersion":"2.2.3","isTransitiveDependency":true,"dependencyTree":"org.apache.hadoop:hadoop-client:2.6.0-cdh5.4.11;org.apache.hadoop:hadoop-aws:2.6.0-cdh5.4.11;com.fasterxml.jackson.core:jackson-databind:2.2.3;com.fasterxml.jackson.core:jackson-core:2.2.3","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.fasterxml.jackson.core:jackson-core:2.7.7"},{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-core","packageVersion":"2.6.7","isTransitiveDependency":true,"dependencyTree":"com.fasterxml.jackson.core:jackson-databind:2.6.7.1;com.fasterxml.jackson.core:jackson-core:2.6.7","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.fasterxml.jackson.core:jackson-core:2.7.7"}],"vulnerabilityIdentifier":"WS-2018-0125","vulnerabilityDetails":"OutOfMemoryError when writing BigDecimal In Jackson Core before version 2.7.7.\nWhen enabled the WRITE_BIGDECIMAL_AS_PLAIN setting, Jackson will attempt to write out the whole number, no matter how large the exponent.","vulnerabilityUrl":"https://github.com/FasterXML/jackson-core/issues/315","cvss2Severity":"medium","cvss2Score":"5.5","extraData":{}}</REMEDIATE> -->

|

True

|

WS-2018-0125 (Medium) detected in jackson-core-2.2.3.jar, jackson-core-2.6.7.jar - ## WS-2018-0125 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-core-2.2.3.jar</b>, <b>jackson-core-2.6.7.jar</b></p></summary>

<p>

<details><summary><b>jackson-core-2.2.3.jar</b></p></summary>

<p>Core Jackson abstractions, basic JSON streaming API implementation</p>

<p>Path to dependency file: /tmp/ws-scm/imhotep/imhotep-archive/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-core/2.2.3/jackson-core-2.2.3.jar</p>

<p>

Dependency Hierarchy:

- hadoop-client-2.6.0-cdh5.4.11.jar (Root Library)

- hadoop-aws-2.6.0-cdh5.4.11.jar

- jackson-databind-2.2.3.jar

- :x: **jackson-core-2.2.3.jar** (Vulnerable Library)

</details>

<details><summary><b>jackson-core-2.6.7.jar</b></p></summary>

<p>Core Jackson abstractions, basic JSON streaming API implementation</p>

<p>Library home page: <a href="https://github.com/FasterXML/jackson-core">https://github.com/FasterXML/jackson-core</a></p>

<p>Path to dependency file: /tmp/ws-scm/imhotep/imhotep-server/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-core/2.6.7/jackson-core-2.6.7.jar</p>

<p>

Dependency Hierarchy:

- jackson-databind-2.6.7.1.jar (Root Library)

- :x: **jackson-core-2.6.7.jar** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/jgeraigery/imhotep/commit/4432df39a5fc652b4512ad35a6db8f1a3776b771">4432df39a5fc652b4512ad35a6db8f1a3776b771</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

OutOfMemoryError when writing BigDecimal In Jackson Core before version 2.7.7.

When enabled the WRITE_BIGDECIMAL_AS_PLAIN setting, Jackson will attempt to write out the whole number, no matter how large the exponent.

<p>Publish Date: 2016-08-25

<p>URL: <a href=https://github.com/FasterXML/jackson-core/issues/315>WS-2018-0125</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/FasterXML/jackson-core/releases/tag/jackson-core-2.7.7">https://github.com/FasterXML/jackson-core/releases/tag/jackson-core-2.7.7</a></p>

<p>Release Date: 2016-08-25</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-core:2.7.7</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-core","packageVersion":"2.2.3","isTransitiveDependency":true,"dependencyTree":"org.apache.hadoop:hadoop-client:2.6.0-cdh5.4.11;org.apache.hadoop:hadoop-aws:2.6.0-cdh5.4.11;com.fasterxml.jackson.core:jackson-databind:2.2.3;com.fasterxml.jackson.core:jackson-core:2.2.3","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.fasterxml.jackson.core:jackson-core:2.7.7"},{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-core","packageVersion":"2.6.7","isTransitiveDependency":true,"dependencyTree":"com.fasterxml.jackson.core:jackson-databind:2.6.7.1;com.fasterxml.jackson.core:jackson-core:2.6.7","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.fasterxml.jackson.core:jackson-core:2.7.7"}],"vulnerabilityIdentifier":"WS-2018-0125","vulnerabilityDetails":"OutOfMemoryError when writing BigDecimal In Jackson Core before version 2.7.7.\nWhen enabled the WRITE_BIGDECIMAL_AS_PLAIN setting, Jackson will attempt to write out the whole number, no matter how large the exponent.","vulnerabilityUrl":"https://github.com/FasterXML/jackson-core/issues/315","cvss2Severity":"medium","cvss2Score":"5.5","extraData":{}}</REMEDIATE> -->

|

non_process

|

ws medium detected in jackson core jar jackson core jar ws medium severity vulnerability vulnerable libraries jackson core jar jackson core jar jackson core jar core jackson abstractions basic json streaming api implementation path to dependency file tmp ws scm imhotep imhotep archive pom xml path to vulnerable library home wss scanner repository com fasterxml jackson core jackson core jackson core jar dependency hierarchy hadoop client jar root library hadoop aws jar jackson databind jar x jackson core jar vulnerable library jackson core jar core jackson abstractions basic json streaming api implementation library home page a href path to dependency file tmp ws scm imhotep imhotep server pom xml path to vulnerable library home wss scanner repository com fasterxml jackson core jackson core jackson core jar dependency hierarchy jackson databind jar root library x jackson core jar vulnerable library found in head commit a href vulnerability details outofmemoryerror when writing bigdecimal in jackson core before version when enabled the write bigdecimal as plain setting jackson will attempt to write out the whole number no matter how large the exponent publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution com fasterxml jackson core jackson core isopenpronvulnerability true ispackagebased true isdefaultbranch true packages vulnerabilityidentifier ws vulnerabilitydetails outofmemoryerror when writing bigdecimal in jackson core before version nwhen enabled the write bigdecimal as plain setting jackson will attempt to write out the whole number no matter how large the exponent vulnerabilityurl

| 0

|

11,872

| 14,673,243,470

|

IssuesEvent

|

2020-12-30 12:38:14

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

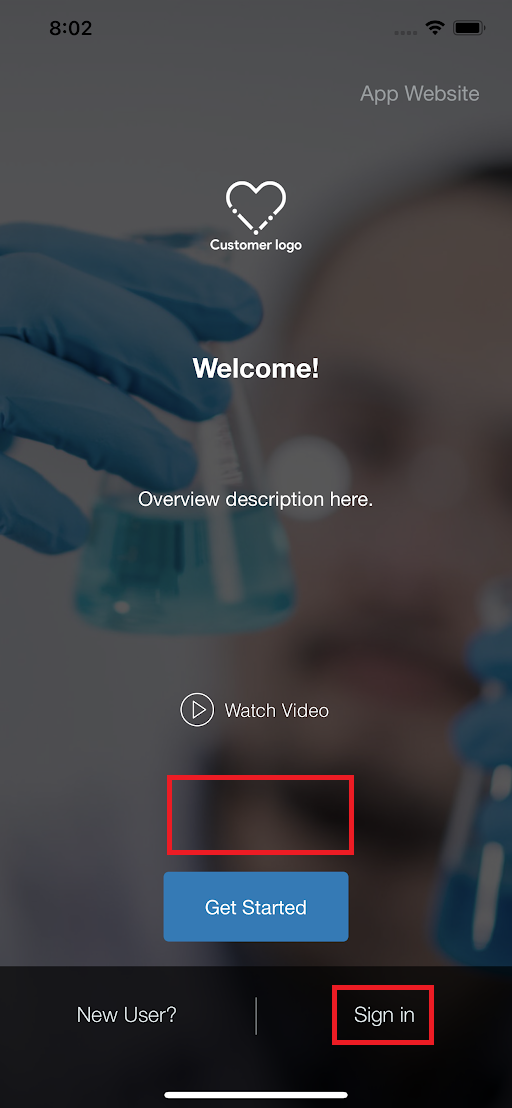

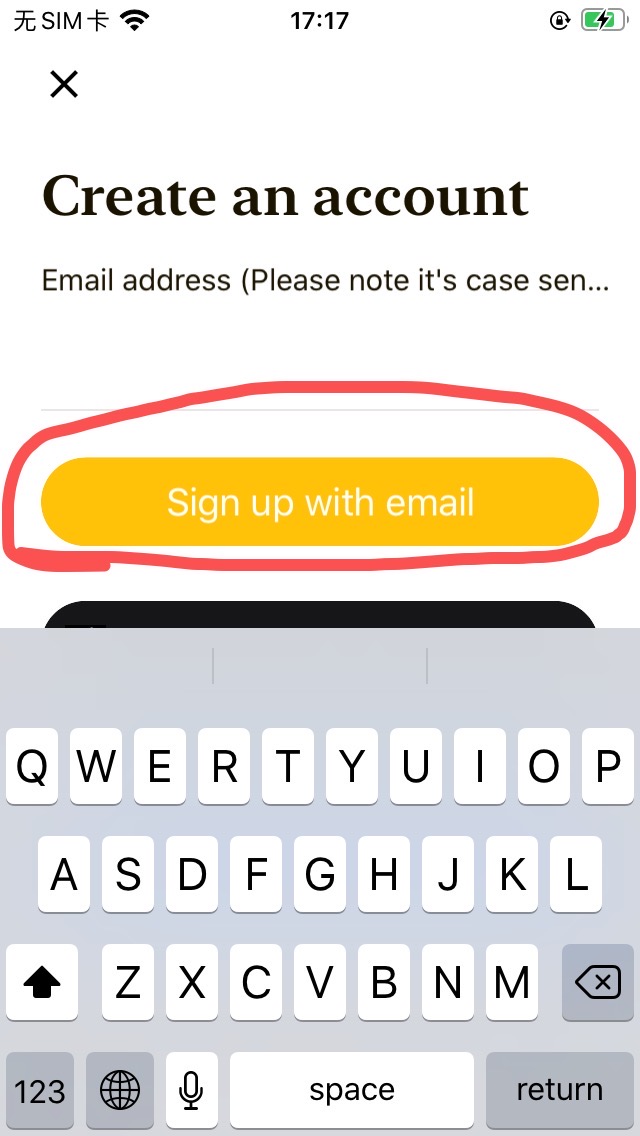

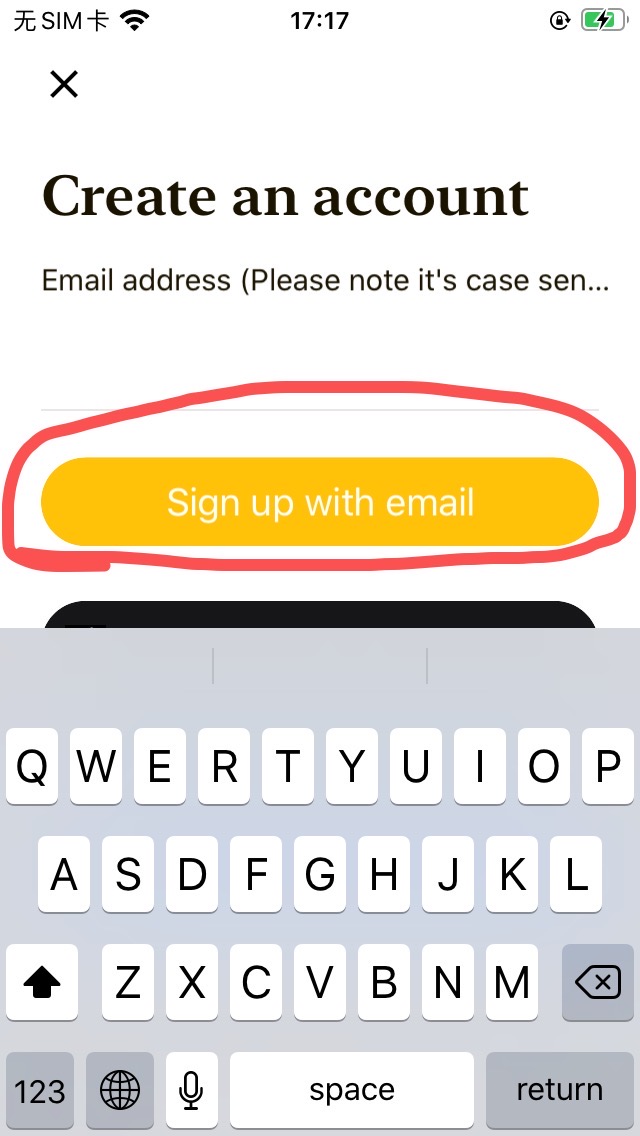

[iOS] UI Issues in Overview screen

|

Bug P2 Process: Tested dev iOS

|

Steps:

1. Launch the app

2. Observe the below issues in Overview screen

Actual result

1. 'Sign in' text is displayed

2. Pagination button is missing above 'Get Started' button

Expected result

1. 'Sign In' text should be displayed

2. Pagination button should be displayed above 'Get Started' button

|

1.0

|

[iOS] UI Issues in Overview screen - Steps:

1. Launch the app

2. Observe the below issues in Overview screen

Actual result

1. 'Sign in' text is displayed

2. Pagination button is missing above 'Get Started' button

Expected result

1. 'Sign In' text should be displayed

2. Pagination button should be displayed above 'Get Started' button

|

process

|

ui issues in overview screen steps launch the app observe the below issues in overview screen actual result sign in text is displayed pagination button is missing above get started button expected result sign in text should be displayed pagination button should be displayed above get started button

| 1

|

678,661

| 23,205,980,180

|

IssuesEvent

|

2022-08-02 05:22:44

|

phetsims/axon

|

https://api.github.com/repos/phetsims/axon

|

closed

|

Can we get rid of getListenerCount?

|

priority:2-high dev:typescript

|

From https://github.com/phetsims/axon/issues/402, @marlitas and I would like to remove getListenerCount from the Emitter and Property interfaces. Current usages seem to only be in tests. Can we get rid of the tests? If not, perhaps subclass and make that method public?

|

1.0

|

Can we get rid of getListenerCount? - From https://github.com/phetsims/axon/issues/402, @marlitas and I would like to remove getListenerCount from the Emitter and Property interfaces. Current usages seem to only be in tests. Can we get rid of the tests? If not, perhaps subclass and make that method public?

|

non_process

|

can we get rid of getlistenercount from marlitas and i would like to remove getlistenercount from the emitter and property interfaces current usages seem to only be in tests can we get rid of the tests if not perhaps subclass and make that method public

| 0

|

172,550

| 27,297,208,425

|

IssuesEvent

|

2023-02-23 21:28:58

|

AlaskaAirlines/auro-nav

|

https://api.github.com/repos/AlaskaAirlines/auro-nav

|

closed

|

Left navigation design

|

auro-nav Type: Design

|

# Blueprint

[Documentation & research

](https://www.figma.com/file/e5SFMd5WwEB27iG2rcdPcU/Navigation?node-id=1%3A9088)

[Desktop design](https://www.figma.com/file/vgBapdyc1pqZGONvOOvmcJ/Left-Navigation?node-id=1908%3A8814&t=7L24WZtyFeK5ziZf-1)

[Mobile design](https://www.figma.com/file/vgBapdyc1pqZGONvOOvmcJ/Left-Navigation?node-id=1952%3A35184&t=7L24WZtyFeK5ziZf-1)

## Outline tasks

- [x] anatomy

- [x] color

- [x] typography

- [x] layout

- [x] spacing

- [x] animation/behavior

- [x] variants

- [x] states (hover, focus, active, focus-visible)

- [x] a11y

## Optional

- [x] Competitive analysis

- [x] research

- [x] site audit

- [x] usage audit

- [x] inspirational work

|

1.0

|

Left navigation design - # Blueprint

[Documentation & research

](https://www.figma.com/file/e5SFMd5WwEB27iG2rcdPcU/Navigation?node-id=1%3A9088)

[Desktop design](https://www.figma.com/file/vgBapdyc1pqZGONvOOvmcJ/Left-Navigation?node-id=1908%3A8814&t=7L24WZtyFeK5ziZf-1)

[Mobile design](https://www.figma.com/file/vgBapdyc1pqZGONvOOvmcJ/Left-Navigation?node-id=1952%3A35184&t=7L24WZtyFeK5ziZf-1)

## Outline tasks

- [x] anatomy

- [x] color

- [x] typography

- [x] layout

- [x] spacing

- [x] animation/behavior

- [x] variants

- [x] states (hover, focus, active, focus-visible)

- [x] a11y

## Optional

- [x] Competitive analysis

- [x] research

- [x] site audit

- [x] usage audit

- [x] inspirational work

|

non_process

|

left navigation design blueprint documentation research outline tasks anatomy color typography layout spacing animation behavior variants states hover focus active focus visible optional competitive analysis research site audit usage audit inspirational work

| 0

|

77,453

| 3,506,387,130

|

IssuesEvent

|

2016-01-08 06:22:01

|

OregonCore/OregonCore

|

https://api.github.com/repos/OregonCore/OregonCore

|

closed

|

A RAID of the 5 into the team, can enter the 40 game player (BB #498)

|

migrated Priority: Medium Type: Bug

|

This issue was migrated from bitbucket.

**Original Reporter:**

**Original Date:** 24.02.2014 12:42:32 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** invalid

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/498

<hr>

A RAID of the 5 into the team, can enter the 40 game player

I'm from china, and my english is not good.

|

1.0

|

A RAID of the 5 into the team, can enter the 40 game player (BB #498) - This issue was migrated from bitbucket.

**Original Reporter:**

**Original Date:** 24.02.2014 12:42:32 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** invalid

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/498

<hr>

A RAID of the 5 into the team, can enter the 40 game player

I'm from china, and my english is not good.

|

non_process

|

a raid of the into the team can enter the game player bb this issue was migrated from bitbucket original reporter original date gmt original priority major original type bug original state invalid direct link a raid of the into the team can enter the game player i m from china and my english is not good

| 0

|

51,966

| 7,739,946,667

|

IssuesEvent

|

2018-05-28 18:32:09

|

ProjectEvergreen/project-evergreen

|

https://api.github.com/repos/ProjectEvergreen/project-evergreen

|

closed

|

Create a basic Todo App example

|

documentation enhancement todo-mvc website

|

based [TodoMVC](http://todomvc.com/)

<img width="621" alt="screen shot 2018-05-22 at 3 40 20 pm" src="https://user-images.githubusercontent.com/895923/40385989-7bd92968-5dd6-11e8-9cf0-c18a6f4bed5f.png">

1. Basic workflows

1. Basic CRUD functionality

1. Some basic styles / examples of things like Web Components, CSS Grid, etc

|

1.0

|

Create a basic Todo App example - based [TodoMVC](http://todomvc.com/)

<img width="621" alt="screen shot 2018-05-22 at 3 40 20 pm" src="https://user-images.githubusercontent.com/895923/40385989-7bd92968-5dd6-11e8-9cf0-c18a6f4bed5f.png">

1. Basic workflows

1. Basic CRUD functionality

1. Some basic styles / examples of things like Web Components, CSS Grid, etc

|

non_process

|

create a basic todo app example based img width alt screen shot at pm src basic workflows basic crud functionality some basic styles examples of things like web components css grid etc

| 0

|

350,016

| 10,477,244,674

|

IssuesEvent

|

2019-09-23 20:27:36

|

wherebyus/general-tasks

|

https://api.github.com/repos/wherebyus/general-tasks

|

closed

|

events planner beta

|

Added After Sprint Planning Priority: High Product: Events Type: Bug UX: Validated

|

## Feature or problem

can't enter date-says invalid date

## UX Validation

Validated

### Suggested priority

High

### Stakeholders

*Submitted:* cristina349

### Definition of done

How will we know when this feature is complete?

### Subtasks

A detailed list of changes that need to be made or subtasks. One checkbox per.

- [ ] Brew the coffee

## Developer estimate

To help the team accurately estimate the complexity of this task,

take a moment to walk through this list and estimate each item. At the end, you can total

the estimates and round to the nearest prime number.

If any of these are at a `5` or higher, or if the total is above a `5`, consider breaking

this issue into multiple smaller issues.

- [ ] Changes to the database ()

- [ ] Changes to the API ()

- [ ] Testing Changes to the API ()

- [ ] Changes to Application Code ()

- [ ] Adding or updating unit tests ()

- [ ] Local developer testing ()

### Total developer estimate: 0

## Additional estimate

- [ ] Code review ()

- [ ] QA Testing ()

- [ ] Stakeholder Sign-off ()

- [ ] Deploy to Production ()

### Total additional estimate:

## QA Notes

Detailed instructions for testing, one checkbox per test to be completed.

### Contextual tests

- [ ] Accessibility check

- [ ] Cross-browser check (Edge, Chrome, Firefox)

- [ ] Responsive check

|

1.0

|

events planner beta - ## Feature or problem

can't enter date-says invalid date

## UX Validation

Validated

### Suggested priority

High

### Stakeholders

*Submitted:* cristina349

### Definition of done

How will we know when this feature is complete?

### Subtasks

A detailed list of changes that need to be made or subtasks. One checkbox per.

- [ ] Brew the coffee

## Developer estimate

To help the team accurately estimate the complexity of this task,

take a moment to walk through this list and estimate each item. At the end, you can total

the estimates and round to the nearest prime number.

If any of these are at a `5` or higher, or if the total is above a `5`, consider breaking

this issue into multiple smaller issues.

- [ ] Changes to the database ()

- [ ] Changes to the API ()

- [ ] Testing Changes to the API ()

- [ ] Changes to Application Code ()

- [ ] Adding or updating unit tests ()

- [ ] Local developer testing ()

### Total developer estimate: 0

## Additional estimate

- [ ] Code review ()

- [ ] QA Testing ()

- [ ] Stakeholder Sign-off ()

- [ ] Deploy to Production ()

### Total additional estimate:

## QA Notes

Detailed instructions for testing, one checkbox per test to be completed.

### Contextual tests

- [ ] Accessibility check

- [ ] Cross-browser check (Edge, Chrome, Firefox)

- [ ] Responsive check

|

non_process

|

events planner beta feature or problem can t enter date says invalid date ux validation validated suggested priority high stakeholders submitted definition of done how will we know when this feature is complete subtasks a detailed list of changes that need to be made or subtasks one checkbox per brew the coffee developer estimate to help the team accurately estimate the complexity of this task take a moment to walk through this list and estimate each item at the end you can total the estimates and round to the nearest prime number if any of these are at a or higher or if the total is above a consider breaking this issue into multiple smaller issues changes to the database changes to the api testing changes to the api changes to application code adding or updating unit tests local developer testing total developer estimate additional estimate code review qa testing stakeholder sign off deploy to production total additional estimate qa notes detailed instructions for testing one checkbox per test to be completed contextual tests accessibility check cross browser check edge chrome firefox responsive check

| 0

|

12,854

| 15,239,399,279

|

IssuesEvent

|

2021-02-19 04:22:27

|

topcoder-platform/community-app

|

https://api.github.com/repos/topcoder-platform/community-app

|

opened

|

Extract Member Skills History for past challenges

|

ShapeupProcess challenge- recommender-tool enhancement

|

Extract Member Skills History for past challenges.

|

1.0

|

Extract Member Skills History for past challenges - Extract Member Skills History for past challenges.

|

process

|

extract member skills history for past challenges extract member skills history for past challenges

| 1

|

665,014

| 22,296,061,695

|

IssuesEvent

|

2022-06-13 01:48:25

|

TencentBlueKing/bk-iam-saas

|

https://api.github.com/repos/TencentBlueKing/bk-iam-saas

|

closed

|

[RBAC] open api: 校验用户是否某个用户组的成员

|

Type: Enhancement Layer: Backend Priority: High Size: S backlog

|

支持批量用户组,批量数有限制

在目前的接口新增, 但是需要考虑, 大表查询校验关系`存在`

-----------------

需要重新梳理现在的接口列表, 如果蓝盾切换, 去掉依赖, 那么以前的部分接口可以下掉?

|

1.0

|

[RBAC] open api: 校验用户是否某个用户组的成员 - 支持批量用户组,批量数有限制

在目前的接口新增, 但是需要考虑, 大表查询校验关系`存在`

-----------------

需要重新梳理现在的接口列表, 如果蓝盾切换, 去掉依赖, 那么以前的部分接口可以下掉?

|

non_process

|

open api 校验用户是否某个用户组的成员 支持批量用户组,批量数有限制 在目前的接口新增 但是需要考虑 大表查询校验关系 存在 需要重新梳理现在的接口列表 如果蓝盾切换 去掉依赖 那么以前的部分接口可以下掉

| 0

|

217,391

| 16,855,762,320

|

IssuesEvent

|

2021-06-21 06:23:37

|

pingcap/tidb

|

https://api.github.com/repos/pingcap/tidb

|

closed

|

DATA_RACE:runtime.mapassign_fast64() failed

|

component/test

|

DATA_RACE:runtime.mapassign_fast64()

```

[2020-11-12T05:22:56.562Z] WARNING: DATA RACE

[2020-11-12T05:22:56.562Z] runtime.mapassign_fast64()

[2020-11-12T05:22:56.562Z] /usr/local/go/src/runtime/map_fast64.go:92 +0x0

[2020-11-12T05:22:56.562Z] github.com/pingcap/parser/terror.ErrClass.NewStdErr()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/parser@v0.0.0-20201109022253-d384bee1451e/terror/terror.go:163 +0x19a

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/util/hint.checkInsertStmtHintDuplicated()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/util/dbterror/terror.go:55 +0x4be

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/util/hint.ExtractTableHintsFromStmtNode()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/util/hint/hint_processor.go:76 +0x108

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/planner.Optimize()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/planner/optimize.go:108 +0x1aa

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*Compiler).Compile()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/compiler.go:62 +0x2fa

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/session.(*session).ExecuteStmt()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/session/session.go:1207 +0x270

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/util/testkit.(*TestKit).Exec()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/util/testkit/testkit.go:170 +0x2f1

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/util/testkit.(*TestKit).MustExec()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/util/testkit/testkit.go:216 +0x91

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/session_test.(*testSessionSuite2).TestStmtHints()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/session/session_test.go:3097 +0xd1f

[2020-11-12T05:22:56.562Z] runtime.call32()

[2020-11-12T05:22:56.562Z] /usr/local/go/src/runtime/asm_amd64.s:539 +0x3a

[2020-11-12T05:22:56.562Z] reflect.Value.Call()

[2020-11-12T05:22:56.562Z] /usr/local/go/src/reflect/value.go:321 +0xd3

[2020-11-12T05:22:56.562Z] github.com/pingcap/check.(*suiteRunner).forkTest.func1()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:850 +0x9aa

[2020-11-12T05:22:56.562Z] github.com/pingcap/check.(*suiteRunner).forkCall.func1()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:739 +0x113

[2020-11-12T05:22:56.562Z]

[2020-11-12T05:22:56.562Z] Previous write at 0x00c0001e0420 by goroutine 456:

[2020-11-12T05:22:56.562Z] runtime.mapassign_fast64()

[2020-11-12T05:22:56.562Z] /usr/local/go/src/runtime/map_fast64.go:92 +0x0

[2020-11-12T05:22:56.562Z] github.com/pingcap/parser/terror.ErrClass.NewStdErr()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/parser@v0.0.0-20201109022253-d384bee1451e/terror/terror.go:163 +0x19a

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*InsertValues).handleErr()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/insert_common.go:300 +0xa54

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*InsertValues).fastEvalRow()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/insert_common.go:373 +0x569

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*InsertValues).fastEvalRow-fm()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/insert_common.go:359 +0xaa

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.insertRows()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/insert_common.go:233 +0x3b6

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*InsertExec).Next()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/insert.go:288 +0x117

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.Next()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/executor.go:268 +0x27d

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*ExecStmt).handleNoDelayExecutor()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/adapter.go:522 +0x38e

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*ExecStmt).handleNoDelay()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/adapter.go:404 +0x254

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*ExecStmt).Exec()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/adapter.go:354 +0x3f6

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/session.runStmt()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/session/session.go:1285 +0x2c1

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/session.(*session).ExecuteStmt()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/session/session.go:1229 +0xa57

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/util/testkit.(*TestKit).Exec()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/util/testkit/testkit.go:170 +0x2f1

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/session_test.(*testSessionSuite).TestPrepareZero()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/session/session_test.go:1021 +0x209

[2020-11-12T05:22:56.562Z] runtime.call32()

[2020-11-12T05:22:56.562Z] /usr/local/go/src/runtime/asm_amd64.s:539 +0x3a

[2020-11-12T05:22:56.562Z] reflect.Value.Call()

[2020-11-12T05:22:56.562Z] /usr/local/go/src/reflect/value.go:321 +0xd3

[2020-11-12T05:22:56.562Z] github.com/pingcap/check.(*suiteRunner).forkTest.func1()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:850 +0x9aa

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).forkCall.func1()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:739 +0x113

[2020-11-12T05:22:56.563Z]

[2020-11-12T05:22:56.563Z] Goroutine 459 (running) created at:

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).forkCall()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:734 +0x4a3

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).forkTest()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:832 +0x1b9

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).doRun()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:666 +0x13a

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).asyncRun.func1()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:650 +0xf7

[2020-11-12T05:22:56.563Z]

[2020-11-12T05:22:56.563Z] Goroutine 456 (running) created at:

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).forkCall()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:734 +0x4a3

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).forkTest()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:832 +0x1b9

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).doRun()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:666 +0x13a

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).asyncRun.func1()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:650 +0xf7

[2020-11-12T05:22:56.563Z] ==================

```

Latest failed builds:

https://internal.pingcap.net/idc-jenkins/job/tidb_ghpr_unit_test/57742/display/redirect

|

1.0

|

DATA_RACE:runtime.mapassign_fast64() failed - DATA_RACE:runtime.mapassign_fast64()

```

[2020-11-12T05:22:56.562Z] WARNING: DATA RACE

[2020-11-12T05:22:56.562Z] runtime.mapassign_fast64()

[2020-11-12T05:22:56.562Z] /usr/local/go/src/runtime/map_fast64.go:92 +0x0

[2020-11-12T05:22:56.562Z] github.com/pingcap/parser/terror.ErrClass.NewStdErr()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/parser@v0.0.0-20201109022253-d384bee1451e/terror/terror.go:163 +0x19a

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/util/hint.checkInsertStmtHintDuplicated()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/util/dbterror/terror.go:55 +0x4be

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/util/hint.ExtractTableHintsFromStmtNode()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/util/hint/hint_processor.go:76 +0x108

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/planner.Optimize()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/planner/optimize.go:108 +0x1aa

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*Compiler).Compile()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/compiler.go:62 +0x2fa

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/session.(*session).ExecuteStmt()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/session/session.go:1207 +0x270

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/util/testkit.(*TestKit).Exec()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/util/testkit/testkit.go:170 +0x2f1

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/util/testkit.(*TestKit).MustExec()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/util/testkit/testkit.go:216 +0x91

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/session_test.(*testSessionSuite2).TestStmtHints()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/session/session_test.go:3097 +0xd1f

[2020-11-12T05:22:56.562Z] runtime.call32()

[2020-11-12T05:22:56.562Z] /usr/local/go/src/runtime/asm_amd64.s:539 +0x3a

[2020-11-12T05:22:56.562Z] reflect.Value.Call()

[2020-11-12T05:22:56.562Z] /usr/local/go/src/reflect/value.go:321 +0xd3

[2020-11-12T05:22:56.562Z] github.com/pingcap/check.(*suiteRunner).forkTest.func1()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:850 +0x9aa

[2020-11-12T05:22:56.562Z] github.com/pingcap/check.(*suiteRunner).forkCall.func1()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:739 +0x113

[2020-11-12T05:22:56.562Z]

[2020-11-12T05:22:56.562Z] Previous write at 0x00c0001e0420 by goroutine 456:

[2020-11-12T05:22:56.562Z] runtime.mapassign_fast64()

[2020-11-12T05:22:56.562Z] /usr/local/go/src/runtime/map_fast64.go:92 +0x0

[2020-11-12T05:22:56.562Z] github.com/pingcap/parser/terror.ErrClass.NewStdErr()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/parser@v0.0.0-20201109022253-d384bee1451e/terror/terror.go:163 +0x19a

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*InsertValues).handleErr()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/insert_common.go:300 +0xa54

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*InsertValues).fastEvalRow()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/insert_common.go:373 +0x569

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*InsertValues).fastEvalRow-fm()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/insert_common.go:359 +0xaa

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.insertRows()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/insert_common.go:233 +0x3b6

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*InsertExec).Next()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/insert.go:288 +0x117

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.Next()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/executor.go:268 +0x27d

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*ExecStmt).handleNoDelayExecutor()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/adapter.go:522 +0x38e

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*ExecStmt).handleNoDelay()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/adapter.go:404 +0x254

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/executor.(*ExecStmt).Exec()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/executor/adapter.go:354 +0x3f6

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/session.runStmt()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/session/session.go:1285 +0x2c1

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/session.(*session).ExecuteStmt()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/session/session.go:1229 +0xa57

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/util/testkit.(*TestKit).Exec()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/util/testkit/testkit.go:170 +0x2f1

[2020-11-12T05:22:56.562Z] github.com/pingcap/tidb/session_test.(*testSessionSuite).TestPrepareZero()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/src/github.com/pingcap/tidb/session/session_test.go:1021 +0x209

[2020-11-12T05:22:56.562Z] runtime.call32()

[2020-11-12T05:22:56.562Z] /usr/local/go/src/runtime/asm_amd64.s:539 +0x3a

[2020-11-12T05:22:56.562Z] reflect.Value.Call()

[2020-11-12T05:22:56.562Z] /usr/local/go/src/reflect/value.go:321 +0xd3

[2020-11-12T05:22:56.562Z] github.com/pingcap/check.(*suiteRunner).forkTest.func1()

[2020-11-12T05:22:56.562Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:850 +0x9aa

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).forkCall.func1()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:739 +0x113

[2020-11-12T05:22:56.563Z]

[2020-11-12T05:22:56.563Z] Goroutine 459 (running) created at:

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).forkCall()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:734 +0x4a3

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).forkTest()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:832 +0x1b9

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).doRun()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:666 +0x13a

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).asyncRun.func1()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:650 +0xf7

[2020-11-12T05:22:56.563Z]

[2020-11-12T05:22:56.563Z] Goroutine 456 (running) created at:

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).forkCall()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:734 +0x4a3

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).forkTest()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:832 +0x1b9

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).doRun()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:666 +0x13a

[2020-11-12T05:22:56.563Z] github.com/pingcap/check.(*suiteRunner).asyncRun.func1()

[2020-11-12T05:22:56.563Z] /home/jenkins/agent/workspace/tidb_ghpr_unit_test/go/pkg/mod/github.com/pingcap/check@v0.0.0-20200212061837-5e12011dc712/check.go:650 +0xf7

[2020-11-12T05:22:56.563Z] ==================

```

Latest failed builds:

https://internal.pingcap.net/idc-jenkins/job/tidb_ghpr_unit_test/57742/display/redirect

|

non_process

|

data race runtime mapassign failed data race runtime mapassign warning data race runtime mapassign usr local go src runtime map go github com pingcap parser terror errclass newstderr home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap parser terror terror go github com pingcap tidb util hint checkinsertstmthintduplicated home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb util dbterror terror go github com pingcap tidb util hint extracttablehintsfromstmtnode home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb util hint hint processor go github com pingcap tidb planner optimize home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb planner optimize go github com pingcap tidb executor compiler compile home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb executor compiler go github com pingcap tidb session session executestmt home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb session session go github com pingcap tidb util testkit testkit exec home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb util testkit testkit go github com pingcap tidb util testkit testkit mustexec home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb util testkit testkit go github com pingcap tidb session test teststmthints home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb session session test go runtime usr local go src runtime asm s reflect value call usr local go src reflect value go github com pingcap check suiterunner forktest home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap check check go github com pingcap check suiterunner forkcall home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap check check go previous write at by goroutine runtime mapassign usr local go src runtime map go github com pingcap parser terror errclass newstderr home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap parser terror terror go github com pingcap tidb executor insertvalues handleerr home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb executor insert common go github com pingcap tidb executor insertvalues fastevalrow home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb executor insert common go github com pingcap tidb executor insertvalues fastevalrow fm home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb executor insert common go github com pingcap tidb executor insertrows home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb executor insert common go github com pingcap tidb executor insertexec next home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb executor insert go github com pingcap tidb executor next home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb executor executor go github com pingcap tidb executor execstmt handlenodelayexecutor home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb executor adapter go github com pingcap tidb executor execstmt handlenodelay home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb executor adapter go github com pingcap tidb executor execstmt exec home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb executor adapter go github com pingcap tidb session runstmt home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb session session go github com pingcap tidb session session executestmt home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb session session go github com pingcap tidb util testkit testkit exec home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb util testkit testkit go github com pingcap tidb session test testsessionsuite testpreparezero home jenkins agent workspace tidb ghpr unit test go src github com pingcap tidb session session test go runtime usr local go src runtime asm s reflect value call usr local go src reflect value go github com pingcap check suiterunner forktest home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap check check go github com pingcap check suiterunner forkcall home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap check check go goroutine running created at github com pingcap check suiterunner forkcall home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap check check go github com pingcap check suiterunner forktest home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap check check go github com pingcap check suiterunner dorun home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap check check go github com pingcap check suiterunner asyncrun home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap check check go goroutine running created at github com pingcap check suiterunner forkcall home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap check check go github com pingcap check suiterunner forktest home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap check check go github com pingcap check suiterunner dorun home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap check check go github com pingcap check suiterunner asyncrun home jenkins agent workspace tidb ghpr unit test go pkg mod github com pingcap check check go latest failed builds

| 0

|

19,847

| 26,247,923,079

|

IssuesEvent

|

2023-01-05 16:40:57

|

python/cpython

|

https://api.github.com/repos/python/cpython

|

closed

|

Insecure MD5 usage in Multiprocessing.connection

|

type-feature type-security expert-multiprocessing

|

# Feature or enhancement

Remove insecure use of md5 in Multiprocessing.connection

# Pitch

We discovered uses off the md5 hash, which has been proven insecure for more than a decade, in the Multiprocessing.connection library in the methods `deliver_challenge` and `answer_challenge`. This usage was apparently added in 2013 since the default implicit hashing mode for `hmac.new` was deprecated at that time. `hmac.new` previously defaulted to MD5 if a hashing algorithm was not specified. The 2013 change brings to code back to consistency with its prior use, but that use is insecure. It should be trivial to change the two uses in this library to a SHA2/3 secure hashing function (e.g., SHA512).

Failure to update the hashing algorithm may require organizations to fully cease use of the Multiprocessing library or components of the library to meet industry security standards with respect to acceptable uses of hashing algorithms.

<!-- gh-linked-prs -->

### Linked PRs

* gh-100772

<!-- /gh-linked-prs -->

|

1.0

|

Insecure MD5 usage in Multiprocessing.connection - # Feature or enhancement

Remove insecure use of md5 in Multiprocessing.connection

# Pitch

We discovered uses off the md5 hash, which has been proven insecure for more than a decade, in the Multiprocessing.connection library in the methods `deliver_challenge` and `answer_challenge`. This usage was apparently added in 2013 since the default implicit hashing mode for `hmac.new` was deprecated at that time. `hmac.new` previously defaulted to MD5 if a hashing algorithm was not specified. The 2013 change brings to code back to consistency with its prior use, but that use is insecure. It should be trivial to change the two uses in this library to a SHA2/3 secure hashing function (e.g., SHA512).

Failure to update the hashing algorithm may require organizations to fully cease use of the Multiprocessing library or components of the library to meet industry security standards with respect to acceptable uses of hashing algorithms.

<!-- gh-linked-prs -->

### Linked PRs

* gh-100772

<!-- /gh-linked-prs -->

|

process

|

insecure usage in multiprocessing connection feature or enhancement remove insecure use of in multiprocessing connection pitch we discovered uses off the hash which has been proven insecure for more than a decade in the multiprocessing connection library in the methods deliver challenge and answer challenge this usage was apparently added in since the default implicit hashing mode for hmac new was deprecated at that time hmac new previously defaulted to if a hashing algorithm was not specified the change brings to code back to consistency with its prior use but that use is insecure it should be trivial to change the two uses in this library to a secure hashing function e g failure to update the hashing algorithm may require organizations to fully cease use of the multiprocessing library or components of the library to meet industry security standards with respect to acceptable uses of hashing algorithms linked prs gh

| 1

|

3,809

| 6,795,273,248

|

IssuesEvent

|

2017-11-01 15:12:12

|

coala/projects

|

https://api.github.com/repos/coala/projects

|

opened

|

Relicense text

|

process/pending_review

|

AGPL is not ideal for large chunks of text.

I suggest that we use CC-BY-SA 4.0

|

1.0

|

Relicense text - AGPL is not ideal for large chunks of text.

I suggest that we use CC-BY-SA 4.0

|

process

|

relicense text agpl is not ideal for large chunks of text i suggest that we use cc by sa

| 1

|

167,307

| 26,484,054,017

|

IssuesEvent

|

2023-01-17 16:37:54

|

influxdata/ui

|

https://api.github.com/repos/influxdata/ui

|

closed

|

Script Editor SQL Adjustments

|

needs/design team/automation release/marty-23.01

|

**Task Description**

<p></p>

We need to do some UI Re-Design to make the script editor SQL experience better:

<p></p>

1 \- Separate the Bucket Selection from the rest of the schema browser so it is more obvious to the user that the bucket selection is required, and that the schema browser is informational only \(see design from Julia\)\.

<p></p>

2 \- Change the text wording to indicate that the user needs to select a database\/bucket first\. See design from Julia\.

<p></p>

3 \- When a user runs a SQL query without a bucket selected, the user will be shown an error message that tells them they must select a database\.

<p></p>

4 \- The raw text that is in the editor should go away once the user clicks into the box and starts typing \(they shouldn't have to delete it manually\)\.

<p></p>

5 \- Remove the time range selection as it will not apply to SQL queries right now\.

### Figma

https://www.figma.com/file/0qAntPk5LVangAHguWT74X/Query-Experience-Project?node-id=2651%3A112467&t=tGT6F3oSYXF2KSZz-1

|

1.0

|

Script Editor SQL Adjustments - **Task Description**

<p></p>

We need to do some UI Re-Design to make the script editor SQL experience better:

<p></p>

1 \- Separate the Bucket Selection from the rest of the schema browser so it is more obvious to the user that the bucket selection is required, and that the schema browser is informational only \(see design from Julia\)\.

<p></p>

2 \- Change the text wording to indicate that the user needs to select a database\/bucket first\. See design from Julia\.

<p></p>

3 \- When a user runs a SQL query without a bucket selected, the user will be shown an error message that tells them they must select a database\.

<p></p>

4 \- The raw text that is in the editor should go away once the user clicks into the box and starts typing \(they shouldn't have to delete it manually\)\.

<p></p>

5 \- Remove the time range selection as it will not apply to SQL queries right now\.

### Figma

https://www.figma.com/file/0qAntPk5LVangAHguWT74X/Query-Experience-Project?node-id=2651%3A112467&t=tGT6F3oSYXF2KSZz-1

|

non_process

|

script editor sql adjustments task description we need to do some ui re design to make the script editor sql experience better separate the bucket selection from the rest of the schema browser so it is more obvious to the user that the bucket selection is required and that the schema browser is informational only see design from julia change the text wording to indicate that the user needs to select a database bucket first see design from julia when a user runs a sql query without a bucket selected the user will be shown an error message that tells them they must select a database the raw text that is in the editor should go away once the user clicks into the box and starts typing they shouldn t have to delete it manually remove the time range selection as it will not apply to sql queries right now figma

| 0

|

139,823

| 11,287,214,652

|

IssuesEvent

|

2020-01-16 03:32:31

|

opentracing-contrib/java-specialagent

|

https://api.github.com/repos/opentracing-contrib/java-specialagent

|

closed

|

`spring-kafka` test failing after refactor

|

.25 bug test

|

@malafeev, would you be able to help me with a test? I have refactored the module structure under `/test`, and for some reason the `spring-kafka` test is failing. Could you take a look at it? The work is in [`circleci` branch](https://github.com/opentracing-contrib/java-specialagent/blob/circleci/test/spring-kafka/spring-kafka-2.3.3/pom.xml), and here's the log of the spring-kafka tests:

https://api.travis-ci.org/v3/job/637261261/log.txt

|

1.0

|

`spring-kafka` test failing after refactor - @malafeev, would you be able to help me with a test? I have refactored the module structure under `/test`, and for some reason the `spring-kafka` test is failing. Could you take a look at it? The work is in [`circleci` branch](https://github.com/opentracing-contrib/java-specialagent/blob/circleci/test/spring-kafka/spring-kafka-2.3.3/pom.xml), and here's the log of the spring-kafka tests:

https://api.travis-ci.org/v3/job/637261261/log.txt

|

non_process

|

spring kafka test failing after refactor malafeev would you be able to help me with a test i have refactored the module structure under test and for some reason the spring kafka test is failing could you take a look at it the work is in and here s the log of the spring kafka tests

| 0

|

130,758

| 12,462,194,340

|

IssuesEvent

|

2020-05-28 08:29:47

|

adriens/covid19-action-plan-nc

|

https://api.github.com/repos/adriens/covid19-action-plan-nc

|

closed

|

Scénario de test du tableau de bord pour crowd testing

|

PRODUCTION Tableau de Bord documentation enhancement

|

# Contexte

La qualité de notre application dépend de:

1 - La justesse des données: ie. sont-t-elles bien alignées avec ce qui est annoncé et la réalité du terrain ?

2 La qualité de l'affichage: typos, texte impossible à lire, taille d'image pas adaptée, graphique gros gros/petit

En Nouvelle-Calédonie a été lancée une plateforme de crowdtesting basée sur le blockchain

# Ressources

- Article [dédié sur le Blog Hightest](https://hightest.nc/blog/posts/le-crowdtesting-met-il-en-danger-les-testeurs-professionnels

)

# Scénarios envisagés

## Dernières données

Si au vu des données du jour, les chiffres de correspondent pas.

## Dates

- Vérifier que le nom du jour et le numéro matchent bien (ie. que ce jour existe bien dans l'année en cours)

## Typo

Toute erreur de typo: orthographe, grammaire, qui nuit à la qualité de la lectre du site

|

1.0

|

Scénario de test du tableau de bord pour crowd testing - # Contexte

La qualité de notre application dépend de:

1 - La justesse des données: ie. sont-t-elles bien alignées avec ce qui est annoncé et la réalité du terrain ?

2 La qualité de l'affichage: typos, texte impossible à lire, taille d'image pas adaptée, graphique gros gros/petit

En Nouvelle-Calédonie a été lancée une plateforme de crowdtesting basée sur le blockchain

# Ressources

- Article [dédié sur le Blog Hightest](https://hightest.nc/blog/posts/le-crowdtesting-met-il-en-danger-les-testeurs-professionnels

)

# Scénarios envisagés

## Dernières données

Si au vu des données du jour, les chiffres de correspondent pas.

## Dates

- Vérifier que le nom du jour et le numéro matchent bien (ie. que ce jour existe bien dans l'année en cours)

## Typo

Toute erreur de typo: orthographe, grammaire, qui nuit à la qualité de la lectre du site

|

non_process

|

scénario de test du tableau de bord pour crowd testing contexte la qualité de notre application dépend de la justesse des données ie sont t elles bien alignées avec ce qui est annoncé et la réalité du terrain la qualité de l affichage typos texte impossible à lire taille d image pas adaptée graphique gros gros petit en nouvelle calédonie a été lancée une plateforme de crowdtesting basée sur le blockchain ressources article scénarios envisagés dernières données si au vu des données du jour les chiffres de correspondent pas dates vérifier que le nom du jour et le numéro matchent bien ie que ce jour existe bien dans l année en cours typo toute erreur de typo orthographe grammaire qui nuit à la qualité de la lectre du site

| 0

|

55,544

| 13,639,169,004

|

IssuesEvent

|

2020-09-25 10:32:28

|

astropy/astropy

|

https://api.github.com/repos/astropy/astropy

|

opened

|

Astropy does not build on MacOSX with xcode 12.0.0

|

build

|

<!-- This comments are hidden when you submit the issue,

so you do not need to remove them! -->

<!-- Please be sure to check out our contributing guidelines,

https://github.com/astropy/astropy/blob/master/CONTRIBUTING.md .

Please be sure to check out our code of conduct,

https://github.com/astropy/astropy/blob/master/CODE_OF_CONDUCT.md . -->

<!-- Please have a search on our GitHub repository to see if a similar

issue has already been posted.

If a similar issue is closed, have a quick look to see if you are satisfied

by the resolution.

If not please go ahead and open an issue! -->

<!-- Please check that the development version still produces the same bug.

You can install development version with

pip install git+https://github.com/astropy/astropy

command. -->

### Description

<!-- Provide a general description of the bug. -->

When I try to build astropy on my Mac (Mojave + Xcode 12.0) it fails with the following error:

```

cextern/cfitsio/lib/group.c:5664:8: error: implicit declaration of function 'getcwd' is invalid in C99 [-Werror,-Wimplicit-function-declaration]

if (!getcwd(buff,FLEN_FILENAME))

^

1 warning and 1 error generated.

error: command 'gcc' failed with exit status 1

(astropy) ➜ astropy-tmp git:(master) gcc --version

Configured with: --prefix=/Applications/Xcode.app/Contents/Developer/usr --with-gxx-include-dir=/Applications/Xcode.app/Contents/Developer/Platforms/MacOSX.platform/Developer/SDKs/MacOSX.sdk/usr/include/c++/4.2.1

Apple clang version 12.0.0 (clang-1200.0.32.2)

Target: x86_64-apple-darwin19.5.0

Thread model: posix

InstalledDir: /Applications/Xcode.app/Contents/Developer/Toolchains/XcodeDefault.xctoolchain/usr/bin

```

Xcode 12.0 was just released Sep. 16 and I'm guessing that it was automatically updated on my Mac since I did not request an update. Astropy is apparently not alone, e.g. https://gitlab.com/graphviz/graphviz/-/issues/1826.

There is a long slack thread with helpful inputs from @saimn and @manodeep here: https://astropy.slack.com/archives/C067V74GK/p1600984862007700

A workaround is:

```

CFLAGS=-Wno-error=implicit-function-declaration pip install -e .

```

### Expected behavior

<!-- What did you expect to happen. -->

Astropy builds and runs tests.

### Actual behavior

<!-- What actually happened. -->

<!-- Was the output confusing or poorly described? -->

Build failed as shown.

### Steps to Reproduce

<!-- Ideally a code example could be provided so we can run it ourselves. -->

<!-- If you are pasting code, use triple backticks (```) around

your code snippet. -->

<!-- If necessary, sanitize your screen output to be pasted so you do not

reveal secrets like tokens and passwords. -->

1. Checkout astropy at master, `git clean -fxd`

2. `tox -e test` or `pip install -e .`

### System Details

<!-- Even if you do not think this is necessary, it is useful information for the maintainers.

Please run the following snippet and paste the output below:

import platform; print(platform.platform())

import sys; print("Python", sys.version)

import numpy; print("Numpy", numpy.__version__)

import astropy; print("astropy", astropy.__version__)

import scipy; print("Scipy", scipy.__version__)

import matplotlib; print("Matplotlib", matplotlib.__version__)

-->

```

Darwin-19.5.0-x86_64-i386-64bit

Python 3.7.7 (default, Mar 26 2020, 10:32:53)

[Clang 4.0.1 (tags/RELEASE_401/final)]

Numpy 1.18.1

astropy 4.2.dev715+gfaccb8b41

Scipy 1.4.1

Matplotlib 3.1.3

```

|

1.0

|

Astropy does not build on MacOSX with xcode 12.0.0 - <!-- This comments are hidden when you submit the issue,

so you do not need to remove them! -->

<!-- Please be sure to check out our contributing guidelines,

https://github.com/astropy/astropy/blob/master/CONTRIBUTING.md .

Please be sure to check out our code of conduct,

https://github.com/astropy/astropy/blob/master/CODE_OF_CONDUCT.md . -->

<!-- Please have a search on our GitHub repository to see if a similar

issue has already been posted.

If a similar issue is closed, have a quick look to see if you are satisfied

by the resolution.

If not please go ahead and open an issue! -->

<!-- Please check that the development version still produces the same bug.

You can install development version with

pip install git+https://github.com/astropy/astropy

command. -->

### Description

<!-- Provide a general description of the bug. -->

When I try to build astropy on my Mac (Mojave + Xcode 12.0) it fails with the following error:

```

cextern/cfitsio/lib/group.c:5664:8: error: implicit declaration of function 'getcwd' is invalid in C99 [-Werror,-Wimplicit-function-declaration]

if (!getcwd(buff,FLEN_FILENAME))

^

1 warning and 1 error generated.

error: command 'gcc' failed with exit status 1

(astropy) ➜ astropy-tmp git:(master) gcc --version

Configured with: --prefix=/Applications/Xcode.app/Contents/Developer/usr --with-gxx-include-dir=/Applications/Xcode.app/Contents/Developer/Platforms/MacOSX.platform/Developer/SDKs/MacOSX.sdk/usr/include/c++/4.2.1

Apple clang version 12.0.0 (clang-1200.0.32.2)

Target: x86_64-apple-darwin19.5.0

Thread model: posix

InstalledDir: /Applications/Xcode.app/Contents/Developer/Toolchains/XcodeDefault.xctoolchain/usr/bin

```

Xcode 12.0 was just released Sep. 16 and I'm guessing that it was automatically updated on my Mac since I did not request an update. Astropy is apparently not alone, e.g. https://gitlab.com/graphviz/graphviz/-/issues/1826.

There is a long slack thread with helpful inputs from @saimn and @manodeep here: https://astropy.slack.com/archives/C067V74GK/p1600984862007700

A workaround is:

```

CFLAGS=-Wno-error=implicit-function-declaration pip install -e .

```