Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

85,565 | 15,755,047,511 | IssuesEvent | 2021-03-31 01:05:20 | RG4421/skyux-forms | https://api.github.com/repos/RG4421/skyux-forms | opened | CVE-2021-23358 (High) detected in underscore-1.7.0.tgz | security vulnerability | ## CVE-2021-23358 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>underscore-1.7.0.tgz</b></p></summary>

<p>JavaScript's functional programming helper library.</p>

<p>Library home page... | True | CVE-2021-23358 (High) detected in underscore-1.7.0.tgz - ## CVE-2021-23358 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>underscore-1.7.0.tgz</b></p></summary>

<p>JavaScript's functi... | non_process | cve high detected in underscore tgz cve high severity vulnerability vulnerable library underscore tgz javascript s functional programming helper library library home page a href path to dependency file skyux forms package json path to vulnerable library skyux forms ... | 0 |

19,773 | 26,149,785,809 | IssuesEvent | 2022-12-30 11:45:38 | firebase/firebase-cpp-sdk | https://api.github.com/repos/firebase/firebase-cpp-sdk | reopened | [C++] Nightly Integration Testing Report for Firestore | type: process nightly-testing |

<hidden value="integration-test-status-comment"></hidden>

### ✅ [build against repo] Integration test succeeded!

Requested by @sunmou99 on commit b07793ae015b4a69f2ec68e1c8f46206f9fac0c7

Last updated: Thu Dec 29 03:40 PST 2022

**[View integration test log & download artifacts](https://github.com/firebase/fire... | 1.0 | [C++] Nightly Integration Testing Report for Firestore -

<hidden value="integration-test-status-comment"></hidden>

### ✅ [build against repo] Integration test succeeded!

Requested by @sunmou99 on commit b07793ae015b4a69f2ec68e1c8f46206f9fac0c7

Last updated: Thu Dec 29 03:40 PST 2022

**[View integration test l... | process | nightly integration testing report for firestore ✅ nbsp integration test succeeded requested by on commit last updated thu dec pst ✅ nbsp integration test succeeded requested by firebase workflow trigger on commit last updated thu dec pst ... | 1 |

13,495 | 15,933,793,035 | IssuesEvent | 2021-04-14 07:54:37 | ckeditor/ckeditor5 | https://api.github.com/repos/ckeditor/ckeditor5 | closed | Document lists research: Lists model structure | domain:v4-compatibility package:list squad:compat type:task | The biggest question is about their structure in the model. We should consider this together with the support for ul/ol attributes as this topic is really tricky today too. Another topic that we should consider is support for having two subsequent lists.

The current completely flat structure works great from the per... | True | Document lists research: Lists model structure - The biggest question is about their structure in the model. We should consider this together with the support for ul/ol attributes as this topic is really tricky today too. Another topic that we should consider is support for having two subsequent lists.

The current c... | non_process | document lists research lists model structure the biggest question is about their structure in the model we should consider this together with the support for ul ol attributes as this topic is really tricky today too another topic that we should consider is support for having two subsequent lists the current c... | 0 |

1,700 | 4,349,707,300 | IssuesEvent | 2016-07-30 19:04:52 | pwittchen/prefser | https://api.github.com/repos/pwittchen/prefser | closed | Release 2.0.6 | release process | **Initial release notes**:

- bumped RxJava to v. 1.1.8

- bumped Gson to 2.7

- bumped Truth to 0.28

- updated dependencies in sample apps

**Things to do**:

- [x] bump library version to 2.0.6

- [x] upload Archives to Maven Central Repository

- [x] close and release artifact on Nexus

- [x] update `CHANGELOG.md... | 1.0 | Release 2.0.6 - **Initial release notes**:

- bumped RxJava to v. 1.1.8

- bumped Gson to 2.7

- bumped Truth to 0.28

- updated dependencies in sample apps

**Things to do**:

- [x] bump library version to 2.0.6

- [x] upload Archives to Maven Central Repository

- [x] close and release artifact on Nexus

- [x] upda... | process | release initial release notes bumped rxjava to v bumped gson to bumped truth to updated dependencies in sample apps things to do bump library version to upload archives to maven central repository close and release artifact on nexus update chang... | 1 |

6,118 | 8,989,680,615 | IssuesEvent | 2019-02-01 00:58:43 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | googlecompute-export - doesn't support sharedVPCs | enhancement post-processor/googlecompute-export | **- Packer version from `packer version`**

Packer v1.3.2

**- Host platform**

Google Cloud

**- Debug log output**

To much company/private data to scrub from entire output - pertinent info pasted

https://gist.github.com/obviousboy/cb5e104846ac6091c94d5ed0b9d38dd5

**Code**

https://gist.github.com/obviousboy/... | 1.0 | googlecompute-export - doesn't support sharedVPCs - **- Packer version from `packer version`**

Packer v1.3.2

**- Host platform**

Google Cloud

**- Debug log output**

To much company/private data to scrub from entire output - pertinent info pasted

https://gist.github.com/obviousboy/cb5e104846ac6091c94d5ed0b9d38... | process | googlecompute export doesn t support sharedvpcs packer version from packer version packer host platform google cloud debug log output to much company private data to scrub from entire output pertinent info pasted code issue builder googlecompute is able t... | 1 |

5,172 | 7,954,618,456 | IssuesEvent | 2018-07-12 08:09:39 | GoogleCloudPlatform/google-cloud-dotnet | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-dotnet | closed | Generate smoke test projects automatically | type: process | When #2305 is merged, the codegen won't be generating project files for smoke tests any more. That doesn't affect existing APIs, but when we add an API with a smoke test we'll end up missing a project file. It should be simple enough to generate it though... | 1.0 | Generate smoke test projects automatically - When #2305 is merged, the codegen won't be generating project files for smoke tests any more. That doesn't affect existing APIs, but when we add an API with a smoke test we'll end up missing a project file. It should be simple enough to generate it though... | process | generate smoke test projects automatically when is merged the codegen won t be generating project files for smoke tests any more that doesn t affect existing apis but when we add an api with a smoke test we ll end up missing a project file it should be simple enough to generate it though | 1 |

23,109 | 11,844,179,422 | IssuesEvent | 2020-03-24 04:55:33 | goharbor/harbor | https://api.github.com/repos/goharbor/harbor | closed | Children artifacts of a manifest list can be scanned via UI hack | area/interrogation-service kind/bug priority/high target/2.0.0 |

<img width="1173" alt="Screen Shot 2020-03-24 at 12 15 13" src="https://user-images.githubusercontent.com/5753287/77387771-3bf53080-6dc9-11ea-9b0e-f03e16ad561e.png">

| 1.0 | Children artifacts of a manifest list can be scanned via UI hack -

<img width="1173" alt="Screen Shot 2020-03-24 at 12 15 13" src="https://user-images.githubusercontent.com/5753287/77387771-3bf53080-6dc9-11ea-9b0e-f03e16ad561e.png">

| non_process | children artifacts of a manifest list can be scanned via ui hack img width alt screen shot at src | 0 |

250,604 | 18,895,715,872 | IssuesEvent | 2021-11-15 17:39:17 | mzapatal2/Proyecto-CDA-Grupo-10 | https://api.github.com/repos/mzapatal2/Proyecto-CDA-Grupo-10 | closed | Construir modelo predictivo | documentation | Dos modelos de predicción para el problema con el reporte de los respectivos parametros | 1.0 | Construir modelo predictivo - Dos modelos de predicción para el problema con el reporte de los respectivos parametros | non_process | construir modelo predictivo dos modelos de predicción para el problema con el reporte de los respectivos parametros | 0 |

17,909 | 23,894,135,877 | IssuesEvent | 2022-09-08 13:39:58 | w3c/webauthn | https://api.github.com/repos/w3c/webauthn | opened | Dependencies section is out of date and duplicates terms index | type:editorial type:process | [§3. Dependencies](https://w3c.github.io/webauthn/#sctn-dependencies) states that

>This specification relies on several other underlying specifications, listed below and in [Terms defined by reference](https://w3c.github.io/webauthn/#index-defined-elsewhere).

It also states, for example:

>**HTML**

>The concep... | 1.0 | Dependencies section is out of date and duplicates terms index - [§3. Dependencies](https://w3c.github.io/webauthn/#sctn-dependencies) states that

>This specification relies on several other underlying specifications, listed below and in [Terms defined by reference](https://w3c.github.io/webauthn/#index-defined-else... | process | dependencies section is out of date and duplicates terms index states that this specification relies on several other underlying specifications listed below and in it also states for example html the concepts of and are defined in but the section lists... | 1 |

12,467 | 9,796,654,308 | IssuesEvent | 2019-06-11 08:10:47 | fossas/dri | https://api.github.com/repos/fossas/dri | opened | Automatically upload test coverage to Codecov | type: infrastructure | Codecov's uploader doesn't understand the HPC format and the only user-provided uploader I could find (https://github.com/guillaume-nargeot/codecov-haskell) seems abandoned. A lot of the steps are already done here, but we'll need to convert HPC into a format that Codecov understands.

- [x] Set up a Codecov project ... | 1.0 | Automatically upload test coverage to Codecov - Codecov's uploader doesn't understand the HPC format and the only user-provided uploader I could find (https://github.com/guillaume-nargeot/codecov-haskell) seems abandoned. A lot of the steps are already done here, but we'll need to convert HPC into a format that Codecov... | non_process | automatically upload test coverage to codecov codecov s uploader doesn t understand the hpc format and the only user provided uploader i could find seems abandoned a lot of the steps are already done here but we ll need to convert hpc into a format that codecov understands set up a codecov project set up... | 0 |

4,859 | 7,746,553,598 | IssuesEvent | 2018-05-29 22:11:24 | Maximus5/ConEmu | https://api.github.com/repos/Maximus5/ConEmu | closed | csudo doesn't work in WSL | processes | ### Versions

ConEmu build: 180506 preview x64

OS version: Windows 10 16299.431 x64

Used shell: WSL and powershell

### Problem description

csudo does not launch consent.exe (does not elevate) when executed in WSL. This worked in the stable build, however.

On powershell (or maybe cmd as well, but untested), G... | 1.0 | csudo doesn't work in WSL - ### Versions

ConEmu build: 180506 preview x64

OS version: Windows 10 16299.431 x64

Used shell: WSL and powershell

### Problem description

csudo does not launch consent.exe (does not elevate) when executed in WSL. This worked in the stable build, however.

On powershell (or maybe c... | process | csudo doesn t work in wsl versions conemu build preview os version windows used shell wsl and powershell problem description csudo does not launch consent exe does not elevate when executed in wsl this worked in the stable build however on powershell or maybe cmd as well but ... | 1 |

46,244 | 19,013,454,254 | IssuesEvent | 2021-11-23 11:54:05 | Azure/Industrial-IoT | https://api.github.com/repos/Azure/Industrial-IoT | closed | OPC Publisher and REST API alone deployment | Services | **Describe the bug**

We want to deploy only the OPC Publisher edge module and the Publisher REST API, but we don't know how to deploy it alone without deploying all the infrastructure.

How to deploy and configure the edge module and the API Rest to connect each other.

Any documentation for deploying individual... | 1.0 | OPC Publisher and REST API alone deployment - **Describe the bug**

We want to deploy only the OPC Publisher edge module and the Publisher REST API, but we don't know how to deploy it alone without deploying all the infrastructure.

How to deploy and configure the edge module and the API Rest to connect each other... | non_process | opc publisher and rest api alone deployment describe the bug we want to deploy only the opc publisher edge module and the publisher rest api but we don t know how to deploy it alone without deploying all the infrastructure how to deploy and configure the edge module and the api rest to connect each other... | 0 |

767,735 | 26,938,359,693 | IssuesEvent | 2023-02-07 22:55:47 | kubernetes-sigs/gateway-api | https://api.github.com/repos/kubernetes-sigs/gateway-api | opened | Cleaning up listenersMatch helper in conformance utils | kind/feature good first issue help wanted priority/backlog kind/cleanup area/conformance go | The `listenersMatch` function in `conformance/utils/kubernetes/helpers.go` has a few `TODO` comments on it about improvements that can be made, and generally could use some refactoring and rewriting.

In particular, based partially on TODO comments therein:

- [ ] possible refactor using generics?

- [ ] allow for ... | 1.0 | Cleaning up listenersMatch helper in conformance utils - The `listenersMatch` function in `conformance/utils/kubernetes/helpers.go` has a few `TODO` comments on it about improvements that can be made, and generally could use some refactoring and rewriting.

In particular, based partially on TODO comments therein:

... | non_process | cleaning up listenersmatch helper in conformance utils the listenersmatch function in conformance utils kubernetes helpers go has a few todo comments on it about improvements that can be made and generally could use some refactoring and rewriting in particular based partially on todo comments therein ... | 0 |

76,115 | 3,481,500,020 | IssuesEvent | 2015-12-29 16:32:57 | MinetestForFun/server-minetestforfun-creative | https://api.github.com/repos/MinetestForFun/server-minetestforfun-creative | closed | [xdecor] mod crashed the server | Modding ➤ BugFix Priority: High Server crash Upstream | http://zerobin.qbuissondebon.info/?2f4107122468a700#dTES6ucMyW/iPbjYqqbAAbrNr1LTvHEak7SH0DRBMUc=

```

2015-12-29 16:58:14: ACTION[ServerThread]: cycyl [???.???.???.???] joins game.

2015-12-29 16:58:14: ACTION[ServerThread]: cycyl joins game. List of players: cycyl

2015-12-29 16:58:14: ACTION[ServerThread]: Update... | 1.0 | [xdecor] mod crashed the server - http://zerobin.qbuissondebon.info/?2f4107122468a700#dTES6ucMyW/iPbjYqqbAAbrNr1LTvHEak7SH0DRBMUc=

```

2015-12-29 16:58:14: ACTION[ServerThread]: cycyl [???.???.???.???] joins game.

2015-12-29 16:58:14: ACTION[ServerThread]: cycyl joins game. List of players: cycyl

2015-12-29 16:5... | non_process | mod crashed the server action cycyl joins game action cycyl joins game list of players cycyl action updated online player file error unrecoverable error occurred stopping server please fix the following error error lua... | 0 |

2,633 | 5,411,672,300 | IssuesEvent | 2017-03-01 12:26:38 | paulkornikov/Pragonas | https://api.github.com/repos/paulkornikov/Pragonas | closed | Processus hebdo de production des opés déductibles impôts | a-new feature processus workload III | depuis le début de l'année jusqu'à la date du jour

avec envoi d'email avec fichier attaché ou stockage sur le cloud. | 1.0 | Processus hebdo de production des opés déductibles impôts - depuis le début de l'année jusqu'à la date du jour

avec envoi d'email avec fichier attaché ou stockage sur le cloud. | process | processus hebdo de production des opés déductibles impôts depuis le début de l année jusqu à la date du jour avec envoi d email avec fichier attaché ou stockage sur le cloud | 1 |

10,092 | 13,044,162,069 | IssuesEvent | 2020-07-29 03:47:29 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `AddTimeDurationNull` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `AddTimeDurationNull` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @lonng

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/r... | 2.0 | UCP: Migrate scalar function `AddTimeDurationNull` from TiDB -

## Description

Port the scalar function `AddTimeDurationNull` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @lonng

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https... | process | ucp migrate scalar function addtimedurationnull from tidb description port the scalar function addtimedurationnull from tidb to coprocessor score mentor s lonng recommended skills rust programming learning materials already implemented expressions ported from tidb ... | 1 |

22,550 | 31,758,590,044 | IssuesEvent | 2023-09-12 02:00:08 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Mon, 11 Sep 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### C-CLIP: Contrastive Image-Text Encoders to Close the Descriptive-Commentative Gap

- **Authors:** William Theisen, Walter Scheirer

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2309.03921

- **Pdf link:** https://arxiv.org/pdf/2309.0392... | 2.0 | New submissions for Mon, 11 Sep 23 - ## Keyword: events

### C-CLIP: Contrastive Image-Text Encoders to Close the Descriptive-Commentative Gap

- **Authors:** William Theisen, Walter Scheirer

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2309.03921

- **Pdf li... | process | new submissions for mon sep keyword events c clip contrastive image text encoders to close the descriptive commentative gap authors william theisen walter scheirer subjects computer vision and pattern recognition cs cv arxiv link pdf link abstract the inte... | 1 |

12,045 | 14,738,799,049 | IssuesEvent | 2021-01-07 05:45:14 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Reusing old account numbers | anc-external anc-ops anc-process anp-1 ant-bug ant-support | In GitLab by @kdjstudios on Jul 31, 2018, 08:24

**Submitted by:** Saskia Keener <saskia@keenercom.net>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-07-28-56282/conversation

**Server:** External

**Client/Site:** Keener

**Account:** Saskia

**Issue:**

I went to add a new client using acc... | 1.0 | Reusing old account numbers - In GitLab by @kdjstudios on Jul 31, 2018, 08:24

**Submitted by:** Saskia Keener <saskia@keenercom.net>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-07-28-56282/conversation

**Server:** External

**Client/Site:** Keener

**Account:** Saskia

**Issue:**

I went... | process | reusing old account numbers in gitlab by kdjstudios on jul submitted by saskia keener helpdesk server external client site keener account saskia issue i went to add a new client using account but i received an error notification that the account number was alr... | 1 |

796,133 | 28,099,774,290 | IssuesEvent | 2023-03-30 18:30:46 | umajho/dicexp | https://api.github.com/repos/umajho/dicexp | opened | 一些数据结构 | kind:feature priority:low | ## Set

可以只作为内部实现细节,隐式地与列表互相转换。

## Map

可以以「原子(Atom)」作为键。与字符串不同,原子只能是字面量。

Dicexp 的使用场景不至于复杂到需要操纵字符串。 | 1.0 | 一些数据结构 - ## Set

可以只作为内部实现细节,隐式地与列表互相转换。

## Map

可以以「原子(Atom)」作为键。与字符串不同,原子只能是字面量。

Dicexp 的使用场景不至于复杂到需要操纵字符串。 | non_process | 一些数据结构 set 可以只作为内部实现细节,隐式地与列表互相转换。 map 可以以「原子(atom)」作为键。与字符串不同,原子只能是字面量。 dicexp 的使用场景不至于复杂到需要操纵字符串。 | 0 |

132,340 | 10,741,781,313 | IssuesEvent | 2019-10-29 20:58:48 | microsoft/vscode-python | https://api.github.com/repos/microsoft/vscode-python | closed | Discovered tests may have problematic filenames. | feature-testing needs decision reason-preexisting type-bug | The testing adapter returns the following data for discovery (one item per root):

```json

[

{

"rootid": "<string>",

"root": "<dirname>",

"parents": [

{

"id": "<string>",

"kind": "<enum-ish>",

"name": "<string>",

... | 1.0 | Discovered tests may have problematic filenames. - The testing adapter returns the following data for discovery (one item per root):

```json

[

{

"rootid": "<string>",

"root": "<dirname>",

"parents": [

{

"id": "<string>",

"kind": "<enum-i... | non_process | discovered tests may have problematic filenames the testing adapter returns the following data for discovery one item per root json rootid root parents id kind name ... | 0 |

5,797 | 21,162,244,304 | IssuesEvent | 2022-04-07 10:24:55 | tuist/tuist | https://api.github.com/repos/tuist/tuist | opened | Automate release of https://github.com/tuist/ProjectAutomation and https://github.com/tuist/ProjectDescription | domain:automation | Right now the repos must be updated manually when releasing tuist, but it should be taken care of by the PR | 1.0 | Automate release of https://github.com/tuist/ProjectAutomation and https://github.com/tuist/ProjectDescription - Right now the repos must be updated manually when releasing tuist, but it should be taken care of by the PR | non_process | automate release of and right now the repos must be updated manually when releasing tuist but it should be taken care of by the pr | 0 |

15,521 | 19,703,268,826 | IssuesEvent | 2022-01-12 18:52:27 | googleapis/java-gke-connect-gateway | https://api.github.com/repos/googleapis/java-gke-connect-gateway | opened | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* release_level must be equal to one of the allowed values in .repo-metadata.json

* api_shortname 'gke-connect-gateway' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-aut... | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* release_level must be equal to one of the allowed values in .repo-metadata.json

* api_shortname 'gke-connect-gateway' invalid in .repo-metadata.json

☝️ Once you correct these problems, you ca... | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 release level must be equal to one of the allowed values in repo metadata json api shortname gke connect gateway invalid in repo metadata json ☝️ once you correct these problems you ca... | 1 |

149,428 | 23,475,102,449 | IssuesEvent | 2022-08-17 04:42:48 | DeliverBle/deliverble-frontend | https://api.github.com/repos/DeliverBle/deliverble-frontend | opened | [learn_detail] 학습 상세 페이지 ver.2 반영 | 💅 design | ## ✨ Description

학습 상세 페이지 ver.2 반영

## ✅ To Do List

- [ ] 상단에 글로벌 네비게이션 바 추가

- [ ] 스크립트, 메모 공간이 길어지면서 전체적으로 세로 길이가 늘어남

- [ ] "아나운서의 목소리를 듣고, 스크립트를 보며 따라 말해보세요." 문구 삭제

- [ ] 물음표 버튼 사라짐

- [ ] 좌: 스크립트, 우: 영상 + 메모로 레이아웃 바뀜

- [ ] 학습법 설명 버튼 생김

- [ ] 닫기 버튼 디자인 및 위치 바뀜 | 1.0 | [learn_detail] 학습 상세 페이지 ver.2 반영 - ## ✨ Description

학습 상세 페이지 ver.2 반영

## ✅ To Do List

- [ ] 상단에 글로벌 네비게이션 바 추가

- [ ] 스크립트, 메모 공간이 길어지면서 전체적으로 세로 길이가 늘어남

- [ ] "아나운서의 목소리를 듣고, 스크립트를 보며 따라 말해보세요." 문구 삭제

- [ ] 물음표 버튼 사라짐

- [ ] 좌: 스크립트, 우: 영상 + 메모로 레이아웃 바뀜

- [ ] 학습법 설명 버튼 생김

- [ ] 닫기 버튼 디자인 및 위치 바뀜 | non_process | 학습 상세 페이지 ver 반영 ✨ description 학습 상세 페이지 ver 반영 ✅ to do list 상단에 글로벌 네비게이션 바 추가 스크립트 메모 공간이 길어지면서 전체적으로 세로 길이가 늘어남 아나운서의 목소리를 듣고 스크립트를 보며 따라 말해보세요 문구 삭제 물음표 버튼 사라짐 좌 스크립트 우 영상 메모로 레이아웃 바뀜 학습법 설명 버튼 생김 닫기 버튼 디자인 및 위치 바뀜 | 0 |

211,061 | 16,167,478,122 | IssuesEvent | 2021-05-01 19:54:02 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: gorm failed | C-test-failure O-roachtest O-robot branch-master release-blocker | roachtest.gorm [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2944550&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=2944550&tab=artifacts#/gorm) on master @ [684e753c15f3fc58df79b6ea70e7b6715eae4835](https://github.com/cockroachdb/cockroach/commits/684e753c15f3fc58... | 2.0 | roachtest: gorm failed - roachtest.gorm [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2944550&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=2944550&tab=artifacts#/gorm) on master @ [684e753c15f3fc58df79b6ea70e7b6715eae4835](https://github.com/cockroachdb/cockroach... | non_process | roachtest gorm failed roachtest gorm with on master the test failed on branch master cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts gorm run gorm go test runner go no gorm blocklist defined for cockroach version alpha ... | 0 |

41,193 | 12,831,725,363 | IssuesEvent | 2020-07-07 06:09:32 | rvvergara/json-server-for-react-native-blog | https://api.github.com/repos/rvvergara/json-server-for-react-native-blog | closed | CVE-2020-8116 (High) detected in dot-prop-4.2.0.tgz | security vulnerability | ## CVE-2020-8116 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dot-prop-4.2.0.tgz</b></p></summary>

<p>Get, set, or delete a property from a nested object using a dot path</p>

<p>Lib... | True | CVE-2020-8116 (High) detected in dot-prop-4.2.0.tgz - ## CVE-2020-8116 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dot-prop-4.2.0.tgz</b></p></summary>

<p>Get, set, or delete a pro... | non_process | cve high detected in dot prop tgz cve high severity vulnerability vulnerable library dot prop tgz get set or delete a property from a nested object using a dot path library home page a href path to dependency file tmp ws scm json server for react native blog package ... | 0 |

194,255 | 6,892,968,356 | IssuesEvent | 2017-11-22 23:55:50 | grpc/grpc | https://api.github.com/repos/grpc/grpc | closed | New Failure: py35.test.reflection._reflection_servicer_test.ReflectionServicerTest | infra/New Failure lang/Python priority/P0/RELEASE BLOCKER | - Test: py35.test.reflection._reflection_servicer_test.ReflectionServicerTest

- Poll Strategy: None

- URL: https://kokoro2.corp.google.com/job/grpc/job/windows/job/master/job/grpc_basictests_dbg/114 | 1.0 | New Failure: py35.test.reflection._reflection_servicer_test.ReflectionServicerTest - - Test: py35.test.reflection._reflection_servicer_test.ReflectionServicerTest

- Poll Strategy: None

- URL: https://kokoro2.corp.google.com/job/grpc/job/windows/job/master/job/grpc_basictests_dbg/114 | non_process | new failure test reflection reflection servicer test reflectionservicertest test test reflection reflection servicer test reflectionservicertest poll strategy none url | 0 |

263,186 | 28,026,467,493 | IssuesEvent | 2023-03-28 09:27:13 | Dima2021/cargo-audit | https://api.github.com/repos/Dima2021/cargo-audit | closed | CVE-2022-4450 (High) detected in openssl-src-111.14.0+1.1.1j.crate - autoclosed | Mend: dependency security vulnerability | ## CVE-2022-4450 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>openssl-src-111.14.0+1.1.1j.crate</b></p></summary>

<p>Source of OpenSSL and logic to build it.

</p>

<p>Library home pa... | True | CVE-2022-4450 (High) detected in openssl-src-111.14.0+1.1.1j.crate - autoclosed - ## CVE-2022-4450 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>openssl-src-111.14.0+1.1.1j.crate</b><... | non_process | cve high detected in openssl src crate autoclosed cve high severity vulnerability vulnerable library openssl src crate source of openssl and logic to build it library home page a href dependency hierarchy rustsec crate root library ca... | 0 |

11,205 | 13,957,703,770 | IssuesEvent | 2020-10-24 08:14:01 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | ES: Update harvesting | ES - Spain Geoportal Harvesting process | Dear Angelo,

we have configurate the "Recaching frequency " in the platform "daily" but the last harvesting was the 22 of April . ¿is there any problem?

We have update information in some capabilites service. ¿was it possible to harverst our metadata ?

Thanks you!

Best Regards

Alejandr... | 1.0 | ES: Update harvesting - Dear Angelo,

we have configurate the "Recaching frequency " in the platform "daily" but the last harvesting was the 22 of April . ¿is there any problem?

We have update information in some capabilites service. ¿was it possible to harverst our metadata ?

Thanks yo... | process | es update harvesting dear angelo we have configurate the quot recaching frequency quot in the platform quot daily quot but the last harvesting was the of april iquest is there any problem we have update information in some capabilites service iquest was it possible to harverst our metadata thanks you... | 1 |

5,959 | 8,784,232,324 | IssuesEvent | 2018-12-20 09:14:53 | linnovate/root | https://api.github.com/repos/linnovate/root | opened | partners permission bugs in offices | 2.0.6 Process bug bug | offices: after adding a partner with commenter or editor roles to the office and then entering the folders tab from manage folders, only the one opened the office show up in the folder. | 1.0 | partners permission bugs in offices - offices: after adding a partner with commenter or editor roles to the office and then entering the folders tab from manage folders, only the one opened the office show up in the folder. | process | partners permission bugs in offices offices after adding a partner with commenter or editor roles to the office and then entering the folders tab from manage folders only the one opened the office show up in the folder | 1 |

763 | 3,250,698,338 | IssuesEvent | 2015-10-19 03:27:56 | t3kt/vjzual2 | https://api.github.com/repos/t3kt/vjzual2 | opened | add source-based mask redux masking | enhancement video processing | use a video source to switch between the two different redux levels | 1.0 | add source-based mask redux masking - use a video source to switch between the two different redux levels | process | add source based mask redux masking use a video source to switch between the two different redux levels | 1 |

15,321 | 19,432,084,654 | IssuesEvent | 2021-12-21 13:11:20 | symfony/symfony | https://api.github.com/repos/symfony/symfony | closed | [Process] Passing standard IO streams to executed process AKA calling an interactive application with cross-platform support | Bug Process Status: Needs Review Stalled | **Symfony version(s) affected**: 5.2.0

**Description**

I would like to call an interactive application using the symfony process component without relying on any non-cross-platform functionality such as the allocation of a tty.

The support for this is indicated in the docs as "Using PHP Streams as the Standard... | 1.0 | [Process] Passing standard IO streams to executed process AKA calling an interactive application with cross-platform support - **Symfony version(s) affected**: 5.2.0

**Description**

I would like to call an interactive application using the symfony process component without relying on any non-cross-platform functi... | process | passing standard io streams to executed process aka calling an interactive application with cross platform support symfony version s affected description i would like to call an interactive application using the symfony process component without relying on any non cross platform functionality ... | 1 |

16,765 | 21,939,941,546 | IssuesEvent | 2022-05-23 17:00:11 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | reopened | Ability to select package resource from Azure Artifact | doc-enhancement devops/prod Pri1 devops-cicd-process/tech |

We are working on some complex deployment pipelines where artifacts are generated by different ci pipelines. We wanted to have ability to select **Package** resource from the azure artifact (nuget packages) however it only supports github packages.

is there any way to use azure artifact?

---

#### Document De... | 1.0 | Ability to select package resource from Azure Artifact -

We are working on some complex deployment pipelines where artifacts are generated by different ci pipelines. We wanted to have ability to select **Package** resource from the azure artifact (nuget packages) however it only supports github packages.

is there ... | process | ability to select package resource from azure artifact we are working on some complex deployment pipelines where artifacts are generated by different ci pipelines we wanted to have ability to select package resource from the azure artifact nuget packages however it only supports github packages is there ... | 1 |

9,844 | 12,836,505,372 | IssuesEvent | 2020-07-07 14:27:06 | checkifcovid/data-science-experiments | https://api.github.com/repos/checkifcovid/data-science-experiments | opened | Data Preprocessing – Fix Gender | bug machine learning preprocessing | Gender is having case issues when mapping to existing models.

```

'gender_Female': 0.0, 'gender_female': 0.06, 'gender_male': 0.02, 'gender_nan': 0.0,

```

**Easy solution:** *Consider a regex match for existing columns?*

**Best solution**: *Consider a regex algorithm for existing columns or columnar data?* | 1.0 | Data Preprocessing – Fix Gender - Gender is having case issues when mapping to existing models.

```

'gender_Female': 0.0, 'gender_female': 0.06, 'gender_male': 0.02, 'gender_nan': 0.0,

```

**Easy solution:** *Consider a regex match for existing columns?*

**Best solution**: *Consider a regex algorithm for existin... | process | data preprocessing – fix gender gender is having case issues when mapping to existing models gender female gender female gender male gender nan easy solution consider a regex match for existing columns best solution consider a regex algorithm for existing ... | 1 |

352,206 | 25,049,562,635 | IssuesEvent | 2022-11-05 18:03:39 | gunrock/gunrock | https://api.github.com/repos/gunrock/gunrock | closed | Gunrock app porting guide | 📋 documentation refactor | How do you port a Gunrock app from old-API to new-API? We need a documentation page that answers this question.

Assigning this to @sgpyc, cc: @neoblizz. I will edit this and make comments for you. Assign it to me when you need a read. I would also like to port one app and will be the guinea pig (at least I hope I ca... | 1.0 | Gunrock app porting guide - How do you port a Gunrock app from old-API to new-API? We need a documentation page that answers this question.

Assigning this to @sgpyc, cc: @neoblizz. I will edit this and make comments for you. Assign it to me when you need a read. I would also like to port one app and will be the guin... | non_process | gunrock app porting guide how do you port a gunrock app from old api to new api we need a documentation page that answers this question assigning this to sgpyc cc neoblizz i will edit this and make comments for you assign it to me when you need a read i would also like to port one app and will be the guin... | 0 |

271,138 | 8,476,587,079 | IssuesEvent | 2018-10-24 22:32:44 | FServais/BoardgameWE | https://api.github.com/repos/FServais/BoardgameWE | closed | The same board game can be added several time in the database | component/backend priority/medium sev/bug sev/resolved | Need to add a unique constraint on the bgg_id key. | 1.0 | The same board game can be added several time in the database - Need to add a unique constraint on the bgg_id key. | non_process | the same board game can be added several time in the database need to add a unique constraint on the bgg id key | 0 |

287,233 | 8,805,891,239 | IssuesEvent | 2018-12-26 23:03:13 | osulp/Scholars-Archive | https://api.github.com/repos/osulp/Scholars-Archive | opened | Replace conference_section predicate on all works needed | Content Priority: High | ### Descriptive summary

Once #1781 is completed, find all works with the old, wrong predicate for conference_section: `https://w2id.org/scholarlydata/ontology/conference-ontology.owl#Track`

Can't rely on just Solr.

Replace with correct predicate on those works and save.

### Related work

#1781 | 1.0 | Replace conference_section predicate on all works needed - ### Descriptive summary

Once #1781 is completed, find all works with the old, wrong predicate for conference_section: `https://w2id.org/scholarlydata/ontology/conference-ontology.owl#Track`

Can't rely on just Solr.

Replace with correct predicate on tho... | non_process | replace conference section predicate on all works needed descriptive summary once is completed find all works with the old wrong predicate for conference section can t rely on just solr replace with correct predicate on those works and save related work | 0 |

16,521 | 21,529,153,285 | IssuesEvent | 2022-04-28 21:54:05 | zotero/zotero | https://api.github.com/repos/zotero/zotero | opened | Quick Format dialog can't be dismissed if Google Doc has been closed | Word Processor Integration Bug | 1. Activate "Add/edit citation" in Google Docs.

2. Leave the quick format dialog open in the background, go back to the document, and close it.

3. Switch back to Zotero and try to dismiss the quick format dialog by pressing Escape.

Dialog should close, but it doesn't. The only way to get it to close is by quitting... | 1.0 | Quick Format dialog can't be dismissed if Google Doc has been closed - 1. Activate "Add/edit citation" in Google Docs.

2. Leave the quick format dialog open in the background, go back to the document, and close it.

3. Switch back to Zotero and try to dismiss the quick format dialog by pressing Escape.

Dialog shoul... | process | quick format dialog can t be dismissed if google doc has been closed activate add edit citation in google docs leave the quick format dialog open in the background go back to the document and close it switch back to zotero and try to dismiss the quick format dialog by pressing escape dialog shoul... | 1 |

22,091 | 30,613,558,025 | IssuesEvent | 2023-07-23 22:39:47 | solop-develop/frontend-core | https://api.github.com/repos/solop-develop/frontend-core | closed | Reporte/Proceso: Ejecutar sin permisos no da mensaje a usuario | bug (PRC) Processes (RPT) Reports (SE) Security | Cuando se ejecuta un proceso/reporte al que no se tiene acceso se genera un error sin mensaje al usuario para que identifique lo que ocurrió.

Este caso es difícil de identificar/replicar debido a que si no tiene acceso a un proceso/reporte no se muestra en el menú, o proceso asociado.

Sin embargo en los formularios q... | 1.0 | Reporte/Proceso: Ejecutar sin permisos no da mensaje a usuario - Cuando se ejecuta un proceso/reporte al que no se tiene acceso se genera un error sin mensaje al usuario para que identifique lo que ocurrió.

Este caso es difícil de identificar/replicar debido a que si no tiene acceso a un proceso/reporte no se muestra ... | process | reporte proceso ejecutar sin permisos no da mensaje a usuario cuando se ejecuta un proceso reporte al que no se tiene acceso se genera un error sin mensaje al usuario para que identifique lo que ocurrió este caso es difícil de identificar replicar debido a que si no tiene acceso a un proceso reporte no se muestra ... | 1 |

30,656 | 7,239,282,593 | IssuesEvent | 2018-02-13 17:00:23 | MvvmCross/MvvmCross | https://api.github.com/repos/MvvmCross/MvvmCross | reopened | Plugin architecture suggestion | Code improvement Feature request Needs investigation Plugins | Looking at some plugins if they were converted to bait and switch they wouldn't actually depend on mvvmcross. I'm proposing that we convert to a xamarin/jamesmontemagno model of having each plugin in its own repo and versioned separately from mvvmcross. This is something we could experiment with in the Hackathon. | 1.0 | Plugin architecture suggestion - Looking at some plugins if they were converted to bait and switch they wouldn't actually depend on mvvmcross. I'm proposing that we convert to a xamarin/jamesmontemagno model of having each plugin in its own repo and versioned separately from mvvmcross. This is something we could experi... | non_process | plugin architecture suggestion looking at some plugins if they were converted to bait and switch they wouldn t actually depend on mvvmcross i m proposing that we convert to a xamarin jamesmontemagno model of having each plugin in its own repo and versioned separately from mvvmcross this is something we could experi... | 0 |

33,023 | 2,761,423,617 | IssuesEvent | 2015-04-28 17:09:37 | kendraio/kendra_hub | https://api.github.com/repos/kendraio/kendra_hub | closed | Setting up Kendra Hub trial users... | High priority | - [ ] It doesn't seem right that a Kendra Hub trial user should be able to edit their own "Status" in their account:

http://hub.kendra.io/user/me/edit - substitute "me" for userid.

- [ ] "Asset actions" buttons are not displayed in a song view:

http://hub.kendra.io/content/stairway-heaven

* Add a contribution

* ... | 1.0 | Setting up Kendra Hub trial users... - - [ ] It doesn't seem right that a Kendra Hub trial user should be able to edit their own "Status" in their account:

http://hub.kendra.io/user/me/edit - substitute "me" for userid.

- [ ] "Asset actions" buttons are not displayed in a song view:

http://hub.kendra.io/content/st... | non_process | setting up kendra hub trial users it doesn t seem right that a kendra hub trial user should be able to edit their own status in their account substitute me for userid asset actions buttons are not displayed in a song view add a contribution new embedded asset sub clip embed a... | 0 |

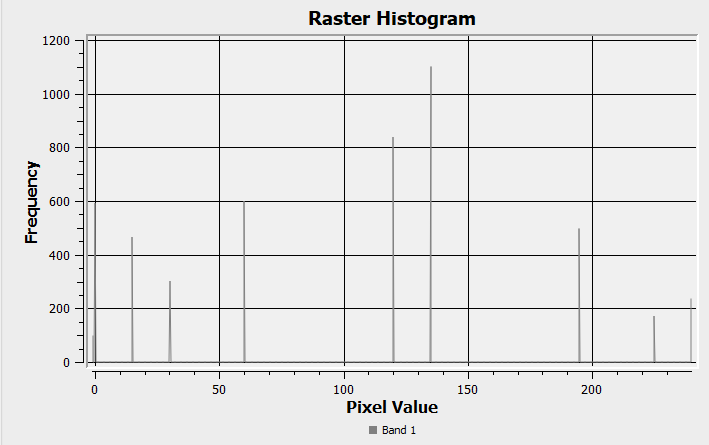

12,491 | 14,958,697,154 | IssuesEvent | 2021-01-27 01:22:06 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | [Processing] Rescale Raster gives bad results. | Bug Processing | The Rescale Raster tool gives nonsensical results in some instances.

I have a raster (float64) whose value are ranging from -1 to 255.

As those should represent a direction (originally from r.terraflow... | 1.0 | [Processing] Rescale Raster gives bad results. - The Rescale Raster tool gives nonsensical results in some instances.

I have a raster (float64) whose value are ranging from -1 to 255.

As those should r... | process | rescale raster gives bad results the rescale raster tool gives nonsensical results in some instances i have a raster whose value are ranging from to as those should represent a direction originally from r terraflow i want to turn them into quadrants when i process the data to rescale ... | 1 |

261,943 | 22,781,938,041 | IssuesEvent | 2022-07-08 20:58:05 | phetsims/friction | https://api.github.com/repos/phetsims/friction | closed | CT cannot set state while setting state | dev:phet-io type:automated-testing | ```

friction : phet-io-state-fuzz : unbuilt

https://bayes.colorado.edu/continuous-testing/ct-snapshots/1654295157038/phet-io-wrappers/state/?sim=friction&phetioDebug=true&fuzz&wrapperContinuousTest=%7B%22test%22%3A%5B%22friction%22%2C%22phet-io-state-fuzz%22%2C%22unbuilt%22%5D%2C%22snapshotName%22%3A%22snapshot-16542... | 1.0 | CT cannot set state while setting state - ```

friction : phet-io-state-fuzz : unbuilt

https://bayes.colorado.edu/continuous-testing/ct-snapshots/1654295157038/phet-io-wrappers/state/?sim=friction&phetioDebug=true&fuzz&wrapperContinuousTest=%7B%22test%22%3A%5B%22friction%22%2C%22phet-io-state-fuzz%22%2C%22unbuilt%22%5... | non_process | ct cannot set state while setting state friction phet io state fuzz unbuilt uncaught error uncaught error assertion failed cannot set state while setting state error assertion failed cannot set state while setting state at window assertions assertfunction at assert phetiostateengine js ... | 0 |

4,769 | 3,442,897,393 | IssuesEvent | 2015-12-15 01:01:22 | jeff1evesque/machine-learning | https://api.github.com/repos/jeff1evesque/machine-learning | reopened | Ensure compiled jsx files are not versioned | build new feature | We need to determine an implementation that prevents compiled [jsx files](https://github.com/jeff1evesque/machine-learning/tree/master/src/jsx) (javascript), in [`/vagrant/src/js`](https://github.com/jeff1evesque/machine-learning/tree/master/src/js) to not be versioned. | 1.0 | Ensure compiled jsx files are not versioned - We need to determine an implementation that prevents compiled [jsx files](https://github.com/jeff1evesque/machine-learning/tree/master/src/jsx) (javascript), in [`/vagrant/src/js`](https://github.com/jeff1evesque/machine-learning/tree/master/src/js) to not be versioned. | non_process | ensure compiled jsx files are not versioned we need to determine an implementation that prevents compiled javascript in to not be versioned | 0 |

4,978 | 7,488,340,914 | IssuesEvent | 2018-04-06 00:37:16 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | opened | Career Field Filter Needed | FAI Requirements Ready | **User Story:** As a user, I'd like to refine my Open Opportunities search by keywords and other information that opportunity creators have entered.

**Acceptance Criteria:**

Filter name/text: Career field

o Entries are generated by user typing and Open Opps using Autocomplete

o As an item is selected, will add a ... | 1.0 | Career Field Filter Needed - **User Story:** As a user, I'd like to refine my Open Opportunities search by keywords and other information that opportunity creators have entered.

**Acceptance Criteria:**

Filter name/text: Career field

o Entries are generated by user typing and Open Opps using Autocomplete

o As an ... | non_process | career field filter needed user story as a user i d like to refine my open opportunities search by keywords and other information that opportunity creators have entered acceptance criteria filter name text career field o entries are generated by user typing and open opps using autocomplete o as an ... | 0 |

9,733 | 12,730,469,297 | IssuesEvent | 2020-06-25 07:36:50 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | [HCL2] Vagrant post-processor: keep_input_artifact does not work | bug hcl2 post-processor/vagrant track-internal |

#### Overview of the Issue

[HCL2] Vagrant post-processor: keep_input_artifact does not work

#### Reproduction Steps

Vagrant post-processor in Packer HCL template:

```hcl

post-processor "vagrant" {

name = "vagrant-box"

output = "output-${var.build_number}/packer-ubuntu1404-${var.build_nu... | 1.0 | [HCL2] Vagrant post-processor: keep_input_artifact does not work -

#### Overview of the Issue

[HCL2] Vagrant post-processor: keep_input_artifact does not work

#### Reproduction Steps

Vagrant post-processor in Packer HCL template:

```hcl

post-processor "vagrant" {

name = "vagrant-box"

ou... | process | vagrant post processor keep input artifact does not work overview of the issue vagrant post processor keep input artifact does not work reproduction steps vagrant post processor in packer hcl template hcl post processor vagrant name vagrant box output ou... | 1 |

10,590 | 13,400,733,396 | IssuesEvent | 2020-09-03 16:14:09 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Pass variables between stages | Pri1 devops-cicd-process/tech devops/prod doc-enhancement | Please, add docs on how to pass and refer variables between stages in a Multi-Stage Pipeline.

stageDependencies.<stageName>.<jobName>.outputs['<taskName>.<variableName>']

Thanks

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.... | 1.0 | Pass variables between stages - Please, add docs on how to pass and refer variables between stages in a Multi-Stage Pipeline.

stageDependencies.<stageName>.<jobName>.outputs['<taskName>.<variableName>']

Thanks

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.micro... | process | pass variables between stages please add docs on how to pass and refer variables between stages in a multi stage pipeline stagedependencies lt stagename gt lt jobname gt outputs thanks document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id ... | 1 |

18,763 | 24,664,306,487 | IssuesEvent | 2022-10-18 09:08:29 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Are "food intake" and "energy expenditure" BP? | Other term-related request organism-level process wont fix | homeostasis is a BP. https://www.ebi.ac.uk/QuickGO/search/homeostasis

"energy homeostasis" is a BP (it is not included in GO though). wikipedia says "... is a biological process that involves the coordinated homeostatic regulation of food intake (energy inflow) and energy expenditure (energy outflow)."

So should ... | 1.0 | Are "food intake" and "energy expenditure" BP? - homeostasis is a BP. https://www.ebi.ac.uk/QuickGO/search/homeostasis

"energy homeostasis" is a BP (it is not included in GO though). wikipedia says "... is a biological process that involves the coordinated homeostatic regulation of food intake (energy inflow) and en... | process | are food intake and energy expenditure bp homeostasis is a bp energy homeostasis is a bp it is not included in go though wikipedia says is a biological process that involves the coordinated homeostatic regulation of food intake energy inflow and energy expenditure energy outflow so shoul... | 1 |

562,488 | 16,661,829,527 | IssuesEvent | 2021-06-06 13:16:38 | episphere/dashboard | https://api.github.com/repos/episphere/dashboard | closed | Remove filter and sort icons from Participant Summary page | Priority | These up/down arrows and filter icon on the left hand side of the Participant Summary page won't be active for launch so please remove. | 1.0 | Remove filter and sort icons from Participant Summary page - These up/down arrows and filter icon on the left hand side of the Participant Summary page won't be active for launch so please remove. | non_process | remove filter and sort icons from participant summary page these up down arrows and filter icon on the left hand side of the participant summary page won t be active for launch so please remove | 0 |

751,077 | 26,229,672,976 | IssuesEvent | 2023-01-04 22:24:39 | redhat-developer/vscode-openshift-tools | https://api.github.com/repos/redhat-developer/vscode-openshift-tools | opened | Find the way to install extension from vsix file going to be released and run unit tests on it | priority/critical kind/task quality | Current release build script runs unit tests from the source first, then run build for vsix files. Would be good to run unit tests, if possible, on extension installed from vsix going to be released to make sure nothing got missing in final vsix assembly. | 1.0 | Find the way to install extension from vsix file going to be released and run unit tests on it - Current release build script runs unit tests from the source first, then run build for vsix files. Would be good to run unit tests, if possible, on extension installed from vsix going to be released to make sure nothing go... | non_process | find the way to install extension from vsix file going to be released and run unit tests on it current release build script runs unit tests from the source first then run build for vsix files would be good to run unit tests if possible on extension installed from vsix going to be released to make sure nothing go... | 0 |

3,772 | 6,742,996,056 | IssuesEvent | 2017-10-20 10:04:01 | inasafe/inasafe-realtime | https://api.github.com/repos/inasafe/inasafe-realtime | closed | Realtime Volcanic Ash Testing | bug ready realtime processor web page | See original ticket at https://github.com/inasafe/inasafe/issues/3285 for further discussion

The old ticket in https://github.com/inasafe/inasafe/issues/2491 has become too long to track. Now that we are in the testing stage, I propose we move the discussion regarding the testing to this new ticket. I will keep the ol... | 1.0 | Realtime Volcanic Ash Testing - See original ticket at https://github.com/inasafe/inasafe/issues/3285 for further discussion

The old ticket in https://github.com/inasafe/inasafe/issues/2491 has become too long to track. Now that we are in the testing stage, I propose we move the discussion regarding the testing to thi... | process | realtime volcanic ash testing see original ticket at for further discussion the old ticket in has become too long to track now that we are in the testing stage i propose we move the discussion regarding the testing to this new ticket i will keep the old ticket open as future reference old ticket link ... | 1 |

22,348 | 31,027,446,691 | IssuesEvent | 2023-08-10 10:05:34 | DxytJuly3/gitalk_blog | https://api.github.com/repos/DxytJuly3/gitalk_blog | opened | [Linux] 什么是进程地址空间?父子进程的代码时如何继承的?程序是怎么加载成进程的?为什么要有进程地址空间? - July.cc Blogs | Gitalk /posts/Linux-Process-Addr-Space | https://www.julysblog.cn/posts/Linux-Process-Addr-Space

在介绍C++的内存控制时, 我用了这样一张图来大致表述一个程序的程序地址空间, 并且也提到过这块空间占用的是内存. 不过这张图, 在Linux系统中需要稍微改动一下 | 1.0 | [Linux] 什么是进程地址空间?父子进程的代码时如何继承的?程序是怎么加载成进程的?为什么要有进程地址空间? - July.cc Blogs - https://www.julysblog.cn/posts/Linux-Process-Addr-Space

在介绍C++的内存控制时, 我用了这样一张图来大致表述一个程序的程序地址空间, 并且也提到过这块空间占用的是内存. 不过这张图, 在Linux系统中需要稍微改动一下 | process | 什么是进程地址空间?父子进程的代码时如何继承的?程序是怎么加载成进程的?为什么要有进程地址空间? july cc blogs 在介绍c 的内存控制时 我用了这样一张图来大致表述一个程序的程序地址空间 并且也提到过这块空间占用的是内存 不过这张图 在linux系统中需要稍微改动一下 | 1 |

12,924 | 15,295,185,236 | IssuesEvent | 2021-02-24 04:13:21 | topcoder-platform/community-app | https://api.github.com/repos/topcoder-platform/community-app | opened | Missing x-total when access from frontend | ShapeupProcess challenge- recommender-tool | - We face weird situation, we see the x-total when testing from Postman. but not from frontend (headers return empty).

- Maybe this is related to backend api about CORS_ALLOW_HEADERS custom headers to have necessary headers (x-total, x-pages..). Check reference here: https://pypi.org/project/django-cors-headers/

<i... | 1.0 | Missing x-total when access from frontend - - We face weird situation, we see the x-total when testing from Postman. but not from frontend (headers return empty).

- Maybe this is related to backend api about CORS_ALLOW_HEADERS custom headers to have necessary headers (x-total, x-pages..). Check reference here: https:/... | process | missing x total when access from frontend we face weird situation we see the x total when testing from postman but not from frontend headers return empty maybe this is related to backend api about cors allow headers custom headers to have necessary headers x total x pages check reference here i... | 1 |

121,079 | 15,836,812,121 | IssuesEvent | 2021-04-06 19:52:31 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | opened | [MCP] Veteran codesign research plan | MCP design research vsa vsa-benefits-2 | ## Issue Description

We need to do a codesign workshop with Veterans in order to better understand how they think of both benefit and copay debt.

---

## Tasks

- [ ] Create a research plan for codesign with Veterans around MCP

- [ ] Upload research plan to GH and link to this ticket

## Acceptance Criteria

- [ ] Resear... | 1.0 | [MCP] Veteran codesign research plan - ## Issue Description

We need to do a codesign workshop with Veterans in order to better understand how they think of both benefit and copay debt.

---

## Tasks

- [ ] Create a research plan for codesign with Veterans around MCP

- [ ] Upload research plan to GH and link to this tick... | non_process | veteran codesign research plan issue description we need to do a codesign workshop with veterans in order to better understand how they think of both benefit and copay debt tasks create a research plan for codesign with veterans around mcp upload research plan to gh and link to this ticket a... | 0 |

513,166 | 14,916,923,639 | IssuesEvent | 2021-01-22 18:59:54 | canonical-web-and-design/ubuntu.com | https://api.github.com/repos/canonical-web-and-design/ubuntu.com | closed | GA impressions not working with async takeovers | Priority: High | The [script that sends the takeover event](https://github.com/canonical-web-and-design/ubuntu.com/blob/master/static/js/src/navigation.js#L142) needs to be moved to the client side rendering of the takeover. | 1.0 | GA impressions not working with async takeovers - The [script that sends the takeover event](https://github.com/canonical-web-and-design/ubuntu.com/blob/master/static/js/src/navigation.js#L142) needs to be moved to the client side rendering of the takeover. | non_process | ga impressions not working with async takeovers the needs to be moved to the client side rendering of the takeover | 0 |

252,749 | 8,041,227,839 | IssuesEvent | 2018-07-31 01:40:28 | allenlol/zSpigot-Issues | https://api.github.com/repos/allenlol/zSpigot-Issues | closed | Problem with pinging players | bug low priority | You can use /ping it works fine and you can do /ping yourign and will work with no problems but when you do /ping someone the message won't pop up. | 1.0 | Problem with pinging players - You can use /ping it works fine and you can do /ping yourign and will work with no problems but when you do /ping someone the message won't pop up. | non_process | problem with pinging players you can use ping it works fine and you can do ping yourign and will work with no problems but when you do ping someone the message won t pop up | 0 |

20,193 | 26,762,334,791 | IssuesEvent | 2023-01-31 08:06:16 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Have more C++ integration tests | P4 type: process team-Rules-CPP | One option would be to port the relevant part of our internal C++ test battery.

Now that we have include pruning, there is a significantly higher chance of breakage so this'd be a good time.

I'm not extremely worried (thus the P3 label) because our internal test battery is pretty extensive, but Bazel works a bit... | 1.0 | Have more C++ integration tests - One option would be to port the relevant part of our internal C++ test battery.

Now that we have include pruning, there is a significantly higher chance of breakage so this'd be a good time.

I'm not extremely worried (thus the P3 label) because our internal test battery is pret... | process | have more c integration tests one option would be to port the relevant part of our internal c test battery now that we have include pruning there is a significantly higher chance of breakage so this d be a good time i m not extremely worried thus the label because our internal test battery is prett... | 1 |

309,662 | 23,302,343,304 | IssuesEvent | 2022-08-07 14:08:56 | hai-vr/av3-animator-as-code | https://api.github.com/repos/hai-vr/av3-animator-as-code | opened | Make an AAC "getting started" first steps, as in how to create a MonoBehaviour, etc. | documentation | Issue stemming from conversation on Discord | 1.0 | Make an AAC "getting started" first steps, as in how to create a MonoBehaviour, etc. - Issue stemming from conversation on Discord | non_process | make an aac getting started first steps as in how to create a monobehaviour etc issue stemming from conversation on discord | 0 |

44,544 | 23,673,632,521 | IssuesEvent | 2022-08-27 18:57:31 | tailscale/tailscale | https://api.github.com/repos/tailscale/tailscale | closed | wgengine/wgcfg: optimize FromUAPI | optimization L3 Some users T3 Performance/Debugging T0 New feature | Lots of allocations, generally heavy-weight, and we use it a lot. | True | wgengine/wgcfg: optimize FromUAPI - Lots of allocations, generally heavy-weight, and we use it a lot. | non_process | wgengine wgcfg optimize fromuapi lots of allocations generally heavy weight and we use it a lot | 0 |

410,952 | 12,004,366,271 | IssuesEvent | 2020-04-09 11:24:37 | vvMv/rpgplus | https://api.github.com/repos/vvMv/rpgplus | closed | Worldguard region checking error | Priority: High Status: Abandoned Type: Bug | **Description of the bug**

An error occurs when checking region | 1.0 | Worldguard region checking error - **Description of the bug**

An error occurs when checking region | non_process | worldguard region checking error description of the bug an error occurs when checking region | 0 |

10,450 | 13,228,159,979 | IssuesEvent | 2020-08-18 05:27:44 | didi/mpx | https://api.github.com/repos/didi/mpx | closed | 文档上内联样式 background-image 使用本地图片的疑问 | processing | 通过实践得知内联样式使用本地图片作为背景图片在开发者工具上可以显示, 但是真机不显示, 例如下面的代码

网上大多说是背景不支持本地图片, 少部分说内联样式的背景可以使用本地图片, 并且 mpx 的文档上也有这样写法

网上大多说是背景不支持本地图片, 少部分说内联样式的背景可以使用本地图片, 并且 mpx 的文档上也有这样写法

(import_node_process8.default.execPath, [import_node_path3.default.join(__dirname2, \"check.js\"), JSON.stringify(this.#options)], {\n detached: true,\n stdio: \"ignore\"\n }).unref();","location":"package/dist/utils/cli.j... | 1.0 | @yamada-ui/cli 0.4.0 has 1 guarddog issues - ```{"npm-silent-process-execution":[{"code":" (0, import_node_child_process.spawn)(import_node_process8.default.execPath, [import_node_path3.default.join(__dirname2, \"check.js\"), JSON.stringify(this.#options)], {\n detached: true,\n stdio: \"ignore\"\n }).u... | process | yamada ui cli has guarddog issues npm silent process execution n detached true n stdio ignore n unref location package dist utils cli js message this package is silently executing another executable | 1 |

581 | 3,060,127,960 | IssuesEvent | 2015-08-14 18:50:41 | Microsoft/poshtools | https://api.github.com/repos/Microsoft/poshtools | closed | Prevent Users From Using Remote Attach to Attach to a Local Process | Process Attaching task | Ideally users should only be using remote attaching to attach to remote processes, not local processes. As such, we should not treat "localhost", "127.0.0.1" or anything similar as a proper qualifier. | 1.0 | Prevent Users From Using Remote Attach to Attach to a Local Process - Ideally users should only be using remote attaching to attach to remote processes, not local processes. As such, we should not treat "localhost", "127.0.0.1" or anything similar as a proper qualifier. | process | prevent users from using remote attach to attach to a local process ideally users should only be using remote attaching to attach to remote processes not local processes as such we should not treat localhost or anything similar as a proper qualifier | 1 |

8,023 | 11,207,885,093 | IssuesEvent | 2020-01-06 05:48:13 | nodejs/node | https://api.github.com/repos/nodejs/node | opened | Flaky test: parallel/test-net-listen-after-destroying-stdin | CI / flaky test arm net process | * **Version**: master

* **Platform**: arm

* **Subsystem**: process, net

[Executed on `test-requireio_williamkapke-debian10-arm64_pi3-1`](https://ci.nodejs.org/job/node-test-binary-arm-12+/3679/RUN_SUBSET=0,label=pi3-docker/console):

```

00:27:37 not ok 686 parallel/test-net-listen-after-destroying-stdin

00:27... | 1.0 | Flaky test: parallel/test-net-listen-after-destroying-stdin - * **Version**: master

* **Platform**: arm

* **Subsystem**: process, net

[Executed on `test-requireio_williamkapke-debian10-arm64_pi3-1`](https://ci.nodejs.org/job/node-test-binary-arm-12+/3679/RUN_SUBSET=0,label=pi3-docker/console):

```

00:27:37 not... | process | flaky test parallel test net listen after destroying stdin version master platform arm subsystem process net not ok parallel test net listen after destroying stdin duration ms severity fail exitcode stack ... | 1 |

13,339 | 15,801,016,081 | IssuesEvent | 2021-04-03 02:29:50 | PyCQA/flake8 | https://api.github.com/repos/PyCQA/flake8 | closed | 5-6x speed regression on large files (flake8 2.6.2 vs flake8 3.0.4) | component:multiprocessing component:performance has attachment help wanted priority:high | In GitLab by @asottile on Oct 3, 2016, 14:21

If you need more information please ask! I think this report is pretty thorough and I've been able to reproduce. The fake file I create is not the actual file that is causing problems, but a representative file (the actual file is causing a nearly 20x slowdown)

## Version... | 1.0 | 5-6x speed regression on large files (flake8 2.6.2 vs flake8 3.0.4) - In GitLab by @asottile on Oct 3, 2016, 14:21

If you need more information please ask! I think this report is pretty thorough and I've been able to reproduce. The fake file I create is not the actual file that is causing problems, but a representati... | process | speed regression on large files vs in gitlab by asottile on oct if you need more information please ask i think this report is pretty thorough and i ve been able to reproduce the fake file i create is not the actual file that is causing problems but a representative file the act... | 1 |

1,268 | 3,798,739,825 | IssuesEvent | 2016-03-23 13:47:14 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | window.FontFace is not overriden | !IMPORTANT! AREA: client COMPLEXITY: easy SYSTEM: URL processing TYPE: bug | Specification - https://drafts.csswg.org/css-font-loading/#FontFace-interface

Reproduced on https://badoo.com/ as script error in Console:

```javascript

Font from origin 'https://badoocdn.com' has been blocked from loading by Cross-Origin Resource Sharing policy: The 'Access-Control-Allow-Origin' header has a value ... | 1.0 | window.FontFace is not overriden - Specification - https://drafts.csswg.org/css-font-loading/#FontFace-interface

Reproduced on https://badoo.com/ as script error in Console:

```javascript

Font from origin 'https://badoocdn.com' has been blocked from loading by Cross-Origin Resource Sharing policy: The 'Access-Contro... | process | window fontface is not overriden specification reproduced on as script error in console javascript font from origin has been blocked from loading by cross origin resource sharing policy the access control allow origin header has a value that is not equal to the supplied origin origin is th... | 1 |

9,145 | 7,842,264,520 | IssuesEvent | 2018-06-18 22:37:39 | Seaal/Pug | https://api.github.com/repos/Seaal/Pug | closed | Add a core module | area: infrastructure effort: low priority: high refactoring | A core module is seen as best practice in the angular style guide.

Cross-cutting services should be included in this module. | 1.0 | Add a core module - A core module is seen as best practice in the angular style guide.

Cross-cutting services should be included in this module. | non_process | add a core module a core module is seen as best practice in the angular style guide cross cutting services should be included in this module | 0 |

133,192 | 18,843,935,032 | IssuesEvent | 2021-11-11 12:55:05 | Geonovum/KP-APIs | https://api.github.com/repos/Geonovum/KP-APIs | opened | API-17: Publishing language - Feedback Publieke Consultatie | API design rules (normatief) Consultatie | Originele bericht van Provincie Zuid-Holland:

```

API-17: Publish documentation in Dutch unless there is existing documentation in English

Zie de opmerking bij API-04. Er zijn situaties waarbij Nederlandstalig niet gewenst is. In een ontwikketraject beschik je nog niet over Engelstalige documentatie, maar die is w... | 1.0 | API-17: Publishing language - Feedback Publieke Consultatie - Originele bericht van Provincie Zuid-Holland:

```

API-17: Publish documentation in Dutch unless there is existing documentation in English

Zie de opmerking bij API-04. Er zijn situaties waarbij Nederlandstalig niet gewenst is. In een ontwikketraject bes... | non_process | api publishing language feedback publieke consultatie originele bericht van provincie zuid holland api publish documentation in dutch unless there is existing documentation in english zie de opmerking bij api er zijn situaties waarbij nederlandstalig niet gewenst is in een ontwikketraject beschi... | 0 |

530 | 2,999,841,630 | IssuesEvent | 2015-07-23 21:08:51 | zhengj2007/BFO-test | https://api.github.com/repos/zhengj2007/BFO-test | opened | How to solve issue 31 pertaining to use of underscores in relations | imported Type-BFO2-Process | _From [mcour...@gmail.com](https://code.google.com/u/116795168307825520406/) on May 21, 2012 00:15:12_

BFO2 developers disagree as to whether relations labels should contain underscores or spaces as word separators, see https://code.google.com/p/bfo/issues/detail?id=31 . It is unclear how to solve this. One proposal i... | 1.0 | How to solve issue 31 pertaining to use of underscores in relations - _From [mcour...@gmail.com](https://code.google.com/u/116795168307825520406/) on May 21, 2012 00:15:12_

BFO2 developers disagree as to whether relations labels should contain underscores or spaces as word separators, see https://code.google.com/p/bfo... | process | how to solve issue pertaining to use of underscores in relations from on may developers disagree as to whether relations labels should contain underscores or spaces as word separators see it is unclear how to solve this one proposal is to poll the bfo community as one argument relies on s... | 1 |

45,028 | 9,666,271,857 | IssuesEvent | 2019-05-21 10:24:00 | atomist/sdm-core | https://api.github.com/repos/atomist/sdm-core | reopened | Code Inspection: npm audit on master | code-inspection | ### graphql-code-generator:>=0

- _(error)_ [Insecure Default Configuration](https://npmjs.com/advisories/834) _No fix is currently available. Consider using an alternative module until a fix is made available._

- `graphql-code-generator:0.16.1`:

- `@atomist/automation-client>graphql-code-generator`

### handle... | 1.0 | Code Inspection: npm audit on master - ### graphql-code-generator:>=0

- _(error)_ [Insecure Default Configuration](https://npmjs.com/advisories/834) _No fix is currently available. Consider using an alternative module until a fix is made available._

- `graphql-code-generator:0.16.1`:

- `@atomist/automation-cli... | non_process | code inspection npm audit on master graphql code generator error no fix is currently available consider using an alternative module until a fix is made available graphql code generator atomist automation client graphql code generator handlebars ... | 0 |

3,939 | 6,882,247,775 | IssuesEvent | 2017-11-21 02:46:10 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Linux Process.StartTime is in the future | area-System.Diagnostics.Process bug | TestStartTimeProperty failed on my laptop:

```

System.Diagnostics.Tests.ProcessThreadTests.TestStartTimeProperty [FAIL]

Assert.InRange() Failure

Range: (11/17/17 3:21:16 AM - 11/16/17 12:10:45 PM)

Actual: 11/17/17 3:21:17 AM

Stack Trace:

/home/tmds/repos/corefx/src/System.Dia... | 1.0 | Linux Process.StartTime is in the future - TestStartTimeProperty failed on my laptop:

```

System.Diagnostics.Tests.ProcessThreadTests.TestStartTimeProperty [FAIL]

Assert.InRange() Failure

Range: (11/17/17 3:21:16 AM - 11/16/17 12:10:45 PM)

Actual: 11/17/17 3:21:17 AM

Stack Trace:

... | process | linux process starttime is in the future teststarttimeproperty failed on my laptop system diagnostics tests processthreadtests teststarttimeproperty assert inrange failure range am pm actual am stack trace home tmds repos... | 1 |

17,372 | 23,197,832,834 | IssuesEvent | 2022-08-01 18:11:57 | vectordotdev/vector | https://api.github.com/repos/vectordotdev/vector | closed | Ability to drop old and future data | type: enhancement meta: idea have: nice domain: processing | Vector needs the ability to drop old and future data. This helps protect against errors that could result in a high number of downstream indexes. For example, if a date is incorrectly extracted this could result in many dates spanning many days. This would subsequently create many downstream indexes/partitions which wo... | 1.0 | Ability to drop old and future data - Vector needs the ability to drop old and future data. This helps protect against errors that could result in a high number of downstream indexes. For example, if a date is incorrectly extracted this could result in many dates spanning many days. This would subsequently create many ... | process | ability to drop old and future data vector needs the ability to drop old and future data this helps protect against errors that could result in a high number of downstream indexes for example if a date is incorrectly extracted this could result in many dates spanning many days this would subsequently create many ... | 1 |

8,635 | 11,786,481,734 | IssuesEvent | 2020-03-17 12:22:03 | threefoldtech/jumpscaleX_core | https://api.github.com/repos/threefoldtech/jumpscaleX_core | closed | readonly bcdb | process_wontfix type_bug | ```

JSX> j.servers.threebot.default.stop()

* stop: lapis

Mon 02 10:40:12 ata/peewee/peewee.py -3069 - execute_sql : EXCEPTION:

Operational

Mon 02 10:40:12 ata/peewee/peewee.py -3069 - execute_sql : EXCEPTION:

OperationalError('attempt to write a readonly da... | 1.0 | readonly bcdb - ```

JSX> j.servers.threebot.default.stop()

* stop: lapis

Mon 02 10:40:12 ata/peewee/peewee.py -3069 - execute_sql : EXCEPTION:

Operational

Mon 02 10:40:12 ata/peewee/peewee.py -3069 - execute_sql : EXCEPTION:

OperationalError('attempt to wri... | process | readonly bcdb jsx j servers threebot default stop stop lapis mon ata peewee peewee py execute sql exception operational mon ata peewee peewee py execute sql exception operationalerror attempt to write a readonly ... | 1 |

5,586 | 8,442,226,625 | IssuesEvent | 2018-10-18 12:41:30 | kiwicom/orbit-components | https://api.github.com/repos/kiwicom/orbit-components | closed | Textarea: provide default height | enhancement processing | It would be nice if the textarea component could take initial height as props. We currently need it on tequila tbh.

| 1.0 | Textarea: provide default height - It would be nice if the textarea component could take initial height as props. We currently need it on tequila tbh.

| process | textarea provide default height it would be nice if the textarea component could take initial height as props we currently need it on tequila tbh | 1 |

15,232 | 19,102,107,454 | IssuesEvent | 2021-11-30 00:20:53 | varabyte/kobweb | https://api.github.com/repos/varabyte/kobweb | closed | Remove server's reference to anyHost | process | The default kotr templates set you up with anyHost but recommend disabling it. Now that we're getting close to hosting an actual site, we should find out what we're supposed to put there. | 1.0 | Remove server's reference to anyHost - The default kotr templates set you up with anyHost but recommend disabling it. Now that we're getting close to hosting an actual site, we should find out what we're supposed to put there. | process | remove server s reference to anyhost the default kotr templates set you up with anyhost but recommend disabling it now that we re getting close to hosting an actual site we should find out what we re supposed to put there | 1 |

10,357 | 13,181,524,544 | IssuesEvent | 2020-08-12 14:27:05 | bluePlatinum/pitCrewTelemetry | https://api.github.com/repos/bluePlatinum/pitCrewTelemetry | opened | add testing capabilities | testing/process | add testing capabilities and include them into the CI (Travis CI) process | 1.0 | add testing capabilities - add testing capabilities and include them into the CI (Travis CI) process | process | add testing capabilities add testing capabilities and include them into the ci travis ci process | 1 |

282,452 | 21,315,490,445 | IssuesEvent | 2022-04-16 07:39:12 | zhongfu/pe | https://api.github.com/repos/zhongfu/pe | opened | Inconsistent formatting in command summary | type.DocumentationBug severity.Low | The "Command Format" section specifies that words in `UPPER_CASE` can be substituted for user-specified parameters; however, the command summary uses the lowercase `keyword` and `more keywords` for what is actually supposed to be user-specified parameters.