Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

22,454 | 31,224,994,714 | IssuesEvent | 2023-08-19 01:33:37 | h4sh5/npm-auto-scanner | https://api.github.com/repos/h4sh5/npm-auto-scanner | opened | school-task-tester 1.0.15 has 2 guarddog issues | npm-install-script npm-silent-process-execution | ```{"npm-install-script":[{"code":" \"postinstall\": \"node preinstall.js\",","location":"package/package.json:11","message":"The package.json has a script automatically running when the package is installed"}],"npm-silent-process-execution":[{"code":"const child = spawn('node', ['index.js'], {\n detached: true,\n ... | 1.0 | school-task-tester 1.0.15 has 2 guarddog issues - ```{"npm-install-script":[{"code":" \"postinstall\": \"node preinstall.js\",","location":"package/package.json:11","message":"The package.json has a script automatically running when the package is installed"}],"npm-silent-process-execution":[{"code":"const child = s... | process | school task tester has guarddog issues npm install script npm silent process execution n detached true n stdio ignore n location package preinstall js message this package is silently executing another executable | 1 |

6,607 | 9,693,108,283 | IssuesEvent | 2019-05-24 15:17:21 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | closed | Community - who can view applicants | Apply Process Approved Requirements Ready State Dept. | Who: Student internship users

What: ability to view applicants

Why: in order to limit who can see who has applied to the internship

Acceptance Criteria:

1) Applicants will not see who else has applied to an opportunity - hide right rail on internship opportunity

2) Bureau Internship coordinators will not be ab... | 1.0 | Community - who can view applicants - Who: Student internship users

What: ability to view applicants

Why: in order to limit who can see who has applied to the internship

Acceptance Criteria:

1) Applicants will not see who else has applied to an opportunity - hide right rail on internship opportunity

2) Bureau ... | process | community who can view applicants who student internship users what ability to view applicants why in order to limit who can see who has applied to the internship acceptance criteria applicants will not see who else has applied to an opportunity hide right rail on internship opportunity bureau ... | 1 |

279,794 | 21,184,367,034 | IssuesEvent | 2022-04-08 11:08:28 | CarmenMariaMP/UStudy | https://api.github.com/repos/CarmenMariaMP/UStudy | closed | Recopilación de situaciones adversas | documentation prio: low | SITUACIONES ADVERSAS (2 OPCIONES)

1. SITUACIONES DESDE INICIO DEL DESARROLLO

Las situaciones adversas deben tener: estado, lecciones aprendidas, acciones propuestas, objetivo a alcanzar y ver si se ha alcanzado, qué otras acciones hay en caso no funcine la principal, cómo vemos si funciona la acción y qué tiempo se d... | 1.0 | Recopilación de situaciones adversas - SITUACIONES ADVERSAS (2 OPCIONES)

1. SITUACIONES DESDE INICIO DEL DESARROLLO

Las situaciones adversas deben tener: estado, lecciones aprendidas, acciones propuestas, objetivo a alcanzar y ver si se ha alcanzado, qué otras acciones hay en caso no funcine la principal, cómo vemos ... | non_process | recopilación de situaciones adversas situaciones adversas opciones situaciones desde inicio del desarrollo las situaciones adversas deben tener estado lecciones aprendidas acciones propuestas objetivo a alcanzar y ver si se ha alcanzado qué otras acciones hay en caso no funcine la principal cómo vemos ... | 0 |

279,920 | 21,189,400,565 | IssuesEvent | 2022-04-08 15:43:22 | borgbackup/borg | https://api.github.com/repos/borgbackup/borg | closed | borg wants to re-add everything after relocating repo | question documentation | ## Have you checked borgbackup docs, FAQ, and open Github issues?

Yes

## Is this a BUG / ISSUE report or a QUESTION?

bug (I think, or maybe I did too many weird things at once, or hopefully you can point out a mistake)

## System information. For client/server mode post info for both machines.

client:

Linu... | 1.0 | borg wants to re-add everything after relocating repo - ## Have you checked borgbackup docs, FAQ, and open Github issues?

Yes

## Is this a BUG / ISSUE report or a QUESTION?

bug (I think, or maybe I did too many weird things at once, or hopefully you can point out a mistake)

## System information. For client... | non_process | borg wants to re add everything after relocating repo have you checked borgbackup docs faq and open github issues yes is this a bug issue report or a question bug i think or maybe i did too many weird things at once or hopefully you can point out a mistake system information for client... | 0 |

15,027 | 18,740,545,908 | IssuesEvent | 2021-11-04 13:08:51 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | reopened | Computed Variables for Algorithm Outputs are NULL in Graphical Modeler | Processing Bug Modeller | ### What is the bug or the crash?

I have tried to use the computed variables for an algorithm output (e.g. `@Reproject_layer_OUTPUT_minx `) in a `Pre-calculated value` field in a subsequent algorithm. All but those coming from the input layer are always NULL.

### Steps to reproduce the issue

1. Start Graphical... | 1.0 | Computed Variables for Algorithm Outputs are NULL in Graphical Modeler - ### What is the bug or the crash?

I have tried to use the computed variables for an algorithm output (e.g. `@Reproject_layer_OUTPUT_minx `) in a `Pre-calculated value` field in a subsequent algorithm. All but those coming from the input layer a... | process | computed variables for algorithm outputs are null in graphical modeler what is the bug or the crash i have tried to use the computed variables for an algorithm output e g reproject layer output minx in a pre calculated value field in a subsequent algorithm all but those coming from the input layer a... | 1 |

14,509 | 17,605,327,901 | IssuesEvent | 2021-08-17 16:19:36 | openservicemesh/osm-docs | https://api.github.com/repos/openservicemesh/osm-docs | closed | mirror the v0.8 release docs | process | Add a v0.8 mirror of the docs as they existed in https://github.com/openservicemesh/osm/tree/release-v0.8/docs (available in a drop-down and not updated). | 1.0 | mirror the v0.8 release docs - Add a v0.8 mirror of the docs as they existed in https://github.com/openservicemesh/osm/tree/release-v0.8/docs (available in a drop-down and not updated). | process | mirror the release docs add a mirror of the docs as they existed in available in a drop down and not updated | 1 |

92,310 | 10,741,046,683 | IssuesEvent | 2019-10-29 19:25:06 | eriq-augustine/test-issue-copy | https://api.github.com/repos/eriq-augustine/test-issue-copy | opened | [CLOSED] External Functions with a Single Argument | Documentation - Question Type - Bug | <a href="https://github.com/eriq-augustine"><img src="https://avatars0.githubusercontent.com/u/337857?v=4" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [eriq-augustine](https://github.com/eriq-augustine)**

_Saturday Nov 12, 2016 at 19:28 GMT_

_Originally opened as https://github.com/eriq-august... | 1.0 | [CLOSED] External Functions with a Single Argument - <a href="https://github.com/eriq-augustine"><img src="https://avatars0.githubusercontent.com/u/337857?v=4" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [eriq-augustine](https://github.com/eriq-augustine)**

_Saturday Nov 12, 2016 at 19:28 GMT_... | non_process | external functions with a single argument issue by saturday nov at gmt originally opened as as i recall there is an issue with external functions that only take a single argument check to see if this is true | 0 |

11,281 | 14,078,588,830 | IssuesEvent | 2020-11-04 13:46:57 | alphagov/govuk-design-system | https://api.github.com/repos/alphagov/govuk-design-system | opened | Run through 'tech & ops health check' | process | ## What

Run through the 'tech & ops health check' checklist - 'a handy list of good practices for a team to self evaluate against'.

The health check covers the technical and operational aspects of a service only. It does not cover user-centered design or agile ways of working.

## Why

The tech & ops health c... | 1.0 | Run through 'tech & ops health check' - ## What

Run through the 'tech & ops health check' checklist - 'a handy list of good practices for a team to self evaluate against'.

The health check covers the technical and operational aspects of a service only. It does not cover user-centered design or agile ways of worki... | process | run through tech ops health check what run through the tech ops health check checklist a handy list of good practices for a team to self evaluate against the health check covers the technical and operational aspects of a service only it does not cover user centered design or agile ways of worki... | 1 |

25,137 | 12,500,116,248 | IssuesEvent | 2020-06-01 21:31:40 | flutter/flutter | https://api.github.com/repos/flutter/flutter | opened | [cocoon] Create a handler that pushes benchmark to Metrics Center | severe: performance team: infra | Per discussion with @liyuqian we'd like to push the devicelab benchmark data to metrics center, which transfers the data to skia perf later. So that skia perf can be utilized to view the devicelab benchmark results.

We will need to integrate https://github.com/liyuqian/metrics_center with Cocoon backend. And add a c... | True | [cocoon] Create a handler that pushes benchmark to Metrics Center - Per discussion with @liyuqian we'd like to push the devicelab benchmark data to metrics center, which transfers the data to skia perf later. So that skia perf can be utilized to view the devicelab benchmark results.

We will need to integrate https:/... | non_process | create a handler that pushes benchmark to metrics center per discussion with liyuqian we d like to push the devicelab benchmark data to metrics center which transfers the data to skia perf later so that skia perf can be utilized to view the devicelab benchmark results we will need to integrate with cocoon ... | 0 |

11,707 | 14,545,541,156 | IssuesEvent | 2020-12-15 19:48:36 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Define artifact from feed in resources | Pri2 devops-cicd-process/tech devops/prod support-request | Hello,

I am switching from using builds artifacts to publishing them as nuget packages in artifacts feed. I have read in various places that it is also a recommendation and good practice.

However, I wanted to define it in YAML pipeline as `resources packages`, but I guess it is only relevant to GitHub artifacts.

... | 1.0 | Define artifact from feed in resources - Hello,

I am switching from using builds artifacts to publishing them as nuget packages in artifacts feed. I have read in various places that it is also a recommendation and good practice.

However, I wanted to define it in YAML pipeline as `resources packages`, but I guess ... | process | define artifact from feed in resources hello i am switching from using builds artifacts to publishing them as nuget packages in artifacts feed i have read in various places that it is also a recommendation and good practice however i wanted to define it in yaml pipeline as resources packages but i guess ... | 1 |

17,162 | 22,739,148,923 | IssuesEvent | 2022-07-07 00:44:42 | MPMG-DCC-UFMG/C01 | https://api.github.com/repos/MPMG-DCC-UFMG/C01 | closed | Erro código 1006 ao coletar algumas páginas | [1] Bug [2] Baixa Prioridade [0] Desenvolvimento [3] Processamento Dinâmico | # Comportamento Esperado

Realizar as coletas sem erro algum.

# Comportamento Atual

As coletas apresentam o erro code = 1006. Na maioria das vezes este erro não impede o funcionamento do coletor.

Segue log exemplo para este problema:

`2021-08-03 19:55:04 [websockets.protocol] DEBUG: client - event = eof_receiv... | 1.0 | Erro código 1006 ao coletar algumas páginas - # Comportamento Esperado

Realizar as coletas sem erro algum.

# Comportamento Atual

As coletas apresentam o erro code = 1006. Na maioria das vezes este erro não impede o funcionamento do coletor.

Segue log exemplo para este problema:

`2021-08-03 19:55:04 [websocket... | process | erro código ao coletar algumas páginas comportamento esperado realizar as coletas sem erro algum comportamento atual as coletas apresentam o erro code na maioria das vezes este erro não impede o funcionamento do coletor segue log exemplo para este problema debug client event ... | 1 |

138,434 | 20,518,376,687 | IssuesEvent | 2022-03-01 14:09:34 | sul-dlss/argo | https://api.github.com/repos/sul-dlss/argo | closed | (new Argo interface) Show the default object rights on the APO page | design needed | The default object rights should be displayed on the APO page, per Astrid's design.

<img width="635" alt="Screen Shot 2022-01-28 at 12 01 09 AM" src="https://user-images.githubusercontent.com/8975583/151509501-e164fbed-e6d2-4c5d-b0e3-43414cb00977.png">

This may come in a later phase of the Argo UI, depending on p... | 1.0 | (new Argo interface) Show the default object rights on the APO page - The default object rights should be displayed on the APO page, per Astrid's design.

<img width="635" alt="Screen Shot 2022-01-28 at 12 01 09 AM" src="https://user-images.githubusercontent.com/8975583/151509501-e164fbed-e6d2-4c5d-b0e3-43414cb00977.... | non_process | new argo interface show the default object rights on the apo page the default object rights should be displayed on the apo page per astrid s design img width alt screen shot at am src this may come in a later phase of the argo ui depending on prioritization | 0 |

17,375 | 23,199,252,116 | IssuesEvent | 2022-08-01 19:37:39 | googleapis/google-cloud-go | https://api.github.com/repos/googleapis/google-cloud-go | closed | all: add static analysis presubmit | type: process | When I recently re-imported the storage module into third_party, static analysis presubmits there caught a number of minor bugs such as unused err values, use of deprecated types, and nil dereferences.

Not all of these checks are available in OSS world, but we should at least consider running [staticcheck](https://s... | 1.0 | all: add static analysis presubmit - When I recently re-imported the storage module into third_party, static analysis presubmits there caught a number of minor bugs such as unused err values, use of deprecated types, and nil dereferences.

Not all of these checks are available in OSS world, but we should at least con... | process | all add static analysis presubmit when i recently re imported the storage module into third party static analysis presubmits there caught a number of minor bugs such as unused err values use of deprecated types and nil dereferences not all of these checks are available in oss world but we should at least con... | 1 |

278,833 | 30,702,409,869 | IssuesEvent | 2023-07-27 01:27:46 | yunexMasters/jdk | https://api.github.com/repos/yunexMasters/jdk | closed | CVE-2023-2004 (Medium) detected in freetypefreetype-2.10.4 - autoclosed | Mend: dependency security vulnerability | ## CVE-2023-2004 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>freetypefreetype-2.10.4</b></p></summary>

<p>

<p>A free, high-quality, and portable font engine</p>

<p>Library home p... | True | CVE-2023-2004 (Medium) detected in freetypefreetype-2.10.4 - autoclosed - ## CVE-2023-2004 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>freetypefreetype-2.10.4</b></p></summary>

<p... | non_process | cve medium detected in freetypefreetype autoclosed cve medium severity vulnerability vulnerable library freetypefreetype a free high quality and portable font engine library home page a href found in base branch master vulnerable source files ... | 0 |

19,241 | 25,402,217,654 | IssuesEvent | 2022-11-22 12:58:28 | FOLIO-FSE/folio_migration_tools | https://api.github.com/repos/FOLIO-FSE/folio_migration_tools | closed | Rewrite the extra data process to not rely on logging | simplify_migration_process | We rely on logging in order to create the additional objects that need to be created as part of a transformation. Since people using the tools as a library instead of running them from the command line will use other types of logging, these objects will not be created as expected in these cases.

Examples of objects ... | 1.0 | Rewrite the extra data process to not rely on logging - We rely on logging in order to create the additional objects that need to be created as part of a transformation. Since people using the tools as a library instead of running them from the command line will use other types of logging, these objects will not be cre... | process | rewrite the extra data process to not rely on logging we rely on logging in order to create the additional objects that need to be created as part of a transformation since people using the tools as a library instead of running them from the command line will use other types of logging these objects will not be cre... | 1 |

315,654 | 27,092,800,908 | IssuesEvent | 2023-02-14 22:43:50 | eclipse-openj9/openj9 | https://api.github.com/repos/eclipse-openj9/openj9 | closed | jdk20 OpenJDK jdk/internal/misc/ThreadFlock/WithScopedValue UnsatisfiedLinkError Thread.scopedValueCache | comp:vm test failure project:loom jdk20 | https://openj9-jenkins.osuosl.org/job/Test_openjdk20_j9_sanity.openjdk_aarch64_linux_OpenJDK20/1/

All platforms, not platform specific.

jdk_lang

jdk/internal/misc/ThreadFlock/WithScopedValue.java

```

10:30:29 STDOUT:

10:30:29 test WithScopedValue.testInheritsScopedValue(java.util.concurrent.Executors$DefaultThr... | 1.0 | jdk20 OpenJDK jdk/internal/misc/ThreadFlock/WithScopedValue UnsatisfiedLinkError Thread.scopedValueCache - https://openj9-jenkins.osuosl.org/job/Test_openjdk20_j9_sanity.openjdk_aarch64_linux_OpenJDK20/1/

All platforms, not platform specific.

jdk_lang

jdk/internal/misc/ThreadFlock/WithScopedValue.java

```

10:30:29... | non_process | openjdk jdk internal misc threadflock withscopedvalue unsatisfiedlinkerror thread scopedvaluecache all platforms not platform specific jdk lang jdk internal misc threadflock withscopedvalue java stdout test withscopedvalue testinheritsscopedvalue java util concurrent executors defaultth... | 0 |

253,398 | 19,100,267,713 | IssuesEvent | 2021-11-29 21:34:29 | SandraScherer/EntertainmentInfothek | https://api.github.com/repos/SandraScherer/EntertainmentInfothek | opened | Add runtime information to series | documentation enhancement database program | - [ ] Add table Series_Runtime to database

- [ ] Add/adapt class Runtime in EntertainmentDB.dll

- [ ] Add tests to EntertainmentDB.Tests

- [ ] Add/adapt ContentCreator classes in WikiPageCreator

- [ ] Add tests to WikiPageCreator.Tests

- [ ] Update documentation

- [ ] EntertainmentInfothek_Database.vpp

- [... | 1.0 | Add runtime information to series - - [ ] Add table Series_Runtime to database

- [ ] Add/adapt class Runtime in EntertainmentDB.dll

- [ ] Add tests to EntertainmentDB.Tests

- [ ] Add/adapt ContentCreator classes in WikiPageCreator

- [ ] Add tests to WikiPageCreator.Tests

- [ ] Update documentation

- [ ] Entert... | non_process | add runtime information to series add table series runtime to database add adapt class runtime in entertainmentdb dll add tests to entertainmentdb tests add adapt contentcreator classes in wikipagecreator add tests to wikipagecreator tests update documentation entertainmentinfothe... | 0 |

17,687 | 24,382,651,517 | IssuesEvent | 2022-10-04 09:08:06 | Noaaan/MythicMetals | https://api.github.com/repos/Noaaan/MythicMetals | closed | [1.19.2] (0.16.1) Possible incompatibility with Terralith (Feature order cycle) | bug compatibility | Hello o/

im struggling to add Mythic Metals to my modlist because it crashes on world creation. Using the latest 1.19.2 versions of this mod and Alloy Forgery. Modlist works fine without Mythic Metals.

<details><summary>Here's the crash report</summary>

<p>

```

---- Minecraft Crash Report ----

// Surpri... | True | [1.19.2] (0.16.1) Possible incompatibility with Terralith (Feature order cycle) - Hello o/

im struggling to add Mythic Metals to my modlist because it crashes on world creation. Using the latest 1.19.2 versions of this mod and Alloy Forgery. Modlist works fine without Mythic Metals.

<details><summary>Here's the c... | non_process | possible incompatibility with terralith feature order cycle hello o im struggling to add mythic metals to my modlist because it crashes on world creation using the latest versions of this mod and alloy forgery modlist works fine without mythic metals here s the crash report ... | 0 |

5,582 | 8,441,959,213 | IssuesEvent | 2018-10-18 11:53:24 | kiwicom/orbit-components | https://api.github.com/repos/kiwicom/orbit-components | closed | Label in Checkbox component should be optional | enhancement processing | **Is your feature request related to a problem? Please describe.**

We have a checkbox with a link to our terms and condition.

**Describe the solution you'd like**

Label for Checkbox component should be optional.

**Describe alternatives you've considered**

- Empty string in label :]

**Additional context**

I... | 1.0 | Label in Checkbox component should be optional - **Is your feature request related to a problem? Please describe.**

We have a checkbox with a link to our terms and condition.

**Describe the solution you'd like**

Label for Checkbox component should be optional.

**Describe alternatives you've considered**

- Empt... | process | label in checkbox component should be optional is your feature request related to a problem please describe we have a checkbox with a link to our terms and condition describe the solution you d like label for checkbox component should be optional describe alternatives you ve considered empt... | 1 |

301,811 | 22,776,260,146 | IssuesEvent | 2022-07-08 14:44:04 | towhee-io/towhee | https://api.github.com/repos/towhee-io/towhee | closed | [Documentation]: | kind/documentation | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### What kind of documentation would you like added or changed?

Hi, can you please provide an example on how to run this:

```

with towhee.api['file']() as api:

app_insert = (

api.image_load['file', 'img']()

... | 1.0 | [Documentation]: - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### What kind of documentation would you like added or changed?

Hi, can you please provide an example on how to run this:

```

with towhee.api['file']() as api:

app_insert = (

api.image_load['file... | non_process | is there an existing issue for this i have searched the existing issues what kind of documentation would you like added or changed hi can you please provide an example on how to run this with towhee api as api app insert api image load save image dir... | 0 |

180,349 | 13,930,114,114 | IssuesEvent | 2020-10-22 01:32:47 | OpenMined/PySyft | https://api.github.com/repos/OpenMined/PySyft | closed | Add torch.Tensor.copy_ to allowlist and test suite | Priority: 2 - High :cold_sweat: Severity: 3 - Medium :unamused: Status: Available :wave: Type: New Feature :heavy_plus_sign: Type: Testing :test_tube: |

# Description

This issue is a part of Syft 0.3.0 Epic 2: https://github.com/OpenMined/PySyft/issues/3696

In this issue, you will be adding support for remote execution of the torch.Tensor.copy_

method or property. This might be a really small project (literally a one-liner) or

it might require adding significant fun... | 2.0 | Add torch.Tensor.copy_ to allowlist and test suite -

# Description

This issue is a part of Syft 0.3.0 Epic 2: https://github.com/OpenMined/PySyft/issues/3696

In this issue, you will be adding support for remote execution of the torch.Tensor.copy_

method or property. This might be a really small project (literally a ... | non_process | add torch tensor copy to allowlist and test suite description this issue is a part of syft epic in this issue you will be adding support for remote execution of the torch tensor copy method or property this might be a really small project literally a one liner or it might require adding signific... | 0 |

16,368 | 21,056,725,977 | IssuesEvent | 2022-04-01 04:38:39 | fei-protocol/fei-protocol-core | https://api.github.com/repos/fei-protocol/fei-protocol-core | closed | Git Flow | devops/process | The Problem: Our git flow is not very analogous to what actually happens, especially around dao proposals, and often causes 3-4 day periods where we expect integration tests to fail. Also, we often merge code to our develop/working branch that never hits mainnet.

Let's discuss potential git flows that are more suite... | 1.0 | Git Flow - The Problem: Our git flow is not very analogous to what actually happens, especially around dao proposals, and often causes 3-4 day periods where we expect integration tests to fail. Also, we often merge code to our develop/working branch that never hits mainnet.

Let's discuss potential git flows that are... | process | git flow the problem our git flow is not very analogous to what actually happens especially around dao proposals and often causes day periods where we expect integration tests to fail also we often merge code to our develop working branch that never hits mainnet let s discuss potential git flows that are... | 1 |

18,983 | 24,975,152,912 | IssuesEvent | 2022-11-02 07:00:25 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | MF in BP ontology | multi-species process | GO:0052054

negative regulation by symbiont of host peptidase activity

Any process in which an organism stops, prevents, or reduces the frequency, rate or extent of host protease activity, the catalysis of the hydrolysis of peptide bonds in a protein. The host is defined as the larger of the organisms involved in a ... | 1.0 | MF in BP ontology - GO:0052054

negative regulation by symbiont of host peptidase activity

Any process in which an organism stops, prevents, or reduces the frequency, rate or extent of host protease activity, the catalysis of the hydrolysis of peptide bonds in a protein. The host is defined as the larger of the orga... | process | mf in bp ontology go negative regulation by symbiont of host peptidase activity any process in which an organism stops prevents or reduces the frequency rate or extent of host protease activity the catalysis of the hydrolysis of peptide bonds in a protein the host is defined as the larger of the organisms ... | 1 |

111,711 | 14,138,883,750 | IssuesEvent | 2020-11-10 09:05:09 | milanbargiel/kulturgenerator | https://api.github.com/repos/milanbargiel/kulturgenerator | closed | [App] Finalize content of info page | wait for design | ### Requirements

- Text on info & submission page https://staging.kulturgenerator.org/about is final

- It has been decided, which statistics are to be presented (e.g. Time since existence, number of artworks, number of sold artworks

- Statistics are based on real values from backend

- The layout of the info page lo... | 1.0 | [App] Finalize content of info page - ### Requirements

- Text on info & submission page https://staging.kulturgenerator.org/about is final

- It has been decided, which statistics are to be presented (e.g. Time since existence, number of artworks, number of sold artworks

- Statistics are based on real values from bac... | non_process | finalize content of info page requirements text on info submission page is final it has been decided which statistics are to be presented e g time since existence number of artworks number of sold artworks statistics are based on real values from backend the layout of the info page looks li... | 0 |

14,285 | 17,260,784,111 | IssuesEvent | 2021-07-22 07:17:29 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Android] User should be redirected to Password help screen for the following scenario | Android Bug P2 Process: Fixed Process: Tested QA Process: Tested dev | **Steps**

1. Install mobile app in the device

2. Open the app

3. Click on Sign up

4. Enter all the required field and click on submit

5. After navigating to the verification step, without entering the verification code kill the app

6. Open the app

7. Click on forgot password

8. Enter a valid email and click on ... | 3.0 | [Android] User should be redirected to Password help screen for the following scenario - **Steps**

1. Install mobile app in the device

2. Open the app

3. Click on Sign up

4. Enter all the required field and click on submit

5. After navigating to the verification step, without entering the verification code kill th... | process | user should be redirected to password help screen for the following scenario steps install mobile app in the device open the app click on sign up enter all the required field and click on submit after navigating to the verification step without entering the verification code kill the app ... | 1 |

11,770 | 14,600,141,990 | IssuesEvent | 2020-12-21 06:10:27 | cypress-io/cypress-documentation | https://api.github.com/repos/cypress-io/cypress-documentation | closed | Speed up Cypress tests by splitting the dominant spec | process: ci | We have one spec that dominates the duration of the run.

<img width="1091" alt="Screen Shot 2020-12-15 at 10 39 42 AM" src="https://user-images.githubusercontent.com/2212006/102236742-da7a7200-3ec1-11eb-8ddd-3fcc4f4fa7ff.png">

We should split this spec to speed things up. | 1.0 | Speed up Cypress tests by splitting the dominant spec - We have one spec that dominates the duration of the run.

<img width="1091" alt="Screen Shot 2020-12-15 at 10 39 42 AM" src="https://user-images.githubusercontent.com/2212006/102236742-da7a7200-3ec1-11eb-8ddd-3fcc4f4fa7ff.png">

We should split this spec to sp... | process | speed up cypress tests by splitting the dominant spec we have one spec that dominates the duration of the run img width alt screen shot at am src we should split this spec to speed things up | 1 |

6,470 | 9,546,700,656 | IssuesEvent | 2019-05-01 20:43:31 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | closed | Department of State: Experience | Apply Process Approved Requirements Ready State Dept. | Who: Student Applicant

What: Experience and references page

Why: As a student applicant I want to provide my experience in my application

A/C

- The information will be populated from the users USAJOBS profile

- "All fields are required unless otherwise noted" (right margin)

- There will be a header: Experience ... | 1.0 | Department of State: Experience - Who: Student Applicant

What: Experience and references page

Why: As a student applicant I want to provide my experience in my application

A/C

- The information will be populated from the users USAJOBS profile

- "All fields are required unless otherwise noted" (right margin)

- T... | process | department of state experience who student applicant what experience and references page why as a student applicant i want to provide my experience in my application a c the information will be populated from the users usajobs profile all fields are required unless otherwise noted right margin t... | 1 |

229,079 | 25,288,921,589 | IssuesEvent | 2022-11-16 21:55:21 | github/roadmap | https://api.github.com/repos/github/roadmap | closed | Enterprise-level security overview (server beta) | beta github advanced security security & compliance server GHES 3.5 | ### Summary

Security overview aggregates security and compliance results. This data is already visible at the organization level - the next step is to make it available at the [enterprise level](https://docs.github.com/en/enterprise-server@3.3/admin/overview/about-enterprise-accounts).

In its initial (beta) relea... | True | Enterprise-level security overview (server beta) - ### Summary

Security overview aggregates security and compliance results. This data is already visible at the organization level - the next step is to make it available at the [enterprise level](https://docs.github.com/en/enterprise-server@3.3/admin/overview/about-e... | non_process | enterprise level security overview server beta summary security overview aggregates security and compliance results this data is already visible at the organization level the next step is to make it available at the in its initial beta release the enterprise level security overview will include a... | 0 |

654,032 | 21,634,599,523 | IssuesEvent | 2022-05-05 13:12:04 | gama-platform/gama | https://api.github.com/repos/gama-platform/gama | closed | No display on Wayland backend (like on Ubuntu 22.04) | 😱 Bug OS Linux 🖥 Display OpenGL Priority High 📺 Display Java2D V. 1.8.2 | **Describe the bug**

The new release of Ubuntu (22.04) did switch default window manager from X11 to Wayland.

Unfortunatly, it completly change every low-level graphical logic and :

- Java2D displays don't work

- **Describe the bug**

The new release of Ubuntu (22.04) did switch default window manager from X11 to Wayland.

Unfortunatly, it completly change every low-level graphical logic and :

- Java2D displays don't work

and got the following warning:

```/mnt/ufs18/rs-004/edgerpat_lab/Scotty/TE_Density/transposon/gene_data.py:112: PerformanceWarning: your performance may suffer as PyTables will pickle object types that it cannot map directly to c-typ... | 1.0 | Pytables Pickle warning for gene data - Copied from PR #117

I ran the Arabidopsis genome set (the same one you did above) and got the following warning:

```/mnt/ufs18/rs-004/edgerpat_lab/Scotty/TE_Density/transposon/gene_data.py:112: PerformanceWarning: your performance may suffer as PyTables will pickle object ty... | non_process | pytables pickle warning for gene data copied from pr i ran the arabidopsis genome set the same one you did above and got the following warning mnt rs edgerpat lab scotty te density transposon gene data py performancewarning your performance may suffer as pytables will pickle object types that i... | 0 |

406,184 | 27,555,002,288 | IssuesEvent | 2023-03-07 17:16:30 | cweagans/composer-patches | https://api.github.com/repos/cweagans/composer-patches | closed | New documentation website is missing the ignore-patches | documentation | ### Verification

- [X] I have searched existing issues _and_ discussions for my problem.

### Which file do you have feedback about? (leave blank if not applicable)

_No response_

### Feedback

I was searching for the documentation about ignoring patches but was presented with the new and great looking new docs: http... | 1.0 | New documentation website is missing the ignore-patches - ### Verification

- [X] I have searched existing issues _and_ discussions for my problem.

### Which file do you have feedback about? (leave blank if not applicable)

_No response_

### Feedback

I was searching for the documentation about ignoring patches but w... | non_process | new documentation website is missing the ignore patches verification i have searched existing issues and discussions for my problem which file do you have feedback about leave blank if not applicable no response feedback i was searching for the documentation about ignoring patches but was... | 0 |

18,753 | 24,656,578,554 | IssuesEvent | 2022-10-18 00:30:20 | openxla/stablehlo | https://api.github.com/repos/openxla/stablehlo | opened | Automatically generate status.md | Process | As discussed in #331, it would be useful to be able to automatically generate status.md to avoid it diverging from the actual status of StableHLO. The comments on the linked pull request summarize the challenges and propose some ideas for how to go about this. | 1.0 | Automatically generate status.md - As discussed in #331, it would be useful to be able to automatically generate status.md to avoid it diverging from the actual status of StableHLO. The comments on the linked pull request summarize the challenges and propose some ideas for how to go about this. | process | automatically generate status md as discussed in it would be useful to be able to automatically generate status md to avoid it diverging from the actual status of stablehlo the comments on the linked pull request summarize the challenges and propose some ideas for how to go about this | 1 |

286,361 | 31,561,149,682 | IssuesEvent | 2023-09-03 09:14:51 | SovereignCloudStack/issues | https://api.github.com/repos/SovereignCloudStack/issues | opened | IaaS Internal Pentesting (Gray-Box) | IaaS security | Assessing the security of internal resources and components hosted within a SCS testbed deployment. This will be done from a Gray-box perspective, which involves a mix of knowledge about the environment, similar to internal personnel, combined with external information that an attacker might gather.

(Related to #391)

... | True | IaaS Internal Pentesting (Gray-Box) - Assessing the security of internal resources and components hosted within a SCS testbed deployment. This will be done from a Gray-box perspective, which involves a mix of knowledge about the environment, similar to internal personnel, combined with external information that an atta... | non_process | iaas internal pentesting gray box assessing the security of internal resources and components hosted within a scs testbed deployment this will be done from a gray box perspective which involves a mix of knowledge about the environment similar to internal personnel combined with external information that an atta... | 0 |

387,719 | 26,734,747,862 | IssuesEvent | 2023-01-30 08:35:53 | mobxjs/mobx | https://api.github.com/repos/mobxjs/mobx | closed | observable.set triggers a refresh whenever any value in the set changes, even if that value was not accessed in the observer | ❔ question 📖 documentation | **Intended outcome:**

I am using observable.set with many often-changing values. I also have many small observers observing the presence of single values in that set.

I would expect each observer to only refresh if the value it is observing is changing, e.g. if `observable.has("mainValue")` switches from false to... | 1.0 | observable.set triggers a refresh whenever any value in the set changes, even if that value was not accessed in the observer - **Intended outcome:**

I am using observable.set with many often-changing values. I also have many small observers observing the presence of single values in that set.

I would expect each ... | non_process | observable set triggers a refresh whenever any value in the set changes even if that value was not accessed in the observer intended outcome i am using observable set with many often changing values i also have many small observers observing the presence of single values in that set i would expect each ... | 0 |

34,449 | 6,334,903,979 | IssuesEvent | 2017-07-26 17:41:20 | sinonjs/sinon | https://api.github.com/repos/sinonjs/sinon | closed | Documentation: useFakeTimers options | Documentation Help wanted Needs investigation | > the documentation for useFakeTimers says that you can list functions to fake but doesn't explain what that would mean if you do vs don't

Improvements should be made to https://github.com/sinonjs/sinon/blob/master/docs/release-source/release/fake-timers.md, and all the versions for 2.x. | 1.0 | Documentation: useFakeTimers options - > the documentation for useFakeTimers says that you can list functions to fake but doesn't explain what that would mean if you do vs don't

Improvements should be made to https://github.com/sinonjs/sinon/blob/master/docs/release-source/release/fake-timers.md, and all the version... | non_process | documentation usefaketimers options the documentation for usefaketimers says that you can list functions to fake but doesn t explain what that would mean if you do vs don t improvements should be made to and all the versions for x | 0 |

36,979 | 5,097,282,582 | IssuesEvent | 2017-01-03 20:59:20 | hashcat/hashcat | https://api.github.com/repos/hashcat/hashcat | closed | hashcat benchmark on windows 10 results in fopen() error | needs testing | I've just downloaded the hashcat binary and tried to run the benchmark which results in an error:

ERROR: inc_cipher_256aes.cl: fopen(): No such file or directory

The file it speaks of is in the same directory as the binary and I get the same error using both 64 and 32-bit versions. It also results in the same error i... | 1.0 | hashcat benchmark on windows 10 results in fopen() error - I've just downloaded the hashcat binary and tried to run the benchmark which results in an error:

ERROR: inc_cipher_256aes.cl: fopen(): No such file or directory

The file it speaks of is in the same directory as the binary and I get the same error using both ... | non_process | hashcat benchmark on windows results in fopen error i ve just downloaded the hashcat binary and tried to run the benchmark which results in an error error inc cipher cl fopen no such file or directory the file it speaks of is in the same directory as the binary and i get the same error using both and ... | 0 |

64,418 | 15,882,707,571 | IssuesEvent | 2021-04-09 16:22:44 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [SB] Questionnaires/Active task >'Anchor date based' radio button is disabled in the following scenario | Bug P1 Process: Fixed Process: Tested QA Process: Tested dev Study builder | Steps

1. Create/edit study

2. Study settings page > Select 'Yes' under 'Use participant enrollment date as anchor date?' section

3. Click on the Mark as completed button

4. Go to the Study information section and change the study ID and complete the section

5. Go to Questionnaire/Active tasks

6. Switch the sched... | 1.0 | [SB] Questionnaires/Active task >'Anchor date based' radio button is disabled in the following scenario - Steps

1. Create/edit study

2. Study settings page > Select 'Yes' under 'Use participant enrollment date as anchor date?' section

3. Click on the Mark as completed button

4. Go to the Study information section ... | non_process | questionnaires active task anchor date based radio button is disabled in the following scenario steps create edit study study settings page select yes under use participant enrollment date as anchor date section click on the mark as completed button go to the study information section and... | 0 |

6,745 | 9,873,262,882 | IssuesEvent | 2019-06-22 12:54:47 | hyj378/EmojiRecommand | https://api.github.com/repos/hyj378/EmojiRecommand | closed | 데이터 전처리 | Preprocessing | https://colab.research.google.com/drive/13eF9lBVJD0b1pzJgMSzMjVmI5uZom1iX

데이터 전처리 코드입니다.

original_csv 에는 여러분이 크롤링한 자료

preprocessed 특수문자 뺀 것 저장할 이름

eng_ver 번역한 것 저장할 이름

써주시면 됩니다~~~

참고로 완전 오래걸리니까 켜놓고 주무시는게 좋을 것 같아요...ㅎㅎ | 1.0 | 데이터 전처리 - https://colab.research.google.com/drive/13eF9lBVJD0b1pzJgMSzMjVmI5uZom1iX

데이터 전처리 코드입니다.

original_csv 에는 여러분이 크롤링한 자료

preprocessed 특수문자 뺀 것 저장할 이름

eng_ver 번역한 것 저장할 이름

써주시면 됩니다~~~

참고로 완전 오래걸리니까 켜놓고 주무시는게 좋을 것 같아요...ㅎㅎ | process | 데이터 전처리 데이터 전처리 코드입니다 original csv 에는 여러분이 크롤링한 자료 preprocessed 특수문자 뺀 것 저장할 이름 eng ver 번역한 것 저장할 이름 써주시면 됩니다 참고로 완전 오래걸리니까 켜놓고 주무시는게 좋을 것 같아요 ㅎㅎ | 1 |

10,468 | 13,245,872,735 | IssuesEvent | 2020-08-19 14:57:01 | LibraryOfCongress/concordia | https://api.github.com/repos/LibraryOfCongress/concordia | closed | "Find another page" in review track should go to a review page | CM WIP review process user experience | At the moment when I click "find a new page" while I'm reviewing, it takes me to a page needing transcription. We want to keep people in the review stream if that's what they've chosen to work on. | 1.0 | "Find another page" in review track should go to a review page - At the moment when I click "find a new page" while I'm reviewing, it takes me to a page needing transcription. We want to keep people in the review stream if that's what they've chosen to work on. | process | find another page in review track should go to a review page at the moment when i click find a new page while i m reviewing it takes me to a page needing transcription we want to keep people in the review stream if that s what they ve chosen to work on | 1 |

60,460 | 3,129,471,528 | IssuesEvent | 2015-09-09 01:30:34 | girlcodeakl/girlcodeapp | https://api.github.com/repos/girlcodeakl/girlcodeapp | closed | Make the log-in system only log you in if you have an account | medium Priority 2 wontfix | This ticket is for after #11 and #12 are done.

- [ ] On the server (index.js), make it so you can only log in if you are logging into an account that actually exists!

- [ ] if the account doesn't exist, you don't get a cookie

- [ ] Send some kind of bad response using the 'res' object.

- [ ] in login.html, displa... | 1.0 | Make the log-in system only log you in if you have an account - This ticket is for after #11 and #12 are done.

- [ ] On the server (index.js), make it so you can only log in if you are logging into an account that actually exists!

- [ ] if the account doesn't exist, you don't get a cookie

- [ ] Send some kind of b... | non_process | make the log in system only log you in if you have an account this ticket is for after and are done on the server index js make it so you can only log in if you are logging into an account that actually exists if the account doesn t exist you don t get a cookie send some kind of bad respo... | 0 |

9,145 | 12,203,195,906 | IssuesEvent | 2020-04-30 10:11:11 | MHRA/products | https://api.github.com/repos/MHRA/products | closed | UAT script | EPIC - Auto Batch Process :oncoming_automobile: | ## User want

As a business stakeholder

I want to ensure that the Stellent batch processing replacement fulfills the business requirements

So that I can sign off the business case

## Acceptance Criteria

A document that captures tests that will confirm that the business requirements have been met

### Customer... | 1.0 | UAT script - ## User want

As a business stakeholder

I want to ensure that the Stellent batch processing replacement fulfills the business requirements

So that I can sign off the business case

## Acceptance Criteria

A document that captures tests that will confirm that the business requirements have been met

... | process | uat script user want as a business stakeholder i want to ensure that the stellent batch processing replacement fulfills the business requirements so that i can sign off the business case acceptance criteria a document that captures tests that will confirm that the business requirements have been met ... | 1 |

656 | 3,126,521,343 | IssuesEvent | 2015-09-08 09:47:51 | robotology/yarp | https://api.github.com/repos/robotology/yarp | reopened | look again at ebottle? | Component: YARP_OS Type: Process | Some years back, Danilo Tardioli wrote a reimplementation of the Bottle class, with efficiency in mind. It couldn't be integrated with YARP at the time due to its use of STL header files that we were avoiding. These days that is no longer an issue, so it might be worth revisiting this. Links:

* http://robots.unizar.... | 1.0 | look again at ebottle? - Some years back, Danilo Tardioli wrote a reimplementation of the Bottle class, with efficiency in mind. It couldn't be integrated with YARP at the time due to its use of STL header files that we were avoiding. These days that is no longer an issue, so it might be worth revisiting this. Links:

... | process | look again at ebottle some years back danilo tardioli wrote a reimplementation of the bottle class with efficiency in mind it couldn t be integrated with yarp at the time due to its use of stl header files that we were avoiding these days that is no longer an issue so it might be worth revisiting this links ... | 1 |

19,004 | 6,664,062,520 | IssuesEvent | 2017-10-02 18:43:14 | habitat-sh/habitat | https://api.github.com/repos/habitat-sh/habitat | closed | Integrate new docker publish into builder-worker | A-builder C-feature | Now that we've finished the new `hab pkg export docker` command we'll need to wire this into the Builder-Worker. Creds will need to be recorded on the origin and assigned to the project through the public API for them to be made available to the worker.

Related: #3259, #3109 | 1.0 | Integrate new docker publish into builder-worker - Now that we've finished the new `hab pkg export docker` command we'll need to wire this into the Builder-Worker. Creds will need to be recorded on the origin and assigned to the project through the public API for them to be made available to the worker.

Related: #32... | non_process | integrate new docker publish into builder worker now that we ve finished the new hab pkg export docker command we ll need to wire this into the builder worker creds will need to be recorded on the origin and assigned to the project through the public api for them to be made available to the worker related ... | 0 |

275,715 | 30,288,342,094 | IssuesEvent | 2023-07-09 00:43:12 | temporalio/sdk-typescript | https://api.github.com/repos/temporalio/sdk-typescript | closed | nyc-test-coverage-1.8.0.tgz: 1 vulnerabilities (highest severity is: 5.3) - autoclosed | Mend: dependency security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nyc-test-coverage-1.8.0.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /packages/test/package.json</p>

<p>Path to vulnerable library: /node_modules/semver/... | True | nyc-test-coverage-1.8.0.tgz: 1 vulnerabilities (highest severity is: 5.3) - autoclosed - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nyc-test-coverage-1.8.0.tgz</b></p></summary>

<p></p>

<p>Path to dependency f... | non_process | nyc test coverage tgz vulnerabilities highest severity is autoclosed vulnerable library nyc test coverage tgz path to dependency file packages test package json path to vulnerable library node modules semver package json found in head commit a href vulnerabi... | 0 |

20,281 | 26,912,279,894 | IssuesEvent | 2023-02-07 01:27:55 | googleapis/google-cloud-node | https://api.github.com/repos/googleapis/google-cloud-node | closed | GA release of Nodejs Analytics Data | type: process api: analyticsdata | Package name: @google-analytics/data

Current release: beta

Proposed release: GA

Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

Required

28 days elapsed since last beta release with new API s... | 1.0 | GA release of Nodejs Analytics Data - Package name: @google-analytics/data

Current release: beta

Proposed release: GA

Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

Required

28 days elapsed ... | process | ga release of nodejs analytics data package name google analytics data current release beta proposed release ga instructions check the lists below adding tests documentation as required once all the required boxes are ticked please create a release and close this issue required days elapsed s... | 1 |

51,214 | 7,686,870,179 | IssuesEvent | 2018-05-17 01:55:38 | pallets/click | https://api.github.com/repos/pallets/click | closed | Option name alias / custom option name mapping | documentation good first issue | Here's my code:

```

@click.command()

@click.option("--max")

def main(max):

# ...

```

This works, but the problem is that the `max` parameter shadows the built-in function. I would like to change the function parameter name, but I don't want to change the option name. Is there a way to change the mapping?... | 1.0 | Option name alias / custom option name mapping - Here's my code:

```

@click.command()

@click.option("--max")

def main(max):

# ...

```

This works, but the problem is that the `max` parameter shadows the built-in function. I would like to change the function parameter name, but I don't want to change the o... | non_process | option name alias custom option name mapping here s my code click command click option max def main max this works but the problem is that the max parameter shadows the built in function i would like to change the function parameter name but i don t want to change the o... | 0 |

19,633 | 25,995,132,378 | IssuesEvent | 2022-12-20 10:55:13 | Graylog2/graylog2-server | https://api.github.com/repos/Graylog2/graylog2-server | closed | Changes to pipeline not applied but simulator works | processing bug to-verify | <!--- Provide a general summary of the issue in the Title above -->

## Expected Behavior

Changing the referenced grok expression should alter the result of my pipeline.

Even replacing `set_field("original_message", message_field);` with `set_field("event.original", message_field);` does not change the result.

... | 1.0 | Changes to pipeline not applied but simulator works - <!--- Provide a general summary of the issue in the Title above -->

## Expected Behavior

Changing the referenced grok expression should alter the result of my pipeline.

Even replacing `set_field("original_message", message_field);` with `set_field("event.orig... | process | changes to pipeline not applied but simulator works expected behavior changing the referenced grok expression should alter the result of my pipeline even replacing set field original message message field with set field event original message field does not change the result current ... | 1 |

5,912 | 8,735,532,909 | IssuesEvent | 2018-12-11 17:00:46 | googleapis/google-cloud-node | https://api.github.com/repos/googleapis/google-cloud-node | closed | Update all samples to use async/await | help wanted type: process | Most of our samples are using promises for async programming, which is great. Now that Node 8 is the latest LTS, and 10 is about to go out - we should start converting all the samples to async/await. Lets use this as a high level tracking bug.

- [x] [googleapis/google-api-nodejs-client](https://github.com/google... | 1.0 | Update all samples to use async/await - Most of our samples are using promises for async programming, which is great. Now that Node 8 is the latest LTS, and 10 is about to go out - we should start converting all the samples to async/await. Lets use this as a high level tracking bug.

- [x] [googleapis/google-api-... | process | update all samples to use async await most of our samples are using promises for async programming which is great now that node is the latest lts and is about to go out we should start converting all the samples to async await lets use this as a high level tracking bug ... | 1 |

1,939 | 4,769,001,760 | IssuesEvent | 2016-10-26 11:01:53 | nolanjian/Cawler | https://api.github.com/repos/nolanjian/Cawler | opened | TP0021 Interface Inprovement | In Processing urgent | replace stl obj in interface with C mode or other, just for save and set treat warning as error | 1.0 | TP0021 Interface Inprovement - replace stl obj in interface with C mode or other, just for save and set treat warning as error | process | interface inprovement replace stl obj in interface with c mode or other just for save and set treat warning as error | 1 |

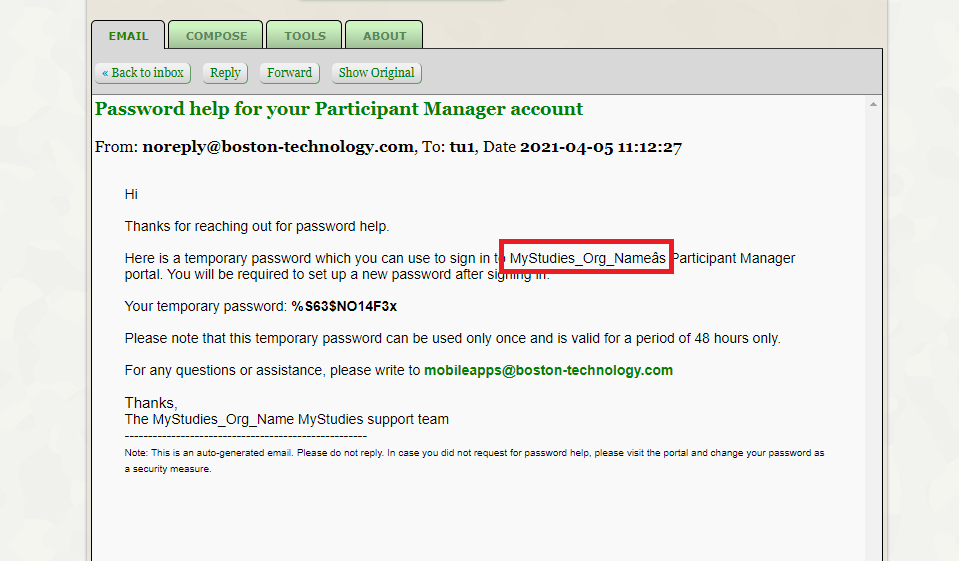

13,695 | 16,451,149,848 | IssuesEvent | 2021-05-21 06:02:16 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] Password help email template issue | Bug P2 Participant manager Process: Fixed | AR : Password help email template > Some other character is displaying instead of ' icon

ER : It should display Org Name's

[Note: Issue observed only in master branch]

| 1.0 | [PM] Password help email template issue - AR : Password help email template > Some other character is displaying instead of ' icon

ER : It should display Org Name's

[Note: Issue observed only in master branch]

.

- [X] I have confirmed this bug exists on the [latest version](https://github.com/pycaret/pycaret/releases) of pycaret.

- [ ] I have confirmed this bug exists on the master... | 1.0 | [BUG]: clustering.setup does not accept 'iterative' in imputation_type - ### pycaret version checks

- [X] I have checked that this issue has not already been reported [here](https://github.com/pycaret/pycaret/issues).

- [X] I have confirmed this bug exists on the [latest version](https://github.com/pycaret/pycaret/r... | process | clustering setup does not accept iterative in imputation type pycaret version checks i have checked that this issue has not already been reported i have confirmed this bug exists on the of pycaret i have confirmed this bug exists on the master branch of pycaret pip install u git ... | 1 |

2,166 | 5,013,340,502 | IssuesEvent | 2016-12-13 14:17:59 | opentrials/opentrials | https://api.github.com/repos/opentrials/opentrials | closed | Add unique constraint to "records.source_url" | 3. In Development API data cleaning Processors | On #532 we found a few records with repeated `source_url` values, and found out that was a bug. We then added a functionality to the `record_remover` processor to remove trials with the same `source_url`. Now we need to make sure that doesn't happen again by adding a unique constraint on that table. After that's done a... | 1.0 | Add unique constraint to "records.source_url" - On #532 we found a few records with repeated `source_url` values, and found out that was a bug. We then added a functionality to the `record_remover` processor to remove trials with the same `source_url`. Now we need to make sure that doesn't happen again by adding a uniq... | process | add unique constraint to records source url on we found a few records with repeated source url values and found out that was a bug we then added a functionality to the record remover processor to remove trials with the same source url now we need to make sure that doesn t happen again by adding a unique... | 1 |

1,899 | 4,726,734,212 | IssuesEvent | 2016-10-18 11:14:27 | openvstorage/blktap | https://api.github.com/repos/openvstorage/blktap | closed | Support for blktap3 | process_wontfix | The current implementation only supports blktap2. We might have to fix some bugs to also support blktap3 | 1.0 | Support for blktap3 - The current implementation only supports blktap2. We might have to fix some bugs to also support blktap3 | process | support for the current implementation only supports we might have to fix some bugs to also support | 1 |

62,112 | 6,776,258,221 | IssuesEvent | 2017-10-27 17:08:30 | emfoundation/ce100-app | https://api.github.com/repos/emfoundation/ce100-app | closed | Match challenges with challenges | bug please-test priority-2 T2h T4h user-story | As a primary user, I get an overview of which of my challenges matches other member’s challenges, So that I can see how many other members share a related challenge and may be potential collaborators in solving it.

| 1.0 | Match challenges with challenges - As a primary user, I get an overview of which of my challenges matches other member’s challenges, So that I can see how many other members share a related challenge and may be potential collaborators in solving it.

| non_process | match challenges with challenges as a primary user i get an overview of which of my challenges matches other member’s challenges so that i can see how many other members share a related challenge and may be potential collaborators in solving it | 0 |

174,594 | 27,694,768,022 | IssuesEvent | 2023-03-14 00:41:09 | waldreg/waldreg-client | https://api.github.com/repos/waldreg/waldreg-client | closed | [Feat] 네비게이션 | design feat | ### 목적

> global navigation 구현

### 작업 상세 내용

- [x] 네비게이션 라우팅

- [x] 상세페이지 아코디언

- [x] 클릭 시 접기

- [ ] 공개범위

- [x] 애니메이션

| 1.0 | [Feat] 네비게이션 - ### 목적

> global navigation 구현

### 작업 상세 내용

- [x] 네비게이션 라우팅

- [x] 상세페이지 아코디언

- [x] 클릭 시 접기

- [ ] 공개범위

- [x] 애니메이션

| non_process | 네비게이션 목적 global navigation 구현 작업 상세 내용 네비게이션 라우팅 상세페이지 아코디언 클릭 시 접기 공개범위 애니메이션 | 0 |

317,964 | 9,672,091,737 | IssuesEvent | 2019-05-22 01:54:04 | codeforboston/communityconnect | https://api.github.com/repos/codeforboston/communityconnect | closed | Add & use new Category Filter icon in Mobile View | CfB - good first issue low priority | get file from slack here: https://cfb-public.slack.com/archives/CC85SAJ0Z/p1549501248024600

looks something like 👇 , but use the file in slack ☝️

| 1.0 | Add & use new Category Filter icon in Mobile View - get file from slack here: https://cfb-public.slack.com/archives/CC85SAJ0Z/p1549501248024600

looks something like 👇 , but use the file in slack ☝️

| non_process | add use new category filter icon in mobile view get file from slack here looks something like 👇 but use the file in slack ☝️ | 0 |

168,947 | 6,392,535,671 | IssuesEvent | 2017-08-04 03:06:14 | minio/minio-go | https://api.github.com/repos/minio/minio-go | closed | optimalPartInfo() default partSize results in running out of memory | priority: medium | Like #404, I'm streaming data which could be over 1GB and trying to use PutObjectStreaming(), but this results in optimalPartInfo(-1) being called and a partSize of 603979776 being returned.

The problem I face is that this partSize is used as, essentially, the size of the read buffer, and I'm running out of memory (... | 1.0 | optimalPartInfo() default partSize results in running out of memory - Like #404, I'm streaming data which could be over 1GB and trying to use PutObjectStreaming(), but this results in optimalPartInfo(-1) being called and a partSize of 603979776 being returned.

The problem I face is that this partSize is used as, ess... | non_process | optimalpartinfo default partsize results in running out of memory like i m streaming data which could be over and trying to use putobjectstreaming but this results in optimalpartinfo being called and a partsize of being returned the problem i face is that this partsize is used as essentially th... | 0 |

14,274 | 17,227,209,810 | IssuesEvent | 2021-07-20 04:45:50 | e4exp/paper_manager_abstract | https://api.github.com/repos/e4exp/paper_manager_abstract | reopened | Deduplicating Training Data Makes Language Models Better | Analysis Dataset Natural Language Processing | - https://arxiv.org/abs/2107.06499

- 2021

既存の言語モデルのデータセットには、重複した例文や長い繰り返しのある部分が多く含まれていることがわかった。

その結果、これらのデータセットで学習された言語モデルのプロンプトなしの出力の1%以上が、学習データからそのままコピーされていることがわかった。

我々は、学習データセットの重複を排除するための2つのツールを開発した。

例えば、6万回以上繰り返される61語の英文をC4から取り除くことができる。

重複排除により、記憶されたテキストを出力する頻度が10分の1に減り、少ない学習ステップで同等以上の精度を達成するモデルを学習することができる... | 1.0 | Deduplicating Training Data Makes Language Models Better - - https://arxiv.org/abs/2107.06499

- 2021

既存の言語モデルのデータセットには、重複した例文や長い繰り返しのある部分が多く含まれていることがわかった。

その結果、これらのデータセットで学習された言語モデルのプロンプトなしの出力の1%以上が、学習データからそのままコピーされていることがわかった。

我々は、学習データセットの重複を排除するための2つのツールを開発した。

例えば、6万回以上繰り返される61語の英文をC4から取り除くことができる。

重複排除により、記憶さ... | process | deduplicating training data makes language models better 既存の言語モデルのデータセットには、重複した例文や長い繰り返しのある部分が多く含まれていることがわかった。 その結果、 以上が、学習データからそのままコピーされていることがわかった。 我々は、 。 例えば、 。 重複排除により、 、少ない学習ステップで同等以上の精度を達成するモデルを学習することができる。 また、 %以上に影響する訓練とテストの重複を減らすことができ、より正確な評価が可能になります。 私たちの研究を再現し、データセットの重複排除を行うためのコードは、こちらのhtt... | 1 |

815,681 | 30,567,440,583 | IssuesEvent | 2023-07-20 18:56:39 | upsonp/dart | https://api.github.com/repos/upsonp/dart | closed | Time|Position mission | bug Priority | Diana asked, "What happens if for some reason the GPS scraper on the ship doesn't get the Time|Position values and Time|Position appears as 'N/A' in the elog file.

Well. It turns out, nothing good. An error is thrown in the elog.py process_attachments_actions_time_location stating there's an issue with the MID objec... | 1.0 | Time|Position mission - Diana asked, "What happens if for some reason the GPS scraper on the ship doesn't get the Time|Position values and Time|Position appears as 'N/A' in the elog file.

Well. It turns out, nothing good. An error is thrown in the elog.py process_attachments_actions_time_location stating there's an ... | non_process | time position mission diana asked what happens if for some reason the gps scraper on the ship doesn t get the time position values and time position appears as n a in the elog file well it turns out nothing good an error is thrown in the elog py process attachments actions time location stating there s an ... | 0 |

49,457 | 7,517,378,905 | IssuesEvent | 2018-04-12 03:13:49 | StarChart-Labs/alloy | https://api.github.com/repos/StarChart-Labs/alloy | opened | Document Migration From Guava Functions to Java 8 Lambdas | documentation | [Guava Functions](https://github.com/google/guava/blob/master/guava/src/com/google/common/base/Functions.java) is completely deprecated in favor of Java 8's StandardCharSets - document this migration path in the Guava -> StarChart migration documentation | 1.0 | Document Migration From Guava Functions to Java 8 Lambdas - [Guava Functions](https://github.com/google/guava/blob/master/guava/src/com/google/common/base/Functions.java) is completely deprecated in favor of Java 8's StandardCharSets - document this migration path in the Guava -> StarChart migration documentation | non_process | document migration from guava functions to java lambdas is completely deprecated in favor of java s standardcharsets document this migration path in the guava starchart migration documentation | 0 |

121,043 | 15,833,887,717 | IssuesEvent | 2021-04-06 16:07:42 | cagov/ui-claim-tracker | https://api.github.com/repos/cagov/ui-claim-tracker | reopened | Coordinate with EDD to understand required pre-launch testing/review processes | Design Design Ops Product Size: M | ### Task

Find out what's involved in required pre-launch testing/review processes (e.g. accessibility testing, usability review, user acceptance testing, security assessment and authorization) -- especially timelines.

- @dianagriffin will take point on confirming process/expectations for security assessment (Todd I... | 2.0 | Coordinate with EDD to understand required pre-launch testing/review processes - ### Task

Find out what's involved in required pre-launch testing/review processes (e.g. accessibility testing, usability review, user acceptance testing, security assessment and authorization) -- especially timelines.

- @dianagriffin w... | non_process | coordinate with edd to understand required pre launch testing review processes task find out what s involved in required pre launch testing review processes e g accessibility testing usability review user acceptance testing security assessment and authorization especially timelines dianagriffin w... | 0 |

13,973 | 16,745,700,550 | IssuesEvent | 2021-06-11 15:14:50 | dtcenter/MET | https://api.github.com/repos/dtcenter/MET | opened | Enhance PB2NC to derive Mixed-Layer CAPE (MLCAPE). | MET: Grid PreProcessing Tools alert: NEED ACCOUNT KEY alert: NEED PROJECT ASSIGNMENT priority: high requestor: NOAA/EMC type: enhancement | ## Describe the Enhancement ##

On 6/8/21, NOAA/EMC requested that the PB2NC tool be enhanced to derive additional variations of CAPE. They are most interested in mixed-layer CAPE (representing the average characteristics of the boundary layer). Mixed-layer is the "flavor" of CAPE that was recommended to be verified in... | 1.0 | Enhance PB2NC to derive Mixed-Layer CAPE (MLCAPE). - ## Describe the Enhancement ##

On 6/8/21, NOAA/EMC requested that the PB2NC tool be enhanced to derive additional variations of CAPE. They are most interested in mixed-layer CAPE (representing the average characteristics of the boundary layer). Mixed-layer is the "f... | process | enhance to derive mixed layer cape mlcape describe the enhancement on noaa emc requested that the tool be enhanced to derive additional variations of cape they are most interested in mixed layer cape representing the average characteristics of the boundary layer mixed layer is the flavor of... | 1 |

22,048 | 30,570,680,477 | IssuesEvent | 2023-07-20 21:52:39 | UnitTestBot/UTBotJava | https://api.github.com/repos/UnitTestBot/UTBotJava | closed | Convert `UtExecutionInstrumentation` from object to class | ctg-enhancement comp-instrumented-process comp-spring | **Description**

`UtExecutionInstrumentation` is not a singleton by design.

However, it was implemented like this because of some problems with serialization.

They need to be fixed now because we introduce `SpringUtExecutionInstrumentation` and using singletons becomes much more inconvenient.

| 1.0 | Convert `UtExecutionInstrumentation` from object to class - **Description**

`UtExecutionInstrumentation` is not a singleton by design.

However, it was implemented like this because of some problems with serialization.

They need to be fixed now because we introduce `SpringUtExecutionInstrumentation` and using singl... | process | convert utexecutioninstrumentation from object to class description utexecutioninstrumentation is not a singleton by design however it was implemented like this because of some problems with serialization they need to be fixed now because we introduce springutexecutioninstrumentation and using singl... | 1 |

7,876 | 11,046,129,164 | IssuesEvent | 2019-12-09 16:18:52 | prisma/prisma2 | https://api.github.com/repos/prisma/prisma2 | closed | Lift and dev, popup to create a new database missing | bug/2-confirmed kind/bug kind/regression process/candidate | ```

divyendusingh [footybot]$ prisma2 --version

prisma2@2.0.0-preview017.2, binary version: 6159bf3a263921c3c28ee68e2c9e130b5a69c293

```

To reproduce:

1. Create a schema file with a DB url where the database does not exist

2. Run lift save or dev

```

divyendusingh [footybot]$ prisma2 lift save --name ini... | 1.0 | Lift and dev, popup to create a new database missing - ```

divyendusingh [footybot]$ prisma2 --version

prisma2@2.0.0-preview017.2, binary version: 6159bf3a263921c3c28ee68e2c9e130b5a69c293

```

To reproduce:

1. Create a schema file with a DB url where the database does not exist

2. Run lift save or dev

```

... | process | lift and dev popup to create a new database missing divyendusingh version binary version to reproduce create a schema file with a db url where the database does not exist run lift save or dev divyendusingh lift save name init error could not connect t... | 1 |

130,299 | 18,155,709,597 | IssuesEvent | 2021-09-27 01:05:41 | snowdensb/Leo | https://api.github.com/repos/snowdensb/Leo | opened | CVE-2021-36374 (Medium) detected in ant-1.8.1.jar | security vulnerability | ## CVE-2021-36374 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ant-1.8.1.jar</b></p></summary>

<p>Apache Ant</p>

<p>Library home page: <a href="http://ant.apache.org/">http://ant.... | True | CVE-2021-36374 (Medium) detected in ant-1.8.1.jar - ## CVE-2021-36374 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ant-1.8.1.jar</b></p></summary>

<p>Apache Ant</p>

<p>Library hom... | non_process | cve medium detected in ant jar cve medium severity vulnerability vulnerable library ant jar apache ant library home page a href path to dependency file leo core pom xml path to vulnerable library home wss scanner repository org apache ant ant ant jar ... | 0 |

10,263 | 13,111,155,202 | IssuesEvent | 2020-08-04 22:14:58 | googleapis/nodejs-translate | https://api.github.com/repos/googleapis/nodejs-translate | closed | Move automl samples to googleapis/nodejs-automl | api: translate type: process | We have `automl` samples sitting in this repo. While they're samples about translation, they're unrelated to this npm module. We should move these samples over to googleapis/nodejs-automl, so that they're tested as part of the release process of that module. | 1.0 | Move automl samples to googleapis/nodejs-automl - We have `automl` samples sitting in this repo. While they're samples about translation, they're unrelated to this npm module. We should move these samples over to googleapis/nodejs-automl, so that they're tested as part of the release process of that module. | process | move automl samples to googleapis nodejs automl we have automl samples sitting in this repo while they re samples about translation they re unrelated to this npm module we should move these samples over to googleapis nodejs automl so that they re tested as part of the release process of that module | 1 |

18,137 | 24,182,358,717 | IssuesEvent | 2022-09-23 10:04:50 | apache/arrow-rs | https://api.github.com/repos/apache/arrow-rs | closed | Figure out and document the versioning and release cadence for object_store | development-process object-store | in https://github.com/apache/arrow-rs/issues/2030 we incorporated the [object_store](https://crates.io/crates/object_store) crate into the arrow-rs repository and under the governance of the Apache Arrow project.

Now we need to figure out:

- [ ] What should trigger a release of a `object_store` (on a schedule, w... | 1.0 | Figure out and document the versioning and release cadence for object_store - in https://github.com/apache/arrow-rs/issues/2030 we incorporated the [object_store](https://crates.io/crates/object_store) crate into the arrow-rs repository and under the governance of the Apache Arrow project.

Now we need to figure out... | process | figure out and document the versioning and release cadence for object store in we incorporated the crate into the arrow rs repository and under the governance of the apache arrow project now we need to figure out what should trigger a release of a object store on a schedule when someone wants ... | 1 |

64,133 | 8,712,142,824 | IssuesEvent | 2018-12-06 21:19:16 | zendframework/zend-view | https://api.github.com/repos/zendframework/zend-view | closed | Issues with Cycle View Helper docs | bug documentation | Can someone explain to me what is this: {{{PHP2}}} ?

Also later in the same doc, there is section about:

> You can also cycle in reverse, using the `prev()` method instead of `next()`:

However in the example there is neither a `prev()` nor `next()` method used.

```

<table>

<?php foreach ($this->books ... | 1.0 | Issues with Cycle View Helper docs - Can someone explain to me what is this: {{{PHP2}}} ?

Also later in the same doc, there is section about:

> You can also cycle in reverse, using the `prev()` method instead of `next()`:

However in the example there is neither a `prev()` nor `next()` method used.

```

<tab... | non_process | issues with cycle view helper docs can someone explain to me what is this also later in the same doc there is section about you can also cycle in reverse using the prev method instead of next however in the example there is neither a prev nor next method used ... | 0 |

8,334 | 11,494,280,170 | IssuesEvent | 2020-02-12 01:06:28 | kubeflow/kfserving | https://api.github.com/repos/kubeflow/kfserving | closed | Add licenses for dependencies in KFServing images for Kubeflow 1.0 | area/inference kind/process priority/p0 | Related to kubeflow/testing#539 https://github.com/kubeflow/kubeflow/issues/4061

Need to add all license content for thrid-party dependencies in KFServing controller images.

For Golang images, you may find these instructions helpful to extract all license files:

https://github.com/kubeflow/metadata/blob/master... | 1.0 | Add licenses for dependencies in KFServing images for Kubeflow 1.0 - Related to kubeflow/testing#539 https://github.com/kubeflow/kubeflow/issues/4061

Need to add all license content for thrid-party dependencies in KFServing controller images.

For Golang images, you may find these instructions helpful to extract a... | process | add licenses for dependencies in kfserving images for kubeflow related to kubeflow testing need to add all license content for thrid party dependencies in kfserving controller images for golang images you may find these instructions helpful to extract all license files | 1 |

2,269 | 5,103,054,593 | IssuesEvent | 2017-01-04 20:11:17 | P0cL4bs/WiFi-Pumpkin | https://api.github.com/repos/P0cL4bs/WiFi-Pumpkin | closed | no Internet Connection on Victim-device | in process priority solved | ## What's the problem (or question)?

The Target-Device (Android Phone) doesn't have internet, when connected to PumpAP.

#### Please tell us details about your environment.

Wifi: Atheros Communications, Inc. AR9271 802.11n