Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

759,915 | 26,618,479,466 | IssuesEvent | 2023-01-24 09:23:43 | quadratic-funding/mpc-phase2-suite | https://api.github.com/repos/quadratic-funding/mpc-phase2-suite | closed | Github Authentication Token Expiration | Enhancement 🥋 Low Priority 🍏 | Test how this would affect the tool, and document usage for coordinator setup | 1.0 | Github Authentication Token Expiration - Test how this would affect the tool, and document usage for coordinator setup | non_process | github authentication token expiration test how this would affect the tool and document usage for coordinator setup | 0 |

10,519 | 13,303,094,831 | IssuesEvent | 2020-08-25 15:04:29 | GoogleCloudPlatform/dotnet-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/dotnet-docs-samples | opened | [Firestore]: Fix and reactivate flaky tests. | api: firestore priority: p1 type: process | - FirestoreTests.GoogleCloudSamples.FirestoreTests.ListenMultipleTest

- FirestoreTests.GoogleCloudSamples.FirestoreTests.ListenDocumentTest

Build result [here](https://source.cloud.google.com/results/invocations/2d685bfb-f67c-48aa-9bb2-927b6f120d6f/targets/github%2Fdotnet-docs-samples%2Ffirestore%2Fapi%2FFirestoreT... | 1.0 | [Firestore]: Fix and reactivate flaky tests. - - FirestoreTests.GoogleCloudSamples.FirestoreTests.ListenMultipleTest

- FirestoreTests.GoogleCloudSamples.FirestoreTests.ListenDocumentTest

Build result [here](https://source.cloud.google.com/results/invocations/2d685bfb-f67c-48aa-9bb2-927b6f120d6f/targets/github%2Fdot... | process | fix and reactivate flaky tests firestoretests googlecloudsamples firestoretests listenmultipletest firestoretests googlecloudsamples firestoretests listendocumenttest build result | 1 |

3,723 | 6,732,907,156 | IssuesEvent | 2017-10-18 13:15:33 | lockedata/rcms | https://api.github.com/repos/lockedata/rcms | opened | Manage registration | attendee osem processes | ## Detailed task

- Edit your information

- Get a refund on your ticker

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.

Use a 👍 (`:+1:`) reaction to this task if you were ... | 1.0 | Manage registration - ## Detailed task

- Edit your information

- Get a refund on your ticker

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.

Use a 👍 (`:+1:`) reaction to ... | process | manage registration detailed task edit your information get a refund on your ticker assessing the task try to perform the task use google and the system documentation to help part of what we re trying to assess how easy it is for people to work out how to do tasks use a 👍 reaction to ... | 1 |

20,075 | 26,570,078,340 | IssuesEvent | 2023-01-21 02:52:05 | bitfocus/companion-module-requests | https://api.github.com/repos/bitfocus/companion-module-requests | closed | Server Window Won't Launch/Open | NOT YET PROCESSED | I have updated to the newest stable version of Companion but when I click on it the Server Window will not open.

TBH it never has and am not sure why

| 1.0 | Server Window Won't Launch/Open - I have updated to the newest stable version of Companion but when I click on it the Server Window will not open.

TBH it never has and am not sure why

| process | server window won t launch open i have updated to the newest stable version of companion but when i click on it the server window will not open tbh it never has and am not sure why | 1 |

1,002 | 2,594,430,658 | IssuesEvent | 2015-02-20 03:17:52 | BALL-Project/ball | https://api.github.com/repos/BALL-Project/ball | opened | Berechnung der Bindungsordnung nicht korrekt 2 | C: VIEW P: minor T: defect | **Reported by mkonietzko on 7 Jan 42142795 17:23 UTC**

Bei Berechnung der Bindungsordnung wird zunchst ein Molekl vorgeschlagen. Klickt man nun auf "Nchste Lsung berechnen", so werden zwar richtige Molekle vorgeschlagen und auf das aktuelle Molekl angewendet, aber die Wasserstoffatome werden nicht korrigiert. Auch wenn... | 1.0 | Berechnung der Bindungsordnung nicht korrekt 2 - **Reported by mkonietzko on 7 Jan 42142795 17:23 UTC**

Bei Berechnung der Bindungsordnung wird zunchst ein Molekl vorgeschlagen. Klickt man nun auf "Nchste Lsung berechnen", so werden zwar richtige Molekle vorgeschlagen und auf das aktuelle Molekl angewendet, aber die Wa... | non_process | berechnung der bindungsordnung nicht korrekt reported by mkonietzko on jan utc bei berechnung der bindungsordnung wird zunchst ein molekl vorgeschlagen klickt man nun auf nchste lsung berechnen so werden zwar richtige molekle vorgeschlagen und auf das aktuelle molekl angewendet aber die wasserstoff... | 0 |

710,405 | 24,416,906,078 | IssuesEvent | 2022-10-05 16:41:13 | ufs-community/regional_workflow | https://api.github.com/repos/ufs-community/regional_workflow | closed | Add the RRFS DA and cycling components to the develop branch of regional_workflow | enhancement medium priority | ## Description

Add @hu5970's RRFS-based DA and cycling additions to the develop branch of regional_workflow.

## Solution

Once the authoritative RRFS branch has been created in regional_workflow, Incrementally introduce PRs into the develop branch to implement both DA and cycling functionality. Individual componen... | 1.0 | Add the RRFS DA and cycling components to the develop branch of regional_workflow - ## Description

Add @hu5970's RRFS-based DA and cycling additions to the develop branch of regional_workflow.

## Solution

Once the authoritative RRFS branch has been created in regional_workflow, Incrementally introduce PRs into the... | non_process | add the rrfs da and cycling components to the develop branch of regional workflow description add s rrfs based da and cycling additions to the develop branch of regional workflow solution once the authoritative rrfs branch has been created in regional workflow incrementally introduce prs into the deve... | 0 |

78,873 | 9,807,612,290 | IssuesEvent | 2019-06-12 14:03:24 | djgroen/FabSim3 | https://api.github.com/repos/djgroen/FabSim3 | closed | FabSim / VECMAtk logo | design-only | Guys... I think it is time we think of designing one. Suggestions are welcome for logos of either type (I think we should have both ;)). | 1.0 | FabSim / VECMAtk logo - Guys... I think it is time we think of designing one. Suggestions are welcome for logos of either type (I think we should have both ;)). | non_process | fabsim vecmatk logo guys i think it is time we think of designing one suggestions are welcome for logos of either type i think we should have both | 0 |

9,380 | 12,378,912,211 | IssuesEvent | 2020-05-19 11:33:39 | prisma/prisma-client-js | https://api.github.com/repos/prisma/prisma-client-js | opened | Datasource override from client constructor doesn't match the datasource block from the schema.prisma file | kind/improvement process/candidate topic: dx | ## Problem

prisma.schema

```prisma

datasource myawesomedb {

provider = "mysql"

url = "mysql://......"

}

```

The shape of the input in the constructor is not the same as the datasource block in the schema:

```ts

const client = new PrismaClient({

datasources: {

myawesomedb: "mysql://...... | 1.0 | Datasource override from client constructor doesn't match the datasource block from the schema.prisma file - ## Problem

prisma.schema

```prisma

datasource myawesomedb {

provider = "mysql"

url = "mysql://......"

}

```

The shape of the input in the constructor is not the same as the datasource block in... | process | datasource override from client constructor doesn t match the datasource block from the schema prisma file problem prisma schema prisma datasource myawesomedb provider mysql url mysql the shape of the input in the constructor is not the same as the datasource block in... | 1 |

25,324 | 2,679,331,255 | IssuesEvent | 2015-03-26 16:09:58 | BladeRunnerJS/brjs | https://api.github.com/repos/BladeRunnerJS/brjs | closed | McAffee virus scanner makes the app take 20+ mins to load | bug CaplinSupport high-priority | In the process of upgrading to CT4 / Motif 2.1.1. When running ./brjs serve and navigating to localhost:7070/dashboard it never loads. After around 25mins the browser will redirect to localhost:7070/dashboard/en but the dashboard still does not load. Navigating directly to /dashboard/en also has the some result. CPU pr... | 1.0 | McAffee virus scanner makes the app take 20+ mins to load - In the process of upgrading to CT4 / Motif 2.1.1. When running ./brjs serve and navigating to localhost:7070/dashboard it never loads. After around 25mins the browser will redirect to localhost:7070/dashboard/en but the dashboard still does not load. Navigatin... | non_process | mcaffee virus scanner makes the app take mins to load in the process of upgrading to motif when running brjs serve and navigating to localhost dashboard it never loads after around the browser will redirect to localhost dashboard en but the dashboard still does not load navigating directly to ... | 0 |

21,635 | 30,052,114,419 | IssuesEvent | 2023-06-28 02:00:10 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Wed, 28 Jun 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### Self-supervised Learning of Event-guided Video Frame Interpolation for Rolling Shutter Frames

- **Authors:** Yunfan Lu, Guoqiang Liang, Lin Wang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Robotics (cs.RO)

- **Arxiv link:** https://arxiv.org/abs/2306.15507

- **Pdf link:*... | 2.0 | New submissions for Wed, 28 Jun 23 - ## Keyword: events

### Self-supervised Learning of Event-guided Video Frame Interpolation for Rolling Shutter Frames

- **Authors:** Yunfan Lu, Guoqiang Liang, Lin Wang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Robotics (cs.RO)

- **Arxiv link:** https://arx... | process | new submissions for wed jun keyword events self supervised learning of event guided video frame interpolation for rolling shutter frames authors yunfan lu guoqiang liang lin wang subjects computer vision and pattern recognition cs cv robotics cs ro arxiv link pdf li... | 1 |

8,230 | 7,299,005,779 | IssuesEvent | 2018-02-26 18:44:05 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | Gerrit doesn't check for CLAs | P1 high area-infrastructure | On github every PR had an automatic check for CLA. The same thing should happen on Gerrit, so that we can more easily accept external patches through Gerrit. | 1.0 | Gerrit doesn't check for CLAs - On github every PR had an automatic check for CLA. The same thing should happen on Gerrit, so that we can more easily accept external patches through Gerrit. | non_process | gerrit doesn t check for clas on github every pr had an automatic check for cla the same thing should happen on gerrit so that we can more easily accept external patches through gerrit | 0 |

319,920 | 23,795,386,886 | IssuesEvent | 2022-09-02 19:00:18 | 7-Stories-Above-Ponce/yelpApp | https://api.github.com/repos/7-Stories-Above-Ponce/yelpApp | closed | README File | documentation | As a DEVELOPER, I want to develop a README in order to provide insight to the user about the app. | 1.0 | README File - As a DEVELOPER, I want to develop a README in order to provide insight to the user about the app. | non_process | readme file as a developer i want to develop a readme in order to provide insight to the user about the app | 0 |

7,858 | 11,033,436,132 | IssuesEvent | 2019-12-06 23:00:27 | shirou/gopsutil | https://api.github.com/repos/shirou/gopsutil | closed | [process][darwin] Panic when calling process.CreateTime() | os:darwin package:process | Am trying to list all the processes and print the names as follows:

```

func listAllProcesses() {

processes, err := process.Processes()

if err != nil {

fmt.Println("Could not get a list of processes!")

return

}

for _, process := range processes {

name, err := process.Cmdline()

if e... | 1.0 | [process][darwin] Panic when calling process.CreateTime() - Am trying to list all the processes and print the names as follows:

```

func listAllProcesses() {

processes, err := process.Processes()

if err != nil {

fmt.Println("Could not get a list of processes!")

return

}

for _, process := ran... | process | panic when calling process createtime am trying to list all the processes and print the names as follows func listallprocesses processes err process processes if err nil fmt println could not get a list of processes return for process range processes ... | 1 |

5,923 | 8,743,165,179 | IssuesEvent | 2018-12-12 18:21:49 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | closed | [Firestore] Field Path escaping in python doesn't match other languages | api: firestore triaged for GA type: process | There are no FieldPath overloads in the SDK. Instead, there is a field_path() method that returns an encoded string. While the Python SDK expects fully escaped field paths,

other SDKS escape user-provided field paths by default and split on dots for this escaping.

| 1.0 | [Firestore] Field Path escaping in python doesn't match other languages - There are no FieldPath overloads in the SDK. Instead, there is a field_path() method that returns an encoded string. While the Python SDK expects fully escaped field paths,

other SDKS escape user-provided field paths by default and split on dot... | process | field path escaping in python doesn t match other languages there are no fieldpath overloads in the sdk instead there is a field path method that returns an encoded string while the python sdk expects fully escaped field paths other sdks escape user provided field paths by default and split on dots for this... | 1 |

274,476 | 20,834,165,028 | IssuesEvent | 2022-03-19 23:25:27 | SE701-T1/frontend | https://api.github.com/repos/SE701-T1/frontend | closed | Add Code Owners | Status: Available Type: Documentation Priority: Low | **Describe the task that needs to be done.**

Code owners need to be added so that the right people are automatically requested for review when PRs are made in directories relevant to them.

**Describe how a solution to your proposed task might look like (and any alternatives considered).**

Split the `src` directory... | 1.0 | Add Code Owners - **Describe the task that needs to be done.**

Code owners need to be added so that the right people are automatically requested for review when PRs are made in directories relevant to them.

**Describe how a solution to your proposed task might look like (and any alternatives considered).**

Split t... | non_process | add code owners describe the task that needs to be done code owners need to be added so that the right people are automatically requested for review when prs are made in directories relevant to them describe how a solution to your proposed task might look like and any alternatives considered split t... | 0 |

344,884 | 24,832,992,928 | IssuesEvent | 2022-10-26 06:18:06 | tensorchord/envd | https://api.github.com/repos/tensorchord/envd | closed | bug(doc): unclear chart when change to night mode | type/bug 🐛 type/documentation 📄 | <img width="879" alt="Screen Shot 2022-10-25 at 22 23 39" src="https://user-images.githubusercontent.com/3927355/197800195-71decaa2-2b45-438d-a8ac-383d6142b56e.png">

| 1.0 | bug(doc): unclear chart when change to night mode - <img width="879" alt="Screen Shot 2022-10-25 at 22 23 39" src="https://user-images.githubusercontent.com/3927355/197800195-71decaa2-2b45-438d-a8ac-383d6142b56e.png">

| non_process | bug doc unclear chart when change to night mode img width alt screen shot at src | 0 |

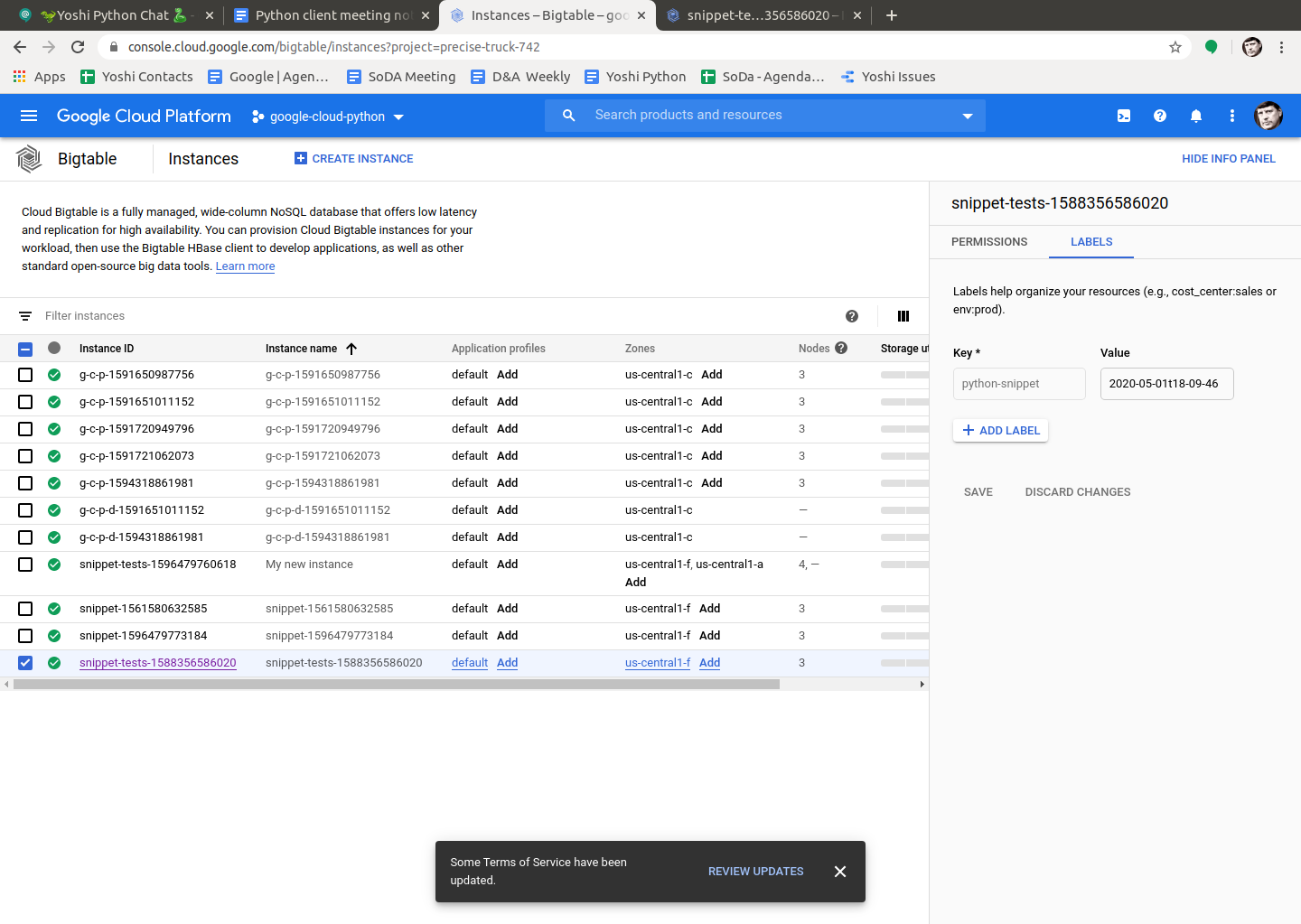

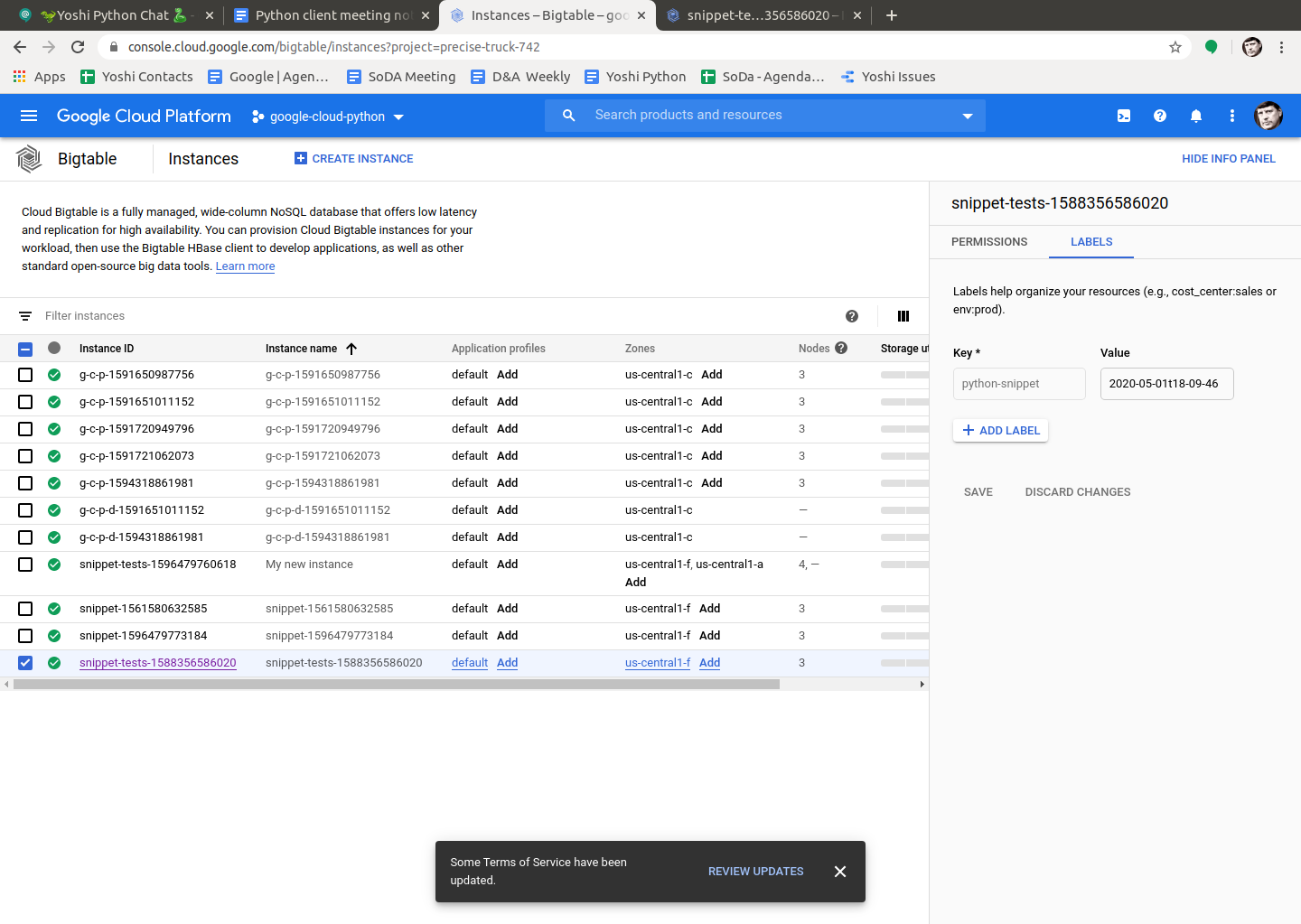

10,237 | 13,098,106,842 | IssuesEvent | 2020-08-03 18:47:20 | googleapis/python-bigtable | https://api.github.com/repos/googleapis/python-bigtable | opened | systests / snippets leaking instances | testing type: process |

| Instance ID | Creation Date |

| --- | --- |

| g-c-p-1591650987756 | 2020-06-08t21-16-27 |

| g-c-p-1591651011152 | 2020-06-08t21-16-51 |

| g-c-p-1591720949796 | 2020-06-09t1... | 1.0 | systests / snippets leaking instances -

| Instance ID | Creation Date |

| --- | --- |

| g-c-p-1591650987756 | 2020-06-08t21-16-27 |

| g-c-p-1591651011152 | 2020-06-08t21-16-51... | process | systests snippets leaking instances instance id creation date g c p g c p g c p g c p g c p g c p d g c p d snippet tests snippet ... | 1 |

449,342 | 31,839,462,084 | IssuesEvent | 2023-09-14 15:22:32 | CodeSystem2022/ERROR-404-Trabajo-Final | https://api.github.com/repos/CodeSystem2022/ERROR-404-Trabajo-Final | opened | -Realizar las pruebas y documentarlas | documentation enhancement | - Como tester del proyecto deberá realizar la documentación de de las pruebas, documentarlas y pasar el link de dicho documento a Sonia para que lo pueda agregar en el README. | 1.0 | -Realizar las pruebas y documentarlas - - Como tester del proyecto deberá realizar la documentación de de las pruebas, documentarlas y pasar el link de dicho documento a Sonia para que lo pueda agregar en el README. | non_process | realizar las pruebas y documentarlas como tester del proyecto deberá realizar la documentación de de las pruebas documentarlas y pasar el link de dicho documento a sonia para que lo pueda agregar en el readme | 0 |

38,943 | 10,268,641,211 | IssuesEvent | 2019-08-23 06:58:53 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | reopened | error: 'is_final' is not a member of 'std' | subtype:bazel type:build/install | With

bazel 0.26.1

gcc 7.4

cuda 10.1 update 2

and the latest git clone of tensorflow, I hit the following error at the bazel build command

```

ERROR: /home/mh.naderan/.cache/bazel/_bazel_mh.naderan/dacf7a124fc721f30ac789c201b3b139/external/llvm/BUILD.bazel:201:1: C++ compilation of rule '@llvm//:llvm-tb... | 1.0 | error: 'is_final' is not a member of 'std' - With

bazel 0.26.1

gcc 7.4

cuda 10.1 update 2

and the latest git clone of tensorflow, I hit the following error at the bazel build command

```

ERROR: /home/mh.naderan/.cache/bazel/_bazel_mh.naderan/dacf7a124fc721f30ac789c201b3b139/external/llvm/BUILD.bazel:20... | non_process | error is final is not a member of std with bazel gcc cuda update and the latest git clone of tensorflow i hit the following error at the bazel build command error home mh naderan cache bazel bazel mh naderan external llvm build bazel c compilation of rule llvm... | 0 |

32,651 | 13,903,557,148 | IssuesEvent | 2020-10-20 07:25:29 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | System module - Strange behavior when disabled metricsets will still collect metrics | Team:Services bug v7.10.0 | Events from other metricsets coming in although they have been disabled.

- when I run the cpu metricset even though I disable process and network in the system.yml config file metricset they are still running.

Example config:

```

- module: system

period: 10s

metricsets:

- cpu

#- load

#- memory... | 1.0 | System module - Strange behavior when disabled metricsets will still collect metrics - Events from other metricsets coming in although they have been disabled.

- when I run the cpu metricset even though I disable process and network in the system.yml config file metricset they are still running.

Example config:

```... | non_process | system module strange behavior when disabled metricsets will still collect metrics events from other metricsets coming in although they have been disabled when i run the cpu metricset even though i disable process and network in the system yml config file metricset they are still running example config ... | 0 |

9,220 | 12,256,874,814 | IssuesEvent | 2020-05-06 12:50:59 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Output in Process{} block is not shown - By design, or undocumented "feature"? | Pri2 automation/svc cxp process-automation/subsvc product-question triaged | Using a ```Process{}``` block inside a Automation Account runbook does not give any output to terminal/ output streams.

```Begin{}``` and ```End{}``` shows output though.

Sample code:

```powershell

[OutputType($null)]

Param ()

Begin {

Write-Output -InputObject 'Begin -> Write-Output'

Write-Verbose -Message... | 1.0 | Output in Process{} block is not shown - By design, or undocumented "feature"? - Using a ```Process{}``` block inside a Automation Account runbook does not give any output to terminal/ output streams.

```Begin{}``` and ```End{}``` shows output though.

Sample code:

```powershell

[OutputType($null)]

Param ()

Begin {

... | process | output in process block is not shown by design or undocumented feature using a process block inside a automation account runbook does not give any output to terminal output streams begin and end shows output though sample code powershell param begin write output in... | 1 |

2,140 | 4,982,505,263 | IssuesEvent | 2016-12-07 11:35:11 | jlm2017/jlm-video-subtitles | https://api.github.com/repos/jlm2017/jlm-video-subtitles | opened | [subtitles] [FR] Le libre-échange détruit la sidérurgie française - Invervention au Parlement européen | Language: French Process: [0] Awaiting subtitles | # Video title

Le libre-échange détruit la sidérurgie française - Intervention au Parlement européen

# URL

https://www.youtube.com/watch?v=hwveRPW9Doc&index=12&list=PLnAm9o_Xn_3DU-PS1cGkVoMthELDzFVRT

# Youtube subtitles language

Français

# Duration

1:05

# URL subtitles

https://www.youtube.com/... | 1.0 | [subtitles] [FR] Le libre-échange détruit la sidérurgie française - Invervention au Parlement européen - # Video title

Le libre-échange détruit la sidérurgie française - Intervention au Parlement européen

# URL

https://www.youtube.com/watch?v=hwveRPW9Doc&index=12&list=PLnAm9o_Xn_3DU-PS1cGkVoMthELDzFVRT

# Yo... | process | le libre échange détruit la sidérurgie française invervention au parlement européen video title le libre échange détruit la sidérurgie française intervention au parlement européen url youtube subtitles language français duration url subtitles | 1 |

380,192 | 11,255,131,854 | IssuesEvent | 2020-01-12 06:29:45 | AugurProject/augur | https://api.github.com/repos/AugurProject/augur | opened | The interest sweep doesn't work during global shutdown. | Priority: Medium V2 Audit | https://github.com/AugurProject/augur/blob/5235fda53bd586efe3b2f0c11f0812d5eb72bd98/packages/augur-core/source/contracts/reporting/Universe.sol#L725

When Maker goes into global shutdown, DAI cannot be minted anymore, Pot DAI can only be turned into Vat DAI. The `sweepInterest` function correctly withdraws Pot DAI t... | 1.0 | The interest sweep doesn't work during global shutdown. - https://github.com/AugurProject/augur/blob/5235fda53bd586efe3b2f0c11f0812d5eb72bd98/packages/augur-core/source/contracts/reporting/Universe.sol#L725

When Maker goes into global shutdown, DAI cannot be minted anymore, Pot DAI can only be turned into Vat DAI. ... | non_process | the interest sweep doesn t work during global shutdown when maker goes into global shutdown dai cannot be minted anymore pot dai can only be turned into vat dai the sweepinterest function correctly withdraws pot dai to vat dai during global shutdown and it correctly transfers vat dai when necessary but ... | 0 |

69,914 | 30,500,784,505 | IssuesEvent | 2023-07-18 13:50:14 | Azure/azure-cli | https://api.github.com/repos/Azure/azure-cli | reopened | learn the ETA of the latest Version CLI | Service Attention Network - DNS customer-reported Auto-Assign Auto-Resolve | Hi Team,

Hope things are going well.

I would like to learn the ETA of the latest Version CLI.

Recently, Some customers meet similar issue in using Windows CLI to export/import DNS zone

az network dns zone import -g eitb_dns -n eitb.eus --file eitb.eus.txt

az network private-dns zone export -g myresource... | 1.0 | learn the ETA of the latest Version CLI - Hi Team,

Hope things are going well.

I would like to learn the ETA of the latest Version CLI.

Recently, Some customers meet similar issue in using Windows CLI to export/import DNS zone

az network dns zone import -g eitb_dns -n eitb.eus --file eitb.eus.txt

az net... | non_process | learn the eta of the latest version cli hi team hope things are going well i would like to learn the eta of the latest version cli recently some customers meet similar issue in using windows cli to export import dns zone az network dns zone import g eitb dns n eitb eus file eitb eus txt az net... | 0 |

22,144 | 30,684,658,557 | IssuesEvent | 2023-07-26 11:31:00 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | How to establish "Connect-PnPOnline" connection in PowerShell runbook using managed identity? | automation/svc triaged assigned-to-author product-question process-automation/subsvc Pri2 |

How to establish "Connect-PnPOnline" connection in PowerShell runbook using managed identity?

Connect-PnPOnline -ManagedIdentity

Seems the above command is not working.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 8a8470c7... | 1.0 | How to establish "Connect-PnPOnline" connection in PowerShell runbook using managed identity? -

How to establish "Connect-PnPOnline" connection in PowerShell runbook using managed identity?

Connect-PnPOnline -ManagedIdentity

Seems the above command is not working.

---

#### Document Details

⚠ *Do not edi... | process | how to establish connect pnponline connection in powershell runbook using managed identity how to establish connect pnponline connection in powershell runbook using managed identity connect pnponline managedidentity seems the above command is not working document details ⚠ do not edi... | 1 |

7,906 | 11,089,904,168 | IssuesEvent | 2019-12-14 22:00:58 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Conkeyrefs not working correctly when map in sub-directory | needs reproduction preprocess/keyref stale | Similar to #2680, if the ditamap is in a sub-directory below my project root, for example:

|-- maps

root-- |

|-- topics

|--images

Then defining standard text keys and using conkeyrefs doesn't work. The pulled in text is left blank, because the file isn't being found. I have attache... | 1.0 | Conkeyrefs not working correctly when map in sub-directory - Similar to #2680, if the ditamap is in a sub-directory below my project root, for example:

|-- maps

root-- |

|-- topics

|--images

Then defining standard text keys and using conkeyrefs doesn't work. The pulled in text is l... | process | conkeyrefs not working correctly when map in sub directory similar to if the ditamap is in a sub directory below my project root for example maps root topics images then defining standard text keys and using conkeyrefs doesn t work the pulled in text is left... | 1 |

26,712 | 4,777,627,620 | IssuesEvent | 2016-10-27 16:49:16 | gbif/ipt | https://api.github.com/repos/gbif/ipt | closed | Deleting unregistered resource says resource is registered in dialog box | bug Component-i18n Component-UI Priority-High Type-Defect | When I want to delete an **un**registered resource, I get:

Seems like the dialog box doesn't make a distinction between registered and unregistered, but mentions `registered` anyway? | 1.0 | Deleting unregistered resource says resource is registered in dialog box - When I want to delete an **un**registered resource, I get:

Seems like the dialog box doesn't make a distinction between registered ... | non_process | deleting unregistered resource says resource is registered in dialog box when i want to delete an un registered resource i get seems like the dialog box doesn t make a distinction between registered and unregistered but mentions registered anyway | 0 |

10,447 | 13,224,963,366 | IssuesEvent | 2020-08-17 20:12:33 | googleapis/python-storage | https://api.github.com/repos/googleapis/python-storage | opened | Replace unsafe 'timestamp.mktemp' with 'timestamp.{Named,}TemporaryFile' in systests | testing type: process | Pydoc says:

```

Help on function mktemp in tempfile:

tempfile.mktemp = mktemp(suffix='', prefix='tmp', dir=None)

User-callable function to return a unique temporary file name. The

file is not created.

Arguments are as for mkstemp, except that the 'text' argument is

not accepted.

... | 1.0 | Replace unsafe 'timestamp.mktemp' with 'timestamp.{Named,}TemporaryFile' in systests - Pydoc says:

```

Help on function mktemp in tempfile:

tempfile.mktemp = mktemp(suffix='', prefix='tmp', dir=None)

User-callable function to return a unique temporary file name. The

file is not created.

Arg... | process | replace unsafe timestamp mktemp with timestamp named temporaryfile in systests pydoc says help on function mktemp in tempfile tempfile mktemp mktemp suffix prefix tmp dir none user callable function to return a unique temporary file name the file is not created arg... | 1 |

348,259 | 24,909,968,505 | IssuesEvent | 2022-10-29 18:41:15 | AY2223S1-CS2103T-W11-3/tp | https://api.github.com/repos/AY2223S1-CS2103T-W11-3/tp | closed | [PE-D][Tester D] Invalid command error when user follows format in UG for addcom | type.DocumentationBug | The User Guide (UG) gives the example command `addcom n/Tokyo Ghoul Kaneki f/50 d/2022-10-15` which follows the format for "Adding a commission: `addcom`".

However, this com... | 1.0 | [PE-D][Tester D] Invalid command error when user follows format in UG for addcom - The User Guide (UG) gives the example command `addcom n/Tokyo Ghoul Kaneki f/50 d/2022-10-15` which follows the format for "Adding a commission: `addcom`".

Gecko/20100101 Firefox/78.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/117679 -->

**URL**: https://www.notion.so/signup

**Browser / Version**: Firefox 78.0

**Oper... | 1.0 | www.notion.so - site is not usable - <!-- @browser: Firefox 78.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64; rv:78.0) Gecko/20100101 Firefox/78.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/117679 -->

**URL**: https://www.notion.so/signup

**Bro... | non_process | site is not usable url browser version firefox operating system linux tested another browser yes opera problem type site is not usable description page not loading correctly steps to reproduce when i try to login into notion the site won t load same issue ap... | 0 |

15,764 | 19,913,027,286 | IssuesEvent | 2022-01-25 19:13:19 | input-output-hk/high-assurance-legacy | https://api.github.com/repos/input-output-hk/high-assurance-legacy | closed | Remove the `output_rest` interpretation of the `residual` locale | language: isabelle topic: process calculus type: improvement | Currently, the `residual` locale has an interpretation for output rests, although output rests aren’t morally residuals. The reason is that the manual definition of `proper_lift` and the manually conducted proofs of its properties referred to `output_rest_lift` and its properties. Meanwhile, we use Isabelle’s support f... | 1.0 | Remove the `output_rest` interpretation of the `residual` locale - Currently, the `residual` locale has an interpretation for output rests, although output rests aren’t morally residuals. The reason is that the manual definition of `proper_lift` and the manually conducted proofs of its properties referred to `output_re... | process | remove the output rest interpretation of the residual locale currently the residual locale has an interpretation for output rests although output rests aren’t morally residuals the reason is that the manual definition of proper lift and the manually conducted proofs of its properties referred to output re... | 1 |

15,770 | 19,915,010,904 | IssuesEvent | 2022-01-25 21:28:47 | medic/cht-core | https://api.github.com/repos/medic/cht-core | closed | Release 3.13.1 | Type: Internal process | # Planning - Product Manager

- [x] Create an GH Milestone and add this issue to it.

- [x] Add all the issues to be worked on to the Milestone.

# Development - Release Engineer

When development is ready to begin one of the engineers should be nominated as a Release Engineer. They will be responsible for making... | 1.0 | Release 3.13.1 - # Planning - Product Manager

- [x] Create an GH Milestone and add this issue to it.

- [x] Add all the issues to be worked on to the Milestone.

# Development - Release Engineer

When development is ready to begin one of the engineers should be nominated as a Release Engineer. They will be respo... | process | release planning product manager create an gh milestone and add this issue to it add all the issues to be worked on to the milestone development release engineer when development is ready to begin one of the engineers should be nominated as a release engineer they will be responsibl... | 1 |

24,815 | 12,152,856,281 | IssuesEvent | 2020-04-24 23:38:11 | Azure/azure-sdk-for-js | https://api.github.com/repos/Azure/azure-sdk-for-js | closed | Service Bus Send Reliability | Client Service Bus customer-reported | - **Package Name**: azure/service-bus

- **Package Version**: 1.1.1

- **Operating system**: linux node:8.16-slim

**Describe the bug**

Sending messages to service bus will often encounter errors during platform service bus updates, deployments and other transient issues.

1. Sends taking too long, There appears t... | 1.0 | Service Bus Send Reliability - - **Package Name**: azure/service-bus

- **Package Version**: 1.1.1

- **Operating system**: linux node:8.16-slim

**Describe the bug**

Sending messages to service bus will often encounter errors during platform service bus updates, deployments and other transient issues.

1. Sends t... | non_process | service bus send reliability package name azure service bus package version operating system linux node slim describe the bug sending messages to service bus will often encounter errors during platform service bus updates deployments and other transient issues sends ta... | 0 |

22,614 | 31,841,352,959 | IssuesEvent | 2023-09-14 16:32:28 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | metaflow-netflixext 1.0.2 has 1 GuardDog issues | guarddog silent-process-execution | https://pypi.org/project/metaflow-netflixext

https://inspector.pypi.io/project/metaflow-netflixext

```{

"dependency": "metaflow-netflixext",

"version": "1.0.2",

"result": {

"issues": 1,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "metaflow-netflixext-... | 1.0 | metaflow-netflixext 1.0.2 has 1 GuardDog issues - https://pypi.org/project/metaflow-netflixext

https://inspector.pypi.io/project/metaflow-netflixext

```{

"dependency": "metaflow-netflixext",

"version": "1.0.2",

"result": {

"issues": 1,

"errors": {},

"results": {

"silent-process-execution": [

... | process | metaflow netflixext has guarddog issues dependency metaflow netflixext version result issues errors results silent process execution location metaflow netflixext metaflow extensions netflix ext plugins... | 1 |

1,645 | 4,269,584,495 | IssuesEvent | 2016-07-13 01:18:29 | ParsePlatform/parse-server | https://api.github.com/repos/ParsePlatform/parse-server | closed | Group chat for repository (Gitter) | in-process | I noticed that a lot of tasks are created just to discuss or ask some question. It would be probably better to use group chat for that, something like Gitter or Slack. I think Gitter would be better, because it stores chat logs forever.

I checked that you were asked for having Gitter chat already in #566 and #1366.

... | 1.0 | Group chat for repository (Gitter) - I noticed that a lot of tasks are created just to discuss or ask some question. It would be probably better to use group chat for that, something like Gitter or Slack. I think Gitter would be better, because it stores chat logs forever.

I checked that you were asked for having Gi... | process | group chat for repository gitter i noticed that a lot of tasks are created just to discuss or ask some question it would be probably better to use group chat for that something like gitter or slack i think gitter would be better because it stores chat logs forever i checked that you were asked for having gi... | 1 |

3,991 | 6,918,519,960 | IssuesEvent | 2017-11-29 12:31:19 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | Should emulate native browser behaviour related with trailing slashes for location properties | !IMPORTANT! AREA: client SYSTEM: URL processing TYPE: bug | Related with https://testcafe-discuss.devexpress.com/t/getting-infinite-angular-digest-cycles-only-with-testcafe/557/7

Code to reproduce:

```js

Page location is 'http://example.com'

Without proxing:

location.href: 'https://example.com/'

With proxing:

location.href: 'https://example.com'

``` | 1.0 | Should emulate native browser behaviour related with trailing slashes for location properties - Related with https://testcafe-discuss.devexpress.com/t/getting-infinite-angular-digest-cycles-only-with-testcafe/557/7

Code to reproduce:

```js

Page location is 'http://example.com'

Without proxing:

location.href: '... | process | should emulate native browser behaviour related with trailing slashes for location properties related with code to reproduce js page location is without proxing location href with proxing location href | 1 |

20,780 | 27,516,889,060 | IssuesEvent | 2023-03-06 12:35:59 | alphagov/govuk-design-system | https://api.github.com/repos/alphagov/govuk-design-system | closed | Build a publishing plan for Exit this Page | process | ## What

Scope remaining stories for mvp and build a publishing plan.

## Why

So the team and stakeholders know what's left to do and we can give ourselves healthy targets to work towards.

## Who needs to work on

Kelly

## Who needs to review this

Steve, Katrina, Ciandelle, David, Owen, Beeps, Calvin

## Do... | 1.0 | Build a publishing plan for Exit this Page - ## What

Scope remaining stories for mvp and build a publishing plan.

## Why

So the team and stakeholders know what's left to do and we can give ourselves healthy targets to work towards.

## Who needs to work on

Kelly

## Who needs to review this

Steve, Katrina, C... | process | build a publishing plan for exit this page what scope remaining stories for mvp and build a publishing plan why so the team and stakeholders know what s left to do and we can give ourselves healthy targets to work towards who needs to work on kelly who needs to review this steve katrina c... | 1 |

131,496 | 18,247,955,725 | IssuesEvent | 2021-10-01 21:22:36 | turkdevops/grafana | https://api.github.com/repos/turkdevops/grafana | closed | CVE-2018-14042 (Medium) detected in bootstrap-3.3.7.js - autoclosed | security vulnerability | ## CVE-2018-14042 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.7.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first... | True | CVE-2018-14042 (Medium) detected in bootstrap-3.3.7.js - autoclosed - ## CVE-2018-14042 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.7.js</b></p></summary>

<p>The mo... | non_process | cve medium detected in bootstrap js autoclosed cve medium severity vulnerability vulnerable library bootstrap js the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to dependency file grafana ... | 0 |

1,069 | 3,536,080,960 | IssuesEvent | 2016-01-17 00:34:43 | MaretEngineering/MROV | https://api.github.com/repos/MaretEngineering/MROV | closed | Add a vertical thrust label | enhancement Processing | Somewhere near the right trigger and left trigger but make it look nice. It should have the actual thrust value being sent. | 1.0 | Add a vertical thrust label - Somewhere near the right trigger and left trigger but make it look nice. It should have the actual thrust value being sent. | process | add a vertical thrust label somewhere near the right trigger and left trigger but make it look nice it should have the actual thrust value being sent | 1 |

14,802 | 18,102,902,911 | IssuesEvent | 2021-09-22 15:54:37 | yandali-damian/LIM015-social-network | https://api.github.com/repos/yandali-damian/LIM015-social-network | opened | Correcciones del segundo sprint | pending Process | >-[ ] Modificar imagen de logo en login y signup

>-[ ] Validar email y password en login

>-[ ] Implementar funcionalidad del botón de google en signup.

>-[ ] Capturar error de correo ya registrado.

>-[ ] Validar el confirma contraseña para que no redireccione sin ella. | 1.0 | Correcciones del segundo sprint - >-[ ] Modificar imagen de logo en login y signup

>-[ ] Validar email y password en login

>-[ ] Implementar funcionalidad del botón de google en signup.

>-[ ] Capturar error de correo ya registrado.

>-[ ] Validar el confirma contraseña para que no redireccione sin ella. | process | correcciones del segundo sprint modificar imagen de logo en login y signup validar email y password en login implementar funcionalidad del botón de google en signup capturar error de correo ya registrado validar el confirma contraseña para que no redireccione sin ella | 1 |

1,839 | 2,671,760,210 | IssuesEvent | 2015-03-24 09:39:56 | McStasMcXtrace/McCode | https://api.github.com/repos/McStasMcXtrace/McCode | closed | missing argument of an outf | bug C: McCode kernel P: minor | **Reported by erkn on 11 Oct 2012 21:05 UTC**

there is an ID_PRE to few at line 1059 in src/cogen.c.in | 1.0 | missing argument of an outf - **Reported by erkn on 11 Oct 2012 21:05 UTC**

there is an ID_PRE to few at line 1059 in src/cogen.c.in | non_process | missing argument of an outf reported by erkn on oct utc there is an id pre to few at line in src cogen c in | 0 |

23,247 | 10,863,197,541 | IssuesEvent | 2019-11-14 14:43:09 | benchmarkdebricked/groovy | https://api.github.com/repos/benchmarkdebricked/groovy | opened | CVE-2017-5645 (High) detected in log4j-core-2.8.jar | security vulnerability | ## CVE-2017-5645 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.8.jar</b></p></summary>

<p>The Apache Log4j Implementation</p>

<p>Library home page: <a href="https://logg... | True | CVE-2017-5645 (High) detected in log4j-core-2.8.jar - ## CVE-2017-5645 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.8.jar</b></p></summary>

<p>The Apache Log4j Implemen... | non_process | cve high detected in core jar cve high severity vulnerability vulnerable library core jar the apache implementation library home page a href path to vulnerable library groovy build gradle groovy build gradle dependency hierarchy x core jar vuln... | 0 |

14,243 | 17,172,627,600 | IssuesEvent | 2021-07-15 07:26:22 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | New standalone console tool for running processing algorithms (Request in QGIS) | 3.14 Processing Tools | ### Request for documentation

From pull request QGIS/qgis#34617

Author: @nyalldawson

QGIS version: 3.14 (Feature)

**New standalone console tool for running processing algorithms**

### PR Description:

**UPDATE**: the final tool is called `qgis_process`, all examples here have been updated to reflect the change

This... | 1.0 | New standalone console tool for running processing algorithms (Request in QGIS) - ### Request for documentation

From pull request QGIS/qgis#34617

Author: @nyalldawson

QGIS version: 3.14 (Feature)

**New standalone console tool for running processing algorithms**

### PR Description:

**UPDATE**: the final tool is called... | process | new standalone console tool for running processing algorithms request in qgis request for documentation from pull request qgis qgis author nyalldawson qgis version feature new standalone console tool for running processing algorithms pr description update the final tool is called qgi... | 1 |

25,451 | 25,206,981,785 | IssuesEvent | 2022-11-13 20:02:44 | bevyengine/bevy | https://api.github.com/repos/bevyengine/bevy | opened | Add an additional constructor method to `ManualEventReader`, which starts at the current frame's events0 | A-ECS C-Usability | ## What problem does this solve or what need does it fill?

#5730 introduced a generally helpful error message,

> 2022-11-13T19:35:54.363800Z WARN bevy_ecs::event: Missed 345 `bevy_input::mouse::MouseMotion` events. Consider reading from the `EventReader` more often (generally the best solution) or calling Event... | True | Add an additional constructor method to `ManualEventReader`, which starts at the current frame's events0 - ## What problem does this solve or what need does it fill?

#5730 introduced a generally helpful error message,

> 2022-11-13T19:35:54.363800Z WARN bevy_ecs::event: Missed 345 `bevy_input::mouse::MouseMotion... | non_process | add an additional constructor method to manualeventreader which starts at the current frame s what problem does this solve or what need does it fill introduced a generally helpful error message warn bevy ecs event missed bevy input mouse mousemotion events consider reading ... | 0 |

19,406 | 13,226,727,554 | IssuesEvent | 2020-08-18 00:50:52 | microsoft/react-native-windows | https://api.github.com/repos/microsoft/react-native-windows | closed | E2ETest: VisitAllPages instability | Area: Test Infrastructure bug | We're seeing an instability in the new VisitAllPages test. Log snippet from one of the failures:

2020-08-07T08:09:13.1994088Z [0-7] loading page PlatformColor

2020-08-07T08:09:13.1995129Z 2020-08-07T08:09:13.193Z INFO webdriver: COMMAND findElement("accessibility id", "PlatformColor")

2020-08-07T08:09:13.1996630... | 1.0 | E2ETest: VisitAllPages instability - We're seeing an instability in the new VisitAllPages test. Log snippet from one of the failures:

2020-08-07T08:09:13.1994088Z [0-7] loading page PlatformColor

2020-08-07T08:09:13.1995129Z 2020-08-07T08:09:13.193Z INFO webdriver: COMMAND findElement("accessibility id", "Platfo... | non_process | visitallpages instability we re seeing an instability in the new visitallpages test log snippet from one of the failures loading page platformcolor info webdriver command findelement accessibility id platformcolor info webdriver ... | 0 |

290,159 | 32,037,271,526 | IssuesEvent | 2023-09-22 16:16:39 | DimaMend/DemoCorp | https://api.github.com/repos/DimaMend/DemoCorp | opened | underscore-1.8.3.tgz: 1 vulnerabilities (highest severity is: 7.2) | Mend: dependency security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>underscore-1.8.3.tgz</b></p></summary>

<p>JavaScript's functional programming helper library.</p>

<p>Library home page: <a href="https://registry.npmjs.org/underscore... | True | underscore-1.8.3.tgz: 1 vulnerabilities (highest severity is: 7.2) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>underscore-1.8.3.tgz</b></p></summary>

<p>JavaScript's functional programming helper library.</p>... | non_process | underscore tgz vulnerabilities highest severity is vulnerable library underscore tgz javascript s functional programming helper library library home page a href path to dependency file package json path to vulnerable library node modules underscore package json f... | 0 |

13,068 | 15,397,125,055 | IssuesEvent | 2021-03-03 21:39:47 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Processing algorithms silently fail to load when psycopg2 is missing | Bug Processing |

So [this thread](https://lists.osgeo.org/pipermail/qgis-developer/2021-March/063199.html) on the mailing list raised what I think is the same issue I encountered some time ago when setting up a new computer. All algorithms except native QGIS ones were silently failing to load. I was able to do the following to unders... | 1.0 | Processing algorithms silently fail to load when psycopg2 is missing -

So [this thread](https://lists.osgeo.org/pipermail/qgis-developer/2021-March/063199.html) on the mailing list raised what I think is the same issue I encountered some time ago when setting up a new computer. All algorithms except native QGIS ones ... | process | processing algorithms silently fail to load when is missing so on the mailing list raised what i think is the same issue i encountered some time ago when setting up a new computer all algorithms except native qgis ones were silently failing to load i was able to do the following to understand what was going... | 1 |

6,178 | 9,086,886,748 | IssuesEvent | 2019-02-18 12:13:51 | FACK1/ReservationSystem | https://api.github.com/repos/FACK1/ReservationSystem | reopened | login Authentication | inProcess technical | - [x] check if the user logged then redirect the user to the book event page. | 1.0 | login Authentication - - [x] check if the user logged then redirect the user to the book event page. | process | login authentication check if the user logged then redirect the user to the book event page | 1 |

16,795 | 22,044,126,588 | IssuesEvent | 2022-05-29 20:10:56 | bow-simulation/virtualbow | https://api.github.com/repos/bow-simulation/virtualbow | closed | Update dependencies | area: software process type: improvement | * Eigen: No need for using the master branch anymore since 3.9.0

* Json: Use `nlohmann::ordered_json` in combination with the new serialization macros

* Boost, Catch: No particular reason, but easy to update

* Qt: Stay on 5.15 as long as Qt 6 is not widely packaged on Linux yet

* NLopt: Not needed anymore | 1.0 | Update dependencies - * Eigen: No need for using the master branch anymore since 3.9.0

* Json: Use `nlohmann::ordered_json` in combination with the new serialization macros

* Boost, Catch: No particular reason, but easy to update

* Qt: Stay on 5.15 as long as Qt 6 is not widely packaged on Linux yet

* NLopt: Not ne... | process | update dependencies eigen no need for using the master branch anymore since json use nlohmann ordered json in combination with the new serialization macros boost catch no particular reason but easy to update qt stay on as long as qt is not widely packaged on linux yet nlopt not nee... | 1 |

4,346 | 7,247,360,450 | IssuesEvent | 2018-02-15 02:19:02 | amigosdapoli/donation-system | https://api.github.com/repos/amigosdapoli/donation-system | closed | System admin is able to download the list of donations through admin interface | admin-processes | Single list with columns differentiating recurring from one-off and payment methods as well | 1.0 | System admin is able to download the list of donations through admin interface - Single list with columns differentiating recurring from one-off and payment methods as well | process | system admin is able to download the list of donations through admin interface single list with columns differentiating recurring from one off and payment methods as well | 1 |

1,863 | 4,691,155,569 | IssuesEvent | 2016-10-11 09:32:07 | CERNDocumentServer/cds | https://api.github.com/repos/CERNDocumentServer/cds | closed | Process: initiate processes per video | avc_processing review | For each video we should be able to initiate process with the payload

``` json

{

"bucket_id": "xxx",

"depid": "xxx",

...

}

``` | 1.0 | Process: initiate processes per video - For each video we should be able to initiate process with the payload

``` json

{

"bucket_id": "xxx",

"depid": "xxx",

...

}

``` | process | process initiate processes per video for each video we should be able to initiate process with the payload json bucket id xxx depid xxx | 1 |

13,471 | 15,962,950,830 | IssuesEvent | 2021-04-16 02:39:08 | rubberduck-vba/Rubberduck | https://api.github.com/repos/rubberduck-vba/Rubberduck | closed | Rubberduck doesn't seem to load twinBASIC types? | bug typeinfo-processing | ## ⏩ To Summarize

**A quick description of the problem:**

This could be a bug in Rubberduck's library loader, or just something weird with my twinBASIC library or my computer, or it could be that twinBASIC type libraries are [currently?] structured in a way that somehow sets them apart from other libraries, but it ... | 1.0 | Rubberduck doesn't seem to load twinBASIC types? - ## ⏩ To Summarize

**A quick description of the problem:**

This could be a bug in Rubberduck's library loader, or just something weird with my twinBASIC library or my computer, or it could be that twinBASIC type libraries are [currently?] structured in a way that so... | process | rubberduck doesn t seem to load twinbasic types ⏩ to summarize a quick description of the problem this could be a bug in rubberduck s library loader or just something weird with my twinbasic library or my computer or it could be that twinbasic type libraries are structured in a way that somehow sets ... | 1 |

16 | 2,496,242,720 | IssuesEvent | 2015-01-06 18:05:05 | vivo-isf/vivo-isf-ontology | https://api.github.com/repos/vivo-isf/vivo-isf-ontology | closed | memory consolidation | biological_process imported | _From [fcold...@eagle-i.org](https://code.google.com/u/113677139039624182507/) on November 30, 2012 13:33:25_

\<b>**** Use the form below to request a new term ****</b>

\<b>**** Scroll down to see a term request example ****</b>

\<b>Please indicate the label for the proposed term:</b>

memory consolidation ... | 1.0 | memory consolidation - _From [fcold...@eagle-i.org](https://code.google.com/u/113677139039624182507/) on November 30, 2012 13:33:25_

\<b>**** Use the form below to request a new term ****</b>

\<b>**** Scroll down to see a term request example ****</b>

\<b>Please indicate the label for the proposed term:</b>

me... | process | memory consolidation from on november use the form below to request a new term scroll down to see a term request example please indicate the label for the proposed term memory consolidation please provide a textual definition with source memory co... | 1 |

11,090 | 13,931,788,719 | IssuesEvent | 2020-10-22 06:05:07 | sebastianbergmann/phpunit | https://api.github.com/repos/sebastianbergmann/phpunit | closed | Process isolation under phpdbg throws exceptions | feature/process-isolation type/bug | <!--

- Please do not report an issue for a version of PHPUnit that is no longer supported. A list of currently supported versions of PHPUnit is available at https://phpunit.de/supported-versions.html.

- Please do not report an issue if you are using a version of PHP that is not supported by the version of PHPUnit you... | 1.0 | Process isolation under phpdbg throws exceptions - <!--

- Please do not report an issue for a version of PHPUnit that is no longer supported. A list of currently supported versions of PHPUnit is available at https://phpunit.de/supported-versions.html.

- Please do not report an issue if you are using a version of PHP ... | process | process isolation under phpdbg throws exceptions please do not report an issue for a version of phpunit that is no longer supported a list of currently supported versions of phpunit is available at please do not report an issue if you are using a version of php that is not supported by the version of ph... | 1 |

15,043 | 18,762,447,034 | IssuesEvent | 2021-11-05 18:10:23 | googleapis/python-datacatalog | https://api.github.com/repos/googleapis/python-datacatalog | closed | Samples that depend on BigQuery are not compatible with Python 3.10 | api: datacatalog type: process | `google-cloud-bigquery` does not yet support Python 3.10. When it does, the 3.10 samples check should turn green. For now, it is OK to merge PRs with a failing 3.10 samples check (the status check is intentionally optional).

See https://github.com/googleapis/python-bigquery/issues/1006 for the status of python 3.10... | 1.0 | Samples that depend on BigQuery are not compatible with Python 3.10 - `google-cloud-bigquery` does not yet support Python 3.10. When it does, the 3.10 samples check should turn green. For now, it is OK to merge PRs with a failing 3.10 samples check (the status check is intentionally optional).

See https://github.co... | process | samples that depend on bigquery are not compatible with python google cloud bigquery does not yet support python when it does the samples check should turn green for now it is ok to merge prs with a failing samples check the status check is intentionally optional see for the status of p... | 1 |

389 | 2,838,438,097 | IssuesEvent | 2015-05-27 07:40:59 | ChelseaStats/issues | https://api.github.com/repos/ChelseaStats/issues | closed | PA_dugout May 26 2015 at 11:27PM | to process tweet ★ priority-medium | <blockquote class="twitter-tweet">

<p lang="en" dir="ltr" xml:lang="en">Chelsea boss Jose Mourinho has been named LMA Premier League Manager of the Year <a href="http://u.thechels.uk/1RlWytj">http://pic.twitter.com/SWXfywrgT9</a></p>

— PA Dugout (@PA_dugout) <a href="https://twitter.com/PA_dugout/status/603326563... | 1.0 | PA_dugout May 26 2015 at 11:27PM - <blockquote class="twitter-tweet">

<p lang="en" dir="ltr" xml:lang="en">Chelsea boss Jose Mourinho has been named LMA Premier League Manager of the Year <a href="http://u.thechels.uk/1RlWytj">http://pic.twitter.com/SWXfywrgT9</a></p>

— PA Dugout (@PA_dugout) <a href="https://twi... | process | pa dugout may at chelsea boss jose mourinho has been named lma premier league manager of the year a href mdash pa dugout pa dugout may at via twitter | 1 |

14,948 | 18,428,165,489 | IssuesEvent | 2021-10-14 02:35:58 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Polygon to line algorithm does not recognise smooth output in Graphical modeler | Processing Bug Modeller | ### What is the bug or the crash?

In graphical modeler, I want to smooth polygons then extract the lines of theses smoothed polygons.

The algorithm "polygon to lines" does not recognise the output from "smooth algorithm"

### Steps to reproduce the issue

In graphical modeler:

Create Smooth algorithm .

The... | 1.0 | Polygon to line algorithm does not recognise smooth output in Graphical modeler - ### What is the bug or the crash?

In graphical modeler, I want to smooth polygons then extract the lines of theses smoothed polygons.

The algorithm "polygon to lines" does not recognise the output from "smooth algorithm"

### Steps... | process | polygon to line algorithm does not recognise smooth output in graphical modeler what is the bug or the crash in graphical modeler i want to smooth polygons then extract the lines of theses smoothed polygons the algorithm polygon to lines does not recognise the output from smooth algorithm steps... | 1 |

21,056 | 28,005,361,411 | IssuesEvent | 2023-03-27 14:57:23 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Random hangs and failures when sending tensors that are split using torch.split in a JoinableQueue | high priority module: multiprocessing triaged | ### 🐛 Random hangs and failures when sending tensors that are split using torch.split in a JoinableQueue

Splitting tensors using torch.split and sending them to processes using a JoinableQueue seems to cause random errors and hangs in 2.0.0.dev20230130+cu116, while works perfectly fine on 1.9.1+cu102

I tried to ... | 1.0 | Random hangs and failures when sending tensors that are split using torch.split in a JoinableQueue - ### 🐛 Random hangs and failures when sending tensors that are split using torch.split in a JoinableQueue

Splitting tensors using torch.split and sending them to processes using a JoinableQueue seems to cause random... | process | random hangs and failures when sending tensors that are split using torch split in a joinablequeue 🐛 random hangs and failures when sending tensors that are split using torch split in a joinablequeue splitting tensors using torch split and sending them to processes using a joinablequeue seems to cause random... | 1 |

11,552 | 14,435,151,346 | IssuesEvent | 2020-12-07 08:18:59 | MelissaMorales13/4a | https://api.github.com/repos/MelissaMorales13/4a | closed | fill_size_estimating_template | process-dashboard | -llenado de template de estimación de líneas de código en process Dashboard

-correr el PROBE wizard | 1.0 | fill_size_estimating_template - -llenado de template de estimación de líneas de código en process Dashboard

-correr el PROBE wizard | process | fill size estimating template llenado de template de estimación de líneas de código en process dashboard correr el probe wizard | 1 |

205,283 | 15,964,836,815 | IssuesEvent | 2021-04-16 06:54:19 | icytornado/pe | https://api.github.com/repos/icytornado/pe | closed | edit selected command confusing/ not working | severity.Medium type.DocumentationBug | steps to reproduce:

1.edit selected - p 84311456

expected the selected is edited

actual: No selected person(s) to edit warning.

Cannot select person, do you mean selecting the person by clicking on it. How do i select a person exactlly? state clearly in the UG please

![Screenshot 2021-04-16 at 2.44.47 PM.png]... | 1.0 | edit selected command confusing/ not working - steps to reproduce:

1.edit selected - p 84311456

expected the selected is edited

actual: No selected person(s) to edit warning.

Cannot select person, do you mean selecting the person by clicking on it. How do i select a person exactlly? state clearly in the UG pleas... | non_process | edit selected command confusing not working steps to reproduce edit selected p expected the selected is edited actual no selected person s to edit warning cannot select person do you mean selecting the person by clicking on it how do i select a person exactlly state clearly in the ug please ... | 0 |

109,716 | 23,812,026,163 | IssuesEvent | 2022-09-04 22:23:24 | files-community/Files | https://api.github.com/repos/files-community/Files | opened | Nullable reference types annotations | codebase quality triage approved good first issue | We are migrating to WASDK and adopt .NET 6, so we get build-in nullable reference types support.

We should annotate our code to eliminate thousands of warnings about NRT.

This is a large one, but we can do it progressively. | 1.0 | Nullable reference types annotations - We are migrating to WASDK and adopt .NET 6, so we get build-in nullable reference types support.

We should annotate our code to eliminate thousands of warnings about NRT.

This is a large one, but we can do it progressively. | non_process | nullable reference types annotations we are migrating to wasdk and adopt net so we get build in nullable reference types support we should annotate our code to eliminate thousands of warnings about nrt this is a large one but we can do it progressively | 0 |

20,955 | 4,650,991,076 | IssuesEvent | 2016-10-03 08:15:58 | syl20bnr/spacemacs | https://api.github.com/repos/syl20bnr/spacemacs | closed | Mismatch of "package.el" and "LAYERS.org" | - Bug tracker - Documentation ✏ Fixed in develop | #### Description

When using "configuration-layer/create-layer" to create a new layer, the "package.el" uses a keyword "defconst", while "LAYERS.org" uses "setq"

#### Reproduction guide

- Start Emacd:/home/cyd/.spacemacs.private/my-cygwin/

#### System Info

- OS: windows-nt

- Emacs: 24.5.1

- Spacemacs: 0.105.2... | 1.0 | Mismatch of "package.el" and "LAYERS.org" - #### Description

When using "configuration-layer/create-layer" to create a new layer, the "package.el" uses a keyword "defconst", while "LAYERS.org" uses "setq"

#### Reproduction guide

- Start Emacd:/home/cyd/.spacemacs.private/my-cygwin/

#### System Info

- OS: windo... | non_process | mismatch of package el and layers org description when using configuration layer create layer to create a new layer the package el uses a keyword defconst while layers org uses setq reproduction guide start emacd home cyd spacemacs private my cygwin system info os windo... | 0 |

4,846 | 7,739,846,889 | IssuesEvent | 2018-05-28 17:56:22 | codeforireland2/transparentwater_backend_new | https://api.github.com/repos/codeforireland2/transparentwater_backend_new | opened | Enable jsdoc for all code | development process | This is already set up for the mobile app.

We should enable js doc in eslint to force documentation of all functions where relevant. | 1.0 | Enable jsdoc for all code - This is already set up for the mobile app.

We should enable js doc in eslint to force documentation of all functions where relevant. | process | enable jsdoc for all code this is already set up for the mobile app we should enable js doc in eslint to force documentation of all functions where relevant | 1 |

320,592 | 9,783,326,853 | IssuesEvent | 2019-06-08 08:59:41 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Cannot run not-Nil returning function if that function body contains a lambda function reference | Component/jBallerina Priority/Blocker Type/Bug | Please consider the following code

```ballerina

import ballerina/io;

public function main() {

io:println("Test");

}

function testStreamPublishingAndSubscriptionForAssignableTupleTypeStream(string s1) returns int {

test("lambda", zzz);

return 0;

}

function test(string val, function (string)... | 1.0 | Cannot run not-Nil returning function if that function body contains a lambda function reference - Please consider the following code

```ballerina

import ballerina/io;

public function main() {

io:println("Test");

}

function testStreamPublishingAndSubscriptionForAssignableTupleTypeStream(string s1) retur... | non_process | cannot run not nil returning function if that function body contains a lambda function reference please consider the following code ballerina import ballerina io public function main io println test function teststreampublishingandsubscriptionforassignabletupletypestream string return... | 0 |

8,780 | 11,901,098,941 | IssuesEvent | 2020-03-30 11:51:36 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | reopened | mutualism | multi-species process |

Finds this term

GO:0085030 | symbiotic process benefiting host

defined

GO:0085030 JSON

Definition (GO:0085030 GONUTS page)

A process carried out by symbiont gene products that enables a symbiotic interaction with a host organism, that is beneficial to the host organism.

related 'mutualism'

So, there ... | 1.0 | mutualism -

Finds this term

GO:0085030 | symbiotic process benefiting host

defined

GO:0085030 JSON

Definition (GO:0085030 GONUTS page)

A process carried out by symbiont gene products that enables a symbiotic interaction with a host organism, that is beneficial to the host organism.

related 'mutualism'

... | process | mutualism finds this term go symbiotic process benefiting host defined go json definition go gonuts page a process carried out by symbiont gene products that enables a symbiotic interaction with a host organism that is beneficial to the host organism related mutualism so there isn t ... | 1 |

7,737 | 10,855,062,665 | IssuesEvent | 2019-11-13 17:35:09 | codeuniversity/smag-mvp | https://api.github.com/repos/codeuniversity/smag-mvp | opened | [Images] Object recognition model to analyse interests | Image Processing | To receive interests from photos, we need an additional general object recognition model. | 1.0 | [Images] Object recognition model to analyse interests - To receive interests from photos, we need an additional general object recognition model. | process | object recognition model to analyse interests to receive interests from photos we need an additional general object recognition model | 1 |

518,029 | 15,022,722,723 | IssuesEvent | 2021-02-01 17:17:46 | godaddy-wordpress/coblocks | https://api.github.com/repos/godaddy-wordpress/coblocks | closed | Gist Block: Console error message | [Priority] Low [Type] Bug | ### Describe the bug:

Add the Gist Block to page or post and It will produce an error in the console when you will try to add some random text rather than adding GitHub URL.

### To reproduce:

1. Go to 'Gist block'

2. Click on 'Textbox provided by gist block'

3. Now try to add some random text in that

4. See err... | 1.0 | Gist Block: Console error message - ### Describe the bug:

Add the Gist Block to page or post and It will produce an error in the console when you will try to add some random text rather than adding GitHub URL.

### To reproduce:

1. Go to 'Gist block'

2. Click on 'Textbox provided by gist block'

3. Now try to add ... | non_process | gist block console error message describe the bug add the gist block to page or post and it will produce an error in the console when you will try to add some random text rather than adding github url to reproduce go to gist block click on textbox provided by gist block now try to add ... | 0 |

13,508 | 16,047,418,597 | IssuesEvent | 2021-04-22 15:03:39 | JitenPalaparthi/readyGo | https://api.github.com/repos/JitenPalaparthi/readyGo | closed | lint and fix the code | continuous process improvement | golangci-lint is a good static analysis tool. There seems to be lot of issues. All to be fixed, | 1.0 | lint and fix the code - golangci-lint is a good static analysis tool. There seems to be lot of issues. All to be fixed, | process | lint and fix the code golangci lint is a good static analysis tool there seems to be lot of issues all to be fixed | 1 |

5,459 | 8,320,090,531 | IssuesEvent | 2018-09-25 19:07:34 | GoogleCloudPlatform/google-cloud-python | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-python | opened | BigQuery: 'test_load_table_from_uri_then_dump_table' flakes w/ 429 in bucket teardown | api: bigquery flaky testing type: process | From: https://circleci.com/gh/GoogleCloudPlatform/google-cloud-python/8387

```python

____________ TestBigQuery.test_load_table_from_uri_then_dump_table _____________

self = <tests.system.TestBigQuery testMethod=test_load_table_from_uri_then_dump_table>

def tearDown(self):

from google.cloud.storag... | 1.0 | BigQuery: 'test_load_table_from_uri_then_dump_table' flakes w/ 429 in bucket teardown - From: https://circleci.com/gh/GoogleCloudPlatform/google-cloud-python/8387

```python

____________ TestBigQuery.test_load_table_from_uri_then_dump_table _____________

self = <tests.system.TestBigQuery testMethod=test_load_tabl... | process | bigquery test load table from uri then dump table flakes w in bucket teardown from python testbigquery test load table from uri then dump table self def teardown self from google cloud storage import bucket from google cloud exceptions impor... | 1 |

4,624 | 7,468,696,503 | IssuesEvent | 2018-04-02 19:56:09 | syndesisio/syndesis | https://api.github.com/repos/syndesisio/syndesis | closed | UX: Add navigation to the UX Tracker | cat/process group/uxd | ## This is a...

<!-- Check one of the following options with "x" -->

<pre><code>

[ ] Feature request

[ ] Regression (a behavior that used to work and stopped working in a new release)

[ ] Bug report <!-- Please search GitHub for a similar issue or PR before submitting -->

[x] Documentation issue or request

</co... | 1.0 | UX: Add navigation to the UX Tracker - ## This is a...

<!-- Check one of the following options with "x" -->

<pre><code>

[ ] Feature request

[ ] Regression (a behavior that used to work and stopped working in a new release)

[ ] Bug report <!-- Please search GitHub for a similar issue or PR before submitting -->

[... | process | ux add navigation to the ux tracker this is a feature request regression a behavior that used to work and stopped working in a new release bug report documentation issue or request the problem the ux work tracker should include two items on the nav bar in addition to t... | 1 |

9,736 | 12,731,824,960 | IssuesEvent | 2020-06-25 09:25:15 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Strange @@relation error message when running prisma generate | bug/2-confirmed kind/bug process/candidate team/engines topic: schema validation | <!--

Thanks for helping us improve Prisma! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by setting the `DEBUG="*"` environment variable and enabling additional logging output in Prisma Client.

Learn more about writing proper bug reports here: ht... | 1.0 | Strange @@relation error message when running prisma generate - <!--

Thanks for helping us improve Prisma! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by setting the `DEBUG="*"` environment variable and enabling additional logging output in Prism... | process | strange relation error message when running prisma generate thanks for helping us improve prisma 🙏 please follow the sections in the template and provide as much information as possible about your problem e g by setting the debug environment variable and enabling additional logging output in prism... | 1 |

5,800 | 8,641,448,378 | IssuesEvent | 2018-11-24 17:52:02 | carloseduardov8/Viajato | https://api.github.com/repos/carloseduardov8/Viajato | closed | Executar testes de sistema | Priority:High Process:Run Test Case | Verificar se cada cenário foi adequadamente implementado, realizar correções necessárias e gerar artefato sintetizando resultados obtidos. | 1.0 | Executar testes de sistema - Verificar se cada cenário foi adequadamente implementado, realizar correções necessárias e gerar artefato sintetizando resultados obtidos. | process | executar testes de sistema verificar se cada cenário foi adequadamente implementado realizar correções necessárias e gerar artefato sintetizando resultados obtidos | 1 |

19,109 | 25,162,644,304 | IssuesEvent | 2022-11-10 17:59:31 | GoogleCloudPlatform/golang-samples | https://api.github.com/repos/GoogleCloudPlatform/golang-samples | closed | kokoro: run_tests.sh always exits 0 | priority: p1 type: process samples | https://github.com/GoogleCloudPlatform/golang-samples/blob/d2f99a1ba256d31151ffe895c0e966712e09729f/testing/kokoro/system_tests.sh#L169-L172

...

https://github.com/GoogleCloudPlatform/golang-samples/blob/d2f99a1ba256d31151ffe895c0e966712e09729f/testing/kokoro/system_tests.sh#L247

There are no updates to `exit_... | 1.0 | kokoro: run_tests.sh always exits 0 - https://github.com/GoogleCloudPlatform/golang-samples/blob/d2f99a1ba256d31151ffe895c0e966712e09729f/testing/kokoro/system_tests.sh#L169-L172

...

https://github.com/GoogleCloudPlatform/golang-samples/blob/d2f99a1ba256d31151ffe895c0e966712e09729f/testing/kokoro/system_tests.sh#... | process | kokoro run tests sh always exits there are no updates to exit code so it seems this will always exit | 1 |

939 | 3,408,432,845 | IssuesEvent | 2015-12-04 10:36:20 | triplea-game/triplea | https://api.github.com/repos/triplea-game/triplea | closed | Maps in Github | Closed if no Further Comment Process Improvement | This issue is to discuss how to organize maps into github repositories, whether to group maps into 3 or 4 repositories, or whether to put one map per repository.

With multiple maps per repository there are a number of problems that come up:

- more difficult to write scripts that work for each repository and every m... | 1.0 | Maps in Github - This issue is to discuss how to organize maps into github repositories, whether to group maps into 3 or 4 repositories, or whether to put one map per repository.

With multiple maps per repository there are a number of problems that come up: