Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

17,438

| 23,263,025,328

|

IssuesEvent

|

2022-08-04 14:55:57

|

googleapis/java-shared-dependencies

|

https://api.github.com/repos/googleapis/java-shared-dependencies

|

closed

|

GitHub Check to find linkage errors

|

type: process

|

The current setup of Linkage Monitor does not detect linkage errors between the member of the shared dependencies BOM. This is because Linkage Monitor detects conflicts in the libraries BOM and the Libraries BOM does not import the shared dependencies BOM.

Jeff came up with an idea in running Linkage Monitor in the similar way as the existing "downstream" checks. I'll explore that option.

|

1.0

|

GitHub Check to find linkage errors - The current setup of Linkage Monitor does not detect linkage errors between the member of the shared dependencies BOM. This is because Linkage Monitor detects conflicts in the libraries BOM and the Libraries BOM does not import the shared dependencies BOM.

Jeff came up with an idea in running Linkage Monitor in the similar way as the existing "downstream" checks. I'll explore that option.

|

process

|

github check to find linkage errors the current setup of linkage monitor does not detect linkage errors between the member of the shared dependencies bom this is because linkage monitor detects conflicts in the libraries bom and the libraries bom does not import the shared dependencies bom jeff came up with an idea in running linkage monitor in the similar way as the existing downstream checks i ll explore that option

| 1

|

96,605

| 12,144,906,570

|

IssuesEvent

|

2020-04-24 08:24:34

|

se701-group6/A2

|

https://api.github.com/repos/se701-group6/A2

|

closed

|

Webapp can be used without logging in.

|

approved bug design front-end

|

**Describe the bug**

I can access any page without having to login first.

**To Reproduce**

Steps to reproduce the behavior:

1. Start the webapp

2. Go to login page and login

3. Copy the URL

4. Go to a different browser or private browsing (of the same browser)

5. Paste the URL

6. Go to the URL

OR

1. Start the webapp

2. Go to localhost:3000/#/home/split

3. Create a bill

4. Press "Split Bill"

**Severity**

Mark with an x.

[x]: Minor effect eg. graphical

[]: Functional error eg. App does not function correctly

[]: Severe eg. Crashing

**Reproducibility**

Mark with an x.

[x]: Consistent

[]: Occasional

[]: Cannot reproduce at the moment eg. unsure

**Expected behavior**

I should be redirected to the login screen if I haven't already logged in on the browser.

**Screenshots, Images and Traces**

**Conditions**

I used Ubuntu OS with FireFox, FireFox Private Browsing and Chromium. I have not tested this on Windows OS.

**Additional context**

* The backend will return a message saying "User not logged in", meaning it is responding correctly to not logging in.

* Using the second set of instructions the new bill isn't saved due to the above stated error message.

|

1.0

|

Webapp can be used without logging in. - **Describe the bug**

I can access any page without having to login first.

**To Reproduce**

Steps to reproduce the behavior:

1. Start the webapp

2. Go to login page and login

3. Copy the URL

4. Go to a different browser or private browsing (of the same browser)

5. Paste the URL

6. Go to the URL

OR

1. Start the webapp

2. Go to localhost:3000/#/home/split

3. Create a bill

4. Press "Split Bill"

**Severity**

Mark with an x.

[x]: Minor effect eg. graphical

[]: Functional error eg. App does not function correctly

[]: Severe eg. Crashing

**Reproducibility**

Mark with an x.

[x]: Consistent

[]: Occasional

[]: Cannot reproduce at the moment eg. unsure

**Expected behavior**

I should be redirected to the login screen if I haven't already logged in on the browser.

**Screenshots, Images and Traces**

**Conditions**

I used Ubuntu OS with FireFox, FireFox Private Browsing and Chromium. I have not tested this on Windows OS.

**Additional context**

* The backend will return a message saying "User not logged in", meaning it is responding correctly to not logging in.

* Using the second set of instructions the new bill isn't saved due to the above stated error message.

|

non_process

|

webapp can be used without logging in describe the bug i can access any page without having to login first to reproduce steps to reproduce the behavior start the webapp go to login page and login copy the url go to a different browser or private browsing of the same browser paste the url go to the url or start the webapp go to localhost home split create a bill press split bill severity mark with an x minor effect eg graphical functional error eg app does not function correctly severe eg crashing reproducibility mark with an x consistent occasional cannot reproduce at the moment eg unsure expected behavior i should be redirected to the login screen if i haven t already logged in on the browser screenshots images and traces conditions i used ubuntu os with firefox firefox private browsing and chromium i have not tested this on windows os additional context the backend will return a message saying user not logged in meaning it is responding correctly to not logging in using the second set of instructions the new bill isn t saved due to the above stated error message

| 0

|

98,158

| 29,497,233,802

|

IssuesEvent

|

2023-06-02 18:06:00

|

openvinotoolkit/openvino

|

https://api.github.com/repos/openvinotoolkit/openvino

|

closed

|

ie_precision.hpp defines == operator but not !=

|

category: inference category: build platform: win32

|

See https://github.com/openvinotoolkit/openvino/blob/031f2cc7d1a9aa10fb8a242057735d7ef1fd7f71/src/inference/include/ie/ie_precision.hpp#L162

I just upgraded to Visual Studio 17.6 and in our codebase this header file generates error [C2666](https://learn.microsoft.com/en-us/cpp/error-messages/compiler-errors-2/compiler-error-c2666?view=msvc-170) . We are using `/std:c++latest`. I believe it disregards the == operator because of a missing != operator. Indeed adding

```c++

bool operator!=(const Precision& p) const noexcept { return !(*this == p); }

```

fixes our code.

I'm not in a position to compile OpenVino itself at the moment, otherwise I'd have done a PR with this suggestion, or is there a reason not to add the != operator?

|

1.0

|

ie_precision.hpp defines == operator but not != - See https://github.com/openvinotoolkit/openvino/blob/031f2cc7d1a9aa10fb8a242057735d7ef1fd7f71/src/inference/include/ie/ie_precision.hpp#L162

I just upgraded to Visual Studio 17.6 and in our codebase this header file generates error [C2666](https://learn.microsoft.com/en-us/cpp/error-messages/compiler-errors-2/compiler-error-c2666?view=msvc-170) . We are using `/std:c++latest`. I believe it disregards the == operator because of a missing != operator. Indeed adding

```c++

bool operator!=(const Precision& p) const noexcept { return !(*this == p); }

```

fixes our code.

I'm not in a position to compile OpenVino itself at the moment, otherwise I'd have done a PR with this suggestion, or is there a reason not to add the != operator?

|

non_process

|

ie precision hpp defines operator but not see i just upgraded to visual studio and in our codebase this header file generates error we are using std c latest i believe it disregards the operator because of a missing operator indeed adding c bool operator const precision p const noexcept return this p fixes our code i m not in a position to compile openvino itself at the moment otherwise i d have done a pr with this suggestion or is there a reason not to add the operator

| 0

|

12,606

| 15,008,151,099

|

IssuesEvent

|

2021-01-31 08:44:21

|

threefoldtech/js-sdk

|

https://api.github.com/repos/threefoldtech/js-sdk

|

closed

|

Expiration dates do not match payment plans.

|

process_duplicate type_bug

|

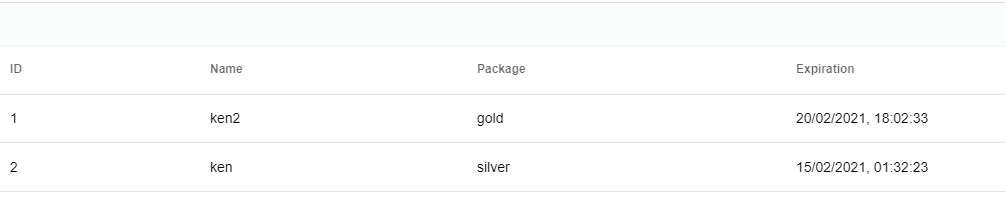

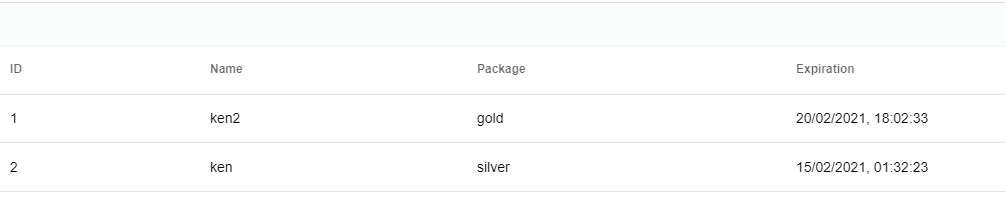

1) I deployed Two VDCs today, 28/01/2021

2) One with 15 TFT on testnet ( silver ) one with 30 TFT on testnet ( gold )

I would expect both these to be valid until 28/02/2021 ( or at least 30 days ) based on what was said when I picked the specific plans.

Reality is =>

|

1.0

|

Expiration dates do not match payment plans. - 1) I deployed Two VDCs today, 28/01/2021

2) One with 15 TFT on testnet ( silver ) one with 30 TFT on testnet ( gold )

I would expect both these to be valid until 28/02/2021 ( or at least 30 days ) based on what was said when I picked the specific plans.

Reality is =>

|

process

|

expiration dates do not match payment plans i deployed two vdcs today one with tft on testnet silver one with tft on testnet gold i would expect both these to be valid until or at least days based on what was said when i picked the specific plans reality is

| 1

|

3,077

| 3,321,485,916

|

IssuesEvent

|

2015-11-09 09:15:30

|

gama-platform/gama

|

https://api.github.com/repos/gama-platform/gama

|

opened

|

Remember the gama workspace after a crash of the application

|

> Question Affects Usability

|

It should be nice to remember the workspace after a crash of the application. Maybe to save the workspace after each file closing/opening should be better than to save the workspace after a clean application closing...

|

True

|

Remember the gama workspace after a crash of the application - It should be nice to remember the workspace after a crash of the application. Maybe to save the workspace after each file closing/opening should be better than to save the workspace after a clean application closing...

|

non_process

|

remember the gama workspace after a crash of the application it should be nice to remember the workspace after a crash of the application maybe to save the workspace after each file closing opening should be better than to save the workspace after a clean application closing

| 0

|

19,289

| 25,466,313,287

|

IssuesEvent

|

2022-11-25 05:00:31

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[GCI] [PM] Getting 'An internal error has occurred' message in participant manager when tried to sign in

|

Bug Blocker P0 Participant manager Process: Fixed Process: Tested QA Process: Tested dev

|

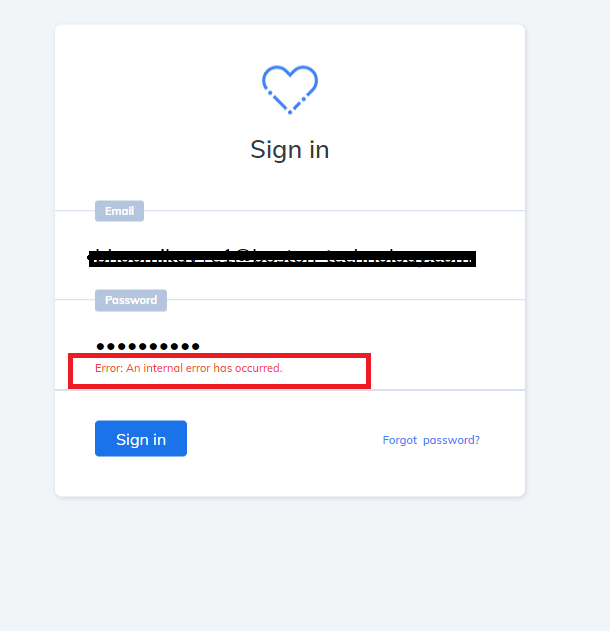

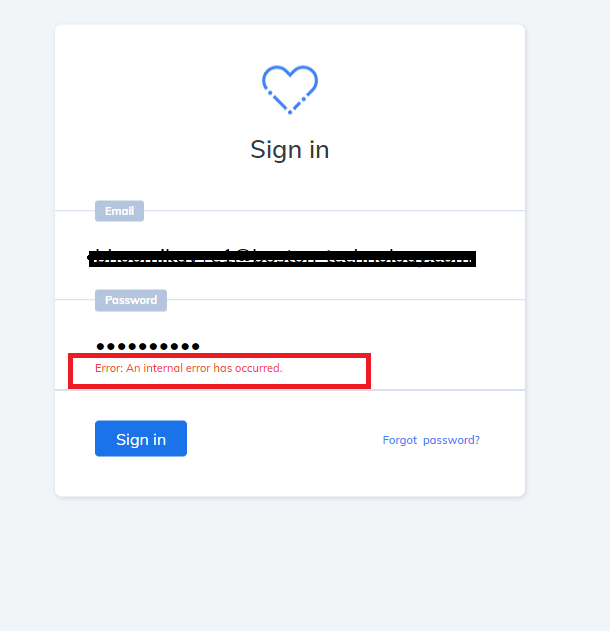

**Steps:**

1. Add organizational user in the participant manager

2. Complete set up your account process

3. Sign in with registered credentials and Verify

**AR:** Getting an internal error has occurred message when tried to sign in

**ER:** Admin's should be able to sign without any error's

**Participant manager**

|

3.0

|

[GCI] [PM] Getting 'An internal error has occurred' message in participant manager when tried to sign in - **Steps:**

1. Add organizational user in the participant manager

2. Complete set up your account process

3. Sign in with registered credentials and Verify

**AR:** Getting an internal error has occurred message when tried to sign in

**ER:** Admin's should be able to sign without any error's

**Participant manager**

|

process

|

getting an internal error has occurred message in participant manager when tried to sign in steps add organizational user in the participant manager complete set up your account process sign in with registered credentials and verify ar getting an internal error has occurred message when tried to sign in er admin s should be able to sign without any error s participant manager

| 1

|

641,880

| 20,842,760,139

|

IssuesEvent

|

2022-03-21 03:44:52

|

patternfly/patternfly-elements

|

https://api.github.com/repos/patternfly/patternfly-elements

|

closed

|

Add UTC example to pfe-datetime

|

priority: low update component request

|

# pfe-datetime

Add an example of how to specify the time-zone at UTC for `pfe-datetime`.

|

1.0

|

Add UTC example to pfe-datetime - # pfe-datetime

Add an example of how to specify the time-zone at UTC for `pfe-datetime`.

|

non_process

|

add utc example to pfe datetime pfe datetime add an example of how to specify the time zone at utc for pfe datetime

| 0

|

117,117

| 15,055,665,158

|

IssuesEvent

|

2021-02-03 19:06:25

|

ctoec/data-collection

|

https://api.github.com/repos/ctoec/data-collection

|

opened

|

Redesign Reporting Period select

|

design-team

|

As an ECE Reporter user, when I choose reporting periods:

- I can more easily find and select the reporting period that accurately represents the child's enrollment

- I am not confused by a long drop-down with many different options

|

1.0

|

Redesign Reporting Period select - As an ECE Reporter user, when I choose reporting periods:

- I can more easily find and select the reporting period that accurately represents the child's enrollment

- I am not confused by a long drop-down with many different options

|

non_process

|

redesign reporting period select as an ece reporter user when i choose reporting periods i can more easily find and select the reporting period that accurately represents the child s enrollment i am not confused by a long drop down with many different options

| 0

|

10,610

| 13,435,983,847

|

IssuesEvent

|

2020-09-07 13:43:53

|

googleapis/python-pubsub

|

https://api.github.com/repos/googleapis/python-pubsub

|

closed

|

Transition the library to the new microgenerator

|

api: pubsub type: process

|

With the new code generator ready to be rolled out, we can make the transition here in PubSub. **This implies dropping support for Pythom 2.7 and 3.5!**

|

1.0

|

Transition the library to the new microgenerator - With the new code generator ready to be rolled out, we can make the transition here in PubSub. **This implies dropping support for Pythom 2.7 and 3.5!**

|

process

|

transition the library to the new microgenerator with the new code generator ready to be rolled out we can make the transition here in pubsub this implies dropping support for pythom and

| 1

|

6,581

| 9,661,648,962

|

IssuesEvent

|

2019-05-20 18:36:42

|

AmpersandTarski/Ampersand

|

https://api.github.com/repos/AmpersandTarski/Ampersand

|

closed

|

On Travis CI: Temp database creation failed!

|

priority:high software process

|

## What happens?

Lately we get a failure when testing on Travis CI, with this error: [Here is an example of such a build](https://travis-ci.org/AmpersandTarski/Ampersand/jobs/511197166).

Because of this, we get false negatives as a build result.

## Diagnosis

I have seen several locations where different tests go wrong. It seems to occur with random test cases.

|

1.0

|

On Travis CI: Temp database creation failed! - ## What happens?

Lately we get a failure when testing on Travis CI, with this error: [Here is an example of such a build](https://travis-ci.org/AmpersandTarski/Ampersand/jobs/511197166).

Because of this, we get false negatives as a build result.

## Diagnosis

I have seen several locations where different tests go wrong. It seems to occur with random test cases.

|

process

|

on travis ci temp database creation failed what happens lately we get a failure when testing on travis ci with this error because of this we get false negatives as a build result diagnosis i have seen several locations where different tests go wrong it seems to occur with random test cases

| 1

|

27,411

| 5,346,105,901

|

IssuesEvent

|

2017-02-17 18:46:31

|

bridgedotnet/Bridge

|

https://api.github.com/repos/bridgedotnet/Bridge

|

opened

|

Update documentation, Global Configuration

|

documentation-required

|

Add new global option to https://github.com/bridgedotnet/Bridge/wiki/global-configuration

## disabledAnnotatedFunctionNames

**Type:** `boolean`

True to disable function name adding if Name attribute is applied to a C# method. False is default value.

#### Example

C# method example

```cs

[Name("sm")]

public void SomeMethod();

```

Generated code js code for `false`

```js

sm: function sm () ...

```

Generated code js code for `true`

```js

sm: function () ...

```

#### Example of `bridge.json`

```js

"disabledAnnotatedFunctionNames": true

```

|

1.0

|

Update documentation, Global Configuration - Add new global option to https://github.com/bridgedotnet/Bridge/wiki/global-configuration

## disabledAnnotatedFunctionNames

**Type:** `boolean`

True to disable function name adding if Name attribute is applied to a C# method. False is default value.

#### Example

C# method example

```cs

[Name("sm")]

public void SomeMethod();

```

Generated code js code for `false`

```js

sm: function sm () ...

```

Generated code js code for `true`

```js

sm: function () ...

```

#### Example of `bridge.json`

```js

"disabledAnnotatedFunctionNames": true

```

|

non_process

|

update documentation global configuration add new global option to disabledannotatedfunctionnames type boolean true to disable function name adding if name attribute is applied to a c method false is default value example c method example cs public void somemethod generated code js code for false js sm function sm generated code js code for true js sm function example of bridge json js disabledannotatedfunctionnames true

| 0

|

8,821

| 11,937,527,816

|

IssuesEvent

|

2020-04-02 12:20:24

|

ComposableWeb/poolbase

|

https://api.github.com/repos/ComposableWeb/poolbase

|

opened

|

feat(plugin): process known formats with a plugin

|

epic: processing

|

Example: process cooking recipe from known sites or from microformat

|

1.0

|

feat(plugin): process known formats with a plugin - Example: process cooking recipe from known sites or from microformat

|

process

|

feat plugin process known formats with a plugin example process cooking recipe from known sites or from microformat

| 1

|

10,870

| 13,640,424,138

|

IssuesEvent

|

2020-09-25 12:43:59

|

timberio/vector

|

https://api.github.com/repos/timberio/vector

|

closed

|

New `truncate` remap function

|

domain: mapping domain: processing type: feature

|

The `truncate` function truncates a string to the provided length.

## Example

Given the following event:

```js

{

"message": "Supercalifragilisticexpialidocious"

}

```

### Default

And the following remap instruction set:

```

.message = truncate(.message, 5)

```

Would result in:

```js

{

"message": "Super"

}

```

### Ellipsis

And the following remap instruction set:

```

.message = truncate(.message, 5, true)

```

Would result in:

```js

{

"message": "Super..."

}

```

Or, if the limit exceeds the length of the string, then no `...` is added:

```

.message = truncate(.message, 9999999, true)

```

Would result in:

```js

{

"message": "Supercalifragilisticexpialidocious"

}

```

|

1.0

|

New `truncate` remap function - The `truncate` function truncates a string to the provided length.

## Example

Given the following event:

```js

{

"message": "Supercalifragilisticexpialidocious"

}

```

### Default

And the following remap instruction set:

```

.message = truncate(.message, 5)

```

Would result in:

```js

{

"message": "Super"

}

```

### Ellipsis

And the following remap instruction set:

```

.message = truncate(.message, 5, true)

```

Would result in:

```js

{

"message": "Super..."

}

```

Or, if the limit exceeds the length of the string, then no `...` is added:

```

.message = truncate(.message, 9999999, true)

```

Would result in:

```js

{

"message": "Supercalifragilisticexpialidocious"

}

```

|

process

|

new truncate remap function the truncate function truncates a string to the provided length example given the following event js message supercalifragilisticexpialidocious default and the following remap instruction set message truncate message would result in js message super ellipsis and the following remap instruction set message truncate message true would result in js message super or if the limit exceeds the length of the string then no is added message truncate message true would result in js message supercalifragilisticexpialidocious

| 1

|

20,571

| 27,231,130,187

|

IssuesEvent

|

2023-02-21 13:19:45

|

dotnet/runtime

|

https://api.github.com/repos/dotnet/runtime

|

closed

|

PS7.3 (using .NET 7) locks up when used with screen on Linux and macOS

|

area-System.Diagnostics.Process untriaged in-pr

|

### Description

When using PS7.2 (built on .NET 6) with screen, it works fine on Linux (still seems to lock up on macOS).

PS7.3 (built on .NET 7) with screen will lock up (seems to be waiting on stdin) after running a native command:

```bash

screen ./pwsh

ls

```

Running cmdlets (like `get-childitem`) works fine. Only when executing a native command does it seem to be blocking and because the process doesn't finish, the PS prompt never comes.

I did rebuild 7.2 (which works with screen on Linux) against .NET 7 and that also exhibits the same locking behavior, so it seems that it's due to a change in .NET 7.

Using `set DOTNET_SYSTEM_CONSOLE_USENET6COMPATREADKEY=1` does not resolve the issue, so it seems unrelated to those changes.

### Reproduction Steps

I tried creating a simple repl that starts exes, but it doesn't repro the issue. PS7 does more complex work reading keyboard and handling starting exes.

```bash

screen ./pwsh

ls

```

### Expected behavior

`ls` to output and PS prompt to come back

### Actual behavior

`ls` shows output, and process is blocked

### Regression?

From .NET 6

### Known Workarounds

_No response_

### Configuration

Specifically tested under Ubuntu 22.04 using WSL

### Other information

_No response_

|

1.0

|

PS7.3 (using .NET 7) locks up when used with screen on Linux and macOS - ### Description

When using PS7.2 (built on .NET 6) with screen, it works fine on Linux (still seems to lock up on macOS).

PS7.3 (built on .NET 7) with screen will lock up (seems to be waiting on stdin) after running a native command:

```bash

screen ./pwsh

ls

```

Running cmdlets (like `get-childitem`) works fine. Only when executing a native command does it seem to be blocking and because the process doesn't finish, the PS prompt never comes.

I did rebuild 7.2 (which works with screen on Linux) against .NET 7 and that also exhibits the same locking behavior, so it seems that it's due to a change in .NET 7.

Using `set DOTNET_SYSTEM_CONSOLE_USENET6COMPATREADKEY=1` does not resolve the issue, so it seems unrelated to those changes.

### Reproduction Steps

I tried creating a simple repl that starts exes, but it doesn't repro the issue. PS7 does more complex work reading keyboard and handling starting exes.

```bash

screen ./pwsh

ls

```

### Expected behavior

`ls` to output and PS prompt to come back

### Actual behavior

`ls` shows output, and process is blocked

### Regression?

From .NET 6

### Known Workarounds

_No response_

### Configuration

Specifically tested under Ubuntu 22.04 using WSL

### Other information

_No response_

|

process

|

using net locks up when used with screen on linux and macos description when using built on net with screen it works fine on linux still seems to lock up on macos built on net with screen will lock up seems to be waiting on stdin after running a native command bash screen pwsh ls running cmdlets like get childitem works fine only when executing a native command does it seem to be blocking and because the process doesn t finish the ps prompt never comes i did rebuild which works with screen on linux against net and that also exhibits the same locking behavior so it seems that it s due to a change in net using set dotnet system console does not resolve the issue so it seems unrelated to those changes reproduction steps i tried creating a simple repl that starts exes but it doesn t repro the issue does more complex work reading keyboard and handling starting exes bash screen pwsh ls expected behavior ls to output and ps prompt to come back actual behavior ls shows output and process is blocked regression from net known workarounds no response configuration specifically tested under ubuntu using wsl other information no response

| 1

|

5,264

| 12,288,694,738

|

IssuesEvent

|

2020-05-09 17:54:41

|

kubernetes/kubernetes

|

https://api.github.com/repos/kubernetes/kubernetes

|

closed

|

avoid major-version package names

|

area/code-organization kind/feature lifecycle/rotten priority/important-longterm sig/api-machinery sig/architecture

|

Packages in this repository with paths like `k8s.io/api/core/v1` are named according to their major version (`package v1`) instead of a more semantically meaningful part of the path (`package core`).

That forces users of these packages to pretty much always rename them upon import.

Unfortunately, fixing the package names for existing packages would be a breaking change.

However, for future packages, it might be a good idea to use more descriptive package names in the [package clause](https://golang.org/ref/spec#Package_clause).

See also https://blog.golang.org/package-names.

|

1.0

|

avoid major-version package names - Packages in this repository with paths like `k8s.io/api/core/v1` are named according to their major version (`package v1`) instead of a more semantically meaningful part of the path (`package core`).

That forces users of these packages to pretty much always rename them upon import.

Unfortunately, fixing the package names for existing packages would be a breaking change.

However, for future packages, it might be a good idea to use more descriptive package names in the [package clause](https://golang.org/ref/spec#Package_clause).

See also https://blog.golang.org/package-names.

|

non_process

|

avoid major version package names packages in this repository with paths like io api core are named according to their major version package instead of a more semantically meaningful part of the path package core that forces users of these packages to pretty much always rename them upon import unfortunately fixing the package names for existing packages would be a breaking change however for future packages it might be a good idea to use more descriptive package names in the see also

| 0

|

351,240

| 31,990,316,766

|

IssuesEvent

|

2023-09-21 05:05:13

|

redpanda-data/redpanda

|

https://api.github.com/repos/redpanda-data/redpanda

|

closed

|

CI Failure (TimeoutError) in `UpgradeFromPriorFeatureVersionCloudStorageTest.test_rolling_upgrade`

|

kind/bug ci-failure area/cloud-storage ci-disabled-test sev/high

|

<!-- Before creating an issue look through existing ci-issues to avoid duplicates

https://github.com/redpanda-data/redpanda/issues?q=is%3Aissue+is%3Aopen+label%3Aci-failure -->

https://buildkite.com/redpanda/redpanda/builds/36165#018a4cc9-65ad-4b5a-a56e-08ff1a45006a

<!-- Copy the summary from the "Failed Tests" section of report.html -->

```

Module: rptest.tests.upgrade_test

Class: UpgradeFromPriorFeatureVersionCloudStorageTest

Method: test_rolling_upgrade

Arguments:

{

"cloud_storage_type": 1

}

```

<!-- Copy the summary from report.txt if it's too vague to differentiate key symptoms (e.g. TimeoutError is thrown for multiple reason so it makes to dig deeper) - fetch the tarball, check debug and redpanda logs -->

```

test_id: rptest.tests.upgrade_test.UpgradeFromPriorFeatureVersionCloudStorageTest.test_rolling_upgrade.cloud_storage_type=CloudStorageType.S3

status: FAIL

run time: 1 minute 43.579 seconds

TimeoutError('New manifest was not uploaded post upgrade')

Traceback (most recent call last):

File "/usr/local/lib/python3.10/dist-packages/ducktape/tests/runner_client.py", line 135, in run

data = self.run_test()

File "/usr/local/lib/python3.10/dist-packages/ducktape/tests/runner_client.py", line 227, in run_test

return self.test_context.function(self.test)

File "/usr/local/lib/python3.10/dist-packages/ducktape/mark/_mark.py", line 481, in wrapper

return functools.partial(f, *args, **kwargs)(*w_args, **w_kwargs)

File "/root/tests/rptest/services/cluster.py", line 82, in wrapped

r = f(self, *args, **kwargs)

File "/root/tests/rptest/tests/upgrade_test.py", line 582, in test_rolling_upgrade

wait_until(insync_offset_advanced,

File "/usr/local/lib/python3.10/dist-packages/ducktape/utils/util.py", line 57, in wait_until

raise TimeoutError(err_msg() if callable(err_msg) else err_msg) from last_exception

ducktape.errors.TimeoutError: New manifest was not uploaded post upgrade

```

|

1.0

|

CI Failure (TimeoutError) in `UpgradeFromPriorFeatureVersionCloudStorageTest.test_rolling_upgrade` - <!-- Before creating an issue look through existing ci-issues to avoid duplicates

https://github.com/redpanda-data/redpanda/issues?q=is%3Aissue+is%3Aopen+label%3Aci-failure -->

https://buildkite.com/redpanda/redpanda/builds/36165#018a4cc9-65ad-4b5a-a56e-08ff1a45006a

<!-- Copy the summary from the "Failed Tests" section of report.html -->

```

Module: rptest.tests.upgrade_test

Class: UpgradeFromPriorFeatureVersionCloudStorageTest

Method: test_rolling_upgrade

Arguments:

{

"cloud_storage_type": 1

}

```

<!-- Copy the summary from report.txt if it's too vague to differentiate key symptoms (e.g. TimeoutError is thrown for multiple reason so it makes to dig deeper) - fetch the tarball, check debug and redpanda logs -->

```

test_id: rptest.tests.upgrade_test.UpgradeFromPriorFeatureVersionCloudStorageTest.test_rolling_upgrade.cloud_storage_type=CloudStorageType.S3

status: FAIL

run time: 1 minute 43.579 seconds

TimeoutError('New manifest was not uploaded post upgrade')

Traceback (most recent call last):

File "/usr/local/lib/python3.10/dist-packages/ducktape/tests/runner_client.py", line 135, in run

data = self.run_test()

File "/usr/local/lib/python3.10/dist-packages/ducktape/tests/runner_client.py", line 227, in run_test

return self.test_context.function(self.test)

File "/usr/local/lib/python3.10/dist-packages/ducktape/mark/_mark.py", line 481, in wrapper

return functools.partial(f, *args, **kwargs)(*w_args, **w_kwargs)

File "/root/tests/rptest/services/cluster.py", line 82, in wrapped

r = f(self, *args, **kwargs)

File "/root/tests/rptest/tests/upgrade_test.py", line 582, in test_rolling_upgrade

wait_until(insync_offset_advanced,

File "/usr/local/lib/python3.10/dist-packages/ducktape/utils/util.py", line 57, in wait_until

raise TimeoutError(err_msg() if callable(err_msg) else err_msg) from last_exception

ducktape.errors.TimeoutError: New manifest was not uploaded post upgrade

```

|

non_process

|

ci failure timeouterror in upgradefrompriorfeatureversioncloudstoragetest test rolling upgrade before creating an issue look through existing ci issues to avoid duplicates module rptest tests upgrade test class upgradefrompriorfeatureversioncloudstoragetest method test rolling upgrade arguments cloud storage type test id rptest tests upgrade test upgradefrompriorfeatureversioncloudstoragetest test rolling upgrade cloud storage type cloudstoragetype status fail run time minute seconds timeouterror new manifest was not uploaded post upgrade traceback most recent call last file usr local lib dist packages ducktape tests runner client py line in run data self run test file usr local lib dist packages ducktape tests runner client py line in run test return self test context function self test file usr local lib dist packages ducktape mark mark py line in wrapper return functools partial f args kwargs w args w kwargs file root tests rptest services cluster py line in wrapped r f self args kwargs file root tests rptest tests upgrade test py line in test rolling upgrade wait until insync offset advanced file usr local lib dist packages ducktape utils util py line in wait until raise timeouterror err msg if callable err msg else err msg from last exception ducktape errors timeouterror new manifest was not uploaded post upgrade

| 0

|

176,373

| 13,638,673,846

|

IssuesEvent

|

2020-09-25 09:44:10

|

Scholar-6/brillder

|

https://api.github.com/repos/Scholar-6/brillder

|

closed

|

Only show 'Choose more than one option' text during Review (not Investigation)

|

Betatester Request Input Brick

|

<img width="475" alt="Screenshot 2020-09-24 at 15 31 14" src="https://user-images.githubusercontent.com/59654112/94151805-20074000-fe7b-11ea-91bc-17b8e22df85b.png">

|

1.0

|

Only show 'Choose more than one option' text during Review (not Investigation) - <img width="475" alt="Screenshot 2020-09-24 at 15 31 14" src="https://user-images.githubusercontent.com/59654112/94151805-20074000-fe7b-11ea-91bc-17b8e22df85b.png">

|

non_process

|

only show choose more than one option text during review not investigation img width alt screenshot at src

| 0

|

2,324

| 7,655,810,795

|

IssuesEvent

|

2018-05-10 14:25:20

|

City-Bureau/city-scrapers

|

https://api.github.com/repos/City-Bureau/city-scrapers

|

closed

|

New Spider Request: CPS Community Action Council

|

architecture: spiders good first issue new spider needed priority: normal (important to have)

|

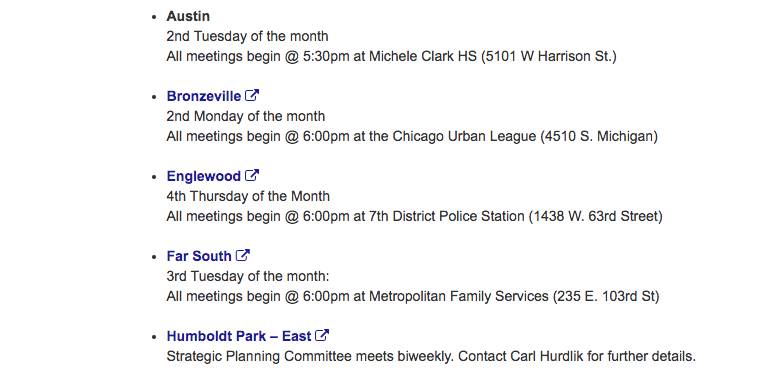

Link to the site is here: http://cps.edu/FACE/Pages/CAC.aspx

Looks like a pretty straightforward spider with one main exception—meetings aren't listed individually, they're listed on a recurring schedule:

|

1.0

|

New Spider Request: CPS Community Action Council - Link to the site is here: http://cps.edu/FACE/Pages/CAC.aspx

Looks like a pretty straightforward spider with one main exception—meetings aren't listed individually, they're listed on a recurring schedule:

|

non_process

|

new spider request cps community action council link to the site is here looks like a pretty straightforward spider with one main exception—meetings aren t listed individually they re listed on a recurring schedule

| 0

|

81,870

| 10,263,320,759

|

IssuesEvent

|

2019-08-22 14:09:00

|

angristan/openvpn-install

|

https://api.github.com/repos/angristan/openvpn-install

|

closed

|

Need "#security-and-encryption" section in README.md

|

documentation

|

Hello,

```

Do you want to customize encryption settings?

Unless you know what you're doing, you should stick with the default parameters provided by the script.

Note that whatever you choose, all the choices presented in the script are safe. (Unlike OpenVPN's defaults)

See https://github.com/angristan/openvpn-install#security-and-encryption to learn more.

```

Link: https://github.com/angristan/openvpn-install#security-and-encryption

At: https://github.com/angristan/openvpn-install/blob/master/README.md

Best,

Max Base

|

1.0

|

Need "#security-and-encryption" section in README.md - Hello,

```

Do you want to customize encryption settings?

Unless you know what you're doing, you should stick with the default parameters provided by the script.

Note that whatever you choose, all the choices presented in the script are safe. (Unlike OpenVPN's defaults)

See https://github.com/angristan/openvpn-install#security-and-encryption to learn more.

```

Link: https://github.com/angristan/openvpn-install#security-and-encryption

At: https://github.com/angristan/openvpn-install/blob/master/README.md

Best,

Max Base

|

non_process

|

need security and encryption section in readme md hello do you want to customize encryption settings unless you know what you re doing you should stick with the default parameters provided by the script note that whatever you choose all the choices presented in the script are safe unlike openvpn s defaults see to learn more link at best max base

| 0

|

22,803

| 4,839,084,763

|

IssuesEvent

|

2016-11-09 07:55:30

|

baidu/Paddle

|

https://api.github.com/repos/baidu/Paddle

|

closed

|

Need to rewrite English document on Distributed Training

|

documentation help welcome

|

New documents created in https://github.com/baidu/Paddle/pull/293 are extensions to the [SSH-base d distributed training document](https://github.com/baidu/Paddle/blob/develop/doc/cluster/opensource/cluster_train.md) . To make the new document preferable, we need to polish the latter.

|

1.0

|

Need to rewrite English document on Distributed Training - New documents created in https://github.com/baidu/Paddle/pull/293 are extensions to the [SSH-base d distributed training document](https://github.com/baidu/Paddle/blob/develop/doc/cluster/opensource/cluster_train.md) . To make the new document preferable, we need to polish the latter.

|

non_process

|

need to rewrite english document on distributed training new documents created in are extensions to the to make the new document preferable we need to polish the latter

| 0

|

482,175

| 13,902,101,689

|

IssuesEvent

|

2020-10-20 04:33:44

|

canonical-web-and-design/maas-ui

|

https://api.github.com/repos/canonical-web-and-design/maas-ui

|

closed

|

Settings table headers are squashed

|

Bug 🐛 Priority: High

|

The table headers for all the tables in settings are squashed:

<img width="958" alt="Screen Shot 2020-10-14 at 6 07 29 pm" src="https://user-images.githubusercontent.com/361637/95955327-64697880-0e48-11eb-96dc-289f1442a5ad.png">

|

1.0

|

Settings table headers are squashed - The table headers for all the tables in settings are squashed:

<img width="958" alt="Screen Shot 2020-10-14 at 6 07 29 pm" src="https://user-images.githubusercontent.com/361637/95955327-64697880-0e48-11eb-96dc-289f1442a5ad.png">

|

non_process

|

settings table headers are squashed the table headers for all the tables in settings are squashed img width alt screen shot at pm src

| 0

|

10,375

| 13,192,163,463

|

IssuesEvent

|

2020-08-13 13:22:11

|

kubeflow/kubeflow

|

https://api.github.com/repos/kubeflow/kubeflow

|

closed

|

Update release guide for website docs

|

area/docs kind/feature kind/process priority/p2

|

/kind process

Filing this issue to ensure we update the release guide for the website to include improvements to the release process from 1.1.

I think we should making running @RFMVasconcelos's script to add outdated banners to all pages a part of the process (see kubeflow/website#2066).

I think this is a good way to ensure that every page gets at least a once over every 60-90 days.

Per kubeflow/website#2067 I think we want to make it platform owners responsibility to update the shortcodes.

/assign @RFMVasconcelos @8bitmp3

|

1.0

|

Update release guide for website docs - /kind process

Filing this issue to ensure we update the release guide for the website to include improvements to the release process from 1.1.

I think we should making running @RFMVasconcelos's script to add outdated banners to all pages a part of the process (see kubeflow/website#2066).

I think this is a good way to ensure that every page gets at least a once over every 60-90 days.

Per kubeflow/website#2067 I think we want to make it platform owners responsibility to update the shortcodes.

/assign @RFMVasconcelos @8bitmp3

|

process

|

update release guide for website docs kind process filing this issue to ensure we update the release guide for the website to include improvements to the release process from i think we should making running rfmvasconcelos s script to add outdated banners to all pages a part of the process see kubeflow website i think this is a good way to ensure that every page gets at least a once over every days per kubeflow website i think we want to make it platform owners responsibility to update the shortcodes assign rfmvasconcelos

| 1

|

5,319

| 4,892,351,902

|

IssuesEvent

|

2016-11-18 19:27:31

|

statsmodels/statsmodels

|

https://api.github.com/repos/statsmodels/statsmodels

|

opened

|

SUMM/ENH: RLM/GLM nonlinear function optimization

|

comp-genmod comp-robust Performance type-enh

|

We would like to extend RLM #3261 and GLM #2179 to nonlinear mean functions.

several other related issues that I didn't look for

We can use the default scipy optimizers. But it might be more performant to have something more specific, e.g. based on the new scipy leastsq functions. This would be something like a nonlinear equivalent of IRLS.

(parking this reference)

Gay, David M., and Roy E. Welsch. 1988. “Maximum Likelihood and Quasi-Likelihood for Nonlinear Exponential Family Regression Models.” Journal of the American Statistical Association 83 (404): 990–98. doi:10.2307/2290125.

They discuss approximating the "messy" part of the Hessian. This sounds a bit similar to OIM versus EIM (observed versus expected information matrix) and the expensive part of the current GLM hessian.

I'm a bit surprised in how few iterations their estimator converges, but they use mostly very small sample sizes.

Also, for RLM we still need to get score_obs and hessian or the `..._factor` versions if applicable.

|

True

|

SUMM/ENH: RLM/GLM nonlinear function optimization - We would like to extend RLM #3261 and GLM #2179 to nonlinear mean functions.

several other related issues that I didn't look for

We can use the default scipy optimizers. But it might be more performant to have something more specific, e.g. based on the new scipy leastsq functions. This would be something like a nonlinear equivalent of IRLS.

(parking this reference)

Gay, David M., and Roy E. Welsch. 1988. “Maximum Likelihood and Quasi-Likelihood for Nonlinear Exponential Family Regression Models.” Journal of the American Statistical Association 83 (404): 990–98. doi:10.2307/2290125.

They discuss approximating the "messy" part of the Hessian. This sounds a bit similar to OIM versus EIM (observed versus expected information matrix) and the expensive part of the current GLM hessian.

I'm a bit surprised in how few iterations their estimator converges, but they use mostly very small sample sizes.

Also, for RLM we still need to get score_obs and hessian or the `..._factor` versions if applicable.

|

non_process

|

summ enh rlm glm nonlinear function optimization we would like to extend rlm and glm to nonlinear mean functions several other related issues that i didn t look for we can use the default scipy optimizers but it might be more performant to have something more specific e g based on the new scipy leastsq functions this would be something like a nonlinear equivalent of irls parking this reference gay david m and roy e welsch “maximum likelihood and quasi likelihood for nonlinear exponential family regression models ” journal of the american statistical association – doi they discuss approximating the messy part of the hessian this sounds a bit similar to oim versus eim observed versus expected information matrix and the expensive part of the current glm hessian i m a bit surprised in how few iterations their estimator converges but they use mostly very small sample sizes also for rlm we still need to get score obs and hessian or the factor versions if applicable

| 0

|

164,154

| 13,936,338,063

|

IssuesEvent

|

2020-10-22 12:48:59

|

DeepRegNet/DeepReg

|

https://api.github.com/repos/DeepRegNet/DeepReg

|

opened

|

Integration with 3DSlicer

|

documentation enhancement

|

## Subject of the feature

It would be nice to have integration (either through a scripted module or otherwise) with 3D Slicer to enable the underlying DeepReg models to be used for inference within a more user-friendly/less-technical avenue.

|

1.0

|

Integration with 3DSlicer - ## Subject of the feature

It would be nice to have integration (either through a scripted module or otherwise) with 3D Slicer to enable the underlying DeepReg models to be used for inference within a more user-friendly/less-technical avenue.

|

non_process

|

integration with subject of the feature it would be nice to have integration either through a scripted module or otherwise with slicer to enable the underlying deepreg models to be used for inference within a more user friendly less technical avenue

| 0

|

18,903

| 24,842,910,945

|

IssuesEvent

|

2022-10-26 13:57:31

|

MicrosoftDocs/azure-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-docs

|

closed

|

Possible mistake in this page

|

automation/svc triaged cxp doc-enhancement process-automation/subsvc Pri2

|

I think there is a mistake in this document. In this section https://learn.microsoft.com/en-us/azure/automation/automation-secure-asset-encryption#use-of-customer-managed-keys-for-an-automation-account, it states "The managed identity is available only after the storage account is created." I think this should read "The managed identity is available only after the automation account is created."

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: b96959e5-3ca5-7725-4f06-4d2abbf4a48e

* Version Independent ID: 377c1f35-a67a-5a79-5dc2-d58c3fbba7ef

* Content: [Encryption of secure assets in Azure Automation](https://learn.microsoft.com/en-us/azure/automation/automation-secure-asset-encryption)

* Content Source: [articles/automation/automation-secure-asset-encryption.md](https://github.com/MicrosoftDocs/azure-docs/blob/main/articles/automation/automation-secure-asset-encryption.md)

* Service: **automation**

* Sub-service: **process-automation**

* GitHub Login: @snehithm

* Microsoft Alias: **snmuvva**

|

1.0

|

Possible mistake in this page -

I think there is a mistake in this document. In this section https://learn.microsoft.com/en-us/azure/automation/automation-secure-asset-encryption#use-of-customer-managed-keys-for-an-automation-account, it states "The managed identity is available only after the storage account is created." I think this should read "The managed identity is available only after the automation account is created."

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: b96959e5-3ca5-7725-4f06-4d2abbf4a48e

* Version Independent ID: 377c1f35-a67a-5a79-5dc2-d58c3fbba7ef

* Content: [Encryption of secure assets in Azure Automation](https://learn.microsoft.com/en-us/azure/automation/automation-secure-asset-encryption)

* Content Source: [articles/automation/automation-secure-asset-encryption.md](https://github.com/MicrosoftDocs/azure-docs/blob/main/articles/automation/automation-secure-asset-encryption.md)

* Service: **automation**

* Sub-service: **process-automation**

* GitHub Login: @snehithm

* Microsoft Alias: **snmuvva**

|

process

|

possible mistake in this page i think there is a mistake in this document in this section it states the managed identity is available only after the storage account is created i think this should read the managed identity is available only after the automation account is created document details ⚠ do not edit this section it is required for learn microsoft com ➟ github issue linking id version independent id content content source service automation sub service process automation github login snehithm microsoft alias snmuvva

| 1

|

5,521

| 8,381,046,629

|

IssuesEvent

|

2018-10-07 20:46:27

|

MichiganDataScienceTeam/googleanalytics

|

https://api.github.com/repos/MichiganDataScienceTeam/googleanalytics

|

opened

|

Preprocess: u'geoNetwork.country', u'geoNetwork.latitude', u'geoNetwork.longitude', u'geoNetwork.metro',

|

easy preprocessing

|

Preprocess the following features:

u'geoNetwork.country',

u'geoNetwork.latitude',

u'geoNetwork.longitude',

u'geoNetwork.metro',

1. Standardization: [http://scikit-learn.org/stable/modules/preprocessing.html#standardization-or-mean-removal-and-variance-scaling](http://scikit-learn.org/stable/modules/preprocessing.html#standardization-or-mean-removal-and-variance-scaling)

2. Impute missing values: [http://scikit-learn.org/stable/modules/impute.html](http://scikit-learn.org/stable/modules/impute.html)

3. Normalization: [http://scikit-learn.org/stable/modules/preprocessing.html#normalization](http://scikit-learn.org/stable/modules/preprocessing.html#normalization)

4. Encode categorical features (optional): [http://scikit-learn.org/stable/modules/preprocessing.html#encoding-categorical-features](http://scikit-learn.org/stable/modules/preprocessing.html#encoding-categorical-features)

5. Discretization (optional): [http://scikit-learn.org/stable/modules/preprocessing.html#discretization](http://scikit-learn.org/stable/modules/preprocessing.html#discretization)

[http://scikit-learn.org/stable/modules/preprocessing.html](http://scikit-learn.org/stable/modules/preprocessing.html)

|

1.0

|

Preprocess: u'geoNetwork.country', u'geoNetwork.latitude', u'geoNetwork.longitude', u'geoNetwork.metro', - Preprocess the following features:

u'geoNetwork.country',

u'geoNetwork.latitude',

u'geoNetwork.longitude',

u'geoNetwork.metro',

1. Standardization: [http://scikit-learn.org/stable/modules/preprocessing.html#standardization-or-mean-removal-and-variance-scaling](http://scikit-learn.org/stable/modules/preprocessing.html#standardization-or-mean-removal-and-variance-scaling)

2. Impute missing values: [http://scikit-learn.org/stable/modules/impute.html](http://scikit-learn.org/stable/modules/impute.html)

3. Normalization: [http://scikit-learn.org/stable/modules/preprocessing.html#normalization](http://scikit-learn.org/stable/modules/preprocessing.html#normalization)

4. Encode categorical features (optional): [http://scikit-learn.org/stable/modules/preprocessing.html#encoding-categorical-features](http://scikit-learn.org/stable/modules/preprocessing.html#encoding-categorical-features)

5. Discretization (optional): [http://scikit-learn.org/stable/modules/preprocessing.html#discretization](http://scikit-learn.org/stable/modules/preprocessing.html#discretization)

[http://scikit-learn.org/stable/modules/preprocessing.html](http://scikit-learn.org/stable/modules/preprocessing.html)

|

process

|

preprocess u geonetwork country u geonetwork latitude u geonetwork longitude u geonetwork metro preprocess the following features u geonetwork country u geonetwork latitude u geonetwork longitude u geonetwork metro standardization impute missing values normalization encode categorical features optional discretization optional

| 1

|

100,278

| 11,184,839,610

|

IssuesEvent

|

2019-12-31 20:32:38

|

garbagemule/MobArena

|

https://api.github.com/repos/garbagemule/MobArena

|

closed

|

Modernize all existing documentation

|

documentation enhancement high priority

|

# Summary

* This issue is a…

* [ ] Bug report

* [ ] Feature request

* [X] Documentation request

* [ ] Other issue

* [ ] Question

* **Describe the issue / feature in 1-2 sentences**: Update all documentation for the new [ReadTheDocs site](https://mobarena.readthedocs.io/en/latest/), modernize documentation for current codebase where needed

# Background

**What does it do?**: Improves usability of plugin and makes it more user-friendly

This ticket is more of a tracking ticket for the existing effort of modernizing documentation. I'll try to update this ticket as I progress through the documentation. Modernized documentation is the first critical part of my [community revitalization proposal](https://docs.google.com/document/d/1kEhNl9XedF8fIIpDzCG7816VLrPFTi0tYAZjq78Mens/edit?usp=sharing).

# Details

* [X] Announcements (#400)

* [X] Arena setup (#401)

* [ ] Class chests (#402)

* [ ] Commands (#413)

* [ ] Getting started

* [x] Item and reward syntax (#416)

* [ ] Leaderboards

* [ ] Monster types

* [ ] Permissions

* [ ] Setting up config file

* [ ] Setting up waves

* [ ] Using MobArena

* [ ] Wave formulas

|

1.0

|

Modernize all existing documentation - # Summary

* This issue is a…

* [ ] Bug report

* [ ] Feature request

* [X] Documentation request

* [ ] Other issue

* [ ] Question

* **Describe the issue / feature in 1-2 sentences**: Update all documentation for the new [ReadTheDocs site](https://mobarena.readthedocs.io/en/latest/), modernize documentation for current codebase where needed

# Background

**What does it do?**: Improves usability of plugin and makes it more user-friendly

This ticket is more of a tracking ticket for the existing effort of modernizing documentation. I'll try to update this ticket as I progress through the documentation. Modernized documentation is the first critical part of my [community revitalization proposal](https://docs.google.com/document/d/1kEhNl9XedF8fIIpDzCG7816VLrPFTi0tYAZjq78Mens/edit?usp=sharing).

# Details

* [X] Announcements (#400)

* [X] Arena setup (#401)

* [ ] Class chests (#402)

* [ ] Commands (#413)

* [ ] Getting started

* [x] Item and reward syntax (#416)

* [ ] Leaderboards

* [ ] Monster types

* [ ] Permissions

* [ ] Setting up config file

* [ ] Setting up waves

* [ ] Using MobArena

* [ ] Wave formulas

|

non_process

|

modernize all existing documentation summary this issue is a… bug report feature request documentation request other issue question describe the issue feature in sentences update all documentation for the new modernize documentation for current codebase where needed background what does it do improves usability of plugin and makes it more user friendly this ticket is more of a tracking ticket for the existing effort of modernizing documentation i ll try to update this ticket as i progress through the documentation modernized documentation is the first critical part of my details announcements arena setup class chests commands getting started item and reward syntax leaderboards monster types permissions setting up config file setting up waves using mobarena wave formulas

| 0

|

22,620

| 31,844,667,016

|

IssuesEvent

|

2023-09-14 18:56:32

|

sqlc-dev/sqlc

|

https://api.github.com/repos/sqlc-dev/sqlc

|

closed

|

Support YAML anchors in sqlc.yaml

|

bug panic :gear: process

|

### What do you want to change?

Currently I am working on a microservices project using SQLC. All is fine, but I find myself repeating the same configuration options for each codegen section. Using [YAML anchors](https://yaml.org/spec/1.2.2/#3222-anchors-and-aliases) this would be trivial and very nice, but this is currently not supported.

Then I could do something like the following.

```yaml

sql:

- schema: database

queries: services/a

engine: postgresql

codegen:

- out: gateway/src/gateway/services/organization

plugin: py

options: &base-options

query_parameter_limit: 1

package: gateway

output_models_file_name: null

emit_module: true

emit_generators: false

emit_async: true

- schema: database

queries: services/b

engine: postgresql

codegen:

- out: gateway/src/gateway/services/project

plugin: py

options: *base-options

```

https://play.sqlc.dev/p/32c6b52785ce13ddfeb2311a2a835800d2578b56bf1addd95887e3fe45d2ace0

### What database engines need to be changed?

_No response_

### What programming language backends need to be changed?

_No response_

|

1.0

|

Support YAML anchors in sqlc.yaml - ### What do you want to change?

Currently I am working on a microservices project using SQLC. All is fine, but I find myself repeating the same configuration options for each codegen section. Using [YAML anchors](https://yaml.org/spec/1.2.2/#3222-anchors-and-aliases) this would be trivial and very nice, but this is currently not supported.

Then I could do something like the following.

```yaml

sql:

- schema: database

queries: services/a

engine: postgresql

codegen:

- out: gateway/src/gateway/services/organization

plugin: py

options: &base-options

query_parameter_limit: 1

package: gateway

output_models_file_name: null

emit_module: true

emit_generators: false

emit_async: true

- schema: database

queries: services/b

engine: postgresql

codegen:

- out: gateway/src/gateway/services/project

plugin: py

options: *base-options

```

https://play.sqlc.dev/p/32c6b52785ce13ddfeb2311a2a835800d2578b56bf1addd95887e3fe45d2ace0

### What database engines need to be changed?

_No response_

### What programming language backends need to be changed?

_No response_

|

process

|

support yaml anchors in sqlc yaml what do you want to change currently i am working on a microservices project using sqlc all is fine but i find myself repeating the same configuration options for each codegen section using this would be trivial and very nice but this is currently not supported then i could do something like the following yaml sql schema database queries services a engine postgresql codegen out gateway src gateway services organization plugin py options base options query parameter limit package gateway output models file name null emit module true emit generators false emit async true schema database queries services b engine postgresql codegen out gateway src gateway services project plugin py options base options what database engines need to be changed no response what programming language backends need to be changed no response

| 1

|

9,656

| 7,781,071,770

|

IssuesEvent

|

2018-06-05 22:17:48

|

paypal/NNAnalytics

|

https://api.github.com/repos/paypal/NNAnalytics

|

opened

|

Add Auth0 support for authentication

|

enhancement security

|

Today NNA only supports authentication support for LDAP.

It would be nice to add Auth0 protocol support for those running SSO (Single-Sign-On).

pac4j should support it: http://www.pac4j.org/docs/clients/oauth.html

|

True

|

Add Auth0 support for authentication - Today NNA only supports authentication support for LDAP.

It would be nice to add Auth0 protocol support for those running SSO (Single-Sign-On).

pac4j should support it: http://www.pac4j.org/docs/clients/oauth.html

|

non_process

|

add support for authentication today nna only supports authentication support for ldap it would be nice to add protocol support for those running sso single sign on should support it

| 0

|

159,764

| 20,085,909,328

|

IssuesEvent

|

2022-02-05 01:10:50

|

DavidSpek/pipelines

|

https://api.github.com/repos/DavidSpek/pipelines

|

opened

|

CVE-2022-21733 (Medium) detected in tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl

|

security vulnerability

|

## CVE-2022-21733 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/ec/98/f968caf5f65759e78873b900cbf0ae20b1699fb11268ecc0f892186419a7/tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl">https://files.pythonhosted.org/packages/ec/98/f968caf5f65759e78873b900cbf0ae20b1699fb11268ecc0f892186419a7/tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl</a></p>

<p>Path to dependency file: /contrib/components/openvino/ovms-deployer/containers/requirements.txt</p>

<p>Path to vulnerable library: /contrib/components/openvino/ovms-deployer/containers/requirements.txt,/pipelines/samples/core/ai_platform/training</p>

<p>

Dependency Hierarchy:

- :x: **tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Tensorflow is an Open Source Machine Learning Framework. The implementation of `StringNGrams` can be used to trigger a denial of service attack by causing an out of memory condition after an integer overflow. We are missing a validation on `pad_witdh` and that result in computing a negative value for `ngram_width` which is later used to allocate parts of the output. The fix will be included in TensorFlow 2.8.0. We will also cherrypick this commit on TensorFlow 2.7.1, TensorFlow 2.6.3, and TensorFlow 2.5.3, as these are also affected and still in supported range.

<p>Publish Date: 2022-02-03

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-21733>CVE-2022-21733</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/tensorflow/tensorflow/security/advisories/GHSA-98j8-c9q4-r38g">https://github.com/tensorflow/tensorflow/security/advisories/GHSA-98j8-c9q4-r38g</a></p>

<p>Release Date: 2022-02-03</p>

<p>Fix Resolution: tensorflow - 2.5.3,2.6.3,2.7.1;tensorflow-cpu - 2.5.3,2.6.3,2.7.1;tensorflow-gpu - 2.5.3,2.6.3,2.7.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2022-21733 (Medium) detected in tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl - ## CVE-2022-21733 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/ec/98/f968caf5f65759e78873b900cbf0ae20b1699fb11268ecc0f892186419a7/tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl">https://files.pythonhosted.org/packages/ec/98/f968caf5f65759e78873b900cbf0ae20b1699fb11268ecc0f892186419a7/tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl</a></p>

<p>Path to dependency file: /contrib/components/openvino/ovms-deployer/containers/requirements.txt</p>

<p>Path to vulnerable library: /contrib/components/openvino/ovms-deployer/containers/requirements.txt,/pipelines/samples/core/ai_platform/training</p>

<p>

Dependency Hierarchy:

- :x: **tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Tensorflow is an Open Source Machine Learning Framework. The implementation of `StringNGrams` can be used to trigger a denial of service attack by causing an out of memory condition after an integer overflow. We are missing a validation on `pad_witdh` and that result in computing a negative value for `ngram_width` which is later used to allocate parts of the output. The fix will be included in TensorFlow 2.8.0. We will also cherrypick this commit on TensorFlow 2.7.1, TensorFlow 2.6.3, and TensorFlow 2.5.3, as these are also affected and still in supported range.

<p>Publish Date: 2022-02-03

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-21733>CVE-2022-21733</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/tensorflow/tensorflow/security/advisories/GHSA-98j8-c9q4-r38g">https://github.com/tensorflow/tensorflow/security/advisories/GHSA-98j8-c9q4-r38g</a></p>

<p>Release Date: 2022-02-03</p>

<p>Fix Resolution: tensorflow - 2.5.3,2.6.3,2.7.1;tensorflow-cpu - 2.5.3,2.6.3,2.7.1;tensorflow-gpu - 2.5.3,2.6.3,2.7.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in tensorflow whl cve medium severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file contrib components openvino ovms deployer containers requirements txt path to vulnerable library contrib components openvino ovms deployer containers requirements txt pipelines samples core ai platform training dependency hierarchy x tensorflow whl vulnerable library found in base branch master vulnerability details tensorflow is an open source machine learning framework the implementation of stringngrams can be used to trigger a denial of service attack by causing an out of memory condition after an integer overflow we are missing a validation on pad witdh and that result in computing a negative value for ngram width which is later used to allocate parts of the output the fix will be included in tensorflow we will also cherrypick this commit on tensorflow tensorflow and tensorflow as these are also affected and still in supported range publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution tensorflow tensorflow cpu tensorflow gpu step up your open source security game with whitesource

| 0

|

334,329

| 29,831,092,803

|

IssuesEvent

|

2023-06-18 09:30:43

|

unifyai/ivy

|

https://api.github.com/repos/unifyai/ivy

|

reopened

|

Fix raw_ops.test_tensorflow_Shape

|

TensorFlow Frontend Sub Task Failing Test

|

| | |

|---|---|

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5302683745/jobs/9597784377"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5302683745/jobs/9597784377"><img src=https://img.shields.io/badge/-success-success></a>

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5302683745/jobs/9597784377"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5302683745/jobs/9597784377"><img src=https://img.shields.io/badge/-success-success></a>

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/5302683745/jobs/9597784377"><img src=https://img.shields.io/badge/-success-success></a>

|

1.0

|

Fix raw_ops.test_tensorflow_Shape - | | |

|---|---|

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5302683745/jobs/9597784377"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5302683745/jobs/9597784377"><img src=https://img.shields.io/badge/-success-success></a>

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5302683745/jobs/9597784377"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5302683745/jobs/9597784377"><img src=https://img.shields.io/badge/-success-success></a>

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/5302683745/jobs/9597784377"><img src=https://img.shields.io/badge/-success-success></a>

|

non_process

|

fix raw ops test tensorflow shape numpy a href src jax a href src tensorflow a href src torch a href src paddle a href src

| 0

|

1,065

| 3,536,070,913

|

IssuesEvent

|

2016-01-17 00:23:29

|

t3kt/vjzual2

|

https://api.github.com/repos/t3kt/vjzual2

|

closed

|

add compositing modes to the linked transform module

|

enhancement video processing

|

an extension of #260.

it should support compositing with the master module's dry input as well as over the linked module's input

|

1.0

|

add compositing modes to the linked transform module - an extension of #260.

it should support compositing with the master module's dry input as well as over the linked module's input

|

process

|

add compositing modes to the linked transform module an extension of it should support compositing with the master module s dry input as well as over the linked module s input

| 1

|

147,420

| 19,522,792,508

|

IssuesEvent

|

2021-12-29 22:21:58

|

swagger-api/swagger-codegen

|

https://api.github.com/repos/swagger-api/swagger-codegen

|

opened

|

WS-2018-0124 (Medium) detected in multiple libraries

|

security vulnerability

|

## WS-2018-0124 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-core-2.7.8.jar</b>, <b>jackson-core-2.4.5.jar</b>, <b>jackson-core-2.6.4.jar</b></p></summary>

<p>

<details><summary><b>jackson-core-2.7.8.jar</b></p></summary>

<p>Core Jackson abstractions, basic JSON streaming API implementation</p>

<p>Library home page: <a href="https://github.com/FasterXML/jackson-core">https://github.com/FasterXML/jackson-core</a></p>

<p>Path to vulnerable library: /home/wss-scanner/.ivy2/cache/com.fasterxml.jackson.core/jackson-core/bundles/jackson-core-2.7.8.jar</p>

<p>

Dependency Hierarchy:

- lagom-scaladsl-api_2.11-1.3.8.jar (Root Library)

- lagom-api_2.11-1.3.8.jar

- play_2.11-2.5.13.jar

- jackson-databind-2.7.8.jar

- :x: **jackson-core-2.7.8.jar** (Vulnerable Library)

</details>

<details><summary><b>jackson-core-2.4.5.jar</b></p></summary>

<p>Core Jackson abstractions, basic JSON streaming API implementation</p>

<p>Library home page: <a href="https://github.com/FasterXML/jackson">https://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: /samples/client/petstore/scala/build.gradle</p>