Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

18,498

| 24,551,122,177

|

IssuesEvent

|

2022-10-12 12:41:34

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[iOS] [Offline indicator] Toast message is displayed instead of pop-up message in below scenario

|

Bug P2 iOS Process: Fixed Process: Tested dev

|

**Steps:**

1. Install the app

2. Don't sign in/signup

3. Click on any study

4. Switch off the internet

5. Click on 'Participate'

6. Observe

**Actual:** Toast message 'You are offline' is displayed in sign in screen

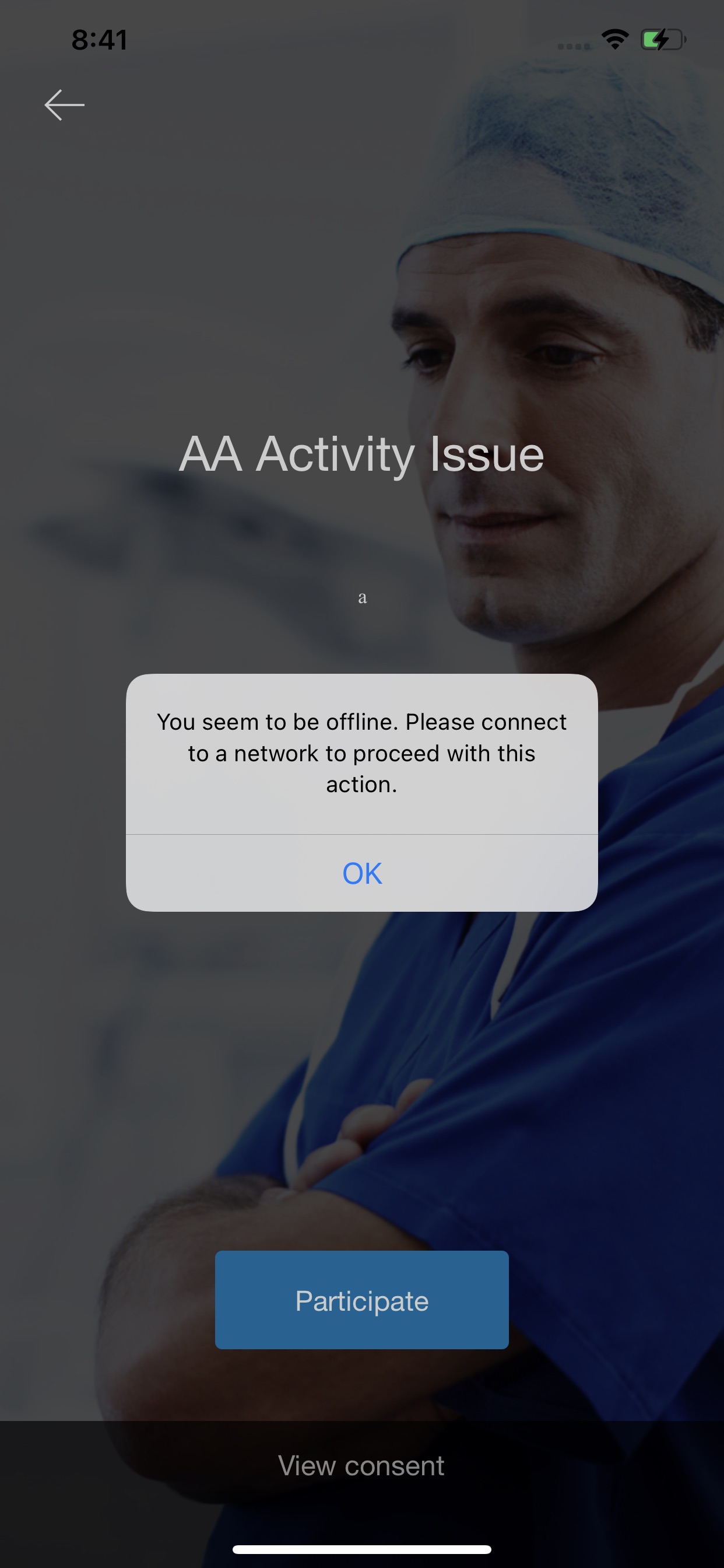

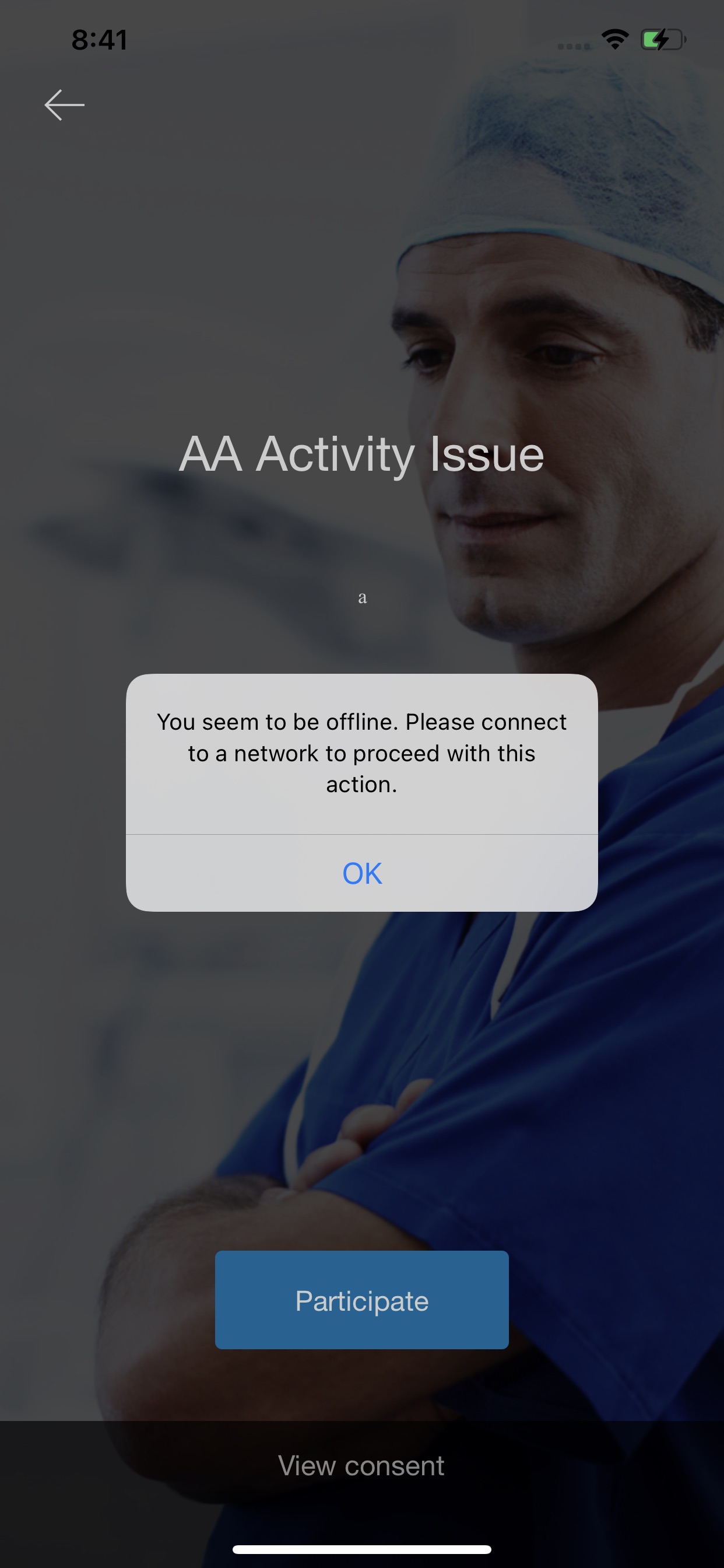

**Expected:** Pop-up message 'You seem to be offline. Please connect to a network to proceed with this action.' pop-up should be displayed

**Issue not observed for logged in users**

Actual:

Expected pop-up screen:

|

2.0

|

[iOS] [Offline indicator] Toast message is displayed instead of pop-up message in below scenario - **Steps:**

1. Install the app

2. Don't sign in/signup

3. Click on any study

4. Switch off the internet

5. Click on 'Participate'

6. Observe

**Actual:** Toast message 'You are offline' is displayed in sign in screen

**Expected:** Pop-up message 'You seem to be offline. Please connect to a network to proceed with this action.' pop-up should be displayed

**Issue not observed for logged in users**

Actual:

Expected pop-up screen:

|

process

|

toast message is displayed instead of pop up message in below scenario steps install the app don t sign in signup click on any study switch off the internet click on participate observe actual toast message you are offline is displayed in sign in screen expected pop up message you seem to be offline please connect to a network to proceed with this action pop up should be displayed issue not observed for logged in users actual expected pop up screen

| 1

|

94,072

| 11,846,407,413

|

IssuesEvent

|

2020-03-24 10:08:36

|

zilahir/teleprompter

|

https://api.github.com/repos/zilahir/teleprompter

|

closed

|

need design for error states

|

:nail_care: design :rocket: waiting for deploy

|

both of them in the header modals:

1) login error (error mesage: `email or password is not correct`

2) registration error (error message a) `password mismatch` b) `email is in use`

|

1.0

|

need design for error states - both of them in the header modals:

1) login error (error mesage: `email or password is not correct`

2) registration error (error message a) `password mismatch` b) `email is in use`

|

non_process

|

need design for error states both of them in the header modals login error error mesage email or password is not correct registration error error message a password mismatch b email is in use

| 0

|

120,342

| 4,788,151,579

|

IssuesEvent

|

2016-10-30 12:12:02

|

cyclejs/cyclejs

|

https://api.github.com/repos/cyclejs/cyclejs

|

closed

|

cycle/dom: cannot use the same isolate scope for parent and child components

|

issue is bug priority 4 (must) scope: dom

|

**Code to reproduce the issue:**

```js

import Cycle from '@cycle/xstream-run';

import {div, button, makeDOMDriver} from '@cycle/dom';

import isolate from '@cycle/isolate';

import xs from 'xstream';

function Item(sources, count) {

const childVdom$ = count > 0 ?

isolate(Item, '0')(sources, count-1).DOM : // REMOVE SCOPE '0' AND IT WILL WORK AS INTENDED

xs.of(undefined);

const highlight$ = sources.DOM.select('button').events('click')

.fold((x, _) => !x, false);

const vdom$ = xs.combine(childVdom$, highlight$)

.map(([childVdom, highlight]) =>

div({style: {'margin': '10px', border: '1px solid #999'}}, [

button({style: {'background-color': highlight ? '#f00' : '#fff'}}, 'click me'),

childVdom

])

);

return { DOM: vdom$ };

}

function main(sources) {

const vdom$ = Item(sources, 3).DOM;

return { DOM: vdom$ };

}

Cycle.run(main, {

DOM: makeDOMDriver('#main')

});

```

**Expected behavior:**

Each button is toggled red/white when clicked.

**Actual behavior:**

The inner `Item`s are not isolated from each other. Clicking any button toggles all the others.

**Versions of packages used:**

@cycle/dom: 12.2.5

@cycle/xstream-run: 3.1.0

@cycle/isolate: 1.4.0

xstream: 6.1.0

**Why anyone would want to do this:**

Because of cycle-onionify. In onionify components in a list are isolated from each other by their index (0, 1, 2, ...). Thus if you make a list of lists of lists... (i.e. a tree) of the same component, you'll end up with many parent-child-grandchild-... with the same numerical isolate scope, like in the example above.

|

1.0

|

cycle/dom: cannot use the same isolate scope for parent and child components - **Code to reproduce the issue:**

```js

import Cycle from '@cycle/xstream-run';

import {div, button, makeDOMDriver} from '@cycle/dom';

import isolate from '@cycle/isolate';

import xs from 'xstream';

function Item(sources, count) {

const childVdom$ = count > 0 ?

isolate(Item, '0')(sources, count-1).DOM : // REMOVE SCOPE '0' AND IT WILL WORK AS INTENDED

xs.of(undefined);

const highlight$ = sources.DOM.select('button').events('click')

.fold((x, _) => !x, false);

const vdom$ = xs.combine(childVdom$, highlight$)

.map(([childVdom, highlight]) =>

div({style: {'margin': '10px', border: '1px solid #999'}}, [

button({style: {'background-color': highlight ? '#f00' : '#fff'}}, 'click me'),

childVdom

])

);

return { DOM: vdom$ };

}

function main(sources) {

const vdom$ = Item(sources, 3).DOM;

return { DOM: vdom$ };

}

Cycle.run(main, {

DOM: makeDOMDriver('#main')

});

```

**Expected behavior:**

Each button is toggled red/white when clicked.

**Actual behavior:**

The inner `Item`s are not isolated from each other. Clicking any button toggles all the others.

**Versions of packages used:**

@cycle/dom: 12.2.5

@cycle/xstream-run: 3.1.0

@cycle/isolate: 1.4.0

xstream: 6.1.0

**Why anyone would want to do this:**

Because of cycle-onionify. In onionify components in a list are isolated from each other by their index (0, 1, 2, ...). Thus if you make a list of lists of lists... (i.e. a tree) of the same component, you'll end up with many parent-child-grandchild-... with the same numerical isolate scope, like in the example above.

|

non_process

|

cycle dom cannot use the same isolate scope for parent and child components code to reproduce the issue js import cycle from cycle xstream run import div button makedomdriver from cycle dom import isolate from cycle isolate import xs from xstream function item sources count const childvdom count isolate item sources count dom remove scope and it will work as intended xs of undefined const highlight sources dom select button events click fold x x false const vdom xs combine childvdom highlight map div style margin border solid button style background color highlight fff click me childvdom return dom vdom function main sources const vdom item sources dom return dom vdom cycle run main dom makedomdriver main expected behavior each button is toggled red white when clicked actual behavior the inner item s are not isolated from each other clicking any button toggles all the others versions of packages used cycle dom cycle xstream run cycle isolate xstream why anyone would want to do this because of cycle onionify in onionify components in a list are isolated from each other by their index thus if you make a list of lists of lists i e a tree of the same component you ll end up with many parent child grandchild with the same numerical isolate scope like in the example above

| 0

|

199,564

| 6,991,686,464

|

IssuesEvent

|

2017-12-15 01:28:58

|

kubernetes/kubernetes

|

https://api.github.com/repos/kubernetes/kubernetes

|

closed

|

Problem with replacing nodes

|

lifecycle/stale priority/backlog sig/api-machinery

|

When I run kubectl replace command follow [here](http://kubernetes.io/v1.0/docs/admin/cluster-management.html), I got the below error

```

kubectl replace nodes 10.162.64.136 --patch='{"apiVersion": "v1", "spec": {"unschedulable": true}}'

Error: unknown flag: --patch

Run 'kubectl help' for usage.

```

|

1.0

|

Problem with replacing nodes - When I run kubectl replace command follow [here](http://kubernetes.io/v1.0/docs/admin/cluster-management.html), I got the below error

```

kubectl replace nodes 10.162.64.136 --patch='{"apiVersion": "v1", "spec": {"unschedulable": true}}'

Error: unknown flag: --patch

Run 'kubectl help' for usage.

```

|

non_process

|

problem with replacing nodes when i run kubectl replace command follow i got the below error kubectl replace nodes patch apiversion spec unschedulable true error unknown flag patch run kubectl help for usage

| 0

|

225,537

| 7,482,406,736

|

IssuesEvent

|

2018-04-05 01:10:51

|

SETI/pds-opus

|

https://api.github.com/repos/SETI/pds-opus

|

opened

|

Handling of ert_sec is totally broken

|

Bug Effort-Easy Priority 3

|

See apps/metadata/views.py around line 288. The original version works only for obs_general TIME fields (normal observation time), not for ert, which occurs in BOTH Cassini and Voyage mission tables.

|

1.0

|

Handling of ert_sec is totally broken - See apps/metadata/views.py around line 288. The original version works only for obs_general TIME fields (normal observation time), not for ert, which occurs in BOTH Cassini and Voyage mission tables.

|

non_process

|

handling of ert sec is totally broken see apps metadata views py around line the original version works only for obs general time fields normal observation time not for ert which occurs in both cassini and voyage mission tables

| 0

|

6,188

| 9,102,663,928

|

IssuesEvent

|

2019-02-20 14:17:50

|

googleapis/google-cloud-cpp

|

https://api.github.com/repos/googleapis/google-cloud-cpp

|

closed

|

Document how to `make install`.

|

type: process

|

Our README file just describes how to compile the code, but not how to install it. Installing it requires having the dependencies installed themselves, and some of them do not document well how to do this.

|

1.0

|

Document how to `make install`. - Our README file just describes how to compile the code, but not how to install it. Installing it requires having the dependencies installed themselves, and some of them do not document well how to do this.

|

process

|

document how to make install our readme file just describes how to compile the code but not how to install it installing it requires having the dependencies installed themselves and some of them do not document well how to do this

| 1

|

11,121

| 13,957,685,612

|

IssuesEvent

|

2020-10-24 08:08:41

|

alexanderkotsev/geoportal

|

https://api.github.com/repos/alexanderkotsev/geoportal

|

opened

|

MT: Harvesting Process

|

Geoportal Harvesting process MT - Malta

|

Dear Geoportal Team,

On Friday 8th November 2019 I started a harvesting request but it seems that this request failed along the process. Is something wrong with the process or is it an issue from our side?

Regards,

Rene

|

1.0

|

MT: Harvesting Process - Dear Geoportal Team,

On Friday 8th November 2019 I started a harvesting request but it seems that this request failed along the process. Is something wrong with the process or is it an issue from our side?

Regards,

Rene

|

process

|

mt harvesting process dear geoportal team on friday november i started a harvesting request but it seems that this request failed along the process is something wrong with the process or is it an issue from our side regards rene

| 1

|

59,223

| 14,369,086,787

|

IssuesEvent

|

2020-12-01 09:18:44

|

ignatandrei/stankins

|

https://api.github.com/repos/ignatandrei/stankins

|

closed

|

CVE-2019-6283 (Medium) detected in multiple libraries

|

security vulnerability

|

## CVE-2019-6283 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sass-4.10.0.tgz</b>, <b>node-sass-4.9.3.tgz</b></p></summary>

<p>

<details><summary><b>node-sass-4.10.0.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-sass/-/node-sass-4.10.0.tgz">https://registry.npmjs.org/node-sass/-/node-sass-4.10.0.tgz</a></p>

<p>Path to dependency file: /tmp/ws-scm/stankins/stankinsv2/solution/StankinsV2/StankinsDataWebAngular/package.json</p>

<p>Path to vulnerable library: /tmp/ws-scm/stankins/stankinsv2/solution/StankinsV2/StankinsDataWebAngular/node_modules/node-sass/package.json</p>

<p>

Dependency Hierarchy:

- build-angular-0.11.4.tgz (Root Library)

- :x: **node-sass-4.10.0.tgz** (Vulnerable Library)

</details>

<details><summary><b>node-sass-4.9.3.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-sass/-/node-sass-4.9.3.tgz">https://registry.npmjs.org/node-sass/-/node-sass-4.9.3.tgz</a></p>

<p>Path to dependency file: /tmp/ws-scm/stankins/stankinsv2/solution/StankinsV2/StankinsAliveAngular/package.json</p>

<p>Path to vulnerable library: /tmp/ws-scm/stankins/stankinsv2/solution/StankinsV2/StankinsAliveAngular/node_modules/node-sass/package.json</p>

<p>

Dependency Hierarchy:

- build-angular-0.10.7.tgz (Root Library)

- :x: **node-sass-4.9.3.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/ignatandrei/stankins/commit/525550ef1e023c62d5d53d2f2bce03d5d168d46e">525550ef1e023c62d5d53d2f2bce03d5d168d46e</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In LibSass 3.5.5, a heap-based buffer over-read exists in Sass::Prelexer::parenthese_scope in prelexer.hpp.

<p>Publish Date: 2019-01-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-6283>CVE-2019-6283</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-6284">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-6284</a></p>

<p>Release Date: 2019-08-06</p>

<p>Fix Resolution: LibSass - 3.6.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2019-6283 (Medium) detected in multiple libraries - ## CVE-2019-6283 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sass-4.10.0.tgz</b>, <b>node-sass-4.9.3.tgz</b></p></summary>

<p>

<details><summary><b>node-sass-4.10.0.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-sass/-/node-sass-4.10.0.tgz">https://registry.npmjs.org/node-sass/-/node-sass-4.10.0.tgz</a></p>

<p>Path to dependency file: /tmp/ws-scm/stankins/stankinsv2/solution/StankinsV2/StankinsDataWebAngular/package.json</p>

<p>Path to vulnerable library: /tmp/ws-scm/stankins/stankinsv2/solution/StankinsV2/StankinsDataWebAngular/node_modules/node-sass/package.json</p>

<p>

Dependency Hierarchy:

- build-angular-0.11.4.tgz (Root Library)

- :x: **node-sass-4.10.0.tgz** (Vulnerable Library)

</details>

<details><summary><b>node-sass-4.9.3.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-sass/-/node-sass-4.9.3.tgz">https://registry.npmjs.org/node-sass/-/node-sass-4.9.3.tgz</a></p>

<p>Path to dependency file: /tmp/ws-scm/stankins/stankinsv2/solution/StankinsV2/StankinsAliveAngular/package.json</p>

<p>Path to vulnerable library: /tmp/ws-scm/stankins/stankinsv2/solution/StankinsV2/StankinsAliveAngular/node_modules/node-sass/package.json</p>

<p>

Dependency Hierarchy:

- build-angular-0.10.7.tgz (Root Library)

- :x: **node-sass-4.9.3.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/ignatandrei/stankins/commit/525550ef1e023c62d5d53d2f2bce03d5d168d46e">525550ef1e023c62d5d53d2f2bce03d5d168d46e</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In LibSass 3.5.5, a heap-based buffer over-read exists in Sass::Prelexer::parenthese_scope in prelexer.hpp.

<p>Publish Date: 2019-01-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-6283>CVE-2019-6283</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-6284">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-6284</a></p>

<p>Release Date: 2019-08-06</p>

<p>Fix Resolution: LibSass - 3.6.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries node sass tgz node sass tgz node sass tgz wrapper around libsass library home page a href path to dependency file tmp ws scm stankins solution stankinsdatawebangular package json path to vulnerable library tmp ws scm stankins solution stankinsdatawebangular node modules node sass package json dependency hierarchy build angular tgz root library x node sass tgz vulnerable library node sass tgz wrapper around libsass library home page a href path to dependency file tmp ws scm stankins solution stankinsaliveangular package json path to vulnerable library tmp ws scm stankins solution stankinsaliveangular node modules node sass package json dependency hierarchy build angular tgz root library x node sass tgz vulnerable library found in head commit a href vulnerability details in libsass a heap based buffer over read exists in sass prelexer parenthese scope in prelexer hpp publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution libsass step up your open source security game with whitesource

| 0

|

54,064

| 29,502,783,405

|

IssuesEvent

|

2023-06-03 01:04:42

|

jqlang/jq

|

https://api.github.com/repos/jqlang/jq

|

closed

|

`first` definition is extremely complicated

|

performance

|

In the file [builtin.jq](https://github.com/stedolan/jq/blob/master/src/builtin.jq) I find the definition of `limit` extremely complicated:

```

def first(g): label $out | foreach g as $item ([false, null]; if .[0]==true then break $out else [true, $item] end; .[1]) ;

```

I thik this one will do the work, or I'm missing something?

```

def first(g): label $pipe | g | ., break $pipe ;

```

|

True

|

`first` definition is extremely complicated - In the file [builtin.jq](https://github.com/stedolan/jq/blob/master/src/builtin.jq) I find the definition of `limit` extremely complicated:

```

def first(g): label $out | foreach g as $item ([false, null]; if .[0]==true then break $out else [true, $item] end; .[1]) ;

```

I thik this one will do the work, or I'm missing something?

```

def first(g): label $pipe | g | ., break $pipe ;

```

|

non_process

|

first definition is extremely complicated in the file i find the definition of limit extremely complicated def first g label out foreach g as item if true then break out else end i thik this one will do the work or i m missing something def first g label pipe g break pipe

| 0

|

603,663

| 18,670,399,361

|

IssuesEvent

|

2021-10-30 15:49:08

|

AY2122S1-CS2103-T14-1/tp

|

https://api.github.com/repos/AY2122S1-CS2103-T14-1/tp

|

closed

|

[PE-D] Formatting of Input Parameter details

|

priority.High type.Document type.Duplicate

|

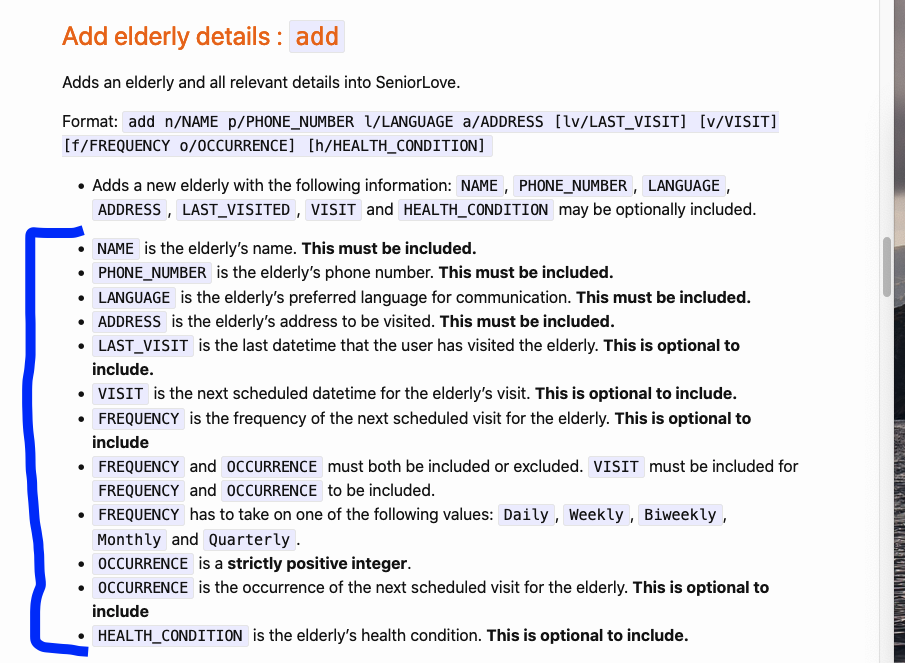

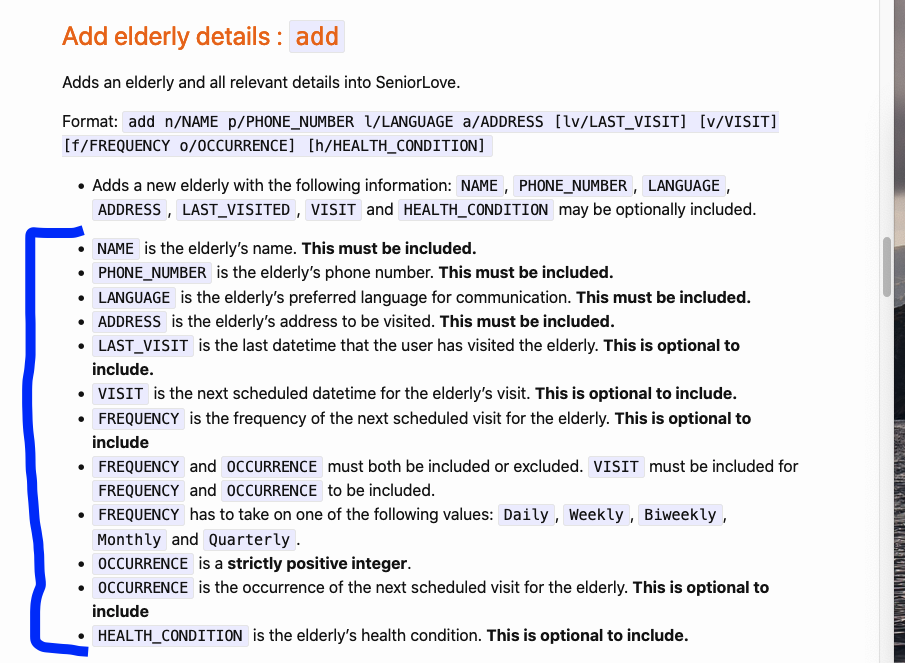

The annotated section is a slightly difficult to read and follow (especially from point 7 - `FREQUENCY` onwards). Perhaps it could be formatted into a table?

Nit - there is a line break between points 1 and 2.

<!--session: 1635494469100-6c9cee70-f4f6-40a9-8e5b-7874850be29d-->

<!--Version: Web v3.4.1-->

-------------

Labels: `severity.Low` `type.DocumentationBug`

original: shaliniseshadri/ped#5

|

1.0

|

[PE-D] Formatting of Input Parameter details -

The annotated section is a slightly difficult to read and follow (especially from point 7 - `FREQUENCY` onwards). Perhaps it could be formatted into a table?

Nit - there is a line break between points 1 and 2.

<!--session: 1635494469100-6c9cee70-f4f6-40a9-8e5b-7874850be29d-->

<!--Version: Web v3.4.1-->

-------------

Labels: `severity.Low` `type.DocumentationBug`

original: shaliniseshadri/ped#5

|

non_process

|

formatting of input parameter details the annotated section is a slightly difficult to read and follow especially from point frequency onwards perhaps it could be formatted into a table nit there is a line break between points and labels severity low type documentationbug original shaliniseshadri ped

| 0

|

7,570

| 10,684,603,698

|

IssuesEvent

|

2019-10-22 10:51:08

|

aiidateam/aiida-core

|

https://api.github.com/repos/aiidateam/aiida-core

|

closed

|

Except submitted processes whose class cannot be loaded

|

priority/nice-to-have topic/engine topic/processes type/accepted feature

|

Currently the task is just acknowledged if the node cannot be loaded. This is necessary because often the reason for the node failure is that it no longer exists. Since the node cannot be loaded, its state can also not be changed. However, in the case where it is the loading of the class that fails, the node _can_ be loaded. In this case it is better to set the process state to excepted instead of leaving it in created. This will depend on [this issue in plumpy](https://github.com/aiidateam/plumpy/issues/123) to be fixed such that the specific import error can be caught.

|

1.0

|

Except submitted processes whose class cannot be loaded - Currently the task is just acknowledged if the node cannot be loaded. This is necessary because often the reason for the node failure is that it no longer exists. Since the node cannot be loaded, its state can also not be changed. However, in the case where it is the loading of the class that fails, the node _can_ be loaded. In this case it is better to set the process state to excepted instead of leaving it in created. This will depend on [this issue in plumpy](https://github.com/aiidateam/plumpy/issues/123) to be fixed such that the specific import error can be caught.

|

process

|

except submitted processes whose class cannot be loaded currently the task is just acknowledged if the node cannot be loaded this is necessary because often the reason for the node failure is that it no longer exists since the node cannot be loaded its state can also not be changed however in the case where it is the loading of the class that fails the node can be loaded in this case it is better to set the process state to excepted instead of leaving it in created this will depend on to be fixed such that the specific import error can be caught

| 1

|

149,994

| 13,307,049,285

|

IssuesEvent

|

2020-08-25 21:21:57

|

clastix/capsule

|

https://api.github.com/repos/clastix/capsule

|

closed

|

Fix typos in the docs

|

documentation good first issue help wanted

|

Pretty straightforward, there are several typos in the documentation that sounds terrible in the English language.

Help is wanted, so please don't hesitate to refer to this issue while contributing to fixing small typos on Capsule! :tada:

|

1.0

|

Fix typos in the docs - Pretty straightforward, there are several typos in the documentation that sounds terrible in the English language.

Help is wanted, so please don't hesitate to refer to this issue while contributing to fixing small typos on Capsule! :tada:

|

non_process

|

fix typos in the docs pretty straightforward there are several typos in the documentation that sounds terrible in the english language help is wanted so please don t hesitate to refer to this issue while contributing to fixing small typos on capsule tada

| 0

|

119,968

| 17,644,002,799

|

IssuesEvent

|

2021-08-20 01:26:10

|

AkshayMukkavilli/Analyzing-the-Significance-of-Structure-in-Amazon-Review-Data-Using-Machine-Learning-Approaches

|

https://api.github.com/repos/AkshayMukkavilli/Analyzing-the-Significance-of-Structure-in-Amazon-Review-Data-Using-Machine-Learning-Approaches

|

opened

|

CVE-2021-29528 (Medium) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl

|

security vulnerability

|

## CVE-2021-29528 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/d2/ea/ab2c8c0e81bd051cc1180b104c75a865ab0fc66c89be992c4b20bbf6d624/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl">https://files.pythonhosted.org/packages/d2/ea/ab2c8c0e81bd051cc1180b104c75a865ab0fc66c89be992c4b20bbf6d624/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</a></p>

<p>Path to dependency file: /FinalProject/requirements.txt</p>

<p>Path to vulnerable library: teSource-ArchiveExtractor_8b9e071c-3b11-4aa9-ba60-cdeb60d053b7/20190525011350_65403/20190525011256_depth_0/9/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64/tensorflow-1.13.1.data/purelib/tensorflow</p>

<p>

Dependency Hierarchy:

- :x: **tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

TensorFlow is an end-to-end open source platform for machine learning. An attacker can trigger a division by 0 in `tf.raw_ops.QuantizedMul`. This is because the implementation(https://github.com/tensorflow/tensorflow/blob/55900e961ed4a23b438392024912154a2c2f5e85/tensorflow/core/kernels/quantized_mul_op.cc#L188-L198) does a division by a quantity that is controlled by the caller. The fix will be included in TensorFlow 2.5.0. We will also cherrypick this commit on TensorFlow 2.4.2, TensorFlow 2.3.3, TensorFlow 2.2.3 and TensorFlow 2.1.4, as these are also affected and still in supported range.

<p>Publish Date: 2021-05-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-29528>CVE-2021-29528</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/tensorflow/tensorflow/security/advisories/GHSA-6f84-42vf-ppwp">https://github.com/tensorflow/tensorflow/security/advisories/GHSA-6f84-42vf-ppwp</a></p>

<p>Release Date: 2021-05-14</p>

<p>Fix Resolution: tensorflow - 2.5.0, tensorflow-cpu - 2.5.0, tensorflow-gpu - 2.5.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2021-29528 (Medium) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2021-29528 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/d2/ea/ab2c8c0e81bd051cc1180b104c75a865ab0fc66c89be992c4b20bbf6d624/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl">https://files.pythonhosted.org/packages/d2/ea/ab2c8c0e81bd051cc1180b104c75a865ab0fc66c89be992c4b20bbf6d624/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</a></p>

<p>Path to dependency file: /FinalProject/requirements.txt</p>

<p>Path to vulnerable library: teSource-ArchiveExtractor_8b9e071c-3b11-4aa9-ba60-cdeb60d053b7/20190525011350_65403/20190525011256_depth_0/9/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64/tensorflow-1.13.1.data/purelib/tensorflow</p>

<p>

Dependency Hierarchy:

- :x: **tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

TensorFlow is an end-to-end open source platform for machine learning. An attacker can trigger a division by 0 in `tf.raw_ops.QuantizedMul`. This is because the implementation(https://github.com/tensorflow/tensorflow/blob/55900e961ed4a23b438392024912154a2c2f5e85/tensorflow/core/kernels/quantized_mul_op.cc#L188-L198) does a division by a quantity that is controlled by the caller. The fix will be included in TensorFlow 2.5.0. We will also cherrypick this commit on TensorFlow 2.4.2, TensorFlow 2.3.3, TensorFlow 2.2.3 and TensorFlow 2.1.4, as these are also affected and still in supported range.

<p>Publish Date: 2021-05-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-29528>CVE-2021-29528</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/tensorflow/tensorflow/security/advisories/GHSA-6f84-42vf-ppwp">https://github.com/tensorflow/tensorflow/security/advisories/GHSA-6f84-42vf-ppwp</a></p>

<p>Release Date: 2021-05-14</p>

<p>Fix Resolution: tensorflow - 2.5.0, tensorflow-cpu - 2.5.0, tensorflow-gpu - 2.5.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in tensorflow whl cve medium severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file finalproject requirements txt path to vulnerable library tesource archiveextractor depth tensorflow tensorflow data purelib tensorflow dependency hierarchy x tensorflow whl vulnerable library vulnerability details tensorflow is an end to end open source platform for machine learning an attacker can trigger a division by in tf raw ops quantizedmul this is because the implementation does a division by a quantity that is controlled by the caller the fix will be included in tensorflow we will also cherrypick this commit on tensorflow tensorflow tensorflow and tensorflow as these are also affected and still in supported range publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution tensorflow tensorflow cpu tensorflow gpu step up your open source security game with whitesource

| 0

|

20,269

| 26,897,828,892

|

IssuesEvent

|

2023-02-06 13:43:26

|

microsoft/vscode

|

https://api.github.com/repos/microsoft/vscode

|

closed

|

VSCode integrated terminal no longer loads bash .profile on startup

|

confirmation-pending terminal-process new release

|

Type: <b>Bug</b>

When using a custom ~/.profile in WSL, the initial load of the integrated terminal won't load (It seems to be ignoring `terminal.integrated.profiles.linux` settings, since the custom icon I set is not working either) but subsequent terminal creation (not with split/add but with the dropdown clicking on the profile directly) loads the profile fine.

VS Code version: Code 1.75.0 (e2816fe719a4026ffa1ee0189dc89bdfdbafb164, 2023-02-01T15:23:45.584Z)

OS version: Windows_NT x64 10.0.19044

Modes:

Sandboxed: Yes

Remote OS version: Linux x64 5.15.79.1-microsoft-standard-WSL2

<details>

<summary>System Info</summary>

|Item|Value|

|---|---|

|CPUs|AMD Ryzen 9 5900X 12-Core Processor (24 x 3700)|

|GPU Status|2d_canvas: enabled<br>canvas_oop_rasterization: disabled_off<br>direct_rendering_display_compositor: disabled_off_ok<br>gpu_compositing: enabled<br>multiple_raster_threads: enabled_on<br>opengl: enabled_on<br>rasterization: enabled<br>raw_draw: disabled_off_ok<br>skia_renderer: enabled_on<br>video_decode: enabled<br>video_encode: enabled<br>vulkan: disabled_off<br>webgl: enabled<br>webgl2: enabled<br>webgpu: disabled_off|

|Load (avg)|undefined|

|Memory (System)|63.92GB (39.72GB free)|

|Process Argv|--log=trace --folder-uri=vscode-remote://wsl+Ubuntu/home/wunder/repos/Farm-Smart --remote=wsl+Ubuntu --crash-reporter-id 0fe24daf-6a84-45c5-8bd5-d3682756f872|

|Screen Reader|no|

|VM|0%|

|Item|Value|

|---|---|

|Remote|WSL: Ubuntu|

|OS|Linux x64 5.15.79.1-microsoft-standard-WSL2|

|CPUs|AMD Ryzen 9 5900X 12-Core Processor (24 x 3700)|

|Memory (System)|31.30GB (28.66GB free)|

|VM|0%|

</details><details><summary>Extensions (62)</summary>

Extension|Author (truncated)|Version

---|---|---

vsc-material-theme|Equ|33.6.0

vsc-material-theme-icons|equ|2.5.0

remotehub|Git|0.50.0

vscode-graphql-syntax|Gra|1.0.6

vscode-cloak|joh|0.5.0

vscode-peacock|joh|4.2.2

edgedb|mag|0.1.5

theme-1|Mar|1.2.6

dotenv|mik|1.0.1

jupyter-keymap|ms-|1.0.0

remote-containers|ms-|0.275.0

remote-ssh|ms-|0.96.0

remote-ssh-edit|ms-|0.84.0

remote-wsl|ms-|0.75.1

azure-repos|ms-|0.26.0

remote-explorer|ms-|0.2.0

remote-repositories|ms-|0.28.0

material-icon-theme|PKi|4.23.1

vscode-caniuse|aga|0.5.0

vscode-color-picker|Ant|0.0.4

vscode-tailwindcss|bra|0.9.7

turbo-console-log|Cha|2.6.2

hide-node-modules|chr|1.1.4

regex|chr|0.4.0

vscode-eslint|dba|2.2.6

vscode-html-css|ecm|1.13.1

prettier-vscode|esb|9.10.4

vscode-highlight|fab|1.7.2

vscode-todo-plus|fab|4.19.1

copilot|Git|1.71.8269

vscode-pull-request-github|Git|0.58.0

go|gol|0.37.1

vscode-graphql|Gra|0.8.5

vscode-graphql-syntax|Gra|1.0.6

vscode-sort|hen|0.2.5

vscode-edit-csv|jan|0.7.2

svg|joc|1.5.0

launchdarkly|lau|3.0.6

gitless|maa|11.7.2

rainbow-csv|mec|3.5.0

template-string-converter|meg|0.6.0

inline-fold|moa|0.2.2

vscode-docker|ms-|1.23.3

isort|ms-|2022.8.0

python|ms-|2023.2.0

vscode-pylance|ms-|2023.2.10

jupyter|ms-|2023.1.2000312134

jupyter-keymap|ms-|1.0.0

jupyter-renderers|ms-|1.0.14

vscode-jupyter-cell-tags|ms-|0.1.6

vscode-jupyter-slideshow|ms-|0.1.5

powershell|ms-|2023.1.0

vscode-typescript-next|ms-|5.0.202302010

vetur|oct|0.36.1

heroku-command|pko|0.0.8

prisma|Pri|4.9.0

rust-analyzer|rus|0.3.1386

vs-code-prettier-eslint|rve|5.0.4

vscode-javascript-booster|sbu|14.0.1

even-better-toml|tam|0.19.0

tauri-vscode|tau|0.2.1

vscode-js-console-utils|wht|0.7.0

(8 theme extensions excluded)

</details><details>

<summary>A/B Experiments</summary>

```

vsliv368cf:30146710

vsreu685:30147344

python383cf:30185419

vspor879:30202332

vspor708:30202333

vspor363:30204092

vslsvsres303:30308271

pythonvspyl392:30443607

vserr242:30382549

pythontb:30283811

vsjup518:30340749

pythonptprofiler:30281270

vsdfh931cf:30280410

vshan820:30294714

vstes263:30335439

pythondataviewer:30285071

vscod805cf:30301675

binariesv615:30325510

bridge0708:30335490

bridge0723:30353136

cmake_vspar411:30581797

vsaa593:30376534

pythonvs932:30410667

cppdebug:30492333

vsclangdc:30486549

c4g48928:30535728

dsvsc012cf:30540253

azure-dev_surveyone:30548225

vscccc:30610679

pyindex848:30577860

nodejswelcome1cf:30587006

282f8724:30602487

pyind779:30657576

89544117:30613380

pythonsymbol12:30657548

vscsb:30628654

```

</details>

<!-- generated by issue reporter -->

|

1.0

|

VSCode integrated terminal no longer loads bash .profile on startup - Type: <b>Bug</b>

When using a custom ~/.profile in WSL, the initial load of the integrated terminal won't load (It seems to be ignoring `terminal.integrated.profiles.linux` settings, since the custom icon I set is not working either) but subsequent terminal creation (not with split/add but with the dropdown clicking on the profile directly) loads the profile fine.

VS Code version: Code 1.75.0 (e2816fe719a4026ffa1ee0189dc89bdfdbafb164, 2023-02-01T15:23:45.584Z)

OS version: Windows_NT x64 10.0.19044

Modes:

Sandboxed: Yes

Remote OS version: Linux x64 5.15.79.1-microsoft-standard-WSL2

<details>

<summary>System Info</summary>

|Item|Value|

|---|---|

|CPUs|AMD Ryzen 9 5900X 12-Core Processor (24 x 3700)|

|GPU Status|2d_canvas: enabled<br>canvas_oop_rasterization: disabled_off<br>direct_rendering_display_compositor: disabled_off_ok<br>gpu_compositing: enabled<br>multiple_raster_threads: enabled_on<br>opengl: enabled_on<br>rasterization: enabled<br>raw_draw: disabled_off_ok<br>skia_renderer: enabled_on<br>video_decode: enabled<br>video_encode: enabled<br>vulkan: disabled_off<br>webgl: enabled<br>webgl2: enabled<br>webgpu: disabled_off|

|Load (avg)|undefined|

|Memory (System)|63.92GB (39.72GB free)|

|Process Argv|--log=trace --folder-uri=vscode-remote://wsl+Ubuntu/home/wunder/repos/Farm-Smart --remote=wsl+Ubuntu --crash-reporter-id 0fe24daf-6a84-45c5-8bd5-d3682756f872|

|Screen Reader|no|

|VM|0%|

|Item|Value|

|---|---|

|Remote|WSL: Ubuntu|

|OS|Linux x64 5.15.79.1-microsoft-standard-WSL2|

|CPUs|AMD Ryzen 9 5900X 12-Core Processor (24 x 3700)|

|Memory (System)|31.30GB (28.66GB free)|

|VM|0%|

</details><details><summary>Extensions (62)</summary>

Extension|Author (truncated)|Version

---|---|---

vsc-material-theme|Equ|33.6.0

vsc-material-theme-icons|equ|2.5.0

remotehub|Git|0.50.0

vscode-graphql-syntax|Gra|1.0.6

vscode-cloak|joh|0.5.0

vscode-peacock|joh|4.2.2

edgedb|mag|0.1.5

theme-1|Mar|1.2.6

dotenv|mik|1.0.1

jupyter-keymap|ms-|1.0.0

remote-containers|ms-|0.275.0

remote-ssh|ms-|0.96.0

remote-ssh-edit|ms-|0.84.0

remote-wsl|ms-|0.75.1

azure-repos|ms-|0.26.0

remote-explorer|ms-|0.2.0

remote-repositories|ms-|0.28.0

material-icon-theme|PKi|4.23.1

vscode-caniuse|aga|0.5.0

vscode-color-picker|Ant|0.0.4

vscode-tailwindcss|bra|0.9.7

turbo-console-log|Cha|2.6.2

hide-node-modules|chr|1.1.4

regex|chr|0.4.0

vscode-eslint|dba|2.2.6

vscode-html-css|ecm|1.13.1

prettier-vscode|esb|9.10.4

vscode-highlight|fab|1.7.2

vscode-todo-plus|fab|4.19.1

copilot|Git|1.71.8269

vscode-pull-request-github|Git|0.58.0

go|gol|0.37.1

vscode-graphql|Gra|0.8.5

vscode-graphql-syntax|Gra|1.0.6

vscode-sort|hen|0.2.5

vscode-edit-csv|jan|0.7.2

svg|joc|1.5.0

launchdarkly|lau|3.0.6

gitless|maa|11.7.2

rainbow-csv|mec|3.5.0

template-string-converter|meg|0.6.0

inline-fold|moa|0.2.2

vscode-docker|ms-|1.23.3

isort|ms-|2022.8.0

python|ms-|2023.2.0

vscode-pylance|ms-|2023.2.10

jupyter|ms-|2023.1.2000312134

jupyter-keymap|ms-|1.0.0

jupyter-renderers|ms-|1.0.14

vscode-jupyter-cell-tags|ms-|0.1.6

vscode-jupyter-slideshow|ms-|0.1.5

powershell|ms-|2023.1.0

vscode-typescript-next|ms-|5.0.202302010

vetur|oct|0.36.1

heroku-command|pko|0.0.8

prisma|Pri|4.9.0

rust-analyzer|rus|0.3.1386

vs-code-prettier-eslint|rve|5.0.4

vscode-javascript-booster|sbu|14.0.1

even-better-toml|tam|0.19.0

tauri-vscode|tau|0.2.1

vscode-js-console-utils|wht|0.7.0

(8 theme extensions excluded)

</details><details>

<summary>A/B Experiments</summary>

```

vsliv368cf:30146710

vsreu685:30147344

python383cf:30185419

vspor879:30202332

vspor708:30202333

vspor363:30204092

vslsvsres303:30308271

pythonvspyl392:30443607

vserr242:30382549

pythontb:30283811

vsjup518:30340749

pythonptprofiler:30281270

vsdfh931cf:30280410

vshan820:30294714

vstes263:30335439

pythondataviewer:30285071

vscod805cf:30301675

binariesv615:30325510

bridge0708:30335490

bridge0723:30353136

cmake_vspar411:30581797

vsaa593:30376534

pythonvs932:30410667

cppdebug:30492333

vsclangdc:30486549

c4g48928:30535728

dsvsc012cf:30540253

azure-dev_surveyone:30548225

vscccc:30610679

pyindex848:30577860

nodejswelcome1cf:30587006

282f8724:30602487

pyind779:30657576

89544117:30613380

pythonsymbol12:30657548

vscsb:30628654

```

</details>

<!-- generated by issue reporter -->

|

process

|

vscode integrated terminal no longer loads bash profile on startup type bug when using a custom profile in wsl the initial load of the integrated terminal won t load it seems to be ignoring terminal integrated profiles linux settings since the custom icon i set is not working either but subsequent terminal creation not with split add but with the dropdown clicking on the profile directly loads the profile fine vs code version code os version windows nt modes sandboxed yes remote os version linux microsoft standard system info item value cpus amd ryzen core processor x gpu status canvas enabled canvas oop rasterization disabled off direct rendering display compositor disabled off ok gpu compositing enabled multiple raster threads enabled on opengl enabled on rasterization enabled raw draw disabled off ok skia renderer enabled on video decode enabled video encode enabled vulkan disabled off webgl enabled enabled webgpu disabled off load avg undefined memory system free process argv log trace folder uri vscode remote wsl ubuntu home wunder repos farm smart remote wsl ubuntu crash reporter id screen reader no vm item value remote wsl ubuntu os linux microsoft standard cpus amd ryzen core processor x memory system free vm extensions extension author truncated version vsc material theme equ vsc material theme icons equ remotehub git vscode graphql syntax gra vscode cloak joh vscode peacock joh edgedb mag theme mar dotenv mik jupyter keymap ms remote containers ms remote ssh ms remote ssh edit ms remote wsl ms azure repos ms remote explorer ms remote repositories ms material icon theme pki vscode caniuse aga vscode color picker ant vscode tailwindcss bra turbo console log cha hide node modules chr regex chr vscode eslint dba vscode html css ecm prettier vscode esb vscode highlight fab vscode todo plus fab copilot git vscode pull request github git go gol vscode graphql gra vscode graphql syntax gra vscode sort hen vscode edit csv jan svg joc launchdarkly lau gitless maa rainbow csv mec template string converter meg inline fold moa vscode docker ms isort ms python ms vscode pylance ms jupyter ms jupyter keymap ms jupyter renderers ms vscode jupyter cell tags ms vscode jupyter slideshow ms powershell ms vscode typescript next ms vetur oct heroku command pko prisma pri rust analyzer rus vs code prettier eslint rve vscode javascript booster sbu even better toml tam tauri vscode tau vscode js console utils wht theme extensions excluded a b experiments pythontb pythonptprofiler pythondataviewer cmake cppdebug vsclangdc azure dev surveyone vscccc vscsb

| 1

|

79,442

| 15,586,152,136

|

IssuesEvent

|

2021-03-18 01:17:26

|

jrshutske/unit-conversion-api

|

https://api.github.com/repos/jrshutske/unit-conversion-api

|

opened

|

CVE-2017-18640 (High) detected in snakeyaml-1.23.jar

|

security vulnerability

|

## CVE-2017-18640 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>snakeyaml-1.23.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http://www.snakeyaml.org">http://www.snakeyaml.org</a></p>

<p>Path to dependency file: /unit-conversion-api/pom.xml</p>

<p>Path to vulnerable library: /root/.m2/repository/org/yaml/snakeyaml/1.23/snakeyaml-1.23.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-actuator-2.1.2.RELEASE.jar (Root Library)

- spring-boot-starter-2.1.2.RELEASE.jar

- :x: **snakeyaml-1.23.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The Alias feature in SnakeYAML 1.18 allows entity expansion during a load operation, a related issue to CVE-2003-1564.

<p>Publish Date: 2019-12-12

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-18640>CVE-2017-18640</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2017-18640">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2017-18640</a></p>

<p>Release Date: 2019-12-12</p>

<p>Fix Resolution: org.yaml:snakeyaml:1.26</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2017-18640 (High) detected in snakeyaml-1.23.jar - ## CVE-2017-18640 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>snakeyaml-1.23.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http://www.snakeyaml.org">http://www.snakeyaml.org</a></p>

<p>Path to dependency file: /unit-conversion-api/pom.xml</p>

<p>Path to vulnerable library: /root/.m2/repository/org/yaml/snakeyaml/1.23/snakeyaml-1.23.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-actuator-2.1.2.RELEASE.jar (Root Library)

- spring-boot-starter-2.1.2.RELEASE.jar

- :x: **snakeyaml-1.23.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The Alias feature in SnakeYAML 1.18 allows entity expansion during a load operation, a related issue to CVE-2003-1564.

<p>Publish Date: 2019-12-12

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-18640>CVE-2017-18640</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2017-18640">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2017-18640</a></p>

<p>Release Date: 2019-12-12</p>

<p>Fix Resolution: org.yaml:snakeyaml:1.26</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve high detected in snakeyaml jar cve high severity vulnerability vulnerable library snakeyaml jar yaml parser and emitter for java library home page a href path to dependency file unit conversion api pom xml path to vulnerable library root repository org yaml snakeyaml snakeyaml jar dependency hierarchy spring boot starter actuator release jar root library spring boot starter release jar x snakeyaml jar vulnerable library vulnerability details the alias feature in snakeyaml allows entity expansion during a load operation a related issue to cve publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution org yaml snakeyaml step up your open source security game with whitesource

| 0

|

62,569

| 3,188,927,689

|

IssuesEvent

|

2015-09-29 01:01:53

|

ddurieux/redminetest

|

https://api.github.com/repos/ddurieux/redminetest

|

closed

|

there should be no dependencies from this plugin on the snmp plugin

|

Component: For junior contributor Component: Found in version Priority: Normal Status: Closed Tracker: Bug

|

---

Author Name: **Fabrice Flore-Thebault** (@themr0c)

Original Redmine Issue: 482, http://forge.fusioninventory.org/issues/482

Original Date: 2010-11-10

Original Assignee: David Durieux

---

when installing @fusinvinventory@ plugin without the @fusinvsnmp@ plugin, the whole plugins page is blocked in glpi and i get this error :

```

PHP Warning: require_once(../plugins/fusinvsnmp/inc/communicationsnmp.class.php) [function.require-once]: failed to open stream: No such file or directory in /var/www/glpi/plugins/fusinvinventory/inc/import_controller.class.php at line 45

Fatal error: require_once() [function.require]: Failed opening required '../plugins/fusinvsnmp/inc/communicationsnmp.class.php' (include_path='.:/usr/share/php:/usr/share/pear') in /var/www/glpi/plugins/fusinvinventory/inc/import_controller.class.php on line 45

```

there should be no such dependencies between plugins, and such an error should not block the listing of the plugins in glpi ...

|

1.0

|

there should be no dependencies from this plugin on the snmp plugin - ---

Author Name: **Fabrice Flore-Thebault** (@themr0c)

Original Redmine Issue: 482, http://forge.fusioninventory.org/issues/482

Original Date: 2010-11-10

Original Assignee: David Durieux

---

when installing @fusinvinventory@ plugin without the @fusinvsnmp@ plugin, the whole plugins page is blocked in glpi and i get this error :

```

PHP Warning: require_once(../plugins/fusinvsnmp/inc/communicationsnmp.class.php) [function.require-once]: failed to open stream: No such file or directory in /var/www/glpi/plugins/fusinvinventory/inc/import_controller.class.php at line 45

Fatal error: require_once() [function.require]: Failed opening required '../plugins/fusinvsnmp/inc/communicationsnmp.class.php' (include_path='.:/usr/share/php:/usr/share/pear') in /var/www/glpi/plugins/fusinvinventory/inc/import_controller.class.php on line 45

```

there should be no such dependencies between plugins, and such an error should not block the listing of the plugins in glpi ...

|

non_process

|

there should be no dependencies from this plugin on the snmp plugin author name fabrice flore thebault original redmine issue original date original assignee david durieux when installing fusinvinventory plugin without the fusinvsnmp plugin the whole plugins page is blocked in glpi and i get this error php warning require once plugins fusinvsnmp inc communicationsnmp class php failed to open stream no such file or directory in var www glpi plugins fusinvinventory inc import controller class php at line fatal error require once failed opening required plugins fusinvsnmp inc communicationsnmp class php include path usr share php usr share pear in var www glpi plugins fusinvinventory inc import controller class php on line there should be no such dependencies between plugins and such an error should not block the listing of the plugins in glpi

| 0

|

106,223

| 13,256,369,094

|

IssuesEvent

|

2020-08-20 12:35:07

|

raiden-network/light-client

|

https://api.github.com/repos/raiden-network/light-client

|

closed

|

Change the header according to the new design throughout the dApp

|

Design 🎨 dApp 📱

|

## Description

Within #1460 @sashseurat made a nice proposal to change the header of the dApp.

https://www.figma.com/file/3UX5wudtGolsdh5QVyuoTS/LC-Header?node-id=0%3A1

## Acceptance criteria

-

## Tasks

- [ ]

|

1.0

|

Change the header according to the new design throughout the dApp - ## Description

Within #1460 @sashseurat made a nice proposal to change the header of the dApp.

https://www.figma.com/file/3UX5wudtGolsdh5QVyuoTS/LC-Header?node-id=0%3A1

## Acceptance criteria

-

## Tasks

- [ ]

|

non_process

|

change the header according to the new design throughout the dapp description within sashseurat made a nice proposal to change the header of the dapp acceptance criteria tasks

| 0

|

16,006

| 20,188,222,097

|

IssuesEvent

|

2022-02-11 01:19:18

|

savitamittalmsft/WAS-SEC-TEST

|

https://api.github.com/repos/savitamittalmsft/WAS-SEC-TEST

|

opened

|

Establish an incident response plan and perform periodically a simulated execution

|

WARP-Import WAF FEB 2021 Security Performance and Scalability Capacity Management Processes Operational Procedures Incident Response

|

<a href="https://info.microsoft.com/rs/157-GQE-382/images/EN-US-CNTNT-emergency-doc-digital.pdf">Establish an incident response plan and perform periodically a simulated execution</a>

<p><b>Why Consider This?</b></p>

Actions executed during an incident and response investigation could impact application availability or performance. It is recommended to define these processes and align them with the responsible (and in most cases central) SecOps team. The impact of such an investigation on the application has to be analyzed.

<p><b>Context</b></p>

<p><span>It is important for an organization to accept the fact that compromises occur, and being prepared is a necessity."nbsp; Preparation categories include the technical, operational, legal, and communication aspects of a major cybersecurity incident.</p><p>Lastly, simulating the execution of the incident response plan is paramount in ensuring the organization can be confident that the plan contains all necessary components, and that the intended outcomes are achieved."nbsp; </span></p>

<p><b>Suggested Actions</b></p>

<p><span>Develop or enhance existing incident response plan to ensure it is comprehensive, tested, and receives continual updates based on the current threat landscape.</span></p>

<p><b>Learn More</b></p>

<p><a href="https://github.com/MarkSimos/MicrosoftSecurity/blob/master/IR%20Reference%20Guide.pdf" target="_blank"><span>Incident response reference guide</span></a><span /></p>

|

1.0

|

Establish an incident response plan and perform periodically a simulated execution - <a href="https://info.microsoft.com/rs/157-GQE-382/images/EN-US-CNTNT-emergency-doc-digital.pdf">Establish an incident response plan and perform periodically a simulated execution</a>

<p><b>Why Consider This?</b></p>

Actions executed during an incident and response investigation could impact application availability or performance. It is recommended to define these processes and align them with the responsible (and in most cases central) SecOps team. The impact of such an investigation on the application has to be analyzed.

<p><b>Context</b></p>

<p><span>It is important for an organization to accept the fact that compromises occur, and being prepared is a necessity."nbsp; Preparation categories include the technical, operational, legal, and communication aspects of a major cybersecurity incident.</p><p>Lastly, simulating the execution of the incident response plan is paramount in ensuring the organization can be confident that the plan contains all necessary components, and that the intended outcomes are achieved."nbsp; </span></p>

<p><b>Suggested Actions</b></p>

<p><span>Develop or enhance existing incident response plan to ensure it is comprehensive, tested, and receives continual updates based on the current threat landscape.</span></p>

<p><b>Learn More</b></p>

<p><a href="https://github.com/MarkSimos/MicrosoftSecurity/blob/master/IR%20Reference%20Guide.pdf" target="_blank"><span>Incident response reference guide</span></a><span /></p>

|

process

|

establish an incident response plan and perform periodically a simulated execution why consider this actions executed during an incident and response investigation could impact application availability or performance it is recommended to define these processes and align them with the responsible and in most cases central secops team the impact of such an investigation on the application has to be analyzed context it is important for an organization to accept the fact that compromises occur and being prepared is a necessity nbsp preparation categories include the technical operational legal and communication aspects of a major cybersecurity incident lastly simulating the execution of the incident response plan is paramount in ensuring the organization can be confident that the plan contains all necessary components and that the intended outcomes are achieved nbsp suggested actions develop or enhance existing incident response plan to ensure it is comprehensive tested and receives continual updates based on the current threat landscape learn more incident response reference guide

| 1

|

15,851

| 20,031,989,637

|

IssuesEvent

|

2022-02-02 07:36:14

|

googleapis/gax-dotnet

|

https://api.github.com/repos/googleapis/gax-dotnet

|

closed

|

Check all TODOs in Google.Api.Gax.Grpc.Rest before GA

|

type: process

|

There are currently 14 TODO comments in Google.Api.Gax.Grpc.Rest.

We don't need to necessarily implement everything, but we should check each one.

|

1.0

|

Check all TODOs in Google.Api.Gax.Grpc.Rest before GA - There are currently 14 TODO comments in Google.Api.Gax.Grpc.Rest.

We don't need to necessarily implement everything, but we should check each one.

|

process

|

check all todos in google api gax grpc rest before ga there are currently todo comments in google api gax grpc rest we don t need to necessarily implement everything but we should check each one

| 1

|

13,823

| 16,587,868,162

|

IssuesEvent

|

2021-06-01 01:24:09

|

lsmacedo/spotifyt-back-end

|

https://api.github.com/repos/lsmacedo/spotifyt-back-end

|

opened

|

Rodar scripts de pre-commit e pre-push

|

process

|

Pre-commit: rodar lint

Pre-push: rodar testes unitários e E2E

|

1.0

|

Rodar scripts de pre-commit e pre-push - Pre-commit: rodar lint

Pre-push: rodar testes unitários e E2E

|

process

|

rodar scripts de pre commit e pre push pre commit rodar lint pre push rodar testes unitários e

| 1

|

201,565

| 7,033,658,585

|

IssuesEvent

|

2017-12-27 12:13:22

|

meetalva/alva

|

https://api.github.com/repos/meetalva/alva

|

closed

|

App is rendered useless when attempting to add component to playground

|

priority: high type: bug

|

Every time I try to add a component to the Playground the screen blanks to white, the component isn't editable and I can no longer add any elements. This is just using the newly created space. I'm on Mac OSX High Sierra. Turning on developer tools yields this error stack:

```

/Applications/Alva.app/Contents/Resources/app.asar/node_modules/fbjs/lib/warning.js:33 Warning: Each child in an array or iterator should have a unique "key" prop.

Check the render method of `PatternListContainer`. See https://fb.me/react-warning-keys for more information.

in PatternList (created by PatternListContainer)

in PatternListContainer (created by App)

in div (created by styled.div)

in styled.div (created by PatternsPane)

in PatternsPane (created by App)

in div (created by styled.div)

in styled.div (created by Styled(styled.div))

in Styled(styled.div)

in Unknown (created by App)

in div (created by styled.div)

in styled.div (created by Styled(styled.div))

in Styled(styled.div)

in Unknown (created by App)

in div (created by styled.div)

in styled.div (created by Layout)

in Layout (created by App)

in App```

|

1.0

|

App is rendered useless when attempting to add component to playground - Every time I try to add a component to the Playground the screen blanks to white, the component isn't editable and I can no longer add any elements. This is just using the newly created space. I'm on Mac OSX High Sierra. Turning on developer tools yields this error stack:

```

/Applications/Alva.app/Contents/Resources/app.asar/node_modules/fbjs/lib/warning.js:33 Warning: Each child in an array or iterator should have a unique "key" prop.

Check the render method of `PatternListContainer`. See https://fb.me/react-warning-keys for more information.

in PatternList (created by PatternListContainer)

in PatternListContainer (created by App)

in div (created by styled.div)

in styled.div (created by PatternsPane)

in PatternsPane (created by App)

in div (created by styled.div)

in styled.div (created by Styled(styled.div))

in Styled(styled.div)

in Unknown (created by App)

in div (created by styled.div)

in styled.div (created by Styled(styled.div))

in Styled(styled.div)

in Unknown (created by App)

in div (created by styled.div)

in styled.div (created by Layout)

in Layout (created by App)

in App```

|

non_process

|

app is rendered useless when attempting to add component to playground every time i try to add a component to the playground the screen blanks to white the component isn t editable and i can no longer add any elements this is just using the newly created space i m on mac osx high sierra turning on developer tools yields this error stack applications alva app contents resources app asar node modules fbjs lib warning js warning each child in an array or iterator should have a unique key prop check the render method of patternlistcontainer see for more information in patternlist created by patternlistcontainer in patternlistcontainer created by app in div created by styled div in styled div created by patternspane in patternspane created by app in div created by styled div in styled div created by styled styled div in styled styled div in unknown created by app in div created by styled div in styled div created by styled styled div in styled styled div in unknown created by app in div created by styled div in styled div created by layout in layout created by app in app

| 0

|

78,799

| 10,089,376,014

|

IssuesEvent

|

2019-07-26 08:46:20

|

JeongChaeEun/2019study

|

https://api.github.com/repos/JeongChaeEun/2019study

|

closed

|

Golang program using windows command 'tasklist'

|

documentation

|

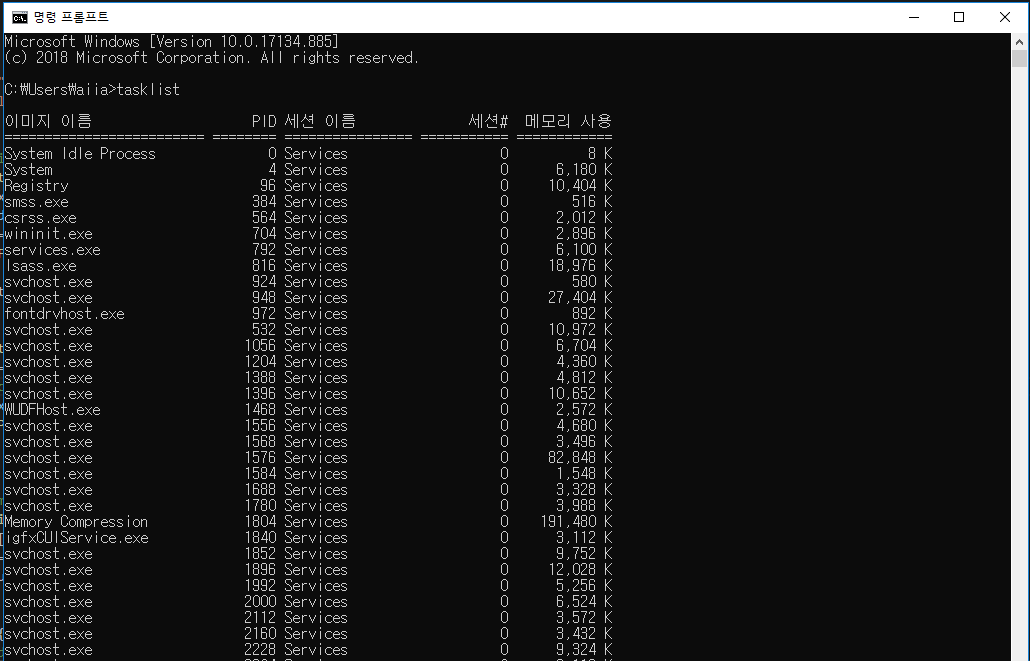

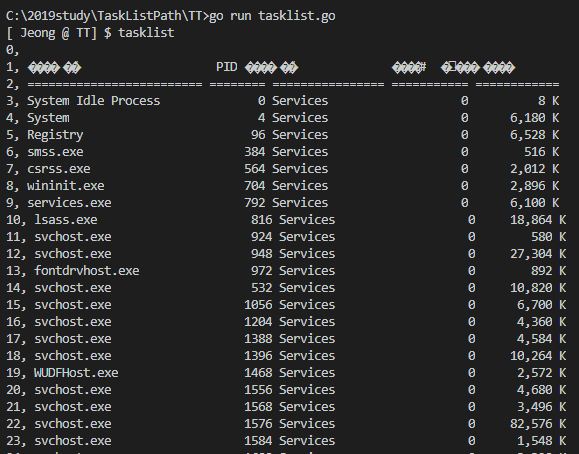

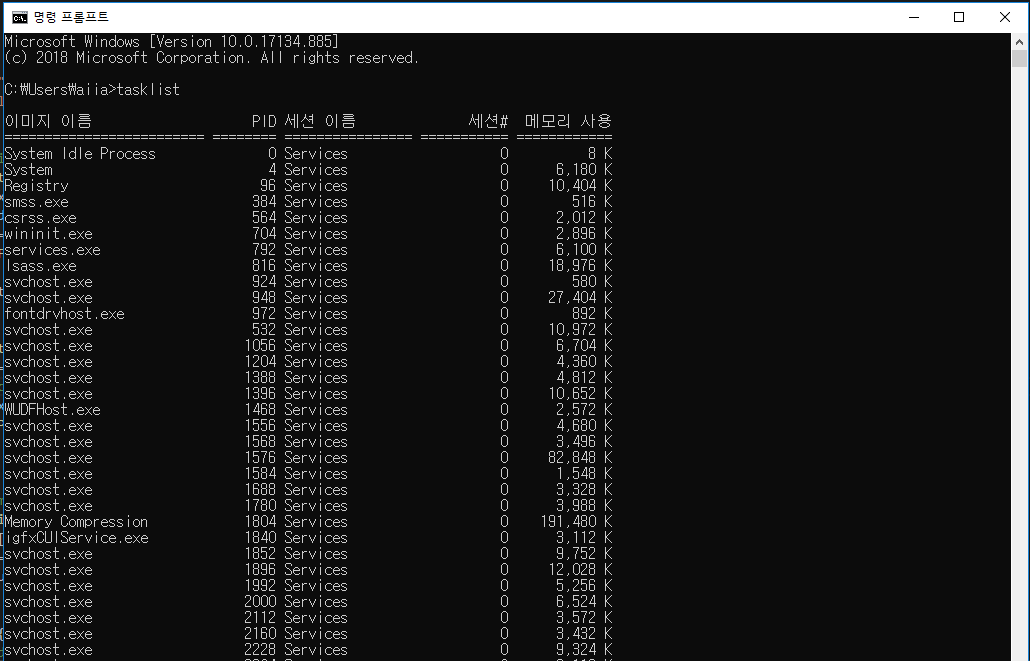

# Tasklist on Windows's cmd prompt

- If I typed the tasklist as command on cmd prompt, can see many tasks like this screenshot.

- Using go language, I made a program doing like this.

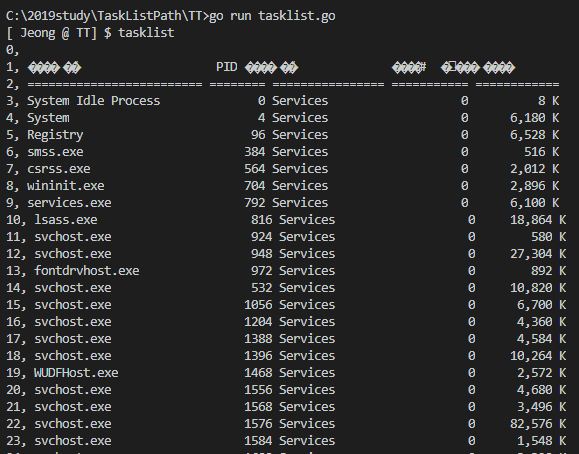

## Result _ TaskListPath/TT/tasklist.go

- code

~~~

package main

import (

"bytes"

"fmt"

"log"

"os"

"os/exec"

"path/filepath"

)

//This function is same as 'tasklist' command on cmd prompt

func tasklistCommand() []byte {

cmd := exec.Command("tasklist.exe")

stdoutStderr, err := cmd.CombinedOutput() //CombinedOutput runs the command and returns its combined standard output and standard error

if err != nil {

log.Fatal(err)

}

return stdoutStderr

}

func printOutput(outs []byte) {

output := byteSplitbyNewLine(outs)

//get each task's line using for loop

for index, element := range output {

fmt.Printf("%d, %s\n", index, string(element))

}

}

//split parameter type of []byte every newlines character

func byteSplitbyNewLine(outs []byte) [][]byte {

join := []byte{'\n'}

output := bytes.Split(outs, join)

return output

}

func main() {

//command input variable initialized

args := []string{"0", "0", "0"}

//for printing path on prompt

for {

path, _ := os.Getwd()

_, file := filepath.Split(path)

fmt.Print("[ Jeong @ ", file, "] $ ")

fmt.Scanln(&args[0], &args[1], &args[2])

switch args[0] {

case "tasklist":

stdout := tasklistCommand()

printOutput(stdout)

case "exit":

os.Exit(1)

default:

fmt.Println("not command")

}

}

}

~~~

- result screenshot

|

1.0

|

Golang program using windows command 'tasklist' - # Tasklist on Windows's cmd prompt

- If I typed the tasklist as command on cmd prompt, can see many tasks like this screenshot.

- Using go language, I made a program doing like this.

## Result _ TaskListPath/TT/tasklist.go

- code

~~~

package main

import (

"bytes"

"fmt"

"log"

"os"

"os/exec"

"path/filepath"

)

//This function is same as 'tasklist' command on cmd prompt

func tasklistCommand() []byte {

cmd := exec.Command("tasklist.exe")

stdoutStderr, err := cmd.CombinedOutput() //CombinedOutput runs the command and returns its combined standard output and standard error

if err != nil {

log.Fatal(err)

}

return stdoutStderr

}

func printOutput(outs []byte) {

output := byteSplitbyNewLine(outs)

//get each task's line using for loop

for index, element := range output {

fmt.Printf("%d, %s\n", index, string(element))

}

}

//split parameter type of []byte every newlines character

func byteSplitbyNewLine(outs []byte) [][]byte {

join := []byte{'\n'}

output := bytes.Split(outs, join)

return output

}

func main() {

//command input variable initialized

args := []string{"0", "0", "0"}

//for printing path on prompt

for {

path, _ := os.Getwd()

_, file := filepath.Split(path)

fmt.Print("[ Jeong @ ", file, "] $ ")

fmt.Scanln(&args[0], &args[1], &args[2])

switch args[0] {

case "tasklist":

stdout := tasklistCommand()

printOutput(stdout)

case "exit":

os.Exit(1)

default:

fmt.Println("not command")

}

}

}

~~~

- result screenshot

|

non_process

|

golang program using windows command tasklist tasklist on windows s cmd prompt if i typed the tasklist as command on cmd prompt can see many tasks like this screenshot using go language i made a program doing like this result tasklistpath tt tasklist go code package main import bytes fmt log os os exec path filepath this function is same as tasklist command on cmd prompt func tasklistcommand byte cmd exec command tasklist exe stdoutstderr err cmd combinedoutput combinedoutput runs the command and returns its combined standard output and standard error if err nil log fatal err return stdoutstderr func printoutput outs byte output bytesplitbynewline outs get each task s line using for loop for index element range output fmt printf d s n index string element split parameter type of byte every newlines character func bytesplitbynewline outs byte byte join byte n output bytes split outs join return output func main command input variable initialized args string for printing path on prompt for path os getwd file filepath split path fmt print fmt scanln args args args switch args case tasklist stdout tasklistcommand printoutput stdout case exit os exit default fmt println not command result screenshot

| 0

|

610,522

| 18,910,638,930

|

IssuesEvent

|

2021-11-16 13:48:17

|

gazprom-neft/consta-uikit

|

https://api.github.com/repos/gazprom-neft/consta-uikit

|

closed

|

DatePicker: date-range — перенести иконку у компонента в Storybook

|

🔥🔥 priority improvement

|

**Описание бага**