Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

71,904

| 18,923,046,729

|

IssuesEvent

|

2021-11-17 05:43:44

|

ARM-software/armnn

|

https://api.github.com/repos/ARM-software/armnn

|

closed

|

Compile error for TFlite Delegate

|

Documentation issue Build issue

|

aim at: **Build the TfLite Delegate (Stand-Alone)**

ref from:https://github.com/ARM-software/armnn/blob/branches%2Farmnn_21_02/delegate/BuildGuideNative.md

env:

**1) ubuntu18.04(x86)

2) tensorflow-2.3.1 -build success

3) flatbuffers-1.12.0-build arm64 success

4) compute library_21.02 -build success**

`scons arch=arm64-v8a neon=1 opencl=1 embed_kernels=1 extra_cxx_flags="-fPIC" benchmark_tests=0 validation_tests=0 -j8 internal_only=0`

**5)build armnn 21.02 success**

```

CXX=arrch64-linux-gnu-g++ CC=aarch64-linux-gnu-gcc cmake .. -DARMCOMPUTE_ROOT=$BASEDIR/ComputeLibrary -DARMCOMPUTENEON=1 -DBUILD_UNIT_TESTS=0

make

```

**6) build tflte delegate**

```

CXX=arrch64-linux-gnu-g++ CC=aarch64-linux-gnu-gcc cmake .. -DTENSORFLOW_LIB_DIR=$BASEDIR/tensorflow/bazel-bin -DTENSORFLOW_ROOT=$BASEDIR/tensorflow -DTFLITE_LIB_ROOT=$BASEDIR/tensorflow/bazel-bin -DFLATBUFFERS_ROOT=$BASEDIR/flatbuffers/install -DArmnn_DIR=$BASEDIR/armnn/build -DARMNN_SOURCE_DIR=$BASEDIR/armnn

make

```

/usr/lib/gcc-cross/aarch64-linux-gnu/7/../../../aarch64-linux-gnu/bin/ld:skipping incompatible /home/***/delegate/tensorflow/bazel-bin/libtensorflow_lite_all.so when searching for -ltensorflow_lite_all

/usr/lib/gcc-cross/aarch64-linux-gnu/7/../../../aarch64-linux-gnu/bin/ld: cannot find -ltensorflow_lite_all

look at dirctory,libtensorflow_lite_all.so is exist:

```

ls ../../../tensorflow-bazel-bin

libtensorflow_lite_all.so

```

please give me some advice to slove it,thanks。

|

1.0

|

Compile error for TFlite Delegate - aim at: **Build the TfLite Delegate (Stand-Alone)**

ref from:https://github.com/ARM-software/armnn/blob/branches%2Farmnn_21_02/delegate/BuildGuideNative.md

env:

**1) ubuntu18.04(x86)

2) tensorflow-2.3.1 -build success

3) flatbuffers-1.12.0-build arm64 success

4) compute library_21.02 -build success**

`scons arch=arm64-v8a neon=1 opencl=1 embed_kernels=1 extra_cxx_flags="-fPIC" benchmark_tests=0 validation_tests=0 -j8 internal_only=0`

**5)build armnn 21.02 success**

```

CXX=arrch64-linux-gnu-g++ CC=aarch64-linux-gnu-gcc cmake .. -DARMCOMPUTE_ROOT=$BASEDIR/ComputeLibrary -DARMCOMPUTENEON=1 -DBUILD_UNIT_TESTS=0

make

```

**6) build tflte delegate**

```

CXX=arrch64-linux-gnu-g++ CC=aarch64-linux-gnu-gcc cmake .. -DTENSORFLOW_LIB_DIR=$BASEDIR/tensorflow/bazel-bin -DTENSORFLOW_ROOT=$BASEDIR/tensorflow -DTFLITE_LIB_ROOT=$BASEDIR/tensorflow/bazel-bin -DFLATBUFFERS_ROOT=$BASEDIR/flatbuffers/install -DArmnn_DIR=$BASEDIR/armnn/build -DARMNN_SOURCE_DIR=$BASEDIR/armnn

make

```

/usr/lib/gcc-cross/aarch64-linux-gnu/7/../../../aarch64-linux-gnu/bin/ld:skipping incompatible /home/***/delegate/tensorflow/bazel-bin/libtensorflow_lite_all.so when searching for -ltensorflow_lite_all

/usr/lib/gcc-cross/aarch64-linux-gnu/7/../../../aarch64-linux-gnu/bin/ld: cannot find -ltensorflow_lite_all

look at dirctory,libtensorflow_lite_all.so is exist:

```

ls ../../../tensorflow-bazel-bin

libtensorflow_lite_all.so

```

please give me some advice to slove it,thanks。

|

non_process

|

compile error for tflite delegate aim at build the tflite delegate stand alone ref from env tensorflow build success flatbuffers build success compute library build success scons arch neon opencl embed kernels extra cxx flags fpic benchmark tests validation tests internal only build armnn success cxx linux gnu g cc linux gnu gcc cmake darmcompute root basedir computelibrary darmcomputeneon dbuild unit tests make build tflte delegate cxx linux gnu g cc linux gnu gcc cmake dtensorflow lib dir basedir tensorflow bazel bin dtensorflow root basedir tensorflow dtflite lib root basedir tensorflow bazel bin dflatbuffers root basedir flatbuffers install darmnn dir basedir armnn build darmnn source dir basedir armnn make usr lib gcc cross linux gnu linux gnu bin ld skipping incompatible home delegate tensorflow bazel bin libtensorflow lite all so when searching for ltensorflow lite all usr lib gcc cross linux gnu linux gnu bin ld cannot find ltensorflow lite all look at dirctory,libtensorflow lite all so is exist: ls tensorflow bazel bin libtensorflow lite all so please give me some advice to slove it,thanks。

| 0

|

8,270

| 11,430,788,728

|

IssuesEvent

|

2020-02-04 10:46:25

|

Graylog2/graylog2-server

|

https://api.github.com/repos/Graylog2/graylog2-server

|

closed

|

Grok pattern Extractors crashes and drop logs

|

bug processing

|

After I upgraded Graylog to 3.2 I found that one extractor containing a grok pattern was constantly crashing, the logs were then forever lost.

## Expected Behavior

When I add an extractor with a grok pattern for syslog and select "Always try to extract", graylog should not drop the logs that failed!

## Current Behavior

I added the following extractor :

And I can see in graylog-server logs :

```

2020-02-04T10:16:09.713+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb393704-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:09.713+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb393705-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:09.713+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb393706-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:09.958+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb5e7245-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:09.958+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb5e7244-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:09.958+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb5e7243-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:10.076+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb709ab4-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:10.077+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb70c1c0-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

```

When this happened, I have lost a lot of logs that were not indexed in graylog.

When I delete the extractor everything work smoothly.

## Possible Solution

Catch the error so that the logs can be indexed even tho the extractor failed ?

## Steps to Reproduce (for bugs)

1. Graylog in 3.2

2. Add an extractor with the Grok Pattern from above

3. Add logs that do not match the Grok Pattern to Graylog

## Context

This bug automatically dropped logs without warning.

## Your Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* Graylog Version: 3.2

* Elasticsearch Version:

* MongoDB Version:

* Operating System: Linux/Debian

* Browser version: Firefox

|

1.0

|

Grok pattern Extractors crashes and drop logs - After I upgraded Graylog to 3.2 I found that one extractor containing a grok pattern was constantly crashing, the logs were then forever lost.

## Expected Behavior

When I add an extractor with a grok pattern for syslog and select "Always try to extract", graylog should not drop the logs that failed!

## Current Behavior

I added the following extractor :

And I can see in graylog-server logs :

```

2020-02-04T10:16:09.713+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb393704-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:09.713+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb393705-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:09.713+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb393706-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:09.958+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb5e7245-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:09.958+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb5e7244-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:09.958+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb5e7243-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:10.076+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb709ab4-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

2020-02-04T10:16:10.077+01:00 WARN [ProcessBufferProcessor] Unable to process message <fb70c1c0-472e-11ea-bb88-4256e21dfc8e>: java.lang.ClassCastException: Cannot cast java.lang.String to org.joda.time.DateTime

```

When this happened, I have lost a lot of logs that were not indexed in graylog.

When I delete the extractor everything work smoothly.

## Possible Solution

Catch the error so that the logs can be indexed even tho the extractor failed ?

## Steps to Reproduce (for bugs)

1. Graylog in 3.2

2. Add an extractor with the Grok Pattern from above

3. Add logs that do not match the Grok Pattern to Graylog

## Context

This bug automatically dropped logs without warning.

## Your Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* Graylog Version: 3.2

* Elasticsearch Version:

* MongoDB Version:

* Operating System: Linux/Debian

* Browser version: Firefox

|

process

|

grok pattern extractors crashes and drop logs after i upgraded graylog to i found that one extractor containing a grok pattern was constantly crashing the logs were then forever lost expected behavior when i add an extractor with a grok pattern for syslog and select always try to extract graylog should not drop the logs that failed current behavior i added the following extractor and i can see in graylog server logs warn unable to process message java lang classcastexception cannot cast java lang string to org joda time datetime warn unable to process message java lang classcastexception cannot cast java lang string to org joda time datetime warn unable to process message java lang classcastexception cannot cast java lang string to org joda time datetime warn unable to process message java lang classcastexception cannot cast java lang string to org joda time datetime warn unable to process message java lang classcastexception cannot cast java lang string to org joda time datetime warn unable to process message java lang classcastexception cannot cast java lang string to org joda time datetime warn unable to process message java lang classcastexception cannot cast java lang string to org joda time datetime warn unable to process message java lang classcastexception cannot cast java lang string to org joda time datetime when this happened i have lost a lot of logs that were not indexed in graylog when i delete the extractor everything work smoothly possible solution catch the error so that the logs can be indexed even tho the extractor failed steps to reproduce for bugs graylog in add an extractor with the grok pattern from above add logs that do not match the grok pattern to graylog context this bug automatically dropped logs without warning your environment graylog version elasticsearch version mongodb version operating system linux debian browser version firefox

| 1

|

8,542

| 11,714,422,489

|

IssuesEvent

|

2020-03-09 12:19:13

|

nodejs/node

|

https://api.github.com/repos/nodejs/node

|

closed

|

docs: needs clarification: "terminal raw mode" (signal handling)

|

doc process tty

|

About the `SIGINT` event on `process` it is said in the docs that

> It is not generated when terminal raw mode is enabled.

https://github.com/nodejs/node/blame/3ec4b21b1c438255df6f1652377011080dc28052/doc/api/process.md#L504

In the example program I play around with the SIGINT handler was not firing. I assumed that I was testing with the "terminal raw mode", so I was looking into understanding what that is and how to disable it.

A web search for `nodejs "terminal raw mode"` didn't yield anything useful, though. I also explored `node --help` and didn't see anything obvious.

I think we should clarify in docs what "terminal raw mode" is and then cross-link to that place from the SIGINT doc I linked above.

|

1.0

|

docs: needs clarification: "terminal raw mode" (signal handling) - About the `SIGINT` event on `process` it is said in the docs that

> It is not generated when terminal raw mode is enabled.

https://github.com/nodejs/node/blame/3ec4b21b1c438255df6f1652377011080dc28052/doc/api/process.md#L504

In the example program I play around with the SIGINT handler was not firing. I assumed that I was testing with the "terminal raw mode", so I was looking into understanding what that is and how to disable it.

A web search for `nodejs "terminal raw mode"` didn't yield anything useful, though. I also explored `node --help` and didn't see anything obvious.

I think we should clarify in docs what "terminal raw mode" is and then cross-link to that place from the SIGINT doc I linked above.

|

process

|

docs needs clarification terminal raw mode signal handling about the sigint event on process it is said in the docs that it is not generated when terminal raw mode is enabled in the example program i play around with the sigint handler was not firing i assumed that i was testing with the terminal raw mode so i was looking into understanding what that is and how to disable it a web search for nodejs terminal raw mode didn t yield anything useful though i also explored node help and didn t see anything obvious i think we should clarify in docs what terminal raw mode is and then cross link to that place from the sigint doc i linked above

| 1

|

2,634

| 5,412,258,728

|

IssuesEvent

|

2017-03-01 14:07:31

|

neuropoly/spinalcordtoolbox

|

https://api.github.com/repos/neuropoly/spinalcordtoolbox

|

opened

|

NURBS are unstable when calculated in voxel coordinates

|

bug fix:minor priority: high sct_process_segmentation

|

especially when voxel spacing in one direction is very large.

This instability causes fluctuations of the derivatives, which induces wrong calculations of the angles. This has an strong effect on CSA computation, as demonstrated in the example below.

Data: ` `

Command:

```

sct_process_segmentation -i t2s_segm.nii.gz -p csa

```

Results:

```

# Slice (z),CSA (mm^2),Angle with respect to the I-S direction (degrees)

0,63.5923927147,18.6837707469

1,79.2658753014,8.26795055912

2,88.2874975915,5.12747654869

3,87.9995780207,6.03282331933

4,-8.61862123509,95.2875209491

5,89.2278470889,4.91847828944

6,95.8647986243,9.45846253778

7,84.0700371176,4.83530051485

8,84.7877521872,1.7651865876

9,81.1628827015,0.530803473898

10,82.0784541216,0.515470049064

11,80.383388821,1.2822922865

12,83.1066671944,1.84459163675

```

|

1.0

|

NURBS are unstable when calculated in voxel coordinates - especially when voxel spacing in one direction is very large.

This instability causes fluctuations of the derivatives, which induces wrong calculations of the angles. This has an strong effect on CSA computation, as demonstrated in the example below.

Data: ` `

Command:

```

sct_process_segmentation -i t2s_segm.nii.gz -p csa

```

Results:

```

# Slice (z),CSA (mm^2),Angle with respect to the I-S direction (degrees)

0,63.5923927147,18.6837707469

1,79.2658753014,8.26795055912

2,88.2874975915,5.12747654869

3,87.9995780207,6.03282331933

4,-8.61862123509,95.2875209491

5,89.2278470889,4.91847828944

6,95.8647986243,9.45846253778

7,84.0700371176,4.83530051485

8,84.7877521872,1.7651865876

9,81.1628827015,0.530803473898

10,82.0784541216,0.515470049064

11,80.383388821,1.2822922865

12,83.1066671944,1.84459163675

```

|

process

|

nurbs are unstable when calculated in voxel coordinates especially when voxel spacing in one direction is very large this instability causes fluctuations of the derivatives which induces wrong calculations of the angles this has an strong effect on csa computation as demonstrated in the example below data command sct process segmentation i segm nii gz p csa results slice z csa mm angle with respect to the i s direction degrees

| 1

|

14,811

| 18,143,492,276

|

IssuesEvent

|

2021-09-25 02:39:25

|

qgis/QGIS

|

https://api.github.com/repos/qgis/QGIS

|

closed

|

Crash when clipping a larger layer with a small layer

|

Feedback stale Processing Bug Crash/Data Corruption Upstream

|

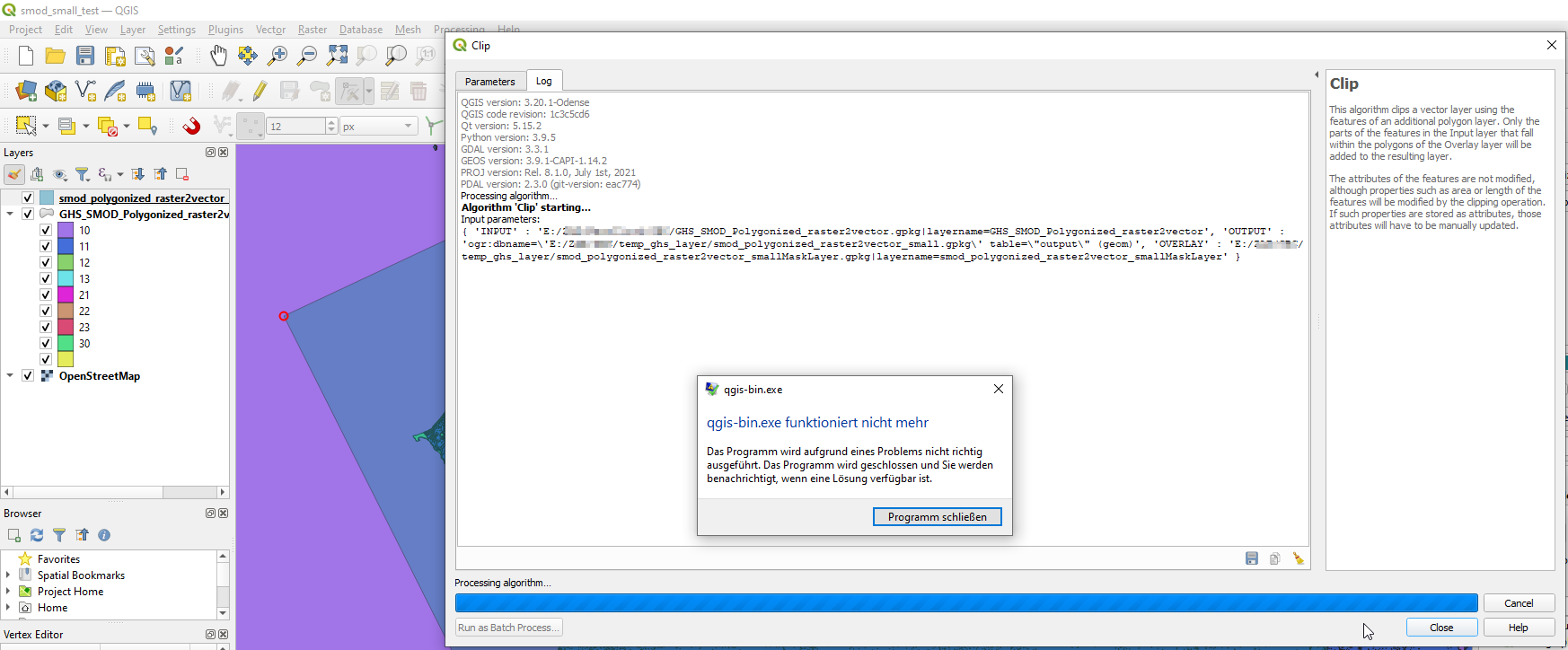

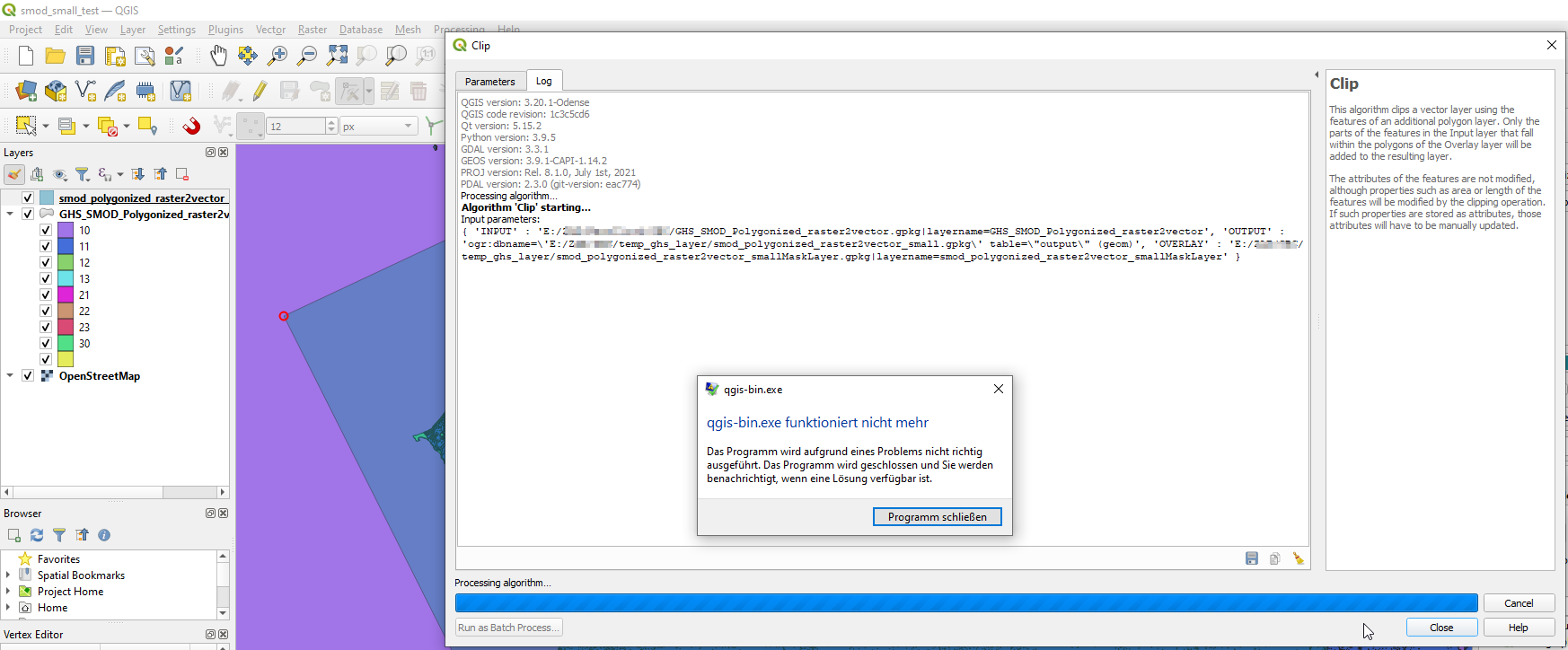

### What is the bug or the crash?

Qgis crashes after approx 10s when clipping a larger layer with a small overlay layer. Restarting Qgis or rebooting the computer doesn't help.

Input layer (Feature count: 2.923.688, CRS ESRI:54009 - World_Mollweide):

[GHS_SMOD_Polygonized_raster2vector.zip](https://drive.google.com/file/d/1QJqkm585TIgtG7J8skGN3nLj7E7SLELO/view?usp=sharing)

Overlay layer (Feature count: 1, CRS EPSG:4326 - WGS 84 ):

[smod_polygonized_raster2vector_smallMaskLayer.zip](https://github.com/qgis/QGIS/files/6960914/smod_polygonized_raster2vector_smallMaskLayer.zip)

### Steps to reproduce the issue

1. Import both layers into a new qgis project

2. processing tools -> clip

3. choose layers according to the screenshot

4. a crash occurs after several seconds

### Versions

QGIS version | 3.20.1-Odense | QGIS code revision | 1c3c5cd6

-- | -- | -- | --

Qt version | 5.15.2

Python version | 3.9.5

GDAL/OGR version | 3.3.1

PROJ version | 8.1.0

EPSG Registry database version | v10.027 (2021-06-17)

GEOS version | 3.9.1-CAPI-1.14.2

SQLite version | 3.35.2

PDAL version | 2.3.0

PostgreSQL client version | 13.0

SpatiaLite version | 5.0.1

QWT version | 6.1.3

QScintilla2 version | 2.11.5

OS version | Windows 10 Version 2009

| | |

Active Python plugins | GroupStatslatlontoolsnominatimQuickOSMdb_managerprocessing

### Additional context

_No response_

|

1.0

|

Crash when clipping a larger layer with a small layer - ### What is the bug or the crash?

Qgis crashes after approx 10s when clipping a larger layer with a small overlay layer. Restarting Qgis or rebooting the computer doesn't help.

Input layer (Feature count: 2.923.688, CRS ESRI:54009 - World_Mollweide):

[GHS_SMOD_Polygonized_raster2vector.zip](https://drive.google.com/file/d/1QJqkm585TIgtG7J8skGN3nLj7E7SLELO/view?usp=sharing)

Overlay layer (Feature count: 1, CRS EPSG:4326 - WGS 84 ):

[smod_polygonized_raster2vector_smallMaskLayer.zip](https://github.com/qgis/QGIS/files/6960914/smod_polygonized_raster2vector_smallMaskLayer.zip)

### Steps to reproduce the issue

1. Import both layers into a new qgis project

2. processing tools -> clip

3. choose layers according to the screenshot

4. a crash occurs after several seconds

### Versions

QGIS version | 3.20.1-Odense | QGIS code revision | 1c3c5cd6

-- | -- | -- | --

Qt version | 5.15.2

Python version | 3.9.5

GDAL/OGR version | 3.3.1

PROJ version | 8.1.0

EPSG Registry database version | v10.027 (2021-06-17)

GEOS version | 3.9.1-CAPI-1.14.2

SQLite version | 3.35.2

PDAL version | 2.3.0

PostgreSQL client version | 13.0

SpatiaLite version | 5.0.1

QWT version | 6.1.3

QScintilla2 version | 2.11.5

OS version | Windows 10 Version 2009

| | |

Active Python plugins | GroupStatslatlontoolsnominatimQuickOSMdb_managerprocessing

### Additional context

_No response_

|

process

|

crash when clipping a larger layer with a small layer what is the bug or the crash qgis crashes after approx when clipping a larger layer with a small overlay layer restarting qgis or rebooting the computer doesn t help input layer feature count crs esri world mollweide overlay layer feature count crs epsg wgs steps to reproduce the issue import both layers into a new qgis project processing tools clip choose layers according to the screenshot a crash occurs after several seconds versions qgis version odense qgis code revision qt version python version gdal ogr version proj version epsg registry database version geos version capi sqlite version pdal version postgresql client version spatialite version qwt version version os version windows version active python plugins groupstatslatlontoolsnominatimquickosmdb managerprocessing additional context no response

| 1

|

100,908

| 30,813,173,077

|

IssuesEvent

|

2023-08-01 11:51:38

|

assistant-ai/jess

|

https://api.github.com/repos/assistant-ai/jess

|

reopened

|

Cloudbuild: use Windows container for `go build` with `CGO_ENABLED=1`

|

feature build

|

Maybe it is the best container. )

https://hub.docker.com/layers/library/golang/windowsservercore-ltsc2022/images/sha256-647b841b8cc8b449ebd00e2774b7fcc8753d7053dd83227c11c306d956662f00?context=explore

|

1.0

|

Cloudbuild: use Windows container for `go build` with `CGO_ENABLED=1` - Maybe it is the best container. )

https://hub.docker.com/layers/library/golang/windowsservercore-ltsc2022/images/sha256-647b841b8cc8b449ebd00e2774b7fcc8753d7053dd83227c11c306d956662f00?context=explore

|

non_process

|

cloudbuild use windows container for go build with cgo enabled maybe it is the best container

| 0

|

303,720

| 23,037,141,865

|

IssuesEvent

|

2022-07-22 20:14:23

|

stoplightio/prism

|

https://api.github.com/repos/stoplightio/prism

|

closed

|

Add protocol requirement to prism proxy URLs

|

documentation

|

This topic:

https://meta.stoplight.io/docs/prism/72d69fb629de0-validation-proxy#validation-proxy

Has an incorrect example.

prism proxy reference/backend/openapi.yaml localhost:3000 --errors

Should be:

prism proxy reference/backend/openapi.yaml http://localhost:3000 --errors

|

1.0

|

Add protocol requirement to prism proxy URLs - This topic:

https://meta.stoplight.io/docs/prism/72d69fb629de0-validation-proxy#validation-proxy

Has an incorrect example.

prism proxy reference/backend/openapi.yaml localhost:3000 --errors

Should be:

prism proxy reference/backend/openapi.yaml http://localhost:3000 --errors

|

non_process

|

add protocol requirement to prism proxy urls this topic has an incorrect example prism proxy reference backend openapi yaml localhost errors should be prism proxy reference backend openapi yaml errors

| 0

|

16,511

| 21,519,625,306

|

IssuesEvent

|

2022-04-28 13:10:55

|

symfony/symfony

|

https://api.github.com/repos/symfony/symfony

|

closed

|

[Process] Allow running multiple commands at once

|

Feature Process Stalled

|

**Description**

When running a lot of small shell commands after each other, the overhead of creating the underlying processes becomes noticeable. It would be handy if the process component would support chaining commands.

Working directly on a Linux/Windows shell you would typically do this:

```shell

# Linux

$ foo; bar; baz

$ foo && bar && baz

# Windows

$ foo & bar & baz

$ foo && bar && baz

```

(Yes there are other chaining operators as well like `||`.)

This is currently not possible with the process component.

**API Example**

I like that `Symfony\Component\Process\Process` became somewhat immutable now (the command line is baked in after the constructor ran), so the API could maybe look like this:

```php

$process = new Process(['foo']);

// foo; bar

$process = $process->withChainedCommand(['bar']); // returns a new process instance

// foo && bar

$process = $process->withAndChainedCommand(['bar']); // returns a new process instance

```

What do you think? Is this a worthwhile addition?

|

1.0

|

[Process] Allow running multiple commands at once - **Description**

When running a lot of small shell commands after each other, the overhead of creating the underlying processes becomes noticeable. It would be handy if the process component would support chaining commands.

Working directly on a Linux/Windows shell you would typically do this:

```shell

# Linux

$ foo; bar; baz

$ foo && bar && baz

# Windows

$ foo & bar & baz

$ foo && bar && baz

```

(Yes there are other chaining operators as well like `||`.)

This is currently not possible with the process component.

**API Example**

I like that `Symfony\Component\Process\Process` became somewhat immutable now (the command line is baked in after the constructor ran), so the API could maybe look like this:

```php

$process = new Process(['foo']);

// foo; bar

$process = $process->withChainedCommand(['bar']); // returns a new process instance

// foo && bar

$process = $process->withAndChainedCommand(['bar']); // returns a new process instance

```

What do you think? Is this a worthwhile addition?

|

process

|

allow running multiple commands at once description when running a lot of small shell commands after each other the overhead of creating the underlying processes becomes noticeable it would be handy if the process component would support chaining commands working directly on a linux windows shell you would typically do this shell linux foo bar baz foo bar baz windows foo bar baz foo bar baz yes there are other chaining operators as well like this is currently not possible with the process component api example i like that symfony component process process became somewhat immutable now the command line is baked in after the constructor ran so the api could maybe look like this php process new process foo bar process process withchainedcommand returns a new process instance foo bar process process withandchainedcommand returns a new process instance what do you think is this a worthwhile addition

| 1

|

67,592

| 27,958,769,316

|

IssuesEvent

|

2023-03-24 14:14:59

|

MicrosoftDocs/azure-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-docs

|

closed

|

Chat Completion API

|

cognitive-services/svc triaged cxp doc-enhancement Pri1

|

Hello, I would like to know if "Chat Completion API" means "an API for [chat completion](https://platform.openai.com/docs/guides/chat)" (not a proper noun), or if it's the name of an API, which will remain in English, even for the other languages (which means this "Chat Completion API" name should not be translated). Thanks!

Best regards,

François

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: 13994f3e-a96a-8804-4deb-7e0adad7f479

* Version Independent ID: e087d8ce-bf3a-f12c-41dc-3ea924c6f089

* Content: [How to work with the ChatGPT and GPT-4 models (preview) - Azure OpenAI Service](https://learn.microsoft.com/en-us/azure/cognitive-services/openai/how-to/chatgpt?pivots=programming-language-chat-completions#working-with-the-chat-completion-api)

* Content Source: [articles/cognitive-services/openai/how-to/chatgpt.md](https://github.com/MicrosoftDocs/azure-docs/blob/main/articles/cognitive-services/openai/how-to/chatgpt.md)

* Service: **cognitive-services**

* GitHub Login: @mrbullwinkle

* Microsoft Alias: **mbullwin**

|

1.0

|

Chat Completion API -

Hello, I would like to know if "Chat Completion API" means "an API for [chat completion](https://platform.openai.com/docs/guides/chat)" (not a proper noun), or if it's the name of an API, which will remain in English, even for the other languages (which means this "Chat Completion API" name should not be translated). Thanks!

Best regards,

François

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: 13994f3e-a96a-8804-4deb-7e0adad7f479

* Version Independent ID: e087d8ce-bf3a-f12c-41dc-3ea924c6f089

* Content: [How to work with the ChatGPT and GPT-4 models (preview) - Azure OpenAI Service](https://learn.microsoft.com/en-us/azure/cognitive-services/openai/how-to/chatgpt?pivots=programming-language-chat-completions#working-with-the-chat-completion-api)

* Content Source: [articles/cognitive-services/openai/how-to/chatgpt.md](https://github.com/MicrosoftDocs/azure-docs/blob/main/articles/cognitive-services/openai/how-to/chatgpt.md)

* Service: **cognitive-services**

* GitHub Login: @mrbullwinkle

* Microsoft Alias: **mbullwin**

|

non_process

|

chat completion api hello i would like to know if chat completion api means an api for not a proper noun or if it s the name of an api which will remain in english even for the other languages which means this chat completion api name should not be translated thanks best regards françois document details ⚠ do not edit this section it is required for learn microsoft com ➟ github issue linking id version independent id content content source service cognitive services github login mrbullwinkle microsoft alias mbullwin

| 0

|

22,198

| 30,755,628,325

|

IssuesEvent

|

2023-07-29 02:40:47

|

open-telemetry/opentelemetry-collector-contrib

|

https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib

|

closed

|

[processor/resourcedetection] No system attributes set if `host.id` cannot be fetched

|

bug priority:p1 processor/resourcedetection

|

### Component(s)

_No response_

### What happened?

After https://github.com/open-telemetry/opentelemetry-collector-contrib/pull/24239, instead of setting empty `host.id`, we started throwing an error and dropping all other important system detector attributes: `host.name`, `os.type`, `host.arch`.

We need set other system resources even if `host.id` cannot be fetched.

Also, it's happening even if the `host.id` is disabled, which is now the default behavior. https://github.com/open-telemetry/opentelemetry-collector-contrib/issues/24369 will solve this particular issue. But it's not as important as this one.

### Collector version

0.82.0 (binaries are not released yet)

|

1.0

|

[processor/resourcedetection] No system attributes set if `host.id` cannot be fetched - ### Component(s)

_No response_

### What happened?

After https://github.com/open-telemetry/opentelemetry-collector-contrib/pull/24239, instead of setting empty `host.id`, we started throwing an error and dropping all other important system detector attributes: `host.name`, `os.type`, `host.arch`.

We need set other system resources even if `host.id` cannot be fetched.

Also, it's happening even if the `host.id` is disabled, which is now the default behavior. https://github.com/open-telemetry/opentelemetry-collector-contrib/issues/24369 will solve this particular issue. But it's not as important as this one.

### Collector version

0.82.0 (binaries are not released yet)

|

process

|

no system attributes set if host id cannot be fetched component s no response what happened after instead of setting empty host id we started throwing an error and dropping all other important system detector attributes host name os type host arch we need set other system resources even if host id cannot be fetched also it s happening even if the host id is disabled which is now the default behavior will solve this particular issue but it s not as important as this one collector version binaries are not released yet

| 1

|

12,209

| 14,742,829,363

|

IssuesEvent

|

2021-01-07 12:58:01

|

kdjstudios/SABillingGitlab

|

https://api.github.com/repos/kdjstudios/SABillingGitlab

|

closed

|

68 Portland - SAB Latency

|

anc-process anp-2 ant-support

|

In GitLab by @kdjstudios on Jun 7, 2019, 09:22

**Submitted by:** "Grant Crymes" <grant.crymes@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/8513066 - CLOSED

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/10208596

**Server:** Internal

**Client/Site:** Portland

**Account:** NA

**Issue:**

We are experiencing latency when posting checks in SAB. It’s taking approximately 8-20 seconds to advance through each screen which is really slowing the payment processing down. This latency issue started a month or so ago but has been getting progressively worse.

|

1.0

|

68 Portland - SAB Latency - In GitLab by @kdjstudios on Jun 7, 2019, 09:22

**Submitted by:** "Grant Crymes" <grant.crymes@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/8513066 - CLOSED

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/10208596

**Server:** Internal

**Client/Site:** Portland

**Account:** NA

**Issue:**

We are experiencing latency when posting checks in SAB. It’s taking approximately 8-20 seconds to advance through each screen which is really slowing the payment processing down. This latency issue started a month or so ago but has been getting progressively worse.

|

process

|

portland sab latency in gitlab by kdjstudios on jun submitted by grant crymes helpdesk closed helpdesk server internal client site portland account na issue we are experiencing latency when posting checks in sab it’s taking approximately seconds to advance through each screen which is really slowing the payment processing down this latency issue started a month or so ago but has been getting progressively worse

| 1

|

22,089

| 7,113,517,689

|

IssuesEvent

|

2018-01-17 20:46:12

|

dotnet/buildtools

|

https://api.github.com/repos/dotnet/buildtools

|

closed

|

PushToBlobFeed manifest creation should use VSTS default variables from env as a fallback

|

area-building-support

|

For Core repos (and maybe more) these values don't match what we need, but adding this fallback would make the implementation in some places have less code. (See https://github.com/dotnet/core-eng/issues/2404#issuecomment-358389341.)

https://docs.microsoft.com/en-us/vsts/build-release/concepts/definitions/build/variables

* `BUILD_REPOSITORY_NAME`

* `BUILD_SOURCEVERSION`

* `BUILD_SOURCEBRANCHNAME`

* In my experience this has chopped off the `release` part of `release/2.0` so it might not work. `BUILD_SOURCEBRANCH` contains more info than needed but might be better.

* (Edit) Confirmed that in a CoreCLR `release/2.0.0` build, this var is `2.0.0`. SOURCEBRANCH is `refs/heads/release/2.0.0`.

* `BUILD_BUILDID`

/cc @tmat @tannergooding

|

1.0

|

PushToBlobFeed manifest creation should use VSTS default variables from env as a fallback - For Core repos (and maybe more) these values don't match what we need, but adding this fallback would make the implementation in some places have less code. (See https://github.com/dotnet/core-eng/issues/2404#issuecomment-358389341.)

https://docs.microsoft.com/en-us/vsts/build-release/concepts/definitions/build/variables

* `BUILD_REPOSITORY_NAME`

* `BUILD_SOURCEVERSION`

* `BUILD_SOURCEBRANCHNAME`

* In my experience this has chopped off the `release` part of `release/2.0` so it might not work. `BUILD_SOURCEBRANCH` contains more info than needed but might be better.

* (Edit) Confirmed that in a CoreCLR `release/2.0.0` build, this var is `2.0.0`. SOURCEBRANCH is `refs/heads/release/2.0.0`.

* `BUILD_BUILDID`

/cc @tmat @tannergooding

|

non_process

|

pushtoblobfeed manifest creation should use vsts default variables from env as a fallback for core repos and maybe more these values don t match what we need but adding this fallback would make the implementation in some places have less code see build repository name build sourceversion build sourcebranchname in my experience this has chopped off the release part of release so it might not work build sourcebranch contains more info than needed but might be better edit confirmed that in a coreclr release build this var is sourcebranch is refs heads release build buildid cc tmat tannergooding

| 0

|

170,768

| 14,269,525,516

|

IssuesEvent

|

2020-11-21 01:51:17

|

AzureAD/microsoft-authentication-library-for-js

|

https://api.github.com/repos/AzureAD/microsoft-authentication-library-for-js

|

opened

|

Modified copy of msal-angular code inside samples/msal-angular-v2-samples/angular10-browser-sample?

|

documentation question

|

## Library

- [x] `@azure/msal-browser@2.x.x`

- [x] `@azure/msal-angular@2.x.x`

## Documentation location

- [x] Documentation does not exist

## Description

The Angular 10 example does not use the code from `@azure/msal-angular@2.x.x`. Instead it has copied and modified the code, see https://github.com/AzureAD/microsoft-authentication-library-for-js/tree/dev/samples/msal-angular-v2-samples/angular10-browser-sample/src/app/msal.

Why is that? Does it mean we can't use the official `@azure/msal-angular@2.x.x` library, but instead have to copy the code from the example? What happens with bug fixes? Will they be committed to both, @azure/msal-angular@2.x.x` and the example project?

|

1.0

|

Modified copy of msal-angular code inside samples/msal-angular-v2-samples/angular10-browser-sample? - ## Library

- [x] `@azure/msal-browser@2.x.x`

- [x] `@azure/msal-angular@2.x.x`

## Documentation location

- [x] Documentation does not exist

## Description

The Angular 10 example does not use the code from `@azure/msal-angular@2.x.x`. Instead it has copied and modified the code, see https://github.com/AzureAD/microsoft-authentication-library-for-js/tree/dev/samples/msal-angular-v2-samples/angular10-browser-sample/src/app/msal.

Why is that? Does it mean we can't use the official `@azure/msal-angular@2.x.x` library, but instead have to copy the code from the example? What happens with bug fixes? Will they be committed to both, @azure/msal-angular@2.x.x` and the example project?

|

non_process

|

modified copy of msal angular code inside samples msal angular samples browser sample library azure msal browser x x azure msal angular x x documentation location documentation does not exist description the angular example does not use the code from azure msal angular x x instead it has copied and modified the code see why is that does it mean we can t use the official azure msal angular x x library but instead have to copy the code from the example what happens with bug fixes will they be committed to both azure msal angular x x and the example project

| 0

|

22,552

| 31,761,984,305

|

IssuesEvent

|

2023-09-12 06:09:47

|

bazelbuild/bazel

|

https://api.github.com/repos/bazelbuild/bazel

|

closed

|

[Mirror] rules_graalvm@v0.10.2

|

P2 type: process team-OSS mirror request

|

### Please list the URLs of the archives you'd like to mirror:

https://github.com/sgammon/rules_graalvm/releases/download/v0.10.2/rules_graalvm-0.10.2.zip

https://github.com/graalvm/graalvm-ce-builds/releases/download/graal-23.0.1/native-image-installable-svm-java20-windows-amd64-23.0.1.jar

https://github.com/graalvm/graalvm-ce-builds/releases/download/jdk-20.0.2/graalvm-community-jdk-20.0.2_windows-x64_bin.zip

https://github.com/graalvm/graalvm-ce-builds/releases/download/graal-23.0.1/native-image-installable-svm-java20-linux-amd64-23.0.1.jar

https://github.com/graalvm/graalvm-ce-builds/releases/download/jdk-20.0.2/graalvm-community-jdk-20.0.2_linux-x64_bin.tar.gz

https://github.com/graalvm/graalvm-ce-builds/releases/download/graal-23.0.1/native-image-installable-svm-java20-darwin-aarch64-23.0.1.jar

https://github.com/graalvm/graalvm-ce-builds/releases/download/jdk-20.0.2/graalvm-community-jdk-20.0.2_macos-aarch64_bin.tar.gz

https://github.com/graalvm/graalvm-ce-builds/releases/download/graal-23.0.1/native-image-installable-svm-java20-darwin-amd64-23.0.1.jar

https://github.com/graalvm/graalvm-ce-builds/releases/download/jdk-20.0.2/graalvm-community-jdk-20.0.2_macos-x64_bin.tar.gz

|

1.0

|

[Mirror] rules_graalvm@v0.10.2 - ### Please list the URLs of the archives you'd like to mirror:

https://github.com/sgammon/rules_graalvm/releases/download/v0.10.2/rules_graalvm-0.10.2.zip

https://github.com/graalvm/graalvm-ce-builds/releases/download/graal-23.0.1/native-image-installable-svm-java20-windows-amd64-23.0.1.jar

https://github.com/graalvm/graalvm-ce-builds/releases/download/jdk-20.0.2/graalvm-community-jdk-20.0.2_windows-x64_bin.zip

https://github.com/graalvm/graalvm-ce-builds/releases/download/graal-23.0.1/native-image-installable-svm-java20-linux-amd64-23.0.1.jar

https://github.com/graalvm/graalvm-ce-builds/releases/download/jdk-20.0.2/graalvm-community-jdk-20.0.2_linux-x64_bin.tar.gz

https://github.com/graalvm/graalvm-ce-builds/releases/download/graal-23.0.1/native-image-installable-svm-java20-darwin-aarch64-23.0.1.jar

https://github.com/graalvm/graalvm-ce-builds/releases/download/jdk-20.0.2/graalvm-community-jdk-20.0.2_macos-aarch64_bin.tar.gz

https://github.com/graalvm/graalvm-ce-builds/releases/download/graal-23.0.1/native-image-installable-svm-java20-darwin-amd64-23.0.1.jar

https://github.com/graalvm/graalvm-ce-builds/releases/download/jdk-20.0.2/graalvm-community-jdk-20.0.2_macos-x64_bin.tar.gz

|

process

|

rules graalvm please list the urls of the archives you d like to mirror

| 1

|

2,878

| 5,833,394,325

|

IssuesEvent

|

2017-05-09 01:24:04

|

codefordenver/org

|

https://api.github.com/repos/codefordenver/org

|

closed

|

Create Mission Statement and upload to all appropriate places

|

Process

|

Create mission statement to aid fundraising marketing campaign and general understanding of organization.

Get sign-off from heads.

|

1.0

|

Create Mission Statement and upload to all appropriate places - Create mission statement to aid fundraising marketing campaign and general understanding of organization.

Get sign-off from heads.

|

process

|

create mission statement and upload to all appropriate places create mission statement to aid fundraising marketing campaign and general understanding of organization get sign off from heads

| 1

|

133,771

| 18,353,242,454

|

IssuesEvent

|

2021-10-08 14:51:52

|

symfony/symfony-docs

|

https://api.github.com/repos/symfony/symfony-docs

|

closed

|

[Security] Automatically register custom authenticator as entry_point (…

|

Security

|

| Q | A

| ------------ | ---

| Feature PR | symfony/symfony#39153

| PR author(s) | @wouterj

| Merged in | 5.2

|

True

|

[Security] Automatically register custom authenticator as entry_point (… - | Q | A

| ------------ | ---

| Feature PR | symfony/symfony#39153

| PR author(s) | @wouterj

| Merged in | 5.2

|

non_process

|

automatically register custom authenticator as entry point … q a feature pr symfony symfony pr author s wouterj merged in

| 0

|

216,706

| 7,311,092,383

|

IssuesEvent

|

2018-02-28 16:44:53

|

EthereumCommonwealth/etherwallet

|

https://api.github.com/repos/EthereumCommonwealth/etherwallet

|

closed

|

Add more networks.

|

low_priority

|

### Description

Just add custom nodes from https://github.com/kvhnuke/etherwallet/blob/mercury/app/scripts/nodes.js

- Tomo Coin network.

- Ella network.

- POA network.

NOTE: It may be necessary to configure corresponding colorings for each of the networks.

|

1.0

|

Add more networks. - ### Description

Just add custom nodes from https://github.com/kvhnuke/etherwallet/blob/mercury/app/scripts/nodes.js

- Tomo Coin network.

- Ella network.

- POA network.

NOTE: It may be necessary to configure corresponding colorings for each of the networks.

|

non_process

|

add more networks description just add custom nodes from tomo coin network ella network poa network note it may be necessary to configure corresponding colorings for each of the networks

| 0

|

178,030

| 29,486,349,611

|

IssuesEvent

|

2023-06-02 10:01:28

|

OfficeDev/TeamsFx

|

https://api.github.com/repos/OfficeDev/TeamsFx

|

closed

|

TeamsFx spfx tab project Env's replicating each other

|

needs more info *as-designed TA:E2E no recent activity

|

**Describe the bug**

Hi, I have created a spfx tab project from teamfx cli. i have created two env's dev & test which i am managing from teams toolkit exstension. when i deploy new update in dev env and approve it at MS teams admin portal it's automatically reflecting me at both dev & test env app. Also, can't use .env file in spfx tab project.

**To Reproduce**

Steps to reproduce the behavior:

1. Create new spfx tab project from teamsfx cli.

2. Create new env's and provision them.

3. Deploy both env's app and approve at MS teams admin

4. Create new update for dev env and deploy it.

5. It reflects at both dev & test env apps.

**Expected behavior**

Dev update should not be reflect at other env if we deploy new update only at dev env.

**VS Code Extension Information (please complete the following information):**

- Teams Toolkit extension verion: 4.1.3

**CLI Information (please complete the following information):**

- Teamsfx-cli version: 1.2.4

|

1.0

|

TeamsFx spfx tab project Env's replicating each other - **Describe the bug**

Hi, I have created a spfx tab project from teamfx cli. i have created two env's dev & test which i am managing from teams toolkit exstension. when i deploy new update in dev env and approve it at MS teams admin portal it's automatically reflecting me at both dev & test env app. Also, can't use .env file in spfx tab project.

**To Reproduce**

Steps to reproduce the behavior:

1. Create new spfx tab project from teamsfx cli.

2. Create new env's and provision them.

3. Deploy both env's app and approve at MS teams admin

4. Create new update for dev env and deploy it.

5. It reflects at both dev & test env apps.

**Expected behavior**

Dev update should not be reflect at other env if we deploy new update only at dev env.

**VS Code Extension Information (please complete the following information):**

- Teams Toolkit extension verion: 4.1.3

**CLI Information (please complete the following information):**

- Teamsfx-cli version: 1.2.4

|

non_process

|

teamsfx spfx tab project env s replicating each other describe the bug hi i have created a spfx tab project from teamfx cli i have created two env s dev test which i am managing from teams toolkit exstension when i deploy new update in dev env and approve it at ms teams admin portal it s automatically reflecting me at both dev test env app also can t use env file in spfx tab project to reproduce steps to reproduce the behavior create new spfx tab project from teamsfx cli create new env s and provision them deploy both env s app and approve at ms teams admin create new update for dev env and deploy it it reflects at both dev test env apps expected behavior dev update should not be reflect at other env if we deploy new update only at dev env vs code extension information please complete the following information teams toolkit extension verion cli information please complete the following information teamsfx cli version

| 0

|

50,256

| 13,187,403,496

|

IssuesEvent

|

2020-08-13 03:18:23

|

icecube-trac/tix3

|

https://api.github.com/repos/icecube-trac/tix3

|

closed

|

make_tarball_rootsys.sh.in bugfix (Trac #393)

|

Migrated from Trac combo core defect

|

cmake/make_tarball_rootsys.sh.in needs a recursive copy flag '-r' since new ROOT lib dir contains subdirectories.

Line 14:

- cp -P @ROOTSYS@/lib/* @CMAKE_INSTALL_PREFIX@/cernroot/lib

+ cp -r -P @ROOTSYS@/lib/* @CMAKE_INSTALL_PREFIX@/cernroot/lib

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/393

, reported by juancarlos and owned by blaufuss_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2012-06-03T04:44:16",

"description": "cmake/make_tarball_rootsys.sh.in needs a recursive copy flag '-r' since new ROOT lib dir contains subdirectories.\n\nLine 14:\n\n- cp -P @ROOTSYS@/lib/* @CMAKE_INSTALL_PREFIX@/cernroot/lib\n+ cp -r -P @ROOTSYS@/lib/* @CMAKE_INSTALL_PREFIX@/cernroot/lib",

"reporter": "juancarlos",

"cc": "",

"resolution": "fixed",

"_ts": "1338698656000000",

"component": "combo core",

"summary": "make_tarball_rootsys.sh.in bugfix",

"priority": "normal",

"keywords": "cmake",

"time": "2012-05-16T21:21:39",

"milestone": "",

"owner": "blaufuss",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

make_tarball_rootsys.sh.in bugfix (Trac #393) - cmake/make_tarball_rootsys.sh.in needs a recursive copy flag '-r' since new ROOT lib dir contains subdirectories.

Line 14:

- cp -P @ROOTSYS@/lib/* @CMAKE_INSTALL_PREFIX@/cernroot/lib

+ cp -r -P @ROOTSYS@/lib/* @CMAKE_INSTALL_PREFIX@/cernroot/lib

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/393

, reported by juancarlos and owned by blaufuss_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2012-06-03T04:44:16",

"description": "cmake/make_tarball_rootsys.sh.in needs a recursive copy flag '-r' since new ROOT lib dir contains subdirectories.\n\nLine 14:\n\n- cp -P @ROOTSYS@/lib/* @CMAKE_INSTALL_PREFIX@/cernroot/lib\n+ cp -r -P @ROOTSYS@/lib/* @CMAKE_INSTALL_PREFIX@/cernroot/lib",

"reporter": "juancarlos",

"cc": "",

"resolution": "fixed",

"_ts": "1338698656000000",

"component": "combo core",

"summary": "make_tarball_rootsys.sh.in bugfix",

"priority": "normal",

"keywords": "cmake",

"time": "2012-05-16T21:21:39",

"milestone": "",

"owner": "blaufuss",

"type": "defect"

}

```

</p>

</details>

|

non_process

|

make tarball rootsys sh in bugfix trac cmake make tarball rootsys sh in needs a recursive copy flag r since new root lib dir contains subdirectories line cp p rootsys lib cmake install prefix cernroot lib cp r p rootsys lib cmake install prefix cernroot lib migrated from reported by juancarlos and owned by blaufuss json status closed changetime description cmake make tarball rootsys sh in needs a recursive copy flag r since new root lib dir contains subdirectories n nline n n cp p rootsys lib cmake install prefix cernroot lib n cp r p rootsys lib cmake install prefix cernroot lib reporter juancarlos cc resolution fixed ts component combo core summary make tarball rootsys sh in bugfix priority normal keywords cmake time milestone owner blaufuss type defect

| 0

|

19,874

| 26,288,037,800

|

IssuesEvent

|

2023-01-08 03:17:44

|

fdhhhdjd/Web-Online-School-Libary-Book

|

https://api.github.com/repos/fdhhhdjd/Web-Online-School-Libary-Book

|

opened

|

Read File Excel ( Back-End )

|

DEV Processing

|

**** Read File Excel ****

- Library node-xlsx

- Code performance equal buffer.

- convert data JSON.

|

1.0

|

Read File Excel ( Back-End ) - **** Read File Excel ****

- Library node-xlsx

- Code performance equal buffer.

- convert data JSON.

|

process

|

read file excel back end read file excel library node xlsx code performance equal buffer convert data json

| 1

|

21,524

| 29,806,279,230

|

IssuesEvent

|

2023-06-16 11:56:57

|

parca-dev/parca-agent

|

https://api.github.com/repos/parca-dev/parca-agent

|

closed

|

Normalization issues

|

P0 area/process-mapping

|

i.e. while running `kubectl run -n parca debug --image=python:latest -it`

aka "the cockroachdb bug" (still present in `main`)

|

1.0

|

Normalization issues - i.e. while running `kubectl run -n parca debug --image=python:latest -it`

aka "the cockroachdb bug" (still present in `main`)

|

process

|

normalization issues i e while running kubectl run n parca debug image python latest it aka the cockroachdb bug still present in main

| 1

|

296,626

| 22,310,482,100

|

IssuesEvent

|

2022-06-13 16:31:04

|

minetest/minetest

|

https://api.github.com/repos/minetest/minetest

|

closed

|

moveresult.touching_ground value is incorrect or misleading

|

@ Documentation Question

|

##### Minetest version

<!--

Paste Minetest version between quotes below

If you are on a devel version, please add git commit hash

You can use `minetest --version` to find it.

-->

```

Minetest 5.3.0 (Linux)

Using Irrlicht 1.8.4

BUILD_TYPE=Release

RUN_IN_PLACE=0

USE_GETTEXT=1

USE_SOUND=1

USE_CURL=1

USE_FREETYPE=1

USE_LUAJIT=1

STATIC_SHAREDIR="/nix/store/lyl5b6q7rvv7pp6szn0krddy7102fnx6-minetest-5.3.0/share/minetest"

STATIC_LOCALEDIR="/nix/store/lyl5b6q7rvv7pp6szn0krddy7102fnx6-minetest-5.3.0/share/locale"

```

I've also tested with commit `4fcd000e20a26120349184cb9d40342b7876e6b8` from January 21st.

##### First of all

Thanks for all the hard work. I'm loving writing mods for minetest.

##### Summary

<!-- Describe your problem here -->

The collision info table received during the `on_step` function has a wrong or misleading value for `touching_ground`. This value only seems to be `true` if the entity has just moved. For instance, you can spawn a physical entity in the air and set it's velocity to negative y. It will fall and `touching_ground` will be `false`, which is correct. Once it touches the ground, `touching_ground` becomes `true`, which is also correct, and the velocity seems to be reset to 0 because of the collision. The problem is that on the next `on_step`, `touching_ground` is now `false`, even though the entity is literally on the ground.

My guess is that collisions were not calculated because there is no movement, but somehow the engine didn't account for the fact that the entity is *already* on the ground.

This seems like a weird behavior to me, and I couldn't find a good way to determine that an entity is on the ground from Lua. Am I missing something here? If there's a good solution for this, I'm happy to write documentation about it.

|

1.0

|

moveresult.touching_ground value is incorrect or misleading - ##### Minetest version

<!--

Paste Minetest version between quotes below

If you are on a devel version, please add git commit hash

You can use `minetest --version` to find it.

-->

```

Minetest 5.3.0 (Linux)

Using Irrlicht 1.8.4

BUILD_TYPE=Release

RUN_IN_PLACE=0

USE_GETTEXT=1

USE_SOUND=1

USE_CURL=1

USE_FREETYPE=1

USE_LUAJIT=1

STATIC_SHAREDIR="/nix/store/lyl5b6q7rvv7pp6szn0krddy7102fnx6-minetest-5.3.0/share/minetest"

STATIC_LOCALEDIR="/nix/store/lyl5b6q7rvv7pp6szn0krddy7102fnx6-minetest-5.3.0/share/locale"

```

I've also tested with commit `4fcd000e20a26120349184cb9d40342b7876e6b8` from January 21st.

##### First of all

Thanks for all the hard work. I'm loving writing mods for minetest.

##### Summary

<!-- Describe your problem here -->

The collision info table received during the `on_step` function has a wrong or misleading value for `touching_ground`. This value only seems to be `true` if the entity has just moved. For instance, you can spawn a physical entity in the air and set it's velocity to negative y. It will fall and `touching_ground` will be `false`, which is correct. Once it touches the ground, `touching_ground` becomes `true`, which is also correct, and the velocity seems to be reset to 0 because of the collision. The problem is that on the next `on_step`, `touching_ground` is now `false`, even though the entity is literally on the ground.

My guess is that collisions were not calculated because there is no movement, but somehow the engine didn't account for the fact that the entity is *already* on the ground.

This seems like a weird behavior to me, and I couldn't find a good way to determine that an entity is on the ground from Lua. Am I missing something here? If there's a good solution for this, I'm happy to write documentation about it.

|

non_process

|

moveresult touching ground value is incorrect or misleading minetest version paste minetest version between quotes below if you are on a devel version please add git commit hash you can use minetest version to find it minetest linux using irrlicht build type release run in place use gettext use sound use curl use freetype use luajit static sharedir nix store minetest share minetest static localedir nix store minetest share locale i ve also tested with commit from january first of all thanks for all the hard work i m loving writing mods for minetest summary the collision info table received during the on step function has a wrong or misleading value for touching ground this value only seems to be true if the entity has just moved for instance you can spawn a physical entity in the air and set it s velocity to negative y it will fall and touching ground will be false which is correct once it touches the ground touching ground becomes true which is also correct and the velocity seems to be reset to because of the collision the problem is that on the next on step touching ground is now false even though the entity is literally on the ground my guess is that collisions were not calculated because there is no movement but somehow the engine didn t account for the fact that the entity is already on the ground this seems like a weird behavior to me and i couldn t find a good way to determine that an entity is on the ground from lua am i missing something here if there s a good solution for this i m happy to write documentation about it

| 0

|

6,508

| 6,490,278,376

|

IssuesEvent

|

2017-08-21 06:38:15

|

camptocamp/c2cgeoportal

|

https://api.github.com/repos/camptocamp/c2cgeoportal

|

closed

|

Suspicious relativ path to build the project standalone

|

Infrastructure Ready

|

Appears on c2cgeoportal master (20 feb 2017)

I've tried to to a make build on the project but these two lines make issues:

- https://github.com/camptocamp/c2cgeoportal/blob/daca42c1b3469531b7dc176ed3ffa5d368ede658/Makefile#L1 (`/build` shouldn't be `build` ?)

- https://github.com/camptocamp/c2cgeoportal/blob/daca42c1b3469531b7dc176ed3ffa5d368ede658/Makefile#L61 ?

Absolute path... that's not false ?

|

1.0

|

Suspicious relativ path to build the project standalone - Appears on c2cgeoportal master (20 feb 2017)

I've tried to to a make build on the project but these two lines make issues:

- https://github.com/camptocamp/c2cgeoportal/blob/daca42c1b3469531b7dc176ed3ffa5d368ede658/Makefile#L1 (`/build` shouldn't be `build` ?)

- https://github.com/camptocamp/c2cgeoportal/blob/daca42c1b3469531b7dc176ed3ffa5d368ede658/Makefile#L61 ?

Absolute path... that's not false ?

|

non_process

|

suspicious relativ path to build the project standalone appears on master feb i ve tried to to a make build on the project but these two lines make issues build shouldn t be build absolute path that s not false

| 0

|

411,031

| 27,811,102,130

|

IssuesEvent

|

2023-03-18 05:33:39

|

Real-Dev-Squad/website-api-contracts

|

https://api.github.com/repos/Real-Dev-Squad/website-api-contracts

|

closed

|

API Contract for GET Idle users/members

|

documentation

|

### AC

- Create an API contract for `/users/idle` and `/users/idle?members=true`

### Link with

Issue [#635](https://github.com/Real-Dev-Squad/website-backend/issues/635)

|

1.0

|

API Contract for GET Idle users/members - ### AC

- Create an API contract for `/users/idle` and `/users/idle?members=true`

### Link with

Issue [#635](https://github.com/Real-Dev-Squad/website-backend/issues/635)

|

non_process

|

api contract for get idle users members ac create an api contract for users idle and users idle members true link with issue

| 0

|

163

| 2,583,797,330

|

IssuesEvent

|

2015-02-16 10:17:32

|

luc-github/Repetier-Firmware-0.92

|

https://api.github.com/repos/luc-github/Repetier-Firmware-0.92

|

closed

|

Build fails on Arduino Nightly (1.6.0) Jan 14

|

enhancement Waiting to be processed

|

It looks like the current nightly builds of Arduino have incompatible changes with variants.cpp. I was able to build by copying the "hardware" directory from 1.5.8.

|

1.0

|

Build fails on Arduino Nightly (1.6.0) Jan 14 - It looks like the current nightly builds of Arduino have incompatible changes with variants.cpp. I was able to build by copying the "hardware" directory from 1.5.8.

|

process

|

build fails on arduino nightly jan it looks like the current nightly builds of arduino have incompatible changes with variants cpp i was able to build by copying the hardware directory from

| 1

|

4,394

| 7,285,884,163

|

IssuesEvent

|

2018-02-23 07:12:33

|

muflihun/residue

|

https://api.github.com/repos/muflihun/residue

|

closed

|

Remove plain log request support

|

area: log-processing type: improvement

|

This is a security concern + extra instructions for potentially unused feature

We will remove it in 1.5.0

|

1.0

|

Remove plain log request support - This is a security concern + extra instructions for potentially unused feature

We will remove it in 1.5.0

|

process

|

remove plain log request support this is a security concern extra instructions for potentially unused feature we will remove it in

| 1

|

115,529

| 14,799,011,046

|

IssuesEvent

|

2021-01-13 01:13:01

|

vmware-tanzu/antrea

|

https://api.github.com/repos/vmware-tanzu/antrea

|

opened

|

Replace hack/netpol/ with new upstream NetworkPolicy test suite

|

area/test/community kind/design priority/important-longterm

|

The netpol test suite that we protoyped in Antrea (https://github.com/vmware-tanzu/antrea/tree/master/hack/netpol) was ported upstream by @jayunit100 and others: https://github.com/kubernetes/kubernetes/tree/master/test/e2e/network/netpol

As a result it now makes sense to remove the `hack/netpol/` directory altogether and instead start running the upstream version of the test suite as part of Antrea CI. I think we can probably move from a Kind CI job to a Jenkins job on VMC (VMware on AWS), to avoid some known issues with Kind / the Open vSwitch netdev datapath (see https://github.com/vmware-tanzu/antrea/issues/897 for an example).

My preference would be to simply run it as part of the existing `jenkins-networkpolicy` job:

* because it makes sense :)

* to avoid introducing yet another job

* because the test suite is supposed to run pretty fast and should not add too much time compared to the current job

I am hoping we can simply update https://github.com/vmware-tanzu/antrea/blob/master/ci/run-k8s-e2e-tests.sh to avoid increasing the number of CI scripts we have to maintain.

The only issue I see is that at this time (01/12), there is no named tag of the `k8s.gcr.io/conformance` image which includes the upstream netpol tests. The latest tag seems to be `v1.21.0-alpha.0`, and it doesn't include them.

As a result, we can either:

1. wait for a version of the `k8s.gcr.io/conformance` image with support for the netpol tests

2. build the `k8s.gcr.io/conformance` image from the K8s source (https://github.com/kubernetes/kubernetes/blob/master/cluster/images/conformance/Makefile) and use it in `run-k8s-e2e-tests.sh`

I don't really have a preference for either. If someone wants to start working on this issue in the near future, then they can go with the second solution.

|

1.0

|

Replace hack/netpol/ with new upstream NetworkPolicy test suite - The netpol test suite that we protoyped in Antrea (https://github.com/vmware-tanzu/antrea/tree/master/hack/netpol) was ported upstream by @jayunit100 and others: https://github.com/kubernetes/kubernetes/tree/master/test/e2e/network/netpol

As a result it now makes sense to remove the `hack/netpol/` directory altogether and instead start running the upstream version of the test suite as part of Antrea CI. I think we can probably move from a Kind CI job to a Jenkins job on VMC (VMware on AWS), to avoid some known issues with Kind / the Open vSwitch netdev datapath (see https://github.com/vmware-tanzu/antrea/issues/897 for an example).

My preference would be to simply run it as part of the existing `jenkins-networkpolicy` job:

* because it makes sense :)

* to avoid introducing yet another job

* because the test suite is supposed to run pretty fast and should not add too much time compared to the current job

I am hoping we can simply update https://github.com/vmware-tanzu/antrea/blob/master/ci/run-k8s-e2e-tests.sh to avoid increasing the number of CI scripts we have to maintain.

The only issue I see is that at this time (01/12), there is no named tag of the `k8s.gcr.io/conformance` image which includes the upstream netpol tests. The latest tag seems to be `v1.21.0-alpha.0`, and it doesn't include them.

As a result, we can either:

1. wait for a version of the `k8s.gcr.io/conformance` image with support for the netpol tests

2. build the `k8s.gcr.io/conformance` image from the K8s source (https://github.com/kubernetes/kubernetes/blob/master/cluster/images/conformance/Makefile) and use it in `run-k8s-e2e-tests.sh`

I don't really have a preference for either. If someone wants to start working on this issue in the near future, then they can go with the second solution.

|

non_process

|

replace hack netpol with new upstream networkpolicy test suite the netpol test suite that we protoyped in antrea was ported upstream by and others as a result it now makes sense to remove the hack netpol directory altogether and instead start running the upstream version of the test suite as part of antrea ci i think we can probably move from a kind ci job to a jenkins job on vmc vmware on aws to avoid some known issues with kind the open vswitch netdev datapath see for an example my preference would be to simply run it as part of the existing jenkins networkpolicy job because it makes sense to avoid introducing yet another job because the test suite is supposed to run pretty fast and should not add too much time compared to the current job i am hoping we can simply update to avoid increasing the number of ci scripts we have to maintain the only issue i see is that at this time there is no named tag of the gcr io conformance image which includes the upstream netpol tests the latest tag seems to be alpha and it doesn t include them as a result we can either wait for a version of the gcr io conformance image with support for the netpol tests build the gcr io conformance image from the source and use it in run tests sh i don t really have a preference for either if someone wants to start working on this issue in the near future then they can go with the second solution

| 0

|

615,216

| 19,250,014,456

|

IssuesEvent

|

2021-12-09 03:17:19

|

matrixorigin/matrixone

|

https://api.github.com/repos/matrixorigin/matrixone

|

opened

|

add AOE RFC documents

|

component/aoe priority/high kind/feature severity/critical

|

1. Overall architecture

2. WAL

3. Buffer manager

4. Metadata

5. Data and index management

6. MVCC

7. Logstore

|

1.0

|

add AOE RFC documents - 1. Overall architecture

2. WAL

3. Buffer manager

4. Metadata

5. Data and index management

6. MVCC

7. Logstore

|

non_process

|

add aoe rfc documents overall architecture wal buffer manager metadata data and index management mvcc logstore

| 0

|

412,455

| 27,859,147,553

|

IssuesEvent

|

2023-03-21 03:34:54

|

CodeforHawaii/HIERR

|

https://api.github.com/repos/CodeforHawaii/HIERR

|

closed

|

Define user stories

|

documentation High Priority

|

This is for defining what user stories there are. Once this list is defined, we will send to Scott for prioritization.

|

1.0

|

Define user stories - This is for defining what user stories there are. Once this list is defined, we will send to Scott for prioritization.

|

non_process

|

define user stories this is for defining what user stories there are once this list is defined we will send to scott for prioritization

| 0

|

372,020

| 11,007,923,144

|

IssuesEvent

|

2019-12-04 09:31:36

|

webcompat/web-bugs

|

https://api.github.com/repos/webcompat/web-bugs

|

closed

|

t.co - see bug description

|

browser-focus-geckoview engine-gecko priority-critical

|

<!-- @browser: Firefox Mobile 71.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:71.0) Gecko/71.0 Firefox/71.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://t.co/YDbDt5izbJ?amp=1

**Browser / Version**: Firefox Mobile 71.0

**Operating System**: Android

**Tested Another Browser**: Yes

**Problem type**: Something else

**Description**: Firefox focus does not trust user level https certificates, making Adguard https filtering broken

**Steps to Reproduce**:

Firefox focus does not trust user level https certificates, making Adguard https filtering broken

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

1.0

|

t.co - see bug description - <!-- @browser: Firefox Mobile 71.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:71.0) Gecko/71.0 Firefox/71.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://t.co/YDbDt5izbJ?amp=1

**Browser / Version**: Firefox Mobile 71.0

**Operating System**: Android

**Tested Another Browser**: Yes

**Problem type**: Something else

**Description**: Firefox focus does not trust user level https certificates, making Adguard https filtering broken

**Steps to Reproduce**:

Firefox focus does not trust user level https certificates, making Adguard https filtering broken

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

non_process

|

t co see bug description url browser version firefox mobile operating system android tested another browser yes problem type something else description firefox focus does not trust user level https certificates making adguard https filtering broken steps to reproduce firefox focus does not trust user level https certificates making adguard https filtering broken browser configuration none from with ❤️

| 0

|

10,448

| 13,227,205,896

|

IssuesEvent

|

2020-08-18 02:25:35

|

googlemaps/v3-utility-library

|

https://api.github.com/repos/googlemaps/v3-utility-library

|

closed

|

markerclustererplus unit tests

|

help wanted priority: p3 stale type: process

|

recently had issues such as #549 that could have been caught through unittests

|

1.0

|

markerclustererplus unit tests - recently had issues such as #549 that could have been caught through unittests

|

process

|

markerclustererplus unit tests recently had issues such as that could have been caught through unittests

| 1

|

191

| 2,519,739,944

|

IssuesEvent

|

2015-01-18 09:00:56

|

mbunkus/mtx-trac-import-test

|

https://api.github.com/repos/mbunkus/mtx-trac-import-test

|

opened

|

mkvmerge will freeze if used with many tracks

|

C: mkvmerge P: normal R: fixed T: defect

|

**Reported by moritz on 9 Aug 2003 20:40 UTC**

Liisachan reports:

I tested 3 samples made by MatroskaMuxer

(1) Xvid + 1 Vorbis + 5 ssa = 7 tracks

(2) xvid + 1 vorbis + 16 ssa = 18 tracks

(3) xvid + 1 vorbis + 17 ssa = 19 tracks

Command line is like:

mkvmerge -o "out.mkv" --language 1:rus --language 2:rus

--language 3:eng --language 4:jpn --language 5:fre --language