Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

15,337 | 19,472,409,961 | IssuesEvent | 2021-12-24 05:01:21 | home-climate-control/dz | https://api.github.com/repos/home-climate-control/dz | opened | Ignore 1-Wire sensor departure, just react to timeout | fault tolerance 1-wire reactive process control | ### Existing Behavior

1-Wire sensor departure immediately propagates to the control system and makes it act prematurely, as jitter and short blackouts are possible.

### Desired Behavior

If/when a 1-Wire sensor departs, the departure is not propagated down the control flow immediately, but only after a given timeou... | 1.0 | Ignore 1-Wire sensor departure, just react to timeout - ### Existing Behavior

1-Wire sensor departure immediately propagates to the control system and makes it act prematurely, as jitter and short blackouts are possible.

### Desired Behavior

If/when a 1-Wire sensor departs, the departure is not propagated down the... | process | ignore wire sensor departure just react to timeout existing behavior wire sensor departure immediately propagates to the control system and makes it act prematurely as jitter and short blackouts are possible desired behavior if when a wire sensor departs the departure is not propagated down the... | 1 |

18,918 | 24,865,200,149 | IssuesEvent | 2022-10-27 11:22:38 | alphagov/govuk-design-system | https://api.github.com/repos/alphagov/govuk-design-system | closed | Secure community facilitators for Design System Day 2022 workshops and breakout sessions | 🕔 weeks process shared ownership | ## What

Approach the community to facilitate workshops and breakout sessions on Design System Day 2022.

### Workshops

90-minute sessions, usually involves a presentation and audience activities

5-7 facilitators needed to run their workshop on both days

### Breakout sessions

50-minute sessions, freeform — can ... | 1.0 | Secure community facilitators for Design System Day 2022 workshops and breakout sessions - ## What

Approach the community to facilitate workshops and breakout sessions on Design System Day 2022.

### Workshops

90-minute sessions, usually involves a presentation and audience activities

5-7 facilitators needed to ru... | process | secure community facilitators for design system day workshops and breakout sessions what approach the community to facilitate workshops and breakout sessions on design system day workshops minute sessions usually involves a presentation and audience activities facilitators needed to run their... | 1 |

8,048 | 11,220,764,528 | IssuesEvent | 2020-01-07 16:25:43 | code4romania/expert-consultation-api | https://api.github.com/repos/code4romania/expert-consultation-api | opened | Unable to load document path that contains spaces | bug document processing documents good first issue help wanted | When uploading a new document in the platform, of the document URL contains spaces, the document upload fails with the following message:

{

"i18nErrors": null,

"i18nFieldErrors": null,

**"additionalInfo": "Index 0 out of bounds for length 0"**

}

The initial DTO for creating the new document is the... | 1.0 | Unable to load document path that contains spaces - When uploading a new document in the platform, of the document URL contains spaces, the document upload fails with the following message:

{

"i18nErrors": null,

"i18nFieldErrors": null,

**"additionalInfo": "Index 0 out of bounds for length 0"**

}

... | process | unable to load document path that contains spaces when uploading a new document in the platform of the document url contains spaces the document upload fails with the following message null null additionalinfo index out of bounds for length the initial dto for cre... | 1 |

15,731 | 19,905,560,906 | IssuesEvent | 2022-01-25 12:26:03 | prometheus-community/windows_exporter | https://api.github.com/repos/prometheus-community/windows_exporter | closed | How to write a process restart alarm rule? | question collector/process | A process restart can be indicated by windows_process_start_time. If the value of this indicator changes, it indicates that the process is restarted. For example: windows_process_start_time{process="ACDG",instance="10.35.236.40"}, what if this cannot be greater than or less than? | 1.0 | How to write a process restart alarm rule? - A process restart can be indicated by windows_process_start_time. If the value of this indicator changes, it indicates that the process is restarted. For example: windows_process_start_time{process="ACDG",instance="10.35.236.40"}, what if this cannot be greater than or less ... | process | how to write a process restart alarm rule a process restart can be indicated by windows process start time if the value of this indicator changes it indicates that the process is restarted for example windows process start time process acdg instance what if this cannot be greater than or less than | 1 |

75,790 | 9,886,000,605 | IssuesEvent | 2019-06-25 05:07:27 | getgauge/taiko | https://api.github.com/repos/getgauge/taiko | closed | Need to fix taiko doc | documentation |

Observation:

1. Under overview there are few texts which appears as link we need to rectify. Example: sample project

<img width="894" alt="Screen Shot 2019-06-14 at 3 46 31 PM" src="https://user-images.githubusercontent.com/46309201/59582980-88536780-90f7-11e9-8c23-b4ad51b2519b.png">

2. cosmetic issue:

<img w... | 1.0 | Need to fix taiko doc -

Observation:

1. Under overview there are few texts which appears as link we need to rectify. Example: sample project

<img width="894" alt="Screen Shot 2019-06-14 at 3 46 31 PM" src="https://user-images.githubusercontent.com/46309201/59582980-88536780-90f7-11e9-8c23-b4ad51b2519b.png">

2.... | non_process | need to fix taiko doc observation under overview there are few texts which appears as link we need to rectify example sample project img width alt screen shot at pm src cosmetic issue img width alt screen shot at pm src taiko emulate network ... | 0 |

15,624 | 19,770,228,787 | IssuesEvent | 2022-01-17 09:17:19 | skellig-framework/skellig-core | https://api.github.com/repos/skellig-framework/skellig-core | closed | Modify date and time from current date | processing | Add ability to modify current date and time, by adding a function which can go after `now()`, ex:

`now().plusDays(1)` | 1.0 | Modify date and time from current date - Add ability to modify current date and time, by adding a function which can go after `now()`, ex:

`now().plusDays(1)` | process | modify date and time from current date add ability to modify current date and time by adding a function which can go after now ex now plusdays | 1 |

45,635 | 24,147,423,805 | IssuesEvent | 2022-09-21 20:08:35 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Expression.Compile performance regression on .NET7 RC1 | tenet-performance area-VM-coreclr | <!--This is just a template - feel free to delete any and all of it and replace as appropriate.-->

### Description

Running simple benchmark to test performance of Expression.Compile shows regressions on .NET7 RC1

<details>

<summary>Benchmark code</summary>

```c#

using System;

using System.Linq.Expressi... | True | Expression.Compile performance regression on .NET7 RC1 - <!--This is just a template - feel free to delete any and all of it and replace as appropriate.-->

### Description

Running simple benchmark to test performance of Expression.Compile shows regressions on .NET7 RC1

<details>

<summary>Benchmark code</summa... | non_process | expression compile performance regression on description running simple benchmark to test performance of expression compile shows regressions on benchmark code c using system using system linq expressions using benchmarkdotnet attributes using benchmarkdotnet configs ... | 0 |

195,180 | 6,904,978,482 | IssuesEvent | 2017-11-27 03:45:06 | AIE-2017-Yr1-Group1/cultist-game | https://api.github.com/repos/AIE-2017-Yr1-Group1/cultist-game | closed | Cultists are firing at Demorial's feet | bug priority-1 | Probably because the lookat fix for offset fireballs is looking at Demorial position, which is 0,0,0 at his feet. | 1.0 | Cultists are firing at Demorial's feet - Probably because the lookat fix for offset fireballs is looking at Demorial position, which is 0,0,0 at his feet. | non_process | cultists are firing at demorial s feet probably because the lookat fix for offset fireballs is looking at demorial position which is at his feet | 0 |

160,052 | 20,092,552,107 | IssuesEvent | 2022-02-06 01:28:25 | PGreaneyLYIT/easybuggy4django | https://api.github.com/repos/PGreaneyLYIT/easybuggy4django | opened | CVE-2019-8331 (Medium) detected in bootstrap-3.3.7.min.js | security vulnerability | ## CVE-2019-8331 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.7.min.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile fi... | True | CVE-2019-8331 (Medium) detected in bootstrap-3.3.7.min.js - ## CVE-2019-8331 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.7.min.js</b></p></summary>

<p>The most popu... | non_process | cve medium detected in bootstrap min js cve medium severity vulnerability vulnerable library bootstrap min js the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to dependency file templates ba... | 0 |

4,361 | 7,260,514,255 | IssuesEvent | 2018-02-18 10:53:23 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [FEATURE] [processing] New algorithm to compute geometry by expression | Processing User Manual | This algorithm updates existing geometries (or creates new geometries) for input features by use of a QGIS expression. This allows complex geometry modifications which can utilise all the

flexibility of the QGIS expression engine to manipulate and create geometries for output features.

see https://github.com/qgis/Q... | 1.0 | [FEATURE] [processing] New algorithm to compute geometry by expression - This algorithm updates existing geometries (or creates new geometries) for input features by use of a QGIS expression. This allows complex geometry modifications which can utilise all the

flexibility of the QGIS expression engine to manipulate a... | process | new algorithm to compute geometry by expression this algorithm updates existing geometries or creates new geometries for input features by use of a qgis expression this allows complex geometry modifications which can utilise all the flexibility of the qgis expression engine to manipulate and create geometrie... | 1 |

776,863 | 27,264,724,273 | IssuesEvent | 2023-02-22 17:09:43 | ascheid/itsg33-pbmm-issue-gen | https://api.github.com/repos/ascheid/itsg33-pbmm-issue-gen | opened | SA-11(5): Developer Security Testing And Evaluation | Penetration Testing / Analysis | Priority: P3 ITSG-33 Suggested Assignment: IT Projects Class: Management Control: SA-11 | # Control Definition

DEVELOPER SECURITY TESTING AND EVALUATION | PENETRATION TESTING / ANALYSIS

The organization requires the developer of the information system, system component, or information system service to perform penetration testing at [Assignment: organization-defined breadth/depth] and with [Assignment: org... | 1.0 | SA-11(5): Developer Security Testing And Evaluation | Penetration Testing / Analysis - # Control Definition

DEVELOPER SECURITY TESTING AND EVALUATION | PENETRATION TESTING / ANALYSIS

The organization requires the developer of the information system, system component, or information system service to perform penetratio... | non_process | sa developer security testing and evaluation penetration testing analysis control definition developer security testing and evaluation penetration testing analysis the organization requires the developer of the information system system component or information system service to perform penetration... | 0 |

5,848 | 8,672,991,394 | IssuesEvent | 2018-11-30 00:14:57 | googleapis/google-cloud-java | https://api.github.com/repos/googleapis/google-cloud-java | closed | Setup and fix Java 11 tests | type: process | Java 11 is an LTS version scheduled for GA 2018-09-25.

Also, we need to fix the build on Java 11. | 1.0 | Setup and fix Java 11 tests - Java 11 is an LTS version scheduled for GA 2018-09-25.

Also, we need to fix the build on Java 11. | process | setup and fix java tests java is an lts version scheduled for ga also we need to fix the build on java | 1 |

768,699 | 26,976,316,511 | IssuesEvent | 2023-02-09 09:49:40 | MattTheLegoman/RealmsInExile | https://api.github.com/repos/MattTheLegoman/RealmsInExile | opened | GUI in culture screen to show descriptions | priority: low gui | Tweak GUI in culture screen to show cultural descriptions. | 1.0 | GUI in culture screen to show descriptions - Tweak GUI in culture screen to show cultural descriptions. | non_process | gui in culture screen to show descriptions tweak gui in culture screen to show cultural descriptions | 0 |

674,165 | 23,041,692,335 | IssuesEvent | 2022-07-23 08:31:37 | tsunamods-codes/7th-Heaven | https://api.github.com/repos/tsunamods-codes/7th-Heaven | closed | Incorrect folder priority because of naming. | help wanted priority/P2 | I have been doing some tests. I use my Models Fusion mod which includes Field/Battle/World models, normally with al the files needed to create the model. I decided to add Ninostyle Chibi models (IRO) v0.245 also to 7H. So, my order is:

- Ninostyle Chibis (for Field)

- Models Fusion (basically are Chaos models, for ... | 1.0 | Incorrect folder priority because of naming. - I have been doing some tests. I use my Models Fusion mod which includes Field/Battle/World models, normally with al the files needed to create the model. I decided to add Ninostyle Chibi models (IRO) v0.245 also to 7H. So, my order is:

- Ninostyle Chibis (for Field)

- ... | non_process | incorrect folder priority because of naming i have been doing some tests i use my models fusion mod which includes field battle world models normally with al the files needed to create the model i decided to add ninostyle chibi models iro also to so my order is ninostyle chibis for field mode... | 0 |

5,222 | 8,026,315,844 | IssuesEvent | 2018-07-27 03:13:45 | turnkeylinux/tracker | https://api.github.com/repos/turnkeylinux/tracker | opened | Develop user friendly instructions (script?) for updating 3rd party PHP packages | mambo processmaker sitracker ushahidi vtiger | A few TurnKey appliances that include 3rd party PHP apps are not compatible with PHP7. In those instances, we have included PHP5.6 from Ondřej Surý's [third party Debian repo](https://deb.sury.org/).

That's all well and good, but we have not included these third party repos in the auto security updates. Whilst we ar... | 1.0 | Develop user friendly instructions (script?) for updating 3rd party PHP packages - A few TurnKey appliances that include 3rd party PHP apps are not compatible with PHP7. In those instances, we have included PHP5.6 from Ondřej Surý's [third party Debian repo](https://deb.sury.org/).

That's all well and good, but we h... | process | develop user friendly instructions script for updating party php packages a few turnkey appliances that include party php apps are not compatible with in those instances we have included from ondřej surý s that s all well and good but we have not included these third party repos in the auto sec... | 1 |

47,334 | 24,956,753,360 | IssuesEvent | 2022-11-01 12:28:39 | deeplearning4j/deeplearning4j | https://api.github.com/repos/deeplearning4j/deeplearning4j | closed | DL4J: implement MLKDNNLSTMHelper.backpropGradient + enable helper | Performance DL4J | https://github.com/eclipse/deeplearning4j/blob/master/deeplearning4j/deeplearning4j-nn/src/main/java/org/deeplearning4j/nn/layers/mkldnn/MKLDNNLSTMHelper.java#L34-L42

Now that LSTMLayer backprop has been merged (with MKLDNN support), we should finally add this to libnd4j and enable the helper in the DL4J LSTM layer:... | True | DL4J: implement MLKDNNLSTMHelper.backpropGradient + enable helper - https://github.com/eclipse/deeplearning4j/blob/master/deeplearning4j/deeplearning4j-nn/src/main/java/org/deeplearning4j/nn/layers/mkldnn/MKLDNNLSTMHelper.java#L34-L42

Now that LSTMLayer backprop has been merged (with MKLDNN support), we should final... | non_process | implement mlkdnnlstmhelper backpropgradient enable helper now that lstmlayer backprop has been merged with mkldnn support we should finally add this to and enable the helper in the lstm layer | 0 |

54,258 | 23,217,111,397 | IssuesEvent | 2022-08-02 14:53:53 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | closed | Complete Citybase integration with Knack action items | Service: Dev Service: Apps Product: Banners | Complete [Standard Checkout document](https://docs.google.com/document/d/1jl6j3CHr1UWnCmfe3uyJK7cuPUqJIuZC/edit)

**Action items from session 1**

- [x] Section 1: Service Fees- Mateo/Diana to confirm fees are passed to customer at time of payment, or if Austin is paying fees for customers.

In issue #8268 we doc... | 2.0 | Complete Citybase integration with Knack action items - Complete [Standard Checkout document](https://docs.google.com/document/d/1jl6j3CHr1UWnCmfe3uyJK7cuPUqJIuZC/edit)

**Action items from session 1**

- [x] Section 1: Service Fees- Mateo/Diana to confirm fees are passed to customer at time of payment, or if Austi... | non_process | complete citybase integration with knack action items complete action items from session section service fees mateo diana to confirm fees are passed to customer at time of payment or if austin is paying fees for customers in issue we documented the stakeholders comments having the... | 0 |

215,110 | 24,126,433,296 | IssuesEvent | 2022-09-21 01:09:57 | smb-h/Estates-price-prediction | https://api.github.com/repos/smb-h/Estates-price-prediction | opened | CVE-2022-36027 (Medium) detected in tensorflow-2.6.3-cp37-cp37m-manylinux2010_x86_64.whl | security vulnerability | ## CVE-2022-36027 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-2.6.3-cp37-cp37m-manylinux2010_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learn... | True | CVE-2022-36027 (Medium) detected in tensorflow-2.6.3-cp37-cp37m-manylinux2010_x86_64.whl - ## CVE-2022-36027 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-2.6.3-cp37-cp37... | non_process | cve medium detected in tensorflow whl cve medium severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file requirements txt path ... | 0 |

11,794 | 14,620,804,822 | IssuesEvent | 2020-12-22 20:24:48 | pacificclimate/quail | https://api.github.com/repos/pacificclimate/quail | closed | Get available indices by name | process | ## Description

This function takes a climdexInput object as input and returns the names of all the indices which may be computed or, if \code{get.function.names} is TRUE (the default), the names of the functions corresponding to the indices.

## Function to wrap

[`climdex.get.available.indices`](https://github.com/... | 1.0 | Get available indices by name - ## Description

This function takes a climdexInput object as input and returns the names of all the indices which may be computed or, if \code{get.function.names} is TRUE (the default), the names of the functions corresponding to the indices.

## Function to wrap

[`climdex.get.availab... | process | get available indices by name description this function takes a climdexinput object as input and returns the names of all the indices which may be computed or if code get function names is true the default the names of the functions corresponding to the indices function to wrap | 1 |

230,319 | 25,464,164,546 | IssuesEvent | 2022-11-25 01:01:37 | pactflow/example-bi-directional-provider-restassured | https://api.github.com/repos/pactflow/example-bi-directional-provider-restassured | opened | CVE-2022-45868 (High) detected in h2-1.4.200.jar | security vulnerability | ## CVE-2022-45868 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>h2-1.4.200.jar</b></p></summary>

<p>H2 Database Engine</p>

<p>Library home page: <a href="https://h2database.com">http... | True | CVE-2022-45868 (High) detected in h2-1.4.200.jar - ## CVE-2022-45868 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>h2-1.4.200.jar</b></p></summary>

<p>H2 Database Engine</p>

<p>Libra... | non_process | cve high detected in jar cve high severity vulnerability vulnerable library jar database engine library home page a href path to dependency file build gradle path to vulnerable library m jar dependency hierarchy x jar ... | 0 |

22,501 | 31,494,396,015 | IssuesEvent | 2023-08-31 00:15:37 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | multiple parametric masks | feature: new scope: UI scope: image processing controversial no-issue-activity | **Is your feature request related to a problem? Please describe.**

Occasionally one would like to select a union of various properties that can be described by parametric masks (eg skin color and blue for eyes, nothing else).

**Describe the solution you'd like**

Multiple parametric masks in a module instance, ... | 1.0 | multiple parametric masks - **Is your feature request related to a problem? Please describe.**

Occasionally one would like to select a union of various properties that can be described by parametric masks (eg skin color and blue for eyes, nothing else).

**Describe the solution you'd like**

Multiple parametric ... | process | multiple parametric masks is your feature request related to a problem please describe occasionally one would like to select a union of various properties that can be described by parametric masks eg skin color and blue for eyes nothing else describe the solution you d like multiple parametric ... | 1 |

47,117 | 7,307,492,269 | IssuesEvent | 2018-02-28 03:03:57 | adafruit/circuitpython | https://api.github.com/repos/adafruit/circuitpython | closed | Fix warnings in sphinx documentation build | documentation | There are unconnected pages, ambiguous references, and warnings about the new `templates/replace.inc` file, which came in with MPy v1.9.2 | 1.0 | Fix warnings in sphinx documentation build - There are unconnected pages, ambiguous references, and warnings about the new `templates/replace.inc` file, which came in with MPy v1.9.2 | non_process | fix warnings in sphinx documentation build there are unconnected pages ambiguous references and warnings about the new templates replace inc file which came in with mpy | 0 |

95,315 | 10,877,574,960 | IssuesEvent | 2019-11-16 11:21:04 | tobiasanker/SakuraTree | https://api.github.com/repos/tobiasanker/SakuraTree | closed | Add usage of multiple blossom-subtypes | documentation feature / enhancement | ## Feature-request

### Description

Blossoms can have only one subtype at the moment. It is only a problem of the compiler, because its not completely updated for the current parser-version.

### Possible Implementation

Update `convertBlossom`-method in `SakuraCompiler` to iterate over the subtypes.

| 1.0 | Add usage of multiple blossom-subtypes - ## Feature-request

### Description

Blossoms can have only one subtype at the moment. It is only a problem of the compiler, because its not completely updated for the current parser-version.

### Possible Implementation

Update `convertBlossom`-method in `SakuraCompiler... | non_process | add usage of multiple blossom subtypes feature request description blossoms can have only one subtype at the moment it is only a problem of the compiler because its not completely updated for the current parser version possible implementation update convertblossom method in sakuracompiler... | 0 |

257,865 | 19,533,836,895 | IssuesEvent | 2021-12-30 23:38:20 | mjskay/ggdist | https://api.github.com/repos/mjskay/ggdist | closed | Use new @examplesIf tag in roxygen examples | documentation | For conditional examples (e.g. ones depending on suggested packages), switch to using `@examplesIf` | 1.0 | Use new @examplesIf tag in roxygen examples - For conditional examples (e.g. ones depending on suggested packages), switch to using `@examplesIf` | non_process | use new examplesif tag in roxygen examples for conditional examples e g ones depending on suggested packages switch to using examplesif | 0 |

2,317 | 5,139,972,878 | IssuesEvent | 2017-01-12 02:18:01 | vuejs/vue-loader | https://api.github.com/repos/vuejs/vue-loader | closed | External Pug template not working if it's filename is the same as the component's one. | pre-processor | I got this code in a `Hello.vue` component ;

```

<template lang="pug" src="./Hello..pug"></template>

<script>

export default {

name: 'hello',

.....

}

}

</script>

<style lang='sass' src='./style.sass'></style>

```

That doesn't work.

I noticed that it only appens when template name is the exact s... | 1.0 | External Pug template not working if it's filename is the same as the component's one. - I got this code in a `Hello.vue` component ;

```

<template lang="pug" src="./Hello..pug"></template>

<script>

export default {

name: 'hello',

.....

}

}

</script>

<style lang='sass' src='./style.sass'></style>

... | process | external pug template not working if it s filename is the same as the component s one i got this code in a hello vue component export default name hello that doesn t work i noticed that it only appens when template name is the exact same as the compone... | 1 |

20,862 | 27,645,510,056 | IssuesEvent | 2023-03-10 22:29:14 | cse442-at-ub/project_s23-cinco | https://api.github.com/repos/cse442-at-ub/project_s23-cinco | opened | Create routing within React to navigate different pages when the corresponding button is clicked | Processing Task Sprint 2 | *Task Test*

test 1:

-go to the homepage: in your editor of choice, in the project folder, type "npm start" in the terminal to open up the homepage in the browser.

- click on the login button and ensure it takes you to the login page.

test 2:

-go to the homepage: in your editor of choice, in the project folder, type "... | 1.0 | Create routing within React to navigate different pages when the corresponding button is clicked - *Task Test*

test 1:

-go to the homepage: in your editor of choice, in the project folder, type "npm start" in the terminal to open up the homepage in the browser.

- click on the login button and ensure it takes you to th... | process | create routing within react to navigate different pages when the corresponding button is clicked task test test go to the homepage in your editor of choice in the project folder type npm start in the terminal to open up the homepage in the browser click on the login button and ensure it takes you to th... | 1 |

7,697 | 18,893,353,318 | IssuesEvent | 2021-11-15 15:23:41 | Krakenus00/EzNote | https://api.github.com/repos/Krakenus00/EzNote | opened | Unite entities at the Domain-Layer | architecture | It`s some changes in architecture. We need to move the same entities from Business-Layer and Data-Access to Domain-Layer | 1.0 | Unite entities at the Domain-Layer - It`s some changes in architecture. We need to move the same entities from Business-Layer and Data-Access to Domain-Layer | non_process | unite entities at the domain layer it s some changes in architecture we need to move the same entities from business layer and data access to domain layer | 0 |

9,089 | 4,413,688,691 | IssuesEvent | 2016-08-13 01:02:30 | facebook/osquery | https://api.github.com/repos/facebook/osquery | closed | Flaky tests: DaemonTests::test_5_daemon_sigint variance in return code | build/test test error | See:

```

5/9 Test #5: python_test_osqueryd .............***Failed 33.75 sec

.I0721 12:22:59.882045 26907 options.cpp:61] Verbose logging enabled by config option

I0721 12:22:59.882532 26907 daemon.cpp:38] Not starting the distributed query service: Distributed query service not enabled.

...FI0721 12:23:29.781241... | 1.0 | Flaky tests: DaemonTests::test_5_daemon_sigint variance in return code - See:

```

5/9 Test #5: python_test_osqueryd .............***Failed 33.75 sec

.I0721 12:22:59.882045 26907 options.cpp:61] Verbose logging enabled by config option

I0721 12:22:59.882532 26907 daemon.cpp:38] Not starting the distributed query s... | non_process | flaky tests daemontests test daemon sigint variance in return code see test python test osqueryd failed sec options cpp verbose logging enabled by config option daemon cpp not starting the distributed query service distributed query service no... | 0 |

243,852 | 26,290,654,331 | IssuesEvent | 2023-01-08 11:20:39 | yaeljacobs67/fs-agent | https://api.github.com/repos/yaeljacobs67/fs-agent | opened | WS-2016-7112 (Medium) detected in spring-context-4.3.1.RELEASE.jar | security vulnerability | ## WS-2016-7112 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-context-4.3.1.RELEASE.jar</b></p></summary>

<p>Spring Context</p>

<p>Library home page: <a href="https://github... | True | WS-2016-7112 (Medium) detected in spring-context-4.3.1.RELEASE.jar - ## WS-2016-7112 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-context-4.3.1.RELEASE.jar</b></p></summary>... | non_process | ws medium detected in spring context release jar ws medium severity vulnerability vulnerable library spring context release jar spring context library home page a href path to dependency file fs agent pom xml path to vulnerable library root repository org spring... | 0 |

7,148 | 10,289,323,736 | IssuesEvent | 2019-08-27 15:35:53 | Holmeyoung/blog-comment | https://api.github.com/repos/Holmeyoung/blog-comment | opened | python 多线程与多进程 | 一只羊的碎碎念 | /python-threading-multiprocessing/ Gitalk | https://www.holmeyoung.com/python-threading-multiprocessing/

多进程进程池阻塞与非阻塞 异步进程池(非阻塞)123456789101112131415161718from multiprocessing import Pooldef test(i): print (i)if __name__=="__main__": pool = Pool(processes=10) for i in xrange(500): ''' For循环中执行步骤: | 1.0 | python 多线程与多进程 | 一只羊的碎碎念 - https://www.holmeyoung.com/python-threading-multiprocessing/

多进程进程池阻塞与非阻塞 异步进程池(非阻塞)123456789101112131415161718from multiprocessing import Pooldef test(i): print (i)if __name__=="__main__": pool = Pool(processes=10) for i in xrange(500): ''' For循环中执行步骤: | process | python 多线程与多进程 一只羊的碎碎念 多进程进程池阻塞与非阻塞 异步进程池(非阻塞) multiprocessing import pooldef test i print i if name main pool pool processes for i in xrange for循环中执行步骤: | 1 |

111,677 | 14,121,332,300 | IssuesEvent | 2020-11-09 01:39:42 | rohmishra/personal_website | https://api.github.com/repos/rohmishra/personal_website | closed | General improvement and changes. | Design Needed enhancement help wanted no-issue-activity | **This report is tracking multiple changes to improve the design of website.**

1. ~Fix "My projects" text on dark mode chrome. #38~ ( Fixed in #43 )

2. Change background design for designs pages

3. Improve the cards for projects.

4. Show more details for each project (pending design)

5. Prepare for transition to... | 1.0 | General improvement and changes. - **This report is tracking multiple changes to improve the design of website.**

1. ~Fix "My projects" text on dark mode chrome. #38~ ( Fixed in #43 )

2. Change background design for designs pages

3. Improve the cards for projects.

4. Show more details for each project (pending de... | non_process | general improvement and changes this report is tracking multiple changes to improve the design of website fix my projects text on dark mode chrome fixed in change background design for designs pages improve the cards for projects show more details for each project pending desi... | 0 |

303,827 | 26,230,700,791 | IssuesEvent | 2023-01-04 23:44:26 | ImagingDataCommons/IDC-WebApp | https://api.github.com/repos/ImagingDataCommons/IDC-WebApp | closed | In test and prod tier, There is a warning message for BMI when a cohorts with BMI filters is saved. | bug testing needed testing passed | Note - I was unable to determine if this issue is actually a bug (We discussed this in our weekly meeting and i was suggested to open a ticket). Please feel free to delete the ticket if its not a bug.

Summary - On the IDC webapp, When I select TCGA collection and BMI filter to create a cohort, I see the message that... | 2.0 | In test and prod tier, There is a warning message for BMI when a cohorts with BMI filters is saved. - Note - I was unable to determine if this issue is actually a bug (We discussed this in our weekly meeting and i was suggested to open a ticket). Please feel free to delete the ticket if its not a bug.

Summary - On ... | non_process | in test and prod tier there is a warning message for bmi when a cohorts with bmi filters is saved note i was unable to determine if this issue is actually a bug we discussed this in our weekly meeting and i was suggested to open a ticket please feel free to delete the ticket if its not a bug summary on ... | 0 |

6,748 | 6,584,002,898 | IssuesEvent | 2017-09-13 08:35:08 | vmware/docker-volume-vsphere | https://api.github.com/repos/vmware/docker-volume-vsphere | closed | Improve CI/CD log collection | component/ci-infrastructure kind/enhancement | - [ ] Dump relevant information for both successful and failed runs

- [ ] Post logs to a location where developers can download it

- [ ] Improve logging output in CI/CD | 1.0 | Improve CI/CD log collection - - [ ] Dump relevant information for both successful and failed runs

- [ ] Post logs to a location where developers can download it

- [ ] Improve logging output in CI/CD | non_process | improve ci cd log collection dump relevant information for both successful and failed runs post logs to a location where developers can download it improve logging output in ci cd | 0 |

5,105 | 7,883,610,503 | IssuesEvent | 2018-06-27 06:08:31 | ropensci/onboarding-meta | https://api.github.com/repos/ropensci/onboarding-meta | closed | Submitters not using reviews | process | What do we do if submitters don't want to make changes that reviewers/editors suggest?

I think we have to be firm about this. If submitter gives good reasons for not making changes reviewers/editors suggest that's fine (and it should be item by item response too), but if there's a blanket statement that they just... | 1.0 | Submitters not using reviews - What do we do if submitters don't want to make changes that reviewers/editors suggest?

I think we have to be firm about this. If submitter gives good reasons for not making changes reviewers/editors suggest that's fine (and it should be item by item response too), but if there's a b... | process | submitters not using reviews what do we do if submitters don t want to make changes that reviewers editors suggest i think we have to be firm about this if submitter gives good reasons for not making changes reviewers editors suggest that s fine and it should be item by item response too but if there s a b... | 1 |

17,312 | 23,134,091,686 | IssuesEvent | 2022-07-28 13:01:41 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | opened | Cross compile qnx, Bazel does not produce shared libraries. Release 5.2.0 - $MONTH $YEAR | P1 type: process release team-OSS | **Description of the bug:**

When I cross compile QNX, no dynamic library is generated. At this time, after I add

`feature( name = "supports_dynamic_linker", enabled = True, )`, it prompts me that ld cannot be found. then i create a ld soft connection `ln aarch64-unknown-nto-qnx7.1.0-ld ld`, this problem is solved... | 1.0 | Cross compile qnx, Bazel does not produce shared libraries. Release 5.2.0 - $MONTH $YEAR - **Description of the bug:**

When I cross compile QNX, no dynamic library is generated. At this time, after I add

`feature( name = "supports_dynamic_linker", enabled = True, )`, it prompts me that ld cannot be found. then i c... | process | cross compile qnx bazel does not produce shared libraries release month year description of the bug when i cross compile qnx no dynamic library is generated at this time after i add feature name supports dynamic linker enabled true , it prompts me that ld cannot be found then i c... | 1 |

7,132 | 10,278,464,312 | IssuesEvent | 2019-08-25 14:39:06 | nextmoov/nextmoov | https://api.github.com/repos/nextmoov/nextmoov | closed | Standard entrypoint: yarn start, yarn start:production | #Dev Tools & Processes | # Standard EntryPoint

```yarn start``` Run the project in *Development*

```yarn build``` Build the static assets to be served in production in /dist

# Archived Discussion

I would like to standardize the entrypoint for Front & Back project, for both Production & Development.

I hereby propose that all our p... | 1.0 | Standard entrypoint: yarn start, yarn start:production - # Standard EntryPoint

```yarn start``` Run the project in *Development*

```yarn build``` Build the static assets to be served in production in /dist

# Archived Discussion

I would like to standardize the entrypoint for Front & Back project, for both Pro... | process | standard entrypoint yarn start yarn start production standard entrypoint yarn start run the project in development yarn build build the static assets to be served in production in dist archived discussion i would like to standardize the entrypoint for front back project for both pro... | 1 |

54,708 | 3,071,123,078 | IssuesEvent | 2015-08-19 09:58:13 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Некорректно работает перенос папки в очереди загрузки | bug Component-Logic imported Priority-Critical Usability | _From [rain.bipper@gmail.com](https://code.google.com/u/rain.bipper@gmail.com/) on November 06, 2009 12:26:45_

если в очереди загрузки выбрать "переместить" папку (подчеркиваю! именно

папку, а не файлы), то после перемещения в конечной папке будут все файлы

из исходной папки, а сама папка не создается.

Например: ... | 1.0 | Некорректно работает перенос папки в очереди загрузки - _From [rain.bipper@gmail.com](https://code.google.com/u/rain.bipper@gmail.com/) on November 06, 2009 12:26:45_

если в очереди загрузки выбрать "переместить" папку (подчеркиваю! именно

папку, а не файлы), то после перемещения в конечной папке будут все файлы

и... | non_process | некорректно работает перенос папки в очереди загрузки from on november если в очереди загрузки выбрать переместить папку подчеркиваю именно папку а не файлы то после перемещения в конечной папке будут все файлы из исходной папки а сама папка не создается например в очереди есть кот... | 0 |

12,638 | 15,016,625,529 | IssuesEvent | 2021-02-01 09:49:39 | threefoldtech/js-sdk | https://api.github.com/repos/threefoldtech/js-sdk | closed | newly deployed solutions are not listed on 'marketplace' deployed solutions. | process_wontfix type_bug | just deployed taiga and its not listed on marketplace deployed solution

<img width="1433" alt="Screenshot 2021-01-28 at 13 42 40" src="https://user-images.githubusercontent.com/43240801/106140257-d0a98700-616e-11eb-90dd-543a786022ea.png">

although it's shown on taiga/my workloads

<img width="1404" alt="Screens... | 1.0 | newly deployed solutions are not listed on 'marketplace' deployed solutions. - just deployed taiga and its not listed on marketplace deployed solution

<img width="1433" alt="Screenshot 2021-01-28 at 13 42 40" src="https://user-images.githubusercontent.com/43240801/106140257-d0a98700-616e-11eb-90dd-543a786022ea.png">

... | process | newly deployed solutions are not listed on marketplace deployed solutions just deployed taiga and its not listed on marketplace deployed solution img width alt screenshot at src although it s shown on taiga my workloads img width alt screenshot at src | 1 |

20,053 | 26,541,065,203 | IssuesEvent | 2023-01-19 19:18:31 | Azure/azure-sdk-tools | https://api.github.com/repos/Azure/azure-sdk-tools | closed | APIView integration from Cadl PR | Epic APIView Central-EngSys Cadl WS: Process Tools & Automation | When a user creates a PR in the specs repo and is using Cadl, make sure there is an APIView created for it (as we currently do for SDK changes) | 1.0 | APIView integration from Cadl PR - When a user creates a PR in the specs repo and is using Cadl, make sure there is an APIView created for it (as we currently do for SDK changes) | process | apiview integration from cadl pr when a user creates a pr in the specs repo and is using cadl make sure there is an apiview created for it as we currently do for sdk changes | 1 |

107,706 | 13,503,651,480 | IssuesEvent | 2020-09-13 14:30:52 | rubyforgood/casa | https://api.github.com/repos/rubyforgood/casa | closed | Make edit profile accessible by dropdown menu in All CASA Admin dashboard | :cityscape: Multitenancy :globe_with_meridians: All CASA Admin :paintbrush: Design Good First Issue Help Wanted Priority: High Ruby For Good 🎃 Fall 2020 | Part of epic #527

**What type of user is this for? [volunteer/admin/supervisor/all or All CASA Admin]**

All CASA Admin

**Where does/should this occur?**

In the All CASA Admin dashboard (dependent on #628)

**Description**

All CASA Admin needs an entry point to their Edit Profile page. It should be accessible... | 1.0 | Make edit profile accessible by dropdown menu in All CASA Admin dashboard - Part of epic #527

**What type of user is this for? [volunteer/admin/supervisor/all or All CASA Admin]**

All CASA Admin

**Where does/should this occur?**

In the All CASA Admin dashboard (dependent on #628)

**Description**

All CASA Ad... | non_process | make edit profile accessible by dropdown menu in all casa admin dashboard part of epic what type of user is this for all casa admin where does should this occur in the all casa admin dashboard dependent on description all casa admin needs an entry point to their edit profile page ... | 0 |

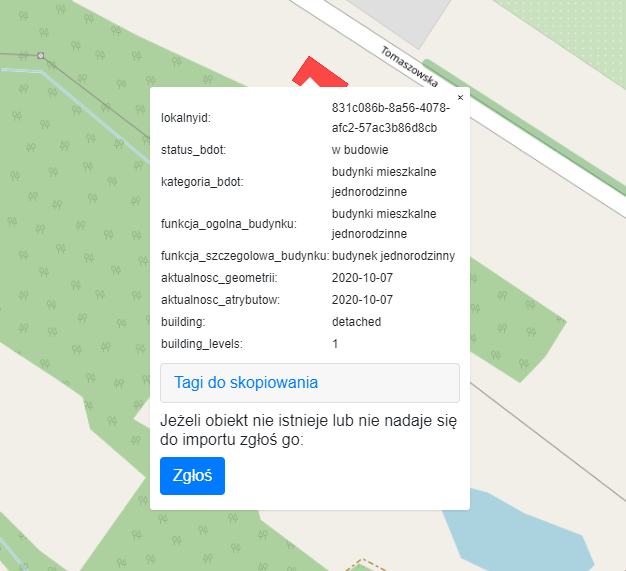

14,772 | 18,049,104,580 | IssuesEvent | 2021-09-19 12:29:34 | openstreetmap-polska/gugik2osm | https://api.github.com/repos/openstreetmap-polska/gugik2osm | closed | Budynki w budowie | data_processing | Aktualnie budynki w budowie są importowane jako zbudowane.

Proponuję je oznaczać jako building=construction, ew. pomijać.

| enhancement vscode api adoption | <!-- Please search existing issues to avoid creating duplicates. -->

<!-- Describe the feature you'd like. -->

Just like https://github.com/alefragnani/vscode-bookmarks/issues/430

The extension will have **limited** support, not allowing **indicate the formatter app path** on untrusted workspaces. | 1.0 | [FEATURE] - Support Workspace Trust API (limited) - <!-- Please search existing issues to avoid creating duplicates. -->

<!-- Describe the feature you'd like. -->

Just like https://github.com/alefragnani/vscode-bookmarks/issues/430

The extension will have **limited** support, not allowing **indicate the formatte... | non_process | support workspace trust api limited just like the extension will have limited support not allowing indicate the formatter app path on untrusted workspaces | 0 |

93,613 | 3,906,621,301 | IssuesEvent | 2016-04-19 09:31:57 | leoncastillejos/sonar | https://api.github.com/repos/leoncastillejos/sonar | closed | Sonar Web authentication and encryption | action:in_progress priority:high type:enhancement | Right now, Sonar web is cleartext, and does not ask for a password, therefore anyone is able to create, delete, view and modify machines and alerts. This is not ideal. | 1.0 | Sonar Web authentication and encryption - Right now, Sonar web is cleartext, and does not ask for a password, therefore anyone is able to create, delete, view and modify machines and alerts. This is not ideal. | non_process | sonar web authentication and encryption right now sonar web is cleartext and does not ask for a password therefore anyone is able to create delete view and modify machines and alerts this is not ideal | 0 |

70 | 2,523,723,824 | IssuesEvent | 2015-01-20 13:06:53 | sysown/proxysql-0.2 | https://api.github.com/repos/sysown/proxysql-0.2 | opened | Implement table stats_mysql_commands_counters to store counters about commands | ADMIN cxx_db cxx_pa development enhancement MYSQL PROTOCOL QUERY PROCESSOR STATISTICS | Table needs to be created in statsdb

Optionally it can also store total execution time | 1.0 | Implement table stats_mysql_commands_counters to store counters about commands - Table needs to be created in statsdb

Optionally it can also store total execution time | process | implement table stats mysql commands counters to store counters about commands table needs to be created in statsdb optionally it can also store total execution time | 1 |

518,850 | 15,035,991,207 | IssuesEvent | 2021-02-02 14:46:04 | StatCan/daaas | https://api.github.com/repos/StatCan/daaas | closed | Support Construction Starts project by investigating running fastai/pytorch jobs in distributed multi-GPU setup | component/kubeflow current-sprint kind/task priority/soon size/XL triage/support | Goals:

* demonstrate running pytorch jobs in distributed multi-gpu setup on platform

* demonstrate running fastai jobs in distributed multi-gpu setup on platform

* advise construction starts project on how to achieve above multi-gpu setups to scale up their network training

* build a tutorial/guide notebooks for di... | 1.0 | Support Construction Starts project by investigating running fastai/pytorch jobs in distributed multi-GPU setup - Goals:

* demonstrate running pytorch jobs in distributed multi-gpu setup on platform

* demonstrate running fastai jobs in distributed multi-gpu setup on platform

* advise construction starts project on h... | non_process | support construction starts project by investigating running fastai pytorch jobs in distributed multi gpu setup goals demonstrate running pytorch jobs in distributed multi gpu setup on platform demonstrate running fastai jobs in distributed multi gpu setup on platform advise construction starts project on h... | 0 |

14,258 | 9,221,057,571 | IssuesEvent | 2019-03-11 19:00:00 | OriginProtocol/origin | https://api.github.com/repos/OriginProtocol/origin | opened | Shipping Information | dapp discussion html/css javascript p1 security transaction flow ui/ux | This is a placeholder for discussing the long-requested ability for a buyer to add a shipping address when making an offer. This hasn't been done yet because we obviously don't want to store unencrypted, sensitive information publicly. And the messaging infrastructure was not stable enough to rely on for this use. | True | Shipping Information - This is a placeholder for discussing the long-requested ability for a buyer to add a shipping address when making an offer. This hasn't been done yet because we obviously don't want to store unencrypted, sensitive information publicly. And the messaging infrastructure was not stable enough to rel... | non_process | shipping information this is a placeholder for discussing the long requested ability for a buyer to add a shipping address when making an offer this hasn t been done yet because we obviously don t want to store unencrypted sensitive information publicly and the messaging infrastructure was not stable enough to rel... | 0 |

199,284 | 22,693,267,196 | IssuesEvent | 2022-07-05 01:05:37 | PGreaneyLYIT/easybuggy4django | https://api.github.com/repos/PGreaneyLYIT/easybuggy4django | opened | CVE-2022-34265 (High) detected in Django-2.0.3-py3-none-any.whl | security vulnerability | ## CVE-2022-34265 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Django-2.0.3-py3-none-any.whl</b></p></summary>

<p>A high-level Python Web framework that encourages rapid development... | True | CVE-2022-34265 (High) detected in Django-2.0.3-py3-none-any.whl - ## CVE-2022-34265 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Django-2.0.3-py3-none-any.whl</b></p></summary>

<p>A... | non_process | cve high detected in django none any whl cve high severity vulnerability vulnerable library django none any whl a high level python web framework that encourages rapid development and clean pragmatic design library home page a href path to dependency file tmp ws ... | 0 |

664,690 | 22,285,284,120 | IssuesEvent | 2022-06-11 14:22:09 | magento/magento2 | https://api.github.com/repos/magento/magento2 | closed | jQuery UI Slider and SelectMenu Mapping is not correct in requirejs-config.js | Issue: Confirmed Reproduced on 2.4.x Progress: PR in progress Priority: P2 Area: Framework Reported on 2.4.4 | <!---

Thank you for contributing to Magento.

To help us process this issue we recommend that you add the following information:

- Summary of the issue,

- Information on your environment,

- Steps to reproduce,

- Expected and actual results,

Fields marked with (*) are required. Plea... | 1.0 | jQuery UI Slider and SelectMenu Mapping is not correct in requirejs-config.js - <!---

Thank you for contributing to Magento.

To help us process this issue we recommend that you add the following information:

- Summary of the issue,

- Information on your environment,

- Steps to reproduce,

... | non_process | jquery ui slider and selectmenu mapping is not correct in requirejs config js thank you for contributing to magento to help us process this issue we recommend that you add the following information summary of the issue information on your environment steps to reproduce ... | 0 |

2,055 | 2,603,975,613 | IssuesEvent | 2015-02-24 19:01:25 | chrsmith/nishazi6 | https://api.github.com/repos/chrsmith/nishazi6 | opened | 沈阳治疗湿尤需要多长时间 | auto-migrated Priority-Medium Type-Defect | ```

沈阳治疗湿尤需要多长时间〓沈陽軍區政治部醫院性病〓TEL��

�024-31023308〓成立于1946年,68年專注于性傳播疾病的研究和治�

��。位于沈陽市沈河區二緯路32號。是一所與新中國同建立共�

��煌的歷史悠久、設備精良、技術權威、專家云集,是預防、

保健、醫療、科研康復為一體的綜合性醫院。是國家首批公��

�甲等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大�

��、東南大學等知名高等院校的教學醫院。曾被中國人民解放

軍空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立��

�體二等功。

```

-----

Original issue reported on code.google.com by `q96... | 1.0 | 沈阳治疗湿尤需要多长时间 - ```

沈阳治疗湿尤需要多长时间〓沈陽軍區政治部醫院性病〓TEL��

�024-31023308〓成立于1946年,68年專注于性傳播疾病的研究和治�

��。位于沈陽市沈河區二緯路32號。是一所與新中國同建立共�

��煌的歷史悠久、設備精良、技術權威、專家云集,是預防、

保健、醫療、科研康復為一體的綜合性醫院。是國家首批公��

�甲等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大�

��、東南大學等知名高等院校的教學醫院。曾被中國人民解放

軍空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立��

�體二等功。

```

-----

Original issue reported on code.goo... | non_process | 沈阳治疗湿尤需要多长时间 沈阳治疗湿尤需要多长时间〓沈陽軍區政治部醫院性病〓tel�� � 〓 , � ��。 。是一所與新中國同建立共� ��煌的歷史悠久、設備精良、技術權威、專家云集,是預防、 保健、醫療、科研康復為一體的綜合性醫院。是國家首批公�� �甲等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大� ��、東南大學等知名高等院校的教學醫院。曾被中國人民解放 軍空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立�� �體二等功。 original issue reported on code google com by gmail com on jun at ... | 0 |

52,893 | 10,959,744,161 | IssuesEvent | 2019-11-27 12:06:05 | Regalis11/Barotrauma | https://api.github.com/repos/Regalis11/Barotrauma | closed | [0.9.5.1] [Issue] [Graphics] Volume Icons on crewlist don't indicate volume. | Bug Code | Right now the new voice chat VOIP volume icon is a dot that extends into a bunch of curved lines to indicate volume. While it properly works in the lobby, only the dot shows up in the ingame crew list in the top left. Someone probably just forgot to port over the new system. | 1.0 | [0.9.5.1] [Issue] [Graphics] Volume Icons on crewlist don't indicate volume. - Right now the new voice chat VOIP volume icon is a dot that extends into a bunch of curved lines to indicate volume. While it properly works in the lobby, only the dot shows up in the ingame crew list in the top left. Someone probably just f... | non_process | volume icons on crewlist don t indicate volume right now the new voice chat voip volume icon is a dot that extends into a bunch of curved lines to indicate volume while it properly works in the lobby only the dot shows up in the ingame crew list in the top left someone probably just forgot to port over the ... | 0 |

20,672 | 27,335,245,132 | IssuesEvent | 2023-02-26 05:18:32 | python/cpython | https://api.github.com/repos/python/cpython | closed | Process and Thread resource recycling issue | type-bug expert-multiprocessing | # Summary

<!--

如果一个子进程为了提供服务而创建,子进程的父进程如果创建一个线程去轮询对应服务,则当前的进程默认退出机制会先释放子进程,导致线程轮询服务的线程raise Error

-->

For a child process with daemon=False creates the multiprocessing.Manager object, if it creates a thread for polling SyncManager-created Lock/Event objects, the default recycling mechanism will first release the gr... | 1.0 | Process and Thread resource recycling issue - # Summary

<!--

如果一个子进程为了提供服务而创建,子进程的父进程如果创建一个线程去轮询对应服务,则当前的进程默认退出机制会先释放子进程,导致线程轮询服务的线程raise Error

-->

For a child process with daemon=False creates the multiprocessing.Manager object, if it creates a thread for polling SyncManager-created Lock/Event objects, the default... | process | process and thread resource recycling issue summary 如果一个子进程为了提供服务而创建,子进程的父进程如果创建一个线程去轮询对应服务,则当前的进程默认退出机制会先释放子进程,导致线程轮询服务的线程raise error for a child process with daemon false creates the multiprocessing manager object if it creates a thread for polling syncmanager created lock event objects the default... | 1 |

7,039 | 10,197,375,067 | IssuesEvent | 2019-08-13 00:04:22 | Ultimate-Hosts-Blacklist/whitelist | https://api.github.com/repos/Ultimate-Hosts-Blacklist/whitelist | closed | False positive | whitelisting process | *@macuser666 commented on Aug 12, 2019, 12:33 PM UTC:*

hi

the following site is blocked

[www.bcee.lu](http://www.bcee.lu)

bcee.lu

It's a legimate bank website of Banque et Caisse d'Épargne de l'État.

*This issue was moved by [funilrys](https://github.com/funilrys) from [mitchellkrogza/Ultimate.Hosts.Blacklist#519](ht... | 1.0 | False positive - *@macuser666 commented on Aug 12, 2019, 12:33 PM UTC:*

hi

the following site is blocked

[www.bcee.lu](http://www.bcee.lu)

bcee.lu

It's a legimate bank website of Banque et Caisse d'Épargne de l'État.

*This issue was moved by [funilrys](https://github.com/funilrys) from [mitchellkrogza/Ultimate.Hosts.... | process | false positive commented on aug pm utc hi the following site is blocked bcee lu it s a legimate bank website of banque et caisse d épargne de l état this issue was moved by from | 1 |

197,833 | 14,944,836,392 | IssuesEvent | 2021-01-26 02:28:28 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | test_cholesky_solve_batched_many_batches_cuda_complex128 has cuda illegal memory access | high priority module: crash module: linear algebra module: tests triaged | ## 🐛 Bug

test_cholesky_solve_batched_many_batches_cuda_complex128 has cuda illegal memory access. https://github.com/pytorch/pytorch/pull/47047 might be related.

## To Reproduce

Steps to reproduce the behavior:

```

$ PYTORCH_TEST_WITH_SLOW=1 python test/test_linalg.py -v -k test_cholesky_solve_batched_many... | 1.0 | test_cholesky_solve_batched_many_batches_cuda_complex128 has cuda illegal memory access - ## 🐛 Bug

test_cholesky_solve_batched_many_batches_cuda_complex128 has cuda illegal memory access. https://github.com/pytorch/pytorch/pull/47047 might be related.

## To Reproduce

Steps to reproduce the behavior:

```

$ ... | non_process | test cholesky solve batched many batches cuda has cuda illegal memory access 🐛 bug test cholesky solve batched many batches cuda has cuda illegal memory access might be related to reproduce steps to reproduce the behavior pytorch test with slow python test test linalg py v k test... | 0 |

163,811 | 12,745,623,589 | IssuesEvent | 2020-06-26 14:33:47 | eclipse/openj9 | https://api.github.com/repos/eclipse/openj9 | closed | samplingObjectAllocation.soae001 failed, expected 1+ but got: 0 | comp:gc test failure | https://ci.eclipse.org/openj9/job/Test_openjdk11_j9_sanity.functional_x86-64_windows_OpenJDK11_testList_0/3

https://ci.eclipse.org/openj9/job/Test_openjdk11_j9_sanity.functional_x86-64_windows_Nightly_testList_0/10

cmdLineTester_jvmtitests_soae_3

```

Testing: soae001

Test start time: 2020/06/05 04:04:16 Central St... | 1.0 | samplingObjectAllocation.soae001 failed, expected 1+ but got: 0 - https://ci.eclipse.org/openj9/job/Test_openjdk11_j9_sanity.functional_x86-64_windows_OpenJDK11_testList_0/3

https://ci.eclipse.org/openj9/job/Test_openjdk11_j9_sanity.functional_x86-64_windows_Nightly_testList_0/10

cmdLineTester_jvmtitests_soae_3

```

... | non_process | samplingobjectallocation failed expected but got cmdlinetester jvmtitests soae testing test start time central standard time running command c users jenkins workspace test sanity functional windows testlist openjdkbinary image bin java xcompressedrefs xj... | 0 |

401,361 | 11,789,200,533 | IssuesEvent | 2020-03-17 16:45:42 | aces/Loris | https://api.github.com/repos/aces/Loris | closed | [New Module] Electrophysiology browser | Feature Priority: High | List of bugs found in the current set up:

- Task Name seems to be hardcoded to FaceHousCheck. Fix that so it takes that information from the physiological_parameter_file table (TaskName is one of the JSON file field). In jsx/components/eeg_session_panels.js and js/eeg_session_view.js

| 1.0 | [New Module] Electrophysiology browser - List of bugs found in the current set up:

- Task Name seems to be hardcoded to FaceHousCheck. Fix that so it takes that information from the physiological_parameter_file table (TaskName is one of the JSON file field). In jsx/components/eeg_session_panels.js and js/eeg_session_v... | non_process | electrophysiology browser list of bugs found in the current set up task name seems to be hardcoded to facehouscheck fix that so it takes that information from the physiological parameter file table taskname is one of the json file field in jsx components eeg session panels js and js eeg session view js | 0 |

17,102 | 22,622,771,145 | IssuesEvent | 2022-06-30 08:03:13 | 2i2c-org/infrastructure | https://api.github.com/repos/2i2c-org/infrastructure | closed | Define some first-line and second-line support processes | :label: team-process | ### Background and proposal

There are often cases where our support process is under-documented. For example, a few questions that people weren't sure how to answer:

- How should we prioritize support requests?

- What should we do if a request is not immediately "closeable"? Or if it requires ongoing follow-up w... | 1.0 | Define some first-line and second-line support processes - ### Background and proposal

There are often cases where our support process is under-documented. For example, a few questions that people weren't sure how to answer:

- How should we prioritize support requests?

- What should we do if a request is not imm... | process | define some first line and second line support processes background and proposal there are often cases where our support process is under documented for example a few questions that people weren t sure how to answer how should we prioritize support requests what should we do if a request is not imm... | 1 |

136,184 | 18,722,443,415 | IssuesEvent | 2021-11-03 13:17:12 | ioana-github-enterprise/simplebuild | https://api.github.com/repos/ioana-github-enterprise/simplebuild | opened | CVE-2019-16335 (High) detected in jackson-databind-2.7.9.jar | security vulnerability | ## CVE-2019-16335 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.7.9.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2019-16335 (High) detected in jackson-databind-2.7.9.jar - ## CVE-2019-16335 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.7.9.jar</b></p></summary>

<p>General... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file simplebuild build gradle path... | 0 |

14,433 | 17,483,498,373 | IssuesEvent | 2021-08-09 07:52:05 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | [API Proposal]: System.Diagnostics.Process.WaitForExit() should return the Process to allow chaining of calls | api-suggestion area-System.Diagnostics.Process untriaged | ### Background and motivation

This would allow method calls to be chained and reduce the number of lines of code required for certain actions.

### API Proposal

Before

```C#

namespace System.Diagnostics

{

public class Process

{

public void WaitForExit();

}

}

```

After:

```C#

... | 1.0 | [API Proposal]: System.Diagnostics.Process.WaitForExit() should return the Process to allow chaining of calls - ### Background and motivation

This would allow method calls to be chained and reduce the number of lines of code required for certain actions.

### API Proposal

Before

```C#

namespace System.Diagnostics

... | process | system diagnostics process waitforexit should return the process to allow chaining of calls background and motivation this would allow method calls to be chained and reduce the number of lines of code required for certain actions api proposal before c namespace system diagnostics publi... | 1 |

99,941 | 16,470,788,375 | IssuesEvent | 2021-05-23 11:19:40 | axe-api/axe-api | https://api.github.com/repos/axe-api/axe-api | closed | Relationship queries | enhancement security | We need to add `with` feature to the **Queries**.

## Examples

`api/users?with=posts{id|title|comments{content}}`

## Dependencies

We should update the documentations. | True | Relationship queries - We need to add `with` feature to the **Queries**.

## Examples

`api/users?with=posts{id|title|comments{content}}`

## Dependencies

We should update the documentations. | non_process | relationship queries we need to add with feature to the queries examples api users with posts id title comments content dependencies we should update the documentations | 0 |

518,712 | 15,033,172,642 | IssuesEvent | 2021-02-02 11:07:54 | YangCatalog/backend | https://api.github.com/repos/YangCatalog/backend | closed | Reading log from .gz files | Priority: Critical bug | since we are compressing log files on daily basis we need to be able to read them back programmatically as well | 1.0 | Reading log from .gz files - since we are compressing log files on daily basis we need to be able to read them back programmatically as well | non_process | reading log from gz files since we are compressing log files on daily basis we need to be able to read them back programmatically as well | 0 |

41,853 | 10,683,695,202 | IssuesEvent | 2019-10-22 08:54:49 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Regression in Firebird's SUBSTRING() implementation | C: DB: Firebird C: Functionality E: All Editions P: High R: Fixed T: Defect | We're currently generating bad SQL for `substring` in Firebird:

```sql

select

substring('abcde' from 1),

substring('abcde' from 1 for2),

substring('abcde' from 3),

substring('abcde' from 3 for2)

from RDB$DATABASE

```

The regression was introduced by this, or a related change:

https://github.co... | 1.0 | Regression in Firebird's SUBSTRING() implementation - We're currently generating bad SQL for `substring` in Firebird:

```sql

select

substring('abcde' from 1),

substring('abcde' from 1 for2),

substring('abcde' from 3),

substring('abcde' from 3 for2)

from RDB$DATABASE

```

The regression was intro... | non_process | regression in firebird s substring implementation we re currently generating bad sql for substring in firebird sql select substring abcde from substring abcde from substring abcde from substring abcde from from rdb database the regression was introduced ... | 0 |

760,717 | 26,653,997,110 | IssuesEvent | 2023-01-25 15:34:47 | KingGizzard/Ballotbox | https://api.github.com/repos/KingGizzard/Ballotbox | closed | Build Agent 3 | high priority | Agent 3 is the data request agent!

You can see the dynamics of agent 3 in the [figma diagram here](https://www.figma.com/file/SkUdZjkenjeh5WQvJIe9vz/BallotBox?node-id=0%3A1&t=Gy4phXY2zIQ3Scjy-0)

Agent 3 should be able to:

- Request data from the Ballotbox.sol contract, and provide an email to be contacted thro... | 1.0 | Build Agent 3 - Agent 3 is the data request agent!

You can see the dynamics of agent 3 in the [figma diagram here](https://www.figma.com/file/SkUdZjkenjeh5WQvJIe9vz/BallotBox?node-id=0%3A1&t=Gy4phXY2zIQ3Scjy-0)

Agent 3 should be able to:

- Request data from the Ballotbox.sol contract, and provide an email to b... | non_process | build agent agent is the data request agent you can see the dynamics of agent in the agent should be able to request data from the ballotbox sol contract and provide an email to be contacted through access ipfs data and save data locally when the encryption methods for the and ... | 0 |

12,246 | 14,744,109,121 | IssuesEvent | 2021-01-07 14:51:23 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Holiday Fee Bug - Child accounts | anc-process anp-0.5 ant-bug ant-enhancement has attachment | In GitLab by @kdjstudios on Jan 2, 2020, 14:15

**Submitted by:** Kyle

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/10125583

**Server:** ALL

**Client/Site:** NA

**Account:** NA

**Issue:**

During a phone call with Leah we believe we found an issue with the holiday fee settings with parent ch... | 1.0 | Holiday Fee Bug - Child accounts - In GitLab by @kdjstudios on Jan 2, 2020, 14:15

**Submitted by:** Kyle

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/10125583

**Server:** ALL

**Client/Site:** NA

**Account:** NA

**Issue:**

During a phone call with Leah we believe we found an issue with the ... | process | holiday fee bug child accounts in gitlab by kdjstudios on jan submitted by kyle helpdesk server all client site na account na issue during a phone call with leah we believe we found an issue with the holiday fee settings with parent child accounts we setup a pare... | 1 |

23,672 | 2,660,221,062 | IssuesEvent | 2015-03-19 04:05:47 | dartsim/dart | https://api.github.com/repos/dartsim/dart | closed | Bug in FreeJoint helper | Kind: Bug Priority: High | * File: dart/dynamics/FreeJoint.cpp

* Functions compromised: convertToPositions (line 61) and converToTransform (line 70)

* Problem description: I am generating a set of transformations with rotations around Z with angles in the range [0,2*PI], increasing at each step. I use these Transformations to set the joint po... | 1.0 | Bug in FreeJoint helper - * File: dart/dynamics/FreeJoint.cpp

* Functions compromised: convertToPositions (line 61) and converToTransform (line 70)

* Problem description: I am generating a set of transformations with rotations around Z with angles in the range [0,2*PI], increasing at each step. I use these Transform... | non_process | bug in freejoint helper file dart dynamics freejoint cpp functions compromised converttopositions line and convertotransform line problem description i am generating a set of transformations with rotations around z with angles in the range increasing at each step i use these transformations to... | 0 |

16,500 | 21,481,344,694 | IssuesEvent | 2022-04-26 18:03:37 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | opened | Iterate on intake form | intake-process-pct | # User story

As a Platform team member, I need a way of submitting a request to the Content team, so that I can request Platform Content team support for Platform website pages.

# Tasks

- [ ] Revise intake form in YAML

- [ ] Confirm that form submissions are appearing on documentation triage board

# Acceptance crit... | 1.0 | Iterate on intake form - # User story

As a Platform team member, I need a way of submitting a request to the Content team, so that I can request Platform Content team support for Platform website pages.

# Tasks

- [ ] Revise intake form in YAML

- [ ] Confirm that form submissions are appearing on documentation triage... | process | iterate on intake form user story as a platform team member i need a way of submitting a request to the content team so that i can request platform content team support for platform website pages tasks revise intake form in yaml confirm that form submissions are appearing on documentation triage boa... | 1 |

362,443 | 25,376,305,556 | IssuesEvent | 2022-11-21 14:21:26 | redhat-plumbers-in-action/advanced-issue-labeler | https://api.github.com/repos/redhat-plumbers-in-action/advanced-issue-labeler | opened | Make it more visible in README which options don't work together :memo: | type: documentation | ### Type of issue

other

### Description

_No response_

### Describe the solution you'd like

_No response_ | 1.0 | Make it more visible in README which options don't work together :memo: - ### Type of issue

other

### Description

_No response_

### Describe the solution you'd like

_No response_ | non_process | make it more visible in readme which options don t work together memo type of issue other description no response describe the solution you d like no response | 0 |

68,199 | 17,191,958,982 | IssuesEvent | 2021-07-16 12:19:54 | angular/angular-cli | https://api.github.com/repos/angular/angular-cli | closed | ng serve fails after some modification to the source code - module.buildInfo.jsonData TypeError: Cannot read property 'jsonData' of undefined | comp: devkit/build-angular freq1: low severity3: broken type: bug/fix | <!--🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅

Oh hi there! 😄

To expedite issue processing please search open and closed issues before submitting a new one.

Existing issues often contain information about workarounds, resolution, or progress updates.

🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅... | 1.0 | ng serve fails after some modification to the source code - module.buildInfo.jsonData TypeError: Cannot read property 'jsonData' of undefined - <!--🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅

Oh hi there! 😄

To expedite issue processing please search open and closed issues before submitting a ... | non_process | ng serve fails after some modification to the source code module buildinfo jsondata typeerror cannot read property jsondata of undefined 🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅 oh hi there 😄 to expedite issue processing please search open and closed issues before submitting a ... | 0 |

219,553 | 7,343,624,460 | IssuesEvent | 2018-03-07 12:02:53 | trimstray/pvimport | https://api.github.com/repos/trimstray/pvimport | opened | Limiting disk usage during import. | Priority: Critical Status: In Progress Type: Maintenance | Next Release: **[testing](https://github.com/trimstray/pvimport/tree/testing)**

Status: **In Progress**

Limiting disk usage during import:

- use **CFQ scheduler**

- use **ionice** to change io scheduling class and priority | 1.0 | Limiting disk usage during import. - Next Release: **[testing](https://github.com/trimstray/pvimport/tree/testing)**

Status: **In Progress**

Limiting disk usage during import:

- use **CFQ scheduler**

- use **ionice** to change io scheduling class and priority | non_process | limiting disk usage during import next release status in progress limiting disk usage during import use cfq scheduler use ionice to change io scheduling class and priority | 0 |

431,055 | 12,474,556,762 | IssuesEvent | 2020-05-29 09:52:05 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Don't show removed titles in lists | Priority: Medium Status: Fixed |

1) It clutters up the list without any use.

2) In case of transfering a deed by mistake to one of the removed titles, an admin needs to reclaim it, as it's stuck otherwise.

I'd have them removed from al... | 1.0 | Don't show removed titles in lists -

1) It clutters up the list without any use.

2) In case of transfering a deed by mistake to one of the removed titles, an admin needs to reclaim it, as it's stuck otherw... | non_process | don t show removed titles in lists it clutters up the list without any use in case of transfering a deed by mistake to one of the removed titles an admin needs to reclaim it as it s stuck otherwise i d have them removed from all such lists actually | 0 |

14,187 | 17,070,591,552 | IssuesEvent | 2021-07-07 12:54:05 | opensrp/web | https://api.github.com/repos/opensrp/web | opened | [FHIR Road Map] Descriptive error messages for all forms | FHIR compatibility | ## Issue Description

add descriptive error messages to forms. Replace generic 'error occurred' messages in all forms with descriptive messages.

## Resources

### FHIR Resources to reference

## Please share any other relevant information about the change request

---

Remember:

1. To alert the team in t... | True | [FHIR Road Map] Descriptive error messages for all forms - ## Issue Description

add descriptive error messages to forms. Replace generic 'error occurred' messages in all forms with descriptive messages.

## Resources

### FHIR Resources to reference

## Please share any other relevant information about the cha... | non_process | descriptive error messages for all forms issue description add descriptive error messages to forms replace generic error occurred messages in all forms with descriptive messages resources fhir resources to reference please share any other relevant information about the change request ... | 0 |

8,791 | 11,908,170,982 | IssuesEvent | 2020-03-31 00:10:51 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Change location of temporary executables for geoprocessing tools | Feature Request Processing | **Feature description.**

<!-- A clear and concise description of what you want to happen. Ex. QGIS would rock even more if [...] -->

I am not sure if I missed something somewhere, but it would be amazing to add the option to decide where the intermediate geoprocessing executables are stored and executed.

We woul... | 1.0 | Change location of temporary executables for geoprocessing tools - **Feature description.**

<!-- A clear and concise description of what you want to happen. Ex. QGIS would rock even more if [...] -->

I am not sure if I missed something somewhere, but it would be amazing to add the option to decide where the interme... | process | change location of temporary executables for geoprocessing tools feature description i am not sure if i missed something somewhere but it would be amazing to add the option to decide where the intermediate geoprocessing executables are stored and executed we would like to use qgis with saga grass ... | 1 |