Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

16,406 | 21,189,410,565 | IssuesEvent | 2022-04-08 15:43:55 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Remove bundled typescript from packages/server | stage: needs investigating type: performance 🏃♀️ process: dependencies | ### Current behavior:

TypeScript is bundled in `packages/server` because of `dependency-tree`. It takes about 35MB of space. I should be removed to reduce the bundle size.

### Desired behavior:

TypeScript should be removed from the bundle. We need to find ways to replace `dependency-tree`.

### Steps to repr... | 1.0 | Remove bundled typescript from packages/server - ### Current behavior:

TypeScript is bundled in `packages/server` because of `dependency-tree`. It takes about 35MB of space. I should be removed to reduce the bundle size.

### Desired behavior:

TypeScript should be removed from the bundle. We need to find ways t... | process | remove bundled typescript from packages server current behavior typescript is bundled in packages server because of dependency tree it takes about of space i should be removed to reduce the bundle size desired behavior typescript should be removed from the bundle we need to find ways to r... | 1 |

13,958 | 16,738,408,552 | IssuesEvent | 2021-06-11 06:41:53 | largecats/blog | https://api.github.com/repos/largecats/blog | opened | Stream Processing 101 | /blog/2021/06/10/stream-processing-101/ Gitalk | https://largecats.github.io/blog/2021/06/10/stream-processing-101/

This post is based on a sharing I did on general concepts in stream processing. | 1.0 | Stream Processing 101 - https://largecats.github.io/blog/2021/06/10/stream-processing-101/

This post is based on a sharing I did on general concepts in stream processing. | process | stream processing this post is based on a sharing i did on general concepts in stream processing | 1 |

21,968 | 30,463,807,084 | IssuesEvent | 2023-07-17 08:56:49 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [processor/resourcedetection] Docker detector doesn't et any attributes | bug priority:p1 processor/resourcedetection | ### What happened?

After migrating the detection processor to the new config interface in https://github.com/open-telemetry/opentelemetry-collector-contrib/pull/23253, the docker detector stopped setting any attributes

### Collector version

0.81.0

| 1.0 | [processor/resourcedetection] Docker detector doesn't et any attributes - ### What happened?

After migrating the detection processor to the new config interface in https://github.com/open-telemetry/opentelemetry-collector-contrib/pull/23253, the docker detector stopped setting any attributes

### Collector version... | process | docker detector doesn t et any attributes what happened after migrating the detection processor to the new config interface in the docker detector stopped setting any attributes collector version | 1 |

489,683 | 14,111,391,483 | IssuesEvent | 2020-11-07 00:05:28 | AY2021S1-CS2113-T13-3/tp | https://api.github.com/repos/AY2021S1-CS2113-T13-3/tp | closed | Improve CompareCommand such that same user should not be able to compare with himself | priority.High severity.High type.Enhancement | Currently, `CompareCommand` allows same user to compare with him- or herself, which should not be the case.

Also, the current user should not appear in the userlist for comparison. | 1.0 | Improve CompareCommand such that same user should not be able to compare with himself - Currently, `CompareCommand` allows same user to compare with him- or herself, which should not be the case.

Also, the current user should not appear in the userlist for comparison. | non_process | improve comparecommand such that same user should not be able to compare with himself currently comparecommand allows same user to compare with him or herself which should not be the case also the current user should not appear in the userlist for comparison | 0 |

15,496 | 19,703,235,530 | IssuesEvent | 2022-01-12 18:50:13 | googleapis/google-auth-library-python-httplib2 | https://api.github.com/repos/googleapis/google-auth-library-python-httplib2 | opened | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* client_documentation must match pattern "^https://.*" in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-automation** if you have any questions. | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* client_documentation must match pattern "^https://.*" in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-automation** if you have a... | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 client documentation must match pattern in repo metadata json ☝️ once you correct these problems you can close this issue reach out to go github automation if you have any questio... | 1 |

4,921 | 3,104,778,026 | IssuesEvent | 2015-08-31 17:36:54 | teeworlds/teeworlds | https://api.github.com/repos/teeworlds/teeworlds | closed | game layer tile set picker + brush manipulation | code review editor | In `editor.cpp` in `DoMapEditor` where the tile set picker gets active it should also copy the current layer's `m_Game` to have a proper rotate and flip behaviour:

`m_TilesetPicker.m_Game = t->m_Game;` | 1.0 | game layer tile set picker + brush manipulation - In `editor.cpp` in `DoMapEditor` where the tile set picker gets active it should also copy the current layer's `m_Game` to have a proper rotate and flip behaviour:

`m_TilesetPicker.m_Game = t->m_Game;` | non_process | game layer tile set picker brush manipulation in editor cpp in domapeditor where the tile set picker gets active it should also copy the current layer s m game to have a proper rotate and flip behaviour m tilesetpicker m game t m game | 0 |

352,244 | 10,533,836,035 | IssuesEvent | 2019-10-01 13:47:51 | jenkins-x/jx | https://api.github.com/repos/jenkins-x/jx | closed | Only release the jx binary once versions has fully passed | area/fox area/quality area/versions backlog kind/enhancement priority/critical | Right now we release the jx binary on every PR. We should make these "pre-releases" and do the final release once versions has passed. This will need to update github, chocolatey, the website, brew dockerhub and dev-env | 1.0 | Only release the jx binary once versions has fully passed - Right now we release the jx binary on every PR. We should make these "pre-releases" and do the final release once versions has passed. This will need to update github, chocolatey, the website, brew dockerhub and dev-env | non_process | only release the jx binary once versions has fully passed right now we release the jx binary on every pr we should make these pre releases and do the final release once versions has passed this will need to update github chocolatey the website brew dockerhub and dev env | 0 |

14,421 | 17,470,591,885 | IssuesEvent | 2021-08-07 03:58:35 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Raster Layer Zonal Stats columns issue when raster has no CRS | Processing Bug | ### What is the bug or the crash?

When a raster without any defined CRS is used, the second column normally used to store the area of each region is not always well defined (obviously...) which causes problem depending on the output format:

- **Temporary output**: the _area_ column name is not present thus all fo... | 1.0 | Raster Layer Zonal Stats columns issue when raster has no CRS - ### What is the bug or the crash?

When a raster without any defined CRS is used, the second column normally used to store the area of each region is not always well defined (obviously...) which causes problem depending on the output format:

- **Tempo... | process | raster layer zonal stats columns issue when raster has no crs what is the bug or the crash when a raster without any defined crs is used the second column normally used to store the area of each region is not always well defined obviously which causes problem depending on the output format tempo... | 1 |

26,037 | 4,553,048,623 | IssuesEvent | 2016-09-13 02:17:10 | cakephp/cakephp | https://api.github.com/repos/cakephp/cakephp | closed | getMockForModel uses default datasource when cacheMethods are disabled | Defect testing | When cacheMethods are disabled in CakePHP 2.7.* getMockForModel makes atleast once use of default datasource instead of test datasource. | 1.0 | getMockForModel uses default datasource when cacheMethods are disabled - When cacheMethods are disabled in CakePHP 2.7.* getMockForModel makes atleast once use of default datasource instead of test datasource. | non_process | getmockformodel uses default datasource when cachemethods are disabled when cachemethods are disabled in cakephp getmockformodel makes atleast once use of default datasource instead of test datasource | 0 |

7,412 | 10,534,345,177 | IssuesEvent | 2019-10-01 14:40:13 | codurance/open-space | https://api.github.com/repos/codurance/open-space | closed | Create tslint and .idea code style config | 🐁 1 📊 process | Don't spend too much time just pick default for intellij idea and utilize a standard for tslint (like AirBnB) | 1.0 | Create tslint and .idea code style config - Don't spend too much time just pick default for intellij idea and utilize a standard for tslint (like AirBnB) | process | create tslint and idea code style config don t spend too much time just pick default for intellij idea and utilize a standard for tslint like airbnb | 1 |

12,188 | 3,588,208,773 | IssuesEvent | 2016-01-30 21:24:07 | JayNewstrom/ScreenSwitcher | https://api.github.com/repos/JayNewstrom/ScreenSwitcher | closed | Readme updates | Documentation | - Document `Screen` lifecycle

- Comparison to fragments

- What the goal of the project is (small, focused, back stack, configurable) (no presenter, etc)

- Document that you can do awesome custom transitions using the Animations api | 1.0 | Readme updates - - Document `Screen` lifecycle

- Comparison to fragments

- What the goal of the project is (small, focused, back stack, configurable) (no presenter, etc)

- Document that you can do awesome custom transitions using the Animations api | non_process | readme updates document screen lifecycle comparison to fragments what the goal of the project is small focused back stack configurable no presenter etc document that you can do awesome custom transitions using the animations api | 0 |

19,557 | 25,878,528,262 | IssuesEvent | 2022-12-14 09:39:52 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | DISABLED test_terminate_exit (__main__.ForkTest) | module: multiprocessing triaged module: flaky-tests skipped | Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_terminate_exit&suite=ForkTest&file=test_multiprocessing_spawn.py) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/7313915078).

Over the past 3 hours,... | 1.0 | DISABLED test_terminate_exit (__main__.ForkTest) - Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_terminate_exit&suite=ForkTest&file=test_multiprocessing_spawn.py) and the most recent trunk [workflow logs](https://github.com/pytorc... | process | disabled test terminate exit main forktest platforms linux this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has been determined flaky in workflow s with red and green cc vitalyfedyunin | 1 |

290,214 | 21,872,322,505 | IssuesEvent | 2022-05-19 06:58:42 | fragcolor-xyz/chainblocks | https://api.github.com/repos/fragcolor-xyz/chainblocks | opened | Document how to set up and use the Chainblocks-Unity integration plugin | Project: Documentation | **Pitch**

As a game developer interested in using Fragnova assets in the Unity game engine, I want to know how to set up and use the Chainblocks-Unity plugin so that I may be able to load/save Fragnova game assets in my Unity projects.

**Acceptance criteria**

A brief guide on how to set up and use the Chainblocks-... | 1.0 | Document how to set up and use the Chainblocks-Unity integration plugin - **Pitch**

As a game developer interested in using Fragnova assets in the Unity game engine, I want to know how to set up and use the Chainblocks-Unity plugin so that I may be able to load/save Fragnova game assets in my Unity projects.

**Acce... | non_process | document how to set up and use the chainblocks unity integration plugin pitch as a game developer interested in using fragnova assets in the unity game engine i want to know how to set up and use the chainblocks unity plugin so that i may be able to load save fragnova game assets in my unity projects acce... | 0 |

373,007 | 11,031,739,987 | IssuesEvent | 2019-12-06 18:28:32 | PREreview/rapid-prereview | https://api.github.com/repos/PREreview/rapid-prereview | closed | data for chrome and firefox webstores | priority | - [x] icon (@halmos) must respect the exact width and height. See screenshot below and https://developer.chrome.com/webstore/images#icons

<img width="1213" alt="Screen Shot 2019-12-01 at 11 56 44 AM" src="https://user-images.githubusercontent.com/1141327/69919456-ccca8980-1431-11ea-8d8a-f0a9da0df144.png">

- [x] det... | 1.0 | data for chrome and firefox webstores - - [x] icon (@halmos) must respect the exact width and height. See screenshot below and https://developer.chrome.com/webstore/images#icons

<img width="1213" alt="Screen Shot 2019-12-01 at 11 56 44 AM" src="https://user-images.githubusercontent.com/1141327/69919456-ccca8980-1431-1... | non_process | data for chrome and firefox webstores icon halmos must respect the exact width and height see screenshot below and img width alt screen shot at am src detailed description dasaderi or majohansson see screenshot below for instructions img width alt screen shot ... | 0 |

3,542 | 6,583,927,215 | IssuesEvent | 2017-09-13 08:16:56 | DynareTeam/dynare | https://api.github.com/repos/DynareTeam/dynare | closed | Block histval from accepting positive lags | bug preprocessor | `histval` serves for setting past values, not future ones. The Matlab-files are not able to handle this case. The code

```

% Test whether preprocessor recognizes state variables introduced by optimal policy Github #1193

var pai, c, n, r, a;

varexo u;

parameters beta, rho, epsilon, omega, phi, gamma;

... | 1.0 | Block histval from accepting positive lags - `histval` serves for setting past values, not future ones. The Matlab-files are not able to handle this case. The code

```

% Test whether preprocessor recognizes state variables introduced by optimal policy Github #1193

var pai, c, n, r, a;

varexo u;

paramete... | process | block histval from accepting positive lags histval serves for setting past values not future ones the matlab files are not able to handle this case the code test whether preprocessor recognizes state variables introduced by optimal policy github var pai c n r a varexo u parameters ... | 1 |

294,914 | 9,050,393,574 | IssuesEvent | 2019-02-12 08:33:29 | Activiti/Activiti | https://api.github.com/repos/Activiti/Activiti | closed | modeling UI does not show all process variables | priority1 | I loaded some [example process variable json](https://github.com/Activiti/activiti-cloud-runtime-bundle-service/blob/develop/activiti-cloud-starter-runtime-bundle/src/test/resources/processes/variable-mapping-extensions.json#L11) into the modeling UI.

After [changing all the values of the variables to be strings (wh... | 1.0 | modeling UI does not show all process variables - I loaded some [example process variable json](https://github.com/Activiti/activiti-cloud-runtime-bundle-service/blob/develop/activiti-cloud-starter-runtime-bundle/src/test/resources/processes/variable-mapping-extensions.json#L11) into the modeling UI.

After [changing... | non_process | modeling ui does not show all process variables i loaded some into the modeling ui after then i was able to set up the variables and save them but i can t then see them all in the ui only a subset if i then use the button to add another then that one appears in the json but also not in t... | 0 |

20,949 | 27,808,697,648 | IssuesEvent | 2023-03-17 23:23:03 | hsmusic/hsmusic-data | https://api.github.com/repos/hsmusic/hsmusic-data | opened | Three in the Morning (4 1/3 Hours Late Remix)/(Ngame's Bowmix) fixes | scope: fandom type: data fix type: involved process what: art tags what: lyrics what: references & leitmotifs/samples | (4 1/3 Hours Late Remix):

- missing reference to Michael Bowman Remix

(Ngame's Bowmix):

- missing lyrics

- missing reference to Three in the Morning (4 1/3 Hours Late Remix; CaNon edit)

- missing reference/sample to newly included material

Both:

- remove Snowman art tag

Prereq (makes things easier):

- #... | 1.0 | Three in the Morning (4 1/3 Hours Late Remix)/(Ngame's Bowmix) fixes - (4 1/3 Hours Late Remix):

- missing reference to Michael Bowman Remix

(Ngame's Bowmix):

- missing lyrics

- missing reference to Three in the Morning (4 1/3 Hours Late Remix; CaNon edit)

- missing reference/sample to newly included material

... | process | three in the morning hours late remix ngame s bowmix fixes hours late remix missing reference to michael bowman remix ngame s bowmix missing lyrics missing reference to three in the morning hours late remix canon edit missing reference sample to newly included material ... | 1 |

4,518 | 7,360,194,192 | IssuesEvent | 2018-03-10 16:05:35 | ODiogoSilva/assemblerflow | https://api.github.com/repos/ODiogoSilva/assemblerflow | opened | Add better error detection and handling for assembler processes | bug process | As of now, failure in the assembler processes may trigger an error in the `.status` file but it does not trigger the error for the nextflow process. This leads to the assembly not being performed due to some reason and the resume cannot repeat the process, since it terminated successfully as far as nextflow knows.

T... | 1.0 | Add better error detection and handling for assembler processes - As of now, failure in the assembler processes may trigger an error in the `.status` file but it does not trigger the error for the nextflow process. This leads to the assembly not being performed due to some reason and the resume cannot repeat the proce... | process | add better error detection and handling for assembler processes as of now failure in the assembler processes may trigger an error in the status file but it does not trigger the error for the nextflow process this leads to the assembly not being performed due to some reason and the resume cannot repeat the proce... | 1 |

10,970 | 12,992,127,640 | IssuesEvent | 2020-07-23 06:02:10 | ValveSoftware/Proton | https://api.github.com/repos/ValveSoftware/Proton | closed | BATTLETECH (637090) | .NET Game compatibility - Unofficial | I wish to throw this out there to see if anyone knows why this Unity3D game fails to launch when forced to use proton?

At present it crashes and spits out a error log similar to the one linked below. I know there is a native version but it uses OpenGL and has significant performance issues, so I want to test the d3... | True | BATTLETECH (637090) - I wish to throw this out there to see if anyone knows why this Unity3D game fails to launch when forced to use proton?

At present it crashes and spits out a error log similar to the one linked below. I know there is a native version but it uses OpenGL and has significant performance issues, so... | non_process | battletech i wish to throw this out there to see if anyone knows why this game fails to launch when forced to use proton at present it crashes and spits out a error log similar to the one linked below i know there is a native version but it uses opengl and has significant performance issues so i want to ... | 0 |

584,145 | 17,407,536,261 | IssuesEvent | 2021-08-03 08:10:26 | ballerina-platform/ballerina-dev-website | https://api.github.com/repos/ballerina-platform/ballerina-dev-website | closed | Enable Pushing Selected Changes to the Ballerina Live Site | Area/Backend Area/Docs Priority/Highest Type/NewFeature | **Description:**

Need to find and implement the optimal way to enable pushing selected changes to the Ballerina live site.

**Describe your problem(s)**

**Describe your solution(s)**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component ... | 1.0 | Enable Pushing Selected Changes to the Ballerina Live Site - **Description:**

Need to find and implement the optimal way to enable pushing selected changes to the Ballerina live site.

**Describe your problem(s)**

**Describe your solution(s)**

**Related Issues (optional):**

<!-- Any related issues such as sub... | non_process | enable pushing selected changes to the ballerina live site description need to find and implement the optimal way to enable pushing selected changes to the ballerina live site describe your problem s describe your solution s related issues optional suggested labels optional ... | 0 |

137,007 | 20,027,412,224 | IssuesEvent | 2022-02-01 23:11:28 | Hyperobjekt/crs | https://api.github.com/repos/Hyperobjekt/crs | opened | Mockup map with client edits | Design | **Copy changes**

“Schoolboard” → “School District”

“Severity” → “Progress”

“Action” → “Activity”

**Shading + Key**

Binary: introduced, passed (change terminology of severity)

Don't have the same shape for school board and state level in key

**Federal Icon**

Incorporate, have a line connecting it to D.C.

| 1.0 | Mockup map with client edits - **Copy changes**

“Schoolboard” → “School District”

“Severity” → “Progress”

“Action” → “Activity”

**Shading + Key**

Binary: introduced, passed (change terminology of severity)

Don't have the same shape for school board and state level in key

**Federal Icon**

Incorporate, have a line conn... | non_process | mockup map with client edits copy changes “schoolboard” → “school district” “severity” → “progress” “action” → “activity” shading key binary introduced passed change terminology of severity don t have the same shape for school board and state level in key federal icon incorporate have a line conn... | 0 |

17,804 | 23,728,978,274 | IssuesEvent | 2022-08-30 22:49:54 | googleapis/gapic-generator-python | https://api.github.com/repos/googleapis/gapic-generator-python | opened | Implement Showcase integration tests for transport=rest | type: process priority: p1 | Implement Showcase integration tests and add them to CI for transport=REST:

* with numeric enums turned on

* with numeric enums turned off

This is arguably a GA blocker for GA'ing REGAPIC.

(This issue filed as a refinement of #1404) | 1.0 | Implement Showcase integration tests for transport=rest - Implement Showcase integration tests and add them to CI for transport=REST:

* with numeric enums turned on

* with numeric enums turned off

This is arguably a GA blocker for GA'ing REGAPIC.

(This issue filed as a refinement of #1404) | process | implement showcase integration tests for transport rest implement showcase integration tests and add them to ci for transport rest with numeric enums turned on with numeric enums turned off this is arguably a ga blocker for ga ing regapic this issue filed as a refinement of | 1 |

272 | 2,700,749,578 | IssuesEvent | 2015-04-04 14:39:00 | cs2103jan2015-f10-3c/main | https://api.github.com/repos/cs2103jan2015-f10-3c/main | closed | data processing support to show dates on top | component.DataProcessor priority.high type.task | e.g:

display this month

Tuesday, 24 Mar 2015

1. 07:00-08:00 goes to school

2. 12:00-13:00 lunch w OG mates

Wednesday, 25 Mar 2015

1. 18:00-19:00 early dinner w besties

2. 21:00-22:00 night movies | 1.0 | data processing support to show dates on top - e.g:

display this month

Tuesday, 24 Mar 2015

1. 07:00-08:00 goes to school

2. 12:00-13:00 lunch w OG mates

Wednesday, 25 Mar 2015

1. 18:00-19:00 early dinner w besties

2. 21:00-22:00 night movies | process | data processing support to show dates on top e g display this month tuesday mar goes to school lunch w og mates wednesday mar early dinner w besties night movies | 1 |

812,988 | 30,441,299,745 | IssuesEvent | 2023-07-15 04:58:54 | MDAnalysis/mdanalysis | https://api.github.com/repos/MDAnalysis/mdanalysis | closed | [docs] core/topologyattrs page format is broken | help wanted Component-Docs Priority-Critical | ## Expected behavior ##

All docs pages are well-formatted.

## Actual behavior ##

Doc page [11.3.3. Topology attribute objects — [MDAnalysis.core.topologyattrs](https://docs.mdanalysis.org/stable/documentation_pages/core/topologyattrs.html) is not formatted correctly (see screen shot, sorry, long)

- footer with ... | 1.0 | [docs] core/topologyattrs page format is broken - ## Expected behavior ##

All docs pages are well-formatted.

## Actual behavior ##

Doc page [11.3.3. Topology attribute objects — [MDAnalysis.core.topologyattrs](https://docs.mdanalysis.org/stable/documentation_pages/core/topologyattrs.html) is not formatted correctl... | non_process | core topologyattrs page format is broken expected behavior all docs pages are well formatted actual behavior doc page is not formatted correctly see screen shot sorry long footer with authors on the side classes and functions are partially hideen by side menu and at end instead of ... | 0 |

18,271 | 24,350,499,475 | IssuesEvent | 2022-10-02 21:58:33 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | 'Delete holes' bug when 'area' argument is used | Processing Bug | ### What is the bug or the crash?

I discovered that 'Delete holes' does not work reliable when you use the 'area' argument i.e. when you want to delete all holes smaller than a specified area.

Sometimes holes larger than the specified area are incorrectly removed.

Therefore I have created a small example in an attac... | 1.0 | 'Delete holes' bug when 'area' argument is used - ### What is the bug or the crash?

I discovered that 'Delete holes' does not work reliable when you use the 'area' argument i.e. when you want to delete all holes smaller than a specified area.

Sometimes holes larger than the specified area are incorrectly removed.

Th... | process | delete holes bug when area argument is used what is the bug or the crash i discovered that delete holes does not work reliable when you use the area argument i e when you want to delete all holes smaller than a specified area sometimes holes larger than the specified area are incorrectly removed th... | 1 |

67,373 | 27,820,781,289 | IssuesEvent | 2023-03-19 07:45:44 | aws/aws-sdk-go-v2 | https://api.github.com/repos/aws/aws-sdk-go-v2 | closed | rds data api- need way to get all exceptions from BatchExecuteStatement | feature-request service-api | ### Describe the feature

When using BatchExecuteStatement using rdsdata api, a single error from the batch is returned in the error object, with the message stating:

`. Call getNextException to see other errors in the batch.`

There seems to be no way to actually call getNextException or access the rest of the er... | 1.0 | rds data api- need way to get all exceptions from BatchExecuteStatement - ### Describe the feature

When using BatchExecuteStatement using rdsdata api, a single error from the batch is returned in the error object, with the message stating:

`. Call getNextException to see other errors in the batch.`

There seems t... | non_process | rds data api need way to get all exceptions from batchexecutestatement describe the feature when using batchexecutestatement using rdsdata api a single error from the batch is returned in the error object with the message stating call getnextexception to see other errors in the batch there seems t... | 0 |

553,081 | 16,343,374,561 | IssuesEvent | 2021-05-13 02:36:43 | volcano-sh/volcano | https://api.github.com/repos/volcano-sh/volcano | reopened | Can Volcano job support volumeClaimTemplates | area/controllers kind/feature lifecycle/stale priority/important-soon | <!-- This form is for bug reports and feature requests ONLY!

If you're looking for a help then check our [Slack Channel](http://volcano-sh.slack.com/) or have a look at our [dev mailing](https://groups.google.com/forum/#!forum/volcano-sh)

-->

**Is this a BUG REPORT or FEATURE REQUEST?**:

/kind feature

**W... | 1.0 | Can Volcano job support volumeClaimTemplates - <!-- This form is for bug reports and feature requests ONLY!

If you're looking for a help then check our [Slack Channel](http://volcano-sh.slack.com/) or have a look at our [dev mailing](https://groups.google.com/forum/#!forum/volcano-sh)

-->

**Is this a BUG REPORT... | non_process | can volcano job support volumeclaimtemplates this form is for bug reports and feature requests only if you re looking for a help then check our or have a look at our is this a bug report or feature request kind feature what happened old version hope to keep... | 0 |

590,173 | 17,772,692,585 | IssuesEvent | 2021-08-30 15:19:46 | GoogleCloudPlatform/golang-samples | https://api.github.com/repos/GoogleCloudPlatform/golang-samples | closed | vision/product_search: TestGetSimilarProductsURIWithFilter failed | type: bug priority: p2 api: vision samples flakybot: issue | Note: #2189 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 88b2a1089882340cbb1a55b67718645c5ee9af99

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/5217657a-08aa-4983-b787-650d10e2e338), [Sponge](http://sponge2/5217657a-08aa-4983-... | 1.0 | vision/product_search: TestGetSimilarProductsURIWithFilter failed - Note: #2189 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 88b2a1089882340cbb1a55b67718645c5ee9af99

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/5217657a-08aa-... | non_process | vision product search testgetsimilarproductsuriwithfilter failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed test output get similar products uri with filter test go getsimilarproductsuri productsearch ... | 0 |

3,465 | 6,545,833,444 | IssuesEvent | 2017-09-04 07:41:12 | threefoldfoundation/app_backend | https://api.github.com/repos/threefoldfoundation/app_backend | closed | Page with signed ITO agreements | process_duplicate type_feature | Only visible for payment-admins (IYO sub org, next to admins).

Similar as node orders, grouped by status, but with a `Mark as paid` button. Also show how much money they need to transfer (`currency_rate * token_count + " " + currency`) | 1.0 | Page with signed ITO agreements - Only visible for payment-admins (IYO sub org, next to admins).

Similar as node orders, grouped by status, but with a `Mark as paid` button. Also show how much money they need to transfer (`currency_rate * token_count + " " + currency`) | process | page with signed ito agreements only visible for payment admins iyo sub org next to admins similar as node orders grouped by status but with a mark as paid button also show how much money they need to transfer currency rate token count currency | 1 |

31,575 | 11,954,307,292 | IssuesEvent | 2020-04-03 23:11:35 | authelia/authelia | https://api.github.com/repos/authelia/authelia | closed | Add an option to disable "keep me logged in". | P3 Security | From a security point of view, an `allow_keep_me_logged_in` boolean parameter in Authelia configuration file to toggle this feature on or off would be useful. | True | Add an option to disable "keep me logged in". - From a security point of view, an `allow_keep_me_logged_in` boolean parameter in Authelia configuration file to toggle this feature on or off would be useful. | non_process | add an option to disable keep me logged in from a security point of view an allow keep me logged in boolean parameter in authelia configuration file to toggle this feature on or off would be useful | 0 |

493,508 | 14,233,858,757 | IssuesEvent | 2020-11-18 12:47:25 | GSG-G9/IS-Autocomplete | https://api.github.com/repos/GSG-G9/IS-Autocomplete | opened | HEROKU deploy | priority-1 | the project should be deployed on Heroku, and making sure that it is viewed properly | 1.0 | HEROKU deploy - the project should be deployed on Heroku, and making sure that it is viewed properly | non_process | heroku deploy the project should be deployed on heroku and making sure that it is viewed properly | 0 |

161,232 | 13,808,247,551 | IssuesEvent | 2020-10-12 01:44:43 | z3t0/Arduino-IRremote | https://api.github.com/repos/z3t0/Arduino-IRremote | closed | Tiny85: Configure different send pin? | Documentation | **Board:** Adafruit Trinket 5V

**Library Version:** 2.1.0

**Protocol:** Any

The Trinket uses a Tiny85 and the remote library is configured to use pin 1. How can I configure it to use a different output pin? Pin 1 is connected to the builtin LED which is pulsed on startup.

| 1.0 | Tiny85: Configure different send pin? - **Board:** Adafruit Trinket 5V

**Library Version:** 2.1.0

**Protocol:** Any

The Trinket uses a Tiny85 and the remote library is configured to use pin 1. How can I configure it to use a different output pin? Pin 1 is connected to the builtin LED which is pulsed on startup.

... | non_process | configure different send pin board adafruit trinket library version protocol any the trinket uses a and the remote library is configured to use pin how can i configure it to use a different output pin pin is connected to the builtin led which is pulsed on startup | 0 |

15,878 | 20,068,377,959 | IssuesEvent | 2022-02-04 01:17:50 | nion-software/nionswift | https://api.github.com/repos/nion-software/nionswift | opened | Rectangle masks does not draw consistently when partially or entirely out of bounds | type - bug level - easy f - processing f - filtering/masking | - Ellipses seem to work

- Rotated rectangles mostly work, but may throw exceptions

- Rectangles mostly fail when moved off left/top edges

| 1.0 | Rectangle masks does not draw consistently when partially or entirely out of bounds - - Ellipses seem to work

- Rotated rectangles mostly work, but may throw exceptions

- Rectangles mostly fail when moved off left/top edges

| process | rectangle masks does not draw consistently when partially or entirely out of bounds ellipses seem to work rotated rectangles mostly work but may throw exceptions rectangles mostly fail when moved off left top edges | 1 |

10,729 | 3,420,704,747 | IssuesEvent | 2015-12-08 15:52:47 | WP-API/WP-API | https://api.github.com/repos/WP-API/WP-API | closed | "Philosophy Lesson" in "Extending" should live in Philosophy | Documentation Enhancement | It's a more human-readable version of why you should use WP-API, and why not. | 1.0 | "Philosophy Lesson" in "Extending" should live in Philosophy - It's a more human-readable version of why you should use WP-API, and why not. | non_process | philosophy lesson in extending should live in philosophy it s a more human readable version of why you should use wp api and why not | 0 |

16,253 | 20,813,208,625 | IssuesEvent | 2022-03-18 06:59:29 | equinor/MAD-VSM-WEB | https://api.github.com/repos/equinor/MAD-VSM-WEB | closed | Verify what code is currently running | back-end front-end process improvement | ### User-story

As a tester,

I need to verify what features are currently running in the browser,

So that I can do a good test and test the right thing.

### Background

This is important to get in because of browser caching. How can someone verify that they are testing the correct build?

We need to be confident t... | 1.0 | Verify what code is currently running - ### User-story

As a tester,

I need to verify what features are currently running in the browser,

So that I can do a good test and test the right thing.

### Background

This is important to get in because of browser caching. How can someone verify that they are testing the c... | process | verify what code is currently running user story as a tester i need to verify what features are currently running in the browser so that i can do a good test and test the right thing background this is important to get in because of browser caching how can someone verify that they are testing the c... | 1 |

256,265 | 22,042,416,501 | IssuesEvent | 2022-05-29 15:01:41 | SAA-SDT/eac-cpf-schema | https://api.github.com/repos/SAA-SDT/eac-cpf-schema | closed | <relationType> | Element Tested by Schema Team Comments period | ## Relation Type

- add new optional, repeatable text element `<relationType>` as child element of `<relation>`

- add **optional attributes**

`@audience`

`@conventationDeclarationReference`

`@id`

`@languageOfElement`

`@localType`

`@localTypeDeclarationReference`

`@maintenanceEventReference`

`@scriptOfEl... | 1.0 | <relationType> - ## Relation Type

- add new optional, repeatable text element `<relationType>` as child element of `<relation>`

- add **optional attributes**

`@audience`

`@conventationDeclarationReference`

`@id`

`@languageOfElement`

`@localType`

`@localTypeDeclarationReference`

`@maintenanceEventReferen... | non_process | relation type add new optional repeatable text element as child element of add optional attributes audience conventationdeclarationreference id languageofelement localtype localtypedeclarationreference maintenanceeventreference scriptofelement sourcer... | 0 |

5,833 | 8,665,884,428 | IssuesEvent | 2018-11-29 01:21:41 | cityofaustin/techstack | https://api.github.com/repos/cityofaustin/techstack | closed | Draft process page for How to Start a Farmers Market Business | Content Type: Process Page Department: Public Health Site Content Size: S Team: Content | Current: chrome-extension://oemmndcbldboiebfnladdacbdfmadadm/http://austintexas.gov/sites/default/files/files/Health/Environmental/Food/How_to_Start_a_Farmers_Market_Booth_Business_2018.pdf

Drive: | 1.0 | Draft process page for How to Start a Farmers Market Business - Current: chrome-extension://oemmndcbldboiebfnladdacbdfmadadm/http://austintexas.gov/sites/default/files/files/Health/Environmental/Food/How_to_Start_a_Farmers_Market_Booth_Business_2018.pdf

Drive: | process | draft process page for how to start a farmers market business current chrome extension oemmndcbldboiebfnladdacbdfmadadm drive | 1 |

279,172 | 30,702,459,793 | IssuesEvent | 2023-07-27 01:32:00 | nidhi7598/linux-3.0.35_CVE-2018-13405 | https://api.github.com/repos/nidhi7598/linux-3.0.35_CVE-2018-13405 | closed | CVE-2020-29368 (High) detected in linux-stable-rtv3.8.6 - autoclosed | Mend: dependency security vulnerability | ## CVE-2020-29368 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv3.8.6</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page... | True | CVE-2020-29368 (High) detected in linux-stable-rtv3.8.6 - autoclosed - ## CVE-2020-29368 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv3.8.6</b></p></summary>

<p>

<p>... | non_process | cve high detected in linux stable autoclosed cve high severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulnerable... | 0 |

210,804 | 7,194,830,636 | IssuesEvent | 2018-02-04 10:27:15 | elor/tuvero | https://api.github.com/repos/elor/tuvero | closed | KO-Baum: Korrigierte Ergebnisse ändern noch nicht die nachfolgenden Spiele | backend priority 5: feature | Geänderte Ergebnisse im KO-Baum werden zwar gespeichert, aber wirken sich noch nicht auf die nachfolgend ermöglichten Spiele aus.

Der Nutzer würde erwarten, dass er ein gerade eben beendetes Spiel noch korrigieren und ein laufendes Spiel rückgängig machen kann.

Eine elegante Lösung wäre, alle nachfolgenden Spiele als... | 1.0 | KO-Baum: Korrigierte Ergebnisse ändern noch nicht die nachfolgenden Spiele - Geänderte Ergebnisse im KO-Baum werden zwar gespeichert, aber wirken sich noch nicht auf die nachfolgend ermöglichten Spiele aus.

Der Nutzer würde erwarten, dass er ein gerade eben beendetes Spiel noch korrigieren und ein laufendes Spiel rück... | non_process | ko baum korrigierte ergebnisse ändern noch nicht die nachfolgenden spiele geänderte ergebnisse im ko baum werden zwar gespeichert aber wirken sich noch nicht auf die nachfolgend ermöglichten spiele aus der nutzer würde erwarten dass er ein gerade eben beendetes spiel noch korrigieren und ein laufendes spiel rück... | 0 |

55,658 | 14,619,844,349 | IssuesEvent | 2020-12-22 18:35:42 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | opened | 508-defect-2 [KEYBOARD]: Focus MUST be returned to the Edit button when Update page is pressed or clicked | 508-defect-2 508-issue-focus-mgmt 508/Accessibility HLR vsa | # [508-defect-2](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-2)

<!--

Enter an issue title using the format [ERROR TYPE]: Brief description of the problem

---

[SCREENREADER]: Edit buttons need aria-label for context

... | 1.0 | 508-defect-2 [KEYBOARD]: Focus MUST be returned to the Edit button when Update page is pressed or clicked - # [508-defect-2](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-2)

<!--

Enter an issue title using the format [E... | non_process | defect focus must be returned to the edit button when update page is pressed or clicked enter an issue title using the format brief description of the problem edit buttons need aria label for context add another user link will not receive keyboard focus heading levels should ... | 0 |

496,096 | 14,332,232,484 | IssuesEvent | 2020-11-27 01:45:53 | dotnetcore/Natasha | https://api.github.com/repos/dotnetcore/Natasha | closed | 不支持识别 static 、new static | low-priority | parent class:

```csharp

public class T1

{

public static void A() { }

public virtual void B() { }

public virtual void C() { }

public virtual void D() { }

public void E() { }

}

```

child class:

```csharp

public class T2 : T1

{

public... | 1.0 | 不支持识别 static 、new static - parent class:

```csharp

public class T1

{

public static void A() { }

public virtual void B() { }

public virtual void C() { }

public virtual void D() { }

public void E() { }

}

```

child class:

```csharp

public class T2 ... | non_process | 不支持识别 static 、new static parent class csharp public class public static void a public virtual void b public virtual void c public virtual void d public void e child class csharp public class ... | 0 |

310,352 | 26,711,620,452 | IssuesEvent | 2023-01-28 01:14:18 | stockmann-lab/shim_amp_hardware_linear | https://api.github.com/repos/stockmann-lab/shim_amp_hardware_linear | opened | Amp ctrl (D3)/power (D1) testing of design changes | testing needed | Finish testing changes before next prototyping round (ctrl board D4, power board D2)

- [ ] New offset calibration circuit

- Test the offset cal on all channels (inc. forcing the offset-cal on while OPA548 is enabled, to see where current flows through the collector resistors)

- On "shutdown logic test" board, ... | 1.0 | Amp ctrl (D3)/power (D1) testing of design changes - Finish testing changes before next prototyping round (ctrl board D4, power board D2)

- [ ] New offset calibration circuit

- Test the offset cal on all channels (inc. forcing the offset-cal on while OPA548 is enabled, to see where current flows through the collect... | non_process | amp ctrl power testing of design changes finish testing changes before next prototyping round ctrl board power board new offset calibration circuit test the offset cal on all channels inc forcing the offset cal on while is enabled to see where current flows through the collector resistor... | 0 |

147,605 | 19,522,851,883 | IssuesEvent | 2021-12-29 22:31:46 | swagger-api/swagger-codegen | https://api.github.com/repos/swagger-api/swagger-codegen | opened | CVE-2021-20190 (High) detected in multiple libraries | security vulnerability | ## CVE-2021-20190 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.4.5.jar</b>, <b>jackson-databind-2.8.9.jar</b>, <b>jackson-databind-2.8.8.jar</b>, <b>jackson-data... | True | CVE-2021-20190 (High) detected in multiple libraries - ## CVE-2021-20190 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.4.5.jar</b>, <b>jackson-databind-2.8.9.jar<... | non_process | cve high detected in multiple libraries cve high severity vulnerability vulnerable libraries jackson databind jar jackson databind jar jackson databind jar jackson databind jar jackson databind jar jackson databind jar general data bi... | 0 |

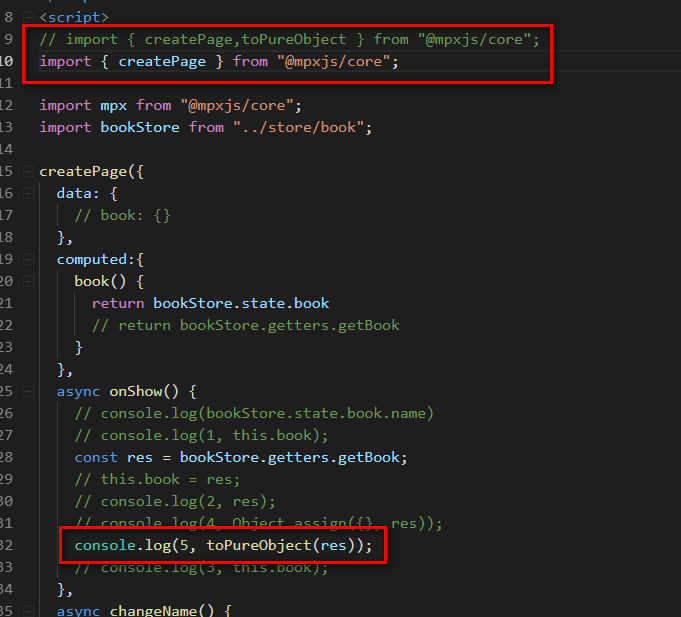

6,753 | 9,880,372,549 | IssuesEvent | 2019-06-24 12:28:18 | didi/mpx | https://api.github.com/repos/didi/mpx | closed | function 加上 async 后代码发生错误也不会抛出 | processing | 如图,toPureObject 没有被引入,理应抛出错误。但在onShow 前面加上了 async,错误就消失了,但代码没有继续执行下去。去掉 async 会抛出错误。

| 1.0 | function 加上 async 后代码发生错误也不会抛出 - 如图,toPureObject 没有被引入,理应抛出错误。但在onShow 前面加上了 async,错误就消失了,但代码没有继续执行下去。去掉 async 会抛出错误。

| process | function 加上 async 后代码发生错误也不会抛出 如图,topureobject 没有被引入,理应抛出错误。但在onshow 前面加上了 async,错误就消失了,但代码没有继续执行下去。去掉 async 会抛出错误。 | 1 |

89,510 | 15,830,754,911 | IssuesEvent | 2021-04-06 12:53:39 | rsoreq/zenbot | https://api.github.com/repos/rsoreq/zenbot | reopened | CVE-2019-20149 (High) detected in kind-of-6.0.2.tgz | security vulnerability | ## CVE-2019-20149 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>kind-of-6.0.2.tgz</b></p></summary>

<p>Get the native type of a value.</p>

<p>Library home page: <a href="https://regi... | True | CVE-2019-20149 (High) detected in kind-of-6.0.2.tgz - ## CVE-2019-20149 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>kind-of-6.0.2.tgz</b></p></summary>

<p>Get the native type of a ... | non_process | cve high detected in kind of tgz cve high severity vulnerability vulnerable library kind of tgz get the native type of a value library home page a href path to dependency file zenbot package json path to vulnerable library zenbot node modules snapdragon node node mo... | 0 |

8,965 | 12,069,922,403 | IssuesEvent | 2020-04-16 16:47:36 | googleapis/google-cloud-go | https://api.github.com/repos/googleapis/google-cloud-go | opened | monitoring: re-enable generation after breaking change comes through | api: monitoring type: process | There is a breaking change coming to this library. We will need to":

- stop generation

- Mark v1(of apiv3) as deprecated

- re-enable generation under v2 after breaking change is merged

- Update deprecation notice to let users know they should use v2 | 1.0 | monitoring: re-enable generation after breaking change comes through - There is a breaking change coming to this library. We will need to":

- stop generation

- Mark v1(of apiv3) as deprecated

- re-enable generation under v2 after breaking change is merged

- Update deprecation notice to let users know they should ... | process | monitoring re enable generation after breaking change comes through there is a breaking change coming to this library we will need to stop generation mark of as deprecated re enable generation under after breaking change is merged update deprecation notice to let users know they should use | 1 |

10,878 | 13,649,116,510 | IssuesEvent | 2020-09-26 12:53:53 | timberio/vector | https://api.github.com/repos/timberio/vector | closed | New `format_timestamp` remap function | domain: mapping domain: processing transform: remap type: feature | A `format_timestamp` function takes a timestamp as argument and formats it according to [Rust's strftime format](https://docs.rs/chrono/0.3.1/chrono/format/strftime/index.html).

Note, we explicitly opted not to use the `strftime` function name, since our remap syntax function naming rules use the `format_` prefix fo... | 1.0 | New `format_timestamp` remap function - A `format_timestamp` function takes a timestamp as argument and formats it according to [Rust's strftime format](https://docs.rs/chrono/0.3.1/chrono/format/strftime/index.html).

Note, we explicitly opted not to use the `strftime` function name, since our remap syntax function ... | process | new format timestamp remap function a format timestamp function takes a timestamp as argument and formats it according to note we explicitly opted not to use the strftime function name since our remap syntax function naming rules use the format prefix for formatting string example ... | 1 |

9,891 | 12,890,275,957 | IssuesEvent | 2020-07-13 15:45:05 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Token for the current date seems missing | Pri2 devops-cicd-process/tech devops/prod doc-enhancement | Please also include a token for the current day. We already have a token for yyyymmdd. I don't know if its not supported or missing in documentation but we needed a token for just the day (dd) component of the date.

[Enter feedback here]

---

#### Document Details

⚠ *Do not edit this section. It is required fo... | 1.0 | Token for the current date seems missing - Please also include a token for the current day. We already have a token for yyyymmdd. I don't know if its not supported or missing in documentation but we needed a token for just the day (dd) component of the date.

[Enter feedback here]

---

#### Document Details

⚠ *... | process | token for the current date seems missing please also include a token for the current day we already have a token for yyyymmdd i don t know if its not supported or missing in documentation but we needed a token for just the day dd component of the date document details ⚠ do not edit this sec... | 1 |

1,694 | 4,345,741,284 | IssuesEvent | 2016-07-29 13:49:09 | openvstorage/framework-alba-plugin | https://api.github.com/repos/openvstorage/framework-alba-plugin | closed | Speed up listing ALBA backends and presets during add vPool | priority_urgent process_wontfix type_bug | When you create a vPool and load the local backend it takes ages before the data is shown. We should make the vPool creation wizard much snappier.

Some suggestions:

* Load only the presets of the selected backend.

* Don't always check if policies are in use

* Only fetch the name of the preset instead of the preset ... | 1.0 | Speed up listing ALBA backends and presets during add vPool - When you create a vPool and load the local backend it takes ages before the data is shown. We should make the vPool creation wizard much snappier.

Some suggestions:

* Load only the presets of the selected backend.

* Don't always check if policies are in u... | process | speed up listing alba backends and presets during add vpool when you create a vpool and load the local backend it takes ages before the data is shown we should make the vpool creation wizard much snappier some suggestions load only the presets of the selected backend don t always check if policies are in u... | 1 |

7,742 | 10,863,261,944 | IssuesEvent | 2019-11-14 14:49:49 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | opened | Change EST to ET | Apply Process State Dept. | Who: All users

What: Change EST to ET

Why: To be consistent and avoid confusion

Acceptance Criteria:

Change instances of EST to be ET

Reason: EST is only half of the year as well as EDT so ET should be used

Changes should be made:

- State: Next steps page

- State: What's next page

- State: Update application page

- S... | 1.0 | Change EST to ET - Who: All users

What: Change EST to ET

Why: To be consistent and avoid confusion

Acceptance Criteria:

Change instances of EST to be ET

Reason: EST is only half of the year as well as EDT so ET should be used

Changes should be made:

- State: Next steps page

- State: What's next page

- State: Update a... | process | change est to et who all users what change est to et why to be consistent and avoid confusion acceptance criteria change instances of est to be et reason est is only half of the year as well as edt so et should be used changes should be made state next steps page state what s next page state update a... | 1 |

118,945 | 17,602,834,027 | IssuesEvent | 2021-08-17 13:49:52 | harrinry/nifi | https://api.github.com/repos/harrinry/nifi | opened | CVE-2020-9548 (High) detected in multiple libraries | security vulnerability | ## CVE-2020-9548 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.9.7.jar</b>, <b>jackson-databind-2.9.6.jar</b>, <b>jackson-databind-2.9.5.jar</b>, <b>jackson-datab... | True | CVE-2020-9548 (High) detected in multiple libraries - ## CVE-2020-9548 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.9.7.jar</b>, <b>jackson-databind-2.9.6.jar</b... | non_process | cve high detected in multiple libraries cve high severity vulnerability vulnerable libraries jackson databind jar jackson databind jar jackson databind jar jackson databind jar jackson databind jar jackson databind jar jackson databind ... | 0 |

234,470 | 7,721,504,575 | IssuesEvent | 2018-05-24 05:47:35 | WordImpress/Give | https://api.github.com/repos/WordImpress/Give | closed | fix(donation): prevent WP users from being created if donation fails | 3-reported high-priority urgent | ## User Story

As a `Give Admin`, I am experiencing a lot of spam user registrations through my Give donation forms. I want to prevent that from happening.

## Current Behavior

I currently am using both the Akismet integration and also the functionality plugin [Stop Donor Spam](https://github.com/mathetos/Stop-Donor... | 1.0 | fix(donation): prevent WP users from being created if donation fails - ## User Story

As a `Give Admin`, I am experiencing a lot of spam user registrations through my Give donation forms. I want to prevent that from happening.

## Current Behavior

I currently am using both the Akismet integration and also the functi... | non_process | fix donation prevent wp users from being created if donation fails user story as a give admin i am experiencing a lot of spam user registrations through my give donation forms i want to prevent that from happening current behavior i currently am using both the akismet integration and also the functi... | 0 |

327,733 | 28,080,369,903 | IssuesEvent | 2023-03-30 05:39:39 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: sqlsmith/setup=seed/setting=no-ddl failed | C-test-failure O-robot O-roachtest branch-release-23.1 | roachtest.sqlsmith/setup=seed/setting=no-ddl [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/9329923?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/9329923?buildTab=artifacts#/sqlsm... | 2.0 | roachtest: sqlsmith/setup=seed/setting=no-ddl failed - roachtest.sqlsmith/setup=seed/setting=no-ddl [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/9329923?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_Roa... | non_process | roachtest sqlsmith setup seed setting no ddl failed roachtest sqlsmith setup seed setting no ddl with on release test artifacts and logs in artifacts sqlsmith setup seed setting no ddl run sqlsmith go error pq internal error regproc resolvefunction unimplemented stmt with ... | 0 |

303,806 | 23,040,578,371 | IssuesEvent | 2022-07-23 04:31:46 | wp-graphql/wp-graphql | https://api.github.com/repos/wp-graphql/wp-graphql | closed | Have docs site pull extension docs from their repo README files | Documentation :rocket: Actionable | The docs.wpgraphql.com site should pull the README from each extension as the content for the docs shown for that extension. This way, each extension can maintain their canonical docs in their repo and be reflected in the searchable docs site.

Additional docs required for extensions should be maintained separately ... | 1.0 | Have docs site pull extension docs from their repo README files - The docs.wpgraphql.com site should pull the README from each extension as the content for the docs shown for that extension. This way, each extension can maintain their canonical docs in their repo and be reflected in the searchable docs site.

Additi... | non_process | have docs site pull extension docs from their repo readme files the docs wpgraphql com site should pull the readme from each extension as the content for the docs shown for that extension this way each extension can maintain their canonical docs in their repo and be reflected in the searchable docs site additi... | 0 |

307,805 | 23,217,324,426 | IssuesEvent | 2022-08-02 15:02:52 | woowa-techcamp-2022/android-accountbook-12 | https://api.github.com/repos/woowa-techcamp-2022/android-accountbook-12 | closed | Setting 화면(+ 결제수단, 카테고리 작성 화면) Component 정리 및 세부 기능 구현하기 | documentation💡 feature🔥 refactor🛠 진연주🍿 | ### 📌 내용

Setting화면과 결제수단, 수입/지출 카테고리 작성화면 Component 분리 및 세부 기능 추가 구현하기

### 🛠 ToDo

- [x] 결제 수단 입력 화면 Component 분리하기

- [x] 수입/지출 카테고리 입력화면 Component 분리하기

- [x] 설정 화면 Component 분리하기

- [x] 결제 수단 입력 시, 이름 중복 체크

- [x] 수입/지출 입력 시, 이름 중복 체크

- [x] 이름이 중복일 시 snackbar로 알려주기

- [x] 결제수단, 수입/지출 입력 시, soft keyboard에 의해 버... | 1.0 | Setting 화면(+ 결제수단, 카테고리 작성 화면) Component 정리 및 세부 기능 구현하기 - ### 📌 내용

Setting화면과 결제수단, 수입/지출 카테고리 작성화면 Component 분리 및 세부 기능 추가 구현하기

### 🛠 ToDo

- [x] 결제 수단 입력 화면 Component 분리하기

- [x] 수입/지출 카테고리 입력화면 Component 분리하기

- [x] 설정 화면 Component 분리하기

- [x] 결제 수단 입력 시, 이름 중복 체크

- [x] 수입/지출 입력 시, 이름 중복 체크

- [x] 이름이 중복일 시 ... | non_process | setting 화면 결제수단 카테고리 작성 화면 component 정리 및 세부 기능 구현하기 📌 내용 setting화면과 결제수단 수입 지출 카테고리 작성화면 component 분리 및 세부 기능 추가 구현하기 🛠 todo 결제 수단 입력 화면 component 분리하기 수입 지출 카테고리 입력화면 component 분리하기 설정 화면 component 분리하기 결제 수단 입력 시 이름 중복 체크 수입 지출 입력 시 이름 중복 체크 이름이 중복일 시 snackbar로 알려... | 0 |

34,726 | 30,322,347,820 | IssuesEvent | 2023-07-10 20:17:38 | ProjectPythia/projectpythia.github.io | https://api.github.com/repos/ProjectPythia/projectpythia.github.io | closed | Issue with dataset | infrastructure | Do the CESM2_sst_data.nc and CESM2_grid_variables.nc in https://github.com/ProjectPythia/pythia-datasets/tree/main/data need to be updated. The format for date and time seem to have an issue | 1.0 | Issue with dataset - Do the CESM2_sst_data.nc and CESM2_grid_variables.nc in https://github.com/ProjectPythia/pythia-datasets/tree/main/data need to be updated. The format for date and time seem to have an issue | non_process | issue with dataset do the sst data nc and grid variables nc in need to be updated the format for date and time seem to have an issue | 0 |

350,894 | 25,005,369,491 | IssuesEvent | 2022-11-03 11:23:23 | tech-acs/chimera-docs | https://api.github.com/repos/tech-acs/chimera-docs | opened | Fill in sections of 'Users' documentation | documentation enhancement | Introduction section and its sub-sections

User interface section and all its sub-sections

| 1.0 | Fill in sections of 'Users' documentation - Introduction section and its sub-sections

User interface section and all its sub-sections

| non_process | fill in sections of users documentation introduction section and its sub sections user interface section and all its sub sections | 0 |

2,184 | 5,032,365,606 | IssuesEvent | 2016-12-16 10:57:57 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | opened | Figure out how we may to emulate meta[name="referrer"] tag behavior | AREA: client AREA: server SYSTEM: URL processing TYPE: bug | We have a problem with it on https://login.yahoo.com

This page contains `meta[name="referrer"]` tag because of which we have the refirer with `http://localhost:1401` value

`<meta name="referrer" content... | 1.0 | Figure out how we may to emulate meta[name="referrer"] tag behavior - We have a problem with it on https://login.yahoo.com

This page contains `meta[name="referrer"]` tag because of which we have the refi... | process | figure out how we may to emulate meta tag behavior we have a problem with it on this page contains meta tag because of which we have the refirer with value | 1 |

5,375 | 8,203,286,127 | IssuesEvent | 2018-09-02 19:33:24 | EasyEngine/easyengine | https://api.github.com/repos/EasyEngine/easyengine | opened | Look into static analysis tools for EE | component/development-process | We can use something like - [PHPMetrics](http://www.phpmetrics.org/) or [SonarQube](https://github.com/SonarSource/sonar-php) and integrate it with our CI servers to continually monitor our code quality and other metrics like cyclometric complexity etc... so that we're aware which part of our codebase is more bloated a... | 1.0 | Look into static analysis tools for EE - We can use something like - [PHPMetrics](http://www.phpmetrics.org/) or [SonarQube](https://github.com/SonarSource/sonar-php) and integrate it with our CI servers to continually monitor our code quality and other metrics like cyclometric complexity etc... so that we're aware whi... | process | look into static analysis tools for ee we can use something like or and integrate it with our ci servers to continually monitor our code quality and other metrics like cyclometric complexity etc so that we re aware which part of our codebase is more bloated and needs refactoring | 1 |

21,512 | 29,799,390,948 | IssuesEvent | 2023-06-16 06:51:13 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | Metrics in k8s processor needs refactor | bug help wanted good first issue processor/k8sattributes | **Describe the bug**

Metrics exposed by k8s processor lacks meaningful description, and have invalid format.

**Additional context**

As mentioned in comment https://github.com/open-telemetry/opentelemetry-collector-contrib/pull/2199#discussion_r573754727 there is need to provide some additional information about ... | 1.0 | Metrics in k8s processor needs refactor - **Describe the bug**

Metrics exposed by k8s processor lacks meaningful description, and have invalid format.

**Additional context**

As mentioned in comment https://github.com/open-telemetry/opentelemetry-collector-contrib/pull/2199#discussion_r573754727 there is need to ... | process | metrics in processor needs refactor describe the bug metrics exposed by processor lacks meaningful description and have invalid format additional context as mentioned in comment there is need to provide some additional information about meaning of those metrics also need to change their format... | 1 |

202,622 | 15,287,850,778 | IssuesEvent | 2021-02-23 16:12:41 | pangeo-data/climpred | https://api.github.com/repos/pangeo-data/climpred | opened | resolve skipped tests | testing | currently there are 17 skipped/silent tests. Ideally, there should be none. | 1.0 | resolve skipped tests - currently there are 17 skipped/silent tests. Ideally, there should be none. | non_process | resolve skipped tests currently there are skipped silent tests ideally there should be none | 0 |

9,065 | 12,138,658,265 | IssuesEvent | 2020-04-23 17:36:08 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | closed | remove gcp-devrel-py-tools from kms/api-client/requirements-test.txt | priority: p2 remove-gcp-devrel-py-tools type: process | remove gcp-devrel-py-tools from kms/api-client/requirements-test.txt | 1.0 | remove gcp-devrel-py-tools from kms/api-client/requirements-test.txt - remove gcp-devrel-py-tools from kms/api-client/requirements-test.txt | process | remove gcp devrel py tools from kms api client requirements test txt remove gcp devrel py tools from kms api client requirements test txt | 1 |

14,944 | 8,711,287,270 | IssuesEvent | 2018-12-06 18:46:40 | szeged/webrender | https://api.github.com/repos/szeged/webrender | closed | (Re)use the Descriptor sets more efficiently | dx12 enhancement in progress performance | Currently we allocating a lot of descriptor sets in a pool, and DX12 does not like this very much. But without it we can run out of them easily.

We should be more conservative with the allocation and reuse more of the sets. | True | (Re)use the Descriptor sets more efficiently - Currently we allocating a lot of descriptor sets in a pool, and DX12 does not like this very much. But without it we can run out of them easily.

We should be more conservative with the allocation and reuse more of the sets. | non_process | re use the descriptor sets more efficiently currently we allocating a lot of descriptor sets in a pool and does not like this very much but without it we can run out of them easily we should be more conservative with the allocation and reuse more of the sets | 0 |

22,289 | 30,842,316,315 | IssuesEvent | 2023-08-02 11:32:45 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | Decompiler Ignores Possibility of NaN in Floating Point Comparison | Feature: Processor/x86 Status: Internal | **Describe the bug**

The decompiler incorrectly decompiles a floating point comparison of the form:

```

ucomisd %xmm0,%xmm0

setp %al

ret

```

to assume that the result is always false, discounting the possibility of xmm0 containing NaN.

**To Reproduce**

Steps to reproduce the behavior:

1. Open `is_nan.o` in ... | 1.0 | Decompiler Ignores Possibility of NaN in Floating Point Comparison - **Describe the bug**

The decompiler incorrectly decompiles a floating point comparison of the form:

```

ucomisd %xmm0,%xmm0

setp %al

ret

```

to assume that the result is always false, discounting the possibility of xmm0 containing NaN.

**To ... | process | decompiler ignores possibility of nan in floating point comparison describe the bug the decompiler incorrectly decompiles a floating point comparison of the form ucomisd setp al ret to assume that the result is always false discounting the possibility of containing nan to reproduce... | 1 |

42,089 | 17,027,035,110 | IssuesEvent | 2021-07-03 18:50:32 | aws/aws-cdk | https://api.github.com/repos/aws/aws-cdk | closed | (ecs-service-extensions): The ability to create the taskRole | @aws-cdk-containers/ecs-service-extensions effort/small feature-request feature/pattern p2 | We need the ability to create the taskRole of a taskDefinition ahead of time and not rely on the auto-generated taskRole because we deploy our CDK applications in 2 stacks (infrastructure and service). We create the infrastructure before the service so when I create things like S3 buckets with resource policies in the ... | 1.0 | (ecs-service-extensions): The ability to create the taskRole - We need the ability to create the taskRole of a taskDefinition ahead of time and not rely on the auto-generated taskRole because we deploy our CDK applications in 2 stacks (infrastructure and service). We create the infrastructure before the service so when... | non_process | ecs service extensions the ability to create the taskrole we need the ability to create the taskrole of a taskdefinition ahead of time and not rely on the auto generated taskrole because we deploy our cdk applications in stacks infrastructure and service we create the infrastructure before the service so when... | 0 |

55,875 | 8,033,397,819 | IssuesEvent | 2018-07-29 05:11:18 | BitcoinCashKit/BitcoinCashKit | https://api.github.com/repos/BitcoinCashKit/BitcoinCashKit | opened | Add About section to README | documentation | # Describe what you've considered?

Add `About` section to describe who maintains BitcoinCashKit

# Code sample / Spec

The section will be like this. But the website should be in English.

```

## About

BitcoinCashKit is maintained by [Yenom Inc](https://yenom.tech).

``` | 1.0 | Add About section to README - # Describe what you've considered?

Add `About` section to describe who maintains BitcoinCashKit

# Code sample / Spec

The section will be like this. But the website should be in English.

```

## About

BitcoinCashKit is maintained by [Yenom Inc](https://yenom.tech).

``` | non_process | add about section to readme describe what you ve considered add about section to describe who maintains bitcoincashkit code sample spec the section will be like this but the website should be in english about bitcoincashkit is maintained by | 0 |

9,739 | 12,733,030,627 | IssuesEvent | 2020-06-25 11:31:53 | prisma/prisma-client-js | https://api.github.com/repos/prisma/prisma-client-js | closed | Multiple quick `count` requests result in `Invalid prisma.web_hooks.count invocation` | bug/2-confirmed kind/bug process/next-milestone team/engines topic: broken query | ## Bug description

After introspecting our Discourse database (credentials on 1Password), and running Studio, Studio crashes. I ran the faulty query in a separate script and that crashes too, so I might have found a Prisma Client issue.

## How to reproduce

1. Introspect our Discourse DB (credentials on 1Passwo... | 1.0 | Multiple quick `count` requests result in `Invalid prisma.web_hooks.count invocation` - ## Bug description

After introspecting our Discourse database (credentials on 1Password), and running Studio, Studio crashes. I ran the faulty query in a separate script and that crashes too, so I might have found a Prisma Client... | process | multiple quick count requests result in invalid prisma web hooks count invocation bug description after introspecting our discourse database credentials on and running studio studio crashes i ran the faulty query in a separate script and that crashes too so i might have found a prisma client issue ... | 1 |

8,918 | 12,025,366,877 | IssuesEvent | 2020-04-12 08:50:12 | Arch666Angel/mods | https://api.github.com/repos/Arch666Angel/mods | closed | crafting time for fish water | Angels Bio Processing Enhancement | check ratio for adv chem plant vs fish tank, seems like it's 1:2 (?) it should require less chem plants, so maybe increase amount/recipe a bit if this is the case | 1.0 | crafting time for fish water - check ratio for adv chem plant vs fish tank, seems like it's 1:2 (?) it should require less chem plants, so maybe increase amount/recipe a bit if this is the case | process | crafting time for fish water check ratio for adv chem plant vs fish tank seems like it s it should require less chem plants so maybe increase amount recipe a bit if this is the case | 1 |

6,219 | 9,160,219,918 | IssuesEvent | 2019-03-01 06:30:07 | ga4gh/dockstore | https://api.github.com/repos/ga4gh/dockstore | closed | Complete NIH insider training | process | See https://wiki.oicr.on.ca/display/DOC/Notes+on+NIH+Training for accompanying notes

┆Issue is synchronized with this [Jira Story](https://ucsc-cgl.atlassian.net/browse/DOCK-227)

┆Issue Number: DOCK-227

| 1.0 | Complete NIH insider training - See https://wiki.oicr.on.ca/display/DOC/Notes+on+NIH+Training for accompanying notes

┆Issue is synchronized with this [Jira Story](https://ucsc-cgl.atlassian.net/browse/DOCK-227)

┆Issue Number: DOCK-227

| process | complete nih insider training see for accompanying notes ┆issue is synchronized with this ┆issue number dock | 1 |

98,363 | 16,373,809,966 | IssuesEvent | 2021-05-15 17:39:10 | hugh-whitesource/NodeGoat-1 | https://api.github.com/repos/hugh-whitesource/NodeGoat-1 | opened | WS-2018-0084 (High) detected in sshpk-1.10.1.tgz | security vulnerability | ## WS-2018-0084 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sshpk-1.10.1.tgz</b></p></summary>

<p>A library for finding and using SSH public keys</p>

<p>Library home page: <a href=... | True | WS-2018-0084 (High) detected in sshpk-1.10.1.tgz - ## WS-2018-0084 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sshpk-1.10.1.tgz</b></p></summary>

<p>A library for finding and using... | non_process | ws high detected in sshpk tgz ws high severity vulnerability vulnerable library sshpk tgz a library for finding and using ssh public keys library home page a href path to dependency file nodegoat package json path to vulnerable library nodegoat node modules npm n... | 0 |

7,656 | 10,740,478,463 | IssuesEvent | 2019-10-29 18:15:58 | brucemiller/LaTeXML | https://api.github.com/repos/brucemiller/LaTeXML | opened | Pluggable and configurable post-processing | enhancement postprocessing | This issue targets specifically the post-processing pipeline of latexml, as part of a larger configuration effort for latexml.

We want to make it possible to write plugins with additional post-processors, and have them execute in the correct order. For this we need to provide several pieces of specification and docu... | 1.0 | Pluggable and configurable post-processing - This issue targets specifically the post-processing pipeline of latexml, as part of a larger configuration effort for latexml.

We want to make it possible to write plugins with additional post-processors, and have them execute in the correct order. For this we need to pro... | process | pluggable and configurable post processing this issue targets specifically the post processing pipeline of latexml as part of a larger configuration effort for latexml we want to make it possible to write plugins with additional post processors and have them execute in the correct order for this we need to pro... | 1 |

7,242 | 10,410,295,279 | IssuesEvent | 2019-09-13 10:59:39 | googleapis/google-cloud-dotnet | https://api.github.com/repos/googleapis/google-cloud-dotnet | closed | Update MultiRegional and Regional to Standard | type: docs type: process | (I don't have assign privileges in this repository but this should be assigned to @dopiera )

Previously, we implemented the concept of "Location Type" to move the concept of bucket location away from storage class. It is no longer considered correct to use "MultiRegional" or "Regional" as a storage class, instead th... | 1.0 | Update MultiRegional and Regional to Standard - (I don't have assign privileges in this repository but this should be assigned to @dopiera )

Previously, we implemented the concept of "Location Type" to move the concept of bucket location away from storage class. It is no longer considered correct to use "MultiRegion... | process | update multiregional and regional to standard i don t have assign privileges in this repository but this should be assigned to dopiera previously we implemented the concept of location type to move the concept of bucket location away from storage class it is no longer considered correct to use multiregion... | 1 |