Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

17,714 | 23,610,025,980 | IssuesEvent | 2022-08-24 11:37:10 | bjorkgard/public-secretary | https://api.github.com/repos/bjorkgard/public-secretary | closed | Exportera adresslista | enhancement Publisher in process | En användare med rättighet skall kunna exportera en adresslista

- Komplett (default)

- Enbart verksamma

- Enbart kontaktpersoner

- Med nödkontakter

Sorteringar

- Bokstavsordning

- Gruppordning (default)

Format (default är församlingens inställning):

- PDF

- Excel | 1.0 | Exportera adresslista - En användare med rättighet skall kunna exportera en adresslista

- Komplett (default)

- Enbart verksamma

- Enbart kontaktpersoner

- Med nödkontakter

Sorteringar

- Bokstavsordning

- Gruppordning (default)

Format (default är församlingens inställning):

- PDF

- Excel | process | exportera adresslista en användare med rättighet skall kunna exportera en adresslista komplett default enbart verksamma enbart kontaktpersoner med nödkontakter sorteringar bokstavsordning gruppordning default format default är församlingens inställning pdf excel | 1 |

11,527 | 9,222,883,795 | IssuesEvent | 2019-03-12 00:52:30 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | All libcurl-related tests failing on Ubuntu.1804.Arm64.Open-arm64-Release | area-Infrastructure | System.Net.Http.Native can't be loaded, I'm assuming because it's unable to load libcurl:

```

Unhandled Exception of Type System.TypeInitializationException

Message :

System.TypeInitializationException : The type initializer for 'System.Net.Http.CurlHandler' threw an exception.

---- System.TypeInitializationExcept... | 1.0 | All libcurl-related tests failing on Ubuntu.1804.Arm64.Open-arm64-Release - System.Net.Http.Native can't be loaded, I'm assuming because it's unable to load libcurl:

```

Unhandled Exception of Type System.TypeInitializationException

Message :

System.TypeInitializationException : The type initializer for 'System.Net... | non_process | all libcurl related tests failing on ubuntu open release system net http native can t be loaded i m assuming because it s unable to load libcurl unhandled exception of type system typeinitializationexception message system typeinitializationexception the type initializer for system net http curlh... | 0 |

51,811 | 21,884,317,472 | IssuesEvent | 2022-05-19 17:00:58 | emergenzeHack/ukrainehelp.emergenzehack.info_segnalazioni | https://api.github.com/repos/emergenzeHack/ukrainehelp.emergenzehack.info_segnalazioni | opened | Sportello dedicato all'emergenza ucraina | Services Legal hospitality healthcare | <pre><yamldata>

servicetypes:

materialGoods: false

hospitality: true

transport: false

healthcare: true

Legal: true

translation: false

job: false

psychologicalSupport: false

Children: false

disability: false

women: false

education: false

offerFromWho: Comune di San Donato Milanese

title: Sportell... | 1.0 | Sportello dedicato all'emergenza ucraina - <pre><yamldata>

servicetypes:

materialGoods: false

hospitality: true

transport: false

healthcare: true

Legal: true

translation: false

job: false

psychologicalSupport: false

Children: false

disability: false

women: false

education: false

offerFromWho: Co... | non_process | sportello dedicato all emergenza ucraina servicetypes materialgoods false hospitality true transport false healthcare true legal true translation false job false psychologicalsupport false children false disability false women false education false offerfromwho comune di san d... | 0 |

19,352 | 3,193,613,674 | IssuesEvent | 2015-09-30 07:00:25 | netty/netty | https://api.github.com/repos/netty/netty | closed | Adding DefaultHttpHeaders to itself creates infinite loop | defect | Example:

public void test() {

HttpHeaders headers = new DefaultHttpHeaders();

headers.add("foo", "bar");

headers.add(headers);

// This will never end

headers.forEach(entry -> {});

}

| 1.0 | Adding DefaultHttpHeaders to itself creates infinite loop - Example:

public void test() {

HttpHeaders headers = new DefaultHttpHeaders();

headers.add("foo", "bar");

headers.add(headers);

// This will never end

headers.forEach(entry -> {});

}

| non_process | adding defaulthttpheaders to itself creates infinite loop example public void test httpheaders headers new defaulthttpheaders headers add foo bar headers add headers this will never end headers foreach entry | 0 |

542,150 | 15,856,053,044 | IssuesEvent | 2021-04-08 01:24:22 | sonia-auv/octopus-telemetry | https://api.github.com/repos/sonia-auv/octopus-telemetry | closed | Side bar | Priority: High Type: Feature | **Warning :** Before creating an issue or task, make sure that it does not already exists in the [issue tracker](../). Thank you.

## Context

Add side bar to drop module

## Changes

<!-- Give a brief description of the components that need to change and how -->

## Comments

<!-- Add further comments if needed ... | 1.0 | Side bar - **Warning :** Before creating an issue or task, make sure that it does not already exists in the [issue tracker](../). Thank you.

## Context

Add side bar to drop module

## Changes

<!-- Give a brief description of the components that need to change and how -->

## Comments

<!-- Add further comments... | non_process | side bar warning before creating an issue or task make sure that it does not already exists in the thank you context add side bar to drop module changes comments | 0 |

2,111 | 2,603,976,485 | IssuesEvent | 2015-02-24 19:01:37 | chrsmith/nishazi6 | https://api.github.com/repos/chrsmith/nishazi6 | opened | 沈阳龟头上肉芽 | auto-migrated Priority-Medium Type-Defect | ```

沈阳龟头上肉芽〓沈陽軍區政治部醫院性病〓TEL:024-31023308��

�成立于1946年,68年專注于性傳播疾病的研究和治療。位于沈�

��市沈河區二緯路32號。是一所與新中國同建立共輝煌的歷史�

��久、設備精良、技術權威、專家云集,是預防、保健、醫療

、科研康復為一體的綜合性醫院。是國家首批公立甲等部隊��

�院、全國首批醫療規范定點單位,是第四軍醫大學、東南大�

��等知名高等院校的教學醫院。曾被中國人民解放軍空軍后勤

部衛生部評為衛生工作先進單位,先后兩次榮立集體二等功��

�

```

-----

Original issue reported on code.google.com by `q964105.... | 1.0 | 沈阳龟头上肉芽 - ```

沈阳龟头上肉芽〓沈陽軍區政治部醫院性病〓TEL:024-31023308��

�成立于1946年,68年專注于性傳播疾病的研究和治療。位于沈�

��市沈河區二緯路32號。是一所與新中國同建立共輝煌的歷史�

��久、設備精良、技術權威、專家云集,是預防、保健、醫療

、科研康復為一體的綜合性醫院。是國家首批公立甲等部隊��

�院、全國首批醫療規范定點單位,是第四軍醫大學、東南大�

��等知名高等院校的教學醫院。曾被中國人民解放軍空軍后勤

部衛生部評為衛生工作先進單位,先后兩次榮立集體二等功��

�

```

-----

Original issue reported on code.google.com by... | non_process | 沈阳龟头上肉芽 沈阳龟头上肉芽〓沈陽軍區政治部醫院性病〓tel: �� � , 。位于沈� �� 。是一所與新中國同建立共輝煌的歷史� ��久、設備精良、技術權威、專家云集,是預防、保健、醫療 、科研康復為一體的綜合性醫院。是國家首批公立甲等部隊�� �院、全國首批醫療規范定點單位,是第四軍醫大學、東南大� ��等知名高等院校的教學醫院。曾被中國人民解放軍空軍后勤 部衛生部評為衛生工作先進單位,先后兩次榮立集體二等功�� � original issue reported on code google com by gmail com on jun at | 0 |

26,018 | 12,341,137,200 | IssuesEvent | 2020-05-14 21:16:50 | microsoft/vscode-cpptools | https://api.github.com/repos/microsoft/vscode-cpptools | closed | Intellisense Progressively Gets Slower | Language Service bug performance | **Type: LanguageService**

OS and Version: Windows 10 - Home Edition

VS Code Version: Latest

C/C++ Extension Version: Latest

**Details**

When I first open VSCode, intellisense runs perfectly fine and takes almost no time to come up. As changes to files are made, and as I build and rebuild the source, intellis... | 1.0 | Intellisense Progressively Gets Slower - **Type: LanguageService**

OS and Version: Windows 10 - Home Edition

VS Code Version: Latest

C/C++ Extension Version: Latest

**Details**

When I first open VSCode, intellisense runs perfectly fine and takes almost no time to come up. As changes to files are made, and as... | non_process | intellisense progressively gets slower type languageservice os and version windows home edition vs code version latest c c extension version latest details when i first open vscode intellisense runs perfectly fine and takes almost no time to come up as changes to files are made and as ... | 0 |

4,734 | 7,573,646,163 | IssuesEvent | 2018-04-23 18:25:07 | resin-io/etcher | https://api.github.com/repos/resin-io/etcher | opened | Publish Debian packages to Bintray through Resin CI on every commit | Process priority:low | So we can easily test Etcher Pro updates. | 1.0 | Publish Debian packages to Bintray through Resin CI on every commit - So we can easily test Etcher Pro updates. | process | publish debian packages to bintray through resin ci on every commit so we can easily test etcher pro updates | 1 |

116,722 | 9,882,072,091 | IssuesEvent | 2019-06-24 15:58:58 | microsoft/vscode-remote-release | https://api.github.com/repos/microsoft/vscode-remote-release | opened | Test: Alpine support | containers testplan-item | #54

- [ ] Windows

- [ ] anyOS

Complexity: 4

Check that the container definitions for Alpine work and try some typical actions depending on the definition. (Like compile, dynamically forward a port, IntelliSense.)

TODO @chrmarti: More details. | 1.0 | Test: Alpine support - #54

- [ ] Windows

- [ ] anyOS

Complexity: 4

Check that the container definitions for Alpine work and try some typical actions depending on the definition. (Like compile, dynamically forward a port, IntelliSense.)

TODO @chrmarti: More details. | non_process | test alpine support windows anyos complexity check that the container definitions for alpine work and try some typical actions depending on the definition like compile dynamically forward a port intellisense todo chrmarti more details | 0 |

184,005 | 21,784,782,477 | IssuesEvent | 2022-05-14 01:18:01 | mgh3326/railbook | https://api.github.com/repos/mgh3326/railbook | closed | WS-2021-0152 (High) detected in color-string-1.5.3.tgz - autoclosed | security vulnerability | ## WS-2021-0152 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>color-string-1.5.3.tgz</b></p></summary>

<p>Parser and generator for CSS color strings</p>

<p>Library home page: <a href... | True | WS-2021-0152 (High) detected in color-string-1.5.3.tgz - autoclosed - ## WS-2021-0152 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>color-string-1.5.3.tgz</b></p></summary>

<p>Parser... | non_process | ws high detected in color string tgz autoclosed ws high severity vulnerability vulnerable library color string tgz parser and generator for css color strings library home page a href path to dependency file package json path to vulnerable library node modules col... | 0 |

22,159 | 30,700,952,387 | IssuesEvent | 2023-07-26 23:19:01 | esmero/ami | https://api.github.com/repos/esmero/ami | opened | CASV exporter might fail if the CID used by the temporary storage surpasses the max. DB length | bug Find and Replace VBO Actions CSV Processing | # What?

Unheard of before. But I should have known better bc I saw something (and fixed) similar while building the LoD reconciliation service.

During an CSV export, to keep the order of children/parents in place we generate a Batch that uses temporary storage. Temporary storage requires a unique ID per item, and... | 1.0 | CASV exporter might fail if the CID used by the temporary storage surpasses the max. DB length - # What?

Unheard of before. But I should have known better bc I saw something (and fixed) similar while building the LoD reconciliation service.

During an CSV export, to keep the order of children/parents in place we g... | process | casv exporter might fail if the cid used by the temporary storage surpasses the max db length what unheard of before but i should have known better bc i saw something and fixed similar while building the lod reconciliation service during an csv export to keep the order of children parents in place we g... | 1 |

52,294 | 22,141,777,031 | IssuesEvent | 2022-06-03 07:42:55 | klubcoin/lcn-mobile | https://api.github.com/repos/klubcoin/lcn-mobile | opened | [Chat Service] Store chat message in server | Chat / Messaging Services | ### **Description:**

- Store chat message in server.

- Add API for user can delete their message in a conversation

- Add API for user can edit their message in a conversation

- Add field/API for user reply a message in a conversation | 1.0 | [Chat Service] Store chat message in server - ### **Description:**

- Store chat message in server.

- Add API for user can delete their message in a conversation

- Add API for user can edit their message in a conversation

- Add field/API for user reply a message in a conversation | non_process | store chat message in server description store chat message in server add api for user can delete their message in a conversation add api for user can edit their message in a conversation add field api for user reply a message in a conversation | 0 |

12,674 | 15,043,448,926 | IssuesEvent | 2021-02-03 00:44:23 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | Segfault on --log-format=CADDY with additional non-JSON fields | bug log-processing log/date/time format | With the following standard log output from CADDY (I just anonymized host and IP)

```

2021/02/02 12:22:53.394 error http.log.access.log0 handled request {"request": {"remote_addr": "11.22.33.44:5678", "proto": "HTTP/2.0", "method": "GET", "host": "example.com", "uri": "/favicon.ico", "headers": {"Cache-Control... | 1.0 | Segfault on --log-format=CADDY with additional non-JSON fields - With the following standard log output from CADDY (I just anonymized host and IP)

```

2021/02/02 12:22:53.394 error http.log.access.log0 handled request {"request": {"remote_addr": "11.22.33.44:5678", "proto": "HTTP/2.0", "method": "GET", "host":... | process | segfault on log format caddy with additional non json fields with the following standard log output from caddy i just anonymized host and ip error http log access handled request request remote addr proto http method get host example com uri... | 1 |

31,113 | 11,871,950,892 | IssuesEvent | 2020-03-26 15:07:09 | brave/brave-ios | https://api.github.com/repos/brave/brave-ios | closed | Updating Package-Lock.json | Epic: Security QA/Yes bug release-notes/exclude sec-low security | ### Description:

- `https://github.com/brave/brave-ios/network/alert/package-lock.json/minimist/open`

- Just related to outdated libraries being used across all platforms for millions of applications.

- We updated the packages so we need to make sure stuff still works.

### Steps to Reproduce

1. N/A

**Expe... | True | Updating Package-Lock.json - ### Description:

- `https://github.com/brave/brave-ios/network/alert/package-lock.json/minimist/open`

- Just related to outdated libraries being used across all platforms for millions of applications.

- We updated the packages so we need to make sure stuff still works.

### Steps to R... | non_process | updating package lock json description just related to outdated libraries being used across all platforms for millions of applications we updated the packages so we need to make sure stuff still works steps to reproduce n a expected result sync should work brave ver... | 0 |

693,185 | 23,766,105,083 | IssuesEvent | 2022-09-01 12:56:24 | Laravel-Backpack/CRUD | https://api.github.com/repos/Laravel-Backpack/CRUD | closed | [Bug] DataTable row colors are changed and a little broken now | Bug triage Priority: MUST Minor Bug | # Bug report

### What I did

Updated to latest CRUD & PRO.

### What I expected to happen

DataTable look and work the same.

### What happened

Notice:

- the colors... | 1.0 | [Bug] DataTable row colors are changed and a little broken now - # Bug report

### What I did

Updated to latest CRUD & PRO.

### What I expected to happen

DataTable look and work the same.

### What happened

: add labels to page | documentation enhancement | ### Description

I want to add labels to the markdown page for arsp to make filtering/searching better

### Acceptance Criteria

- [ ] add `wip` label

- [ ] add `math` label

- [ ] add `research` label

### Related Issues

_No response_ | 1.0 | story(arsp): add labels to page - ### Description

I want to add labels to the markdown page for arsp to make filtering/searching better

### Acceptance Criteria

- [ ] add `wip` label

- [ ] add `math` label

- [ ] add `research` label

### Related Issues

_No response_ | non_process | story arsp add labels to page description i want to add labels to the markdown page for arsp to make filtering searching better acceptance criteria add wip label add math label add research label related issues no response | 0 |

18,769 | 24,674,296,521 | IssuesEvent | 2022-10-18 15:47:03 | keras-team/keras-cv | https://api.github.com/repos/keras-team/keras-cv | closed | [Design] Video support for augmentation layers | preprocessing | Some major considerations:

- augmentations like jitter, shear, etc should be consistent (or slowly change) throughout videos.

- performance

- consistency with our ecosystem | 1.0 | [Design] Video support for augmentation layers - Some major considerations:

- augmentations like jitter, shear, etc should be consistent (or slowly change) throughout videos.

- performance

- consistency with our ecosystem | process | video support for augmentation layers some major considerations augmentations like jitter shear etc should be consistent or slowly change throughout videos performance consistency with our ecosystem | 1 |

109,338 | 23,749,362,263 | IssuesEvent | 2022-08-31 19:02:12 | unicode-org/icu4x | https://api.github.com/repos/unicode-org/icu4x | closed | Fix typo: to_code_point_invesion_list | T-bug C-unicode S-tiny | There is a function `to_code_point_invesion_list`. Fix the typo. | 1.0 | Fix typo: to_code_point_invesion_list - There is a function `to_code_point_invesion_list`. Fix the typo. | non_process | fix typo to code point invesion list there is a function to code point invesion list fix the typo | 0 |

43,473 | 11,233,140,908 | IssuesEvent | 2020-01-09 00:07:16 | apache/incubator-mxnet | https://api.github.com/repos/apache/incubator-mxnet | closed | Fail to build mxnet from source | Bug Build CMake | ## Description

Following the steps at https://mxnet.apache.org/get_started/ubuntu_setup

I got the following error:

### Error Message

```

OSError: /usr/lib/liblapack.so.3: undefined symbol: gotoblas

```

## To Reproduce

```

rm -rf build

mkdir -p build && cd build

cmake -GNinja \

-DUS... | 1.0 | Fail to build mxnet from source - ## Description

Following the steps at https://mxnet.apache.org/get_started/ubuntu_setup

I got the following error:

### Error Message

```

OSError: /usr/lib/liblapack.so.3: undefined symbol: gotoblas

```

## To Reproduce

```

rm -rf build

mkdir -p build && cd build

... | non_process | fail to build mxnet from source description following the steps at i got the following error error message oserror usr lib liblapack so undefined symbol gotoblas to reproduce rm rf build mkdir p build cd build cmake gninja duse cuda off ... | 0 |

9,571 | 12,521,697,370 | IssuesEvent | 2020-06-03 17:50:48 | googleapis/synthtool | https://api.github.com/repos/googleapis/synthtool | closed | Node.js, add environment variable file to .kokoro | type: process | We should figure out a reasonable approach to managing environment variables, such that new environment variables can be added as needed [see](https://github.com/googleapis/nodejs-secret-manager/pull/34).

The [nodejs-docs-samples use a common build.sh file](

https://github.com/GoogleCloudPlatform/nodejs-docs-sample... | 1.0 | Node.js, add environment variable file to .kokoro - We should figure out a reasonable approach to managing environment variables, such that new environment variables can be added as needed [see](https://github.com/googleapis/nodejs-secret-manager/pull/34).

The [nodejs-docs-samples use a common build.sh file](

https... | process | node js add environment variable file to kokoro we should figure out a reasonable approach to managing environment variables such that new environment variables can be added as needed the i think we could do something similar perhaps it s enough to simply allow folks to start adding some additional e... | 1 |

647,686 | 21,133,953,278 | IssuesEvent | 2022-04-06 03:30:03 | lowRISC/opentitan | https://api.github.com/repos/lowRISC/opentitan | closed | [cleanup] Add assertion check for all output enables which are tied to 1 | Priority:P2 Type:Cleanup | This is an item from Sysrst_Ctrl review.

We can consider updating the checklist as well.

In this [ASSERT_KNOWN_ADDED](https://github.com/lowRISC/opentitan/blob/master/doc/project/checklist.md#assert_known_added), if it's tied to a fixed value, instead of checking it's a known value, we can check it with the actual... | 1.0 | [cleanup] Add assertion check for all output enables which are tied to 1 - This is an item from Sysrst_Ctrl review.

We can consider updating the checklist as well.

In this [ASSERT_KNOWN_ADDED](https://github.com/lowRISC/opentitan/blob/master/doc/project/checklist.md#assert_known_added), if it's tied to a fixed val... | non_process | add assertion check for all output enables which are tied to this is an item from sysrst ctrl review we can consider updating the checklist as well in this if it s tied to a fixed value instead of checking it s a known value we can check it with the actual value | 0 |

19,865 | 26,277,601,970 | IssuesEvent | 2023-01-07 00:46:35 | AssetRipper/AssetRipper | https://api.github.com/repos/AssetRipper/AssetRipper | opened | Child State Machines | enhancement animation processing | ### Describe the new feature or enhancement

Currently, complex animator controllers get exported like this:

Ideally, they should be more like this:

Ideally, they should be more like this:

or a Windows des... | 1.0 | appUriHandlers and ShellExecuteEx - Hi there, I was wondering if someone could clarify what this section is trying to say, referring to setting up an http(s) app URI handler:

> This feature works whenever your app is a UWP app launched with [LaunchUriAsync](https://docs.microsoft.com/en-us/uwp/api/windows.system.launc... | process | appurihandlers and shellexecuteex hi there i was wondering if someone could clarify what this section is trying to say referring to setting up an http s app uri handler this feature works whenever your app is a uwp app launched with or a windows desktop app launched with if the url corresponds to a reg... | 1 |

13,443 | 15,882,621,551 | IssuesEvent | 2021-04-09 16:15:42 | plazi/arcadia-project | https://api.github.com/repos/plazi/arcadia-project | closed | processing: The head anatomy of Protanilla lini (Hymenoptera: Formicidae: Lep | processing input | can you please process this article:

https://myrmecologicalnews.org/cms/index.php?option=com_download&view=download&filename=volume31/mn31_85-114_printable.pdf&format=raw

not urgent (within a week)

Level: subsection, upload to BLR | 1.0 | processing: The head anatomy of Protanilla lini (Hymenoptera: Formicidae: Lep - can you please process this article:

https://myrmecologicalnews.org/cms/index.php?option=com_download&view=download&filename=volume31/mn31_85-114_printable.pdf&format=raw

not urgent (within a week)

Level: subsection, upload to BLR | process | processing the head anatomy of protanilla lini hymenoptera formicidae lep can you please process this article not urgent within a week level subsection upload to blr | 1 |

19,517 | 25,828,899,204 | IssuesEvent | 2022-12-12 14:49:36 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | "Create New Integrated Terminal (Local)" does not apply the correct path to the shell executable | bug remote terminal-process | <!-- Please search existing issues to avoid creating duplicates, and review our troubleshooting tips: https://code.visualstudio.com/docs/remote/troubleshooting -->

<!-- Please attach logs to help us diagnose your issue. Learn more here: https://code.visualstudio.com/docs/remote/troubleshooting#_reporting-issues -->

<... | 1.0 | "Create New Integrated Terminal (Local)" does not apply the correct path to the shell executable - <!-- Please search existing issues to avoid creating duplicates, and review our troubleshooting tips: https://code.visualstudio.com/docs/remote/troubleshooting -->

<!-- Please attach logs to help us diagnose your issue. ... | process | create new integrated terminal local does not apply the correct path to the shell executable vscode version local os version darwin macos monterey remote os version arch linux remote extension connection type ssh steps to reproduce from a local macos vs co... | 1 |

348,233 | 31,475,178,674 | IssuesEvent | 2023-08-30 10:11:05 | risingwavelabs/risingwave | https://api.github.com/repos/risingwavelabs/risingwave | opened | Integration test with cloud-hosed system | type/feature component/test | ### Is your feature request related to a problem? Please describe.

Right now we only have the integration test with upstream and downstream system deployed via docker compose. But we also support source and sink of cloud-hosted system, e.g. AWS MSK, AWS RDS, etc. But we haven't setup integration test environment for t... | 1.0 | Integration test with cloud-hosed system - ### Is your feature request related to a problem? Please describe.

Right now we only have the integration test with upstream and downstream system deployed via docker compose. But we also support source and sink of cloud-hosted system, e.g. AWS MSK, AWS RDS, etc. But we haven... | non_process | integration test with cloud hosed system is your feature request related to a problem please describe right now we only have the integration test with upstream and downstream system deployed via docker compose but we also support source and sink of cloud hosted system e g aws msk aws rds etc but we haven... | 0 |

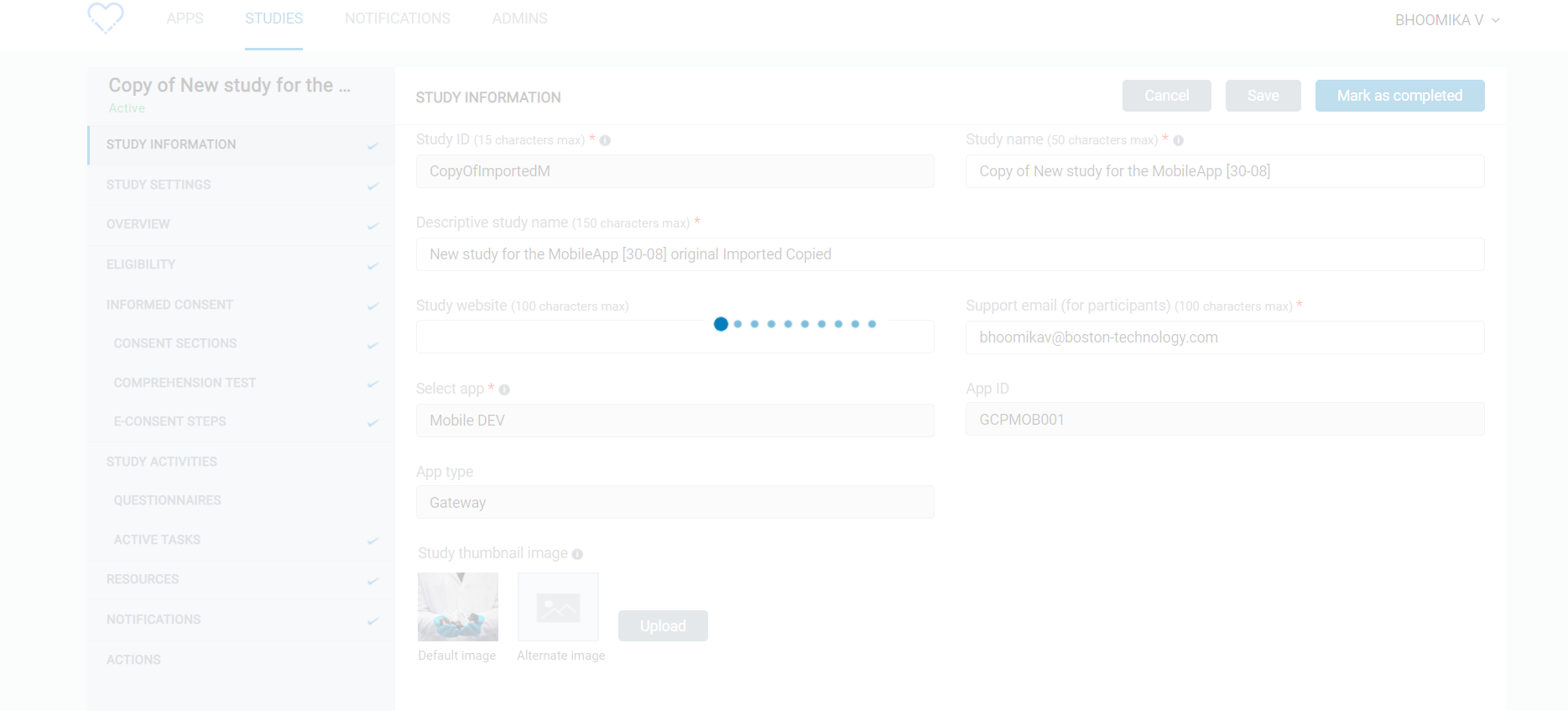

85,215 | 24,543,682,410 | IssuesEvent | 2022-10-12 07:03:00 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [SB] Questionnaires / Active tasks > Screen is loading continuously when clicked on Questionnaires / Active tasks section | Bug Blocker P0 Study builder Process: Fixed Process: Tested dev | Questionnaires / Active tasks > Screen is loading continuously when clicked on Questionnaires / Active tasks section in the study builder

| 1.0 | [SB] Questionnaires / Active tasks > Screen is loading continuously when clicked on Questionnaires / Active tasks section - Questionnaires / Active tasks > Screen is loading continuously when clicked on Questionnaires / Active tasks section in the study builder

detected in express-fileupload-0.4.0.tgz | security vulnerability | ## CVE-2020-7699 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>express-fileupload-0.4.0.tgz</b></p></summary>

<p>Simple express file upload middleware that wraps around Busboy</p>

<p... | True | CVE-2020-7699 (High) detected in express-fileupload-0.4.0.tgz - ## CVE-2020-7699 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>express-fileupload-0.4.0.tgz</b></p></summary>

<p>Simpl... | non_process | cve high detected in express fileupload tgz cve high severity vulnerability vulnerable library express fileupload tgz simple express file upload middleware that wraps around busboy library home page a href path to dependency file dvna package json path to vulnerable l... | 0 |

8,384 | 11,544,898,871 | IssuesEvent | 2020-02-18 12:24:58 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | extract_levels preprocessor only support 1D coordinates | preprocessor | Currently, `extract_levels` assume that levels are identical at every point and timestep. This is not true always, i.e. when using model levels. The preprocessor function must be enhanced to allow processing this kind of variables | 1.0 | extract_levels preprocessor only support 1D coordinates - Currently, `extract_levels` assume that levels are identical at every point and timestep. This is not true always, i.e. when using model levels. The preprocessor function must be enhanced to allow processing this kind of variables | process | extract levels preprocessor only support coordinates currently extract levels assume that levels are identical at every point and timestep this is not true always i e when using model levels the preprocessor function must be enhanced to allow processing this kind of variables | 1 |

2,531 | 5,289,878,727 | IssuesEvent | 2017-02-08 18:28:25 | MikePopoloski/slang | https://api.github.com/repos/MikePopoloski/slang | closed | Check whether keywords are actually eligible given current PP state | area-preprocessor easy | SystemVerilog has a preprocessor feature that enables and disables various sets of keywords. We should take into account that state and downgrade keyword tokens back into simple identifier tokens. | 1.0 | Check whether keywords are actually eligible given current PP state - SystemVerilog has a preprocessor feature that enables and disables various sets of keywords. We should take into account that state and downgrade keyword tokens back into simple identifier tokens. | process | check whether keywords are actually eligible given current pp state systemverilog has a preprocessor feature that enables and disables various sets of keywords we should take into account that state and downgrade keyword tokens back into simple identifier tokens | 1 |

186,988 | 6,744,123,483 | IssuesEvent | 2017-10-20 14:38:04 | MoveOnOrg/Spoke | https://api.github.com/repos/MoveOnOrg/Spoke | closed | Access data warehouse | priority: 2 project | - [ ] As an admin, I want to have direct access to my own internal data warehouse from the application without uploading a CSV to create texting lists

- [ ] As an admin, I want to sync information gathered from a campaign to the data warehouse. Currently, an admin is able to export data to an S3 bucket with proper c... | 1.0 | Access data warehouse - - [ ] As an admin, I want to have direct access to my own internal data warehouse from the application without uploading a CSV to create texting lists

- [ ] As an admin, I want to sync information gathered from a campaign to the data warehouse. Currently, an admin is able to export data to an... | non_process | access data warehouse as an admin i want to have direct access to my own internal data warehouse from the application without uploading a csv to create texting lists as an admin i want to sync information gathered from a campaign to the data warehouse currently an admin is able to export data to an b... | 0 |

297 | 2,732,611,760 | IssuesEvent | 2015-04-17 07:55:40 | tomchristie/django-rest-framework | https://api.github.com/repos/tomchristie/django-rest-framework | opened | Standard response to usage questions. | Process | I think we should probably have a standard response to usage questions.

@jpadilla's wording here seems suitably brief and appropriate...

> The [discussion group](https://groups.google.com/forum/#!forum/django-rest-framework) is the best place to take this discussion and other usage questions. Thanks!

/cc @carl... | 1.0 | Standard response to usage questions. - I think we should probably have a standard response to usage questions.

@jpadilla's wording here seems suitably brief and appropriate...

> The [discussion group](https://groups.google.com/forum/#!forum/django-rest-framework) is the best place to take this discussion and oth... | process | standard response to usage questions i think we should probably have a standard response to usage questions jpadilla s wording here seems suitably brief and appropriate the is the best place to take this discussion and other usage questions thanks cc carltongibson xordoquy kevin brown jpad... | 1 |

2,600 | 5,356,208,512 | IssuesEvent | 2017-02-20 15:05:45 | AllenFang/react-bootstrap-table | https://api.github.com/repos/AllenFang/react-bootstrap-table | closed | Uncaught TypeError: Cannot read property 'slice' of undefined at new BootstrapTable | enhancement inprocess | Im getting this error when passing my data in the prop "data={}" for the BootstrapTable.

My data structure is just an array of objects passed from an Apollo Provider. Anyone else have this issue?

`Uncaught TypeError: Cannot read property 'slice' of undefined

at new BootstrapTable` | 1.0 | Uncaught TypeError: Cannot read property 'slice' of undefined at new BootstrapTable - Im getting this error when passing my data in the prop "data={}" for the BootstrapTable.

My data structure is just an array of objects passed from an Apollo Provider. Anyone else have this issue?

`Uncaught TypeError: Cannot r... | process | uncaught typeerror cannot read property slice of undefined at new bootstraptable im getting this error when passing my data in the prop data for the bootstraptable my data structure is just an array of objects passed from an apollo provider anyone else have this issue uncaught typeerror cannot r... | 1 |

11,126 | 13,957,687,407 | IssuesEvent | 2020-10-24 08:09:14 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | PT: registered Discovery service not reachable | Geoportal Harvesting process PT - Portugal | Dear Marta,

since yesterday we are currently experiencing difficulties to contact your registered endpoint.

https://catalogosnig.dgterritorio.gov.pt/geoportal/csw/discovery?service=CSW&request=GetCapabilities

At the moment, it returns "Página não encontrada" (HTTP 404).

Could you please in... | 1.0 | PT: registered Discovery service not reachable - Dear Marta,

since yesterday we are currently experiencing difficulties to contact your registered endpoint.

https://catalogosnig.dgterritorio.gov.pt/geoportal/csw/discovery?service=CSW&request=GetCapabilities

At the moment, it returns "Página não... | process | pt registered discovery service not reachable dear marta since yesterday we are currently experiencing difficulties to contact your registered endpoint at the moment it returns quot p aacute gina n atilde o encontrada quot http could you please investigate the issue and let us know for the time being ... | 1 |

189,744 | 14,520,598,490 | IssuesEvent | 2020-12-14 05:48:33 | kalexmills/github-vet-tests-dec2020 | https://api.github.com/repos/kalexmills/github-vet-tests-dec2020 | closed | mindreframer/golang-devops-stuff: src/github.com/hashicorp/terraform/helper/ssh/communicator_test.go; 12 LoC | fresh small test |

Found a possible issue in [mindreframer/golang-devops-stuff](https://www.github.com/mindreframer/golang-devops-stuff) at [src/github.com/hashicorp/terraform/helper/ssh/communicator_test.go](https://github.com/mindreframer/golang-devops-stuff/blob/bb6c6c16ff0ae7892cd0ed0715b8a6b769052f9f/src/github.com/hashicorp/terraf... | 1.0 | mindreframer/golang-devops-stuff: src/github.com/hashicorp/terraform/helper/ssh/communicator_test.go; 12 LoC -

Found a possible issue in [mindreframer/golang-devops-stuff](https://www.github.com/mindreframer/golang-devops-stuff) at [src/github.com/hashicorp/terraform/helper/ssh/communicator_test.go](https://github.com... | non_process | mindreframer golang devops stuff src github com hashicorp terraform helper ssh communicator test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your conside... | 0 |

14,729 | 17,946,801,706 | IssuesEvent | 2021-09-12 00:19:21 | beecorrea/midas | https://api.github.com/repos/beecorrea/midas | closed | [PROCESS] Transfer money | processes | # Description

Draws money from an account and deposits it in another.

# Rules involved

- Does the account exist?

- Is the requester the owner of this account?

- Is this account open?

- Can this amount be drawn from this account?

# Steps

1. Draw money from sender/origin account.

2. Deposit money into recei... | 1.0 | [PROCESS] Transfer money - # Description

Draws money from an account and deposits it in another.

# Rules involved

- Does the account exist?

- Is the requester the owner of this account?

- Is this account open?

- Can this amount be drawn from this account?

# Steps

1. Draw money from sender/origin account.

... | process | transfer money description draws money from an account and deposits it in another rules involved does the account exist is the requester the owner of this account is this account open can this amount be drawn from this account steps draw money from sender origin account depos... | 1 |

22,306 | 30,859,915,637 | IssuesEvent | 2023-08-03 01:29:19 | emily-writes-poems/emily-writes-poems-processing | https://api.github.com/repos/emily-writes-poems/emily-writes-poems-processing | closed | react: collection page | processing | have these at least functioning from the react page:

- [x] display all collections list

- [x] create new collection

- technically supported by #4, but to add/edit poems in collection is still TBD (see #9, #5) | 1.0 | react: collection page - have these at least functioning from the react page:

- [x] display all collections list

- [x] create new collection

- technically supported by #4, but to add/edit poems in collection is still TBD (see #9, #5) | process | react collection page have these at least functioning from the react page display all collections list create new collection technically supported by but to add edit poems in collection is still tbd see | 1 |

439,380 | 12,681,938,932 | IssuesEvent | 2020-06-19 16:20:08 | JamieMason/syncpack | https://api.github.com/repos/JamieMason/syncpack | closed | format command sorts "files" property and breaks packages | Priority: High Status: To Do Type: Fix | ## Description

syncpack is reordering the contents of the "files" property which changes the contents of the tarball when using "npm publish" or "npm pack".

example package.json:

```

{

files: [

"dist/",

"!*.test.*",

"!__mocks__"

]

}

```

command:

`npx syncpack format`

r... | 1.0 | format command sorts "files" property and breaks packages - ## Description

syncpack is reordering the contents of the "files" property which changes the contents of the tarball when using "npm publish" or "npm pack".

example package.json:

```

{

files: [

"dist/",

"!*.test.*",

"!__mo... | non_process | format command sorts files property and breaks packages description syncpack is reordering the contents of the files property which changes the contents of the tarball when using npm publish or npm pack example package json files dist test mo... | 0 |

17,635 | 23,456,844,566 | IssuesEvent | 2022-08-16 09:36:49 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Missing Networking info | automation/svc triaged cxp doc-bug process-automation/subsvc Pri2 | This is missing information about the Networking tab after Advanced.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 9b4440e0-1ff5-0fd3-6983-d5f6ed86e818

* Version Independent ID: 8d6aecae-1a58-83aa-45f7-306fb6c92d38

* Content: [C... | 1.0 | Missing Networking info - This is missing information about the Networking tab after Advanced.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 9b4440e0-1ff5-0fd3-6983-d5f6ed86e818

* Version Independent ID: 8d6aecae-1a58-83aa-45f7-3... | process | missing networking info this is missing information about the networking tab after advanced document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source ... | 1 |

19,972 | 26,451,953,070 | IssuesEvent | 2023-01-16 11:55:43 | firebase/firebase-cpp-sdk | https://api.github.com/repos/firebase/firebase-cpp-sdk | reopened | [C++] Nightly Integration Testing Report for Firestore | type: process nightly-testing |

<hidden value="integration-test-status-comment"></hidden>

### ✅ [build against repo] Integration test succeeded!

Requested by @sunmou99 on commit 45f8e3268c2adbabca165ed0a937835f18930d2f

Last updated: Sun Jan 15 04:03 PST 2023

**[View integration test log & download artifacts](https://github.com/firebase/fire... | 1.0 | [C++] Nightly Integration Testing Report for Firestore -

<hidden value="integration-test-status-comment"></hidden>

### ✅ [build against repo] Integration test succeeded!

Requested by @sunmou99 on commit 45f8e3268c2adbabca165ed0a937835f18930d2f

Last updated: Sun Jan 15 04:03 PST 2023

**[View integration test l... | process | nightly integration testing report for firestore ✅ nbsp integration test succeeded requested by on commit last updated sun jan pst ✅ nbsp integration test succeeded requested by firebase workflow trigger on commit last updated sun jan pst ... | 1 |

19,078 | 25,119,367,037 | IssuesEvent | 2022-11-09 06:33:11 | streamnative/flink | https://api.github.com/repos/streamnative/flink | opened | [release] November Release, | compute/data-processing | In this release we need to release the 1.16 as well, need to setup the release pipeline and test the code. | 1.0 | [release] November Release, - In this release we need to release the 1.16 as well, need to setup the release pipeline and test the code. | process | november release in this release we need to release the as well need to setup the release pipeline and test the code | 1 |

515 | 2,989,806,920 | IssuesEvent | 2015-07-21 03:20:36 | codefordenver/org | https://api.github.com/repos/codefordenver/org | opened | Add Project Kickoff worksheet | Process Writing | As a product owner for a new project in partnership with a nonprofit (or other group/individual), I want a clear process / form to identify what tasks for the project should be. | 1.0 | Add Project Kickoff worksheet - As a product owner for a new project in partnership with a nonprofit (or other group/individual), I want a clear process / form to identify what tasks for the project should be. | process | add project kickoff worksheet as a product owner for a new project in partnership with a nonprofit or other group individual i want a clear process form to identify what tasks for the project should be | 1 |

18,111 | 24,143,701,518 | IssuesEvent | 2022-09-21 16:47:26 | googleapis/java-pubsublite-kafka | https://api.github.com/repos/googleapis/java-pubsublite-kafka | closed | Your .repo-metadata.json file has a problem 🤒 | type: process api: pubsublite repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'pubsublite-kafka' invalid in .repo-metadata.json

☝️ Once you address these problems, you can close this issue.

### Need help?

* [Schema definition](https://github.com/googleapis/repo-automation-bots/blob/main/packages/repo-m... | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'pubsublite-kafka' invalid in .repo-metadata.json

☝️ Once you address these problems, you can close this issue.

### Need help?

* [Schema definition](https://github.com/googleap... | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 api shortname pubsublite kafka invalid in repo metadata json ☝️ once you address these problems you can close this issue need help lists valid options for each field f... | 1 |

11,021 | 13,806,951,302 | IssuesEvent | 2020-10-11 19:51:13 | km4ack/pi-build | https://api.github.com/repos/km4ack/pi-build | closed | Conky fails to start if dnsmasq.leases is not present | bug in process | per this [post](https://groups.io/g/KM4ACK-Pi/topic/77144861)

simple solution is to touch the dnsmasq.leases file after installing conky | 1.0 | Conky fails to start if dnsmasq.leases is not present - per this [post](https://groups.io/g/KM4ACK-Pi/topic/77144861)

simple solution is to touch the dnsmasq.leases file after installing conky | process | conky fails to start if dnsmasq leases is not present per this simple solution is to touch the dnsmasq leases file after installing conky | 1 |

11,217 | 13,998,587,993 | IssuesEvent | 2020-10-28 09:40:06 | prisma/quaint | https://api.github.com/repos/prisma/quaint | closed | Clean-up of our feature flags | kind/tech process/candidate | Our feature flags are a mess. We should clean them up and run more feature combinations on CI before merging anything. | 1.0 | Clean-up of our feature flags - Our feature flags are a mess. We should clean them up and run more feature combinations on CI before merging anything. | process | clean up of our feature flags our feature flags are a mess we should clean them up and run more feature combinations on ci before merging anything | 1 |

59,399 | 3,109,755,864 | IssuesEvent | 2015-09-02 00:02:29 | The-Stampede/Web-Page | https://api.github.com/repos/The-Stampede/Web-Page | opened | Site Load | difficulty: intermediate priority: important type: research value: performance | Determine that the site load is no longer than 3s average (May be shooting for too low) | 1.0 | Site Load - Determine that the site load is no longer than 3s average (May be shooting for too low) | non_process | site load determine that the site load is no longer than average may be shooting for too low | 0 |

15,749 | 27,820,863,966 | IssuesEvent | 2023-03-19 08:00:57 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | opened | Issue/Discussion template defaults can lead to incorrect submissions | priority-3-medium type:refactor status:requirements | ### Describe the proposed change(s).

I notice sometimes people submitting invalid information, I think this is often caused by them simply leaving the default value, such as:

<img width="511" alt="image" src="https://user-images.githubusercontent.com/6311784/226161755-0636d4eb-4a4c-406f-a5b3-f3891c74b62a.png">

S... | 1.0 | Issue/Discussion template defaults can lead to incorrect submissions - ### Describe the proposed change(s).

I notice sometimes people submitting invalid information, I think this is often caused by them simply leaving the default value, such as:

<img width="511" alt="image" src="https://user-images.githubuserconten... | non_process | issue discussion template defaults can lead to incorrect submissions describe the proposed change s i notice sometimes people submitting invalid information i think this is often caused by them simply leaving the default value such as img width alt image src sometimes we could fix this by sw... | 0 |

17,958 | 23,962,476,855 | IssuesEvent | 2022-09-12 20:29:19 | openxla/stablehlo | https://api.github.com/repos/openxla/stablehlo | closed | Do not run buildAndTest GitHub Action if only markdown changes | Process | ### Request description

We should only run build and test if there is a source change, the definition of _source change_ can become more precise over time, but "not markdown" should resolve this issue for the majority of PRs that have come through (spec changes).

I noticed that #109 triggered a build of the Stable... | 1.0 | Do not run buildAndTest GitHub Action if only markdown changes - ### Request description

We should only run build and test if there is a source change, the definition of _source change_ can become more precise over time, but "not markdown" should resolve this issue for the majority of PRs that have come through (spec ... | process | do not run buildandtest github action if only markdown changes request description we should only run build and test if there is a source change the definition of source change can become more precise over time but not markdown should resolve this issue for the majority of prs that have come through spec ... | 1 |

17,345 | 23,171,040,956 | IssuesEvent | 2022-07-30 18:14:20 | open-ephys/GUI | https://api.github.com/repos/open-ephys/GUI | closed | FileReaderThread isn't compatible with the Open Ephys data format | Processors | At the moment, the FileReaderThread reads data in an arbitrary format: consecutive int16s representing samples from 16 channels. It also uses pauses to approximate the original sample rate, which is clearly not a viable long-term solution.

We need to create a dedicated "FileReader" processor (rather than a DataThread ... | 1.0 | FileReaderThread isn't compatible with the Open Ephys data format - At the moment, the FileReaderThread reads data in an arbitrary format: consecutive int16s representing samples from 16 channels. It also uses pauses to approximate the original sample rate, which is clearly not a viable long-term solution.

We need to ... | process | filereaderthread isn t compatible with the open ephys data format at the moment the filereaderthread reads data in an arbitrary format consecutive representing samples from channels it also uses pauses to approximate the original sample rate which is clearly not a viable long term solution we need to create... | 1 |

19,443 | 25,713,957,124 | IssuesEvent | 2022-12-07 09:01:24 | googleapis/google-cloud-php | https://api.github.com/repos/googleapis/google-cloud-php | closed | Your .repo-metadata.json files have a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json files:

Result of scan 📈:

* client_documentation must match pattern "^https://.*" in AccessApproval/.repo-metadata.json

* release_level must be equal to one of the allowed values in AccessApproval/.repo-metadata.json

* api_shortname field missing from AccessApproval/.r... | 1.0 | Your .repo-metadata.json files have a problem 🤒 - You have a problem with your .repo-metadata.json files:

Result of scan 📈:

* client_documentation must match pattern "^https://.*" in AccessApproval/.repo-metadata.json

* release_level must be equal to one of the allowed values in AccessApproval/.repo-metadata.json

*... | process | your repo metadata json files have a problem 🤒 you have a problem with your repo metadata json files result of scan 📈 client documentation must match pattern in accessapproval repo metadata json release level must be equal to one of the allowed values in accessapproval repo metadata json api short... | 1 |

21,818 | 30,316,653,495 | IssuesEvent | 2023-07-10 16:00:57 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Change term - coordinateUncertaintyInMeters | Term - change Class - Location non-normative Process - complete | ## Term change

* Submitter: John Wieczorek

* Efficacy Justification (why is this change necessary?): Outright error, the date Selective Availability was turned off is wrong.

* Demand Justification (if the change is semantic in nature, name at least two organizations that independently need this term): Truth and Ju... | 1.0 | Change term - coordinateUncertaintyInMeters - ## Term change

* Submitter: John Wieczorek

* Efficacy Justification (why is this change necessary?): Outright error, the date Selective Availability was turned off is wrong.

* Demand Justification (if the change is semantic in nature, name at least two organizations th... | process | change term coordinateuncertaintyinmeters term change submitter john wieczorek efficacy justification why is this change necessary outright error the date selective availability was turned off is wrong demand justification if the change is semantic in nature name at least two organizations th... | 1 |

17,718 | 23,619,080,787 | IssuesEvent | 2022-08-24 18:39:59 | Jigsaw-Code/outline-client | https://api.github.com/repos/Jigsaw-Code/outline-client | closed | Push current Linux client to S3 | os/linux release process | It seems the Linux client is not updated on S3, based on what I saw at https://github.com/Jigsaw-Code/outline-client/issues/1089.

We need to:

- [x] Push the current client to S3

- [x] Make sure the release process updates S3

/cc @jyyi1 @daniellacosse | 1.0 | Push current Linux client to S3 - It seems the Linux client is not updated on S3, based on what I saw at https://github.com/Jigsaw-Code/outline-client/issues/1089.

We need to:

- [x] Push the current client to S3

- [x] Make sure the release process updates S3

/cc @jyyi1 @daniellacosse | process | push current linux client to it seems the linux client is not updated on based on what i saw at we need to push the current client to make sure the release process updates cc daniellacosse | 1 |

27,045 | 4,272,490,772 | IssuesEvent | 2016-07-13 14:39:51 | NishantUpadhyay-BTC/BLISS-Issue-Tracking | https://api.github.com/repos/NishantUpadhyay-BTC/BLISS-Issue-Tracking | reopened | #1378 - Guest UI: Availability Table: Graphics Changes | Change Request Deployed to Test | From my recent email to Sherry, Amit, and Nishant.

First:

On the Availability Table - the first one with the red/green blocks - can we have additional rows that separate out the months? So, for instance, as I scroll through June and then get to July, the first row of July would be a different colored row that spans ... | 1.0 | #1378 - Guest UI: Availability Table: Graphics Changes - From my recent email to Sherry, Amit, and Nishant.

First:

On the Availability Table - the first one with the red/green blocks - can we have additional rows that separate out the months? So, for instance, as I scroll through June and then get to July, the fir... | non_process | guest ui availability table graphics changes from my recent email to sherry amit and nishant first on the availability table the first one with the red green blocks can we have additional rows that separate out the months so for instance as i scroll through june and then get to july the first ... | 0 |

17,064 | 22,501,423,960 | IssuesEvent | 2022-06-23 12:11:45 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | closed | Print out start instructions in compact logger | team/process-automation | **Description**

Log information about start instructions in record logging.

Also add information about start instructions to record interface to unblock https://github.com/camunda/zeebe-process-test/issues/411 | 1.0 | Print out start instructions in compact logger - **Description**

Log information about start instructions in record logging.

Also add information about start instructions to record interface to unblock https://github.com/camunda/zeebe-process-test/issues/411 | process | print out start instructions in compact logger description log information about start instructions in record logging also add information about start instructions to record interface to unblock | 1 |

16,899 | 22,203,055,426 | IssuesEvent | 2022-06-07 12:54:45 | pycaret/pycaret | https://api.github.com/repos/pycaret/pycaret | closed | 3.0.0.rc2 - Hourly data missing imputation error on time_series module | time_series preprocessing impute | ### pycaret version checks

- [X] I have checked that this issue has not already been reported [here](https://github.com/pycaret/pycaret/issues).

- [X] I have confirmed this bug exists on the [latest version](https://github.com/pycaret/pycaret/releases) of pycaret.

- [ ] I have confirmed this bug exists on the develo... | 1.0 | 3.0.0.rc2 - Hourly data missing imputation error on time_series module - ### pycaret version checks

- [X] I have checked that this issue has not already been reported [here](https://github.com/pycaret/pycaret/issues).

- [X] I have confirmed this bug exists on the [latest version](https://github.com/pycaret/pycaret/r... | process | hourly data missing imputation error on time series module pycaret version checks i have checked that this issue has not already been reported i have confirmed this bug exists on the of pycaret i have confirmed this bug exists on the develop branch of pycaret pip install u git... | 1 |

439,392 | 30,694,988,280 | IssuesEvent | 2023-07-26 17:51:50 | pwa-builder/PWABuilder | https://api.github.com/repos/pwa-builder/PWABuilder | opened | [DOCS] Docs about token flow and promotion requirements | documentation | Full documentation for new free Msoft dev promotion:

Checking if your PWA qualifies ➡️ getting your token ➡️ where to use that token.

Also going to experiment with an entry field to take you straight to the validation page. | 1.0 | [DOCS] Docs about token flow and promotion requirements - Full documentation for new free Msoft dev promotion:

Checking if your PWA qualifies ➡️ getting your token ➡️ where to use that token.

Also going to experiment with an entry field to take you straight to the validation page. | non_process | docs about token flow and promotion requirements full documentation for new free msoft dev promotion checking if your pwa qualifies ➡️ getting your token ➡️ where to use that token also going to experiment with an entry field to take you straight to the validation page | 0 |

32,613 | 7,552,531,868 | IssuesEvent | 2018-04-19 00:51:37 | dickschoeller/gedbrowser | https://api.github.com/repos/dickschoeller/gedbrowser | closed | Tests of API controllers, crud classes and helpers | code smell in progress | Right now the tests are:

* all through the controllers

* don't check behaviors well

Fix to:

* Test the helpers directly

* Test the CRUDs directly

* Really check the results

* Don't check the JSON from the controllers

Coverage issues:

* ApiFamily

* SaveController

* ApiSource

* GedWriter

* ApiSubmitter | 1.0 | Tests of API controllers, crud classes and helpers - Right now the tests are:

* all through the controllers

* don't check behaviors well

Fix to:

* Test the helpers directly

* Test the CRUDs directly

* Really check the results

* Don't check the JSON from the controllers

Coverage issues:

* ApiFamily

* SaveController

* ... | non_process | tests of api controllers crud classes and helpers right now the tests are all through the controllers don t check behaviors well fix to test the helpers directly test the cruds directly really check the results don t check the json from the controllers coverage issues apifamily savecontroller ... | 0 |

40,627 | 10,075,724,597 | IssuesEvent | 2019-07-24 14:48:20 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | jcache onheap structure at non default partition counts data missing | Module: ICache Team: Core Type: Critical Type: Defect | a simple test loading 4 structures (mapBak1HD, mapBak1, cacheBak1HD, cacheBak1)

with 10000 key values each

then testing the size of the structures to be 10000

http://jenkins.hazelcast.com/view/stable/job/stable-partition-data/1/console

http://54.147.27.51/~jenkins/workspace/stable-partition-data/3.12.1/2019_... | 1.0 | jcache onheap structure at non default partition counts data missing - a simple test loading 4 structures (mapBak1HD, mapBak1, cacheBak1HD, cacheBak1)

with 10000 key values each

then testing the size of the structures to be 10000

http://jenkins.hazelcast.com/view/stable/job/stable-partition-data/1/console

ht... | non_process | jcache onheap structure at non default partition counts data missing a simple test loading structures with key values each then testing the size of the structures to be at some non default prime partition counts we see on heap jcach structure fails the test pwd ... | 0 |

69,257 | 3,296,408,608 | IssuesEvent | 2015-11-01 20:41:23 | dwyl/dwyl.github.io | https://api.github.com/repos/dwyl/dwyl.github.io | closed | Incorrect CSS overriding styles for the rest of the page | bug priority-2 | Incorrect CSS here:

<img width="385" alt="screen shot 2015-10-29 at 23 08 01" src="https://cloud.githubusercontent.com/assets/4185328/10834528/f9c1b7d6-7e91-11e5-9450-be2b4c949c48.png">

Is causing all of the paragraph text on the page to be grey (`color: #616161;`). | 1.0 | Incorrect CSS overriding styles for the rest of the page - Incorrect CSS here:

<img width="385" alt="screen shot 2015-10-29 at 23 08 01" src="https://cloud.githubusercontent.com/assets/4185328/10834528/f9c1b7d6-7e91-11e5-9450-be2b4c949c48.png">

Is causing all of the paragraph text on the page to be grey (`color: #6... | non_process | incorrect css overriding styles for the rest of the page incorrect css here img width alt screen shot at src is causing all of the paragraph text on the page to be grey color | 0 |

873 | 3,332,222,412 | IssuesEvent | 2015-11-11 19:11:48 | pwittchen/ReactiveBeacons | https://api.github.com/repos/pwittchen/ReactiveBeacons | closed | Release 0.3.2 | release process | **Initial release notes**:

- bug fix: wrapped `BluetoothManager` inside `isBleSupported()` to avoid `NoClassDefFound` error occurring while instantiating `ReactiveBeacons` object on devices running API < 18 - fixed in PR #30.

**Things to do**:

- [x] bump library version :point_right: PR #32

- [x] upload archives... | 1.0 | Release 0.3.2 - **Initial release notes**:

- bug fix: wrapped `BluetoothManager` inside `isBleSupported()` to avoid `NoClassDefFound` error occurring while instantiating `ReactiveBeacons` object on devices running API < 18 - fixed in PR #30.

**Things to do**:

- [x] bump library version :point_right: PR #32

- [x]... | process | release initial release notes bug fix wrapped bluetoothmanager inside isblesupported to avoid noclassdeffound error occurring while instantiating reactivebeacons object on devices running api fixed in pr things to do bump library version point right pr upload... | 1 |

103,766 | 11,372,210,720 | IssuesEvent | 2020-01-28 01:01:03 | SETI/pds-opus | https://api.github.com/repos/SETI/pds-opus | closed | Need new API Guide | A-Enhancement B-Documentation Effort 2 Medium Priority 3 Important | The current API Guide is based on a horrible YAML infrastructure that limits the kind of formatting we can do. For the February goal we need a new, pretty, and complete API Guide.

| 1.0 | Need new API Guide - The current API Guide is based on a horrible YAML infrastructure that limits the kind of formatting we can do. For the February goal we need a new, pretty, and complete API Guide.

| non_process | need new api guide the current api guide is based on a horrible yaml infrastructure that limits the kind of formatting we can do for the february goal we need a new pretty and complete api guide | 0 |

18,502 | 24,551,247,947 | IssuesEvent | 2022-10-12 12:46:50 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] Study activities screen > 'No activities found' error message getting displayed for few seconds in the following screen | Bug P1 iOS Process: Fixed Process: Tested dev | Steps:

1. Sign up or sign in to the mobile app

2. Enroll to the study

3. Navigate to study activities screen and observe

AR: 'No activities found' error message getting displayed for few seconds

ER: 'No activities found' error message should not get displayed

[Issue observed when user enters to study activiti... | 2.0 | [iOS] Study activities screen > 'No activities found' error message getting displayed for few seconds in the following screen - Steps:

1. Sign up or sign in to the mobile app

2. Enroll to the study

3. Navigate to study activities screen and observe

AR: 'No activities found' error message getting displayed for few... | process | study activities screen no activities found error message getting displayed for few seconds in the following screen steps sign up or sign in to the mobile app enroll to the study navigate to study activities screen and observe ar no activities found error message getting displayed for few sec... | 1 |

775,402 | 27,233,188,768 | IssuesEvent | 2023-02-21 14:37:41 | k3s-io/k3s | https://api.github.com/repos/k3s-io/k3s | closed | Install script improvements - preflight checks | kind/enhancement priority/important-longterm | Perhaps leverage check-config script or pass a `--preflight-checks` flag to check for any potential issues prior to an install.

For example, check if NetworkManager is utilized, if so warn the user this could cause issues (related to https://github.com/rancher/rke2/issues/786) | 1.0 | Install script improvements - preflight checks - Perhaps leverage check-config script or pass a `--preflight-checks` flag to check for any potential issues prior to an install.

For example, check if NetworkManager is utilized, if so warn the user this could cause issues (related to https://github.com/rancher/rke2/is... | non_process | install script improvements preflight checks perhaps leverage check config script or pass a preflight checks flag to check for any potential issues prior to an install for example check if networkmanager is utilized if so warn the user this could cause issues related to | 0 |

17,103 | 22,624,462,527 | IssuesEvent | 2022-06-30 09:25:03 | pyanodon/pybugreports | https://api.github.com/repos/pyanodon/pybugreports | closed | Deadlock stacks compatibilty | confirmed WIP postprocess-fail crash compatibility | ### Mod source

Github

### Which mod are you having an issue with?

- [ ] pyalienlife

- [ ] pyalternativeenergy

- [ ] pycoalprocessing

- [ ] pyfusionenergy

- [ ] pyhightech

- [ ] pyindustry

- [ ] pypetroleumhandling

- [X] pypostprocessing

- [ ] pyrawores

### Operating system

< Windows 10

### What kind of issue is t... | 1.0 | Deadlock stacks compatibilty - ### Mod source

Github

### Which mod are you having an issue with?

- [ ] pyalienlife

- [ ] pyalternativeenergy

- [ ] pycoalprocessing

- [ ] pyfusionenergy

- [ ] pyhightech

- [ ] pyindustry

- [ ] pypetroleumhandling

- [X] pypostprocessing

- [ ] pyrawores

### Operating system

< Windows... | process | deadlock stacks compatibilty mod source github which mod are you having an issue with pyalienlife pyalternativeenergy pycoalprocessing pyfusionenergy pyhightech pyindustry pypetroleumhandling pypostprocessing pyrawores operating system windows what kind ... | 1 |

8,207 | 11,402,600,084 | IssuesEvent | 2020-01-31 03:55:29 | scala/community-build | https://api.github.com/repos/scala/community-build | closed | dependencies.txt should be in deterministic order | process | because it's annoying for it constantly to show up in `git status` (and not only that, it also leads to me being lazy about checking in the changes when something actually _has_ changed) | 1.0 | dependencies.txt should be in deterministic order - because it's annoying for it constantly to show up in `git status` (and not only that, it also leads to me being lazy about checking in the changes when something actually _has_ changed) | process | dependencies txt should be in deterministic order because it s annoying for it constantly to show up in git status and not only that it also leads to me being lazy about checking in the changes when something actually has changed | 1 |

12,560 | 14,979,675,511 | IssuesEvent | 2021-01-28 12:36:02 | parcel-bundler/parcel | https://api.github.com/repos/parcel-bundler/parcel | closed | JS imported css files are bundled in the wrong order | :bug: Bug :clock1: Waiting CSS Preprocessing ✨ Parcel 2 | <!---

Thanks for filing an issue 😄 ! Before you submit, please read the following:

Search open/closed issues before submitting since someone might have asked the same thing before!

-->

# 🐛 bug report

<!--- Provide a general summary of the issue here -->

I'm importing these two css and scss files in my app... | 1.0 | JS imported css files are bundled in the wrong order - <!---

Thanks for filing an issue 😄 ! Before you submit, please read the following:

Search open/closed issues before submitting since someone might have asked the same thing before!

-->

# 🐛 bug report

<!--- Provide a general summary of the issue here --... | process | js imported css files are bundled in the wrong order thanks for filing an issue 😄 before you submit please read the following search open closed issues before submitting since someone might have asked the same thing before 🐛 bug report i m importing these two css and scss files in my ... | 1 |

5,190 | 7,970,406,036 | IssuesEvent | 2018-07-16 12:41:19 | rubberduck-vba/Rubberduck | https://api.github.com/repos/rubberduck-vba/Rubberduck | opened | COM Collector needs to handle indexed TLBs and referenced, but unregistered type libraries | feature-reference-explorer library-specific parse-tree-processing | Using a command like `ThisWorkbook.VBProject.References.AddFromFile "C:\Program Files (x86)\Common Files\microsoft shared\VBA\VBA7.1\VBE7.dll\3"` (note the index numeral, at the tail of the path), it's possible to add a `Reference` that isn't the default TLB for a file (in this case, the `VBInternal` TLB is referenced)... | 1.0 | COM Collector needs to handle indexed TLBs and referenced, but unregistered type libraries - Using a command like `ThisWorkbook.VBProject.References.AddFromFile "C:\Program Files (x86)\Common Files\microsoft shared\VBA\VBA7.1\VBE7.dll\3"` (note the index numeral, at the tail of the path), it's possible to add a `Refere... | process | com collector needs to handle indexed tlbs and referenced but unregistered type libraries using a command like thisworkbook vbproject references addfromfile c program files common files microsoft shared vba dll note the index numeral at the tail of the path it s possible to add a reference tha... | 1 |

449,124 | 31,828,881,623 | IssuesEvent | 2023-09-14 09:17:58 | integrations/terraform-provider-github | https://api.github.com/repos/integrations/terraform-provider-github | opened | [DOCS]: Document how github_repository_ruleset.rules.required_status_checks.required_check.integration_id can be found | Type: Documentation Status: Triage | ### Describe the need

Its not clear from the documentation how the following property's value can be found so it may be correctly set:

```

github_repository_ruleset.rules.required_status_checks.required_check.integration_id can be found

```

Its also unclear from the GH API documentation, all they say is:

> inte... | 1.0 | [DOCS]: Document how github_repository_ruleset.rules.required_status_checks.required_check.integration_id can be found - ### Describe the need

Its not clear from the documentation how the following property's value can be found so it may be correctly set:

```

github_repository_ruleset.rules.required_status_checks.re... | non_process | document how github repository ruleset rules required status checks required check integration id can be found describe the need its not clear from the documentation how the following property s value can be found so it may be correctly set github repository ruleset rules required status checks require... | 0 |

18,371 | 24,498,294,642 | IssuesEvent | 2022-10-10 10:34:53 | Blazebit/blaze-persistence | https://api.github.com/repos/Blazebit/blaze-persistence | closed | Concurrency issues with the annotation processor | kind: bug worth: medium component: entity-view-annotation-processor | We are using gradle 7.5.1 with java 17, also happened with gradle 6 and java 11. It happened with every BP version since 1.5.x and above.

It does happen in the CI (less often due to the build cache)

When we compile our codebase from scratch, it is about 90% likely that we get this exception/error. It seems to hap... | 1.0 | Concurrency issues with the annotation processor - We are using gradle 7.5.1 with java 17, also happened with gradle 6 and java 11. It happened with every BP version since 1.5.x and above.

It does happen in the CI (less often due to the build cache)

When we compile our codebase from scratch, it is about 90% likel... | process | concurrency issues with the annotation processor we are using gradle with java also happened with gradle and java it happened with every bp version since x and above it does happen in the ci less often due to the build cache when we compile our codebase from scratch it is about likely t... | 1 |

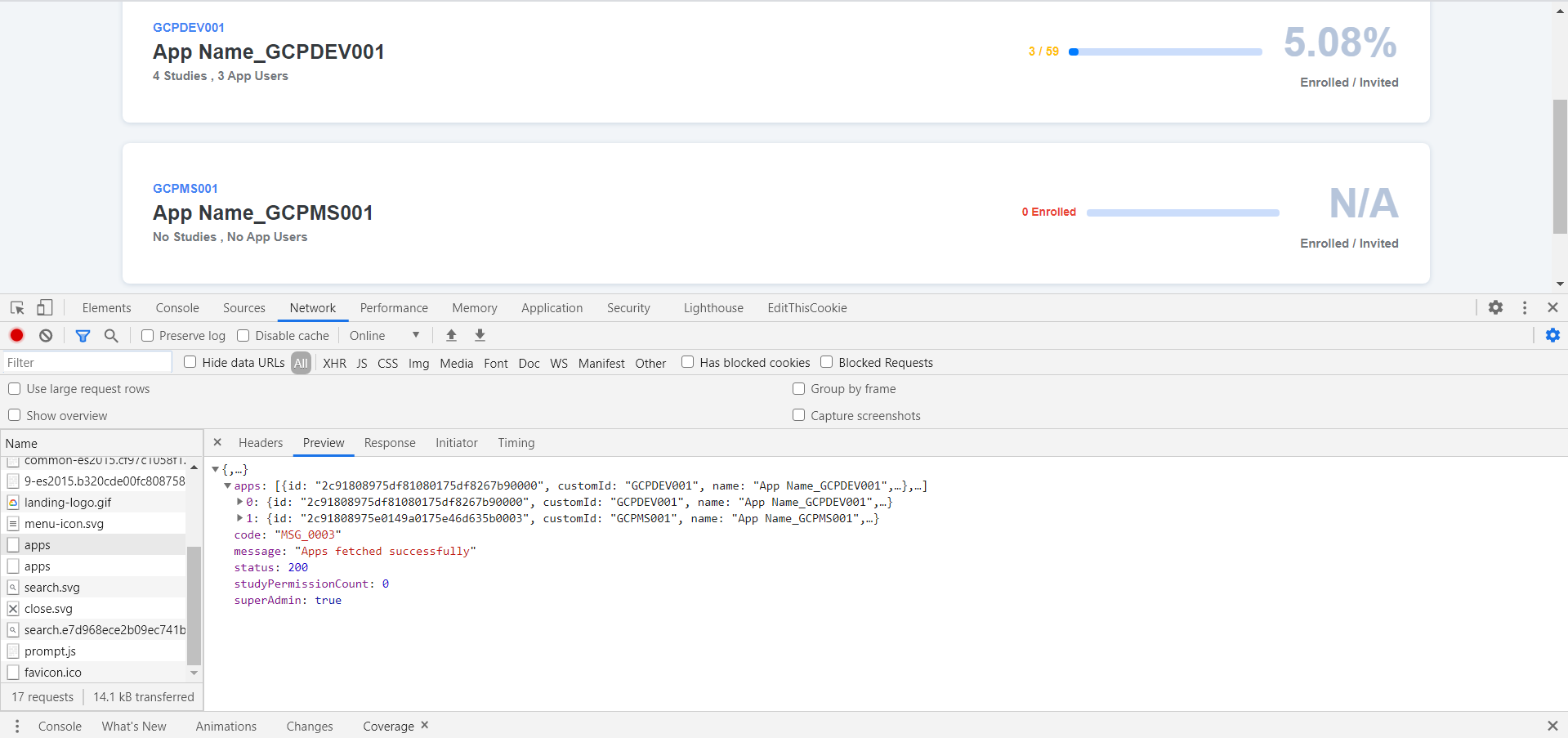

11,772 | 14,601,077,098 | IssuesEvent | 2020-12-21 08:04:16 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] [Dev] Apps > App_GCPMS001 record is displayed even though there are no studies created with this app ID in SB | Bug P1 Participant manager Process: Dev Process: Tested dev | App_GCPMS001 record is displayed even though there are no studies created with this app ID in SB

| 2.0 | [PM] [Dev] Apps > App_GCPMS001 record is displayed even though there are no studies created with this app ID in SB - App_GCPMS001 record is displayed even though there are no studies created with this app ID in SB

.

If I'm commenting on this issue too often, add the `buildcop: quiet` label and

I will stop commenting.

---

commit: fd2a938d45c1d1b5fbd4800723771c5e9de3... | 2.0 | analyzing faces in video: should identify faces in a local file failed - This test failed!

To configure my behavior, see [the Build Cop Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/buildcop).

If I'm commenting on this issue too often, add the `buildcop: quiet` label and

I... | non_process | analyzing faces in video should identify faces in a local file failed this test failed to configure my behavior see if i m commenting on this issue too often add the buildcop quiet label and i will stop commenting commit buildurl status failed test output expected waiting for ope... | 0 |

1,859 | 4,682,374,204 | IssuesEvent | 2016-10-09 07:53:23 | sysown/proxysql | https://api.github.com/repos/sysown/proxysql | closed | rule with only digest set always matches on everything | bug QUERY PROCESSOR | I have a rule with only digest value set, and regarding the documentation I expected it will only match if its the identical digest, but seems to match on any query.

> digest - match queries with a specific digest, as returned by stats_mysql_query_digest.digest

```

mysql> select * from runtime_mysql_query_rules ... | 1.0 | rule with only digest set always matches on everything - I have a rule with only digest value set, and regarding the documentation I expected it will only match if its the identical digest, but seems to match on any query.

> digest - match queries with a specific digest, as returned by stats_mysql_query_digest.diges... | process | rule with only digest set always matches on everything i have a rule with only digest value set and regarding the documentation i expected it will only match if its the identical digest but seems to match on any query digest match queries with a specific digest as returned by stats mysql query digest diges... | 1 |

20,675 | 27,342,315,853 | IssuesEvent | 2023-02-26 23:03:30 | JuliaParallel/Dagger.jl | https://api.github.com/repos/JuliaParallel/Dagger.jl | opened | Add more built-in thread-based processor types | enhancement processors | - `ThreadProc` -> `ThreadLockedProc` (no longer default enabled)

- `ThreadProc`: Runs on any thread and allows task migration (default enabled)

- `NUMAPinnedProc`: Pinned to a given NUMA domain, possibly allocated manually (not default enabled) | 1.0 | Add more built-in thread-based processor types - - `ThreadProc` -> `ThreadLockedProc` (no longer default enabled)

- `ThreadProc`: Runs on any thread and allows task migration (default enabled)

- `NUMAPinnedProc`: Pinned to a given NUMA domain, possibly allocated manually (not default enabled) | process | add more built in thread based processor types threadproc threadlockedproc no longer default enabled threadproc runs on any thread and allows task migration default enabled numapinnedproc pinned to a given numa domain possibly allocated manually not default enabled | 1 |

7,298 | 10,442,910,788 | IssuesEvent | 2019-09-18 13:57:21 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | kernel message: goaccess trap divide error | bug log-processing on-disk | When running goaccess 1.3 daemonized, I ran in this issue today.

The web page with realtime stats was empty, on disk the realtime html and database look good.

In the syslog I see :

traps: goaccess[4010] trap divide error ip:559ca6039673 sp:7fff900f9170 error:0 in goaccess[559ca6020000+97000]

traps: goaccess[410... | 1.0 | kernel message: goaccess trap divide error - When running goaccess 1.3 daemonized, I ran in this issue today.

The web page with realtime stats was empty, on disk the realtime html and database look good.

In the syslog I see :