Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

3,073 | 6,077,576,794 | IssuesEvent | 2017-06-16 04:44:51 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | reopened | Test: System.Diagnostics.Tests.ProcessStartInfoTests/Verbs_GetWithExeExtension_ReturnsExpected failed with "Xunit.Sdk.ContainsException" | area-System.Diagnostics.Process os-windows-uwp test-run-uwp-coreclr | Opened on behalf of @Jiayili1

The test `System.Diagnostics.Tests.ProcessStartInfoTests/Verbs_GetWithExeExtension_ReturnsExpected` has failed.

Assert.Contains() Failure\r

Not found: open\r

In value: String[] []

Stack Trace:

at System.Diagnostics.Tests.ProcessStartInfoTests.Verbs_GetWithEx... | 1.0 | Test: System.Diagnostics.Tests.ProcessStartInfoTests/Verbs_GetWithExeExtension_ReturnsExpected failed with "Xunit.Sdk.ContainsException" - Opened on behalf of @Jiayili1

The test `System.Diagnostics.Tests.ProcessStartInfoTests/Verbs_GetWithExeExtension_ReturnsExpected` has failed.

Assert.Contains() Failure\r

Not f... | process | test system diagnostics tests processstartinfotests verbs getwithexeextension returnsexpected failed with xunit sdk containsexception opened on behalf of the test system diagnostics tests processstartinfotests verbs getwithexeextension returnsexpected has failed assert contains failure r not found o... | 1 |

7,450 | 10,558,824,853 | IssuesEvent | 2019-10-04 09:59:43 | Altinn/altinn-studio | https://api.github.com/repos/Altinn/altinn-studio | closed | Update the handling of workflow in runtime to support to submit/archive directly from fill in form | app-backend process ready-for-specification team-tamagotchi user-story | **Functional architect/designer:** @-mention

**Technical architect:** @-mention

**Description**

As a end user I would like to submit/archive directly from fill in form

Relates to #1029

**Sketch (if relevant)**

(Screenshot and link to Figma, make sure your sketch is public!)

**Navigation from/to (if rele... | 1.0 | Update the handling of workflow in runtime to support to submit/archive directly from fill in form - **Functional architect/designer:** @-mention

**Technical architect:** @-mention

**Description**

As a end user I would like to submit/archive directly from fill in form

Relates to #1029

**Sketch (if relevant)... | process | update the handling of workflow in runtime to support to submit archive directly from fill in form functional architect designer mention technical architect mention description as a end user i would like to submit archive directly from fill in form relates to sketch if relevant ... | 1 |

23,768 | 7,374,159,132 | IssuesEvent | 2018-03-13 19:25:56 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | opened | Omit UI buildInfo.json from archive | Category: Build Category: Services Category: UI Priority: High | In #2241 I removed the `services-build` target when creating the packaging archive. This created a regression when running `make archive`, leading to failures like this:

```

# Copy the buildInfo.json file for hoot services

if [ "services" == "services" ]; then mkdir -p hootenanny-0.2.39_20_g672adde/hoot-ui/data; ... | 1.0 | Omit UI buildInfo.json from archive - In #2241 I removed the `services-build` target when creating the packaging archive. This created a regression when running `make archive`, leading to failures like this:

```

# Copy the buildInfo.json file for hoot services

if [ "services" == "services" ]; then mkdir -p hooten... | non_process | omit ui buildinfo json from archive in i removed the services build target when creating the packaging archive this created a regression when running make archive leading to failures like this copy the buildinfo json file for hoot services if then mkdir p hootenanny hoot ui data c... | 0 |

11,857 | 14,664,841,606 | IssuesEvent | 2020-12-29 12:57:14 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] [Dev] Studies > All studies are not displayed and loader icon is not shown at end even though there are >10 studies | Bug P0 Participant manager Process: Dev Process: Fixed | Steps:

1. Navigate to Studies tab

2. Scroll till end

3. Observe

A/R: Only 9 sets of studies are displayed

E/R: 10 studies should be displayed per set and loader icon should be displayed | 2.0 | [PM] [Dev] Studies > All studies are not displayed and loader icon is not shown at end even though there are >10 studies - Steps:

1. Navigate to Studies tab

2. Scroll till end

3. Observe

A/R: Only 9 sets of studies are displayed

E/R: 10 studies should be displayed per set and loader icon should be displayed | process | studies all studies are not displayed and loader icon is not shown at end even though there are studies steps navigate to studies tab scroll till end observe a r only sets of studies are displayed e r studies should be displayed per set and loader icon should be displayed | 1 |

15,608 | 19,730,027,146 | IssuesEvent | 2022-01-14 00:48:17 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | closed | Security Vulnerability for Pillow V8 references | type: process priority: p1 samples | References:

https://github.com/advisories/GHSA-xrcv-f9gm-v42c

https://github.com/advisories/GHSA-8vj2-vxx3-667w

https://github.com/advisories/GHSA-pw3c-h7wp-cvhx

There are 3 references to it in this repo. | 1.0 | Security Vulnerability for Pillow V8 references - References:

https://github.com/advisories/GHSA-xrcv-f9gm-v42c

https://github.com/advisories/GHSA-8vj2-vxx3-667w

https://github.com/advisories/GHSA-pw3c-h7wp-cvhx

There are 3 references to it in this repo. | process | security vulnerability for pillow references references there are references to it in this repo | 1 |

19,156 | 25,240,360,611 | IssuesEvent | 2022-11-15 06:46:33 | googleapis/sphinx-docfx-yaml | https://api.github.com/repos/googleapis/sphinx-docfx-yaml | opened | Update docfx minimum Python version to 3.9 on client libraries | type: process priority: p1 | Followup to #266: will need to update docfx jobs running with Python 3.9 throughout the client libraries. | 1.0 | Update docfx minimum Python version to 3.9 on client libraries - Followup to #266: will need to update docfx jobs running with Python 3.9 throughout the client libraries. | process | update docfx minimum python version to on client libraries followup to will need to update docfx jobs running with python throughout the client libraries | 1 |

189,275 | 14,497,110,981 | IssuesEvent | 2020-12-11 13:47:37 | kalexmills/github-vet-tests-dec2020 | https://api.github.com/repos/kalexmills/github-vet-tests-dec2020 | closed | anchore/syft: syft/cataloger/java/archive_parser_test.go; 5 LoC | fresh test tiny |

Found a possible issue in [anchore/syft](https://www.github.com/anchore/syft) at [syft/cataloger/java/archive_parser_test.go](https://github.com/anchore/syft/blob/52bac6e2fd1adb3d8852f0fab6536a81ec037b89/syft/cataloger/java/archive_parser_test.go#L245-L249)

Below is the message reported by the analyzer for this snipp... | 1.0 | anchore/syft: syft/cataloger/java/archive_parser_test.go; 5 LoC -

Found a possible issue in [anchore/syft](https://www.github.com/anchore/syft) at [syft/cataloger/java/archive_parser_test.go](https://github.com/anchore/syft/blob/52bac6e2fd1adb3d8852f0fab6536a81ec037b89/syft/cataloger/java/archive_parser_test.go#L245-L... | non_process | anchore syft syft cataloger java archive parser test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below message ... | 0 |

18,139 | 24,183,927,087 | IssuesEvent | 2022-09-23 11:34:48 | cloudfoundry/korifi | https://api.github.com/repos/cloudfoundry/korifi | closed | [Feature]: Developer can push apps using the top-level `instances` field in the manifest | Top-level process config | ### Background

**As a** developer

**I want** top-level process configuration in manifests to be supported

**So that** I can use shortcut `cf push` flags like `-c`, `-i`, `-m` etc.

### Acceptance Criteria

* **GIVEN** I have the sources of an application (e.g. `tests/smoke/assets/test-node-app`)

**AND** `ma... | 1.0 | [Feature]: Developer can push apps using the top-level `instances` field in the manifest - ### Background

**As a** developer

**I want** top-level process configuration in manifests to be supported

**So that** I can use shortcut `cf push` flags like `-c`, `-i`, `-m` etc.

### Acceptance Criteria

* **GIVEN** I ... | process | developer can push apps using the top level instances field in the manifest background as a developer i want top level process configuration in manifests to be supported so that i can use shortcut cf push flags like c i m etc acceptance criteria given i have the... | 1 |

42,644 | 17,225,875,525 | IssuesEvent | 2021-07-20 01:31:53 | dorksquad/artwork | https://api.github.com/repos/dorksquad/artwork | opened | artwork service - streamline apis | artwork service good first issue | 1. condense all apis to just `/artworks` with 2 optional query parameters (instead of path variables).

- query parameters will be `name` (name of artwork) and `album` (name of the music album).

2. add all CRUD operations to the `/artworks` api path.

3. clean up service layer get methods to be just one getArtwo... | 1.0 | artwork service - streamline apis - 1. condense all apis to just `/artworks` with 2 optional query parameters (instead of path variables).

- query parameters will be `name` (name of artwork) and `album` (name of the music album).

2. add all CRUD operations to the `/artworks` api path.

3. clean up service layer... | non_process | artwork service streamline apis condense all apis to just artworks with optional query parameters instead of path variables query parameters will be name name of artwork and album name of the music album add all crud operations to the artworks api path clean up service layer... | 0 |

794 | 2,545,209,135 | IssuesEvent | 2015-01-29 15:51:09 | slick/slick | https://api.github.com/repos/slick/slick | closed | relative links for API doc references | 1 - Ready improvement topic:documentation | *[Migrated from Assembla ticket [306](https://www.assembla.com/spaces/typesafe-slick/tickets/306) - reported by @cvogt on 2013-08-21 10:43:35]*

Could we use relative links for API docs and generate them locally into the right folder? They work locally when you test the docs. We put the scaladoc under ./api anyway, so ... | 1.0 | relative links for API doc references - *[Migrated from Assembla ticket [306](https://www.assembla.com/spaces/typesafe-slick/tickets/306) - reported by @cvogt on 2013-08-21 10:43:35]*

Could we use relative links for API docs and generate them locally into the right folder? They work locally when you test the docs. We ... | non_process | relative links for api doc references reported by cvogt on could we use relative links for api docs and generate them locally into the right folder they work locally when you test the docs we put the scaladoc under api anyway so if we could link to it there we could copy or move the gener... | 0 |

95,971 | 12,067,562,051 | IssuesEvent | 2020-04-16 13:34:41 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | [Help]I want to create buttonAppNavigation layout like attached image.But, I don't know this way. | f: material design framework | I want to create button app navigation layout like attached image.

But, I don't know way to create that this layout.

I tried to implement using standard button app navigation and floating action button, centerDocked of floating action button location, but it has totally different layout.

So if you know how to im... | 1.0 | [Help]I want to create buttonAppNavigation layout like attached image.But, I don't know this way. - I want to create button app navigation layout like attached image.

But, I don't know way to create that this layout.

I tried to implement using standard button app navigation and floating action button, centerDocked ... | non_process | i want to create buttonappnavigation layout like attached image but i don t know this way i want to create button app navigation layout like attached image but i don t know way to create that this layout i tried to implement using standard button app navigation and floating action button centerdocked of fl... | 0 |

8,437 | 11,598,899,526 | IssuesEvent | 2020-02-25 00:28:23 | quinngroup/CiliaRepresentation | https://api.github.com/repos/quinngroup/CiliaRepresentation | opened | Obtaining segmentation masks for localized representation learning | datasets processing | Incorporating segmentation masks into representation learning will allow learning localized ciliary patches. Right now, we have segmentation masks on ~20% of entire dataset and appearance module is not targeting cilia regions. For time efficacy, unknown segmentation masks can be computed through 1) Rudimentary threshol... | 1.0 | Obtaining segmentation masks for localized representation learning - Incorporating segmentation masks into representation learning will allow learning localized ciliary patches. Right now, we have segmentation masks on ~20% of entire dataset and appearance module is not targeting cilia regions. For time efficacy, unkno... | process | obtaining segmentation masks for localized representation learning incorporating segmentation masks into representation learning will allow learning localized ciliary patches right now we have segmentation masks on of entire dataset and appearance module is not targeting cilia regions for time efficacy unknow... | 1 |

15,291 | 19,296,149,005 | IssuesEvent | 2021-12-12 16:16:59 | glennl-msft/WAF_PnP_Demo3 | https://api.github.com/repos/glennl-msft/WAF_PnP_Demo3 | opened | Put a solution in place that ensures all VMs are patched in a timely manner and that ensures strong local administrative password management | Security Operational Procedures Patch & Update Process (PNU) | <a href="https://docs.microsoft.com/azure/automation/update-management/overview">Put a solution in place that ensures all VMs are patched in a timely manner and that ensures strong local administrative password management</a> | 1.0 | Put a solution in place that ensures all VMs are patched in a timely manner and that ensures strong local administrative password management - <a href="https://docs.microsoft.com/azure/automation/update-management/overview">Put a solution in place that ensures all VMs are patched in a timely manner and that ensures str... | process | put a solution in place that ensures all vms are patched in a timely manner and that ensures strong local administrative password management | 1 |

17,068 | 22,534,002,474 | IssuesEvent | 2022-06-25 00:57:22 | neudesic/documentation-solution-centers | https://api.github.com/repos/neudesic/documentation-solution-centers | closed | Add Links to Homepage | feature Process | Add links on the homepage Readme for

- Onboarding Checklist

- Internship Program

- Best Practices (Development)

- Processes and Guidelines

- Solution Center Narrative | 1.0 | Add Links to Homepage - Add links on the homepage Readme for

- Onboarding Checklist

- Internship Program

- Best Practices (Development)

- Processes and Guidelines

- Solution Center Narrative | process | add links to homepage add links on the homepage readme for onboarding checklist internship program best practices development processes and guidelines solution center narrative | 1 |

57,852 | 8,211,307,221 | IssuesEvent | 2018-09-04 13:29:32 | daostack/access_control | https://api.github.com/repos/daostack/access_control | closed | Making an EIP | documentation | - Figure out what the structure of an EIP is and write one in an `.md` file under the `docs` dir. | 1.0 | Making an EIP - - Figure out what the structure of an EIP is and write one in an `.md` file under the `docs` dir. | non_process | making an eip figure out what the structure of an eip is and write one in an md file under the docs dir | 0 |

237,210 | 26,084,070,710 | IssuesEvent | 2022-12-25 21:18:14 | billmcchesney1/goalert | https://api.github.com/repos/billmcchesney1/goalert | opened | CVE-2022-46175 (High) detected in json5-1.0.1.tgz, json5-2.1.3.tgz | security vulnerability | ## CVE-2022-46175 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>json5-1.0.1.tgz</b>, <b>json5-2.1.3.tgz</b></p></summary>

<p>

<details><summary><b>json5-1.0.1.tgz</b></p></summary>... | True | CVE-2022-46175 (High) detected in json5-1.0.1.tgz, json5-2.1.3.tgz - ## CVE-2022-46175 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>json5-1.0.1.tgz</b>, <b>json5-2.1.3.tgz</b></p><... | non_process | cve high detected in tgz tgz cve high severity vulnerability vulnerable libraries tgz tgz tgz json for humans library home page a href path to dependency file web src package json path to vulnerable library web src node mod... | 0 |

5,561 | 8,403,499,643 | IssuesEvent | 2018-10-11 09:56:31 | kiwicom/orbit-components | https://api.github.com/repos/kiwicom/orbit-components | closed | <Stack />: align option doesn't apply align-items property | bug processing | ## Expected Behavior

`align="end"` to attach `align-items: flex-end`

## Current Behavior

`align="end"` to attaches `align-content: flex-end` but not `align-items: flex-end`

## Steps to Reproduce

`<... | 1.0 | <Stack />: align option doesn't apply align-items property - ## Expected Behavior

`align="end"` to attach `align-items: flex-end`

## Current Behavior

`align="end"` to attaches `align-content: flex-end` but not `align-items: flex-end`

`GAML Syntax > System > RunThread.gaml`

2. Move the mouse over `interval: `

![RunThread gaml - Gama (runtime) 2022-09-26 07-54-... | 1.0 | Popup documentation in editor do not show up for action arguments - **Describe the bug**

In the GAML editor, no documentation show up when moving the mouse over action arguments.

**To Reproduce**

Steps to reproduce the behavior:

1. Open (for instance) `GAML Syntax > System > RunThread.gaml`

2. Move the mouse ov... | non_process | popup documentation in editor do not show up for action arguments describe the bug in the gaml editor no documentation show up when moving the mouse over action arguments to reproduce steps to reproduce the behavior open for instance gaml syntax system runthread gaml move the mouse ov... | 0 |

469,145 | 13,501,998,275 | IssuesEvent | 2020-09-13 05:52:52 | ceochrism/StackOverFlowHackerRankHybrid | https://api.github.com/repos/ceochrism/StackOverFlowHackerRankHybrid | opened | Character Selection/Customization Screen | Priority:Medium Status:On Hold | This would be apart of some sort of settings screen, or the first time the user loads the game. We will have a variety of costumes a user can choose from in order to customize their character. This can be done in mono game or win forms, I believe it may be user in Windows Forms if we utilize something like the ImageLis... | 1.0 | Character Selection/Customization Screen - This would be apart of some sort of settings screen, or the first time the user loads the game. We will have a variety of costumes a user can choose from in order to customize their character. This can be done in mono game or win forms, I believe it may be user in Windows Form... | non_process | character selection customization screen this would be apart of some sort of settings screen or the first time the user loads the game we will have a variety of costumes a user can choose from in order to customize their character this can be done in mono game or win forms i believe it may be user in windows form... | 0 |

98,419 | 16,373,817,026 | IssuesEvent | 2021-05-15 17:40:54 | hugh-whitesource/NodeGoat-1 | https://api.github.com/repos/hugh-whitesource/NodeGoat-1 | opened | WS-2018-0069 (High) detected in is-my-json-valid-2.15.0.tgz | security vulnerability | ## WS-2018-0069 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>is-my-json-valid-2.15.0.tgz</b></p></summary>

<p>A JSONSchema validator that uses code generation to be extremely fast</... | True | WS-2018-0069 (High) detected in is-my-json-valid-2.15.0.tgz - ## WS-2018-0069 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>is-my-json-valid-2.15.0.tgz</b></p></summary>

<p>A JSONSch... | non_process | ws high detected in is my json valid tgz ws high severity vulnerability vulnerable library is my json valid tgz a jsonschema validator that uses code generation to be extremely fast library home page a href path to dependency file nodegoat package json path to vulne... | 0 |

14,758 | 18,040,776,942 | IssuesEvent | 2021-09-18 02:31:17 | ooi-data/RS01SBPS-SF01A-4A-NUTNRA101-streamed-nutnr_a_dark_sample | https://api.github.com/repos/ooi-data/RS01SBPS-SF01A-4A-NUTNRA101-streamed-nutnr_a_dark_sample | opened | 🛑 Processing failed: OSError | process | ## Overview

`OSError` found in `processing_task` task during run ended on 2021-09-18T02:31:16.255440.

## Details

Flow name: `RS01SBPS-SF01A-4A-NUTNRA101-streamed-nutnr_a_dark_sample`

Task name: `processing_task`

Error type: `OSError`

Error message: [Errno 16] Please reduce your request rate.

<details>

<summary>Tra... | 1.0 | 🛑 Processing failed: OSError - ## Overview

`OSError` found in `processing_task` task during run ended on 2021-09-18T02:31:16.255440.

## Details

Flow name: `RS01SBPS-SF01A-4A-NUTNRA101-streamed-nutnr_a_dark_sample`

Task name: `processing_task`

Error type: `OSError`

Error message: [Errno 16] Please reduce your reques... | process | 🛑 processing failed oserror overview oserror found in processing task task during run ended on details flow name streamed nutnr a dark sample task name processing task error type oserror error message please reduce your request rate traceback traceback most... | 1 |

388,293 | 11,485,932,739 | IssuesEvent | 2020-02-11 08:54:58 | DigitalCampus/moodle-block_oppia_mobile_export | https://api.github.com/repos/DigitalCampus/moodle-block_oppia_mobile_export | closed | In server connections save all settings | enhancement low priority | So, for example, can have different cropping, image icons sizes etc for the different servers used

| 1.0 | In server connections save all settings - So, for example, can have different cropping, image icons sizes etc for the different servers used

| non_process | in server connections save all settings so for example can have different cropping image icons sizes etc for the different servers used | 0 |

1,604 | 4,217,943,607 | IssuesEvent | 2016-06-30 14:36:51 | e-government-ua/iBP | https://api.github.com/repos/e-government-ua/iBP | closed | Київ: Присвоєння поштової адреси | In process of testing in work test | предлагаемая схема процесса - https://www.dropbox.com/s/40wip0wzg16o3gv/%D0%9F%D1%80%D0%B8%D1%81%D0%B2%D0%BE%D1%94%D0%BD%D0%BD%D1%8F%20%D0%BF%D0%BE%D1%88%D1%82%D0%BE%D0%B2%D0%BE%D1%97%20%D0%B0%D0%B4%D1%80%D0%B5%D1%81%D0%B8%20%28%D0%BD%D0%B5%D0%B6%D0%B8%D1%82%D0%BB%D0%BE%D0%B2%D1%96%20%D0%BF%D1%80%D0%B8%D0%BC%D1%96%D1%8... | 1.0 | Київ: Присвоєння поштової адреси - предлагаемая схема процесса - https://www.dropbox.com/s/40wip0wzg16o3gv/%D0%9F%D1%80%D0%B8%D1%81%D0%B2%D0%BE%D1%94%D0%BD%D0%BD%D1%8F%20%D0%BF%D0%BE%D1%88%D1%82%D0%BE%D0%B2%D0%BE%D1%97%20%D0%B0%D0%B4%D1%80%D0%B5%D1%81%D0%B8%20%28%D0%BD%D0%B5%D0%B6%D0%B8%D1%82%D0%BB%D0%BE%D0%B2%D1%96%2... | process | київ присвоєння поштової адреси предлагаемая схема процесса исходные материалы | 1 |

192,882 | 14,631,656,202 | IssuesEvent | 2020-12-23 20:24:50 | github-vet/rangeloop-pointer-findings | https://api.github.com/repos/github-vet/rangeloop-pointer-findings | closed | Noxdew/Knights-Of-Discord: vendor/github.com/mongodb/mongo-go-driver/mongo/crud_spec_test.go; 51 LoC | fresh medium test vendored |

Found a possible issue in [Noxdew/Knights-Of-Discord](https://www.github.com/Noxdew/Knights-Of-Discord) at [vendor/github.com/mongodb/mongo-go-driver/mongo/crud_spec_test.go](https://github.com/Noxdew/Knights-Of-Discord/blob/54e2089536ec92da137c78869f0023e47b2ae354/vendor/github.com/mongodb/mongo-go-driver/mongo/crud_... | 1.0 | Noxdew/Knights-Of-Discord: vendor/github.com/mongodb/mongo-go-driver/mongo/crud_spec_test.go; 51 LoC -

Found a possible issue in [Noxdew/Knights-Of-Discord](https://www.github.com/Noxdew/Knights-Of-Discord) at [vendor/github.com/mongodb/mongo-go-driver/mongo/crud_spec_test.go](https://github.com/Noxdew/Knights-Of-Disc... | non_process | noxdew knights of discord vendor github com mongodb mongo go driver mongo crud spec test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration t... | 0 |

6,700 | 9,814,742,406 | IssuesEvent | 2019-06-13 10:55:25 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | gdal2tiles very slow compared to QGIS 2.18 | Bug Feedback Processing | Author Name: **Karsten Tebling** (Karsten Tebling)

Original Redmine Issue: [21819](https://issues.qgis.org/issues/21819)

Affected QGIS version: 3.6.1

Redmine category:processing/gdal

---

I tried to generate tiles for zoom levels 10-11 for a roughly 2GB compressed DOP, with QGIS 3.6.1 it took about 581 minutes to fini... | 1.0 | gdal2tiles very slow compared to QGIS 2.18 - Author Name: **Karsten Tebling** (Karsten Tebling)

Original Redmine Issue: [21819](https://issues.qgis.org/issues/21819)

Affected QGIS version: 3.6.1

Redmine category:processing/gdal

---

I tried to generate tiles for zoom levels 10-11 for a roughly 2GB compressed DOP, with... | process | very slow compared to qgis author name karsten tebling karsten tebling original redmine issue affected qgis version redmine category processing gdal i tried to generate tiles for zoom levels for a roughly compressed dop with qgis it took about minutes to finish i also tr... | 1 |

78,777 | 7,668,423,239 | IssuesEvent | 2018-05-14 05:37:39 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Unable to view upgrade status when upgrading charts | area/catalog kind/enhancement status/resolved status/to-test version/2.0 | **Rancher versions:** master 04/27

**Docker version: (`docker version`,`docker info` preferred)** 17.03.2-ce

**Operating system and kernel: (`cat /etc/os-release`, `uname -r` preferred)** Ubuntu 16.04.4 LTS 4.4.0-1052-aws

**Type/provider of hosts: (VirtualBox/Bare-metal/AWS/GCE/DO)** AWS

When I upgraded ... | 1.0 | Unable to view upgrade status when upgrading charts - **Rancher versions:** master 04/27

**Docker version: (`docker version`,`docker info` preferred)** 17.03.2-ce

**Operating system and kernel: (`cat /etc/os-release`, `uname -r` preferred)** Ubuntu 16.04.4 LTS 4.4.0-1052-aws

**Type/provider of hosts: (Virtua... | non_process | unable to view upgrade status when upgrading charts rancher versions master docker version docker version docker info preferred ce operating system and kernel cat etc os release uname r preferred ubuntu lts aws type provider of hosts virtualbox bare... | 0 |

783 | 3,265,873,236 | IssuesEvent | 2015-10-22 18:10:17 | USC-CSSL/TACIT | https://api.github.com/repos/USC-CSSL/TACIT | opened | TACIT word count: preprocess twitter json fails | bug Preprocessing word count | If you put a json file in to word count (or a corpus), it will run a word count on the entire file. However, if you click preprocess, it will say it created a new preprocessed file, but it wont actually create anything and then it wont give you a word count output. | 1.0 | TACIT word count: preprocess twitter json fails - If you put a json file in to word count (or a corpus), it will run a word count on the entire file. However, if you click preprocess, it will say it created a new preprocessed file, but it wont actually create anything and then it wont give you a word count output. | process | tacit word count preprocess twitter json fails if you put a json file in to word count or a corpus it will run a word count on the entire file however if you click preprocess it will say it created a new preprocessed file but it wont actually create anything and then it wont give you a word count output | 1 |

100,642 | 4,099,777,274 | IssuesEvent | 2016-06-03 13:58:09 | jpppina/migracion-galeno-art-forms11g | https://api.github.com/repos/jpppina/migracion-galeno-art-forms11g | opened | No se visualiza los botones | Aplicación-ART Error Priority-Low | Sellados Provinciales-->Informacion a Entidades Provinciales-->Sellados Prov. Prest. Interfase

Usuario: RIALM

Pass: desaa002

No se pueden ver los botones | 1.0 | No se visualiza los botones - Sellados Provinciales-->Informacion a Entidades Provinciales-->Sellados Prov. Prest. Interfase

Usuario: RIALM

Pass: desaa002

No se pueden ver los botones | non_process | no se visualiza los botones sellados provinciales informacion a entidades provinciales sellados prov prest interfase usuario rialm pass no se pueden ver los botones | 0 |

6,137 | 8,999,231,516 | IssuesEvent | 2019-02-03 07:40:10 | SerialLain3170/GAN-papers | https://api.github.com/repos/SerialLain3170/GAN-papers | opened | Controllable Image-to-Video Translation:A Case Study on Facial Expression Generation | Video Processing | # Paper

[Controllable Image-to-Video Translation:A Case Study on Facial Expression Generation](https://arxiv.org/pdf/1808.02992.pdf)

# Summary

- Encoderを2つ用意して片方の出力にフレーム毎の変数を掛けて、もう片方の出力に足したものをDecoderの入力に入れる

- Adversarial lossやReconstruction lossに加え、Temporary loss, Landmark prediction lossを考慮

# Summary

- Encoderを2つ用意して片方の出力にフレーム毎の変数を掛けて、もう片方の出力に足したものをDecoderの入力に入れる

- Adversarial lossやR... | process | controllable image to video translation a case study on facial expression generation paper summary 、もう片方の出力に足したものをdecoderの入力に入れる adversarial lossやreconstruction lossに加え、temporary loss landmark prediction lossを考慮 date | 1 |

15,620 | 19,762,212,131 | IssuesEvent | 2022-01-16 15:46:38 | ForNeVeR/Cesium | https://api.github.com/repos/ForNeVeR/Cesium | opened | C17-compliant preprocessor | kind:feature status:help-wanted area:standard-support area:preprocessor | The section **6.10 Preprocessing directives** of the C standard defines the requirements to the C preprocessor. We should fulfill them.

- [ ] 6.10 Preprocessing directives

- [ ] 6.10.1 Conditional inclusion

- [ ] 6.10.2 Source file inclusion

- [ ] 6.10.3 Macro replacement

- [ ] 6.10.3.1 Argument subst... | 1.0 | C17-compliant preprocessor - The section **6.10 Preprocessing directives** of the C standard defines the requirements to the C preprocessor. We should fulfill them.

- [ ] 6.10 Preprocessing directives

- [ ] 6.10.1 Conditional inclusion

- [ ] 6.10.2 Source file inclusion

- [ ] 6.10.3 Macro replacement

... | process | compliant preprocessor the section preprocessing directives of the c standard defines the requirements to the c preprocessor we should fulfill them preprocessing directives conditional inclusion source file inclusion macro replacement arg... | 1 |

12,784 | 15,166,085,985 | IssuesEvent | 2021-02-12 15:54:12 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | cell growth mode switching, bipolar to monopolar TPV? | PomBase cellular processes parent relationship query | I know we have discussed this many times before, but I can't work out if something has changed

and it is now incorrect, or it was always like this and I misremebered

I want to use the term

https://www.ebi.ac.uk/QuickGO/term/GO:0051524

GO:0051524 cell growth mode switching, bipolar to monopolar

(I think t... | 1.0 | cell growth mode switching, bipolar to monopolar TPV? - I know we have discussed this many times before, but I can't work out if something has changed

and it is now incorrect, or it was always like this and I misremebered

I want to use the term

https://www.ebi.ac.uk/QuickGO/term/GO:0051524

GO:0051524 cell g... | process | cell growth mode switching bipolar to monopolar tpv i know we have discussed this many times before but i can t work out if something has changed and it is now incorrect or it was always like this and i misremebered i want to use the term go cell growth mode switching bipolar to monopolar i t... | 1 |

444,333 | 31,033,122,032 | IssuesEvent | 2023-08-10 13:41:28 | stormatics/pg_cirrus | https://api.github.com/repos/stormatics/pg_cirrus | opened | Update HOWTO web page | documentation | Since there have been changes in how pg_cirrus executes, the [HOWTO](https://stormatics.tech/how-to-use-pg_cirrus-to-setup-a-highly-available-postgresql-cluster) web page must be updated. | 1.0 | Update HOWTO web page - Since there have been changes in how pg_cirrus executes, the [HOWTO](https://stormatics.tech/how-to-use-pg_cirrus-to-setup-a-highly-available-postgresql-cluster) web page must be updated. | non_process | update howto web page since there have been changes in how pg cirrus executes the web page must be updated | 0 |

21,807 | 30,316,402,473 | IssuesEvent | 2023-07-10 15:51:39 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Change term - countrycode | Term - change Class - Location non-normative Process - complete | Submitter: John Wieczorek (following issue raised by Ian Engelbrecht @ianengelbrecht Issue #221 and tdwg/dwc-qa#141)

Justification (why is this change necessary?): Clarity

Proponents (who needs this change): Everyone

Current Term definition: https://dwc.tdwg.org/list/#dwc_countrycode

Proposed new attributes of th... | 1.0 | Change term - countrycode - Submitter: John Wieczorek (following issue raised by Ian Engelbrecht @ianengelbrecht Issue #221 and tdwg/dwc-qa#141)

Justification (why is this change necessary?): Clarity

Proponents (who needs this change): Everyone

Current Term definition: https://dwc.tdwg.org/list/#dwc_countrycode

P... | process | change term countrycode submitter john wieczorek following issue raised by ian engelbrecht ianengelbrecht issue and tdwg dwc qa justification why is this change necessary clarity proponents who needs this change everyone current term definition proposed new attributes of the term usage ... | 1 |

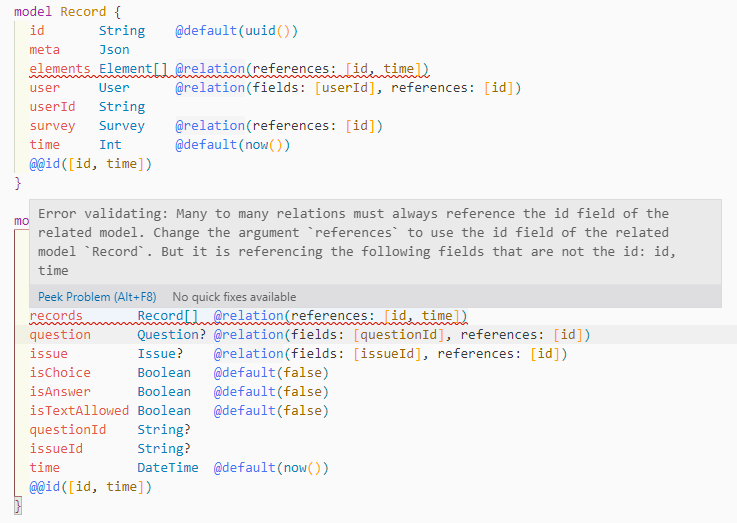

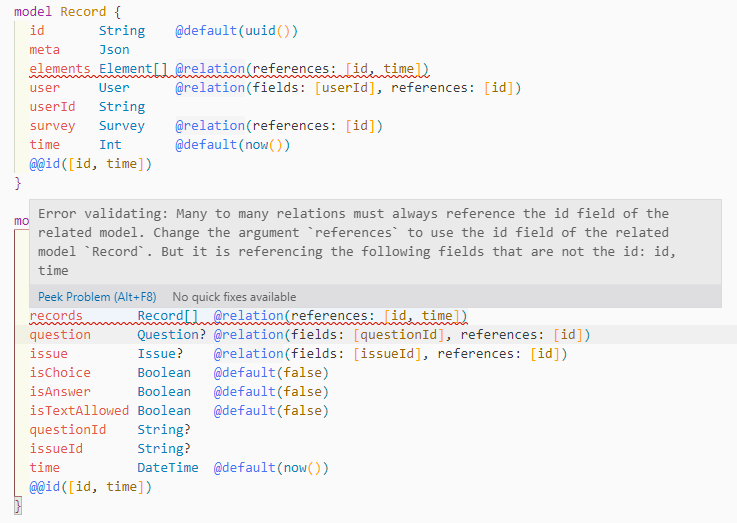

9,745 | 12,733,961,505 | IssuesEvent | 2020-06-25 13:11:22 | prisma/vscode | https://api.github.com/repos/prisma/vscode | closed | Composite keys are not considered valid? | bug/2-confirmed kind/bug process/candidate team/engines |

As the image explains...

Happened with prisma `2.0.1` and vscode extension `2.0.3`. | 1.0 | Composite keys are not considered valid? -

As the image explains...

Happened with prisma `2.0.1` and vscode extension `2.0.3`. | process | composite keys are not considered valid as the image explains happened with prisma and vscode extension | 1 |

9,275 | 12,302,262,844 | IssuesEvent | 2020-05-11 16:41:02 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Referencing Output Variable from Dependency does not work as described | Pri1 devops-cicd-process/tech devops/prod doc-bug | I'm using the runOnce strategy with the deploy hook, but referencing an output from one of the tasks does not work using this syntax as described here.

$[dependencies.<job-name>.outputs['<lifecycle-hookname>.<step-name>.<variable-name>']]

The only way I was able to get this to work was actual... | 1.0 | Referencing Output Variable from Dependency does not work as described - I'm using the runOnce strategy with the deploy hook, but referencing an output from one of the tasks does not work using this syntax as described here.

$[dependencies.<job-name>.outputs['<lifecycle-hookname>.<step-name>.<var... | process | referencing output variable from dependency does not work as described i m using the runonce strategy with the deploy hook but referencing an output from one of the tasks does not work using this syntax as described here the only way i was able to get this to work was actually by using the following syntax ... | 1 |

13,491 | 16,018,831,373 | IssuesEvent | 2021-04-20 19:39:20 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | closed | Change KMeans algorithm for KBinsDiscretizer from 'elkan' (default) to 'full' | Performance module:preprocessing | In KBinsDiscretizer KMeans is used with default parameters (eps=1e-4, algorithm='elkan').

https://github.com/scikit-learn/scikit-learn/blob/8c6a045e46abe94e43a971d4f8042728addfd6a7/sklearn/preprocessing/_discretization.py#L208

But 'full' algotithm works better. Here are timings from two different stations. I also... | 1.0 | Change KMeans algorithm for KBinsDiscretizer from 'elkan' (default) to 'full' - In KBinsDiscretizer KMeans is used with default parameters (eps=1e-4, algorithm='elkan').

https://github.com/scikit-learn/scikit-learn/blob/8c6a045e46abe94e43a971d4f8042728addfd6a7/sklearn/preprocessing/_discretization.py#L208

But 'fu... | process | change kmeans algorithm for kbinsdiscretizer from elkan default to full in kbinsdiscretizer kmeans is used with default parameters eps algorithm elkan but full algotithm works better here are timings from two different stations i also checked two different eps values since discretization d... | 1 |

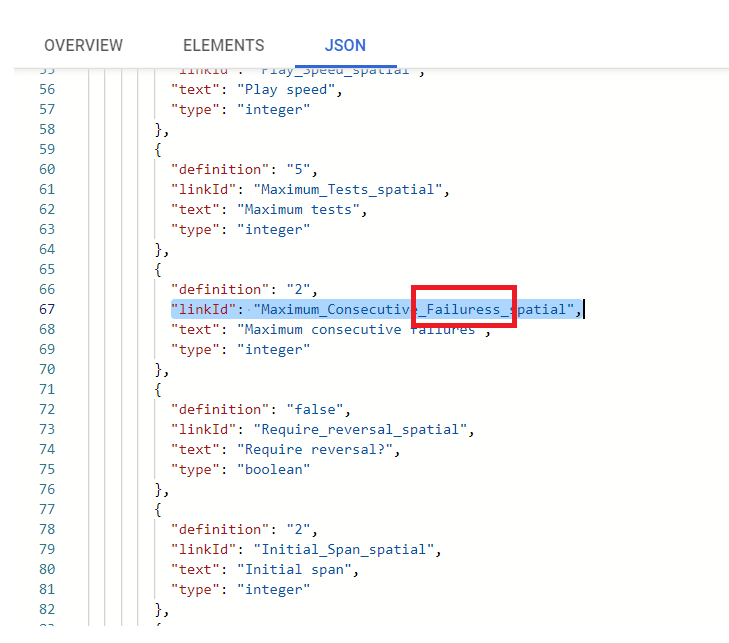

18,675 | 24,594,063,940 | IssuesEvent | 2022-10-14 06:39:11 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [FHIR] Spatial span memory > JSON > Maximum consecutive failures > Need to correct the Spelling(typo) for the linkID | Bug Response datastore P3 Process: Fixed Process: Tested dev | Spatial span memory > JSON > Maximum consecutive failures > Need to correct the Spelling(typo) for the linkID,

linkID should be - Maximum_Consecutive_Failures_spatial

| 2.0 | [FHIR] Spatial span memory > JSON > Maximum consecutive failures > Need to correct the Spelling(typo) for the linkID - Spatial span memory > JSON > Maximum consecutive failures > Need to correct the Spelling(typo) for the linkID,

linkID should be - Maximum_Consecutive_Failures_spatial

. Also the animations will be taken care of, so that's nice.

Noting these resources for myself while I work on this:

Official demo

https://github.com/a... | 1.0 | Use SortedList for RecyclerView file listing - This will make updating the list with new info much easier (e.g. first run of `ls` to get file names, second run of `ls -l` to get detailed info such as file sizes). Also the animations will be taken care of, so that's nice.

Noting these resources for myself while I wor... | non_process | use sortedlist for recyclerview file listing this will make updating the list with new info much easier e g first run of ls to get file names second run of ls l to get detailed info such as file sizes also the animations will be taken care of so that s nice noting these resources for myself while i wor... | 0 |

9,693 | 12,699,160,328 | IssuesEvent | 2020-06-22 14:28:16 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Unify prisma introspect and prisma introspect --url wrt re-introspection | kind/improvement process/candidate topic: re-introspection | The output of `prisma introspect` and `prisma introspect --url <url>` should be the same.

Currently only `prisma introspect` is able to trigger the re-introspection workflow and sends existing schema.prisma to the introspection engine.

```

@prisma/cli : 2.1.0-dev.54

Current platform : darwin

Que... | 1.0 | Unify prisma introspect and prisma introspect --url wrt re-introspection - The output of `prisma introspect` and `prisma introspect --url <url>` should be the same.

Currently only `prisma introspect` is able to trigger the re-introspection workflow and sends existing schema.prisma to the introspection engine.

`... | process | unify prisma introspect and prisma introspect url wrt re introspection the output of prisma introspect and prisma introspect url should be the same currently only prisma introspect is able to trigger the re introspection workflow and sends existing schema prisma to the introspection engine ... | 1 |

6,686 | 9,806,689,958 | IssuesEvent | 2019-06-12 12:04:44 | Open-EO/openeo-api | https://api.github.com/repos/Open-EO/openeo-api | closed | Improvements for process catalogue | process discovery | There are some more nice additions we could integrate into our process catalogue.

- Numeric types could hold an additional unit of measurement for values

- Maybe allow additional schemas than JSON (depending on mime-type?)

Some of these ideas are borrowed from WPS2, which our description is already similar to.

... | 1.0 | Improvements for process catalogue - There are some more nice additions we could integrate into our process catalogue.

- Numeric types could hold an additional unit of measurement for values

- Maybe allow additional schemas than JSON (depending on mime-type?)

Some of these ideas are borrowed from WPS2, which our... | process | improvements for process catalogue there are some more nice additions we could integrate into our process catalogue numeric types could hold an additional unit of measurement for values maybe allow additional schemas than json depending on mime type some of these ideas are borrowed from which our de... | 1 |

12,551 | 14,976,853,583 | IssuesEvent | 2021-01-28 08:41:49 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Hydra] Account lock functionality is failed in Some scenario | Bug Hydra P0 Process: Fixed Process: Tested QA Process: Tested dev | Steps:-

1. Open the application

2. Navigate to sign in screen

3. Enter valid email and Invalid password for 5 times

4. Verify account is locked

5. Sign in using email and temporary password

6. Once navigated to reset password screen, enter the temp password received and enter old password used in the past in new... | 3.0 | [Hydra] Account lock functionality is failed in Some scenario - Steps:-

1. Open the application

2. Navigate to sign in screen

3. Enter valid email and Invalid password for 5 times

4. Verify account is locked

5. Sign in using email and temporary password

6. Once navigated to reset password screen, enter the temp... | process | account lock functionality is failed in some scenario steps open the application navigate to sign in screen enter valid email and invalid password for times verify account is locked sign in using email and temporary password once navigated to reset password screen enter the temp passw... | 1 |

4,699 | 7,542,414,174 | IssuesEvent | 2018-04-17 12:57:13 | amarbajric/EBUSA-AIM17 | https://api.github.com/repos/amarbajric/EBUSA-AIM17 | opened | Adapt BP based on new Data Model | BP business processes in progress | - Registration Process needs adaption based on User <--> Company Relation

- ProcessValidation should be extended with ProcessModel Upload into the ProcessStore and renamed to "Processupload and Validation"

- ProcessPurchase needs adaption especially for the DEV Team to set the structure, how the procedure should be h... | 1.0 | Adapt BP based on new Data Model - - Registration Process needs adaption based on User <--> Company Relation

- ProcessValidation should be extended with ProcessModel Upload into the ProcessStore and renamed to "Processupload and Validation"

- ProcessPurchase needs adaption especially for the DEV Team to set the struc... | process | adapt bp based on new data model registration process needs adaption based on user company relation processvalidation should be extended with processmodel upload into the processstore and renamed to processupload and validation processpurchase needs adaption especially for the dev team to set the structur... | 1 |

4,972 | 7,807,792,568 | IssuesEvent | 2018-06-11 18:02:36 | decidim/decidim | https://api.github.com/repos/decidim/decidim | closed | User roles and participatory processes privacy | space: processes stale-issue type: discussion wontfix | # This is a Feature Proposal

#### :tophat: Description

Sometimes associations need to have private debates. It would be nice to have user roles and choose which ones could participate at each participatory process.

USER WORKFLOW

* System Admin could view, create, edit and delete organization user roles

*... | 1.0 | User roles and participatory processes privacy - # This is a Feature Proposal

#### :tophat: Description

Sometimes associations need to have private debates. It would be nice to have user roles and choose which ones could participate at each participatory process.

USER WORKFLOW

* System Admin could view, cre... | process | user roles and participatory processes privacy this is a feature proposal tophat description sometimes associations need to have private debates it would be nice to have user roles and choose which ones could participate at each participatory process user workflow system admin could view cre... | 1 |

6,285 | 9,285,377,897 | IssuesEvent | 2019-03-21 06:51:11 | omuskywalker/gitalk-comment | https://api.github.com/repos/omuskywalker/gitalk-comment | closed | XV6 Ch1 OS 組織 | OMU Skywalker | /xv6-1-process/ Gitalk | https://blog.omuskywalker.com/xv6-1-process/

OS 必須具備三項技能:多工、獨立及交流。 kernel 組織 Monolithic kernel:整個 OS 都位於 kernel 中,如此一來所有 system calls 都會在 kernel 中執行(XV6)。 好處 設計者不須決定 OS 的哪些部份不需要完整的硬體特權。 更方便的讓不同部份的 OS 去合作。 壞處 通常在不同部份的 OS 中的介面是複雜的。 這會容易讓開發者出錯。 | 1.0 | XV6 Ch1 OS 組織 | OMU Skywalker - https://blog.omuskywalker.com/xv6-1-process/

OS 必須具備三項技能:多工、獨立及交流。 kernel 組織 Monolithic kernel:整個 OS 都位於 kernel 中,如此一來所有 system calls 都會在 kernel 中執行(XV6)。 好處 設計者不須決定 OS 的哪些部份不需要完整的硬體特權。 更方便的讓不同部份的 OS 去合作。 壞處 通常在不同部份的 OS 中的介面是複雜的。 這會容易讓開發者出錯。 | process | os 組織 omu skywalker os 必須具備三項技能:多工、獨立及交流。 kernel 組織 monolithic kernel:整個 os 都位於 kernel 中,如此一來所有 system calls 都會在 kernel 中執行( )。 好處 設計者不須決定 os 的哪些部份不需要完整的硬體特權。 更方便的讓不同部份的 os 去合作。 壞處 通常在不同部份的 os 中的介面是複雜的。 這會容易讓開發者出錯。 | 1 |

165,821 | 6,286,892,845 | IssuesEvent | 2017-07-19 13:57:13 | phil-mansfield/shellfish | https://api.github.com/repos/phil-mansfield/shellfish | opened | Generalize Gadget reader | bug priority: everything is on fire | Generalize Gadget reader so that it can read:

- Standard DMO Gadget snapshots where DM particles are type 1.

- Non-standard DMO Gadget snapshots where DM particles are type 0.

- Non-standard DMO Gadget snapshots where DM particles are multiple types.

- Standard hydro Gadget snapshots which contain gas particles w... | 1.0 | Generalize Gadget reader - Generalize Gadget reader so that it can read:

- Standard DMO Gadget snapshots where DM particles are type 1.

- Non-standard DMO Gadget snapshots where DM particles are type 0.

- Non-standard DMO Gadget snapshots where DM particles are multiple types.

- Standard hydro Gadget snapshots wh... | non_process | generalize gadget reader generalize gadget reader so that it can read standard dmo gadget snapshots where dm particles are type non standard dmo gadget snapshots where dm particles are type non standard dmo gadget snapshots where dm particles are multiple types standard hydro gadget snapshots wh... | 0 |

20,037 | 26,520,585,044 | IssuesEvent | 2023-01-19 02:00:08 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Thu, 19 Jan 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

There is no result

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

There is no result

## Keyword: ISP

### PTA-Det: Point Transformer Associating Po... | 2.0 | New submissions for Thu, 19 Jan 23 - ## Keyword: events

There is no result

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

There is no result

## Keyword: ISP

### PTA-... | process | new submissions for thu jan keyword events there is no result keyword event camera there is no result keyword events camera there is no result keyword white balance there is no result keyword color contrast there is no result keyword awb there is no result keyword isp pta de... | 1 |

5,232 | 8,033,076,877 | IssuesEvent | 2018-07-28 23:37:25 | JonathanBelanger/DECaxp | https://api.github.com/repos/JonathanBelanger/DECaxp | closed | Compile issue. | in process changes | Hello Jonathan!

I am trying to compile DECaxp under FreeBSD. I 've got:

In file included from /emulator/Alpha/prg/DECaxp/src/comutl/AXP_VirtualDisk.c:32:0:

/emulator/Alpha/prg/DECaxp/src/comutl/AXP_VHDX.h:265:5: error: unknown type name 'AXP_BLOCK_DSC'

AXP_BLOCK_DSC header;

^~~~~~~~~~~~~

/emulator/Alpha... | 1.0 | Compile issue. - Hello Jonathan!

I am trying to compile DECaxp under FreeBSD. I 've got:

In file included from /emulator/Alpha/prg/DECaxp/src/comutl/AXP_VirtualDisk.c:32:0:

/emulator/Alpha/prg/DECaxp/src/comutl/AXP_VHDX.h:265:5: error: unknown type name 'AXP_BLOCK_DSC'

AXP_BLOCK_DSC header;

^~~~~~~~~~~~~... | process | compile issue hello jonathan i am trying to compile decaxp under freebsd i ve got in file included from emulator alpha prg decaxp src comutl axp virtualdisk c emulator alpha prg decaxp src comutl axp vhdx h error unknown type name axp block dsc axp block dsc header ... | 1 |

9,422 | 12,416,855,583 | IssuesEvent | 2020-05-22 19:10:24 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | MSVC Float comparison to 0.0 not decompiled properly | Feature: Processor/x86 Type: Bug | **Describe the bug**

On 32 bit x86 architecture (and possibly 64bit), MSVC generates a specific pattern to test equality to zero of floating point numbers (see: https://stackoverflow.com/a/46772747). This pattern is incorrectly decompiled.

**To Reproduce**

decompile this pattern:

```

0f 57 c0 XORPS ... | 1.0 | MSVC Float comparison to 0.0 not decompiled properly - **Describe the bug**

On 32 bit x86 architecture (and possibly 64bit), MSVC generates a specific pattern to test equality to zero of floating point numbers (see: https://stackoverflow.com/a/46772747). This pattern is incorrectly decompiled.

**To Reproduce**

dec... | process | msvc float comparison to not decompiled properly describe the bug on bit architecture and possibly msvc generates a specific pattern to test equality to zero of floating point numbers see this pattern is incorrectly decompiled to reproduce decompile this pattern ... | 1 |

15,432 | 19,622,518,645 | IssuesEvent | 2022-01-07 08:56:42 | symfony/symfony | https://api.github.com/repos/symfony/symfony | closed | [Process] arrays in `$env` result in `Array to string conversion` | Bug Process Status: Needs Review | ### Symfony version(s) affected

4.4.35, 4.4.36

### Description

Since https://github.com/symfony/symfony/commit/11ccbcd24c2e2d3f4e5897f159d1c1d23fc62a67, `Process->run()` prints errors "Array to string conversion" when `$env` contains array.

Before this commit, `$env` was cleaned of arrays, but this got removed. I... | 1.0 | [Process] arrays in `$env` result in `Array to string conversion` - ### Symfony version(s) affected

4.4.35, 4.4.36

### Description

Since https://github.com/symfony/symfony/commit/11ccbcd24c2e2d3f4e5897f159d1c1d23fc62a67, `Process->run()` prints errors "Array to string conversion" when `$env` contains array.

Befor... | process | arrays in env result in array to string conversion symfony version s affected description since process run prints errors array to string conversion when env contains array before this commit env was cleaned of arrays but this got removed in particular introdu... | 1 |

21,420 | 29,359,592,108 | IssuesEvent | 2023-05-28 00:36:56 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Remoto] CTO na Coodesh | SALVADOR PJ INFRAESTRUTURA BANCO DE DADOS STARTUP REQUISITOS REMOTO PROCESSOS INOVAÇÃO GITHUB INGLÊS CI EXCEL UMA R LIDERANÇA CLOUD COMPUTING MANUTENÇÃO NEGÓCIOS INTELIGÊNCIA ARTIFICIAL ARQUITETURA DE SOFTWARE CYBER SECURITY Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/cto-203939393?utm_source=github&utm_m... | 1.0 | [Remoto] CTO na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/cto-2039393... | process | cto na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado de candidatura 👋 a kor solutio... | 1 |

280,753 | 24,330,077,579 | IssuesEvent | 2022-09-30 18:31:08 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing Cypress test Mark one alert as acknowledged when more than one open alerts are selected | failed-test skipped-test Team:Detections and Resp Team: SecuritySolution Team:Detection Alerts | *Kibana version:*

8.2

Failing test: https://buildkite.com/elastic/kibana-pull-request/builds/31565#b9dd9c8b-7861-4542-9e5b-f891118c4fc1

[Error message](https://s3.amazonaws.com/buildkiteartifacts.com/e0f3970e-3a75-4621-919f-e6c773e2bb12/0fda5127-f57f-42fb-8e5a-146b3d535916/8825e03b-08f5-4fc0-b6b6-3a00e842b89a/b9... | 2.0 | Failing Cypress test Mark one alert as acknowledged when more than one open alerts are selected - *Kibana version:*

8.2

Failing test: https://buildkite.com/elastic/kibana-pull-request/builds/31565#b9dd9c8b-7861-4542-9e5b-f891118c4fc1

[Error message](https://s3.amazonaws.com/buildkiteartifacts.com/e0f3970e-3a75-4... | non_process | failing cypress test mark one alert as acknowledged when more than one open alerts are selected kibana version failing test skipped here | 0 |

4,460 | 7,329,950,610 | IssuesEvent | 2018-03-05 08:04:00 | UKHomeOffice/dq-aws-transition | https://api.github.com/repos/UKHomeOffice/dq-aws-transition | closed | Update MVT database creds in ACL Python script | DQ Data Ingest DQ Tranche 1 Production SSM processing | Update MVT Database Creds in ACL Python script

- [x] dp1_acl.sh | 1.0 | Update MVT database creds in ACL Python script - Update MVT Database Creds in ACL Python script

- [x] dp1_acl.sh | process | update mvt database creds in acl python script update mvt database creds in acl python script acl sh | 1 |

35,767 | 7,992,814,348 | IssuesEvent | 2018-07-20 03:58:47 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | [4.0] Edit profile on frontend, cancel button reloads page, | J4 Issue No Code Attached Yet | ### Steps to reproduce the issue

install 4.0-dev at 79fd94942e1758cff32da7f8f368b5f78cc75c40

install sample data

login on frontend as super admin

click change password in top right module

Click Cancel button

### Expected result

"something" is cancelled, and redirected "somewhere'

I dont have the answer ... | 1.0 | [4.0] Edit profile on frontend, cancel button reloads page, - ### Steps to reproduce the issue

install 4.0-dev at 79fd94942e1758cff32da7f8f368b5f78cc75c40

install sample data

login on frontend as super admin

click change password in top right module

Click Cancel button

### Expected result

"something" is ca... | non_process | edit profile on frontend cancel button reloads page steps to reproduce the issue install dev at install sample data login on frontend as super admin click change password in top right module click cancel button expected result something is cancelled and redirected somewhere i do... | 0 |

20,646 | 27,323,575,885 | IssuesEvent | 2023-02-24 22:33:57 | cse442-at-ub/project_s23-iweatherify | https://api.github.com/repos/cse442-at-ub/project_s23-iweatherify | closed | Think of possible ideas for what the project should be and document them | Processing Task Sprint 1 | **Task Tests**

*Test 1*

1) Open document https://docs.google.com/document/d/1fQDM2_rvD49LgCHpRX-fh-yX0UqBG1fgJzAu0D9KsRQ/edit?usp=sharing

2) Verify that document has at least three ideas and that each idea gives at least a brief description of what it is about and what features it envisions being implemented | 1.0 | Think of possible ideas for what the project should be and document them - **Task Tests**

*Test 1*

1) Open document https://docs.google.com/document/d/1fQDM2_rvD49LgCHpRX-fh-yX0UqBG1fgJzAu0D9KsRQ/edit?usp=sharing

2) Verify that document has at least three ideas and that each idea gives at least a brief description of ... | process | think of possible ideas for what the project should be and document them task tests test open document verify that document has at least three ideas and that each idea gives at least a brief description of what it is about and what features it envisions being implemented | 1 |

364,998 | 25,515,776,602 | IssuesEvent | 2022-11-28 16:13:02 | bergmanlab/ngs_te_mapper | https://api.github.com/repos/bergmanlab/ngs_te_mapper | closed | Update readme to explain inputs and output of system | high priority documentation/usability | - explain which new directories & subdirectories are created

- explain which files are created in each (sub)directory (what they are, what their format is)

| 1.0 | Update readme to explain inputs and output of system - - explain which new directories & subdirectories are created

- explain which files are created in each (sub)directory (what they are, what their format is)

| non_process | update readme to explain inputs and output of system explain which new directories subdirectories are created explain which files are created in each sub directory what they are what their format is | 0 |

344,120 | 24,798,757,750 | IssuesEvent | 2022-10-24 19:41:09 | valkim55/VK-just-tech-news | https://api.github.com/repos/valkim55/VK-just-tech-news | closed | Users can create, read, update, and delete a profile in the database | documentation | - as a user, I can create my own profile that stores information about me

- as a user, I can retrieve my profile data or another user's profile data

- as a user, I can update my profile data

- as a user, I can delete my profile data | 1.0 | Users can create, read, update, and delete a profile in the database - - as a user, I can create my own profile that stores information about me

- as a user, I can retrieve my profile data or another user's profile data

- as a user, I can update my profile data

- as a user, I can delete my profile data | non_process | users can create read update and delete a profile in the database as a user i can create my own profile that stores information about me as a user i can retrieve my profile data or another user s profile data as a user i can update my profile data as a user i can delete my profile data | 0 |

257,789 | 27,563,817,934 | IssuesEvent | 2023-03-08 01:08:41 | billmcchesney1/superagent | https://api.github.com/repos/billmcchesney1/superagent | opened | CVE-2019-1010266 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2019-1010266 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-2.4.2.tgz</b>, <b>lodash-3.10.1.tgz</b>, <b>lodash-3.2.0.tgz</b>, <b>lodash-2.1.0.tgz</b></p></summary>

<p... | True | CVE-2019-1010266 (Medium) detected in multiple libraries - ## CVE-2019-1010266 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-2.4.2.tgz</b>, <b>lodash-3.10.1.tgz</b>, <b>lod... | non_process | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries lodash tgz lodash tgz lodash tgz lodash tgz lodash tgz a utility library delivering consistency customization performance extras library h... | 0 |

20,797 | 3,419,228,968 | IssuesEvent | 2015-12-08 08:37:51 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | List.from is fixed length, but loop through list still checks for ioore | area-dart2js dart2js-optimization Priority-Medium triaged Type-Defect | Consider this code:

List<String> fruits = new List.from(['apples', 'oranges']);

void main() {

for (int i = 0; i < fruits.length; i++) {

print(fruits[i]);

}

}

this compiles to the following JS code:

$.main = function() {

var i, t1;

for... | 1.0 | List.from is fixed length, but loop through list still checks for ioore - Consider this code:

List<String> fruits = new List.from(['apples', 'oranges']);

void main() {

for (int i = 0; i < fruits.length; i++) {

print(fruits[i]);

}

}

this compiles to the follow... | non_process | list from is fixed length but loop through list still checks for ioore consider this code list lt string gt fruits new list from void main nbsp nbsp for int i i lt fruits length i nbsp nbsp nbsp nbsp print fruits nbsp nbsp this compiles to the following js code main ... | 0 |

20,212 | 26,803,987,434 | IssuesEvent | 2023-02-01 16:55:53 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Mongo does not handle nested limit | Type:Bug Database/Mongo Querying/Processor .Backend | As part of https://github.com/metabase/metabase/issues/23422 we discovered that

`metabase.query-processor-test.expressions-test/expression-using-aggregation-test` was failing (partly) because the `:limit 3` inside source query was being ignored. | 1.0 | Mongo does not handle nested limit - As part of https://github.com/metabase/metabase/issues/23422 we discovered that

`metabase.query-processor-test.expressions-test/expression-using-aggregation-test` was failing (partly) because the `:limit 3` inside source query was being ignored. | process | mongo does not handle nested limit as part of we discovered that metabase query processor test expressions test expression using aggregation test was failing partly because the limit inside source query was being ignored | 1 |

7,843 | 11,014,444,235 | IssuesEvent | 2019-12-04 22:46:57 | googleapis/java-recommender | https://api.github.com/repos/googleapis/java-recommender | opened | Release a BOM | type: process | Create a google-cloud-recommender-bom artifact that includes the versions of the artifacts released from this library. | 1.0 | Release a BOM - Create a google-cloud-recommender-bom artifact that includes the versions of the artifacts released from this library. | process | release a bom create a google cloud recommender bom artifact that includes the versions of the artifacts released from this library | 1 |

13,211 | 15,683,157,237 | IssuesEvent | 2021-03-25 08:24:03 | ropensci/software-review-meta | https://api.github.com/repos/ropensci/software-review-meta | closed | New editor task after acceptance: check reviewer volunteering form link to authors | process | if they aren't authors yet. Also mention to the author who opened an issue that they should forward the link to other major contributors of the package.

Of course, this will be added to the editor guide only after I updated the survey. 😁 | 1.0 | New editor task after acceptance: check reviewer volunteering form link to authors - if they aren't authors yet. Also mention to the author who opened an issue that they should forward the link to other major contributors of the package.

Of course, this will be added to the editor guide only after I updated the surv... | process | new editor task after acceptance check reviewer volunteering form link to authors if they aren t authors yet also mention to the author who opened an issue that they should forward the link to other major contributors of the package of course this will be added to the editor guide only after i updated the surv... | 1 |

4,621 | 7,467,019,244 | IssuesEvent | 2018-04-02 13:42:46 | agroportal/agroportal_web_ui | https://api.github.com/repos/agroportal/agroportal_web_ui | opened | Biorefinery & Transmat failed to parse | ontology processing problem | Error from parsing log file (Biorefinery): Illegal rdf:nodeID value '_:genid259'

there is an equivalent error for Transmat.

This error seems to have been identified in the NCBO BioPortal: see

- [https://sourceforge.net/p/owlapi/mailman/message/3594402/](url)

- [https://github.com/ncbo/bioportal-project/issues/32#e... | 1.0 | Biorefinery & Transmat failed to parse - Error from parsing log file (Biorefinery): Illegal rdf:nodeID value '_:genid259'

there is an equivalent error for Transmat.

This error seems to have been identified in the NCBO BioPortal: see

- [https://sourceforge.net/p/owlapi/mailman/message/3594402/](url)

- [https://gith... | process | biorefinery transmat failed to parse error from parsing log file biorefinery illegal rdf nodeid value there is an equivalent error for transmat this error seems to have been identified in the ncbo bioportal see url url url jvendetti did you solve this problem | 1 |

215,443 | 16,671,965,140 | IssuesEvent | 2021-06-07 12:04:19 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | com.hazelcast.jet.impl.JobSummaryTest.when_manyJobs_then_sortedBySubmissionTime | Team: Core Type: Test-Failure | _master_ (commit e3352af34221c58de14ed09dcde0edc9206098c8)

Failed on Oracle JDK 11: http://jenkins.hazelcast.com/view/Official%20Builds/job/Hazelcast-master-OracleJDK11/271/testReport/com.hazelcast.jet.impl/JobSummaryTest/when_manyJobs_then_sortedBySubmissionTime/

Stacktrace:

```

org.junit.ComparisonFailure: ex... | 1.0 | com.hazelcast.jet.impl.JobSummaryTest.when_manyJobs_then_sortedBySubmissionTime - _master_ (commit e3352af34221c58de14ed09dcde0edc9206098c8)

Failed on Oracle JDK 11: http://jenkins.hazelcast.com/view/Official%20Builds/job/Hazelcast-master-OracleJDK11/271/testReport/com.hazelcast.jet.impl/JobSummaryTest/when_manyJobs... | non_process | com hazelcast jet impl jobsummarytest when manyjobs then sortedbysubmissiontime master commit failed on oracle jdk stacktrace org junit comparisonfailure expected but was at org junit assert assertequals assert java at org junit assert assertequals assert java at com hazelcast... | 0 |

219,089 | 16,817,081,341 | IssuesEvent | 2021-06-17 08:40:10 | nilisha-jais/Musicophilia | https://api.github.com/repos/nilisha-jais/Musicophilia | opened | Modification of Navbar. | documentation | I want to modify the navbar , to be more precise the CSS of the navbar to make the site look much better than before, @nilisha-jais please assign this task to me.

| 1.0 | Modification of Navbar. - I want to modify the navbar , to be more precise the CSS of the navbar to make the site look much better than before, @nilisha-jais please assign this task to me.

| non_process | modification of navbar i want to modify the navbar to be more precise the css of the navbar to make the site look much better than before nilisha jais please assign this task to me | 0 |

82,044 | 10,267,756,636 | IssuesEvent | 2019-08-23 03:07:04 | OpenPHDGuiding/phd2 | https://api.github.com/repos/OpenPHDGuiding/phd2 | closed | documentation: need more info about camera gain setting | Type-Documentation | camera gain description should describe how phd2's 0-100 scale maps to camera native gain value | 1.0 | documentation: need more info about camera gain setting - camera gain description should describe how phd2's 0-100 scale maps to camera native gain value | non_process | documentation need more info about camera gain setting camera gain description should describe how s scale maps to camera native gain value | 0 |

241,372 | 26,256,762,171 | IssuesEvent | 2023-01-06 01:55:31 | dkushwah/WhiteSourceTs | https://api.github.com/repos/dkushwah/WhiteSourceTs | opened | CVE-2021-3803 (High) detected in nth-check-1.0.1.tgz | security vulnerability | ## CVE-2021-3803 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nth-check-1.0.1.tgz</b></p></summary>

<p>performant nth-check parser & compiler</p>

<p>Library home page: <a href="http... | True | CVE-2021-3803 (High) detected in nth-check-1.0.1.tgz - ## CVE-2021-3803 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nth-check-1.0.1.tgz</b></p></summary>

<p>performant nth-check pa... | non_process | cve high detected in nth check tgz cve high severity vulnerability vulnerable library nth check tgz performant nth check parser compiler library home page a href dependency hierarchy html webpack plugin tgz root library pretty error tgz ... | 0 |

15,670 | 19,847,318,363 | IssuesEvent | 2022-01-21 08:19:47 | ooi-data/RS03AXPS-SF03A-2A-CTDPFA302-streamed-ctdpf_sbe43_sample | https://api.github.com/repos/ooi-data/RS03AXPS-SF03A-2A-CTDPFA302-streamed-ctdpf_sbe43_sample | opened | 🛑 Processing failed: ValueError | process | ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T08:19:46.430177.

## Details

Flow name: `RS03AXPS-SF03A-2A-CTDPFA302-streamed-ctdpf_sbe43_sample`

Task name: `processing_task`

Error type: `ValueError`

Error message: cannot reshape array of size 1209600 into shape (12500000,)

<... | 1.0 | 🛑 Processing failed: ValueError - ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T08:19:46.430177.

## Details

Flow name: `RS03AXPS-SF03A-2A-CTDPFA302-streamed-ctdpf_sbe43_sample`

Task name: `processing_task`

Error type: `ValueError`

Error message: cannot reshape array of size... | process | 🛑 processing failed valueerror overview valueerror found in processing task task during run ended on details flow name streamed ctdpf sample task name processing task error type valueerror error message cannot reshape array of size into shape traceback ... | 1 |

42,329 | 9,203,344,164 | IssuesEvent | 2019-03-08 02:02:22 | GSA/datagov-deploy | https://api.github.com/repos/GSA/datagov-deploy | closed | Document: Detail changes | application codeigniter component/dashboard php | Task: Document how repo should be used and how it integrates with its parent repo.

Repo: datagov-deploy-dashboard | 1.0 | Document: Detail changes - Task: Document how repo should be used and how it integrates with its parent repo.

Repo: datagov-deploy-dashboard | non_process | document detail changes task document how repo should be used and how it integrates with its parent repo repo datagov deploy dashboard | 0 |

18,608 | 24,579,075,351 | IssuesEvent | 2022-10-13 14:23:31 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Auth] Signup email > Support email address is not updated in resend verification code flow | Bug P1 Process: Fixed Process: Tested dev Auth server | **Steps:**

1. Install mobile app

2. Signup. Support email is updated in email body

3. Click on 'Resend verification code'

4. Observe the email body

**Actual:** Support email address is displayed as `{{supportEMail}}` in resend verification code flow

**Expected:** Support email added in SB manage apps section ... | 2.0 | [Auth] Signup email > Support email address is not updated in resend verification code flow - **Steps:**

1. Install mobile app

2. Signup. Support email is updated in email body

3. Click on 'Resend verification code'

4. Observe the email body

**Actual:** Support email address is displayed as `{{supportEMail}}` in... | process | signup email support email address is not updated in resend verification code flow steps install mobile app signup support email is updated in email body click on resend verification code observe the email body actual support email address is displayed as supportemail in rese... | 1 |

255,126 | 19,293,606,281 | IssuesEvent | 2021-12-12 07:43:47 | typedorm/typedorm | https://api.github.com/repos/typedorm/typedorm | closed | Could not resolve primary key on find. | documentation enhancement | Hello,

I'm trying to do a simple find on a GSI but I have the error `"id" was referenced in ITEM#{{id}} but it's value could not be resolved.` even if it's not used in the request

Here is my code

```typescript

import { Attribute, AUTO_GENERATE_ATTRIBUTE_STRATEGY, AutoGenerateAttribute, Entity, INDEX_TYPE } fr... | 1.0 | Could not resolve primary key on find. - Hello,

I'm trying to do a simple find on a GSI but I have the error `"id" was referenced in ITEM#{{id}} but it's value could not be resolved.` even if it's not used in the request

Here is my code

```typescript

import { Attribute, AUTO_GENERATE_ATTRIBUTE_STRATEGY, AutoG... | non_process | could not resolve primary key on find hello i m trying to do a simple find on a gsi but i have the error id was referenced in item id but it s value could not be resolved even if it s not used in the request here is my code typescript import attribute auto generate attribute strategy autog... | 0 |

8,679 | 11,810,650,141 | IssuesEvent | 2020-03-19 16:49:50 | MHRA/products | https://api.github.com/repos/MHRA/products | opened | Move Environment Variable Checks to Bootstrap | EPIC - Auto Batch Process :oncoming_automobile: STORY :book: | ## User want

As an _operator_

I want _the system to fail immediately if environment is configured wrong_

So that _I don't have nasty surprises during runtime_

## Acceptance Criteria

- [ ] All env var checks should happen before we start routing.

## Data - Potential impact

**Size**

**Value**

**Eff... | 1.0 | Move Environment Variable Checks to Bootstrap - ## User want

As an _operator_

I want _the system to fail immediately if environment is configured wrong_

So that _I don't have nasty surprises during runtime_

## Acceptance Criteria

- [ ] All env var checks should happen before we start routing.

## Data - Po... | process | move environment variable checks to bootstrap user want as an operator i want the system to fail immediately if environment is configured wrong so that i don t have nasty surprises during runtime acceptance criteria all env var checks should happen before we start routing data pote... | 1 |

539,130 | 15,783,918,953 | IssuesEvent | 2021-04-01 14:32:55 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | API Product subscriptions are not displayed under the API subscription page. | API-M 4.0.0 Feature/APIProducts Priority/High Type/Bug | ### Description:

<!-- Describe the issue -->

visible in application's subscriptions section

visible in application's subscriptions section

.

I'm planning to reenable not failing tests and substitute that issue so that only relevant tests are disabled.

<details>

<summary>List of failing tests on uap</summary>

```

ERROR: System.Diagnostics.Tests.Process... | 1.0 | S.D.Process tests are failing on uap - Currently all S.D.Process tests are disabled (https://github.com/dotnet/corefx/issues/20948).

I'm planning to reenable not failing tests and substitute that issue so that only relevant tests are disabled.

<details>

<summary>List of failing tests on uap</summary>

```

ERR... | process | s d process tests are failing on uap currently all s d process tests are disabled i m planning to reenable not failing tests and substitute that issue so that only relevant tests are disabled list of failing tests on uap error system diagnostics tests processcollectiontests testthreadcollec... | 1 |

75,047 | 25,499,093,425 | IssuesEvent | 2022-11-28 01:05:51 | dkfans/keeperfx | https://api.github.com/repos/dkfans/keeperfx | opened | Game crashes after exiting possession after defeat | Type-Defect Priority-High | To reproduce the crash:

1) Have heroes destroy your heart