Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

12,533 | 14,972,386,435 | IssuesEvent | 2021-01-27 22:45:45 | BootBlock/FileSieve | https://api.github.com/repos/BootBlock/FileSieve | opened | Split Source Item pre-scanning across multiple threads | backend-core performance processing | Threaded pre-scanning is partially in-place, but an effective thread initialiser is halting things. It needs to be intelligent so that it can determine the correct number of threads to be used in all cases, from number of files to if files reside on the same or different physical media.

This has the potential for bi... | 1.0 | Split Source Item pre-scanning across multiple threads - Threaded pre-scanning is partially in-place, but an effective thread initialiser is halting things. It needs to be intelligent so that it can determine the correct number of threads to be used in all cases, from number of files to if files reside on the same or d... | process | split source item pre scanning across multiple threads threaded pre scanning is partially in place but an effective thread initialiser is halting things it needs to be intelligent so that it can determine the correct number of threads to be used in all cases from number of files to if files reside on the same or d... | 1 |

477,451 | 13,762,656,004 | IssuesEvent | 2020-10-07 09:25:41 | AY2021S1-CS2103T-T11-1/tp | https://api.github.com/repos/AY2021S1-CS2103T-T11-1/tp | closed | Enhance tag model and add batch operations | priority.High type.Task | Enhance tag model to support tagging of multiple other models and add batch operations on tags. | 1.0 | Enhance tag model and add batch operations - Enhance tag model to support tagging of multiple other models and add batch operations on tags. | non_process | enhance tag model and add batch operations enhance tag model to support tagging of multiple other models and add batch operations on tags | 0 |

215,433 | 16,602,660,969 | IssuesEvent | 2021-06-01 21:54:21 | klatour324/tea_subscription | https://api.github.com/repos/klatour324/tea_subscription | closed | API Contract | documentation good first issue mvp setup | - [x] Plan out endpoints to build

- [x] Create a table within README documentation to showcase the JSON API endpoints for FE plug in | 1.0 | API Contract - - [x] Plan out endpoints to build

- [x] Create a table within README documentation to showcase the JSON API endpoints for FE plug in | non_process | api contract plan out endpoints to build create a table within readme documentation to showcase the json api endpoints for fe plug in | 0 |

3,325 | 2,676,477,875 | IssuesEvent | 2015-03-25 17:54:02 | twosigma/beaker-notebook | https://api.github.com/repos/twosigma/beaker-notebook | closed | python dataframe display | bug UI Design | If from python you output a data frame without wrapping in html the system still detects a table BUT python outputs only a part of the data frame and the last table row contains only dots (....). | 1.0 | python dataframe display - If from python you output a data frame without wrapping in html the system still detects a table BUT python outputs only a part of the data frame and the last table row contains only dots (....). | non_process | python dataframe display if from python you output a data frame without wrapping in html the system still detects a table but python outputs only a part of the data frame and the last table row contains only dots | 0 |

20,664 | 27,334,852,108 | IssuesEvent | 2023-02-26 03:50:55 | cse442-at-ub/project_s23-team-infinity | https://api.github.com/repos/cse442-at-ub/project_s23-team-infinity | closed | Create backend documentation in order to collate and organize instructions for easier on-boarding and general guidance in the backend. | IO Task Processing Task Sprint 1 | **Task Test**

*Test 1*

1) Research what systems will be running on the UB webserver.

2) Research how cheshire will be accessed and how programs will be placed on the server.

3) Research how the database will be created, accessed, updated, and maintained.

4) Create the documentation via Google Docs for easier sharing a... | 1.0 | Create backend documentation in order to collate and organize instructions for easier on-boarding and general guidance in the backend. - **Task Test**

*Test 1*

1) Research what systems will be running on the UB webserver.

2) Research how cheshire will be accessed and how programs will be placed on the server.

3) Resea... | process | create backend documentation in order to collate and organize instructions for easier on boarding and general guidance in the backend task test test research what systems will be running on the ub webserver research how cheshire will be accessed and how programs will be placed on the server resea... | 1 |

5,941 | 7,434,967,399 | IssuesEvent | 2018-03-26 12:53:53 | zin-/Mem | https://api.github.com/repos/zin-/Mem | closed | Update mem | Persistent Service | # Feature

Update mem

# Specification

Implementation method

- [x] On Detail

- [x] On List(mem done)

# Test

- [ ] Update mem | 1.0 | Update mem - # Feature

Update mem

# Specification

Implementation method

- [x] On Detail

- [x] On List(mem done)

# Test

- [ ] Update mem | non_process | update mem feature update mem specification implementation method on detail on list mem done test update mem | 0 |

22,643 | 4,822,299,467 | IssuesEvent | 2016-11-05 19:42:56 | zulip/zulip | https://api.github.com/repos/zulip/zulip | closed | Write more developer tutorials | area: documentation | Expand developer documentation with more tutorials explaining how to do various types of projects.

Ideas:

- add a new translation

- cut a new release

- add a new emoji

- test Zulip with a new browser

- work with a new file format (for uploading and/or thumbnailing in previews)

| 1.0 | Write more developer tutorials - Expand developer documentation with more tutorials explaining how to do various types of projects.

Ideas:

- add a new translation

- cut a new release

- add a new emoji

- test Zulip with a new browser

- work with a new file format (for uploading and/or thumbnailing in previews)

| non_process | write more developer tutorials expand developer documentation with more tutorials explaining how to do various types of projects ideas add a new translation cut a new release add a new emoji test zulip with a new browser work with a new file format for uploading and or thumbnailing in previews | 0 |

739,240 | 25,586,831,931 | IssuesEvent | 2022-12-01 09:58:41 | projectdiscovery/httpx | https://api.github.com/repos/projectdiscovery/httpx | closed | Add option to return base64-encoded response-body on json output | Priority: Low Status: Completed Type: Enhancement | <!--

1. Please make sure to provide a detailed description with all the relevant information that might be required to start working on this feature.

2. In case you are not sure about your request or whether the particular feature is already supported or not, please start a discussion instead.

3. GitHub Discussion: ... | 1.0 | Add option to return base64-encoded response-body on json output - <!--

1. Please make sure to provide a detailed description with all the relevant information that might be required to start working on this feature.

2. In case you are not sure about your request or whether the particular feature is already supported... | non_process | add option to return encoded response body on json output please make sure to provide a detailed description with all the relevant information that might be required to start working on this feature in case you are not sure about your request or whether the particular feature is already supported or n... | 0 |

19,565 | 25,887,139,182 | IssuesEvent | 2022-12-14 15:17:33 | aiidateam/aiida-core | https://api.github.com/repos/aiidateam/aiida-core | opened | Add utility that can easily relaunch a process from a `ProcessNode` with minimal required setup | priority/important type/accepted feature topic/processes topic/utilities type/usability | A big focus of AiiDA is provenance to guarantee reproducibility. Although it does store a significant amount of relevant provenance data, it is currently not very easy to actually reproduce a result that has been generated.

The first step was the introduction of the `ProcessNode.get_builder_restart` method which all... | 1.0 | Add utility that can easily relaunch a process from a `ProcessNode` with minimal required setup - A big focus of AiiDA is provenance to guarantee reproducibility. Although it does store a significant amount of relevant provenance data, it is currently not very easy to actually reproduce a result that has been generated... | process | add utility that can easily relaunch a process from a processnode with minimal required setup a big focus of aiida is provenance to guarantee reproducibility although it does store a significant amount of relevant provenance data it is currently not very easy to actually reproduce a result that has been generated... | 1 |

431,921 | 30,257,751,272 | IssuesEvent | 2023-07-07 05:15:37 | PLAIF-dev/sw_synthetic_rospkg | https://api.github.com/repos/PLAIF-dev/sw_synthetic_rospkg | closed | 불필요한 Git 수정/변경 추적 해제 | documentation | ## 설명

1. .gitignore에 와일드카드 및 패턴을 추가하여 수정/변경 추적 해제 사항들을 일괄 인식하도록 변경합니다.

2. 프로젝트 내에 포함되지 않아야 할 파일들을 제거합니다. | 1.0 | 불필요한 Git 수정/변경 추적 해제 - ## 설명

1. .gitignore에 와일드카드 및 패턴을 추가하여 수정/변경 추적 해제 사항들을 일괄 인식하도록 변경합니다.

2. 프로젝트 내에 포함되지 않아야 할 파일들을 제거합니다. | non_process | 불필요한 git 수정 변경 추적 해제 설명 gitignore에 와일드카드 및 패턴을 추가하여 수정 변경 추적 해제 사항들을 일괄 인식하도록 변경합니다 프로젝트 내에 포함되지 않아야 할 파일들을 제거합니다 | 0 |

12,945 | 15,308,024,309 | IssuesEvent | 2021-02-24 21:47:45 | cypress-io/cypress-documentation | https://api.github.com/repos/cypress-io/cypress-documentation | closed | Switch from manual deploy to semantic-action | process: deployment | Just need to think how to setup branches `develop` to `staging` and `master` to `production` | 1.0 | Switch from manual deploy to semantic-action - Just need to think how to setup branches `develop` to `staging` and `master` to `production` | process | switch from manual deploy to semantic action just need to think how to setup branches develop to staging and master to production | 1 |

11,917 | 14,702,007,827 | IssuesEvent | 2021-01-04 12:54:03 | threefoldtech/js-sdk | https://api.github.com/repos/threefoldtech/js-sdk | closed | VDC Pricing Does Not Match Resource | process_wontfix type_bug | the smallest flavor of the vdc `Silver` which costs 10 EUR ~= 364 TFT consumes much more resources than what can be bought with this price.

the initial deployment includes the below workloads:

```python

JS-NG> for w in workloads:

2 cloud_units = w.resource_units().cloud_units()

3 print(w.id, w.... | 1.0 | VDC Pricing Does Not Match Resource - the smallest flavor of the vdc `Silver` which costs 10 EUR ~= 364 TFT consumes much more resources than what can be bought with this price.

the initial deployment includes the below workloads:

```python

JS-NG> for w in workloads:

2 cloud_units = w.resource_units().cl... | process | vdc pricing does not match resource the smallest flavor of the vdc silver which costs eur tft consumes much more resources than what can be bought with this price the initial deployment includes the below workloads python js ng for w in workloads cloud units w resource units cloud... | 1 |

8,976 | 12,093,093,522 | IssuesEvent | 2020-04-19 18:12:32 | Ultimate-Hosts-Blacklist/whitelist | https://api.github.com/repos/Ultimate-Hosts-Blacklist/whitelist | closed | [FALSE-POSITIVE?] d1f8f9xcsvx3ha.cloudfront.net d3e1078hs60k37.cloudfront.net | whitelisting process | Looks like we are blocking all of Cloudfront due to Smed79 blocking all of Cloudfront https://github.com/smed79/blacklist/blob/master/hosts/cloudfront.txt

I posted an issue for smed79 to unblacklist Cloudfront, but if he says no we might need to consider other alternatives.

Amazon CloudFront is a fast content del... | 1.0 | [FALSE-POSITIVE?] d1f8f9xcsvx3ha.cloudfront.net d3e1078hs60k37.cloudfront.net - Looks like we are blocking all of Cloudfront due to Smed79 blocking all of Cloudfront https://github.com/smed79/blacklist/blob/master/hosts/cloudfront.txt

I posted an issue for smed79 to unblacklist Cloudfront, but if he says no we might... | process | cloudfront net cloudfront net looks like we are blocking all of cloudfront due to blocking all of cloudfront i posted an issue for to unblacklist cloudfront but if he says no we might need to consider other alternatives amazon cloudfront is a fast content delivery network cdn service that secure... | 1 |

724,838 | 24,943,305,563 | IssuesEvent | 2022-10-31 20:56:59 | bounswe/bounswe2022group4 | https://api.github.com/repos/bounswe/bounswe2022group4 | closed | Frontend: UI Improvement For Navigation Bar | Category - To Do Priority - High Status: In Progress whom: individual Difficulty - Medium Language - CSS Language - React.js Team - Frontend | I need to improve navigation bar ui in order to increase user experience.

Steps:

1) Changing static background color to gradiant one.

2) Improving sign in and sign up button design

3) Changing text colors and put some effects on them

Deadline: 31.10.2022 15.00

Reviewer: @mbatuhan-malazgirt | 1.0 | Frontend: UI Improvement For Navigation Bar - I need to improve navigation bar ui in order to increase user experience.

Steps:

1) Changing static background color to gradiant one.

2) Improving sign in and sign up button design

3) Changing text colors and put some effects on them

Deadline: 31.10.2022 15.00

R... | non_process | frontend ui improvement for navigation bar i need to improve navigation bar ui in order to increase user experience steps changing static background color to gradiant one improving sign in and sign up button design changing text colors and put some effects on them deadline reviewer... | 0 |

10,805 | 13,609,288,192 | IssuesEvent | 2020-09-23 04:50:12 | googleapis/java-securitycenter-settings | https://api.github.com/repos/googleapis/java-securitycenter-settings | closed | Dependency Dashboard | api: securitycenter type: process | This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/com.google.cloud-google-cloud-securitycenter-settings-0.x -->chore(deps): update dependency com.google.clou... | 1.0 | Dependency Dashboard - This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/com.google.cloud-google-cloud-securitycenter-settings-0.x -->chore(deps): update dep... | process | dependency dashboard this issue contains a list of renovate updates and their statuses open these updates have all been created already click a checkbox below to force a retry rebase of any chore deps update dependency com google cloud google cloud securitycenter settings to chore deps ... | 1 |

31 | 2,499,433,347 | IssuesEvent | 2015-01-08 00:26:10 | tinkerpop/tinkerpop3 | https://api.github.com/repos/tinkerpop/tinkerpop3 | opened | Now we can do as('a').out.as('b') in Gremlin-Groovy with SugarPlugin. | enhancement process | @mbroecheler @joshsh @dkuppitz

Now that we have `AnonymousGraphTraversal.Tokens.__`, `match()` looks like this in Gremlin-Groovy (with Sugar).

```groovy

g.V.match('a',

__.as('a').out('created').as('b'),

__.as('b').has('name', 'lop'),

__.as('b').in('created').as('c'),

__.as('c').has('age', 29... | 1.0 | Now we can do as('a').out.as('b') in Gremlin-Groovy with SugarPlugin. - @mbroecheler @joshsh @dkuppitz

Now that we have `AnonymousGraphTraversal.Tokens.__`, `match()` looks like this in Gremlin-Groovy (with Sugar).

```groovy

g.V.match('a',

__.as('a').out('created').as('b'),

__.as('b').has('name', 'lop... | process | now we can do as a out as b in gremlin groovy with sugarplugin mbroecheler joshsh dkuppitz now that we have anonymousgraphtraversal tokens match looks like this in gremlin groovy with sugar groovy g v match a as a out created as b as b has name lop... | 1 |

16,560 | 12,042,753,363 | IssuesEvent | 2020-04-14 11:10:02 | teambit/bit | https://api.github.com/repos/teambit/bit | opened | fix split e2e tests on circle by timing on windows | area/infrastructure type/bug | Currently, if look at the windows_e2e tests on circle under the `write e2e files` step, you will see this warning:

```

Error autodetecting timing type, falling back to weighting by name. Autodetect no matching filename or classname. If file names are used, double check paths for absolute vs relative.

Example input ... | 1.0 | fix split e2e tests on circle by timing on windows - Currently, if look at the windows_e2e tests on circle under the `write e2e files` step, you will see this warning:

```

Error autodetecting timing type, falling back to weighting by name. Autodetect no matching filename or classname. If file names are used, double ... | non_process | fix split tests on circle by timing on windows currently if look at the windows tests on circle under the write files step you will see this warning error autodetecting timing type falling back to weighting by name autodetect no matching filename or classname if file names are used double check ... | 0 |

13,601 | 16,177,450,288 | IssuesEvent | 2021-05-03 09:15:32 | melink14/rikaikun | https://api.github.com/repos/melink14/rikaikun | closed | Split codeql scanning such that it only runs dependabot changes on pull_request | P2 process | dependabot doesn't play well with codeql. During pull requests, the push action fails due to coming too quickly after PR action, and during merge it fails do to being read only.

We should make https://github.com/melink14/rikaikun/blob/master/.github/workflows/codeql-analysis.yml a composite action and have one workf... | 1.0 | Split codeql scanning such that it only runs dependabot changes on pull_request - dependabot doesn't play well with codeql. During pull requests, the push action fails due to coming too quickly after PR action, and during merge it fails do to being read only.

We should make https://github.com/melink14/rikaikun/blob/... | process | split codeql scanning such that it only runs dependabot changes on pull request dependabot doesn t play well with codeql during pull requests the push action fails due to coming too quickly after pr action and during merge it fails do to being read only we should make a composite action and have one workflow... | 1 |

135,844 | 11,019,838,131 | IssuesEvent | 2019-12-05 13:32:01 | dzhw/SLC-IntEr | https://api.github.com/repos/dzhw/SLC-IntEr | closed | Kalendarien | Modul: Kalendarien Update testing | Wir benötigen zwei Kalendarien, jeweils eine pro Kohorte:

- Cohort 1: Zeitraum Januar 2018 bis Dezember 2019 mit Episodentypen:

o Studium

o Berufsausbildung

o Fort-, Weiterbildung (längerfristig, insgesamt mind. 70 h)

o Jobben

o Erwerbstätigkeit (auch Selbständigkeit)

o Praktikum

o Elternzeit/Mutterschutz, Ha... | 1.0 | Kalendarien - Wir benötigen zwei Kalendarien, jeweils eine pro Kohorte:

- Cohort 1: Zeitraum Januar 2018 bis Dezember 2019 mit Episodentypen:

o Studium

o Berufsausbildung

o Fort-, Weiterbildung (längerfristig, insgesamt mind. 70 h)

o Jobben

o Erwerbstätigkeit (auch Selbständigkeit)

o Praktikum

o Elternzeit/Mu... | non_process | kalendarien wir benötigen zwei kalendarien jeweils eine pro kohorte cohort zeitraum januar bis dezember mit episodentypen o studium o berufsausbildung o fort weiterbildung längerfristig insgesamt mind h o jobben o erwerbstätigkeit auch selbständigkeit o praktikum o elternzeit muttersch... | 0 |

20,942 | 27,802,341,444 | IssuesEvent | 2023-03-17 16:47:10 | RConsortium/submissions-pilot3-adam | https://api.github.com/repos/RConsortium/submissions-pilot3-adam | closed | renv.lock | submission process | Would it be a possibility to include renv.lock into submission to help reviewers to reconstruct our environment? | 1.0 | renv.lock - Would it be a possibility to include renv.lock into submission to help reviewers to reconstruct our environment? | process | renv lock would it be a possibility to include renv lock into submission to help reviewers to reconstruct our environment | 1 |

53,709 | 11,114,736,307 | IssuesEvent | 2019-12-18 09:19:30 | microsoft/calculator | https://api.github.com/repos/microsoft/calculator | closed | UnitConverter has many instances where code can be simiplified ore made more efficient | codebase quality | - Some raw loops could be made to be range-for loops.

- wstringstream is often used for building a string which has lots of overhead compared to appending to a wstring.

- Some functions take a `wstring` or `const wchar_t*` where a `wstring_view` would be more appropriate.

- `wstring_view` does not require construc... | 1.0 | UnitConverter has many instances where code can be simiplified ore made more efficient - - Some raw loops could be made to be range-for loops.

- wstringstream is often used for building a string which has lots of overhead compared to appending to a wstring.

- Some functions take a `wstring` or `const wchar_t*` where ... | non_process | unitconverter has many instances where code can be simiplified ore made more efficient some raw loops could be made to be range for loops wstringstream is often used for building a string which has lots of overhead compared to appending to a wstring some functions take a wstring or const wchar t where ... | 0 |

279,708 | 8,672,121,030 | IssuesEvent | 2018-11-29 21:10:17 | Davoleo/Metallurgy-4-Reforged | https://api.github.com/repos/Davoleo/Metallurgy-4-Reforged | closed | Typo in Wiki | Priority: Low Wiki bug | In Harvest Level of Metals Block and Ores Block, there are typos in

Ore Harvestability:

1. "Zinco Ore"

4. "Rubracacium Ore"

| 1.0 | Typo in Wiki - In Harvest Level of Metals Block and Ores Block, there are typos in

Ore Harvestability:

1. "Zinco Ore"

4. "Rubracacium Ore"

| non_process | typo in wiki in harvest level of metals block and ores block there are typos in ore harvestability zinco ore rubracacium ore | 0 |

13,066 | 15,396,868,235 | IssuesEvent | 2021-03-03 21:16:30 | opendistro-for-elasticsearch/opendistro-build | https://api.github.com/repos/opendistro-for-elasticsearch/opendistro-build | closed | How are the variables in the values.yaml file defined? | in process | https://github.com/opendistro-for-elasticsearch/opendistro-build/blob/b42c52309f05dd9d28accaaf0abbf7c795d0763f/helm/opendistro-es/values.yaml#L454

Hello,

In the `elasticsearch.config` part of the **values.yaml** file, there is these variables like ${CLUSTER_NAME}, ${NODE_MASTER}, ${HTTP_ENABLE} that are reference... | 1.0 | How are the variables in the values.yaml file defined? - https://github.com/opendistro-for-elasticsearch/opendistro-build/blob/b42c52309f05dd9d28accaaf0abbf7c795d0763f/helm/opendistro-es/values.yaml#L454

Hello,

In the `elasticsearch.config` part of the **values.yaml** file, there is these variables like ${CLUSTER... | process | how are the variables in the values yaml file defined hello in the elasticsearch config part of the values yaml file there is these variables like cluster name node master http enable that are referenced but its unclear to me where these should be defined can you please tell me how and... | 1 |

1,953 | 4,082,945,858 | IssuesEvent | 2016-05-31 14:29:32 | IBM-Bluemix/logistics-wizard | https://api.github.com/repos/IBM-Bluemix/logistics-wizard | closed | New API to delete a Demo environment | erp-service task | DELETE /Demos/{guid}

to delete all users and their related data

related to #11 | 1.0 | New API to delete a Demo environment - DELETE /Demos/{guid}

to delete all users and their related data

related to #11 | non_process | new api to delete a demo environment delete demos guid to delete all users and their related data related to | 0 |

19,154 | 25,234,970,961 | IssuesEvent | 2022-11-14 23:35:50 | rusefi/rusefi_documentation | https://api.github.com/repos/rusefi/rusefi_documentation | closed | What shall we do with legacy wiki? wiki1 | wiki location & process change | wiki1 https://rusefi.com/wiki/

wiki2-human https://github.com/rusefi/rusefi/wiki/

wiki2-technical https://github.com/rusefi/rusefi_documentation

wiki3 wiki.rusefi.com

Problem: there are a few legacy links to pages like

https://rusefi.com/wiki/index.php?title=Main_Page

See http://www.turbobricks.org/forums... | 1.0 | What shall we do with legacy wiki? wiki1 - wiki1 https://rusefi.com/wiki/

wiki2-human https://github.com/rusefi/rusefi/wiki/

wiki2-technical https://github.com/rusefi/rusefi_documentation

wiki3 wiki.rusefi.com

Problem: there are a few legacy links to pages like

https://rusefi.com/wiki/index.php?title=Main_Pa... | process | what shall we do with legacy wiki human technical wiki rusefi com problem there are a few legacy links to pages like see see do we want to ignore and just drop or do we want some nicer solution | 1 |

11,577 | 14,443,245,840 | IssuesEvent | 2020-12-07 19:21:23 | A01731346/4a | https://api.github.com/repos/A01731346/4a | closed | complete_size_estimating_template | process - dashboard | - Completar el formato de estimación de LOC con los valores reales obtenidos | 1.0 | complete_size_estimating_template - - Completar el formato de estimación de LOC con los valores reales obtenidos | process | complete size estimating template completar el formato de estimación de loc con los valores reales obtenidos | 1 |

6,513 | 7,657,286,997 | IssuesEvent | 2018-05-10 19:08:11 | odoo/odoo | https://api.github.com/repos/odoo/odoo | closed | CRM Activities not showing in Calendar | 11.0 Services | Impacted versions: 11

Steps to reproduce: Create an Activity in CRM and then looking for in calendar.

Current behavior: Not showing

Expected behavior: Show my activities in Calendar.

Video/Screenshot link (optional):

| 1.0 | CRM Activities not showing in Calendar - Impacted versions: 11

Steps to reproduce: Create an Activity in CRM and then looking for in calendar.

Current behavior: Not showing

Expected behavior: Show my activities in Calendar.

Video/Screenshot link (optional):

| non_process | crm activities not showing in calendar impacted versions steps to reproduce create an activity in crm and then looking for in calendar current behavior not showing expected behavior show my activities in calendar video screenshot link optional | 0 |

10,962 | 16,017,268,208 | IssuesEvent | 2021-04-20 17:36:12 | NASA-PDS/pds-registry-app | https://api.github.com/repos/NASA-PDS/pds-registry-app | closed | The service shall store metadata for a registered artifact in an underlying metadata store | component:registry requirement requirement-topic:publish-artifacts | Level 4 Requirement:

* 🦄 L4.REG.3

| 2.0 | The service shall store metadata for a registered artifact in an underlying metadata store - Level 4 Requirement:

* 🦄 L4.REG.3

| non_process | the service shall store metadata for a registered artifact in an underlying metadata store level requirement 🦄 reg | 0 |

17,509 | 23,319,868,431 | IssuesEvent | 2022-08-08 15:26:03 | maticnetwork/miden | https://api.github.com/repos/maticnetwork/miden | opened | refactor Hasher lookup handling in the chiplets bus | processor | As discussed in #348, the first version of the hasher handling in the chiplets bus was straightforward and left room for several future optimizations.

In particular, the following should be refactored and optimized in the future:

- how hasher lookup values are computed

- how lookups are requested from the decoder

... | 1.0 | refactor Hasher lookup handling in the chiplets bus - As discussed in #348, the first version of the hasher handling in the chiplets bus was straightforward and left room for several future optimizations.

In particular, the following should be refactored and optimized in the future:

- how hasher lookup values are c... | process | refactor hasher lookup handling in the chiplets bus as discussed in the first version of the hasher handling in the chiplets bus was straightforward and left room for several future optimizations in particular the following should be refactored and optimized in the future how hasher lookup values are com... | 1 |

40,898 | 10,591,146,410 | IssuesEvent | 2019-10-09 10:13:30 | gradle/gradle | https://api.github.com/repos/gradle/gradle | opened | Allow CopySpec to specify a different normalization | @build-cache from:member | While copying files (using `Copy` or `Zip` etc.) we currently treat the sources specified via `CopySpec`s as `PathSensitivity.RELATIVE`. However, sometimes we want to copy things where we know they are going to be used as a classpath, like when including JARs in a WAR file. In those cases we could specify `@Classpath` ... | 1.0 | Allow CopySpec to specify a different normalization - While copying files (using `Copy` or `Zip` etc.) we currently treat the sources specified via `CopySpec`s as `PathSensitivity.RELATIVE`. However, sometimes we want to copy things where we know they are going to be used as a classpath, like when including JARs in a W... | non_process | allow copyspec to specify a different normalization while copying files using copy or zip etc we currently treat the sources specified via copyspec s as pathsensitivity relative however sometimes we want to copy things where we know they are going to be used as a classpath like when including jars in a w... | 0 |

250,950 | 27,127,581,459 | IssuesEvent | 2023-02-16 07:07:01 | monizb/FireShort | https://api.github.com/repos/monizb/FireShort | closed | CVE-2022-25881 (Medium) detected in http-cache-semantics-4.1.0.tgz - autoclosed | security vulnerability | ## CVE-2022-25881 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>http-cache-semantics-4.1.0.tgz</b></p></summary>

<p>Parses Cache-Control and other headers. Helps building correct H... | True | CVE-2022-25881 (Medium) detected in http-cache-semantics-4.1.0.tgz - autoclosed - ## CVE-2022-25881 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>http-cache-semantics-4.1.0.tgz</b><... | non_process | cve medium detected in http cache semantics tgz autoclosed cve medium severity vulnerability vulnerable library http cache semantics tgz parses cache control and other headers helps building correct http caches and proxies library home page a href path to dependency f... | 0 |

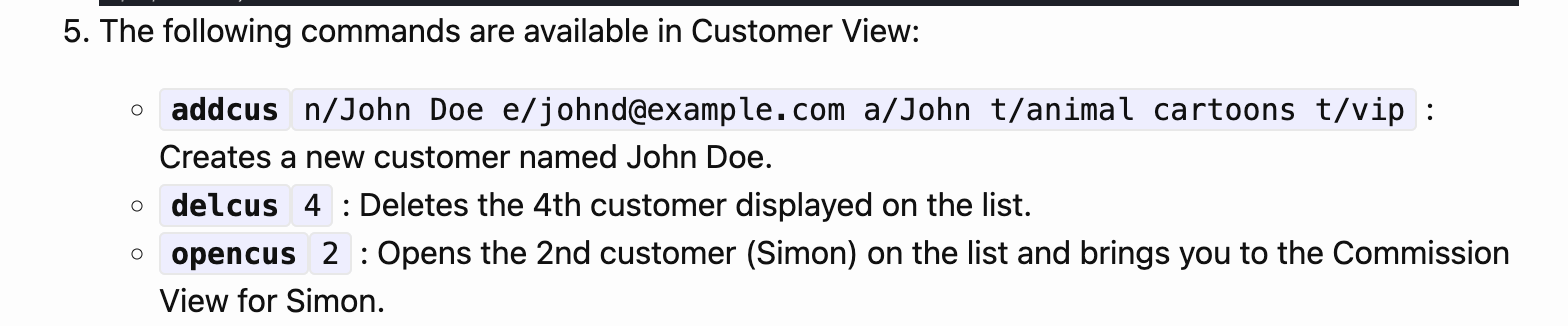

346,364 | 24,886,774,273 | IssuesEvent | 2022-10-28 08:28:11 | carriezhengjr/ped | https://api.github.com/repos/carriezhengjr/ped | opened | Example command provided in UG does not work in actual app | type.DocumentationBug severity.Medium | The User Guide (UG) states that the command `addcus n/John Doe e/johnd@example.com a/John t/animal cartoons t/vip` will creates a new customer named John Doe.

However, when ... | 1.0 | Example command provided in UG does not work in actual app - The User Guide (UG) states that the command `addcus n/John Doe e/johnd@example.com a/John t/animal cartoons t/vip` will creates a new customer named John Doe.

by Aug 9 | Accounting Data/Processes Portfolio Management QBR/Quarterly | Produce accrual with full adjustment (Kelly will then give feedback)

Need to manually accrue for MGA retro and fees

Kelly needs JP's spreadsheet to reconcile premium received

DC project (Walter/Travis) to ingest loan files?

JP tied Freddie - Fannie calc easier

| 1.0 | New accruals process and premium reconciliation (JP spreadsheet) by Aug 9 - Produce accrual with full adjustment (Kelly will then give feedback)

Need to manually accrue for MGA retro and fees

Kelly needs JP's spreadsheet to reconcile premium received

DC project (Walter/Travis) to ingest loan files?

JP tied Freddie ... | process | new accruals process and premium reconciliation jp spreadsheet by aug produce accrual with full adjustment kelly will then give feedback need to manually accrue for mga retro and fees kelly needs jp s spreadsheet to reconcile premium received dc project walter travis to ingest loan files jp tied freddie ... | 1 |

247,036 | 26,670,305,002 | IssuesEvent | 2023-01-26 09:41:41 | eclipse-kanto/kanto | https://api.github.com/repos/eclipse-kanto/kanto | closed | Move to the last Go version | feature security | Currently, the Kanto release workflow is using: 1.17.2. There are new major versions with a bunch of features and bug fixes:

- [1.18](https://go.dev/doc/devel/release#go1.18)

- [1.19](https://go.dev/doc/devel/release#go1.19)

Reminder: All notice files on all components have to be updated to this new version.

La... | True | Move to the last Go version - Currently, the Kanto release workflow is using: 1.17.2. There are new major versions with a bunch of features and bug fixes:

- [1.18](https://go.dev/doc/devel/release#go1.18)

- [1.19](https://go.dev/doc/devel/release#go1.19)

Reminder: All notice files on all components have to be upda... | non_process | move to the last go version currently the kanto release workflow is using there are new major versions with a bunch of features and bug fixes reminder all notice files on all components have to be updated to this new version latest version to use tasks ecl... | 0 |

27,315 | 11,470,793,095 | IssuesEvent | 2020-02-09 06:21:35 | scriptex/webpack-mpa | https://api.github.com/repos/scriptex/webpack-mpa | closed | CVE-2020-8116 (Medium) detected in dot-prop-4.2.0.tgz | security vulnerability | ## CVE-2020-8116 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dot-prop-4.2.0.tgz</b></p></summary>

<p>Get, set, or delete a property from a nested object using a dot path</p>

<p>L... | True | CVE-2020-8116 (Medium) detected in dot-prop-4.2.0.tgz - ## CVE-2020-8116 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dot-prop-4.2.0.tgz</b></p></summary>

<p>Get, set, or delete a... | non_process | cve medium detected in dot prop tgz cve medium severity vulnerability vulnerable library dot prop tgz get set or delete a property from a nested object using a dot path library home page a href path to dependency file tmp ws scm webpack mpa package json path to vul... | 0 |

19,373 | 25,499,188,894 | IssuesEvent | 2022-11-28 01:16:23 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | opened | Replace Maven with Gradle in CI | enhancement process | ### Problem

As a developer, I'd like to consolidate build tools to reduce dependabot PRs, reduce newcomer confusion, and take advantage of the benefits that Gradle providers over Maven.

### Solution

* Remove separate module workflows in favor of consolidated Gradle matrix workflow

* Replace use of Maven in CI with ... | 1.0 | Replace Maven with Gradle in CI - ### Problem

As a developer, I'd like to consolidate build tools to reduce dependabot PRs, reduce newcomer confusion, and take advantage of the benefits that Gradle providers over Maven.

### Solution

* Remove separate module workflows in favor of consolidated Gradle matrix workflow

... | process | replace maven with gradle in ci problem as a developer i d like to consolidate build tools to reduce dependabot prs reduce newcomer confusion and take advantage of the benefits that gradle providers over maven solution remove separate module workflows in favor of consolidated gradle matrix workflow ... | 1 |

487,075 | 14,018,791,360 | IssuesEvent | 2020-10-29 17:17:19 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | closed | Introduce ordering on column for table widget | Accepted Priority: High Project: C040 Widgets | Enable the sorting tool (ascending / descending) of records in table widget (currently not enabled)

as it is currently working for the Attribute Table tool

of records in table widget (currently not enabled)

as it is currently working for the Attribute Table tool ... | non_process | introduce ordering on column for table widget enable the sorting tool ascending descending of records in table widget currently not enabled as it is currently working for the attribute table tool | 0 |

159,606 | 20,074,933,477 | IssuesEvent | 2022-02-04 11:36:14 | finos/cla-bot | https://api.github.com/repos/finos/cla-bot | opened | CVE-2021-23383 (High) detected in handlebars-4.4.2.tgz | security vulnerability | ## CVE-2021-23383 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.4.2.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates ... | True | CVE-2021-23383 (High) detected in handlebars-4.4.2.tgz - ## CVE-2021-23383 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.4.2.tgz</b></p></summary>

<p>Handlebars provides... | non_process | cve high detected in handlebars tgz cve high severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file package js... | 0 |

8,409 | 11,575,537,751 | IssuesEvent | 2020-02-21 09:58:26 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Custom Schedules or Post-Script doesn't work | Pri1 automation/svc cxp process-automation/subsvc product-question triaged | When creating a custom schedule or running the StartStop Parent runbook with the stop action as a post script, the $WhatIf value is inputted as a string rather than a bool causing failure.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 225c... | 1.0 | Custom Schedules or Post-Script doesn't work - When creating a custom schedule or running the StartStop Parent runbook with the stop action as a post script, the $WhatIf value is inputted as a string rather than a bool causing failure.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.mic... | process | custom schedules or post script doesn t work when creating a custom schedule or running the startstop parent runbook with the stop action as a post script the whatif value is inputted as a string rather than a bool causing failure document details ⚠ do not edit this section it is required for docs mic... | 1 |

21,073 | 28,017,779,645 | IssuesEvent | 2023-03-28 01:07:06 | HaoNguyenNhat/CNPMNC | https://api.github.com/repos/HaoNguyenNhat/CNPMNC | opened | Yêu cầu hỗ trợ | 8 point DG HUY NN HÀO IN PROCESS | Là khách hàng chính thức tôi muốn gửi yêu cầu hỗ trợ trực tuyến để được giải đáp thắc mắc và giải quyết vấn đề kịp thời | 1.0 | Yêu cầu hỗ trợ - Là khách hàng chính thức tôi muốn gửi yêu cầu hỗ trợ trực tuyến để được giải đáp thắc mắc và giải quyết vấn đề kịp thời | process | yêu cầu hỗ trợ là khách hàng chính thức tôi muốn gửi yêu cầu hỗ trợ trực tuyến để được giải đáp thắc mắc và giải quyết vấn đề kịp thời | 1 |

18,121 | 24,151,418,567 | IssuesEvent | 2022-09-22 01:27:50 | neuropsychology/NeuroKit | https://api.github.com/repos/neuropsychology/NeuroKit | reopened | Generic function for 1/f noise | wontfix signal processing :chart_with_upwards_trend: Complexity/Chaos :bomb: inactive 👻 | Would be good to have a generic `signal_1f` function to measure 1/f noise in particular for eeg and ecg signals (many papers have been published on this e.g., [here](https://www.jneurosci.org/content/jneuro/35/38/13257.full.pdf))

To-do:

- [ ] Dissociate the functionalities for our existing [fractal_psdslope](https:... | 1.0 | Generic function for 1/f noise - Would be good to have a generic `signal_1f` function to measure 1/f noise in particular for eeg and ecg signals (many papers have been published on this e.g., [here](https://www.jneurosci.org/content/jneuro/35/38/13257.full.pdf))

To-do:

- [ ] Dissociate the functionalities for our e... | process | generic function for f noise would be good to have a generic signal function to measure f noise in particular for eeg and ecg signals many papers have been published on this e g to do dissociate the functionalities for our existing slope itself should be computed in signal and this c... | 1 |

96,762 | 12,156,481,009 | IssuesEvent | 2020-04-25 17:31:09 | COVID19Tracking/website | https://api.github.com/repos/COVID19Tracking/website | opened | Responsive Navbar Seems weird and crowded. | DESIGN | The mobile navbar seems so crowded and basic. It just doesn't match with the whole layout of the site.

I suggest we either add some space between the navbar items or create a vertical hamburger menu instead.

#### Screenshots

<img src="https://user-images.githubusercontent.com/54989142/80286262-f4572100-8747-11ea... | 1.0 | Responsive Navbar Seems weird and crowded. - The mobile navbar seems so crowded and basic. It just doesn't match with the whole layout of the site.

I suggest we either add some space between the navbar items or create a vertical hamburger menu instead.

#### Screenshots

<img src="https://user-images.githubusercon... | non_process | responsive navbar seems weird and crowded the mobile navbar seems so crowded and basic it just doesn t match with the whole layout of the site i suggest we either add some space between the navbar items or create a vertical hamburger menu instead screenshots nbsp nbsp | 0 |

222,424 | 17,069,249,319 | IssuesEvent | 2021-07-07 11:14:56 | telerik/kendo-react | https://api.github.com/repos/telerik/kendo-react | closed | Editor raises an error on enter key | documentation pkg:editor | The error is "RangeError: Can not convert <> to a Fragment (looks like multiple versions of prosemirror-model were loaded)".

Currently, it happens on the [KendoReact website](https://www.telerik.com/kendo-react-ui/components/editor/plugins/#toc-input-rules). If you open an example in StackBlitz, download it and run ... | 1.0 | Editor raises an error on enter key - The error is "RangeError: Can not convert <> to a Fragment (looks like multiple versions of prosemirror-model were loaded)".

Currently, it happens on the [KendoReact website](https://www.telerik.com/kendo-react-ui/components/editor/plugins/#toc-input-rules). If you open an examp... | non_process | editor raises an error on enter key the error is rangeerror can not convert to a fragment looks like multiple versions of prosemirror model were loaded currently it happens on the if you open an example in stackblitz download it and run it locally you will see that it works as expected the error ha... | 0 |

343,131 | 30,653,288,146 | IssuesEvent | 2023-07-25 10:20:00 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix jax_numpy_creation.test_jax_csingle | JAX Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5497819199/jobs/10018855036"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5497819199/jobs/10018855036"><img src=https://img.shields.io/badge/-success-success... | 1.0 | Fix jax_numpy_creation.test_jax_csingle - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5497819199/jobs/10018855036"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5497819199/jobs/10018855036"><img src=htt... | non_process | fix jax numpy creation test jax csingle tensorflow a href src torch a href src paddle a href src numpy a href src jax a href src | 0 |

10,093 | 13,044,162,070 | IssuesEvent | 2020-07-29 03:47:29 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `AddDateAndDuration` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `AddDateAndDuration` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/sr... | 2.0 | UCP: Migrate scalar function `AddDateAndDuration` from TiDB -

## Description

Port the scalar function `AddDateAndDuration` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- htt... | process | ucp migrate scalar function adddateandduration from tidb description port the scalar function adddateandduration from tidb to coprocessor score mentor s breeswish recommended skills rust programming learning materials already implemented expressions ported from tidb ... | 1 |

264,163 | 23,099,669,271 | IssuesEvent | 2022-07-27 00:24:11 | MPMG-DCC-UFMG/F01 | https://api.github.com/repos/MPMG-DCC-UFMG/F01 | closed | Teste de generalizacao para a tag Servidores - Registro da remuneração - Padre Carvalho | generalization test development template-Síntese tecnologia informatica subtag-Dados de Remuneração tag-Servidores | DoD: Realizar o teste de Generalização do validador da tag Servidores - Registro da remuneração para o Município de Padre Carvalho. | 1.0 | Teste de generalizacao para a tag Servidores - Registro da remuneração - Padre Carvalho - DoD: Realizar o teste de Generalização do validador da tag Servidores - Registro da remuneração para o Município de Padre Carvalho. | non_process | teste de generalizacao para a tag servidores registro da remuneração padre carvalho dod realizar o teste de generalização do validador da tag servidores registro da remuneração para o município de padre carvalho | 0 |

508,494 | 14,701,368,389 | IssuesEvent | 2021-01-04 11:45:39 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.google.com - see bug description | browser-firefox engine-gecko ml-needsdiagnosis-false ml-probability-high priority-critical | <!-- @browser: Firefox 85.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:85.0) Gecko/20100101 Firefox/85.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/64894 -->

**URL**: https://www.google.com/sorry/index?continue=https://www.google.... | 1.0 | www.google.com - see bug description - <!-- @browser: Firefox 85.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:85.0) Gecko/20100101 Firefox/85.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/64894 -->

**URL**: https://www.google.com/s... | non_process | see bug description url browser version firefox operating system windows tested another browser yes chrome problem type something else description recptcha steps to reproduce im not a robot error appear view the screenshot img alt screen... | 0 |

240,999 | 7,807,945,905 | IssuesEvent | 2018-06-11 18:32:36 | michalkowal/Cake.MkDocs | https://api.github.com/repos/michalkowal/Cake.MkDocs | closed | Recommended changes resulting from automated audit | Priority: Medium Status: In Progress Type: Maintenance | We performed an automated audit of your Cake addin and found that it does not follow all the best practices.

We encourage you to make the following modifications:

- [x] You are currently referencing Cake.Core 0.27.2. Please upgrade to 0.28.0

- [ ] The nuget package for your addin should use the cake-contrib icon... | 1.0 | Recommended changes resulting from automated audit - We performed an automated audit of your Cake addin and found that it does not follow all the best practices.

We encourage you to make the following modifications:

- [x] You are currently referencing Cake.Core 0.27.2. Please upgrade to 0.28.0

- [ ] The nuget pa... | non_process | recommended changes resulting from automated audit we performed an automated audit of your cake addin and found that it does not follow all the best practices we encourage you to make the following modifications you are currently referencing cake core please upgrade to the nuget package ... | 0 |

9,763 | 12,744,389,169 | IssuesEvent | 2020-06-26 12:24:54 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | PS equivalent for portal steps | Pri2 automation/svc cxp process-automation/subsvc product-question triaged |

[Enter feedback here]

https://docs.microsoft.com/en-us/azure/automation/automation-hrw-run-runbooks#use-runbook-authentication-with-run-as-account

Is there a Powershell equivalent for executing the above step.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitH... | 1.0 | PS equivalent for portal steps -

[Enter feedback here]

https://docs.microsoft.com/en-us/azure/automation/automation-hrw-run-runbooks#use-runbook-authentication-with-run-as-account

Is there a Powershell equivalent for executing the above step.

---

#### Document Details

⚠ *Do not edit this section. It is requi... | process | ps equivalent for portal steps is there a powershell equivalent for executing the above step document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source ... | 1 |

315,390 | 27,069,826,505 | IssuesEvent | 2023-02-14 05:28:57 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | [Testerina] Jacoco code coverage throws a MethodTooLarge exception for certain services | Type/Bug Team/DevTools Area/TestFramework | **Description:**

This is thrown from the ballerina project after the tests are executed at the code coverage generation phase.

```

java.lang.instrument.IllegalClassFormatException: Error while instrumenting cookie/logging/0_1_0/$value$Service$$service$_2.

at org.jacoco.agent.rt.internal_f3994fa.Coverage... | 1.0 | [Testerina] Jacoco code coverage throws a MethodTooLarge exception for certain services - **Description:**

This is thrown from the ballerina project after the tests are executed at the code coverage generation phase.

```

java.lang.instrument.IllegalClassFormatException: Error while instrumenting cookie/logging/0... | non_process | jacoco code coverage throws a methodtoolarge exception for certain services description this is thrown from the ballerina project after the tests are executed at the code coverage generation phase java lang instrument illegalclassformatexception error while instrumenting cookie logging valu... | 0 |

177,082 | 13,683,105,144 | IssuesEvent | 2020-09-30 00:46:50 | MiqueasAmorim/Pedido | https://api.github.com/repos/MiqueasAmorim/Pedido | closed | CT02 - (ProdutoTest) - O valor unitário do produto não pode ser negativo | test case | **Dados de entrada:**

- Nome : Borracha

- Valor unitário: -1.5

- Quantidade: 10

**Resultado esperado:**

- RuntimeException: "Valor inválido: -1.5"

| 1.0 | CT02 - (ProdutoTest) - O valor unitário do produto não pode ser negativo - **Dados de entrada:**

- Nome : Borracha

- Valor unitário: -1.5

- Quantidade: 10

**Resultado esperado:**

- RuntimeException: "Valor inválido: -1.5"

| non_process | produtotest o valor unitário do produto não pode ser negativo dados de entrada nome borracha valor unitário quantidade resultado esperado runtimeexception valor inválido | 0 |

6,958 | 10,114,086,867 | IssuesEvent | 2019-07-30 18:19:39 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | New-OnPremiseHybridWorker.ps1 needs to be updated | Pri2 assigned-to-author automation/svc process-automation/subsvc triaged | The file New-OnPremiseHybridWorker.ps1 should be updated to use the new PowerShell AZ modules, it's still using AzureRM.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 7b29372c-7bd9-7da2-4cff-9afbb432bccf

* Version Independent ID: 66ce101... | 1.0 | New-OnPremiseHybridWorker.ps1 needs to be updated - The file New-OnPremiseHybridWorker.ps1 should be updated to use the new PowerShell AZ modules, it's still using AzureRM.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 7b29372c-7bd9-7da2... | process | new onpremisehybridworker needs to be updated the file new onpremisehybridworker should be updated to use the new powershell az modules it s still using azurerm document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version in... | 1 |

21,699 | 30,195,098,560 | IssuesEvent | 2023-07-04 19:52:39 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | [MLv2] [Bug] `replace-clause` is broken with joins | Type:Bug .Backend .metabase-lib .Team/QueryProcessor :hammer_and_wrench: | Failing test:

```clj

(deftest ^:parallel replace-join-test

(let [query (lib.tu/query-with-join)

[original-join] (lib/joins query)]

(is (=? {:stages [{:joins [{:lib/type :mbql/join, :alias "Cat", :fields :all}]}]}

query))

(let [new-join (lib/with-join-fields original-join... | 1.0 | [MLv2] [Bug] `replace-clause` is broken with joins - Failing test:

```clj

(deftest ^:parallel replace-join-test

(let [query (lib.tu/query-with-join)

[original-join] (lib/joins query)]

(is (=? {:stages [{:joins [{:lib/type :mbql/join, :alias "Cat", :fields :all}]}]}

query))

... | process | replace clause is broken with joins failing test clj deftest parallel replace join test let query lib tu query with join lib joins query is stages query let is stages lib replace clause query orig... | 1 |

476,516 | 13,745,938,126 | IssuesEvent | 2020-10-06 04:21:10 | AY2021S1-CS2113T-F12-3/tp | https://api.github.com/repos/AY2021S1-CS2113T-F12-3/tp | closed | Add JSON parsing for questions loaded from file | priority.High type.Task | The file that stores questions in #8 can be a JSON file which will be parsed and put into the topics, questions, answers and hints objects. | 1.0 | Add JSON parsing for questions loaded from file - The file that stores questions in #8 can be a JSON file which will be parsed and put into the topics, questions, answers and hints objects. | non_process | add json parsing for questions loaded from file the file that stores questions in can be a json file which will be parsed and put into the topics questions answers and hints objects | 0 |

522,917 | 15,169,581,154 | IssuesEvent | 2021-02-12 21:25:16 | MaowImpl/Optionals | https://api.github.com/repos/MaowImpl/Optionals | closed | @Optional attributes don't support arrays | annotation enhancement javac low priority | Currently, the attributes in `@Optional` do not include any arrays. This will require a bit more condition checking in the Javac side of things, but it seems doable for now. | 1.0 | @Optional attributes don't support arrays - Currently, the attributes in `@Optional` do not include any arrays. This will require a bit more condition checking in the Javac side of things, but it seems doable for now. | non_process | optional attributes don t support arrays currently the attributes in optional do not include any arrays this will require a bit more condition checking in the javac side of things but it seems doable for now | 0 |

169,578 | 6,411,882,065 | IssuesEvent | 2017-08-08 00:44:51 | projectcalico/calico | https://api.github.com/repos/projectcalico/calico | reopened | General content improvements (style, consistency etc.) | area/docs/ux priority/P2 size/L | A lot of our docs are authored in subtly different ways. Would be good to go through the entire docs and provide a more common use of MD directives.

For example:

- [ ] Consistent use of numbered headings when describing a set of instructions

- [ ] Remove the clickable links and replace with inline bold links at... | 1.0 | General content improvements (style, consistency etc.) - A lot of our docs are authored in subtly different ways. Would be good to go through the entire docs and provide a more common use of MD directives.

For example:

- [ ] Consistent use of numbered headings when describing a set of instructions

- [ ] Remove ... | non_process | general content improvements style consistency etc a lot of our docs are authored in subtly different ways would be good to go through the entire docs and provide a more common use of md directives for example consistent use of numbered headings when describing a set of instructions remove the ... | 0 |

55,949 | 14,074,860,158 | IssuesEvent | 2020-11-04 08:07:10 | teena24/WebGoat | https://api.github.com/repos/teena24/WebGoat | opened | CVE-2018-14040 (Medium) detected in bootstrap-3.3.7.jar, bootstrap-3.1.1.min.js | security vulnerability | ## CVE-2018-14040 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.7.jar</b>, <b>bootstrap-3.1.1.min.js</b></p></summary>

<p>

<details><summary><b>bootstrap-3.3.7.jar<... | True | CVE-2018-14040 (Medium) detected in bootstrap-3.3.7.jar, bootstrap-3.1.1.min.js - ## CVE-2018-14040 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.7.jar</b>, <b>boots... | non_process | cve medium detected in bootstrap jar bootstrap min js cve medium severity vulnerability vulnerable libraries bootstrap jar bootstrap min js bootstrap jar webjar for bootstrap library home page a href path to dependency file webgoat webgoat... | 0 |

4,542 | 7,374,707,943 | IssuesEvent | 2018-03-13 21:13:56 | KantaraInitiative/wg-uma | https://api.github.com/repos/KantaraInitiative/wg-uma | closed | Update the Release Notes for UMA 2.0 | V2.0 process | Add a new section to the Release Notes covering the changes in UMA 2.0 by the time it's published:

http://kantarainitiative.org/confluence/display/uma/UMA+Release+Notes

| 1.0 | Update the Release Notes for UMA 2.0 - Add a new section to the Release Notes covering the changes in UMA 2.0 by the time it's published:

http://kantarainitiative.org/confluence/display/uma/UMA+Release+Notes

| process | update the release notes for uma add a new section to the release notes covering the changes in uma by the time it s published | 1 |

448 | 2,883,308,485 | IssuesEvent | 2015-06-11 11:21:38 | kvakulo/Switcheroo | https://api.github.com/repos/kvakulo/Switcheroo | closed | SmartGit window is missing from the window list if a child window is opened | bug in process | I think I saw SmartGit window on one of your screenshots, which is good, because this means you can easily reproduce the bug ;)

1. Open SmartGit

2. Open Switcheroo to verify SmartGit window is correctly displayed in the list by default

3. Switch to SmartGit, hit `Ctrl+R` to display reset window

4. Open Switcheroo... | 1.0 | SmartGit window is missing from the window list if a child window is opened - I think I saw SmartGit window on one of your screenshots, which is good, because this means you can easily reproduce the bug ;)

1. Open SmartGit

2. Open Switcheroo to verify SmartGit window is correctly displayed in the list by default

3... | process | smartgit window is missing from the window list if a child window is opened i think i saw smartgit window on one of your screenshots which is good because this means you can easily reproduce the bug open smartgit open switcheroo to verify smartgit window is correctly displayed in the list by default ... | 1 |

13,719 | 16,484,110,825 | IssuesEvent | 2021-05-24 15:29:37 | frictionlessdata/project | https://api.github.com/repos/frictionlessdata/project | closed | Github discussions as potential upgrade of this forum | Topic: Process | Github announced discussions in beta in early May. Not yet generally available. When they are definitely worth investigating. | 1.0 | Github discussions as potential upgrade of this forum - Github announced discussions in beta in early May. Not yet generally available. When they are definitely worth investigating. | process | github discussions as potential upgrade of this forum github announced discussions in beta in early may not yet generally available when they are definitely worth investigating | 1 |

18,571 | 24,556,218,886 | IssuesEvent | 2022-10-12 16:04:26 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Android] UI issue for the Scale responce type to choose the value | Bug P1 Android Process: Fixed Process: Tested QA Process: Tested dev | Steps:-

1. Configure the Questionnarie activity with form step and with the responce type **Scale(Min&Max values = 1&10 respectively)** for the study in Study builder and publish

2. Install and login into the mobile app

3. Enroll into the same study which is mentioned in step-1

4. Navigate inside the activity and ... | 3.0 | [Android] UI issue for the Scale responce type to choose the value - Steps:-

1. Configure the Questionnarie activity with form step and with the responce type **Scale(Min&Max values = 1&10 respectively)** for the study in Study builder and publish

2. Install and login into the mobile app

3. Enroll into the same stu... | process | ui issue for the scale responce type to choose the value steps configure the questionnarie activity with form step and with the responce type scale min max values respectively for the study in study builder and publish install and login into the mobile app enroll into the same study which ... | 1 |

74,018 | 19,976,857,465 | IssuesEvent | 2022-01-29 08:05:00 | envoyproxy/envoy | https://api.github.com/repos/envoyproxy/envoy | opened | Newer release available `com_google_protobuf`: v3.19.4 (current: v3.19.3) | area/build no stalebot dependencies |

Package Name: com_google_protobuf

Current Version: v3.19.3@2022-01-11 17:17:30

Available Version: v3.19.4@2022-01-28 03:35:56

Upstream releases: https://github.com/protocolbuffers/protobuf/releases

| 1.0 | Newer release available `com_google_protobuf`: v3.19.4 (current: v3.19.3) -

Package Name: com_google_protobuf

Current Version: v3.19.3@2022-01-11 17:17:30

Available Version: v3.19.4@2022-01-28 03:35:56

Upstream releases: https://github.com/protocolbuffers/protobuf/releases

| non_process | newer release available com google protobuf current package name com google protobuf current version available version upstream releases | 0 |

113,440 | 11,802,848,006 | IssuesEvent | 2020-03-18 22:32:26 | mike-goodwin/owasp-threat-dragon | https://api.github.com/repos/mike-goodwin/owasp-threat-dragon | closed | Which browsers are suported? | documentation help wanted | Having issues to create a basic template any recommendation on which browser i can use? | 1.0 | Which browsers are suported? - Having issues to create a basic template any recommendation on which browser i can use? | non_process | which browsers are suported having issues to create a basic template any recommendation on which browser i can use | 0 |

13,312 | 15,782,171,683 | IssuesEvent | 2021-04-01 12:26:39 | ooi-data/CE04OSPS-SF01B-3A-FLORTD104-streamed-flort_d_data_record | https://api.github.com/repos/ooi-data/CE04OSPS-SF01B-3A-FLORTD104-streamed-flort_d_data_record | opened | 🛑 Processing failed: ResponseParserError | process | ## Overview

`ResponseParserError` found in `processing_task` task during run ended on 2021-04-01T12:26:38.912793.

## Details

Flow name: `CE04OSPS-SF01B-3A-FLORTD104-streamed-flort_d_data_record`

Task name: `processing_task`

Error type: `ResponseParserError`

Error message: Unable to parse response (no element found: ... | 1.0 | 🛑 Processing failed: ResponseParserError - ## Overview

`ResponseParserError` found in `processing_task` task during run ended on 2021-04-01T12:26:38.912793.

## Details

Flow name: `CE04OSPS-SF01B-3A-FLORTD104-streamed-flort_d_data_record`

Task name: `processing_task`

Error type: `ResponseParserError`

Error message: ... | process | 🛑 processing failed responseparsererror overview responseparsererror found in processing task task during run ended on details flow name streamed flort d data record task name processing task error type responseparsererror error message unable to parse response no elemen... | 1 |

16,476 | 21,411,466,836 | IssuesEvent | 2022-04-22 06:34:04 | ppy/osu-web | https://api.github.com/repos/ppy/osu-web | closed | Hide online offset option for qualified beatmaps from BNs | area:beatmap-processing priority:0 | There is no reason for BNs to have access to this.

1. They can only access this panel for qualified and pending beatmaps.

2. Standard procedure is to disqualify maps with incorrect offset according to [some official wiki source which I lost the link to]:

> Issues that can be mitigated through online offsets, tags ... | 1.0 | Hide online offset option for qualified beatmaps from BNs - There is no reason for BNs to have access to this.

1. They can only access this panel for qualified and pending beatmaps.

2. Standard procedure is to disqualify maps with incorrect offset according to [some official wiki source which I lost the link to]:

... | process | hide online offset option for qualified beatmaps from bns there is no reason for bns to have access to this they can only access this panel for qualified and pending beatmaps standard procedure is to disqualify maps with incorrect offset according to issues that can be mitigated through online offse... | 1 |

218,862 | 16,772,911,485 | IssuesEvent | 2021-06-14 16:54:51 | unitaryfund/mitiq | https://api.github.com/repos/unitaryfund/mitiq | closed | Consider reverting cirq-google until deprecation warnings will disappear. | discussion documentation infrastructure priority/p1 | When we passed from `cirq` to `cirq-core`, a lot of deprecation warning appear each time we import something.

E.g. if a user tries to run:

```

python

>>> from mitiq.zne import execute_with_zne

```

The following long message appears:

<details>

--- Logging error ---

Traceback (most recent call last):

Fi... | 1.0 | Consider reverting cirq-google until deprecation warnings will disappear. - When we passed from `cirq` to `cirq-core`, a lot of deprecation warning appear each time we import something.

E.g. if a user tries to run:

```

python

>>> from mitiq.zne import execute_with_zne

```

The following long message appears:

... | non_process | consider reverting cirq google until deprecation warnings will disappear when we passed from cirq to cirq core a lot of deprecation warning appear each time we import something e g if a user tries to run python from mitiq zne import execute with zne the following long message appears ... | 0 |

11,945 | 14,708,853,106 | IssuesEvent | 2021-01-05 00:46:59 | yuta252/startlens_react_frontend | https://api.github.com/repos/yuta252/startlens_react_frontend | closed | 画像のリサイズ及びBase64へのエンコーディング | dev process enhancement | ## 概要

ユーザーのプロフィール画像を登録するにあたって、画像のサイズが大きい場合に通信速度の大幅な遅延が想定される。そのためフロントエンドで一度画像をリサイズした上でBase64エンコーディングを行いバックエンドのAPIと通信する実装を行う。

## 変更点

- [ ] 画像のアップロード時に縦横の長さが大きい方を500pxに合わせ、他方の小さい方をアップロード画像と同じ縮尺でリサイズする。

- [ ] APIでバックエンドに送信するためにBase64エンコードする

## 追加タスク

- [ ] FileAPIを利用して複数の画像投稿に対するアップロードを可能にする

- [ ] 画像アップロード機... | 1.0 | 画像のリサイズ及びBase64へのエンコーディング - ## 概要

ユーザーのプロフィール画像を登録するにあたって、画像のサイズが大きい場合に通信速度の大幅な遅延が想定される。そのためフロントエンドで一度画像をリサイズした上でBase64エンコーディングを行いバックエンドのAPIと通信する実装を行う。

## 変更点

- [ ] 画像のアップロード時に縦横の長さが大きい方を500pxに合わせ、他方の小さい方をアップロード画像と同じ縮尺でリサイズする。

- [ ] APIでバックエンドに送信するためにBase64エンコードする

## 追加タスク

- [ ] FileAPIを利用して複数の画像投稿に対するア... | process | 概要 ユーザーのプロフィール画像を登録するにあたって、画像のサイズが大きい場合に通信速度の大幅な遅延が想定される。 。 変更点 、他方の小さい方をアップロード画像と同じ縮尺でリサイズする。 追加タスク fileapiを利用して複数の画像投稿に対するアップロードを可能にする 画像アップロード機能は再利用するため関数化を行う 課題 参照 備考 画像のリサイズを行うためにcanvasを生成し、 。... | 1 |

3,008 | 6,009,097,216 | IssuesEvent | 2017-06-06 09:38:05 | zero-os/0-orchestrator | https://api.github.com/repos/zero-os/0-orchestrator | closed | Container created with fake directory in init process | process_wontfix state_question | ## Request

## Response

container created !

## SV

resourcepool : a30a8b... | 1.0 | Container created with fake directory in init process - ## Request

## Response

public void testRingbufferOverflow() throws Exception {

int b... | 1.0 | Emitter processor does not satisfy ringbuffer description claimed in class docs - ### Expected behavior

Elements are overriding oldest entries in queue if queue is full

### Actual behavior

Processor blocks thread in a infinite loop waiting for a free space in queue

### Steps to reproduce

```

@Test(... | non_process | emitter processor does not satisfy ringbuffer description claimed in class docs expected behavior elements are overriding oldest entries in queue if queue is full actual behavior processor blocks thread in a infinite loop waiting for a free space in queue steps to reproduce test ... | 0 |

4,371 | 7,260,515,787 | IssuesEvent | 2018-02-18 10:54:32 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [FEATURE] Subdivide algorithm for QgsGeometry | Automatic new feature Processing User Manual | Original commit: https://github.com/qgis/QGIS/commit/e74395d95bd326788ce55f2a7caa31435fed9ea9 by nyalldawson

Subdivides the geometry. The returned geometry will be a collection

containing subdivided parts from the original geometry, where no

part has more then the specified maximum number of nodes. | 1.0 | [FEATURE] Subdivide algorithm for QgsGeometry - Original commit: https://github.com/qgis/QGIS/commit/e74395d95bd326788ce55f2a7caa31435fed9ea9 by nyalldawson

Subdivides the geometry. The returned geometry will be a collection

containing subdivided parts from the original geometry, where no

part has more then the specif... | process | subdivide algorithm for qgsgeometry original commit by nyalldawson subdivides the geometry the returned geometry will be a collection containing subdivided parts from the original geometry where no part has more then the specified maximum number of nodes | 1 |

35,236 | 14,654,300,075 | IssuesEvent | 2020-12-28 08:21:03 | Azure/azure-cli | https://api.github.com/repos/Azure/azure-cli | closed | az backup protectable-item list error | Recovery Services Backup Service Attention customer-response-expected |

### **This is autogenerated. Please review and update as needed.**

## Describe the bug

az backup protectable-item list failes with an error when used with workload-type AzureFileshare

**Command Name**

`az backup protectable-item list`

**Errors:**

```

'AzureFileShare'

Traceback (most recent call last):

... | 2.0 | az backup protectable-item list error -

### **This is autogenerated. Please review and update as needed.**

## Describe the bug

az backup protectable-item list failes with an error when used with workload-type AzureFileshare

**Command Name**

`az backup protectable-item list`

**Errors:**

```

'AzureFileShar... | non_process | az backup protectable item list error this is autogenerated please review and update as needed describe the bug az backup protectable item list failes with an error when used with workload type azurefileshare command name az backup protectable item list errors azurefileshar... | 0 |

139,084 | 11,243,391,383 | IssuesEvent | 2020-01-10 02:55:51 | goharbor/harbor | https://api.github.com/repos/goharbor/harbor | closed | [Add Jenkins Job] Performance test needs to be triggered by Jenkins | area/test automation target/2.0.0 | Add Performance test by rally into Jenkins.

| 1.0 | [Add Jenkins Job] Performance test needs to be triggered by Jenkins - Add Performance test by rally into Jenkins.

| non_process | performance test needs to be triggered by jenkins add performance test by rally into jenkins | 0 |

162,375 | 12,663,177,553 | IssuesEvent | 2020-06-18 00:27:30 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Test failure: System.Net.Security.Tests.ServerAsyncAuthenticateTest.ServerAsyncAuthenticate_MismatchProtocols_Fails(serverProtocol: Tls12, clientProtocol: Tls, expectedException: typeof(System.Security.Authentication.AuthenticationException)) | arch-x64 area-System.Net.Security disabled-test test-run-core | failed in job: [runtime 20200510.20 ](https://dev.azure.com/dnceng/public/_build/results?buildId=638932&view=ms.vss-test-web.build-test-results-tab&runId=19860426&resultId=150684&paneView=debug)

Error message

~~~

Assert.IsAssignableFrom() Failure

Expected: typeof(System.Security.Authentication.AuthenticationExc... | 2.0 | Test failure: System.Net.Security.Tests.ServerAsyncAuthenticateTest.ServerAsyncAuthenticate_MismatchProtocols_Fails(serverProtocol: Tls12, clientProtocol: Tls, expectedException: typeof(System.Security.Authentication.AuthenticationException)) - failed in job: [runtime 20200510.20 ](https://dev.azure.com/dnceng/public/_... | non_process | test failure system net security tests serverasyncauthenticatetest serverasyncauthenticate mismatchprotocols fails serverprotocol clientprotocol tls expectedexception typeof system security authentication authenticationexception failed in job error message assert isassignablefrom failure ... | 0 |

3,754 | 3,548,067,052 | IssuesEvent | 2016-01-20 12:50:36 | rabbitmq/rabbitmq-management | https://api.github.com/repos/rabbitmq/rabbitmq-management | closed | No error is displayed when policy is created with invalid paramaters | effort-low usability | RabbitMQ 3.6.0.

It can be reproduced by putting some random text in 'Definition' fields and submitting the form. Nothing will happen but in the Network console you can see the server responds with a `400 Bad Request`:

currently says

> (AT developer) If there is a bug in AT, file the bug for the AT.

I think it would be good to further track these bugs in this project, both to be able to follow up but also to demonstrate impact. It could also be helpful for AT ... | 1.0 | Process for tracking implementation bugs found by running the tests - The [working mode](https://github.com/w3c/aria-at/wiki/Working-Mode) currently says

> (AT developer) If there is a bug in AT, file the bug for the AT.

I think it would be good to further track these bugs in this project, both to be able to foll... | process | process for tracking implementation bugs found by running the tests the currently says at developer if there is a bug in at file the bug for the at i think it would be good to further track these bugs in this project both to be able to follow up but also to demonstrate impact it could also be helpfu... | 1 |

25,585 | 7,727,653,109 | IssuesEvent | 2018-05-25 04:03:45 | Microsoft/MixedRealityToolkit-Unity | https://api.github.com/repos/Microsoft/MixedRealityToolkit-Unity | reopened | Unable to Deploy from Build Window | Build Tools HoloLens | ## Overview

Unable to deploy to Hololens.

## Expected Behavior

After successfully building a barebones project and building the Appx, the program deploys correctly to the Hololens

## Actual Behavior.

Builds project correctly. Appears to connect to Hololens.

Does not deploy to the Hololens when pressing "Inst... | 1.0 | Unable to Deploy from Build Window - ## Overview

Unable to deploy to Hololens.

## Expected Behavior

After successfully building a barebones project and building the Appx, the program deploys correctly to the Hololens

## Actual Behavior.

Builds project correctly. Appears to connect to Hololens.

Does not deplo... | non_process | unable to deploy from build window overview unable to deploy to hololens expected behavior after successfully building a barebones project and building the appx the program deploys correctly to the hololens actual behavior builds project correctly appears to connect to hololens does not deplo... | 0 |