Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

554,989 | 16,444,785,767 | IssuesEvent | 2021-05-20 18:12:38 | LBNL-ETA/BEDES-Manager | https://api.github.com/repos/LBNL-ETA/BEDES-Manager | opened | multiple copies of same composite term should not be created upon import | bug medium priority | When I import an application mapping using a .csv file, I can have multiple application terms that are mapped to the same composite term (e.g., multiple rows with the same values in the "BEDES Term" and "BEDES Atomic Term Mapping" columns). Currently, when I import such a .csv file, the result is that multiple copies o... | 1.0 | multiple copies of same composite term should not be created upon import - When I import an application mapping using a .csv file, I can have multiple application terms that are mapped to the same composite term (e.g., multiple rows with the same values in the "BEDES Term" and "BEDES Atomic Term Mapping" columns). Curr... | non_process | multiple copies of same composite term should not be created upon import when i import an application mapping using a csv file i can have multiple application terms that are mapped to the same composite term e g multiple rows with the same values in the bedes term and bedes atomic term mapping columns curr... | 0 |

18,265 | 24,346,959,354 | IssuesEvent | 2022-10-02 12:52:03 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | How to monitor files in azure storage account, like blobs or fileshares using this example? Currently it is just watching some windows directory on some vm? | automation/svc triaged cxp product-question process-automation/subsvc Pri2 | How to monitor files in azure storage account, like blobs or fileshares using this example? Currently it is just watching some windows directory on some vm?

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: 73598b10-eae3-6387-682b-82... | 1.0 | How to monitor files in azure storage account, like blobs or fileshares using this example? Currently it is just watching some windows directory on some vm? - How to monitor files in azure storage account, like blobs or fileshares using this example? Currently it is just watching some windows directory on some vm?

... | process | how to monitor files in azure storage account like blobs or fileshares using this example currently it is just watching some windows directory on some vm how to monitor files in azure storage account like blobs or fileshares using this example currently it is just watching some windows directory on some vm ... | 1 |

264,902 | 28,214,106,786 | IssuesEvent | 2023-04-05 07:39:23 | hshivhare67/platform_device_renesas_kernel_v4.19.72 | https://api.github.com/repos/hshivhare67/platform_device_renesas_kernel_v4.19.72 | closed | CVE-2022-4095 (High) detected in linuxlinux-4.19.279 - autoclosed | Mend: dependency security vulnerability | ## CVE-2022-4095 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.ke... | True | CVE-2022-4095 (High) detected in linuxlinux-4.19.279 - autoclosed - ## CVE-2022-4095 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>The Li... | non_process | cve high detected in linuxlinux autoclosed cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in base branch main vulnerable source files drivers staging cmd c driver... | 0 |

20,547 | 27,197,142,728 | IssuesEvent | 2023-02-20 06:37:56 | rust-lang/rust | https://api.github.com/repos/rust-lang/rust | closed | std::process::Command::output is unacceptably slow | I-slow C-bug O-unix A-process | `std::process::Command::output` is unacceptably slow. Consider this code:

https://play.rust-lang.org/?version=nightly&mode=release&edition=2021&gist=333b57442699553257f0273bb1bba3ac

When trying this code, make sure you have enabled release mode. On my local machine I see this output:

```text

1565 ms

100000000

... | 1.0 | std::process::Command::output is unacceptably slow - `std::process::Command::output` is unacceptably slow. Consider this code:

https://play.rust-lang.org/?version=nightly&mode=release&edition=2021&gist=333b57442699553257f0273bb1bba3ac

When trying this code, make sure you have enabled release mode. On my local mac... | process | std process command output is unacceptably slow std process command output is unacceptably slow consider this code when trying this code make sure you have enabled release mode on my local machine i see this output text ms ms so std process command output is slower ... | 1 |

6,916 | 10,073,232,030 | IssuesEvent | 2019-07-24 09:09:54 | googleapis/gax-dotnet | https://api.github.com/repos/googleapis/gax-dotnet | opened | Revisit platform integration tests | priority: p2 type: process | - Get them working again

- Add Cloud Run support

- Ideally run in Kokoro | 1.0 | Revisit platform integration tests - - Get them working again

- Add Cloud Run support

- Ideally run in Kokoro | process | revisit platform integration tests get them working again add cloud run support ideally run in kokoro | 1 |

21,289 | 28,485,090,640 | IssuesEvent | 2023-04-18 07:16:58 | ethereumclassic/ECIPs | https://api.github.com/repos/ethereumclassic/ECIPs | closed | Remove atoulme from ECIP editors | meta:3 process | Hello all,

I have not been active and given my lack of contributions, I think it is time for me to step down as ECIP editor. Please let me know if this is appropriate and accept my resignation. I will follow steps per process. | 1.0 | Remove atoulme from ECIP editors - Hello all,

I have not been active and given my lack of contributions, I think it is time for me to step down as ECIP editor. Please let me know if this is appropriate and accept my resignation. I will follow steps per process. | process | remove atoulme from ecip editors hello all i have not been active and given my lack of contributions i think it is time for me to step down as ecip editor please let me know if this is appropriate and accept my resignation i will follow steps per process | 1 |

17,811 | 23,739,771,844 | IssuesEvent | 2022-08-31 11:22:57 | cloudfoundry/korifi | https://api.github.com/repos/cloudfoundry/korifi | opened | [Feature]: Developer can push apps using the top-level `health-check-invocation-timeout` field in the manifest | Top-level process config | ### Blockers/Dependencies

_No response_

### Background

**As a** developer

**I want** top-level process configuration in manifests to be supported

**So that** I can use shortcut `cf push` flags like `-c`, `-i`, `-m` etc.

### Acceptance Criteria

* **GIVEN** I have the following node app:

```js

var http = req... | 1.0 | [Feature]: Developer can push apps using the top-level `health-check-invocation-timeout` field in the manifest - ### Blockers/Dependencies

_No response_

### Background

**As a** developer

**I want** top-level process configuration in manifests to be supported

**So that** I can use shortcut `cf push` flags like `-c`... | process | developer can push apps using the top level health check invocation timeout field in the manifest blockers dependencies no response background as a developer i want top level process configuration in manifests to be supported so that i can use shortcut cf push flags like c i ... | 1 |

560,916 | 16,606,328,691 | IssuesEvent | 2021-06-02 04:38:21 | alephdata/memorious | https://api.github.com/repos/alephdata/memorious | closed | Add user authentication and scraper namespacing to Memorious | priority-low | We want other people to run their own scrapers on our platform. Ideally, they will have the permissions to see, run and update their own crawlers. We will have 3 tiers of permissions for users:

- `public`: You can see the public crawlers; but can't run/cancel them

- `authorized`: You can see, run and cancel your own ... | 1.0 | Add user authentication and scraper namespacing to Memorious - We want other people to run their own scrapers on our platform. Ideally, they will have the permissions to see, run and update their own crawlers. We will have 3 tiers of permissions for users:

- `public`: You can see the public crawlers; but can't run/ca... | non_process | add user authentication and scraper namespacing to memorious we want other people to run their own scrapers on our platform ideally they will have the permissions to see run and update their own crawlers we will have tiers of permissions for users public you can see the public crawlers but can t run ca... | 0 |

44,363 | 12,120,791,355 | IssuesEvent | 2020-04-22 08:16:18 | cf-convention/cf-conventions | https://api.github.com/repos/cf-convention/cf-conventions | opened | udunits supports ppm, but documentation states it does not | defect | # Title

udunits supports ppm, but documentation states it does not

# Moderator

None at the moment

# Requirement Summary

The documentation states that udunits does not support dimensionless ratios like parts-per-million. But udunits does support such units.

# Technical Proposal Summary

Change sentence to st... | 1.0 | udunits supports ppm, but documentation states it does not - # Title

udunits supports ppm, but documentation states it does not

# Moderator

None at the moment

# Requirement Summary

The documentation states that udunits does not support dimensionless ratios like parts-per-million. But udunits does support such ... | non_process | udunits supports ppm but documentation states it does not title udunits supports ppm but documentation states it does not moderator none at the moment requirement summary the documentation states that udunits does not support dimensionless ratios like parts per million but udunits does support such ... | 0 |

121,661 | 26,010,359,273 | IssuesEvent | 2022-12-21 00:41:04 | WordPress/openverse-catalog | https://api.github.com/repos/WordPress/openverse-catalog | opened | Alert when a non-dated DAG ingests unexpected amount of data | 🟨 priority: medium ✨ goal: improvement 💻 aspect: code | ## Problem

A non-dated DAG ingests all available data from its provider on each run. Therefore, we expect these DAGs to ingest the same amount _or more_ data on each run. If a non-dated DAG ingests _fewer_ records than it has on previous runs, this is highly unusual and worthy of investigation. (It should be noted t... | 1.0 | Alert when a non-dated DAG ingests unexpected amount of data - ## Problem

A non-dated DAG ingests all available data from its provider on each run. Therefore, we expect these DAGs to ingest the same amount _or more_ data on each run. If a non-dated DAG ingests _fewer_ records than it has on previous runs, this is hi... | non_process | alert when a non dated dag ingests unexpected amount of data problem a non dated dag ingests all available data from its provider on each run therefore we expect these dags to ingest the same amount or more data on each run if a non dated dag ingests fewer records than it has on previous runs this is hi... | 0 |

4,225 | 7,181,155,887 | IssuesEvent | 2018-02-01 03:09:20 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | closed | Performance issue with blocks with a lot of transactions | status-inprocess tools-getBlock type-enhancement | This command

getBlock -r 4200400

is much faster than this one

getBlock -c 4200400

even though the one from the cache is supposed to be significantly faster. Why? | 1.0 | Performance issue with blocks with a lot of transactions - This command

getBlock -r 4200400

is much faster than this one

getBlock -c 4200400

even though the one from the cache is supposed to be significantly faster. Why? | process | performance issue with blocks with a lot of transactions this command getblock r is much faster than this one getblock c even though the one from the cache is supposed to be significantly faster why | 1 |

222,427 | 17,069,301,177 | IssuesEvent | 2021-07-07 11:18:53 | kaiwalyakoparkar/Lilliputian-Bot | https://api.github.com/repos/kaiwalyakoparkar/Lilliputian-Bot | opened | [Doc] Structure the commands offered by the bot. | documentation enhancement good first issue | Create a table instead of putting one below the other and give an external link to the images. The links provided in the readme are of Imgur so can be directly linked as the external source. | 1.0 | [Doc] Structure the commands offered by the bot. - Create a table instead of putting one below the other and give an external link to the images. The links provided in the readme are of Imgur so can be directly linked as the external source. | non_process | structure the commands offered by the bot create a table instead of putting one below the other and give an external link to the images the links provided in the readme are of imgur so can be directly linked as the external source | 0 |

10,173 | 13,044,162,751 | IssuesEvent | 2020-07-29 03:47:35 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `SetVar` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `SetVar` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @iosmanthus

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr)... | 2.0 | UCP: Migrate scalar function `SetVar` from TiDB -

## Description

Port the scalar function `SetVar` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @iosmanthus

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/ti... | process | ucp migrate scalar function setvar from tidb description port the scalar function setvar from tidb to coprocessor score mentor s iosmanthus recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

365,291 | 25,527,711,674 | IssuesEvent | 2022-11-29 04:50:18 | howieraem/COMS4156-ASE | https://api.github.com/repos/howieraem/COMS4156-ASE | closed | [DO_NOT_DELETE] Tracker for T5 | documentation | ### About this

A simple tracker to maintain todos across our two repos, i know we can use a GH project instead, but this is easy to use.

### Instructions on using this Tracker

1. add a new item when you find one.

2. if you are owning the item, add `@yourself` in the front of the item.

3. once done, cross it out ... | 1.0 | [DO_NOT_DELETE] Tracker for T5 - ### About this

A simple tracker to maintain todos across our two repos, i know we can use a GH project instead, but this is easy to use.

### Instructions on using this Tracker

1. add a new item when you find one.

2. if you are owning the item, add `@yourself` in the front of the i... | non_process | tracker for about this a simple tracker to maintain todos across our two repos i know we can use a gh project instead but this is easy to use instructions on using this tracker add a new item when you find one if you are owning the item add yourself in the front of the item once d... | 0 |

7,653 | 2,919,576,594 | IssuesEvent | 2015-06-24 14:51:09 | Semantic-Org/Semantic-UI | https://api.github.com/repos/Semantic-Org/Semantic-UI | closed | FormValidate: test if two fields does not Match. | Needs Test Case Read the Readme | Hi, I'm using the formValidate on my website. My form is a sing-up form.

```Javascript

$('#form').form({

ps: {

identifier : 'ps',

rules: [

{

type : 'empty',

prompt : 'Veuillez choisir un pseudonyme.'

}

... | 1.0 | FormValidate: test if two fields does not Match. - Hi, I'm using the formValidate on my website. My form is a sing-up form.

```Javascript

$('#form').form({

ps: {

identifier : 'ps',

rules: [

{

type : 'empty',

prompt : 'Veu... | non_process | formvalidate test if two fields does not match hi i m using the formvalidate on my website my form is a sing up form javascript form form ps identifier ps rules type empty prompt veu... | 0 |

12,300 | 9,682,495,843 | IssuesEvent | 2019-05-23 09:18:12 | GIScience/openrouteservice | https://api.github.com/repos/GIScience/openrouteservice | closed | Use local maven artifact repo for development | Awaiting release infrastructure | Use local maven artifact repo in order to speed up development turnover time. | 1.0 | Use local maven artifact repo for development - Use local maven artifact repo in order to speed up development turnover time. | non_process | use local maven artifact repo for development use local maven artifact repo in order to speed up development turnover time | 0 |

195,610 | 15,531,407,804 | IssuesEvent | 2021-03-13 23:29:05 | MUHC-DP-Project/pbrn-gateway | https://api.github.com/repos/MUHC-DP-Project/pbrn-gateway | closed | First Iteration of the SRS documentation | documentation | # Goal

You will have to do the first iteration of the SRS documentation following the IEEE style.

The Template will be as follow:

## Section I: Introduction

1.1. Purpose ............................................................................................3

1.2. Scope ............................................ | 1.0 | First Iteration of the SRS documentation - # Goal

You will have to do the first iteration of the SRS documentation following the IEEE style.

The Template will be as follow:

## Section I: Introduction

1.1. Purpose ............................................................................................3

1.2. Scop... | non_process | first iteration of the srs documentation goal you will have to do the first iteration of the srs documentation following the ieee style the template will be as follow section i introduction purpose scop... | 0 |

16,015 | 9,669,748,758 | IssuesEvent | 2019-05-21 18:08:58 | PowerShell/Announcements | https://api.github.com/repos/PowerShell/Announcements | opened | Microsoft Security Advisory CVE-2019-0733 - Windows Defender Application Control Security Feature Bypass Vulnerability | PowerShell Security | # Microsoft Security Advisory CVE-2019-0733 - Windows Defender Application Control Security Feature Bypass Vulnerability

## Executive Summary

A security feature bypass vulnerability exists in Windows Defender Application Control (WDAC) which could allow an attacker to bypass WDAC enforcement. An attacker who succ... | True | Microsoft Security Advisory CVE-2019-0733 - Windows Defender Application Control Security Feature Bypass Vulnerability - # Microsoft Security Advisory CVE-2019-0733 - Windows Defender Application Control Security Feature Bypass Vulnerability

## Executive Summary

A security feature bypass vulnerability exists in W... | non_process | microsoft security advisory cve windows defender application control security feature bypass vulnerability microsoft security advisory cve windows defender application control security feature bypass vulnerability executive summary a security feature bypass vulnerability exists in windows defen... | 0 |

5,730 | 8,570,073,523 | IssuesEvent | 2018-11-11 16:51:32 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Non-UTF-8 env vars | feature request process | * **Version**: v8.8.1

* **Platform**: Linux daurn-z170 4.13.7-1-ARCH #1 SMP PREEMPT Sat Oct 14 20:13:26 CEST 2017 x86_64 GNU/Linux

* **Subsystem**: process

<!-- Enter your issue details below this comment. -->

Node.js doesn't seem to have a way to read environment variables that aren't valid unicode. For both k... | 1.0 | Non-UTF-8 env vars - * **Version**: v8.8.1

* **Platform**: Linux daurn-z170 4.13.7-1-ARCH #1 SMP PREEMPT Sat Oct 14 20:13:26 CEST 2017 x86_64 GNU/Linux

* **Subsystem**: process

<!-- Enter your issue details below this comment. -->

Node.js doesn't seem to have a way to read environment variables that aren't val... | process | non utf env vars version platform linux daurn arch smp preempt sat oct cest gnu linux subsystem process node js doesn t seem to have a way to read environment variables that aren t valid unicode for both keys and values e g env i f ... | 1 |

9,198 | 12,232,396,947 | IssuesEvent | 2020-05-04 09:36:53 | prisma/prisma-client-js | https://api.github.com/repos/prisma/prisma-client-js | closed | Prisma Client removed on adding a new package: Error: "Photon is not initialized yet" | bug/0-needs-info kind/bug process/next-milestone team/typescript | This behavior can be observed in both `npm` and `yarn`, setup a Photon project and run any query with photon to ensure that it exists.

Now add any dependency in `yarn`, `npm` and re-execute photon query. I reproduced this with `yarn add dotenv` or `npm install dotenv`.

It doesn't matter if `dotenv` is already i... | 1.0 | Prisma Client removed on adding a new package: Error: "Photon is not initialized yet" - This behavior can be observed in both `npm` and `yarn`, setup a Photon project and run any query with photon to ensure that it exists.

Now add any dependency in `yarn`, `npm` and re-execute photon query. I reproduced this with `... | process | prisma client removed on adding a new package error photon is not initialized yet this behavior can be observed in both npm and yarn setup a photon project and run any query with photon to ensure that it exists now add any dependency in yarn npm and re execute photon query i reproduced this with ... | 1 |

13,918 | 16,676,385,723 | IssuesEvent | 2021-06-07 16:42:45 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | Issues with preprocessors that use fx variables | bug preprocessor | I found some small issues with the new implementation of the fx preprocessors in #999 (great job btw there @sloosvel!).

Issues

---------

1. Not directly related to #999, but do we have a list of all preprocessors for which `fx_variables` can be specified? Maybe I'm blind, but I didn't find it in the documentatio... | 1.0 | Issues with preprocessors that use fx variables - I found some small issues with the new implementation of the fx preprocessors in #999 (great job btw there @sloosvel!).

Issues

---------

1. Not directly related to #999, but do we have a list of all preprocessors for which `fx_variables` can be specified? Maybe I... | process | issues with preprocessors that use fx variables i found some small issues with the new implementation of the fx preprocessors in great job btw there sloosvel issues not directly related to but do we have a list of all preprocessors for which fx variables can be specified maybe i m b... | 1 |

16,290 | 20,919,583,805 | IssuesEvent | 2022-03-24 16:10:13 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | closed | sklearn.preprocessing.LabelEncoder: new feature to manage unknown value in `transform()` method | New Feature module:preprocessing | ### Describe the workflow you want to enable

Hi, I'm working with `from sklearn.preprocessing import LabelEncoder` and I would find interesting (and useful) to have an option to not get an error in case of when using the `transform()` method there is an unknown label as in this toy example.

Currently:

```pytho... | 1.0 | sklearn.preprocessing.LabelEncoder: new feature to manage unknown value in `transform()` method - ### Describe the workflow you want to enable

Hi, I'm working with `from sklearn.preprocessing import LabelEncoder` and I would find interesting (and useful) to have an option to not get an error in case of when using th... | process | sklearn preprocessing labelencoder new feature to manage unknown value in transform method describe the workflow you want to enable hi i m working with from sklearn preprocessing import labelencoder and i would find interesting and useful to have an option to not get an error in case of when using th... | 1 |

244,594 | 26,436,647,761 | IssuesEvent | 2023-01-15 13:25:26 | MikeGratsas/currency-converter | https://api.github.com/repos/MikeGratsas/currency-converter | closed | CVE-2022-22968 (Medium) detected in spring-context-5.3.12.jar - autoclosed | security vulnerability | ## CVE-2022-22968 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-context-5.3.12.jar</b></p></summary>

<p>Spring Context</p>

<p>Library home page: <a href="https://github.com/... | True | CVE-2022-22968 (Medium) detected in spring-context-5.3.12.jar - autoclosed - ## CVE-2022-22968 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-context-5.3.12.jar</b></p></summa... | non_process | cve medium detected in spring context jar autoclosed cve medium severity vulnerability vulnerable library spring context jar spring context library home page a href path to dependency file pom xml path to vulnerable library home wss scanner repository org spri... | 0 |

114,722 | 14,628,377,804 | IssuesEvent | 2020-12-23 14:03:36 | alpheios-project/alignment-editor-new | https://api.github.com/repos/alpheios-project/alignment-editor-new | closed | decide on text states storage requirements | design | @balmas and @kirlat , I suggest to discuss overall scope of the task here.

**About handling with texts:**

After examinining the requirements I believe that we could define the following states of source/translations text:

1. input text

- it could be uploaded manually, from file, from external API (DTS AP... | 1.0 | decide on text states storage requirements - @balmas and @kirlat , I suggest to discuss overall scope of the task here.

**About handling with texts:**

After examinining the requirements I believe that we could define the following states of source/translations text:

1. input text

- it could be uploaded m... | non_process | decide on text states storage requirements balmas and kirlat i suggest to discuss overall scope of the task here about handling with texts after examinining the requirements i believe that we could define the following states of source translations text input text it could be uploaded m... | 0 |

63,045 | 8,652,998,239 | IssuesEvent | 2018-11-27 09:41:58 | wix/react-native-navigation | https://api.github.com/repos/wix/react-native-navigation | closed | Add this sample app to v2 docs | type: documentation user: looking for contributors | I don't know if this is something you'd like to include in the readme but I built this app with v2 with many features it has over v1 like SplitView etc.

https://github.com/birkir/hekla | 1.0 | Add this sample app to v2 docs - I don't know if this is something you'd like to include in the readme but I built this app with v2 with many features it has over v1 like SplitView etc.

https://github.com/birkir/hekla | non_process | add this sample app to docs i don t know if this is something you d like to include in the readme but i built this app with with many features it has over like splitview etc | 0 |

28,475 | 23,284,985,478 | IssuesEvent | 2022-08-05 15:33:23 | accessibility-exchange/platform | https://api.github.com/repos/accessibility-exchange/platform | closed | Ensure routes, config, and events are cached in production | help wanted infrastructure | These commands should be added to the deployment workflow:

```

php artisan optimize # will cache routes and config

php artisan event:cache

```

The first line above can replace these two for brevity: https://github.com/accessibility-exchange/platform/blob/897da5615b30696f7fb464b62493dfda7c6b4496/.deploy/accessi... | 1.0 | Ensure routes, config, and events are cached in production - These commands should be added to the deployment workflow:

```

php artisan optimize # will cache routes and config

php artisan event:cache

```

The first line above can replace these two for brevity: https://github.com/accessibility-exchange/platform/... | non_process | ensure routes config and events are cached in production these commands should be added to the deployment workflow php artisan optimize will cache routes and config php artisan event cache the first line above can replace these two for brevity | 0 |

6,962 | 10,115,829,811 | IssuesEvent | 2019-07-30 23:10:25 | googleapis/google-cloud-java | https://api.github.com/repos/googleapis/google-cloud-java | closed | Remove unused dependencies | type: process | Running `mvn dependency:analyze` shows that there are a bunch of "Used undeclared dependencies found" and "Unused declared dependencies found". This issue is to clean up the "Unused declared dependencies found" | 1.0 | Remove unused dependencies - Running `mvn dependency:analyze` shows that there are a bunch of "Used undeclared dependencies found" and "Unused declared dependencies found". This issue is to clean up the "Unused declared dependencies found" | process | remove unused dependencies running mvn dependency analyze shows that there are a bunch of used undeclared dependencies found and unused declared dependencies found this issue is to clean up the unused declared dependencies found | 1 |

15,528 | 19,703,291,075 | IssuesEvent | 2022-01-12 18:53:56 | googleapis/nodejs-service-usage | https://api.github.com/repos/googleapis/nodejs-service-usage | opened | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'service-usage' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-automation** if you have any questions. | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'service-usage' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-automation** if you have any questions. | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 api shortname service usage invalid in repo metadata json ☝️ once you correct these problems you can close this issue reach out to go github automation if you have any questions | 1 |

744,739 | 25,953,900,612 | IssuesEvent | 2022-12-18 00:30:24 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | use of __noinit with ecc memory hangs system | bug priority: low Stale | **Describe the bug**

The `__noinit` attribute is used primarily for the purposes listed below:

1. to preserve a section of SRAM across reboots

1. to speedup init time by reducing the size of `.bss`

Some configurations may also use `__noinit` to skip initialization of memory regions initialized by other applicatio... | 1.0 | use of __noinit with ecc memory hangs system - **Describe the bug**

The `__noinit` attribute is used primarily for the purposes listed below:

1. to preserve a section of SRAM across reboots

1. to speedup init time by reducing the size of `.bss`

Some configurations may also use `__noinit` to skip initialization of... | non_process | use of noinit with ecc memory hangs system describe the bug the noinit attribute is used primarily for the purposes listed below to preserve a section of sram across reboots to speedup init time by reducing the size of bss some configurations may also use noinit to skip initialization of... | 0 |

111,092 | 9,498,863,013 | IssuesEvent | 2019-04-24 03:47:26 | stan-dev/math | https://api.github.com/repos/stan-dev/math | closed | Math repo runs no tests on Windows | continuous integration testing windows | Ideally we'd run the unit tests there. I think they might be broken, though.

#### Current Version:

v2.18.0

| 1.0 | Math repo runs no tests on Windows - Ideally we'd run the unit tests there. I think they might be broken, though.

#### Current Version:

v2.18.0

| non_process | math repo runs no tests on windows ideally we d run the unit tests there i think they might be broken though current version | 0 |

111,673 | 9,537,933,552 | IssuesEvent | 2019-04-30 13:42:10 | pints-team/pints | https://api.github.com/repos/pints-team/pints | closed | Functional testing results repo | functional-testing | The [functional-testing-results](https://github.com/pints-team/functional-testing-results) repo is currently 1.6Gb, increasing by the hour.

This is because we seem to be literally versioning png images every time a new test is run, and that's not really a use-case that GitHub is intended for.

We're definitely goi... | 1.0 | Functional testing results repo - The [functional-testing-results](https://github.com/pints-team/functional-testing-results) repo is currently 1.6Gb, increasing by the hour.

This is because we seem to be literally versioning png images every time a new test is run, and that's not really a use-case that GitHub is int... | non_process | functional testing results repo the repo is currently increasing by the hour this is because we seem to be literally versioning png images every time a new test is run and that s not really a use case that github is intended for we re definitely going to hit the github fair use limits either soone... | 0 |

17,910 | 23,898,037,105 | IssuesEvent | 2022-09-08 16:12:38 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | Release checklist 0.63 | enhancement process | ### Problem

We need a checklist to verify the release is rolled out successfully.

### Solution

## Preparation

- [x] Milestone field populated on relevant [issues](https://github.com/hashgraph/hedera-mirror-node/issues?q=is%3Aclosed+no%3Amilestone+sort%3Aupdated-desc)

- [x] Nothing open for [milestone](https://gi... | 1.0 | Release checklist 0.63 - ### Problem

We need a checklist to verify the release is rolled out successfully.

### Solution

## Preparation

- [x] Milestone field populated on relevant [issues](https://github.com/hashgraph/hedera-mirror-node/issues?q=is%3Aclosed+no%3Amilestone+sort%3Aupdated-desc)

- [x] Nothing open f... | process | release checklist problem we need a checklist to verify the release is rolled out successfully solution preparation milestone field populated on relevant nothing open for github checks for branch are passing automated kubernetes deployment successful tag release ... | 1 |

1,016 | 3,478,620,004 | IssuesEvent | 2015-12-28 14:08:48 | pwittchen/prefser | https://api.github.com/repos/pwittchen/prefser | closed | Release 2.0.5 | release process | **Initial release notes**:

- improved error checking in `put(...)` method

- added missing annotations to some tests and reorganized tests

- added missing license info to some classes

- bumped RxAndroid version in `README.md` file

**Things to do**:

- [x] bump library version :arrow_right: https://github.com/pw... | 1.0 | Release 2.0.5 - **Initial release notes**:

- improved error checking in `put(...)` method

- added missing annotations to some tests and reorganized tests

- added missing license info to some classes

- bumped RxAndroid version in `README.md` file

**Things to do**:

- [x] bump library version :arrow_right: https... | process | release initial release notes improved error checking in put method added missing annotations to some tests and reorganized tests added missing license info to some classes bumped rxandroid version in readme md file things to do bump library version arrow right ... | 1 |

14,807 | 18,108,668,400 | IssuesEvent | 2021-09-22 22:46:32 | googleapis/gax-nodejs | https://api.github.com/repos/googleapis/gax-nodejs | closed | Migrate to main branch on GitHub | type: process | We're in the process of migrating to using `main` as the primary branch name on GitHub.

It would be good if `gax-nodejs` was migrated, so that it's consistent with other Node.js repositories.

You will need to be mindful of any automation configured on this repository. | 1.0 | Migrate to main branch on GitHub - We're in the process of migrating to using `main` as the primary branch name on GitHub.

It would be good if `gax-nodejs` was migrated, so that it's consistent with other Node.js repositories.

You will need to be mindful of any automation configured on this repository. | process | migrate to main branch on github we re in the process of migrating to using main as the primary branch name on github it would be good if gax nodejs was migrated so that it s consistent with other node js repositories you will need to be mindful of any automation configured on this repository | 1 |

40,115 | 5,277,148,908 | IssuesEvent | 2017-02-07 01:51:50 | kakazuo/yimengtech | https://api.github.com/repos/kakazuo/yimengtech | closed | 测试结算功能 | test 测试任务 翡翠厂 | 1、1级公司明细进货。

2、定价。

3、发货到2级公司。

2、定价。

3、发货到2级公司。

选择结算 ( )单据结算 ( )结算退货 结算查询: 结算退货查询: 结论:数据正确 用时: | 0 |

84,421 | 10,526,595,132 | IssuesEvent | 2019-09-30 17:26:25 | mozilla-lockwise/lockwise-android | https://api.github.com/repos/mozilla-lockwise/lockwise-android | opened | Prevent entry duplication during edit | feature-edit ✏️ needs-design type: enhancement | We should not be saving a duplicate of an existing login when editing an entry. It is possible to edit an entry by changing the username to conflict with one already saved for that `origin`/`formActionOrigin`/`httpRealm` combo.

## Context

### hostname

**has to have http:// or https://**

- We don't have such... | 1.0 | Prevent entry duplication during edit - We should not be saving a duplicate of an existing login when editing an entry. It is possible to edit an entry by changing the username to conflict with one already saved for that `origin`/`formActionOrigin`/`httpRealm` combo.

## Context

### hostname

**has to have htt... | non_process | prevent entry duplication during edit we should not be saving a duplicate of an existing login when editing an entry it is possible to edit an entry by changing the username to conflict with one already saved for that origin formactionorigin httprealm combo context hostname has to have htt... | 0 |

191,922 | 6,845,053,386 | IssuesEvent | 2017-11-13 06:04:50 | SuperTurban/ekm_mobiilposm2ng | https://api.github.com/repos/SuperTurban/ekm_mobiilposm2ng | opened | Show different tabs on leaderboard prompt (7h) | MobApp Priority 2 UC-5.2 | Different tabs for different games leaderboards that are changed by swiping. The first leaderboard is the general one.

[UC-5.2](https://github.com/SuperTurban/ekm_mobiilposm2ng/wiki/Use-cases#uc-52---checking-the-leaderboard-on-the-mobile-app)

Sub task of [https://github.com/SuperTurban/ekm_mobiilposm2ng/issues/86](h... | 1.0 | Show different tabs on leaderboard prompt (7h) - Different tabs for different games leaderboards that are changed by swiping. The first leaderboard is the general one.

[UC-5.2](https://github.com/SuperTurban/ekm_mobiilposm2ng/wiki/Use-cases#uc-52---checking-the-leaderboard-on-the-mobile-app)

Sub task of [https://gith... | non_process | show different tabs on leaderboard prompt different tabs for different games leaderboards that are changed by swiping the first leaderboard is the general one sub task of | 0 |

140,097 | 18,895,231,596 | IssuesEvent | 2021-11-15 17:08:14 | bgoonz/searchAwesome | https://api.github.com/repos/bgoonz/searchAwesome | closed | CVE-2021-23341 (High) detected in prismjs-1.20.0.tgz | security vulnerability | ## CVE-2021-23341 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>prismjs-1.20.0.tgz</b></p></summary>

<p>Lightweight, robust, elegant syntax highlighting. A spin-off project from Dabb... | True | CVE-2021-23341 (High) detected in prismjs-1.20.0.tgz - ## CVE-2021-23341 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>prismjs-1.20.0.tgz</b></p></summary>

<p>Lightweight, robust, el... | non_process | cve high detected in prismjs tgz cve high severity vulnerability vulnerable library prismjs tgz lightweight robust elegant syntax highlighting a spin off project from dabblet library home page a href path to dependency file searchawesome clones awesome wpo website p... | 0 |

12,709 | 15,081,217,043 | IssuesEvent | 2021-02-05 12:53:03 | emacs-ess/ESS | https://api.github.com/repos/emacs-ess/ESS | closed | Current buffer has no process | process process:remote | On Windows 10 / Emacs 27.1, 26.3 / ESS 20210126, I occasionally edit files on network drives, and run into some odd ESS behavior. It's hard to pin down the issue, but after working interactively with R scripts on network drives, the iESS buffer loses connection, and shows `no process` in the modeline.

In the messag... | 2.0 | Current buffer has no process - On Windows 10 / Emacs 27.1, 26.3 / ESS 20210126, I occasionally edit files on network drives, and run into some odd ESS behavior. It's hard to pin down the issue, but after working interactively with R scripts on network drives, the iESS buffer loses connection, and shows `no process` in... | process | current buffer has no process on windows emacs ess i occasionally edit files on network drives and run into some odd ess behavior it s hard to pin down the issue but after working interactively with r scripts on network drives the iess buffer loses connection and shows no process in the model... | 1 |

8,511 | 11,693,662,335 | IssuesEvent | 2020-03-06 01:18:05 | Ultimate-Hosts-Blacklist/whitelist | https://api.github.com/repos/Ultimate-Hosts-Blacklist/whitelist | closed | blocked wss on hqq.tv | whitelisting process | *@Vladislavik commented on Jan 24, 2019, 6:12 PM UTC:*

Hi, filter block p2p function on players from hqq.tv, remove please domain hqq.tv and hqq.watch from list, wss used for p2p on this players.

*This issue was moved by [funilrys](https://github.com/funilrys) from [Ultimate-Hosts-Blacklist/adblock-nocoin-list#2](htt... | 1.0 | blocked wss on hqq.tv - *@Vladislavik commented on Jan 24, 2019, 6:12 PM UTC:*

Hi, filter block p2p function on players from hqq.tv, remove please domain hqq.tv and hqq.watch from list, wss used for p2p on this players.

*This issue was moved by [funilrys](https://github.com/funilrys) from [Ultimate-Hosts-Blacklist/ad... | process | blocked wss on hqq tv vladislavik commented on jan pm utc hi filter block function on players from hqq tv remove please domain hqq tv and hqq watch from list wss used for on this players this issue was moved by from | 1 |

20,392 | 27,049,210,592 | IssuesEvent | 2023-02-13 12:03:11 | notofonts/tamil | https://api.github.com/repos/notofonts/tamil | closed | NotoSerifTamilSlanted-VF.ttf and NotoSerifTamilSlanted-Italic-VF.ttf in tree/main/unhinted/variable-ttf | Script-Tamil Noto-Process-Issue A:Shaping | In tree/main/unhinted/variable-ttf we have both NotoSerifTamilSlanted-VF.ttf and NotoSerifTamilSlanted-Italic-VF.ttf. I think one of them should be removed? (Also in slim-variable-ttf ) | 1.0 | NotoSerifTamilSlanted-VF.ttf and NotoSerifTamilSlanted-Italic-VF.ttf in tree/main/unhinted/variable-ttf - In tree/main/unhinted/variable-ttf we have both NotoSerifTamilSlanted-VF.ttf and NotoSerifTamilSlanted-Italic-VF.ttf. I think one of them should be removed? (Also in slim-variable-ttf ) | process | notoseriftamilslanted vf ttf and notoseriftamilslanted italic vf ttf in tree main unhinted variable ttf in tree main unhinted variable ttf we have both notoseriftamilslanted vf ttf and notoseriftamilslanted italic vf ttf i think one of them should be removed also in slim variable ttf | 1 |

209,951 | 23,730,980,557 | IssuesEvent | 2022-08-31 01:39:29 | ChoeMinji/scikit-learn-0.23.0 | https://api.github.com/repos/ChoeMinji/scikit-learn-0.23.0 | closed | CVE-2021-41496 (High) detected in numpy-1.21.4-cp37-cp37m-manylinux_2_12_x86_64.manylinux2010_x86_64.whl - autoclosed | security vulnerability | ## CVE-2021-41496 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>numpy-1.21.4-cp37-cp37m-manylinux_2_12_x86_64.manylinux2010_x86_64.whl</b></p></summary>

<p>NumPy is the fundamental p... | True | CVE-2021-41496 (High) detected in numpy-1.21.4-cp37-cp37m-manylinux_2_12_x86_64.manylinux2010_x86_64.whl - autoclosed - ## CVE-2021-41496 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b... | non_process | cve high detected in numpy manylinux whl autoclosed cve high severity vulnerability vulnerable library numpy manylinux whl numpy is the fundamental package for array computing with python library home page a href path to dependency ... | 0 |

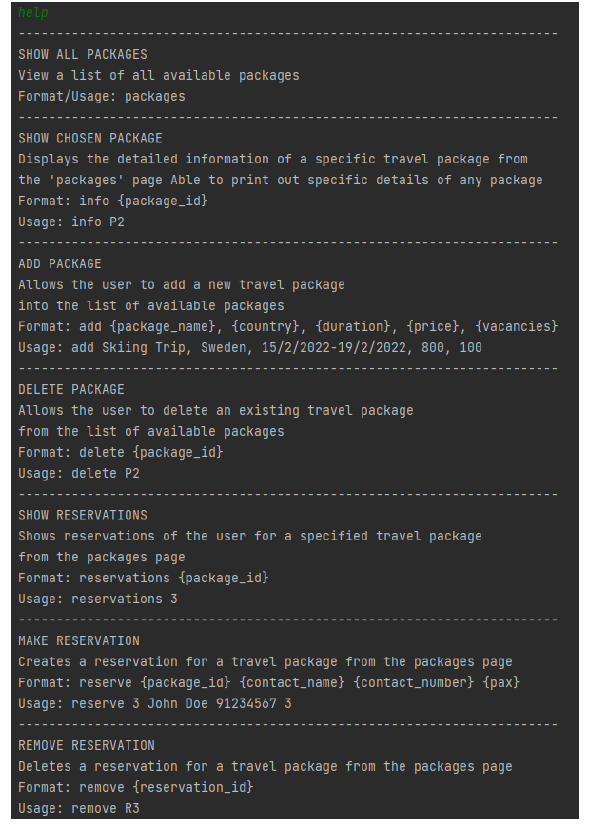

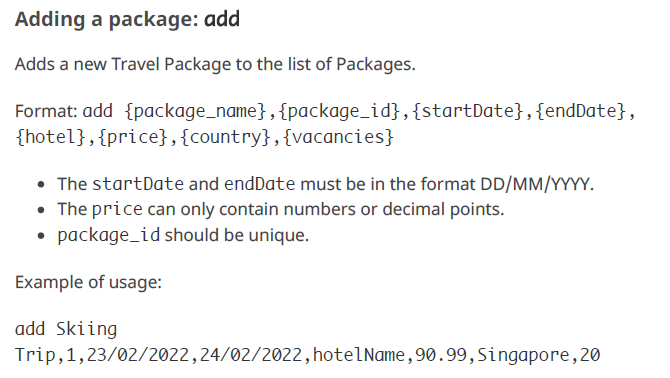

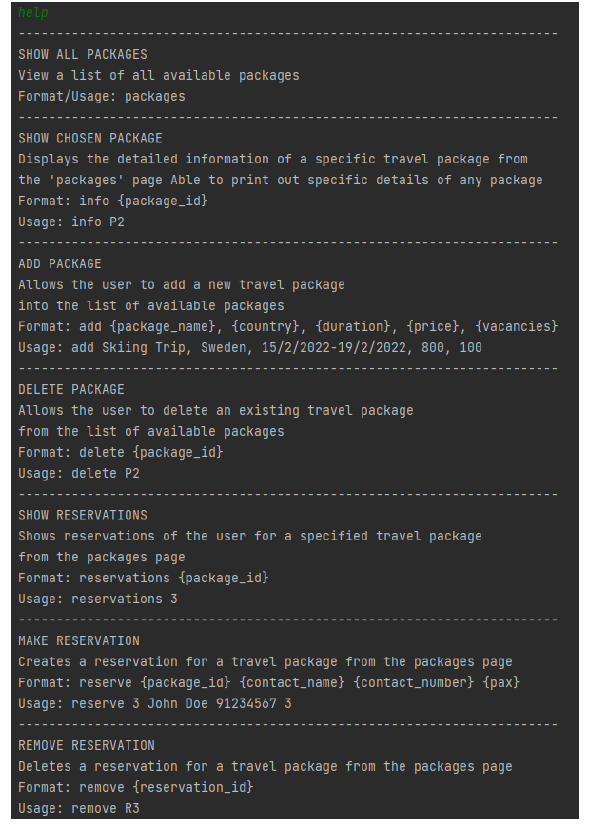

283,030 | 21,316,019,184 | IssuesEvent | 2022-04-16 09:35:11 | Bryan-BC/pe | https://api.github.com/repos/Bryan-BC/pe | opened | Inconsistency in help command in UG and DG | severity.High type.DocumentationBug | The DG has the format as follows (see ADD PACKAGE part):

whereas the UG mentions:

<!--sess... | 1.0 | Inconsistency in help command in UG and DG - The DG has the format as follows (see ADD PACKAGE part):

whereas the UG mentions:

detected in xmlhttprequest-ssl-1.5.5.tgz | security vulnerability | ## CVE-2020-28502 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xmlhttprequest-ssl-1.5.5.tgz</b></p></summary>

<p>XMLHttpRequest for Node</p>

<p>Library home page: <a href="https://r... | True | CVE-2020-28502 (High) detected in xmlhttprequest-ssl-1.5.5.tgz - ## CVE-2020-28502 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xmlhttprequest-ssl-1.5.5.tgz</b></p></summary>

<p>XML... | non_process | cve high detected in xmlhttprequest ssl tgz cve high severity vulnerability vulnerable library xmlhttprequest ssl tgz xmlhttprequest for node library home page a href path to dependency file webpack mpa next package json path to vulnerable library webpack mpa next no... | 0 |

50,104 | 7,567,122,072 | IssuesEvent | 2018-04-22 05:53:26 | jeffreydwalter/arlo | https://api.github.com/repos/jeffreydwalter/arlo | closed | Question on setting custom mode | documentation question | Great work. I see in the examples you can arm and disarm. Have you found a way to set a custom mode?

Thanks

Please answer these questions before submitting your issue. Thanks!

### What version of Python are you using (`python -V`)?

### What operating system and processor architecture are you using (`py... | 1.0 | Question on setting custom mode - Great work. I see in the examples you can arm and disarm. Have you found a way to set a custom mode?

Thanks

Please answer these questions before submitting your issue. Thanks!

### What version of Python are you using (`python -V`)?

### What operating system and process... | non_process | question on setting custom mode great work i see in the examples you can arm and disarm have you found a way to set a custom mode thanks please answer these questions before submitting your issue thanks what version of python are you using python v what operating system and process... | 0 |

120,836 | 4,794,973,043 | IssuesEvent | 2016-10-31 22:50:28 | pombase/curation | https://api.github.com/repos/pombase/curation | closed | cellular protein localization | annotation priority high priority | I added this to the "GO terms not to annotate, we should always be able to specify to where"

There are quite a lot of violations from the old annotation (in artemis) which will disappear gradually either by adding specificity or converting to phenotypes.

These are the only ones in canto

SPAC10F6.12c.1 GO:0034613... | 2.0 | cellular protein localization - I added this to the "GO terms not to annotate, we should always be able to specify to where"

There are quite a lot of violations from the old annotation (in artemis) which will disappear gradually either by adding specificity or converting to phenotypes.

These are the only ones in canto... | non_process | cellular protein localization i added this to the go terms not to annotate we should always be able to specify to where there are quite a lot of violations from the old annotation in artemis which will disappear gradually either by adding specificity or converting to phenotypes these are the only ones in canto... | 0 |

4,007 | 6,935,188,952 | IssuesEvent | 2017-12-03 05:03:08 | candango/myfuses | https://api.github.com/repos/candango/myfuses | closed | Builder is being called statically incorrectly. | bug core engine process | We are adding a builder instance in the controller during it's initialization but we never use this instance again.

In another hand we're calling the builder in the life cycle statically. This is not correct and generates depreciated errors when ~E_DEPRECIATED is removed from error_reporting in the php.ini.

Let'... | 1.0 | Builder is being called statically incorrectly. - We are adding a builder instance in the controller during it's initialization but we never use this instance again.

In another hand we're calling the builder in the life cycle statically. This is not correct and generates depreciated errors when ~E_DEPRECIATED is rem... | process | builder is being called statically incorrectly we are adding a builder instance in the controller during it s initialization but we never use this instance again in another hand we re calling the builder in the life cycle statically this is not correct and generates depreciated errors when e depreciated is rem... | 1 |

21,200 | 28,238,777,833 | IssuesEvent | 2023-04-06 04:40:59 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | How do "MS-SR-Update-MobilityServiceForA2AVirtualMachines" jobs get updated to use managed identities? | automation/svc triaged cxp product-question process-automation/subsvc Pri1 |

When a recovery services vault has been configured to hold ASR-replicated VMs, Site Recovery leverages an automation account to manage Site Recovery extensions on all your replicated items and keeps them up-to-date, as seen here:

| 1.0 | Allow searching for parent item title/creator/year in Add Note dialog - https://forums.zotero.org/discussion/99611/inserting-notes-in-word-how-the-search-bar-works

And show all of the item's notes. (Relatedly, we should show the parent item title as well, similar to how we show it in the PDF reader notes pane.) | process | allow searching for parent item title creator year in add note dialog and show all of the item s notes relatedly we should show the parent item title as well similar to how we show it in the pdf reader notes pane | 1 |

9,631 | 12,576,632,610 | IssuesEvent | 2020-06-09 08:13:57 | varys-main/ps-tools | https://api.github.com/repos/varys-main/ps-tools | closed | StartUp - Keine lokalen NAV-Installationen - Fehlerbehandlung | processing | # User Story

- Ist auf dem Computer, auf den das StartUp-Skript ausgeführt wird, das Verzeichnis C:\NAV nicht vorhanden, führt das zu Fehlermeldungen.

- Diese Fehler sollen durch eine Warnung ersetzt werden.

# Task

- [x] Prüfung, ob C:\NAV vorhanden ist

- [x] ~~Warnung ausgeben~~

# Implementations

- Is... | 1.0 | StartUp - Keine lokalen NAV-Installationen - Fehlerbehandlung - # User Story

- Ist auf dem Computer, auf den das StartUp-Skript ausgeführt wird, das Verzeichnis C:\NAV nicht vorhanden, führt das zu Fehlermeldungen.

- Diese Fehler sollen durch eine Warnung ersetzt werden.

# Task

- [x] Prüfung, ob C:\NAV vorhan... | process | startup keine lokalen nav installationen fehlerbehandlung user story ist auf dem computer auf den das startup skript ausgeführt wird das verzeichnis c nav nicht vorhanden führt das zu fehlermeldungen diese fehler sollen durch eine warnung ersetzt werden task prüfung ob c nav vorhande... | 1 |

50,218 | 6,065,846,049 | IssuesEvent | 2017-06-14 17:07:31 | openshift/origin | https://api.github.com/repos/openshift/origin | closed | Extended.[Conformance][registry][migration] manifest migration from etcd to registry storage registry can get access to manifest [local] | dependency/devicemapper kind/test-flake priority/P1 | Flaking out as seen [here](https://ci.openshift.redhat.com/jenkins/job/test_pull_requests_origin_future/710/testReport/junit/(root)/Extended/_Conformance__registry__migration__manifest_migration_from_etcd_to_registry_storage_registry_can_get_access_to_manifest__local_/):

```

/go/src/github.com/openshift/origin/_out... | 1.0 | Extended.[Conformance][registry][migration] manifest migration from etcd to registry storage registry can get access to manifest [local] - Flaking out as seen [here](https://ci.openshift.redhat.com/jenkins/job/test_pull_requests_origin_future/710/testReport/junit/(root)/Extended/_Conformance__registry__migration__manif... | non_process | extended manifest migration from etcd to registry storage registry can get access to manifest flaking out as seen go src github com openshift origin output local go src github com openshift origin test extended registry registry go expected error status message ... | 0 |

244,481 | 26,412,566,976 | IssuesEvent | 2023-01-13 13:29:15 | dmyers87/amundsenfrontendlibrary | https://api.github.com/repos/dmyers87/amundsenfrontendlibrary | opened | CVE-2022-21191 (High) detected in global-modules-path-2.3.0.tgz | security vulnerability | ## CVE-2022-21191 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>global-modules-path-2.3.0.tgz</b></p></summary>

<p>Returns path to globally installed package</p>

<p>Library home page... | True | CVE-2022-21191 (High) detected in global-modules-path-2.3.0.tgz - ## CVE-2022-21191 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>global-modules-path-2.3.0.tgz</b></p></summary>

<p>R... | non_process | cve high detected in global modules path tgz cve high severity vulnerability vulnerable library global modules path tgz returns path to globally installed package library home page a href path to dependency file amundsen application static package json path to vulner... | 0 |

9,303 | 12,312,501,544 | IssuesEvent | 2020-05-12 14:01:27 | bazelbuild/rules_python | https://api.github.com/repos/bazelbuild/rules_python | closed | Clarify ownership of rules_python | P1 type: process | Following up on #290, I've added @andyscott and @thundergolfer as maintainers of rules_python. We now need to define the boundaries between community-owned pieces of this repo and pieces owned by the core Bazel team.

+Cc @lberki and @laurentlb on core Bazel, and @pstradomski who is responsible for the wheel packagin... | 1.0 | Clarify ownership of rules_python - Following up on #290, I've added @andyscott and @thundergolfer as maintainers of rules_python. We now need to define the boundaries between community-owned pieces of this repo and pieces owned by the core Bazel team.

+Cc @lberki and @laurentlb on core Bazel, and @pstradomski who i... | process | clarify ownership of rules python following up on i ve added andyscott and thundergolfer as maintainers of rules python we now need to define the boundaries between community owned pieces of this repo and pieces owned by the core bazel team cc lberki and laurentlb on core bazel and pstradomski who is ... | 1 |

81,191 | 3,587,795,083 | IssuesEvent | 2016-01-30 15:41:55 | fgpv-vpgf/fgpv-vpgf | https://api.github.com/repos/fgpv-vpgf/fgpv-vpgf | opened | chore(build): wet sample page missing resources on fgpcloud site | priority: high type: chore | I didn't want to include wet code in our source, so I linked resourced from a lib folder. Auto-deploy script doesn't copy them over and the wet sample page is currently broken: http://fgpv.cloudapp.net/demo/develop/index-wet.html

What should we do? Include wet in source or update auto-deploy script to copy over wet ... | 1.0 | chore(build): wet sample page missing resources on fgpcloud site - I didn't want to include wet code in our source, so I linked resourced from a lib folder. Auto-deploy script doesn't copy them over and the wet sample page is currently broken: http://fgpv.cloudapp.net/demo/develop/index-wet.html

What should we do? I... | non_process | chore build wet sample page missing resources on fgpcloud site i didn t want to include wet code in our source so i linked resourced from a lib folder auto deploy script doesn t copy them over and the wet sample page is currently broken what should we do include wet in source or update auto deploy script t... | 0 |

9,353 | 12,366,223,065 | IssuesEvent | 2020-05-18 10:04:52 | DiSSCo/user-stories | https://api.github.com/repos/DiSSCo/user-stories | opened | to select all digitized labels from a specific collector | 2. Collection Management 2. University/Research institute 4. Data processing ICEDIG-SURVEY Specimen level | As a Scientist I want to extract handwriting samples of a collector so that I can verify collection localities and collection dates of specimens of a collector for this I need to select all digitized labels from a specific collector | 1.0 | to select all digitized labels from a specific collector - As a Scientist I want to extract handwriting samples of a collector so that I can verify collection localities and collection dates of specimens of a collector for this I need to select all digitized labels from a specific collector | process | to select all digitized labels from a specific collector as a scientist i want to extract handwriting samples of a collector so that i can verify collection localities and collection dates of specimens of a collector for this i need to select all digitized labels from a specific collector | 1 |

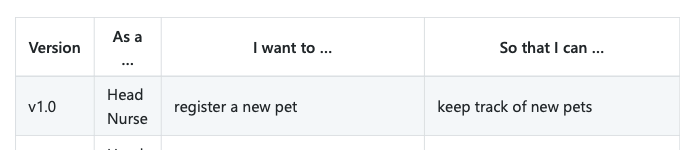

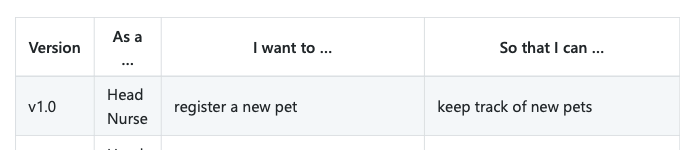

357,634 | 25,176,414,710 | IssuesEvent | 2022-11-11 09:39:32 | markusteim/pe | https://api.github.com/repos/markusteim/pe | opened | User stories parts don't match | type.DocumentationBug severity.Low |

The description of the user story does not match the name of it, it should be that a new pet can be added to the list of pets that are in the hospital, not that you could keep track of them, it sounds more... | 1.0 | User stories parts don't match -

The description of the user story does not match the name of it, it should be that a new pet can be added to the list of pets that are in the hospital, not that you could k... | non_process | user stories parts don t match the description of the user story does not match the name of it it should be that a new pet can be added to the list of pets that are in the hospital not that you could keep track of them it sounds more like viewing the list of pets | 0 |

22,134 | 30,679,612,032 | IssuesEvent | 2023-07-26 08:17:07 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Deploy a Linux Hybrid Runbook Worker in Docker | automation/svc triaged assigned-to-author doc-idea process-automation/subsvc Pri2 | I would be great to have instructions for deploying to a Linux Docker container also.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: e38be5b8-d76d-a4f1-c014-7bf9248be2de

* Version Independent ID: 976e5e90-b28c-d7ba-0495-69d92e62ea46... | 1.0 | Deploy a Linux Hybrid Runbook Worker in Docker - I would be great to have instructions for deploying to a Linux Docker container also.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: e38be5b8-d76d-a4f1-c014-7bf9248be2de

* Version Ind... | process | deploy a linux hybrid runbook worker in docker i would be great to have instructions for deploying to a linux docker container also document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id c... | 1 |

301,080 | 26,014,510,714 | IssuesEvent | 2022-12-21 07:01:12 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | opened | Getting STOD (stuck triangle of doom) trying to log in to `account.brave.com` using `Private window` | bug privacy QA/Yes QA/Test-Plan-Specified OS/Desktop feature/vpn | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | Getting STOD (stuck triangle of doom) trying to log in to `account.brave.com` using `Private window` - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE... | non_process | getting stod stuck triangle of doom trying to log in to account brave com using private window have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue... | 0 |

1,342 | 3,193,643,515 | IssuesEvent | 2015-09-30 07:15:06 | raml-org/raml-spec | https://api.github.com/repos/raml-org/raml-spec | opened | Allow Defining Configurable New Security Schemes | v1-enhanced-security-schemes | Allow new security schemes to be defined that require certain configuration settings, and allow consuming them in an API definition. The processor of the RAML spec would need to support such custom schemes. | True | Allow Defining Configurable New Security Schemes - Allow new security schemes to be defined that require certain configuration settings, and allow consuming them in an API definition. The processor of the RAML spec would need to support such custom schemes. | non_process | allow defining configurable new security schemes allow new security schemes to be defined that require certain configuration settings and allow consuming them in an api definition the processor of the raml spec would need to support such custom schemes | 0 |

19,616 | 25,970,685,475 | IssuesEvent | 2022-12-19 10:58:41 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Remove DML dependency from MongoDB introspection | process/candidate topic: introspection topic: internal tech/engines/introspection engine team/schema topic: mongodb | We still use DML in MongoDB introspection. Let's get rid of that, and get something similar going what we have in the SQL side. | 1.0 | Remove DML dependency from MongoDB introspection - We still use DML in MongoDB introspection. Let's get rid of that, and get something similar going what we have in the SQL side. | process | remove dml dependency from mongodb introspection we still use dml in mongodb introspection let s get rid of that and get something similar going what we have in the sql side | 1 |

12,488 | 14,952,630,936 | IssuesEvent | 2021-01-26 15:45:27 | ORNL-AMO/AMO-Tools-Desktop | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop | closed | Pre-Release testing of PHAST assessment | Process Heating important | Step through creation of a couple new assessments. Import MEASUR Demo Plant B, and any other frequently used. Anything else is fair game as well. | 1.0 | Pre-Release testing of PHAST assessment - Step through creation of a couple new assessments. Import MEASUR Demo Plant B, and any other frequently used. Anything else is fair game as well. | process | pre release testing of phast assessment step through creation of a couple new assessments import measur demo plant b and any other frequently used anything else is fair game as well | 1 |

302,562 | 22,830,877,431 | IssuesEvent | 2022-07-12 12:50:46 | cambiatus/backend | https://api.github.com/repos/cambiatus/backend | closed | Atualizar readme para mencionar a dependência exiftool | 📚 documentation | O PR #247 introduziu a limpeza dos metadados de imagem. Pra fazer isso usamos uma dependência externa chamada "exiftool" que precisa ser instalada na máquina que irá rodar o backend.

Para informar novos usuários e facilitar a colaboração devemos informar essa dependência no readme do diretório, assim como fazmos par... | 1.0 | Atualizar readme para mencionar a dependência exiftool - O PR #247 introduziu a limpeza dos metadados de imagem. Pra fazer isso usamos uma dependência externa chamada "exiftool" que precisa ser instalada na máquina que irá rodar o backend.

Para informar novos usuários e facilitar a colaboração devemos informar essa ... | non_process | atualizar readme para mencionar a dependência exiftool o pr introduziu a limpeza dos metadados de imagem pra fazer isso usamos uma dependência externa chamada exiftool que precisa ser instalada na máquina que irá rodar o backend para informar novos usuários e facilitar a colaboração devemos informar essa de... | 0 |

63,022 | 8,652,109,210 | IssuesEvent | 2018-11-27 06:43:35 | aopell/SchoologyPlus | https://api.github.com/repos/aopell/SchoologyPlus | opened | Document grade page features | Documentation Enhancement | We currently have extensive documentation on the theme editor. Given the popularity of the grade page features, we should probably have similar documentation on them. | 1.0 | Document grade page features - We currently have extensive documentation on the theme editor. Given the popularity of the grade page features, we should probably have similar documentation on them. | non_process | document grade page features we currently have extensive documentation on the theme editor given the popularity of the grade page features we should probably have similar documentation on them | 0 |

31,540 | 14,987,670,627 | IssuesEvent | 2021-01-28 23:24:54 | diegodlh/zotero-wikicite | https://api.github.com/repos/diegodlh/zotero-wikicite | opened | Alternative Wikidata search translator for citation target item metadata | enhancement performance wikidata | Methods such as `Citations.syncItemCitationsWithWikidata` rely on `Wikidata.getItems` to get citation target item metadata.

However, `Wikidata.getItems` relies on custom Wikidata API search translator, which returns a full item. Most of this information will be discarded when saving the citation (#27).

This means... | True | Alternative Wikidata search translator for citation target item metadata - Methods such as `Citations.syncItemCitationsWithWikidata` rely on `Wikidata.getItems` to get citation target item metadata.

However, `Wikidata.getItems` relies on custom Wikidata API search translator, which returns a full item. Most of this ... | non_process | alternative wikidata search translator for citation target item metadata methods such as citations syncitemcitationswithwikidata rely on wikidata getitems to get citation target item metadata however wikidata getitems relies on custom wikidata api search translator which returns a full item most of this ... | 0 |

14,387 | 17,403,912,199 | IssuesEvent | 2021-08-03 01:14:27 | googleapis/python-resource-settings | https://api.github.com/repos/googleapis/python-resource-settings | closed | GA Release | api: resourcesettings type: process | [GA release template](https://github.com/googleapis/google-cloud-common/issues/287)

## Required

- [x] 28 days elapsed since last beta release with new API surface. See [release history](https://github.com/googleapis/python-resource-settings/releases).

- [x] Server API is GA. See [API Release Notes](https://cloud.... | 1.0 | GA Release - [GA release template](https://github.com/googleapis/google-cloud-common/issues/287)

## Required

- [x] 28 days elapsed since last beta release with new API surface. See [release history](https://github.com/googleapis/python-resource-settings/releases).

- [x] Server API is GA. See [API Release Notes](h... | process | ga release required days elapsed since last beta release with new api surface see server api is ga see package api is stable and we can commit to backward compatibility all dependencies are ga | 1 |

14,366 | 17,390,123,738 | IssuesEvent | 2021-08-02 05:57:17 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Obsoletion notice: GO:0044825 retroviral strand transfer activity | multi-species process obsoletion | Please provide as much information as you can:

* **GO term ID and Label**

GO:0044825 retroviral strand transfer activity

* **Reason for deprecation**

This represents part of an activity - 'part of' some 'retroviral integrase activity'

* **"Replace by" term (ID and label)**

If all annotations can safel... | 1.0 | Obsoletion notice: GO:0044825 retroviral strand transfer activity - Please provide as much information as you can:

* **GO term ID and Label**

GO:0044825 retroviral strand transfer activity

* **Reason for deprecation**

This represents part of an activity - 'part of' some 'retroviral integrase activity'

... | process | obsoletion notice go retroviral strand transfer activity please provide as much information as you can go term id and label go retroviral strand transfer activity reason for deprecation this represents part of an activity part of some retroviral integrase activity rep... | 1 |

9,292 | 12,306,094,285 | IssuesEvent | 2020-05-12 00:22:55 | bazelbuild/rules_python | https://api.github.com/repos/bazelbuild/rules_python | closed | Release 0.0.2 | P1 type: process | Targeting Bazel 2.0 (or sooner).

This means we want compatibility with any incompatible changes in 2.0. Then we'll aim to be included in the Bazel Federation release that'll come out shortly after Bazel 2.0. | 1.0 | Release 0.0.2 - Targeting Bazel 2.0 (or sooner).

This means we want compatibility with any incompatible changes in 2.0. Then we'll aim to be included in the Bazel Federation release that'll come out shortly after Bazel 2.0. | process | release targeting bazel or sooner this means we want compatibility with any incompatible changes in then we ll aim to be included in the bazel federation release that ll come out shortly after bazel | 1 |

6,866 | 9,999,249,660 | IssuesEvent | 2019-07-12 10:09:10 | OI-wiki/OI-wiki | https://api.github.com/repos/OI-wiki/OI-wiki | closed | 树状数组页面代码格式混乱 | 需要处理 / Need Processing 需要帮助修正格式 / Help needed for format | 首先,十分欢迎你来给 OI WIki 开 issue,在提交之前,请花时间阅读一下这个模板的内容,谢谢合作!

- [x] 请确认已经读过了 [F.A.Q.](https://oi-wiki.org/intro/faq/)(确认过后请将选项打钩 / 填为 `[x]`)

- 是出现了什么问题?(最好截图)

树状数组页面代码格式混乱,大括号时而换行,时而不换行。

https://github.com/24OI/OI-wiki/blob/master/docs/ds/bit.md#L72

- 你是否正在着手修复?

没有

- 如何复现?

| 1.0 | 树状数组页面代码格式混乱 - 首先,十分欢迎你来给 OI WIki 开 issue,在提交之前,请花时间阅读一下这个模板的内容,谢谢合作!

- [x] 请确认已经读过了 [F.A.Q.](https://oi-wiki.org/intro/faq/)(确认过后请将选项打钩 / 填为 `[x]`)

- 是出现了什么问题?(最好截图)

树状数组页面代码格式混乱,大括号时而换行,时而不换行。

https://github.com/24OI/OI-wiki/blob/master/docs/ds/bit.md#L72

- 你是否正在着手修复?

没有

- 如何复现?

| process | 树状数组页面代码格式混乱 首先,十分欢迎你来给 oi wiki 开 issue,在提交之前,请花时间阅读一下这个模板的内容,谢谢合作! 请确认已经读过了 填为 ) 是出现了什么问题?(最好截图) 树状数组页面代码格式混乱,大括号时而换行,时而不换行。 你是否正在着手修复? 没有 如何复现? | 1 |

347 | 2,793,293,628 | IssuesEvent | 2015-05-11 09:57:47 | ecodistrict/IDSSDashboard | https://api.github.com/repos/ecodistrict/IDSSDashboard | closed | Tab should be called ‘develop variant’ instead of ‘alternatives’ (right? It is confusing ☺) | form feedback 09102014 process step: develop alternatives | Tab should be called ‘develop variant’ instead of ‘alternatives’ (right? It is confusing ☺) | 1.0 | Tab should be called ‘develop variant’ instead of ‘alternatives’ (right? It is confusing ☺) - Tab should be called ‘develop variant’ instead of ‘alternatives’ (right? It is confusing ☺) | process | tab should be called ‘develop variant’ instead of ‘alternatives’ right it is confusing ☺ tab should be called ‘develop variant’ instead of ‘alternatives’ right it is confusing ☺ | 1 |

22,042 | 30,564,400,832 | IssuesEvent | 2023-07-20 16:38:43 | AvaloniaUI/Avalonia | https://api.github.com/repos/AvaloniaUI/Avalonia | closed | GlyphRun - Index was outside the bounds of the array on Android | bug os-android area-textprocessing | **Describe the bug**

Run my app on Android mobile device.

Use Material.Avalonia.

When print in textbox app crush.

**To Reproduce**

Steps to reproduce the behavior:

Run app on android. Print to textbox.

**Expected behavior**

text in textbox

- OS: Android 13. OneUI 5.1

- Version 11.0.0-rc1

Exce... | 1.0 | GlyphRun - Index was outside the bounds of the array on Android - **Describe the bug**

Run my app on Android mobile device.

Use Material.Avalonia.

When print in textbox app crush.

**To Reproduce**

Steps to reproduce the behavior:

Run app on android. Print to textbox.

**Expected behavior**

text in text... | process | glyphrun index was outside the bounds of the array on android describe the bug run my app on android mobile device use material avalonia when print in textbox app crush to reproduce steps to reproduce the behavior run app on android print to textbox expected behavior text in text... | 1 |

17,387 | 23,205,965,840 | IssuesEvent | 2022-08-02 05:21:24 | u4gbot/status.webodm.net | https://api.github.com/repos/u4gbot/status.webodm.net | closed | spark1.webodm.net was unavailable for 10 minutes on 8/2/22 1am EST for a planned storage upgrade | status processing-network-spark1 | It is now back online. | 1.0 | spark1.webodm.net was unavailable for 10 minutes on 8/2/22 1am EST for a planned storage upgrade - It is now back online. | process | webodm net was unavailable for minutes on est for a planned storage upgrade it is now back online | 1 |

433,100 | 30,312,318,029 | IssuesEvent | 2023-07-10 13:34:09 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Example of building and testing libraries doesn't work | documentation area-Infrastructure-libraries needs-further-triage in-pr | https://github.com/dotnet/runtime/blob/66def3a7024d8c860c047a9b1681085c591d46ca/docs/workflow/building/libraries/README.md?plain=1#L40-L48

There are two problems:

1. AFAIK `command1 && command2` runs sequentially but `command1 & command2` runs simultaneously. So the last line would not work as expected.

2. `Sy... | 1.0 | Example of building and testing libraries doesn't work - https://github.com/dotnet/runtime/blob/66def3a7024d8c860c047a9b1681085c591d46ca/docs/workflow/building/libraries/README.md?plain=1#L40-L48

There are two problems:

1. AFAIK `command1 && command2` runs sequentially but `command1 & command2` runs simultaneousl... | non_process | example of building and testing libraries doesn t work there are two problems afaik runs sequentially but runs simultaneously so the last line would not work as expected system text regularexpressions is uncommon that there s not a xxx csproj in directory tests | 0 |

648,042 | 21,163,775,128 | IssuesEvent | 2022-04-07 11:50:35 | woocommerce/woocommerce-ios | https://api.github.com/repos/woocommerce/woocommerce-ios | closed | Remove `axisOfTwoSubviews` helper | type: task good first issue priority: low | unrelated: this doesn't seem to be used in the codebase anymore, we can delete this at some point 🙂

_Originally posted by @jaclync in https://github.com/woocommerce/woocommerce-ios/pull/5656#discussion_r784494673_ | 1.0 | Remove `axisOfTwoSubviews` helper - unrelated: this doesn't seem to be used in the codebase anymore, we can delete this at some point 🙂

_Originally posted by @jaclync in https://github.com/woocommerce/woocommerce-ios/pull/5656#discussion_r784494673_ | non_process | remove axisoftwosubviews helper unrelated this doesn t seem to be used in the codebase anymore we can delete this at some point 🙂 originally posted by jaclync in | 0 |