Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

11,213 | 13,965,936,536 | IssuesEvent | 2020-10-26 00:40:49 | NixOS/nixpkgs | https://api.github.com/repos/NixOS/nixpkgs | closed | 20.09 Release notes | 0.kind: enhancement 6.topic: release process | This thread is for any release-worthy notes which may have not have made their way into https://github.com/NixOS/nixpkgs/blob/master/nixos/doc/manual/release-notes/rl-2009.xml yet.

Please leave a summary and any relevant links to the items. I will try and go through them before the release to ensure the notes are in... | 1.0 | 20.09 Release notes - This thread is for any release-worthy notes which may have not have made their way into https://github.com/NixOS/nixpkgs/blob/master/nixos/doc/manual/release-notes/rl-2009.xml yet.

Please leave a summary and any relevant links to the items. I will try and go through them before the release to e... | process | release notes this thread is for any release worthy notes which may have not have made their way into yet please leave a summary and any relevant links to the items i will try and go through them before the release to ensure the notes are in order list to make items easier to track was removed ... | 1 |

248,602 | 18,858,095,699 | IssuesEvent | 2021-11-12 09:22:49 | tzejit/pe | https://api.github.com/repos/tzejit/pe | opened | Broken link | severity.Low type.DocumentationBug | Link shown in the diagram on page 11 of the DG does not lead to a valid github page

<!--session: 1636704460468-56fd96ad-880c-43e7-b185-87285ec0f872-->

<!--Version: Web v3.4.1--> | 1.0 | Broken link - Link shown in the diagram on page 11 of the DG does not lead to a valid github page

<!--session: 1636704460468-56fd96ad-880c-43e7-b185-87285ec0f872-->

<!--Version: Web v3.4.1--> | non_process | broken link link shown in the diagram on page of the dg does not lead to a valid github page | 0 |

12,687 | 15,052,413,183 | IssuesEvent | 2021-02-03 15:10:29 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Postgres date types `time` and `date` are not working | bug/2-confirmed kind/bug process/candidate status/needs-action team/migrations topic: native database types topic: types | For example:

SQL

```sql

CREATE TABLE `User` (

id integer PRIMARY KEY,

time time,

);

```

Prisma Schema

```prisma

model User {

id Int @id

time DateTime?

}

```

Code

```ts

const date = new Date('2015-01-01T00:00:00Z')

const data: UserCreateInput = {

id: 1,

time: dat... | 1.0 | Postgres date types `time` and `date` are not working - For example:

SQL

```sql

CREATE TABLE `User` (

id integer PRIMARY KEY,

time time,

);

```

Prisma Schema

```prisma

model User {

id Int @id

time DateTime?

}

```

Code

```ts

const date = new Date('2015-01-01T00:00:00Z')... | process | postgres date types time and date are not working for example sql sql create table user id integer primary key time time prisma schema prisma model user id int id time datetime code ts const date new date const d... | 1 |

120,302 | 4,787,800,973 | IssuesEvent | 2016-10-30 06:53:42 | CS2103AUG2016-T17-C3/main | https://api.github.com/repos/CS2103AUG2016-T17-C3/main | closed | Multi Undo | priority.medium type.command.undo&redo type.enhancement | i.e. able to use command `undo 5` to undo 5 times

consider:

1) if 2 available undos but `undo 4` command given,

Give different feedback to user?

Still proceed with undo?

2) Since can't show all undo steps, change feedback for multi undo? | 1.0 | Multi Undo - i.e. able to use command `undo 5` to undo 5 times

consider:

1) if 2 available undos but `undo 4` command given,

Give different feedback to user?

Still proceed with undo?

2) Since can't show all undo steps, change feedback for multi undo? | non_process | multi undo i e able to use command undo to undo times consider if available undos but undo command given give different feedback to user still proceed with undo since can t show all undo steps change feedback for multi undo | 0 |

3,187 | 6,259,059,621 | IssuesEvent | 2017-07-14 17:07:06 | cyipt/cyipt | https://api.github.com/repos/cyipt/cyipt | closed | Share how quietness algorithm works | data preprocessing | Agreement that greater internal scrutiny of the algorithm is needed before it can be used in CyIPT. The algorithm is not open source but Martin to investigate ways it can be shared internally. | 1.0 | Share how quietness algorithm works - Agreement that greater internal scrutiny of the algorithm is needed before it can be used in CyIPT. The algorithm is not open source but Martin to investigate ways it can be shared internally. | process | share how quietness algorithm works agreement that greater internal scrutiny of the algorithm is needed before it can be used in cyipt the algorithm is not open source but martin to investigate ways it can be shared internally | 1 |

5,646 | 8,507,342,569 | IssuesEvent | 2018-10-30 18:48:52 | easy-software-ufal/annotations_repos | https://api.github.com/repos/easy-software-ufal/annotations_repos | opened | grimmi/TheBelt Configuration keys are named for the properties, not the attributes | C# RPV wrong processing | Issue: `https://github.com/grimmi/TheBelt/issues/1`

PR: `https://github.com/grimmi/TheBelt/commit/ec0f1ac65cd771d29344bae6814d73910e69d04e` | 1.0 | grimmi/TheBelt Configuration keys are named for the properties, not the attributes - Issue: `https://github.com/grimmi/TheBelt/issues/1`

PR: `https://github.com/grimmi/TheBelt/commit/ec0f1ac65cd771d29344bae6814d73910e69d04e` | process | grimmi thebelt configuration keys are named for the properties not the attributes issue pr | 1 |

232,548 | 7,661,282,677 | IssuesEvent | 2018-05-11 13:46:37 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | closed | Admin Role does not have permission to modify node role when a role is specified in Allowed Node Roles field | Priority: Medium Type: Bug | In the web admin, Configuration -> System Configuration -> Admin Access, a new admin role was created with a role specified under Allowed node roles:

When a user belonging in this admin role tries to assig... | 1.0 | Admin Role does not have permission to modify node role when a role is specified in Allowed Node Roles field - In the web admin, Configuration -> System Configuration -> Admin Access, a new admin role was created with a role specified under Allowed node roles:

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src... | 2.0 | UCP: Migrate scalar function `JsonStorageSizeSig` from TiDB -

## Description

Port the scalar function `JsonStorageSizeSig` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- http... | process | ucp migrate scalar function jsonstoragesizesig from tidb description port the scalar function jsonstoragesizesig from tidb to coprocessor score mentor s sticnarf recommended skills rust programming learning materials already implemented expressions ported from tidb ... | 1 |

103,756 | 8,948,408,426 | IssuesEvent | 2019-01-25 02:15:02 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | UI links to CLI are broken | area/packaging kind/bug status/resolved status/to-test version/2.0 | **Rancher versions:**

rancher/server or rancher/rancher: v2.1.0

i.e. for Linux:

```

https://releases.rancher.com/cli/V2.0.5/rancher-linux-amd64-V2.0.5.tar.gz

```

Seems that CLI is published under `/cli2/` and we have an uppercase typo (`V` vs `v`)

```

https://releases.rancher.com/cli2/v2.0.5/rancher-linux... | 1.0 | UI links to CLI are broken - **Rancher versions:**

rancher/server or rancher/rancher: v2.1.0

i.e. for Linux:

```

https://releases.rancher.com/cli/V2.0.5/rancher-linux-amd64-V2.0.5.tar.gz

```

Seems that CLI is published under `/cli2/` and we have an uppercase typo (`V` vs `v`)

```

https://releases.rancher.... | non_process | ui links to cli are broken rancher versions rancher server or rancher rancher i e for linux seems that cli is published under and we have an uppercase typo v vs v | 0 |

218,006 | 7,329,934,210 | IssuesEvent | 2018-03-05 07:59:29 | metasfresh/metasfresh | https://api.github.com/repos/metasfresh/metasfresh | closed | Distribution Editor Move HU takes ages | priority:high type:bug | ### Is this a bug or feature request?

Bug

### What is the current behavior?

When selecting a Distribution Orderline and starting the action "move HU" the dropdown list for HU does not come to an end (pending).

#### Which are the steps to reproduce?

Open, try and see.

### What is the expected or desired behavior?

... | 1.0 | Distribution Editor Move HU takes ages - ### Is this a bug or feature request?

Bug

### What is the current behavior?

When selecting a Distribution Orderline and starting the action "move HU" the dropdown list for HU does not come to an end (pending).

#### Which are the steps to reproduce?

Open, try and see.

### W... | non_process | distribution editor move hu takes ages is this a bug or feature request bug what is the current behavior when selecting a distribution orderline and starting the action move hu the dropdown list for hu does not come to an end pending which are the steps to reproduce open try and see w... | 0 |

406,434 | 27,564,781,054 | IssuesEvent | 2023-03-08 02:18:07 | hashicorp/terraform-provider-aws | https://api.github.com/repos/hashicorp/terraform-provider-aws | opened | [Docs]: | documentation needs-triage | ### Documentation Link

https://registry.terraform.io/providers/hashicorp/aws/latest/docs/resources/lb_target_group_attachment

### Description

Hi Team,

I found one loopholes in aws_lb_target_group_attachment documentation, where target id defines instance id, but during launching new aws instance using resource bl... | 1.0 | [Docs]: - ### Documentation Link

https://registry.terraform.io/providers/hashicorp/aws/latest/docs/resources/lb_target_group_attachment

### Description

Hi Team,

I found one loopholes in aws_lb_target_group_attachment documentation, where target id defines instance id, but during launching new aws instance using ... | non_process | documentation link description hi team i found one loopholes in aws lb target group attachment documentation where target id defines instance id but during launching new aws instance using resource block we can t get id attribute as an output then how can we put target id in target attachmen... | 0 |

4,613 | 7,459,524,240 | IssuesEvent | 2018-03-30 15:40:56 | eobermuhlner/big-math | https://api.github.com/repos/eobermuhlner/big-math | closed | Prepare release 2.0.0 | development process | - [x] rename release note

- [x] change version in `gradle.build`

- [x] build and commit javadoc

- [ ] upload artifacts to maven central

- [x] uncomment task `uploadArchives` in `gradle.build`

- [x] run `./gradlew uploadArchives`

- [x] go to https://oss.sonatype.org/

- [x] in tab 'Staging Repositories... | 1.0 | Prepare release 2.0.0 - - [x] rename release note

- [x] change version in `gradle.build`

- [x] build and commit javadoc

- [ ] upload artifacts to maven central

- [x] uncomment task `uploadArchives` in `gradle.build`

- [x] run `./gradlew uploadArchives`

- [x] go to https://oss.sonatype.org/

- [x] in t... | process | prepare release rename release note change version in gradle build build and commit javadoc upload artifacts to maven central uncomment task uploadarchives in gradle build run gradlew uploadarchives go to in tab staging repositories locate own rep... | 1 |

64,928 | 18,960,828,811 | IssuesEvent | 2021-11-19 04:27:25 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Facebook's preview of Riot is massive | T-Defect P2 S-Minor S-Tolerable | I'm not sure if this is something that Riot can actually fix, but it is hilariously large for a preview:

| 1.0 | Facebook's preview of Riot is massive - I'm not sure if this is something that Riot can actually fix, but it is hilariously large for a preview:

| non_process | facebook s preview of riot is massive i m not sure if this is something that riot can actually fix but it is hilariously large for a preview | 0 |

7,449 | 10,558,142,139 | IssuesEvent | 2019-10-04 08:23:28 | prisma/photonjs | https://api.github.com/repos/prisma/photonjs | closed | Photon filters `in` prop missing `null` support | process/candidate | The Photon find filters like `StringFilter` have all properties optional with null support but the `in` and `notIn` have only `Enumerable<string>` optional type:

This conflict with generated GraphQL typ... | 1.0 | Photon filters `in` prop missing `null` support - The Photon find filters like `StringFilter` have all properties optional with null support but the `in` and `notIn` have only `Enumerable<string>` optional type:

* Wha... | 1.0 | fuse script puts EXPECT_FATAL_FAILURE into .cc file - ```

EXPECT_FATAL_FAILURE is a define in gtest-spi.h but is put into gtest-all.cc

file after fusing.

This requires to include .cc file into file where EXPECT_FATAL_FAILURE is used,

which is very strange. Also it makes impossible to use EXPECT_FATAL_FAILURE in

two... | non_process | fuse script puts expect fatal failure into cc file expect fatal failure is a define in gtest spi h but is put into gtest all cc file after fusing this requires to include cc file into file where expect fatal failure is used which is very strange also it makes impossible to use expect fatal failure in two... | 0 |

23 | 2,496,263,365 | IssuesEvent | 2015-01-06 18:14:51 | vivo-isf/vivo-isf-ontology | https://api.github.com/repos/vivo-isf/vivo-isf-ontology | closed | Cellular Pluripotency | biological_process imported | _From [rgar...@eagle-i.org](https://code.google.com/u/111247205719752845822/) on March 25, 2013 08:52:48_

\<b>**** Use the form below to request a new term ****</b>

\<b>**** Scroll down to see a term request example ****</b>

\<b>Please indicate the label for the proposed term:</b>

Cellular Pluripotency (child ... | 1.0 | Cellular Pluripotency - _From [rgar...@eagle-i.org](https://code.google.com/u/111247205719752845822/) on March 25, 2013 08:52:48_

\<b>**** Use the form below to request a new term ****</b>

\<b>**** Scroll down to see a term request example ****</b>

\<b>Please indicate the label for the proposed term:</b>

Cellu... | process | cellular pluripotency from on march use the form below to request a new term scroll down to see a term request example please indicate the label for the proposed term cellular pluripotency child of cell potency please provide a textual definition wi... | 1 |

27,342 | 5,340,798,105 | IssuesEvent | 2017-02-17 00:13:38 | scikit-image/scikit-image | https://api.github.com/repos/scikit-image/scikit-image | closed | Active contour does not document that snake length depends on input | difficulty: novice documentation | The output snake length is the same as the input boundary given--no points are added. This should be explicitly documented.

| 1.0 | Active contour does not document that snake length depends on input - The output snake length is the same as the input boundary given--no points are added. This should be explicitly documented.

| non_process | active contour does not document that snake length depends on input the output snake length is the same as the input boundary given no points are added this should be explicitly documented | 0 |

36,381 | 12,405,027,226 | IssuesEvent | 2020-05-21 16:32:12 | wrbejar/Nova8JavaVulnerable | https://api.github.com/repos/wrbejar/Nova8JavaVulnerable | opened | CVE-2018-1000632 (High) detected in dom4j-1.6.1.jar | security vulnerability | ## CVE-2018-1000632 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dom4j-1.6.1.jar</b></p></summary>

<p>dom4j: the flexible XML framework for Java</p>

<p>Library home page: <a href="h... | True | CVE-2018-1000632 (High) detected in dom4j-1.6.1.jar - ## CVE-2018-1000632 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dom4j-1.6.1.jar</b></p></summary>

<p>dom4j: the flexible XML f... | non_process | cve high detected in jar cve high severity vulnerability vulnerable library jar the flexible xml framework for java library home page a href path to vulnerable library target javavulnerablelab web inf lib jar depth target javavulnerablelab meta in... | 0 |

51,745 | 12,800,839,450 | IssuesEvent | 2020-07-02 17:54:01 | tomopy/tomopy | https://api.github.com/repos/tomopy/tomopy | closed | Building TomoPy from source on Windows | build question | I'm trying to build TomoPy from source on a fresh python 3.7 install (from python.org) in a Win10 environment. Running into some build issues and wondering if anybody can give me some tips? Here is where I am now:

1. I have installed MinGW and placed the binaries in my PATH following this procedure:

[https://www.... | 1.0 | Building TomoPy from source on Windows - I'm trying to build TomoPy from source on a fresh python 3.7 install (from python.org) in a Win10 environment. Running into some build issues and wondering if anybody can give me some tips? Here is where I am now:

1. I have installed MinGW and placed the binaries in my PATH f... | non_process | building tomopy from source on windows i m trying to build tomopy from source on a fresh python install from python org in a environment running into some build issues and wondering if anybody can give me some tips here is where i am now i have installed mingw and placed the binaries in my path follo... | 0 |

32,148 | 15,241,993,840 | IssuesEvent | 2021-02-19 09:15:40 | wave-harmonic/crest | https://api.github.com/repos/wave-harmonic/crest | closed | Bad performance on PS4 | performance | **Describe the bug**

Dear Crest community, I've ran a few performance tests today (main scene) on the major consoles and found out that Crest performs quite bad on the PS4. Even worse than on the Switch. Tested a few different versions, URP and HDRP and the verdict stays the same (check the screenshot). I remember hav... | True | Bad performance on PS4 - **Describe the bug**

Dear Crest community, I've ran a few performance tests today (main scene) on the major consoles and found out that Crest performs quite bad on the PS4. Even worse than on the Switch. Tested a few different versions, URP and HDRP and the verdict stays the same (check the sc... | non_process | bad performance on describe the bug dear crest community i ve ran a few performance tests today main scene on the major consoles and found out that crest performs quite bad on the even worse than on the switch tested a few different versions urp and hdrp and the verdict stays the same check the screen... | 0 |

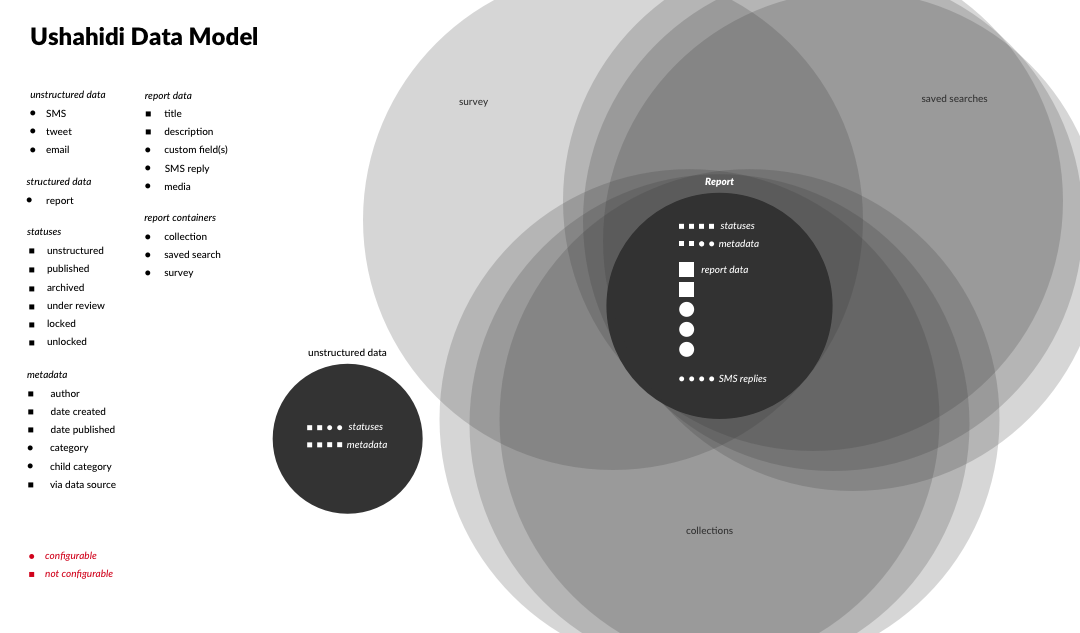

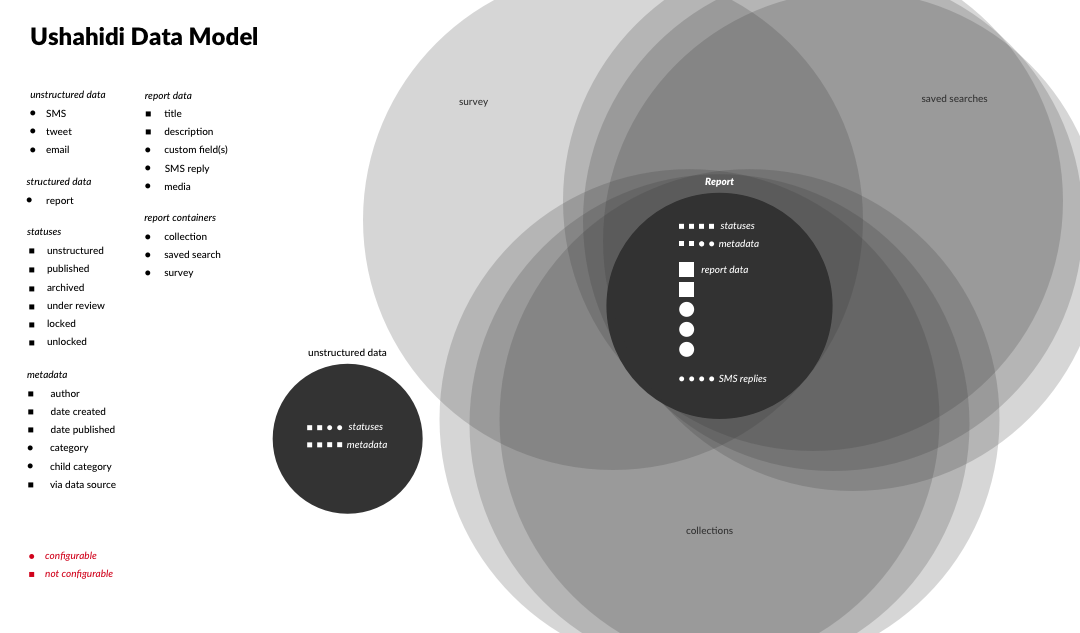

74,645 | 9,795,286,889 | IssuesEvent | 2019-06-11 02:58:48 | ushahidi/platform | https://api.github.com/repos/ushahidi/platform | closed | Data model diagram | Archived documentation | @rjmackay asked me to create a diagram of our data model as I see it now to discuss at the retreat.

Also in PDF form:

[diagram.pdf](https://github.com/ushahidi/platform/files/1591799/diagram.pdf)

I... | 1.0 | Data model diagram - @rjmackay asked me to create a diagram of our data model as I see it now to discuss at the retreat.

Also in PDF form:

[diagram.pdf](https://github.com/ushahidi/platform/files/1591... | non_process | data model diagram rjmackay asked me to create a diagram of our data model as i see it now to discuss at the retreat also in pdf form i tried to represent all the things data can be in the platform and how they relate to each other square things are user configurable circular things are no... | 0 |

153,508 | 13,507,323,137 | IssuesEvent | 2020-09-14 05:41:17 | SAP/fundamental-ngx | https://api.github.com/repos/SAP/fundamental-ngx | closed | error in platform checkbox exampes | bug documentation v.0.21.0 | #### Is this a bug, enhancement, or feature request?

bug

#### Briefly describe your proposal.

go to http://localhost:4200/fundamental-ngx#/platform/checkbox and check the console:

you will see

```

core.js:4196 ERROR Error: ExpressionChangedAfterItHasBeenCheckedError: Expression has changed after it was chec... | 1.0 | error in platform checkbox exampes - #### Is this a bug, enhancement, or feature request?

bug

#### Briefly describe your proposal.

go to http://localhost:4200/fundamental-ngx#/platform/checkbox and check the console:

you will see

```

core.js:4196 ERROR Error: ExpressionChangedAfterItHasBeenCheckedError: Exp... | non_process | error in platform checkbox exampes is this a bug enhancement or feature request bug briefly describe your proposal go to and check the console you will see core js error error expressionchangedafterithasbeencheckederror expression has changed after it was checked previous value ... | 0 |

15,744 | 19,910,559,692 | IssuesEvent | 2022-01-25 16:45:19 | input-output-hk/high-assurance-legacy | https://api.github.com/repos/input-output-hk/high-assurance-legacy | closed | Formally prove lemma `sidetrack_addition` | type: enhancement language: isabelle topic: process calculus | Our goal is to formally prove the `sidetrack_addition` lemma described in #65. | 1.0 | Formally prove lemma `sidetrack_addition` - Our goal is to formally prove the `sidetrack_addition` lemma described in #65. | process | formally prove lemma sidetrack addition our goal is to formally prove the sidetrack addition lemma described in | 1 |

264,861 | 8,320,394,535 | IssuesEvent | 2018-09-25 20:04:59 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | internetpf.itau.com.br - see bug description | browser-firefox priority-normal | <!-- @browser: Firefox 63.0b9 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:63.0) Gecko/20100101 Firefox/63.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://internetpf.itau.com.br/banklinepf//GRIPNET/bklcom.dll

**Browser / Version**: Firefox 63.0b9

**Operating System**: Windows 10

... | 1.0 | internetpf.itau.com.br - see bug description - <!-- @browser: Firefox 63.0b9 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:63.0) Gecko/20100101 Firefox/63.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://internetpf.itau.com.br/banklinepf//GRIPNET/bklcom.dll

**Browser / Version**: F... | non_process | internetpf itau com br see bug description url browser version firefox operating system windows tested another browser yes problem type something else description i can not type the password in the window the window turns red and the keyboard does not work in this p... | 0 |

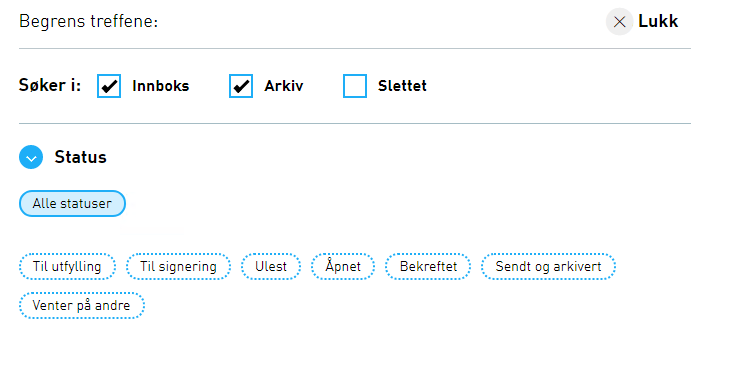

19,539 | 25,853,148,324 | IssuesEvent | 2022-12-13 11:51:14 | Altinn/altinn-storage | https://api.github.com/repos/Altinn/altinn-storage | closed | Extend advanced search to support search on status | kind/user-story solution/sbl area/search area/process area/platform-sbl-integration Epic tm/no | ## Description

Currently we only support "search in": inbox, archive and trash.

But there are also other statuses we can filter searches on.

We should look into supporting these

## Screenshots

... | 1.0 | Extend advanced search to support search on status - ## Description

Currently we only support "search in": inbox, archive and trash.

But there are also other statuses we can filter searches on.

We should look into supporting these

## Screenshots

| problem process test | This is a test issue that should (hopefully) demonstrate the bot. | 1.0 | Hi Igor (test issue to demonstrate how @privacyspy-bot handles our process) - This is a test issue that should (hopefully) demonstrate the bot. | process | hi igor test issue to demonstrate how privacyspy bot handles our process this is a test issue that should hopefully demonstrate the bot | 1 |

14,091 | 16,980,548,719 | IssuesEvent | 2021-06-30 08:17:54 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | Time preprocessor uses numpy array instead of dask array | preprocessor |

The line is:

https://github.com/ESMValGroup/ESMValCore/blob/bcb384b4417a670d0b7e40c78be8bae05389df7d/esmvalcore/preprocessor/_time.py#L178

It is:

```

ones = np.ones_like(cube.data)

```

but it should be:

```

ones = da.ones(cube.data.shape)

```

Minor change, but this change helps me run a heavy... | 1.0 | Time preprocessor uses numpy array instead of dask array -

The line is:

https://github.com/ESMValGroup/ESMValCore/blob/bcb384b4417a670d0b7e40c78be8bae05389df7d/esmvalcore/preprocessor/_time.py#L178

It is:

```

ones = np.ones_like(cube.data)

```

but it should be:

```

ones = da.ones(cube.data.shape)... | process | time preprocessor uses numpy array instead of dask array the line is it is ones np ones like cube data but it should be ones da ones cube data shape minor change but this change helps me run a heavy recipe on jasmin | 1 |

2,440 | 5,219,714,801 | IssuesEvent | 2017-01-26 19:50:25 | wpninjas/ninja-forms | https://api.github.com/repos/wpninjas/ninja-forms | closed | New radio event before form submission. | Feature Request FRONT: Processing | The before:submit radio message is sent before fields are validated. I think that the expectation should be that this fires only after fields are valid and the form is ready to submit.

This should be moved to after the validation calls, and a new before:validation radio message should be added in the place that befo... | 1.0 | New radio event before form submission. - The before:submit radio message is sent before fields are validated. I think that the expectation should be that this fires only after fields are valid and the form is ready to submit.

This should be moved to after the validation calls, and a new before:validation radio mess... | process | new radio event before form submission the before submit radio message is sent before fields are validated i think that the expectation should be that this fires only after fields are valid and the form is ready to submit this should be moved to after the validation calls and a new before validation radio mess... | 1 |

225,931 | 17,931,142,071 | IssuesEvent | 2021-09-10 09:23:50 | input-output-hk/cardano-wallet | https://api.github.com/repos/input-output-hk/cardano-wallet | opened | Flaky test: `/CLI Specifications/SHELLEY_CLI_TRANSACTIONS/TRANS_DELETE_01` | Test failure | ### Please ensure:

- [X] This is actually a flaky test already present in the code and not caused by your PR.

### Context

https://github.com/input-output-hk/cardano-wallet/pull/2885#issuecomment-916761510

### Job name

https://buildkite.com/input-output-hk/cardano-wallet/builds/16551#ed15249e-3da0-4700-b514-8060721... | 1.0 | Flaky test: `/CLI Specifications/SHELLEY_CLI_TRANSACTIONS/TRANS_DELETE_01` - ### Please ensure:

- [X] This is actually a flaky test already present in the code and not caused by your PR.

### Context

https://github.com/input-output-hk/cardano-wallet/pull/2885#issuecomment-916761510

### Job name

https://buildkite.co... | non_process | flaky test cli specifications shelley cli transactions trans delete please ensure this is actually a flaky test already present in the code and not caused by your pr context job name test case name s cli specifications shelley cli transactions trans delete error message... | 0 |

49,724 | 7,531,249,155 | IssuesEvent | 2018-04-15 02:57:02 | awyand/tactical-mdm | https://api.github.com/repos/awyand/tactical-mdm | opened | Complete mockup/wireframe | documentation | Includes:

1. Screen-by-screen design layouts with annotations

2. Describe all UI/UX components and all data relevant to the screen | 1.0 | Complete mockup/wireframe - Includes:

1. Screen-by-screen design layouts with annotations

2. Describe all UI/UX components and all data relevant to the screen | non_process | complete mockup wireframe includes screen by screen design layouts with annotations describe all ui ux components and all data relevant to the screen | 0 |

17,011 | 22,386,215,533 | IssuesEvent | 2022-06-17 00:51:25 | figlesias221/ProyectoDevOps_Grupo3_IglesiasPerezMolinoloJuan | https://api.github.com/repos/figlesias221/ProyectoDevOps_Grupo3_IglesiasPerezMolinoloJuan | closed | Review Error de lógica en ResortPricingCalulator | task process | Cada product owner debe seguir como guía los escenarios descritos en Gherkin

Esfuerzo en HS-P (por persona):

Estimado: 1

Real: 1 (@matiasmolinolo ) | 1.0 | Review Error de lógica en ResortPricingCalulator - Cada product owner debe seguir como guía los escenarios descritos en Gherkin

Esfuerzo en HS-P (por persona):

Estimado: 1

Real: 1 (@matiasmolinolo ) | process | review error de lógica en resortpricingcalulator cada product owner debe seguir como guía los escenarios descritos en gherkin esfuerzo en hs p por persona estimado real matiasmolinolo | 1 |

6,877 | 10,014,606,770 | IssuesEvent | 2019-07-15 17:57:18 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | opened | Logging: systest teardown flakes with 404. | api: logging flaky testing type: process | From: https://source.cloud.google.com/results/invocations/91034a85-0df7-4b53-bba7-258e166bb3fe/targets/cloud-devrel%2Fclient-libraries%2Fgoogle-cloud-python%2Fpresubmit%2Flogging/log

```python

______________________ TestLogging.test_log_root_handler _______________________

self = <test_system.TestLogging testMetho... | 1.0 | Logging: systest teardown flakes with 404. - From: https://source.cloud.google.com/results/invocations/91034a85-0df7-4b53-bba7-258e166bb3fe/targets/cloud-devrel%2Fclient-libraries%2Fgoogle-cloud-python%2Fpresubmit%2Flogging/log

```python

______________________ TestLogging.test_log_root_handler _____________________... | process | logging systest teardown flakes with from python testlogging test log root handler self def teardown self retry retryerrors notfound toomanyrequests retryerror max tries for doomed in self to delete try ... | 1 |

15,900 | 20,106,264,525 | IssuesEvent | 2022-02-07 10:47:46 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | Comparing several single model ensembles | enhancement preprocessor | Hi all,

two very common things that users will want to do in ESMValTool are:

1. Compare single model ensemble means.

For instance, I have a HadGEM2-ES 4 member ensemble and UKESM 12 member ensemble. I want to make some time series plots showing the time development of the two single-model ensemble means.

H... | 1.0 | Comparing several single model ensembles - Hi all,

two very common things that users will want to do in ESMValTool are:

1. Compare single model ensemble means.

For instance, I have a HadGEM2-ES 4 member ensemble and UKESM 12 member ensemble. I want to make some time series plots showing the time development of... | process | comparing several single model ensembles hi all two very common things that users will want to do in esmvaltool are compare single model ensemble means for instance i have a es member ensemble and ukesm member ensemble i want to make some time series plots showing the time development of the tw... | 1 |

626,320 | 19,807,691,371 | IssuesEvent | 2022-01-19 08:53:39 | IATI/ckanext-iati | https://api.github.com/repos/IATI/ckanext-iati | opened | Registry API package_search does not return data for xm-dac-3-1 | High priority Q1 | It was reported that the **package_search** does not return data when searching for publisher xm-dac-3-1 For other publishers data is returned.

Registry API endpoint returning data: https://iatiregistry.org/api/3/action/package_search?q=publisher_iati_id:dk-cvr-26487013

Registry API endpoint that does not return ... | 1.0 | Registry API package_search does not return data for xm-dac-3-1 - It was reported that the **package_search** does not return data when searching for publisher xm-dac-3-1 For other publishers data is returned.

Registry API endpoint returning data: https://iatiregistry.org/api/3/action/package_search?q=publisher_iat... | non_process | registry api package search does not return data for xm dac it was reported that the package search does not return data when searching for publisher xm dac for other publishers data is returned registry api endpoint returning data registry api endpoint that does not return data | 0 |

714,568 | 24,566,692,817 | IssuesEvent | 2022-10-13 04:11:52 | encorelab/ck-board | https://api.github.com/repos/encorelab/ck-board | opened | Create "Learner Model" UI | enhancement high priority | The Learner Model UI displays graphs of student data (some data manually entered by the teacher and some gathered from the CK Board).

1. For instance, we may add the Learner Model UI as part of the CK Student Monitor UI (below the task monitoring tools)

<img width="212" alt="Screen Shot 2022-10-12 at 11 29 13 PM" s... | 1.0 | Create "Learner Model" UI - The Learner Model UI displays graphs of student data (some data manually entered by the teacher and some gathered from the CK Board).

1. For instance, we may add the Learner Model UI as part of the CK Student Monitor UI (below the task monitoring tools)

<img width="212" alt="Screen Shot ... | non_process | create learner model ui the learner model ui displays graphs of student data some data manually entered by the teacher and some gathered from the ck board for instance we may add the learner model ui as part of the ck student monitor ui below the task monitoring tools img width alt screen shot ... | 0 |

478,895 | 13,787,752,326 | IssuesEvent | 2020-10-09 05:44:34 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | [ISSUE] Script issues while source oracle scripts against oracle 12c and 19c with 5.9.0 latest wum | Priority/Highest Severity/Critical bug | **Environment**

wso2is-5.9.0+1585323544240.full

**Steps to Reproduce**

Source dbscripts/oracle.sql against oracle 12c and oracle 19c

**Observation**

Below errors were noticed

```

table REG_CLUSTER_LOCK created.

table REG_LOG created.

sequence REG_LOG_SEQUENCE created.

index REG_LOG_IND_BY_REGLOG cre... | 1.0 | [ISSUE] Script issues while source oracle scripts against oracle 12c and 19c with 5.9.0 latest wum - **Environment**

wso2is-5.9.0+1585323544240.full

**Steps to Reproduce**

Source dbscripts/oracle.sql against oracle 12c and oracle 19c

**Observation**

Below errors were noticed

```

table REG_CLUSTER_LOCK ... | non_process | script issues while source oracle scripts against oracle and with latest wum environment full steps to reproduce source dbscripts oracle sql against oracle and oracle observation below errors were noticed table reg cluster lock created table reg log created... | 0 |

4,026 | 6,961,369,508 | IssuesEvent | 2017-12-08 09:12:11 | nlbdev/pipeline | https://api.github.com/repos/nlbdev/pipeline | closed | braille CSS: translator / oversetter | bug pre-processing Priority:2 - Medium | (norwegian)

*from Trello-board*

NLB-CSS: title page: - «Oversatt av» skal kun stå når det er oversetter. (Nå kommer det med også på bøker som ikke er oversatt) | 1.0 | braille CSS: translator / oversetter - (norwegian)

*from Trello-board*

NLB-CSS: title page: - «Oversatt av» skal kun stå når det er oversetter. (Nå kommer det med også på bøker som ikke er oversatt) | process | braille css translator oversetter norwegian from trello board nlb css title page «oversatt av» skal kun stå når det er oversetter nå kommer det med også på bøker som ikke er oversatt | 1 |

21,207 | 6,132,364,777 | IssuesEvent | 2017-06-25 01:10:20 | ganeti/ganeti | https://api.github.com/repos/ganeti/ganeti | closed | gnt-instance modify --disk add:[...] always like --no-wait-for-sync | imported_from_google_code Status:WontFix | Originally reported of Google Code with ID 768.

```

What software version are you running? Please provide the output of "gnt-

cluster --version", "gnt-cluster version", and "hspace --version".

<b>What distribution are you using?</b>

root@node1 ~ # gnt-cluster --version

gnt-cluster (ganeti v2.9.5) 2.9.5

root@node... | 1.0 | gnt-instance modify --disk add:[...] always like --no-wait-for-sync - Originally reported of Google Code with ID 768.

```

What software version are you running? Please provide the output of "gnt-

cluster --version", "gnt-cluster version", and "hspace --version".

<b>What distribution are you using?</b>

root@node1 ~... | non_process | gnt instance modify disk add always like no wait for sync originally reported of google code with id what software version are you running please provide the output of gnt cluster version gnt cluster version and hspace version what distribution are you using root gnt cluster ... | 0 |

18,249 | 24,330,986,452 | IssuesEvent | 2022-09-30 19:23:27 | benthosdev/benthos | https://api.github.com/repos/benthosdev/benthos | closed | bug: http_client sends duplicat content-type header | bug processors inputs outputs | Just noticed this while testing that when I have this line in my configuration:

```

output:

http_client:

headers:

Content-Type: application/json

```

I am seeing this on the receiving end:

```

2022-09-29T21:35:31-04:00 DBG Got request:

2022-09-29T21:35:31-04:00 DBG POST / HTTP/1.1

2022-09-29T... | 1.0 | bug: http_client sends duplicat content-type header - Just noticed this while testing that when I have this line in my configuration:

```

output:

http_client:

headers:

Content-Type: application/json

```

I am seeing this on the receiving end:

```

2022-09-29T21:35:31-04:00 DBG Got request:

2022... | process | bug http client sends duplicat content type header just noticed this while testing that when i have this line in my configuration output http client headers content type application json i am seeing this on the receiving end dbg got request db... | 1 |

1,108 | 3,587,971,235 | IssuesEvent | 2016-01-30 18:09:20 | mesosphere/kubernetes-mesos | https://api.github.com/repos/mesosphere/kubernetes-mesos | closed | testing for v0.7.2-v1.1.5 release | process/release | - [x] test ubercontainer failover onto another host when the original host fails (see below)

- [x] pods should keep running on other hosts

- [x] pods on killed host should be restarted (if backed by rc)

- [x] dns resolution should keep working: in busybox container `nslookup kube-ui.kube-system.svc.cluster.local`... | 1.0 | testing for v0.7.2-v1.1.5 release - - [x] test ubercontainer failover onto another host when the original host fails (see below)

- [x] pods should keep running on other hosts

- [x] pods on killed host should be restarted (if backed by rc)

- [x] dns resolution should keep working: in busybox container `nslookup ku... | process | testing for release test ubercontainer failover onto another host when the original host fails see below pods should keep running on other hosts pods on killed host should be restarted if backed by rc dns resolution should keep working in busybox container nslookup kube ui kube... | 1 |

15,565 | 19,703,504,302 | IssuesEvent | 2022-01-12 19:08:03 | googleapis/java-talent | https://api.github.com/repos/googleapis/java-talent | opened | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* release_level must be equal to one of the allowed values in .repo-metadata.json

* api_shortname 'talent' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-automation** if ... | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* release_level must be equal to one of the allowed values in .repo-metadata.json

* api_shortname 'talent' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this ... | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 release level must be equal to one of the allowed values in repo metadata json api shortname talent invalid in repo metadata json ☝️ once you correct these problems you can close this ... | 1 |

1,118 | 3,592,220,050 | IssuesEvent | 2016-02-01 15:18:53 | coala-analyzer/coala | https://api.github.com/repos/coala-analyzer/coala | closed | File in SourcePosition should use `abspath` | difficulty/newcomer process/wip type/bug | The file in SourcePosition currently doesnt getnormalized using abspath - this is bad because when comparison wouldnt work beterrn two SourceRanges because the path given may not be the same (although the files are same)

Eg:

paths like `/a/b/c` and `/a/d/../b/c` are the same but SourceRange doesn't think they are t... | 1.0 | File in SourcePosition should use `abspath` - The file in SourcePosition currently doesnt getnormalized using abspath - this is bad because when comparison wouldnt work beterrn two SourceRanges because the path given may not be the same (although the files are same)

Eg:

paths like `/a/b/c` and `/a/d/../b/c` are the... | process | file in sourceposition should use abspath the file in sourceposition currently doesnt getnormalized using abspath this is bad because when comparison wouldnt work beterrn two sourceranges because the path given may not be the same although the files are same eg paths like a b c and a d b c are the... | 1 |

11,667 | 14,529,126,048 | IssuesEvent | 2020-12-14 17:23:54 | googleapis/google-api-dotnet-client | https://api.github.com/repos/googleapis/google-api-dotnet-client | closed | Broken User schema on latest Nuget version | priority: p1 type: process | The new nuget package [Google.Apis.Admin.Directory.directory_v1 version 1.49.0.2161](https://www.nuget.org/packages/Google.Apis.Admin.Directory.directory_v1/1.49.0.2161) for the directory API seems to have a broken User class:

https://github.com/googleapis/google-api-dotnet-client/blob/master/Src/Generated/Google.Ap... | 1.0 | Broken User schema on latest Nuget version - The new nuget package [Google.Apis.Admin.Directory.directory_v1 version 1.49.0.2161](https://www.nuget.org/packages/Google.Apis.Admin.Directory.directory_v1/1.49.0.2161) for the directory API seems to have a broken User class:

https://github.com/googleapis/google-api-dotn... | process | broken user schema on latest nuget version the new nuget package for the directory api seems to have a broken user class version csharp public virtual ilist organizations get set version broken csharp public virtual object organizations get se... | 1 |

21,722 | 30,229,791,743 | IssuesEvent | 2023-07-06 05:45:36 | MikaylaFischler/cc-mek-scada | https://api.github.com/repos/MikaylaFischler/cc-mek-scada | closed | Process Fuel Self Limiting | enhancement supervisor process control | The system should monitor fuel percentages and ramp down the setpoint until the fuel input rate is net positive. | 1.0 | Process Fuel Self Limiting - The system should monitor fuel percentages and ramp down the setpoint until the fuel input rate is net positive. | process | process fuel self limiting the system should monitor fuel percentages and ramp down the setpoint until the fuel input rate is net positive | 1 |

52,982 | 6,668,794,025 | IssuesEvent | 2017-10-03 17:00:30 | Esri/solutions-geoevent-java | https://api.github.com/repos/Esri/solutions-geoevent-java | closed | Transfer labels | 4 - Done A-bug A-feature A-question B-high B-low B-moderate C-L C-M C-S C-XL C-XS E-as designed E-duplicate E-invalid E-no count E-non reproducible E-verified E-won't fix FT-Workflows G-Design G-Development G-Documentation G-Documentation Review G-Research G-Testing HP-Candidate HP-HotFix HP-Patch priority - Showstoppe... | _From @lfunkhouser on October 2, 2017 15:24_

This issue is used to transfer issues to another repo

_Copied from original issue: Esri/solutions-grg-widget#119_ | 2.0 | Transfer labels - _From @lfunkhouser on October 2, 2017 15:24_

This issue is used to transfer issues to another repo

_Copied from original issue: Esri/solutions-grg-widget#119_ | non_process | transfer labels from lfunkhouser on october this issue is used to transfer issues to another repo copied from original issue esri solutions grg widget | 0 |

19,824 | 4,443,140,749 | IssuesEvent | 2016-08-19 15:42:57 | TEIC/TEI | https://api.github.com/repos/TEIC/TEI | closed | Make @select a bit more generalized | Status: Go TEI: Guidelines & Documentation TEI: Schema Type: FeatureRequest | The note on the `@select` attribute at <http://www.tei-c.org/release/doc/tei-p5-doc/en/html/ref-att.global.linking.html> says

>This attribute should be placed on an element which is superordinate to all of the alternants from which the selection is being made.

It would be useful, however, to be able to use `@sele... | 1.0 | Make @select a bit more generalized - The note on the `@select` attribute at <http://www.tei-c.org/release/doc/tei-p5-doc/en/html/ref-att.global.linking.html> says

>This attribute should be placed on an element which is superordinate to all of the alternants from which the selection is being made.

It would be use... | non_process | make select a bit more generalized the note on the select attribute at says this attribute should be placed on an element which is superordinate to all of the alternants from which the selection is being made it would be useful however to be able to use select as the converse of exclude specif... | 0 |

314,138 | 23,508,128,003 | IssuesEvent | 2022-08-18 14:15:37 | timescale/docs | https://api.github.com/repos/timescale/docs | closed | [Docs RFC] Update example of finding job_id in decompress section | documentation enhancement community | # Describe change in content, appearance, or functionality

The example given to find a job ID in the decompression section is outdated. Update accordingly.

# Subject matter expert (SME)

@noctarius

# Deadline

[When does this need to be addressed]

# Any further info

[Link to Community Slack thread](... | 1.0 | [Docs RFC] Update example of finding job_id in decompress section - # Describe change in content, appearance, or functionality

The example given to find a job ID in the decompression section is outdated. Update accordingly.

# Subject matter expert (SME)

@noctarius

# Deadline

[When does this need to be ... | non_process | update example of finding job id in decompress section describe change in content appearance or functionality the example given to find a job id in the decompression section is outdated update accordingly subject matter expert sme noctarius deadline any further info ... | 0 |

116,347 | 11,907,854,379 | IssuesEvent | 2020-03-30 23:19:27 | maryoohhh/homeproj1 | https://api.github.com/repos/maryoohhh/homeproj1 | closed | Update README.md to create instructions on how to clone a repository | documentation | You can also explain what is happening when you clone a repository to your local machine.

Recap:

Why did we create a `dev` directory?

- to keep things organized

what is the `mkdir` command?

- command in Unix used to make a new directory.

what is the `cd` command?

- command in Unix used to make a change to a n... | 1.0 | Update README.md to create instructions on how to clone a repository - You can also explain what is happening when you clone a repository to your local machine.

Recap:

Why did we create a `dev` directory?

- to keep things organized

what is the `mkdir` command?

- command in Unix used to make a new directory.

wh... | non_process | update readme md to create instructions on how to clone a repository you can also explain what is happening when you clone a repository to your local machine recap why did we create a dev directory to keep things organized what is the mkdir command command in unix used to make a new directory wh... | 0 |

67,330 | 20,961,606,045 | IssuesEvent | 2022-03-27 21:48:15 | abedmaatalla/sipdroid | https://api.github.com/repos/abedmaatalla/sipdroid | closed | Contact integration | Priority-Medium Type-Defect auto-migrated | ```

I would like sipdroid , to have a menu toggle point where the user can choose

if he want or do not want dialer contact list integration or not

Most of the time it is troublesome because dialer list is mostly local mobile

work friend and family , while sipdroid contacts are people in other countries

and viber ... | 1.0 | Contact integration - ```

I would like sipdroid , to have a menu toggle point where the user can choose

if he want or do not want dialer contact list integration or not

Most of the time it is troublesome because dialer list is mostly local mobile

work friend and family , while sipdroid contacts are people in other ... | non_process | contact integration i would like sipdroid to have a menu toggle point where the user can choose if he want or do not want dialer contact list integration or not most of the time it is troublesome because dialer list is mostly local mobile work friend and family while sipdroid contacts are people in other ... | 0 |

8,832 | 11,943,877,762 | IssuesEvent | 2020-04-03 00:38:19 | googleapis/python-storage | https://api.github.com/repos/googleapis/python-storage | closed | Update shared conformance tests | api: storage testing type: process | ~~We need to add a test suite which exercises the cross-language tests and fix anything where we don't pass.~~

We need to *update* the shared conformance tests to match the current spec:

- [Proto spec for the testcase file](https://github.com/googleapis/conformance-tests/blob/master/storage/v1/proto/google/cloud/... | 1.0 | Update shared conformance tests - ~~We need to add a test suite which exercises the cross-language tests and fix anything where we don't pass.~~

We need to *update* the shared conformance tests to match the current spec:

- [Proto spec for the testcase file](https://github.com/googleapis/conformance-tests/blob/mas... | process | update shared conformance tests we need to add a test suite which exercises the cross language tests and fix anything where we don t pass we need to update the shared conformance tests to match the current spec cc frankyn | 1 |

17,469 | 23,294,937,068 | IssuesEvent | 2022-08-06 12:14:18 | apache/arrow-datafusion | https://api.github.com/repos/apache/arrow-datafusion | closed | Lint check fails on master: apache-rat license violation: js/test.ts | bug development-process | **Describe the bug**

A CI check stated failing on master:

https://github.com/apache/arrow-datafusion/runs/7698061920?check_suite_focus=true

```

8s

Run archery lint --rat

INFO:archery:Running apache-rat linter

apache-rat license violation: js/test.ts

Error: Process completed with exit code [1](https://github... | 1.0 | Lint check fails on master: apache-rat license violation: js/test.ts - **Describe the bug**

A CI check stated failing on master:

https://github.com/apache/arrow-datafusion/runs/7698061920?check_suite_focus=true

```

8s

Run archery lint --rat

INFO:archery:Running apache-rat linter

apache-rat license violation:... | process | lint check fails on master apache rat license violation js test ts describe the bug a ci check stated failing on master run archery lint rat info archery running apache rat linter apache rat license violation js test ts error process completed with exit code to reproduce ... | 1 |

3,619 | 2,694,875,089 | IssuesEvent | 2015-04-01 23:03:56 | mozilla/webmaker-app | https://api.github.com/repos/mozilla/webmaker-app | closed | Create a help / FAQ / Step by Step | design needs discussion | From Bangladesh,

- Users would like a section where there is help, a step by step approach, a FAQ.

" I would love if it guides me when I make my app "

@flukeout @thisandagain @k88hudson | 1.0 | Create a help / FAQ / Step by Step - From Bangladesh,

- Users would like a section where there is help, a step by step approach, a FAQ.

" I would love if it guides me when I make my app "

@flukeout @thisandagain @k88hudson | non_process | create a help faq step by step from bangladesh users would like a section where there is help a step by step approach a faq i would love if it guides me when i make my app flukeout thisandagain | 0 |

14,018 | 2,789,853,620 | IssuesEvent | 2015-05-08 21:56:02 | google/google-visualization-api-issues | https://api.github.com/repos/google/google-visualization-api-issues | opened | Motion chart hangs when you select few countries and play with trails enabled. | Priority-Medium Type-Defect | Original [issue 308](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=308) created by orwant on 2010-06-07T05:50:57.000Z:

Hi,

The issue is with Google Motion Chart trails option.

Here i have more than 3000 rows for datatable.

steps:

Select few countries. say 5 countries in motion char... | 1.0 | Motion chart hangs when you select few countries and play with trails enabled. - Original [issue 308](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=308) created by orwant on 2010-06-07T05:50:57.000Z:

Hi,

The issue is with Google Motion Chart trails option.

Here i have more than 3000 ro... | non_process | motion chart hangs when you select few countries and play with trails enabled original created by orwant on hi the issue is with google motion chart trails option here i have more than rows for datatable steps select few countries say countries in motion chart enable trails optio... | 0 |

8,811 | 6,660,493,041 | IssuesEvent | 2017-10-02 00:46:01 | apache/incubator-mxnet | https://api.github.com/repos/apache/incubator-mxnet | closed | ImageIter is very slow when preprocessing cifar10 in windows | Data-loading Performance | ## Environment info

Operating System:Windows 10

Package used (Python/R/Scala/Julia):Python

MXNet version:0.11(20170930 from [this](https://github.com/yajiedesign/mxnet/releases))

Python version and distribution:Python 3.6.2 Anaconda 4.4.0

CPU: Core i5 6500

GPU: 1080Ti

## Error Message:

### ImageIter is very slo... | True | ImageIter is very slow when preprocessing cifar10 in windows - ## Environment info

Operating System:Windows 10

Package used (Python/R/Scala/Julia):Python

MXNet version:0.11(20170930 from [this](https://github.com/yajiedesign/mxnet/releases))

Python version and distribution:Python 3.6.2 Anaconda 4.4.0

CPU: Core i5 ... | non_process | imageiter is very slow when preprocessing in windows environment info operating system windows package used python r scala julia python mxnet version from python version and distribution python anaconda cpu core gpu error message imageiter is very slow when prepr... | 0 |

18,083 | 12,532,980,025 | IssuesEvent | 2020-06-04 16:48:31 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | [ML] Deleting multiple custom-rules requires lots of clicks | :ml Feature:Anomaly Detection usability v7.9.0 | **Kibana version:**

6.4.0

**Describe the bug:**

The "Edit Rule" panel closes after deleting one rule, giving a poor user experience when trying to delete multiple rules.

**Steps to reproduce:**

1. Create job e.g. mean(metricvalue) by metricname

2. Add multiple rules to a single detector

3. Then try to ... | True | [ML] Deleting multiple custom-rules requires lots of clicks - **Kibana version:**

6.4.0

**Describe the bug:**

The "Edit Rule" panel closes after deleting one rule, giving a poor user experience when trying to delete multiple rules.

**Steps to reproduce:**

1. Create job e.g. mean(metricvalue) by metricname... | non_process | deleting multiple custom rules requires lots of clicks kibana version describe the bug the edit rule panel closes after deleting one rule giving a poor user experience when trying to delete multiple rules steps to reproduce create job e g mean metricvalue by metricname ... | 0 |

9,856 | 12,856,115,211 | IssuesEvent | 2020-07-09 06:59:21 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Add a Rescale rasters alg | Feature Request Processing | Author Name: **Paolo Cavallini** (@pcav)

Original Redmine Issue: [18208](https://issues.qgis.org/issues/18208)

Redmine category:analysis_library

---

It would be useful to have a new alg rescaling raster values to percent of max.

This would be useful e.g. for probability models, so that we can easily draw lines enco... | 1.0 | Add a Rescale rasters alg - Author Name: **Paolo Cavallini** (@pcav)

Original Redmine Issue: [18208](https://issues.qgis.org/issues/18208)

Redmine category:analysis_library

---

It would be useful to have a new alg rescaling raster values to percent of max.

This would be useful e.g. for probability models, so that w... | process | add a rescale rasters alg author name paolo cavallini pcav original redmine issue redmine category analysis library it would be useful to have a new alg rescaling raster values to percent of max this would be useful e g for probability models so that we can easily draw lines encompassing a cer... | 1 |

3,201 | 6,262,322,689 | IssuesEvent | 2017-07-15 09:21:42 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | RFE: A way to spawn a foreground process | child_process feature request | I have a module called `foreground-child` that will spawn a child process with inherited stdio, and proxy signals to it, and exit appropriately when the child exits.

However, since it's not _actually_ possible to do an `execvp` without a `fork` in Node, it's always going to be a little bit of a kludge. Because it's... | 1.0 | RFE: A way to spawn a foreground process - I have a module called `foreground-child` that will spawn a child process with inherited stdio, and proxy signals to it, and exit appropriately when the child exits.

However, since it's not _actually_ possible to do an `execvp` without a `fork` in Node, it's always going to... | process | rfe a way to spawn a foreground process i have a module called foreground child that will spawn a child process with inherited stdio and proxy signals to it and exit appropriately when the child exits however since it s not actually possible to do an execvp without a fork in node it s always going to... | 1 |

724,432 | 24,930,624,150 | IssuesEvent | 2022-10-31 11:17:52 | openhab/openhab-android | https://api.github.com/repos/openhab/openhab-android | closed | "Info ssl client cert" tooltip has bright background in dark themes | bug Priority: Low | <!-- Please search the issue, if there is one with your issue -->

### Actual behaviour

"Info ssl client cert" tooltip has bright background in dark themes, which makes the tooltip hard to read.

### Expected behaviour

"Info ssl client cert" tooltip should have a dark background.

### Environment data

####... | 1.0 | "Info ssl client cert" tooltip has bright background in dark themes - <!-- Please search the issue, if there is one with your issue -->

### Actual behaviour

"Info ssl client cert" tooltip has bright background in dark themes, which makes the tooltip hard to read.

### Expected behaviour

"Info ssl client cert" ... | non_process | info ssl client cert tooltip has bright background in dark themes actual behaviour info ssl client cert tooltip has bright background in dark themes which makes the tooltip hard to read expected behaviour info ssl client cert tooltip should have a dark background environment data... | 0 |

11,056 | 13,889,350,474 | IssuesEvent | 2020-10-19 07:46:31 | zerolab-fe/awesome-nodejs | https://api.github.com/repos/zerolab-fe/awesome-nodejs | closed | supervisor | Process management | 在👆 Title 处填写包名,并补充下面信息:

```json

{

"repoUrl": "https://github.com/petruisfan/node-supervisor",

"description": "监听文件变化并自动重启"

}

```

| 1.0 | supervisor - 在👆 Title 处填写包名,并补充下面信息:

```json

{

"repoUrl": "https://github.com/petruisfan/node-supervisor",

"description": "监听文件变化并自动重启"

}

```

| process | supervisor 在👆 title 处填写包名,并补充下面信息: json repourl description 监听文件变化并自动重启 | 1 |

6,988 | 10,134,428,729 | IssuesEvent | 2019-08-02 07:30:07 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | opened | Add Cypress to GitHub package registry | process: release stage: ready for work type: chore | Apparently it's a thing. How many people will use it 🤷♀

Instructions: https://help.github.com/en/articles/configuring-npm-for-use-with-github-package-registry | 1.0 | Add Cypress to GitHub package registry - Apparently it's a thing. How many people will use it 🤷♀

Instructions: https://help.github.com/en/articles/configuring-npm-for-use-with-github-package-registry | process | add cypress to github package registry apparently it s a thing how many people will use it 🤷♀ instructions | 1 |

142,464 | 19,090,531,198 | IssuesEvent | 2021-11-29 11:34:14 | sultanabubaker/NuGet_Project_SDK_NonSDK | https://api.github.com/repos/sultanabubaker/NuGet_Project_SDK_NonSDK | opened | CVE-2017-0247 (High) detected in system.text.encodings.web.4.0.0.nupkg | security vulnerability | ## CVE-2017-0247 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>system.text.encodings.web.4.0.0.nupkg</b></p></summary>

<p>Provides types for encoding and escaping strings for use in ... | True | CVE-2017-0247 (High) detected in system.text.encodings.web.4.0.0.nupkg - ## CVE-2017-0247 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>system.text.encodings.web.4.0.0.nupkg</b></p></... | non_process | cve high detected in system text encodings web nupkg cve high severity vulnerability vulnerable library system text encodings web nupkg provides types for encoding and escaping strings for use in javascript hypertext markup language h library home page a href path t... | 0 |

7,273 | 10,426,808,618 | IssuesEvent | 2019-09-16 18:27:19 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | pgdump is no longer a recognized format for the GDAL/OGR convert format algorithm | Feature Request Processing | The option to define the output format is normally given by the extension of the output file.

It is no longer possible to specify 'PgDump' as the output format (-f PGDump).

| 1.0 | pgdump is no longer a recognized format for the GDAL/OGR convert format algorithm - The option to define the output format is normally given by the extension of the output file.

It is no longer possible to specify 'PgDump' as the output format (-f PGDump).

| process | pgdump is no longer a recognized format for the gdal ogr convert format algorithm the option to define the output format is normally given by the extension of the output file it is no longer possible to specify pgdump as the output format f pgdump | 1 |

53,085 | 13,260,877,196 | IssuesEvent | 2020-08-20 18:54:58 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | SLALIB/C needs a real makefile (Trac #677) | Migrated from Trac defect tools/ports | makefile is currently a PoS. needs to be re-done with proper make constructs, and variables. (ie: CC, CFLAGS)

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/677">https://code.icecube.wisc.edu/projects/icecube/ticket/677</a>, reported by negaand owned by nega</em></... | 1.0 | SLALIB/C needs a real makefile (Trac #677) - makefile is currently a PoS. needs to be re-done with proper make constructs, and variables. (ie: CC, CFLAGS)

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/677">https://code.icecube.wisc.edu/projects/icecube/ticket/677<... | non_process | slalib c needs a real makefile trac makefile is currently a pos needs to be re done with proper make constructs and variables ie cc cflags migrated from json status closed changetime ts description makefile is currently a pos needs to be... | 0 |

21,981 | 30,472,628,225 | IssuesEvent | 2023-07-17 14:31:29 | USGS-WiM/StreamStats | https://api.github.com/repos/USGS-WiM/StreamStats | closed | Add refresh button to Batch Status and Manage Queue tabs | Batch Processor | Add a refresh button to the Batch Status and Manage Queue tabs so that the user can easily see the latest queue/results | 1.0 | Add refresh button to Batch Status and Manage Queue tabs - Add a refresh button to the Batch Status and Manage Queue tabs so that the user can easily see the latest queue/results | process | add refresh button to batch status and manage queue tabs add a refresh button to the batch status and manage queue tabs so that the user can easily see the latest queue results | 1 |

53,588 | 28,299,291,257 | IssuesEvent | 2023-04-10 03:28:22 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | opened | Use `number` as an index for `system.numbers` | feature performance | **Use case**

People want to write queries as follows:

```

SELECT number FROM system.numbers WHERE number BETWEEN 10 AND 100;

SELECT number FROM system.numbers WHERE number IN (123, 456);

```

and expect this query to be smart enough to read only requested ranges.

**Describe the solution you'd like**

Determ... | True | Use `number` as an index for `system.numbers` - **Use case**

People want to write queries as follows:

```

SELECT number FROM system.numbers WHERE number BETWEEN 10 AND 100;

SELECT number FROM system.numbers WHERE number IN (123, 456);

```

and expect this query to be smart enough to read only requested ranges.

... | non_process | use number as an index for system numbers use case people want to write queries as follows select number from system numbers where number between and select number from system numbers where number in and expect this query to be smart enough to read only requested ranges des... | 0 |

432,805 | 30,295,598,570 | IssuesEvent | 2023-07-09 20:21:35 | kopia/kopia | https://api.github.com/repos/kopia/kopia | closed | Can you make a video explaining the retention policies in more detail | help wanted question onboarding-experience documentation stale | I'm not sure I understand the retention policies and how they apply to backups and what it means when selecting 4 weekly, 4 monthly etc...

How do these retention policies affect backups? | 1.0 | Can you make a video explaining the retention policies in more detail - I'm not sure I understand the retention policies and how they apply to backups and what it means when selecting 4 weekly, 4 monthly etc...

How do these retention policies affect backups? | non_process | can you make a video explaining the retention policies in more detail i m not sure i understand the retention policies and how they apply to backups and what it means when selecting weekly monthly etc how do these retention policies affect backups | 0 |

16,329 | 20,985,764,704 | IssuesEvent | 2022-03-29 02:51:38 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | GDAL: "Clip vector by mask layer" takes forever as it does not seem to consider the mask layer's extent | Feedback stale Processing Bug | ### What is the bug or the crash?

I've been testing the "Clip vector by mask layer" and the "Clip vector by extent" tool of the GDAL processing suite I encountered very long processing times (>10 min) with "Clip vector by mask layer" which could easily be avoided by considering the clipped layer's extent. I was usin... | 1.0 | GDAL: "Clip vector by mask layer" takes forever as it does not seem to consider the mask layer's extent - ### What is the bug or the crash?

I've been testing the "Clip vector by mask layer" and the "Clip vector by extent" tool of the GDAL processing suite I encountered very long processing times (>10 min) with "Clip... | process | gdal clip vector by mask layer takes forever as it does not seem to consider the mask layer s extent what is the bug or the crash i ve been testing the clip vector by mask layer and the clip vector by extent tool of the gdal processing suite i encountered very long processing times min with clip ... | 1 |

95,901 | 8,580,702,762 | IssuesEvent | 2018-11-13 12:47:41 | sozu-proxy/sozu | https://api.github.com/repos/sozu-proxy/sozu | closed | new configuration messages: add/remove listeners | Configuration enhancement needs testing | the way TCP listeners are managed right now is slightly annoying:

- HTTP and HTTPS proxy can only listen on one socket (this is not a very big deal, most of the time we'll only have HTTP on `0.0.0.0:80` and HTTPS on `0.0.0.0:443`)

- TCP listeners are linked to TCP fronts, so there's a special case to register a lis... | 1.0 | new configuration messages: add/remove listeners - the way TCP listeners are managed right now is slightly annoying:

- HTTP and HTTPS proxy can only listen on one socket (this is not a very big deal, most of the time we'll only have HTTP on `0.0.0.0:80` and HTTPS on `0.0.0.0:443`)

- TCP listeners are linked to TCP ... | non_process | new configuration messages add remove listeners the way tcp listeners are managed right now is slightly annoying http and https proxy can only listen on one socket this is not a very big deal most of the time we ll only have http on and https on tcp listeners are linked to tcp fro... | 0 |

71,458 | 8,657,041,522 | IssuesEvent | 2018-11-27 20:09:00 | cityofaustin/techstack | https://api.github.com/repos/cityofaustin/techstack | closed | Janis v2 Design: breakpoint exploration | Janis 2.0 Resident Interface Size: M Team: Design + Research | Work on meeting USWDS 2.0 [break point criteria](https://v2.designsystem.digital.gov/utilities/layout-grid/):

- [ ] Mobile large ≥480px

- [ ] Tablet ≥640px

- [ ] Desktop ≥ 1024px

| 1.0 | Janis v2 Design: breakpoint exploration - Work on meeting USWDS 2.0 [break point criteria](https://v2.designsystem.digital.gov/utilities/layout-grid/):

- [ ] Mobile large ≥480px

- [ ] Tablet ≥640px

- [ ] Desktop ≥ 1024px

| non_process | janis design breakpoint exploration work on meeting uswds mobile large ≥ tablet ≥ desktop ≥ | 0 |

15,459 | 19,720,539,191 | IssuesEvent | 2022-01-13 14:59:37 | pnp/sp-dev-fx-webparts | https://api.github.com/repos/pnp/sp-dev-fx-webparts | closed | react-birthdays wont build | type:bug-suspected status:node-compatibility status:wrong-author | ### Sample

react-birthdays

### Author(s)

@VesaJuvonen

### What happened?

Receive following error when execute npm install

8344 error code 1

8345 error path c:\react-birthdays\node_modules\deasync

8346 error command failed

8347 error command C:\WINDOWS\system32\cmd.exe /d /s /c node ./build.js

8348 error gyp... | True | react-birthdays wont build - ### Sample

react-birthdays

### Author(s)

@VesaJuvonen

### What happened?

Receive following error when execute npm install

8344 error code 1

8345 error path c:\react-birthdays\node_modules\deasync

8346 error command failed

8347 error command C:\WINDOWS\system32\cmd.exe /d /s /c no... | non_process | react birthdays wont build sample react birthdays author s vesajuvonen what happened receive following error when execute npm install error code error path c react birthdays node modules deasync error command failed error command c windows cmd exe d s c node build js er... | 0 |

3,823 | 6,802,227,549 | IssuesEvent | 2017-11-02 19:25:59 | ncbo/bioportal-project | https://api.github.com/repos/ncbo/bioportal-project | closed | LCMPT: classes page returns "Not Found" | ontology processing problem | ### Issue 1:

LCMPT, a SKOS ontology appears to have parsed correctly, but when navigating to the classes page:

http://bioportal.bioontology.org/ontologies/LCMPT?p=classes

BioPortal returns "The page you are looking for wasn't found. Please try again."

### Issue 2:

The ontology pull location is set to an ... | 1.0 | LCMPT: classes page returns "Not Found" - ### Issue 1:

LCMPT, a SKOS ontology appears to have parsed correctly, but when navigating to the classes page:

http://bioportal.bioontology.org/ontologies/LCMPT?p=classes

BioPortal returns "The page you are looking for wasn't found. Please try again."

### Issue 2:

... | process | lcmpt classes page returns not found issue lcmpt a skos ontology appears to have parsed correctly but when navigating to the classes page bioportal returns the page you are looking for wasn t found please try again issue the ontology pull location is set to an html page inste... | 1 |

18,556 | 24,555,473,162 | IssuesEvent | 2022-10-12 15:32:42 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Android] [Offline indicator] Delete app account > I do not wish to delete my account button should be disabled in the confirmation screen | Bug P2 Android Process: Fixed Process: Tested QA Process: Tested dev | Delete app account > I do not wish to delete my account button should be disabled in the confirmation screen

| 3.0 | [Android] [Offline indicator] Delete app account > I do not wish to delete my account button should be disabled in the confirmation screen - Delete app account > I do not wish to delete my account button should be disabled in the confirmation screen

- http://blog.mattedgar.com/2015/11/17/design-principles-for-an-enterprising-city/

And there are lots of good ideas and initiatives in http://ny... | 1.0 | Create the Design Principles for a Digital Liverpool - Matt Edgar does a good job of making a start on what he thinks the design principles for a city is (he's particularly thinking of Leeds, but we already share a canal so...) - http://blog.mattedgar.com/2015/11/17/design-principles-for-an-enterprising-city/

And th... | non_process | create the design principles for a digital liverpool matt edgar does a good job of making a start on what he thinks the design principles for a city is he s particularly thinking of leeds but we already share a canal so and there are lots of good ideas and initiatives in richard pope has also listed... | 0 |

3,993 | 6,919,099,119 | IssuesEvent | 2017-11-29 14:30:00 | nlbdev/pipeline | https://api.github.com/repos/nlbdev/pipeline | closed | Info about missing print page numbers in "om boka" | enhancement pre-processing Priority:2 - Medium | autodetect that before starting the conversion and disable page number / page markers if there are no page numbers

make that info available for *om boken* (ingen sidetall fra visuell utgave) | 1.0 | Info about missing print page numbers in "om boka" - autodetect that before starting the conversion and disable page number / page markers if there are no page numbers

make that info available for *om boken* (ingen sidetall fra visuell utgave) | process | info about missing print page numbers in om boka autodetect that before starting the conversion and disable page number page markers if there are no page numbers make that info available for om boken ingen sidetall fra visuell utgave | 1 |

167,714 | 6,345,021,363 | IssuesEvent | 2017-07-27 21:14:42 | vmware/vic | https://api.github.com/repos/vmware/vic | closed | Add mount data from portlayer to replenish persona container cache on VCH restart | area/docker component/docker-api-server component/portlayer priority/medium | As a user of VIC, I expect docker inspect to return mount information for a container after a VCH restarts.

Acceptance Criteria

- [ ] Updated robot scripts to verify that the docker inspect returns valid mount data after a VCH restarts

---

This is the second part of the docker inspect/mount data issue. This ta... | 1.0 | Add mount data from portlayer to replenish persona container cache on VCH restart - As a user of VIC, I expect docker inspect to return mount information for a container after a VCH restarts.

Acceptance Criteria

- [ ] Updated robot scripts to verify that the docker inspect returns valid mount data after a VCH resta... | non_process | add mount data from portlayer to replenish persona container cache on vch restart as a user of vic i expect docker inspect to return mount information for a container after a vch restarts acceptance criteria updated robot scripts to verify that the docker inspect returns valid mount data after a vch restart... | 0 |