Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

418 | 2,852,501,524 | IssuesEvent | 2015-06-01 13:58:04 | genomizer/genomizer-server | https://api.github.com/repos/genomizer/genomizer-server | closed | File cleanup utility cannot delete all temporary folders after a process execution | Medium priority Processing | The problem could be due to a process holding a file stream that is not closed at the time file cleanup utility is called. | 1.0 | File cleanup utility cannot delete all temporary folders after a process execution - The problem could be due to a process holding a file stream that is not closed at the time file cleanup utility is called. | process | file cleanup utility cannot delete all temporary folders after a process execution the problem could be due to a process holding a file stream that is not closed at the time file cleanup utility is called | 1 |

13,142 | 3,117,397,008 | IssuesEvent | 2015-09-04 00:48:22 | meren/anvio | https://api.github.com/repos/meren/anvio | closed | annotation.db needs to know which tables are populated | bug design | _self_ table in the annotation.db should be updated when any of the papi-populate-*-table scripts are run.

it should be clear to the profiler whether a papi-populate-*-table was run and the results were empty, or it was never run at all. | 1.0 | annotation.db needs to know which tables are populated - _self_ table in the annotation.db should be updated when any of the papi-populate-*-table scripts are run.

it should be clear to the profiler whether a papi-populate-*-table was run and the results were empty, or it was never run at all. | non_process | annotation db needs to know which tables are populated self table in the annotation db should be updated when any of the papi populate table scripts are run it should be clear to the profiler whether a papi populate table was run and the results were empty or it was never run at all | 0 |

168,658 | 14,169,199,961 | IssuesEvent | 2020-11-12 12:53:45 | selectize/selectize.js | https://api.github.com/repos/selectize/selectize.js | closed | Documentation Request: Nested Properties | documentation | Looking at current documentation it notes that sifter.js has the ability to filter results based on nested properties. Looking at docs for both selectize and sifter doesn't give a clear indication of how to do so. If the values you are looking for are not in the top level search fields then no results will be displayed... | 1.0 | Documentation Request: Nested Properties - Looking at current documentation it notes that sifter.js has the ability to filter results based on nested properties. Looking at docs for both selectize and sifter doesn't give a clear indication of how to do so. If the values you are looking for are not in the top level sear... | non_process | documentation request nested properties looking at current documentation it notes that sifter js has the ability to filter results based on nested properties looking at docs for both selectize and sifter doesn t give a clear indication of how to do so if the values you are looking for are not in the top level sear... | 0 |

447,345 | 31,683,961,587 | IssuesEvent | 2023-09-08 04:01:27 | apecloud/kubeblocks | https://api.github.com/repos/apecloud/kubeblocks | opened | [Features] Cost extension | kind/feature area/user-interaction documentation | Problems:

With the development of customer business, there are more and more database clusters running in KubeBlocks, the resources used by database clusters are a black box for users, opening the black box will help users better manage the database, control costs, and increase the added value of KubeBlocks.

Goals:... | 1.0 | [Features] Cost extension - Problems:

With the development of customer business, there are more and more database clusters running in KubeBlocks, the resources used by database clusters are a black box for users, opening the black box will help users better manage the database, control costs, and increase the added va... | non_process | cost extension problems with the development of customer business there are more and more database clusters running in kubeblocks the resources used by database clusters are a black box for users opening the black box will help users better manage the database control costs and increase the added value of ku... | 0 |

2,529 | 5,288,868,862 | IssuesEvent | 2017-02-08 16:06:52 | mesosphere/marathon | https://api.github.com/repos/mesosphere/marathon | closed | scale test better error reporting | Epic:Improve CI and Release Process sprint-3 | hashmap of errors that are in loops,

add errors with counts at the end of loop | 1.0 | scale test better error reporting - hashmap of errors that are in loops,

add errors with counts at the end of loop | process | scale test better error reporting hashmap of errors that are in loops add errors with counts at the end of loop | 1 |

5,624 | 8,481,798,030 | IssuesEvent | 2018-10-25 16:40:32 | googleapis/google-cloud-node | https://api.github.com/repos/googleapis/google-cloud-node | closed | Add Node 11 to the CI config | status: blocked type: process | Note: we need to wait for Node.js 11 to be released, which should happen "soon" ™️ | 1.0 | Add Node 11 to the CI config - Note: we need to wait for Node.js 11 to be released, which should happen "soon" ™️ | process | add node to the ci config note we need to wait for node js to be released which should happen soon ™️ | 1 |

2,492 | 5,267,653,382 | IssuesEvent | 2017-02-05 00:54:14 | coala/teams | https://api.github.com/repos/coala/teams | closed | Core Team Member Application: Rohan VB | process/approved | # Bio

I'm Rohan. I'm currently a 3rd year undergrad student of Maths & Computing in India.

# coala Contributions so far

My main contributions have been to the coala/coala repo, solving and opening issues. I've also reviewed a few PRs in the same repo. Other contributions have been in the coala/documentation re... | 1.0 | Core Team Member Application: Rohan VB - # Bio

I'm Rohan. I'm currently a 3rd year undergrad student of Maths & Computing in India.

# coala Contributions so far

My main contributions have been to the coala/coala repo, solving and opening issues. I've also reviewed a few PRs in the same repo. Other contribution... | process | core team member application rohan vb bio i m rohan i m currently a year undergrad student of maths computing in india coala contributions so far my main contributions have been to the coala coala repo solving and opening issues i ve also reviewed a few prs in the same repo other contributions ... | 1 |

17,116 | 22,635,800,957 | IssuesEvent | 2022-06-30 18:51:03 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | Add optional --no-python argument for qgis_process to disable Python support (Request in QGIS) | Processing 3.24 | ### Request for documentation

From pull request QGIS/qgis#46579

Author: @nyalldawson

QGIS version: 3.24

**Add optional --no-python argument for qgis_process to disable Python support**

### PR Description:

Skipping python support when it's not required can result in a significant improvement in qgis_process startup ti... | 1.0 | Add optional --no-python argument for qgis_process to disable Python support (Request in QGIS) - ### Request for documentation

From pull request QGIS/qgis#46579

Author: @nyalldawson

QGIS version: 3.24

**Add optional --no-python argument for qgis_process to disable Python support**

### PR Description:

Skipping python ... | process | add optional no python argument for qgis process to disable python support request in qgis request for documentation from pull request qgis qgis author nyalldawson qgis version add optional no python argument for qgis process to disable python support pr description skipping python suppo... | 1 |

11,104 | 13,941,864,695 | IssuesEvent | 2020-10-22 20:05:21 | googleapis/google-auth-library-python | https://api.github.com/repos/googleapis/google-auth-library-python | closed | Tests broken on Python < 3.8 | testing type: process | ```python

_______ ERROR collecting tests_async/transport/test_aiohttp_requests.py ________

ImportError while importing test module '/home/tseaver/projects/agendaless/Google/src/google-auth/tests_async/transport/test_aiohttp_requests.py'.

Hint: make sure your test modules/packages have valid Python names.

Traceback:... | 1.0 | Tests broken on Python < 3.8 - ```python

_______ ERROR collecting tests_async/transport/test_aiohttp_requests.py ________

ImportError while importing test module '/home/tseaver/projects/agendaless/Google/src/google-auth/tests_async/transport/test_aiohttp_requests.py'.

Hint: make sure your test modules/packages have ... | process | tests broken on python python error collecting tests async transport test aiohttp requests py importerror while importing test module home tseaver projects agendaless google src google auth tests async transport test aiohttp requests py hint make sure your test modules packages have ... | 1 |

206,873 | 23,405,108,157 | IssuesEvent | 2022-08-12 12:03:23 | nexmo-community/phone-number-pool-manager-node | https://api.github.com/repos/nexmo-community/phone-number-pool-manager-node | opened | axios-0.19.2.tgz: 4 vulnerabilities (highest severity is: 7.5) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>axios-0.19.2.tgz</b></p></summary>

<p>Promise based HTTP client for the browser and node.js</p>

<p>Library home page: <a href="https://registry.npmjs.org/axios/-/axio... | True | axios-0.19.2.tgz: 4 vulnerabilities (highest severity is: 7.5) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>axios-0.19.2.tgz</b></p></summary>

<p>Promise based HTTP client for the browser and node.js</p>

<p>Li... | non_process | axios tgz vulnerabilities highest severity is vulnerable library axios tgz promise based http client for the browser and node js library home page a href path to dependency file package json path to vulnerable library node modules axios package json found in head ... | 0 |

9,787 | 12,804,290,263 | IssuesEvent | 2020-07-03 03:58:53 | OUDcollective/twenty20times | https://api.github.com/repos/OUDcollective/twenty20times | closed | Basic writing and formatting syntax - GitHub Help | workflow-process |

## ON RELATIVE LINKS

---

**Source URL**:

[https://help.github.com/en/github/writing-on-github/basic-writing-and-formatting-syntax](https://help.github.... | 1.0 | Basic writing and formatting syntax - GitHub Help -

## ON RELATIVE LINKS

---

**Source URL**:

[https://help.github.com/en/github/writing-on-github/basic... | process | basic writing and formatting syntax github help on relative links source url browser chrome os windows bit screen size viewport size pixel ratio zoom level | 1 |

16,229 | 20,766,312,302 | IssuesEvent | 2022-03-15 20:58:41 | GSA/CIW | https://api.github.com/repos/GSA/CIW | closed | CIW Bug Fixes: 2022-03 | Type: Bug Topic: Upload/Processing Topic: Validation | Fixing issues resulting from the release of #220, listed below:

- [x] Allow valid childcare CIWs to be passed in with no start or end date

- [x] Update "last updated" and "last updated by" fields whenever a contract's end date is updated by the CIW process | 1.0 | CIW Bug Fixes: 2022-03 - Fixing issues resulting from the release of #220, listed below:

- [x] Allow valid childcare CIWs to be passed in with no start or end date

- [x] Update "last updated" and "last updated by" fields whenever a contract's end date is updated by the CIW process | process | ciw bug fixes fixing issues resulting from the release of listed below allow valid childcare ciws to be passed in with no start or end date update last updated and last updated by fields whenever a contract s end date is updated by the ciw process | 1 |

146,335 | 19,399,935,679 | IssuesEvent | 2021-12-19 01:21:08 | SmartBear/ready-jira-plugin | https://api.github.com/repos/SmartBear/ready-jira-plugin | opened | CVE-2021-45105 (High) detected in log4j-core-2.11.0.jar | security vulnerability | ## CVE-2021-45105 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.11.0.jar</b></p></summary>

<p>The Apache Log4j Implementation</p>

<p>Library home page: <a href="https://... | True | CVE-2021-45105 (High) detected in log4j-core-2.11.0.jar - ## CVE-2021-45105 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.11.0.jar</b></p></summary>

<p>The Apache Log4j ... | non_process | cve high detected in core jar cve high severity vulnerability vulnerable library core jar the apache implementation library home page a href path to dependency file ready jira plugin pom xml path to vulnerable library ory org apache logging core cor... | 0 |

35,196 | 14,629,917,521 | IssuesEvent | 2020-12-23 16:42:07 | trisacrypto/testnet | https://api.github.com/repos/trisacrypto/testnet | opened | Lookup by common name is returning 404 | bug directory service | On the live server lookup by common name is returning 404 even though lookup by id is working and returning the common name that was requested. | 1.0 | Lookup by common name is returning 404 - On the live server lookup by common name is returning 404 even though lookup by id is working and returning the common name that was requested. | non_process | lookup by common name is returning on the live server lookup by common name is returning even though lookup by id is working and returning the common name that was requested | 0 |

259,279 | 27,621,791,480 | IssuesEvent | 2023-03-10 01:11:56 | artsking/linux-4.19.72 | https://api.github.com/repos/artsking/linux-4.19.72 | opened | CVE-2023-1074 (Medium) detected in linux-yoctov5.4.51 | Mend: dependency security vulnerability | ## CVE-2023-1074 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedded kernel</p>

<p>Library home page: <a href=https://git... | True | CVE-2023-1074 (Medium) detected in linux-yoctov5.4.51 - ## CVE-2023-1074 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embed... | non_process | cve medium detected in linux cve medium severity vulnerability vulnerable library linux yocto linux embedded kernel library home page a href found in base branch master vulnerable source files net sctp bind addr c net sctp bind add... | 0 |

2,403 | 5,193,025,398 | IssuesEvent | 2017-01-22 15:18:19 | AllenFang/react-bootstrap-table | https://api.github.com/repos/AllenFang/react-bootstrap-table | closed | Wrong header items width | inprocess | The table header's width is not calculated the same way as the table's body, ending up in situations like this (note the header overflowing on the right):

**[Edit: actually it ... | 1.0 | Wrong header items width - The table header's width is not calculated the same way as the table's body, ending up in situations like this (note the header overflowing on the right):

{

__m128 x = _mm_shuffle_ps(v, v, 0x00);

__m128 y = _mm_shuffle_ps(v, v, 0x55);

__m128 z = _mm_... | 1.0 | Decompiler interprets SHUFPS Z values as 0. - **Describe the bug**

A clear and concise description of the bug.

**To Reproduce**

Steps to reproduce the behavior:

1. Compile

```c

#include <cstdio>

#include <xmmintrin.h>

int func(const __m128 v)

{

__m128 x = _mm_shuffle_ps(v, v, 0x00);

__m128 y = _m... | process | decompiler interprets shufps z values as describe the bug a clear and concise description of the bug to reproduce steps to reproduce the behavior compile c include include int func const v x mm shuffle ps v v y mm shuffle ps v v ... | 1 |

247,535 | 26,712,343,796 | IssuesEvent | 2023-01-28 03:14:32 | NileshGule/cloud-native-ninja | https://api.github.com/repos/NileshGule/cloud-native-ninja | closed | spring-boot-starter-web-3.0.2.jar: 1 vulnerabilities (highest severity is: 9.8) - autoclosed | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-boot-starter-web-3.0.2.jar</b></p></summary>

<p></p>

<p>Path to dependency file: /src/java/techtalks-producer/pom.xml</p>

<p>Path to vulnerable library: /home/... | True | spring-boot-starter-web-3.0.2.jar: 1 vulnerabilities (highest severity is: 9.8) - autoclosed - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-boot-starter-web-3.0.2.jar</b></p></summary>

<p></p>

<p>Path to ... | non_process | spring boot starter web jar vulnerabilities highest severity is autoclosed vulnerable library spring boot starter web jar path to dependency file src java techtalks producer pom xml path to vulnerable library home wss scanner repository org yaml snakeyaml snakeyaml ... | 0 |

290,145 | 32,035,560,198 | IssuesEvent | 2023-09-22 15:06:15 | flatcar/Flatcar | https://api.github.com/repos/flatcar/Flatcar | closed | update: glibc | security advisory cvss/HIGH | **Name**: glibc

**CVEs**: [CVE-2023-4527](https://nvd.nist.gov/vuln/detail/CVE-2023-4527), [CVE-2023-4806](https://nvd.nist.gov/vuln/detail/CVE-2023-4806)

**CVSSs**: 8.2, n/a

**Action Needed**: update to >= 2.37-r5 or >= 2.38-r2

**Summary**:

* CVE-2023-4527: A flaw was found in glibc. When the getaddrinfo func... | True | update: glibc - **Name**: glibc

**CVEs**: [CVE-2023-4527](https://nvd.nist.gov/vuln/detail/CVE-2023-4527), [CVE-2023-4806](https://nvd.nist.gov/vuln/detail/CVE-2023-4806)

**CVSSs**: 8.2, n/a

**Action Needed**: update to >= 2.37-r5 or >= 2.38-r2

**Summary**:

* CVE-2023-4527: A flaw was found in glibc. When the ... | non_process | update glibc name glibc cves cvsss n a action needed update to or summary cve a flaw was found in glibc when the getaddrinfo function is called with the af unspec address family and the system is configured with no aaaa mode via etc resolv con... | 0 |

1,425 | 3,989,485,766 | IssuesEvent | 2016-05-09 14:11:37 | DynareTeam/dynare | https://api.github.com/repos/DynareTeam/dynare | closed | Crash in preprocessor in unstable branch | bug preprocessor | I get a crash in the preprocessor in the unstable branch, since I rebased my local unstable branch onto the recent commits [pervious preprocessor versions I used date back October 2015 ...] when dealing with our Global Multicountry model.

I get the error both in Windows and MacOS.

In MacOS I get the following me... | 1.0 | Crash in preprocessor in unstable branch - I get a crash in the preprocessor in the unstable branch, since I rebased my local unstable branch onto the recent commits [pervious preprocessor versions I used date back October 2015 ...] when dealing with our Global Multicountry model.

I get the error both in Windows an... | process | crash in preprocessor in unstable branch i get a crash in the preprocessor in the unstable branch since i rebased my local unstable branch onto the recent commits when dealing with our global multicountry model i get the error both in windows and macos in macos i get the following message dynare m ... | 1 |

10,626 | 13,440,696,407 | IssuesEvent | 2020-09-08 01:48:01 | chavarera/python-mini-projects | https://api.github.com/repos/chavarera/python-mini-projects | closed | Convert an image to cartoon usimg opencv | Assigned Image-processing Learn Opencv Project boring-stuffs | **problem statement**

Convert an image to cartoon usimg opencv | 1.0 | Convert an image to cartoon usimg opencv - **problem statement**

Convert an image to cartoon usimg opencv | process | convert an image to cartoon usimg opencv problem statement convert an image to cartoon usimg opencv | 1 |

7,017 | 10,168,222,359 | IssuesEvent | 2019-08-07 20:15:20 | brucemiller/LaTeXML | https://api.github.com/repos/brucemiller/LaTeXML | closed | Postprocessing dies on natbib item with "others" in author field | bug postprocessing | An MWE to reproduce the issue:

main.tex:

```latex

\documentclass{article}

\usepackage{natbib}

\begin{document}

\cite{key}

\bibliography{mybib}

\bibliographystyle{plainnat}

\end{document}

```

mybib.bib:

```bibtex

@article{key,

title={Title},

author={Lastname, Firstname and others},

year={2019}

}

... | 1.0 | Postprocessing dies on natbib item with "others" in author field - An MWE to reproduce the issue:

main.tex:

```latex

\documentclass{article}

\usepackage{natbib}

\begin{document}

\cite{key}

\bibliography{mybib}

\bibliographystyle{plainnat}

\end{document}

```

mybib.bib:

```bibtex

@article{key,

title={Titl... | process | postprocessing dies on natbib item with others in author field an mwe to reproduce the issue main tex latex documentclass article usepackage natbib begin document cite key bibliography mybib bibliographystyle plainnat end document mybib bib bibtex article key title titl... | 1 |

17,535 | 23,345,563,584 | IssuesEvent | 2022-08-09 17:36:58 | AdyanRios-NOAA/SEFSC-MH-Processing | https://api.github.com/repos/AdyanRios-NOAA/SEFSC-MH-Processing | closed | Redundancy check improvements | enhancement icebox Processing | https://github.com/SEFSC-Management-History-Project/SEFSC-MH-Processing/blob/dcd6f518b1ee081472ac2d1a20577386de035c64/MH_process.R#L112-L125

# Notes have been added to explain the redundancy check. However, we can still improve it.

I think that we do not need to create the vector to track change events that later ... | 1.0 | Redundancy check improvements - https://github.com/SEFSC-Management-History-Project/SEFSC-MH-Processing/blob/dcd6f518b1ee081472ac2d1a20577386de035c64/MH_process.R#L112-L125

# Notes have been added to explain the redundancy check. However, we can still improve it.

I think that we do not need to create the vector to... | process | redundancy check improvements notes have been added to explain the redundancy check however we can still improve it i think that we do not need to create the vector to track change events that later gets merged into the cluster i think we could just create a new reg change variable for the entire mh prep... | 1 |

19,779 | 26,162,801,628 | IssuesEvent | 2022-12-31 21:09:01 | RobertCraigie/prisma-client-py | https://api.github.com/repos/RobertCraigie/prisma-client-py | closed | Add support for CockroachDB | topic: types kind/feature process/candidate topic: client level/intermediate priority/high | ## Problem

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

We already have pretty good support for CockroachDB but there is one blind spot, the `push` operation for arrays is not supported.

## Suggested solution

<!-- A clear and concise description of wh... | 1.0 | Add support for CockroachDB - ## Problem

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

We already have pretty good support for CockroachDB but there is one blind spot, the `push` operation for arrays is not supported.

## Suggested solution

<!-- A clear... | process | add support for cockroachdb problem we already have pretty good support for cockroachdb but there is one blind spot the push operation for arrays is not supported suggested solution we need to disable generating this type additional context | 1 |

158,860 | 20,035,460,204 | IssuesEvent | 2022-02-02 11:23:05 | kapseliboi/crowdfunding-frontend | https://api.github.com/repos/kapseliboi/crowdfunding-frontend | opened | CVE-2020-7793 (High) detected in ua-parser-js-0.7.14.tgz | security vulnerability | ## CVE-2020-7793 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ua-parser-js-0.7.14.tgz</b></p></summary>

<p>Lightweight JavaScript-based user-agent string parser</p>

<p>Library home ... | True | CVE-2020-7793 (High) detected in ua-parser-js-0.7.14.tgz - ## CVE-2020-7793 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ua-parser-js-0.7.14.tgz</b></p></summary>

<p>Lightweight Jav... | non_process | cve high detected in ua parser js tgz cve high severity vulnerability vulnerable library ua parser js tgz lightweight javascript based user agent string parser library home page a href path to dependency file package json path to vulnerable library node modules ua ... | 0 |

19,902 | 26,357,040,115 | IssuesEvent | 2023-01-11 10:28:49 | tradingstrategy-ai/trading-strategy | https://api.github.com/repos/tradingstrategy-ai/trading-strategy | opened | The version specifier in the code does not match the actual release version | process priority: P2 size: S | Looking at the [\_\_version\_\_](https://github.com/tradingstrategy-ai/trading-strategy/blob/c2b3cea698c9adb1b0f2d884549b1fdcbe6f8da9/tradingstrategy/__init__.py#L1) marker in the source, it just hardcodes the initial version `0.1.0`, while the actual [latest release](https://pypi.org/project/trading-strategy/) version... | 1.0 | The version specifier in the code does not match the actual release version - Looking at the [\_\_version\_\_](https://github.com/tradingstrategy-ai/trading-strategy/blob/c2b3cea698c9adb1b0f2d884549b1fdcbe6f8da9/tradingstrategy/__init__.py#L1) marker in the source, it just hardcodes the initial version `0.1.0`, while t... | process | the version specifier in the code does not match the actual release version looking at the marker in the source it just hardcodes the initial version while the actual version is at the time of writing the release process needs to be updated so that the version specifier in the code is p... | 1 |

81,768 | 10,256,675,571 | IssuesEvent | 2019-08-21 18:14:52 | ddnexus/pagy | https://api.github.com/repos/ddnexus/pagy | closed | Incorrect documentation on rendering templates? | documentation | The documentation for rendering a customizable template for the navigation links shows:

```

<%== render 'pagy/nav', locals: {pagy: @pagy} %>

```

This did not work for me and I had to change it to:

```

<%== render partial: 'pagy/nav', locals: {pagy: @pagy} %>

```

The [Rails documentation ](https://guides.rubyonr... | 1.0 | Incorrect documentation on rendering templates? - The documentation for rendering a customizable template for the navigation links shows:

```

<%== render 'pagy/nav', locals: {pagy: @pagy} %>

```

This did not work for me and I had to change it to:

```

<%== render partial: 'pagy/nav', locals: {pagy: @pagy} %>

```

... | non_process | incorrect documentation on rendering templates the documentation for rendering a customizable template for the navigation links shows this did not work for me and i had to change it to the says that partial must be explicitly added whenever there are options passed to the render c... | 0 |

594,738 | 18,052,311,070 | IssuesEvent | 2021-09-19 23:49:13 | CraftTweaker/CraftTweaker | https://api.github.com/repos/CraftTweaker/CraftTweaker | closed | [1.15.2] Using .reuse() in CT recipes causes items to duplicate | bug Low Priority | #### Issue Description:

If you make a crafting table recipe with some items having .reuse() on them then it will cause these items to duplicate themselves when crafting with said item. The .reuse() items must have at least 2 items in the stack for this to happen. I have verified that these are not just ghost items.

... | 1.0 | [1.15.2] Using .reuse() in CT recipes causes items to duplicate - #### Issue Description:

If you make a crafting table recipe with some items having .reuse() on them then it will cause these items to duplicate themselves when crafting with said item. The .reuse() items must have at least 2 items in the stack for this ... | non_process | using reuse in ct recipes causes items to duplicate issue description if you make a crafting table recipe with some items having reuse on them then it will cause these items to duplicate themselves when crafting with said item the reuse items must have at least items in the stack for this to happ... | 0 |

15,858 | 20,034,181,257 | IssuesEvent | 2022-02-02 10:06:03 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Allow inline many-to-many relation syntax for mongoDB | bug/2-confirmed kind/bug process/candidate topic: schema validation engines/data model parser team/migrations topic: mongodb team/psl-wg | There is an untested feature on mongodb that the query engine relies on: embedded inline many-to-many relations:

```prisma

model A {

id String @id @map("_id") @db.ObjectId

gql String?

b_ids String[] @db.Array(ObjectId)

bs B[] @relation(fields: [b_ids])

}

model B {

id String... | 1.0 | Allow inline many-to-many relation syntax for mongoDB - There is an untested feature on mongodb that the query engine relies on: embedded inline many-to-many relations:

```prisma

model A {

id String @id @map("_id") @db.ObjectId

gql String?

b_ids String[] @db.Array(ObjectId)

bs B[] @r... | process | allow inline many to many relation syntax for mongodb there is an untested feature on mongodb that the query engine relies on embedded inline many to many relations prisma model a id string id map id db objectid gql string b ids string db array objectid bs b rel... | 1 |

6,726 | 9,829,285,375 | IssuesEvent | 2019-06-15 19:17:59 | ION28/BLUESPAWN | https://api.github.com/repos/ION28/BLUESPAWN | opened | Investigate subscribing to PsSetCreateProcessNotifyRoutine | enhancement hard other os processes | Pssetcreateprocessnotifyroutine for managing process creation and blocking new procs

| 1.0 | Investigate subscribing to PsSetCreateProcessNotifyRoutine - Pssetcreateprocessnotifyroutine for managing process creation and blocking new procs

| process | investigate subscribing to pssetcreateprocessnotifyroutine pssetcreateprocessnotifyroutine for managing process creation and blocking new procs | 1 |

10,350 | 13,175,401,165 | IssuesEvent | 2020-08-12 01:30:25 | googleapis/python-spanner | https://api.github.com/repos/googleapis/python-spanner | closed | docs-presubmit failing | api: spanner priority: p2 type: process | ```

[18:13:16][ERROR] Failed to get build config

com.google.devtools.kokoro.config.ConfigException: Couldn't find build configuration file docs-presubmit.cfg or docs-presubmit.gcl under /tmp/workspace/workspace/cloud-devrel/client-libraries/python/googleapis/python-spanner/docs/docs-presubmit/src/github/python-spanne... | 1.0 | docs-presubmit failing - ```

[18:13:16][ERROR] Failed to get build config

com.google.devtools.kokoro.config.ConfigException: Couldn't find build configuration file docs-presubmit.cfg or docs-presubmit.gcl under /tmp/workspace/workspace/cloud-devrel/client-libraries/python/googleapis/python-spanner/docs/docs-presubmit... | process | docs presubmit failing failed to get build config com google devtools kokoro config configexception couldn t find build configuration file docs presubmit cfg or docs presubmit gcl under tmp workspace workspace cloud devrel client libraries python googleapis python spanner docs docs presubmit src github pyt... | 1 |

123,794 | 12,218,562,484 | IssuesEvent | 2020-05-01 19:37:42 | koala-il/origame-backend | https://api.github.com/repos/koala-il/origame-backend | opened | Plan the UI/UX of the application | documentation | How does the User see customers?

How to save customers?

How to register?

How to set events on the schedule?

| 1.0 | Plan the UI/UX of the application - How does the User see customers?

How to save customers?

How to register?

How to set events on the schedule?

| non_process | plan the ui ux of the application how does the user see customers how to save customers how to register how to set events on the schedule | 0 |

279,286 | 24,211,726,314 | IssuesEvent | 2022-09-26 00:04:20 | envoyproxy/envoy | https://api.github.com/repos/envoyproxy/envoy | closed | ProtocolIntegrationTest.HandleUpstreamSocketFail is flaky | bug area/test flakes stale | The test is flaky in various ways:

Fails at:

```

EXPECT_THAT(waitForAccessLog(access_log_name_),

HasSubstr("upstream_reset_before_response_started{connection_termination}"));

```

with corresponding log observed:

[2022-08-12 18:49:00.193][14][critical][assert] [test/integration/base_integratio... | 1.0 | ProtocolIntegrationTest.HandleUpstreamSocketFail is flaky - The test is flaky in various ways:

Fails at:

```

EXPECT_THAT(waitForAccessLog(access_log_name_),

HasSubstr("upstream_reset_before_response_started{connection_termination}"));

```

with corresponding log observed:

[2022-08-12 18:49:00.... | non_process | protocolintegrationtest handleupstreamsocketfail is flaky the test is flaky in various ways fails at expect that waitforaccesslog access log name hassubstr upstream reset before response started connection termination with corresponding log observed assert failure... | 0 |

9,946 | 12,976,232,683 | IssuesEvent | 2020-07-21 18:24:20 | obinnaokechukwu/internship-2020 | https://api.github.com/repos/obinnaokechukwu/internship-2020 | opened | Update OBS API documentation with info about newly added APIs | process | Update OBS API documentation with info about newly added APIs | 1.0 | Update OBS API documentation with info about newly added APIs - Update OBS API documentation with info about newly added APIs | process | update obs api documentation with info about newly added apis update obs api documentation with info about newly added apis | 1 |

15,742 | 19,910,538,565 | IssuesEvent | 2022-01-25 16:44:12 | input-output-hk/high-assurance-legacy | https://api.github.com/repos/input-output-hk/high-assurance-legacy | closed | Formally prove `bidirectional_bridge` core lemmas | type: enhancement language: isabelle topic: process calculus | Our goal is to formally prove the `bidirectional_bridge` core lemmas described in #35.

Informal proofs of these lemmas are given in https://github.com/input-output-hk/fm-ouroboros/issues/15#issuecomment-486381795. | 1.0 | Formally prove `bidirectional_bridge` core lemmas - Our goal is to formally prove the `bidirectional_bridge` core lemmas described in #35.

Informal proofs of these lemmas are given in https://github.com/input-output-hk/fm-ouroboros/issues/15#issuecomment-486381795. | process | formally prove bidirectional bridge core lemmas our goal is to formally prove the bidirectional bridge core lemmas described in informal proofs of these lemmas are given in | 1 |

285,930 | 21,558,595,107 | IssuesEvent | 2022-04-30 21:06:14 | magidoc-org/magidoc | https://api.github.com/repos/magidoc-org/magidoc | closed | Update documentation with SDL introspection and Rollup-parse-graphql-schema | documentation | Update documentation with SDL introspection and Rollup-parse-graphql-schema. See https://github.com/magidoc-org/magidoc/pull/40 | 1.0 | Update documentation with SDL introspection and Rollup-parse-graphql-schema - Update documentation with SDL introspection and Rollup-parse-graphql-schema. See https://github.com/magidoc-org/magidoc/pull/40 | non_process | update documentation with sdl introspection and rollup parse graphql schema update documentation with sdl introspection and rollup parse graphql schema see | 0 |

552,547 | 16,243,319,885 | IssuesEvent | 2021-05-07 12:11:20 | aiidateam/aiida-quantumespresso | https://api.github.com/repos/aiidateam/aiida-quantumespresso | opened | `PwBandStructureWorkChain`: Deprecate and fix issues | priority/important topic/workflows type/bug type/refactoring | The `PwBandStructureWorkChain` is a legacy version of a band structure work chain with a pre-defined protocol, mostly used for previous tutorials. However, now that the `PwBandsWorkChain` has been equipped with a protocol through the `get_builder_from_protocol()` method, it no longer serves a purpose and should be depr... | 1.0 | `PwBandStructureWorkChain`: Deprecate and fix issues - The `PwBandStructureWorkChain` is a legacy version of a band structure work chain with a pre-defined protocol, mostly used for previous tutorials. However, now that the `PwBandsWorkChain` has been equipped with a protocol through the `get_builder_from_protocol()` m... | non_process | pwbandstructureworkchain deprecate and fix issues the pwbandstructureworkchain is a legacy version of a band structure work chain with a pre defined protocol mostly used for previous tutorials however now that the pwbandsworkchain has been equipped with a protocol through the get builder from protocol m... | 0 |

6,865 | 9,998,709,255 | IssuesEvent | 2019-07-12 08:52:18 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Changing ZenHub pipeline should change label on the GitHub issue | process: contributing stage: needs investigating type: chore | If we start working on the issue and move it in the pipeline

<img width="440" alt="screen shot 2019-01-23 at 4 03 05 pm" src="https://user-images.githubusercontent.com/2212006/51636950-7abefa80-1f28-11e9-8cef-c70d1654f1e2.png">

it should change the "state: ..." label on that GitHub issue. | 1.0 | Changing ZenHub pipeline should change label on the GitHub issue - If we start working on the issue and move it in the pipeline

<img width="440" alt="screen shot 2019-01-23 at 4 03 05 pm" src="https://user-images.githubusercontent.com/2212006/51636950-7abefa80-1f28-11e9-8cef-c70d1654f1e2.png">

it should change th... | process | changing zenhub pipeline should change label on the github issue if we start working on the issue and move it in the pipeline img width alt screen shot at pm src it should change the state label on that github issue | 1 |

22,018 | 30,523,569,569 | IssuesEvent | 2023-07-19 09:41:14 | h4sh5/npm-auto-scanner | https://api.github.com/repos/h4sh5/npm-auto-scanner | opened | @mitech/onit-cli 3.0.1 has 2 guarddog issues | npm-silent-process-execution | ```{"npm-silent-process-execution":[{"code":" const subprocess = (0, child_process_1.spawn)(pm2exec, ['stop', 'all'], {\n detached: true,\n stdio: 'ignore'\n });","location":"package/dist/bin/serve/_versions/2.0.0/lib/pm2.js:67","message":"This package is silently executing another executable"},{"co... | 1.0 | @mitech/onit-cli 3.0.1 has 2 guarddog issues - ```{"npm-silent-process-execution":[{"code":" const subprocess = (0, child_process_1.spawn)(pm2exec, ['stop', 'all'], {\n detached: true,\n stdio: 'ignore'\n });","location":"package/dist/bin/serve/_versions/2.0.0/lib/pm2.js:67","message":"This package ... | process | mitech onit cli has guarddog issues npm silent process execution n detached true n stdio ignore n location package dist bin serve versions lib js message this package is silently executing another executable code const subprocess spawn ... | 1 |

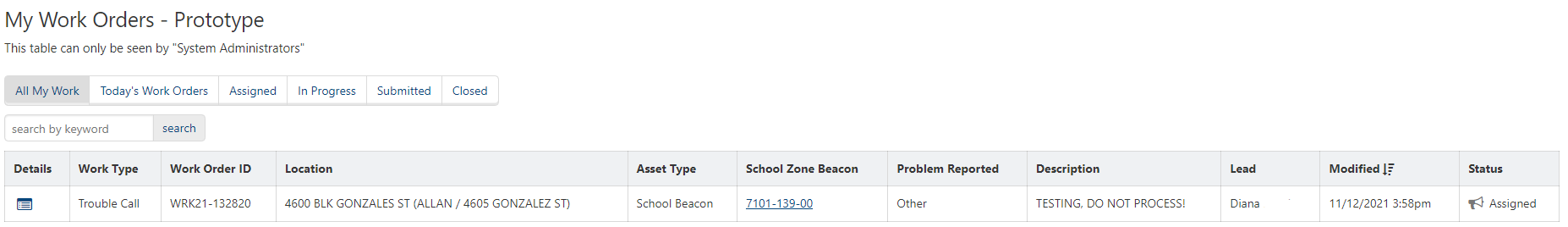

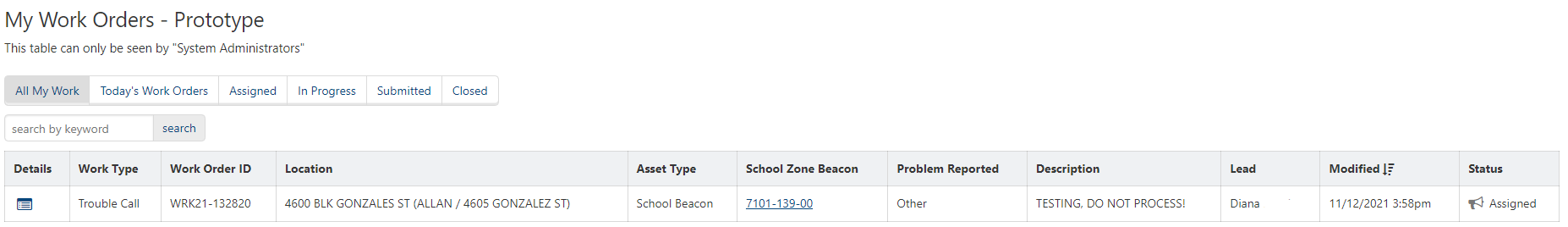

51,181 | 21,582,106,579 | IssuesEvent | 2022-05-02 19:55:32 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | closed | Create Prototype "My Work Order Details" page | Workgroup: AMD Service: Apps Type: Enhancement Product: AMD Data Tracker | Created a new "My Work Orders - Prototype" table on the `my-work-orders` page

- Hidden to others that do not have the "System Administrator" role

| 1.0 | Create Prototype "My Work Order Details" page - Created a new "My Work Orders - Prototype" table on the `my-work-orders` page

- Hidden to others that do not have the "System Administrator" role

| non_process | create prototype my work order details page created a new my work orders prototype table on the my work orders page hidden to others that do not have the system administrator role | 0 |

420,701 | 28,293,184,910 | IssuesEvent | 2023-04-09 13:19:24 | AY2223S2-CS2113-W15-2/tp | https://api.github.com/repos/AY2223S2-CS2113-W15-2/tp | closed | testing: improve code coverage | type.Documentation | @AY2223S2-CS2113-W15-2/developers: We should aim to minimally achieve 100% LOC coverage (currently 79%) in testing to avoid unexpected bugs surfacing, with the long-term goal of achieving 100% branch coverage.

__Todo:__

- [ ] Achieve 100% line coverage in sub-packages

- [x] Integration testing for `Frontend` and ... | 1.0 | testing: improve code coverage - @AY2223S2-CS2113-W15-2/developers: We should aim to minimally achieve 100% LOC coverage (currently 79%) in testing to avoid unexpected bugs surfacing, with the long-term goal of achieving 100% branch coverage.

__Todo:__

- [ ] Achieve 100% line coverage in sub-packages

- [x] Integr... | non_process | testing improve code coverage developers we should aim to minimally achieve loc coverage currently in testing to avoid unexpected bugs surfacing with the long term goal of achieving branch coverage todo achieve line coverage in sub packages integration testing for fronte... | 0 |

54,938 | 11,352,940,052 | IssuesEvent | 2020-01-24 14:39:20 | Altinn/altinn-studio | https://api.github.com/repos/Altinn/altinn-studio | opened | docker-compose build fails for repos - "max depth exceeded" | kind/bug quality/code solution/studio/repos | ## Describe the bug

After using docker-compose for Altinn Studio with `--build` parameter multiple times, you will eventually get this error, and need to remove the gitea-image.

```

ERROR: Service 'altinn_repositories' failed to build: max depth exceeded

```

This is the same problem as described [here](https:/... | 1.0 | docker-compose build fails for repos - "max depth exceeded" - ## Describe the bug

After using docker-compose for Altinn Studio with `--build` parameter multiple times, you will eventually get this error, and need to remove the gitea-image.

```

ERROR: Service 'altinn_repositories' failed to build: max depth exceede... | non_process | docker compose build fails for repos max depth exceeded describe the bug after using docker compose for altinn studio with build parameter multiple times you will eventually get this error and need to remove the gitea image error service altinn repositories failed to build max depth exceede... | 0 |

18,708 | 24,601,712,149 | IssuesEvent | 2022-10-14 13:03:00 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | child_process.spawn not checking null byte in args | child_process | ### Version

v18.9.1

### Platform

Linux tsc-ubuntu2204 5.15.0-48-generic #54-Ubuntu SMP Fri Aug 26 13:26:29 UTC 2022 x86_64 x86_64 x86_64 GNU/Linux

### Subsystem

child_process

### What steps will reproduce the bug?

```js

//// spawner.js

const {spawn} = require('child_process');

const myArgs = ['dump.js','AAA... | 1.0 | child_process.spawn not checking null byte in args - ### Version

v18.9.1

### Platform

Linux tsc-ubuntu2204 5.15.0-48-generic #54-Ubuntu SMP Fri Aug 26 13:26:29 UTC 2022 x86_64 x86_64 x86_64 GNU/Linux

### Subsystem

child_process

### What steps will reproduce the bug?

```js

//// spawner.js

const {spawn} = requir... | process | child process spawn not checking null byte in args version platform linux tsc generic ubuntu smp fri aug utc gnu linux subsystem child process what steps will reproduce the bug js spawner js const spawn require child process const m... | 1 |

4,524 | 7,187,307,395 | IssuesEvent | 2018-02-02 04:18:26 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | The HttpClient.SendAsync throws WebException instead of wrapping it with HttpRequestException | area-System.Net.Http bug needs more info tenet-compatibility | The 4.6.1 framework version did not do this.

When moving to .NET Standard 2.0 + .NET Framework 4.7.1, the same exception is no longer wrapped into an HttpRequestException (while the docs still state that it should).

This breaks robustness wrappers and retry mechanisms built around the HttpClient.SendAsync.

Consider ... | True | The HttpClient.SendAsync throws WebException instead of wrapping it with HttpRequestException - The 4.6.1 framework version did not do this.

When moving to .NET Standard 2.0 + .NET Framework 4.7.1, the same exception is no longer wrapped into an HttpRequestException (while the docs still state that it should).

This b... | non_process | the httpclient sendasync throws webexception instead of wrapping it with httprequestexception the framework version did not do this when moving to net standard net framework the same exception is no longer wrapped into an httprequestexception while the docs still state that it should this b... | 0 |

21,179 | 28,149,381,438 | IssuesEvent | 2023-04-02 21:19:32 | AbdElAziz333/Pluto | https://api.github.com/repos/AbdElAziz333/Pluto | closed | Mod causes server connection issues | bug vanilla parity in processing | Game version: Forge 40.1.84 (Minecraft 1.18.2)

Mod version: 0.0.2 (https://www.curseforge.com/minecraft/mc-mods/pluto/files/4049858)

Steps to reproduce:

-Install Pluto on both server and client

-Join server

-Disconnect from server

You will now be unable to reconnect to the server until the client is restart... | 1.0 | Mod causes server connection issues - Game version: Forge 40.1.84 (Minecraft 1.18.2)

Mod version: 0.0.2 (https://www.curseforge.com/minecraft/mc-mods/pluto/files/4049858)

Steps to reproduce:

-Install Pluto on both server and client

-Join server

-Disconnect from server

You will now be unable to reconnect to ... | process | mod causes server connection issues game version forge minecraft mod version steps to reproduce install pluto on both server and client join server disconnect from server you will now be unable to reconnect to the server until the client is restarted | 1 |

6,157 | 9,037,760,463 | IssuesEvent | 2019-02-09 14:00:00 | kmycode/sangokukmy | https://api.github.com/repos/kmycode/sangokukmy | closed | 城壁耐久力の、守兵への置き換え | enhancement process-processing | 新規要素として、都市のパラメータ「城壁耐久力」を削除し、守兵へ置き換えることを検討しています。

## 城壁耐久力とは

原作からあった仕様で、耐久力が高いほど、戦争で城壁を攻め込まれたときの城壁の減りを遅らせることができました。

反面、三国志NET KMY Versionでは能力のインフレが起きやすく、戦争における耐久力はあまり意味をなしていませんでした。

## 修正概要

* 「城壁耐久力」パラメータを削除し、「守兵」に置き換える

* 守兵の最大は、標準で2000とする

* 「守兵募集(仮)」コマンドで守兵を増やすことができる。実行結果は武将の人望に依存する

* 守兵は、都市の技術に応じた兵種(弓系)をもつ(技... | 2.0 | 城壁耐久力の、守兵への置き換え - 新規要素として、都市のパラメータ「城壁耐久力」を削除し、守兵へ置き換えることを検討しています。

## 城壁耐久力とは

原作からあった仕様で、耐久力が高いほど、戦争で城壁を攻め込まれたときの城壁の減りを遅らせることができました。

反面、三国志NET KMY Versionでは能力のインフレが起きやすく、戦争における耐久力はあまり意味をなしていませんでした。

## 修正概要

* 「城壁耐久力」パラメータを削除し、「守兵」に置き換える

* 守兵の最大は、標準で2000とする

* 「守兵募集(仮)」コマンドで守兵を増やすことができる。実行結果は武将の人望に依存する

* 守兵は、都市... | process | 城壁耐久力の、守兵への置き換え 新規要素として、都市のパラメータ「城壁耐久力」を削除し、守兵へ置き換えることを検討しています。 城壁耐久力とは 原作からあった仕様で、耐久力が高いほど、戦争で城壁を攻め込まれたときの城壁の減りを遅らせることができました。 反面、三国志net kmy versionでは能力のインフレが起きやすく、戦争における耐久力はあまり意味をなしていませんでした。 修正概要 「城壁耐久力」パラメータを削除し、「守兵」に置き換える 守兵の最大は、 「守兵募集(仮)」コマンドで守兵を増やすことができる。実行結果は武将の人望に依存する 守兵は、都市の技術に応じた兵種... | 1 |

29,553 | 11,759,835,868 | IssuesEvent | 2020-03-13 18:06:22 | 01binary/elevator | https://api.github.com/repos/01binary/elevator | opened | CVE-2020-8116 (High) detected in dot-prop-4.2.0.tgz | security vulnerability | ## CVE-2020-8116 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dot-prop-4.2.0.tgz</b></p></summary>

<p>Get, set, or delete a property from a nested object using a dot path</p>

<p>Lib... | True | CVE-2020-8116 (High) detected in dot-prop-4.2.0.tgz - ## CVE-2020-8116 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dot-prop-4.2.0.tgz</b></p></summary>

<p>Get, set, or delete a pro... | non_process | cve high detected in dot prop tgz cve high severity vulnerability vulnerable library dot prop tgz get set or delete a property from a nested object using a dot path library home page a href path to dependency file tmp ws scm elevator clientapp package json path to ... | 0 |

19,627 | 25,982,546,182 | IssuesEvent | 2022-12-19 20:15:26 | aws/sagemaker-python-sdk | https://api.github.com/repos/aws/sagemaker-python-sdk | closed | FrameworkProcessor is broken with SageMaker Pipelines | bug component: processing component: pipelines | **Describe the bug**

Trying to use any Processor derived from FrameworkProcessor is bugged with SageMaker Pipelines. There is a problem with the `command` and `entrypoint` parameter, where `command` does not pass `python3`, causing the following error:

> `line 2: import: command not found`

**To reproduce**

1. C... | 1.0 | FrameworkProcessor is broken with SageMaker Pipelines - **Describe the bug**

Trying to use any Processor derived from FrameworkProcessor is bugged with SageMaker Pipelines. There is a problem with the `command` and `entrypoint` parameter, where `command` does not pass `python3`, causing the following error:

> `line... | process | frameworkprocessor is broken with sagemaker pipelines describe the bug trying to use any processor derived from frameworkprocessor is bugged with sagemaker pipelines there is a problem with the command and entrypoint parameter where command does not pass causing the following error line im... | 1 |

126,144 | 26,786,983,792 | IssuesEvent | 2023-02-01 04:11:20 | microsoft/vsmarketplace | https://api.github.com/repos/microsoft/vsmarketplace | closed | Extensions malfunction | vscode | Type: <b>Performance Issue</b>

Extensions keep disappearing every time I startup the app.

When I download an extension then close the app, the extensions don't show again when I reopen the app. I have been having this issue for the past one month.

VS Code version: Code 1.74.3 (97dec172d3256f8ca4bfb2143f3f76b503c... | 1.0 | Extensions malfunction - Type: <b>Performance Issue</b>

Extensions keep disappearing every time I startup the app.

When I download an extension then close the app, the extensions don't show again when I reopen the app. I have been having this issue for the past one month.

VS Code version: Code 1.74.3 (97dec172d3... | non_process | extensions malfunction type performance issue extensions keep disappearing every time i startup the app when i download an extension then close the app the extensions don t show again when i reopen the app i have been having this issue for the past one month vs code version code ... | 0 |

283 | 2,722,682,804 | IssuesEvent | 2015-04-14 06:58:14 | mkdocs/mkdocs | https://api.github.com/repos/mkdocs/mkdocs | closed | Create google group for discussions. | Process | Also link to this and the IRC channel in both README and the front page of the docs. | 1.0 | Create google group for discussions. - Also link to this and the IRC channel in both README and the front page of the docs. | process | create google group for discussions also link to this and the irc channel in both readme and the front page of the docs | 1 |

6,197 | 3,770,583,899 | IssuesEvent | 2016-03-16 15:02:26 | rust-lang/rust | https://api.github.com/repos/rust-lang/rust | closed | rustbuild: can't cross compile compiler-rt for ARM | A-rustbuild | rustbuild is using cmake to build compiler-rt instead of Makefiles like the old build system does,

and there are some issues that make the build fail when the target is ARM.

### Background info

For ARM, there 3 floats ABI (see `-mfloat-abi` in [1]):

- `soft`: processor has no Floating Point Unit (FPU), floati... | 1.0 | rustbuild: can't cross compile compiler-rt for ARM - rustbuild is using cmake to build compiler-rt instead of Makefiles like the old build system does,

and there are some issues that make the build fail when the target is ARM.

### Background info

For ARM, there 3 floats ABI (see `-mfloat-abi` in [1]):

- `soft... | non_process | rustbuild can t cross compile compiler rt for arm rustbuild is using cmake to build compiler rt instead of makefiles like the old build system does and there are some issues that make the build fail when the target is arm background info for arm there floats abi see mfloat abi in soft ... | 0 |

10,777 | 13,607,753,721 | IssuesEvent | 2020-09-23 00:21:22 | GoogleCloudPlatform/cloud-ops-sandbox | https://api.github.com/repos/GoogleCloudPlatform/cloud-ops-sandbox | reopened | Secure e2e tests & the test runner since it has many IAM roles | priority: p2 type: process | Our e2e test runner has a lot of permissions in IAM, with ever increasing scope as we add on features. This makes us vulnerable to strangers making malicious PRs to start the test runner and then abusing this access in code.

This is something that really should be resolved at the Github level. At the moment [it isn'... | 1.0 | Secure e2e tests & the test runner since it has many IAM roles - Our e2e test runner has a lot of permissions in IAM, with ever increasing scope as we add on features. This makes us vulnerable to strangers making malicious PRs to start the test runner and then abusing this access in code.

This is something that real... | process | secure tests the test runner since it has many iam roles our test runner has a lot of permissions in iam with ever increasing scope as we add on features this makes us vulnerable to strangers making malicious prs to start the test runner and then abusing this access in code this is something that really s... | 1 |

4,385 | 7,274,977,874 | IssuesEvent | 2018-02-21 11:56:11 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | Function wrappers break is-defined checks | AREA: client AREA: server SYSTEM: resource processing TYPE: bug | Our site runs some code in a webworker, using an internal package that in some circumstances accesses `localStorage`:

```javascript

if (typeof (localStorage) !== 'undefined') {

// ...

}

```

However, this completely breaks after Hammerhead (for some reason?) surrounds this with a wrapper.

```javascript

/... | 1.0 | Function wrappers break is-defined checks - Our site runs some code in a webworker, using an internal package that in some circumstances accesses `localStorage`:

```javascript

if (typeof (localStorage) !== 'undefined') {

// ...

}

```

However, this completely breaks after Hammerhead (for some reason?) surrou... | process | function wrappers break is defined checks our site runs some code in a webworker using an internal package that in some circumstances accesses localstorage javascript if typeof localstorage undefined however this completely breaks after hammerhead for some reason surrou... | 1 |

96,841 | 28,028,403,080 | IssuesEvent | 2023-03-28 10:40:47 | yeahitsjan/pawxel | https://api.github.com/repos/yeahitsjan/pawxel | closed | leftover files after `make clean` | linux build | After running `make clean` there are still some `.o` files left, is this expected?

```

$ make clean

rm -f qrc_framelesshelpercore.cpp qrc_resources.cpp

rm -f moc_predefs.h

rm -f moc_application.cpp moc_sniparea.cpp moc_preview_window.cpp moc_editor_view.cpp moc_items.cpp moc_editor_window.cpp moc_preferences.cpp... | 1.0 | leftover files after `make clean` - After running `make clean` there are still some `.o` files left, is this expected?

```

$ make clean

rm -f qrc_framelesshelpercore.cpp qrc_resources.cpp

rm -f moc_predefs.h

rm -f moc_application.cpp moc_sniparea.cpp moc_preview_window.cpp moc_editor_view.cpp moc_items.cpp moc_e... | non_process | leftover files after make clean after running make clean there are still some o files left is this expected make clean rm f qrc framelesshelpercore cpp qrc resources cpp rm f moc predefs h rm f moc application cpp moc sniparea cpp moc preview window cpp moc editor view cpp moc items cpp moc e... | 0 |

1,175 | 2,599,630,398 | IssuesEvent | 2015-02-23 10:23:54 | v-l-m/vlm | https://api.github.com/repos/v-l-m/vlm | closed | Utiliser le redimensionnement auto coté client pour les infos bulles (images) | C: site P: major R: fixed T: defect | **Reported by paparazzia on 4 Oct 2009 10:04 UTC**

* ca vite de faire des accs locaux juste pour lire la dimension de l'image

* c'est de toute faon ncessaire avec la faon de grer les images dynamiques. | 1.0 | Utiliser le redimensionnement auto coté client pour les infos bulles (images) - **Reported by paparazzia on 4 Oct 2009 10:04 UTC**

* ca vite de faire des accs locaux juste pour lire la dimension de l'image

* c'est de toute faon ncessaire avec la faon de grer les images dynamiques. | non_process | utiliser le redimensionnement auto coté client pour les infos bulles images reported by paparazzia on oct utc ca vite de faire des accs locaux juste pour lire la dimension de l image c est de toute faon ncessaire avec la faon de grer les images dynamiques | 0 |

4,904 | 7,783,536,285 | IssuesEvent | 2018-06-06 10:11:19 | gvwilson/teachtogether.tech | https://api.github.com/repos/gvwilson/teachtogether.tech | opened | Ch06 Joel Ostblom | Ch06 Process | - on writing things up in a logical order: this can reinforce the reader's impostor syndrome, since they *aren't* doing things in that order

- "Indistinguishable from what good teachers do using..." are there specific references for this? | 1.0 | Ch06 Joel Ostblom - - on writing things up in a logical order: this can reinforce the reader's impostor syndrome, since they *aren't* doing things in that order

- "Indistinguishable from what good teachers do using..." are there specific references for this? | process | joel ostblom on writing things up in a logical order this can reinforce the reader s impostor syndrome since they aren t doing things in that order indistinguishable from what good teachers do using are there specific references for this | 1 |

9,447 | 12,427,312,279 | IssuesEvent | 2020-05-25 01:53:01 | GetTerminus/terminus-ui | https://api.github.com/repos/GetTerminus/terminus-ui | opened | Look into test component harness | Focus: community Goal: Process Improvement Needs: exploration | Should we use this concept? Is it easier to use or maintain vs standalone functions like we currently support?

- https://material.angular.io/cdk/test-harnesses/overview

- https://material.angular.io/guide/using-component-harnesses

- https://medium.com/@mhamel06/component-test-harnesses-angular-9s-second-best-feature-4... | 1.0 | Look into test component harness - Should we use this concept? Is it easier to use or maintain vs standalone functions like we currently support?

- https://material.angular.io/cdk/test-harnesses/overview

- https://material.angular.io/guide/using-component-harnesses

- https://medium.com/@mhamel06/component-test-harness... | process | look into test component harness should we use this concept is it easier to use or maintain vs standalone functions like we currently support should we leverage this concept what is the path forward | 1 |

294,814 | 9,048,723,164 | IssuesEvent | 2019-02-12 01:17:35 | less/less.js | https://api.github.com/repos/less/less.js | closed | Comments inside ruleset maps are parsed by the each-function | feature request medium priority | Let's say I have the following map and iterate over it with the each-function:

This will result in the following compiled output (with less.js):

This will result in the follo... | non_process | comments inside ruleset maps are parsed by the each function let s say i have the following map and iterate over it with the each function this will result in the following compiled output with less js the lines that are commented out are also parsed and the index is used as the key variable... | 0 |

384,566 | 26,593,402,164 | IssuesEvent | 2023-01-23 10:31:49 | pyodide/pyodide | https://api.github.com/repos/pyodide/pyodide | opened | Detectiong Pyodide, at build time | documentation | ## 📚 Documentation

The current documentation reads:

To detect Pyodide, at build time use,

```

import os

if "PYODIDE" in os.environ:

# building for Pyodide

```

This used to work on my computer using pyodide-build v0.21.

When I tried it using pyodide-build v0.22 however, the test `if "PYODIDE" i... | 1.0 | Detectiong Pyodide, at build time - ## 📚 Documentation

The current documentation reads:

To detect Pyodide, at build time use,

```

import os

if "PYODIDE" in os.environ:

# building for Pyodide

```

This used to work on my computer using pyodide-build v0.21.

When I tried it using pyodide-build v0.... | non_process | detectiong pyodide at build time 📚 documentation the current documentation reads to detect pyodide at build time use import os if pyodide in os environ building for pyodide this used to work on my computer using pyodide build when i tried it using pyodide build h... | 0 |

46,067 | 7,235,638,980 | IssuesEvent | 2018-02-13 01:50:10 | Marak/colors.js | https://api.github.com/repos/Marak/colors.js | opened | Add CONTRIBUTING.md | documentation | It would be good to have a guide on how to propose useful new features/fixes to the repo. In particular, we should be more explicit about what kinds of things we will/won't accept, how to format/test code, etc. We should also make things clear enough that first-time contributors don't feel discouraged from pushing fi... | 1.0 | Add CONTRIBUTING.md - It would be good to have a guide on how to propose useful new features/fixes to the repo. In particular, we should be more explicit about what kinds of things we will/won't accept, how to format/test code, etc. We should also make things clear enough that first-time contributors don't feel disco... | non_process | add contributing md it would be good to have a guide on how to propose useful new features fixes to the repo in particular we should be more explicit about what kinds of things we will won t accept how to format test code etc we should also make things clear enough that first time contributors don t feel disco... | 0 |

273,577 | 20,798,422,606 | IssuesEvent | 2022-03-17 11:35:54 | bkaczkowski/xcore | https://api.github.com/repos/bkaczkowski/xcore | closed | modelGeneExpression improve output description | documentation | Improve modelGeneExpression output description. | 1.0 | modelGeneExpression improve output description - Improve modelGeneExpression output description. | non_process | modelgeneexpression improve output description improve modelgeneexpression output description | 0 |

231,442 | 25,499,199,649 | IssuesEvent | 2022-11-28 01:17:38 | MValle21/Intelehealth-WebApp | https://api.github.com/repos/MValle21/Intelehealth-WebApp | opened | CVE-2022-24999 (Medium) detected in qs-6.5.2.tgz, qs-6.7.0.tgz | security vulnerability | ## CVE-2022-24999 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>qs-6.5.2.tgz</b>, <b>qs-6.7.0.tgz</b></p></summary>

<p>

<details><summary><b>qs-6.5.2.tgz</b></p></summary>

<p>A ... | True | CVE-2022-24999 (Medium) detected in qs-6.5.2.tgz, qs-6.7.0.tgz - ## CVE-2022-24999 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>qs-6.5.2.tgz</b>, <b>qs-6.7.0.tgz</b></p></summary... | non_process | cve medium detected in qs tgz qs tgz cve medium severity vulnerability vulnerable libraries qs tgz qs tgz qs tgz a querystring parser that supports nesting and arrays with a depth limit library home page a href path to dependency file pa... | 0 |

1,541 | 6,572,233,159 | IssuesEvent | 2017-09-11 00:23:20 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | Haproxy module doesn't check if the service is present on the given host | affects_2.0 bug_report networking waiting_on_maintainer | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

haproxy

##### ANSIBLE VERSION

2.0.2.0

##### CONFIGURATION

##### OS / ENVIRONMENT

##### SUMMARY

The haproxy module assumes that a given host presents in _all_ proxies, and if it is not true, an error occures.

##### STEPS TO REPRODUCE

haproxy: host=a_backend_host soc... | True | Haproxy module doesn't check if the service is present on the given host - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

haproxy

##### ANSIBLE VERSION

2.0.2.0

##### CONFIGURATION

##### OS / ENVIRONMENT

##### SUMMARY

The haproxy module assumes that a given host presents in _all_ proxies, and if it is not true, a... | non_process | haproxy module doesn t check if the service is present on the given host issue type bug report component name haproxy ansible version configuration os environment summary the haproxy module assumes that a given host presents in all proxies and if it is not true a... | 0 |

830,715 | 32,022,074,870 | IssuesEvent | 2023-09-22 05:52:02 | oceanbase/odc | https://api.github.com/repos/oceanbase/odc | opened | [Feature]: White-screen configuration for integrating bastion user login | type-feature priority-medium module-Bastion integration | ### Is your feature request related to a problem?

ODC support bastion user login integration since 3.x release.

But, the configuration is complex, because you should modifying meta DB.

### Describe the solution you'd like

ODC support a white-screen configuration for integrating bastion user login

### Additional c... | 1.0 | [Feature]: White-screen configuration for integrating bastion user login - ### Is your feature request related to a problem?

ODC support bastion user login integration since 3.x release.

But, the configuration is complex, because you should modifying meta DB.

### Describe the solution you'd like

ODC support a whit... | non_process | white screen configuration for integrating bastion user login is your feature request related to a problem odc support bastion user login integration since x release but the configuration is complex because you should modifying meta db describe the solution you d like odc support a white screen... | 0 |

33,603 | 7,735,752,047 | IssuesEvent | 2018-05-27 18:34:40 | fga-gpp-mds/2018.1_Nexte | https://api.github.com/repos/fga-gpp-mds/2018.1_Nexte | closed | Eu, como usuário, desejo realizar efetivamente meu login. | code eps infrastructure medium refactoring | ## Descrição

Essa issue destina-se a integrar o login ao servidor.

## Critérios de Aceitação

- [ ] Integrar login ao servidor;

- [ ] Fazer login funcionar tanto com informações normais, quanto com o account kit;

- [ ] Atualizar o user do singleton de usuário.

| 1.0 | Eu, como usuário, desejo realizar efetivamente meu login. - ## Descrição

Essa issue destina-se a integrar o login ao servidor.

## Critérios de Aceitação

- [ ] Integrar login ao servidor;

- [ ] Fazer login funcionar tanto com informações normais, quanto com o account kit;

- [ ] Atualizar o user do singleton de usuári... | non_process | eu como usuário desejo realizar efetivamente meu login descrição essa issue destina se a integrar o login ao servidor critérios de aceitação integrar login ao servidor fazer login funcionar tanto com informações normais quanto com o account kit atualizar o user do singleton de usuário | 0 |

9,414 | 12,407,418,306 | IssuesEvent | 2020-05-21 21:03:06 | burnpiro/wod-bike-dataset-generator | https://api.github.com/repos/burnpiro/wod-bike-dataset-generator | closed | Preprocessing for csv files from './data' | data processing | We need to clean and save preprocessed files from `./data` folder | 1.0 | Preprocessing for csv files from './data' - We need to clean and save preprocessed files from `./data` folder | process | preprocessing for csv files from data we need to clean and save preprocessed files from data folder | 1 |

9,381 | 6,277,930,102 | IssuesEvent | 2017-07-18 13:24:04 | giantswarm/cli | https://api.github.com/repos/giantswarm/cli | closed | how to delete orgs and envs is inconsistent | 0 - status/inbox area/consistency area/usability | deleting orgs `swarm org delete <org>` ... and deleting envs `swarm env -d <env>`

Similar issue: https://github.com/giantswarm/cli/issues/137

| True | how to delete orgs and envs is inconsistent - deleting orgs `swarm org delete <org>` ... and deleting envs `swarm env -d <env>`

Similar issue: https://github.com/giantswarm/cli/issues/137

| non_process | how to delete orgs and envs is inconsistent deleting orgs swarm org delete and deleting envs swarm env d similar issue | 0 |

13,937 | 16,704,593,542 | IssuesEvent | 2021-06-09 08:29:12 | hochschule-darmstadt/openartbrowser | https://api.github.com/repos/hochschule-darmstadt/openartbrowser | opened | Blacklist functionality in etl | etl process medium priority | **Reason (Why?)**

If some categories seem inappropriate, there should be a functionality to filter them from the dataset.

**Solution (What?)**

Implementation of a blacklist, which is applied to the crawling process in a performant way. The blacklist should be a json array, containing all QIDs of blacklisteted enti... | 1.0 | Blacklist functionality in etl - **Reason (Why?)**

If some categories seem inappropriate, there should be a functionality to filter them from the dataset.

**Solution (What?)**

Implementation of a blacklist, which is applied to the crawling process in a performant way. The blacklist should be a json array, containi... | process | blacklist functionality in etl reason why if some categories seem inappropriate there should be a functionality to filter them from the dataset solution what implementation of a blacklist which is applied to the crawling process in a performant way the blacklist should be a json array containi... | 1 |

43,706 | 17,633,749,553 | IssuesEvent | 2021-08-19 11:17:09 | hashicorp/terraform-provider-azurerm | https://api.github.com/repos/hashicorp/terraform-provider-azurerm | closed | Support for function_app vnet integration | question service/functions | <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not l... | 1.0 | Support for function_app vnet integration - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainer... | non_process | support for function app vnet integration community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or me too comments they generate extra noise for issue followers and do not help priori... | 0 |

585,345 | 17,485,499,081 | IssuesEvent | 2021-08-09 10:28:04 | nimblehq/nimble-medium-ios | https://api.github.com/repos/nimblehq/nimble-medium-ios | opened | As a user, I can login from the article details screen | type : feature type: integration priority: medium | ## Why

For the existing users of the application that don't login yet, they should be able to see the `Login` screen for being able to login into the application, use more features and manage personal contents.

## Acceptance Criteria

- [ ] When the users tap on the `Login` highlighted text from the `Article Detail... | 1.0 | As a user, I can login from the article details screen - ## Why

For the existing users of the application that don't login yet, they should be able to see the `Login` screen for being able to login into the application, use more features and manage personal contents.

## Acceptance Criteria

- [ ] When the users tap... | non_process | as a user i can login from the article details screen why for the existing users of the application that don t login yet they should be able to see the login screen for being able to login into the application use more features and manage personal contents acceptance criteria when the users tap o... | 0 |

21,948 | 30,449,508,104 | IssuesEvent | 2023-07-16 05:19:30 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | Add support to attributes with array values in processors that manipulate attributes | enhancement Stale processor/attributes processor/resourcedetection processor/resource priority:needed closed as inactive | ### Is your feature request related to a problem? Please describe.

[The spec states](https://github.com/open-telemetry/opentelemetry-specification/blob/main/specification/common/README.md#attribute) that attribute values can be "An array of primitive type values. The array MUST be homogeneous, i.e., it MUST NOT contai... | 3.0 | Add support to attributes with array values in processors that manipulate attributes - ### Is your feature request related to a problem? Please describe.

[The spec states](https://github.com/open-telemetry/opentelemetry-specification/blob/main/specification/common/README.md#attribute) that attribute values can be "An ... | process | add support to attributes with array values in processors that manipulate attributes is your feature request related to a problem please describe that attribute values can be an array of primitive type values the array must be homogeneous i e it must not contain values of different types the fol... | 1 |

791,706 | 27,873,231,058 | IssuesEvent | 2023-03-21 14:39:03 | labring/laf | https://api.github.com/repos/labring/laf | closed | [bug] Dependency load error after custom port | priority:medium good first issue | ### Search before asking

- [X] I had searched in the [issues](https://github.com/labring/laf/issues?q=is%3Aissue) and found no similar issues.

### Environment

Linux (self-host)

### What happened

Dependency load error after custom port

and found no similar issues.

### Environment

Linux (self-host)

### What happened

Dependency load error after custom port

; Artificial Intelligence (cs.AI); Machine Learning (cs.LG); Robotics (cs.RO)

- **Arxiv link:** https://arxiv.org/abs/2309.12295

... | 2.0 | New submissions for Fri, 22 Sep 23 - ## Keyword: events

### Learning to Drive Anywhere

- **Authors:** Ruizhao Zhu, Peng Huang, Eshed Ohn-Bar, Venkatesh Saligrama

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI); Machine Learning (cs.LG); Robotics (cs.RO)

- **Arxiv link... | process | new submissions for fri sep keyword events learning to drive anywhere authors ruizhao zhu peng huang eshed ohn bar venkatesh saligrama subjects computer vision and pattern recognition cs cv artificial intelligence cs ai machine learning cs lg robotics cs ro arxiv link ... | 1 |

6,088 | 8,949,629,179 | IssuesEvent | 2019-01-25 08:20:58 | our-city-app/oca-backend | https://api.github.com/repos/our-city-app/oca-backend | closed | Failed to sync events | priority_major process_duplicate type_bug | ```

2019-01-11 01:30:25.575 CET

process_cityapp_uitdatabank_events for service-c0e9e53e-0372-4c5a-9ae7-8a1fe788ace3@rogerth.at page 1 (/base/data/home/apps/e~rogerthat-server/20190109t140721.415280197299602092/solutions/common/cron/events/uitdatabank.py:73)

...

2019-01-11 01:30:26.478 CET

_get_uitdatabank_even... | 1.0 | Failed to sync events - ```

2019-01-11 01:30:25.575 CET

process_cityapp_uitdatabank_events for service-c0e9e53e-0372-4c5a-9ae7-8a1fe788ace3@rogerth.at page 1 (/base/data/home/apps/e~rogerthat-server/20190109t140721.415280197299602092/solutions/common/cron/events/uitdatabank.py:73)

...

2019-01-11 01:30:26.478 CE... | process | failed to sync events cet process cityapp uitdatabank events for service rogerth at page base data home apps e rogerthat server solutions common cron events uitdatabank py cet get uitdatabank events failed with status code and content base data... | 1 |