Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

83,081 | 10,320,328,698 | IssuesEvent | 2019-08-30 20:10:42 | mosdef-hub/foyer | https://api.github.com/repos/mosdef-hub/foyer | opened | Developer-facing flowchart | documentation | **Describe the behavior you would like added to Foyer**

We have this flowchart for the atom-typing process (from the paper):

since there are a lot of strung-together modular/internal functions that actua... | 1.0 | Developer-facing flowchart - **Describe the behavior you would like added to Foyer**

We have this flowchart for the atom-typing process (from the paper):

since there are a lot of strung-together modular/... | non_process | developer facing flowchart describe the behavior you would like added to foyer we have this flowchart for the atom typing process from the paper since there are a lot of strung together modular internal functions that actually implement this algorithm it would be useful to have this annotated with... | 0 |

17,354 | 23,175,977,087 | IssuesEvent | 2022-07-31 12:28:11 | ppy/osu-web | https://api.github.com/repos/ppy/osu-web | closed | Incorrect link in Featured Artist label | area:beatmap-processing | https://osu.ppy.sh/beatmapsets/1703527#taiko/3483886

Featured Artist label links to https://osu.ppy.sh/beatmaps/artists/tracks/4404 - which is on katagiri's Featured Artist listing, but it should direct the user to tokiwa's listing instead. I believe this should instead be tracks/5085?

This might have happened be... | 1.0 | Incorrect link in Featured Artist label - https://osu.ppy.sh/beatmapsets/1703527#taiko/3483886

Featured Artist label links to https://osu.ppy.sh/beatmaps/artists/tracks/4404 - which is on katagiri's Featured Artist listing, but it should direct the user to tokiwa's listing instead. I believe this should instead be t... | process | incorrect link in featured artist label featured artist label links to which is on katagiri s featured artist listing but it should direct the user to tokiwa s listing instead i believe this should instead be tracks this might have happened because this remix is on katagiri s fa listing and is t... | 1 |

187,894 | 22,046,004,341 | IssuesEvent | 2022-05-30 01:49:36 | artsking/linux-4.1.15 | https://api.github.com/repos/artsking/linux-4.1.15 | closed | CVE-2019-16089 (Medium) detected in linux-stable-rtv4.1.33 - autoclosed | security vulnerability | ## CVE-2019-16089 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home p... | True | CVE-2019-16089 (Medium) detected in linux-stable-rtv4.1.33 - autoclosed - ## CVE-2019-16089 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p... | non_process | cve medium detected in linux stable autoclosed cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulner... | 0 |

21,023 | 27,969,909,863 | IssuesEvent | 2023-03-25 00:17:13 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | duplicate manager - inconsistent processing | feature: enhancement difficulty: hard scope: UI scope: image processing bug: pending no-issue-activity | Within the darkroom the duplicate manager allows you to click on any of the duplicate images to compare with the current edit. However, the image you get when you click on a duplicate is often not consistent with the image you'd get if you double-clicked to change image and view directly in the darkroom.

For example... | 1.0 | duplicate manager - inconsistent processing - Within the darkroom the duplicate manager allows you to click on any of the duplicate images to compare with the current edit. However, the image you get when you click on a duplicate is often not consistent with the image you'd get if you double-clicked to change image and... | process | duplicate manager inconsistent processing within the darkroom the duplicate manager allows you to click on any of the duplicate images to compare with the current edit however the image you get when you click on a duplicate is often not consistent with the image you d get if you double clicked to change image and... | 1 |

2,094 | 4,931,386,429 | IssuesEvent | 2016-11-28 10:01:30 | tomlutzenberger/frontal-coding-guideline | https://api.github.com/repos/tomlutzenberger/frontal-coding-guideline | opened | GIT Hooks | enhancement process quality suggestion | Konfiguration, die es bei mangelnder Qualität/nicht bestandenen Tests unmöglich macht zu pushen/mergen. | 1.0 | GIT Hooks - Konfiguration, die es bei mangelnder Qualität/nicht bestandenen Tests unmöglich macht zu pushen/mergen. | process | git hooks konfiguration die es bei mangelnder qualität nicht bestandenen tests unmöglich macht zu pushen mergen | 1 |

498,046 | 14,399,332,079 | IssuesEvent | 2020-12-03 10:46:49 | gnosis/conditional-tokens-explorer | https://api.github.com/repos/gnosis/conditional-tokens-explorer | closed | Condition Id field is cleared out when select a position to merge with for a position with 2 conditions | Medium priority QA Passed bug verify in production | Related to #650, #668, #571, #598, #512

**Steps:**

1. create positions with 2 or more conditions

2. Open Merge positions page

3. Select a position with 2 or more conditions in the Select position section

4. Select a positoin in the Merge with section

**AR:** Condition Id field is cleared out. However, user is able ... | 1.0 | Condition Id field is cleared out when select a position to merge with for a position with 2 conditions - Related to #650, #668, #571, #598, #512

**Steps:**

1. create positions with 2 or more conditions

2. Open Merge positions page

3. Select a position with 2 or more conditions in the Select position section

4. Sel... | non_process | condition id field is cleared out when select a position to merge with for a position with conditions related to steps create positions with or more conditions open merge positions page select a position with or more conditions in the select position section select a posi... | 0 |

157,043 | 12,344,134,608 | IssuesEvent | 2020-05-15 06:14:00 | celery/celery | https://api.github.com/repos/celery/celery | closed | kombu.exceptions.OperationalError: Cannot route message for exchange 'reply.celery.pidbox': Table empty or key no longer exists | Status: Needs Testcase ✘ | <!--

Please fill this template entirely and do not erase parts of it.

We reserve the right to close without a response

bug reports which are incomplete.

-->

# Checklist

<!--

To check an item on the list replace [ ] with [x].

-->

- [ ] I have verified that the issue exists against the `master` branch of Celery.... | 1.0 | kombu.exceptions.OperationalError: Cannot route message for exchange 'reply.celery.pidbox': Table empty or key no longer exists - <!--

Please fill this template entirely and do not erase parts of it.

We reserve the right to close without a response

bug reports which are incomplete.

-->

# Checklist

<!--

To check ... | non_process | kombu exceptions operationalerror cannot route message for exchange reply celery pidbox table empty or key no longer exists please fill this template entirely and do not erase parts of it we reserve the right to close without a response bug reports which are incomplete checklist to check ... | 0 |

2,009 | 4,832,728,401 | IssuesEvent | 2016-11-08 08:34:47 | woesterduolf/Mission-reisbureau | https://api.github.com/repos/woesterduolf/Mission-reisbureau | closed | Hotel kiezen pagina | Boekingsprocess priority: highest Type:Feature | **See mockup file (page 3)**

Here we have the screen for the selection of the hotel preferences.

Again, we have the top banner present with the images of the cities. This time however, we do not have the advertisement banner on the left.

On the left there are all the options a customer could want to choose fro... | 1.0 | Hotel kiezen pagina - **See mockup file (page 3)**

Here we have the screen for the selection of the hotel preferences.

Again, we have the top banner present with the images of the cities. This time however, we do not have the advertisement banner on the left.

On the left there are all the options a customer co... | process | hotel kiezen pagina see mockup file page here we have the screen for the selection of the hotel preferences again we have the top banner present with the images of the cities this time however we do not have the advertisement banner on the left on the left there are all the options a customer co... | 1 |

12,508 | 14,962,121,304 | IssuesEvent | 2021-01-27 08:50:10 | laurent-daniel-utt/MeshIneBits | https://api.github.com/repos/laurent-daniel-utt/MeshIneBits | reopened | add a reserved space for each bit in order to be able to hold during cutting | enhancement preprocessor | At bit population stage, each bit should be checked for having a reserved space in order to be able to be hold by prehensor during cutting process. The size of reserved space should be a parameter. At the moment, it will only be used when preprocessing in order to add preprocessing information like a potential cut line... | 1.0 | add a reserved space for each bit in order to be able to hold during cutting - At bit population stage, each bit should be checked for having a reserved space in order to be able to be hold by prehensor during cutting process. The size of reserved space should be a parameter. At the moment, it will only be used when pr... | process | add a reserved space for each bit in order to be able to hold during cutting at bit population stage each bit should be checked for having a reserved space in order to be able to be hold by prehensor during cutting process the size of reserved space should be a parameter at the moment it will only be used when pr... | 1 |

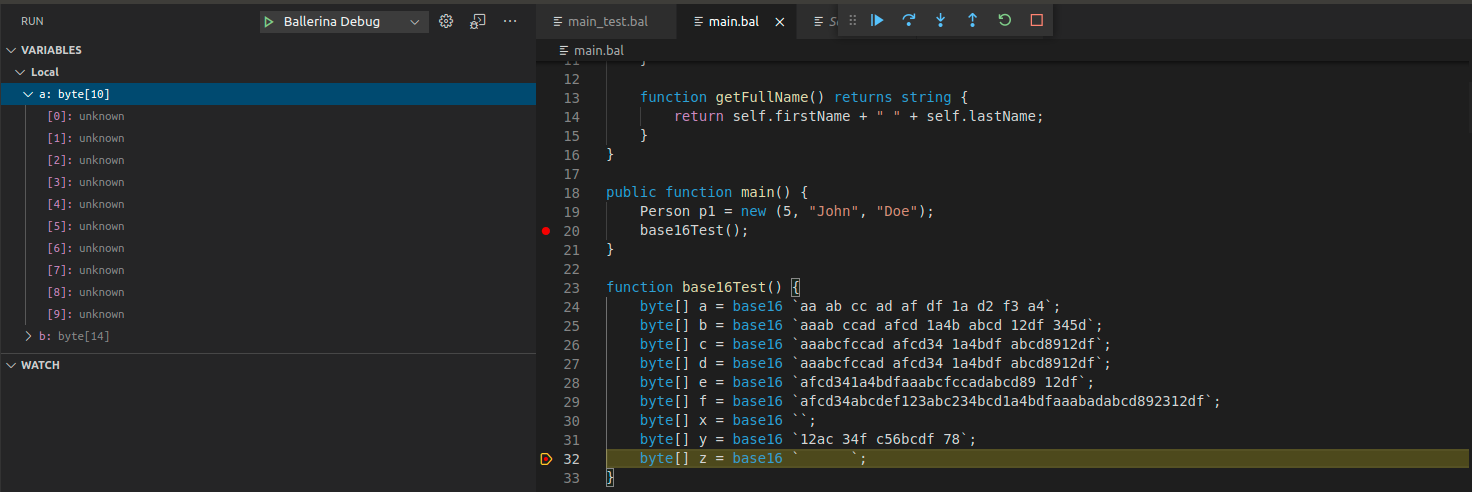

528,569 | 15,369,902,359 | IssuesEvent | 2021-03-02 08:04:29 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | [Debugger] Byte array element values are shown as 'unknown' | Area/Debugger Priority/High Team/DevTools Type/Bug | **Description:**

Please refer the below sample code. Ballerina byte array values are shown as `unkown` in here.

**Steps to reproduce:**

**Affected Versions:**

**OS, ... | 1.0 | [Debugger] Byte array element values are shown as 'unknown' - **Description:**

Please refer the below sample code. Ballerina byte array values are shown as `unkown` in here.

... | non_process | byte array element values are shown as unknown description please refer the below sample code ballerina byte array values are shown as unkown in here steps to reproduce affected versions os db other environment details and versions related issues optional ... | 0 |

650,584 | 21,409,865,991 | IssuesEvent | 2022-04-22 03:55:41 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | reopened | Removing x-wso2-request-interceptor in API Definition | Type/Bug Priority/Normal | ### Description:

Automatically removing the x-wso2-request-interceptor (in the API level) property from the generated API resource when creating an API by using a swagger file with the **x-wso2-request-interceptor** in the API level.

### Steps to reproduce:

- Create an API by using a small swagger file with the ... | 1.0 | Removing x-wso2-request-interceptor in API Definition - ### Description:

Automatically removing the x-wso2-request-interceptor (in the API level) property from the generated API resource when creating an API by using a swagger file with the **x-wso2-request-interceptor** in the API level.

### Steps to reproduce:

... | non_process | removing x request interceptor in api definition description automatically removing the x request interceptor in the api level property from the generated api resource when creating an api by using a swagger file with the x request interceptor in the api level steps to reproduce create... | 0 |

69,083 | 22,142,689,219 | IssuesEvent | 2022-06-03 08:37:15 | MarcusWolschon/osmeditor4android | https://api.github.com/repos/MarcusWolschon/osmeditor4android | opened | Object search that doesn't return any results creates an error notification | Defect Minor | An object search that doesn't find any objects shows an error toast that then leads to an error notification. An empty result should naturally just be a warning toast without a notification. | 1.0 | Object search that doesn't return any results creates an error notification - An object search that doesn't find any objects shows an error toast that then leads to an error notification. An empty result should naturally just be a warning toast without a notification. | non_process | object search that doesn t return any results creates an error notification an object search that doesn t find any objects shows an error toast that then leads to an error notification an empty result should naturally just be a warning toast without a notification | 0 |

15,773 | 19,915,937,393 | IssuesEvent | 2022-01-25 22:40:17 | medic/cht-core | https://api.github.com/repos/medic/cht-core | opened | Release 3.15.0 | Type: Internal process | # Planning - Product Manager

- [ ] Create a GH Milestone for the release. We use [semver](http://semver.org) so if there are breaking changes increment the major, otherwise if there are new features increment the minor, otherwise increment the service pack. Breaking changes in our case relate to updated software req... | 1.0 | Release 3.15.0 - # Planning - Product Manager

- [ ] Create a GH Milestone for the release. We use [semver](http://semver.org) so if there are breaking changes increment the major, otherwise if there are new features increment the minor, otherwise increment the service pack. Breaking changes in our case relate to upd... | process | release planning product manager create a gh milestone for the release we use so if there are breaking changes increment the major otherwise if there are new features increment the minor otherwise increment the service pack breaking changes in our case relate to updated software requirements ... | 1 |

5,390 | 8,213,358,996 | IssuesEvent | 2018-09-04 19:17:21 | GoogleCloudPlatform/google-cloud-python | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-python | opened | Bigquery: 'test_extract_table' snippet, bucket creation flakes with 500 | api: bigquery flaky testing type: process | Similar to #5746, #5747, #5748, but with a 500 error instead of a 429.

See: https://circleci.com/gh/GoogleCloudPlatform/google-cloud-python/7904 (first error in `snippets-2-7` run).

```python

______________________________ test_extract_table ______________________________

client = <google.cloud.bigquery.clien... | 1.0 | Bigquery: 'test_extract_table' snippet, bucket creation flakes with 500 - Similar to #5746, #5747, #5748, but with a 500 error instead of a 429.

See: https://circleci.com/gh/GoogleCloudPlatform/google-cloud-python/7904 (first error in `snippets-2-7` run).

```python

______________________________ test_extract_tab... | process | bigquery test extract table snippet bucket creation flakes with similar to but with a error instead of a see first error in snippets run python test extract table client to delete def test ext... | 1 |

342,645 | 10,320,047,033 | IssuesEvent | 2019-08-30 19:17:36 | thirtybees/thirtybees | https://api.github.com/repos/thirtybees/thirtybees | closed | PHP translation BUG (maybe?) | Bug Estimate: L Priority: low | Again, I am not sure if to consider it as a bug.

But when you have a space after opening and before closing parentheses, the string won't be recognized as translatable string:

$this->l('Cart block') - will work

But this won't:

$this->l( 'Cart block' ) | 1.0 | PHP translation BUG (maybe?) - Again, I am not sure if to consider it as a bug.

But when you have a space after opening and before closing parentheses, the string won't be recognized as translatable string:

$this->l('Cart block') - will work

But this won't:

$this->l( 'Cart block' ) | non_process | php translation bug maybe again i am not sure if to consider it as a bug but when you have a space after opening and before closing parentheses the string won t be recognized as translatable string this l cart block will work but this won t this l cart block | 0 |

17,164 | 22,742,639,453 | IssuesEvent | 2022-07-07 06:06:17 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Improve GO:0033638 modulation by symbiont of host response to heat | multi-species process | GO:0033638 modulation by symbiont of host response to heat has a single annotation, from a paper that describes how a symbiont changes the temperature range under which its host can live (PMID:17425405, PMID:35350854)

The @geneontology/multiorganism-working-group would like to improve the term label, definition and ... | 1.0 | Improve GO:0033638 modulation by symbiont of host response to heat - GO:0033638 modulation by symbiont of host response to heat has a single annotation, from a paper that describes how a symbiont changes the temperature range under which its host can live (PMID:17425405, PMID:35350854)

The @geneontology/multiorgani... | process | improve go modulation by symbiont of host response to heat go modulation by symbiont of host response to heat has a single annotation from a paper that describes how a symbiont changes the temperature range under which its host can live pmid pmid the geneontology multiorganism working group would lik... | 1 |

2,407 | 5,193,205,819 | IssuesEvent | 2017-01-22 17:02:13 | raphym/Simulation_of_message_routing_by_intelligent_agents | https://api.github.com/repos/raphym/Simulation_of_message_routing_by_intelligent_agents | opened | traceroute betwen quorum with DFS | being processed | I have to find

- the routes between the backbones

-the route in the quorums

i will use the dfs algorythm | 1.0 | traceroute betwen quorum with DFS - I have to find

- the routes between the backbones

-the route in the quorums

i will use the dfs algorythm | process | traceroute betwen quorum with dfs i have to find the routes between the backbones the route in the quorums i will use the dfs algorythm | 1 |

15,180 | 18,953,037,277 | IssuesEvent | 2021-11-18 16:59:52 | Bodmer/TFT_eSPI | https://api.github.com/repos/Bodmer/TFT_eSPI | closed | TFT_eSPI - ESP32-S2 - ST7789 | To do: enhancement Compatibility update New processor variant | Hi,

As Arduino ESP32 Core 2.0.0 is out, I would like to use TFT_eSPI for my ESP32-2 Saola and my [adafruit 240x320 ST7789](https://www.adafruit.com/product/4311).

With the same hardware, I can use Adafruit_ST7789 driver using

```Adafruit_ST7789 tft = Adafruit_ST7789(TFT_CS, TFT_DC, TFT_MOSI, TFT_SCLK, TFT_RST);`... | 1.0 | TFT_eSPI - ESP32-S2 - ST7789 - Hi,

As Arduino ESP32 Core 2.0.0 is out, I would like to use TFT_eSPI for my ESP32-2 Saola and my [adafruit 240x320 ST7789](https://www.adafruit.com/product/4311).

With the same hardware, I can use Adafruit_ST7789 driver using

```Adafruit_ST7789 tft = Adafruit_ST7789(TFT_CS, TFT_DC... | process | tft espi hi as arduino core is out i would like to use tft espi for my saola and my with the same hardware i can use adafruit driver using adafruit tft adafruit tft cs tft dc tft mosi tft sclk tft rst but with tft espi nothing is display i only have a black... | 1 |

15,364 | 19,536,052,360 | IssuesEvent | 2021-12-31 07:09:45 | apache/iotdb | https://api.github.com/repos/apache/iotdb | closed | 希望降频聚合查询补空值支持avg函数 | Module - Query Processing Priority - Middle | 降频聚合查询补空值,现在只支持 last_value 聚合函数。avg函数会报错。

Msg: 411: Meet error in query process: Group By Fill only support last_value function

希望能支持avg函数。 | 1.0 | 希望降频聚合查询补空值支持avg函数 - 降频聚合查询补空值,现在只支持 last_value 聚合函数。avg函数会报错。

Msg: 411: Meet error in query process: Group By Fill only support last_value function

希望能支持avg函数。 | process | 希望降频聚合查询补空值支持avg函数 降频聚合查询补空值,现在只支持 last value 聚合函数。avg函数会报错。 msg meet error in query process group by fill only support last value function 希望能支持avg函数。 | 1 |

9,874 | 12,886,267,575 | IssuesEvent | 2020-07-13 09:13:29 | deepset-ai/haystack | https://api.github.com/repos/deepset-ai/haystack | closed | File upload with Asian languages triggers warning in language detection | preprocessing question | To Author:

I have uploaded the text file with Chinese and Thai, but the system showed "The language for file-uploads/ec87658d3f7c4756a99c986b2f9ab558_Duterte.txt is not one of ['']. The file may not have been decoded in the correct text format." Is that normal ? I just want some suggestions. Thank you!

<img width="401" alt="Screen Shot 2020-04-05 at 10 51 23 AM" src="https://user-images.githubusercontent.com/12788951/78503319-8660a080-772b-11ea-8e2f-23c380074048.png">

| 1.0 | [BUG] Puffer Refugium Graphical Glitch - The Puffer Refugium icon has a magic pipe connection that appears to work across an air gap (for puffer atmosphere input)

<img width="401" alt="Screen Shot 2020-04-05 at 10 51 23 AM" src="https://user-images.githubusercontent.com/12788951/78503319-8660a080-772b-11ea-8e2f-23c3... | process | puffer refugium graphical glitch the puffer refugium icon has a magic pipe connection that appears to work across an air gap for puffer atmosphere input img width alt screen shot at am src | 1 |

238,755 | 7,782,786,226 | IssuesEvent | 2018-06-06 07:51:58 | javaee/servlet-spec | https://api.github.com/repos/javaee/servlet-spec | closed | Need way to track progress of requests; proposal included | Component: Listeners Priority: Major Type: Improvement multipart progressbar upload | Servlet 3.0 added multipart request processing to, in part, make handling file uploads easier (and easier it did make it). Before 3.0, many users used Commons FileUpload to accomplish this task. However, 3.0's multipart processing did not, unfortunately, completely eliminate the need for FileUpload. One of the major fe... | 1.0 | Need way to track progress of requests; proposal included - Servlet 3.0 added multipart request processing to, in part, make handling file uploads easier (and easier it did make it). Before 3.0, many users used Commons FileUpload to accomplish this task. However, 3.0's multipart processing did not, unfortunately, compl... | non_process | need way to track progress of requests proposal included servlet added multipart request processing to in part make handling file uploads easier and easier it did make it before many users used commons fileupload to accomplish this task however s multipart processing did not unfortunately compl... | 0 |

16,971 | 22,333,695,390 | IssuesEvent | 2022-06-14 16:30:52 | GoogleCloudPlatform/cloud-ops-sandbox | https://api.github.com/repos/GoogleCloudPlatform/cloud-ops-sandbox | closed | fix make-release pipeline | type: process priority: p3 | When running make release for v0.7.1, the [push-tags action](https://github.com/GoogleCloudPlatform/cloud-ops-sandbox/blob/main/.github/workflows/push-tags.yml) failed to run. I had to trigger it manually. We should fix this so that the release process is fully automated and reliable | 1.0 | fix make-release pipeline - When running make release for v0.7.1, the [push-tags action](https://github.com/GoogleCloudPlatform/cloud-ops-sandbox/blob/main/.github/workflows/push-tags.yml) failed to run. I had to trigger it manually. We should fix this so that the release process is fully automated and reliable | process | fix make release pipeline when running make release for the failed to run i had to trigger it manually we should fix this so that the release process is fully automated and reliable | 1 |

19,226 | 25,368,890,113 | IssuesEvent | 2022-11-21 09:05:20 | NEARWEEK/CORE | https://api.github.com/repos/NEARWEEK/CORE | opened | Create staking battleplan & start execution | Process | ## 🎉 Subtasks

- [ ] Create strategy for staking node

- [ ] Map all top tiers partners we need to reach out to

- [ ] Start execute plan

## 🤼♂️ Reviewer

@Kisgus

| 1.0 | Create staking battleplan & start execution - ## 🎉 Subtasks

- [ ] Create strategy for staking node

- [ ] Map all top tiers partners we need to reach out to

- [ ] Start execute plan

## 🤼♂️ Reviewer

@Kisgus

| process | create staking battleplan start execution 🎉 subtasks create strategy for staking node map all top tiers partners we need to reach out to start execute plan 🤼♂️ reviewer kisgus | 1 |

155,816 | 12,278,514,415 | IssuesEvent | 2020-05-08 10:07:32 | inf112-v20/legless-crane | https://api.github.com/repos/inf112-v20/legless-crane | closed | Thourough tests of Board.java | Need tests | As we might replace Board.java, don't prioritize this currently

- [x] A test for each type of tile (belt vs cog etc.) | 1.0 | Thourough tests of Board.java - As we might replace Board.java, don't prioritize this currently

- [x] A test for each type of tile (belt vs cog etc.) | non_process | thourough tests of board java as we might replace board java don t prioritize this currently a test for each type of tile belt vs cog etc | 0 |

108,089 | 9,259,587,386 | IssuesEvent | 2019-03-18 00:41:57 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Etcd snapshots have .part file along with the actual snapshot | kind/bug-qa status/blocker status/resolved status/to-test team/ca version/2.0 | Version: Master build from March 14th

Steps:

1. Create a single node cluster with local backup config of 1hr creation time

2. Wait for the first automatic snapshot to be taken

The snapshots have a .part in /opt/rke/etcd-snapshots directory

```

root@soumyasingagn1:/opt/rke/etcd-snapshots# ls -ltr

total 1940

... | 1.0 | Etcd snapshots have .part file along with the actual snapshot - Version: Master build from March 14th

Steps:

1. Create a single node cluster with local backup config of 1hr creation time

2. Wait for the first automatic snapshot to be taken

The snapshots have a .part in /opt/rke/etcd-snapshots directory

```

ro... | non_process | etcd snapshots have part file along with the actual snapshot version master build from march steps create a single node cluster with local backup config of creation time wait for the first automatic snapshot to be taken the snapshots have a part in opt rke etcd snapshots directory root ... | 0 |

14,886 | 18,288,329,750 | IssuesEvent | 2021-10-05 12:50:45 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Android] User is able to skip the force upgrade pop-up and continue using the app by clicking on 'Forgot passcode?Sign in again' | Bug P2 Android Process: Fixed Process: Tested QA Process: Tested dev | **Steps:**

1. Signup/login to Android app and create a passcode

2. Configure force upgrade from SB and publish app

3. Kill and relaunch the app/minimize the app

4. Click on 'Forgot passcode?Sign in again' link

5. User is navigated to signin.

6. Again signin/signup with new user, user is able to enroll/take act... | 3.0 | [Android] User is able to skip the force upgrade pop-up and continue using the app by clicking on 'Forgot passcode?Sign in again' - **Steps:**

1. Signup/login to Android app and create a passcode

2. Configure force upgrade from SB and publish app

3. Kill and relaunch the app/minimize the app

4. Click on 'Forgot p... | process | user is able to skip the force upgrade pop up and continue using the app by clicking on forgot passcode sign in again steps signup login to android app and create a passcode configure force upgrade from sb and publish app kill and relaunch the app minimize the app click on forgot passcode ... | 1 |

5,670 | 8,556,164,795 | IssuesEvent | 2018-11-08 12:18:18 | kiwicom/orbit-components | https://api.github.com/repos/kiwicom/orbit-components | closed | [Orbit UI v0.29.0] New & renamed illustrations | Illustrations Processing | Almost all illustrations were changed (small tweaks with positioning mostly); a few were renamed and few were added. Hopefully, the full list of changes here:

New illustrations

- Nomad

- Success

- Error

- BusinessTravel

- MobileApp

- PlaceholderTours

- DesktopSearch

Renaming

- no-bookings => NoResults

... | 1.0 | [Orbit UI v0.29.0] New & renamed illustrations - Almost all illustrations were changed (small tweaks with positioning mostly); a few were renamed and few were added. Hopefully, the full list of changes here:

New illustrations

- Nomad

- Success

- Error

- BusinessTravel

- MobileApp

- PlaceholderTours

- DesktopS... | process | new renamed illustrations almost all illustrations were changed small tweaks with positioning mostly a few were renamed and few were added hopefully the full list of changes here new illustrations nomad success error businesstravel mobileapp placeholdertours desktopsearch renami... | 1 |

27,016 | 7,888,123,904 | IssuesEvent | 2018-06-27 20:53:29 | Polymer/tools | https://api.github.com/repos/Polymer/tools | closed | [project-config] Uncompiled presets | Package: build Priority: Medium Status: Available Type: Bug | Hi,

Is there a reason why the `uncompiled-bundled` and the `uncompiled-unbundled` presets have **es2018** in browserCapabilities instead of **es2016** in the [builds.ts](https://github.com/Polymer/tools/blob/dd1c8bbb44f37f67974fbabf878b7a495ffeb6f6/packages/project-config/src/builds.ts#L224-L249) file of project-con... | 1.0 | [project-config] Uncompiled presets - Hi,

Is there a reason why the `uncompiled-bundled` and the `uncompiled-unbundled` presets have **es2018** in browserCapabilities instead of **es2016** in the [builds.ts](https://github.com/Polymer/tools/blob/dd1c8bbb44f37f67974fbabf878b7a495ffeb6f6/packages/project-config/src/bu... | non_process | uncompiled presets hi is there a reason why the uncompiled bundled and the uncompiled unbundled presets have in browsercapabilities instead of in the file of project config package also as these presets are uncompiled should they have module as browser capabilities by example this ... | 0 |

17,651 | 23,471,174,697 | IssuesEvent | 2022-08-16 22:04:22 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | reopened | Three memory copies of every dataloader cpu tensor | module: multiprocessing module: dataloader module: cuda triaged enhancement | ### 🐛 Describe the bug

(from slack discussion with @albanD)

Is it possible to both share_memory and pin_memory for a tensor, for dataloading across shared memory with zero copies?

```

>>> x.share_memory_().pin_memory().is_shared()

False

>>> x.pin_memory().share_memory_().is_pinned()

False

```

It seems l... | 1.0 | Three memory copies of every dataloader cpu tensor - ### 🐛 Describe the bug

(from slack discussion with @albanD)

Is it possible to both share_memory and pin_memory for a tensor, for dataloading across shared memory with zero copies?

```

>>> x.share_memory_().pin_memory().is_shared()

False

>>> x.pin_memory().... | process | three memory copies of every dataloader cpu tensor 🐛 describe the bug from slack discussion with alband is it possible to both share memory and pin memory for a tensor for dataloading across shared memory with zero copies x share memory pin memory is shared false x pin memory ... | 1 |

14,506 | 17,604,363,042 | IssuesEvent | 2021-08-17 15:17:50 | flancast90/Speech-To-Text-in-TW5 | https://api.github.com/repos/flancast90/Speech-To-Text-in-TW5 | closed | Text-editor toolbar button brainstorming | process-description | I open this issue as a reminder that we want to create a text-editor toolbar button that allows inserting the recorded text into the current tiddlers' text field

For that we need some brainstorming and we need to collect information about the tiddlywiki internals so that we know how we can realize this | 1.0 | Text-editor toolbar button brainstorming - I open this issue as a reminder that we want to create a text-editor toolbar button that allows inserting the recorded text into the current tiddlers' text field

For that we need some brainstorming and we need to collect information about the tiddlywiki internals so that we... | process | text editor toolbar button brainstorming i open this issue as a reminder that we want to create a text editor toolbar button that allows inserting the recorded text into the current tiddlers text field for that we need some brainstorming and we need to collect information about the tiddlywiki internals so that we... | 1 |

168,364 | 20,754,724,701 | IssuesEvent | 2022-03-15 11:04:07 | arngrimur/computersaysno | https://api.github.com/repos/arngrimur/computersaysno | closed | CVE-2020-8565 (Medium) detected in github.com/docker/cli-v20.10.11 - autoclosed | security vulnerability | ## CVE-2020-8565 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/docker/cli-v20.10.11</b></p></summary>

<p>The Docker CLI</p>

<p>

Dependency Hierarchy:

- github.com/ory... | True | CVE-2020-8565 (Medium) detected in github.com/docker/cli-v20.10.11 - autoclosed - ## CVE-2020-8565 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/docker/cli-v20.10.11</b><... | non_process | cve medium detected in github com docker cli autoclosed cve medium severity vulnerability vulnerable library github com docker cli the docker cli dependency hierarchy github com ory dockertest root library x github com docker cli vulnerabl... | 0 |

29,901 | 14,334,080,586 | IssuesEvent | 2020-11-27 07:24:04 | pandas-dev/pandas | https://api.github.com/repos/pandas-dev/pandas | closed | PERF: regression in Series.asof with single date | Performance Regression | From https://pandas.pydata.org/speed/pandas/#timeseries.AsOf.time_asof_single?p-constructor='Series'&commits=52a17259-24e881d4&x-axis=commit&Cython=0.29.21&python=3.8

Snippet extracted from ASV:

```python

N = 10000

rng = pd.date_range(start="1/1/1990", periods=N, freq="53s")

s = pd.Series(np.random.randn(N), i... | True | PERF: regression in Series.asof with single date - From https://pandas.pydata.org/speed/pandas/#timeseries.AsOf.time_asof_single?p-constructor='Series'&commits=52a17259-24e881d4&x-axis=commit&Cython=0.29.21&python=3.8

Snippet extracted from ASV:

```python

N = 10000

rng = pd.date_range(start="1/1/1990", periods=... | non_process | perf regression in series asof with single date from snippet extracted from asv python n rng pd date range start periods n freq s pd series np random randn n index rng dates pd date range start periods n freq date dates timeit s asof date ... | 0 |

120,133 | 17,644,020,786 | IssuesEvent | 2021-08-20 01:28:54 | mariano72/node | https://api.github.com/repos/mariano72/node | opened | CVE-2020-36048 (High) detected in engine.io-3.4.0.tgz | security vulnerability | ## CVE-2020-36048 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>engine.io-3.4.0.tgz</b></p></summary>

<p>The realtime engine behind Socket.IO. Provides the foundation of a bidirectio... | True | CVE-2020-36048 (High) detected in engine.io-3.4.0.tgz - ## CVE-2020-36048 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>engine.io-3.4.0.tgz</b></p></summary>

<p>The realtime engine b... | non_process | cve high detected in engine io tgz cve high severity vulnerability vulnerable library engine io tgz the realtime engine behind socket io provides the foundation of a bidirectional connection between client and server library home page a href path to dependency file nod... | 0 |

4,727 | 7,571,408,759 | IssuesEvent | 2018-04-23 12:09:50 | dzhw/zofar | https://api.github.com/repos/dzhw/zofar | closed | Bug in Carousel | category: technical.processes et: 3 prio: ? status: testing type: backlog.task type: bug | If two Carousels are on one page. Both change header title simultaneous, when slide in one of the carousels is changed | 1.0 | Bug in Carousel - If two Carousels are on one page. Both change header title simultaneous, when slide in one of the carousels is changed | process | bug in carousel if two carousels are on one page both change header title simultaneous when slide in one of the carousels is changed | 1 |

2,734 | 5,622,580,736 | IssuesEvent | 2017-04-04 13:11:07 | AllenFang/react-bootstrap-table | https://api.github.com/repos/AllenFang/react-bootstrap-table | reopened | Handling duplicate rows in custom modal body | inprocess | Thanks for excellent plugin!

I have a custom modal body for the 'insert row' and validator for the key field. How can I check if the entered key already exists and update the validateState?

Thanks for your help. | 1.0 | Handling duplicate rows in custom modal body - Thanks for excellent plugin!

I have a custom modal body for the 'insert row' and validator for the key field. How can I check if the entered key already exists and update the validateState?

Thanks for your help. | process | handling duplicate rows in custom modal body thanks for excellent plugin i have a custom modal body for the insert row and validator for the key field how can i check if the entered key already exists and update the validatestate thanks for your help | 1 |

17,699 | 23,549,054,045 | IssuesEvent | 2022-08-21 14:59:46 | divertingPan/divertingPan.github.io | https://api.github.com/repos/divertingPan/divertingPan.github.io | opened | 科普 | 电脑里的图片到底是什么样的 | 老潘家的潘老师 | Gitalk /post/basic_digital_image_processing/ | https://divertingpan.github.io/post/basic_digital_image_processing/

使用图片处理软件,就不可避免的要用到计算机(不管是手机还是电脑,原理都是一样)。今天潘老师从科学的角度解剖图片,了解图片背后的一些机制,再使用Photoshop就会更明白些。

今天的文章侧重科普,没有很多操作上的东西。即使读者没有任何计算机... | 1.0 | 科普 | 电脑里的图片到底是什么样的 | 老潘家的潘老师 - https://divertingpan.github.io/post/basic_digital_image_processing/

使用图片处理软件,就不可避免的要用到计算机(不管是手机还是电脑,原理都是一样)。今天潘老师从科学的角度解剖图片,了解图片背后的一些机制,再使用Photoshop就会更明白些。

今天的文章侧重科普,没有很多操作上的东西。即使读者没有任何计算机... | process | 科普 电脑里的图片到底是什么样的 老潘家的潘老师 使用图片处理软件,就不可避免的要用到计算机(不管是手机还是电脑,原理都是一样)。今天潘老师从科学的角度解剖图片,了解图片背后的一些机制,再使用photoshop就会更明白些。 今天的文章侧重科普,没有很多操作上的东西。即使读者没有任何计算机 | 1 |

698,076 | 23,964,747,583 | IssuesEvent | 2022-09-12 23:03:24 | apache/hudi | https://api.github.com/repos/apache/hudi | closed | [SUPPORT]Hudi Inserts and Upserts for MoR and CoW tables are taking very long time. | performance priority:major | **_Tips before filing an issue_**

- Have you gone through our [FAQs](https://cwiki.apache.org/confluence/display/HUDI/FAQ)?

- Join the mailing list to engage in conversations and get faster support at dev-subscribe@hudi.apache.org.

- If you have triaged this as a bug, then file an [issue](https://issues.apache... | 1.0 | [SUPPORT]Hudi Inserts and Upserts for MoR and CoW tables are taking very long time. - **_Tips before filing an issue_**

- Have you gone through our [FAQs](https://cwiki.apache.org/confluence/display/HUDI/FAQ)?

- Join the mailing list to engage in conversations and get faster support at dev-subscribe@hudi.apache.o... | non_process | hudi inserts and upserts for mor and cow tables are taking very long time tips before filing an issue have you gone through our join the mailing list to engage in conversations and get faster support at dev subscribe hudi apache org if you have triaged this as a bug then file an direc... | 0 |

13,345 | 15,801,910,589 | IssuesEvent | 2021-04-03 07:07:01 | PyCQA/flake8 | https://api.github.com/repos/PyCQA/flake8 | closed | Simplify and speed up multiprocessing - [merged] | component:multiprocessing component:performance gitlab merge request | In GitLab by @asottile on Nov 22, 2016, 15:45

_Merges faster -> master_

This is a bit of a WIP, I moved away from Queue (since it seems to be the bottleneck)

From #265 the same test finishes (still slower) but in reasonable time:

```

$ time flake8 -j8 bar

real 0m17.583s

user 0m26.312s

sys 0m2.288s

``` | 1.0 | Simplify and speed up multiprocessing - [merged] - In GitLab by @asottile on Nov 22, 2016, 15:45

_Merges faster -> master_

This is a bit of a WIP, I moved away from Queue (since it seems to be the bottleneck)

From #265 the same test finishes (still slower) but in reasonable time:

```

$ time flake8 -j8 bar

real 0m1... | process | simplify and speed up multiprocessing in gitlab by asottile on nov merges faster master this is a bit of a wip i moved away from queue since it seems to be the bottleneck from the same test finishes still slower but in reasonable time time bar real user sys | 1 |

9,789 | 12,805,470,203 | IssuesEvent | 2020-07-03 07:35:05 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | closed | View of MV slower than querying directly? | comp-processors performance v20.3-affected v20.4-affected | ```sql

CREATE MATERIALIZED VIEW player_champ_counts_mv

ENGINE = AggregatingMergeTree() ORDER BY (patch_num, my_account_id, champ_id)

as select patch_num, my_account_id, champ_id, countState() as c_state

from full_info

group by patch_num, my_account_id, champ_id;

create view player_champ_counts as

select patc... | 1.0 | View of MV slower than querying directly? - ```sql

CREATE MATERIALIZED VIEW player_champ_counts_mv

ENGINE = AggregatingMergeTree() ORDER BY (patch_num, my_account_id, champ_id)

as select patch_num, my_account_id, champ_id, countState() as c_state

from full_info

group by patch_num, my_account_id, champ_id;

cre... | process | view of mv slower than querying directly sql create materialized view player champ counts mv engine aggregatingmergetree order by patch num my account id champ id as select patch num my account id champ id countstate as c state from full info group by patch num my account id champ id cre... | 1 |

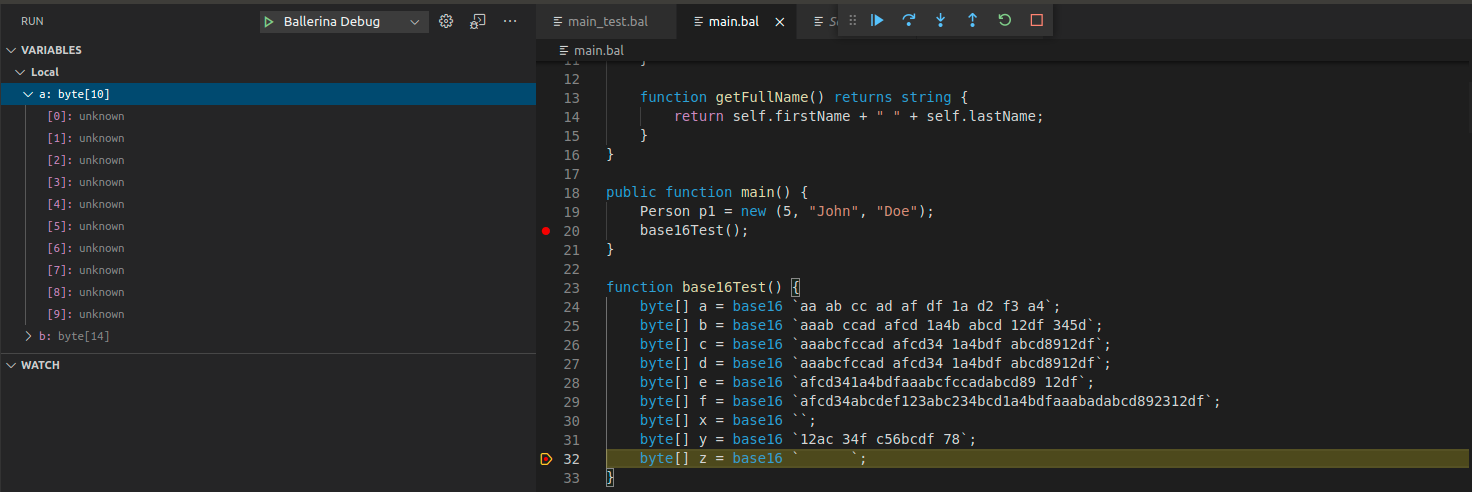

282,453 | 21,315,490,446 | IssuesEvent | 2022-04-16 07:39:12 | Rye-Catcher/pe | https://api.github.com/repos/Rye-Catcher/pe | opened | Wrong command format of `remove` command provided in Command Suumary of UG | type.DocumentationBug severity.VeryLow | This is its format in the `remove` command section

This is its format in the Command Summary section

This is its format in the Command Summary section

.

We need to follow our internal processes/policies to make this happen; this is a process tracking issue.

The [license](https://useast.ensembl.org/info/about/legal/code_licence.html) is [Apache 2.0](http://www.apach... | 1.0 | Public release of VEP docker image. - It turned out that we are the first who need to publish VEP docker images on [gcr.io](http://gcr.io).

We need to follow our internal processes/policies to make this happen; this is a process tracking issue.

The [license](https://useast.ensembl.org/info/about/legal/code_licence.... | process | public release of vep docker image it turned out that we are the first who need to publish vep docker images on we need to follow our internal processes policies to make this happen this is a process tracking issue the is | 1 |

15,908 | 20,113,115,023 | IssuesEvent | 2022-02-07 16:47:07 | pelias/api | https://api.github.com/repos/pelias/api | closed | parser: fails to parse apartment numbers | input parsing processed Q1-2017 libpostal | the address parser is making a mistake parsing `1917/2 Pike Drive`, it ends up thinking that `2` is the housenumber.

``` javascript

"query": {

"text": "1917/2 Pike Drive",

"parsed_text": {

"number": 2,

"street": "Pike Drive",

"regions": []

}

}

``` | 1.0 | parser: fails to parse apartment numbers - the address parser is making a mistake parsing `1917/2 Pike Drive`, it ends up thinking that `2` is the housenumber.

``` javascript

"query": {

"text": "1917/2 Pike Drive",

"parsed_text": {

"number": 2,

"street": "Pike Drive",

"regions": []

}

}

``` | process | parser fails to parse apartment numbers the address parser is making a mistake parsing pike drive it ends up thinking that is the housenumber javascript query text pike drive parsed text number street pike drive regions | 1 |

27,684 | 22,154,710,494 | IssuesEvent | 2022-06-03 20:59:55 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | Redirect COVID chatbot link to the main coronavirus FAQs page | backend operations tools-be-review infrastructure platform-sre console-services team-platform-infrastructure | On May 15, the coronavirus chatbot will be retired. By that date, we will want to redirect users who visit that link over to the main coronavirus FAQs page.

See below:

https://github.com/department-of-veterans-affairs/va-virtual-agent/issues/456

https://app.zenhub.com/workspaces/vft-59c95ae5fda7577a9b3184f8/issue... | 2.0 | Redirect COVID chatbot link to the main coronavirus FAQs page - On May 15, the coronavirus chatbot will be retired. By that date, we will want to redirect users who visit that link over to the main coronavirus FAQs page.

See below:

https://github.com/department-of-veterans-affairs/va-virtual-agent/issues/456

http... | non_process | redirect covid chatbot link to the main coronavirus faqs page on may the coronavirus chatbot will be retired by that date we will want to redirect users who visit that link over to the main coronavirus faqs page see below the virtual agent chatbot team is looking for guidance from sitewide teams t... | 0 |

6,931 | 10,095,831,885 | IssuesEvent | 2019-07-27 12:45:37 | shirou/gopsutil | https://api.github.com/repos/shirou/gopsutil | closed | On CentOS, the value returned by process.Percent() is bigger than 100% | os:linux package:cpu package:process | How I get cpu usage:

```

c.CPUUsage, err = p.Percent(time.Second * 1)

```

Centos Version: 6.4 | 1.0 | On CentOS, the value returned by process.Percent() is bigger than 100% - How I get cpu usage:

```

c.CPUUsage, err = p.Percent(time.Second * 1)

```

Centos Version: 6.4 | process | on centos the value returned by process percent is bigger than how i get cpu usage c cpuusage err p percent time second centos version | 1 |

113,725 | 17,150,887,827 | IssuesEvent | 2021-07-13 20:26:22 | snowdensb/braindump | https://api.github.com/repos/snowdensb/braindump | opened | CVE-2018-16492 (High) detected in extend-3.0.0.tgz | security vulnerability | ## CVE-2018-16492 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>extend-3.0.0.tgz</b></p></summary>

<p>Port of jQuery.extend for node.js and the browser</p>

<p>Library home page: <a h... | True | CVE-2018-16492 (High) detected in extend-3.0.0.tgz - ## CVE-2018-16492 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>extend-3.0.0.tgz</b></p></summary>

<p>Port of jQuery.extend for n... | non_process | cve high detected in extend tgz cve high severity vulnerability vulnerable library extend tgz port of jquery extend for node js and the browser library home page a href path to dependency file braindump package json path to vulnerable library braindump node modules e... | 0 |

20,836 | 27,608,098,833 | IssuesEvent | 2023-03-09 14:18:27 | rusefi/rusefi_documentation | https://api.github.com/repos/rusefi/rusefi_documentation | closed | EPIC: errors in markdown need fixing before merging a change (CI for documentation) | bug IMPORTANT wiki location & process change | Validate Markdown Files With MarkdownLint according to [this ](https://matthewsetter.com/tools-that-make-technical-writing-easier-markdown-linter/)blog post revealed over 7K errors.

To avoid follow-on errors in other tools I'd suggest to fix them.

Luckily most errors can be fixed automatically.

As a follow-up w... | 1.0 | EPIC: errors in markdown need fixing before merging a change (CI for documentation) - Validate Markdown Files With MarkdownLint according to [this ](https://matthewsetter.com/tools-that-make-technical-writing-easier-markdown-linter/)blog post revealed over 7K errors.

To avoid follow-on errors in other tools I'd sugges... | process | epic errors in markdown need fixing before merging a change ci for documentation validate markdown files with markdownlint according to post revealed over errors to avoid follow on errors in other tools i d suggest to fix them luckily most errors can be fixed automatically as a follow up we should... | 1 |

14,098 | 16,987,917,780 | IssuesEvent | 2021-06-30 16:24:07 | CesiumGS/cesium | https://api.github.com/repos/CesiumGS/cesium | closed | Post Processing sandcastle issue | category - post-processing type - bug | Run http://localhost:8080/Apps/Sandcastle/index.html?src=Post%20Processing.html&label=All

1. There is an odd flash full screen flash when you enable each of the effects the first time.

2. I got a random crash the first time I enabled black and white about undefined `v` in a UniformSample or something similar (not s... | 1.0 | Post Processing sandcastle issue - Run http://localhost:8080/Apps/Sandcastle/index.html?src=Post%20Processing.html&label=All

1. There is an odd flash full screen flash when you enable each of the effects the first time.

2. I got a random crash the first time I enabled black and white about undefined `v` in a Unifor... | process | post processing sandcastle issue run there is an odd flash full screen flash when you enable each of the effects the first time i got a random crash the first time i enabled black and white about undefined v in a uniformsample or something similar not sure of the exact details i couldn t reproduce | 1 |

5,488 | 8,359,342,428 | IssuesEvent | 2018-10-03 07:54:51 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | insulin secretion - always from pancreatic cells? | cellular processes | ```

[Term]

id: GO:0030073

name: insulin secretion

def: "The regulated release of proinsulin from ***secretory granules (B granules) in the B cells of the pancreas***; accompanied by cleavage of proinsulin to form mature insulin." [GOC:mah, ISBN:0198506732]

is_a: GO:0030072 ! peptide hormone secretion

```

We have a two... | 1.0 | insulin secretion - always from pancreatic cells? - ```

[Term]

id: GO:0030073

name: insulin secretion

def: "The regulated release of proinsulin from ***secretory granules (B granules) in the B cells of the pancreas***; accompanied by cleavage of proinsulin to form mature insulin." [GOC:mah, ISBN:0198506732]

is_a: GO:00... | process | insulin secretion always from pancreatic cells id go name insulin secretion def the regulated release of proinsulin from secretory granules b granules in the b cells of the pancreas accompanied by cleavage of proinsulin to form mature insulin is a go peptide hormone secretion we ... | 1 |

819,383 | 30,732,456,609 | IssuesEvent | 2023-07-28 03:43:07 | TEAM-cafe-in/cafe-in-be | https://api.github.com/repos/TEAM-cafe-in/cafe-in-be | closed | feat: API 명세를 수정한다 | 🔥High priority ❗️refactoring | ### As-is

---

- 프론트의 요구사항에 맞추어 API 수정이 필요합니다

### To-be

---

- [x] 회원의 커피콩을 반환하는 API를 구현한다

- [x] `POST` 요청으로 조회한 카페를 다시 조회할 때의 처리

- [x] 순환 참조 문제를 해결한다

- [x] 구현한 API의 테스트를 작성한다

| 1.0 | feat: API 명세를 수정한다 - ### As-is

---

- 프론트의 요구사항에 맞추어 API 수정이 필요합니다

### To-be

---

- [x] 회원의 커피콩을 반환하는 API를 구현한다

- [x] `POST` 요청으로 조회한 카페를 다시 조회할 때의 처리

- [x] 순환 참조 문제를 해결한다

- [x] 구현한 API의 테스트를 작성한다

| non_process | feat api 명세를 수정한다 as is 프론트의 요구사항에 맞추어 api 수정이 필요합니다 to be 회원의 커피콩을 반환하는 api를 구현한다 post 요청으로 조회한 카페를 다시 조회할 때의 처리 순환 참조 문제를 해결한다 구현한 api의 테스트를 작성한다 | 0 |

19,756 | 6,760,341,633 | IssuesEvent | 2017-10-24 20:17:13 | mapbox/mapbox-gl-native | https://api.github.com/repos/mapbox/mapbox-gl-native | closed | Bitrise should upload releases to GitHub too | build iOS Node.js | Currently Travis uploads prebuilt releases to an s3 directory with no public file listing, [complicating the manual setup story](https://github.com/mapbox/mapbox-gl-native/wiki/Installing-Mapbox-GL-for-iOS/_compare/cbc4f2345a332b69d9f9a01130efffaec4ab87d4...df38e6f10a123c656cdd5d6a7b662fe04f23fb25) for folks who can’t ... | 1.0 | Bitrise should upload releases to GitHub too - Currently Travis uploads prebuilt releases to an s3 directory with no public file listing, [complicating the manual setup story](https://github.com/mapbox/mapbox-gl-native/wiki/Installing-Mapbox-GL-for-iOS/_compare/cbc4f2345a332b69d9f9a01130efffaec4ab87d4...df38e6f10a123c6... | non_process | bitrise should upload releases to github too currently travis uploads prebuilt releases to an directory with no public file listing for folks who can’t use cocoapods we should configure travis to to be listed cc incanus bsudekum | 0 |

385,911 | 26,658,989,583 | IssuesEvent | 2023-01-25 19:17:39 | SKY-ALIN/regta | https://api.github.com/repos/SKY-ALIN/regta | closed | Documentation for v0.3.0 | documentation | - [x] Python 3.11 support

- [x] `regta-period` page

- [x] Scheduling alternatives and comparison with regta (separated page)

- [x] Add a link to the page from README

- [x] Mark benchmarks as not ready

- [x] Explain verbose flag more detailed

- [x] Add `Period` to API Reference | 1.0 | Documentation for v0.3.0 - - [x] Python 3.11 support

- [x] `regta-period` page

- [x] Scheduling alternatives and comparison with regta (separated page)

- [x] Add a link to the page from README

- [x] Mark benchmarks as not ready

- [x] Explain verbose flag more detailed

- [x] Add `Period` to API Reference | non_process | documentation for python support regta period page scheduling alternatives and comparison with regta separated page add a link to the page from readme mark benchmarks as not ready explain verbose flag more detailed add period to api reference | 0 |

147,537 | 19,522,837,715 | IssuesEvent | 2021-12-29 22:29:25 | swagger-api/swagger-codegen | https://api.github.com/repos/swagger-api/swagger-codegen | opened | CVE-2017-16042 (High) detected in growl-1.9.2.tgz, growl-1.8.1.tgz | security vulnerability | ## CVE-2017-16042 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>growl-1.9.2.tgz</b>, <b>growl-1.8.1.tgz</b></p></summary>

<p>

<details><summary><b>growl-1.9.2.tgz</b></p></summary>... | True | CVE-2017-16042 (High) detected in growl-1.9.2.tgz, growl-1.8.1.tgz - ## CVE-2017-16042 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>growl-1.9.2.tgz</b>, <b>growl-1.8.1.tgz</b></p><... | non_process | cve high detected in growl tgz growl tgz cve high severity vulnerability vulnerable libraries growl tgz growl tgz growl tgz growl unobtrusive notifications library home page a href path to dependency file samples client petstore typescrip... | 0 |

779 | 3,258,517,299 | IssuesEvent | 2015-10-20 22:49:28 | HAW-BAI4-SE2/UniKit | https://api.github.com/repos/HAW-BAI4-SE2/UniKit | closed | Anmeldephase implementieren | in process | # Phase 1: Veranstaltungsauswahl

## Komponenten:

* Hibernatekomponente

* Studierendenkomponente

---

## ToDo:

* __Pre__: Dummy-Studentenobjekt, das seine wählbaren Veranstaltungen kennt.

* System zeigt für einen aktuellen Benutzer alle wählbaren Veranstaltungen an.

* Benutzer kann Veranstaltungen wählen und E... | 1.0 | Anmeldephase implementieren - # Phase 1: Veranstaltungsauswahl

## Komponenten:

* Hibernatekomponente

* Studierendenkomponente

---

## ToDo:

* __Pre__: Dummy-Studentenobjekt, das seine wählbaren Veranstaltungen kennt.

* System zeigt für einen aktuellen Benutzer alle wählbaren Veranstaltungen an.

* Benutzer kan... | process | anmeldephase implementieren phase veranstaltungsauswahl komponenten hibernatekomponente studierendenkomponente todo pre dummy studentenobjekt das seine wählbaren veranstaltungen kennt system zeigt für einen aktuellen benutzer alle wählbaren veranstaltungen an benutzer kan... | 1 |

11,172 | 13,957,694,833 | IssuesEvent | 2020-10-24 08:11:21 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | MT: Harvest | Geoportal Harvesting process MT - Malta | Dear Angelo,

Can you kindly perform a harvest on the Maltese CSW as we need to check some changes.

Thanks in advance for your help.

Regards,

Rene | 1.0 | MT: Harvest - Dear Angelo,

Can you kindly perform a harvest on the Maltese CSW as we need to check some changes.

Thanks in advance for your help.

Regards,

Rene | process | mt harvest dear angelo can you kindly perform a harvest on the maltese csw as we need to check some changes thanks in advance for your help regards rene | 1 |

279,421 | 21,159,951,569 | IssuesEvent | 2022-04-07 08:28:05 | xtensor-stack/xtensor | https://api.github.com/repos/xtensor-stack/xtensor | closed | Documentation improvement suggestions | Enhancement Documentation | ## A - Replace ``` ``xt::something`` ``` with ``:cpp:func:`xt::something` ``

I suggest replacing inline code that mention a xtensor function or class with the associated sphinx target.

This is [supported by Breathe for classes and functions](https://breathe.readthedocs.io/en/latest/domains.html) and has the advantage... | 1.0 | Documentation improvement suggestions - ## A - Replace ``` ``xt::something`` ``` with ``:cpp:func:`xt::something` ``

I suggest replacing inline code that mention a xtensor function or class with the associated sphinx target.

This is [supported by Breathe for classes and functions](https://breathe.readthedocs.io/en/la... | non_process | documentation improvement suggestions a replace xt something with cpp func xt something i suggest replacing inline code that mention a xtensor function or class with the associated sphinx target this is and has the advantage that the resulting html links to the reference sections wh... | 0 |

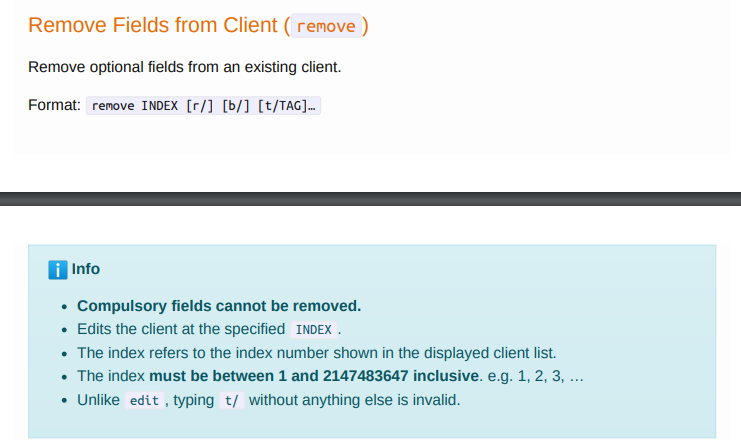

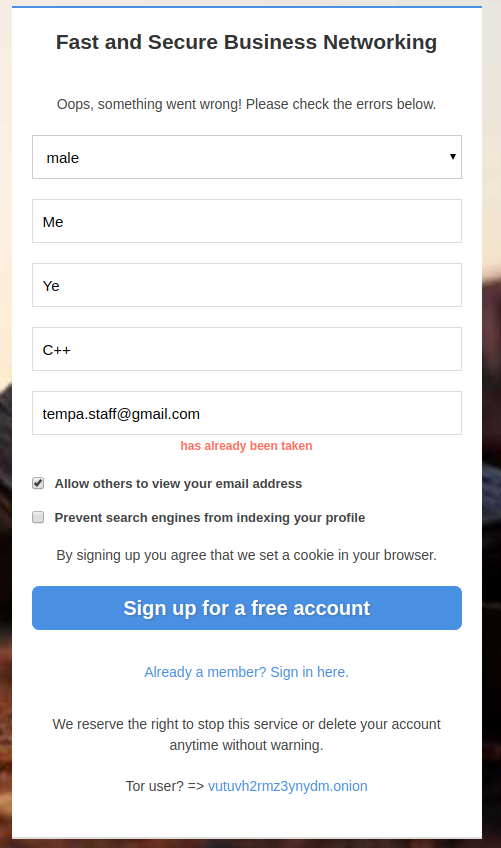

18,203 | 10,217,961,045 | IssuesEvent | 2019-08-15 14:51:39 | vutuv/vutuv | https://api.github.com/repos/vutuv/vutuv | closed | Prevent user enumeration - wording of sign up warnings | Feature Request Security |

Protect users email from hacker, by changing the word email "has already been taken", to something like: "Please check your email to complete the registration".

The email sent to the mailbox is something l... | True | Prevent user enumeration - wording of sign up warnings -

Protect users email from hacker, by changing the word email "has already been taken", to something like: "Please check your email to complete the reg... | non_process | prevent user enumeration wording of sign up warnings protect users email from hacker by changing the word email has already been taken to something like please check your email to complete the registration the email sent to the mailbox is something like you tried to sign up but you already have... | 0 |

120,534 | 25,813,144,885 | IssuesEvent | 2022-12-12 01:25:18 | sec-edgar/sec-edgar | https://api.github.com/repos/sec-edgar/sec-edgar | closed | Add compatibility with EDGAR APIs | enhancement help-wanted code-structure | **Is your feature request related to a problem? Please describe.**

The SEC is currently offering a RESTful API in beta! See more [here](https://www.sec.gov/edgar/sec-api-documentation). Not sure exactly what the best way to integrate this into the package would be, but want to put it out there so that it can be discus... | 1.0 | Add compatibility with EDGAR APIs - **Is your feature request related to a problem? Please describe.**

The SEC is currently offering a RESTful API in beta! See more [here](https://www.sec.gov/edgar/sec-api-documentation). Not sure exactly what the best way to integrate this into the package would be, but want to put i... | non_process | add compatibility with edgar apis is your feature request related to a problem please describe the sec is currently offering a restful api in beta see more not sure exactly what the best way to integrate this into the package would be but want to put it out there so that it can be discussed describ... | 0 |

300,563 | 9,211,457,730 | IssuesEvent | 2019-03-09 15:34:19 | qgisissuebot/QGIS | https://api.github.com/repos/qgisissuebot/QGIS | closed | Raster Symbology, Paletted/Unique values not respecting selected band | Bug Priority: normal | ---

Author Name: **Andrew Harvey** (Andrew Harvey)

Original Redmine Issue: 21505, https://issues.qgis.org/issues/21505

Original Date: 2019-03-07T00:51:46.888Z

Affected QGIS version: 3.6.0

---

I have a 4 band raster loaded into QGIS, under the layer Symbology I've chosen "Paletted/Unique values" and selected Band 3... | 1.0 | Raster Symbology, Paletted/Unique values not respecting selected band - ---

Author Name: **Andrew Harvey** (Andrew Harvey)

Original Redmine Issue: 21505, https://issues.qgis.org/issues/21505

Original Date: 2019-03-07T00:51:46.888Z

Affected QGIS version: 3.6.0

---

I have a 4 band raster loaded into QGIS, under the ... | non_process | raster symbology paletted unique values not respecting selected band author name andrew harvey andrew harvey original redmine issue original date affected qgis version i have a band raster loaded into qgis under the layer symbology i ve chosen paletted unique values ... | 0 |

5,904 | 8,722,791,970 | IssuesEvent | 2018-12-09 15:56:15 | P0cL4bs/WiFi-Pumpkin | https://api.github.com/repos/P0cL4bs/WiFi-Pumpkin | closed | Update new Version 0.8.7 | Feature request in process new version | I'm work in a new version more moduled with @yudevan.

@yudevan list the features bellow: | 1.0 | Update new Version 0.8.7 - I'm work in a new version more moduled with @yudevan.

@yudevan list the features bellow: | process | update new version i m work in a new version more moduled with yudevan yudevan list the features bellow | 1 |

1,568 | 4,165,429,090 | IssuesEvent | 2016-06-19 13:51:54 | sysown/proxysql | https://api.github.com/repos/sysown/proxysql | opened | Disable/enable multiplexing from mysql_query_rules | ADMIN CONNECTION POOL MYSQL PROTOCOL QUERY PROCESSOR | In `mysql_query_rules` we need to add a new variable that defines if multiplexing needs to be disabled or re-enabled.

This can be useful if:

* we want that a specific query disables multiplexing

* we want that multiplexing is re-enabled for example after ProxySQL things that is not safe to use multiplexing (ex, when... | 1.0 | Disable/enable multiplexing from mysql_query_rules - In `mysql_query_rules` we need to add a new variable that defines if multiplexing needs to be disabled or re-enabled.

This can be useful if:

* we want that a specific query disables multiplexing

* we want that multiplexing is re-enabled for example after ProxySQL ... | process | disable enable multiplexing from mysql query rules in mysql query rules we need to add a new variable that defines if multiplexing needs to be disabled or re enabled this can be useful if we want that a specific query disables multiplexing we want that multiplexing is re enabled for example after proxysql ... | 1 |

56 | 2,516,123,386 | IssuesEvent | 2015-01-15 23:29:19 | GsDevKit/gsApplicationTools | https://api.github.com/repos/GsDevKit/gsApplicationTools | opened | GemServerLauncher needs to isolate processes and semaphores ... not commitable | in process | Need to use TransientStackVlue to isolate....discovered while working through interactive debugging of Seaside using gem server. | 1.0 | GemServerLauncher needs to isolate processes and semaphores ... not commitable - Need to use TransientStackVlue to isolate....discovered while working through interactive debugging of Seaside using gem server. | process | gemserverlauncher needs to isolate processes and semaphores not commitable need to use transientstackvlue to isolate discovered while working through interactive debugging of seaside using gem server | 1 |

141,148 | 11,395,812,527 | IssuesEvent | 2020-01-30 12:16:21 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | opened | Test Case and Test Execution for GIDS: Allow user to update crosswalk values for WEAMS data that has an IPED or OPE match #4751 | bah-gids bah-sprint-39 testing | As a member of the BAH development team, I need to make sure that we have traceability between our stories and test case execution so that we can easily see what test cases were executed against our functional work and when those cases were executed.

Assumptions:

1. All test cases pass

2. All defects resulting from t... | 1.0 | Test Case and Test Execution for GIDS: Allow user to update crosswalk values for WEAMS data that has an IPED or OPE match #4751 - As a member of the BAH development team, I need to make sure that we have traceability between our stories and test case execution so that we can easily see what test cases were executed aga... | non_process | test case and test execution for gids allow user to update crosswalk values for weams data that has an iped or ope match as a member of the bah development team i need to make sure that we have traceability between our stories and test case execution so that we can easily see what test cases were executed agains... | 0 |

21,126 | 28,092,826,695 | IssuesEvent | 2023-03-30 14:04:13 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | cumulativetodelta: initial data points are included for monotonic counters | bug help wanted Stale priority:p2 processor/cumulativetodelta | ### Component(s)

processor/cumulativetodelta

### What happened?

The cumulative-to-delta processor includes the initial delta from zero to the current value as a data point:

https://github.com/open-telemetry/opentelemetry-collector-contrib/blob/34b31da629f65c195125e8db07480574b181477f/processor/cumulativetodeltapr... | 1.0 | cumulativetodelta: initial data points are included for monotonic counters - ### Component(s)

processor/cumulativetodelta

### What happened?

The cumulative-to-delta processor includes the initial delta from zero to the current value as a data point:

https://github.com/open-telemetry/opentelemetry-collector-contri... | process | cumulativetodelta initial data points are included for monotonic counters component s processor cumulativetodelta what happened the cumulative to delta processor includes the initial delta from zero to the current value as a data point even for monotonic counters this is counterintuitive and n... | 1 |

616,564 | 19,306,194,032 | IssuesEvent | 2021-12-13 11:47:11 | kubernetes/release | https://api.github.com/repos/kubernetes/release | closed | General promote-images tool for k8s image maintainers | kind/feature priority/important-longterm sig/release area/release-eng | <!-- Please only use this template for submitting feature requests -->

#### What would you like to be added:

Currently, the krel promote-images tool only works for kubernetes images. It would be great to extend it to other projects in k8s and k8s-sigs that have staging images hosted in k8s.gcr.io.

Slack thread... | 1.0 | General promote-images tool for k8s image maintainers - <!-- Please only use this template for submitting feature requests -->

#### What would you like to be added:

Currently, the krel promote-images tool only works for kubernetes images. It would be great to extend it to other projects in k8s and k8s-sigs that h... | non_process | general promote images tool for image maintainers what would you like to be added currently the krel promote images tool only works for kubernetes images it would be great to extend it to other projects in and sigs that have staging images hosted in gcr io slack thread for context | 0 |

17,082 | 22,587,153,422 | IssuesEvent | 2022-06-28 16:10:12 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [processor/transform] Add 'like' capability to WHERE condition | priority:p2 comp: transformprocessor | **Is your feature request related to a problem? Please describe.**

The transform processor's where condition cannot handle checking if 2 string match only in specific positions. It must check the whole string.

**Describe the solution you'd like**

For strings, a condition allows you to use the keyword `like` whic... | 1.0 | [processor/transform] Add 'like' capability to WHERE condition - **Is your feature request related to a problem? Please describe.**

The transform processor's where condition cannot handle checking if 2 string match only in specific positions. It must check the whole string.

**Describe the solution you'd like**

F... | process | add like capability to where condition is your feature request related to a problem please describe the transform processor s where condition cannot handle checking if string match only in specific positions it must check the whole string describe the solution you d like for strings a condit... | 1 |

8,663 | 11,798,104,183 | IssuesEvent | 2020-03-18 13:52:56 | MHRA/products | https://api.github.com/repos/MHRA/products | opened | Observability | Azure monitor | EPIC - Auto Batch Process :oncoming_automobile: | ## User want

As a technical user

I want to visualize doc index updater using Azure monitor

So I can monitor and alert

## Technical acceptance criteria

Azure Monitor should have the tooling to visualise the [golden signals](https://landing.google.com/sre/sre-book/chapters/monitoring-distributed-systems/#xref... | 1.0 | Observability | Azure monitor - ## User want

As a technical user

I want to visualize doc index updater using Azure monitor

So I can monitor and alert

## Technical acceptance criteria

Azure Monitor should have the tooling to visualise the [golden signals](https://landing.google.com/sre/sre-book/chapters/mon... | process | observability azure monitor user want as a technical user i want to visualize doc index updater using azure monitor so i can monitor and alert technical acceptance criteria azure monitor should have the tooling to visualise the latency traffic errors saturation size... | 1 |

187,331 | 22,045,644,279 | IssuesEvent | 2022-05-30 01:09:49 | CodeChung/bobert-ai | https://api.github.com/repos/CodeChung/bobert-ai | opened | CVE-2022-25878 (High) detected in protobufjs-6.8.8.tgz | security vulnerability | ## CVE-2022-25878 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>protobufjs-6.8.8.tgz</b></p></summary>

<p>Protocol Buffers for JavaScript (& TypeScript).</p>

<p>Library home page: <a... | True | CVE-2022-25878 (High) detected in protobufjs-6.8.8.tgz - ## CVE-2022-25878 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>protobufjs-6.8.8.tgz</b></p></summary>

<p>Protocol Buffers fo... | non_process | cve high detected in protobufjs tgz cve high severity vulnerability vulnerable library protobufjs tgz protocol buffers for javascript typescript library home page a href path to dependency file package json path to vulnerable library node modules protobufjs pa... | 0 |

3,899 | 6,821,849,734 | IssuesEvent | 2017-11-07 18:02:54 | rubberduck-vba/Rubberduck | https://api.github.com/repos/rubberduck-vba/Rubberduck | closed | Q: What is Resolver Error? | parse-tree-processing support | In Excel 2010 , or Excel 2013, I open a file with c17500 LOC (excluding comments) VBA. I click "Pending". after a couple of minutes the caption changes to "Resolver Error". Is there a way of finding out why?

| 1.0 | Q: What is Resolver Error? - In Excel 2010 , or Excel 2013, I open a file with c17500 LOC (excluding comments) VBA. I click "Pending". after a couple of minutes the caption changes to "Resolver Error". Is there a way of finding out why?

| process | q what is resolver error in excel or excel i open a file with loc excluding comments vba i click pending after a couple of minutes the caption changes to resolver error is there a way of finding out why | 1 |

551,761 | 16,188,669,497 | IssuesEvent | 2021-05-04 03:44:59 | TerriaJS/neii-viewer | https://api.github.com/repos/TerriaJS/neii-viewer | closed | NEII - Apr 2021 release | Priority high | - [x] update to latest terriajs next

- [x] Global Horizontal Exposure not loading (WMS): https://github.com/TerriaJS/neii-viewer/issues/172

- [x] Wrong error message for NEMSR catalogue item: https://github.com/TerriaJS/neii-viewer/issues/171

- [x] Geofabric datasets errors: https://github.com/TerriaJS/neii-viewer/... | 1.0 | NEII - Apr 2021 release - - [x] update to latest terriajs next

- [x] Global Horizontal Exposure not loading (WMS): https://github.com/TerriaJS/neii-viewer/issues/172

- [x] Wrong error message for NEMSR catalogue item: https://github.com/TerriaJS/neii-viewer/issues/171

- [x] Geofabric datasets errors: https://github... | non_process | neii apr release update to latest terriajs next global horizontal exposure not loading wms wrong error message for nemsr catalogue item geofabric datasets errors see update hydrology and marine points url from ga see | 0 |

140,519 | 11,349,427,874 | IssuesEvent | 2020-01-24 04:53:22 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | [test-failed]: Chrome UI Functional Tests.test/functional/apps/home/_sample_data·ts - homepage app sample data dashboard should launch sample logs data set dashboard | failed-test test-cloud | **Version: 7.6**

**Class: Chrome UI Functional Tests.test/functional/apps/home/_sample_data·ts**

**Stack Trace:**

Error: retry.try timeout: TimeoutError: Waiting for element to be located By(css selector, [data-test-subj="launchSampleDataSetlogs"])

Wait timed out after 10017ms

at /var/lib/jenkins/workspace/elastic... | 2.0 | [test-failed]: Chrome UI Functional Tests.test/functional/apps/home/_sample_data·ts - homepage app sample data dashboard should launch sample logs data set dashboard - **Version: 7.6**

**Class: Chrome UI Functional Tests.test/functional/apps/home/_sample_data·ts**

**Stack Trace:**

Error: retry.try timeout: TimeoutErro... | non_process | chrome ui functional tests test functional apps home sample data·ts homepage app sample data dashboard should launch sample logs data set dashboard version class chrome ui functional tests test functional apps home sample data·ts stack trace error retry try timeout timeouterror waiting f... | 0 |

12,097 | 14,740,105,100 | IssuesEvent | 2021-01-07 08:31:29 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Santa Rosa - SA Billing - Late Fee Account List | anc-process anp-important ant-bug has attachment | In GitLab by @kdjstudios on Oct 4, 2018, 10:43

[Santa_Rosa.xlsx](/uploads/ccbfbeb17d9d8c6f37eacd159efb96b6/Santa_Rosa.xlsx)

HD: http://www.servicedesk.answernet.com/profiles/ticket/2018-10-03-82891/conversation | 1.0 | Santa Rosa - SA Billing - Late Fee Account List - In GitLab by @kdjstudios on Oct 4, 2018, 10:43

[Santa_Rosa.xlsx](/uploads/ccbfbeb17d9d8c6f37eacd159efb96b6/Santa_Rosa.xlsx)

HD: http://www.servicedesk.answernet.com/profiles/ticket/2018-10-03-82891/conversation | process | santa rosa sa billing late fee account list in gitlab by kdjstudios on oct uploads santa rosa xlsx hd | 1 |

14,946 | 18,426,704,083 | IssuesEvent | 2021-10-13 23:28:03 | yandali-damian/LIM015-social-network | https://api.github.com/repos/yandali-damian/LIM015-social-network | closed | USER HISTORY - 004 | pending Process | Como usuario logeado debo poder publicar, visualizar, modificar y eliminar una publicación

- tareas

- [x] estructura HTML de home

> - [x] perfil

> > - [x] Agregar botón para editar foto y nombre

> >> - [x] Modal para cambiar nombre y photo

> > - [x] mostrar los post personales

> - [x] post home

> > - [x] Ag... | 1.0 | USER HISTORY - 004 - Como usuario logeado debo poder publicar, visualizar, modificar y eliminar una publicación

- tareas

- [x] estructura HTML de home

> - [x] perfil

> > - [x] Agregar botón para editar foto y nombre

> >> - [x] Modal para cambiar nombre y photo