Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

16,695 | 21,793,706,881 | IssuesEvent | 2022-05-15 09:56:02 | bitPogo/kmock | https://api.github.com/repos/bitPogo/kmock | closed | Consider a DummyFactory for data classes | enhancement kmock kmock-processor kmock-gradle | ## Description

<!--- Provide a detailed introduction to the issue itself, and why you consider it to be a bug -->

Once KFixture has been progressed further and is ready to release a much easier support for relaxing will be possible.

However to prepare slowly for a probable plugin a first step should be to provide an... | 1.0 | Consider a DummyFactory for data classes - ## Description

<!--- Provide a detailed introduction to the issue itself, and why you consider it to be a bug -->

Once KFixture has been progressed further and is ready to release a much easier support for relaxing will be possible.

However to prepare slowly for a probable ... | process | consider a dummyfactory for data classes description once kfixture has been progressed further and is ready to release a much easier support for relaxing will be possible however to prepare slowly for a probable plugin a first step should be to provide an entry point for data class dummies acceptance cri... | 1 |

10,085 | 13,044,161,989 | IssuesEvent | 2020-07-29 03:47:28 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `SubTimeStringNull` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `SubTimeStringNull` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @andylokandy

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/s... | 2.0 | UCP: Migrate scalar function `SubTimeStringNull` from TiDB -

## Description

Port the scalar function `SubTimeStringNull` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @andylokandy

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- htt... | process | ucp migrate scalar function subtimestringnull from tidb description port the scalar function subtimestringnull from tidb to coprocessor score mentor s andylokandy recommended skills rust programming learning materials already implemented expressions ported from tidb ... | 1 |

25,189 | 2,677,853,447 | IssuesEvent | 2015-03-26 04:43:01 | JukkaL/mypy | https://api.github.com/repos/JukkaL/mypy | closed | Allow __init__ with signature but no return type | bug priority | Code:

```

class Visitor:

def __init__(self, a: int):

pass

```

Error:

```

x.py: In member "__init__" of class "Visitor":

x.py, line 2: Cannot define return type for "__init__"

```

The return type is `Any` (implicitly), and `Any` should be a valid return type for `__init__`.

This was rep... | 1.0 | Allow __init__ with signature but no return type - Code:

```

class Visitor:

def __init__(self, a: int):

pass

```

Error:

```

x.py: In member "__init__" of class "Visitor":

x.py, line 2: Cannot define return type for "__init__"

```

The return type is `Any` (implicitly), and `Any` should be ... | non_process | allow init with signature but no return type code class visitor def init self a int pass error x py in member init of class visitor x py line cannot define return type for init the return type is any implicitly and any should be ... | 0 |

770,758 | 27,055,236,027 | IssuesEvent | 2023-02-13 15:45:34 | googleapis/python-iam | https://api.github.com/repos/googleapis/python-iam | closed | Samples tests are failing with `PermissionDenied` | api: iam type: bug priority: p2 samples | The sample test for `create_deny_policy` is failing with `PermissionDenied`. See build log [here](https://source.cloud.google.com/results/invocations/57ea4d08-0e28-4a9f-b472-ef3db77dc055/log) and stack trace below:

```

create_deny_policy.py:103: in create_deny_policy

result = policies_client.create_policy(reques... | 1.0 | Samples tests are failing with `PermissionDenied` - The sample test for `create_deny_policy` is failing with `PermissionDenied`. See build log [here](https://source.cloud.google.com/results/invocations/57ea4d08-0e28-4a9f-b472-ef3db77dc055/log) and stack trace below:

```

create_deny_policy.py:103: in create_deny_polic... | non_process | samples tests are failing with permissiondenied the sample test for create deny policy is failing with permissiondenied see build log and stack trace below create deny policy py in create deny policy result policies client create policy request request result google cloud iam s... | 0 |

773,562 | 27,161,881,069 | IssuesEvent | 2023-02-17 12:33:29 | DataverseNO/local.dataverse.no | https://api.github.com/repos/DataverseNO/local.dataverse.no | closed | Add Data Privacy Statement to dataverse.no | enhancement PRIORITY | A link to the DataverseNO Privacy Statement should be added to dataverse.no. See how to in the Dataverse guide: [https://guides.dataverse.org/en/latest/installation/config.html?highlight=footer#applicationprivacypolicyurl](https://guides.dataverse.org/en/latest/installation/config.html?highlight=footer#applicationpriva... | 1.0 | Add Data Privacy Statement to dataverse.no - A link to the DataverseNO Privacy Statement should be added to dataverse.no. See how to in the Dataverse guide: [https://guides.dataverse.org/en/latest/installation/config.html?highlight=footer#applicationprivacypolicyurl](https://guides.dataverse.org/en/latest/installation/... | non_process | add data privacy statement to dataverse no a link to the dataverseno privacy statement should be added to dataverse no see how to in the dataverse guide in the command replace with this should also be reflected in the new cloud based instance of dataverseno sorry for the late notice th... | 0 |

191,362 | 14,594,037,069 | IssuesEvent | 2020-12-20 02:51:18 | github-vet/rangeloop-pointer-findings | https://api.github.com/repos/github-vet/rangeloop-pointer-findings | closed | rootfs/node-fencing: vendor/k8s.io/kubernetes/pkg/controller/job/jobcontroller_test.go; 3 LoC | fresh test tiny vendored |

Found a possible issue in [rootfs/node-fencing](https://www.github.com/rootfs/node-fencing) at [vendor/k8s.io/kubernetes/pkg/controller/job/jobcontroller_test.go](https://github.com/rootfs/node-fencing/blob/b78deb66758bdffcf65efe25d2894b6a6343543c/vendor/k8s.io/kubernetes/pkg/controller/job/jobcontroller_test.go#L259-... | 1.0 | rootfs/node-fencing: vendor/k8s.io/kubernetes/pkg/controller/job/jobcontroller_test.go; 3 LoC -

Found a possible issue in [rootfs/node-fencing](https://www.github.com/rootfs/node-fencing) at [vendor/k8s.io/kubernetes/pkg/controller/job/jobcontroller_test.go](https://github.com/rootfs/node-fencing/blob/b78deb66758bdffc... | non_process | rootfs node fencing vendor io kubernetes pkg controller job jobcontroller test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the co... | 0 |

21,054 | 28,001,304,207 | IssuesEvent | 2023-03-27 11:58:29 | Ultimate-Hosts-Blacklist/whitelist | https://api.github.com/repos/Ultimate-Hosts-Blacklist/whitelist | opened | [FALSE-POSITIVE?] | whitelisting process | **Domains or links**

<!-- Please list below any domains and links listed here which you believe are a false positive. -->

1. www.rt.com

**More Information**

<!-- How did you discover your web site or domain was listed here? -->

1. tried to go to the website, it doesn't belong to me.

**Have you requested rem... | 1.0 | [FALSE-POSITIVE?] - **Domains or links**

<!-- Please list below any domains and links listed here which you believe are a false positive. -->

1. www.rt.com

**More Information**

<!-- How did you discover your web site or domain was listed here? -->

1. tried to go to the website, it doesn't belong to me.

**H... | process | domains or links more information tried to go to the website it doesn t belong to me have you requested removal from other sources none additional context i copied your host file into my host file under the assumption that this list is not a political ce... | 1 |

11,442 | 14,261,856,663 | IssuesEvent | 2020-11-20 12:01:15 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | opened | ntr: replication fork relocation to nuclear pore complex | New term request PomBase cell cycle and DNA processes community curation | We've received a request from community curator Sarah Lambert, to use for PMID:33159083:

name: replication fork relocation to nuclear pore complex

suggested def (cribbed from the paper intro): A cellular process in which a DNA replication fork that has stalled relocates and anchors to a nuclear pore complex, in a p... | 1.0 | ntr: replication fork relocation to nuclear pore complex - We've received a request from community curator Sarah Lambert, to use for PMID:33159083:

name: replication fork relocation to nuclear pore complex

suggested def (cribbed from the paper intro): A cellular process in which a DNA replication fork that has stal... | process | ntr replication fork relocation to nuclear pore complex we ve received a request from community curator sarah lambert to use for pmid name replication fork relocation to nuclear pore complex suggested def cribbed from the paper intro a cellular process in which a dna replication fork that has stalled rel... | 1 |

14,746 | 18,017,074,234 | IssuesEvent | 2021-09-16 14:57:27 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Change label GO:0061765 modulation by virus of host NIK/NF-kappaB signaling & children | multi-species process | * GO:0061765 modulation by virus of host NIK/NF-kappaB signaling

-> change to 'modulation by virus of host NIK/NF-kappaB cascade'

* 'GO:0039644 suppression by virus of host NF-kappaB transcription factor activity'

-> change to 'suppression by virus of host NF-kappaB cascade'

* GO:0039652 activation by vir... | 1.0 | Change label GO:0061765 modulation by virus of host NIK/NF-kappaB signaling & children - * GO:0061765 modulation by virus of host NIK/NF-kappaB signaling

-> change to 'modulation by virus of host NIK/NF-kappaB cascade'

* 'GO:0039644 suppression by virus of host NF-kappaB transcription factor activity'

-> chang... | process | change label go modulation by virus of host nik nf kappab signaling children go modulation by virus of host nik nf kappab signaling change to modulation by virus of host nik nf kappab cascade go suppression by virus of host nf kappab transcription factor activity change to suppression ... | 1 |

153,826 | 19,708,617,916 | IssuesEvent | 2022-01-13 01:45:41 | artsking/linux-4.19.72_CVE-2020-14386 | https://api.github.com/repos/artsking/linux-4.19.72_CVE-2020-14386 | opened | CVE-2020-25220 (High) detected in linux-yoctov5.4.51 | security vulnerability | ## CVE-2020-25220 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedded kernel</p>

<p>Library home page: <a href=https://git.... | True | CVE-2020-25220 (High) detected in linux-yoctov5.4.51 - ## CVE-2020-25220 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedde... | non_process | cve high detected in linux cve high severity vulnerability vulnerable library linux yocto linux embedded kernel library home page a href found in base branch master vulnerable source files vulnerability details the lin... | 0 |

965 | 3,422,103,198 | IssuesEvent | 2015-12-08 21:34:43 | darnir/wget | https://api.github.com/repos/darnir/wget | closed | warc.c:612:5: 'HAVE_UUID_CREATE' is not defined, evaluates to 0 | Lexical or Preprocessor Issue Wundef | warc.c:612:5: warning: 'HAVE_UUID_CREATE' is not defined, evaluates to 0 [-Wundef,Lexical or Preprocessor Issue]

| 1.0 | warc.c:612:5: 'HAVE_UUID_CREATE' is not defined, evaluates to 0 - warc.c:612:5: warning: 'HAVE_UUID_CREATE' is not defined, evaluates to 0 [-Wundef,Lexical or Preprocessor Issue]

| process | warc c have uuid create is not defined evaluates to warc c warning have uuid create is not defined evaluates to | 1 |

67,378 | 20,961,608,618 | IssuesEvent | 2022-03-27 21:48:43 | abedmaatalla/sipdroid | https://api.github.com/repos/abedmaatalla/sipdroid | closed | new option for Static wifi IP address | Priority-Medium Type-Defect auto-migrated | ```

According this article, "Sipdroid sends probe packets every minute over WLANs

to detect such changes.". This makes stand time is short under wifi than under

3G.

http://code.google.com/p/sipdroid/wiki/NewStandbyTechnique

but all the time I use wifi at home and my home wifi is static IP address. Can

you add a op... | 1.0 | new option for Static wifi IP address - ```

According this article, "Sipdroid sends probe packets every minute over WLANs

to detect such changes.". This makes stand time is short under wifi than under

3G.

http://code.google.com/p/sipdroid/wiki/NewStandbyTechnique

but all the time I use wifi at home and my home wifi... | non_process | new option for static wifi ip address according this article sipdroid sends probe packets every minute over wlans to detect such changes this makes stand time is short under wifi than under but all the time i use wifi at home and my home wifi is static ip address can you add a option in sipdroid f... | 0 |

1,299 | 3,838,363,391 | IssuesEvent | 2016-04-02 10:37:00 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Topichead with @copy-to on it throws NPE | bug obsolete P2 preprocess | If I have in the DITA Map something like:

<topichead navtitle="THEAD" copy-to="topics/copyright.dita"/>

The DITA OT throws an error when processing it:

D:\projects\eXml\frameworks\dita\DITA-OT\plugins\org.dita.base\build_preprocess.xml:35: Failed to run pipeline: [DOTJ012F][FATAL] Failed to parse the... | 1.0 | Topichead with @copy-to on it throws NPE - If I have in the DITA Map something like:

<topichead navtitle="THEAD" copy-to="topics/copyright.dita"/>

The DITA OT throws an error when processing it:

D:\projects\eXml\frameworks\dita\DITA-OT\plugins\org.dita.base\build_preprocess.xml:35: Failed to run pipe... | process | topichead with copy to on it throws npe if i have in the dita map something like the dita ot throws an error when processing it d projects exml frameworks dita dita ot plugins org dita base build preprocess xml failed to run pipeline failed to parse the input file flowers ditamap th... | 1 |

15,599 | 19,723,317,898 | IssuesEvent | 2022-01-13 17:21:47 | open-telemetry/opentelemetry-collector | https://api.github.com/repos/open-telemetry/opentelemetry-collector | closed | Access headers in processor | enhancement priority:p3 spec:trace spec:metrics release:after-ga area:processor | **Is your feature request related to a problem? Please describe.**

I would like to access HTTP headers in the processor pipeline and add attribute to span/resource - e.g. tenant ID.

**Describe the solution you'd like**

Read the headers from the context object in a processor. The headers which should be injecte... | 1.0 | Access headers in processor - **Is your feature request related to a problem? Please describe.**

I would like to access HTTP headers in the processor pipeline and add attribute to span/resource - e.g. tenant ID.

**Describe the solution you'd like**

Read the headers from the context object in a processor. The h... | process | access headers in processor is your feature request related to a problem please describe i would like to access http headers in the processor pipeline and add attribute to span resource e g tenant id describe the solution you d like read the headers from the context object in a processor the h... | 1 |

355,069 | 25,175,518,664 | IssuesEvent | 2022-11-11 08:54:27 | P0tatoChips/pe | https://api.github.com/repos/P0tatoChips/pe | opened | Unneeded Spacing in heading | severity.VeryLow type.DocumentationBug |

As you can see in the pic, the design heading is indented and is at a weird position.

<!--session: 1668152709074-ffebd4e7-c492-47e8-8e19-ad25fc3b6a31-->

<!--Version: Web v3.4.4--> | 1.0 | Unneeded Spacing in heading -

As you can see in the pic, the design heading is indented and is at a weird position.

<!--session: 1668152709074-ffebd4e7-c492-47e8-8e19-ad25fc3b6a31-->

<!--Version: Web v3... | non_process | unneeded spacing in heading as you can see in the pic the design heading is indented and is at a weird position | 0 |

334,950 | 30,000,199,123 | IssuesEvent | 2023-06-26 08:49:01 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | opened | [CI] RoleReferenceIntersectionTests testBuildRoleForListOfRoleReferences failing | :Core/Infra/Core >test-failure Team:Core/Infra | Seems that that problem from #93395 came back again. (mockito version needs to be updated?)

Fails regularly since June 20th so I will mute the test for now.

**Build scan:**

https://gradle-enterprise.elastic.co/s/pgqrjtsjap53o/tests/:x-pack:plugin:core:test/org.elasticsearch.xpack.core.security.authz.store.RoleReferen... | 1.0 | [CI] RoleReferenceIntersectionTests testBuildRoleForListOfRoleReferences failing - Seems that that problem from #93395 came back again. (mockito version needs to be updated?)

Fails regularly since June 20th so I will mute the test for now.

**Build scan:**

https://gradle-enterprise.elastic.co/s/pgqrjtsjap53o/tests/:x-... | non_process | rolereferenceintersectiontests testbuildroleforlistofrolereferences failing seems that that problem from came back again mockito version needs to be updated fails regularly since june so i will mute the test for now build scan reproduction line gradlew x pack plugin core test tes... | 0 |

5,721 | 30,249,262,233 | IssuesEvent | 2023-07-06 19:04:48 | carbon-design-system/carbon | https://api.github.com/repos/carbon-design-system/carbon | closed | [Question]: SideNav not closing when clicking links | type: question ❓ status: needs triage 🕵️♀️ status: waiting for maintainer response 💬 status: needs reproduction | ### Question for Carbon

**Package**

@carbon/react

**Browser**

Chrome

**Package version**

@carbon/react: 1.19.0

**React version**

18.2.0

Description

As pointed out in [#3666](https://github.com/carbon-design-system/carbon/issues/3666) the sideNav menu is not collapsing when clicking a link element. The... | True | [Question]: SideNav not closing when clicking links - ### Question for Carbon

**Package**

@carbon/react

**Browser**

Chrome

**Package version**

@carbon/react: 1.19.0

**React version**

18.2.0

Description

As pointed out in [#3666](https://github.com/carbon-design-system/carbon/issues/3666) the sideNav me... | non_process | sidenav not closing when clicking links question for carbon package carbon react browser chrome package version carbon react react version description as pointed out in the sidenav menu is not collapsing when clicking a link element the issue to close the m... | 0 |

404 | 2,848,095,451 | IssuesEvent | 2015-05-29 20:44:48 | mitchellh/packer | https://api.github.com/repos/mitchellh/packer | closed | Atlas post-processor fails with "unexpected EOF" when trying to upload a Vagrant box | bug post-processor/atlas | Crash log here:

https://gist.github.com/KFishner/613d17334b96f6967fe4

Packer template here:

https://gist.github.com/KFishner/1084057e7eb970c513d8 | 1.0 | Atlas post-processor fails with "unexpected EOF" when trying to upload a Vagrant box - Crash log here:

https://gist.github.com/KFishner/613d17334b96f6967fe4

Packer template here:

https://gist.github.com/KFishner/1084057e7eb970c513d8 | process | atlas post processor fails with unexpected eof when trying to upload a vagrant box crash log here packer template here | 1 |

38,290 | 19,090,281,905 | IssuesEvent | 2021-11-29 11:17:43 | ChainSafe/lodestar | https://api.github.com/repos/ChainSafe/lodestar | closed | Optimize fork choice methods that iterate | prio5-medium scope-performance | <!--NOTE: -->

<!--- General questions should go to the discord chat instead of the issue tracker.-->

**Describe the bug**

Some forkchoice methods are way too inneficient iterating all nodes unnecessarily

**Expected behavior**

Be more optimal | True | Optimize fork choice methods that iterate - <!--NOTE: -->

<!--- General questions should go to the discord chat instead of the issue tracker.-->

**Describe the bug**

Some forkchoice methods are way too inneficient iterating all nodes unnecessarily

**Expected behavior**

Be more optimal | non_process | optimize fork choice methods that iterate describe the bug some forkchoice methods are way too inneficient iterating all nodes unnecessarily expected behavior be more optimal | 0 |

66,892 | 27,618,837,515 | IssuesEvent | 2023-03-09 21:47:06 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | closed | Check-in with SMO on Moped first impressions 💓 | Type: Meeting Service: Dev Service: Product Workgroup: SMO Product: Moped Project: Moped v2.0 | > As a follow-up to our introductory meeting, let's take this time to review Smart Mobility's first impressions of Moped.

>

> We hope to learn about how Moped might be of value to your work, and what changes we could bring to the app to make it best fit your needs.

>

> We'll be prepared to give you an update on o... | 2.0 | Check-in with SMO on Moped first impressions 💓 - > As a follow-up to our introductory meeting, let's take this time to review Smart Mobility's first impressions of Moped.

>

> We hope to learn about how Moped might be of value to your work, and what changes we could bring to the app to make it best fit your needs.

... | non_process | check in with smo on moped first impressions 💓 as a follow up to our introductory meeting let s take this time to review smart mobility s first impressions of moped we hope to learn about how moped might be of value to your work and what changes we could bring to the app to make it best fit your needs ... | 0 |

381,853 | 11,296,802,854 | IssuesEvent | 2020-01-17 03:17:59 | Novusphere/discussions-app | https://api.github.com/repos/Novusphere/discussions-app | closed | Unified ID Wallet Integation | enhancement feature high priority | In `nsuid.js` is provided

1) How to go from token symbol & contract --> chain id, see getchains

2) How to go from chainid and wallet key --> balance, see getbalance

3) How to do wallet key --> wallet key (pubk to pubk), see transfer

4) How to do wallet key --> eos account (pubk to eos acc) see withdraw

You ca... | 1.0 | Unified ID Wallet Integation - In `nsuid.js` is provided

1) How to go from token symbol & contract --> chain id, see getchains

2) How to go from chainid and wallet key --> balance, see getbalance

3) How to do wallet key --> wallet key (pubk to pubk), see transfer

4) How to do wallet key --> eos account (pubk to ... | non_process | unified id wallet integation in nsuid js is provided how to go from token symbol contract chain id see getchains how to go from chainid and wallet key balance see getbalance how to do wallet key wallet key pubk to pubk see transfer how to do wallet key eos account pubk to ... | 0 |

250,185 | 27,051,864,980 | IssuesEvent | 2023-02-13 13:48:22 | elastic/cloudbeat | https://api.github.com/repos/elastic/cloudbeat | closed | [CI] CloudFormation templates linter | Team:Cloud Security 8.8 candidate Vulnerability Management | **Motivation**

As decided on a separate ticket (see below), Cloudbeat repository will be in charge of managing CloudFormation templates.

Before we publish the templates to S3 we should assure that they are in the correct form. As part of Cloudbeat's CI, we should validate the structure of the template. Some tools tha... | True | [CI] CloudFormation templates linter - **Motivation**

As decided on a separate ticket (see below), Cloudbeat repository will be in charge of managing CloudFormation templates.

Before we publish the templates to S3 we should assure that they are in the correct form. As part of Cloudbeat's CI, we should validate the st... | non_process | cloudformation templates linter motivation as decided on a separate ticket see below cloudbeat repository will be in charge of managing cloudformation templates before we publish the templates to we should assure that they are in the correct form as part of cloudbeat s ci we should validate the struct... | 0 |

12,700 | 15,077,883,382 | IssuesEvent | 2021-02-05 07:48:10 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Add Cypress to GitHub package registry | process: release stage: ready for work type: chore | Apparently it's a thing. How many people will use it 🤷♀

Instructions: https://help.github.com/en/articles/configuring-npm-for-use-with-github-package-registry

Add a 👍 here if you use Cypress and would use this. | 1.0 | Add Cypress to GitHub package registry - Apparently it's a thing. How many people will use it 🤷♀

Instructions: https://help.github.com/en/articles/configuring-npm-for-use-with-github-package-registry

Add a 👍 here if you use Cypress and would use this. | process | add cypress to github package registry apparently it s a thing how many people will use it 🤷♀ instructions add a 👍 here if you use cypress and would use this | 1 |

20,377 | 27,031,431,806 | IssuesEvent | 2023-02-12 08:38:48 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | New component: timestamp processor | processor/transform | ### The purpose and use-cases of the new component

The purpose of this processor is to change the timestamp of all data that it processes (logs, traces, metrics) by adding or removing a static time duration.

### Example configuration for the component

```

processors:

timestamp:

offset: "0h"

timestamp/a... | 1.0 | New component: timestamp processor - ### The purpose and use-cases of the new component

The purpose of this processor is to change the timestamp of all data that it processes (logs, traces, metrics) by adding or removing a static time duration.

### Example configuration for the component

```

processors:

timestam... | process | new component timestamp processor the purpose and use cases of the new component the purpose of this processor is to change the timestamp of all data that it processes logs traces metrics by adding or removing a static time duration example configuration for the component processors timestam... | 1 |

99,596 | 8,705,233,950 | IssuesEvent | 2018-12-05 21:46:22 | Microsoft/vscode | https://api.github.com/repos/Microsoft/vscode | closed | Test automatic extension host profiling | testplan-item | Refs: #60332

- [ ] any @mjbvz

- [x] any @isidorn - will test on os x

Complexity: 4

When an extension takes over the extension host process we now start to profile and to point out those extensions. This is how it works:

* profiling starts when the extension host is unresponsive for 3 seconds already

* pro... | 1.0 | Test automatic extension host profiling - Refs: #60332

- [ ] any @mjbvz

- [x] any @isidorn - will test on os x

Complexity: 4

When an extension takes over the extension host process we now start to profile and to point out those extensions. This is how it works:

* profiling starts when the extension host is... | non_process | test automatic extension host profiling refs any mjbvz any isidorn will test on os x complexity when an extension takes over the extension host process we now start to profile and to point out those extensions this is how it works profiling starts when the extension host is unrespo... | 0 |

14,577 | 17,702,948,545 | IssuesEvent | 2021-08-25 01:57:11 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Change term - associatedReferences | Term - change Class - Occurrence Class - ResourceRelationship non-normative Process - complete | ## Change term

* Submitter: John Wieczorek

* Justification (why is this change necessary?): Consistency and clarity

* Proponents (who needs this change): Everyone

Current Term definition: https://dwc.tdwg.org/terms/#dwc:associatedReferences

Proposed new attributes of the term:

* Term name (in lowerCamelCa... | 1.0 | Change term - associatedReferences - ## Change term

* Submitter: John Wieczorek

* Justification (why is this change necessary?): Consistency and clarity

* Proponents (who needs this change): Everyone

Current Term definition: https://dwc.tdwg.org/terms/#dwc:associatedReferences

Proposed new attributes of the ... | process | change term associatedreferences change term submitter john wieczorek justification why is this change necessary consistency and clarity proponents who needs this change everyone current term definition proposed new attributes of the term term name in lowercamelcase associate... | 1 |

16,087 | 20,255,890,389 | IssuesEvent | 2022-02-14 23:10:35 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | non-intuitive pipeline trigger behaviour | devops/prod Pri2 devops-cicd-process/tech |

"Pipeline completion triggers use the [Default branch for manual and scheduled builds](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/pipeline-default-branch?view=azure-devops) setting to determine which branch's version of a YAML pipeline's branch filters to evaluate when determining whether to run ... | 1.0 | non-intuitive pipeline trigger behaviour -

"Pipeline completion triggers use the [Default branch for manual and scheduled builds](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/pipeline-default-branch?view=azure-devops) setting to determine which branch's version of a YAML pipeline's branch filters t... | process | non intuitive pipeline trigger behaviour pipeline completion triggers use the setting to determine which branch s version of a yaml pipeline s branch filters to evaluate when determining whether to run a pipeline as the result of another pipeline completing by default this setting points to the default branch... | 1 |

2,333 | 5,142,704,898 | IssuesEvent | 2017-01-12 14:07:29 | jimbrown75/Permit-Vision-Enhancements | https://api.github.com/repos/jimbrown75/Permit-Vision-Enhancements | opened | Make it mandatory to have a RA for low low risk permits | Further discussion (Shell) Must Fix Process Related | Since we now have the "Permit Exempted Task", i don't think there is a use case for a Low Low risk permit without any Hazards and Controls, so we should make it mandatory for even low low risk permits to have an RA.

There is nothing in the 8 step process that says we don't need a risk assessment for low low risk per... | 1.0 | Make it mandatory to have a RA for low low risk permits - Since we now have the "Permit Exempted Task", i don't think there is a use case for a Low Low risk permit without any Hazards and Controls, so we should make it mandatory for even low low risk permits to have an RA.

There is nothing in the 8 step process that... | process | make it mandatory to have a ra for low low risk permits since we now have the permit exempted task i don t think there is a use case for a low low risk permit without any hazards and controls so we should make it mandatory for even low low risk permits to have an ra there is nothing in the step process that... | 1 |

5,745 | 8,582,915,831 | IssuesEvent | 2018-11-13 18:15:26 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | vSphere post-processor "\" escaping issue | bug post-processor/vsphere | vSphere username follows the following convention: `domain\username`. When creating a VM and exporting it as an OVA using the ovftool through packer, the following error is received:

`Error exporting virtual machine: exit status 1`

...

`Could not lookup host: domain`

After looking at the vSphere post-processor ... | 1.0 | vSphere post-processor "\" escaping issue - vSphere username follows the following convention: `domain\username`. When creating a VM and exporting it as an OVA using the ovftool through packer, the following error is received:

`Error exporting virtual machine: exit status 1`

...

`Could not lookup host: domain`

... | process | vsphere post processor escaping issue vsphere username follows the following convention domain username when creating a vm and exporting it as an ova using the ovftool through packer the following error is received error exporting virtual machine exit status could not lookup host domain ... | 1 |

57,634 | 6,551,943,616 | IssuesEvent | 2017-09-05 16:23:28 | NetsBlox/NetsBlox | https://api.github.com/repos/NetsBlox/NetsBlox | closed | Client animation test failing | bug minor testing | The client test making sure it only animates if the stage is selected is failing. It should make sure the stage is not selected initially. | 1.0 | Client animation test failing - The client test making sure it only animates if the stage is selected is failing. It should make sure the stage is not selected initially. | non_process | client animation test failing the client test making sure it only animates if the stage is selected is failing it should make sure the stage is not selected initially | 0 |

6,930 | 6,676,480,240 | IssuesEvent | 2017-10-05 06:00:29 | jorgegil96/All-NBA | https://api.github.com/repos/jorgegil96/All-NBA | closed | Change login WebView to open browser | enhancement security | Currently, when going to _profile_, an activity with a webview is opened where the user enters his Reddit credentials to log in.

A WebView should not be used because the user cannot know if the site being displayed is actually Reddit and not a fake one. Instead, the user should be redirected to the actual web browser (... | True | Change login WebView to open browser - Currently, when going to _profile_, an activity with a webview is opened where the user enters his Reddit credentials to log in.

A WebView should not be used because the user cannot know if the site being displayed is actually Reddit and not a fake one. Instead, the user should be... | non_process | change login webview to open browser currently when going to profile an activity with a webview is opened where the user enters his reddit credentials to log in a webview should not be used because the user cannot know if the site being displayed is actually reddit and not a fake one instead the user should be... | 0 |

456,054 | 13,136,311,772 | IssuesEvent | 2020-08-07 05:44:31 | teamforus/general | https://api.github.com/repos/teamforus/general | reopened | CMS: editing texts, adding images/videos to webshop | Approval: Granted Epic Priority: Must have Type: Improvement Proposal project-100 | Learn more about improvement proposals: https://bit.ly/2xLJT3R

## Current situation

Every webshop implementation requires customisation. This has different perspectives: technical, and content-wise. This CMS is about trying to solve implementation bottlenecks while being able to fulfil customers need more quickly a... | 1.0 | CMS: editing texts, adding images/videos to webshop - Learn more about improvement proposals: https://bit.ly/2xLJT3R

## Current situation

Every webshop implementation requires customisation. This has different perspectives: technical, and content-wise. This CMS is about trying to solve implementation bottlenecks wh... | non_process | cms editing texts adding images videos to webshop learn more about improvement proposals current situation every webshop implementation requires customisation this has different perspectives technical and content wise this cms is about trying to solve implementation bottlenecks while being able to ful... | 0 |

387,038 | 26,711,002,850 | IssuesEvent | 2023-01-27 23:58:32 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | opened | Docs: S3Cluster Table Engine | comp-documentation | **Describe the issue**

The S3 Table Engine also has an S3Cluster Table Engine option, which is not currently documented. We please need to add a S3Cluster Table Engine page under Engines->Table Engines->Integrations->S3Cluster (just under the S3 Table Engine)

(alternately, we could include the S3Cluster Table Engin... | 1.0 | Docs: S3Cluster Table Engine - **Describe the issue**

The S3 Table Engine also has an S3Cluster Table Engine option, which is not currently documented. We please need to add a S3Cluster Table Engine page under Engines->Table Engines->Integrations->S3Cluster (just under the S3 Table Engine)

(alternately, we could in... | non_process | docs table engine describe the issue the table engine also has an table engine option which is not currently documented we please need to add a table engine page under engines table engines integrations just under the table engine alternately we could include the table engine on the ... | 0 |

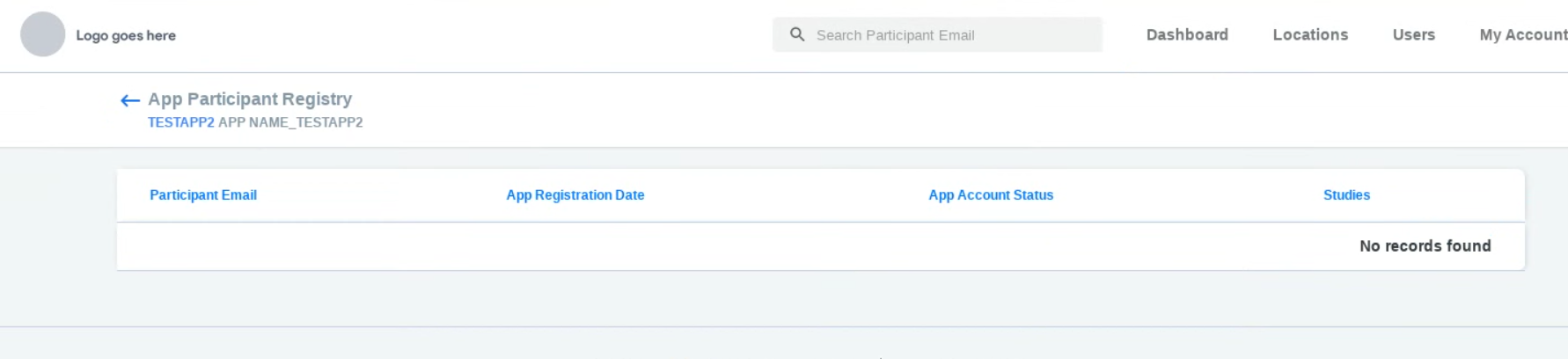

11,748 | 14,583,386,220 | IssuesEvent | 2020-12-18 13:55:35 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | Make display of "no records found" consistent across the participant manager | Bug P2 Participant manager Process: Tested QA Process: Tested dev UI | Sometimes the 'no records found' text is left justified, other times it is centered, and here it is right justified. This should be consistent across the application.

| 2.0 | Make display of "no records found" consistent across the participant manager - Sometimes the 'no records found' text is left justified, other times it is centered, and here it is right justified. This should be consistent across the application.

detected in openjpegv2.3.0 - autoclosed | security vulnerability | ## CVE-2022-34266 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>openjpegv2.3.0</b></p></summary>

<p>

<p>Official repository of the OpenJPEG project</p>

<p>Library home page: <a hre... | True | CVE-2022-34266 (Medium) detected in openjpegv2.3.0 - autoclosed - ## CVE-2022-34266 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>openjpegv2.3.0</b></p></summary>

<p>

<p>Official r... | non_process | cve medium detected in autoclosed cve medium severity vulnerability vulnerable library official repository of the openjpeg project library home page a href found in head commit a href found in base branch master vulnerable source files ... | 0 |

389,574 | 26,821,503,208 | IssuesEvent | 2023-02-02 09:48:37 | iti-ict/wakamiti | https://api.github.com/repos/iti-ict/wakamiti | opened | Incluir documentación por versión | documentation enhancement | Sería interesante incluir que la documentación de wakamiti, tanto en el core como en los plugins, estuviera disponible por versión. Un desplegable con las versiones disponibles y que se pueda seleccionar, ya que actualmente solo incluye la última versión. | 1.0 | Incluir documentación por versión - Sería interesante incluir que la documentación de wakamiti, tanto en el core como en los plugins, estuviera disponible por versión. Un desplegable con las versiones disponibles y que se pueda seleccionar, ya que actualmente solo incluye la última versión. | non_process | incluir documentación por versión sería interesante incluir que la documentación de wakamiti tanto en el core como en los plugins estuviera disponible por versión un desplegable con las versiones disponibles y que se pueda seleccionar ya que actualmente solo incluye la última versión | 0 |

244,723 | 20,692,541,963 | IssuesEvent | 2022-03-11 02:55:11 | PalisadoesFoundation/talawa | https://api.github.com/repos/PalisadoesFoundation/talawa | closed | Views: Create tests for edit_profile_page.dart | good first issue test points 01 | The Talawa code base needs to be 100% reliable. This means we need to have 100% test code coverage.

Tests need to be written for file `lib/views/after_auth_screens/profile/edit_profile_page.dart`

- When complete, all methods, classes and/or functions in the file will need to be tested.

- These tests must be pla... | 1.0 | Views: Create tests for edit_profile_page.dart - The Talawa code base needs to be 100% reliable. This means we need to have 100% test code coverage.

Tests need to be written for file `lib/views/after_auth_screens/profile/edit_profile_page.dart`

- When complete, all methods, classes and/or functions in the file wi... | non_process | views create tests for edit profile page dart the talawa code base needs to be reliable this means we need to have test code coverage tests need to be written for file lib views after auth screens profile edit profile page dart when complete all methods classes and or functions in the file will n... | 0 |

38,882 | 10,261,137,166 | IssuesEvent | 2019-08-22 09:08:42 | ShaikASK/Testing | https://api.github.com/repos/ShaikASK/Testing | closed | QA /Production : Safari : New Hire : Emails tab /Activities tab : Extra white space is being displayed against email tab and activities tab | Activities Beta Release #5 Build#4 Defect Emails Initiate On boarding New Hire P3 | Steps To Replicate

1.Launch the URL

2.Sign in as HR admin

3.Create a New Hire and Save it

4.Initiate the above created New Hire

5.Complete the onboarding process from candidate side

6.Check the Emails tab and Activities tab for above initiated New Hire

Experienced Behavior : Observed that Extra white sp... | 1.0 | QA /Production : Safari : New Hire : Emails tab /Activities tab : Extra white space is being displayed against email tab and activities tab - Steps To Replicate

1.Launch the URL

2.Sign in as HR admin

3.Create a New Hire and Save it

4.Initiate the above created New Hire

5.Complete the onboarding process from c... | non_process | qa production safari new hire emails tab activities tab extra white space is being displayed against email tab and activities tab steps to replicate launch the url sign in as hr admin create a new hire and save it initiate the above created new hire complete the onboarding process from c... | 0 |

233,892 | 19,084,048,487 | IssuesEvent | 2021-11-29 01:56:39 | DnD-Montreal/session-tome | https://api.github.com/repos/DnD-Montreal/session-tome | closed | Add cypress test for user registration and login | task test | ## Description

Write E2E Cypress tests for user registration and login.

## Possible Implementation

- Create a user through the registration form

- Log into a user's account through the login form | 1.0 | Add cypress test for user registration and login - ## Description

Write E2E Cypress tests for user registration and login.

## Possible Implementation

- Create a user through the registration form

- Log into a user's account through the login form | non_process | add cypress test for user registration and login description write cypress tests for user registration and login possible implementation create a user through the registration form log into a user s account through the login form | 0 |

4,320 | 10,917,612,138 | IssuesEvent | 2019-11-21 15:27:47 | maSchoeller/JimnyTainment | https://api.github.com/repos/maSchoeller/JimnyTainment | closed | Project distribution and Namespace naming | V0.1 milestone program architecture | # Project distribution

General distribution of the application into 4 projects:

- **JimnyTainment.UI.View**

_The project contains the actual user interface._

- **JimnyTainment.UI.ViewModel**

_Contains the control logic for the user interface._

- **JimnyTainment.Lib**

_Contains various services that are used by t... | 1.0 | Project distribution and Namespace naming - # Project distribution

General distribution of the application into 4 projects:

- **JimnyTainment.UI.View**

_The project contains the actual user interface._

- **JimnyTainment.UI.ViewModel**

_Contains the control logic for the user interface._

- **JimnyTainment.Lib**

_... | non_process | project distribution and namespace naming project distribution general distribution of the application into projects jimnytainment ui view the project contains the actual user interface jimnytainment ui viewmodel contains the control logic for the user interface jimnytainment lib ... | 0 |

15,637 | 19,808,603,178 | IssuesEvent | 2022-01-19 09:46:41 | Blazebit/blaze-persistence | https://api.github.com/repos/Blazebit/blaze-persistence | closed | NPE in entity view annotation processor when @PostLoad is used | kind: bug worth: high component: entity-view-annotation-processor | According to a user report, `ImplementationClassWriter#1877` throws a NPE with version 1.6.4. when having a `@PostLoad` annotated method in an entity view. It seems we used `getPostCreate` accidently whereas it should have been `getPostLoad` on that line. | 1.0 | NPE in entity view annotation processor when @PostLoad is used - According to a user report, `ImplementationClassWriter#1877` throws a NPE with version 1.6.4. when having a `@PostLoad` annotated method in an entity view. It seems we used `getPostCreate` accidently whereas it should have been `getPostLoad` on that line. | process | npe in entity view annotation processor when postload is used according to a user report implementationclasswriter throws a npe with version when having a postload annotated method in an entity view it seems we used getpostcreate accidently whereas it should have been getpostload on that line | 1 |

18,792 | 24,698,096,784 | IssuesEvent | 2022-10-19 13:32:46 | km4ack/pi-build | https://api.github.com/repos/km4ack/pi-build | closed | revert pat to install pkg instead of build from source | enhancement in process | Building from source was done when 64bit Pi OS was released. Now that there are 64bit versions of Pat, there's really no need to build from source and installing the package from the Pat site will speed the install. There may also be an issue with building from source on 64bit machines. See [this post](https://groups.i... | 1.0 | revert pat to install pkg instead of build from source - Building from source was done when 64bit Pi OS was released. Now that there are 64bit versions of Pat, there's really no need to build from source and installing the package from the Pat site will speed the install. There may also be an issue with building from s... | process | revert pat to install pkg instead of build from source building from source was done when pi os was released now that there are versions of pat there s really no need to build from source and installing the package from the pat site will speed the install there may also be an issue with building from source on... | 1 |

19,223 | 25,358,617,032 | IssuesEvent | 2022-11-20 16:34:33 | streamnative/pulsar-spark | https://api.github.com/repos/streamnative/pulsar-spark | closed | [BUG] Spark can't start read stream- NullPointerException in pulsar-spark-connector V3.1.1.2 | type/bug compute/data-processing | **Describe the bug**

While trying to start spark structured streaming read stream, connector throws NullPointerException.

```

pulsar-connector jar - “io.streamnative.connectors:pulsar-spark-connector_2.12:3.1.1.2”

PySpark version = 3.1.2

python version = 3.7

```

Code snippet throwing error is-

```

... | 1.0 | [BUG] Spark can't start read stream- NullPointerException in pulsar-spark-connector V3.1.1.2 - **Describe the bug**

While trying to start spark structured streaming read stream, connector throws NullPointerException.

```

pulsar-connector jar - “io.streamnative.connectors:pulsar-spark-connector_2.12:3.1.1.2”

PySpa... | process | spark can t start read stream nullpointerexception in pulsar spark connector describe the bug while trying to start spark structured streaming read stream connector throws nullpointerexception pulsar connector jar “io streamnative connectors pulsar spark connector ” pyspark ver... | 1 |

16,580 | 21,625,404,041 | IssuesEvent | 2022-05-05 00:57:36 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | opened | DISABLED test_fs (__main__.TestMultiprocessing) | module: multiprocessing module: flaky-tests skipped | Platforms: asan, linux

This test was disabled because it is failing in CI. See [recent examples](http://torch-ci.com/failure/test_fs%2C%20TestMultiprocessing) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/6297446390).

Over the past 3 hours, it has been determined flaky in 1 workflo... | 1.0 | DISABLED test_fs (__main__.TestMultiprocessing) - Platforms: asan, linux

This test was disabled because it is failing in CI. See [recent examples](http://torch-ci.com/failure/test_fs%2C%20TestMultiprocessing) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/6297446390).

Over the past ... | process | disabled test fs main testmultiprocessing platforms asan linux this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has been determined flaky in workflow s with red and green | 1 |

2,266 | 2,589,945,020 | IssuesEvent | 2015-02-18 16:03:41 | klassebe/klasse-wp-poll-survey | https://api.github.com/repos/klassebe/klasse-wp-poll-survey | closed | Add php to handle sort order changes | All testmodi feature guiRevision | These changes must be passed on to all other versions that are part of the same test collection (parent) | 1.0 | Add php to handle sort order changes - These changes must be passed on to all other versions that are part of the same test collection (parent) | non_process | add php to handle sort order changes these changes must be passed on to all other versions that are part of the same test collection parent | 0 |

102,511 | 12,805,739,227 | IssuesEvent | 2020-07-03 08:07:31 | amalto/platform6-ui-components | https://api.github.com/repos/amalto/platform6-ui-components | closed | Create a form component | Design: Atom Web component enhancement | The component must :

* add the attribute "Novalidate" to prevent automatic validation by the browser.

* find all the "inputs" of the form, native or not.

* validate the form data when submitting it. | 1.0 | Create a form component - The component must :

* add the attribute "Novalidate" to prevent automatic validation by the browser.

* find all the "inputs" of the form, native or not.

* validate the form data when submitting it. | non_process | create a form component the component must add the attribute novalidate to prevent automatic validation by the browser find all the inputs of the form native or not validate the form data when submitting it | 0 |

6,468 | 2,848,025,911 | IssuesEvent | 2015-05-29 20:20:57 | isenseDev/rSENSE | https://api.github.com/repos/isenseDev/rSENSE | closed | Add Indication on Map Markers of Data Sets that Contain Photos | In Testing UI | **General description:** When a data set includes a picture that is visible on the map, it'd be nice to add some type of indication it exists on the markers. Maybe a font awesome photo icon of some sort? Like a camera.

**live/dev/localhost:** live

**iSENSE Version:** v6.3

**Logged in (Y or N):** N

**Admin (Y or N... | 1.0 | Add Indication on Map Markers of Data Sets that Contain Photos - **General description:** When a data set includes a picture that is visible on the map, it'd be nice to add some type of indication it exists on the markers. Maybe a font awesome photo icon of some sort? Like a camera.

**live/dev/localhost:** live

**i... | non_process | add indication on map markers of data sets that contain photos general description when a data set includes a picture that is visible on the map it d be nice to add some type of indication it exists on the markers maybe a font awesome photo icon of some sort like a camera live dev localhost live i... | 0 |

145,413 | 19,339,414,694 | IssuesEvent | 2021-12-15 01:29:01 | hydrogen-dev/molecule-quickstart-app | https://api.github.com/repos/hydrogen-dev/molecule-quickstart-app | opened | CVE-2021-23424 (High) detected in ansi-html-0.0.7.tgz | security vulnerability | ## CVE-2021-23424 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansi-html-0.0.7.tgz</b></p></summary>

<p>An elegant lib that converts the chalked (ANSI) text to HTML.</p>

<p>Library ... | True | CVE-2021-23424 (High) detected in ansi-html-0.0.7.tgz - ## CVE-2021-23424 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansi-html-0.0.7.tgz</b></p></summary>

<p>An elegant lib that c... | non_process | cve high detected in ansi html tgz cve high severity vulnerability vulnerable library ansi html tgz an elegant lib that converts the chalked ansi text to html library home page a href path to dependency file molecule quickstart app package json path to vulnerable l... | 0 |

18,396 | 24,532,612,834 | IssuesEvent | 2022-10-11 17:46:28 | bridgetownrb/bridgetown | https://api.github.com/repos/bridgetownrb/bridgetown | closed | Future: extract Tailwind support to a community maintained repo | process | With the rapid developments in new and future CSS and seeing how Tailwind has struggled mightily to maintain relevance in the face of it (the `:has` pseudo-class alone has the potential to revolutionize styling, as does cascade layers, new color functions, container queries, and so forth)—even going so far as to invent... | 1.0 | Future: extract Tailwind support to a community maintained repo - With the rapid developments in new and future CSS and seeing how Tailwind has struggled mightily to maintain relevance in the face of it (the `:has` pseudo-class alone has the potential to revolutionize styling, as does cascade layers, new color function... | process | future extract tailwind support to a community maintained repo with the rapid developments in new and future css and seeing how tailwind has struggled mightily to maintain relevance in the face of it the has pseudo class alone has the potential to revolutionize styling as does cascade layers new color function... | 1 |

181,651 | 14,072,794,870 | IssuesEvent | 2020-11-04 02:52:06 | hiltonjp/journey | https://api.github.com/repos/hiltonjp/journey | closed | Unit Testing: Boss battle classes | testing | - [x] HealthBelowCondition

- [x] AttackEngine

- [x] ~~Boss~~ All the complex interactions are delegated to the attack engine anyway.

- [x] BossWeakSpot

- [x] ~~MiniEye~~ Specific to a single boss battle. Probably not worth the effort.

- [x] ~~MegaEye~~ Specific to a single boss battle. Probably not worth the effor... | 1.0 | Unit Testing: Boss battle classes - - [x] HealthBelowCondition

- [x] AttackEngine

- [x] ~~Boss~~ All the complex interactions are delegated to the attack engine anyway.

- [x] BossWeakSpot

- [x] ~~MiniEye~~ Specific to a single boss battle. Probably not worth the effort.

- [x] ~~MegaEye~~ Specific to a single boss ... | non_process | unit testing boss battle classes healthbelowcondition attackengine boss all the complex interactions are delegated to the attack engine anyway bossweakspot minieye specific to a single boss battle probably not worth the effort megaeye specific to a single boss battle prob... | 0 |

37,168 | 5,104,891,123 | IssuesEvent | 2017-01-05 03:48:31 | AeroScripts/QuestieDev | https://api.github.com/repos/AeroScripts/QuestieDev | closed | Quest Item tooltip error. | bug resolved test again | Questie raise error on tooltip on quest item when item withdraw from bank.

How to make this error,

1. Store quest item in bank.

2. withdraw from bank and mouse over quest item.

3. Get error in QuestieNotes.lua line 396.

I changed in QuestieNotes.lua line 395,

> if Questie... | 1.0 | Quest Item tooltip error. - Questie raise error on tooltip on quest item when item withdraw from bank.

How to make this error,

1. Store quest item in bank.

2. withdraw from bank and mouse over quest item.

3. Get error in QuestieNotes.lua line 396.

I changed in QuestieNotes.lua line 395,

> ... | non_process | quest item tooltip error questie raise error on tooltip on quest item when item withdraw from bank how to make this error store quest item in bank withdraw from bank and mouse over quest item get error in questienotes lua line i changed in questienotes lua line ... | 0 |

18,672 | 24,590,723,883 | IssuesEvent | 2022-10-14 01:45:50 | benthosdev/benthos | https://api.github.com/repos/benthosdev/benthos | closed | awk processor decode and assign | question processors | I want to decode the string into base64 in awk processor and assign it to a variable,just like this:

```

#! /bin/bash

astr=MjAyMi0xMC0xMiAxMDoyMTowNg==

bstr=`echo -n $astr|base64 -d`

echo "$bstr"

```

Decode astr with base64 and assign it to bstr

How to implement the above function in awk processor? | 1.0 | awk processor decode and assign - I want to decode the string into base64 in awk processor and assign it to a variable,just like this:

```

#! /bin/bash

astr=MjAyMi0xMC0xMiAxMDoyMTowNg==

bstr=`echo -n $astr|base64 -d`

echo "$bstr"

```

Decode astr with base64 and assign it to bstr

How to implement the above f... | process | awk processor decode and assign i want to decode the string into in awk processor and assign it to a variable,just like this bin bash astr bstr echo n astr d echo bstr decode astr with and assign it to bstr how to implement the above function in awk processor? | 1 |

21,014 | 27,958,013,238 | IssuesEvent | 2023-03-24 13:47:24 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Unable to start editing of mesh layer: Face is flat | Feedback Processing Bug Mesh | ### What is the bug or the crash?

I want to edit a mesh layer but get the error message

>Unable to start editing of mesh layer "meshlayername": Face XXX is flat

where XXX is the number of the face.

### Steps to reproduce the issue

First, create a mesh (2dm file) using the "TIN Mesh Creation" tool. Input vecto... | 1.0 | Unable to start editing of mesh layer: Face is flat - ### What is the bug or the crash?

I want to edit a mesh layer but get the error message

>Unable to start editing of mesh layer "meshlayername": Face XXX is flat

where XXX is the number of the face.

### Steps to reproduce the issue

First, create a mesh (2dm... | process | unable to start editing of mesh layer face is flat what is the bug or the crash i want to edit a mesh layer but get the error message unable to start editing of mesh layer meshlayername face xxx is flat where xxx is the number of the face steps to reproduce the issue first create a mesh f... | 1 |

9,378 | 12,375,056,763 | IssuesEvent | 2020-05-19 03:29:18 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `GreatestReal` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the function `GreatestReal` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr)

... | 2.0 | UCP: Migrate scalar function `GreatestReal` from TiDB -

## Description

Port the function `GreatestReal` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tik... | process | ucp migrate scalar function greatestreal from tidb description port the function greatestreal from tidb to coprocessor score mentor s breeswish recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

252,741 | 8,041,138,463 | IssuesEvent | 2018-07-31 01:09:17 | Verseghy/website_backend | https://api.github.com/repos/Verseghy/website_backend | closed | Add checkCaching() function to TestsBase | Priority: Low Type: Maintenance | This function should execute a query twice with two dates and check for response codes `200 ok` and `304 not modified`

Usage: `$this->checkCaching($endpoint, $params)`

It should use who dates:

```php

$farDate = 'Mon, 4 Jan 2100 00:00:00';

$oldDate = 'Mon, 5 Jan 1970 00:00:00';

``` | 1.0 | Add checkCaching() function to TestsBase - This function should execute a query twice with two dates and check for response codes `200 ok` and `304 not modified`

Usage: `$this->checkCaching($endpoint, $params)`

It should use who dates:

```php

$farDate = 'Mon, 4 Jan 2100 00:00:00';

$oldDate = 'Mon, 5 Jan 19... | non_process | add checkcaching function to testsbase this function should execute a query twice with two dates and check for response codes ok and not modified usage this checkcaching endpoint params it should use who dates php fardate mon jan olddate mon jan ... | 0 |

15,527 | 19,703,290,628 | IssuesEvent | 2022-01-12 18:53:54 | googleapis/nodejs-service-control | https://api.github.com/repos/googleapis/nodejs-service-control | opened | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'service-control' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-automation** if you have any questions. | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'service-control' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-automation** if you have any questions. | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 api shortname service control invalid in repo metadata json ☝️ once you correct these problems you can close this issue reach out to go github automation if you have any questions | 1 |

21,987 | 30,483,343,143 | IssuesEvent | 2023-07-17 22:31:55 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | roblox-pyc 1.16.35 has 2 GuardDog issues | guarddog silent-process-execution | https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.16.35",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "roblox-pyc-1.16.35/src/robloxpy.py:106",

... | 1.0 | roblox-pyc 1.16.35 has 2 GuardDog issues - https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.16.35",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "ro... | process | roblox pyc has guarddog issues dependency roblox pyc version result issues errors results silent process execution location roblox pyc src robloxpy py code subprocess call ... | 1 |

9,273 | 12,302,181,633 | IssuesEvent | 2020-05-11 16:32:46 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | Include 3rd party licenses and source in images | P1 enhancement process | **Problem**

Requirements for Google Cloud Marketplace inclusion mandate that Docker images include the licenses for all 3rd party dependencies (including transitive dependencies). Additionally, for CDDL, GPL and LGPL we may have to include the source code of dependencies. For CDDL/GPL, all our dependencies have the cl... | 1.0 | Include 3rd party licenses and source in images - **Problem**

Requirements for Google Cloud Marketplace inclusion mandate that Docker images include the licenses for all 3rd party dependencies (including transitive dependencies). Additionally, for CDDL, GPL and LGPL we may have to include the source code of dependenci... | process | include party licenses and source in images problem requirements for google cloud marketplace inclusion mandate that docker images include the licenses for all party dependencies including transitive dependencies additionally for cddl gpl and lgpl we may have to include the source code of dependencies ... | 1 |

3,458 | 6,544,149,754 | IssuesEvent | 2017-09-03 12:22:50 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | opened | ethprice initialization | apps-ethPrice status-inprocess type-bug | Remove ~/.quickBlocks folder (i.e. brand new user)

Run test cases for ethprice (they all fail)

| 1.0 | ethprice initialization - Remove ~/.quickBlocks folder (i.e. brand new user)

Run test cases for ethprice (they all fail)

| process | ethprice initialization remove quickblocks folder i e brand new user run test cases for ethprice they all fail | 1 |

3,364 | 6,493,490,941 | IssuesEvent | 2017-08-21 17:19:57 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | add F-P link between GO:0016926 protein desumoylation and GO:0016929 SUMO-specific protease activity | cellular processes missing parentage |

GO:0016926 protein desumoylation

--part_of SUMO-specific protease activity | 1.0 | add F-P link between GO:0016926 protein desumoylation and GO:0016929 SUMO-specific protease activity -

GO:0016926 protein desumoylation

--part_of SUMO-specific protease activity | process | add f p link between go protein desumoylation and go sumo specific protease activity go protein desumoylation part of sumo specific protease activity | 1 |

138,343 | 11,199,315,002 | IssuesEvent | 2020-01-03 18:21:58 | sharkwouter/minigalaxy | https://api.github.com/repos/sharkwouter/minigalaxy | closed | Games not detected if their installation folder isn't their exact title | bug needs testing | The GOG installers that you download off their website omit certain characters when creating the installation folder. The characters I have found that have been omitted are : and -. There are quite possibly more characters but I don't have any examples in my library. As these characters don't exist in the folder but... | 1.0 | Games not detected if their installation folder isn't their exact title - The GOG installers that you download off their website omit certain characters when creating the installation folder. The characters I have found that have been omitted are : and -. There are quite possibly more characters but I don't have any ... | non_process | games not detected if their installation folder isn t their exact title the gog installers that you download off their website omit certain characters when creating the installation folder the characters i have found that have been omitted are and there are quite possibly more characters but i don t have any ... | 0 |

28,193 | 11,598,804,034 | IssuesEvent | 2020-02-25 00:11:06 | fufunoyu/WebGoat-Legacy | https://api.github.com/repos/fufunoyu/WebGoat-Legacy | opened | CVE-2019-20330 (High) detected in jackson-databind-2.0.4.jar | security vulnerability | ## CVE-2019-20330 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.0.4.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2019-20330 (High) detected in jackson-databind-2.0.4.jar - ## CVE-2019-20330 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.0.4.jar</b></p></summary>

<p>General... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api path to dependency file tmp ws scm webgoat legacy pom xml path to vulnerable librar... | 0 |

7,134 | 16,659,847,456 | IssuesEvent | 2021-06-06 06:16:29 | OndrejSzekely/metron | https://api.github.com/repos/OndrejSzekely/metron | opened | Add Hydra config framework | Metron architecture | Moving from [Dynaconf](https://dynaconf.readthedocs.io/en/docs_223/) configuration framework to Facebook's [Hydra](https://hydra.cc/) config tool. This allows to have much broader configuration options, which more fit into our scenario. | 1.0 | Add Hydra config framework - Moving from [Dynaconf](https://dynaconf.readthedocs.io/en/docs_223/) configuration framework to Facebook's [Hydra](https://hydra.cc/) config tool. This allows to have much broader configuration options, which more fit into our scenario. | non_process | add hydra config framework moving from configuration framework to facebook s config tool this allows to have much broader configuration options which more fit into our scenario | 0 |

17,605 | 23,427,746,542 | IssuesEvent | 2022-08-14 16:44:44 | vortexntnu/Vortex-CV | https://api.github.com/repos/vortexntnu/Vortex-CV | closed | Create an image processing "core" ROS node (cv_utils) | Future feature Image Processing Utility | **Time estimate:** 5-7 hours

**Description of task:**

Write a template ROS node for image processing that is a clonable code module. It should be a base 'stripped-down' version of a ROS program meant for image processing testing and development. The main purpose is to have an easy way to set up image processing nod... | 1.0 | Create an image processing "core" ROS node (cv_utils) - **Time estimate:** 5-7 hours

**Description of task:**

Write a template ROS node for image processing that is a clonable code module. It should be a base 'stripped-down' version of a ROS program meant for image processing testing and development. The main purpo... | process | create an image processing core ros node cv utils time estimate hours description of task write a template ros node for image processing that is a clonable code module it should be a base stripped down version of a ros program meant for image processing testing and development the main purpo... | 1 |

13,662 | 16,383,867,970 | IssuesEvent | 2021-05-17 07:58:52 | trpo2021/cw-ip-011_keyboardninja | https://api.github.com/repos/trpo2021/cw-ip-011_keyboardninja | opened | Transfer the program to windows | bug in process | Due to the incorrect operation of the program under Linux, it was decided to create an application for the Windows platform. | 1.0 | Transfer the program to windows - Due to the incorrect operation of the program under Linux, it was decided to create an application for the Windows platform. | process | transfer the program to windows due to the incorrect operation of the program under linux it was decided to create an application for the windows platform | 1 |

4,209 | 7,176,092,690 | IssuesEvent | 2018-01-31 08:51:06 | nuclio/nuclio | https://api.github.com/repos/nuclio/nuclio | closed | Support platform configuration, inject to processors | area/processor | A platform level configuration mechanism should be introduced to allow central configuration of:

Logging

Metrics

All processors should be injected this configuration | 1.0 | Support platform configuration, inject to processors - A platform level configuration mechanism should be introduced to allow central configuration of:

Logging

Metrics

All processors should be injected this configuration | process | support platform configuration inject to processors a platform level configuration mechanism should be introduced to allow central configuration of logging metrics all processors should be injected this configuration | 1 |

8,372 | 3,164,468,351 | IssuesEvent | 2015-09-21 04:21:41 | commercialhaskell/stack | https://api.github.com/repos/commercialhaskell/stack | closed | LGPL licensing restrictions on Windows because of integer-gmp | component: documentation | Default version of GHC on Windows produces binaries with integer-gmp linked statically. As integer-gmp's license is LGPL, it means that resulting binaries [should be provided with source code or object files](http://www.gnu.org/licenses/gpl-faq.html#LGPLStaticVsDynamic). I guess that's not a thing everyone would want.

... | 1.0 | LGPL licensing restrictions on Windows because of integer-gmp - Default version of GHC on Windows produces binaries with integer-gmp linked statically. As integer-gmp's license is LGPL, it means that resulting binaries [should be provided with source code or object files](http://www.gnu.org/licenses/gpl-faq.html#LGPLSt... | non_process | lgpl licensing restrictions on windows because of integer gmp default version of ghc on windows produces binaries with integer gmp linked statically as integer gmp s license is lgpl it means that resulting binaries i guess that s not a thing everyone would want on unixes integer gmp is linked dynamically s... | 0 |

14,500 | 17,604,292,690 | IssuesEvent | 2021-08-17 15:13:32 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [Processing][needs-docs] Add incremental field: add modulo option (Request in QGIS) | Processing Alg 3.22 | ### Request for documentation

From pull request QGIS/qgis#44354

Author: @lbartoletti

QGIS version: 3.22

** [Processing][needs-docs] Add incremental field: add modulo option**

### PR Description:

## Description

This algorithm allows to add a column with an integer that will be incremented

from START to the limit, ... | 1.0 | [Processing][needs-docs] Add incremental field: add modulo option (Request in QGIS) - ### Request for documentation

From pull request QGIS/qgis#44354

Author: @lbartoletti

QGIS version: 3.22

** [Processing][needs-docs] Add incremental field: add modulo option**

### PR Description:

## Description

This algorithm allo... | process | add incremental field add modulo option request in qgis request for documentation from pull request qgis qgis author lbartoletti qgis version add incremental field add modulo option pr description description this algorithm allows to add a column with an integer that will be i... | 1 |

22,755 | 32,075,639,229 | IssuesEvent | 2023-09-25 10:48:41 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | reprepbuild 1.2.0 has 3 GuardDog issues | guarddog silent-process-execution | https://pypi.org/project/reprepbuild

https://inspector.pypi.io/project/reprepbuild

```{

"dependency": "reprepbuild",

"version": "1.2.0",

"result": {

"issues": 3,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "RepRepBuild-1.2.0/src/reprepbuild/scripts/la... | 1.0 | reprepbuild 1.2.0 has 3 GuardDog issues - https://pypi.org/project/reprepbuild

https://inspector.pypi.io/project/reprepbuild

```{

"dependency": "reprepbuild",

"version": "1.2.0",

"result": {

"issues": 3,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "Re... | process | reprepbuild has guarddog issues dependency reprepbuild version result issues errors results silent process execution location reprepbuild src reprepbuild scripts latex py code ... | 1 |

6,260 | 9,218,632,320 | IssuesEvent | 2019-03-11 13:50:07 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | NTR and ontology revisions for plant immunity terms | PomBase multi-species process viruses and symbionts | Attention

@lreiser (TAIR)

@ebakker2 (TAIR)

@tberardini (TAIR)

@CuzickA (PHI_Base)

As part of the PHI-Base project we are building and ontology to represent and annotate pathogen host interaction phenotypes. These terms will be logically defined using GO terms to describe 'normal' processes . We will also pro... | 1.0 | NTR and ontology revisions for plant immunity terms - Attention

@lreiser (TAIR)

@ebakker2 (TAIR)

@tberardini (TAIR)

@CuzickA (PHI_Base)