Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2,614 | 5,394,178,293 | IssuesEvent | 2017-02-27 01:38:06 | uccser/kordac | https://api.github.com/repos/uccser/kordac | closed | Implement {video} tag | processor implementation testing | Implement the existing tag used in the CSFG. The function is found within [`markdownsection.py`](https://github.com/uccser/cs-field-guide/blob/develop/generator/markdownsection.py).

```

[video]

regex: ^\{video (?P<args>[^\}]*)\}

function: create_video_html

```

| 1.0 | Implement {video} tag - Implement the existing tag used in the CSFG. The function is found within [`markdownsection.py`](https://github.com/uccser/cs-field-guide/blob/develop/generator/markdownsection.py).

```

[video]

regex: ^\{video (?P<args>[^\}]*)\}

function: create_video_html

```

| process | implement video tag implement the existing tag used in the csfg the function is found within regex video p function create video html | 1 |

762,443 | 26,718,821,243 | IssuesEvent | 2023-01-28 21:58:26 | AlexNav73/Navitski.Crystalized | https://api.github.com/repos/AlexNav73/Navitski.Crystalized | closed | Create LazyCopyCollection/Relation types | enhancement priority-medium | Data should be copied only when it will be modified, now we are coping whole shard even if only one collection would be modified | 1.0 | Create LazyCopyCollection/Relation types - Data should be copied only when it will be modified, now we are coping whole shard even if only one collection would be modified | non_process | create lazycopycollection relation types data should be copied only when it will be modified now we are coping whole shard even if only one collection would be modified | 0 |

99,130 | 4,047,974,555 | IssuesEvent | 2016-05-23 08:32:20 | Taeir/ContextProject-MIGI2 | https://api.github.com/repos/Taeir/ContextProject-MIGI2 | closed | Controller Support | Priority B | As the VR player,

I want to be able use an Xbox 360 controller,

so that I can control the movement of my character with it.

This task has the following subtasks:

#126 - Controller support - link

#127 - Controller support - key bindings | 1.0 | Controller Support - As the VR player,

I want to be able use an Xbox 360 controller,

so that I can control the movement of my character with it.

This task has the following subtasks:

#126 - Controller support - link

#127 - Controller support - key bindings | non_process | controller support as the vr player i want to be able use an xbox controller so that i can control the movement of my character with it this task has the following subtasks controller support link controller support key bindings | 0 |

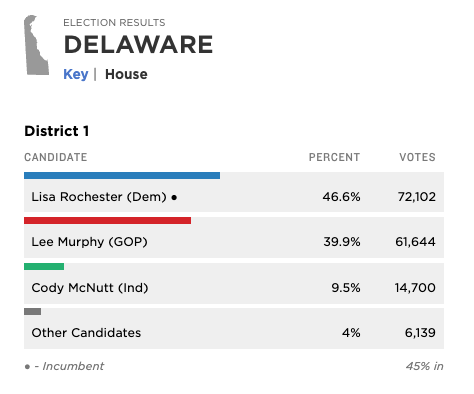

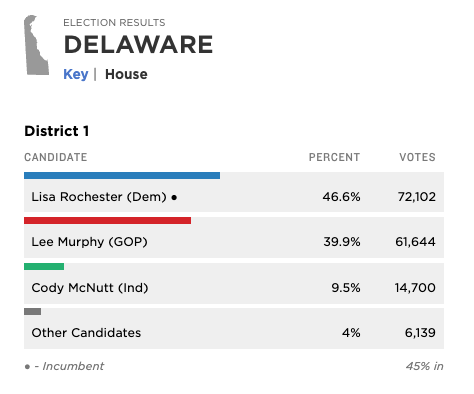

725,645 | 24,969,751,328 | IssuesEvent | 2022-11-01 23:15:53 | nprapps/elections22 | https://api.github.com/repos/nprapps/elections22 | closed | If there's only one race we're following in a state, that should appear by default on the "Key" tab | Priority: Low | thinking in particular of DE, DC but there are may be a handful of others

| 1.0 | If there's only one race we're following in a state, that should appear by default on the "Key" tab - thinking in particular of DE, DC but there are may be a handful of others

| non_process | if there s only one race we re following in a state that should appear by default on the key tab thinking in particular of de dc but there are may be a handful of others | 0 |

7,435 | 10,550,298,142 | IssuesEvent | 2019-10-03 10:43:12 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | area_weighted regridding fails on several observational datasets | bug help wanted preprocessor | The preprocessor function `regrid` with argument `scheme = 'area_weighted'` fails on several observational datasets. The error thrown is:

```

File "/iris/experimental/regrid.py", line 680, in regrid_area_weighted_rectilinear_src_and_grid

raise ValueError("The horizontal grid coordinates of both the source "

Val... | 1.0 | area_weighted regridding fails on several observational datasets - The preprocessor function `regrid` with argument `scheme = 'area_weighted'` fails on several observational datasets. The error thrown is:

```

File "/iris/experimental/regrid.py", line 680, in regrid_area_weighted_rectilinear_src_and_grid

raise Va... | process | area weighted regridding fails on several observational datasets the preprocessor function regrid with argument scheme area weighted fails on several observational datasets the error thrown is file iris experimental regrid py line in regrid area weighted rectilinear src and grid raise valu... | 1 |

737 | 3,214,323,495 | IssuesEvent | 2015-10-07 00:49:52 | broadinstitute/hellbender-dataflow | https://api.github.com/repos/broadinstitute/hellbender-dataflow | opened | Consider replacing ReferenceShard and VariantShard with SimpleInterval | Dataflow DataflowPreprocessingPipeline | _From @droazen on July 22, 2015 18:58_

_Copied from original issue: broadinstitute/hellbender#703_ | 1.0 | Consider replacing ReferenceShard and VariantShard with SimpleInterval - _From @droazen on July 22, 2015 18:58_

_Copied from original issue: broadinstitute/hellbender#703_ | process | consider replacing referenceshard and variantshard with simpleinterval from droazen on july copied from original issue broadinstitute hellbender | 1 |

6,938 | 10,102,755,739 | IssuesEvent | 2019-07-29 12:03:19 | eaudeweb/ozone | https://api.github.com/repos/eaudeweb/ozone | opened | Custom titles and help text for Transfers and ProcAgent forms | Feature: Process Agents Feature: Transfers Frontend: Small stuff | Right now the ProcAgents form shows the title of the Transfers form:

http://prntscr.com/olgw82

We need to show different texts, depending on the obligation behind the form (including the submission info tab): http://prntscr.com/olgwot

| 1.0 | Custom titles and help text for Transfers and ProcAgent forms - Right now the ProcAgents form shows the title of the Transfers form:

http://prntscr.com/olgw82

We need to show different texts, depending on the obligation behind the form (including the submission info tab): http://prntscr.com/olgwot

| process | custom titles and help text for transfers and procagent forms right now the procagents form shows the title of the transfers form we need to show different texts depending on the obligation behind the form including the submission info tab | 1 |

177,857 | 6,587,917,690 | IssuesEvent | 2017-09-13 23:29:24 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | failing: [sig-instrumentation] Cluster level logging implemented by Stackdriver should ingest logs | area/platform/gke kind/bug priority/critical-urgent sig/gcp sig/instrumentation | **Is this a BUG REPORT or FEATURE REQUEST?**:

/kind bug

**What happened**:

EDIT: this probably shows it better

https://storage.googleapis.com/k8s-gubernator/triage/index.html?text=AAAAAAAAAAAAAA

I noticed this test started failing across all PR's in the pull-kubernetes-e2e-gce-bazel job this morning.

Firs... | 1.0 | failing: [sig-instrumentation] Cluster level logging implemented by Stackdriver should ingest logs - **Is this a BUG REPORT or FEATURE REQUEST?**:

/kind bug

**What happened**:

EDIT: this probably shows it better

https://storage.googleapis.com/k8s-gubernator/triage/index.html?text=AAAAAAAAAAAAAA

I noticed thi... | non_process | failing cluster level logging implemented by stackdriver should ingest logs is this a bug report or feature request kind bug what happened edit this probably shows it better i noticed this test started failing across all pr s in the pull kubernetes gce bazel job this morning first f... | 0 |

429,676 | 30,085,228,450 | IssuesEvent | 2023-06-29 08:08:23 | Nalini1998/Project_Public | https://api.github.com/repos/Nalini1998/Project_Public | closed | 5. Iterate through the elements in the x and y array | documentation enhancement help wanted good first issue question | Inside the for loop, iterate through the elements in the 'x' and 'y' array to set the 'x[i]' positions to be the canvas's horizontal center and the 'y[i]' positions to be the canvas's vertical center.

**Hint:**

_We can set the horizontal and vertical center of the canvas relative to width and height variables._ | 1.0 | 5. Iterate through the elements in the x and y array - Inside the for loop, iterate through the elements in the 'x' and 'y' array to set the 'x[i]' positions to be the canvas's horizontal center and the 'y[i]' positions to be the canvas's vertical center.

**Hint:**

_We can set the horizontal and vertical center of the... | non_process | iterate through the elements in the x and y array inside the for loop iterate through the elements in the x and y array to set the x positions to be the canvas s horizontal center and the y positions to be the canvas s vertical center hint we can set the horizontal and vertical center of the can... | 0 |

1,110 | 3,588,370,047 | IssuesEvent | 2016-01-30 23:46:33 | sysown/proxysql | https://api.github.com/repos/sysown/proxysql | closed | Implement query digest to monitor performance | ADMIN GLOBAL MYSQL QUERY PROCESSOR STATISTICS | Parent ticket for more tickets.

We need to implement a new table in which queries performances are logged | 1.0 | Implement query digest to monitor performance - Parent ticket for more tickets.

We need to implement a new table in which queries performances are logged | process | implement query digest to monitor performance parent ticket for more tickets we need to implement a new table in which queries performances are logged | 1 |

23,274 | 3,785,839,692 | IssuesEvent | 2016-03-20 19:26:59 | LlmDl/Towny | https://api.github.com/repos/LlmDl/Towny | closed | Townychat Event Priority is having a issue with chatcontrol and deluxechat | Status-New Type-Defect | Originally reported on Google Code with ID 2333

```

Im using chatcontrol And deluxechat with townychat

Deluxechat is a nice formating tool that let me add interactive chat to townychat

The developer of both plugin said that it should be a priority issue.

And chatcontrol is a filter tool to filter key words and to ch... | 1.0 | Townychat Event Priority is having a issue with chatcontrol and deluxechat - Originally reported on Google Code with ID 2333

```

Im using chatcontrol And deluxechat with townychat

Deluxechat is a nice formating tool that let me add interactive chat to townychat

The developer of both plugin said that it should be a pr... | non_process | townychat event priority is having a issue with chatcontrol and deluxechat originally reported on google code with id im using chatcontrol and deluxechat with townychat deluxechat is a nice formating tool that let me add interactive chat to townychat the developer of both plugin said that it should be a prior... | 0 |

16,232 | 10,682,018,046 | IssuesEvent | 2019-10-22 03:24:14 | rabbitmq/rabbitmq-cli | https://api.github.com/repos/rabbitmq/rabbitmq-cli | closed | Erlang formatter not working as expected | bug usability | Reported in [this `rabbitmq-users` discussion.](https://groups.google.com/d/topic/rabbitmq-users/t1DONfY2oEg/discussion)

To reproduce:

* Start up RabbitMQ 3.8.0

* Run `rabbitmqctl cluster_status --formatter erlang`. Instead of the "old style" erlang term output, you get the "new" output stuffed into a binary:

... | True | Erlang formatter not working as expected - Reported in [this `rabbitmq-users` discussion.](https://groups.google.com/d/topic/rabbitmq-users/t1DONfY2oEg/discussion)

To reproduce:

* Start up RabbitMQ 3.8.0

* Run `rabbitmqctl cluster_status --formatter erlang`. Instead of the "old style" erlang term output, you get... | non_process | erlang formatter not working as expected reported in to reproduce start up rabbitmq run rabbitmqctl cluster status formatter erlang instead of the old style erlang term output you get the new output stuffed into a binary cluster status of node rabbit shostakovich ... | 0 |

344,674 | 30,752,140,907 | IssuesEvent | 2023-07-28 20:22:31 | saltstack/salt | https://api.github.com/repos/saltstack/salt | opened | [Increase Test Coverage] Batch 95 | Tests | Increase the code coverage percent on the following files to at least 80%.

Please be aware that currently the percentage might be inaccurate if the module uses salt due to #64696

File | Percent

salt/states/mssql_user.py 13

salt/states/postgres_group.py 79

salt/states/rbac_solaris.py 4

salt/states/win_wua.py 75

salt/ut... | 1.0 | [Increase Test Coverage] Batch 95 - Increase the code coverage percent on the following files to at least 80%.

Please be aware that currently the percentage might be inaccurate if the module uses salt due to #64696

File | Percent

salt/states/mssql_user.py 13

salt/states/postgres_group.py 79

salt/states/rbac_solaris.py... | non_process | batch increase the code coverage percent on the following files to at least please be aware that currently the percentage might be inaccurate if the module uses salt due to file percent salt states mssql user py salt states postgres group py salt states rbac solaris py salt states win wua py sal... | 0 |

20,315 | 26,958,723,196 | IssuesEvent | 2023-02-08 16:35:46 | googleapis/java-core | https://api.github.com/repos/googleapis/java-core | closed | Dependency Dashboard | type: process priority: p4 | This issue lists Renovate updates and detected dependencies. Read the [Dependency Dashboard](https://docs.renovatebot.com/key-concepts/dashboard/) docs to learn more.

## Repository problems

These problems occurred while renovating this repository.

- WARN: RepoCacheS3.getCacheFolder() - appending missing trailing sl... | 1.0 | Dependency Dashboard - This issue lists Renovate updates and detected dependencies. Read the [Dependency Dashboard](https://docs.renovatebot.com/key-concepts/dashboard/) docs to learn more.

## Repository problems

These problems occurred while renovating this repository.

- WARN: RepoCacheS3.getCacheFolder() - append... | process | dependency dashboard this issue lists renovate updates and detected dependencies read the docs to learn more repository problems these problems occurred while renovating this repository warn getcachefolder appending missing trailing slash to pathname open these updates have all been create... | 1 |

638,264 | 20,720,241,090 | IssuesEvent | 2022-03-13 09:17:38 | AY2122S2-CS2103T-T09-2/tp | https://api.github.com/repos/AY2122S2-CS2103T-T09-2/tp | opened | As a Recruiter, I can see what stage of the job application an applicant is at | type.Story priority.High | so that, I can keep track of their progress | 1.0 | As a Recruiter, I can see what stage of the job application an applicant is at - so that, I can keep track of their progress | non_process | as a recruiter i can see what stage of the job application an applicant is at so that i can keep track of their progress | 0 |

741,261 | 25,786,300,926 | IssuesEvent | 2022-12-09 20:51:40 | low-key-code/poorcast | https://api.github.com/repos/low-key-code/poorcast | closed | Design Bills Page | Frontend MEDIUM priority | ### Problem:

Bills page needs design.

### Solution:

See issue #43 and design accordingly through dribble and mocks in Miro.

### Success Criteria:

- [x] Bills page is designed

| 1.0 | Design Bills Page - ### Problem:

Bills page needs design.

### Solution:

See issue #43 and design accordingly through dribble and mocks in Miro.

### Success Criteria:

- [x] Bills page is designed

| non_process | design bills page problem bills page needs design solution see issue and design accordingly through dribble and mocks in miro success criteria bills page is designed | 0 |

3,047 | 4,141,011,830 | IssuesEvent | 2016-06-14 01:59:21 | NixOS/nixpkgs | https://api.github.com/repos/NixOS/nixpkgs | closed | Grsecurity does not work in virtualbox | 6.topic: grsecurity | So, i wanted to test grsecurity in virtualbox, but it does not seem to boot. This is my config

```

security.grsecurity = {

enable = true;

stable = true;

config = {

system = "server";

hardwareVirtualisation = false;

virtualisationSoftware = "virtualbox";

};

... | True | Grsecurity does not work in virtualbox - So, i wanted to test grsecurity in virtualbox, but it does not seem to boot. This is my config

```

security.grsecurity = {

enable = true;

stable = true;

config = {

system = "server";

hardwareVirtualisation = false;

virtuali... | non_process | grsecurity does not work in virtualbox so i wanted to test grsecurity in virtualbox but it does not seem to boot this is my config security grsecurity enable true stable true config system server hardwarevirtualisation false virtuali... | 0 |

171,175 | 27,076,917,065 | IssuesEvent | 2023-02-14 11:15:21 | ZoCom-utbildning/folkans-it-utbildningar | https://api.github.com/repos/ZoCom-utbildning/folkans-it-utbildningar | closed | Questionaire styling | Design Programmering V: Formulär | - [x] mobilvy - card/image component - prototype

- [x] desktopvy - card/image component - prototype

- [ ] mobilvy - card/image component

- [ ] desktopvy - card/image component | 1.0 | Questionaire styling - - [x] mobilvy - card/image component - prototype

- [x] desktopvy - card/image component - prototype

- [ ] mobilvy - card/image component

- [ ] desktopvy - card/image component | non_process | questionaire styling mobilvy card image component prototype desktopvy card image component prototype mobilvy card image component desktopvy card image component | 0 |

76,495 | 15,496,123,921 | IssuesEvent | 2021-03-11 02:06:12 | poojasoude/SmartHotel | https://api.github.com/repos/poojasoude/SmartHotel | opened | CVE-2021-23337 (High) detected in lodash-4.17.10.tgz, lodash-3.10.1.tgz | security vulnerability | ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-4.17.10.tgz</b>, <b>lodash-3.10.1.tgz</b></p></summary>

<p>

<details><summary><b>lodash-4.17.10.tgz</b></p></... | True | CVE-2021-23337 (High) detected in lodash-4.17.10.tgz, lodash-3.10.1.tgz - ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-4.17.10.tgz</b>, <b>lodash-3.10.1.tg... | non_process | cve high detected in lodash tgz lodash tgz cve high severity vulnerability vulnerable libraries lodash tgz lodash tgz lodash tgz lodash modular utilities library home page a href path to dependency file smarthotel website clientapp pack... | 0 |

5,609 | 8,468,914,353 | IssuesEvent | 2018-10-23 21:07:14 | carloseduardov8/Viajato | https://api.github.com/repos/carloseduardov8/Viajato | closed | Criar banco de dados de locação de veículos | Priority:Normal Process: Setup Environment | Definir e popular base com empresas de locação e tipos de automóveis disponíveis. | 1.0 | Criar banco de dados de locação de veículos - Definir e popular base com empresas de locação e tipos de automóveis disponíveis. | process | criar banco de dados de locação de veículos definir e popular base com empresas de locação e tipos de automóveis disponíveis | 1 |

20,583 | 27,244,872,563 | IssuesEvent | 2023-02-22 00:36:50 | emacs-ess/ESS | https://api.github.com/repos/emacs-ess/ESS | closed | Default R session for executing code blocks | process:eval | Hi

In org-mode and with multiple R sessions if I have an R code and execute code with C-cC-c it will use the default R session for that buffer, or section etc.

However, if I go into ess-mode (C-c') and I try to send a block to the R session either with C-cC-c or C-cC-j or any other command it will ask for which R s... | 1.0 | Default R session for executing code blocks - Hi

In org-mode and with multiple R sessions if I have an R code and execute code with C-cC-c it will use the default R session for that buffer, or section etc.

However, if I go into ess-mode (C-c') and I try to send a block to the R session either with C-cC-c or C-cC-j ... | process | default r session for executing code blocks hi in org mode and with multiple r sessions if i have an r code and execute code with c cc c it will use the default r session for that buffer or section etc however if i go into ess mode c c and i try to send a block to the r session either with c cc c or c cc j ... | 1 |

73,066 | 24,438,182,204 | IssuesEvent | 2022-10-06 12:58:47 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | User can get stuck with sending read receipts disabled if server stopped supporting it | T-Defect A-User-Settings A-Read-Receipts O-Uncommon | - Use a server that supports MSC2285

- Disable sending read receipts

- The server stops supporting MSC2285

- You're stuck with no RRs and no way to clear notifs

It's an edge-case but we should at least provide a way to enable them again | 1.0 | User can get stuck with sending read receipts disabled if server stopped supporting it - - Use a server that supports MSC2285

- Disable sending read receipts

- The server stops supporting MSC2285

- You're stuck with no RRs and no way to clear notifs

It's an edge-case but we should at least provide a way to enable... | non_process | user can get stuck with sending read receipts disabled if server stopped supporting it use a server that supports disable sending read receipts the server stops supporting you re stuck with no rrs and no way to clear notifs it s an edge case but we should at least provide a way to enable them again | 0 |

13,925 | 16,681,803,881 | IssuesEvent | 2021-06-08 01:23:56 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Warning before running analyses on too large rasters | Feature Request Feedback Processing | Author Name: **Paolo Cavallini** (@pcav)

Original Redmine Issue: [8249](https://issues.qgis.org/issues/8249)

Redmine category:processing/core

---

If the user does not select an appropriate resolution for the resulting raster(s), it risks to get stuck with an excessive resolution, and too long processing time. I sugg... | 1.0 | Warning before running analyses on too large rasters - Author Name: **Paolo Cavallini** (@pcav)

Original Redmine Issue: [8249](https://issues.qgis.org/issues/8249)

Redmine category:processing/core

---

If the user does not select an appropriate resolution for the resulting raster(s), it risks to get stuck with an exc... | process | warning before running analyses on too large rasters author name paolo cavallini pcav original redmine issue redmine category processing core if the user does not select an appropriate resolution for the resulting raster s it risks to get stuck with an excessive resolution and too long processi... | 1 |

6,297 | 9,303,207,790 | IssuesEvent | 2019-03-24 15:49:05 | shirou/gopsutil | https://api.github.com/repos/shirou/gopsutil | closed | process.PidExists(0) return False on Linux | os:linux package:process | With current version of `gopsutil`:

```

$ cat test.go

cat test.go

package main

import (

"fmt"

"github.com/shirou/gopsutil/process"

)

func main() {

fmt.Println(process.PidExists(0))

}

$ go run test.go

false <nil>

```

On any POSIX system, PID 0 means all process and that check should return True. At lea... | 1.0 | process.PidExists(0) return False on Linux - With current version of `gopsutil`:

```

$ cat test.go

cat test.go

package main

import (

"fmt"

"github.com/shirou/gopsutil/process"

)

func main() {

fmt.Println(process.PidExists(0))

}

$ go run test.go

false <nil>

```

On any POSIX system, PID 0 means all proc... | process | process pidexists return false on linux with current version of gopsutil cat test go cat test go package main import fmt github com shirou gopsutil process func main fmt println process pidexists go run test go false on any posix system pid means all process ... | 1 |

18,157 | 3,029,736,953 | IssuesEvent | 2015-08-04 14:11:29 | idaholab/moose | https://api.github.com/repos/idaholab/moose | closed | RandomMaterial test is not testing anything | C: MOOSE P: normal T: defect | There is a type in the input file, causing the material property not to be output. I'll fix this. | 1.0 | RandomMaterial test is not testing anything - There is a type in the input file, causing the material property not to be output. I'll fix this. | non_process | randommaterial test is not testing anything there is a type in the input file causing the material property not to be output i ll fix this | 0 |

6,953 | 10,113,921,971 | IssuesEvent | 2019-07-30 17:55:09 | material-components/material-components-ios | https://api.github.com/repos/material-components/material-components-ios | closed | [BottomSheet] Update checklist for BottomSheet | [BottomSheet] type:Process | OWNERs should update checklist to fill in missing values.

https://docs.google.com/spreadsheets/d/1H30t5r8rJcXkyG9tNpPSOgVEJ0enxaZlazQJrlfyYSo/edit#gid=0

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/117177634](http://b/117177634) | 1.0 | [BottomSheet] Update checklist for BottomSheet - OWNERs should update checklist to fill in missing values.

https://docs.google.com/spreadsheets/d/1H30t5r8rJcXkyG9tNpPSOgVEJ0enxaZlazQJrlfyYSo/edit#gid=0

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/1171776... | process | update checklist for bottomsheet owners should update checklist to fill in missing values internal data associated internal bug | 1 |

23,590 | 7,346,463,710 | IssuesEvent | 2018-03-07 20:48:50 | BeAPI/bea-media-analytics | https://api.github.com/repos/BeAPI/bea-media-analytics | opened | Envira Gallery support | Builder Support Enhancement | <!--

Thanks for contributing !

Please note:

- These comments won't show up when you submit your issue.

- Please choose a descriptive title, ex. : "On media delete, it's still indexed".

- Try to provide as many details as possible on the below list.

- If requesting a new feature, please explain why you'd like to... | 1.0 | Envira Gallery support - <!--

Thanks for contributing !

Please note:

- These comments won't show up when you submit your issue.

- Please choose a descriptive title, ex. : "On media delete, it's still indexed".

- Try to provide as many details as possible on the below list.

- If requesting a new feature, please ... | non_process | envira gallery support thanks for contributing please note these comments won t show up when you submit your issue please choose a descriptive title ex on media delete it s still indexed try to provide as many details as possible on the below list if requesting a new feature please ... | 0 |

70,348 | 7,183,848,937 | IssuesEvent | 2018-02-01 14:43:15 | openshift/origin | https://api.github.com/repos/openshift/origin | reopened | executing 'oc get secrets -o name --all-namespaces; oc describe appliedclusterresourcequota/for-deads-by-annotation -n quota-bar --as deads' expecting any result and text 'secrets. | kind/test-flake priority/P1 sig/master | https://openshift-gce-devel.appspot.com/build/origin-ci-test/pr-logs/pull/17599/test_pull_request_origin_cmd/6974/

@deads2k you've got your name on it, you own it

/assign deads2k

/kind test-flake

/sig master | 1.0 | executing 'oc get secrets -o name --all-namespaces; oc describe appliedclusterresourcequota/for-deads-by-annotation -n quota-bar --as deads' expecting any result and text 'secrets. - https://openshift-gce-devel.appspot.com/build/origin-ci-test/pr-logs/pull/17599/test_pull_request_origin_cmd/6974/

@deads2k you've got... | non_process | executing oc get secrets o name all namespaces oc describe appliedclusterresourcequota for deads by annotation n quota bar as deads expecting any result and text secrets you ve got your name on it you own it assign kind test flake sig master | 0 |

436 | 2,868,705,560 | IssuesEvent | 2015-06-05 20:31:50 | gremau/NMEG_fluxproc_testing | https://api.github.com/repos/gremau/NMEG_fluxproc_testing | closed | Put VPD in fluxall_qc files | enhancement Gap Filling QC Process | There is no vpd value in the `fluxall_qc` files. Currently we let the MPI gapfiller calculate this for us. We should calculate it ourselves and put it in these files. | 1.0 | Put VPD in fluxall_qc files - There is no vpd value in the `fluxall_qc` files. Currently we let the MPI gapfiller calculate this for us. We should calculate it ourselves and put it in these files. | process | put vpd in fluxall qc files there is no vpd value in the fluxall qc files currently we let the mpi gapfiller calculate this for us we should calculate it ourselves and put it in these files | 1 |

18,785 | 24,690,505,448 | IssuesEvent | 2022-10-19 08:11:44 | deepset-ai/haystack | https://api.github.com/repos/deepset-ai/haystack | closed | Bug: Load PreProcessor with `split_by: None` from config fails | type:bug topic:preprocessing | ### The issue

When trying to load the PreProcessor node with `split_by: None` from config fails.

### Reproduce the issue

To reproduce the issue, execute this script with the latest Haystack version and python 3.8:

```python

from haystack import Pipeline

from haystack.nodes.preprocessor import PreProcessor

... | 1.0 | Bug: Load PreProcessor with `split_by: None` from config fails - ### The issue

When trying to load the PreProcessor node with `split_by: None` from config fails.

### Reproduce the issue

To reproduce the issue, execute this script with the latest Haystack version and python 3.8:

```python

from haystack import P... | process | bug load preprocessor with split by none from config fails the issue when trying to load the preprocessor node with split by none from config fails reproduce the issue to reproduce the issue execute this script with the latest haystack version and python python from haystack import p... | 1 |

5,843 | 8,670,721,096 | IssuesEvent | 2018-11-29 17:09:50 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | closed | [Firestore] FirestoreClient.list_collections() is missing, but is in DocumentReference. | api: firestore triaged for GA type: process | FirestoreClient.list_collections() is missing, but it does exist as part of DocumentReference.

| 1.0 | [Firestore] FirestoreClient.list_collections() is missing, but is in DocumentReference. - FirestoreClient.list_collections() is missing, but it does exist as part of DocumentReference.

| process | firestoreclient list collections is missing but is in documentreference firestoreclient list collections is missing but it does exist as part of documentreference | 1 |

15,796 | 19,986,267,425 | IssuesEvent | 2022-01-30 18:03:25 | processing/processing4 | https://api.github.com/repos/processing/processing4 | closed | Problem with function size(int arg, int arg) in Class | help wanted preprocessor | When the function `void size(int arg, int arg)` is used with two arguments in `Class` that's cause a syntax problem when this function is call directly in `void setup()`

Processing 4.0.2b / OS Monterey

Code to reproduce:

```

Truc truc = new Truc();

void setup() {

size(200,200);

truc.size(1,1); // problem... | 1.0 | Problem with function size(int arg, int arg) in Class - When the function `void size(int arg, int arg)` is used with two arguments in `Class` that's cause a syntax problem when this function is call directly in `void setup()`

Processing 4.0.2b / OS Monterey

Code to reproduce:

```

Truc truc = new Truc();

void s... | process | problem with function size int arg int arg in class when the function void size int arg int arg is used with two arguments in class that s cause a syntax problem when this function is call directly in void setup processing os monterey code to reproduce truc truc new truc void se... | 1 |

20,908 | 27,749,863,053 | IssuesEvent | 2023-03-15 19:50:00 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | opened | [processor/servicegraph] Deprecate component | processor/servicegraph connector/servicegraph | ### Component(s)

connector/servicegraph, processor/servicegraph

### Describe the issue you're reporting

This is a tracking issue relating to https://github.com/open-telemetry/opentelemetry-collector/issues/7370

The processor has been reimplemented as a connector. (See https://github.com/open-telemetry/opentelemet... | 1.0 | [processor/servicegraph] Deprecate component - ### Component(s)

connector/servicegraph, processor/servicegraph

### Describe the issue you're reporting

This is a tracking issue relating to https://github.com/open-telemetry/opentelemetry-collector/issues/7370

The processor has been reimplemented as a connector. (Se... | process | deprecate component component s connector servicegraph processor servicegraph describe the issue you re reporting this is a tracking issue relating to the processor has been reimplemented as a connector see we should deprecate the processor when the code owners believe the time is appropri... | 1 |

18,947 | 24,908,256,210 | IssuesEvent | 2022-10-29 14:39:42 | calaldees/KaraKara | https://api.github.com/repos/calaldees/KaraKara | closed | Parallel encoding | feature processmedia2 | I have a 64-core server available; ffmpeg has multi-threading but it never seems to use more than a few at once, so to use all the processing power we would need multiple ffmpeg instances. I did a hacky thing of setting encode order to random, commenting out the lock file, and then running multiple processmedia2 docker... | 1.0 | Parallel encoding - I have a 64-core server available; ffmpeg has multi-threading but it never seems to use more than a few at once, so to use all the processing power we would need multiple ffmpeg instances. I did a hacky thing of setting encode order to random, commenting out the lock file, and then running multiple ... | process | parallel encoding i have a core server available ffmpeg has multi threading but it never seems to use more than a few at once so to use all the processing power we would need multiple ffmpeg instances i did a hacky thing of setting encode order to random commenting out the lock file and then running multiple ... | 1 |

228,336 | 18,170,921,835 | IssuesEvent | 2021-09-27 19:56:30 | USAID-OHA-SI/That-is-MSDup | https://api.github.com/repos/USAID-OHA-SI/That-is-MSDup | closed | HTS POS FY19 targets incorrect - age/sex/result plus keypop/result | OU - Cameroon Tech Area - Testing MSD | Cameroon has reported that their FY19 targets are wrong in the PPR. known issue - just adding another country to the list.

for programs that are primarily KP this really screws with their targets (e.g. doubling them in Cameroon's case) | 1.0 | HTS POS FY19 targets incorrect - age/sex/result plus keypop/result - Cameroon has reported that their FY19 targets are wrong in the PPR. known issue - just adding another country to the list.

for programs that are primarily KP this really screws with their targets (e.g. doubling them in Cameroon's case) | non_process | hts pos targets incorrect age sex result plus keypop result cameroon has reported that their targets are wrong in the ppr known issue just adding another country to the list for programs that are primarily kp this really screws with their targets e g doubling them in cameroon s case | 0 |

259,080 | 19,584,842,057 | IssuesEvent | 2022-01-05 04:40:52 | GetBlok-io/Translations | https://api.github.com/repos/GetBlok-io/Translations | closed | Translation Needed - Russian - GB_BLOKBOUNTY | documentation Bounty | 2 ERG Bounty per translation.

Looking for translations in the following languages:

Chinese

Portuguese

Spanish

Russian

German

Arabic

Translations must be true and will be verified (NO GOOGLE TRANSLATE). Please make sure to post a comment if you accept the bounty to avoid duplicative work.

Notify @ArOhBeK ... | 1.0 | Translation Needed - Russian - GB_BLOKBOUNTY - 2 ERG Bounty per translation.

Looking for translations in the following languages:

Chinese

Portuguese

Spanish

Russian

German

Arabic

Translations must be true and will be verified (NO GOOGLE TRANSLATE). Please make sure to post a comment if you accept the boun... | non_process | translation needed russian gb blokbounty erg bounty per translation looking for translations in the following languages chinese portuguese spanish russian german arabic translations must be true and will be verified no google translate please make sure to post a comment if you accept the boun... | 0 |

5,100 | 7,881,645,994 | IssuesEvent | 2018-06-26 19:47:17 | ArctosDB/new-collections | https://api.github.com/repos/ArctosDB/new-collections | closed | Angelo State | Draft MOU in process Fee Reduction Approved Registered at GRBIO | Request for fee reduction received, approved by ASC; need to formally contact to indicate approval of request; new collections manager recently hired with Arctos background from MSB | 1.0 | Angelo State - Request for fee reduction received, approved by ASC; need to formally contact to indicate approval of request; new collections manager recently hired with Arctos background from MSB | process | angelo state request for fee reduction received approved by asc need to formally contact to indicate approval of request new collections manager recently hired with arctos background from msb | 1 |

219 | 2,649,312,944 | IssuesEvent | 2015-03-14 19:56:06 | eiskaltdcpp/eiskaltdcpp | https://api.github.com/repos/eiskaltdcpp/eiskaltdcpp | opened | Translation issues | imported Type-DevProcess | _From [Nickollai](https://code.google.com/u/Nickollai/) on March 23, 2010 16:31:21_

Предлагаю их сообщать в этот баг.

1) на иконке в лотке: "Show/hide window"

2) в настройках: "I'm away. State your business and I might answer later if

you're lucky."

_Original issue: http://code.google.com/p/eiskaltdc/issues/deta... | 1.0 | Translation issues - _From [Nickollai](https://code.google.com/u/Nickollai/) on March 23, 2010 16:31:21_

Предлагаю их сообщать в этот баг.

1) на иконке в лотке: "Show/hide window"

2) в настройках: "I'm away. State your business and I might answer later if

you're lucky."

_Original issue: http://code.google.com/p/... | process | translation issues from on march предлагаю их сообщать в этот баг на иконке в лотке show hide window в настройках i m away state your business and i might answer later if you re lucky original issue | 1 |

266,908 | 23,267,816,053 | IssuesEvent | 2022-08-04 19:14:44 | ValveSoftware/Source-1-Games | https://api.github.com/repos/ValveSoftware/Source-1-Games | closed | [TF2] Lasers on rd_asteroid are broken | Team Fortress 2 Need Retest Animation | On rd_asteroid, env_laser entities are used at both sides of the map and rely on path_track as endpoints of the lasers so they can be displayed. However, since the 7/7/2022 update, path_track entities are now server-side and as such, can't be used as endpoints for env_lasers. Lasers on rd_asteroid are now rendered like... | 1.0 | [TF2] Lasers on rd_asteroid are broken - On rd_asteroid, env_laser entities are used at both sides of the map and rely on path_track as endpoints of the lasers so they can be displayed. However, since the 7/7/2022 update, path_track entities are now server-side and as such, can't be used as endpoints for env_lasers. La... | non_process | lasers on rd asteroid are broken on rd asteroid env laser entities are used at both sides of the map and rely on path track as endpoints of the lasers so they can be displayed however since the update path track entities are now server side and as such can t be used as endpoints for env lasers lasers on... | 0 |

252,277 | 8,034,011,535 | IssuesEvent | 2018-07-29 13:48:14 | Automattic/liveblog | https://api.github.com/repos/Automattic/liveblog | opened | Updates stop when paginating AMP Liveblog | Priority::High confirmed bug | When viewing an AMP Liveblog, it provides a notification of new entries. These cease as soon as pagination is used.

# Steps to reproduce

1. Create a Liveblog with more than one page

2. Open the AMP version

3. Post a new update via the non-AMP liveblog

4. A notification will appear on the AMP liveblog

5. Click... | 1.0 | Updates stop when paginating AMP Liveblog - When viewing an AMP Liveblog, it provides a notification of new entries. These cease as soon as pagination is used.

# Steps to reproduce

1. Create a Liveblog with more than one page

2. Open the AMP version

3. Post a new update via the non-AMP liveblog

4. A notificati... | non_process | updates stop when paginating amp liveblog when viewing an amp liveblog it provides a notification of new entries these cease as soon as pagination is used steps to reproduce create a liveblog with more than one page open the amp version post a new update via the non amp liveblog a notificati... | 0 |

127,694 | 18,018,503,231 | IssuesEvent | 2021-09-16 16:21:08 | harrinry/spark-on-k8s-operator | https://api.github.com/repos/harrinry/spark-on-k8s-operator | opened | CVE-2020-36066 (High) detected in github.com/tidwall/match-v1.0.0 | security vulnerability | ## CVE-2020-36066 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/tidwall/match-v1.0.0</b></p></summary>

<p>Simple string pattern matcher for Go</p>

<p>

Dependency Hierarch... | True | CVE-2020-36066 (High) detected in github.com/tidwall/match-v1.0.0 - ## CVE-2020-36066 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/tidwall/match-v1.0.0</b></p></summary>

... | non_process | cve high detected in github com tidwall match cve high severity vulnerability vulnerable library github com tidwall match simple string pattern matcher for go dependency hierarchy github com google go cloud root library github com tidwall sjson ... | 0 |

429,618 | 12,426,523,354 | IssuesEvent | 2020-05-24 21:37:56 | danbooru/danbooru | https://api.github.com/repos/danbooru/danbooru | closed | dmail: Turn nested quote block into expand block | Low Priority | Viewing/previewing dmail only:

Since the Respond link wraps the old message in a quote block, a Respond to that Respond wraps _that_ in a quote block, etc...; you end up with deeply-nested quote blocks taking up a lot of space.

Second level quote blocks should be displayed as expand blocks with the first line in the ... | 1.0 | dmail: Turn nested quote block into expand block - Viewing/previewing dmail only:

Since the Respond link wraps the old message in a quote block, a Respond to that Respond wraps _that_ in a quote block, etc...; you end up with deeply-nested quote blocks taking up a lot of space.

Second level quote blocks should be dis... | non_process | dmail turn nested quote block into expand block viewing previewing dmail only since the respond link wraps the old message in a quote block a respond to that respond wraps that in a quote block etc you end up with deeply nested quote blocks taking up a lot of space second level quote blocks should be dis... | 0 |

66,767 | 12,824,857,265 | IssuesEvent | 2020-07-06 14:07:13 | MeAmAnUsername/pie | https://api.github.com/repos/MeAmAnUsername/pie | opened | remove try-p2j-ast-exp | Component: code base Priority: low Status: specified Type: enhancement | It turns errors at stratego compile time into errors at Java compile time / Java runtime, which is bad. | 1.0 | remove try-p2j-ast-exp - It turns errors at stratego compile time into errors at Java compile time / Java runtime, which is bad. | non_process | remove try ast exp it turns errors at stratego compile time into errors at java compile time java runtime which is bad | 0 |

312,405 | 26,862,348,525 | IssuesEvent | 2023-02-03 19:38:21 | spring-projects/spring-security | https://api.github.com/repos/spring-projects/spring-security | closed | WebTestUtilsTestRuntimeHints should only be invoked for Servlet | in: test type: bug status: forward-port | Forward port of issue #12622 to 6.1.x. | 1.0 | WebTestUtilsTestRuntimeHints should only be invoked for Servlet - Forward port of issue #12622 to 6.1.x. | non_process | webtestutilstestruntimehints should only be invoked for servlet forward port of issue to x | 0 |

9,410 | 12,406,901,466 | IssuesEvent | 2020-05-21 20:01:35 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | LocationWrapper can be initialized without `messageSandbox`, but `messageSandbox` optional isn't properly handled | AREA: client SYSTEM: client side processing TYPE: bug | <!--

If you have all reproduction steps with a complete sample app, please share as many details as possible in the sections below.

Make sure that you tried using the latest Hammerhead version (https://github.com/DevExpress/testcafe-hammerhead/releases), where this behavior might have been already addressed.

Bef... | 1.0 | LocationWrapper can be initialized without `messageSandbox`, but `messageSandbox` optional isn't properly handled - <!--

If you have all reproduction steps with a complete sample app, please share as many details as possible in the sections below.

Make sure that you tried using the latest Hammerhead version (https:... | process | locationwrapper can be initialized without messagesandbox but messagesandbox optional isn t properly handled if you have all reproduction steps with a complete sample app please share as many details as possible in the sections below make sure that you tried using the latest hammerhead version wher... | 1 |

311,424 | 9,532,901,324 | IssuesEvent | 2019-04-29 19:50:37 | hackla-engage/engage-client | https://api.github.com/repos/hackla-engage/engage-client | closed | When viewing Item Detail Card, browser "back" button does not behave as expected | Priority: Medium Status: Backlog Type: Bug | when viewing the item detail card, pressing the back button does not take the user back to the feed. As the URL changes when viewing an item detail card (an id query param is added to it with the id of the card in question), and as clicking the back button does change the URL, it should also mimic the behavior of takin... | 1.0 | When viewing Item Detail Card, browser "back" button does not behave as expected - when viewing the item detail card, pressing the back button does not take the user back to the feed. As the URL changes when viewing an item detail card (an id query param is added to it with the id of the card in question), and as click... | non_process | when viewing item detail card browser back button does not behave as expected when viewing the item detail card pressing the back button does not take the user back to the feed as the url changes when viewing an item detail card an id query param is added to it with the id of the card in question and as click... | 0 |

7,670 | 10,758,930,667 | IssuesEvent | 2019-10-31 15:46:22 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | closed | Storage: 'test_bpo_set_unset_preserves_acls' no longer sees expected 'BadRequest'. | api: storage backend testing type: process | From [this Kokoro job](https://source.cloud.google.com/results/invocations/2691ce70-20dd-4f90-ad95-d483b80b4ec2/targets/cloud-devrel%2Fclient-libraries%2Fgoogle-cloud-python%2Fpresubmit%2Fstorage/log):

```python

____________ TestIAMConfiguration.test_bpo_set_unset_preserves_acls ____________

self = <tests.system.T... | 1.0 | Storage: 'test_bpo_set_unset_preserves_acls' no longer sees expected 'BadRequest'. - From [this Kokoro job](https://source.cloud.google.com/results/invocations/2691ce70-20dd-4f90-ad95-d483b80b4ec2/targets/cloud-devrel%2Fclient-libraries%2Fgoogle-cloud-python%2Fpresubmit%2Fstorage/log):

```python

____________ TestIA... | process | storage test bpo set unset preserves acls no longer sees expected badrequest from python testiamconfiguration test bpo set unset preserves acls self def test bpo set unset preserves acls self new bucket name bpo acls unique resource id ... | 1 |

17,359 | 23,185,419,856 | IssuesEvent | 2022-08-01 07:58:13 | streamnative/flink | https://api.github.com/repos/streamnative/flink | closed | [New Connector] No watermarks using new source connector. | compute/data-processing type/bug | will need to update with instructions https://github.com/streamnative/sn-pulsar-flink-workshop

^ can u access this repo? to reproduce

Run docker-compose up pulsar

Run setup.sh

Run this class https://github.com/streamnative/sn-pulsar-flink-workshop/blob/main/src/main/java/io/ipolyzos/compute/v3/EnrichmentStream.java <- ... | 1.0 | [New Connector] No watermarks using new source connector. - will need to update with instructions https://github.com/streamnative/sn-pulsar-flink-workshop

^ can u access this repo? to reproduce

Run docker-compose up pulsar

Run setup.sh

Run this class https://github.com/streamnative/sn-pulsar-flink-workshop/blob/main/sr... | process | no watermarks using new source connector will need to update with instructions can u access this repo to reproduce run docker compose up pulsar run setup sh run this class you don t need a flink cluster they binaries will spin up one with a ui so navigate to the flink ui run both the producers here ... | 1 |

2,352 | 5,164,004,478 | IssuesEvent | 2017-01-17 09:12:10 | jlm2017/jlm-video-subtitles | https://api.github.com/repos/jlm2017/jlm-video-subtitles | closed | [subtitles] [eng] Orlando : « Un meurtre homophobe de masse » | Language: English Process: [6] Approved | # Video title

Orlando : « Un meurtre homophobe de masse »

# URL

https://www.youtube.com/watch?v=0I5nlon7Mn0

# Youtube subtitle language

Anglais

# Duration

3:14

# URL subtitles

https://www.youtube.com/timedtext_editor?ref=player&v=0I5nlon7Mn0&tab=captions&bl=vmp&lang=en&ui=hd&action_mde_edit_f... | 1.0 | [subtitles] [eng] Orlando : « Un meurtre homophobe de masse » - # Video title

Orlando : « Un meurtre homophobe de masse »

# URL

https://www.youtube.com/watch?v=0I5nlon7Mn0

# Youtube subtitle language

Anglais

# Duration

3:14

# URL subtitles

https://www.youtube.com/timedtext_editor?ref=player&v... | process | orlando « un meurtre homophobe de masse » video title orlando « un meurtre homophobe de masse » url youtube subtitle language anglais duration url subtitles | 1 |

112,069 | 4,506,130,931 | IssuesEvent | 2016-09-02 01:46:06 | Aricelio/App-Balancete | https://api.github.com/repos/Aricelio/App-Balancete | closed | Criar Tabela N pra N 'TagLancamento' | higher priority task | A tabela Lancamento e a tabela Tag possuem relacionamento N para N | 1.0 | Criar Tabela N pra N 'TagLancamento' - A tabela Lancamento e a tabela Tag possuem relacionamento N para N | non_process | criar tabela n pra n taglancamento a tabela lancamento e a tabela tag possuem relacionamento n para n | 0 |

17,971 | 23,983,657,651 | IssuesEvent | 2022-09-13 17:03:54 | mdsreq-fga-unb/2022.1-Capita-C | https://api.github.com/repos/mdsreq-fga-unb/2022.1-Capita-C | closed | Processo de Requisitos | requisito ProcessoRequisitos REQ Comentários Professor | Da mesma maneira como foi realizado na unidade 1, está sendo listado um conjunto de atividades: quando serão feitas, por quem, etc. Onde essas atividades estão ou serão posicionadas no processo de trabalho?

**Fonte**

https://mdsreq-fga-unb.github.io/2022.1-Capita-C/processoER/

| 1.0 | Processo de Requisitos - Da mesma maneira como foi realizado na unidade 1, está sendo listado um conjunto de atividades: quando serão feitas, por quem, etc. Onde essas atividades estão ou serão posicionadas no processo de trabalho?

**Fonte**

https://mdsreq-fga-unb.github.io/2022.1-Capita-C/processoER/

| process | processo de requisitos da mesma maneira como foi realizado na unidade está sendo listado um conjunto de atividades quando serão feitas por quem etc onde essas atividades estão ou serão posicionadas no processo de trabalho fonte | 1 |

18,511 | 24,551,581,120 | IssuesEvent | 2022-10-12 13:00:49 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Mobile apps] Sign in screen > Password field > 'Eye' icon should not get displayed in the mobile apps | Bug P1 iOS Android Process: Fixed Process: Tested dev Auth server | Sign in screen > Password field > 'Eye' icon should not get displayed in the mobile apps | 2.0 | [Mobile apps] Sign in screen > Password field > 'Eye' icon should not get displayed in the mobile apps - Sign in screen > Password field > 'Eye' icon should not get displayed in the mobile apps | process | sign in screen password field eye icon should not get displayed in the mobile apps sign in screen password field eye icon should not get displayed in the mobile apps | 1 |

344,397 | 24,812,256,723 | IssuesEvent | 2022-10-25 10:20:21 | postmanlabs/postman-app-support | https://api.github.com/repos/postmanlabs/postman-app-support | closed | Markdown numbered lists clip tens digit when rendered | bug product/documentation | ### Is there an existing issue for this?

- [X] I have searched the tracker for existing similar issues and I know that duplicates will be closed

### Describe the Issue

When I add ten or more items to a numbered markdown list for documentation, the tens digit is clipped when rendered. So the rended tenth element loo... | 1.0 | Markdown numbered lists clip tens digit when rendered - ### Is there an existing issue for this?

- [X] I have searched the tracker for existing similar issues and I know that duplicates will be closed

### Describe the Issue

When I add ten or more items to a numbered markdown list for documentation, the tens digit is... | non_process | markdown numbered lists clip tens digit when rendered is there an existing issue for this i have searched the tracker for existing similar issues and i know that duplicates will be closed describe the issue when i add ten or more items to a numbered markdown list for documentation the tens digit is c... | 0 |

294,814 | 25,407,746,164 | IssuesEvent | 2022-11-22 16:27:58 | tnha22/gPBL-Team2 | https://api.github.com/repos/tnha22/gPBL-Team2 | opened | AnhTNH - Testing System | Testing | Includes:

- Test front-end

- Test APIs

- Test design documents

- Test documents | 1.0 | AnhTNH - Testing System - Includes:

- Test front-end

- Test APIs

- Test design documents

- Test documents | non_process | anhtnh testing system includes test front end test apis test design documents test documents | 0 |

7,763 | 10,885,389,505 | IssuesEvent | 2019-11-18 10:18:02 | didi/mpx | https://api.github.com/repos/didi/mpx | closed | [Bug report]api-proxy把非function类型属性变成了function | bug processing | **问题描述**

api-proxy把非function类型属性变成了function

**复现步骤**

请给出如何复现这个问题的方法,像下面一样分步骤描述清楚

1. 访问mpx.env属性,却获取到了一个function类型的属性

**期望的表现**

获取到mpx下的原来的非function属性

| 1.0 | [Bug report]api-proxy把非function类型属性变成了function - **问题描述**

api-proxy把非function类型属性变成了function

**复现步骤**

请给出如何复现这个问题的方法,像下面一样分步骤描述清楚

1. 访问mpx.env属性,却获取到了一个function类型的属性

**期望的表现**

获取到mpx下的原来的非function属性

| process | api proxy把非function类型属性变成了function 问题描述 api proxy把非function类型属性变成了function 复现步骤 请给出如何复现这个问题的方法,像下面一样分步骤描述清楚 访问mpx env属性,却获取到了一个function类型的属性 期望的表现 获取到mpx下的原来的非function属性 | 1 |

56,002 | 11,494,520,969 | IssuesEvent | 2020-02-12 01:49:37 | toolbox-team/reddit-moderator-toolbox | https://api.github.com/repos/toolbox-team/reddit-moderator-toolbox | opened | Abstract the context listeners a bit more | code quality | Currently, in order to add an item to the context menu, a lot of logic checking has to be done to add or remove the right elements (for example, [usernotes.js:778-801](https://github.com/toolbox-team/reddit-moderator-toolbox/blob/83613b4e2aaaef637124874be2bcf77ede96682d/extension/data/modules/usernotes.js#L778-L801)). ... | 1.0 | Abstract the context listeners a bit more - Currently, in order to add an item to the context menu, a lot of logic checking has to be done to add or remove the right elements (for example, [usernotes.js:778-801](https://github.com/toolbox-team/reddit-moderator-toolbox/blob/83613b4e2aaaef637124874be2bcf77ede96682d/exten... | non_process | abstract the context listeners a bit more currently in order to add an item to the context menu a lot of logic checking has to be done to add or remove the right elements for example we could greatly simplify this by creating an api something like this js tbui registercontextitem title show r ... | 0 |

10,292 | 13,145,442,257 | IssuesEvent | 2020-08-08 03:30:27 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | Feature Request: Packetbeat in docker network | :Processors Packetbeat Stalled enhancement needs_team | It would be nice if packetbeat were more "docker aware", and if the `add_docker_metadata` could enrich docker container networks.

For example, we have a stack set up on one host, communicating using a bridge network. The packetbeat container is on the same stack, monitoring the host network.

| 1.0 | Feature Request: Packetbeat in docker network - It would be nice if packetbeat were more "docker aware", and if the `add_docker_metadata` could enrich docker container networks.

For example, we have a stack set up on one host, communicating using a bridge network. The packetbeat container is on the same stack, mon... | process | feature request packetbeat in docker network it would be nice if packetbeat were more docker aware and if the add docker metadata could enrich docker container networks for example we have a stack set up on one host communicating using a bridge network the packetbeat container is on the same stack mon... | 1 |

8,891 | 11,986,063,425 | IssuesEvent | 2020-04-07 18:37:33 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | Add Pod Priority Class | P2 enhancement process | **Problem**

When initially installing and when resources are constrained and it needs to preempt pods, Kubernetes does not know which pods are more important than others. [Pod priority class](https://kubernetes.io/docs/concepts/configuration/pod-priority-preemption/) is a critical feature for a stable application to s... | 1.0 | Add Pod Priority Class - **Problem**

When initially installing and when resources are constrained and it needs to preempt pods, Kubernetes does not know which pods are more important than others. [Pod priority class](https://kubernetes.io/docs/concepts/configuration/pod-priority-preemption/) is a critical feature for ... | process | add pod priority class problem when initially installing and when resources are constrained and it needs to preempt pods kubernetes does not know which pods are more important than others is a critical feature for a stable application to set to indicate to kubernetes that missing information solution... | 1 |

17,113 | 22,634,815,018 | IssuesEvent | 2022-06-30 17:51:11 | hashgraph/hedera-json-rpc-relay | https://api.github.com/repos/hashgraph/hedera-json-rpc-relay | opened | Automate deployment of relay in envs | enhancement P2 process | ### Problem

Helm chart deployment capabilities were added.

However, they are currently manual

### Solution

Add automation support to

- [ ] Deploy to integration on every main branch checking

- [ ] Deploy to previewnet on every vx.y.z tag

- [ ] Semi-automated flow to deploy to testnet once test gates have been c... | 1.0 | Automate deployment of relay in envs - ### Problem

Helm chart deployment capabilities were added.

However, they are currently manual

### Solution

Add automation support to

- [ ] Deploy to integration on every main branch checking

- [ ] Deploy to previewnet on every vx.y.z tag

- [ ] Semi-automated flow to deploy... | process | automate deployment of relay in envs problem helm chart deployment capabilities were added however they are currently manual solution add automation support to deploy to integration on every main branch checking deploy to previewnet on every vx y z tag semi automated flow to deploy to te... | 1 |

3,687 | 6,716,778,075 | IssuesEvent | 2017-10-14 13:11:46 | TraningManagementSystem/tms | https://api.github.com/repos/TraningManagementSystem/tms | closed | SwaggerCodegenを行うGradleプラグインの最新バージョン追いつき | dev process | ### Description

SwaggerCodegenを行うGradleプラグインの最新バージョン追いつき。

----

### Details

SwaggerCodegenを行うGradleプラグインの最新バージョン追いつき。

最新版だと、2.8.0になってしまい記載方法のベストプラクティスも変化してきているので・・・

----

### Relation Issue

なし

---- | 1.0 | SwaggerCodegenを行うGradleプラグインの最新バージョン追いつき - ### Description

SwaggerCodegenを行うGradleプラグインの最新バージョン追いつき。

----

### Details

SwaggerCodegenを行うGradleプラグインの最新バージョン追いつき。

最新版だと、2.8.0になってしまい記載方法のベストプラクティスも変化してきているので・・・

----

### Relation Issue

なし

---- | process | swaggercodegenを行うgradleプラグインの最新バージョン追いつき description swaggercodegenを行うgradleプラグインの最新バージョン追いつき。 details swaggercodegenを行うgradleプラグインの最新バージョン追いつき。 最新版だと、 ・・・ relation issue なし | 1 |

364,732 | 25,499,635,958 | IssuesEvent | 2022-11-28 02:00:47 | Choi-Won-Jun/bankServiceProject | https://api.github.com/repos/Choi-Won-Jun/bankServiceProject | opened | [All] 형식 통일하기 | documentation | 본인 담당 코드에 아래와 같은 형식이 필요하다면,

1. 100,000 원처럼 금액을 표현 할 때 ---> ₩100,000 으로 통일 해주세요.

추가로 통일 필요한 형식이나, 형식 궁금한게 있다면 댓글로 달아주세요. | 1.0 | [All] 형식 통일하기 - 본인 담당 코드에 아래와 같은 형식이 필요하다면,

1. 100,000 원처럼 금액을 표현 할 때 ---> ₩100,000 으로 통일 해주세요.

추가로 통일 필요한 형식이나, 형식 궁금한게 있다면 댓글로 달아주세요. | non_process | 형식 통일하기 본인 담당 코드에 아래와 같은 형식이 필요하다면 원처럼 금액을 표현 할 때 ₩ 으로 통일 해주세요 추가로 통일 필요한 형식이나 형식 궁금한게 있다면 댓글로 달아주세요 | 0 |

81,125 | 15,603,083,463 | IssuesEvent | 2021-03-19 01:09:28 | turkdevops/desktop | https://api.github.com/repos/turkdevops/desktop | opened | CVE-2021-23337 (High) detected in lodash-3.10.1.tgz | security vulnerability | ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-3.10.1.tgz</b></p></summary>

<p>The modern build of lodash modular utilities.</p>

<p>Library home page: <a href... | True | CVE-2021-23337 (High) detected in lodash-3.10.1.tgz - ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-3.10.1.tgz</b></p></summary>

<p>The modern build of lodas... | non_process | cve high detected in lodash tgz cve high severity vulnerability vulnerable library lodash tgz the modern build of lodash modular utilities library home page a href path to dependency file desktop node modules lodash package json path to vulnerable library desktop no... | 0 |

3,847 | 6,808,539,491 | IssuesEvent | 2017-11-04 04:17:44 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | reopened | whenBlock: Date search does not read empty blocks | status-inprocess tools-whenBlock type-bug | Only searches non-empty blocks in date mode

whenBlock 2017-06-01T00: block #3800775 : 1496275190 : 2017-05-31 23:59:50 UTC

whenBlock 2017-06-01T00:01: block #3800779 : 1496275259 : 2017-06-01 00:00:59 UTC

Here, we ask for block numbers with one second in between, but a difference of four blocks is rep... | 1.0 | whenBlock: Date search does not read empty blocks - Only searches non-empty blocks in date mode

whenBlock 2017-06-01T00: block #3800775 : 1496275190 : 2017-05-31 23:59:50 UTC

whenBlock 2017-06-01T00:01: block #3800779 : 1496275259 : 2017-06-01 00:00:59 UTC

Here, we ask for block numbers with one second... | process | whenblock date search does not read empty blocks only searches non empty blocks in date mode whenblock block utc whenblock block utc here we ask for block numbers with one second in between but a difference of four blocks is reported this... | 1 |

553,291 | 16,362,114,475 | IssuesEvent | 2021-05-14 11:08:15 | epam/ketcher | https://api.github.com/repos/epam/ketcher | opened | Blank page and a console error appear while saving | bug priority: high | _Affected versions:_ Remote, Standalone

Tested on Ketcher v2.2.1-102-g430a3502

_Steps to reproduce:_

1. Launch Ketcher.

2. Create any structure (e.g. chain).

2. Click the 'Save As' button.

3. Select any format except MDL Molfile V2000.

_Expected result:_ It's possible to save the structure.

_Actual result:_... | 1.0 | Blank page and a console error appear while saving - _Affected versions:_ Remote, Standalone

Tested on Ketcher v2.2.1-102-g430a3502

_Steps to reproduce:_

1. Launch Ketcher.

2. Create any structure (e.g. chain).

2. Click the 'Save As' button.

3. Select any format except MDL Molfile V2000.

_Expected result:_ ... | non_process | blank page and a console error appear while saving affected versions remote standalone tested on ketcher steps to reproduce launch ketcher create any structure e g chain click the save as button select any format except mdl molfile expected result it s possible t... | 0 |

15,333 | 3,456,148,928 | IssuesEvent | 2015-12-17 23:27:48 | infiniteautomation/ma-core-public | https://api.github.com/repos/infiniteautomation/ma-core-public | closed | Excel Reports - Saving without points quiet failure | Enhancement Ready for Testing | Saving a report with no points produces a validation error message at the top, but no information as to the error. | 1.0 | Excel Reports - Saving without points quiet failure - Saving a report with no points produces a validation error message at the top, but no information as to the error. | non_process | excel reports saving without points quiet failure saving a report with no points produces a validation error message at the top but no information as to the error | 0 |

15,871 | 20,036,623,721 | IssuesEvent | 2022-02-02 12:36:55 | syncfusion/ej2-angular-ui-components | https://api.github.com/repos/syncfusion/ej2-angular-ui-components | closed | It can not use two document editor components in one parent component. | word-processor | HTML elements in document editor have an id property. When using more than one document editor component in one parent component, the app raises warnings:

After destroying the component that contains tw... | 1.0 | It can not use two document editor components in one parent component. - HTML elements in document editor have an id property. When using more than one document editor component in one parent component, the app raises warnings:

.

Use cases:

* Repair a process instance that is stuck (primary)

* Testing activities of a process in... | 1.0 | [Epic] Process Instance Modification - ## Description

As a user, I can modify an active process instance to repair the execution. The execution may be stuck and I want to continue the execution on a different activity (i.e. skip or repeat activities).

Use cases:

* Repair a process instance that is stuck (primary... | process | process instance modification description as a user i can modify an active process instance to repair the execution the execution may be stuck and i want to continue the execution on a different activity i e skip or repeat activities use cases repair a process instance that is stuck primary ... | 1 |

239,277 | 18,267,922,111 | IssuesEvent | 2021-10-04 10:37:21 | marmelab/react-admin | https://api.github.com/repos/marmelab/react-admin | closed | Module not found: Can't resolve 'graphql' in 'C:\Users\me\Desktop\react-admin-example\node_modules\ra-data-graphql-simple\esm' | good first issue documentation |

I followed the instructions [here](https://www.npmjs.com/package/ra-data-graphql-simple) but i got the following error

**node_modules/ra-data-graphql-simple/esm/buildGqlQuery.js

Module not found: Can't resolve 'graphql' in 'C:\Users\Razi\Desktop\react-admin-auth0-example\node_modules\ra-data-graphql-simple\esm'*... | 1.0 | Module not found: Can't resolve 'graphql' in 'C:\Users\me\Desktop\react-admin-example\node_modules\ra-data-graphql-simple\esm' -

I followed the instructions [here](https://www.npmjs.com/package/ra-data-graphql-simple) but i got the following error

**node_modules/ra-data-graphql-simple/esm/buildGqlQuery.js

Module... | non_process | module not found can t resolve graphql in c users me desktop react admin example node modules ra data graphql simple esm i followed the instructions but i got the following error node modules ra data graphql simple esm buildgqlquery js module not found can t resolve graphql in c users razi des... | 0 |

1,635 | 4,254,941,301 | IssuesEvent | 2016-07-09 03:58:35 | pattern-lab/the-spec | https://api.github.com/repos/pattern-lab/the-spec | closed | Discussion & Vote: Developing an Acid Test StarterKit | process-enhancement vote pending / needed |

I need to check if my changes to core affect output in an unexpected way. I also need to make sure my changes meet the needs of spec.

-----

We have no good way to ensure that Node and PHP are developing towards spec or if we are in compliance. We use the demo but it doesn't address all of the various test cases... | 1.0 | Discussion & Vote: Developing an Acid Test StarterKit -

I need to check if my changes to core affect output in an unexpected way. I also need to make sure my changes meet the needs of spec.

-----

We have no good way to ensure that Node and PHP are developing towards spec or if we are in compliance. We use the d... | process | discussion vote developing an acid test starterkit i need to check if my changes to core affect output in an unexpected way i also need to make sure my changes meet the needs of spec we have no good way to ensure that node and php are developing towards spec or if we are in compliance we use the d... | 1 |

18,353 | 6,583,127,035 | IssuesEvent | 2017-09-13 03:24:23 | HypothesisWorks/hypothesis-python | https://api.github.com/repos/HypothesisWorks/hypothesis-python | closed | Keeping Hypothesis current on conda-forge | CI-and-build | Downstream issue: conda-forge/hypothesis-feedstock#8

`conda` is a pip+virtualenv alternative which is very popular for scientific and data python users (including me). The default repos are maintained by Continuum, but there is also an increasingly popular community-run channel called conda-forge (think RHEL: CentO... | 1.0 | Keeping Hypothesis current on conda-forge - Downstream issue: conda-forge/hypothesis-feedstock#8

`conda` is a pip+virtualenv alternative which is very popular for scientific and data python users (including me). The default repos are maintained by Continuum, but there is also an increasingly popular community-run c... | non_process | keeping hypothesis current on conda forge downstream issue conda forge hypothesis feedstock conda is a pip virtualenv alternative which is very popular for scientific and data python users including me the default repos are maintained by continuum but there is also an increasingly popular community run c... | 0 |

104,108 | 8,961,885,762 | IssuesEvent | 2019-01-28 10:51:17 | humera987/FXLabs-Test-Automation | https://api.github.com/repos/humera987/FXLabs-Test-Automation | closed | API Test 1 : ApiV1ProjectsIdSearchAutoSuggestionsSearchStatusGetQueryParamPagesizeSla | API Test 1 API Test 1 | Project : API Test 1

Job : JOB

Env : ENV

Category : null

Tags : null

Severity : null

Region : AliTest

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, max-age=0, must-revalidate], Pragma=[no-ca... | 2.0 | API Test 1 : ApiV1ProjectsIdSearchAutoSuggestionsSearchStatusGetQueryParamPagesizeSla - Project : API Test 1

Job : JOB

Env : ENV

Category : null

Tags : null

Severity : null

Region : AliTest

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; m... | non_process | api test project api test job job env env category null tags null severity null region alitest result fail status code headers x content type options x xss protection cache control pragma expires x frame options set cookie content type... | 0 |

16,945 | 22,301,859,557 | IssuesEvent | 2022-06-13 09:26:16 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | PostgreSQL should work with array ids | process/candidate kind/improvement tech/engines/migration engine tech/engines/query engine tech/engines/introspection engine tech/engines/datamodel topic: postgresql list team/schema team/client topic: postgresql | PostgreSQL lets you to create a table with an array as the primary key. Prisma does not let you to do that, blocking some use-cases. We should enable all array types on PostgreSQL for `@id`/`@@id` columns, and make sure Query Engine will work properly with these identifiers.

The Schema Team side is done already in t... | 1.0 | PostgreSQL should work with array ids - PostgreSQL lets you to create a table with an array as the primary key. Prisma does not let you to do that, blocking some use-cases. We should enable all array types on PostgreSQL for `@id`/`@@id` columns, and make sure Query Engine will work properly with these identifiers.

T... | process | postgresql should work with array ids postgresql lets you to create a table with an array as the primary key prisma does not let you to do that blocking some use cases we should enable all array types on postgresql for id id columns and make sure query engine will work properly with these identifiers t... | 1 |

5,318 | 8,132,382,140 | IssuesEvent | 2018-08-18 11:06:20 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | [System.Diagnostics] Using LoadUserProfile on Linux: Starting a process with a different identity is not supported on this platform | area-System.Diagnostics.Process bug | **Hello!** I'm new to .NET Core (recently moved my project from .NET Framework for it).

### Story

- I didn't know that RunAs is partially available on Linux, so I used all functionality of it in my code.

- I catched exception `System.PlatformNotSupportedException: Starting a process with a different identity is ... | 1.0 | [System.Diagnostics] Using LoadUserProfile on Linux: Starting a process with a different identity is not supported on this platform - **Hello!** I'm new to .NET Core (recently moved my project from .NET Framework for it).

### Story

- I didn't know that RunAs is partially available on Linux, so I used all function... | process | using loaduserprofile on linux starting a process with a different identity is not supported on this platform hello i m new to net core recently moved my project from net framework for it story i didn t know that runas is partially available on linux so i used all functionality of it in my c... | 1 |

7,641 | 10,737,503,526 | IssuesEvent | 2019-10-29 13:13:57 | Open-EO/openeo-api | https://api.github.com/repos/Open-EO/openeo-api | closed | Spec for "bands" cube:dimension? | data discovery processes stac / ogc work in progress | For [collection metadata](https://open-eo.github.io/openeo-api/apireference/#tag/EO-Data-Discovery/paths/~1collections~1{collection_id}/get) the spec defines the cube dimensions under `properties/cube:dimensions`. For spatial and temporal dimensions the `type` is well defined (`spatial` and `temporal`), but for "additi... | 1.0 | Spec for "bands" cube:dimension? - For [collection metadata](https://open-eo.github.io/openeo-api/apireference/#tag/EO-Data-Discovery/paths/~1collections~1{collection_id}/get) the spec defines the cube dimensions under `properties/cube:dimensions`. For spatial and temporal dimensions the `type` is well defined (`spatia... | process | spec for bands cube dimension for the spec defines the cube dimensions under properties cube dimensions for spatial and temporal dimensions the type is well defined spatial and temporal but for additional dimension typically for spectral bands i guess it a is free form custom type examp... | 1 |