Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

46,360 | 13,163,815,260 | IssuesEvent | 2020-08-11 01:40:34 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | dotnet implementation of AES decryption is slower than native call to bcrypt | area-System.Security tenet-performance | ### Description

I've compared 3 different implementations of AES 128 decrypt as a simple benchmark project.

| Method | Time | Ratio |

|-|-|-|

| DecryptAesDotNetTransform | 5.76 µs | 1.00 baseline |

| DecryptAesBCrypt | 4.35 µs | 0.76 |

| DecryptAesDotNetStream | 9.32 µs | 1.62 |

You can find implementation... | True | dotnet implementation of AES decryption is slower than native call to bcrypt - ### Description

I've compared 3 different implementations of AES 128 decrypt as a simple benchmark project.

| Method | Time | Ratio |

|-|-|-|

| DecryptAesDotNetTransform | 5.76 µs | 1.00 baseline |

| DecryptAesBCrypt | 4.35 µs | 0.7... | non_process | dotnet implementation of aes decryption is slower than native call to bcrypt description i ve compared different implementations of aes decrypt as a simple benchmark project method time ratio decryptaesdotnettransform µs baseline decryptaesbcrypt µs ... | 0 |

9,407 | 12,405,672,359 | IssuesEvent | 2020-05-21 17:42:30 | tokio-rs/tokio | https://api.github.com/repos/tokio-rs/tokio | closed | Several AsyncRead impls unnecessarily zero buffers in prepare_uninitialized_buffer | A-tokio C-bug E-easy E-help-wanted M-io M-process T-performance | <!--

Thank you for reporting an issue.

Please fill in as much of the template below as you're able.

-->

## Version

<!--

List the versions of all `tokio` crates you are using. The easiest way to get

this information is using `cargo-tree`.

`cargo install cargo-tree`

(see install here: https://github.com/... | 1.0 | Several AsyncRead impls unnecessarily zero buffers in prepare_uninitialized_buffer - <!--

Thank you for reporting an issue.

Please fill in as much of the template below as you're able.

-->

## Version

<!--

List the versions of all `tokio` crates you are using. The easiest way to get

this information is usin... | process | several asyncread impls unnecessarily zero buffers in prepare uninitialized buffer thank you for reporting an issue please fill in as much of the template below as you re able version list the versions of all tokio crates you are using the easiest way to get this information is usin... | 1 |

113,260 | 17,116,224,415 | IssuesEvent | 2021-07-11 12:10:06 | theHinneh/ha | https://api.github.com/repos/theHinneh/ha | closed | CVE-2020-8203 (High) detected in lodash-4.17.15.tgz, lodash-3.10.1.tgz | security vulnerability | ## CVE-2020-8203 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-4.17.15.tgz</b>, <b>lodash-3.10.1.tgz</b></p></summary>

<p>

<details><summary><b>lodash-4.17.15.tgz</b></p></s... | True | CVE-2020-8203 (High) detected in lodash-4.17.15.tgz, lodash-3.10.1.tgz - ## CVE-2020-8203 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-4.17.15.tgz</b>, <b>lodash-3.10.1.tgz<... | non_process | cve high detected in lodash tgz lodash tgz cve high severity vulnerability vulnerable libraries lodash tgz lodash tgz lodash tgz lodash modular utilities library home page a href path to dependency file ha backend package json path to v... | 0 |

34,399 | 7,450,985,989 | IssuesEvent | 2018-03-29 00:09:07 | kerdokullamae/test_koik_issued | https://api.github.com/repos/kerdokullamae/test_koik_issued | closed | Täpsema otsingu tulemus | C: AIS P: highest R: fixed T: defect | **Reported by jelenag on 18 Feb 2013 09:34 UTC**

Täpsem otsing -> määran lähteandmeteks midagi Isiku/Organisatsiooni lahtrisse - > tulemus tuleb kõikide väärtuste puhul üks ja sama, ja tundub, et ole seotud valitud Isikuga.

Näide,

Isik = Allohvitseride Kool

Tulemus -> [= Akadeemiline keemia selts

Tulemus -> [ht... | 1.0 | Täpsema otsingu tulemus - **Reported by jelenag on 18 Feb 2013 09:34 UTC**

Täpsem otsing -> määran lähteandmeteks midagi Isiku/Organisatsiooni lahtrisse - > tulemus tuleb kõikide väärtuste puhul üks ja sama, ja tundub, et ole seotud valitud Isikuga.

Näide,

Isik = Allohvitseride Kool

Tulemus -> [= Akadeemiline ke... | non_process | täpsema otsingu tulemus reported by jelenag on feb utc täpsem otsing määran lähteandmeteks midagi isiku organisatsiooni lahtrisse tulemus tuleb kõikide väärtuste puhul üks ja sama ja tundub et ole seotud valitud isikuga näide isik allohvitseride kool tulemus akadeemiline keemia s... | 0 |

122,386 | 12,150,410,057 | IssuesEvent | 2020-04-24 17:55:43 | FraunhoferIOSB/FROST-Server | https://api.github.com/repos/FraunhoferIOSB/FROST-Server | closed | Dead link to Docker Documentation | documentation | Hi,

Just to let you know that the link to "use Docker" page of the documentation runs to a 404 page.

Correct link seems to be https://fraunhoferiosb.github.io/FROST-Server/deployment/docker.html

The "deployement" part is missing.

Best regards | 1.0 | Dead link to Docker Documentation - Hi,

Just to let you know that the link to "use Docker" page of the documentation runs to a 404 page.

Correct link seems to be https://fraunhoferiosb.github.io/FROST-Server/deployment/docker.html

The "deployement" part is missing.

Best regards | non_process | dead link to docker documentation hi just to let you know that the link to use docker page of the documentation runs to a page correct link seems to be the deployement part is missing best regards | 0 |

74,120 | 9,754,250,892 | IssuesEvent | 2019-06-04 11:07:42 | verdaccio/website | https://api.github.com/repos/verdaccio/website | closed | Webui wrong scope example in Configuration table | documentation | The webui scope example = `\@myscope` used to work pre v4.0. Specify it this way in >= v4.0 _config.yaml_ will fail the JSON.parse on the client in _index.html_ as illegal escape character.

Sample should read: **`"@myscope"`**

> Note: the sample in the black-box (full _web:_ example) is correct but not in the **... | 1.0 | Webui wrong scope example in Configuration table - The webui scope example = `\@myscope` used to work pre v4.0. Specify it this way in >= v4.0 _config.yaml_ will fail the JSON.parse on the client in _index.html_ as illegal escape character.

Sample should read: **`"@myscope"`**

> Note: the sample in the black-box... | non_process | webui wrong scope example in configuration table the webui scope example myscope used to work pre specify it this way in config yaml will fail the json parse on the client in index html as illegal escape character sample should read myscope note the sample in the black box ... | 0 |

33,736 | 7,214,192,631 | IssuesEvent | 2018-02-08 00:54:56 | jccastillo0007/eFacturaT | https://api.github.com/repos/jccastillo0007/eFacturaT | opened | recibo electrónico de pago (REP) - en pago en efectivo, no debe enviar los datos de cuenta bancaria | bug defect | Marca error.

Aquí hay 2 formas de hacerlo:

a) la correcta, si eligen forma de pago Efectivo al momento de capturar el REP, se deshabilita la captura de las cuentas y todo lo relativo a ello.

b) la chacarrona... adicional a ello, si capturan como forma de pago efectivo, no se deben enviar los números de cuenta al XML... | 1.0 | recibo electrónico de pago (REP) - en pago en efectivo, no debe enviar los datos de cuenta bancaria - Marca error.

Aquí hay 2 formas de hacerlo:

a) la correcta, si eligen forma de pago Efectivo al momento de capturar el REP, se deshabilita la captura de las cuentas y todo lo relativo a ello.

b) la chacarrona... adic... | non_process | recibo electrónico de pago rep en pago en efectivo no debe enviar los datos de cuenta bancaria marca error aquí hay formas de hacerlo a la correcta si eligen forma de pago efectivo al momento de capturar el rep se deshabilita la captura de las cuentas y todo lo relativo a ello b la chacarrona adic... | 0 |

362,580 | 10,729,530,855 | IssuesEvent | 2019-10-28 15:45:41 | steve8x8/geotoad | https://api.github.com/repos/steve8x8/geotoad | closed | GeoToad (3.29.1 and before) randomly reuses text for additional waypoints | Priority-Medium bug fixed | Sometimes, GeoToad does not extract the proper "Note" text from the Additional Waypoints table, and uses the one of the previous entry instead - this can happen multiple times in a row for the same cache (example: GC6GHP4; instructions for some virtual waypoint tasks are missing from the GPX output - fortunately they a... | 1.0 | GeoToad (3.29.1 and before) randomly reuses text for additional waypoints - Sometimes, GeoToad does not extract the proper "Note" text from the Additional Waypoints table, and uses the one of the previous entry instead - this can happen multiple times in a row for the same cache (example: GC6GHP4; instructions for some... | non_process | geotoad and before randomly reuses text for additional waypoints sometimes geotoad does not extract the proper note text from the additional waypoints table and uses the one of the previous entry instead this can happen multiple times in a row for the same cache example instructions for some virtua... | 0 |

14,046 | 16,850,624,492 | IssuesEvent | 2021-06-20 12:37:14 | rladstaetter/LogoRRR | https://api.github.com/repos/rladstaetter/LogoRRR | closed | Move to gluonfx-maven-plugin | release process | client-maven-plugin was renamed to gluonfx-maven-plugin. The necessary changes should be applied to the project. | 1.0 | Move to gluonfx-maven-plugin - client-maven-plugin was renamed to gluonfx-maven-plugin. The necessary changes should be applied to the project. | process | move to gluonfx maven plugin client maven plugin was renamed to gluonfx maven plugin the necessary changes should be applied to the project | 1 |

255,659 | 21,942,722,079 | IssuesEvent | 2022-05-23 19:57:48 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Incorrect Pending message for uphold | bug feature/rewards QA/Yes QA/Test-Plan-Specified closed/not-actionable OS/Desktop | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | Incorrect Pending message for uphold - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE... | non_process | incorrect pending message for uphold have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get the issue closed it will only be... | 0 |

7,133 | 10,278,469,532 | IssuesEvent | 2019-08-25 14:41:33 | nextmoov/nextmoov | https://api.github.com/repos/nextmoov/nextmoov | closed | DX on Sterroids | #Dev Tools & Processes | nextmoov on Sterroids is a huge internal project @ nextmoov, having as a target to become an even more efficient company, despite scaling heavily.

This project touches every part of nextmoov — the way we handle accounting, PM, day-to-day @ HQ and remotely, responsabilities inside the (core) team,...

**DX on Sterr... | 1.0 | DX on Sterroids - nextmoov on Sterroids is a huge internal project @ nextmoov, having as a target to become an even more efficient company, despite scaling heavily.

This project touches every part of nextmoov — the way we handle accounting, PM, day-to-day @ HQ and remotely, responsabilities inside the (core) team,..... | process | dx on sterroids nextmoov on sterroids is a huge internal project nextmoov having as a target to become an even more efficient company despite scaling heavily this project touches every part of nextmoov — the way we handle accounting pm day to day hq and remotely responsabilities inside the core team ... | 1 |

699,151 | 24,006,628,923 | IssuesEvent | 2022-09-14 15:15:24 | COPRS/rs-issues | https://api.github.com/repos/COPRS/rs-issues | closed | [BUG] [PRO] rs-addon s1-l0aiop: tasktable drops all RAW sessions files | bug WERUM dev ivv pro CCB priority:minor | <!--

Note: Please search to see if an issue already exists for the bug you encountered.

Note: A closed bug can be reopened and affected to a new version of the software.

-->

**Environment:**

<!--

- Delivery tag: release/0.1.0

- Platform: IVV Orange Cloud

- Configuration:

-->

- Delivery tag: 1.4.0-rc1

-... | 1.0 | [BUG] [PRO] rs-addon s1-l0aiop: tasktable drops all RAW sessions files - <!--

Note: Please search to see if an issue already exists for the bug you encountered.

Note: A closed bug can be reopened and affected to a new version of the software.

-->

**Environment:**

<!--

- Delivery tag: release/0.1.0

- Platfo... | non_process | rs addon tasktable drops all raw sessions files note please search to see if an issue already exists for the bug you encountered note a closed bug can be reopened and affected to a new version of the software environment delivery tag release platform ivv orange... | 0 |

21,123 | 28,091,451,332 | IssuesEvent | 2023-03-30 13:18:53 | googleapis/nodejs-storage | https://api.github.com/repos/googleapis/nodejs-storage | closed | test: update signed url conformance test cases | type: process api: storage | Please update your conformance test v4_signatures.json file to match with https://github.com/googleapis/conformance-tests/blob/main/storage/v1/v4_signatures.json and make sure tests pass.

PR was made to the conformance test repo adding a test case: https://github.com/googleapis/conformance-tests/pull/75 | 1.0 | test: update signed url conformance test cases - Please update your conformance test v4_signatures.json file to match with https://github.com/googleapis/conformance-tests/blob/main/storage/v1/v4_signatures.json and make sure tests pass.

PR was made to the conformance test repo adding a test case: https://github.com/... | process | test update signed url conformance test cases please update your conformance test signatures json file to match with and make sure tests pass pr was made to the conformance test repo adding a test case | 1 |

42,133 | 22,310,554,069 | IssuesEvent | 2022-06-13 16:35:10 | tstack/lnav | https://api.github.com/repos/tstack/lnav | closed | Load/index files in fragments | performance | Currently, lnav indexes all available file content on startup so you can start working with the full data set. But, this doesn't work well if the files are large since it can take awhile to index and the user is left looking at a blank screen. So, we should look into loading the files in fragments so that the UI pops... | True | Load/index files in fragments - Currently, lnav indexes all available file content on startup so you can start working with the full data set. But, this doesn't work well if the files are large since it can take awhile to index and the user is left looking at a blank screen. So, we should look into loading the files ... | non_process | load index files in fragments currently lnav indexes all available file content on startup so you can start working with the full data set but this doesn t work well if the files are large since it can take awhile to index and the user is left looking at a blank screen so we should look into loading the files ... | 0 |

5,626 | 8,481,858,711 | IssuesEvent | 2018-10-25 16:50:59 | easy-software-ufal/annotations_repos | https://api.github.com/repos/easy-software-ufal/annotations_repos | opened | dotnet/BenchmarkDotNet Benchmark attributed with "HardwareCounters" throws an exception | ADA C# wrong processing | Issue: `https://github.com/dotnet/BenchmarkDotNet/issues/879`

PR: `https://github.com/dotnet/BenchmarkDotNet/pull/878/files` | 1.0 | dotnet/BenchmarkDotNet Benchmark attributed with "HardwareCounters" throws an exception - Issue: `https://github.com/dotnet/BenchmarkDotNet/issues/879`

PR: `https://github.com/dotnet/BenchmarkDotNet/pull/878/files` | process | dotnet benchmarkdotnet benchmark attributed with hardwarecounters throws an exception issue pr | 1 |

221,137 | 16,995,125,036 | IssuesEvent | 2021-07-01 04:55:31 | faridlesosibirsk/TrainingRepository | https://api.github.com/repos/faridlesosibirsk/TrainingRepository | opened | 40.01 Список удаленных веток | documentation | В конце файла написать заголовок и ответ, всё сохранить в файл "40. Удаленные ветки.md". | 1.0 | 40.01 Список удаленных веток - В конце файла написать заголовок и ответ, всё сохранить в файл "40. Удаленные ветки.md". | non_process | список удаленных веток в конце файла написать заголовок и ответ всё сохранить в файл удаленные ветки md | 0 |

126,314 | 12,289,927,842 | IssuesEvent | 2020-05-10 00:23:38 | scanapi/scanapi | https://api.github.com/repos/scanapi/scanapi | opened | Templates Marketplace | documentation reporter | ## Description

Add a section to README.md with examples of report templates and asking for people to send their templates.

Something like danger's plugins section: https://danger.systems/ruby/

| 1.0 | Templates Marketplace - ## Description

Add a section to README.md with examples of report templates and asking for people to send their templates.

Something like danger's plugins section: https://danger.systems/ruby/

passed on rerun on one configuration and failed on the other, suggesting general flakiness. It looks like historically they are slightly flaky. The "true" version is not.

The failure is bec... | 1.0 | Sporadic failure in System.Diagnostics.Tests.ProcessTests.TestEnableRaiseEvents on Linux - Debian.8.Amd64-x64 and Alpine.38.Amd64-x64 today. Previously I see SLES and OpenSUSE.

Each of the two tests (null and false) passed on rerun on one configuration and failed on the other, suggesting general flakiness. It looks ... | process | sporadic failure in system diagnostics tests processtests testenableraiseevents on linux debian and alpine today previously i see sles and opensuse each of the two tests null and false passed on rerun on one configuration and failed on the other suggesting general flakiness it looks like historic... | 1 |

9,588 | 12,539,705,339 | IssuesEvent | 2020-06-05 09:04:37 | GoogleCloudPlatform/dotnet-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/dotnet-docs-samples | closed | Reactivate Sudukumb WebApp tests after fixing Chrome Driver issue. | priority: p1 type: process | We are getting the following error when trying to run Sudokumb WebApp tests which use Selenium.

> This version of chromedriver only supports chrome version 77

I've deactivated these tests (#922) until the issue is fixed. I want to check all other possible failures and get CI as green as possible to be able to mer... | 1.0 | Reactivate Sudukumb WebApp tests after fixing Chrome Driver issue. - We are getting the following error when trying to run Sudokumb WebApp tests which use Selenium.

> This version of chromedriver only supports chrome version 77

I've deactivated these tests (#922) until the issue is fixed. I want to check all othe... | process | reactivate sudukumb webapp tests after fixing chrome driver issue we are getting the following error when trying to run sudokumb webapp tests which use selenium this version of chromedriver only supports chrome version i ve deactivated these tests until the issue is fixed i want to check all other p... | 1 |

99,441 | 30,454,299,379 | IssuesEvent | 2023-07-16 17:37:48 | SigNoz/signoz | https://api.github.com/repos/SigNoz/signoz | closed | Add support for limit on Time series | query-builder customer demand | Currently limit on time series is not supported.

Limit on time series limits on number of series after ordering by Aggregation(key). | 1.0 | Add support for limit on Time series - Currently limit on time series is not supported.

Limit on time series limits on number of series after ordering by Aggregation(key). | non_process | add support for limit on time series currently limit on time series is not supported limit on time series limits on number of series after ordering by aggregation key | 0 |

824,755 | 31,169,367,215 | IssuesEvent | 2023-08-16 23:02:14 | janus-idp/backstage-showcase | https://api.github.com/repos/janus-idp/backstage-showcase | opened | Pygments vulnerable to ReDoS | priority/critical kind/security | ### What is the issue?

Pygments vulnerable to ReDoS

### Is there a CVE Mitre link?

https://quay.io/repository/janus-idp/backstage-showcase/manifest/sha256:97d53948633234ce8111bdd9ba7223da834db83dbf9e845c651839d20ff83515?tab=vulnerabilities

### What is the mitigation if known?

Upgrade from 2.14 to 2.15 | 1.0 | Pygments vulnerable to ReDoS - ### What is the issue?

Pygments vulnerable to ReDoS

### Is there a CVE Mitre link?

https://quay.io/repository/janus-idp/backstage-showcase/manifest/sha256:97d53948633234ce8111bdd9ba7223da834db83dbf9e845c651839d20ff83515?tab=vulnerabilities

### What is the mitigation if known?

... | non_process | pygments vulnerable to redos what is the issue pygments vulnerable to redos is there a cve mitre link what is the mitigation if known upgrade from to | 0 |

2,337 | 5,143,253,307 | IssuesEvent | 2017-01-12 15:33:33 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | child_process execFile / spawn throw non-descript exception on windows if exe requires elevation | child_process libuv windows | * **Version**: v6.5.0

* **Platform**: Windows 10 (x64)

* **Subsystem**: child_process

```

let cp = require('child_process');

let util = require('util');

try {

cp.execFileSync('SomeTool.exe')

} catch (err) {

console.log('err', util.inspect(err));

}

```

outputs

```

err { Error: spawnSync SomeTool.... | 1.0 | child_process execFile / spawn throw non-descript exception on windows if exe requires elevation - * **Version**: v6.5.0

* **Platform**: Windows 10 (x64)

* **Subsystem**: child_process

```

let cp = require('child_process');

let util = require('util');

try {

cp.execFileSync('SomeTool.exe')

} catch (err) {

... | process | child process execfile spawn throw non descript exception on windows if exe requires elevation version platform windows subsystem child process let cp require child process let util require util try cp execfilesync sometool exe catch err con... | 1 |

19,094 | 25,147,995,119 | IssuesEvent | 2022-11-10 07:40:52 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Fit Cells/Nodes selector is missing in SAGA tools | Processing Bug | ### What is the bug or the crash?

In QGIS 3.20 Versions with SAGA 7.8.2 implemented I experience that in several Raster algorithms like Features to Raster, Resampling, Multilevel B-Spline-Interpolation the selector "Fit cells" / "Fit nodes" is missing. The result is that "Fit nodes" is selected by default and the calc... | 1.0 | Fit Cells/Nodes selector is missing in SAGA tools - ### What is the bug or the crash?

In QGIS 3.20 Versions with SAGA 7.8.2 implemented I experience that in several Raster algorithms like Features to Raster, Resampling, Multilevel B-Spline-Interpolation the selector "Fit cells" / "Fit nodes" is missing. The result is ... | process | fit cells nodes selector is missing in saga tools what is the bug or the crash in qgis versions with saga implemented i experience that in several raster algorithms like features to raster resampling multilevel b spline interpolation the selector fit cells fit nodes is missing the result is t... | 1 |

16,934 | 22,283,039,942 | IssuesEvent | 2022-06-11 06:52:45 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | wrong terminal startup folder when open vscode with path param | needs more info confirmation-pending terminal-process |

Issue Type: <b>Bug</b>

When open vscode from terminal with a path,

e.g. `code repo-x`,

the intergrated terminal will start at the original folder instead of from `repo-x`.

A typical use case:

```sh

# working on one repo, try to open another repo the first time

# open integrated terminal

repo-a> code ../repo-b

```

... | 1.0 | wrong terminal startup folder when open vscode with path param -

Issue Type: <b>Bug</b>

When open vscode from terminal with a path,

e.g. `code repo-x`,

the intergrated terminal will start at the original folder instead of from `repo-x`.

A typical use case:

```sh

# working on one repo, try to open another repo the f... | process | wrong terminal startup folder when open vscode with path param issue type bug when open vscode from terminal with a path e g code repo x the intergrated terminal will start at the original folder instead of from repo x a typical use case sh working on one repo try to open another repo the first ... | 1 |

16,842 | 22,092,067,895 | IssuesEvent | 2022-06-01 07:00:05 | ethereum/EIPs | https://api.github.com/repos/ethereum/EIPs | closed | Introduce more automation | type: EIP1 (Process) | We have the following pieces of automation currently:

1. automerger bot

2. syntax tests

a. eipv (preplaced the old validator not long ago](https://github.com/ethereum/EIPs/pull/2860))

b. spellcheck

c. hmlcheck

3. greeter bot

4. stale bot ([added lately](https://github.com/ethereum/EIPs/pull/2949))

... | 1.0 | Introduce more automation - We have the following pieces of automation currently:

1. automerger bot

2. syntax tests

a. eipv (preplaced the old validator not long ago](https://github.com/ethereum/EIPs/pull/2860))

b. spellcheck

c. hmlcheck

3. greeter bot

4. stale bot ([added lately](https://github.com/... | process | introduce more automation we have the following pieces of automation currently automerger bot syntax tests a eipv preplaced the old validator not long ago b spellcheck c hmlcheck greeter bot stale bot the automerger can automatically merge prs except those changing stat... | 1 |

252,608 | 27,247,532,023 | IssuesEvent | 2023-02-22 04:12:08 | a23au/awe-base-images | https://api.github.com/repos/a23au/awe-base-images | closed | Resolve vulnerability alerts | security | Tracking resolution of the following alerts:

## Vulnerability CVE-2023-23914

- [ ] https://github.com/a23au/awe-base-images/security/code-scanning/50

| Severity | Package | Fixed Version | Link |

| ----- | ----- | ----- | ----- |

| LOW | libcurl | 7.87.0-r2 | [CVE-2023-23914](https://avd.aquasec.com/nvd/cve-20... | True | Resolve vulnerability alerts - Tracking resolution of the following alerts:

## Vulnerability CVE-2023-23914

- [ ] https://github.com/a23au/awe-base-images/security/code-scanning/50

| Severity | Package | Fixed Version | Link |

| ----- | ----- | ----- | ----- |

| LOW | libcurl | 7.87.0-r2 | [CVE-2023-23914](htt... | non_process | resolve vulnerability alerts tracking resolution of the following alerts vulnerability cve severity package fixed version link low libcurl hsts ignored on multiple requests vulnerability cve severity pa... | 0 |

263,679 | 8,300,542,667 | IssuesEvent | 2018-09-21 08:27:47 | prometheus/prometheus | https://api.github.com/repos/prometheus/prometheus | closed | retention not working in version 2.3.1 | component/promql kind/bug low hanging fruit priority/P3 | Hey,

i set prometheus retention to be 1h with flag

--storage.tsdb.retention="1h"

can see it in the ui but still can query data longer than 1h.

i am using the latest version

ui shows 1h:

https://screenshot.net/3dk9riq

more than 1h data:

https://screenshot.net/20plrad

i have been asked to open a bug whe... | 1.0 | retention not working in version 2.3.1 - Hey,

i set prometheus retention to be 1h with flag

--storage.tsdb.retention="1h"

can see it in the ui but still can query data longer than 1h.

i am using the latest version

ui shows 1h:

https://screenshot.net/3dk9riq

more than 1h data:

https://screenshot.net/20plr... | non_process | retention not working in version hey i set prometheus retention to be with flag storage tsdb retention can see it in the ui but still can query data longer than i am using the latest version ui shows more than data i have been asked to open a bug when asked in prometheus ... | 0 |

9,139 | 12,203,187,289 | IssuesEvent | 2020-04-30 10:10:18 | MHRA/products | https://api.github.com/repos/MHRA/products | closed | AUTO BATCH - Support XML Requests | EPIC - Auto Batch Process :oncoming_automobile: HIGH PRIORITY :arrow_double_up: | Accenture require an XML interface to the `doc-index-updater` API, as the Sentinel system is unable to serialize or deserialize JSON.

**Acceptance Criteria**

- The API should check the `Content-Type` or `Accept` headers. If the header is `application/xml`, then we should deserialize body & serialize response assu... | 1.0 | AUTO BATCH - Support XML Requests - Accenture require an XML interface to the `doc-index-updater` API, as the Sentinel system is unable to serialize or deserialize JSON.

**Acceptance Criteria**

- The API should check the `Content-Type` or `Accept` headers. If the header is `application/xml`, then we should deseri... | process | auto batch support xml requests accenture require an xml interface to the doc index updater api as the sentinel system is unable to serialize or deserialize json acceptance criteria the api should check the content type or accept headers if the header is application xml then we should deseri... | 1 |

418,515 | 12,198,907,903 | IssuesEvent | 2020-04-30 00:05:31 | microsoft/terminal | https://api.github.com/repos/microsoft/terminal | closed | Crashes if zoom font size up and down rapidly with ctrl + scroll wheel | Area-Rendering In-PR Issue-Bug Priority-1 Product-Terminal Severity-Crash | # Environment

Win10 1909 x64

Windows Terminal version (if applicable): 0.11.1121.0

# Steps to reproduce

Increase and decrease the font size rapidly in the first tab using ctrl and mouse scroll wheel

# Expected behavior

Not to crash

# Actual behavior

Crashes

---

Crashes every time when I us... | 1.0 | Crashes if zoom font size up and down rapidly with ctrl + scroll wheel - # Environment

Win10 1909 x64

Windows Terminal version (if applicable): 0.11.1121.0

# Steps to reproduce

Increase and decrease the font size rapidly in the first tab using ctrl and mouse scroll wheel

# Expected behavior

Not to cra... | non_process | crashes if zoom font size up and down rapidly with ctrl scroll wheel environment windows terminal version if applicable steps to reproduce increase and decrease the font size rapidly in the first tab using ctrl and mouse scroll wheel expected behavior not to crash actua... | 0 |

50,550 | 7,611,010,183 | IssuesEvent | 2018-05-01 11:45:35 | has2k1/plotnine | https://api.github.com/repos/has2k1/plotnine | closed | Changing limits in scale_y_continuous errors out: | documentation | qplot('currency', 'gini', data=df, geom="bar", stat="identity") +\

scale_y_continuous(range=(0.8, 1)) +\

theme(axis_text_x=element_text(rotation=45))

it looks like range is trying to call "train" on the object.

From a wee df I can provide I think, returns this error:

error here --> self.range.train(x)

Att... | 1.0 | Changing limits in scale_y_continuous errors out: - qplot('currency', 'gini', data=df, geom="bar", stat="identity") +\

scale_y_continuous(range=(0.8, 1)) +\

theme(axis_text_x=element_text(rotation=45))

it looks like range is trying to call "train" on the object.

From a wee df I can provide I think, returns ... | non_process | changing limits in scale y continuous errors out qplot currency gini data df geom bar stat identity scale y continuous range theme axis text x element text rotation it looks like range is trying to call train on the object from a wee df i can provide i think returns t... | 0 |

18,086 | 24,108,196,804 | IssuesEvent | 2022-09-20 09:11:33 | cloudfoundry/korifi | https://api.github.com/repos/cloudfoundry/korifi | closed | [Feature]: Developer can push apps using the top-level `memory` field in the manifest | Top-level process config | ### Background

**As a** developer

**I want** top-level process configuration in manifests to be supported

**So that** I can use shortcut `cf push` flags like `-c`, `-i`, `-m` etc.

### Acceptance Criteria

* **GIVEN** I have the sources of an application (e.g. `tests/smoke/assets/test-node-app`)

**AND** `ma... | 1.0 | [Feature]: Developer can push apps using the top-level `memory` field in the manifest - ### Background

**As a** developer

**I want** top-level process configuration in manifests to be supported

**So that** I can use shortcut `cf push` flags like `-c`, `-i`, `-m` etc.

### Acceptance Criteria

* **GIVEN** I hav... | process | developer can push apps using the top level memory field in the manifest background as a developer i want top level process configuration in manifests to be supported so that i can use shortcut cf push flags like c i m etc acceptance criteria given i have the so... | 1 |

9,440 | 12,425,143,066 | IssuesEvent | 2020-05-24 15:01:53 | jyn514/rcc | https://api.github.com/repos/jyn514/rcc | opened | Missing predefined macros | enhancement preprocessor | [6.10.8.1 Mandatory macros](http://port70.net/~nsz/c/c11/n1570.html#6.10.8.1)

- [ ] `__LINE__` - tricky - can't be passed through to `replace()` literally. This might be as simple as updating `self.definitions` whenever someone calls `line()`?

- [ ] `__COLUMN__` - tricky since we don't keep track of this explicitly... | 1.0 | Missing predefined macros - [6.10.8.1 Mandatory macros](http://port70.net/~nsz/c/c11/n1570.html#6.10.8.1)

- [ ] `__LINE__` - tricky - can't be passed through to `replace()` literally. This might be as simple as updating `self.definitions` whenever someone calls `line()`?

- [ ] `__COLUMN__` - tricky since we don't k... | process | missing predefined macros line tricky can t be passed through to replace literally this might be as simple as updating self definitions whenever someone calls line column tricky since we don t keep track of this explicitly and recalculate it probably needs yet more prepr... | 1 |

179,757 | 30,294,624,249 | IssuesEvent | 2023-07-09 17:51:34 | sars-cov-2-variants/lineage-proposals | https://api.github.com/repos/sars-cov-2-variants/lineage-proposals | closed | EU.1.1 with Orf8: A51V and its sublineage with S:F157L emerging in Germany. | Discussion Mutations outside spike designated | Mutations on top of EU.1.1: Seemingly just Orf8:A51V

Gisaid Inquiry: Spike_I410V,P521S, F486P +C28045T

Sequences: EPI_ISL_17070943, EPI_ISL_17080159, EPI_ISL_17113008,

EPI_ISL_17113070, EPI_ISL_17184064, EPI_ISL_17184110,

EPI_ISL_17184731, EPI_ISL_17186627, EPI_ISL_17189053,

EPI_ISL_17189727, EPI_ISL_17209859, EPI... | 1.0 | EU.1.1 with Orf8: A51V and its sublineage with S:F157L emerging in Germany. - Mutations on top of EU.1.1: Seemingly just Orf8:A51V

Gisaid Inquiry: Spike_I410V,P521S, F486P +C28045T

Sequences: EPI_ISL_17070943, EPI_ISL_17080159, EPI_ISL_17113008,

EPI_ISL_17113070, EPI_ISL_17184064, EPI_ISL_17184110,

EPI_ISL_17184731... | non_process | eu with and its sublineage with s emerging in germany mutations on top of eu seemingly just gisaid inquiry spike sequences epi isl epi isl epi isl epi isl epi isl epi isl epi isl epi isl epi isl epi isl epi isl epi isl epi isl epi isl ep... | 0 |

11,445 | 14,264,499,745 | IssuesEvent | 2020-11-20 15:50:17 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | StartInfo_TextFile_ShellExecute failing consistently on Server Core | area-System.Diagnostics.Process bug | ERROR: type should be string, got "https://mc.dot.net/#/product/netcore/master/source/official~2Fcorefx~2Fmaster~2F/type/test~2Ffunctional~2Fcli~2F/build/20180521.01/workItem/System.Diagnostics.Process.Tests/analysis/xunit/System.Diagnostics.Tests.ProcessStartInfoTests~2FStartInfo_TextFile_ShellExecute\r\n\r\nApparently Process.Start on foo.txt with UseShellExecute=true is returning a null process object. \r\n\r\n```\r\nCould not start C:\\\\Users\\\\runner\\\\AppData\\\\Local\\\\Temp\\\\ProcessStartInfoTests_x1e54hcw.eri\\\\StartInfo_TextFile_ShellExecute_993_88c6de27.txt UseShellExecute=True\r\nAssociation details for '.txt'\r\n------------------------------\r\nOpen command: C:\\\\Windows\\\\system32\\\\NOTEPAD.EXE %1\r\nProgID: txtfile\r\n\r\nExpected: True\r\nActual: False\r\nStack Trace :\r\n at System.Diagnostics.Tests.ProcessStartInfoTests.StartInfo_TextFile_ShellExecute() in E:\\A\\_work\\63\\s\\corefx\\src\\System.Diagnostics.Process\\tests\\ProcessStartInfoTests.cs:line 990\r\n```" | 1.0 | StartInfo_TextFile_ShellExecute failing consistently on Server Core - https://mc.dot.net/#/product/netcore/master/source/official~2Fcorefx~2Fmaster~2F/type/test~2Ffunctional~2Fcli~2F/build/20180521.01/workItem/System.Diagnostics.Process.Tests/analysis/xunit/System.Diagnostics.Tests.ProcessStartInfoTests~2FStartInfo_Tex... | process | startinfo textfile shellexecute failing consistently on server core apparently process start on foo txt with useshellexecute true is returning a null process object could not start c users runner appdata local temp processstartinfotests eri startinfo textfile shellexecute txt useshellexec... | 1 |

18,490 | 24,550,908,479 | IssuesEvent | 2022-10-12 12:32:40 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] [Offline indicator] Sign in screen > Empty screen is getting displayed | Bug P1 iOS Process: Fixed Process: Tested QA Process: Tested dev | Steps:

1. Install the app and open the app

2. Click on the 'Get started'

3. Click on the Hamburger menu button

4. Click on 'Signin'

5. Blank screen is getting displayed with a 'You are offline' message

6. Turn on the data and observe

AR: Blank screen is getting displayed even though user turn on their mobile ... | 3.0 | [iOS] [Offline indicator] Sign in screen > Empty screen is getting displayed - Steps:

1. Install the app and open the app

2. Click on the 'Get started'

3. Click on the Hamburger menu button

4. Click on 'Signin'

5. Blank screen is getting displayed with a 'You are offline' message

6. Turn on the data and observe

... | process | sign in screen empty screen is getting displayed steps install the app and open the app click on the get started click on the hamburger menu button click on signin blank screen is getting displayed with a you are offline message turn on the data and observe ar blank screen is... | 1 |

8,104 | 11,299,519,037 | IssuesEvent | 2020-01-17 11:23:39 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | SAGA Fill sinks (Wang & Liu) gives error in 3.4.13 | Bug Feedback Processing | **Describe the bug**

In QGIS 3.4.13 the SAGA Fill sink (Wang & Liu) processing tool is not working. It gives an error. See screenshot. We tested it against QGIS 3.4.10 where it was still working with the same procedure and files.

gives error in 3.4.13 - **Describe the bug**

In QGIS 3.4.13 the SAGA Fill sink (Wang & Liu) processing tool is not working. It gives an error. See screenshot. We tested it against QGIS 3.4.10 where it was still working with the same procedure and files.

on November 12, 2012 15:57:52_

GO:0006260

Parent: cellular biosynthetic process

\<a href="http://purl.obolibrary.org/obo/GO_0044249" rel="nofollow">http://purl.obolibrary.org/obo/GO_0044249</a>

for this record:

\<a href=... | 1.0 | DNA replication - _From [vasil...@ohsu.edu](https://code.google.com/u/108803237899917466626/) on November 12, 2012 15:57:52_

GO:0006260

Parent: cellular biosynthetic process

\<a href="http://purl.obolibrary.org/obo/GO_0044249" rel="nofollow">http://purl.obolibrary.org/obo/GO_0044249</a>

for this reco... | process | dna replication from on november go parent cellular biosynthetic process for this record original issue | 1 |

13,446 | 15,883,719,332 | IssuesEvent | 2021-04-09 17:48:04 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Documentation is incorrect for issecret, fails to note secret variables do *not* get set as env vars | Pri1 devops-cicd-process/tech devops/prod doc-enhancement | The documentation mentions:

> This doesn't update the environment variables, but it does make the new variable available to downstream steps within the same job.

>

> When issecret is set to true, the value of the variable will be saved as secret and masked from the log.

> ...

> Subsequent steps will also have the... | 1.0 | Documentation is incorrect for issecret, fails to note secret variables do *not* get set as env vars - The documentation mentions:

> This doesn't update the environment variables, but it does make the new variable available to downstream steps within the same job.

>

> When issecret is set to true, the value of the ... | process | documentation is incorrect for issecret fails to note secret variables do not get set as env vars the documentation mentions this doesn t update the environment variables but it does make the new variable available to downstream steps within the same job when issecret is set to true the value of the ... | 1 |

7,568 | 10,683,362,182 | IssuesEvent | 2019-10-22 08:11:35 | prisma/prisma2 | https://api.github.com/repos/prisma/prisma2 | closed | `prisma2 studio` | kind/feature process/candidate | It would be nice to have a CLI command to start Studio out of the context of Watchmode (`prisma2 dev`), so without any possible side effects of migration, the `dev` UI complications and so on.

Possible output:

```

$ prisma2 studio

Prisma Studio is now available at http://localhost:5555/

Ctrl + C to exit

^C

$... | 1.0 | `prisma2 studio` - It would be nice to have a CLI command to start Studio out of the context of Watchmode (`prisma2 dev`), so without any possible side effects of migration, the `dev` UI complications and so on.

Possible output:

```

$ prisma2 studio

Prisma Studio is now available at http://localhost:5555/

Ctrl +... | process | studio it would be nice to have a cli command to start studio out of the context of watchmode dev so without any possible side effects of migration the dev ui complications and so on possible output studio prisma studio is now available at ctrl c to exit c notes ... | 1 |

11,034 | 13,850,497,674 | IssuesEvent | 2020-10-15 01:23:20 | kubeflow/community | https://api.github.com/repos/kubeflow/community | closed | Additional calendar admins needed | kind/process | We need additional admins for the [Kubeflow Calendar](https://calendar.google.com/calendar/embed?src=kubeflow.org_7l5vnbn8suj2se10sen81d9428%40group.calendar.google.com&ctz=America%2FLos_Angeles)

We currently have some partial tooling for managing the calendar. The [script](https://github.com/kubeflow/community/blob... | 1.0 | Additional calendar admins needed - We need additional admins for the [Kubeflow Calendar](https://calendar.google.com/calendar/embed?src=kubeflow.org_7l5vnbn8suj2se10sen81d9428%40group.calendar.google.com&ctz=America%2FLos_Angeles)

We currently have some partial tooling for managing the calendar. The [script](https:... | process | additional calendar admins needed we need additional admins for the we currently have some partial tooling for managing the calendar the configures the calendar based on the script currently needs to be run manually by someone with admin privileges to the calendar expectations for would be volu... | 1 |

21,630 | 30,034,010,939 | IssuesEvent | 2023-06-27 11:32:40 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | dsPIC30F6014 Emulation Error | Feature: Processor/PIC Status: Internal | **Describe the bug**

Attempting to emulate a dsPIC30F6014 file causes:

```

java.lang.IllegalArgumentException: Memory-mapped register PC does not map to space register:

```

**To Reproduce**

Steps to reproduce the behavior:

1. Compile program with the [XC16 compiler](https://www.microchip.com/en-us/tools-resource... | 1.0 | dsPIC30F6014 Emulation Error - **Describe the bug**

Attempting to emulate a dsPIC30F6014 file causes:

```

java.lang.IllegalArgumentException: Memory-mapped register PC does not map to space register:

```

**To Reproduce**

Steps to reproduce the behavior:

1. Compile program with the [XC16 compiler](https://www.mic... | process | emulation error describe the bug attempting to emulate a file causes java lang illegalargumentexception memory mapped register pc does not map to space register to reproduce steps to reproduce the behavior compile program with the c include int main pri... | 1 |

1,869 | 4,697,561,610 | IssuesEvent | 2016-10-12 09:46:27 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | child_process.spawn will either exit or not control stdin when detached and using inherit | child_process os x | This may be multiple issues in node or just discrepancies between what the docs suggest and how it actually operates.

> When using the detached option to start a long-running process, ... If the parent's stdio is inherited, the child will remain attached to the controlling terminal.

### conosle.log() causes earl... | 1.0 | child_process.spawn will either exit or not control stdin when detached and using inherit - This may be multiple issues in node or just discrepancies between what the docs suggest and how it actually operates.

> When using the detached option to start a long-running process, ... If the parent's stdio is inherited, t... | process | child process spawn will either exit or not control stdin when detached and using inherit this may be multiple issues in node or just discrepancies between what the docs suggest and how it actually operates when using the detached option to start a long running process if the parent s stdio is inherited t... | 1 |

14,252 | 17,188,390,574 | IssuesEvent | 2021-07-16 07:23:10 | medic/cht-core | https://api.github.com/repos/medic/cht-core | opened | Release 3.10.5 | Type: Internal process | # Planning

- [ ] Create an GH Milestone and add this issue to it.

- [ ] Add all the issues to be worked on to the Milestone.

# Development

When development is ready to begin one of the engineers should be nominated as a Release Manager. They will be responsible for making sure the following tasks are complete... | 1.0 | Release 3.10.5 - # Planning

- [ ] Create an GH Milestone and add this issue to it.

- [ ] Add all the issues to be worked on to the Milestone.

# Development

When development is ready to begin one of the engineers should be nominated as a Release Manager. They will be responsible for making sure the following t... | process | release planning create an gh milestone and add this issue to it add all the issues to be worked on to the milestone development when development is ready to begin one of the engineers should be nominated as a release manager they will be responsible for making sure the following tasks ... | 1 |

1,342 | 3,901,423,010 | IssuesEvent | 2016-04-18 10:47:23 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | PageProcessor parse binaries as a html page | AREA: server SYSTEM: resource processing TYPE: bug | Reproduces in the playground when try to download a file.

For example reproduced on the http://testcafe.devexpress.com page

Click `Download TestCafe` button.

We have the following `Content-Type` in response: `application/octet-stream`

But `RequestPipelineContext.isPage` is `true` because it depends on request ... | 1.0 | PageProcessor parse binaries as a html page - Reproduces in the playground when try to download a file.

For example reproduced on the http://testcafe.devexpress.com page

Click `Download TestCafe` button.

We have the following `Content-Type` in response: `application/octet-stream`

But `RequestPipelineContext.isP... | process | pageprocessor parse binaries as a html page reproduces in the playground when try to download a file for example reproduced on the page click download testcafe button we have the following content type in response application octet stream but requestpipelinecontext ispage is true because it de... | 1 |

20,916 | 27,754,022,465 | IssuesEvent | 2023-03-15 23:55:36 | dDevTech/tapas-top-frontend | https://api.github.com/repos/dDevTech/tapas-top-frontend | closed | Crear pagina register-account-info 20/03/2023 | pending in process require testing | Crear nuevo package en webapp/app/modules/account nombrado como register-account-info

En el se crearán los archivos necesarios para:

Crear pagina nueva account-info donde se podrán introducir los campos:

- Nombre (requerido)

- Apellido 1 text (opcional)

- Apellido 2 text (opcional)

- Adjuntar foto como archivo... | 1.0 | Crear pagina register-account-info 20/03/2023 - Crear nuevo package en webapp/app/modules/account nombrado como register-account-info

En el se crearán los archivos necesarios para:

Crear pagina nueva account-info donde se podrán introducir los campos:

- Nombre (requerido)

- Apellido 1 text (opcional)

- Apellido ... | process | crear pagina register account info crear nuevo package en webapp app modules account nombrado como register account info en el se crearán los archivos necesarios para crear pagina nueva account info donde se podrán introducir los campos nombre requerido apellido text opcional apellido te... | 1 |

589,116 | 17,690,020,340 | IssuesEvent | 2021-08-24 08:48:24 | magento/magento2 | https://api.github.com/repos/magento/magento2 | closed | Refactor codebase to fix problem of reserved keyword "match" for PHP 8 | Progress: PR in progress Priority: P2 Project: PHP8 Project: Platform Health |

### Description (*)

Magento has places where use keyword "match" that is reserved PHP from version 8 according to [this document](https://www.php.net/manual/en/reserved.keywords.php).

These is classes provided below

app/code/Magento/CustomerSegment/Controller/Adminhtml/Index/Match.php

app/code/Magento/Elasticse... | 1.0 | Refactor codebase to fix problem of reserved keyword "match" for PHP 8 -

### Description (*)

Magento has places where use keyword "match" that is reserved PHP from version 8 according to [this document](https://www.php.net/manual/en/reserved.keywords.php).

These is classes provided below

app/code/Magento/Custome... | non_process | refactor codebase to fix problem of reserved keyword match for php description magento has places where use keyword match that is reserved php from version according to these is classes provided below app code magento customersegment controller adminhtml index match php app code magento el... | 0 |

225,790 | 24,881,366,618 | IssuesEvent | 2022-10-28 01:37:40 | praneethpanasala/linux | https://api.github.com/repos/praneethpanasala/linux | opened | CVE-2022-3621 (High) detected in linuxlinux-4.19.6 | security vulnerability | ## CVE-2022-3621 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.6</b></p></summary>

<p>

<p>Apache Software Foundation (ASF)</p>

<p>Library home page: <a href=https://m... | True | CVE-2022-3621 (High) detected in linuxlinux-4.19.6 - ## CVE-2022-3621 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.6</b></p></summary>

<p>

<p>Apache Software Foundat... | non_process | cve high detected in linuxlinux cve high severity vulnerability vulnerable library linuxlinux apache software foundation asf library home page a href found in base branch master vulnerable source files vulnerability details ... | 0 |

92,369 | 26,666,790,146 | IssuesEvent | 2023-01-26 05:22:37 | runatlantis/atlantis | https://api.github.com/repos/runatlantis/atlantis | closed | Add permissions to all github actions | build github-actions | <!--- Please keep this note for the community --->

### Community Note

- Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request. Searching for pre... | 1.0 | Add permissions to all github actions - <!--- Please keep this note for the community --->

### Community Note

- Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers pr... | non_process | add permissions to all github actions community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request searching for pre existing feature requests helps us consolidate datapoints for identical requirements into a single plac... | 0 |

126,756 | 12,298,732,535 | IssuesEvent | 2020-05-11 11:03:18 | BA-HanseML/NF_Prj_MIMII_Dataset | https://api.github.com/repos/BA-HanseML/NF_Prj_MIMII_Dataset | closed | MEL and PSD grid picture cards | documentation mi | a nice high res pcture with PSD and MEL per MAchine part and ID

machine part ID

normal ,also normal, abnormal, alose abnorma

PSD

MEL

plus mp 3

| 1.0 | MEL and PSD grid picture cards - a nice high res pcture with PSD and MEL per MAchine part and ID

machine part ID

normal ,also normal, abnormal, alose abnorma

PSD

MEL

plus mp 3

| non_process | mel and psd grid picture cards a nice high res pcture with psd and mel per machine part and id machine part id normal also normal abnormal alose abnorma psd mel plus mp | 0 |

18,956 | 24,918,406,616 | IssuesEvent | 2022-10-30 17:23:47 | aiidateam/aiida-core | https://api.github.com/repos/aiidateam/aiida-core | closed | `get_function_source_code` gives `FileNotFoundError` when `calcfunction` is not in a file. | type/bug priority/nice-to-have topic/processes | ### Steps to reproduce

In `verdi shell`:

```python

from aiida.engine import calcfunction

@calcfunction

def my_func(inp):

return Int(4)

res=my_func(Int(1))

res.creator.get_function_source_code()

```

### Expected behavior

Not sure what is the behavior we want here...because the source code ... | 1.0 | `get_function_source_code` gives `FileNotFoundError` when `calcfunction` is not in a file. - ### Steps to reproduce

In `verdi shell`:

```python

from aiida.engine import calcfunction

@calcfunction

def my_func(inp):

return Int(4)

res=my_func(Int(1))

res.creator.get_function_source_code()

```

#... | process | get function source code gives filenotfounderror when calcfunction is not in a file steps to reproduce in verdi shell python from aiida engine import calcfunction calcfunction def my func inp return int res my func int res creator get function source code ... | 1 |

13,125 | 15,526,203,480 | IssuesEvent | 2021-03-13 00:25:28 | googleapis/python-binary-authorization | https://api.github.com/repos/googleapis/python-binary-authorization | opened | Add v1 | type: process | https://github.com/googleapis/googleapis/tree/master/google/cloud/binaryauthorization

There are no v1 protos in googleapis/googleapis. Probably need to ping the API team to sync v1 protos. | 1.0 | Add v1 - https://github.com/googleapis/googleapis/tree/master/google/cloud/binaryauthorization

There are no v1 protos in googleapis/googleapis. Probably need to ping the API team to sync v1 protos. | process | add there are no protos in googleapis googleapis probably need to ping the api team to sync protos | 1 |

36,297 | 17,604,381,813 | IssuesEvent | 2021-08-17 15:18:58 | smith-chem-wisc/Spritz | https://api.github.com/repos/smith-chem-wisc/Spritz | closed | gatk MarkDuplicates Exception in thread "main" java.lang.OutOfMemoryError: GC overhead limit exceeded | Question Performance | Dear Spritz developers,

thank you very much for your amazing work.

We tried to run Spritz for 12 samples on a local machine with 40G RAM and many cores, but computation stopped with GATK MarkDuplicates command.

`INFO 2021-07-20 06:16:14 MarkDuplicates Tracking 65403162 as yet unmatched pairs. 65358276 rec... | True | gatk MarkDuplicates Exception in thread "main" java.lang.OutOfMemoryError: GC overhead limit exceeded - Dear Spritz developers,

thank you very much for your amazing work.

We tried to run Spritz for 12 samples on a local machine with 40G RAM and many cores, but computation stopped with GATK MarkDuplicates command.

... | non_process | gatk markduplicates exception in thread main java lang outofmemoryerror gc overhead limit exceeded dear spritz developers thank you very much for your amazing work we tried to run spritz for samples on a local machine with ram and many cores but computation stopped with gatk markduplicates command i... | 0 |

255,323 | 21,918,159,229 | IssuesEvent | 2022-05-22 06:24:08 | MohistMC/Mohist | https://api.github.com/repos/MohistMC/Mohist | closed | New version not working on linux | 1.16.5 Wait Needs Testing | <!-- ISSUE_TEMPLATE_3 -> IMPORTANT: DO NOT DELETE THIS LINE.-->

<!-- Thank you for reporting ! Please note that issues can take a lot of time to be fixed and there is no eta.-->

<!-- If you don't know where to upload your logs and crash reports, you can use these websites : -->

<!-- https://gist.github.com (reco... | 1.0 | New version not working on linux - <!-- ISSUE_TEMPLATE_3 -> IMPORTANT: DO NOT DELETE THIS LINE.-->

<!-- Thank you for reporting ! Please note that issues can take a lot of time to be fixed and there is no eta.-->

<!-- If you don't know where to upload your logs and crash reports, you can use these websites : -->

... | non_process | new version not working on linux important do not delete this line minecraft version mohist version operating system linux centos logs mod list none plugin list none description of issue download mohist jar from w... | 0 |

325,282 | 27,862,746,811 | IssuesEvent | 2023-03-21 08:03:23 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | closed | Fix ndarray.test_numpy_instance_ior__ | NumPy Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4475563861/jobs/7865105584" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4475563861/jobs/7865105584" rel="noopener ... | 1.0 | Fix ndarray.test_numpy_instance_ior__ - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4475563861/jobs/7865105584" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/44... | non_process | fix ndarray test numpy instance ior tensorflow img src torch img src numpy img src jax img src | 0 |

10,641 | 13,446,164,910 | IssuesEvent | 2020-09-08 12:34:18 | MHRA/products | https://api.github.com/repos/MHRA/products | closed | PARs - File upload on every edit | BUG :bug: EPIC - PARs process | ### User want

As a Medical Writer I would like my file to remain uploaded even after editing my upload details

### Acceptance Criteria

**Customer acceptance criteria**

- [ ] Document remains uploaded following edit of details

- [ ] Document remains uploaded following deletion of another PARs document

**T... | 1.0 | PARs - File upload on every edit - ### User want

As a Medical Writer I would like my file to remain uploaded even after editing my upload details

### Acceptance Criteria

**Customer acceptance criteria**

- [ ] Document remains uploaded following edit of details

- [ ] Document remains uploaded following deleti... | process | pars file upload on every edit user want as a medical writer i would like my file to remain uploaded even after editing my upload details acceptance criteria customer acceptance criteria document remains uploaded following edit of details document remains uploaded following deletion o... | 1 |

1,782 | 4,513,786,188 | IssuesEvent | 2016-09-04 13:54:23 | AnalyticalGraphicsInc/cesium | https://api.github.com/repos/AnalyticalGraphicsInc/cesium | opened | Run WebGL tests in CI | dev process | As discussed with @mramato offline:

* Replace all read pixels expectations with a function that can have a no-op expectation when the tests are ran with a "no WebGL" flag, e.g.,

```javascript

expect(scene.renderForSpecs()).toEqual([0, 0, 0, 255]);

```

becomes

```javascript

scene.expectRenderForSpecs([0, 0, 0, ... | 1.0 | Run WebGL tests in CI - As discussed with @mramato offline:

* Replace all read pixels expectations with a function that can have a no-op expectation when the tests are ran with a "no WebGL" flag, e.g.,

```javascript

expect(scene.renderForSpecs()).toEqual([0, 0, 0, 255]);

```

becomes

```javascript

scene.expectR... | process | run webgl tests in ci as discussed with mramato offline replace all read pixels expectations with a function that can have a no op expectation when the tests are ran with a no webgl flag e g javascript expect scene renderforspecs toequal becomes javascript scene expectrenderforspecs... | 1 |

35,856 | 12,393,588,171 | IssuesEvent | 2020-05-20 15:38:16 | wallanpsantos/apache-camel-alura | https://api.github.com/repos/wallanpsantos/apache-camel-alura | opened | CVE-2009-2625 (Medium) detected in xercesImpl-2.8.0.jar | security vulnerability | ## CVE-2009-2625 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xercesImpl-2.8.0.jar</b></p></summary>

<p>Xerces2 is the next generation of high performance, fully compliant XML par... | True | CVE-2009-2625 (Medium) detected in xercesImpl-2.8.0.jar - ## CVE-2009-2625 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xercesImpl-2.8.0.jar</b></p></summary>

<p>Xerces2 is the ne... | non_process | cve medium detected in xercesimpl jar cve medium severity vulnerability vulnerable library xercesimpl jar is the next generation of high performance fully compliant xml parsers in the apache xerces family this new version of xerces introduces the xerces native interface ... | 0 |

462 | 2,902,653,419 | IssuesEvent | 2015-06-18 08:34:56 | haskell-distributed/distributed-process | https://api.github.com/repos/haskell-distributed/distributed-process | closed | The SimpleLocalnet backend should take (RemoteTable -> RemoteTable) rather than RemoteTable | Bug distributed-process-simplelocalnet | This avoids the need for Control.Distributed.Process.Node in applications that use it. | 1.0 | The SimpleLocalnet backend should take (RemoteTable -> RemoteTable) rather than RemoteTable - This avoids the need for Control.Distributed.Process.Node in applications that use it. | process | the simplelocalnet backend should take remotetable remotetable rather than remotetable this avoids the need for control distributed process node in applications that use it | 1 |

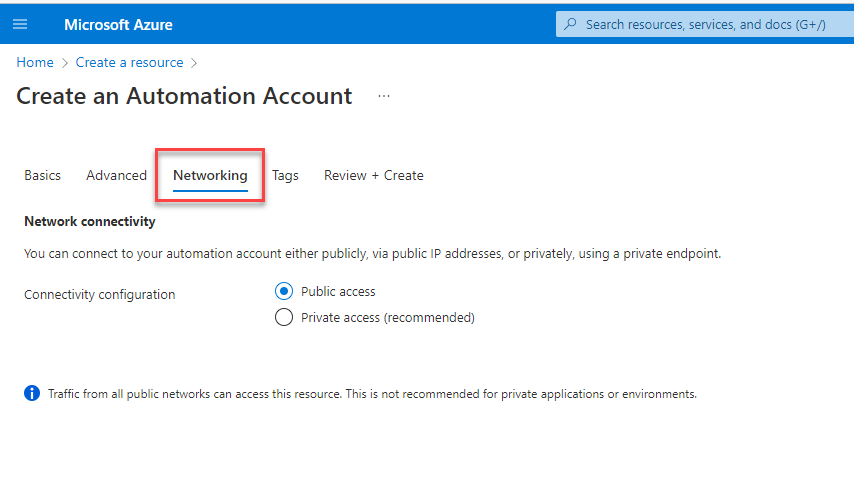

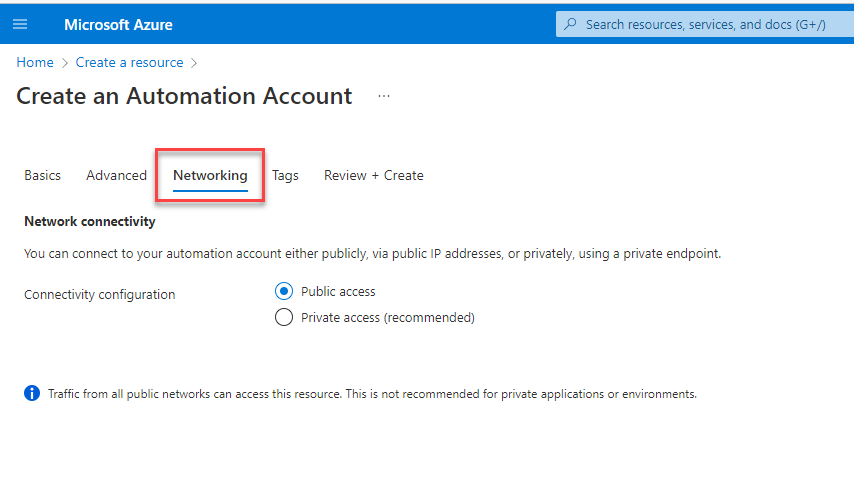

19,382 | 25,518,987,817 | IssuesEvent | 2022-11-28 18:45:18 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Networking Tab is missing | automation/svc triaged assigned-to-author doc-bug process-automation/subsvc Pri2 | Networking Tab is missing in documentation.

There is no explanation/guideline which option should be selected and their further impacts.

---

#### Document Details

⚠ *Do not edit this section. ... | 1.0 | Networking Tab is missing - Networking Tab is missing in documentation.

There is no explanation/guideline which option should be selected and their further impacts.

---

#### Document Details

⚠... | process | networking tab is missing networking tab is missing in documentation there is no explanation guideline which option should be selected and their further impacts document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id ... | 1 |

1,180 | 3,681,568,915 | IssuesEvent | 2016-02-24 04:12:09 | 18F/FEC | https://api.github.com/repos/18F/FEC | closed | Positive: Calendar features | processed |

## What were you trying to do and how can we improve it?

I was looking at your new calendar features

## General feedback?

I like the new layout

## Tell us about yourself

I'm new to the FEC website

## Details

* URL: https://fec-proxy.18f.gov/calendar/

* User Agent: Mozilla/5.0 (Macintosh; Intel Mac OS X 10.... | 1.0 | Positive: Calendar features -

## What were you trying to do and how can we improve it?

I was looking at your new calendar features

## General feedback?

I like the new layout

## Tell us about yourself

I'm new to the FEC website

## Details

* URL: https://fec-proxy.18f.gov/calendar/

* User Agent: Mozilla/5.0 ... | process | positive calendar features what were you trying to do and how can we improve it i was looking at your new calendar features general feedback i like the new layout tell us about yourself i m new to the fec website details url user agent mozilla macintosh intel mac os x rv ... | 1 |

18,286 | 24,380,782,985 | IssuesEvent | 2022-10-04 07:38:14 | nedbat/coveragepy | https://api.github.com/repos/nedbat/coveragepy | closed | Some multiprocessing processes not covered (but were covered by pytest-cov 3.0.0) | bug multiprocessing needs triage | **Describe the bug**

I am one of the developers of [qtile](https://github.com/qtile/qtile). We have a fairly large test suite that we ran with `pytest-cov`. Since they dropped `multiprocessing` support in 4.0.0, I have followed [their advice](https://pytest-cov.readthedocs.io/en/latest/changelog.html#id1) to use `cove... | 1.0 | Some multiprocessing processes not covered (but were covered by pytest-cov 3.0.0) - **Describe the bug**

I am one of the developers of [qtile](https://github.com/qtile/qtile). We have a fairly large test suite that we ran with `pytest-cov`. Since they dropped `multiprocessing` support in 4.0.0, I have followed [their ... | process | some multiprocessing processes not covered but were covered by pytest cov describe the bug i am one of the developers of we have a fairly large test suite that we ran with pytest cov since they dropped multiprocessing support in i have followed to use coverage the coveragerc fil... | 1 |

346,948 | 10,422,127,315 | IssuesEvent | 2019-09-16 08:16:45 | zdnscloud/singlecloud | https://api.github.com/repos/zdnscloud/singlecloud | closed | error should return if deleting storagecluster which is used by pod | bug priority: Medium todo | 1. create a storagecluster,

2. create a pod use the storagecluster,

3. delete storagecluser,

the following occurs:

204 NoContent returns and the storagecluster is deleted.

expected behavior:

user can't delete storagecluster if storagecluster is in use. It's better to returns an error. | 1.0 | error should return if deleting storagecluster which is used by pod - 1. create a storagecluster,

2. create a pod use the storagecluster,

3. delete storagecluser,

the following occurs:

204 NoContent returns and the storagecluster is deleted.

expected behavior:

user can't delete storagecluster if storagecluste... | non_process | error should return if deleting storagecluster which is used by pod create a storagecluster create a pod use the storagecluster delete storagecluser the following occurs nocontent returns and the storagecluster is deleted expected behavior user can t delete storagecluster if storagecluster ... | 0 |

8,625 | 7,343,225,893 | IssuesEvent | 2018-03-07 10:37:40 | NationalBankBelgium/stark | https://api.github.com/repos/NationalBankBelgium/stark | opened | developer guide: document how to protect against HTML extraction | comp: developer-guide comp: docs security should type: doc | Goal: review and add to our doc: https://chloe.re/2016/07/19/protect-against-html-extraction/ | True | developer guide: document how to protect against HTML extraction - Goal: review and add to our doc: https://chloe.re/2016/07/19/protect-against-html-extraction/ | non_process | developer guide document how to protect against html extraction goal review and add to our doc | 0 |

20,598 | 27,265,255,776 | IssuesEvent | 2023-02-22 17:31:25 | googleapis/google-cloud-node | https://api.github.com/repos/googleapis/google-cloud-node | closed | Your .repo-metadata.json files have a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json files:

Result of scan 📈:

* release_level must be equal to one of the allowed values in packages/gapic-node-templating/templates/bootstrap-templates/.repo-metadata.json

* api_shortname field missing from packages/gapic-node-templating/templates/bootstrap-templates/.rep... | 1.0 | Your .repo-metadata.json files have a problem 🤒 - You have a problem with your .repo-metadata.json files:

Result of scan 📈:

* release_level must be equal to one of the allowed values in packages/gapic-node-templating/templates/bootstrap-templates/.repo-metadata.json

* api_shortname field missing from packages/gapic... | process | your repo metadata json files have a problem 🤒 you have a problem with your repo metadata json files result of scan 📈 release level must be equal to one of the allowed values in packages gapic node templating templates bootstrap templates repo metadata json api shortname field missing from packages gapic... | 1 |

3,117 | 6,149,228,540 | IssuesEvent | 2017-06-27 19:38:07 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Reenable the tests ActiveIssue'd on 19909 this in Uap (not UapAot) | area-System.Diagnostics.Process os-mac-os-x-10.13 os-windows-uwp test-run-uwp-coreclr | ```

System.IO.MemoryMappedFiles.Tests -method System.IO.MemoryMappedFiles.Tests.DataShared

System.Net.Primitives.Functional.Tests -method System.Net.Primitives.Functional.Tests.EventSource_EventsRaisedAsExpected

System.Net.Security.Tests -method System.Net.Security.Tests.EventSource_EventsRaisedAsExpected

```

... | 1.0 | Reenable the tests ActiveIssue'd on 19909 this in Uap (not UapAot) - ```

System.IO.MemoryMappedFiles.Tests -method System.IO.MemoryMappedFiles.Tests.DataShared

System.Net.Primitives.Functional.Tests -method System.Net.Primitives.Functional.Tests.EventSource_EventsRaisedAsExpected

System.Net.Security.Tests -metho... | process | reenable the tests activeissue d on this in uap not uapaot system io memorymappedfiles tests method system io memorymappedfiles tests datashared system net primitives functional tests method system net primitives functional tests eventsource eventsraisedasexpected system net security tests method sy... | 1 |

1,901 | 4,117,504,667 | IssuesEvent | 2016-06-08 07:46:38 | deb761/Solstice | https://api.github.com/repos/deb761/Solstice | closed | Create a procedure that returns a students missed problems | Requirement | Create a stored procedure that returns the problems of a given type that a student has missed in the current level. | 1.0 | Create a procedure that returns a students missed problems - Create a stored procedure that returns the problems of a given type that a student has missed in the current level. | non_process | create a procedure that returns a students missed problems create a stored procedure that returns the problems of a given type that a student has missed in the current level | 0 |

21,499 | 29,661,828,611 | IssuesEvent | 2023-06-10 08:50:41 | nextflow-io/nextflow | https://api.github.com/repos/nextflow-io/nextflow | closed | Files (symlinks) not found when using regex and `followLinks: false` | stale lang/processes | Not completely sure if this is a bug:

I am running into an issue when using the option followLinks in the output section and combining it with the regex. Here is the simplest example to showcase the issue. The first example works as expected:

```

#!/usr/bin/env nextflow

process createMetaFiles {

output:

... | 1.0 | Files (symlinks) not found when using regex and `followLinks: false` - Not completely sure if this is a bug:

I am running into an issue when using the option followLinks in the output section and combining it with the regex. Here is the simplest example to showcase the issue. The first example works as expected:

```... | process | files symlinks not found when using regex and followlinks false not completely sure if this is a bug i am running into an issue when using the option followlinks in the output section and combining it with the regex here is the simplest example to showcase the issue the first example works as expected ... | 1 |

19,835 | 26,230,140,757 | IssuesEvent | 2023-01-04 22:59:30 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | Remove deprecated 'gke' and 'gce' resource detectors after v0.54.0 is released | admin issues comp:google processor/resourcedetection | The replacement is to use the single 'gcp' resource detector. See https://github.com/open-telemetry/opentelemetry-collector-contrib/pull/10347. | 1.0 | Remove deprecated 'gke' and 'gce' resource detectors after v0.54.0 is released - The replacement is to use the single 'gcp' resource detector. See https://github.com/open-telemetry/opentelemetry-collector-contrib/pull/10347. | process | remove deprecated gke and gce resource detectors after is released the replacement is to use the single gcp resource detector see | 1 |

14,490 | 17,603,832,787 | IssuesEvent | 2021-08-17 14:46:50 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | Algorithm parameters - use of include and handling advanced parameters | Processing | ## Description

**First**:

We have been "experimenting" with includes for algorithm parameters, and have discovered that the RST `.. include:` has some important limitations. It does not seem to be possible to use it to import a group of elements of a list-table.

Below are some alternatives that we have tested, and... | 1.0 | Algorithm parameters - use of include and handling advanced parameters - ## Description

**First**:

We have been "experimenting" with includes for algorithm parameters, and have discovered that the RST `.. include:` has some important limitations. It does not seem to be possible to use it to import a group of elemen... | process | algorithm parameters use of include and handling advanced parameters description first we have been experimenting with includes for algorithm parameters and have discovered that the rst include has some important limitations it does not seem to be possible to use it to import a group of elemen... | 1 |

126,392 | 12,291,507,803 | IssuesEvent | 2020-05-10 10:22:11 | i-on-project/core | https://api.github.com/repos/i-on-project/core | closed | Docs: Compose API Spec | documentation | Compose a basic documentation of the API. Some topics to include:

- endpoints

- available operations

- properties

- specific errors

- routes

- error handling

- message formats

- [ ] Read API Spec

- [ ] Write API Spec | 1.0 | Docs: Compose API Spec - Compose a basic documentation of the API. Some topics to include:

- endpoints

- available operations

- properties

- specific errors

- routes

- error handling

- message formats

- [ ] Read API Spec

- [ ] Write API Spec | non_process | docs compose api spec compose a basic documentation of the api some topics to include endpoints available operations properties specific errors routes error handling message formats read api spec write api spec | 0 |

69,096 | 17,571,287,217 | IssuesEvent | 2021-08-14 18:56:14 | haskell/cabal | https://api.github.com/repos/haskell/cabal | closed | `new-haddock`'s file monitoring broken | type: bug cabal-install: nix-local-build | Consider the following simple reproduction instructions:

```

$ cabal get sort-1.0.0.1 && cd sort-0.0.0.1/

Unpacking to sort-0.0.0.1/

```

The first invocation of `new-haddock` works as expected:

```

$ cabal new-haddock

Resolving dependencies...