Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

20,443 | 27,100,574,062 | IssuesEvent | 2023-02-15 08:19:04 | billingran/Newsletter | https://api.github.com/repos/billingran/Newsletter | closed | Afficher un message d’erreur compréhensible pour l'inscription avec la même adresse email. | processing... Brief 2 | - [ ] Afficher un message d’erreur compréhensible pour l'inscription avec la même adresse email. | 1.0 | Afficher un message d’erreur compréhensible pour l'inscription avec la même adresse email. - - [ ] Afficher un message d’erreur compréhensible pour l'inscription avec la même adresse email. | process | afficher un message d’erreur compréhensible pour l inscription avec la même adresse email afficher un message d’erreur compréhensible pour l inscription avec la même adresse email | 1 |

41,058 | 16,617,283,638 | IssuesEvent | 2021-06-02 18:26:11 | microsoft/botframework-solutions | https://api.github.com/repos/microsoft/botframework-solutions | closed | [Docs] Missing " in dispatch add --type "qna" --name "kb-name ` | Bot Services Type: Docs customer-replied-to customer-reported | **Missing " in dispatch add --type "qna" --name "kb-name `**

**https://microsoft.github.io/botframework-solutions/virtual-assistant/tutorials/deploy-assistant/cli/7-create-dispatch-model/**

| 1.0 | [Docs] Missing " in dispatch add --type "qna" --name "kb-name ` - **Missing " in dispatch add --type "qna" --name "kb-name `**

**https://microsoft.github.io/botframework-solutions/virtual-assistant/tutorials/deploy-assistant/cli/7-create-dispatch-model/**

| non_process | missing in dispatch add type qna name kb name missing in dispatch add type qna name kb name | 0 |

20,806 | 27,566,827,980 | IssuesEvent | 2023-03-08 05:01:55 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | reopened | Clarify the status of ctx.tokenize | P4 type: process untriaged stale team-Rules-API | It's not documented, but it's still present.

cc @laszlocsomor | 1.0 | Clarify the status of ctx.tokenize - It's not documented, but it's still present.

cc @laszlocsomor | process | clarify the status of ctx tokenize it s not documented but it s still present cc laszlocsomor | 1 |

273,828 | 23,788,438,553 | IssuesEvent | 2022-09-02 12:27:05 | Exawind/nalu-wind | https://api.github.com/repos/Exawind/nalu-wind | closed | edgeHybridFluids test diffing | failing-tests | The edgeHybridFluids test is diffing on the SNL nightly dashboard ([internal link]()) since Aug. 25 at 10pm MDT. The diff seems to not be large, and the fact that it doesn't appear on the NREL dashboard implies that either something has changed slightly in Trilinos develop or it's system noise on the SNL machine.

@... | 1.0 | edgeHybridFluids test diffing - The edgeHybridFluids test is diffing on the SNL nightly dashboard ([internal link]()) since Aug. 25 at 10pm MDT. The diff seems to not be large, and the fact that it doesn't appear on the NREL dashboard implies that either something has changed slightly in Trilinos develop or it's syste... | non_process | edgehybridfluids test diffing the edgehybridfluids test is diffing on the snl nightly dashboard since aug at mdt the diff seems to not be large and the fact that it doesn t appear on the nrel dashboard implies that either something has changed slightly in trilinos develop or it s system noise on the snl... | 0 |

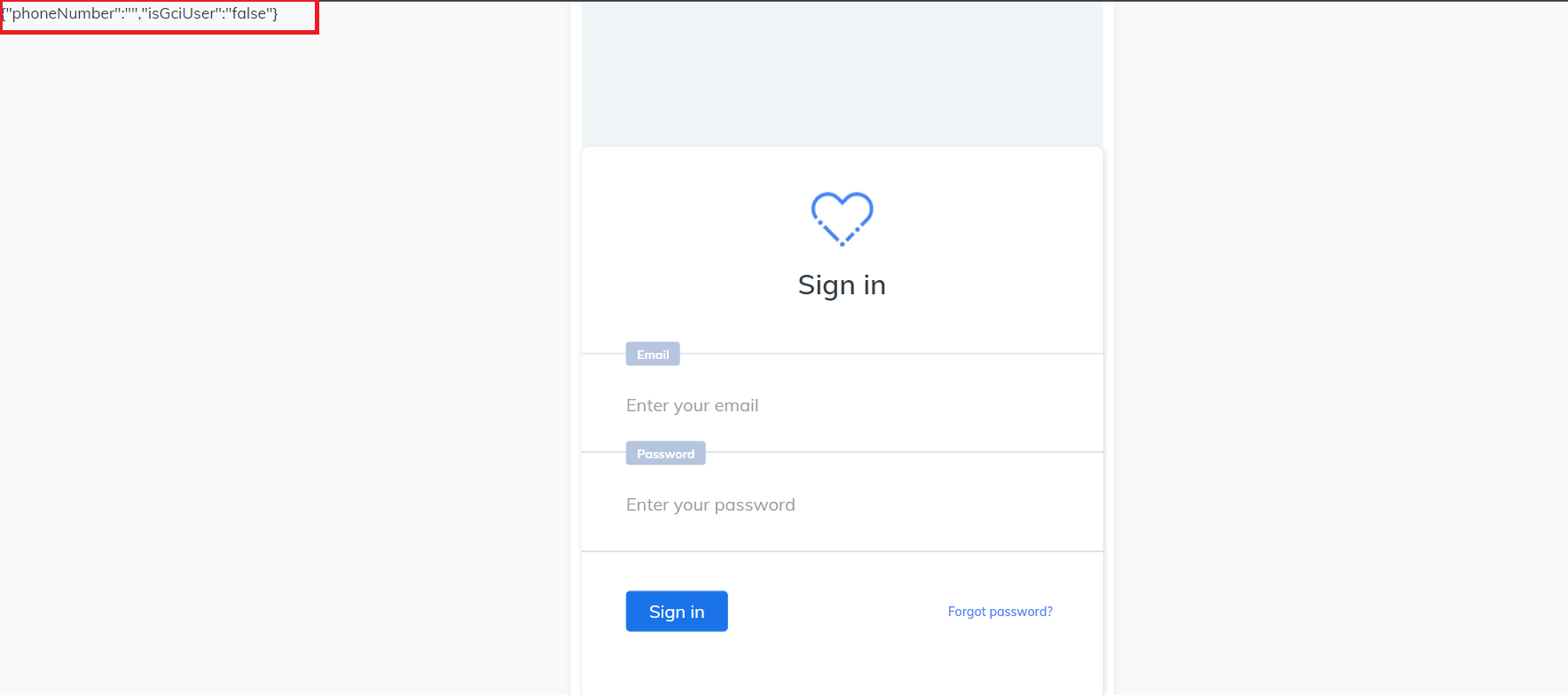

19,286 | 25,466,249,303 | IssuesEvent | 2022-11-25 04:53:40 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [GCI] [PM] UI issue in the sign in screen when entered invalid credentials | Bug P0 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | UI issue is observed in the sign in screen when entered invalid credentials.

| 3.0 | [GCI] [PM] UI issue in the sign in screen when entered invalid credentials - UI issue is observed in the sign in screen when entered invalid credentials.

| process | ui issue in the sign in screen when entered invalid credentials ui issue is observed in the sign in screen when entered invalid credentials | 1 |

20,976 | 27,829,066,721 | IssuesEvent | 2023-03-20 02:00:08 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Mon, 20 Mar 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### Classification of Primitive Manufacturing Tasks from Filtered Event Data

- **Authors:** Laura Duarte, Pedro Neto

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Robotics (cs.RO)

- **Arxiv link:** https://arxiv.org/abs/2303.09558

- **Pdf link:** https://arxiv.org/pdf/2303.0955... | 2.0 | New submissions for Mon, 20 Mar 23 - ## Keyword: events

### Classification of Primitive Manufacturing Tasks from Filtered Event Data

- **Authors:** Laura Duarte, Pedro Neto

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Robotics (cs.RO)

- **Arxiv link:** https://arxiv.org/abs/2303.09558

- **Pdf li... | process | new submissions for mon mar keyword events classification of primitive manufacturing tasks from filtered event data authors laura duarte pedro neto subjects computer vision and pattern recognition cs cv robotics cs ro arxiv link pdf link abstract collabor... | 1 |

233,637 | 19,024,888,630 | IssuesEvent | 2021-11-24 01:21:42 | crypto-org-chain/cronos | https://api.github.com/repos/crypto-org-chain/cronos | closed | Problem: hermes is not supported on 'x86_64-darwin' | tests | **Describe the bug**

After pulling the commit 9c3ba89b259f6da54a2c9f6096c8f0dd496efdc4, then

run `nix-shell integration_tests/shell.nix`, it prints out the following error:

```

error: Package ‘hermes’ in /Users/damonchen/Documents/cronos/nix/hermes.nix:4 is not supported on ‘x86_64-darwin’, refusing to evaluate.

... | 1.0 | Problem: hermes is not supported on 'x86_64-darwin' - **Describe the bug**

After pulling the commit 9c3ba89b259f6da54a2c9f6096c8f0dd496efdc4, then

run `nix-shell integration_tests/shell.nix`, it prints out the following error:

```

error: Package ‘hermes’ in /Users/damonchen/Documents/cronos/nix/hermes.nix:4 is no... | non_process | problem hermes is not supported on darwin describe the bug after pulling the commit then run nix shell integration tests shell nix it prints out the following error error package ‘hermes’ in users damonchen documents cronos nix hermes nix is not supported on ‘ darwin’ refusing to e... | 0 |

10,571 | 13,382,952,690 | IssuesEvent | 2020-09-02 09:34:25 | prisma/migrate | https://api.github.com/repos/prisma/migrate | closed | A migration of `@default(cuid())` inserts `null` values to the DB | bug/1-repro-available engines/migration engine kind/bug process/candidate | ## Bug description

Adding a new column with `@default(cuid())` ends up with null values in the new column after migration.

## How to reproduce

Add a new column to an existing table, e.g.:

```

model Folder {

...

uid String? @default(cuid())

...

}

```

Run `prisma migrate save --experimental` fo... | 1.0 | A migration of `@default(cuid())` inserts `null` values to the DB - ## Bug description

Adding a new column with `@default(cuid())` ends up with null values in the new column after migration.

## How to reproduce

Add a new column to an existing table, e.g.:

```

model Folder {

...

uid String? @default(c... | process | a migration of default cuid inserts null values to the db bug description adding a new column with default cuid ends up with null values in the new column after migration how to reproduce add a new column to an existing table e g model folder uid string default c... | 1 |

21,165 | 11,137,730,305 | IssuesEvent | 2019-12-20 20:11:19 | couchbase/sync_gateway | https://api.github.com/repos/couchbase/sync_gateway | closed | Store attachments outside the bucket | enhancement icebox known-issue performance | Currently we store attachments as individual binary docs in the bucket. That's problematic, especially because there's a 20mb size limit.

We should switch to external storage, probably CBFS which was created specifically to solve this problem.

I think CBFS will make it easier to GC attachments too.

| True | Store attachments outside the bucket - Currently we store attachments as individual binary docs in the bucket. That's problematic, especially because there's a 20mb size limit.

We should switch to external storage, probably CBFS which was created specifically to solve this problem.

I think CBFS will make it easier to... | non_process | store attachments outside the bucket currently we store attachments as individual binary docs in the bucket that s problematic especially because there s a size limit we should switch to external storage probably cbfs which was created specifically to solve this problem i think cbfs will make it easier to gc... | 0 |

7,474 | 10,568,580,002 | IssuesEvent | 2019-10-06 14:01:21 | rubberduck-vba/Rubberduck | https://api.github.com/repos/rubberduck-vba/Rubberduck | closed | Mid statement is incorrectly identified as a Function in VBA library (in the Strings module) | bug hacktoberfest navigation parse-tree-processing | While having the same appearance with regard to their names and parameters, the `Mid` statement is part of the `VBA` language, while the `Mid` function is (after aliasing) a function in the `VBA` type library.

RD should be able to add a `SubroutineDeclaration` for `Mid`, and then make the distinction between a state... | 1.0 | Mid statement is incorrectly identified as a Function in VBA library (in the Strings module) - While having the same appearance with regard to their names and parameters, the `Mid` statement is part of the `VBA` language, while the `Mid` function is (after aliasing) a function in the `VBA` type library.

RD should be... | process | mid statement is incorrectly identified as a function in vba library in the strings module while having the same appearance with regard to their names and parameters the mid statement is part of the vba language while the mid function is after aliasing a function in the vba type library rd should be... | 1 |

670,777 | 22,703,657,430 | IssuesEvent | 2022-07-05 12:58:33 | easystats/report | https://api.github.com/repos/easystats/report | closed | Fixing build failures | bug :bug: high priority :runner: | Builds seem to be failing with the following error:

```r

Quitting from lines 105-108 (report.Rmd)

Error: Error: processing vignette 'report.Rmd' failed with diagnostics:

argument is of length zero

--- failed re-building ‘report.Rmd’

SUMMARY: processing the following file failed:

‘report.Rmd’

Error: Error: ... | 1.0 | Fixing build failures - Builds seem to be failing with the following error:

```r

Quitting from lines 105-108 (report.Rmd)

Error: Error: processing vignette 'report.Rmd' failed with diagnostics:

argument is of length zero

--- failed re-building ‘report.Rmd’

SUMMARY: processing the following file failed:

‘rep... | non_process | fixing build failures builds seem to be failing with the following error r quitting from lines report rmd error error processing vignette report rmd failed with diagnostics argument is of length zero failed re building ‘report rmd’ summary processing the following file failed ‘report ... | 0 |

2,264 | 5,095,516,729 | IssuesEvent | 2017-01-03 15:27:35 | MTG/freesound | https://api.github.com/repos/MTG/freesound | closed | Refactor sound processing state handling | Improvement _Processing | The current way in which we handle processing state and processing of sounds does not allow for optimised workflows when we want to reprocess sounds. To improve this, the processing_state field in the sound model should only be used to say that a sound has either failed processing or being successfully processed (simil... | 1.0 | Refactor sound processing state handling - The current way in which we handle processing state and processing of sounds does not allow for optimised workflows when we want to reprocess sounds. To improve this, the processing_state field in the sound model should only be used to say that a sound has either failed proces... | process | refactor sound processing state handling the current way in which we handle processing state and processing of sounds does not allow for optimised workflows when we want to reprocess sounds to improve this the processing state field in the sound model should only be used to say that a sound has either failed proces... | 1 |

67,100 | 16,818,686,674 | IssuesEvent | 2021-06-17 10:25:08 | status-im/StatusQ | https://api.github.com/repos/status-im/StatusQ | opened | Extend sandbox build script to allow for qmake/qt version specification | type: build type: feature | Right now the build script simply uses `qmake` which is provided by whatever Qt version it is linked to. This makes it hard to build sandbox for different Qt versions to test upgrades etc.

Ideally, the script would rely on a global environment variable (if it exists), most likely `QT_PATH`, which should come with a ... | 1.0 | Extend sandbox build script to allow for qmake/qt version specification - Right now the build script simply uses `qmake` which is provided by whatever Qt version it is linked to. This makes it hard to build sandbox for different Qt versions to test upgrades etc.

Ideally, the script would rely on a global environment... | non_process | extend sandbox build script to allow for qmake qt version specification right now the build script simply uses qmake which is provided by whatever qt version it is linked to this makes it hard to build sandbox for different qt versions to test upgrades etc ideally the script would rely on a global environment... | 0 |

22,584 | 31,811,050,055 | IssuesEvent | 2023-09-13 16:51:38 | alphagov/govuk-design-system | https://api.github.com/repos/alphagov/govuk-design-system | reopened | Add Kam to the team page on Design System website | process | ## What

Add Kam to the list of Design System team members on the website.

## Why

To tell everyone that Kam has joined the team.

## Who needs to work on this

Kelly & Kam

## Who needs to review this

Anybody on the team

## Done when

- [ ] created a pull request to add Kam's name to the list

| 1.0 | Add Kam to the team page on Design System website - ## What

Add Kam to the list of Design System team members on the website.

## Why

To tell everyone that Kam has joined the team.

## Who needs to work on this

Kelly & Kam

## Who needs to review this

Anybody on the team

## Done when

- [ ] created a pull ... | process | add kam to the team page on design system website what add kam to the list of design system team members on the website why to tell everyone that kam has joined the team who needs to work on this kelly kam who needs to review this anybody on the team done when created a pull re... | 1 |

22,739 | 32,056,195,084 | IssuesEvent | 2023-09-24 05:19:39 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [processor/metricstransform] Include Splitby operation | enhancement Stale processor/metricstransform closed as inactive | ### Component(s)

processor/metricstransform

### Is your feature request related to a problem? Please describe.

In the components current form, it is not possible without some complicated aggregation logic to convert an attribute to a new metric.

### Describe the solution you'd like

What I'd like to do is the follo... | 1.0 | [processor/metricstransform] Include Splitby operation - ### Component(s)

processor/metricstransform

### Is your feature request related to a problem? Please describe.

In the components current form, it is not possible without some complicated aggregation logic to convert an attribute to a new metric.

### Describe ... | process | include splitby operation component s processor metricstransform is your feature request related to a problem please describe in the components current form it is not possible without some complicated aggregation logic to convert an attribute to a new metric describe the solution you d like wh... | 1 |

826,291 | 31,563,938,640 | IssuesEvent | 2023-09-03 15:33:12 | NikkelM/Outlook-Mail-Notes | https://api.github.com/repos/NikkelM/Outlook-Mail-Notes | closed | Export notes to CSV | UI feature Priority: Normal | It would be best if users could decide to export all notes, or just those specific to the current mail, conversation or sender. | 1.0 | Export notes to CSV - It would be best if users could decide to export all notes, or just those specific to the current mail, conversation or sender. | non_process | export notes to csv it would be best if users could decide to export all notes or just those specific to the current mail conversation or sender | 0 |

5,936 | 8,757,456,741 | IssuesEvent | 2018-12-14 21:19:48 | kythe/kythe | https://api.github.com/repos/kythe/kythe | opened | Determine how to collect and serve available build configuration | API post-processing | #3309 discusses how to represent build config in the graph, but does not go into how to post-process and serve this information to make it relevant to users. This should be determined and documented. | 1.0 | Determine how to collect and serve available build configuration - #3309 discusses how to represent build config in the graph, but does not go into how to post-process and serve this information to make it relevant to users. This should be determined and documented. | process | determine how to collect and serve available build configuration discusses how to represent build config in the graph but does not go into how to post process and serve this information to make it relevant to users this should be determined and documented | 1 |

17,855 | 23,800,775,703 | IssuesEvent | 2022-09-03 08:45:14 | RobertCraigie/prisma-client-py | https://api.github.com/repos/RobertCraigie/prisma-client-py | closed | Make the custom `Base64` type compatible with OpenAPI generation | bug/2-confirmed kind/bug process/candidate topic: client level/beginner priority/medium | <!--

Thanks for helping us improve Prisma Client Python! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by enabling additional logging output.

See https://prisma-client-py.readthedocs.io/en/stable/reference/logging/ for how to enable additional log... | 1.0 | Make the custom `Base64` type compatible with OpenAPI generation - <!--

Thanks for helping us improve Prisma Client Python! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by enabling additional logging output.

See https://prisma-client-py.readthedo... | process | make the custom type compatible with openapi generation thanks for helping us improve prisma client python 🙏 please follow the sections in the template and provide as much information as possible about your problem e g by enabling additional logging output see for how to enable additional logging ... | 1 |

9,776 | 2,615,174,521 | IssuesEvent | 2015-03-01 06:57:31 | chrsmith/reaver-wps | https://api.github.com/repos/chrsmith/reaver-wps | opened | wps locked yes after 20 tryings | auto-migrated Priority-Triage Type-Defect | ```

1. What operating system are you using (Linux is the only supported OS)?

reaver 1.4 backtrack

2. Is your wireless card in monitor mode (yes/no)?

rtl8187 monitor enable on mon0

3. What is the signal strength of the Access Point you are trying to crack?

60%

4. What is the manufacturer and model # of t... | 1.0 | wps locked yes after 20 tryings - ```

1. What operating system are you using (Linux is the only supported OS)?

reaver 1.4 backtrack

2. Is your wireless card in monitor mode (yes/no)?

rtl8187 monitor enable on mon0

3. What is the signal strength of the Access Point you are trying to crack?

60%

4. What is... | non_process | wps locked yes after tryings what operating system are you using linux is the only supported os reaver backtrack is your wireless card in monitor mode yes no monitor enable on what is the signal strength of the access point you are trying to crack what is the manufa... | 0 |

12,743 | 15,106,548,101 | IssuesEvent | 2021-02-08 14:25:58 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Update l.gcr.io/google/bazel:latest bazel docker image to bazel version 4.0.0 | team-XProduct type: process untriaged | > ATTENTION! Please read and follow:

> - if this is a _question_ about how to build / test / query / deploy using Bazel, or a _discussion starter_, send it to bazel-discuss@googlegroups.com

> - if this is a _bug_ or _feature request_, fill the form below as best as you can.

### Description of the problem / feature... | 1.0 | Update l.gcr.io/google/bazel:latest bazel docker image to bazel version 4.0.0 - > ATTENTION! Please read and follow:

> - if this is a _question_ about how to build / test / query / deploy using Bazel, or a _discussion starter_, send it to bazel-discuss@googlegroups.com

> - if this is a _bug_ or _feature request_, fil... | process | update l gcr io google bazel latest bazel docker image to bazel version attention please read and follow if this is a question about how to build test query deploy using bazel or a discussion starter send it to bazel discuss googlegroups com if this is a bug or feature request fil... | 1 |

565,836 | 16,771,123,055 | IssuesEvent | 2021-06-14 14:54:16 | Brevada/brv | https://api.github.com/repos/Brevada/brv | closed | Remove Decimal Point From Data Presentation | enhancement high priority ux | I don't think we need to show the scores with a decimal point - on an 80 point scale its meaningless.

@RobbieGoldfarb @noahnu

| 1.0 | Remove Decimal Point From Data Presentation - I don't think we need to show the scores with a decimal point - on an 80 point scale its meaningless.

@RobbieGoldfarb @noahnu

| non_process | remove decimal point from data presentation i don t think we need to show the scores with a decimal point on an point scale its meaningless robbiegoldfarb noahnu | 0 |

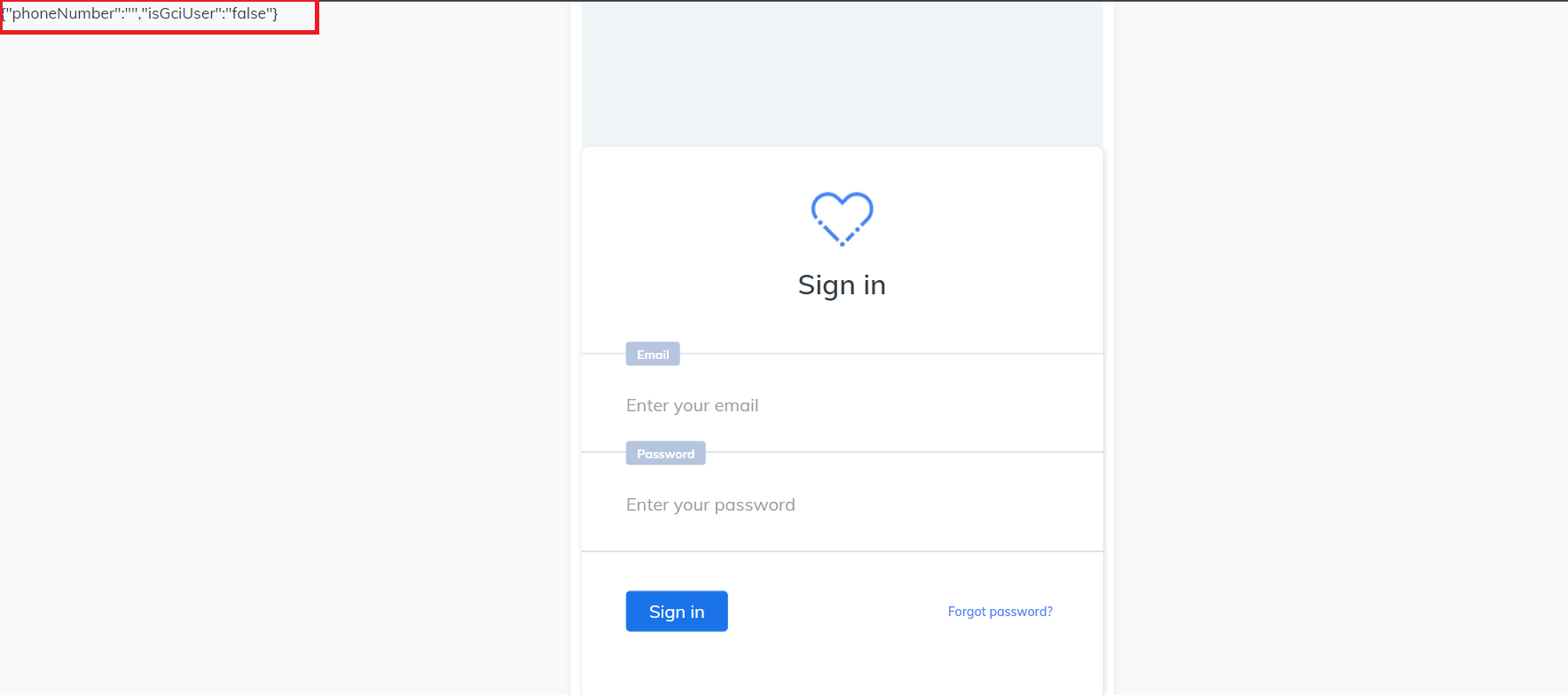

6,019 | 8,822,900,713 | IssuesEvent | 2019-01-02 11:20:21 | linnovate/root | https://api.github.com/repos/linnovate/root | opened | Search: can't delete in multiple select just after refresh/go to other tab | 2.0.6 Process bug bug | open a few items with name of test.

search in the search line : "test".

click on the multiple select and choose all items.

click on delete.

cli... | process | search can t delete in multiple select just after refresh go to other tab open a few items with name of test search in the search line test click on the multiple select and choose all items click on delete the items didn t delete until you refresh or going to other tab | 1 |

80,801 | 10,210,774,378 | IssuesEvent | 2019-08-14 15:26:45 | google/nixery | https://api.github.com/repos/google/nixery | closed | Pin specific nixpkgs checkout | documentation enhancement | For the sake of reproducibility and stability, it would be great if we could specify a specific nixpkgs checkout version that should be used while assembling the container. | 1.0 | Pin specific nixpkgs checkout - For the sake of reproducibility and stability, it would be great if we could specify a specific nixpkgs checkout version that should be used while assembling the container. | non_process | pin specific nixpkgs checkout for the sake of reproducibility and stability it would be great if we could specify a specific nixpkgs checkout version that should be used while assembling the container | 0 |

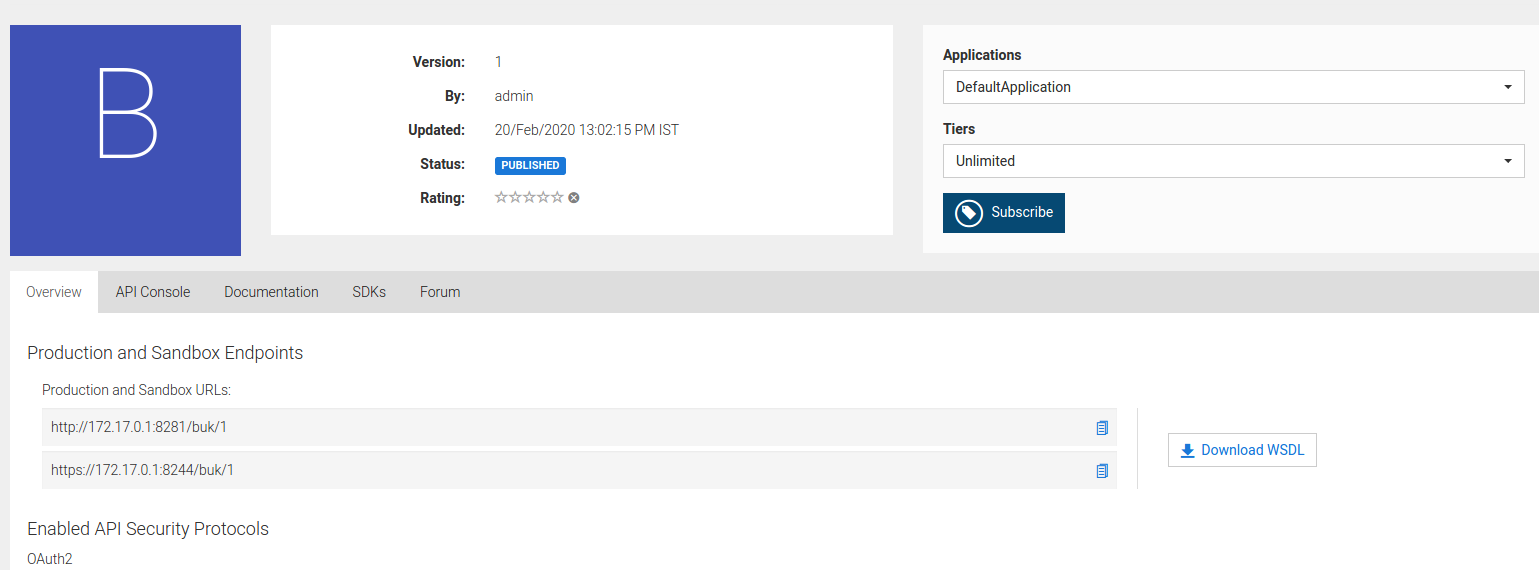

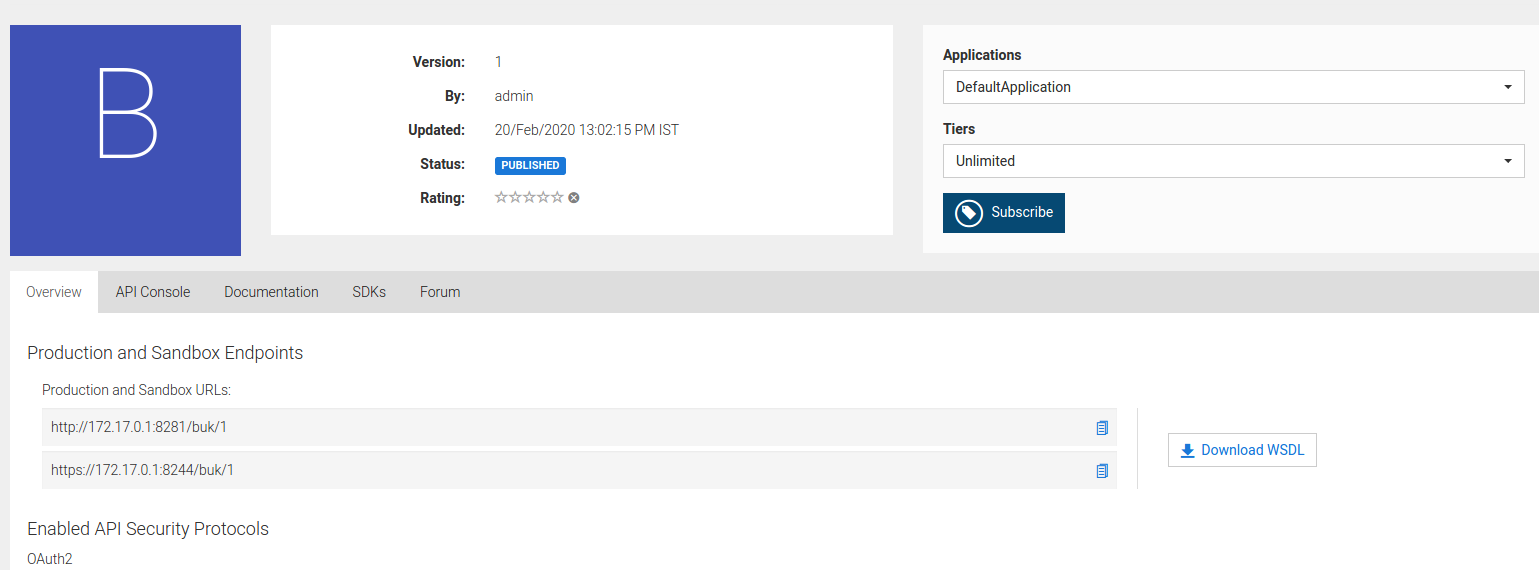

390,914 | 11,565,696,595 | IssuesEvent | 2020-02-20 10:59:32 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | No way to download WSDL file from Dev Portal | 3.1.0 Priority/Normal Type/Improvement | ### Describe your problem(s)

In 2.6.0, WSDL can be downloaded from Store for the REST APIs generated from SOAP back-end. Refer the following screenshot:

In 3.1.0 Dev Portal, no UI option to download WSDL ... | 1.0 | No way to download WSDL file from Dev Portal - ### Describe your problem(s)

In 2.6.0, WSDL can be downloaded from Store for the REST APIs generated from SOAP back-end. Refer the following screenshot:

In 3... | non_process | no way to download wsdl file from dev portal describe your problem s in wsdl can be downloaded from store for the rest apis generated from soap back end refer the following screenshot in dev portal no ui option to download wsdl file | 0 |

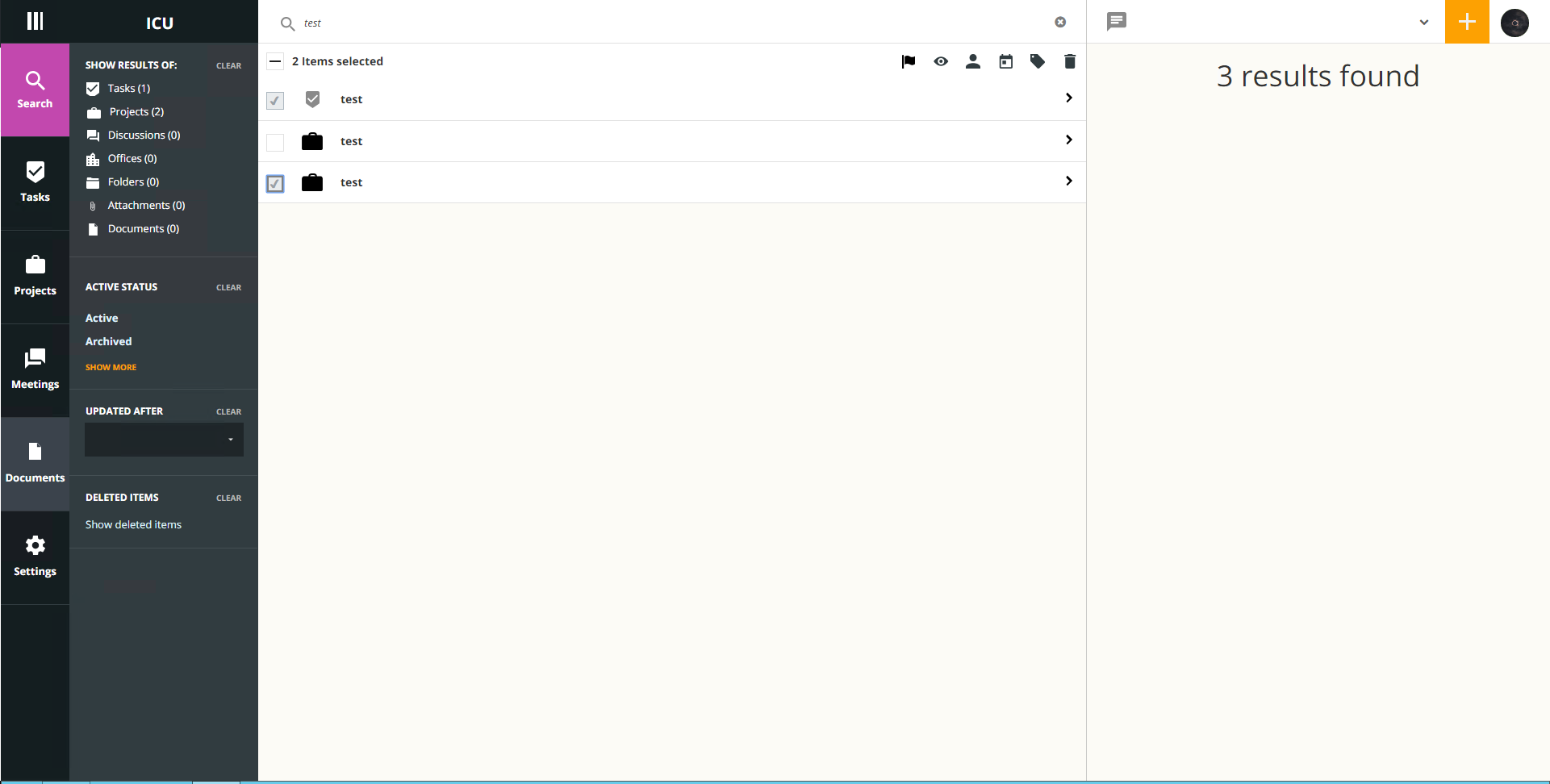

19,306 | 25,466,659,346 | IssuesEvent | 2022-11-25 05:34:03 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [IDP] [PM] Submit button is disabled in the account setup screen | Bug Blocker P0 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | **Steps:**

1. Add admins in the participant manager by entering the value in the phone number field which should be as similar in the format displayed in the UI

2. After getting account setup link in an email, click on that link

3. Complete all the fields and click on Submit button and Verify

**AR:** Submit butto... | 3.0 | [IDP] [PM] Submit button is disabled in the account setup screen - **Steps:**

1. Add admins in the participant manager by entering the value in the phone number field which should be as similar in the format displayed in the UI

2. After getting account setup link in an email, click on that link

3. Complete all the f... | process | submit button is disabled in the account setup screen steps add admins in the participant manager by entering the value in the phone number field which should be as similar in the format displayed in the ui after getting account setup link in an email click on that link complete all the fields a... | 1 |

120,120 | 25,743,610,461 | IssuesEvent | 2022-12-08 08:16:59 | cython/cython | https://api.github.com/repos/cython/cython | closed | Illegal C code created with double complex | defect Code Generation | I am using `double complex` numbers in my code in various places, e.g., as array types, with a custom type defined by

```

ctypedef double complex dcomplex

```

This results in faulty C code:

```

/* Declarations.proto */

#if CYTHON_CCOMPLEX

#ifdef __cplusplus

typedef ::std::complex< npy_float64 > __pyx_t_n... | 1.0 | Illegal C code created with double complex - I am using `double complex` numbers in my code in various places, e.g., as array types, with a custom type defined by

```

ctypedef double complex dcomplex

```

This results in faulty C code:

```

/* Declarations.proto */

#if CYTHON_CCOMPLEX

#ifdef __cplusplus

ty... | non_process | illegal c code created with double complex i am using double complex numbers in my code in various places e g as array types with a custom type defined by ctypedef double complex dcomplex this results in faulty c code declarations proto if cython ccomplex ifdef cplusplus ty... | 0 |

807,971 | 30,028,741,417 | IssuesEvent | 2023-06-27 08:11:38 | microsoft/PowerToys | https://api.github.com/repos/microsoft/PowerToys | closed | powerlauncher preventing powershell 7 uninstall/update | Issue-Bug Product-PowerToys Run Priority-1 Needs-Triage Needs-Team-Response | <!--

**Important: When reporting BSODs or security issues, DO NOT attach memory dumps, logs, or traces to Github issues**.

Instead, send dumps/traces to secure@microsoft.com, referencing this GitHub issue.

-->

## ℹ Computer information

- PowerToys version: 0.33.1

- PowerToy Utility: powerlauncher

- Running P... | 1.0 | powerlauncher preventing powershell 7 uninstall/update - <!--

**Important: When reporting BSODs or security issues, DO NOT attach memory dumps, logs, or traces to Github issues**.

Instead, send dumps/traces to secure@microsoft.com, referencing this GitHub issue.

-->

## ℹ Computer information

- PowerToys versio... | non_process | powerlauncher preventing powershell uninstall update important when reporting bsods or security issues do not attach memory dumps logs or traces to github issues instead send dumps traces to secure microsoft com referencing this github issue ℹ computer information powertoys versio... | 0 |

13,790 | 16,550,848,877 | IssuesEvent | 2021-05-28 08:24:55 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Mobile] Studies are not displaying in Study list screen in mobile | Blocker Bug P0 Process: Fixed Process: Tested QA Unknown backend | Issue is observed in QA instance

| 2.0 | [Mobile] Studies are not displaying in Study list screen in mobile - Issue is observed in QA instance

| process | studies are not displaying in study list screen in mobile issue is observed in qa instance | 1 |

241,449 | 26,256,798,503 | IssuesEvent | 2023-01-06 01:58:59 | DavidSpek/kubeflow | https://api.github.com/repos/DavidSpek/kubeflow | opened | CVE-2022-0155 (Medium) detected in follow-redirects-1.9.0.tgz | security vulnerability | ## CVE-2022-0155 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>follow-redirects-1.9.0.tgz</b></p></summary>

<p>HTTP and HTTPS modules that follow redirects.</p>

<p>Library home pag... | True | CVE-2022-0155 (Medium) detected in follow-redirects-1.9.0.tgz - ## CVE-2022-0155 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>follow-redirects-1.9.0.tgz</b></p></summary>

<p>HTTP ... | non_process | cve medium detected in follow redirects tgz cve medium severity vulnerability vulnerable library follow redirects tgz http and https modules that follow redirects library home page a href path to dependency file components centraldashboard package json path to vulne... | 0 |

1,415 | 3,979,671,041 | IssuesEvent | 2016-05-06 01:17:01 | neuropoly/spinalcordtoolbox | https://api.github.com/repos/neuropoly/spinalcordtoolbox | opened | minor improvements on EXCEL output | enhancement sct_process_segmentation | - move column F between column A and B

- when "z" is selected, the column "Vertebral level" shows "ALL", which is wrong. Please change for "NaN" | 1.0 | minor improvements on EXCEL output - - move column F between column A and B

- when "z" is selected, the column "Vertebral level" shows "ALL", which is wrong. Please change for "NaN" | process | minor improvements on excel output move column f between column a and b when z is selected the column vertebral level shows all which is wrong please change for nan | 1 |

9,235 | 12,264,075,452 | IssuesEvent | 2020-05-07 03:03:51 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | vmware-iso builder losing artifact before post-processor can use it | bug post-processor/vsphere | #### Overview of the Issue

I can't put my finger on it, but I have a hunch that the `vmware-iso` builder is losing the `ova` artifact before the `vsphere` post-processor can use it.

I was getting the error `\* Post-processor failed: Error uploading virtual machine: exit status 1`, and saw https://github.com/hashi... | 1.0 | vmware-iso builder losing artifact before post-processor can use it - #### Overview of the Issue

I can't put my finger on it, but I have a hunch that the `vmware-iso` builder is losing the `ova` artifact before the `vsphere` post-processor can use it.

I was getting the error `\* Post-processor failed: Error uploa... | process | vmware iso builder losing artifact before post processor can use it overview of the issue i can t put my finger on it but i have a hunch that the vmware iso builder is losing the ova artifact before the vsphere post processor can use it i was getting the error post processor failed error uploa... | 1 |

339,617 | 10,256,870,340 | IssuesEvent | 2019-08-21 18:42:33 | byu-animation/dccpipe | https://api.github.com/repos/byu-animation/dccpipe | opened | Optimize which departments are shown in lists when publishing | Maya enhancement good first issue priority: medium | We don't want users publishing to an un-used department for a prop, like cfx or fx when they are creating a static prop. | 1.0 | Optimize which departments are shown in lists when publishing - We don't want users publishing to an un-used department for a prop, like cfx or fx when they are creating a static prop. | non_process | optimize which departments are shown in lists when publishing we don t want users publishing to an un used department for a prop like cfx or fx when they are creating a static prop | 0 |

44,273 | 2,902,837,296 | IssuesEvent | 2015-06-18 09:42:37 | OpenDataNode/open-data-node | https://api.github.com/repos/OpenDataNode/open-data-node | opened | CKAN datastore: no view created | priority: High severity: bug | In 2.3 CKAN, there is no view generated in resource detail when using dpus:

* relationalToCkan

* relationalDiffToCkan

The resource is created correctly and data is inserted too, only the recline view isn't generated automatically and has to be added manually. | 1.0 | CKAN datastore: no view created - In 2.3 CKAN, there is no view generated in resource detail when using dpus:

* relationalToCkan

* relationalDiffToCkan

The resource is created correctly and data is inserted too, only the recline view isn't generated automatically and has to be added manually. | non_process | ckan datastore no view created in ckan there is no view generated in resource detail when using dpus relationaltockan relationaldifftockan the resource is created correctly and data is inserted too only the recline view isn t generated automatically and has to be added manually | 0 |

99,184 | 4,049,127,056 | IssuesEvent | 2016-05-23 13:09:51 | duckduckgo/community-platform | https://api.github.com/repos/duckduckgo/community-platform | closed | insert image does not allow upload | Feature Forum Improvement Priority: Low | current forum post `insert image` button prompts for an image url but does not allow a new image to be uploaded to dukgo.com | 1.0 | insert image does not allow upload - current forum post `insert image` button prompts for an image url but does not allow a new image to be uploaded to dukgo.com | non_process | insert image does not allow upload current forum post insert image button prompts for an image url but does not allow a new image to be uploaded to dukgo com | 0 |

13,975 | 16,748,118,543 | IssuesEvent | 2021-06-11 18:23:11 | sysflow-telemetry/sf-docs | https://api.github.com/repos/sysflow-telemetry/sf-docs | closed | Pull and update policies from S3/object store bucket | enhancement sf-processor | Implement a mechanism for pulling and updating policies from S3. Things to consider:

- configurable update/pull checks

- checksum/hash checking during updates

- policy syntax check before updating (use `compile` function from policy engine)

- use secrets wrapper to read S3 access/secret keys from container vault ... | 1.0 | Pull and update policies from S3/object store bucket - Implement a mechanism for pulling and updating policies from S3. Things to consider:

- configurable update/pull checks

- checksum/hash checking during updates

- policy syntax check before updating (use `compile` function from policy engine)

- use secrets wrap... | process | pull and update policies from object store bucket implement a mechanism for pulling and updating policies from things to consider configurable update pull checks checksum hash checking during updates policy syntax check before updating use compile function from policy engine use secrets wrappe... | 1 |

8,079 | 20,822,064,613 | IssuesEvent | 2022-03-18 16:20:30 | RuanScherer/api-health-checker | https://api.github.com/repos/RuanScherer/api-health-checker | closed | Prepare backend base environment | architecture backend | **Describe the solution you'd like**

Prepare the backend development environment using:

- Node.js

- Typescript

- Express.js

- CORS | 1.0 | Prepare backend base environment - **Describe the solution you'd like**

Prepare the backend development environment using:

- Node.js

- Typescript

- Express.js

- CORS | non_process | prepare backend base environment describe the solution you d like prepare the backend development environment using node js typescript express js cors | 0 |

13,571 | 16,109,056,243 | IssuesEvent | 2021-04-27 18:31:47 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | Optimize Timescaledb migration script | P3 database enhancement process | **Problem**

[This PR](https://github.com/hashgraph/hedera-mirror-node/pull/1798) added some fixes to the timescaledb migration script, however some optimization could still be done.

**Solution**

- Find a way to handle the column order mismatch that does not require an explicit column list in the restore script (this ... | 1.0 | Optimize Timescaledb migration script - **Problem**

[This PR](https://github.com/hashgraph/hedera-mirror-node/pull/1798) added some fixes to the timescaledb migration script, however some optimization could still be done.

**Solution**

- Find a way to handle the column order mismatch that does not require an explicit ... | process | optimize timescaledb migration script problem added some fixes to the timescaledb migration script however some optimization could still be done solution find a way to handle the column order mismatch that does not require an explicit column list in the restore script this becomes a maintenance bur... | 1 |

324,187 | 23,987,561,588 | IssuesEvent | 2022-09-13 20:34:41 | sloik/Major.Minor.Patch | https://api.github.com/repos/sloik/Major.Minor.Patch | closed | Expand README.md | documentation good first issue | # What to do

Add more information sections to `README.md`:

## Acceptance Criteria

Sections that are added:

* Installation

* short description about semantic versioning and link to the specification

* some examples of usage | 1.0 | Expand README.md - # What to do

Add more information sections to `README.md`:

## Acceptance Criteria

Sections that are added:

* Installation

* short description about semantic versioning and link to the specification

* some examples of usage | non_process | expand readme md what to do add more information sections to readme md acceptance criteria sections that are added installation short description about semantic versioning and link to the specification some examples of usage | 0 |

162,062 | 20,164,376,493 | IssuesEvent | 2022-02-10 01:46:50 | kapseliboi/dapp | https://api.github.com/repos/kapseliboi/dapp | opened | CVE-2021-3807 (High) detected in multiple libraries | security vulnerability | ## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ansi-regex-5.0.0.tgz</b>, <b>ansi-regex-3.0.0.tgz</b>, <b>ansi-regex-4.1.0.tgz</b></p></summary>

<p>

<details><summar... | True | CVE-2021-3807 (High) detected in multiple libraries - ## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ansi-regex-5.0.0.tgz</b>, <b>ansi-regex-3.0.0.tgz</b>, <b>ansi-r... | non_process | cve high detected in multiple libraries cve high severity vulnerability vulnerable libraries ansi regex tgz ansi regex tgz ansi regex tgz ansi regex tgz regular expression for matching ansi escape codes library home page a href path to dependen... | 0 |

19,604 | 25,959,130,910 | IssuesEvent | 2022-12-18 16:54:11 | tokio-rs/tokio | https://api.github.com/repos/tokio-rs/tokio | closed | Possible bug in tokio::process::Command | C-bug A-tokio M-process | **Version**

tokio = { version = "1.23.0", features = ["full"] }

**Platform**

Linux 160R 5.19.17-2-MANJARO

**Description**

From documentation of `tokio::process::Command::wait`:

> The stdin handle to the child process, if any, will be closed

> before waiting. This helps avoid deadlock: it ensures that ... | 1.0 | Possible bug in tokio::process::Command - **Version**

tokio = { version = "1.23.0", features = ["full"] }

**Platform**

Linux 160R 5.19.17-2-MANJARO

**Description**

From documentation of `tokio::process::Command::wait`:

> The stdin handle to the child process, if any, will be closed

> before waiting. T... | process | possible bug in tokio process command version tokio version features platform linux manjaro description from documentation of tokio process command wait the stdin handle to the child process if any will be closed before waiting this helps avo... | 1 |

102,243 | 16,550,354,059 | IssuesEvent | 2021-05-28 07:52:17 | Check-den-Fakt/Frontend | https://api.github.com/repos/Check-den-Fakt/Frontend | closed | CVE-2021-24033 (Medium) detected in react-dev-utils-10.2.1.tgz | security vulnerability wontfix | ## CVE-2021-24033 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>react-dev-utils-10.2.1.tgz</b></p></summary>

<p>webpack utilities used by Create React App</p>

<p>Library home page:... | True | CVE-2021-24033 (Medium) detected in react-dev-utils-10.2.1.tgz - ## CVE-2021-24033 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>react-dev-utils-10.2.1.tgz</b></p></summary>

<p>web... | non_process | cve medium detected in react dev utils tgz cve medium severity vulnerability vulnerable library react dev utils tgz webpack utilities used by create react app library home page a href path to dependency file frontend package json path to vulnerable library frontend n... | 0 |

510,640 | 14,813,693,712 | IssuesEvent | 2021-01-14 02:44:35 | google/knative-gcp | https://api.github.com/repos/google/knative-gcp | closed | Evaluate the impact of initial delivery timeout on throughput | area/broker kind/bug lifecycle/stale priority/2 release/2 | Currently, BrokerCell deployments have a default timeout of 10 minutes per event. There is also a cap on the number of concurrent open connections. When there is a mix of fast and slow clients, from throughput perspective, it may not always be optimal to hang on to slow clients for that long. For example, if 10 clients... | 1.0 | Evaluate the impact of initial delivery timeout on throughput - Currently, BrokerCell deployments have a default timeout of 10 minutes per event. There is also a cap on the number of concurrent open connections. When there is a mix of fast and slow clients, from throughput perspective, it may not always be optimal to h... | non_process | evaluate the impact of initial delivery timeout on throughput currently brokercell deployments have a default timeout of minutes per event there is also a cap on the number of concurrent open connections when there is a mix of fast and slow clients from throughput perspective it may not always be optimal to ha... | 0 |

7,911 | 11,092,068,876 | IssuesEvent | 2019-12-15 16:30:37 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Single most recently added Custom GDAL "Create options" profile does not work in Processing | Bug Processing | **New description**

Go in options > settings > GDAL

and create 2 or more custom "create options" profiles.

Then try to use them in Processing (GDAL tools) or in the "save as..." dialog (for rasters of course). Only the latter will work, while in the former only the 1st custom profile will work, the others shows em... | 1.0 | Single most recently added Custom GDAL "Create options" profile does not work in Processing - **New description**

Go in options > settings > GDAL

and create 2 or more custom "create options" profiles.

Then try to use them in Processing (GDAL tools) or in the "save as..." dialog (for rasters of course). Only the la... | process | single most recently added custom gdal create options profile does not work in processing new description go in options settings gdal and create or more custom create options profiles then try to use them in processing gdal tools or in the save as dialog for rasters of course only the la... | 1 |

8,266 | 11,428,819,188 | IssuesEvent | 2020-02-04 06:05:06 | kubeflow/manifests | https://api.github.com/repos/kubeflow/manifests | closed | Retag notebook images to v1.0 and update the configmap | area/jupyter feature kind/process priority/p0 | We should retag our existing jupyter images to be v1.0 and then update the configmap that lists them for the jupyter web app.

Rebuilding the docker images I think only makes sense if we are bumping the TF versions and thus the base images. Ideally we would do that but I don't think we should block on it. | 1.0 | Retag notebook images to v1.0 and update the configmap - We should retag our existing jupyter images to be v1.0 and then update the configmap that lists them for the jupyter web app.

Rebuilding the docker images I think only makes sense if we are bumping the TF versions and thus the base images. Ideally we would do ... | process | retag notebook images to and update the configmap we should retag our existing jupyter images to be and then update the configmap that lists them for the jupyter web app rebuilding the docker images i think only makes sense if we are bumping the tf versions and thus the base images ideally we would do th... | 1 |

92,413 | 15,857,075,330 | IssuesEvent | 2021-04-08 03:54:17 | f00b4rb00f/test-project | https://api.github.com/repos/f00b4rb00f/test-project | opened | WS-2020-0042 (High) detected in acorn-5.7.3.tgz | security vulnerability | ## WS-2020-0042 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>acorn-5.7.3.tgz</b></p></summary>

<p>ECMAScript parser</p>

<p>Library home page: <a href="https://registry.npmjs.org/aco... | True | WS-2020-0042 (High) detected in acorn-5.7.3.tgz - ## WS-2020-0042 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>acorn-5.7.3.tgz</b></p></summary>

<p>ECMAScript parser</p>

<p>Library ... | non_process | ws high detected in acorn tgz ws high severity vulnerability vulnerable library acorn tgz ecmascript parser library home page a href path to dependency file test project package json path to vulnerable library test project attacker app node modules acorn package jso... | 0 |

1,145 | 3,632,358,534 | IssuesEvent | 2016-02-11 09:30:24 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | Setting up an href attribute for a non-added to DOM "base" tag should not cause urlResolver to update. | AREA: client SYSTEM: URL processing TYPE: bug | see https://testcafe-hhhm.devexpress.com/history?timestamp=1455001095958&siteUrl=http://www.microsoft.com/ru-ru/default.aspx | 1.0 | Setting up an href attribute for a non-added to DOM "base" tag should not cause urlResolver to update. - see https://testcafe-hhhm.devexpress.com/history?timestamp=1455001095958&siteUrl=http://www.microsoft.com/ru-ru/default.aspx | process | setting up an href attribute for a non added to dom base tag should not cause urlresolver to update see | 1 |

1,266 | 3,798,609,854 | IssuesEvent | 2016-03-23 13:17:58 | DynareTeam/dynare | https://api.github.com/repos/DynareTeam/dynare | closed | Be more flexible with calib_smoother command | enhancement preprocessor | Currently, we do not allow ```prefilter```, ```loglinear```, and ```first_obs```. I think they are all valid options that can be easily handled within the current framework. The first step would be to add the preprocessor interface allowing these options. | 1.0 | Be more flexible with calib_smoother command - Currently, we do not allow ```prefilter```, ```loglinear```, and ```first_obs```. I think they are all valid options that can be easily handled within the current framework. The first step would be to add the preprocessor interface allowing these options. | process | be more flexible with calib smoother command currently we do not allow prefilter loglinear and first obs i think they are all valid options that can be easily handled within the current framework the first step would be to add the preprocessor interface allowing these options | 1 |

13,006 | 15,364,691,598 | IssuesEvent | 2021-03-01 22:21:51 | nextgenhealthcare/connect | https://api.github.com/repos/nextgenhealthcare/connect | closed | When reprocessing messages, add a button to clear all the checkboxes for the destinations | checkboxes closed-due-to-inactivity reprocess | I have a number of channels with a large number of destinations. Sometimes I wish to reprocess selected messages to just one or two destinations. The default is that all destination checkboxes are selected. I should like to be able to clear this in one go, and just tick the detinations I need rather than uncheck man... | 1.0 | When reprocessing messages, add a button to clear all the checkboxes for the destinations - I have a number of channels with a large number of destinations. Sometimes I wish to reprocess selected messages to just one or two destinations. The default is that all destination checkboxes are selected. I should like to b... | process | when reprocessing messages add a button to clear all the checkboxes for the destinations i have a number of channels with a large number of destinations sometimes i wish to reprocess selected messages to just one or two destinations the default is that all destination checkboxes are selected i should like to b... | 1 |

42,827 | 2,874,573,734 | IssuesEvent | 2015-06-08 23:31:22 | NuGet/Home | https://api.github.com/repos/NuGet/Home | closed | Inconsistency in the way UI window is launched for projects and solutions. Either only 1 window should show up or multiple windows should show up in all the cases | 2 - Working Area:VS.Client Priority:2 Type:Bug | a. Repro steps

i. Create a console application

ii. Launch the 'Manage NuGet Packages' for solution. It opens a new window

iii. Launch the 'Manage NuGet Packages' for a project.

iv. Expected: It opens a new window

iv. Actual: It selects the 'Manage NuGet Packages' for solution rather than open a new win... | 1.0 | Inconsistency in the way UI window is launched for projects and solutions. Either only 1 window should show up or multiple windows should show up in all the cases - a. Repro steps

i. Create a console application

ii. Launch the 'Manage NuGet Packages' for solution. It opens a new window

iii. Launch the 'Manage NuG... | non_process | inconsistency in the way ui window is launched for projects and solutions either only window should show up or multiple windows should show up in all the cases a repro steps i create a console application ii launch the manage nuget packages for solution it opens a new window iii launch the manage nug... | 0 |

2,554 | 5,310,929,815 | IssuesEvent | 2017-02-13 00:06:46 | csstree/stylelint-validator | https://api.github.com/repos/csstree/stylelint-validator | closed | Ignoring Less variables | enhancement preprocessors | I took this lovely plugin for a whirl today, it correctly discovered some issues where my team had written invalid syntax, however the majority of the errors it reported was the `csstree/validator` rule choking on Less variables, ex:

```

src/Component/Component.less

28:13 ✖ Can't parse value "@link-hover-color" ... | 1.0 | Ignoring Less variables - I took this lovely plugin for a whirl today, it correctly discovered some issues where my team had written invalid syntax, however the majority of the errors it reported was the `csstree/validator` rule choking on Less variables, ex:

```

src/Component/Component.less

28:13 ✖ Can't parse v... | process | ignoring less variables i took this lovely plugin for a whirl today it correctly discovered some issues where my team had written invalid syntax however the majority of the errors it reported was the csstree validator rule choking on less variables ex src component component less ✖ can t parse val... | 1 |

309,841 | 23,307,461,493 | IssuesEvent | 2022-08-08 03:45:30 | stoneatom/stonedb | https://api.github.com/repos/stoneatom/stonedb | closed | bug: Pictures width exceeds content area in docs site. | A-bug A-documentation | ### Describe the problem

for example:

https://stonedb.io/docs/O&M-Guide/monitoring-and-alerting/prometheus+grafana-monitor/

For all the contents in the document that contain pictures, the width of the pictures exceeds the content area.

### Expected behavior

The width of the picture can be automatically adapted.

... | 1.0 | bug: Pictures width exceeds content area in docs site. - ### Describe the problem

for example:

https://stonedb.io/docs/O&M-Guide/monitoring-and-alerting/prometheus+grafana-monitor/

For all the contents in the document that contain pictures, the width of the pictures exceeds the content area.

### Expected behavior... | non_process | bug pictures width exceeds content area in docs site describe the problem for example for all the contents in the document that contain pictures the width of the pictures exceeds the content area expected behavior the width of the picture can be automatically adapted how to reproduce see ... | 0 |

12,175 | 14,741,929,772 | IssuesEvent | 2021-01-07 11:24:23 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Angel Care 045-W00922 - Billing Issues | anc-process anp-important ant-bug ant-enhancement ant-parent/primary | In GitLab by @kdjstudios on Feb 26, 2019, 15:43

**Submitted by:** "Kimberly Gagner" <kimberly.gagner@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-02-26-38195/conversation

**Server:** Internal

**Client/Site:** Orlando

**Account:** Angel Care 045-W00922

**Issue:**

I recei... | 1.0 | Angel Care 045-W00922 - Billing Issues - In GitLab by @kdjstudios on Feb 26, 2019, 15:43

**Submitted by:** "Kimberly Gagner" <kimberly.gagner@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-02-26-38195/conversation

**Server:** Internal

**Client/Site:** Orlando

**Account:** An... | process | angel care billing issues in gitlab by kdjstudios on feb submitted by kimberly gagner helpdesk server internal client site orlando account angel care issue i received an inquiry from our client angel care llc regarding the amount of their bill increa... | 1 |

14,962 | 18,452,557,101 | IssuesEvent | 2021-10-15 12:43:03 | mt-ag/apex-flowsforapex | https://api.github.com/repos/mt-ag/apex-flowsforapex | opened | [enhancement]: Add "Start Step" to Manage Flow Instance Step plug-in | enhancement process-plugin | ### Feature description

Ability to select "Start Step" as Action in the plug-in Manage Flow Instance Step.

This action should call the `flow_api_pkg.flow_start_step` procedure.

### Describe alternatives you've considered

_No response_

### Additional context

_No response_ | 1.0 | [enhancement]: Add "Start Step" to Manage Flow Instance Step plug-in - ### Feature description

Ability to select "Start Step" as Action in the plug-in Manage Flow Instance Step.

This action should call the `flow_api_pkg.flow_start_step` procedure.

### Describe alternatives you've considered

_No response_

### Addi... | process | add start step to manage flow instance step plug in feature description ability to select start step as action in the plug in manage flow instance step this action should call the flow api pkg flow start step procedure describe alternatives you ve considered no response additional conte... | 1 |

17,790 | 23,716,175,880 | IssuesEvent | 2022-08-30 11:59:10 | wp-media/wp-rocket | https://api.github.com/repos/wp-media/wp-rocket | closed | PHP warning: Invalid argument supplied for foreach() in wp-background-process.php | type: bug module: preload tool: background process | **Before submitting an issue please check that you’ve completed the following steps:**

- Made sure you’re on the latest version - 3.9.0.5

- Used the search feature to ensure that the bug hasn’t been reported before - yes

**Describe the bug**

WP_DEBUG logs multiple errors of the following content:

`PHP Warning: I... | 1.0 | PHP warning: Invalid argument supplied for foreach() in wp-background-process.php - **Before submitting an issue please check that you’ve completed the following steps:**

- Made sure you’re on the latest version - 3.9.0.5

- Used the search feature to ensure that the bug hasn’t been reported before - yes

**Describe... | process | php warning invalid argument supplied for foreach in wp background process php before submitting an issue please check that you’ve completed the following steps made sure you’re on the latest version used the search feature to ensure that the bug hasn’t been reported before yes describe... | 1 |

378,777 | 26,337,545,349 | IssuesEvent | 2023-01-10 15:21:54 | RandomFractals/geo-data-viewer | https://api.github.com/repos/RandomFractals/geo-data-viewer | closed | Refine documentation sections in README.md | documentation | Update extension description, recommended extensions list, and other sections in docs. | 1.0 | Refine documentation sections in README.md - Update extension description, recommended extensions list, and other sections in docs. | non_process | refine documentation sections in readme md update extension description recommended extensions list and other sections in docs | 0 |

2,487 | 5,266,003,035 | IssuesEvent | 2017-02-04 07:18:45 | mitchellh/packer | https://api.github.com/repos/mitchellh/packer | closed | Support replacing placeholders in vagrantfile with user variables | enhancement post-processor/vagrant | I would really love to have support for replacing variables in my vagrant template file with user variables.

Vagrantfile.template

``` ruby

config.vm.define {{boxname}}

config.vm.box {{boxname}}

config.ssh.forward_agent {{forwardSshAgent}}

```

packer.json

``` json

{

"variables": {

"boxname": ... | 1.0 | Support replacing placeholders in vagrantfile with user variables - I would really love to have support for replacing variables in my vagrant template file with user variables.

Vagrantfile.template

``` ruby

config.vm.define {{boxname}}

config.vm.box {{boxname}}

config.ssh.forward_agent {{forwardSshAgent}}... | process | support replacing placeholders in vagrantfile with user variables i would really love to have support for replacing variables in my vagrant template file with user variables vagrantfile template ruby config vm define boxname config vm box boxname config ssh forward agent forwardsshagent ... | 1 |

19,211 | 25,344,555,139 | IssuesEvent | 2022-11-19 03:42:18 | Ultimate-Hosts-Blacklist/whitelist | https://api.github.com/repos/Ultimate-Hosts-Blacklist/whitelist | closed | [FALSE-POSITIVE?] bkrenergy.ca | whitelisting process | **Domains or links**

<!-- Please list below any domains and links listed here which you believe are a false positive. -->

- bkrenergy.ca 172.64.80.1

**More Information**

1. This must be a new addition, I stopped being able to access my thermostat through my app

2. I then found the IP of the company, found it... | 1.0 | [FALSE-POSITIVE?] bkrenergy.ca - **Domains or links**

<!-- Please list below any domains and links listed here which you believe are a false positive. -->

- bkrenergy.ca 172.64.80.1

**More Information**

1. This must be a new addition, I stopped being able to access my thermostat through my app

2. I then foun... | process | bkrenergy ca domains or links bkrenergy ca more information this must be a new addition i stopped being able to access my thermostat through my app i then found the ip of the company found it in your list tested by disabling the list and trying again and it worked additi... | 1 |

10,445 | 13,224,700,491 | IssuesEvent | 2020-08-17 19:40:07 | jyn514/saltwater | https://api.github.com/repos/jyn514/saltwater | opened | The order of preprocessor stringifying is wrong. | bug preprocessor | Stringify should be applied before doing replacement on the function parameters, but that replacement should occur before passing them to deeper functions (I think). I expect this has been broken since #456 but I will dig deeper.

# Sample Code

```

#define str(a) # a

#define xstr(a) str(a)

#define f(x) [x]

str... | 1.0 | The order of preprocessor stringifying is wrong. - Stringify should be applied before doing replacement on the function parameters, but that replacement should occur before passing them to deeper functions (I think). I expect this has been broken since #456 but I will dig deeper.

# Sample Code

```

#define str(a) #... | process | the order of preprocessor stringifying is wrong stringify should be applied before doing replacement on the function parameters but that replacement should occur before passing them to deeper functions i think i expect this has been broken since but i will dig deeper sample code define str a a... | 1 |

301,465 | 9,220,702,193 | IssuesEvent | 2019-03-11 18:07:07 | python/mypy | https://api.github.com/repos/python/mypy | closed | attr converter with default value - false error | priority-1-normal topic-attrs | When using `attr` attribute with both `converter` and `default`, wrong expectations is applied on `default` type with mypy == 0.670

The valid code

```python

import attr

import typing

def to_int(x: typing.Any) -> int:

return int(x)

@attr.s

class C:

a: int = attr.ib(converter=to_int, default="1")

... | 1.0 | attr converter with default value - false error - When using `attr` attribute with both `converter` and `default`, wrong expectations is applied on `default` type with mypy == 0.670

The valid code

```python

import attr

import typing

def to_int(x: typing.Any) -> int:

return int(x)

@attr.s

class C:

... | non_process | attr converter with default value false error when using attr attribute with both converter and default wrong expectations is applied on default type with mypy the valid code python import attr import typing def to int x typing any int return int x attr s class c a... | 0 |

31,855 | 7,460,097,830 | IssuesEvent | 2018-03-30 18:08:48 | google/shaka-player | https://api.github.com/repos/google/shaka-player | opened | Complete implementation of TS stream generator | code health enhancement | For our integration tests, we have a stream generator that generates DASH-style streams of Mp4 files. With the recent integration test for CEA-708 captions, a stream generator was created for HLS-style streams of TS files, but the implementation is very stripped-down. It makes a "stream" with a single segment.

A more ... | 1.0 | Complete implementation of TS stream generator - For our integration tests, we have a stream generator that generates DASH-style streams of Mp4 files. With the recent integration test for CEA-708 captions, a stream generator was created for HLS-style streams of TS files, but the implementation is very stripped-down. It... | non_process | complete implementation of ts stream generator for our integration tests we have a stream generator that generates dash style streams of files with the recent integration test for cea captions a stream generator was created for hls style streams of ts files but the implementation is very stripped down it mak... | 0 |

5,423 | 8,269,188,675 | IssuesEvent | 2018-09-15 02:28:56 | shirou/gopsutil | https://api.github.com/repos/shirou/gopsutil | closed | [process] get invalid pid by Processes in darwin_amd64 | os:darwin package:process | When I run code:

```

all, err := process.Processes()

if err != nil {

panic(err)

}

for _, p := range all {

fmt.Println("pid", p.Pid)

}

```

It will show some invalid pid, such as

```

pid 1531858944

```

using gopsutil: [ef54649](https://github.com/shirou/gopsutil/commit/ef54649286408053c0620d366d... | 1.0 | [process] get invalid pid by Processes in darwin_amd64 - When I run code:

```

all, err := process.Processes()

if err != nil {

panic(err)

}

for _, p := range all {

fmt.Println("pid", p.Pid)

}

```

It will show some invalid pid, such as

```

pid 1531858944

```

using gopsutil: [ef54649](https://git... | process | get invalid pid by processes in darwin when i run code all err process processes if err nil panic err for p range all fmt println pid p pid it will show some invalid pid such as pid using gopsutil | 1 |

126,503 | 4,996,644,099 | IssuesEvent | 2016-12-09 14:32:57 | HBHWoolacotts/RPii | https://api.github.com/repos/HBHWoolacotts/RPii | closed | "Unconfirm Selected Line" is not available if the sale is on a HP | FIXED - HBH Live Label: General RP Bugs and Support Priority - Low | "Unconfirm Selected Line" is not available if the sale is on a HP

| 1.0 | "Unconfirm Selected Line" is not available if the sale is on a HP - "Unconfirm Selected Line" is not available if the sale is on a HP

| non_process | unconfirm selected line is not available if the sale is on a hp unconfirm selected line is not available if the sale is on a hp | 0 |

3,596 | 6,625,802,318 | IssuesEvent | 2017-09-22 16:50:22 | wpninjas/ninja-forms | https://api.github.com/repos/wpninjas/ninja-forms | closed | Required Fields that have not been focused do not get caught as required | DIFFICULTY: Easy FRONT: Processing PRIORITY: High VALUE: Painless | Any required field that has been conditionally shown (part or individual field) is not being treated as "required" for Form Submission to take place. | 1.0 | Required Fields that have not been focused do not get caught as required - Any required field that has been conditionally shown (part or individual field) is not being treated as "required" for Form Submission to take place. | process | required fields that have not been focused do not get caught as required any required field that has been conditionally shown part or individual field is not being treated as required for form submission to take place | 1 |

3,730 | 6,733,142,355 | IssuesEvent | 2017-10-18 13:58:37 | york-region-tpss/stp | https://api.github.com/repos/york-region-tpss/stp | closed | Contract preparation single item view - Process Data | process workflow | Flush the data in form and collection to the database. | 1.0 | Contract preparation single item view - Process Data - Flush the data in form and collection to the database. | process | contract preparation single item view process data flush the data in form and collection to the database | 1 |

97,207 | 20,194,231,642 | IssuesEvent | 2022-02-11 09:10:39 | Regalis11/Barotrauma | https://api.github.com/repos/Regalis11/Barotrauma | opened | Ballast flora improvements part 2 | Code Design Design pending | Split from the ticket https://github.com/regalis11/barotrauma-development/issues/2992

> Investigate a way in which keeping the organism is a valid tactic, albeit one that requires trimming of the plant from electronics. Consider player incentives. | 1.0 | Ballast flora improvements part 2 - Split from the ticket https://github.com/regalis11/barotrauma-development/issues/2992

> Investigate a way in which keeping the organism is a valid tactic, albeit one that requires trimming of the plant from electronics. Consider player incentives. | non_process | ballast flora improvements part split from the ticket investigate a way in which keeping the organism is a valid tactic albeit one that requires trimming of the plant from electronics consider player incentives | 0 |

14,249 | 17,185,235,782 | IssuesEvent | 2021-07-16 00:06:32 | filecoin-project/lotus | https://api.github.com/repos/filecoin-project/lotus | closed | [Deal Making Issue] | area/markets epic/seal-unseal-process hint/needs-author-input kind/stale | **Basic Information**

Client and Miner side for online retrieval deal.

**Describe the problem**

The client is trying to retrieval an online storage deal and is running into "ERROR: retrieval failed: Retrieve: Retrieval Error: error generated by data transfer: deal data transfer failed: incomplete response".

**V... | 1.0 | [Deal Making Issue] - **Basic Information**

Client and Miner side for online retrieval deal.

**Describe the problem**

The client is trying to retrieval an online storage deal and is running into "ERROR: retrieval failed: Retrieve: Retrieval Error: error generated by data transfer: deal data transfer failed: incomp... | process | basic information client and miner side for online retrieval deal describe the problem the client is trying to retrieval an online storage deal and is running into error retrieval failed retrieve retrieval error error generated by data transfer deal data transfer failed incomplete response ... | 1 |

18,729 | 4,308,328,203 | IssuesEvent | 2016-07-21 12:37:19 | spring-cloud/spring-cloud-netflix | https://api.github.com/repos/spring-cloud/spring-cloud-netflix | reopened | Feign: setting header in feign RequestInterceptor does not work in BRIXTON.SR3 | documentation | We want to switch from spring-cloud-1.0.0-RELEASE to spring-cloud-Brixton.SR3 and and find that calls to @FeignClient annotated services interfaces are executed in an async manner (hystrix command).

This way our registred feign ReceptInterceptors which uses ThreadLocal bound headers to be forwarded to the receiver d... | 1.0 | Feign: setting header in feign RequestInterceptor does not work in BRIXTON.SR3 - We want to switch from spring-cloud-1.0.0-RELEASE to spring-cloud-Brixton.SR3 and and find that calls to @FeignClient annotated services interfaces are executed in an async manner (hystrix command).

This way our registred feign ReceptIn... | non_process | feign setting header in feign requestinterceptor does not work in brixton we want to switch from spring cloud release to spring cloud brixton and and find that calls to feignclient annotated services interfaces are executed in an async manner hystrix command this way our registred feign receptinterc... | 0 |

9,157 | 12,217,314,720 | IssuesEvent | 2020-05-01 16:56:15 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | partially successful/warning condition | Pri1 devops-cicd-process/tech devops/prod doc-bug | If a task fails with continue on error the job is triggered as partially successful. Is there a condition to pick up on this? I tried with failed() which didn't trigger and succeeded() which worked. But i don't really want it to trigger on true succeeded...

---

#### Document Details

⚠ *Do not edit this section. It is... | 1.0 | partially successful/warning condition - If a task fails with continue on error the job is triggered as partially successful. Is there a condition to pick up on this? I tried with failed() which didn't trigger and succeeded() which worked. But i don't really want it to trigger on true succeeded...

---

#### Document De... | process | partially successful warning condition if a task fails with continue on error the job is triggered as partially successful is there a condition to pick up on this i tried with failed which didn t trigger and succeeded which worked but i don t really want it to trigger on true succeeded document de... | 1 |

9,596 | 12,543,313,730 | IssuesEvent | 2020-06-05 15:21:00 | knative/serving | https://api.github.com/repos/knative/serving | closed | Kubernetes 1.16 minimum version | area/API area/test-and-release kind/feature kind/process | /area API

/area test-and-release

/kind process

## Describe the feature

This happens in knative/pkg, but opening this here to track.

This is in accordance with our Release Principles. | 1.0 | Kubernetes 1.16 minimum version - /area API

/area test-and-release

/kind process

## Describe the feature

This happens in knative/pkg, but opening this here to track.

This is in accordance with our Release Principles. | process | kubernetes minimum version area api area test and release kind process describe the feature this happens in knative pkg but opening this here to track this is in accordance with our release principles | 1 |

1,285 | 3,822,507,638 | IssuesEvent | 2016-03-30 01:36:50 | dataproofer/Dataproofer | https://api.github.com/repos/dataproofer/Dataproofer | opened | Read in Excel file data | engine: processing large | Currently the app handles data from a CSV file or a Google Spreadsheet tab. We'd need to add this functionality into the logic in `src/` and some UI into `electron/`. Dataproofer reads spreadsheets in as an array of row objects, so we'll have to convert it if it comes in as an array of row arrays.

## Possible Fix

[... | 1.0 | Read in Excel file data - Currently the app handles data from a CSV file or a Google Spreadsheet tab. We'd need to add this functionality into the logic in `src/` and some UI into `electron/`. Dataproofer reads spreadsheets in as an array of row objects, so we'll have to convert it if it comes in as an array of row arr... | process | read in excel file data currently the app handles data from a csv file or a google spreadsheet tab we d need to add this functionality into the logic in src and some ui into electron dataproofer reads spreadsheets in as an array of row objects so we ll have to convert it if it comes in as an array of row arr... | 1 |

2,931 | 5,917,225,949 | IssuesEvent | 2017-05-22 12:45:16 | intelsdi-x/snap | https://api.github.com/repos/intelsdi-x/snap | closed | Plugin wanted: logs filter | plugin-wishlist/processor | I'd like to have a processor plugin to filter logs (using tags) collected by [snap-plugin-collector-logs](https://github.com/intelsdi-x/snap-plugin-collector-logs). | 1.0 | Plugin wanted: logs filter - I'd like to have a processor plugin to filter logs (using tags) collected by [snap-plugin-collector-logs](https://github.com/intelsdi-x/snap-plugin-collector-logs). | process | plugin wanted logs filter i d like to have a processor plugin to filter logs using tags collected by | 1 |

4,959 | 7,802,863,316 | IssuesEvent | 2018-06-10 17:12:25 | StrikeNP/trac_test | https://api.github.com/repos/StrikeNP/trac_test | closed | Add useful plots and get rid of useless plots on plotgen (Trac #824) | Migrated from Trac bmg2@uwm.edu post_processing task | Plotgen could be improved by adding more useful panels to it. However, that also increases the amount of space that each plotgen ```.maff``` files takes up, and we don't want to go over the attachment limit for Trac. In order to make room for new plots, useless panels should be taken off plotgen. An example of a use... | 1.0 | Add useful plots and get rid of useless plots on plotgen (Trac #824) - Plotgen could be improved by adding more useful panels to it. However, that also increases the amount of space that each plotgen ```.maff``` files takes up, and we don't want to go over the attachment limit for Trac. In order to make room for new ... | process | add useful plots and get rid of useless plots on plotgen trac plotgen could be improved by adding more useful panels to it however that also increases the amount of space that each plotgen maff files takes up and we don t want to go over the attachment limit for trac in order to make room for new pl... | 1 |

1,888 | 2,578,103,259 | IssuesEvent | 2015-02-12 21:05:20 | SCIInstitute/SCIRun | https://api.github.com/repos/SCIInstitute/SCIRun | opened | Unstable streamline computation methods in GenerateStreamLines module | Modules Priority-Medium Testing | Cell walk is definitely unstable (see SCIRun 5's GenerateStreamLines-cellwalk-partial.srn5 vs SCIRun 4's GenerateStreamLines-cellwalk.srn). Other computation methods need testing too. | 1.0 | Unstable streamline computation methods in GenerateStreamLines module - Cell walk is definitely unstable (see SCIRun 5's GenerateStreamLines-cellwalk-partial.srn5 vs SCIRun 4's GenerateStreamLines-cellwalk.srn). Other computation methods need testing too. | non_process | unstable streamline computation methods in generatestreamlines module cell walk is definitely unstable see scirun s generatestreamlines cellwalk partial vs scirun s generatestreamlines cellwalk srn other computation methods need testing too | 0 |

18,362 | 24,492,287,732 | IssuesEvent | 2022-10-10 04:16:50 | phamtanduongtk29/html-css-training | https://api.github.com/repos/phamtanduongtk29/html-css-training | opened | Create and Responsive pricing section | not yet processing | - Estimates; 7h

- Create heading

- Create table price list :

- Price

- Description | 1.0 | Create and Responsive pricing section - - Estimates; 7h

- Create heading