Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

74,347 | 20,142,018,073 | IssuesEvent | 2022-02-09 00:54:32 | microsoft/PowerToys | https://api.github.com/repos/microsoft/PowerToys | closed | [Build] Build failed: Path to exceeds max length | Issue-Bug Product-PowerToys Run Area-Build Needs-Triage | ### Microsoft PowerToys version

f2a3fa5ec68d1b07ab347beadc8f6e9160069cce

### Running as admin

- [ ] Yes

### Area(s) with issue?

PowerToys Run

### Steps to reproduce

Build Launcher.

### ✔️ Expected Behavior

Build works.

### ❌ Actual Behavior

```

Severity Code Description Project File Line Suppression State

... | 1.0 | [Build] Build failed: Path to exceeds max length - ### Microsoft PowerToys version

f2a3fa5ec68d1b07ab347beadc8f6e9160069cce

### Running as admin

- [ ] Yes

### Area(s) with issue?

PowerToys Run

### Steps to reproduce

Build Launcher.

### ✔️ Expected Behavior

Build works.

### ❌ Actual Behavior

```

Severity Cod... | non_process | build failed path to exceeds max length microsoft powertoys version running as admin yes area s with issue powertoys run steps to reproduce build launcher ✔️ expected behavior build works ❌ actual behavior severity code description project file line suppression sta... | 0 |

9,038 | 12,130,107,934 | IssuesEvent | 2020-04-23 00:30:40 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | closed | remove gcp-devrel-py-tools from appengine/standard/endpoints-frameworks-v2/quickstart/requirements-test.txt | priority: p2 remove-gcp-devrel-py-tools type: process | remove gcp-devrel-py-tools from appengine/standard/endpoints-frameworks-v2/quickstart/requirements-test.txt | 1.0 | remove gcp-devrel-py-tools from appengine/standard/endpoints-frameworks-v2/quickstart/requirements-test.txt - remove gcp-devrel-py-tools from appengine/standard/endpoints-frameworks-v2/quickstart/requirements-test.txt | process | remove gcp devrel py tools from appengine standard endpoints frameworks quickstart requirements test txt remove gcp devrel py tools from appengine standard endpoints frameworks quickstart requirements test txt | 1 |

8,150 | 11,354,731,938 | IssuesEvent | 2020-01-24 18:19:40 | googleapis/java-texttospeech | https://api.github.com/repos/googleapis/java-texttospeech | closed | Promote to GA | type: process | Package name: **google-cloud-texttospeech**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

- [x] 28 days elapsed si... | 1.0 | Promote to GA - Package name: **google-cloud-texttospeech**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

- [x] 28... | process | promote to ga package name google cloud texttospeech current release beta proposed release ga instructions check the lists below adding tests documentation as required once all the required boxes are ticked please create a release and close this issue required da... | 1 |

9,193 | 12,229,616,961 | IssuesEvent | 2020-05-04 01:07:08 | chfor183/data_science_articles | https://api.github.com/repos/chfor183/data_science_articles | opened | Outliers | Data Preprocessing | ## TL;DR

Yes

## Key Takeaways

- Remove 2% at each end

- Remove 1.5x the interquartile range (Q2+Q3)*1.5

- Study individually

## Useful Code Snippets

```

function test() {

console.log("notice the blank line before this function?");

}

```

## Articles/Ressources | 1.0 | Outliers - ## TL;DR

Yes

## Key Takeaways

- Remove 2% at each end

- Remove 1.5x the interquartile range (Q2+Q3)*1.5

- Study individually

## Useful Code Snippets

```

function test() {

console.log("notice the blank line before this function?");

}

```

## Articles/Ressources | process | outliers tl dr yes key takeaways remove at each end remove the interquartile range study individually useful code snippets function test console log notice the blank line before this function articles ressources | 1 |

31,209 | 11,887,383,012 | IssuesEvent | 2020-03-28 01:30:41 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | mono test failure: System.Security.Cryptography.Csp.Tests | area-System.Security untriaged | Found failure in "Libraries Test Run release mono OSX x64 Debug" on PR https://github.com/dotnet/runtime/pull/34208:

https://dev.azure.com/dnceng/public/_build/results?buildId=577051

```

.packages/microsoft.dotnet.helix.sdk/5.0.0-beta.20171.1/tools/Microsoft.DotNet.Helix.Sdk.MultiQueue.targets(76,5): error : (NETC... | True | mono test failure: System.Security.Cryptography.Csp.Tests - Found failure in "Libraries Test Run release mono OSX x64 Debug" on PR https://github.com/dotnet/runtime/pull/34208:

https://dev.azure.com/dnceng/public/_build/results?buildId=577051

```

.packages/microsoft.dotnet.helix.sdk/5.0.0-beta.20171.1/tools/Micros... | non_process | mono test failure system security cryptography csp tests found failure in libraries test run release mono osx debug on pr packages microsoft dotnet helix sdk beta tools microsoft dotnet helix sdk multiqueue targets error netcore engineering telemetry test work item sys... | 0 |

14,735 | 17,970,651,633 | IssuesEvent | 2021-09-14 01:14:05 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | Add a rosetta helm chart | enhancement P1 process rosetta | **Problem**

Helm chart currently contains sub charts for all modules expect rosetta

**Solution**

Add a sub chart that uses the rosetta docker image and exposes customizable configs.

As a chart it can be similar to the REST API chart

**Alternatives**

**Additional Context**

Depends on docker images deploye... | 1.0 | Add a rosetta helm chart - **Problem**

Helm chart currently contains sub charts for all modules expect rosetta

**Solution**

Add a sub chart that uses the rosetta docker image and exposes customizable configs.

As a chart it can be similar to the REST API chart

**Alternatives**

**Additional Context**

Depen... | process | add a rosetta helm chart problem helm chart currently contains sub charts for all modules expect rosetta solution add a sub chart that uses the rosetta docker image and exposes customizable configs as a chart it can be similar to the rest api chart alternatives additional context depen... | 1 |

6,599 | 9,682,230,970 | IssuesEvent | 2019-05-23 08:43:01 | brandon1roadgears/Interpreter-of-programming-language-of-Turing-Machine | https://api.github.com/repos/brandon1roadgears/Interpreter-of-programming-language-of-Turing-Machine | closed | !!!ПЛАНЫ МЕНЯЮТСЯ!!! Работа с файлами | C++ Work in process help wanted | @goldmen4ik little job for u

## Для комфортной работы с программой необходимо, чтобы считывание правил и исходной строки происходило из файла.

* В функции input_rules добавить цикл, в котором будут считываться файлы.

* Также добавить считывание исходной строки из файла в функции input_main_row | 1.0 | !!!ПЛАНЫ МЕНЯЮТСЯ!!! Работа с файлами - @goldmen4ik little job for u

## Для комфортной работы с программой необходимо, чтобы считывание правил и исходной строки происходило из файла.

* В функции input_rules добавить цикл, в котором будут считываться файлы.

* Также добавить считывание исходной строки из файла в функ... | process | планы меняются работа с файлами little job for u для комфортной работы с программой необходимо чтобы считывание правил и исходной строки происходило из файла в функции input rules добавить цикл в котором будут считываться файлы также добавить считывание исходной строки из файла в функции input... | 1 |

4,712 | 7,550,041,430 | IssuesEvent | 2018-04-18 15:44:46 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | reopened | Remove `integrity` attributes from resources | !IMPORTANT! AREA: client AREA: server SYSTEM: resource processing TYPE: bug | See: http://www.w3.org/TR/SRI/

This attribute is used to avoid MITM attacks. Attribute contains SHA of the desired resource. Which doesn't work for us. This is widely used currently. And it brokes e.g. GitHub proxying. I suggest to remove this attribute entirely. It applies only to `<script>` and `<link>` elements.

| 1.0 | Remove `integrity` attributes from resources - See: http://www.w3.org/TR/SRI/

This attribute is used to avoid MITM attacks. Attribute contains SHA of the desired resource. Which doesn't work for us. This is widely used currently. And it brokes e.g. GitHub proxying. I suggest to remove this attribute entirely. It appli... | process | remove integrity attributes from resources see this attribute is used to avoid mitm attacks attribute contains sha of the desired resource which doesn t work for us this is widely used currently and it brokes e g github proxying i suggest to remove this attribute entirely it applies only to and e... | 1 |

57,882 | 24,258,072,494 | IssuesEvent | 2022-09-27 19:40:15 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | closed | [Enhancement] Ability to search by Project ID | Service: Dev Need: 1-Must Have Type: Enhancement Product: Moped Project: Moped v2.0 | Now that we have project IDs rendering everywhere (#9551), users should easily be able to search by project ID with the non-advanced search features.

<img width="1447" alt="Screen Shot 2022-09-21 at 2 24 23 PM" src="https://user-images.githubusercontent.com/14793120/191582332-2e56aa5e-ea30-46f5-a3b9-940f0a269ff8.png... | 1.0 | [Enhancement] Ability to search by Project ID - Now that we have project IDs rendering everywhere (#9551), users should easily be able to search by project ID with the non-advanced search features.

<img width="1447" alt="Screen Shot 2022-09-21 at 2 24 23 PM" src="https://user-images.githubusercontent.com/14793120/19... | non_process | ability to search by project id now that we have project ids rendering everywhere users should easily be able to search by project id with the non advanced search features img width alt screen shot at pm src | 0 |

20,362 | 6,034,229,861 | IssuesEvent | 2017-06-09 10:28:36 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | closed | Clean up code in MultipleFileProperty | Quality: Code Quality | #19826 was merged in time for the release but comments on the code are valid and need to be addressed.

I think other clean ups would be worthwhile such as making some variables, `constexpr` rather than constructing them each time through the methods, e.g. [here](https://github.com/mantidproject/mantid/blob/0488b194c... | 1.0 | Clean up code in MultipleFileProperty - #19826 was merged in time for the release but comments on the code are valid and need to be addressed.

I think other clean ups would be worthwhile such as making some variables, `constexpr` rather than constructing them each time through the methods, e.g. [here](https://github... | non_process | clean up code in multiplefileproperty was merged in time for the release but comments on the code are valid and need to be addressed i think other clean ups would be worthwhile such as making some variables constexpr rather than constructing them each time through the methods e g | 0 |

19,324 | 25,472,107,774 | IssuesEvent | 2022-11-25 11:04:58 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [IDP] [PM] Getting an error message in the edit admin screen | Bug Blocker P0 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | **Pre-condition:** mfa should be disabled in the PM

Getting an error message when edited admin in the application,

**Steps:**

1. Login to PM

2. Click on 'Admins' tab

3. Edit admin in the list

4. Remove the phone number

5. Click on 'Save' button and Verify

**AR:** Getting an error message as attached in ... | 3.0 | [IDP] [PM] Getting an error message in the edit admin screen - **Pre-condition:** mfa should be disabled in the PM

Getting an error message when edited admin in the application,

**Steps:**

1. Login to PM

2. Click on 'Admins' tab

3. Edit admin in the list

4. Remove the phone number

5. Click on 'Save' button a... | process | getting an error message in the edit admin screen pre condition mfa should be disabled in the pm getting an error message when edited admin in the application steps login to pm click on admins tab edit admin in the list remove the phone number click on save button and veri... | 1 |

14,708 | 17,909,920,523 | IssuesEvent | 2021-09-09 02:50:37 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Cuda Tensor IPC Does Not Work Properly With P2P Access | module: multiprocessing module: cuda triaged | ## 🐛 Bug

<!-- A clear and concise description of what the bug is. -->

CUDA support both memory IPC and P2P Access, and also combination of them, which means If I allocate a block of memory on device A and send it to child process which runs on device B with CUDA IPC API and device A and device B support P2P acces... | 1.0 | Cuda Tensor IPC Does Not Work Properly With P2P Access - ## 🐛 Bug

<!-- A clear and concise description of what the bug is. -->

CUDA support both memory IPC and P2P Access, and also combination of them, which means If I allocate a block of memory on device A and send it to child process which runs on device B with ... | process | cuda tensor ipc does not work properly with access 🐛 bug cuda support both memory ipc and access and also combination of them which means if i allocate a block of memory on device a and send it to child process which runs on device b with cuda ipc api and device a and device b support access chil... | 1 |

29,254 | 14,018,897,184 | IssuesEvent | 2020-10-29 17:25:56 | reima-ecom/reima-theme | https://api.github.com/repos/reima-ecom/reima-theme | closed | Eagerly load above-the-fold images | performance | Pages should load the hero image (first module) eagerly.

Product pages should load the main product picture eagerly.

See README for details. | True | Eagerly load above-the-fold images - Pages should load the hero image (first module) eagerly.

Product pages should load the main product picture eagerly.

See README for details. | non_process | eagerly load above the fold images pages should load the hero image first module eagerly product pages should load the main product picture eagerly see readme for details | 0 |

9,771 | 12,758,656,627 | IssuesEvent | 2020-06-29 03:06:31 | didi/mpx | https://api.github.com/repos/didi/mpx | closed | [Bug report] 字节跳动小程序ad webpack 校验出错 | processing | **问题描述**

npm run build:cross 的时候,提示:

```

<ad> is not supported in bytedance environment!

```

**环境信息描述**

至少包含以下部分:

1. 系统类型(Mac或者Windows) mac

2. Mpx依赖版本:

> "@mpxjs/api-proxy": "^2.5.20",

> "@mpxjs/core": "^2.5.28",

> "@mpxjs/fetch": "^2.3.9"

ad标签组件已经支持: https://microapp.bytedance.com/dev/cn/m... | 1.0 | [Bug report] 字节跳动小程序ad webpack 校验出错 - **问题描述**

npm run build:cross 的时候,提示:

```

<ad> is not supported in bytedance environment!

```

**环境信息描述**

至少包含以下部分:

1. 系统类型(Mac或者Windows) mac

2. Mpx依赖版本:

> "@mpxjs/api-proxy": "^2.5.20",

> "@mpxjs/core": "^2.5.28",

> "@mpxjs/fetch": "^2.3.9"

ad标签组件已经支持: h... | process | 字节跳动小程序ad webpack 校验出错 问题描述 npm run build cross 的时候,提示: is not supported in bytedance environment 环境信息描述 至少包含以下部分: 系统类型 mac或者windows mac mpx依赖版本 mpxjs api proxy mpxjs core mpxjs fetch ad标签组件已经支持: | 1 |

11,944 | 14,708,853,095 | IssuesEvent | 2021-01-05 00:46:58 | yuta252/startlens_react_frontend | https://api.github.com/repos/yuta252/startlens_react_frontend | closed | ユーザープロフィール機能の実装 | dev process | ## 概要

ユーザーのプロフィール(基本情報)を表示・編集する機能を実装する。

## 変更点

- [ ] プロフィール基本情報の表示

- [ ] プロフィール基本情報の編集

- [ ] プロフィール画像の表示及びアップデート処理

## 追加タスク

## 課題

---

## 参照

---

## 備考

-- | 1.0 | ユーザープロフィール機能の実装 - ## 概要

ユーザーのプロフィール(基本情報)を表示・編集する機能を実装する。

## 変更点

- [ ] プロフィール基本情報の表示

- [ ] プロフィール基本情報の編集

- [ ] プロフィール画像の表示及びアップデート処理

## 追加タスク

## 課題

---

## 参照

---

## 備考

-- | process | ユーザープロフィール機能の実装 概要 ユーザーのプロフィール(基本情報)を表示・編集する機能を実装する。 変更点 プロフィール基本情報の表示 プロフィール基本情報の編集 プロフィール画像の表示及びアップデート処理 追加タスク 課題 参照 備考 | 1 |

109,905 | 4,415,288,533 | IssuesEvent | 2016-08-14 00:03:51 | AUV-IITK/auv | https://api.github.com/repos/AUV-IITK/auv | closed | (task_handler_layer) Launch file to launch all nodes together | priority | Also on launch all nodes should be inactive. Only when they get a goal should they start doing image processing or sending motion goals to motion library | 1.0 | (task_handler_layer) Launch file to launch all nodes together - Also on launch all nodes should be inactive. Only when they get a goal should they start doing image processing or sending motion goals to motion library | non_process | task handler layer launch file to launch all nodes together also on launch all nodes should be inactive only when they get a goal should they start doing image processing or sending motion goals to motion library | 0 |

79,976 | 23,080,359,963 | IssuesEvent | 2022-07-26 06:31:49 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | Setup elasticsearch dependency monitoring with Snyk | :Delivery/Build Team:Delivery | We want to monitor the elasticsearch dependencies on a regular basis using Snyk to be able to recognise vulnerabilities faster and being able to proactively react on security issues within the production dependencies.

- [x] Setup Gradle plugin for monitoring dependencies in snyk ( [#88036](https://github.com/elasti... | 1.0 | Setup elasticsearch dependency monitoring with Snyk - We want to monitor the elasticsearch dependencies on a regular basis using Snyk to be able to recognise vulnerabilities faster and being able to proactively react on security issues within the production dependencies.

- [x] Setup Gradle plugin for monitoring dep... | non_process | setup elasticsearch dependency monitoring with snyk we want to monitor the elasticsearch dependencies on a regular basis using snyk to be able to recognise vulnerabilities faster and being able to proactively react on security issues within the production dependencies setup gradle plugin for monitoring depen... | 0 |

95,564 | 19,716,916,039 | IssuesEvent | 2022-01-13 11:56:19 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Consider special-casing `== ""` in the JIT? | area-CodeGen-coreclr untriaged in pr | I tried enabling CA1820 ("Test for empty strings using string length") and it flagged more than 100 cases in a libraries build. Many of them were of the form `s == ""` or `s != ""`. These have measurably worse codegen than just doing a null and length check, e.g.

https://sharplab.io/#v2:EYLgxg9gTgpgtADwGwBYA0AXEBDAz... | 1.0 | Consider special-casing `== ""` in the JIT? - I tried enabling CA1820 ("Test for empty strings using string length") and it flagged more than 100 cases in a libraries build. Many of them were of the form `s == ""` or `s != ""`. These have measurably worse codegen than just doing a null and length check, e.g.

https:/... | non_process | consider special casing in the jit i tried enabling test for empty strings using string length and it flagged more than cases in a libraries build many of them were of the form s or s these have measurably worse codegen than just doing a null and length check e g c publ... | 0 |

20,073 | 26,564,375,935 | IssuesEvent | 2023-01-20 18:40:58 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | Square is not a square when exported | scope: image processing bug: pending | **Describe the bug/issue**

When exporting an image that has been cropped to aspect ratio 1:1 with the crop the result is not a square, the other side is one pixel off.

**To Reproduce**

1. Open an image

2. Crop image to 1:1, keeping the full height

3. Return to darkroom

4. Export image full sized image

**Expe... | 1.0 | Square is not a square when exported - **Describe the bug/issue**

When exporting an image that has been cropped to aspect ratio 1:1 with the crop the result is not a square, the other side is one pixel off.

**To Reproduce**

1. Open an image

2. Crop image to 1:1, keeping the full height

3. Return to darkroom

4. ... | process | square is not a square when exported describe the bug issue when exporting an image that has been cropped to aspect ratio with the crop the result is not a square the other side is one pixel off to reproduce open an image crop image to keeping the full height return to darkroom ... | 1 |

10,665 | 2,622,179,682 | IssuesEvent | 2015-03-04 00:18:15 | byzhang/leveldb | https://api.github.com/repos/byzhang/leveldb | opened | correct library naming on mac os x | auto-migrated Priority-Medium Type-Defect | ```

Would it be possible to name library as {name}.{version}.{extension} ?

And maybe add install prefix + make target ?

```

Original issue reported on code.google.com by `humdumde...@gmail.com` on 13 Nov 2012 at 2:01 | 1.0 | correct library naming on mac os x - ```

Would it be possible to name library as {name}.{version}.{extension} ?

And maybe add install prefix + make target ?

```

Original issue reported on code.google.com by `humdumde...@gmail.com` on 13 Nov 2012 at 2:01 | non_process | correct library naming on mac os x would it be possible to name library as name version extension and maybe add install prefix make target original issue reported on code google com by humdumde gmail com on nov at | 0 |

12,939 | 15,303,671,873 | IssuesEvent | 2021-02-24 16:03:25 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | opened | Change term - subgenus | Class - Taxon Process - ready for public comment Term - change | ## Change term

* Submitter: @tucotuco

* Justification (why is this change necessary?): Based on discussions in Issue #176, it was suggested that the examples for subgenus were not strictly correct. The examples were changed to remove the reference to the genus, but the definition of the term still includes "Values... | 1.0 | Change term - subgenus - ## Change term

* Submitter: @tucotuco

* Justification (why is this change necessary?): Based on discussions in Issue #176, it was suggested that the examples for subgenus were not strictly correct. The examples were changed to remove the reference to the genus, but the definition of the te... | process | change term subgenus change term submitter tucotuco justification why is this change necessary based on discussions in issue it was suggested that the examples for subgenus were not strictly correct the examples were changed to remove the reference to the genus but the definition of the term... | 1 |

12,506 | 14,961,860,201 | IssuesEvent | 2021-01-27 08:26:50 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Audit Logs] Event is not triggered for Response Datastore module in Android and iOS | Bug P1 Participant datastore Process: Reopened | **Event:**

STUDY_METADATA_RECEIVED | 1.0 | [Audit Logs] Event is not triggered for Response Datastore module in Android and iOS - **Event:**

STUDY_METADATA_RECEIVED | process | event is not triggered for response datastore module in android and ios event study metadata received | 1 |

144,771 | 19,301,915,772 | IssuesEvent | 2021-12-13 07:07:26 | SmartBear/soapui | https://api.github.com/repos/SmartBear/soapui | closed | CVE-2021-39152 (High) detected in xstream-1.4.13.jar - autoclosed | security vulnerability | ## CVE-2021-39152 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.13.jar</b></p></summary>

<p></p>

<p>Library home page: <a href="http://x-stream.github.io">http://x-stream... | True | CVE-2021-39152 (High) detected in xstream-1.4.13.jar - autoclosed - ## CVE-2021-39152 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.13.jar</b></p></summary>

<p></p>

<p>Li... | non_process | cve high detected in xstream jar autoclosed cve high severity vulnerability vulnerable library xstream jar library home page a href path to dependency file soapui soapui pom xml path to vulnerable library canner repository thoughtworks xstream xstream ... | 0 |

17,008 | 23,425,067,088 | IssuesEvent | 2022-08-14 09:06:56 | Sirttas/ElementalCraft | https://api.github.com/repos/Sirttas/ElementalCraft | closed | [Suggestion] Project MMO Compatibility | enhancement Compatibility | [Project MMO](https://www.curseforge.com/minecraft/mc-mods/project-mmo) is a mod where you can level up different skills (agility, combat, farming, cooking, smithing, etc.) and you get better and better perks as you level.

So specifically, it would be great if ElementalCraft furnaces gave smithing/cooking XP dependi... | True | [Suggestion] Project MMO Compatibility - [Project MMO](https://www.curseforge.com/minecraft/mc-mods/project-mmo) is a mod where you can level up different skills (agility, combat, farming, cooking, smithing, etc.) and you get better and better perks as you level.

So specifically, it would be great if ElementalCraft ... | non_process | project mmo compatibility is a mod where you can level up different skills agility combat farming cooking smithing etc and you get better and better perks as you level so specifically it would be great if elementalcraft furnaces gave smithing cooking xp depending on what it s processing also the ... | 0 |

13,330 | 15,790,017,906 | IssuesEvent | 2021-04-02 00:18:03 | e4exp/paper_manager_abstract | https://api.github.com/repos/e4exp/paper_manager_abstract | opened | StyleCLIP: Text-Driven Manipulation of StyleGAN Imagery | GAN Image Synthesis Natural Language Processing Vision-Language | - https://arxiv.org/abs/2103.17249

- 2021

StyleGANが様々な分野で非常にリアルな画像を生成できることに触発され、最近では、生成された画像や実物の画像を操作するためにStyleGANの潜在空間をどのように使用するかを理解することに多くの研究が集中している。

しかし、意味のある潜在的な操作を発見するためには、多くの自由度を人間が丹念に調べたり、目的の操作ごとに画像を集めて注釈を付けたりする必要があります。

本研究では、最近導入されたCLIP(Contrastive Language-Image Pre-training)モデルを活用することで、このような手作業を必要としないSt... | 1.0 | StyleCLIP: Text-Driven Manipulation of StyleGAN Imagery - - https://arxiv.org/abs/2103.17249

- 2021

StyleGANが様々な分野で非常にリアルな画像を生成できることに触発され、最近では、生成された画像や実物の画像を操作するためにStyleGANの潜在空間をどのように使用するかを理解することに多くの研究が集中している。

しかし、意味のある潜在的な操作を発見するためには、多くの自由度を人間が丹念に調べたり、目的の操作ごとに画像を集めて注釈を付けたりする必要があります。

本研究では、最近導入されたCLIP(Contrastive... | process | styleclip text driven manipulation of stylegan imagery styleganが様々な分野で非常にリアルな画像を生成できることに触発され、最近では、生成された画像や実物の画像を操作するためにstyleganの潜在空間をどのように使用するかを理解することに多くの研究が集中している。 しかし、意味のある潜在的な操作を発見するためには、多くの自由度を人間が丹念に調べたり、目的の操作ごとに画像を集めて注釈を付けたりする必要があります。 本研究では、最近導入されたclip(contrastive language image pre training)モデルを活... | 1 |

131,204 | 18,233,002,216 | IssuesEvent | 2021-10-01 01:02:39 | jmacwhitesource/cloud-pipeline | https://api.github.com/repos/jmacwhitesource/cloud-pipeline | opened | CVE-2021-23446 (High) detected in handsontable-0.33.0.tgz | security vulnerability | ## CVE-2021-23446 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handsontable-0.33.0.tgz</b></p></summary>

<p>Spreadsheet-like data grid editor that provides copy/paste functionality ... | True | CVE-2021-23446 (High) detected in handsontable-0.33.0.tgz - ## CVE-2021-23446 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handsontable-0.33.0.tgz</b></p></summary>

<p>Spreadsheet-l... | non_process | cve high detected in handsontable tgz cve high severity vulnerability vulnerable library handsontable tgz spreadsheet like data grid editor that provides copy paste functionality compatible with excel google docs library home page a href path to dependency file cloud pi... | 0 |

262,254 | 8,257,682,953 | IssuesEvent | 2018-09-13 06:29:34 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.w3schools.com - see bug description | browser-firefox-mobile priority-important | <!-- @browser: Firefox Mobile 64.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 5.1.1; Mobile; rv:64.0) Gecko/64.0 Firefox/64.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://www.w3schools.com/tags/tryit.asp?filename=tryhtml_form_submit

**Browser / Version**: Firefox Mobile 64.0

**Operating System**: Andr... | 1.0 | www.w3schools.com - see bug description - <!-- @browser: Firefox Mobile 64.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 5.1.1; Mobile; rv:64.0) Gecko/64.0 Firefox/64.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://www.w3schools.com/tags/tryit.asp?filename=tryhtml_form_submit

**Browser / Version**: Fire... | non_process | see bug description url browser version firefox mobile operating system android tested another browser yes problem type something else description a long click doesn t allow pasting text anymore steps to reproduce normally i click long in a textfield and ... | 0 |

68,275 | 9,166,016,701 | IssuesEvent | 2019-03-02 00:07:43 | pkmgarcia/codefoo-9 | https://api.github.com/repos/pkmgarcia/codefoo-9 | opened | Document the front end application | documentation easy front end | ## What's wrong?

There is no documentation (dependencies, how to build, etc.).

## How do I fix it?

Update or create frontend/README.md | 1.0 | Document the front end application - ## What's wrong?

There is no documentation (dependencies, how to build, etc.).

## How do I fix it?

Update or create frontend/README.md | non_process | document the front end application what s wrong there is no documentation dependencies how to build etc how do i fix it update or create frontend readme md | 0 |

3,892 | 6,820,987,226 | IssuesEvent | 2017-11-07 15:31:56 | WormBase/wormbase-pipeline | https://api.github.com/repos/WormBase/wormbase-pipeline | closed | Anatomy function hash text reassignment proposal | MODELS Processing | The conversion of leaf tags that are themselves data with an optional Text field has the consequence of loosing the leaf tag and therefore data. It is proposed to remove the ?Text field in this instance as this appears to be optional additional information about the leaf tag qualifier that has been selected.

Current... | 1.0 | Anatomy function hash text reassignment proposal - The conversion of leaf tags that are themselves data with an optional Text field has the consequence of loosing the leaf tag and therefore data. It is proposed to remove the ?Text field in this instance as this appears to be optional additional information about the le... | process | anatomy function hash text reassignment proposal the conversion of leaf tags that are themselves data with an optional text field has the consequence of loosing the leaf tag and therefore data it is proposed to remove the text field in this instance as this appears to be optional additional information about the le... | 1 |

9,913 | 12,953,235,735 | IssuesEvent | 2020-07-19 23:48:24 | PHPSocialNetwork/phpfastcache | https://api.github.com/repos/PHPSocialNetwork/phpfastcache | closed | Maybe support Memcached::OPT_PREFIX_KEY ? | 7.1 8.0 8.1 >_< Working & Scheduled [-_-] In Process | **Configuration (optional)**

- **PhpFastCache version:** "7.1.1"

- **PhpFastCache API version:** "2.0.4"

- **PHP version:** "7.2"

- **Operating system:** "Ubuntu 18.04"

**My question**

> There are some projects with same memcached in my server. I find the redis config has 'optPrefix' but memcached none.

| 1.0 | Maybe support Memcached::OPT_PREFIX_KEY ? - **Configuration (optional)**

- **PhpFastCache version:** "7.1.1"

- **PhpFastCache API version:** "2.0.4"

- **PHP version:** "7.2"

- **Operating system:** "Ubuntu 18.04"

**My question**

> There are some projects with same memcached in my server. I find the redis config... | process | maybe support memcached opt prefix key configuration optional phpfastcache version phpfastcache api version php version operating system ubuntu my question there are some projects with same memcached in my server i find the redis config h... | 1 |

14,803 | 18,103,473,639 | IssuesEvent | 2021-09-22 16:29:04 | 2i2c-org/team-compass | https://api.github.com/repos/2i2c-org/team-compass | closed | Process for decommissioning short-lived hubs | :label: team-process type: task needs: review | # Summary

<!-- What is the context needed to understand this task -->

For hubs we deploy for a short-term, such as to support workshops and conferences, we should have a decommission process that checks-in with the Community Representative/Hub Admins that removing the hub is ok, any data from NFS servers they'd l... | 1.0 | Process for decommissioning short-lived hubs - # Summary

<!-- What is the context needed to understand this task -->

For hubs we deploy for a short-term, such as to support workshops and conferences, we should have a decommission process that checks-in with the Community Representative/Hub Admins that removing th... | process | process for decommissioning short lived hubs summary for hubs we deploy for a short term such as to support workshops and conferences we should have a decommission process that checks in with the community representative hub admins that removing the hub is ok any data from nfs servers they d like to keep... | 1 |

15,019 | 18,733,374,970 | IssuesEvent | 2021-11-04 02:09:53 | MicrosoftDocs/windows-uwp | https://api.github.com/repos/MicrosoftDocs/windows-uwp | closed | ms-settings app network URI | product-question uwp/prod processes-and-threading/tech | Is there a URI which can be used to trigger the network 'reset now' button?

[Enter feedback here]

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 987ec16c-9456-93a4-177a-dbd563be7eb7

* Version Independent ID: f41f0344-f7f6-f092-a6... | 1.0 | ms-settings app network URI - Is there a URI which can be used to trigger the network 'reset now' button?

[Enter feedback here]

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 987ec16c-9456-93a4-177a-dbd563be7eb7

* Version Indepen... | process | ms settings app network uri is there a uri which can be used to trigger the network reset now button document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content s... | 1 |

11,987 | 14,737,151,885 | IssuesEvent | 2021-01-07 01:01:07 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Towne - posting payments - problem | anc-external anc-ops anc-process anc-ui anp-0.5 ant-bug ant-support | In GitLab by @kdjstudios on Apr 18, 2018, 16:06

**Submitted by:** Deb Crown <dcrown@towneanswering.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-04-18-12861/conversation

**Server:** External

**Client/Site:** ALL

**Account:** ALL

**Issue:**

Sooooo, I’m not sure why this would be h... | 1.0 | Towne - posting payments - problem - In GitLab by @kdjstudios on Apr 18, 2018, 16:06

**Submitted by:** Deb Crown <dcrown@towneanswering.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-04-18-12861/conversation

**Server:** External

**Client/Site:** ALL

**Account:** ALL

**Issue:**

Soo... | process | towne posting payments problem in gitlab by kdjstudios on apr submitted by deb crown helpdesk server external client site all account all issue sooooo i’m not sure why this would be happening again but when i am posting payments the same thing is happen... | 1 |

7,028 | 10,188,946,038 | IssuesEvent | 2019-08-11 15:24:42 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | process.title don't change process name | confirmed-bug libuv macos process | <!--

Thank you for reporting a possible bug in Node.js.

Please fill in as much of the template below as you can.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please specify the affected core module name

If possible, please pro... | 1.0 | process.title don't change process name - <!--

Thank you for reporting a possible bug in Node.js.

Please fill in as much of the template below as you can.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please specify the affected c... | process | process title don t change process name thank you for reporting a possible bug in node js please fill in as much of the template below as you can version output of node v platform output of uname a unix or version and or bit windows subsystem if known please specify the affected cor... | 1 |

199,568 | 15,049,230,280 | IssuesEvent | 2021-02-03 11:12:07 | lutraconsulting/input-manual-tests | https://api.github.com/repos/lutraconsulting/input-manual-tests | closed | Test Execution InputApp 0.7.8 (android) | test execution | ## Test plan for Input manual testing

| Test environment | Value |

|---|---|

| Input Version: | dc69f04fdb2826c549b3304724b16aba9092e529 arm64-v8a |

| Mergin Version: | |

| Mergin URL: <> | |

| QGIS Version: | |

| Mergin plugin Version: | |

| Mobile OS: | Android arm64-v8a |

| Date of Executio... | 1.0 | Test Execution InputApp 0.7.8 (android) - ## Test plan for Input manual testing

| Test environment | Value |

|---|---|

| Input Version: | dc69f04fdb2826c549b3304724b16aba9092e529 arm64-v8a |

| Mergin Version: | |

| Mergin URL: <> | |

| QGIS Version: | |

| Mergin plugin Version: | |

| Mobile OS:... | non_process | test execution inputapp android test plan for input manual testing test environment value input version mergin version mergin url qgis version mergin plugin version mobile os android date of execution ... | 0 |

9,500 | 12,488,909,272 | IssuesEvent | 2020-05-31 16:21:29 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Customizing the PDF extension doesn't work | bug plugin/pdf preprocess priority/high | ## Expected Behavior

In the plug-in that extends the capabilities of PDF have to work set through customization.dir.

## Actual Behavior

The standard "org.dita.pdf2" plugin ignores the "customization.dir" setting.

## Steps to Reproduce

1. Copy folder "com.example.print-pdf" from dita-ot-3.5\docsrc\samples\plu... | 1.0 | Customizing the PDF extension doesn't work - ## Expected Behavior

In the plug-in that extends the capabilities of PDF have to work set through customization.dir.

## Actual Behavior

The standard "org.dita.pdf2" plugin ignores the "customization.dir" setting.

## Steps to Reproduce

1. Copy folder "com.example.p... | process | customizing the pdf extension doesn t work expected behavior in the plug in that extends the capabilities of pdf have to work set through customization dir actual behavior the standard org dita plugin ignores the customization dir setting steps to reproduce copy folder com example prin... | 1 |

21,272 | 28,442,176,851 | IssuesEvent | 2023-04-16 02:40:42 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | OMBB BUG | Feedback stale Processing Bug | ### What is the bug or the crash?

mauvaise orientation des polygones lors du lancement de l'algorithme OMBB. ce ci est lié à la version QGIS 3.22

L'algorithme fonctionne parfaitement sur les version précédentes

Voici un exemple

| 1.0 | in documents, adding a file doesnt work when on "files" tab - in documents, adding a file doesnt work when on "files" tab

| process | in documents adding a file doesnt work when on files tab in documents adding a file doesnt work when on files tab | 1 |

15,124 | 18,858,190,007 | IssuesEvent | 2021-11-12 09:29:09 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [FEATURE][needs-docs][processing] add gdal_viewshed algorithm | Automatic new feature Processing Alg 3.12 | Original commit: https://github.com/qgis/QGIS/commit/02fbe42a306a289aa48c6fe39a9c5603d00ac347 by nyalldawson

Unfortunately this naughty coder did not write a description... :-( | 1.0 | [FEATURE][needs-docs][processing] add gdal_viewshed algorithm - Original commit: https://github.com/qgis/QGIS/commit/02fbe42a306a289aa48c6fe39a9c5603d00ac347 by nyalldawson

Unfortunately this naughty coder did not write a description... :-( | process | add gdal viewshed algorithm original commit by nyalldawson unfortunately this naughty coder did not write a description | 1 |

811,601 | 30,293,857,821 | IssuesEvent | 2023-07-09 16:02:11 | matrixorigin/matrixone | https://api.github.com/repos/matrixorigin/matrixone | opened | [Subtask]: avoid conflict between compact block transactions and newly committed deletes happening after the compaction start | priority/p0 kind/subtask 1.0-perf-tp | ### Parent Issue

#10208

### Detail of Subtask

avoid conflict between compact block transactions and newly committed deletes happening after the compaction start

### Describe implementation you've considered

Compaction transactions usually take a long time, and when the transaction is ready to commit, there may be... | 1.0 | [Subtask]: avoid conflict between compact block transactions and newly committed deletes happening after the compaction start - ### Parent Issue

#10208

### Detail of Subtask

avoid conflict between compact block transactions and newly committed deletes happening after the compaction start

### Describe implementatio... | non_process | avoid conflict between compact block transactions and newly committed deletes happening after the compaction start parent issue detail of subtask avoid conflict between compact block transactions and newly committed deletes happening after the compaction start describe implementation you ve con... | 0 |

7,861 | 11,036,096,712 | IssuesEvent | 2019-12-07 18:16:34 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | `childProcess.killed` should be `true` after `process.kill(childProcess.pid)` is called | child_process feature request | **Is your feature request related to a problem? Please describe.**

`childProcess.killed` is inconsistent depending on how the child process was killed:

```js

const { spawn } = require('child_process')

const childProcess = spawn('sleep', [5e6])

childProcess.kill()

console.log(childProcess.killed) // true

```

... | 1.0 | `childProcess.killed` should be `true` after `process.kill(childProcess.pid)` is called - **Is your feature request related to a problem? Please describe.**

`childProcess.killed` is inconsistent depending on how the child process was killed:

```js

const { spawn } = require('child_process')

const childProcess = sp... | process | childprocess killed should be true after process kill childprocess pid is called is your feature request related to a problem please describe childprocess killed is inconsistent depending on how the child process was killed js const spawn require child process const childprocess sp... | 1 |

6,119 | 8,996,228,715 | IssuesEvent | 2019-02-02 00:11:15 | bow-simulation/virtualbow | https://api.github.com/repos/bow-simulation/virtualbow | closed | Evaluate Qbs as an alternative build system to cmake | area: software process prio: normal type: idea | In GitLab by @spfeifer on Nov 25, 2017, 20:27

[http://doc.qt.io/qbs/](http://doc.qt.io/qbs/) | 1.0 | Evaluate Qbs as an alternative build system to cmake - In GitLab by @spfeifer on Nov 25, 2017, 20:27

[http://doc.qt.io/qbs/](http://doc.qt.io/qbs/) | process | evaluate qbs as an alternative build system to cmake in gitlab by spfeifer on nov | 1 |

2,072 | 4,889,337,418 | IssuesEvent | 2016-11-18 09:52:36 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | Wrong processing link that was created in non-top window and added to top window. | AREA: client COMPLEXITY: easy SYSTEM: resource processing TYPE: bug | Files to reproduce:

### Index.js

``` javascript

var http = require('http');

var fs = require('fs');

http.createServer(function (req, res) {

var content = '';

if (req.url === '/')

content = fs.readFileSync('index.html');

else if (req.url === '/iframe.html')

content = fs.readFileSync('ifr... | 1.0 | Wrong processing link that was created in non-top window and added to top window. - Files to reproduce:

### Index.js

``` javascript

var http = require('http');

var fs = require('fs');

http.createServer(function (req, res) {

var content = '';

if (req.url === '/')

content = fs.readFileSync('index.htm... | process | wrong processing link that was created in non top window and added to top window files to reproduce index js javascript var http require http var fs require fs http createserver function req res var content if req url content fs readfilesync index htm... | 1 |

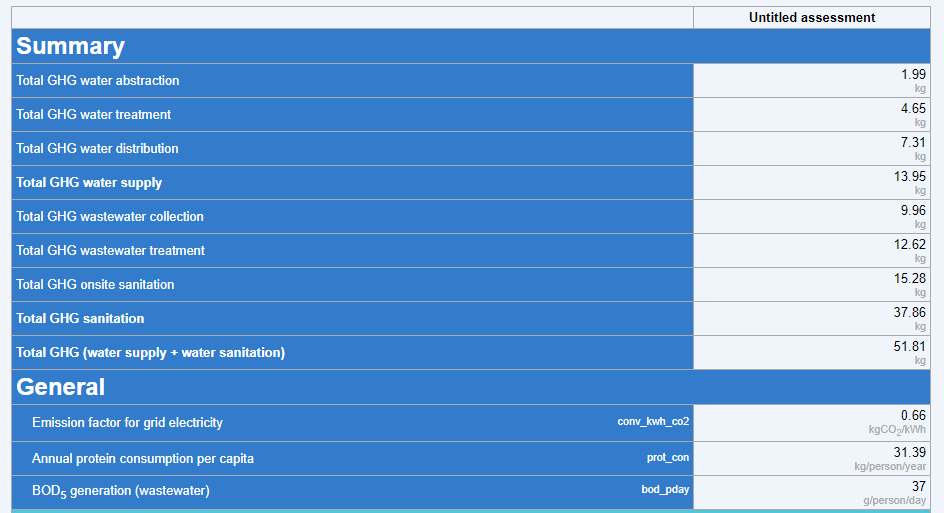

13,513 | 16,049,237,934 | IssuesEvent | 2021-04-22 16:59:04 | icra/ecam | https://api.github.com/repos/icra/ecam | closed | Units in "Compare Assessments" | discussed in process | Hi Lluis, the units might need to get adapted:

It only shows "kg" not even "kgCO2eq". It should be possible to select whether:

It only shows "kg" not even "kgCO2eq". It should be possible to select whether:

```

ERROR: System.Diagnostics.Tests.ProcessTests.TestProcessStartTime [FAIL]

System.NullReferenceException : Object reference not set to an instance of an object.

``` | 1.0 | [S.D.Process] TestProcessStartTime throws NRE on UAP - (Test case will be added soon, creating issue so that I can disable that in the PR)

```

ERROR: System.Diagnostics.Tests.ProcessTests.TestProcessStartTime [FAIL]

System.NullReferenceException : Object reference not set to an instance of an object.

... | process | testprocessstarttime throws nre on uap test case will be added soon creating issue so that i can disable that in the pr error system diagnostics tests processtests testprocessstarttime system nullreferenceexception object reference not set to an instance of an object | 1 |

4,349 | 7,252,832,101 | IssuesEvent | 2018-02-16 01:00:13 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | Problems with referers (KeyPrases from google) | log-processing | Hi Im looking for keyprases from google not empty. Attached are a report in JSON format.

querystrings from google.es and google it are striped it. In the original NGINX log they show.

Thanks in advance for the help.

[goaccess-1517393407555.txt](https://github.com/allinurl/goaccess/files/1681256/goaccess-1517393... | 1.0 | Problems with referers (KeyPrases from google) - Hi Im looking for keyprases from google not empty. Attached are a report in JSON format.

querystrings from google.es and google it are striped it. In the original NGINX log they show.

Thanks in advance for the help.

[goaccess-1517393407555.txt](https://github.com... | process | problems with referers keyprases from google hi im looking for keyprases from google not empty attached are a report in json format querystrings from google es and google it are striped it in the original nginx log they show thanks in advance for the help | 1 |

22,040 | 30,560,058,593 | IssuesEvent | 2023-07-20 14:06:01 | threefoldtech/tfgrid-sdk-ts | https://api.github.com/repos/threefoldtech/tfgrid-sdk-ts | closed | Dashboard raises an error on connecting to the Polkadot extension, also the loading credentials loading forever | process_wontfix type_bug | ### Description

I'm having trouble accessing the explore tab in the dashboard after connecting my account and refreshing the page. The nodes section seems to be stuck in an endless loading loop.

also, I'm seeing an error message that states "Can't get any account information from Polkadot extension. Please ensure... | 1.0 | Dashboard raises an error on connecting to the Polkadot extension, also the loading credentials loading forever - ### Description

I'm having trouble accessing the explore tab in the dashboard after connecting my account and refreshing the page. The nodes section seems to be stuck in an endless loading loop.

also,... | process | dashboard raises an error on connecting to the polkadot extension also the loading credentials loading forever description i m having trouble accessing the explore tab in the dashboard after connecting my account and refreshing the page the nodes section seems to be stuck in an endless loading loop also ... | 1 |

4,622 | 7,468,401,438 | IssuesEvent | 2018-04-02 18:50:51 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Test failure: System.Diagnostics.Process.Tests | area-System.Diagnostics.Process | (I'm new, so maybe I'm totally missing something, but I'm following the instructions as best I can and I keep getting test errors.)

So I (successfully) ran the build, made some changes to some libraries and started experiencing some issues. I wanted to get back to a good state, so I did

> git checkout master

> c... | 1.0 | Test failure: System.Diagnostics.Process.Tests - (I'm new, so maybe I'm totally missing something, but I'm following the instructions as best I can and I keep getting test errors.)

So I (successfully) ran the build, made some changes to some libraries and started experiencing some issues. I wanted to get back to a g... | process | test failure system diagnostics process tests i m new so maybe i m totally missing something but i m following the instructions as best i can and i keep getting test errors so i successfully ran the build made some changes to some libraries and started experiencing some issues i wanted to get back to a g... | 1 |

5,943 | 8,767,727,275 | IssuesEvent | 2018-12-17 20:40:02 | googleapis/google-auth-library-nodejs | https://api.github.com/repos/googleapis/google-auth-library-nodejs | closed | Can you do a new release so we can have the fix for #524? Revoke does not work in current release but is fixed in master. | type: process | Can you do a new release so we can have the fix for #524? Revoke does not work in current release but is fixed in master.

| 1.0 | Can you do a new release so we can have the fix for #524? Revoke does not work in current release but is fixed in master. - Can you do a new release so we can have the fix for #524? Revoke does not work in current release but is fixed in master.

| process | can you do a new release so we can have the fix for revoke does not work in current release but is fixed in master can you do a new release so we can have the fix for revoke does not work in current release but is fixed in master | 1 |

146,032 | 5,592,333,748 | IssuesEvent | 2017-03-30 03:54:56 | nus-mtp/nus-oracle | https://api.github.com/repos/nus-mtp/nus-oracle | opened | [Input Validation] Create a component that displays list of input mistakes | high priority UI | * More user friendly to list down validation mistakes | 1.0 | [Input Validation] Create a component that displays list of input mistakes - * More user friendly to list down validation mistakes | non_process | create a component that displays list of input mistakes more user friendly to list down validation mistakes | 0 |

18,853 | 24,767,063,125 | IssuesEvent | 2022-10-22 17:09:58 | fertadeo/ISPC-2do-Cuat-Proyecto | https://api.github.com/repos/fertadeo/ISPC-2do-Cuat-Proyecto | closed | #TK 14.3 Agregar mapa y redes en footer de la vista de Administración | in process | Agragar al footer redes sociales y mapa de ubicación | 1.0 | #TK 14.3 Agregar mapa y redes en footer de la vista de Administración - Agragar al footer redes sociales y mapa de ubicación | process | tk agregar mapa y redes en footer de la vista de administración agragar al footer redes sociales y mapa de ubicación | 1 |

4,481 | 7,343,530,591 | IssuesEvent | 2018-03-07 11:42:06 | fablabbcn/fablabs.io | https://api.github.com/repos/fablabbcn/fablabs.io | opened | Address autocomplete does not work in China | Approval Process bug | When adding a `Lab`, the address autocomplete that uses Google API seems to be not working, see #390. We should either:

- Use a service that works for all countries, including China

- Use Baidu just for Chinese users

- Move the autocomplete server side | 1.0 | Address autocomplete does not work in China - When adding a `Lab`, the address autocomplete that uses Google API seems to be not working, see #390. We should either:

- Use a service that works for all countries, including China

- Use Baidu just for Chinese users

- Move the autocomplete server side | process | address autocomplete does not work in china when adding a lab the address autocomplete that uses google api seems to be not working see we should either use a service that works for all countries including china use baidu just for chinese users move the autocomplete server side | 1 |

31,289 | 11,906,022,862 | IssuesEvent | 2020-03-30 19:37:14 | stefanfreitag/cicd_angular_s3 | https://api.github.com/repos/stefanfreitag/cicd_angular_s3 | opened | CVE-2015-9251 (Medium) detected in jquery-1.7.2.min.js | security vulnerability | ## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.2.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="htt... | True | CVE-2015-9251 (Medium) detected in jquery-1.7.2.min.js - ## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.2.min.js</b></p></summary>

<p>JavaScript library ... | non_process | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file tmp ws scm cicd angular node modules jmespath index html path to vulnerabl... | 0 |

3,593 | 4,542,049,118 | IssuesEvent | 2016-09-09 19:53:14 | Storj/bridge | https://api.github.com/repos/Storj/bridge | closed | vulnerability: allow weird character in buckets | security | ### Package Versions

Replace the values below using the output from `npm list storj-bridge`.

```

3.1.0

```

```

v4.5.0

```

### Expected Behavior

```

bucket id should error out when invalid

```

### Actual Behavior

```

application allows you to escape bucket id potentially allowing a slew of othe... | True | vulnerability: allow weird character in buckets - ### Package Versions

Replace the values below using the output from `npm list storj-bridge`.

```

3.1.0

```

```

v4.5.0

```

### Expected Behavior

```

bucket id should error out when invalid

```

### Actual Behavior

```

application allows you to es... | non_process | vulnerability allow weird character in buckets package versions replace the values below using the output from npm list storj bridge expected behavior bucket id should error out when invalid actual behavior application allows you to esc... | 0 |

21,036 | 27,979,257,085 | IssuesEvent | 2023-03-26 00:18:59 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | Color reconstruction fails to be applied during export | scope: image processing bug: pending no-issue-activity | **Describe the bug**

I had a photo which included a street lamp. I enabled `color reconstruction` module and disabled `highlight reconstruction`. It fixed the highlight quite well. However on exported image the correction was gone. It looked like the reconstruction module was turned off. Moreover re-opening the imag... | 1.0 | Color reconstruction fails to be applied during export - **Describe the bug**

I had a photo which included a street lamp. I enabled `color reconstruction` module and disabled `highlight reconstruction`. It fixed the highlight quite well. However on exported image the correction was gone. It looked like the reconstru... | process | color reconstruction fails to be applied during export describe the bug i had a photo which included a street lamp i enabled color reconstruction module and disabled highlight reconstruction it fixed the highlight quite well however on exported image the correction was gone it looked like the reconstru... | 1 |

21,115 | 28,078,847,949 | IssuesEvent | 2023-03-30 03:38:20 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | Renaming metrics does not work on the metrics generated by spanmetrics connector | bug processor/metricstransform | ### Component(s)

processor/metricstransform

### What happened?

## Description

I'm using `metricstranform` processor and `spanmetrics` connector in my otel collector as below. There are two transformations contained in the `metricstranform` processor. The first transformation which contains operations to add label `... | 1.0 | Renaming metrics does not work on the metrics generated by spanmetrics connector - ### Component(s)

processor/metricstransform

### What happened?

## Description

I'm using `metricstranform` processor and `spanmetrics` connector in my otel collector as below. There are two transformations contained in the `metricstra... | process | renaming metrics does not work on the metrics generated by spanmetrics connector component s processor metricstransform what happened description i m using metricstranform processor and spanmetrics connector in my otel collector as below there are two transformations contained in the metricstra... | 1 |

2,936 | 5,920,780,801 | IssuesEvent | 2017-05-22 21:07:57 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | reopened | Process tests failing in Portable run due to needing elevation | area-System.Diagnostics.Process os-linux test-run-portable | ERROR: type should be string, got "\r\nhttps://mc.dot.net/#/product/netcore/master/source/official~2Fcorefx~2Fmaster~2F/type/test~2Ffunctional~2Fportable~2Fcli~2F/build/20170521.02/workItem/System.Diagnostics.Process.Tests\r\n\r\nThese tests need elevation. Either the runs need to be elevated, the tests need to elevate themselves, or they need filtering out by trait and covered some other way." | 1.0 | Process tests failing in Portable run due to needing elevation -

https://mc.dot.net/#/product/netcore/master/source/official~2Fcorefx~2Fmaster~2F/type/test~2Ffunctional~2Fportable~2Fcli~2F/build/20170521.02/workItem/System.Diagnostics.Process.Tests

These tests need elevation. Either the runs need to be elevated, t... | process | process tests failing in portable run due to needing elevation these tests need elevation either the runs need to be elevated the tests need to elevate themselves or they need filtering out by trait and covered some other way | 1 |

22,752 | 32,072,426,365 | IssuesEvent | 2023-09-25 08:53:07 | aws/sagemaker-python-sdk | https://api.github.com/repos/aws/sagemaker-python-sdk | closed | TypeError: can only concatenate str (not "list") to str | component: processing | This error appears when you run the ScriptProcessor.run(). The script its at thew same level of my notebook in pagemaker Studio. It doesn't change if I add input, and output. I'm using a custom image on ECR. I also tried to save my script on s3 and pass the s3 path.

```

from sagemaker.processing import Processor

p... | 1.0 | TypeError: can only concatenate str (not "list") to str - This error appears when you run the ScriptProcessor.run(). The script its at thew same level of my notebook in pagemaker Studio. It doesn't change if I add input, and output. I'm using a custom image on ECR. I also tried to save my script on s3 and pass the s3 p... | process | typeerror can only concatenate str not list to str this error appears when you run the scriptprocessor run the script its at thew same level of my notebook in pagemaker studio it doesn t change if i add input and output i m using a custom image on ecr i also tried to save my script on and pass the pat... | 1 |

17,721 | 23,625,566,544 | IssuesEvent | 2022-08-25 03:17:09 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add The Rusty Venture Show | suggested title in process | Title:

The Rusty Venture Show

Type (film/tv show):

TV

Film or show in which it appears:

The Venture Bros.

Is the parent film/show streaming anywhere?

HBO Max.

There are also clips on the Adult Swim page: https://www.adultswim.com/videos/the-venture-bros

About when in the parent film/show does it appear... | 1.0 | Add The Rusty Venture Show - Title:

The Rusty Venture Show

Type (film/tv show):

TV

Film or show in which it appears:

The Venture Bros.

Is the parent film/show streaming anywhere?

HBO Max.

There are also clips on the Adult Swim page: https://www.adultswim.com/videos/the-venture-bros

About when in the pa... | process | add the rusty venture show title the rusty venture show type film tv show tv film or show in which it appears the venture bros is the parent film show streaming anywhere hbo max there are also clips on the adult swim page about when in the parent film show does it appear sporadically thr... | 1 |

99,058 | 4,045,339,109 | IssuesEvent | 2016-05-21 23:07:43 | Valenchak/sharpsense | https://api.github.com/repos/Valenchak/sharpsense | closed | RXD, RTS, DTR - Should be driven low before turning off the Sim900 power MOSFET | auto-migrated Category-Firmware Priority-Critical Type-Task | ```

Since GSM_RXD, GSM_RTS, GSM_DTR pins will be pulled up internally to PSoC3 (or

driven strong), before turning off the power to SIM900 (using MOSFET), it has

to be ensured that these three pins are driven low, so that they won't back

power the sim900.

```

Original issue reported on code.google.com by `mysorena... | 1.0 | RXD, RTS, DTR - Should be driven low before turning off the Sim900 power MOSFET - ```

Since GSM_RXD, GSM_RTS, GSM_DTR pins will be pulled up internally to PSoC3 (or

driven strong), before turning off the power to SIM900 (using MOSFET), it has

to be ensured that these three pins are driven low, so that they won't back... | non_process | rxd rts dtr should be driven low before turning off the power mosfet since gsm rxd gsm rts gsm dtr pins will be pulled up internally to or driven strong before turning off the power to using mosfet it has to be ensured that these three pins are driven low so that they won t back power the ... | 0 |

291 | 2,731,419,473 | IssuesEvent | 2015-04-16 20:15:16 | cfpb/hmda-viz-prototype | https://api.github.com/repos/cfpb/hmda-viz-prototype | closed | report_list.json files | enhancement Processing | We're creating MSA-MD folders when no reports exist. The folder has the report_list.json file with just an empty object.

Here is an example:

https://github.com/cfpb/hmda-viz-prototype/tree/gh-pages/aggregate/2013/alabama/albertville

- [ ] don't create the msa-md folder if there are no reports

- [ ] rename the fil... | 1.0 | report_list.json files - We're creating MSA-MD folders when no reports exist. The folder has the report_list.json file with just an empty object.

Here is an example:

https://github.com/cfpb/hmda-viz-prototype/tree/gh-pages/aggregate/2013/alabama/albertville

- [ ] don't create the msa-md folder if there are no repo... | process | report list json files we re creating msa md folders when no reports exist the folder has the report list json file with just an empty object here is an example don t create the msa md folder if there are no reports rename the file to either reports json or report list json with a hyphen to be co... | 1 |

13,781 | 10,455,690,037 | IssuesEvent | 2019-09-19 22:03:14 | OregonDigital/OD2 | https://api.github.com/repos/OregonDigital/OD2 | opened | WC7 - Setup on-premises k8s cluster | Epic Infrastructure Priority - High | During WC7 we need to build out the new on-premises staging environment at OSU.

- [ ] Setup control plane and worker VMs

- [ ] Setup Kubernetes cluster

- [ ] Integrate with OSULP Rancher

- [ ] Update CI/CD build/deploy pipeline

**lib-odcp1-3** (control plane nodes)

- 4 CPU

- 8GB RAM

- 100GB disk

**lib-od... | 1.0 | WC7 - Setup on-premises k8s cluster - During WC7 we need to build out the new on-premises staging environment at OSU.

- [ ] Setup control plane and worker VMs

- [ ] Setup Kubernetes cluster

- [ ] Integrate with OSULP Rancher

- [ ] Update CI/CD build/deploy pipeline

**lib-odcp1-3** (control plane nodes)

- 4 CP... | non_process | setup on premises cluster during we need to build out the new on premises staging environment at osu setup control plane and worker vms setup kubernetes cluster integrate with osulp rancher update ci cd build deploy pipeline lib control plane nodes cpu ram di... | 0 |

28,006 | 22,751,488,864 | IssuesEvent | 2022-07-07 13:28:03 | w3f/polkadot-wiki | https://api.github.com/repos/w3f/polkadot-wiki | closed | Audit local link references for 'Page not Found' errors | Wiki Infrastructure | This issue builds off #3136 which was recently closed. That task involved resolving all the external link urls but did not include references to other local markdown. While scanning the console output for build warnings and errors I noticed the following:

```

[INFO] Docusaurus found broken links!

Please check th... | 1.0 | Audit local link references for 'Page not Found' errors - This issue builds off #3136 which was recently closed. That task involved resolving all the external link urls but did not include references to other local markdown. While scanning the console output for build warnings and errors I noticed the following:

`... | non_process | audit local link references for page not found errors this issue builds off which was recently closed that task involved resolving all the external link urls but did not include references to other local markdown while scanning the console output for build warnings and errors i noticed the following ... | 0 |

15,829 | 20,020,646,742 | IssuesEvent | 2022-02-01 16:06:33 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Implement datamodel parser validations for two-way embedded many-to-many relations on MongoDB | process/candidate team/migrations topic: mongodb | We want to enable relations looking like this:

```

model Book {

...

author_ids String[] @db.ObjectId

authors Author[] @relation(fields: [author_ids], references: id)

}

model Author {

...

book_ids String[] @db.ObjectId

books Book[] @relation(fields: [book_ids], references: id)

}

```

... | 1.0 | Implement datamodel parser validations for two-way embedded many-to-many relations on MongoDB - We want to enable relations looking like this:

```

model Book {

...

author_ids String[] @db.ObjectId

authors Author[] @relation(fields: [author_ids], references: id)

}

model Author {

...

book_ids St... | process | implement datamodel parser validations for two way embedded many to many relations on mongodb we want to enable relations looking like this model book author ids string db objectid authors author relation fields references id model author book ids string db obj... | 1 |

12,168 | 14,741,611,270 | IssuesEvent | 2021-01-07 10:53:26 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Rockland - TAC-6570 | anc-process anp-important ant-bug ant-enhancement ant-parent/primary has attachment | In GitLab by @kdjstudios on Jan 29, 2019, 09:19

**Submitted by:** Michele Burns <michele.burns@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-01-29-24769/conversation

**Server:** Internal

**Client/Site:** Rockland

**Account:** TAC-6570

**Issue:**

Please see the attached ... | 1.0 | Rockland - TAC-6570 - In GitLab by @kdjstudios on Jan 29, 2019, 09:19

**Submitted by:** Michele Burns <michele.burns@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-01-29-24769/conversation

**Server:** Internal

**Client/Site:** Rockland

**Account:** TAC-6570

**Issue:**

Pl... | process | rockland tac in gitlab by kdjstudios on jan submitted by michele burns helpdesk server internal client site rockland account tac issue please see the attached ledger for the account listed in the subject the balance on the account in the ledger is bu... | 1 |

20,374 | 27,028,702,062 | IssuesEvent | 2023-02-11 23:19:02 | PHPSocialNetwork/phpfastcache | https://api.github.com/repos/PHPSocialNetwork/phpfastcache | closed | getItemsByTag() - empty after one item has expired | 0_0 Bug [-_-] In Process ~_~ Issue confirmed 8.1 9.1 | ### What type of issue is this?

Incorrect/unexpected/unexplainable behavior

### Operating system + version

Debian 11

### PHP version

8.1

### Connector/Database version (if applicable)

Memcached, Files

### Phpfastcache version

9.1.2 ✅

### Describe the issue you're facing

I create multiple keys with different ... | 1.0 | getItemsByTag() - empty after one item has expired - ### What type of issue is this?

Incorrect/unexpected/unexplainable behavior

### Operating system + version

Debian 11

### PHP version

8.1

### Connector/Database version (if applicable)

Memcached, Files

### Phpfastcache version

9.1.2 ✅

### Describe the issue ... | process | getitemsbytag empty after one item has expired what type of issue is this incorrect unexpected unexplainable behavior operating system version debian php version connector database version if applicable memcached files phpfastcache version ✅ describe the issue y... | 1 |

364,803 | 10,773,460,570 | IssuesEvent | 2019-11-02 20:47:20 | minj/foxtrick | https://api.github.com/repos/minj/foxtrick | reopened | Rewrite the player faces functionality in MatchOrderInterface | MatchOrder NT Priority-Medium accepted enhancement | Possible to use available data to generate faces:

Should fix NT player faces

| 1.0 | Rewrite the player faces functionality in MatchOrderInterface - Possible to use available data to generate faces:

Should fix NT player faces

| non_process | rewrite the player faces functionality in matchorderinterface possible to use available data to generate faces should fix nt player faces | 0 |

434,798 | 12,528,000,988 | IssuesEvent | 2020-06-04 08:52:05 | MLH-Fellowship/CodeVidLive | https://api.github.com/repos/MLH-Fellowship/CodeVidLive | closed | Covid 19 case dataset with geo locations | duplicate priority/high | Crawl news and merge?

Checking out existing Covid Data on Kaggle | 1.0 | Covid 19 case dataset with geo locations - Crawl news and merge?

Checking out existing Covid Data on Kaggle | non_process | covid case dataset with geo locations crawl news and merge checking out existing covid data on kaggle | 0 |

613,413 | 19,089,533,447 | IssuesEvent | 2021-11-29 10:30:26 | hashicorp/terraform-cdk | https://api.github.com/repos/hashicorp/terraform-cdk | closed | Bundle CLI for distribution | enhancement cdktf-cli committed priority/critical-urgent size/medium | <!--- Please keep this note for the community --->

### Community Note

- Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

- Please do not l... | 1.0 | Bundle CLI for distribution - <!--- Please keep this note for the community --->

### Community Note

- Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize t... | non_process | bundle cli for distribution community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or other comments that do not add relevant new information or questions they generate extra noise for i... | 0 |

14,166 | 17,086,041,061 | IssuesEvent | 2021-07-08 11:59:42 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [SB] Anchor date based custom scheduling > Order of anchor runs is incorrect in GetStudyActivityList API | Bug P1 Process: Fixed Process: Tested dev Study datastore | Steps:

1. Configure a date based source questionnaire

2. Add a questionnaire and choose anchor based custom schdule

3. Configure some runs involving negative X/Y values

4. Publish updates

5. Observe the order in GetStudyActivityList API

Actual: Order is incorrect

Expected: Order should lowest X value run to ... | 2.0 | [SB] Anchor date based custom scheduling > Order of anchor runs is incorrect in GetStudyActivityList API - Steps:

1. Configure a date based source questionnaire

2. Add a questionnaire and choose anchor based custom schdule

3. Configure some runs involving negative X/Y values

4. Publish updates

5. Observe the order... | process | anchor date based custom scheduling order of anchor runs is incorrect in getstudyactivitylist api steps configure a date based source questionnaire add a questionnaire and choose anchor based custom schdule configure some runs involving negative x y values publish updates observe the order in... | 1 |

163,292 | 13,914,694,298 | IssuesEvent | 2020-10-20 22:43:10 | EOSIO/eosjs | https://api.github.com/repos/EOSIO/eosjs | closed | [docs] Suggestion / Change Request | bug documentation | ```

const resp = await rpc.get_table_rows({

json: true, // Get the response as json

code: 'eosio.token', // Contract that we target

scope: 'testacc' // Account that owns the data

table: 'accounts' // Table name

limit: 10, // Maximum number of rows ... | 1.0 | [docs] Suggestion / Change Request - ```

const resp = await rpc.get_table_rows({

json: true, // Get the response as json

code: 'eosio.token', // Contract that we target

scope: 'testacc' // Account that owns the data

table: 'accounts' // Table name

limit: 10, ... | non_process | suggestion change request const resp await rpc get table rows json true get the response as json code eosio token contract that we target scope testacc account that owns the data table accounts table name limit ... | 0 |

21,021 | 27,969,901,368 | IssuesEvent | 2023-03-25 00:16:21 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | [Feature request] Use the one or more selection areas to auto generate the parmetric mask | feature: enhancement difficulty: hard scope: image processing no-issue-activity | If you want to create a parametric mask, you have to move the slider manually for one (or more) channels. Color picker helps to estimate where the desired areas to be selected are located on each channel.

Can color picker area be used to auto generate the parametric mask?

The best way to explain what I mean is to... | 1.0 | [Feature request] Use the one or more selection areas to auto generate the parmetric mask - If you want to create a parametric mask, you have to move the slider manually for one (or more) channels. Color picker helps to estimate where the desired areas to be selected are located on each channel.

Can color picker are... | process | use the one or more selection areas to auto generate the parmetric mask if you want to create a parametric mask you have to move the slider manually for one or more channels color picker helps to estimate where the desired areas to be selected are located on each channel can color picker area be used to aut... | 1 |