Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

592,475 | 17,893,711,185 | IssuesEvent | 2021-09-08 05:13:12 | francheska-vicente/cssweng | https://api.github.com/repos/francheska-vicente/cssweng | opened | Discounts do not reflect when booking for Bridal Family Room | bug priority: high issue: back-end severity: high | ### Summary:

- Only for Bridal Family Room, when inputting discounts, the total cost does not change

### Steps to Reproduce:

- Create Booking (Room 999, Sept 8-10, 2 pax, 2 PWD, 2000PHP discount)

### Visual Proof:

### Visual Proof:

| 1.0 | Use the real Github issues templates - It would be a better integration if you could use the real issue templates provided by GitHub

See: https://help.github.com/articles/about-issue-and-pull-request-templates/#issue-templates

(I discussed this with @deniak) | process | use the real github issues templates it would be a better integration if you could use the real issue templates provided by github see i discussed this with deniak | 1 |

15,178 | 18,951,001,890 | IssuesEvent | 2021-11-18 15:10:19 | googleapis/google-auth-library-python | https://api.github.com/repos/googleapis/google-auth-library-python | closed | Intersphinx URL for 'requests-oauthlib' now 404s | type: process | From [this failed build](https://source.cloud.google.com/results/invocations/56d76e91-941f-45a5-b4e4-6b74a9e86461/targets/cloud-devrel%2Fclient-libraries%2Fpython%2Fgoogleapis%2Fgoogle-auth-library-python%2Fpresubmit%2Fpresubmit/log):

```python

nox > Running session docs

nox > Creating virtual environment (virtual... | 1.0 | Intersphinx URL for 'requests-oauthlib' now 404s - From [this failed build](https://source.cloud.google.com/results/invocations/56d76e91-941f-45a5-b4e4-6b74a9e86461/targets/cloud-devrel%2Fclient-libraries%2Fpython%2Fgoogleapis%2Fgoogle-auth-library-python%2Fpresubmit%2Fpresubmit/log):

```python

nox > Running sessio... | process | intersphinx url for requests oauthlib now from python nox running session docs nox creating virtual environment virtualenv using in nox docs nox python m pip install e nox python m pip install sphinx alabaster recommonmark sphinx docstring typing nox sphinx build t w n b ... | 1 |

441,234 | 12,709,669,513 | IssuesEvent | 2020-06-23 12:44:54 | grpc/grpc | https://api.github.com/repos/grpc/grpc | opened | Invalid TLS handshakes from the grpc cpp client when connecting to a specific IP | kind/bug priority/P2 | ### What version of gRPC and what language are you using?

Latest, C++

### What operating system (Linux, Windows,...) and version?

Linux, Centos 7

### What runtime / compiler are you using (e.g. python version or version of gcc)

n/a

### What did you do?

**Background on the TLS Server Name Indication extensi... | 1.0 | Invalid TLS handshakes from the grpc cpp client when connecting to a specific IP - ### What version of gRPC and what language are you using?

Latest, C++

### What operating system (Linux, Windows,...) and version?

Linux, Centos 7

### What runtime / compiler are you using (e.g. python version or version of gcc)

... | non_process | invalid tls handshakes from the grpc cpp client when connecting to a specific ip what version of grpc and what language are you using latest c what operating system linux windows and version linux centos what runtime compiler are you using e g python version or version of gcc ... | 0 |

485,842 | 14,000,311,017 | IssuesEvent | 2020-10-28 12:08:11 | EyeSeeTea/training-app | https://api.github.com/repos/EyeSeeTea/training-app | closed | Close behavior on tutorial | complexity - low (1 hr) priority - low priority - medium status - blocked type - cosmetic type - feedback | - Pop-up window on shutting down tutorial telling users what is happening and how they can relaunch the tool (or minimize if they prefer) | 2.0 | Close behavior on tutorial - - Pop-up window on shutting down tutorial telling users what is happening and how they can relaunch the tool (or minimize if they prefer) | non_process | close behavior on tutorial pop up window on shutting down tutorial telling users what is happening and how they can relaunch the tool or minimize if they prefer | 0 |

6,027 | 7,468,143,929 | IssuesEvent | 2018-04-02 17:55:45 | acelabini/ibinex-option-one | https://api.github.com/repos/acelabini/ibinex-option-one | closed | [ SERVICES ] Orientation and size of phone icon in nav/header | DESKTOP/WEB NAV/HEADER SERVICES | Tester: Joel Simpao

OS: Windows 10

Browser: Chrome

Expected:

Actual:

Actual:

: Consistency and clarity

* Proponents (who needs this change): Everyone

Current Term definition: https://dwc.tdwg.org/terms/#dwc:associatedTaxa

Proposed new attributes of the term:

* Term name (in lowerCamelCase): a... | 1.0 | Change term - associatedTaxa - ## Change term

* Submitter: John Wieczorek

* Justification (why is this change necessary?): Consistency and clarity

* Proponents (who needs this change): Everyone

Current Term definition: https://dwc.tdwg.org/terms/#dwc:associatedTaxa

Proposed new attributes of the term:

* T... | process | change term associatedtaxa change term submitter john wieczorek justification why is this change necessary consistency and clarity proponents who needs this change everyone current term definition proposed new attributes of the term term name in lowercamelcase associatedtaxa ... | 1 |

136,796 | 12,734,791,564 | IssuesEvent | 2020-06-25 14:24:56 | graknlabs/graql | https://api.github.com/repos/graknlabs/graql | opened | Slack badge displayed in README | type: documentation | ## Problem to Solve

A Slack badge is displayed in the README.

## Proposed Solution

Replace the Slack badge with a Discord badge, like we have in the `grakn` repo.

| 1.0 | Slack badge displayed in README - ## Problem to Solve

A Slack badge is displayed in the README.

## Proposed Solution

Replace the Slack badge with a Discord badge, like we have in the `grakn` repo.

| non_process | slack badge displayed in readme problem to solve a slack badge is displayed in the readme proposed solution replace the slack badge with a discord badge like we have in the grakn repo | 0 |

640 | 3,098,040,417 | IssuesEvent | 2015-08-28 08:20:42 | deb-sandeep/PHPWebApps | https://api.github.com/repos/deb-sandeep/PHPWebApps | opened | Provide support for multi-choice questions | enhancement jove_notes_db jove_notes_grammar jove_notes_processor jove_notes_server jove_notes_ui | This is an enhancement request for supporting multiple-choice type questions in JoveNotes, which is _sorely_ missing now.

### Definition

A multiple-choice question is a type of interactive question, which has:

1. A question

2. Multiple options

3. One or more right answers

4. Explanation [Optional]

The user... | 1.0 | Provide support for multi-choice questions - This is an enhancement request for supporting multiple-choice type questions in JoveNotes, which is _sorely_ missing now.

### Definition

A multiple-choice question is a type of interactive question, which has:

1. A question

2. Multiple options

3. One or more right a... | process | provide support for multi choice questions this is an enhancement request for supporting multiple choice type questions in jovenotes which is sorely missing now definition a multiple choice question is a type of interactive question which has a question multiple options one or more right a... | 1 |

2,295 | 5,115,485,568 | IssuesEvent | 2017-01-06 21:58:36 | jlm2017/jlm-video-subtitles | https://api.github.com/repos/jlm2017/jlm-video-subtitles | opened | [subtitles] [eng] MÉLENCHON - POURQUOI LE CODE DU TRAVAIL EST GROS ? | Language: English Process: [2] Ready for review (1) | # Video title

MÉLENCHON - POURQUOI LE CODE DU TRAVAIL EST GROS ?

# URL

https://www.youtube.com/watch?v=jnqujbuCNsM

# Youtube subtitles language

Anglais

# Duration

1:58

# Subtitles URL

https://www.youtube.com/timedtext_editor?tab=captions&bl=vmp&lang=en-US&action_mde_edit_form=1&ref=player&v=j... | 1.0 | [subtitles] [eng] MÉLENCHON - POURQUOI LE CODE DU TRAVAIL EST GROS ? - # Video title

MÉLENCHON - POURQUOI LE CODE DU TRAVAIL EST GROS ?

# URL

https://www.youtube.com/watch?v=jnqujbuCNsM

# Youtube subtitles language

Anglais

# Duration

1:58

# Subtitles URL

https://www.youtube.com/timedtext_edit... | process | mélenchon pourquoi le code du travail est gros video title mélenchon pourquoi le code du travail est gros url youtube subtitles language anglais duration subtitles url | 1 |

5,900 | 8,717,581,182 | IssuesEvent | 2018-12-07 17:35:35 | GoogleCloudPlatform/google-cloud-cpp | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-cpp | opened | Decide if we want to support Xcode 7.3. | type: process | We got a bug report, the code fails to compile with [Xcode 7.3](https://en.wikipedia.org/wiki/Xcode#7.x_series), which was released around 2015. The problem is actually in the compiler (e.g. does not support `thread_local`), and will submit a workaround shortly.

We need to decide if the compiler is something we wan... | 1.0 | Decide if we want to support Xcode 7.3. - We got a bug report, the code fails to compile with [Xcode 7.3](https://en.wikipedia.org/wiki/Xcode#7.x_series), which was released around 2015. The problem is actually in the compiler (e.g. does not support `thread_local`), and will submit a workaround shortly.

We need to ... | process | decide if we want to support xcode we got a bug report the code fails to compile with which was released around the problem is actually in the compiler e g does not support thread local and will submit a workaround shortly we need to decide if the compiler is something we want to support long ... | 1 |

458,404 | 13,174,672,593 | IssuesEvent | 2020-08-11 23:09:00 | shimming-toolbox/shimming-toolbox-py | https://api.github.com/repos/shimming-toolbox/shimming-toolbox-py | opened | Improve masking capabilities | Priority: LOW enhancement | ## Context

The initial issue #47 which was partially fixed by PR #60 added some basic capabilities to mask some data (threshold, shape: square, cube). More masking capability could be implemented to segment the brain and spinal cord as well as other shapes.

Algorithms that could help

- SCT: for spinal cord + simpl... | 1.0 | Improve masking capabilities - ## Context

The initial issue #47 which was partially fixed by PR #60 added some basic capabilities to mask some data (threshold, shape: square, cube). More masking capability could be implemented to segment the brain and spinal cord as well as other shapes.

Algorithms that could help

... | non_process | improve masking capabilities context the initial issue which was partially fixed by pr added some basic capabilities to mask some data threshold shape square cube more masking capability could be implemented to segment the brain and spinal cord as well as other shapes algorithms that could help ... | 0 |

15,945 | 20,163,727,854 | IssuesEvent | 2022-02-10 00:47:37 | ooi-data/CE09OSSM-MFD37-01-OPTAAC000-telemetered-optaa_dj_dcl_instrument | https://api.github.com/repos/ooi-data/CE09OSSM-MFD37-01-OPTAAC000-telemetered-optaa_dj_dcl_instrument | opened | 🛑 Processing failed: GroupNotFoundError | process | ## Overview

`GroupNotFoundError` found in `processing_task` task during run ended on 2022-02-10T00:47:36.630149.

## Details

Flow name: `CE09OSSM-MFD37-01-OPTAAC000-telemetered-optaa_dj_dcl_instrument`

Task name: `processing_task`

Error type: `GroupNotFoundError`

Error message: group not found at path ''

<details>

... | 1.0 | 🛑 Processing failed: GroupNotFoundError - ## Overview

`GroupNotFoundError` found in `processing_task` task during run ended on 2022-02-10T00:47:36.630149.

## Details

Flow name: `CE09OSSM-MFD37-01-OPTAAC000-telemetered-optaa_dj_dcl_instrument`

Task name: `processing_task`

Error type: `GroupNotFoundError`

Error messa... | process | 🛑 processing failed groupnotfounderror overview groupnotfounderror found in processing task task during run ended on details flow name telemetered optaa dj dcl instrument task name processing task error type groupnotfounderror error message group not found at path ... | 1 |

208,120 | 15,876,454,812 | IssuesEvent | 2021-04-09 08:23:42 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | closed | No default blob container name shows in the 'Clone' dialog for one local emulator blob container | :beetle: regression :gear: emulator 🧪 testing | **Storage Explorer Version**: 1.19.0-dev

**Build Number**: 20210408.5

**Branch**: main

**Platform/OS**: Windows 10

**Emulator Version**: Azurite 3.11.0/ Microsoft Azure Storage Emulator 5.10.0.0

**Architecture**: ia32

**Regression From**: Previous release (1.18.1)

## Steps to Reproduce ##

1. Install and run A... | 1.0 | No default blob container name shows in the 'Clone' dialog for one local emulator blob container - **Storage Explorer Version**: 1.19.0-dev

**Build Number**: 20210408.5

**Branch**: main

**Platform/OS**: Windows 10

**Emulator Version**: Azurite 3.11.0/ Microsoft Azure Storage Emulator 5.10.0.0

**Architecture**: ia3... | non_process | no default blob container name shows in the clone dialog for one local emulator blob container storage explorer version dev build number branch main platform os windows emulator version azurite microsoft azure storage emulator architecture regressio... | 0 |

19,721 | 26,073,828,975 | IssuesEvent | 2022-12-24 07:06:26 | pyanodon/pybugreports | https://api.github.com/repos/pyanodon/pybugreports | closed | Mod "Packing Tape" incompatible | bug mod:pypostprocessing crash compatibility | ### Mod source

Factorio Mod Portal

### Which mod are you having an issue with?

- [ ] pyalienlife

- [ ] pyalternativeenergy

- [ ] pycoalprocessing

- [ ] pyfusionenergy

- [ ] pyhightech

- [ ] pyindustry

- [ ] pypetroleumhandling

- [X] pypostprocessing

- [ ] pyrawores

### Operating system

>=Windows 10

### What kind ... | 1.0 | Mod "Packing Tape" incompatible - ### Mod source

Factorio Mod Portal

### Which mod are you having an issue with?

- [ ] pyalienlife

- [ ] pyalternativeenergy

- [ ] pycoalprocessing

- [ ] pyfusionenergy

- [ ] pyhightech

- [ ] pyindustry

- [ ] pypetroleumhandling

- [X] pypostprocessing

- [ ] pyrawores

### Operating sy... | process | mod packing tape incompatible mod source factorio mod portal which mod are you having an issue with pyalienlife pyalternativeenergy pycoalprocessing pyfusionenergy pyhightech pyindustry pypetroleumhandling pypostprocessing pyrawores operating system windows ... | 1 |

6,856 | 9,993,166,156 | IssuesEvent | 2019-07-11 14:50:34 | eaudeweb/ozone | https://api.github.com/repos/eaudeweb/ozone | closed | Error when importing process agents | Component: Backend Feature: Data migration Feature: Process Agents Priority: Highest | KeyError: 'UNK'

File "/var/local/ozone/ozone/core/management/commands/import_procagents.py", line 340, in get_or_create_decision

meeting = self.meetings[meeting_id]

caused by ProcAgentEmitLimits.Decision = UNK | 1.0 | Error when importing process agents - KeyError: 'UNK'

File "/var/local/ozone/ozone/core/management/commands/import_procagents.py", line 340, in get_or_create_decision

meeting = self.meetings[meeting_id]

caused by ProcAgentEmitLimits.Decision = UNK | process | error when importing process agents keyerror unk file var local ozone ozone core management commands import procagents py line in get or create decision meeting self meetings caused by procagentemitlimits decision unk | 1 |

1,417 | 3,984,317,443 | IssuesEvent | 2016-05-07 03:36:26 | opattison/olivermakes | https://api.github.com/repos/opattison/olivermakes | closed | Testing: avoid links that only exist on master (html-proofer) | bug content maintenance process | See: https://travis-ci.org/opattison/olivermakes/builds/127293069

The link is only live when the post is published on the master branch, leading to errors in Travis CI that aren’t actually errors.

Either:

- `--url-ignore www.github.com`

- `<a href="http://notareallink" data-proofer-ignore>Not checked.</a>` | 1.0 | Testing: avoid links that only exist on master (html-proofer) - See: https://travis-ci.org/opattison/olivermakes/builds/127293069

The link is only live when the post is published on the master branch, leading to errors in Travis CI that aren’t actually errors.

Either:

- `--url-ignore www.github.com`

- `<a hre... | process | testing avoid links that only exist on master html proofer see the link is only live when the post is published on the master branch leading to errors in travis ci that aren’t actually errors either url ignore not checked | 1 |

193,192 | 22,216,084,211 | IssuesEvent | 2022-06-08 01:54:15 | maddyCode23/linux-4.1.15 | https://api.github.com/repos/maddyCode23/linux-4.1.15 | reopened | CVE-2019-15216 (Medium) detected in linux-stable-rtv4.1.33 | security vulnerability | ## CVE-2019-15216 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home p... | True | CVE-2019-15216 (Medium) detected in linux-stable-rtv4.1.33 - ## CVE-2019-15216 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia C... | non_process | cve medium detected in linux stable cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulnerable source f... | 0 |

18,615 | 24,579,367,699 | IssuesEvent | 2022-10-13 14:34:16 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Mobile apps] [FHIR] Enrollment flow is not working in the android mobile apps | Bug Blocker P0 iOS Android Process: Fixed Process: Tested QA Process: Tested dev | **AR:** Enrollment flow is not working in the android mobile apps

**ER:** Participants should be able to enroll in the android mobile apps

| 3.0 | [Mobile apps] [FHIR] Enrollment flow is not working in the android mobile apps - **AR:** Enrollment flow is not working in the android mobile apps

**ER:** Participants should be able to enroll in the android mobile apps

:

```python

_________________ TestV4POSTPolicies.test_get_signed_policy_v4 _________________

... | 1.0 | 'test_get_signed_policy_v4' flakes with 500 - From [this Kokoro build](https://source.cloud.google.com/results/invocations/0499a0e3-d3c1-444e-a1a4-e74e80e39dcf/targets/cloud-devrel%2Fclient-libraries%2Fpython%2Fgoogleapis%2Fpython-storage%2Fpresubmit%2Fpresubmit/log):

```python

_________________ TestV4POSTPolicies.... | process | test get signed policy flakes with from python test get signed policy self def test get signed policy self bucket name post policy unique resource id self assertraises exceptions notfound config client get bucket ... | 1 |

21,242 | 28,366,913,256 | IssuesEvent | 2023-04-12 14:25:54 | Deltares/Ribasim | https://api.github.com/repos/Deltares/Ribasim | opened | allow salt injection as boundary condition | physical process | include salt injection as boundary condition to represent salt influx from the sea | 1.0 | allow salt injection as boundary condition - include salt injection as boundary condition to represent salt influx from the sea | process | allow salt injection as boundary condition include salt injection as boundary condition to represent salt influx from the sea | 1 |

156,523 | 12,313,199,921 | IssuesEvent | 2020-05-12 14:59:12 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: version/mixed/nodes=3 failed | C-test-failure O-roachtest O-robot branch-release-20.1 release-blocker | [(roachtest).version/mixed/nodes=3 failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=1928509&tab=buildLog) on [release-20.1@1affcc3ac8819e133d54668a1ce0fc4a4ce99b4f](https://github.com/cockroachdb/cockroach/commits/1affcc3ac8819e133d54668a1ce0fc4a4ce99b4f):

```

| 579.0s 0 2559.0 ... | 2.0 | roachtest: version/mixed/nodes=3 failed - [(roachtest).version/mixed/nodes=3 failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=1928509&tab=buildLog) on [release-20.1@1affcc3ac8819e133d54668a1ce0fc4a4ce99b4f](https://github.com/cockroachdb/cockroach/commits/1affcc3ac8819e133d54668a1ce0fc4a4ce99b4f):

```

... | non_process | roachtest version mixed nodes failed on write write elapsed errors ops sec inst ops sec cum ms ms ms pmax ms ... | 0 |

33,405 | 15,896,458,729 | IssuesEvent | 2021-04-11 17:30:10 | sp614x/optifine | https://api.github.com/repos/sp614x/optifine | closed | FPS decreasing over time with shaders/in F5/in inventory | 1.16 details needed performance shaders | Without shaders I get 100fps easily, but when I put them on it goes from 70fps to 20fps in ~10 minutes. It used to work well.

Specs :

- Ryzen 7 2700

- 2x8gb ram 3200mhz

- RTX 2070

Temps are very good, I allocated 6-8gb of ram for mc. I use Forge 1.16.1 and the last optifine update | True | FPS decreasing over time with shaders/in F5/in inventory - Without shaders I get 100fps easily, but when I put them on it goes from 70fps to 20fps in ~10 minutes. It used to work well.

Specs :

- Ryzen 7 2700

- 2x8gb ram 3200mhz

- RTX 2070

Temps are very good, I allocated 6-8gb of ram for mc. I use Forge 1.16.1 and... | non_process | fps decreasing over time with shaders in in inventory without shaders i get easily but when i put them on it goes from to in minutes it used to work well specs ryzen ram rtx temps are very good i allocated of ram for mc i use forge and the last optifine update | 0 |

105,996 | 16,663,950,074 | IssuesEvent | 2021-06-06 20:50:28 | AlexRogalskiy/github-action-charts | https://api.github.com/repos/AlexRogalskiy/github-action-charts | opened | CVE-2020-28469 (Medium) detected in glob-parent-5.1.1.tgz | security vulnerability | ## CVE-2020-28469 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>glob-parent-5.1.1.tgz</b></p></summary>

<p>Extract the non-magic parent path from a glob string.</p>

<p>Library home... | True | CVE-2020-28469 (Medium) detected in glob-parent-5.1.1.tgz - ## CVE-2020-28469 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>glob-parent-5.1.1.tgz</b></p></summary>

<p>Extract the n... | non_process | cve medium detected in glob parent tgz cve medium severity vulnerability vulnerable library glob parent tgz extract the non magic parent path from a glob string library home page a href path to dependency file github action charts package json path to vulnerable libr... | 0 |

109,112 | 9,368,522,340 | IssuesEvent | 2019-04-03 08:54:15 | scality/metalk8s | https://api.github.com/repos/scality/metalk8s | closed | Conflict between control plane network IPs when running tests in CI single node env | bug ci tests | In the CI we run the tests, and especially we retry the bootstrap script to see if it's idempotent.

In the CI, in the single node env, we have two IPs on the worker:

1. The one from Openstack: 10.100.A.B

1. The one from the pod network we set: 10.233.C.D

In the CI, we define the control plane network as 10.0.... | 1.0 | Conflict between control plane network IPs when running tests in CI single node env - In the CI we run the tests, and especially we retry the bootstrap script to see if it's idempotent.

In the CI, in the single node env, we have two IPs on the worker:

1. The one from Openstack: 10.100.A.B

1. The one from the pod... | non_process | conflict between control plane network ips when running tests in ci single node env in the ci we run the tests and especially we retry the bootstrap script to see if it s idempotent in the ci in the single node env we have two ips on the worker the one from openstack a b the one from the pod ne... | 0 |

816 | 3,290,958,174 | IssuesEvent | 2015-10-30 04:35:29 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Make strict processing mode fail on error messages | enhancement P2 preprocess | Improve processing mode coverage to treat error messages as fatal errors. | 1.0 | Make strict processing mode fail on error messages - Improve processing mode coverage to treat error messages as fatal errors. | process | make strict processing mode fail on error messages improve processing mode coverage to treat error messages as fatal errors | 1 |

281,655 | 8,697,938,816 | IssuesEvent | 2018-12-04 21:45:09 | mplusmuseum/mplusmuseum-collections-explorer | https://api.github.com/repos/mplusmuseum/mplusmuseum-collections-explorer | closed | Inline stylized category text | priority-low question task | Add inline stylized text for categories.

Please see design.

I'm marking this as a low-priority for now until we get everything else done. The simple case of this would be s... | 1.0 | Inline stylized category text - Add inline stylized text for categories.

Please see design.

I'm marking this as a low-priority for now until we get everything else done. Th... | non_process | inline stylized category text add inline stylized text for categories please see design i m marking this as a low priority for now until we get everything else done the simple case of this would be simple but i worry that the edge cases of long text might turn this task into a more difficult one ... | 0 |

17,001 | 22,364,193,203 | IssuesEvent | 2022-06-16 00:59:22 | hashgraph/hedera-json-rpc-relay | https://api.github.com/repos/hashgraph/hedera-json-rpc-relay | opened | Add additional eth_getBlockByNumber acceptance tests | enhancement P2 process | ### Problem

The initial eth_getBlockByNumber coverage was scarce

### Solution

Add move coverage

- [ ] Enhance the already existing `getBlockByNumber` test by adding assertion of the returned object that it has `transactions` array **containing the full transaction** and not only the string of their hash (testing ... | 1.0 | Add additional eth_getBlockByNumber acceptance tests - ### Problem

The initial eth_getBlockByNumber coverage was scarce

### Solution

Add move coverage

- [ ] Enhance the already existing `getBlockByNumber` test by adding assertion of the returned object that it has `transactions` array **containing the full transa... | process | add additional eth getblockbynumber acceptance tests problem the initial eth getblockbynumber coverage was scarce solution add move coverage enhance the already existing getblockbynumber test by adding assertion of the returned object that it has transactions array containing the full transact... | 1 |

16,584 | 21,630,841,257 | IssuesEvent | 2022-05-05 09:32:26 | googleapis/google-cloud-dotnet | https://api.github.com/repos/googleapis/google-cloud-dotnet | closed | Docs release fails for IAM (alpha05) | type: process | Filing as process as it isn't a bug that will affect customers, beyond our ability to release.

The release build for #8423 is failing in the docs build with:

> [22-04-29 01:57:02.625]Warning:[ExtractMetadata](T:/src/github/google-cloud-dotnet/releasebuild/apis/Google.Cloud.Iam.V1/Google.Cloud.Iam.V1/Google.Cloud.... | 1.0 | Docs release fails for IAM (alpha05) - Filing as process as it isn't a bug that will affect customers, beyond our ability to release.

The release build for #8423 is failing in the docs build with:

> [22-04-29 01:57:02.625]Warning:[ExtractMetadata](T:/src/github/google-cloud-dotnet/releasebuild/apis/Google.Cloud.I... | process | docs release fails for iam filing as process as it isn t a bug that will affect customers beyond our ability to release the release build for is failing in the docs build with warning t src github google cloud dotnet releasebuild apis google cloud iam google cloud iam google cloud iam cspr... | 1 |

254,067 | 8,069,590,243 | IssuesEvent | 2018-08-06 06:38:42 | containous/traefik | https://api.github.com/repos/containous/traefik | closed | [acme.dnsChallenge] not working with gandiv5: No or incorrect TXT record | area/acme kind/bug/confirmed priority/P1 | <!--

DO NOT FILE ISSUES FOR GENERAL SUPPORT QUESTIONS.

The issue tracker is for reporting bugs and feature requests only.

For end-user related support questions, please refer to one of the following:

- Stack Overflow (using the "traefik" tag): https://stackoverflow.com/questions/tagged/traefik

- the Traefik co... | 1.0 | [acme.dnsChallenge] not working with gandiv5: No or incorrect TXT record - <!--

DO NOT FILE ISSUES FOR GENERAL SUPPORT QUESTIONS.

The issue tracker is for reporting bugs and feature requests only.

For end-user related support questions, please refer to one of the following:

- Stack Overflow (using the "traefi... | non_process | not working with no or incorrect txt record do not file issues for general support questions the issue tracker is for reporting bugs and feature requests only for end user related support questions please refer to one of the following stack overflow using the traefik tag the traefi... | 0 |

8,028 | 20,611,725,284 | IssuesEvent | 2022-03-07 09:18:48 | dfds/backstage | https://api.github.com/repos/dfds/backstage | closed | Crosplane - Retrospectively add the required role for enabling assuming role in AWS provider | Enhancement Architecture | - [x] Investigate how this is currently done

- [x] Complete #480

- [x] Find and replace the referenced infrastructure-modules version in all capability sub-folders of aws-account-manifests and update to the new infrastructure-modules version

- [x] Submit PR

- [ ] Merge PR | 1.0 | Crosplane - Retrospectively add the required role for enabling assuming role in AWS provider - - [x] Investigate how this is currently done

- [x] Complete #480

- [x] Find and replace the referenced infrastructure-modules version in all capability sub-folders of aws-account-manifests and update to the new infrastruct... | non_process | crosplane retrospectively add the required role for enabling assuming role in aws provider investigate how this is currently done complete find and replace the referenced infrastructure modules version in all capability sub folders of aws account manifests and update to the new infrastructure modu... | 0 |

94,072 | 15,962,327,718 | IssuesEvent | 2021-04-16 01:03:49 | RG4421/terra-dev-site | https://api.github.com/repos/RG4421/terra-dev-site | opened | CVE-2021-23362 (Medium) detected in hosted-git-info-2.8.8.tgz | security vulnerability | ## CVE-2021-23362 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hosted-git-info-2.8.8.tgz</b></p></summary>

<p>Provides metadata and conversions from repository urls for Github, Bi... | True | CVE-2021-23362 (Medium) detected in hosted-git-info-2.8.8.tgz - ## CVE-2021-23362 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hosted-git-info-2.8.8.tgz</b></p></summary>

<p>Provi... | non_process | cve medium detected in hosted git info tgz cve medium severity vulnerability vulnerable library hosted git info tgz provides metadata and conversions from repository urls for github bitbucket and gitlab library home page a href path to dependency file terra dev site pa... | 0 |

2,236 | 5,088,589,846 | IssuesEvent | 2016-12-31 22:51:59 | sw4j-org/tool-jpa-processor | https://api.github.com/repos/sw4j-org/tool-jpa-processor | opened | Handle @ForeignKey Annotation | annotation processor task | Handle the `@ForeignKey` annotation.

See [JSR 338: Java Persistence API, Version 2.1](http://download.oracle.com/otn-pub/jcp/persistence-2_1-fr-eval-spec/JavaPersistence.pdf)

- 11.1.19 Foreign Key Annotation

| 1.0 | Handle @ForeignKey Annotation - Handle the `@ForeignKey` annotation.

See [JSR 338: Java Persistence API, Version 2.1](http://download.oracle.com/otn-pub/jcp/persistence-2_1-fr-eval-spec/JavaPersistence.pdf)

- 11.1.19 Foreign Key Annotation

| process | handle foreignkey annotation handle the foreignkey annotation see foreign key annotation | 1 |

54,990 | 13,943,655,069 | IssuesEvent | 2020-10-22 23:42:37 | mlmcd/WebGoat | https://api.github.com/repos/mlmcd/WebGoat | opened | CVE-2019-10747 (High) detected in set-value-2.0.0.tgz, set-value-0.4.3.tgz | security vulnerability | ## CVE-2019-10747 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>set-value-2.0.0.tgz</b>, <b>set-value-0.4.3.tgz</b></p></summary>

<p>

<details><summary><b>set-value-2.0.0.tgz</b></... | True | CVE-2019-10747 (High) detected in set-value-2.0.0.tgz, set-value-0.4.3.tgz - ## CVE-2019-10747 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>set-value-2.0.0.tgz</b>, <b>set-value-0.... | non_process | cve high detected in set value tgz set value tgz cve high severity vulnerability vulnerable libraries set value tgz set value tgz set value tgz create nested values and any intermediaries using dot notation a b c paths library home page ... | 0 |

15,080 | 18,785,546,567 | IssuesEvent | 2021-11-08 11:44:14 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Drop experimental_multi_threaded_digest | type: process untriaged team-Local-Exec | This options defaults to true since a while and should just be removed, likely with the whole `SsdModule`. | 1.0 | Drop experimental_multi_threaded_digest - This options defaults to true since a while and should just be removed, likely with the whole `SsdModule`. | process | drop experimental multi threaded digest this options defaults to true since a while and should just be removed likely with the whole ssdmodule | 1 |

22,171 | 30,721,409,378 | IssuesEvent | 2023-07-27 16:11:08 | SpikeInterface/spikeinterface | https://api.github.com/repos/SpikeInterface/spikeinterface | closed | Questions about the modes "median"/"average" in `remove_artifacts` | question preprocessing | Hi!

I am trying to explore the functionality of [`remove_artifacts`](https://github.com/SpikeInterface/spikeinterface/blob/main/src/spikeinterface/preprocessing/remove_artifacts.py) with mode "median" or "average" and have a few questions:

(1) Just to confirm if my understanding is correct or not: The input argum... | 1.0 | Questions about the modes "median"/"average" in `remove_artifacts` - Hi!

I am trying to explore the functionality of [`remove_artifacts`](https://github.com/SpikeInterface/spikeinterface/blob/main/src/spikeinterface/preprocessing/remove_artifacts.py) with mode "median" or "average" and have a few questions:

(1) J... | process | questions about the modes median average in remove artifacts hi i am trying to explore the functionality of with mode median or average and have a few questions just to confirm if my understanding is correct or not the input argument artifacts gives a set of templates that suggest what ea... | 1 |

155,414 | 24,463,073,836 | IssuesEvent | 2022-10-07 12:54:50 | dccabs/housecallmd | https://api.github.com/repos/dccabs/housecallmd | closed | Contact Us - Redesign with tailwind ui | design upgrade | Please replace the current contact us page with tailwind ui

https://www.housecallmd.org/contact

- Please replace with this component - https://tailwindui.com/components/marketing/sections/contact-sections#component-dec976a631662b509173ba8b848c49cd

- Make sure form still works

- Show form is submitting indicato... | 1.0 | Contact Us - Redesign with tailwind ui - Please replace the current contact us page with tailwind ui

https://www.housecallmd.org/contact

- Please replace with this component - https://tailwindui.com/components/marketing/sections/contact-sections#component-dec976a631662b509173ba8b848c49cd

- Make sure form still w... | non_process | contact us redesign with tailwind ui please replace the current contact us page with tailwind ui please replace with this component make sure form still works show form is submitting indicator please show modal that confirms a message was sent please include some type of error handling ... | 0 |

68,890 | 7,113,054,759 | IssuesEvent | 2018-01-17 19:07:03 | GalaxyTrail/GFR_bugs | https://api.github.com/repos/GalaxyTrail/GFR_bugs | closed | 1-2 eggplant statue house - enemy once got stuck in floor | bug maybe fixed - needs more testing | Submitter: Patrick Murphy

Email: italiangamer97@gmail.com

In the house of eggplant statues in Course 1-2, I noticed that an enemy managed to get stuck in the floor. Unsure of how I triggered it, but I was able to get the enemy out of the floor and defeat it. Perhaps I did something with one of the statues there? | 1.0 | 1-2 eggplant statue house - enemy once got stuck in floor - Submitter: Patrick Murphy

Email: italiangamer97@gmail.com

In the house of eggplant statues in Course 1-2, I noticed that an enemy managed to get stuck in the floor. Unsure of how I triggered it, but I was able to get the enemy out of the floor and defeat it.... | non_process | eggplant statue house enemy once got stuck in floor submitter patrick murphy email gmail com in the house of eggplant statues in course i noticed that an enemy managed to get stuck in the floor unsure of how i triggered it but i was able to get the enemy out of the floor and defeat it perhaps i di... | 0 |

559,706 | 16,569,422,307 | IssuesEvent | 2021-05-30 04:40:41 | gitextensions/gitextensions | https://api.github.com/repos/gitextensions/gitextensions | closed | GE hangs when viewing submodules in diff viewer | :beetle: type: bug :construction: status: in progress :grey_exclamation: priority: high | ## Current behaviour

Regression in master in the last days.

If a submodule is selected in worktree (at least - seem OK for HEAD though), GE hangs and stops responding. Status is never changed from the default. Mouse just spinning.

## Expected behaviour

Submodule is displayed.

## Steps to reproduce

Se... | 1.0 | GE hangs when viewing submodules in diff viewer - ## Current behaviour

Regression in master in the last days.

If a submodule is selected in worktree (at least - seem OK for HEAD though), GE hangs and stops responding. Status is never changed from the default. Mouse just spinning.

## Expected behaviour

Subm... | non_process | ge hangs when viewing submodules in diff viewer current behaviour regression in master in the last days if a submodule is selected in worktree at least seem ok for head though ge hangs and stops responding status is never changed from the default mouse just spinning expected behaviour subm... | 0 |

657,658 | 21,799,590,185 | IssuesEvent | 2022-05-16 02:30:09 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Add setting to toggle visibility of Bookmark button in the URL bar | priority/P4 feature/settings OS/Desktop | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | Add setting to toggle visibility of Bookmark button in the URL bar - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE... | non_process | add setting to toggle visibility of bookmark button in the url bar have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get the... | 0 |

731 | 3,214,309,873 | IssuesEvent | 2015-10-07 00:42:40 | broadinstitute/hellbender | https://api.github.com/repos/broadinstitute/hellbender | closed | Add mechanism to generate unique ids for data types (reads, variants, etc.) | Dataflow DataflowPreprocessingPipeline | Needed for GroupByKey, since Java serialization is not deterministic. Initial idea is to create IDs based on the source of each record (eg., URI + file offset or record number). | 1.0 | Add mechanism to generate unique ids for data types (reads, variants, etc.) - Needed for GroupByKey, since Java serialization is not deterministic. Initial idea is to create IDs based on the source of each record (eg., URI + file offset or record number). | process | add mechanism to generate unique ids for data types reads variants etc needed for groupbykey since java serialization is not deterministic initial idea is to create ids based on the source of each record eg uri file offset or record number | 1 |

28,127 | 11,590,028,152 | IssuesEvent | 2020-02-24 05:03:37 | aksiksi/vaulty | https://api.github.com/repos/aksiksi/vaulty | closed | Add support for address whitelisting | security vaulty-mail | Allow users to specify a list of whitelisted sender addresses a Vaulty address under their control.

In addition, it might make sense to allow users to define a "secret word" that **must** be present in the email body or subject to accept the email. The word could be stripped from the email before getting pushed to ... | True | Add support for address whitelisting - Allow users to specify a list of whitelisted sender addresses a Vaulty address under their control.

In addition, it might make sense to allow users to define a "secret word" that **must** be present in the email body or subject to accept the email. The word could be stripped f... | non_process | add support for address whitelisting allow users to specify a list of whitelisted sender addresses a vaulty address under their control in addition it might make sense to allow users to define a secret word that must be present in the email body or subject to accept the email the word could be stripped f... | 0 |

6,410 | 9,488,866,277 | IssuesEvent | 2019-04-22 20:45:24 | material-components/material-components-ios | https://api.github.com/repos/material-components/material-components-ios | closed | [AppBar] Mark AppBar theming extension ready | [AppBar] type:Process | This was filed as an internal issue. If you are a Googler, please visit [b/130716217](http://b/130716217) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/130716217](http://b/130716217) | 1.0 | [AppBar] Mark AppBar theming extension ready - This was filed as an internal issue. If you are a Googler, please visit [b/130716217](http://b/130716217) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/130716217](http://b/130716217) | process | mark appbar theming extension ready this was filed as an internal issue if you are a googler please visit for more details internal data associated internal bug | 1 |

8,987 | 12,100,407,087 | IssuesEvent | 2020-04-20 13:45:31 | ComposableWeb/poolbase | https://api.github.com/repos/ComposableWeb/poolbase | opened | [💥FEAT] derive color palette from page screenshot | enhancement epic: processing | **Feature request? Please describe.**

For frontend display of pages: Derive colors from screenshot using AI

**Acceptance Criteria - Describe the solution you'd like**

A clear and concise description of what you want to happen in bullet points:

* ...

**Related issues**

#1

And any other context or screenshots ... | 1.0 | [💥FEAT] derive color palette from page screenshot - **Feature request? Please describe.**

For frontend display of pages: Derive colors from screenshot using AI

**Acceptance Criteria - Describe the solution you'd like**

A clear and concise description of what you want to happen in bullet points:

* ...

**Relate... | process | derive color palette from page screenshot feature request please describe for frontend display of pages derive colors from screenshot using ai acceptance criteria describe the solution you d like a clear and concise description of what you want to happen in bullet points related issue... | 1 |

67,843 | 7,065,242,262 | IssuesEvent | 2018-01-06 17:38:28 | pandas-dev/pandas | https://api.github.com/repos/pandas-dev/pandas | closed | `(x + 1) % 1` inside test_multi.py | MultiIndex Testing | #### Code Sample, a copy-pastable example if possible

https://github.com/pandas-dev/pandas/blob/93033151a8d8aaa650a81df9f41347758bf6c393/pandas/tests/indexes/test_multi.py#L170-L171

#### Problem description

I assume this is supposed to shift the labels cyclically by one. Then `% 4` and `% 2` should be used. Ot... | 1.0 | `(x + 1) % 1` inside test_multi.py - #### Code Sample, a copy-pastable example if possible

https://github.com/pandas-dev/pandas/blob/93033151a8d8aaa650a81df9f41347758bf6c393/pandas/tests/indexes/test_multi.py#L170-L171

#### Problem description

I assume this is supposed to shift the labels cyclically by one. Th... | non_process | x inside test multi py code sample a copy pastable example if possible problem description i assume this is supposed to shift the labels cyclically by one then and should be used otherwise its at least weird to write x instead of | 0 |

3,921 | 6,843,043,013 | IssuesEvent | 2017-11-12 10:48:24 | pwittchen/ReactiveNetwork | https://api.github.com/repos/pwittchen/ReactiveNetwork | opened | RxJava1.x: release 0.12.2 | release process | **Initial realese notes**:

- updated project dependencies

- updated Gradle to 3.0.0

**Things to do**:

- [ ] RxJava1.x branch:

- [ ] bump library version

- [ ] upload archives to Maven Central

- [ ] close and release artifact on Maven Central

- [ ] update `CHANGELOG.md` after Maven Sync

- [ ] bump... | 1.0 | RxJava1.x: release 0.12.2 - **Initial realese notes**:

- updated project dependencies

- updated Gradle to 3.0.0

**Things to do**:

- [ ] RxJava1.x branch:

- [ ] bump library version

- [ ] upload archives to Maven Central

- [ ] close and release artifact on Maven Central

- [ ] update `CHANGELOG.md` af... | process | x release initial realese notes updated project dependencies updated gradle to things to do x branch bump library version upload archives to maven central close and release artifact on maven central update changelog md after maven sync b... | 1 |

103,843 | 11,383,025,622 | IssuesEvent | 2020-01-29 04:20:55 | car12lin12/InstaBaddies | https://api.github.com/repos/car12lin12/InstaBaddies | opened | Update readme to clarify goals of the project | Documentation :memo: | Update the readme to show the description / objective / core features of the project.

Core features :

- upload a picture

- follow a user

- receive notifications from that user if they upload a new picture

- comment on a picture | 1.0 | Update readme to clarify goals of the project - Update the readme to show the description / objective / core features of the project.

Core features :

- upload a picture

- follow a user

- receive notifications from that user if they upload a new picture

- comment on a picture | non_process | update readme to clarify goals of the project update the readme to show the description objective core features of the project core features upload a picture follow a user receive notifications from that user if they upload a new picture comment on a picture | 0 |

238,054 | 19,694,490,725 | IssuesEvent | 2022-01-12 10:40:01 | dotnet/sdk | https://api.github.com/repos/dotnet/sdk | closed | With .NET 7 x86 SDK, Testhost process exited with error: It was not possible to find any compatible framework version | Area-DotNet Test untriaged | **--Repro Steps---**

1. Install .NET 7 x86 SDK(https://github.com/dotnet/installer) on Win x64 OS

2. Create a UT project and run it

**--Expected Result --**

1. dotnet test works fine

**--Actual Result--**

1. Testhost process exited with error: It was not possible to find any compatible framework version

The ... | 1.0 | With .NET 7 x86 SDK, Testhost process exited with error: It was not possible to find any compatible framework version - **--Repro Steps---**

1. Install .NET 7 x86 SDK(https://github.com/dotnet/installer) on Win x64 OS

2. Create a UT project and run it

**--Expected Result --**

1. dotnet test works fine

**--Actu... | non_process | with net sdk testhost process exited with error it was not possible to find any compatible framework version repro steps install net sdk on win os create a ut project and run it expected result dotnet test works fine actual result testhost process exited... | 0 |

16,951 | 22,306,072,081 | IssuesEvent | 2022-06-13 13:09:39 | deepset-ai/haystack | https://api.github.com/repos/deepset-ai/haystack | closed | Crawler: can it handle website with contents dynamically loaded by JavaScript? | type:feature good first issue Contributions wanted! good second issue topic:preprocessing journey:intermediate | **Question**

Per captioned, can crawler handle website with contents dynamically loaded by pressing an "expand" button?

**Additional context**

In today's website designs a lot of them display content dynamically through Javascript. On inspecting Page Source they will be shown as loading in on client side. Some ... | 1.0 | Crawler: can it handle website with contents dynamically loaded by JavaScript? - **Question**

Per captioned, can crawler handle website with contents dynamically loaded by pressing an "expand" button?

**Additional context**

In today's website designs a lot of them display content dynamically through Javascript. ... | process | crawler can it handle website with contents dynamically loaded by javascript question per captioned can crawler handle website with contents dynamically loaded by pressing an expand button additional context in today s website designs a lot of them display content dynamically through javascript ... | 1 |

11,179 | 13,957,695,350 | IssuesEvent | 2020-10-24 08:11:31 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | PT: Harvesting | Geoportal Harvesting process PT - Portugal | Geoportal team,

Can you please start a harvesting do the Portuguese catalogue?

Thank you! | 1.0 | PT: Harvesting - Geoportal team,

Can you please start a harvesting do the Portuguese catalogue?

Thank you! | process | pt harvesting geoportal team can you please start a harvesting do the portuguese catalogue thank you | 1 |

8,761 | 11,880,979,031 | IssuesEvent | 2020-03-27 11:45:07 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Test Failed: Process terminated. Assertion failed. "!_fileHandle.IsClosed" | area-System.Diagnostics.Process os-windows test bug test-corefx | **Detail:**

https://helix.dot.net/api/2019-06-17/jobs/0c15185a-2331-4dcf-978c-cd7c4a4b3189/workitems/System.Diagnostics.Process.Tests/console

**Log:**

```

C:\dotnetbuild\work\0c15185a-2331-4dcf-978c-cd7c4a4b3189\Work\2eeb62f3-774b-43f1-94ac-c844f873d662\Exec>"C:\dotnetbuild\work\0c15185a-2331-4dcf-978c-cd7c4a4b31... | 1.0 | Test Failed: Process terminated. Assertion failed. "!_fileHandle.IsClosed" - **Detail:**

https://helix.dot.net/api/2019-06-17/jobs/0c15185a-2331-4dcf-978c-cd7c4a4b3189/workitems/System.Diagnostics.Process.Tests/console

**Log:**

```

C:\dotnetbuild\work\0c15185a-2331-4dcf-978c-cd7c4a4b3189\Work\2eeb62f3-774b-43f1-9... | process | test failed process terminated assertion failed filehandle isclosed detail log c dotnetbuild work work exec c dotnetbuild work payload dotnet exe exec runtimeconfig system diagnostics process tests runtimeconfig json xunit console dll system diagnostics... | 1 |

10,234 | 13,096,025,883 | IssuesEvent | 2020-08-03 15:02:04 | ZbayApp/zbay | https://api.github.com/repos/ZbayApp/zbay | closed | Automate Windows deploy process | dev process | Automate Windows build, signing with cert if possible. Look into #148 first. | 1.0 | Automate Windows deploy process - Automate Windows build, signing with cert if possible. Look into #148 first. | process | automate windows deploy process automate windows build signing with cert if possible look into first | 1 |

241,155 | 26,256,663,675 | IssuesEvent | 2023-01-06 01:46:12 | EmpoHQ/empo.im | https://api.github.com/repos/EmpoHQ/empo.im | opened | CVE-2022-24999 (High) detected in qs-6.10.1.tgz | security vulnerability | ## CVE-2022-24999 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>qs-6.10.1.tgz</b></p></summary>

<p>A querystring parser that supports nesting and arrays, with a depth limit</p>

<p>Li... | True | CVE-2022-24999 (High) detected in qs-6.10.1.tgz - ## CVE-2022-24999 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>qs-6.10.1.tgz</b></p></summary>

<p>A querystring parser that support... | non_process | cve high detected in qs tgz cve high severity vulnerability vulnerable library qs tgz a querystring parser that supports nesting and arrays with a depth limit library home page a href path to dependency file package json path to vulnerable library node modules qs ... | 0 |

315,663 | 23,590,886,821 | IssuesEvent | 2022-08-23 15:05:59 | arturo-lang/arturo | https://api.github.com/repos/arturo-lang/arturo | closed | [Reflection\complex?] add documentation example | documentation library todo easy | [Reflection\complex?] add documentation example

https://github.com/arturo-lang/arturo/blob/3a89302d1b1f33da097ed6e7bd6dfb05fc338482/src/library/Reflection.nim#L292

```text

builtin "color?",

alias = unaliased,

rule = PrefixPrecedence,

description = "checks if given value is o... | 1.0 | [Reflection\complex?] add documentation example - [Reflection\complex?] add documentation example

https://github.com/arturo-lang/arturo/blob/3a89302d1b1f33da097ed6e7bd6dfb05fc338482/src/library/Reflection.nim#L292

```text

builtin "color?",

alias = unaliased,

rule = PrefixPrecedence,... | non_process | add documentation example add documentation example text builtin color alias unaliased rule prefixprecedence description checks if given value is of type color args value any attrs noa... | 0 |

10,186 | 13,044,162,864 | IssuesEvent | 2020-07-29 03:47:37 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `RoundWithFracReal` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `RoundWithFracReal` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @mapleFU

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/r... | 2.0 | UCP: Migrate scalar function `RoundWithFracReal` from TiDB -

## Description

Port the scalar function `RoundWithFracReal` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @mapleFU

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https:/... | process | ucp migrate scalar function roundwithfracreal from tidb description port the scalar function roundwithfracreal from tidb to coprocessor score mentor s maplefu recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

207,244 | 7,126,399,097 | IssuesEvent | 2018-01-20 09:45:55 | wordpress-mobile/AztecEditor-Android | https://api.github.com/repos/wordpress-mobile/AztecEditor-Android | reopened | Repeating characters in title | bug high priority | ### Expected

If I type "ABCD" in the title box, the title should say "ABCD"

### Observed

I type A, it says A. I type B, the title becomes "AAB". I type C, I get "AABABC", etc.

You can see a video and screenshot of the issue in action here:

https://cloudup.com/cE18S9bfbWY

Also, note if I add a space, it rese... | 1.0 | Repeating characters in title - ### Expected

If I type "ABCD" in the title box, the title should say "ABCD"

### Observed

I type A, it says A. I type B, the title becomes "AAB". I type C, I get "AABABC", etc.

You can see a video and screenshot of the issue in action here:

https://cloudup.com/cE18S9bfbWY

Also... | non_process | repeating characters in title expected if i type abcd in the title box the title should say abcd observed i type a it says a i type b the title becomes aab i type c i get aababc etc you can see a video and screenshot of the issue in action here also note if i add a space it re... | 0 |

16,821 | 22,060,943,988 | IssuesEvent | 2022-05-30 17:44:09 | bitPogo/kmock | https://api.github.com/repos/bitPogo/kmock | closed | Relaxation fails for Generics | bug kmock-processor | ## Description

<!--- Provide a detailed introduction to the issue itself, and why you consider it to be a bug -->

Currently Relaxation for Interfaces with generics causes an Compiler Error.

This only applies for inline functions. (The need additional arguments and cannot be inline in this case) | 1.0 | Relaxation fails for Generics - ## Description

<!--- Provide a detailed introduction to the issue itself, and why you consider it to be a bug -->

Currently Relaxation for Interfaces with generics causes an Compiler Error.

This only applies for inline functions. (The need additional arguments and cannot be inline in ... | process | relaxation fails for generics description currently relaxation for interfaces with generics causes an compiler error this only applies for inline functions the need additional arguments and cannot be inline in this case | 1 |

345,270 | 30,794,983,040 | IssuesEvent | 2023-07-31 19:07:10 | boltlabs-inc/tss-ecdsa | https://api.github.com/repos/boltlabs-inc/tss-ecdsa | closed | Write tests for PiSchProof | tests | A `PiSchProof` has two elements and must satisfy one constraint. Some ideas for tests:

- Choose bad secret input `x` (not the dlog of `X`)

- Swap the commitment `A` for a random element

- Swap the response `z` for a random element

- Try bad common input, e.g. where `g` is not a generator of `G`

- Try verifying with di... | 1.0 | Write tests for PiSchProof - A `PiSchProof` has two elements and must satisfy one constraint. Some ideas for tests:

- Choose bad secret input `x` (not the dlog of `X`)

- Swap the commitment `A` for a random element

- Swap the response `z` for a random element

- Try bad common input, e.g. where `g` is not a generator o... | non_process | write tests for pischproof a pischproof has two elements and must satisfy one constraint some ideas for tests choose bad secret input x not the dlog of x swap the commitment a for a random element swap the response z for a random element try bad common input e g where g is not a generator o... | 0 |

57,650 | 7,087,363,575 | IssuesEvent | 2018-01-11 17:33:05 | Nersent/wexond | https://api.github.com/repos/Nersent/wexond | closed | What should be in a new tab page? | design question | What should be in a new tab page? Please give me your suggestions below. I'm wondering if add bookmarks cards or recently visited websites or something different. | 1.0 | What should be in a new tab page? - What should be in a new tab page? Please give me your suggestions below. I'm wondering if add bookmarks cards or recently visited websites or something different. | non_process | what should be in a new tab page what should be in a new tab page please give me your suggestions below i m wondering if add bookmarks cards or recently visited websites or something different | 0 |

317,094 | 23,663,707,592 | IssuesEvent | 2022-08-26 18:18:30 | dockstore/dockstore | https://api.github.com/repos/dockstore/dockstore | closed | Remove 1.13 stuff in documentation stable branch | bug documentation | **Describe the bug**

On our documentation repo, develop got merged/rebased/cherrypicked into the hotfix/1.12.2 branch. There's 99% chance I'm the guy who did this, so I'm going to be the guy who fixes it. The rebase/merge/whatever doesn't break anything, but it does include a few small details about the next release (s... | 1.0 | Remove 1.13 stuff in documentation stable branch - **Describe the bug**

On our documentation repo, develop got merged/rebased/cherrypicked into the hotfix/1.12.2 branch. There's 99% chance I'm the guy who did this, so I'm going to be the guy who fixes it. The rebase/merge/whatever doesn't break anything, but it does in... | non_process | remove stuff in documentation stable branch describe the bug on our documentation repo develop got merged rebased cherrypicked into the hotfix branch there s chance i m the guy who did this so i m going to be the guy who fixes it the rebase merge whatever doesn t break anything but it does inclu... | 0 |

20,148 | 26,695,474,296 | IssuesEvent | 2023-01-27 09:59:22 | ppy/osu-web | https://api.github.com/repos/ppy/osu-web | closed | Error downloading old beatmap | area:beatmap-processing | This map is unable to download: https://osu.ppy.sh/beatmapsets/13758#osu/50686

If you try download from beatmap page, it returns 500 error.

But if you try doing it form beatmap search page it returns this:

```

Warning: sprintf(): Too few arguments in /var/www/html/S3.php on line 742

Warning: Cannot modify he... | 1.0 | Error downloading old beatmap - This map is unable to download: https://osu.ppy.sh/beatmapsets/13758#osu/50686

If you try download from beatmap page, it returns 500 error.

But if you try doing it form beatmap search page it returns this:

```

Warning: sprintf(): Too few arguments in /var/www/html/S3.php on line ... | process | error downloading old beatmap this map is unable to download if you try download from beatmap page it returns error but if you try doing it form beatmap search page it returns this warning sprintf too few arguments in var www html php on line warning cannot modify header information ... | 1 |

79,228 | 15,166,238,917 | IssuesEvent | 2021-02-12 16:06:09 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | closed | Fix pings for lsif WAUs | team/code-intelligence | We currently aren't getting code intelligence WAUs.

<img width="1482" alt="Screenshot 2021-02-02 at 16 38 10" src="https://user-images.githubusercontent.com/1657213/106653045-99840d00-6596-11eb-97a7-8562c1feb4ab.png">

| 1.0 | Fix pings for lsif WAUs - We currently aren't getting code intelligence WAUs.

<img width="1482" alt="Screenshot 2021-02-02 at 16 38 10" src="https://user-images.githubusercontent.com/1657213/106653045-99840d00-6596-11eb-97a7-8562c1feb4ab.png">

| non_process | fix pings for lsif waus we currently aren t getting code intelligence waus img width alt screenshot at src | 0 |

416,243 | 28,074,149,565 | IssuesEvent | 2023-03-29 21:35:03 | sujuka99/dotfiles | https://api.github.com/repos/sujuka99/dotfiles | reopened | Document how to use repo | documentation dotfiles | Document:

- [x] Clone repo

- [x] Configure git for bare repository

- [ ] List requirements | 1.0 | Document how to use repo - Document:

- [x] Clone repo

- [x] Configure git for bare repository

- [ ] List requirements | non_process | document how to use repo document clone repo configure git for bare repository list requirements | 0 |

682,863 | 23,360,272,544 | IssuesEvent | 2022-08-10 11:03:33 | microsoft/fluentui | https://api.github.com/repos/microsoft/fluentui | opened | [Feature]: Fluent React v9 Preview Banner 🎉 | Type: Feature Priority 1: High Area: Website Partner Ask | ### Library

React Components / v9 (@fluentui/react-components)

### Describe the feature that you would like added

In mid to late August the Microsite is planned to go live to the public. To let our customers know of the new site we would like to have a top banner added to the existing [Fluent Design System](https://... | 1.0 | [Feature]: Fluent React v9 Preview Banner 🎉 - ### Library

React Components / v9 (@fluentui/react-components)

### Describe the feature that you would like added

In mid to late August the Microsite is planned to go live to the public. To let our customers know of the new site we would like to have a top banner added ... | non_process | fluent react preview banner 🎉 library react components fluentui react components describe the feature that you would like added in mid to late august the microsite is planned to go live to the public to let our customers know of the new site we would like to have a top banner added to the exi... | 0 |

654,114 | 21,638,101,550 | IssuesEvent | 2022-05-05 15:55:14 | internetarchive/openlibrary | https://api.github.com/repos/internetarchive/openlibrary | opened | Enriching Reading Log data exports (more fields) | Theme: Reading Log export Module: Data dumps Priority: 2 Affects: Data Lead: @mekarpeles | * Currently Reading Log dumps are limited to IDs because large Reading Logs (500+ items) can cause performance issues aggregating results in memory and and serving as large files. Currently, Lists impose an export limit if there are more than ~1k items

* The manual work around is to look over each Reading Log entry (e... | 1.0 | Enriching Reading Log data exports (more fields) - * Currently Reading Log dumps are limited to IDs because large Reading Logs (500+ items) can cause performance issues aggregating results in memory and and serving as large files. Currently, Lists impose an export limit if there are more than ~1k items

* The manual wo... | non_process | enriching reading log data exports more fields currently reading log dumps are limited to ids because large reading logs items can cause performance issues aggregating results in memory and and serving as large files currently lists impose an export limit if there are more than items the manual work ... | 0 |

10,482 | 13,252,913,227 | IssuesEvent | 2020-08-20 06:33:10 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | Deadline check in table / index scan executor | sig/coprocessor status/discussion | ## Feature Request

Currently we check deadline in DAG next(). However there are many scenarios that this doesn't work, i.e. there is a selection executor that filtered a lot of lines, or an aggregation executor that emits a row only after collecting all rows.

By checking deadline in the innermost executor, i.e. t... | 1.0 | Deadline check in table / index scan executor - ## Feature Request

Currently we check deadline in DAG next(). However there are many scenarios that this doesn't work, i.e. there is a selection executor that filtered a lot of lines, or an aggregation executor that emits a row only after collecting all rows.

By che... | process | deadline check in table index scan executor feature request currently we check deadline in dag next however there are many scenarios that this doesn t work i e there is a selection executor that filtered a lot of lines or an aggregation executor that emits a row only after collecting all rows by che... | 1 |

6,835 | 9,978,102,343 | IssuesEvent | 2019-07-09 18:59:30 | TileDB-Inc/TileDB | https://api.github.com/repos/TileDB-Inc/TileDB | closed | Lock release with multiple contexts | bug process safety vfs | The following program demonstrates a failure to release the lockfile when a local URI is opened from multiple contexts.

<details>

```

import tiledb

import numpy as np

import shutil, os

if os.path.isdir("C:/tmp/foo"):

shutil.rmtree("C:/tmp/foo")

ctx = tiledb.Ctx()

ctx2= tiledb.Ctx()

dom = tiledb.... | 1.0 | Lock release with multiple contexts - The following program demonstrates a failure to release the lockfile when a local URI is opened from multiple contexts.

<details>

```

import tiledb

import numpy as np

import shutil, os

if os.path.isdir("C:/tmp/foo"):

shutil.rmtree("C:/tmp/foo")

ctx = tiledb.Ctx(... | process | lock release with multiple contexts the following program demonstrates a failure to release the lockfile when a local uri is opened from multiple contexts import tiledb import numpy as np import shutil os if os path isdir c tmp foo shutil rmtree c tmp foo ctx tiledb ctx ti... | 1 |

99,060 | 30,268,069,549 | IssuesEvent | 2023-07-07 13:23:20 | cms-sw/cmssw | https://api.github.com/repos/cms-sw/cmssw | closed | Build CMSSW_13_0_10 | release-notes-requested release-announced release-build-request slc7_amd64_gcc11-finished el8_amd64_gcc11-finished el8_aarch64_gcc11-finished el8_ppc64le_gcc11-finished el9_amd64_gcc11-finished | To start the MC production campaign for Run3 2023

The build will go in parallel with the IB tests in CMSSW_13_0_X_2023-07-05-1100, to speed up the procedure: the release will get uploaded only if those tests show no issues. | 1.0 | Build CMSSW_13_0_10 - To start the MC production campaign for Run3 2023

The build will go in parallel with the IB tests in CMSSW_13_0_X_2023-07-05-1100, to speed up the procedure: the release will get uploaded only if those tests show no issues. | non_process | build cmssw to start the mc production campaign for the build will go in parallel with the ib tests in cmssw x to speed up the procedure the release will get uploaded only if those tests show no issues | 0 |

16,624 | 21,678,126,955 | IssuesEvent | 2022-05-09 01:23:15 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add Super in America | suggested title in process | Please add as much of the following info as you can:

Title: Super in America

Type (film/tv show): reality TV show

Film or show in which it appears: The Boys

Is the parent film/show streaming anywhere? Yes - Amazon Prime

About when in the parent film/show does it appear? Throughout ep. 1x06; "The Innocent... | 1.0 | Add Super in America - Please add as much of the following info as you can:

Title: Super in America

Type (film/tv show): reality TV show

Film or show in which it appears: The Boys

Is the parent film/show streaming anywhere? Yes - Amazon Prime

About when in the parent film/show does it appear? Throughout ... | process | add super in america please add as much of the following info as you can title super in america type film tv show reality tv show film or show in which it appears the boys is the parent film show streaming anywhere yes amazon prime about when in the parent film show does it appear throughout ... | 1 |

64,212 | 18,279,840,558 | IssuesEvent | 2021-10-05 00:47:17 | microsoft/STL | https://api.github.com/repos/microsoft/STL | opened | P2418R2 Add Support For `std::generator`-like Types To `std::format` | cxx20 format defect report | [P2418R2](https://wg21.link/P2418R2) Add Support For `std::generator`-like Types To `std::format`

This increases the value of `__cpp_lib_format`, also increased by [P2372R3](https://wg21.link/P2372R3) (see #2237). | 1.0 | P2418R2 Add Support For `std::generator`-like Types To `std::format` - [P2418R2](https://wg21.link/P2418R2) Add Support For `std::generator`-like Types To `std::format`

This increases the value of `__cpp_lib_format`, also increased by [P2372R3](https://wg21.link/P2372R3) (see #2237). | non_process | add support for std generator like types to std format add support for std generator like types to std format this increases the value of cpp lib format also increased by see | 0 |

348,012 | 31,392,433,350 | IssuesEvent | 2023-08-26 14:06:54 | void-linux/void-packages | https://api.github.com/repos/void-linux/void-packages | opened | `docker build` fails with "ERROR: http: invalid Host header" with docker-buildx-0.10.3_3 | bug needs-testing | ### Is this a new report?

Yes

### System Info

Void 6.3.13_1 x86_64 AuthenticAMD notuptodate DFFF

### Package(s) Affected

docker-buildx-0.10.3_3

### Does a report exist for this bug with the project's home (upstream) and/or another distro?

https://github.com/moby/moby/issues/45935

### Expected behaviour

work wi... | 1.0 | `docker build` fails with "ERROR: http: invalid Host header" with docker-buildx-0.10.3_3 - ### Is this a new report?

Yes

### System Info

Void 6.3.13_1 x86_64 AuthenticAMD notuptodate DFFF

### Package(s) Affected

docker-buildx-0.10.3_3

### Does a report exist for this bug with the project's home (upstream) and/or ... | non_process | docker build fails with error http invalid host header with docker buildx is this a new report yes system info void authenticamd notuptodate dfff package s affected docker buildx does a report exist for this bug with the project s home upstream and or anothe... | 0 |

1,561 | 2,645,217,029 | IssuesEvent | 2015-03-12 21:20:23 | editorconfig/editorconfig | https://api.github.com/repos/editorconfig/editorconfig | closed | Add support for existing `**` expansion and bash-like `**` expansion | core code core code (C) core code (python) feature request | Tests have been added for this in the [v0.11.0-development](https://github.com/editorconfig/editorconfig-core-test/tree/v0.11.0-development) branch.

In reference to editorconfig/editorconfig-core-js#1

<bountysource-plugin>

---

Want to back this issue? **[Post a bounty on it!](https://www.bountysource.com/issues... | 3.0 | Add support for existing `**` expansion and bash-like `**` expansion - Tests have been added for this in the [v0.11.0-development](https://github.com/editorconfig/editorconfig-core-test/tree/v0.11.0-development) branch.

In reference to editorconfig/editorconfig-core-js#1

<bountysource-plugin>

---

Want to back t... | non_process | add support for existing expansion and bash like expansion tests have been added for this in the branch in reference to editorconfig editorconfig core js want to back this issue we accept bounties via | 0 |

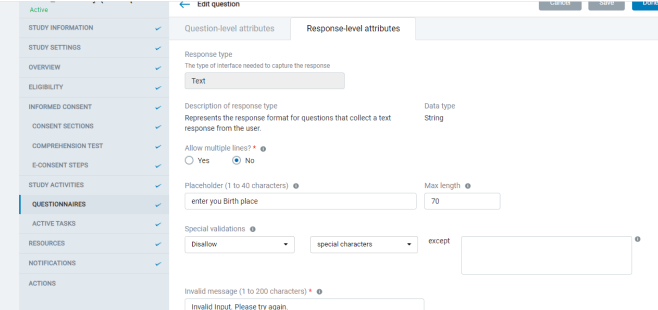

21,420 | 17,038,387,673 | IssuesEvent | 2021-07-05 10:07:18 | indico/indico | https://api.github.com/repos/indico/indico | closed | Timetable: add warning in case of draft mode | enhancement usability | We have a "Draft" switch in the list of contributions, which decides whether the Timetable is published or not.

This is at times confusing for users. One nice improvement would be to add a warning at the to... | True | Timetable: add warning in case of draft mode - We have a "Draft" switch in the list of contributions, which decides whether the Timetable is published or not.

This is at times confusing for users. One nice ... | non_process | timetable add warning in case of draft mode we have a draft switch in the list of contributions which decides whether the timetable is published or not this is at times confusing for users one nice improvement would be to add a warning at the top of the timetable management page something like th... | 0 |

76,667 | 15,496,164,254 | IssuesEvent | 2021-03-11 02:10:43 | jinuem/Shopping-Cart-POC | https://api.github.com/repos/jinuem/Shopping-Cart-POC | opened | CVE-2021-23337 (High) detected in lodash-4.17.11.tgz | security vulnerability | ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.11.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.... | True | CVE-2021-23337 (High) detected in lodash-4.17.11.tgz - ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.11.tgz</b></p></summary>

<p>Lodash modular utilitie... | non_process | cve high detected in lodash tgz cve high severity vulnerability vulnerable library lodash tgz lodash modular utilities library home page a href path to dependency file shopping cart poc rejsx package json path to vulnerable library shopping cart poc rejsx node modu... | 0 |