Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

81,484

| 30,875,180,005

|

IssuesEvent

|

2023-08-03 13:53:29

|

vector-im/element-web

|

https://api.github.com/repos/vector-im/element-web

|

opened

|

adding a new space just redisplays the add dialog

|

T-Defect

|

### Steps to reproduce

1. Viewing a space's room list, eg

2. Click the Add button

3. Click Add space

4. Fill out Name and Address fields (and optionally image and Description, same result)

5. Click Add button

### Outcome

#### What did you expect?

For this child space to be created and appear in the parent's room list

#### What happened instead?

The add dialog redisplays, showing no error.

### Operating system

macos ventura

### Application version

Element version: 1.11.37 Olm version: 3.2.14

### How did you install the app?

_No response_

### Homeserver

matrix.org

### Will you send logs?

Yes

|

1.0

|

adding a new space just redisplays the add dialog - ### Steps to reproduce

1. Viewing a space's room list, eg

2. Click the Add button

3. Click Add space

4. Fill out Name and Address fields (and optionally image and Description, same result)

5. Click Add button

### Outcome

#### What did you expect?

For this child space to be created and appear in the parent's room list

#### What happened instead?

The add dialog redisplays, showing no error.

### Operating system

macos ventura

### Application version

Element version: 1.11.37 Olm version: 3.2.14

### How did you install the app?

_No response_

### Homeserver

matrix.org

### Will you send logs?

Yes

|

non_process

|

adding a new space just redisplays the add dialog steps to reproduce viewing a space s room list eg click the add button click add space fill out name and address fields and optionally image and description same result click add button outcome what did you expect for this child space to be created and appear in the parent s room list what happened instead the add dialog redisplays showing no error operating system macos ventura application version element version olm version how did you install the app no response homeserver matrix org will you send logs yes

| 0

|

179,074

| 6,621,338,252

|

IssuesEvent

|

2017-09-21 18:47:01

|

coreos/bugs

|

https://api.github.com/repos/coreos/bugs

|

opened

|

Consider using the NOOP IO scheduler in virtualized environments

|

area/performance component/kernel kind/enhancement priority/P2 team/os

|

# Issue Report #

## Feature Request ##

### Environment ###

All virtualized environments.

### Desired Feature ###

Use `elevator=noop` to use the NOOP IO scheduler. This allows the hypervisor to make scheduling decisions instead. This may not be applicable to all environments, but it's at least [recommended on Hyper-V](https://docs.microsoft.com/en-us/windows-server/virtualization/hyper-v/Best-Practices-for-running-Linux-on-Hyper-V).

|

1.0

|

Consider using the NOOP IO scheduler in virtualized environments - # Issue Report #

## Feature Request ##

### Environment ###

All virtualized environments.

### Desired Feature ###

Use `elevator=noop` to use the NOOP IO scheduler. This allows the hypervisor to make scheduling decisions instead. This may not be applicable to all environments, but it's at least [recommended on Hyper-V](https://docs.microsoft.com/en-us/windows-server/virtualization/hyper-v/Best-Practices-for-running-Linux-on-Hyper-V).

|

non_process

|

consider using the noop io scheduler in virtualized environments issue report feature request environment all virtualized environments desired feature use elevator noop to use the noop io scheduler this allows the hypervisor to make scheduling decisions instead this may not be applicable to all environments but it s at least

| 0

|

21,949

| 30,451,926,309

|

IssuesEvent

|

2023-07-16 12:10:45

|

tokio-rs/tokio

|

https://api.github.com/repos/tokio-rs/tokio

|

closed

|

Make `Command::raw_arg` show up in docs

|

C-bug T-docs A-tokio M-process

|

This method was added in #5704, but it does not show up in the documentation because it is windows-only. We need to fix that.

|

1.0

|

Make `Command::raw_arg` show up in docs - This method was added in #5704, but it does not show up in the documentation because it is windows-only. We need to fix that.

|

process

|

make command raw arg show up in docs this method was added in but it does not show up in the documentation because it is windows only we need to fix that

| 1

|

9,539

| 12,507,169,878

|

IssuesEvent

|

2020-06-02 13:44:29

|

tikv/tikv

|

https://api.github.com/repos/tikv/tikv

|

closed

|

UCP: Migrate scalar function `MakeSet` from TiDB

|

challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor

|

## Description

Port the scalar function `MakeSet` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr)

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/expr)

|

2.0

|

UCP: Migrate scalar function `MakeSet` from TiDB -

## Description

Port the scalar function `MakeSet` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr)

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/expr)

|

process

|

ucp migrate scalar function makeset from tidb description port the scalar function makeset from tidb to coprocessor score mentor s breeswish recommended skills rust programming learning materials already implemented expressions ported from tidb

| 1

|

10,704

| 13,501,851,654

|

IssuesEvent

|

2020-09-13 04:58:54

|

amor71/LiuAlgoTrader

|

https://api.github.com/repos/amor71/LiuAlgoTrader

|

closed

|

expose trade & quote events to strategies

|

in-process

|

including imbalance calculations (which are already done..)

|

1.0

|

expose trade & quote events to strategies - including imbalance calculations (which are already done..)

|

process

|

expose trade quote events to strategies including imbalance calculations which are already done

| 1

|

11,973

| 14,737,017,004

|

IssuesEvent

|

2021-01-07 00:37:59

|

kdjstudios/SABillingGitlab

|

https://api.github.com/repos/kdjstudios/SABillingGitlab

|

closed

|

Keener - not able to run reports

|

anc-external anc-ops anc-process anc-report anp-important ant-bug ant-support

|

In GitLab by @kdjstudios on Apr 10, 2018, 08:09

**Submitted by:** Gaylan Garrett <gaylan@keenercom.net>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-04-10-62175/conversation

**Server:** Hosted

**Client/Site:** Keener

**Account:** NA

**Issue:**

I just tried to run the accounts receivables report and I received the error. We’re sorry, but something went wrong.

|

1.0

|

Keener - not able to run reports - In GitLab by @kdjstudios on Apr 10, 2018, 08:09

**Submitted by:** Gaylan Garrett <gaylan@keenercom.net>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-04-10-62175/conversation

**Server:** Hosted

**Client/Site:** Keener

**Account:** NA

**Issue:**

I just tried to run the accounts receivables report and I received the error. We’re sorry, but something went wrong.

|

process

|

keener not able to run reports in gitlab by kdjstudios on apr submitted by gaylan garrett helpdesk server hosted client site keener account na issue i just tried to run the accounts receivables report and i received the error we’re sorry but something went wrong

| 1

|

19,274

| 25,463,974,583

|

IssuesEvent

|

2022-11-25 00:38:21

|

devssa/onde-codar-em-salvador

|

https://api.github.com/repos/devssa/onde-codar-em-salvador

|

closed

|

Analista de Implantação ERP na [SANKHYA]

|

SALVADOR COMERCIAL INFRAESTRUTURA SUPORTE TÉCNICO PROCESSOS Stale

|

<!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA EMPRESA]`

==================================================

-->

## Analista de Implantação ERP

Se você gosta de trabalhar com processos bem estruturados, a Sankhya é o seu lugar!

Venha fazer parte de uma empresa presente no mercado ERP há 30 anos, em constante evolução e com expectativa de crescimento de 40% em 2019.

**Responsabilidades**

- Realizar levantamento de processos nos clientes e sugestão de melhorias quando cabíveis

- Parametrizar o sistema conforme a definição de processos

- Simular e homologar as rotinas implantadas com os usuários nos clientes

- Conduzir treinamentos aos usuários dos clientes

- Acompanhar a colocação do sistema em produção e do desempenho após implantação

- Contribuir com a aplicação das melhores práticas de gestão aplicadas no mercado/segmento de atuação do cliente, com o objetivo de apoiá-lo na evolução do seu negócio, garantindo que os benefícios da solução sejam perceptíveis

## Local

Salvador - Bahia

## Benefícios

- Plano de Saúde

- Plano Odontológico

- Universidade Corporativa

- Vale Alimentação ou Refeição

- Reembolso de KM / Despesas de viagens

#### Diferenciais

Na Sankhya você trabalhará com um dos produtos mais inovadores do mercado ERP! Terá oportunidades de desenvolvimento profissional e experiência abrangente em diversas áreas de negócio, atendendo clientes de destaque em diferentes segmentos.

## Requisitos

**Obrigatórios:**

- Ensino superior completo em Administração, Ciências Contábeis, Sistemas de Informações ou áreas afins;

- Experiência com implantação e parametrização de Software;

- Conhecimentos de processos administrativos, financeiros, contábeis, regras fiscais, tributárias, entre outros;

- Veículo próprio e disponibilidade para viagens;

- Residir em Salvador/BA.

## SANKHYA

Atuando em todo o mercado nacional desde 1989, a Sankhya Gestão de Negócios é uma das maiores empresas provedoras de soluções integradas de gestão corporativa (ERP) do Brasil. Veja alguns números:

- 29 unidades de negócio

- 10.000 clientes corporativos

- Mais de 100 mil usuários

- Mais de 1000 colaboradores diretos

As soluções da Sankhya preparam sua empresa para o futuro, transformando dados operacionais em informações gerenciais para uma tomada de decisão mais segura e precisa.

Por meio de intensa análise, conhecimento e identificação da real necessidade do cliente, a Sankhya dimensiona o conjunto de sistemas e serviços ideais para a gestão de cada empresa.

As soluções Sankhya foram desenvolvidas com estruturas modulares, flexíveis, customizáveis, totalmente WEB e mobile para facilitar a tomada de decisão e resultar em ganhos de produtividade e rentabilidade para a sua empresa.

## Como se candidatar

https://sankhya.gupy.io/jobs/55598?cid=16

|

1.0

|

Analista de Implantação ERP na [SANKHYA] - <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA EMPRESA]`

==================================================

-->

## Analista de Implantação ERP

Se você gosta de trabalhar com processos bem estruturados, a Sankhya é o seu lugar!

Venha fazer parte de uma empresa presente no mercado ERP há 30 anos, em constante evolução e com expectativa de crescimento de 40% em 2019.

**Responsabilidades**

- Realizar levantamento de processos nos clientes e sugestão de melhorias quando cabíveis

- Parametrizar o sistema conforme a definição de processos

- Simular e homologar as rotinas implantadas com os usuários nos clientes

- Conduzir treinamentos aos usuários dos clientes

- Acompanhar a colocação do sistema em produção e do desempenho após implantação

- Contribuir com a aplicação das melhores práticas de gestão aplicadas no mercado/segmento de atuação do cliente, com o objetivo de apoiá-lo na evolução do seu negócio, garantindo que os benefícios da solução sejam perceptíveis

## Local

Salvador - Bahia

## Benefícios

- Plano de Saúde

- Plano Odontológico

- Universidade Corporativa

- Vale Alimentação ou Refeição

- Reembolso de KM / Despesas de viagens

#### Diferenciais

Na Sankhya você trabalhará com um dos produtos mais inovadores do mercado ERP! Terá oportunidades de desenvolvimento profissional e experiência abrangente em diversas áreas de negócio, atendendo clientes de destaque em diferentes segmentos.

## Requisitos

**Obrigatórios:**

- Ensino superior completo em Administração, Ciências Contábeis, Sistemas de Informações ou áreas afins;

- Experiência com implantação e parametrização de Software;

- Conhecimentos de processos administrativos, financeiros, contábeis, regras fiscais, tributárias, entre outros;

- Veículo próprio e disponibilidade para viagens;

- Residir em Salvador/BA.

## SANKHYA

Atuando em todo o mercado nacional desde 1989, a Sankhya Gestão de Negócios é uma das maiores empresas provedoras de soluções integradas de gestão corporativa (ERP) do Brasil. Veja alguns números:

- 29 unidades de negócio

- 10.000 clientes corporativos

- Mais de 100 mil usuários

- Mais de 1000 colaboradores diretos

As soluções da Sankhya preparam sua empresa para o futuro, transformando dados operacionais em informações gerenciais para uma tomada de decisão mais segura e precisa.

Por meio de intensa análise, conhecimento e identificação da real necessidade do cliente, a Sankhya dimensiona o conjunto de sistemas e serviços ideais para a gestão de cada empresa.

As soluções Sankhya foram desenvolvidas com estruturas modulares, flexíveis, customizáveis, totalmente WEB e mobile para facilitar a tomada de decisão e resultar em ganhos de produtividade e rentabilidade para a sua empresa.

## Como se candidatar

https://sankhya.gupy.io/jobs/55598?cid=16

|

process

|

analista de implantação erp na por favor só poste se a vaga for para salvador e cidades vizinhas use desenvolvedor front end ao invés de front end developer o exemplo desenvolvedor front end na analista de implantação erp se você gosta de trabalhar com processos bem estruturados a sankhya é o seu lugar venha fazer parte de uma empresa presente no mercado erp há anos em constante evolução e com expectativa de crescimento de em responsabilidades realizar levantamento de processos nos clientes e sugestão de melhorias quando cabíveis parametrizar o sistema conforme a definição de processos simular e homologar as rotinas implantadas com os usuários nos clientes conduzir treinamentos aos usuários dos clientes acompanhar a colocação do sistema em produção e do desempenho após implantação contribuir com a aplicação das melhores práticas de gestão aplicadas no mercado segmento de atuação do cliente com o objetivo de apoiá lo na evolução do seu negócio garantindo que os benefícios da solução sejam perceptíveis local salvador bahia benefícios plano de saúde plano odontológico universidade corporativa vale alimentação ou refeição reembolso de km despesas de viagens diferenciais na sankhya você trabalhará com um dos produtos mais inovadores do mercado erp terá oportunidades de desenvolvimento profissional e experiência abrangente em diversas áreas de negócio atendendo clientes de destaque em diferentes segmentos requisitos obrigatórios ensino superior completo em administração ciências contábeis sistemas de informações ou áreas afins experiência com implantação e parametrização de software conhecimentos de processos administrativos financeiros contábeis regras fiscais tributárias entre outros veículo próprio e disponibilidade para viagens residir em salvador ba sankhya atuando em todo o mercado nacional desde a sankhya gestão de negócios é uma das maiores empresas provedoras de soluções integradas de gestão corporativa erp do brasil veja alguns números unidades de negócio clientes corporativos mais de mil usuários mais de colaboradores diretos as soluções da sankhya preparam sua empresa para o futuro transformando dados operacionais em informações gerenciais para uma tomada de decisão mais segura e precisa por meio de intensa análise conhecimento e identificação da real necessidade do cliente a sankhya dimensiona o conjunto de sistemas e serviços ideais para a gestão de cada empresa as soluções sankhya foram desenvolvidas com estruturas modulares flexíveis customizáveis totalmente web e mobile para facilitar a tomada de decisão e resultar em ganhos de produtividade e rentabilidade para a sua empresa como se candidatar

| 1

|

7,674

| 10,760,747,784

|

IssuesEvent

|

2019-10-31 19:15:46

|

microsoft/ptvsd

|

https://api.github.com/repos/microsoft/ptvsd

|

closed

|

"pydevdSystemInfo" does not report "ppid" on Python 2.7 on Windows

|

Bug Upstream-pydevd area:Multiprocessing

|

`os.getppid()` was not available on Windows until Python 3.2. Pydevd catches any exceptions from that call, and omits the property from JSON, so this doesn't crash the debugger. But because the adapter doesn't receive "ppid" for the incoming server connection, it doesn't know which of the existing debug sessions is the parent, and doesn't send the subprocess notification to any of them. This effectively breaks multiproc debugging on 2.7.

|

1.0

|

"pydevdSystemInfo" does not report "ppid" on Python 2.7 on Windows - `os.getppid()` was not available on Windows until Python 3.2. Pydevd catches any exceptions from that call, and omits the property from JSON, so this doesn't crash the debugger. But because the adapter doesn't receive "ppid" for the incoming server connection, it doesn't know which of the existing debug sessions is the parent, and doesn't send the subprocess notification to any of them. This effectively breaks multiproc debugging on 2.7.

|

process

|

pydevdsysteminfo does not report ppid on python on windows os getppid was not available on windows until python pydevd catches any exceptions from that call and omits the property from json so this doesn t crash the debugger but because the adapter doesn t receive ppid for the incoming server connection it doesn t know which of the existing debug sessions is the parent and doesn t send the subprocess notification to any of them this effectively breaks multiproc debugging on

| 1

|

725,598

| 24,967,681,082

|

IssuesEvent

|

2022-11-01 20:56:45

|

FlutterFlow/flutterflow-issues

|

https://api.github.com/repos/FlutterFlow/flutterflow-issues

|

opened

|

AudioPlayer not working on web with deep linking enabled for asset audios

|

status: confirmed priority: medium

|

Issue tracker is **ONLY** used for reporting bugs. New feature suggestions and questions should be discussed on Community or submitted through our user feedback form.

Your issue may already be reported! Please search in the [issue tracker](../) before creating one.

Please **thumbs up** this issue if you have also experienced it. You may also add more information if there is something relevant that was not mentioned. However, please refrain from comments that are not constructive, like "I have this problem too", etc.

## Expected behavior (required)

<!-- A clear and concise description of what you expected to happen. -->

Audio player loads "asset" audios.

## Current behavior (required)

<!-- What happens instead of the expected behavior. -->

Audio player can't play asset audios on web in the scenario below.

## To Reproduce (required)

<!-- Please be detailed as possible here so we can help diagnose the issue. Issues cannot be accepted if they are too vague. For example, "project fails to build" could be better reported as:

1. Create new page

2. Add container widget

3. Set width = 123

4. Click Run

5. Observe that project doesn’t build

Code can be included in this section if it is relevant to reproducing the bug.

-->

Steps to reproduce the behavior:

1. Create new project

2. Add new page, e.g. Page2

3. Add link from HomePage to Page2.

4. Add an audio to project assets.

5. On Page2, create an audio player.

6. Select audio type = Asset and the uploaded audio as Asset Audio.

7. Publish to web.

8. Open the published link.

9. Navigate from HomePage to Page2.

10. Observe that the audio widget does not show.

11. Open browser dev tools/network.

12. Observe that it tried to load the audio from `https://<project>/page2/assets/assets/audios/<filename>.mp3`. Expected: `https://<project>.flutterflow.app/assets/assets/audios/<filename>.mp3`

Project: https://app.flutterflow.io/project/audio-asset-63344x

## Context (required)

<!-- How has this issue affected you? What are you trying to accomplish? -->

Can't use audio player on web.

## Screenshots / recordings

<!-- If applicable, add screenshots to help explain your problem. -->

N/A

## Your environment

<!--- Include relevant details about the environment you experienced the bug in -->

* **Bug Report Code:**

* Version of FlutterFlow used: FlutterFlow v3.0, released October 28, 2022

* Platform (e.g. Web, MacOS Desktop): Web

* Browser name and version: Chrome, Version 107.0.5304.87 (Official Build) (x86_64)

* Operating system and version (desktop or mobile): MacOS 12.5.1 Monterey.

|

1.0

|

AudioPlayer not working on web with deep linking enabled for asset audios - Issue tracker is **ONLY** used for reporting bugs. New feature suggestions and questions should be discussed on Community or submitted through our user feedback form.

Your issue may already be reported! Please search in the [issue tracker](../) before creating one.

Please **thumbs up** this issue if you have also experienced it. You may also add more information if there is something relevant that was not mentioned. However, please refrain from comments that are not constructive, like "I have this problem too", etc.

## Expected behavior (required)

<!-- A clear and concise description of what you expected to happen. -->

Audio player loads "asset" audios.

## Current behavior (required)

<!-- What happens instead of the expected behavior. -->

Audio player can't play asset audios on web in the scenario below.

## To Reproduce (required)

<!-- Please be detailed as possible here so we can help diagnose the issue. Issues cannot be accepted if they are too vague. For example, "project fails to build" could be better reported as:

1. Create new page

2. Add container widget

3. Set width = 123

4. Click Run

5. Observe that project doesn’t build

Code can be included in this section if it is relevant to reproducing the bug.

-->

Steps to reproduce the behavior:

1. Create new project

2. Add new page, e.g. Page2

3. Add link from HomePage to Page2.

4. Add an audio to project assets.

5. On Page2, create an audio player.

6. Select audio type = Asset and the uploaded audio as Asset Audio.

7. Publish to web.

8. Open the published link.

9. Navigate from HomePage to Page2.

10. Observe that the audio widget does not show.

11. Open browser dev tools/network.

12. Observe that it tried to load the audio from `https://<project>/page2/assets/assets/audios/<filename>.mp3`. Expected: `https://<project>.flutterflow.app/assets/assets/audios/<filename>.mp3`

Project: https://app.flutterflow.io/project/audio-asset-63344x

## Context (required)

<!-- How has this issue affected you? What are you trying to accomplish? -->

Can't use audio player on web.

## Screenshots / recordings

<!-- If applicable, add screenshots to help explain your problem. -->

N/A

## Your environment

<!--- Include relevant details about the environment you experienced the bug in -->

* **Bug Report Code:**

* Version of FlutterFlow used: FlutterFlow v3.0, released October 28, 2022

* Platform (e.g. Web, MacOS Desktop): Web

* Browser name and version: Chrome, Version 107.0.5304.87 (Official Build) (x86_64)

* Operating system and version (desktop or mobile): MacOS 12.5.1 Monterey.

|

non_process

|

audioplayer not working on web with deep linking enabled for asset audios issue tracker is only used for reporting bugs new feature suggestions and questions should be discussed on community or submitted through our user feedback form your issue may already be reported please search in the before creating one please thumbs up this issue if you have also experienced it you may also add more information if there is something relevant that was not mentioned however please refrain from comments that are not constructive like i have this problem too etc expected behavior required audio player loads asset audios current behavior required audio player can t play asset audios on web in the scenario below to reproduce required please be detailed as possible here so we can help diagnose the issue issues cannot be accepted if they are too vague for example project fails to build could be better reported as create new page add container widget set width click run observe that project doesn’t build code can be included in this section if it is relevant to reproducing the bug steps to reproduce the behavior create new project add new page e g add link from homepage to add an audio to project assets on create an audio player select audio type asset and the uploaded audio as asset audio publish to web open the published link navigate from homepage to observe that the audio widget does not show open browser dev tools network observe that it tried to load the audio from expected project context required can t use audio player on web screenshots recordings n a your environment bug report code version of flutterflow used flutterflow released october platform e g web macos desktop web browser name and version chrome version official build operating system and version desktop or mobile macos monterey

| 0

|

15,331

| 19,457,021,531

|

IssuesEvent

|

2021-12-23 00:55:22

|

googleapis/release-please

|

https://api.github.com/repos/googleapis/release-please

|

closed

|

process: add integration test for regression in #594

|

type: process

|

## Problem

We broke pagination logic when merging #587.

A fix has already been landed [here](https://github.com/googleapis/release-please/pull/594).

## Why this is less than ideal

We should add an integration test that actually exercises our graphql queries (unit tests did not catch this issue, because it's an exception thrown by GitHub).

|

1.0

|

process: add integration test for regression in #594 - ## Problem

We broke pagination logic when merging #587.

A fix has already been landed [here](https://github.com/googleapis/release-please/pull/594).

## Why this is less than ideal

We should add an integration test that actually exercises our graphql queries (unit tests did not catch this issue, because it's an exception thrown by GitHub).

|

process

|

process add integration test for regression in problem we broke pagination logic when merging a fix has already been landed why this is less than ideal we should add an integration test that actually exercises our graphql queries unit tests did not catch this issue because it s an exception thrown by github

| 1

|

173,505

| 6,525,791,908

|

IssuesEvent

|

2017-08-29 17:09:53

|

wordpress-mobile/AztecEditor-Android

|

https://api.github.com/repos/wordpress-mobile/AztecEditor-Android

|

closed

|

Non-latin characters are copied as HTML-encoded entities

|

bug high priority

|

Reported by @rachelmcr:

> When copying non-Latin text from the beta editor and pasting it elsewhere, the text is encoded. I reproduced this by copying Japanese text from Aztec and pasting it into Keep; the HTML encoded text is what appears in Keep.

The same happens for emoji, in both the main text editor and the title field.

For example:

> Êtest 😊😃

> 测试一个

becomes:

`Êtest 😊😃<br>测试一个`

|

1.0

|

Non-latin characters are copied as HTML-encoded entities - Reported by @rachelmcr:

> When copying non-Latin text from the beta editor and pasting it elsewhere, the text is encoded. I reproduced this by copying Japanese text from Aztec and pasting it into Keep; the HTML encoded text is what appears in Keep.

The same happens for emoji, in both the main text editor and the title field.

For example:

> Êtest 😊😃

> 测试一个

becomes:

`Êtest 😊😃<br>测试一个`

|

non_process

|

non latin characters are copied as html encoded entities reported by rachelmcr when copying non latin text from the beta editor and pasting it elsewhere the text is encoded i reproduced this by copying japanese text from aztec and pasting it into keep the html encoded text is what appears in keep the same happens for emoji in both the main text editor and the title field for example êtest 😊😃 测试一个 becomes test

| 0

|

9,912

| 12,952,554,155

|

IssuesEvent

|

2020-07-19 20:59:28

|

pb866/TrackMatcher.jl

|

https://api.github.com/repos/pb866/TrackMatcher.jl

|

closed

|

Inconsistencies in FlightAware archive

|

check data processing issue

|

Column names vary slightly in different FlightAware archive files for different year. Make sure `TrackMatcher` works for all cases.

|

1.0

|

Inconsistencies in FlightAware archive - Column names vary slightly in different FlightAware archive files for different year. Make sure `TrackMatcher` works for all cases.

|

process

|

inconsistencies in flightaware archive column names vary slightly in different flightaware archive files for different year make sure trackmatcher works for all cases

| 1

|

1,670

| 4,308,536,426

|

IssuesEvent

|

2016-07-21 13:22:17

|

paperjs/paper.js

|

https://api.github.com/repos/paperjs/paper.js

|

closed

|

incoherent fill-rule used when building CompoundPath

|

cat: path-processing pri: important type: bug

|

The default fill-rule of SVG, 'nonzero', is respected when building a CompoundPath from a single SVG path:

```javascript

var p = new CompoundPath('m 51.428571,89.50504 94.285719,-20 20,91.42857 -102.857147,17.14286 z M 200,130.43865 l 22.85715,82.85714 -97.14286,28.57143 -17.14286,-114.28571 z');

p.fillColor = 'black';

p.windingRule = 'nonzero';

```

[See demo](http://sketch.paperjs.org/#S/VY89T8MwEIb/yslLiuRYtmM3dqtOnZEQjJTBTQyN4pyjkFIpiP+OA2bgtnveR/fxSdANnuzIU+/n5kIoaWK79if8cBOMcAD0NzjGYYxXbB/cfNkUA2jBlDS6FtRYprnmCqxiP8TSUnKQnNrsQCm4ZGukaipqJhLdwgL3SeJUVJypymw1BJC/mqYm+1Da7FNp2EqqNO0PlULknbAUd/sTQq6RvXYhHGOIU7q/OAfX9MW//NZh2+Hb4zX41cCIi59isU//nyfv+jF2OL+T3fPL1zc=).

But when trying to build it from the two sub-path, the result is totally different:

```javascript

var p = new CompoundPath({

fillColor: 'black',

children: [

new Path('m 20,35.219325 94.28572,-19.999999 20,91.428574 -102.857148,17.14285 z'),

new Path('M 168.57143,46.647895 85.714289,43.790753 54.285715,146.64789 137.14286,158.07646 z')

]

});

```

[See demo](http://sketch.paperjs.org/#S/bVBNa4NAEP0rw15sYDM4+72WnnIuFHpMcjBqUNRdsbaFhvz3upHe+k4zb94HzI2FcmxYwd77ZqlaxlkV67R/lTNM8AKh+YZDHKf4Geq3cmmfbqcAK67dMBziEOcCsstQVn3Gt0PVdkM9N6GA40YkpJSHOxtB5FxqFOSl0OAVCqet4Hvy6B9IAk+oEq9gT7nAdSLlOFmkRMNPtuP/hb8CGYdJLLkyaJR1XoPTaJPPcyXR+txqCXrrJc3pTwgkt3zDSTvMrVEmNW1F51O4757X/1zmpuyn2IXlgxXH8/0X) (the shape of the path isn't the problem here, only the way it's filled)

If the first CompoundPath is exported to JSON and then re-imported in a CompoundPath, the way it is filled will also be different. Before finding a fix for this problem, I'm pretty sure that there is a workaround to achieve the same fill result as CompoundPath built from a single svg path, without actually using an svg path.

|

1.0

|

incoherent fill-rule used when building CompoundPath - The default fill-rule of SVG, 'nonzero', is respected when building a CompoundPath from a single SVG path:

```javascript

var p = new CompoundPath('m 51.428571,89.50504 94.285719,-20 20,91.42857 -102.857147,17.14286 z M 200,130.43865 l 22.85715,82.85714 -97.14286,28.57143 -17.14286,-114.28571 z');

p.fillColor = 'black';

p.windingRule = 'nonzero';

```

[See demo](http://sketch.paperjs.org/#S/VY89T8MwEIb/yslLiuRYtmM3dqtOnZEQjJTBTQyN4pyjkFIpiP+OA2bgtnveR/fxSdANnuzIU+/n5kIoaWK79if8cBOMcAD0NzjGYYxXbB/cfNkUA2jBlDS6FtRYprnmCqxiP8TSUnKQnNrsQCm4ZGukaipqJhLdwgL3SeJUVJypymw1BJC/mqYm+1Da7FNp2EqqNO0PlULknbAUd/sTQq6RvXYhHGOIU7q/OAfX9MW//NZh2+Hb4zX41cCIi59isU//nyfv+jF2OL+T3fPL1zc=).

But when trying to build it from the two sub-path, the result is totally different:

```javascript

var p = new CompoundPath({

fillColor: 'black',

children: [

new Path('m 20,35.219325 94.28572,-19.999999 20,91.428574 -102.857148,17.14285 z'),

new Path('M 168.57143,46.647895 85.714289,43.790753 54.285715,146.64789 137.14286,158.07646 z')

]

});

```

[See demo](http://sketch.paperjs.org/#S/bVBNa4NAEP0rw15sYDM4+72WnnIuFHpMcjBqUNRdsbaFhvz3upHe+k4zb94HzI2FcmxYwd77ZqlaxlkV67R/lTNM8AKh+YZDHKf4Geq3cmmfbqcAK67dMBziEOcCsstQVn3Gt0PVdkM9N6GA40YkpJSHOxtB5FxqFOSl0OAVCqet4Hvy6B9IAk+oEq9gT7nAdSLlOFmkRMNPtuP/hb8CGYdJLLkyaJR1XoPTaJPPcyXR+txqCXrrJc3pTwgkt3zDSTvMrVEmNW1F51O4757X/1zmpuyn2IXlgxXH8/0X) (the shape of the path isn't the problem here, only the way it's filled)

If the first CompoundPath is exported to JSON and then re-imported in a CompoundPath, the way it is filled will also be different. Before finding a fix for this problem, I'm pretty sure that there is a workaround to achieve the same fill result as CompoundPath built from a single svg path, without actually using an svg path.

|

process

|

incoherent fill rule used when building compoundpath the default fill rule of svg nonzero is respected when building a compoundpath from a single svg path javascript var p new compoundpath m z m l z p fillcolor black p windingrule nonzero but when trying to build it from the two sub path the result is totally different javascript var p new compoundpath fillcolor black children new path m z new path m z the shape of the path isn t the problem here only the way it s filled if the first compoundpath is exported to json and then re imported in a compoundpath the way it is filled will also be different before finding a fix for this problem i m pretty sure that there is a workaround to achieve the same fill result as compoundpath built from a single svg path without actually using an svg path

| 1

|

432,912

| 30,299,408,618

|

IssuesEvent

|

2023-07-10 03:54:20

|

dotnet/runtime

|

https://api.github.com/repos/dotnet/runtime

|

opened

|

Port System.Cloud documentation from .NET 8.0 APIs

|

documentation blocking-release

|

Below is the list of APIs that still show up as undocumented in dotnet-api-docs and were introduced in .NET 8.0.

Full porting instructions can be found in the [main issue](https://github.com/dotnet/runtime/issues/88561).

This task needs to be finished before the RC2 snap (September 18th).

| Summary | Parameters | TypeParameters | ReturnValue | API |

|----------|------------|----------------|-------------|------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| Missing | NA | NA | NA | [N:System.Cloud.DocumentDb](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/ns-System.Cloud.DocumentDb.xml) |

| Missing | NA | NA | NA | [M:System.Cloud.DocumentDb.DatabaseOptions.#ctor](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/System.Cloud.DocumentDb/DatabaseOptions.xml) |

| Missing | NA | NA | NA | [M:System.Cloud.DocumentDb.QueryRequestOptions1.#ctor](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/System.Cloud.DocumentDb/QueryRequestOptions1.xml) |

| Missing | NA | NA | NA | [M:System.Cloud.DocumentDb.RegionalDatabaseOptions.#ctor](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/System.Cloud.DocumentDb/RegionalDatabaseOptions.xml) |

| Missing | NA | NA | NA | [M:System.Cloud.DocumentDb.RequestOptions.#ctor](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/System.Cloud.DocumentDb/RequestOptions.xml) |

| Missing | NA | NA | NA | [M:System.Cloud.DocumentDb.RequestOptions1.#ctor](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/System.Cloud.DocumentDb/RequestOptions1.xml) |

| Missing | NA | NA | NA | [M:System.Cloud.DocumentDb.TableOptions.#ctor](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/System.Cloud.DocumentDb/TableOptions.xml) |

| Missing | NA | NA | NA | [N:System.Cloud.Messaging](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/ns-System.Cloud.Messaging.xml) |

|

1.0

|

Port System.Cloud documentation from .NET 8.0 APIs - Below is the list of APIs that still show up as undocumented in dotnet-api-docs and were introduced in .NET 8.0.

Full porting instructions can be found in the [main issue](https://github.com/dotnet/runtime/issues/88561).

This task needs to be finished before the RC2 snap (September 18th).

| Summary | Parameters | TypeParameters | ReturnValue | API |

|----------|------------|----------------|-------------|------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| Missing | NA | NA | NA | [N:System.Cloud.DocumentDb](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/ns-System.Cloud.DocumentDb.xml) |

| Missing | NA | NA | NA | [M:System.Cloud.DocumentDb.DatabaseOptions.#ctor](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/System.Cloud.DocumentDb/DatabaseOptions.xml) |

| Missing | NA | NA | NA | [M:System.Cloud.DocumentDb.QueryRequestOptions1.#ctor](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/System.Cloud.DocumentDb/QueryRequestOptions1.xml) |

| Missing | NA | NA | NA | [M:System.Cloud.DocumentDb.RegionalDatabaseOptions.#ctor](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/System.Cloud.DocumentDb/RegionalDatabaseOptions.xml) |

| Missing | NA | NA | NA | [M:System.Cloud.DocumentDb.RequestOptions.#ctor](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/System.Cloud.DocumentDb/RequestOptions.xml) |

| Missing | NA | NA | NA | [M:System.Cloud.DocumentDb.RequestOptions1.#ctor](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/System.Cloud.DocumentDb/RequestOptions1.xml) |

| Missing | NA | NA | NA | [M:System.Cloud.DocumentDb.TableOptions.#ctor](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/System.Cloud.DocumentDb/TableOptions.xml) |

| Missing | NA | NA | NA | [N:System.Cloud.Messaging](https://github.com/dotnet/dotnet-api-docs/blob/main/xml/ns-System.Cloud.Messaging.xml) |

|

non_process

|

port system cloud documentation from net apis below is the list of apis that still show up as undocumented in dotnet api docs and were introduced in net full porting instructions can be found in the this task needs to be finished before the snap september summary parameters typeparameters returnvalue api missing na na na missing na na na missing na na na missing na na na missing na na na missing na na na missing na na na missing na na na

| 0

|

16,922

| 22,267,822,230

|

IssuesEvent

|

2022-06-10 09:13:05

|

camunda/zeebe

|

https://api.github.com/repos/camunda/zeebe

|

closed

|

Collect metrics about started instances with start instructions through Zeebe Analytics

|

team/process-automation

|

Zeebe Analytics should be able to distinguish between process instances started at the root start event and process instances started at other elements. This will allow us to collect data on the usage of the Start Process Instance Anywhere feature.

Blocked by https://github.com/camunda/zeebe/issues/9390

Possibly blocked by https://github.com/camunda/zeebe/issues/9397 and https://github.com/camunda/zeebe/issues/9398 for testing

|

1.0

|

Collect metrics about started instances with start instructions through Zeebe Analytics - Zeebe Analytics should be able to distinguish between process instances started at the root start event and process instances started at other elements. This will allow us to collect data on the usage of the Start Process Instance Anywhere feature.

Blocked by https://github.com/camunda/zeebe/issues/9390

Possibly blocked by https://github.com/camunda/zeebe/issues/9397 and https://github.com/camunda/zeebe/issues/9398 for testing

|

process

|

collect metrics about started instances with start instructions through zeebe analytics zeebe analytics should be able to distinguish between process instances started at the root start event and process instances started at other elements this will allow us to collect data on the usage of the start process instance anywhere feature blocked by possibly blocked by and for testing

| 1

|

15,688

| 19,847,971,243

|

IssuesEvent

|

2022-01-21 09:04:39

|

googleapis/java-shell

|

https://api.github.com/repos/googleapis/java-shell

|

closed

|

Your .repo-metadata.json file has a problem 🤒

|

type: process api: cloudshell repo-metadata: lint

|

You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'shell' invalid in .repo-metadata.json

☝️ Once you address these problems, you can close this issue.

### Need help?

* [Schema definition](https://github.com/googleapis/repo-automation-bots/blob/main/packages/repo-metadata-lint/src/repo-metadata-schema.json): lists valid options for each field.

* [API index](https://github.com/googleapis/googleapis/blob/master/api-index-v1.json): for gRPC libraries **api_shortname** should match the subdomain of an API's **hostName**.

* Reach out to **go/github-automation** if you have any questions.

|

1.0

|

Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'shell' invalid in .repo-metadata.json

☝️ Once you address these problems, you can close this issue.

### Need help?

* [Schema definition](https://github.com/googleapis/repo-automation-bots/blob/main/packages/repo-metadata-lint/src/repo-metadata-schema.json): lists valid options for each field.

* [API index](https://github.com/googleapis/googleapis/blob/master/api-index-v1.json): for gRPC libraries **api_shortname** should match the subdomain of an API's **hostName**.

* Reach out to **go/github-automation** if you have any questions.

|

process

|

your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 api shortname shell invalid in repo metadata json ☝️ once you address these problems you can close this issue need help lists valid options for each field for grpc libraries api shortname should match the subdomain of an api s hostname reach out to go github automation if you have any questions

| 1

|

408,844

| 11,953,174,184

|

IssuesEvent

|

2020-04-03 20:21:05

|

forseti-security/forseti-security

|

https://api.github.com/repos/forseti-security/forseti-security

|

closed

|

[PATCH] Forseti v2.25.1

|

module: inventory priority: p0 triaged: yes

|

Patch Forseti v2.25.0 to fix method calls for organization policies (Issue #3709).

Related to terraform-google-forseti issue [#561](https://github.com/forseti-security/terraform-google-forseti/issues/561)

|

1.0

|

[PATCH] Forseti v2.25.1 - Patch Forseti v2.25.0 to fix method calls for organization policies (Issue #3709).

Related to terraform-google-forseti issue [#561](https://github.com/forseti-security/terraform-google-forseti/issues/561)

|

non_process

|

forseti patch forseti to fix method calls for organization policies issue related to terraform google forseti issue

| 0

|

539,849

| 15,795,828,228

|

IssuesEvent

|

2021-04-02 13:51:53

|

pioneers/runtime

|

https://api.github.com/repos/pioneers/runtime

|

opened

|

[NET_HANDLER] Work with Shepherd to implement hearbeat

|

enhancement high-priority

|

Shepherd currently has some trouble detecting robot disconnects. They rely on Runtime cleanly exiting in the event of an emergency of some sort, and closing the server-side port. However, in some of these edge cases, Runtime isn't really able to close that socket well (ex. networking error / disconnect, robot shutdown because of low voltage, etc.). It would be easier to simply implement a heartbeat that is sent from Runtime to Shepherd periodically (maybe once per second or so) which would let Shepherd know that the robot is still alive and well.

|

1.0

|

[NET_HANDLER] Work with Shepherd to implement hearbeat - Shepherd currently has some trouble detecting robot disconnects. They rely on Runtime cleanly exiting in the event of an emergency of some sort, and closing the server-side port. However, in some of these edge cases, Runtime isn't really able to close that socket well (ex. networking error / disconnect, robot shutdown because of low voltage, etc.). It would be easier to simply implement a heartbeat that is sent from Runtime to Shepherd periodically (maybe once per second or so) which would let Shepherd know that the robot is still alive and well.

|

non_process

|

work with shepherd to implement hearbeat shepherd currently has some trouble detecting robot disconnects they rely on runtime cleanly exiting in the event of an emergency of some sort and closing the server side port however in some of these edge cases runtime isn t really able to close that socket well ex networking error disconnect robot shutdown because of low voltage etc it would be easier to simply implement a heartbeat that is sent from runtime to shepherd periodically maybe once per second or so which would let shepherd know that the robot is still alive and well

| 0

|

8,911

| 12,016,244,721

|

IssuesEvent

|

2020-04-10 15:41:46

|

dotnet/runtime

|

https://api.github.com/repos/dotnet/runtime

|

closed

|

ServiceController tests fail to start test service

|

area-System.ServiceProcess untriaged

|

These tests verify ServiceBase and ServiceController but are rarely run because you need to specify outerloop AND you have to be elevated. They currently are failing for me -- I tried two machines.

```

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\tests>msbuild /t:rebuildandtest /p:outerloop=true

...

...

Discovering: System.ServiceProcess.ServiceController.Tests (method display = ClassAndMethod, method display options

= None)

Discovered: System.ServiceProcess.ServiceController.Tests (found 36 of 37 test cases)

Starting: System.ServiceProcess.ServiceController.Tests (parallel test collections = on, max threads = 4)...

System.InvalidOperationException : Cannot start service '4cb85dca-edbf-430c-afe2-61f5d782f266.Dependent' on com

puter '.'.

---- System.ComponentModel.Win32Exception : Access is denied.

Stack Trace:

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\src\System\ServiceProcess\ServiceControl

ler.cs(844,0): at System.ServiceProcess.ServiceController.Start(String[] args)

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\src\System\ServiceProcess\ServiceControl

ler.cs(804,0): at System.ServiceProcess.ServiceController.Start()

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\tests\System.ServiceProcess.ServiceContr

oller.TestService\TestServiceInstaller.cs(106,0): at System.ServiceProcess.Tests.TestServiceInstaller.Install()

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\tests\TestServiceProvider.cs(123,0): at

System.ServiceProcess.Tests.TestServiceProvider.CreateTestServices()

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\tests\TestServiceProvider.cs(77,0): at S

ystem.ServiceProcess.Tests.TestServiceProvider..ctor(String serviceName, String userName)

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\tests\TestServiceProvider.cs(62,0): at S

ystem.ServiceProcess.Tests.TestServiceProvider..ctor()

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\tests\ServiceControllerTests.cs(24,0): a

t System.ServiceProcess.Tests.ServiceControllerTests..ctor()

----- Inner Stack Trace -----

```

This is definitely an elevated prompt. I tried `Debugger.Launch()` in the TestService constructor, and it does not fire. I looked at the Security event log, and it does not have any "failed" messages. "net start <servicename>" gives access denied as well. So it's not the test harness.

@Anipik do you repro this?

|

1.0

|

ServiceController tests fail to start test service - These tests verify ServiceBase and ServiceController but are rarely run because you need to specify outerloop AND you have to be elevated. They currently are failing for me -- I tried two machines.

```

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\tests>msbuild /t:rebuildandtest /p:outerloop=true

...

...

Discovering: System.ServiceProcess.ServiceController.Tests (method display = ClassAndMethod, method display options

= None)

Discovered: System.ServiceProcess.ServiceController.Tests (found 36 of 37 test cases)

Starting: System.ServiceProcess.ServiceController.Tests (parallel test collections = on, max threads = 4)...

System.InvalidOperationException : Cannot start service '4cb85dca-edbf-430c-afe2-61f5d782f266.Dependent' on com

puter '.'.

---- System.ComponentModel.Win32Exception : Access is denied.

Stack Trace:

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\src\System\ServiceProcess\ServiceControl

ler.cs(844,0): at System.ServiceProcess.ServiceController.Start(String[] args)

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\src\System\ServiceProcess\ServiceControl

ler.cs(804,0): at System.ServiceProcess.ServiceController.Start()

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\tests\System.ServiceProcess.ServiceContr

oller.TestService\TestServiceInstaller.cs(106,0): at System.ServiceProcess.Tests.TestServiceInstaller.Install()

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\tests\TestServiceProvider.cs(123,0): at

System.ServiceProcess.Tests.TestServiceProvider.CreateTestServices()

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\tests\TestServiceProvider.cs(77,0): at S

ystem.ServiceProcess.Tests.TestServiceProvider..ctor(String serviceName, String userName)

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\tests\TestServiceProvider.cs(62,0): at S

ystem.ServiceProcess.Tests.TestServiceProvider..ctor()

C:\git\runtime\src\libraries\System.ServiceProcess.ServiceController\tests\ServiceControllerTests.cs(24,0): a

t System.ServiceProcess.Tests.ServiceControllerTests..ctor()

----- Inner Stack Trace -----

```

This is definitely an elevated prompt. I tried `Debugger.Launch()` in the TestService constructor, and it does not fire. I looked at the Security event log, and it does not have any "failed" messages. "net start <servicename>" gives access denied as well. So it's not the test harness.

@Anipik do you repro this?

|

process

|

servicecontroller tests fail to start test service these tests verify servicebase and servicecontroller but are rarely run because you need to specify outerloop and you have to be elevated they currently are failing for me i tried two machines c git runtime src libraries system serviceprocess servicecontroller tests msbuild t rebuildandtest p outerloop true discovering system serviceprocess servicecontroller tests method display classandmethod method display options none discovered system serviceprocess servicecontroller tests found of test cases starting system serviceprocess servicecontroller tests parallel test collections on max threads system invalidoperationexception cannot start service edbf dependent on com puter system componentmodel access is denied stack trace c git runtime src libraries system serviceprocess servicecontroller src system serviceprocess servicecontrol ler cs at system serviceprocess servicecontroller start string args c git runtime src libraries system serviceprocess servicecontroller src system serviceprocess servicecontrol ler cs at system serviceprocess servicecontroller start c git runtime src libraries system serviceprocess servicecontroller tests system serviceprocess servicecontr oller testservice testserviceinstaller cs at system serviceprocess tests testserviceinstaller install c git runtime src libraries system serviceprocess servicecontroller tests testserviceprovider cs at system serviceprocess tests testserviceprovider createtestservices c git runtime src libraries system serviceprocess servicecontroller tests testserviceprovider cs at s ystem serviceprocess tests testserviceprovider ctor string servicename string username c git runtime src libraries system serviceprocess servicecontroller tests testserviceprovider cs at s ystem serviceprocess tests testserviceprovider ctor c git runtime src libraries system serviceprocess servicecontroller tests servicecontrollertests cs a t system serviceprocess tests servicecontrollertests ctor inner stack trace this is definitely an elevated prompt i tried debugger launch in the testservice constructor and it does not fire i looked at the security event log and it does not have any failed messages net start gives access denied as well so it s not the test harness anipik do you repro this

| 1

|

40,580

| 12,799,575,817

|

IssuesEvent

|

2020-07-02 15:36:31

|

TreyM-WSS/WhiteSource-Demo

|

https://api.github.com/repos/TreyM-WSS/WhiteSource-Demo

|

opened

|

CVE-2020-8840 (High) detected in jackson-databind-2.8.1.jar

|

security vulnerability

|

## CVE-2020-8840 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.1.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: /tmp/ws-scm/WhiteSource-Demo/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.8.1/jackson-databind-2.8.1.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-web-1.4.0.RELEASE.jar (Root Library)

- :x: **jackson-databind-2.8.1.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://api.github.com/repos/TreyM-WSS/WhiteSource-Demo/commits/75659f691fb82d67ecd666ba6076394defeb92d0">75659f691fb82d67ecd666ba6076394defeb92d0</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.0.0 through 2.9.10.2 lacks certain xbean-reflect/JNDI blocking, as demonstrated by org.apache.xbean.propertyeditor.JndiConverter.

<p>Publish Date: 2020-02-10

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-8840>CVE-2020-8840</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/FasterXML/jackson-databind/issues/2620">https://github.com/FasterXML/jackson-databind/issues/2620</a></p>

<p>Release Date: 2020-02-10</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.9.10.3</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-databind","packageVersion":"2.8.1","isTransitiveDependency":true,"dependencyTree":"org.springframework.boot:spring-boot-starter-web:1.4.0.RELEASE;com.fasterxml.jackson.core:jackson-databind:2.8.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.fasterxml.jackson.core:jackson-databind:2.9.10.3"}],"vulnerabilityIdentifier":"CVE-2020-8840","vulnerabilityDetails":"FasterXML jackson-databind 2.0.0 through 2.9.10.2 lacks certain xbean-reflect/JNDI blocking, as demonstrated by org.apache.xbean.propertyeditor.JndiConverter.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-8840","cvss3Severity":"high","cvss3Score":"9.8","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> -->

|

True

|

CVE-2020-8840 (High) detected in jackson-databind-2.8.1.jar - ## CVE-2020-8840 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.1.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: /tmp/ws-scm/WhiteSource-Demo/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.8.1/jackson-databind-2.8.1.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-web-1.4.0.RELEASE.jar (Root Library)

- :x: **jackson-databind-2.8.1.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://api.github.com/repos/TreyM-WSS/WhiteSource-Demo/commits/75659f691fb82d67ecd666ba6076394defeb92d0">75659f691fb82d67ecd666ba6076394defeb92d0</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.0.0 through 2.9.10.2 lacks certain xbean-reflect/JNDI blocking, as demonstrated by org.apache.xbean.propertyeditor.JndiConverter.

<p>Publish Date: 2020-02-10

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-8840>CVE-2020-8840</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/FasterXML/jackson-databind/issues/2620">https://github.com/FasterXML/jackson-databind/issues/2620</a></p>

<p>Release Date: 2020-02-10</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.9.10.3</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-databind","packageVersion":"2.8.1","isTransitiveDependency":true,"dependencyTree":"org.springframework.boot:spring-boot-starter-web:1.4.0.RELEASE;com.fasterxml.jackson.core:jackson-databind:2.8.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.fasterxml.jackson.core:jackson-databind:2.9.10.3"}],"vulnerabilityIdentifier":"CVE-2020-8840","vulnerabilityDetails":"FasterXML jackson-databind 2.0.0 through 2.9.10.2 lacks certain xbean-reflect/JNDI blocking, as demonstrated by org.apache.xbean.propertyeditor.JndiConverter.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-8840","cvss3Severity":"high","cvss3Score":"9.8","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> -->

|

non_process

|

cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file tmp ws scm whitesource demo pom xml path to vulnerable library home wss scanner repository com fasterxml jackson core jackson databind jackson databind jar dependency hierarchy spring boot starter web release jar root library x jackson databind jar vulnerable library found in head commit a href vulnerability details fasterxml jackson databind through lacks certain xbean reflect jndi blocking as demonstrated by org apache xbean propertyeditor jndiconverter publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution com fasterxml jackson core jackson databind isopenpronvulnerability true ispackagebased true isdefaultbranch true packages vulnerabilityidentifier cve vulnerabilitydetails fasterxml jackson databind through lacks certain xbean reflect jndi blocking as demonstrated by org apache xbean propertyeditor jndiconverter vulnerabilityurl

| 0

|

14,006

| 16,813,627,275

|

IssuesEvent

|

2021-06-17 03:18:13

|

qgis/QGIS

|

https://api.github.com/repos/qgis/QGIS

|

closed

|

Data defined parameter not taken into account when editing features "in-place"

|

Bug Processing

|

<!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

- [ ] Search through existing issue reports and gis.stackexchange.com to check whether the issue already exists

- [ ] Test with a [clean new user profile](https://docs.qgis.org/testing/en/docs/user_manual/introduction/qgis_configuration.html?highlight=profile#working-with-user-profiles).

- [ ] Create a light and self-contained sample dataset and project file which demonstrates the issue

-->

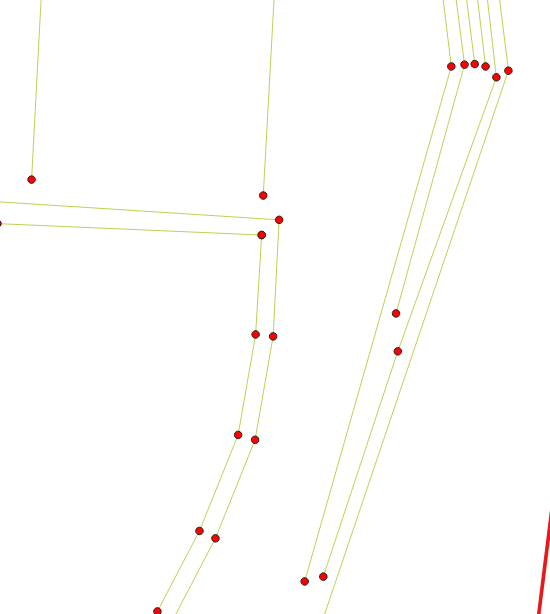

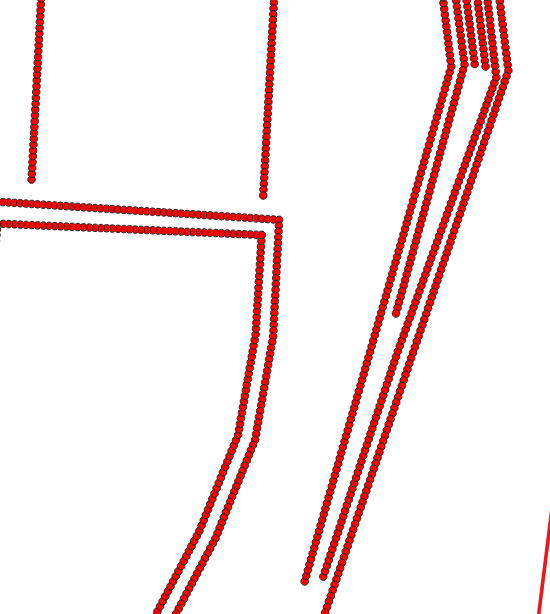

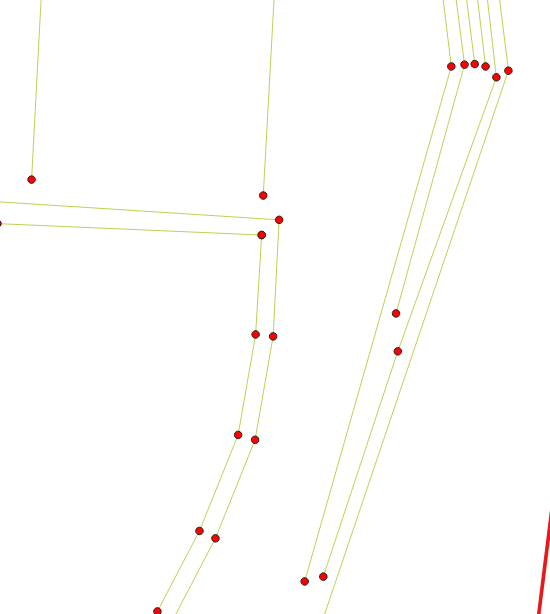

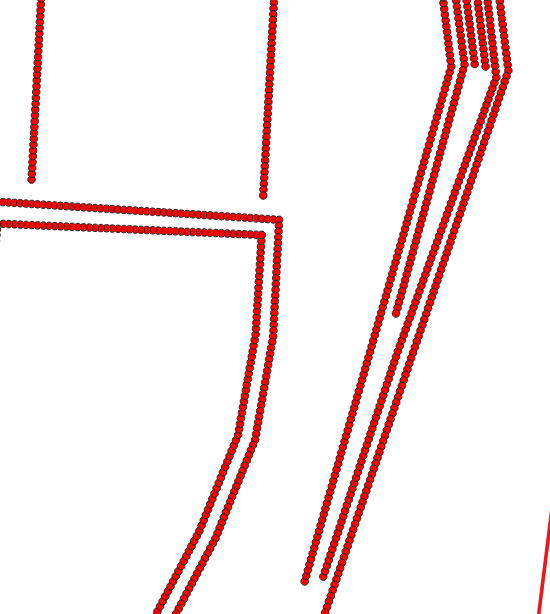

**Describe the bug**

Take for example "densify geometries given an interval".

If I run this alg on a layer with a size attribut and set interval as data defined by this attribut and output to a new layer, data defined parameter is taken into account.

On the opposite, if I modify the entities "in-place", data defined interval is not taken into account.

Input layer

Output to a new layer

Edit "in-place"

**How to Reproduce**

<!-- Steps, sample datasets and qgis project file to reproduce the behavior. Screencasts or screenshots welcome -->

1. Download [lignes_contraintes.zip](https://github.com/qgis/QGIS/files/6664102/lignes_contraintes.zip) and load it in Qgis

2. Enable Edit "in-place"

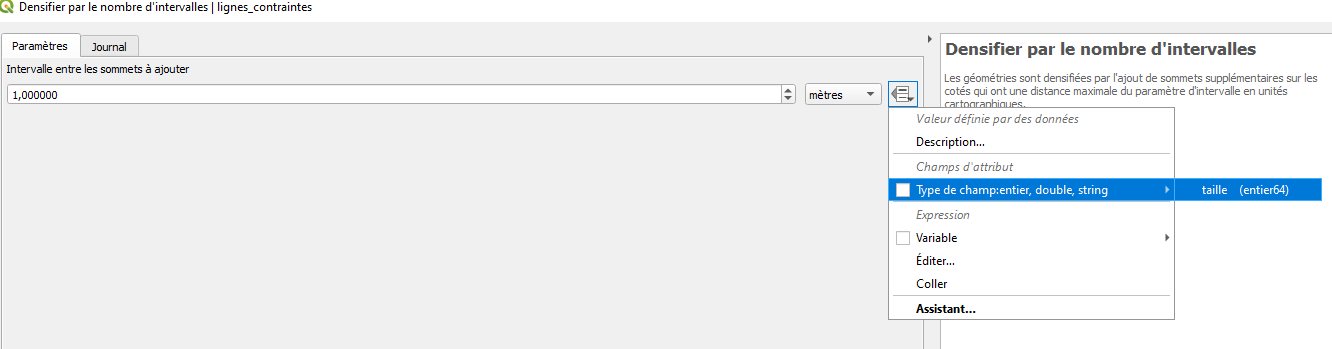

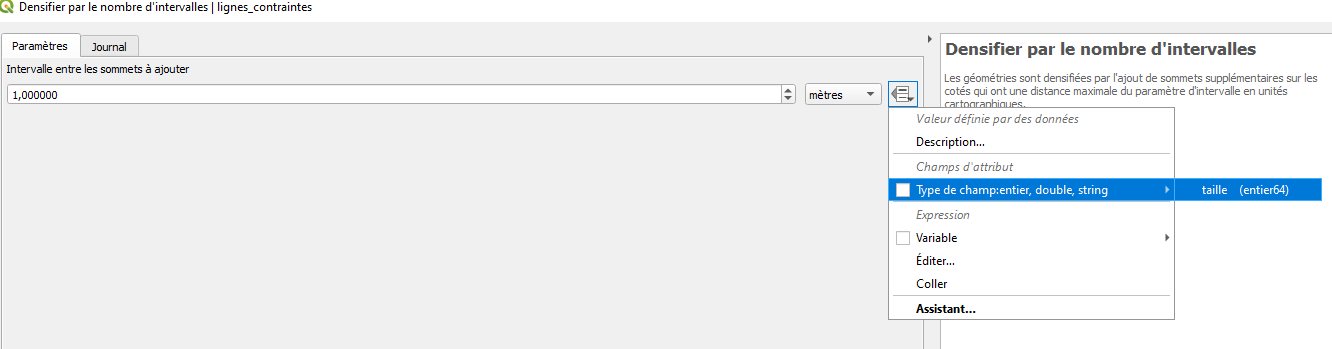

3. Run alg "densify geometries given an interval"

4. Set attribut "taille" as data defined value for Interval

5. Distance between vertices is 1 meter (default value of the alg) instead of 2 or 3 meters.

You can run the same alg but output to a new layer and see that data defined value for interval is taken into account.

**QGIS and OS versions**

3.18.3 OsGeo4W testing

<!-- In the QGIS Help menu -> About, click in the table, Ctrl+A and then Ctrl+C. Finally paste here -->

**Additional context**

I observe a similar issue for at least 2 or 3 other algs. So it seems not specific to "densify geometries given an interval" but a general issue when editing features "in-place".

<!-- Add any other context about the problem here. -->

|

1.0

|

Data defined parameter not taken into account when editing features "in-place" - <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

- [ ] Search through existing issue reports and gis.stackexchange.com to check whether the issue already exists

- [ ] Test with a [clean new user profile](https://docs.qgis.org/testing/en/docs/user_manual/introduction/qgis_configuration.html?highlight=profile#working-with-user-profiles).

- [ ] Create a light and self-contained sample dataset and project file which demonstrates the issue

-->

**Describe the bug**

Take for example "densify geometries given an interval".

If I run this alg on a layer with a size attribut and set interval as data defined by this attribut and output to a new layer, data defined parameter is taken into account.

On the opposite, if I modify the entities "in-place", data defined interval is not taken into account.

Input layer

Output to a new layer

Edit "in-place"

**How to Reproduce**

<!-- Steps, sample datasets and qgis project file to reproduce the behavior. Screencasts or screenshots welcome -->

1. Download [lignes_contraintes.zip](https://github.com/qgis/QGIS/files/6664102/lignes_contraintes.zip) and load it in Qgis

2. Enable Edit "in-place"

3. Run alg "densify geometries given an interval"

4. Set attribut "taille" as data defined value for Interval

5. Distance between vertices is 1 meter (default value of the alg) instead of 2 or 3 meters.

You can run the same alg but output to a new layer and see that data defined value for interval is taken into account.

**QGIS and OS versions**

3.18.3 OsGeo4W testing

<!-- In the QGIS Help menu -> About, click in the table, Ctrl+A and then Ctrl+C. Finally paste here -->

**Additional context**

I observe a similar issue for at least 2 or 3 other algs. So it seems not specific to "densify geometries given an interval" but a general issue when editing features "in-place".

<!-- Add any other context about the problem here. -->

|

process

|

data defined parameter not taken into account when editing features in place bug fixing and feature development is a community responsibility and not the responsibility of the qgis project alone if this bug report or feature request is high priority for you we suggest engaging a qgis developer or support organisation and financially sponsoring a fix checklist before submitting search through existing issue reports and gis stackexchange com to check whether the issue already exists test with a create a light and self contained sample dataset and project file which demonstrates the issue describe the bug take for example densify geometries given an interval if i run this alg on a layer with a size attribut and set interval as data defined by this attribut and output to a new layer data defined parameter is taken into account on the opposite if i modify the entities in place data defined interval is not taken into account input layer output to a new layer edit in place how to reproduce download and load it in qgis enable edit in place run alg densify geometries given an interval set attribut taille as data defined value for interval distance between vertices is meter default value of the alg instead of or meters you can run the same alg but output to a new layer and see that data defined value for interval is taken into account qgis and os versions testing about click in the table ctrl a and then ctrl c finally paste here additional context i observe a similar issue for at least or other algs so it seems not specific to densify geometries given an interval but a general issue when editing features in place

| 1

|

103,434

| 8,908,940,283

|

IssuesEvent

|

2019-01-18 03:21:24

|

xcat2/xcat2-task-management

|

https://api.github.com/repos/xcat2/xcat2-task-management

|

closed

|

Refine cases to reduce false errors

|

sprint2 test

|

In CI regression, we found some cases failed because the environment is not clear. Refine following cases to reduce the false error

```

rmdef_dynamic_group

lsdef_nics

```

|

1.0

|

Refine cases to reduce false errors - In CI regression, we found some cases failed because the environment is not clear. Refine following cases to reduce the false error

```

rmdef_dynamic_group

lsdef_nics

```

|

non_process

|

refine cases to reduce false errors in ci regression we found some cases failed because the environment is not clear refine following cases to reduce the false error rmdef dynamic group lsdef nics

| 0

|

10,814

| 13,609,290,269

|

IssuesEvent

|

2020-09-23 04:50:33

|

googleapis/java-mediatranslation

|

https://api.github.com/repos/googleapis/java-mediatranslation

|

closed

|

Dependency Dashboard

|

api: mediatranslation type: process

|

This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/com.google.cloud-google-cloud-mediatranslation-0.x -->chore(deps): update dependency com.google.cloud:google-cloud-mediatranslation to v0.2.2

---

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

|

1.0

|

Dependency Dashboard - This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/com.google.cloud-google-cloud-mediatranslation-0.x -->chore(deps): update dependency com.google.cloud:google-cloud-mediatranslation to v0.2.2

---

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

|

process

|

dependency dashboard this issue contains a list of renovate updates and their statuses open these updates have all been created already click a checkbox below to force a retry rebase of any chore deps update dependency com google cloud google cloud mediatranslation to check this box to trigger a request for renovate to run again on this repository

| 1

|

11,795

| 14,622,679,050

|

IssuesEvent

|

2020-12-23 01:01:42

|

ocaml-batteries-team/batteries-included

|

https://api.github.com/repos/ocaml-batteries-team/batteries-included

|

closed

|

Consider moving to ocaml-community?

|

development process

|

The [ocaml-community](https://github.com/ocaml-community/meta) has recently sprung up as a community group committed to adopting and maintaining OCaml software projects that are not being actively developed by their authors anymore.

I think that it is a natural question to wonder whether batteries-included should migrate to ocaml-community. From the start, batteries-included was designed as a non-hierarchical project built for and by "the ocaml community" at learge. I think the people creating the ocaml-batteries-team organization had in mind the same sort of open-ended, non-hierarchical code ownership and evolution that ocaml-community now represents. In practice this vision did not manifest itself through adopting other repositories, but I would say that in a sense Batteries was a pre-github incarnation of the same ideas.