Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

20,573

| 27,233,067,994

|

IssuesEvent

|

2023-02-21 14:33:14

|

bazelbuild/bazel

|

https://api.github.com/repos/bazelbuild/bazel

|

closed

|

Build API Roadmap: discussion

|

P3 type: support / not a bug (process) untriaged team-Rules-API

|

This is a non-technical issue just meant as a connection point for discussion, thoughts, questions, concerns, etc. about Bazel's Build API work as prioritized in https://www.bazel.build/roadmaps/build-api.html.

It's also a step toward integrating our project workflow deeper into the Bazel community.

My current thinking is to maintain one of these issues and roadmaps for each year. But I'm open to suggestions for other venues like bazel-dev, etc.

I also want to have a dedicated GitHub issue for each individual roadmap item. As of this writing we're

about halfway there. So individual priorities can be discussed on their own threads and this thread can cover big picture stuff.

|

1.0

|

Build API Roadmap: discussion - This is a non-technical issue just meant as a connection point for discussion, thoughts, questions, concerns, etc. about Bazel's Build API work as prioritized in https://www.bazel.build/roadmaps/build-api.html.

It's also a step toward integrating our project workflow deeper into the Bazel community.

My current thinking is to maintain one of these issues and roadmaps for each year. But I'm open to suggestions for other venues like bazel-dev, etc.

I also want to have a dedicated GitHub issue for each individual roadmap item. As of this writing we're

about halfway there. So individual priorities can be discussed on their own threads and this thread can cover big picture stuff.

|

process

|

build api roadmap discussion this is a non technical issue just meant as a connection point for discussion thoughts questions concerns etc about bazel s build api work as prioritized in it s also a step toward integrating our project workflow deeper into the bazel community my current thinking is to maintain one of these issues and roadmaps for each year but i m open to suggestions for other venues like bazel dev etc i also want to have a dedicated github issue for each individual roadmap item as of this writing we re about halfway there so individual priorities can be discussed on their own threads and this thread can cover big picture stuff

| 1

|

2,167

| 5,018,592,633

|

IssuesEvent

|

2016-12-14 08:56:22

|

paulkornikov/Pragonas

|

https://api.github.com/repos/paulkornikov/Pragonas

|

closed

|

Blocage général archivage et autres processus

|

a-enhancement processus

|

à partir du web config. Voir si possible un app config.

|

1.0

|

Blocage général archivage et autres processus - à partir du web config. Voir si possible un app config.

|

process

|

blocage général archivage et autres processus à partir du web config voir si possible un app config

| 1

|

37,600

| 6,623,267,022

|

IssuesEvent

|

2017-09-22 06:10:43

|

openebs/openebs

|

https://api.github.com/repos/openebs/openebs

|

opened

|

Add Glossary section under Getting Started

|

documentation

|

Add a Glossary section where terms used in documentation are described and how they are used in the documentation.

|

1.0

|

Add Glossary section under Getting Started - Add a Glossary section where terms used in documentation are described and how they are used in the documentation.

|

non_process

|

add glossary section under getting started add a glossary section where terms used in documentation are described and how they are used in the documentation

| 0

|

245,065

| 18,771,715,993

|

IssuesEvent

|

2021-11-07 00:02:22

|

matheus-srego/DroneArara

|

https://api.github.com/repos/matheus-srego/DroneArara

|

closed

|

Pesquisar outras formas de reconhecimentos em IAs

|

documentation

|

1. Para recriar a Inteligência Artificial é necesário pesquisar outras formas de criar e treinar o reconhecimento dos gestos das mãos.

2. Pendente decidir se vamos seguir com o reconhecimento apenas dos gestos em libras ou usar outros gestos.

|

1.0

|

Pesquisar outras formas de reconhecimentos em IAs - 1. Para recriar a Inteligência Artificial é necesário pesquisar outras formas de criar e treinar o reconhecimento dos gestos das mãos.

2. Pendente decidir se vamos seguir com o reconhecimento apenas dos gestos em libras ou usar outros gestos.

|

non_process

|

pesquisar outras formas de reconhecimentos em ias para recriar a inteligência artificial é necesário pesquisar outras formas de criar e treinar o reconhecimento dos gestos das mãos pendente decidir se vamos seguir com o reconhecimento apenas dos gestos em libras ou usar outros gestos

| 0

|

234,537

| 7,722,942,487

|

IssuesEvent

|

2018-05-24 10:49:04

|

geosolutions-it/MapStore2

|

https://api.github.com/repos/geosolutions-it/MapStore2

|

closed

|

Wrong geometry update and center of geodesic circle in query panel

|

Priority: High Project: C028 bug pending review review

|

### Description

Geodesic circle geometry is not updated correctly

### In case of Bug (otherwise remove this paragraph)

*Browser Affected*

(use this site: https://www.whatsmybrowser.org/ for non expert users)

- [ ] Internet Explorer

- [x] Chrome

- [ ] Firefox

- [ ] Safari

*Browser Version Affected*

- Chrome v66

*Steps to reproduce*

- open map with vector layer

- select layer in TOC

- open feature grid

- open query panel

- draw a spatial filter Circle

- edit spatial filter and confirm

*Expected Result*

- circle is updated correctly center and radius

*Current Result*

- circle isn't update correctly

### Other useful information (optional):

It needed the follow configuration

```

{

"name": "FeatureEditor",

"cfg": {

"showFilteredObject": true

}

}

```

```

{

"name": "QueryPanel",

"cfg": {

"activateQueryTool": true,

"spatialOperations": [

{"id": "INTERSECTS", "name": "queryform.spatialfilter.operations.intersects"},

{"id": "BBOX", "name": "queryform.spatialfilter.operations.bbox"},

{"id": "CONTAINS", "name": "queryform.spatialfilter.operations.contains"},

{"id": "WITHIN", "name": "queryform.spatialfilter.operations.within"}

],

"spatialMethodOptions": [

{"id": "Viewport", "name": "queryform.spatialfilter.methods.viewport"},

{"id": "BBOX", "name": "queryform.spatialfilter.methods.box"},

{"id": "Circle", "name": "queryform.spatialfilter.methods.circle", "geodesic": true},

{"id": "Polygon", "name": "queryform.spatialfilter.methods.poly"}

]

}

}

```

|

1.0

|

Wrong geometry update and center of geodesic circle in query panel - ### Description

Geodesic circle geometry is not updated correctly

### In case of Bug (otherwise remove this paragraph)

*Browser Affected*

(use this site: https://www.whatsmybrowser.org/ for non expert users)

- [ ] Internet Explorer

- [x] Chrome

- [ ] Firefox

- [ ] Safari

*Browser Version Affected*

- Chrome v66

*Steps to reproduce*

- open map with vector layer

- select layer in TOC

- open feature grid

- open query panel

- draw a spatial filter Circle

- edit spatial filter and confirm

*Expected Result*

- circle is updated correctly center and radius

*Current Result*

- circle isn't update correctly

### Other useful information (optional):

It needed the follow configuration

```

{

"name": "FeatureEditor",

"cfg": {

"showFilteredObject": true

}

}

```

```

{

"name": "QueryPanel",

"cfg": {

"activateQueryTool": true,

"spatialOperations": [

{"id": "INTERSECTS", "name": "queryform.spatialfilter.operations.intersects"},

{"id": "BBOX", "name": "queryform.spatialfilter.operations.bbox"},

{"id": "CONTAINS", "name": "queryform.spatialfilter.operations.contains"},

{"id": "WITHIN", "name": "queryform.spatialfilter.operations.within"}

],

"spatialMethodOptions": [

{"id": "Viewport", "name": "queryform.spatialfilter.methods.viewport"},

{"id": "BBOX", "name": "queryform.spatialfilter.methods.box"},

{"id": "Circle", "name": "queryform.spatialfilter.methods.circle", "geodesic": true},

{"id": "Polygon", "name": "queryform.spatialfilter.methods.poly"}

]

}

}

```

|

non_process

|

wrong geometry update and center of geodesic circle in query panel description geodesic circle geometry is not updated correctly in case of bug otherwise remove this paragraph browser affected use this site for non expert users internet explorer chrome firefox safari browser version affected chrome steps to reproduce open map with vector layer select layer in toc open feature grid open query panel draw a spatial filter circle edit spatial filter and confirm expected result circle is updated correctly center and radius current result circle isn t update correctly other useful information optional it needed the follow configuration name featureeditor cfg showfilteredobject true name querypanel cfg activatequerytool true spatialoperations id intersects name queryform spatialfilter operations intersects id bbox name queryform spatialfilter operations bbox id contains name queryform spatialfilter operations contains id within name queryform spatialfilter operations within spatialmethodoptions id viewport name queryform spatialfilter methods viewport id bbox name queryform spatialfilter methods box id circle name queryform spatialfilter methods circle geodesic true id polygon name queryform spatialfilter methods poly

| 0

|

525,612

| 15,257,114,539

|

IssuesEvent

|

2021-02-20 23:27:20

|

marbl/MetagenomeScope

|

https://api.github.com/repos/marbl/MetagenomeScope

|

opened

|

Detect paths encoded in GFA files and pass to user interface

|

highpriorityfeature

|

Offshoot of #147. Will depend on #205 being merged in.

The idea here is taking these paths (called "paths" in [GFA1](https://github.com/GFA-spec/GFA-spec/blob/master/GFA1.md), still called "paths" but encoded as O-lines (ordered "groups") in [GFA2](https://github.com/GFA-spec/GFA-spec/blob/master/GFA2.md#group)) from an input GFA file and storing them so that they can be viewed in the interface analogously to how paths in AGP files can be viewed. Fortunately, getting paths from GfaPy [seems pretty simple](https://gfapy.readthedocs.io/en/latest/tutorial/gfa.html) (just using `g.paths`), so the main challenge will just be passing this data to the JS cleanly (and figuring out how to represent it -- should this be independent of the AGP visualization? an alternate set of widgets?)

U-lines (unordered "groups") in GFA2 files might also be usable in the same way, but I suspect that might not be worth the trouble -- the interpretation seems different. Can reevaluate if there is demand.

It would be great to make this very simple for users, but in the worst case scenario (passing the path info is challenging) it should be pretty doable to write some code that converts the paths in a GFA file to AGP, which users can then upload in the interface.

|

1.0

|

Detect paths encoded in GFA files and pass to user interface - Offshoot of #147. Will depend on #205 being merged in.

The idea here is taking these paths (called "paths" in [GFA1](https://github.com/GFA-spec/GFA-spec/blob/master/GFA1.md), still called "paths" but encoded as O-lines (ordered "groups") in [GFA2](https://github.com/GFA-spec/GFA-spec/blob/master/GFA2.md#group)) from an input GFA file and storing them so that they can be viewed in the interface analogously to how paths in AGP files can be viewed. Fortunately, getting paths from GfaPy [seems pretty simple](https://gfapy.readthedocs.io/en/latest/tutorial/gfa.html) (just using `g.paths`), so the main challenge will just be passing this data to the JS cleanly (and figuring out how to represent it -- should this be independent of the AGP visualization? an alternate set of widgets?)

U-lines (unordered "groups") in GFA2 files might also be usable in the same way, but I suspect that might not be worth the trouble -- the interpretation seems different. Can reevaluate if there is demand.

It would be great to make this very simple for users, but in the worst case scenario (passing the path info is challenging) it should be pretty doable to write some code that converts the paths in a GFA file to AGP, which users can then upload in the interface.

|

non_process

|

detect paths encoded in gfa files and pass to user interface offshoot of will depend on being merged in the idea here is taking these paths called paths in still called paths but encoded as o lines ordered groups in from an input gfa file and storing them so that they can be viewed in the interface analogously to how paths in agp files can be viewed fortunately getting paths from gfapy just using g paths so the main challenge will just be passing this data to the js cleanly and figuring out how to represent it should this be independent of the agp visualization an alternate set of widgets u lines unordered groups in files might also be usable in the same way but i suspect that might not be worth the trouble the interpretation seems different can reevaluate if there is demand it would be great to make this very simple for users but in the worst case scenario passing the path info is challenging it should be pretty doable to write some code that converts the paths in a gfa file to agp which users can then upload in the interface

| 0

|

1,542

| 4,152,284,672

|

IssuesEvent

|

2016-06-16 00:02:52

|

onyx-platform/onyx

|

https://api.github.com/repos/onyx-platform/onyx

|

closed

|

Add dependent projects to release process

|

release-process

|

- [x] onyx-starter

- [x] onyx-template

- [x] onyx-examples

- [x] onyx-website

- [x] onyx-cheat-sheet

- [x] onyx-plugin-template

- [x] learn-onyx

There may be others, also.

|

1.0

|

Add dependent projects to release process - - [x] onyx-starter

- [x] onyx-template

- [x] onyx-examples

- [x] onyx-website

- [x] onyx-cheat-sheet

- [x] onyx-plugin-template

- [x] learn-onyx

There may be others, also.

|

process

|

add dependent projects to release process onyx starter onyx template onyx examples onyx website onyx cheat sheet onyx plugin template learn onyx there may be others also

| 1

|

5,892

| 8,709,663,990

|

IssuesEvent

|

2018-12-06 14:34:20

|

Open-EO/openeo-api

|

https://api.github.com/repos/Open-EO/openeo-api

|

closed

|

NoData handling in custom scripts

|

data processing extension udfs

|

Given that a user can provide his own scripts, how should he implement proper nodata handling?

The problem is that actual nodata values can differ, depending on the data layer and the endpoint against which the script is executed.

Options:

1. Nodata value is part of band metadata, developer should get it from there and propagate into his script

2. Nodata values passed along to the user function

3. Nodata 'macros' such as isNoData(x) are provided by the backend, that do the right thing?

This is linked to the question of what datatype will be used for pixel operations? We can standardize on always using doubles (even if the raw data is for instance stored as a byte), which would help in solving these things.

|

1.0

|

NoData handling in custom scripts - Given that a user can provide his own scripts, how should he implement proper nodata handling?

The problem is that actual nodata values can differ, depending on the data layer and the endpoint against which the script is executed.

Options:

1. Nodata value is part of band metadata, developer should get it from there and propagate into his script

2. Nodata values passed along to the user function

3. Nodata 'macros' such as isNoData(x) are provided by the backend, that do the right thing?

This is linked to the question of what datatype will be used for pixel operations? We can standardize on always using doubles (even if the raw data is for instance stored as a byte), which would help in solving these things.

|

process

|

nodata handling in custom scripts given that a user can provide his own scripts how should he implement proper nodata handling the problem is that actual nodata values can differ depending on the data layer and the endpoint against which the script is executed options nodata value is part of band metadata developer should get it from there and propagate into his script nodata values passed along to the user function nodata macros such as isnodata x are provided by the backend that do the right thing this is linked to the question of what datatype will be used for pixel operations we can standardize on always using doubles even if the raw data is for instance stored as a byte which would help in solving these things

| 1

|

16,625

| 21,684,651,644

|

IssuesEvent

|

2022-05-09 10:03:52

|

NixOS/nixpkgs

|

https://api.github.com/repos/NixOS/nixpkgs

|

opened

|

ZERO Hydra Failures 22.05

|

6.topic: release process

|

## Mission

Every time we branch off a release we stabilize the release branch.

Our goal here is to get as little as possible jobs failing on the trunk/master jobsets.

We call this effort "Zero Hydra Failure".

I'd like to heighten, while it's great to focus on zero as our goal, it's essentially to

have all deliverables that worked in the previous release work here also.

Please note the changes included in [RFC 85](https://github.com/NixOS/rfcs/blob/master/rfcs/0085-nixos-release-stablization.md).

Most significantly, branch off will occur on 2022 May 22; prior to that date, ZHF will be conducted

on master; after that date, ZHF will be conducted on the release channel using a backport

workflow similar to previous ZHFs.

## Jobsets

[trunk Jobset](https://hydra.nixos.org/jobset/nixpkgs/trunk) (includes linux, darwin, and aarch64-linux builds)

[nixos/combined Jobset](https://hydra.nixos.org/jobset/nixos/trunk-combined) (includes many nixos tests)

<!--[nixos:release-22.05 Jobset](https://hydra.nixos.org/jobset/nixos/release-22.05)

[nixpkgs:nixpkgs-22.05-darwin Jobset](https://hydra.nixos.org/jobset/nixpkgs/nixpkgs-22.05-darwin)-->

## How to help (textual)

1. Select an evaluation of the [trunk jobset](https://hydra.nixos.org/jobset/nixpkgs/trunk)

2. Find a failed job ❌️ , you can use the filter field to scope packages to your platform, or search for packages that are relevant to you.

Note: you can filter for architecture by filtering for it, eg: https://hydra.nixos.org/eval/1719540?filter=x86_64-linux&compare=1719463&full=#tabs-still-fail

3. Search to see if a PR is not already open for the package. It there is one, please help review it.

4. If there is no open PR, troubleshoot why it's failing and fix it.

5. Create a Pull Request with the fix targeting master, wait for it to be merged.

If your PR causes around 500+ rebuilds, it's preferred to target `staging` to avoid compute and storage churn.

6. (after 2022 May 22) Please follow [backporting steps](https://github.com/NixOS/nixpkgs/blob/master/CONTRIBUTING.md#backporting-changes) and target the `release-22.05` branch if the original PR landed in `master` or `staging-22.05` if the PR landed in `staging`. Be sure to do `git cherry-pick -x <rev>` on the commits that landed in unstable. @jonringer created [a video covering the backport process](https://www.youtube.com/watch?v=4Zb3GpIc6vk&t=520s).

Always reference this issue in the body of your PR:

```

ZHF: ##172160

```

Please ping @NixOS/nixos-release-managers on the PR.

If you're unable to because you're not a member of the NixOS org please ping @dasJ, @tomberek, @jonringer, @Mic92

## How can I easily check packages that I maintain?

I have created an experimental website that automatically crawls Hydra and lists packages by maintainer and lists the most important dependencies (failing packages with the most dependants).

You can reach it here: https://zh.fail

If you prefer the command-line way, you can also check failing packages that you maintain by running:

```

# from root of nixpkgs

nix-build maintainers/scripts/build.nix --argstr maintainer <name>

```

## New to nixpkgs?

- [Packaging a basic C application](https://www.youtube.com/watch?v=LiEqN8r-BRw)

- [Python nix packaging](https://www.youtube.com/watch?v=jXd-hkP4xnU)

- [Adding a package to nixpkgs](https://www.youtube.com/watch?v=fvj8H5yUKu8)

- other resources at: https://github.com/nix-community/awesome-nix

- https://nix.dev/tutorials/

## Packages that don't get fixed

The remaining packages will be marked as broken before the release (on the failing platforms).

You can do this like:

```nix

meta = {

# ref to issue/explanation

# `true` is for everything

broken = stdenv.isDarwin;

};

```

## Closing

This is a great way to help NixOS, and it is a great time for new contributors to start their nixpkgs adventure. :partying_face:

cc @NixOS/nixpkgs-committers @NixOS/nixpkgs-maintainers @NixOS/release-engineers

## Related Issues

- Timeline: #165792

- Feature Freeze Items: #167025

|

1.0

|

ZERO Hydra Failures 22.05 - ## Mission

Every time we branch off a release we stabilize the release branch.

Our goal here is to get as little as possible jobs failing on the trunk/master jobsets.

We call this effort "Zero Hydra Failure".

I'd like to heighten, while it's great to focus on zero as our goal, it's essentially to

have all deliverables that worked in the previous release work here also.

Please note the changes included in [RFC 85](https://github.com/NixOS/rfcs/blob/master/rfcs/0085-nixos-release-stablization.md).

Most significantly, branch off will occur on 2022 May 22; prior to that date, ZHF will be conducted

on master; after that date, ZHF will be conducted on the release channel using a backport

workflow similar to previous ZHFs.

## Jobsets

[trunk Jobset](https://hydra.nixos.org/jobset/nixpkgs/trunk) (includes linux, darwin, and aarch64-linux builds)

[nixos/combined Jobset](https://hydra.nixos.org/jobset/nixos/trunk-combined) (includes many nixos tests)

<!--[nixos:release-22.05 Jobset](https://hydra.nixos.org/jobset/nixos/release-22.05)

[nixpkgs:nixpkgs-22.05-darwin Jobset](https://hydra.nixos.org/jobset/nixpkgs/nixpkgs-22.05-darwin)-->

## How to help (textual)

1. Select an evaluation of the [trunk jobset](https://hydra.nixos.org/jobset/nixpkgs/trunk)

2. Find a failed job ❌️ , you can use the filter field to scope packages to your platform, or search for packages that are relevant to you.

Note: you can filter for architecture by filtering for it, eg: https://hydra.nixos.org/eval/1719540?filter=x86_64-linux&compare=1719463&full=#tabs-still-fail

3. Search to see if a PR is not already open for the package. It there is one, please help review it.

4. If there is no open PR, troubleshoot why it's failing and fix it.

5. Create a Pull Request with the fix targeting master, wait for it to be merged.

If your PR causes around 500+ rebuilds, it's preferred to target `staging` to avoid compute and storage churn.

6. (after 2022 May 22) Please follow [backporting steps](https://github.com/NixOS/nixpkgs/blob/master/CONTRIBUTING.md#backporting-changes) and target the `release-22.05` branch if the original PR landed in `master` or `staging-22.05` if the PR landed in `staging`. Be sure to do `git cherry-pick -x <rev>` on the commits that landed in unstable. @jonringer created [a video covering the backport process](https://www.youtube.com/watch?v=4Zb3GpIc6vk&t=520s).

Always reference this issue in the body of your PR:

```

ZHF: ##172160

```

Please ping @NixOS/nixos-release-managers on the PR.

If you're unable to because you're not a member of the NixOS org please ping @dasJ, @tomberek, @jonringer, @Mic92

## How can I easily check packages that I maintain?

I have created an experimental website that automatically crawls Hydra and lists packages by maintainer and lists the most important dependencies (failing packages with the most dependants).

You can reach it here: https://zh.fail

If you prefer the command-line way, you can also check failing packages that you maintain by running:

```

# from root of nixpkgs

nix-build maintainers/scripts/build.nix --argstr maintainer <name>

```

## New to nixpkgs?

- [Packaging a basic C application](https://www.youtube.com/watch?v=LiEqN8r-BRw)

- [Python nix packaging](https://www.youtube.com/watch?v=jXd-hkP4xnU)

- [Adding a package to nixpkgs](https://www.youtube.com/watch?v=fvj8H5yUKu8)

- other resources at: https://github.com/nix-community/awesome-nix

- https://nix.dev/tutorials/

## Packages that don't get fixed

The remaining packages will be marked as broken before the release (on the failing platforms).

You can do this like:

```nix

meta = {

# ref to issue/explanation

# `true` is for everything

broken = stdenv.isDarwin;

};

```

## Closing

This is a great way to help NixOS, and it is a great time for new contributors to start their nixpkgs adventure. :partying_face:

cc @NixOS/nixpkgs-committers @NixOS/nixpkgs-maintainers @NixOS/release-engineers

## Related Issues

- Timeline: #165792

- Feature Freeze Items: #167025

|

process

|

zero hydra failures mission every time we branch off a release we stabilize the release branch our goal here is to get as little as possible jobs failing on the trunk master jobsets we call this effort zero hydra failure i d like to heighten while it s great to focus on zero as our goal it s essentially to have all deliverables that worked in the previous release work here also please note the changes included in most significantly branch off will occur on may prior to that date zhf will be conducted on master after that date zhf will be conducted on the release channel using a backport workflow similar to previous zhfs jobsets includes linux darwin and linux builds includes many nixos tests how to help textual select an evaluation of the find a failed job ❌️ you can use the filter field to scope packages to your platform or search for packages that are relevant to you note you can filter for architecture by filtering for it eg search to see if a pr is not already open for the package it there is one please help review it if there is no open pr troubleshoot why it s failing and fix it create a pull request with the fix targeting master wait for it to be merged if your pr causes around rebuilds it s preferred to target staging to avoid compute and storage churn after may please follow and target the release branch if the original pr landed in master or staging if the pr landed in staging be sure to do git cherry pick x on the commits that landed in unstable jonringer created always reference this issue in the body of your pr zhf please ping nixos nixos release managers on the pr if you re unable to because you re not a member of the nixos org please ping dasj tomberek jonringer how can i easily check packages that i maintain i have created an experimental website that automatically crawls hydra and lists packages by maintainer and lists the most important dependencies failing packages with the most dependants you can reach it here if you prefer the command line way you can also check failing packages that you maintain by running from root of nixpkgs nix build maintainers scripts build nix argstr maintainer new to nixpkgs other resources at packages that don t get fixed the remaining packages will be marked as broken before the release on the failing platforms you can do this like nix meta ref to issue explanation true is for everything broken stdenv isdarwin closing this is a great way to help nixos and it is a great time for new contributors to start their nixpkgs adventure partying face cc nixos nixpkgs committers nixos nixpkgs maintainers nixos release engineers related issues timeline feature freeze items

| 1

|

757,779

| 26,528,024,141

|

IssuesEvent

|

2023-01-19 10:15:56

|

pytorch/pytorch

|

https://api.github.com/repos/pytorch/pytorch

|

closed

|

RuntimeError: could not construct a memory descriptor using a format tag

|

high priority triage review oncall: jit module: mkldnn

|

### 🐛 Describe the bug

Hi! When optimizing the model by `optimize_for_inference`, I encountered this `RuntimeError`. The model works fine in eager mode, and also could be traced. But when using `optimize_for_inference` after tracing, it leads to an error.

```python

import torch

import torch.nn as nn

class MyModule(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = nn.Conv2d(

1, 2, kernel_size=(509,2), stride=3, padding=255, dilation=(1, 1014),

)

def forward(self, i0, i1):

x = torch.max(i0, i1)

y = self.conv1(x)

return y

i0 = torch.zeros((1,1,2,505), dtype=torch.float32)

i1 = torch.zeros((1,2,505), dtype=torch.float32)

mod = MyModule()

out = mod(i0, i1)

print(f'eager: out = {out}') # <-- works fine

exported = torch.jit.trace(mod, [i0, i1])

exported = torch.jit.optimize_for_inference(exported) # <-- RuntimeError: could not construct a memory descriptor using a format tag

eout = exported(i0, i1)

print(f'JIT: eout = {eout}')

assert torch.allclose(out, eout)

```

Logs:

```python

eager: out = tensor([[[[-0.0269],

[-0.0269]],

[[-0.0094],

[-0.0094]]]], grad_fn=<ConvolutionBackward0>)

Traceback (most recent call last):

File "/home/colin/code/bug.py", line 25, in <module>

exported = torch.jit.optimize_for_inference(exported) # <-- RuntimeError: could not construct a memory descriptor using a format tag

File "/home/colin/miniconda3/envs/py39/lib/python3.9/site-packages/torch/jit/_freeze.py", line 218, in optimize_for_inference

torch._C._jit_pass_optimize_for_inference(mod._c, other_methods)

RuntimeError: could not construct a memory descriptor using a format tag

```

### Versions

```python

PyTorch version: 1.12.1+cu116

Is debug build: False

CUDA used to build PyTorch: 11.6

ROCM used to build PyTorch: N/A

OS: Ubuntu 22.04.1 LTS (x86_64)

GCC version: (Ubuntu 11.2.0-19ubuntu1) 11.2.0

Clang version: Could not collect

CMake version: version 3.22.1

Libc version: glibc-2.35

Python version: 3.9.13 (main, Aug 25 2022, 23:26:10) [GCC 11.2.0] (64-bit runtime)

Python platform: Linux-5.15.0-48-generic-x86_64-with-glibc2.35

Is CUDA available: True

CUDA runtime version: 11.6.124

GPU models and configuration:

GPU 0: NVIDIA GeForce RTX 3090

GPU 1: NVIDIA GeForce RTX 3090

GPU 2: NVIDIA GeForce RTX 3090

Nvidia driver version: 515.65.01

cuDNN version: Could not collect

HIP runtime version: N/A

MIOpen runtime version: N/A

Is XNNPACK available: True

Versions of relevant libraries:

[pip3] numpy==1.23.3

[pip3] torch==1.12.1+cu116

[pip3] torchaudio==0.12.1+cu116

[pip3] torchvision==0.13.1+cu116

[conda] numpy 1.23.3 pypi_0 pypi

[conda] torch 1.12.1+cu116 pypi_0 pypi

[conda] torchaudio 0.12.1+cu116 pypi_0 pypi

[conda] torchvision 0.13.1+cu116 pypi_0 pypi

```

cc @ezyang @gchanan @zou3519 @gujinghui @PenghuiCheng @XiaobingSuper @jianyuh @VitalyFedyunin

|

1.0

|

RuntimeError: could not construct a memory descriptor using a format tag - ### 🐛 Describe the bug

Hi! When optimizing the model by `optimize_for_inference`, I encountered this `RuntimeError`. The model works fine in eager mode, and also could be traced. But when using `optimize_for_inference` after tracing, it leads to an error.

```python

import torch

import torch.nn as nn

class MyModule(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = nn.Conv2d(

1, 2, kernel_size=(509,2), stride=3, padding=255, dilation=(1, 1014),

)

def forward(self, i0, i1):

x = torch.max(i0, i1)

y = self.conv1(x)

return y

i0 = torch.zeros((1,1,2,505), dtype=torch.float32)

i1 = torch.zeros((1,2,505), dtype=torch.float32)

mod = MyModule()

out = mod(i0, i1)

print(f'eager: out = {out}') # <-- works fine

exported = torch.jit.trace(mod, [i0, i1])

exported = torch.jit.optimize_for_inference(exported) # <-- RuntimeError: could not construct a memory descriptor using a format tag

eout = exported(i0, i1)

print(f'JIT: eout = {eout}')

assert torch.allclose(out, eout)

```

Logs:

```python

eager: out = tensor([[[[-0.0269],

[-0.0269]],

[[-0.0094],

[-0.0094]]]], grad_fn=<ConvolutionBackward0>)

Traceback (most recent call last):

File "/home/colin/code/bug.py", line 25, in <module>

exported = torch.jit.optimize_for_inference(exported) # <-- RuntimeError: could not construct a memory descriptor using a format tag

File "/home/colin/miniconda3/envs/py39/lib/python3.9/site-packages/torch/jit/_freeze.py", line 218, in optimize_for_inference

torch._C._jit_pass_optimize_for_inference(mod._c, other_methods)

RuntimeError: could not construct a memory descriptor using a format tag

```

### Versions

```python

PyTorch version: 1.12.1+cu116

Is debug build: False

CUDA used to build PyTorch: 11.6

ROCM used to build PyTorch: N/A

OS: Ubuntu 22.04.1 LTS (x86_64)

GCC version: (Ubuntu 11.2.0-19ubuntu1) 11.2.0

Clang version: Could not collect

CMake version: version 3.22.1

Libc version: glibc-2.35

Python version: 3.9.13 (main, Aug 25 2022, 23:26:10) [GCC 11.2.0] (64-bit runtime)

Python platform: Linux-5.15.0-48-generic-x86_64-with-glibc2.35

Is CUDA available: True

CUDA runtime version: 11.6.124

GPU models and configuration:

GPU 0: NVIDIA GeForce RTX 3090

GPU 1: NVIDIA GeForce RTX 3090

GPU 2: NVIDIA GeForce RTX 3090

Nvidia driver version: 515.65.01

cuDNN version: Could not collect

HIP runtime version: N/A

MIOpen runtime version: N/A

Is XNNPACK available: True

Versions of relevant libraries:

[pip3] numpy==1.23.3

[pip3] torch==1.12.1+cu116

[pip3] torchaudio==0.12.1+cu116

[pip3] torchvision==0.13.1+cu116

[conda] numpy 1.23.3 pypi_0 pypi

[conda] torch 1.12.1+cu116 pypi_0 pypi

[conda] torchaudio 0.12.1+cu116 pypi_0 pypi

[conda] torchvision 0.13.1+cu116 pypi_0 pypi

```

cc @ezyang @gchanan @zou3519 @gujinghui @PenghuiCheng @XiaobingSuper @jianyuh @VitalyFedyunin

|

non_process

|

runtimeerror could not construct a memory descriptor using a format tag 🐛 describe the bug hi when optimizing the model by optimize for inference i encountered this runtimeerror the model works fine in eager mode and also could be traced but when using optimize for inference after tracing it leads to an error python import torch import torch nn as nn class mymodule nn module def init self super init self nn kernel size stride padding dilation def forward self x torch max y self x return y torch zeros dtype torch torch zeros dtype torch mod mymodule out mod print f eager out out works fine exported torch jit trace mod exported torch jit optimize for inference exported runtimeerror could not construct a memory descriptor using a format tag eout exported print f jit eout eout assert torch allclose out eout logs python eager out tensor grad fn traceback most recent call last file home colin code bug py line in exported torch jit optimize for inference exported runtimeerror could not construct a memory descriptor using a format tag file home colin envs lib site packages torch jit freeze py line in optimize for inference torch c jit pass optimize for inference mod c other methods runtimeerror could not construct a memory descriptor using a format tag versions python pytorch version is debug build false cuda used to build pytorch rocm used to build pytorch n a os ubuntu lts gcc version ubuntu clang version could not collect cmake version version libc version glibc python version main aug bit runtime python platform linux generic with is cuda available true cuda runtime version gpu models and configuration gpu nvidia geforce rtx gpu nvidia geforce rtx gpu nvidia geforce rtx nvidia driver version cudnn version could not collect hip runtime version n a miopen runtime version n a is xnnpack available true versions of relevant libraries numpy torch torchaudio torchvision numpy pypi pypi torch pypi pypi torchaudio pypi pypi torchvision pypi pypi cc ezyang gchanan gujinghui penghuicheng xiaobingsuper jianyuh vitalyfedyunin

| 0

|

825,453

| 31,390,725,302

|

IssuesEvent

|

2023-08-26 09:59:40

|

sosyal-app/frontend

|

https://api.github.com/repos/sosyal-app/frontend

|

closed

|

Giriş Yapma ekranı > Kod

|

priority feature

|

Giriş yapma fonksiyonu, çalıştığının onaylanması.

Giriş yapma sırasında dönen JWT tokenlarının işlenmesi, kaydedilmesi.

|

1.0

|

Giriş Yapma ekranı > Kod - Giriş yapma fonksiyonu, çalıştığının onaylanması.

Giriş yapma sırasında dönen JWT tokenlarının işlenmesi, kaydedilmesi.

|

non_process

|

giriş yapma ekranı kod giriş yapma fonksiyonu çalıştığının onaylanması giriş yapma sırasında dönen jwt tokenlarının işlenmesi kaydedilmesi

| 0

|

79,262

| 22,685,177,724

|

IssuesEvent

|

2022-07-04 13:29:13

|

hannobraun/Fornjot

|

https://api.github.com/repos/hannobraun/Fornjot

|

closed

|

Check documentation in CI build

|

good first issue type: development topic: build

|

The rustdoc documentation had accumulated a few warnings that I fixed (https://github.com/hannobraun/Fornjot/pull/270). This should be checked in the CI build. A check can be added to [`workflows/test.yml`](https://github.com/hannobraun/Fornjot/blob/main/.github/workflows/test.yml).

Labeling https://github.com/hannobraun/Fornjot/labels/good%20first%20issue, as this is a self-contained change that doesn't require knowledge of Fornjot.

|

1.0

|

Check documentation in CI build - The rustdoc documentation had accumulated a few warnings that I fixed (https://github.com/hannobraun/Fornjot/pull/270). This should be checked in the CI build. A check can be added to [`workflows/test.yml`](https://github.com/hannobraun/Fornjot/blob/main/.github/workflows/test.yml).

Labeling https://github.com/hannobraun/Fornjot/labels/good%20first%20issue, as this is a self-contained change that doesn't require knowledge of Fornjot.

|

non_process

|

check documentation in ci build the rustdoc documentation had accumulated a few warnings that i fixed this should be checked in the ci build a check can be added to labeling as this is a self contained change that doesn t require knowledge of fornjot

| 0

|

228,880

| 17,483,539,505

|

IssuesEvent

|

2021-08-09 07:55:48

|

LimeChain/hedera-services

|

https://api.github.com/repos/LimeChain/hedera-services

|

closed

|

Matrix of APIs pros/cons whether we should implement it

|

documentation research

|

Document the pros & cons of the APIs and decide whether to implement it.

|

1.0

|

Matrix of APIs pros/cons whether we should implement it - Document the pros & cons of the APIs and decide whether to implement it.

|

non_process

|

matrix of apis pros cons whether we should implement it document the pros cons of the apis and decide whether to implement it

| 0

|

418,921

| 28,132,466,892

|

IssuesEvent

|

2023-04-01 02:15:16

|

harrisont/fastbuild-vscode

|

https://api.github.com/repos/harrisont/fastbuild-vscode

|

closed

|

Improve documentation

|

documentation

|

* Update the contributor-docs with a high-level architecture (lexer, parser, evaluator).

* Update the contributor-docs with a file-layout overview.

|

1.0

|

Improve documentation - * Update the contributor-docs with a high-level architecture (lexer, parser, evaluator).

* Update the contributor-docs with a file-layout overview.

|

non_process

|

improve documentation update the contributor docs with a high level architecture lexer parser evaluator update the contributor docs with a file layout overview

| 0

|

21,188

| 28,180,221,327

|

IssuesEvent

|

2023-04-04 01:25:26

|

ssytnt/papers

|

https://api.github.com/repos/ssytnt/papers

|

opened

|

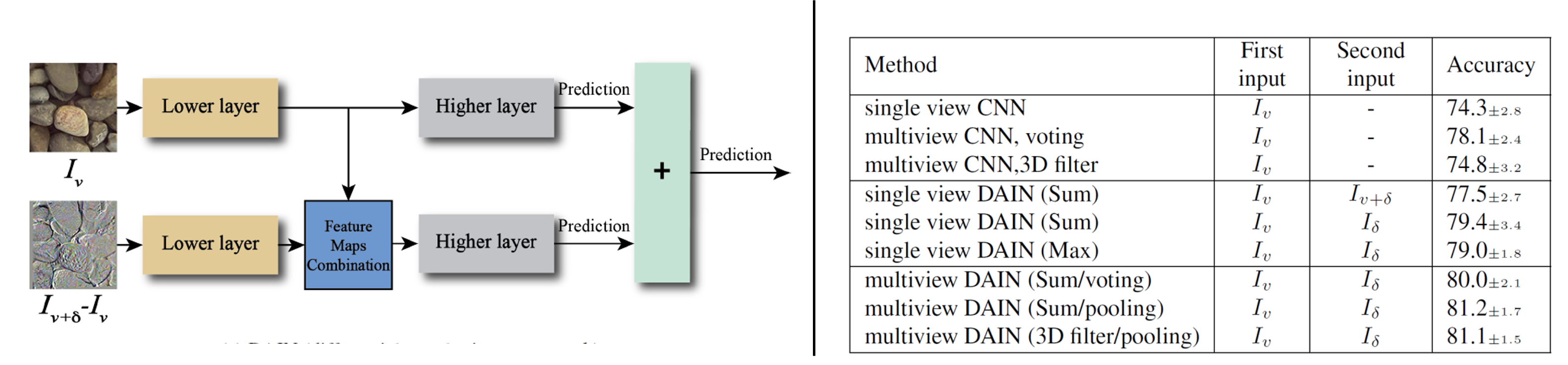

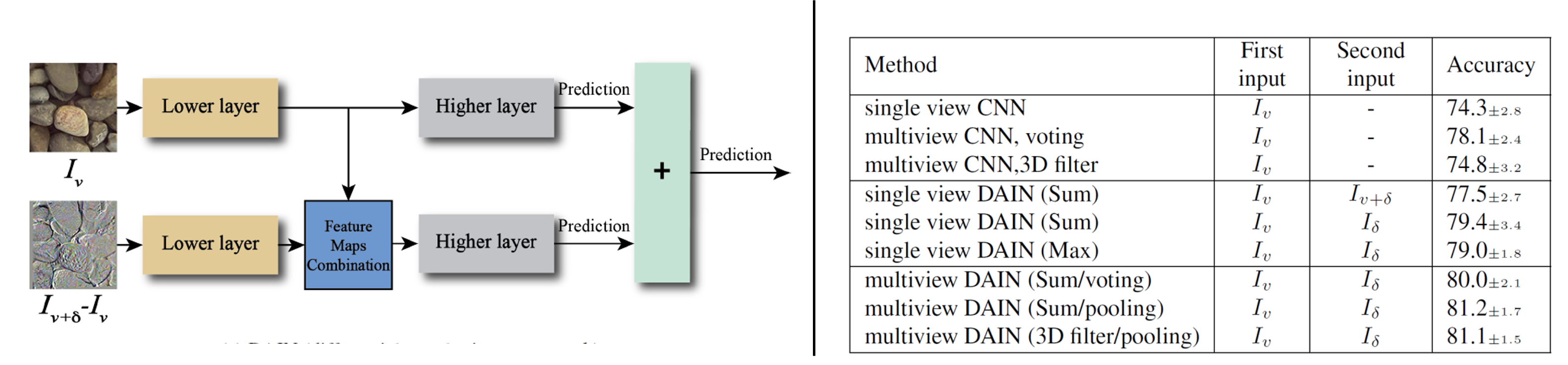

Differential Angular Imaging for Material Recognition[Xue+(Rutgers Univ.), CVPR2017]

|

ImageProcessing Imaging

|

## 概要

複数視点で撮影された(局所的な)RGB画像から材質推定。

## 方法

・Action recognitionの分野で優れた結果を出しているTwo-Stream構造のモデルを使用。

・ロボットアームが装備されたロボットを用いて、5°ステップで画像を取得@屋外。

## 結果

・差分画像を入力に追加することで精度改善。特に、データ数が十分でない場合、明示的に差分をとることは有効。

・複数のRGB画像を入力とするより、1枚の差分画像の方が良い結果。

|

1.0

|

Differential Angular Imaging for Material Recognition[Xue+(Rutgers Univ.), CVPR2017] - ## 概要

複数視点で撮影された(局所的な)RGB画像から材質推定。

## 方法

・Action recognitionの分野で優れた結果を出しているTwo-Stream構造のモデルを使用。

・ロボットアームが装備されたロボットを用いて、5°ステップで画像を取得@屋外。

## 結果

・差分画像を入力に追加することで精度改善。特に、データ数が十分でない場合、明示的に差分をとることは有効。

・複数のRGB画像を入力とするより、1枚の差分画像の方が良い結果。

|

process

|

differential angular imaging for material recognition 概要 複数視点で撮影された 局所的な rgb画像から材質推定。 方法 ・action recognitionの分野で優れた結果を出しているtwo stream構造のモデルを使用。 ・ロボットアームが装備されたロボットを用いて、 °ステップで画像を取得@屋外。 結果 ・差分画像を入力に追加することで精度改善。特に、データ数が十分でない場合、明示的に差分をとることは有効。 ・複数のrgb画像を入力とするより、 。

| 1

|

4,440

| 7,312,884,057

|

IssuesEvent

|

2018-02-28 22:32:01

|

MicrosoftDocs/azure-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-docs

|

closed

|

Edge support

|

active-directory cxp in-process triaged

|

Hello

When will you add support for Edge?

We have Edge as default browser in our Windows 10 setup.

We cannot deploy this needed feature before Edge is supported.

This has been a problem for almost 6 month now. It makes no sense to me, what is going on?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 3e1db2d3-8e7d-0c60-f5c5-827b6a673505

* Version Independent ID: f28d55d1-f974-7767-6c69-56b74f14ff50

* [Content](https://docs.microsoft.com/en-us/azure/active-directory/connect/active-directory-aadconnect-sso)

* [Content Source](https://github.com/Microsoft/azure-docs/blob/master/articles/active-directory/connect/active-directory-aadconnect-sso.md)

* Service: active-directory

|

1.0

|

Edge support - Hello

When will you add support for Edge?

We have Edge as default browser in our Windows 10 setup.

We cannot deploy this needed feature before Edge is supported.

This has been a problem for almost 6 month now. It makes no sense to me, what is going on?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 3e1db2d3-8e7d-0c60-f5c5-827b6a673505

* Version Independent ID: f28d55d1-f974-7767-6c69-56b74f14ff50

* [Content](https://docs.microsoft.com/en-us/azure/active-directory/connect/active-directory-aadconnect-sso)

* [Content Source](https://github.com/Microsoft/azure-docs/blob/master/articles/active-directory/connect/active-directory-aadconnect-sso.md)

* Service: active-directory

|

process

|

edge support hello when will you add support for edge we have edge as default browser in our windows setup we cannot deploy this needed feature before edge is supported this has been a problem for almost month now it makes no sense to me what is going on document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id service active directory

| 1

|

42,868

| 23,020,866,788

|

IssuesEvent

|

2022-07-22 04:34:03

|

keras-team/keras

|

https://api.github.com/repos/keras-team/keras

|

closed

|

NASNet error using custom input shape without top layer

|

type:bug/performance

|

https://github.com/keras-team/keras/blob/07e13740fd181fc3ddec7d9a594d8a08666645f6/keras/applications/nasnet.py#L174-L180

Shouldn't the parameter `require_flatten` uses `include_top` value instead of constant `True`?

Using `True` makes custom shaped input invalid when using size that isn't the `default_size` with `include_top = False`

Checked several other models such as efficientnet_v2 and xception, they all use `include_top` as value instead of `True`

|

True

|

NASNet error using custom input shape without top layer - https://github.com/keras-team/keras/blob/07e13740fd181fc3ddec7d9a594d8a08666645f6/keras/applications/nasnet.py#L174-L180

Shouldn't the parameter `require_flatten` uses `include_top` value instead of constant `True`?

Using `True` makes custom shaped input invalid when using size that isn't the `default_size` with `include_top = False`

Checked several other models such as efficientnet_v2 and xception, they all use `include_top` as value instead of `True`

|

non_process

|

nasnet error using custom input shape without top layer shouldn t the parameter require flatten uses include top value instead of constant true using true makes custom shaped input invalid when using size that isn t the default size with include top false checked several other models such as efficientnet and xception they all use include top as value instead of true

| 0

|

176,135

| 14,564,456,177

|

IssuesEvent

|

2020-12-17 05:09:26

|

sanskrit-lexicon/COLOGNE

|

https://api.github.com/repos/sanskrit-lexicon/COLOGNE

|

closed

|

pywork - segregate same and separate files

|

Documentation

|

A cursory look with `diff` shows the following differences between the actually used files of `pywork` folder between VCP and MD dictionaries.

# Same files

```

pywork/hw.py

pywork/hw0.py

pywork/hw2.py

pywork/hwparse.py # Only `dictcode = 'vcp'` line is different. This can be handled easily.

pywork/parseheadline.py

pywork/updateByLine.py # Except the `try except` in md

```

# Different

```

pywork/headword.py

pywork/hw1.py

pywork/hw_page.py

pywork/make_xml.py

pywork/update.py # Not seen in vcp/pywork.

```

@funderburkjim may like to tell whether the classification is OK or not.

*Question 1* - If the classification is OK, we can keep the SAME files at one place only. So that any update is directly applied to all the dictionaries at one go.

*Question 2* - Are the files in the DIFFERENT list so different that we may not have their unified version? There would be really different items at some place. Can we put them in some form of config file and make the scripts generic ?

If these two items are answered satisfactorily, we can move ahead to unified code base (as far as possible).

|

1.0

|

pywork - segregate same and separate files - A cursory look with `diff` shows the following differences between the actually used files of `pywork` folder between VCP and MD dictionaries.

# Same files

```

pywork/hw.py

pywork/hw0.py

pywork/hw2.py

pywork/hwparse.py # Only `dictcode = 'vcp'` line is different. This can be handled easily.

pywork/parseheadline.py

pywork/updateByLine.py # Except the `try except` in md

```

# Different

```

pywork/headword.py

pywork/hw1.py

pywork/hw_page.py

pywork/make_xml.py

pywork/update.py # Not seen in vcp/pywork.

```

@funderburkjim may like to tell whether the classification is OK or not.

*Question 1* - If the classification is OK, we can keep the SAME files at one place only. So that any update is directly applied to all the dictionaries at one go.

*Question 2* - Are the files in the DIFFERENT list so different that we may not have their unified version? There would be really different items at some place. Can we put them in some form of config file and make the scripts generic ?

If these two items are answered satisfactorily, we can move ahead to unified code base (as far as possible).

|

non_process

|

pywork segregate same and separate files a cursory look with diff shows the following differences between the actually used files of pywork folder between vcp and md dictionaries same files pywork hw py pywork py pywork py pywork hwparse py only dictcode vcp line is different this can be handled easily pywork parseheadline py pywork updatebyline py except the try except in md different pywork headword py pywork py pywork hw page py pywork make xml py pywork update py not seen in vcp pywork funderburkjim may like to tell whether the classification is ok or not question if the classification is ok we can keep the same files at one place only so that any update is directly applied to all the dictionaries at one go question are the files in the different list so different that we may not have their unified version there would be really different items at some place can we put them in some form of config file and make the scripts generic if these two items are answered satisfactorily we can move ahead to unified code base as far as possible

| 0

|

344,866

| 30,768,181,697

|

IssuesEvent

|

2023-07-30 15:09:21

|

oh-dab/server-api

|

https://api.github.com/repos/oh-dab/server-api

|

opened

|

[Feat] 교재 상세조회(전체 학생에 대한 오답노트 조회) controller 테스트 & 구현

|

feat test MISTAKENOTE

|

### WHAT-TO-DO

<!-- 진행할 작업을 나열하며 할 일을 정확히 파악합니다. -->

- [ ] controller 단위 테스트

- [ ] cotroller 구현

|

1.0

|

[Feat] 교재 상세조회(전체 학생에 대한 오답노트 조회) controller 테스트 & 구현 - ### WHAT-TO-DO

<!-- 진행할 작업을 나열하며 할 일을 정확히 파악합니다. -->

- [ ] controller 단위 테스트

- [ ] cotroller 구현

|

non_process

|

교재 상세조회 전체 학생에 대한 오답노트 조회 controller 테스트 구현 what to do controller 단위 테스트 cotroller 구현

| 0

|

15,656

| 19,846,876,032

|

IssuesEvent

|

2022-01-21 07:45:56

|

ooi-data/RS03AXPS-PC03A-06-VADCPA301-streamed-vadcp_velocity_beam_5

|

https://api.github.com/repos/ooi-data/RS03AXPS-PC03A-06-VADCPA301-streamed-vadcp_velocity_beam_5

|

opened

|

🛑 Processing failed: ValueError

|

process

|

## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T07:45:56.012083.

## Details

Flow name: `RS03AXPS-PC03A-06-VADCPA301-streamed-vadcp_velocity_beam_5`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)

<details>

<summary>Traceback</summary>

```

Traceback (most recent call last):

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/pipeline.py", line 165, in processing

final_path = finalize_data_stream(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 84, in finalize_data_stream

append_to_zarr(mod_ds, final_store, enc, logger=logger)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 357, in append_to_zarr

_append_zarr(store, mod_ds)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/utils.py", line 187, in _append_zarr

existing_arr.append(var_data.values)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 519, in values

return _as_array_or_item(self._data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 259, in _as_array_or_item

data = np.asarray(data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 1541, in __array__

x = self.compute()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 288, in compute

(result,) = compute(self, traverse=False, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 571, in compute

results = schedule(dsk, keys, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/threaded.py", line 79, in get

results = get_async(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 507, in get_async

raise_exception(exc, tb)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 315, in reraise

raise exc

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 220, in execute_task

result = _execute_task(task, data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/core.py", line 119, in _execute_task

return func(*(_execute_task(a, cache) for a in args))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 116, in getter

c = np.asarray(c)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 357, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 551, in __array__

self._ensure_cached()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 548, in _ensure_cached

self.array = NumpyIndexingAdapter(np.asarray(self.array))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 521, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/coding/variables.py", line 70, in __array__

return self.func(self.array)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/coding/variables.py", line 137, in _apply_mask

data = np.asarray(data, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/backends/zarr.py", line 73, in __getitem__

return array[key.tuple]

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 673, in __getitem__

return self.get_basic_selection(selection, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 798, in get_basic_selection

return self._get_basic_selection_nd(selection=selection, out=out,

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 841, in _get_basic_selection_nd

return self._get_selection(indexer=indexer, out=out, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 1135, in _get_selection

lchunk_coords, lchunk_selection, lout_selection = zip(*indexer)

ValueError: not enough values to unpack (expected 3, got 0)

```

</details>

|

1.0

|

🛑 Processing failed: ValueError - ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T07:45:56.012083.

## Details

Flow name: `RS03AXPS-PC03A-06-VADCPA301-streamed-vadcp_velocity_beam_5`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)

<details>

<summary>Traceback</summary>

```

Traceback (most recent call last):

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/pipeline.py", line 165, in processing

final_path = finalize_data_stream(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 84, in finalize_data_stream

append_to_zarr(mod_ds, final_store, enc, logger=logger)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 357, in append_to_zarr

_append_zarr(store, mod_ds)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/utils.py", line 187, in _append_zarr

existing_arr.append(var_data.values)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 519, in values

return _as_array_or_item(self._data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 259, in _as_array_or_item

data = np.asarray(data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 1541, in __array__

x = self.compute()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 288, in compute

(result,) = compute(self, traverse=False, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 571, in compute

results = schedule(dsk, keys, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/threaded.py", line 79, in get

results = get_async(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 507, in get_async

raise_exception(exc, tb)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 315, in reraise

raise exc

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 220, in execute_task

result = _execute_task(task, data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/core.py", line 119, in _execute_task

return func(*(_execute_task(a, cache) for a in args))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 116, in getter

c = np.asarray(c)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 357, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 551, in __array__

self._ensure_cached()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 548, in _ensure_cached

self.array = NumpyIndexingAdapter(np.asarray(self.array))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 521, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/coding/variables.py", line 70, in __array__

return self.func(self.array)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/coding/variables.py", line 137, in _apply_mask

data = np.asarray(data, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/backends/zarr.py", line 73, in __getitem__

return array[key.tuple]

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 673, in __getitem__

return self.get_basic_selection(selection, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 798, in get_basic_selection

return self._get_basic_selection_nd(selection=selection, out=out,

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 841, in _get_basic_selection_nd

return self._get_selection(indexer=indexer, out=out, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 1135, in _get_selection

lchunk_coords, lchunk_selection, lout_selection = zip(*indexer)

ValueError: not enough values to unpack (expected 3, got 0)

```

</details>

|

process

|

🛑 processing failed valueerror overview valueerror found in processing task task during run ended on details flow name streamed vadcp velocity beam task name processing task error type valueerror error message not enough values to unpack expected got traceback traceback most recent call last file srv conda envs notebook lib site packages ooi harvester processor pipeline py line in processing final path finalize data stream file srv conda envs notebook lib site packages ooi harvester processor init py line in finalize data stream append to zarr mod ds final store enc logger logger file srv conda envs notebook lib site packages ooi harvester processor init py line in append to zarr append zarr store mod ds file srv conda envs notebook lib site packages ooi harvester processor utils py line in append zarr existing arr append var data values file srv conda envs notebook lib site packages xarray core variable py line in values return as array or item self data file srv conda envs notebook lib site packages xarray core variable py line in as array or item data np asarray data file srv conda envs notebook lib site packages dask array core py line in array x self compute file srv conda envs notebook lib site packages dask base py line in compute result compute self traverse false kwargs file srv conda envs notebook lib site packages dask base py line in compute results schedule dsk keys kwargs file srv conda envs notebook lib site packages dask threaded py line in get results get async file srv conda envs notebook lib site packages dask local py line in get async raise exception exc tb file srv conda envs notebook lib site packages dask local py line in reraise raise exc file srv conda envs notebook lib site packages dask local py line in execute task result execute task task data file srv conda envs notebook lib site packages dask core py line in execute task return func execute task a cache for a in args file srv conda envs notebook lib site packages dask array core py line in getter c np asarray c file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray self array dtype dtype file srv conda envs notebook lib site packages xarray core indexing py line in array self ensure cached file srv conda envs notebook lib site packages xarray core indexing py line in ensure cached self array numpyindexingadapter np asarray self array file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray self array dtype dtype file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray array dtype none file srv conda envs notebook lib site packages xarray coding variables py line in array return self func self array file srv conda envs notebook lib site packages xarray coding variables py line in apply mask data np asarray data dtype dtype file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray array dtype none file srv conda envs notebook lib site packages xarray backends zarr py line in getitem return array file srv conda envs notebook lib site packages zarr core py line in getitem return self get basic selection selection fields fields file srv conda envs notebook lib site packages zarr core py line in get basic selection return self get basic selection nd selection selection out out file srv conda envs notebook lib site packages zarr core py line in get basic selection nd return self get selection indexer indexer out out fields fields file srv conda envs notebook lib site packages zarr core py line in get selection lchunk coords lchunk selection lout selection zip indexer valueerror not enough values to unpack expected got

| 1

|

1,877

| 4,704,239,962

|

IssuesEvent

|

2016-10-13 10:44:36

|

dita-ot/dita-ot

|

https://api.github.com/repos/dita-ot/dita-ot

|

opened

|

Adjust table row span after filtering

|

feature P2 preprocess

|

DITA source has tables with row spans and profiling attributes on rows. When publishing, the filter strips out some rows and the original `@morerows` is no longer valid. Right now this will fail in error because XSLT cannot deal with invalid tables like these.

One solution to fix this would be to write the custom table coordinate attributes during initial parse *and* replace the `@morerows` with `@dita:rowspan-end`. This is similar to what CALS does with column span, specifying span end column name instead of span length. After filtering this could be converted back to `@morerows` by calculating the length with the current coordinates.

This would also work when conref push is used to push new rows into a rows span.

|

1.0

|

Adjust table row span after filtering - DITA source has tables with row spans and profiling attributes on rows. When publishing, the filter strips out some rows and the original `@morerows` is no longer valid. Right now this will fail in error because XSLT cannot deal with invalid tables like these.

One solution to fix this would be to write the custom table coordinate attributes during initial parse *and* replace the `@morerows` with `@dita:rowspan-end`. This is similar to what CALS does with column span, specifying span end column name instead of span length. After filtering this could be converted back to `@morerows` by calculating the length with the current coordinates.

This would also work when conref push is used to push new rows into a rows span.

|

process

|

adjust table row span after filtering dita source has tables with row spans and profiling attributes on rows when publishing the filter strips out some rows and the original morerows is no longer valid right now this will fail in error because xslt cannot deal with invalid tables like these one solution to fix this would be to write the custom table coordinate attributes during initial parse and replace the morerows with dita rowspan end this is similar to what cals does with column span specifying span end column name instead of span length after filtering this could be converted back to morerows by calculating the length with the current coordinates this would also work when conref push is used to push new rows into a rows span

| 1

|

15,457

| 19,669,288,801

|

IssuesEvent

|

2022-01-11 04:21:17

|

q191201771/lal

|

https://api.github.com/repos/q191201771/lal

|

closed

|

ffmpeg 推 rtmp 到 lal,hls 拉流播放卡住

|

#Bug *In process

|

1. ffmpeg.exe -i http://devimages.apple.com.edgekey.net/streaming/examples/bipbop_4x3/gear1/prog_index.m3u8 -c:v copy -an -f flv rtmp://127.0.0.1/live/test110

2. ffplay.exe -i rtmp://127.0.0.1/live/test110 (播放正常)

3. ffplay.exe -i rtmp://127.0.0.1:8080/hls/test110.m3u8 (播放一段时间后卡住)

播放 hls 到卡住时日志如下:

```

2022/01/09 12:03:01.260854 DEBUG group size=1 - server_manager.go:242

2022/01/09 12:03:01.260854 DEBUG {"stream_name":"test110","audio_codec":"","video_codec":"H264","video_width":400,"video_height":300,"pub":{"protocol":"RTMP","session_id":"RTMPPUBSUB1","remote_addr":"127.0.0.1:52571","start_time":"2022-01-09 12:00:34.993","read_bytes_sum":44299697,"wrote_bytes_sum":3500,"bitrate":2822,"read_bitrate":2822,"write_bitrate":0},"subs":null,"pull":{"protocol":"","session_id":"","remote_addr":"","start_time":"","read_bytes_sum":0,"wrote_bytes_sum":0,"bitrate":0,"read_bitrate":0,"write_bitrate":0}} - server_manager.go:245

2022/01/09 12:03:18.917768 ERROR [STREAMER1] invalid video message length. header={Csid:6 MsgLen:5 MsgTypeId:9 MsgStreamId:1 TimestampAbs:1800029}, payload=00000000 17 02 00 00 00 |.....|

- streamer.go:101

2022/01/09 12:03:18.919773 DEBUG [RTMPPUBSUB1] read command message, ignore it. cmd=FCUnpublish, header={Csid:3 MsgLen:34 MsgTypeId:20 MsgStreamId:0 TimestampAbs:0}, b=23, hex=00000000 02 00 0b 46 43 55 6e 70 75 62 6c 69 73 68 00 40 |...FCUnpublish.@|

00000010 18 00 00 00 00 00 00 05 02 00 07 74 65 73 74 31 |...........test1|

00000020 31 30 |10|

- server_session.go:323

2022/01/09 12:03:18.920767 DEBUG [RTMPPUBSUB1] read command message, ignore it. cmd=deleteStream, header={Csid:3 MsgLen:34 MsgTypeId:20 MsgStreamId:0 TimestampAbs:0}, b=24, hex=00000000 02 00 0c 64 65 6c 65 74 65 53 74 72 65 61 6d 00 |...deleteStream.|

00000010 40 1c 00 00 00 00 00 00 05 00 3f f0 00 00 00 00 |@.........?.....|

00000020 00 00 |..|

- server_session.go:323

2022/01/09 12:03:18.928763 DEBUG [NAZACONN1] close once. err=EOF - connection.go:504

2022/01/09 12:03:18.931767 INFO [RTMPPUBSUB1] rtmp loop done. err=EOF - server.go:69

2022/01/09 12:03:18.933762 DEBUG [GROUP1] [RTMPPUBSUB1] del rtmp PubSession from group. - group.go:652

2022/01/09 12:03:18.934761 INFO [HLSMUXER1] lifecycle dispose hls muxer. - muxer.go:129

2022/01/09 12:03:19.260662 INFO erase empty group. [GROUP1] - server_manager.go:230

2022/01/09 12:03:19.260662 INFO [GROUP1] lifecycle dispose group. - group.go:262

2022/01/09 12:03:31.260661 DEBUG group size=0 - server_manager.go:242

2022/01/09 12:04:01.261023 DEBUG group size=0 - server_manager.go:242

```

|

1.0

|

ffmpeg 推 rtmp 到 lal,hls 拉流播放卡住 - 1. ffmpeg.exe -i http://devimages.apple.com.edgekey.net/streaming/examples/bipbop_4x3/gear1/prog_index.m3u8 -c:v copy -an -f flv rtmp://127.0.0.1/live/test110

2. ffplay.exe -i rtmp://127.0.0.1/live/test110 (播放正常)

3. ffplay.exe -i rtmp://127.0.0.1:8080/hls/test110.m3u8 (播放一段时间后卡住)

播放 hls 到卡住时日志如下:

```

2022/01/09 12:03:01.260854 DEBUG group size=1 - server_manager.go:242

2022/01/09 12:03:01.260854 DEBUG {"stream_name":"test110","audio_codec":"","video_codec":"H264","video_width":400,"video_height":300,"pub":{"protocol":"RTMP","session_id":"RTMPPUBSUB1","remote_addr":"127.0.0.1:52571","start_time":"2022-01-09 12:00:34.993","read_bytes_sum":44299697,"wrote_bytes_sum":3500,"bitrate":2822,"read_bitrate":2822,"write_bitrate":0},"subs":null,"pull":{"protocol":"","session_id":"","remote_addr":"","start_time":"","read_bytes_sum":0,"wrote_bytes_sum":0,"bitrate":0,"read_bitrate":0,"write_bitrate":0}} - server_manager.go:245

2022/01/09 12:03:18.917768 ERROR [STREAMER1] invalid video message length. header={Csid:6 MsgLen:5 MsgTypeId:9 MsgStreamId:1 TimestampAbs:1800029}, payload=00000000 17 02 00 00 00 |.....|

- streamer.go:101

2022/01/09 12:03:18.919773 DEBUG [RTMPPUBSUB1] read command message, ignore it. cmd=FCUnpublish, header={Csid:3 MsgLen:34 MsgTypeId:20 MsgStreamId:0 TimestampAbs:0}, b=23, hex=00000000 02 00 0b 46 43 55 6e 70 75 62 6c 69 73 68 00 40 |...FCUnpublish.@|

00000010 18 00 00 00 00 00 00 05 02 00 07 74 65 73 74 31 |...........test1|

00000020 31 30 |10|

- server_session.go:323

2022/01/09 12:03:18.920767 DEBUG [RTMPPUBSUB1] read command message, ignore it. cmd=deleteStream, header={Csid:3 MsgLen:34 MsgTypeId:20 MsgStreamId:0 TimestampAbs:0}, b=24, hex=00000000 02 00 0c 64 65 6c 65 74 65 53 74 72 65 61 6d 00 |...deleteStream.|

00000010 40 1c 00 00 00 00 00 00 05 00 3f f0 00 00 00 00 |@.........?.....|

00000020 00 00 |..|

- server_session.go:323

2022/01/09 12:03:18.928763 DEBUG [NAZACONN1] close once. err=EOF - connection.go:504

2022/01/09 12:03:18.931767 INFO [RTMPPUBSUB1] rtmp loop done. err=EOF - server.go:69

2022/01/09 12:03:18.933762 DEBUG [GROUP1] [RTMPPUBSUB1] del rtmp PubSession from group. - group.go:652

2022/01/09 12:03:18.934761 INFO [HLSMUXER1] lifecycle dispose hls muxer. - muxer.go:129

2022/01/09 12:03:19.260662 INFO erase empty group. [GROUP1] - server_manager.go:230

2022/01/09 12:03:19.260662 INFO [GROUP1] lifecycle dispose group. - group.go:262

2022/01/09 12:03:31.260661 DEBUG group size=0 - server_manager.go:242

2022/01/09 12:04:01.261023 DEBUG group size=0 - server_manager.go:242

```

|

process

|

ffmpeg 推 rtmp 到 lal,hls 拉流播放卡住 ffmpeg exe i c v copy an f flv rtmp live ffplay exe i rtmp live (播放正常) ffplay exe i rtmp hls (播放一段时间后卡住) 播放 hls 到卡住时日志如下 debug group size server manager go debug stream name audio codec video codec video width video height pub protocol rtmp session id remote addr start time read bytes sum wrote bytes sum bitrate read bitrate write bitrate subs null pull protocol session id remote addr start time read bytes sum wrote bytes sum bitrate read bitrate write bitrate server manager go error invalid video message length header csid msglen msgtypeid msgstreamid timestampabs payload streamer go debug read command message ignore it cmd fcunpublish header csid msglen msgtypeid msgstreamid timestampabs b hex fcunpublish server session go debug read command message ignore it cmd deletestream header csid msglen msgtypeid msgstreamid timestampabs b hex deletestream server session go debug close once err eof connection go info rtmp loop done err eof server go debug del rtmp pubsession from group group go info lifecycle dispose hls muxer muxer go info erase empty group server manager go info lifecycle dispose group group go debug group size server manager go debug group size server manager go

| 1

|

9,260

| 8,576,294,771

|

IssuesEvent

|

2018-11-12 19:55:42

|

MicrosoftDocs/azure-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-docs

|

closed

|

Not sure if you inteneted to give two links to the same article above?

|

cxp doc-provided in-progress service-fabric/svc triaged

|

Is listed twice above, it makes sense but wanted to make sure that was your intent

https://docs.microsoft.com/en-us/azure/service-fabric/service-fabric-application-upgrade-parameters

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 273ff0d0-2b07-0157-f090-fe6a4c8da21b

* Version Independent ID: 0039fbc1-0774-8a6b-0222-07df80ce216a

* Content: [Configure the upgrade of a Service Fabric application](https://docs.microsoft.com/en-us/azure/service-fabric/service-fabric-visualstudio-configure-upgrade#parameters-needed-to-upgrade)

* Content Source: [articles/service-fabric/service-fabric-visualstudio-configure-upgrade.md](https://github.com/Microsoft/azure-docs/blob/master/articles/service-fabric/service-fabric-visualstudio-configure-upgrade.md)

* Service: **service-fabric**

* GitHub Login: @MikkelHegn

* Microsoft Alias: **mikkelhegn**

|

1.0

|