Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

4,066

| 6,996,181,679

|

IssuesEvent

|

2017-12-15 22:50:28

|

P0cL4bs/WiFi-Pumpkin

|

https://api.github.com/repos/P0cL4bs/WiFi-Pumpkin

|

closed

|

Redirect Traffic from all domain doesn't working

|

enhancement in process priority solved

|

## Redirect Traffic from all domain doesn't working ?

Hi, i use DNS Spoofer with Windows Update Attack Generator.

In DNS Spoofer i activate "Redirect traffic from all domains" but when on AP Wifi i go on any website i have no connection screen but if i disable "Redirect traffic from all domains" and add manualy "google.com" its working on this and only on this.

Thanks for helping me (screen of my tool config)

####

* Card wireless adapters name : Don't know the name but working good.

* Version used tool: Last Update

* Virtual Machine : No

* Operating System and version: Kali Linux 2017.2

|

1.0

|

Redirect Traffic from all domain doesn't working - ## Redirect Traffic from all domain doesn't working ?

Hi, i use DNS Spoofer with Windows Update Attack Generator.

In DNS Spoofer i activate "Redirect traffic from all domains" but when on AP Wifi i go on any website i have no connection screen but if i disable "Redirect traffic from all domains" and add manualy "google.com" its working on this and only on this.

Thanks for helping me (screen of my tool config)

####

* Card wireless adapters name : Don't know the name but working good.

* Version used tool: Last Update

* Virtual Machine : No

* Operating System and version: Kali Linux 2017.2

|

process

|

redirect traffic from all domain doesn t working redirect traffic from all domain doesn t working hi i use dns spoofer with windows update attack generator in dns spoofer i activate redirect traffic from all domains but when on ap wifi i go on any website i have no connection screen but if i disable redirect traffic from all domains and add manualy google com its working on this and only on this thanks for helping me screen of my tool config card wireless adapters name don t know the name but working good version used tool last update virtual machine no operating system and version kali linux

| 1

|

397,227

| 27,155,924,072

|

IssuesEvent

|

2023-02-17 07:48:04

|

WordPress/hosting-handbook

|

https://api.github.com/repos/WordPress/hosting-handbook

|

closed

|

Security page changes (6): Cache (Opcache)

|

documentation WCEU

|

Some changes, but more or less the same.

ACTUAL TEXT:

### OpCache Security

PHP opcode caching can significantly improve the performance of PHP processing for WordPress websites, as outlined in the Performance section of the WordPress Hosting Handbook. However, when improperly configured PHP opcode caching can enable users to access other users' PHP files without authorization. There are important PHP configuration options for opcode caching that mitigate vulnerabilities such as accessing files without authorization.

#### Validate permission

The following setting makes PHP check that the current user has the necessary permissions to access the cached file. It should be enabled at the root php.ini configuration level to prevent users from accessing other users cached files.

`opcache.validate_permission = On`

This setting is not enabled by default. It is also only available as of PHP 7.0.14.

#### Validate root

The following setting prevents PHP users from accessing files outside of the chroot'd directory to which they normally would not have access. It should also be added to the root php.ini configuration level to prevent unauthorized access to files.

`opcache.validate_root = On`

This setting is not enabled by default. It is also only available as of PHP 7.0.14.

#### Restrict API

Normally, any PHP user can access the opcache API for viewing the currently cached files and for managing the PHP opcode cache. With some PHP configurations, however, the PHP opcode cache shares the same memory between all users on the server. Sharing the PHP opcode cache between all users means all users can view and access the PHP opcode cache and can access other users' cached PHP files. Restricting the Opcache API prevents PHP scripts run in unauthorized directories from viewing cached files and interacting with the PHP opcode cache manually from within PHP scripts. The following setting defines the directory path PHP scripts must start with to be able to access the Opcache API.

`opcache.restrict_api = '/some/folder/path'`

The default value for the setting is `''`, which means there are no restrictions on which PHP scripts can access the Opcache API. This setting should be defined in the root php.ini for your PHP configuration in order to prevent users from overriding it.

NEW PROPOSAL:

### Opcache Security

The PHP Opcode can significantly improve the performance of PHP processing, however, when misconfigured it can allow users to access other users' PHP files without authorization. There are important PHP configuration options for opcode caching that mitigate vulnerabilities such as unauthorized file access.

#### Access validation

The following configuration causes PHP to check that the current user has the necessary permissions to access the cache file. It must be enabled at the root level of php.ini to prevent users from accessing other users' cached files.

```

opcache.validate_permission = on

```

This setting is not activated by default. Available as of PHP 7.0.14.

#### Root validation

The following configuration prevents PHP users from accessing files outside the chrooted directory that they would not normally have access to. It should also be added to the root level of php.ini to prevent unauthorized access to files.

```

opcache.validate_root = on

```

This setting is not activated by default. Available as of PHP 7.0.14.

#### API Restriction

Normally any PHP user can access the Opcache API to view the currently cached files and to manage the PHP opcode cache. However, with some PHP configurations, the PHP opcode cache shares the same memory among all users on the server.

Restricting the Opcache API prevents PHP scripts from running in directories that are not authorized to view cached files and interact with the PHP opcode cache manually from within the PHP scripts. The following configuration defines the directory path with which PHP scripts must start in order to access the opcache API.

```

opcache.restrict_api = '/some/folder/path

```

The default value for the configuration is `"` (*nothing*), which means that there are no restrictions on which PHP scripts can access the Opcache API. This setting must be defined in the php.ini root of your PHP configuration to prevent users from overriding it.

|

1.0

|

Security page changes (6): Cache (Opcache) - Some changes, but more or less the same.

ACTUAL TEXT:

### OpCache Security

PHP opcode caching can significantly improve the performance of PHP processing for WordPress websites, as outlined in the Performance section of the WordPress Hosting Handbook. However, when improperly configured PHP opcode caching can enable users to access other users' PHP files without authorization. There are important PHP configuration options for opcode caching that mitigate vulnerabilities such as accessing files without authorization.

#### Validate permission

The following setting makes PHP check that the current user has the necessary permissions to access the cached file. It should be enabled at the root php.ini configuration level to prevent users from accessing other users cached files.

`opcache.validate_permission = On`

This setting is not enabled by default. It is also only available as of PHP 7.0.14.

#### Validate root

The following setting prevents PHP users from accessing files outside of the chroot'd directory to which they normally would not have access. It should also be added to the root php.ini configuration level to prevent unauthorized access to files.

`opcache.validate_root = On`

This setting is not enabled by default. It is also only available as of PHP 7.0.14.

#### Restrict API

Normally, any PHP user can access the opcache API for viewing the currently cached files and for managing the PHP opcode cache. With some PHP configurations, however, the PHP opcode cache shares the same memory between all users on the server. Sharing the PHP opcode cache between all users means all users can view and access the PHP opcode cache and can access other users' cached PHP files. Restricting the Opcache API prevents PHP scripts run in unauthorized directories from viewing cached files and interacting with the PHP opcode cache manually from within PHP scripts. The following setting defines the directory path PHP scripts must start with to be able to access the Opcache API.

`opcache.restrict_api = '/some/folder/path'`

The default value for the setting is `''`, which means there are no restrictions on which PHP scripts can access the Opcache API. This setting should be defined in the root php.ini for your PHP configuration in order to prevent users from overriding it.

NEW PROPOSAL:

### Opcache Security

The PHP Opcode can significantly improve the performance of PHP processing, however, when misconfigured it can allow users to access other users' PHP files without authorization. There are important PHP configuration options for opcode caching that mitigate vulnerabilities such as unauthorized file access.

#### Access validation

The following configuration causes PHP to check that the current user has the necessary permissions to access the cache file. It must be enabled at the root level of php.ini to prevent users from accessing other users' cached files.

```

opcache.validate_permission = on

```

This setting is not activated by default. Available as of PHP 7.0.14.

#### Root validation

The following configuration prevents PHP users from accessing files outside the chrooted directory that they would not normally have access to. It should also be added to the root level of php.ini to prevent unauthorized access to files.

```

opcache.validate_root = on

```

This setting is not activated by default. Available as of PHP 7.0.14.

#### API Restriction

Normally any PHP user can access the Opcache API to view the currently cached files and to manage the PHP opcode cache. However, with some PHP configurations, the PHP opcode cache shares the same memory among all users on the server.

Restricting the Opcache API prevents PHP scripts from running in directories that are not authorized to view cached files and interact with the PHP opcode cache manually from within the PHP scripts. The following configuration defines the directory path with which PHP scripts must start in order to access the opcache API.

```

opcache.restrict_api = '/some/folder/path

```

The default value for the configuration is `"` (*nothing*), which means that there are no restrictions on which PHP scripts can access the Opcache API. This setting must be defined in the php.ini root of your PHP configuration to prevent users from overriding it.

|

non_process

|

security page changes cache opcache some changes but more or less the same actual text opcache security php opcode caching can significantly improve the performance of php processing for wordpress websites as outlined in the performance section of the wordpress hosting handbook however when improperly configured php opcode caching can enable users to access other users php files without authorization there are important php configuration options for opcode caching that mitigate vulnerabilities such as accessing files without authorization validate permission the following setting makes php check that the current user has the necessary permissions to access the cached file it should be enabled at the root php ini configuration level to prevent users from accessing other users cached files opcache validate permission on this setting is not enabled by default it is also only available as of php validate root the following setting prevents php users from accessing files outside of the chroot d directory to which they normally would not have access it should also be added to the root php ini configuration level to prevent unauthorized access to files opcache validate root on this setting is not enabled by default it is also only available as of php restrict api normally any php user can access the opcache api for viewing the currently cached files and for managing the php opcode cache with some php configurations however the php opcode cache shares the same memory between all users on the server sharing the php opcode cache between all users means all users can view and access the php opcode cache and can access other users cached php files restricting the opcache api prevents php scripts run in unauthorized directories from viewing cached files and interacting with the php opcode cache manually from within php scripts the following setting defines the directory path php scripts must start with to be able to access the opcache api opcache restrict api some folder path the default value for the setting is which means there are no restrictions on which php scripts can access the opcache api this setting should be defined in the root php ini for your php configuration in order to prevent users from overriding it new proposal opcache security the php opcode can significantly improve the performance of php processing however when misconfigured it can allow users to access other users php files without authorization there are important php configuration options for opcode caching that mitigate vulnerabilities such as unauthorized file access access validation the following configuration causes php to check that the current user has the necessary permissions to access the cache file it must be enabled at the root level of php ini to prevent users from accessing other users cached files opcache validate permission on this setting is not activated by default available as of php root validation the following configuration prevents php users from accessing files outside the chrooted directory that they would not normally have access to it should also be added to the root level of php ini to prevent unauthorized access to files opcache validate root on this setting is not activated by default available as of php api restriction normally any php user can access the opcache api to view the currently cached files and to manage the php opcode cache however with some php configurations the php opcode cache shares the same memory among all users on the server restricting the opcache api prevents php scripts from running in directories that are not authorized to view cached files and interact with the php opcode cache manually from within the php scripts the following configuration defines the directory path with which php scripts must start in order to access the opcache api opcache restrict api some folder path the default value for the configuration is nothing which means that there are no restrictions on which php scripts can access the opcache api this setting must be defined in the php ini root of your php configuration to prevent users from overriding it

| 0

|

2,065

| 4,876,031,751

|

IssuesEvent

|

2016-11-16 11:27:52

|

sysown/proxysql

|

https://api.github.com/repos/sysown/proxysql

|

closed

|

Consider setting transaction_persistent=1 by default.

|

ADMIN CONNECTION POOL QUERY PROCESSOR ROUTING

|

I've seen comments which explains why `transaction_persistent=0` by default .(https://github.com/sysown/proxysql/issues/653)

This issue here is to discuss this, after reading the comment, I still think `transaction_persistent=1` should be default.

### why `0` is good

Yes, I understand that it is possible that some connectors, applications, frameworks and many other tools disable `autocommit=0` by default or wrap everything inside a transaction.

so yes, having `transaction_persistent=1` by default could indeed lead the query routing behaviour to not work and feel not flexible as an unknowing inexperienced human would expect when (s)he's just adding some users or just copy pastes something from a blogpost found on the internet and then figures out that that `proxysql` tool did not send those darn selects to a replica.

### why `1` is good

However, that exact same inexperienced person who is a dba/sysadmin might actually create some major problems in an application with the current default behaviour of `transaction_persistent=0`. Suddenly after setting up proxysql, `select` statements within the transaction go to a slave and that inexperienced person might become very happy after checking his statistics seeing that the query went to the slave.

But in turn, several of these `select`s actually start to show different results as it would have when executed within the transaction, as maybe the `select` read some data that is being changed in that transaction, and it might make wrong decisions for the application to see what to perform as `insert` as next query.

This problem is similar to sending all/ a lot of reads to asynchronous slaves.

Even in this case, I am very careful before I tell my customers in for example healthcare and financial sectors to just send those reads to slaves.

### plea for `1` default!

To summarise, I think that, because we are dealing with data, big and small, we should aim at keeping safety/expected behavior first, only when we really want it, we can have that flexibility and set `transaction_persistent=0` when it is really required.

Let's make ProxySQL proper-expected-transactional-behavior Default (and Great) Again!!

|

1.0

|

Consider setting transaction_persistent=1 by default. - I've seen comments which explains why `transaction_persistent=0` by default .(https://github.com/sysown/proxysql/issues/653)

This issue here is to discuss this, after reading the comment, I still think `transaction_persistent=1` should be default.

### why `0` is good

Yes, I understand that it is possible that some connectors, applications, frameworks and many other tools disable `autocommit=0` by default or wrap everything inside a transaction.

so yes, having `transaction_persistent=1` by default could indeed lead the query routing behaviour to not work and feel not flexible as an unknowing inexperienced human would expect when (s)he's just adding some users or just copy pastes something from a blogpost found on the internet and then figures out that that `proxysql` tool did not send those darn selects to a replica.

### why `1` is good

However, that exact same inexperienced person who is a dba/sysadmin might actually create some major problems in an application with the current default behaviour of `transaction_persistent=0`. Suddenly after setting up proxysql, `select` statements within the transaction go to a slave and that inexperienced person might become very happy after checking his statistics seeing that the query went to the slave.

But in turn, several of these `select`s actually start to show different results as it would have when executed within the transaction, as maybe the `select` read some data that is being changed in that transaction, and it might make wrong decisions for the application to see what to perform as `insert` as next query.

This problem is similar to sending all/ a lot of reads to asynchronous slaves.

Even in this case, I am very careful before I tell my customers in for example healthcare and financial sectors to just send those reads to slaves.

### plea for `1` default!

To summarise, I think that, because we are dealing with data, big and small, we should aim at keeping safety/expected behavior first, only when we really want it, we can have that flexibility and set `transaction_persistent=0` when it is really required.

Let's make ProxySQL proper-expected-transactional-behavior Default (and Great) Again!!

|

process

|

consider setting transaction persistent by default i ve seen comments which explains why transaction persistent by default this issue here is to discuss this after reading the comment i still think transaction persistent should be default why is good yes i understand that it is possible that some connectors applications frameworks and many other tools disable autocommit by default or wrap everything inside a transaction so yes having transaction persistent by default could indeed lead the query routing behaviour to not work and feel not flexible as an unknowing inexperienced human would expect when s he s just adding some users or just copy pastes something from a blogpost found on the internet and then figures out that that proxysql tool did not send those darn selects to a replica why is good however that exact same inexperienced person who is a dba sysadmin might actually create some major problems in an application with the current default behaviour of transaction persistent suddenly after setting up proxysql select statements within the transaction go to a slave and that inexperienced person might become very happy after checking his statistics seeing that the query went to the slave but in turn several of these select s actually start to show different results as it would have when executed within the transaction as maybe the select read some data that is being changed in that transaction and it might make wrong decisions for the application to see what to perform as insert as next query this problem is similar to sending all a lot of reads to asynchronous slaves even in this case i am very careful before i tell my customers in for example healthcare and financial sectors to just send those reads to slaves plea for default to summarise i think that because we are dealing with data big and small we should aim at keeping safety expected behavior first only when we really want it we can have that flexibility and set transaction persistent when it is really required let s make proxysql proper expected transactional behavior default and great again

| 1

|

16,543

| 21,568,582,415

|

IssuesEvent

|

2022-05-02 04:16:06

|

jmacost5/CPP-528-Project

|

https://api.github.com/repos/jmacost5/CPP-528-Project

|

closed

|

Add file names and file types to the .gitignore file to prevent them from syncing to the team repository

|

TEAM PROCESS

|

Use the R specific .gitignore file courtesy of GitHub

|

1.0

|

Add file names and file types to the .gitignore file to prevent them from syncing to the team repository - Use the R specific .gitignore file courtesy of GitHub

|

process

|

add file names and file types to the gitignore file to prevent them from syncing to the team repository use the r specific gitignore file courtesy of github

| 1

|

682

| 3,163,302,786

|

IssuesEvent

|

2015-09-20 05:03:39

|

spootTheLousy/saguaro

|

https://api.github.com/repos/spootTheLousy/saguaro

|

closed

|

Captcha doesn't disable for admin form

|

Admin Function Bug: Minor Post/text processing

|

Probably an issue with regist. Should be a simple fix using valid()

|

1.0

|

Captcha doesn't disable for admin form - Probably an issue with regist. Should be a simple fix using valid()

|

process

|

captcha doesn t disable for admin form probably an issue with regist should be a simple fix using valid

| 1

|

2,782

| 5,716,439,801

|

IssuesEvent

|

2017-04-19 15:08:54

|

allinurl/goaccess

|

https://api.github.com/repos/allinurl/goaccess

|

closed

|

Filter request url from into log file

|

log-processing question

|

Hi I want to know if it's possible to filter the request URL from a log file that's means If I could Know what functionality the user use of my application since the url?

|

1.0

|

Filter request url from into log file - Hi I want to know if it's possible to filter the request URL from a log file that's means If I could Know what functionality the user use of my application since the url?

|

process

|

filter request url from into log file hi i want to know if it s possible to filter the request url from a log file that s means if i could know what functionality the user use of my application since the url

| 1

|

14,862

| 18,267,411,270

|

IssuesEvent

|

2021-10-04 10:03:29

|

googleapis/java-logging

|

https://api.github.com/repos/googleapis/java-logging

|

closed

|

blocking batch flow control belies async logging

|

type: process api: logging lang: java

|

google-cloud-logging enables the blocking flow controller in the GAX batching library, going to lengths to modify the [default gapic behavior](https://github.com/googleapis/googleapis/blob/ee3c7eb3401f8de84348046b71fba7b1ac2215cf/google/logging/v2/logging_gapic.yaml#L22): https://github.com/googleapis/java-logging/blob/7b3928e1df1986bfd6fdd1197e22bcc00ce96a6d/google-cloud-logging/src/main/java/com/google/cloud/logging/spi/v2/GrpcLoggingRpc.java#L168-L181

The trouble with the blocking batch flow controller is that it can block `LoggingImpl.writeAsync` and thus any application code that happens to log after the flow controller is full. (That can happen either because of too much application logging or because of a slow-down in the logging service.) Preferable behavior would be dropping the log messages that cannot be uploaded and leaving a note about dropped messages in the next batch or stderr.

(This issue is notwithstanding a recent regression described in #632. That regression occludes the problematic behavior described here by removing the GAX batcher.)

|

1.0

|

blocking batch flow control belies async logging - google-cloud-logging enables the blocking flow controller in the GAX batching library, going to lengths to modify the [default gapic behavior](https://github.com/googleapis/googleapis/blob/ee3c7eb3401f8de84348046b71fba7b1ac2215cf/google/logging/v2/logging_gapic.yaml#L22): https://github.com/googleapis/java-logging/blob/7b3928e1df1986bfd6fdd1197e22bcc00ce96a6d/google-cloud-logging/src/main/java/com/google/cloud/logging/spi/v2/GrpcLoggingRpc.java#L168-L181

The trouble with the blocking batch flow controller is that it can block `LoggingImpl.writeAsync` and thus any application code that happens to log after the flow controller is full. (That can happen either because of too much application logging or because of a slow-down in the logging service.) Preferable behavior would be dropping the log messages that cannot be uploaded and leaving a note about dropped messages in the next batch or stderr.

(This issue is notwithstanding a recent regression described in #632. That regression occludes the problematic behavior described here by removing the GAX batcher.)

|

process

|

blocking batch flow control belies async logging google cloud logging enables the blocking flow controller in the gax batching library going to lengths to modify the the trouble with the blocking batch flow controller is that it can block loggingimpl writeasync and thus any application code that happens to log after the flow controller is full that can happen either because of too much application logging or because of a slow down in the logging service preferable behavior would be dropping the log messages that cannot be uploaded and leaving a note about dropped messages in the next batch or stderr this issue is notwithstanding a recent regression described in that regression occludes the problematic behavior described here by removing the gax batcher

| 1

|

609

| 3,078,014,780

|

IssuesEvent

|

2015-08-21 07:05:10

|

maraujop/django-crispy-forms

|

https://api.github.com/repos/maraujop/django-crispy-forms

|

closed

|

"Not a registered tag library" against Django Pre-1.9 master

|

Testing/Process

|

Getting an error running against latest Django master:

TemplateSyntaxError: 'crispy_forms_tags' is not a registered tag library. Must be one of:

(Note there's literally nothing in the output after the `:` there — i.e. it looks like a list is expected but missing.)

[See here for an example build](https://travis-ci.org/maraujop/django-crispy-forms/builds/75753379)

|

1.0

|

"Not a registered tag library" against Django Pre-1.9 master - Getting an error running against latest Django master:

TemplateSyntaxError: 'crispy_forms_tags' is not a registered tag library. Must be one of:

(Note there's literally nothing in the output after the `:` there — i.e. it looks like a list is expected but missing.)

[See here for an example build](https://travis-ci.org/maraujop/django-crispy-forms/builds/75753379)

|

process

|

not a registered tag library against django pre master getting an error running against latest django master templatesyntaxerror crispy forms tags is not a registered tag library must be one of note there s literally nothing in the output after the there — i e it looks like a list is expected but missing

| 1

|

17,081

| 22,586,510,273

|

IssuesEvent

|

2022-06-28 15:40:13

|

metallb/metallb

|

https://api.github.com/repos/metallb/metallb

|

closed

|

Issues with helm release process

|

process helm

|

I just published v0.10.0, which is our first release since including the new helm chart. Unfortunately the helm release portion of the release process didn't work correctly.

https://github.com/metallb/metallb/runs/2767270351?check_suite_focus=true

We have the chart-releaser github action set to run against tags, with the idea that we would publish new helm chart releases in parallel with metallb releases. The chart-releaser action determined that no chart release was necessary, though.

Based on the [docs of the action](https://github.com/helm/chart-releaser-action/), it sounds like running against the `main` branch is the common way this action is used. We either need to figure out what changes are necessary to make this work against tags, or adjust our process to release the helm chart from `main`.

If the helm chart is *only* released from main when we update the `charts/metallb/Chart.yaml` file with a new version, then that is probably OK.

|

1.0

|

Issues with helm release process - I just published v0.10.0, which is our first release since including the new helm chart. Unfortunately the helm release portion of the release process didn't work correctly.

https://github.com/metallb/metallb/runs/2767270351?check_suite_focus=true

We have the chart-releaser github action set to run against tags, with the idea that we would publish new helm chart releases in parallel with metallb releases. The chart-releaser action determined that no chart release was necessary, though.

Based on the [docs of the action](https://github.com/helm/chart-releaser-action/), it sounds like running against the `main` branch is the common way this action is used. We either need to figure out what changes are necessary to make this work against tags, or adjust our process to release the helm chart from `main`.

If the helm chart is *only* released from main when we update the `charts/metallb/Chart.yaml` file with a new version, then that is probably OK.

|

process

|

issues with helm release process i just published which is our first release since including the new helm chart unfortunately the helm release portion of the release process didn t work correctly we have the chart releaser github action set to run against tags with the idea that we would publish new helm chart releases in parallel with metallb releases the chart releaser action determined that no chart release was necessary though based on the it sounds like running against the main branch is the common way this action is used we either need to figure out what changes are necessary to make this work against tags or adjust our process to release the helm chart from main if the helm chart is only released from main when we update the charts metallb chart yaml file with a new version then that is probably ok

| 1

|

3,003

| 5,997,235,639

|

IssuesEvent

|

2017-06-03 21:51:32

|

alexrj/Slic3r

|

https://api.github.com/repos/alexrj/Slic3r

|

closed

|

Perform Background Processing also while the preset editor dialog is open

|

Background Processing Feature request

|

Fresh build on April 2, 2017: 1.2.9-901-g90623c4

OS: Debian Stable

Description:

Background Processing is enabled, but changes in the Print Settings window do not update until the Print Settings window is closed.

|

1.0

|

Perform Background Processing also while the preset editor dialog is open - Fresh build on April 2, 2017: 1.2.9-901-g90623c4

OS: Debian Stable

Description:

Background Processing is enabled, but changes in the Print Settings window do not update until the Print Settings window is closed.

|

process

|

perform background processing also while the preset editor dialog is open fresh build on april os debian stable description background processing is enabled but changes in the print settings window do not update until the print settings window is closed

| 1

|

21,060

| 28,007,608,601

|

IssuesEvent

|

2023-03-27 16:13:17

|

varabyte/kobweb

|

https://api.github.com/repos/varabyte/kobweb

|

opened

|

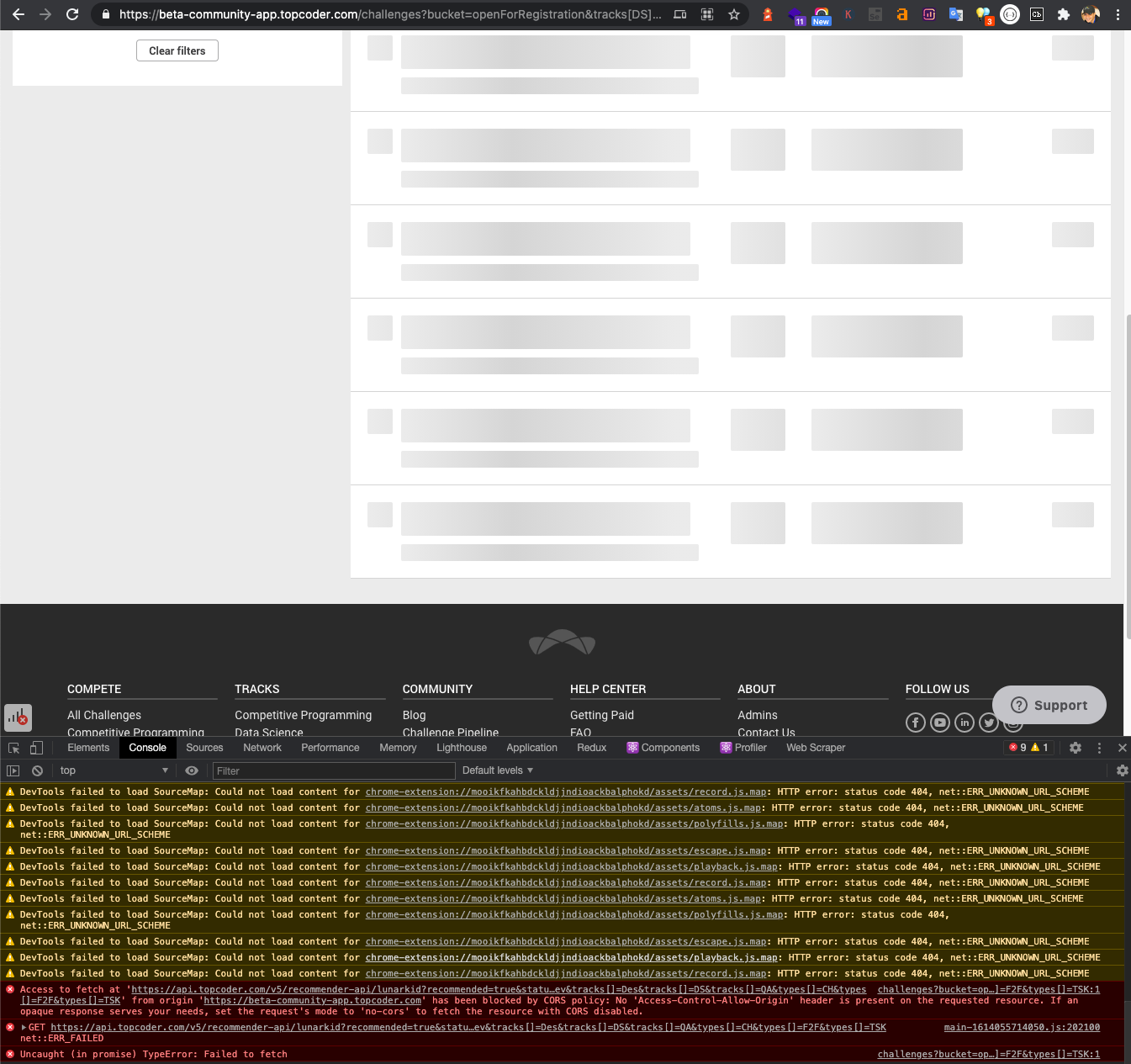

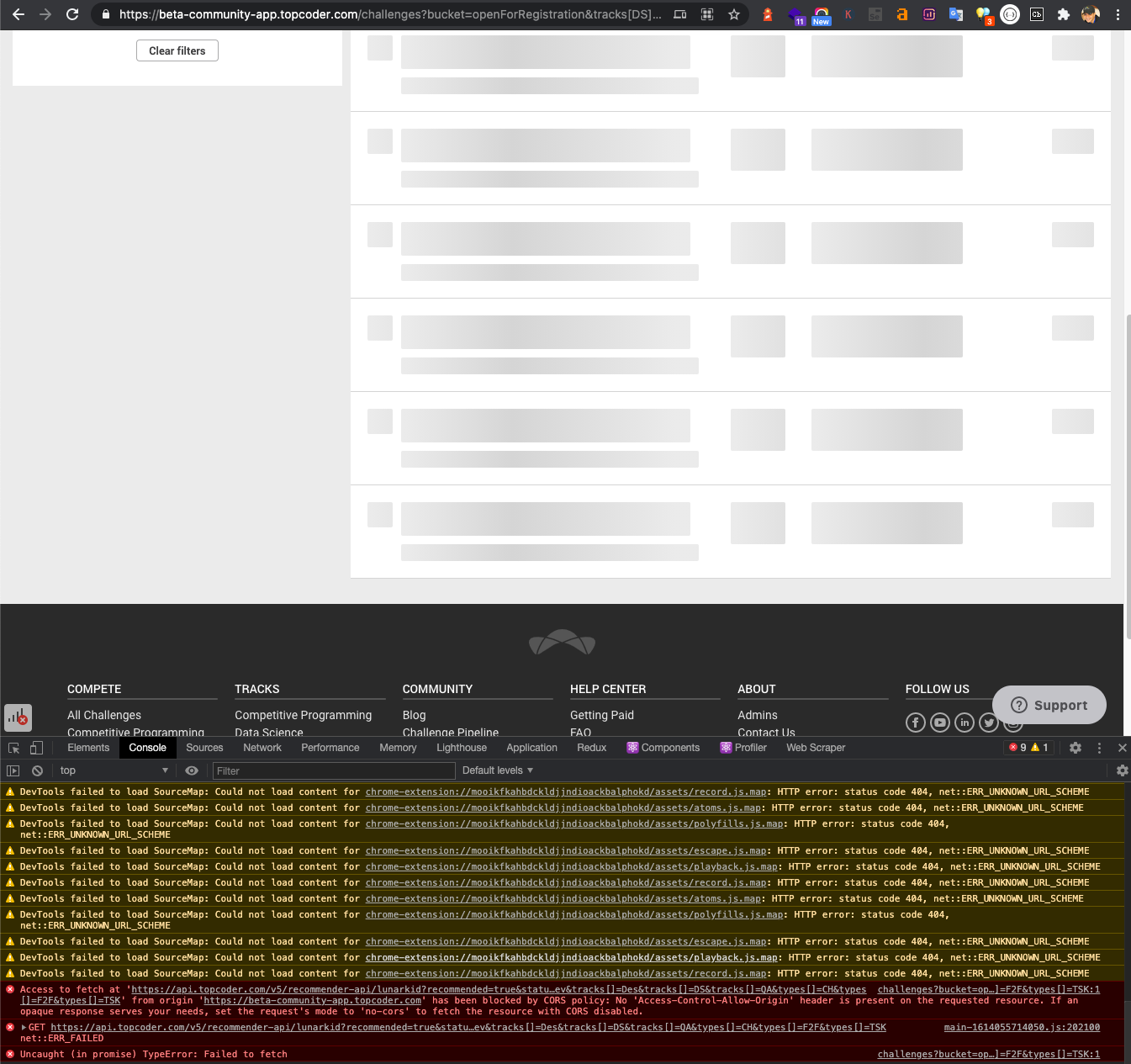

Lighthouse investigation

|

process

|

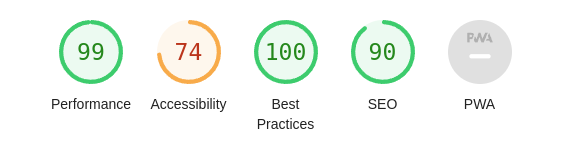

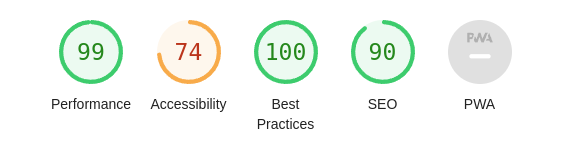

Do some [lighthouse analysis](https://github.com/GoogleChrome/lighthouse/blob/HEAD/docs/user-flows.md) across several pages that were rendering with Kobweb, make sure what's being generated is generally healthy.

This bug can be used to document the list of things worth visiting to improve lighthouse scorse.

For example, here's the current score for https://bitspittle.dev at the time of creating this issue:

a11y looks like it needs some digging into. Not sure yet if that's a Kobweb issue OR an issue with how I made that page.

|

1.0

|

Lighthouse investigation - Do some [lighthouse analysis](https://github.com/GoogleChrome/lighthouse/blob/HEAD/docs/user-flows.md) across several pages that were rendering with Kobweb, make sure what's being generated is generally healthy.

This bug can be used to document the list of things worth visiting to improve lighthouse scorse.

For example, here's the current score for https://bitspittle.dev at the time of creating this issue:

a11y looks like it needs some digging into. Not sure yet if that's a Kobweb issue OR an issue with how I made that page.

|

process

|

lighthouse investigation do some across several pages that were rendering with kobweb make sure what s being generated is generally healthy this bug can be used to document the list of things worth visiting to improve lighthouse scorse for example here s the current score for at the time of creating this issue looks like it needs some digging into not sure yet if that s a kobweb issue or an issue with how i made that page

| 1

|

11,382

| 17,019,102,129

|

IssuesEvent

|

2021-07-02 16:00:52

|

samoody2/wiki

|

https://api.github.com/repos/samoody2/wiki

|

closed

|

search suggestion

|

project requirement

|

If the query does not match the name of an encyclopedia entry, the user should instead be taken to a search results page that displays a list of all encyclopedia entries that have the query as a substring. For example, if the search query were ytho, then Python should appear in the search results.

|

1.0

|

search suggestion - If the query does not match the name of an encyclopedia entry, the user should instead be taken to a search results page that displays a list of all encyclopedia entries that have the query as a substring. For example, if the search query were ytho, then Python should appear in the search results.

|

non_process

|

search suggestion if the query does not match the name of an encyclopedia entry the user should instead be taken to a search results page that displays a list of all encyclopedia entries that have the query as a substring for example if the search query were ytho then python should appear in the search results

| 0

|

547,246

| 16,040,703,826

|

IssuesEvent

|

2021-04-22 07:30:03

|

googleapis/java-aiplatform

|

https://api.github.com/repos/googleapis/java-aiplatform

|

reopened

|

aiplatform.CreateBatchPredictionJobTextEntityExtractionSampleTest: testCreateBatchPredictionJobTextEntityExtractionSample failed

|

api: aiplatform flakybot: flaky flakybot: issue priority: p1 type: bug

|

This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 8cfdab67f373cbd7aa3f139a82c1d63502a360fa

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/109b6dd4-4ffe-453b-8e53-5e15c4a70504), [Sponge](http://sponge2/109b6dd4-4ffe-453b-8e53-5e15c4a70504)

status: failed

<details><summary>Test output</summary><br><pre>java.lang.ArrayIndexOutOfBoundsException: 1

at aiplatform.CreateBatchPredictionJobTextEntityExtractionSampleTest.tearDown(CreateBatchPredictionJobTextEntityExtractionSampleTest.java:71)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:59)

at org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

at org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:56)

at org.junit.internal.runners.statements.RunAfters.invokeMethod(RunAfters.java:46)

at org.junit.internal.runners.statements.RunAfters.evaluate(RunAfters.java:33)

at org.junit.runners.ParentRunner$3.evaluate(ParentRunner.java:306)

at org.junit.runners.BlockJUnit4ClassRunner$1.evaluate(BlockJUnit4ClassRunner.java:100)

at org.junit.runners.ParentRunner.runLeaf(ParentRunner.java:366)

at org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:103)

at org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:63)

at org.junit.runners.ParentRunner$4.run(ParentRunner.java:331)

at org.junit.runners.ParentRunner$1.schedule(ParentRunner.java:79)

at org.junit.runners.ParentRunner.runChildren(ParentRunner.java:329)

at org.junit.runners.ParentRunner.access$100(ParentRunner.java:66)

at org.junit.runners.ParentRunner$2.evaluate(ParentRunner.java:293)

at org.junit.internal.runners.statements.RunBefores.evaluate(RunBefores.java:26)

at org.junit.runners.ParentRunner$3.evaluate(ParentRunner.java:306)

at org.junit.runners.ParentRunner.run(ParentRunner.java:413)

at org.apache.maven.surefire.junit4.JUnit4Provider.execute(JUnit4Provider.java:364)

at org.apache.maven.surefire.junit4.JUnit4Provider.executeWithRerun(JUnit4Provider.java:272)

at org.apache.maven.surefire.junit4.JUnit4Provider.executeTestSet(JUnit4Provider.java:237)

at org.apache.maven.surefire.junit4.JUnit4Provider.invoke(JUnit4Provider.java:158)

at org.apache.maven.surefire.booter.ForkedBooter.runSuitesInProcess(ForkedBooter.java:428)

at org.apache.maven.surefire.booter.ForkedBooter.execute(ForkedBooter.java:162)

at org.apache.maven.surefire.booter.ForkedBooter.run(ForkedBooter.java:562)

at org.apache.maven.surefire.booter.ForkedBooter.main(ForkedBooter.java:548)

</pre></details>

|

1.0

|

aiplatform.CreateBatchPredictionJobTextEntityExtractionSampleTest: testCreateBatchPredictionJobTextEntityExtractionSample failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 8cfdab67f373cbd7aa3f139a82c1d63502a360fa

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/109b6dd4-4ffe-453b-8e53-5e15c4a70504), [Sponge](http://sponge2/109b6dd4-4ffe-453b-8e53-5e15c4a70504)

status: failed

<details><summary>Test output</summary><br><pre>java.lang.ArrayIndexOutOfBoundsException: 1

at aiplatform.CreateBatchPredictionJobTextEntityExtractionSampleTest.tearDown(CreateBatchPredictionJobTextEntityExtractionSampleTest.java:71)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:59)

at org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

at org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:56)

at org.junit.internal.runners.statements.RunAfters.invokeMethod(RunAfters.java:46)

at org.junit.internal.runners.statements.RunAfters.evaluate(RunAfters.java:33)

at org.junit.runners.ParentRunner$3.evaluate(ParentRunner.java:306)

at org.junit.runners.BlockJUnit4ClassRunner$1.evaluate(BlockJUnit4ClassRunner.java:100)

at org.junit.runners.ParentRunner.runLeaf(ParentRunner.java:366)

at org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:103)

at org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:63)

at org.junit.runners.ParentRunner$4.run(ParentRunner.java:331)

at org.junit.runners.ParentRunner$1.schedule(ParentRunner.java:79)

at org.junit.runners.ParentRunner.runChildren(ParentRunner.java:329)

at org.junit.runners.ParentRunner.access$100(ParentRunner.java:66)

at org.junit.runners.ParentRunner$2.evaluate(ParentRunner.java:293)

at org.junit.internal.runners.statements.RunBefores.evaluate(RunBefores.java:26)

at org.junit.runners.ParentRunner$3.evaluate(ParentRunner.java:306)

at org.junit.runners.ParentRunner.run(ParentRunner.java:413)

at org.apache.maven.surefire.junit4.JUnit4Provider.execute(JUnit4Provider.java:364)

at org.apache.maven.surefire.junit4.JUnit4Provider.executeWithRerun(JUnit4Provider.java:272)

at org.apache.maven.surefire.junit4.JUnit4Provider.executeTestSet(JUnit4Provider.java:237)

at org.apache.maven.surefire.junit4.JUnit4Provider.invoke(JUnit4Provider.java:158)

at org.apache.maven.surefire.booter.ForkedBooter.runSuitesInProcess(ForkedBooter.java:428)

at org.apache.maven.surefire.booter.ForkedBooter.execute(ForkedBooter.java:162)

at org.apache.maven.surefire.booter.ForkedBooter.run(ForkedBooter.java:562)

at org.apache.maven.surefire.booter.ForkedBooter.main(ForkedBooter.java:548)

</pre></details>

|

non_process

|

aiplatform createbatchpredictionjobtextentityextractionsampletest testcreatebatchpredictionjobtextentityextractionsample failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output java lang arrayindexoutofboundsexception at aiplatform createbatchpredictionjobtextentityextractionsampletest teardown createbatchpredictionjobtextentityextractionsampletest java at sun reflect nativemethodaccessorimpl native method at sun reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at org junit runners model frameworkmethod runreflectivecall frameworkmethod java at org junit internal runners model reflectivecallable run reflectivecallable java at org junit runners model frameworkmethod invokeexplosively frameworkmethod java at org junit internal runners statements runafters invokemethod runafters java at org junit internal runners statements runafters evaluate runafters java at org junit runners parentrunner evaluate parentrunner java at org junit runners evaluate java at org junit runners parentrunner runleaf parentrunner java at org junit runners runchild java at org junit runners runchild java at org junit runners parentrunner run parentrunner java at org junit runners parentrunner schedule parentrunner java at org junit runners parentrunner runchildren parentrunner java at org junit runners parentrunner access parentrunner java at org junit runners parentrunner evaluate parentrunner java at org junit internal runners statements runbefores evaluate runbefores java at org junit runners parentrunner evaluate parentrunner java at org junit runners parentrunner run parentrunner java at org apache maven surefire execute java at org apache maven surefire executewithrerun java at org apache maven surefire executetestset java at org apache maven surefire invoke java at org apache maven surefire booter forkedbooter runsuitesinprocess forkedbooter java at org apache maven surefire booter forkedbooter execute forkedbooter java at org apache maven surefire booter forkedbooter run forkedbooter java at org apache maven surefire booter forkedbooter main forkedbooter java

| 0

|

183

| 2,588,444,240

|

IssuesEvent

|

2015-02-18 01:24:19

|

GsDevKit/gsDevKitHome

|

https://api.github.com/repos/GsDevKit/gsDevKitHome

|

closed

|

`Do not clone as root` ...

|

in process

|

It is worth mentioning that when the initial clone is made there is no need to run the clone as root and that it should be cloned such that the normal user has write permissions ... `sudo` is used where and when `root` operations are performed

|

1.0

|

`Do not clone as root` ... - It is worth mentioning that when the initial clone is made there is no need to run the clone as root and that it should be cloned such that the normal user has write permissions ... `sudo` is used where and when `root` operations are performed

|

process

|

do not clone as root it is worth mentioning that when the initial clone is made there is no need to run the clone as root and that it should be cloned such that the normal user has write permissions sudo is used where and when root operations are performed

| 1

|

29,945

| 8,444,315,766

|

IssuesEvent

|

2018-10-18 18:04:55

|

gimli-org/gimli

|

https://api.github.com/repos/gimli-org/gimli

|

closed

|

Fix install script for Mac OS

|

building and distribution

|

We should test the curl installer and give additional instructions on the website for manual compilation on MacOS. Any experiences & help from Mac users is welcome.

|

1.0

|

Fix install script for Mac OS - We should test the curl installer and give additional instructions on the website for manual compilation on MacOS. Any experiences & help from Mac users is welcome.

|

non_process

|

fix install script for mac os we should test the curl installer and give additional instructions on the website for manual compilation on macos any experiences help from mac users is welcome

| 0

|

261,468

| 8,233,709,340

|

IssuesEvent

|

2018-09-08 04:35:30

|

Sakuten/backend

|

https://api.github.com/repos/Sakuten/backend

|

opened

|

Use `app.logger.warning` instead of `app.logger.warn`

|

low priority refactoring small

|

<!-- あくまでテンプレートなので必ずしもすべての項目を埋めなくてよい -->

Step 1: 目的

============

* `The 'warn' method is deprecated, use 'warning' instead`

Step 2: 概要

============

* Use `app.logger.warning` instead of `app.logger.warn`

|

1.0

|

Use `app.logger.warning` instead of `app.logger.warn` - <!-- あくまでテンプレートなので必ずしもすべての項目を埋めなくてよい -->

Step 1: 目的

============

* `The 'warn' method is deprecated, use 'warning' instead`

Step 2: 概要

============

* Use `app.logger.warning` instead of `app.logger.warn`

|

non_process

|

use app logger warning instead of app logger warn step 目的 the warn method is deprecated use warning instead step 概要 use app logger warning instead of app logger warn

| 0

|

60,924

| 14,596,421,154

|

IssuesEvent

|

2020-12-20 15:46:59

|

billmcchesney1/superagent

|

https://api.github.com/repos/billmcchesney1/superagent

|

opened

|

CVE-2020-11023 (Medium) detected in jquery-1.7.1.min.js

|

security vulnerability

|

## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.7.1/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.7.1/jquery.min.js</a></p>

<p>Path to dependency file: superagent/node_modules/watchify/node_modules/vm-browserify/example/run/index.html</p>

<p>Path to vulnerable library: superagent/node_modules/watchify/node_modules/vm-browserify/example/run/index.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.7.1.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/billmcchesney1/superagent/commit/77fefdaffd4ef3cef2e5b252e165b5f40fae61d5">77fefdaffd4ef3cef2e5b252e165b5f40fae61d5</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In jQuery versions greater than or equal to 1.0.3 and before 3.5.0, passing HTML containing <option> elements from untrusted sources - even after sanitizing it - to one of jQuery's DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.

<p>Publish Date: 2020-04-29

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11023>CVE-2020-11023</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-11023">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-11023</a></p>

<p>Release Date: 2020-04-29</p>

<p>Fix Resolution: jquery - 3.5.0</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"JavaScript","packageName":"jquery","packageVersion":"1.7.1","isTransitiveDependency":false,"dependencyTree":"jquery:1.7.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"jquery - 3.5.0"}],"vulnerabilityIdentifier":"CVE-2020-11023","vulnerabilityDetails":"In jQuery versions greater than or equal to 1.0.3 and before 3.5.0, passing HTML containing \u003coption\u003e elements from untrusted sources - even after sanitizing it - to one of jQuery\u0027s DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11023","cvss3Severity":"medium","cvss3Score":"6.1","cvss3Metrics":{"A":"None","AC":"Low","PR":"None","S":"Changed","C":"Low","UI":"Required","AV":"Network","I":"Low"},"extraData":{}}</REMEDIATE> -->

|

True

|

CVE-2020-11023 (Medium) detected in jquery-1.7.1.min.js - ## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.7.1/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.7.1/jquery.min.js</a></p>

<p>Path to dependency file: superagent/node_modules/watchify/node_modules/vm-browserify/example/run/index.html</p>

<p>Path to vulnerable library: superagent/node_modules/watchify/node_modules/vm-browserify/example/run/index.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.7.1.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/billmcchesney1/superagent/commit/77fefdaffd4ef3cef2e5b252e165b5f40fae61d5">77fefdaffd4ef3cef2e5b252e165b5f40fae61d5</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In jQuery versions greater than or equal to 1.0.3 and before 3.5.0, passing HTML containing <option> elements from untrusted sources - even after sanitizing it - to one of jQuery's DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.

<p>Publish Date: 2020-04-29

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11023>CVE-2020-11023</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-11023">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-11023</a></p>

<p>Release Date: 2020-04-29</p>

<p>Fix Resolution: jquery - 3.5.0</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"JavaScript","packageName":"jquery","packageVersion":"1.7.1","isTransitiveDependency":false,"dependencyTree":"jquery:1.7.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"jquery - 3.5.0"}],"vulnerabilityIdentifier":"CVE-2020-11023","vulnerabilityDetails":"In jQuery versions greater than or equal to 1.0.3 and before 3.5.0, passing HTML containing \u003coption\u003e elements from untrusted sources - even after sanitizing it - to one of jQuery\u0027s DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11023","cvss3Severity":"medium","cvss3Score":"6.1","cvss3Metrics":{"A":"None","AC":"Low","PR":"None","S":"Changed","C":"Low","UI":"Required","AV":"Network","I":"Low"},"extraData":{}}</REMEDIATE> -->

|

non_process

|

cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file superagent node modules watchify node modules vm browserify example run index html path to vulnerable library superagent node modules watchify node modules vm browserify example run index html dependency hierarchy x jquery min js vulnerable library found in head commit a href found in base branch master vulnerability details in jquery versions greater than or equal to and before passing html containing elements from untrusted sources even after sanitizing it to one of jquery s dom manipulation methods i e html append and others may execute untrusted code this problem is patched in jquery publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution jquery isopenpronvulnerability true ispackagebased true isdefaultbranch true packages vulnerabilityidentifier cve vulnerabilitydetails in jquery versions greater than or equal to and before passing html containing elements from untrusted sources even after sanitizing it to one of jquery dom manipulation methods i e html append and others may execute untrusted code this problem is patched in jquery vulnerabilityurl

| 0

|

1,822

| 4,579,701,103

|

IssuesEvent

|

2016-09-18 10:40:18

|

edovio/OpenStudentBook

|

https://api.github.com/repos/edovio/OpenStudentBook

|

closed

|

Task 2: Identify all the Faculties

|

Help wanted - Bisogno di Aiuto Processing - In Lavorazione

|

Identify all the Faculties to insert in this guide

Identificare tutte le Facoltà o indirizzi di studio da inserire nella guida

|

1.0

|

Task 2: Identify all the Faculties - Identify all the Faculties to insert in this guide

Identificare tutte le Facoltà o indirizzi di studio da inserire nella guida

|

process

|

task identify all the faculties identify all the faculties to insert in this guide identificare tutte le facoltà o indirizzi di studio da inserire nella guida

| 1

|

22,011

| 30,515,200,812

|

IssuesEvent

|

2023-07-19 02:00:10

|

lizhihao6/get-daily-arxiv-noti

|

https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti

|

opened

|

New submissions for Wed, 19 Jul 23

|

event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB

|

## Keyword: events

### Online Self-Supervised Thermal Water Segmentation for Aerial Vehicles

- **Authors:** Connor Lee, Jonathan Gustafsson Frennert, Lu Gan, Matthew Anderson, Soon-Jo Chung

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Robotics (cs.RO)

- **Arxiv link:** https://arxiv.org/abs/2307.09027

- **Pdf link:** https://arxiv.org/pdf/2307.09027

- **Abstract**

We present a new method to adapt an RGB-trained water segmentation network to target-domain aerial thermal imagery using online self-supervision by leveraging texture and motion cues as supervisory signals. This new thermal capability enables current autonomous aerial robots operating in near-shore environments to perform tasks such as visual navigation, bathymetry, and flow tracking at night. Our method overcomes the problem of scarce and difficult-to-obtain near-shore thermal data that prevents the application of conventional supervised and unsupervised methods. In this work, we curate the first aerial thermal near-shore dataset, show that our approach outperforms fully-supervised segmentation models trained on limited target-domain thermal data, and demonstrate real-time capabilities onboard an Nvidia Jetson embedded computing platform. Code and datasets used in this work will be available at: https://github.com/connorlee77/uav-thermal-water-segmentation.

### Plug the Leaks: Advancing Audio-driven Talking Face Generation by Preventing Unintended Information Flow

- **Authors:** Dogucan Yaman, Fevziye Irem Eyiokur, Leonard Bärmann, Hazim Kemal Ekenel, Alexander Waibel

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.09368

- **Pdf link:** https://arxiv.org/pdf/2307.09368

- **Abstract**

Audio-driven talking face generation is the task of creating a lip-synchronized, realistic face video from given audio and reference frames. This involves two major challenges: overall visual quality of generated images on the one hand, and audio-visual synchronization of the mouth part on the other hand. In this paper, we start by identifying several problematic aspects of synchronization methods in recent audio-driven talking face generation approaches. Specifically, this involves unintended flow of lip and pose information from the reference to the generated image, as well as instabilities during model training. Subsequently, we propose various techniques for obviating these issues: First, a silent-lip reference image generator prevents leaking of lips from the reference to the generated image. Second, an adaptive triplet loss handles the pose leaking problem. Finally, we propose a stabilized formulation of synchronization loss, circumventing aforementioned training instabilities while additionally further alleviating the lip leaking issue. Combining the individual improvements, we present state-of-the art performance on LRS2 and LRW in both synchronization and visual quality. We further validate our design in various ablation experiments, confirming the individual contributions as well as their complementary effects.

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

### EVIL: Evidential Inference Learning for Trustworthy Semi-supervised Medical Image Segmentation

- **Authors:** Yingyu Chen, Ziyuan Yang, Chenyu Shen, Zhiwen Wang, Yang Qin, Yi Zhang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI)

- **Arxiv link:** https://arxiv.org/abs/2307.08988

- **Pdf link:** https://arxiv.org/pdf/2307.08988

- **Abstract**

Recently, uncertainty-aware methods have attracted increasing attention in semi-supervised medical image segmentation. However, current methods usually suffer from the drawback that it is difficult to balance the computational cost, estimation accuracy, and theoretical support in a unified framework. To alleviate this problem, we introduce the Dempster-Shafer Theory of Evidence (DST) into semi-supervised medical image segmentation, dubbed Evidential Inference Learning (EVIL). EVIL provides a theoretically guaranteed solution to infer accurate uncertainty quantification in a single forward pass. Trustworthy pseudo labels on unlabeled data are generated after uncertainty estimation. The recently proposed consistency regularization-based training paradigm is adopted in our framework, which enforces the consistency on the perturbed predictions to enhance the generalization with few labeled data. Experimental results show that EVIL achieves competitive performance in comparison with several state-of-the-art methods on the public dataset.

## Keyword: ISP

### RepViT: Revisiting Mobile CNN From ViT Perspective

- **Authors:** Ao Wang, Hui Chen, Zijia Lin, Hengjun Pu, Guiguang Ding

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.09283

- **Pdf link:** https://arxiv.org/pdf/2307.09283

- **Abstract**

Recently, lightweight Vision Transformers (ViTs) demonstrate superior performance and lower latency compared with lightweight Convolutional Neural Networks (CNNs) on resource-constrained mobile devices. This improvement is usually attributed to the multi-head self-attention module, which enables the model to learn global representations. However, the architectural disparities between lightweight ViTs and lightweight CNNs have not been adequately examined. In this study, we revisit the efficient design of lightweight CNNs and emphasize their potential for mobile devices. We incrementally enhance the mobile-friendliness of a standard lightweight CNN, specifically MobileNetV3, by integrating the efficient architectural choices of lightweight ViTs. This ends up with a new family of pure lightweight CNNs, namely RepViT. Extensive experiments show that RepViT outperforms existing state-of-the-art lightweight ViTs and exhibits favorable latency in various vision tasks. On ImageNet, RepViT achieves over 80\% top-1 accuracy with nearly 1ms latency on an iPhone 12, which is the first time for a lightweight model, to the best of our knowledge. Our largest model, RepViT-M3, obtains 81.4\% accuracy with only 1.3ms latency. The code and trained models are available at \url{https://github.com/jameslahm/RepViT}.

## Keyword: image signal processing

There is no result

## Keyword: image signal process

There is no result

## Keyword: compression

### UPSCALE: Unconstrained Channel Pruning

- **Authors:** Alvin Wan, Hanxiang Hao, Kaushik Patnaik, Yueyang Xu, Omer Hadad, David Güera, Zhile Ren, Qi Shan

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.08771

- **Pdf link:** https://arxiv.org/pdf/2307.08771

- **Abstract**

As neural networks grow in size and complexity, inference speeds decline. To combat this, one of the most effective compression techniques -- channel pruning -- removes channels from weights. However, for multi-branch segments of a model, channel removal can introduce inference-time memory copies. In turn, these copies increase inference latency -- so much so that the pruned model can be slower than the unpruned model. As a workaround, pruners conventionally constrain certain channels to be pruned together. This fully eliminates memory copies but, as we show, significantly impairs accuracy. We now have a dilemma: Remove constraints but increase latency, or add constraints and impair accuracy. In response, our insight is to reorder channels at export time, (1) reducing latency by reducing memory copies and (2) improving accuracy by removing constraints. Using this insight, we design a generic algorithm UPSCALE to prune models with any pruning pattern. By removing constraints from existing pruners, we improve ImageNet accuracy for post-training pruned models by 2.1 points on average -- benefiting DenseNet (+16.9), EfficientNetV2 (+7.9), and ResNet (+6.2). Furthermore, by reordering channels, UPSCALE improves inference speeds by up to 2x over a baseline export.

### In Defense of Clip-based Video Relation Detection

- **Authors:** Meng Wei, Long Chen, Wei Ji, Xiaoyu Yue, Roger Zimmermann

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.08984

- **Pdf link:** https://arxiv.org/pdf/2307.08984

- **Abstract**

Video Visual Relation Detection (VidVRD) aims to detect visual relationship triplets in videos using spatial bounding boxes and temporal boundaries. Existing VidVRD methods can be broadly categorized into bottom-up and top-down paradigms, depending on their approach to classifying relations. Bottom-up methods follow a clip-based approach where they classify relations of short clip tubelet pairs and then merge them into long video relations. On the other hand, top-down methods directly classify long video tubelet pairs. While recent video-based methods utilizing video tubelets have shown promising results, we argue that the effective modeling of spatial and temporal context plays a more significant role than the choice between clip tubelets and video tubelets. This motivates us to revisit the clip-based paradigm and explore the key success factors in VidVRD. In this paper, we propose a Hierarchical Context Model (HCM) that enriches the object-based spatial context and relation-based temporal context based on clips. We demonstrate that using clip tubelets can achieve superior performance compared to most video-based methods. Additionally, using clip tubelets offers more flexibility in model designs and helps alleviate the limitations associated with video tubelets, such as the challenging long-term object tracking problem and the loss of temporal information in long-term tubelet feature compression. Extensive experiments conducted on two challenging VidVRD benchmarks validate that our HCM achieves a new state-of-the-art performance, highlighting the effectiveness of incorporating advanced spatial and temporal context modeling within the clip-based paradigm.

### Knowledge Distillation for Object Detection: from generic to remote sensing datasets

- **Authors:** Hoàng-Ân Lê, Minh-Tan Pham

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.09264

- **Pdf link:** https://arxiv.org/pdf/2307.09264

- **Abstract**

Knowledge distillation, a well-known model compression technique, is an active research area in both computer vision and remote sensing communities. In this paper, we evaluate in a remote sensing context various off-the-shelf object detection knowledge distillation methods which have been originally developed on generic computer vision datasets such as Pascal VOC. In particular, methods covering both logit mimicking and feature imitation approaches are applied for vehicle detection using the well-known benchmarks such as xView and VEDAI datasets. Extensive experiments are performed to compare the relative performance and interrelationships of the methods. Experimental results show high variations and confirm the importance of result aggregation and cross validation on remote sensing datasets.

## Keyword: RAW

### EVIL: Evidential Inference Learning for Trustworthy Semi-supervised Medical Image Segmentation

- **Authors:** Yingyu Chen, Ziyuan Yang, Chenyu Shen, Zhiwen Wang, Yang Qin, Yi Zhang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI)

- **Arxiv link:** https://arxiv.org/abs/2307.08988

- **Pdf link:** https://arxiv.org/pdf/2307.08988

- **Abstract**

Recently, uncertainty-aware methods have attracted increasing attention in semi-supervised medical image segmentation. However, current methods usually suffer from the drawback that it is difficult to balance the computational cost, estimation accuracy, and theoretical support in a unified framework. To alleviate this problem, we introduce the Dempster-Shafer Theory of Evidence (DST) into semi-supervised medical image segmentation, dubbed Evidential Inference Learning (EVIL). EVIL provides a theoretically guaranteed solution to infer accurate uncertainty quantification in a single forward pass. Trustworthy pseudo labels on unlabeled data are generated after uncertainty estimation. The recently proposed consistency regularization-based training paradigm is adopted in our framework, which enforces the consistency on the perturbed predictions to enhance the generalization with few labeled data. Experimental results show that EVIL achieves competitive performance in comparison with several state-of-the-art methods on the public dataset.

### Visual Validation versus Visual Estimation: A Study on the Average Value in Scatterplots

- **Authors:** Daniel Braun, Ashley Suh, Remco Chang, Michael Gleicher, Tatiana von Landesberger

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Graphics (cs.GR)

- **Arxiv link:** https://arxiv.org/abs/2307.09330

- **Pdf link:** https://arxiv.org/pdf/2307.09330

- **Abstract**

We investigate the ability of individuals to visually validate statistical models in terms of their fit to the data. While visual model estimation has been studied extensively, visual model validation remains under-investigated. It is unknown how well people are able to visually validate models, and how their performance compares to visual and computational estimation. As a starting point, we conducted a study across two populations (crowdsourced and volunteers). Participants had to both visually estimate (i.e, draw) and visually validate (i.e., accept or reject) the frequently studied model of averages. Across both populations, the level of accuracy of the models that were considered valid was lower than the accuracy of the estimated models. We find that participants' validation and estimation were unbiased. Moreover, their natural critical point between accepting and rejecting a given mean value is close to the boundary of its 95% confidence interval, indicating that the visually perceived confidence interval corresponds to a common statistical standard. Our work contributes to the understanding of visual model validation and opens new research opportunities.

## Keyword: raw image

There is no result

|

2.0

|

New submissions for Wed, 19 Jul 23 - ## Keyword: events

### Online Self-Supervised Thermal Water Segmentation for Aerial Vehicles

- **Authors:** Connor Lee, Jonathan Gustafsson Frennert, Lu Gan, Matthew Anderson, Soon-Jo Chung

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Robotics (cs.RO)

- **Arxiv link:** https://arxiv.org/abs/2307.09027

- **Pdf link:** https://arxiv.org/pdf/2307.09027

- **Abstract**

We present a new method to adapt an RGB-trained water segmentation network to target-domain aerial thermal imagery using online self-supervision by leveraging texture and motion cues as supervisory signals. This new thermal capability enables current autonomous aerial robots operating in near-shore environments to perform tasks such as visual navigation, bathymetry, and flow tracking at night. Our method overcomes the problem of scarce and difficult-to-obtain near-shore thermal data that prevents the application of conventional supervised and unsupervised methods. In this work, we curate the first aerial thermal near-shore dataset, show that our approach outperforms fully-supervised segmentation models trained on limited target-domain thermal data, and demonstrate real-time capabilities onboard an Nvidia Jetson embedded computing platform. Code and datasets used in this work will be available at: https://github.com/connorlee77/uav-thermal-water-segmentation.

### Plug the Leaks: Advancing Audio-driven Talking Face Generation by Preventing Unintended Information Flow

- **Authors:** Dogucan Yaman, Fevziye Irem Eyiokur, Leonard Bärmann, Hazim Kemal Ekenel, Alexander Waibel

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.09368

- **Pdf link:** https://arxiv.org/pdf/2307.09368

- **Abstract**