Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

3,339

| 6,473,237,849

|

IssuesEvent

|

2017-08-17 15:29:58

|

syndesisio/syndesis-ui

|

https://api.github.com/repos/syndesisio/syndesis-ui

|

closed

|

Documentation for UI

|

dev process research

|

Automatically generated documentation could be very useful for encouraging contribution, as we are using many third party dependencies, and many developers are new to Angular 2 on its own. For instance, we are using Angular CLI, and just going through its dependencies, as well as how Webpack works, etc. To facilitate this, it makes sense to have some type of technical overview along with automatically generated documentation.

|

1.0

|

Documentation for UI - Automatically generated documentation could be very useful for encouraging contribution, as we are using many third party dependencies, and many developers are new to Angular 2 on its own. For instance, we are using Angular CLI, and just going through its dependencies, as well as how Webpack works, etc. To facilitate this, it makes sense to have some type of technical overview along with automatically generated documentation.

|

process

|

documentation for ui automatically generated documentation could be very useful for encouraging contribution as we are using many third party dependencies and many developers are new to angular on its own for instance we are using angular cli and just going through its dependencies as well as how webpack works etc to facilitate this it makes sense to have some type of technical overview along with automatically generated documentation

| 1

|

12,309

| 14,859,802,579

|

IssuesEvent

|

2021-01-18 19:12:44

|

neuropoly/ukbiobank-spinalcord-csa

|

https://api.github.com/repos/neuropoly/ukbiobank-spinalcord-csa

|

closed

|

No use of manual disc label for T2w in process_data.sh

|

process_data

|

In `process_data.sh`, the manual C2-C3 disc label is only used in the function `label_if_does_not_exist` called for T1w disc labeling :

https://github.com/sandrinebedard/Projet3/blob/5bf91fb4531cc7e4543e281b53825333e5b8d8ee/process_data.sh#L32-L41

But if the subject moved between T1w et T2w as discussed in issue #13 , we will add manual identification of the disc for T2w. The problem is that file manual_label for T2w will never be used in `process_data.sh` as it is right now, the function `label_if_does_not_exist` is not used here:

https://github.com/sandrinebedard/Projet3/blob/5bf91fb4531cc7e4543e281b53825333e5b8d8ee/process_data.sh#L145-L151

It will only use labeling from T1w `label_T1w/template/PAM50_levels.nii.gz` even if T2w manual disc label exists.

|

1.0

|

No use of manual disc label for T2w in process_data.sh - In `process_data.sh`, the manual C2-C3 disc label is only used in the function `label_if_does_not_exist` called for T1w disc labeling :

https://github.com/sandrinebedard/Projet3/blob/5bf91fb4531cc7e4543e281b53825333e5b8d8ee/process_data.sh#L32-L41

But if the subject moved between T1w et T2w as discussed in issue #13 , we will add manual identification of the disc for T2w. The problem is that file manual_label for T2w will never be used in `process_data.sh` as it is right now, the function `label_if_does_not_exist` is not used here:

https://github.com/sandrinebedard/Projet3/blob/5bf91fb4531cc7e4543e281b53825333e5b8d8ee/process_data.sh#L145-L151

It will only use labeling from T1w `label_T1w/template/PAM50_levels.nii.gz` even if T2w manual disc label exists.

|

process

|

no use of manual disc label for in process data sh in process data sh the manual disc label is only used in the function label if does not exist called for disc labeling but if the subject moved between et as discussed in issue we will add manual identification of the disc for the problem is that file manual label for will never be used in process data sh as it is right now the function label if does not exist is not used here it will only use labeling from label template levels nii gz even if manual disc label exists

| 1

|

21,380

| 29,202,228,868

|

IssuesEvent

|

2023-05-21 00:37:00

|

devssa/onde-codar-em-salvador

|

https://api.github.com/repos/devssa/onde-codar-em-salvador

|

closed

|

[Hibrido / Barueri, São Paulo, Brazil] Database Consultant (Oracle) na Coodesh

|

SALVADOR BANCO DE DADOS MYSQL SQL REQUISITOS Telecomunicações FIREWALL PROCESSOS GITHUB BACKUP SEGURANÇA UMA C QUALIDADE NEGÓCIOS MONITORAMENTO ALOCADO Stale

|

## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/database-consultant-oracle-175423911?utm_source=github&utm_medium=devssa-onde-codar-em-salvador&modal=open) com o pop-up personalizado de candidatura. 👋

<p>A <strong>Grupo Telefônica</strong> está em busca de <strong><ins>Database Consultant (Oracle)</ins></strong> para compor seu time!</p>

<p></p>

<p>A Unidade de Negócio de Cloud da Telefónica Tech chega ao Brasil para habilitar as empresas com soluções de cloud híbrida e multi-cloud através de um portfólio de serviços ágeis e inovadores. </p>

<p></p>

<p><strong>Responsabilidades:</strong></p>

<ul>

<li>Atendimento de incidentes, câmbios e requisições de serviço;</li>

<li>Instalação, configuração, upgrade e migração de versões de BD;</li>

<li>Aplicação de patch em BD;</li>

<li>Será responsável pela realização de análises preventivas e corretivas de bancos de dados relacionais, especialmente em banco de dados Oracle e SQL Server;</li>

<li>Planejar e estruturar o banco de dados, garantindo a melhor arquitetura, integridade dos dados, segurança de acesso, identificando oportunidades de melhoria na construção e utilização;</li>

<li>Efetuar monitoramento de banco de dados, análise e tunning de performance;</li>

<li>Administração replicas via dataguard e golden gate;</li>

<li>Auxílio em pontos de contenção ou consumo excessivo de recursos;</li>

<li>Apoiar/melhorar rotinas de backup/restore;</li>

<li>Análise de infra-estrutura e capacity planning;</li>

<li>Habilidade com tunning de queries;</li>

<li>Atuar em ambientes de alta disponibilidade;</li>

<li>Criação e automatizar a criação de relatórios técnicos para compartilhar com nossos clientes.</li>

</ul>

## Telefônica :

<p>Somos uma empresa do Grupo Telefônica, líder em telecomunicações no Brasil. Trabalhamos com o propósito de Digitalizar para Aproximar pessoas, negócios e toda sociedade, construindo uma nação mais conectada e transformando a vida dos brasileiros. </p>

<p>Buscamos ampliar a autonomia, a personalização e as escolhas em tempo real dos nossos clientes, colocando-os no comando da sua vida digital, com segurança e confiabilidade – tudo isso com a qualidade que só a Vivo tem.</p><a href='https://coodesh.com/empresas/telefonica'>Veja mais no site</a>

## Habilidades:

- Oracle

- Microsoft SQL Server

- Banco de dados relacionais (SQL)

## Local:

Barueri, São Paulo, Brazil

## Requisitos:

- Experiência sólida com ambientes produtivos críticos e de alta disponibilidade;

- Experiência e vivência comprovada com engines de bancos de dados Oracle, SQL Server e MySQL;

- Sólidos conhecimentos em performance tunning;

- Perfil Analítico, Hands on e Proativo;

- Conhecimento e experiência com processos Ágeis;

- Conhecimento na maioria das ferramentas: Dataguard, Golden Gate, Real Application Test (RAT), RMAN, Enterprise Manager, Database Firewall, Data Masking;

- Superior Completo - Certificado Conclusão.

## Diferenciais:

- Certificações Oracle e SQL serão diferenciais.

## Como se candidatar:

Candidatar-se exclusivamente através da plataforma Coodesh no link a seguir: [Database Consultant (Oracle) na Telefônica ](https://coodesh.com/vagas/database-consultant-oracle-175423911?utm_source=github&utm_medium=devssa-onde-codar-em-salvador&modal=open)

Após candidatar-se via plataforma Coodesh e validar o seu login, você poderá acompanhar e receber todas as interações do processo por lá. Utilize a opção **Pedir Feedback** entre uma etapa e outra na vaga que se candidatou. Isso fará com que a pessoa **Recruiter** responsável pelo processo na empresa receba a notificação.

## Labels

#### Alocação

Alocado

#### Regime

CLT

#### Categoria

Banco de Dados

|

1.0

|

[Hibrido / Barueri, São Paulo, Brazil] Database Consultant (Oracle) na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/database-consultant-oracle-175423911?utm_source=github&utm_medium=devssa-onde-codar-em-salvador&modal=open) com o pop-up personalizado de candidatura. 👋

<p>A <strong>Grupo Telefônica</strong> está em busca de <strong><ins>Database Consultant (Oracle)</ins></strong> para compor seu time!</p>

<p></p>

<p>A Unidade de Negócio de Cloud da Telefónica Tech chega ao Brasil para habilitar as empresas com soluções de cloud híbrida e multi-cloud através de um portfólio de serviços ágeis e inovadores. </p>

<p></p>

<p><strong>Responsabilidades:</strong></p>

<ul>

<li>Atendimento de incidentes, câmbios e requisições de serviço;</li>

<li>Instalação, configuração, upgrade e migração de versões de BD;</li>

<li>Aplicação de patch em BD;</li>

<li>Será responsável pela realização de análises preventivas e corretivas de bancos de dados relacionais, especialmente em banco de dados Oracle e SQL Server;</li>

<li>Planejar e estruturar o banco de dados, garantindo a melhor arquitetura, integridade dos dados, segurança de acesso, identificando oportunidades de melhoria na construção e utilização;</li>

<li>Efetuar monitoramento de banco de dados, análise e tunning de performance;</li>

<li>Administração replicas via dataguard e golden gate;</li>

<li>Auxílio em pontos de contenção ou consumo excessivo de recursos;</li>

<li>Apoiar/melhorar rotinas de backup/restore;</li>

<li>Análise de infra-estrutura e capacity planning;</li>

<li>Habilidade com tunning de queries;</li>

<li>Atuar em ambientes de alta disponibilidade;</li>

<li>Criação e automatizar a criação de relatórios técnicos para compartilhar com nossos clientes.</li>

</ul>

## Telefônica :

<p>Somos uma empresa do Grupo Telefônica, líder em telecomunicações no Brasil. Trabalhamos com o propósito de Digitalizar para Aproximar pessoas, negócios e toda sociedade, construindo uma nação mais conectada e transformando a vida dos brasileiros. </p>

<p>Buscamos ampliar a autonomia, a personalização e as escolhas em tempo real dos nossos clientes, colocando-os no comando da sua vida digital, com segurança e confiabilidade – tudo isso com a qualidade que só a Vivo tem.</p><a href='https://coodesh.com/empresas/telefonica'>Veja mais no site</a>

## Habilidades:

- Oracle

- Microsoft SQL Server

- Banco de dados relacionais (SQL)

## Local:

Barueri, São Paulo, Brazil

## Requisitos:

- Experiência sólida com ambientes produtivos críticos e de alta disponibilidade;

- Experiência e vivência comprovada com engines de bancos de dados Oracle, SQL Server e MySQL;

- Sólidos conhecimentos em performance tunning;

- Perfil Analítico, Hands on e Proativo;

- Conhecimento e experiência com processos Ágeis;

- Conhecimento na maioria das ferramentas: Dataguard, Golden Gate, Real Application Test (RAT), RMAN, Enterprise Manager, Database Firewall, Data Masking;

- Superior Completo - Certificado Conclusão.

## Diferenciais:

- Certificações Oracle e SQL serão diferenciais.

## Como se candidatar:

Candidatar-se exclusivamente através da plataforma Coodesh no link a seguir: [Database Consultant (Oracle) na Telefônica ](https://coodesh.com/vagas/database-consultant-oracle-175423911?utm_source=github&utm_medium=devssa-onde-codar-em-salvador&modal=open)

Após candidatar-se via plataforma Coodesh e validar o seu login, você poderá acompanhar e receber todas as interações do processo por lá. Utilize a opção **Pedir Feedback** entre uma etapa e outra na vaga que se candidatou. Isso fará com que a pessoa **Recruiter** responsável pelo processo na empresa receba a notificação.

## Labels

#### Alocação

Alocado

#### Regime

CLT

#### Categoria

Banco de Dados

|

process

|

database consultant oracle na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado de candidatura 👋 a grupo telefônica está em busca de database consultant oracle para compor seu time a unidade de negócio de cloud da telefónica tech chega ao brasil para habilitar as empresas com soluções de cloud híbrida e multi cloud através de um portfólio de serviços ágeis e inovadores nbsp responsabilidades atendimento de incidentes câmbios e requisições de serviço instalação configuração upgrade e migração de versões de bd aplicação de patch em bd será responsável pela realização de análises preventivas e corretivas de bancos de dados relacionais especialmente em banco de dados oracle e sql server planejar e estruturar o banco de dados garantindo a melhor arquitetura integridade dos dados segurança de acesso identificando oportunidades de melhoria na construção e utilização efetuar monitoramento de banco de dados análise e tunning de performance administração replicas via dataguard e golden gate auxílio em pontos de contenção ou consumo excessivo de recursos apoiar melhorar rotinas de backup restore análise de infra estrutura e capacity planning habilidade com tunning de queries atuar em ambientes de alta disponibilidade criação e automatizar a criação de relatórios técnicos para compartilhar com nossos clientes telefônica somos uma empresa do grupo telefônica líder em telecomunicações no brasil trabalhamos com o propósito de digitalizar para aproximar pessoas negócios e toda sociedade construindo uma nação mais conectada e transformando a vida dos brasileiros nbsp buscamos ampliar a autonomia a personalização e as escolhas em tempo real dos nossos clientes colocando os no comando da sua vida digital com segurança e confiabilidade – tudo isso com a qualidade que só a vivo tem habilidades oracle microsoft sql server banco de dados relacionais sql local barueri são paulo brazil requisitos experiência sólida com ambientes produtivos críticos e de alta disponibilidade experiência e vivência comprovada com engines de bancos de dados oracle sql server e mysql sólidos conhecimentos em performance tunning perfil analítico hands on e proativo conhecimento e experiência com processos ágeis conhecimento na maioria das ferramentas dataguard golden gate real application test rat rman enterprise manager database firewall data masking superior completo certificado conclusão diferenciais certificações oracle e sql serão diferenciais como se candidatar candidatar se exclusivamente através da plataforma coodesh no link a seguir após candidatar se via plataforma coodesh e validar o seu login você poderá acompanhar e receber todas as interações do processo por lá utilize a opção pedir feedback entre uma etapa e outra na vaga que se candidatou isso fará com que a pessoa recruiter responsável pelo processo na empresa receba a notificação labels alocação alocado regime clt categoria banco de dados

| 1

|

308,751

| 9,449,471,994

|

IssuesEvent

|

2019-04-16 02:03:53

|

PMEAL/OpenPNM

|

https://api.github.com/repos/PMEAL/OpenPNM

|

closed

|

scaling on plot_connections is messed up in 2D

|

Priority - Low bug

|

When plotting coordinates in 2D, it scale from 0,0 but when plotting connections, it zooms in to 0.5, 0.5

|

1.0

|

scaling on plot_connections is messed up in 2D - When plotting coordinates in 2D, it scale from 0,0 but when plotting connections, it zooms in to 0.5, 0.5

|

non_process

|

scaling on plot connections is messed up in when plotting coordinates in it scale from but when plotting connections it zooms in to

| 0

|

203,906

| 15,890,724,780

|

IssuesEvent

|

2021-04-10 16:28:27

|

veg-share/frontend-ui

|

https://api.github.com/repos/veg-share/frontend-ui

|

closed

|

Dev Issue : Initial SetUp

|

Developer Phase 1 documentation set-up

|

**What is the issue?**

> Set up initial build of React app

install updated dependencies

establish local/remote repository connections for all members

-[ ] https://www.npmjs.com/package/react-modal

|

1.0

|

Dev Issue : Initial SetUp - **What is the issue?**

> Set up initial build of React app

install updated dependencies

establish local/remote repository connections for all members

-[ ] https://www.npmjs.com/package/react-modal

|

non_process

|

dev issue initial setup what is the issue set up initial build of react app install updated dependencies establish local remote repository connections for all members

| 0

|

759,494

| 26,597,801,969

|

IssuesEvent

|

2023-01-23 13:46:29

|

stratosphererl/stratosphere

|

https://api.github.com/repos/stratosphererl/stratosphere

|

opened

|

[SPIKE] - Consolidating CSS and Components for a unified and responsive design system

|

type: spike priority: medium work: complicated [2] area: infra

|

# Context

To improve the maintainability and consistency of our design system, we want to consolidate our CSS and components and make them responsive. Our current system uses tailwind components, but there are issues with code smell and lack of generic components. We want to research options for unifying the design and feel, such as using a component library or creating an in-house solution.

# Timebox

3 days

# Tasks

- [ ] Research and evaluate different CSS and component consolidation techniques and tools

- [ ] Audit the current CSS and components, identifying areas for improvement and consolidation

- [ ] Research and evaluate different responsive design techniques and tools

- [ ] Research and evaluate different component libraries and in-house solutions for creating a unified design system

- [ ] Create a plan for consolidating the CSS and components and making them responsive

# Notes

- Storybook is a good component testing library that isolates components.

|

1.0

|

[SPIKE] - Consolidating CSS and Components for a unified and responsive design system - # Context

To improve the maintainability and consistency of our design system, we want to consolidate our CSS and components and make them responsive. Our current system uses tailwind components, but there are issues with code smell and lack of generic components. We want to research options for unifying the design and feel, such as using a component library or creating an in-house solution.

# Timebox

3 days

# Tasks

- [ ] Research and evaluate different CSS and component consolidation techniques and tools

- [ ] Audit the current CSS and components, identifying areas for improvement and consolidation

- [ ] Research and evaluate different responsive design techniques and tools

- [ ] Research and evaluate different component libraries and in-house solutions for creating a unified design system

- [ ] Create a plan for consolidating the CSS and components and making them responsive

# Notes

- Storybook is a good component testing library that isolates components.

|

non_process

|

consolidating css and components for a unified and responsive design system context to improve the maintainability and consistency of our design system we want to consolidate our css and components and make them responsive our current system uses tailwind components but there are issues with code smell and lack of generic components we want to research options for unifying the design and feel such as using a component library or creating an in house solution timebox days tasks research and evaluate different css and component consolidation techniques and tools audit the current css and components identifying areas for improvement and consolidation research and evaluate different responsive design techniques and tools research and evaluate different component libraries and in house solutions for creating a unified design system create a plan for consolidating the css and components and making them responsive notes storybook is a good component testing library that isolates components

| 0

|

20,725

| 27,425,740,748

|

IssuesEvent

|

2023-03-01 20:08:32

|

ORNL-AMO/AMO-Tools-Desktop

|

https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop

|

opened

|

Cooling Fan Power Updates

|

bug Calculator Process Cooling

|

* move Water Flow Rate and rename to "Rated Cooling Tower Water Flow Rate" (if name is too long - just go with "Rated Water Flow Rate")

* Y-axis label unit is hp not %

* Add to end of calculator help text (like the note in Process heating's "O2 Enrichment"):

``` NOTE: This calculator assumes the cooling tower has a constant water flow rate and cannot estimate the fan power for variable water flow conditions.```

|

1.0

|

Cooling Fan Power Updates - * move Water Flow Rate and rename to "Rated Cooling Tower Water Flow Rate" (if name is too long - just go with "Rated Water Flow Rate")

* Y-axis label unit is hp not %

* Add to end of calculator help text (like the note in Process heating's "O2 Enrichment"):

``` NOTE: This calculator assumes the cooling tower has a constant water flow rate and cannot estimate the fan power for variable water flow conditions.```

|

process

|

cooling fan power updates move water flow rate and rename to rated cooling tower water flow rate if name is too long just go with rated water flow rate y axis label unit is hp not add to end of calculator help text like the note in process heating s enrichment note this calculator assumes the cooling tower has a constant water flow rate and cannot estimate the fan power for variable water flow conditions

| 1

|

106,435

| 4,272,326,717

|

IssuesEvent

|

2016-07-13 14:13:11

|

chocolatey/choco

|

https://api.github.com/repos/chocolatey/choco

|

closed

|

64bit 7z.exe on 32bit system in chocolatey\tools

|

3 - Done Bug Priority_HIGH

|

I'm working on a 32bit system. If I want to install a zip package, choco install fails with the following message:

`ERROR: Exception calling "Start" with "0" argument(s): "The specified executable is not a valid application for this OS platform."`

I checked the C:\ProgramData\chocolatey\tools\7z.exe and it is really a 64bit version.

If I change it to a 32bit build, the install works well.

### Output Log

```

2016-06-28 09:07:36,817 [INFO ] - ============================================================

2016-06-28 09:07:36,818 [INFO ] - Chocolatey v0.9.10.3

2016-06-28 09:07:36,822 [DEBUG] - Chocolatey is running on Windows v 6.1.7601.65536

2016-06-28 09:07:36,824 [DEBUG] - Attempting to delete file "C:/ProgramData/chocolatey/choco.exe.old".

2016-06-28 09:07:36,827 [DEBUG] - Attempting to delete file "C:\ProgramData\chocolatey\choco.exe.old".

2016-06-28 09:07:36,836 [DEBUG] - Command line: "C:\ProgramData\chocolatey\choco.exe" install vim-tux.portable -fdv -s C:\ProgramData\chocolatey\lib-bad\vim-tux.portable

2016-06-28 09:07:36,838 [DEBUG] - Received arguments: install vim-tux.portable -fdv -s C:\ProgramData\chocolatey\lib-bad\vim-tux.portable

2016-06-28 09:07:36,875 [DEBUG] - RemovePendingPackagesTask is now ready and waiting for PreRunMessage.

2016-06-28 09:07:36,879 [DEBUG] - Sending message 'PreRunMessage' out if there are subscribers...

2016-06-28 09:07:36,883 [DEBUG] - [Pending] Removing all pending packages that should not be considered installed...

2016-06-28 09:07:36,936 [DEBUG] - The source 'C:\ProgramData\chocolatey\lib-bad\vim-tux.portable' evaluated to a 'normal' source type

2016-06-28 09:07:36,939 [DEBUG] -

NOTE: Hiding sensitive configuration data! Please double and triple

check to be sure no sensitive data is shown, especially if copying

output to a gist for review.

2016-06-28 09:07:36,945 [DEBUG] - Configuration: CommandName='install'|

CacheLocation='C:\Users\xxxxx\AppData\Local\Temp\chocolatey'|

ContainsLegacyPackageInstalls='True'|

CommandExecutionTimeoutSeconds='2700'|WebRequestTimeoutSeconds='30'|

Sources='C:\ProgramData\chocolatey\lib-bad\vim-tux.portable'|

SourceType='normal'|Debug='True'|Verbose='True'|Force='True'|

Noop='False'|HelpRequested='False'|RegularOutput='True'|

QuietOutput='False'|PromptForConfirmation='False'|

AcceptLicense='False'|

AllowUnofficialBuild='False'|Input='vim-tux.portable'|

AllVersions='False'|SkipPackageInstallProvider='False'|

PackageNames='vim-tux.portable'|Prerelease='False'|ForceX86='False'|

OverrideArguments='False'|NotSilent='False'|IgnoreDependencies='False'|

AllowMultipleVersions='False'|AllowDowngrade='False'|

ForceDependencies='False'|Information.PlatformType='Windows'|

Information.PlatformVersion='6.1.7601.65536'|

Information.PlatformName='Windows 7'|

Information.ChocolateyVersion='0.9.10.3'|

Information.ChocolateyProductVersion='0.9.10.3'|

Information.FullName='choco, Version=0.9.10.3, Culture=neutral, PublicKeyToken=79d02ea9cad655eb'|

Information.Is64Bit='False'|Information.IsInteractive='True'|

Information.IsUserAdministrator='True'|

Information.IsProcessElevated='True'|

Information.IsLicensedVersion='False'|Features.AutoUninstaller='True'|

Features.CheckSumFiles='True'|Features.FailOnAutoUninstaller='False'|

Features.FailOnStandardError='False'|Features.UsePowerShellHost='True'|

Features.LogEnvironmentValues='False'|Features.VirusCheck='False'|

Features.FailOnInvalidOrMissingLicense='False'|

Features.IgnoreInvalidOptionsSwitches='True'|

Features.UsePackageExitCodes='True'|

Features.UseFipsCompliantChecksums='False'|

ListCommand.LocalOnly='False'|

ListCommand.IncludeRegistryPrograms='False'|ListCommand.PageSize='25'|

ListCommand.Exact='False'|ListCommand.ByIdOnly='False'|

ListCommand.IdStartsWith='False'|ListCommand.OrderByPopularity='False'|

ListCommand.ApprovedOnly='False'|

ListCommand.DownloadCacheAvailable='False'|

ListCommand.NotBroken='False'|UpgradeCommand.FailOnUnfound='False'|

UpgradeCommand.FailOnNotInstalled='False'|

UpgradeCommand.NotifyOnlyAvailableUpgrades='False'|

NewCommand.AutomaticPackage='False'|

NewCommand.UseOriginalTemplate='False'|SourceCommand.Command='unknown'|

SourceCommand.Priority='0'|FeatureCommand.Command='unknown'|

ConfigCommand.Command='unknown'|PinCommand.Command='unknown'|

...

Executing command ['C:\ProgramData\chocolatey\tools\7z.exe' x -aoa -bd -bb1 -o"C:\ProgramData\chocolatey\lib\vim-tux.portable\tools\vim74" -y "C:\Users\xxxxx\AppData\Local\Temp\chocolatey\vim-tux.portable\7.4.1949\complete-x86.7z"]

ERROR: Exception calling "Start" with "0" argument(s): "The specified executable is not a valid application for this OS platform."

at Get-ChocolateyUnzip, C:\ProgramData\chocolatey\helpers\functions\Get-ChocolateyUnzip.ps1: line 159

```

|

1.0

|

64bit 7z.exe on 32bit system in chocolatey\tools - I'm working on a 32bit system. If I want to install a zip package, choco install fails with the following message:

`ERROR: Exception calling "Start" with "0" argument(s): "The specified executable is not a valid application for this OS platform."`

I checked the C:\ProgramData\chocolatey\tools\7z.exe and it is really a 64bit version.

If I change it to a 32bit build, the install works well.

### Output Log

```

2016-06-28 09:07:36,817 [INFO ] - ============================================================

2016-06-28 09:07:36,818 [INFO ] - Chocolatey v0.9.10.3

2016-06-28 09:07:36,822 [DEBUG] - Chocolatey is running on Windows v 6.1.7601.65536

2016-06-28 09:07:36,824 [DEBUG] - Attempting to delete file "C:/ProgramData/chocolatey/choco.exe.old".

2016-06-28 09:07:36,827 [DEBUG] - Attempting to delete file "C:\ProgramData\chocolatey\choco.exe.old".

2016-06-28 09:07:36,836 [DEBUG] - Command line: "C:\ProgramData\chocolatey\choco.exe" install vim-tux.portable -fdv -s C:\ProgramData\chocolatey\lib-bad\vim-tux.portable

2016-06-28 09:07:36,838 [DEBUG] - Received arguments: install vim-tux.portable -fdv -s C:\ProgramData\chocolatey\lib-bad\vim-tux.portable

2016-06-28 09:07:36,875 [DEBUG] - RemovePendingPackagesTask is now ready and waiting for PreRunMessage.

2016-06-28 09:07:36,879 [DEBUG] - Sending message 'PreRunMessage' out if there are subscribers...

2016-06-28 09:07:36,883 [DEBUG] - [Pending] Removing all pending packages that should not be considered installed...

2016-06-28 09:07:36,936 [DEBUG] - The source 'C:\ProgramData\chocolatey\lib-bad\vim-tux.portable' evaluated to a 'normal' source type

2016-06-28 09:07:36,939 [DEBUG] -

NOTE: Hiding sensitive configuration data! Please double and triple

check to be sure no sensitive data is shown, especially if copying

output to a gist for review.

2016-06-28 09:07:36,945 [DEBUG] - Configuration: CommandName='install'|

CacheLocation='C:\Users\xxxxx\AppData\Local\Temp\chocolatey'|

ContainsLegacyPackageInstalls='True'|

CommandExecutionTimeoutSeconds='2700'|WebRequestTimeoutSeconds='30'|

Sources='C:\ProgramData\chocolatey\lib-bad\vim-tux.portable'|

SourceType='normal'|Debug='True'|Verbose='True'|Force='True'|

Noop='False'|HelpRequested='False'|RegularOutput='True'|

QuietOutput='False'|PromptForConfirmation='False'|

AcceptLicense='False'|

AllowUnofficialBuild='False'|Input='vim-tux.portable'|

AllVersions='False'|SkipPackageInstallProvider='False'|

PackageNames='vim-tux.portable'|Prerelease='False'|ForceX86='False'|

OverrideArguments='False'|NotSilent='False'|IgnoreDependencies='False'|

AllowMultipleVersions='False'|AllowDowngrade='False'|

ForceDependencies='False'|Information.PlatformType='Windows'|

Information.PlatformVersion='6.1.7601.65536'|

Information.PlatformName='Windows 7'|

Information.ChocolateyVersion='0.9.10.3'|

Information.ChocolateyProductVersion='0.9.10.3'|

Information.FullName='choco, Version=0.9.10.3, Culture=neutral, PublicKeyToken=79d02ea9cad655eb'|

Information.Is64Bit='False'|Information.IsInteractive='True'|

Information.IsUserAdministrator='True'|

Information.IsProcessElevated='True'|

Information.IsLicensedVersion='False'|Features.AutoUninstaller='True'|

Features.CheckSumFiles='True'|Features.FailOnAutoUninstaller='False'|

Features.FailOnStandardError='False'|Features.UsePowerShellHost='True'|

Features.LogEnvironmentValues='False'|Features.VirusCheck='False'|

Features.FailOnInvalidOrMissingLicense='False'|

Features.IgnoreInvalidOptionsSwitches='True'|

Features.UsePackageExitCodes='True'|

Features.UseFipsCompliantChecksums='False'|

ListCommand.LocalOnly='False'|

ListCommand.IncludeRegistryPrograms='False'|ListCommand.PageSize='25'|

ListCommand.Exact='False'|ListCommand.ByIdOnly='False'|

ListCommand.IdStartsWith='False'|ListCommand.OrderByPopularity='False'|

ListCommand.ApprovedOnly='False'|

ListCommand.DownloadCacheAvailable='False'|

ListCommand.NotBroken='False'|UpgradeCommand.FailOnUnfound='False'|

UpgradeCommand.FailOnNotInstalled='False'|

UpgradeCommand.NotifyOnlyAvailableUpgrades='False'|

NewCommand.AutomaticPackage='False'|

NewCommand.UseOriginalTemplate='False'|SourceCommand.Command='unknown'|

SourceCommand.Priority='0'|FeatureCommand.Command='unknown'|

ConfigCommand.Command='unknown'|PinCommand.Command='unknown'|

...

Executing command ['C:\ProgramData\chocolatey\tools\7z.exe' x -aoa -bd -bb1 -o"C:\ProgramData\chocolatey\lib\vim-tux.portable\tools\vim74" -y "C:\Users\xxxxx\AppData\Local\Temp\chocolatey\vim-tux.portable\7.4.1949\complete-x86.7z"]

ERROR: Exception calling "Start" with "0" argument(s): "The specified executable is not a valid application for this OS platform."

at Get-ChocolateyUnzip, C:\ProgramData\chocolatey\helpers\functions\Get-ChocolateyUnzip.ps1: line 159

```

|

non_process

|

exe on system in chocolatey tools i m working on a system if i want to install a zip package choco install fails with the following message error exception calling start with argument s the specified executable is not a valid application for this os platform i checked the c programdata chocolatey tools exe and it is really a version if i change it to a build the install works well output log chocolatey chocolatey is running on windows v attempting to delete file c programdata chocolatey choco exe old attempting to delete file c programdata chocolatey choco exe old command line c programdata chocolatey choco exe install vim tux portable fdv s c programdata chocolatey lib bad vim tux portable received arguments install vim tux portable fdv s c programdata chocolatey lib bad vim tux portable removependingpackagestask is now ready and waiting for prerunmessage sending message prerunmessage out if there are subscribers removing all pending packages that should not be considered installed the source c programdata chocolatey lib bad vim tux portable evaluated to a normal source type note hiding sensitive configuration data please double and triple check to be sure no sensitive data is shown especially if copying output to a gist for review configuration commandname install cachelocation c users xxxxx appdata local temp chocolatey containslegacypackageinstalls true commandexecutiontimeoutseconds webrequesttimeoutseconds sources c programdata chocolatey lib bad vim tux portable sourcetype normal debug true verbose true force true noop false helprequested false regularoutput true quietoutput false promptforconfirmation false acceptlicense false allowunofficialbuild false input vim tux portable allversions false skippackageinstallprovider false packagenames vim tux portable prerelease false false overridearguments false notsilent false ignoredependencies false allowmultipleversions false allowdowngrade false forcedependencies false information platformtype windows information platformversion information platformname windows information chocolateyversion information chocolateyproductversion information fullname choco version culture neutral publickeytoken information false information isinteractive true information isuseradministrator true information isprocesselevated true information islicensedversion false features autouninstaller true features checksumfiles true features failonautouninstaller false features failonstandarderror false features usepowershellhost true features logenvironmentvalues false features viruscheck false features failoninvalidormissinglicense false features ignoreinvalidoptionsswitches true features usepackageexitcodes true features usefipscompliantchecksums false listcommand localonly false listcommand includeregistryprograms false listcommand pagesize listcommand exact false listcommand byidonly false listcommand idstartswith false listcommand orderbypopularity false listcommand approvedonly false listcommand downloadcacheavailable false listcommand notbroken false upgradecommand failonunfound false upgradecommand failonnotinstalled false upgradecommand notifyonlyavailableupgrades false newcommand automaticpackage false newcommand useoriginaltemplate false sourcecommand command unknown sourcecommand priority featurecommand command unknown configcommand command unknown pincommand command unknown executing command error exception calling start with argument s the specified executable is not a valid application for this os platform at get chocolateyunzip c programdata chocolatey helpers functions get chocolateyunzip line

| 0

|

4,115

| 7,058,976,002

|

IssuesEvent

|

2018-01-04 22:44:39

|

Southclaws/pawn

|

https://api.github.com/repos/Southclaws/pawn

|

closed

|

Recursive relative include doesn't change CWD when using forward slash

|

state: stale type: pre-processor

|

Assume a matryoshka of kinds:

```

- test.pwn

- level_1

-- test.inc

-- level_2

--- test.inc

--- level_3

---- test.inc

```

When using backslash to specify path to included file, everything is nice. However, only that setup will work:

_test.pwn_

```

#include "level_1/test"

```

_level_1/test.inc_

```

#include "level_1\level_2/test"

```

_level_2/test.inc_

```

#include "level_1\level_2\level_3/test"

```

Is this intended behaviour?

|

1.0

|

Recursive relative include doesn't change CWD when using forward slash - Assume a matryoshka of kinds:

```

- test.pwn

- level_1

-- test.inc

-- level_2

--- test.inc

--- level_3

---- test.inc

```

When using backslash to specify path to included file, everything is nice. However, only that setup will work:

_test.pwn_

```

#include "level_1/test"

```

_level_1/test.inc_

```

#include "level_1\level_2/test"

```

_level_2/test.inc_

```

#include "level_1\level_2\level_3/test"

```

Is this intended behaviour?

|

process

|

recursive relative include doesn t change cwd when using forward slash assume a matryoshka of kinds test pwn level test inc level test inc level test inc when using backslash to specify path to included file everything is nice however only that setup will work test pwn include level test level test inc include level level test level test inc include level level level test is this intended behaviour

| 1

|

13,438

| 15,882,013,135

|

IssuesEvent

|

2021-04-09 15:29:29

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[Auth][UI] Sign in screen > Error messages are overlapping with the screen elements

|

Auth server Bug P2 Process: Fixed Process: Tested QA Process: Tested dev

|

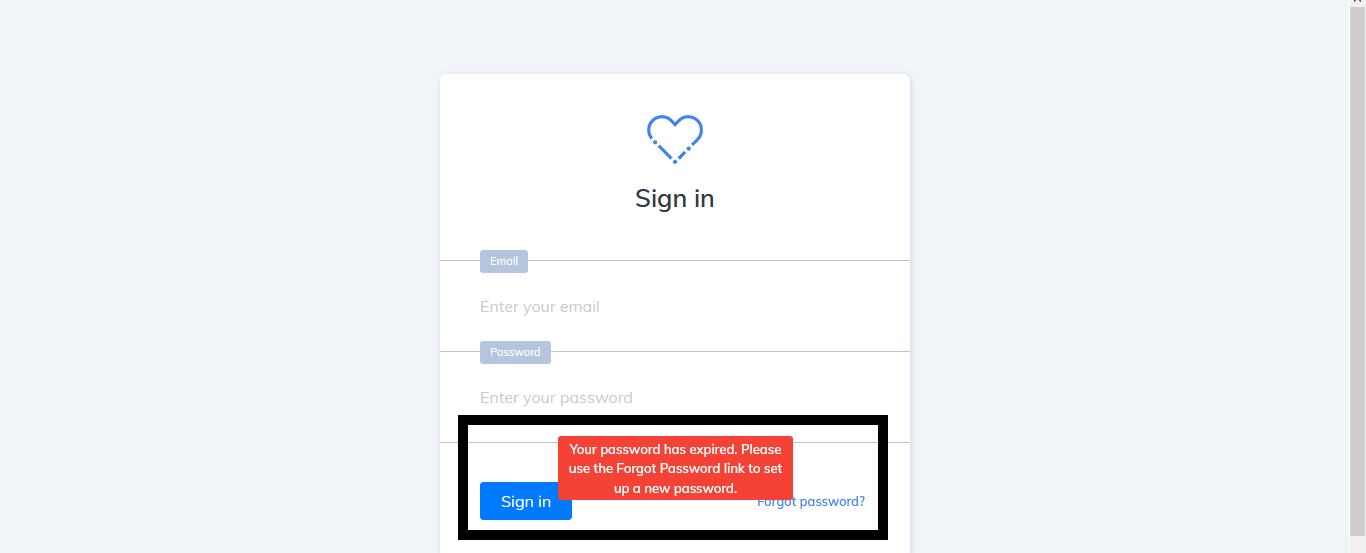

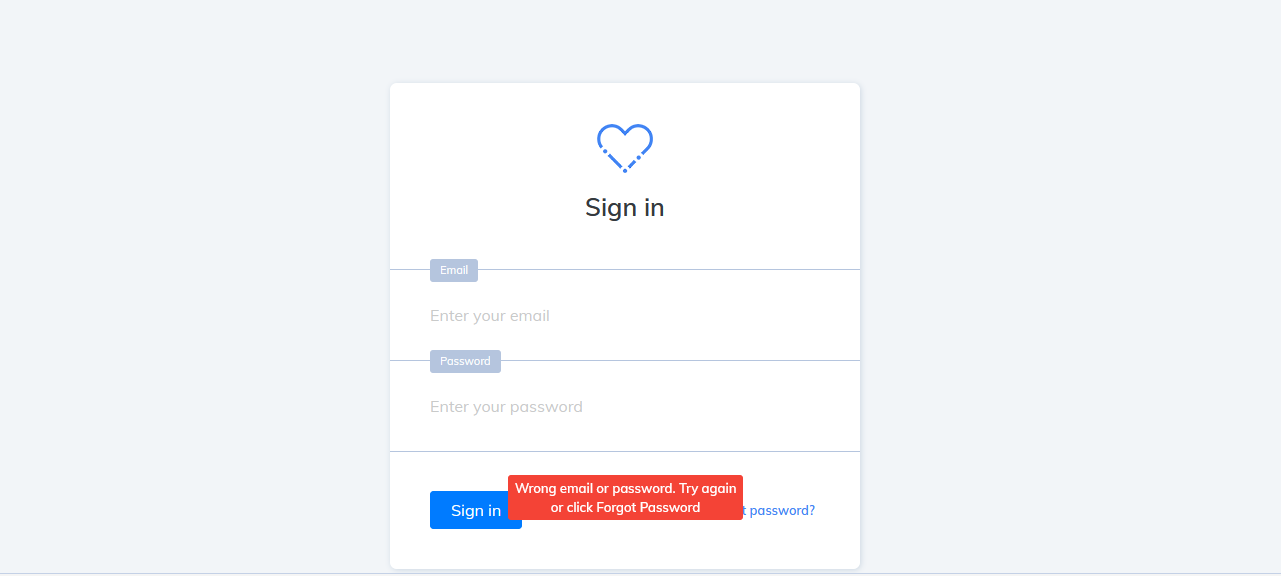

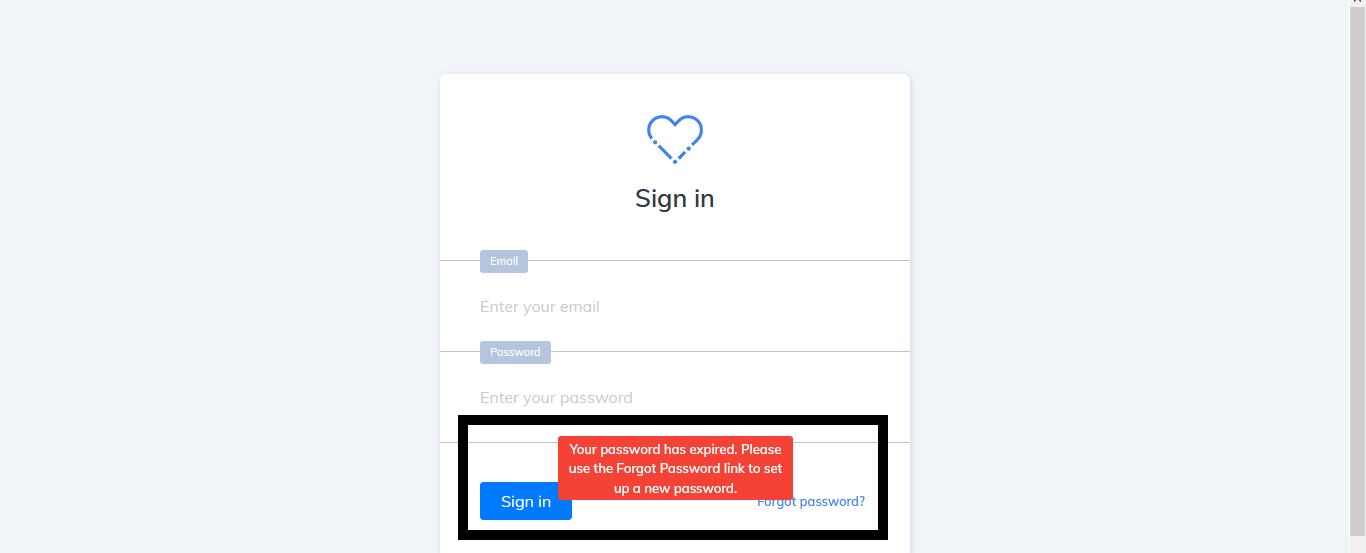

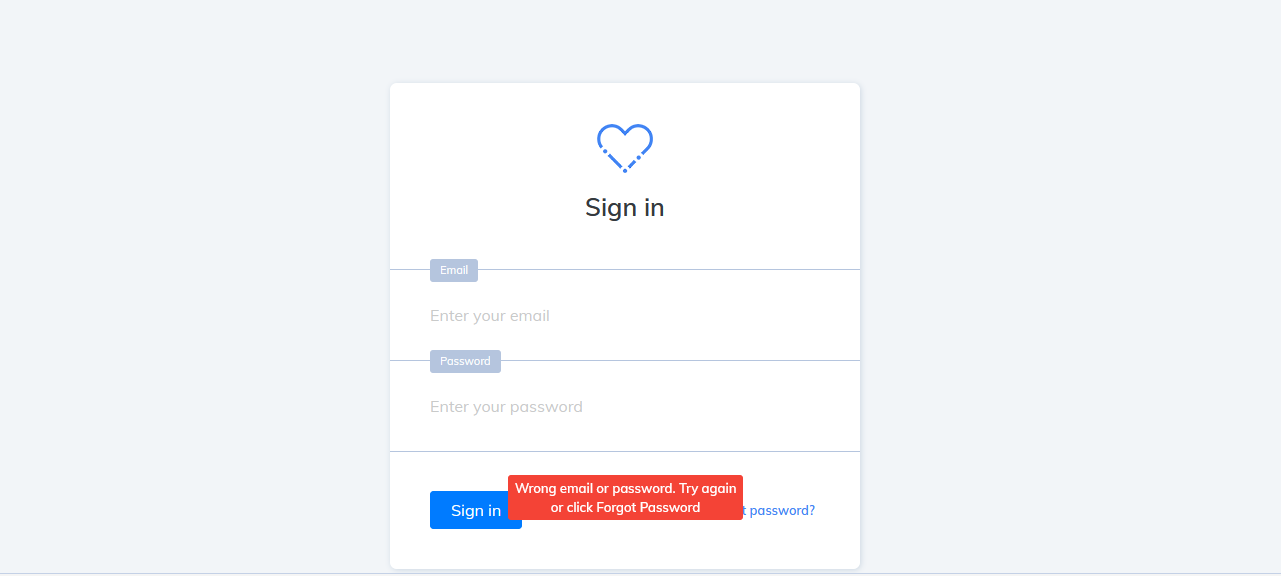

Sign in screen > Error messages are overlapping with the screen elements

[Note: Issue should be fixed for all the error messages]

|

3.0

|

[Auth][UI] Sign in screen > Error messages are overlapping with the screen elements - Sign in screen > Error messages are overlapping with the screen elements

[Note: Issue should be fixed for all the error messages]

|

process

|

sign in screen error messages are overlapping with the screen elements sign in screen error messages are overlapping with the screen elements

| 1

|

81,449

| 15,729,646,611

|

IssuesEvent

|

2021-03-29 15:04:18

|

danmar/testissues

|

https://api.github.com/repos/danmar/testissues

|

opened

|

"The scope of the variable XXX can be limited" not detected when variable is initilialized during declaration (Trac #272)

|

Improve check Incomplete Migration Migrated from Trac enhancement php-coderrr

|

Migrated from https://trac.cppcheck.net/ticket/272

```json

{

"status": "closed",

"changetime": "2009-08-16T19:17:41",

"description": "cppcheck is raising a warning style with the code above.\n\ncppcheck -q -a -s .\n[./main.c:8]: (style) The scope of the variable var can be limited\n\nBut when the variable \"var\" is initialized during declaration the warning is no more raised\n\n{{{\n#include <stdio.h>\n#include <stdlib.h>\n\nint main(void)\n{\n int j =0;\n int i;\n int var;\n int condition = 0;\n puts(\"!!! Hello World in C !!!\");\n if (condition) {\n puts(\"!!!Not possible!!!\");\n } else {\n do\n {\n for (var = 0; var < 10; ++var) {\n i++;\n }\n } while (0);\n }\n printf(\"\\n ===> i = %d\\n\", i);\n return EXIT_SUCCESS;\n}\n\n}}}\n",

"reporter": "paskalad",

"cc": "",

"resolution": "fixed",

"_ts": "1250450261000000",

"component": "Improve check",

"summary": "\"The scope of the variable XXX can be limited\" not detected when variable is initilialized during declaration",

"priority": "",

"keywords": "",

"time": "2009-05-01T08:31:52",

"milestone": "1.36",

"owner": "php-coderrr",

"type": "enhancement"

}

```

|

1.0

|

"The scope of the variable XXX can be limited" not detected when variable is initilialized during declaration (Trac #272) - Migrated from https://trac.cppcheck.net/ticket/272

```json

{

"status": "closed",

"changetime": "2009-08-16T19:17:41",

"description": "cppcheck is raising a warning style with the code above.\n\ncppcheck -q -a -s .\n[./main.c:8]: (style) The scope of the variable var can be limited\n\nBut when the variable \"var\" is initialized during declaration the warning is no more raised\n\n{{{\n#include <stdio.h>\n#include <stdlib.h>\n\nint main(void)\n{\n int j =0;\n int i;\n int var;\n int condition = 0;\n puts(\"!!! Hello World in C !!!\");\n if (condition) {\n puts(\"!!!Not possible!!!\");\n } else {\n do\n {\n for (var = 0; var < 10; ++var) {\n i++;\n }\n } while (0);\n }\n printf(\"\\n ===> i = %d\\n\", i);\n return EXIT_SUCCESS;\n}\n\n}}}\n",

"reporter": "paskalad",

"cc": "",

"resolution": "fixed",

"_ts": "1250450261000000",

"component": "Improve check",

"summary": "\"The scope of the variable XXX can be limited\" not detected when variable is initilialized during declaration",

"priority": "",

"keywords": "",

"time": "2009-05-01T08:31:52",

"milestone": "1.36",

"owner": "php-coderrr",

"type": "enhancement"

}

```

|

non_process

|

the scope of the variable xxx can be limited not detected when variable is initilialized during declaration trac migrated from json status closed changetime description cppcheck is raising a warning style with the code above n ncppcheck q a s n style the scope of the variable var can be limited n nbut when the variable var is initialized during declaration the warning is no more raised n n n include n include n nint main void n n int j n int i n int var n int condition n puts hello world in c n if condition n puts not possible n else n do n n for var var i d n i n return exit success n n n n reporter paskalad cc resolution fixed ts component improve check summary the scope of the variable xxx can be limited not detected when variable is initilialized during declaration priority keywords time milestone owner php coderrr type enhancement

| 0

|

43,179

| 12,970,495,281

|

IssuesEvent

|

2020-07-21 09:25:18

|

logzio/apollo

|

https://api.github.com/repos/logzio/apollo

|

closed

|

WS-2019-0331 (Medium) detected in handlebars-2.0.0.tgz

|

security vulnerability

|

## WS-2019-0331 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-2.0.0.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates effectively with no frustration</p>

<p>Library home page: <a href="https://registry.npmjs.org/handlebars/-/handlebars-2.0.0.tgz">https://registry.npmjs.org/handlebars/-/handlebars-2.0.0.tgz</a></p>

<p>Path to dependency file: /tmp/ws-scm/apollo/ui/package.json</p>

<p>Path to vulnerable library: /tmp/ws-scm/apollo/ui/node_modules/handlebars/package.json</p>

<p>

Dependency Hierarchy:

- grunt-google-cdn-0.4.3.tgz (Root Library)

- google-cdn-0.7.0.tgz

- bower-1.3.12.tgz

- :x: **handlebars-2.0.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/logzio/apollo/commit/656f895f3b072d3da92ac4b9ec9c5c938f1e1c71">656f895f3b072d3da92ac4b9ec9c5c938f1e1c71</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Arbitrary Code Execution vulnerability found in handlebars before 4.5.2. Lookup helper fails to validate templates. Attack may submit templates that execute arbitrary JavaScript in the system.

<p>Publish Date: 2019-12-05

<p>URL: <a href=https://github.com/wycats/handlebars.js/commit/d54137810a49939fd2ad01a91a34e182ece4528e>WS-2019-0331</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1316">https://www.npmjs.com/advisories/1316</a></p>

<p>Release Date: 2019-12-05</p>

<p>Fix Resolution: handlebars - 4.5.2</p>

</p>

</details>

<p></p>

|

True

|

WS-2019-0331 (Medium) detected in handlebars-2.0.0.tgz - ## WS-2019-0331 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-2.0.0.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates effectively with no frustration</p>

<p>Library home page: <a href="https://registry.npmjs.org/handlebars/-/handlebars-2.0.0.tgz">https://registry.npmjs.org/handlebars/-/handlebars-2.0.0.tgz</a></p>

<p>Path to dependency file: /tmp/ws-scm/apollo/ui/package.json</p>

<p>Path to vulnerable library: /tmp/ws-scm/apollo/ui/node_modules/handlebars/package.json</p>

<p>

Dependency Hierarchy:

- grunt-google-cdn-0.4.3.tgz (Root Library)

- google-cdn-0.7.0.tgz

- bower-1.3.12.tgz

- :x: **handlebars-2.0.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/logzio/apollo/commit/656f895f3b072d3da92ac4b9ec9c5c938f1e1c71">656f895f3b072d3da92ac4b9ec9c5c938f1e1c71</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Arbitrary Code Execution vulnerability found in handlebars before 4.5.2. Lookup helper fails to validate templates. Attack may submit templates that execute arbitrary JavaScript in the system.

<p>Publish Date: 2019-12-05

<p>URL: <a href=https://github.com/wycats/handlebars.js/commit/d54137810a49939fd2ad01a91a34e182ece4528e>WS-2019-0331</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1316">https://www.npmjs.com/advisories/1316</a></p>

<p>Release Date: 2019-12-05</p>

<p>Fix Resolution: handlebars - 4.5.2</p>

</p>

</details>

<p></p>

|

non_process

|

ws medium detected in handlebars tgz ws medium severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file tmp ws scm apollo ui package json path to vulnerable library tmp ws scm apollo ui node modules handlebars package json dependency hierarchy grunt google cdn tgz root library google cdn tgz bower tgz x handlebars tgz vulnerable library found in head commit a href vulnerability details arbitrary code execution vulnerability found in handlebars before lookup helper fails to validate templates attack may submit templates that execute arbitrary javascript in the system publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution handlebars

| 0

|

79,721

| 15,256,698,238

|

IssuesEvent

|

2021-02-20 21:22:47

|

cornell-dti/campus-density-android

|

https://api.github.com/repos/cornell-dti/campus-density-android

|

closed

|

Consider app architecture

|

big code in progress

|

We should try to refactor our code base so that it is more consistent with industry standards in terms of app architecture: https://developer.android.com/jetpack/docs/guide

### Overall Goals

The ultimate goal of this issue is to make our code easier to reason about, which will allow us to scale more effectively.

Implement:

- MVVM architecture

- reactive programming principles

- single source of truth for data

|

1.0

|

Consider app architecture - We should try to refactor our code base so that it is more consistent with industry standards in terms of app architecture: https://developer.android.com/jetpack/docs/guide

### Overall Goals

The ultimate goal of this issue is to make our code easier to reason about, which will allow us to scale more effectively.

Implement:

- MVVM architecture

- reactive programming principles

- single source of truth for data

|

non_process

|

consider app architecture we should try to refactor our code base so that it is more consistent with industry standards in terms of app architecture overall goals the ultimate goal of this issue is to make our code easier to reason about which will allow us to scale more effectively implement mvvm architecture reactive programming principles single source of truth for data

| 0

|

296,045

| 22,286,896,001

|

IssuesEvent

|

2022-06-11 19:28:48

|

thegooddocsproject/chronologue

|

https://api.github.com/repos/thegooddocsproject/chronologue

|

opened

|

Chronologue docs: Troubleshooting Framework

|

documentation

|

## Summary

"Troubleshooting Framework" is a *Troubleshooting that provides *technicians* with the general steps to prepare for a troubleshooting task.

### Research

- [ ] Determine user goals.

- [ ] Determine prerequisites

- [ ] Determine what steps the user has to take.

### Writing/Testing

- [ ] Create a new branch.

- [ ] Create a draft.

- [ ] Write a commentary in the document to highlight best practices.

- [ ] Take feedback notes for the template group.

- [ ] Create a PR.

### Review

- [ ] Grammatical review.

- [ ] Technical review.

### Publication

- [ ] Merge PR to `docs`.

### Resources

**Source file**: INSERT LINK IF SOURCE FILE ALREADY EXISTS

**Template**: INSERT LINK FOR THE TEMPLATE. All templates are located in: https://github.com/thegooddocsproject/templates/

**Feedback form**: INSERT LINK

|

1.0

|

Chronologue docs: Troubleshooting Framework - ## Summary

"Troubleshooting Framework" is a *Troubleshooting that provides *technicians* with the general steps to prepare for a troubleshooting task.

### Research

- [ ] Determine user goals.

- [ ] Determine prerequisites

- [ ] Determine what steps the user has to take.

### Writing/Testing

- [ ] Create a new branch.

- [ ] Create a draft.

- [ ] Write a commentary in the document to highlight best practices.

- [ ] Take feedback notes for the template group.

- [ ] Create a PR.

### Review

- [ ] Grammatical review.

- [ ] Technical review.

### Publication

- [ ] Merge PR to `docs`.

### Resources

**Source file**: INSERT LINK IF SOURCE FILE ALREADY EXISTS

**Template**: INSERT LINK FOR THE TEMPLATE. All templates are located in: https://github.com/thegooddocsproject/templates/

**Feedback form**: INSERT LINK

|

non_process

|

chronologue docs troubleshooting framework summary troubleshooting framework is a troubleshooting that provides technicians with the general steps to prepare for a troubleshooting task research determine user goals determine prerequisites determine what steps the user has to take writing testing create a new branch create a draft write a commentary in the document to highlight best practices take feedback notes for the template group create a pr review grammatical review technical review publication merge pr to docs resources source file insert link if source file already exists template insert link for the template all templates are located in feedback form insert link

| 0

|

170,816

| 14,271,007,191

|

IssuesEvent

|

2020-11-21 10:16:26

|

livelyapps/pluploader

|

https://api.github.com/repos/livelyapps/pluploader

|

closed

|

Add .gifs to README

|

documentation todo

|

As we want to show the capabilities of the pluploader, we should include gifs into the README.

Minimum:

- [ ] Add gif in the header section to show main features of pluploader (upload, list, info, etc...)

Optional:

- [ ] Add gif to every heading to show full capabilities

|

1.0

|

Add .gifs to README - As we want to show the capabilities of the pluploader, we should include gifs into the README.

Minimum:

- [ ] Add gif in the header section to show main features of pluploader (upload, list, info, etc...)

Optional:

- [ ] Add gif to every heading to show full capabilities

|

non_process

|

add gifs to readme as we want to show the capabilities of the pluploader we should include gifs into the readme minimum add gif in the header section to show main features of pluploader upload list info etc optional add gif to every heading to show full capabilities

| 0

|

23,094

| 3,992,588,022

|

IssuesEvent

|

2016-05-10 02:46:48

|

dotnet/corefx

|

https://api.github.com/repos/dotnet/corefx

|

closed

|

X509 ExportMultiplePrivateKeys test failed in CI

|

2 - In Progress System.Security test bug Windows

|

http://dotnet-ci.cloudapp.net/job/dotnet_corefx_windows_debug_prtest/3248/console

```

Discovering: System.Security.Cryptography.X509Certificates.Tests

Discovered: System.Security.Cryptography.X509Certificates.Tests

Starting: System.Security.Cryptography.X509Certificates.Tests

System.Security.Cryptography.X509Certificates.Tests.CollectionTests.ExportMultiplePrivateKeys [FAIL]

System.Security.Cryptography.CryptographicException : Error occurred during a cryptographic operation.

Stack Trace:

d:\j\workspace\dotnet_corefx_windows_debug_prtest\src\System.Security.Cryptography.X509Certificates\src\Internal\Cryptography\Pal.Windows\StorePal.Export.cs(82,0): at Internal.Cryptography.Pal.StorePal.Export(X509ContentType contentType, String password)

d:\j\workspace\dotnet_corefx_windows_debug_prtest\src\System.Security.Cryptography.X509Certificates\src\System\Security\Cryptography\X509Certificates\X509Certificate2Collection.cs(123,0): at System.Security.Cryptography.X509Certificates.X509Certificate2Collection.Export(X509ContentType contentType, String password)

d:\j\workspace\dotnet_corefx_windows_debug_prtest\src\System.Security.Cryptography.X509Certificates\src\System\Security\Cryptography\X509Certificates\X509Certificate2Collection.cs(116,0): at System.Security.Cryptography.X509Certificates.X509Certificate2Collection.Export(X509ContentType contentType)

d:\j\workspace\dotnet_corefx_windows_debug_prtest\src\System.Security.Cryptography.X509Certificates\tests\CollectionTests.cs(482,0): at System.Security.Cryptography.X509Certificates.Tests.CollectionTests.ExportMultiplePrivateKeys()

Finished: System.Security.Cryptography.X509Certificates.Tests

```

|

1.0

|

X509 ExportMultiplePrivateKeys test failed in CI - http://dotnet-ci.cloudapp.net/job/dotnet_corefx_windows_debug_prtest/3248/console

```

Discovering: System.Security.Cryptography.X509Certificates.Tests

Discovered: System.Security.Cryptography.X509Certificates.Tests

Starting: System.Security.Cryptography.X509Certificates.Tests

System.Security.Cryptography.X509Certificates.Tests.CollectionTests.ExportMultiplePrivateKeys [FAIL]

System.Security.Cryptography.CryptographicException : Error occurred during a cryptographic operation.

Stack Trace:

d:\j\workspace\dotnet_corefx_windows_debug_prtest\src\System.Security.Cryptography.X509Certificates\src\Internal\Cryptography\Pal.Windows\StorePal.Export.cs(82,0): at Internal.Cryptography.Pal.StorePal.Export(X509ContentType contentType, String password)

d:\j\workspace\dotnet_corefx_windows_debug_prtest\src\System.Security.Cryptography.X509Certificates\src\System\Security\Cryptography\X509Certificates\X509Certificate2Collection.cs(123,0): at System.Security.Cryptography.X509Certificates.X509Certificate2Collection.Export(X509ContentType contentType, String password)

d:\j\workspace\dotnet_corefx_windows_debug_prtest\src\System.Security.Cryptography.X509Certificates\src\System\Security\Cryptography\X509Certificates\X509Certificate2Collection.cs(116,0): at System.Security.Cryptography.X509Certificates.X509Certificate2Collection.Export(X509ContentType contentType)

d:\j\workspace\dotnet_corefx_windows_debug_prtest\src\System.Security.Cryptography.X509Certificates\tests\CollectionTests.cs(482,0): at System.Security.Cryptography.X509Certificates.Tests.CollectionTests.ExportMultiplePrivateKeys()

Finished: System.Security.Cryptography.X509Certificates.Tests

```

|

non_process

|

exportmultipleprivatekeys test failed in ci discovering system security cryptography tests discovered system security cryptography tests starting system security cryptography tests system security cryptography tests collectiontests exportmultipleprivatekeys system security cryptography cryptographicexception error occurred during a cryptographic operation stack trace d j workspace dotnet corefx windows debug prtest src system security cryptography src internal cryptography pal windows storepal export cs at internal cryptography pal storepal export contenttype string password d j workspace dotnet corefx windows debug prtest src system security cryptography src system security cryptography cs at system security cryptography export contenttype string password d j workspace dotnet corefx windows debug prtest src system security cryptography src system security cryptography cs at system security cryptography export contenttype d j workspace dotnet corefx windows debug prtest src system security cryptography tests collectiontests cs at system security cryptography tests collectiontests exportmultipleprivatekeys finished system security cryptography tests

| 0

|

631,545

| 20,153,649,164

|

IssuesEvent

|

2022-02-09 14:40:30

|

brave/brave-browser

|

https://api.github.com/repos/brave/brave-browser

|

closed

|

Apostrophe not being unescaped in YouTube channel name

|

bug feature/rewards priority/P3 QA/Yes release-notes/exclude greaselion OS/Desktop

|

## Description

Apostrophe is appearing as `#39;` instead of an apostrophe `'` in YouTube channel name.

Example: https://www.youtube.com/c/LolasLifeLessons

|

1.0

|

Apostrophe not being unescaped in YouTube channel name - ## Description

Apostrophe is appearing as `#39;` instead of an apostrophe `'` in YouTube channel name.

Example: https://www.youtube.com/c/LolasLifeLessons

|

non_process

|

apostrophe not being unescaped in youtube channel name description apostrophe is appearing as instead of an apostrophe in youtube channel name example

| 0

|

111,600

| 24,157,714,423

|

IssuesEvent

|

2022-09-22 09:04:00

|

alibaba/nacos

|

https://api.github.com/repos/alibaba/nacos

|

closed

|

IV initial vector size is 16 bytes, take the first 16 bytes of secret Key

|

kind/code quality

|

<!-- Here is for bug reports and feature requests ONLY!

If you're looking for help, please check our mail list、WeChat group and the Gitter room.

Please try to use English to describe your issue, or at least provide a snippet of English translation.

我们鼓励使用英文,如果不能直接使用,可以使用翻译软件,您仍旧可以保留中文原文。

-->

## Issue Description

Type: *bug report* or *feature request*

### Describe what happened (or what feature you want)

### Describe what you expected to happen

### How to reproduce it (as minimally and precisely as possible)

1.

2.

3.

### Tell us your environment

### Anything else we need to know?

|

1.0

|

IV initial vector size is 16 bytes, take the first 16 bytes of secret Key -

<!-- Here is for bug reports and feature requests ONLY!

If you're looking for help, please check our mail list、WeChat group and the Gitter room.

Please try to use English to describe your issue, or at least provide a snippet of English translation.

我们鼓励使用英文,如果不能直接使用,可以使用翻译软件,您仍旧可以保留中文原文。

-->

## Issue Description

Type: *bug report* or *feature request*

### Describe what happened (or what feature you want)

### Describe what you expected to happen

### How to reproduce it (as minimally and precisely as possible)

1.

2.

3.

### Tell us your environment

### Anything else we need to know?

|

non_process

|

iv initial vector size is bytes take the first bytes of secret key here is for bug reports and feature requests only if you re looking for help please check our mail list、wechat group and the gitter room please try to use english to describe your issue or at least provide a snippet of english translation 我们鼓励使用英文,如果不能直接使用,可以使用翻译软件,您仍旧可以保留中文原文。 issue description type bug report or feature request describe what happened or what feature you want describe what you expected to happen how to reproduce it as minimally and precisely as possible tell us your environment anything else we need to know

| 0

|

17,140

| 22,678,312,039

|

IssuesEvent

|

2022-07-04 07:35:20

|

camunda/zeebe

|

https://api.github.com/repos/camunda/zeebe

|

closed

|

Record log does not list correct starting before elements

|

kind/bug severity/low team/process-automation

|

**Describe the bug**

<!-- A clear and concise description of what the bug is. -->

In the `PROC_INST_CREATION` record we will log the elements which the process started at. If a process starts at 2 different element this record will log the same id twice, instead of logging both ids.

**To Reproduce**

<!--

Steps to reproduce the behavior

If possible add a minimal reproducer code sample

- when using the Java client: https://github.com/zeebe-io/zeebe-test-template-java

-->

Create a process instance. Start it at 2 different elements. When you look at the creation record it will say it started at the same element twice.

**Expected behavior**

<!-- A clear and concise description of what you expected to happen. -->

The records logs shows the correct elements that were started at.

**Log/Stacktrace**

<!-- If possible add the full stacktrace or Zeebe log which contains the issue. -->

```

C PROC_INST_CREATION CREATE - #006-> -1 -1 - new <process "process_id_27"> (starting before elements: fork_id_18, fork_id_18) with variables: {boundary_timer_id_25=PT8760H, boundary_timer_id_23=PT8760H, correlationKey=default_correlation_key}

...

C PROC_INST ACTIVATE - #012->#006 K008 - RECEIVE_TASK "id_16" in <process "process_id_27"[K004]>

C PROC_INST ACTIVATE - #013->#006 K009 - PARALLEL_GATEWAY "fork_id_18" in <process "process_id_27"[K004]>

E PROC_INST_CREATION CREATED - #014->#006 K010 - new <process "process_id_27"> (starting before elements: fork_id_18, fork_id_18) with variables: {boundary_timer_id_25=PT8760H, boundary_timer_id_23=PT8760H, correlationKey=default_correlation_key}

```

This shows we started at `fork_id_18` twice, but when looking at the activate commands we can see that we actually started at `fork_id_18` and `id_16`

**Environment:**

- OS: <!-- [e.g. Linux] -->

- Zeebe Version: <!-- [e.g. 0.20.0] -->

- Configuration: <!-- [e.g. exporters etc.] -->

\cc @korthout

|

1.0

|

Record log does not list correct starting before elements - **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

In the `PROC_INST_CREATION` record we will log the elements which the process started at. If a process starts at 2 different element this record will log the same id twice, instead of logging both ids.

**To Reproduce**

<!--

Steps to reproduce the behavior

If possible add a minimal reproducer code sample

- when using the Java client: https://github.com/zeebe-io/zeebe-test-template-java

-->

Create a process instance. Start it at 2 different elements. When you look at the creation record it will say it started at the same element twice.

**Expected behavior**

<!-- A clear and concise description of what you expected to happen. -->

The records logs shows the correct elements that were started at.

**Log/Stacktrace**

<!-- If possible add the full stacktrace or Zeebe log which contains the issue. -->

```

C PROC_INST_CREATION CREATE - #006-> -1 -1 - new <process "process_id_27"> (starting before elements: fork_id_18, fork_id_18) with variables: {boundary_timer_id_25=PT8760H, boundary_timer_id_23=PT8760H, correlationKey=default_correlation_key}

...

C PROC_INST ACTIVATE - #012->#006 K008 - RECEIVE_TASK "id_16" in <process "process_id_27"[K004]>

C PROC_INST ACTIVATE - #013->#006 K009 - PARALLEL_GATEWAY "fork_id_18" in <process "process_id_27"[K004]>

E PROC_INST_CREATION CREATED - #014->#006 K010 - new <process "process_id_27"> (starting before elements: fork_id_18, fork_id_18) with variables: {boundary_timer_id_25=PT8760H, boundary_timer_id_23=PT8760H, correlationKey=default_correlation_key}

```

This shows we started at `fork_id_18` twice, but when looking at the activate commands we can see that we actually started at `fork_id_18` and `id_16`

**Environment:**

- OS: <!-- [e.g. Linux] -->

- Zeebe Version: <!-- [e.g. 0.20.0] -->

- Configuration: <!-- [e.g. exporters etc.] -->

\cc @korthout

|

process

|

record log does not list correct starting before elements describe the bug in the proc inst creation record we will log the elements which the process started at if a process starts at different element this record will log the same id twice instead of logging both ids to reproduce steps to reproduce the behavior if possible add a minimal reproducer code sample when using the java client create a process instance start it at different elements when you look at the creation record it will say it started at the same element twice expected behavior the records logs shows the correct elements that were started at log stacktrace c proc inst creation create new starting before elements fork id fork id with variables boundary timer id boundary timer id correlationkey default correlation key c proc inst activate receive task id in c proc inst activate parallel gateway fork id in e proc inst creation created new starting before elements fork id fork id with variables boundary timer id boundary timer id correlationkey default correlation key this shows we started at fork id twice but when looking at the activate commands we can see that we actually started at fork id and id environment os zeebe version configuration cc korthout

| 1

|

443,064

| 30,872,686,753

|

IssuesEvent

|

2023-08-03 12:27:42

|

cbyrohl/scida

|

https://api.github.com/repos/cbyrohl/scida

|

closed

|

info() does not exist for series

|

documentation enhancement

|

```

>>> from scida import load

>>> ds = load('/virgotng/universe/IllustrisTNG/TNG100-3/', units=True)

>>> ds.info()

```

as in the tutorial, gives an error:

```AttributeError: 'ArepoSimulation' object has no attribute 'info'```

|

1.0

|

info() does not exist for series - ```

>>> from scida import load

>>> ds = load('/virgotng/universe/IllustrisTNG/TNG100-3/', units=True)

>>> ds.info()

```

as in the tutorial, gives an error:

```AttributeError: 'ArepoSimulation' object has no attribute 'info'```

|

non_process

|

info does not exist for series from scida import load ds load virgotng universe illustristng units true ds info as in the tutorial gives an error attributeerror areposimulation object has no attribute info

| 0

|

3,194

| 6,261,026,027

|

IssuesEvent

|

2017-07-14 22:24:03

|

saguaroib/saguaro

|

https://api.github.com/repos/saguaroib/saguaro

|

closed

|

Chmod images after creation

|

Bug: Minor Image processing

|

First of all, when i run test.php it seems to work, but it remain a blank page.

Second:

when i try to make a post a bunch of errors appear.

Any solution?

|

1.0

|

Chmod images after creation - First of all, when i run test.php it seems to work, but it remain a blank page.

Second:

when i try to make a post a bunch of errors appear.

Any solution?

|

process

|

chmod images after creation first of all when i run test php it seems to work but it remain a blank page second when i try to make a post a bunch of errors appear any solution

| 1

|

85,496

| 16,670,103,669

|

IssuesEvent

|

2021-06-07 09:46:26

|

pytorch/vision

|

https://api.github.com/repos/pytorch/vision

|

closed

|

Port test/test_models.py to pytest

|

code quality good first issue module: models module: tests

|

Currently, most tests in [test/test_models.py](https://github.com/pytorch/vision/blob/master/test/test_models.py) rely on `unittest.TestCase`. Now that we support `pytest`, we want to remove the use of the `unittest` module.

For a similar issue: see https://github.com/pytorch/vision/issues/3945 and #3956

### Instructions

There are many tests in this file, but it should be possible to port all of them in one PR as they're all pretty straightforward. If you're interested in this issue, please comment below to indicate that you started working on a PR. Look below for some porting tips, and please don't hesitate to ask for help. Thanks!

In this file there are already some test functions like:

```py

def test_classification_model(model_name, dev):

ModelTester()._test_classification_model(model_name, dev)

```

For those, we should just copy the body of `_test_classification_model` into `test_classification_model` so that we can get rid of the `ModelTester` class altogether.

`@pytest.mark.parametrize('dev', _devs)` should be changed into `@pytest.mark.parametrize('dev', cpu_and_gpu())` where `cpu_and_gpu()` is in `common_utils`.

The tests that need cuda (e.g. `test_fasterrcnn_switch_devices`) should use the `@needs_cuda` decorator, also from `common_utils`. The test that *don't* need cuda should use the `@cpu_only` decorator.

### How to port a test to pytest

Porting a test from `unittest` to pytest is usually fairly straightforward. For a typical example, see https://github.com/pytorch/vision/pull/3907/files:

- take the test method out of the `Tester(unittest.TestCase)` class and just declare it as a function

- Replace `@unittest.skipIf` with `pytest.mark.skipif(cond, reason=...)`

- remove any use of `self.assertXYZ`.

- Typically `assertEqual(a, b)` can be replaced by `assert a == b` when a and b are pure python objects (scalars, tuples, lists), and otherwise we can rely on `assert_equal` which is already used in the file.

- `self.assertRaises` should be replaced with the `pytest.raises(Exp, match=...):` context manager, as done in https://github.com/pytorch/vision/pull/3907/files. Same for warnings with `pytest.warns`

- `self.assertTrue` should be replaced with a plain `assert`

- When a function uses for loops to tests multiple parameter values, one should use`pytest.mark.parametrize` instead, as done e.g. in https://github.com/pytorch/vision/pull/3907/files.

cc @pmeier

|

1.0

|

Port test/test_models.py to pytest - Currently, most tests in [test/test_models.py](https://github.com/pytorch/vision/blob/master/test/test_models.py) rely on `unittest.TestCase`. Now that we support `pytest`, we want to remove the use of the `unittest` module.

For a similar issue: see https://github.com/pytorch/vision/issues/3945 and #3956

### Instructions

There are many tests in this file, but it should be possible to port all of them in one PR as they're all pretty straightforward. If you're interested in this issue, please comment below to indicate that you started working on a PR. Look below for some porting tips, and please don't hesitate to ask for help. Thanks!

In this file there are already some test functions like:

```py

def test_classification_model(model_name, dev):

ModelTester()._test_classification_model(model_name, dev)

```

For those, we should just copy the body of `_test_classification_model` into `test_classification_model` so that we can get rid of the `ModelTester` class altogether.

`@pytest.mark.parametrize('dev', _devs)` should be changed into `@pytest.mark.parametrize('dev', cpu_and_gpu())` where `cpu_and_gpu()` is in `common_utils`.

The tests that need cuda (e.g. `test_fasterrcnn_switch_devices`) should use the `@needs_cuda` decorator, also from `common_utils`. The test that *don't* need cuda should use the `@cpu_only` decorator.

### How to port a test to pytest

Porting a test from `unittest` to pytest is usually fairly straightforward. For a typical example, see https://github.com/pytorch/vision/pull/3907/files:

- take the test method out of the `Tester(unittest.TestCase)` class and just declare it as a function

- Replace `@unittest.skipIf` with `pytest.mark.skipif(cond, reason=...)`

- remove any use of `self.assertXYZ`.

- Typically `assertEqual(a, b)` can be replaced by `assert a == b` when a and b are pure python objects (scalars, tuples, lists), and otherwise we can rely on `assert_equal` which is already used in the file.

- `self.assertRaises` should be replaced with the `pytest.raises(Exp, match=...):` context manager, as done in https://github.com/pytorch/vision/pull/3907/files. Same for warnings with `pytest.warns`