Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

75,696 | 3,471,088,742 | IssuesEvent | 2015-12-23 13:13:00 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | closed | REFM instrument definition | Component: Reflectometry Misc: SSC Priority: High | This issue was originally [TRAC 11308](http://trac.mantidproject.org/mantid/ticket/11308)

Allow better sharing of reflectomtery tools

- - - -

Keywords: SSC, 2015, SNS reflOther

| 1.0 | REFM instrument definition - This issue was originally [TRAC 11308](http://trac.mantidproject.org/mantid/ticket/11308)

Allow better sharing of reflectomtery tools

- - - -

Keywords: SSC, 2015, SNS reflOther

| non_process | refm instrument definition this issue was originally allow better sharing of reflectomtery tools keywords ssc sns reflother | 0 |

5,969 | 8,790,937,355 | IssuesEvent | 2018-12-21 10:46:09 | tinyMediaManager/tinyMediaManager | https://api.github.com/repos/tinyMediaManager/tinyMediaManager | closed | Removing or moving extras | bug processing | __What TMM version are you using?__

2.9.11

__release, pre-release, nightly, or directly from GitHub/branch?__

release

__What is the actual behaviour?__

Rename/cleanup move extras to ".deletedByTMM" or move from ./MovieFolder/extras to ./MovieFolder/

I have empty FileName pattern in settings because i want l... | 1.0 | Removing or moving extras - __What TMM version are you using?__

2.9.11

__release, pre-release, nightly, or directly from GitHub/branch?__

release

__What is the actual behaviour?__

Rename/cleanup move extras to ".deletedByTMM" or move from ./MovieFolder/extras to ./MovieFolder/

I have empty FileName pattern ... | process | removing or moving extras what tmm version are you using release pre release nightly or directly from github branch release what is the actual behaviour rename cleanup move extras to deletedbytmm or move from moviefolder extras to moviefolder i have empty filename pattern i... | 1 |

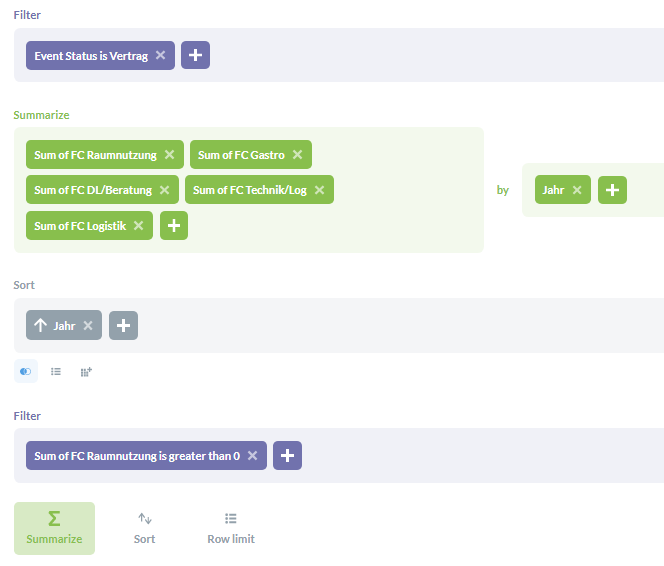

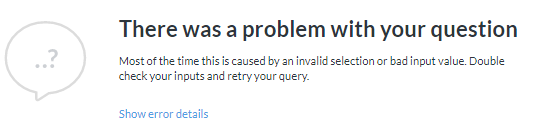

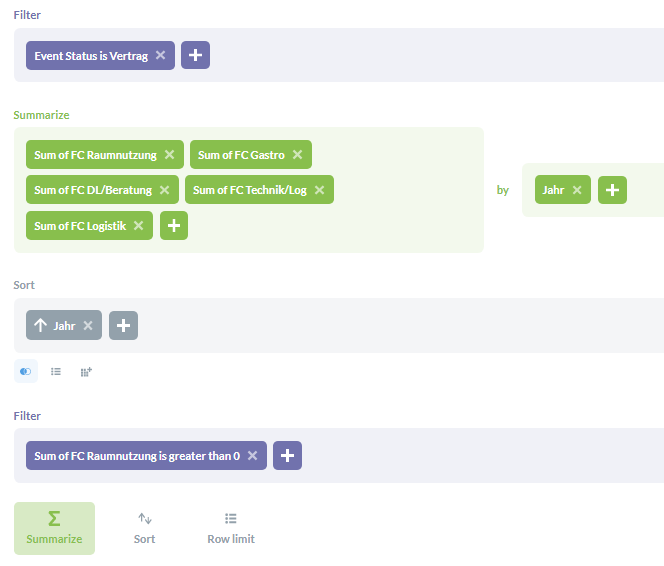

18,288 | 24,387,412,330 | IssuesEvent | 2022-10-04 12:53:40 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Cannot drill-through "View these ..." when aggregated results are filtered | Type:Bug Priority:P2 Querying/Processor .Reproduced | **Describe the bug**

This setup

Throws this error

When Viewing (drilling down to) Details:

... | 1.0 | Cannot drill-through "View these ..." when aggregated results are filtered - **Describe the bug**

This setup

Throws this error

on June 29, 2009 12:37:35_

Несколько раз был зафиксирован момент когда при запуске нового поиска,

именно при обращении к хабу программа наглухо виснет, т.е. нагрузка на

процессор падает в 0 и вообще какие либо действия прекращаются.

По ... | 1.0 | При большом количестве потоков поиска программа виснет - _From [a.rain...@gmail.com](https://code.google.com/u/117892482479228821242/) on June 29, 2009 12:37:35_

Несколько раз был зафиксирован момент когда при запуске нового поиска,

именно при обращении к хабу программа наглухо виснет, т.е. нагрузка на

процессор п... | non_process | при большом количестве потоков поиска программа виснет from on june несколько раз был зафиксирован момент когда при запуске нового поиска именно при обращении к хабу программа наглухо виснет т е нагрузка на процессор падает в и вообще какие либо действия прекращаются по прошествии таймаут... | 0 |

140,235 | 31,861,979,903 | IssuesEvent | 2023-09-15 11:37:23 | kamilsk/dotfiles | https://api.github.com/repos/kamilsk/dotfiles | closed | command: add books as the same as obsidian | type: feature scope: code impact: medium effort: easy | **Motivation:** review disk usage, now I have 27G audiobooks.

```bash

$ books

# cd ~/Library/Containers/com.apple.BKAgentService/Data/Documents/iBooks

# du -h * | sort -h

``` | 1.0 | command: add books as the same as obsidian - **Motivation:** review disk usage, now I have 27G audiobooks.

```bash

$ books

# cd ~/Library/Containers/com.apple.BKAgentService/Data/Documents/iBooks

# du -h * | sort -h

``` | non_process | command add books as the same as obsidian motivation review disk usage now i have audiobooks bash books cd library containers com apple bkagentservice data documents ibooks du h sort h | 0 |

17,149 | 22,699,177,960 | IssuesEvent | 2022-07-05 09:06:24 | anitsh/til | https://api.github.com/repos/anitsh/til | opened | Information Technology Infrastructure Library (ITIL) | wip process | The Information Technology Infrastructure Library (ITIL) is a set of detailed practices for IT activities such as [IT service management](https://en.wikipedia.org/wiki/IT_service_management) (ITSM) and [IT asset management](https://en.wikipedia.org/wiki/IT_asset_management) (ITAM) that focus on aligning IT services wit... | 1.0 | Information Technology Infrastructure Library (ITIL) - The Information Technology Infrastructure Library (ITIL) is a set of detailed practices for IT activities such as [IT service management](https://en.wikipedia.org/wiki/IT_service_management) (ITSM) and [IT asset management](https://en.wikipedia.org/wiki/IT_asset_ma... | process | information technology infrastructure library itil the information technology infrastructure library itil is a set of detailed practices for it activities such as itsm and itam that focus on aligning it services with the needs of business itil describes processes procedures tasks and checklists... | 1 |

789,142 | 27,780,500,557 | IssuesEvent | 2023-03-16 20:38:37 | SunnyDaye/github-issues-template | https://api.github.com/repos/SunnyDaye/github-issues-template | opened | Site Terms link is not working | bug Severity 1 Priority 1 | **Describe the bug**

The Site Terms link leads to a 404 page not found error.

**To Reproduce**

Steps to reproduce the behavior:

1. Hover over 'resources'

2. Click on 'Site Terms'

**Expected behavior**

The Site Terms link should lead to a page with the terms of each tour site.

**Desktop (please complete th... | 1.0 | Site Terms link is not working - **Describe the bug**

The Site Terms link leads to a 404 page not found error.

**To Reproduce**

Steps to reproduce the behavior:

1. Hover over 'resources'

2. Click on 'Site Terms'

**Expected behavior**

The Site Terms link should lead to a page with the terms of each tour site.... | non_process | site terms link is not working describe the bug the site terms link leads to a page not found error to reproduce steps to reproduce the behavior hover over resources click on site terms expected behavior the site terms link should lead to a page with the terms of each tour site ... | 0 |

8,191 | 11,391,981,909 | IssuesEvent | 2020-01-30 00:50:29 | knative/serving | https://api.github.com/repos/knative/serving | closed | Revisit TestScaleTo50 SLO after Istio 1.1 | area/networking area/test-and-release kind/feature kind/process | ## In what area(s)?

<!-- Remove the '> ' to select -->

/area networking

/area test-and-release

<!--

Other classifications:

/kind process

-->

## Describe the feature

In https://github.com/knative/serving/issues/2850 we saw that our SLO can't be very high yet. This appears to be an issue with the servic... | 1.0 | Revisit TestScaleTo50 SLO after Istio 1.1 - ## In what area(s)?

<!-- Remove the '> ' to select -->

/area networking

/area test-and-release

<!--

Other classifications:

/kind process

-->

## Describe the feature

In https://github.com/knative/serving/issues/2850 we saw that our SLO can't be very high yet.... | process | revisit slo after istio in what area s to select area networking area test and release other classifications kind process describe the feature in we saw that our slo can t be very high yet this appears to be an issue with the service mesh in istio x since... | 1 |

2,744 | 5,651,694,594 | IssuesEvent | 2017-04-08 07:14:03 | facebook/osquery | https://api.github.com/repos/facebook/osquery | opened | Audit events can cause instability if a backlog_wait_time is not set to 1 | Linux process auditing wishlist | As part of the osquery audit configuration, the audit setting for `backlog_wait_time` is set to 1. This is the clock delay applied when the audit backlog is filled. This queue may fill if lots of events are queue and the owner of the userland Netlink socket cannot dequeue fast enough.

Example of a default configurat... | 1.0 | Audit events can cause instability if a backlog_wait_time is not set to 1 - As part of the osquery audit configuration, the audit setting for `backlog_wait_time` is set to 1. This is the clock delay applied when the audit backlog is filled. This queue may fill if lots of events are queue and the owner of the userland N... | process | audit events can cause instability if a backlog wait time is not set to as part of the osquery audit configuration the audit setting for backlog wait time is set to this is the clock delay applied when the audit backlog is filled this queue may fill if lots of events are queue and the owner of the userland n... | 1 |

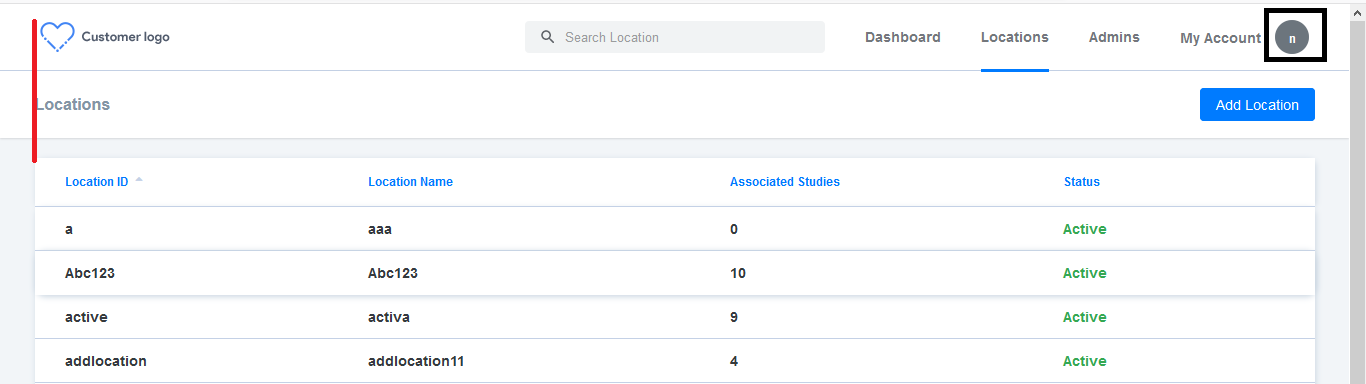

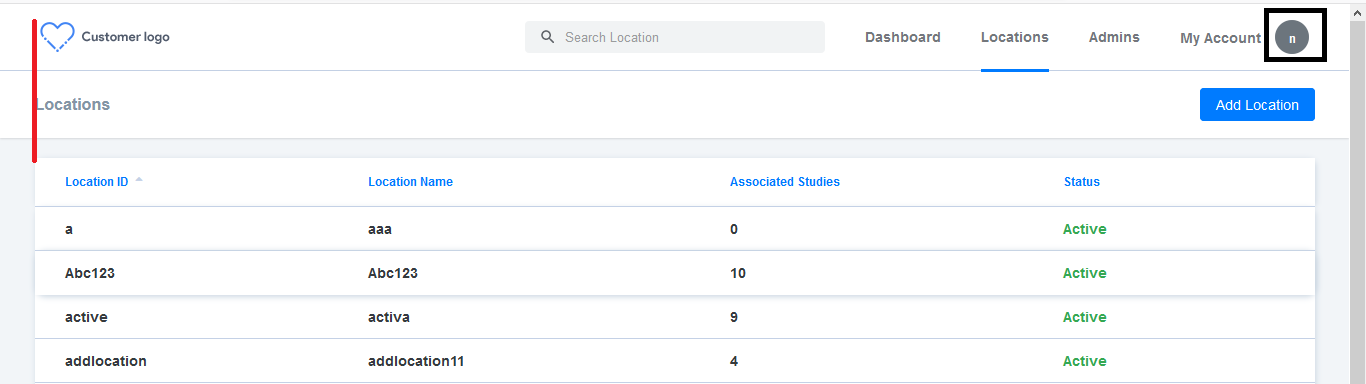

12,463 | 14,937,186,442 | IssuesEvent | 2021-01-25 14:20:32 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | Cutomer logo > Alignment issue | Bug P2 Participant manager Process: Fixed Process: Tested dev | 1. Cutomer logo > Alignment issue

2. My Account circle icon text is not centralized

[Note : Issue should be fixed in all screens]

| 2.0 | Cutomer logo > Alignment issue - 1. Cutomer logo > Alignment issue

2. My Account circle icon text is not centralized

[Note : Issue should be fixed in all screens]

| process | cutomer logo alignment issue cutomer logo alignment issue my account circle icon text is not centralized | 1 |

4,970 | 7,806,853,410 | IssuesEvent | 2018-06-11 15:09:32 | sysown/proxysql | https://api.github.com/repos/sysown/proxysql | closed | Error while importing a dump | CONNECTION POOL PROTOCOL QUERY PROCESSOR bug | ```

root@galera-n3:~# cat talkyoo_preview_dump.sql | mysql -uroot -pXXX -h 10.0.0.219 -P6033 talkyoo_preview

ERROR 1231 (#4200) at line 101: Variable 'character_set_client' can't be set to the value of 'NULL'

```

| 1.0 | Error while importing a dump - ```

root@galera-n3:~# cat talkyoo_preview_dump.sql | mysql -uroot -pXXX -h 10.0.0.219 -P6033 talkyoo_preview

ERROR 1231 (#4200) at line 101: Variable 'character_set_client' can't be set to the value of 'NULL'

```

| process | error while importing a dump root galera cat talkyoo preview dump sql mysql uroot pxxx h talkyoo preview error at line variable character set client can t be set to the value of null | 1 |

323,092 | 23,933,305,637 | IssuesEvent | 2022-09-10 21:50:24 | cloudflare/cloudflare-docs | https://api.github.com/repos/cloudflare/cloudflare-docs | opened | Clarify how to create an API token for running Wrangler in CI/CD | documentation content:edit | ### Which Cloudflare product does this pertain to?

Workers

### Existing documentation URL(s)

https://developers.cloudflare.com/workers/wrangler/ci-cd/

### Section that requires update

Cloudflare API token

### What needs to change?

It's not really clear to me (a) how and where to get an API token, and (b) what to... | 1.0 | Clarify how to create an API token for running Wrangler in CI/CD - ### Which Cloudflare product does this pertain to?

Workers

### Existing documentation URL(s)

https://developers.cloudflare.com/workers/wrangler/ci-cd/

### Section that requires update

Cloudflare API token

### What needs to change?

It's not really... | non_process | clarify how to create an api token for running wrangler in ci cd which cloudflare product does this pertain to workers existing documentation url s section that requires update cloudflare api token what needs to change it s not really clear to me a how and where to get an api token and ... | 0 |

6,463 | 9,546,597,581 | IssuesEvent | 2019-05-01 20:24:58 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | closed | Remove Go to Saved from Create Internship Opportunity | Apply Process Approved Opportunity Create Requirements Ready State Dept. | Who: Interns

What: Viewing internship - should not see Saved internships section

Why: Old design

Remove the Saved internships section from the Create internship opportunity view. This was part of an old mock up and was removed.

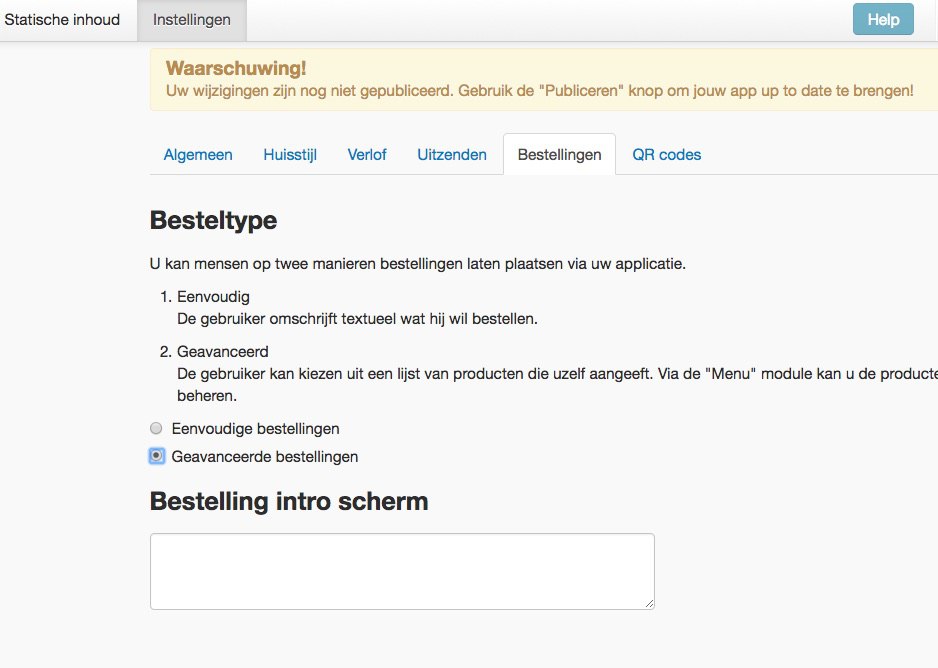

| 1.0 | Not possible to change from simple to advanced order in services in the city dashboard -

. | bug P2 preprocess | I uploaded a zip here:

http://www.oxygenxml.com/forum/files/ug.zip

If the usermanual DITA Map gets published to plain XHTML the "eppo-installation-linux" HTML file should contain inside it the content from the two subtopics but it no longer does that.

The used chunk method is:

<topicref href="topics/e... | 1.0 | Problem with chunking (DITA OT 2.x). - I uploaded a zip here:

http://www.oxygenxml.com/forum/files/ug.zip

If the usermanual DITA Map gets published to plain XHTML the "eppo-installation-linux" HTML file should contain inside it the content from the two subtopics but it no longer does that.

The used chunk metho... | process | problem with chunking dita ot x i uploaded a zip here if the usermanual dita map gets published to plain xhtml the eppo installation linux html file should contain inside it the content from the two subtopics but it no longer does that the used chunk method is ... | 1 |

14,235 | 17,154,705,232 | IssuesEvent | 2021-07-14 04:29:07 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | opened | Provision to configure app-specific email addresses | Android P1 Participant datastore Process: Enhancement iOS | Add the ability to configure app-specific email addresses (based on App ID) for the following types of emails

1. Feedback, Contact Us 'To' addresses

2. The 'from' address for all outgoing emails to app users

3. The 'support email' displayed in outgoing mails. Outgoing mails refer to the Welcome/Registration, Forg... | 1.0 | Provision to configure app-specific email addresses - Add the ability to configure app-specific email addresses (based on App ID) for the following types of emails

1. Feedback, Contact Us 'To' addresses

2. The 'from' address for all outgoing emails to app users

3. The 'support email' displayed in outgoing mails. ... | process | provision to configure app specific email addresses add the ability to configure app specific email addresses based on app id for the following types of emails feedback contact us to addresses the from address for all outgoing emails to app users the support email displayed in outgoing mails ... | 1 |

1,196 | 3,697,266,488 | IssuesEvent | 2016-02-27 15:17:29 | sysown/proxysql | https://api.github.com/repos/sysown/proxysql | closed | enforce_autocommit_on_reads | ADMIN CONNECTION POOL MYSQL PROTOCOL QUERY PROCESSOR | ## WHY

Since 3449ab0f598db7bd537f1065983a0594927122d8 ( #438 ) ProxySQL tracks the value of autocommit and enforces it in database connection.

This implementation had the drawback that if a client sends `set autocommit=0` , and read/write split is implemented too, transactions are opened on slaves as pointed in #469 ... | 1.0 | enforce_autocommit_on_reads - ## WHY

Since 3449ab0f598db7bd537f1065983a0594927122d8 ( #438 ) ProxySQL tracks the value of autocommit and enforces it in database connection.

This implementation had the drawback that if a client sends `set autocommit=0` , and read/write split is implemented too, transactions are opened... | process | enforce autocommit on reads why since proxysql tracks the value of autocommit and enforces it in database connection this implementation had the drawback that if a client sends set autocommit and read write split is implemented too transactions are opened on slaves as pointed in and therefo... | 1 |

107,464 | 23,417,717,812 | IssuesEvent | 2022-08-13 07:37:54 | creativecommons/commoners | https://api.github.com/repos/creativecommons/commoners | opened | [Bug] Site navigation broken after CCID login | 🟧 priority: high 🚦 status: awaiting triage 🛠 goal: fix 💻 aspect: code | ## Description

After logging in to the CCGN site, one is redirected back to the CCGN site, and menu items don't lead to expected links.

## Reproduction

1. Visit: https://network.creativecommons.org/ [must not yet be logged in]

2. Click on "Members" in top navigation menu

3. Get redirected to https://login.creati... | 1.0 | [Bug] Site navigation broken after CCID login - ## Description

After logging in to the CCGN site, one is redirected back to the CCGN site, and menu items don't lead to expected links.

## Reproduction

1. Visit: https://network.creativecommons.org/ [must not yet be logged in]

2. Click on "Members" in top navigation... | non_process | site navigation broken after ccid login description after logging in to the ccgn site one is redirected back to the ccgn site and menu items don t lead to expected links reproduction visit click on members in top navigation menu get redirected to to log in because members is a pro... | 0 |

16,540 | 21,566,062,086 | IssuesEvent | 2022-05-01 21:48:11 | fmnas/fmnas-site | https://api.github.com/repos/fmnas/fmnas-site | closed | Change application response text | form processor x-small (<1h) | remove "applications are reviewed Sunday through Thursday" and add:

If you have not already done so, please submit a few photos of home (inside and outside, wherever your pets are allowed to go)/yard/fence/current pets, which can be emailed as .jpg attachments. Since we are unable to do pre-adoption home visits for ... | 1.0 | Change application response text - remove "applications are reviewed Sunday through Thursday" and add:

If you have not already done so, please submit a few photos of home (inside and outside, wherever your pets are allowed to go)/yard/fence/current pets, which can be emailed as .jpg attachments. Since we are unable ... | process | change application response text remove applications are reviewed sunday through thursday and add if you have not already done so please submit a few photos of home inside and outside wherever your pets are allowed to go yard fence current pets which can be emailed as jpg attachments since we are unable ... | 1 |

16,381 | 21,104,130,243 | IssuesEvent | 2022-04-04 17:01:21 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Release 5.1 - March 2022 | P1 type: process team-OSS | # Status of Bazel 5.1

- Expected release date: 2022-03-24

- [Release blockers milestone](https://github.com/bazelbuild/bazel/milestone/35)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manager will triage it and add it to the milestone.

To cherry... | 1.0 | Release 5.1 - March 2022 - # Status of Bazel 5.1

- Expected release date: 2022-03-24

- [Release blockers milestone](https://github.com/bazelbuild/bazel/milestone/35)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manager will triage it and add it to ... | process | release march status of bazel expected release date to report a release blocking bug please add a comment with the text bazel io flag to the issue a release manager will triage it and add it to the milestone to cherry pick a mainline commit into simply send a pr again... | 1 |

240,072 | 7,800,375,519 | IssuesEvent | 2018-06-09 08:39:13 | tine20/Tine-2.0-Open-Source-Groupware-and-CRM | https://api.github.com/repos/tine20/Tine-2.0-Open-Source-Groupware-and-CRM | closed | 0008960:

more than one list (group membership) filter breaks contact search | Addressbook Bug Mantis high priority | **Reported by pschuele on 26 Sep 2013 12:30**

**Version:** Collin (2013.10.1~beta1)

more than one list (group membership) filter breaks contact search

**Steps to reproduce:** "filters": [

{

"field": "list&q... | 1.0 | 0008960:

more than one list (group membership) filter breaks contact search - **Reported by pschuele on 26 Sep 2013 12:30**

**Version:** Collin (2013.10.1~beta1)

more than one list (group membership) filter breaks contact search

**Steps to reproduce:** "filters": [

... | non_process | more than one list group membership filter breaks contact search reported by pschuele on sep version collin more than one list group membership filter breaks contact search steps to reproduce quot filters quot ... | 0 |

5,605 | 8,468,001,841 | IssuesEvent | 2018-10-23 18:30:05 | icra/ecam | https://api.github.com/repos/icra/ecam | closed | Add missing descriptions | in process | Please see the table below. I am still waiting for the French translation.

Variable | English | Spanish | French | Thai

-- | -- | -- | -- | --

fsc_cont_emp | Fraction of produced faecal sludge that is emptied from containments during the assessment period. If only partial emptying is done it should be refle... | 1.0 | Add missing descriptions - Please see the table below. I am still waiting for the French translation.

Variable | English | Spanish | French | Thai

-- | -- | -- | -- | --

fsc_cont_emp | Fraction of produced faecal sludge that is emptied from containments during the assessment period. If only partial emptying ... | process | add missing descriptions please see the table below i am still waiting for the french translation variable english spanish french thai fsc cont emp fraction of produced faecal sludge that is emptied from containments during the assessment period if only partial emptying ... | 1 |

394,813 | 11,648,983,359 | IssuesEvent | 2020-03-01 23:44:22 | ayumi-cloud/oc-security-module | https://api.github.com/repos/ayumi-cloud/oc-security-module | opened | Add Reddit bot to whitelist rules | Add to Whitelist Firewall Priority: Medium enhancement in-progress | ### Enhancement idea

- [ ] Add Reddit bot to whitelist rules.

| 1.0 | Add Reddit bot to whitelist rules - ### Enhancement idea

- [ ] Add Reddit bot to whitelist rules.

| non_process | add reddit bot to whitelist rules enhancement idea add reddit bot to whitelist rules | 0 |

22,392 | 19,277,408,480 | IssuesEvent | 2021-12-10 13:30:39 | MPMG-DCC-UFMG/C01 | https://api.github.com/repos/MPMG-DCC-UFMG/C01 | closed | Ajustar a ordenação da listagem de coletores | usabilidade Desenvolvimento | ## Comportamento Esperado

Os coletores com instâncias em execução ou modificados recentimente ficam no topo da listagem

## Comportamento Atual

Os coletores são ordenados apenas por ordem de criação, então se um coletor mais antigo estiver sendo utilizado, é preciso fazer um scroll e encontrar ele na lista, que pod... | True | Ajustar a ordenação da listagem de coletores - ## Comportamento Esperado

Os coletores com instâncias em execução ou modificados recentimente ficam no topo da listagem

## Comportamento Atual

Os coletores são ordenados apenas por ordem de criação, então se um coletor mais antigo estiver sendo utilizado, é preciso fa... | non_process | ajustar a ordenação da listagem de coletores comportamento esperado os coletores com instâncias em execução ou modificados recentimente ficam no topo da listagem comportamento atual os coletores são ordenados apenas por ordem de criação então se um coletor mais antigo estiver sendo utilizado é preciso fa... | 0 |

285 | 2,725,124,832 | IssuesEvent | 2015-04-14 21:44:52 | neuropoly/spinalcordtoolbox | https://api.github.com/repos/neuropoly/spinalcordtoolbox | opened | compute_csa should receive instance 'param' as a parameter in order to be callable from another script | priority: medium sct_process_segmentation | In order to call the function from a different script the instance 'param' should always been put as an argument. Original 'param' will then be applied in every script that is being called. | 1.0 | compute_csa should receive instance 'param' as a parameter in order to be callable from another script - In order to call the function from a different script the instance 'param' should always been put as an argument. Original 'param' will then be applied in every script that is being called. | process | compute csa should receive instance param as a parameter in order to be callable from another script in order to call the function from a different script the instance param should always been put as an argument original param will then be applied in every script that is being called | 1 |

368,279 | 25,785,471,166 | IssuesEvent | 2022-12-09 19:56:26 | ray-project/kuberay | https://api.github.com/repos/ray-project/kuberay | closed | [HelmChart] No documentation how to use customized image (for development) in HelmChart instruction | bug documentation P1 | ### Search before asking

- [X] I searched the [issues](https://github.com/ray-project/kuberay/issues) and found no similar issues.

### KubeRay Component

ray-operator

### What happened + What you expected to happen

I have built my own image in my own docker hub, but not able to find instruction where to update the... | 1.0 | [HelmChart] No documentation how to use customized image (for development) in HelmChart instruction - ### Search before asking

- [X] I searched the [issues](https://github.com/ray-project/kuberay/issues) and found no similar issues.

### KubeRay Component

ray-operator

### What happened + What you expected to happe... | non_process | no documentation how to use customized image for development in helmchart instruction search before asking i searched the and found no similar issues kuberay component ray operator what happened what you expected to happen i have built my own image in my own docker hub but not able ... | 0 |

396,968 | 11,716,523,131 | IssuesEvent | 2020-03-09 15:46:16 | lukeparser/lukeparser | https://api.github.com/repos/lukeparser/lukeparser | opened | Command to list arguments of another command | Area: View Priority: Normal Type: Feature | For example:

```

\listargs[

# blub

]

```

shall list all arguments that can be provided for a section. | 1.0 | Command to list arguments of another command - For example:

```

\listargs[

# blub

]

```

shall list all arguments that can be provided for a section. | non_process | command to list arguments of another command for example listargs blub shall list all arguments that can be provided for a section | 0 |

3,600 | 6,630,924,434 | IssuesEvent | 2017-09-25 03:34:31 | sysown/proxysql | https://api.github.com/repos/sysown/proxysql | closed | Issue of temporary table in call procedure | ADMIN CONNECTION POOL enhancement QUERY PROCESSOR | When calling a procedure from php, the procedure creates a temporary table that is used in another line of code to display information (same connection). But it seems that between two line of code the temporary table doesn't exist anymore (probably not the same connection in the back-end for the two lines of code, even... | 1.0 | Issue of temporary table in call procedure - When calling a procedure from php, the procedure creates a temporary table that is used in another line of code to display information (same connection). But it seems that between two line of code the temporary table doesn't exist anymore (probably not the same connection in... | process | issue of temporary table in call procedure when calling a procedure from php the procedure creates a temporary table that is used in another line of code to display information same connection but it seems that between two line of code the temporary table doesn t exist anymore probably not the same connection in... | 1 |

16,464 | 21,390,659,125 | IssuesEvent | 2022-04-21 06:42:36 | zammad/zammad | https://api.github.com/repos/zammad/zammad | closed | parsing incoming mails breaks on this line "Reply-To: <>" | enhancement verified prioritised by payment mail processing | <!--

Hi there - thanks for filing an issue. Please ensure the following things before creating an issue - thank you! 🤓

Since november 15th we handle all requests, except real bugs, at our community board.

Full explanation: https://community.zammad.org/t/major-change-regarding-github-issues-community-board/21

P... | 1.0 | parsing incoming mails breaks on this line "Reply-To: <>" - <!--

Hi there - thanks for filing an issue. Please ensure the following things before creating an issue - thank you! 🤓

Since november 15th we handle all requests, except real bugs, at our community board.

Full explanation: https://community.zammad.org/t/... | process | parsing incoming mails breaks on this line reply to hi there thanks for filing an issue please ensure the following things before creating an issue thank you 🤓 since november we handle all requests except real bugs at our community board full explanation please post feature reques... | 1 |

14,537 | 17,643,826,562 | IssuesEvent | 2021-08-20 01:00:17 | open-telemetry/opentelemetry-collector | https://api.github.com/repos/open-telemetry/opentelemetry-collector | closed | Merge include/exclude logic from span package | area:processor | With https://github.com/open-telemetry/opentelemetry-collector/pull/537 the config for include and exclude are under object. This issue tracks having the Matcher object(or some logical name) that checks both the include and exclude properties for the processors. It would remove functions like https://github.com/open-te... | 1.0 | Merge include/exclude logic from span package - With https://github.com/open-telemetry/opentelemetry-collector/pull/537 the config for include and exclude are under object. This issue tracks having the Matcher object(or some logical name) that checks both the include and exclude properties for the processors. It would ... | process | merge include exclude logic from span package with the config for include and exclude are under object this issue tracks having the matcher object or some logical name that checks both the include and exclude properties for the processors it would remove functions like | 1 |

17,629 | 23,445,647,301 | IssuesEvent | 2022-08-15 19:19:52 | apache/arrow-datafusion | https://api.github.com/repos/apache/arrow-datafusion | closed | Remove outdated license text left over from arrow repo | enhancement development-process | I was reviewing code and noticed that https://github.com/apache/arrow-datafusion/blob/master/LICENSE.txt was a copy/paste from https://github.com/apache/arrow/blob/master/LICENSE.txt when we split out this repo. Thus it contains many incorrect and irrelevant references

| 1.0 | Remove outdated license text left over from arrow repo - I was reviewing code and noticed that https://github.com/apache/arrow-datafusion/blob/master/LICENSE.txt was a copy/paste from https://github.com/apache/arrow/blob/master/LICENSE.txt when we split out this repo. Thus it contains many incorrect and irrelevant refe... | process | remove outdated license text left over from arrow repo i was reviewing code and noticed that was a copy paste from when we split out this repo thus it contains many incorrect and irrelevant references | 1 |

161,832 | 12,577,233,118 | IssuesEvent | 2020-06-09 09:12:37 | WoWManiaUK/Redemption | https://api.github.com/repos/WoWManiaUK/Redemption | closed | Can't Filter on Cloth Chest Pieces in AH | Fix - Tester Confirmed | **Links:**

**What is Happening:**

If you filter on Cloth hands, feet or other items it works. But if you filter on chestpieces you get all cloth items.

**What Should happen:**

If you filter on cloth chest pieces, it should be only be showing that.

| 1.0 | Can't Filter on Cloth Chest Pieces in AH - **Links:**

**What is Happening:**

If you filter on Cloth hands, feet or other items it works. But if you filter on chestpieces you get all cloth items.

**What Should happen:**

If you filter on cloth chest pieces, it should be only be showing that.

| non_process | can t filter on cloth chest pieces in ah links what is happening if you filter on cloth hands feet or other items it works but if you filter on chestpieces you get all cloth items what should happen if you filter on cloth chest pieces it should be only be showing that | 0 |

11,493 | 14,366,720,973 | IssuesEvent | 2020-12-01 05:08:52 | carp-lang/Carp | https://api.github.com/repos/carp-lang/Carp | opened | Release scripts broken | bug process | Seems like the release scripts broke (I noticed this when trying to add one for Windows... now none of them work).

Seems to be a mixture of Stack problems and some changes to Github actions? | 1.0 | Release scripts broken - Seems like the release scripts broke (I noticed this when trying to add one for Windows... now none of them work).

Seems to be a mixture of Stack problems and some changes to Github actions? | process | release scripts broken seems like the release scripts broke i noticed this when trying to add one for windows now none of them work seems to be a mixture of stack problems and some changes to github actions | 1 |

204,689 | 15,527,911,412 | IssuesEvent | 2021-03-13 08:27:28 | eclipse/che | https://api.github.com/repos/eclipse/che | closed | Nightly Eclipse Che E2E devfile tests are failing on creation of workspace | area/qe e2e-test/failure kind/bug severity/P1 | ### Describe the bug

Nightly Eclipse Che E2E devfile tests are failing on creation of workspace on minikube:

https://codeready-workspaces-jenkins.rhev-ci-vms.eng.rdu2.redhat.com/job/basic-MultiUser-Che-check-e2e-tests-against-k8s/3231/console

```

14:12:03 + docker run --shm-size=1g --net=host --ipc=host -p 5920:... | 1.0 | Nightly Eclipse Che E2E devfile tests are failing on creation of workspace - ### Describe the bug

Nightly Eclipse Che E2E devfile tests are failing on creation of workspace on minikube:

https://codeready-workspaces-jenkins.rhev-ci-vms.eng.rdu2.redhat.com/job/basic-MultiUser-Che-check-e2e-tests-against-k8s/3231/conso... | non_process | nightly eclipse che devfile tests are failing on creation of workspace describe the bug nightly eclipse che devfile tests are failing on creation of workspace on minikube docker run shm size net host ipc host p e ts selenium base url e ts selenium log level debug e ts s... | 0 |

13,787 | 16,549,426,065 | IssuesEvent | 2021-05-28 06:42:19 | rladies/rladiesguide | https://api.github.com/repos/rladies/rladiesguide | closed | add info on dead name updates for directory | improvements needed :point_up: rladies processes :bullettrain_side: | draft text:

## Updating Dead Names

When updating an existing R-Ladies member directory profile, we usually edit the entry but this will not fix the URL. As a result, the dead name will still appear in the URL for the directory entry. Therefore, when requested to update a dead name in an existing directory entry, we... | 1.0 | add info on dead name updates for directory - draft text:

## Updating Dead Names

When updating an existing R-Ladies member directory profile, we usually edit the entry but this will not fix the URL. As a result, the dead name will still appear in the URL for the directory entry. Therefore, when requested to update ... | process | add info on dead name updates for directory draft text updating dead names when updating an existing r ladies member directory profile we usually edit the entry but this will not fix the url as a result the dead name will still appear in the url for the directory entry therefore when requested to update ... | 1 |

7,722 | 10,826,179,250 | IssuesEvent | 2019-11-09 20:52:41 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Windows service crash when stop it | area-System.ServiceProcess | _From @dospat07 on Wednesday, September 25, 2019 2:18:37 PM_

### To Reproduce

Steps to reproduce the behavior:

1. Using this version of ASP.NET Core 3.0 and Visual Studio 2019 version 16.3

2. Create new ASP.NET core API web application

3. Add Microsoft.Extensions.Hosting.WindowsServices

4. Change metho... | 1.0 | Windows service crash when stop it - _From @dospat07 on Wednesday, September 25, 2019 2:18:37 PM_

### To Reproduce

Steps to reproduce the behavior:

1. Using this version of ASP.NET Core 3.0 and Visual Studio 2019 version 16.3

2. Create new ASP.NET core API web application

3. Add Microsoft.Extensions.Hos... | process | windows service crash when stop it from on wednesday september pm to reproduce steps to reproduce the behavior using this version of asp net core and visual studio version create new asp net core api web application add microsoft extensions hosting windowsservi... | 1 |

406,856 | 27,585,348,322 | IssuesEvent | 2023-03-08 19:17:42 | kokkos/kokkos | https://api.github.com/repos/kokkos/kokkos | closed | Do ScatterViews support subviews? | Documentation InDevelop | Simple question: Do ScatterViews support subviews? If not, consider this a feature request. See #1393. | 1.0 | Do ScatterViews support subviews? - Simple question: Do ScatterViews support subviews? If not, consider this a feature request. See #1393. | non_process | do scatterviews support subviews simple question do scatterviews support subviews if not consider this a feature request see | 0 |

6,384 | 9,449,377,661 | IssuesEvent | 2019-04-16 01:37:56 | googleapis/google-auth-library-nodejs | https://api.github.com/repos/googleapis/google-auth-library-nodejs | closed | Add a test to cover #605 | type: process | There's a PR over in #605 to fix a bug with not throwing on refreshAccessToken when no refresh_token is set. We... should have a test to cover that. | 1.0 | Add a test to cover #605 - There's a PR over in #605 to fix a bug with not throwing on refreshAccessToken when no refresh_token is set. We... should have a test to cover that. | process | add a test to cover there s a pr over in to fix a bug with not throwing on refreshaccesstoken when no refresh token is set we should have a test to cover that | 1 |

7,237 | 10,384,749,738 | IssuesEvent | 2019-09-10 12:42:39 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | closed | Synthesis failed for phishingprotection | api: phishingprotection autosynth failure type: process | Hello! Autosynth couldn't regenerate phishingprotection. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to branch 'autosynth-phishingprotection'

Running synthtool

['/tmpfs/src/git/autosynth/env/bin/python3', '-m', 'synthtool', 'synth.py', '--']

synthtool > Execut... | 1.0 | Synthesis failed for phishingprotection - Hello! Autosynth couldn't regenerate phishingprotection. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to branch 'autosynth-phishingprotection'

Running synthtool

['/tmpfs/src/git/autosynth/env/bin/python3', '-m', 'syntht... | process | synthesis failed for phishingprotection hello autosynth couldn t regenerate phishingprotection broken heart here s the output from running synth py cloning into working repo switched to branch autosynth phishingprotection running synthtool synthtool executing tmpfs src git autosynth working r... | 1 |

22,742 | 32,056,366,254 | IssuesEvent | 2023-09-24 05:49:35 | subspace/status | https://api.github.com/repos/subspace/status | closed | 🛑 Gemini-3f block explorer squid Processor service is down | status gemini-3f-block-explorer-squid-processor-service | In [`36994fc`](https://github.com/subspace/status/commit/36994fc98b99a80cabd122c01df81ce173f23532

), Gemini-3f block explorer squid Processor service (https://squid.gemini-3f.subspace.network/processor-health) was **down**:

- HTTP code: 0

- Response time: 0 ms

| 1.0 | 🛑 Gemini-3f block explorer squid Processor service is down - In [`36994fc`](https://github.com/subspace/status/commit/36994fc98b99a80cabd122c01df81ce173f23532

), Gemini-3f block explorer squid Processor service (https://squid.gemini-3f.subspace.network/processor-health) was **down**:

- HTTP code: 0

- Response time: 0 ... | process | 🛑 gemini block explorer squid processor service is down in gemini block explorer squid processor service was down http code response time ms | 1 |

248,993 | 26,870,972,579 | IssuesEvent | 2023-02-04 13:06:04 | MatBenfield/news | https://api.github.com/repos/MatBenfield/news | closed | [SecurityWeek] Exploitation of Oracle E-Business Suite Vulnerability Starts After PoC Publication | SecurityWeek Stale |

**Exploitation attempts targeting a critical-severity Oracle E-Business Suite vulnerability have been observed shortly after proof-of-concept (PoC) code was published.**

One of the major Oracle product lines, the E-Business Suite is a set of enterprise applications that help organizations automate processes such as ... | True | [SecurityWeek] Exploitation of Oracle E-Business Suite Vulnerability Starts After PoC Publication -

**Exploitation attempts targeting a critical-severity Oracle E-Business Suite vulnerability have been observed shortly after proof-of-concept (PoC) code was published.**

One of the major Oracle product lines, the E-Bu... | non_process | exploitation of oracle e business suite vulnerability starts after poc publication exploitation attempts targeting a critical severity oracle e business suite vulnerability have been observed shortly after proof of concept poc code was published one of the major oracle product lines the e business suite ... | 0 |

9,911 | 12,950,613,526 | IssuesEvent | 2020-07-19 13:52:35 | cyfile/Matlab-miscellanies | https://api.github.com/repos/cyfile/Matlab-miscellanies | opened | 用 ipcam 对接 ffmpeg 提供的桌面流 | Image Processing | ipcam 是 MATLAB Support Package for IP Cameras (MATLAB Add-on附加功能)提供的一个命令

先在电脑端 下载ffmpeg 并添加到cmd 里.

然后用其抓取桌面 并按摄像头格式推流

matlab 命令里用 ipcam 接收 | 1.0 | 用 ipcam 对接 ffmpeg 提供的桌面流 - ipcam 是 MATLAB Support Package for IP Cameras (MATLAB Add-on附加功能)提供的一个命令

先在电脑端 下载ffmpeg 并添加到cmd 里.

然后用其抓取桌面 并按摄像头格式推流

matlab 命令里用 ipcam 接收 | process | 用 ipcam 对接 ffmpeg 提供的桌面流 ipcam 是 matlab support package for ip cameras (matlab add on附加功能)提供的一个命令 先在电脑端 下载ffmpeg 并添加到cmd 里 然后用其抓取桌面 并按摄像头格式推流 matlab 命令里用 ipcam 接收 | 1 |

367,748 | 25,760,137,333 | IssuesEvent | 2022-12-08 19:45:12 | skeletonlabs/skeleton | https://api.github.com/repos/skeletonlabs/skeleton | closed | Docs: set CLI as the new SvelteKit onboarding default | documentation | ### Link to the Page

https://www.skeleton.dev/guides/install

### Describe the Issue

Let's update and tailor the onboarding documentation to recommend the CLI by default. We'll want to clearly indicate it's in beta, however, we've had zero issues reported since it went live. It seems to be doing everything we need it... | 1.0 | Docs: set CLI as the new SvelteKit onboarding default - ### Link to the Page

https://www.skeleton.dev/guides/install

### Describe the Issue

Let's update and tailor the onboarding documentation to recommend the CLI by default. We'll want to clearly indicate it's in beta, however, we've had zero issues reported since ... | non_process | docs set cli as the new sveltekit onboarding default link to the page describe the issue let s update and tailor the onboarding documentation to recommend the cli by default we ll want to clearly indicate it s in beta however we ve had zero issues reported since it went live it seems to be doing eve... | 0 |

137,973 | 11,171,890,361 | IssuesEvent | 2019-12-29 00:04:24 | HRSEVESOSK/cide-ionic-app | https://api.github.com/repos/HRSEVESOSK/cide-ionic-app | opened | ROLE_CIDE_COORDINATOR Upload of documents | testing | Only one document can be uploaded in “Zapisnik”. It was required at least three of them on meeting on 29th of May 2019.

Note 14.6.2019. Information required if three documents can be uploaded.

| 1.0 | ROLE_CIDE_COORDINATOR Upload of documents - Only one document can be uploaded in “Zapisnik”. It was required at least three of them on meeting on 29th of May 2019.

Note 14.6.2019. Information required if... | non_process | role cide coordinator upload of documents only one document can be uploaded in “zapisnik” it was required at least three of them on meeting on of may note information required if three documents can be uploaded | 0 |

1,484 | 4,058,960,195 | IssuesEvent | 2016-05-25 07:42:07 | e-government-ua/iBP | https://api.github.com/repos/e-government-ua/iBP | closed | Нетішин Хмельницька обл - Дозвіл на розробку проекту землеустрою щодо відведення земельної ділянки | In process of testing in work |

Опрацьовувати їх буде та ж людина - начальник ЦНАПу Кушта Галина

Галина Кушта - начальник ЦНАП (їхня робоча пошта)

cnap_netishyn@ukr.net

neteshin_user1 - логин и пароль

Олена Матросова (менеджер від iGov)

olena.boichuk@gmail.com

neteshin_user2 - логин и пароль

Руслан Рудомський (менеджер від iGov... | 1.0 | Нетішин Хмельницька обл - Дозвіл на розробку проекту землеустрою щодо відведення земельної ділянки -

Опрацьовувати їх буде та ж людина - начальник ЦНАПу Кушта Галина

Галина Кушта - начальник ЦНАП (їхня робоча пошта)

cnap_netishyn@ukr.net

neteshin_user1 - логин и пароль

Олена Матросова (менеджер від iGov) ... | process | нетішин хмельницька обл дозвіл на розробку проекту землеустрою щодо відведення земельної ділянки опрацьовувати їх буде та ж людина начальник цнапу кушта галина галина кушта начальник цнап їхня робоча пошта cnap netishyn ukr net neteshin логин и пароль олена матросова менеджер від igov ol... | 1 |

742,979 | 25,881,432,103 | IssuesEvent | 2022-12-14 11:32:48 | thoth-station/opendatahub-cnbi | https://api.github.com/repos/thoth-station/opendatahub-cnbi | closed | Make the deployment compatible with ODH and ArgoCD for op1st / OSC cluster | kind/feature sig/devsecops thoth/group-programming wg/cre priority/critical-urgent | ## Problem statement

<!-- Is your feature request related to a problem? Please provide a clear and concise description of what the problem is.

Ex. I'm always frustrated when [...]

This should be a user story! -->

Continuation of https://github.com/open-services-group/scrum/issues/37

As a BYON/CNBi developer,

... | 1.0 | Make the deployment compatible with ODH and ArgoCD for op1st / OSC cluster - ## Problem statement

<!-- Is your feature request related to a problem? Please provide a clear and concise description of what the problem is.

Ex. I'm always frustrated when [...]

This should be a user story! -->

Continuation of https://... | non_process | make the deployment compatible with odh and argocd for osc cluster problem statement is your feature request related to a problem please provide a clear and concise description of what the problem is ex i m always frustrated when this should be a user story continuation of as a byon c... | 0 |

508,329 | 14,698,523,277 | IssuesEvent | 2021-01-04 06:39:21 | teamforus/general | https://api.github.com/repos/teamforus/general | closed | Provider signup: add back button in beginning (after info steps) | Approval: Granted Priority: Must have Scope: Small Status: Planned project-100 | Learn more about change requests here: https://bit.ly/39CWeEE

### Requested by:

Jamal

### Change description

As a provider I would like to go back to read the information again after clicking next on the info pages.

**Sidenote:** Being "stuck" on this page if you quickly skipped over the information but are... | 1.0 | Provider signup: add back button in beginning (after info steps) - Learn more about change requests here: https://bit.ly/39CWeEE

### Requested by:

Jamal

### Change description

As a provider I would like to go back to read the information again after clicking next on the info pages.

**Sidenote:** Being "stuc... | non_process | provider signup add back button in beginning after info steps learn more about change requests here requested by jamal change description as a provider i would like to go back to read the information again after clicking next on the info pages sidenote being stuck on this page if yo... | 0 |

12,878 | 15,268,227,031 | IssuesEvent | 2021-02-22 11:06:27 | alphagov/govuk-design-system | https://api.github.com/repos/alphagov/govuk-design-system | opened | Send contributions to working group for February / March review | process 🕔 days | ## What

Send updated link styles and hover states to working group for review.

## Why

To get feedback on iterations.

## Who needs to know about this

Designer, Community Manager

## Done when

- [ ] Contribution prepared

- [ ] Contribution sent to working group

- [ ] Folder created for November review and s... | 1.0 | Send contributions to working group for February / March review - ## What

Send updated link styles and hover states to working group for review.

## Why

To get feedback on iterations.

## Who needs to know about this

Designer, Community Manager

## Done when

- [ ] Contribution prepared

- [ ] Contribution sen... | process | send contributions to working group for february march review what send updated link styles and hover states to working group for review why to get feedback on iterations who needs to know about this designer community manager done when contribution prepared contribution sent to... | 1 |

2,083 | 4,912,478,581 | IssuesEvent | 2016-11-23 09:14:08 | CERNDocumentServer/cds | https://api.github.com/repos/CERNDocumentServer/cds | closed | webhooks: faster transcoded files commit | avc_processing enhancement in progress | The `video_transcode` task generates the transcoded videos, but they are commited in the database only when the task completes. Therefore, the UI cannot get hold of them until then, so we need to commit in the middle of the task. | 1.0 | webhooks: faster transcoded files commit - The `video_transcode` task generates the transcoded videos, but they are commited in the database only when the task completes. Therefore, the UI cannot get hold of them until then, so we need to commit in the middle of the task. | process | webhooks faster transcoded files commit the video transcode task generates the transcoded videos but they are commited in the database only when the task completes therefore the ui cannot get hold of them until then so we need to commit in the middle of the task | 1 |

12,761 | 15,115,943,489 | IssuesEvent | 2021-02-09 05:43:14 | bitia-ru/gekkon | https://api.github.com/repos/bitia-ru/gekkon | opened | Загрузка фотографии по клику на плюс (когда фотки нет) | enhancement frontend-desktop frontend-mobile processed | Про то, касается ли этот тикет мобильника — проверить. | 1.0 | Загрузка фотографии по клику на плюс (когда фотки нет) - Про то, касается ли этот тикет мобильника — проверить. | process | загрузка фотографии по клику на плюс когда фотки нет про то касается ли этот тикет мобильника — проверить | 1 |

2,243 | 5,088,645,007 | IssuesEvent | 2016-12-31 23:55:00 | sw4j-org/tool-jpa-processor | https://api.github.com/repos/sw4j-org/tool-jpa-processor | opened | Handle @MapKeyColumn Annotation | annotation processor task | Handle the `@MapKeyColumn` annotation for a property or field.

See [JSR 338: Java Persistence API, Version 2.1](http://download.oracle.com/otn-pub/jcp/persistence-2_1-fr-eval-spec/JavaPersistence.pdf)

- 11.1.33 MapKeyColumn Annotation

| 1.0 | Handle @MapKeyColumn Annotation - Handle the `@MapKeyColumn` annotation for a property or field.

See [JSR 338: Java Persistence API, Version 2.1](http://download.oracle.com/otn-pub/jcp/persistence-2_1-fr-eval-spec/JavaPersistence.pdf)

- 11.1.33 MapKeyColumn Annotation

| process | handle mapkeycolumn annotation handle the mapkeycolumn annotation for a property or field see mapkeycolumn annotation | 1 |

131,997 | 12,496,276,283 | IssuesEvent | 2020-06-01 14:35:07 | go-bdd/gobdd | https://api.github.com/repos/go-bdd/gobdd | closed | Add a short gif of using the lib to readme | documentation good first issue | The goal is to add a short gif where we show how the library works. It's a good developer-experience and more developer-friendly :) | 1.0 | Add a short gif of using the lib to readme - The goal is to add a short gif where we show how the library works. It's a good developer-experience and more developer-friendly :) | non_process | add a short gif of using the lib to readme the goal is to add a short gif where we show how the library works it s a good developer experience and more developer friendly | 0 |

4,171 | 7,107,919,811 | IssuesEvent | 2018-01-16 21:46:05 | 18F/product-guide | https://api.github.com/repos/18F/product-guide | closed | SECTION UPDATE (Project Comms) - Product storytellers | help wanted process change | Product storytellers are something we may be implementing to handle project communications. It has not been fully socialized yet, but if we move forward with it, we will want to add to and perhaps edit the Project Communications section of the guide to fold this in.

| 1.0 | SECTION UPDATE (Project Comms) - Product storytellers - Product storytellers are something we may be implementing to handle project communications. It has not been fully socialized yet, but if we move forward with it, we will want to add to and perhaps edit the Project Communications section of the guide to fold this i... | process | section update project comms product storytellers product storytellers are something we may be implementing to handle project communications it has not been fully socialized yet but if we move forward with it we will want to add to and perhaps edit the project communications section of the guide to fold this i... | 1 |

12,834 | 15,214,381,342 | IssuesEvent | 2021-02-17 13:08:19 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Some exported types no longer available in Prisma namespace in 2.17 | process/candidate team/client tech/typescript | <!--

Thanks for helping us improve Prisma! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by setting the `DEBUG="*"` environment variable and enabling additional logging output in Prisma Client.

Learn more about writing proper bug reports here: htt... | 1.0 | Some exported types no longer available in Prisma namespace in 2.17 - <!--

Thanks for helping us improve Prisma! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by setting the `DEBUG="*"` environment variable and enabling additional logging output in P... | process | some exported types no longer available in prisma namespace in thanks for helping us improve prisma 🙏 please follow the sections in the template and provide as much information as possible about your problem e g by setting the debug environment variable and enabling additional logging output in pr... | 1 |

14,335 | 17,366,342,055 | IssuesEvent | 2021-07-30 07:51:55 | tokio-rs/tokio | https://api.github.com/repos/tokio-rs/tokio | closed | How can I get signal from ExitStatus | A-tokio C-question M-process | I want to get the **signal** from `ExitStatus`, like `std::process::ExitStatus` provided.

| 1.0 | How can I get signal from ExitStatus - I want to get the **signal** from `ExitStatus`, like `std::process::ExitStatus` provided.

| process | how can i get signal from exitstatus i want to get the signal from exitstatus like std process exitstatus provided | 1 |

9,256 | 12,291,991,262 | IssuesEvent | 2020-05-10 12:44:04 | Ultimate-Hosts-Blacklist/whitelist | https://api.github.com/repos/Ultimate-Hosts-Blacklist/whitelist | opened | [FALSE-POSITIVE?] | whitelisting process | **Domains or links**

pochta.ru

**More Information**

I use hosts file, and when I tried to check delivery status on pochta.ru, name was not resolved.

**Have you requested removal from other sources?**

No

**Additional context**

This is a Russian Post web site.

| 1.0 | [FALSE-POSITIVE?] - **Domains or links**

pochta.ru

**More Information**

I use hosts file, and when I tried to check delivery status on pochta.ru, name was not resolved.

**Have you requested removal from other sources?**

No

**Additional context**

This is a Russian Post web site.

| process | domains or links pochta ru more information i use hosts file and when i tried to check delivery status on pochta ru name was not resolved have you requested removal from other sources no additional context this is a russian post web site | 1 |

268 | 2,699,134,537 | IssuesEvent | 2015-04-03 14:41:06 | appsgate2015/appsgate | https://api.github.com/repos/appsgate2015/appsgate | opened | Cohérence des NotificationMsg | appsgate-server P1 PROCESSING | Pour certains équipements qui surchargent CoreNotficationMsg ou qui implémentent NotificationMsg (CoreObjectSpec).

L'état renvoyé par le JSON (objectId, varName, value, oldValue) -> utilisé par le client

n'est pas cohérent avec les méthode correspondantes de l'objet java (respectivement getSource, getVarName, get... | 1.0 | Cohérence des NotificationMsg - Pour certains équipements qui surchargent CoreNotficationMsg ou qui implémentent NotificationMsg (CoreObjectSpec).

L'état renvoyé par le JSON (objectId, varName, value, oldValue) -> utilisé par le client

n'est pas cohérent avec les méthode correspondantes de l'objet java (respectiv... | process | cohérence des notificationmsg pour certains équipements qui surchargent corenotficationmsg ou qui implémentent notificationmsg coreobjectspec l état renvoyé par le json objectid varname value oldvalue utilisé par le client n est pas cohérent avec les méthode correspondantes de l objet java respectiv... | 1 |

348,344 | 10,441,307,581 | IssuesEvent | 2019-09-18 10:31:39 | gardener/etcd-backup-restore | https://api.github.com/repos/gardener/etcd-backup-restore | opened | Optimise the graceful deletion time in case of active snapshot upload | area/performance component/etcd-backup-restore exp/intermediate kind/enhancement platform/all priority/normal status/accepted | **Motivation (Why is this needed?):**

Currently on receiving stop signal, snapshotter wait untill current snapshot upload is finished. Specifically in case of full snapshot upload it takes too much time end the etcd-backup-restore process gracefully.

Same with processing initialisation request. Initialisation requ... | 1.0 | Optimise the graceful deletion time in case of active snapshot upload - **Motivation (Why is this needed?):**

Currently on receiving stop signal, snapshotter wait untill current snapshot upload is finished. Specifically in case of full snapshot upload it takes too much time end the etcd-backup-restore process gracefu... | non_process | optimise the graceful deletion time in case of active snapshot upload motivation why is this needed currently on receiving stop signal snapshotter wait untill current snapshot upload is finished specifically in case of full snapshot upload it takes too much time end the etcd backup restore process gracefu... | 0 |

7,996 | 11,188,128,428 | IssuesEvent | 2020-01-02 02:55:36 | 52ABP/Documents | https://api.github.com/repos/52ABP/Documents | opened | ASP.NET Core 进程内(InProcess)托管 | 52ABP官方技术文档与博客 | ASP.NET Core 进程内(InProcess)托管 | 52ABP官方技术文档与博客 Gitalk | https://docs.52abp.com/mvc/6-In-ProcessHosting.html

旨在打造新手小白从入门到实战的学习网站,内容涵盖:ASP.NET Core、Angular 、.NET Core、52ABP、等企业级解决方案等 | 1.0 | ASP.NET Core 进程内(InProcess)托管 | 52ABP官方技术文档与博客 - https://docs.52abp.com/mvc/6-In-ProcessHosting.html

旨在打造新手小白从入门到实战的学习网站,内容涵盖:ASP.NET Core、Angular 、.NET Core、52ABP、等企业级解决方案等 | process | asp net core 进程内 inprocess 托管 旨在打造新手小白从入门到实战的学习网站,内容涵盖:asp net core、angular 、 net core、 、等企业级解决方案等 | 1 |

44,849 | 7,132,881,400 | IssuesEvent | 2018-01-22 15:52:33 | capistrano/bundler | https://api.github.com/repos/capistrano/bundler | closed | Explain that .bundle should be added to linked_dirs | documentation you can help! | As explained in #95, the `bundle check` behavior used by this gem depends on `.bundle/config` from previous deployments. If this directory is not linked, `bundle check` will be ineffective and a slower `bundle install` will be used. This unnecessarily slows down deployments.

We should add this explanation to the REA... | 1.0 | Explain that .bundle should be added to linked_dirs - As explained in #95, the `bundle check` behavior used by this gem depends on `.bundle/config` from previous deployments. If this directory is not linked, `bundle check` will be ineffective and a slower `bundle install` will be used. This unnecessarily slows down dep... | non_process | explain that bundle should be added to linked dirs as explained in the bundle check behavior used by this gem depends on bundle config from previous deployments if this directory is not linked bundle check will be ineffective and a slower bundle install will be used this unnecessarily slows down depl... | 0 |

814,262 | 30,497,522,833 | IssuesEvent | 2023-07-18 11:55:57 | Polarts/feel-tracker | https://api.github.com/repos/Polarts/feel-tracker | closed | Day Selector | high priority | # Requirements:

The day selector consists of two components:

1. Day Item - a single day item that represents a calendary date the user can pick.

2. Day Carousel - a carousel displaying 7 days at a time.

- [ ] Add a `DayItem` component to `src/components/day`. The component should have the following states:

... | 1.0 | Day Selector - # Requirements:

The day selector consists of two components:

1. Day Item - a single day item that represents a calendary date the user can pick.

2. Day Carousel - a carousel displaying 7 days at a time.

- [ ] Add a `DayItem` component to `src/components/day`. The component should have the followi... | non_process | day selector requirements the day selector consists of two components day item a single day item that represents a calendary date the user can pick day carousel a carousel displaying days at a time add a dayitem component to src components day the component should have the following... | 0 |

66,210 | 3,251,173,214 | IssuesEvent | 2015-10-19 08:18:14 | cs2103aug2015-w14-3j/main | https://api.github.com/repos/cs2103aug2015-w14-3j/main | closed | As a user, I can view all my schedule in a planner/calendar | priority.low type.story | so that I can find empty slots easily | 1.0 | As a user, I can view all my schedule in a planner/calendar - so that I can find empty slots easily | non_process | as a user i can view all my schedule in a planner calendar so that i can find empty slots easily | 0 |

54,850 | 13,933,381,176 | IssuesEvent | 2020-10-22 08:37:38 | jinuem/PDFJsAnnotations | https://api.github.com/repos/jinuem/PDFJsAnnotations | opened | CVE-2018-14042 (Medium) detected in bootstrap-4.0.0.min.js | security vulnerability | ## CVE-2018-14042 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-4.0.0.min.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile f... | True | CVE-2018-14042 (Medium) detected in bootstrap-4.0.0.min.js - ## CVE-2018-14042 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-4.0.0.min.js</b></p></summary>

<p>The most po... | non_process | cve medium detected in bootstrap min js cve medium severity vulnerability vulnerable library bootstrap min js the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to dependency file pdfjsannotati... | 0 |

12,149 | 14,741,397,920 | IssuesEvent | 2021-01-07 10:33:33 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Edit Account - Rebillibles and Vendors permission bug | anc-process anp-1 ant-bug ant-enhancement | In GitLab by @kdjstudios on Jan 16, 2019, 10:38

**Submitted by:** @amishra

**Helpdesk:** NA

**Server:** All

**Client/Site:** ALL

**Account:** ALL

**Issue:**

While tesing we found another issue in rebillable section under account **Edit Page.**

- suppose a user has security level "NCMS3" or "NCSM5".

- goto a... | 1.0 | Edit Account - Rebillibles and Vendors permission bug - In GitLab by @kdjstudios on Jan 16, 2019, 10:38

**Submitted by:** @amishra

**Helpdesk:** NA

**Server:** All

**Client/Site:** ALL

**Account:** ALL

**Issue:**

While tesing we found another issue in rebillable section under account **Edit Page.**

- suppose... | process | edit account rebillibles and vendors permission bug in gitlab by kdjstudios on jan submitted by amishra helpdesk na server all client site all account all issue while tesing we found another issue in rebillable section under account edit page suppose a use... | 1 |

3,850 | 6,808,544,516 | IssuesEvent | 2017-11-04 04:22:31 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | reopened | --dollar not correct for getTokenBal. | status-inprocess tools-getTokenBal type-bug | getTokenBal needs a price list for the wei / token conversion to dollars. This is not correct currently because it reports dollars as a converstion at the eth/dollar rate.

Also-- dollars should be swapped out of 'fiat' and the user should be able to specify the price source in any denomination they want. | 1.0 | --dollar not correct for getTokenBal. - getTokenBal needs a price list for the wei / token conversion to dollars. This is not correct currently because it reports dollars as a converstion at the eth/dollar rate.

Also-- dollars should be swapped out of 'fiat' and the user should be able to specify the price source ... | process | dollar not correct for gettokenbal gettokenbal needs a price list for the wei token conversion to dollars this is not correct currently because it reports dollars as a converstion at the eth dollar rate also dollars should be swapped out of fiat and the user should be able to specify the price source ... | 1 |

13,015 | 15,371,038,267 | IssuesEvent | 2021-03-02 09:32:25 | googleapis/google-cloud-dotnet | https://api.github.com/repos/googleapis/google-cloud-dotnet | closed | Diff detection PR step is failing | priority: p1 type: process | This started today - I wonder whether it's because the "ubuntu-latest" GitHub action environment has updated to 20.04.

Will try updating the version of LibGit2Sharp we use. | 1.0 | Diff detection PR step is failing - This started today - I wonder whether it's because the "ubuntu-latest" GitHub action environment has updated to 20.04.

Will try updating the version of LibGit2Sharp we use. | process | diff detection pr step is failing this started today i wonder whether it s because the ubuntu latest github action environment has updated to will try updating the version of we use | 1 |

11,943 | 14,707,933,025 | IssuesEvent | 2021-01-04 22:32:12 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Batch processing "Select files..." in Autofill raise python error with QgsProcessingParameterFile | Bug Processing | <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

-... | 1.0 | Batch processing "Select files..." in Autofill raise python error with QgsProcessingParameterFile - <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS de... | process | batch processing select files in autofill raise python error with qgsprocessingparameterfile bug fixing and feature development is a community responsibility and not the responsibility of the qgis project alone if this bug report or feature request is high priority for you we suggest engaging a qgis de... | 1 |

15,418 | 19,605,488,624 | IssuesEvent | 2022-01-06 08:56:27 | plazi/community | https://api.github.com/repos/plazi/community | opened | to be processed | process request | here is one more new species from the CAS press release

please process, including holotype and GBIF transfer

[ichtyhology&herpetology.109.3.806-835.pdf](https://github.com/plazi/community/files/7820575/ichtyhology.herpetology.109.3.806-835.pdf)

| 1.0 | to be processed - here is one more new species from the CAS press release

please process, including holotype and GBIF transfer

[ichtyhology&herpetology.109.3.806-835.pdf](https://github.com/plazi/community/files/7820575/ichtyhology.herpetology.109.3.806-835.pdf)

| process | to be processed here is one more new species from the cas press release please process including holotype and gbif transfer | 1 |

129,576 | 10,578,576,363 | IssuesEvent | 2019-10-07 23:10:39 | MicrosoftDocs/visualstudio-docs | https://api.github.com/repos/MicrosoftDocs/visualstudio-docs | closed | xUnit Test Generator extension not updated for VS 2019 | Pri1 doc-bug visual-studio-windows/prod vs-ide-test/tech | There is another one at https://marketplace.visualstudio.com/items?itemName=YowkoTsai.xUnitnetTestGenerator. Perhaps should be linked as well.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 30b8edaf-7667-a3e6-38dc-371c53bcd386

* Version In... | 1.0 | xUnit Test Generator extension not updated for VS 2019 - There is another one at https://marketplace.visualstudio.com/items?itemName=YowkoTsai.xUnitnetTestGenerator. Perhaps should be linked as well.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*... | non_process | xunit test generator extension not updated for vs there is another one at perhaps should be linked as well document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source ... | 0 |

3,570 | 6,612,058,473 | IssuesEvent | 2017-09-20 01:12:57 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | Date and Time filter in GoAccess | duplicate log-processing question | Hi,

I have configured go access dashboard with HTML page.

/usr/local/bin/goaccess -a -f /tmp/dis2.log -o /var/www/html/example.com.html

Is there any way to filter date and time in that dashboard ???

Thanks,

Bipin Bahuguna

| 1.0 | Date and Time filter in GoAccess - Hi,

I have configured go access dashboard with HTML page.

/usr/local/bin/goaccess -a -f /tmp/dis2.log -o /var/www/html/example.com.html

Is there any way to filter date and time in that dashboard ???

Thanks,

Bipin Bahuguna

| process | date and time filter in goaccess hi i have configured go access dashboard with html page usr local bin goaccess a f tmp log o var www html example com html is there any way to filter date and time in that dashboard thanks bipin bahuguna | 1 |

56,965 | 13,958,823,832 | IssuesEvent | 2020-10-24 13:47:48 | yungyuc/turgon | https://api.github.com/repos/yungyuc/turgon | closed | Configure github codespaces for the turgon repository | build | GitHub Codespaces: https://github.com/features/codespaces

Codespaces document: https://docs.github.com/en/free-pro-team@latest/github/developing-online-with-codespaces | 1.0 | Configure github codespaces for the turgon repository - GitHub Codespaces: https://github.com/features/codespaces

Codespaces document: https://docs.github.com/en/free-pro-team@latest/github/developing-online-with-codespaces | non_process | configure github codespaces for the turgon repository github codespaces codespaces document | 0 |

20,027 | 5,964,776,314 | IssuesEvent | 2017-05-30 09:45:33 | elastic/logstash | https://api.github.com/repos/elastic/logstash | closed | ensure api code is compatible with the existence of multiple pipelines | api code cleanup | There needs to be an investigation and fix of any obstacles that stop logstash from working properly with multi pipelines.

At least one problem has been detected: currently there are two explicit references to the id "main" of the pipelines in the api code:

```

logstash-core/lib/logstash/api/commands/node.rb: ... | 1.0 | ensure api code is compatible with the existence of multiple pipelines - There needs to be an investigation and fix of any obstacles that stop logstash from working properly with multi pipelines.

At least one problem has been detected: currently there are two explicit references to the id "main" of the pipelines in ... | non_process | ensure api code is compatible with the existence of multiple pipelines there needs to be an investigation and fix of any obstacles that stop logstash from working properly with multi pipelines at least one problem has been detected currently there are two explicit references to the id main of the pipelines in ... | 0 |

130,849 | 10,667,553,275 | IssuesEvent | 2019-10-19 13:20:06 | libigl/libigl | https://api.github.com/repos/libigl/libigl | closed | Reveal name of failing mesh during unit test | question unit-tests | In a unit test that applies the pattern:

```

TEST_CASE("my test", "[igl]")

{

const auto test_case = [](const std::string ¶m)

{

...

};

test_common::run_test_cases(test_common::all_meshes(), test_case);

}

```

If I run `ctest -R "my test"` I see the output for a failure as:

```

ctest -R ... | 1.0 | Reveal name of failing mesh during unit test - In a unit test that applies the pattern:

```

TEST_CASE("my test", "[igl]")

{

const auto test_case = [](const std::string ¶m)

{

...

};

test_common::run_test_cases(test_common::all_meshes(), test_case);

}

```

If I run `ctest -R "my test"` I see... | non_process | reveal name of failing mesh during unit test in a unit test that applies the pattern test case my test const auto test case const std string param test common run test cases test common all meshes test case if i run ctest r my test i see the ... | 0 |

9,488 | 12,480,345,113 | IssuesEvent | 2020-05-29 20:07:14 | sct-pipeline/spine-generic | https://api.github.com/repos/sct-pipeline/spine-generic | closed | Make sure the spineGeneric single and multi subjects are 100% BIDS compatible | processing urgent | Ultimately they should pass the BIDS validator of OpenNeuro | 1.0 | Make sure the spineGeneric single and multi subjects are 100% BIDS compatible - Ultimately they should pass the BIDS validator of OpenNeuro | process | make sure the spinegeneric single and multi subjects are bids compatible ultimately they should pass the bids validator of openneuro | 1 |

248,635 | 21,047,763,573 | IssuesEvent | 2022-03-31 17:40:44 | searchspring/snap | https://api.github.com/repos/searchspring/snap | closed | Testing: snap-controller | testing open source critical | Current 63.67% get over 80%.

Test without network connection to ensure that mock data is always being used. | 1.0 | Testing: snap-controller - Current 63.67% get over 80%.

Test without network connection to ensure that mock data is always being used. | non_process | testing snap controller current get over test without network connection to ensure that mock data is always being used | 0 |

241,833 | 7,834,886,023 | IssuesEvent | 2018-06-16 19:53:32 | DarkPacks/SevTech-Ages | https://api.github.com/repos/DarkPacks/SevTech-Ages | closed | Using "To the Bat Poles" on a server forces all players into third-person mode. | Category: Mod Priority: Low Status: Reported To Mod Status: Stale Type: Bug | <!-- Instructions on how to do issues like your boy darkosto -->

<!-- NOTE: If you have other mods installed or you have changed versions; please revert to a clean install

of the modpack and try to replicate the crash/issue otherwise we can ignore the crash due to a "modded" pack.

-->

<!-- Before an... | 1.0 | Using "To the Bat Poles" on a server forces all players into third-person mode. - <!-- Instructions on how to do issues like your boy darkosto -->

<!-- NOTE: If you have other mods installed or you have changed versions; please revert to a clean install

of the modpack and try to replicate the crash/issu... | non_process | using to the bat poles on a server forces all players into third person mode note if you have other mods installed or you have changed versions please revert to a clean install of the modpack and try to replicate the crash issue otherwise we can ignore the crash due to a modded pack ... | 0 |

64,885 | 26,901,397,659 | IssuesEvent | 2023-02-06 15:53:25 | hashicorp/terraform-provider-azurerm | https://api.github.com/repos/hashicorp/terraform-provider-azurerm | closed | azurerm_function_app_function doesn't support multiple files | enhancement service/functions | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Community Note

<!--- Please keep this note for the community --->