Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

21,616 | 30,022,518,007 | IssuesEvent | 2023-06-27 01:34:13 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Include error messages in incompatible change process | P2 type: process team-OSS stale | ### Description of the problem / feature request:

The [incompatible change process](https://bazel.build/breaking-changes-guide.html) is centered on github labels/issues for a proactive testing workflow. Good error messages are needed to complement this, to address anyone doing _reactive_ detection of bazel breakages... | 1.0 | Include error messages in incompatible change process - ### Description of the problem / feature request:

The [incompatible change process](https://bazel.build/breaking-changes-guide.html) is centered on github labels/issues for a proactive testing workflow. Good error messages are needed to complement this, to addr... | process | include error messages in incompatible change process description of the problem feature request the is centered on github labels issues for a proactive testing workflow good error messages are needed to complement this to address anyone doing reactive detection of bazel breakages aka trying a new ... | 1 |

52,294 | 6,608,339,607 | IssuesEvent | 2017-09-19 10:34:35 | SleipnerTechnologies/saftens-bekaempelse | https://api.github.com/repos/SleipnerTechnologies/saftens-bekaempelse | closed | Forside Design | design feature | Lav forsiden til projektet.

Skal have en store CTA i toppen, men tilmelding som indsamler, og derefter lidt generel info om blærekræft..... ikke for meget... det er det vi har info siden til

#5 #2 | 1.0 | Forside Design - Lav forsiden til projektet.

Skal have en store CTA i toppen, men tilmelding som indsamler, og derefter lidt generel info om blærekræft..... ikke for meget... det er det vi har info siden til

#5 #2 | non_process | forside design lav forsiden til projektet skal have en store cta i toppen men tilmelding som indsamler og derefter lidt generel info om blærekræft ikke for meget det er det vi har info siden til | 0 |

167,029 | 12,980,598,152 | IssuesEvent | 2020-07-22 05:45:00 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: gossip/chaos/nodes=9 failed | C-test-failure O-roachtest O-robot branch-provisional_202007220233_v20.2.0-alpha.2 release-blocker | [(roachtest).gossip/chaos/nodes=9 failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2107811&tab=buildLog) on [provisional_202007220233_v20.2.0-alpha.2@d3119926d33d808c6384cf3e99a7f7435f395489](https://github.com/cockroachdb/cockroach/commits/d3119926d33d808c6384cf3e99a7f7435f395489):

```

The test failed on... | 2.0 | roachtest: gossip/chaos/nodes=9 failed - [(roachtest).gossip/chaos/nodes=9 failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2107811&tab=buildLog) on [provisional_202007220233_v20.2.0-alpha.2@d3119926d33d808c6384cf3e99a7f7435f395489](https://github.com/cockroachdb/cockroach/commits/d3119926d33d808c6384cf3e9... | non_process | roachtest gossip chaos nodes failed on the test failed on branch provisional alpha cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts gossip chaos nodes run gossip go gossip go gossip go gossip go test runner go gossip ... | 0 |

22,623 | 31,847,110,905 | IssuesEvent | 2023-09-14 20:52:46 | KASHO7/project2 | https://api.github.com/repos/KASHO7/project2 | opened | Handling template-processor.js (problem 2) | Issue | Template Processor | Hayden will be working on creating the template processor file. | 1.0 | Handling template-processor.js (problem 2) - Hayden will be working on creating the template processor file. | process | handling template processor js problem hayden will be working on creating the template processor file | 1 |

795,066 | 28,059,788,263 | IssuesEvent | 2023-03-29 11:53:27 | KDT-Final-Team4/backend | https://api.github.com/repos/KDT-Final-Team4/backend | closed | [Feat] 공지사항 공개 여부 기능 구현 | For : API Priority : Low Status : In progress Type : Feature | ## 🔨개발 할 기능

- 공지사항 공개 여부 기능 구현

## 🧩 세부 기능

해당 기능에 대한 세부 계획 작성 (ex. -[ ] 로그인 시 아이디 비번 입력 받기)

- [x] 공지사항 상태 변경 기능 (공개/비공개)

## 📖 참고 사항

| 1.0 | [Feat] 공지사항 공개 여부 기능 구현 - ## 🔨개발 할 기능

- 공지사항 공개 여부 기능 구현

## 🧩 세부 기능

해당 기능에 대한 세부 계획 작성 (ex. -[ ] 로그인 시 아이디 비번 입력 받기)

- [x] 공지사항 상태 변경 기능 (공개/비공개)

## 📖 참고 사항

| non_process | 공지사항 공개 여부 기능 구현 🔨개발 할 기능 공지사항 공개 여부 기능 구현 🧩 세부 기능 해당 기능에 대한 세부 계획 작성 ex 로그인 시 아이디 비번 입력 받기 공지사항 상태 변경 기능 공개 비공개 📖 참고 사항 | 0 |

4,893 | 5,376,069,190 | IssuesEvent | 2017-02-23 07:51:29 | symfony/symfony | https://api.github.com/repos/symfony/symfony | closed | circular reference on security.access.decision_manager | Bug Security Status: Needs Review Unconfirmed | | Q | A

| ---------------- | -----

| Bug report? | yes (possibly)

| Feature request? | no

| BC Break report? | unsure

| RFC? | no

| Symfony version | 2.8.15

While creating a custom voter as per [these instructions](http://symfony.com/doc/2.8/security/voters.html) I'm getting th... | True | circular reference on security.access.decision_manager - | Q | A

| ---------------- | -----

| Bug report? | yes (possibly)

| Feature request? | no

| BC Break report? | unsure

| RFC? | no

| Symfony version | 2.8.15

While creating a custom voter as per [these instructions](http:/... | non_process | circular reference on security access decision manager q a bug report yes possibly feature request no bc break report unsure rfc no symfony version while creating a custom voter as per i m getting the followin... | 0 |

32,354 | 8,838,953,533 | IssuesEvent | 2019-01-05 23:13:07 | inexorgame/inexor-core | https://api.github.com/repos/inexorgame/inexor-core | closed | Create new release first as a draft, publish when packages uploaded | cat:BUG feat:build environment org:needs validation/discussion/decision | Right now we have dead download links for ca. 20 - 40 minutes after every merge into master.

This is because our CI first creates a new GitHub release and instantly publish it.

However, the building process for Windows and Linux is taking much longer than this and it takes time till these packages are successfully ... | 1.0 | Create new release first as a draft, publish when packages uploaded - Right now we have dead download links for ca. 20 - 40 minutes after every merge into master.

This is because our CI first creates a new GitHub release and instantly publish it.

However, the building process for Windows and Linux is taking much lo... | non_process | create new release first as a draft publish when packages uploaded right now we have dead download links for ca minutes after every merge into master this is because our ci first creates a new github release and instantly publish it however the building process for windows and linux is taking much long... | 0 |

2,861 | 5,824,385,008 | IssuesEvent | 2017-05-07 12:33:07 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [FEATURE][processing] New algorithm for merging connected lines | HackFest needs-backport Processing Text User Manual | Original commit: https://github.com/qgis/QGIS/commit/299037a0bfcaf0251b2d4b763f5a8b9c1b8176b1 by nyalldawson

This algorithm joins all connected parts of MultiLineString

geometries into single LineString geometries.

If any parts of the input MultiLineString geometries are not

connected, the resultant geometry will be ... | 1.0 | [FEATURE][processing] New algorithm for merging connected lines - Original commit: https://github.com/qgis/QGIS/commit/299037a0bfcaf0251b2d4b763f5a8b9c1b8176b1 by nyalldawson

This algorithm joins all connected parts of MultiLineString

geometries into single LineString geometries.

If any parts of the input MultiLineSt... | process | new algorithm for merging connected lines original commit by nyalldawson this algorithm joins all connected parts of multilinestring geometries into single linestring geometries if any parts of the input multilinestring geometries are not connected the resultant geometry will be a multilinestring containing... | 1 |

7,261 | 10,420,651,110 | IssuesEvent | 2019-09-16 01:55:37 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Not possible to toggle use selected features in the Processing layer combobox | Bug Processing Regression | <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

-... | 1.0 | Not possible to toggle use selected features in the Processing layer combobox - <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support o... | process | not possible to toggle use selected features in the processing layer combobox bug fixing and feature development is a community responsibility and not the responsibility of the qgis project alone if this bug report or feature request is high priority for you we suggest engaging a qgis developer or support o... | 1 |

53,023 | 13,781,975,310 | IssuesEvent | 2020-10-08 16:56:54 | ioana-nicolae/first | https://api.github.com/repos/ioana-nicolae/first | opened | CVE-2016-10735 (Medium) detected in bootstrap-3.3.7.tgz, bootstrap-3.1.1.tgz | security vulnerability | ## CVE-2016-10735 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.7.tgz</b>, <b>bootstrap-3.1.1.tgz</b></p></summary>

<p>

<details><summary><b>bootstrap-3.3.7.tgz</b>... | True | CVE-2016-10735 (Medium) detected in bootstrap-3.3.7.tgz, bootstrap-3.1.1.tgz - ## CVE-2016-10735 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.7.tgz</b>, <b>bootstra... | non_process | cve medium detected in bootstrap tgz bootstrap tgz cve medium severity vulnerability vulnerable libraries bootstrap tgz bootstrap tgz bootstrap tgz the most popular front end framework for developing responsive mobile first projects on the web ... | 0 |

11,063 | 13,894,765,695 | IssuesEvent | 2020-10-19 15:03:40 | cncf/cnf-conformance | https://api.github.com/repos/cncf/cnf-conformance | opened | Implementation: Move build process to CircleCI from Travis CI | process sprint18 | ### Implementation: Move build process to CircleCI from Travis CI

Short Description:

- PoC was completed in #428

- this issue will cover the remaining implementation steps to start using CircleCI w/ the cnf-conformance GitHub repo

### Documentation Tasks:

- [ ] Add comment suggesting updates as needed for:... | 1.0 | Implementation: Move build process to CircleCI from Travis CI - ### Implementation: Move build process to CircleCI from Travis CI

Short Description:

- PoC was completed in #428

- this issue will cover the remaining implementation steps to start using CircleCI w/ the cnf-conformance GitHub repo

### Documenta... | process | implementation move build process to circleci from travis ci implementation move build process to circleci from travis ci short description poc was completed in this issue will cover the remaining implementation steps to start using circleci w the cnf conformance github repo documentati... | 1 |

306,393 | 9,392,635,104 | IssuesEvent | 2019-04-07 03:04:06 | tra38/Paranoia_Super_Mission_Generator | https://api.github.com/repos/tra38/Paranoia_Super_Mission_Generator | opened | Create a machine learning algorithm to generate missions | low-priority | **User Story (MVP)** - Use should be able to run a neural network trained on "synthetic data" (missions generated by PARANOIA Super Mission Generator) to generate human-readable missions.

**Post-MVP** - Neural network is to be trained on published PARANOIA missions instead of "synthetic data". Doing this will requir... | 1.0 | Create a machine learning algorithm to generate missions - **User Story (MVP)** - Use should be able to run a neural network trained on "synthetic data" (missions generated by PARANOIA Super Mission Generator) to generate human-readable missions.

**Post-MVP** - Neural network is to be trained on published PARANOIA m... | non_process | create a machine learning algorithm to generate missions user story mvp use should be able to run a neural network trained on synthetic data missions generated by paranoia super mission generator to generate human readable missions post mvp neural network is to be trained on published paranoia m... | 0 |

7,036 | 10,196,724,175 | IssuesEvent | 2019-08-12 21:33:30 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Critical Turkish translation SQL failure | Database/Postgres Priority:P2 Query Processor Type:Bug | - Chrome Version 71.0.3578.98 (Official Build) (64-bit)

- Ubuntu 18.04 up-to-date

- Postgres 10

- Metabase v0.31.2

- Docker

- Postgres 10 for Internal Database (Separate server from other postgres)

- All system locales, containers locales are default to en_us, even the docker host

Reproduce steps;

1. Do ini... | 1.0 | Critical Turkish translation SQL failure - - Chrome Version 71.0.3578.98 (Official Build) (64-bit)

- Ubuntu 18.04 up-to-date

- Postgres 10

- Metabase v0.31.2

- Docker

- Postgres 10 for Internal Database (Separate server from other postgres)

- All system locales, containers locales are default to en_us, even the d... | process | critical turkish translation sql failure chrome version official build bit ubuntu up to date postgres metabase docker postgres for internal database separate server from other postgres all system locales containers locales are default to en us even the docker host ... | 1 |

6,434 | 9,534,549,726 | IssuesEvent | 2019-04-30 02:09:16 | de-ai/designengine.ai | https://api.github.com/repos/de-ai/designengine.ai | closed | Missing Sketch Fonts | Good First Issue New feature Processing Render | Determine from Sketch plugin if any fonts used aren't installed. Notify on render page for author to upload font files.

- [x] Figure out how to identify missing fonts in plugin

- [x] Send list of missing font names to db

- [x] Notify user during processing that x fonts are missing

- [ ] Upload drop + API call to ... | 1.0 | Missing Sketch Fonts - Determine from Sketch plugin if any fonts used aren't installed. Notify on render page for author to upload font files.

- [x] Figure out how to identify missing fonts in plugin

- [x] Send list of missing font names to db

- [x] Notify user during processing that x fonts are missing

- [ ] Upl... | process | missing sketch fonts determine from sketch plugin if any fonts used aren t installed notify on render page for author to upload font files figure out how to identify missing fonts in plugin send list of missing font names to db notify user during processing that x fonts are missing upload drop... | 1 |

16,589 | 21,638,659,002 | IssuesEvent | 2022-05-05 16:25:19 | shirou/gopsutil | https://api.github.com/repos/shirou/gopsutil | closed | question: `(*process.Process).CPUPercent()` returning values above 100, what does this number represent? | question package:process | when printing `(*process.Process).CPUPercent()` of a process that is pretty heavy i get numbers in the range of 500 or 600 (sometimes more). _(When the tested process finishes the heavy calculations, this number returns to around 2 or 3.)_

Why is that? should't it be a precentage with maximum value of 100? | 1.0 | question: `(*process.Process).CPUPercent()` returning values above 100, what does this number represent? - when printing `(*process.Process).CPUPercent()` of a process that is pretty heavy i get numbers in the range of 500 or 600 (sometimes more). _(When the tested process finishes the heavy calculations, this number r... | process | question process process cpupercent returning values above what does this number represent when printing process process cpupercent of a process that is pretty heavy i get numbers in the range of or sometimes more when the tested process finishes the heavy calculations this number returns... | 1 |

142,360 | 5,474,514,149 | IssuesEvent | 2017-03-11 01:18:23 | dgore7/knowledge-management | https://api.github.com/repos/dgore7/knowledge-management | closed | Automatic Summarization of Text Documents | enhancement low priority | Provide a system which parses text documents and calls a relevant function which summarizes them. Algorithm commonly used is SMMRY. Information on that/relevant API can be found here: [http://smmry.com/about](http://smmry.com/about).

Key points:

- Summarizes documents and stores that "value" in the file database in... | 1.0 | Automatic Summarization of Text Documents - Provide a system which parses text documents and calls a relevant function which summarizes them. Algorithm commonly used is SMMRY. Information on that/relevant API can be found here: [http://smmry.com/about](http://smmry.com/about).

Key points:

- Summarizes documents and... | non_process | automatic summarization of text documents provide a system which parses text documents and calls a relevant function which summarizes them algorithm commonly used is smmry information on that relevant api can be found here key points summarizes documents and stores that value in the file database in a... | 0 |

4,178 | 7,111,637,933 | IssuesEvent | 2018-01-17 14:48:47 | itsyouonline/identityserver | https://api.github.com/repos/itsyouonline/identityserver | closed | Arrived back at step 1 after registration (Safari on iOS 8) | process_wontfix type_bug | I went through steps 1, 2 and 3. Clicked login and ended back at step 1.

Happened on iPhone 5S simulator with iOS 8.1

<img width="432" alt="screen shot 2017-10-07 at 17 38 09" src="https://user-images.githubusercontent.com/17762105/31309442-85fd9742-ab86-11e7-9ce0-d7ecc42a2b3d.png">

| 1.0 | Arrived back at step 1 after registration (Safari on iOS 8) - I went through steps 1, 2 and 3. Clicked login and ended back at step 1.

Happened on iPhone 5S simulator with iOS 8.1

<img width="432" alt="screen shot 2017-10-07 at 17 38 09" src="https://user-images.githubusercontent.com/17762105/31309442-85fd9742-ab... | process | arrived back at step after registration safari on ios i went through steps and clicked login and ended back at step happened on iphone simulator with ios img width alt screen shot at src | 1 |

212,971 | 16,505,908,419 | IssuesEvent | 2021-05-25 19:16:43 | giridhar196/pm-notes | https://api.github.com/repos/giridhar196/pm-notes | closed | Write notes for Class 05/13/2021 | documentation good first issue | Gather notes for today's class and submit them under the documents after the class. | 1.0 | Write notes for Class 05/13/2021 - Gather notes for today's class and submit them under the documents after the class. | non_process | write notes for class gather notes for today s class and submit them under the documents after the class | 0 |

11,364 | 14,175,779,640 | IssuesEvent | 2020-11-12 22:12:32 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | How to trigger CD after CI | Pri1 devops-cicd-process/tech devops/prod doc-enhancement | I follow the document to create two pipelines, build and release. But whenever I commit change to a branch, it triggers both pipelines. How i can trigger release only after build pipeline completed?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

... | 1.0 | How to trigger CD after CI - I follow the document to create two pipelines, build and release. But whenever I commit change to a branch, it triggers both pipelines. How i can trigger release only after build pipeline completed?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.c... | process | how to trigger cd after ci i follow the document to create two pipelines build and release but whenever i commit change to a branch it triggers both pipelines how i can trigger release only after build pipeline completed document details ⚠ do not edit this section it is required for docs microsoft c... | 1 |

114,312 | 24,583,615,506 | IssuesEvent | 2022-10-13 17:39:37 | trezor/trezor-suite | https://api.github.com/repos/trezor/trezor-suite | closed | suite-native: Dependencies - upgrade React native | dependencies code mobile-app | According to this [issue](https://github.com/trezor/trezor-suite/issues/6414) (upgrade React in the whole monorepo to version 18) and [PR](https://github.com/trezor/trezor-suite/pull/6437) - we want to upgrade RN to the newest version. | 1.0 | suite-native: Dependencies - upgrade React native - According to this [issue](https://github.com/trezor/trezor-suite/issues/6414) (upgrade React in the whole monorepo to version 18) and [PR](https://github.com/trezor/trezor-suite/pull/6437) - we want to upgrade RN to the newest version. | non_process | suite native dependencies upgrade react native according to this upgrade react in the whole monorepo to version and we want to upgrade rn to the newest version | 0 |

572 | 3,037,319,673 | IssuesEvent | 2015-08-06 16:25:42 | pwittchen/prefser | https://api.github.com/repos/pwittchen/prefser | closed | Review & Update README.md | release process | Review & Update README.md after changes in the library functionality and its API. | 1.0 | Review & Update README.md - Review & Update README.md after changes in the library functionality and its API. | process | review update readme md review update readme md after changes in the library functionality and its api | 1 |

22,028 | 30,543,376,868 | IssuesEvent | 2023-07-20 00:20:50 | ReMobidyc/ReMobidyc | https://api.github.com/repos/ReMobidyc/ReMobidyc | closed | modelpath should not contain the ":" char | bug processor | The ":" char is used for the drive letter delimiter on the Windows platform.

We have to avoid using it. | 1.0 | modelpath should not contain the ":" char - The ":" char is used for the drive letter delimiter on the Windows platform.

We have to avoid using it. | process | modelpath should not contain the char the char is used for the drive letter delimiter on the windows platform we have to avoid using it | 1 |

517,599 | 15,016,823,132 | IssuesEvent | 2021-02-01 10:04:09 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | Sending access_token through /oauth2/userinfo API Body in WSO2 5.7.0 | Affected/5.7.0 Complexity/Medium Component/Kernel Priority/Highest Severity/Critical WUM bug | In IS-5.3.0 we can send "access_token" through API Body for "/oauth2/userinfo" API. But in IS-5.7.0 if we enabled the correlation value as follows then sending access_token through API body will fail.

```

<Valve className="org.wso2.carbon.tomcat.ext.valves.RequestCorrelationIdValve"

headerT... | 1.0 | Sending access_token through /oauth2/userinfo API Body in WSO2 5.7.0 - In IS-5.3.0 we can send "access_token" through API Body for "/oauth2/userinfo" API. But in IS-5.7.0 if we enabled the correlation value as follows then sending access_token through API body will fail.

```

<Valve className="org.wso2.carbon.tomcat... | non_process | sending access token through userinfo api body in in is we can send access token through api body for userinfo api but in is if we enabled the correlation value as follows then sending access token through api body will fail valve classname org carbon tomcat ext valves requ... | 0 |

22,702 | 32,017,867,651 | IssuesEvent | 2023-09-22 00:13:17 | TableRise/tablerise-backend | https://api.github.com/repos/TableRise/tablerise-backend | closed | Montar entidade de usuário | test-process | Agora que já temos acesso as informações do usuário, montaremos a entidade a ser persistida no banco de dados.

Objeto de exemplo ( user )

```

{

"email": "some@email.com",

"password": "oauth",

"nickname": "holder",

"tag": "1447",

"picture": "http://imgur.com",

"createdAt": "timestamp",

"updated... | 1.0 | Montar entidade de usuário - Agora que já temos acesso as informações do usuário, montaremos a entidade a ser persistida no banco de dados.

Objeto de exemplo ( user )

```

{

"email": "some@email.com",

"password": "oauth",

"nickname": "holder",

"tag": "1447",

"picture": "http://imgur.com",

"created... | process | montar entidade de usuário agora que já temos acesso as informações do usuário montaremos a entidade a ser persistida no banco de dados objeto de exemplo user email some email com password oauth nickname holder tag picture createdat timestamp ... | 1 |

78,324 | 3,509,571,461 | IssuesEvent | 2016-01-08 23:32:21 | OregonCore/OregonCore | https://api.github.com/repos/OregonCore/OregonCore | opened | [warden] Making character disconnects client (BB #1096) | Category: Client Freeze migrated Priority: Medium Type: Bug | This issue was migrated from bitbucket.

**Original Reporter:** Dikkedeur

**Original Date:** 14.08.2015 18:13:14 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** new

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/1096

<hr>

Rev: c07fe354bafbd8c689bf67de1106c8c31ca1ef93

... | 1.0 | [warden] Making character disconnects client (BB #1096) - This issue was migrated from bitbucket.

**Original Reporter:** Dikkedeur

**Original Date:** 14.08.2015 18:13:14 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** new

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/1... | non_process | making character disconnects client bb this issue was migrated from bitbucket original reporter dikkedeur original date gmt original priority major original type bug original state new direct link rev problem when somebody makes a new character they get b... | 0 |

749 | 3,222,820,084 | IssuesEvent | 2015-10-09 05:11:08 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | The process never ends when piping the stdin to a child process | child_process process | Initially I asked this on [StackOverflow](http://stackoverflow.com/q/31716784/1420197), but looks like a bug (https://github.com/joyent/node/issues/9190). Post follows:

---

I'm using `spawn` to create a child process and pipe data:

child process | parent process (main)

------------------------------... | 2.0 | The process never ends when piping the stdin to a child process - Initially I asked this on [StackOverflow](http://stackoverflow.com/q/31716784/1420197), but looks like a bug (https://github.com/joyent/node/issues/9190). Post follows:

---

I'm using `spawn` to create a child process and pipe data:

child pro... | process | the process never ends when piping the stdin to a child process initially i asked this on but looks like a bug post follows i m using spawn to create a child process and pipe data child process parent process main stdout ... | 1 |

8,163 | 11,385,316,181 | IssuesEvent | 2020-01-29 10:50:52 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | remove comment GO:0080185 | multi-species process |

GO:0080185 JSON

effector-mediated induction of plant hypersensitive response by symbiont

A symbiont process whereby a molecule secreted by the symbiont activates plant effector-triggered immunity (ETI)...

has the comment:

Note that this term should probably be only used to annotate plant pathogens. Evidence ... | 1.0 | remove comment GO:0080185 -

GO:0080185 JSON

effector-mediated induction of plant hypersensitive response by symbiont

A symbiont process whereby a molecule secreted by the symbiont activates plant effector-triggered immunity (ETI)...

has the comment:

Note that this term should probably be only used to annotat... | process | remove comment go go json effector mediated induction of plant hypersensitive response by symbiont a symbiont process whereby a molecule secreted by the symbiont activates plant effector triggered immunity eti has the comment note that this term should probably be only used to annotate plant path... | 1 |

417,101 | 12,155,849,045 | IssuesEvent | 2020-04-25 14:54:53 | wso2/product-is | https://api.github.com/repos/wso2/product-is | opened | [ISSUE] Password grant doesn't return configured sub claim in acces token | Affected/5.11.0-m12 Component/OIDC Priority/Highest Severity/Blocker Type/Bug | **Describe the issue:**

With password grant, a self-contained access token doesn't return the confgured custom claim but id token contains the correct custom claim as the sub claim.

**Reproduce steps**

1. Add a service provider with OIDC protocol, select JWT token issuer

2. Create a custom claim under local cla... | 1.0 | [ISSUE] Password grant doesn't return configured sub claim in acces token - **Describe the issue:**

With password grant, a self-contained access token doesn't return the confgured custom claim but id token contains the correct custom claim as the sub claim.

**Reproduce steps**

1. Add a service provider with OIDC... | non_process | password grant doesn t return configured sub claim in acces token describe the issue with password grant a self contained access token doesn t return the confgured custom claim but id token contains the correct custom claim as the sub claim reproduce steps add a service provider with oidc proto... | 0 |

445,904 | 31,334,944,983 | IssuesEvent | 2023-08-24 04:52:17 | bghira/SimpleTuner | https://api.github.com/repos/bghira/SimpleTuner | closed | Multi consumer GPU | documentation enhancement help wanted question | Have you had results with 2x cards that have 24G of vram, would the batch size be the same as using 48G A6000? | 1.0 | Multi consumer GPU - Have you had results with 2x cards that have 24G of vram, would the batch size be the same as using 48G A6000? | non_process | multi consumer gpu have you had results with cards that have of vram would the batch size be the same as using | 0 |

20,468 | 27,129,277,122 | IssuesEvent | 2023-02-16 08:36:01 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Remove objc_proto_library rule | P3 type: process team-Rules-Server stale | `objc_proto_library` is not usable in Bazel. The code is present because it may be used inside Google. We should remove the rule from Bazel and from its documentation (https://docs.bazel.build/versions/master/be/objective-c.html#objc_proto_library).

(tracking bug in Google: b/123888674) | 1.0 | Remove objc_proto_library rule - `objc_proto_library` is not usable in Bazel. The code is present because it may be used inside Google. We should remove the rule from Bazel and from its documentation (https://docs.bazel.build/versions/master/be/objective-c.html#objc_proto_library).

(tracking bug in Google: b/12388... | process | remove objc proto library rule objc proto library is not usable in bazel the code is present because it may be used inside google we should remove the rule from bazel and from its documentation tracking bug in google b | 1 |

14,026 | 16,825,724,196 | IssuesEvent | 2021-06-17 18:17:09 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | Color Calibration Channel Mixer produces black patch | scope: image processing | **Describe the bug/issue**

Here is a gradient created and embedded with linear rec 2020 color space, saved as 16 bit tiff.

[gradient chart l rec 2020.tif.txt](https://github.com/darktable-org/darktable/files/6554086/gradient.chart.l.rec.2020.tif.txt)

I attempt to invert colours using the channel mixer part of co... | 1.0 | Color Calibration Channel Mixer produces black patch - **Describe the bug/issue**

Here is a gradient created and embedded with linear rec 2020 color space, saved as 16 bit tiff.

[gradient chart l rec 2020.tif.txt](https://github.com/darktable-org/darktable/files/6554086/gradient.chart.l.rec.2020.tif.txt)

I attem... | process | color calibration channel mixer produces black patch describe the bug issue here is a gradient created and embedded with linear rec color space saved as bit tiff i attempt to invert colours using the channel mixer part of color calibration module with the following settings cat adaptation ... | 1 |

16,047 | 20,192,792,408 | IssuesEvent | 2022-02-11 07:44:42 | soederpop/active-mdx-software-project-test-repo | https://api.github.com/repos/soederpop/active-mdx-software-project-test-repo | closed | A customer should be able to pay with paypal | story-created epic-payment-processing |

# A customer should be able to pay with paypal

As a customer I want to be able to pay with paypal so I can complete my order

| 1.0 | A customer should be able to pay with paypal -

# A customer should be able to pay with paypal

As a customer I want to be able to pay with paypal so I can complete my order

| process | a customer should be able to pay with paypal a customer should be able to pay with paypal as a customer i want to be able to pay with paypal so i can complete my order | 1 |

10,248 | 13,103,196,438 | IssuesEvent | 2020-08-04 08:08:59 | zammad/zammad | https://api.github.com/repos/zammad/zammad | closed | unprocessible mail "FrozenError: can't modify frozen String" | bug mail processing prioritized by payment verified | <!--

Hi there - thanks for filing an issue. Please ensure the following things before creating an issue - thank you! 🤓

Since november 15th we handle all requests, except real bugs, at our community board.

Full explanation: https://community.zammad.org/t/major-change-regarding-github-issues-community-board/21

P... | 1.0 | unprocessible mail "FrozenError: can't modify frozen String" - <!--

Hi there - thanks for filing an issue. Please ensure the following things before creating an issue - thank you! 🤓

Since november 15th we handle all requests, except real bugs, at our community board.

Full explanation: https://community.zammad.org... | process | unprocessible mail frozenerror can t modify frozen string hi there thanks for filing an issue please ensure the following things before creating an issue thank you 🤓 since november we handle all requests except real bugs at our community board full explanation please post feature re... | 1 |

21,531 | 29,820,135,675 | IssuesEvent | 2023-06-17 00:58:33 | bitfocus/companion-module-requests | https://api.github.com/repos/bitfocus/companion-module-requests | opened | Module for Shure MV7 | NOT YET PROCESSED | - [ ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

Shure MV7

What you would like to be able to make it do from Companion:

Change mic gain, headphone volume, e... | 1.0 | Module for Shure MV7 - - [ ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

Shure MV7

What you would like to be able to make it do from Companion:

Change mic ga... | process | module for shure i have researched the list of existing companion modules and requests and have determined this has not yet been requested the name of the device hardware or software you would like to control shure what you would like to be able to make it do from companion change mic gain he... | 1 |

16,673 | 21,776,852,381 | IssuesEvent | 2022-05-13 14:34:36 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | opened | Adding more operators to `depth_integration` | enhancement preprocessor | **Is your feature request related to a problem? Please describe.**

A while back, Javi worked on generalising the `depth_integration` preprocessor to accept other operators. It would be useful to have this changes officially in the core.

Additionally, it could be an opportunity to improve some lines to make the pre... | 1.0 | Adding more operators to `depth_integration` - **Is your feature request related to a problem? Please describe.**

A while back, Javi worked on generalising the `depth_integration` preprocessor to accept other operators. It would be useful to have this changes officially in the core.

Additionally, it could be an op... | process | adding more operators to depth integration is your feature request related to a problem please describe a while back javi worked on generalising the depth integration preprocessor to accept other operators it would be useful to have this changes officially in the core additionally it could be an op... | 1 |

20,258 | 26,875,838,154 | IssuesEvent | 2023-02-05 02:00:09 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Fri, 3 Feb 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### Cooperative Saliency-based Obstacle Detection and AR Rendering for Increased Situational Awareness

- **Authors:** Gerasimos Arvanitis, Nikolaos Stagakis, Evangelia I. Zacharaki, Konstantinos Moustakas

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Image and Video Processing (... | 2.0 | New submissions for Fri, 3 Feb 23 - ## Keyword: events

### Cooperative Saliency-based Obstacle Detection and AR Rendering for Increased Situational Awareness

- **Authors:** Gerasimos Arvanitis, Nikolaos Stagakis, Evangelia I. Zacharaki, Konstantinos Moustakas

- **Subjects:** Computer Vision and Pattern Recognition ... | process | new submissions for fri feb keyword events cooperative saliency based obstacle detection and ar rendering for increased situational awareness authors gerasimos arvanitis nikolaos stagakis evangelia i zacharaki konstantinos moustakas subjects computer vision and pattern recognition ... | 1 |

3,559 | 6,593,461,498 | IssuesEvent | 2017-09-15 01:20:53 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Check if we can open the directory on Linux when UseShellExecute is set | area-System.Diagnostics.Process enhancement os-linux up-for-grabs | I am creating this issue to track if we can open the directory on Linux when having ShellExecute is set.

- Ideally we would want to throw exception only when UseShellExecute option was false.

- In OSX, the program "open" successfully opens folder using "finder".

- But Linux needs extra effort to be able to... | 1.0 | Check if we can open the directory on Linux when UseShellExecute is set - I am creating this issue to track if we can open the directory on Linux when having ShellExecute is set.

- Ideally we would want to throw exception only when UseShellExecute option was false.

- In OSX, the program "open" successfully open... | process | check if we can open the directory on linux when useshellexecute is set i am creating this issue to track if we can open the directory on linux when having shellexecute is set ideally we would want to throw exception only when useshellexecute option was false in osx the program open successfully open... | 1 |

291,563 | 25,155,732,802 | IssuesEvent | 2022-11-10 13:25:32 | Uuvana-Studios/longvinter-windows-client | https://api.github.com/repos/Uuvana-Studios/longvinter-windows-client | closed | Can't place items on sand | Bug Not Tested | A path of sand appeared through my land after the new update. I cannot place anything on these parts leaving me unable to close off my fence. It says it is overlapping with something.

to the original issue to help the community and maintainers prioritize this request

* Please do not l... | 1.0 | Support for creating child DNS-zone - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prio... | non_process | support for creating child dns zone community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or me too comments they generate extra noise for issue followers and do not help prioritize t... | 0 |

6,750 | 9,879,973,225 | IssuesEvent | 2019-06-24 11:25:39 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Specifying cell size instead of size when reprojecting and resampling a raster | Feature Request Processing | Author Name: **Mattias Lindman** (Mattias Lindman)

Original Redmine Issue: [14510](https://issues.qgis.org/issues/14510)

Redmine category:processing/gdal

---

When using Raster/Projections/Warp(Reproject) in order to reproject a raster to a different projection it would be convenient to specify the desired cell size ... | 1.0 | Specifying cell size instead of size when reprojecting and resampling a raster - Author Name: **Mattias Lindman** (Mattias Lindman)

Original Redmine Issue: [14510](https://issues.qgis.org/issues/14510)

Redmine category:processing/gdal

---

When using Raster/Projections/Warp(Reproject) in order to reproject a raster t... | process | specifying cell size instead of size when reprojecting and resampling a raster author name mattias lindman mattias lindman original redmine issue redmine category processing gdal when using raster projections warp reproject in order to reproject a raster to a different projection it would be conve... | 1 |

142,902 | 19,122,596,882 | IssuesEvent | 2021-12-01 01:17:21 | Tim-sandbox/webgoat-trng | https://api.github.com/repos/Tim-sandbox/webgoat-trng | opened | CVE-2021-22096 (Medium) detected in spring-web-5.2.2.RELEASE.jar | security vulnerability | ## CVE-2021-22096 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-web-5.2.2.RELEASE.jar</b></p></summary>

<p>Spring Web</p>

<p>Library home page: <a href="https://github.com/s... | True | CVE-2021-22096 (Medium) detected in spring-web-5.2.2.RELEASE.jar - ## CVE-2021-22096 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-web-5.2.2.RELEASE.jar</b></p></summary>

<p... | non_process | cve medium detected in spring web release jar cve medium severity vulnerability vulnerable library spring web release jar spring web library home page a href path to dependency file webgoat trng webgoat integration tests pom xml path to vulnerable library home wss s... | 0 |

41,290 | 2,868,994,330 | IssuesEvent | 2015-06-05 22:26:43 | dart-lang/pub-dartlang | https://api.github.com/repos/dart-lang/pub-dartlang | closed | Supply stats for pub authors | enhancement MovedToGithub Priority-Medium | <a href="https://github.com/sethladd"><img src="https://avatars.githubusercontent.com/u/5479?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [sethladd](https://github.com/sethladd)**

_Originally opened as dart-lang/sdk#5424_

----

A pub author will be interested in their own stats for their p... | 1.0 | Supply stats for pub authors - <a href="https://github.com/sethladd"><img src="https://avatars.githubusercontent.com/u/5479?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [sethladd](https://github.com/sethladd)**

_Originally opened as dart-lang/sdk#5424_

----

A pub author will be interested... | non_process | supply stats for pub authors issue by originally opened as dart lang sdk a pub author will be interested in their own stats for their pub packages some stats would include page views downloads per version updates if we track those differently from initial downloads ideally pub ... | 0 |

12,388 | 14,908,341,709 | IssuesEvent | 2021-01-22 05:39:19 | ncbo/bioportal-project | https://api.github.com/repos/ncbo/bioportal-project | opened | MedDRA concept tree doesn't display | bug ontology processing problem | Since UMLS 2020AB upload, the MedDRA ontology isn't displaying its concept tree, the issue being that no root concepts were identified. Misha and I have done some early investigation, and learned the following.

No other UMLS concept trees in production have this issue; all the other ontologies not display a tree are... | 1.0 | MedDRA concept tree doesn't display - Since UMLS 2020AB upload, the MedDRA ontology isn't displaying its concept tree, the issue being that no root concepts were identified. Misha and I have done some early investigation, and learned the following.

No other UMLS concept trees in production have this issue; all the o... | process | meddra concept tree doesn t display since umls upload the meddra ontology isn t displaying its concept tree the issue being that no root concepts were identified misha and i have done some early investigation and learned the following no other umls concept trees in production have this issue all the other ... | 1 |

59,214 | 14,539,801,766 | IssuesEvent | 2020-12-15 12:25:17 | angular/angular-cli | https://api.github.com/repos/angular/angular-cli | closed | `--hmr` with `enableProdMode()` breaks HMR | comp: devkit/build-angular devkit/build-angular: dev-server freq1: low severity1: confusing type: bug/fix | <!--🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅

Oh hi there! 😄

To expedite issue processing please search open and closed issues before submitting a new one.

Existing issues often contain information about workarounds, resolution, or progress updates.

🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅... | 2.0 | `--hmr` with `enableProdMode()` breaks HMR - <!--🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅

Oh hi there! 😄

To expedite issue processing please search open and closed issues before submitting a new one.

Existing issues often contain information about workarounds, resolution, or progress updat... | non_process | hmr with enableprodmode breaks hmr 🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅 oh hi there 😄 to expedite issue processing please search open and closed issues before submitting a new one existing issues often contain information about workarounds resolution or progress updat... | 0 |

16,816 | 22,060,927,478 | IssuesEvent | 2022-05-30 17:42:39 | bitPogo/kmock | https://api.github.com/repos/bitPogo/kmock | closed | Move UnitRelaxer Logic into ProxyFactory | enhancement kmock kmock-processor | ## Description

<!--- Provide a detailed introduction to the issue itself, and why you consider it to be a bug -->

Currently the Processor hardwires all the Relaxers into the Mocks. At least the UnitRelaxer can be invoked with the ProxyFactory.

Acceptance Criteria:

At least `relaxVoidFunction` is wired by the Prox... | 1.0 | Move UnitRelaxer Logic into ProxyFactory - ## Description

<!--- Provide a detailed introduction to the issue itself, and why you consider it to be a bug -->

Currently the Processor hardwires all the Relaxers into the Mocks. At least the UnitRelaxer can be invoked with the ProxyFactory.

Acceptance Criteria:

At lea... | process | move unitrelaxer logic into proxyfactory description currently the processor hardwires all the relaxers into the mocks at least the unitrelaxer can be invoked with the proxyfactory acceptance criteria at least relaxvoidfunction is wired by the proxyfactory optional find a way to make that for al... | 1 |

235,344 | 19,338,989,467 | IssuesEvent | 2021-12-15 00:42:47 | wuespace/telestion-client | https://api.github.com/repos/wuespace/telestion-client | closed | Update Storybook packages and configuration | :hammer: enhancement :link: dependencies :eyeglasses: tests | Please update the Storybook packages/components and their configuration.

Some parts of storybook have changed drastically, e.g. Create React App is now in a separate thread and the default PostCSS plugin is deprecated. | 1.0 | Update Storybook packages and configuration - Please update the Storybook packages/components and their configuration.

Some parts of storybook have changed drastically, e.g. Create React App is now in a separate thread and the default PostCSS plugin is deprecated. | non_process | update storybook packages and configuration please update the storybook packages components and their configuration some parts of storybook have changed drastically e g create react app is now in a separate thread and the default postcss plugin is deprecated | 0 |

9,356 | 12,366,368,880 | IssuesEvent | 2020-05-18 10:19:39 | DiSSCo/user-stories | https://api.github.com/repos/DiSSCo/user-stories | opened | an automated identification tool | 1. NH museum 4. Data processing ICEDIG-SURVEY Research Specimen level | As a Citizen Scientist I want to identify a specimen so that I can know which organism I have encountered for this I need an automated identification tool | 1.0 | an automated identification tool - As a Citizen Scientist I want to identify a specimen so that I can know which organism I have encountered for this I need an automated identification tool | process | an automated identification tool as a citizen scientist i want to identify a specimen so that i can know which organism i have encountered for this i need an automated identification tool | 1 |

22,190 | 30,744,080,720 | IssuesEvent | 2023-07-28 13:45:53 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Status of Bazel 7.0.0-pre.20230710.5 | P1 type: process release team-OSS |

- Expected release date: 2023-07-27

Task list:

- [x] Pick release baseline: [7845acae](https://github.com/bazelbuild/bazel/commit/7845acae9769a72dc507dc2f57c4e032ebf429d3) with cherrypicks [d9e2f918](https://github.com/bazelbuild/bazel/commit/d9e2f9181f8fa283e3986ee3b261e610c41cf61b) [da23370d](https://github.com/ba... | 1.0 | Status of Bazel 7.0.0-pre.20230710.5 -

- Expected release date: 2023-07-27

Task list:

- [x] Pick release baseline: [7845acae](https://github.com/bazelbuild/bazel/commit/7845acae9769a72dc507dc2f57c4e032ebf429d3) with cherrypicks [d9e2f918](https://github.com/bazelbuild/bazel/commit/d9e2f9181f8fa283e3986ee3b261e610c41... | process | status of bazel pre expected release date task list pick release baseline with cherrypicks create release candidate post submit push the release update the | 1 |

163,897 | 12,749,751,187 | IssuesEvent | 2020-06-27 00:08:57 | mozilla-mobile/fenix | https://api.github.com/repos/mozilla-mobile/fenix | opened | Intermittent test failure - FenixSearchEngineProviderTest - sharedprefs contains installed engines when installedSearchEngineIdentifiers | intermittent-test | ### Test Run:

https://firefoxci.taskcluster-artifacts.net/eh0VCU3UQLaXUooAI5QEuw/0/public/reports/test/testGeckoNightlyDebugUnitTest/classes/org.mozilla.fenix.components.searchengine.FenixSearchEngineProviderTest.html#GIVEN%20sharedprefs%20contains%20installed%20engines%20WHEN%20installedSearchEngineIdentifiers%20THEN... | 1.0 | Intermittent test failure - FenixSearchEngineProviderTest - sharedprefs contains installed engines when installedSearchEngineIdentifiers - ### Test Run:

https://firefoxci.taskcluster-artifacts.net/eh0VCU3UQLaXUooAI5QEuw/0/public/reports/test/testGeckoNightlyDebugUnitTest/classes/org.mozilla.fenix.components.searchengi... | non_process | intermittent test failure fenixsearchengineprovidertest sharedprefs contains installed engines when installedsearchengineidentifiers test run stacktrace java lang illegalstateexception this job has not completed yet at kotlinx coroutines jobsupport getcompletionexceptionornull jobsupport ... | 0 |

79,491 | 7,717,717,395 | IssuesEvent | 2018-05-23 14:26:08 | openshift/origin | https://api.github.com/repos/openshift/origin | opened | TestOAuthServiceAccountClientEvent is timing sensitive | kind/test-flake | in reference to https://github.com/openshift/origin/pull/16850, https://github.com/openshift/origin/issues/16940, and https://github.com/openshift/origin/pull/19790

Is one of the integration tests that still appears to be flaky

https://openshift-gce-devel.appspot.com/build/origin-ci-test/pr-logs/pull/19759/test_pul... | 1.0 | TestOAuthServiceAccountClientEvent is timing sensitive - in reference to https://github.com/openshift/origin/pull/16850, https://github.com/openshift/origin/issues/16940, and https://github.com/openshift/origin/pull/19790

Is one of the integration tests that still appears to be flaky

https://openshift-gce-devel.app... | non_process | testoauthserviceaccountclientevent is timing sensitive in reference to and is one of the integration tests that still appears to be flaky assigning as he appears to be the last one touching the test cc enj bparees | 0 |

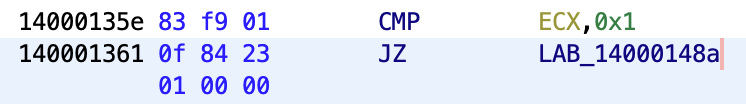

17,638 | 23,460,975,111 | IssuesEvent | 2022-08-16 13:06:05 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | Context Dependent Decoding of Jump Instructions | Feature: Processor/x86 Status: Prioritize | **Is your feature request related to a problem? Please describe.**

*Ghidra* decodes `0F 84` as `JZ` following a comparison with a non-zero value.

**Describe the solution you'd like**

Wouldn't it b... | 1.0 | Context Dependent Decoding of Jump Instructions - **Is your feature request related to a problem? Please describe.**

*Ghidra* decodes `0F 84` as `JZ` following a comparison with a non-zero value.

**D... | process | context dependent decoding of jump instructions is your feature request related to a problem please describe ghidra decodes as jz following a comparison with a non zero value describe the solution you d like wouldn t it be nicer to decode as je environment os window... | 1 |

94,993 | 10,863,361,223 | IssuesEvent | 2019-11-14 15:00:00 | quarkusio/quarkus | https://api.github.com/repos/quarkusio/quarkus | closed | Make an explicit section on Hibernate ORM in production with the different defaults | component:documentation enhancement | The default import.sql file is not executed when running in Native mode. According to the docs, Hibernate ORM, upon boot, will read and execute the SQL statements in the /import.sql file (if present).

That works in JVM Mode. In native mode the script is not executed. As workaround, you have to set explicitly the prope... | 1.0 | Make an explicit section on Hibernate ORM in production with the different defaults - The default import.sql file is not executed when running in Native mode. According to the docs, Hibernate ORM, upon boot, will read and execute the SQL statements in the /import.sql file (if present).

That works in JVM Mode. In nativ... | non_process | make an explicit section on hibernate orm in production with the different defaults the default import sql file is not executed when running in native mode according to the docs hibernate orm upon boot will read and execute the sql statements in the import sql file if present that works in jvm mode in nativ... | 0 |

9,785 | 12,801,170,542 | IssuesEvent | 2020-07-02 18:35:12 | solid/process | https://api.github.com/repos/solid/process | closed | Test Suite not really a Panel | process proposal | The test suite is in a separate repository from the specification so it could be interpreted as falling outside of the editorial process.

Although there is a test suite panel, there is no corresponding editorial assignment so it is unclear who would review proposals from the test suite panel.

One solution could... | 1.0 | Test Suite not really a Panel - The test suite is in a separate repository from the specification so it could be interpreted as falling outside of the editorial process.

Although there is a test suite panel, there is no corresponding editorial assignment so it is unclear who would review proposals from the test su... | process | test suite not really a panel the test suite is in a separate repository from the specification so it could be interpreted as falling outside of the editorial process although there is a test suite panel there is no corresponding editorial assignment so it is unclear who would review proposals from the test su... | 1 |

345,690 | 24,870,690,426 | IssuesEvent | 2022-10-27 15:01:26 | kubernetes-client/python | https://api.github.com/repos/kubernetes-client/python | opened | Body is not acccepted as None for patch_certificate_signing_request_approval | kind/documentation | **Link to the issue (please include a link to the specific documentation or example)**:

https://github.com/kubernetes-client/python/blob/master/kubernetes/docs/CertificatesV1Api.md#patch_certificate_signing_request_approval

**Description of the issue (please include outputs or screenshots if possible)**:

```/home/... | 1.0 | Body is not acccepted as None for patch_certificate_signing_request_approval - **Link to the issue (please include a link to the specific documentation or example)**:

https://github.com/kubernetes-client/python/blob/master/kubernetes/docs/CertificatesV1Api.md#patch_certificate_signing_request_approval

**Description... | non_process | body is not acccepted as none for patch certificate signing request approval link to the issue please include a link to the specific documentation or example description of the issue please include outputs or screenshots if possible home cloud user cnsbc site packages requests init ... | 0 |

7,973 | 11,161,944,967 | IssuesEvent | 2019-12-26 15:50:00 | pytorch/xla | https://api.github.com/repos/pytorch/xla | closed | Training is sooooooooo slow | multiprocessing needs reproduction perf stale transformers | Hello, I'm trying to train the transformer model based on PyTorch's nn.Transformer using multiprocessing with 8 TPUs.

However, the forward is super slow (even the first iteration, with 10 batch size). Each iteration takes a few minutes.

here are logs:

===============================================

2019... | 1.0 | Training is sooooooooo slow - Hello, I'm trying to train the transformer model based on PyTorch's nn.Transformer using multiprocessing with 8 TPUs.

However, the forward is super slow (even the first iteration, with 10 batch size). Each iteration takes a few minutes.

here are logs:

=========================... | process | training is sooooooooo slow hello i m trying to train the transformer model based on pytorch s nn transformer using multiprocessing with tpus however the forward is super slow even the first iteration with batch size each iteration takes a few minutes here are logs ... | 1 |

3,193 | 3,833,126,424 | IssuesEvent | 2016-04-01 01:00:36 | anholt/linux | https://api.github.com/repos/anholt/linux | closed | vc4: Queue V3D bin/render jobs in parallel | performance | Varad has been working on taking my anholt/vc4-kms-v3d-rpi2-binrendersubmit-2 branch and fixing it. Hopefully done soon. | True | vc4: Queue V3D bin/render jobs in parallel - Varad has been working on taking my anholt/vc4-kms-v3d-rpi2-binrendersubmit-2 branch and fixing it. Hopefully done soon. | non_process | queue bin render jobs in parallel varad has been working on taking my anholt kms binrendersubmit branch and fixing it hopefully done soon | 0 |

38,374 | 8,468,424,006 | IssuesEvent | 2018-10-23 19:42:24 | pnp/pnpjs | https://api.github.com/repos/pnp/pnpjs | closed | Question: Setting property bag values (e.g. on folders) - possible at all via REST? | area: code status: answered type: question | Is it at all possible to update the properties (in the property bag, not object properties or item fields) of folders/items/webs via REST? A MERGE fails. | 1.0 | Question: Setting property bag values (e.g. on folders) - possible at all via REST? - Is it at all possible to update the properties (in the property bag, not object properties or item fields) of folders/items/webs via REST? A MERGE fails. | non_process | question setting property bag values e g on folders possible at all via rest is it at all possible to update the properties in the property bag not object properties or item fields of folders items webs via rest a merge fails | 0 |

16,535 | 21,563,635,512 | IssuesEvent | 2022-05-01 14:40:41 | MartinBruun/P6 | https://api.github.com/repos/MartinBruun/P6 | opened | (CI) Add the user that creates a Pull Request as the Assignee | 3: Should have Need grooming Process | Make an addition to labels-on-pr.yml (or a new) workflow in the .github/workflows folder, which adds the current user as the assignee when the person creates a pull request. Makes it easier to see who created what. | 1.0 | (CI) Add the user that creates a Pull Request as the Assignee - Make an addition to labels-on-pr.yml (or a new) workflow in the .github/workflows folder, which adds the current user as the assignee when the person creates a pull request. Makes it easier to see who created what. | process | ci add the user that creates a pull request as the assignee make an addition to labels on pr yml or a new workflow in the github workflows folder which adds the current user as the assignee when the person creates a pull request makes it easier to see who created what | 1 |

722,722 | 24,872,669,547 | IssuesEvent | 2022-10-27 16:21:38 | AY2223S1-CS2103T-W16-3/tp | https://api.github.com/repos/AY2223S1-CS2103T-W16-3/tp | closed | Editing upcoming appointment deletes all past appointments | priority.High type.Bug severity.High | This is due to a bug with the `EditPersonDescriptor` class within the `EditCommand` class, where past appointments are taken from `editPersonDescriptor` instead of `personToEdit`. | 1.0 | Editing upcoming appointment deletes all past appointments - This is due to a bug with the `EditPersonDescriptor` class within the `EditCommand` class, where past appointments are taken from `editPersonDescriptor` instead of `personToEdit`. | non_process | editing upcoming appointment deletes all past appointments this is due to a bug with the editpersondescriptor class within the editcommand class where past appointments are taken from editpersondescriptor instead of persontoedit | 0 |

11,771 | 14,601,071,344 | IssuesEvent | 2020-12-21 08:03:38 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] [Dev] Multiple entries are found in sites tab for the same study | Bug P1 Participant manager Process: Fixed Process: Tested dev | Steps:

1. Login to PM as superadmin

2. Navigate to sites tab

3. Observe multiple enteries record

A/R: Multiple entries are found in sites tab for the same study

E/R: Only single record should be available

| 1.0 | How do we want to start? - Hi @flancast90 , I'm writing this first issue to get an overview how we want to start this little project :) | process | how do we want to start hi i m writing this first issue to get an overview how we want to start this little project | 1 |

19,482 | 25,792,866,014 | IssuesEvent | 2022-12-10 08:29:26 | COPIM/open-book-collective | https://api.github.com/repos/COPIM/open-book-collective | closed | Allow initiatives to access subscribers' data | userstory development membership management (pillar 4) organisational process | As an open access book publisher (OABP) or an open access infrastructure provider (OAIP) ...

I want a mechanism for receiving and storing member library data (eg IP addresses, contact points) ...

... so that I am able to provide membership services.

| 1.0 | Allow initiatives to access subscribers' data - As an open access book publisher (OABP) or an open access infrastructure provider (OAIP) ...

I want a mechanism for receiving and storing member library data (eg IP addresses, contact points) ...

... so that I am able to provide membership services.

| process | allow initiatives to access subscribers data as an open access book publisher oabp or an open access infrastructure provider oaip i want a mechanism for receiving and storing member library data eg ip addresses contact points so that i am able to provide membership services | 1 |

13,107 | 15,497,098,241 | IssuesEvent | 2021-03-11 03:57:03 | 2i2c-org/team-compass | https://api.github.com/repos/2i2c-org/team-compass | closed | Tech Team Update: March 2021 | team-process | Hey @2i2c-org/tech-team - time to fill in some updates about what you've been up to the last couple of weeks!

Can folks fill out the [HackMD](https://hackmd.io/i2Siurp1TkmPYgn3ZgxFQw) with their own updates? ✨

- **Updates HackMD**: https://hackmd.io/i2Siurp1TkmPYgn3ZgxFQw

- **Team Sync history**: https://2i2c.org... | 1.0 | Tech Team Update: March 2021 - Hey @2i2c-org/tech-team - time to fill in some updates about what you've been up to the last couple of weeks!

Can folks fill out the [HackMD](https://hackmd.io/i2Siurp1TkmPYgn3ZgxFQw) with their own updates? ✨

- **Updates HackMD**: https://hackmd.io/i2Siurp1TkmPYgn3ZgxFQw

- **Team S... | process | tech team update march hey org tech team time to fill in some updates about what you ve been up to the last couple of weeks can folks fill out the with their own updates ✨ updates hackmd team sync history todo clean up the for this update ping the team member... | 1 |

19,008 | 25,007,167,784 | IssuesEvent | 2022-11-03 12:47:31 | googleapis/gapic-generator-csharp | https://api.github.com/repos/googleapis/gapic-generator-csharp | closed | Consider removing unit test generation | priority: p2 type: process | This should probably only happen after we've got integration tests working with show case, but our unit test generation is frustrating:

- Generating test projects means keeping more dependencies up to date

- Adding a generator feature often means modifying test generation too

- These tests don't actually add value... | 1.0 | Consider removing unit test generation - This should probably only happen after we've got integration tests working with show case, but our unit test generation is frustrating:

- Generating test projects means keeping more dependencies up to date

- Adding a generator feature often means modifying test generation to... | process | consider removing unit test generation this should probably only happen after we ve got integration tests working with show case but our unit test generation is frustrating generating test projects means keeping more dependencies up to date adding a generator feature often means modifying test generation to... | 1 |

145,037 | 13,132,761,061 | IssuesEvent | 2020-08-06 19:32:51 | Qiskit/qiskit-tutorials | https://api.github.com/repos/Qiskit/qiskit-tutorials | closed | 1_getting_started_with_qiskit.ipynb should improve markdown for rst conversion | documentation | ## Target file

tutorials/circuits/1_getting_started_with_qiskit.ipynb.

## Improvement points

### 1. rst constraints

Markdown can use emphasis and link at the same time, but rst cannot. It should be modified to a notation that can be converted to rst.

The following is the converted statement.

> The fundamen... | 1.0 | 1_getting_started_with_qiskit.ipynb should improve markdown for rst conversion - ## Target file

tutorials/circuits/1_getting_started_with_qiskit.ipynb.

## Improvement points

### 1. rst constraints

Markdown can use emphasis and link at the same time, but rst cannot. It should be modified to a notation that can be ... | non_process | getting started with qiskit ipynb should improve markdown for rst conversion target file tutorials circuits getting started with qiskit ipynb improvement points rst constraints markdown can use emphasis and link at the same time but rst cannot it should be modified to a notation that can be ... | 0 |

767,751 | 26,938,836,831 | IssuesEvent | 2023-02-07 23:27:02 | phetsims/chipper | https://api.github.com/repos/phetsims/chipper | closed | Locale info is still a "ROUGH DRAFT". Make it production ready. | priority:2-high | While working on https://github.com/phetsims/chipper/issues/1374, I was examining commits for localeInfoModule.js, the primary source for PhET locale information that is displayed in Preferences and elsewhere.

There is a single comment by @jonathanolson about this file in https://github.com/phetsims/chipper/issues... | 1.0 | Locale info is still a "ROUGH DRAFT". Make it production ready. - While working on https://github.com/phetsims/chipper/issues/1374, I was examining commits for localeInfoModule.js, the primary source for PhET locale information that is displayed in Preferences and elsewhere.

There is a single comment by @jonathano... | non_process | locale info is still a rough draft make it production ready while working on i was examining commits for localeinfomodule js the primary source for phet locale information that is displayed in preferences and elsewhere there is a single comment by jonathanolson about this file in added a very rough... | 0 |

682,837 | 23,359,190,035 | IssuesEvent | 2022-08-10 10:06:45 | exeGesIS-SDM/NetworkDesignTools_Feb21 | https://api.github.com/repos/exeGesIS-SDM/NetworkDesignTools_Feb21 | closed | Create property count layer for properties in a polygon | Priority 1 New feature | Request user selects a PN (primary node) polygon (which covers multiple buildings)

Select all addresses in the polygon

Add a single point for each building on a property summary layer

Set the property ref

Count the number of properties in the building(ie with the same property ID or at the same point) and save in ... | 1.0 | Create property count layer for properties in a polygon - Request user selects a PN (primary node) polygon (which covers multiple buildings)

Select all addresses in the polygon

Add a single point for each building on a property summary layer

Set the property ref

Count the number of properties in the building(ie wi... | non_process | create property count layer for properties in a polygon request user selects a pn primary node polygon which covers multiple buildings select all addresses in the polygon add a single point for each building on a property summary layer set the property ref count the number of properties in the building ie wi... | 0 |

325,061 | 27,845,629,376 | IssuesEvent | 2023-03-20 15:19:47 | QubesOS/updates-status | https://api.github.com/repos/QubesOS/updates-status | closed | video-companion v1.0.1-1 (r4.1) | r4.1-buster-cur-test r4.1-dom0-cur-test r4.1-bullseye-cur-test r4.1-centos-stream8-cur-test r4.1-bookworm-cur-test r4.1-fc36-cur-test r4.1-fc37-cur-test r4.1-fc38-cur-test | Update of video-companion to v1.0.1-1 for Qubes r4.1, see comments below for details.

Built from: https://github.com/QubesOS/qubes-video-companion/commit/7b568f15cffe8ec0fde5aab37f5afe3d62ce6885

[Changes since previous version](https://github.com/QubesOS/qubes-video-companion/compare/v2.0.0...v1.0.1-1):

QubesOS/qubes... | 8.0 | video-companion v1.0.1-1 (r4.1) - Update of video-companion to v1.0.1-1 for Qubes r4.1, see comments below for details.

Built from: https://github.com/QubesOS/qubes-video-companion/commit/7b568f15cffe8ec0fde5aab37f5afe3d62ce6885

[Changes since previous version](https://github.com/QubesOS/qubes-video-companion/compare... | non_process | video companion update of video companion to for qubes see comments below for details built from qubesos qubes video companion version qubesos qubes video companion pylint disable consider using f string qubesos qubes video companion tests webcam test is supposed t... | 0 |

388,465 | 26,767,299,128 | IssuesEvent | 2023-01-31 11:35:57 | nuxt/nuxt | https://api.github.com/repos/nuxt/nuxt | closed | docs: markdown badly rendered | documentation 3.x upstream | ### Environment

all

### Reproduction

https://nuxt.com/docs/api/composables/use-fetch

https://nuxt.com/docs/api/composables/use-async-data

### Describe the bug

A lot of characters are not rendered in the code block for useFetch and useAsyncData type

### Additional context

\o/ nuxt 3 finally released \o/ thank ... | 1.0 | docs: markdown badly rendered - ### Environment

all

### Reproduction

https://nuxt.com/docs/api/composables/use-fetch

https://nuxt.com/docs/api/composables/use-async-data

### Describe the bug

A lot of characters are not rendered in the code block for useFetch and useAsyncData type

### Additional context

\o/ nu... | non_process | docs markdown badly rendered environment all reproduction describe the bug a lot of characters are not rendered in the code block for usefetch and useasyncdata type additional context o nuxt finally released o thank you for all your hard work o logs no response | 0 |

393,463 | 11,616,176,440 | IssuesEvent | 2020-02-26 15:19:18 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.theguardian.com - see bug description | browser-fenix engine-gecko priority-important | <!-- @browser: Firefox Mobile 75.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:75.0) Gecko/75.0 Firefox/75.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/49016 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.theguardian.com/politics/2020/feb/2... | 1.0 | www.theguardian.com - see bug description - <!-- @browser: Firefox Mobile 75.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:75.0) Gecko/75.0 Firefox/75.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/49016 -->

<!-- @extra_labels: browser-fenix -->

**URL**: htt... | non_process | see bug description url browser version firefox mobile operating system android tested another browser no problem type something else description every page loads somewhere in the middle never at the top beginning of an article was the same on bbc com and sz d... | 0 |

84,743 | 24,404,916,181 | IssuesEvent | 2022-10-05 07:00:35 | godotengine/godot-proposals | https://api.github.com/repos/godotengine/godot-proposals | closed | Musl libc support, scons detection for libexecinfo | topic:buildsystem topic:porting platform:linuxbsd | <!--

Please fill in *all* the questions below and don't remove any of them.

Proposals not following the template below will be closed immediately.

-->

### Describe the project you are working on

Musl support for Godot

On distributions like Alpine Linux or Gentoo Linux musl support seems to be already feature-... | 1.0 | Musl libc support, scons detection for libexecinfo - <!--

Please fill in *all* the questions below and don't remove any of them.

Proposals not following the template below will be closed immediately.

-->

### Describe the project you are working on

Musl support for Godot

On distributions like Alpine Linux or G... | non_process | musl libc support scons detection for libexecinfo please fill in all the questions below and don t remove any of them proposals not following the template below will be closed immediately describe the project you are working on musl support for godot on distributions like alpine linux or g... | 0 |

8,136 | 11,339,552,995 | IssuesEvent | 2020-01-23 02:28:45 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | reopened | [1.0.0] TestUserCredentialsPropertiesOnWindows failing on Windows (v1.0 only) | area-System.Diagnostics.Process blocked bug disabled-test | ```

System.Diagnostics.Tests.ProcessStartInfoTests.TestUserCredentialsPropertiesOnWindows [FAIL]

System.InvalidOperationException : No process is associated with this object.

Stack Trace:

D:\j\workspace\outerloop_win---2155886e\src\System.Diagnostics.Process\src\System\Diagnostics\Process.cs(... | 1.0 | [1.0.0] TestUserCredentialsPropertiesOnWindows failing on Windows (v1.0 only) - ```

System.Diagnostics.Tests.ProcessStartInfoTests.TestUserCredentialsPropertiesOnWindows [FAIL]

System.InvalidOperationException : No process is associated with this object.

Stack Trace:

D:\j\workspace\outerloop_... | process | testusercredentialspropertiesonwindows failing on windows only system diagnostics tests processstartinfotests testusercredentialspropertiesonwindows system invalidoperationexception no process is associated with this object stack trace d j workspace outerloop win src ... | 1 |

792,251 | 27,952,616,819 | IssuesEvent | 2023-03-24 10:00:27 | nimblehq/android-templates | https://api.github.com/repos/nimblehq/android-templates | opened | Add new sample yaml file for setting up CI/CD with CodeMagic | type : feature status : approved priority : normal | ## Why

[Original RFC](https://github.com/nimblehq/android-templates/discussions/414)

As of now, we are using [CodeMagic](https://codemagic.io/start/) to execute CI/CD pipelines on some projects, there is a lack of a sample yaml file for quick setup when initializing a new project ([.cicdtemplate](https://github.c... | 1.0 | Add new sample yaml file for setting up CI/CD with CodeMagic - ## Why

[Original RFC](https://github.com/nimblehq/android-templates/discussions/414)