Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

6,557 | 9,648,670,630 | IssuesEvent | 2019-05-17 16:54:30 | googleapis/sloth | https://api.github.com/repos/googleapis/sloth | closed | chore(release): proposal for next release | release-candidate type: process | _:robot: Here's what the next release of **@justinbeckwith/sloth** would look like._

---

### [4.0.1](https://www.github.com/googleapis/sloth/compare/v4.0.0...v4.0.1) (2019-05-17)

### Bug Fixes

* remove suprious log ([#230](https://www.github.com/googleapis/sloth/issues/230)) ([cf361b0](https://www.github.com/googl... | 1.0 | chore(release): proposal for next release - _:robot: Here's what the next release of **@justinbeckwith/sloth** would look like._

---

### [4.0.1](https://www.github.com/googleapis/sloth/compare/v4.0.0...v4.0.1) (2019-05-17)

### Bug Fixes

* remove suprious log ([#230](https://www.github.com/googleapis/sloth/issues/2... | process | chore release proposal for next release robot here s what the next release of justinbeckwith sloth would look like bug fixes remove suprious log use the drift prod enpoint deps update dependency update notifier to ... | 1 |

8,817 | 11,935,721,490 | IssuesEvent | 2020-04-02 09:04:46 | pwittchen/ReactiveNetwork | https://api.github.com/repos/pwittchen/ReactiveNetwork | closed | Release 3.0.8 | release process | **release notes**:

- updated project dependencies

- update gradle version

- fixed bug #422 and #415 (changed port for default host for checking internet connectivity from https to http)

**things to do:**

- [x] update javadocs

- [x] update docs

- [x] bump version

- [x] release library

- [x] update changelog

... | 1.0 | Release 3.0.8 - **release notes**:

- updated project dependencies

- update gradle version

- fixed bug #422 and #415 (changed port for default host for checking internet connectivity from https to http)

**things to do:**

- [x] update javadocs

- [x] update docs

- [x] bump version

- [x] release library

- [x] up... | process | release release notes updated project dependencies update gradle version fixed bug and changed port for default host for checking internet connectivity from https to http things to do update javadocs update docs bump version release library update changelog... | 1 |

3,347 | 6,486,519,983 | IssuesEvent | 2017-08-19 20:29:21 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | add synonym GO:0071630 ubiquitin-dependent catabolism of misfolded proteins by nucleus-associated proteasome | cellular processes editors-discussion | add

exact:

nuclear protein quality control by the ubiquitin-proteasome system

broad:protein quality control (PQC)

to

GO:0071630 ubiquitin-dependent catabolism of misfolded proteins by nucleus-associated proteasome

PMID: 21324894 pombe

PMID: 21211726 cerevisiae

I'm angling for a general "protein qual... | 1.0 | add synonym GO:0071630 ubiquitin-dependent catabolism of misfolded proteins by nucleus-associated proteasome - add

exact:

nuclear protein quality control by the ubiquitin-proteasome system

broad:protein quality control (PQC)

to

GO:0071630 ubiquitin-dependent catabolism of misfolded proteins by nucleus-associ... | process | add synonym go ubiquitin dependent catabolism of misfolded proteins by nucleus associated proteasome add exact nuclear protein quality control by the ubiquitin proteasome system broad protein quality control pqc to go ubiquitin dependent catabolism of misfolded proteins by nucleus associated proteas... | 1 |

196,234 | 6,926,097,659 | IssuesEvent | 2017-11-30 17:58:08 | minio/minio | https://api.github.com/repos/minio/minio | closed | On Ubuntu 16.04, Minio service falis to start - tls: bad certificate error | priority: medium | <!--- Provide a general summary of the issue in the Title above -->

## Expected Behavior

After setting up Minio with a Let's Encrypt certificate, the service should start successfully.

## Current Behavior

Instead, Minio fails to start, giving out the following error:

```

level=error msg="Error in reading fr... | 1.0 | On Ubuntu 16.04, Minio service falis to start - tls: bad certificate error - <!--- Provide a general summary of the issue in the Title above -->

## Expected Behavior

After setting up Minio with a Let's Encrypt certificate, the service should start successfully.

## Current Behavior

Instead, Minio fails to start,... | non_process | on ubuntu minio service falis to start tls bad certificate error expected behavior after setting up minio with a let s encrypt certificate the service should start successfully current behavior instead minio fails to start giving out the following error level error msg error in r... | 0 |

53,042 | 7,804,365,467 | IssuesEvent | 2018-06-11 07:09:07 | minishift/minishift | https://api.github.com/repos/minishift/minishift | reopened | Minishift fails to start using xhyve if broken VBox files are on path | component/documentation priority/minor status/pinned | ### General information

* Minishift version: 1.13.1+75352e5

* OS: macOS

* Hypervisor: xhyve

### Steps to reproduce

1. Install virtualbox

2. Remove virtualbox but leave its files in /usr/local/bin

3. Clean ~/.minishift and ~/.kube

4. Start minishift with xhyve as hypervisor

### Expected

... | 1.0 | Minishift fails to start using xhyve if broken VBox files are on path - ### General information

* Minishift version: 1.13.1+75352e5

* OS: macOS

* Hypervisor: xhyve

### Steps to reproduce

1. Install virtualbox

2. Remove virtualbox but leave its files in /usr/local/bin

3. Clean ~/.minishift and... | non_process | minishift fails to start using xhyve if broken vbox files are on path general information minishift version os macos hypervisor xhyve steps to reproduce install virtualbox remove virtualbox but leave its files in usr local bin clean minishift and kub... | 0 |

20,731 | 27,430,261,001 | IssuesEvent | 2023-03-02 00:23:02 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | new java_tools release required to fix @remote_java_tools on Windows | type: process team-Rules-Java | Compiling `@remote_java_tools//:ijar_cc_binary` fails on Windows since 9f2c62aa489394ae882e07fb97eefb0556075944:

```

undeclared inclusion(s) in rule '@remote_java_tools//:ijar_cc_binary':

this rule is missing dependency declarations for the following files included by 'java_tools/ijar/classfile.cc':

'external/baz... | 1.0 | new java_tools release required to fix @remote_java_tools on Windows - Compiling `@remote_java_tools//:ijar_cc_binary` fails on Windows since 9f2c62aa489394ae882e07fb97eefb0556075944:

```

undeclared inclusion(s) in rule '@remote_java_tools//:ijar_cc_binary':

this rule is missing dependency declarations for the follo... | process | new java tools release required to fix remote java tools on windows compiling remote java tools ijar cc binary fails on windows since undeclared inclusion s in rule remote java tools ijar cc binary this rule is missing dependency declarations for the following files included by java tools ijar... | 1 |

12,198 | 14,742,473,457 | IssuesEvent | 2021-01-07 12:21:24 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | 054- Fair Oaks PO # not going on invoice | Parant:896 | anc-process anp-0.5 ant-bug has attachment | In GitLab by @kdjstudios on Apr 23, 2019, 08:34

**Submitted by:** "vanessa salamanca" <vanessa.salamanca@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-22-55412

**Second HD:** http://www.servicedesk.answernet.com/profiles/ticket/8823322

**Server:** Internal (All?)

**Clien... | 1.0 | 054- Fair Oaks PO # not going on invoice | Parant:896 - In GitLab by @kdjstudios on Apr 23, 2019, 08:34

**Submitted by:** "vanessa salamanca" <vanessa.salamanca@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-22-55412

**Second HD:** http://www.servicedesk.answernet.com/profi... | process | fair oaks po not going on invoice parant in gitlab by kdjstudios on apr submitted by vanessa salamanca helpdesk second hd server internal all client site account issue i have a client who requires each invoice to be sent to their ap depar... | 1 |

7,974 | 11,163,764,104 | IssuesEvent | 2019-12-27 00:56:11 | LLNL/axom | https://api.github.com/repos/LLNL/axom | closed | Remove AXOM_USE_CXX11 compiler define | Software process | Axom requires a ``C++11`` compiler, so we should remove all compiler guards that provide fallbacks for cases where `C++11` was not available (e.g. `#ifdef AXOM_USE_CXX11`). | 1.0 | Remove AXOM_USE_CXX11 compiler define - Axom requires a ``C++11`` compiler, so we should remove all compiler guards that provide fallbacks for cases where `C++11` was not available (e.g. `#ifdef AXOM_USE_CXX11`). | process | remove axom use compiler define axom requires a c compiler so we should remove all compiler guards that provide fallbacks for cases where c was not available e g ifdef axom use | 1 |

1,004 | 3,470,288,631 | IssuesEvent | 2015-12-23 06:54:47 | t3kt/vjzual2 | https://api.github.com/repos/t3kt/vjzual2 | closed | simple redux module | enhancement video processing | no multiple masked layers, etc.

also, do it in a shader. it's not complicated. | 1.0 | simple redux module - no multiple masked layers, etc.

also, do it in a shader. it's not complicated. | process | simple redux module no multiple masked layers etc also do it in a shader it s not complicated | 1 |

4,399 | 7,295,805,369 | IssuesEvent | 2018-02-26 08:35:35 | UKHomeOffice/dq-aws-transition | https://api.github.com/repos/UKHomeOffice/dq-aws-transition | opened | Turn On S4 AWS Feed from WFTP01 on Sungard | Production S4 Processing | Turn On S4 AWS Feed from WFTP01 on Sungard.

- [ ] Verify Windows Ingest -> Greenplum jobs in-place and running

- [ ] Ticket Raised on Yellow Board for Sungard Change

- [ ] Change made and verified | 1.0 | Turn On S4 AWS Feed from WFTP01 on Sungard - Turn On S4 AWS Feed from WFTP01 on Sungard.

- [ ] Verify Windows Ingest -> Greenplum jobs in-place and running

- [ ] Ticket Raised on Yellow Board for Sungard Change

- [ ] Change made and verified | process | turn on aws feed from on sungard turn on aws feed from on sungard verify windows ingest greenplum jobs in place and running ticket raised on yellow board for sungard change change made and verified | 1 |

93,202 | 19,100,987,623 | IssuesEvent | 2021-11-29 22:31:22 | google/android-fhir | https://api.github.com/repos/google/android-fhir | opened | Use datastore 1.0.0 library instead of 1.0.0-rc02 | code health | **Describe the Issue**

The latest version of datastore library is [1.0.0](https://developer.android.com/topic/libraries/architecture/datastore#datastore-typed). Let's use that instead of 1.0.0-rc02

**Would you like to work on the issue?**

Yes | 1.0 | Use datastore 1.0.0 library instead of 1.0.0-rc02 - **Describe the Issue**

The latest version of datastore library is [1.0.0](https://developer.android.com/topic/libraries/architecture/datastore#datastore-typed). Let's use that instead of 1.0.0-rc02

**Would you like to work on the issue?**

Yes | non_process | use datastore library instead of describe the issue the latest version of datastore library is let s use that instead of would you like to work on the issue yes | 0 |

284,384 | 21,416,579,492 | IssuesEvent | 2022-04-22 11:27:58 | opentelekomcloud/vault-plugin-secrets-openstack | https://api.github.com/repos/opentelekomcloud/vault-plugin-secrets-openstack | closed | README reference command inconsistency | documentation | I believe this

``` vault read /os/creds/example-role ```

should be:

``` vault read /openstack/creds/example-role ```

to be aligned with the previous commands.

https://github.com/opentelekomcloud/vault-plugin-secrets-openstack/blob/e56d944faeea6de3e601342395858182748e5c37/README.md?plain=1#L81 | 1.0 | README reference command inconsistency - I believe this

``` vault read /os/creds/example-role ```

should be:

``` vault read /openstack/creds/example-role ```

to be aligned with the previous commands.

https://github.com/opentelekomcloud/vault-plugin-secrets-openstack/blob/e56d944faeea6de3e601342395858182748... | non_process | readme reference command inconsistency i believe this vault read os creds example role should be vault read openstack creds example role to be aligned with the previous commands | 0 |

718,018 | 24,700,515,432 | IssuesEvent | 2022-10-19 14:59:13 | PrefectHQ/prefect | https://api.github.com/repos/PrefectHQ/prefect | closed | Tasks do not support callable objects | bug priority:medium status:in-progress | ### First check

- [X] I added a descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the Prefect documentation for this issue.

- [X] I checked that this issue is related to Prefect and not one of its dependencies.

### Bug summary

As the... | 1.0 | Tasks do not support callable objects - ### First check

- [X] I added a descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the Prefect documentation for this issue.

- [X] I checked that this issue is related to Prefect and not one of its de... | non_process | tasks do not support callable objects first check i added a descriptive title to this issue i used the github search to find a similar issue and didn t find it i searched the prefect documentation for this issue i checked that this issue is related to prefect and not one of its dependenci... | 0 |

7,729 | 10,852,889,237 | IssuesEvent | 2019-11-13 13:43:23 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | PowerPC VLE offset sometimes incorrect (e_add2i, e_add2is, e_mull2i, e_cmp16i, e_cmph16i) | Feature: Processor/PowerPC Type: Bug | **Description:**

Certain PowerPC VLE instructions may have an incorrect signed 16 bit immediate value (SIMM16) if one of the instruction fields (SIMM_0_10_VLE) is negative.

**To reproduce:**

1. Create binary file holding bytes "72 C6 96 7D"

2. Load binary as PowerPC PowerISA-VLE-64-32addr

3. Make sure vle bi... | 1.0 | PowerPC VLE offset sometimes incorrect (e_add2i, e_add2is, e_mull2i, e_cmp16i, e_cmph16i) - **Description:**

Certain PowerPC VLE instructions may have an incorrect signed 16 bit immediate value (SIMM16) if one of the instruction fields (SIMM_0_10_VLE) is negative.

**To reproduce:**

1. Create binary file holdin... | process | powerpc vle offset sometimes incorrect e e e e e description certain powerpc vle instructions may have an incorrect signed bit immediate value if one of the instruction fields simm vle is negative to reproduce create binary file holding bytes load binar... | 1 |

135,219 | 12,678,678,413 | IssuesEvent | 2020-06-19 10:12:56 | kai687/sphinxawesome-theme | https://api.github.com/repos/kai687/sphinxawesome-theme | closed | Include instruction about sampdirective | documentation enhancement | If you want to use it in the documentation, you also need to add it to the extension list.

I could think of a way to automatically add it, since we will always install it anyway.

If import fails for some reason (someone uninstalled it, etc.) I can skip it but it should

work from within the theme. | 1.0 | Include instruction about sampdirective - If you want to use it in the documentation, you also need to add it to the extension list.

I could think of a way to automatically add it, since we will always install it anyway.

If import fails for some reason (someone uninstalled it, etc.) I can skip it but it should

wo... | non_process | include instruction about sampdirective if you want to use it in the documentation you also need to add it to the extension list i could think of a way to automatically add it since we will always install it anyway if import fails for some reason someone uninstalled it etc i can skip it but it should wo... | 0 |

10,422 | 13,214,171,003 | IssuesEvent | 2020-08-16 16:25:21 | jyn514/saltwater | https://api.github.com/repos/jyn514/saltwater | closed | Missing predefined macros | enhancement preprocessor | [6.10.8.1 Mandatory macros](http://port70.net/~nsz/c/c11/n1570.html#6.10.8.1)

- [x] `__LINE__` - tricky, can't be passed through to `replace()` literally. This might be as simple as updating `self.definitions` whenever someone calls `line()`?

- [ ] `__COLUMN__` - tricky since we don't keep track of this explicitly ... | 1.0 | Missing predefined macros - [6.10.8.1 Mandatory macros](http://port70.net/~nsz/c/c11/n1570.html#6.10.8.1)

- [x] `__LINE__` - tricky, can't be passed through to `replace()` literally. This might be as simple as updating `self.definitions` whenever someone calls `line()`?

- [ ] `__COLUMN__` - tricky since we don't ke... | process | missing predefined macros line tricky can t be passed through to replace literally this might be as simple as updating self definitions whenever someone calls line column tricky since we don t keep track of this explicitly and recalculate it probably needs yet more prepro... | 1 |

20,353 | 27,013,260,494 | IssuesEvent | 2023-02-10 17:02:35 | NCAR/comp-pipeline | https://api.github.com/repos/NCAR/comp-pipeline | closed | First pass at reprocessing pre-December 2012 | level 1 process | Need to get the raw data from the archive, reprocess, and do some analysis on the results to see if they are consistent with the rest of the mission.

The sub-tasks are:

- [x] download pre-December 2012 from Campaign Storage

- [x] untar

- [x] reprocess level 1 and 2

- [x] update flat plot

- [x] update velocity... | 1.0 | First pass at reprocessing pre-December 2012 - Need to get the raw data from the archive, reprocess, and do some analysis on the results to see if they are consistent with the rest of the mission.

The sub-tasks are:

- [x] download pre-December 2012 from Campaign Storage

- [x] untar

- [x] reprocess level 1 and 2... | process | first pass at reprocessing pre december need to get the raw data from the archive reprocess and do some analysis on the results to see if they are consistent with the rest of the mission the sub tasks are download pre december from campaign storage untar reprocess level and update... | 1 |

58,468 | 16,548,303,894 | IssuesEvent | 2021-05-28 04:38:11 | Questie/Questie | https://api.github.com/repos/Questie/Questie | opened | [Classic ERA] minimap button can't be hidden | Type - Defect | ## Bug description

It came back after each reload.

## Questie version

3.3.13 | 1.0 | [Classic ERA] minimap button can't be hidden - ## Bug description

It came back after each reload.

## Questie version

3.3.13 | non_process | minimap button can t be hidden bug description it came back after each reload questie version | 0 |

133,829 | 5,215,367,937 | IssuesEvent | 2017-01-26 04:29:47 | biocore/gneiss | https://api.github.com/repos/biocore/gneiss | opened | RegressionModel.fit() issues | bug high priority | - [ ] The RegressionModel object will add more variables if `fit` is called multiple times

- [ ] `fit` should return the updated RegressionModel object as output | 1.0 | RegressionModel.fit() issues - - [ ] The RegressionModel object will add more variables if `fit` is called multiple times

- [ ] `fit` should return the updated RegressionModel object as output | non_process | regressionmodel fit issues the regressionmodel object will add more variables if fit is called multiple times fit should return the updated regressionmodel object as output | 0 |

8,063 | 11,223,812,678 | IssuesEvent | 2020-01-07 23:55:14 | mendezc1/GenderMagRecordersAssistant | https://api.github.com/repos/mendezc1/GenderMagRecordersAssistant | closed | Change of facet name : "Willingness to Tinker" to "Learning: by Process vs. by Tinkering" | Good First Issue Information Processing Style Learning by Process vs. by Tinkering Medium Priority | * **What Operating System?**

System-wide issue

* **What steps will reproduce the issue?**

Start a GenderMag tool and you will find "Willingness to Tinker" under facets list

* **What would you expect to be the outcome?**

"Learning: by Process vs. by Tinkering" instead of "Willingness to Tinker" based on fou... | 2.0 | Change of facet name : "Willingness to Tinker" to "Learning: by Process vs. by Tinkering" - * **What Operating System?**

System-wide issue

* **What steps will reproduce the issue?**

Start a GenderMag tool and you will find "Willingness to Tinker" under facets list

* **What would you expect to be the outcome?*... | process | change of facet name willingness to tinker to learning by process vs by tinkering what operating system system wide issue what steps will reproduce the issue start a gendermag tool and you will find willingness to tinker under facets list what would you expect to be the outcome ... | 1 |

16,606 | 21,659,420,681 | IssuesEvent | 2022-05-06 17:29:32 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | Incorrect Dissassembly of MIPS Function Prologue (Big Endian) | Type: Bug Feature: Processor/MIPS | **Describe the bug**

Ghidra disassembles the function prologue as follows:

```

.text:004fad64 lui gp,0x57

assume t9 = <UNKNOWN>

assume gp = <UNKNOWN>

.text:004fad68 addiu gp,gp,0xfcc

.text:004fad6c addu gp,gp,t9

.text:... | 1.0 | Incorrect Dissassembly of MIPS Function Prologue (Big Endian) - **Describe the bug**

Ghidra disassembles the function prologue as follows:

```

.text:004fad64 lui gp,0x57

assume t9 = <UNKNOWN>

assume gp = <UNKNOWN>

.text:004fad68 addiu gp,gp,0xfcc... | process | incorrect dissassembly of mips function prologue big endian describe the bug ghidra disassembles the function prologue as follows text lui gp assume assume gp text addiu gp gp text addu ... | 1 |

642,484 | 20,905,180,466 | IssuesEvent | 2022-03-24 00:48:37 | JustArchiNET/ArchiSteamFarm | https://api.github.com/repos/JustArchiNET/ArchiSteamFarm | closed | zh-CH global setting not fully effective | 🐛 Bug ✔️ Confirmed 🟢 Low priority | ### Checklist

- [X] I read and understood ASF's **[Contributing guidelines](https://github.com/JustArchiNET/ArchiSteamFarm/blob/main/.github/CONTRIBUTING.md)**

- [X] I also read **[Setting-up](https://github.com/JustArchiNET/ArchiSteamFarm/wiki/Setting-up)** and **[FAQ](https://github.com/JustArchiNET/ArchiSteamFarm/w... | 1.0 | zh-CH global setting not fully effective - ### Checklist

- [X] I read and understood ASF's **[Contributing guidelines](https://github.com/JustArchiNET/ArchiSteamFarm/blob/main/.github/CONTRIBUTING.md)**

- [X] I also read **[Setting-up](https://github.com/JustArchiNET/ArchiSteamFarm/wiki/Setting-up)** and **[FAQ](https... | non_process | zh ch global setting not fully effective checklist i read and understood asf s i also read and i don t need this is a bug report i don t own more than i m not using this is not a this is not a this is not asf version latest s... | 0 |

277,992 | 24,116,863,570 | IssuesEvent | 2022-09-20 15:20:07 | redpanda-data/redpanda | https://api.github.com/repos/redpanda-data/redpanda | closed | Failure in `EndToEndShadowIndexingTestWithDisruptions.test_write_with_node_failures` (Failed to consume up to offsets) | kind/bug area/tests ci-failure area/shadow-indexing ci-disabled-test | https://buildkite.com/redpanda/redpanda/builds/9910#0ad95b8f-ad64-45eb-bd6d-081ecbbd9f81

```

test_id: rptest.tests.e2e_shadow_indexing_test.EndToEndShadowIndexingTestWithDisruptions.test_write_with_node_failures

status: FAIL

run time: 2 minutes 47.183 seconds

TimeoutError("Consumer failed to con... | 2.0 | Failure in `EndToEndShadowIndexingTestWithDisruptions.test_write_with_node_failures` (Failed to consume up to offsets) - https://buildkite.com/redpanda/redpanda/builds/9910#0ad95b8f-ad64-45eb-bd6d-081ecbbd9f81

```

test_id: rptest.tests.e2e_shadow_indexing_test.EndToEndShadowIndexingTestWithDisruptions.test_write... | non_process | failure in endtoendshadowindexingtestwithdisruptions test write with node failures failed to consume up to offsets test id rptest tests shadow indexing test endtoendshadowindexingtestwithdisruptions test write with node failures status fail run time minutes seconds timeout... | 0 |

1,566 | 4,164,978,824 | IssuesEvent | 2016-06-19 06:20:05 | sysown/proxysql | https://api.github.com/repos/sysown/proxysql | opened | Set custom wait_timeout | ADMIN CONNECTION POOL MYSQL PROTOCOL QUERY PROCESSOR | When ProxySQL connects to a backend it should define a custom wait_timeout. | 1.0 | Set custom wait_timeout - When ProxySQL connects to a backend it should define a custom wait_timeout. | process | set custom wait timeout when proxysql connects to a backend it should define a custom wait timeout | 1 |

15,456 | 19,669,069,241 | IssuesEvent | 2022-01-11 03:57:13 | q191201771/lal | https://api.github.com/repos/q191201771/lal | closed | rtmp 推流到 lal,可能会 panic | #Bug *In process | rtmp 推流到 lal,如果是如下的源,lal 会 panic

ffmpeg.exe -i http://:8800/hls/0/index.m3u8 -c copy -f flv rtmp://127.0.0.1:1935/live/test110

```

panic: runtime error: invalid memory address or nil pointer dereference

[signal 0xc0000005 code=0x0 addr=0x1 pc=0x13ea02a]

goroutine 34903 [running]:

github.com/q191201771/lal/pkg... | 1.0 | rtmp 推流到 lal,可能会 panic - rtmp 推流到 lal,如果是如下的源,lal 会 panic

ffmpeg.exe -i http://:8800/hls/0/index.m3u8 -c copy -f flv rtmp://127.0.0.1:1935/live/test110

```

panic: runtime error: invalid memory address or nil pointer dereference

[signal 0xc0000005 code=0x0 addr=0x1 pc=0x13ea02a]

goroutine 34903 [running]:

gith... | process | rtmp 推流到 lal,可能会 panic rtmp 推流到 lal,如果是如下的源,lal 会 panic ffmpeg exe i c copy f flv rtmp live panic runtime error invalid memory address or nil pointer dereference goroutine github com lal pkg aac asccontext getsamplingfrequency volumes extssd chef git lal p... | 1 |

636 | 3,092,139,715 | IssuesEvent | 2015-08-26 16:19:58 | e-government-ua/iBP | https://api.github.com/repos/e-government-ua/iBP | opened | ЦНАП м.Тернопіль - Погодження режиму роботи об’єктів сфери торгівлі та сфери обслуговування населення на території м. Тернополя" , | in process of creating | Описание

https://drive.google.com/file/d/0B-TXzbaEvbw9MFUxQUlaMzVaZVE/view?usp=sharing

| 1.0 | ЦНАП м.Тернопіль - Погодження режиму роботи об’єктів сфери торгівлі та сфери обслуговування населення на території м. Тернополя" , - Описание

https://drive.google.com/file/d/0B-TXzbaEvbw9MFUxQUlaMzVaZVE/view?usp=sharing

| process | цнап м тернопіль погодження режиму роботи об’єктів сфери торгівлі та сфери обслуговування населення на території м тернополя описание | 1 |

231,767 | 7,643,117,001 | IssuesEvent | 2018-05-08 11:36:15 | containous/traefik | https://api.github.com/repos/containous/traefik | closed | Keep AccessLog entries based on retry attempts | area/logs kind/enhancement priority/P2 | ### Do you want to request a *feature* or report a *bug*?

*feature*

### Description

We want to keep all access logs that either responded with a 5xx or where at least one retry attempt happened. While the former is perfectly possible now with the access log filters for status codes, we can't achieve the second... | 1.0 | Keep AccessLog entries based on retry attempts - ### Do you want to request a *feature* or report a *bug*?

*feature*

### Description

We want to keep all access logs that either responded with a 5xx or where at least one retry attempt happened. While the former is perfectly possible now with the access log filt... | non_process | keep accesslog entries based on retry attempts do you want to request a feature or report a bug feature description we want to keep all access logs that either responded with a or where at least one retry attempt happened while the former is perfectly possible now with the access log filter... | 0 |

20,580 | 27,242,212,981 | IssuesEvent | 2023-02-21 21:34:33 | biocodellc/localcontexts_db | https://api.github.com/repos/biocodellc/localcontexts_db | opened | Login page: add a 'forgot username?' function | registration process | Users have been asking their usernames to login so need to add functionality that would help a user remember their username. | 1.0 | Login page: add a 'forgot username?' function - Users have been asking their usernames to login so need to add functionality that would help a user remember their username. | process | login page add a forgot username function users have been asking their usernames to login so need to add functionality that would help a user remember their username | 1 |

99,345 | 8,698,043,613 | IssuesEvent | 2018-12-04 22:03:56 | Microsoft/vscode | https://api.github.com/repos/Microsoft/vscode | closed | Test: storage (global) | testplan-item | Refs: https://github.com/Microsoft/vscode/issues/58957

Complexity: 5

- [x] macOS: @RMacfarlane

- [x] Windows: @alexr00

- [x] Linux: @Tyriar

**Setup**

You need to set an environment variable (best from the console) to be able to test this item because while the code is in, it will not be enabled by defaul... | 1.0 | Test: storage (global) - Refs: https://github.com/Microsoft/vscode/issues/58957

Complexity: 5

- [x] macOS: @RMacfarlane

- [x] Windows: @alexr00

- [x] Linux: @Tyriar

**Setup**

You need to set an environment variable (best from the console) to be able to test this item because while the code is in, it will... | non_process | test storage global refs complexity macos rmacfarlane windows linux tyriar setup you need to set an environment variable best from the console to be able to test this item because while the code is in it will not be enabled by default in the next stable release m... | 0 |

5,861 | 8,681,910,613 | IssuesEvent | 2018-12-02 01:21:21 | lightningWhite/weatherLearning | https://api.github.com/repos/lightningWhite/weatherLearning | closed | Consolidate the data | dataProcessing to do | Since we will probably be presenting data to the net that we extrapolated from the original data (instead of actually using the data), it might be easier to create a new file containing everything we want with all of the cities' datas consolidated into one file.

Currently, there is a separate file for each attribute,... | 1.0 | Consolidate the data - Since we will probably be presenting data to the net that we extrapolated from the original data (instead of actually using the data), it might be easier to create a new file containing everything we want with all of the cities' datas consolidated into one file.

Currently, there is a separate f... | process | consolidate the data since we will probably be presenting data to the net that we extrapolated from the original data instead of actually using the data it might be easier to create a new file containing everything we want with all of the cities datas consolidated into one file currently there is a separate f... | 1 |

149,166 | 23,440,267,444 | IssuesEvent | 2022-08-15 14:15:47 | department-of-veterans-affairs/va.gov-cms | https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms | opened | Events near me: Build test-ready prototypes | Design Needs refining ⭐️ Public Websites | ## Description

Using sketched concepts, build out prototypes that are ready for testing with editors.

## Acceptance Criteria

- [ ] At least 2 prototypes are built and ready to test

### CMS Team

Please check the team(s) that will do this work.

- [ ] `Program`

- [ ] `Platform CMS Team`

- [ ] `Sitewide Crew`... | 1.0 | Events near me: Build test-ready prototypes - ## Description

Using sketched concepts, build out prototypes that are ready for testing with editors.

## Acceptance Criteria

- [ ] At least 2 prototypes are built and ready to test

### CMS Team

Please check the team(s) that will do this work.

- [ ] `Program`

- ... | non_process | events near me build test ready prototypes description using sketched concepts build out prototypes that are ready for testing with editors acceptance criteria at least prototypes are built and ready to test cms team please check the team s that will do this work program p... | 0 |

86,833 | 17,089,840,401 | IssuesEvent | 2021-07-08 15:59:33 | eclipse/eclipse.jdt.ls | https://api.github.com/repos/eclipse/eclipse.jdt.ls | closed | 'Create method' code action for method reference | code-actions enhancement upstream | in code block

```

list.map(this::cutPrefix);

```

would be nice to have suggested code action 'Create method cutPrefix' if method is absent | 1.0 | 'Create method' code action for method reference - in code block

```

list.map(this::cutPrefix);

```

would be nice to have suggested code action 'Create method cutPrefix' if method is absent | non_process | create method code action for method reference in code block list map this cutprefix would be nice to have suggested code action create method cutprefix if method is absent | 0 |

161,232 | 12,534,357,851 | IssuesEvent | 2020-06-04 19:16:55 | Azure-Samples/Cognitive-Services-Voice-Assistant | https://api.github.com/repos/Azure-Samples/Cognitive-Services-Voice-Assistant | closed | Use common naming convention for variable across samples | Devices Console Client (C++) UWP Voice Assistant (C# UWP) Voice Assistant Test Tool (C# .NET Core) Windows Voice Assistant Client (C# WPF) | We should use a common convention for variables across all samples. We could use Win32: https://docs.microsoft.com/en-us/windows/win32/stg/coding-style-conventions

or come up with our own, but we should be consistent

I imagine this will result in an issue for each client to follow the decided convention.

### T... | 1.0 | Use common naming convention for variable across samples - We should use a common convention for variables across all samples. We could use Win32: https://docs.microsoft.com/en-us/windows/win32/stg/coding-style-conventions

or come up with our own, but we should be consistent

I imagine this will result in an issue... | non_process | use common naming convention for variable across samples we should use a common convention for variables across all samples we could use or come up with our own but we should be consistent i imagine this will result in an issue for each client to follow the decided convention this issue is for a ... | 0 |

285,272 | 8,756,769,632 | IssuesEvent | 2018-12-14 18:52:11 | kotekan/kotekan | https://api.github.com/repos/kotekan/kotekan | opened | Double counting RFI data sample loss (over normalization) | bug high priority | In the `2018.12` deployment it was discovered that when packets are lost due to network/system load levels, and there was RFI flagged on the same time period in which the packet loss happened, then the number of lost samples is double counted. This means the amount of renormalization applied ends up being too high.

... | 1.0 | Double counting RFI data sample loss (over normalization) - In the `2018.12` deployment it was discovered that when packets are lost due to network/system load levels, and there was RFI flagged on the same time period in which the packet loss happened, then the number of lost samples is double counted. This means the ... | non_process | double counting rfi data sample loss over normalization in the deployment it was discovered that when packets are lost due to network system load levels and there was rfi flagged on the same time period in which the packet loss happened then the number of lost samples is double counted this means the amou... | 0 |

120,648 | 25,836,680,307 | IssuesEvent | 2022-12-12 20:14:30 | Clueless-Community/fintech-api | https://api.github.com/repos/Clueless-Community/fintech-api | closed | Create an endpoint to calculate Purchasing Power | issue:3 codepeak 22 | ### What bug or feature you wants to report.

Create an endpoint to calculate Purchasing Power of an entity. | 1.0 | Create an endpoint to calculate Purchasing Power - ### What bug or feature you wants to report.

Create an endpoint to calculate Purchasing Power of an entity. | non_process | create an endpoint to calculate purchasing power what bug or feature you wants to report create an endpoint to calculate purchasing power of an entity | 0 |

12,683 | 15,048,569,885 | IssuesEvent | 2021-02-03 10:21:13 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [processing][feature] New algorithm "Flatten Relationship" | 3.16 Automatic new feature Processing Alg | Original commit: https://github.com/qgis/QGIS/commit/d45cf980d8bfa35ea902444d6eec34043b337d33 by nyalldawson

This algorithm flattens all relationships for a vector layer,

exporting a single layer containing one master feature per

related feature. This master feature contains all the

attributes for the related features... | 1.0 | [processing][feature] New algorithm "Flatten Relationship" - Original commit: https://github.com/qgis/QGIS/commit/d45cf980d8bfa35ea902444d6eec34043b337d33 by nyalldawson

This algorithm flattens all relationships for a vector layer,

exporting a single layer containing one master feature per

related feature. This master... | process | new algorithm flatten relationship original commit by nyalldawson this algorithm flattens all relationships for a vector layer exporting a single layer containing one master feature per related feature this master feature contains all the attributes for the related features it s designed as a quick way t... | 1 |

18,241 | 24,313,060,962 | IssuesEvent | 2022-09-30 01:47:27 | benthosdev/benthos | https://api.github.com/repos/benthosdev/benthos | closed | How to execute sql statement in a loop? | question processors | I want to loop insert data from array into database. just like this:

```yaml

input:

generate:

mapping: |

root = {"sqldata":["delete from b.tax where month_of_salary='202208'","delete from a.tax where month_of_salary='202208'"]}

interval: 0s

count: 1

pipeline:

processors:

- while:

... | 1.0 | How to execute sql statement in a loop? - I want to loop insert data from array into database. just like this:

```yaml

input:

generate:

mapping: |

root = {"sqldata":["delete from b.tax where month_of_salary='202208'","delete from a.tax where month_of_salary='202208'"]}

interval: 0s

count: 1

... | process | how to execute sql statement in a loop i want to loop insert data from array into database just like this yaml input generate mapping root sqldata interval count pipeline processors while at least once false max loops che... | 1 |

21,189 | 28,180,676,806 | IssuesEvent | 2023-04-04 02:00:10 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Tue, 4 Apr 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### LivePose: Online 3D Reconstruction from Monocular Video with Dynamic Camera Poses

- **Authors:** Noah Stier, Baptiste Angles, Liang Yang, Yajie Yan, Alex Colburn, Ming Chuang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2304.00054

- ... | 2.0 | New submissions for Tue, 4 Apr 23 - ## Keyword: events

### LivePose: Online 3D Reconstruction from Monocular Video with Dynamic Camera Poses

- **Authors:** Noah Stier, Baptiste Angles, Liang Yang, Yajie Yan, Alex Colburn, Ming Chuang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:**... | process | new submissions for tue apr keyword events livepose online reconstruction from monocular video with dynamic camera poses authors noah stier baptiste angles liang yang yajie yan alex colburn ming chuang subjects computer vision and pattern recognition cs cv arxiv link ... | 1 |

1,320 | 3,870,871,039 | IssuesEvent | 2016-04-11 07:12:26 | opentrials/opentrials | https://api.github.com/repos/opentrials/opentrials | closed | Migrate collectors, processors to Docker Cloud and PaperTrail | Collectors enhancement Processors | @roll

It looks like the same old Tutum. I suppose it's just like change few hosts.

https://support.tutum.co/support/solutions/articles/13000003644-tutum-to-docker-cloud-migration-guide

# Tasks

* [x] Migrate to Docker Cloud

* [x] Drain logs to PaperTrail (@pwalsh has creds) | 1.0 | Migrate collectors, processors to Docker Cloud and PaperTrail - @roll

It looks like the same old Tutum. I suppose it's just like change few hosts.

https://support.tutum.co/support/solutions/articles/13000003644-tutum-to-docker-cloud-migration-guide

# Tasks

* [x] Migrate to Docker Cloud

* [x] Drain logs to... | process | migrate collectors processors to docker cloud and papertrail roll it looks like the same old tutum i suppose it s just like change few hosts tasks migrate to docker cloud drain logs to papertrail pwalsh has creds | 1 |

17,940 | 23,937,356,195 | IssuesEvent | 2022-09-11 12:26:59 | OpenDataScotland/the_od_bods | https://api.github.com/repos/OpenDataScotland/the_od_bods | closed | Add Spatial Hub as a data source | research data processing | Add https://data.spatialhub.scot/dataset/ as a source

We think this might be possibly through the existing CKAN API.

Some datasets on the Spatial Hub are already published by local authorities in their individual open data portals which has the potential to cause duplicates. For example, Angus Council’s polling d... | 1.0 | Add Spatial Hub as a data source - Add https://data.spatialhub.scot/dataset/ as a source

We think this might be possibly through the existing CKAN API.

Some datasets on the Spatial Hub are already published by local authorities in their individual open data portals which has the potential to cause duplicates. For... | process | add spatial hub as a data source add as a source we think this might be possibly through the existing ckan api some datasets on the spatial hub are already published by local authorities in their individual open data portals which has the potential to cause duplicates for example angus council’s polling di... | 1 |

207,791 | 23,495,761,191 | IssuesEvent | 2022-08-18 01:04:26 | LingalaShalini/openjpeg-2.3.0_before_fix | https://api.github.com/repos/LingalaShalini/openjpeg-2.3.0_before_fix | closed | CVE-2022-0908 (Medium) detected in openjpegv2.3.0 - autoclosed | security vulnerability | ## CVE-2022-0908 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>openjpegv2.3.0</b></p></summary>

<p>

<p>Official repository of the OpenJPEG project</p>

<p>Library home page: <a href... | True | CVE-2022-0908 (Medium) detected in openjpegv2.3.0 - autoclosed - ## CVE-2022-0908 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>openjpegv2.3.0</b></p></summary>

<p>

<p>Official rep... | non_process | cve medium detected in autoclosed cve medium severity vulnerability vulnerable library official repository of the openjpeg project library home page a href found in base branch master vulnerable source files thirdparty libtiff tif dirre... | 0 |

21,728 | 30,240,655,413 | IssuesEvent | 2023-07-06 13:19:31 | EBIvariation/eva-opentargets | https://api.github.com/repos/EBIvariation/eva-opentargets | closed | Manual curation for 2023.09 release | Processing | Should be done after #379 but before 30 June to ensure handover goes smoothly and SOPs are up to date.

Refer to [documentation](https://github.com/EBIvariation/eva-opentargets/tree/master/docs/manual-curation) for full description of steps.

**Checklist:**

- [x] Step 1 — Process

- [x] Step 2 — Curate

- [x] ... | 1.0 | Manual curation for 2023.09 release - Should be done after #379 but before 30 June to ensure handover goes smoothly and SOPs are up to date.

Refer to [documentation](https://github.com/EBIvariation/eva-opentargets/tree/master/docs/manual-curation) for full description of steps.

**Checklist:**

- [x] Step 1 — Proc... | process | manual curation for release should be done after but before june to ensure handover goes smoothly and sops are up to date refer to for full description of steps checklist step — process step — curate curation review review step — export ... | 1 |

7,941 | 11,137,385,066 | IssuesEvent | 2019-12-20 19:11:34 | kubeflow/testing | https://api.github.com/repos/kubeflow/testing | closed | install tektoncd/pipeline in our test clusters | area/testing kind/process priority/p1 | It might be valuable to support [tektoncd/pipeline](https://rubygems.pkg.github.com/tektoncd/pipeline/blob/master/docs/install.md) for writing E2E tests.

One of the nice things about pipelines is that pipelines are split into reusable tasks. So we could have common tasks for things like deploying kubeflow and then c... | 1.0 | install tektoncd/pipeline in our test clusters - It might be valuable to support [tektoncd/pipeline](https://rubygems.pkg.github.com/tektoncd/pipeline/blob/master/docs/install.md) for writing E2E tests.

One of the nice things about pipelines is that pipelines are split into reusable tasks. So we could have common ta... | process | install tektoncd pipeline in our test clusters it might be valuable to support for writing tests one of the nice things about pipelines is that pipelines are split into reusable tasks so we could have common tasks for things like deploying kubeflow and then combine these tasks into tests installing i... | 1 |

400,748 | 27,298,388,288 | IssuesEvent | 2023-02-23 22:35:11 | Azure/azure-functions-java-worker | https://api.github.com/repos/Azure/azure-functions-java-worker | closed | Spring Cloud Functions in Azure Functions | Documentation | Quick start and developer guide

Sample code for top triggers and bindings | 1.0 | Spring Cloud Functions in Azure Functions - Quick start and developer guide

Sample code for top triggers and bindings | non_process | spring cloud functions in azure functions quick start and developer guide sample code for top triggers and bindings | 0 |

17,436 | 23,255,647,979 | IssuesEvent | 2022-08-04 09:01:45 | goblint/analyzer | https://api.github.com/repos/goblint/analyzer | opened | Polish `__goblint_check` | cleanup testing preprocessing | In #278 all the `assert`s in tests were changed to `__goblint_check`, which is declared by a `goblint.h` header that we forcefully include into every analyzed file via `-include`. This makes the programs normally uncompilable and most of them still include `assert.h`, which now is excessive.

This situation should be... | 1.0 | Polish `__goblint_check` - In #278 all the `assert`s in tests were changed to `__goblint_check`, which is declared by a `goblint.h` header that we forcefully include into every analyzed file via `-include`. This makes the programs normally uncompilable and most of them still include `assert.h`, which now is excessive.

... | process | polish goblint check in all the assert s in tests were changed to goblint check which is declared by a goblint h header that we forcefully include into every analyzed file via include this makes the programs normally uncompilable and most of them still include assert h which now is excessive ... | 1 |

9,186 | 12,228,624,864 | IssuesEvent | 2020-05-03 20:14:03 | chfor183/data_science_articles | https://api.github.com/repos/chfor183/data_science_articles | opened | Data Preprocessing Concepts | Data Preprocessing | ## TL;DR

1. Load data

2. Look at the data shape and type

3. Drop duplicates

4. Look at the few first and last rows

5. Remove special characters from numeric columns

6. Remove special characters from categorical columns

7. Handling missing values

8. Look at the data distribution

9. Handling of outliers

10. D... | 1.0 | Data Preprocessing Concepts - ## TL;DR

1. Load data

2. Look at the data shape and type

3. Drop duplicates

4. Look at the few first and last rows

5. Remove special characters from numeric columns

6. Remove special characters from categorical columns

7. Handling missing values

8. Look at the data distribution

... | process | data preprocessing concepts tl dr load data look at the data shape and type drop duplicates look at the few first and last rows remove special characters from numeric columns remove special characters from categorical columns handling missing values look at the data distribution ... | 1 |

7,430 | 10,548,713,310 | IssuesEvent | 2019-10-03 06:48:17 | Altinn/altinn-studio | https://api.github.com/repos/Altinn/altinn-studio | closed | Create a workflow with conditional steps | altinn-studio process | As a service developer I should be able to create a workflow with conditional steps | 1.0 | Create a workflow with conditional steps - As a service developer I should be able to create a workflow with conditional steps | process | create a workflow with conditional steps as a service developer i should be able to create a workflow with conditional steps | 1 |

2,676 | 5,495,567,821 | IssuesEvent | 2017-03-15 05:02:04 | rubberduck-vba/Rubberduck | https://api.github.com/repos/rubberduck-vba/Rubberduck | closed | False positive of "extraneous input" due to RaiseEvent | bug critical parse-tree-processing | I have a complex procedure that is causing a parser error.

The offending line is simply this:

mFrmQueue.RecordSource = strSQL

and it reports `extraneous input 'strSQL' expecting {')', WS, LINE_CONTINUATION}`.

Few things that raise red flags:

It reports the error being located at the line 313,... | 1.0 | False positive of "extraneous input" due to RaiseEvent - I have a complex procedure that is causing a parser error.

The offending line is simply this:

mFrmQueue.RecordSource = strSQL

and it reports `extraneous input 'strSQL' expecting {')', WS, LINE_CONTINUATION}`.

Few things that raise red flag... | process | false positive of extraneous input due to raiseevent i have a complex procedure that is causing a parser error the offending line is simply this mfrmqueue recordsource strsql and it reports extraneous input strsql expecting ws line continuation few things that raise red flag... | 1 |

30,093 | 5,726,222,892 | IssuesEvent | 2017-04-20 18:26:20 | stormpath/stormpath-sdk-dotnet | https://api.github.com/repos/stormpath/stormpath-sdk-dotnet | closed | Add examples of token creation code | documentation wontfix | This page currently lacks examples of code for each type of token generation strategy: https://docs.stormpath.com/csharp/product-guide/latest/auth_n.html#generating-an-oauth-2-0-access-token | 1.0 | Add examples of token creation code - This page currently lacks examples of code for each type of token generation strategy: https://docs.stormpath.com/csharp/product-guide/latest/auth_n.html#generating-an-oauth-2-0-access-token | non_process | add examples of token creation code this page currently lacks examples of code for each type of token generation strategy | 0 |

10,311 | 13,156,649,694 | IssuesEvent | 2020-08-10 11:13:15 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | vsphere-template post-processor -> Failed - the task was cancelled by a user | post-processor/vsphere-template regression waiting-reply | Here's our post-processors chain:

> "post-processors": [

> [

> {

> "cluster": "{{ user `vsphere_cluster` }}",

> "datacenter": "{{ user `vsphere_datacenter` }}",

> "datastore": "{{ user `vsphere_datastore` }}",

> "disk_mode": "{{ user `vsphere_disk_mod... | 1.0 | vsphere-template post-processor -> Failed - the task was cancelled by a user - Here's our post-processors chain:

> "post-processors": [

> [

> {

> "cluster": "{{ user `vsphere_cluster` }}",

> "datacenter": "{{ user `vsphere_datacenter` }}",

> "datastore": "{{ user ... | process | vsphere template post processor failed the task was cancelled by a user here s our post processors chain post processors cluster user vsphere cluster datacenter user vsphere datacenter datastore user ... | 1 |

19,886 | 26,330,435,258 | IssuesEvent | 2023-01-10 10:22:52 | deepset-ai/haystack | https://api.github.com/repos/deepset-ai/haystack | closed | `PreProcessor` `FileExistsError` with parallel pipeline instantiation on same machine (noisy neighbor) | type:bug topic:preprocessing | **Describe the bug**

When a `PreProcessor` is initialized, it downloads the `punkt` sentence tokenizer ([see code here](https://github.com/deepset-ai/haystack/blob/fc077992067263a1a2c2d8bdf3ccfad02ea87ee6/haystack/nodes/preprocessor/preprocessor.py#L99)). When this is done by multiple processes in parallel then it can... | 1.0 | `PreProcessor` `FileExistsError` with parallel pipeline instantiation on same machine (noisy neighbor) - **Describe the bug**

When a `PreProcessor` is initialized, it downloads the `punkt` sentence tokenizer ([see code here](https://github.com/deepset-ai/haystack/blob/fc077992067263a1a2c2d8bdf3ccfad02ea87ee6/haystack/... | process | preprocessor fileexistserror with parallel pipeline instantiation on same machine noisy neighbor describe the bug when a preprocessor is initialized it downloads the punkt sentence tokenizer when this is done by multiple processes in parallel then it can happen that both don t find the model data... | 1 |

46,972 | 6,035,117,521 | IssuesEvent | 2017-06-09 13:07:37 | geetsisbac/WK2XQXCBCXIVMLBGXSPVU5EB | https://api.github.com/repos/geetsisbac/WK2XQXCBCXIVMLBGXSPVU5EB | reopened | aM3WrkmVCjZRWlNDoR92bv6UcoTRvywEAK+V6gANgqRDDcICwpIcCCE2nHK420T/Ycnv2tijxWAoDyXJXSAnU5MUXVlk9yFf91miza+U/dkE6q5JaJlxNl7XsZldMgca6OOqBdXqnn8whgzdeCaie4Q3/F/fjdhpP128xe5nGl0= | design | y50q32r6Y2e0mDbJ7oz5wbFQ31DXp+o8QTm/eSfvVdBfNKBIhtb8D/Bf/cFg8K+NCQLdp3zhZjYjxvWP6IR2UaQ7XZOWjQbj+BwYz8hlxeJtf3cPydKNrGl5X8ZKRPUMEdCGj9RYDKZvxXVTftXU1y8+n+XuaJW66hyNgdA/W3yxuNx7KINBYkrgv7zJpemAWnree1jDNbBExXDf3K7M7xE1J2Iea7gwPx/HWGj8QYOH00ULtTfhfWe4yuYHE/m3xFF22qzOn8o80CEiKE4Rcp6mxHaTrko/9P2dljFWM++ct167VCuNhP3Uta+hKcft... | 1.0 | aM3WrkmVCjZRWlNDoR92bv6UcoTRvywEAK+V6gANgqRDDcICwpIcCCE2nHK420T/Ycnv2tijxWAoDyXJXSAnU5MUXVlk9yFf91miza+U/dkE6q5JaJlxNl7XsZldMgca6OOqBdXqnn8whgzdeCaie4Q3/F/fjdhpP128xe5nGl0= - y50q32r6Y2e0mDbJ7oz5wbFQ31DXp+o8QTm/eSfvVdBfNKBIhtb8D/Bf/cFg8K+NCQLdp3zhZjYjxvWP6IR2UaQ7XZOWjQbj+BwYz8hlxeJtf3cPydKNrGl5X8ZKRPUMEdCGj9RYDKZvxXVTf... | non_process | u f bf n b p nmjn eqx cyrvuswcwccqcngjk hk | 0 |

19,492 | 25,801,009,632 | IssuesEvent | 2022-12-11 01:26:05 | LLazyEmail/nomoretogo_email_template | https://api.github.com/repos/LLazyEmail/nomoretogo_email_template | closed | подумать как добавлять множество text | todo in process | ERROR: type should be string, got "https://github.com/LLazyEmail/nomoretogo_email_template/blob/16a0b7def2f80d35a05e53e7c2d257aeabc84b8c/src/components/instructionComponent.js#L13\n\n```javascript\n\n// Create instruction component\nconst INSTRUCTION_COMPONENT_ERROR = (variable) =>\n `Empty ${variable} in instructionComponent`;\n\nconst createTitle = (title) => {\n return `<p style=\"margin-top: 0px; margin-bottom: 10px; line-height: 150%;\"><strong>${title}</strong></p>`;\n};\n\nconst createText = (text) => {\n return `<p style=\"margin-top: 0px; margin-bottom: 10px; line-height: 150%;\">${text}</p>`;\n};\n\n// TODO : нужно подумать как добавлять множество text\nconst mainBlock = (params) => {\n var { title, text, title2, text2 } = params;\n return `<table align=\"center\" border=\"0\" bgcolor=\"#ffffff\" class=\"mlContentTable mlContentTableDefault\" cellpadding=\"0\" cellspacing=\"0\" width=\"640\">\n <tbody><tr>\n <td class=\"mlContentTableCardTd\">\n <table align=\"center\" bgcolor=\"#ffffff\" border=\"0\" cellpadding=\"0\" cellspacing=\"0\" class=\"mlContentTable ml-default\" style=\"width: 640px; min-width: 640px;\" width=\"640\">\n <tbody><tr>\n <td>\n <table role=\"presentation\" cellpadding=\"0\" cellspacing=\"0\" border=\"0\" align=\"center\" width=\"640\" style=\"width: 640px; min-width: 640px;\" class=\"mlContentTable\">\n <tbody><tr>\n <td height=\"20\" class=\"spacingHeight-20\" style=\"line-height: 20px; min-height: 20px;\"></td>\n </tr>\n </tbody></table>\n <table role=\"presentation\" cellpadding=\"0\" cellspacing=\"0\" border=\"0\" align=\"center\" width=\"640\" style=\"width: 640px; min-width: 640px;\" class=\"mlContentTable\">\n <tbody><tr>\n <td align=\"center\" style=\"padding: 0px 40px;\" class=\"mlContentOuter\">\n <table role=\"presentation\" cellpadding=\"0\" cellspacing=\"0\" border=\"0\" align=\"center\" width=\"100%\">\n <tbody><tr>\n <td class=\"bodyTitle\" id=\"bodyText-34\" style=\"font-family: 'Poppins', sans-serif; font-size: 14px; line-height: 150%; color: #6f6f6f;\">\n <p style=\"margin-top: 0px; margin-bottom: 10px; line-height: 150%; text-align: center;\"></p>\n <p style=\"margin-top: 0px; margin-bottom: 10px; line-height: 150%;\"><strong></strong></p>\n ${createTitle(title)}\n ${createText(text)}\n ${createTitle(title2)}\n ${createText(text2)}\n <p style=\"margin-top: 0px; margin-bottom: 10px; line-height: 150%;\">Slice and Dice: Cut the vegetables and store in zippered bags or divided containers.</p>\n <p style=\"margin-top: 0px; margin-bottom: 0px; line-height: 150%;\">Make Ahead and Refrigerate: Make the sauce; Cook the noodles; Make the dressing; Make the spaetzle; Cook the rice.<br><br><br><br><strong></strong><br><strong></strong><strong></strong></p>\n </td>\n </tr>\n </tbody></table>\n </td>\n </tr>\n </tbody></table>\n <table role=\"presentation\" cellpadding=\"0\" cellspacing=\"0\" border=\"0\" align=\"center\" width=\"640\" style=\"width: 640px; min-width: 640px;\" class=\"mlContentTable\">\n <tbody><tr>\n <td height=\"20\" class=\"spacingHeight-20\" style=\"line-height: 20px; min-height: 20px;\"></td>\n </tr>\n </tbody></table>\n </td>\n </tr>\n </tbody></table>\n </td>\n </tr>\n </tbody></table>`;\n};\n\n// we are throwing an error with the same constant 10 times.\nfunction searchForErrors(params) {\n var { title, text, title2, text2 } = params;\n\n if (title == '') {\n throw new Error(INSTRUCTION_COMPONENT_ERROR('title'));\n }\n if (text == '') {\n throw new Error(INSTRUCTION_COMPONENT_ERROR('text'));\n }\n if (title2 == '') {\n throw new Error(INSTRUCTION_COMPONENT_ERROR('title2'));\n }\n if (text2 == '') {\n throw new Error(INSTRUCTION_COMPONENT_ERROR('text2'));\n }\n}\n\nexport default function (data) {\n searchForErrors(data);\n return mainBlock(data);\n}\n\n```" | 1.0 | подумать как добавлять множество text - https://github.com/LLazyEmail/nomoretogo_email_template/blob/16a0b7def2f80d35a05e53e7c2d257aeabc84b8c/src/components/instructionComponent.js#L13

```javascript

// Create instruction component

const INSTRUCTION_COMPONENT_ERROR = (variable) =>

`Empty ${variable} in instructionC... | process | подумать как добавлять множество text javascript create instruction component const instruction component error variable empty variable in instructioncomponent const createtitle title return title const createtext text return text todo ну... | 1 |

611,284 | 18,950,927,218 | IssuesEvent | 2021-11-18 15:06:39 | ARMmbed/mbed-os | https://api.github.com/repos/ARMmbed/mbed-os | closed | Export to uvision failing with missing context fault handler | priority: untriaged component: untriaged | <!--

************************************** WARNING **************************************

The ciarcom bot parses this header automatically. Any deviation from the

template may cause the bot to automatically correct this header or may result in a

warning message, requesting updates.

Please e... | 1.0 | Export to uvision failing with missing context fault handler - <!--

************************************** WARNING **************************************

The ciarcom bot parses this header automatically. Any deviation from the

template may cause the bot to automatically correct this header or may resul... | non_process | export to uvision failing with missing context fault handler warning the ciarcom bot parses this header automatically any deviation from the template may cause the bot to automatically correct this header or may resul... | 0 |

1,944 | 4,769,527,120 | IssuesEvent | 2016-10-26 12:53:03 | Lever-age/leverage | https://api.github.com/repos/Lever-age/leverage | closed | Separate repos for data pipeline and analysis? | process/administration question ready for review | The data pipeline will scrape donation records from the BoE, clean it, and load it into the database. The analysis subproject looks at new ways of classifying the donations in the DB. People who are working on the public-facing application probably don't need to clone all of this code. | 1.0 | Separate repos for data pipeline and analysis? - The data pipeline will scrape donation records from the BoE, clean it, and load it into the database. The analysis subproject looks at new ways of classifying the donations in the DB. People who are working on the public-facing application probably don't need to clone al... | process | separate repos for data pipeline and analysis the data pipeline will scrape donation records from the boe clean it and load it into the database the analysis subproject looks at new ways of classifying the donations in the db people who are working on the public facing application probably don t need to clone al... | 1 |

18,641 | 24,580,825,857 | IssuesEvent | 2022-10-13 15:29:17 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Consent API] Cloud storage > Unsigned document > Issue related to consent version in the naming convention of the unsigned document | Bug P2 Process: Fixed Process: Tested dev | Publish the study for the fourth time in the study builder and Verify the naming convention of unsigned document in the cloud storage.

**AR:** Consent version is getting displayed as "1.3000001" in cloud storage

**ER:** Consent version should be displayed as "1.3" in cloud storage

**Note:**

1. Issue is observe... | 2.0 | [Consent API] Cloud storage > Unsigned document > Issue related to consent version in the naming convention of the unsigned document - Publish the study for the fourth time in the study builder and Verify the naming convention of unsigned document in the cloud storage.

**AR:** Consent version is getting displayed as... | process | cloud storage unsigned document issue related to consent version in the naming convention of the unsigned document publish the study for the fourth time in the study builder and verify the naming convention of unsigned document in the cloud storage ar consent version is getting displayed as in cl... | 1 |

411,137 | 12,015,007,798 | IssuesEvent | 2020-04-10 12:59:49 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Tipping banner does not scale properly based on viewport size | feature/rewards priority/P5 | **Issue:** Tipping banner does not scale properly based on viewport size. If viewport size is smaller than usual because of, e.g., docked dev tools, the tipping banner will appear shorter horizontally, and users will have to scroll side to side inside it to use it.

Even when the developer tools are later closed, the... | 1.0 | Tipping banner does not scale properly based on viewport size - **Issue:** Tipping banner does not scale properly based on viewport size. If viewport size is smaller than usual because of, e.g., docked dev tools, the tipping banner will appear shorter horizontally, and users will have to scroll side to side inside it ... | non_process | tipping banner does not scale properly based on viewport size issue tipping banner does not scale properly based on viewport size if viewport size is smaller than usual because of e g docked dev tools the tipping banner will appear shorter horizontally and users will have to scroll side to side inside it ... | 0 |

22,165 | 30,712,785,490 | IssuesEvent | 2023-07-27 10:58:30 | ipfs/kubo | https://api.github.com/repos/ipfs/kubo | closed | Patch Releases | topic/process | Currently, all go-ipfs releases are "patch" releases. IIRC, this was because we decided to bump to 0.5.0 when we hit beta. Unfortunately, this means we can't issue _actual_ patch releases and means that issues like https://github.com/ipfs/go-ipfs/issues/6254.

Proposal:

1. Start cutting 0.x.0 releases starting wit... | 1.0 | Patch Releases - Currently, all go-ipfs releases are "patch" releases. IIRC, this was because we decided to bump to 0.5.0 when we hit beta. Unfortunately, this means we can't issue _actual_ patch releases and means that issues like https://github.com/ipfs/go-ipfs/issues/6254.

Proposal:

1. Start cutting 0.x.0 rele... | process | patch releases currently all go ipfs releases are patch releases iirc this was because we decided to bump to when we hit beta unfortunately this means we can t issue actual patch releases and means that issues like proposal start cutting x releases starting with create patch ... | 1 |

108,465 | 9,308,371,826 | IssuesEvent | 2019-03-25 14:26:12 | xcat2/xcat-core | https://api.github.com/repos/xcat2/xcat-core | closed | synclist: EXECUTEALWAYS error when another line in the synclist references the same destination node | status:pending test:testcase_requested | Hi,

This one is a bit involved, but it's still a perfectly reproducible bug :)

### Description

When an `EXECUTEALWAYS` directive in a `syncfile` refers to a destination node that is already referenced in a previous file copy directive, `updatenode -F` returns a `Not an ARRAY reference` error

### Steps to re... | 2.0 | synclist: EXECUTEALWAYS error when another line in the synclist references the same destination node - Hi,

This one is a bit involved, but it's still a perfectly reproducible bug :)

### Description

When an `EXECUTEALWAYS` directive in a `syncfile` refers to a destination node that is already referenced in a pr... | non_process | synclist executealways error when another line in the synclist references the same destination node hi this one is a bit involved but it s still a perfectly reproducible bug description when an executealways directive in a syncfile refers to a destination node that is already referenced in a pr... | 0 |

5,983 | 8,799,683,750 | IssuesEvent | 2018-12-24 15:59:33 | syauqiahmd/project_e-commerce | https://api.github.com/repos/syauqiahmd/project_e-commerce | closed | Laporan | on process | - [x] Laporan transaksi penjualan per-priode (offline/online)

- [x] Laporan barang terjual per-priode (terjual via offline/online)

- [x] master brand

- [x] master barang

- [x] master pengguna (public/admin) | 1.0 | Laporan - - [x] Laporan transaksi penjualan per-priode (offline/online)

- [x] Laporan barang terjual per-priode (terjual via offline/online)

- [x] master brand

- [x] master barang

- [x] master pengguna (public/admin) | process | laporan laporan transaksi penjualan per priode offline online laporan barang terjual per priode terjual via offline online master brand master barang master pengguna public admin | 1 |

366,032 | 10,807,898,087 | IssuesEvent | 2019-11-07 09:26:56 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Review public API's (jBallerina runtime value classes) | Area/jBallerina Points/2 Priority/High Type/Task | **Description:**

Do a API review on the user facing public methods from jBallerina runtime value classes.

**Steps to reproduce:**

**Affected Versions:**

**OS, DB, other environment details and versions:**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other r... | 1.0 | Review public API's (jBallerina runtime value classes) - **Description:**

Do a API review on the user facing public methods from jBallerina runtime value classes.

**Steps to reproduce:**

**Affected Versions:**

**OS, DB, other environment details and versions:**

**Related Issues (optional):**

<!-- Any rel... | non_process | review public api s jballerina runtime value classes description do a api review on the user facing public methods from jballerina runtime value classes steps to reproduce affected versions os db other environment details and versions related issues optional sugge... | 0 |

340,007 | 10,265,622,775 | IssuesEvent | 2019-08-22 19:19:13 | deep-learning-indaba/Baobab | https://api.github.com/repos/deep-learning-indaba/Baobab | closed | Attendance Admin: Unconfirmed Registration for Industry Professionals to Appear on Attendance list with an Alert | High Priority back-end front-end | Industry professionals with unconfirmed registration to appear on attendance list, but can't be marked as attending.

The interface must display a message/alert to go pay fee at the special circumstances desk. The special circumstances desk can then confirm the registration and then the individual will appear on the... | 1.0 | Attendance Admin: Unconfirmed Registration for Industry Professionals to Appear on Attendance list with an Alert - Industry professionals with unconfirmed registration to appear on attendance list, but can't be marked as attending.

The interface must display a message/alert to go pay fee at the special circumstance... | non_process | attendance admin unconfirmed registration for industry professionals to appear on attendance list with an alert industry professionals with unconfirmed registration to appear on attendance list but can t be marked as attending the interface must display a message alert to go pay fee at the special circumstance... | 0 |

12,181 | 14,742,019,987 | IssuesEvent | 2021-01-07 11:33:34 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | 054-Fair Oaks Issue account XTC7995 | anc-process anp-0.5 ant-support | In GitLab by @kdjstudios on Mar 6, 2019, 09:05

**Submitted by:** "Vanessa Salamanca" <vanessa.salamanca@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/7360476

**Server:** Internal

**Client/Site:** Fair Oaks

**Account:** XTC7995

**Issue:**

Terminated account is throwing out a c... | 1.0 | 054-Fair Oaks Issue account XTC7995 - In GitLab by @kdjstudios on Mar 6, 2019, 09:05

**Submitted by:** "Vanessa Salamanca" <vanessa.salamanca@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/7360476

**Server:** Internal

**Client/Site:** Fair Oaks

**Account:** XTC7995

**Issue:**

... | process | fair oaks issue account in gitlab by kdjstudios on mar submitted by vanessa salamanca helpdesk server internal client site fair oaks account issue terminated account is throwing out a charge of on a terminated account from they have no usage and h... | 1 |

43,468 | 23,252,264,413 | IssuesEvent | 2022-08-04 05:44:12 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | [Perf] Regressions in System.Buffers.Tests.RentReturnArrayPoolTests<Byte> | area-System.Threading tenet-performance tenet-performance-benchmarks refs/heads/main RunKind=micro Regression CoreClr arm64 ubuntu 20.04 | ### Run Information

Architecture | arm64

-- | --

OS | ubuntu 20.04

Baseline | [3d74b00659fec817506e2888f87936518556e01c](https://github.com/dotnet/runtime/commit/3d74b00659fec817506e2888f87936518556e01c)

Compare | [0c3d5ad05754be529e470d7b0399f40a2bc8087d](https://github.com/dotnet/runtime/commit/0c3d5ad05754be5... | True | [Perf] Regressions in System.Buffers.Tests.RentReturnArrayPoolTests<Byte> - ### Run Information

Architecture | arm64

-- | --

OS | ubuntu 20.04

Baseline | [3d74b00659fec817506e2888f87936518556e01c](https://github.com/dotnet/runtime/commit/3d74b00659fec817506e2888f87936518556e01c)

Compare | [0c3d5ad05754be529e470d... | non_process | regressions in system buffers tests rentreturnarraypooltests run information architecture os ubuntu baseline compare diff regressions in system buffers tests rentreturnarraypooltests lt byte gt benchmark baseline test test base test quality edg... | 0 |

158,363 | 20,024,301,124 | IssuesEvent | 2022-02-01 19:30:47 | Recidiviz/supervision-success-component | https://api.github.com/repos/Recidiviz/supervision-success-component | closed | Security Alert - Package: color-string; Severity: MODERATE | Subject: Security Subject: Vulnerability Severity: MODERATE |

---

due: 2022-03-26

---

Affected package: color-string

Ecosystem: NPM

Affected version range: < 1.5.5

Summary: Regular Expression Denial of Service (ReDOS)

Description: In the npm package `color-string`, there is a ReDos (Regular Expression Denial of Service) vulnerabili... | True | Security Alert - Package: color-string; Severity: MODERATE -

---

due: 2022-03-26

---

Affected package: color-string

Ecosystem: NPM

Affected version range: < 1.5.5

Summary: Regular Expression Denial of Service (ReDOS)

Description: In the npm package `color-string`, there i... | non_process | security alert package color string severity moderate due affected package color string ecosystem npm affected version range summary regular expression denial of service redos description in the npm package color string there is a ... | 0 |

9,313 | 12,324,035,775 | IssuesEvent | 2020-05-13 13:08:31 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `TruncateInt` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `TruncateInt` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @andylokandy

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn... | 2.0 | UCP: Migrate scalar function `TruncateInt` from TiDB -

## Description

Port the scalar function `TruncateInt` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @andylokandy

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.... | process | ucp migrate scalar function truncateint from tidb description port the scalar function truncateint from tidb to coprocessor score mentor s andylokandy recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

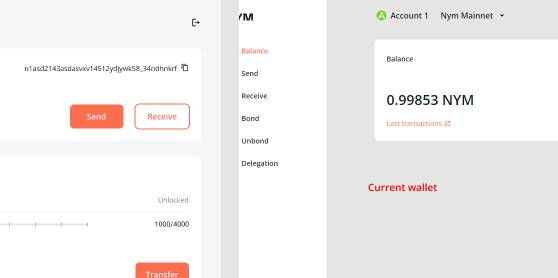

147,821 | 23,278,970,456 | IssuesEvent | 2022-08-05 10:02:51 | nymtech/team-product | https://api.github.com/repos/nymtech/team-product | closed | Wallet - Menu, highlights, global background colors | desktop wallet design | - We are using wrong background color in light version of wallet

- Spacing in leftside menu

So between each position in menu we have 32px of spacing, but each position is in block/group which height is 2... | 1.0 | Wallet - Menu, highlights, global background colors - - We are using wrong background color in light version of wallet

- Spacing in leftside menu

So between each position in menu we have 32px of spacing,... | non_process | wallet menu highlights global background colors we are using wrong background color in light version of wallet spacing in leftside menu so between each position in menu we have of spacing but each position is in block group which height is because we don t have this space menu still looks clut... | 0 |

46,598 | 24,618,132,531 | IssuesEvent | 2022-10-15 15:22:10 | gtadigital/grp-userfeedback | https://api.github.com/repos/gtadigital/grp-userfeedback | opened | Load Time | performance | [Feedback Gregorio]

The gta Digital page takes a very long time to load (but perhaps this is inevitable, given the complexity and richness of the results). | True | Load Time - [Feedback Gregorio]

The gta Digital page takes a very long time to load (but perhaps this is inevitable, given the complexity and richness of the results). | non_process | load time the gta digital page takes a very long time to load but perhaps this is inevitable given the complexity and richness of the results | 0 |

6,282 | 9,260,474,808 | IssuesEvent | 2019-03-18 05:51:42 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | child_process, process: possibly confusing error message | child_process errors process | * **Version**: all?

* **Platform**: all?

* **Subsystem**: child_process, process

`parent.js`:

```js

'use strict';

const subprocess = require('child_process').fork('subprocess.js');

try {

subprocess.send(Symbol());

} catch (err) {

console.error('PARENT error:', err);

}

```

`subprocess.js`:

```js

... | 2.0 | child_process, process: possibly confusing error message - * **Version**: all?

* **Platform**: all?

* **Subsystem**: child_process, process

`parent.js`:

```js

'use strict';