Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

21,367 | 29,194,080,693 | IssuesEvent | 2023-05-20 00:31:50 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Remoto] QA Analyst na Coodesh | SALVADOR TESTE PJ JAVASCRIPT RUBY REQUISITOS SELENIUM CUCUMBER REMOTO CYPRESS PROCESSOS GITHUB UMA QUALIDADE VENDAS QA TESTES AUTOMATIZADOS METODOLOGIAS ÁGEIS RSPEC AUTOMAÇÃO DE TESTES Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/qa-analyst-202942193?utm_source=githu... | 1.0 | [Remoto] QA Analyst na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/qa-a... | process | qa analyst na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado de candidatura 👋 a bala... | 1 |

7,259 | 10,420,471,777 | IssuesEvent | 2019-09-16 00:42:00 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | In place processing multipart to singleparts does not handle unique constraints | Bug Processing | Provider errors are generated due to failure of UNIQUE constraint when you try to save after running Multipart to singleparts in a geopackage layer.

**How to Reproduce**

1. Download and open a copy of ‘Multi_to_Singleparts_FID_Bug.qgz’ (zip attached below)

2. Open the Attribute Table for the ‘Multi_to_Singlepart... | 1.0 | In place processing multipart to singleparts does not handle unique constraints - Provider errors are generated due to failure of UNIQUE constraint when you try to save after running Multipart to singleparts in a geopackage layer.

**How to Reproduce**

1. Download and open a copy of ‘Multi_to_Singleparts_FID_Bug.q... | process | in place processing multipart to singleparts does not handle unique constraints provider errors are generated due to failure of unique constraint when you try to save after running multipart to singleparts in a geopackage layer how to reproduce download and open a copy of ‘multi to singleparts fid bug q... | 1 |

71,721 | 3,367,617,921 | IssuesEvent | 2015-11-22 10:19:05 | music-encoding/music-encoding | https://api.github.com/repos/music-encoding/music-encoding | closed | The element head is not allowed in projectDesc | Priority: Medium | _From [siggelun...@gmail.com](https://code.google.com/u/110461478002540803867/) on December 19, 2013 03:06:13_

What steps will reproduce the problem? 1. 2. 3. What is the expected output? What do you see instead? What version of the product are you using? On what operating system? Please provide any additional informa... | 1.0 | The element head is not allowed in projectDesc - _From [siggelun...@gmail.com](https://code.google.com/u/110461478002540803867/) on December 19, 2013 03:06:13_

What steps will reproduce the problem? 1. 2. 3. What is the expected output? What do you see instead? What version of the product are you using? On what operat... | non_process | the element head is not allowed in projectdesc from on december what steps will reproduce the problem what is the expected output what do you see instead what version of the product are you using on what operating system please provide any additional information below in my view an... | 0 |

17,141 | 22,686,040,208 | IssuesEvent | 2022-07-04 14:11:13 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | closed | Enhance randomized process tests with start process instance anywhere ability | team/process-automation | To comprehensively test the start process instance anywhere feature, we'll need to enhance the randomized process tests with the ability to start the process instance anywhere.

Blocked by #9390 #9391

## Out of scope

- starting the process instance inside a multi-instance

- starting the process instance inside a... | 1.0 | Enhance randomized process tests with start process instance anywhere ability - To comprehensively test the start process instance anywhere feature, we'll need to enhance the randomized process tests with the ability to start the process instance anywhere.

Blocked by #9390 #9391

## Out of scope

- starting the pr... | process | enhance randomized process tests with start process instance anywhere ability to comprehensively test the start process instance anywhere feature we ll need to enhance the randomized process tests with the ability to start the process instance anywhere blocked by out of scope starting the process ... | 1 |

7,406 | 10,525,900,731 | IssuesEvent | 2019-09-30 15:56:15 | Python-Markdown/markdown | https://api.github.com/repos/Python-Markdown/markdown | reopened | Python Version Support Timeline | process | Just noting this so I can find it later. Python defines the status of its versions [here][1].

The following end-of life cycles are currently scheduled:

* <del>Python 3.4 2019-03-16</del>

* Python 2.7 2020-01-01

* Python 3.5 2020-09-13

* Python 3.6 2021-12-23

* Python 3.7 2023-06-27

* Python 3.8 2024-10

Al... | 1.0 | Python Version Support Timeline - Just noting this so I can find it later. Python defines the status of its versions [here][1].

The following end-of life cycles are currently scheduled:

* <del>Python 3.4 2019-03-16</del>

* Python 2.7 2020-01-01

* Python 3.5 2020-09-13

* Python 3.6 2021-12-23

* Python 3.7 2023... | process | python version support timeline just noting this so i can find it later python defines the status of its versions the following end of life cycles are currently scheduled python python python python python python also of inter... | 1 |

18,085 | 24,107,887,533 | IssuesEvent | 2022-09-20 08:58:04 | googleapis/dotnet-spanner-nhibernate | https://api.github.com/repos/googleapis/dotnet-spanner-nhibernate | closed | Dependency Dashboard | type: process api: spanner | This issue lists Renovate updates and detected dependencies. Read the [Dependency Dashboard](https://docs.renovatebot.com/key-concepts/dashboard/) docs to learn more.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/benchma... | 1.0 | Dependency Dashboard - This issue lists Renovate updates and detected dependencies. Read the [Dependency Dashboard](https://docs.renovatebot.com/key-concepts/dashboard/) docs to learn more.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-... | process | dependency dashboard this issue lists renovate updates and detected dependencies read the docs to learn more open these updates have all been created already click a checkbox below to force a retry rebase of any pull pull pull pull pull ... | 1 |

691,291 | 23,691,105,218 | IssuesEvent | 2022-08-29 10:52:49 | googleapis/python-pubsub | https://api.github.com/repos/googleapis/python-pubsub | closed | tests.system.TestStreamingPull: test_streaming_pull_ack_deadline failed | api: pubsub type: bug priority: p2 flakybot: issue flakybot: flaky | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: b48a5a5dc43c95ce8c466686e568e72c583de2f4

b... | 1.0 | tests.system.TestStreamingPull: test_streaming_pull_ack_deadline failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will... | non_process | tests system teststreamingpull test streaming pull ack deadline failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output self publisher s... | 0 |

6,901 | 10,053,667,243 | IssuesEvent | 2019-07-21 18:43:56 | tokio-rs/tokio | https://api.github.com/repos/tokio-rs/tokio | opened | process: Investigate using job objects and IOCP on Windows | tokio-process | Originally reported in alexcrichton/tokio-process#11:

> While reading the MSDN docs on job objects for something, I came across this page:

https://msdn.microsoft.com/en-us/library/windows/desktop/ms684141(v=vs.85).aspx

>

> If you create a job object, you can associate an IOCP with it. If you then create processes... | 1.0 | process: Investigate using job objects and IOCP on Windows - Originally reported in alexcrichton/tokio-process#11:

> While reading the MSDN docs on job objects for something, I came across this page:

https://msdn.microsoft.com/en-us/library/windows/desktop/ms684141(v=vs.85).aspx

>

> If you create a job object, yo... | process | process investigate using job objects and iocp on windows originally reported in alexcrichton tokio process while reading the msdn docs on job objects for something i came across this page if you create a job object you can associate an iocp with it if you then create processes in that job gene... | 1 |

654,767 | 21,662,157,185 | IssuesEvent | 2022-05-06 20:36:28 | bounswe/bounswe2022group7 | https://api.github.com/repos/bounswe/bounswe2022group7 | closed | [Database] Implement "ArtItem", "DiscussionPost", and "Comment" models | Status: Completed Priority: Medium Difficulty: Medium Type: Implementation | [\_\_init.py\_\_](https://github.com/bounswe/bounswe2022group7/blob/practice-app/database-base/practice-app/website/__init__.py) and [models.py](https://github.com/bounswe/bounswe2022group7/blob/practice-app/database-base/practice-app/website/models.py) files are created with fundamental functions.

Given those, I s... | 1.0 | [Database] Implement "ArtItem", "DiscussionPost", and "Comment" models - [\_\_init.py\_\_](https://github.com/bounswe/bounswe2022group7/blob/practice-app/database-base/practice-app/website/__init__.py) and [models.py](https://github.com/bounswe/bounswe2022group7/blob/practice-app/database-base/practice-app/website/mode... | non_process | implement artitem discussionpost and comment models and files are created with fundamental functions given those i shall implement artitem discussionpost and comment models implement mentioned models push the updates to the branch deadline reviewer ... | 0 |

443,781 | 12,799,552,447 | IssuesEvent | 2020-07-02 15:34:20 | bitnami-labs/kubewatch | https://api.github.com/repos/bitnami-labs/kubewatch | opened | Support watching arbitrary custom resources | enhancement priority-P1 | Currently kubewatch supports only watching a built-in list of resource kinds.

Nowadays end users consume many custom resources (CRs) and also new k8s versions keep adding new resources and we shouldn't need to update kubewatch every time such a new resource pops up. | 1.0 | Support watching arbitrary custom resources - Currently kubewatch supports only watching a built-in list of resource kinds.

Nowadays end users consume many custom resources (CRs) and also new k8s versions keep adding new resources and we shouldn't need to update kubewatch every time such a new resource pops up. | non_process | support watching arbitrary custom resources currently kubewatch supports only watching a built in list of resource kinds nowadays end users consume many custom resources crs and also new versions keep adding new resources and we shouldn t need to update kubewatch every time such a new resource pops up | 0 |

16,021 | 20,188,228,740 | IssuesEvent | 2022-02-11 01:19:51 | savitamittalmsft/WAS-SEC-TEST | https://api.github.com/repos/savitamittalmsft/WAS-SEC-TEST | opened | Develop a security training program | WARP-Import WAF FEB 2021 Security Performance and Scalability Capacity Management Processes Operational Model & DevOps Roles & Responsibilities | <a href="https://www.microsoft.com/itshowcase/blog/how-microsoft-is-transforming-its-approach-to-security-training/">Develop a security training program</a>

<p><b>Why Consider This?</b></p>

Cybersecurity threats are always evolving and therefore those responsible for organizational information security require ... | 1.0 | Develop a security training program - <a href="https://www.microsoft.com/itshowcase/blog/how-microsoft-is-transforming-its-approach-to-security-training/">Develop a security training program</a>

<p><b>Why Consider This?</b></p>

Cybersecurity threats are always evolving and therefore those responsible for organi... | process | develop a security training program why consider this cybersecurity threats are always evolving and therefore those responsible for organizational information security require specialized continual and relevant training to ensure staff maintains the level of competency required to protect detect an... | 1 |

143,276 | 19,177,907,851 | IssuesEvent | 2021-12-04 00:04:02 | samq-ghdemo/js-monorepo | https://api.github.com/repos/samq-ghdemo/js-monorepo | opened | CVE-2014-10064 (High) detected in qs-0.6.6.tgz | security vulnerability | ## CVE-2014-10064 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>qs-0.6.6.tgz</b></p></summary>

<p>querystring parser</p>

<p>Library home page: <a href="https://registry.npmjs.org/qs/... | True | CVE-2014-10064 (High) detected in qs-0.6.6.tgz - ## CVE-2014-10064 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>qs-0.6.6.tgz</b></p></summary>

<p>querystring parser</p>

<p>Library h... | non_process | cve high detected in qs tgz cve high severity vulnerability vulnerable library qs tgz querystring parser library home page a href path to dependency file js monorepo nodegoat package json path to vulnerable library js monorepo nodegoat node modules zaproxy node modul... | 0 |

17,067 | 22,505,126,751 | IssuesEvent | 2022-06-23 14:54:14 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | [API Proposal]: `Process.Architecture` or `Process.Is64Bit` | api-suggestion area-System.Diagnostics.Process | ### Background and motivation

Working a lot with external process memory, I find it a little tedious having to use [`IsWow64Process`](https://docs.microsoft.com/en-us/windows/win32/api/wow64apiset/nf-wow64apiset-iswow64process) each time I want to infer the architecture of a target process. Not to mention, that functi... | 1.0 | [API Proposal]: `Process.Architecture` or `Process.Is64Bit` - ### Background and motivation

Working a lot with external process memory, I find it a little tedious having to use [`IsWow64Process`](https://docs.microsoft.com/en-us/windows/win32/api/wow64apiset/nf-wow64apiset-iswow64process) each time I want to infer the... | process | process architecture or process background and motivation working a lot with external process memory i find it a little tedious having to use each time i want to infer the architecture of a target process not to mention that function s out parameter is heaps confusing it would be a lot ni... | 1 |

433,331 | 12,505,688,934 | IssuesEvent | 2020-06-02 11:12:10 | gitcoinco/web | https://api.github.com/repos/gitcoinco/web | closed | Wonky layout in the "x new funded issues" mails | bug priority: backlog | **Describe the bug**

Layout between the "funder" and the text is all over the place.

**To Reproduce**

Receive e-mail, read e-mail

**Expected behavior**

A clear line between "owner"/"tasker"/"requester" and text

**Screenshots**

that makes shapes for the DY CR in the doub... | 1.0 | [PROC] [SHAPE] Prepare Latinos Hands-On tutorial - The tutorial should be composed of two parts, both working with UL 2018v9:

1. Using processor, define two reasonable workflows for a double muon dataset and DY NLO

2. Using shape analysis create another example folder that works with latinos root files (as it is for 20... | process | prepare latinos hands on tutorial the tutorial should be composed of two parts both working with ul using processor define two reasonable workflows for a double muon dataset and dy nlo using shape analysis create another example folder that works with latinos root files as it is for that makes shap... | 1 |

108,761 | 9,331,088,699 | IssuesEvent | 2019-03-28 08:56:31 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | org.elasticsearch.action.search.SearchResponseMergerTests.testMergeSearchHits Test failure | :Search/Search >test-failure v7.1.0 v8.0.0 | Fails reliably on master and 7.x (possibly other branches)

```

./gradlew :server:unitTest -Dtests.seed=6AD7B08D850920A6 -Dtests.class=org.elasticsearch.action.search.SearchResponseMergerTests -Dtests.method="testMergeSearchHits" -Dtests.security.manager=true -Dtests.locale=ar-SY -Dtests.timezone=SystemV/MST7 -Dcomp... | 1.0 | org.elasticsearch.action.search.SearchResponseMergerTests.testMergeSearchHits Test failure - Fails reliably on master and 7.x (possibly other branches)

```

./gradlew :server:unitTest -Dtests.seed=6AD7B08D850920A6 -Dtests.class=org.elasticsearch.action.search.SearchResponseMergerTests -Dtests.method="testMergeSearch... | non_process | org elasticsearch action search searchresponsemergertests testmergesearchhits test failure fails reliably on master and x possibly other branches gradlew server unittest dtests seed dtests class org elasticsearch action search searchresponsemergertests dtests method testmergesearchhits dtests s... | 0 |

12,609 | 15,012,974,343 | IssuesEvent | 2021-02-01 03:07:08 | topcoder-platform/community-app | https://api.github.com/repos/topcoder-platform/community-app | closed | Recommended checkbox functionality | FE ShapeupProcess challenge- recommender-tool | Recommended checkbox must not be part of the challenge type group.

It must a standalone checkbox

<img width="436" alt="Screenshot 2021-01-27 at 3 37 03 PM" src="https://user-images.githubusercontent.com/58783823/105976274-f757b700-60b5-11eb-89ae-5fcd8b17a4e6.png">

At present checking the recommended checkbox, d... | 1.0 | Recommended checkbox functionality - Recommended checkbox must not be part of the challenge type group.

It must a standalone checkbox

<img width="436" alt="Screenshot 2021-01-27 at 3 37 03 PM" src="https://user-images.githubusercontent.com/58783823/105976274-f757b700-60b5-11eb-89ae-5fcd8b17a4e6.png">

At present... | process | recommended checkbox functionality recommended checkbox must not be part of the challenge type group it must a standalone checkbox img width alt screenshot at pm src at present checking the recommended checkbox deselects the challenge type checkboxes this must not be the case | 1 |

480,008 | 13,821,856,508 | IssuesEvent | 2020-10-13 03:31:55 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | No voting for removing someone from office | Category: Laws Priority: Medium Type: Feature | It seems like the supreme court that can remove a president from office can not do so by a majority vote of the three, but the members are only able to remove him from office by themselves - each one can alone. | 1.0 | No voting for removing someone from office - It seems like the supreme court that can remove a president from office can not do so by a majority vote of the three, but the members are only able to remove him from office by themselves - each one can alone. | non_process | no voting for removing someone from office it seems like the supreme court that can remove a president from office can not do so by a majority vote of the three but the members are only able to remove him from office by themselves each one can alone | 0 |

5,476 | 27,363,850,307 | IssuesEvent | 2023-02-27 17:37:51 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | Bug: samconfig.toml values don't override default values in sam template | blocked/more-info-needed blocked/close-if-inactive maintainer/need-followup | ### Description:

Running a .NET Core 3.1 project containing Lambdas, trying to deploy using sam build and sam deploy. Build is successful, but Deploy fails in AWS because parameter overrides specified in samconfig.toml are not used, even though log for both sam build and sam deploy states that they are found correctly... | True | Bug: samconfig.toml values don't override default values in sam template - ### Description:

Running a .NET Core 3.1 project containing Lambdas, trying to deploy using sam build and sam deploy. Build is successful, but Deploy fails in AWS because parameter overrides specified in samconfig.toml are not used, even though... | non_process | bug samconfig toml values don t override default values in sam template description running a net core project containing lambdas trying to deploy using sam build and sam deploy build is successful but deploy fails in aws because parameter overrides specified in samconfig toml are not used even though... | 0 |

275,299 | 23,904,726,369 | IssuesEvent | 2022-09-08 22:44:55 | handsontable/hyperformula | https://api.github.com/repos/handsontable/hyperformula | closed | Run performance benchmark automatically for each PR | Chore Tests Released Performance Impact: Medium | ### Description

<!--- [mandatory] Describe the actual behavior and expected behavior -->

Run performance benchmark automatically for each PR. Something similar is done in Spreadsheet Viewer repo. It might be helpful

### Links

- https://github.com/handsontable/hyperformula/pull/938#issuecomment-1090052862

- htt... | 1.0 | Run performance benchmark automatically for each PR - ### Description

<!--- [mandatory] Describe the actual behavior and expected behavior -->

Run performance benchmark automatically for each PR. Something similar is done in Spreadsheet Viewer repo. It might be helpful

### Links

- https://github.com/handsontabl... | non_process | run performance benchmark automatically for each pr description run performance benchmark automatically for each pr something similar is done in spreadsheet viewer repo it might be helpful links internal discussion accessible by sequba | 0 |

169,901 | 13,164,828,075 | IssuesEvent | 2020-08-11 04:57:04 | mozilla-mobile/fenix | https://api.github.com/repos/mozilla-mobile/fenix | closed | FNX-12780 ⁃ Intermittent UI test failure - defaultDesktopBookmarksFoldersTest | eng:ui-test intermittent-test | ### Firebase Test Run:

https://console.firebase.google.com/u/0/project/moz-fenix/testlab/histories/bh.66b7091e15d53d45/matrices/7976327018966179987/executions/bs.58e6f78a50568c44/testcases/1/test-cases

https://console.firebase.google.com/u/0/project/moz-fenix/testlab/histories/bh.66b7091e15d53d45/matrices/761890037839... | 2.0 | FNX-12780 ⁃ Intermittent UI test failure - defaultDesktopBookmarksFoldersTest - ### Firebase Test Run:

https://console.firebase.google.com/u/0/project/moz-fenix/testlab/histories/bh.66b7091e15d53d45/matrices/7976327018966179987/executions/bs.58e6f78a50568c44/testcases/1/test-cases

https://console.firebase.google.com/u... | non_process | fnx ⁃ intermittent ui test failure defaultdesktopbookmarksfolderstest firebase test run stacktrace androidx test espresso base defaultfailurehandler assertionfailedwithcauseerror is displayed on the screen to the user doesn t match the selected view expected is displayed on the screen to the... | 0 |

12,480 | 14,949,074,403 | IssuesEvent | 2021-01-26 10:59:17 | cmux/koot | https://api.github.com/repos/cmux/koot | closed | 新的生命周期 beforeBuild & afterBuild | bundling process enhancement | ```koot.config.js

module.exports = {

// ...

beforeBuild: async (fullConfig) => {},

afterBuild: async (fullConfig) => {},

// ...

};

``` | 1.0 | 新的生命周期 beforeBuild & afterBuild - ```koot.config.js

module.exports = {

// ...

beforeBuild: async (fullConfig) => {},

afterBuild: async (fullConfig) => {},

// ...

};

``` | process | 新的生命周期 beforebuild afterbuild koot config js module exports beforebuild async fullconfig afterbuild async fullconfig | 1 |

14,351 | 17,374,572,807 | IssuesEvent | 2021-07-30 18:49:41 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Unlink functionnality actually seem to apply to release pipelines | Pri2 devops-cicd-process/tech devops/prod doc-enhancement |

> The Link and Unlink functionality applies to build pipelines only. It does not apply to release pipelines.

The "Unlink all" button does exist on "Releases" Pipelines, and is referenced on [another doc](https://docs.microsoft.com/en-us/azure/devops/pipelines/library/task-groups?view=azure-devops). I personally do... | 1.0 | Unlink functionnality actually seem to apply to release pipelines -

> The Link and Unlink functionality applies to build pipelines only. It does not apply to release pipelines.

The "Unlink all" button does exist on "Releases" Pipelines, and is referenced on [another doc](https://docs.microsoft.com/en-us/azure/devo... | process | unlink functionnality actually seem to apply to release pipelines the link and unlink functionality applies to build pipelines only it does not apply to release pipelines the unlink all button does exist on releases pipelines and is referenced on i personally don t see it on a pipelines pipeline ... | 1 |

9,667 | 12,675,347,071 | IssuesEvent | 2020-06-19 01:23:21 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Still don't know where Run (build) number is set in classic pipelines | Pri1 devops-cicd-process/tech devops/prod | I came to this page to find out where the "Build Number" option moved since I can no longer find it to edit in our classic pipelines. After reading this page, I still have no idea where the actual field is (despite a note saying that you can leave it blank).

If it's gone, it should be documented here. If it's suppos... | 1.0 | Still don't know where Run (build) number is set in classic pipelines - I came to this page to find out where the "Build Number" option moved since I can no longer find it to edit in our classic pipelines. After reading this page, I still have no idea where the actual field is (despite a note saying that you can leave ... | process | still don t know where run build number is set in classic pipelines i came to this page to find out where the build number option moved since i can no longer find it to edit in our classic pipelines after reading this page i still have no idea where the actual field is despite a note saying that you can leave ... | 1 |

77,781 | 7,603,563,912 | IssuesEvent | 2018-04-29 15:48:58 | OregonStateRocketry/30k2018-CS-Capstone | https://api.github.com/repos/OregonStateRocketry/30k2018-CS-Capstone | closed | Avionics - rocket is using payload's state machine | before practice launch #1 required tests | Right now mainRocket is using the state machine designed for mainPayload. This will not work because the main rocket does not go through the same phases. Many stages are the same but others are not. It might be confusing to use logic to separate them.

I think we should probably make sure it does what we want it t... | 1.0 | Avionics - rocket is using payload's state machine - Right now mainRocket is using the state machine designed for mainPayload. This will not work because the main rocket does not go through the same phases. Many stages are the same but others are not. It might be confusing to use logic to separate them.

I think w... | non_process | avionics rocket is using payload s state machine right now mainrocket is using the state machine designed for mainpayload this will not work because the main rocket does not go through the same phases many stages are the same but others are not it might be confusing to use logic to separate them i think w... | 0 |

593,945 | 18,020,551,057 | IssuesEvent | 2021-09-16 18:50:16 | google/shaka-player | https://api.github.com/repos/google/shaka-player | closed | Networking engine does not provide response HTTP status code | enhancement contributions welcome Why didn't we catch this sooner? priority:P3 | **Have you read the [FAQ](https://bit.ly/ShakaFAQ) and checked for duplicate open issues?**

Yes

**What version of Shaka Player are you using?**

3.2.0

**Can you reproduce the issue with our latest release version?**

Yes

**Can you reproduce the issue with the latest code from `master`?**

Yes

**Are you u... | 1.0 | Networking engine does not provide response HTTP status code - **Have you read the [FAQ](https://bit.ly/ShakaFAQ) and checked for duplicate open issues?**

Yes

**What version of Shaka Player are you using?**

3.2.0

**Can you reproduce the issue with our latest release version?**

Yes

**Can you reproduce the ... | non_process | networking engine does not provide response http status code have you read the and checked for duplicate open issues yes what version of shaka player are you using can you reproduce the issue with our latest release version yes can you reproduce the issue with the latest code ... | 0 |

8,769 | 11,886,378,192 | IssuesEvent | 2020-03-27 21:46:34 | MicrosoftDocs/vsts-docs | https://api.github.com/repos/MicrosoftDocs/vsts-docs | closed | Step condition doesn't work | Pri1 devops-cicd-process/tech devops/prod support-request | After https://github.com/Pr0methean/BetterRandom/commit/abd1a4353829ee3725527cd2b4734c686b678597, I still don't get any artifacts after the previous step fails. Why not?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 3f151218-9a11-0078-e038... | 1.0 | Step condition doesn't work - After https://github.com/Pr0methean/BetterRandom/commit/abd1a4353829ee3725527cd2b4734c686b678597, I still don't get any artifacts after the previous step fails. Why not?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

... | process | step condition doesn t work after i still don t get any artifacts after the previous step fails why not document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source ... | 1 |

13,810 | 16,569,500,969 | IssuesEvent | 2021-05-30 05:05:02 | trpo2021/cw-ip-011_keyboardninja | https://api.github.com/repos/trpo2021/cw-ip-011_keyboardninja | opened | merging branches | in process | need to carefully merge the branches of the test2 graphics and main, along the way, correct the tests and makefile | 1.0 | merging branches - need to carefully merge the branches of the test2 graphics and main, along the way, correct the tests and makefile | process | merging branches need to carefully merge the branches of the graphics and main along the way correct the tests and makefile | 1 |

160,877 | 25,248,533,241 | IssuesEvent | 2022-11-15 13:02:38 | Sun-Mountain/lettuceMeetApp | https://api.github.com/repos/Sun-Mountain/lettuceMeetApp | closed | Feature - Make nav bar collapsable when viewed on a smaller screen | content-design description-needed | <!-- e.g.

Title should be describing the story/feature in one sentences:

- As a team user, I want to be able to move a student from one roster to another.

- Create Title Case component and use for all Nav Items, Section Titles, and Table Headers -->

**Description and related issues -**

<!-- Describe the feature here a... | 1.0 | Feature - Make nav bar collapsable when viewed on a smaller screen - <!-- e.g.

Title should be describing the story/feature in one sentences:

- As a team user, I want to be able to move a student from one roster to another.

- Create Title Case component and use for all Nav Items, Section Titles, and Table Headers -->

... | non_process | feature make nav bar collapsable when viewed on a smaller screen e g title should be describing the story feature in one sentences as a team user i want to be able to move a student from one roster to another create title case component and use for all nav items section titles and table headers ... | 0 |

303,226 | 26,194,127,000 | IssuesEvent | 2023-01-03 11:52:57 | navikt/tiltaksgjennomforing-prosess | https://api.github.com/repos/navikt/tiltaksgjennomforing-prosess | closed | Bygg av testing-dokumentfordeling | deploy dev-fss testing-dokumentfordeling | Kommenter med

>/deploy testing-dokumentfordeling

for å deploye til dev-fss.

Commit: 494723237ef34f1de33270cc3c1b78943f51fb48 | 1.0 | Bygg av testing-dokumentfordeling - Kommenter med

>/deploy testing-dokumentfordeling

for å deploye til dev-fss.

Commit: 494723237ef34f1de33270cc3c1b78943f51fb48 | non_process | bygg av testing dokumentfordeling kommenter med deploy testing dokumentfordeling for å deploye til dev fss commit | 0 |

16,043 | 20,190,730,255 | IssuesEvent | 2022-02-11 05:01:06 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Create runbook step 2 incorrectly indicates to select Powershell instead of PowerShell Workflow | automation/svc triaged cxp doc-enhancement process-automation/subsvc Pri2 |

[Enter feedback here]

Step 2 of the creating a Workbook indicates to create a PowerShell workbook and should be a PowerShell Workflow

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 3632c749-8963-f5ed-55ec-28af005780bd

* Version ... | 1.0 | Create runbook step 2 incorrectly indicates to select Powershell instead of PowerShell Workflow -

[Enter feedback here]

Step 2 of the creating a Workbook indicates to create a PowerShell workbook and should be a PowerShell Workflow

---

#### Document Details

⚠ *Do not edit this section. It is required for doc... | process | create runbook step incorrectly indicates to select powershell instead of powershell workflow step of the creating a workbook indicates to create a powershell workbook and should be a powershell workflow document details ⚠ do not edit this section it is required for docs microsoft com ➟ gi... | 1 |

12,995 | 15,359,205,502 | IssuesEvent | 2021-03-01 15:36:32 | edwardsmarc/CASFRI | https://api.github.com/repos/edwardsmarc/CASFRI | opened | Create a DropConstraints.sql script | blocker enhancement post-translation process translation | So constraints can be droped befere retranslating an inventory (should make translation faster) or before translating a new one which does not fullfil all the constraints. | 1.0 | Create a DropConstraints.sql script - So constraints can be droped befere retranslating an inventory (should make translation faster) or before translating a new one which does not fullfil all the constraints. | process | create a dropconstraints sql script so constraints can be droped befere retranslating an inventory should make translation faster or before translating a new one which does not fullfil all the constraints | 1 |

12,300 | 14,856,300,329 | IssuesEvent | 2021-01-18 13:58:37 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Grouping by required fields should be not have nullable output type | bug/2-confirmed kind/bug process/candidate team/client topic: groupBy | ## Problem

```prisma

type User {

id Int @id

name String

}

```

```ts

const user = prisma.user.groupBy({

by: ['name']

})

user[0].name // string | null

```

## Suggested solution

If `name` can't be null in the schema, then the name field in the result set can't be null. It should be:

```ts

... | 1.0 | Grouping by required fields should be not have nullable output type - ## Problem

```prisma

type User {

id Int @id

name String

}

```

```ts

const user = prisma.user.groupBy({

by: ['name']

})

user[0].name // string | null

```

## Suggested solution

If `name` can't be null in the schema, then t... | process | grouping by required fields should be not have nullable output type problem prisma type user id int id name string ts const user prisma user groupby by user name string null suggested solution if name can t be null in the schema then the name f... | 1 |

11,950 | 14,713,115,234 | IssuesEvent | 2021-01-05 09:53:59 | nestauk/sg_covid_impact | https://api.github.com/repos/nestauk/sg_covid_impact | closed | Load and process BRES and IDBR data | processing | We use getters to load and process BRES and IDBR data into division level. | 1.0 | Load and process BRES and IDBR data - We use getters to load and process BRES and IDBR data into division level. | process | load and process bres and idbr data we use getters to load and process bres and idbr data into division level | 1 |

18,259 | 24,341,438,806 | IssuesEvent | 2022-10-01 19:05:32 | OpenDataScotland/the_od_bods | https://api.github.com/repos/OpenDataScotland/the_od_bods | closed | Tidy filetypes for datasets | good first issue data processing back end | Filetypes for datasets are getting a bit out of hand. Currently we display them directly as provided by publisher, but inconsistencies are starting to show (e.g. "ZIP" and ".ZIP")

This should be a relatively easy solution to apply and it would be quite similar to how we tidy licensing and category information alread... | 1.0 | Tidy filetypes for datasets - Filetypes for datasets are getting a bit out of hand. Currently we display them directly as provided by publisher, but inconsistencies are starting to show (e.g. "ZIP" and ".ZIP")

This should be a relatively easy solution to apply and it would be quite similar to how we tidy licensing a... | process | tidy filetypes for datasets filetypes for datasets are getting a bit out of hand currently we display them directly as provided by publisher but inconsistencies are starting to show e g zip and zip this should be a relatively easy solution to apply and it would be quite similar to how we tidy licensing a... | 1 |

15,652 | 19,846,793,876 | IssuesEvent | 2022-01-21 07:38:47 | ooi-data/CE09OSSM-RID26-01-ADCPTC000-recovered_host-adcp_velocity_earth | https://api.github.com/repos/ooi-data/CE09OSSM-RID26-01-ADCPTC000-recovered_host-adcp_velocity_earth | opened | 🛑 Processing failed: ValueError | process | ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T07:38:46.523700.

## Details

Flow name: `CE09OSSM-RID26-01-ADCPTC000-recovered_host-adcp_velocity_earth`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)

<detai... | 1.0 | 🛑 Processing failed: ValueError - ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T07:38:46.523700.

## Details

Flow name: `CE09OSSM-RID26-01-ADCPTC000-recovered_host-adcp_velocity_earth`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to ... | process | 🛑 processing failed valueerror overview valueerror found in processing task task during run ended on details flow name recovered host adcp velocity earth task name processing task error type valueerror error message not enough values to unpack expected got tra... | 1 |

147,373 | 19,520,315,274 | IssuesEvent | 2021-12-29 17:10:34 | mregen/UA-.NetStandardLibrary | https://api.github.com/repos/mregen/UA-.NetStandardLibrary | closed | CVE-2019-1302 (High) detected in microsoft.netcore.app.2.1.0.nupkg | security vulnerability | ## CVE-2019-1302 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>microsoft.netcore.app.2.1.0.nupkg</b></p></summary>

<p>A set of .NET API's that are included in the default .NET Core a... | True | CVE-2019-1302 (High) detected in microsoft.netcore.app.2.1.0.nupkg - ## CVE-2019-1302 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>microsoft.netcore.app.2.1.0.nupkg</b></p></summary>... | non_process | cve high detected in microsoft netcore app nupkg cve high severity vulnerability vulnerable library microsoft netcore app nupkg a set of net api s that are included in the default net core application model library home page a href path to dependency file test... | 0 |

594,453 | 18,046,020,883 | IssuesEvent | 2021-09-18 22:56:59 | python/mypy | https://api.github.com/repos/python/mypy | closed | Crash in proper_plugin.py when one argument passed to `isinstance` | crash priority-2-low | **Crash Report**

When using the `misc/proper_plugin.py` plugin, mypy crashes when only one argument is passed to `isinstance`

**Traceback**

```

test.py:1: error: Too few arguments for "isinstance"

test.py:1: error: INTERNAL ERROR -- Please try using mypy master on Github:

https://mypy.readthedocs.io/en/stab... | 1.0 | Crash in proper_plugin.py when one argument passed to `isinstance` - **Crash Report**

When using the `misc/proper_plugin.py` plugin, mypy crashes when only one argument is passed to `isinstance`

**Traceback**

```

test.py:1: error: Too few arguments for "isinstance"

test.py:1: error: INTERNAL ERROR -- Please ... | non_process | crash in proper plugin py when one argument passed to isinstance crash report when using the misc proper plugin py plugin mypy crashes when only one argument is passed to isinstance traceback test py error too few arguments for isinstance test py error internal error please ... | 0 |

8,538 | 11,713,944,699 | IssuesEvent | 2020-03-09 11:18:49 | kazuwjnlab/cvpaper | https://api.github.com/repos/kazuwjnlab/cvpaper | opened | [cvpaper] CVPR2019 #71 Label Efficient Semi-Supervised Learning via Graph Filtering | graph graph convolutional neural network graph signal processing semi-supervised learning | ## \#71 [Label Efficient Semi-Supervised Learning via Graph Filtering](http://openaccess.thecvf.com/content_CVPR_2019/papers/Li_Label_Efficient_Semi-Supervised_Learning_via_Graph_Filtering_CVPR_2019_paper.pdf)

Qimai Li, Xiao-Ming Wu, Han Liu, Xiaotong Zhang, Zhichao Guan

### どんな論文か?

グラフベース半教師あり学習は、ラベル間の接続情報を使用できる点で他の半教... | 1.0 | [cvpaper] CVPR2019 #71 Label Efficient Semi-Supervised Learning via Graph Filtering - ## \#71 [Label Efficient Semi-Supervised Learning via Graph Filtering](http://openaccess.thecvf.com/content_CVPR_2019/papers/Li_Label_Efficient_Semi-Supervised_Learning_via_Graph_Filtering_CVPR_2019_paper.pdf)

Qimai Li, Xiao-Ming Wu, ... | process | label efficient semi supervised learning via graph filtering qimai li xiao ming wu han liu xiaotong zhang zhichao guan どんな論文か? グラフベース半教師あり学習は、ラベル間の接続情報を使用できる点で他の半教師あり学習の手法に比べて有利だが、ラベル伝播の手法が古典的なものであったり、nn系の手法を用いるためにラベル付きデータを大量に必要とするためラベル効率が悪い問題があった。この論文では、ラベル伝播をグラフ上での信号伝播と捉え、グラフフィルタリングで特徴抽出を行... | 1 |

7,051 | 10,210,693,024 | IssuesEvent | 2019-08-14 15:17:27 | pelias/pelias | https://api.github.com/repos/pelias/pelias | closed | Support multiple boundary.country parameters in a request | enhancement good first issue help wanted processed | #### Here's what I did :innocent:

This came in through support (see desk 882). The user is looking for a way to filter by multiple countries with autocomplete, although this applies to other endpoints as well.

Example query: https://mapzen.com/search/explorer/?query=search&text=ymca&boundary.country=GBR%2CIRL&foc... | 1.0 | Support multiple boundary.country parameters in a request - #### Here's what I did :innocent:

This came in through support (see desk 882). The user is looking for a way to filter by multiple countries with autocomplete, although this applies to other endpoints as well.

Example query: https://mapzen.com/search/exp... | process | support multiple boundary country parameters in a request here s what i did innocent this came in through support see desk the user is looking for a way to filter by multiple countries with autocomplete although this applies to other endpoints as well example query here s what i go... | 1 |

11,399 | 14,235,002,051 | IssuesEvent | 2020-11-18 14:18:44 | ORNL-AMO/AMO-Tools-Desktop | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop | opened | Icons for PH calcs | Calculator Process Heating | Let me know if they still have the white background and I'll send them in slack

Flue Gas

Wall

Wall

detected in junit-4.12.jar | security vulnerability | ## CVE-2020-15250 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>junit-4.12.jar</b></p></summary>

<p>JUnit is a unit testing framework for Java, created by Erich Gamma and Kent Beck... | True | CVE-2020-15250 (Medium) detected in junit-4.12.jar - ## CVE-2020-15250 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>junit-4.12.jar</b></p></summary>

<p>JUnit is a unit testing fra... | non_process | cve medium detected in junit jar cve medium severity vulnerability vulnerable library junit jar junit is a unit testing framework for java created by erich gamma and kent beck library home page a href path to dependency file gson build gradle path to vulnerable library... | 0 |

4,978 | 7,808,418,722 | IssuesEvent | 2018-06-11 20:07:25 | GoogleCloudPlatform/google-cloud-cpp | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-cpp | closed | Run integration tests against emulator | storage testing type: process | To get code coverage statistics that make any sense we need to run the integration tests against some kind of emulator. Maybe we can reuse the python stuff we did for httpbin and add other handlers for the emulator. All we need is to keep buckets and objects in memory.

| 1.0 | Run integration tests against emulator - To get code coverage statistics that make any sense we need to run the integration tests against some kind of emulator. Maybe we can reuse the python stuff we did for httpbin and add other handlers for the emulator. All we need is to keep buckets and objects in memory.

| process | run integration tests against emulator to get code coverage statistics that make any sense we need to run the integration tests against some kind of emulator maybe we can reuse the python stuff we did for httpbin and add other handlers for the emulator all we need is to keep buckets and objects in memory | 1 |

640,728 | 20,797,615,564 | IssuesEvent | 2022-03-17 10:49:26 | slsdetectorgroup/slsDetectorPackage | https://api.github.com/repos/slsdetectorgroup/slsDetectorPackage | closed | Receiver: disabled ports write files in 10g | action - Bug priority - High status - resolved | <!-- Preview changes before submitting -->

<!-- Please fill out everything with an *, as this report will be discarded otherwise -->

<!-- This is a comment, the syntax is a bit different from c++ or bash -->

##### *Distribution:

<!-- RHEL7, RHEL6, Fedora, etc -->

##### *Detector type:

<!-- If applicable, ... | 1.0 | Receiver: disabled ports write files in 10g - <!-- Preview changes before submitting -->

<!-- Please fill out everything with an *, as this report will be discarded otherwise -->

<!-- This is a comment, the syntax is a bit different from c++ or bash -->

##### *Distribution:

<!-- RHEL7, RHEL6, Fedora, etc -->

... | non_process | receiver disabled ports write files in distribution detector type receiverv software package version priority high describe the bug disabled ports write files expected behavior to reproduce sc... | 0 |

2,332 | 5,142,636,883 | IssuesEvent | 2017-01-12 13:55:36 | jimbrown75/Permit-Vision-Enhancements | https://api.github.com/repos/jimbrown75/Permit-Vision-Enhancements | opened | Include Template Verifier and Authoriser signatures in permit created from Template | bug Medium Priority Should Fix Verified by PTW Process Lead | When creating a permit from a template the template verifier and authoriser should be included on the Permit signatures (electronic and printed) | 1.0 | Include Template Verifier and Authoriser signatures in permit created from Template - When creating a permit from a template the template verifier and authoriser should be included on the Permit signatures (electronic and printed) | process | include template verifier and authoriser signatures in permit created from template when creating a permit from a template the template verifier and authoriser should be included on the permit signatures electronic and printed | 1 |

129,104 | 10,563,213,043 | IssuesEvent | 2019-10-04 20:21:24 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Kuberntes 1.15 as officially supported on Rancher 2.2.x | [zube]: To Test team/ca | Currently k8s 1.15 is experimental on 2.2.x. We have to enable an official support for it, and drop support for k8s 1.12 with that change. | 1.0 | Kuberntes 1.15 as officially supported on Rancher 2.2.x - Currently k8s 1.15 is experimental on 2.2.x. We have to enable an official support for it, and drop support for k8s 1.12 with that change. | non_process | kuberntes as officially supported on rancher x currently is experimental on x we have to enable an official support for it and drop support for with that change | 0 |

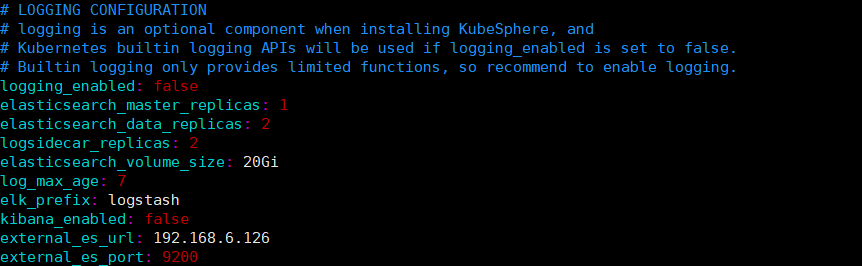

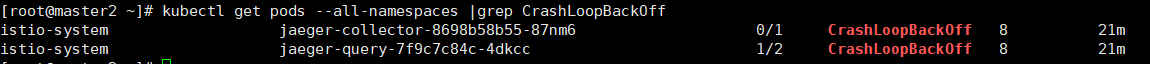

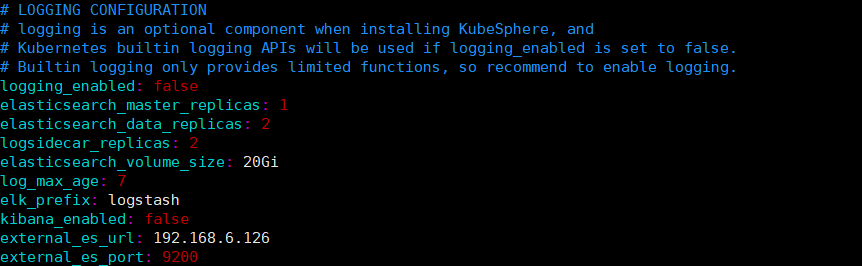

19,155 | 11,156,698,344 | IssuesEvent | 2019-12-25 08:37:23 | kubesphere/kubesphere | https://api.github.com/repos/kubesphere/kubesphere | closed | kubesphere安装配置的外接es,日志系统未开启,安装后部分pod无法启动 | area/logging area/microservice | 安装环境:

4核4G * 3

centos7

没有开启日志系统,配置如下:

安装完成后,其他组件正常,现在istio有两个组件异常

报错信息如下:

```

[roo... | 1.0 | kubesphere安装配置的外接es,日志系统未开启,安装后部分pod无法启动 - 安装环境:

4核4G * 3

centos7

没有开启日志系统,配置如下:

安装完成后,其他组件正常,现在istio有两个组件异常

| Priority: Medium | Changed from "Add New" string to "Add New" button. Needs styling.

| 1.0 | Organization "Add new" button (CSS) - Changed from "Add New" string to "Add New" button. Needs styling.

| non_process | organization add new button css changed from add new string to add new button needs styling | 0 |

142,019 | 19,012,457,231 | IssuesEvent | 2021-11-23 10:48:02 | Yann-dv/_Pekocko | https://api.github.com/repos/Yann-dv/_Pekocko | opened | CVE-2020-7608 (Medium) detected in yargs-parser-7.0.0.tgz, yargs-parser-10.1.0.tgz | security vulnerability | ## CVE-2020-7608 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>yargs-parser-7.0.0.tgz</b>, <b>yargs-parser-10.1.0.tgz</b></p></summary>

<p>

<details><summary><b>yargs-parser-7.0.... | True | CVE-2020-7608 (Medium) detected in yargs-parser-7.0.0.tgz, yargs-parser-10.1.0.tgz - ## CVE-2020-7608 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>yargs-parser-7.0.0.tgz</b>, <b>... | non_process | cve medium detected in yargs parser tgz yargs parser tgz cve medium severity vulnerability vulnerable libraries yargs parser tgz yargs parser tgz yargs parser tgz the mighty option parser used by yargs library home page a href path to depen... | 0 |

19,337 | 4,383,043,706 | IssuesEvent | 2016-08-07 08:58:24 | zcash/zcash | https://api.github.com/repos/zcash/zcash | closed | Finish the list of consensus changes for security auditors | consensus protocol documentation SECURITY | The security auditors need the [list of consensus changes](https://github.com/zcash/zcash/wiki/Security-Auditor-Quick-Start#list-of-consensus-changes) to be finished before the audit can begin. It would also be nice if those bullet points linked to relevant code (in the security-review-frozen version). | 1.0 | Finish the list of consensus changes for security auditors - The security auditors need the [list of consensus changes](https://github.com/zcash/zcash/wiki/Security-Auditor-Quick-Start#list-of-consensus-changes) to be finished before the audit can begin. It would also be nice if those bullet points linked to relevant c... | non_process | finish the list of consensus changes for security auditors the security auditors need the to be finished before the audit can begin it would also be nice if those bullet points linked to relevant code in the security review frozen version | 0 |

2,645 | 5,425,336,484 | IssuesEvent | 2017-03-03 05:37:46 | FujiXeroxNZ-Wellington/Indigo | https://api.github.com/repos/FujiXeroxNZ-Wellington/Indigo | closed | js Auto validate cannot validate elements in List group | 0-4-Contract Processing 0-Contract Management bug v1.0 | Error: Angular-auto-validate: invalid bs3 form structure elements must be wrapped by a form-group class

the above error is thrown when validating the form fields using js-autovalidate library | 1.0 | js Auto validate cannot validate elements in List group - Error: Angular-auto-validate: invalid bs3 form structure elements must be wrapped by a form-group class

the above error is thrown when validating the form fields using js-autovalidate library | process | js auto validate cannot validate elements in list group error angular auto validate invalid form structure elements must be wrapped by a form group class the above error is thrown when validating the form fields using js autovalidate library | 1 |

156,230 | 19,831,499,138 | IssuesEvent | 2022-01-20 12:29:48 | Dima2021/SecurityShepherd | https://api.github.com/repos/Dima2021/SecurityShepherd | closed | CVE-2020-9493 (High) detected in log4j-1.2.7.jar | security vulnerability | ## CVE-2020-9493 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-1.2.7.jar</b></p></summary>

<p></p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /tory... | True | CVE-2020-9493 (High) detected in log4j-1.2.7.jar - ## CVE-2020-9493 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-1.2.7.jar</b></p></summary>

<p></p>

<p>Path to dependency file... | non_process | cve high detected in jar cve high severity vulnerability vulnerable library jar path to dependency file pom xml path to vulnerable library tory jar target owaspsecurityshepherd web inf lib jar dependency hierarchy x ja... | 0 |

11,510 | 14,394,750,249 | IssuesEvent | 2020-12-03 02:02:31 | A01551343/4a | https://api.github.com/repos/A01551343/4a | closed | complete_size_estimating_template | process-dashboard | - completar el formato de estimacion de LOC con los valores reales obtenidos | 1.0 | complete_size_estimating_template - - completar el formato de estimacion de LOC con los valores reales obtenidos | process | complete size estimating template completar el formato de estimacion de loc con los valores reales obtenidos | 1 |

140,628 | 18,905,973,963 | IssuesEvent | 2021-11-16 09:08:01 | VerdantSparks/vuetify_ts_aspnetcore_starter | https://api.github.com/repos/VerdantSparks/vuetify_ts_aspnetcore_starter | closed | CVE-2018-8292 (High) detected in microsoft.netcore.app.2.0.0.nupkg, system.net.http.4.3.0.nupkg | security vulnerability | ## CVE-2018-8292 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>microsoft.netcore.app.2.0.0.nupkg</b>, <b>system.net.http.4.3.0.nupkg</b></p></summary>

<p>

<details><summary><b>micr... | True | CVE-2018-8292 (High) detected in microsoft.netcore.app.2.0.0.nupkg, system.net.http.4.3.0.nupkg - ## CVE-2018-8292 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>microsoft.netcore.ap... | non_process | cve high detected in microsoft netcore app nupkg system net http nupkg cve high severity vulnerability vulnerable libraries microsoft netcore app nupkg system net http nupkg microsoft netcore app nupkg a set of net api s that are included in the d... | 0 |

67,236 | 8,114,933,365 | IssuesEvent | 2018-08-15 03:36:11 | the-tale/the-tale | https://api.github.com/repos/the-tale/the-tale | closed | Карты судьбы: поправить карты прямого начисления влияния | comp_cards comp_politics cont_game_designe est_simple type_improvement | Дополнить описание перечнем модификаторов, которые должны влиять на начисляемое влияние.

Дописать тесты, которые будут это проверять.

Должны ли хоть какие-то модификаторы влиять на эту величину? В текущий момент получается, что есть карты на влияние в задании, на которые действуют все бонусы и штрафы. А есть карты пр... | 1.0 | Карты судьбы: поправить карты прямого начисления влияния - Дополнить описание перечнем модификаторов, которые должны влиять на начисляемое влияние.

Дописать тесты, которые будут это проверять.

Должны ли хоть какие-то модификаторы влиять на эту величину? В текущий момент получается, что есть карты на влияние в задании... | non_process | карты судьбы поправить карты прямого начисления влияния дополнить описание перечнем модификаторов которые должны влиять на начисляемое влияние дописать тесты которые будут это проверять должны ли хоть какие то модификаторы влиять на эту величину в текущий момент получается что есть карты на влияние в задании... | 0 |

135,257 | 30,274,098,750 | IssuesEvent | 2023-07-07 17:55:12 | WordPress/openverse | https://api.github.com/repos/WordPress/openverse | closed | Use Elasticsearch 8 locally | 🟧 priority: high 💻 aspect: code 🧰 goal: internal improvement 🧱 stack: api 🔧 tech: elasticsearch | ## Problem

<!-- Describe a problem solved by this feature; or delete the section entirely. -->

We need to make sure Openverse will work with ElasticSearch 8 prior to upgrading our existing cluster.

## Description

<!-- Describe the feature and how it solves the problem. -->

Update the root docker compose ... | 1.0 | Use Elasticsearch 8 locally - ## Problem

<!-- Describe a problem solved by this feature; or delete the section entirely. -->

We need to make sure Openverse will work with ElasticSearch 8 prior to upgrading our existing cluster.

## Description

<!-- Describe the feature and how it solves the problem. -->

U... | non_process | use elasticsearch locally problem we need to make sure openverse will work with elasticsearch prior to upgrading our existing cluster description update the root docker compose to use version of elasticsearch or whatever the latest version is at the time of experimentation ... | 0 |

376,908 | 26,222,514,223 | IssuesEvent | 2023-01-04 15:53:27 | SandraScherer/EntertainmentInfothek | https://api.github.com/repos/SandraScherer/EntertainmentInfothek | closed | Add sound mix information to series | documentation enhancement database program | - [x] Add table Series_SoundMix to database

- [x] Add/adapt Series class in EntertainmentDB.dll

- [x] Add tests to EntertainmentDB.Tests

- [x] Add/adapt ContentCreator classes in WikiPageCreator

- [x] Add tests to WikiPageCreator.Tests

- [x] Update documentation

- [x] EntertainmentInfothek_Database.vpp

- [... | 1.0 | Add sound mix information to series - - [x] Add table Series_SoundMix to database

- [x] Add/adapt Series class in EntertainmentDB.dll

- [x] Add tests to EntertainmentDB.Tests

- [x] Add/adapt ContentCreator classes in WikiPageCreator

- [x] Add tests to WikiPageCreator.Tests

- [x] Update documentation

- [x] Ente... | non_process | add sound mix information to series add table series soundmix to database add adapt series class in entertainmentdb dll add tests to entertainmentdb tests add adapt contentcreator classes in wikipagecreator add tests to wikipagecreator tests update documentation entertainmentinfot... | 0 |

15,408 | 19,598,022,471 | IssuesEvent | 2022-01-05 20:27:44 | opensearch-project/data-prepper | https://api.github.com/repos/opensearch-project/data-prepper | opened | Create an Aggregate Processor | plugin - processor | **Is your feature request related to a problem? Please describe.**

Data Prepper users need a processor that can be utilized for generic stateful aggregation

**Describe the solution you'd like**

A solution has already been proposed in RFC #699 | 1.0 | Create an Aggregate Processor - **Is your feature request related to a problem? Please describe.**

Data Prepper users need a processor that can be utilized for generic stateful aggregation

**Describe the solution you'd like**

A solution has already been proposed in RFC #699 | process | create an aggregate processor is your feature request related to a problem please describe data prepper users need a processor that can be utilized for generic stateful aggregation describe the solution you d like a solution has already been proposed in rfc | 1 |

46,008 | 18,924,532,155 | IssuesEvent | 2021-11-17 08:02:01 | Azure/azure-powershell | https://api.github.com/repos/Azure/azure-powershell | closed | Remove-AzSqlDatabaseSecondary Throws Object reference not set to an instance of an object | SQL Service Attention question customer-reported needs-author-feedback no-recent-activity | <!--

- Make sure you are able to reproduce this issue on the latest released version of Az

- https://www.powershellgallery.com/packages/Az

- Please search the existing issues to see if there has been a similar issue filed

- For issue related to importing a module, please refer to our troubleshooting guide:

... | 1.0 | Remove-AzSqlDatabaseSecondary Throws Object reference not set to an instance of an object - <!--

- Make sure you are able to reproduce this issue on the latest released version of Az

- https://www.powershellgallery.com/packages/Az

- Please search the existing issues to see if there has been a similar issue fil... | non_process | remove azsqldatabasesecondary throws object reference not set to an instance of an object make sure you are able to reproduce this issue on the latest released version of az please search the existing issues to see if there has been a similar issue filed for issue related to importing a modul... | 0 |

9,923 | 12,963,288,340 | IssuesEvent | 2020-07-20 18:32:26 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Passing parameters between triggering pipelines | Pri2 devops-cicd-process/tech devops/prod support-request | How do I pass (or receive) parameters between pipelines? My triggered pipeline has input parameters that need to be set from the 'build' pipeline that triggers it.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 86285f72-9e28-da97-5... | 1.0 | Passing parameters between triggering pipelines - How do I pass (or receive) parameters between pipelines? My triggered pipeline has input parameters that need to be set from the 'build' pipeline that triggers it.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ Gi... | process | passing parameters between triggering pipelines how do i pass or receive parameters between pipelines my triggered pipeline has input parameters that need to be set from the build pipeline that triggers it document details ⚠ do not edit this section it is required for docs microsoft com ➟ gi... | 1 |

4,410 | 7,299,077,731 | IssuesEvent | 2018-02-26 18:58:08 | UKHomeOffice/dq-aws-transition | https://api.github.com/repos/UKHomeOffice/dq-aws-transition | closed | Finalise Windows Data Ingest Prod server | DQ Data Ingest Production S4 Processing | Finalise Windows Data Ingest Prod server

- [x] Terraform Deployment

- [x] Post-Deployment Tasks

- [x] SEQ check running

- [x] Data transfer tasks running

- [x] Swapped in vars

## Acceptance criteria

- [x] Data is flowing in from S3

- [x] Data is being processed by SEQ job

- [x] WS is launching jobs

... | 1.0 | Finalise Windows Data Ingest Prod server - Finalise Windows Data Ingest Prod server

- [x] Terraform Deployment

- [x] Post-Deployment Tasks

- [x] SEQ check running

- [x] Data transfer tasks running

- [x] Swapped in vars

## Acceptance criteria

- [x] Data is flowing in from S3

- [x] Data is being process... | process | finalise windows data ingest prod server finalise windows data ingest prod server terraform deployment post deployment tasks seq check running data transfer tasks running swapped in vars acceptance criteria data is flowing in from data is being processed by seq job ... | 1 |

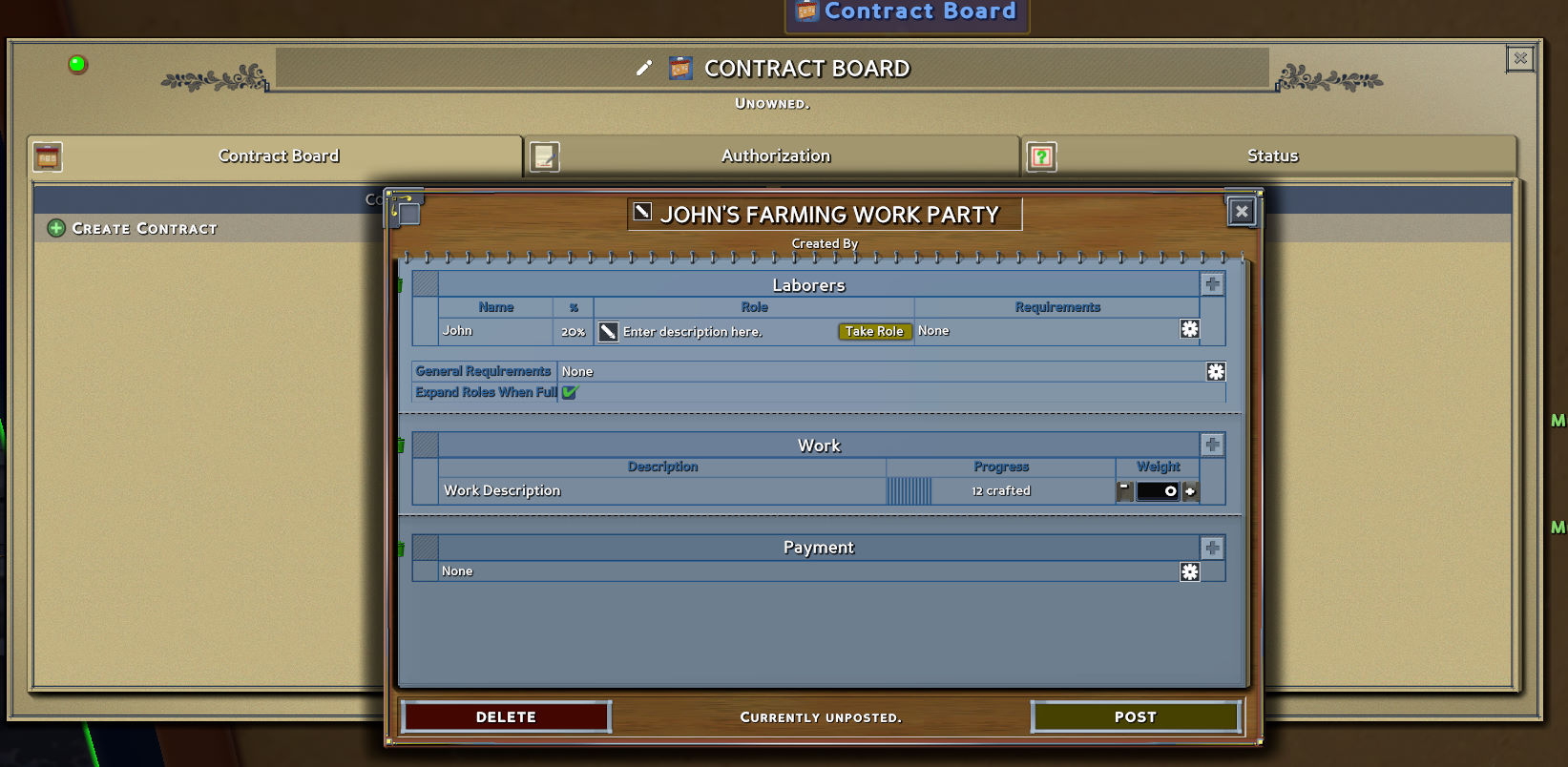

418,698 | 12,202,203,356 | IssuesEvent | 2020-04-30 08:34:35 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0 staging-1528] Work party: strange template for the first party | Priority: Medium Status: Fixed | 1. Make a new server

2. Place a workbench and make 10 hewn log order

3. Place a contract board and start to create workparty

You will see this strange template

| 1.0 | [0.9.0 staging-1528] Work party: strange template for the first party - 1. Make a new server

2. Place a workbench and make 10 hewn log order

3. Place a contract board and start to create workparty

You will see this strange template

but is back again in Witch PU.

| 1.0 | UI Overlap When Gold Appears in Combat - This was fixed [here](https://github.com/TerryCavanagh/diceydungeons.com/issues/755) but is back again in Witch PU.

| non_process | ui overlap when gold appears in combat this was fixed but is back again in witch pu | 0 |

32,969 | 13,994,311,777 | IssuesEvent | 2020-10-28 00:20:57 | Azure/azure-sdk-for-java | https://api.github.com/repos/Azure/azure-sdk-for-java | closed | [Service Bus] Fix module-info.java | Client Service Bus blocking-release | `module-info.java` in Service Bus has many `opens` statements exposing multiple packages. These `opens` statements have to be removed before GA. | 1.0 | [Service Bus] Fix module-info.java - `module-info.java` in Service Bus has many `opens` statements exposing multiple packages. These `opens` statements have to be removed before GA. | non_process | fix module info java module info java in service bus has many opens statements exposing multiple packages these opens statements have to be removed before ga | 0 |

43,343 | 11,628,285,965 | IssuesEvent | 2020-02-27 18:02:10 | google/flogger | https://api.github.com/repos/google/flogger | closed | Flag --incompatible_load_java_rules_from_bzl will break Flogger in Bazel 1.2.1 | P3 type=defect | Incompatible flag --incompatible_load_java_rules_from_bzl will break Flogger once Bazel 1.2.1 is released.

Please see the following CI builds for more information:

* [:ubuntu: 16.04 (OpenJDK 8)](<a href="https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/342#7fdfdbb2-c0ac-49f7-8fb4-4af9267c90e6" targ... | 1.0 | Flag --incompatible_load_java_rules_from_bzl will break Flogger in Bazel 1.2.1 - Incompatible flag --incompatible_load_java_rules_from_bzl will break Flogger once Bazel 1.2.1 is released.

Please see the following CI builds for more information:

* [:ubuntu: 16.04 (OpenJDK 8)](<a href="https://buildkite.com/bazel/bazel... | non_process | flag incompatible load java rules from bzl will break flogger in bazel incompatible flag incompatible load java rules from bzl will break flogger once bazel is released please see the following ci builds for more information ubuntu openjdk questions please file an issue in ... | 0 |

7,619 | 10,727,398,402 | IssuesEvent | 2019-10-28 11:36:08 | prisma/photonjs | https://api.github.com/repos/prisma/photonjs | closed | Filtering `null` relationships is not possible | bug/2-confirmed kind/bug process/candidate | ## Schema

```prisma

model User {

id Int @id

address Address?

}

model Address {

id Int @id

}

```

## Code

```ts

const usersWithoutAddress = await photon.users.findMany({

where: {

address: null

}

})

```

This generates an incorrect query under the hood with querying `address: {}` inste... | 1.0 | Filtering `null` relationships is not possible - ## Schema

```prisma

model User {

id Int @id

address Address?

}

model Address {

id Int @id

}

```

## Code

```ts

const usersWithoutAddress = await photon.users.findMany({

where: {

address: null

}

})

```

This generates an incorrect query... | process | filtering null relationships is not possible schema prisma model user id int id address address model address id int id code ts const userswithoutaddress await photon users findmany where address null this generates an incorrect query... | 1 |

17,560 | 23,372,954,891 | IssuesEvent | 2022-08-10 21:54:33 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Tip: You can create an empty environment and reference it from deployment jobs | devops/prod cba Pri1 devops-cicd-process/tech | The document contains this tip:

"Tip: You can create an empty environment and reference it from deployment jobs"

I would like to do that, meaning I would like to run a deployment job that does not actually move any files but just records the deployment.

However, when I try, I get the error:

"No resource wer... | 1.0 | Tip: You can create an empty environment and reference it from deployment jobs - The document contains this tip:

"Tip: You can create an empty environment and reference it from deployment jobs"

I would like to do that, meaning I would like to run a deployment job that does not actually move any files but just rec... | process | tip you can create an empty environment and reference it from deployment jobs the document contains this tip tip you can create an empty environment and reference it from deployment jobs i would like to do that meaning i would like to run a deployment job that does not actually move any files but just rec... | 1 |

283,148 | 30,889,604,890 | IssuesEvent | 2023-08-04 02:58:43 | maddyCode23/linux-4.1.15 | https://api.github.com/repos/maddyCode23/linux-4.1.15 | reopened | CVE-2020-8649 (Medium) detected in linux-stable-rtv4.1.33 | Mend: dependency security vulnerability | ## CVE-2020-8649 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home pa... | True | CVE-2020-8649 (Medium) detected in linux-stable-rtv4.1.33 - ## CVE-2020-8649 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Car... | non_process | cve medium detected in linux stable cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulnerable source f... | 0 |

280,432 | 21,260,072,498 | IssuesEvent | 2022-04-13 02:31:42 | mikezimm/SecureScript7 | https://api.github.com/repos/mikezimm/SecureScript7 | closed | Add Debugging code tips in prop pane setup | documentation enhancement Complete | It's hard to debug. Add a tips with lessons learned in the web part. | 1.0 | Add Debugging code tips in prop pane setup - It's hard to debug. Add a tips with lessons learned in the web part. | non_process | add debugging code tips in prop pane setup it s hard to debug add a tips with lessons learned in the web part | 0 |

87,780 | 25,209,199,648 | IssuesEvent | 2022-11-14 01:02:52 | OpenBB-finance/OpenBBTerminal | https://api.github.com/repos/OpenBB-finance/OpenBBTerminal | closed | [Bug] Windows Installer Broken with Poetry Install | bug build installer | **Describe the bug**

Windows installer is broken. [Link](https://github.com/OpenBB-finance/OpenBBTerminal/actions/runs/3286347083/jobs/5414352853)

<img width="1340" alt="Screen Shot 2022-10-20 at 2 10 09 PM" src="https://user-images.githubusercontent.com/53658028/197025636-54437cb9-7ae1-4934-a4e2-96ae52db3a49.png">... | 1.0 | [Bug] Windows Installer Broken with Poetry Install - **Describe the bug**

Windows installer is broken. [Link](https://github.com/OpenBB-finance/OpenBBTerminal/actions/runs/3286347083/jobs/5414352853)

<img width="1340" alt="Screen Shot 2022-10-20 at 2 10 09 PM" src="https://user-images.githubusercontent.com/53658028... | non_process | windows installer broken with poetry install describe the bug windows installer is broken img width alt screen shot at pm src | 0 |

10,640 | 13,446,159,186 | IssuesEvent | 2020-09-08 12:33:48 | MHRA/products | https://api.github.com/repos/MHRA/products | closed | PARS - Design review for search results display | EPIC - PARs process NEW :new: | TASK

Design tweaks needed to the search results display, so that they are in line with SPC / PILs

### Acceptance Criteria

- [ ] The commas are removed from the between product name, dose, strength etc

- [ ] There is a dash between the name and the PL number (example...... Ibuprofen 200mg Tablets (Ibuprofen) - PL ... | 1.0 | PARS - Design review for search results display - TASK

Design tweaks needed to the search results display, so that they are in line with SPC / PILs

### Acceptance Criteria

- [ ] The commas are removed from the between product name, dose, strength etc

- [ ] There is a dash between the name and the PL number (examp... | process | pars design review for search results display task design tweaks needed to the search results display so that they are in line with spc pils acceptance criteria the commas are removed from the between product name dose strength etc there is a dash between the name and the pl number example ... | 1 |

2,635 | 5,412,522,136 | IssuesEvent | 2017-03-01 14:45:38 | DynareTeam/dynare | https://api.github.com/repos/DynareTeam/dynare | closed | create fortran preprocessor output | enhancement preprocessor | dynamic and static files each as independent functions

model as a fortran module

| 1.0 | create fortran preprocessor output - dynamic and static files each as independent functions

model as a fortran module

| process | create fortran preprocessor output dynamic and static files each as independent functions model as a fortran module | 1 |

93,849 | 19,346,556,203 | IssuesEvent | 2021-12-15 11:25:55 | Onelinerhub/onelinerhub | https://api.github.com/repos/Onelinerhub/onelinerhub | closed | Short solution needed: "How to list docker tags" (docker) | help wanted good first issue code docker | Please help us write most modern and shortest code solution for this issue:

**How to list docker tags** (technology: [docker](https://onelinerhub.com/docker))

### Fast way

Just write the code solution in the comments.

### Prefered way

1. Create pull request with a new code file inside [inbox folder](https://github.co... | 1.0 | Short solution needed: "How to list docker tags" (docker) - Please help us write most modern and shortest code solution for this issue:

**How to list docker tags** (technology: [docker](https://onelinerhub.com/docker))

### Fast way

Just write the code solution in the comments.

### Prefered way

1. Create pull request ... | non_process | short solution needed how to list docker tags docker please help us write most modern and shortest code solution for this issue how to list docker tags technology fast way just write the code solution in the comments prefered way create pull request with a new code file inside don... | 0 |

249,185 | 7,953,975,332 | IssuesEvent | 2018-07-12 05:11:51 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Placed items disappear but block placement | Medium Priority | **Version:** 0.7.4.5 beta

**Expected behavior:** When placing an item (so far tried ramp, chair), it should appear.

**Actual behavior:** The item disappears immediately. I can walk through it, but I cant place anything else there (blocks or items). The item can be deleted with the Dev Tool (ramps need to be delet... | 1.0 | Placed items disappear but block placement - **Version:** 0.7.4.5 beta