Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

5,816 | 8,651,268,682 | IssuesEvent | 2018-11-27 02:18:38 | google/codeworld | https://api.github.com/repos/google/codeworld | closed | Automatic requirement checker for exercises | enhancement in process | Idea from class today, that @marcianx and I plan to work on this evening.

When students are completing an exercise in class, there are usually some requirements for success. For example, in class today, we wanted students to redefine three variables to be applications of the same function. Enforcing this by hand i... | 1.0 | Automatic requirement checker for exercises - Idea from class today, that @marcianx and I plan to work on this evening.

When students are completing an exercise in class, there are usually some requirements for success. For example, in class today, we wanted students to redefine three variables to be applications o... | process | automatic requirement checker for exercises idea from class today that marcianx and i plan to work on this evening when students are completing an exercise in class there are usually some requirements for success for example in class today we wanted students to redefine three variables to be applications o... | 1 |

12,855 | 15,240,065,186 | IssuesEvent | 2021-02-19 05:56:41 | gfx-rs/naga | https://api.github.com/repos/gfx-rs/naga | closed | Analysis doesn't process Call statement correctly | area: processing kind: bug | It should follow the same logic as `Expression::Call`, but currently it's doing less than that. | 1.0 | Analysis doesn't process Call statement correctly - It should follow the same logic as `Expression::Call`, but currently it's doing less than that. | process | analysis doesn t process call statement correctly it should follow the same logic as expression call but currently it s doing less than that | 1 |

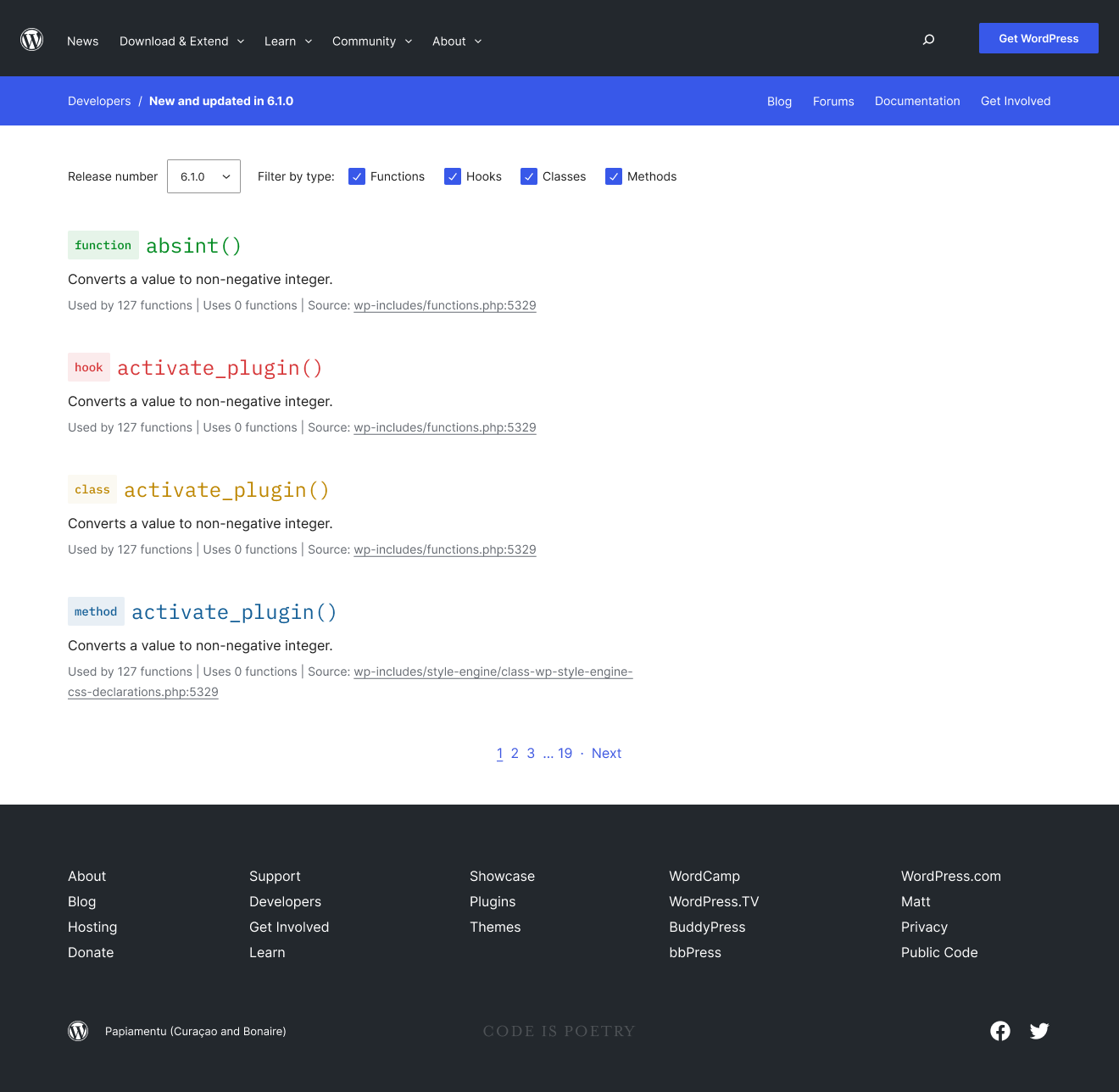

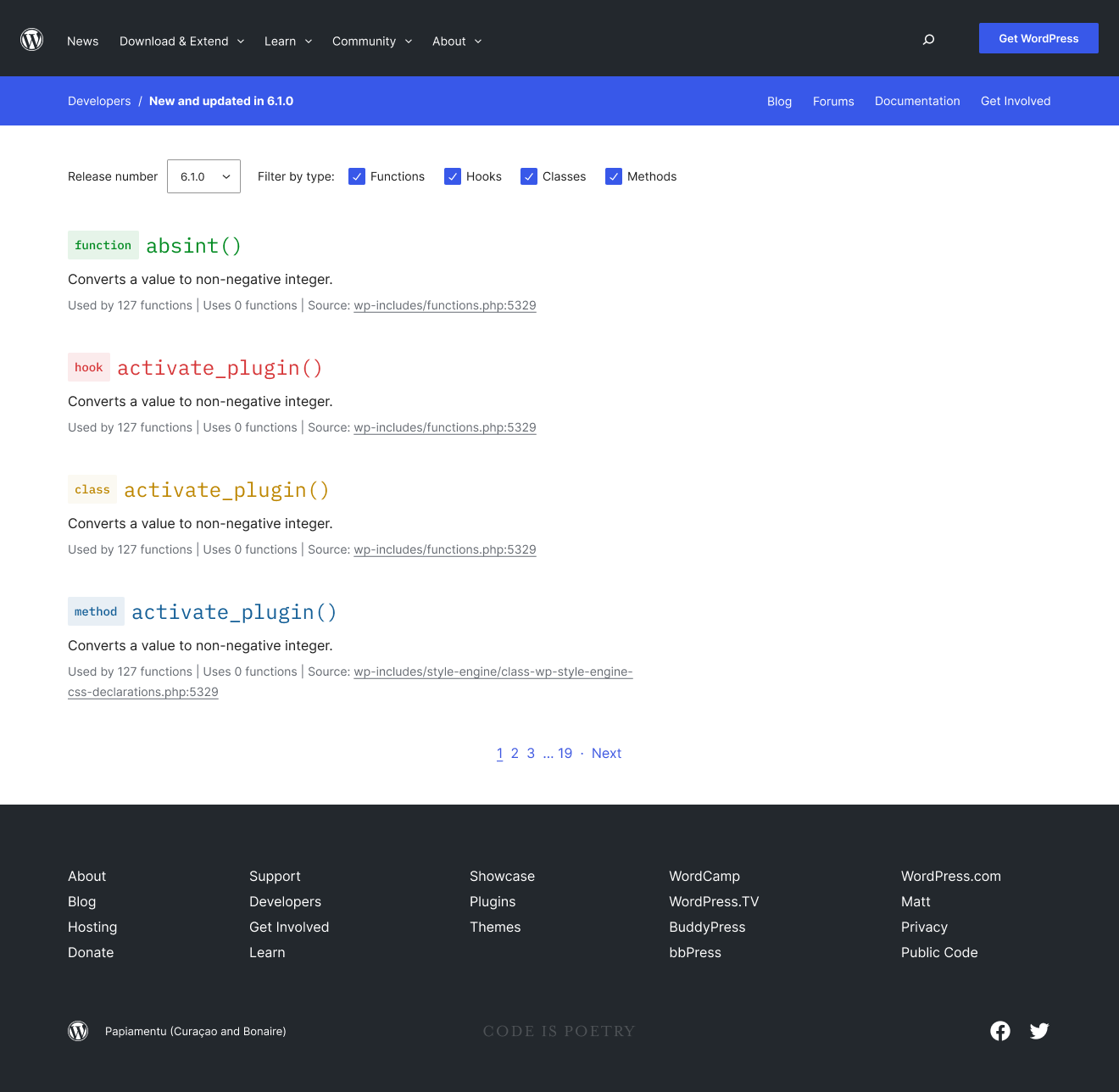

171,838 | 27,186,110,280 | IssuesEvent | 2023-02-19 08:17:32 | WordPress/wporg-developer | https://api.github.com/repos/WordPress/wporg-developer | closed | Implement WP version archive view. | Redesign | Users should be able to view the code changes between wp versions. This page also contains filters and a unique update to the breadcrumb.

## Screenshot

[Figma](https://www.figma.com/file/2WxlJFzMJvqPf... | 1.0 | Implement WP version archive view. - Users should be able to view the code changes between wp versions. This page also contains filters and a unique update to the breadcrumb.

## Screenshot

[Figma](htt... | non_process | implement wp version archive view users should be able to view the code changes between wp versions this page also contains filters and a unique update to the breadcrumb screenshot | 0 |

21,648 | 30,083,376,504 | IssuesEvent | 2023-06-29 06:35:47 | inmanta/web-console | https://api.github.com/repos/inmanta/web-console | opened | Dump server and agent log when e2e tests fail | process | The e2e tests use an Inmanta server created by the local-setup tool. When a test case fails, it's very useful to have access to the server and agent logs to investigate what went wrong. This information goes lost when `yarn kill-server` is executed at the end of the Jenkins pipeline. It would be good to archive this in... | 1.0 | Dump server and agent log when e2e tests fail - The e2e tests use an Inmanta server created by the local-setup tool. When a test case fails, it's very useful to have access to the server and agent logs to investigate what went wrong. This information goes lost when `yarn kill-server` is executed at the end of the Jenki... | process | dump server and agent log when tests fail the tests use an inmanta server created by the local setup tool when a test case fails it s very useful to have access to the server and agent logs to investigate what went wrong this information goes lost when yarn kill server is executed at the end of the jenkins p... | 1 |

6,942 | 9,219,359,200 | IssuesEvent | 2019-03-11 15:16:08 | LLK/scratch-blocks | https://api.github.com/repos/LLK/scratch-blocks | closed | Blocks in "Echo of the Light - prototype" do not show up | bug compatibility | ### Expected Behavior

The blocks show up (when you have the "Pen Text Engine" sprite selected), looking something like this:

### Actual... | True | Blocks in "Echo of the Light - prototype" do not show up - ### Expected Behavior

The blocks show up (when you have the "Pen Text Engine" sprite selected), looking something like this:

2. See the quote at the bottom listed as "Chetster, you little fruit loop. You’re done!"

**Expected behavior**

The correct... | 1.0 | Typo/misquote for Invitation to Love - **Describe the bug**

The quote in Invitation to Love is incorrect.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to the listing for Invitation to Love (as seen in Twin Peaks)

2. See the quote at the bottom listed as "Chetster, you little fruit loop. You’re done!... | process | typo misquote for invitation to love describe the bug the quote in invitation to love is incorrect to reproduce steps to reproduce the behavior go to the listing for invitation to love as seen in twin peaks see the quote at the bottom listed as chetster you little fruit loop you’re done ... | 1 |

190,194 | 14,537,283,334 | IssuesEvent | 2020-12-15 08:57:04 | microsoft/azure-pipelines-tasks | https://api.github.com/repos/microsoft/azure-pipelines-tasks | closed | Visual Studio Test (V 2.*) Task: How to retrieve TestRunId when Test Selection uses Test Plan. | Area: Test question | **Question, Bug, or Feature?**

*Type*: Question

**Enter Task Name**: Visual Studio Test V2

## Environment

Azure Pipelines

## Issue Description

Hi Team,

We are using Visual Studio Test (V 2.*) Task to execute Automated Tests under given Test plan. In Automated Test methods , we have custo... | 1.0 | Visual Studio Test (V 2.*) Task: How to retrieve TestRunId when Test Selection uses Test Plan. - **Question, Bug, or Feature?**

*Type*: Question

**Enter Task Name**: Visual Studio Test V2

## Environment

Azure Pipelines

## Issue Description

Hi Team,

We are using Visual Studio Test (V 2.*)... | non_process | visual studio test v task how to retrieve testrunid when test selection uses test plan question bug or feature type question enter task name visual studio test environment azure pipelines issue description hi team we are using visual studio test v ... | 0 |

620,950 | 19,573,887,893 | IssuesEvent | 2022-01-04 13:20:31 | literakl/mezinamiridici | https://api.github.com/repos/literakl/mezinamiridici | opened | Service worker does not update obsolete index file | type: bug priority: P1 | Index page has the correct expiration metadata though it is serves cached content even on new page view. Manual refresh was neccessary. | 1.0 | Service worker does not update obsolete index file - Index page has the correct expiration metadata though it is serves cached content even on new page view. Manual refresh was neccessary. | non_process | service worker does not update obsolete index file index page has the correct expiration metadata though it is serves cached content even on new page view manual refresh was neccessary | 0 |

367,387 | 10,853,158,545 | IssuesEvent | 2019-11-13 14:13:39 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | opened | Motion again super slow in Safari | Browser Issues [Priority] High | **Describe the bug**

Previously: #17383.

For me this issue makes the editor **very** hard to use. If I'm editing a post quick block movement is perpetually delayed. :(

**To reproduce**

* Undo or redo blocks OR

* Paste blocks OR

* Delete a block

I think it becomes more obvious as there are more blocks p... | 1.0 | Motion again super slow in Safari - **Describe the bug**

Previously: #17383.

For me this issue makes the editor **very** hard to use. If I'm editing a post quick block movement is perpetually delayed. :(

**To reproduce**

* Undo or redo blocks OR

* Paste blocks OR

* Delete a block

I think it becomes mor... | non_process | motion again super slow in safari describe the bug previously for me this issue makes the editor very hard to use if i m editing a post quick block movement is perpetually delayed to reproduce undo or redo blocks or paste blocks or delete a block i think it becomes more ob... | 0 |

424,535 | 29,144,612,086 | IssuesEvent | 2023-05-18 00:57:38 | jrsteensen/OpenHornet | https://api.github.com/repos/jrsteensen/OpenHornet | opened | Generate MFG Files: OH1A8A1-1 - ASSY, FWD UFC | Type: Documentation "Category: MCAD Priority: Normal" | Generate the manufacturing files for Generate MFG Files: OH1A8A1-1 - ASSY, FWD UFC.

__Check off each item in issue as you complete it.__

### File generation

- [OH Wiki HOWTO Link](https://github.com/jrsteensen/OpenHornet/wiki/HOWTO:-Generating-Fusion360-Manufacturing-Files)

- [ ] Generate SVG files (if required.)

- ... | 1.0 | Generate MFG Files: OH1A8A1-1 - ASSY, FWD UFC - Generate the manufacturing files for Generate MFG Files: OH1A8A1-1 - ASSY, FWD UFC.

__Check off each item in issue as you complete it.__

### File generation

- [OH Wiki HOWTO Link](https://github.com/jrsteensen/OpenHornet/wiki/HOWTO:-Generating-Fusion360-Manufacturing-F... | non_process | generate mfg files assy fwd ufc generate the manufacturing files for generate mfg files assy fwd ufc check off each item in issue as you complete it file generation generate svg files if required generate files if required generate step files if required c... | 0 |

95,267 | 3,941,413,440 | IssuesEvent | 2016-04-27 07:36:17 | raml-org/raml-js-parser-2 | https://api.github.com/repos/raml-org/raml-js-parser-2 | closed | types are getting parsed as a whole, not individually anymore | bug priority:normal | Using the following RAML:

```yaml

#%RAML 1.0

title: API

types:

TypeA:

type: object

properties:

a: string[]

TypeB:

type: object

properties:

b:

type: string[]

TypeC:

type: object

properties:

d:

type: array

items:

typ... | 1.0 | types are getting parsed as a whole, not individually anymore - Using the following RAML:

```yaml

#%RAML 1.0

title: API

types:

TypeA:

type: object

properties:

a: string[]

TypeB:

type: object

properties:

b:

type: string[]

TypeC:

type: object

propertie... | non_process | types are getting parsed as a whole not individually anymore using the following raml yaml raml title api types typea type object properties a string typeb type object properties b type string typec type object properties ... | 0 |

7,465 | 10,563,144,519 | IssuesEvent | 2019-10-04 20:10:10 | nodejs/security-wg | https://api.github.com/repos/nodejs/security-wg | closed | HackerOne Support team ideas | process | Some ideas on further improving the triage process with the help of the HackerOne team which has been valuable from the recent reports I've seen (thanks a lot Alek, Megan and Reed).

1. We currently have a `Node.js WG triage team` bucket for everything that has been triaged. Quite a bit of the reports are still for l... | 1.0 | HackerOne Support team ideas - Some ideas on further improving the triage process with the help of the HackerOne team which has been valuable from the recent reports I've seen (thanks a lot Alek, Megan and Reed).

1. We currently have a `Node.js WG triage team` bucket for everything that has been triaged. Quite a bit... | process | hackerone support team ideas some ideas on further improving the triage process with the help of the hackerone team which has been valuable from the recent reports i ve seen thanks a lot alek megan and reed we currently have a node js wg triage team bucket for everything that has been triaged quite a bit... | 1 |

19,783 | 26,163,381,744 | IssuesEvent | 2022-12-31 23:47:56 | RobertCraigie/prisma-client-py | https://api.github.com/repos/RobertCraigie/prisma-client-py | closed | Required array fields cannot be used in type safe raw queries | bug/2-confirmed topic: types kind/bug process/candidate level/advanced priority/high topic: crash topic: raw queries | <!--

Thanks for helping us improve Prisma Client Python! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by enabling additional logging output.

See https://prisma-client-py.readthedocs.io/en/stable/reference/logging/ for how to enable additional log... | 1.0 | Required array fields cannot be used in type safe raw queries - <!--

Thanks for helping us improve Prisma Client Python! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by enabling additional logging output.

See https://prisma-client-py.readthedocs.... | process | required array fields cannot be used in type safe raw queries thanks for helping us improve prisma client python 🙏 please follow the sections in the template and provide as much information as possible about your problem e g by enabling additional logging output see for how to enable additional loggin... | 1 |

146,812 | 11,757,819,698 | IssuesEvent | 2020-03-13 14:21:50 | CIMDBORG/CIMMigrationProject | https://api.github.com/repos/CIMDBORG/CIMMigrationProject | opened | Code Clean-Up | Testing enhancement | [codechanges.txt](https://github.com/CIMDBORG/CIMMigrationProject/files/4330006/codechanges.txt)

The attached document is a very informal list of what needs to be looked at in the database. These small clean-ups will make the application look and run smoother. | 1.0 | Code Clean-Up - [codechanges.txt](https://github.com/CIMDBORG/CIMMigrationProject/files/4330006/codechanges.txt)

The attached document is a very informal list of what needs to be looked at in the database. These small clean-ups will make the application look and run smoother. | non_process | code clean up the attached document is a very informal list of what needs to be looked at in the database these small clean ups will make the application look and run smoother | 0 |

12,036 | 14,738,645,232 | IssuesEvent | 2021-01-07 05:20:58 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Invoice summary email | anc-process anp-2.5 ant-child/secondary ant-enhancement grt-billing | In GitLab by @kdjstudios on Jul 5, 2018, 09:44

Hello team,

While working on #937; it became apparent that not always is the user who is running the billing cycle have access to the email address used at the site level. Which is where the email/fax summaries will be sent. how difficult would it be to have this email b... | 1.0 | Invoice summary email - In GitLab by @kdjstudios on Jul 5, 2018, 09:44

Hello team,

While working on #937; it became apparent that not always is the user who is running the billing cycle have access to the email address used at the site level. Which is where the email/fax summaries will be sent. how difficult would it... | process | invoice summary email in gitlab by kdjstudios on jul hello team while working on it became apparent that not always is the user who is running the billing cycle have access to the email address used at the site level which is where the email fax summaries will be sent how difficult would it be to ... | 1 |

13,255 | 15,725,719,140 | IssuesEvent | 2021-03-29 10:20:12 | hashicorp/packer-plugin-amazon | https://api.github.com/repos/hashicorp/packer-plugin-amazon | opened | amazon-import - improvement | enhancement post-processor/amazon-import | _This issue was originally opened by @Roxyrob as hashicorp/packer#9582. It was migrated here as a result of the [Packer plugin split](https://github.com/hashicorp/packer/issues/8610#issuecomment-770034737). The original body of the issue is below._

<hr>

#### Feature Description

amazon-import supporting "import-sna... | 1.0 | amazon-import - improvement - _This issue was originally opened by @Roxyrob as hashicorp/packer#9582. It was migrated here as a result of the [Packer plugin split](https://github.com/hashicorp/packer/issues/8610#issuecomment-770034737). The original body of the issue is below._

<hr>

#### Feature Description

amazon... | process | amazon import improvement this issue was originally opened by roxyrob as hashicorp packer it was migrated here as a result of the the original body of the issue is below feature description amazon import supporting import snapshot ami import passing through snapshot use case s ... | 1 |

22,167 | 3,940,523,602 | IssuesEvent | 2016-04-27 01:26:42 | extnet/Ext.NET | https://api.github.com/repos/extnet/Ext.NET | closed | Support multiple axes per position in a chart | 2.x 3.x 4.x feature fixed-in-latest-extjs sencha | http://forums.ext.net/showthread.php?26496

http://www.sencha.com/forum/showthread.php?275032

**Update:** Allegedly fixed in a _pending_ release at the time of 6.0.1 release. | 1.0 | Support multiple axes per position in a chart - http://forums.ext.net/showthread.php?26496

http://www.sencha.com/forum/showthread.php?275032

**Update:** Allegedly fixed in a _pending_ release at the time of 6.0.1 release. | non_process | support multiple axes per position in a chart update allegedly fixed in a pending release at the time of release | 0 |

6,380 | 9,429,859,405 | IssuesEvent | 2019-04-12 07:30:11 | googleapis/google-cloud-node | https://api.github.com/repos/googleapis/google-cloud-node | closed | use `process.execSync` in favor of `execa` in tests | type: process | `process.execSync` is great for writing unit tests, it's what we use in Node.js itself.

I think that `execa`, `cross-spawn`, etc., address some historic inconsistencies in the Node.js API that we shouldn't be bumping into in simple assertions (and I'm not sure what, if any of the issues, continue to exist on Node.js... | 1.0 | use `process.execSync` in favor of `execa` in tests - `process.execSync` is great for writing unit tests, it's what we use in Node.js itself.

I think that `execa`, `cross-spawn`, etc., address some historic inconsistencies in the Node.js API that we shouldn't be bumping into in simple assertions (and I'm not sure wh... | process | use process execsync in favor of execa in tests process execsync is great for writing unit tests it s what we use in node js itself i think that execa cross spawn etc address some historic inconsistencies in the node js api that we shouldn t be bumping into in simple assertions and i m not sure wh... | 1 |

175,155 | 13,536,866,458 | IssuesEvent | 2020-09-16 09:39:06 | Muhammad-Mamduh/Muhammad-Mamduh | https://api.github.com/repos/Muhammad-Mamduh/Muhammad-Mamduh | opened | ali | Taggg (a) Taggggggg (b) Taggggggg (c) test tag0 test tag1 test tag2 test tag3 test tag4 test tag5 test tag6 test tag7 test tag8 test tag9 | # :clipboard: Bug Details

>ali

key | value

--|--

Reported At | 2020-09-16 09:38:45 UTC

Email | test@example.com

Categories | Report a bug

Tags | test tag0, test tag1, test tag2, test tag3, test tag4, test tag5, test tag6, test tag7, test tag8, test tag9, Taggg (a), Taggggggg (b), Taggggggg (c)

App Version... | 10.0 | ali - # :clipboard: Bug Details

>ali

key | value

--|--

Reported At | 2020-09-16 09:38:45 UTC

Email | test@example.com

Categories | Report a bug

Tags | test tag0, test tag1, test tag2, test tag3, test tag4, test tag5, test tag6, test tag7, test tag8, test tag9, Taggg (a), Taggggggg (b), Taggggggg (c)

App V... | non_process | ali clipboard bug details ali key value reported at utc email test example com categories report a bug tags test test test test test test test test test test taggg a taggggggg b taggggggg c app version kotlin session durat... | 0 |

60,764 | 8,461,218,227 | IssuesEvent | 2018-10-22 21:07:31 | droidkfx/Yet-Another-Productivity-App | https://api.github.com/repos/droidkfx/Yet-Another-Productivity-App | closed | document new features | documentation | - [ ] Logout needs to be documented

- [ ] Document update to yapa-api - added authentication, bugfix multithread access to task | 1.0 | document new features - - [ ] Logout needs to be documented

- [ ] Document update to yapa-api - added authentication, bugfix multithread access to task | non_process | document new features logout needs to be documented document update to yapa api added authentication bugfix multithread access to task | 0 |

16,545 | 21,568,598,940 | IssuesEvent | 2022-05-02 04:17:56 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add The Cooper Clan | suggested title in process | Title: The Cooper Clan

Type (film/tv show): TV show

Show in which it appears: Monk

Is the parent show streaming anywhere? Amazon Prime and Peacock

About when in the parent show does it appear? Season 8, Episode 1, "Mr. Monk's Favorite Show"

Actual footage of the show can be seen (yes/no)? Yes

Cast:

C... | 1.0 | Add The Cooper Clan - Title: The Cooper Clan

Type (film/tv show): TV show

Show in which it appears: Monk

Is the parent show streaming anywhere? Amazon Prime and Peacock

About when in the parent show does it appear? Season 8, Episode 1, "Mr. Monk's Favorite Show"

Actual footage of the show can be seen (ye... | process | add the cooper clan title the cooper clan type film tv show tv show show in which it appears monk is the parent show streaming anywhere amazon prime and peacock about when in the parent show does it appear season episode mr monk s favorite show actual footage of the show can be seen ye... | 1 |

184,476 | 14,981,394,459 | IssuesEvent | 2021-01-28 14:47:44 | google/jsonnet | https://api.github.com/repos/google/jsonnet | reopened | Document -S (--string) in language reference (and maybe tutorial, too). | documentation | Not much else to say.

```sh

$ jsonnet --version && cat test.jsonnet && echo && jsonnet test.jsonnet

Jsonnet commandline interpreter v0.16.0

local wrapper = [

{

some_prop: true

}

];

std.manifestYamlDoc(wrapper)

"- \"some_prop\": true"

``` | 1.0 | Document -S (--string) in language reference (and maybe tutorial, too). - Not much else to say.

```sh

$ jsonnet --version && cat test.jsonnet && echo && jsonnet test.jsonnet

Jsonnet commandline interpreter v0.16.0

local wrapper = [

{

some_prop: true

}

];

std.manifestYamlDoc(wrapper)

"- \"some_pr... | non_process | document s string in language reference and maybe tutorial too not much else to say sh jsonnet version cat test jsonnet echo jsonnet test jsonnet jsonnet commandline interpreter local wrapper some prop true std manifestyamldoc wrapper some prop... | 0 |

20,367 | 27,024,939,008 | IssuesEvent | 2023-02-11 13:28:47 | firebase/firebase-cpp-sdk | https://api.github.com/repos/firebase/firebase-cpp-sdk | closed | [C++] Nightly Integration Testing Report for Firestore | type: process nightly-testing |

<hidden value="integration-test-status-comment"></hidden>

### ✅ [build against repo] Integration test succeeded!

Requested by @sunmou99 on commit b1a5444b10c751c4c039a29bb9fe531b39d1b9ad

Last updated: Sat Feb 11 04:02 PST 2023

**[View integration test log & download artifacts](https://github.com/firebase/fire... | 1.0 | [C++] Nightly Integration Testing Report for Firestore -

<hidden value="integration-test-status-comment"></hidden>

### ✅ [build against repo] Integration test succeeded!

Requested by @sunmou99 on commit b1a5444b10c751c4c039a29bb9fe531b39d1b9ad

Last updated: Sat Feb 11 04:02 PST 2023

**[View integration test l... | process | nightly integration testing report for firestore ✅ nbsp integration test succeeded requested by on commit last updated sat feb pst ❌ nbsp integration test failed requested by firebase workflow trigger on commit last updated fri feb pst failu... | 1 |

54,299 | 6,378,250,202 | IssuesEvent | 2017-08-02 12:15:57 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | TestClientGoCustomResourceExample flakes | kind/flake priority/failing-test sig/api-machinery | flaking on HEAD:

https://storage.googleapis.com/k8s-gubernator/triage/index.html?pr=1&job=ci-kubernetes-test-go&test=TestClientGoCustomResourceExample

https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/logs/ci-kubernetes-test-go/8135#k8siokubernetesvendork8sioapiextensions-apiservertestintegration-testclie... | 1.0 | TestClientGoCustomResourceExample flakes - flaking on HEAD:

https://storage.googleapis.com/k8s-gubernator/triage/index.html?pr=1&job=ci-kubernetes-test-go&test=TestClientGoCustomResourceExample

https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/logs/ci-kubernetes-test-go/8135#k8siokubernetesvendork8sioapie... | non_process | testclientgocustomresourceexample flakes flaking on head kubernetes sig api machinery test failures | 0 |

22,745 | 32,062,153,572 | IssuesEvent | 2023-09-24 19:33:11 | bitfocus/companion-module-requests | https://api.github.com/repos/bitfocus/companion-module-requests | opened | ENTTEC S-Play | NOT YET PROCESSED | - [x] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

ENTTEC S-PLAY via the http api

https://www.enttec.com/product/lighting-show-recorder/smart-light-show-controll... | 1.0 | ENTTEC S-Play - - [x] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

ENTTEC S-PLAY via the http api

https://www.enttec.com/product/lighting-show-recorder/smart-lig... | process | enttec s play i have researched the list of existing companion modules and requests and have determined this has not yet been requested the name of the device hardware or software you would like to control enttec s play via the http api s play has osc support but it s very limited what you wou... | 1 |

120,156 | 12,060,360,654 | IssuesEvent | 2020-04-15 21:03:12 | SAP/openui5 | https://api.github.com/repos/SAP/openui5 | closed | Tooltips still not satisfactory | documentation | OpenUI5 version: 1.68.1

I have found this issue https://github.com/SAP/openui5/issues/1612 and I have to say I agree and conclude it is not fixed still.

1. All Controls show a tooltip aggregation and the documentation keeps sending you in circles. For example, the https://openui5.hana.ondemand.com/#/api/sap.f.Car... | 1.0 | Tooltips still not satisfactory - OpenUI5 version: 1.68.1

I have found this issue https://github.com/SAP/openui5/issues/1612 and I have to say I agree and conclude it is not fixed still.

1. All Controls show a tooltip aggregation and the documentation keeps sending you in circles. For example, the https://openui5... | non_process | tooltips still not satisfactory version i have found this issue and i have to say i agree and conclude it is not fixed still all controls show a tooltip aggregation and the documentation keeps sending you in circles for example the has a tooltip aggregation it tells you the option to use the... | 0 |

381,726 | 11,286,759,318 | IssuesEvent | 2020-01-16 01:49:07 | Radarr/Radarr | https://api.github.com/repos/Radarr/Radarr | closed | Update cleanReleaseGroupRegEx parsing | confirmed done aphrodite enhancement parser priority:low sonarr upstream | Scene name gets messed up on a repost/xpost/scrambled post-fix.

The Sonarr code was updated, so should be easy fix.

https://github.com/Radarr/Radarr/blob/develop/src/NzbDrone.Core/Parser/Parser.cs#L113

Added strings for movies would be xpost/Scrambled, but for usability ideally make it user configurable. | 1.0 | Update cleanReleaseGroupRegEx parsing - Scene name gets messed up on a repost/xpost/scrambled post-fix.

The Sonarr code was updated, so should be easy fix.

https://github.com/Radarr/Radarr/blob/develop/src/NzbDrone.Core/Parser/Parser.cs#L113

Added strings for movies would be xpost/Scrambled, but for usability ... | non_process | update cleanreleasegroupregex parsing scene name gets messed up on a repost xpost scrambled post fix the sonarr code was updated so should be easy fix added strings for movies would be xpost scrambled but for usability ideally make it user configurable | 0 |

9,421 | 12,416,849,919 | IssuesEvent | 2020-05-22 19:09:36 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | M68000 decompiler: Read of volatile region ignored if followed by one or more writes | Feature: Processor/68000 Type: Bug | **Describe the bug**

When a read of a volatile memory region is followed by a sequence of zero-writes, the read is silently dropped from the decompiled output.

**To Reproduce**

Steps to reproduce the behavior:

1. Load the code segment included below as M68020 Big Endian code.

2. Define a volatile read-write regi... | 1.0 | M68000 decompiler: Read of volatile region ignored if followed by one or more writes - **Describe the bug**

When a read of a volatile memory region is followed by a sequence of zero-writes, the read is silently dropped from the decompiled output.

**To Reproduce**

Steps to reproduce the behavior:

1. Load the code ... | process | decompiler read of volatile region ignored if followed by one or more writes describe the bug when a read of a volatile memory region is followed by a sequence of zero writes the read is silently dropped from the decompiled output to reproduce steps to reproduce the behavior load the code segme... | 1 |

68,657 | 14,941,691,911 | IssuesEvent | 2021-01-25 20:08:23 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | "SQL Information Protection" is not available under "Security Center --> Pricing & Settings" | Pri2 cxp doc-enhancement security-center/svc triaged | The option "SQL Information Protection" is not available under "Security Center --> Pricing & Settings"

[Enter feedback here]

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 4ecc186b-cbfd-1c4e-fc2e-165883dc0a02

* Version Independe... | True | "SQL Information Protection" is not available under "Security Center --> Pricing & Settings" - The option "SQL Information Protection" is not available under "Security Center --> Pricing & Settings"

[Enter feedback here]

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.... | non_process | sql information protection is not available under security center pricing settings the option sql information protection is not available under security center pricing settings document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue l... | 0 |

19,486 | 25,795,378,490 | IssuesEvent | 2022-12-10 13:53:47 | vesoft-inc/nebula-studio | https://api.github.com/repos/vesoft-inc/nebula-studio | closed | connect a non-existent address timeout and get 500 code | process/fixed severity/major affects/v3.2 type/bug | **Describe the bug (__must be provided__)**

A clear and concise description of what the bug is.

**Your Environments (__must be provided__)**

* OS: `Mac OS 12.3.1`

* Node-version: `18.7.0`

* Studio-version: `all`

**How To Reproduce(__must be provided__)**

Steps to reproduce the behavior:

1. input ... | 1.0 | connect a non-existent address timeout and get 500 code - **Describe the bug (__must be provided__)**

A clear and concise description of what the bug is.

**Your Environments (__must be provided__)**

* OS: `Mac OS 12.3.1`

* Node-version: `18.7.0`

* Studio-version: `all`

**How To Reproduce(__must be provid... | process | connect a non existent address timeout and get code describe the bug must be provided a clear and concise description of what the bug is your environments must be provided os mac os node version studio version all how to reproduce must be provided ... | 1 |

304,995 | 26,354,230,353 | IssuesEvent | 2023-01-11 08:26:15 | OffchainLabs/arb-token-lists | https://api.github.com/repos/OffchainLabs/arb-token-lists | closed | CI integration tests | Type: Tests | Test "arbify":

- Fetch public arbified list (i.e., CMC); we'll call it L

- aribify it locally; i.e., check that arbifying its corresponding layer 1 list with L as the previous list still (deep)-equals L

Test "update":

- Fetch public arbified list (i.e., CMC); we'll call it L

- Updating L should still equal L

... | 1.0 | CI integration tests - Test "arbify":

- Fetch public arbified list (i.e., CMC); we'll call it L

- aribify it locally; i.e., check that arbifying its corresponding layer 1 list with L as the previous list still (deep)-equals L

Test "update":

- Fetch public arbified list (i.e., CMC); we'll call it L

- Updating L... | non_process | ci integration tests test arbify fetch public arbified list i e cmc we ll call it l aribify it locally i e check that arbifying its corresponding layer list with l as the previous list still deep equals l test update fetch public arbified list i e cmc we ll call it l updating l... | 0 |

13,313 | 15,783,613,299 | IssuesEvent | 2021-04-01 14:11:55 | ooi-data/CE04OSPS-SF01B-3C-PARADA102-streamed-parad_sa_sample | https://api.github.com/repos/ooi-data/CE04OSPS-SF01B-3C-PARADA102-streamed-parad_sa_sample | opened | 🛑 Processing failed: OSError | process | ## Overview

`OSError` found in `processing_task` task during run ended on 2021-04-01T14:11:54.836710.

## Details

Flow name: `CE04OSPS-SF01B-3C-PARADA102-streamed-parad_sa_sample`

Task name: `processing_task`

Error type: `OSError`

Error message: [Errno 16] Please reduce your request rate.

<details>

<summary>Traceba... | 1.0 | 🛑 Processing failed: OSError - ## Overview

`OSError` found in `processing_task` task during run ended on 2021-04-01T14:11:54.836710.

## Details

Flow name: `CE04OSPS-SF01B-3C-PARADA102-streamed-parad_sa_sample`

Task name: `processing_task`

Error type: `OSError`

Error message: [Errno 16] Please reduce your request ra... | process | 🛑 processing failed oserror overview oserror found in processing task task during run ended on details flow name streamed parad sa sample task name processing task error type oserror error message please reduce your request rate traceback traceback most rec... | 1 |

75,804 | 21,001,817,879 | IssuesEvent | 2022-03-29 18:13:37 | cse-sim/cse | https://api.github.com/repos/cse-sim/cse | opened | Add economizer to RSYS | enhancement building science 2 - medium priority | - Add input for minimum OA fraction with hourly/subhourly variability

- Add inputs for economizer controls (see [EnergyPlus documentation](https://bigladdersoftware.com/epx/docs/9-6/input-output-reference/group-controllers.html#controlleroutdoorair))

- Maximum OA flow/fraction?

- Control type (Fixed/differential... | 1.0 | Add economizer to RSYS - - Add input for minimum OA fraction with hourly/subhourly variability

- Add inputs for economizer controls (see [EnergyPlus documentation](https://bigladdersoftware.com/epx/docs/9-6/input-output-reference/group-controllers.html#controlleroutdoorair))

- Maximum OA flow/fraction?

- Control... | non_process | add economizer to rsys add input for minimum oa fraction with hourly subhourly variability add inputs for economizer controls see maximum oa flow fraction control type fixed differential drybulb enthalpy lockout some controls can probably be set based on expressions others may need c... | 0 |

164,114 | 25,921,480,784 | IssuesEvent | 2022-12-15 22:28:02 | coder/coder | https://api.github.com/repos/coder/coder | closed | Long metadata strings get truncated | site design waiting-for-info | Can we have a way to specify that a particular metadata string not be truncated even if its too long?

<img width="826" alt="Screen Shot 2022-10-25 at 9 41 29 pm" src="https://user-images.githubusercontent.com/21701128/197753151-e89e7778-8f67-4470-a77e-6fd3b74acb9d.png">

| 1.0 | Long metadata strings get truncated - Can we have a way to specify that a particular metadata string not be truncated even if its too long?

<img width="826" alt="Screen Shot 2022-10-25 at 9 41 29 pm" src="https://user-images.githubusercontent.com/21701128/197753151-e89e7778-8f67-4470-a77e-6fd3b74acb9d.png">

| non_process | long metadata strings get truncated can we have a way to specify that a particular metadata string not be truncated even if its too long img width alt screen shot at pm src | 0 |

4,064 | 6,995,525,398 | IssuesEvent | 2017-12-15 19:40:42 | syndesisio/syndesis | https://api.github.com/repos/syndesisio/syndesis | opened | Improve Development Process | cat/discussion cat/process cat/retro | I personally think this should be an Epic, as it's so broad and there are a lot of areas we can improve on here. This was a card in the retrospective that drew my attention. The general comment on it was that we need time to accommodate for QE, UXD, and Docs review after implementing specific features, and that this wa... | 1.0 | Improve Development Process - I personally think this should be an Epic, as it's so broad and there are a lot of areas we can improve on here. This was a card in the retrospective that drew my attention. The general comment on it was that we need time to accommodate for QE, UXD, and Docs review after implementing speci... | process | improve development process i personally think this should be an epic as it s so broad and there are a lot of areas we can improve on here this was a card in the retrospective that drew my attention the general comment on it was that we need time to accommodate for qe uxd and docs review after implementing speci... | 1 |

265,546 | 28,297,918,719 | IssuesEvent | 2023-04-10 01:16:19 | hshivhare67/platform_external_tcpdump_AOSP10_r33_4.9.2 | https://api.github.com/repos/hshivhare67/platform_external_tcpdump_AOSP10_r33_4.9.2 | closed | CVE-2020-8037 (High) detected in platform_external_tcpdumpandroid-mainline-12.0.0_r17 - autoclosed | Mend: dependency security vulnerability | ## CVE-2020-8037 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>platform_external_tcpdumpandroid-mainline-12.0.0_r17</b></p></summary>

<p>

<p>Library home page: <a href=https://github... | True | CVE-2020-8037 (High) detected in platform_external_tcpdumpandroid-mainline-12.0.0_r17 - autoclosed - ## CVE-2020-8037 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>platform_external_t... | non_process | cve high detected in platform external tcpdumpandroid mainline autoclosed cve high severity vulnerability vulnerable library platform external tcpdumpandroid mainline library home page a href found in head commit a href found in base branch master ... | 0 |

148,952 | 19,560,750,033 | IssuesEvent | 2022-01-03 15:54:15 | shaimael/Webgoat | https://api.github.com/repos/shaimael/Webgoat | opened | CVE-2017-7957 (High) detected in xstream-1.4.5.jar | security vulnerability | ## CVE-2017-7957 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.5.jar</b></p></summary>

<p>XStream is a serialization library from Java objects to XML and back.</p>

<p>Pat... | True | CVE-2017-7957 (High) detected in xstream-1.4.5.jar - ## CVE-2017-7957 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.5.jar</b></p></summary>

<p>XStream is a serialization ... | non_process | cve high detected in xstream jar cve high severity vulnerability vulnerable library xstream jar xstream is a serialization library from java objects to xml and back path to dependency file webgoat lessons vulnerable components pom xml path to vulnerable library reposi... | 0 |

258,894 | 8,180,602,948 | IssuesEvent | 2018-08-28 20:00:29 | MultiPoolMiner/MultiPoolMiner | https://api.github.com/repos/MultiPoolMiner/MultiPoolMiner | closed | WIP: Algorithms.txt with all new Equihash and CryptoNight algorithms | priority workaround | ### Important!!!

**Algorithms.txt in the download zip file is broken - please use the file in this thread!**

Due to the quick changes in the CryptoNight and Equihash ecosystem we are struggling to keep Algorithms.txt up-to-date.

Should you encounter issues with CryptoNight or Equihash miners then make sure you... | 1.0 | WIP: Algorithms.txt with all new Equihash and CryptoNight algorithms - ### Important!!!

**Algorithms.txt in the download zip file is broken - please use the file in this thread!**

Due to the quick changes in the CryptoNight and Equihash ecosystem we are struggling to keep Algorithms.txt up-to-date.

Should you ... | non_process | wip algorithms txt with all new equihash and cryptonight algorithms important algorithms txt in the download zip file is broken please use the file in this thread due to the quick changes in the cryptonight and equihash ecosystem we are struggling to keep algorithms txt up to date should you ... | 0 |

254 | 2,677,662,878 | IssuesEvent | 2015-03-26 02:10:45 | meteor/meteor | https://api.github.com/repos/meteor/meteor | closed | Release 1.0.5 | Project:Release Process | This issue tracks the release of Meteor 1.0.5. Have any concerns? Mention them here. Finding that the RCs are working great? Mention that here. We will keep this top-level description updated with known issues.

The current RC is `1.0.5-rc.0`. Update to the release candidate with: `meteor update --release 1.0.5-rc.0`... | 1.0 | Release 1.0.5 - This issue tracks the release of Meteor 1.0.5. Have any concerns? Mention them here. Finding that the RCs are working great? Mention that here. We will keep this top-level description updated with known issues.

The current RC is `1.0.5-rc.0`. Update to the release candidate with: `meteor update --rel... | process | release this issue tracks the release of meteor have any concerns mention them here finding that the rcs are working great mention that here we will keep this top level description updated with known issues the current rc is rc update to the release candidate with meteor update rel... | 1 |

54,447 | 13,348,768,024 | IssuesEvent | 2020-08-29 20:20:28 | cosmos/cosmos-sdk | https://api.github.com/repos/cosmos/cosmos-sdk | closed | go mod tidy fails with crypto/secp256k1/internal/secp256k1: no matching versions for query "latest" | build | When you run `go mod tidy`, the process exits with error:

```bash

alessio@phoenix:~/work/cosmos-sdk$ go mod tidy

go: finding module for package github.com/cosmos/cosmos-sdk/crypto/secp256k1/internal/secp256k1

github.com/cosmos/cosmos-sdk/crypto/keys/secp256k1 imports

github.com/cosmos/cosmos-sdk/crypto/secp256k... | 1.0 | go mod tidy fails with crypto/secp256k1/internal/secp256k1: no matching versions for query "latest" - When you run `go mod tidy`, the process exits with error:

```bash

alessio@phoenix:~/work/cosmos-sdk$ go mod tidy

go: finding module for package github.com/cosmos/cosmos-sdk/crypto/secp256k1/internal/secp256k1

git... | non_process | go mod tidy fails with crypto internal no matching versions for query latest when you run go mod tidy the process exits with error bash alessio phoenix work cosmos sdk go mod tidy go finding module for package github com cosmos cosmos sdk crypto internal github com cosmos cosmos sdk crypto... | 0 |

180,415 | 6,649,520,876 | IssuesEvent | 2017-09-28 13:31:33 | tootsuite/mastodon | https://api.github.com/repos/tootsuite/mastodon | closed | Ability to add alt text to images/media | a11y enhancement new user experience priority - high | Images must have text alternatives that describe the information or function represented by the images. This ensures that images can be used by people with various disabilities. The main technique to add alt text is via the `alt` attribute.

Twitter added the ability to add alt text to images earlier this year. Somet... | 1.0 | Ability to add alt text to images/media - Images must have text alternatives that describe the information or function represented by the images. This ensures that images can be used by people with various disabilities. The main technique to add alt text is via the `alt` attribute.

Twitter added the ability to add a... | non_process | ability to add alt text to images media images must have text alternatives that describe the information or function represented by the images this ensures that images can be used by people with various disabilities the main technique to add alt text is via the alt attribute twitter added the ability to add a... | 0 |

4,450 | 7,315,757,361 | IssuesEvent | 2018-03-01 12:16:26 | rubberduck-vba/Rubberduck | https://api.github.com/repos/rubberduck-vba/Rubberduck | closed | comment seen as code | antlr bug difficulty-02-ducky feature-inspections parse-tree-processing up-for-grabs | Error: Local variable 'testbatch' is not declared - (XLTest163a.xlsm) XLTest.Informations, line 227

```

Sub testAudit() '@notrace testautotest testbatch

``` | 1.0 | comment seen as code - Error: Local variable 'testbatch' is not declared - (XLTest163a.xlsm) XLTest.Informations, line 227

```

Sub testAudit() '@notrace testautotest testbatch

``` | process | comment seen as code error local variable testbatch is not declared xlsm xltest informations line sub testaudit notrace testautotest testbatch | 1 |

13,058 | 15,394,228,219 | IssuesEvent | 2021-03-03 17:38:45 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Obsolete 'detection of stimulus involved in cell cycle checkpoint' branch | cell cycle and DNA processes obsoletion | Dear all,

The proposal has been made to obsolete the 'detection of stimulus involved in cell cycle checkpoint' branch. The reason for obsoletion is that these terms correspond to molecular functions. The terms impacted are:

GO:0090429 detection of endogenous biotic stimulus

GO:0072394 detection of stimulus inv... | 1.0 | Obsolete 'detection of stimulus involved in cell cycle checkpoint' branch - Dear all,

The proposal has been made to obsolete the 'detection of stimulus involved in cell cycle checkpoint' branch. The reason for obsoletion is that these terms correspond to molecular functions. The terms impacted are:

GO:0090429 d... | process | obsolete detection of stimulus involved in cell cycle checkpoint branch dear all the proposal has been made to obsolete the detection of stimulus involved in cell cycle checkpoint branch the reason for obsoletion is that these terms correspond to molecular functions the terms impacted are go detecti... | 1 |

13,814 | 5,467,265,993 | IssuesEvent | 2017-03-10 00:31:53 | mitchellh/packer | https://api.github.com/repos/mitchellh/packer | closed | build -force should remove existing AWS AMI | bug builder/amazon | According to the docs, -force should remove existing artifacts before building, however this seems to work for QEMU but not for amazon-ebs builder:

# packer build -force template.json

[...]

==> amazon-ebs: Error: name conflicts with an existing AMI: ami-0f160a63

Build 'amazon-ebs' errored: Error: name conflicts with... | 1.0 | build -force should remove existing AWS AMI - According to the docs, -force should remove existing artifacts before building, however this seems to work for QEMU but not for amazon-ebs builder:

# packer build -force template.json

[...]

==> amazon-ebs: Error: name conflicts with an existing AMI: ami-0f160a63

Build 'a... | non_process | build force should remove existing aws ami according to the docs force should remove existing artifacts before building however this seems to work for qemu but not for amazon ebs builder packer build force template json amazon ebs error name conflicts with an existing ami ami build amazon ebs ... | 0 |

135,309 | 18,678,892,678 | IssuesEvent | 2021-11-01 01:02:30 | benchmarkdebricked/kubernetes | https://api.github.com/repos/benchmarkdebricked/kubernetes | opened | CVE-2020-15113 (High) detected in mobyv17.03.2-ce, kubernetesv1.16.0-alpha.0 | security vulnerability | ## CVE-2020-15113 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>mobyv17.03.2-ce</b>, <b>kubernetesv1.16.0-alpha.0</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><... | True | CVE-2020-15113 (High) detected in mobyv17.03.2-ce, kubernetesv1.16.0-alpha.0 - ## CVE-2020-15113 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>mobyv17.03.2-ce</b>, <b>kubernetesv1.1... | non_process | cve high detected in ce alpha cve high severity vulnerability vulnerable libraries ce alpha vulnerability details in etcd before versions and certain directory paths are created etcd data directory and the directory path whe... | 0 |

668,369 | 22,580,879,121 | IssuesEvent | 2022-06-28 11:31:36 | Mezzanine-UI/mezzanine | https://api.github.com/repos/Mezzanine-UI/mezzanine | closed | Add support for React v18 and server side render | feature Priority: HIGH react | ### Is your feature request related to a problem? Please describe.

1. mezzanine `createNotifier` use `render` and `unmountComponentAtNode` which comes from `react-dom` package and is already marked as DEPRECATED in react v18.

2. In SSR mode, document will be undefined.

### Describe the solution you'd like

See h... | 1.0 | Add support for React v18 and server side render - ### Is your feature request related to a problem? Please describe.

1. mezzanine `createNotifier` use `render` and `unmountComponentAtNode` which comes from `react-dom` package and is already marked as DEPRECATED in react v18.

2. In SSR mode, document will be undefi... | non_process | add support for react and server side render is your feature request related to a problem please describe mezzanine createnotifier use render and unmountcomponentatnode which comes from react dom package and is already marked as deprecated in react in ssr mode document will be undefined ... | 0 |

12,004 | 14,738,160,194 | IssuesEvent | 2021-01-07 03:56:16 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | 001 NCSM - NCSM 1st cycle accounts | anc-ops anc-process anp-important ant-bug ant-support | In GitLab by @kdjstudios on May 9, 2018, 12:17

**Submitted by:** "Jesus Corchado" <jesus.corchado@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-05-09-47848/conversation

**Server:** Internal

**Client/Site:** Multiple

**Account:** NA

**Issue:**

Sorry, we found another item... | 1.0 | 001 NCSM - NCSM 1st cycle accounts - In GitLab by @kdjstudios on May 9, 2018, 12:17

**Submitted by:** "Jesus Corchado" <jesus.corchado@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-05-09-47848/conversation

**Server:** Internal

**Client/Site:** Multiple

**Account:** NA

**Is... | process | ncsm ncsm cycle accounts in gitlab by kdjstudios on may submitted by jesus corchado helpdesk server internal client site multiple account na issue sorry we found another item that we need fixed i processed the billing on the outbound sites for the ncsm ... | 1 |

174,454 | 14,483,172,461 | IssuesEvent | 2020-12-10 14:51:51 | nilearn/nilearn | https://api.github.com/repos/nilearn/nilearn | closed | Improve Documentation of masker family | Documentation | Perhaps the docstrings for the following classes could be improved:

```

- NiftiMasker

- NiftiLabelsMasker

- NiftiMapsMasker

- MultiNiftiMasker

```

More specifically, at the top of the respective class docstrings, one might add

- a more generous explanation of the purpose of the class

- its commonalities and differenc... | 1.0 | Improve Documentation of masker family - Perhaps the docstrings for the following classes could be improved:

```

- NiftiMasker

- NiftiLabelsMasker

- NiftiMapsMasker

- MultiNiftiMasker

```

More specifically, at the top of the respective class docstrings, one might add

- a more generous explanation of the purpose of th... | non_process | improve documentation of masker family perhaps the docstrings for the following classes could be improved niftimasker niftilabelsmasker niftimapsmasker multiniftimasker more specifically at the top of the respective class docstrings one might add a more generous explanation of the purpose of th... | 0 |

6,838 | 9,979,560,449 | IssuesEvent | 2019-07-09 23:24:03 | GroceriStar/food-static-files-generator | https://api.github.com/repos/GroceriStar/food-static-files-generator | closed | fileSystem methods separation | enhancement good first issue help wanted in-process | **Is your feature request related to a problem? Please describe.**

https://github.com/GroceriStar/food-static-files-generator/blob/master/src/fileSystem.js

https://github.com/GroceriStar/food-static-files-generator/issues/72

**Describe the solution you'd like**

move write and read methods to a separated file. it ... | 1.0 | fileSystem methods separation - **Is your feature request related to a problem? Please describe.**

https://github.com/GroceriStar/food-static-files-generator/blob/master/src/fileSystem.js

https://github.com/GroceriStar/food-static-files-generator/issues/72

**Describe the solution you'd like**

move write and read ... | process | filesystem methods separation is your feature request related to a problem please describe describe the solution you d like move write and read methods to a separated file it will help to make writefile js less complicated and shorter describe alternatives you ve considered a clear and co... | 1 |

92,993 | 10,764,432,758 | IssuesEvent | 2019-11-01 08:15:47 | tiuweehan/ped | https://api.github.com/repos/tiuweehan/ped | opened | Sort command is not valid (and supposed to come in v1.3) | severity.Medium type.DocumentationBug | Sort is not a valid command in the application and UG says `coming in 1.3` even though the version is 1.3

| 1.0 | Sort command is not valid (and supposed to come in v1.3) - Sort is not a valid command in the application and UG says `coming in 1.3` even though the version is 1.3

| non_process | sort command is not valid and supposed to come in sort is not a valid command in the application and ug says coming in even though the version is | 0 |

10,663 | 13,453,185,035 | IssuesEvent | 2020-09-09 00:11:26 | zaimoni/Cataclysm | https://api.github.com/repos/zaimoni/Cataclysm | opened | local fork: PDCurses | process refactor | For tile support. Also the reference curses implementation for Windows. catacurse.cpp/h is a hand-rolled re-implementation. License is public domain (ok). | 1.0 | local fork: PDCurses - For tile support. Also the reference curses implementation for Windows. catacurse.cpp/h is a hand-rolled re-implementation. License is public domain (ok). | process | local fork pdcurses for tile support also the reference curses implementation for windows catacurse cpp h is a hand rolled re implementation license is public domain ok | 1 |

437,847 | 12,603,364,566 | IssuesEvent | 2020-06-11 13:22:21 | EBISPOT/goci | https://api.github.com/repos/EBISPOT/goci | closed | Beta not imported into curation interface from deposition | Priority: High Type: Bug | Beta was entered in the submission template but was not imported into the curation interface - Example Buchwald J 32157176.

| 1.0 | Beta not imported into curation interface from deposition - Beta was entered in the submission template but was not imported into the curation interface - Example Buchwald J 32157176.

| non_process | beta not imported into curation interface from deposition beta was entered in the submission template but was not imported into the curation interface example buchwald j | 0 |

256,862 | 19,474,118,292 | IssuesEvent | 2021-12-24 08:47:43 | dxc-technology/halstack-style-guide | https://api.github.com/repos/dxc-technology/halstack-style-guide | reopened | [Docs] Component review and design improvements | category: documentation :notebook: task: epic :pushpin: | ## Component reviews

- [x] #190

- [x] #224

- [x] #225

- [x] #443

- [x] #477

- [x] #478

- [x] #497

- [x] #499

- [x] #530

- [x] #542

- [x] #619

## Fixes

- [x] #322

- [x] #323

- [x] #437

- [x] #460

- [x] #474

- [x] #547

- [x] #572

- [x] #588

- [x] #645

- [x] #646

- [ ] #656

## Design ... | 1.0 | [Docs] Component review and design improvements - ## Component reviews

- [x] #190

- [x] #224

- [x] #225

- [x] #443

- [x] #477

- [x] #478

- [x] #497

- [x] #499

- [x] #530

- [x] #542

- [x] #619

## Fixes

- [x] #322

- [x] #323

- [x] #437

- [x] #460

- [x] #474

- [x] #547

- [x] #572

- [x] #588

... | non_process | component review and design improvements component reviews fixes design improvements | 0 |

530,639 | 15,435,333,606 | IssuesEvent | 2021-03-07 08:22:29 | magento/magento2 | https://api.github.com/repos/magento/magento2 | closed | Page layouts are hard-coded in Magento\Widget\Block\Adminhtml\Widget\Instance\Edit\Chooser\Container | Issue: Confirmed Issue: ready for confirmation Priority: P2 Progress: PR in progress Reported on 2.3.5 Reproduced on 2.4.x Severity: S3 Triage: Dev.Experience | ### Preconditions (*)

Magento 2.3.5

### Steps to reproduce (*)

Have a look at the code source of `Magento\Widget\Block\Adminhtml\Widget\Instance\Edit\Chooser\Container`.

### Expected result (*)

The function `getPageLayouts()` should return the actual list of page layouts declared by the different modules... | 1.0 | Page layouts are hard-coded in Magento\Widget\Block\Adminhtml\Widget\Instance\Edit\Chooser\Container - ### Preconditions (*)

Magento 2.3.5

### Steps to reproduce (*)

Have a look at the code source of `Magento\Widget\Block\Adminhtml\Widget\Instance\Edit\Chooser\Container`.

### Expected result (*)

The func... | non_process | page layouts are hard coded in magento widget block adminhtml widget instance edit chooser container preconditions magento steps to reproduce have a look at the code source of magento widget block adminhtml widget instance edit chooser container expected result the func... | 0 |

17,778 | 23,704,366,931 | IssuesEvent | 2022-08-29 22:33:47 | googleapis/gapic-showcase | https://api.github.com/repos/googleapis/gapic-showcase | opened | switch docs to reference "latest" release assets instead of specific version | type: process | We should change all of the examples in the README to use the "latest" release version, specifically when downloading release assets. See https://docs.github.com/en/repositories/releasing-projects-on-github/linking-to-releases.

As part of this, we'd need to exclude the release version from the asset names e.g. `gapi... | 1.0 | switch docs to reference "latest" release assets instead of specific version - We should change all of the examples in the README to use the "latest" release version, specifically when downloading release assets. See https://docs.github.com/en/repositories/releasing-projects-on-github/linking-to-releases.

As part of... | process | switch docs to reference latest release assets instead of specific version we should change all of the examples in the readme to use the latest release version specifically when downloading release assets see as part of this we d need to exclude the release version from the asset names e g gapic showcas... | 1 |

5,571 | 8,407,883,919 | IssuesEvent | 2018-10-11 22:34:58 | HumanCellAtlas/dcp-community | https://api.github.com/repos/HumanCellAtlas/dcp-community | opened | Add recommended format for Slack Channels | charter-process | Adopt the best practice that @lauraclarke created in the Ingest Support charter:

Recommended format for Slack Channels

`[HumanCellAtlas/channel](https://humancellatlas.slack.com/messages/channel)`

- [ ] Update charter template to include sub-sections for Mailing Lists and Slack Channels now that we have mailing... | 1.0 | Add recommended format for Slack Channels - Adopt the best practice that @lauraclarke created in the Ingest Support charter:

Recommended format for Slack Channels

`[HumanCellAtlas/channel](https://humancellatlas.slack.com/messages/channel)`

- [ ] Update charter template to include sub-sections for Mailing Lists... | process | add recommended format for slack channels adopt the best practice that lauraclarke created in the ingest support charter recommended format for slack channels update charter template to include sub sections for mailing lists and slack channels now that we have mailing lists update existing ch... | 1 |

3,213 | 6,274,320,909 | IssuesEvent | 2017-07-18 01:37:39 | gaocegege/Processing.R | https://api.github.com/repos/gaocegege/Processing.R | opened | Add compiler or related tech to solve call back functions | community/processing difficulty/high priority/p1 size/no-idea status/to-be-claimed type/bug | related issues: #166

There are some functions which will be defined in R but it is called in Java. Actually we have limited implementation for these functions, for example, draw and settings. But some libraries and some other built-in functions need to be supported.

Built-in functions includes: mouse related fun... | 1.0 | Add compiler or related tech to solve call back functions - related issues: #166

There are some functions which will be defined in R but it is called in Java. Actually we have limited implementation for these functions, for example, draw and settings. But some libraries and some other built-in functions need to be ... | process | add compiler or related tech to solve call back functions related issues there are some functions which will be defined in r but it is called in java actually we have limited implementation for these functions for example draw and settings but some libraries and some other built in functions need to be su... | 1 |

13,316 | 15,784,065,090 | IssuesEvent | 2021-04-01 14:42:52 | edwardsmarc/CASFRI | https://api.github.com/repos/edwardsmarc/CASFRI | opened | Create one version of TT_RowIsValid() and TT_HasPrecedence() for each process that requires it | enhancement high post-translation process | TT_RowIsValid() and TT_HasPrecedence() are two functions that have to be overwritten according to the context they are used. They only require to be passed the same king of argument and to return a boolean.

They are used in many contexts:

- in the basic geohistory test

- in the real data geohistory test

- in t... | 1.0 | Create one version of TT_RowIsValid() and TT_HasPrecedence() for each process that requires it - TT_RowIsValid() and TT_HasPrecedence() are two functions that have to be overwritten according to the context they are used. They only require to be passed the same king of argument and to return a boolean.

They are use... | process | create one version of tt rowisvalid and tt hasprecedence for each process that requires it tt rowisvalid and tt hasprecedence are two functions that have to be overwritten according to the context they are used they only require to be passed the same king of argument and to return a boolean they are use... | 1 |

6,584 | 9,661,896,557 | IssuesEvent | 2019-05-20 19:18:33 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | opened | Ensure Python tests run under Windows | P1 team-Rules-Python type: process | We [currently disable](https://github.com/bazelbuild/bazel/blob/bdc6c10b674757df38982e71752aab8eddab47a0/src/test/shell/bazel/BUILD#L724-L725) some Python tests under Windows because until recently our CI machines didn't have both Python 2 and Python 3 available. Now that that's resolved, we should enable them.

P1 b... | 1.0 | Ensure Python tests run under Windows - We [currently disable](https://github.com/bazelbuild/bazel/blob/bdc6c10b674757df38982e71752aab8eddab47a0/src/test/shell/bazel/BUILD#L724-L725) some Python tests under Windows because until recently our CI machines didn't have both Python 2 and Python 3 available. Now that that's ... | process | ensure python tests run under windows we some python tests under windows because until recently our ci machines didn t have both python and python available now that that s resolved we should enable them because this blocks fixing which in turn is needed to enable toolchains on windows separat... | 1 |

4,857 | 7,745,937,541 | IssuesEvent | 2018-05-29 19:59:47 | GoogleCloudPlatform/google-cloud-python | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-python | reopened | [BigQuery] cannot be used with Python 3.7 (Pandas) | api: bigquery testing type: process | Pandas/Cython doesn't currently support 3.7. Once it does we need to enable 3.7 testing on BigQuery.

Collecting pandas>=0.17.1 (from google-cloud-monitoring==0.29.0)

Using cached https://files.pythonhosted.org/packages/08/01/803834bc8a4e708aedebb133095a88a4dad9f45bbaf5ad777d2bea543c7e/pandas-0.22.0.tar.gz

Could no... | 1.0 | [BigQuery] cannot be used with Python 3.7 (Pandas) - Pandas/Cython doesn't currently support 3.7. Once it does we need to enable 3.7 testing on BigQuery.

Collecting pandas>=0.17.1 (from google-cloud-monitoring==0.29.0)

Using cached https://files.pythonhosted.org/packages/08/01/803834bc8a4e708aedebb133095a88a4dad9f4... | process | cannot be used with python pandas pandas cython doesn t currently support once it does we need to enable testing on bigquery collecting pandas from google cloud monitoring using cached could not find a version that satisfies the requirement cython from versions no matchin... | 1 |

8,563 | 11,736,676,482 | IssuesEvent | 2020-03-11 13:28:55 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Mapping for South Africa | Pri2 automation/svc awaiting-product-team-response cxp process-automation/subsvc product-question triaged | Hi, what is the mapping for South Africa? Are solutions like Update Management not available in that region?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: f8f86bd0-7555-9be2-1015-76e3ab88062f

* Version Independent ID: 1d310402-fadf-d602-04... | 1.0 | Mapping for South Africa - Hi, what is the mapping for South Africa? Are solutions like Update Management not available in that region?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: f8f86bd0-7555-9be2-1015-76e3ab88062f

* Version Independen... | process | mapping for south africa hi what is the mapping for south africa are solutions like update management not available in that region document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id fadf conten... | 1 |

3,208 | 6,264,803,417 | IssuesEvent | 2017-07-16 11:54:55 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | n.kill(19) throws error | child_process doc good first contribution process | On versions 6 and 7 of Node.js

when I started a child process with `const n = require('child_process').spawn`

if I try,

`n.kill(19)`, I get an error saying the signal is not recognized

but 19 is a valid signal

http://stackoverflow.com/questions/9951556/why-number-9-in-kill-9-command-in-unix

I would expe... | 2.0 | n.kill(19) throws error - On versions 6 and 7 of Node.js

when I started a child process with `const n = require('child_process').spawn`

if I try,

`n.kill(19)`, I get an error saying the signal is not recognized

but 19 is a valid signal

http://stackoverflow.com/questions/9951556/why-number-9-in-kill-9-comma... | process | n kill throws error on versions and of node js when i started a child process with const n require child process spawn if i try n kill i get an error saying the signal is not recognized but is a valid signal i would expect it to work if i do n kill sigstop it seems to... | 1 |

12,529 | 14,972,166,098 | IssuesEvent | 2021-01-27 22:21:36 | BootBlock/FileSieve | https://api.github.com/repos/BootBlock/FileSieve | opened | Decouple Profile processing from the UI | backend-core processing ui | To allow the simultaneous processing of multiple profiles and to allow the Trigger system to fire for more than one profile at a time, profile processing will need to be decoupled from the user interface.

Requires some thought, especially when it comes to showing the fact that multiple profiles are being processed a... | 1.0 | Decouple Profile processing from the UI - To allow the simultaneous processing of multiple profiles and to allow the Trigger system to fire for more than one profile at a time, profile processing will need to be decoupled from the user interface.

Requires some thought, especially when it comes to showing the fact th... | process | decouple profile processing from the ui to allow the simultaneous processing of multiple profiles and to allow the trigger system to fire for more than one profile at a time profile processing will need to be decoupled from the user interface requires some thought especially when it comes to showing the fact th... | 1 |

2,226 | 3,575,814,867 | IssuesEvent | 2016-01-27 17:12:44 | allo-/firefox-profilemaker | https://api.github.com/repos/allo-/firefox-profilemaker | closed | Migrate all forms to DynamicConfigForm | Infrastructure | All Forms should use an options dictionary and be created by ``create_configform``. Then the view can be adapted to create forms from a list of ``(id, name, options)`` tuples instead of Form objects. | 1.0 | Migrate all forms to DynamicConfigForm - All Forms should use an options dictionary and be created by ``create_configform``. Then the view can be adapted to create forms from a list of ``(id, name, options)`` tuples instead of Form objects. | non_process | migrate all forms to dynamicconfigform all forms should use an options dictionary and be created by create configform then the view can be adapted to create forms from a list of id name options tuples instead of form objects | 0 |

20,122 | 26,659,350,410 | IssuesEvent | 2023-01-25 19:36:47 | pb866/Kimai.jl | https://api.github.com/repos/pb866/Kimai.jl | opened | Allow comments in input files | enhancement data processing | Allow the possibility to add comments in the input data files of vacation and sick leave by specifying a character, e.g. `#`, in `CSV.read`. | 1.0 | Allow comments in input files - Allow the possibility to add comments in the input data files of vacation and sick leave by specifying a character, e.g. `#`, in `CSV.read`. | process | allow comments in input files allow the possibility to add comments in the input data files of vacation and sick leave by specifying a character e g in csv read | 1 |

17,476 | 23,298,447,210 | IssuesEvent | 2022-08-07 00:19:06 | mdsreq-fga-unb/2022.1-GDS | https://api.github.com/repos/mdsreq-fga-unb/2022.1-GDS | closed | Riscos | Planejamento Processo de Desenvolvimento | **Descrição**

quando será realizado o gerenciamento dos riscos? em que momento do processo?

Ou seja, quando e como a equipe irá revisar e atualizar os riscos do projeto e do produto? | 1.0 | Riscos - **Descrição**

quando será realizado o gerenciamento dos riscos? em que momento do processo?

Ou seja, quando e como a equipe irá revisar e atualizar os riscos do projeto e do produto? | process | riscos descrição quando será realizado o gerenciamento dos riscos em que momento do processo ou seja quando e como a equipe irá revisar e atualizar os riscos do projeto e do produto | 1 |

9,879 | 12,886,461,010 | IssuesEvent | 2020-07-13 09:31:17 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Improve tests in `cli` (currently using Mocha) | kind/improvement process/candidate team/typescript topic: internal topic: tests | Tests in https://github.com/prisma/prisma/tree/master/src/packages/cli/src/__tests__/

They run on Mocha while we are using Jest everywhere else.

Each new Mocha test now require a manual edit of the package.json.

Maybe it makes sense to keep Mocha for the integration tests but we should use Jest for the rest.

... | 1.0 | Improve tests in `cli` (currently using Mocha) - Tests in https://github.com/prisma/prisma/tree/master/src/packages/cli/src/__tests__/

They run on Mocha while we are using Jest everywhere else.

Each new Mocha test now require a manual edit of the package.json.

Maybe it makes sense to keep Mocha for the integrati... | process | improve tests in cli currently using mocha tests in they run on mocha while we are using jest everywhere else each new mocha test now require a manual edit of the package json maybe it makes sense to keep mocha for the integration tests but we should use jest for the rest todo adding jest alon... | 1 |

15,276 | 19,257,181,218 | IssuesEvent | 2021-12-09 12:36:46 | km4ack/pi-build | https://api.github.com/repos/km4ack/pi-build | closed | EES post to outbox on Bullseye and Pat 0.12 not working | bug in process | I initially thought this was a CRONJOB issue of not posting the EES emails to the PAT outbox but I believe it is a bug in the movetopi.html script referencing a bad location for the outbox.

The EES email is created is in /var/www/html/emails

The mv command to post to the PAT outbox is referencing a folder that... | 1.0 | EES post to outbox on Bullseye and Pat 0.12 not working - I initially thought this was a CRONJOB issue of not posting the EES emails to the PAT outbox but I believe it is a bug in the movetopi.html script referencing a bad location for the outbox.

The EES email is created is in /var/www/html/emails

The mv comm... | process | ees post to outbox on bullseye and pat not working i initially thought this was a cronjob issue of not posting the ees emails to the pat outbox but i believe it is a bug in the movetopi html script referencing a bad location for the outbox the ees email is created is in var www html emails the mv comma... | 1 |

790,151 | 27,817,435,632 | IssuesEvent | 2023-03-18 21:06:53 | qutebrowser/qutebrowser | https://api.github.com/repos/qutebrowser/qutebrowser | closed | Crashes in QStyleSheetStyle::styleHint | qt priority: 1 - middle bug: segfault/crash/hang | **Version info**:

```

qutebrowser v1.10.2

Git commit:

Backend: QtWebEngine (Chromium 77.0.3865.129)

Qt: 5.14.2

CPython: 3.8.2

PyQt: 5.14.2

sip: 5.1.2

colorama: 0.4.3

pypeg2: 2.15

jinja2: 2.11.2

pygments: 2.6.1

yaml: 5.3.1

cssutils: no

attr: 19.3.0

PyQt5.QtWebEngineWidgets: yes

PyQt5.QtWebEngine:... | 1.0 | Crashes in QStyleSheetStyle::styleHint - **Version info**:

```

qutebrowser v1.10.2

Git commit:

Backend: QtWebEngine (Chromium 77.0.3865.129)

Qt: 5.14.2

CPython: 3.8.2

PyQt: 5.14.2

sip: 5.1.2

colorama: 0.4.3

pypeg2: 2.15

jinja2: 2.11.2

pygments: 2.6.1

yaml: 5.3.1

cssutils: no

attr: 19.3.0

PyQt5.Qt... | non_process | crashes in qstylesheetstyle stylehint version info qutebrowser git commit backend qtwebengine chromium qt cpython pyqt sip colorama pygments yaml cssutils no attr qtwebenginewidgets yes qt... | 0 |

346,815 | 31,025,942,689 | IssuesEvent | 2023-08-10 09:08:38 | YeolJyeongKong/fittering-BE | https://api.github.com/repos/YeolJyeongKong/fittering-BE | opened | 로그인 기능 수정 | 🐞 BugFix ✅ Test | ### 로그인

- 입력 정보로 조회되는 유저가 없을 때 `null`을 반환해야 하는데 예외만 날리고 있어 문제 발생

+ 입력한 이메일로 조회된 유저가 있음

- 비밀번호 틀렸음 : `일치하는 유저 정보가 없습니다.`

- 비밀번호 맞음 : `200` OK

+ 입력한 이메일로 조회된 유저가 없음

- `500` : **수정 필요!!**

- OAuth로 로그인한 사용자에게 **JWT 발급**이 되는지 점검 필요 | 1.0 | 로그인 기능 수정 - ### 로그인

- 입력 정보로 조회되는 유저가 없을 때 `null`을 반환해야 하는데 예외만 날리고 있어 문제 발생

+ 입력한 이메일로 조회된 유저가 있음

- 비밀번호 틀렸음 : `일치하는 유저 정보가 없습니다.`

- 비밀번호 맞음 : `200` OK

+ 입력한 이메일로 조회된 유저가 없음

- `500` : **수정 필요!!**

- OAuth로 로그인한 사용자에게 **JWT 발급**이 되는지 점검 필요 | non_process | 로그인 기능 수정 로그인 입력 정보로 조회되는 유저가 없을 때 null 을 반환해야 하는데 예외만 날리고 있어 문제 발생 입력한 이메일로 조회된 유저가 있음 비밀번호 틀렸음 일치하는 유저 정보가 없습니다 비밀번호 맞음 ok 입력한 이메일로 조회된 유저가 없음 수정 필요 oauth로 로그인한 사용자에게 jwt 발급 이 되는지 점검 필요 | 0 |