Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

5,184 | 2,910,456,310 | IssuesEvent | 2015-06-21 19:32:54 | Benestar/asparagus | https://api.github.com/repos/Benestar/asparagus | closed | Add a more complex example to README | documentation | We need a more complex usage example in README.md to show how filters, optionals and unions work. | 1.0 | Add a more complex example to README - We need a more complex usage example in README.md to show how filters, optionals and unions work. | non_process | add a more complex example to readme we need a more complex usage example in readme md to show how filters optionals and unions work | 0 |

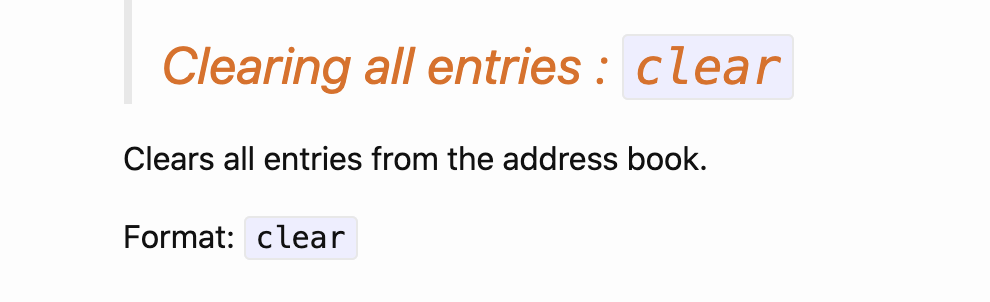

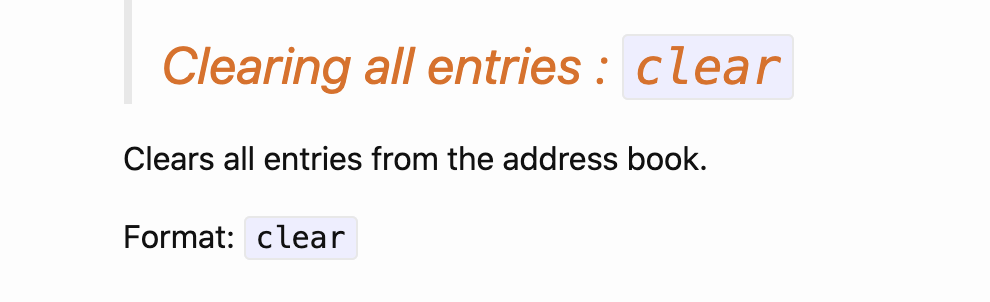

201,194 | 15,802,021,808 | IssuesEvent | 2021-04-03 07:39:08 | rachelljt/ped | https://api.github.com/repos/rachelljt/ped | opened | typos in UG | severity.VeryLow type.DocumentationBug |

Small issue here but i realised there were many instances of address book being mentioned. I think it would be best to change to Module Planner which is the name of the app. ... | 1.0 | typos in UG -

Small issue here but i realised there were many instances of address book being mentioned. I think it would be best to change to Module Planner which is the nam... | non_process | typos in ug small issue here but i realised there were many instances of address book being mentioned i think it would be best to change to module planner which is the name of the app | 0 |

601 | 3,074,419,123 | IssuesEvent | 2015-08-20 07:06:49 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Support multiple scope names in a single keyscope attribute | DITA 1.3 feature P2 preprocess | I publish the following DITA Map which has key scopes and references in it to XHTML:

http://www.oxygenxml.com/forum/files/dot-bugs-testKSNPE.zip

and I obtain at some point:

BUILD FAILED

D:\projects\eXml\frameworks\dita\DITA-OT2.x\build.xml:41: The following error occurred while executi... | 1.0 | Support multiple scope names in a single keyscope attribute - I publish the following DITA Map which has key scopes and references in it to XHTML:

http://www.oxygenxml.com/forum/files/dot-bugs-testKSNPE.zip

and I obtain at some point:

BUILD FAILED

D:\projects\eXml\frameworks\dita\DITA-... | process | support multiple scope names in a single keyscope attribute i publish the following dita map which has key scopes and references in it to xhtml and i obtain at some point build failed d projects exml frameworks dita dita x build xml the following error occurred while execut... | 1 |

286,182 | 31,335,075,886 | IssuesEvent | 2023-08-24 05:02:11 | istio/istio | https://api.github.com/repos/istio/istio | closed | Re-enable dualuse workload cert generation (CommonName identical to the SAN) | kind/enhancement area/security lifecycle/stale | **Describe the feature request**

***Historical background***

There used to be a feature to instrument `node-agent` to generate workload certificates with a non-empty Subject. Today, Istio's generated workload certificates have an empty subject.

```

openssl x509 -in istio-sidecar-cert.pem -text -noout

Cer... | True | Re-enable dualuse workload cert generation (CommonName identical to the SAN) - **Describe the feature request**

***Historical background***

There used to be a feature to instrument `node-agent` to generate workload certificates with a non-empty Subject. Today, Istio's generated workload certificates have an empty... | non_process | re enable dualuse workload cert generation commonname identical to the san describe the feature request historical background there used to be a feature to instrument node agent to generate workload certificates with a non empty subject today istio s generated workload certificates have an empty... | 0 |

84,626 | 15,724,730,485 | IssuesEvent | 2021-03-29 09:09:10 | crouchr/learnage | https://api.github.com/repos/crouchr/learnage | opened | CVE-2013-2765 (Medium) detected in mod-securitymodsecurity-apache_2.5.11 | security vulnerability | ## CVE-2013-2765 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mod-securitymodsecurity-apache_2.5.11</b></p></summary>

<p>

<p>Library home page: <a href=https://sourceforge.net/pro... | True | CVE-2013-2765 (Medium) detected in mod-securitymodsecurity-apache_2.5.11 - ## CVE-2013-2765 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mod-securitymodsecurity-apache_2.5.11</b></... | non_process | cve medium detected in mod securitymodsecurity apache cve medium severity vulnerability vulnerable library mod securitymodsecurity apache library home page a href found in head commit a href found in base branch master vulnerable source files ... | 0 |

8,828 | 11,940,092,970 | IssuesEvent | 2020-04-02 16:09:42 | GoogleCloudPlatform/dotnet-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/dotnet-docs-samples | opened | Spanner: TestDataTypes timing out on CI | api: spanner priority: p1 type: process | After 10 minutes. (Locally it passes after around 2 minutes.)

I've skipped it in #1001 but it should be looked at.

One thing would be to separate out in single tests. At least we could isolate the culprit easier.

@skuruppu feel free to assign to someone from your team that can take care of it. | 1.0 | Spanner: TestDataTypes timing out on CI - After 10 minutes. (Locally it passes after around 2 minutes.)

I've skipped it in #1001 but it should be looked at.

One thing would be to separate out in single tests. At least we could isolate the culprit easier.

@skuruppu feel free to assign to someone from your team that... | process | spanner testdatatypes timing out on ci after minutes locally it passes after around minutes i ve skipped it in but it should be looked at one thing would be to separate out in single tests at least we could isolate the culprit easier skuruppu feel free to assign to someone from your team that can... | 1 |

22,546 | 11,743,727,138 | IssuesEvent | 2020-03-12 05:35:14 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Calls Between App Service Environments | Pri2 app-service/svc cxp product-question triaged | As I understand, the "Calls Between App Service Environments" section describes situation when "ASE Two" is external ASE. But what if it's an ILB ASE? In this case, it won't be an "Internet" call, right? The ILB address will be used as destination and "ASE One" subnet address - as a source, correct?

---

#### Document... | 1.0 | Calls Between App Service Environments - As I understand, the "Calls Between App Service Environments" section describes situation when "ASE Two" is external ASE. But what if it's an ILB ASE? In this case, it won't be an "Internet" call, right? The ILB address will be used as destination and "ASE One" subnet address - ... | non_process | calls between app service environments as i understand the calls between app service environments section describes situation when ase two is external ase but what if it s an ilb ase in this case it won t be an internet call right the ilb address will be used as destination and ase one subnet address ... | 0 |

9,887 | 12,889,437,717 | IssuesEvent | 2020-07-13 14:32:09 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Stages is no longer in-preview | Pri2 devops-cicd-process/tech devops/prod doc-enhancement |

The page has a note about stages being in-preview, they are GA so the note can be removed.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 4266f72c-c774-0046-4593-d01eb775d3c3

* Version Independent ID: f20827aa-a6c5-96a8-5969-e57... | 1.0 | Stages is no longer in-preview -

The page has a note about stages being in-preview, they are GA so the note can be removed.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 4266f72c-c774-0046-4593-d01eb775d3c3

* Version Independen... | process | stages is no longer in preview the page has a note about stages being in preview they are ga so the note can be removed document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content ... | 1 |

7,375 | 10,513,808,742 | IssuesEvent | 2019-09-27 21:42:00 | googlemaps/google-maps-services-java | https://api.github.com/repos/googlemaps/google-maps-services-java | closed | Include package-list file in Javadoc | help wanted priority: p3 type: process | Please include the `package-list` file when publishing Javadocs so that we can link to it when generating our own Javadocs. For example, I would expect to find it at the below URL, but a 404 is returned.

https://googlemaps.github.io/google-maps-services-java/latest/javadoc/package-list | 1.0 | Include package-list file in Javadoc - Please include the `package-list` file when publishing Javadocs so that we can link to it when generating our own Javadocs. For example, I would expect to find it at the below URL, but a 404 is returned.

https://googlemaps.github.io/google-maps-services-java/latest/javadoc/pac... | process | include package list file in javadoc please include the package list file when publishing javadocs so that we can link to it when generating our own javadocs for example i would expect to find it at the below url but a is returned | 1 |

20,825 | 27,580,477,537 | IssuesEvent | 2023-03-08 15:53:39 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Process: support tiers for hardware for Zephyr v3.3 and later | Process | This issue covers a process discussion for introducing support tiers for boards with upstream Zephyr project support.

The goal of this issue is to define a high level process for defining the support level for different Zephyr boards. This will aid users in knowing which platforms are well tested and debugged. For a... | 1.0 | Process: support tiers for hardware for Zephyr v3.3 and later - This issue covers a process discussion for introducing support tiers for boards with upstream Zephyr project support.

The goal of this issue is to define a high level process for defining the support level for different Zephyr boards. This will aid user... | process | process support tiers for hardware for zephyr and later this issue covers a process discussion for introducing support tiers for boards with upstream zephyr project support the goal of this issue is to define a high level process for defining the support level for different zephyr boards this will aid users... | 1 |

5,291 | 8,074,398,662 | IssuesEvent | 2018-08-06 23:05:15 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Enhance EKS driver | area/cluster process/needs-ui status/reopened status/resolved status/to-test version/2.0 | Add these additional features if requested:

> 1. Amazon suggests using a reusable service role for creating clusters. Right now we are creating a new service role for each EKS cluster we spin up. We could change Rancher to use an existing role that the user specifics, or have Rancher only create the service role i... | 1.0 | Enhance EKS driver - Add these additional features if requested:

> 1. Amazon suggests using a reusable service role for creating clusters. Right now we are creating a new service role for each EKS cluster we spin up. We could change Rancher to use an existing role that the user specifics, or have Rancher only crea... | process | enhance eks driver add these additional features if requested amazon suggests using a reusable service role for creating clusters right now we are creating a new service role for each eks cluster we spin up we could change rancher to use an existing role that the user specifics or have rancher only crea... | 1 |

10,183 | 13,044,162,858 | IssuesEvent | 2020-07-29 03:47:37 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `TruncateUint` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `TruncateUint` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @mapleFU

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_ex... | 2.0 | UCP: Migrate scalar function `TruncateUint` from TiDB -

## Description

Port the scalar function `TruncateUint` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @mapleFU

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.co... | process | ucp migrate scalar function truncateuint from tidb description port the scalar function truncateuint from tidb to coprocessor score mentor s maplefu recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

13,512 | 8,247,838,271 | IssuesEvent | 2018-09-11 16:37:43 | ampproject/amphtml | https://api.github.com/repos/ampproject/amphtml | closed | Build module/nomodule scripts for v0.js | Category: Runtime Related to: Performance | AMP should be outputting v0-module.js and v0-nmodule.js in order to run these scripts inside `<script type=module` or `<script nomodule` for the same.

These outputs will be identical in build except the perf.tick and perf.addEnabledExperiment parameters as shown here: https://github.com/ampproject/amphtml/blob/maste... | True | Build module/nomodule scripts for v0.js - AMP should be outputting v0-module.js and v0-nmodule.js in order to run these scripts inside `<script type=module` or `<script nomodule` for the same.

These outputs will be identical in build except the perf.tick and perf.addEnabledExperiment parameters as shown here: https:... | non_process | build module nomodule scripts for js amp should be outputting module js and nmodule js in order to run these scripts inside script type module or script nomodule for the same these outputs will be identical in build except the perf tick and perf addenabledexperiment parameters as shown here ... | 0 |

16,529 | 21,557,297,025 | IssuesEvent | 2022-04-30 16:40:10 | CynthiaChuang/CynthiaChuang.github.io | https://api.github.com/repos/CynthiaChuang/CynthiaChuang.github.io | closed | Typo in /HedgeDoc-a-New-Fork-of-CodiMD | Non Issue Processing Typo | https://cynthiachuang.github.io/HedgeDoc-a-New-Fork-of-CodiMD/

因此基於種種原因,社群版的 CodiMD 後來更名成為 HedgeDoc,並將 `log` 換成一隻可愛小刺蝟。 | 1.0 | Typo in /HedgeDoc-a-New-Fork-of-CodiMD - https://cynthiachuang.github.io/HedgeDoc-a-New-Fork-of-CodiMD/

因此基於種種原因,社群版的 CodiMD 後來更名成為 HedgeDoc,並將 `log` 換成一隻可愛小刺蝟。 | process | typo in hedgedoc a new fork of codimd 因此基於種種原因,社群版的 codimd 後來更名成為 hedgedoc,並將 log 換成一隻可愛小刺蝟。 | 1 |

55,120 | 30,593,549,104 | IssuesEvent | 2023-07-21 19:23:55 | nexB/scancode-workbench | https://api.github.com/repos/nexB/scancode-workbench | closed | Blank space on BarChart view | bug D3 performance should have | <img width="1988" alt="screen shot 2018-08-24 at 6 30 18 pm" src="https://user-images.githubusercontent.com/7485204/44613682-de06fe00-a7cb-11e8-8eca-65b551e039a9.png">

As you can see from the above picture, there is a large blank space that shows up occasionally on the Bar Chart view. It seems the more items on the ... | True | Blank space on BarChart view - <img width="1988" alt="screen shot 2018-08-24 at 6 30 18 pm" src="https://user-images.githubusercontent.com/7485204/44613682-de06fe00-a7cb-11e8-8eca-65b551e039a9.png">

As you can see from the above picture, there is a large blank space that shows up occasionally on the Bar Chart view. ... | non_process | blank space on barchart view img width alt screen shot at pm src as you can see from the above picture there is a large blank space that shows up occasionally on the bar chart view it seems the more items on the bar chart the more blank space there is ultimately we need to git rid of... | 0 |

16,770 | 21,944,735,764 | IssuesEvent | 2022-05-23 22:26:25 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Batch Nominatim Geocoder output directly saved to file has no CRS | Processing Bug Projections/Transformations | ### What is the bug or the crash?

When batch-geocoding a table with the Batch Nominatim Geocoder and saving directly to file, the loaded layer does not have a CRS. It should have EPSG:4326.

Both GPKG and SHP exports fail at storing the right CRS. However, _not_ saving as file but loading a temporary scratch layer d... | 1.0 | Batch Nominatim Geocoder output directly saved to file has no CRS - ### What is the bug or the crash?

When batch-geocoding a table with the Batch Nominatim Geocoder and saving directly to file, the loaded layer does not have a CRS. It should have EPSG:4326.

Both GPKG and SHP exports fail at storing the right CRS. H... | process | batch nominatim geocoder output directly saved to file has no crs what is the bug or the crash when batch geocoding a table with the batch nominatim geocoder and saving directly to file the loaded layer does not have a crs it should have epsg both gpkg and shp exports fail at storing the right crs howe... | 1 |

98,804 | 4,031,464,799 | IssuesEvent | 2016-05-18 17:15:48 | giantotter/giantotter_public | https://api.github.com/repos/giantotter/giantotter_public | opened | Bot can execute actions on behalf of other NPCs | backend: AI priority: A | Sometimes the action of the bot or the user results in another NPC taking an action. The slackbot should execute these actions for other NPCs.

For example, the chef prepares a steak after asking him for steak. | 1.0 | Bot can execute actions on behalf of other NPCs - Sometimes the action of the bot or the user results in another NPC taking an action. The slackbot should execute these actions for other NPCs.

For example, the chef prepares a steak after asking him for steak. | non_process | bot can execute actions on behalf of other npcs sometimes the action of the bot or the user results in another npc taking an action the slackbot should execute these actions for other npcs for example the chef prepares a steak after asking him for steak | 0 |

300,853 | 25,999,830,020 | IssuesEvent | 2022-12-20 14:30:57 | keycloak/keycloak | https://api.github.com/repos/keycloak/keycloak | closed | Flaky test: org.keycloak.testsuite.federation.kerberos.KerberosLdapTest#validatePasswordPolicyTest | kind/bug area/ci flaky-test | ## org.keycloak.testsuite.federation.kerberos.KerberosLdapTest#validatePasswordPolicyTest

[Run (pull_request)](https://github.com/keycloak/keycloak/actions/runs/3740969966)

### Errors

| 1.0 | Flaky test: org.keycloak.testsuite.federation.kerberos.KerberosLdapTest#validatePasswordPolicyTest - ## org.keycloak.testsuite.federation.kerberos.KerberosLdapTest#validatePasswordPolicyTest

[Run (pull_request)](https://github.com/keycloak/keycloak/actions/runs/3740969966)

### Errors

| non_process | flaky test org keycloak testsuite federation kerberos kerberosldaptest validatepasswordpolicytest org keycloak testsuite federation kerberos kerberosldaptest validatepasswordpolicytest errors | 0 |

814,813 | 30,522,967,310 | IssuesEvent | 2023-07-19 09:18:40 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | [Coverity CID: 322061] Unchecked return value in drivers/input/input_xpt2046.c | bug priority: medium Coverity area: Input |

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/18636af6d6658cf66e5f055901ffe2515116d329/drivers/input/input_xpt2046.c

Category: Error handling issues

Function: `xpt2046_work_handler`

Component: Drivers

CID: [322061](https://scan9.scan.coverity.com/reports.htm#v29726/p12996/m... | 1.0 | [Coverity CID: 322061] Unchecked return value in drivers/input/input_xpt2046.c -

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/18636af6d6658cf66e5f055901ffe2515116d329/drivers/input/input_xpt2046.c

Category: Error handling issues

Function: `xpt2046_work_handler`

Component: ... | non_process | unchecked return value in drivers input input c static code scan issues found in file category error handling issues function work handler component drivers cid details please fix or provide comments in coverity using the link for more information about the violation check the ... | 0 |

154,538 | 5,920,827,545 | IssuesEvent | 2017-05-22 21:15:17 | infolab-csail/WikipediaBase | https://api.github.com/repos/infolab-csail/WikipediaBase | opened | Consider what to do with "duplicate" attributes in duplicate infoboxes | priority/medium question | E.g., [USS Powhatan (ID-3013)](https://en.wikipedia.org/wiki/USS_Powhatan_(ID-3013)) has two instantiations of the `ship-career` infobox template. `get-attributes` returns two instances of the attribute, one per infobox:

```

$ telnet <host> 8023

(get-attributes "wikipedia-ship-career" "USS Powhatan (ID-3013)")

(..... | 1.0 | Consider what to do with "duplicate" attributes in duplicate infoboxes - E.g., [USS Powhatan (ID-3013)](https://en.wikipedia.org/wiki/USS_Powhatan_(ID-3013)) has two instantiations of the `ship-career` infobox template. `get-attributes` returns two instances of the attribute, one per infobox:

```

$ telnet <host> 802... | non_process | consider what to do with duplicate attributes in duplicate infoboxes e g has two instantiations of the ship career infobox template get attributes returns two instances of the attribute one per infobox telnet get attributes wikipedia ship career uss powhatan id code s... | 0 |

147,350 | 23,203,221,973 | IssuesEvent | 2022-08-02 00:47:25 | antrea-io/antrea | https://api.github.com/repos/antrea-io/antrea | closed | Containerized Network Function (CNF) use case support on Antrea | kind/design lifecycle/stale | **Describe what you are trying to solve**

SD-WAN/Telco CNF (Containerized Network Function) support on Antrea. Establishing network function virtualization (network application data plane) as a micro service orchestration in K8s environment, requires the below functionalities to be supported by CNI plugin (vital to su... | 1.0 | Containerized Network Function (CNF) use case support on Antrea - **Describe what you are trying to solve**

SD-WAN/Telco CNF (Containerized Network Function) support on Antrea. Establishing network function virtualization (network application data plane) as a micro service orchestration in K8s environment, requires th... | non_process | containerized network function cnf use case support on antrea describe what you are trying to solve sd wan telco cnf containerized network function support on antrea establishing network function virtualization network application data plane as a micro service orchestration in environment requires the ... | 0 |

29,008 | 2,712,810,752 | IssuesEvent | 2015-04-09 15:45:08 | mavoine/tarsius | https://api.github.com/repos/mavoine/tarsius | closed | time distribution graph | auto-migrated Priority-Medium Type-Enhancement | ```

a time distribution graph like that of F-Spot

```

Original issue reported on code.google.com by `avoin...@gmail.com` on 11 Dec 2009 at 6:23 | 1.0 | time distribution graph - ```

a time distribution graph like that of F-Spot

```

Original issue reported on code.google.com by `avoin...@gmail.com` on 11 Dec 2009 at 6:23 | non_process | time distribution graph a time distribution graph like that of f spot original issue reported on code google com by avoin gmail com on dec at | 0 |

48,186 | 10,220,389,841 | IssuesEvent | 2019-08-15 21:11:46 | EdenServer/community | https://api.github.com/repos/EdenServer/community | closed | NPC Rondipur N. San d'Oria - displaying quest dialogue when he shouldn't | in-code-review | ### Checklist

<!--

Don't edit or delete this section, but tick the boxes after you have submitted your issue.

If there are unticked boxes a developer may not address the issue.

Make sure you comply with the checklist and then start writing in the details section below.

-->

- [x] I have searched for... | 1.0 | NPC Rondipur N. San d'Oria - displaying quest dialogue when he shouldn't - ### Checklist

<!--

Don't edit or delete this section, but tick the boxes after you have submitted your issue.

If there are unticked boxes a developer may not address the issue.

Make sure you comply with the checklist and then st... | non_process | npc rondipur n san d oria displaying quest dialogue when he shouldn t checklist don t edit or delete this section but tick the boxes after you have submitted your issue if there are unticked boxes a developer may not address the issue make sure you comply with the checklist and then st... | 0 |

19,000 | 24,995,253,603 | IssuesEvent | 2022-11-02 23:08:08 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | Update OS packages in image | enhancement security process | ### Problem

The container images frequently contains out of date packages from the base image that may contain vulnerabilities. We should keep them up to date even if there's not an updated base image to consume.

### Solution

* Update base image for all mirror node components

* Update postgresql chart and postgres-r... | 1.0 | Update OS packages in image - ### Problem

The container images frequently contains out of date packages from the base image that may contain vulnerabilities. We should keep them up to date even if there's not an updated base image to consume.

### Solution

* Update base image for all mirror node components

* Update p... | process | update os packages in image problem the container images frequently contains out of date packages from the base image that may contain vulnerabilities we should keep them up to date even if there s not an updated base image to consume solution update base image for all mirror node components update p... | 1 |

36,534 | 9,819,930,580 | IssuesEvent | 2019-06-14 00:02:51 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | Can't import tensorflow | stat:awaiting response subtype:windows type:build/install |

**System information**

- OS Platform and Distribution (Windows 7):

- TensorFlow installed from (source or binary):

- TensorFlow version: 1.13.1

- Python version: 3.6

- Installed using virtualenv? installed using pip

- Bazel version (if compiling from source):

- GCC/Compiler version (if compiling from source)... | 1.0 | Can't import tensorflow -

**System information**

- OS Platform and Distribution (Windows 7):

- TensorFlow installed from (source or binary):

- TensorFlow version: 1.13.1

- Python version: 3.6

- Installed using virtualenv? installed using pip

- Bazel version (if compiling from source):

- GCC/Compiler version ... | non_process | can t import tensorflow system information os platform and distribution windows tensorflow installed from source or binary tensorflow version python version installed using virtualenv installed using pip bazel version if compiling from source gcc compiler version ... | 0 |

334,684 | 24,431,315,513 | IssuesEvent | 2022-10-06 08:20:43 | hashicorp/terraform-provider-aws | https://api.github.com/repos/hashicorp/terraform-provider-aws | opened | [Docs]: aws_identitystore_group example | documentation needs-triage | ### Documentation Link

https://registry.terraform.io/providers/hashicorp/aws/latest/docs/resources/identitystore_group

### Description

The attribute `identity_store_id ` must be set with an id and not the arn of the identity store.

```

resource "aws_identitystore_group" "this" {

display_name = "Example g... | 1.0 | [Docs]: aws_identitystore_group example - ### Documentation Link

https://registry.terraform.io/providers/hashicorp/aws/latest/docs/resources/identitystore_group

### Description

The attribute `identity_store_id ` must be set with an id and not the arn of the identity store.

```

resource "aws_identitystore_group" ... | non_process | aws identitystore group example documentation link description the attribute identity store id must be set with an id and not the arn of the identity store resource aws identitystore group this display name example group description example description ide... | 0 |

19,082 | 25,127,778,003 | IssuesEvent | 2022-11-09 13:05:16 | prisma/prisma-engines | https://api.github.com/repos/prisma/prisma-engines | opened | Rethink PartialEq implementation for sql-schema-describer::ColumnTypeFamily | process/candidate kind/tech team/schema | It compares IDs, which we absolutely do not want in the migration engine differ, but is harmless everywhere else. Should we break up ColumnTypeFamily variants into scalar and enum and unsupported variants? Remove the PartialEq impl?

https://github.com/prisma/prisma-engines/pull/3372 | 1.0 | Rethink PartialEq implementation for sql-schema-describer::ColumnTypeFamily - It compares IDs, which we absolutely do not want in the migration engine differ, but is harmless everywhere else. Should we break up ColumnTypeFamily variants into scalar and enum and unsupported variants? Remove the PartialEq impl?

https:... | process | rethink partialeq implementation for sql schema describer columntypefamily it compares ids which we absolutely do not want in the migration engine differ but is harmless everywhere else should we break up columntypefamily variants into scalar and enum and unsupported variants remove the partialeq impl | 1 |

16,853 | 22,112,506,769 | IssuesEvent | 2022-06-01 22:47:22 | googleapis/repo-automation-bots | https://api.github.com/repos/googleapis/repo-automation-bots | closed | infra: bot template/config for running on Cloud Run | type: process | In the future, we will want extra dependencies available to our bot. We should still be able to run our probot-based bots easily with our credentials and tasks shims in `gcf-utils` within a Docker container on Cloud Run.

- [x] Figure out how to run in Cloud Build

- [x] Configure canary-bot to run on Cloud Build

- ... | 1.0 | infra: bot template/config for running on Cloud Run - In the future, we will want extra dependencies available to our bot. We should still be able to run our probot-based bots easily with our credentials and tasks shims in `gcf-utils` within a Docker container on Cloud Run.

- [x] Figure out how to run in Cloud Build... | process | infra bot template config for running on cloud run in the future we will want extra dependencies available to our bot we should still be able to run our probot based bots easily with our credentials and tasks shims in gcf utils within a docker container on cloud run figure out how to run in cloud build ... | 1 |

226,718 | 18,043,955,427 | IssuesEvent | 2021-09-18 14:55:43 | logicmoo/logicmoo_workspace | https://api.github.com/repos/logicmoo/logicmoo_workspace | opened | logicmoo.base.examples.fol.SANITY_EXISTS_03 JUnit | Test_9999 logicmoo.base.examples.fol unit_test SANITY_EXISTS_03 | (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/logicmoo_base/t/examples/fol ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s lmoo-clif sanity_exists_03.pfc.pl)

GH_MASTER_ISSUE_FINFO=

ISSUE_SEARCH: https://github.com/logicmoo/logicmoo_workspace/issues?q=is%3Aissue+label%3ASANITY_EXISTS_03

... | 2.0 | logicmoo.base.examples.fol.SANITY_EXISTS_03 JUnit - (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/logicmoo_base/t/examples/fol ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s lmoo-clif sanity_exists_03.pfc.pl)

GH_MASTER_ISSUE_FINFO=

ISSUE_SEARCH: https://github.com/logicmoo/logicmoo_work... | non_process | logicmoo base examples fol sanity exists junit cd var lib jenkins workspace logicmoo workspace packs sys logicmoo base t examples fol timeout foreground preserve status s sigkill k lmoo clif sanity exists pfc pl gh master issue finfo issue search gitlab latest this build git... | 0 |

12,671 | 15,038,812,360 | IssuesEvent | 2021-02-02 17:53:15 | w3c/aria-at | https://api.github.com/repos/w3c/aria-at | opened | Proposed Patterns for Test Plan Development (Q1/Q2 2021) | Agenda+ process tests | Issue #318 outlines the initial 13 patterns to be worked on by members of the PAC team. We are approaching the completion point on those patterns (status outlined below), and want to take input from the community group on which patterns to tackle next.

#### Current Status

* Pull requests have been submitted for ... | 1.0 | Proposed Patterns for Test Plan Development (Q1/Q2 2021) - Issue #318 outlines the initial 13 patterns to be worked on by members of the PAC team. We are approaching the completion point on those patterns (status outlined below), and want to take input from the community group on which patterns to tackle next.

####... | process | proposed patterns for test plan development issue outlines the initial patterns to be worked on by members of the pac team we are approaching the completion point on those patterns status outlined below and want to take input from the community group on which patterns to tackle next current... | 1 |

5,853 | 8,679,088,597 | IssuesEvent | 2018-11-30 22:15:03 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | closed | [Firestore] Query start/end at/after accepts snapshots, doesn't properly apply implicit ordering. | api: firestore triaged for GA type: process | Query.start_at()/start_after()/end_at()/end_after() accepts DocumentSnapshots, but doesn’t properly apply the implicit ordering.

| 1.0 | [Firestore] Query start/end at/after accepts snapshots, doesn't properly apply implicit ordering. - Query.start_at()/start_after()/end_at()/end_after() accepts DocumentSnapshots, but doesn’t properly apply the implicit ordering.

| process | query start end at after accepts snapshots doesn t properly apply implicit ordering query start at start after end at end after accepts documentsnapshots but doesn’t properly apply the implicit ordering | 1 |

21,038 | 27,979,267,458 | IssuesEvent | 2023-03-26 00:20:53 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | Manage border for non-convex and self-intersecting path mask | feature: enhancement difficulty: hard scope: UI scope: image processing no-issue-activity | Just a remainder in case someone want to tackle this difficult task.

Currently the algorithm for drawing path mask doesn't manage properly

1. self-intersencting paths: it fails to recognize the outside side of the path

[VID:80:src/Users.js:100] | VeracodeFlaw: Medium Veracode Pipeline Scan | **Filename:** src/Users.js

**Line:** 100

**CWE:** 80 (Improper Neutralization of Script-Related HTML Tags in a Web Page (Basic XSS))

<span>This call to jQueryResult.replaceWith() contains a cross-site scripting (XSS) flaw. The application populates the HTTP response with untrusted input, allowing an attacker to emb... | 2.0 | Improper Neutralization of Script-Related HTML Tags in a Web Page (Basic XSS) [VID:80:src/Users.js:100] - **Filename:** src/Users.js

**Line:** 100

**CWE:** 80 (Improper Neutralization of Script-Related HTML Tags in a Web Page (Basic XSS))

<span>This call to jQueryResult.replaceWith() contains a cross-site scripting ... | non_process | improper neutralization of script related html tags in a web page basic xss filename src users js line cwe improper neutralization of script related html tags in a web page basic xss this call to jqueryresult replacewith contains a cross site scripting xss flaw the application pop... | 0 |

249,241 | 18,858,174,858 | IssuesEvent | 2021-11-12 09:28:07 | pss-coder/pe | https://api.github.com/repos/pss-coder/pe | opened | Storage component UI diagram Date and Frequency | severity.High type.DocumentationBug | Where does your Frequency and Date class comes from? From your code it seems that your Frequency class comes from the Model component, and your date comes from the internal Java class which isn't necessary to add in.

affected**:

4.3-dev

**Description**

When reading from a process producing rapid output, it's necessary to periodically clear the process output stream buffer to avoid memory exhaustion. Between consecutive calls to getIncrementalOutput() and clearOutput(), additional output may be asynchron... | 1.0 | [Process] Cannot safely clear output - **Symfony version(s) affected**:

4.3-dev

**Description**

When reading from a process producing rapid output, it's necessary to periodically clear the process output stream buffer to avoid memory exhaustion. Between consecutive calls to getIncrementalOutput() and clearOutpu... | process | cannot safely clear output symfony version s affected dev description when reading from a process producing rapid output it s necessary to periodically clear the process output stream buffer to avoid memory exhaustion between consecutive calls to getincrementaloutput and clearoutput add... | 1 |

16,778 | 21,961,290,554 | IssuesEvent | 2022-05-24 16:02:23 | google/ground-android | https://api.github.com/repos/google/ground-android | closed | [Cloud Build] Save gradle cache only if dependencies have been updated | type: process priority: p2 | Currently, we zip and copy gradle cache to GCS with every successful trigger. This takes ~ 40 seconds and doesn't add any value if the dependencies haven't been updated in the current pull request.

Ideas:

* Generate hash (md5) for `build.gradle` files (both project and app level)

* Run `Compress gradle build cac... | 1.0 | [Cloud Build] Save gradle cache only if dependencies have been updated - Currently, we zip and copy gradle cache to GCS with every successful trigger. This takes ~ 40 seconds and doesn't add any value if the dependencies haven't been updated in the current pull request.

Ideas:

* Generate hash (md5) for `build.grad... | process | save gradle cache only if dependencies have been updated currently we zip and copy gradle cache to gcs with every successful trigger this takes seconds and doesn t add any value if the dependencies haven t been updated in the current pull request ideas generate hash for build gradle files both... | 1 |

11,313 | 14,116,333,413 | IssuesEvent | 2020-11-08 02:23:44 | kubeflow/testing | https://api.github.com/repos/kubeflow/testing | closed | kubeflow-testing old GPU nodes | area/engprod kind/bug kind/process lifecycle/frozen lifecycle/stale priority/p2 | Our kubeflow-testing cluster has a GPU node pool with 6 nodes.

I don't think we have many tests actually using that. We should have autoscaling enabled.

I suspect its not getting downscaled because K8s isn't smart enough to not schedule workloads on that node-pool. | 1.0 | kubeflow-testing old GPU nodes - Our kubeflow-testing cluster has a GPU node pool with 6 nodes.

I don't think we have many tests actually using that. We should have autoscaling enabled.

I suspect its not getting downscaled because K8s isn't smart enough to not schedule workloads on that node-pool. | process | kubeflow testing old gpu nodes our kubeflow testing cluster has a gpu node pool with nodes i don t think we have many tests actually using that we should have autoscaling enabled i suspect its not getting downscaled because isn t smart enough to not schedule workloads on that node pool | 1 |

4,294 | 7,192,432,840 | IssuesEvent | 2018-02-03 03:31:59 | amaster507/ifbmt | https://api.github.com/repos/amaster507/ifbmt | closed | Data Import | calendar idea importing data process | # There should be a way to import existing user data.

Of course the easiest way to import data is from a CSV (excel) file and sanitizing the input and mapping the columns to the correct database fields. But, what other formats are data in that everyone is using that may need to be imported?

Automatic importing ma... | 1.0 | Data Import - # There should be a way to import existing user data.

Of course the easiest way to import data is from a CSV (excel) file and sanitizing the input and mapping the columns to the correct database fields. But, what other formats are data in that everyone is using that may need to be imported?

Automati... | process | data import there should be a way to import existing user data of course the easiest way to import data is from a csv excel file and sanitizing the input and mapping the columns to the correct database fields but what other formats are data in that everyone is using that may need to be imported automati... | 1 |

8,393 | 11,564,244,840 | IssuesEvent | 2020-02-20 08:13:33 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Processing : Perform SQL query on a PostGIS database, add the ability to select schema | Feature Request Processing | **Feature description.**

Add the ability to select schema and not only the database name.

**Additional context**

See screenshot on a standard version :

Add an other dropdown menu to select schema will b... | 1.0 | Processing : Perform SQL query on a PostGIS database, add the ability to select schema - **Feature description.**

Add the ability to select schema and not only the database name.

**Additional context**

See screenshot on a standard version :

Since sometimes you want to use a subset of all peaks, add a parameter to various SCD-type algorithms to use only some of the peaks.

| 1.0 | Add parameter for peaks indices in things - This issue was originally [TRAC 7275](http://trac.mantidproject.org/mantid/ticket/7275)

Since sometimes you want to use a subset of all peaks, add a parameter to various SCD-type algorithms to use only some of the peaks.

| non_process | add parameter for peaks indices in things this issue was originally since sometimes you want to use a subset of all peaks add a parameter to various scd type algorithms to use only some of the peaks | 0 |

146 | 2,577,590,520 | IssuesEvent | 2015-02-12 17:55:26 | cfpb/hmda-viz-prototype | https://api.github.com/repos/cfpb/hmda-viz-prototype | opened | MSA name and State name | Processing | Create MSA name and State name

json file - non numeric, non abbreviated | 1.0 | MSA name and State name - Create MSA name and State name

json file - non numeric, non abbreviated | process | msa name and state name create msa name and state name json file non numeric non abbreviated | 1 |

1,626 | 4,238,827,101 | IssuesEvent | 2016-07-06 06:41:16 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | When I write áéíóú, process.stdout.write in Windows English version, process.stdout is closed | process windows | When I write áéíóú, process.stdout.write in Windows English version, process.stdout is closed

E.g.

```javascript

process.stdout.write('Aplicación') //print nothing

process.stdout.write('xxxx') // Error: this.socket.is closed

```

* **Version**: node v5.9.1

* **Platform**: Windows, 7 32 bit (English Language... | 1.0 | When I write áéíóú, process.stdout.write in Windows English version, process.stdout is closed - When I write áéíóú, process.stdout.write in Windows English version, process.stdout is closed

E.g.

```javascript

process.stdout.write('Aplicación') //print nothing

process.stdout.write('xxxx') // Error: this.socket.is... | process | when i write áéíóú process stdout write in windows english version process stdout is closed when i write áéíóú process stdout write in windows english version process stdout is closed e g javascript process stdout write aplicación print nothing process stdout write xxxx error this socket is... | 1 |

95,899 | 12,059,242,491 | IssuesEvent | 2020-04-15 18:55:07 | palantir/blueprint | https://api.github.com/repos/palantir/blueprint | closed | Add resizing options to Core Kit Sketch | Domain: design | #### Environment

- __Package version(s)__: 3.5.1

- __Browser and OS versions__: N/A

#### Feature request

Having the Sketch file in the repo is super helpful for developing against Blueprint!

It would be very helpful to have the `Core Kit.sketch` file have the correct resize options set, i.e., fix height/wi... | 1.0 | Add resizing options to Core Kit Sketch - #### Environment

- __Package version(s)__: 3.5.1

- __Browser and OS versions__: N/A

#### Feature request

Having the Sketch file in the repo is super helpful for developing against Blueprint!

It would be very helpful to have the `Core Kit.sketch` file have the corre... | non_process | add resizing options to core kit sketch environment package version s browser and os versions n a feature request having the sketch file in the repo is super helpful for developing against blueprint it would be very helpful to have the core kit sketch file have the corre... | 0 |

116,615 | 4,704,317,996 | IssuesEvent | 2016-10-13 11:01:51 | PowerlineApp/powerline-mobile | https://api.github.com/repos/PowerlineApp/powerline-mobile | closed | Facebook Registration - Address Entry | bug P2 - Medium Priority | When a user registers with Facebook for the first time, they still need to provide their address. The second screen of the e-mail registration flow (showing address + DOB + phone number fields) should be displayed to user.

On iOS, the user is clicking the REgister with Facebook button and is being brought to their ... | 1.0 | Facebook Registration - Address Entry - When a user registers with Facebook for the first time, they still need to provide their address. The second screen of the e-mail registration flow (showing address + DOB + phone number fields) should be displayed to user.

On iOS, the user is clicking the REgister with Facebo... | non_process | facebook registration address entry when a user registers with facebook for the first time they still need to provide their address the second screen of the e mail registration flow showing address dob phone number fields should be displayed to user on ios the user is clicking the register with facebo... | 0 |

245,804 | 26,567,520,995 | IssuesEvent | 2023-01-20 21:56:44 | kubecost/cost-analyzer-helm-chart | https://api.github.com/repos/kubecost/cost-analyzer-helm-chart | closed | kiwigrid/k8s-sidecar:1.15.4 impacted by 7 Critical CVEs | bug security stale | **Describe the bug**

kiwigrid/k8s-sidecar:1.15.4 contains 20 CVEs -- 1 Critical CVE affecting busybox, 1 Critical affecting ssl_client, and 5 Critical affecting expat.

The following image tags do not contain these or any other Medium/High/Critical vulnerabilities:

- kiwigrid/k8s-sidecar:1.16.0

- kiwigrid/k8s-sidecar:1... | True | kiwigrid/k8s-sidecar:1.15.4 impacted by 7 Critical CVEs - **Describe the bug**

kiwigrid/k8s-sidecar:1.15.4 contains 20 CVEs -- 1 Critical CVE affecting busybox, 1 Critical affecting ssl_client, and 5 Critical affecting expat.

The following image tags do not contain these or any other Medium/High/Critical vulnerabiliti... | non_process | kiwigrid sidecar impacted by critical cves describe the bug kiwigrid sidecar contains cves critical cve affecting busybox critical affecting ssl client and critical affecting expat the following image tags do not contain these or any other medium high critical vulnerabilities k... | 0 |

11,412 | 14,241,651,069 | IssuesEvent | 2020-11-18 23:53:29 | googleapis/python-logging | https://api.github.com/repos/googleapis/python-logging | closed | `from google.cloud import logging` collides with `logging` from standard library | api: logging priority: p2 type: process | Also, an issue with `from google.cloud import logging` is that it collides with the `logging` standard library, and they are often [going to be used together](https://cloud.google.com/logging/docs/setup/python#connecting_the_library_to_python_logging). Right now we tell users to import as `import google.cloud.logging` ... | 1.0 | `from google.cloud import logging` collides with `logging` from standard library - Also, an issue with `from google.cloud import logging` is that it collides with the `logging` standard library, and they are often [going to be used together](https://cloud.google.com/logging/docs/setup/python#connecting_the_library_to_p... | process | from google cloud import logging collides with logging from standard library also an issue with from google cloud import logging is that it collides with the logging standard library and they are often right now we tell users to import as import google cloud logging and use the full path in their code... | 1 |

513,135 | 14,916,406,163 | IssuesEvent | 2021-01-22 18:09:30 | xwikisas/application-mocca-calendar | https://api.github.com/repos/xwikisas/application-mocca-calendar | closed | Warnings for deprecated usage of method when using Calendar Pro | Priority: Major Type: Bug | STEPS TO REPRODUCE

Environment: Windows 10 Pro 64bit, Chrome 87, using an instance of XWiki 12.10.2 on PostgreSQL 13, Tomcat 9.0.41

While using Calendar Pro 2.9.2, in XWiki console there are many warnings displayed related to deprecated usage of method, like:

`2021-01-19 12:14:58,119 [http-nio-1115-exec-8 - ht... | 1.0 | Warnings for deprecated usage of method when using Calendar Pro - STEPS TO REPRODUCE

Environment: Windows 10 Pro 64bit, Chrome 87, using an instance of XWiki 12.10.2 on PostgreSQL 13, Tomcat 9.0.41

While using Calendar Pro 2.9.2, in XWiki console there are many warnings displayed related to deprecated usage of me... | non_process | warnings for deprecated usage of method when using calendar pro steps to reproduce environment windows pro chrome using an instance of xwiki on postgresql tomcat while using calendar pro in xwiki console there are many warnings displayed related to deprecated usage of method like... | 0 |

69,515 | 13,259,899,525 | IssuesEvent | 2020-08-20 17:23:10 | coghex/abridgefaraway | https://api.github.com/repos/coghex/abridgefaraway | opened | dynamic texture loading from lua | new code | change the number of loaded images whenever a texture is added live | 1.0 | dynamic texture loading from lua - change the number of loaded images whenever a texture is added live | non_process | dynamic texture loading from lua change the number of loaded images whenever a texture is added live | 0 |

13,281 | 15,761,259,412 | IssuesEvent | 2021-03-31 09:48:23 | prisma/quaint | https://api.github.com/repos/prisma/quaint | closed | Run CI on GitHub Actions | kind/tech process/candidate | Now we can't run tests from 3rd party pull requests. We could move our test runs to GitHub actions, which would make it easier for people to write pull requests. And, additionally, we could run the tests on platforms such as Windows and macOS. | 1.0 | Run CI on GitHub Actions - Now we can't run tests from 3rd party pull requests. We could move our test runs to GitHub actions, which would make it easier for people to write pull requests. And, additionally, we could run the tests on platforms such as Windows and macOS. | process | run ci on github actions now we can t run tests from party pull requests we could move our test runs to github actions which would make it easier for people to write pull requests and additionally we could run the tests on platforms such as windows and macos | 1 |

139,955 | 11,300,003,325 | IssuesEvent | 2020-01-17 12:37:28 | kyma-project/kyma | https://api.github.com/repos/kyma-project/kyma | opened | Mockice instances is CBS integration tests has a low resources | area/core-and-supporting bug test-failing | <!-- Thank you for your contribution. Before you submit the issue:

1. Search open and closed issues for duplicates.

2. Read the contributing guidelines.

-->

**Description**

Sometimes integration tests on CBS fail due by problem with provisioning pod of Mockice instances in tests. Probably this is a problem wit... | 1.0 | Mockice instances is CBS integration tests has a low resources - <!-- Thank you for your contribution. Before you submit the issue:

1. Search open and closed issues for duplicates.

2. Read the contributing guidelines.

-->

**Description**

Sometimes integration tests on CBS fail due by problem with provisioning ... | non_process | mockice instances is cbs integration tests has a low resources thank you for your contribution before you submit the issue search open and closed issues for duplicates read the contributing guidelines description sometimes integration tests on cbs fail due by problem with provisioning ... | 0 |

1,404 | 3,968,812,007 | IssuesEvent | 2016-05-03 20:59:41 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | ServiceControllerTests.PauseAndContinue test failed in CI | blocking-clean-ci System.ServiceProcess | http://dotnet-ci.cloudapp.net/job/dotnet_corefx/job/outerloop_win10_debug/142/consoleFull

```

18:55:59 System.ServiceProcess.Tests.ServiceControllerTests.PauseAndContinue [FAIL]

18:55:59 Assert.Equal() Failure

18:55:59 Expected: Running

18:55:59 Actual: StartPending

18:55:59 ... | 1.0 | ServiceControllerTests.PauseAndContinue test failed in CI - http://dotnet-ci.cloudapp.net/job/dotnet_corefx/job/outerloop_win10_debug/142/consoleFull

```

18:55:59 System.ServiceProcess.Tests.ServiceControllerTests.PauseAndContinue [FAIL]

18:55:59 Assert.Equal() Failure

18:55:59 Expected: Runnin... | process | servicecontrollertests pauseandcontinue test failed in ci system serviceprocess tests servicecontrollertests pauseandcontinue assert equal failure expected running actual startpending stack trace d j workspace o... | 1 |

18,839 | 24,744,471,235 | IssuesEvent | 2022-10-21 08:34:28 | fadeoutsoftware/WASDI | https://api.github.com/repos/fadeoutsoftware/WASDI | closed | Runtime error: mosaic_tile_checker failed due to code issue | bug P2 libraries app / processor | Execution of mosaic_tile_checker failed because Exception: : 'NoneType' object is not iterable | 1.0 | Runtime error: mosaic_tile_checker failed due to code issue - Execution of mosaic_tile_checker failed because Exception: : 'NoneType' object is not iterable | process | runtime error mosaic tile checker failed due to code issue execution of mosaic tile checker failed because exception nonetype object is not iterable | 1 |

84,470 | 10,540,790,987 | IssuesEvent | 2019-10-02 09:14:05 | pydata/sparse | https://api.github.com/repos/pydata/sparse | closed | Cython for radix argsort and fast indexing? | design decision good first issue help wanted type:performance | Since our main bottleneck is sorting, I was considering adding Cython as a dev dependency to create a

- Custom radix sort solution.

- Blazing fast indexing (#60)

I was considering this for 0.3. | 1.0 | Cython for radix argsort and fast indexing? - Since our main bottleneck is sorting, I was considering adding Cython as a dev dependency to create a

- Custom radix sort solution.

- Blazing fast indexing (#60)

I was considering this for 0.3. | non_process | cython for radix argsort and fast indexing since our main bottleneck is sorting i was considering adding cython as a dev dependency to create a custom radix sort solution blazing fast indexing i was considering this for | 0 |

354 | 2,794,158,344 | IssuesEvent | 2015-05-11 15:16:53 | Graylog2/graylog2-server | https://api.github.com/repos/Graylog2/graylog2-server | closed | Grok Extractor, Converter Support | processing | Hi,

when parsing metrics out of our logs, using a Grok extractor is really convenient. However, there's currently no way to apply converters to extracted fields?

It'd be very useful to, for example, be able to attach converters to either fields in the extractor or individual Grok patterns (even better!). | 1.0 | Grok Extractor, Converter Support - Hi,

when parsing metrics out of our logs, using a Grok extractor is really convenient. However, there's currently no way to apply converters to extracted fields?

It'd be very useful to, for example, be able to attach converters to either fields in the extractor or individual Gr... | process | grok extractor converter support hi when parsing metrics out of our logs using a grok extractor is really convenient however there s currently no way to apply converters to extracted fields it d be very useful to for example be able to attach converters to either fields in the extractor or individual gr... | 1 |

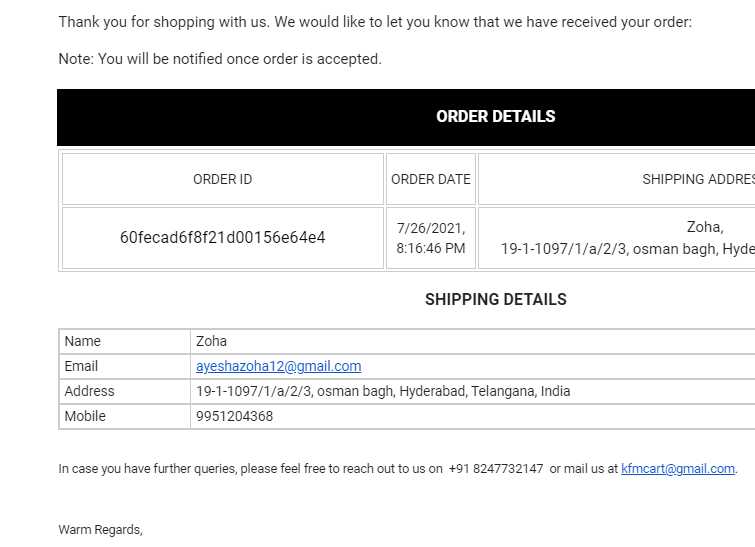

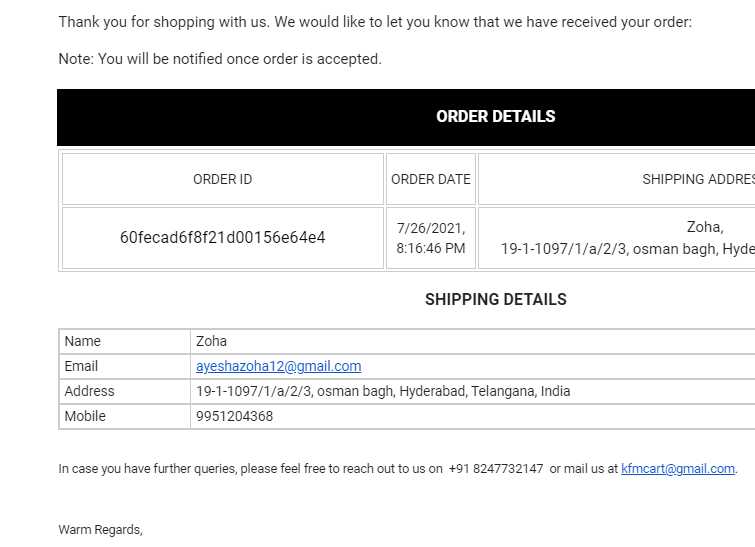

224,529 | 17,754,769,129 | IssuesEvent | 2021-08-28 14:34:53 | MuzaffarMohammed/kfmenterprises-ecommerce | https://api.github.com/repos/MuzaffarMohammed/kfmenterprises-ecommerce | closed | Order details page redirection in order placed mail. | enhancement good first issue Priority Tested - Dev In production |

Acceptance Criteria: User should have an option to view order details in application by navigation.

1. Make order Id as a clickable link to order detail page in application.

2. A button to view order t... | 1.0 | Order details page redirection in order placed mail. -

Acceptance Criteria: User should have an option to view order details in application by navigation.

1. Make order Id as a clickable link to order de... | non_process | order details page redirection in order placed mail acceptance criteria user should have an option to view order details in application by navigation make order id as a clickable link to order detail page in application a button to view order to same order detail page | 0 |

362,561 | 25,381,495,573 | IssuesEvent | 2022-11-21 17:55:48 | gnosischain/documentation | https://api.github.com/repos/gnosischain/documentation | closed | Validators - Rewards & Penalties | documentation validators | Current Page:

https://docs.gnosischain.com/node/incentives

## Tasks

- [ ] Check validity of information

### Rewards

- [ ] Calculate and show the current Yield

- [ ] Embed key information: https://dune.xyz/maxaleks/Gnosis-Beacon-Chain-(Deposits)

### Penalties

- [ ] Penalties

- [ ] Link to Penalties docs (?)

- [ ]... | 1.0 | Validators - Rewards & Penalties - Current Page:

https://docs.gnosischain.com/node/incentives

## Tasks

- [ ] Check validity of information

### Rewards

- [ ] Calculate and show the current Yield

- [ ] Embed key information: https://dune.xyz/maxaleks/Gnosis-Beacon-Chain-(Deposits)

### Penalties

- [ ] Penalties

- [... | non_process | validators rewards penalties current page tasks check validity of information rewards calculate and show the current yield embed key information penalties penalties link to penalties docs emphasize slashing emphasize inactivity leaks including mass inactivi... | 0 |

1,270 | 3,799,502,479 | IssuesEvent | 2016-03-23 16:05:53 | SIMEXP/niak | https://api.github.com/repos/SIMEXP/niak | closed | shortcut for quitting in the QC of fMRI preproc | enhancement preprocessing quality control | illdopejake wrote:

It would be cool if there was a keyboard shortcut to Quit. (Maybe there is and I just don't know about it?) | 1.0 | shortcut for quitting in the QC of fMRI preproc - illdopejake wrote:

It would be cool if there was a keyboard shortcut to Quit. (Maybe there is and I just don't know about it?) | process | shortcut for quitting in the qc of fmri preproc illdopejake wrote it would be cool if there was a keyboard shortcut to quit maybe there is and i just don t know about it | 1 |

19,599 | 25,952,353,300 | IssuesEvent | 2022-12-17 19:26:31 | RobotComponents/RobotComponents | https://api.github.com/repos/RobotComponents/RobotComponents | closed | Mod: Make controller utility base class | modification in process | Make a controller utility base class and all functionality we have to this base class.

Everything is now written in the component classes. We should centralize this to avoid duplicate codes, and to make it accessible through the API of the base class. | 1.0 | Mod: Make controller utility base class - Make a controller utility base class and all functionality we have to this base class.

Everything is now written in the component classes. We should centralize this to avoid duplicate codes, and to make it accessible through the API of the base class. | process | mod make controller utility base class make a controller utility base class and all functionality we have to this base class everything is now written in the component classes we should centralize this to avoid duplicate codes and to make it accessible through the api of the base class | 1 |

154,205 | 19,710,829,880 | IssuesEvent | 2022-01-13 05:00:27 | CanarysPlayground/Demo02063984 | https://api.github.com/repos/CanarysPlayground/Demo02063984 | closed | CVE-2020-7693 (Medium) detected in sockjs-0.3.19.tgz | security vulnerability | ## CVE-2020-7693 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sockjs-0.3.19.tgz</b></p></summary>

<p>SockJS-node is a server counterpart of SockJS-client a JavaScript library that... | True | CVE-2020-7693 (Medium) detected in sockjs-0.3.19.tgz - ## CVE-2020-7693 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sockjs-0.3.19.tgz</b></p></summary>

<p>SockJS-node is a server... | non_process | cve medium detected in sockjs tgz cve medium severity vulnerability vulnerable library sockjs tgz sockjs node is a server counterpart of sockjs client a javascript library that provides a websocket like object in the browser sockjs gives you a coherent cross browser javascrip... | 0 |

417,156 | 12,156,235,365 | IssuesEvent | 2020-04-25 16:27:56 | dev-protocol/stakes.social | https://api.github.com/repos/dev-protocol/stakes.social | opened | Mobile support: ButtonCard | Priority: Medium Type: Feature | On a mobile device, it would be a good look if it looked like this:

| 1.0 | Mobile support: ButtonCard - On a mobile device, it would be a good look if it looked like this:

| non_process | mobile support buttoncard on a mobile device it would be a good look if it looked like this | 0 |

21,462 | 29,498,383,903 | IssuesEvent | 2023-06-02 19:07:38 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | [MLv2] Port annotate middleware to MLv2 part 1: port aggregation/expression metadata fns | Querying/Processor .Backend .metabase-lib .Team/QueryProcessor :hammer_and_wrench: | The `annotate` middleware code to generate metadata for aggregations and expressions overlaps heavily with the stuff in MLv2 (and with the JS stuff ported in https://github.com/metabase/metabase/issues/28882), we should rework things so it uses MLv2 like everybody else does. This will be both a proof-of-concept for usi... | 2.0 | [MLv2] Port annotate middleware to MLv2 part 1: port aggregation/expression metadata fns - The `annotate` middleware code to generate metadata for aggregations and expressions overlaps heavily with the stuff in MLv2 (and with the JS stuff ported in https://github.com/metabase/metabase/issues/28882), we should rework th... | process | port annotate middleware to part port aggregation expression metadata fns the annotate middleware code to generate metadata for aggregations and expressions overlaps heavily with the stuff in and with the js stuff ported in we should rework things so it uses like everybody else does this will be both... | 1 |

756 | 3,236,931,634 | IssuesEvent | 2015-10-14 09:00:36 | DynareTeam/dynare | https://api.github.com/repos/DynareTeam/dynare | closed | Discuss allowed use of endogenous variables outside of model-block | decision preprocessor | Consider the mod-file

```

var y, c, k, a, h, b;

varexo e, u;

parameters beta, rho, alpha, delta, theta, psi, tau test;

alpha = 0.36;

rho = 0.95;

tau = 0.025;

beta = 0.99;

delta = 0.025;

psi = 0;

theta = 2.95;

phi = 0.1;

test=y*beta;

model;

c*theta*h^(1+psi)=(1-alpha)*y;

k = beta*(((exp(... | 1.0 | Discuss allowed use of endogenous variables outside of model-block - Consider the mod-file

```

var y, c, k, a, h, b;

varexo e, u;

parameters beta, rho, alpha, delta, theta, psi, tau test;

alpha = 0.36;

rho = 0.95;

tau = 0.025;

beta = 0.99;

delta = 0.025;

psi = 0;

theta = 2.95;

phi = 0.1;

tes... | process | discuss allowed use of endogenous variables outside of model block consider the mod file var y c k a h b varexo e u parameters beta rho alpha delta theta psi tau test alpha rho tau beta delta psi theta phi test y beta... | 1 |

22,222 | 30,771,725,737 | IssuesEvent | 2023-07-31 00:37:06 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Desenvolvedor BackEnd / SRE Infra Google] - Remoto - HunterHunter | BACK-END COMERCIAL INFRAESTRUTURA MONGODB JAVASCRIPT SQL TYPESCRIPT NODE.JS DOCKER KUBERNETES REQUISITOS REMOTO GOOGLE CLOUD PROCESSOS BACKEND INGLÊS SEGURANÇA UMA C CLEAN R DOCUMENTAÇÃO MODELAGEM DE DADOS TERRAFORM HELP WANTED ESPECIALISTA TESTES DE PERFORMANCE MONITORAMENTO SRE Stale | [Desenvolvedor BackEnd / SRE Infra Google] - Remoto. R$ 8 mil CLT. Com idas a cada 3 meses uma vez para São Paulo no horário comercial.

Skills técnicas relevantes:

Mais de 2 anos de experiência

Terraform (mandatório)

Google Cloud com mais de 2 anos de experiência (mandatório)

Javascript / Typescript (mandatório)

... | 1.0 | [Desenvolvedor BackEnd / SRE Infra Google] - Remoto - HunterHunter - [Desenvolvedor BackEnd / SRE Infra Google] - Remoto. R$ 8 mil CLT. Com idas a cada 3 meses uma vez para São Paulo no horário comercial.

Skills técnicas relevantes:

Mais de 2 anos de experiência

Terraform (mandatório)

Google Cloud com mais de 2 ano... | process | remoto hunterhunter remoto r mil clt com idas a cada meses uma vez para são paulo no horário comercial skills técnicas relevantes mais de anos de experiência terraform mandatório google cloud com mais de anos de experiência mandatório javascript typescript mandatório microsserviço... | 1 |

2,282 | 5,108,136,168 | IssuesEvent | 2017-01-05 16:50:08 | jlm2017/jlm-video-subtitles | https://api.github.com/repos/jlm2017/jlm-video-subtitles | closed | [Subtitles] [FR] La revue de la semaine n°2 : Syrie, médias, Orwell, CETA, lobbies, agriculture | Language: French Process: Someone is working on this issue Process: [3] Review (1) in progress | # Video title

La revue de la semaine n°2 : Syrie, médias, Orwell, CETA, lobbies, agriculture

# URL

https://www.youtube.com/watch?v=YxhxvRoCATY

# Youtube subtitles language

Français

# Duration

24:14

# Subtitles URL

https://www.youtube.com/timedtext_editor?lang=fr&bl=vmp&ui=hd&v=YxhxvRoCATY&tab=captions&... | 2.0 | [Subtitles] [FR] La revue de la semaine n°2 : Syrie, médias, Orwell, CETA, lobbies, agriculture - # Video title

La revue de la semaine n°2 : Syrie, médias, Orwell, CETA, lobbies, agriculture

# URL

https://www.youtube.com/watch?v=YxhxvRoCATY

# Youtube subtitles language

Français

# Duration

24:14

# Subtit... | process | la revue de la semaine n° syrie médias orwell ceta lobbies agriculture video title la revue de la semaine n° syrie médias orwell ceta lobbies agriculture url youtube subtitles language français duration subtitles url | 1 |

21,592 | 29,993,306,536 | IssuesEvent | 2023-06-26 01:49:56 | global-healthy-liveable-cities/global-indicators | https://api.github.com/repos/global-healthy-liveable-cities/global-indicators | opened | Configuration needs to be simpler | enhancement user process to document | Configuration is currently the most difficult and involving part of the spatial indicator calculation process. This involves specifying a range of data sources and parameters required to run analysis and document metadata for reporting. While this has been simplified from previous iterations, users still report diffi... | 1.0 | Configuration needs to be simpler - Configuration is currently the most difficult and involving part of the spatial indicator calculation process. This involves specifying a range of data sources and parameters required to run analysis and document metadata for reporting. While this has been simplified from previous ... | process | configuration needs to be simpler configuration is currently the most difficult and involving part of the spatial indicator calculation process this involves specifying a range of data sources and parameters required to run analysis and document metadata for reporting while this has been simplified from previous ... | 1 |

17,388 | 23,206,870,558 | IssuesEvent | 2022-08-02 06:32:17 | pyanodon/pybugreports | https://api.github.com/repos/pyanodon/pybugreports | closed | postprocess fail: item/fluid has no source with 5dims new mining | mod:pypostprocessing postprocess-fail compatibility | ### Mod source

PyAE Beta

### Which mod are you having an issue with?

- [ ] pyalienlife

- [ ] pyalternativeenergy

- [ ] pycoalprocessing

- [ ] pyfusionenergy

- [ ] pyhightech

- [ ] pyindustry

- [ ] pypetroleumhandling

- [X] pypostprocessing

- [ ] pyrawores

### Operating system

>=Windows 10

### What kind of issue i... | 2.0 | postprocess fail: item/fluid has no source with 5dims new mining - ### Mod source

PyAE Beta

### Which mod are you having an issue with?

- [ ] pyalienlife

- [ ] pyalternativeenergy

- [ ] pycoalprocessing

- [ ] pyfusionenergy

- [ ] pyhightech

- [ ] pyindustry

- [ ] pypetroleumhandling

- [X] pypostprocessing

- [ ] pyra... | process | postprocess fail item fluid has no source with new mining mod source pyae beta which mod are you having an issue with pyalienlife pyalternativeenergy pycoalprocessing pyfusionenergy pyhightech pyindustry pypetroleumhandling pypostprocessing pyrawores operating s... | 1 |

75,893 | 26,127,060,269 | IssuesEvent | 2022-12-28 20:15:56 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | BUG: Deprecation warning says to use non-existent symbols | defect scipy.optimize needs-decision deprecated | ### Describe your issue.

About a year ago (commit 6b08e087171479b30fc2dc015d2074a24844789e) the ``optimize.tnc`` module gained some deprecation warnings, indicating that symbols of the form ``optimze.tnc.<SYM>`` were deprecated, use instead ``optimize.<SYM>``. Since none of these symbols exist in optimize directly, d... | 1.0 | BUG: Deprecation warning says to use non-existent symbols - ### Describe your issue.

About a year ago (commit 6b08e087171479b30fc2dc015d2074a24844789e) the ``optimize.tnc`` module gained some deprecation warnings, indicating that symbols of the form ``optimze.tnc.<SYM>`` were deprecated, use instead ``optimize.<SYM>``... | non_process | bug deprecation warning says to use non existent symbols describe your issue about a year ago commit the optimize tnc module gained some deprecation warnings indicating that symbols of the form optimze tnc were deprecated use instead optimize since none of these symbols exist in optimiz... | 0 |

12,172 | 14,741,848,264 | IssuesEvent | 2021-01-07 11:16:24 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | News - Connection Timeout | anc-process anp-urgent ant-bug has attachment | In GitLab by @kdjstudios on Feb 18, 2019, 10:16

**Submitted by:** Kyle

**Helpdesk:**

- Deb: http://www.servicedesk.answernet.com/profiles/ticket/7152314

- Gaylan: http://www.servicedesk.answernet.com/profiles/ticket/2019-02-21-72387/conversation

- Richard and Gary: http://www.servicedesk.answernet.com/profiles/tick... | 1.0 | News - Connection Timeout - In GitLab by @kdjstudios on Feb 18, 2019, 10:16

**Submitted by:** Kyle

**Helpdesk:**

- Deb: http://www.servicedesk.answernet.com/profiles/ticket/7152314

- Gaylan: http://www.servicedesk.answernet.com/profiles/ticket/2019-02-21-72387/conversation

- Richard and Gary: http://www.servicedesk... | process | news connection timeout in gitlab by kdjstudios on feb submitted by kyle helpdesk deb gaylan richard and gary grant server internal client site na account na issue while attempting to update the news sections in sa billing today i kept encounte... | 1 |

20,988 | 10,565,011,058 | IssuesEvent | 2019-10-05 07:35:04 | plan-player-analytics/Plan | https://api.github.com/repos/plan-player-analytics/Plan | closed | XSS Attack possible with Bungee-Bukkit connection system [4.1.0 -> 4.8.7] | Security Vulnerability p: 25 server: Networks | ### How to reproduce the vulnerability

Version 4.5.2

- Find a BungeeCord server that has Plan on it

- ~~Find a Bukkit/Sponge server UUID from the /debug page~~ Snoop on the HTTP traffic between Bungee and Bukkit servers for a server UUID

- Send a valid CacheAnalysisPageRequest to /info/cacheanalysispagerequest ... | True | XSS Attack possible with Bungee-Bukkit connection system [4.1.0 -> 4.8.7] - ### How to reproduce the vulnerability

Version 4.5.2

- Find a BungeeCord server that has Plan on it

- ~~Find a Bukkit/Sponge server UUID from the /debug page~~ Snoop on the HTTP traffic between Bungee and Bukkit servers for a server UUID... | non_process | xss attack possible with bungee bukkit connection system how to reproduce the vulnerability version find a bungeecord server that has plan on it find a bukkit sponge server uuid from the debug page snoop on the http traffic between bungee and bukkit servers for a server uuid send a vali... | 0 |

113,132 | 14,370,337,201 | IssuesEvent | 2020-12-01 10:57:57 | openaustralia/righttoknow | https://api.github.com/repos/openaustralia/righttoknow | closed | Version 0.31.0.4 | design enhancement | # 0.31.0.4

## Highlighted Features

* Updated translations from Transifex (Liz Conlan)

# 0.31.0.3

## Highlighted Features

* Fix broken translation string (Gareth Rees)

# 0.31.0.2

## Highlighted Features

* Remove obsolete pro msgids (Gareth Rees)

# 0.31.0.1

## Highlighted Features

* Updat... | 1.0 | Version 0.31.0.4 - # 0.31.0.4

## Highlighted Features

* Updated translations from Transifex (Liz Conlan)

# 0.31.0.3

## Highlighted Features

* Fix broken translation string (Gareth Rees)

# 0.31.0.2

## Highlighted Features

* Remove obsolete pro msgids (Gareth Rees)

# 0.31.0.1

## Highlighted ... | non_process | version highlighted features updated translations from transifex liz conlan highlighted features fix broken translation string gareth rees highlighted features remove obsolete pro msgids gareth rees highlighted featu... | 0 |

19,064 | 25,083,667,851 | IssuesEvent | 2022-11-07 21:37:39 | googleapis/gaxios | https://api.github.com/repos/googleapis/gaxios | closed | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* must have required property 'library_type' in .repo-metadata.json

* must have required property 'release_level' in .repo-metadata.json

* must have required property 'client_documentation' in .repo-metadata.json

☝️ Once you address these pro... | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* must have required property 'library_type' in .repo-metadata.json

* must have required property 'release_level' in .repo-metadata.json

* must have required property 'client_documentation' in .r... | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 must have required property library type in repo metadata json must have required property release level in repo metadata json must have required property client documentation in r... | 1 |

7,855 | 11,029,633,772 | IssuesEvent | 2019-12-06 14:17:14 | prisma/photonjs | https://api.github.com/repos/prisma/photonjs | closed | UniqueConstraintViolation error data as JSON object | kind/improvement process/next-milestone | Hello!

Do you have any plans to support returning the UniqueConstraintViolation error data as JSON object which contains the name of the field with duplicate value? At this moment this info is returned as a string which I need to parse. | 1.0 | UniqueConstraintViolation error data as JSON object - Hello!

Do you have any plans to support returning the UniqueConstraintViolation error data as JSON object which contains the name of the field with duplicate value? At this moment this info is returned as a string which I need to parse. | process | uniqueconstraintviolation error data as json object hello do you have any plans to support returning the uniqueconstraintviolation error data as json object which contains the name of the field with duplicate value at this moment this info is returned as a string which i need to parse | 1 |

765,531 | 26,850,545,770 | IssuesEvent | 2023-02-03 10:40:41 | kartoza/ckanext-dalrrd-emc-dcpr | https://api.github.com/repos/kartoza/ckanext-dalrrd-emc-dcpr | opened | Make json the default on the server | priority:low | The current EMC STAC API root endpoint is https://csw-testing.emc.kartoza.com/, but accessing this returns an html page which isn't readable in the STAC Browser QGIS plugin.