Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

20,153 | 11,402,220,711 | IssuesEvent | 2020-01-31 02:18:18 | Azure/azure-sdk-for-net | https://api.github.com/repos/Azure/azure-sdk-for-net | closed | 7000 Failed AcceptMessageSession dependencies in AppInsight per hour | Bug Client Service Attention Service Bus customer-reported | Crossposting original bug from [https://github.com/Azure/azure-service-bus-dotnet/issues/588](url)

> **Actual Behavior**

> Ensure AppInisight is configured and dependency tracking is enabled.

> Construct a SubscriptionClient for a topic with sessions enabled.

> Call RegisterSessionHandler on the client.

> (When ... | 2.0 | 7000 Failed AcceptMessageSession dependencies in AppInsight per hour - Crossposting original bug from [https://github.com/Azure/azure-service-bus-dotnet/issues/588](url)

> **Actual Behavior**

> Ensure AppInisight is configured and dependency tracking is enabled.

> Construct a SubscriptionClient for a topic with se... | non_process | failed acceptmessagesession dependencies in appinsight per hour crossposting original bug from url actual behavior ensure appinisight is configured and dependency tracking is enabled construct a subscriptionclient for a topic with sessions enabled call registersessionhandler on the client ... | 0 |

15,301 | 19,340,492,192 | IssuesEvent | 2021-12-15 03:29:52 | alexrp/system-terminal | https://api.github.com/repos/alexrp/system-terminal | closed | Figure out a `System.Diagnostics.Process` story | type: feature state: blocked area: drivers area: processes | Right now, executing a `System.Diagnostics.Process` will mess up the `termios` state on Unix systems. We need to figure out a way of dealing with this. It's not clear what the right thing to do actually *is* given that we could be in raw mode, while a child process expects cooked mode.

Further, on Windows, we need t... | 1.0 | Figure out a `System.Diagnostics.Process` story - Right now, executing a `System.Diagnostics.Process` will mess up the `termios` state on Unix systems. We need to figure out a way of dealing with this. It's not clear what the right thing to do actually *is* given that we could be in raw mode, while a child process expe... | process | figure out a system diagnostics process story right now executing a system diagnostics process will mess up the termios state on unix systems we need to figure out a way of dealing with this it s not clear what the right thing to do actually is given that we could be in raw mode while a child process expe... | 1 |

8,333 | 11,493,902,016 | IssuesEvent | 2020-02-12 00:09:19 | xatkit-bot-platform/xatkit-runtime | https://api.github.com/repos/xatkit-bot-platform/xatkit-runtime | opened | Xatkit shouldn't crash if a processor failed his initialization | Bug Processors | This is particularly true for processors like Stanford NLP ones that require external libraries. If the library cannot be found we should log an error, but Xatkit should still start. | 1.0 | Xatkit shouldn't crash if a processor failed his initialization - This is particularly true for processors like Stanford NLP ones that require external libraries. If the library cannot be found we should log an error, but Xatkit should still start. | process | xatkit shouldn t crash if a processor failed his initialization this is particularly true for processors like stanford nlp ones that require external libraries if the library cannot be found we should log an error but xatkit should still start | 1 |

17,228 | 22,915,757,825 | IssuesEvent | 2022-07-17 00:09:26 | kubernetes/minikube | https://api.github.com/repos/kubernetes/minikube | closed | Append Changelog to KIC Base Image build and ISO Image build process | help wanted priority/important-longterm lifecycle/rotten kind/process | each time we comment ok-to-build-iso or ok-to-build-image our Bots creates an Docker image and ISO image

we should add a changelog.txt to file both ISO and KIC image with PR number and Commit number

so we see and audit of all merged things in that ISO / Kic Image

This task can be done by two PRs one for KIC and ... | 1.0 | Append Changelog to KIC Base Image build and ISO Image build process - each time we comment ok-to-build-iso or ok-to-build-image our Bots creates an Docker image and ISO image

we should add a changelog.txt to file both ISO and KIC image with PR number and Commit number

so we see and audit of all merged things in th... | process | append changelog to kic base image build and iso image build process each time we comment ok to build iso or ok to build image our bots creates an docker image and iso image we should add a changelog txt to file both iso and kic image with pr number and commit number so we see and audit of all merged things in th... | 1 |

207,550 | 23,458,623,516 | IssuesEvent | 2022-08-16 11:10:28 | Gal-Doron/Baragon-test-6 | https://api.github.com/repos/Gal-Doron/Baragon-test-6 | opened | async-http-client-1.9.38.jar: 1 vulnerabilities (highest severity is: 9.1) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>async-http-client-1.9.38.jar</b></p></summary>

<p></p>

<p>Path to dependency file: /BaragonService/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/re... | True | async-http-client-1.9.38.jar: 1 vulnerabilities (highest severity is: 9.1) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>async-http-client-1.9.38.jar</b></p></summary>

<p></p>

<p>Path to dependency file: /Barag... | non_process | async http client jar vulnerabilities highest severity is vulnerable library async http client jar path to dependency file baragonservice pom xml path to vulnerable library home wss scanner repository io netty netty final netty final jar found in head co... | 0 |

6,934 | 10,101,619,498 | IssuesEvent | 2019-07-29 09:10:28 | CurtinFRC/ModularVisionTracking | https://api.github.com/repos/CurtinFRC/ModularVisionTracking | opened | add priority starting threads in the vision map | Processes Threading enhancement visionMap | This allows to user to prioritise which threads start up first and/or which functions from that thread, e.g tape tracking is number 1, then ball tracking is number 2.

The hopes is that if we want to track a retro reflective ball, you want the retro tape tracking before the circular tracking.

i dunno just a though... | 1.0 | add priority starting threads in the vision map - This allows to user to prioritise which threads start up first and/or which functions from that thread, e.g tape tracking is number 1, then ball tracking is number 2.

The hopes is that if we want to track a retro reflective ball, you want the retro tape tracking befo... | process | add priority starting threads in the vision map this allows to user to prioritise which threads start up first and or which functions from that thread e g tape tracking is number then ball tracking is number the hopes is that if we want to track a retro reflective ball you want the retro tape tracking befo... | 1 |

639,985 | 20,770,589,469 | IssuesEvent | 2022-03-16 03:56:45 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | [Improvement] Refactor Solace Developer Portal Related implementation | Type/Improvement Priority/Normal APIM - 4.1.0 | ### Describe your problem(s)

The new feature of Solace broker integration with WSO2 API Manager was introduced in the APIM 4.1.0 release. For maintainability, most of the Solace implementations were written in extension mode to support decoupling. But due to some UI complications, the Developer portal related Solace i... | 1.0 | [Improvement] Refactor Solace Developer Portal Related implementation - ### Describe your problem(s)

The new feature of Solace broker integration with WSO2 API Manager was introduced in the APIM 4.1.0 release. For maintainability, most of the Solace implementations were written in extension mode to support decoupling.... | non_process | refactor solace developer portal related implementation describe your problem s the new feature of solace broker integration with api manager was introduced in the apim release for maintainability most of the solace implementations were written in extension mode to support decoupling but due to som... | 0 |

297,914 | 22,408,198,970 | IssuesEvent | 2022-06-18 09:50:05 | Lu1z-Gust4v0/Fup-Final_Project | https://api.github.com/repos/Lu1z-Gust4v0/Fup-Final_Project | closed | Refactor args_parser file | bug documentation Story | Propose to refactor of args_parser file because of extra shit parsing and some logic errors

@Lu1z-Gust4v0

- [ ] Change parse_value fn condition to or

- [ ] Remove line arg in check fn and pass the hint_counter on the order hand

- [ ] Pass hint_counter as line number for motive line

- [ ] Document each fn to a b... | 1.0 | Refactor args_parser file - Propose to refactor of args_parser file because of extra shit parsing and some logic errors

@Lu1z-Gust4v0

- [ ] Change parse_value fn condition to or

- [ ] Remove line arg in check fn and pass the hint_counter on the order hand

- [ ] Pass hint_counter as line number for motive line

-... | non_process | refactor args parser file propose to refactor of args parser file because of extra shit parsing and some logic errors change parse value fn condition to or remove line arg in check fn and pass the hint counter on the order hand pass hint counter as line number for motive line document eac... | 0 |

18,893 | 5,730,214,458 | IssuesEvent | 2017-04-21 08:49:04 | gaymers-discord/DiscoBot | https://api.github.com/repos/gaymers-discord/DiscoBot | closed | !role command doesn't accept roles unless they are specifically named | bug code-improvement pr-submitted | !role command doesn't accept roles unless they are specifically named, usually in Title Case. Recently happened with the creation of the `CS:GO` role that can't be added due to the way we transform the role name.

We should refactor how roles are looked up from Discord so we can avoid this. | 1.0 | !role command doesn't accept roles unless they are specifically named - !role command doesn't accept roles unless they are specifically named, usually in Title Case. Recently happened with the creation of the `CS:GO` role that can't be added due to the way we transform the role name.

We should refactor how roles ar... | non_process | role command doesn t accept roles unless they are specifically named role command doesn t accept roles unless they are specifically named usually in title case recently happened with the creation of the cs go role that can t be added due to the way we transform the role name we should refactor how roles ar... | 0 |

7,709 | 10,818,264,558 | IssuesEvent | 2019-11-08 11:37:33 | prisma/prisma2 | https://api.github.com/repos/prisma/prisma2 | closed | prisma2 init flow is throwing "File name too long" | bug/2-confirmed kind/regression process/candidate | After installing preview 16, after following the following init flow:

1. prisma2 init

2. Blank project

3. SQLite

4. Selecting both Photon and Lift > Confirm

5. Typescript

6. Demo script

It is throwing the this Rust panic:

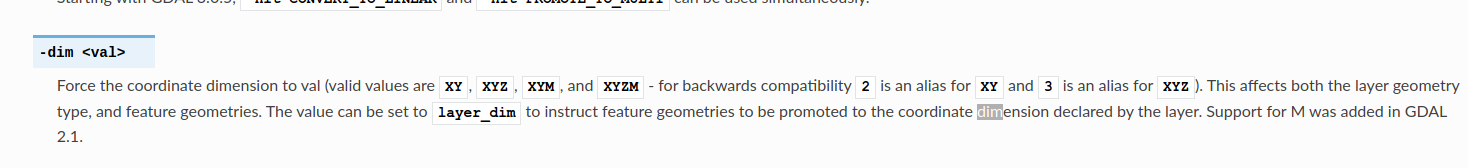

However when running the processing algorithm `Export to PostgreSQL (Available Connections)` It only shows two ... | 1.0 | Expose XYZM dimension with Ogr2ogr export to PostgreSQL algorithms - # Problem

The documentation of https://gdal.org/programs/ogr2ogr.html clearly states that :

However when running the processing algor... | process | expose xyzm dimension with export to postgresql algorithms problem the documentation of clearly states that however when running the processing algorithm export to postgresql available connections it only shows two options this prevents users from being able to import data types ... | 1 |

3,304 | 6,401,348,672 | IssuesEvent | 2017-08-05 20:01:11 | facebook/osquery | https://api.github.com/repos/facebook/osquery | closed | Linux Audit publisher could implement a fast-dequeue thread | Linux process auditing wishlist | The current Linux Audit implementation dequeues from the Audit Netlink socket, parses, broadcasts to all subscribers, then writes JSON to RocksDB synchronously. This leads to queue drops and backlog stalls for >2k events/s. You can simulate Audit queue drops using:

```

./tools/analysis/system_stress.py -n 10 -i lo0

... | 1.0 | Linux Audit publisher could implement a fast-dequeue thread - The current Linux Audit implementation dequeues from the Audit Netlink socket, parses, broadcasts to all subscribers, then writes JSON to RocksDB synchronously. This leads to queue drops and backlog stalls for >2k events/s. You can simulate Audit queue drops... | process | linux audit publisher could implement a fast dequeue thread the current linux audit implementation dequeues from the audit netlink socket parses broadcasts to all subscribers then writes json to rocksdb synchronously this leads to queue drops and backlog stalls for events s you can simulate audit queue drops ... | 1 |

4,405 | 7,298,729,405 | IssuesEvent | 2018-02-26 17:50:18 | jamesfulford/fulford.data | https://api.github.com/repos/jamesfulford/fulford.data | opened | Use "from Queue import Queue" instead of Trickle | .processing | Maybe more efficient in that it won't clutter threads with sleeping for 0.05 seconds. Probably better implementation of asynchronous queueing. | 1.0 | Use "from Queue import Queue" instead of Trickle - Maybe more efficient in that it won't clutter threads with sleeping for 0.05 seconds. Probably better implementation of asynchronous queueing. | process | use from queue import queue instead of trickle maybe more efficient in that it won t clutter threads with sleeping for seconds probably better implementation of asynchronous queueing | 1 |

217,710 | 24,348,945,452 | IssuesEvent | 2022-10-02 17:50:29 | venkateshreddypala/NeverNote | https://api.github.com/repos/venkateshreddypala/NeverNote | opened | CVE-2022-38750 (Medium) detected in snakeyaml-1.25.jar | security vulnerability | ## CVE-2022-38750 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>snakeyaml-1.25.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http... | True | CVE-2022-38750 (Medium) detected in snakeyaml-1.25.jar - ## CVE-2022-38750 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>snakeyaml-1.25.jar</b></p></summary>

<p>YAML 1.1 parser and... | non_process | cve medium detected in snakeyaml jar cve medium severity vulnerability vulnerable library snakeyaml jar yaml parser and emitter for java library home page a href path to dependency file pom xml path to vulnerable library home wss scanner repository org yaml snak... | 0 |

12,962 | 15,341,585,710 | IssuesEvent | 2021-02-27 12:39:03 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | AbortSignal doesn't abort child_process.spawn | child_process | * **Version**: v15.5.0, v15.8.0 (works fine on v15.6, v15.7)

* **Platform**: macOS 10.15.7

* **Subsystem**: child_process

### What steps will reproduce the bug?

Create a child process using spawn, and abort the controller. The signal doesn't abort child process.

### How often does it reproduce? Is there a re... | 1.0 | AbortSignal doesn't abort child_process.spawn - * **Version**: v15.5.0, v15.8.0 (works fine on v15.6, v15.7)

* **Platform**: macOS 10.15.7

* **Subsystem**: child_process

### What steps will reproduce the bug?

Create a child process using spawn, and abort the controller. The signal doesn't abort child process.

... | process | abortsignal doesn t abort child process spawn version works fine on platform macos subsystem child process what steps will reproduce the bug create a child process using spawn and abort the controller the signal doesn t abort child process how ... | 1 |

11,595 | 14,448,621,216 | IssuesEvent | 2020-12-08 06:37:16 | A01731346/5a | https://api.github.com/repos/A01731346/5a | closed | fill_size_estimating_template | process-dashboard | - Llenado de template de estimación de líneas de código en process dashboard

- Correr el PROBE wizard | 1.0 | fill_size_estimating_template - - Llenado de template de estimación de líneas de código en process dashboard

- Correr el PROBE wizard | process | fill size estimating template llenado de template de estimación de líneas de código en process dashboard correr el probe wizard | 1 |

10,593 | 13,401,061,950 | IssuesEvent | 2020-09-03 16:43:05 | w3c/webauthn | https://api.github.com/repos/w3c/webauthn | opened | need "how to install bikeshed in one's local webauthn repo clone" instructions | type:process | I was attempting to run the ./update-bikeshed-cache.sh on my local webauthn repo clone (following the directions here: https://github.com/w3c/webauthn#updating-copies-of-bikeshed-data-files-stored-in-this-repo) and this is what I got:

```

$ ./update-bikeshed-cache.sh \

&& git add .spec-data .bikeshed-include \

... | 1.0 | need "how to install bikeshed in one's local webauthn repo clone" instructions - I was attempting to run the ./update-bikeshed-cache.sh on my local webauthn repo clone (following the directions here: https://github.com/w3c/webauthn#updating-copies-of-bikeshed-data-files-stored-in-this-repo) and this is what I got:

```... | process | need how to install bikeshed in one s local webauthn repo clone instructions i was attempting to run the update bikeshed cache sh on my local webauthn repo clone following the directions here and this is what i got update bikeshed cache sh git add spec data bikeshed include git co... | 1 |

153,388 | 13,504,255,864 | IssuesEvent | 2020-09-13 17:10:44 | nextjs-starter/nextjs-webapp-starter | https://api.github.com/repos/nextjs-starter/nextjs-webapp-starter | opened | Update repo documentation files to reflect changes in project's documentation | type: documentation | The structure of the project's documentation has changed, which include content changes navigation structure and page links. The in-repo documentation files such as the README will therefore need to be updated. | 1.0 | Update repo documentation files to reflect changes in project's documentation - The structure of the project's documentation has changed, which include content changes navigation structure and page links. The in-repo documentation files such as the README will therefore need to be updated. | non_process | update repo documentation files to reflect changes in project s documentation the structure of the project s documentation has changed which include content changes navigation structure and page links the in repo documentation files such as the readme will therefore need to be updated | 0 |

440,829 | 30,760,934,747 | IssuesEvent | 2023-07-29 17:37:32 | oksana-mlynska/homepage | https://api.github.com/repos/oksana-mlynska/homepage | closed | Скласти інтро | documentation BSA-hometask-level4 | Currently, I work as a CRM tester and want to develop further in the field of QA engineering. I like to plan everything, learn about technology. I want to get more practical skills and learn best practices in testing from professionals. I know that in IT sphere continuous learning is very important, that is why I am ha... | 1.0 | Скласти інтро - Currently, I work as a CRM tester and want to develop further in the field of QA engineering. I like to plan everything, learn about technology. I want to get more practical skills and learn best practices in testing from professionals. I know that in IT sphere continuous learning is very important, tha... | non_process | скласти інтро currently i work as a crm tester and want to develop further in the field of qa engineering i like to plan everything learn about technology i want to get more practical skills and learn best practices in testing from professionals i know that in it sphere continuous learning is very important tha... | 0 |

11,949 | 14,712,698,303 | IssuesEvent | 2021-01-05 09:18:25 | panther-labs/panther | https://api.github.com/repos/panther-labs/panther | opened | Support AWS Network Firewall logs | story team:data processing | ### Description

Add support for AWS Network Firewall logs: https://docs.aws.amazon.com/network-firewall/latest/developerguide/firewall-logging.html

### Acceptance Criteria

- Users can select AWS Network Firewall logs when onboarding a new S3 source

- Users can write rules for AWS Network Firewall logs

| 1.0 | Support AWS Network Firewall logs - ### Description

Add support for AWS Network Firewall logs: https://docs.aws.amazon.com/network-firewall/latest/developerguide/firewall-logging.html

### Acceptance Criteria

- Users can select AWS Network Firewall logs when onboarding a new S3 source

- Users can write rules for AWS ... | process | support aws network firewall logs description add support for aws network firewall logs acceptance criteria users can select aws network firewall logs when onboarding a new source users can write rules for aws network firewall logs | 1 |

1,214 | 3,420,384,068 | IssuesEvent | 2015-12-08 14:37:44 | NAFITH/IraqWeb | https://api.github.com/repos/NAFITH/IraqWeb | opened | Manifest Duplication, specify new rule to the duplicate manifests | Critical Missing Requirement question | The system was deciding if the new manifest already exist in the database by checking the following values :

• Voyage number

• Manifest number

• Shipping line

If the values above (together) matches the values of an active manifest, then the system will not allow the user to save it and a notification message w... | 1.0 | Manifest Duplication, specify new rule to the duplicate manifests - The system was deciding if the new manifest already exist in the database by checking the following values :

• Voyage number

• Manifest number

• Shipping line

If the values above (together) matches the values of an active manifest, then th... | non_process | manifest duplication specify new rule to the duplicate manifests the system was deciding if the new manifest already exist in the database by checking the following values • voyage number • manifest number • shipping line if the values above together matches the values of an active manifest then th... | 0 |

21,084 | 6,130,484,158 | IssuesEvent | 2017-06-24 05:43:45 | Tilana/Classification | https://api.github.com/repos/Tilana/Classification | closed | remove Collection.py class | bug code refactoring | lda/Collection.py is outdated as a pandas dataframe is used to store a collection of documents.

remove class and dependencies | 1.0 | remove Collection.py class - lda/Collection.py is outdated as a pandas dataframe is used to store a collection of documents.

remove class and dependencies | non_process | remove collection py class lda collection py is outdated as a pandas dataframe is used to store a collection of documents remove class and dependencies | 0 |

29,547 | 11,759,834,319 | IssuesEvent | 2020-03-13 18:06:10 | 01binary/elevator | https://api.github.com/repos/01binary/elevator | opened | WS-2018-0347 (Medium) detected in eslint-4.10.0.tgz | security vulnerability | ## WS-2018-0347 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>eslint-4.10.0.tgz</b></p></summary>

<p>An AST-based pattern checker for JavaScript.</p>

<p>Library home page: <a href=... | True | WS-2018-0347 (Medium) detected in eslint-4.10.0.tgz - ## WS-2018-0347 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>eslint-4.10.0.tgz</b></p></summary>

<p>An AST-based pattern chec... | non_process | ws medium detected in eslint tgz ws medium severity vulnerability vulnerable library eslint tgz an ast based pattern checker for javascript library home page a href path to dependency file tmp ws scm elevator clientapp package json path to vulnerable library tmp w... | 0 |

21,646 | 30,083,029,195 | IssuesEvent | 2023-06-29 06:13:41 | bitfocus/companion-module-requests | https://api.github.com/repos/bitfocus/companion-module-requests | opened | Xilica Audio Processors | NOT YET PROCESSED | - [x] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

Xilica Solaro

What you would like to be able to make it do from Companion:

Control audio functions such... | 1.0 | Xilica Audio Processors - - [x] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

Xilica Solaro

What you would like to be able to make it do from Companion:

Co... | process | xilica audio processors i have researched the list of existing companion modules and requests and have determined this has not yet been requested the name of the device hardware or software you would like to control xilica solaro what you would like to be able to make it do from companion cont... | 1 |

2,914 | 5,905,106,158 | IssuesEvent | 2017-05-19 11:50:52 | rubberduck-vba/Rubberduck | https://api.github.com/repos/rubberduck-vba/Rubberduck | closed | Parser Error when method has 0 or >1 parameters in parentheses on continued line | bug parse-tree-processing | MCVEs:

```vb

'This causes Parse Error, because 0 parameter)

Debug.Print Now _

()

'This causes Parse Error, because > 1 parameter)

Debug.Print Round _

(1, 2)

```

I'm unsure if passing 1 parameter is resolving the parentheses as Value cast of the parameter?

```vb

'This parses because exactly 1 parameter,... | 1.0 | Parser Error when method has 0 or >1 parameters in parentheses on continued line - MCVEs:

```vb

'This causes Parse Error, because 0 parameter)

Debug.Print Now _

()

'This causes Parse Error, because > 1 parameter)

Debug.Print Round _

(1, 2)

```

I'm unsure if passing 1 parameter is resolving the parenthese... | process | parser error when method has or parameters in parentheses on continued line mcves vb this causes parse error because parameter debug print now this causes parse error because parameter debug print round i m unsure if passing parameter is resolving the parenthese... | 1 |

13,156 | 15,574,657,520 | IssuesEvent | 2021-03-17 10:06:20 | googleapis/gax-dotnet | https://api.github.com/repos/googleapis/gax-dotnet | opened | Update gRPC dependencies before releasing 3.3.0 | type: process | We need to check whether Grpc.Core 2.36.1 has any known deployment issues - there have been a few changes there. | 1.0 | Update gRPC dependencies before releasing 3.3.0 - We need to check whether Grpc.Core 2.36.1 has any known deployment issues - there have been a few changes there. | process | update grpc dependencies before releasing we need to check whether grpc core has any known deployment issues there have been a few changes there | 1 |

722,609 | 24,868,984,411 | IssuesEvent | 2022-10-27 13:59:28 | enjoythecode/scrum-wizards-cs321 | https://api.github.com/repos/enjoythecode/scrum-wizards-cs321 | closed | SUPER ADMIN: Change users read permissions | high priority @Super Admin | **As a**

Super Admin,

**I want to be able to**

Change the read permissions of any C-CAMS user, giving or revoking permission to read and view certain data.

**so that**

I can control the privacy of this system, which leverages massive amounts of data. | 1.0 | SUPER ADMIN: Change users read permissions - **As a**

Super Admin,

**I want to be able to**

Change the read permissions of any C-CAMS user, giving or revoking permission to read and view certain data.

**so that**

I can control the privacy of this system, which leverages massive amounts of data. | non_process | super admin change users read permissions as a super admin i want to be able to change the read permissions of any c cams user giving or revoking permission to read and view certain data so that i can control the privacy of this system which leverages massive amounts of data | 0 |

4,662 | 5,221,841,837 | IssuesEvent | 2017-01-27 04:07:53 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | [arm32/Linux] Make clang 3.6 the default toolset for cross arm32 build | area-Infrastructure arm32 os-linux | CoreCLR repo specifies clang 3.6 for cross builds (https://github.com/dotnet/coreclr/blob/master/build.sh#L747) but CoreFX does not (see https://github.com/dotnet/corefx/blob/master/src/Native/build-native.sh).

CC @hqueue @hseok-oh @jyoungyun | 1.0 | [arm32/Linux] Make clang 3.6 the default toolset for cross arm32 build - CoreCLR repo specifies clang 3.6 for cross builds (https://github.com/dotnet/coreclr/blob/master/build.sh#L747) but CoreFX does not (see https://github.com/dotnet/corefx/blob/master/src/Native/build-native.sh).

CC @hqueue @hseok-oh @jyoungyun | non_process | make clang the default toolset for cross build coreclr repo specifies clang for cross builds but corefx does not see cc hqueue hseok oh jyoungyun | 0 |

395,725 | 27,084,339,780 | IssuesEvent | 2023-02-14 15:59:44 | supabase/supabase | https://api.github.com/repos/supabase/supabase | closed | Add docs on Testing + Debugging Postgres Functions | documentation good first issue | Note this is a similar request to [#7311](https://github.com/supabase/supabase/issues/7311)

## Context

I'm getting a postgres error for a function that is triggered whenever a new auth user is created

## The issue

I'm finding it frustrating to debug the issue. Currently it's very difficult to debug postgres fun... | 1.0 | Add docs on Testing + Debugging Postgres Functions - Note this is a similar request to [#7311](https://github.com/supabase/supabase/issues/7311)

## Context

I'm getting a postgres error for a function that is triggered whenever a new auth user is created

## The issue

I'm finding it frustrating to debug the issue... | non_process | add docs on testing debugging postgres functions note this is a similar request to context i m getting a postgres error for a function that is triggered whenever a new auth user is created the issue i m finding it frustrating to debug the issue currently it s very difficult to debug postgres func... | 0 |

22,452 | 31,199,747,035 | IssuesEvent | 2023-08-18 01:15:07 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [processor/k8sattributes] Allow specifying that all labels/annotations should be copied | enhancement processor/k8sattributes | ### Component(s)

processor/k8sattributes

### Is your feature request related to a problem? Please describe.

Today the processor allows configuring which specific labels and annotations should be added as resource attributes. For users who want all the labels and/or annotations, this requires them to:

1. Know ... | 1.0 | [processor/k8sattributes] Allow specifying that all labels/annotations should be copied - ### Component(s)

processor/k8sattributes

### Is your feature request related to a problem? Please describe.

Today the processor allows configuring which specific labels and annotations should be added as resource attribut... | process | allow specifying that all labels annotations should be copied component s processor is your feature request related to a problem please describe today the processor allows configuring which specific labels and annotations should be added as resource attributes for users who want all the label... | 1 |

322,131 | 23,892,961,523 | IssuesEvent | 2022-09-08 12:54:41 | tidyverse/purrr | https://api.github.com/repos/tidyverse/purrr | closed | Add article for row-oriented workflow (or variations thereupon) | documentation tidy-dev-day :nerd_face: | Since this doesn't really fit in any one tidyverse repo:

https://community.rstudio.com/t/missing-workflow-in-tidyverse/20578 | 1.0 | Add article for row-oriented workflow (or variations thereupon) - Since this doesn't really fit in any one tidyverse repo:

https://community.rstudio.com/t/missing-workflow-in-tidyverse/20578 | non_process | add article for row oriented workflow or variations thereupon since this doesn t really fit in any one tidyverse repo | 0 |

14,688 | 17,798,493,642 | IssuesEvent | 2021-09-01 03:09:18 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Caged Heat | suggested title in process | Please add as much of the following info as you can:

Title: Caged Heat

Type (film/tv show): TV Show

Film or show in which it appears: All Hail the King (Marvel Short)

Is the parent film/show streaming anywhere? Yes

About when in the parent film/show does it appear? 6:10

Actual footage of the film/show... | 1.0 | Caged Heat - Please add as much of the following info as you can:

Title: Caged Heat

Type (film/tv show): TV Show

Film or show in which it appears: All Hail the King (Marvel Short)

Is the parent film/show streaming anywhere? Yes

About when in the parent film/show does it appear? 6:10

Actual footage of ... | process | caged heat please add as much of the following info as you can title caged heat type film tv show tv show film or show in which it appears all hail the king marvel short is the parent film show streaming anywhere yes about when in the parent film show does it appear actual footage of t... | 1 |

325,544 | 9,932,741,615 | IssuesEvent | 2019-07-02 10:32:56 | xwikisas/application-googleapps | https://api.github.com/repos/xwikisas/application-googleapps | closed | Automatically import user photo from google account | Priority: Major Type: Improvement | When logging in with Google account a wiki account is created but the user photo is not added from it. | 1.0 | Automatically import user photo from google account - When logging in with Google account a wiki account is created but the user photo is not added from it. | non_process | automatically import user photo from google account when logging in with google account a wiki account is created but the user photo is not added from it | 0 |

10,779 | 13,607,803,978 | IssuesEvent | 2020-09-23 00:30:17 | googleapis/google-auth-library-java | https://api.github.com/repos/googleapis/google-auth-library-java | closed | Security review on Google Client_id and Client_secret | type: process | *[Following this discussion](https://github.com/googleapis/google-auth-library-java/pull/469#discussion_r479640965)*

To allow the library to generate an Id_token based on the User Credential, I reuse the client_id and the client_secret provided by the gcloud SDK. *I got them like this*

```

gcloud config set log_... | 1.0 | Security review on Google Client_id and Client_secret - *[Following this discussion](https://github.com/googleapis/google-auth-library-java/pull/469#discussion_r479640965)*

To allow the library to generate an Id_token based on the User Credential, I reuse the client_id and the client_secret provided by the gcloud SD... | process | security review on google client id and client secret to allow the library to generate an id token based on the user credential i reuse the client id and the client secret provided by the gcloud sdk i got them like this gcloud config set log http redact token false gcloud auth print identity toke... | 1 |

99,543 | 16,447,550,048 | IssuesEvent | 2021-05-20 21:41:25 | turkdevops/prism | https://api.github.com/repos/turkdevops/prism | reopened | CVE-2020-7608 (Medium) detected in yargs-parser-5.0.0.tgz | security vulnerability | ## CVE-2020-7608 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>yargs-parser-5.0.0.tgz</b></p></summary>

<p>the mighty option parser used by yargs</p>

<p>Library home page: <a href=... | True | CVE-2020-7608 (Medium) detected in yargs-parser-5.0.0.tgz - ## CVE-2020-7608 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>yargs-parser-5.0.0.tgz</b></p></summary>

<p>the mighty op... | non_process | cve medium detected in yargs parser tgz cve medium severity vulnerability vulnerable library yargs parser tgz the mighty option parser used by yargs library home page a href path to dependency file prism package json path to vulnerable library prism node modules yarg... | 0 |

7,928 | 11,103,420,908 | IssuesEvent | 2019-12-17 03:47:14 | swoft-cloud/swoft | https://api.github.com/repos/swoft-cloud/swoft | closed | 无效的调试代码 t.txt | bug: fixed swoft: process | 在Swoft\Process\Swoole下的WorkerStopListener代码里有一段测试代码建议去掉。

ile_put_contents('t.txt', 'stop');

| 1.0 | 无效的调试代码 t.txt - 在Swoft\Process\Swoole下的WorkerStopListener代码里有一段测试代码建议去掉。

ile_put_contents('t.txt', 'stop');

| process | 无效的调试代码 t txt 在swoft process swoole下的workerstoplistener代码里有一段测试代码建议去掉。 ile put contents t txt stop | 1 |

257,898 | 8,148,375,211 | IssuesEvent | 2018-08-22 05:26:46 | MyMICDS/MyMICDS-v2 | https://api.github.com/repos/MyMICDS/MyMICDS-v2 | opened | Part of default schedule not underlaid | bug effort: medium priority: urgent work length: medium | This is the current schedule:

It says Block B first on the schedule even though it is a free period (as expected), but lunch nor collaborative show from 11:50 to 1:10. This should be there.

| 1.0 | Part of default schedule not underlaid - This is the current schedule:

It says Block B first on the schedule even though it is a free period (as expected), but lunch nor collaborative show from 11:50 to... | non_process | part of default schedule not underlaid this is the current schedule it says block b first on the schedule even though it is a free period as expected but lunch nor collaborative show from to this should be there | 0 |

137,169 | 12,747,077,138 | IssuesEvent | 2020-06-26 17:10:32 | streamnative/pulsar | https://api.github.com/repos/streamnative/pulsar | closed | ISSUE-6927: Add C# client documentation | area/documentation component/documentation component/website size: 1 triage/week-19 type/feature workflow::in-review | Original Issue: apache/pulsar#6927

---

**Is your feature request related to a problem? Please describe.**

Since we have official c# client https://github.com/apache/pulsar-dotpulsar, we'd better add the C# client documentation in the client documentation.

or just hanging if I tried to use `read_to_e... | 1.0 | Can't shutdown stdin of process - ## Version

0.2.11

## Platform

Ubuntu 18.04 x64

## Subcrates

process, io

## Description

So I'm piping into and out of a process using tokio process. And the input goes fine, and the output pipe was getting to the last byte then hanging (when I did it in chunks) or just ha... | process | can t shutdown stdin of process version platform ubuntu subcrates process io description so i m piping into and out of a process using tokio process and the input goes fine and the output pipe was getting to the last byte then hanging when i did it in chunks or just hanging... | 1 |

17,759 | 23,676,288,167 | IssuesEvent | 2022-08-28 05:54:28 | Tencent/tdesign-miniprogram | https://api.github.com/repos/Tencent/tdesign-miniprogram | closed | tdesign-小程序 主题颜色怎么改? | good first issue Stale in process | ### tdesign 版本

微信小程序 0.13.2

### 重现链接

_No response_

### 重现步骤

_No response_

### 期望结果

_No response_

### 实际结果

_No response_

### 框架版本

_No response_

### 浏览器版本

_No response_

### 系统版本

_No response_

### Node版本

_No response_

### 补充说明

_No response_ | 1.0 | tdesign-小程序 主题颜色怎么改? - ### tdesign 版本

微信小程序 0.13.2

### 重现链接

_No response_

### 重现步骤

_No response_

### 期望结果

_No response_

### 实际结果

_No response_

### 框架版本

_No response_

### 浏览器版本

_No response_

### 系统版本

_No response_

### Node版本

_No response_

### 补充说明

_No response_ | process | tdesign 小程序 主题颜色怎么改? tdesign 版本 微信小程序 重现链接 no response 重现步骤 no response 期望结果 no response 实际结果 no response 框架版本 no response 浏览器版本 no response 系统版本 no response node版本 no response 补充说明 no response | 1 |

5,242 | 3,911,776,935 | IssuesEvent | 2016-04-20 07:47:09 | elastic/rally | https://api.github.com/repos/elastic/rally | opened | Allow to specify the target host(s) when using the "benchmark-only" pipeline | :Usability enhancement | The (rather dangerous) "benchmark-only" pipeline allows to run benchmarks against clusters which were not provisioned by Rally. However, we bolted this on a bit and it only allows to target localhost:9200 so far. We should allow a user to define a list of target hosts and ports that we pass then to the client driver. | True | Allow to specify the target host(s) when using the "benchmark-only" pipeline - The (rather dangerous) "benchmark-only" pipeline allows to run benchmarks against clusters which were not provisioned by Rally. However, we bolted this on a bit and it only allows to target localhost:9200 so far. We should allow a user to de... | non_process | allow to specify the target host s when using the benchmark only pipeline the rather dangerous benchmark only pipeline allows to run benchmarks against clusters which were not provisioned by rally however we bolted this on a bit and it only allows to target localhost so far we should allow a user to defin... | 0 |

14,024 | 16,824,076,689 | IssuesEvent | 2021-06-17 16:11:14 | w3c/webauthn | https://api.github.com/repos/w3c/webauthn | closed | need "how to install bikeshed in one's local webauthn repo clone" instructions | priority:low type:process | I was attempting to run the `./update-bikeshed-cache.sh` on my local webauthn repo clone (following the directions here: https://github.com/w3c/webauthn#updating-copies-of-bikeshed-data-files-stored-in-this-repo) and this is what I got:

```

$ ./update-bikeshed-cache.sh \

&& git add .spec-data .bikeshed-include \

... | 1.0 | need "how to install bikeshed in one's local webauthn repo clone" instructions - I was attempting to run the `./update-bikeshed-cache.sh` on my local webauthn repo clone (following the directions here: https://github.com/w3c/webauthn#updating-copies-of-bikeshed-data-files-stored-in-this-repo) and this is what I got:

`... | process | need how to install bikeshed in one s local webauthn repo clone instructions i was attempting to run the update bikeshed cache sh on my local webauthn repo clone following the directions here and this is what i got update bikeshed cache sh git add spec data bikeshed include git ... | 1 |

43,731 | 5,696,645,195 | IssuesEvent | 2017-04-16 14:05:18 | pandas-dev/pandas | https://api.github.com/repos/pandas-dev/pandas | closed | API: add testing functions to public API ? | API Design Docs Testing | A lot of other projects that use pandas will (like to) use pandas testing functionality like `assert_frame_equal` in their test suite. Although the pandas testing functions are available in the namespace (#6188), they are not really 'officially' labeled as public API that other projects can use (and rely upon).

Numpy ... | 1.0 | API: add testing functions to public API ? - A lot of other projects that use pandas will (like to) use pandas testing functionality like `assert_frame_equal` in their test suite. Although the pandas testing functions are available in the namespace (#6188), they are not really 'officially' labeled as public API that ot... | non_process | api add testing functions to public api a lot of other projects that use pandas will like to use pandas testing functionality like assert frame equal in their test suite although the pandas testing functions are available in the namespace they are not really officially labeled as public api that other... | 0 |

133,692 | 12,551,084,335 | IssuesEvent | 2020-06-06 13:32:34 | svsticky/static-sticky | https://api.github.com/repos/svsticky/static-sticky | closed | Manual needs update | documentation | Explain the steps for setting up the site better and in more detail. The current format gives the impression that the users need to make a new contentful account. | 1.0 | Manual needs update - Explain the steps for setting up the site better and in more detail. The current format gives the impression that the users need to make a new contentful account. | non_process | manual needs update explain the steps for setting up the site better and in more detail the current format gives the impression that the users need to make a new contentful account | 0 |

9,244 | 12,270,574,925 | IssuesEvent | 2020-05-07 15:42:30 | googleapis/google-cloud-cpp | https://api.github.com/repos/googleapis/google-cloud-cpp | closed | Move Issues from old repo to new with library-specific label | api: spanner type: process | Move all the ISSUES in the old repo to -cpp with the correct `api: ???` label. https://help.github.com/en/github/managing-your-work-on-github/transferring-an-issue-to-another-repository | 1.0 | Move Issues from old repo to new with library-specific label - Move all the ISSUES in the old repo to -cpp with the correct `api: ???` label. https://help.github.com/en/github/managing-your-work-on-github/transferring-an-issue-to-another-repository | process | move issues from old repo to new with library specific label move all the issues in the old repo to cpp with the correct api label | 1 |

20,219 | 26,809,763,528 | IssuesEvent | 2023-02-01 21:17:24 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | opened | Possibly running out of memory when using a lot of preprocessors. | help wanted preprocessor | Hi all,

In the July 2022 Meeting, I raised an issue, that I can't process the data with a lot of preprocessors (see https://github.com/ESMValGroup/Community/discussions/33#discussioncomment-3121419). When I tried it back there, it all seemed to work just fine, however, I finally ran into a problem.

I was runni... | 1.0 | Possibly running out of memory when using a lot of preprocessors. - Hi all,

In the July 2022 Meeting, I raised an issue, that I can't process the data with a lot of preprocessors (see https://github.com/ESMValGroup/Community/discussions/33#discussioncomment-3121419). When I tried it back there, it all seemed to wor... | process | possibly running out of memory when using a lot of preprocessors hi all in the july meeting i raised an issue that i can t process the data with a lot of preprocessors see when i tried it back there it all seemed to work just fine however i finally ran into a problem i was running it looks... | 1 |

80,009 | 3,549,528,513 | IssuesEvent | 2016-01-20 18:22:08 | GalliumOS/galliumos-distro | https://api.github.com/repos/GalliumOS/galliumos-distro | closed | Notifications Behind Full Screen Applications | bug priority:medium | Notifications for volume, brightness and battery are not visible when running applications in full screen. I found this xfce bug report and was wondering if the patch could be included with galliumos.

https://bugzilla.xfce.org/show_bug.cgi?id=7928 | 1.0 | Notifications Behind Full Screen Applications - Notifications for volume, brightness and battery are not visible when running applications in full screen. I found this xfce bug report and was wondering if the patch could be included with galliumos.

https://bugzilla.xfce.org/show_bug.cgi?id=7928 | non_process | notifications behind full screen applications notifications for volume brightness and battery are not visible when running applications in full screen i found this xfce bug report and was wondering if the patch could be included with galliumos | 0 |

76,857 | 15,496,218,048 | IssuesEvent | 2021-03-11 02:16:30 | hiucimon/react-hooks-redux-template | https://api.github.com/repos/hiucimon/react-hooks-redux-template | opened | CVE-2019-10744 (High) detected in multiple libraries | security vulnerability | ## CVE-2019-10744 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash.template-4.4.0.tgz</b>, <b>lodash-es-4.17.11.tgz</b>, <b>lodash-4.17.11.tgz</b></p></summary>

<p>

<details><s... | True | CVE-2019-10744 (High) detected in multiple libraries - ## CVE-2019-10744 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash.template-4.4.0.tgz</b>, <b>lodash-es-4.17.11.tgz</b>, <... | non_process | cve high detected in multiple libraries cve high severity vulnerability vulnerable libraries lodash template tgz lodash es tgz lodash tgz lodash template tgz the lodash method template exported as a module library home page a href path to ... | 0 |

165,374 | 12,839,200,237 | IssuesEvent | 2020-07-07 18:53:05 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | Kubernetes failed to start after installation throwing errors | kind/failing-test sig/node triage/support | <!-- Please only use this template for submitting reports about continuously failing tests or jobs in Kubernetes CI -->

**Which jobs are failing**:

kubelet.service - kubelet: The Kubernetes Node Agent

Loaded: loaded (/usr/lib/systemd/system/kubelet.service; enabled; vendor preset: disabled)

Drop-In: /usr/li... | 1.0 | Kubernetes failed to start after installation throwing errors - <!-- Please only use this template for submitting reports about continuously failing tests or jobs in Kubernetes CI -->

**Which jobs are failing**:

kubelet.service - kubelet: The Kubernetes Node Agent

Loaded: loaded (/usr/lib/systemd/system/kubele... | non_process | kubernetes failed to start after installation throwing errors which jobs are failing kubelet service kubelet the kubernetes node agent loaded loaded usr lib systemd system kubelet service enabled vendor preset disabled drop in usr lib systemd system kubelet service d └─ kub... | 0 |

390,629 | 11,551,087,199 | IssuesEvent | 2020-02-19 00:19:37 | googleapis/java-spanner-jdbc | https://api.github.com/repos/googleapis/java-spanner-jdbc | closed | Synthesis failed for java-spanner-jdbc | api: spanner autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate java-spanner-jdbc. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to branch 'autosynth'

Running synthtool

['/tmpfs/src/git/autosynth/env/bin/python3', '-m', 'synthtool', 'synth.py', '--']

synthtool > Executing /tmpfs/src/git/a... | 1.0 | Synthesis failed for java-spanner-jdbc - Hello! Autosynth couldn't regenerate java-spanner-jdbc. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to branch 'autosynth'

Running synthtool

['/tmpfs/src/git/autosynth/env/bin/python3', '-m', 'synthtool', 'synth.py', '--... | non_process | synthesis failed for java spanner jdbc hello autosynth couldn t regenerate java spanner jdbc broken heart here s the output from running synth py cloning into working repo switched to branch autosynth running synthtool synthtool executing tmpfs src git autosynth working repo synth py on bran... | 0 |

14,257 | 17,192,666,123 | IssuesEvent | 2021-07-16 13:15:09 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [FEATURE][processing] Add algorithms for raising warnings and exceptions from models | 3.14 Automatic new feature Processing Alg | Original commit: https://github.com/qgis/QGIS/commit/5f533e561c903e37fb6d6498d62278a3ee3b9669 by nyalldawson

These algorithms raise either a custom warning in the processing log, OR raise

an exception which causes the model execution to terminate.

An optional condition expression can be specified to control whether o... | 1.0 | [FEATURE][processing] Add algorithms for raising warnings and exceptions from models - Original commit: https://github.com/qgis/QGIS/commit/5f533e561c903e37fb6d6498d62278a3ee3b9669 by nyalldawson

These algorithms raise either a custom warning in the processing log, OR raise

an exception which causes the model executio... | process | add algorithms for raising warnings and exceptions from models original commit by nyalldawson these algorithms raise either a custom warning in the processing log or raise an exception which causes the model execution to terminate an optional condition expression can be specified to control whether or not t... | 1 |

13,463 | 15,950,007,931 | IssuesEvent | 2021-04-15 08:09:01 | 2020mt93213/Pune_BusRoutes | https://api.github.com/repos/2020mt93213/Pune_BusRoutes | closed | Feature: Buses in motion : Need different filename for distinct timestamps | enhancement process | ## Feature: Buses in motion

Image names should be appended with timestamp.

This will help in sequential arrangements of source images to generate a GIF output

### Sample -

1. for 03 April 2021 01:51:00AM filename should be 20210403015100.png

| 1.0 | Feature: Buses in motion : Need different filename for distinct timestamps - ## Feature: Buses in motion

Image names should be appended with timestamp.

This will help in sequential arrangements of source images to generate a GIF output

### Sample -

1. for 03 April 2021 01:51:00AM filename should be 202104030151... | process | feature buses in motion need different filename for distinct timestamps feature buses in motion image names should be appended with timestamp this will help in sequential arrangements of source images to generate a gif output sample for april filename should be png | 1 |

85,223 | 10,432,703,679 | IssuesEvent | 2019-09-17 11:57:09 | vtex-apps/io-documentation | https://api.github.com/repos/vtex-apps/io-documentation | closed | vtex-apps/store-component-template has no documentation yet | no-documentation | [vtex-apps/store-component-template](https://github.com/vtex-apps/store-component-template) hasn't created any README file yet or is not using Docs Builder | 1.0 | vtex-apps/store-component-template has no documentation yet - [vtex-apps/store-component-template](https://github.com/vtex-apps/store-component-template) hasn't created any README file yet or is not using Docs Builder | non_process | vtex apps store component template has no documentation yet hasn t created any readme file yet or is not using docs builder | 0 |

11,045 | 13,864,863,122 | IssuesEvent | 2020-10-16 02:40:49 | aws-cloudformation/cloudformation-cli | https://api.github.com/repos/aws-cloudformation/cloudformation-cli | closed | "Model name conflict" when embedded objects share same base name | enhancement schema processing | When subobjects within an object share the same parent name, their values conflict when the rewriting occurs. This should ideally generate a definition name that doesn't conflict with the other (perhaps with an iterator?).

Example debug log and schema attached.

[debug-log.txt](https://github.com/aws-cloudformation/... | 1.0 | "Model name conflict" when embedded objects share same base name - When subobjects within an object share the same parent name, their values conflict when the rewriting occurs. This should ideally generate a definition name that doesn't conflict with the other (perhaps with an iterator?).

Example debug log and schem... | process | model name conflict when embedded objects share same base name when subobjects within an object share the same parent name their values conflict when the rewriting occurs this should ideally generate a definition name that doesn t conflict with the other perhaps with an iterator example debug log and schem... | 1 |

544,525 | 15,894,326,182 | IssuesEvent | 2021-04-11 09:55:27 | AY2021S2-CS2103T-T12-3/tp | https://api.github.com/repos/AY2021S2-CS2103T-T12-3/tp | closed | Refactor code to not use SortedFilteredPersonsList | priority.High type.Bug | View is using `SortedFilteredPersonsList` whereas commands are operating on `FilteredPersonsList` | 1.0 | Refactor code to not use SortedFilteredPersonsList - View is using `SortedFilteredPersonsList` whereas commands are operating on `FilteredPersonsList` | non_process | refactor code to not use sortedfilteredpersonslist view is using sortedfilteredpersonslist whereas commands are operating on filteredpersonslist | 0 |

17,912 | 3,013,586,259 | IssuesEvent | 2015-07-29 09:52:48 | yawlfoundation/yawl | https://api.github.com/repos/yawlfoundation/yawl | closed | InterfaceX updateWorkitemData Problem | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. develop a custom service

2. override the handleWorkItemStatusChangeEvent method and update the workitem

data when the status of workitem changes from Fired to Executing.

What is the expected output? What do you see instead?

I expect that the workitem could be started aft... | 1.0 | InterfaceX updateWorkitemData Problem - ```

What steps will reproduce the problem?

1. develop a custom service

2. override the handleWorkItemStatusChangeEvent method and update the workitem

data when the status of workitem changes from Fired to Executing.

What is the expected output? What do you see instead?

I expec... | non_process | interfacex updateworkitemdata problem what steps will reproduce the problem develop a custom service override the handleworkitemstatuschangeevent method and update the workitem data when the status of workitem changes from fired to executing what is the expected output what do you see instead i expec... | 0 |

19,711 | 26,053,749,345 | IssuesEvent | 2022-12-22 21:50:03 | opensearch-project/data-prepper | https://api.github.com/repos/opensearch-project/data-prepper | closed | Provide a type conversion / cast processor | enhancement plugin - processor | **Is your feature request related to a problem? Please describe.**

Some pipelines have Event values in one type (e.g. string), but want to convert them to another type (e.g. integer).

**Describe the solution you'd like**

Provide a new convert processor along with the other Mutate Event Processors.

```

proc... | 1.0 | Provide a type conversion / cast processor - **Is your feature request related to a problem? Please describe.**

Some pipelines have Event values in one type (e.g. string), but want to convert them to another type (e.g. integer).

**Describe the solution you'd like**

Provide a new convert processor along with th... | process | provide a type conversion cast processor is your feature request related to a problem please describe some pipelines have event values in one type e g string but want to convert them to another type e g integer describe the solution you d like provide a new convert processor along with th... | 1 |

1,762 | 4,469,240,142 | IssuesEvent | 2016-08-25 12:25:16 | pelias/text-analyzer | https://api.github.com/repos/pelias/text-analyzer | closed | functions declared during execution | processed | I just noticed that we are declaring a bunch of functions inline during execution:

```javascript

module.exports.parse = function parse(query) {

var getAdminPartsBySplittingOnDelim = function(queryParts) {

...

var getAddressParts = function(query) {

...

```

It would be cleaner/more performant to decl... | 1.0 | functions declared during execution - I just noticed that we are declaring a bunch of functions inline during execution:

```javascript

module.exports.parse = function parse(query) {

var getAdminPartsBySplittingOnDelim = function(queryParts) {

...

var getAddressParts = function(query) {

...

```

It wo... | process | functions declared during execution i just noticed that we are declaring a bunch of functions inline during execution javascript module exports parse function parse query var getadminpartsbysplittingondelim function queryparts var getaddressparts function query it wo... | 1 |

6,390 | 9,473,795,278 | IssuesEvent | 2019-04-19 03:58:24 | hackcambridge/hack-cambridge-website | https://api.github.com/repos/hackcambridge/hack-cambridge-website | opened | Set up Heroku Postgres instances for staging / PR apps | Epic: Dev process | This will allow us to run these apps with a DB, and also means we can run the full release script. | 1.0 | Set up Heroku Postgres instances for staging / PR apps - This will allow us to run these apps with a DB, and also means we can run the full release script. | process | set up heroku postgres instances for staging pr apps this will allow us to run these apps with a db and also means we can run the full release script | 1 |

20,990 | 27,853,684,959 | IssuesEvent | 2023-03-20 20:48:57 | keras-team/keras-cv | https://api.github.com/repos/keras-team/keras-cv | opened | Support segmentation masks in MixUp layer | contribution-welcome preprocessing augmentation | This should follow the same structure as segmentation mask augmentation in our other preprocessing layers.

Treating masks like 1-channel images for the purpose of applying MixUp should be a simple and effective approach for this. | 1.0 | Support segmentation masks in MixUp layer - This should follow the same structure as segmentation mask augmentation in our other preprocessing layers.

Treating masks like 1-channel images for the purpose of applying MixUp should be a simple and effective approach for this. | process | support segmentation masks in mixup layer this should follow the same structure as segmentation mask augmentation in our other preprocessing layers treating masks like channel images for the purpose of applying mixup should be a simple and effective approach for this | 1 |

22,655 | 31,895,827,985 | IssuesEvent | 2023-09-18 01:32:00 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Change term - latestGeochronologicalEra | Term - change Class - GeologicalContext normative Task Group - Material Sample Process - complete | ## Term change

* Submitter: [Material Sample Task Group](https://www.tdwg.org/community/osr/material-sample/)

* Efficacy Justification (why is this change necessary?): Create consistency of terms for material in Darwin Core.

* Demand Justification (if the change is semantic in nature, name at least two organizatio... | 1.0 | Change term - latestGeochronologicalEra - ## Term change

* Submitter: [Material Sample Task Group](https://www.tdwg.org/community/osr/material-sample/)

* Efficacy Justification (why is this change necessary?): Create consistency of terms for material in Darwin Core.

* Demand Justification (if the change is semanti... | process | change term latestgeochronologicalera term change submitter efficacy justification why is this change necessary create consistency of terms for material in darwin core demand justification if the change is semantic in nature name at least two organizations that independently need this term... | 1 |

19,699 | 26,049,472,494 | IssuesEvent | 2022-12-22 17:11:11 | usdigitalresponse/usdr-gost | https://api.github.com/repos/usdigitalresponse/usdr-gost | closed | [Process] New user signup request. | process signup request | A partner has asked either for access to the Demo tenant, or for a new Tenant to be set up for their government. Please set this up within a week.

See https://www.jotform.com/tables/222236011470138 for details.

| 1.0 | [Process] New user signup request. - A partner has asked either for access to the Demo tenant, or for a new Tenant to be set up for their government. Please set this up within a week.

See https://www.jotform.com/tables/222236011470138 for details.

| process | new user signup request a partner has asked either for access to the demo tenant or for a new tenant to be set up for their government please set this up within a week see for details | 1 |

3,753 | 6,733,154,208 | IssuesEvent | 2017-10-18 14:00:40 | york-region-tpss/stp | https://api.github.com/repos/york-region-tpss/stp | closed | Load previous year's pricing data | process workflow | Load previous year's pricing data into this year's `Unit Price (LY)`.

Procedure should directly update the database column. | 1.0 | Load previous year's pricing data - Load previous year's pricing data into this year's `Unit Price (LY)`.

Procedure should directly update the database column. | process | load previous year s pricing data load previous year s pricing data into this year s unit price ly procedure should directly update the database column | 1 |

455,471 | 13,127,697,595 | IssuesEvent | 2020-08-06 10:51:59 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | m.alibaba.com - see bug description | browser-firefox-mobile engine-gecko priority-important | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 6.0; Mobile; rv:68.0) Gecko/20100101 Firefox/68.0 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/56264 -->

**URL**: https://m.alibaba.com/myalibaba.htm?templateName=wap2-my-alibaba&... | 1.0 | m.alibaba.com - see bug description - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 6.0; Mobile; rv:68.0) Gecko/20100101 Firefox/68.0 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/56264 -->

**URL**: https://m.alibaba.com/myali... | non_process | m alibaba com see bug description url browser version firefox mobile operating system android tested another browser yes opera problem type something else description child porn being sold human trafficking steps to reproduce reported child abuse fbi cia ... | 0 |

75,675 | 9,308,545,802 | IssuesEvent | 2019-03-25 14:46:05 | brymut/quotet.co.ke | https://api.github.com/repos/brymut/quotet.co.ke | opened | Develop API | design enhancement | Development of API for data delivery from database & admin console to visitor side of the website. | 1.0 | Develop API - Development of API for data delivery from database & admin console to visitor side of the website. | non_process | develop api development of api for data delivery from database admin console to visitor side of the website | 0 |

19,889 | 26,335,285,374 | IssuesEvent | 2023-01-10 13:55:44 | firebase/firebase-cpp-sdk | https://api.github.com/repos/firebase/firebase-cpp-sdk | reopened | [C++] Nightly Integration Testing Report for Firestore | type: process nightly-testing |

<hidden value="integration-test-status-comment"></hidden>

### ✅ [build against repo] Integration test succeeded!

Requested by @sunmou99 on commit e61d6bb264633c720b1ce857717f4e9638f40279

Last updated: Tue Jan 10 03:54 PST 2023

**[View integration test log & download artifacts](https://github.com/firebase/fire... | 1.0 | [C++] Nightly Integration Testing Report for Firestore -

<hidden value="integration-test-status-comment"></hidden>

### ✅ [build against repo] Integration test succeeded!

Requested by @sunmou99 on commit e61d6bb264633c720b1ce857717f4e9638f40279

Last updated: Tue Jan 10 03:54 PST 2023

**[View integration test l... | process | nightly integration testing report for firestore ✅ nbsp integration test succeeded requested by on commit last updated tue jan pst ✅ nbsp integration test succeeded requested by firebase workflow trigger on commit last updated mon jan pst ... | 1 |

10,071 | 13,044,161,896 | IssuesEvent | 2020-07-29 03:47:27 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `UTCTimeWithArg` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `UTCTimeWithArg` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rp... | 2.0 | UCP: Migrate scalar function `UTCTimeWithArg` from TiDB -

## Description

Port the scalar function `UTCTimeWithArg` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://git... | process | ucp migrate scalar function utctimewitharg from tidb description port the scalar function utctimewitharg from tidb to coprocessor score mentor s breeswish recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

606,666 | 18,767,427,788 | IssuesEvent | 2021-11-06 06:47:08 | PokemonAutomation/ComputerControl | https://api.github.com/repos/PokemonAutomation/ComputerControl | opened | Investigate non-shiny hunts. | enhancement P4 - Low Priority | Moved From: https://github.com/PokemonAutomation/SwSh-Arduino/issues/11

Which of these are possible and can we do them?

- [x] Poipole and Cosmog: Stat hunting

- [ ] Keldeo: Stat and mark hunting

- [ ] Galar Birds: Stat and mark hunting

- [x] Calyrex: Stat hunting

- [x] Regis: Stat hunting

| 1.0 | Investigate non-shiny hunts. - Moved From: https://github.com/PokemonAutomation/SwSh-Arduino/issues/11

Which of these are possible and can we do them?

- [x] Poipole and Cosmog: Stat hunting

- [ ] Keldeo: Stat and mark hunting

- [ ] Galar Birds: Stat and mark hunting

- [x] Calyrex: Stat hunting

- [x] Regis: St... | non_process | investigate non shiny hunts moved from which of these are possible and can we do them poipole and cosmog stat hunting keldeo stat and mark hunting galar birds stat and mark hunting calyrex stat hunting regis stat hunting | 0 |

165,464 | 26,175,512,985 | IssuesEvent | 2023-01-02 09:13:47 | zitadel/zitadel | https://api.github.com/repos/zitadel/zitadel | closed | Helpvideos ZITADEL | category: design | The idea is, to provide short videos with how-to's for specific features/workflows in zitadel (console).

for example:

- [ ] User management

- [ ] Setting up a project

- [ ] Setting up an application

- [ ] Handling 'Actions'

- [ ] How to manage Authorizations

- [ ] Setup ZITADEL with your corporate design/brand

etc. | 1.0 | Helpvideos ZITADEL - The idea is, to provide short videos with how-to's for specific features/workflows in zitadel (console).

for example:

- [ ] User management

- [ ] Setting up a project

- [ ] Setting up an application

- [ ] Handling 'Actions'

- [ ] How to manage Authorizations

- [ ] Setup ZITADEL with your corporate... | non_process | helpvideos zitadel the idea is to provide short videos with how to s for specific features workflows in zitadel console for example user management setting up a project setting up an application handling actions how to manage authorizations setup zitadel with your corporate design bran... | 0 |

259,463 | 27,621,909,964 | IssuesEvent | 2023-03-10 01:20:51 | nidhi7598/linux-3.0.35 | https://api.github.com/repos/nidhi7598/linux-3.0.35 | closed | CVE-2017-10911 (Medium) detected in linuxlinux-3.0.40 - autoclosed | Mend: dependency security vulnerability | ## CVE-2017-10911 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-3.0.40</b></p></summary>

<p>

<p>Apache Software Foundation (ASF)</p>

<p>Library home page: <a href=https:... | True | CVE-2017-10911 (Medium) detected in linuxlinux-3.0.40 - autoclosed - ## CVE-2017-10911 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-3.0.40</b></p></summary>

<p>

<p>Apac... | non_process | cve medium detected in linuxlinux autoclosed cve medium severity vulnerability vulnerable library linuxlinux apache software foundation asf library home page a href found in head commit a href found in base branch master vulnerable source files ... | 0 |

13,731 | 16,488,337,189 | IssuesEvent | 2021-05-24 21:42:53 | DSpace/DSpace | https://api.github.com/repos/DSpace/DSpace | closed | Index-Discovery commands not listed | bug e/2 interface: command-line medium priority tools: processes | **Describe the bug**

It seams `index-discovery` is missing or omitted from the CLI list.

**To Reproduce**

Steps to reproduce the behavior:

1. When executing `[/dspace]/bin/dspace ` a list is displayed

**Expected behavior**

In the displayed list, it's missing `index-discovery`, but regardless, if you try to ex... | 1.0 | Index-Discovery commands not listed - **Describe the bug**

It seams `index-discovery` is missing or omitted from the CLI list.

**To Reproduce**

Steps to reproduce the behavior:

1. When executing `[/dspace]/bin/dspace ` a list is displayed

**Expected behavior**

In the displayed list, it's missing `index-discov... | process | index discovery commands not listed describe the bug it seams index discovery is missing or omitted from the cli list to reproduce steps to reproduce the behavior when executing bin dspace a list is displayed expected behavior in the displayed list it s missing index discovery bu... | 1 |

327,510 | 28,068,011,620 | IssuesEvent | 2023-03-29 16:51:46 | ossf/scorecard-action | https://api.github.com/repos/ossf/scorecard-action | closed | Failing e2e tests - scorecard-latest-release on ossf-tests/scorecard-action-branch-protection-e2e | e2e automated-tests | Matrix: null

Repo: https://github.com/ossf-tests/scorecard-action-branch-protection-e2e/tree/main

Run: https://github.com/ossf-tests/scorecard-action-branch-protection-e2e/actions/runs/3511112490

Workflow name: scorecard-latest-release

Workflow file: https://github.com/ossf-tests/scorecard-action-branch-protection-e2e/... | 1.0 | Failing e2e tests - scorecard-latest-release on ossf-tests/scorecard-action-branch-protection-e2e - Matrix: null

Repo: https://github.com/ossf-tests/scorecard-action-branch-protection-e2e/tree/main

Run: https://github.com/ossf-tests/scorecard-action-branch-protection-e2e/actions/runs/3511112490

Workflow name: scorecard... | non_process | failing tests scorecard latest release on ossf tests scorecard action branch protection matrix null repo run workflow name scorecard latest release workflow file trigger schedule branch main | 0 |

16,161 | 20,599,209,613 | IssuesEvent | 2022-03-06 01:18:29 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | opened | 'Join attributes by field value' throws error because of field types (when there is a field name conflict) | Processing Bug | ### What is the bug or the crash?

It seems field values are shifted during a 'join attributes by field value' operation.

If by chance two consecutive fields have types that don't match, QGIS can easily throw a value error, like this:

```

Feature could not be written to Joined_layer_5d3154a1_0be4_4c9d_9ce7_fef6aa... | 1.0 | 'Join attributes by field value' throws error because of field types (when there is a field name conflict) - ### What is the bug or the crash?

It seems field values are shifted during a 'join attributes by field value' operation.

If by chance two consecutive fields have types that don't match, QGIS can easily throw... | process | join attributes by field value throws error because of field types when there is a field name conflict what is the bug or the crash it seems field values are shifted during a join attributes by field value operation if by chance two consecutive fields have types that don t match qgis can easily throw... | 1 |

11,079 | 13,920,474,064 | IssuesEvent | 2020-10-21 10:29:29 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | opened | Python error when using "translate (convert format)" and input is a VRT file | Bug Processing | QGIS 3.10.10 on Ubuntu 20.04

```

QGIS version: 3.10.10-A Coruña

QGIS code revision: 1869829378

Qt version: 5.12.8

GDAL version: 3.0.4

GEOS version: 3.8.0-CAPI-1.13.1

PROJ version: Rel. 6.3.1, February 10th, 2020

Processing algorithm…

Algorithm 'Translate (convert format)' starting…

Input parameters:

{ '... | 1.0 | Python error when using "translate (convert format)" and input is a VRT file - QGIS 3.10.10 on Ubuntu 20.04

```

QGIS version: 3.10.10-A Coruña

QGIS code revision: 1869829378

Qt version: 5.12.8

GDAL version: 3.0.4

GEOS version: 3.8.0-CAPI-1.13.1

PROJ version: Rel. 6.3.1, February 10th, 2020