Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

6,138 | 9,009,231,675 | IssuesEvent | 2019-02-05 08:20:31 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | CLI unit tests often break - and it is flake | CI: circle process: tests | When building CLI on Circle the same 5 unit tests break, but when rerunning them pass

example build (renovate PR)

https://circleci.com/gh/cypress-io/cypress/43807 from workflow https://circleci.com/workflow-run/842085ba-85aa-4b7d-a20f-2b3dd58d4409

<img width="1343" alt="screen shot 2018-12-27 at 10 15 32 am" s... | 1.0 | CLI unit tests often break - and it is flake - When building CLI on Circle the same 5 unit tests break, but when rerunning them pass

example build (renovate PR)

https://circleci.com/gh/cypress-io/cypress/43807 from workflow https://circleci.com/workflow-run/842085ba-85aa-4b7d-a20f-2b3dd58d4409

<img width="1343... | process | cli unit tests often break and it is flake when building cli on circle the same unit tests break but when rerunning them pass example build renovate pr from workflow img width alt screen shot at am src all errors come from lib tasks verify seems the error message ge... | 1 |

289,406 | 8,870,079,497 | IssuesEvent | 2019-01-11 08:21:49 | Radarr/Radarr | https://api.github.com/repos/Radarr/Radarr | reopened | Memory Leak | bug confirmed help wanted priority:medium under investigation | **Description:**

Radarr appears to have a memory leak. I have started getting warnings in the past few weeks about used SWAP space, and I had narrowed it down to being one of my Docker containers. Whenever I restarted all my containers, the usage dropped substantially. Today I went through and found that Radarr was ... | 1.0 | Memory Leak - **Description:**

Radarr appears to have a memory leak. I have started getting warnings in the past few weeks about used SWAP space, and I had narrowed it down to being one of my Docker containers. Whenever I restarted all my containers, the usage dropped substantially. Today I went through and found th... | non_process | memory leak description radarr appears to have a memory leak i have started getting warnings in the past few weeks about used swap space and i had narrowed it down to being one of my docker containers whenever i restarted all my containers the usage dropped substantially today i went through and found th... | 0 |

18,451 | 4,273,043,220 | IssuesEvent | 2016-07-13 16:07:18 | hyperspy/hyperspy | https://api.github.com/repos/hyperspy/hyperspy | reopened | Archlinux installation instructions are old | documentation fix-submitted | As the title says, the archlinux installation instructions are old / deprecated (written for python2 as well). As it stands now, they should be at least removed from the user guide, preferably updated with a working set of steps. | 1.0 | Archlinux installation instructions are old - As the title says, the archlinux installation instructions are old / deprecated (written for python2 as well). As it stands now, they should be at least removed from the user guide, preferably updated with a working set of steps. | non_process | archlinux installation instructions are old as the title says the archlinux installation instructions are old deprecated written for as well as it stands now they should be at least removed from the user guide preferably updated with a working set of steps | 0 |

22,016 | 30,521,492,976 | IssuesEvent | 2023-07-19 08:23:43 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | [Mirror] Please add mirrors for Zulu JDK 11.0.20 LTS | P2 type: process team-OSS mirror request | ### Please list the URLs of the archives you'd like to mirror:

* Linux x64: https://cdn.azul.com/zulu/bin/zulu11.66.15-ca-jdk11.0.20-linux_x64.tar.gz

* Linux aarch64: https://cdn.azul.com/zulu/bin/zulu11.66.15-ca-jdk11.0.20-linux_aarch64.tar.gz

* MacOS x64: https://cdn.azul.com/zulu/bin/zulu11.66.15-ca-jdk11.0.20-m... | 1.0 | [Mirror] Please add mirrors for Zulu JDK 11.0.20 LTS - ### Please list the URLs of the archives you'd like to mirror:

* Linux x64: https://cdn.azul.com/zulu/bin/zulu11.66.15-ca-jdk11.0.20-linux_x64.tar.gz

* Linux aarch64: https://cdn.azul.com/zulu/bin/zulu11.66.15-ca-jdk11.0.20-linux_aarch64.tar.gz

* MacOS x64: htt... | process | please add mirrors for zulu jdk lts please list the urls of the archives you d like to mirror linux linux macos macos windows | 1 |

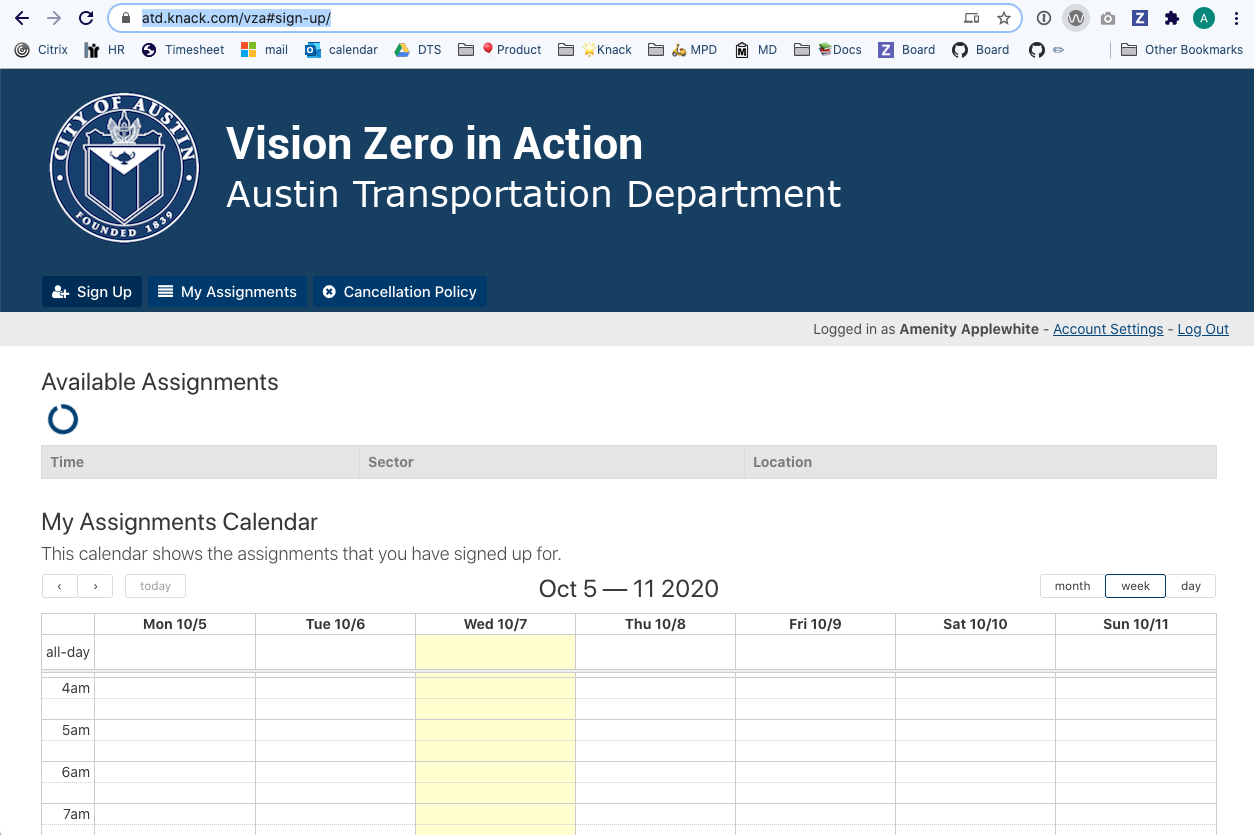

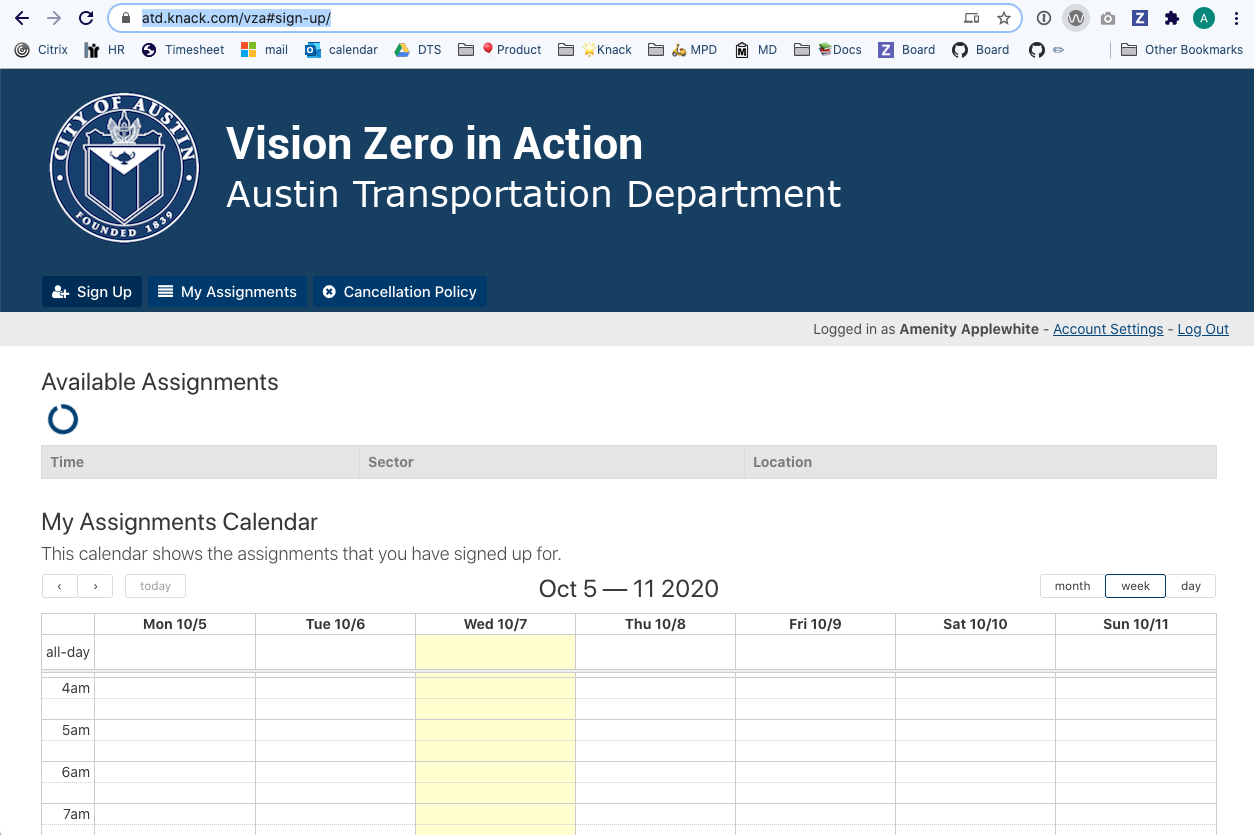

32,209 | 13,781,184,237 | IssuesEvent | 2020-10-08 15:50:42 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | closed | [BUG] "All Assignments" not loading on Officers' "Sign Up" page | Product: Vision Zero in Action Service: Apps Type: Bug Report | "All Assignments" table never loads.

**URL**

https://atd.knack.com/vza#sign-up/

**Device**

I'm seeing this in desktop, OSX, Chrome. Matt (Albert) A. is also experiencing... | 1.0 | [BUG] "All Assignments" not loading on Officers' "Sign Up" page - "All Assignments" table never loads.

**URL**

https://atd.knack.com/vza#sign-up/

**Device**

I'm seeing ... | non_process | all assignments not loading on officers sign up page all assignments table never loads url device i m seeing this in desktop osx chrome matt albert a is also experiencing it on his iphone | 0 |

21,513 | 29,799,856,152 | IssuesEvent | 2023-06-16 07:14:23 | phuocduong-agilityio/internship-huy-dao | https://api.github.com/repos/phuocduong-agilityio/internship-huy-dao | closed | Implement filter the `Brand` button products and process the filter results. | In-process | ### Filter by `Brand`

- [x] Add product filtering based on selected brand types

- [x] Add `type` for object button images

- [x] Using `useState()` to change the status

- [x] Filter by 1 or more brands at once

### Design

- **See more details:** [Link](https://docs.google.com/document/d/1iTrT9cdKrMCjUc6aDLS514gEk7... | 1.0 | Implement filter the `Brand` button products and process the filter results. - ### Filter by `Brand`

- [x] Add product filtering based on selected brand types

- [x] Add `type` for object button images

- [x] Using `useState()` to change the status

- [x] Filter by 1 or more brands at once

### Design

- **See more ... | process | implement filter the brand button products and process the filter results filter by brand add product filtering based on selected brand types add type for object button images using usestate to change the status filter by or more brands at once design see more details ... | 1 |

128,405 | 18,048,206,601 | IssuesEvent | 2021-09-19 09:09:17 | jinhogate/pizza_angular | https://api.github.com/repos/jinhogate/pizza_angular | opened | CVE-2019-6284 (Medium) detected in node-sass-4.9.3.tgz | security vulnerability | ## CVE-2019-6284 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-sass-4.9.3.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.n... | True | CVE-2019-6284 (Medium) detected in node-sass-4.9.3.tgz - ## CVE-2019-6284 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-sass-4.9.3.tgz</b></p></summary>

<p>Wrapper around libs... | non_process | cve medium detected in node sass tgz cve medium severity vulnerability vulnerable library node sass tgz wrapper around libsass library home page a href dependency hierarchy build angular tgz root library x node sass tgz vulnerable librar... | 0 |

60,575 | 17,023,461,775 | IssuesEvent | 2021-07-03 02:09:11 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Potlatch moves nodes without touching them | Component: potlatch (flash editor) Priority: major Resolution: wontfix Type: defect | **[Submitted to the original trac issue database at 12.53pm, Saturday, 15th August 2009]**

While editing country names using the Multilingual Country-List*, it's becoming apparent that Potlatch moves nodes ever so slightly, without even touching the node. It's apparent because using the JOSM link on that page centers ... | 1.0 | Potlatch moves nodes without touching them - **[Submitted to the original trac issue database at 12.53pm, Saturday, 15th August 2009]**

While editing country names using the Multilingual Country-List*, it's becoming apparent that Potlatch moves nodes ever so slightly, without even touching the node. It's apparent beca... | non_process | potlatch moves nodes without touching them while editing country names using the multilingual country list it s becoming apparent that potlatch moves nodes ever so slightly without even touching the node it s apparent because using the josm link on that page centers on the exact coordinates it last saw the... | 0 |

18,824 | 24,721,882,449 | IssuesEvent | 2022-10-20 11:20:54 | hermes-hmc/workflow | https://api.github.com/repos/hermes-hmc/workflow | opened | Implement "new" step: validation | enhancement 2️ process/validate | After processing, the theoretically unified dataset should be validated, to a specific (configurable?) standard of valid metadata.

This probably needs a specific entrypoint.

In this step, things like adherence to a sensible default should be asserted. | 1.0 | Implement "new" step: validation - After processing, the theoretically unified dataset should be validated, to a specific (configurable?) standard of valid metadata.

This probably needs a specific entrypoint.

In this step, things like adherence to a sensible default should be asserted. | process | implement new step validation after processing the theoretically unified dataset should be validated to a specific configurable standard of valid metadata this probably needs a specific entrypoint in this step things like adherence to a sensible default should be asserted | 1 |

234,699 | 7,725,065,980 | IssuesEvent | 2018-05-24 16:46:46 | test4gloirin/m | https://api.github.com/repos/test4gloirin/m | closed | 0001032:

update 0.27 is not working on installation with ldap account backend | Addressbook bug high priority | **Reported by pschuele on 5 May 2009 15:26**

update 0.27 is not working on installation with ldap account backend

- error when creating addressbook_image table with foreign key

| 1.0 | 0001032:

update 0.27 is not working on installation with ldap account backend - **Reported by pschuele on 5 May 2009 15:26**

update 0.27 is not working on installation with ldap account backend

- error when creating addressbook_image table with foreign key

| non_process | update is not working on installation with ldap account backend reported by pschuele on may update is not working on installation with ldap account backend error when creating addressbook image table with foreign key | 0 |

85,305 | 15,736,682,396 | IssuesEvent | 2021-03-30 01:11:53 | vlaship/build-docker-image | https://api.github.com/repos/vlaship/build-docker-image | opened | CVE-2019-17563 (High) detected in tomcat-embed-core-9.0.26.jar | security vulnerability | ## CVE-2019-17563 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-9.0.26.jar</b></p></summary>

<p>Core Tomcat implementation</p>

<p>Library home page: <a href="https:... | True | CVE-2019-17563 (High) detected in tomcat-embed-core-9.0.26.jar - ## CVE-2019-17563 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-9.0.26.jar</b></p></summary>

<p>Cor... | non_process | cve high detected in tomcat embed core jar cve high severity vulnerability vulnerable library tomcat embed core jar core tomcat implementation library home page a href path to dependency file build docker image build gradle path to vulnerable library root gradle ca... | 0 |

259,298 | 27,621,798,088 | IssuesEvent | 2023-03-10 01:12:25 | nidhi7598/linux-3.0.35_CVE-2018-13405 | https://api.github.com/repos/nidhi7598/linux-3.0.35_CVE-2018-13405 | opened | CVE-2023-1078 (High) detected in linuxlinux-3.0.40 | Mend: dependency security vulnerability | ## CVE-2023-1078 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-3.0.40</b></p></summary>

<p>

<p>Apache Software Foundation (ASF)</p>

<p>Library home page: <a href=https://m... | True | CVE-2023-1078 (High) detected in linuxlinux-3.0.40 - ## CVE-2023-1078 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-3.0.40</b></p></summary>

<p>

<p>Apache Software Foundat... | non_process | cve high detected in linuxlinux cve high severity vulnerability vulnerable library linuxlinux apache software foundation asf library home page a href found in head commit a href found in base branch master vulnerable source files ... | 0 |

130,232 | 18,055,103,696 | IssuesEvent | 2021-09-20 07:05:41 | opensrp/web | https://api.github.com/repos/opensrp/web | closed | User management Design QA issues | Bug Report Design | - [x] Remove gear icon

- [x] Set search text 14px

- [x] in the admin menu, when a subsection is open but you are in a different section, the subsection is still white. Is this default Ant? Update the submenu to show selection even when in a different section but under the same submenu

- [x] Remove the `Required Acti... | 1.0 | User management Design QA issues - - [x] Remove gear icon

- [x] Set search text 14px

- [x] in the admin menu, when a subsection is open but you are in a different section, the subsection is still white. Is this default Ant? Update the submenu to show selection even when in a different section but under the same subme... | non_process | user management design qa issues remove gear icon set search text in the admin menu when a subsection is open but you are in a different section the subsection is still white is this default ant update the submenu to show selection even when in a different section but under the same submenu r... | 0 |

176,733 | 6,564,450,879 | IssuesEvent | 2017-09-08 01:40:18 | HAS-CRM/IssueTracker | https://api.github.com/repos/HAS-CRM/IssueTracker | opened | Prepare UAT Server for Vietnam Testing | Priority.High Status.Ongoing Type.ChangeRequest | Background:

Irene will want to let user at Vietnam try out at CRM Test Server. | 1.0 | Prepare UAT Server for Vietnam Testing - Background:

Irene will want to let user at Vietnam try out at CRM Test Server. | non_process | prepare uat server for vietnam testing background irene will want to let user at vietnam try out at crm test server | 0 |

413,535 | 27,957,105,737 | IssuesEvent | 2023-03-24 13:12:19 | cloudscape-design/components | https://api.github.com/repos/cloudscape-design/components | opened | [Documentation]: How should help panels integrate with the app layout? | documentation | ### Description

I'm struggling to understand the documentation relating to the help panels.

https://cloudscape.design/patterns/general/help-system/ says "Info links are the triggers that opens the help panel and display the corresponding content." and "An info link should always be anchored to headers." ... but tha... | 1.0 | [Documentation]: How should help panels integrate with the app layout? - ### Description

I'm struggling to understand the documentation relating to the help panels.

https://cloudscape.design/patterns/general/help-system/ says "Info links are the triggers that opens the help panel and display the corresponding conte... | non_process | how should help panels integrate with the app layout description i m struggling to understand the documentation relating to the help panels says info links are the triggers that opens the help panel and display the corresponding content and an info link should always be anchored to headers bu... | 0 |

267,278 | 20,198,141,900 | IssuesEvent | 2022-02-11 12:40:08 | wagtail/wagtail | https://api.github.com/repos/wagtail/wagtail | reopened | Add banner to docs (for Wagtail Space or in general) | type:Enhancement Documentation | We want to add a banner to the docs to advertise Wagtail Space.

We're referencing #4986, and we could just do the same as it, but for the upcoming Wagtail Space. One potential difficulty is getting it into a tag that will show in the stable version of the docs.

Another option would be to add a banner page to [wa... | 1.0 | Add banner to docs (for Wagtail Space or in general) - We want to add a banner to the docs to advertise Wagtail Space.

We're referencing #4986, and we could just do the same as it, but for the upcoming Wagtail Space. One potential difficulty is getting it into a tag that will show in the stable version of the docs.... | non_process | add banner to docs for wagtail space or in general we want to add a banner to the docs to advertise wagtail space we re referencing and we could just do the same as it but for the upcoming wagtail space one potential difficulty is getting it into a tag that will show in the stable version of the docs ... | 0 |

15,338 | 19,480,234,642 | IssuesEvent | 2021-12-25 04:58:19 | emily-writes-poems/emily-writes-poems-processing | https://api.github.com/repos/emily-writes-poems/emily-writes-poems-processing | closed | add poem dropdown selector for creating new feature | processing refinement | also rework the function for creating new feature - since we will now provide a list of poem ids to choose from, we don't need to check that the poem exists already...

now, update it so the function receives both id and title and uses those to create the feature. | 1.0 | add poem dropdown selector for creating new feature - also rework the function for creating new feature - since we will now provide a list of poem ids to choose from, we don't need to check that the poem exists already...

now, update it so the function receives both id and title and uses those to create the feature. | process | add poem dropdown selector for creating new feature also rework the function for creating new feature since we will now provide a list of poem ids to choose from we don t need to check that the poem exists already now update it so the function receives both id and title and uses those to create the feature | 1 |

15,239 | 19,161,813,189 | IssuesEvent | 2021-12-03 01:41:29 | streamnative/pulsar-flink | https://api.github.com/repos/streamnative/pulsar-flink | closed | [FEATURE] pulsar-flink-sql-connector_2.11 support flink 1.12.2 | type/bug platform/data-processing | **Is your feature request related to a problem? Please describe.**

pulsar-flink-sql-connector_2.11 not support flink 1.12.2

when I use flink 1.12.2 and io.streamnative.connectors:pulsar-flink-sql-connector_2.11:1.12.4.2

it will tips Unrecognized field "readername"

**Describe the solution you'd like**

support... | 1.0 | [FEATURE] pulsar-flink-sql-connector_2.11 support flink 1.12.2 - **Is your feature request related to a problem? Please describe.**

pulsar-flink-sql-connector_2.11 not support flink 1.12.2

when I use flink 1.12.2 and io.streamnative.connectors:pulsar-flink-sql-connector_2.11:1.12.4.2

it will tips Unrecognized fi... | process | pulsar flink sql connector support flink is your feature request related to a problem please describe pulsar flink sql connector not support flink when i use flink and io streamnative connectors pulsar flink sql connector it will tips unrecognized field readername... | 1 |

5,966 | 8,787,894,422 | IssuesEvent | 2018-12-20 20:12:57 | AnotherCodeArtist/CEPWare | https://api.github.com/repos/AnotherCodeArtist/CEPWare | closed | EmptyString Error in Flink-Task | WP6 - Complex Event Processing bug | One of the tasks fails when being submitted through the python-script, but works when submitted manually. | 1.0 | EmptyString Error in Flink-Task - One of the tasks fails when being submitted through the python-script, but works when submitted manually. | process | emptystring error in flink task one of the tasks fails when being submitted through the python script but works when submitted manually | 1 |

10,555 | 2,622,173,264 | IssuesEvent | 2015-03-04 00:15:32 | byzhang/leveldb | https://api.github.com/repos/byzhang/leveldb | closed | afdfsdfsdfsdf | auto-migrated Priority-Medium Type-Defect | ```

sdfsdfsfd

```

Original issue reported on code.google.com by `wpx...@gmail.com` on 13 May 2011 at 10:13 | 1.0 | afdfsdfsdfsdf - ```

sdfsdfsfd

```

Original issue reported on code.google.com by `wpx...@gmail.com` on 13 May 2011 at 10:13 | non_process | afdfsdfsdfsdf sdfsdfsfd original issue reported on code google com by wpx gmail com on may at | 0 |

297,365 | 22,352,473,213 | IssuesEvent | 2022-06-15 13:13:26 | Deltares/HYDROLIB-core | https://api.github.com/repos/Deltares/HYDROLIB-core | opened | Update "First steps" tutorial to notebook and binder | documentation | **What is the need for this task.**

The HYDROLIB team strives to create notebooks as tutorial that can be launched in binder

**What is the task?**

- improve first steps tutorial

- create notebook

- include binder option to documentation

**Additional context**

Add any other context or screenshots about the ... | 1.0 | Update "First steps" tutorial to notebook and binder - **What is the need for this task.**

The HYDROLIB team strives to create notebooks as tutorial that can be launched in binder

**What is the task?**

- improve first steps tutorial

- create notebook

- include binder option to documentation

**Additional con... | non_process | update first steps tutorial to notebook and binder what is the need for this task the hydrolib team strives to create notebooks as tutorial that can be launched in binder what is the task improve first steps tutorial create notebook include binder option to documentation additional con... | 0 |

8,558 | 11,731,084,018 | IssuesEvent | 2020-03-10 23:00:30 | AcademySoftwareFoundation/OpenCue | https://api.github.com/repos/AcademySoftwareFoundation/OpenCue | closed | label "good first issue" to issues where applicable | process triaged | ASWF is trying to standardize this across projects.

Section being added to TAC README in https://github.com/AcademySoftwareFoundation/tac/pull/45 | 1.0 | label "good first issue" to issues where applicable - ASWF is trying to standardize this across projects.

Section being added to TAC README in https://github.com/AcademySoftwareFoundation/tac/pull/45 | process | label good first issue to issues where applicable aswf is trying to standardize this across projects section being added to tac readme in | 1 |

15,839 | 20,028,184,605 | IssuesEvent | 2022-02-02 00:26:31 | googleapis/java-translate | https://api.github.com/repos/googleapis/java-translate | closed | com.example.translate.BatchTranslateTextTests: testBatchTranslateText failed | priority: p2 type: process api: translate flakybot: issue flakybot: flaky | Note: #709 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 8c1ae20536844353ffa329473c35e8a054a69d53

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/98a4e9bf-3fc6-49e1-afb6-db7c642449bc), [Sponge](http://sponge2/98a4e9bf-3fc6-49e1-a... | 1.0 | com.example.translate.BatchTranslateTextTests: testBatchTranslateText failed - Note: #709 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 8c1ae20536844353ffa329473c35e8a054a69d53

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/98a4... | process | com example translate batchtranslatetexttests testbatchtranslatetext failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed test output java util concurrent executionexception com google api gax rpc invalidarg... | 1 |

75,423 | 9,854,021,970 | IssuesEvent | 2019-06-19 15:54:36 | kubernetes-sigs/cluster-api | https://api.github.com/repos/kubernetes-sigs/cluster-api | closed | Add a new use case for cluster health check | kind/bug kind/documentation priority/backlog | /kind bug

**What steps did you take and what happened:**

[A clear and concise description of what the bug is.]

**What did you expect to happen:**

This is a follow up action for https://github.com/kubernetes-sigs/cluster-api/pull/903#discussion_r277693810 , I will create a PR for the use case of cluster healt... | 1.0 | Add a new use case for cluster health check - /kind bug

**What steps did you take and what happened:**

[A clear and concise description of what the bug is.]

**What did you expect to happen:**

This is a follow up action for https://github.com/kubernetes-sigs/cluster-api/pull/903#discussion_r277693810 , I will... | non_process | add a new use case for cluster health check kind bug what steps did you take and what happened what did you expect to happen this is a follow up action for i will create a pr for the use case of cluster health check assign fyi vincepri jichenjc detiber | 0 |

19,663 | 26,026,579,981 | IssuesEvent | 2022-12-21 16:51:28 | ConnorBaker/BSRT | https://api.github.com/repos/ConnorBaker/BSRT | closed | data_processing: Create separate module | enhancement module: data_processing | The `data-processing` module should be refactored into its own, standalone module outside the BSRT module. It offers a number of utility functions, like creating RAW bursts from a single image, which are very useful. | 1.0 | data_processing: Create separate module - The `data-processing` module should be refactored into its own, standalone module outside the BSRT module. It offers a number of utility functions, like creating RAW bursts from a single image, which are very useful. | process | data processing create separate module the data processing module should be refactored into its own standalone module outside the bsrt module it offers a number of utility functions like creating raw bursts from a single image which are very useful | 1 |

181,644 | 30,719,826,838 | IssuesEvent | 2023-07-27 15:12:09 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | opened | Allow large interactive areas | Needs Design Feedback | ## What problem does this address?

<!--

Please describe if this feature or enhancement is related to a current problem

or pain point. For example, "I'm always frustrated when ..." or "It is currently

difficult to ...".

-->

Sometimes we want a whole group of related visual items to have the same interaction (cli... | 1.0 | Allow large interactive areas - ## What problem does this address?

<!--

Please describe if this feature or enhancement is related to a current problem

or pain point. For example, "I'm always frustrated when ..." or "It is currently

difficult to ...".

-->

Sometimes we want a whole group of related visual items t... | non_process | allow large interactive areas what problem does this address please describe if this feature or enhancement is related to a current problem or pain point for example i m always frustrated when or it is currently difficult to sometimes we want a whole group of related visual items t... | 0 |

106,403 | 11,486,924,912 | IssuesEvent | 2020-02-11 10:53:29 | fahlke/golibs | https://api.github.com/repos/fahlke/golibs | closed | Add documentation for Pearson hashing | documentation | - [x] adjust `README.md`

- [x] add examples to `doc.go`

- [x] add package description to `doc.go`

- [x] document sources and tests | 1.0 | Add documentation for Pearson hashing - - [x] adjust `README.md`

- [x] add examples to `doc.go`

- [x] add package description to `doc.go`

- [x] document sources and tests | non_process | add documentation for pearson hashing adjust readme md add examples to doc go add package description to doc go document sources and tests | 0 |

16,549 | 21,568,599,087 | IssuesEvent | 2022-05-02 04:17:56 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add Today In Fashion | suggested title in process | Please add as much of the following info as you can:

Title: Today In Fashion

Type (film/tv show): tv reality

Film or show in which it appears: Mars Attacks!

Is the parent film/show streaming anywhere? HBO MAX

About when in the parent film/show does it appear? First 20 mins

Actual footage of the film/... | 1.0 | Add Today In Fashion - Please add as much of the following info as you can:

Title: Today In Fashion

Type (film/tv show): tv reality

Film or show in which it appears: Mars Attacks!

Is the parent film/show streaming anywhere? HBO MAX

About when in the parent film/show does it appear? First 20 mins

Actu... | process | add today in fashion please add as much of the following info as you can title today in fashion type film tv show tv reality film or show in which it appears mars attacks is the parent film show streaming anywhere hbo max about when in the parent film show does it appear first mins actua... | 1 |

3,894 | 6,821,024,178 | IssuesEvent | 2017-11-07 15:37:39 | syndesisio/syndesis-ui | https://api.github.com/repos/syndesisio/syndesis-ui | opened | Standardize the code linting flow for all contributors | dev process enhancement in progress Priority - Low | With more developers coming on board we should strive to keep consistency in our code in regards of format, style and syntax. Applying a linting phase bound to the transpilation/versioning phases of development seems a good start.

The idea is to ensure that all developers get their code linted regardless the IDE in ... | 1.0 | Standardize the code linting flow for all contributors - With more developers coming on board we should strive to keep consistency in our code in regards of format, style and syntax. Applying a linting phase bound to the transpilation/versioning phases of development seems a good start.

The idea is to ensure that al... | process | standardize the code linting flow for all contributors with more developers coming on board we should strive to keep consistency in our code in regards of format style and syntax applying a linting phase bound to the transpilation versioning phases of development seems a good start the idea is to ensure that al... | 1 |

14,218 | 17,138,079,088 | IssuesEvent | 2021-07-13 06:16:44 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [QA] Push notifications not triggered from SB | Bug P0 Process: Fixed Unknown backend | **Steps:**

1. Send a manual app level/study level notification

2. Observe the status in 'Notifications' screen

**Actual:** Push notifications not triggered from SB and status is 'Sending'

**Expected:** Push notifications should be triggered

Note: Issue tested in QA

Issue also observed for automated push not... | 1.0 | [QA] Push notifications not triggered from SB - **Steps:**

1. Send a manual app level/study level notification

2. Observe the status in 'Notifications' screen

**Actual:** Push notifications not triggered from SB and status is 'Sending'

**Expected:** Push notifications should be triggered

Note: Issue tested i... | process | push notifications not triggered from sb steps send a manual app level study level notification observe the status in notifications screen actual push notifications not triggered from sb and status is sending expected push notifications should be triggered note issue tested in q... | 1 |

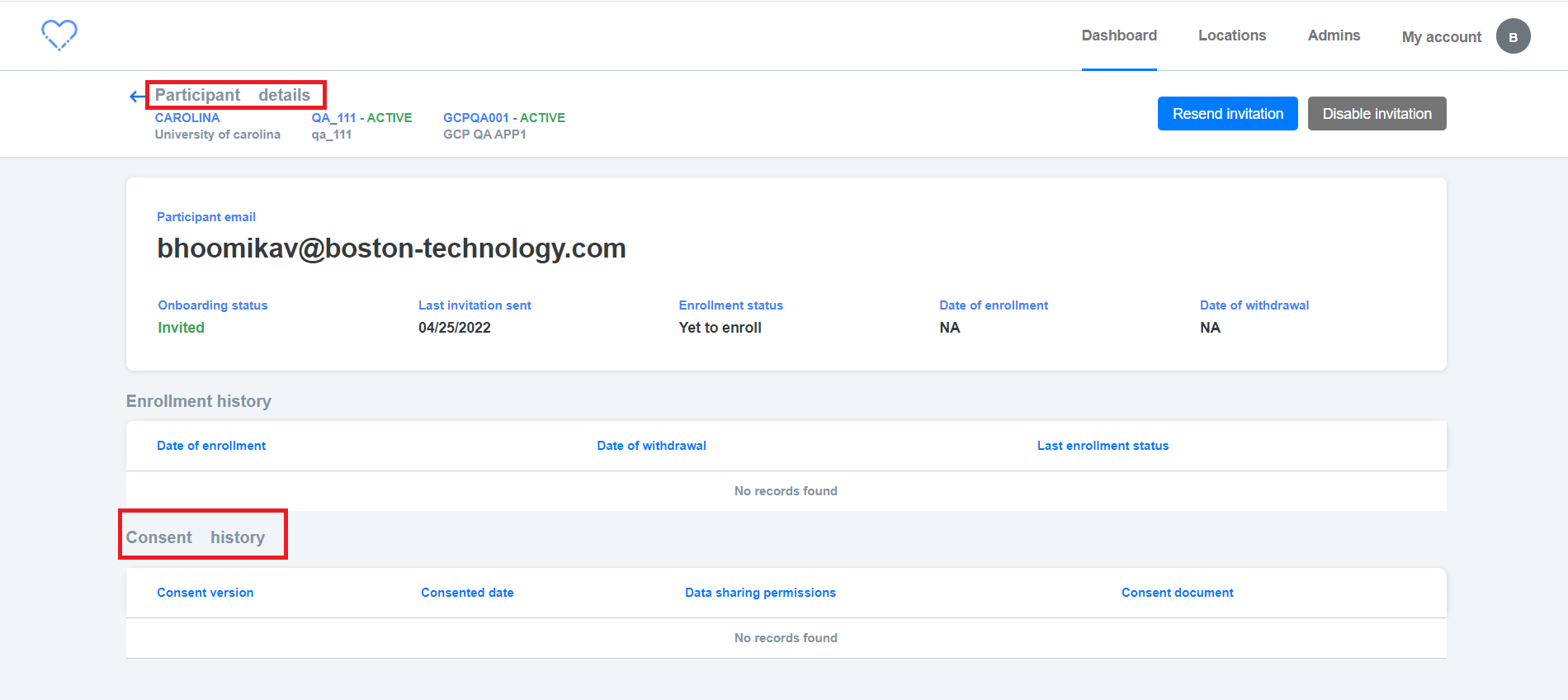

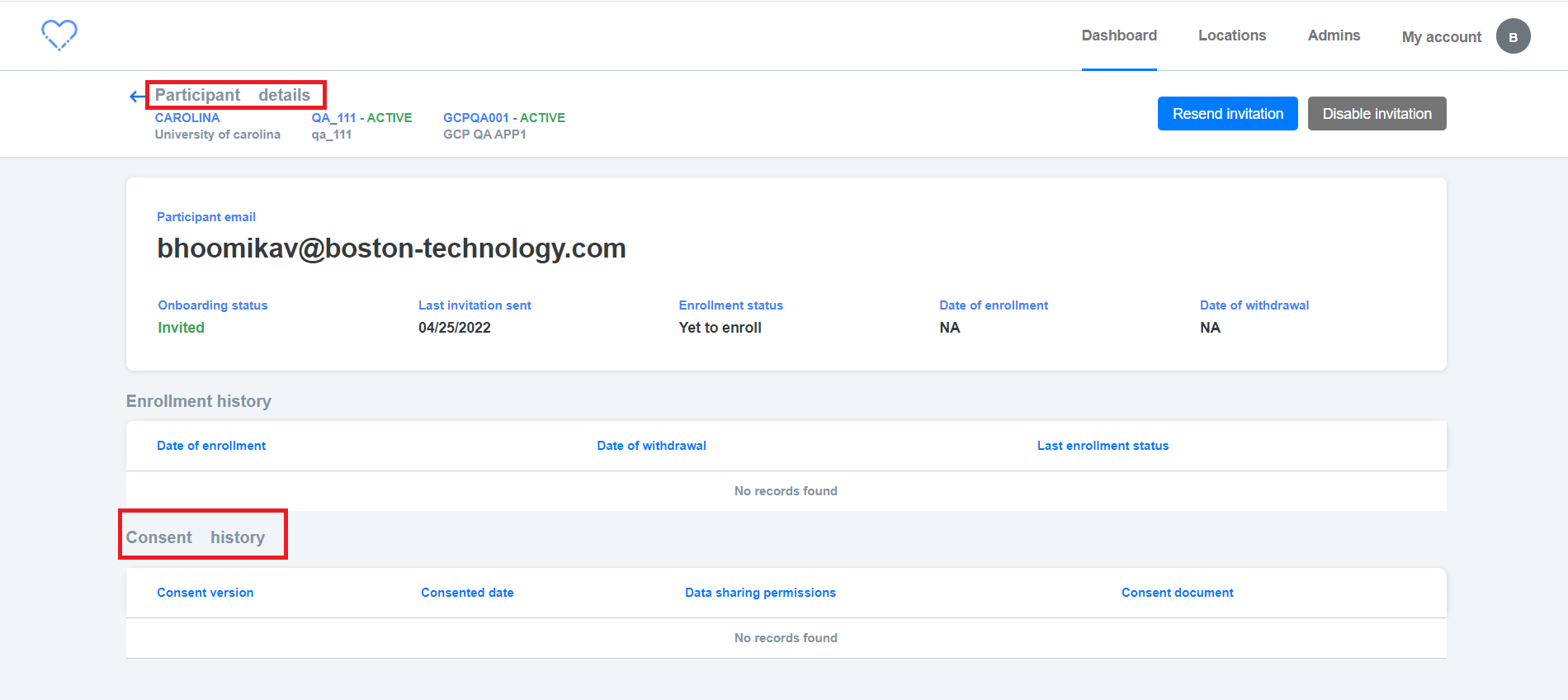

18,455 | 24,548,599,278 | IssuesEvent | 2022-10-12 10:44:33 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] [QA] Dashboard > Sites / Studies > UI Issue is observed in Participants details screen | Bug P2 Participant manager Process: Fixed Process: Tested dev | UI issue is observed in ,

Sites / Studies > Participants details screen

| 2.0 | [PM] [QA] Dashboard > Sites / Studies > UI Issue is observed in Participants details screen - UI issue is observed in ,

Sites / Studies > Participants details screen

| process | dashboard sites studies ui issue is observed in participants details screen ui issue is observed in sites studies participants details screen | 1 |

8,344 | 11,498,511,672 | IssuesEvent | 2020-02-12 12:09:27 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | `extract_month` not functioning properly | bug preprocessor | **Describe the bug**

The preprocessor `extract_month` does not work when the input cube does not yet have the auxiliary coordinate `month_number`. It needs to be added first if it is not there yet.

Example recipe:

```

CMIP5_landcover: &CMIP5_landcover

additional_datasets:

- {dataset: MPI-ESM-LR, project... | 1.0 | `extract_month` not functioning properly - **Describe the bug**

The preprocessor `extract_month` does not work when the input cube does not yet have the auxiliary coordinate `month_number`. It needs to be added first if it is not there yet.

Example recipe:

```

CMIP5_landcover: &CMIP5_landcover

additional_dat... | process | extract month not functioning properly describe the bug the preprocessor extract month does not work when the input cube does not yet have the auxiliary coordinate month number it needs to be added first if it is not there yet example recipe landcover landcover additional datasets ... | 1 |

167,760 | 13,041,582,735 | IssuesEvent | 2020-07-28 20:40:05 | apple/servicetalk | https://api.github.com/repos/apple/servicetalk | closed | ZipkinPublisherTest.testThriftRoundTrip test failure | flaky tests | https://ci.servicetalk.io/job/servicetalk-java8-prb/904/testReport/junit/io.servicetalk.opentracing.zipkin.publisher/ZipkinPublisherTest/testThriftRoundTrip/

```

Error Message

org.junit.runners.model.TestTimedOutException: test timed out after 90000 milliseconds

Stacktrace

org.junit.runners.model.TestTimedOutE... | 1.0 | ZipkinPublisherTest.testThriftRoundTrip test failure - https://ci.servicetalk.io/job/servicetalk-java8-prb/904/testReport/junit/io.servicetalk.opentracing.zipkin.publisher/ZipkinPublisherTest/testThriftRoundTrip/

```

Error Message

org.junit.runners.model.TestTimedOutException: test timed out after 90000 millisecon... | non_process | zipkinpublishertest testthriftroundtrip test failure error message org junit runners model testtimedoutexception test timed out after milliseconds stacktrace org junit runners model testtimedoutexception test timed out after milliseconds at sun misc unsafe park native method at java util con... | 0 |

19,190 | 25,315,074,487 | IssuesEvent | 2022-11-17 20:50:54 | carbon-design-system/ibm-cloud-cognitive | https://api.github.com/repos/carbon-design-system/ibm-cloud-cognitive | closed | Inline edit v2 release review | type: process improvement component: InlineEdit | ## Review for release

### Readiness

- [x] One or more scenarios for a design pattern have been identified as a

useful unit of functionality to publish.

- [x] A functioning component or components delivering those scenarios have been

delivered and merged to main.

- [x] Design maintainer has approved the im... | 1.0 | Inline edit v2 release review - ## Review for release

### Readiness

- [x] One or more scenarios for a design pattern have been identified as a

useful unit of functionality to publish.

- [x] A functioning component or components delivering those scenarios have been

delivered and merged to main.

- [x] Desig... | process | inline edit release review review for release readiness one or more scenarios for a design pattern have been identified as a useful unit of functionality to publish a functioning component or components delivering those scenarios have been delivered and merged to main design maint... | 1 |

7,048 | 10,208,530,512 | IssuesEvent | 2019-08-14 10:20:38 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Update Access Token | Pri2 assigned-to-author automation/svc process-automation/subsvc product-feedback triaged | There is no way to update the access token after it expires.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 83c90e64-b615-711f-a53d-fc76606e2ecd

* Version Independent ID: 2d164036-6886-4440-50f7-369f99f41cea

* Content: [Source Control integ... | 1.0 | Update Access Token - There is no way to update the access token after it expires.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 83c90e64-b615-711f-a53d-fc76606e2ecd

* Version Independent ID: 2d164036-6886-4440-50f7-369f99f41cea

* Content:... | process | update access token there is no way to update the access token after it expires document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service automation ... | 1 |

221,723 | 17,366,826,698 | IssuesEvent | 2021-07-30 08:29:45 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: X-Pack Accessibility Tests.x-pack/test/accessibility/apps/ml·ts - ml for user with full ML access with data loaded anomaly detection Anomaly Explorer page | :ml failed-test | A test failed on a tracked branch

```

Error: expected testSubject(mlPageAnomalyExplorer) to exist

at TestSubjects.existOrFail (/dev/shm/workspace/parallel/17/kibana/test/functional/services/common/test_subjects.ts:45:13)

at Object.openAnomalyExplorer (test/functional/services/ml/single_metric_viewer.ts:124:7)

... | 1.0 | Failing test: X-Pack Accessibility Tests.x-pack/test/accessibility/apps/ml·ts - ml for user with full ML access with data loaded anomaly detection Anomaly Explorer page - A test failed on a tracked branch

```

Error: expected testSubject(mlPageAnomalyExplorer) to exist

at TestSubjects.existOrFail (/dev/shm/workspac... | non_process | failing test x pack accessibility tests x pack test accessibility apps ml·ts ml for user with full ml access with data loaded anomaly detection anomaly explorer page a test failed on a tracked branch error expected testsubject mlpageanomalyexplorer to exist at testsubjects existorfail dev shm workspac... | 0 |

94,580 | 15,987,536,852 | IssuesEvent | 2021-04-19 00:55:54 | dotnet/aspnetcore | https://api.github.com/repos/dotnet/aspnetcore | closed | 'Correlation failed.' on Google Authorization when using Docker - Cookie not found. | area-security | ### Describe the bug

I am getting a `Correlation failed.` exception when using the external Google authentication service in ASP.NET Core. I head to my Index page, and the Google account select box comes up. Then I select my account, and the exception happens. The The message also says something about a missing cookie... | True | 'Correlation failed.' on Google Authorization when using Docker - Cookie not found. - ### Describe the bug

I am getting a `Correlation failed.` exception when using the external Google authentication service in ASP.NET Core. I head to my Index page, and the Google account select box comes up. Then I select my account,... | non_process | correlation failed on google authorization when using docker cookie not found describe the bug i am getting a correlation failed exception when using the external google authentication service in asp net core i head to my index page and the google account select box comes up then i select my account ... | 0 |

79,933 | 15,586,248,655 | IssuesEvent | 2021-03-18 01:30:41 | hiucimon/ClamScanService | https://api.github.com/repos/hiucimon/ClamScanService | opened | CVE-2020-24616 (High) detected in jackson-databind-2.8.5.jar | security vulnerability | ## CVE-2020-24616 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.5.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-24616 (High) detected in jackson-databind-2.8.5.jar - ## CVE-2020-24616 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.5.jar</b></p></summary>

<p>General... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file clamscanservice build gradle ... | 0 |

5,152 | 7,931,506,221 | IssuesEvent | 2018-07-07 00:57:27 | ncbo/bioportal-project | https://api.github.com/repos/ncbo/bioportal-project | closed | ONTONEO: last 4 submissions failed to parse | ontology processing problem | The lastest 4 submissions for [ONTONEO](http://bioportal.bioontology.org/ontologies/ONTONEO) failed to parse. Error from parsing.log file:

```

E, [2017-08-03T19:13:05.004447 #2608] ERROR -- : ["Exception: Rapper cannot parse rdfxml file at /srv/ncbo/repository/ONTONEO/10/owlapi.xrdf:

rapper: Parsing URI file:///s... | 1.0 | ONTONEO: last 4 submissions failed to parse - The lastest 4 submissions for [ONTONEO](http://bioportal.bioontology.org/ontologies/ONTONEO) failed to parse. Error from parsing.log file:

```

E, [2017-08-03T19:13:05.004447 #2608] ERROR -- : ["Exception: Rapper cannot parse rdfxml file at /srv/ncbo/repository/ONTONEO/1... | process | ontoneo last submissions failed to parse the lastest submissions for failed to parse error from parsing log file e error exception rapper cannot parse rdfxml file at srv ncbo repository ontoneo owlapi xrdf rapper parsing uri file srv ncbo repository ontoneo owlapi xrdf with p... | 1 |

20,052 | 6,808,668,294 | IssuesEvent | 2017-11-04 06:31:53 | nasa/europa | https://api.github.com/repos/nasa/europa | closed | Symbol not found when launching europa. | Component-Build | #### Configuration:

* OpenJDK 1.8

* ftjam 2.5.3

* GCC 7.2.0

* libantlr3c 3.5.2

I am trying to follow the quick start guide (using official binaries); When launching `ant` I have the following error:

```

Buildfile: /home/gandre/WIP/Aeroport/Planning/Light/build.xml

init:

compile:

run:

[echo]... | 1.0 | Symbol not found when launching europa. - #### Configuration:

* OpenJDK 1.8

* ftjam 2.5.3

* GCC 7.2.0

* libantlr3c 3.5.2

I am trying to follow the quick start guide (using official binaries); When launching `ant` I have the following error:

```

Buildfile: /home/gandre/WIP/Aeroport/Planning/Light/build.xml... | non_process | symbol not found when launching europa configuration openjdk ftjam gcc i am trying to follow the quick start guide using official binaries when launching ant i have the following error buildfile home gandre wip aeroport planning light build xml init ... | 0 |

314,486 | 23,524,953,295 | IssuesEvent | 2022-08-19 09:53:06 | joshtom/josh-folio | https://api.github.com/repos/joshtom/josh-folio | opened | Working code for splitting Js in Next Js | documentation | https://github.com/shshaw/Splitting/issues/80#issuecomment-1220441652

import "splitting/dist/splitting.css";

import "splitting/dist/splitting-cells.css";

```js

const Component = () => {

let target;

setTimeout(() => {

if ( window && document && target ) {

const Splitting = requi... | 1.0 | Working code for splitting Js in Next Js - https://github.com/shshaw/Splitting/issues/80#issuecomment-1220441652

import "splitting/dist/splitting.css";

import "splitting/dist/splitting-cells.css";

```js

const Component = () => {

let target;

setTimeout(() => {

if ( window && document && targ... | non_process | working code for splitting js in next js import splitting dist splitting css import splitting dist splitting cells css js const component let target settimeout if window document target const splitting require splitting ... | 0 |

7,764 | 10,887,658,963 | IssuesEvent | 2019-11-18 14:56:09 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | opened | Merge GO:0060154 cellular process regulating host cell cycle in response to virus into GO:0060153 modulation by virus of host cell cycle | multi-species process | Merge GO:0060154 cellular process regulating host cell cycle in response to virus (Any cellular process that modulates the rate or extent of progression through the cell cycle in response to a virus.) into GO:0060153 modulation by virus of host cell cycle (Any viral process that modulates the rate or extent of progress... | 1.0 | Merge GO:0060154 cellular process regulating host cell cycle in response to virus into GO:0060153 modulation by virus of host cell cycle - Merge GO:0060154 cellular process regulating host cell cycle in response to virus (Any cellular process that modulates the rate or extent of progression through the cell cycle in re... | process | merge go cellular process regulating host cell cycle in response to virus into go modulation by virus of host cell cycle merge go cellular process regulating host cell cycle in response to virus any cellular process that modulates the rate or extent of progression through the cell cycle in response to a virus ... | 1 |

3,532 | 6,570,779,307 | IssuesEvent | 2017-09-10 04:24:44 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | How to start the system default browser. | area-System.Diagnostics.Process bug up-for-grabs | The following line doesn't work:

Process.Start("http://localhost:5000");

Microsoft said that that was the official way to start a new browser, a long time ago, at:

https://support.microsoft.com/en-us/kb/305703

Is there any multiplatform way to do this now? Will it ever be?

| 1.0 | How to start the system default browser. - The following line doesn't work:

Process.Start("http://localhost:5000");

Microsoft said that that was the official way to start a new browser, a long time ago, at:

https://support.microsoft.com/en-us/kb/305703

Is there any multiplatform way to do this now? Will it ever be... | process | how to start the system default browser the following line doesn t work process start microsoft said that that was the official way to start a new browser a long time ago at is there any multiplatform way to do this now will it ever be | 1 |

76,128 | 3,481,800,847 | IssuesEvent | 2015-12-29 18:34:24 | phetsims/tasks | https://api.github.com/repos/phetsims/tasks | opened | Test Java version of Isotopes and Atomic mass | Medium Priority QA | @bryo5363 can you test the Java version of Isotopes and Atomic Mass. We are porting this sim, and it would be good to identify any existing bugs. | 1.0 | Test Java version of Isotopes and Atomic mass - @bryo5363 can you test the Java version of Isotopes and Atomic Mass. We are porting this sim, and it would be good to identify any existing bugs. | non_process | test java version of isotopes and atomic mass can you test the java version of isotopes and atomic mass we are porting this sim and it would be good to identify any existing bugs | 0 |

30,451 | 8,551,722,782 | IssuesEvent | 2018-11-07 18:53:33 | servo/servo | https://api.github.com/repos/servo/servo | closed | Taskcluster builds fail in mozjs bindgen build step | A-build A-infrastructure | ```

error: unknown argument: '-fno-sized-deallocation', err: true

```

Maybe we need to have a newer version of clang in our taskcluster setup? | 1.0 | Taskcluster builds fail in mozjs bindgen build step - ```

error: unknown argument: '-fno-sized-deallocation', err: true

```

Maybe we need to have a newer version of clang in our taskcluster setup? | non_process | taskcluster builds fail in mozjs bindgen build step error unknown argument fno sized deallocation err true maybe we need to have a newer version of clang in our taskcluster setup | 0 |

324,062 | 9,883,342,285 | IssuesEvent | 2019-06-24 19:09:21 | d2r2/go-dht | https://api.github.com/repos/d2r2/go-dht | closed | Decimal part of the temperature / humidity value for the DHT11 | Priority: Medium Status: Pending Type: Maintenance | in the line 184, 185 of dht.go. why don't add the decimal part to the value? Based on the datasheet of DHT11, it supports the decimal part. | 1.0 | Decimal part of the temperature / humidity value for the DHT11 - in the line 184, 185 of dht.go. why don't add the decimal part to the value? Based on the datasheet of DHT11, it supports the decimal part. | non_process | decimal part of the temperature humidity value for the in the line of dht go why don t add the decimal part to the value based on the datasheet of it supports the decimal part | 0 |

7,426 | 10,545,409,578 | IssuesEvent | 2019-10-02 19:04:20 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | opened | DoS: Update text on next steps page | Apply Process State Dept. |

We have already pulled USAJOBS data by now to remove that reference | 1.0 | DoS: Update text on next steps page -

We have already pulled USAJOBS data by now to remove that reference | process | dos update text on next steps page we have already pulled usajobs data by now to remove that reference | 1 |

7,696 | 10,780,639,921 | IssuesEvent | 2019-11-04 13:25:30 | radis/radis | https://api.github.com/repos/radis/radis | closed | remove medium | interface post-process refactor | combination of `nm` + medium='air' or 'vacuum' is confusing

I suggest discarding the `medium` parameter and using, as wavespace:

`cm-1`

`nm_air`

`nm_vacuum`

Question remains: what would the default `nm` points to? | 1.0 | remove medium - combination of `nm` + medium='air' or 'vacuum' is confusing

I suggest discarding the `medium` parameter and using, as wavespace:

`cm-1`

`nm_air`

`nm_vacuum`

Question remains: what would the default `nm` points to? | process | remove medium combination of nm medium air or vacuum is confusing i suggest discarding the medium parameter and using as wavespace cm nm air nm vacuum question remains what would the default nm points to | 1 |

16,266 | 20,862,996,560 | IssuesEvent | 2022-03-22 02:09:26 | streamnative/flink | https://api.github.com/repos/streamnative/flink | closed | [Feature] Support Pulsar schema evolution in PulsarSink | compute/data-processing type/feature | Pulsar has a built-in schema and supports schema evolution out of the box. We should add this support in the Pulsar sink. | 1.0 | [Feature] Support Pulsar schema evolution in PulsarSink - Pulsar has a built-in schema and supports schema evolution out of the box. We should add this support in the Pulsar sink. | process | support pulsar schema evolution in pulsarsink pulsar has a built in schema and supports schema evolution out of the box we should add this support in the pulsar sink | 1 |

208,358 | 15,887,115,901 | IssuesEvent | 2021-04-10 00:48:59 | backend-br/vagas | https://api.github.com/repos/backend-br/vagas | closed | [REMOTO] Desenvolvedor(a) Python @ Instruct Soluções em Tecnologia | CLT Django Docker Git GraphQL Linux Python Remoto Rest SQL Stale Testes Unitários | ## Descrição da vaga

Aqui na Instruct nossos times são multidisciplinares e valorizamos a troca de experiência dos colaboradores entre as diversas áreas. Sabemos que os resultados dependem do trabalho em equipe!

Prezamos por um ambiente amigável, inclusivo e seguro e estamos sempre abertos e dispostos a contribuir... | 1.0 | [REMOTO] Desenvolvedor(a) Python @ Instruct Soluções em Tecnologia - ## Descrição da vaga

Aqui na Instruct nossos times são multidisciplinares e valorizamos a troca de experiência dos colaboradores entre as diversas áreas. Sabemos que os resultados dependem do trabalho em equipe!

Prezamos por um ambiente amigável,... | non_process | desenvolvedor a python instruct soluções em tecnologia descrição da vaga aqui na instruct nossos times são multidisciplinares e valorizamos a troca de experiência dos colaboradores entre as diversas áreas sabemos que os resultados dependem do trabalho em equipe prezamos por um ambiente amigável inclus... | 0 |

17,361 | 23,185,583,565 | IssuesEvent | 2022-08-01 08:06:11 | streamnative/flink | https://api.github.com/repos/streamnative/flink | closed | [SQL Connector] fix the TimeoutException of PulsarSourceITCase | compute/data-processing | PulsarSourceITCase>SourceTestSuiteBase.testSavepoint:236->SourceTestSuiteBase.restartFromSavepoint:388->SourceTestSuiteBase.checkResultWithSemantic:744 is failing, we can see if increase the timeout value would fix the problem.

https://github.com/streamnative/streamnative-ci/runs/7378238879?check_suite_focus=true | 1.0 | [SQL Connector] fix the TimeoutException of PulsarSourceITCase - PulsarSourceITCase>SourceTestSuiteBase.testSavepoint:236->SourceTestSuiteBase.restartFromSavepoint:388->SourceTestSuiteBase.checkResultWithSemantic:744 is failing, we can see if increase the timeout value would fix the problem.

https://github.com/stream... | process | fix the timeoutexception of pulsarsourceitcase pulsarsourceitcase sourcetestsuitebase testsavepoint sourcetestsuitebase restartfromsavepoint sourcetestsuitebase checkresultwithsemantic is failing we can see if increase the timeout value would fix the problem | 1 |

448,510 | 12,951,848,795 | IssuesEvent | 2020-07-19 18:18:27 | rtc-focus-org/focus_issues | https://api.github.com/repos/rtc-focus-org/focus_issues | closed | Firefox needs dropdown to choose sharing type | enhancement priority screenshare | _From @rtc-focus on June 19, 2018 18:30_

Firefox needs to be told in advance of requesting screen sharing whether full screen or a window is to shared. So to support Firefox properly we need to present this choice after user clicks screen share button.

_Copied from original issue: rtc-focus-org/focus#1_ | 1.0 | Firefox needs dropdown to choose sharing type - _From @rtc-focus on June 19, 2018 18:30_

Firefox needs to be told in advance of requesting screen sharing whether full screen or a window is to shared. So to support Firefox properly we need to present this choice after user clicks screen share button.

_Copied from ori... | non_process | firefox needs dropdown to choose sharing type from rtc focus on june firefox needs to be told in advance of requesting screen sharing whether full screen or a window is to shared so to support firefox properly we need to present this choice after user clicks screen share button copied from original ... | 0 |

72,528 | 7,300,672,537 | IssuesEvent | 2018-02-27 00:52:52 | littlevgl/lvgl | https://api.github.com/repos/littlevgl/lvgl | closed | Input pointer drag on empty container causes invalidation/refresh | bug need test | Easiest way to see and replicate this issue is to run the theme demo.

Go to Tab 3 - everything on this page fits on screen, so there is no vertical scrollbar.

If you drag in any direction in the empty area to the right of the floating windows (in the area where there are no objects to interact with) the result is ess... | 1.0 | Input pointer drag on empty container causes invalidation/refresh - Easiest way to see and replicate this issue is to run the theme demo.

Go to Tab 3 - everything on this page fits on screen, so there is no vertical scrollbar.

If you drag in any direction in the empty area to the right of the floating windows (in the... | non_process | input pointer drag on empty container causes invalidation refresh easiest way to see and replicate this issue is to run the theme demo go to tab everything on this page fits on screen so there is no vertical scrollbar if you drag in any direction in the empty area to the right of the floating windows in the... | 0 |

11,197 | 13,957,702,554 | IssuesEvent | 2020-10-24 08:13:38 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | MT - MITA: Harvest | Geoportal Harvesting process MT - Malta | Good Morning Angelo,

Kindly can you perform a harvest on the Maltese CSW please as we did some changes and would like to see the result.

Thanks,

Rene | 1.0 | MT - MITA: Harvest - Good Morning Angelo,

Kindly can you perform a harvest on the Maltese CSW please as we did some changes and would like to see the result.

Thanks,

Rene | process | mt mita harvest good morning angelo kindly can you perform a harvest on the maltese csw please as we did some changes and would like to see the result thanks rene | 1 |

5,285 | 8,071,955,383 | IssuesEvent | 2018-08-06 14:38:44 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | NPE in TopicFragmentFilter module [DITA OT 2.x] | P2 bug preprocess preprocess/conref | DITA Map:

``` xml

<!DOCTYPE map PUBLIC "-//OASIS//DTD DITA Map//EN" "map.dtd">

<map>

<title>DITA Topic Map</title>

<keydef href="zuzu.dita" keys="zuzu"/>

<topicref href="test.dita"/>

</map>

```

zuzu.dita:

``` xml

<!DOCTYPE dita PUBLIC "-//OASIS//DTD DITA Composite//EN" "ditabase.dtd">

<dita>

<topic id="a">

... | 2.0 | NPE in TopicFragmentFilter module [DITA OT 2.x] - DITA Map:

``` xml

<!DOCTYPE map PUBLIC "-//OASIS//DTD DITA Map//EN" "map.dtd">

<map>

<title>DITA Topic Map</title>

<keydef href="zuzu.dita" keys="zuzu"/>

<topicref href="test.dita"/>

</map>

```

zuzu.dita:

``` xml

<!DOCTYPE dita PUBLIC "-//OASIS//DTD DITA Composite... | process | npe in topicfragmentfilter module dita map xml dita topic map zuzu dita xml a a test dita xml aaa npe when publishing build failed d projects exml frameworks dita dita x build xml the following ... | 1 |

565 | 3,024,104,411 | IssuesEvent | 2015-08-02 07:59:13 | HazyResearch/dd-genomics | https://api.github.com/repos/HazyResearch/dd-genomics | closed | Modify XML parser code so as to pick up years for titles | Preprocessing | Pull *all* sections of a document before outputting objects.

(@Colossus- I could do a join as you suggested to compensate for the titles all having null year, but seems like this will be better long term solution to fix it here upstream / probably will take net similar amount of time. If not this should still be op... | 1.0 | Modify XML parser code so as to pick up years for titles - Pull *all* sections of a document before outputting objects.

(@Colossus- I could do a join as you suggested to compensate for the titles all having null year, but seems like this will be better long term solution to fix it here upstream / probably will take ... | process | modify xml parser code so as to pick up years for titles pull all sections of a document before outputting objects colossus i could do a join as you suggested to compensate for the titles all having null year but seems like this will be better long term solution to fix it here upstream probably will take ... | 1 |

61,778 | 14,640,709,879 | IssuesEvent | 2020-12-25 03:21:37 | fu1771695yongxie/pm | https://api.github.com/repos/fu1771695yongxie/pm | opened | CVE-2018-14732 (High) detected in webpack-dev-server-1.16.5.tgz | security vulnerability | ## CVE-2018-14732 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>webpack-dev-server-1.16.5.tgz</b></p></summary>

<p>Serves a webpack app. Updates the browser on changes.</p>

<p>Librar... | True | CVE-2018-14732 (High) detected in webpack-dev-server-1.16.5.tgz - ## CVE-2018-14732 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>webpack-dev-server-1.16.5.tgz</b></p></summary>

<p>S... | non_process | cve high detected in webpack dev server tgz cve high severity vulnerability vulnerable library webpack dev server tgz serves a webpack app updates the browser on changes library home page a href path to dependency file pm package json path to vulnerable library pm ... | 0 |

16,701 | 21,802,251,838 | IssuesEvent | 2022-05-16 06:59:02 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | Win x64 calling convention: wrong XMM registers assignment | Feature: Processor/x86 | Register assignment is incorrect for Windows x64 calling convention when a function accepts arguments of type `double` or `float`.

Here is the minimal example showing the problem:

```cpp

__declspec(noinline) __declspec(dllexport)

int func3(int a, double b, int c, float d) {

return a * b + (int)(b / d);

}

... | 1.0 | Win x64 calling convention: wrong XMM registers assignment - Register assignment is incorrect for Windows x64 calling convention when a function accepts arguments of type `double` or `float`.

Here is the minimal example showing the problem:

```cpp

__declspec(noinline) __declspec(dllexport)

int func3(int a, doub... | process | win calling convention wrong xmm registers assignment register assignment is incorrect for windows calling convention when a function accepts arguments of type double or float here is the minimal example showing the problem cpp declspec noinline declspec dllexport int int a double b in... | 1 |

524,421 | 15,213,309,342 | IssuesEvent | 2021-02-17 11:36:45 | nf-core/tools | https://api.github.com/repos/nf-core/tools | closed | Shields.io badge not recognised | linting low-priority | From https://shields.io/

> We support .svg and .json. The default is .svg, which can be omitted from the URL.

So such a badge works:

```Markdown

[](http://bioconda.github.io/)

```

[](http://bioconda.github.io/)

```

[![i... | non_process | shields io badge not recognised from we support svg and json the default is svg which can be omitted from the url so such a badge works markdown but it s not recognised by linting i m guessing we re checking for the exact existence of this line markdown ... | 0 |

18,045 | 24,055,926,521 | IssuesEvent | 2022-09-16 16:52:11 | GregTechCEu/gt-ideas | https://api.github.com/repos/GregTechCEu/gt-ideas | opened | UDMH and Rocket Fuel Production | processing chain | ## Details

This processing chain is used for producing UDMH, Unsymmetrical Dimethylhydrazine (aka 1,1-dimethylhydrazine), which is used as rocket fuel.

## Products

Main Product: UDMH

Side Product(s): None

## Steps

2 Chlorine (g) + 6 Sodium Hydroxide (s) + Water (l) -> 1000L Salt Water (l) + 1000L Bleach (l)

... | 1.0 | UDMH and Rocket Fuel Production - ## Details

This processing chain is used for producing UDMH, Unsymmetrical Dimethylhydrazine (aka 1,1-dimethylhydrazine), which is used as rocket fuel.

## Products

Main Product: UDMH

Side Product(s): None

## Steps

2 Chlorine (g) + 6 Sodium Hydroxide (s) + Water (l) -> 1000L S... | process | udmh and rocket fuel production details this processing chain is used for producing udmh unsymmetrical dimethylhydrazine aka dimethylhydrazine which is used as rocket fuel products main product udmh side product s none steps chlorine g sodium hydroxide s water l salt ... | 1 |

4,063 | 6,995,392,342 | IssuesEvent | 2017-12-15 19:06:15 | syndesisio/syndesis | https://api.github.com/repos/syndesisio/syndesis | opened | Solidify Definitions and Expectations of Labels and Statuses | cat/discussion cat/process cat/retro | We discussed a few issues that are related to the agile methodology and how we follow it, what the expectations are, how we label items, etc.

Topics of interest:

1. Large undertakings that are not necessarily features, such as solving critical architectural problems, but improve the sustainability of the project,... | 1.0 | Solidify Definitions and Expectations of Labels and Statuses - We discussed a few issues that are related to the agile methodology and how we follow it, what the expectations are, how we label items, etc.

Topics of interest:

1. Large undertakings that are not necessarily features, such as solving critical archite... | process | solidify definitions and expectations of labels and statuses we discussed a few issues that are related to the agile methodology and how we follow it what the expectations are how we label items etc topics of interest large undertakings that are not necessarily features such as solving critical archite... | 1 |

330,918 | 24,283,370,136 | IssuesEvent | 2022-09-28 19:31:21 | chartjs/Chart.js | https://api.github.com/repos/chartjs/Chart.js | closed | Samples in Documentation are small and tabs are not working | type: bug type: documentation | ### Expected behavior

The charts in the documentation should be big enough that you can see what is going on in them.

When the charts are in tabs, when you switch to another tab it should show the chart

### Current behavior

The charts are extremely small so that the information and customization is almost not vi... | 1.0 | Samples in Documentation are small and tabs are not working - ### Expected behavior

The charts in the documentation should be big enough that you can see what is going on in them.

When the charts are in tabs, when you switch to another tab it should show the chart

### Current behavior

The charts are extremely sm... | non_process | samples in documentation are small and tabs are not working expected behavior the charts in the documentation should be big enough that you can see what is going on in them when the charts are in tabs when you switch to another tab it should show the chart current behavior the charts are extremely sm... | 0 |

17,147 | 22,693,563,808 | IssuesEvent | 2022-07-05 01:43:39 | Carlosmtp/DomuzSGI | https://api.github.com/repos/Carlosmtp/DomuzSGI | closed | Creación de nuevas columnas | Enhancement Medium Process Management | - [x] Crear una columna goal en los indicadores de procesos

- [x] Crear la columna goal en el proceso

- [x] Funcion para crear un registro en periodic_records

- [x] Funcion para retornar los periodic_records en base a un año. Ex "get/periodic_records/",2021 (Nose si en la base de datos se puede tener una **fecha** q... | 1.0 | Creación de nuevas columnas - - [x] Crear una columna goal en los indicadores de procesos

- [x] Crear la columna goal en el proceso

- [x] Funcion para crear un registro en periodic_records

- [x] Funcion para retornar los periodic_records en base a un año. Ex "get/periodic_records/",2021 (Nose si en la base de datos ... | process | creación de nuevas columnas crear una columna goal en los indicadores de procesos crear la columna goal en el proceso funcion para crear un registro en periodic records funcion para retornar los periodic records en base a un año ex get periodic records nose si en la base de datos se puede te... | 1 |

22,308 | 30,861,130,129 | IssuesEvent | 2023-08-03 03:11:32 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | [MLv2] Tweaks to `join-lhs-display-name` method | .metabase-lib .Team/QueryProcessor :hammer_and_wrench: | Apparently, the `join-lhs-display-name` logic is a bit more comprehensive when adding a new join. Scenarios:

### 1. Adding a join when there're no joins

When adding a new join and there're no other joins, we should use the source table/model/question name as the LHS table display name (even before the RHS table i... | 1.0 | [MLv2] Tweaks to `join-lhs-display-name` method - Apparently, the `join-lhs-display-name` logic is a bit more comprehensive when adding a new join. Scenarios:

### 1. Adding a join when there're no joins

When adding a new join and there're no other joins, we should use the source table/model/question name as the L... | process | tweaks to join lhs display name method apparently the join lhs display name logic is a bit more comprehensive when adding a new join scenarios adding a join when there re no joins when adding a new join and there re no other joins we should use the source table model question name as the lhs ta... | 1 |

19,813 | 26,202,927,834 | IssuesEvent | 2023-01-03 19:20:02 | zammad/zammad | https://api.github.com/repos/zammad/zammad | opened | mail processing not possible if reply-to header is faulty | bug verified mail processing | ### Used Zammad Version

5.3 / stable

### Environment

- Installation method: any

- Operating system (if you're unsure: `cat /etc/os-release` ): any

- Database + version: any

- Elasticsearch version: any

- Browser + version: any

### Actual behaviour

Zammad cannot process emails that contain invalid email addr... | 1.0 | mail processing not possible if reply-to header is faulty - ### Used Zammad Version

5.3 / stable

### Environment

- Installation method: any

- Operating system (if you're unsure: `cat /etc/os-release` ): any

- Database + version: any

- Elasticsearch version: any

- Browser + version: any

### Actual behaviour

... | process | mail processing not possible if reply to header is faulty used zammad version stable environment installation method any operating system if you re unsure cat etc os release any database version any elasticsearch version any browser version any actual behaviour ... | 1 |

2,789 | 5,721,829,510 | IssuesEvent | 2017-04-20 07:53:12 | openvstorage/volumedriver | https://api.github.com/repos/openvstorage/volumedriver | closed | Integrate Alba with block cache | process_wontfix type_enhancement | API change: new `block_path` field in `struct alba::statistics::RoraCounter`. | 1.0 | Integrate Alba with block cache - API change: new `block_path` field in `struct alba::statistics::RoraCounter`. | process | integrate alba with block cache api change new block path field in struct alba statistics roracounter | 1 |

795,806 | 28,086,938,096 | IssuesEvent | 2023-03-30 10:24:05 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | closed | asset.snippets.quickstart_getfeed_test: test_get_feed failed | priority: p1 type: bug api: cloudasset samples flakybot: issue | Note: #9021 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: a0b5ecff8961110ffaa3fad9cf013c7268779e19

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/a7f4b84c-bd62-4b1f-bc1c-e447bc4457f9), [Sponge](http://sponge2/a7f4b84c-bd62-4b1f-... | 1.0 | asset.snippets.quickstart_getfeed_test: test_get_feed failed - Note: #9021 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: a0b5ecff8961110ffaa3fad9cf013c7268779e19

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/a7f4b84c-bd62-4b1f-... | non_process | asset snippets quickstart getfeed test test get feed failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed test output traceback most recent call last file workspace asset snippets nox py lib s... | 0 |

8,108 | 11,300,888,030 | IssuesEvent | 2020-01-17 14:32:44 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | opened | Problem with multiprocessing, custom __getstate__ with Tensors and forkserver | module: multiprocessing module: serialization | ## 🐛 Bug

**TL;DR**: multiprocessing forkserver / spawn + custom class with `__getstate__` returning tensors give

```python

RuntimeError: unable to resize file <filename not specified> to the right size

```

### Context

Suppose the user created a custom class which holds a (large) list of torch tensors insid... | 1.0 | Problem with multiprocessing, custom __getstate__ with Tensors and forkserver - ## 🐛 Bug

**TL;DR**: multiprocessing forkserver / spawn + custom class with `__getstate__` returning tensors give

```python

RuntimeError: unable to resize file <filename not specified> to the right size

```

### Context

Suppose t... | process | problem with multiprocessing custom getstate with tensors and forkserver 🐛 bug tl dr multiprocessing forkserver spawn custom class with getstate returning tensors give python runtimeerror unable to resize file to the right size context suppose the user created a custo... | 1 |

17,320 | 23,141,162,271 | IssuesEvent | 2022-07-28 18:37:53 | benthosdev/benthos | https://api.github.com/repos/benthosdev/benthos | closed | Processing Avro OCF | enhancement processors inputs | Hi!

Am i right that currently it is not possible to process Avro OCF (Object Container Files, http://avro.apache.org/docs/current/spec.html#Object+Container+Files) with benthos?

The main difference here is, that the schema is within the header an can be used to decode the message.

Cheers

Armin

| 1.0 | Processing Avro OCF - Hi!

Am i right that currently it is not possible to process Avro OCF (Object Container Files, http://avro.apache.org/docs/current/spec.html#Object+Container+Files) with benthos?

The main difference here is, that the schema is within the header an can be used to decode the message.

Cheers

Ar... | process | processing avro ocf hi am i right that currently it is not possible to process avro ocf object container files with benthos the main difference here is that the schema is within the header an can be used to decode the message cheers armin | 1 |

597,588 | 18,167,053,718 | IssuesEvent | 2021-09-27 15:36:17 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | The coap_server sample is missing the actual send in the retransmit routine | bug priority: medium area: Networking | **Describe the bug**

If a device can't reach it's destination, it will get into `retransmit_request` for several times to decrement its retries, however no actual send is performed which makes the retransmit request useless.

**To Reproduce**

Steps to reproduce the behavior:

1. Build the coap_server sample

2. Obs... | 1.0 | The coap_server sample is missing the actual send in the retransmit routine - **Describe the bug**

If a device can't reach it's destination, it will get into `retransmit_request` for several times to decrement its retries, however no actual send is performed which makes the retransmit request useless.

**To Reproduc... | non_process | the coap server sample is missing the actual send in the retransmit routine describe the bug if a device can t reach it s destination it will get into retransmit request for several times to decrement its retries however no actual send is performed which makes the retransmit request useless to reproduc... | 0 |

4,604 | 7,451,846,824 | IssuesEvent | 2018-03-29 05:41:40 | TEAMMATES/teammates | https://api.github.com/repos/TEAMMATES/teammates | closed | Instructor: edit session: multiple choice questions: support generating options for all other teams | a-Process d.Contributors e.4 f-Questions | Similar to #8412 but for 'all other teams' instead of 'all other students'

| 1.0 | Instructor: edit session: multiple choice questions: support generating options for all other teams - Similar to #8412 but for 'all other teams' instead of 'all other students'

| process | instructor edit session multiple choice questions support generating options for all other teams similar to but for all other teams instead of all other students | 1 |

338,563 | 24,590,729,329 | IssuesEvent | 2022-10-14 01:46:23 | nvaccess/nvda | https://api.github.com/repos/nvaccess/nvda | closed | The delete profile dialog should name the profile that is going to be deleted | component/documentation feature/configuration-profiles p4 good first issue triaged | ### Steps to reproduce:

1. Open the NVDA menu.

2. Select `Configuration profiles`.

3. Choose a profile.

4. Press `delete`.

### Actual behavior:

Receive a dialog including the following text:

>This profile will be permanently deleted. This action cannot be undone.

>OK Cancel

### Expected behavior:

... | 1.0 | The delete profile dialog should name the profile that is going to be deleted - ### Steps to reproduce:

1. Open the NVDA menu.

2. Select `Configuration profiles`.

3. Choose a profile.

4. Press `delete`.

### Actual behavior:

Receive a dialog including the following text:

>This profile will be permanently ... | non_process | the delete profile dialog should name the profile that is going to be deleted steps to reproduce open the nvda menu select configuration profiles choose a profile press delete actual behavior receive a dialog including the following text this profile will be permanently ... | 0 |

17,410 | 23,225,296,537 | IssuesEvent | 2022-08-02 23:00:50 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | Release checklist 0.61 | enhancement P1 process | ### Problem