Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

1,044

| 3,511,845,757

|

IssuesEvent

|

2016-01-10 16:13:29

|

pwittchen/ReactiveNetwork

|

https://api.github.com/repos/pwittchen/ReactiveNetwork

|

opened

|

Release 0.1.5

|

release process

|

**Initial release notes**:

TBD.

**Things to do**:

- [ ] bump library version to 0.1.5

- [ ] upload Archives to Maven Central Repository

- [ ] bump library version in `README.md` after Maven Sync

- [ ] update `CHANGELOG.md` after Maven Sync

- [ ] create new GitHub release

|

1.0

|

Release 0.1.5 - **Initial release notes**:

TBD.

**Things to do**:

- [ ] bump library version to 0.1.5

- [ ] upload Archives to Maven Central Repository

- [ ] bump library version in `README.md` after Maven Sync

- [ ] update `CHANGELOG.md` after Maven Sync

- [ ] create new GitHub release

|

process

|

release initial release notes tbd things to do bump library version to upload archives to maven central repository bump library version in readme md after maven sync update changelog md after maven sync create new github release

| 1

|

30,716

| 5,842,090,417

|

IssuesEvent

|

2017-05-10 04:11:26

|

KSP-KOS/KOS

|

https://api.github.com/repos/KSP-KOS/KOS

|

closed

|

unable to differentiate similar-named part modules

|

documentation enhancement

|

As seen in the attached screenshot, I have three part modules all named ModuleScienceExperiment, and I am only able to call the first one on the list. Given how you can get a List of part module names, perhaps it would be better to just reference them by index rather than a string? Or at least in addition to a string?

|

1.0

|

unable to differentiate similar-named part modules - As seen in the attached screenshot, I have three part modules all named ModuleScienceExperiment, and I am only able to call the first one on the list. Given how you can get a List of part module names, perhaps it would be better to just reference them by index rather than a string? Or at least in addition to a string?

|

non_process

|

unable to differentiate similar named part modules as seen in the attached screenshot i have three part modules all named modulescienceexperiment and i am only able to call the first one on the list given how you can get a list of part module names perhaps it would be better to just reference them by index rather than a string or at least in addition to a string

| 0

|

784,357

| 27,567,578,276

|

IssuesEvent

|

2023-03-08 06:01:33

|

njtierney/conmat

|

https://api.github.com/repos/njtierney/conmat

|

closed

|

How to effectively compare age groups

|

Priority 2

|

Currently within `apply_vaccination` we just check that certain dimensions match - I think it is better to check that the age breaks are the same, but for this case, I think that these are the same, but I'm not sure what sort of method we would use to compare them - `80+` is the same as `c(80, Inf)`, and is `0-5, 5-10` the same as `0-4, 5-11`?

``` r

library(conmat)

ngm_nsw <- generate_ngm_oz(

state_name = "NSW",

age_breaks = c(seq(0, 80, by = 5), Inf),

R_target = 1.5

)

age_breaks(ngm_nsw$home)

#> [1] "[0,5)" "[5,10)" "[10,15)" "[15,20)" "[20,25)" "[25,30)"

#> [7] "[30,35)" "[35,40)" "[40,45)" "[45,50)" "[50,55)" "[55,60)"

#> [13] "[60,65)" "[65,70)" "[70,75)" "[75,80)" "[80,Inf)"

vaccination_effect_example_data

#> # A tibble: 17 × 4

#> age_band coverage acquisition transmission

#> <chr> <dbl> <dbl> <dbl>

#> 1 0-4 0 0 0

#> 2 5-11 0.782 0.583 0.254

#> 3 12-15 0.997 0.631 0.295

#> 4 16-19 0.965 0.786 0.469

#> 5 20-24 0.861 0.774 0.453

#> 6 25-29 0.997 0.778 0.458

#> 7 30-34 0.998 0.803 0.493

#> 8 35-39 0.998 0.829 0.533

#> 9 40-44 0.999 0.841 0.551

#> 10 45-49 0.993 0.847 0.562

#> 11 50-54 0.999 0.857 0.579

#> 12 55-59 0.996 0.864 0.591

#> 13 60-64 0.998 0.858 0.581

#> 14 65-69 0.999 0.864 0.591

#> 15 70-74 0.999 0.867 0.597

#> 16 75-79 0.999 0.866 0.595

#> 17 80+ 0.999 0.844 0.556

# these two data sources ^^ are used in `apply_vaccination` below:

# Apply vaccination effect to next generation matrices

ngm_nsw_vacc <- apply_vaccination(

ngm = ngm_nsw,

data = vaccination_effect_example_data,

coverage_col = coverage,

acquisition_col = acquisition,

transmission_col = transmission

)

```

<sup>Created on 2023-01-17 with [reprex v2.0.2](https://reprex.tidyverse.org)</sup>

<details style="margin-bottom:10px;">

<summary>

Session info

</summary>

``` r

sessioninfo::session_info()

#> ─ Session info ───────────────────────────────────────────────────────────────

#> setting value

#> version R version 4.2.1 (2022-06-23)

#> os macOS Monterey 12.3.1

#> system aarch64, darwin20

#> ui X11

#> language (EN)

#> collate en_US.UTF-8

#> ctype en_US.UTF-8

#> tz Australia/Brisbane

#> date 2023-01-17

#> pandoc 2.19.2 @ /Applications/RStudio.app/Contents/Resources/app/quarto/bin/tools/ (via rmarkdown)

#>

#> ─ Packages ───────────────────────────────────────────────────────────────────

#> package * version date (UTC) lib source

#> assertthat 0.2.1 2019-03-21 [1] CRAN (R 4.2.0)

#> cli 3.4.1 2022-09-23 [1] CRAN (R 4.2.0)

#> codetools 0.2-18 2020-11-04 [1] CRAN (R 4.2.1)

#> colorspace 2.0-3 2022-02-21 [1] CRAN (R 4.2.0)

#> conmat * 0.0.1.9000 2023-01-16 [1] local

#> countrycode 1.4.0 2022-05-04 [1] CRAN (R 4.2.0)

#> curl 4.3.3 2022-10-06 [1] CRAN (R 4.2.0)

#> data.table 1.14.6 2022-11-16 [1] CRAN (R 4.2.0)

#> DBI 1.1.3 2022-06-18 [1] CRAN (R 4.2.0)

#> digest 0.6.30 2022-10-18 [1] CRAN (R 4.2.0)

#> dotCall64 1.0-2 2022-10-03 [1] CRAN (R 4.2.0)

#> dplyr 1.0.10 2022-09-01 [1] CRAN (R 4.2.0)

#> ellipsis 0.3.2 2021-04-29 [1] CRAN (R 4.2.0)

#> evaluate 0.18 2022-11-07 [1] CRAN (R 4.2.0)

#> fansi 1.0.3 2022-03-24 [1] CRAN (R 4.2.0)

#> fastmap 1.1.0 2021-01-25 [1] CRAN (R 4.2.0)

#> fields 14.1 2022-08-12 [1] CRAN (R 4.2.0)

#> fs 1.5.2 2021-12-08 [1] CRAN (R 4.2.0)

#> furrr 0.3.1 2022-08-15 [1] CRAN (R 4.2.0)

#> future 1.29.0 2022-11-06 [1] CRAN (R 4.2.0)

#> generics 0.1.3 2022-07-05 [1] CRAN (R 4.2.0)

#> ggplot2 3.4.0 2022-11-04 [1] CRAN (R 4.2.0)

#> globals 0.16.2 2022-11-21 [1] CRAN (R 4.2.1)

#> glue 1.6.2 2022-02-24 [1] CRAN (R 4.2.0)

#> gridExtra 2.3 2017-09-09 [1] CRAN (R 4.2.0)

#> gtable 0.3.1 2022-09-01 [1] CRAN (R 4.2.0)

#> highr 0.9 2021-04-16 [1] CRAN (R 4.2.0)

#> hms 1.1.2 2022-08-19 [1] CRAN (R 4.2.0)

#> htmltools 0.5.3 2022-07-18 [1] CRAN (R 4.2.0)

#> httr 1.4.4 2022-08-17 [1] CRAN (R 4.2.0)

#> jsonlite 1.8.3 2022-10-21 [1] CRAN (R 4.2.0)

#> knitr 1.41 2022-11-18 [1] CRAN (R 4.2.0)

#> lattice 0.20-45 2021-09-22 [1] CRAN (R 4.2.1)

#> lifecycle 1.0.3 2022-10-07 [1] CRAN (R 4.2.0)

#> listenv 0.8.0 2019-12-05 [1] CRAN (R 4.2.0)

#> lubridate 1.9.0 2022-11-06 [1] CRAN (R 4.2.0)

#> magrittr 2.0.3 2022-03-30 [1] CRAN (R 4.2.0)

#> maps 3.4.1 2022-10-30 [1] CRAN (R 4.2.0)

#> Matrix 1.5-3 2022-11-11 [1] CRAN (R 4.2.0)

#> mgcv 1.8-41 2022-10-21 [1] CRAN (R 4.2.0)

#> munsell 0.5.0 2018-06-12 [1] CRAN (R 4.2.0)

#> nlme 3.1-160 2022-10-10 [1] CRAN (R 4.2.0)

#> oai 0.4.0 2022-11-10 [1] CRAN (R 4.2.0)

#> parallelly 1.32.1 2022-07-21 [1] CRAN (R 4.2.0)

#> pillar 1.8.1 2022-08-19 [1] CRAN (R 4.2.0)

#> pkgconfig 2.0.3 2019-09-22 [1] CRAN (R 4.2.0)

#> plyr 1.8.8 2022-11-11 [1] CRAN (R 4.2.0)

#> purrr * 0.3.5 2022-10-06 [1] CRAN (R 4.2.0)

#> R.cache 0.16.0 2022-07-21 [1] CRAN (R 4.2.0)

#> R.methodsS3 1.8.2 2022-06-13 [1] CRAN (R 4.2.0)

#> R.oo 1.25.0 2022-06-12 [1] CRAN (R 4.2.0)

#> R.utils 2.12.2 2022-11-11 [1] CRAN (R 4.2.0)

#> R6 2.5.1 2021-08-19 [1] CRAN (R 4.2.0)

#> Rcpp 1.0.9 2022-07-08 [1] CRAN (R 4.2.0)

#> readr 2.1.3 2022-10-01 [1] CRAN (R 4.2.0)

#> reprex 2.0.2 2022-08-17 [1] CRAN (R 4.2.0)

#> rlang 1.0.6 2022-09-24 [1] CRAN (R 4.2.0)

#> rmarkdown 2.18 2022-11-09 [1] CRAN (R 4.2.0)

#> rstudioapi 0.14 2022-08-22 [1] CRAN (R 4.2.0)

#> scales 1.2.1 2022-08-20 [1] CRAN (R 4.2.0)

#> sessioninfo 1.2.2 2021-12-06 [1] CRAN (R 4.2.0)

#> socialmixr 0.2.0 2022-10-27 [1] CRAN (R 4.2.0)

#> spam 2.9-1 2022-08-07 [1] CRAN (R 4.2.0)

#> stringi 1.7.8 2022-07-11 [1] CRAN (R 4.2.0)

#> stringr 1.5.0 2022-12-02 [1] CRAN (R 4.2.0)

#> styler 1.8.1 2022-11-07 [1] CRAN (R 4.2.0)

#> tibble 3.1.8 2022-07-22 [1] CRAN (R 4.2.0)

#> tidyr 1.2.1 2022-09-08 [1] CRAN (R 4.2.0)

#> tidyselect 1.2.0 2022-10-10 [1] CRAN (R 4.2.0)

#> timechange 0.1.1 2022-11-04 [1] CRAN (R 4.2.0)

#> tzdb 0.3.0 2022-03-28 [1] CRAN (R 4.2.0)

#> utf8 1.2.2 2021-07-24 [1] CRAN (R 4.2.0)

#> vctrs 0.5.1 2022-11-16 [1] CRAN (R 4.2.0)

#> viridis 0.6.2 2021-10-13 [1] CRAN (R 4.2.0)

#> viridisLite 0.4.1 2022-08-22 [1] CRAN (R 4.2.0)

#> withr 2.5.0 2022-03-03 [1] CRAN (R 4.2.0)

#> wpp2017 1.2-3 2020-02-10 [1] CRAN (R 4.2.0)

#> xfun 0.35 2022-11-16 [1] CRAN (R 4.2.0)

#> xml2 1.3.3 2021-11-30 [1] CRAN (R 4.2.0)

#> yaml 2.3.6 2022-10-18 [1] CRAN (R 4.2.0)

#>

#> [1] /Library/Frameworks/R.framework/Versions/4.2-arm64/Resources/library

#>

#> ──────────────────────────────────────────────────────────────────────────────

```

</details>

|

1.0

|

How to effectively compare age groups - Currently within `apply_vaccination` we just check that certain dimensions match - I think it is better to check that the age breaks are the same, but for this case, I think that these are the same, but I'm not sure what sort of method we would use to compare them - `80+` is the same as `c(80, Inf)`, and is `0-5, 5-10` the same as `0-4, 5-11`?

``` r

library(conmat)

ngm_nsw <- generate_ngm_oz(

state_name = "NSW",

age_breaks = c(seq(0, 80, by = 5), Inf),

R_target = 1.5

)

age_breaks(ngm_nsw$home)

#> [1] "[0,5)" "[5,10)" "[10,15)" "[15,20)" "[20,25)" "[25,30)"

#> [7] "[30,35)" "[35,40)" "[40,45)" "[45,50)" "[50,55)" "[55,60)"

#> [13] "[60,65)" "[65,70)" "[70,75)" "[75,80)" "[80,Inf)"

vaccination_effect_example_data

#> # A tibble: 17 × 4

#> age_band coverage acquisition transmission

#> <chr> <dbl> <dbl> <dbl>

#> 1 0-4 0 0 0

#> 2 5-11 0.782 0.583 0.254

#> 3 12-15 0.997 0.631 0.295

#> 4 16-19 0.965 0.786 0.469

#> 5 20-24 0.861 0.774 0.453

#> 6 25-29 0.997 0.778 0.458

#> 7 30-34 0.998 0.803 0.493

#> 8 35-39 0.998 0.829 0.533

#> 9 40-44 0.999 0.841 0.551

#> 10 45-49 0.993 0.847 0.562

#> 11 50-54 0.999 0.857 0.579

#> 12 55-59 0.996 0.864 0.591

#> 13 60-64 0.998 0.858 0.581

#> 14 65-69 0.999 0.864 0.591

#> 15 70-74 0.999 0.867 0.597

#> 16 75-79 0.999 0.866 0.595

#> 17 80+ 0.999 0.844 0.556

# these two data sources ^^ are used in `apply_vaccination` below:

# Apply vaccination effect to next generation matrices

ngm_nsw_vacc <- apply_vaccination(

ngm = ngm_nsw,

data = vaccination_effect_example_data,

coverage_col = coverage,

acquisition_col = acquisition,

transmission_col = transmission

)

```

<sup>Created on 2023-01-17 with [reprex v2.0.2](https://reprex.tidyverse.org)</sup>

<details style="margin-bottom:10px;">

<summary>

Session info

</summary>

``` r

sessioninfo::session_info()

#> ─ Session info ───────────────────────────────────────────────────────────────

#> setting value

#> version R version 4.2.1 (2022-06-23)

#> os macOS Monterey 12.3.1

#> system aarch64, darwin20

#> ui X11

#> language (EN)

#> collate en_US.UTF-8

#> ctype en_US.UTF-8

#> tz Australia/Brisbane

#> date 2023-01-17

#> pandoc 2.19.2 @ /Applications/RStudio.app/Contents/Resources/app/quarto/bin/tools/ (via rmarkdown)

#>

#> ─ Packages ───────────────────────────────────────────────────────────────────

#> package * version date (UTC) lib source

#> assertthat 0.2.1 2019-03-21 [1] CRAN (R 4.2.0)

#> cli 3.4.1 2022-09-23 [1] CRAN (R 4.2.0)

#> codetools 0.2-18 2020-11-04 [1] CRAN (R 4.2.1)

#> colorspace 2.0-3 2022-02-21 [1] CRAN (R 4.2.0)

#> conmat * 0.0.1.9000 2023-01-16 [1] local

#> countrycode 1.4.0 2022-05-04 [1] CRAN (R 4.2.0)

#> curl 4.3.3 2022-10-06 [1] CRAN (R 4.2.0)

#> data.table 1.14.6 2022-11-16 [1] CRAN (R 4.2.0)

#> DBI 1.1.3 2022-06-18 [1] CRAN (R 4.2.0)

#> digest 0.6.30 2022-10-18 [1] CRAN (R 4.2.0)

#> dotCall64 1.0-2 2022-10-03 [1] CRAN (R 4.2.0)

#> dplyr 1.0.10 2022-09-01 [1] CRAN (R 4.2.0)

#> ellipsis 0.3.2 2021-04-29 [1] CRAN (R 4.2.0)

#> evaluate 0.18 2022-11-07 [1] CRAN (R 4.2.0)

#> fansi 1.0.3 2022-03-24 [1] CRAN (R 4.2.0)

#> fastmap 1.1.0 2021-01-25 [1] CRAN (R 4.2.0)

#> fields 14.1 2022-08-12 [1] CRAN (R 4.2.0)

#> fs 1.5.2 2021-12-08 [1] CRAN (R 4.2.0)

#> furrr 0.3.1 2022-08-15 [1] CRAN (R 4.2.0)

#> future 1.29.0 2022-11-06 [1] CRAN (R 4.2.0)

#> generics 0.1.3 2022-07-05 [1] CRAN (R 4.2.0)

#> ggplot2 3.4.0 2022-11-04 [1] CRAN (R 4.2.0)

#> globals 0.16.2 2022-11-21 [1] CRAN (R 4.2.1)

#> glue 1.6.2 2022-02-24 [1] CRAN (R 4.2.0)

#> gridExtra 2.3 2017-09-09 [1] CRAN (R 4.2.0)

#> gtable 0.3.1 2022-09-01 [1] CRAN (R 4.2.0)

#> highr 0.9 2021-04-16 [1] CRAN (R 4.2.0)

#> hms 1.1.2 2022-08-19 [1] CRAN (R 4.2.0)

#> htmltools 0.5.3 2022-07-18 [1] CRAN (R 4.2.0)

#> httr 1.4.4 2022-08-17 [1] CRAN (R 4.2.0)

#> jsonlite 1.8.3 2022-10-21 [1] CRAN (R 4.2.0)

#> knitr 1.41 2022-11-18 [1] CRAN (R 4.2.0)

#> lattice 0.20-45 2021-09-22 [1] CRAN (R 4.2.1)

#> lifecycle 1.0.3 2022-10-07 [1] CRAN (R 4.2.0)

#> listenv 0.8.0 2019-12-05 [1] CRAN (R 4.2.0)

#> lubridate 1.9.0 2022-11-06 [1] CRAN (R 4.2.0)

#> magrittr 2.0.3 2022-03-30 [1] CRAN (R 4.2.0)

#> maps 3.4.1 2022-10-30 [1] CRAN (R 4.2.0)

#> Matrix 1.5-3 2022-11-11 [1] CRAN (R 4.2.0)

#> mgcv 1.8-41 2022-10-21 [1] CRAN (R 4.2.0)

#> munsell 0.5.0 2018-06-12 [1] CRAN (R 4.2.0)

#> nlme 3.1-160 2022-10-10 [1] CRAN (R 4.2.0)

#> oai 0.4.0 2022-11-10 [1] CRAN (R 4.2.0)

#> parallelly 1.32.1 2022-07-21 [1] CRAN (R 4.2.0)

#> pillar 1.8.1 2022-08-19 [1] CRAN (R 4.2.0)

#> pkgconfig 2.0.3 2019-09-22 [1] CRAN (R 4.2.0)

#> plyr 1.8.8 2022-11-11 [1] CRAN (R 4.2.0)

#> purrr * 0.3.5 2022-10-06 [1] CRAN (R 4.2.0)

#> R.cache 0.16.0 2022-07-21 [1] CRAN (R 4.2.0)

#> R.methodsS3 1.8.2 2022-06-13 [1] CRAN (R 4.2.0)

#> R.oo 1.25.0 2022-06-12 [1] CRAN (R 4.2.0)

#> R.utils 2.12.2 2022-11-11 [1] CRAN (R 4.2.0)

#> R6 2.5.1 2021-08-19 [1] CRAN (R 4.2.0)

#> Rcpp 1.0.9 2022-07-08 [1] CRAN (R 4.2.0)

#> readr 2.1.3 2022-10-01 [1] CRAN (R 4.2.0)

#> reprex 2.0.2 2022-08-17 [1] CRAN (R 4.2.0)

#> rlang 1.0.6 2022-09-24 [1] CRAN (R 4.2.0)

#> rmarkdown 2.18 2022-11-09 [1] CRAN (R 4.2.0)

#> rstudioapi 0.14 2022-08-22 [1] CRAN (R 4.2.0)

#> scales 1.2.1 2022-08-20 [1] CRAN (R 4.2.0)

#> sessioninfo 1.2.2 2021-12-06 [1] CRAN (R 4.2.0)

#> socialmixr 0.2.0 2022-10-27 [1] CRAN (R 4.2.0)

#> spam 2.9-1 2022-08-07 [1] CRAN (R 4.2.0)

#> stringi 1.7.8 2022-07-11 [1] CRAN (R 4.2.0)

#> stringr 1.5.0 2022-12-02 [1] CRAN (R 4.2.0)

#> styler 1.8.1 2022-11-07 [1] CRAN (R 4.2.0)

#> tibble 3.1.8 2022-07-22 [1] CRAN (R 4.2.0)

#> tidyr 1.2.1 2022-09-08 [1] CRAN (R 4.2.0)

#> tidyselect 1.2.0 2022-10-10 [1] CRAN (R 4.2.0)

#> timechange 0.1.1 2022-11-04 [1] CRAN (R 4.2.0)

#> tzdb 0.3.0 2022-03-28 [1] CRAN (R 4.2.0)

#> utf8 1.2.2 2021-07-24 [1] CRAN (R 4.2.0)

#> vctrs 0.5.1 2022-11-16 [1] CRAN (R 4.2.0)

#> viridis 0.6.2 2021-10-13 [1] CRAN (R 4.2.0)

#> viridisLite 0.4.1 2022-08-22 [1] CRAN (R 4.2.0)

#> withr 2.5.0 2022-03-03 [1] CRAN (R 4.2.0)

#> wpp2017 1.2-3 2020-02-10 [1] CRAN (R 4.2.0)

#> xfun 0.35 2022-11-16 [1] CRAN (R 4.2.0)

#> xml2 1.3.3 2021-11-30 [1] CRAN (R 4.2.0)

#> yaml 2.3.6 2022-10-18 [1] CRAN (R 4.2.0)

#>

#> [1] /Library/Frameworks/R.framework/Versions/4.2-arm64/Resources/library

#>

#> ──────────────────────────────────────────────────────────────────────────────

```

</details>

|

non_process

|

how to effectively compare age groups currently within apply vaccination we just check that certain dimensions match i think it is better to check that the age breaks are the same but for this case i think that these are the same but i m not sure what sort of method we would use to compare them is the same as c inf and is the same as r library conmat ngm nsw generate ngm oz state name nsw age breaks c seq by inf r target age breaks ngm nsw home inf vaccination effect example data a tibble × age band coverage acquisition transmission these two data sources are used in apply vaccination below apply vaccination effect to next generation matrices ngm nsw vacc apply vaccination ngm ngm nsw data vaccination effect example data coverage col coverage acquisition col acquisition transmission col transmission created on with session info r sessioninfo session info ─ session info ─────────────────────────────────────────────────────────────── setting value version r version os macos monterey system ui language en collate en us utf ctype en us utf tz australia brisbane date pandoc applications rstudio app contents resources app quarto bin tools via rmarkdown ─ packages ─────────────────────────────────────────────────────────────────── package version date utc lib source assertthat cran r cli cran r codetools cran r colorspace cran r conmat local countrycode cran r curl cran r data table cran r dbi cran r digest cran r cran r dplyr cran r ellipsis cran r evaluate cran r fansi cran r fastmap cran r fields cran r fs cran r furrr cran r future cran r generics cran r cran r globals cran r glue cran r gridextra cran r gtable cran r highr cran r hms cran r htmltools cran r httr cran r jsonlite cran r knitr cran r lattice cran r lifecycle cran r listenv cran r lubridate cran r magrittr cran r maps cran r matrix cran r mgcv cran r munsell cran r nlme cran r oai cran r parallelly cran r pillar cran r pkgconfig cran r plyr cran r purrr cran r r cache cran r r cran r r oo cran r r utils cran r cran r rcpp cran r readr cran r reprex cran r rlang cran r rmarkdown cran r rstudioapi cran r scales cran r sessioninfo cran r socialmixr cran r spam cran r stringi cran r stringr cran r styler cran r tibble cran r tidyr cran r tidyselect cran r timechange cran r tzdb cran r cran r vctrs cran r viridis cran r viridislite cran r withr cran r cran r xfun cran r cran r yaml cran r library frameworks r framework versions resources library ──────────────────────────────────────────────────────────────────────────────

| 0

|

13,989

| 16,763,408,421

|

IssuesEvent

|

2021-06-14 05:02:55

|

qgis/QGIS

|

https://api.github.com/repos/qgis/QGIS

|

closed

|

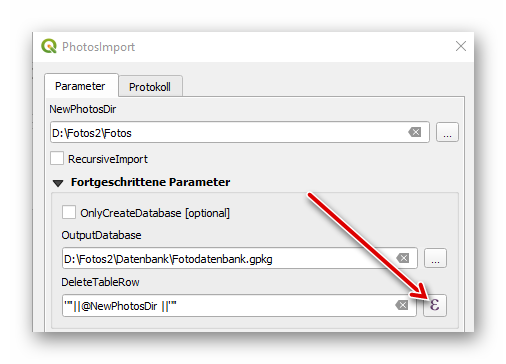

QGIS crash after click on expression button of model input

|

Bug Crash/Data Corruption Processing

|

<!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

- [ ] Search through existing issue reports and gis.stackexchange.com to check whether the issue already exists

- [ ] Test with a [clean new user profile](https://docs.qgis.org/testing/en/docs/user_manual/introduction/qgis_configuration.html?highlight=profile#working-with-user-profiles).

- [ ] Create a light and self-contained sample dataset and project file which demonstrates the issue

-->

**Describe the bug**

QGIS crash after click on expression button of model input.

## Report Details

**Crash ID**: [3fee5ff78a3e1a8843b5c9ef31dc3a80c00865a7](https://github.com/qgis/QGIS/search?q=3fee5ff78a3e1a8843b5c9ef31dc3a80c00865a7&type=Issues)

**Stack Trace**

<pre>

QListData::begin :

QgsExpressionContext::QgsExpressionContext :

QgsExpressionLineEdit::editExpression :

QMetaObject::activate :

QAbstractButton::clicked :

QAbstractButton::click :

QAbstractButton::mouseReleaseEvent :

QToolButton::mouseReleaseEvent :

QWidget::event :

QApplicationPrivate::notify_helper :

QApplication::notify :

QgsApplication::notify :

QCoreApplication::notifyInternal2 :

QApplicationPrivate::sendMouseEvent :

QSizePolicy::QSizePolicy :

QSizePolicy::QSizePolicy :

QApplicationPrivate::notify_helper :

QApplication::notify :

QgsApplication::notify :

QCoreApplication::notifyInternal2 :

QGuiApplicationPrivate::processMouseEvent :

QWindowSystemInterface::sendWindowSystemEvents :

QEventDispatcherWin32::processEvents :

UserCallWinProcCheckWow :

DispatchMessageWorker :

QEventDispatcherWin32::processEvents :

qt_plugin_query_metadata :

QEventLoop::exec :

QCoreApplication::exec :

main :

BaseThreadInitThunk :

RtlUserThreadStart :

</pre>

**QGIS Info**

QGIS Version: 3.18.1-Z�rich

QGIS code revision: 202f1bf7e5

Compiled against Qt: 5.11.2

Running against Qt: 5.11.2

Compiled against GDAL: 3.1.4

Running against GDAL: 3.1.4

**System Info**

CPU Type: x86_64

Kernel Type: winnt

Kernel Version: 10.0.17763

**How to Reproduce**

<!-- Steps, sample datasets and qgis project file to reproduce the behavior. Screencasts or screenshots welcome -->

1. Execute QGIS model [import-photos.zip](https://github.com/qgis/QGIS/files/6638695/import-photos.zip) via QGIS Browser

2. Click expression button (DeleteTableRow input field)

3. QGIS crashes

|

1.0

|

QGIS crash after click on expression button of model input - <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

- [ ] Search through existing issue reports and gis.stackexchange.com to check whether the issue already exists

- [ ] Test with a [clean new user profile](https://docs.qgis.org/testing/en/docs/user_manual/introduction/qgis_configuration.html?highlight=profile#working-with-user-profiles).

- [ ] Create a light and self-contained sample dataset and project file which demonstrates the issue

-->

**Describe the bug**

QGIS crash after click on expression button of model input.

## Report Details

**Crash ID**: [3fee5ff78a3e1a8843b5c9ef31dc3a80c00865a7](https://github.com/qgis/QGIS/search?q=3fee5ff78a3e1a8843b5c9ef31dc3a80c00865a7&type=Issues)

**Stack Trace**

<pre>

QListData::begin :

QgsExpressionContext::QgsExpressionContext :

QgsExpressionLineEdit::editExpression :

QMetaObject::activate :

QAbstractButton::clicked :

QAbstractButton::click :

QAbstractButton::mouseReleaseEvent :

QToolButton::mouseReleaseEvent :

QWidget::event :

QApplicationPrivate::notify_helper :

QApplication::notify :

QgsApplication::notify :

QCoreApplication::notifyInternal2 :

QApplicationPrivate::sendMouseEvent :

QSizePolicy::QSizePolicy :

QSizePolicy::QSizePolicy :

QApplicationPrivate::notify_helper :

QApplication::notify :

QgsApplication::notify :

QCoreApplication::notifyInternal2 :

QGuiApplicationPrivate::processMouseEvent :

QWindowSystemInterface::sendWindowSystemEvents :

QEventDispatcherWin32::processEvents :

UserCallWinProcCheckWow :

DispatchMessageWorker :

QEventDispatcherWin32::processEvents :

qt_plugin_query_metadata :

QEventLoop::exec :

QCoreApplication::exec :

main :

BaseThreadInitThunk :

RtlUserThreadStart :

</pre>

**QGIS Info**

QGIS Version: 3.18.1-Z�rich

QGIS code revision: 202f1bf7e5

Compiled against Qt: 5.11.2

Running against Qt: 5.11.2

Compiled against GDAL: 3.1.4

Running against GDAL: 3.1.4

**System Info**

CPU Type: x86_64

Kernel Type: winnt

Kernel Version: 10.0.17763

**How to Reproduce**

<!-- Steps, sample datasets and qgis project file to reproduce the behavior. Screencasts or screenshots welcome -->

1. Execute QGIS model [import-photos.zip](https://github.com/qgis/QGIS/files/6638695/import-photos.zip) via QGIS Browser

2. Click expression button (DeleteTableRow input field)

3. QGIS crashes

|

process

|

qgis crash after click on expression button of model input bug fixing and feature development is a community responsibility and not the responsibility of the qgis project alone if this bug report or feature request is high priority for you we suggest engaging a qgis developer or support organisation and financially sponsoring a fix checklist before submitting search through existing issue reports and gis stackexchange com to check whether the issue already exists test with a create a light and self contained sample dataset and project file which demonstrates the issue describe the bug qgis crash after click on expression button of model input report details crash id stack trace qlistdata begin qgsexpressioncontext qgsexpressioncontext qgsexpressionlineedit editexpression qmetaobject activate qabstractbutton clicked qabstractbutton click qabstractbutton mousereleaseevent qtoolbutton mousereleaseevent qwidget event qapplicationprivate notify helper qapplication notify qgsapplication notify qcoreapplication qapplicationprivate sendmouseevent qsizepolicy qsizepolicy qsizepolicy qsizepolicy qapplicationprivate notify helper qapplication notify qgsapplication notify qcoreapplication qguiapplicationprivate processmouseevent qwindowsysteminterface sendwindowsystemevents processevents usercallwinproccheckwow dispatchmessageworker processevents qt plugin query metadata qeventloop exec qcoreapplication exec main basethreadinitthunk rtluserthreadstart qgis info qgis version z�rich qgis code revision compiled against qt running against qt compiled against gdal running against gdal system info cpu type kernel type winnt kernel version how to reproduce execute qgis model via qgis browser click expression button deletetablerow input field qgis crashes

| 1

|

11,454

| 14,274,147,284

|

IssuesEvent

|

2020-11-22 01:57:21

|

tdwg/chrono

|

https://api.github.com/repos/tdwg/chrono

|

opened

|

Update Term List Abstract

|

Process - under public review

|

Following recommendation by @gkampmeier in issue #15, update abstract on term list document to say:

"The Chronometric Age Vocabulary is standard for transmitting information about chronometric ages - the processes and results of an assay used to determine the age of a sample. This document lists all terms in namespaces currently used in the vocabulary."

|

1.0

|

Update Term List Abstract - Following recommendation by @gkampmeier in issue #15, update abstract on term list document to say:

"The Chronometric Age Vocabulary is standard for transmitting information about chronometric ages - the processes and results of an assay used to determine the age of a sample. This document lists all terms in namespaces currently used in the vocabulary."

|

process

|

update term list abstract following recommendation by gkampmeier in issue update abstract on term list document to say the chronometric age vocabulary is standard for transmitting information about chronometric ages the processes and results of an assay used to determine the age of a sample this document lists all terms in namespaces currently used in the vocabulary

| 1

|

14,858

| 18,262,943,610

|

IssuesEvent

|

2021-10-04 03:13:25

|

quark-engine/quark-engine

|

https://api.github.com/repos/quark-engine/quark-engine

|

closed

|

Methods from the "Extended Class"

|

work-in-progress issue-processing-state-06

|

Hi all, my friends (@Dil3mm3 and @3aglew0) and I are working to implement new quark rules (version 21.3.2) for a university semester project (our supervisor is @cryptax). We were analyzing Brazking malware (hash SHA256 be3d8500df167b9aaf21c5f76df61c466808b8fdf60e4a7da8d6057d476282b6, let us know if you want the sample).

In a nutshell the problem we have encountered is the following: *we noticed Quark is not able to detect all the API called from an object of a class which extends a noticed Android Class. The root of cause comes from the signature of the API that in the smali code appears with the name of the child class.*

To explain better this problem, we provide the following example: Acessibilidade class is a custom Brazking class which extends Android AccesibilityService class.

```

package com.gservice.autobot;

...

public class Acessibilidade extends AccessibilityService{

...

}

```

Acessibilidade is widely used by this malware to perform accessibilty service actions, as the one below:

```

public void Clicar_Pos(int i, int i2) {

Acessibilidade acessibilidade = this.Contexto;

if (acessibilidade != null) {

try {

Clica(i, i2, acessibilidade.getRootInActiveWindow(), 0);

} catch (Exception unused) {

}

}

}

```

The incriminant line of code is the one where it is called the method `getRootInActionWindow` which in the smali code appears as following

`invoke-virtual {v0}, Lcom/gservice/autobot/Acessibilidade;->getRootInActiveWindow()Landroid/view/accessibility/AccessibilityNodeInfo;`

In the Context of Quark Rules it makes sense link this API with the PerformAction API of AccessibilityNodeInfo class

```

public void Clica(int i, int i2, AccessibilityNodeInfo accessibilityNodeInfo, int i3) {

...

accessibilityNodeInfo.performAction(16);

...

}

```

Finally we have created the following rule (note that the first API is written with the signature of AccessibilityService class and not Acessibilidade since it would not have sense make a rule so specific for a single malware)

```

{

"crime": "Use accessibility service to perform action getting root in active window",

"permission": [],

"api": [

{

"class": "Landroid/accessibilityservice/AccessibilityService;",

"method": "getRootInActiveWindow",

"descriptor": "()Landroid/view/accessibility/AccessibilityNodeInfo;"

},

{

"class": "Landroid/view/accessibility/AccessibilityNodeInfo;",

"method": "performAction",

"descriptor": "(I)Z"

}

],

"score": 1,

"label": [

"accessibility service",

"perform action"

]

}

```

This behaviour may have some repercussions on the functionalities of Quark: launching this rule over Brazking malware the score we obtain is 40% since the first API is not caught.

To sum up, we think that if a class extends another Android class (as in the case of BrazKing for the accessibility service, see class *Acessibilidade* that extends *AccessibilityService*), and a method **M** of the super class is called, it appears in the smali code with the signature of the custom class. If we defined in Quark a rule with that method **M**, from what we have noticed, Quark is not able to detect that **M** is actually a method of the super class.

Our worry is the following: if the malware author writes a class which extends an android general class (as it happens in Brazking for AccessibilityService), quark will never detect all the methods of the super class since in the smali code they appear with the signature of the child class.

Thanks in advance, hope to hear you soon

|

1.0

|

Methods from the "Extended Class" - Hi all, my friends (@Dil3mm3 and @3aglew0) and I are working to implement new quark rules (version 21.3.2) for a university semester project (our supervisor is @cryptax). We were analyzing Brazking malware (hash SHA256 be3d8500df167b9aaf21c5f76df61c466808b8fdf60e4a7da8d6057d476282b6, let us know if you want the sample).

In a nutshell the problem we have encountered is the following: *we noticed Quark is not able to detect all the API called from an object of a class which extends a noticed Android Class. The root of cause comes from the signature of the API that in the smali code appears with the name of the child class.*

To explain better this problem, we provide the following example: Acessibilidade class is a custom Brazking class which extends Android AccesibilityService class.

```

package com.gservice.autobot;

...

public class Acessibilidade extends AccessibilityService{

...

}

```

Acessibilidade is widely used by this malware to perform accessibilty service actions, as the one below:

```

public void Clicar_Pos(int i, int i2) {

Acessibilidade acessibilidade = this.Contexto;

if (acessibilidade != null) {

try {

Clica(i, i2, acessibilidade.getRootInActiveWindow(), 0);

} catch (Exception unused) {

}

}

}

```

The incriminant line of code is the one where it is called the method `getRootInActionWindow` which in the smali code appears as following

`invoke-virtual {v0}, Lcom/gservice/autobot/Acessibilidade;->getRootInActiveWindow()Landroid/view/accessibility/AccessibilityNodeInfo;`

In the Context of Quark Rules it makes sense link this API with the PerformAction API of AccessibilityNodeInfo class

```

public void Clica(int i, int i2, AccessibilityNodeInfo accessibilityNodeInfo, int i3) {

...

accessibilityNodeInfo.performAction(16);

...

}

```

Finally we have created the following rule (note that the first API is written with the signature of AccessibilityService class and not Acessibilidade since it would not have sense make a rule so specific for a single malware)

```

{

"crime": "Use accessibility service to perform action getting root in active window",

"permission": [],

"api": [

{

"class": "Landroid/accessibilityservice/AccessibilityService;",

"method": "getRootInActiveWindow",

"descriptor": "()Landroid/view/accessibility/AccessibilityNodeInfo;"

},

{

"class": "Landroid/view/accessibility/AccessibilityNodeInfo;",

"method": "performAction",

"descriptor": "(I)Z"

}

],

"score": 1,

"label": [

"accessibility service",

"perform action"

]

}

```

This behaviour may have some repercussions on the functionalities of Quark: launching this rule over Brazking malware the score we obtain is 40% since the first API is not caught.

To sum up, we think that if a class extends another Android class (as in the case of BrazKing for the accessibility service, see class *Acessibilidade* that extends *AccessibilityService*), and a method **M** of the super class is called, it appears in the smali code with the signature of the custom class. If we defined in Quark a rule with that method **M**, from what we have noticed, Quark is not able to detect that **M** is actually a method of the super class.

Our worry is the following: if the malware author writes a class which extends an android general class (as it happens in Brazking for AccessibilityService), quark will never detect all the methods of the super class since in the smali code they appear with the signature of the child class.

Thanks in advance, hope to hear you soon

|

process

|

methods from the extended class hi all my friends and and i are working to implement new quark rules version for a university semester project our supervisor is cryptax we were analyzing brazking malware hash let us know if you want the sample in a nutshell the problem we have encountered is the following we noticed quark is not able to detect all the api called from an object of a class which extends a noticed android class the root of cause comes from the signature of the api that in the smali code appears with the name of the child class to explain better this problem we provide the following example acessibilidade class is a custom brazking class which extends android accesibilityservice class package com gservice autobot public class acessibilidade extends accessibilityservice acessibilidade is widely used by this malware to perform accessibilty service actions as the one below public void clicar pos int i int acessibilidade acessibilidade this contexto if acessibilidade null try clica i acessibilidade getrootinactivewindow catch exception unused the incriminant line of code is the one where it is called the method getrootinactionwindow which in the smali code appears as following invoke virtual lcom gservice autobot acessibilidade getrootinactivewindow landroid view accessibility accessibilitynodeinfo in the context of quark rules it makes sense link this api with the performaction api of accessibilitynodeinfo class public void clica int i int accessibilitynodeinfo accessibilitynodeinfo int accessibilitynodeinfo performaction finally we have created the following rule note that the first api is written with the signature of accessibilityservice class and not acessibilidade since it would not have sense make a rule so specific for a single malware crime use accessibility service to perform action getting root in active window permission api class landroid accessibilityservice accessibilityservice method getrootinactivewindow descriptor landroid view accessibility accessibilitynodeinfo class landroid view accessibility accessibilitynodeinfo method performaction descriptor i z score label accessibility service perform action this behaviour may have some repercussions on the functionalities of quark launching this rule over brazking malware the score we obtain is since the first api is not caught to sum up we think that if a class extends another android class as in the case of brazking for the accessibility service see class acessibilidade that extends accessibilityservice and a method m of the super class is called it appears in the smali code with the signature of the custom class if we defined in quark a rule with that method m from what we have noticed quark is not able to detect that m is actually a method of the super class our worry is the following if the malware author writes a class which extends an android general class as it happens in brazking for accessibilityservice quark will never detect all the methods of the super class since in the smali code they appear with the signature of the child class thanks in advance hope to hear you soon

| 1

|

226,147

| 17,313,930,347

|

IssuesEvent

|

2021-07-27 01:32:19

|

microsoft/MixedRealityToolkit-Unity

|

https://api.github.com/repos/microsoft/MixedRealityToolkit-Unity

|

closed

|

Using AR Foundation documentation needs to be updated

|

Documentation

|

## Describe the issue

Bug #6646 no longer effects building on MacOS Big Sur with Xcode 12. The step for un-checking the 'Strip Engine Code' check box in Project Settings > Player > Other Settings > Optimization is no longer necessary to successfully build the MRTK for iOS.

## Feature area

iOS/AR Kit

## Existing doc link

https://github.com/microsoft/MixedRealityToolkit-Unity/blob/mrtk_development/Documentation/CrossPlatform/UsingARFoundation.md

## Additional context

Enabling the Unity AR camera settings provider should also be updated to include removing the WMR camera settings provider if using a profile that has that camera settings provider.

|

1.0

|

Using AR Foundation documentation needs to be updated - ## Describe the issue

Bug #6646 no longer effects building on MacOS Big Sur with Xcode 12. The step for un-checking the 'Strip Engine Code' check box in Project Settings > Player > Other Settings > Optimization is no longer necessary to successfully build the MRTK for iOS.

## Feature area

iOS/AR Kit

## Existing doc link

https://github.com/microsoft/MixedRealityToolkit-Unity/blob/mrtk_development/Documentation/CrossPlatform/UsingARFoundation.md

## Additional context

Enabling the Unity AR camera settings provider should also be updated to include removing the WMR camera settings provider if using a profile that has that camera settings provider.

|

non_process

|

using ar foundation documentation needs to be updated describe the issue bug no longer effects building on macos big sur with xcode the step for un checking the strip engine code check box in project settings player other settings optimization is no longer necessary to successfully build the mrtk for ios feature area ios ar kit existing doc link additional context enabling the unity ar camera settings provider should also be updated to include removing the wmr camera settings provider if using a profile that has that camera settings provider

| 0

|

16,551

| 21,568,599,171

|

IssuesEvent

|

2022-05-02 04:17:57

|

lynnandtonic/nestflix.fun

|

https://api.github.com/repos/lynnandtonic/nestflix.fun

|

closed

|

Diamond Jim

|

suggested title in process

|

Please add as much of the following info as you can:

Title: Bonjour, Diamond Jim

Type (film/tv show): Film

Film or show in which it appears: Tim and Eric's Billion Dollar Movie

Is the parent film/show streaming anywhere? Yes

About when in the parent film/show does it appear? Start

Actual footage of the film/show can be seen (yes/no)? Yes

https://www.youtube.com/watch?v=nUpvDOxg8as

|

1.0

|

Diamond Jim - Please add as much of the following info as you can:

Title: Bonjour, Diamond Jim

Type (film/tv show): Film

Film or show in which it appears: Tim and Eric's Billion Dollar Movie

Is the parent film/show streaming anywhere? Yes

About when in the parent film/show does it appear? Start

Actual footage of the film/show can be seen (yes/no)? Yes

https://www.youtube.com/watch?v=nUpvDOxg8as

|

process

|

diamond jim please add as much of the following info as you can title bonjour diamond jim type film tv show film film or show in which it appears tim and eric s billion dollar movie is the parent film show streaming anywhere yes about when in the parent film show does it appear start actual footage of the film show can be seen yes no yes

| 1

|

20,393

| 27,050,747,754

|

IssuesEvent

|

2023-02-13 13:05:07

|

bitfocus/companion-module-requests

|

https://api.github.com/repos/bitfocus/companion-module-requests

|

opened

|

Thomann t.racks 8x8 Matrix

|

NOT YET PROCESSED

|

- [X ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

Thomann's the t.racks 8x8 Matrix

What you would like to be able to make it do from Companion:

Routing Inputs to defines Outputs, Swithcing In/Outputs ON/OFF (Muting them)

Direct links or attachments to the ethernet control protocol or API:

https://images.static-thomann.de/pics/atg/atgdata/document/manual/490507_c_490507_r1_en_online.pdf

Sadly they just document RS232 but it seems like it's just RS232 via Ethernet/TCP Bridge.

|

1.0

|

Thomann t.racks 8x8 Matrix - - [X ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

Thomann's the t.racks 8x8 Matrix

What you would like to be able to make it do from Companion:

Routing Inputs to defines Outputs, Swithcing In/Outputs ON/OFF (Muting them)

Direct links or attachments to the ethernet control protocol or API:

https://images.static-thomann.de/pics/atg/atgdata/document/manual/490507_c_490507_r1_en_online.pdf

Sadly they just document RS232 but it seems like it's just RS232 via Ethernet/TCP Bridge.

|

process

|

thomann t racks matrix i have researched the list of existing companion modules and requests and have determined this has not yet been requested the name of the device hardware or software you would like to control thomann s the t racks matrix what you would like to be able to make it do from companion routing inputs to defines outputs swithcing in outputs on off muting them direct links or attachments to the ethernet control protocol or api sadly they just document but it seems like it s just via ethernet tcp bridge

| 1

|

6,609

| 9,694,244,392

|

IssuesEvent

|

2019-05-24 18:22:38

|

openopps/openopps-platform

|

https://api.github.com/repos/openopps/openopps-platform

|

closed

|

Bug: Applicant able to select save and continue without having 3 selections

|

Apply Process State Dept.

|

Environment: UAT

Browser: Chrome

Issue: During the apply process where user selects 3 internships, Save and continue shouldn’t be active until 3 choices are selected AND if I only select 2 and click save and continue, I get a red bar on the 3rd selection but no error message.

Steps to Reproduce:

1) Select apply on an internship

2) Select a second internship to apply to but not a third

3) Select Save and continue

- Red bar displays with no error message

Resolution:

- Disable save & continue until 3 options are selected

Issue found during 5/9/19 bug bash with State

|

1.0

|

Bug: Applicant able to select save and continue without having 3 selections - Environment: UAT

Browser: Chrome

Issue: During the apply process where user selects 3 internships, Save and continue shouldn’t be active until 3 choices are selected AND if I only select 2 and click save and continue, I get a red bar on the 3rd selection but no error message.

Steps to Reproduce:

1) Select apply on an internship

2) Select a second internship to apply to but not a third

3) Select Save and continue

- Red bar displays with no error message

Resolution:

- Disable save & continue until 3 options are selected

Issue found during 5/9/19 bug bash with State

|

process

|

bug applicant able to select save and continue without having selections environment uat browser chrome issue during the apply process where user selects internships save and continue shouldn’t be active until choices are selected and if i only select and click save and continue i get a red bar on the selection but no error message steps to reproduce select apply on an internship select a second internship to apply to but not a third select save and continue red bar displays with no error message resolution disable save continue until options are selected issue found during bug bash with state

| 1

|

14,922

| 18,359,528,586

|

IssuesEvent

|

2021-10-09 01:45:38

|

DevExpress/testcafe-hammerhead

|

https://api.github.com/repos/DevExpress/testcafe-hammerhead

|

closed

|

Cannot switch to an rewritten iframe

|

TYPE: bug AREA: client FREQUENCY: level 1 SYSTEM: iframe processing STATE: Stale

|

<!--

If you have all reproduction steps with a complete sample app, please share as many details as possible in the sections below.

Make sure that you tried using the latest Hammerhead version (https://github.com/DevExpress/testcafe-hammerhead/releases), where this behavior might have been already addressed.

Before submitting an issue, please check existing issues in this repository (https://github.com/DevExpress/testcafe-hammerhead/issues) in case a similar issue exists or was already addressed. This may save your time (and ours).

-->

### What is your Scenario?

Execute actions in iframe rewritten via `document.open`.

### What is the Current behavior?

An `Iframe content is not loaded` error shown when trying to switch to an iframe.

### What is the Expected behavior?

TestCafe should switch to an iframe and execute test actions.

### What is your public web site URL?

<!-- Share a public accessible link to your web site or provide a simple app which we can run. -->

Your website URL (or attach your complete example):

<details>

<summary>Your complete app code (or attach your test files):</summary>

<!-- Paste your app code here: -->

```js

const http = require('http');

http

.createServer((req, res) => {

switch (req.url) {

case '/':

res.writeHead(200, { 'content-type': 'text/html' });

res.end(`<iframe src="http://localhost:2201/"></iframe>`);

break;

default:

res.end();

}

})

.listen(2200);

http

.createServer((req, res) => {

switch (req.url) {

case '/':

res.writeHead(200, { 'content-type': 'text/html' });

res.end(`

<iframe src="/iframe"></iframe>

<script>

var iframe = document.querySelector('iframe');

iframe.contentDocument.open();

iframe.contentDocument.write('<div id="helloworld">Hello world</div>');

iframe.contentDocument.close();

</script>

`);

break;

case '/iframe':

res.writeHead(200, { 'content-type': 'text/html' });

res.end(``);

break;

default:

res.end();

}

})

.listen(2201);

```

</details>

<details>

<summary>Test:</summary>

<!-- If applicable, add screenshots to help explain the issue. -->

```js

fixture`fixture`

.page('http://localhost:2200/');

import { Selector } from 'testcafe';

test('test', async t => {

await t

.switchToIframe('iframe');

await t.debug(1000);

await t.switchToIframe(() => document.querySelector('iframe'));

const selector = Selector('div');

await s();

console.log("This is never logged as it's stuck");

});

```

</details>

### Steps to Reproduce:

<!-- Describe what we should do to reproduce the behavior you encountered. -->

1. Go to: ...

2. Execute this command: ...

3. See the error: ...

### Your Environment details:

* node.js version: 12.14.1

* browser name and version: Chrome 79

* platform and version: Windows 10 1909

* other:

|

1.0

|

Cannot switch to an rewritten iframe - <!--

If you have all reproduction steps with a complete sample app, please share as many details as possible in the sections below.

Make sure that you tried using the latest Hammerhead version (https://github.com/DevExpress/testcafe-hammerhead/releases), where this behavior might have been already addressed.

Before submitting an issue, please check existing issues in this repository (https://github.com/DevExpress/testcafe-hammerhead/issues) in case a similar issue exists or was already addressed. This may save your time (and ours).

-->

### What is your Scenario?

Execute actions in iframe rewritten via `document.open`.

### What is the Current behavior?

An `Iframe content is not loaded` error shown when trying to switch to an iframe.

### What is the Expected behavior?

TestCafe should switch to an iframe and execute test actions.

### What is your public web site URL?

<!-- Share a public accessible link to your web site or provide a simple app which we can run. -->

Your website URL (or attach your complete example):

<details>

<summary>Your complete app code (or attach your test files):</summary>

<!-- Paste your app code here: -->

```js

const http = require('http');

http

.createServer((req, res) => {

switch (req.url) {

case '/':

res.writeHead(200, { 'content-type': 'text/html' });

res.end(`<iframe src="http://localhost:2201/"></iframe>`);

break;

default:

res.end();

}

})

.listen(2200);

http

.createServer((req, res) => {

switch (req.url) {

case '/':

res.writeHead(200, { 'content-type': 'text/html' });

res.end(`

<iframe src="/iframe"></iframe>

<script>

var iframe = document.querySelector('iframe');

iframe.contentDocument.open();

iframe.contentDocument.write('<div id="helloworld">Hello world</div>');

iframe.contentDocument.close();

</script>

`);

break;

case '/iframe':

res.writeHead(200, { 'content-type': 'text/html' });

res.end(``);

break;

default:

res.end();

}

})

.listen(2201);

```

</details>

<details>

<summary>Test:</summary>

<!-- If applicable, add screenshots to help explain the issue. -->

```js

fixture`fixture`

.page('http://localhost:2200/');

import { Selector } from 'testcafe';

test('test', async t => {

await t

.switchToIframe('iframe');

await t.debug(1000);

await t.switchToIframe(() => document.querySelector('iframe'));

const selector = Selector('div');

await s();

console.log("This is never logged as it's stuck");

});

```

</details>

### Steps to Reproduce:

<!-- Describe what we should do to reproduce the behavior you encountered. -->

1. Go to: ...

2. Execute this command: ...

3. See the error: ...

### Your Environment details:

* node.js version: 12.14.1

* browser name and version: Chrome 79

* platform and version: Windows 10 1909

* other:

|

process

|

cannot switch to an rewritten iframe if you have all reproduction steps with a complete sample app please share as many details as possible in the sections below make sure that you tried using the latest hammerhead version where this behavior might have been already addressed before submitting an issue please check existing issues in this repository in case a similar issue exists or was already addressed this may save your time and ours what is your scenario execute actions in iframe rewritten via document open what is the current behavior an iframe content is not loaded error shown when trying to switch to an iframe what is the expected behavior testcafe should switch to an iframe and execute test actions what is your public web site url your website url or attach your complete example your complete app code or attach your test files js const http require http http createserver req res switch req url case res writehead content type text html res end iframe src break default res end listen http createserver req res switch req url case res writehead content type text html res end var iframe document queryselector iframe iframe contentdocument open iframe contentdocument write hello world iframe contentdocument close break case iframe res writehead content type text html res end break default res end listen test js fixture fixture page import selector from testcafe test test async t await t switchtoiframe iframe await t debug await t switchtoiframe document queryselector iframe const selector selector div await s console log this is never logged as it s stuck steps to reproduce go to execute this command see the error your environment details node js version browser name and version chrome platform and version windows other

| 1

|

268,793

| 20,361,469,425

|

IssuesEvent

|

2022-02-20 18:51:42

|

ChristopherSzczyglowski/python_package_template

|

https://api.github.com/repos/ChristopherSzczyglowski/python_package_template

|

closed

|

Move python package management to `pipenv`

|

documentation enhancement

|

I like the functionality of the `Pipfile` and `Pipfile.lock` - let's bring it in.

This is made necessary by the fact that CI was hanging because it could not resolve my dependencies so I had to use `pip freeze`. Once you have a frozen pip file you may as well got the whole hog and use a `Pipfile.lock`.

Tasks

* [ ] Need to update `README.md` with instructions on how to install `pipenv`

* [ ] Convert `requirements.txt` and `requirements-dev.txt` into `Pipfile`

* [ ] Update recipes in `Makefile`

* [ ] Update `install-deps` job in CI pipeline

|

1.0

|

Move python package management to `pipenv` - I like the functionality of the `Pipfile` and `Pipfile.lock` - let's bring it in.

This is made necessary by the fact that CI was hanging because it could not resolve my dependencies so I had to use `pip freeze`. Once you have a frozen pip file you may as well got the whole hog and use a `Pipfile.lock`.

Tasks

* [ ] Need to update `README.md` with instructions on how to install `pipenv`

* [ ] Convert `requirements.txt` and `requirements-dev.txt` into `Pipfile`

* [ ] Update recipes in `Makefile`

* [ ] Update `install-deps` job in CI pipeline

|

non_process

|

move python package management to pipenv i like the functionality of the pipfile and pipfile lock let s bring it in this is made necessary by the fact that ci was hanging because it could not resolve my dependencies so i had to use pip freeze once you have a frozen pip file you may as well got the whole hog and use a pipfile lock tasks need to update readme md with instructions on how to install pipenv convert requirements txt and requirements dev txt into pipfile update recipes in makefile update install deps job in ci pipeline

| 0

|

7,691

| 10,778,934,184

|

IssuesEvent

|

2019-11-04 09:27:31

|

lutraconsulting/qgis-crayfish-plugin

|

https://api.github.com/repos/lutraconsulting/qgis-crayfish-plugin

|

closed

|

Create contours (area/line) from mesh

|

enhancement processing

|

Adding support (like old Crayfish) to generate contour (areas or lines)

|

1.0

|

Create contours (area/line) from mesh - Adding support (like old Crayfish) to generate contour (areas or lines)

|

process

|

create contours area line from mesh adding support like old crayfish to generate contour areas or lines

| 1

|

18,081

| 24,096,568,872

|

IssuesEvent

|

2022-09-19 19:19:18

|

ankidroid/Anki-Android

|

https://api.github.com/repos/ankidroid/Anki-Android

|

closed

|

[Bug] Publish / release script can partially publish

|

Bug Keep Open Dev Release process

|

###### Reproduction Steps

1. Attempt to publish (using the release script called by the publish.yml workflow) while there is a translation error that will fail on lintRelease - https://github.com/ankidroid/Anki-Android/runs/2108464284?check_suite_focus=true

2.Try to publish again after fixing the build error (it will fail because the first publish did not fail completely, it only failed partially) https://github.com/ankidroid/Anki-Android/runs/2109306966?check_suite_focus=true

3.

###### Expected Result

A clean failure, no artifacts published anywhere, no git commits

###### Actual Result

It actually will publish to Google Play (thus consuming the version code because Google Play will now have APKs with the new version code) but it will then fail on the lintRelease error and not actually commit the new `AnkiDroid/build.gradle` edit with the incremented version code

The only resolution at that point is to manually bump the version information in build.gradle then you can publish again once you sort out the build error

This is obviously a serious failure in the automated release process but at the same time it has an easy fix and should not have happened in the first place (I rushed an alpha publish, my fault), so it is not a super high priority

But the next time I go into the github workflows to polish - something I do every month or so to add a new feature to them), I'll clean this up if no one beats me to it

###### Debug info

Refer to the [support page](https://ankidroid.org/docs/help.html) if you are unsure where to get the "debug info".

###### Research

*Enter an [x] character to confirm the points below:*

- [ ] I have read the [support page](https://ankidroid.org/docs/help.html) and am reporting a bug or enhancement request specific to AnkiDroid

- [ ] I have checked the [manual](https://ankidroid.org/docs/manual.html) and the [FAQ](https://github.com/ankidroid/Anki-Android/wiki/FAQ) and could not find a solution to my issue

- [ ] I have searched for similar existing issues here and on the user forum

- [ ] (Optional) I have confirmed the issue is not resolved in the latest alpha release ([instructions](https://docs.ankidroid.org/manual.html#betaTesting))

|

1.0

|

[Bug] Publish / release script can partially publish - ###### Reproduction Steps

1. Attempt to publish (using the release script called by the publish.yml workflow) while there is a translation error that will fail on lintRelease - https://github.com/ankidroid/Anki-Android/runs/2108464284?check_suite_focus=true

2.Try to publish again after fixing the build error (it will fail because the first publish did not fail completely, it only failed partially) https://github.com/ankidroid/Anki-Android/runs/2109306966?check_suite_focus=true

3.

###### Expected Result

A clean failure, no artifacts published anywhere, no git commits

###### Actual Result

It actually will publish to Google Play (thus consuming the version code because Google Play will now have APKs with the new version code) but it will then fail on the lintRelease error and not actually commit the new `AnkiDroid/build.gradle` edit with the incremented version code

The only resolution at that point is to manually bump the version information in build.gradle then you can publish again once you sort out the build error

This is obviously a serious failure in the automated release process but at the same time it has an easy fix and should not have happened in the first place (I rushed an alpha publish, my fault), so it is not a super high priority

But the next time I go into the github workflows to polish - something I do every month or so to add a new feature to them), I'll clean this up if no one beats me to it

###### Debug info

Refer to the [support page](https://ankidroid.org/docs/help.html) if you are unsure where to get the "debug info".

###### Research

*Enter an [x] character to confirm the points below:*

- [ ] I have read the [support page](https://ankidroid.org/docs/help.html) and am reporting a bug or enhancement request specific to AnkiDroid

- [ ] I have checked the [manual](https://ankidroid.org/docs/manual.html) and the [FAQ](https://github.com/ankidroid/Anki-Android/wiki/FAQ) and could not find a solution to my issue

- [ ] I have searched for similar existing issues here and on the user forum

- [ ] (Optional) I have confirmed the issue is not resolved in the latest alpha release ([instructions](https://docs.ankidroid.org/manual.html#betaTesting))

|

process

|

publish release script can partially publish reproduction steps attempt to publish using the release script called by the publish yml workflow while there is a translation error that will fail on lintrelease try to publish again after fixing the build error it will fail because the first publish did not fail completely it only failed partially expected result a clean failure no artifacts published anywhere no git commits actual result it actually will publish to google play thus consuming the version code because google play will now have apks with the new version code but it will then fail on the lintrelease error and not actually commit the new ankidroid build gradle edit with the incremented version code the only resolution at that point is to manually bump the version information in build gradle then you can publish again once you sort out the build error this is obviously a serious failure in the automated release process but at the same time it has an easy fix and should not have happened in the first place i rushed an alpha publish my fault so it is not a super high priority but the next time i go into the github workflows to polish something i do every month or so to add a new feature to them i ll clean this up if no one beats me to it debug info refer to the if you are unsure where to get the debug info research enter an character to confirm the points below i have read the and am reporting a bug or enhancement request specific to ankidroid i have checked the and the and could not find a solution to my issue i have searched for similar existing issues here and on the user forum optional i have confirmed the issue is not resolved in the latest alpha release

| 1

|

17,810

| 23,738,783,174

|

IssuesEvent

|

2022-08-31 10:28:29

|

Tencent/tdesign-miniprogram

|

https://api.github.com/repos/Tencent/tdesign-miniprogram

|

closed

|

dialog组件内 overlay组件z-index问题

|

enhancement good first issue in process

|

### tdesign-miniprogram 版本

0.18.0

### 重现链接

_No response_

### 重现步骤

popup组件和dialog组件同时使用时 dialog设置z-index dialog里的overlay组件的z-index还是默认值

### 期望结果

组件内的z-index跟随 dialogz-index

### 实际结果

_No response_

### 框架版本

_No response_

### 浏览器版本

_No response_

### 系统版本

_No response_

### Node版本

_No response_

### 补充说明

_No response_

|

1.0

|

dialog组件内 overlay组件z-index问题 - ### tdesign-miniprogram 版本

0.18.0

### 重现链接

_No response_

### 重现步骤

popup组件和dialog组件同时使用时 dialog设置z-index dialog里的overlay组件的z-index还是默认值

### 期望结果

组件内的z-index跟随 dialogz-index

### 实际结果

_No response_

### 框架版本

_No response_

### 浏览器版本

_No response_

### 系统版本

_No response_

### Node版本

_No response_

### 补充说明

_No response_

|

process

|

dialog组件内 overlay组件z index问题 tdesign miniprogram 版本 重现链接 no response 重现步骤 popup组件和dialog组件同时使用时 dialog设置z index dialog里的overlay组件的z index还是默认值 期望结果 组件内的z index跟随 dialogz index 实际结果 no response 框架版本 no response 浏览器版本 no response 系统版本 no response node版本 no response 补充说明 no response

| 1

|

39,555

| 5,102,400,775

|

IssuesEvent

|

2017-01-04 18:11:38

|

18F/crime-data-api

|

https://api.github.com/repos/18F/crime-data-api

|

closed

|

Consolidate Visual Design Direction

|

design

|

- [x] Review existing visual design directions from discovery sprints and pick out best attributes and components to move forward with

- [x] Gather opinions/general feeling on existing directions from team (which and why)

- [ ] Combine selected attributes into one style

|

1.0

|

Consolidate Visual Design Direction - - [x] Review existing visual design directions from discovery sprints and pick out best attributes and components to move forward with

- [x] Gather opinions/general feeling on existing directions from team (which and why)

- [ ] Combine selected attributes into one style

|

non_process

|

consolidate visual design direction review existing visual design directions from discovery sprints and pick out best attributes and components to move forward with gather opinions general feeling on existing directions from team which and why combine selected attributes into one style

| 0

|

71,731

| 23,778,392,412

|

IssuesEvent

|

2022-09-02 00:04:28

|

CorfuDB/CorfuDB

|

https://api.github.com/repos/CorfuDB/CorfuDB

|

closed

|

Get rid of LogData deserialization dependency on CorfuRuntime

|

defect

|

## Overview

Seems like we used to serialize the CorfuObject to the global log data, which introduced a dependency on CorfuRuntime when deserializing the payload from log data. We need to remove this dependency as we're not serialize the whole CorfuObject now.

|

1.0

|

Get rid of LogData deserialization dependency on CorfuRuntime - ## Overview

Seems like we used to serialize the CorfuObject to the global log data, which introduced a dependency on CorfuRuntime when deserializing the payload from log data. We need to remove this dependency as we're not serialize the whole CorfuObject now.

|

non_process

|

get rid of logdata deserialization dependency on corfuruntime overview seems like we used to serialize the corfuobject to the global log data which introduced a dependency on corfuruntime when deserializing the payload from log data we need to remove this dependency as we re not serialize the whole corfuobject now

| 0

|

12,445

| 14,934,361,360

|

IssuesEvent

|

2021-01-25 10:25:57

|

prisma/prisma

|

https://api.github.com/repos/prisma/prisma

|

closed

|

Implicit many-to-many relations don't work with native types

|

bug/2-confirmed kind/bug process/candidate team/migrations topic: migrate topic: native database types

|

Hi Prisma Team! Prisma Migrate just crashed.

## Versions

| Name | Version |

|-------------|--------------------|

| Platform | darwin |

| Node | v14.3.0 |

| Prisma CLI | 2.15.0 |

| Binary | e51dc3b5a9ee790a07104bec1c9477d51740fe54|

## Error

```

Error: Error in migration engine.

Reason: [migration-engine/connectors/sql-migration-connector/src/sql_schema_calculator.rs:290:41] not yet implemented

Please create an issue in the migrate repo with

your `schema.prisma` and the prisma command you tried to use 🙏:

https://github.com/prisma/prisma/issues/new

```

|

1.0

|

Implicit many-to-many relations don't work with native types - Hi Prisma Team! Prisma Migrate just crashed.

## Versions

| Name | Version |

|-------------|--------------------|

| Platform | darwin |

| Node | v14.3.0 |

| Prisma CLI | 2.15.0 |

| Binary | e51dc3b5a9ee790a07104bec1c9477d51740fe54|

## Error

```

Error: Error in migration engine.

Reason: [migration-engine/connectors/sql-migration-connector/src/sql_schema_calculator.rs:290:41] not yet implemented

Please create an issue in the migrate repo with

your `schema.prisma` and the prisma command you tried to use 🙏:

https://github.com/prisma/prisma/issues/new

```

|

process

|

implicit many to many relations don t work with native types hi prisma team prisma migrate just crashed versions name version platform darwin node prisma cli binary error error error in migration engine reason not yet implemented please create an issue in the migrate repo with your schema prisma and the prisma command you tried to use 🙏

| 1

|

7,956

| 7,161,042,363

|

IssuesEvent

|

2018-01-28 09:21:38

|

aseba-community/aseba

|

https://api.github.com/repos/aseba-community/aseba

|

opened

|

Create a minimalistic web site

|

Infrastructure Wish

|

We need a minimalistic web site for aseba.io. Some months ago @davidjsherman and I discussed a plan for a full-featured web site. While I still think it is a good target, I would like to take an intermediate step so that the identity of Aseba can be clarified and people can easily understand what it is.

Therefore I propose the following structure:

* An image, we could use [this one](https://github.com/aseba-community/aseba/blob/master/docsource/wiki/aseba-studio-overlay.xcf) as a start before we have better.

* A selection of languages.

* A first paragraph describing Aseba, taking from what we already have.

* A second paragraph giving a brief history and linking to the author list.

* A set of three boxes with each one link:

* Download installers (link to a download page)

* Read the documentation (link to the user manual on read the doc)

* Compile the source code (link to https://github.com/aseba-community/aseba#supported-platforms)

For me the main uncertainty is the download page. @davidjsherman and I discussed some times ago whether the idea to create github release for nightly builds and tags, and upload the binaries for at least Windows and macOS there. I contacted Github's team and they confirmed that it is the right way of doing it. @davidjsherman found a [script to upload releases on github](https://github.com/aktau/github-release). The question remains for Linux and embedded hosts, so we might want a separate download page that provide the following links:

- Latest and official github releases with Windows and macOS binaries

- Informations for Linux hosts (PPA for Ubuntu, official packages for Debian, Suse farm's builds for RPM, etc.)

We could put these in the main page but I worry for clutter. We could also try to detect the host system and provide by default a link for this system, but we would still need a page for alternative downloads. Anyway the requirements should be:

- Easy to use for the user

- Easy to maintain for us and contributors

Technically I suggest to use [Jekyll](https://jekyllrb.com/) as it is natively supported by github.

|

1.0

|

Create a minimalistic web site - We need a minimalistic web site for aseba.io. Some months ago @davidjsherman and I discussed a plan for a full-featured web site. While I still think it is a good target, I would like to take an intermediate step so that the identity of Aseba can be clarified and people can easily understand what it is.

Therefore I propose the following structure:

* An image, we could use [this one](https://github.com/aseba-community/aseba/blob/master/docsource/wiki/aseba-studio-overlay.xcf) as a start before we have better.

* A selection of languages.

* A first paragraph describing Aseba, taking from what we already have.

* A second paragraph giving a brief history and linking to the author list.

* A set of three boxes with each one link:

* Download installers (link to a download page)

* Read the documentation (link to the user manual on read the doc)

* Compile the source code (link to https://github.com/aseba-community/aseba#supported-platforms)

For me the main uncertainty is the download page. @davidjsherman and I discussed some times ago whether the idea to create github release for nightly builds and tags, and upload the binaries for at least Windows and macOS there. I contacted Github's team and they confirmed that it is the right way of doing it. @davidjsherman found a [script to upload releases on github](https://github.com/aktau/github-release). The question remains for Linux and embedded hosts, so we might want a separate download page that provide the following links:

- Latest and official github releases with Windows and macOS binaries

- Informations for Linux hosts (PPA for Ubuntu, official packages for Debian, Suse farm's builds for RPM, etc.)

We could put these in the main page but I worry for clutter. We could also try to detect the host system and provide by default a link for this system, but we would still need a page for alternative downloads. Anyway the requirements should be:

- Easy to use for the user

- Easy to maintain for us and contributors