Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

176,138

| 6,556,836,588

|

IssuesEvent

|

2017-09-06 15:20:36

|

strongloop/loopback-component-oauth2

|

https://api.github.com/repos/strongloop/loopback-component-oauth2

|

closed

|

Unable to identify when login is failed

|

feature needs-priority stale

|

Hi,

When the component must ensure that the user is logged, depending on the grant method, we generally reach the following line of code inside `oauth2-loopback.js` :

```

login.ensureLoggedIn({ redirectTo: options.loginPage || '/login' }),

```

If we send back false as user, the component redirect the user to exactly the same _loginPage_ and there's no way to indicate to the user that he does not give the right login/password.

May you make this configurable so we can set a GET param to indicate that the login did not go well ?

Cheers.

Max

|

1.0

|

Unable to identify when login is failed - Hi,

When the component must ensure that the user is logged, depending on the grant method, we generally reach the following line of code inside `oauth2-loopback.js` :

```

login.ensureLoggedIn({ redirectTo: options.loginPage || '/login' }),

```

If we send back false as user, the component redirect the user to exactly the same _loginPage_ and there's no way to indicate to the user that he does not give the right login/password.

May you make this configurable so we can set a GET param to indicate that the login did not go well ?

Cheers.

Max

|

non_process

|

unable to identify when login is failed hi when the component must ensure that the user is logged depending on the grant method we generally reach the following line of code inside loopback js login ensureloggedin redirectto options loginpage login if we send back false as user the component redirect the user to exactly the same loginpage and there s no way to indicate to the user that he does not give the right login password may you make this configurable so we can set a get param to indicate that the login did not go well cheers max

| 0

|

14,760

| 18,041,411,187

|

IssuesEvent

|

2021-09-18 05:12:31

|

ooi-data/CE04OSPD-DP01B-01-CTDPFL105-recovered_inst-dpc_ctd_instrument_recovered

|

https://api.github.com/repos/ooi-data/CE04OSPD-DP01B-01-CTDPFL105-recovered_inst-dpc_ctd_instrument_recovered

|

opened

|

🛑 Processing failed: TypeError

|

process

|

## Overview

`TypeError` found in `processing_task` task during run ended on 2021-09-18T05:12:30.549031.

## Details

Flow name: `CE04OSPD-DP01B-01-CTDPFL105-recovered_inst-dpc_ctd_instrument_recovered`

Task name: `processing_task`

Error type: `TypeError`

Error message: 'NoneType' object is not subscriptable

<details>

<summary>Traceback</summary>

```

Traceback (most recent call last):

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/ooi_harvester/processor/pipeline.py", line 101, in processing

final_path = finalize_zarr(

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/ooi_harvester/processor/__init__.py", line 359, in finalize_zarr

source_store.fs.delete(source_store.root, recursive=True)

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/fsspec/spec.py", line 1187, in delete

return self.rm(path, recursive=recursive, maxdepth=maxdepth)

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/fsspec/asyn.py", line 88, in wrapper

return sync(self.loop, func, *args, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/fsspec/asyn.py", line 69, in sync

raise result[0]

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/fsspec/asyn.py", line 25, in _runner

result[0] = await coro

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/s3fs/core.py", line 1677, in _rm

await asyncio.gather(

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/s3fs/core.py", line 1657, in _bulk_delete

await self._call_s3("delete_objects", kwargs, Bucket=bucket, Delete=delete_keys)

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/s3fs/core.py", line 267, in _call_s3

err = translate_boto_error(err)

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/s3fs/errors.py", line 142, in translate_boto_error

code = error.response["Error"].get("Code")

TypeError: 'NoneType' object is not subscriptable

```

</details>

|

1.0

|

🛑 Processing failed: TypeError - ## Overview

`TypeError` found in `processing_task` task during run ended on 2021-09-18T05:12:30.549031.

## Details

Flow name: `CE04OSPD-DP01B-01-CTDPFL105-recovered_inst-dpc_ctd_instrument_recovered`

Task name: `processing_task`

Error type: `TypeError`

Error message: 'NoneType' object is not subscriptable

<details>

<summary>Traceback</summary>

```

Traceback (most recent call last):

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/ooi_harvester/processor/pipeline.py", line 101, in processing

final_path = finalize_zarr(

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/ooi_harvester/processor/__init__.py", line 359, in finalize_zarr

source_store.fs.delete(source_store.root, recursive=True)

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/fsspec/spec.py", line 1187, in delete

return self.rm(path, recursive=recursive, maxdepth=maxdepth)

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/fsspec/asyn.py", line 88, in wrapper

return sync(self.loop, func, *args, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/fsspec/asyn.py", line 69, in sync

raise result[0]

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/fsspec/asyn.py", line 25, in _runner

result[0] = await coro

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/s3fs/core.py", line 1677, in _rm

await asyncio.gather(

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/s3fs/core.py", line 1657, in _bulk_delete

await self._call_s3("delete_objects", kwargs, Bucket=bucket, Delete=delete_keys)

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/s3fs/core.py", line 267, in _call_s3

err = translate_boto_error(err)

File "/srv/conda/envs/notebook/lib/python3.8/site-packages/s3fs/errors.py", line 142, in translate_boto_error

code = error.response["Error"].get("Code")

TypeError: 'NoneType' object is not subscriptable

```

</details>

|

process

|

🛑 processing failed typeerror overview typeerror found in processing task task during run ended on details flow name recovered inst dpc ctd instrument recovered task name processing task error type typeerror error message nonetype object is not subscriptable traceback traceback most recent call last file srv conda envs notebook lib site packages ooi harvester processor pipeline py line in processing final path finalize zarr file srv conda envs notebook lib site packages ooi harvester processor init py line in finalize zarr source store fs delete source store root recursive true file srv conda envs notebook lib site packages fsspec spec py line in delete return self rm path recursive recursive maxdepth maxdepth file srv conda envs notebook lib site packages fsspec asyn py line in wrapper return sync self loop func args kwargs file srv conda envs notebook lib site packages fsspec asyn py line in sync raise result file srv conda envs notebook lib site packages fsspec asyn py line in runner result await coro file srv conda envs notebook lib site packages core py line in rm await asyncio gather file srv conda envs notebook lib site packages core py line in bulk delete await self call delete objects kwargs bucket bucket delete delete keys file srv conda envs notebook lib site packages core py line in call err translate boto error err file srv conda envs notebook lib site packages errors py line in translate boto error code error response get code typeerror nonetype object is not subscriptable

| 1

|

49,975

| 7,549,130,628

|

IssuesEvent

|

2018-04-18 13:27:41

|

pburtchaell/redux-promise-middleware

|

https://api.github.com/repos/pburtchaell/redux-promise-middleware

|

opened

|

Fix 404 Error in Complex Example

|

documentation good-first-issue

|

The complex example is throwing a 404 error. It's probably best to redevelop this example to use some fun public API—like the [Cat API](http://thecatapi.com/).

|

1.0

|

Fix 404 Error in Complex Example - The complex example is throwing a 404 error. It's probably best to redevelop this example to use some fun public API—like the [Cat API](http://thecatapi.com/).

|

non_process

|

fix error in complex example the complex example is throwing a error it s probably best to redevelop this example to use some fun public api—like the

| 0

|

1,685

| 4,328,565,518

|

IssuesEvent

|

2016-07-26 14:25:43

|

CGAL/cgal

|

https://api.github.com/repos/CGAL/cgal

|

closed

|

Add light display on point sets with normals in Polyhedron demo

|

CGAL 3D demo feature request Pkg::Point_set_processing

|

When point sets have normals, a better and clearer display can be done using light (similarly to a polyhedron).

(@maxGimeno If you add this to your todo-list, this is [the commit](https://github.com/CGAL/cgal-dev/commit/455843e1bf01faf87eda7994b4c715d7ea173b6d) where we worked on it together last time, although you can't use it directly because it modifies other unrelated files – sorry about that, I should have separated it.)

|

1.0

|

Add light display on point sets with normals in Polyhedron demo - When point sets have normals, a better and clearer display can be done using light (similarly to a polyhedron).

(@maxGimeno If you add this to your todo-list, this is [the commit](https://github.com/CGAL/cgal-dev/commit/455843e1bf01faf87eda7994b4c715d7ea173b6d) where we worked on it together last time, although you can't use it directly because it modifies other unrelated files – sorry about that, I should have separated it.)

|

process

|

add light display on point sets with normals in polyhedron demo when point sets have normals a better and clearer display can be done using light similarly to a polyhedron maxgimeno if you add this to your todo list this is where we worked on it together last time although you can t use it directly because it modifies other unrelated files – sorry about that i should have separated it

| 1

|

15,064

| 18,764,637,536

|

IssuesEvent

|

2021-11-05 21:18:14

|

ORNL-AMO/AMO-Tools-Desktop

|

https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop

|

closed

|

PHAST flue gas property suite error

|

bug Process Heating

|

Reproduce

- Need to remove save() method if condition around line 200 flue-gas-losses-form-mass for bug to appear. This was a temprorary fix

- Go to PHAST --> flue gas --> switching from gas to solid/liquid

See last comments of #435 for problems related to this

|

1.0

|

PHAST flue gas property suite error - Reproduce

- Need to remove save() method if condition around line 200 flue-gas-losses-form-mass for bug to appear. This was a temprorary fix

- Go to PHAST --> flue gas --> switching from gas to solid/liquid

See last comments of #435 for problems related to this

|

process

|

phast flue gas property suite error reproduce need to remove save method if condition around line flue gas losses form mass for bug to appear this was a temprorary fix go to phast flue gas switching from gas to solid liquid see last comments of for problems related to this

| 1

|

1,529

| 4,118,762,887

|

IssuesEvent

|

2016-06-08 12:48:45

|

World4Fly/Interface-for-Arduino

|

https://api.github.com/repos/World4Fly/Interface-for-Arduino

|

closed

|

Control Tetris from application

|

enhancement process

|

- [ ] Create mind concept (interaction between tabs and accessing Arduino information)

|

1.0

|

Control Tetris from application - - [ ] Create mind concept (interaction between tabs and accessing Arduino information)

|

process

|

control tetris from application create mind concept interaction between tabs and accessing arduino information

| 1

|

367,876

| 10,862,443,149

|

IssuesEvent

|

2019-11-14 13:19:36

|

mozilla/voice-web

|

https://api.github.com/repos/mozilla/voice-web

|

opened

|

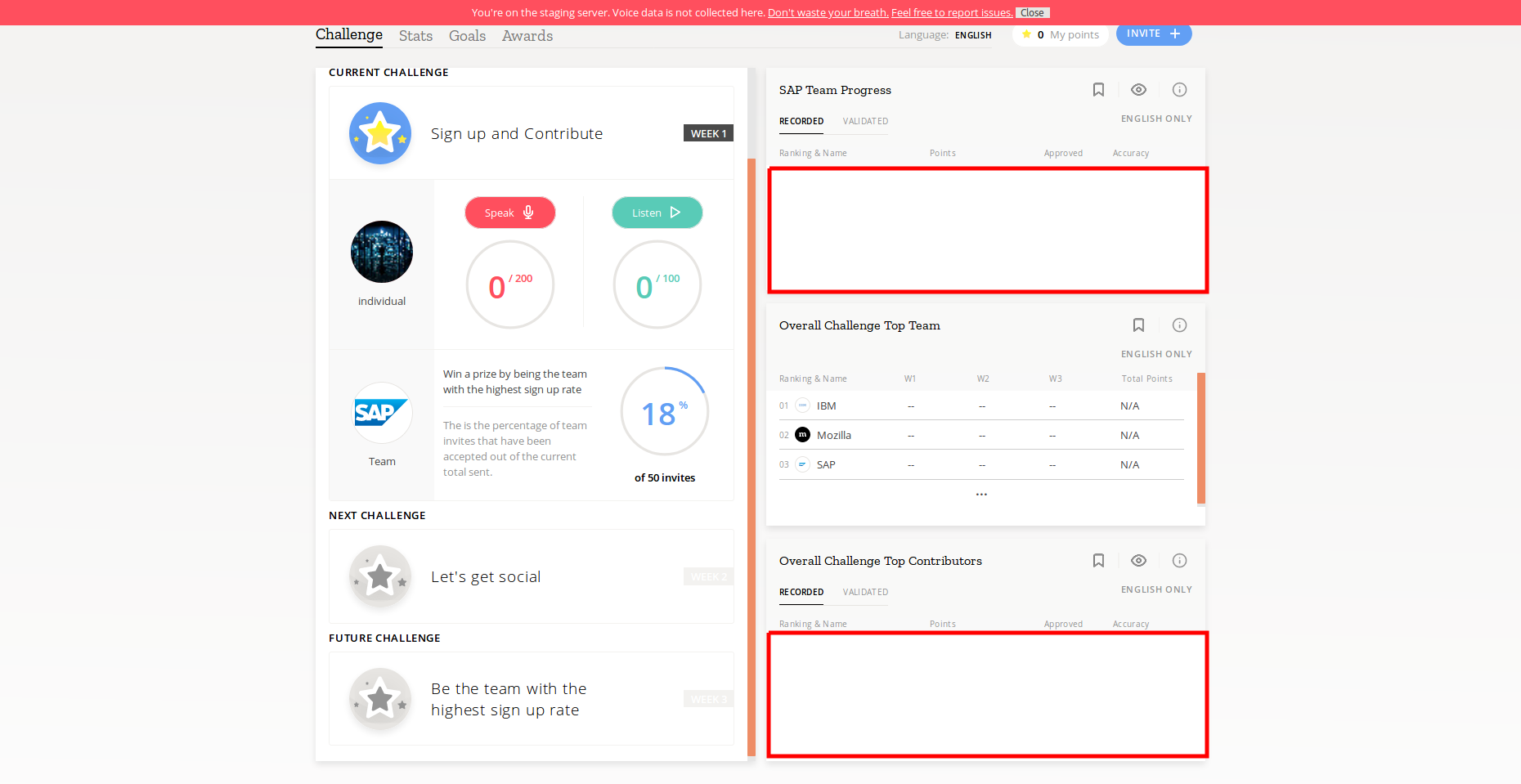

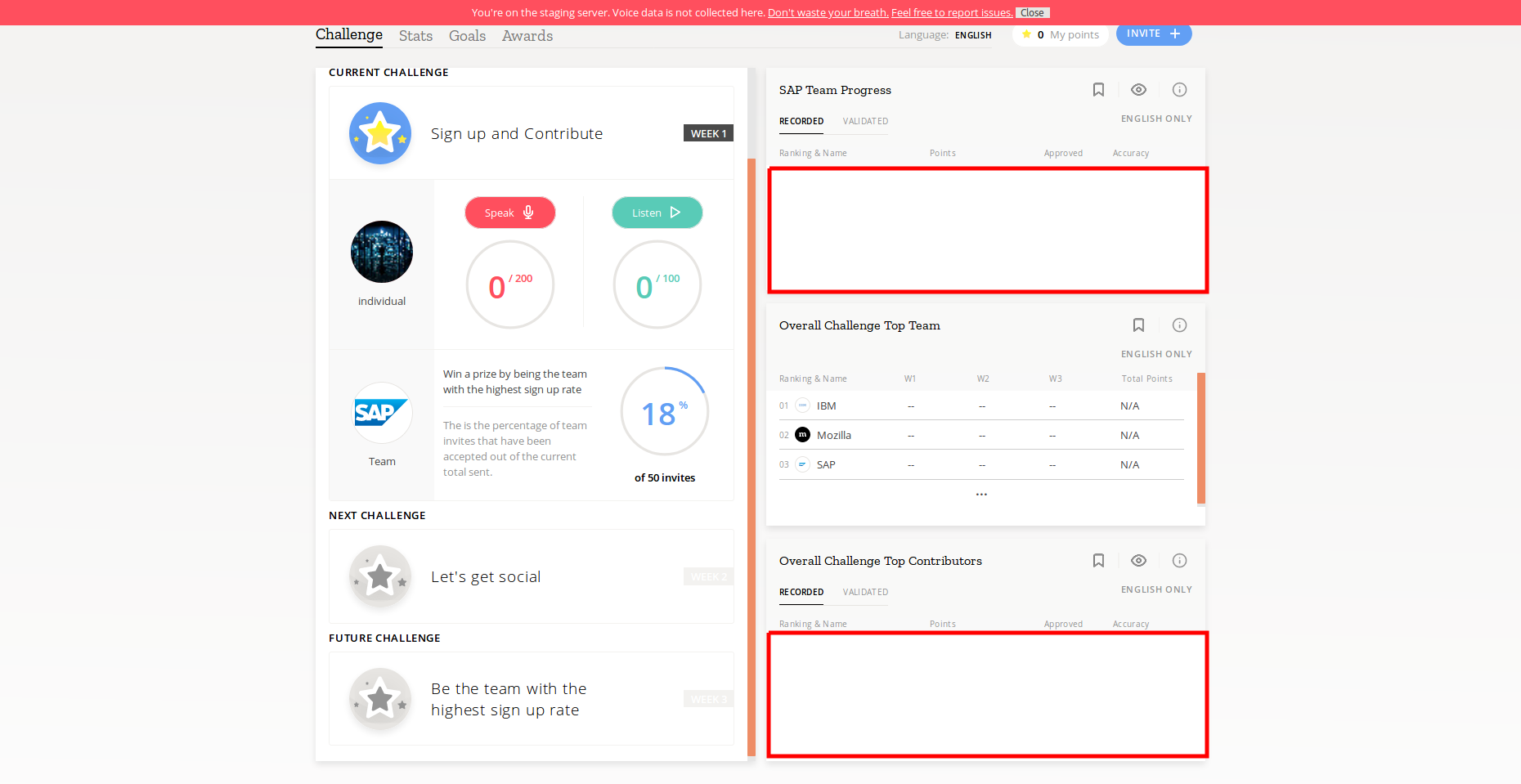

"Team Progress" and "Top Contributors" dashboards are empty, even though people signed up and contributed to the challenge

|

Priority: P0 Type: Bug voice-challenge

|

I used several test accounts to sign up for the challenge in the SAP team

I also contributed with some of those accounts.

Though, the "SAP Team Progress" and "Overall Challenge Top Contributors" dashboards are still empty. Users who accepted the SAP team invite to the challenge should be displayed here.

|

1.0

|

"Team Progress" and "Top Contributors" dashboards are empty, even though people signed up and contributed to the challenge - I used several test accounts to sign up for the challenge in the SAP team

I also contributed with some of those accounts.

Though, the "SAP Team Progress" and "Overall Challenge Top Contributors" dashboards are still empty. Users who accepted the SAP team invite to the challenge should be displayed here.

|

non_process

|

team progress and top contributors dashboards are empty even though people signed up and contributed to the challenge i used several test accounts to sign up for the challenge in the sap team i also contributed with some of those accounts though the sap team progress and overall challenge top contributors dashboards are still empty users who accepted the sap team invite to the challenge should be displayed here

| 0

|

13,027

| 15,380,470,353

|

IssuesEvent

|

2021-03-02 21:09:05

|

Ultimate-Hosts-Blacklist/whitelist

|

https://api.github.com/repos/Ultimate-Hosts-Blacklist/whitelist

|

closed

|

[FALSE-POSITIVE?] fossbytes.com

|

waiting for Mitch whitelisting process

|

I feel `fossbytes.com` shouldn't be blocked. It's just a technology website / blog.

|

1.0

|

[FALSE-POSITIVE?] fossbytes.com - I feel `fossbytes.com` shouldn't be blocked. It's just a technology website / blog.

|

process

|

fossbytes com i feel fossbytes com shouldn t be blocked it s just a technology website blog

| 1

|

1,407

| 3,971,515,885

|

IssuesEvent

|

2016-05-04 12:16:43

|

opentrials/opentrials

|

https://api.github.com/repos/opentrials/opentrials

|

closed

|

Processors: improvements

|

enhancement Processors

|

### Base

- [x] remove `OPENTRIALS_` prefix from env vars?

- [x] check `facts` search uses index

- [x] add time checks for `mapper` stack - map only updated/created items (https://github.com/opentrials/opentrials/issues/105)

- [x] improve papertrail logging formatter (https://github.com/opentrials/opentrials/issues/86)

- [x] remove `trials_trialrecords` table because it's not a `m2m` relationship?

- [x] use some kind of `slug` to improve `Finder` system (now it uses `person.name` for example)

- [x] refactor `Finder`

- [x] move column creation to `api` migration

- [x] adjust extractor (registers) priorities

- [x] merge `links` and `facts`?

- [x] use `facts` analogue for things like `person.name` (and:`primary_facts` + or:`secondary_facts`)?

- [x] don't md5 while slugifying scientific_titles?

- [x] ~~add periodic logger.info like scrapy does: `Translated 50 trials (at 50 trials/min)` (speed is missing)~~

- [x] merge records on entity entries? (https://github.com/opentrials/opentrials/issues/103)

### Concrete

- [x] all - fix `extractors` todos with additional `scraper` work (https://github.com/opentrials/opentrials/issues/104)

|

1.0

|

Processors: improvements - ### Base

- [x] remove `OPENTRIALS_` prefix from env vars?

- [x] check `facts` search uses index

- [x] add time checks for `mapper` stack - map only updated/created items (https://github.com/opentrials/opentrials/issues/105)

- [x] improve papertrail logging formatter (https://github.com/opentrials/opentrials/issues/86)

- [x] remove `trials_trialrecords` table because it's not a `m2m` relationship?

- [x] use some kind of `slug` to improve `Finder` system (now it uses `person.name` for example)

- [x] refactor `Finder`

- [x] move column creation to `api` migration

- [x] adjust extractor (registers) priorities

- [x] merge `links` and `facts`?

- [x] use `facts` analogue for things like `person.name` (and:`primary_facts` + or:`secondary_facts`)?

- [x] don't md5 while slugifying scientific_titles?

- [x] ~~add periodic logger.info like scrapy does: `Translated 50 trials (at 50 trials/min)` (speed is missing)~~

- [x] merge records on entity entries? (https://github.com/opentrials/opentrials/issues/103)

### Concrete

- [x] all - fix `extractors` todos with additional `scraper` work (https://github.com/opentrials/opentrials/issues/104)

|

process

|

processors improvements base remove opentrials prefix from env vars check facts search uses index add time checks for mapper stack map only updated created items improve papertrail logging formatter remove trials trialrecords table because it s not a relationship use some kind of slug to improve finder system now it uses person name for example refactor finder move column creation to api migration adjust extractor registers priorities merge links and facts use facts analogue for things like person name and primary facts or secondary facts don t while slugifying scientific titles add periodic logger info like scrapy does translated trials at trials min speed is missing merge records on entity entries concrete all fix extractors todos with additional scraper work

| 1

|

165,273

| 12,835,820,835

|

IssuesEvent

|

2020-07-07 13:28:55

|

softmatterlab/Braph-2.0-Matlab

|

https://api.github.com/repos/softmatterlab/Braph-2.0-Matlab

|

closed

|

ComparisonDTI

|

analysis test

|

**Branch from and merge to gv-analysis-comparison**

- [ ] ComparisonDTI

- [ ] test_ComparisonDTI

Use as reference ComparisonMRI

Double-check with @egolol, see issue #525

|

1.0

|

ComparisonDTI - **Branch from and merge to gv-analysis-comparison**

- [ ] ComparisonDTI

- [ ] test_ComparisonDTI

Use as reference ComparisonMRI

Double-check with @egolol, see issue #525

|

non_process

|

comparisondti branch from and merge to gv analysis comparison comparisondti test comparisondti use as reference comparisonmri double check with egolol see issue

| 0

|

48,708

| 6,102,612,459

|

IssuesEvent

|

2017-06-20 16:52:27

|

mozilla/network-pulse

|

https://api.github.com/repos/mozilla/network-pulse

|

closed

|

Form: identify if submitter is creator

|

design

|

Let's add a field to the form for submitters to identify whether they own the thing or not.

|

1.0

|

Form: identify if submitter is creator - Let's add a field to the form for submitters to identify whether they own the thing or not.

|

non_process

|

form identify if submitter is creator let s add a field to the form for submitters to identify whether they own the thing or not

| 0

|

2,123

| 4,963,797,915

|

IssuesEvent

|

2016-12-03 12:39:01

|

jlm2017/jlm-video-subtitles

|

https://api.github.com/repos/jlm2017/jlm-video-subtitles

|

closed

|

[subtitles] [FR] Revue de la semaine #9

|

Language: French Process: [6] Approved

|

Video title

RDLS #9 - YOUTUBE, PROGRAMME, FILLON, ANIMAUX POLLINISATEURS EN DANGER

URL

https://www.youtube.com/watch?v=ekBMfrb14xA

Youtube subtitles language

Francais

Duration

23:20

Subtitles URL

https://www.youtube.com/timedtext_editor?lang=fr&tab=captions&v=ekBMfrb14xA&ui=hd&action_mde_edit_form=1&ref=player&bl=vmp

|

1.0

|

[subtitles] [FR] Revue de la semaine #9 - Video title

RDLS #9 - YOUTUBE, PROGRAMME, FILLON, ANIMAUX POLLINISATEURS EN DANGER

URL

https://www.youtube.com/watch?v=ekBMfrb14xA

Youtube subtitles language

Francais

Duration

23:20

Subtitles URL

https://www.youtube.com/timedtext_editor?lang=fr&tab=captions&v=ekBMfrb14xA&ui=hd&action_mde_edit_form=1&ref=player&bl=vmp

|

process

|

revue de la semaine video title rdls youtube programme fillon animaux pollinisateurs en danger url youtube subtitles language francais duration subtitles url

| 1

|

3,886

| 6,818,128,042

|

IssuesEvent

|

2017-11-07 03:18:55

|

Great-Hill-Corporation/quickBlocks

|

https://api.github.com/repos/Great-Hill-Corporation/quickBlocks

|

closed

|

Improvement at getTokenBal when token or address does not exist

|

status-inprocess tools-getTokenBal type-enhancement

|

It will be a nice improvement if we can detect when the user made a mistake and either the token or the account does not exist.

When I run test cases using fake values with valid format like the following, at the first example the token is just an arbitrary value, on the second both are made up values:

./getTokenBal 0xd26114cd6EE289AccF82350c8d84870000000000 0x5e44c3e467a49c9ca0296a9f130fc43304000000

./getTokenBal 0xd26114cd6EE289AccF82350c8d84870000000000 0x5e44c3e467a49c9ca0296a9f130fc43304000000

In these scenarios we report that it is a 0 balance when maybe we can warn the user that they do not exist. I do not know if this is feasible, but would help to detect when you made at typo entering an invalid digit at your address or token.

|

1.0

|

Improvement at getTokenBal when token or address does not exist - It will be a nice improvement if we can detect when the user made a mistake and either the token or the account does not exist.

When I run test cases using fake values with valid format like the following, at the first example the token is just an arbitrary value, on the second both are made up values:

./getTokenBal 0xd26114cd6EE289AccF82350c8d84870000000000 0x5e44c3e467a49c9ca0296a9f130fc43304000000

./getTokenBal 0xd26114cd6EE289AccF82350c8d84870000000000 0x5e44c3e467a49c9ca0296a9f130fc43304000000

In these scenarios we report that it is a 0 balance when maybe we can warn the user that they do not exist. I do not know if this is feasible, but would help to detect when you made at typo entering an invalid digit at your address or token.

|

process

|

improvement at gettokenbal when token or address does not exist it will be a nice improvement if we can detect when the user made a mistake and either the token or the account does not exist when i run test cases using fake values with valid format like the following at the first example the token is just an arbitrary value on the second both are made up values gettokenbal gettokenbal in these scenarios we report that it is a balance when maybe we can warn the user that they do not exist i do not know if this is feasible but would help to detect when you made at typo entering an invalid digit at your address or token

| 1

|

10,180

| 13,044,162,852

|

IssuesEvent

|

2020-07-29 03:47:37

|

tikv/tikv

|

https://api.github.com/repos/tikv/tikv

|

closed

|

UCP: Migrate scalar function `LeastReal` from TiDB

|

challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor

|

## Description

Port the scalar function `LeastReal` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @mapleFU

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr)

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/expr)

|

2.0

|

UCP: Migrate scalar function `LeastReal` from TiDB -

## Description

Port the scalar function `LeastReal` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @mapleFU

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr)

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/expr)

|

process

|

ucp migrate scalar function leastreal from tidb description port the scalar function leastreal from tidb to coprocessor score mentor s maplefu recommended skills rust programming learning materials already implemented expressions ported from tidb

| 1

|

51,145

| 13,190,289,230

|

IssuesEvent

|

2020-08-13 09:55:47

|

ESA-VirES/WebClient-Framework

|

https://api.github.com/repos/ESA-VirES/WebClient-Framework

|

opened

|

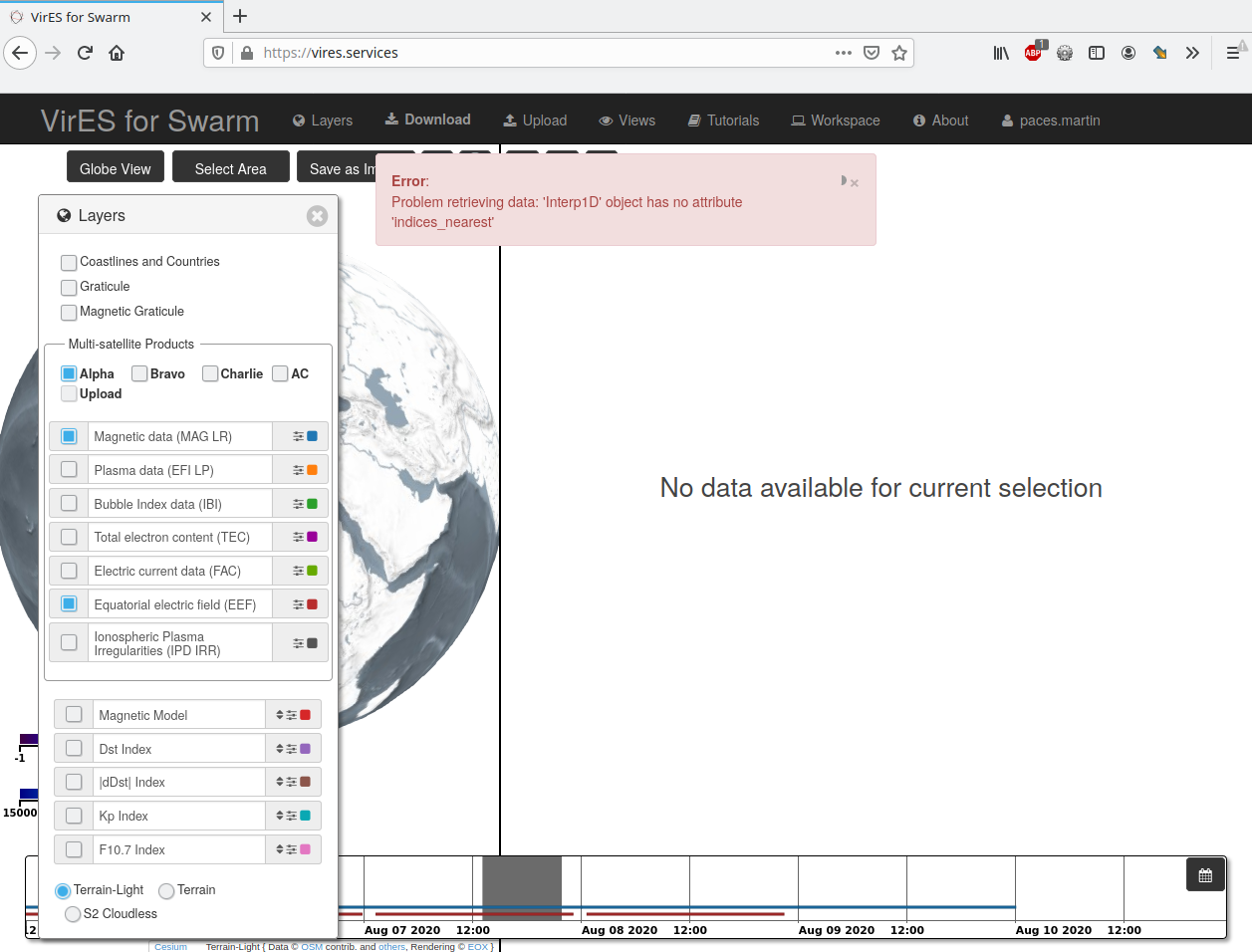

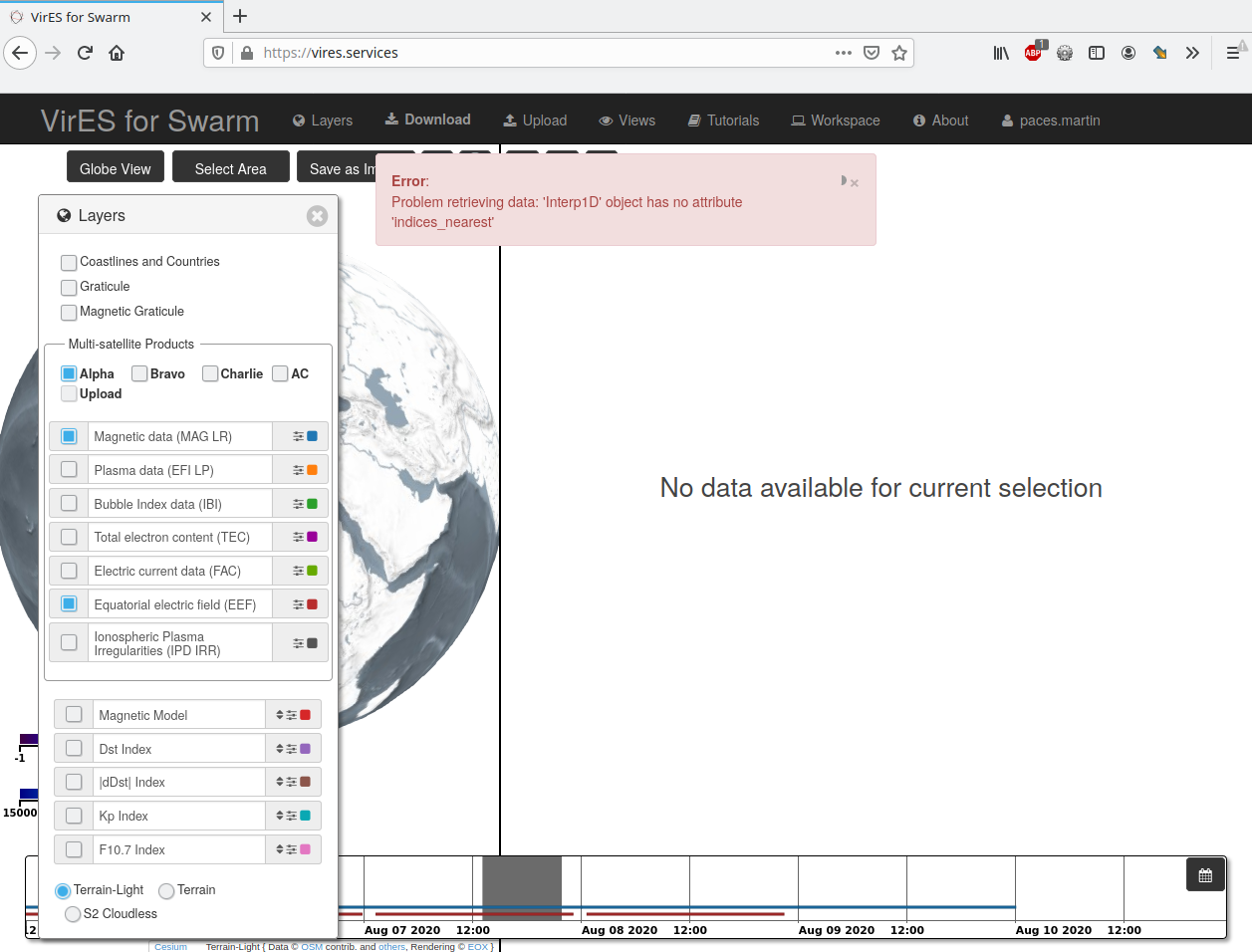

Broken server-side interpolation of the EEF data.

|

defect

|

When selecting MAG and EEF data the server responds with following error:

```

Error: Problem retrieving data: 'Interp1D' object has no attribute 'indices_nearest'

```

This is a regression introduces in v3.3.0.

Observed on the production instance.

Already fixed on staging.

FAO @lmar76

|

1.0

|

Broken server-side interpolation of the EEF data. - When selecting MAG and EEF data the server responds with following error:

```

Error: Problem retrieving data: 'Interp1D' object has no attribute 'indices_nearest'

```

This is a regression introduces in v3.3.0.

Observed on the production instance.

Already fixed on staging.

FAO @lmar76

|

non_process

|

broken server side interpolation of the eef data when selecting mag and eef data the server responds with following error error problem retrieving data object has no attribute indices nearest this is a regression introduces in observed on the production instance already fixed on staging fao

| 0

|

14,494

| 17,604,292,545

|

IssuesEvent

|

2021-08-17 15:13:32

|

qgis/QGIS-Documentation

|

https://api.github.com/repos/qgis/QGIS-Documentation

|

closed

|

port more Processing algorithms to C++ (Request in QGIS)

|

Processing Alg 3.14

|

### Request for documentation

From pull request QGIS/qgis#36372

Author: @alexbruy

QGIS version: 3.14

**port more Processing algorithms to C++**

### PR Description:

## Description

Port some Processing algorithms to C++:

- Split Vector Layer

- PostGIS Execute SQL

- SpatiaLite Execute SQL

- Polygonize

- Snap Geometries

### Commits tagged with [need-docs] or [FEATURE]

* [needs-docs] Add optional parameter for output file type to the vector split algorithm" (#5508)

* "[processing][feature] Add algorithm for executing SQL queries against registered SpatiaLite databases" (#5509)

|

1.0

|

port more Processing algorithms to C++ (Request in QGIS) - ### Request for documentation

From pull request QGIS/qgis#36372

Author: @alexbruy

QGIS version: 3.14

**port more Processing algorithms to C++**

### PR Description:

## Description

Port some Processing algorithms to C++:

- Split Vector Layer

- PostGIS Execute SQL

- SpatiaLite Execute SQL

- Polygonize

- Snap Geometries

### Commits tagged with [need-docs] or [FEATURE]

* [needs-docs] Add optional parameter for output file type to the vector split algorithm" (#5508)

* "[processing][feature] Add algorithm for executing SQL queries against registered SpatiaLite databases" (#5509)

|

process

|

port more processing algorithms to c request in qgis request for documentation from pull request qgis qgis author alexbruy qgis version port more processing algorithms to c pr description description port some processing algorithms to c split vector layer postgis execute sql spatialite execute sql polygonize snap geometries commits tagged with or add optional parameter for output file type to the vector split algorithm add algorithm for executing sql queries against registered spatialite databases

| 1

|

5,916

| 8,736,243,043

|

IssuesEvent

|

2018-12-11 18:59:33

|

ipfs/go-ipfs

|

https://api.github.com/repos/ipfs/go-ipfs

|

opened

|

Improving work tracking and prioritization

|

process

|

## Goal

Based on voting in #5819. We want to track ongoing and upcoming work in a way that makes progress and priorities clear to everyone - team members and the broader community. And it should make managing priorities easy for technical leads. In this issue we're going to discuss (and decide on) something to try first.

## Summary

*eingenito* - I wish we had a place where we could easily track progress on our highest priority initiatives. [mentions 'meta' issues]

*eingenito* - I wish I understood which issues are the ones that should be worked on.

*hannahhoward* - I wish we marked issues as "good first time issue" for first time contributors [we do have difficulty:easy which might be the same thing]

*DonaldTsang* - [mentions Kanban or similar for tracking work]

*eingenito* - I wish I knew what to do with all the old issues in go-ipfs. [they slow down waffle boards]

|

1.0

|

Improving work tracking and prioritization - ## Goal

Based on voting in #5819. We want to track ongoing and upcoming work in a way that makes progress and priorities clear to everyone - team members and the broader community. And it should make managing priorities easy for technical leads. In this issue we're going to discuss (and decide on) something to try first.

## Summary

*eingenito* - I wish we had a place where we could easily track progress on our highest priority initiatives. [mentions 'meta' issues]

*eingenito* - I wish I understood which issues are the ones that should be worked on.

*hannahhoward* - I wish we marked issues as "good first time issue" for first time contributors [we do have difficulty:easy which might be the same thing]

*DonaldTsang* - [mentions Kanban or similar for tracking work]

*eingenito* - I wish I knew what to do with all the old issues in go-ipfs. [they slow down waffle boards]

|

process

|

improving work tracking and prioritization goal based on voting in we want to track ongoing and upcoming work in a way that makes progress and priorities clear to everyone team members and the broader community and it should make managing priorities easy for technical leads in this issue we re going to discuss and decide on something to try first summary eingenito i wish we had a place where we could easily track progress on our highest priority initiatives eingenito i wish i understood which issues are the ones that should be worked on hannahhoward i wish we marked issues as good first time issue for first time contributors donaldtsang eingenito i wish i knew what to do with all the old issues in go ipfs

| 1

|

15,292

| 19,296,163,005

|

IssuesEvent

|

2021-12-12 16:19:32

|

varabyte/kobweb

|

https://api.github.com/repos/varabyte/kobweb

|

closed

|

Audit how API routes work when users don't explicitly do anything

|

process

|

```

@Api

fun someRoute(ctx) {

// Do nothing

}

```

right now, this sends an empty "200" response, the idea being that the user defined the endpoint, so that's enough. But I'm not sure if that's intuitive, or if instead we should require the user do _something_:

```

@Api

fun someRoute(ctx) {

ctx.res.status = 200 // body automatically set to "" if not already set

}

```

or

```

@Api

fun someRoute(ctx) {

ctx.res.body = "..." // status automatically set to 200 if not already set

}

```

This way, we can write code like:

```

@Api

fun someRoute(ctx) {

if (ctx.req.method == GET) {

ctx.res.body = ...

}

}

```

and other methods will return a 400

|

1.0

|

Audit how API routes work when users don't explicitly do anything - ```

@Api

fun someRoute(ctx) {

// Do nothing

}

```

right now, this sends an empty "200" response, the idea being that the user defined the endpoint, so that's enough. But I'm not sure if that's intuitive, or if instead we should require the user do _something_:

```

@Api

fun someRoute(ctx) {

ctx.res.status = 200 // body automatically set to "" if not already set

}

```

or

```

@Api

fun someRoute(ctx) {

ctx.res.body = "..." // status automatically set to 200 if not already set

}

```

This way, we can write code like:

```

@Api

fun someRoute(ctx) {

if (ctx.req.method == GET) {

ctx.res.body = ...

}

}

```

and other methods will return a 400

|

process

|

audit how api routes work when users don t explicitly do anything api fun someroute ctx do nothing right now this sends an empty response the idea being that the user defined the endpoint so that s enough but i m not sure if that s intuitive or if instead we should require the user do something api fun someroute ctx ctx res status body automatically set to if not already set or api fun someroute ctx ctx res body status automatically set to if not already set this way we can write code like api fun someroute ctx if ctx req method get ctx res body and other methods will return a

| 1

|

10,044

| 13,044,161,638

|

IssuesEvent

|

2020-07-29 03:47:24

|

tikv/tikv

|

https://api.github.com/repos/tikv/tikv

|

closed

|

UCP: Migrate scalar function `TimeFormat` from TiDB

|

challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor

|

## Description

Port the scalar function `TimeFormat` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr)

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/expr)

|

2.0

|

UCP: Migrate scalar function `TimeFormat` from TiDB -

## Description

Port the scalar function `TimeFormat` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr)

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/expr)

|

process

|

ucp migrate scalar function timeformat from tidb description port the scalar function timeformat from tidb to coprocessor score mentor s sticnarf recommended skills rust programming learning materials already implemented expressions ported from tidb

| 1

|

792,988

| 27,979,318,684

|

IssuesEvent

|

2023-03-26 00:30:32

|

zephyrproject-rtos/zephyr

|

https://api.github.com/repos/zephyrproject-rtos/zephyr

|

closed

|

Annoying Slirp Message console output from qemu_x86 board target

|

bug priority: low area: QEMU Stale

|

**Describe the bug**

The easiest way to use Networking with QEMU is in user mode with slirp. here QEMU provides the (tcp|udp)/ip stack. Zephyr works just like a charme, however, the qemu version zephyr sdk ships litters the console output with `qemu-system-i386: Slirp: Failed to send packet, ret: -1` messages. As far as I could trace this output back, it does not originate from Zephyr RTOS but from QEMU itself and is false alarm. QEMU was able to send the packet. I can see the response from the server.

**To Reproduce**

Steps to reproduce the behavior:

1. cd samples/net/dhcpv4_client/

2. mkdir boards

3. save file qemu_x86.conf here (see below)

4. mkdir build; cd build

5. cmake -GNinja -DBOARD=qemu\_x86

6. ninja run

7. See console output

**Expected behavior**

QEMU shall remain silent! there is no error. the ip packet gets transmitted by the qemu user mode just fine.

**Impact**

each ip packet results in one `qemu-system-i386: Slirp: Failed to send packet, ret: -1` line on the console output. debugging output of udp applications is annoying, while debugging ip packet heavy tcp traffic from multiple connections is tedious.

**Logs and console output**

```

$ ninja run

[1/183] Preparing syscall dependency handling

[2/183] Generating include/generated/version.h

-- Zephyr version: 3.2.99 (/home/mark/zephyrproject/zephyr), build: zephyr-v3.2.0-1869-g9ebb9abab7b0

[168/183] Linking C executable zephyr/zephyr_pre0.elf

[172/183] Linking C executable zephyr/zephyr_pre1.elf

[182/183] Linking C executable zephyr/zephyr.elf

Memory region Used Size Region Size %age Used

RAM: 184352 B 3 MB 5.86%

IDT_LIST: 0 GB 2 KB 0.00%

[182/183] To exit from QEMU enter: 'CTRL+a, x'[QEMU] CPU: qemu32,+nx,+pae

SeaBIOS (version zephyr-v1.0.0-0-g31d4e0e-dirty-20200714_234759-fv-az50-zephyr)

iPXE (http://ipxe.org) 00:02.0 CA00 PCI2.10 PnP PMM+00392120+002F2120 CA00

Booting from ROM..

*** Booting Zephyr OS build zephyr-v3.2.0-1869-g9ebb9abab7b0 ***

[00:00:00.000,000] <inf> net_dhcpv4_client_sample: Run dhcpv4 client

uart:~$ qemu-system-i386: Slirp: Failed to send packet, ret: -1

qemu-system-i386: Slirp: Failed to send packet, ret: -1

[00:00:07.010,000] <inf> net_dhcpv4: Received: 10.0.2.15

[00:00:07.010,000] <inf> net_dhcpv4_client_sample: Your address: 10.0.2.15

[00:00:07.010,000] <inf> net_dhcpv4_client_sample: Lease time: 86400 seconds

[00:00:07.010,000] <inf> net_dhcpv4_client_sample: Subnet: 255.255.255.0

[00:00:07.010,000] <inf> net_dhcpv4_client_sample: Router: 10.0.2.2

uart:~$ qemu-system-i386: Slirp: Failed to send packet, ret: -1

```

**Environment (please complete the following information):**

- OS: Ubuntu 22.04

- Toolchain Zephyr SDK,

- Version: 9ebb9abab7b0d662e0d5379367fb7f052959c545

**Additional context**

qemu_x86.conf:

```

CONFIG_FPU=y

CONFIG_PCIE=y

CONFIG_NET_L2_ETHERNET=y

CONFIG_NET_QEMU_ETHERNET=y

CONFIG_NET_QEMU_USER=y

CONFIG_NET_CONFIG_PEER_IPV4_ADDR="10.0.2.2"

```

This config advises `ninja run` to use the QEMU-internal virtual network instead of the more complicated tap0 network (with ifconfig, dnsmasq and/or dhcpd) which has to be set up manually and is more error prone than the virtual QEMU-internal network.

|

1.0

|

Annoying Slirp Message console output from qemu_x86 board target - **Describe the bug**

The easiest way to use Networking with QEMU is in user mode with slirp. here QEMU provides the (tcp|udp)/ip stack. Zephyr works just like a charme, however, the qemu version zephyr sdk ships litters the console output with `qemu-system-i386: Slirp: Failed to send packet, ret: -1` messages. As far as I could trace this output back, it does not originate from Zephyr RTOS but from QEMU itself and is false alarm. QEMU was able to send the packet. I can see the response from the server.

**To Reproduce**

Steps to reproduce the behavior:

1. cd samples/net/dhcpv4_client/

2. mkdir boards

3. save file qemu_x86.conf here (see below)

4. mkdir build; cd build

5. cmake -GNinja -DBOARD=qemu\_x86

6. ninja run

7. See console output

**Expected behavior**

QEMU shall remain silent! there is no error. the ip packet gets transmitted by the qemu user mode just fine.

**Impact**

each ip packet results in one `qemu-system-i386: Slirp: Failed to send packet, ret: -1` line on the console output. debugging output of udp applications is annoying, while debugging ip packet heavy tcp traffic from multiple connections is tedious.

**Logs and console output**

```

$ ninja run

[1/183] Preparing syscall dependency handling

[2/183] Generating include/generated/version.h

-- Zephyr version: 3.2.99 (/home/mark/zephyrproject/zephyr), build: zephyr-v3.2.0-1869-g9ebb9abab7b0

[168/183] Linking C executable zephyr/zephyr_pre0.elf

[172/183] Linking C executable zephyr/zephyr_pre1.elf

[182/183] Linking C executable zephyr/zephyr.elf

Memory region Used Size Region Size %age Used

RAM: 184352 B 3 MB 5.86%

IDT_LIST: 0 GB 2 KB 0.00%

[182/183] To exit from QEMU enter: 'CTRL+a, x'[QEMU] CPU: qemu32,+nx,+pae

SeaBIOS (version zephyr-v1.0.0-0-g31d4e0e-dirty-20200714_234759-fv-az50-zephyr)

iPXE (http://ipxe.org) 00:02.0 CA00 PCI2.10 PnP PMM+00392120+002F2120 CA00

Booting from ROM..

*** Booting Zephyr OS build zephyr-v3.2.0-1869-g9ebb9abab7b0 ***

[00:00:00.000,000] <inf> net_dhcpv4_client_sample: Run dhcpv4 client

uart:~$ qemu-system-i386: Slirp: Failed to send packet, ret: -1

qemu-system-i386: Slirp: Failed to send packet, ret: -1

[00:00:07.010,000] <inf> net_dhcpv4: Received: 10.0.2.15

[00:00:07.010,000] <inf> net_dhcpv4_client_sample: Your address: 10.0.2.15

[00:00:07.010,000] <inf> net_dhcpv4_client_sample: Lease time: 86400 seconds

[00:00:07.010,000] <inf> net_dhcpv4_client_sample: Subnet: 255.255.255.0

[00:00:07.010,000] <inf> net_dhcpv4_client_sample: Router: 10.0.2.2

uart:~$ qemu-system-i386: Slirp: Failed to send packet, ret: -1

```

**Environment (please complete the following information):**

- OS: Ubuntu 22.04

- Toolchain Zephyr SDK,

- Version: 9ebb9abab7b0d662e0d5379367fb7f052959c545

**Additional context**

qemu_x86.conf:

```

CONFIG_FPU=y

CONFIG_PCIE=y

CONFIG_NET_L2_ETHERNET=y

CONFIG_NET_QEMU_ETHERNET=y

CONFIG_NET_QEMU_USER=y

CONFIG_NET_CONFIG_PEER_IPV4_ADDR="10.0.2.2"

```

This config advises `ninja run` to use the QEMU-internal virtual network instead of the more complicated tap0 network (with ifconfig, dnsmasq and/or dhcpd) which has to be set up manually and is more error prone than the virtual QEMU-internal network.

|

non_process

|

annoying slirp message console output from qemu board target describe the bug the easiest way to use networking with qemu is in user mode with slirp here qemu provides the tcp udp ip stack zephyr works just like a charme however the qemu version zephyr sdk ships litters the console output with qemu system slirp failed to send packet ret messages as far as i could trace this output back it does not originate from zephyr rtos but from qemu itself and is false alarm qemu was able to send the packet i can see the response from the server to reproduce steps to reproduce the behavior cd samples net client mkdir boards save file qemu conf here see below mkdir build cd build cmake gninja dboard qemu ninja run see console output expected behavior qemu shall remain silent there is no error the ip packet gets transmitted by the qemu user mode just fine impact each ip packet results in one qemu system slirp failed to send packet ret line on the console output debugging output of udp applications is annoying while debugging ip packet heavy tcp traffic from multiple connections is tedious logs and console output ninja run preparing syscall dependency handling generating include generated version h zephyr version home mark zephyrproject zephyr build zephyr linking c executable zephyr zephyr elf linking c executable zephyr zephyr elf linking c executable zephyr zephyr elf memory region used size region size age used ram b mb idt list gb kb to exit from qemu enter ctrl a x cpu nx pae seabios version zephyr dirty fv zephyr ipxe pnp pmm booting from rom booting zephyr os build zephyr net client sample run client uart qemu system slirp failed to send packet ret qemu system slirp failed to send packet ret net received net client sample your address net client sample lease time seconds net client sample subnet net client sample router uart qemu system slirp failed to send packet ret environment please complete the following information os ubuntu toolchain zephyr sdk version additional context qemu conf config fpu y config pcie y config net ethernet y config net qemu ethernet y config net qemu user y config net config peer addr this config advises ninja run to use the qemu internal virtual network instead of the more complicated network with ifconfig dnsmasq and or dhcpd which has to be set up manually and is more error prone than the virtual qemu internal network

| 0

|

10,550

| 13,338,592,475

|

IssuesEvent

|

2020-08-28 11:20:12

|

prisma/prisma

|

https://api.github.com/repos/prisma/prisma

|

opened

|

Move full `blog-env-postgresql` to e2e tests

|

kind/improvement process/candidate size/XS team/typescript

|

In our integration test suite, we have a test called `blog-env-postgresql`.

That test goes over the timeouts we have built-in and therefore takes a long time to succeed (about 30s).

We should move this full test instead into the e2e tests, which run async from the normal publish process, so we can iterate faster.

It's still valuable to have a minimal postgres test in the integration tests, so this test can be shortened.

|

1.0

|

Move full `blog-env-postgresql` to e2e tests - In our integration test suite, we have a test called `blog-env-postgresql`.

That test goes over the timeouts we have built-in and therefore takes a long time to succeed (about 30s).

We should move this full test instead into the e2e tests, which run async from the normal publish process, so we can iterate faster.

It's still valuable to have a minimal postgres test in the integration tests, so this test can be shortened.

|

process

|

move full blog env postgresql to tests in our integration test suite we have a test called blog env postgresql that test goes over the timeouts we have built in and therefore takes a long time to succeed about we should move this full test instead into the tests which run async from the normal publish process so we can iterate faster it s still valuable to have a minimal postgres test in the integration tests so this test can be shortened

| 1

|

15,441

| 19,656,243,773

|

IssuesEvent

|

2022-01-10 12:50:06

|

asam-ev/asam-project-guide

|

https://api.github.com/repos/asam-ev/asam-project-guide

|

opened

|

Add template for rules for Editorial Guide

|

processes

|

- [ ] Add template for rules to https://github.com/asam-ev/asam-project-guide/tree/main/doc/modules/compendium/examples.

- [ ] Add process to use template to https://github.com/asam-ev/asam-project-guide/blob/main/doc/modules/compendium/pages/writing_guidelines/documentation_processes.adoc.

|

1.0

|

Add template for rules for Editorial Guide - - [ ] Add template for rules to https://github.com/asam-ev/asam-project-guide/tree/main/doc/modules/compendium/examples.

- [ ] Add process to use template to https://github.com/asam-ev/asam-project-guide/blob/main/doc/modules/compendium/pages/writing_guidelines/documentation_processes.adoc.

|

process

|

add template for rules for editorial guide add template for rules to add process to use template to

| 1

|

8

| 2,496,223,929

|

IssuesEvent

|

2015-01-06 17:56:26

|

tinkerpop/tinkerpop3

|

https://api.github.com/repos/tinkerpop/tinkerpop3

|

opened

|

[Proposal] OrderMap for Gremlin3

|

enhancement process

|

In Gremlin2 we have `OrderMapStep` which orders the maps flowing through the step. However, what about if you had lists flowing? `OrderListStep`, `OrderStringStep`, `OrderArrayStep`, etc.

Here is a generalization:

```groovy

g.V().out().groupCount().by('age').cap().orderObject().by(incr)

```

What sucks is that we have `order()` for stream ordering and `orderObject()` for per object ordering. This is very similar to the problem with `local()`. Sometimes you want the process local to the object. As such, do we simply promote this:

```groovy

g.V().out().groupCount().by('age').cap().map{it.get().sort()}

```

But that looks lame...and uses Groovy `sort()` where we want something general to Java and Groovy.

```groovy

g.V().out().groupCount().by('age').cap().local(Order.incr)

```

Where `LocalStep` takes not just a `Traversal` but also a `Function<Traverser,Traverser>` where `Order.incr` is a Function.................

Grasping for straws here....ideas?

@mbroecheler @dkuppitz @spmallette @bryncooke

|

1.0

|

[Proposal] OrderMap for Gremlin3 - In Gremlin2 we have `OrderMapStep` which orders the maps flowing through the step. However, what about if you had lists flowing? `OrderListStep`, `OrderStringStep`, `OrderArrayStep`, etc.

Here is a generalization:

```groovy

g.V().out().groupCount().by('age').cap().orderObject().by(incr)

```

What sucks is that we have `order()` for stream ordering and `orderObject()` for per object ordering. This is very similar to the problem with `local()`. Sometimes you want the process local to the object. As such, do we simply promote this:

```groovy

g.V().out().groupCount().by('age').cap().map{it.get().sort()}

```

But that looks lame...and uses Groovy `sort()` where we want something general to Java and Groovy.

```groovy

g.V().out().groupCount().by('age').cap().local(Order.incr)

```

Where `LocalStep` takes not just a `Traversal` but also a `Function<Traverser,Traverser>` where `Order.incr` is a Function.................

Grasping for straws here....ideas?

@mbroecheler @dkuppitz @spmallette @bryncooke

|

process

|

ordermap for in we have ordermapstep which orders the maps flowing through the step however what about if you had lists flowing orderliststep orderstringstep orderarraystep etc here is a generalization groovy g v out groupcount by age cap orderobject by incr what sucks is that we have order for stream ordering and orderobject for per object ordering this is very similar to the problem with local sometimes you want the process local to the object as such do we simply promote this groovy g v out groupcount by age cap map it get sort but that looks lame and uses groovy sort where we want something general to java and groovy groovy g v out groupcount by age cap local order incr where localstep takes not just a traversal but also a function where order incr is a function grasping for straws here ideas mbroecheler dkuppitz spmallette bryncooke

| 1

|

70,393

| 15,085,562,400

|

IssuesEvent

|

2021-02-05 18:52:32

|

mthbernardes/shaggy-rogers

|

https://api.github.com/repos/mthbernardes/shaggy-rogers

|

reopened

|

CVE-2019-16942 (High) detected in jackson-databind-2.9.6.jar

|

security vulnerability

|

## CVE-2019-16942 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.6.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: shaggy-rogers/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.9.6/jackson-databind-2.9.6.jar</p>

<p>

Dependency Hierarchy:

- pantomime-2.11.0.jar (Root Library)

- tika-parsers-1.19.1.jar

- :x: **jackson-databind-2.9.6.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/mthbernardes/shaggy-rogers/commit/f72a5cb259e01c0ac208ba3a95eee5232c30fe6c">f72a5cb259e01c0ac208ba3a95eee5232c30fe6c</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A Polymorphic Typing issue was discovered in FasterXML jackson-databind 2.0.0 through 2.9.10. When Default Typing is enabled (either globally or for a specific property) for an externally exposed JSON endpoint and the service has the commons-dbcp (1.4) jar in the classpath, and an attacker can find an RMI service endpoint to access, it is possible to make the service execute a malicious payload. This issue exists because of org.apache.commons.dbcp.datasources.SharedPoolDataSource and org.apache.commons.dbcp.datasources.PerUserPoolDataSource mishandling.

<p>Publish Date: 2019-10-01

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-16942>CVE-2019-16942</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-16942">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-16942</a></p>

<p>Release Date: 2019-10-01</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.6.7.3,2.7.9.7,2.8.11.5,2.9.10.1</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-databind","packageVersion":"2.9.6","packageFilePaths":["/pom.xml"],"isTransitiveDependency":true,"dependencyTree":"com.novemberain:pantomime:2.11.0;org.apache.tika:tika-parsers:1.19.1;com.fasterxml.jackson.core:jackson-databind:2.9.6","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.fasterxml.jackson.core:jackson-databind:2.6.7.3,2.7.9.7,2.8.11.5,2.9.10.1"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2019-16942","vulnerabilityDetails":"A Polymorphic Typing issue was discovered in FasterXML jackson-databind 2.0.0 through 2.9.10. When Default Typing is enabled (either globally or for a specific property) for an externally exposed JSON endpoint and the service has the commons-dbcp (1.4) jar in the classpath, and an attacker can find an RMI service endpoint to access, it is possible to make the service execute a malicious payload. This issue exists because of org.apache.commons.dbcp.datasources.SharedPoolDataSource and org.apache.commons.dbcp.datasources.PerUserPoolDataSource mishandling.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-16942","cvss3Severity":"high","cvss3Score":"9.8","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> -->

|

True

|

CVE-2019-16942 (High) detected in jackson-databind-2.9.6.jar - ## CVE-2019-16942 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.6.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: shaggy-rogers/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.9.6/jackson-databind-2.9.6.jar</p>

<p>

Dependency Hierarchy:

- pantomime-2.11.0.jar (Root Library)

- tika-parsers-1.19.1.jar

- :x: **jackson-databind-2.9.6.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/mthbernardes/shaggy-rogers/commit/f72a5cb259e01c0ac208ba3a95eee5232c30fe6c">f72a5cb259e01c0ac208ba3a95eee5232c30fe6c</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A Polymorphic Typing issue was discovered in FasterXML jackson-databind 2.0.0 through 2.9.10. When Default Typing is enabled (either globally or for a specific property) for an externally exposed JSON endpoint and the service has the commons-dbcp (1.4) jar in the classpath, and an attacker can find an RMI service endpoint to access, it is possible to make the service execute a malicious payload. This issue exists because of org.apache.commons.dbcp.datasources.SharedPoolDataSource and org.apache.commons.dbcp.datasources.PerUserPoolDataSource mishandling.

<p>Publish Date: 2019-10-01

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-16942>CVE-2019-16942</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-16942">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-16942</a></p>

<p>Release Date: 2019-10-01</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.6.7.3,2.7.9.7,2.8.11.5,2.9.10.1</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-databind","packageVersion":"2.9.6","packageFilePaths":["/pom.xml"],"isTransitiveDependency":true,"dependencyTree":"com.novemberain:pantomime:2.11.0;org.apache.tika:tika-parsers:1.19.1;com.fasterxml.jackson.core:jackson-databind:2.9.6","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.fasterxml.jackson.core:jackson-databind:2.6.7.3,2.7.9.7,2.8.11.5,2.9.10.1"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2019-16942","vulnerabilityDetails":"A Polymorphic Typing issue was discovered in FasterXML jackson-databind 2.0.0 through 2.9.10. When Default Typing is enabled (either globally or for a specific property) for an externally exposed JSON endpoint and the service has the commons-dbcp (1.4) jar in the classpath, and an attacker can find an RMI service endpoint to access, it is possible to make the service execute a malicious payload. This issue exists because of org.apache.commons.dbcp.datasources.SharedPoolDataSource and org.apache.commons.dbcp.datasources.PerUserPoolDataSource mishandling.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-16942","cvss3Severity":"high","cvss3Score":"9.8","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> -->

|

non_process

|

cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file shaggy rogers pom xml path to vulnerable library home wss scanner repository com fasterxml jackson core jackson databind jackson databind jar dependency hierarchy pantomime jar root library tika parsers jar x jackson databind jar vulnerable library found in head commit a href found in base branch master vulnerability details a polymorphic typing issue was discovered in fasterxml jackson databind through when default typing is enabled either globally or for a specific property for an externally exposed json endpoint and the service has the commons dbcp jar in the classpath and an attacker can find an rmi service endpoint to access it is possible to make the service execute a malicious payload this issue exists because of org apache commons dbcp datasources sharedpooldatasource and org apache commons dbcp datasources peruserpooldatasource mishandling publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution com fasterxml jackson core jackson databind isopenpronvulnerability false ispackagebased true isdefaultbranch true packages istransitivedependency true dependencytree com novemberain pantomime org apache tika tika parsers com fasterxml jackson core jackson databind isminimumfixversionavailable true minimumfixversion com fasterxml jackson core jackson databind basebranches vulnerabilityidentifier cve vulnerabilitydetails a polymorphic typing issue was discovered in fasterxml jackson databind through when default typing is enabled either globally or for a specific property for an externally exposed json endpoint and the service has the commons dbcp jar in the classpath and an attacker can find an rmi service endpoint to access it is possible to make the service execute a malicious payload this issue exists because of org apache commons dbcp datasources sharedpooldatasource and org apache commons dbcp datasources peruserpooldatasource mishandling vulnerabilityurl

| 0

|

4,322

| 7,227,831,261

|

IssuesEvent

|

2018-02-11 01:21:54

|

technovus-sfu/rembot

|

https://api.github.com/repos/technovus-sfu/rembot

|

closed

|

Generate rcode array from image array

|

processing

|

### One proposed method

- All pixels to be drawn are white(255) in the code

- When there is a change in values (0 -> 255 i.e. black to white)

- read the first white pixel then until the next change (255 -> 0, i.e white to black )

- read the last white pixel

- The code generated could then be `R01 x5 X100 y0 Y0`

```python

# Command list to draw two lines across image of width 100,

# one from 5 to the end the next from 100 to the middle of 5.

# Assuming with margins the offset is 20x20

x_offset = 20

y_offset = 20

cmd = [ "R01 x(5+x_offset) X(100+x_offset) y(0+y_offset) Y(0+y_offset)",

"R01 x(100+x_offset) X(5+x_offset) y(1+y_offset) Y(1+y_offset)"]

```

|

1.0

|

Generate rcode array from image array - ### One proposed method

- All pixels to be drawn are white(255) in the code

- When there is a change in values (0 -> 255 i.e. black to white)

- read the first white pixel then until the next change (255 -> 0, i.e white to black )

- read the last white pixel

- The code generated could then be `R01 x5 X100 y0 Y0`

```python

# Command list to draw two lines across image of width 100,

# one from 5 to the end the next from 100 to the middle of 5.

# Assuming with margins the offset is 20x20

x_offset = 20

y_offset = 20

cmd = [ "R01 x(5+x_offset) X(100+x_offset) y(0+y_offset) Y(0+y_offset)",

"R01 x(100+x_offset) X(5+x_offset) y(1+y_offset) Y(1+y_offset)"]

```

|

process

|

generate rcode array from image array one proposed method all pixels to be drawn are white in the code when there is a change in values i e black to white read the first white pixel then until the next change i e white to black read the last white pixel the code generated could then be python command list to draw two lines across image of width one from to the end the next from to the middle of assuming with margins the offset is x offset y offset cmd x x offset x x offset y y offset y y offset x x offset x x offset y y offset y y offset

| 1

|

26

| 2,497,007,735

|

IssuesEvent

|

2015-01-07 00:11:40

|

tinkerpop/tinkerpop3

|

https://api.github.com/repos/tinkerpop/tinkerpop3

|

closed

|

New thoughts on looping constructs in Gremin3.

|

enhancement process

|

Now that we have "mastered" internal traversals and we know how to compile them into a linear form for OLAP execution, we can re-think the looping construct if we want.

Below are two examples demonstrating a proposal for "while/do" and "do/while", respectively. Moreover, for a more "graph feel", we can call `until` `loop`.

```java

// in Gremlin-Java

g.V().until(t -> t.get().value('name').equals('marko'), g.of().out())

g.V().until(g.of().out(), t -> t.get().value('name').equals('marko'))

```

```groovy

// in Gremlin-Groovy with Sugar

g.V.until({it.name == 'marko'}, g.of().out)

g.V.until(g.of().out){it.name == 'marko'}

// with the emit predicate

g.V.until({it.name == 'marko'}, g.of().out){true}

g.V.until(g.of().out){it.name == 'marko'}{true}

```

/////////////

A few extra thoughts:

* Rules of sideEffect scoping. If internal traversals are always "linearized" for OLAP, then we may want to make the sideEffect scope be the global scope. That is, no nested sideEffect structures.

* What I like about this model is that there is no need for `as()` and the section of what is being looped is being made apparent by `(`. This is what I like about `local()` as well. `( )` is the delimiter of the construct.

* We we still need to keep `jump()` as the `goto` construct necessary for arbitrary jumping, but it would be used primarily by the strategies (not by users). In fact, we could probably remove `GraphTraversal.jump()` and there would simply be a `JumpStep` available for usage by strategies. In fact, we could renamed `JumpStep` to `GoToStep`. Though, to keep with the graph-theme, "jump" may be more appropriate -- analogous to "loop" vs. "until".

@dkuppitz @mbroecheler @BrynCooke @joshsh

|

1.0

|

New thoughts on looping constructs in Gremin3. - Now that we have "mastered" internal traversals and we know how to compile them into a linear form for OLAP execution, we can re-think the looping construct if we want.

Below are two examples demonstrating a proposal for "while/do" and "do/while", respectively. Moreover, for a more "graph feel", we can call `until` `loop`.

```java

// in Gremlin-Java

g.V().until(t -> t.get().value('name').equals('marko'), g.of().out())

g.V().until(g.of().out(), t -> t.get().value('name').equals('marko'))

```

```groovy

// in Gremlin-Groovy with Sugar

g.V.until({it.name == 'marko'}, g.of().out)

g.V.until(g.of().out){it.name == 'marko'}

// with the emit predicate

g.V.until({it.name == 'marko'}, g.of().out){true}

g.V.until(g.of().out){it.name == 'marko'}{true}

```

/////////////

A few extra thoughts:

* Rules of sideEffect scoping. If internal traversals are always "linearized" for OLAP, then we may want to make the sideEffect scope be the global scope. That is, no nested sideEffect structures.

* What I like about this model is that there is no need for `as()` and the section of what is being looped is being made apparent by `(`. This is what I like about `local()` as well. `( )` is the delimiter of the construct.

* We we still need to keep `jump()` as the `goto` construct necessary for arbitrary jumping, but it would be used primarily by the strategies (not by users). In fact, we could probably remove `GraphTraversal.jump()` and there would simply be a `JumpStep` available for usage by strategies. In fact, we could renamed `JumpStep` to `GoToStep`. Though, to keep with the graph-theme, "jump" may be more appropriate -- analogous to "loop" vs. "until".

@dkuppitz @mbroecheler @BrynCooke @joshsh

|

process

|

new thoughts on looping constructs in now that we have mastered internal traversals and we know how to compile them into a linear form for olap execution we can re think the looping construct if we want below are two examples demonstrating a proposal for while do and do while respectively moreover for a more graph feel we can call until loop java in gremlin java g v until t t get value name equals marko g of out g v until g of out t t get value name equals marko groovy in gremlin groovy with sugar g v until it name marko g of out g v until g of out it name marko with the emit predicate g v until it name marko g of out true g v until g of out it name marko true a few extra thoughts rules of sideeffect scoping if internal traversals are always linearized for olap then we may want to make the sideeffect scope be the global scope that is no nested sideeffect structures what i like about this model is that there is no need for as and the section of what is being looped is being made apparent by this is what i like about local as well is the delimiter of the construct we we still need to keep jump as the goto construct necessary for arbitrary jumping but it would be used primarily by the strategies not by users in fact we could probably remove graphtraversal jump and there would simply be a jumpstep available for usage by strategies in fact we could renamed jumpstep to gotostep though to keep with the graph theme jump may be more appropriate analogous to loop vs until dkuppitz mbroecheler bryncooke joshsh

| 1

|

36,885

| 2,813,344,479

|

IssuesEvent

|

2015-05-18 14:21:14

|

georgjaehnig/serchilo-drupal

|

https://api.github.com/repos/georgjaehnig/serchilo-drupal

|

closed

|

Shortcut edit, fieldgroups: Change jQuery styles to Bootstrap styles

|

Priority: could Social: help wanted Type: enhancement

|

In the Shortcut edit form, the CSS of the fieldgroups should look like Bootstrap (and not like jQuery).

|

1.0

|

Shortcut edit, fieldgroups: Change jQuery styles to Bootstrap styles - In the Shortcut edit form, the CSS of the fieldgroups should look like Bootstrap (and not like jQuery).

|

non_process

|

shortcut edit fieldgroups change jquery styles to bootstrap styles in the shortcut edit form the css of the fieldgroups should look like bootstrap and not like jquery

| 0

|

8,291

| 11,457,195,270

|

IssuesEvent

|

2020-02-06 23:02:07

|

qgis/QGIS

|

https://api.github.com/repos/qgis/QGIS

|

closed

|

Oriented Minimum Bounding Box strange behavior

|

Bug Processing

|

<!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

- [ x ] Search through existing issue reports and gis.stackexchange.com to check whether the issue already exists

- [ x ] Test with a [clean new user profile](https://docs.qgis.org/testing/en/docs/user_manual/introduction/qgis_configuration.html?highlight=profile#working-with-user-profiles).

- [ x ] Create a light and self-contained sample dataset and project file which demonstrates the issue

If the issue concerns a **third party plugin**, then it **cannot** be fixed by the QGIS team. Please raise your issue in the dedicated bug tracker for that specific plugin (as listed in the plugin's description). -->

**Describe the bug**

By running the "Oriented Minimum Bounding Box" algorithm from the Processing Toolbox, on a polygons vector layer, in some (seemingly random) cases, the result is not as expected (it is visibly appreciated that an oriented bounding box of smaller area could be generated).

By creating a very small decimal radius buffer to the geometries of the problematic features, running the Oriented Minimum Bounding Box algorithm over the resulting geometry, gives the expected result.

**How to Reproduce**

1. Create a feature with the following geometry:

`Polygon ((264 -525, 248 -521, 244 -519, 233 -508, 231 -504, 210 -445, 196 -396, 180 -332, 178 -322, 176 -310, 174 -296, 174 -261, 176 -257, 178 -255, 183 -251, 193 -245, 197 -243, 413 -176, 439 -168, 447 -166, 465 -164, 548 -164, 552 -166, 561 -175, 567 -187, 602 -304, 618 -379, 618 -400, 616 -406, 612 -414, 606 -420, 587 -430, 575 -436, 547 -446, 451 -474, 437 -478, 321 -511, 283 -521, 275 -523, 266 -525, 264 -525))`

(Tested in a layer defined in CRS = EPSG:3857, with no projection applied to the project canvas. Although I think it doesn't matter.)

2. Run the Oriented Minimum Bounding Box algorithm to that feature.

The output box attributes are: _width = 361.00_, _height = 444.00_, _angle = 90.00_.

3. Create a Buffer of _Radius = 0.001_ from the original feature.

4. Run the Oriented Minimum Bounding Box algorithm to the buffered geometry feature.

The output box attributes are: _width = 417.63_, _height = 298.02_, _angle = 162.68_.

The area of the box returned by the buffered geometry feature is smaller than that returned by the original one.

**QGIS and OS versions**

QGIS version 3.10.1-A Coruña

Windows 10, 64bit (OSGeoo4W installation).

|

1.0

|

Oriented Minimum Bounding Box strange behavior - <!--