Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

66,451

| 8,925,643,406

|

IssuesEvent

|

2019-01-21 23:51:02

|

softlayer/softlayer.github.io

|

https://api.github.com/repos/softlayer/softlayer.github.io

|

closed

|

Feedback for go - get_unattached_portal_storages.go

|

documentation

|

Feedback regarding: https://softlayer.github.io/go/get_unattached_portal_storages.go/

I am trying this and I am getting:

```

go run get_unattached_portal_storages.go

Unable to retrieve Portable Storages:

- SOAP-ENV:Client: Bad Request (HTTP 200)

```

I made a tiny modification:

```

/*

Get unattached portal storages.

The script gets all unattached portal storages in the account.

Important manual pages:

http://sldn.softlayer.com/reference/services/SoftLayer_Account

http://sldn.softlayer.com/reference/services/SoftLayer_Account/getPortableStorageVolumes

http://sldn.softlayer.com/reference/datatypes/SoftLayer_Virtual_Disk_Image

@License: http://sldn.softlayer.com/article/License

@Author: SoftLayer Technologies, Inc. <sldn@softlayer.com>

*/

package main

import (

"os"

"fmt"

"github.com/softlayer/softlayer-go/datatypes"

"github.com/softlayer/softlayer-go/services"

"github.com/softlayer/softlayer-go/session"

"encoding/json"

)

func main() {

// SoftLayer API username and key

// username := "set me"

// apikey := "set me"

username := os.Getenv("SL_USERNAME")

apikey := os.Getenv("SL_APIKEY")

// Create a session

sess := session.New(username, apikey)

// Get SoftLayer_Account service

service := services.GetAccountService(sess)

// Use masks in order to get Guests of StorageRepositories

mask := "storageRepository[guests]"

// All unattached storage objects will be saved here.

unattachedStorages := []datatypes.Virtual_Disk_Image {}

// Get all portable storage volumes

portableStorages, err := service.Mask(mask).GetPortableStorageVolumes()

if err != nil {

fmt.Printf("\n Unable to retrieve Portable Storages:\n - %s\n", err)

return

}

// Search and save all unattached storages

for _,storage := range portableStorages {

if storage.StorageRepository != nil {

if len(storage.StorageRepository.Guests) == 0 {

unattachedStorages = append(unattachedStorages, storage)

}

}

}

// Following helps to print the result in json format.

for _,storage := range unattachedStorages {

jsonFormat, jsonErr := json.Marshal(storage)

if jsonErr != nil {

fmt.Println(jsonErr)

return

}

fmt.Println(string(jsonFormat))

}

}

```

The error is confusing (HTTP 200 and error?)

|

1.0

|

Feedback for go - get_unattached_portal_storages.go - Feedback regarding: https://softlayer.github.io/go/get_unattached_portal_storages.go/

I am trying this and I am getting:

```

go run get_unattached_portal_storages.go

Unable to retrieve Portable Storages:

- SOAP-ENV:Client: Bad Request (HTTP 200)

```

I made a tiny modification:

```

/*

Get unattached portal storages.

The script gets all unattached portal storages in the account.

Important manual pages:

http://sldn.softlayer.com/reference/services/SoftLayer_Account

http://sldn.softlayer.com/reference/services/SoftLayer_Account/getPortableStorageVolumes

http://sldn.softlayer.com/reference/datatypes/SoftLayer_Virtual_Disk_Image

@License: http://sldn.softlayer.com/article/License

@Author: SoftLayer Technologies, Inc. <sldn@softlayer.com>

*/

package main

import (

"os"

"fmt"

"github.com/softlayer/softlayer-go/datatypes"

"github.com/softlayer/softlayer-go/services"

"github.com/softlayer/softlayer-go/session"

"encoding/json"

)

func main() {

// SoftLayer API username and key

// username := "set me"

// apikey := "set me"

username := os.Getenv("SL_USERNAME")

apikey := os.Getenv("SL_APIKEY")

// Create a session

sess := session.New(username, apikey)

// Get SoftLayer_Account service

service := services.GetAccountService(sess)

// Use masks in order to get Guests of StorageRepositories

mask := "storageRepository[guests]"

// All unattached storage objects will be saved here.

unattachedStorages := []datatypes.Virtual_Disk_Image {}

// Get all portable storage volumes

portableStorages, err := service.Mask(mask).GetPortableStorageVolumes()

if err != nil {

fmt.Printf("\n Unable to retrieve Portable Storages:\n - %s\n", err)

return

}

// Search and save all unattached storages

for _,storage := range portableStorages {

if storage.StorageRepository != nil {

if len(storage.StorageRepository.Guests) == 0 {

unattachedStorages = append(unattachedStorages, storage)

}

}

}

// Following helps to print the result in json format.

for _,storage := range unattachedStorages {

jsonFormat, jsonErr := json.Marshal(storage)

if jsonErr != nil {

fmt.Println(jsonErr)

return

}

fmt.Println(string(jsonFormat))

}

}

```

The error is confusing (HTTP 200 and error?)

|

non_process

|

feedback for go get unattached portal storages go feedback regarding i am trying this and i am getting go run get unattached portal storages go unable to retrieve portable storages soap env client bad request http i made a tiny modification get unattached portal storages the script gets all unattached portal storages in the account important manual pages license author softlayer technologies inc package main import os fmt github com softlayer softlayer go datatypes github com softlayer softlayer go services github com softlayer softlayer go session encoding json func main softlayer api username and key username set me apikey set me username os getenv sl username apikey os getenv sl apikey create a session sess session new username apikey get softlayer account service service services getaccountservice sess use masks in order to get guests of storagerepositories mask storagerepository all unattached storage objects will be saved here unattachedstorages datatypes virtual disk image get all portable storage volumes portablestorages err service mask mask getportablestoragevolumes if err nil fmt printf n unable to retrieve portable storages n s n err return search and save all unattached storages for storage range portablestorages if storage storagerepository nil if len storage storagerepository guests unattachedstorages append unattachedstorages storage following helps to print the result in json format for storage range unattachedstorages jsonformat jsonerr json marshal storage if jsonerr nil fmt println jsonerr return fmt println string jsonformat the error is confusing http and error

| 0

|

18,865

| 24,792,838,614

|

IssuesEvent

|

2022-10-24 14:56:27

|

hashgraph/hedera-mirror-node

|

https://api.github.com/repos/hashgraph/hedera-mirror-node

|

closed

|

web3 module fails to build due to missing dependency

|

bug process

|

### Description

hedera-mirror-web3 fails to build due to the transient dependency `com.swirlds:swirlds-common:jar:0.31.0-alpha.1` not found

```

[INFO] -------------------< com.hedera:hedera-mirror-web3 >--------------------

[INFO] Building Hedera Mirror Node Web3 0.68.0-SNAPSHOT [3/3]

[INFO] --------------------------------[ jar ]---------------------------------

[WARNING] The POM for com.swirlds:swirlds-common:jar:0.31.0-alpha.1 is missing, no dependency information available

[INFO] ------------------------------------------------------------------------

[INFO] Reactor Summary for Hedera Mirror Node 0.68.0-SNAPSHOT:

[INFO]

[INFO] Hedera Mirror Node ................................. SUCCESS [ 1.007 s]

[INFO] Hedera Mirror Node Common .......................... SUCCESS [ 4.399 s]

[INFO] Hedera Mirror Node Web3 ............................ FAILURE [ 0.384 s]

[INFO] ------------------------------------------------------------------------

[INFO] BUILD FAILURE

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 6.346 s (Wall Clock)

[INFO] Finished at: 2022-10-21T15:46:48-05:00

[INFO] ------------------------------------------------------------------------

[ERROR] Failed to execute goal on project hedera-mirror-web3: Could not resolve dependencies for project com.hedera:hedera-mirror-web3:jar:0.68.0-SNAPSHOT: com.swirlds:swirlds-common:jar:0.31.0-alpha.1 was not found in https://hyperledger.jfrog.io/artifactory/besu-maven/ during a previous attempt. This failure was cached in the local repository and resolution is not reattempted until the update interval of besu-repository has elapsed or updates are forced -> [Help 1]

```

### Steps to reproduce

as the description

### Additional context

_No response_

### Hedera network

other

### Version

v0.68.0-SNAPSHOT

### Operating system

_No response_

|

1.0

|

web3 module fails to build due to missing dependency - ### Description

hedera-mirror-web3 fails to build due to the transient dependency `com.swirlds:swirlds-common:jar:0.31.0-alpha.1` not found

```

[INFO] -------------------< com.hedera:hedera-mirror-web3 >--------------------

[INFO] Building Hedera Mirror Node Web3 0.68.0-SNAPSHOT [3/3]

[INFO] --------------------------------[ jar ]---------------------------------

[WARNING] The POM for com.swirlds:swirlds-common:jar:0.31.0-alpha.1 is missing, no dependency information available

[INFO] ------------------------------------------------------------------------

[INFO] Reactor Summary for Hedera Mirror Node 0.68.0-SNAPSHOT:

[INFO]

[INFO] Hedera Mirror Node ................................. SUCCESS [ 1.007 s]

[INFO] Hedera Mirror Node Common .......................... SUCCESS [ 4.399 s]

[INFO] Hedera Mirror Node Web3 ............................ FAILURE [ 0.384 s]

[INFO] ------------------------------------------------------------------------

[INFO] BUILD FAILURE

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 6.346 s (Wall Clock)

[INFO] Finished at: 2022-10-21T15:46:48-05:00

[INFO] ------------------------------------------------------------------------

[ERROR] Failed to execute goal on project hedera-mirror-web3: Could not resolve dependencies for project com.hedera:hedera-mirror-web3:jar:0.68.0-SNAPSHOT: com.swirlds:swirlds-common:jar:0.31.0-alpha.1 was not found in https://hyperledger.jfrog.io/artifactory/besu-maven/ during a previous attempt. This failure was cached in the local repository and resolution is not reattempted until the update interval of besu-repository has elapsed or updates are forced -> [Help 1]

```

### Steps to reproduce

as the description

### Additional context

_No response_

### Hedera network

other

### Version

v0.68.0-SNAPSHOT

### Operating system

_No response_

|

process

|

module fails to build due to missing dependency description hedera mirror fails to build due to the transient dependency com swirlds swirlds common jar alpha not found building hedera mirror node snapshot the pom for com swirlds swirlds common jar alpha is missing no dependency information available reactor summary for hedera mirror node snapshot hedera mirror node success hedera mirror node common success hedera mirror node failure build failure total time s wall clock finished at failed to execute goal on project hedera mirror could not resolve dependencies for project com hedera hedera mirror jar snapshot com swirlds swirlds common jar alpha was not found in during a previous attempt this failure was cached in the local repository and resolution is not reattempted until the update interval of besu repository has elapsed or updates are forced steps to reproduce as the description additional context no response hedera network other version snapshot operating system no response

| 1

|

12,501

| 14,961,499,082

|

IssuesEvent

|

2021-01-27 07:52:15

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[Audit logs] [Consent] Event is not triggered for open study

|

Bug P1 Participant datastore Process: Fixed

|

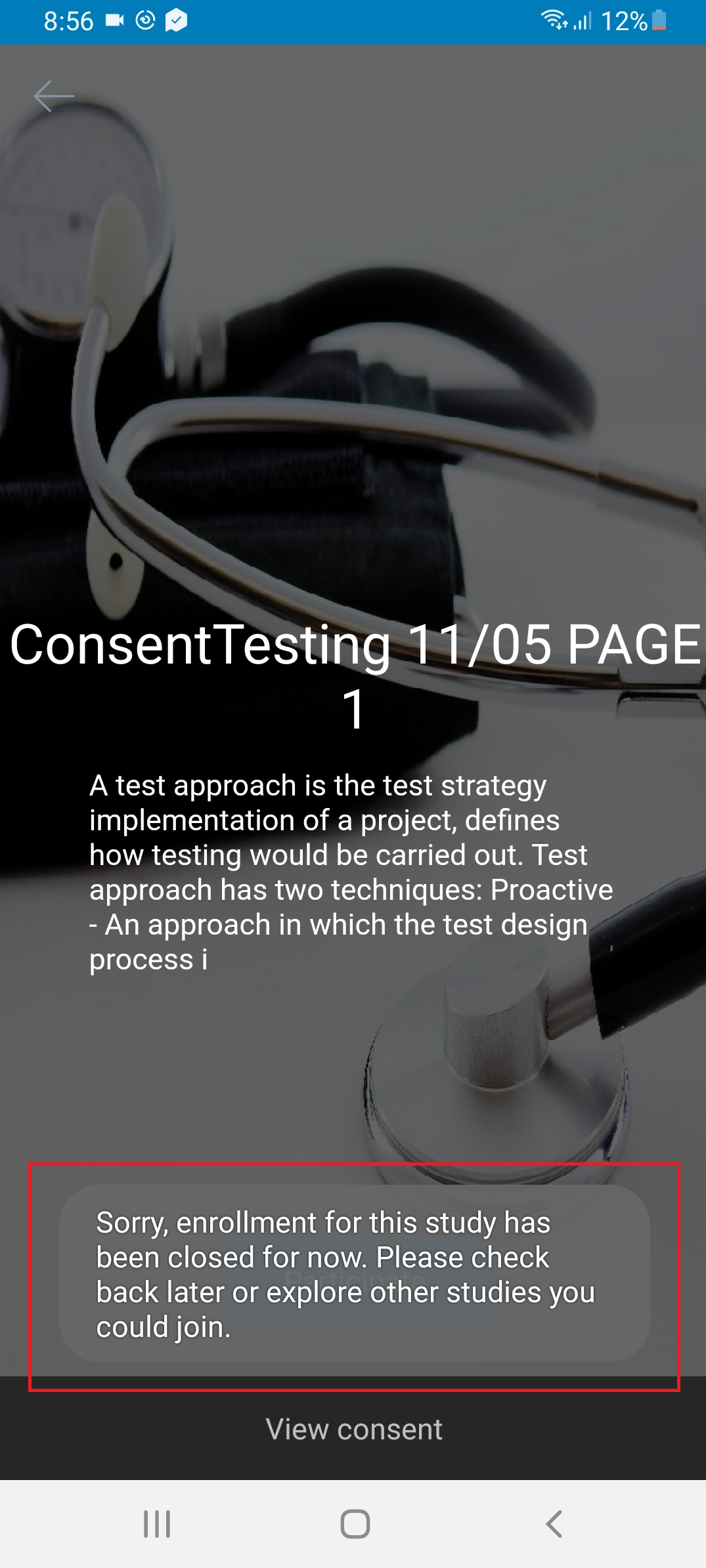

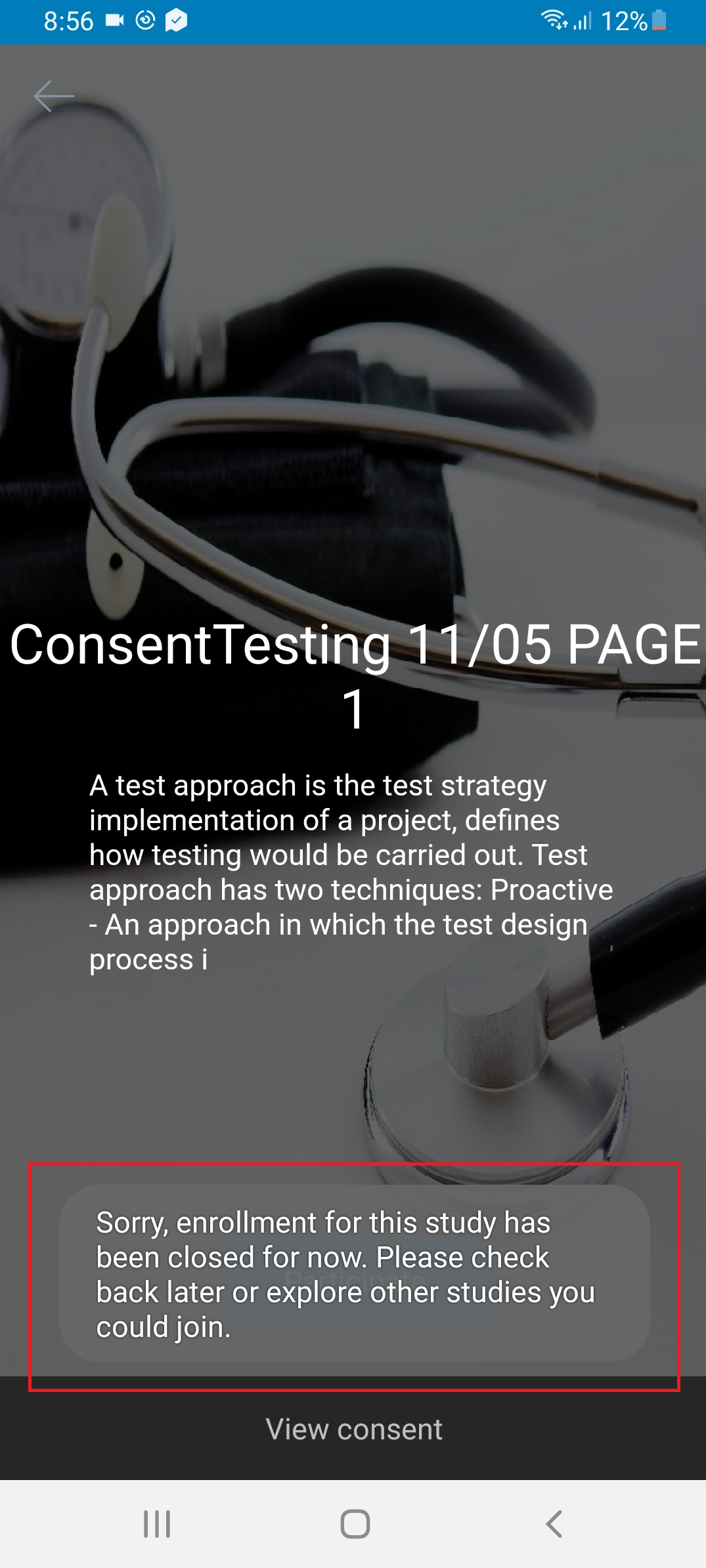

**Event:**

USER_ENROLLED_INTO_STUDY

Note: Issue not observed for closed study and closed study having eligibility test

|

1.0

|

[Audit logs] [Consent] Event is not triggered for open study - **Event:**

USER_ENROLLED_INTO_STUDY

Note: Issue not observed for closed study and closed study having eligibility test

|

process

|

event is not triggered for open study event user enrolled into study note issue not observed for closed study and closed study having eligibility test

| 1

|

19,595

| 25,946,093,472

|

IssuesEvent

|

2022-12-17 01:22:40

|

google/fhir-data-pipes

|

https://api.github.com/repos/google/fhir-data-pipes

|

opened

|

Evaluate and possibly integrate an IPython notebook environment in the single machine package

|

enhancement good first issue P2:should process

|

Currently, our single machine deployment package does not include any notebook or SQL environment. Instead we provide the Thrift server and clients can connect to it through JDBC. Once we start to rely more on [FHIR views](https://github.com/google/fhir-py) for query needs, having a python/notebook environment becomes even more important. We should evaluate different options and possibly integrate one. There are some options [here](https://jupyter-docker-stacks.readthedocs.io/en/latest/using/selecting.html).

|

1.0

|

Evaluate and possibly integrate an IPython notebook environment in the single machine package - Currently, our single machine deployment package does not include any notebook or SQL environment. Instead we provide the Thrift server and clients can connect to it through JDBC. Once we start to rely more on [FHIR views](https://github.com/google/fhir-py) for query needs, having a python/notebook environment becomes even more important. We should evaluate different options and possibly integrate one. There are some options [here](https://jupyter-docker-stacks.readthedocs.io/en/latest/using/selecting.html).

|

process

|

evaluate and possibly integrate an ipython notebook environment in the single machine package currently our single machine deployment package does not include any notebook or sql environment instead we provide the thrift server and clients can connect to it through jdbc once we start to rely more on for query needs having a python notebook environment becomes even more important we should evaluate different options and possibly integrate one there are some options

| 1

|

4,487

| 7,345,940,894

|

IssuesEvent

|

2018-03-07 19:02:48

|

dotnet/corefx

|

https://api.github.com/repos/dotnet/corefx

|

opened

|

TestChildProcessCleanupAfterDispose(true) failed in CI on Linux

|

area-System.Diagnostics.Process

|

https://mc.dot.net/#/user/stephentoub/pr~2Fjenkins~2Fdotnet~2Fcorefx~2Fmaster~2F/test~2Ffunctional~2Fcli~2F/ed9ac7bd3de570b921d74473ded3a06ba9f2baee/workItem/System.Diagnostics.Process.Tests/analysis/xunit/System.Diagnostics.Tests.ProcessTests~2FTestChildProcessCleanupAfterDispose(shortProcess:%20False,%20enableEvents:%20True)

```

Debian.87.Amd64.Open-Release-x64

Get Repro environment

Unhandled Exception of Type Xunit.Sdk.TrueException

Message :

Assert.True() Failure

Expected: True

Actual: False

```

cc: @tmds

|

1.0

|

TestChildProcessCleanupAfterDispose(true) failed in CI on Linux - https://mc.dot.net/#/user/stephentoub/pr~2Fjenkins~2Fdotnet~2Fcorefx~2Fmaster~2F/test~2Ffunctional~2Fcli~2F/ed9ac7bd3de570b921d74473ded3a06ba9f2baee/workItem/System.Diagnostics.Process.Tests/analysis/xunit/System.Diagnostics.Tests.ProcessTests~2FTestChildProcessCleanupAfterDispose(shortProcess:%20False,%20enableEvents:%20True)

```

Debian.87.Amd64.Open-Release-x64

Get Repro environment

Unhandled Exception of Type Xunit.Sdk.TrueException

Message :

Assert.True() Failure

Expected: True

Actual: False

```

cc: @tmds

|

process

|

testchildprocesscleanupafterdispose true failed in ci on linux debian open release get repro environment unhandled exception of type xunit sdk trueexception message assert true failure expected true actual false cc tmds

| 1

|

31,536

| 11,952,951,806

|

IssuesEvent

|

2020-04-03 19:53:31

|

keeweb/keeweb

|

https://api.github.com/repos/keeweb/keeweb

|

closed

|

Implement CSP

|

enhancement security

|

**Is your feature request related to a problem? Please describe.**

We should define a CSP for the webapp and desktop apps to minimize the risk of data leakage and RCE's.

**Describe the solution you'd like**

The webapp should limit connections and script execution. It's also possible that it comes in two versions:

- default option: with strict CSP

- webdav-enabled option: relaxed CSP that allows connecting to external hosts

**Additional context**

It's not clear if WebAssembly works well with CSP now, see https://github.com/WebAssembly/content-security-policy/issues/7

|

True

|

Implement CSP - **Is your feature request related to a problem? Please describe.**

We should define a CSP for the webapp and desktop apps to minimize the risk of data leakage and RCE's.

**Describe the solution you'd like**

The webapp should limit connections and script execution. It's also possible that it comes in two versions:

- default option: with strict CSP

- webdav-enabled option: relaxed CSP that allows connecting to external hosts

**Additional context**

It's not clear if WebAssembly works well with CSP now, see https://github.com/WebAssembly/content-security-policy/issues/7

|

non_process

|

implement csp is your feature request related to a problem please describe we should define a csp for the webapp and desktop apps to minimize the risk of data leakage and rce s describe the solution you d like the webapp should limit connections and script execution it s also possible that it comes in two versions default option with strict csp webdav enabled option relaxed csp that allows connecting to external hosts additional context it s not clear if webassembly works well with csp now see

| 0

|

12,079

| 14,739,972,504

|

IssuesEvent

|

2021-01-07 08:17:02

|

kdjstudios/SABillingGitlab

|

https://api.github.com/repos/kdjstudios/SABillingGitlab

|

closed

|

VCC Activities Needed For Chicago

|

anc-process anp-urgent ant-support has attachment

|

In GitLab by @kdjstudios on Oct 2, 2018, 17:14

**Submitted by:** "Tobey McInally" <tobey.mcinally@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/5780052

**Server:** Internal

**Client/Site:** Chicago

**Account:** NA

**Issue:**

We are in need of the below in order to close out billing for our Chicago location:

1. Add all VCC Activities need to the site.

2. Import posting script needs to be switched over to handle the VCC export file.

3. All accounts need to be updated to use the new VCC Activities.

4. All old activities at the site level need to be deactivated.

Attached is the file we will be using to process Chicago billing, we had to remove all Allentown accounts while we worked on merging the billing for both locations.

[chicago+billing.csv](/uploads/c9bd5cf8efec68812fdf8f666936da15/chicago+billing.csv)

|

1.0

|

VCC Activities Needed For Chicago - In GitLab by @kdjstudios on Oct 2, 2018, 17:14

**Submitted by:** "Tobey McInally" <tobey.mcinally@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/5780052

**Server:** Internal

**Client/Site:** Chicago

**Account:** NA

**Issue:**

We are in need of the below in order to close out billing for our Chicago location:

1. Add all VCC Activities need to the site.

2. Import posting script needs to be switched over to handle the VCC export file.

3. All accounts need to be updated to use the new VCC Activities.

4. All old activities at the site level need to be deactivated.

Attached is the file we will be using to process Chicago billing, we had to remove all Allentown accounts while we worked on merging the billing for both locations.

[chicago+billing.csv](/uploads/c9bd5cf8efec68812fdf8f666936da15/chicago+billing.csv)

|

process

|

vcc activities needed for chicago in gitlab by kdjstudios on oct submitted by tobey mcinally helpdesk server internal client site chicago account na issue we are in need of the below in order to close out billing for our chicago location add all vcc activities need to the site import posting script needs to be switched over to handle the vcc export file all accounts need to be updated to use the new vcc activities all old activities at the site level need to be deactivated attached is the file we will be using to process chicago billing we had to remove all allentown accounts while we worked on merging the billing for both locations uploads chicago billing csv

| 1

|

11,810

| 14,628,759,465

|

IssuesEvent

|

2020-12-23 14:43:03

|

MicrosoftDocs/azure-devops-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-devops-docs

|

closed

|

`resources.triggeringAlias` variable is not available (on premises)

|

Pri2 devops-cicd-process/tech devops/prod doc-bug

|

The variable `resources.triggeringAlias` is not present in my builds. I made sure my builds are triggered automatically from another build, which is listed under `resources:`. All of that is working file.

I checked with bash `env | sort` and I see pipeline-specific variables correctly set but there is no `resources.triggeringAlias`

The problem with this is that I have no way to know which one of the pipeline listed under `resources:` triggered my build, and this makes it impossible for me to handle release branches packaging of different artifacts from different repos

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: ee4ec9d0-e0d5-4fb4-7c3e-b84abfa290c2

* Version Independent ID: 3e2b80d9-30e5-0c48-49f0-4fcdfedf5eee

* Content: [Resources - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/resources?view=azure-devops&tabs=schema)

* Content Source: [docs/pipelines/process/resources.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/master/docs/pipelines/process/resources.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

1.0

|

`resources.triggeringAlias` variable is not available (on premises) - The variable `resources.triggeringAlias` is not present in my builds. I made sure my builds are triggered automatically from another build, which is listed under `resources:`. All of that is working file.

I checked with bash `env | sort` and I see pipeline-specific variables correctly set but there is no `resources.triggeringAlias`

The problem with this is that I have no way to know which one of the pipeline listed under `resources:` triggered my build, and this makes it impossible for me to handle release branches packaging of different artifacts from different repos

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: ee4ec9d0-e0d5-4fb4-7c3e-b84abfa290c2

* Version Independent ID: 3e2b80d9-30e5-0c48-49f0-4fcdfedf5eee

* Content: [Resources - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/resources?view=azure-devops&tabs=schema)

* Content Source: [docs/pipelines/process/resources.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/master/docs/pipelines/process/resources.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

process

|

resources triggeringalias variable is not available on premises the variable resources triggeringalias is not present in my builds i made sure my builds are triggered automatically from another build which is listed under resources all of that is working file i checked with bash env sort and i see pipeline specific variables correctly set but there is no resources triggeringalias the problem with this is that i have no way to know which one of the pipeline listed under resources triggered my build and this makes it impossible for me to handle release branches packaging of different artifacts from different repos document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source product devops technology devops cicd process github login juliakm microsoft alias jukullam

| 1

|

74,847

| 9,126,835,284

|

IssuesEvent

|

2019-02-25 00:41:32

|

vector-im/riot-web

|

https://api.github.com/repos/vector-im/riot-web

|

closed

|

Clicking on Search icon again should collapse search

|

redesign

|

### Description

Clicking on the search icon after search is already open should collapse it again.

|

1.0

|

Clicking on Search icon again should collapse search - ### Description

Clicking on the search icon after search is already open should collapse it again.

|

non_process

|

clicking on search icon again should collapse search description clicking on the search icon after search is already open should collapse it again

| 0

|

319,149

| 27,352,852,305

|

IssuesEvent

|

2023-02-27 10:47:12

|

italia/design-angular-kit

|

https://api.github.com/repos/italia/design-angular-kit

|

closed

|

Add docs and tests for component Notification

|

next docs need for tests

|

## Description

Add docs and tests for component `Notification` following the vanilla JS implementation present in [Bootstrap Italia documentation](https://italia.github.io/bootstrap-italia/docs/componenti/notifiche/).

## Checklist

- [ ] Verify and update, markup and classes of the resulting DOM template, compared to Bootstrap Italia 2

- [ ] Check the rendering of the component (CSS application including spacing, dimensions, typography, ...), compared to Bootstrap Italia and the new UI kit

- [ ] Check the behavior of the component (JavaScript, user interaction, states, keyboard interaction for accessibility, ...), compared to Bootstrap Italia 2

- [ ] Verify the accessibility of the component, including automatic tests and manual evaluations by a11y experts, if possible

- [ ] Evaluate the need to supplement documentation with more detailed information.

- [ ] Write tests for this component

- [ ] Write documentation for this component

<!-- If you need help: Developers Italia Slack (https://developersitalia.slack.com/messages/C7VPAUVB3)! -->

|

1.0

|

Add docs and tests for component Notification - ## Description

Add docs and tests for component `Notification` following the vanilla JS implementation present in [Bootstrap Italia documentation](https://italia.github.io/bootstrap-italia/docs/componenti/notifiche/).

## Checklist

- [ ] Verify and update, markup and classes of the resulting DOM template, compared to Bootstrap Italia 2

- [ ] Check the rendering of the component (CSS application including spacing, dimensions, typography, ...), compared to Bootstrap Italia and the new UI kit

- [ ] Check the behavior of the component (JavaScript, user interaction, states, keyboard interaction for accessibility, ...), compared to Bootstrap Italia 2

- [ ] Verify the accessibility of the component, including automatic tests and manual evaluations by a11y experts, if possible

- [ ] Evaluate the need to supplement documentation with more detailed information.

- [ ] Write tests for this component

- [ ] Write documentation for this component

<!-- If you need help: Developers Italia Slack (https://developersitalia.slack.com/messages/C7VPAUVB3)! -->

|

non_process

|

add docs and tests for component notification description add docs and tests for component notification following the vanilla js implementation present in checklist verify and update markup and classes of the resulting dom template compared to bootstrap italia check the rendering of the component css application including spacing dimensions typography compared to bootstrap italia and the new ui kit check the behavior of the component javascript user interaction states keyboard interaction for accessibility compared to bootstrap italia verify the accessibility of the component including automatic tests and manual evaluations by experts if possible evaluate the need to supplement documentation with more detailed information write tests for this component write documentation for this component

| 0

|

624,330

| 19,694,562,363

|

IssuesEvent

|

2022-01-12 10:44:12

|

ceph/ceph-csi

|

https://api.github.com/repos/ceph/ceph-csi

|

closed

|

Allow bigger size restore/clone for CephFS

|

enhancement priority-4 component/cephfs keepalive

|

# Describe the bug #

CSI spec allows a user to create bigger volumes at restore/clone path. Ideally we should enable it in our driver too. This issue tracks the requirement for CephFS

Eventhough its allowed in today's code, we have to revisit this path with the fix present in CephFS for snapshot size.

|

1.0

|

Allow bigger size restore/clone for CephFS - # Describe the bug #

CSI spec allows a user to create bigger volumes at restore/clone path. Ideally we should enable it in our driver too. This issue tracks the requirement for CephFS

Eventhough its allowed in today's code, we have to revisit this path with the fix present in CephFS for snapshot size.

|

non_process

|

allow bigger size restore clone for cephfs describe the bug csi spec allows a user to create bigger volumes at restore clone path ideally we should enable it in our driver too this issue tracks the requirement for cephfs eventhough its allowed in today s code we have to revisit this path with the fix present in cephfs for snapshot size

| 0

|

14,950

| 18,434,127,098

|

IssuesEvent

|

2021-10-14 11:04:37

|

qgis/QGIS-Documentation

|

https://api.github.com/repos/qgis/QGIS-Documentation

|

closed

|

gdal batch processing algorithms - need to document how to set multiple creation options

|

Processing

|

The documentation includes this for a number of the gdal processing algorithms:

`- For adding one or more creation options that control the raster to be created...`

If you want to run as a batch process with more than one creation option you need to separate the options with a pipe character |.

This is totally non-obvious, and unless there's something I've missed, it isn't documented anywhere.

If the user is lucky they will find it out at https://gis.stackexchange.com/questions/317700/adding-multiple-additional-options-to-qgis-batch-processing

I guess ideally this would be documented with a screenshot like the one there. But I am not sure where it should be documented. Perhaps on the index page for the gdal provider? Or just expand that text above, something like this:

`- For adding one or more creation options (if using batch processing, separate multiple options with a pipe character |) that control the raster to be created...`

Are there any cases other the gdal creation options where you can enter multiple options separated by pipes?

|

1.0

|

gdal batch processing algorithms - need to document how to set multiple creation options - The documentation includes this for a number of the gdal processing algorithms:

`- For adding one or more creation options that control the raster to be created...`

If you want to run as a batch process with more than one creation option you need to separate the options with a pipe character |.

This is totally non-obvious, and unless there's something I've missed, it isn't documented anywhere.

If the user is lucky they will find it out at https://gis.stackexchange.com/questions/317700/adding-multiple-additional-options-to-qgis-batch-processing

I guess ideally this would be documented with a screenshot like the one there. But I am not sure where it should be documented. Perhaps on the index page for the gdal provider? Or just expand that text above, something like this:

`- For adding one or more creation options (if using batch processing, separate multiple options with a pipe character |) that control the raster to be created...`

Are there any cases other the gdal creation options where you can enter multiple options separated by pipes?

|

process

|

gdal batch processing algorithms need to document how to set multiple creation options the documentation includes this for a number of the gdal processing algorithms for adding one or more creation options that control the raster to be created if you want to run as a batch process with more than one creation option you need to separate the options with a pipe character this is totally non obvious and unless there s something i ve missed it isn t documented anywhere if the user is lucky they will find it out at i guess ideally this would be documented with a screenshot like the one there but i am not sure where it should be documented perhaps on the index page for the gdal provider or just expand that text above something like this for adding one or more creation options if using batch processing separate multiple options with a pipe character that control the raster to be created are there any cases other the gdal creation options where you can enter multiple options separated by pipes

| 1

|

12,890

| 15,280,836,428

|

IssuesEvent

|

2021-02-23 07:06:46

|

topcoder-platform/community-app

|

https://api.github.com/repos/topcoder-platform/community-app

|

opened

|

Filter by track and type issue when all filters are disabled

|

P2 ShapeupProcess challenge- recommender-tool

|

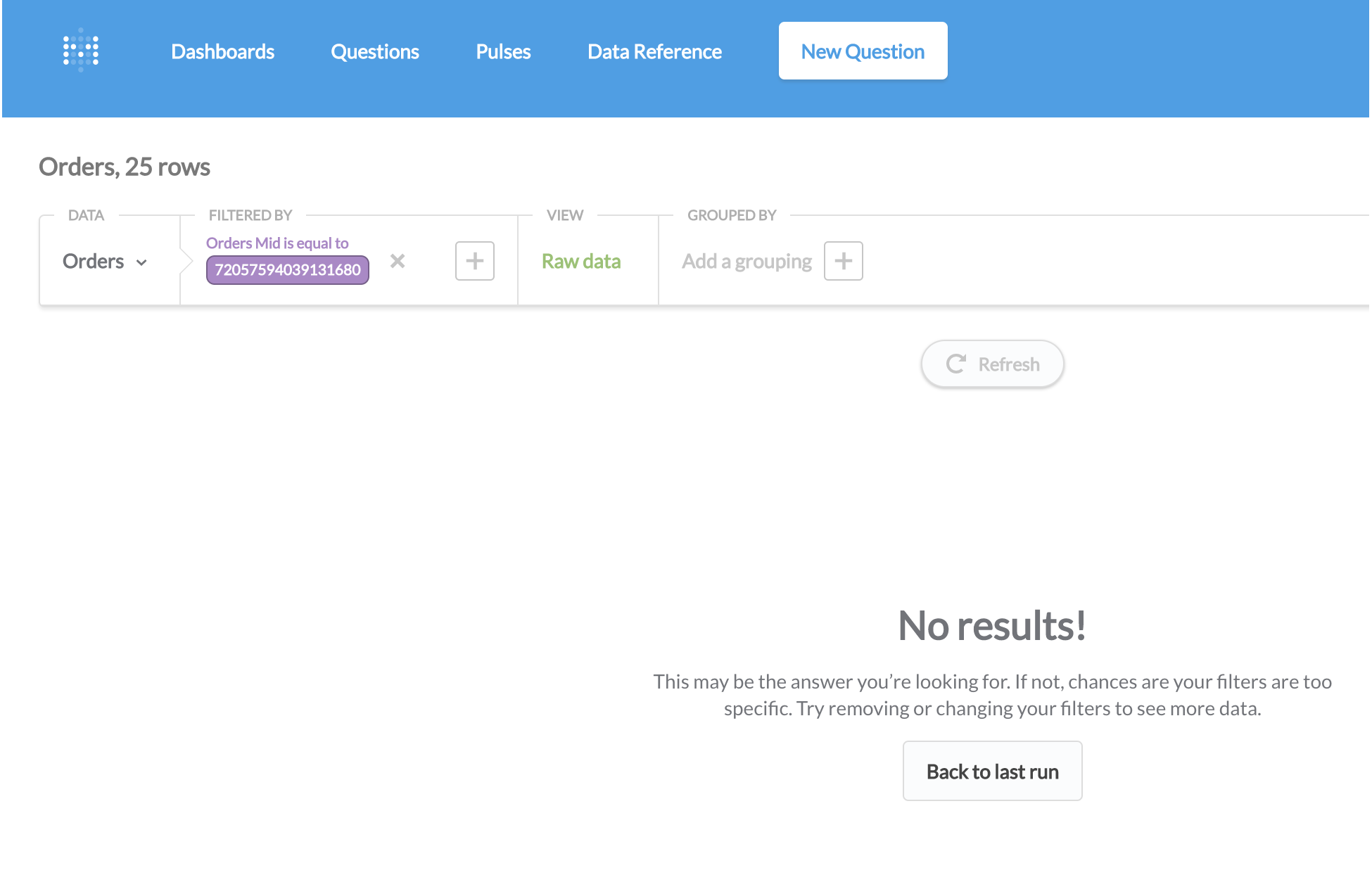

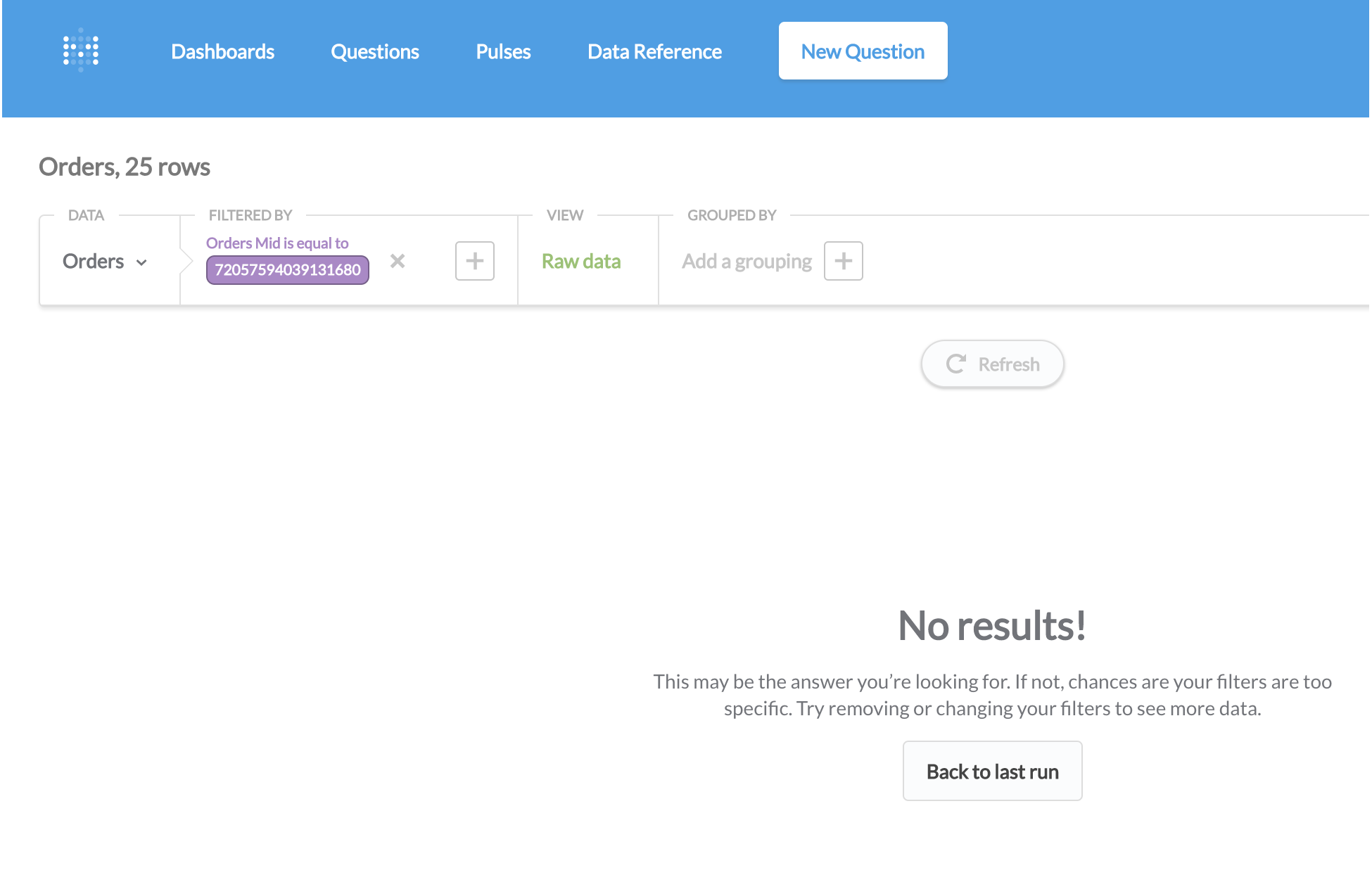

When all track filters are switched off or when all type filters are switched off, then the page keeps loading.

<img width="1440" alt="Screenshot 2021-02-23 at 12 33 56 PM" src="https://user-images.githubusercontent.com/58783823/108811708-bcbd3d80-75d3-11eb-8498-08a5577e8058.png">

<img width="1440" alt="Screenshot 2021-02-23 at 12 35 07 PM" src="https://user-images.githubusercontent.com/58783823/108811721-c21a8800-75d3-11eb-8a15-9c3f7252850f.png">

|

1.0

|

Filter by track and type issue when all filters are disabled - When all track filters are switched off or when all type filters are switched off, then the page keeps loading.

<img width="1440" alt="Screenshot 2021-02-23 at 12 33 56 PM" src="https://user-images.githubusercontent.com/58783823/108811708-bcbd3d80-75d3-11eb-8498-08a5577e8058.png">

<img width="1440" alt="Screenshot 2021-02-23 at 12 35 07 PM" src="https://user-images.githubusercontent.com/58783823/108811721-c21a8800-75d3-11eb-8a15-9c3f7252850f.png">

|

process

|

filter by track and type issue when all filters are disabled when all track filters are switched off or when all type filters are switched off then the page keeps loading img width alt screenshot at pm src img width alt screenshot at pm src

| 1

|

189,121

| 22,046,985,302

|

IssuesEvent

|

2022-05-30 03:39:37

|

madhans23/linux-4.1.15

|

https://api.github.com/repos/madhans23/linux-4.1.15

|

closed

|

CVE-2019-15216 (Medium) detected in linux-stable-rtv4.1.33 - autoclosed

|

security vulnerability

|

## CVE-2019-15216 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/madhans23/linux-4.1.15/commit/f9d19044b0eef1965f9bc412d7d9e579b74ec968">f9d19044b0eef1965f9bc412d7d9e579b74ec968</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/usb/misc/yurex.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/usb/misc/yurex.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in the Linux kernel before 5.0.14. There is a NULL pointer dereference caused by a malicious USB device in the drivers/usb/misc/yurex.c driver.

<p>Publish Date: 2019-08-19

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-15216>CVE-2019-15216</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Physical

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-15216">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-15216</a></p>

<p>Release Date: 2019-09-03</p>

<p>Fix Resolution: v5.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2019-15216 (Medium) detected in linux-stable-rtv4.1.33 - autoclosed - ## CVE-2019-15216 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/madhans23/linux-4.1.15/commit/f9d19044b0eef1965f9bc412d7d9e579b74ec968">f9d19044b0eef1965f9bc412d7d9e579b74ec968</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/usb/misc/yurex.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/usb/misc/yurex.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in the Linux kernel before 5.0.14. There is a NULL pointer dereference caused by a malicious USB device in the drivers/usb/misc/yurex.c driver.

<p>Publish Date: 2019-08-19

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-15216>CVE-2019-15216</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Physical

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-15216">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-15216</a></p>

<p>Release Date: 2019-09-03</p>

<p>Fix Resolution: v5.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in linux stable autoclosed cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulnerable source files drivers usb misc yurex c drivers usb misc yurex c vulnerability details an issue was discovered in the linux kernel before there is a null pointer dereference caused by a malicious usb device in the drivers usb misc yurex c driver publish date url a href cvss score details base score metrics exploitability metrics attack vector physical attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

11,256

| 14,021,469,119

|

IssuesEvent

|

2020-10-29 21:20:29

|

nion-software/nionswift

|

https://api.github.com/repos/nion-software/nionswift

|

opened

|

Mapped sum/average do not work if no mask is present

|

f - filtering/masking f - processing level - easy type - bug

|

Mapped sum/average work on 2d x 2d data to sum a region on each 2d datum and produce a map in collection dimensions. Making a graphic and then using Add to Mask menu item and then mapping the mask works. Running with no mask selected does not work. In addition, does the user expect that making a graphic, selecting it, and mapping should produce a map? Maybe.

|

1.0

|

Mapped sum/average do not work if no mask is present - Mapped sum/average work on 2d x 2d data to sum a region on each 2d datum and produce a map in collection dimensions. Making a graphic and then using Add to Mask menu item and then mapping the mask works. Running with no mask selected does not work. In addition, does the user expect that making a graphic, selecting it, and mapping should produce a map? Maybe.

|

process

|

mapped sum average do not work if no mask is present mapped sum average work on x data to sum a region on each datum and produce a map in collection dimensions making a graphic and then using add to mask menu item and then mapping the mask works running with no mask selected does not work in addition does the user expect that making a graphic selecting it and mapping should produce a map maybe

| 1

|

100,331

| 12,515,944,450

|

IssuesEvent

|

2020-06-03 08:34:36

|

canonical-web-and-design/build.snapcraft.io

|

https://api.github.com/repos/canonical-web-and-design/build.snapcraft.io

|

closed

|

"My repos" dashboard: Visually highlight changes in the table

|

Design: Required Priority: Medium

|

When a user visits the "my repos" dashboard, any changes since the last visit (whether they be changed table cells or new rows) should be visually highlighted. The look of this still needs to be designed.

Do we also highlight when a row has been deleted? This could be very useful for organisation members.

|

1.0

|

"My repos" dashboard: Visually highlight changes in the table - When a user visits the "my repos" dashboard, any changes since the last visit (whether they be changed table cells or new rows) should be visually highlighted. The look of this still needs to be designed.

Do we also highlight when a row has been deleted? This could be very useful for organisation members.

|

non_process

|

my repos dashboard visually highlight changes in the table when a user visits the my repos dashboard any changes since the last visit whether they be changed table cells or new rows should be visually highlighted the look of this still needs to be designed do we also highlight when a row has been deleted this could be very useful for organisation members

| 0

|

13,880

| 16,654,718,147

|

IssuesEvent

|

2021-06-05 10:02:19

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[PM] Responsive issues in My account screen > UI issue

|

Bug P2 Participant manager Process: Fixed Process: Tested dev

|

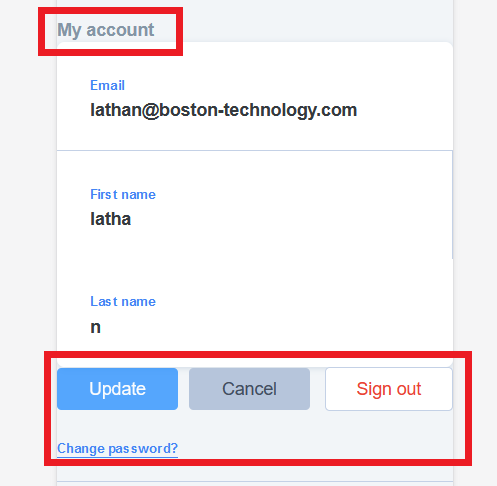

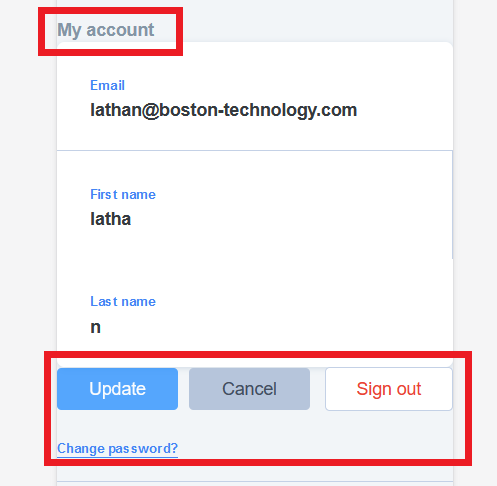

Responsive issues in My account screen > Contents are wrapping up with the margins and above frame (All the buttons)

|

2.0

|

[PM] Responsive issues in My account screen > UI issue - Responsive issues in My account screen > Contents are wrapping up with the margins and above frame (All the buttons)

|

process

|

responsive issues in my account screen ui issue responsive issues in my account screen contents are wrapping up with the margins and above frame all the buttons

| 1

|

44,059

| 17,791,600,943

|

IssuesEvent

|

2021-08-31 16:50:01

|

hashicorp/terraform-provider-azurerm

|

https://api.github.com/repos/hashicorp/terraform-provider-azurerm

|

reopened

|

AKS cluster with PSP enabled should not be blocked

|

question service/kubernetes-cluster

|

<!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backends/index.html) issues

* [Provisioner](https://www.terraform.io/docs/provisioners/index.html) issues

* [Registry](https://registry.terraform.io/) issues

* Spans resources across multiple providers

If you are running into one of these scenarios, we recommend opening an issue in the [Terraform core repository](https://github.com/hashicorp/terraform/) instead.

--->

<!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or "me too" comments, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Terraform (and AzureRM Provider) Version

<!--- Please run `terraform -v` to show the Terraform core version and provider version(s). If you are not running the latest version of Terraform or the provider, please upgrade because your issue may have already been fixed. [Terraform documentation on provider versioning](https://www.terraform.io/docs/configuration/providers.html#provider-versions). --->

### Affected Resource(s)

<!--- Please list the affected resources and data sources. --->

* `azurerm_kubernetes_cluster`

### Terraform Configuration Files

<!--- Information about code formatting: https://help.github.com/articles/basic-writing-and-formatting-syntax/#quoting-code --->

```hcl

# Copy-paste your Terraform configurations here - for large Terraform configs,

# please use a service like Dropbox and share a link to the ZIP file. For

# security, you can also encrypt the files using our GPG public key: https://keybase.io/hashicorp

```

### Debug Output

<!---

Please provide a link to a GitHub Gist containing the complete debug output. Please do NOT paste the debug output in the issue; just paste a link to the Gist.

To obtain the debug output, see the [Terraform documentation on debugging](https://www.terraform.io/docs/internals/debugging.html).

--->

### Panic Output

<!--- If Terraform produced a panic, please provide a link to a GitHub Gist containing the output of the `crash.log`. --->

### Expected Behaviour

Expect to see AKS cluster created with PSP enabled

<!--- What should have happened? --->

### Actual Behaviour

AKS cluster has a client side check to block PSP creation.

https://github.com/terraform-providers/terraform-provider-azurerm/blob/e4ff2ccc529c7c15317987d667001b51ea42fd8c/azurerm/internal/services/containers/kubernetes_cluster_resource.go#L891

AKS PSP deprecation has been removed/delayed until removal of PSP until K8s v1.25

https://docs.microsoft.com/en-us/azure/aks/use-pod-security-policies

<!--- What actually happened? --->

### Steps to Reproduce

<!--- Please list the steps required to reproduce the issue. --->

1. `terraform apply`

### Important Factoids

<!--- Are there anything atypical about your accounts that we should know? For example: Running in a Azure China/Germany/Government? --->

### References

<!---

Information about referencing Github Issues: https://help.github.com/articles/basic-writing-and-formatting-syntax/#referencing-issues-and-pull-requests

Are there any other GitHub issues (open or closed) or pull requests that should be linked here? Such as vendor documentation?

--->

* #0000

|

1.0

|

AKS cluster with PSP enabled should not be blocked - <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backends/index.html) issues

* [Provisioner](https://www.terraform.io/docs/provisioners/index.html) issues

* [Registry](https://registry.terraform.io/) issues

* Spans resources across multiple providers

If you are running into one of these scenarios, we recommend opening an issue in the [Terraform core repository](https://github.com/hashicorp/terraform/) instead.

--->

<!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or "me too" comments, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Terraform (and AzureRM Provider) Version

<!--- Please run `terraform -v` to show the Terraform core version and provider version(s). If you are not running the latest version of Terraform or the provider, please upgrade because your issue may have already been fixed. [Terraform documentation on provider versioning](https://www.terraform.io/docs/configuration/providers.html#provider-versions). --->

### Affected Resource(s)

<!--- Please list the affected resources and data sources. --->

* `azurerm_kubernetes_cluster`

### Terraform Configuration Files

<!--- Information about code formatting: https://help.github.com/articles/basic-writing-and-formatting-syntax/#quoting-code --->

```hcl

# Copy-paste your Terraform configurations here - for large Terraform configs,

# please use a service like Dropbox and share a link to the ZIP file. For

# security, you can also encrypt the files using our GPG public key: https://keybase.io/hashicorp

```

### Debug Output

<!---

Please provide a link to a GitHub Gist containing the complete debug output. Please do NOT paste the debug output in the issue; just paste a link to the Gist.

To obtain the debug output, see the [Terraform documentation on debugging](https://www.terraform.io/docs/internals/debugging.html).

--->

### Panic Output

<!--- If Terraform produced a panic, please provide a link to a GitHub Gist containing the output of the `crash.log`. --->

### Expected Behaviour

Expect to see AKS cluster created with PSP enabled

<!--- What should have happened? --->

### Actual Behaviour

AKS cluster has a client side check to block PSP creation.

https://github.com/terraform-providers/terraform-provider-azurerm/blob/e4ff2ccc529c7c15317987d667001b51ea42fd8c/azurerm/internal/services/containers/kubernetes_cluster_resource.go#L891

AKS PSP deprecation has been removed/delayed until removal of PSP until K8s v1.25

https://docs.microsoft.com/en-us/azure/aks/use-pod-security-policies

<!--- What actually happened? --->

### Steps to Reproduce

<!--- Please list the steps required to reproduce the issue. --->

1. `terraform apply`

### Important Factoids

<!--- Are there anything atypical about your accounts that we should know? For example: Running in a Azure China/Germany/Government? --->

### References

<!---

Information about referencing Github Issues: https://help.github.com/articles/basic-writing-and-formatting-syntax/#referencing-issues-and-pull-requests

Are there any other GitHub issues (open or closed) or pull requests that should be linked here? Such as vendor documentation?

--->

* #0000

|

non_process

|

aks cluster with psp enabled should not be blocked please note the following potential times when an issue might be in terraform core or resource ordering issues and issues issues issues spans resources across multiple providers if you are running into one of these scenarios we recommend opening an issue in the instead community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or me too comments they generate extra noise for issue followers and do not help prioritize the request if you are interested in working on this issue or have submitted a pull request please leave a comment terraform and azurerm provider version affected resource s azurerm kubernetes cluster terraform configuration files hcl copy paste your terraform configurations here for large terraform configs please use a service like dropbox and share a link to the zip file for security you can also encrypt the files using our gpg public key debug output please provide a link to a github gist containing the complete debug output please do not paste the debug output in the issue just paste a link to the gist to obtain the debug output see the panic output expected behaviour expect to see aks cluster created with psp enabled actual behaviour aks cluster has a client side check to block psp creation aks psp deprecation has been removed delayed until removal of psp until steps to reproduce terraform apply important factoids references information about referencing github issues are there any other github issues open or closed or pull requests that should be linked here such as vendor documentation

| 0

|

1,083

| 3,547,769,353

|

IssuesEvent

|

2016-01-20 11:17:12

|

orbisgis/orbisgis

|

https://api.github.com/repos/orbisgis/orbisgis

|

opened

|

New maven project for rendering test

|

Processing and analysis Rendering & cartography

|

Creates a new maven project which run only the rendering from an .ows file and return informations about the rendering performance (running time ...).

|

1.0

|

New maven project for rendering test - Creates a new maven project which run only the rendering from an .ows file and return informations about the rendering performance (running time ...).

|

process

|

new maven project for rendering test creates a new maven project which run only the rendering from an ows file and return informations about the rendering performance running time

| 1

|

11,231

| 14,007,593,912

|

IssuesEvent

|

2020-10-28 21:51:48

|

RIOT-OS/RIOT

|

https://api.github.com/repos/RIOT-OS/RIOT

|

closed

|

Misleading API in netdev driver

|

Area: drivers Area: network Process: API change State: stale

|

# Problem Description

The `int (*recv )(netdev_t *dev, void *buf, size_t len, void *info)` member in `netdev_driver_t` ([see Doxygen](http://api.riot-os.org/structnetdev__driver.html#ae2c8cad80067e3b1f9979931ddb3cc8b)) is a textbook example of a misleading API:

1. The function does three completely different things depending on arguments:

- Receive the message and return its size, if `buf != NULL`

- Returns the message size of the incoming message if `(buf == NULL) && (len == 0)`

- Drops the incoming message if `(buf == NULL) && (len != 0)`

2. The function name only reflects one of the three cases

3. One of the three cases is a corner case (the drop packet case only occurs under high load), so a bug is going to be unnoticed for quite some time

# Symptopms

This misleading API already lead to a bug https://github.com/RIOT-OS/RIOT/issues/9784. I predict similar bugs will show up in the future, if the API is not changed. Update: Many similar bugs were found, see https://github.com/RIOT-OS/RIOT/pull/9832

# Suggestions to Address the Problem

1. Split the function into three functions (e.g. `recv()`, `get_size()` and `drop()`). This would be the cleanest API, but the ROM size of the implementation is likely to increase, as all three will share some common code. (I assume the compiler will inline the common code when implemented in separate functions, so even when no duplicate C code is present, the ROM size will likely grow.)

- Pros:

- Cleanest API: Reviewers and implementers will no longer easily forget about the size/drop features

- Checking for missing size/drop implementations possible by an assert

- No increase in runtime overhead or RAM (barely measurable speedup expected by not longer needing conditional jumps)

- Biggest increase in code readability (control flow simplified, functions get shorter, function names match their intention, header parsing will be moved to short static functions)

- Cons:

- Biggest ROM increase compared to other approaches expected

- Biggest increase in lines of code

2. Rename the function, e.g. to `recv_or_size_or_drop()`. While this name is very clumsy, at least it is very obvious that this functions has to implement three different things.

- Pro:

- No increase in RAM/ROM usage and runtime overhead

- Cons:

- Only cosmetic, does not really address the problem

- Unclean API

3. Add an additional parameter (e.g. an `enum`) to specify which of the three things the function should do. This would be much more obvious to the programmer, even though a bit wasteful

- Pros:

- No increase in ROM usage (and likely in RAM usage, if increased stack usage does not result in higher stack sizes being used)

- Compiler helps implementer if both size and drop is forgotten (unused argument)

- Cons:

- Unclean API

- Slight increase in runtime (barely measurable)

4. Like 1., but only split off drop and keep size in recv

- Pros:

- Only change that part of the API that was affected by bugs so far

- Less ROM overhead and lines of code than 1.

- Cons:

- Still only slightly less unclean API

- Compared to 1. most of the ROM / lines of code increase is already been paid, so why not pay the rest and get a clean API

5. Add `static inline` functions to increase readability: In the upper layers add wrappers to call the size and drop feature od the recv function more explicitly; in the lower layers to test for the drop/size/recv mode more readable

- Pros:

- No increase in RAM/ROM usage and runtime overhead

- Correct recv implementations and upper layer code gets more readible

- Cons:

- Unclean API

- The problem is (mostly) about incorrect lower layer implementations, this change does not help much here

|

1.0

|

Misleading API in netdev driver - # Problem Description

The `int (*recv )(netdev_t *dev, void *buf, size_t len, void *info)` member in `netdev_driver_t` ([see Doxygen](http://api.riot-os.org/structnetdev__driver.html#ae2c8cad80067e3b1f9979931ddb3cc8b)) is a textbook example of a misleading API:

1. The function does three completely different things depending on arguments:

- Receive the message and return its size, if `buf != NULL`

- Returns the message size of the incoming message if `(buf == NULL) && (len == 0)`

- Drops the incoming message if `(buf == NULL) && (len != 0)`

2. The function name only reflects one of the three cases

3. One of the three cases is a corner case (the drop packet case only occurs under high load), so a bug is going to be unnoticed for quite some time

# Symptopms

This misleading API already lead to a bug https://github.com/RIOT-OS/RIOT/issues/9784. I predict similar bugs will show up in the future, if the API is not changed. Update: Many similar bugs were found, see https://github.com/RIOT-OS/RIOT/pull/9832

# Suggestions to Address the Problem

1. Split the function into three functions (e.g. `recv()`, `get_size()` and `drop()`). This would be the cleanest API, but the ROM size of the implementation is likely to increase, as all three will share some common code. (I assume the compiler will inline the common code when implemented in separate functions, so even when no duplicate C code is present, the ROM size will likely grow.)

- Pros:

- Cleanest API: Reviewers and implementers will no longer easily forget about the size/drop features

- Checking for missing size/drop implementations possible by an assert

- No increase in runtime overhead or RAM (barely measurable speedup expected by not longer needing conditional jumps)

- Biggest increase in code readability (control flow simplified, functions get shorter, function names match their intention, header parsing will be moved to short static functions)

- Cons:

- Biggest ROM increase compared to other approaches expected

- Biggest increase in lines of code

2. Rename the function, e.g. to `recv_or_size_or_drop()`. While this name is very clumsy, at least it is very obvious that this functions has to implement three different things.

- Pro:

- No increase in RAM/ROM usage and runtime overhead

- Cons:

- Only cosmetic, does not really address the problem

- Unclean API

3. Add an additional parameter (e.g. an `enum`) to specify which of the three things the function should do. This would be much more obvious to the programmer, even though a bit wasteful

- Pros:

- No increase in ROM usage (and likely in RAM usage, if increased stack usage does not result in higher stack sizes being used)

- Compiler helps implementer if both size and drop is forgotten (unused argument)

- Cons:

- Unclean API

- Slight increase in runtime (barely measurable)

4. Like 1., but only split off drop and keep size in recv

- Pros:

- Only change that part of the API that was affected by bugs so far

- Less ROM overhead and lines of code than 1.

- Cons:

- Still only slightly less unclean API

- Compared to 1. most of the ROM / lines of code increase is already been paid, so why not pay the rest and get a clean API

5. Add `static inline` functions to increase readability: In the upper layers add wrappers to call the size and drop feature od the recv function more explicitly; in the lower layers to test for the drop/size/recv mode more readable

- Pros:

- No increase in RAM/ROM usage and runtime overhead

- Correct recv implementations and upper layer code gets more readible

- Cons:

- Unclean API

- The problem is (mostly) about incorrect lower layer implementations, this change does not help much here

|

process

|

misleading api in netdev driver problem description the int recv netdev t dev void buf size t len void info member in netdev driver t is a textbook example of a misleading api the function does three completely different things depending on arguments receive the message and return its size if buf null returns the message size of the incoming message if buf null len drops the incoming message if buf null len the function name only reflects one of the three cases one of the three cases is a corner case the drop packet case only occurs under high load so a bug is going to be unnoticed for quite some time symptopms this misleading api already lead to a bug i predict similar bugs will show up in the future if the api is not changed update many similar bugs were found see suggestions to address the problem split the function into three functions e g recv get size and drop this would be the cleanest api but the rom size of the implementation is likely to increase as all three will share some common code i assume the compiler will inline the common code when implemented in separate functions so even when no duplicate c code is present the rom size will likely grow pros cleanest api reviewers and implementers will no longer easily forget about the size drop features checking for missing size drop implementations possible by an assert no increase in runtime overhead or ram barely measurable speedup expected by not longer needing conditional jumps biggest increase in code readability control flow simplified functions get shorter function names match their intention header parsing will be moved to short static functions cons biggest rom increase compared to other approaches expected biggest increase in lines of code rename the function e g to recv or size or drop while this name is very clumsy at least it is very obvious that this functions has to implement three different things pro no increase in ram rom usage and runtime overhead cons only cosmetic does not really address the problem unclean api add an additional parameter e g an enum to specify which of the three things the function should do this would be much more obvious to the programmer even though a bit wasteful pros no increase in rom usage and likely in ram usage if increased stack usage does not result in higher stack sizes being used compiler helps implementer if both size and drop is forgotten unused argument cons unclean api slight increase in runtime barely measurable like but only split off drop and keep size in recv pros only change that part of the api that was affected by bugs so far less rom overhead and lines of code than cons still only slightly less unclean api compared to most of the rom lines of code increase is already been paid so why not pay the rest and get a clean api add static inline functions to increase readability in the upper layers add wrappers to call the size and drop feature od the recv function more explicitly in the lower layers to test for the drop size recv mode more readable pros no increase in ram rom usage and runtime overhead correct recv implementations and upper layer code gets more readible cons unclean api the problem is mostly about incorrect lower layer implementations this change does not help much here

| 1

|

17,768

| 23,698,617,418

|

IssuesEvent

|

2022-08-29 16:47:14

|

hashgraph/hedera-json-rpc-relay

|

https://api.github.com/repos/hashgraph/hedera-json-rpc-relay

|

closed

|

Update chart secret resource to use stringData

|

enhancement P2 process

|

### Problem

Currently the secret resource utilizes `data`.

This results in multiple extra base64 conversions.

### Solution

Use `stringData` instead of `data` to avoid all the extra base64 conversions.

### Alternatives

_No response_

|

1.0

|

Update chart secret resource to use stringData - ### Problem

Currently the secret resource utilizes `data`.

This results in multiple extra base64 conversions.

### Solution

Use `stringData` instead of `data` to avoid all the extra base64 conversions.

### Alternatives

_No response_

|

process

|

update chart secret resource to use stringdata problem currently the secret resource utilizes data this results in multiple extra conversions solution use stringdata instead of data to avoid all the extra conversions alternatives no response

| 1

|

26,057

| 12,343,361,954

|

IssuesEvent

|

2020-05-15 03:45:56

|

Azure/azure-sdk-for-net

|

https://api.github.com/repos/Azure/azure-sdk-for-net

|

opened

|

Rename Peek methods to Browse

|

Client Service Bus

|

In our UX studies, users were confused between the concepts of Peek and ReceiveMode.PeekLock. We considered updating the ReceiveMode enum to be called something like ReceiveAndLock (which would align nicely with ReceiveAndDelete), but due to all of the existing documentation out there referring to PeekLock, it was determined the inertia was too great to move away from this name. In order to reduce the confusion, and highlight the differences in the concepts, we would like to rename the PeekAsync/PeekBatchAsync/PeekAtAsync/PeekBatchAtAsync to BrowseAsync/BrowseBatchAsync/BrowseAtAsync/BrowseBatchAtAsync.

|

1.0

|

Rename Peek methods to Browse - In our UX studies, users were confused between the concepts of Peek and ReceiveMode.PeekLock. We considered updating the ReceiveMode enum to be called something like ReceiveAndLock (which would align nicely with ReceiveAndDelete), but due to all of the existing documentation out there referring to PeekLock, it was determined the inertia was too great to move away from this name. In order to reduce the confusion, and highlight the differences in the concepts, we would like to rename the PeekAsync/PeekBatchAsync/PeekAtAsync/PeekBatchAtAsync to BrowseAsync/BrowseBatchAsync/BrowseAtAsync/BrowseBatchAtAsync.

|

non_process

|

rename peek methods to browse in our ux studies users were confused between the concepts of peek and receivemode peeklock we considered updating the receivemode enum to be called something like receiveandlock which would align nicely with receiveanddelete but due to all of the existing documentation out there referring to peeklock it was determined the inertia was too great to move away from this name in order to reduce the confusion and highlight the differences in the concepts we would like to rename the peekasync peekbatchasync peekatasync peekbatchatasync to browseasync browsebatchasync browseatasync browsebatchatasync

| 0

|

234,860

| 19,272,580,436

|

IssuesEvent

|

2021-12-10 08:01:15

|

zephyrproject-rtos/test_results

|

https://api.github.com/repos/zephyrproject-rtos/test_results

|

closed

|

tests-ci : portability: posix: fs.newlib test Build failure

|

bug area: Tests

|

**Describe the bug**

fs.newlib test is Build failure on v2.7.99-2147-g183328a4fbdb on mimxrt685_evk_cm33

see logs for details

**To Reproduce**

1.

```

scripts/twister --device-testing --device-serial /dev/ttyACM0 -p mimxrt685_evk_cm33 --sub-test portability.posix

```

2. See error

**Expected behavior**

test pass

**Impact**

**Logs and console output**

```

None

```

**Environment (please complete the following information):**

- OS: (e.g. Linux )

- Toolchain (e.g Zephyr SDK)

- Commit SHA or Version used: v2.7.99-2147-g183328a4fbdb

|

1.0

|

tests-ci : portability: posix: fs.newlib test Build failure

-

**Describe the bug**

fs.newlib test is Build failure on v2.7.99-2147-g183328a4fbdb on mimxrt685_evk_cm33

see logs for details

**To Reproduce**

1.

```

scripts/twister --device-testing --device-serial /dev/ttyACM0 -p mimxrt685_evk_cm33 --sub-test portability.posix

```

2. See error

**Expected behavior**

test pass

**Impact**

**Logs and console output**

```

None

```

**Environment (please complete the following information):**

- OS: (e.g. Linux )

- Toolchain (e.g Zephyr SDK)

- Commit SHA or Version used: v2.7.99-2147-g183328a4fbdb

|

non_process

|

tests ci portability posix fs newlib test build failure describe the bug fs newlib test is build failure on on evk see logs for details to reproduce scripts twister device testing device serial dev p evk sub test portability posix see error expected behavior test pass impact logs and console output none environment please complete the following information os e g linux toolchain e g zephyr sdk commit sha or version used

| 0

|

8,829

| 11,940,463,005

|

IssuesEvent

|

2020-04-02 16:45:26

|

AlmuraDev/SGCraft

|

https://api.github.com/repos/AlmuraDev/SGCraft

|

closed

|

Cannot break blocks

|

in process

|