Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

91,124

| 8,290,247,196

|

IssuesEvent

|

2018-09-19 16:47:07

|

flutter/flutter

|

https://api.github.com/repos/flutter/flutter

|

closed

|

Make flutter_tools tests work concurrently (without -j1)

|

a: tests

|

One thing that should speed up the bots (#20036) a little is running tests concurrently. We currently use `-j1` because many tests have race conditions when run concurrently (for example many set the currentDirectory on the filesystem).

This issue is to track discussions/fixes for this.

Tests that seem to be setting `fs.currentDirectory` that may need fixing:

- [x] `flutter_tester_test` (#21119)

- [x] `asset_bundle_package_font_test` (#21114)

- [x] `asset_bundle_package_test` (#21427)

- [x] `asset_bundle_variant_test` (#21426)

- [x] `asset_bundle_test` (#21425)

I've also opened #22037 with a change to make tests throw if they try setting `fs.currentDirectory`.

|

1.0

|

Make flutter_tools tests work concurrently (without -j1) - One thing that should speed up the bots (#20036) a little is running tests concurrently. We currently use `-j1` because many tests have race conditions when run concurrently (for example many set the currentDirectory on the filesystem).

This issue is to track discussions/fixes for this.

Tests that seem to be setting `fs.currentDirectory` that may need fixing:

- [x] `flutter_tester_test` (#21119)

- [x] `asset_bundle_package_font_test` (#21114)

- [x] `asset_bundle_package_test` (#21427)

- [x] `asset_bundle_variant_test` (#21426)

- [x] `asset_bundle_test` (#21425)

I've also opened #22037 with a change to make tests throw if they try setting `fs.currentDirectory`.

|

non_process

|

make flutter tools tests work concurrently without one thing that should speed up the bots a little is running tests concurrently we currently use because many tests have race conditions when run concurrently for example many set the currentdirectory on the filesystem this issue is to track discussions fixes for this tests that seem to be setting fs currentdirectory that may need fixing flutter tester test asset bundle package font test asset bundle package test asset bundle variant test asset bundle test i ve also opened with a change to make tests throw if they try setting fs currentdirectory

| 0

|

14,938

| 18,365,858,921

|

IssuesEvent

|

2021-10-10 02:55:10

|

varabyte/kobweb

|

https://api.github.com/repos/varabyte/kobweb

|

opened

|

Gradle plugin: Throw error if a user puts an index.html file in their public/ folder

|

process

|

This will complete with the one we will autogenerate. So they shouldn't do that!

|

1.0

|

Gradle plugin: Throw error if a user puts an index.html file in their public/ folder - This will complete with the one we will autogenerate. So they shouldn't do that!

|

process

|

gradle plugin throw error if a user puts an index html file in their public folder this will complete with the one we will autogenerate so they shouldn t do that

| 1

|

11,198

| 13,957,702,683

|

IssuesEvent

|

2020-10-24 08:13:40

|

alexanderkotsev/geoportal

|

https://api.github.com/repos/alexanderkotsev/geoportal

|

opened

|

MT: Harvest

|

Geoportal Harvesting process MT - Malta

|

Good Morning Angelo,

Kindly can you perform a harvest on the Malta CSW as we need to check some changes we performed.

Regards,

Rene

|

1.0

|

MT: Harvest - Good Morning Angelo,

Kindly can you perform a harvest on the Malta CSW as we need to check some changes we performed.

Regards,

Rene

|

process

|

mt harvest good morning angelo kindly can you perform a harvest on the malta csw as we need to check some changes we performed regards rene

| 1

|

10,299

| 13,152,016,452

|

IssuesEvent

|

2020-08-09 19:49:44

|

GoogleCloudPlatform/stackdriver-sandbox

|

https://api.github.com/repos/GoogleCloudPlatform/stackdriver-sandbox

|

closed

|

OpenTelemetry Tracing for ProductCatalogService

|

lang: go priority: p2 type: process

|

Subtask of #132

Use OpenTelemetry for tracing in the productcatalog service.

|

1.0

|

OpenTelemetry Tracing for ProductCatalogService - Subtask of #132

Use OpenTelemetry for tracing in the productcatalog service.

|

process

|

opentelemetry tracing for productcatalogservice subtask of use opentelemetry for tracing in the productcatalog service

| 1

|

603,975

| 18,675,015,554

|

IssuesEvent

|

2021-10-31 12:10:13

|

siteorigin/siteorigin-panels

|

https://api.github.com/repos/siteorigin/siteorigin-panels

|

closed

|

Add separate setting for panel bottom margin in Page Builder general options

|

enhancement priority-2

|

Hi there, this is a feature request.

I really enjoy working with SOPB however I often have to override via specific CSS rule the widget/panel's bottom margin to make it less than the row's bottom margin. Currently, the widget bottom margin is equal to the row's margin bottom.

I think it would be great to add a separate general setting for the widget/panel bottom margin in Settings > Page Builder.

Setting different margins would great for any website's UX because of basic design/element perception principles. The elements (panels) within a row would be closer to each other, meaning they're correlated one another. This perception would be comunicated at a first sight to the user, leading to a better experience and page readability. That's a basic gestalt psychology grouping mechanism (law of proximity).

What do you think?

|

1.0

|

Add separate setting for panel bottom margin in Page Builder general options - Hi there, this is a feature request.

I really enjoy working with SOPB however I often have to override via specific CSS rule the widget/panel's bottom margin to make it less than the row's bottom margin. Currently, the widget bottom margin is equal to the row's margin bottom.

I think it would be great to add a separate general setting for the widget/panel bottom margin in Settings > Page Builder.

Setting different margins would great for any website's UX because of basic design/element perception principles. The elements (panels) within a row would be closer to each other, meaning they're correlated one another. This perception would be comunicated at a first sight to the user, leading to a better experience and page readability. That's a basic gestalt psychology grouping mechanism (law of proximity).

What do you think?

|

non_process

|

add separate setting for panel bottom margin in page builder general options hi there this is a feature request i really enjoy working with sopb however i often have to override via specific css rule the widget panel s bottom margin to make it less than the row s bottom margin currently the widget bottom margin is equal to the row s margin bottom i think it would be great to add a separate general setting for the widget panel bottom margin in settings page builder setting different margins would great for any website s ux because of basic design element perception principles the elements panels within a row would be closer to each other meaning they re correlated one another this perception would be comunicated at a first sight to the user leading to a better experience and page readability that s a basic gestalt psychology grouping mechanism law of proximity what do you think

| 0

|

72,916

| 31,779,113,550

|

IssuesEvent

|

2023-09-12 16:08:56

|

openstreetmap/operations

|

https://api.github.com/repos/openstreetmap/operations

|

closed

|

Rename openstreetmap-fastly-processed-logs AWS S3 bucket

|

service:tiles location:aws

|

This bucket is not for processed logs, but used as an Athena workspace which saves query results, temp tables, etc. Its name should reflect the real usage.

|

1.0

|

Rename openstreetmap-fastly-processed-logs AWS S3 bucket - This bucket is not for processed logs, but used as an Athena workspace which saves query results, temp tables, etc. Its name should reflect the real usage.

|

non_process

|

rename openstreetmap fastly processed logs aws bucket this bucket is not for processed logs but used as an athena workspace which saves query results temp tables etc its name should reflect the real usage

| 0

|

7,986

| 10,147,122,056

|

IssuesEvent

|

2019-08-05 09:47:57

|

PG85/OpenTerrainGenerator

|

https://api.github.com/repos/PG85/OpenTerrainGenerator

|

closed

|

Primal core: endless loop! issue (otg.generator.resource.TreeGen.spawnInChunk)

|

Bug Forge Mod Compatibility Resource Spawning

|

World generator triggering an endless loop.

OpenTerrainGenerator-1.12.2 - v6

Biome_Bundle-1.12.2-v6.1

> Stacktrace:

at com.pg85.otg.generator.resource.TreeGen.spawnInChunk(TreeGen.java:153)

at com.pg85.otg.generator.resource.Resource.process(Resource.java:152)

at com.pg85.otg.generator.ObjectSpawner.populate(ObjectSpawner.java:259)

at com.pg85.otg.forge.generator.OTGChunkGenerator.func_185931_b(OTGChunkGenerator.java:203)

at net.minecraft.world.chunk.Chunk.func_186034_a(Chunk.java:1016)

at net.minecraft.world.chunk.Chunk.func_186030_a(Chunk.java:988)

at net.minecraft.world.gen.ChunkProviderServer.func_186025_d(ChunkProviderServer.java:157)

at net.minecraft.world.World.func_72964_e(World.java:309)

at net.minecraft.world.World.func_175726_f(World.java:304)

at net.minecraft.world.World.func_180495_p(World.java:910)

at net.minecraft.world.World.func_190529_b(World.java:580)

at net.minecraft.world.World.func_190522_c(World.java:478)

at net.minecraft.world.World.markAndNotifyBlock(World.java:389)

at net.minecraft.world.World.func_180501_a(World.java:360)

at nmd.primal.core.common.world.feature.GenMinableSubOre.func_180709_b(GenMinableSubOre.java:95)

at nmd.primal.core.common.world.WorldGenCommon.generate(WorldGenCommon.java:82)

at nmd.primal.core.common.world.generators.PrimalWorld.generate(PrimalWorld.java:41)

at net.minecraftforge.fml.common.registry.GameRegistry.generateWorld(GameRegistry.java:167)

at net.minecraft.world.chunk.Chunk.func_186034_a(Chunk.java:1017)

at net.minecraft.world.chunk.Chunk.func_186030_a(Chunk.java:988)

at net.minecraft.world.gen.ChunkProviderServer.func_186025_d(ChunkProviderServer.java:157)

at net.minecraft.server.management.PlayerChunkMapEntry.func_187268_a(PlayerChunkMapEntry.java:126)

at net.minecraft.server.management.PlayerChunkMap.func_72693_b(SourceFile:147)

at net.minecraft.world.WorldServer.func_72835_b(WorldServer.java:227)

>

|

True

|

Primal core: endless loop! issue (otg.generator.resource.TreeGen.spawnInChunk) - World generator triggering an endless loop.

OpenTerrainGenerator-1.12.2 - v6

Biome_Bundle-1.12.2-v6.1

> Stacktrace:

at com.pg85.otg.generator.resource.TreeGen.spawnInChunk(TreeGen.java:153)

at com.pg85.otg.generator.resource.Resource.process(Resource.java:152)

at com.pg85.otg.generator.ObjectSpawner.populate(ObjectSpawner.java:259)

at com.pg85.otg.forge.generator.OTGChunkGenerator.func_185931_b(OTGChunkGenerator.java:203)

at net.minecraft.world.chunk.Chunk.func_186034_a(Chunk.java:1016)

at net.minecraft.world.chunk.Chunk.func_186030_a(Chunk.java:988)

at net.minecraft.world.gen.ChunkProviderServer.func_186025_d(ChunkProviderServer.java:157)

at net.minecraft.world.World.func_72964_e(World.java:309)

at net.minecraft.world.World.func_175726_f(World.java:304)

at net.minecraft.world.World.func_180495_p(World.java:910)

at net.minecraft.world.World.func_190529_b(World.java:580)

at net.minecraft.world.World.func_190522_c(World.java:478)

at net.minecraft.world.World.markAndNotifyBlock(World.java:389)

at net.minecraft.world.World.func_180501_a(World.java:360)

at nmd.primal.core.common.world.feature.GenMinableSubOre.func_180709_b(GenMinableSubOre.java:95)

at nmd.primal.core.common.world.WorldGenCommon.generate(WorldGenCommon.java:82)

at nmd.primal.core.common.world.generators.PrimalWorld.generate(PrimalWorld.java:41)

at net.minecraftforge.fml.common.registry.GameRegistry.generateWorld(GameRegistry.java:167)

at net.minecraft.world.chunk.Chunk.func_186034_a(Chunk.java:1017)

at net.minecraft.world.chunk.Chunk.func_186030_a(Chunk.java:988)

at net.minecraft.world.gen.ChunkProviderServer.func_186025_d(ChunkProviderServer.java:157)

at net.minecraft.server.management.PlayerChunkMapEntry.func_187268_a(PlayerChunkMapEntry.java:126)

at net.minecraft.server.management.PlayerChunkMap.func_72693_b(SourceFile:147)

at net.minecraft.world.WorldServer.func_72835_b(WorldServer.java:227)

>

|

non_process

|

primal core endless loop issue otg generator resource treegen spawninchunk world generator triggering an endless loop openterraingenerator biome bundle stacktrace at com otg generator resource treegen spawninchunk treegen java at com otg generator resource resource process resource java at com otg generator objectspawner populate objectspawner java at com otg forge generator otgchunkgenerator func b otgchunkgenerator java at net minecraft world chunk chunk func a chunk java at net minecraft world chunk chunk func a chunk java at net minecraft world gen chunkproviderserver func d chunkproviderserver java at net minecraft world world func e world java at net minecraft world world func f world java at net minecraft world world func p world java at net minecraft world world func b world java at net minecraft world world func c world java at net minecraft world world markandnotifyblock world java at net minecraft world world func a world java at nmd primal core common world feature genminablesubore func b genminablesubore java at nmd primal core common world worldgencommon generate worldgencommon java at nmd primal core common world generators primalworld generate primalworld java at net minecraftforge fml common registry gameregistry generateworld gameregistry java at net minecraft world chunk chunk func a chunk java at net minecraft world chunk chunk func a chunk java at net minecraft world gen chunkproviderserver func d chunkproviderserver java at net minecraft server management playerchunkmapentry func a playerchunkmapentry java at net minecraft server management playerchunkmap func b sourcefile at net minecraft world worldserver func b worldserver java

| 0

|

17,558

| 10,082,742,412

|

IssuesEvent

|

2019-07-25 12:04:14

|

senthilbalakrishnanfull/testing

|

https://api.github.com/repos/senthilbalakrishnanfull/testing

|

opened

|

CVE-2018-11695 (High) detected in opennms-opennms-source-23.0.0-1

|

security vulnerability

|

## CVE-2018-11695 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opennmsopennms-source-23.0.0-1</b></p></summary>

<p>

<p>A Java based fault and performance management system</p>

<p>Library home page: <a href=https://sourceforge.net/projects/opennms/>https://sourceforge.net/projects/opennms/</a></p>

<p>Found in HEAD commit: <a href="https://github.com/senthilbalakrishnanfull/testing/commit/b01154f4f2a0d62cb86b20c539e5c9514f09efac">b01154f4f2a0d62cb86b20c539e5c9514f09efac</a></p>

</p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Library Source Files (68)</summary>

<p></p>

<p> * The source files were matched to this source library based on a best effort match. Source libraries are selected from a list of probable public libraries.</p>

<p>

- /testing/node_modules/node-sass/src/libsass/src/expand.hpp

- /testing/node_modules/node-sass/src/libsass/src/expand.cpp

- /testing/node_modules/node-sass/src/sass_types/factory.cpp

- /testing/node_modules/node-sass/src/sass_types/boolean.cpp

- /testing/node_modules/node-sass/src/libsass/src/util.hpp

- /testing/node_modules/node-sass/src/sass_types/value.h

- /testing/node_modules/node-sass/src/libsass/src/emitter.hpp

- /testing/node_modules/node-sass/src/libsass/src/lexer.cpp

- /testing/node_modules/node-sass/src/callback_bridge.h

- /testing/node_modules/node-sass/src/libsass/src/file.cpp

- /testing/node_modules/node-sass/src/libsass/src/sass.cpp

- /testing/node_modules/node-sass/src/libsass/src/operation.hpp

- /testing/node_modules/node-sass/src/libsass/src/operators.hpp

- /testing/node_modules/node-sass/src/libsass/src/constants.hpp

- /testing/node_modules/node-sass/src/libsass/src/error_handling.hpp

- /testing/node_modules/node-sass/src/libsass/src/ast_fwd_decl.cpp

- /testing/node_modules/node-sass/src/custom_importer_bridge.cpp

- /testing/node_modules/node-sass/src/libsass/src/parser.hpp

- /testing/node_modules/node-sass/src/libsass/src/constants.cpp

- /testing/node_modules/node-sass/src/sass_types/list.cpp

- /testing/node_modules/node-sass/src/libsass/src/cssize.cpp

- /testing/node_modules/node-sass/src/libsass/src/functions.hpp

- /testing/node_modules/node-sass/src/libsass/src/util.cpp

- /testing/node_modules/node-sass/src/custom_function_bridge.cpp

- /testing/node_modules/node-sass/src/custom_importer_bridge.h

- /testing/node_modules/node-sass/src/libsass/src/bind.cpp

- /testing/node_modules/node-sass/src/libsass/src/eval.hpp

- /testing/node_modules/node-sass/src/libsass/src/backtrace.cpp

- /testing/node_modules/node-sass/src/libsass/src/extend.cpp

- /testing/node_modules/node-sass/src/sass_context_wrapper.h

- /testing/node_modules/node-sass/src/sass_types/sass_value_wrapper.h

- /testing/node_modules/node-sass/src/libsass/src/error_handling.cpp

- /testing/node_modules/node-sass/src/libsass/src/node.cpp

- /testing/node_modules/node-sass/src/libsass/src/debugger.hpp

- /testing/node_modules/node-sass/src/libsass/src/emitter.cpp

- /testing/node_modules/node-sass/src/sass_types/number.cpp

- /testing/node_modules/node-sass/src/sass_types/color.h

- /testing/node_modules/node-sass/src/libsass/src/sass_values.cpp

- /testing/node_modules/node-sass/src/libsass/src/ast.hpp

- /testing/node_modules/node-sass/src/libsass/src/output.cpp

- /testing/node_modules/node-sass/src/libsass/src/check_nesting.cpp

- /testing/node_modules/node-sass/src/sass_types/null.cpp

- /testing/node_modules/node-sass/src/libsass/src/ast_def_macros.hpp

- /testing/node_modules/node-sass/src/libsass/src/functions.cpp

- /testing/node_modules/node-sass/src/libsass/src/cssize.hpp

- /testing/node_modules/node-sass/src/libsass/src/prelexer.cpp

- /testing/node_modules/node-sass/src/libsass/src/ast.cpp

- /testing/node_modules/node-sass/src/libsass/src/to_c.cpp

- /testing/node_modules/node-sass/src/libsass/src/to_value.hpp

- /testing/node_modules/node-sass/src/libsass/src/ast_fwd_decl.hpp

- /testing/node_modules/node-sass/src/libsass/src/inspect.hpp

- /testing/node_modules/node-sass/src/sass_types/color.cpp

- /testing/node_modules/node-sass/src/libsass/src/values.cpp

- /testing/node_modules/node-sass/src/sass_context_wrapper.cpp

- /testing/node_modules/node-sass/src/sass_types/list.h

- /testing/node_modules/node-sass/src/libsass/src/memory/SharedPtr.hpp

- /testing/node_modules/node-sass/src/libsass/src/check_nesting.hpp

- /testing/node_modules/node-sass/src/libsass/src/to_c.hpp

- /testing/node_modules/node-sass/src/sass_types/map.cpp

- /testing/node_modules/node-sass/src/libsass/src/to_value.cpp

- /testing/node_modules/node-sass/src/libsass/src/context.cpp

- /testing/node_modules/node-sass/src/libsass/src/listize.hpp

- /testing/node_modules/node-sass/src/sass_types/string.cpp

- /testing/node_modules/node-sass/src/libsass/src/sass_context.cpp

- /testing/node_modules/node-sass/src/libsass/src/prelexer.hpp

- /testing/node_modules/node-sass/src/libsass/src/context.hpp

- /testing/node_modules/node-sass/src/sass_types/boolean.h

- /testing/node_modules/node-sass/src/libsass/src/eval.cpp

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in LibSass through 3.5.2. A NULL pointer dereference was found in the function Sass::Expand::operator which could be leveraged by an attacker to cause a denial of service (application crash) or possibly have unspecified other impact.

<p>Publish Date: 2018-06-04

<p>URL: <a href=https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-11695>CVE-2018-11695</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2018-11695 (High) detected in opennms-opennms-source-23.0.0-1 - ## CVE-2018-11695 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opennmsopennms-source-23.0.0-1</b></p></summary>

<p>

<p>A Java based fault and performance management system</p>

<p>Library home page: <a href=https://sourceforge.net/projects/opennms/>https://sourceforge.net/projects/opennms/</a></p>

<p>Found in HEAD commit: <a href="https://github.com/senthilbalakrishnanfull/testing/commit/b01154f4f2a0d62cb86b20c539e5c9514f09efac">b01154f4f2a0d62cb86b20c539e5c9514f09efac</a></p>

</p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Library Source Files (68)</summary>

<p></p>

<p> * The source files were matched to this source library based on a best effort match. Source libraries are selected from a list of probable public libraries.</p>

<p>

- /testing/node_modules/node-sass/src/libsass/src/expand.hpp

- /testing/node_modules/node-sass/src/libsass/src/expand.cpp

- /testing/node_modules/node-sass/src/sass_types/factory.cpp

- /testing/node_modules/node-sass/src/sass_types/boolean.cpp

- /testing/node_modules/node-sass/src/libsass/src/util.hpp

- /testing/node_modules/node-sass/src/sass_types/value.h

- /testing/node_modules/node-sass/src/libsass/src/emitter.hpp

- /testing/node_modules/node-sass/src/libsass/src/lexer.cpp

- /testing/node_modules/node-sass/src/callback_bridge.h

- /testing/node_modules/node-sass/src/libsass/src/file.cpp

- /testing/node_modules/node-sass/src/libsass/src/sass.cpp

- /testing/node_modules/node-sass/src/libsass/src/operation.hpp

- /testing/node_modules/node-sass/src/libsass/src/operators.hpp

- /testing/node_modules/node-sass/src/libsass/src/constants.hpp

- /testing/node_modules/node-sass/src/libsass/src/error_handling.hpp

- /testing/node_modules/node-sass/src/libsass/src/ast_fwd_decl.cpp

- /testing/node_modules/node-sass/src/custom_importer_bridge.cpp

- /testing/node_modules/node-sass/src/libsass/src/parser.hpp

- /testing/node_modules/node-sass/src/libsass/src/constants.cpp

- /testing/node_modules/node-sass/src/sass_types/list.cpp

- /testing/node_modules/node-sass/src/libsass/src/cssize.cpp

- /testing/node_modules/node-sass/src/libsass/src/functions.hpp

- /testing/node_modules/node-sass/src/libsass/src/util.cpp

- /testing/node_modules/node-sass/src/custom_function_bridge.cpp

- /testing/node_modules/node-sass/src/custom_importer_bridge.h

- /testing/node_modules/node-sass/src/libsass/src/bind.cpp

- /testing/node_modules/node-sass/src/libsass/src/eval.hpp

- /testing/node_modules/node-sass/src/libsass/src/backtrace.cpp

- /testing/node_modules/node-sass/src/libsass/src/extend.cpp

- /testing/node_modules/node-sass/src/sass_context_wrapper.h

- /testing/node_modules/node-sass/src/sass_types/sass_value_wrapper.h

- /testing/node_modules/node-sass/src/libsass/src/error_handling.cpp

- /testing/node_modules/node-sass/src/libsass/src/node.cpp

- /testing/node_modules/node-sass/src/libsass/src/debugger.hpp

- /testing/node_modules/node-sass/src/libsass/src/emitter.cpp

- /testing/node_modules/node-sass/src/sass_types/number.cpp

- /testing/node_modules/node-sass/src/sass_types/color.h

- /testing/node_modules/node-sass/src/libsass/src/sass_values.cpp

- /testing/node_modules/node-sass/src/libsass/src/ast.hpp

- /testing/node_modules/node-sass/src/libsass/src/output.cpp

- /testing/node_modules/node-sass/src/libsass/src/check_nesting.cpp

- /testing/node_modules/node-sass/src/sass_types/null.cpp

- /testing/node_modules/node-sass/src/libsass/src/ast_def_macros.hpp

- /testing/node_modules/node-sass/src/libsass/src/functions.cpp

- /testing/node_modules/node-sass/src/libsass/src/cssize.hpp

- /testing/node_modules/node-sass/src/libsass/src/prelexer.cpp

- /testing/node_modules/node-sass/src/libsass/src/ast.cpp

- /testing/node_modules/node-sass/src/libsass/src/to_c.cpp

- /testing/node_modules/node-sass/src/libsass/src/to_value.hpp

- /testing/node_modules/node-sass/src/libsass/src/ast_fwd_decl.hpp

- /testing/node_modules/node-sass/src/libsass/src/inspect.hpp

- /testing/node_modules/node-sass/src/sass_types/color.cpp

- /testing/node_modules/node-sass/src/libsass/src/values.cpp

- /testing/node_modules/node-sass/src/sass_context_wrapper.cpp

- /testing/node_modules/node-sass/src/sass_types/list.h

- /testing/node_modules/node-sass/src/libsass/src/memory/SharedPtr.hpp

- /testing/node_modules/node-sass/src/libsass/src/check_nesting.hpp

- /testing/node_modules/node-sass/src/libsass/src/to_c.hpp

- /testing/node_modules/node-sass/src/sass_types/map.cpp

- /testing/node_modules/node-sass/src/libsass/src/to_value.cpp

- /testing/node_modules/node-sass/src/libsass/src/context.cpp

- /testing/node_modules/node-sass/src/libsass/src/listize.hpp

- /testing/node_modules/node-sass/src/sass_types/string.cpp

- /testing/node_modules/node-sass/src/libsass/src/sass_context.cpp

- /testing/node_modules/node-sass/src/libsass/src/prelexer.hpp

- /testing/node_modules/node-sass/src/libsass/src/context.hpp

- /testing/node_modules/node-sass/src/sass_types/boolean.h

- /testing/node_modules/node-sass/src/libsass/src/eval.cpp

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in LibSass through 3.5.2. A NULL pointer dereference was found in the function Sass::Expand::operator which could be leveraged by an attacker to cause a denial of service (application crash) or possibly have unspecified other impact.

<p>Publish Date: 2018-06-04

<p>URL: <a href=https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-11695>CVE-2018-11695</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve high detected in opennms opennms source cve high severity vulnerability vulnerable library opennmsopennms source a java based fault and performance management system library home page a href found in head commit a href library source files the source files were matched to this source library based on a best effort match source libraries are selected from a list of probable public libraries testing node modules node sass src libsass src expand hpp testing node modules node sass src libsass src expand cpp testing node modules node sass src sass types factory cpp testing node modules node sass src sass types boolean cpp testing node modules node sass src libsass src util hpp testing node modules node sass src sass types value h testing node modules node sass src libsass src emitter hpp testing node modules node sass src libsass src lexer cpp testing node modules node sass src callback bridge h testing node modules node sass src libsass src file cpp testing node modules node sass src libsass src sass cpp testing node modules node sass src libsass src operation hpp testing node modules node sass src libsass src operators hpp testing node modules node sass src libsass src constants hpp testing node modules node sass src libsass src error handling hpp testing node modules node sass src libsass src ast fwd decl cpp testing node modules node sass src custom importer bridge cpp testing node modules node sass src libsass src parser hpp testing node modules node sass src libsass src constants cpp testing node modules node sass src sass types list cpp testing node modules node sass src libsass src cssize cpp testing node modules node sass src libsass src functions hpp testing node modules node sass src libsass src util cpp testing node modules node sass src custom function bridge cpp testing node modules node sass src custom importer bridge h testing node modules node sass src libsass src bind cpp testing node modules node sass src libsass src eval hpp testing node modules node sass src libsass src backtrace cpp testing node modules node sass src libsass src extend cpp testing node modules node sass src sass context wrapper h testing node modules node sass src sass types sass value wrapper h testing node modules node sass src libsass src error handling cpp testing node modules node sass src libsass src node cpp testing node modules node sass src libsass src debugger hpp testing node modules node sass src libsass src emitter cpp testing node modules node sass src sass types number cpp testing node modules node sass src sass types color h testing node modules node sass src libsass src sass values cpp testing node modules node sass src libsass src ast hpp testing node modules node sass src libsass src output cpp testing node modules node sass src libsass src check nesting cpp testing node modules node sass src sass types null cpp testing node modules node sass src libsass src ast def macros hpp testing node modules node sass src libsass src functions cpp testing node modules node sass src libsass src cssize hpp testing node modules node sass src libsass src prelexer cpp testing node modules node sass src libsass src ast cpp testing node modules node sass src libsass src to c cpp testing node modules node sass src libsass src to value hpp testing node modules node sass src libsass src ast fwd decl hpp testing node modules node sass src libsass src inspect hpp testing node modules node sass src sass types color cpp testing node modules node sass src libsass src values cpp testing node modules node sass src sass context wrapper cpp testing node modules node sass src sass types list h testing node modules node sass src libsass src memory sharedptr hpp testing node modules node sass src libsass src check nesting hpp testing node modules node sass src libsass src to c hpp testing node modules node sass src sass types map cpp testing node modules node sass src libsass src to value cpp testing node modules node sass src libsass src context cpp testing node modules node sass src libsass src listize hpp testing node modules node sass src sass types string cpp testing node modules node sass src libsass src sass context cpp testing node modules node sass src libsass src prelexer hpp testing node modules node sass src libsass src context hpp testing node modules node sass src sass types boolean h testing node modules node sass src libsass src eval cpp vulnerability details an issue was discovered in libsass through a null pointer dereference was found in the function sass expand operator which could be leveraged by an attacker to cause a denial of service application crash or possibly have unspecified other impact publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href step up your open source security game with whitesource

| 0

|

22,750

| 3,794,419,563

|

IssuesEvent

|

2016-03-22 16:51:43

|

flutter/flutter

|

https://api.github.com/repos/flutter/flutter

|

opened

|

Double shadow flash when moving component list underneath app bar

|

affects: material design customer: gallery ⚠ bug

|

Scrolling the component list up under the app bar flashes the shadow at the border between the app bar and the component list.

Movie: https://dl.dropboxusercontent.com/u/316685/RECORDING.mp4

|

1.0

|

Double shadow flash when moving component list underneath app bar - Scrolling the component list up under the app bar flashes the shadow at the border between the app bar and the component list.

Movie: https://dl.dropboxusercontent.com/u/316685/RECORDING.mp4

|

non_process

|

double shadow flash when moving component list underneath app bar scrolling the component list up under the app bar flashes the shadow at the border between the app bar and the component list movie

| 0

|

449,898

| 31,877,440,185

|

IssuesEvent

|

2023-09-16 01:44:49

|

ossf/scorecard

|

https://api.github.com/repos/ossf/scorecard

|

closed

|

Feature: Improve docs on using package manager flags

|

documentation enhancement no-issue-activity

|

**Is your feature request related to a problem? Please describe.**

Scorecard can receive as input the name of the package from `npm`, `pypi` and `rubygems` ecosystems as per [the documentation](https://github.com/ossf/scorecard/blob/4cd5446862ea4c470810fea81fc7f45a36d04dec/README.md?plain=1#L423-L427). It is unclear to me if using such flags changes the evaluation of the package to be more specific to XYZ ecosystem, or if it's only used to find the repository source through the package manager. And it's also unclear that such flags cannot be used along with `--repo` flag before getting an error.

**Describe the solution you'd like**

I would like the documentation section `Using a Package manager` to explain if using the package ecosystem flags affect the final evaluation or not and that such flags cannot be used along with `--repo` flag.

**Describe alternatives you've considered**

None.

**Additional context**

None.

|

1.0

|

Feature: Improve docs on using package manager flags - **Is your feature request related to a problem? Please describe.**

Scorecard can receive as input the name of the package from `npm`, `pypi` and `rubygems` ecosystems as per [the documentation](https://github.com/ossf/scorecard/blob/4cd5446862ea4c470810fea81fc7f45a36d04dec/README.md?plain=1#L423-L427). It is unclear to me if using such flags changes the evaluation of the package to be more specific to XYZ ecosystem, or if it's only used to find the repository source through the package manager. And it's also unclear that such flags cannot be used along with `--repo` flag before getting an error.

**Describe the solution you'd like**

I would like the documentation section `Using a Package manager` to explain if using the package ecosystem flags affect the final evaluation or not and that such flags cannot be used along with `--repo` flag.

**Describe alternatives you've considered**

None.

**Additional context**

None.

|

non_process

|

feature improve docs on using package manager flags is your feature request related to a problem please describe scorecard can receive as input the name of the package from npm pypi and rubygems ecosystems as per it is unclear to me if using such flags changes the evaluation of the package to be more specific to xyz ecosystem or if it s only used to find the repository source through the package manager and it s also unclear that such flags cannot be used along with repo flag before getting an error describe the solution you d like i would like the documentation section using a package manager to explain if using the package ecosystem flags affect the final evaluation or not and that such flags cannot be used along with repo flag describe alternatives you ve considered none additional context none

| 0

|

40,817

| 10,168,215,486

|

IssuesEvent

|

2019-08-07 20:14:14

|

USDepartmentofLabor/OCIO-DOLSafety-iOS

|

https://api.github.com/repos/USDepartmentofLabor/OCIO-DOLSafety-iOS

|

closed

|

Functional - Resources Screen - Address Punctuation Needs Fixes

|

Fixed defect

|

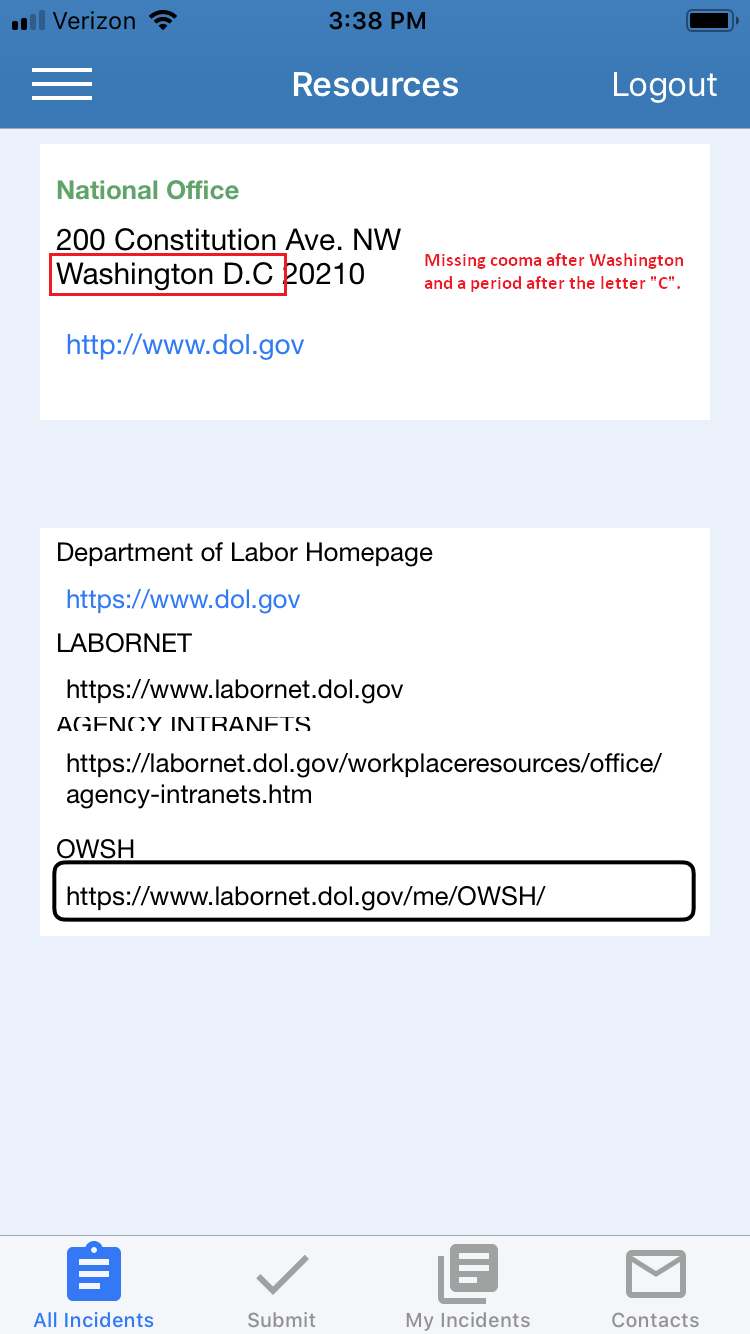

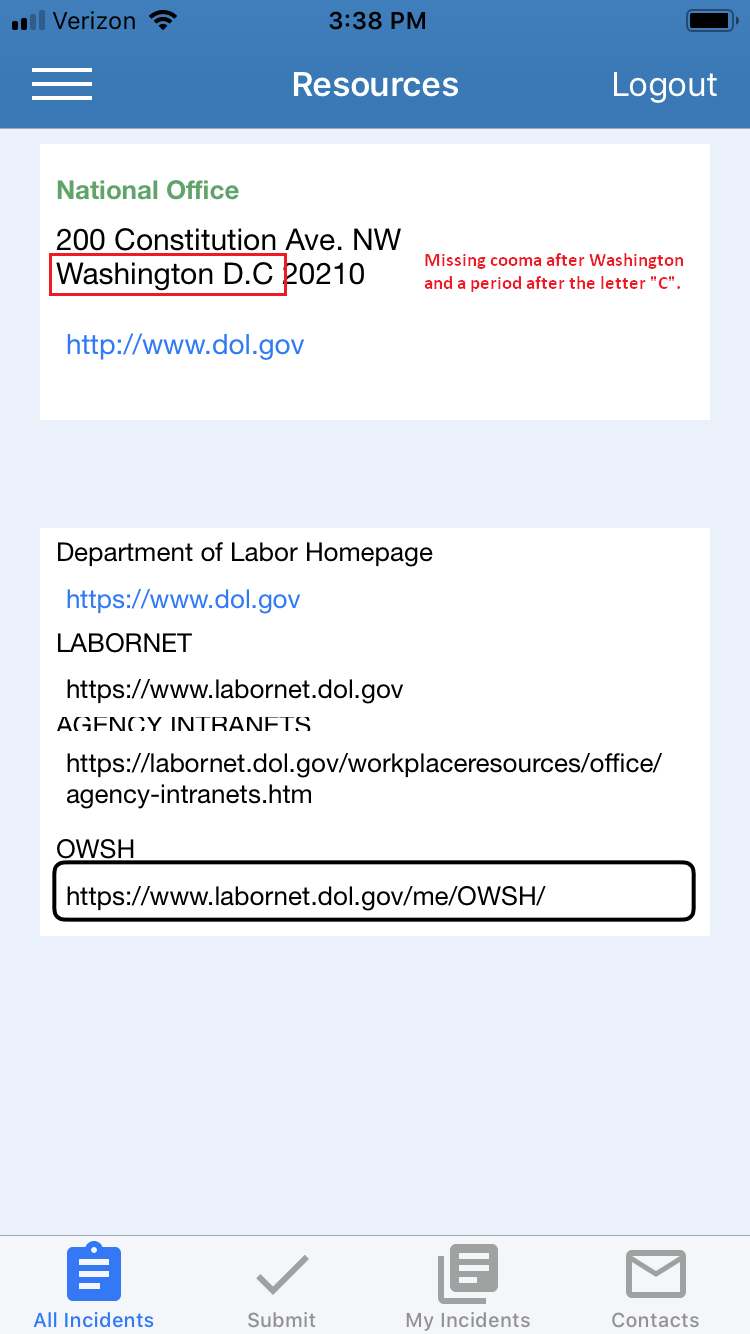

For the second line of the address, a comma is needed after “Washington” along with a period after in the “D.C” or remove the first one.

Please see the attached screenshot.

|

1.0

|

Functional - Resources Screen - Address Punctuation Needs Fixes - For the second line of the address, a comma is needed after “Washington” along with a period after in the “D.C” or remove the first one.

Please see the attached screenshot.

|

non_process

|

functional resources screen address punctuation needs fixes for the second line of the address a comma is needed after “washington” along with a period after in the “d c” or remove the first one please see the attached screenshot

| 0

|

105,154

| 16,624,262,132

|

IssuesEvent

|

2021-06-03 07:35:38

|

Thanraj/OpenSSL_1.0.1q

|

https://api.github.com/repos/Thanraj/OpenSSL_1.0.1q

|

opened

|

CVE-2016-0797 (High) detected in opensslOpenSSL_1_0_1q, opensslOpenSSL_1_0_1q

|

security vulnerability

|

## CVE-2016-0797 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>opensslOpenSSL_1_0_1q</b>, <b>opensslOpenSSL_1_0_1q</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Multiple integer overflows in OpenSSL 1.0.1 before 1.0.1s and 1.0.2 before 1.0.2g allow remote attackers to cause a denial of service (heap memory corruption or NULL pointer dereference) or possibly have unspecified other impact via a long digit string that is mishandled by the (1) BN_dec2bn or (2) BN_hex2bn function, related to crypto/bn/bn.h and crypto/bn/bn_print.c.

<p>Publish Date: 2016-03-03

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-0797>CVE-2016-0797</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2016-0797">https://nvd.nist.gov/vuln/detail/CVE-2016-0797</a></p>

<p>Release Date: 2016-03-03</p>

<p>Fix Resolution: 1.0.1s,1.0.2g</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2016-0797 (High) detected in opensslOpenSSL_1_0_1q, opensslOpenSSL_1_0_1q - ## CVE-2016-0797 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>opensslOpenSSL_1_0_1q</b>, <b>opensslOpenSSL_1_0_1q</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Multiple integer overflows in OpenSSL 1.0.1 before 1.0.1s and 1.0.2 before 1.0.2g allow remote attackers to cause a denial of service (heap memory corruption or NULL pointer dereference) or possibly have unspecified other impact via a long digit string that is mishandled by the (1) BN_dec2bn or (2) BN_hex2bn function, related to crypto/bn/bn.h and crypto/bn/bn_print.c.

<p>Publish Date: 2016-03-03

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-0797>CVE-2016-0797</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2016-0797">https://nvd.nist.gov/vuln/detail/CVE-2016-0797</a></p>

<p>Release Date: 2016-03-03</p>

<p>Fix Resolution: 1.0.1s,1.0.2g</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve high detected in opensslopenssl opensslopenssl cve high severity vulnerability vulnerable libraries opensslopenssl opensslopenssl vulnerability details multiple integer overflows in openssl before and before allow remote attackers to cause a denial of service heap memory corruption or null pointer dereference or possibly have unspecified other impact via a long digit string that is mishandled by the bn or bn function related to crypto bn bn h and crypto bn bn print c publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

631,320

| 20,150,489,013

|

IssuesEvent

|

2022-02-09 11:53:12

|

pulibrary/orangelight

|

https://api.github.com/repos/pulibrary/orangelight

|

closed

|

Downcase what we get from cas

|

high-priority

|

We notice that if we submit a net id with a capitalized letter through CAS, CAS will not downcase it.

|

1.0

|

Downcase what we get from cas - We notice that if we submit a net id with a capitalized letter through CAS, CAS will not downcase it.

|

non_process

|

downcase what we get from cas we notice that if we submit a net id with a capitalized letter through cas cas will not downcase it

| 0

|

72,446

| 9,593,376,269

|

IssuesEvent

|

2019-05-09 11:22:02

|

bounswe/bounswe2019group9

|

https://api.github.com/repos/bounswe/bounswe2019group9

|

closed

|

Evaluate requirements and mockups

|

status : Not Started Yet type : documentation urgency : high

|

Evaluate it in a few (points)sentences and write it to the related subtitle in the "Evaluation of Deliverables" part in milestone report.

- [ ] Halit

- [x] Emirhan

- [ ] Egemen

- [x] Ali Ramazan

|

1.0

|

Evaluate requirements and mockups - Evaluate it in a few (points)sentences and write it to the related subtitle in the "Evaluation of Deliverables" part in milestone report.

- [ ] Halit

- [x] Emirhan

- [ ] Egemen

- [x] Ali Ramazan

|

non_process

|

evaluate requirements and mockups evaluate it in a few points sentences and write it to the related subtitle in the evaluation of deliverables part in milestone report halit emirhan egemen ali ramazan

| 0

|

11,542

| 8,407,106,924

|

IssuesEvent

|

2018-10-11 19:55:57

|

mycelium-com/wallet-android

|

https://api.github.com/repos/mycelium-com/wallet-android

|

opened

|

Encrypted backup of non-masterseed derived data

|

enhancement security

|

Currently, migrating from one phone to another with only the 12 word backup comes at a loss of metadata and absent of some extra work even at the loss of accounts. In line with BIP44, Mycelium does not explore accounts, so users are left to (re)create them on the new device and same goes with the accounts covered by the masterseed in extension to BIP44, namely the coinapult accounts. Lastly, on top of that meta data, there are unrelated accounts that are not covered by the 12 words backup. The current "solution" of [BIP38](https://github.com/bitcoin/bips/blob/master/bip-0038.mediawiki) encrypted keys is cumbersome and users can lose these backups or do them wrongly too easily. They are required to create a pdf, **print it**, **write a key on it** and never lose it.

If we trust our cryptography, we should be able to do better. In order to have no security degradation I propose to use the same primitives as in BIP38 but with a symmetric key derived from the masterseed to store the necessary encrypted data at a place of the user's choice (just like the legacy backup pdf) or propose to store it on our servers or other services (google drive, dropbox, ...).

Things to store:

* address book

* transaction labels

* BIP70 payment requests

* list of activated/archived account indices

* account labels

* unrelated xpriv accounts

* unrelated single key accounts

* state of Coinapult activation

* (date of backup)

Workflow

=======

Format

---------

JSON

Backup

----------

* A user who never did this kind of backup should be presented a list of options of where to store backups.

Services that can then work without further user interaction after an initial setup should be "recommended"

* Trigger the backup mechanism every time any of the backupable data changes.

* If a service that can work automatically was selected, store backup.

* Else, show missing backup warning.

Restore

----------

* When a user restores an account from his 12 words backup, ask him if he might have a backup.

* Allow users to load backups from settings menu, in case they remember later.

Further thoughts

----------------------

* Users paying BIP70 invoices a lot might need to store more data than others and frequently.

* We might want to speed backup up by splitting the backup into many smaller files.

Related issues

===========

* #124 is about exporting things for use in Excel or do bookkeeping.

* #298 is about exporting/importing non-private key material in unencrypted form.

|

True

|

Encrypted backup of non-masterseed derived data - Currently, migrating from one phone to another with only the 12 word backup comes at a loss of metadata and absent of some extra work even at the loss of accounts. In line with BIP44, Mycelium does not explore accounts, so users are left to (re)create them on the new device and same goes with the accounts covered by the masterseed in extension to BIP44, namely the coinapult accounts. Lastly, on top of that meta data, there are unrelated accounts that are not covered by the 12 words backup. The current "solution" of [BIP38](https://github.com/bitcoin/bips/blob/master/bip-0038.mediawiki) encrypted keys is cumbersome and users can lose these backups or do them wrongly too easily. They are required to create a pdf, **print it**, **write a key on it** and never lose it.

If we trust our cryptography, we should be able to do better. In order to have no security degradation I propose to use the same primitives as in BIP38 but with a symmetric key derived from the masterseed to store the necessary encrypted data at a place of the user's choice (just like the legacy backup pdf) or propose to store it on our servers or other services (google drive, dropbox, ...).

Things to store:

* address book

* transaction labels

* BIP70 payment requests

* list of activated/archived account indices

* account labels

* unrelated xpriv accounts

* unrelated single key accounts

* state of Coinapult activation

* (date of backup)

Workflow

=======

Format

---------

JSON

Backup

----------

* A user who never did this kind of backup should be presented a list of options of where to store backups.

Services that can then work without further user interaction after an initial setup should be "recommended"

* Trigger the backup mechanism every time any of the backupable data changes.

* If a service that can work automatically was selected, store backup.

* Else, show missing backup warning.

Restore

----------

* When a user restores an account from his 12 words backup, ask him if he might have a backup.

* Allow users to load backups from settings menu, in case they remember later.

Further thoughts

----------------------

* Users paying BIP70 invoices a lot might need to store more data than others and frequently.

* We might want to speed backup up by splitting the backup into many smaller files.

Related issues

===========

* #124 is about exporting things for use in Excel or do bookkeeping.

* #298 is about exporting/importing non-private key material in unencrypted form.

|

non_process

|

encrypted backup of non masterseed derived data currently migrating from one phone to another with only the word backup comes at a loss of metadata and absent of some extra work even at the loss of accounts in line with mycelium does not explore accounts so users are left to re create them on the new device and same goes with the accounts covered by the masterseed in extension to namely the coinapult accounts lastly on top of that meta data there are unrelated accounts that are not covered by the words backup the current solution of encrypted keys is cumbersome and users can lose these backups or do them wrongly too easily they are required to create a pdf print it write a key on it and never lose it if we trust our cryptography we should be able to do better in order to have no security degradation i propose to use the same primitives as in but with a symmetric key derived from the masterseed to store the necessary encrypted data at a place of the user s choice just like the legacy backup pdf or propose to store it on our servers or other services google drive dropbox things to store address book transaction labels payment requests list of activated archived account indices account labels unrelated xpriv accounts unrelated single key accounts state of coinapult activation date of backup workflow format json backup a user who never did this kind of backup should be presented a list of options of where to store backups services that can then work without further user interaction after an initial setup should be recommended trigger the backup mechanism every time any of the backupable data changes if a service that can work automatically was selected store backup else show missing backup warning restore when a user restores an account from his words backup ask him if he might have a backup allow users to load backups from settings menu in case they remember later further thoughts users paying invoices a lot might need to store more data than others and frequently we might want to speed backup up by splitting the backup into many smaller files related issues is about exporting things for use in excel or do bookkeeping is about exporting importing non private key material in unencrypted form

| 0

|

36,914

| 6,557,447,818

|

IssuesEvent

|

2017-09-06 17:28:14

|

CoraleStudios/Colore

|

https://api.github.com/repos/CoraleStudios/Colore

|

closed

|

List of games using Colore

|

Documentation Idea In progress

|

We should actively list and promote the games which use the Colore library.

The ones I know so far are:

[](http://store.steampowered.com/app/290000/) [](http://store.steampowered.com/app/459090/) [](http://store.steampowered.com/app/342260/)

[](http://store.steampowered.com/app/529590/) [](http://store.steampowered.com/app/318970/) [](http://store.steampowered.com/app/423590/)

And yes, that took longer than i thought making it all pretty.

|

1.0

|

List of games using Colore - We should actively list and promote the games which use the Colore library.

The ones I know so far are:

[](http://store.steampowered.com/app/290000/) [](http://store.steampowered.com/app/459090/) [](http://store.steampowered.com/app/342260/)

[](http://store.steampowered.com/app/529590/) [](http://store.steampowered.com/app/318970/) [](http://store.steampowered.com/app/423590/)

And yes, that took longer than i thought making it all pretty.

|

non_process

|

list of games using colore we should actively list and promote the games which use the colore library the ones i know so far are and yes that took longer than i thought making it all pretty

| 0

|

32,270

| 6,756,696,827

|

IssuesEvent

|

2017-10-24 08:10:12

|

primefaces/primeng

|

https://api.github.com/repos/primefaces/primeng

|

closed

|

MegaMenu doesn't compile with TypeScript 2.4

|

confirmed defect

|

**I'm submitting a ...**

```

[X] bug report

```

**Test case**

You can use the following demo app as test case:

https://github.com/ova2/angular-development-with-primeng/tree/master/chapter7/megamenu

**Current behavior**

If you run the showcase for the MegaMenu with TypeScript 2.4 or run the demo app linked above, you will get a compilation error like

```

Type '{ label: string; items: { label: string; }[]; }[]' has no properties in common with type 'MenuItem'.

```

**Expected behavior**

There should be no compilation error, as when compiling with TypeScript 2.3.

**Minimal reproduction of the problem with instructions**

* Install the above app

* or install the current master of PrimeNG and change the requirements in package.json to Angular 4.3, Angular-Cli 1.3, TypeScript 2.4 (lower Angular/Cli versions require TypeScript 2.3, so you need to test with Angular 4.3 and Cli 1.3)

* Run `npm` install and `npm start` and check the MegaMenu

* **Angular version:** 4.3.3

* **PrimeNG version:** 4.1.2

* **Browser:** all

* **Language:** TypeScript 2.4

* **Node (for AoT issues):** 8.1.4

* **Analysis of the problem:**

In the `MenuItem` interface, the `items` property is defined as of type `MenuItem[]`. But in the MegaMenu, you can have arrays of arrays of MenuItems as items, not just arrays of MenuItems.

In TypeScript 2.4, it’s now an error to assign anything to a weak type when there’s no overlap in properties (see [here](https://www.typescriptlang.org/docs/handbook/release-notes/typescript-2-4.html#weak-type-detection)).

Note that in the `MenuItem` interface all properties are marked as optional. Therefore, this is considered a "weak type".

* **Proposed solution:**

The `items` property in the `MenutItem` interface should be defined as follows:

```

items?: MenuItem[]|MenuItem[][];

```

|

1.0

|

MegaMenu doesn't compile with TypeScript 2.4 - **I'm submitting a ...**

```

[X] bug report

```

**Test case**

You can use the following demo app as test case:

https://github.com/ova2/angular-development-with-primeng/tree/master/chapter7/megamenu

**Current behavior**

If you run the showcase for the MegaMenu with TypeScript 2.4 or run the demo app linked above, you will get a compilation error like

```

Type '{ label: string; items: { label: string; }[]; }[]' has no properties in common with type 'MenuItem'.

```

**Expected behavior**

There should be no compilation error, as when compiling with TypeScript 2.3.

**Minimal reproduction of the problem with instructions**

* Install the above app

* or install the current master of PrimeNG and change the requirements in package.json to Angular 4.3, Angular-Cli 1.3, TypeScript 2.4 (lower Angular/Cli versions require TypeScript 2.3, so you need to test with Angular 4.3 and Cli 1.3)

* Run `npm` install and `npm start` and check the MegaMenu

* **Angular version:** 4.3.3

* **PrimeNG version:** 4.1.2

* **Browser:** all

* **Language:** TypeScript 2.4

* **Node (for AoT issues):** 8.1.4

* **Analysis of the problem:**

In the `MenuItem` interface, the `items` property is defined as of type `MenuItem[]`. But in the MegaMenu, you can have arrays of arrays of MenuItems as items, not just arrays of MenuItems.

In TypeScript 2.4, it’s now an error to assign anything to a weak type when there’s no overlap in properties (see [here](https://www.typescriptlang.org/docs/handbook/release-notes/typescript-2-4.html#weak-type-detection)).

Note that in the `MenuItem` interface all properties are marked as optional. Therefore, this is considered a "weak type".

* **Proposed solution:**

The `items` property in the `MenutItem` interface should be defined as follows:

```

items?: MenuItem[]|MenuItem[][];

```

|

non_process

|

megamenu doesn t compile with typescript i m submitting a bug report test case you can use the following demo app as test case current behavior if you run the showcase for the megamenu with typescript or run the demo app linked above you will get a compilation error like type label string items label string has no properties in common with type menuitem expected behavior there should be no compilation error as when compiling with typescript minimal reproduction of the problem with instructions install the above app or install the current master of primeng and change the requirements in package json to angular angular cli typescript lower angular cli versions require typescript so you need to test with angular and cli run npm install and npm start and check the megamenu angular version primeng version browser all language typescript node for aot issues analysis of the problem in the menuitem interface the items property is defined as of type menuitem but in the megamenu you can have arrays of arrays of menuitems as items not just arrays of menuitems in typescript it’s now an error to assign anything to a weak type when there’s no overlap in properties see note that in the menuitem interface all properties are marked as optional therefore this is considered a weak type proposed solution the items property in the menutitem interface should be defined as follows items menuitem menuitem

| 0

|

1,499

| 4,075,958,399

|

IssuesEvent

|

2016-05-29 15:33:29

|

alexrj/Slic3r

|

https://api.github.com/repos/alexrj/Slic3r

|

closed

|

Issue: Max extrusions speed has preference over retract speed

|

Fixable with post-process script Not a bug

|

This happens at least with repetier firmware in slice3r 1.2.9

In eeprom I can set a maximum extrusion speed (something like 2mm/s on 3mm abs on my K8200)

Retraction speed is set at 40mm/s, but it will retract at no greater speed then what I set for max extrusion, so very very slow.

Automatic extrusion in repetier firmware is set to off, because that has another bug, it will not retract at all, just push, but, at the proper speed :-(

Reasonably speaking this is a bug of slic3r.

|

1.0

|

Issue: Max extrusions speed has preference over retract speed - This happens at least with repetier firmware in slice3r 1.2.9

In eeprom I can set a maximum extrusion speed (something like 2mm/s on 3mm abs on my K8200)

Retraction speed is set at 40mm/s, but it will retract at no greater speed then what I set for max extrusion, so very very slow.

Automatic extrusion in repetier firmware is set to off, because that has another bug, it will not retract at all, just push, but, at the proper speed :-(

Reasonably speaking this is a bug of slic3r.

|

process

|

issue max extrusions speed has preference over retract speed this happens at least with repetier firmware in in eeprom i can set a maximum extrusion speed something like s on abs on my retraction speed is set at s but it will retract at no greater speed then what i set for max extrusion so very very slow automatic extrusion in repetier firmware is set to off because that has another bug it will not retract at all just push but at the proper speed reasonably speaking this is a bug of

| 1

|

187,066

| 14,426,956,557

|

IssuesEvent

|

2020-12-06 01:00:40

|

kalexmills/github-vet-tests-dec2020

|

https://api.github.com/repos/kalexmills/github-vet-tests-dec2020

|

closed

|

giantswarm/kvm-operator-node-controller: vendor/k8s.io/kubernetes/plugin/pkg/scheduler/factory/factory_test.go; 5 LoC

|

fresh test tiny vendored

|

Found a possible issue in [giantswarm/kvm-operator-node-controller](https://www.github.com/giantswarm/kvm-operator-node-controller) at [vendor/k8s.io/kubernetes/plugin/pkg/scheduler/factory/factory_test.go](https://github.com/giantswarm/kvm-operator-node-controller/blob/7146561e54142d4f986daee0206336ebee3ceb18/vendor/k8s.io/kubernetes/plugin/pkg/scheduler/factory/factory_test.go#L551-L555)

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first

issue it finds, so please do not limit your consideration to the contents of the below message.

> function call which takes a reference to node at line 552 may start a goroutine

[Click here to see the code in its original context.](https://github.com/giantswarm/kvm-operator-node-controller/blob/7146561e54142d4f986daee0206336ebee3ceb18/vendor/k8s.io/kubernetes/plugin/pkg/scheduler/factory/factory_test.go#L551-L555)

<details>

<summary>Click here to show the 5 line(s) of Go which triggered the analyzer.</summary>

```go

for _, node := range nodeList.Items {

if nodeFunc(&node) {

nodeNames = append(nodeNames, node.Name)

}

}

```

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: 7146561e54142d4f986daee0206336ebee3ceb18

|

1.0

|

giantswarm/kvm-operator-node-controller: vendor/k8s.io/kubernetes/plugin/pkg/scheduler/factory/factory_test.go; 5 LoC -

Found a possible issue in [giantswarm/kvm-operator-node-controller](https://www.github.com/giantswarm/kvm-operator-node-controller) at [vendor/k8s.io/kubernetes/plugin/pkg/scheduler/factory/factory_test.go](https://github.com/giantswarm/kvm-operator-node-controller/blob/7146561e54142d4f986daee0206336ebee3ceb18/vendor/k8s.io/kubernetes/plugin/pkg/scheduler/factory/factory_test.go#L551-L555)

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first

issue it finds, so please do not limit your consideration to the contents of the below message.

> function call which takes a reference to node at line 552 may start a goroutine

[Click here to see the code in its original context.](https://github.com/giantswarm/kvm-operator-node-controller/blob/7146561e54142d4f986daee0206336ebee3ceb18/vendor/k8s.io/kubernetes/plugin/pkg/scheduler/factory/factory_test.go#L551-L555)

<details>

<summary>Click here to show the 5 line(s) of Go which triggered the analyzer.</summary>

```go

for _, node := range nodeList.Items {

if nodeFunc(&node) {

nodeNames = append(nodeNames, node.Name)

}

}

```

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: 7146561e54142d4f986daee0206336ebee3ceb18

|

non_process

|

giantswarm kvm operator node controller vendor io kubernetes plugin pkg scheduler factory factory test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below message function call which takes a reference to node at line may start a goroutine click here to show the line s of go which triggered the analyzer go for node range nodelist items if nodefunc node nodenames append nodenames node name leave a reaction on this issue to contribute to the project by classifying this instance as a bug mitigated or desirable behavior rocket see the descriptions of the classifications for more information commit id

| 0

|

238,267

| 26,087,070,396

|

IssuesEvent

|

2022-12-26 05:15:39

|

SmartBear/git-en-boite

|

https://api.github.com/repos/SmartBear/git-en-boite

|

closed

|

WS-2021-0638 (High) detected in mocha-10.0.0.tgz

|

wontfix security vulnerability

|

## WS-2021-0638 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mocha-10.0.0.tgz</b></p></summary>

<p>simple, flexible, fun test framework</p>

<p>Library home page: <a href="https://registry.npmjs.org/mocha/-/mocha-10.0.0.tgz">https://registry.npmjs.org/mocha/-/mocha-10.0.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/mocha/package.json</p>

<p>

Dependency Hierarchy:

- git-en-boite-smoke-tests-0.0.0.tgz (Root Library)

- :x: **mocha-10.0.0.tgz** (Vulnerable Library)

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

There is regular Expression Denial of Service (ReDoS) vulnerability in mocha.

It allows cause a denial of service when stripping crafted invalid function definition from strs.

<p>Publish Date: 2021-09-18

<p>URL: <a href=https://github.com/mochajs/mocha/commit/61b4b9209c2c64b32c8d48b1761c3b9384d411ea>WS-2021-0638</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2021-09-18</p>

<p>Fix Resolution: mocha - 10.1.0</p>

</p>

</details>

<p></p>

|

True

|

WS-2021-0638 (High) detected in mocha-10.0.0.tgz - ## WS-2021-0638 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mocha-10.0.0.tgz</b></p></summary>

<p>simple, flexible, fun test framework</p>

<p>Library home page: <a href="https://registry.npmjs.org/mocha/-/mocha-10.0.0.tgz">https://registry.npmjs.org/mocha/-/mocha-10.0.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/mocha/package.json</p>

<p>

Dependency Hierarchy:

- git-en-boite-smoke-tests-0.0.0.tgz (Root Library)

- :x: **mocha-10.0.0.tgz** (Vulnerable Library)

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

There is regular Expression Denial of Service (ReDoS) vulnerability in mocha.

It allows cause a denial of service when stripping crafted invalid function definition from strs.

<p>Publish Date: 2021-09-18

<p>URL: <a href=https://github.com/mochajs/mocha/commit/61b4b9209c2c64b32c8d48b1761c3b9384d411ea>WS-2021-0638</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2021-09-18</p>

<p>Fix Resolution: mocha - 10.1.0</p>

</p>

</details>

<p></p>

|

non_process

|

ws high detected in mocha tgz ws high severity vulnerability vulnerable library mocha tgz simple flexible fun test framework library home page a href path to dependency file package json path to vulnerable library node modules mocha package json dependency hierarchy git en boite smoke tests tgz root library x mocha tgz vulnerable library found in base branch main vulnerability details there is regular expression denial of service redos vulnerability in mocha it allows cause a denial of service when stripping crafted invalid function definition from strs publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version release date fix resolution mocha

| 0

|

256,123

| 22,039,925,926

|

IssuesEvent

|

2022-05-29 07:23:51

|

tgstation/tgstation

|

https://api.github.com/repos/tgstation/tgstation

|

closed

|

Custom say emote issues.

|

Test Merge Bug

|

Reporting client version: 514.1583

<!-- Write **BELOW** The Headers and **ABOVE** The comments else it may not be viewable -->

## Round ID:

[183960](https://scrubby.melonmesa.com/round/183960)

<!--- **INCLUDE THE ROUND ID**

If you discovered this issue from playing tgstation hosted servers:

[Round ID]: # (It can be found in the Status panel or retrieved from https://sb.atlantaned.space/rounds ! The round id let's us look up valuable information and logs for the round the bug happened.)-->

## Testmerges:

- [The Humanening](https://www.github.com/tgstation/tgstation/pull/67298)

- [Optimizes Runechat](https://www.github.com/tgstation/tgstation/pull/65791)

- [Adds Cargorilla](https://www.github.com/tgstation/tgstation/pull/67003)

<!-- If you're certain the issue is to be caused by a test merge [OOC tab -> Show Server Revision], report it in the pull request's comment section rather than on the tracker(If you're unsure you can refer to the issue number by prefixing said number with #. The issue number can be found beside the title after submitting it to the tracker).If no testmerges are active, feel free to remove this section. -->

## Reproduction:

Custom say emotes force you to say "An interesting thing to say" in runechat if no second argument is given.

Example:

`screams in agony!*`