Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

15,697

| 19,848,206,225

|

IssuesEvent

|

2022-01-21 09:19:18

|

ooi-data/CE06ISSM-RID16-07-NUTNRB000-recovered_host-suna_dcl_recovered

|

https://api.github.com/repos/ooi-data/CE06ISSM-RID16-07-NUTNRB000-recovered_host-suna_dcl_recovered

|

opened

|

🛑 Processing failed: ValueError

|

process

|

## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T09:19:17.461091.

## Details

Flow name: `CE06ISSM-RID16-07-NUTNRB000-recovered_host-suna_dcl_recovered`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)

<details>

<summary>Traceback</summary>

```

Traceback (most recent call last):

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/pipeline.py", line 165, in processing

final_path = finalize_data_stream(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 84, in finalize_data_stream

append_to_zarr(mod_ds, final_store, enc, logger=logger)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 357, in append_to_zarr

_append_zarr(store, mod_ds)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/utils.py", line 187, in _append_zarr

existing_arr.append(var_data.values)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 519, in values

return _as_array_or_item(self._data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 259, in _as_array_or_item

data = np.asarray(data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 1541, in __array__

x = self.compute()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 288, in compute

(result,) = compute(self, traverse=False, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 571, in compute

results = schedule(dsk, keys, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/threaded.py", line 79, in get

results = get_async(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 507, in get_async

raise_exception(exc, tb)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 315, in reraise

raise exc

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 220, in execute_task

result = _execute_task(task, data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/core.py", line 119, in _execute_task

return func(*(_execute_task(a, cache) for a in args))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 116, in getter

c = np.asarray(c)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 357, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 551, in __array__

self._ensure_cached()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 548, in _ensure_cached

self.array = NumpyIndexingAdapter(np.asarray(self.array))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 521, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/coding/variables.py", line 70, in __array__

return self.func(self.array)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/coding/variables.py", line 137, in _apply_mask

data = np.asarray(data, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/backends/zarr.py", line 73, in __getitem__

return array[key.tuple]

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 673, in __getitem__

return self.get_basic_selection(selection, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 798, in get_basic_selection

return self._get_basic_selection_nd(selection=selection, out=out,

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 841, in _get_basic_selection_nd

return self._get_selection(indexer=indexer, out=out, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 1135, in _get_selection

lchunk_coords, lchunk_selection, lout_selection = zip(*indexer)

ValueError: not enough values to unpack (expected 3, got 0)

```

</details>

|

1.0

|

🛑 Processing failed: ValueError - ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T09:19:17.461091.

## Details

Flow name: `CE06ISSM-RID16-07-NUTNRB000-recovered_host-suna_dcl_recovered`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)

<details>

<summary>Traceback</summary>

```

Traceback (most recent call last):

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/pipeline.py", line 165, in processing

final_path = finalize_data_stream(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 84, in finalize_data_stream

append_to_zarr(mod_ds, final_store, enc, logger=logger)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 357, in append_to_zarr

_append_zarr(store, mod_ds)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/utils.py", line 187, in _append_zarr

existing_arr.append(var_data.values)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 519, in values

return _as_array_or_item(self._data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 259, in _as_array_or_item

data = np.asarray(data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 1541, in __array__

x = self.compute()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 288, in compute

(result,) = compute(self, traverse=False, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 571, in compute

results = schedule(dsk, keys, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/threaded.py", line 79, in get

results = get_async(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 507, in get_async

raise_exception(exc, tb)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 315, in reraise

raise exc

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 220, in execute_task

result = _execute_task(task, data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/core.py", line 119, in _execute_task

return func(*(_execute_task(a, cache) for a in args))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 116, in getter

c = np.asarray(c)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 357, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 551, in __array__

self._ensure_cached()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 548, in _ensure_cached

self.array = NumpyIndexingAdapter(np.asarray(self.array))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 521, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/coding/variables.py", line 70, in __array__

return self.func(self.array)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/coding/variables.py", line 137, in _apply_mask

data = np.asarray(data, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/backends/zarr.py", line 73, in __getitem__

return array[key.tuple]

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 673, in __getitem__

return self.get_basic_selection(selection, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 798, in get_basic_selection

return self._get_basic_selection_nd(selection=selection, out=out,

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 841, in _get_basic_selection_nd

return self._get_selection(indexer=indexer, out=out, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 1135, in _get_selection

lchunk_coords, lchunk_selection, lout_selection = zip(*indexer)

ValueError: not enough values to unpack (expected 3, got 0)

```

</details>

|

process

|

🛑 processing failed valueerror overview valueerror found in processing task task during run ended on details flow name recovered host suna dcl recovered task name processing task error type valueerror error message not enough values to unpack expected got traceback traceback most recent call last file srv conda envs notebook lib site packages ooi harvester processor pipeline py line in processing final path finalize data stream file srv conda envs notebook lib site packages ooi harvester processor init py line in finalize data stream append to zarr mod ds final store enc logger logger file srv conda envs notebook lib site packages ooi harvester processor init py line in append to zarr append zarr store mod ds file srv conda envs notebook lib site packages ooi harvester processor utils py line in append zarr existing arr append var data values file srv conda envs notebook lib site packages xarray core variable py line in values return as array or item self data file srv conda envs notebook lib site packages xarray core variable py line in as array or item data np asarray data file srv conda envs notebook lib site packages dask array core py line in array x self compute file srv conda envs notebook lib site packages dask base py line in compute result compute self traverse false kwargs file srv conda envs notebook lib site packages dask base py line in compute results schedule dsk keys kwargs file srv conda envs notebook lib site packages dask threaded py line in get results get async file srv conda envs notebook lib site packages dask local py line in get async raise exception exc tb file srv conda envs notebook lib site packages dask local py line in reraise raise exc file srv conda envs notebook lib site packages dask local py line in execute task result execute task task data file srv conda envs notebook lib site packages dask core py line in execute task return func execute task a cache for a in args file srv conda envs notebook lib site packages dask array core py line in getter c np asarray c file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray self array dtype dtype file srv conda envs notebook lib site packages xarray core indexing py line in array self ensure cached file srv conda envs notebook lib site packages xarray core indexing py line in ensure cached self array numpyindexingadapter np asarray self array file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray self array dtype dtype file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray array dtype none file srv conda envs notebook lib site packages xarray coding variables py line in array return self func self array file srv conda envs notebook lib site packages xarray coding variables py line in apply mask data np asarray data dtype dtype file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray array dtype none file srv conda envs notebook lib site packages xarray backends zarr py line in getitem return array file srv conda envs notebook lib site packages zarr core py line in getitem return self get basic selection selection fields fields file srv conda envs notebook lib site packages zarr core py line in get basic selection return self get basic selection nd selection selection out out file srv conda envs notebook lib site packages zarr core py line in get basic selection nd return self get selection indexer indexer out out fields fields file srv conda envs notebook lib site packages zarr core py line in get selection lchunk coords lchunk selection lout selection zip indexer valueerror not enough values to unpack expected got

| 1

|

9,413

| 12,406,993,168

|

IssuesEvent

|

2020-05-21 20:12:40

|

MicrosoftDocs/azure-devops-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-devops-docs

|

closed

|

runtime expression $[variables.var] renders as is when not found

|

Pri1 devops-cicd-process/tech devops/prod doc-enhancement

|

while the doc says it renders empty string.

Example pipeline:

```yml

steps:

- bash: echo '$[variables.var]'

```

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: dd7e0bd3-1f7d-d7b6-cc72-5ef63c31b46a

* Version Independent ID: dae87abd-b73d-9120-bcdb-6097d4b40f2a

* Content: [Define variables - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/variables?view=azure-devops&tabs=yaml%2Cbatch#feedback)

* Content Source: [docs/pipelines/process/variables.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/master/docs/pipelines/process/variables.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

1.0

|

runtime expression $[variables.var] renders as is when not found - while the doc says it renders empty string.

Example pipeline:

```yml

steps:

- bash: echo '$[variables.var]'

```

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: dd7e0bd3-1f7d-d7b6-cc72-5ef63c31b46a

* Version Independent ID: dae87abd-b73d-9120-bcdb-6097d4b40f2a

* Content: [Define variables - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/variables?view=azure-devops&tabs=yaml%2Cbatch#feedback)

* Content Source: [docs/pipelines/process/variables.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/master/docs/pipelines/process/variables.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

process

|

runtime expression renders as is when not found while the doc says it renders empty string example pipeline yml steps bash echo document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id bcdb content content source product devops technology devops cicd process github login juliakm microsoft alias jukullam

| 1

|

14,291

| 17,264,841,937

|

IssuesEvent

|

2021-07-22 12:37:56

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

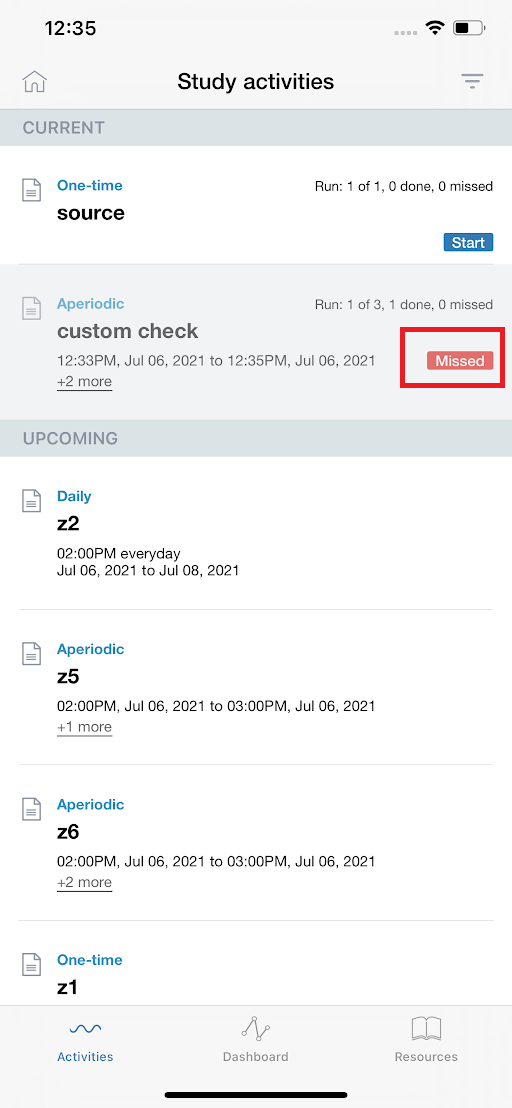

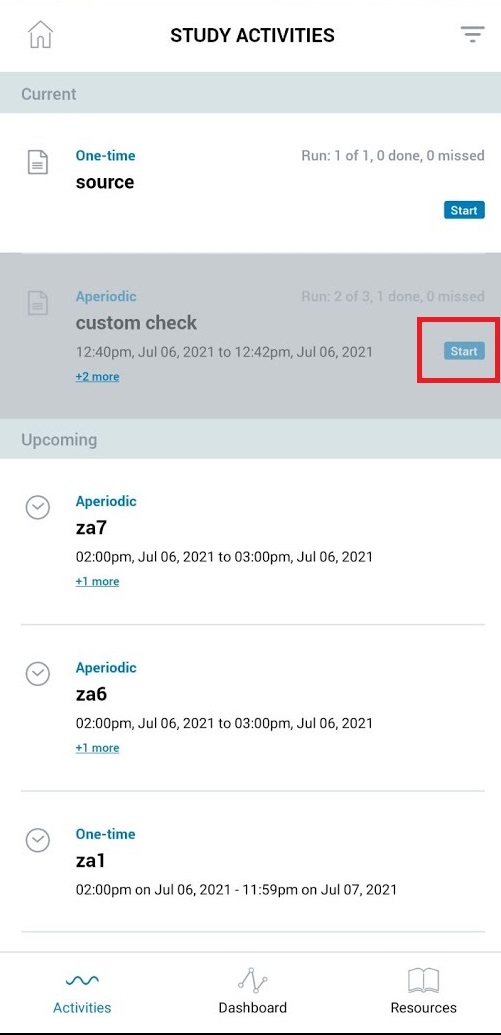

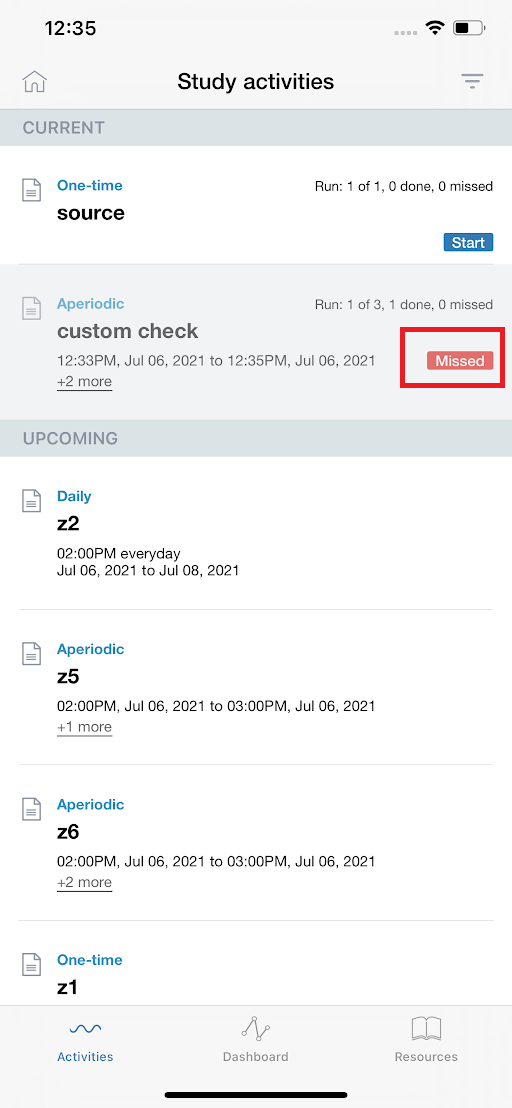

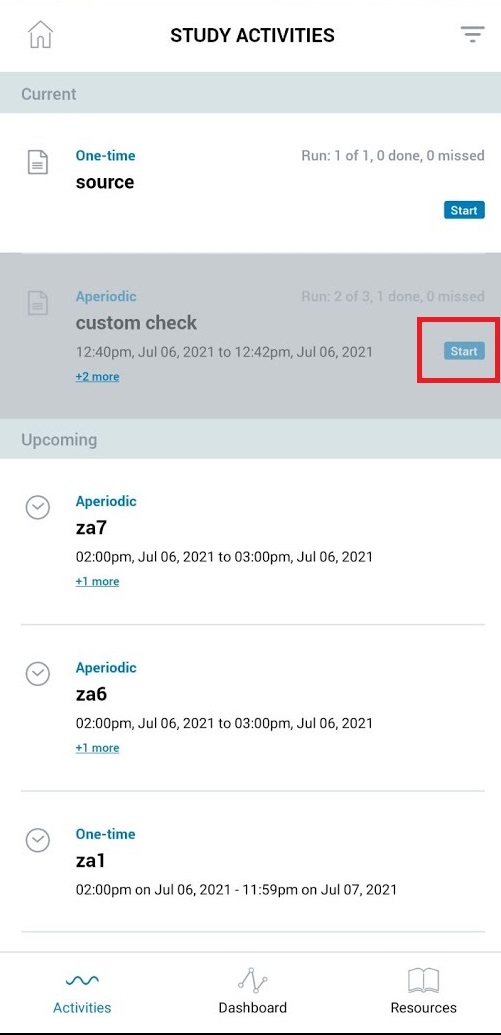

[iOS] Custom schedule > Incorrect label 'Missed' is shown when participant completed previous run and next run yet to start

|

Bug P2 Process: Fixed Process: Tested QA Process: Tested dev iOS

|

Steps:

1. Configure a custom schedule regular/anchor based having multiple runs

2. Let 1st run start from 1PM 07/06 to 2PM 07/06

3. Let 2nd run start from 3PM 07/06 to 4PM 07/06

3. Participant completes the 1st run successfully

4. Let 1st run gets expired

5. Observe the activity 'Label' in between 1st run and 2nd run i.e from 2.01 PM to 2:59 PM

Actual: Label 'Missed' is shown

Expected: Label 'Start' should be shown (Refer android screenshot)

Issue not observed for other scheduling frequencies

Issue should be fixed for questionnaires and active tasks

Issue should be fixed for Regular and Anchor based custom scheduling

iOS:

Android (for reference):

|

3.0

|

[iOS] Custom schedule > Incorrect label 'Missed' is shown when participant completed previous run and next run yet to start - Steps:

1. Configure a custom schedule regular/anchor based having multiple runs

2. Let 1st run start from 1PM 07/06 to 2PM 07/06

3. Let 2nd run start from 3PM 07/06 to 4PM 07/06

3. Participant completes the 1st run successfully

4. Let 1st run gets expired

5. Observe the activity 'Label' in between 1st run and 2nd run i.e from 2.01 PM to 2:59 PM

Actual: Label 'Missed' is shown

Expected: Label 'Start' should be shown (Refer android screenshot)

Issue not observed for other scheduling frequencies

Issue should be fixed for questionnaires and active tasks

Issue should be fixed for Regular and Anchor based custom scheduling

iOS:

Android (for reference):

|

process

|

custom schedule incorrect label missed is shown when participant completed previous run and next run yet to start steps configure a custom schedule regular anchor based having multiple runs let run start from to let run start from to participant completes the run successfully let run gets expired observe the activity label in between run and run i e from pm to pm actual label missed is shown expected label start should be shown refer android screenshot issue not observed for other scheduling frequencies issue should be fixed for questionnaires and active tasks issue should be fixed for regular and anchor based custom scheduling ios android for reference

| 1

|

5,195

| 7,973,978,025

|

IssuesEvent

|

2018-07-17 02:31:07

|

pelias/pelias

|

https://api.github.com/repos/pelias/pelias

|

closed

|

handle OA records with no HASH property

|

processed

|

it seems like a bunch of the OA records still don't have a `HASH` property, eg:

```bash

$ head /data/oa/us/nj/statewide.csv

LON,LAT,NUMBER,STREET,UNIT,CITY,DISTRICT,REGION,POSTCODE,ID,HASH

-74.4226095,39.3689364,729,LEXINGTON AVE,,,,,,,

-74.4147457,39.3689073,29,N VERMONT AVE,,,,,,,

-74.4227321,39.3688336,219,N DELAWARE AVE,,,,,,,

-74.4220756,39.3648725,901,ATLANTIC AVE,,,,,,,

-74.4345249,39.3666268,400,N KENTUCKY AVE,,,,,,,

-74.415292,39.3684783,19,IRVING AVE,,,,,,,

-74.4330455,39.3606627,1729,ATLANTIC AVE RR,,,,,,,

-74.4646897,39.3464363,1,S PLAZA PL,,,,,,,

-74.4637452,39.3456241,4601,ATLANTIC AVE,,,,,,,

```

(that's from a fresh download of `openaddr-collected-global.zip` within an hour of writing this).

this means that search results don't actually generate GID values correctly for these records, eg:

```

"properties": {

"id": "us/nj/statewide:0",

"gid": "openaddresses:address:us/nj/statewide:0",

"layer": "address",

"source": "openaddresses",

"source_id": "us/nj/statewide:0",

"name": "729 Lexington Ave",

"housenumber": "729",

"street": "Lexington Ave",

```

cc/ @iandees

|

1.0

|

handle OA records with no HASH property - it seems like a bunch of the OA records still don't have a `HASH` property, eg:

```bash

$ head /data/oa/us/nj/statewide.csv

LON,LAT,NUMBER,STREET,UNIT,CITY,DISTRICT,REGION,POSTCODE,ID,HASH

-74.4226095,39.3689364,729,LEXINGTON AVE,,,,,,,

-74.4147457,39.3689073,29,N VERMONT AVE,,,,,,,

-74.4227321,39.3688336,219,N DELAWARE AVE,,,,,,,

-74.4220756,39.3648725,901,ATLANTIC AVE,,,,,,,

-74.4345249,39.3666268,400,N KENTUCKY AVE,,,,,,,

-74.415292,39.3684783,19,IRVING AVE,,,,,,,

-74.4330455,39.3606627,1729,ATLANTIC AVE RR,,,,,,,

-74.4646897,39.3464363,1,S PLAZA PL,,,,,,,

-74.4637452,39.3456241,4601,ATLANTIC AVE,,,,,,,

```

(that's from a fresh download of `openaddr-collected-global.zip` within an hour of writing this).

this means that search results don't actually generate GID values correctly for these records, eg:

```

"properties": {

"id": "us/nj/statewide:0",

"gid": "openaddresses:address:us/nj/statewide:0",

"layer": "address",

"source": "openaddresses",

"source_id": "us/nj/statewide:0",

"name": "729 Lexington Ave",

"housenumber": "729",

"street": "Lexington Ave",

```

cc/ @iandees

|

process

|

handle oa records with no hash property it seems like a bunch of the oa records still don t have a hash property eg bash head data oa us nj statewide csv lon lat number street unit city district region postcode id hash lexington ave n vermont ave n delaware ave atlantic ave n kentucky ave irving ave atlantic ave rr s plaza pl atlantic ave that s from a fresh download of openaddr collected global zip within an hour of writing this this means that search results don t actually generate gid values correctly for these records eg properties id us nj statewide gid openaddresses address us nj statewide layer address source openaddresses source id us nj statewide name lexington ave housenumber street lexington ave cc iandees

| 1

|

289,108

| 31,931,181,359

|

IssuesEvent

|

2023-09-19 07:32:27

|

Trinadh465/linux-4.1.15_CVE-2023-4128

|

https://api.github.com/repos/Trinadh465/linux-4.1.15_CVE-2023-4128

|

opened

|

CVE-2022-28356 (Medium) detected in linuxlinux-4.6

|

Mend: dependency security vulnerability

|

## CVE-2022-28356 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/Trinadh465/linux-4.1.15_CVE-2023-4128/commit/0c6c8d8c809f697cd5fc581c6c08e9ad646c55a8">0c6c8d8c809f697cd5fc581c6c08e9ad646c55a8</a></p>

<p>Found in base branch: <b>main</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/net/llc/af_llc.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/net/llc/af_llc.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

In the Linux kernel before 5.17.1, a refcount leak bug was found in net/llc/af_llc.c.

<p>Publish Date: 2022-04-02

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-28356>CVE-2022-28356</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2022-28356">https://www.linuxkernelcves.com/cves/CVE-2022-28356</a></p>

<p>Release Date: 2022-04-02</p>

<p>Fix Resolution: v4.9.309,v4.14.274,v4.19.237,v5.4.188,v5.10.109,v5.15.32,v5.16.18,v5.17.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2022-28356 (Medium) detected in linuxlinux-4.6 - ## CVE-2022-28356 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/Trinadh465/linux-4.1.15_CVE-2023-4128/commit/0c6c8d8c809f697cd5fc581c6c08e9ad646c55a8">0c6c8d8c809f697cd5fc581c6c08e9ad646c55a8</a></p>

<p>Found in base branch: <b>main</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/net/llc/af_llc.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/net/llc/af_llc.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

In the Linux kernel before 5.17.1, a refcount leak bug was found in net/llc/af_llc.c.

<p>Publish Date: 2022-04-02

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-28356>CVE-2022-28356</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2022-28356">https://www.linuxkernelcves.com/cves/CVE-2022-28356</a></p>

<p>Release Date: 2022-04-02</p>

<p>Fix Resolution: v4.9.309,v4.14.274,v4.19.237,v5.4.188,v5.10.109,v5.15.32,v5.16.18,v5.17.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in linuxlinux cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch main vulnerable source files net llc af llc c net llc af llc c vulnerability details in the linux kernel before a refcount leak bug was found in net llc af llc c publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend

| 0

|

12,074

| 14,739,925,035

|

IssuesEvent

|

2021-01-07 08:11:33

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

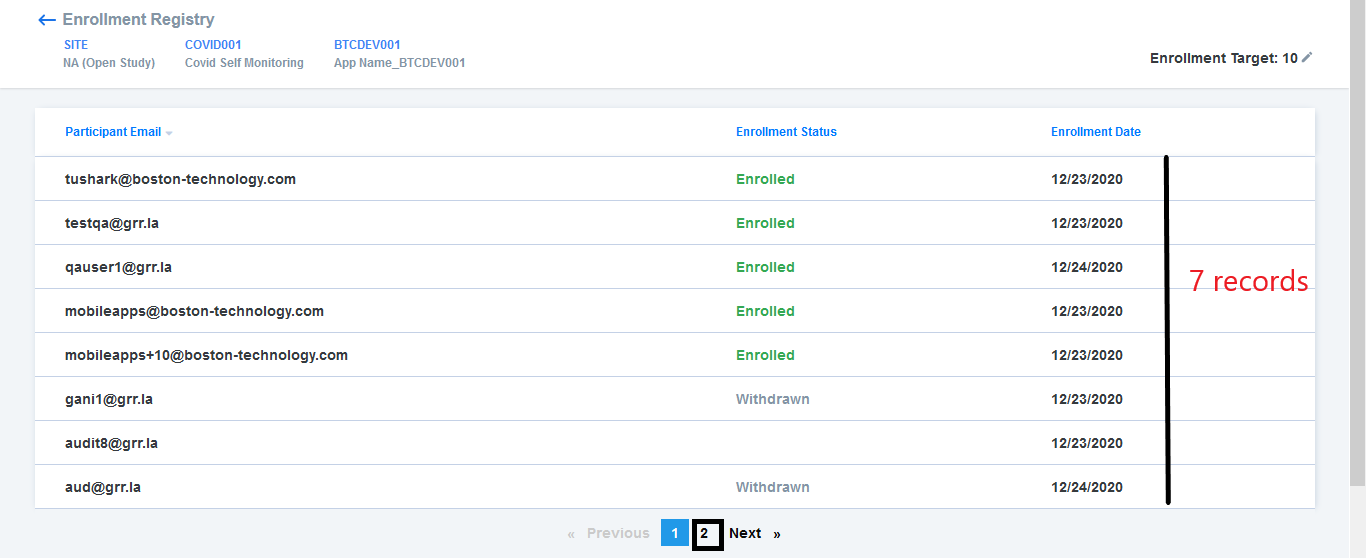

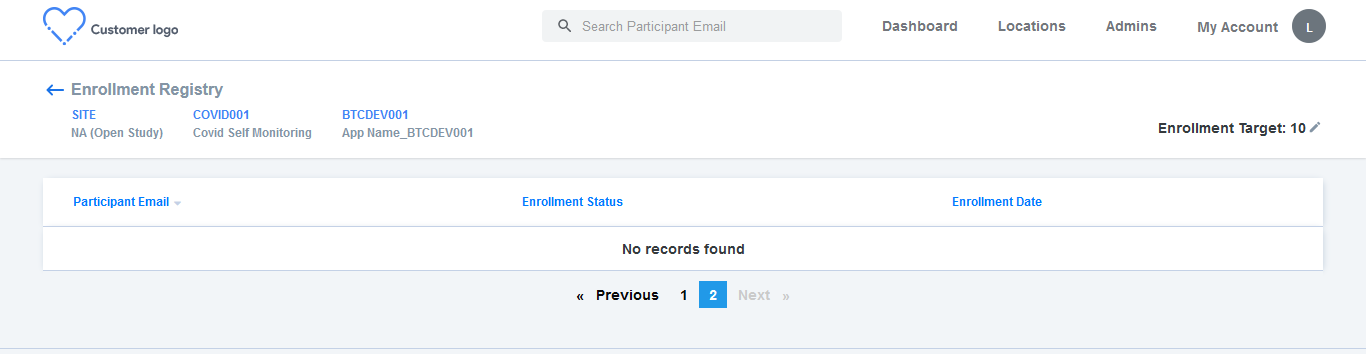

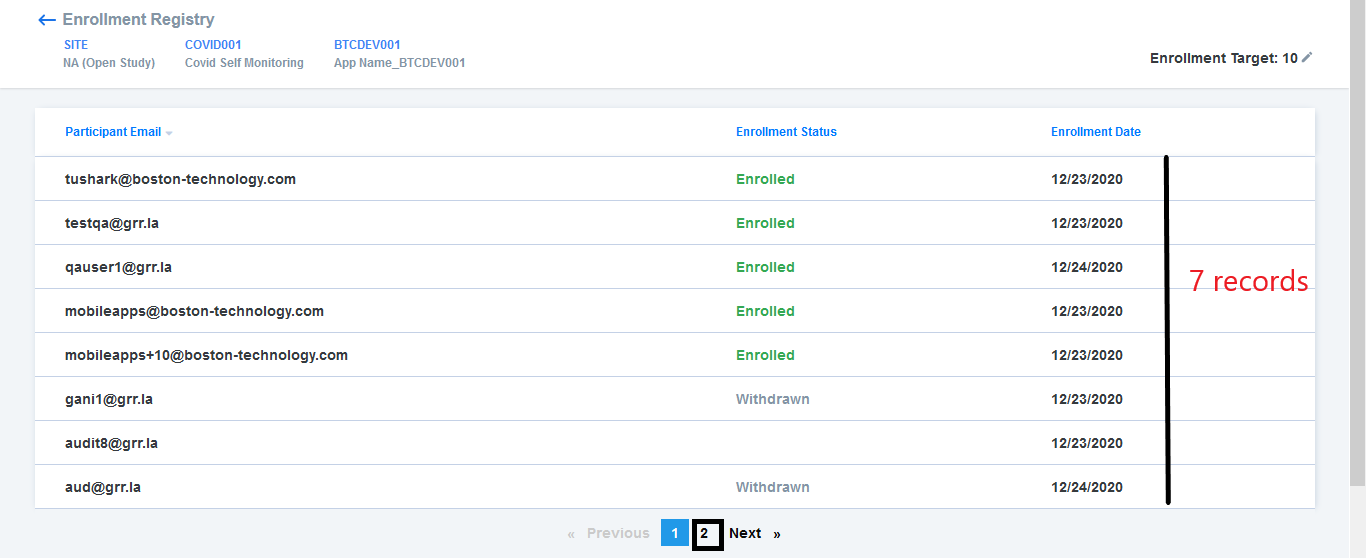

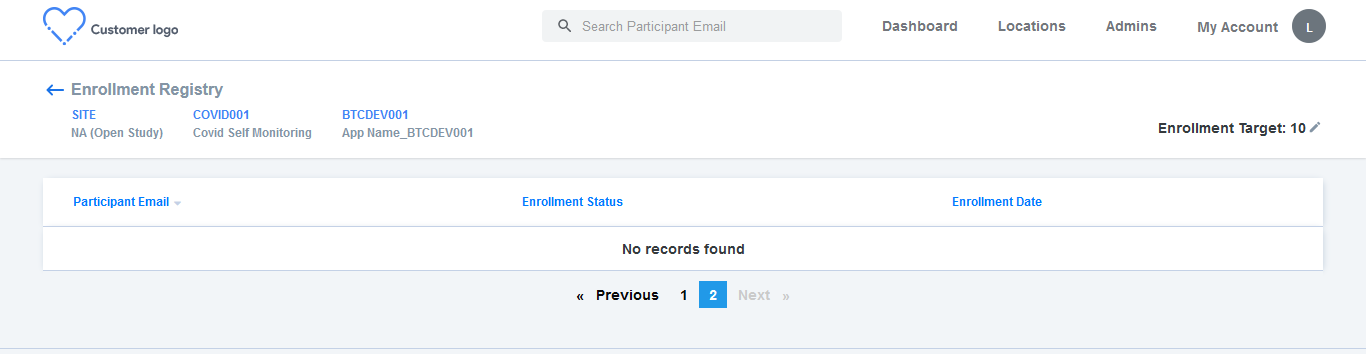

[PM] [Dev] Open study > Enrollment registry > Pagination issue

|

Bug P1 Participant manager Process: Dev Process: Tested dev

|

AR : 2nd page is getting displayed eventhough a study has less than or equal to 10 participant records

ER : If the study has less than or equal to 10 participant records, then 2nd page should not be displayed

|

2.0

|

[PM] [Dev] Open study > Enrollment registry > Pagination issue - AR : 2nd page is getting displayed eventhough a study has less than or equal to 10 participant records

ER : If the study has less than or equal to 10 participant records, then 2nd page should not be displayed

|

process

|

open study enrollment registry pagination issue ar page is getting displayed eventhough a study has less than or equal to participant records er if the study has less than or equal to participant records then page should not be displayed

| 1

|

128,406

| 5,064,777,928

|

IssuesEvent

|

2016-12-23 08:45:27

|

praekeltfoundation/gem-bbb-indo

|

https://api.github.com/repos/praekeltfoundation/gem-bbb-indo

|

closed

|

Missed Deadline text on Goal view

|

enhancement priority - medium

|

Target date copy changes from **"TARGET DATE"** to **"TARGET DATE MISSED"** when a Goal is overdue.

|

1.0

|

Missed Deadline text on Goal view - Target date copy changes from **"TARGET DATE"** to **"TARGET DATE MISSED"** when a Goal is overdue.

|

non_process

|

missed deadline text on goal view target date copy changes from target date to target date missed when a goal is overdue

| 0

|

339,341

| 30,442,451,905

|

IssuesEvent

|

2023-07-15 08:21:11

|

sonic-net/sonic-mgmt

|

https://api.github.com/repos/sonic-net/sonic-mgmt

|

closed

|

show snmp counters unavailable failing test_ft_snmp_trap_counter

|

Spytest

|

Hello,

In spytest/apis/system/snmp.py, we have a method called show_snmp_counters (and clear snmp counters) which is being called by test_ft_snmp_trap_counter and test_ft_snmp_basic_counters.

show_snmp_counters tries to run "show snmp counters" on the DUT. However, on the DUT there is no "show snmp counters" in klish CLI. What could be missing?

The DUT is running :

SONiC Software Version: SONiC.master.565-e0781f46

Distribution: Debian 10.6

Kernel: 4.19.0-9-2-amd64

Build commit: e0781f46

Build date: Mon Nov 23 08:32:04 UTC 2020

Built by: johnar@jenkins-worker-12

Platform: x86_64-kvm_x86_64-r0

HwSKU: Force10-S6000

ASIC: vs

Serial Number: 000000

Uptime: 03:01:08 up 1 day, 4:44, 1 user, load average: 0.45, 0.81, 0.67

Docker images:

REPOSITORY TAG IMAGE ID SIZE

docker-gbsyncd-vs latest fc2bcaf7dc91 447MB

docker-gbsyncd-vs master.565-e0781f46 fc2bcaf7dc91 447MB

docker-syncd-vs latest a599f4b6a7c9 447MB

docker-syncd-vs master.565-e0781f46 a599f4b6a7c9 447MB

docker-snmp latest 72953533ec0e 477MB

docker-snmp master.565-e0781f46 72953533ec0e 477MB

docker-teamd latest 0392627a102f 483MB

docker-teamd master.565-e0781f46 0392627a102f 483MB

docker-nat latest 61210a29630f 486MB

docker-nat master.565-e0781f46 61210a29630f 486MB

docker-router-advertiser latest dcc0e15c5463 441MB

docker-router-advertiser master.565-e0781f46 dcc0e15c5463 441MB

docker-platform-monitor latest 053ce63c0b8f 563MB

docker-platform-monitor master.565-e0781f46 053ce63c0b8f 563MB

docker-lldp latest c68597acb666 514MB

docker-lldp master.565-e0781f46 c68597acb666 514MB

docker-dhcp-relay latest badb3fb27c4e 448MB

docker-dhcp-relay master.565-e0781f46 badb3fb27c4e 448MB

docker-database latest d92f5b4cb6e0 441MB

docker-database master.565-e0781f46 d92f5b4cb6e0 441MB

docker-orchagent latest d9c215c333d9 497MB

docker-orchagent master.565-e0781f46 d9c215c333d9 497MB

docker-sonic-telemetry latest 26941527084a 511MB

docker-sonic-telemetry master.565-e0781f46 26941527084a 511MB

docker-sonic-mgmt-framework latest d92d56dac3a5 598MB

docker-sonic-mgmt-framework master.565-e0781f46 d92d56dac3a5 598MB

docker-fpm-frr latest 6c139d86ef63 500MB

docker-fpm-frr master.565-e0781f46 6c139d86ef63 500MB

docker-sflow latest 883128578286 484MB

docker-sflow master.565-e0781f46 883128578286 484MB

|

1.0

|

show snmp counters unavailable failing test_ft_snmp_trap_counter - Hello,

In spytest/apis/system/snmp.py, we have a method called show_snmp_counters (and clear snmp counters) which is being called by test_ft_snmp_trap_counter and test_ft_snmp_basic_counters.

show_snmp_counters tries to run "show snmp counters" on the DUT. However, on the DUT there is no "show snmp counters" in klish CLI. What could be missing?

The DUT is running :

SONiC Software Version: SONiC.master.565-e0781f46

Distribution: Debian 10.6

Kernel: 4.19.0-9-2-amd64

Build commit: e0781f46

Build date: Mon Nov 23 08:32:04 UTC 2020

Built by: johnar@jenkins-worker-12

Platform: x86_64-kvm_x86_64-r0

HwSKU: Force10-S6000

ASIC: vs

Serial Number: 000000

Uptime: 03:01:08 up 1 day, 4:44, 1 user, load average: 0.45, 0.81, 0.67

Docker images:

REPOSITORY TAG IMAGE ID SIZE

docker-gbsyncd-vs latest fc2bcaf7dc91 447MB

docker-gbsyncd-vs master.565-e0781f46 fc2bcaf7dc91 447MB

docker-syncd-vs latest a599f4b6a7c9 447MB

docker-syncd-vs master.565-e0781f46 a599f4b6a7c9 447MB

docker-snmp latest 72953533ec0e 477MB

docker-snmp master.565-e0781f46 72953533ec0e 477MB

docker-teamd latest 0392627a102f 483MB

docker-teamd master.565-e0781f46 0392627a102f 483MB

docker-nat latest 61210a29630f 486MB

docker-nat master.565-e0781f46 61210a29630f 486MB

docker-router-advertiser latest dcc0e15c5463 441MB

docker-router-advertiser master.565-e0781f46 dcc0e15c5463 441MB

docker-platform-monitor latest 053ce63c0b8f 563MB

docker-platform-monitor master.565-e0781f46 053ce63c0b8f 563MB

docker-lldp latest c68597acb666 514MB

docker-lldp master.565-e0781f46 c68597acb666 514MB

docker-dhcp-relay latest badb3fb27c4e 448MB

docker-dhcp-relay master.565-e0781f46 badb3fb27c4e 448MB

docker-database latest d92f5b4cb6e0 441MB

docker-database master.565-e0781f46 d92f5b4cb6e0 441MB

docker-orchagent latest d9c215c333d9 497MB

docker-orchagent master.565-e0781f46 d9c215c333d9 497MB

docker-sonic-telemetry latest 26941527084a 511MB

docker-sonic-telemetry master.565-e0781f46 26941527084a 511MB

docker-sonic-mgmt-framework latest d92d56dac3a5 598MB

docker-sonic-mgmt-framework master.565-e0781f46 d92d56dac3a5 598MB

docker-fpm-frr latest 6c139d86ef63 500MB

docker-fpm-frr master.565-e0781f46 6c139d86ef63 500MB

docker-sflow latest 883128578286 484MB

docker-sflow master.565-e0781f46 883128578286 484MB

|

non_process

|

show snmp counters unavailable failing test ft snmp trap counter hello in spytest apis system snmp py we have a method called show snmp counters and clear snmp counters which is being called by test ft snmp trap counter and test ft snmp basic counters show snmp counters tries to run show snmp counters on the dut however on the dut there is no show snmp counters in klish cli what could be missing the dut is running sonic software version sonic master distribution debian kernel build commit build date mon nov utc built by johnar jenkins worker platform kvm hwsku asic vs serial number uptime up day user load average docker images repository tag image id size docker gbsyncd vs latest docker gbsyncd vs master docker syncd vs latest docker syncd vs master docker snmp latest docker snmp master docker teamd latest docker teamd master docker nat latest docker nat master docker router advertiser latest docker router advertiser master docker platform monitor latest docker platform monitor master docker lldp latest docker lldp master docker dhcp relay latest docker dhcp relay master docker database latest docker database master docker orchagent latest docker orchagent master docker sonic telemetry latest docker sonic telemetry master docker sonic mgmt framework latest docker sonic mgmt framework master docker fpm frr latest docker fpm frr master docker sflow latest docker sflow master

| 0

|

13,209

| 15,652,604,302

|

IssuesEvent

|

2021-03-23 11:37:20

|

GoogleCloudPlatform/dotnet-docs-samples

|

https://api.github.com/repos/GoogleCloudPlatform/dotnet-docs-samples

|

closed

|

Clouddemo: Failed to build, but only transiently.

|

priority: p3 samples type: process

|

See CI logs [here](https://source.cloud.google.com/results/invocations/73312485-45fb-41eb-b505-bd2bc8c09b77/targets/github%2Fdotnet-docs-samples%2Fapplications%2Fclouddemo%2Fnetcore/tests). Not much information there unfortunatelly.

Might be another manifestation of #1278

@jpassing I understand this might be just a one-of. But it would be great if you could take a quick look.

I'm also creating the issue to be able to track this from the start. Even if we close it now as a one-of, we can always reopen later if it continues to happen. Thanks

|

1.0

|

Clouddemo: Failed to build, but only transiently. - See CI logs [here](https://source.cloud.google.com/results/invocations/73312485-45fb-41eb-b505-bd2bc8c09b77/targets/github%2Fdotnet-docs-samples%2Fapplications%2Fclouddemo%2Fnetcore/tests). Not much information there unfortunatelly.

Might be another manifestation of #1278

@jpassing I understand this might be just a one-of. But it would be great if you could take a quick look.

I'm also creating the issue to be able to track this from the start. Even if we close it now as a one-of, we can always reopen later if it continues to happen. Thanks

|

process

|

clouddemo failed to build but only transiently see ci logs not much information there unfortunatelly might be another manifestation of jpassing i understand this might be just a one of but it would be great if you could take a quick look i m also creating the issue to be able to track this from the start even if we close it now as a one of we can always reopen later if it continues to happen thanks

| 1

|

49,859

| 13,466,498,350

|

IssuesEvent

|

2020-09-09 23:05:38

|

wrbejar/JavaVulnerableLab

|

https://api.github.com/repos/wrbejar/JavaVulnerableLab

|

opened

|

CVE-2017-3523 (High) detected in mysql-connector-java-5.1.26.jar

|

security vulnerability

|

## CVE-2017-3523 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mysql-connector-java-5.1.26.jar</b></p></summary>

<p>MySQL JDBC Type 4 driver</p>

<p>Library home page: <a href="http://dev.mysql.com/doc/connector-j/en/">http://dev.mysql.com/doc/connector-j/en/</a></p>

<p>Path to dependency file: /tmp/ws-ua_20200909230431_TXNAGL/archiveExtraction_JAXWNN/20200909230432/ws-scm_depth_0/JavaVulnerableLab/target/JavaVulnerableLab/META-INF/maven/org.cysecurity/JavaVulnerableLab/pom.xml</p>

<p>Path to vulnerable library: canner/.m2/repository/mysql/mysql-connector-java/5.1.26/mysql-connector-java-5.1.26.jar,_depth_0/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/mysql-connector-java-5.1.26.jar,canner/.m2/repository/mysql/mysql-connector-java/5.1.26/mysql-connector-java-5.1.26.jar,_depth_0/JavaVulnerableLab/bin/target/JavaVulnerableLab/WEB-INF/lib/mysql-connector-java-5.1.26.jar,/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/mysql-connector-java-5.1.26.jar,canner/.m2/repository/mysql/mysql-connector-java/5.1.26/mysql-connector-java-5.1.26.jar,_depth_0/JavaVulnerableLab/bin/target/JavaVulnerableLab/META-INF/maven/org.cysecurity/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/mysql-connector-java-5.1.26.jar,_depth_0/JavaVulnerableLab/target/JavaVulnerableLab/META-INF/maven/org.cysecurity/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/mysql-connector-java-5.1.26.jar,/JavaVulnerableLab/bin/target/JavaVulnerableLab/WEB-INF/lib/mysql-connector-java-5.1.26.jar</p>

<p>

Dependency Hierarchy:

- :x: **mysql-connector-java-5.1.26.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/wrbejar/JavaVulnerableLab/commit/b2c9e600cc70c6f238a3ff941d0e23ea9e816a2a">b2c9e600cc70c6f238a3ff941d0e23ea9e816a2a</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Vulnerability in the MySQL Connectors component of Oracle MySQL (subcomponent: Connector/J). Supported versions that are affected are 5.1.40 and earlier. Difficult to exploit vulnerability allows low privileged attacker with network access via multiple protocols to compromise MySQL Connectors. While the vulnerability is in MySQL Connectors, attacks may significantly impact additional products. Successful attacks of this vulnerability can result in takeover of MySQL Connectors. CVSS 3.0 Base Score 8.5 (Confidentiality, Integrity and Availability impacts). CVSS Vector: (CVSS:3.0/AV:N/AC:H/PR:L/UI:N/S:C/C:H/I:H/A:H).

<p>Publish Date: 2017-04-24

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-3523>CVE-2017-3523</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: Low

- User Interaction: None

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.oracle.com/technetwork/security-advisory/cpuapr2017-3236618.html">https://www.oracle.com/technetwork/security-advisory/cpuapr2017-3236618.html</a></p>

<p>Release Date: 2017-04-24</p>

<p>Fix Resolution: 5.1.41</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"mysql","packageName":"mysql-connector-java","packageVersion":"5.1.26","isTransitiveDependency":false,"dependencyTree":"mysql:mysql-connector-java:5.1.26","isMinimumFixVersionAvailable":true,"minimumFixVersion":"5.1.41"}],"vulnerabilityIdentifier":"CVE-2017-3523","vulnerabilityDetails":"Vulnerability in the MySQL Connectors component of Oracle MySQL (subcomponent: Connector/J). Supported versions that are affected are 5.1.40 and earlier. Difficult to exploit vulnerability allows low privileged attacker with network access via multiple protocols to compromise MySQL Connectors. While the vulnerability is in MySQL Connectors, attacks may significantly impact additional products. Successful attacks of this vulnerability can result in takeover of MySQL Connectors. CVSS 3.0 Base Score 8.5 (Confidentiality, Integrity and Availability impacts). CVSS Vector: (CVSS:3.0/AV:N/AC:H/PR:L/UI:N/S:C/C:H/I:H/A:H).","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-3523","cvss3Severity":"high","cvss3Score":"8.5","cvss3Metrics":{"A":"High","AC":"High","PR":"Low","S":"Changed","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> -->

|

True

|

CVE-2017-3523 (High) detected in mysql-connector-java-5.1.26.jar - ## CVE-2017-3523 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mysql-connector-java-5.1.26.jar</b></p></summary>

<p>MySQL JDBC Type 4 driver</p>

<p>Library home page: <a href="http://dev.mysql.com/doc/connector-j/en/">http://dev.mysql.com/doc/connector-j/en/</a></p>

<p>Path to dependency file: /tmp/ws-ua_20200909230431_TXNAGL/archiveExtraction_JAXWNN/20200909230432/ws-scm_depth_0/JavaVulnerableLab/target/JavaVulnerableLab/META-INF/maven/org.cysecurity/JavaVulnerableLab/pom.xml</p>

<p>Path to vulnerable library: canner/.m2/repository/mysql/mysql-connector-java/5.1.26/mysql-connector-java-5.1.26.jar,_depth_0/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/mysql-connector-java-5.1.26.jar,canner/.m2/repository/mysql/mysql-connector-java/5.1.26/mysql-connector-java-5.1.26.jar,_depth_0/JavaVulnerableLab/bin/target/JavaVulnerableLab/WEB-INF/lib/mysql-connector-java-5.1.26.jar,/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/mysql-connector-java-5.1.26.jar,canner/.m2/repository/mysql/mysql-connector-java/5.1.26/mysql-connector-java-5.1.26.jar,_depth_0/JavaVulnerableLab/bin/target/JavaVulnerableLab/META-INF/maven/org.cysecurity/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/mysql-connector-java-5.1.26.jar,_depth_0/JavaVulnerableLab/target/JavaVulnerableLab/META-INF/maven/org.cysecurity/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/mysql-connector-java-5.1.26.jar,/JavaVulnerableLab/bin/target/JavaVulnerableLab/WEB-INF/lib/mysql-connector-java-5.1.26.jar</p>

<p>

Dependency Hierarchy:

- :x: **mysql-connector-java-5.1.26.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/wrbejar/JavaVulnerableLab/commit/b2c9e600cc70c6f238a3ff941d0e23ea9e816a2a">b2c9e600cc70c6f238a3ff941d0e23ea9e816a2a</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Vulnerability in the MySQL Connectors component of Oracle MySQL (subcomponent: Connector/J). Supported versions that are affected are 5.1.40 and earlier. Difficult to exploit vulnerability allows low privileged attacker with network access via multiple protocols to compromise MySQL Connectors. While the vulnerability is in MySQL Connectors, attacks may significantly impact additional products. Successful attacks of this vulnerability can result in takeover of MySQL Connectors. CVSS 3.0 Base Score 8.5 (Confidentiality, Integrity and Availability impacts). CVSS Vector: (CVSS:3.0/AV:N/AC:H/PR:L/UI:N/S:C/C:H/I:H/A:H).

<p>Publish Date: 2017-04-24

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-3523>CVE-2017-3523</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: Low

- User Interaction: None

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.oracle.com/technetwork/security-advisory/cpuapr2017-3236618.html">https://www.oracle.com/technetwork/security-advisory/cpuapr2017-3236618.html</a></p>

<p>Release Date: 2017-04-24</p>

<p>Fix Resolution: 5.1.41</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"mysql","packageName":"mysql-connector-java","packageVersion":"5.1.26","isTransitiveDependency":false,"dependencyTree":"mysql:mysql-connector-java:5.1.26","isMinimumFixVersionAvailable":true,"minimumFixVersion":"5.1.41"}],"vulnerabilityIdentifier":"CVE-2017-3523","vulnerabilityDetails":"Vulnerability in the MySQL Connectors component of Oracle MySQL (subcomponent: Connector/J). Supported versions that are affected are 5.1.40 and earlier. Difficult to exploit vulnerability allows low privileged attacker with network access via multiple protocols to compromise MySQL Connectors. While the vulnerability is in MySQL Connectors, attacks may significantly impact additional products. Successful attacks of this vulnerability can result in takeover of MySQL Connectors. CVSS 3.0 Base Score 8.5 (Confidentiality, Integrity and Availability impacts). CVSS Vector: (CVSS:3.0/AV:N/AC:H/PR:L/UI:N/S:C/C:H/I:H/A:H).","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-3523","cvss3Severity":"high","cvss3Score":"8.5","cvss3Metrics":{"A":"High","AC":"High","PR":"Low","S":"Changed","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> -->

|

non_process

|

cve high detected in mysql connector java jar cve high severity vulnerability vulnerable library mysql connector java jar mysql jdbc type driver library home page a href path to dependency file tmp ws ua txnagl archiveextraction jaxwnn ws scm depth javavulnerablelab target javavulnerablelab meta inf maven org cysecurity javavulnerablelab pom xml path to vulnerable library canner repository mysql mysql connector java mysql connector java jar depth javavulnerablelab target javavulnerablelab web inf lib mysql connector java jar canner repository mysql mysql connector java mysql connector java jar depth javavulnerablelab bin target javavulnerablelab web inf lib mysql connector java jar javavulnerablelab target javavulnerablelab web inf lib mysql connector java jar canner repository mysql mysql connector java mysql connector java jar depth javavulnerablelab bin target javavulnerablelab meta inf maven org cysecurity javavulnerablelab target javavulnerablelab web inf lib mysql connector java jar depth javavulnerablelab target javavulnerablelab meta inf maven org cysecurity javavulnerablelab target javavulnerablelab web inf lib mysql connector java jar javavulnerablelab bin target javavulnerablelab web inf lib mysql connector java jar dependency hierarchy x mysql connector java jar vulnerable library found in head commit a href vulnerability details vulnerability in the mysql connectors component of oracle mysql subcomponent connector j supported versions that are affected are and earlier difficult to exploit vulnerability allows low privileged attacker with network access via multiple protocols to compromise mysql connectors while the vulnerability is in mysql connectors attacks may significantly impact additional products successful attacks of this vulnerability can result in takeover of mysql connectors cvss base score confidentiality integrity and availability impacts cvss vector cvss av n ac h pr l ui n s c c h i h a h publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required low user interaction none scope changed impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution rescue worker helmet automatic remediation is available for this issue isopenpronvulnerability true ispackagebased true isdefaultbranch true packages vulnerabilityidentifier cve vulnerabilitydetails vulnerability in the mysql connectors component of oracle mysql subcomponent connector j supported versions that are affected are and earlier difficult to exploit vulnerability allows low privileged attacker with network access via multiple protocols to compromise mysql connectors while the vulnerability is in mysql connectors attacks may significantly impact additional products successful attacks of this vulnerability can result in takeover of mysql connectors cvss base score confidentiality integrity and availability impacts cvss vector cvss av n ac h pr l ui n s c c h i h a h vulnerabilityurl

| 0

|

656,562

| 21,767,902,765

|

IssuesEvent

|

2022-05-13 05:35:31

|

COS301-SE-2022/Twitter-Summariser

|

https://api.github.com/repos/COS301-SE-2022/Twitter-Summariser

|

closed

|

Create mockups of at least 3 use cases

|

priority:high status:not-ready scope:frontend role:frontend-engineer role:team-lead role:backend-engineer

|

List of use cases to mockup:

- [x] Explore published reports

- [x] Search for topic to generate

- [x] Generate report

|

1.0

|

Create mockups of at least 3 use cases - List of use cases to mockup:

- [x] Explore published reports

- [x] Search for topic to generate

- [x] Generate report

|

non_process

|

create mockups of at least use cases list of use cases to mockup explore published reports search for topic to generate generate report

| 0

|

11,167

| 13,957,694,437

|

IssuesEvent

|

2020-10-24 08:11:15

|

alexanderkotsev/geoportal

|

https://api.github.com/repos/alexanderkotsev/geoportal

|

opened

|

RO: Harvesting request

|

Geoportal Harvesting process RO - Romania

|

Dear Angelo,

Yesterday, during the harvesting process from the National Geoportal, we had an incident on it. Therefore not all metadata files were visible at that time.

So, can you please start again a harvesting process for the Romanian Geoportal ?

Best regards,

Simona Bunea

|

1.0

|

RO: Harvesting request - Dear Angelo,

Yesterday, during the harvesting process from the National Geoportal, we had an incident on it. Therefore not all metadata files were visible at that time.

So, can you please start again a harvesting process for the Romanian Geoportal ?

Best regards,

Simona Bunea

|

process

|

ro harvesting request dear angelo yesterday during the harvesting process from the national geoportal we had an incident on it therefore not all metadata files were visible at that time so can you please start again a harvesting process for the romanian geoportal best regards simona bunea

| 1

|

17,789

| 23,716,078,268

|

IssuesEvent

|

2022-08-30 11:54:23

|

cloudfoundry/korifi

|

https://api.github.com/repos/cloudfoundry/korifi

|

opened

|

[Feature]: Developer can push apps using the top-level `health-check-http-endpoint` field in the manifest

|

Top-level process config

|

### Blockers/Dependencies

_No response_

### Background

**As a** developer

**I want** top-level process configuration in manifests to be supported

**So that** I can use shortcut `cf push` flags like `-c`, `-i`, `-m` etc.

### Acceptance Criteria

* **GIVEN** I have the following node app:

```js

var http = require('http');

http.createServer(function (request, response) {

if (request.url == '/health') {

response.writeHead(200, {'Content-Type': 'text/plain'});

response.end('ok');

} else {

response.writeHead(500, {'Content-Type': 'text/plain'});

response.end('no');

}

}).listen(process.env.PORT);

```

with the following `manifest.yml`:

```yaml

---

applications:

- name: my-app

health-check-http-endpoint: /health

```

**WHEN I** `cf push`

**THEN I** see the push succeeds with an output similar to this:

```

name: test

requested state: started

routes: test.vcap.me

last uploaded: Mon 29 Aug 16:28:36 UTC 2022

stack: cflinuxfs3

buildpacks:

name version detect output buildpack name

nodejs_buildpack 1.7.61 nodejs nodejs

type: web

sidecars:

instances: 3/3

memory usage: 256M

start command: npm start

state since cpu memory disk details

#0 running 2022-08-29T16:28:54Z 1.6% 42.3M of 256M 115.7M of 1G

```

* **GIVEN** I have the same app with the following manifest:

**AND** `manifest.yml` looks like this:

```yaml

---

applications:

- name: my-app

health-check-http-endpoint: /wrong

processes:

- type: web

health-check-http-endpoint: /health

```

**WHEN I** `cf push`

**THEN I** see the push succeeds with the same output as above

### Dev Notes

_No response_

|

1.0

|

[Feature]: Developer can push apps using the top-level `health-check-http-endpoint` field in the manifest - ### Blockers/Dependencies

_No response_

### Background

**As a** developer

**I want** top-level process configuration in manifests to be supported

**So that** I can use shortcut `cf push` flags like `-c`, `-i`, `-m` etc.

### Acceptance Criteria

* **GIVEN** I have the following node app:

```js

var http = require('http');

http.createServer(function (request, response) {

if (request.url == '/health') {

response.writeHead(200, {'Content-Type': 'text/plain'});

response.end('ok');

} else {

response.writeHead(500, {'Content-Type': 'text/plain'});

response.end('no');

}

}).listen(process.env.PORT);

```

with the following `manifest.yml`:

```yaml

---

applications:

- name: my-app

health-check-http-endpoint: /health

```

**WHEN I** `cf push`

**THEN I** see the push succeeds with an output similar to this:

```

name: test

requested state: started

routes: test.vcap.me

last uploaded: Mon 29 Aug 16:28:36 UTC 2022

stack: cflinuxfs3

buildpacks:

name version detect output buildpack name

nodejs_buildpack 1.7.61 nodejs nodejs

type: web

sidecars:

instances: 3/3

memory usage: 256M

start command: npm start

state since cpu memory disk details

#0 running 2022-08-29T16:28:54Z 1.6% 42.3M of 256M 115.7M of 1G

```

* **GIVEN** I have the same app with the following manifest:

**AND** `manifest.yml` looks like this:

```yaml

---

applications:

- name: my-app

health-check-http-endpoint: /wrong

processes:

- type: web

health-check-http-endpoint: /health

```

**WHEN I** `cf push`

**THEN I** see the push succeeds with the same output as above

### Dev Notes

_No response_

|

process

|

developer can push apps using the top level health check http endpoint field in the manifest blockers dependencies no response background as a developer i want top level process configuration in manifests to be supported so that i can use shortcut cf push flags like c i m etc acceptance criteria given i have the following node app js var http require http http createserver function request response if request url health response writehead content type text plain response end ok else response writehead content type text plain response end no listen process env port with the following manifest yml yaml applications name my app health check http endpoint health when i cf push then i see the push succeeds with an output similar to this name test requested state started routes test vcap me last uploaded mon aug utc stack buildpacks name version detect output buildpack name nodejs buildpack nodejs nodejs type web sidecars instances memory usage start command npm start state since cpu memory disk details running of of given i have the same app with the following manifest and manifest yml looks like this yaml applications name my app health check http endpoint wrong processes type web health check http endpoint health when i cf push then i see the push succeeds with the same output as above dev notes no response

| 1

|

18,192

| 24,240,172,304

|

IssuesEvent

|

2022-09-27 05:43:35

|

geneontology/go-ontology

|

https://api.github.com/repos/geneontology/go-ontology

|

closed

|

GO:0044662 disruption by virus of host cell membrane

|

ready multi-species process

|

From @ValWood in #18692

(thus point was not dealt with):

* **GO:0044662 disruption by virus of host cell membrane**

A process by which a virus has a negative effect on the functioning of a host cellular membrane.

I don't understand this one at all what is "negative effect on the functioning of a host cellular membrane"?

5 annotations CACAO

but looking at the paper it should probably merge into

GO:0044659 cytolysis by virus of host cell

Definition (GO:0044659 GONUTS page)

The killing by a virus of a cell by means of the rupture of cell membranes and the loss of cytoplasm. PMID:26728778

it's another way of saying the same thing

i.e I think most of the high level "disruption terms" can be removed as most of the meaningful terms have alternative sensible parents...

* There is one child term, **GO:0090680 disruption by virus of host outer membrane**

@dsiegele Can the CACAO annotations be re-housed?

Thanks, Pascale

|

1.0

|

GO:0044662 disruption by virus of host cell membrane - From @ValWood in #18692

(thus point was not dealt with):

* **GO:0044662 disruption by virus of host cell membrane**

A process by which a virus has a negative effect on the functioning of a host cellular membrane.

I don't understand this one at all what is "negative effect on the functioning of a host cellular membrane"?

5 annotations CACAO

but looking at the paper it should probably merge into

GO:0044659 cytolysis by virus of host cell

Definition (GO:0044659 GONUTS page)

The killing by a virus of a cell by means of the rupture of cell membranes and the loss of cytoplasm. PMID:26728778

it's another way of saying the same thing

i.e I think most of the high level "disruption terms" can be removed as most of the meaningful terms have alternative sensible parents...

* There is one child term, **GO:0090680 disruption by virus of host outer membrane**

@dsiegele Can the CACAO annotations be re-housed?

Thanks, Pascale

|

process

|

go disruption by virus of host cell membrane from valwood in thus point was not dealt with go disruption by virus of host cell membrane a process by which a virus has a negative effect on the functioning of a host cellular membrane i don t understand this one at all what is negative effect on the functioning of a host cellular membrane annotations cacao but looking at the paper it should probably merge into go cytolysis by virus of host cell definition go gonuts page the killing by a virus of a cell by means of the rupture of cell membranes and the loss of cytoplasm pmid it s another way of saying the same thing i e i think most of the high level disruption terms can be removed as most of the meaningful terms have alternative sensible parents there is one child term go disruption by virus of host outer membrane dsiegele can the cacao annotations be re housed thanks pascale

| 1

|

19,332

| 25,472,559,240

|

IssuesEvent

|

2022-11-25 11:29:00

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

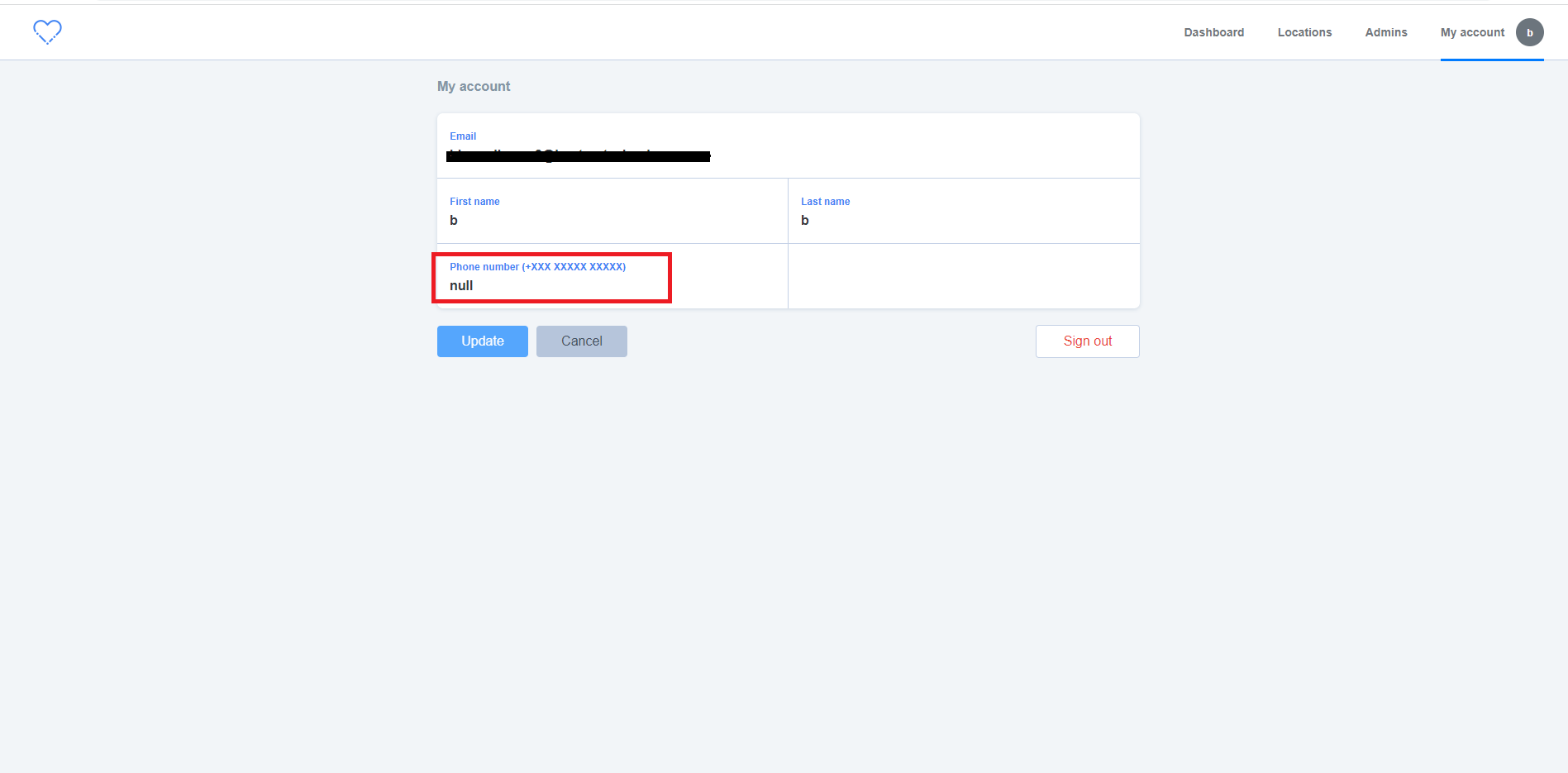

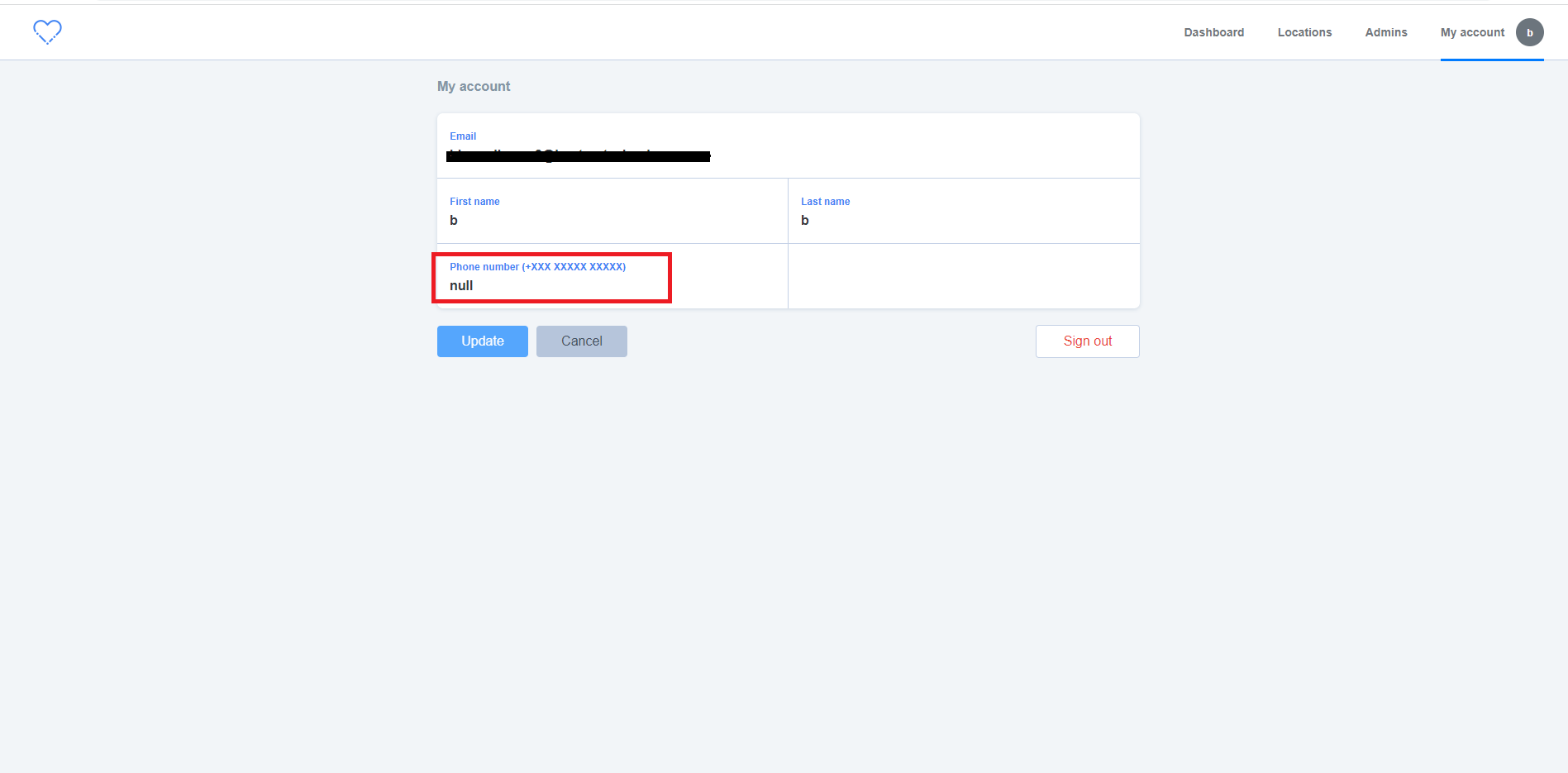

[IDP] [PM] Null value is getting displayed in the phone number field in the following scenario

|

Bug P1 Participant manager Process: Fixed Process: Tested QA Process: Tested dev

|

**Pre-condition:** mfa should be disabled in the PM

**Steps:**

1. Login to PM

2. Add admin in the application

3. Click on account activation link of added admin

4. Set up account without 'Phone number'

5. Login as added admin

6. Navigate to 'My account' screen and Verify

**AR:** Null value is getting displayed in the phone number field in the following scenario

**ER:** Phone number field should be empty without any value

|

3.0

|

[IDP] [PM] Null value is getting displayed in the phone number field in the following scenario - **Pre-condition:** mfa should be disabled in the PM

**Steps:**

1. Login to PM

2. Add admin in the application

3. Click on account activation link of added admin

4. Set up account without 'Phone number'

5. Login as added admin

6. Navigate to 'My account' screen and Verify

**AR:** Null value is getting displayed in the phone number field in the following scenario

**ER:** Phone number field should be empty without any value

|

process

|

null value is getting displayed in the phone number field in the following scenario pre condition mfa should be disabled in the pm steps login to pm add admin in the application click on account activation link of added admin set up account without phone number login as added admin navigate to my account screen and verify ar null value is getting displayed in the phone number field in the following scenario er phone number field should be empty without any value

| 1

|

83,661

| 24,116,302,282

|

IssuesEvent

|

2022-09-20 14:58:08

|

veracruz-project/veracruz

|

https://api.github.com/repos/veracruz-project/veracruz

|

opened

|

Compilation artifacts are not reproducible due to everchanging build ID

|

bug build-process

|

**Describe the bug**

Veracruz binaries (client, server, attestation, runtime manager) contain a build ID (`.note.gnu.build-id` section) in their ELF headers. This build ID is a hash determined by rustc and/or the linker.

However, for unknown reasons (timestamp somewhere? source of randomness?), the build ID changes every time the crate is cargo cleaned then rebuilt.

This results in binaries that are functionally equivalent but have different overall hashes, which gives different Docker images.

**To Reproduce**

* Build Veracruz, e.g. `make nitro`

* Hash e.g. `veracruz-server`: `sha256sum nitro-host/target/debug/veracruz-server`

* Clean: `make clean`

* Build Veracruz again: `make nitro`

* Hash `veracruz-server`: `sha256sum nitro-host/target/debug/veracruz-server`

* The two hashes are different because the build ID is different: `objdump --section=.note.gnu.build-id --full-contents nitro-host/target/release/veracruz-server`

**Expected behaviour**

It's not clear to me how the build ID is determined, but I would expect it to stay constant between builds.

**Solutions**

Strip build ID from binaries or fix build ID generation

|

1.0

|

Compilation artifacts are not reproducible due to everchanging build ID - **Describe the bug**

Veracruz binaries (client, server, attestation, runtime manager) contain a build ID (`.note.gnu.build-id` section) in their ELF headers. This build ID is a hash determined by rustc and/or the linker.

However, for unknown reasons (timestamp somewhere? source of randomness?), the build ID changes every time the crate is cargo cleaned then rebuilt.

This results in binaries that are functionally equivalent but have different overall hashes, which gives different Docker images.

**To Reproduce**

* Build Veracruz, e.g. `make nitro`

* Hash e.g. `veracruz-server`: `sha256sum nitro-host/target/debug/veracruz-server`

* Clean: `make clean`

* Build Veracruz again: `make nitro`

* Hash `veracruz-server`: `sha256sum nitro-host/target/debug/veracruz-server`

* The two hashes are different because the build ID is different: `objdump --section=.note.gnu.build-id --full-contents nitro-host/target/release/veracruz-server`

**Expected behaviour**

It's not clear to me how the build ID is determined, but I would expect it to stay constant between builds.

**Solutions**

Strip build ID from binaries or fix build ID generation

|

non_process

|

compilation artifacts are not reproducible due to everchanging build id describe the bug veracruz binaries client server attestation runtime manager contain a build id note gnu build id section in their elf headers this build id is a hash determined by rustc and or the linker however for unknown reasons timestamp somewhere source of randomness the build id changes every time the crate is cargo cleaned then rebuilt this results in binaries that are functionally equivalent but have different overall hashes which gives different docker images to reproduce build veracruz e g make nitro hash e g veracruz server nitro host target debug veracruz server clean make clean build veracruz again make nitro hash veracruz server nitro host target debug veracruz server the two hashes are different because the build id is different objdump section note gnu build id full contents nitro host target release veracruz server expected behaviour it s not clear to me how the build id is determined but i would expect it to stay constant between builds solutions strip build id from binaries or fix build id generation

| 0

|

12,583

| 9,694,148,374

|

IssuesEvent

|

2019-05-24 18:06:15

|

MicrosoftDocs/azure-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-docs

|

closed

|

Update to reflect new Public IP option for SQL Database Managed Instance

|

assigned-to-author azure-analysis-services/svc doc-enhancement triaged

|

This text should be updated: “ 2 - Azure SQL Database Managed Instance is supported. Because a managed instance runs within Azure VNet with a private IP address, an On-premises Data Gateway is required.”

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: ea45d88b-212b-51ae-b902-6efd5873f6c5

* Version Independent ID: 6f86e171-7553-3d01-00fa-e0865d8d817b

* Content: [Data sources supported in Azure Analysis Services](https://docs.microsoft.com/en-us/azure/analysis-services/analysis-services-datasource#azsqlmanaged)

* Content Source: [articles/analysis-services/analysis-services-datasource.md](https://github.com/Microsoft/azure-docs/blob/master/articles/analysis-services/analysis-services-datasource.md)

* Service: **azure-analysis-services**

* GitHub Login: @Minewiskan

* Microsoft Alias: **owend**

|

1.0

|

Update to reflect new Public IP option for SQL Database Managed Instance - This text should be updated: “ 2 - Azure SQL Database Managed Instance is supported. Because a managed instance runs within Azure VNet with a private IP address, an On-premises Data Gateway is required.”

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: ea45d88b-212b-51ae-b902-6efd5873f6c5

* Version Independent ID: 6f86e171-7553-3d01-00fa-e0865d8d817b

* Content: [Data sources supported in Azure Analysis Services](https://docs.microsoft.com/en-us/azure/analysis-services/analysis-services-datasource#azsqlmanaged)

* Content Source: [articles/analysis-services/analysis-services-datasource.md](https://github.com/Microsoft/azure-docs/blob/master/articles/analysis-services/analysis-services-datasource.md)

* Service: **azure-analysis-services**

* GitHub Login: @Minewiskan

* Microsoft Alias: **owend**

|

non_process

|

update to reflect new public ip option for sql database managed instance this text should be updated “ azure sql database managed instance is supported because a managed instance runs within azure vnet with a private ip address an on premises data gateway is required ” document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service azure analysis services github login minewiskan microsoft alias owend

| 0

|

132,214

| 18,545,258,378

|

IssuesEvent

|

2021-10-21 21:10:04

|

mozilla/foundation.mozilla.org

|

https://api.github.com/repos/mozilla/foundation.mozilla.org

|

opened

|

[PNI Website] Design support & reviews for dev work cont. IV

|

design Buyer's Guide 🛍

|

Continuing support and reviews from #7584

- support and review PRs for devs

|

1.0

|

[PNI Website] Design support & reviews for dev work cont. IV - Continuing support and reviews from #7584

- support and review PRs for devs

|

non_process

|

design support reviews for dev work cont iv continuing support and reviews from support and review prs for devs

| 0

|

447,343

| 12,887,664,267

|

IssuesEvent

|

2020-07-13 11:39:17

|

crestic-urca/remotelabz

|

https://api.github.com/repos/crestic-urca/remotelabz

|

closed

|

Unlink security roles and groups (classes)

|

feature enhancement normal priority

|

In GitLab by @jhubert on Nov 21, 2018, 17:07

Groups as implemented in previous versions are redundant with Symfony’s

roles. They shouldn’t be overloaded by a custom class. Despite of that,

we could create a group class which aims to implement teacher’s

“classes” (or whatever they are called).

*(from redmine: issue id 279, created on 2018-11-21)*

|

1.0

|

Unlink security roles and groups (classes) - In GitLab by @jhubert on Nov 21, 2018, 17:07

Groups as implemented in previous versions are redundant with Symfony’s

roles. They shouldn’t be overloaded by a custom class. Despite of that,