Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

84,275

| 24,267,200,999

|

IssuesEvent

|

2022-09-28 07:25:08

|

appsmithorg/appsmith

|

https://api.github.com/repos/appsmithorg/appsmith

|

closed

|

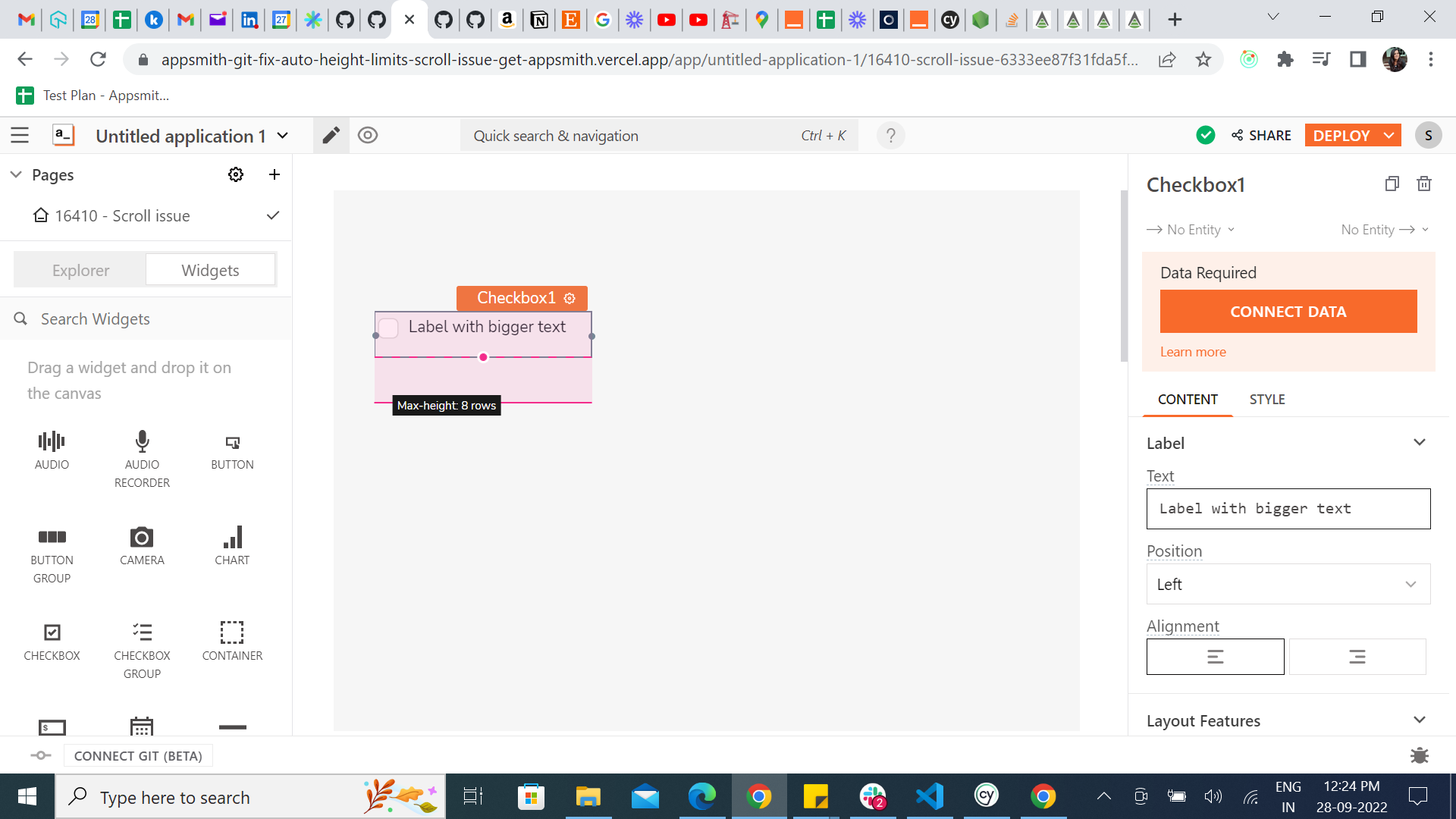

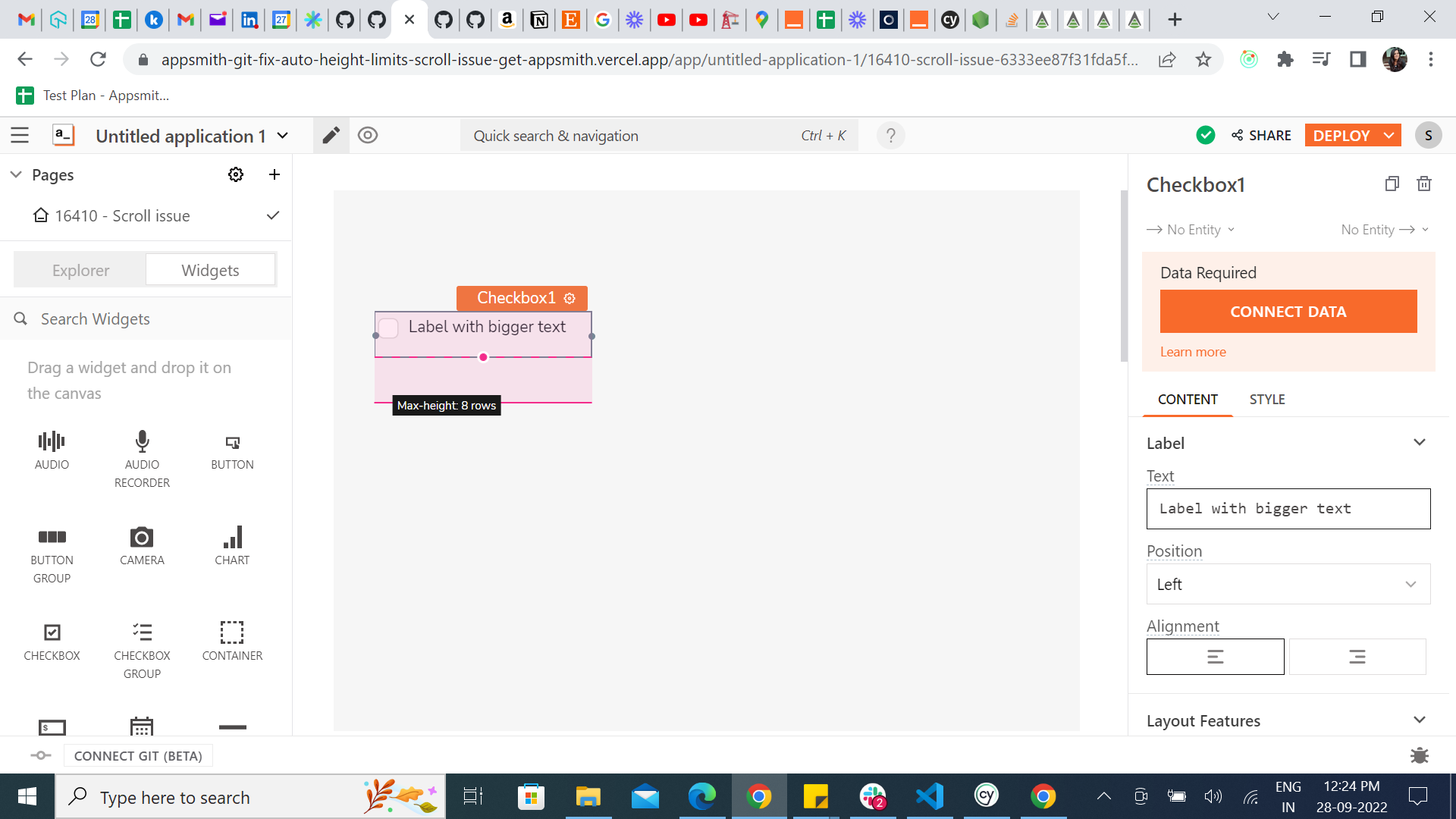

[Bug]: Max height tooltip appears without hover - doesn't disappear

|

Bug Low Needs Triaging UI Builders Pod Dynamic Height

|

### Is there an existing issue for this?

- [X] I have searched the existing issues

### Description

When we D&D a widget with Auto height, and choose Auto height with limits, and click on the widget, Min height tooltip appears only when we hover over the min height signifier, but in case of Max height it appears whenever we select or click on the widget (Without hovering) and doesn't disappear.

### Steps To Reproduce

1. D&D a widget with Auto height enabled

2. Select Auto height with limits in the height property

3. Click on the widget

4. Observe the Min height signifies tooltip disappears once we move the mouse to somewhere but the Max height signifier tooltip doesn't disappear in its own, and it stays until we unselect the widget.

https://appsmith-git-fix-auto-height-limits-scroll-issue-get-appsmith.vercel.app/

### Public Sample App

_No response_

### Version

Deploy preview

|

1.0

|

[Bug]: Max height tooltip appears without hover - doesn't disappear - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Description

When we D&D a widget with Auto height, and choose Auto height with limits, and click on the widget, Min height tooltip appears only when we hover over the min height signifier, but in case of Max height it appears whenever we select or click on the widget (Without hovering) and doesn't disappear.

### Steps To Reproduce

1. D&D a widget with Auto height enabled

2. Select Auto height with limits in the height property

3. Click on the widget

4. Observe the Min height signifies tooltip disappears once we move the mouse to somewhere but the Max height signifier tooltip doesn't disappear in its own, and it stays until we unselect the widget.

https://appsmith-git-fix-auto-height-limits-scroll-issue-get-appsmith.vercel.app/

### Public Sample App

_No response_

### Version

Deploy preview

|

non_process

|

max height tooltip appears without hover doesn t disappear is there an existing issue for this i have searched the existing issues description when we d d a widget with auto height and choose auto height with limits and click on the widget min height tooltip appears only when we hover over the min height signifier but in case of max height it appears whenever we select or click on the widget without hovering and doesn t disappear steps to reproduce d d a widget with auto height enabled select auto height with limits in the height property click on the widget observe the min height signifies tooltip disappears once we move the mouse to somewhere but the max height signifier tooltip doesn t disappear in its own and it stays until we unselect the widget public sample app no response version deploy preview

| 0

|

8,151

| 11,354,735,928

|

IssuesEvent

|

2020-01-24 18:20:13

|

googleapis/nodejs-logging-winston

|

https://api.github.com/repos/googleapis/nodejs-logging-winston

|

closed

|

lw.express.makeMiddleware is not a function

|

type: process

|

Following the instructions in the ReadMe for the express middleware:

```javascript

module.exports = async function(){

const {mylogger, mw} = await lw.express.makeMiddleware(logger);

return mw;

}

```

But it says makeMiddlware doesn't exist?

`UnhandledPromiseRejectionWarning: TypeError: lw.express.makeMiddleware is not a function`

|

1.0

|

lw.express.makeMiddleware is not a function - Following the instructions in the ReadMe for the express middleware:

```javascript

module.exports = async function(){

const {mylogger, mw} = await lw.express.makeMiddleware(logger);

return mw;

}

```

But it says makeMiddlware doesn't exist?

`UnhandledPromiseRejectionWarning: TypeError: lw.express.makeMiddleware is not a function`

|

process

|

lw express makemiddleware is not a function following the instructions in the readme for the express middleware javascript module exports async function const mylogger mw await lw express makemiddleware logger return mw but it says makemiddlware doesn t exist unhandledpromiserejectionwarning typeerror lw express makemiddleware is not a function

| 1

|

56,614

| 8,101,913,171

|

IssuesEvent

|

2018-08-12 19:07:19

|

FreeUKGen/MyopicVicar

|

https://api.github.com/repos/FreeUKGen/MyopicVicar

|

closed

|

Changing my Profile email address has not changed the my Transcriber email value in some batches (was #1319)

|

documentation ready

|

Documentation needs to explain that the email address in a batch header is a feature that was needed in FR1 (when we did not have user IDs). Now we have user IDs, it does not matter what is in this field.

|

1.0

|

Changing my Profile email address has not changed the my Transcriber email value in some batches (was #1319) - Documentation needs to explain that the email address in a batch header is a feature that was needed in FR1 (when we did not have user IDs). Now we have user IDs, it does not matter what is in this field.

|

non_process

|

changing my profile email address has not changed the my transcriber email value in some batches was documentation needs to explain that the email address in a batch header is a feature that was needed in when we did not have user ids now we have user ids it does not matter what is in this field

| 0

|

54,715

| 11,296,038,714

|

IssuesEvent

|

2020-01-17 00:17:47

|

aws-amplify/amplify-cli

|

https://api.github.com/repos/aws-amplify/amplify-cli

|

reopened

|

How do I update src/graphql/schema.json

|

code-gen pending-triage

|

**Which Category is your question related to?**

Updating `src/graphql/schema.json`.

None of the commands I've seen such as `amplify push` do that, and older commands like `amplify code generate --download` no longer work.

**Amplify CLI Version**

4.11.0.

**What AWS Services are you utilizing?**

AppSync + Aurora.

My `.graphconfig` (regenerated with latest):

```

projects:

TestAPI:

schemaPath: amplify/backend/api/TestAPI/build/schema.graphql

includes:

- src/graphql/**/*.js

excludes:

- ./amplify/**

extensions:

amplify:

codeGenTarget: javascript

generatedFileName: ''

docsFilePath: src/graphql

region: eu-west-1

apiId: null

maxDepth: 2

extensions:

amplify:

version: 3

```

|

1.0

|

How do I update src/graphql/schema.json - **Which Category is your question related to?**

Updating `src/graphql/schema.json`.

None of the commands I've seen such as `amplify push` do that, and older commands like `amplify code generate --download` no longer work.

**Amplify CLI Version**

4.11.0.

**What AWS Services are you utilizing?**

AppSync + Aurora.

My `.graphconfig` (regenerated with latest):

```

projects:

TestAPI:

schemaPath: amplify/backend/api/TestAPI/build/schema.graphql

includes:

- src/graphql/**/*.js

excludes:

- ./amplify/**

extensions:

amplify:

codeGenTarget: javascript

generatedFileName: ''

docsFilePath: src/graphql

region: eu-west-1

apiId: null

maxDepth: 2

extensions:

amplify:

version: 3

```

|

non_process

|

how do i update src graphql schema json which category is your question related to updating src graphql schema json none of the commands i ve seen such as amplify push do that and older commands like amplify code generate download no longer work amplify cli version what aws services are you utilizing appsync aurora my graphconfig regenerated with latest projects testapi schemapath amplify backend api testapi build schema graphql includes src graphql js excludes amplify extensions amplify codegentarget javascript generatedfilename docsfilepath src graphql region eu west apiid null maxdepth extensions amplify version

| 0

|

17,448

| 23,268,927,961

|

IssuesEvent

|

2022-08-04 20:25:53

|

ppy/osu-web

|

https://api.github.com/repos/ppy/osu-web

|

closed

|

Allow for selectable difficulty icons

|

proposal area:beatmap-discussions area:beatmap-processing

|

Potentially, this could take the form of a box on the beatmap listing where you can choose one of the icons which would appear when hovering a difficulty icon. The ones that aren't selected would be darker/grayed out and colored on hover/selection.

This would be good because icons are often inaccurate to the intended difficulty level, especially on edge cases like 3.6* insanes or 2.3* normals. Users can already set the name of the difficulty, so being able to set the icon to what difficulty it is meant to be would make sense.

With this it'd be easier to see at a glance on the beatmap listing what the spread of a map is actually like, such as ENHI instead of seeing NNHH - which makes it look like there's two normals and two hards when that's not the case at all.

This would be changeable by:

- the mapper, when a mapset is in pending/wip/graveyard

- QAT and higher when a mapset is in any state.

By default, icons would be set automatically by star rating the same way they currently are.

|

1.0

|

Allow for selectable difficulty icons - Potentially, this could take the form of a box on the beatmap listing where you can choose one of the icons which would appear when hovering a difficulty icon. The ones that aren't selected would be darker/grayed out and colored on hover/selection.

This would be good because icons are often inaccurate to the intended difficulty level, especially on edge cases like 3.6* insanes or 2.3* normals. Users can already set the name of the difficulty, so being able to set the icon to what difficulty it is meant to be would make sense.

With this it'd be easier to see at a glance on the beatmap listing what the spread of a map is actually like, such as ENHI instead of seeing NNHH - which makes it look like there's two normals and two hards when that's not the case at all.

This would be changeable by:

- the mapper, when a mapset is in pending/wip/graveyard

- QAT and higher when a mapset is in any state.

By default, icons would be set automatically by star rating the same way they currently are.

|

process

|

allow for selectable difficulty icons potentially this could take the form of a box on the beatmap listing where you can choose one of the icons which would appear when hovering a difficulty icon the ones that aren t selected would be darker grayed out and colored on hover selection this would be good because icons are often inaccurate to the intended difficulty level especially on edge cases like insanes or normals users can already set the name of the difficulty so being able to set the icon to what difficulty it is meant to be would make sense with this it d be easier to see at a glance on the beatmap listing what the spread of a map is actually like such as enhi instead of seeing nnhh which makes it look like there s two normals and two hards when that s not the case at all this would be changeable by the mapper when a mapset is in pending wip graveyard qat and higher when a mapset is in any state by default icons would be set automatically by star rating the same way they currently are

| 1

|

124,590

| 10,317,157,271

|

IssuesEvent

|

2019-08-30 11:59:12

|

alan-turing-institute/MLJLinearModels.jl

|

https://api.github.com/repos/alan-turing-institute/MLJLinearModels.jl

|

closed

|

Either remove or test (F)ADMM on simpler problem

|

tests

|

Could not get it to work well on LAD but maybe can make it work on something else like LASSO and can test the rest of the implementation on the go.

In the mean time maybe isolate the code in another branch as it makes code cov look bad.

|

1.0

|

Either remove or test (F)ADMM on simpler problem - Could not get it to work well on LAD but maybe can make it work on something else like LASSO and can test the rest of the implementation on the go.

In the mean time maybe isolate the code in another branch as it makes code cov look bad.

|

non_process

|

either remove or test f admm on simpler problem could not get it to work well on lad but maybe can make it work on something else like lasso and can test the rest of the implementation on the go in the mean time maybe isolate the code in another branch as it makes code cov look bad

| 0

|

15,849

| 20,029,314,308

|

IssuesEvent

|

2022-02-02 02:18:40

|

fluent/fluent-bit

|

https://api.github.com/repos/fluent/fluent-bit

|

closed

|

Benchmark

|

work-in-process Stale

|

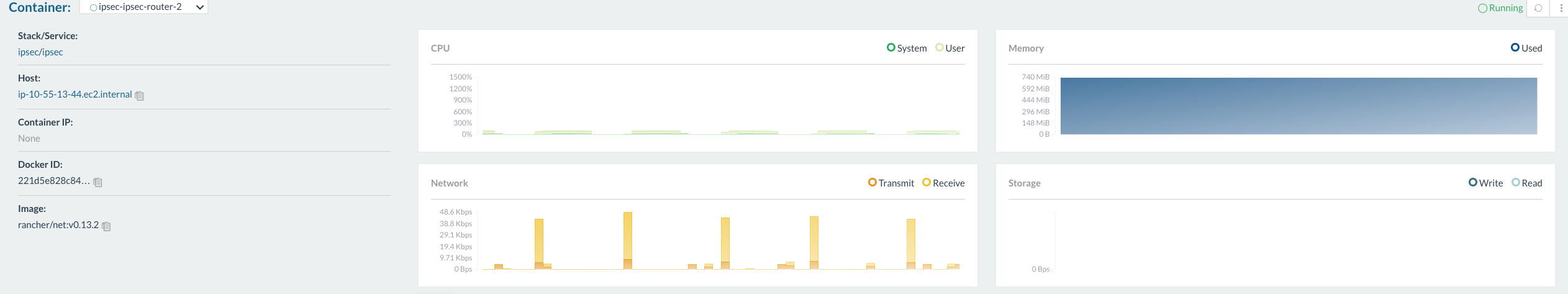

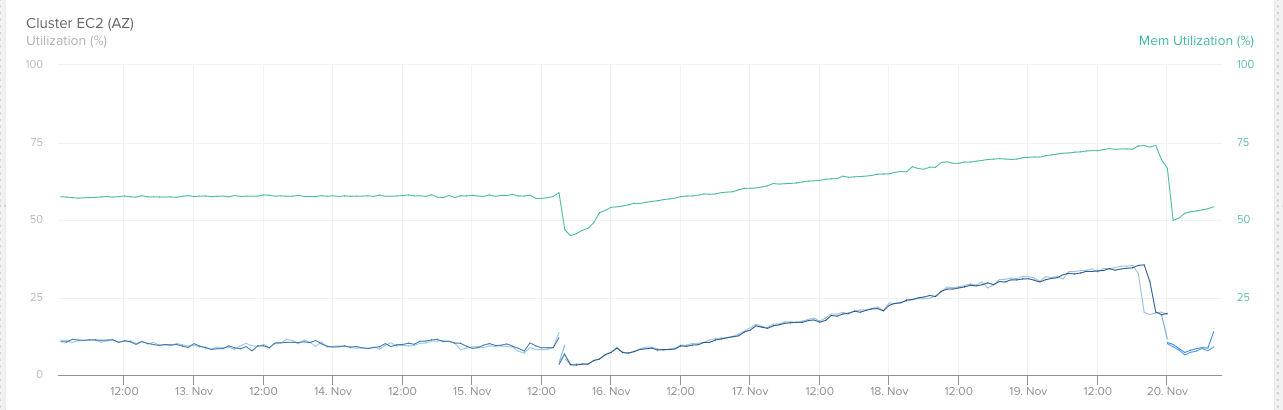

In order for users to assess Fluent Bit, it would be desirable to have a benchmark that covers performance and resource usage. This benchmark could include throughput for various payloads and protocols (HTTP/TCP) as well as resource usage (CPU/MEM, compare with what we did in [Centralized Container Logging with Fluent Bit](https://aws.amazon.com/blogs/opensource/centralized-container-logging-fluent-bit/)). In addition, the benchmark could be used to compare different Fluent Bit releases.

|

1.0

|

Benchmark - In order for users to assess Fluent Bit, it would be desirable to have a benchmark that covers performance and resource usage. This benchmark could include throughput for various payloads and protocols (HTTP/TCP) as well as resource usage (CPU/MEM, compare with what we did in [Centralized Container Logging with Fluent Bit](https://aws.amazon.com/blogs/opensource/centralized-container-logging-fluent-bit/)). In addition, the benchmark could be used to compare different Fluent Bit releases.

|

process

|

benchmark in order for users to assess fluent bit it would be desirable to have a benchmark that covers performance and resource usage this benchmark could include throughput for various payloads and protocols http tcp as well as resource usage cpu mem compare with what we did in in addition the benchmark could be used to compare different fluent bit releases

| 1

|

4,565

| 7,393,767,531

|

IssuesEvent

|

2018-03-17 01:33:12

|

MicrosoftDocs/azure-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-docs

|

closed

|

Useless Link to Windows PowerShell cmdlets for Azure API Management API

|

api-management cxp doc-bug in-process triaged

|

The link given to Windows PowerShell cmdlets for Azure API Management API goes to a useless page. It would be better to send readers to the page for the actual module where the commands are here: https://docs.microsoft.com/en-us/powershell/module/azurerm.apimanagement/?view=azurermps-5.5.0#api_management.

Otherwise the link is a dead-end.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 9329324a-cc58-0824-6ad7-a0e5323f68f8

* Version Independent ID: 82200310-9caa-6d03-e02c-7edcdb2bee61

* Content: [Manage Azure API Management using Azure Automation | Microsoft Docs](https://docs.microsoft.com/en-us/azure/api-management/automation-manage-api-management)

* Content Source: [articles/api-management/automation-manage-api-management.md](https://github.com/Microsoft/azure-docs/blob/master/articles/api-management/automation-manage-api-management.md)

* Service: **api-management**

* GitHub Login: @vladvino

* Microsoft Alias: **apimpm**

|

1.0

|

Useless Link to Windows PowerShell cmdlets for Azure API Management API - The link given to Windows PowerShell cmdlets for Azure API Management API goes to a useless page. It would be better to send readers to the page for the actual module where the commands are here: https://docs.microsoft.com/en-us/powershell/module/azurerm.apimanagement/?view=azurermps-5.5.0#api_management.

Otherwise the link is a dead-end.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 9329324a-cc58-0824-6ad7-a0e5323f68f8

* Version Independent ID: 82200310-9caa-6d03-e02c-7edcdb2bee61

* Content: [Manage Azure API Management using Azure Automation | Microsoft Docs](https://docs.microsoft.com/en-us/azure/api-management/automation-manage-api-management)

* Content Source: [articles/api-management/automation-manage-api-management.md](https://github.com/Microsoft/azure-docs/blob/master/articles/api-management/automation-manage-api-management.md)

* Service: **api-management**

* GitHub Login: @vladvino

* Microsoft Alias: **apimpm**

|

process

|

useless link to windows powershell cmdlets for azure api management api the link given to windows powershell cmdlets for azure api management api goes to a useless page it would be better to send readers to the page for the actual module where the commands are here otherwise the link is a dead end document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service api management github login vladvino microsoft alias apimpm

| 1

|

316,220

| 27,146,178,756

|

IssuesEvent

|

2023-02-16 20:08:50

|

hashicorp/terraform-provider-google

|

https://api.github.com/repos/hashicorp/terraform-provider-google

|

closed

|

Failing test(s): TestAccFirebaseAppleApp_*

|

size/s priority/1 test failure

|

### Affected Resource(s)

<!--- Please list the affected resources and data sources. --->

* google_firebase_apple_app

<!--- This is a template for reporting test failures on nightly builds. It should only be used by core contributors who have access to our CI/CD results. --->

<!-- i.e. "Consistently since X date" or "X% failure in MONTH" -->

Failure rate: 100% since 2022-11-22

<!-- List all impacted tests for searchability. The title of the issue can instead list one or more groups of tests, or describe the overall root cause. -->

Impacted tests:

- TestAccFirebaseAppleApp_firebaseAppleAppBasicExample

- TestAccFirebaseAppleApp_update

- TestAccFirebaseAppleApp_firebaseAppleAppFullExample

<!-- Link to the nightly build(s), ideally with one impacted test opened -->

Nightly builds:

- https://ci-oss.hashicorp.engineering/buildConfiguration/GoogleCloudBeta_ProviderGoogleCloudBetaGoogleProject/360401?buildTab=tests&expandedTest=-7026834308340857772

<!-- The error message that displays in the tests tab, for reference -->

Message:

```

Skip deleting App "projects/ci-test-project-188019/iosApps/1:1067888929963:ios:68211e93cd713a4644dbee" due to deletion_policy: ""

testing_new.go:84: Error running post-test destroy, there may be dangling resources: FirebaseAppleApp still exists

```

```

Error: Error creating AppleApp: googleapi: Error 409: Requested entity already exists

```

|

1.0

|

Failing test(s): TestAccFirebaseAppleApp_* - ### Affected Resource(s)

<!--- Please list the affected resources and data sources. --->

* google_firebase_apple_app

<!--- This is a template for reporting test failures on nightly builds. It should only be used by core contributors who have access to our CI/CD results. --->

<!-- i.e. "Consistently since X date" or "X% failure in MONTH" -->

Failure rate: 100% since 2022-11-22

<!-- List all impacted tests for searchability. The title of the issue can instead list one or more groups of tests, or describe the overall root cause. -->

Impacted tests:

- TestAccFirebaseAppleApp_firebaseAppleAppBasicExample

- TestAccFirebaseAppleApp_update

- TestAccFirebaseAppleApp_firebaseAppleAppFullExample

<!-- Link to the nightly build(s), ideally with one impacted test opened -->

Nightly builds:

- https://ci-oss.hashicorp.engineering/buildConfiguration/GoogleCloudBeta_ProviderGoogleCloudBetaGoogleProject/360401?buildTab=tests&expandedTest=-7026834308340857772

<!-- The error message that displays in the tests tab, for reference -->

Message:

```

Skip deleting App "projects/ci-test-project-188019/iosApps/1:1067888929963:ios:68211e93cd713a4644dbee" due to deletion_policy: ""

testing_new.go:84: Error running post-test destroy, there may be dangling resources: FirebaseAppleApp still exists

```

```

Error: Error creating AppleApp: googleapi: Error 409: Requested entity already exists

```

|

non_process

|

failing test s testaccfirebaseappleapp affected resource s google firebase apple app failure rate since impacted tests testaccfirebaseappleapp firebaseappleappbasicexample testaccfirebaseappleapp update testaccfirebaseappleapp firebaseappleappfullexample nightly builds message skip deleting app projects ci test project iosapps ios due to deletion policy testing new go error running post test destroy there may be dangling resources firebaseappleapp still exists error error creating appleapp googleapi error requested entity already exists

| 0

|

8,042

| 11,217,766,486

|

IssuesEvent

|

2020-01-07 09:58:42

|

prisma/prisma2

|

https://api.github.com/repos/prisma/prisma2

|

closed

|

CLI tries to download npm packages using `npm add`

|

bug/2-confirmed kind/bug process/candidate

|

https://github.com/prisma/prisma2/blob/34f5e82d201fc5ceaad6041bf670838161188f1b/cli/sdk/src/predefinedGeneratorResolvers.ts#L88-L90

It tries to download packages using `npm add` even though npm packages are installed using `npm install`

|

1.0

|

CLI tries to download npm packages using `npm add` - https://github.com/prisma/prisma2/blob/34f5e82d201fc5ceaad6041bf670838161188f1b/cli/sdk/src/predefinedGeneratorResolvers.ts#L88-L90

It tries to download packages using `npm add` even though npm packages are installed using `npm install`

|

process

|

cli tries to download npm packages using npm add it tries to download packages using npm add even though npm packages are installed using npm install

| 1

|

7,665

| 10,756,857,286

|

IssuesEvent

|

2019-10-31 12:08:08

|

kubeflow/kubeflow

|

https://api.github.com/repos/kubeflow/kubeflow

|

opened

|

Qualify 0.7.0 with kfctl_k8s_istio.yaml

|

area/kfctl kind/process priority/p0

|

We are now on 0.7.0RC7 for kfctl

https://github.com/kubeflow/kubeflow/releases

There are currently no known P0 issues.

https://github.com/orgs/kubeflow/projects/22?card_filter_query=label%3Apriority%2Fp0

Opening this bug to track qualifying the `kfctl_k8s_istio.yaml` config.

Ideally we'd like to aim to finalize 0.7.0 today. So it would be great to do run through the deployment and identify and fix any issues that come up.

There was a bug #4415 with the registration flow in the case with no identity.

We'd like to verify that issue has been fixed.

Related to: #4249

/assign @krishnadurai

|

1.0

|

Qualify 0.7.0 with kfctl_k8s_istio.yaml - We are now on 0.7.0RC7 for kfctl

https://github.com/kubeflow/kubeflow/releases

There are currently no known P0 issues.

https://github.com/orgs/kubeflow/projects/22?card_filter_query=label%3Apriority%2Fp0

Opening this bug to track qualifying the `kfctl_k8s_istio.yaml` config.

Ideally we'd like to aim to finalize 0.7.0 today. So it would be great to do run through the deployment and identify and fix any issues that come up.

There was a bug #4415 with the registration flow in the case with no identity.

We'd like to verify that issue has been fixed.

Related to: #4249

/assign @krishnadurai

|

process

|

qualify with kfctl istio yaml we are now on for kfctl there are currently no known issues opening this bug to track qualifying the kfctl istio yaml config ideally we d like to aim to finalize today so it would be great to do run through the deployment and identify and fix any issues that come up there was a bug with the registration flow in the case with no identity we d like to verify that issue has been fixed related to assign krishnadurai

| 1

|

16,687

| 21,791,068,442

|

IssuesEvent

|

2022-05-14 22:53:25

|

lynnandtonic/nestflix.fun

|

https://api.github.com/repos/lynnandtonic/nestflix.fun

|

closed

|

Ask Mr. Lizard from Jim Henson's Dinosaurs

|

suggested title in process

|

Please add as much of the following info as you can:

Title: Ask Mr. Lizard

Type (film/tv show): TV show

Film or show in which it appears: Jim Henson's Dinosaurs

Is the parent film/show streaming anywhere? DISNEY+

About when in the parent film/show does it appear? Episode 201, 215 and 413.

Actual footage of the film/show can be seen (yes/no)?

Yes. The running joke is that the little dinosaur child Timmy always dies and Mr Lizard tells the crew "We're gonna need another Timmy"

|

1.0

|

Ask Mr. Lizard from Jim Henson's Dinosaurs - Please add as much of the following info as you can:

Title: Ask Mr. Lizard

Type (film/tv show): TV show

Film or show in which it appears: Jim Henson's Dinosaurs

Is the parent film/show streaming anywhere? DISNEY+

About when in the parent film/show does it appear? Episode 201, 215 and 413.

Actual footage of the film/show can be seen (yes/no)?

Yes. The running joke is that the little dinosaur child Timmy always dies and Mr Lizard tells the crew "We're gonna need another Timmy"

|

process

|

ask mr lizard from jim henson s dinosaurs please add as much of the following info as you can title ask mr lizard type film tv show tv show film or show in which it appears jim henson s dinosaurs is the parent film show streaming anywhere disney about when in the parent film show does it appear episode and actual footage of the film show can be seen yes no yes the running joke is that the little dinosaur child timmy always dies and mr lizard tells the crew we re gonna need another timmy

| 1

|

11,434

| 9,367,510,782

|

IssuesEvent

|

2019-04-03 05:55:02

|

MicrosoftDocs/azure-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-docs

|

closed

|

There is a missing line in the program list.

|

assigned-to-author cognitive-services/svc doc-bug triaged

|

@linyixian commented on [Sun Mar 17 2019](https://github.com/MicrosoftDocs/azure-docs.ja-jp/issues/2223)

モデルとラベルを読み込む際にファイル名を代入する部分が抜けています。

filename = "model.pb"

labels_filename = "labels.txt"

---

#### ドキュメントの詳細

⚠ *このセクションを編集しないでください。 docs.microsoft.com で必須です ➟ GitHub の問題のリンク。*

* ID: 754dd7d5-dd8b-4a75-5d79-0ba621a847cd

* Version Independent ID: 3cca3535-1c16-2da1-46c6-421cbf808bc3

* Content: [チュートリアル:Python での TensorFlow モデルの実行 - Custom Vision Service](https://docs.microsoft.com/ja-jp/azure/cognitive-services/custom-vision-service/export-model-python#feedback)

* Content Source: [articles/cognitive-services/Custom-Vision-Service/export-model-python.md](https://github.com/MicrosoftDocs/azure-docs.ja-jp/blob/live/articles/cognitive-services/Custom-Vision-Service/export-model-python.md)

* Service: **cognitive-services**

* GitHub Login: @areddish

* Microsoft Alias: **areddish**

|

1.0

|

There is a missing line in the program list. - @linyixian commented on [Sun Mar 17 2019](https://github.com/MicrosoftDocs/azure-docs.ja-jp/issues/2223)

モデルとラベルを読み込む際にファイル名を代入する部分が抜けています。

filename = "model.pb"

labels_filename = "labels.txt"

---

#### ドキュメントの詳細

⚠ *このセクションを編集しないでください。 docs.microsoft.com で必須です ➟ GitHub の問題のリンク。*

* ID: 754dd7d5-dd8b-4a75-5d79-0ba621a847cd

* Version Independent ID: 3cca3535-1c16-2da1-46c6-421cbf808bc3

* Content: [チュートリアル:Python での TensorFlow モデルの実行 - Custom Vision Service](https://docs.microsoft.com/ja-jp/azure/cognitive-services/custom-vision-service/export-model-python#feedback)

* Content Source: [articles/cognitive-services/Custom-Vision-Service/export-model-python.md](https://github.com/MicrosoftDocs/azure-docs.ja-jp/blob/live/articles/cognitive-services/Custom-Vision-Service/export-model-python.md)

* Service: **cognitive-services**

* GitHub Login: @areddish

* Microsoft Alias: **areddish**

|

non_process

|

there is a missing line in the program list linyixian commented on モデルとラベルを読み込む際にファイル名を代入する部分が抜けています。 filename model pb labels filename labels txt ドキュメントの詳細 ⚠ このセクションを編集しないでください。 docs microsoft com で必須です ➟ github の問題のリンク。 id version independent id content content source service cognitive services github login areddish microsoft alias areddish

| 0

|

727,681

| 25,044,031,678

|

IssuesEvent

|

2022-11-05 02:39:47

|

PMEAL/OpenPNM

|

https://api.github.com/repos/PMEAL/OpenPNM

|

closed

|

Remove the py module

|

high priority maintenance

|

This is causing our nightly builds to fail, no sign of what went wrong but while digging I see that the devs [discourage](https://github.com/pytest-dev/py) its use

|

1.0

|

Remove the py module - This is causing our nightly builds to fail, no sign of what went wrong but while digging I see that the devs [discourage](https://github.com/pytest-dev/py) its use

|

non_process

|

remove the py module this is causing our nightly builds to fail no sign of what went wrong but while digging i see that the devs its use

| 0

|

4,242

| 7,005,164,884

|

IssuesEvent

|

2017-12-19 00:23:26

|

websharks/comet-cache

|

https://api.github.com/repos/websharks/comet-cache

|

opened

|

create_function() is deprecated in PHP 7.2

|

compatibility

|

> Seen with WP4.9.1 running on a PHP7.2.0 server:

PHP Deprecated: Function create_function() is deprecated in …\wp-content\plugins\comet-cache\src\includes\traits\Shared\FsUtils.php on line 33

Reported here: https://wordpress.org/support/topic/version-170220-function-create_function-is-deprecated-in-php-7-2/

|

True

|

create_function() is deprecated in PHP 7.2 - > Seen with WP4.9.1 running on a PHP7.2.0 server:

PHP Deprecated: Function create_function() is deprecated in …\wp-content\plugins\comet-cache\src\includes\traits\Shared\FsUtils.php on line 33

Reported here: https://wordpress.org/support/topic/version-170220-function-create_function-is-deprecated-in-php-7-2/

|

non_process

|

create function is deprecated in php seen with running on a server php deprecated function create function is deprecated in … wp content plugins comet cache src includes traits shared fsutils php on line reported here

| 0

|

595

| 3,070,997,438

|

IssuesEvent

|

2015-08-19 09:13:08

|

Graylog2/graylog2-server

|

https://api.github.com/repos/Graylog2/graylog2-server

|

closed

|

Add JSON converter

|

feature processing

|

For extracting information from log messages which contain JSON and might appear in logged query URLs and similar data, a JSON extractor would be useful.

|

1.0

|

Add JSON converter - For extracting information from log messages which contain JSON and might appear in logged query URLs and similar data, a JSON extractor would be useful.

|

process

|

add json converter for extracting information from log messages which contain json and might appear in logged query urls and similar data a json extractor would be useful

| 1

|

1,843

| 4,647,114,221

|

IssuesEvent

|

2016-10-01 09:09:48

|

AllenFang/react-bootstrap-table

|

https://api.github.com/repos/AllenFang/react-bootstrap-table

|

closed

|

TableHeaderColumn does not accept function as className attribute

|

enhancement inprocess

|

According to the [docs](http://allenfang.github.io/react-bootstrap-table/docs.html), the TableHeaderColumn should accept passing a function to the className attribute.

However, the current implementation restricts the PropType to String:

`

TableHeaderColumn.propTypes = {

...

className: PropTypes.string,

...

`

|

1.0

|

TableHeaderColumn does not accept function as className attribute - According to the [docs](http://allenfang.github.io/react-bootstrap-table/docs.html), the TableHeaderColumn should accept passing a function to the className attribute.

However, the current implementation restricts the PropType to String:

`

TableHeaderColumn.propTypes = {

...

className: PropTypes.string,

...

`

|

process

|

tableheadercolumn does not accept function as classname attribute according to the the tableheadercolumn should accept passing a function to the classname attribute however the current implementation restricts the proptype to string tableheadercolumn proptypes classname proptypes string

| 1

|

19,503

| 25,812,450,721

|

IssuesEvent

|

2022-12-12 00:08:13

|

nkdAgility/azure-devops-migration-tools

|

https://api.github.com/repos/nkdAgility/azure-devops-migration-tools

|

closed

|

Issue Migrating Pipeline with Service Connection

|

no-issue-activity Pipeline Processor

|

## Describe your issue:

When trying to migrate a pipeline that references a service connection from one AzDO Project/Org to another AzDO Project/Org, I get the following error on the service connection migration:

```

[08:57:34 ERR] Error migrating ServiceConnection: Artifactory. Please migrate it manually.

Url: POST https://dev.azure.com/TargetOrg/Test/_apis/serviceendpoint/endpoints/

{"$id":"1","innerException":null,"message":"At least one project reference required to create an endpoint.","typeName":"System.ArgumentException, mscorlib","typeKey":"ArgumentException","errorCode":0,"eventId":0}

```

Then when the migration tool tries to migrate the pipeline that uses the service connection I receive this error:

```

[08:57:40 ERR] Error migrating BuildDefinition: Test-CI. Please migrate it manually.

Url: POST https://dev.azure.com/TargetOrg/Test/_apis/build/definitions/

{"$id":"1","innerException":null,"message":"The pipeline is not valid. Job Job_1: Step input artifactoryService references service connection 6696c25f-ac9a-4745-bd11-949dfba9c2cc which could not be found. The service connection does not exist or has not been authorized for use. For authorization details, refer to https://aka.ms/yamlauthz.","typeName":"Microsoft.TeamFoundation.DistributedTask.Pipelines.PipelineValidationException, Microsoft.TeamFoundation.DistributedTask.WebApi","typeKey":"PipelineValidationException","errorCode":0,"eventId":3000}

```

Even if I create the service connection in the target project I get the above error message.

Please see the below config json:

```

{

"ChangeSetMappingFile":null,

"Endpoints":{

"AzureDevOpsEndpoints":[

{

"Name":"Source",

"$type":"AzureDevOpsEndpointOptions",

"Organisation":"https://dev.azure.com/SourceOrg",

"Project":"Test",

"ReflectedWorkItemIDFieldName":"Custom.ReflectedWorkItemId",

"AuthenticationMode":"AccessToken",

"AccessToken":"token",

"EndpointEnrichers":null

},

{

"Name":"Target",

"$type":"AzureDevOpsEndpointOptions",

"Organisation":"https://dev.azure.com/TargetOrg",

"Project":"Test",

"ReflectedWorkItemIDFieldName":"TfsMigrationTool.ReflectedWorkItemId",

"AuthenticationMode":"AccessToken",

"AccessToken":"token",

"EndpointEnrichers":null

}

]

},

"LogLevel":"Debug",

"Processors":[

{

"$type":"AzureDevOpsPipelineProcessorOptions",

"Enabled":true,

"MigrateBuildPipelines":true,

"MigrateReleasePipelines":true,

"MigrateTaskGroups":true,

"MigrateVariableGroups":true,

"MigrateServiceConnections":true,

"BuildPipelines":null,

"ReleasePipelines":null,

"RefName":null,

"SourceName":"Source",

"TargetName":"Target",

"RepositoryNameMaps":{

"Test":"Test"

}

}

],

"Version":"12.0",

"Endpoints":{

"InMemoryWorkItemEndpoints":[

{

"Name":"Source",

"EndpointEnrichers":null

},

{

"Name":"Target",

"EndpointEnrichers":null

}

]

}

}

```

## Source Details

Azure DevOps Services

## Target Details

Azure DevOps Services

[test.txt](https://github.com/nkdAgility/azure-devops-migration-tools/files/9769048/test.txt)

|

1.0

|

Issue Migrating Pipeline with Service Connection - ## Describe your issue:

When trying to migrate a pipeline that references a service connection from one AzDO Project/Org to another AzDO Project/Org, I get the following error on the service connection migration:

```

[08:57:34 ERR] Error migrating ServiceConnection: Artifactory. Please migrate it manually.

Url: POST https://dev.azure.com/TargetOrg/Test/_apis/serviceendpoint/endpoints/

{"$id":"1","innerException":null,"message":"At least one project reference required to create an endpoint.","typeName":"System.ArgumentException, mscorlib","typeKey":"ArgumentException","errorCode":0,"eventId":0}

```

Then when the migration tool tries to migrate the pipeline that uses the service connection I receive this error:

```

[08:57:40 ERR] Error migrating BuildDefinition: Test-CI. Please migrate it manually.

Url: POST https://dev.azure.com/TargetOrg/Test/_apis/build/definitions/

{"$id":"1","innerException":null,"message":"The pipeline is not valid. Job Job_1: Step input artifactoryService references service connection 6696c25f-ac9a-4745-bd11-949dfba9c2cc which could not be found. The service connection does not exist or has not been authorized for use. For authorization details, refer to https://aka.ms/yamlauthz.","typeName":"Microsoft.TeamFoundation.DistributedTask.Pipelines.PipelineValidationException, Microsoft.TeamFoundation.DistributedTask.WebApi","typeKey":"PipelineValidationException","errorCode":0,"eventId":3000}

```

Even if I create the service connection in the target project I get the above error message.

Please see the below config json:

```

{

"ChangeSetMappingFile":null,

"Endpoints":{

"AzureDevOpsEndpoints":[

{

"Name":"Source",

"$type":"AzureDevOpsEndpointOptions",

"Organisation":"https://dev.azure.com/SourceOrg",

"Project":"Test",

"ReflectedWorkItemIDFieldName":"Custom.ReflectedWorkItemId",

"AuthenticationMode":"AccessToken",

"AccessToken":"token",

"EndpointEnrichers":null

},

{

"Name":"Target",

"$type":"AzureDevOpsEndpointOptions",

"Organisation":"https://dev.azure.com/TargetOrg",

"Project":"Test",

"ReflectedWorkItemIDFieldName":"TfsMigrationTool.ReflectedWorkItemId",

"AuthenticationMode":"AccessToken",

"AccessToken":"token",

"EndpointEnrichers":null

}

]

},

"LogLevel":"Debug",

"Processors":[

{

"$type":"AzureDevOpsPipelineProcessorOptions",

"Enabled":true,

"MigrateBuildPipelines":true,

"MigrateReleasePipelines":true,

"MigrateTaskGroups":true,

"MigrateVariableGroups":true,

"MigrateServiceConnections":true,

"BuildPipelines":null,

"ReleasePipelines":null,

"RefName":null,

"SourceName":"Source",

"TargetName":"Target",

"RepositoryNameMaps":{

"Test":"Test"

}

}

],

"Version":"12.0",

"Endpoints":{

"InMemoryWorkItemEndpoints":[

{

"Name":"Source",

"EndpointEnrichers":null

},

{

"Name":"Target",

"EndpointEnrichers":null

}

]

}

}

```

## Source Details

Azure DevOps Services

## Target Details

Azure DevOps Services

[test.txt](https://github.com/nkdAgility/azure-devops-migration-tools/files/9769048/test.txt)

|

process

|

issue migrating pipeline with service connection describe your issue when trying to migrate a pipeline that references a service connection from one azdo project org to another azdo project org i get the following error on the service connection migration error migrating serviceconnection artifactory please migrate it manually url post id innerexception null message at least one project reference required to create an endpoint typename system argumentexception mscorlib typekey argumentexception errorcode eventid then when the migration tool tries to migrate the pipeline that uses the service connection i receive this error error migrating builddefinition test ci please migrate it manually url post id innerexception null message the pipeline is not valid job job step input artifactoryservice references service connection which could not be found the service connection does not exist or has not been authorized for use for authorization details refer to microsoft teamfoundation distributedtask webapi typekey pipelinevalidationexception errorcode eventid even if i create the service connection in the target project i get the above error message please see the below config json changesetmappingfile null endpoints azuredevopsendpoints name source type azuredevopsendpointoptions organisation project test reflectedworkitemidfieldname custom reflectedworkitemid authenticationmode accesstoken accesstoken token endpointenrichers null name target type azuredevopsendpointoptions organisation project test reflectedworkitemidfieldname tfsmigrationtool reflectedworkitemid authenticationmode accesstoken accesstoken token endpointenrichers null loglevel debug processors type azuredevopspipelineprocessoroptions enabled true migratebuildpipelines true migratereleasepipelines true migratetaskgroups true migratevariablegroups true migrateserviceconnections true buildpipelines null releasepipelines null refname null sourcename source targetname target repositorynamemaps test test version endpoints inmemoryworkitemendpoints name source endpointenrichers null name target endpointenrichers null source details azure devops services target details azure devops services

| 1

|

2,948

| 5,930,207,519

|

IssuesEvent

|

2017-05-24 00:24:43

|

ncbo/bioportal-project

|

https://api.github.com/repos/ncbo/bioportal-project

|

opened

|

CHEAR: latest submission failed to parse

|

ontology processing problem

|

The latest submission of the [CHEAR ontology](http://bioportal.bioontology.org/ontologies/CHEAR) failed to parse (status shows "Error Rdf") in the BioPortal UI.

Parsing logs indicate that the ontology was successfully parsed by the OWL API, but failed the secondary rapper parsing step:

```

I, [2017-05-23T07:57:20.760933 #3345] INFO -- : ["OWLAPI Java command: parsing finished successfully."]

I, [2017-05-23T07:57:20.761117 #3345] INFO -- : ["Output size 11890550 in `/srv/ncbo/repository/CHEAR/3/owlapi.xrdf`"]

E, [2017-05-23T07:57:21.540084 #3345] ERROR -- : ["Exception: Rapper cannot parse rdfxml file at /srv/ncbo/repository/CHEAR/3/owlapi.xrdf:

rapper: Parsing URI file:///srv/ncbo/repository/CHEAR/3/owlapi.xrdf with parser rdfxml

rapper: Serializing with serializer ntriples

rapper: Error - URI file:///srv/ncbo/repository/CHEAR/3/owlapi.xrdf:169752 - Illegal rdf:nodeID value '_:genid8154'

```

Manually running rapper command confirms that rapper is unable to serialize the content in the owlapi.xrdf file:

```

[ncbo-deployer@ncbo-prd-app-25 3]$ rapper -i rdfxml -o ntriples owlapi.xrdf > data.triples

rapper: Parsing URI file:///srv/ncbo/share/env/production/repository/CHEAR/3/owlapi.xrdf with parser rdfxml

rapper: Serializing with serializer ntriples

rapper: Error - URI file:///srv/ncbo/share/env/production/repository/CHEAR/3/owlapi.xrdf:169752 - Illegal rdf:nodeID value '_:genid8154'

rapper: Failed to parse file owlapi.xrdf rdfxml content

rapper: Parsing returned 104863 triples

```

|

1.0

|

CHEAR: latest submission failed to parse - The latest submission of the [CHEAR ontology](http://bioportal.bioontology.org/ontologies/CHEAR) failed to parse (status shows "Error Rdf") in the BioPortal UI.

Parsing logs indicate that the ontology was successfully parsed by the OWL API, but failed the secondary rapper parsing step:

```

I, [2017-05-23T07:57:20.760933 #3345] INFO -- : ["OWLAPI Java command: parsing finished successfully."]

I, [2017-05-23T07:57:20.761117 #3345] INFO -- : ["Output size 11890550 in `/srv/ncbo/repository/CHEAR/3/owlapi.xrdf`"]

E, [2017-05-23T07:57:21.540084 #3345] ERROR -- : ["Exception: Rapper cannot parse rdfxml file at /srv/ncbo/repository/CHEAR/3/owlapi.xrdf:

rapper: Parsing URI file:///srv/ncbo/repository/CHEAR/3/owlapi.xrdf with parser rdfxml

rapper: Serializing with serializer ntriples

rapper: Error - URI file:///srv/ncbo/repository/CHEAR/3/owlapi.xrdf:169752 - Illegal rdf:nodeID value '_:genid8154'

```

Manually running rapper command confirms that rapper is unable to serialize the content in the owlapi.xrdf file:

```

[ncbo-deployer@ncbo-prd-app-25 3]$ rapper -i rdfxml -o ntriples owlapi.xrdf > data.triples

rapper: Parsing URI file:///srv/ncbo/share/env/production/repository/CHEAR/3/owlapi.xrdf with parser rdfxml

rapper: Serializing with serializer ntriples

rapper: Error - URI file:///srv/ncbo/share/env/production/repository/CHEAR/3/owlapi.xrdf:169752 - Illegal rdf:nodeID value '_:genid8154'

rapper: Failed to parse file owlapi.xrdf rdfxml content

rapper: Parsing returned 104863 triples

```

|

process

|

chear latest submission failed to parse the latest submission of the failed to parse status shows error rdf in the bioportal ui parsing logs indicate that the ontology was successfully parsed by the owl api but failed the secondary rapper parsing step i info i info e error exception rapper cannot parse rdfxml file at srv ncbo repository chear owlapi xrdf rapper parsing uri file srv ncbo repository chear owlapi xrdf with parser rdfxml rapper serializing with serializer ntriples rapper error uri file srv ncbo repository chear owlapi xrdf illegal rdf nodeid value manually running rapper command confirms that rapper is unable to serialize the content in the owlapi xrdf file rapper i rdfxml o ntriples owlapi xrdf data triples rapper parsing uri file srv ncbo share env production repository chear owlapi xrdf with parser rdfxml rapper serializing with serializer ntriples rapper error uri file srv ncbo share env production repository chear owlapi xrdf illegal rdf nodeid value rapper failed to parse file owlapi xrdf rdfxml content rapper parsing returned triples

| 1

|

100,028

| 4,075,711,373

|

IssuesEvent

|

2016-05-29 12:02:46

|

HabitRPG/habitrpg

|

https://api.github.com/repos/HabitRPG/habitrpg

|

closed

|

"Error request entity too large" - can't join / leave / edit / end challenge or guild

|

API bug Memorable priority - critical

|

```

2013-09-07T23:05:25.959760+00:00 app[web.1]: Error: Request Entity Too Large

2013-09-07T23:05:25.959760+00:00 app[web.1]: at Object.exports.error (/app/node_modules/express/node_modules/connect/lib/utils.js:62:13)

```

----

_edit by admin_:

# What to do if you see "request entity too large" from any action involving a challenge or a guild:

**This problem occurs when guilds and challenges have many players in them.**

**It can be a temporary error, so try again a few hours later.**

**If you are trying to join a challenge, use [this "Join Challenge" form](https://jsfiddle.net/robcthegeek/qnc4g5g6/embedded/result/). It is likely to work in many cases but sometimes might also produce an error (due to the challenge being too large or popular, not due to a bug in the form).**

**If you're still unable to join the challenge, please comment here and include your User ID (see step 6 of the Help -> Report a Bug instructions), the URL of the challenge or guild (the URL will have a long string of random characters in it, such as https://habitica.com/#/options/groups/challenges/3b4f33e9-727b-4b9e-b41c-babfbcdb7ca4), and the action you were trying to take (e.g., join, leave, etc).**

**Especially comment if you are the challenge or guild owner and are trying to edit it but can't. Tell us what changes you need to have made.**

**If you are trying to close a challenge and cannot because of this error, make a note of the challenge's name, its URL, and the User ID and name of the person who is the winner. Add that information here and an admin will help you award the challenge achievement and prize.**

This is due to #5830

<!---

@huboard:{"order":85.21591186523438}

-->

<bountysource-plugin>

---

Want to back this issue? **[Post a bounty on it!](https://www.bountysource.com/issues/965314-error-request-entity-too-large-can-t-join-leave-edit-end-challenge-or-guild?utm_campaign=plugin&utm_content=tracker%2F68393&utm_medium=issues&utm_source=github)** We accept bounties via [Bountysource](https://www.bountysource.com/?utm_campaign=plugin&utm_content=tracker%2F68393&utm_medium=issues&utm_source=github).

</bountysource-plugin>

|

1.0

|

"Error request entity too large" - can't join / leave / edit / end challenge or guild - ```

2013-09-07T23:05:25.959760+00:00 app[web.1]: Error: Request Entity Too Large

2013-09-07T23:05:25.959760+00:00 app[web.1]: at Object.exports.error (/app/node_modules/express/node_modules/connect/lib/utils.js:62:13)

```

----

_edit by admin_:

# What to do if you see "request entity too large" from any action involving a challenge or a guild:

**This problem occurs when guilds and challenges have many players in them.**

**It can be a temporary error, so try again a few hours later.**

**If you are trying to join a challenge, use [this "Join Challenge" form](https://jsfiddle.net/robcthegeek/qnc4g5g6/embedded/result/). It is likely to work in many cases but sometimes might also produce an error (due to the challenge being too large or popular, not due to a bug in the form).**

**If you're still unable to join the challenge, please comment here and include your User ID (see step 6 of the Help -> Report a Bug instructions), the URL of the challenge or guild (the URL will have a long string of random characters in it, such as https://habitica.com/#/options/groups/challenges/3b4f33e9-727b-4b9e-b41c-babfbcdb7ca4), and the action you were trying to take (e.g., join, leave, etc).**

**Especially comment if you are the challenge or guild owner and are trying to edit it but can't. Tell us what changes you need to have made.**

**If you are trying to close a challenge and cannot because of this error, make a note of the challenge's name, its URL, and the User ID and name of the person who is the winner. Add that information here and an admin will help you award the challenge achievement and prize.**

This is due to #5830

<!---

@huboard:{"order":85.21591186523438}

-->

<bountysource-plugin>

---

Want to back this issue? **[Post a bounty on it!](https://www.bountysource.com/issues/965314-error-request-entity-too-large-can-t-join-leave-edit-end-challenge-or-guild?utm_campaign=plugin&utm_content=tracker%2F68393&utm_medium=issues&utm_source=github)** We accept bounties via [Bountysource](https://www.bountysource.com/?utm_campaign=plugin&utm_content=tracker%2F68393&utm_medium=issues&utm_source=github).

</bountysource-plugin>

|

non_process

|

error request entity too large can t join leave edit end challenge or guild app error request entity too large app at object exports error app node modules express node modules connect lib utils js edit by admin what to do if you see request entity too large from any action involving a challenge or a guild this problem occurs when guilds and challenges have many players in them it can be a temporary error so try again a few hours later if you are trying to join a challenge use it is likely to work in many cases but sometimes might also produce an error due to the challenge being too large or popular not due to a bug in the form if you re still unable to join the challenge please comment here and include your user id see step of the help report a bug instructions the url of the challenge or guild the url will have a long string of random characters in it such as and the action you were trying to take e g join leave etc especially comment if you are the challenge or guild owner and are trying to edit it but can t tell us what changes you need to have made if you are trying to close a challenge and cannot because of this error make a note of the challenge s name its url and the user id and name of the person who is the winner add that information here and an admin will help you award the challenge achievement and prize this is due to huboard order want to back this issue we accept bounties via

| 0

|

7,822

| 10,987,928,466

|

IssuesEvent

|

2019-12-02 10:11:58

|

qgis/QGIS-Documentation

|

https://api.github.com/repos/qgis/QGIS-Documentation

|

closed

|

Add new parameter types for documenting algorithms (file, matrix and layer)

|

Guidelines Processing Alg backport release_3.4 enhancement

|

## Description

<!--

Cleaning the queue is a process done by project maintainers, mostly on a volunteer basis.

We try to keep the overhead as small as possible and appreciate if you help us to do so by completing the following items.

Include sentences with details describing the issue you have encountered (e.g., actual behavior, expected behavior, steps to reproduce).

-->

Goal: Add new parameter types for the documentation of algorithms.

* ``file`` (for ``QgsProcessingParameterFileDestination`` - used for instance

in the QGIS "Download file" algorithm)

* ``matrix`` (for ``QgsProcessingParameterMatrix`` - used for instance in the

QGIS "Reclassify by table" algorithm)

Page URL: https://docs.qgis.org/testing/en/docs/documentation_guidelines/writing.html

|

1.0

|

Add new parameter types for documenting algorithms (file, matrix and layer) - ## Description

<!--

Cleaning the queue is a process done by project maintainers, mostly on a volunteer basis.

We try to keep the overhead as small as possible and appreciate if you help us to do so by completing the following items.

Include sentences with details describing the issue you have encountered (e.g., actual behavior, expected behavior, steps to reproduce).

-->

Goal: Add new parameter types for the documentation of algorithms.

* ``file`` (for ``QgsProcessingParameterFileDestination`` - used for instance

in the QGIS "Download file" algorithm)

* ``matrix`` (for ``QgsProcessingParameterMatrix`` - used for instance in the

QGIS "Reclassify by table" algorithm)

Page URL: https://docs.qgis.org/testing/en/docs/documentation_guidelines/writing.html

|

process

|

add new parameter types for documenting algorithms file matrix and layer description cleaning the queue is a process done by project maintainers mostly on a volunteer basis we try to keep the overhead as small as possible and appreciate if you help us to do so by completing the following items include sentences with details describing the issue you have encountered e g actual behavior expected behavior steps to reproduce goal add new parameter types for the documentation of algorithms file for qgsprocessingparameterfiledestination used for instance in the qgis download file algorithm matrix for qgsprocessingparametermatrix used for instance in the qgis reclassify by table algorithm page url

| 1

|

11,507

| 14,383,016,813

|

IssuesEvent

|

2020-12-02 08:30:16

|

carp-lang/Carp

|

https://api.github.com/repos/carp-lang/Carp

|

closed

|

Style guide

|

haskell process under discussion

|

I'm opening a separate issue (from PR #874) for the discussion of a style guide.

Here are some existing style guides for Haskell, as references (thanks @hellerve)

https://kowainik.github.io/posts/2019-02-06-style-guide

https://wiki.haskell.org/Programming_guidelines

They both look pretty good as a basis for ours! Please add more if you know of some great ones.

I'm fine with just adopting the first one (or any similar one) wholesale, with just two clarifications:

(1) Aligning things like imports and tokens like `=`, `->`, etc, should be avoided or left up to the programmer. Even though we can have this "for free" (through automation) I think it slows you down unnecessarily when writing the code and it sometimes looks really weird (if the code on the left hand side varies wildly in length). I think it makes sense to let this be up to the programmer in each instance (the first of the style guides above even say so.) If we want a hard and fast rule I'd err on not having it.

(2) I'd like to stick to a "lispier" style when it comes to the use of `$`. This is personal preference, but I think it has some merit (and a lot of the code is already using this style.) The simple rule is that `$` should *only* be used to avoid parenthesis across multiple lines, like here:

```haskell

foo = blah $ do

a

b

c

```

which admittedly reads much nicer than

```haskell

foo = blah (do

a

b

c)

```

In all other instances I'd much prefer the lisp-inspired

```haskell

foo x y z = f x (g y (h z))

```

rather than

```haskell

foo x y z = f x $ g y $ h z

```

and the like.

This does *not* mean to always avoid useful operators like `<*>` when those make sense, it just makes grouping of code more clear, and easy to manipulate with structural editing tools (like Paredit).

|

1.0

|

Style guide - I'm opening a separate issue (from PR #874) for the discussion of a style guide.

Here are some existing style guides for Haskell, as references (thanks @hellerve)

https://kowainik.github.io/posts/2019-02-06-style-guide

https://wiki.haskell.org/Programming_guidelines

They both look pretty good as a basis for ours! Please add more if you know of some great ones.

I'm fine with just adopting the first one (or any similar one) wholesale, with just two clarifications:

(1) Aligning things like imports and tokens like `=`, `->`, etc, should be avoided or left up to the programmer. Even though we can have this "for free" (through automation) I think it slows you down unnecessarily when writing the code and it sometimes looks really weird (if the code on the left hand side varies wildly in length). I think it makes sense to let this be up to the programmer in each instance (the first of the style guides above even say so.) If we want a hard and fast rule I'd err on not having it.

(2) I'd like to stick to a "lispier" style when it comes to the use of `$`. This is personal preference, but I think it has some merit (and a lot of the code is already using this style.) The simple rule is that `$` should *only* be used to avoid parenthesis across multiple lines, like here:

```haskell

foo = blah $ do

a

b

c

```

which admittedly reads much nicer than

```haskell

foo = blah (do

a

b

c)

```

In all other instances I'd much prefer the lisp-inspired

```haskell

foo x y z = f x (g y (h z))

```

rather than

```haskell

foo x y z = f x $ g y $ h z

```

and the like.

This does *not* mean to always avoid useful operators like `<*>` when those make sense, it just makes grouping of code more clear, and easy to manipulate with structural editing tools (like Paredit).

|

process

|

style guide i m opening a separate issue from pr for the discussion of a style guide here are some existing style guides for haskell as references thanks hellerve they both look pretty good as a basis for ours please add more if you know of some great ones i m fine with just adopting the first one or any similar one wholesale with just two clarifications aligning things like imports and tokens like etc should be avoided or left up to the programmer even though we can have this for free through automation i think it slows you down unnecessarily when writing the code and it sometimes looks really weird if the code on the left hand side varies wildly in length i think it makes sense to let this be up to the programmer in each instance the first of the style guides above even say so if we want a hard and fast rule i d err on not having it i d like to stick to a lispier style when it comes to the use of this is personal preference but i think it has some merit and a lot of the code is already using this style the simple rule is that should only be used to avoid parenthesis across multiple lines like here haskell foo blah do a b c which admittedly reads much nicer than haskell foo blah do a b c in all other instances i d much prefer the lisp inspired haskell foo x y z f x g y h z rather than haskell foo x y z f x g y h z and the like this does not mean to always avoid useful operators like when those make sense it just makes grouping of code more clear and easy to manipulate with structural editing tools like paredit

| 1

|

228,227

| 17,439,446,773

|

IssuesEvent

|

2021-08-05 01:17:53

|

amzn/selling-partner-api-docs

|

https://api.github.com/repos/amzn/selling-partner-api-docs

|

opened

|

sortOrder not working for purchaseOrders

|

bug documentation

|

endpoint: /vendor/orders/v1/purchaseOrders&sortOrder=DESC

It does not seem to be sorting( ASC or DESC ) the purchase orders by creation date

|

1.0

|

sortOrder not working for purchaseOrders - endpoint: /vendor/orders/v1/purchaseOrders&sortOrder=DESC

It does not seem to be sorting( ASC or DESC ) the purchase orders by creation date

|

non_process

|

sortorder not working for purchaseorders endpoint vendor orders purchaseorders sortorder desc it does not seem to be sorting asc or desc the purchase orders by creation date

| 0

|

21,806

| 30,316,364,218

|

IssuesEvent

|

2023-07-10 15:50:15

|

tdwg/dwc

|

https://api.github.com/repos/tdwg/dwc

|

closed

|

Change term - county

|

Term - change Class - Location non-normative Process - complete

|

Submitter: John Wieczorek (following issue raised by Ian Engelbrecht @ianengelbrecht Issue #221 and tdwg/dwc-qa#141)

Justification (why is this change necessary?): Clarity

Proponents (who needs this change): Everyone

Current Term definition: https://dwc.tdwg.org/list/#dwc_county

Proposed new attributes of the term:

Usage comments (recommendations regarding content, etc.): Recommended best practice is to use a controlled vocabulary such as the Getty Thesaurus of Geographic Names. Recommended best practice is to leave this field blank if the Location spans multiple entities at this administrative level or if the Location might be in one or another of multiple possible entities at this level. Multiplicity and uncertainty of the geographic entity can be captured either in the term higherGeography or in the term locality, or both.

Replaces (identifier of the existing term that would be deprecated and replaced by this term, if applicable): http://rs.tdwg.org/dwc/terms/version/county-2017-10-06

|

1.0

|

Change term - county - Submitter: John Wieczorek (following issue raised by Ian Engelbrecht @ianengelbrecht Issue #221 and tdwg/dwc-qa#141)

Justification (why is this change necessary?): Clarity

Proponents (who needs this change): Everyone

Current Term definition: https://dwc.tdwg.org/list/#dwc_county

Proposed new attributes of the term:

Usage comments (recommendations regarding content, etc.): Recommended best practice is to use a controlled vocabulary such as the Getty Thesaurus of Geographic Names. Recommended best practice is to leave this field blank if the Location spans multiple entities at this administrative level or if the Location might be in one or another of multiple possible entities at this level. Multiplicity and uncertainty of the geographic entity can be captured either in the term higherGeography or in the term locality, or both.

Replaces (identifier of the existing term that would be deprecated and replaced by this term, if applicable): http://rs.tdwg.org/dwc/terms/version/county-2017-10-06

|

process

|

change term county submitter john wieczorek following issue raised by ian engelbrecht ianengelbrecht issue and tdwg dwc qa justification why is this change necessary clarity proponents who needs this change everyone current term definition proposed new attributes of the term usage comments recommendations regarding content etc recommended best practice is to use a controlled vocabulary such as the getty thesaurus of geographic names recommended best practice is to leave this field blank if the location spans multiple entities at this administrative level or if the location might be in one or another of multiple possible entities at this level multiplicity and uncertainty of the geographic entity can be captured either in the term highergeography or in the term locality or both replaces identifier of the existing term that would be deprecated and replaced by this term if applicable

| 1

|

1,638

| 4,188,118,621

|

IssuesEvent

|

2016-06-23 19:40:02

|

ForestryMC/ForestryMC

|

https://api.github.com/repos/ForestryMC/ForestryMC

|

closed

|

[1.10] - Caught exception from forestry java.lang.NoSuchFieldError: field_180413_ao

|

Incompatibility

|

Using Forge 1981 for Minecraft 1.10

Forestry: *forestry_1.9.4-5.0.23.170*

Crashlog:

http://paste.ee/p/tddVJ

And before anyone who doesn't have the correct information comments on this with "You're using 1.10 stupid, forestry is for 1.9.4".

1.9.4 mods in MOST cases will work on 1.10, this has been confirmed yesterday/today by a tweeted picture from Cpw aswell as internal testing within the modding community.

For example of a working modpack:

http://i.imgur.com/8rFNiOY.png

|

True

|

[1.10] - Caught exception from forestry java.lang.NoSuchFieldError: field_180413_ao - Using Forge 1981 for Minecraft 1.10

Forestry: *forestry_1.9.4-5.0.23.170*

Crashlog:

http://paste.ee/p/tddVJ

And before anyone who doesn't have the correct information comments on this with "You're using 1.10 stupid, forestry is for 1.9.4".

1.9.4 mods in MOST cases will work on 1.10, this has been confirmed yesterday/today by a tweeted picture from Cpw aswell as internal testing within the modding community.

For example of a working modpack:

http://i.imgur.com/8rFNiOY.png

|

non_process

|

caught exception from forestry java lang nosuchfielderror field ao using forge for minecraft forestry forestry crashlog and before anyone who doesn t have the correct information comments on this with you re using stupid forestry is for mods in most cases will work on this has been confirmed yesterday today by a tweeted picture from cpw aswell as internal testing within the modding community for example of a working modpack

| 0

|

125,416

| 10,341,790,530

|

IssuesEvent

|

2019-09-04 03:45:22

|

rancher/rke

|

https://api.github.com/repos/rancher/rke

|

closed

|

selinux-enabled nodes have hyperkube components receive permission denied for files from service-sidekick

|

[zube]: To Test internal kind/bug team/ca

|

**RKE version:** 0.2.6

**Docker version: (`docker version`,`docker info` preferred)** 18.09.8

**Operating system and kernel: (`cat /etc/os-release`, `uname -r` preferred)** CentOS Linux release 7.6.1810 (Core)

**Type/provider of hosts: (VirtualBox/Bare-metal/AWS/GCE/DO)** N/A, doesn't matter

**cluster.yml file:**

```

nodes:

- address: 172.16.134.48

user: centos

role: [etcd, controlplane, worker]

```

**Steps to Reproduce:**

Start CentOS 7 or RHEL 7 with

```

[root@ck-selinux-test _data]# cat /etc/docker/daemon.json

{

"selinux-enabled": true

}

[root@ck-selinux-test ~]# sestatus

SELinux status: enabled

SELinuxfs mount: /sys/fs/selinux

SELinux root directory: /etc/selinux

Loaded policy name: targeted

Current mode: enforcing

Mode from config file: enforcing

Policy MLS status: enabled

Policy deny_unknown status: allowed

Max kernel policy version: 31

[root@ck-selinux-test ~]#

```

and try to run `rke up` from another machine that has the capability to ssh

**Results:**

```

Endeavor:ck-selinux-test chriskim$ rke up

INFO[0000] Initiating Kubernetes cluster

INFO[0000] [dialer] Setup tunnel for host [172.16.134.48]

INFO[0003] [state] Pulling image [rancher/rke-tools:v0.1.34] on host [172.16.134.48]

INFO[0015] [state] Successfully pulled image [rancher/rke-tools:v0.1.34] on host [172.16.134.48]

INFO[0016] [state] Successfully started [cluster-state-deployer] container on host [172.16.134.48]

INFO[0017] [certificates] Generating CA kubernetes certificates

INFO[0017] [certificates] Generating Kubernetes API server aggregation layer requestheader client CA certificates

INFO[0017] [certificates] Generating Kubernetes API server proxy client certificates

INFO[0017] [certificates] Generating Kubernetes API server certificates

INFO[0017] [certificates] Generating Service account token key

INFO[0017] [certificates] Generating Kube Controller certificates

INFO[0017] [certificates] Generating Kube Scheduler certificates

INFO[0018] [certificates] Generating Kube Proxy certificates

INFO[0018] [certificates] Generating Node certificate

INFO[0018] [certificates] Generating admin certificates and kubeconfig

INFO[0018] [certificates] Generating etcd-172.16.134.48 certificate and key

INFO[0018] Successfully Deployed state file at [./cluster.rkestate]

INFO[0018] Building Kubernetes cluster

INFO[0018] [dialer] Setup tunnel for host [172.16.134.48]

INFO[0020] [network] Deploying port listener containers

INFO[0022] [network] Successfully started [rke-etcd-port-listener] container on host [172.16.134.48]

INFO[0023] [network] Successfully started [rke-cp-port-listener] container on host [172.16.134.48]

INFO[0025] [network] Successfully started [rke-worker-port-listener] container on host [172.16.134.48]

INFO[0025] [network] Port listener containers deployed successfully

INFO[0025] [network] Running control plane -> etcd port checks

INFO[0026] [network] Successfully started [rke-port-checker] container on host [172.16.134.48]

INFO[0027] [network] Running control plane -> worker port checks

INFO[0028] [network] Successfully started [rke-port-checker] container on host [172.16.134.48]

INFO[0028] [network] Running workers -> control plane port checks

INFO[0030] [network] Successfully started [rke-port-checker] container on host [172.16.134.48]

INFO[0030] [network] Checking KubeAPI port Control Plane hosts

INFO[0031] [network] Removing port listener containers

INFO[0031] [remove/rke-etcd-port-listener] Successfully removed container on host [172.16.134.48]

INFO[0032] [remove/rke-cp-port-listener] Successfully removed container on host [172.16.134.48]

INFO[0033] [remove/rke-worker-port-listener] Successfully removed container on host [172.16.134.48]

INFO[0033] [network] Port listener containers removed successfully

INFO[0033] [certificates] Deploying kubernetes certificates to Cluster nodes

INFO[0040] [reconcile] Rebuilding and updating local kube config

INFO[0040] Successfully Deployed local admin kubeconfig at [./kube_config_cluster.yml]

INFO[0040] [certificates] Successfully deployed kubernetes certificates to Cluster nodes

INFO[0040] [reconcile] Reconciling cluster state

INFO[0040] [reconcile] This is newly generated cluster

INFO[0040] Pre-pulling kubernetes images

INFO[0040] [pre-deploy] Pulling image [rancher/hyperkube:v1.14.3-rancher1] on host [172.16.134.48]

INFO[0101] [pre-deploy] Successfully pulled image [rancher/hyperkube:v1.14.3-rancher1] on host [172.16.134.48]

INFO[0101] Kubernetes images pulled successfully

INFO[0101] [etcd] Building up etcd plane..

INFO[0101] [etcd] Pulling image [rancher/coreos-etcd:v3.3.10-rancher1] on host [172.16.134.48]

INFO[0106] [etcd] Successfully pulled image [rancher/coreos-etcd:v3.3.10-rancher1] on host [172.16.134.48]

INFO[0107] [etcd] Successfully started [etcd] container on host [172.16.134.48]

INFO[0107] [etcd] Saving snapshot [etcd-rolling-snapshots] on host [172.16.134.48]

INFO[0109] [etcd] Successfully started [etcd-rolling-snapshots] container on host [172.16.134.48]

INFO[0116] [certificates] Successfully started [rke-bundle-cert] container on host [172.16.134.48]

INFO[0116] Waiting for [rke-bundle-cert] container to exit on host [172.16.134.48]

INFO[0116] [certificates] successfully saved certificate bundle [/opt/rke/etcd-snapshots//pki.bundle.tar.gz] on host [172.16.134.48]

INFO[0118] [etcd] Successfully started [rke-log-linker] container on host [172.16.134.48]

INFO[0119] [remove/rke-log-linker] Successfully removed container on host [172.16.134.48]

INFO[0119] [etcd] Successfully started etcd plane.. Checking etcd cluster health

INFO[0121] [controlplane] Building up Controller Plane..

INFO[0123] [controlplane] Successfully started [kube-apiserver] container on host [172.16.134.48]

INFO[0123] [healthcheck] Start Healthcheck on service [kube-apiserver] on host [172.16.134.48]

FATA[0193] [controlPlane] Failed to bring up Control Plane: [Failed to verify healthcheck: Failed to check https://localhost:6443/healthz for service [kube-apiserver] on host [172.16.134.48]: Get https://localhost:6443/healthz: Unable to access the service on localhost:6443. The service might be still starting up. Error: ssh: rejected: connect failed (Connection refused), log: /bin/bash: /opt/rke-tools/entrypoint.sh: Permission denied]

Endeavor:ck-selinux-test chriskim$

```

When you inspect the `service-sidekick` container you see that there is a shared volume in `/var/lib/docker/volumes` that is then shared into the various kubernetes component containers.