Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

75,601

| 7,478,228,801

|

IssuesEvent

|

2018-04-04 10:54:06

|

EyeSeeTea/malariapp

|

https://api.github.com/repos/EyeSeeTea/malariapp

|

closed

|

Pressing back after selecting to share the obs&act plan produces unexpected results

|

HNQIS complexity - med (1-5hr) priority - medium testing type - bug

|

Bug for H1.2 maintennace but happening in H1.3 and not in H1.2

|

1.0

|

Pressing back after selecting to share the obs&act plan produces unexpected results - Bug for H1.2 maintennace but happening in H1.3 and not in H1.2

|

non_process

|

pressing back after selecting to share the obs act plan produces unexpected results bug for maintennace but happening in and not in

| 0

|

5,297

| 8,120,460,031

|

IssuesEvent

|

2018-08-16 02:53:29

|

nodejs/node

|

https://api.github.com/repos/nodejs/node

|

closed

|

spawnSync's SyncProcessRunner::CopyJsStringArray segfaults with bad getter

|

child_process

|

<!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please specify affected core module name

If possible, please provide code that demonstrates the problem, keeping it as

simple and free of external dependencies as you are able.

-->

* **Version**:

* **Platform**:

* **Subsystem**:

<!-- Enter your issue details below this comment. -->

Similar to #9820, the underlying binding code that is used by spawnSync can

segfault when called with objects/array that have "evil" getters/setters. The

following code shows an example of this:

```javascript

const spawn_sync = process.binding('spawn_sync');

// compute envPairs as done by child_process

let envPairs = [];

for (var key in process.env) {

envPairs.push(key + '=' + process.env[key]);

}

// mess with args

const args = [ '-a' ];

Object.defineProperty(args, 1, {

get: () => {

return 3; // causes StringBytes::Write in spawn_sync.cc:986 to segfault since it's not a string

},

set: () => {

// override so Set after Clone will do nothing because of this

},

enumerable: true

});

const options = {

file: 'ls',

args: args,

envPairs: envPairs,

stdio: [

{ type: 'pipe', readable: true, writable: false },

{ type: 'pipe', readable: false, writable: true },

{ type: 'pipe', readable: false, writable: true }

]

};

spawn_sync.spawn(options);

```

May be worth again ensuring that all arguments are strings before calling into

the binding code.

+ @mlfbrown for working on this with me.

|

1.0

|

spawnSync's SyncProcessRunner::CopyJsStringArray segfaults with bad getter - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please specify affected core module name

If possible, please provide code that demonstrates the problem, keeping it as

simple and free of external dependencies as you are able.

-->

* **Version**:

* **Platform**:

* **Subsystem**:

<!-- Enter your issue details below this comment. -->

Similar to #9820, the underlying binding code that is used by spawnSync can

segfault when called with objects/array that have "evil" getters/setters. The

following code shows an example of this:

```javascript

const spawn_sync = process.binding('spawn_sync');

// compute envPairs as done by child_process

let envPairs = [];

for (var key in process.env) {

envPairs.push(key + '=' + process.env[key]);

}

// mess with args

const args = [ '-a' ];

Object.defineProperty(args, 1, {

get: () => {

return 3; // causes StringBytes::Write in spawn_sync.cc:986 to segfault since it's not a string

},

set: () => {

// override so Set after Clone will do nothing because of this

},

enumerable: true

});

const options = {

file: 'ls',

args: args,

envPairs: envPairs,

stdio: [

{ type: 'pipe', readable: true, writable: false },

{ type: 'pipe', readable: false, writable: true },

{ type: 'pipe', readable: false, writable: true }

]

};

spawn_sync.spawn(options);

```

May be worth again ensuring that all arguments are strings before calling into

the binding code.

+ @mlfbrown for working on this with me.

|

process

|

spawnsync s syncprocessrunner copyjsstringarray segfaults with bad getter thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template below as you re able version output of node v platform output of uname a unix or version and or bit windows subsystem if known please specify affected core module name if possible please provide code that demonstrates the problem keeping it as simple and free of external dependencies as you are able version platform subsystem similar to the underlying binding code that is used by spawnsync can segfault when called with objects array that have evil getters setters the following code shows an example of this javascript const spawn sync process binding spawn sync compute envpairs as done by child process let envpairs for var key in process env envpairs push key process env mess with args const args object defineproperty args get return causes stringbytes write in spawn sync cc to segfault since it s not a string set override so set after clone will do nothing because of this enumerable true const options file ls args args envpairs envpairs stdio type pipe readable true writable false type pipe readable false writable true type pipe readable false writable true spawn sync spawn options may be worth again ensuring that all arguments are strings before calling into the binding code mlfbrown for working on this with me

| 1

|

171,005

| 6,476,338,424

|

IssuesEvent

|

2017-08-17 22:37:45

|

semperfiwebdesign/all-in-one-seo-pack

|

https://api.github.com/repos/semperfiwebdesign/all-in-one-seo-pack

|

closed

|

Some notification messages are missing a CSS rule

|

Bug Priority | Medium

|

Notification messages that don't have a Dismiss link are appearing thinner than they should. See screenshot below.

This is because the content in the notification div is usually wrapped in a paragraph tag so that the following CSS gets applied:

.form-table td .notice p, .notice p, .notice-title, div.error p, div.updated p {

margin: .5em 0;

padding: 2px;

}

Currently this CSS rule isn't getting applied because the content inside these messages is not contained in a paragraph. Wrapping the text in a paragraph tag will resolve this issue.

|

1.0

|

Some notification messages are missing a CSS rule - Notification messages that don't have a Dismiss link are appearing thinner than they should. See screenshot below.

This is because the content in the notification div is usually wrapped in a paragraph tag so that the following CSS gets applied:

.form-table td .notice p, .notice p, .notice-title, div.error p, div.updated p {

margin: .5em 0;

padding: 2px;

}

Currently this CSS rule isn't getting applied because the content inside these messages is not contained in a paragraph. Wrapping the text in a paragraph tag will resolve this issue.

|

non_process

|

some notification messages are missing a css rule notification messages that don t have a dismiss link are appearing thinner than they should see screenshot below this is because the content in the notification div is usually wrapped in a paragraph tag so that the following css gets applied form table td notice p notice p notice title div error p div updated p margin padding currently this css rule isn t getting applied because the content inside these messages is not contained in a paragraph wrapping the text in a paragraph tag will resolve this issue

| 0

|

278,827

| 24,180,119,340

|

IssuesEvent

|

2022-09-23 08:05:11

|

MohistMC/Mohist

|

https://api.github.com/repos/MohistMC/Mohist

|

closed

|

[1.18.2] Unable to use Immersive Portal

|

Wait Needs Testing

|

<!-- ISSUE_TEMPLATE_1 -> IMPORTANT: DO NOT DELETE THIS LINE.-->

**Minecraft Version :** 1.18.2

**Mohist Version :** 1.18.2-90

**Operating System :** Ubuntu 22.04

**Concerned mod / plugin** : [Immersive Portal for Forge](https://www.curseforge.com/minecraft/mc-mods/immersive-portals-for-forge)

**Logs :**

[latest.log](https://github.com/MohistMC/Mohist/files/9618917/latest.log)

**Steps to Reproduce :**

1. Start a 1.18.2 server with Immersive Portal.

**Description of issue :** Unable to use Immersive Portal

|

1.0

|

[1.18.2] Unable to use Immersive Portal - <!-- ISSUE_TEMPLATE_1 -> IMPORTANT: DO NOT DELETE THIS LINE.-->

**Minecraft Version :** 1.18.2

**Mohist Version :** 1.18.2-90

**Operating System :** Ubuntu 22.04

**Concerned mod / plugin** : [Immersive Portal for Forge](https://www.curseforge.com/minecraft/mc-mods/immersive-portals-for-forge)

**Logs :**

[latest.log](https://github.com/MohistMC/Mohist/files/9618917/latest.log)

**Steps to Reproduce :**

1. Start a 1.18.2 server with Immersive Portal.

**Description of issue :** Unable to use Immersive Portal

|

non_process

|

unable to use immersive portal important do not delete this line minecraft version mohist version operating system ubuntu concerned mod plugin logs steps to reproduce start a server with immersive portal description of issue unable to use immersive portal

| 0

|

698,902

| 23,996,159,989

|

IssuesEvent

|

2022-09-14 07:43:59

|

younginnovations/iatipublisher

|

https://api.github.com/repos/younginnovations/iatipublisher

|

closed

|

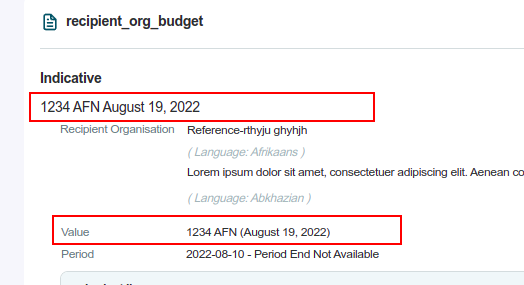

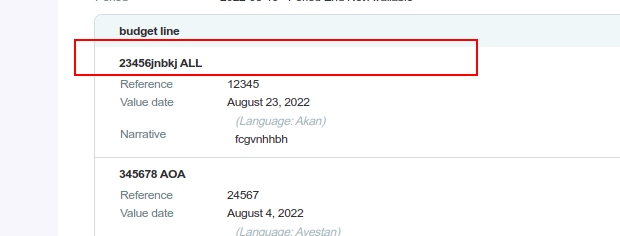

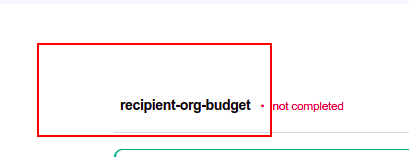

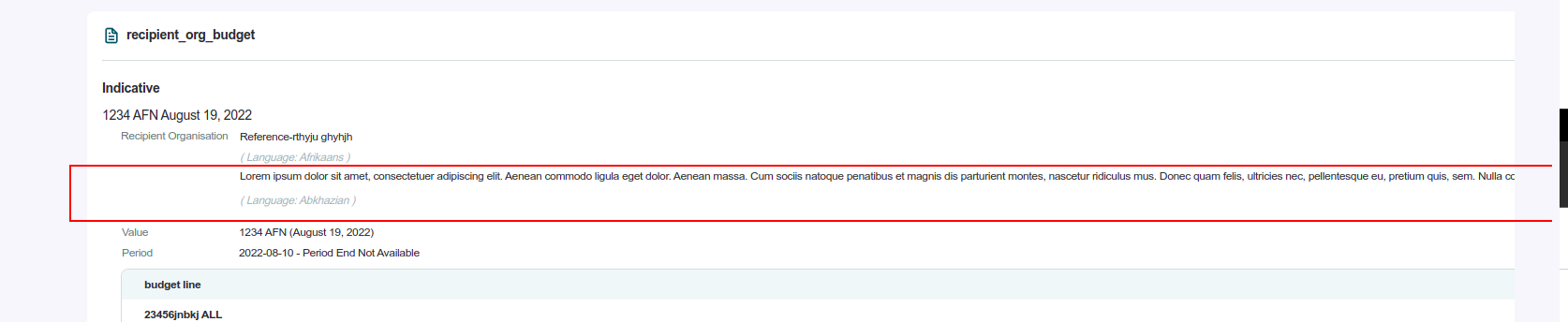

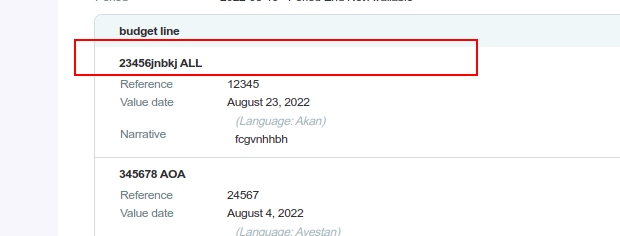

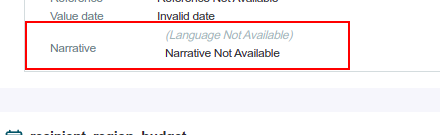

Bug :Organization Detail>>recipient-org-budget

|

type: bug priority: high

|

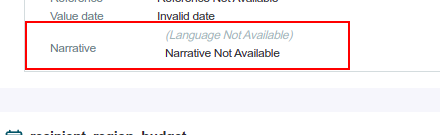

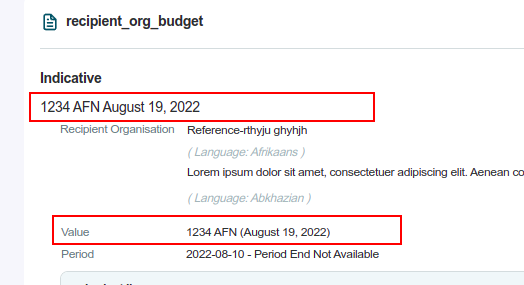

Context

- Desktop

- Chrome 102.0.5005.61

Precondition

- https://stage.iatipublisher.yipl.com.np/

- Username: Publisher 3

- Password: test1234

- for created activity

- [x] **Issue 1 : Icon is missing**

Actual Result

Expected Result

- Icon should be present

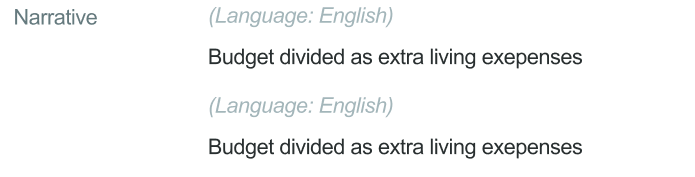

- [x] **Issue 2: The same data has been displayed twice**

Actual Result

Expected Result

- Data should be displayed only once on the org detail page

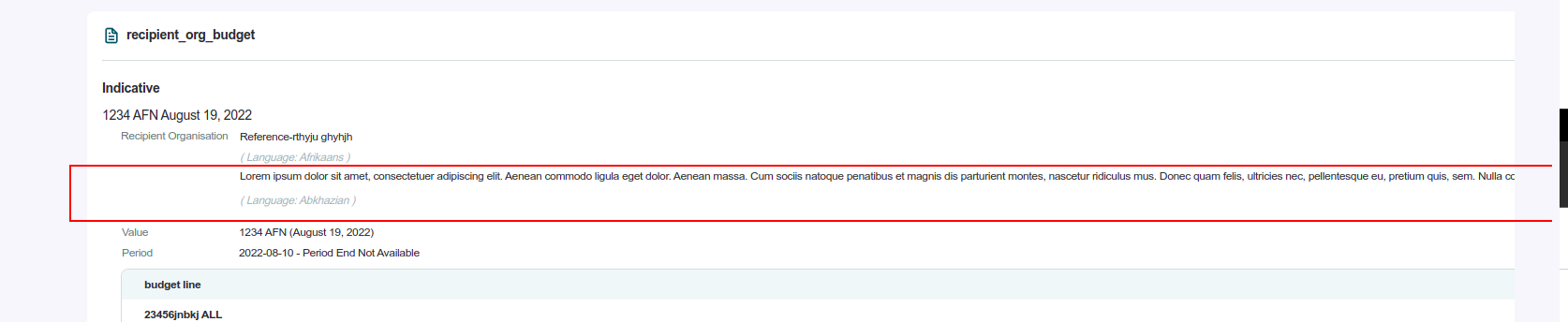

- [x] **Issue 3: When you enter a long text in the narrative page get an overflow**

Actual Result

Expected Result

- The page should not overflow

- [x] **Issue 4: IATI standard has not been followed **

Steps

- Enter period-start(@iso-date 06/23/2025)

- period-end (@iso-date 06/23/2021)

- save

Actual Result

- page gets saved

Expected Result

- The budget Period must not be longer than one year.

- The start of the period must be before the end of the period.

- [ ] **Issue 5:End period date is not displayed on the org detail page.**

Actual Result

Expected Result

- The end period date should be displayed on the org detail page

- [x] **Issue 6: No validation for amount**

Actual Result

Expected Result

- A proper validation should be in the form

- [x] **Issue 7: Inappropriate way to display Narrative**

Actual Result

Excepted Result

|

1.0

|

Bug :Organization Detail>>recipient-org-budget - Context

- Desktop

- Chrome 102.0.5005.61

Precondition

- https://stage.iatipublisher.yipl.com.np/

- Username: Publisher 3

- Password: test1234

- for created activity

- [x] **Issue 1 : Icon is missing**

Actual Result

Expected Result

- Icon should be present

- [x] **Issue 2: The same data has been displayed twice**

Actual Result

Expected Result

- Data should be displayed only once on the org detail page

- [x] **Issue 3: When you enter a long text in the narrative page get an overflow**

Actual Result

Expected Result

- The page should not overflow

- [x] **Issue 4: IATI standard has not been followed **

Steps

- Enter period-start(@iso-date 06/23/2025)

- period-end (@iso-date 06/23/2021)

- save

Actual Result

- page gets saved

Expected Result

- The budget Period must not be longer than one year.

- The start of the period must be before the end of the period.

- [ ] **Issue 5:End period date is not displayed on the org detail page.**

Actual Result

Expected Result

- The end period date should be displayed on the org detail page

- [x] **Issue 6: No validation for amount**

Actual Result

Expected Result

- A proper validation should be in the form

- [x] **Issue 7: Inappropriate way to display Narrative**

Actual Result

Excepted Result

|

non_process

|

bug organization detail recipient org budget context desktop chrome precondition username publisher password for created activity issue icon is missing actual result expected result icon should be present issue the same data has been displayed twice actual result expected result data should be displayed only once on the org detail page issue when you enter a long text in the narrative page get an overflow actual result expected result the page should not overflow issue iati standard has not been followed steps enter period start iso date period end iso date save actual result page gets saved expected result the budget period must not be longer than one year the start of the period must be before the end of the period issue end period date is not displayed on the org detail page actual result expected result the end period date should be displayed on the org detail page issue no validation for amount actual result expected result a proper validation should be in the form issue inappropriate way to display narrative actual result excepted result

| 0

|

17,053

| 23,521,010,266

|

IssuesEvent

|

2022-08-19 05:52:47

|

safing/portmaster

|

https://api.github.com/repos/safing/portmaster

|

opened

|

Where does portmaster place itself relative to distro firewalls (firewalld/ufw)?

|

in/compatibility

|

Hello Safing team! ❤️ Thank you so much for creating Portmaster. I haven't been this excited about new software in years. It is fantastic.

I am curious how the portmaster rules execute in relation to the OS/distro firewall rules on Linux?

For example, this is how firewalld works on Fedora Workstation:

- Deny all incoming connections.

- Allow incoming on ports 1025-65535.

- Allow certain incoming services such as mdns and ssh.

What happens when Portmaster is installed on a system that uses `firewalld`?

My **guess (or at least hope)** is this:

- Portmaster places itself at the top as the highest priority rule.

- Unmarked connections are forwarded to the Portmaster user space for classification.

- All packets for that connection are then marked as either accept or drop, by the flag that Portmaster decided.

- Any packet that is marked as accept then goes to the remaining rules which `firewalld` created, which block all incoming connections but then allow specific service ports (ssh, 1025-65535, etc).

If this is what happens, then I would be able to set Portmaster as "allow all incoming" to basically just forward everything to the rules that are already set up by Fedora in their `firewalld`. That would let me use Portmaster for my intended purpose: Per app blocking of outgoing connections. I would also be able to block specific app"s incoming connections to get extra granularity.

In short if it works as I hope it does, then it would be thr best of all worlds.

|

True

|

Where does portmaster place itself relative to distro firewalls (firewalld/ufw)? - Hello Safing team! ❤️ Thank you so much for creating Portmaster. I haven't been this excited about new software in years. It is fantastic.

I am curious how the portmaster rules execute in relation to the OS/distro firewall rules on Linux?

For example, this is how firewalld works on Fedora Workstation:

- Deny all incoming connections.

- Allow incoming on ports 1025-65535.

- Allow certain incoming services such as mdns and ssh.

What happens when Portmaster is installed on a system that uses `firewalld`?

My **guess (or at least hope)** is this:

- Portmaster places itself at the top as the highest priority rule.

- Unmarked connections are forwarded to the Portmaster user space for classification.

- All packets for that connection are then marked as either accept or drop, by the flag that Portmaster decided.

- Any packet that is marked as accept then goes to the remaining rules which `firewalld` created, which block all incoming connections but then allow specific service ports (ssh, 1025-65535, etc).

If this is what happens, then I would be able to set Portmaster as "allow all incoming" to basically just forward everything to the rules that are already set up by Fedora in their `firewalld`. That would let me use Portmaster for my intended purpose: Per app blocking of outgoing connections. I would also be able to block specific app"s incoming connections to get extra granularity.

In short if it works as I hope it does, then it would be thr best of all worlds.

|

non_process

|

where does portmaster place itself relative to distro firewalls firewalld ufw hello safing team ❤️ thank you so much for creating portmaster i haven t been this excited about new software in years it is fantastic i am curious how the portmaster rules execute in relation to the os distro firewall rules on linux for example this is how firewalld works on fedora workstation deny all incoming connections allow incoming on ports allow certain incoming services such as mdns and ssh what happens when portmaster is installed on a system that uses firewalld my guess or at least hope is this portmaster places itself at the top as the highest priority rule unmarked connections are forwarded to the portmaster user space for classification all packets for that connection are then marked as either accept or drop by the flag that portmaster decided any packet that is marked as accept then goes to the remaining rules which firewalld created which block all incoming connections but then allow specific service ports ssh etc if this is what happens then i would be able to set portmaster as allow all incoming to basically just forward everything to the rules that are already set up by fedora in their firewalld that would let me use portmaster for my intended purpose per app blocking of outgoing connections i would also be able to block specific app s incoming connections to get extra granularity in short if it works as i hope it does then it would be thr best of all worlds

| 0

|

9,676

| 12,678,943,759

|

IssuesEvent

|

2020-06-19 10:44:14

|

KratosMultiphysics/Kratos

|

https://api.github.com/repos/KratosMultiphysics/Kratos

|

closed

|

DEM always prints number_of_neighbours_histogram.txt

|

Post Process

|

can this be made optional? or is it already possible to disable this?

Thx

(discovered in CoSim tests)

|

1.0

|

DEM always prints number_of_neighbours_histogram.txt - can this be made optional? or is it already possible to disable this?

Thx

(discovered in CoSim tests)

|

process

|

dem always prints number of neighbours histogram txt can this be made optional or is it already possible to disable this thx discovered in cosim tests

| 1

|

20,859

| 27,638,803,830

|

IssuesEvent

|

2023-03-10 16:22:12

|

camunda/issues

|

https://api.github.com/repos/camunda/issues

|

opened

|

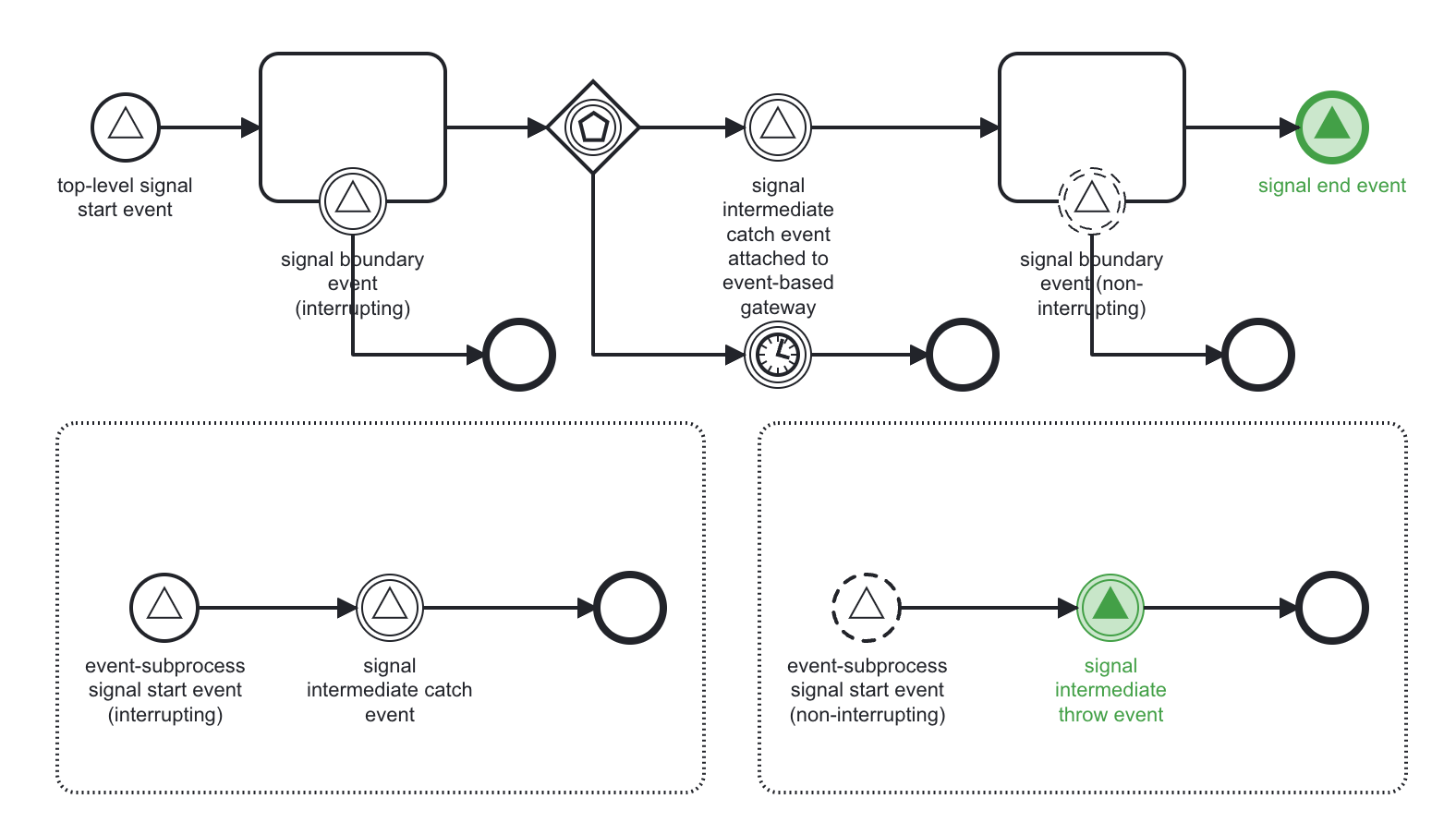

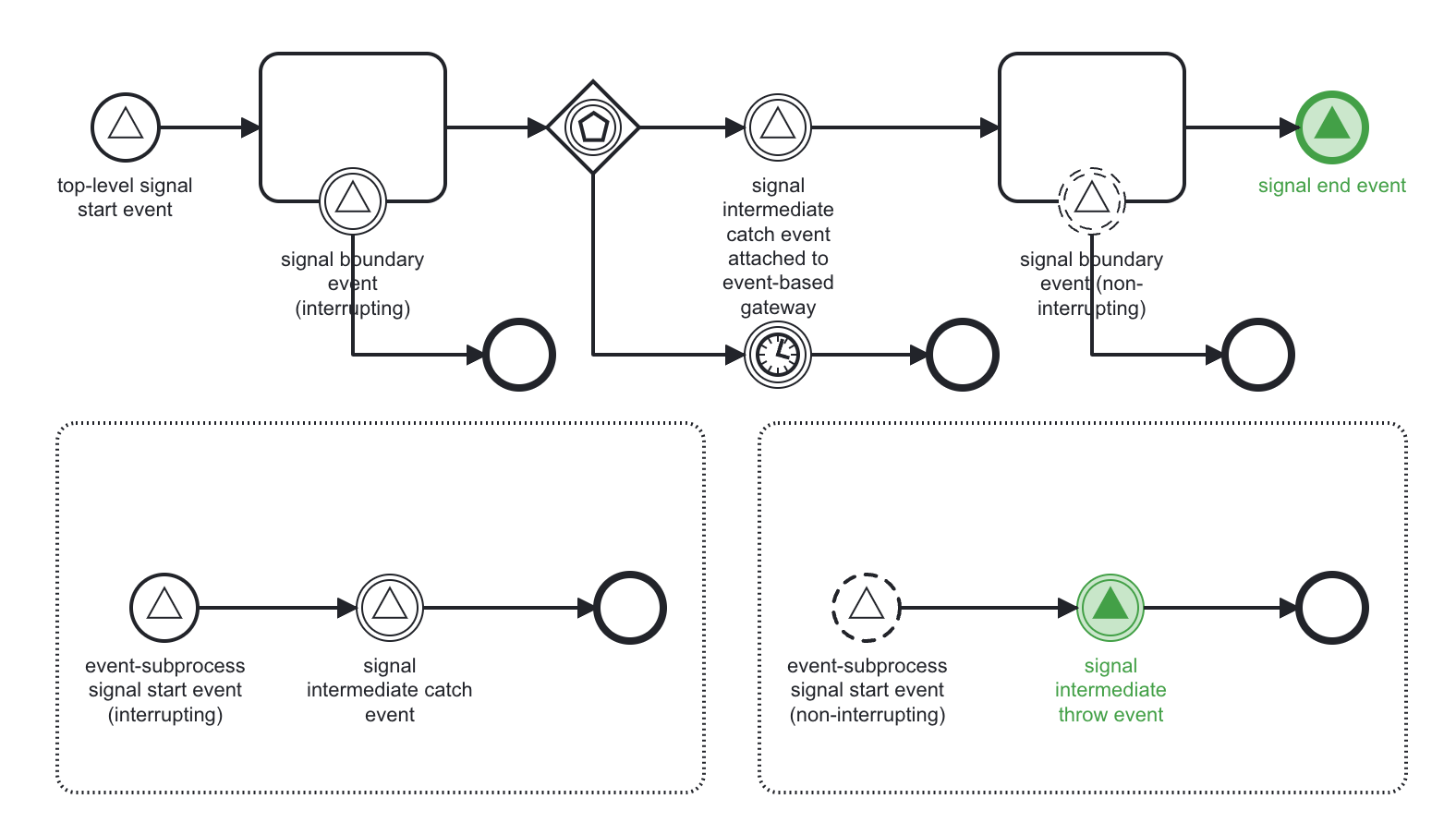

BPMN Signal Events(3): Broadcast signal event using throw signal event

|

component:desktopModeler component:operate component:optimize component:webModeler component:zeebe-process-automation public kind:epic feature-parity

|

### Value Proposition Statement

Use BPMN Throw Signal Events to easily start or continue instances that wait for a signal - without any coding.

### User Problem

Users can use BPMN Catch Signal Events (e.g. Start Event or Intermediate Events), but they have to be triggered via gRPC or one of our Clients. This means using Signal Events requires writing code and also different BPMN symbols have to be used than signals for throwing signals (e.g. I cannot use Signal Throw Events and attach a job worker, but I would have to use a Service Task instead).

### User Stories

I can model signal end event and intermediate signal throw events and linting works correctly.

I can deploy the models with such symbols to the engine and the engine triggers all signals correctly without me having to use the API.

I can see the symbols in other tools like Operate, Optimize.

### Implementation Notes

In the third stage, we'll increase support to all BPMN signal symbols. Specifically, it adds support for the Signal Intermediate Throw Event, and the Signal End Event.

Model highlighting that all the signal events will be supported at this stage, including the signal throw events

When a process instance arrives at a signal throw event, we'll broadcast a signal in the same way as the gateway can broadcast a signal: write a Signal:Broadcast command with relaying to the current partition. The implementation of stage 1 will then relay the command to other partitions, and all signal (start event) subscriptions will be triggered, without additional implementation efforts needed.

### Breakdown

- https://github.com/camunda/zeebe/issues/11918

#### Discovery phase ##

<!-- Example: link to "Conduct customer interview with xyz" -->

#### Define phase ##

<!-- Consider: UI, UX, technical design, documentation design -->

<!-- Example: link to "Define User-Journey Flow" or "Define target architecture" -->

Design Planning

* Reviewed by design: {date}

* Designer assigned: {Yes, No Design Necessary, or No Designer Available}

* Assignee:

* Design Brief - {link to design brief }

* Research Brief - {link to research brief }

Design Deliverables

* {Deliverable Name} {Link to GH Issue}

Documentation Planning

<!-- Complex changes must be reviewed during the Define phase by the DRI of Documentation or technical writer. -->

<!-- Briefly describe the anticipated impact to documentation. -->

<!-- Example: "Creates structural changes in docs as UX is reworked." _Add docs reviewer to Epic for feedback._ -->

Risk Management <!-- add link to risk management issue -->

* Risk Class: <!-- e.g. very low | low | medium | high | very high -->

* Risk Treatment: <!-- e.g. avoid | mitigate | transfer | accept -->

#### Implement phase ##

<!-- Example: link to "Implement User Story xyz". Should not only include core implementation, but also documentation. -->

#### Validate phase ##

<!-- Example: link to "Evaluate usage data of last quarter" -->

### Links to additional collateral

<!-- Example: link to relevant support cases -->

|

1.0

|

BPMN Signal Events(3): Broadcast signal event using throw signal event - ### Value Proposition Statement

Use BPMN Throw Signal Events to easily start or continue instances that wait for a signal - without any coding.

### User Problem

Users can use BPMN Catch Signal Events (e.g. Start Event or Intermediate Events), but they have to be triggered via gRPC or one of our Clients. This means using Signal Events requires writing code and also different BPMN symbols have to be used than signals for throwing signals (e.g. I cannot use Signal Throw Events and attach a job worker, but I would have to use a Service Task instead).

### User Stories

I can model signal end event and intermediate signal throw events and linting works correctly.

I can deploy the models with such symbols to the engine and the engine triggers all signals correctly without me having to use the API.

I can see the symbols in other tools like Operate, Optimize.

### Implementation Notes

In the third stage, we'll increase support to all BPMN signal symbols. Specifically, it adds support for the Signal Intermediate Throw Event, and the Signal End Event.

Model highlighting that all the signal events will be supported at this stage, including the signal throw events

When a process instance arrives at a signal throw event, we'll broadcast a signal in the same way as the gateway can broadcast a signal: write a Signal:Broadcast command with relaying to the current partition. The implementation of stage 1 will then relay the command to other partitions, and all signal (start event) subscriptions will be triggered, without additional implementation efforts needed.

### Breakdown

- https://github.com/camunda/zeebe/issues/11918

#### Discovery phase ##

<!-- Example: link to "Conduct customer interview with xyz" -->

#### Define phase ##

<!-- Consider: UI, UX, technical design, documentation design -->

<!-- Example: link to "Define User-Journey Flow" or "Define target architecture" -->

Design Planning

* Reviewed by design: {date}

* Designer assigned: {Yes, No Design Necessary, or No Designer Available}

* Assignee:

* Design Brief - {link to design brief }

* Research Brief - {link to research brief }

Design Deliverables

* {Deliverable Name} {Link to GH Issue}

Documentation Planning

<!-- Complex changes must be reviewed during the Define phase by the DRI of Documentation or technical writer. -->

<!-- Briefly describe the anticipated impact to documentation. -->

<!-- Example: "Creates structural changes in docs as UX is reworked." _Add docs reviewer to Epic for feedback._ -->

Risk Management <!-- add link to risk management issue -->

* Risk Class: <!-- e.g. very low | low | medium | high | very high -->

* Risk Treatment: <!-- e.g. avoid | mitigate | transfer | accept -->

#### Implement phase ##

<!-- Example: link to "Implement User Story xyz". Should not only include core implementation, but also documentation. -->

#### Validate phase ##

<!-- Example: link to "Evaluate usage data of last quarter" -->

### Links to additional collateral

<!-- Example: link to relevant support cases -->

|

process

|

bpmn signal events broadcast signal event using throw signal event value proposition statement use bpmn throw signal events to easily start or continue instances that wait for a signal without any coding user problem users can use bpmn catch signal events e g start event or intermediate events but they have to be triggered via grpc or one of our clients this means using signal events requires writing code and also different bpmn symbols have to be used than signals for throwing signals e g i cannot use signal throw events and attach a job worker but i would have to use a service task instead user stories i can model signal end event and intermediate signal throw events and linting works correctly i can deploy the models with such symbols to the engine and the engine triggers all signals correctly without me having to use the api i can see the symbols in other tools like operate optimize implementation notes in the third stage we ll increase support to all bpmn signal symbols specifically it adds support for the signal intermediate throw event and the signal end event model highlighting that all the signal events will be supported at this stage including the signal throw events when a process instance arrives at a signal throw event we ll broadcast a signal in the same way as the gateway can broadcast a signal write a signal broadcast command with relaying to the current partition the implementation of stage will then relay the command to other partitions and all signal start event subscriptions will be triggered without additional implementation efforts needed breakdown discovery phase define phase design planning reviewed by design date designer assigned yes no design necessary or no designer available assignee design brief link to design brief research brief link to research brief design deliverables deliverable name link to gh issue documentation planning risk management risk class risk treatment implement phase validate phase links to additional collateral

| 1

|

1,039

| 3,509,552,712

|

IssuesEvent

|

2016-01-08 23:25:12

|

kerubistan/kerub

|

https://api.github.com/repos/kerubistan/kerub

|

opened

|

concurrency bug in the host assigner

|

bug component:data processing priority: high

|

```assignControllers(com.github.K0zka.kerub.host.ControllerAssignerImplTest): java.util.concurrent.ExecutionException: java.util.ConcurrentModificationException: java.util.ConcurrentModificationException```

|

1.0

|

concurrency bug in the host assigner -

```assignControllers(com.github.K0zka.kerub.host.ControllerAssignerImplTest): java.util.concurrent.ExecutionException: java.util.ConcurrentModificationException: java.util.ConcurrentModificationException```

|

process

|

concurrency bug in the host assigner assigncontrollers com github kerub host controllerassignerimpltest java util concurrent executionexception java util concurrentmodificationexception java util concurrentmodificationexception

| 1

|

22,574

| 31,799,346,653

|

IssuesEvent

|

2023-09-13 10:03:18

|

SpikeInterface/spikeinterface

|

https://api.github.com/repos/SpikeInterface/spikeinterface

|

closed

|

spyking circus2 crashes when silence periods

|

bug preprocessing

|

Hello,

I've added silence periods with "spikeinterface.preprocessing.silence_periods" to my recording to reject artefact periods, but this makes spyking circus2 crash with the following error.

I'm using version 0.98.2 of SI.

```

Error running spykingcircus2

---------------------------------------------------------------------------

SpikeSortingError Traceback (most recent call last)

Cell In[32], line 2

1 output_path = r"D:\01_IR-ICM\donnees\Analyses\Epilepsy\testSI\SI_pos_test_silence"

----> 2 sorting = ss.run_sorters(sorter_list,

3 recordings_split,

4 working_folder=output_path,

5 mode_if_folder_exists="overwrite",

6 sorter_params=params,

7 verbose=True)

File ~\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\launcher.py:295, in run_sorters(sorter_list, recording_dict_or_list, working_folder, sorter_params, mode_if_folder_exists, engine, engine_kwargs, verbose, with_output, docker_images, singularity_images)

292 if engine == "loop":

293 # simple loop in main process

294 for task_args in task_args_list:

--> 295 _run_one(task_args)

297 elif engine == "joblib":

298 from joblib import Parallel, delayed

File ~\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\launcher.py:46, in _run_one(arg_list)

43 # because we won't want the loop/worker to break

44 raise_error = False

---> 46 run_sorter(

47 sorter_name,

48 recording,

49 output_folder=output_folder,

50 remove_existing_folder=remove_existing_folder,

51 delete_output_folder=delete_output_folder,

52 verbose=verbose,

53 raise_error=raise_error,

54 docker_image=docker_image,

55 singularity_image=singularity_image,

56 with_output=with_output,

57 **sorter_params,

58 )

File ~\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\runsorter.py:148, in run_sorter(sorter_name, recording, output_folder, remove_existing_folder, delete_output_folder, verbose, raise_error, docker_image, singularity_image, delete_container_files, with_output, **sorter_params)

141 container_image = singularity_image

142 return run_sorter_container(

143 container_image=container_image,

144 mode=mode,

145 **common_kwargs,

146 )

--> 148 return run_sorter_local(**common_kwargs)

File ~\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\runsorter.py:176, in run_sorter_local(sorter_name, recording, output_folder, remove_existing_folder, delete_output_folder, verbose, raise_error, with_output, **sorter_params)

174 SorterClass.run_from_folder(output_folder, raise_error, verbose)

175 if with_output:

--> 176 sorting = SorterClass.get_result_from_folder(output_folder)

177 else:

178 sorting = None

File ~\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\basesorter.py:289, in BaseSorter.get_result_from_folder(cls, output_folder)

286 log = json.load(f)

288 if bool(log["error"]):

--> 289 raise SpikeSortingError(

290 f"Spike sorting error trace:\n{log['error_trace']}\n"

291 f"Spike sorting failed. You can inspect the runtime trace in {output_folder}/spikeinterface_log.json."

292 )

294 if sorter_output_folder.is_dir():

295 sorting = cls._get_result_from_folder(sorter_output_folder)

SpikeSortingError: Spike sorting error trace:

Traceback (most recent call last):

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\basesorter.py", line 234, in run_from_folder

SorterClass._run_from_folder(sorter_output_folder, sorter_params, verbose)

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\internal\spyking_circus2.py", line 73, in _run_from_folder

recording_f = zscore(recording_f, dtype="float32")

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\preprocessing\normalize_scale.py", line 271, in __init__

random_data = get_random_data_chunks(recording, **random_chunk_kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\core\recording_tools.py", line 64, in get_random_data_chunks

segment_trace_chunk = [

^

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\core\recording_tools.py", line 65, in <listcomp>

recording.get_traces(

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\core\baserecording.py", line 278, in get_traces

traces = rs.get_traces(start_frame=start_frame, end_frame=end_frame, channel_indices=channel_indices)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\core\channelslice.py", line 93, in get_traces

traces = self._parent_recording_segment.get_traces(start_frame, end_frame, parent_indices)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\preprocessing\silence_periods.py", line 106, in get_traces

lower_index = np.searchsorted(self.periods[:, 1], new_interval[0])

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "<__array_function__ internals>", line 200, in searchsorted

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\numpy\core\fromnumeric.py", line 1413, in searchsorted

return _wrapfunc(a, 'searchsorted', v, side=side, sorter=sorter)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\numpy\core\fromnumeric.py", line 57, in _wrapfunc

return bound(*args, **kwds)

^^^^^^^^^^^^^^^^^^^^

ValueError: object too deep for desired array

Spike sorting failed. You can inspect the runtime trace in D:\01_IR-ICM\donnees\Analyses\Epilepsy\testSI\SI_pos_test_silence\1\spykingcircus2/spikeinterface_log.json.

```

Thanks!

|

1.0

|

spyking circus2 crashes when silence periods - Hello,

I've added silence periods with "spikeinterface.preprocessing.silence_periods" to my recording to reject artefact periods, but this makes spyking circus2 crash with the following error.

I'm using version 0.98.2 of SI.

```

Error running spykingcircus2

---------------------------------------------------------------------------

SpikeSortingError Traceback (most recent call last)

Cell In[32], line 2

1 output_path = r"D:\01_IR-ICM\donnees\Analyses\Epilepsy\testSI\SI_pos_test_silence"

----> 2 sorting = ss.run_sorters(sorter_list,

3 recordings_split,

4 working_folder=output_path,

5 mode_if_folder_exists="overwrite",

6 sorter_params=params,

7 verbose=True)

File ~\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\launcher.py:295, in run_sorters(sorter_list, recording_dict_or_list, working_folder, sorter_params, mode_if_folder_exists, engine, engine_kwargs, verbose, with_output, docker_images, singularity_images)

292 if engine == "loop":

293 # simple loop in main process

294 for task_args in task_args_list:

--> 295 _run_one(task_args)

297 elif engine == "joblib":

298 from joblib import Parallel, delayed

File ~\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\launcher.py:46, in _run_one(arg_list)

43 # because we won't want the loop/worker to break

44 raise_error = False

---> 46 run_sorter(

47 sorter_name,

48 recording,

49 output_folder=output_folder,

50 remove_existing_folder=remove_existing_folder,

51 delete_output_folder=delete_output_folder,

52 verbose=verbose,

53 raise_error=raise_error,

54 docker_image=docker_image,

55 singularity_image=singularity_image,

56 with_output=with_output,

57 **sorter_params,

58 )

File ~\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\runsorter.py:148, in run_sorter(sorter_name, recording, output_folder, remove_existing_folder, delete_output_folder, verbose, raise_error, docker_image, singularity_image, delete_container_files, with_output, **sorter_params)

141 container_image = singularity_image

142 return run_sorter_container(

143 container_image=container_image,

144 mode=mode,

145 **common_kwargs,

146 )

--> 148 return run_sorter_local(**common_kwargs)

File ~\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\runsorter.py:176, in run_sorter_local(sorter_name, recording, output_folder, remove_existing_folder, delete_output_folder, verbose, raise_error, with_output, **sorter_params)

174 SorterClass.run_from_folder(output_folder, raise_error, verbose)

175 if with_output:

--> 176 sorting = SorterClass.get_result_from_folder(output_folder)

177 else:

178 sorting = None

File ~\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\basesorter.py:289, in BaseSorter.get_result_from_folder(cls, output_folder)

286 log = json.load(f)

288 if bool(log["error"]):

--> 289 raise SpikeSortingError(

290 f"Spike sorting error trace:\n{log['error_trace']}\n"

291 f"Spike sorting failed. You can inspect the runtime trace in {output_folder}/spikeinterface_log.json."

292 )

294 if sorter_output_folder.is_dir():

295 sorting = cls._get_result_from_folder(sorter_output_folder)

SpikeSortingError: Spike sorting error trace:

Traceback (most recent call last):

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\basesorter.py", line 234, in run_from_folder

SorterClass._run_from_folder(sorter_output_folder, sorter_params, verbose)

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\sorters\internal\spyking_circus2.py", line 73, in _run_from_folder

recording_f = zscore(recording_f, dtype="float32")

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\preprocessing\normalize_scale.py", line 271, in __init__

random_data = get_random_data_chunks(recording, **random_chunk_kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\core\recording_tools.py", line 64, in get_random_data_chunks

segment_trace_chunk = [

^

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\core\recording_tools.py", line 65, in <listcomp>

recording.get_traces(

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\core\baserecording.py", line 278, in get_traces

traces = rs.get_traces(start_frame=start_frame, end_frame=end_frame, channel_indices=channel_indices)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\core\channelslice.py", line 93, in get_traces

traces = self._parent_recording_segment.get_traces(start_frame, end_frame, parent_indices)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\spikeinterface\preprocessing\silence_periods.py", line 106, in get_traces

lower_index = np.searchsorted(self.periods[:, 1], new_interval[0])

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "<__array_function__ internals>", line 200, in searchsorted

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\numpy\core\fromnumeric.py", line 1413, in searchsorted

return _wrapfunc(a, 'searchsorted', v, side=side, sorter=sorter)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\katia.lehongre\AppData\Local\anaconda3\envs\spikeinterface\Lib\site-packages\numpy\core\fromnumeric.py", line 57, in _wrapfunc

return bound(*args, **kwds)

^^^^^^^^^^^^^^^^^^^^

ValueError: object too deep for desired array

Spike sorting failed. You can inspect the runtime trace in D:\01_IR-ICM\donnees\Analyses\Epilepsy\testSI\SI_pos_test_silence\1\spykingcircus2/spikeinterface_log.json.

```

Thanks!

|

process

|

spyking crashes when silence periods hello i ve added silence periods with spikeinterface preprocessing silence periods to my recording to reject artefact periods but this makes spyking crash with the following error i m using version of si error running spikesortingerror traceback most recent call last cell in line output path r d ir icm donnees analyses epilepsy testsi si pos test silence sorting ss run sorters sorter list recordings split working folder output path mode if folder exists overwrite sorter params params verbose true file appdata local envs spikeinterface lib site packages spikeinterface sorters launcher py in run sorters sorter list recording dict or list working folder sorter params mode if folder exists engine engine kwargs verbose with output docker images singularity images if engine loop simple loop in main process for task args in task args list run one task args elif engine joblib from joblib import parallel delayed file appdata local envs spikeinterface lib site packages spikeinterface sorters launcher py in run one arg list because we won t want the loop worker to break raise error false run sorter sorter name recording output folder output folder remove existing folder remove existing folder delete output folder delete output folder verbose verbose raise error raise error docker image docker image singularity image singularity image with output with output sorter params file appdata local envs spikeinterface lib site packages spikeinterface sorters runsorter py in run sorter sorter name recording output folder remove existing folder delete output folder verbose raise error docker image singularity image delete container files with output sorter params container image singularity image return run sorter container container image container image mode mode common kwargs return run sorter local common kwargs file appdata local envs spikeinterface lib site packages spikeinterface sorters runsorter py in run sorter local sorter name recording output folder remove existing folder delete output folder verbose raise error with output sorter params sorterclass run from folder output folder raise error verbose if with output sorting sorterclass get result from folder output folder else sorting none file appdata local envs spikeinterface lib site packages spikeinterface sorters basesorter py in basesorter get result from folder cls output folder log json load f if bool log raise spikesortingerror f spike sorting error trace n log n f spike sorting failed you can inspect the runtime trace in output folder spikeinterface log json if sorter output folder is dir sorting cls get result from folder sorter output folder spikesortingerror spike sorting error trace traceback most recent call last file c users katia lehongre appdata local envs spikeinterface lib site packages spikeinterface sorters basesorter py line in run from folder sorterclass run from folder sorter output folder sorter params verbose file c users katia lehongre appdata local envs spikeinterface lib site packages spikeinterface sorters internal spyking py line in run from folder recording f zscore recording f dtype file c users katia lehongre appdata local envs spikeinterface lib site packages spikeinterface preprocessing normalize scale py line in init random data get random data chunks recording random chunk kwargs file c users katia lehongre appdata local envs spikeinterface lib site packages spikeinterface core recording tools py line in get random data chunks segment trace chunk file c users katia lehongre appdata local envs spikeinterface lib site packages spikeinterface core recording tools py line in recording get traces file c users katia lehongre appdata local envs spikeinterface lib site packages spikeinterface core baserecording py line in get traces traces rs get traces start frame start frame end frame end frame channel indices channel indices file c users katia lehongre appdata local envs spikeinterface lib site packages spikeinterface core channelslice py line in get traces traces self parent recording segment get traces start frame end frame parent indices file c users katia lehongre appdata local envs spikeinterface lib site packages spikeinterface preprocessing silence periods py line in get traces lower index np searchsorted self periods new interval file line in searchsorted file c users katia lehongre appdata local envs spikeinterface lib site packages numpy core fromnumeric py line in searchsorted return wrapfunc a searchsorted v side side sorter sorter file c users katia lehongre appdata local envs spikeinterface lib site packages numpy core fromnumeric py line in wrapfunc return bound args kwds valueerror object too deep for desired array spike sorting failed you can inspect the runtime trace in d ir icm donnees analyses epilepsy testsi si pos test silence spikeinterface log json thanks

| 1

|

12,195

| 14,742,383,280

|

IssuesEvent

|

2021-01-07 12:12:08

|

kdjstudios/SABillingGitlab

|

https://api.github.com/repos/kdjstudios/SABillingGitlab

|

closed

|

Hays - FW: Senior Dental Invoice

|

anc-process anp-1 ant-support has attachment

|

In GitLab by @kdjstudios on Apr 11, 2019, 11:38

**Submitted by:** "Jessica Fischer" <jessica.fischer@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-11-77714

**Server:** Internal

**Client/Site:** Hays

**Account:** 121-20180702

**Issue:**

I am inquiring on how this client would have a direct withdrawal. I have never done this with this client I have always manually invoiced and he pays his bill via check. Can you please look into this and see what happened?

Full Email thread: [original_message__3_.html](/uploads/1e14ce40a7431dcc6616cabd988e059e/original_message__3_.html)

|

1.0

|

Hays - FW: Senior Dental Invoice - In GitLab by @kdjstudios on Apr 11, 2019, 11:38

**Submitted by:** "Jessica Fischer" <jessica.fischer@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-11-77714

**Server:** Internal

**Client/Site:** Hays

**Account:** 121-20180702

**Issue:**

I am inquiring on how this client would have a direct withdrawal. I have never done this with this client I have always manually invoiced and he pays his bill via check. Can you please look into this and see what happened?

Full Email thread: [original_message__3_.html](/uploads/1e14ce40a7431dcc6616cabd988e059e/original_message__3_.html)

|

process

|

hays fw senior dental invoice in gitlab by kdjstudios on apr submitted by jessica fischer helpdesk server internal client site hays account issue i am inquiring on how this client would have a direct withdrawal i have never done this with this client i have always manually invoiced and he pays his bill via check can you please look into this and see what happened full email thread uploads original message html

| 1

|

19,006

| 25,006,542,072

|

IssuesEvent

|

2022-11-03 12:19:25

|

Tencent/tdesign-miniprogram

|

https://api.github.com/repos/Tencent/tdesign-miniprogram

|

closed

|

[button] 可否提供一个属性直接修改按钮的背景颜色

|

enhancement good first issue in process

|

### 这个功能解决了什么问题

通过t-class改颜色有点烦,主要是得important才能生效。。。

### 你建议的方案是什么

加一个color属性之类的,或者能通过CSS Variables改也可以

|

1.0

|

[button] 可否提供一个属性直接修改按钮的背景颜色 - ### 这个功能解决了什么问题

通过t-class改颜色有点烦,主要是得important才能生效。。。

### 你建议的方案是什么

加一个color属性之类的,或者能通过CSS Variables改也可以

|

process

|

可否提供一个属性直接修改按钮的背景颜色 这个功能解决了什么问题 通过t class改颜色有点烦,主要是得important才能生效。。。 你建议的方案是什么 加一个color属性之类的,或者能通过css variables改也可以

| 1

|

20,148

| 11,401,800,302

|

IssuesEvent

|

2020-01-31 00:43:27

|

Azure/azure-rest-api-specs

|

https://api.github.com/repos/Azure/azure-rest-api-specs

|

closed

|

Azure DNS REST API cannot create long TXT records (though the portal can)

|

Network - DNS Service Attention

|

# Azure DNS REST API cannot create long TXT records (though the portal can)

I'm trying to use the Azure REST API to create a long TXT record (with a value > 255 characters). This is doable through the portal, but not through the REST API

# Example

This issue blocks https://github.com/terraform-providers/terraform-provider-azurerm/issues/2826 and is also related to https://github.com/terraform-providers/terraform-provider-azurerm/issues/5547

See the full reproduction script at https://gist.github.com/bbkane/f1c8e0e0dd6cf9f4734cb5baed062f35

This bash script defines some constants and functions to create and view a DNS record via the REST API:

```

#!/bin/bash

readonly resourceGroupName="B16_repro_long_txt_record"

readonly zoneName="bbkane.com"

readonly recordType="TXT"

get_txt_record() {

local -r relativeRecordSetName="$1"

az rest \

--method get \

--uri "https://management.azure.com/subscriptions/{subscriptionId}/resourceGroups/$resourceGroupName/providers/Microsoft.Network/dnsZones/$zoneName/$recordType/$relativeRecordSetName?api-version=2018-05-01"

}

make_txt_record_one_value() {

local -r relativeRecordSetName="$1"

local -r value="$2"

cat > "tmp_$relativeRecordSetName.json" << EOF

{

"properties": {

"TTL": 3600,

"TXTRecords": [

{

"value": [

"$value"

]

}

]

}

}

EOF

az rest \

--method put \

--uri "https://management.azure.com/subscriptions/{subscriptionId}/resourceGroups/$resourceGroupName/providers/Microsoft.Network/dnsZones/$zoneName/$recordType/$relativeRecordSetName?api-version=2018-05-01" \

--body "@tmp_$relativeRecordSetName.json"

}

```

## Creating a TXT record with a value less than 255 characters is possible with the API:

```

make_txt_record_one_value not-long-value "$(perl -E "print 'a' x 250")"

```

Prints:

```

{

"etag": "f7037316-05f6-4e50-a93c-61b06cbfaa70",

"id": "/subscriptions/a9396794-7c83-412f-92c6-1e89857f8d96/resourceGroups/B16_repro_long_txt_record/providers/Microsoft.Network/dnszones/bbkane.com/TXT/not-long-value",

"name": "not-long-value",

"properties": {

"TTL": 3600,

"TXTRecords": [

{

"value": [

"aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa"

]

}

],

"fqdn": "not-long-value.bbkane.com.",

"provisioningState": "Succeeded",

"targetResource": {}

},

"resourceGroup": "B16_repro_long_txt_record",

"type": "Microsoft.Network/dnszones/TXT"

}

```

## Creating a TXT record with a value greater than 255 characters is not:

```

make_txt_record_one_value long-value "$(perl -E "print 'a' x 250, 'b' x 250")"

```

```

Bad Request({"code":"BadRequest","message":"The value 'aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaabbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbb' for the TXT record is not valid."})

```

## Creating a TXT record with a value greater than 255 characters is possible via the portal and reachable via the API and `dig`:

```

get_txt_record long-value-portal

```

```

{

"etag": "492ddb37-52a9-4336-b94a-24422d0235e8",

"id": "/subscriptions/a9396794-7c83-412f-92c6-1e89857f8d96/resourceGroups/B16_repro_long_txt_record/providers/Microsoft.Network/dnszones/bbkane.com/TXT/long-value-portal",

"name": "long-value-portal",

"properties": {

"TTL": 3600,

"TXTRecords": [

{

"value": [

"aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaabbbbb",

"bbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbb"

]

}

],

"fqdn": "long-value-portal.bbkane.com.",

"provisioningState": "Succeeded",

"targetResource": {}

},

"resourceGroup": "B16_repro_long_txt_record",

"type": "Microsoft.Network/dnszones/TXT"

}

```

```

$ dig +short +noshort @ns1-03.azure-dns.com long-value-portal.bbkane.com TXT

long-value-portal.bbkane.com. 3600 IN TXT "aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaabbbbb" "bbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbb"

```

|

1.0

|

Azure DNS REST API cannot create long TXT records (though the portal can) - # Azure DNS REST API cannot create long TXT records (though the portal can)

I'm trying to use the Azure REST API to create a long TXT record (with a value > 255 characters). This is doable through the portal, but not through the REST API

# Example

This issue blocks https://github.com/terraform-providers/terraform-provider-azurerm/issues/2826 and is also related to https://github.com/terraform-providers/terraform-provider-azurerm/issues/5547

See the full reproduction script at https://gist.github.com/bbkane/f1c8e0e0dd6cf9f4734cb5baed062f35

This bash script defines some constants and functions to create and view a DNS record via the REST API:

```

#!/bin/bash

readonly resourceGroupName="B16_repro_long_txt_record"

readonly zoneName="bbkane.com"

readonly recordType="TXT"

get_txt_record() {

local -r relativeRecordSetName="$1"

az rest \

--method get \

--uri "https://management.azure.com/subscriptions/{subscriptionId}/resourceGroups/$resourceGroupName/providers/Microsoft.Network/dnsZones/$zoneName/$recordType/$relativeRecordSetName?api-version=2018-05-01"

}

make_txt_record_one_value() {

local -r relativeRecordSetName="$1"

local -r value="$2"

cat > "tmp_$relativeRecordSetName.json" << EOF

{

"properties": {

"TTL": 3600,

"TXTRecords": [

{

"value": [

"$value"

]

}

]

}

}

EOF

az rest \

--method put \

--uri "https://management.azure.com/subscriptions/{subscriptionId}/resourceGroups/$resourceGroupName/providers/Microsoft.Network/dnsZones/$zoneName/$recordType/$relativeRecordSetName?api-version=2018-05-01" \

--body "@tmp_$relativeRecordSetName.json"

}

```

## Creating a TXT record with a value less than 255 characters is possible with the API:

```

make_txt_record_one_value not-long-value "$(perl -E "print 'a' x 250")"

```

Prints:

```

{

"etag": "f7037316-05f6-4e50-a93c-61b06cbfaa70",

"id": "/subscriptions/a9396794-7c83-412f-92c6-1e89857f8d96/resourceGroups/B16_repro_long_txt_record/providers/Microsoft.Network/dnszones/bbkane.com/TXT/not-long-value",

"name": "not-long-value",

"properties": {

"TTL": 3600,

"TXTRecords": [

{

"value": [

"aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa"

]

}

],

"fqdn": "not-long-value.bbkane.com.",

"provisioningState": "Succeeded",

"targetResource": {}

},

"resourceGroup": "B16_repro_long_txt_record",

"type": "Microsoft.Network/dnszones/TXT"

}

```

## Creating a TXT record with a value greater than 255 characters is not:

```

make_txt_record_one_value long-value "$(perl -E "print 'a' x 250, 'b' x 250")"

```

```

Bad Request({"code":"BadRequest","message":"The value 'aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaabbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbb' for the TXT record is not valid."})

```

## Creating a TXT record with a value greater than 255 characters is possible via the portal and reachable via the API and `dig`:

```

get_txt_record long-value-portal

```

```

{

"etag": "492ddb37-52a9-4336-b94a-24422d0235e8",

"id": "/subscriptions/a9396794-7c83-412f-92c6-1e89857f8d96/resourceGroups/B16_repro_long_txt_record/providers/Microsoft.Network/dnszones/bbkane.com/TXT/long-value-portal",

"name": "long-value-portal",

"properties": {

"TTL": 3600,

"TXTRecords": [

{

"value": [

"aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaabbbbb",

"bbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbb"

]

}

],

"fqdn": "long-value-portal.bbkane.com.",

"provisioningState": "Succeeded",

"targetResource": {}

},

"resourceGroup": "B16_repro_long_txt_record",

"type": "Microsoft.Network/dnszones/TXT"

}

```

```

$ dig +short +noshort @ns1-03.azure-dns.com long-value-portal.bbkane.com TXT

long-value-portal.bbkane.com. 3600 IN TXT "aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaabbbbb" "bbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbb"

```

|

non_process

|

azure dns rest api cannot create long txt records though the portal can azure dns rest api cannot create long txt records though the portal can i m trying to use the azure rest api to create a long txt record with a value characters this is doable through the portal but not through the rest api example this issue blocks and is also related to see the full reproduction script at this bash script defines some constants and functions to create and view a dns record via the rest api bin bash readonly resourcegroupname repro long txt record readonly zonename bbkane com readonly recordtype txt get txt record local r relativerecordsetname az rest method get uri make txt record one value local r relativerecordsetname local r value cat tmp relativerecordsetname json eof properties ttl txtrecords value value eof az rest method put uri body tmp relativerecordsetname json creating a txt record with a value less than characters is possible with the api make txt record one value not long value perl e print a x prints etag id subscriptions resourcegroups repro long txt record providers microsoft network dnszones bbkane com txt not long value name not long value properties ttl txtrecords value aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa fqdn not long value bbkane com provisioningstate succeeded targetresource resourcegroup repro long txt record type microsoft network dnszones txt creating a txt record with a value greater than characters is not make txt record one value long value perl e print a x b x bad request code badrequest message the value aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaabbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbb for the txt record is not valid creating a txt record with a value greater than characters is possible via the portal and reachable via the api and dig get txt record long value portal etag id subscriptions resourcegroups repro long txt record providers microsoft network dnszones bbkane com txt long value portal name long value portal properties ttl txtrecords value aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaabbbbb bbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbb fqdn long value portal bbkane com provisioningstate succeeded targetresource resourcegroup repro long txt record type microsoft network dnszones txt dig short noshort azure dns com long value portal bbkane com txt long value portal bbkane com in txt aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaabbbbb bbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbb

| 0

|

11,107

| 13,956,361,563

|

IssuesEvent

|

2020-10-24 00:47:31

|

allinurl/goaccess

|

https://api.github.com/repos/allinurl/goaccess

|

closed

|

Need some improvement in keep-last algorithm

|

bug log-processing

|

Hello, @allinurl.

I have a medium `LOG` to process. About _1.8 million_ of records more accurately.

However, it has percularity time distribution, due to my carelessness.

And, It should only have 1 day of interval. I.E `15/Sep`.

It has the dates, in chronological order:

* First from `12/Aug` until `15/Sep`.

* Soon after, it returns to `29/Aug` until `15/Sep`.

* And in third it returns to `09/Sep` until `15/Sep`.

Truly speaking -- it is the union of other logs and therefore have this particular feature.

And only _12,000_ of records do _not belong_ to `15/Sep`.

Running `GoAccess` with the option` keep-last == 1`, the program slows down processing,

from `20,000/sec` to `10/sec`, when it reaches `15/Sep` at first time .

Debbuging... I found that long time is spended with `clean_old_data_by_date` routine.

To compare, the processing time is:

* `09":15'` -- with the option `keep-last == 1`;

* `02":14'` -- without restriction, `keep-last == 0`;

* `02":08'` -- with `awk` filter, choosing only `15/Sep` [ restrictions do not matter here ].

|

1.0

|

Need some improvement in keep-last algorithm - Hello, @allinurl.

I have a medium `LOG` to process. About _1.8 million_ of records more accurately.

However, it has percularity time distribution, due to my carelessness.

And, It should only have 1 day of interval. I.E `15/Sep`.

It has the dates, in chronological order:

* First from `12/Aug` until `15/Sep`.

* Soon after, it returns to `29/Aug` until `15/Sep`.

* And in third it returns to `09/Sep` until `15/Sep`.

Truly speaking -- it is the union of other logs and therefore have this particular feature.

And only _12,000_ of records do _not belong_ to `15/Sep`.

Running `GoAccess` with the option` keep-last == 1`, the program slows down processing,

from `20,000/sec` to `10/sec`, when it reaches `15/Sep` at first time .

Debbuging... I found that long time is spended with `clean_old_data_by_date` routine.

To compare, the processing time is:

* `09":15'` -- with the option `keep-last == 1`;

* `02":14'` -- without restriction, `keep-last == 0`;

* `02":08'` -- with `awk` filter, choosing only `15/Sep` [ restrictions do not matter here ].

|

process

|

need some improvement in keep last algorithm hello allinurl i have a medium log to process about million of records more accurately however it has percularity time distribution due to my carelessness and it should only have day of interval i e sep it has the dates in chronological order first from aug until sep soon after it returns to aug until sep and in third it returns to sep until sep truly speaking it is the union of other logs and therefore have this particular feature and only of records do not belong to sep running goaccess with the option keep last the program slows down processing from sec to sec when it reaches sep at first time debbuging i found that long time is spended with clean old data by date routine to compare the processing time is with the option keep last without restriction keep last with awk filter choosing only sep

| 1

|

20,433

| 27,098,810,748

|

IssuesEvent

|

2023-02-15 06:40:57

|

bazelbuild/bazel

|

https://api.github.com/repos/bazelbuild/bazel

|

closed

|

Bad incremental build after APT update: undeclared inclusions

|

P4 type: support / not a bug (process) team-ExternalDeps team-Rules-CPP stale

|

### Description of the problem / feature request:

Building a tree that worked fine yesterday now fails with this error:

```

ERROR: /HOMEDIR/.cache/bazel/_bazel_wchargin/52a95bbdd50941251730eb33b7476a66/external/zlib_archive/BUILD.bazel:5:1: undeclared inclusion(s) in rule '@zlib_archive//:zlib':

this rule is missing dependency declarations for the following files included by 'external/zlib_archive/inffast.c':

'/usr/lib/gcc/x86_64-linux-gnu/8/include/stddef.h'

'/usr/lib/gcc/x86_64-linux-gnu/8/include-fixed/limits.h'

'/usr/lib/gcc/x86_64-linux-gnu/8/include-fixed/syslimits.h'

'/usr/lib/gcc/x86_64-linux-gnu/8/include/stdarg.h'

Target //tensorboard/components/tf_backend/test:test_chromium failed to build

```

These four include files are provided by `libgcc-8-dev:amd64`, which was

updated this morning between the successful build and the failing build.

This seems like a probable culprit?

A coworker of mine, @caisq, encountered the exact same failure

yesterday, with the same undeclared inclusions (`@zlib_archive//:zlib`).

He ran `bazel clean --expunge`, which resolved the error. But my

understanding per [the `bazel clean` docs][clean] is that any case is

that any case in which `bazel clean` changes the output of a build is

considered a high-priority bug.

[clean]: https://docs.bazel.build/versions/0.28.0/user-manual.html#the-clean-command

### Feature requests: what underlying problem are you trying to solve with this feature?

N/A

### Bugs: what's the simplest, easiest way to reproduce this bug? Please provide a minimal example if possible.

This is a cache poisoning bug. I cannot reproduce it from a clean state.

If I run `bazel clean --expunge`, the problem will go away. Cloning the

repository into a fresh temp directory and building there works fine.

With my cache, running `bazel build //tensorboard` is sufficient to

trigger the above error.

### What operating system are you running Bazel on?

gLinux (like Debian)

### What's the output of `bazel info release`?

release 0.28.1

### If `bazel info release` returns "development version" or "(@non-git)", tell us how you built Bazel.

N/A

### What's the output of `git remote get-url origin ; git rev-parse master ; git rev-parse HEAD` ?

```

git@github.com:tensorflow/tensorboard.git

master

fatal: ambiguous argument 'master': unknown revision or path not in the working tree.

Use '--' to separate paths from revisions, like this:

'git <command> [<revision>...] -- [<file>...]'

841bdde5ab75cd0a68fdf6b9573190e8a82fc1de

```

(I don’t use a `master` branch; I work mostly in detached HEAD states.)

### Have you found anything relevant by searching the web?

I’ve found various related issues:

- <https://github.com/tensorflow/tensorflow/issues/3939>

- <https://stackoverflow.com/questions/43230143/tensorflow-build-issue-with-bazel#comment73708719_43230143>

…but none with an actual solution. Modifying an upstream CROSSTOOL file

clearly isn’t the answer, and the others just seem to suggest running

`bazel clean`.

### Any other information, logs, or outputs that you want to share?

This project uses `--incompatible_use_python_toolchains=false`, but the

builds from yesterday and today were in the same virtualenv with the

same packages. (Also, this doesn’t look like a Python problem.)

|

1.0

|

Bad incremental build after APT update: undeclared inclusions - ### Description of the problem / feature request:

Building a tree that worked fine yesterday now fails with this error:

```

ERROR: /HOMEDIR/.cache/bazel/_bazel_wchargin/52a95bbdd50941251730eb33b7476a66/external/zlib_archive/BUILD.bazel:5:1: undeclared inclusion(s) in rule '@zlib_archive//:zlib':

this rule is missing dependency declarations for the following files included by 'external/zlib_archive/inffast.c':

'/usr/lib/gcc/x86_64-linux-gnu/8/include/stddef.h'

'/usr/lib/gcc/x86_64-linux-gnu/8/include-fixed/limits.h'

'/usr/lib/gcc/x86_64-linux-gnu/8/include-fixed/syslimits.h'

'/usr/lib/gcc/x86_64-linux-gnu/8/include/stdarg.h'

Target //tensorboard/components/tf_backend/test:test_chromium failed to build

```

These four include files are provided by `libgcc-8-dev:amd64`, which was

updated this morning between the successful build and the failing build.

This seems like a probable culprit?

A coworker of mine, @caisq, encountered the exact same failure

yesterday, with the same undeclared inclusions (`@zlib_archive//:zlib`).

He ran `bazel clean --expunge`, which resolved the error. But my

understanding per [the `bazel clean` docs][clean] is that any case is

that any case in which `bazel clean` changes the output of a build is

considered a high-priority bug.

[clean]: https://docs.bazel.build/versions/0.28.0/user-manual.html#the-clean-command

### Feature requests: what underlying problem are you trying to solve with this feature?

N/A

### Bugs: what's the simplest, easiest way to reproduce this bug? Please provide a minimal example if possible.

This is a cache poisoning bug. I cannot reproduce it from a clean state.

If I run `bazel clean --expunge`, the problem will go away. Cloning the

repository into a fresh temp directory and building there works fine.